mirror of

https://github.com/ByteByteGoHq/system-design-101.git

synced 2026-04-01 16:57:23 -04:00

Adds ByteByteGo guides and links (#106)

This PR adds all the guides from [Visual Guides](https://bytebytego.com/guides/) section on bytebytego to the repository with proper links. - [x] Markdown files for guides and categories are placed inside `data/guides` and `data/categories` - [x] Guide links in readme are auto-generated using `scripts/readme.ts`. Everytime you run the script `npm run update-readme`, it reads the categories and guides from the above mentioned folders, generate production links for guides and categories and populate the table of content in the readme. This ensures that any future guides and categories will automatically get added to the readme. - [x] Sorting inside the readme matches the actual category and guides sorting on production

This commit is contained in:

0

images/banner.jpg → .github/banner.jpg

vendored

0

images/banner.jpg → .github/banner.jpg

vendored

|

Before Width: | Height: | Size: 984 KiB After Width: | Height: | Size: 984 KiB |

3

.gitignore

vendored

3

.gitignore

vendored

@@ -2,6 +2,7 @@

|

|||||||

*.epub

|

*.epub

|

||||||

__pycache__/

|

__pycache__/

|

||||||

*.py[cod]

|

*.py[cod]

|

||||||

|

.idea

|

||||||

|

|

||||||

# C extensions

|

# C extensions

|

||||||

*.so

|

*.so

|

||||||

@@ -41,6 +42,8 @@ htmlcov/

|

|||||||

nosetests.xml

|

nosetests.xml

|

||||||

coverage.xml

|

coverage.xml

|

||||||

|

|

||||||

|

node_modules

|

||||||

|

|

||||||

# Translations

|

# Translations

|

||||||

*.mo

|

*.mo

|

||||||

*.pot

|

*.pot

|

||||||

|

|||||||

@@ -13,7 +13,3 @@ Thank you for your interest in contributing! Here are some guidelines to follow

|

|||||||

### GitHub Pull Requests Docs

|

### GitHub Pull Requests Docs

|

||||||

|

|

||||||

If you are not familiar with pull requests, review the [pull request docs](https://help.github.com/articles/using-pull-requests/).

|

If you are not familiar with pull requests, review the [pull request docs](https://help.github.com/articles/using-pull-requests/).

|

||||||

|

|

||||||

## Translations

|

|

||||||

|

|

||||||

Refer to [TRANSLATIONS.md](translations/TRANSLATIONS.md)

|

|

||||||

|

|||||||

9

data/categories/ai-machine-learning.md

Normal file

9

data/categories/ai-machine-learning.md

Normal file

@@ -0,0 +1,9 @@

|

|||||||

|

---

|

||||||

|

title: 'AI and Machine Learning'

|

||||||

|

description: 'Learn the basics of AI and Machine Learning, how they work, and some real-world applications with visual illustrations.'

|

||||||

|

image: 'https://github.com/ByteByteGoHq/system-design-101/raw/main/images/oAuth2.jpg'

|

||||||

|

icon: '/icons/brain.png'

|

||||||

|

sort: 120

|

||||||

|

---

|

||||||

|

|

||||||

|

AI and Machine Learning are two of the most popular technologies in the tech industry. They are used in various applications such as recommendation systems, image recognition, natural language processing, and more. In this guide, we will explore the basics of AI and Machine Learning, how they work, and some real-world applications.

|

||||||

11

data/categories/api-web-development.md

Normal file

11

data/categories/api-web-development.md

Normal file

@@ -0,0 +1,11 @@

|

|||||||

|

---

|

||||||

|

title: 'API and Web Development'

|

||||||

|

description: 'Learn how APIs enable web development by providing standardized protocols for data exchange between different parts of web applications.'

|

||||||

|

image: 'https://github.com/ByteByteGoHq/system-design-101/raw/main/images/oAuth2.jpg'

|

||||||

|

icon: '/icons/api.png'

|

||||||

|

sort: 100

|

||||||

|

---

|

||||||

|

|

||||||

|

Web development involves building websites and applications by combining frontend UI, backend logic, and databases. APIs are the fundamental building blocks that enable these components to communicate effectively.

|

||||||

|

|

||||||

|

APIs provide standardized protocols for data exchange between different parts of web applications. Using technologies like REST and GraphQL, APIs allow integration of services, database operations, and interactive features while keeping system components cleanly separated.

|

||||||

9

data/categories/caching-performance.md

Normal file

9

data/categories/caching-performance.md

Normal file

@@ -0,0 +1,9 @@

|

|||||||

|

---

|

||||||

|

title: 'Caching & Performance'

|

||||||

|

description: 'Learn to improve the performance of your system by caching data with these visual guides.'

|

||||||

|

image: 'https://github.com/ByteByteGoHq/system-design-101/raw/main/images/oAuth2.jpg'

|

||||||

|

icon: '/icons/order.png'

|

||||||

|

sort: 150

|

||||||

|

---

|

||||||

|

|

||||||

|

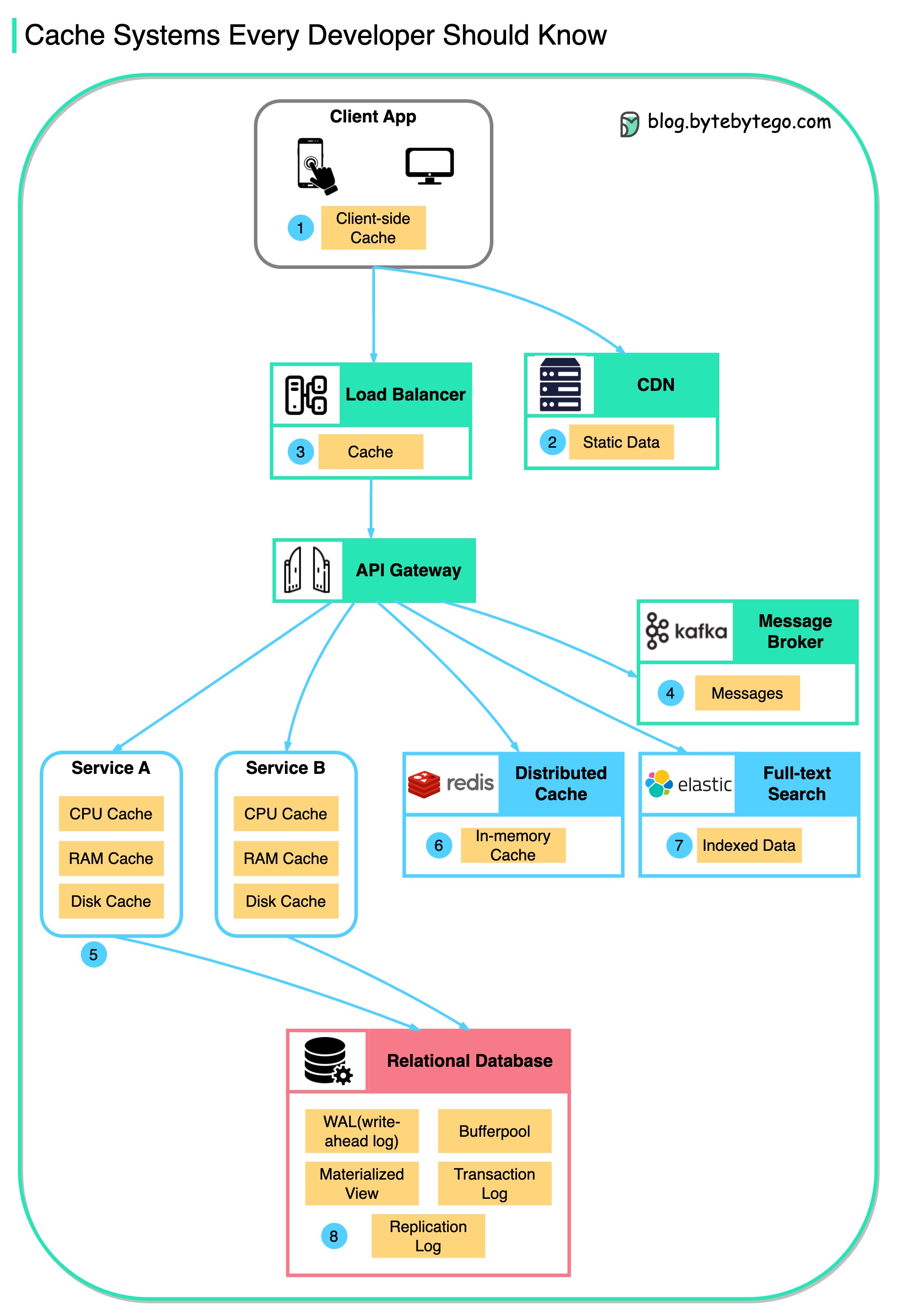

Caching is a technique that stores a copy of a given resource and serves it back when requested. When a web server renders a web page, it stores the result of the page rendering in a cache. The next time the web page is requested, the server serves the cached page without re-rendering the page. This process reduces the time needed to generate the web page and reduces the load on the server.

|

||||||

9

data/categories/cloud-distributed-systems.md

Normal file

9

data/categories/cloud-distributed-systems.md

Normal file

@@ -0,0 +1,9 @@

|

|||||||

|

---

|

||||||

|

title: 'Cloud & Distributed Systems'

|

||||||

|

description: 'Learn the fundamental concepts, best practices, and real-world examples of cloud computing and distributed systems.'

|

||||||

|

image: 'https://github.com/ByteByteGoHq/system-design-101/raw/main/images/oAuth2.jpg'

|

||||||

|

icon: '/icons/cloud.png'

|

||||||

|

sort: 180

|

||||||

|

---

|

||||||

|

|

||||||

|

Cloud computing and distributed systems are the backbone of modern software architecture. They enable us to build scalable, reliable, and high-performance systems. This category covers the fundamental concepts, best practices, and real-world examples of cloud computing and distributed systems.

|

||||||

9

data/categories/computer-fundamentals.md

Normal file

9

data/categories/computer-fundamentals.md

Normal file

@@ -0,0 +1,9 @@

|

|||||||

|

---

|

||||||

|

title: 'Computer Fundamentals'

|

||||||

|

description: 'Understanding computer fundamentals is essential for software engineers. These guides cover the topics that are fundamental to computer science and software engineering and will help you understand certain system design aspects better.'

|

||||||

|

image: 'https://github.com/ByteByteGoHq/system-design-101/raw/main/images/oAuth2.jpg'

|

||||||

|

icon: '/icons/laptop.png'

|

||||||

|

sort: 220

|

||||||

|

---

|

||||||

|

|

||||||

|

Understanding computer fundamentals is essential for software engineers. These guides cover the topics that are fundamental to computer science and software engineering and will help you understand certain system design aspects better.

|

||||||

9

data/categories/database-and-storage.md

Normal file

9

data/categories/database-and-storage.md

Normal file

@@ -0,0 +1,9 @@

|

|||||||

|

---

|

||||||

|

title: 'Database and Storage'

|

||||||

|

description: 'Understand the different types of databases and storage solutions and how to choose the right one for your application.'

|

||||||

|

image: 'https://github.com/ByteByteGoHq/system-design-101/raw/main/images/oAuth2.jpg'

|

||||||

|

icon: '/icons/database.png'

|

||||||

|

sort: 130

|

||||||

|

---

|

||||||

|

|

||||||

|

Databases are the backbone of most modern applications since they store and manage the data that powers the application. There are many types of databases, including relational databases, NoSQL databases, in-memory databases, and key-value stores. Each type of database has its own strengths and weaknesses, and the best choice depends on the specific requirements of the application.

|

||||||

11

data/categories/devops-cicd.md

Normal file

11

data/categories/devops-cicd.md

Normal file

@@ -0,0 +1,11 @@

|

|||||||

|

---

|

||||||

|

title: 'DevOps and CI/CD'

|

||||||

|

description: 'Learn all about DevOps, CI/CD, and how they can help you deliver software faster and more reliably. Understand the best practices and tools to implement DevOps and CI/CD in your organization.'

|

||||||

|

image: 'https://github.com/ByteByteGoHq/system-design-101/raw/main/images/oAuth2.jpg'

|

||||||

|

icon: '/icons/refresh.png'

|

||||||

|

sort: 200

|

||||||

|

---

|

||||||

|

|

||||||

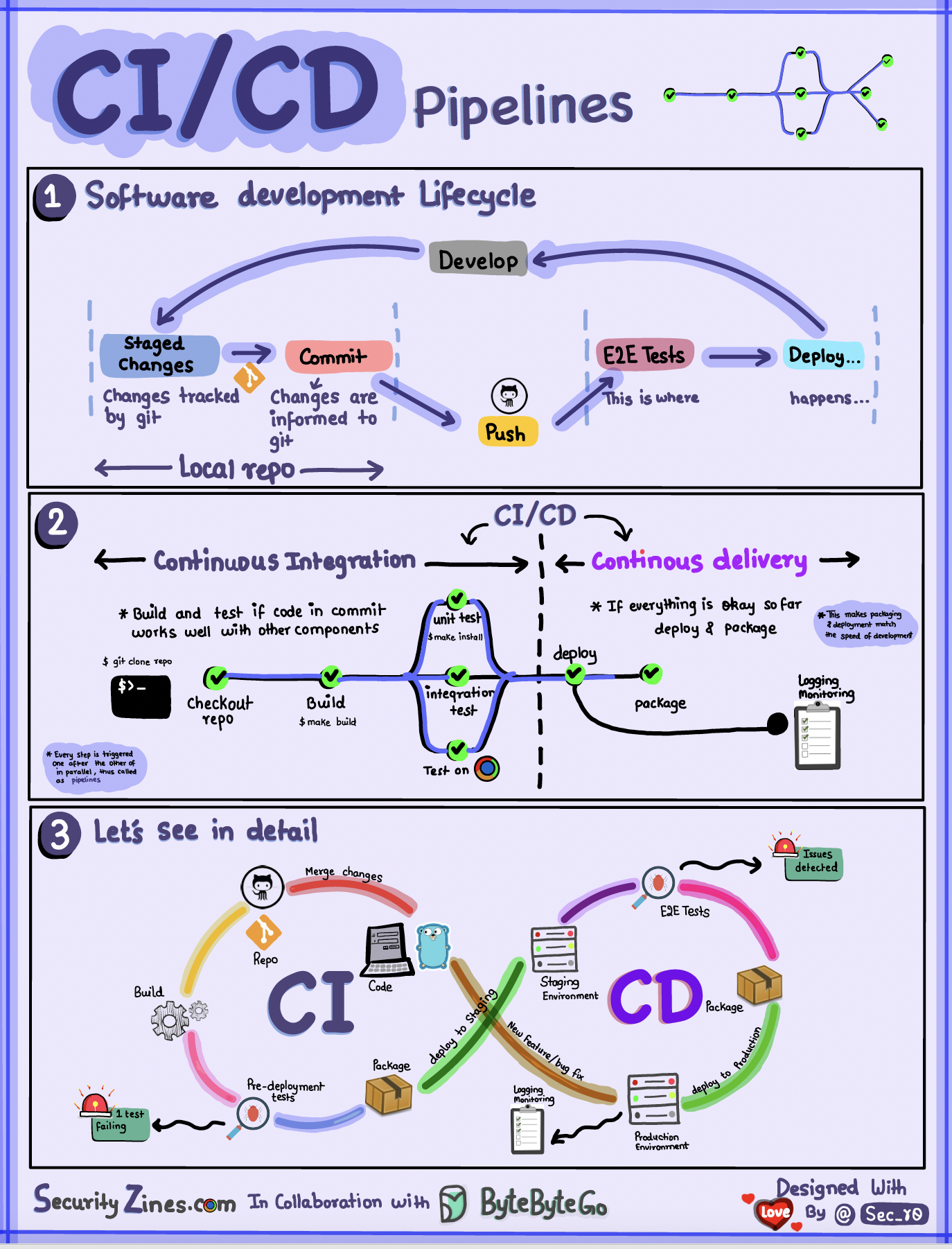

|

DevOps is a set of practices that combines software development (Dev) and IT operations (Ops). It aims to shorten the systems development life cycle and provide continuous delivery with high software quality. DevOps is complementary with Agile software development; several DevOps aspects came from Agile methodology.

|

||||||

|

|

||||||

|

CI/CD stands for Continuous Integration and Continuous Delivery. CI/CD is a method to frequently deliver apps to customers by introducing automation into the stages of app development. The main concepts attributed to CI/CD are continuous integration, continuous delivery, and continuous deployment.

|

||||||

9

data/categories/devtools-productivity.md

Normal file

9

data/categories/devtools-productivity.md

Normal file

@@ -0,0 +1,9 @@

|

|||||||

|

---

|

||||||

|

title: 'DevTools & Productivity'

|

||||||

|

description: 'Guides on developer tools and productivity techniques to help you become more efficient in your daily work.'

|

||||||

|

image: 'https://github.com/ByteByteGoHq/system-design-101/raw/main/images/oAuth2.jpg'

|

||||||

|

icon: '/icons/supplement-bottle.png'

|

||||||

|

sort: 170

|

||||||

|

---

|

||||||

|

|

||||||

|

Developer and productivity tools are essential for software engineers to build, test, and deploy software. This collection of guides covers a wide range of tools and techniques to help you become more productive and efficient in your daily work.

|

||||||

9

data/categories/how-it-works.md

Normal file

9

data/categories/how-it-works.md

Normal file

@@ -0,0 +1,9 @@

|

|||||||

|

---

|

||||||

|

title: 'How it Works?'

|

||||||

|

description: 'Go deep into the internals of how things work with these visual guides ranging from OAuth2 to how the internet works.'

|

||||||

|

image: 'https://github.com/ByteByteGoHq/system-design-101/raw/main/images/oAuth2.jpg'

|

||||||

|

icon: '/icons/question-mark.png'

|

||||||

|

sort: 190

|

||||||

|

---

|

||||||

|

|

||||||

|

Learning how things work is a great way to understand the world around us. This collection of guides will help you understand how things work in the world of system design.

|

||||||

9

data/categories/payment-and-fintech.md

Normal file

9

data/categories/payment-and-fintech.md

Normal file

@@ -0,0 +1,9 @@

|

|||||||

|

---

|

||||||

|

title: 'Payment and Fintech'

|

||||||

|

description: 'Explore the architecture of a payment system and a fintech system. Look at the real-world examples of payment systems like PayPal, Stripe, and Square.'

|

||||||

|

image: 'https://github.com/ByteByteGoHq/system-design-101/raw/main/images/oAuth2.jpg'

|

||||||

|

icon: '/icons/medal.png'

|

||||||

|

sort: 160

|

||||||

|

---

|

||||||

|

|

||||||

|

Payment and Fintech are two of the most popular categories in system design interviews. In these guides, we will explore the architecture of a payment system and a fintech system.

|

||||||

9

data/categories/real-world-case-studies.md

Normal file

9

data/categories/real-world-case-studies.md

Normal file

@@ -0,0 +1,9 @@

|

|||||||

|

---

|

||||||

|

title: 'Real World Case Studies'

|

||||||

|

description: 'Understand how popular tech companies have evolved over the years. Dive into case studies of companies like Twitter, Netflix, Uber and more.'

|

||||||

|

image: 'https://github.com/ByteByteGoHq/system-design-101/raw/main/images/oAuth2.jpg'

|

||||||

|

icon: '/icons/earth-planet.png'

|

||||||

|

sort: 110

|

||||||

|

---

|

||||||

|

|

||||||

|

Real-world case studies are a great way to learn about the architecture, design, and scalability of popular tech companies. Dive into these case studies to understand how companies like Twitter, Netflix, Uber and more have evolved over the years.

|

||||||

9

data/categories/security.md

Normal file

9

data/categories/security.md

Normal file

@@ -0,0 +1,9 @@

|

|||||||

|

---

|

||||||

|

title: 'Security'

|

||||||

|

description: 'Guides on security concepts and best practices for system design. Learn how to protect your system from unauthorized access, data breaches, and other security threats.'

|

||||||

|

image: 'https://github.com/ByteByteGoHq/system-design-101/raw/main/images/oAuth2.jpg'

|

||||||

|

icon: '/icons/lock.png'

|

||||||

|

sort: 210

|

||||||

|

---

|

||||||

|

|

||||||

|

Security is a critical aspect of system design. It is essential to protect the system from unauthorized access, data breaches, and other security threats. In this set of guides, we will explore some of the key security concepts and best practices that you should consider when designing a system.

|

||||||

9

data/categories/software-architecture.md

Normal file

9

data/categories/software-architecture.md

Normal file

@@ -0,0 +1,9 @@

|

|||||||

|

---

|

||||||

|

title: 'Software Architecture'

|

||||||

|

description: 'Learn about software architecture, the process of converting software characteristics such as flexibility, scalability, feasibility, reusability, and security into a structured solution that meets the technical and the business expectations.'

|

||||||

|

image: 'https://github.com/ByteByteGoHq/system-design-101/raw/main/images/oAuth2.jpg'

|

||||||

|

icon: '/icons/image.png'

|

||||||

|

sort: 160

|

||||||

|

---

|

||||||

|

|

||||||

|

Software architecture is the process of converting software characteristics such as flexibility, scalability, feasibility, reusability, and security into a structured solution that meets the technical and the business expectations. It is the process of defining a structured solution that meets all the technical and operational requirements, while optimizing common quality attributes such as performance, security, and manageability.

|

||||||

9

data/categories/software-development.md

Normal file

9

data/categories/software-development.md

Normal file

@@ -0,0 +1,9 @@

|

|||||||

|

---

|

||||||

|

title: 'Software Development'

|

||||||

|

description: 'Visual guides to help you understand different aspects of software development including but not limited to software architecture, design patterns, and software development methodologies.'

|

||||||

|

image: 'https://github.com/ByteByteGoHq/system-design-101/raw/main/images/oAuth2.jpg'

|

||||||

|

icon: '/icons/code.png'

|

||||||

|

sort: 170

|

||||||

|

---

|

||||||

|

|

||||||

|

Software Development is the process of designing, coding, testing, and maintaining software. It is a systematic approach to developing software. Software development is a broad field that includes many different disciplines. Some of the most common disciplines in software development include:

|

||||||

9

data/categories/technical-interviews.md

Normal file

9

data/categories/technical-interviews.md

Normal file

@@ -0,0 +1,9 @@

|

|||||||

|

---

|

||||||

|

title: 'Technical Interviews'

|

||||||

|

description: 'Learn to ace technical interviews with coding challenges, system design questions, and interview tips.'

|

||||||

|

image: 'https://github.com/ByteByteGoHq/system-design-101/raw/main/images/oAuth2.jpg'

|

||||||

|

icon: '/icons/tower.png'

|

||||||

|

sort: 140

|

||||||

|

---

|

||||||

|

|

||||||

|

Technical interviews are a critical part of the hiring process for software engineers. They are designed to test your problem-solving skills, coding abilities, and technical knowledge. In this category, you will find resources to help you prepare for technical interviews, including coding challenges, system design questions, and tips for acing the interview.

|

||||||

44

data/guides/10-books-for-software-developers.md

Normal file

44

data/guides/10-books-for-software-developers.md

Normal file

@@ -0,0 +1,44 @@

|

|||||||

|

---

|

||||||

|

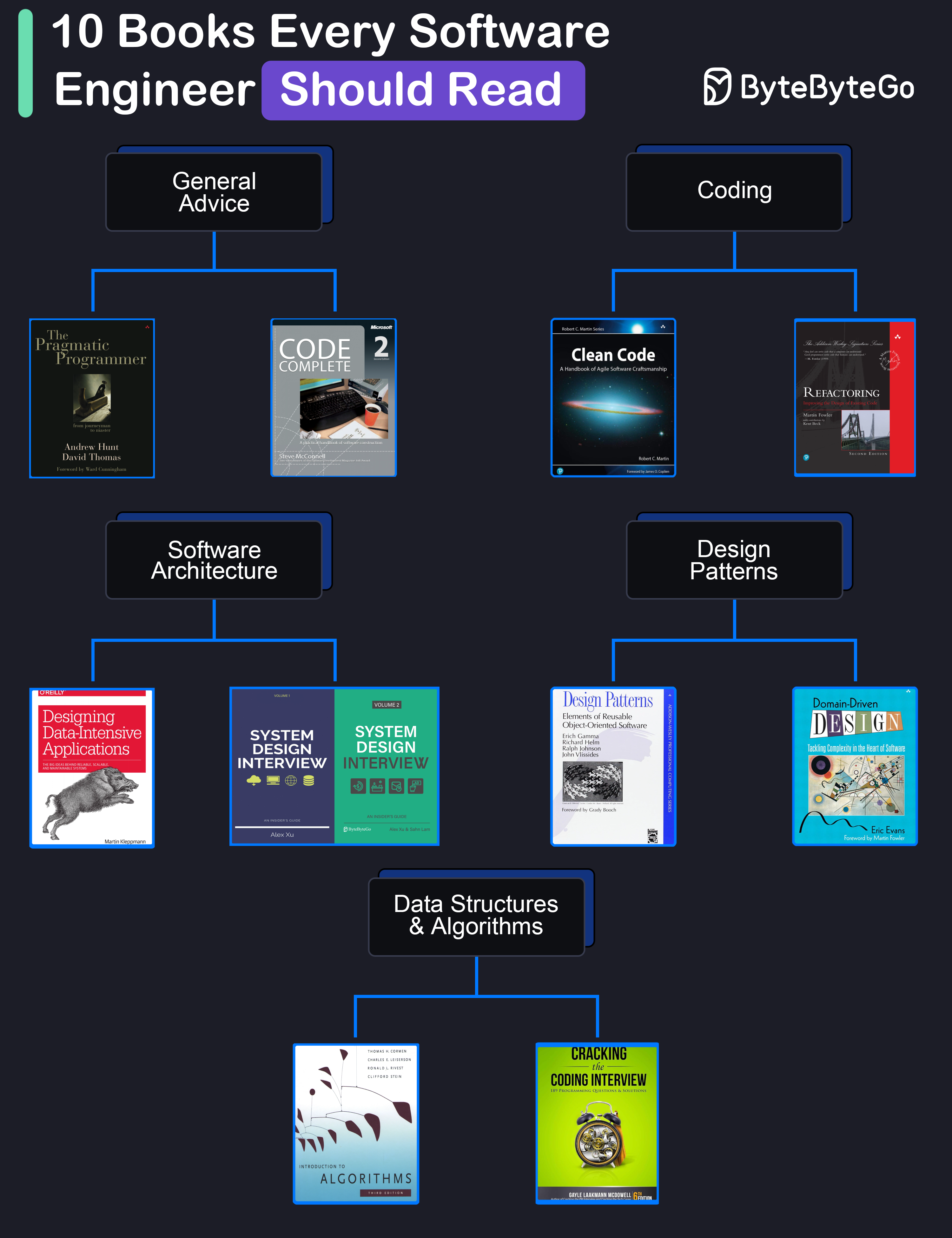

title: "10 Books for Software Developers"

|

||||||

|

description: "A curated list of must-read books for software developers."

|

||||||

|

image: "https://assets.bytebytego.com/diagrams/0023-10-books-every-software-engineer-should-read.png"

|

||||||

|

createdAt: "2024-02-28"

|

||||||

|

draft: false

|

||||||

|

categories:

|

||||||

|

- software-development

|

||||||

|

tags:

|

||||||

|

- "Software Development"

|

||||||

|

- "Books"

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

## General Advice

|

||||||

|

|

||||||

|

* **The Pragmatic Programmer** by Andrew Hunt and David Thomas

|

||||||

|

|

||||||

|

* **Code Complete** by Steve McConnell: Often considered a bible for software developers, this comprehensive book covers all aspects of software development, from design and coding to testing and maintenance.

|

||||||

|

|

||||||

|

## Coding

|

||||||

|

|

||||||

|

* **Clean Code** by Robert C. Martin

|

||||||

|

|

||||||

|

* **Refactoring** by Martin Fowler

|

||||||

|

|

||||||

|

## Software Architecture

|

||||||

|

|

||||||

|

* **Designing Data-Intensive Applications** by Martin Kleppmann

|

||||||

|

|

||||||

|

* **System Design Interview** (our own book :))

|

||||||

|

|

||||||

|

## Design Patterns

|

||||||

|

|

||||||

|

* **Design Patterns** by Eric Gamma and Others

|

||||||

|

|

||||||

|

* **Domain-Driven Design** by Eric Evans

|

||||||

|

|

||||||

|

## Data Structures and Algorithms

|

||||||

|

|

||||||

|

* **Introduction to Algorithms** by Cormen, Leiserson, Rivest, and Stein

|

||||||

|

|

||||||

|

* **Cracking the Coding Interview** by Gayle Laakmann McDowell

|

||||||

@@ -0,0 +1,25 @@

|

|||||||

|

---

|

||||||

|

title: '10 Essential Components of a Production Web Application'

|

||||||

|

description: 'Explore 10 key components for building robust web applications.'

|

||||||

|

image: 'https://assets.bytebytego.com/diagrams/0395-typical-architecture-of-a-web-application.png'

|

||||||

|

createdAt: '2024-02-20'

|

||||||

|

draft: false

|

||||||

|

categories:

|

||||||

|

- api-web-development

|

||||||

|

tags:

|

||||||

|

- Web Architecture

|

||||||

|

- System Design

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

1. It all starts with CI/CD pipelines that deploy code to the server instances. Tools like Jenkins and GitHub help over here.

|

||||||

|

2. The user requests originate from the web browser. After DNS resolution, the requests reach the app servers.

|

||||||

|

3. Load balancers and reverse proxies (such as Nginx & HAProxy) distribute user requests evenly across the web application servers.

|

||||||

|

4. The requests can also be served by a Content Delivery Network (CDN).

|

||||||

|

5. The web app communicates with backend services via APIs.

|

||||||

|

6. The backend services interact with database servers or distributed caches to provide the data.

|

||||||

|

7. Resource-intensive and long-running tasks are sent to job workers using a job queue.

|

||||||

|

8. The full-text search service supports the search functionality. Tools like Elasticsearch and Apache Solr can help here.

|

||||||

|

9. Monitoring tools (such as Sentry, Grafana, and Prometheus) store logs and help analyze data to ensure everything works fine.

|

||||||

|

10. In case of issues, alerting services notify developers through platforms like Slack for quick resolution.

|

||||||

@@ -0,0 +1,56 @@

|

|||||||

|

---

|

||||||

|

title: "10 Good Coding Principles to Improve Code Quality"

|

||||||

|

description: "Improve code quality with these 10 essential coding principles."

|

||||||

|

image: "https://assets.bytebytego.com/diagrams/0051-10-good-coding-principles.png"

|

||||||

|

createdAt: "2024-03-15"

|

||||||

|

draft: false

|

||||||

|

categories:

|

||||||

|

- software-development

|

||||||

|

tags:

|

||||||

|

- "coding practices"

|

||||||

|

- "software quality"

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

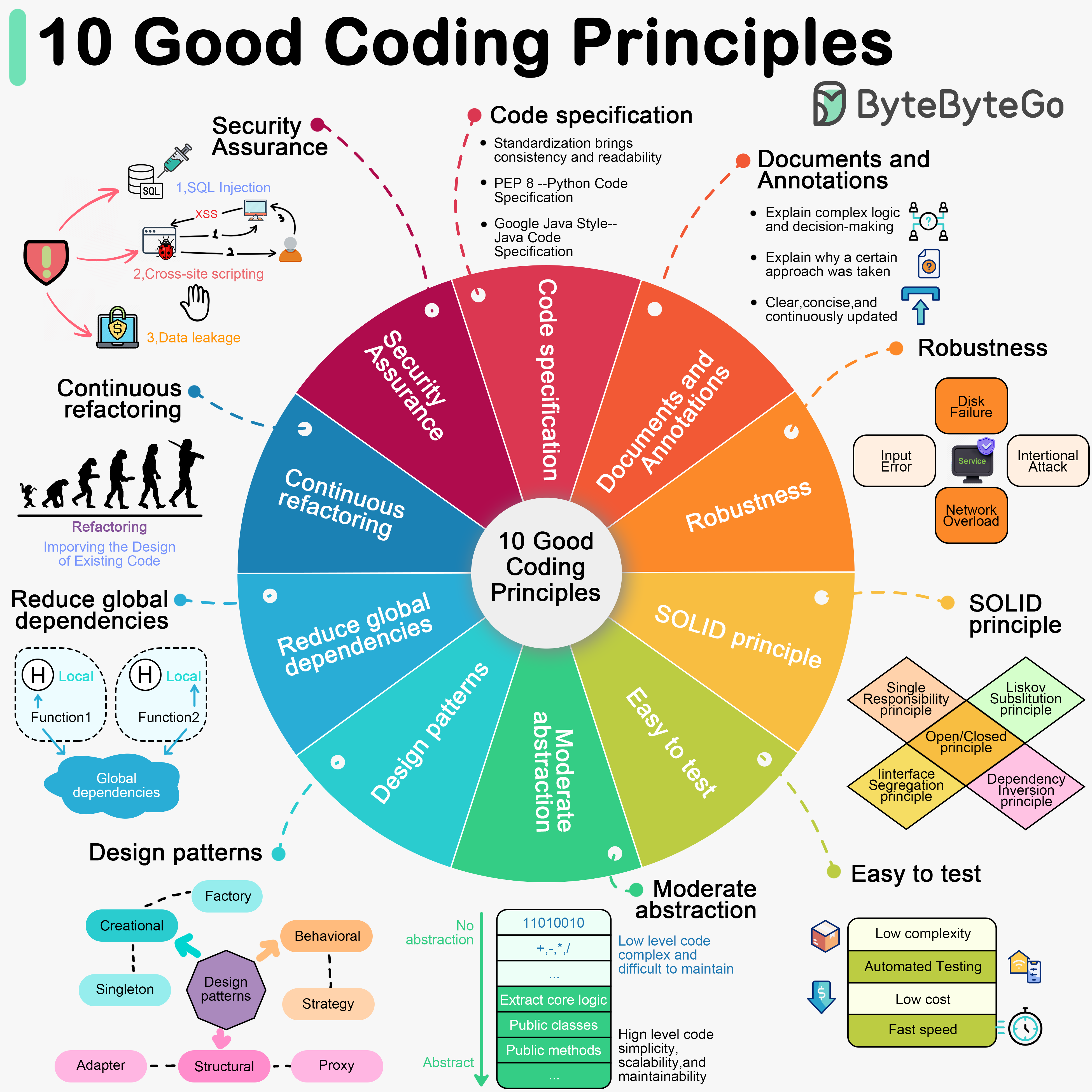

Software development requires good system designs and coding standards. We list 10 good coding principles in the diagram below.

|

||||||

|

|

||||||

|

## 1. Follow Code Specifications

|

||||||

|

|

||||||

|

When we write code, it is important to follow the industry's well-established norms, like “PEP 8”, “Google Java Style”. Adhering to a set of agreed-upon code specifications ensures that the quality of the code is consistent and readable.

|

||||||

|

|

||||||

|

## 2. Documentation and Comments

|

||||||

|

|

||||||

|

Good code should be clearly documented and commented to explain complex logic and decisions. Comments should explain why a certain approach was taken (“Why”) rather than what exactly is being done (“What”). Documentation and comments should be clear, concise, and continuously updated.

|

||||||

|

|

||||||

|

## 3. Robustness

|

||||||

|

|

||||||

|

Good code should be able to handle a variety of unexpected situations and inputs without crashing or producing unpredictable results. Most common approach is to catch and handle exceptions.

|

||||||

|

|

||||||

|

## 4. Follow the SOLID principle

|

||||||

|

|

||||||

|

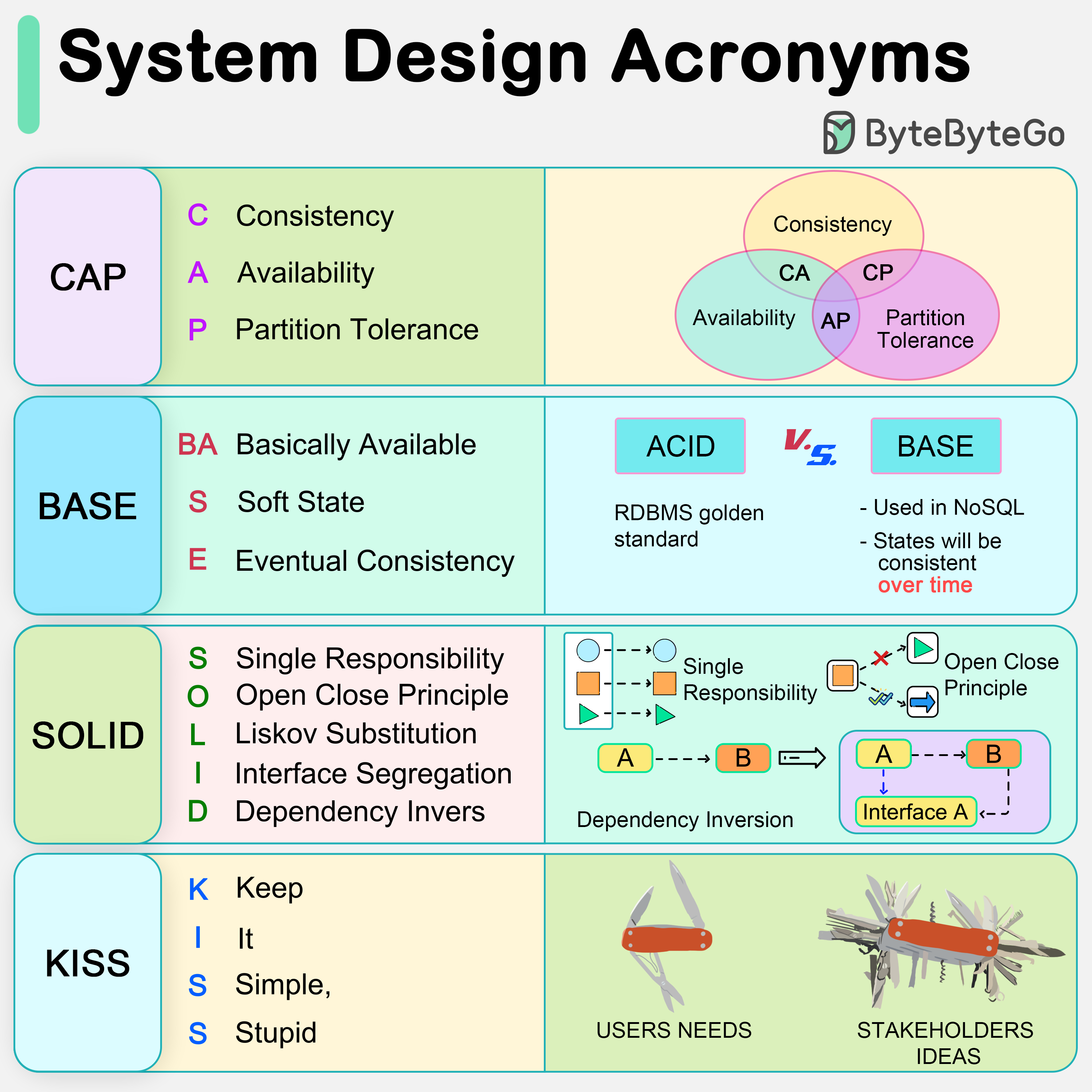

“Single Responsibility”, “Open/Closed”, “Liskov Substitution”, “Interface Segregation”, and “Dependency Inversion” - these five principles (SOLID for short) are the cornerstones of writing code that scales and is easy to maintain.

|

||||||

|

|

||||||

|

## 5. Make Testing Easy

|

||||||

|

|

||||||

|

Testability of software is particularly important. Good code should be easy to test, both by trying to reduce the complexity of each component, and by supporting automated testing to ensure that it behaves as expected.

|

||||||

|

|

||||||

|

## 6. Abstraction

|

||||||

|

|

||||||

|

Abstraction requires us to extract the core logic and hide the complexity, thus making the code more flexible and generic. Good code should have a moderate level of abstraction, neither over-designed nor neglecting long-term expandability and maintainability.

|

||||||

|

|

||||||

|

## 7. Utilize Design Patterns, but don't over-design

|

||||||

|

|

||||||

|

Design patterns can help us solve some common problems. However, every pattern has its applicable scenarios. Overusing or misusing design patterns may make your code more complex and difficult to understand.

|

||||||

|

|

||||||

|

## 8. Reduce Global Dependencies

|

||||||

|

|

||||||

|

We can get bogged down in dependencies and confusing state management if we use global variables and instances. Good code should rely on localized state and parameter passing. Functions should be side-effect free.

|

||||||

|

|

||||||

|

## 9. Continuous Refactoring

|

||||||

|

|

||||||

|

Good code is maintainable and extensible. Continuous refactoring reduces technical debt by identifying and fixing problems as early as possible.

|

||||||

|

|

||||||

|

## 10. Security is a Top Priority

|

||||||

|

|

||||||

|

Good code should avoid common security vulnerabilities.

|

||||||

38

data/guides/10-key-data-structures-we-use-every-day.md

Normal file

38

data/guides/10-key-data-structures-we-use-every-day.md

Normal file

@@ -0,0 +1,38 @@

|

|||||||

|

---

|

||||||

|

title: "10 Key Data Structures We Use Every Day"

|

||||||

|

description: "Explore 10 essential data structures used daily in software development."

|

||||||

|

image: "https://assets.bytebytego.com/diagrams/0024-10-data-structures-used-in-daily-life.png"

|

||||||

|

createdAt: "2024-03-03"

|

||||||

|

draft: false

|

||||||

|

categories:

|

||||||

|

- software-development

|

||||||

|

tags:

|

||||||

|

- "Data Structures"

|

||||||

|

- "Algorithms"

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

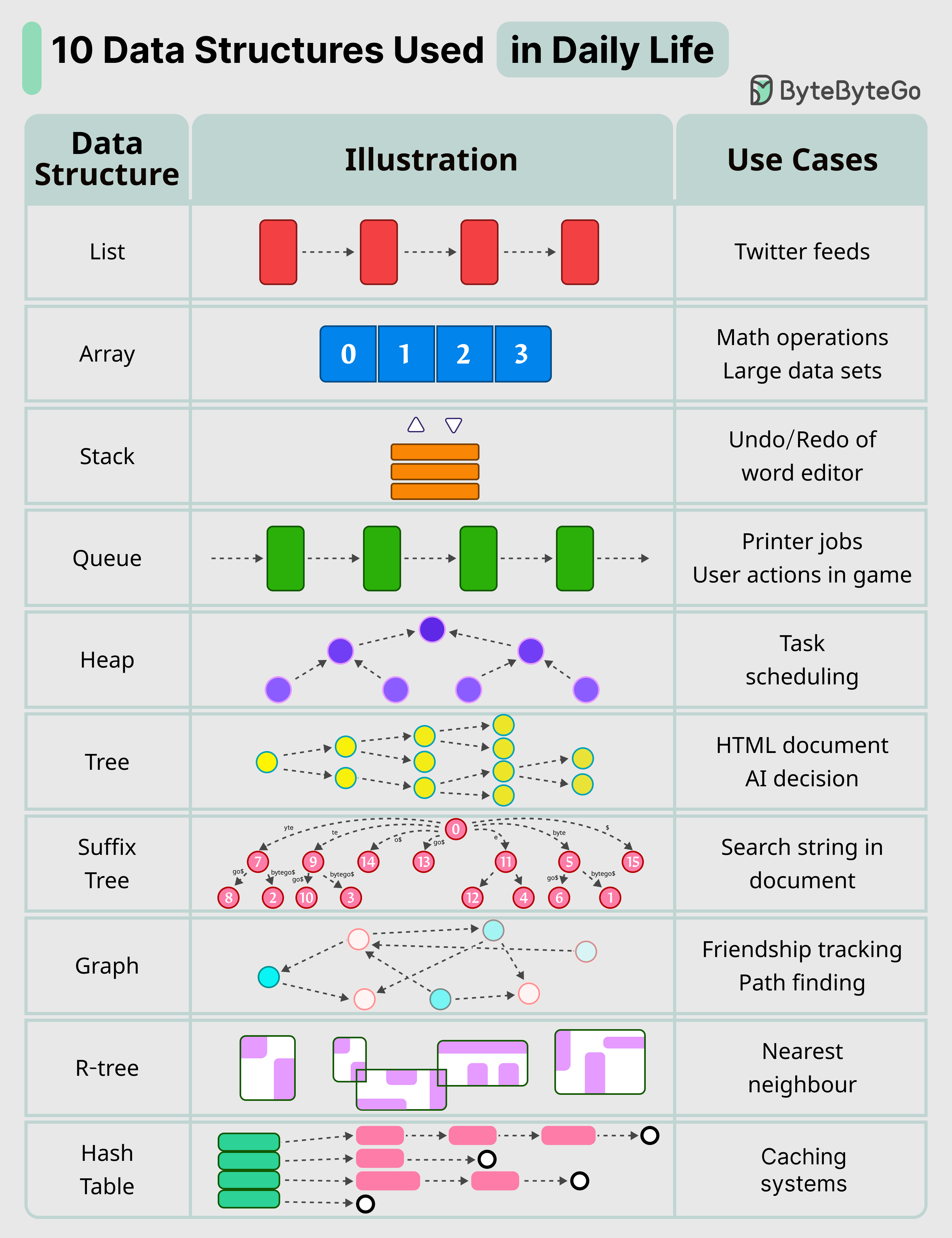

Here are 10 key data structures we use every day:

|

||||||

|

|

||||||

|

* **List**: Keep your Twitter feeds

|

||||||

|

|

||||||

|

* **Stack**: Support undo/redo of the word editor

|

||||||

|

|

||||||

|

* **Queue**: Keep printer jobs, or send user actions in-game

|

||||||

|

|

||||||

|

* **Hash Table**: Caching systems

|

||||||

|

|

||||||

|

* **Array**: Math operations

|

||||||

|

|

||||||

|

* **Heap**: Task scheduling

|

||||||

|

|

||||||

|

* **Tree**: Keep the HTML document, or for AI decision

|

||||||

|

|

||||||

|

* **Suffix Tree**: For searching string in a document

|

||||||

|

|

||||||

|

* **Graph**: For tracking friendship, or path finding

|

||||||

|

|

||||||

|

* **R-Tree**: For finding the nearest neighbor

|

||||||

|

|

||||||

|

* **Vertex Buffer**: For sending data to GPU for rendering

|

||||||

@@ -0,0 +1,56 @@

|

|||||||

|

---

|

||||||

|

title: '10 Principles for Building Resilient Payment Systems'

|

||||||

|

description: '10 principles for building resilient payment systems based on Shopify.'

|

||||||

|

image: 'https://assets.bytebytego.com/diagrams/0336-shopify.png'

|

||||||

|

createdAt: '2024-03-07'

|

||||||

|

draft: false

|

||||||

|

categories:

|

||||||

|

- real-world-case-studies

|

||||||

|

tags:

|

||||||

|

- payment systems

|

||||||

|

- resilience

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

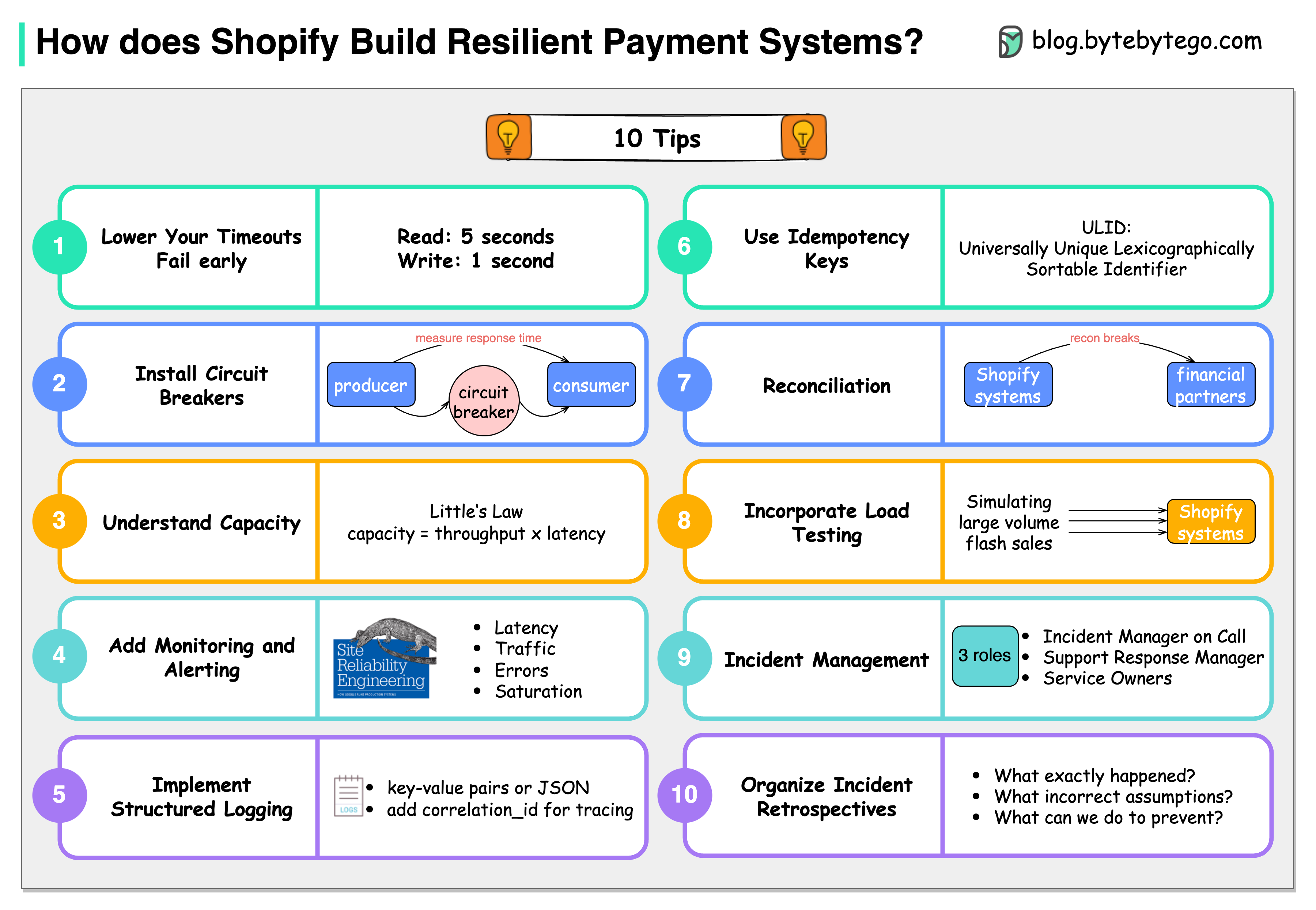

Shopify has some precious tips for building resilient payment systems.

|

||||||

|

|

||||||

|

### Lower the timeouts, and let the service fail early

|

||||||

|

|

||||||

|

The default timeout is 60 seconds. Based on Shopify’s experiences, read timeout of 5 seconds and write timeout of 1 second are decent setups.

|

||||||

|

|

||||||

|

### Install circuit breaks

|

||||||

|

|

||||||

|

Shopify developed Semian to protect Net::HTTP, MySQL, Redis, and gRPC services with a circuit breaker in Ruby.

|

||||||

|

|

||||||

|

### Capacity management

|

||||||

|

|

||||||

|

If we have 50 requests arrive in our queue and it takes an average of 100 milliseconds to process a request, our throughput is 500 requests per second.

|

||||||

|

|

||||||

|

### Add monitoring and alerting

|

||||||

|

|

||||||

|

Google’s site reliability engineering (SRE) book lists four golden signals a user-facing system should be monitored for: latency, traffic, errors, and saturation.

|

||||||

|

|

||||||

|

### Implement structured logging

|

||||||

|

|

||||||

|

We store logs in a centralized place and make them easily searchable.

|

||||||

|

|

||||||

|

### Use idempotency keys

|

||||||

|

|

||||||

|

Use the Universally Unique Lexicographically Sortable Identifier (ULID) for these idempotency keys instead of a random version 4 UUID.

|

||||||

|

|

||||||

|

### Be consistent with reconciliation

|

||||||

|

|

||||||

|

Store the reconciliation breaks with Shopify’s financial partners in the database.

|

||||||

|

|

||||||

|

### Incorporate load testing

|

||||||

|

|

||||||

|

Shopify regularly simulates the large volume flash sales to get the benchmark results.

|

||||||

|

|

||||||

|

### Get on top of incident management

|

||||||

|

|

||||||

|

Each incident channel has 3 roles: Incident Manager on Call (IMOC), Support Response Manager (SRM), and service owners.

|

||||||

|

|

||||||

|

### Organize incident retrospectives

|

||||||

|

|

||||||

|

For each incident, 3 questions are asked at Shopify: What exactly happened? What incorrect assumptions did we hold about our systems? What we can do to prevent this from happening?

|

||||||

76

data/guides/10-system-design-tradeoffs-you-cannot-ignore.md

Normal file

76

data/guides/10-system-design-tradeoffs-you-cannot-ignore.md

Normal file

@@ -0,0 +1,76 @@

|

|||||||

|

---

|

||||||

|

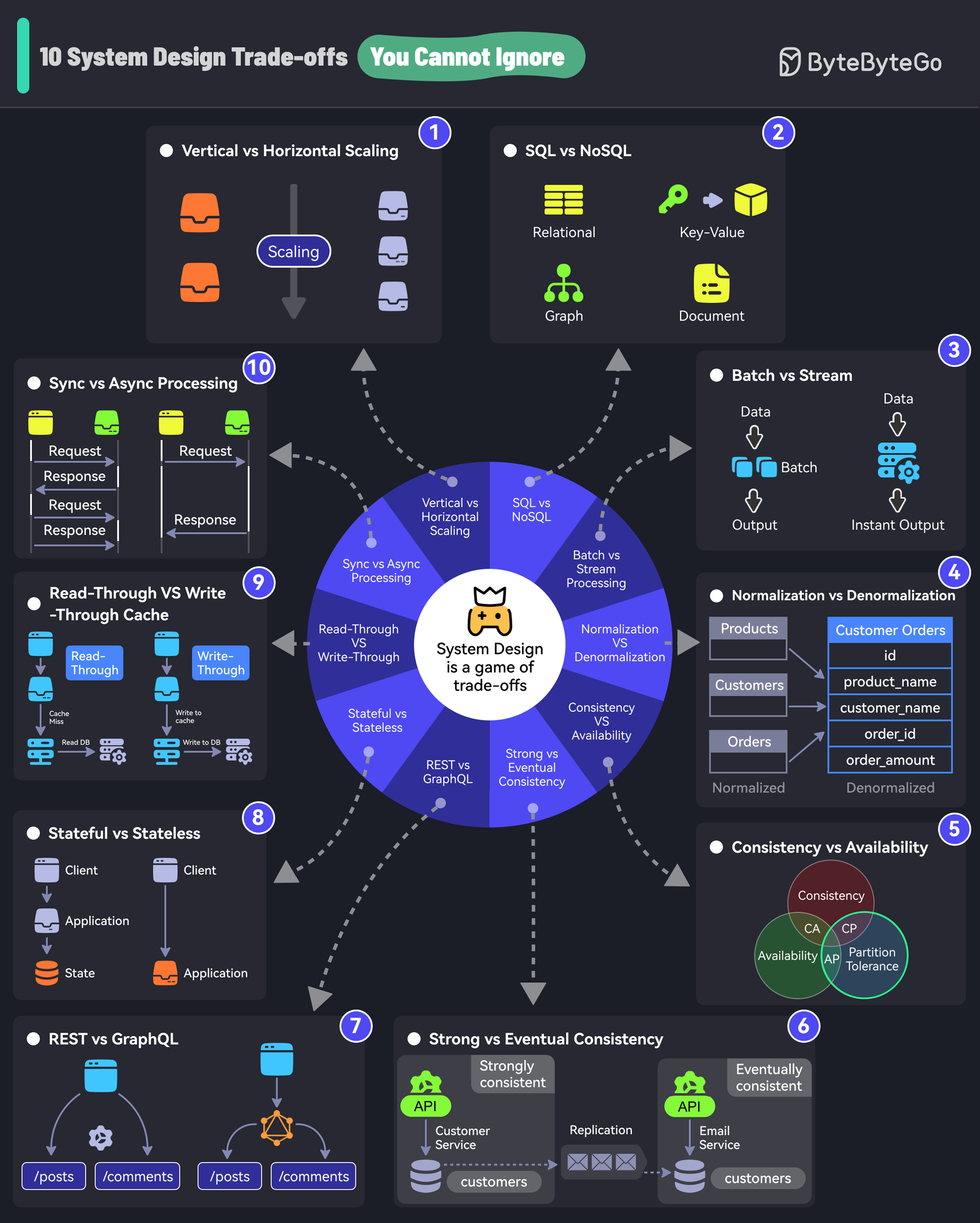

title: "10 System Design Tradeoffs You Cannot Ignore"

|

||||||

|

description: "Explore 10 crucial system design tradeoffs for robust architecture."

|

||||||

|

image: "https://assets.bytebytego.com/diagrams/0026-10-system-design-trade-offs-you-cannot-ignore.png"

|

||||||

|

createdAt: "2024-03-03"

|

||||||

|

draft: false

|

||||||

|

categories:

|

||||||

|

- software-architecture

|

||||||

|

tags:

|

||||||

|

- System Design

|

||||||

|

- Tradeoffs

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

If you don’t know trade-offs, you DON'T KNOW system design.

|

||||||

|

|

||||||

|

## 1. Vertical vs Horizontal Scaling

|

||||||

|

|

||||||

|

Vertical scaling is adding more resources (CPU, RAM) to an existing server.

|

||||||

|

|

||||||

|

Horizontal scaling means adding more servers to the pool.

|

||||||

|

|

||||||

|

## 2. SQL vs NoSQL

|

||||||

|

|

||||||

|

SQL databases organize data into tables of rows and columns.

|

||||||

|

|

||||||

|

NoSQL is ideal for applications that need a flexible schema.

|

||||||

|

|

||||||

|

## 3. Batch vs Stream Processing

|

||||||

|

|

||||||

|

Batch processing involves collecting data and processing it all at once. For example, daily billing processes.

|

||||||

|

|

||||||

|

Stream processing processes data in real time. For example, fraud detection processes.

|

||||||

|

|

||||||

|

## 4. Normalization vs Denormalization

|

||||||

|

|

||||||

|

Normalization splits data into related tables to ensure that each piece of information is stored only once.

|

||||||

|

|

||||||

|

Denormalization combines data into fewer tables for better query performance.

|

||||||

|

|

||||||

|

## 5. Consistency vs Availability

|

||||||

|

|

||||||

|

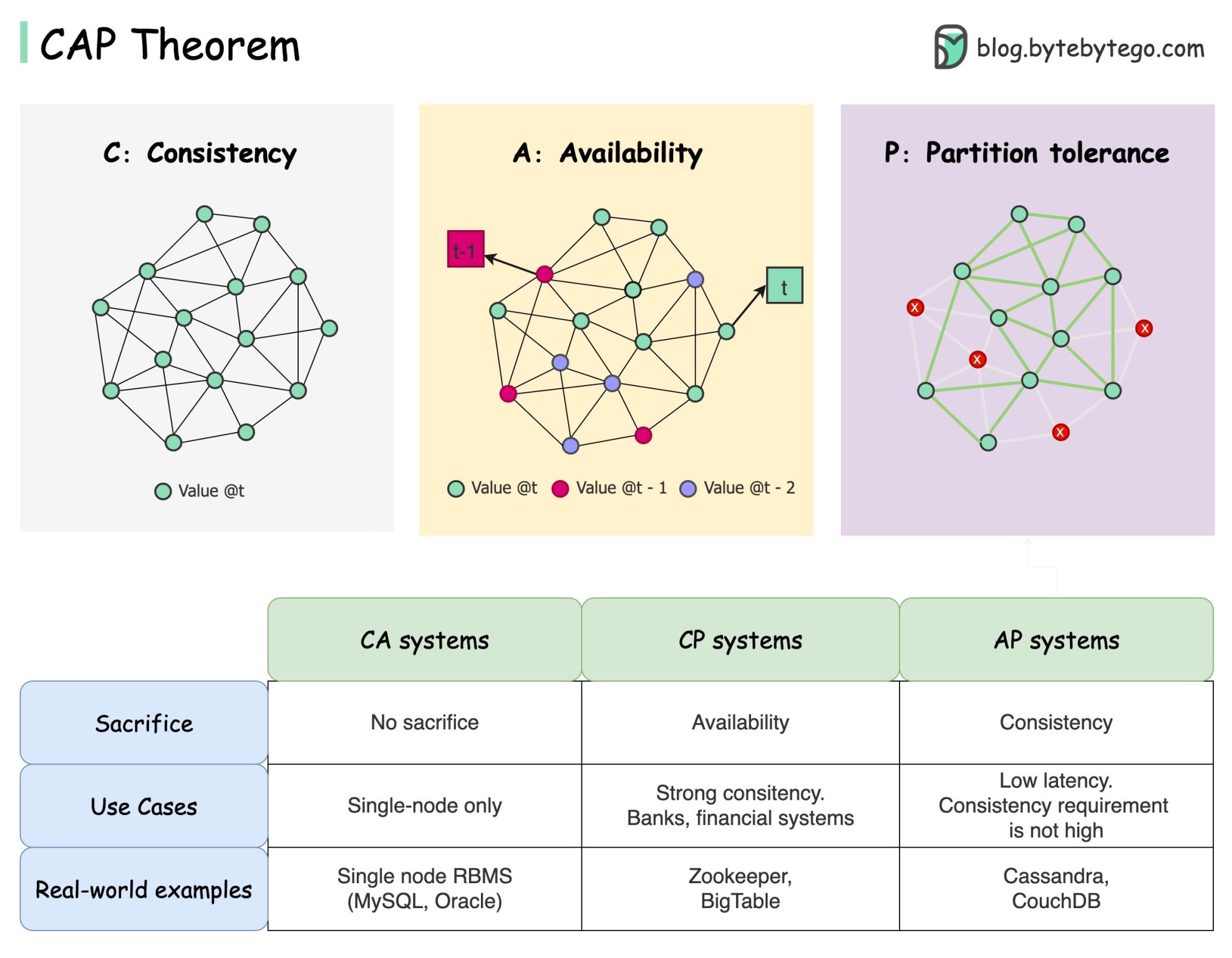

Consistency is the assurance of getting the most recent data every single time.

|

||||||

|

|

||||||

|

Availability is about ensuring that the system is always up and running, even if some parts are having problems.

|

||||||

|

|

||||||

|

## 6. Strong vs Eventual Consistency

|

||||||

|

|

||||||

|

Strong consistency is when data updates are immediately reflected.

|

||||||

|

|

||||||

|

Eventual consistency is when data updates are delayed before being available across nodes.

|

||||||

|

|

||||||

|

## 7. REST vs GraphQL

|

||||||

|

|

||||||

|

With REST endpoints, you gather data by accessing multiple endpoints.

|

||||||

|

|

||||||

|

With GraphQL, you get more efficient data fetching with specific queries but the design cost is higher.

|

||||||

|

|

||||||

|

## 8. Stateful vs Stateless

|

||||||

|

|

||||||

|

A stateful system remembers past interactions.

|

||||||

|

|

||||||

|

A stateless system does not keep track of past interactions.

|

||||||

|

|

||||||

|

## 9. Read-Through vs Write-Through Cache

|

||||||

|

|

||||||

|

A read-through cache loads data from the database in case of a cache miss.

|

||||||

|

|

||||||

|

A write-through cache simultaneously writes data updates to the cache and storage.

|

||||||

|

|

||||||

|

## 10. Sync vs Async Processing

|

||||||

|

|

||||||

|

In synchronous processing, tasks are performed one after another.

|

||||||

|

|

||||||

|

In asynchronous processing, tasks can run in the background. New tasks can be started without waiting for a new task.

|

||||||

44

data/guides/100x-postgres-scaling-at-figma.md

Normal file

44

data/guides/100x-postgres-scaling-at-figma.md

Normal file

@@ -0,0 +1,44 @@

|

|||||||

|

---

|

||||||

|

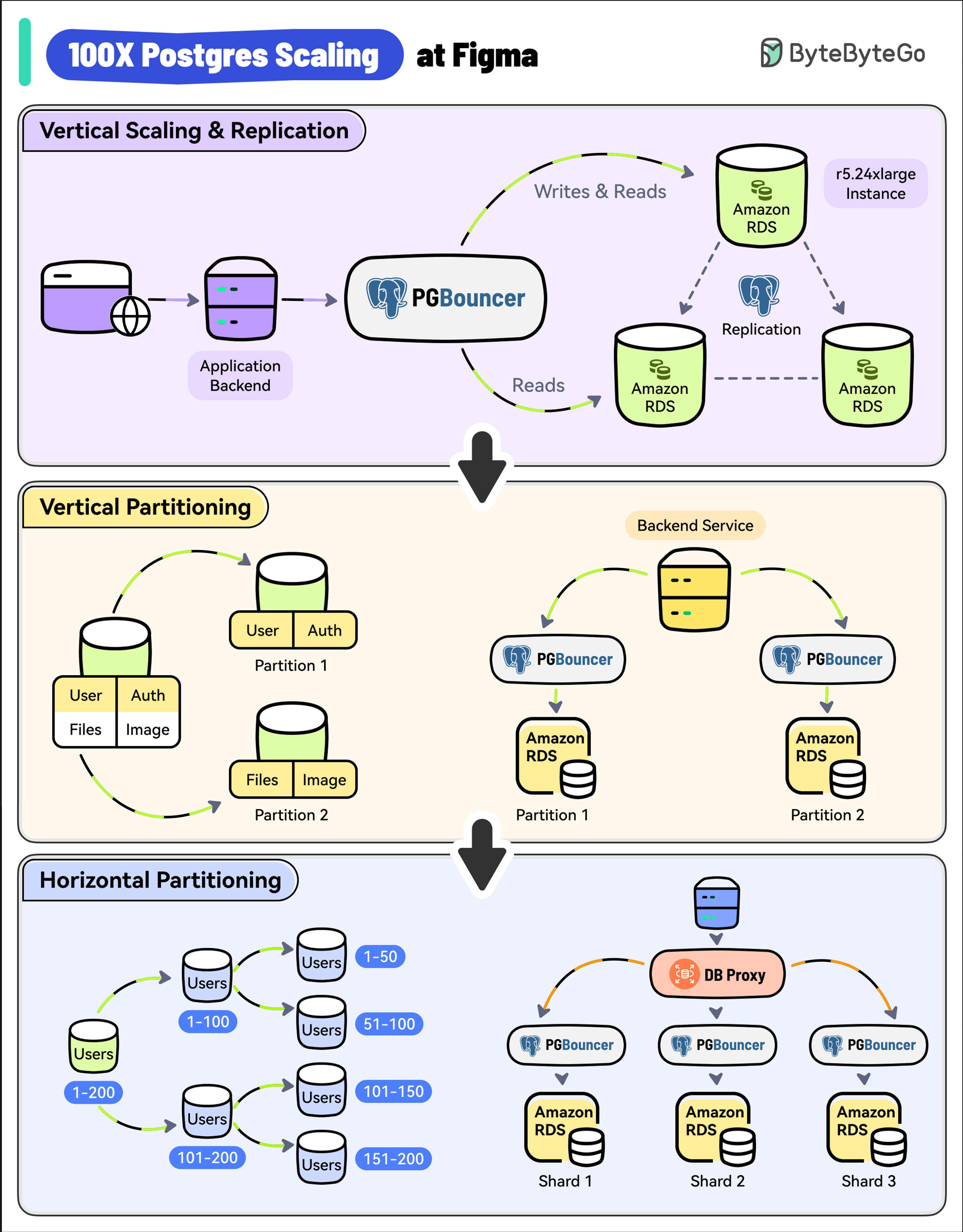

title: '100X Postgres Scaling at Figma'

|

||||||

|

description: 'Learn how Figma scaled its Postgres database by 100x.'

|

||||||

|

image: 'https://assets.bytebytego.com/diagrams/0048-100x-postgres-scaling-at-figma.png'

|

||||||

|

createdAt: '2024-02-12'

|

||||||

|

draft: false

|

||||||

|

categories:

|

||||||

|

- real-world-case-studies

|

||||||

|

tags:

|

||||||

|

- Postgres

|

||||||

|

- Scaling

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

With 3 million monthly users, Figma’s user base has increased by 200% since 2018.

|

||||||

|

|

||||||

|

As a result, its Postgres database witnessed a whopping 100X growth.

|

||||||

|

|

||||||

|

* **Vertical Scaling and Replication**

|

||||||

|

|

||||||

|

Figma used a single, large Amazon RDS database.

|

||||||

|

|

||||||

|

As a first step, they upgraded to the largest instance available (from r5.12xlarge to r5.24xlarge).

|

||||||

|

|

||||||

|

They also created multiple read replicas to scale read traffic and added PgBouncer as a connection pooler to limit the impact of a growing number of connections.

|

||||||

|

|

||||||

|

* **Vertical Partitioning**

|

||||||

|

|

||||||

|

The next step was vertical partitioning.

|

||||||

|

|

||||||

|

They migrated high-traffic tables like “Figma Files” and “Organizations” into their separate databases.

|

||||||

|

|

||||||

|

Multiple PgBouncer instances were used to manage the connections for these separate databases.

|

||||||

|

|

||||||

|

* **Horizontal Partitioning**

|

||||||

|

|

||||||

|

Over time, some tables crossed several terabytes of data and billions of rows.

|

||||||

|

|

||||||

|

Postgres Vacuum became an issue and max IOPS exceeded the limits of Amazon RDS at the time.

|

||||||

|

|

||||||

|

To solve this, Figma implemented horizontal partitioning by splitting large tables across multiple physical databases.

|

||||||

|

|

||||||

|

A new DBProxy service was built to handle routing and query execution.

|

||||||

@@ -0,0 +1,58 @@

|

|||||||

|

---

|

||||||

|

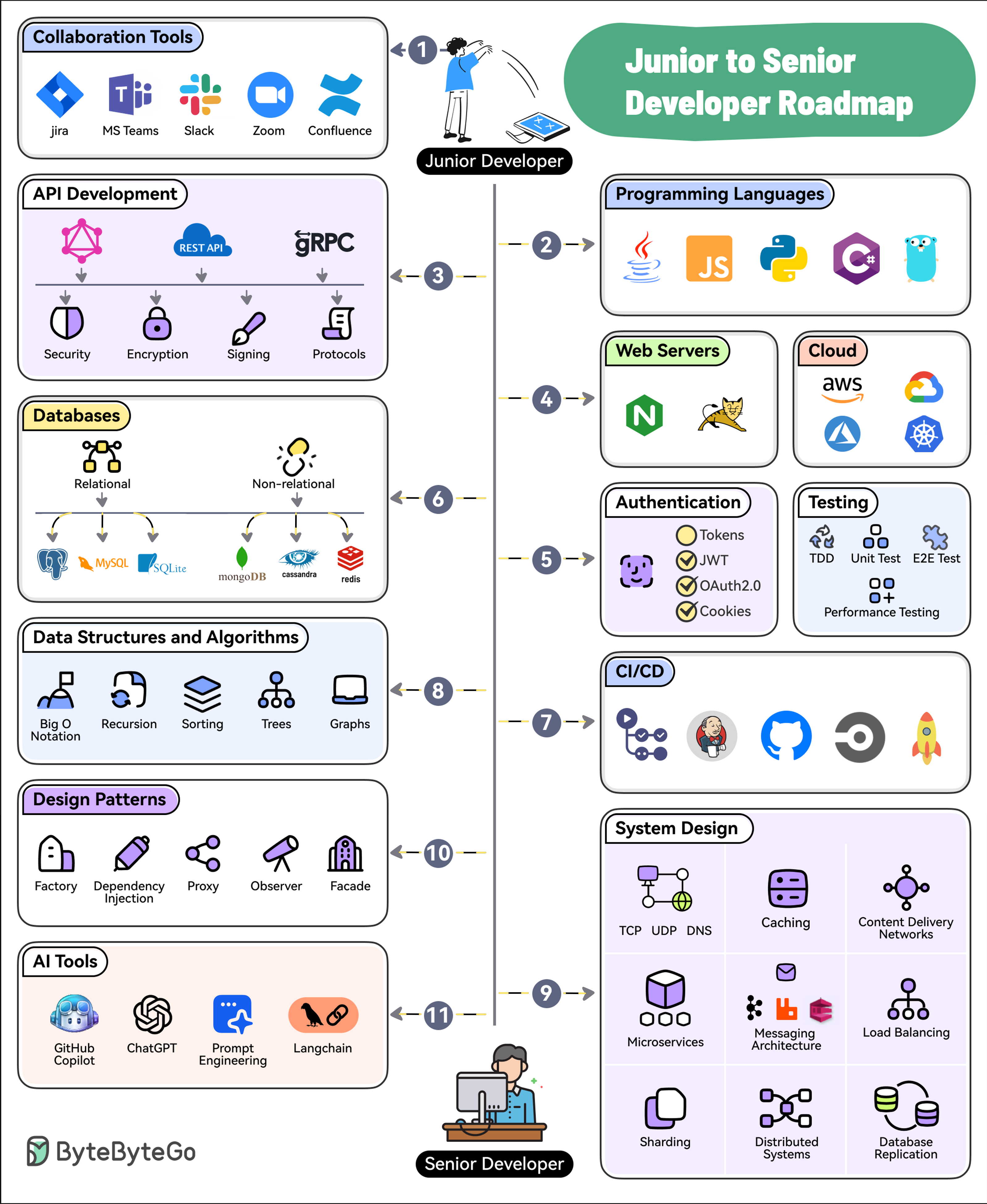

title: "11 Steps to Go From Junior to Senior Developer"

|

||||||

|

description: "Roadmap with steps to transition from junior to senior developer."

|

||||||

|

image: "https://assets.bytebytego.com/diagrams/0243-junior-to-senior-developer-roadmap.png"

|

||||||

|

createdAt: "2024-03-13"

|

||||||

|

draft: false

|

||||||

|

categories:

|

||||||

|

- software-development

|

||||||

|

tags:

|

||||||

|

- career-growth

|

||||||

|

- software-engineering

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

## 1. Collaboration Tools

|

||||||

|

|

||||||

|

Software development is a social activity. Learn to use collaboration tools like Jira, Confluence, Slack, MS Teams, Zoom, etc.

|

||||||

|

|

||||||

|

## 2. Programming Languages

|

||||||

|

|

||||||

|

Pick and master one or two programming languages. Choose from options like Java, Python, JavaScript, C#, Go, etc.

|

||||||

|

|

||||||

|

## 3. API Development

|

||||||

|

|

||||||

|

Learn the ins and outs of API Development approaches such as REST, GraphQL, and gRPC.

|

||||||

|

|

||||||

|

## 4. Web Servers and Hosting

|

||||||

|

|

||||||

|

Know about web servers as well as cloud platforms like AWS, Azure, GCP, and Kubernetes

|

||||||

|

|

||||||

|

## 5. Authentication and Testing

|

||||||

|

|

||||||

|

Learn how to secure your applications with authentication techniques such as JWTs, OAuth2, etc. Also, master testing techniques like TDD, E2E Testing, and Performance Testing

|

||||||

|

|

||||||

|

## 6. Databases

|

||||||

|

|

||||||

|

Learn to work with relational (Postgres, MySQL, and SQLite) and non-relational databases (MongoDB, Cassandra, and Redis).

|

||||||

|

|

||||||

|

## 7. CI/CD

|

||||||

|

|

||||||

|

Pick tools like GitHub Actions, Jenkins, or CircleCI to learn about continuous integration and continuous delivery.

|

||||||

|

|

||||||

|

## 8. Data Structures and Algorithms

|

||||||

|

|

||||||

|

Master the basics of DSA with topics like Big O Notation, Sorting, Trees, and Graphs.

|

||||||

|

|

||||||

|

## 9. System Design

|

||||||

|

|

||||||

|

Learn System Design concepts such as Networking, Caching, CDNs, Microservices, Messaging, Load Balancing, Replication, Distributed Systems, etc.

|

||||||

|

|

||||||

|

## 10. Design patterns

|

||||||

|

|

||||||

|

Master the application of design patterns such as dependency injection, factory, proxy, observers, and facade.

|

||||||

|

|

||||||

|

## 11. AI Tools

|

||||||

|

|

||||||

|

To future-proof your career, learn to leverage AI tools like GitHub Copilot, ChatGPT, Langchain, and Prompt Engineering.

|

||||||

@@ -0,0 +1,56 @@

|

|||||||

|

---

|

||||||

|

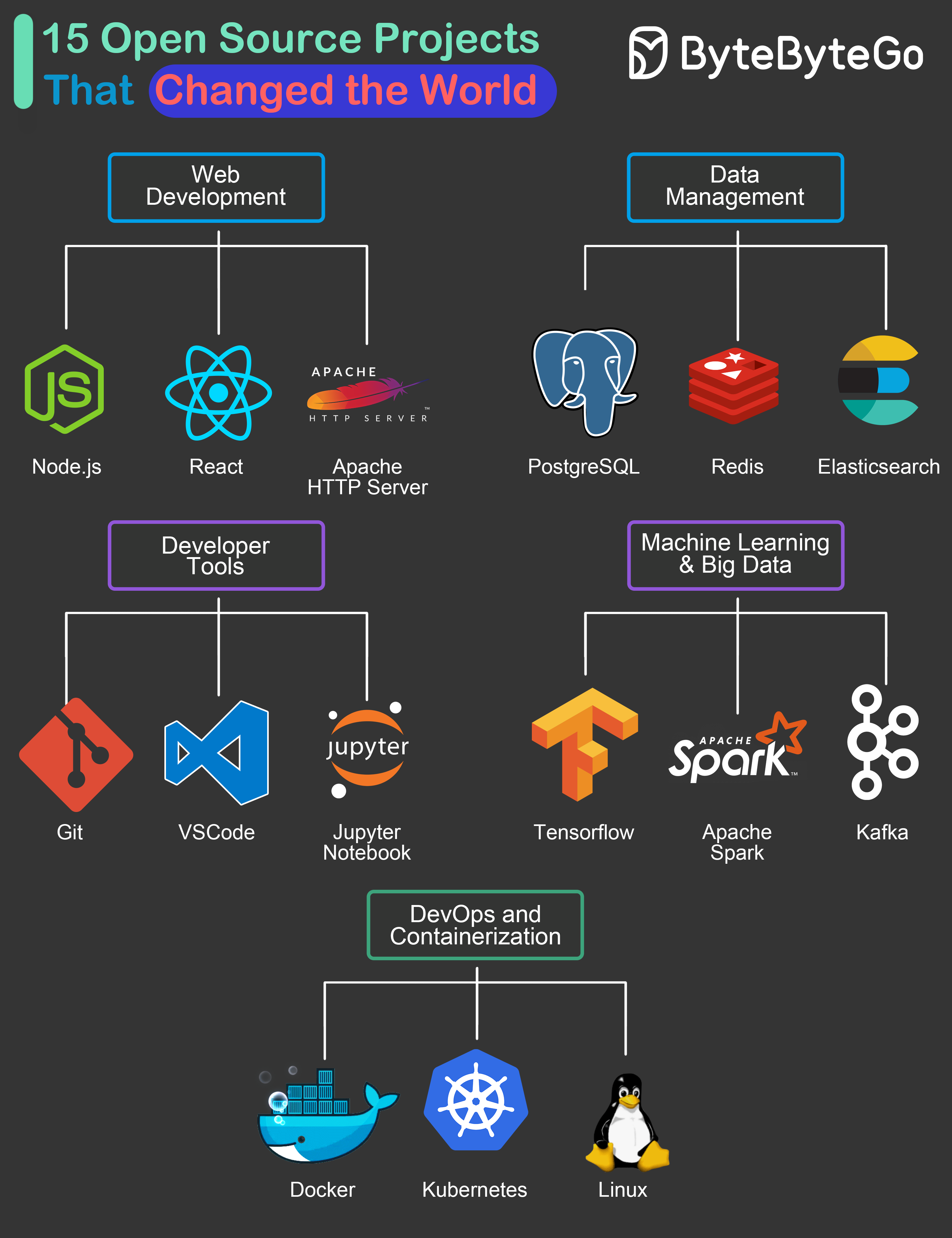

title: "15 Open-Source Projects That Changed the World"

|

||||||

|

description: "Explore 15 open-source projects that revolutionized software development."

|

||||||

|

image: "https://assets.bytebytego.com/diagrams/0029-15-open-source-projects-that-changed-the-world.png"

|

||||||

|

createdAt: "2024-03-10"

|

||||||

|

draft: false

|

||||||

|

categories:

|

||||||

|

- devtools-productivity

|

||||||

|

tags:

|

||||||

|

- "Open Source"

|

||||||

|

- "Software Development"

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

To come up with the list, we tried to look at the overall impact these projects have created on the industry and related technologies. Also, we’ve focused on projects that have led to a big change in the day-to-day lives of many software developers across the world.

|

||||||

|

|

||||||

|

## Web Development

|

||||||

|

|

||||||

|

* **Node.js:** The cross-platform server-side Javascript runtime that brought JS to server-side development

|

||||||

|

|

||||||

|

* **React:** The library that became the foundation of many web development frameworks.

|

||||||

|

|

||||||

|

* **Apache HTTP Server:** The highly versatile web server loved by enterprises and startups alike. Served as inspiration for many other web servers over the years.

|

||||||

|

|

||||||

|

## Data Management

|

||||||

|

|

||||||

|

* **PostgreSQL:** An open-source relational database management system that provided a high-quality alternative to costly systems

|

||||||

|

|

||||||

|

* **Redis:** The super versatile data store that can be used a cache, message broker and even general-purpose storage

|

||||||

|

|

||||||

|

* **Elasticsearch:** A scale solution to search, analyze and visualize large volumes of data

|

||||||

|

|

||||||

|

## Developer Tools

|

||||||

|

|

||||||

|

* **Git:** Free and open-source version control tool that allows developer collaboration across the globe.

|

||||||

|

|

||||||

|

* **VSCode:** One of the most popular source code editors in the world

|

||||||

|

|

||||||

|

* **Jupyter Notebook:** The web application that lets developers share live code, equations, visualizations and narrative text.

|

||||||

|

|

||||||

|

## Machine Learning & Big Data

|

||||||

|

|

||||||

|

* **Tensorflow:** The leading choice to leverage machine learning techniques

|

||||||

|

|

||||||

|

* **Apache Spark:** Standard tool for big data processing and analytics platforms

|

||||||

|

|

||||||

|

* **Kafka:** Standard platform for building real-time data pipelines and applications.

|

||||||

|

|

||||||

|

## DevOps & Containerization

|

||||||

|

|

||||||

|

* **Docker:** The open source solution that allows developers to package and deploy applications in a consistent and portable way.

|

||||||

|

|

||||||

|

* **Kubernetes:** The heart of Cloud-Native architecture and a platform to manage multiple containers

|

||||||

|

|

||||||

|

* **Linux:** The OS that democratized the world of software development.

|

||||||

33

data/guides/18-common-ports-worth-knowing.md

Normal file

33

data/guides/18-common-ports-worth-knowing.md

Normal file

@@ -0,0 +1,33 @@

|

|||||||

|

---

|

||||||

|

title: 18 Common Ports Worth Knowing

|

||||||

|

description: Learn about 18 common network ports and their uses.

|

||||||

|

image: 'https://assets.bytebytego.com/diagrams/0030-18-common-ports-you-must-know.png'

|

||||||

|

createdAt: '2024-02-05'

|

||||||

|

draft: false

|

||||||

|

categories:

|

||||||

|

- api-web-development

|

||||||

|

tags:

|

||||||

|

- Networking

|

||||||

|

- Ports

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

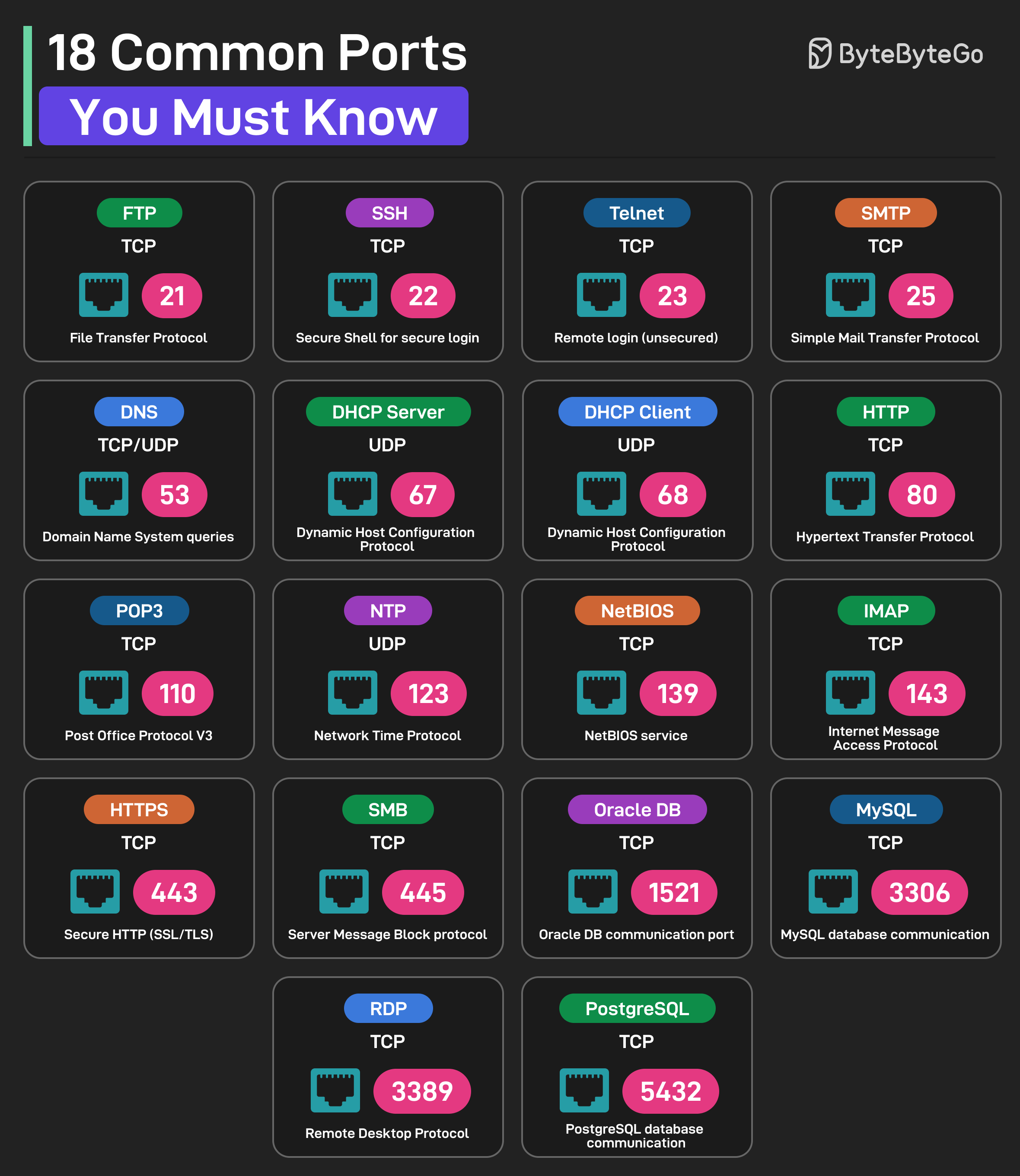

* **FTP (File Transfer Protocol):** Uses TCP Port 21

|

||||||

|

* **SSH (Secure Shell for Login):** Uses TCP Port 22

|

||||||

|

* **Telnet:** Uses TCP Port 23 for remote login

|

||||||

|

* **SMTP (Simple Mail Transfer Protocol):** Uses TCP Port 25

|

||||||

|

* **DNS:** Uses UDP or TCP on Port 53 for DNS queries

|

||||||

|

* **DHCP Server:** Uses UDP Port 67

|

||||||

|

* **DHCP Client:** Uses UDP Port 68

|

||||||

|

* **HTTP (Hypertext Transfer Protocol):** Uses TCP Port 80

|

||||||

|

* **POP3 (Post Office Protocol V3):** Uses TCP Port 110

|

||||||

|

* **NTP (Network Time Protocol):** Uses UDP Port 123

|

||||||

|

* **NetBIOS:** Uses TCP Port 139 for NetBIOS service

|

||||||

|

* **IMAP (Internet Message Access Protocol):** Uses TCP Port 143

|

||||||

|

* **HTTPS (Secure HTTP):** Uses TCP Port 443

|

||||||

|

* **SMB (Server Message Block):** Uses TCP Port 445

|

||||||

|

* **Oracle DB:** Uses TCP Port 1521 for Oracle database communication port

|

||||||

|

* **MySQL:** Uses TCP Port 3306 for MySQL database communication port

|

||||||

|

* **RDP:** Uses TCP Port 3389 for Remote Desktop Protocol

|

||||||

|

* **PostgreSQL:** Uses TCP Port 5432 for PostgreSQL database communication

|

||||||

@@ -0,0 +1,52 @@

|

|||||||

|

---

|

||||||

|

title: "18 Key Design Patterns Every Developer Should Know"

|

||||||

|

description: "Explore 18 essential design patterns for efficient software development."

|

||||||

|

image: "https://assets.bytebytego.com/diagrams/0032-oo-patterns-you-should-know.png"

|

||||||

|

createdAt: "2024-03-02"

|

||||||

|

draft: false

|

||||||

|

categories:

|

||||||

|

- software-architecture

|

||||||

|

tags:

|

||||||

|

- "design patterns"

|

||||||

|

- "software development"

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

Patterns are reusable solutions to common design problems, resulting in a smoother, more efficient development process. They serve as blueprints for building better software structures. These are some of the most popular patterns:

|

||||||

|

|

||||||

|

* **Abstract Factory:** Family Creator - Makes groups of related items.

|

||||||

|

|

||||||

|

* **Builder:** Lego Master - Builds objects step by step, keeping creation and appearance separate.

|

||||||

|

|

||||||

|

* **Prototype:** Clone Maker - Creates copies of fully prepared examples.

|

||||||

|

|

||||||

|

* **Singleton:** One and Only - A special class with just one instance.

|

||||||

|

|

||||||

|

* **Adapter:** Universal Plug - Connects things with different interfaces.

|

||||||

|

|

||||||

|

* **Bridge:** Function Connector - Links how an object works to what it does.

|

||||||

|

|

||||||

|

* **Composite:** Tree Builder - Forms tree-like structures of simple and complex parts.

|

||||||

|

|

||||||

|

* **Decorator:** Customizer - Adds features to objects without changing their core.

|

||||||

|

|

||||||

|

* **Facade:** One-Stop-Shop - Represents a whole system with a single, simplified interface.

|

||||||

|

|

||||||

|

* **Flyweight:** Space Saver - Shares small, reusable items efficiently.

|

||||||

|

|

||||||

|

* **Proxy:** Stand-In Actor - Represents another object, controlling access or actions.

|

||||||

|

|

||||||

|

* **Chain of Responsibility:** Request Relay - Passes a request through a chain of objects until handled.

|

||||||

|

|

||||||

|

* **Command:** Task Wrapper - Turns a request into an object, ready for action.

|

||||||

|

|

||||||

|

* **Iterator:** Collection Explorer - Accesses elements in a collection one by one.

|

||||||

|

|

||||||

|

* **Mediator:** Communication Hub - Simplifies interactions between different classes.

|

||||||

|

|

||||||

|

* **Memento:** Time Capsule - Captures and restores an object's state.

|

||||||

|

|

||||||

|

* **Observer:** News Broadcaster - Notifies classes about changes in other objects.

|

||||||

|

|

||||||

|

* **Visitor:** Skillful Guest - Adds new operations to a class without altering it.

|

||||||

32

data/guides/2-decades-of-cloud-evolution.md

Normal file

32

data/guides/2-decades-of-cloud-evolution.md

Normal file

@@ -0,0 +1,32 @@

|

|||||||

|

---

|

||||||

|

title: "2 Decades of Cloud Evolution"

|

||||||

|

description: "Explore the evolution of cloud computing over the past two decades."

|

||||||

|

image: "https://assets.bytebytego.com/diagrams/0147-cloud-evolution.png"

|

||||||

|

createdAt: "2024-03-02"

|

||||||

|

draft: false

|

||||||

|

categories:

|

||||||

|

- cloud-distributed-systems

|

||||||

|

tags:

|

||||||

|

- "Cloud Computing"

|

||||||

|

- "Cloud Evolution"

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

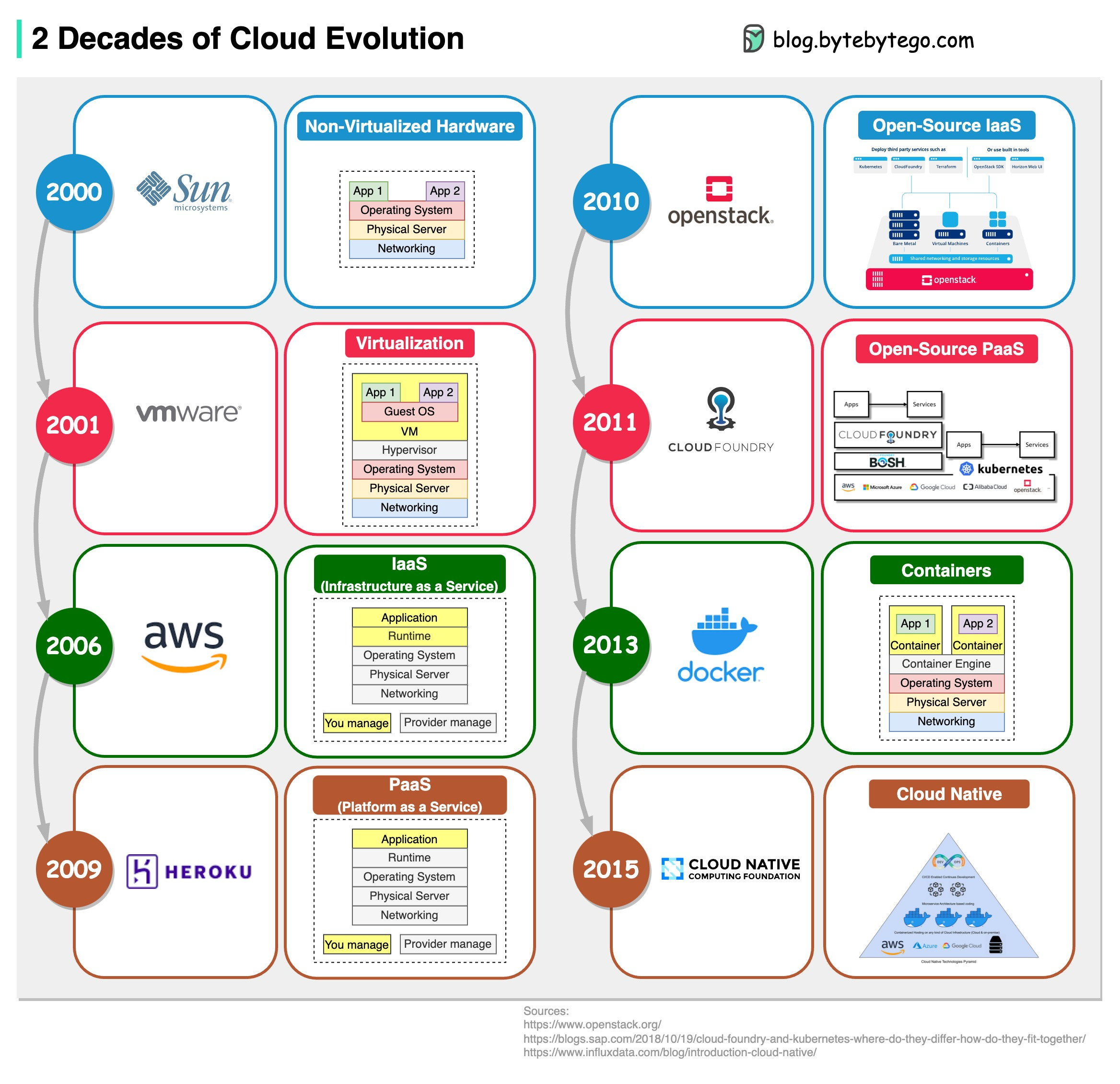

IaaS, PaaS, Cloud Native… How do we get here? The diagram below shows two decades of cloud evolution.

|

||||||

|

|

||||||

|

## Cloud Evolution Timeline

|

||||||

|

|

||||||

|

* 2001 - VMWare - Virtualization via hypervisor

|

||||||

|

|

||||||

|

* 2006 - AWS - IaaS (Infrastructure as a Service)

|

||||||

|

|

||||||

|

* 2009 - Heroku - PaaS (Platform as a Service)

|

||||||

|

|

||||||

|

* 2010 - OpenStack - Open-source IaaS

|

||||||

|

|

||||||

|

* 2011 - CloudFoundry - Open-source PaaS

|

||||||

|

|

||||||

|

* 2013 - Docker - Containers

|

||||||

|

|

||||||

|

* 2015 - CNCF (Cloud Native Computing Foundation) - Cloud Native

|

||||||

@@ -0,0 +1,52 @@

|

|||||||

|

---

|

||||||

|

title: "20 Popular Open Source Projects Started by Big Companies"

|

||||||

|

description: "Explore 20 popular open source projects backed by major tech companies."

|

||||||

|

image: "https://assets.bytebytego.com/diagrams/0034-20-popular-open-source-projects-by-big-tech.png"

|

||||||

|

createdAt: "2024-03-11"

|

||||||

|

draft: false

|

||||||

|

categories:

|

||||||

|

- devtools-productivity

|

||||||

|

tags:

|

||||||

|

- "Open Source"

|

||||||

|

- "Technology"

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

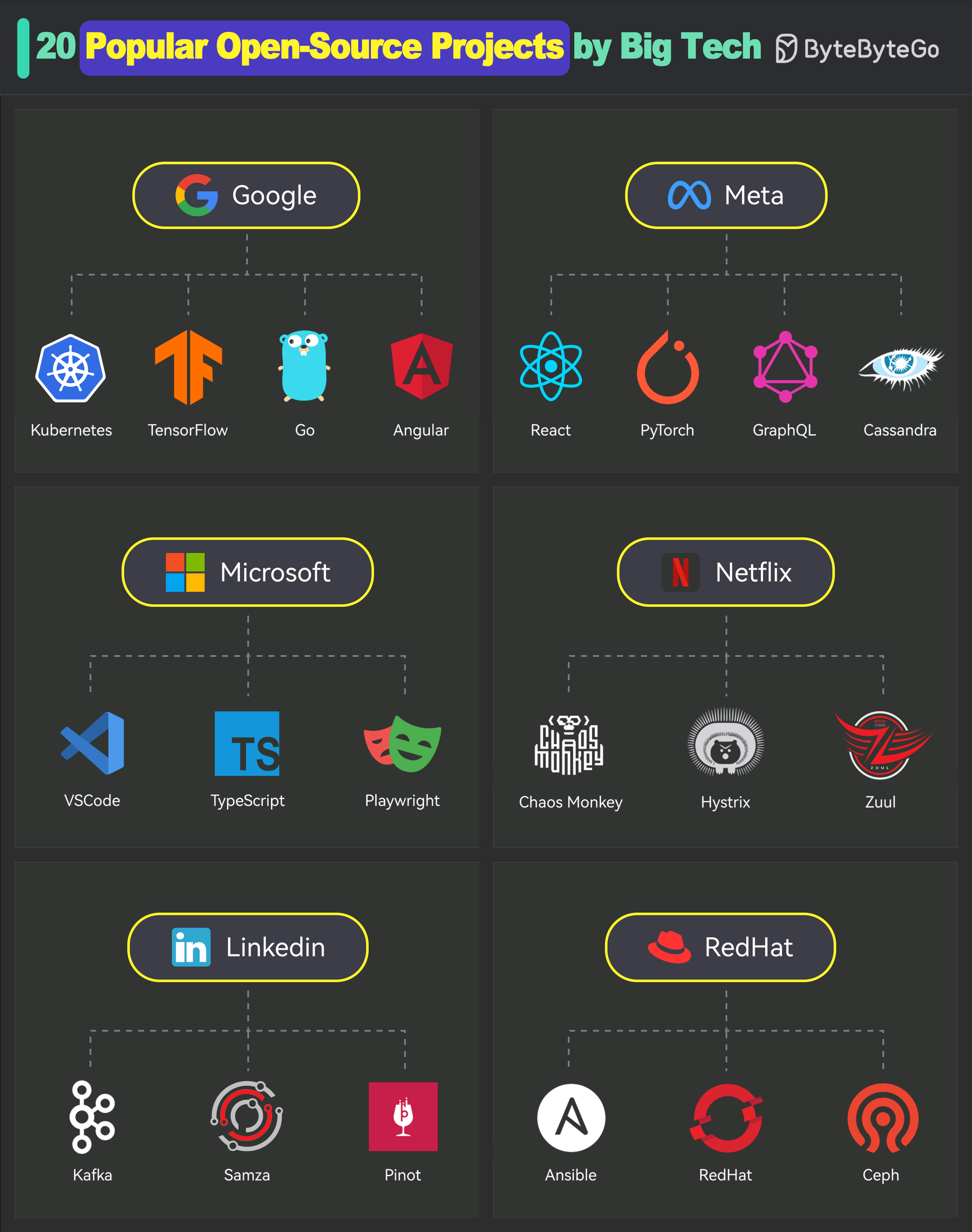

## 1. Google

|

||||||

|

|

||||||

|

* Kubernetes

|

||||||

|

* TensorFlow

|

||||||

|

* Go

|

||||||

|

* Angular

|

||||||

|

|

||||||

|

## 2. Meta

|

||||||

|

|

||||||

|

* React

|

||||||

|

* PyTorch

|

||||||

|

* GraphQL

|

||||||

|

* Cassandra

|

||||||

|

|

||||||

|

## 3. Microsoft

|

||||||

|

|

||||||

|

* VSCode

|

||||||

|

* TypeScript

|

||||||

|

* Playwright

|

||||||

|

|

||||||

|

## 4. Netflix

|

||||||

|

|

||||||

|

* Chaos Monkey

|

||||||

|

* Hystrix

|

||||||

|

* Zuul

|

||||||

|

|

||||||

|

## 5. LinkedIn

|

||||||

|

|

||||||

|

* Kafka

|

||||||

|

* Samza

|

||||||

|

* Pinot

|

||||||

|

|

||||||

|

## 6. RedHat

|

||||||

|

|

||||||

|

* Ansible

|

||||||

|

* OpenShift

|

||||||

|

* Ceph Storage

|

||||||

@@ -0,0 +1,66 @@

|

|||||||

|

---

|

||||||

|

title: "25 Papers That Completely Transformed the Computer World"

|

||||||

|

description: "A curated list of influential papers that shaped the computer world."

|

||||||

|

image: "https://assets.bytebytego.com/diagrams/0419-25-papers-that-completely-transformed-the-computer-world.png"

|

||||||

|

createdAt: "2024-02-09"

|

||||||

|

draft: false

|

||||||

|

categories:

|

||||||

|

- cloud-distributed-systems

|

||||||

|

tags:

|

||||||

|

- "Distributed Systems"

|

||||||

|

- "Computer Science"

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

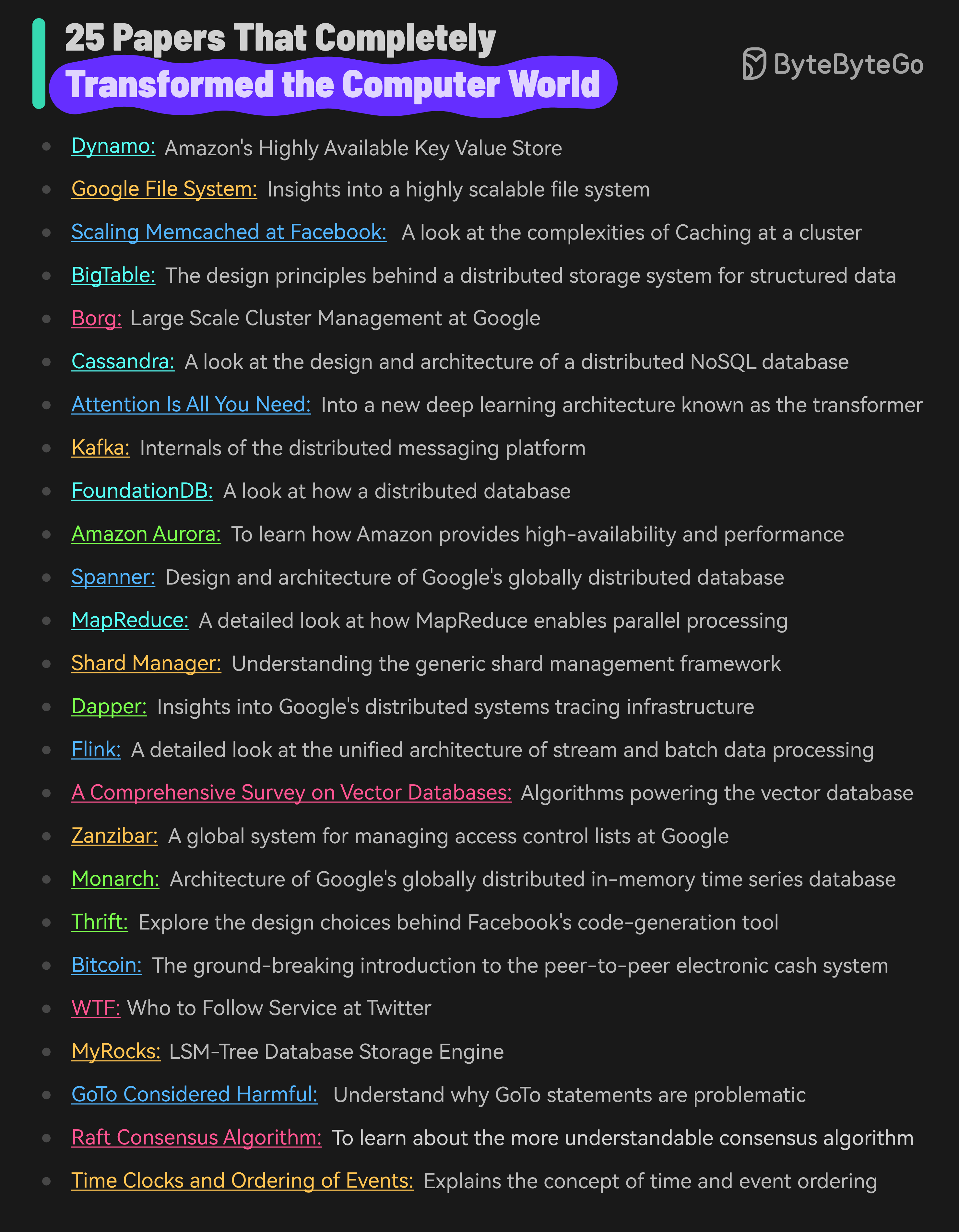

Here are 25 papers that have significantly impacted the field of computer science:

|

||||||

|

|

||||||

|

* [Dynamo - Amazon’s Highly Available Key Value Store](https://www.allthingsdistributed.com/files/amazon-dynamo-sosp2007.pdf)

|

||||||

|

|

||||||

|

* [Google File System](https://static.googleusercontent.com/media/research.google.com/en//archive/gfs-sosp2003.pdf): Insights into a highly scalable file system

|

||||||

|

|

||||||

|

* [Scaling Memcached at Facebook](https://research.facebook.com/file/839620310074473/scaling-memcache-at-facebook.pdf): A look at the complexities of Caching

|

||||||

|

|

||||||

|

* [BigTable](https://static.googleusercontent.com/media/research.google.com/en//archive/bigtable-osdi06.pdf): The design principles behind a distributed storage system

|

||||||

|

|

||||||

|

* [Borg - Large Scale Cluster Management at Google](https://storage.googleapis.com/pub-tools-public-publication-data/pdf/43438.pdf)

|

||||||

|

|

||||||

|

* [Cassandra](https://www.cs.cornell.edu/projects/ladis2009/papers/lakshman-ladis2009.pdf): A look at the design and architecture of a distributed NoSQL database

|

||||||

|

|

||||||

|

* [Attention Is All You Need](https://arxiv.org/abs/1706.03762): Into a new deep learning architecture known as the transformer

|

||||||

|

|

||||||

|

* [Kafka](https://www.microsoft.com/en-us/research/wp-content/uploads/2017/09/Kafka.pdf): Internals of the distributed messaging platform

|

||||||

|

|

||||||

|

* [FoundationDB](https://www.foundationdb.org/files/fdb-paper.pdf): A look at how a distributed database

|

||||||

|

|

||||||

|

* [Amazon Aurora](https://web.stanford.edu/class/cs245/readings/aurora.pdf): To learn how Amazon provides high-availability and performance

|

||||||

|

|

||||||

|

* [Spanner](https://static.googleusercontent.com/media/research.google.com/en//archive/spanner-osdi2012.pdf): Design and architecture of Google’s globally distributed database

|

||||||

|

|

||||||

|

* [MapReduce](https://storage.googleapis.com/pub-tools-public-publication-data/pdf/16cb30b4b92fd4989b8619a61752a2387c6dd474.pdf): A detailed look at how MapReduce enables parallel processing of massive volumes of data

|

||||||

|

|

||||||

|

* [Shard Manager](https://dl.acm.org/doi/pdf/10.1145/3477132.3483546): Understanding the generic shard management framework

|

||||||

|

|

||||||

|

* [Dapper](https://static.googleusercontent.com/media/research.google.com/en//archive/papers/dapper-2010-1.pdf): Insights into Google’s distributed systems tracing infrastructure

|

||||||

|

|

||||||

|

* [Flink](https://www.researchgate.net/publication/308993790_Apache_Flink_Stream_and_Batch_Processing_in_a_Single_Engine): A detailed look at the unified architecture of stream and batch processing

|

||||||

|

|

||||||

|

* [A Comprehensive Survey on Vector Databases](https://arxiv.org/pdf/2310.11703.pdf)

|

||||||

|

|

||||||

|

* [Zanzibar](https://storage.googleapis.com/pub-tools-public-publication-data/pdf/10683a8987dbf0c6d4edcafb9b4f05cc9de5974a.pdf): A look at the design, implementation and deployment of a global system for managing access control lists at Google

|

||||||

|

|

||||||

|

* [Monarch](https://storage.googleapis.com/pub-tools-public-publication-data/pdf/d84ab6c93881af998de877d0070a706de7bec6d8.pdf): Architecture of Google’s in-memory time series database

|

||||||

|

|

||||||

|

* [Thrift](https://thrift.apache.org/static/files/thrift-20070401.pdf): Explore the design choices behind Facebook’s code-generation tool

|

||||||

|

|

||||||

|

* [Bitcoin](https://bitcoin.org/bitcoin.pdf): The ground-breaking introduction to the peer-to-peer electronic cash system

|

||||||

|

|

||||||

|

* [WTF - Who to Follow Service at Twitter](https://web.stanford.edu/~rezab/papers/wtf_overview.pdf): Twitter’s (now X) user recommendation system

|

||||||

|

|

||||||

|

* [MyRocks: LSM-Tree Database Storage Engine](https://www.vldb.org/pvldb/vol13/p3217-matsunobu.pdf)

|

||||||

|

|

||||||

|

* [GoTo Considered Harmful](https://homepages.cwi.nl/~storm/teaching/reader/Dijkstra68.pdf)

|

||||||

|

|

||||||

|

* [Raft Consensus Algorithm](https://raft.github.io/raft.pdf): To learn about the more understandable consensus algorithm

|

||||||

|

|

||||||

|

* [Time Clocks and Ordering of Events](https://lamport.azurewebsites.net/pubs/time-clocks.pdf): The extremely important paper that explains the concept of time and event ordering in a distributed system

|

||||||

64

data/guides/30-useful-ai-apps-that-can-help-you-in-2025.md

Normal file

64

data/guides/30-useful-ai-apps-that-can-help-you-in-2025.md

Normal file

@@ -0,0 +1,64 @@

|

|||||||

|

---

|

||||||

|

title: "30 Useful AI Apps That Can Help You in 2025"

|

||||||

|

description: "Discover 30 AI apps to boost productivity, creativity, and more in 2025."

|

||||||

|

image: "https://assets.bytebytego.com/diagrams/0039-30-useful-ai-apps-that-can-help-you-in-2025.png"

|

||||||

|

createdAt: "2024-03-01"

|

||||||

|

draft: false

|

||||||

|

categories:

|

||||||

|

- devtools-productivity

|

||||||

|

tags:

|

||||||

|

- "AI Tools"

|

||||||

|

- "Productivity"

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

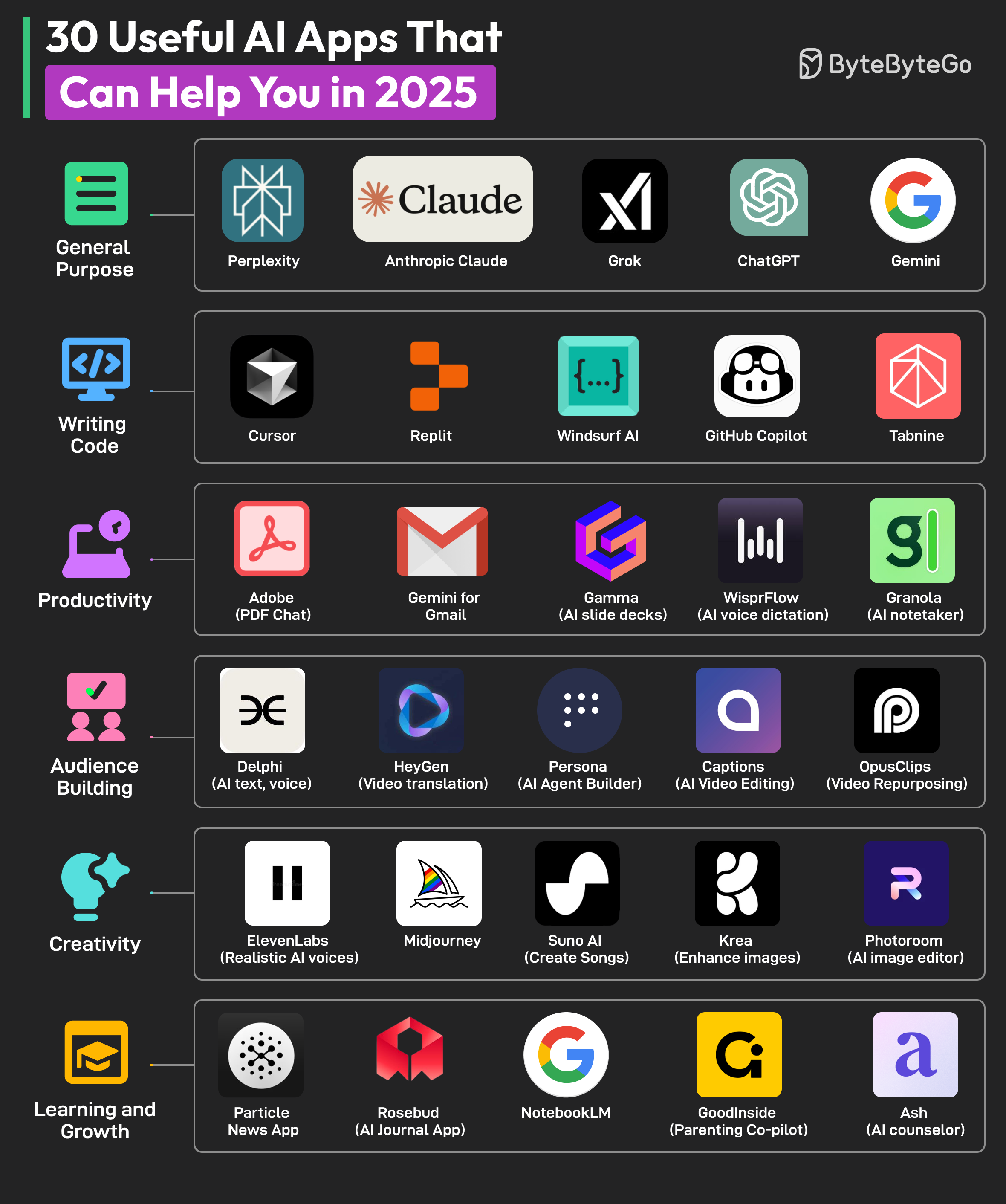

AI apps are taking over the world. There’s an AI app for every conceivable use case. Here are some AI apps for different categories:

|

||||||

|

|

||||||

|

## General Purpose

|

||||||

|

|

||||||

|

* Perplexity

|

||||||

|

* Anthropic Claude

|

||||||

|

* Grok

|

||||||

|

* ChatGPT

|

||||||

|

* Gemini

|

||||||

|

|

||||||

|

## Writing Code

|

||||||

|

|

||||||

|

* Cursor

|

||||||

|

* Replit

|

||||||

|

* Windsurf AI

|

||||||

|

* Github Copilot

|

||||||

|

* Tabnine

|

||||||

|

|

||||||

|

## Productivity

|

||||||

|

|

||||||

|

* Adobe (PDF Chat)

|

||||||

|

* Gemini for Gmail

|

||||||

|

* Gamma (AI slide deck)

|

||||||

|

* WisprFlow (AI voice dictation)

|

||||||

|

* Granola (AI notetaker)

|

||||||

|

|

||||||

|

## Audience Building

|

||||||

|

|

||||||

|

* Delphi (AI text, voice)

|

||||||

|

* HeyGen (video translation)

|

||||||

|

* Persona (AI agent builder)

|

||||||

|

* Captions (AI video editing)

|

||||||

|

* OpusClips (Video repurposing)

|

||||||

|

|

||||||

|

## Creativity

|

||||||

|

|

||||||

|

* ElevenLabs (realistic AI voices)

|

||||||

|

* Midjourney

|

||||||

|

* Suno AI (music generation)

|

||||||

|

* Krea (enhance images)

|

||||||

|

* Photoroom (AI image editing)

|

||||||

|

|

||||||

|

## Learning and Growth

|

||||||

|

|

||||||

|

* Particle News App

|

||||||

|

* Rosebud (AI journal app)

|

||||||

|

* NotebookLM

|

||||||

|

* GoodInside (parenting co-pilot)

|

||||||

|

* Ash (AI counselor)

|

||||||

@@ -0,0 +1,44 @@

|

|||||||

|

---

|

||||||

|

title: '4 Ways Netflix Uses Caching'

|

||||||

|

description: 'Explore how Netflix uses caching to maintain user engagement.'

|

||||||

|

image: 'https://assets.bytebytego.com/diagrams/0007-4-ways-netflix-uses-caching.png'

|

||||||

|

createdAt: '2024-02-25'

|

||||||

|

draft: false

|

||||||

|

categories:

|

||||||

|

- real-world-case-studies

|

||||||

|

tags:

|

||||||

|

- Caching

|

||||||

|

- Netflix

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

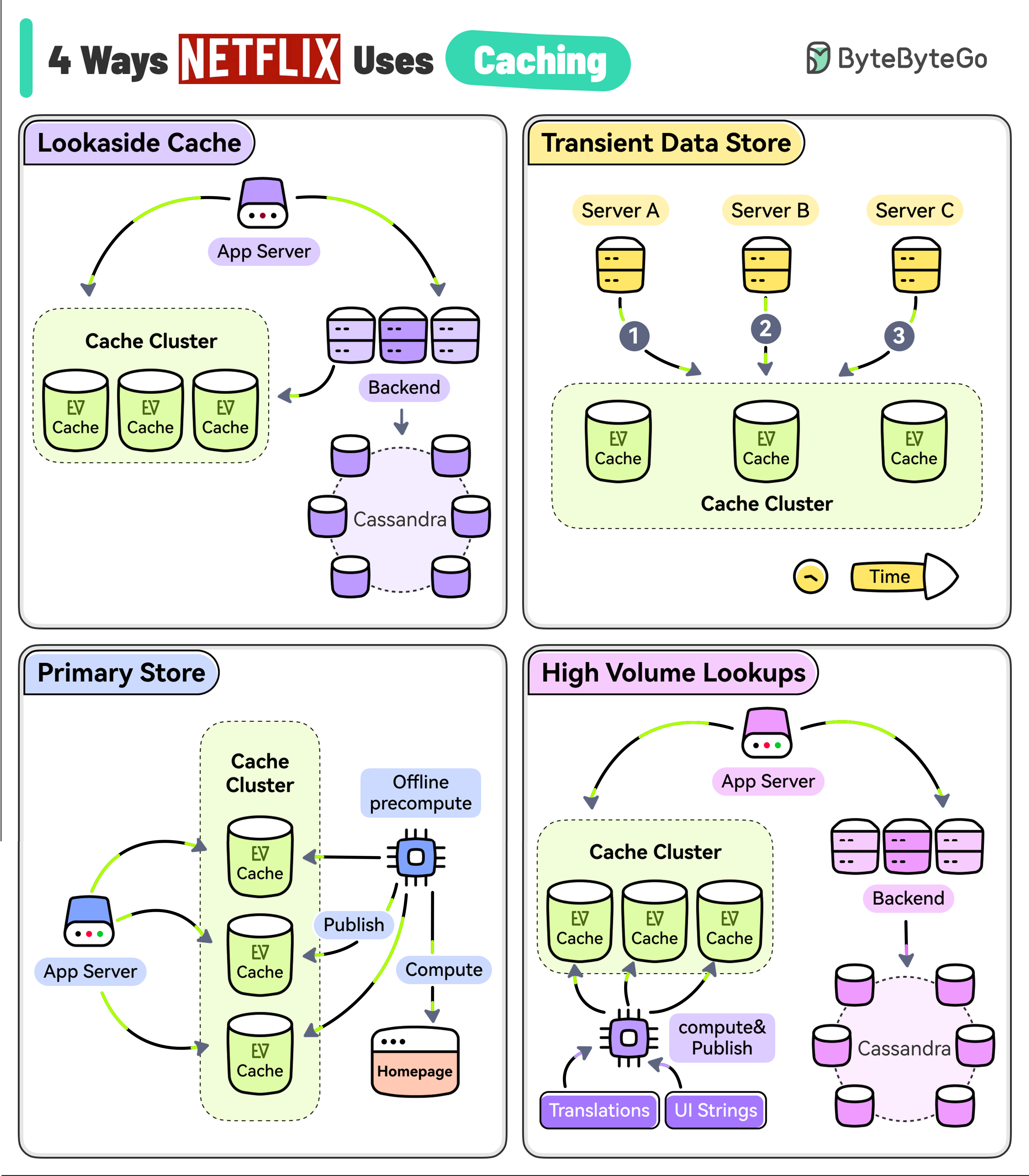

The goal of Netflix is to keep you streaming for as long as possible. But a user’s typical attention span is just 90 seconds.

|

||||||

|

|

||||||

|

They use EVCache (a distributed key-value store) to reduce latency so that the users don’t lose interest.

|

||||||

|

|

||||||

|

However, EVCache has multiple use cases at Netflix.

|

||||||

|

|

||||||

|

* **Lookaside Cache**

|

||||||

|

|

||||||

|

When the application needs some data, it first tries the EVCache client and if the data is not in the cache, it goes to the backend service and the Cassandra database to fetch the data.

|

||||||

|

|

||||||

|

The service also keeps the cache updated for future requests.

|

||||||

|

|

||||||

|

* **Transient Data Store**

|

||||||

|

|

||||||

|

Netflix uses EVCache to keep track of transient data such as playback session information.

|

||||||

|

|

||||||

|

One application service might start the session while the other may update the session followed by a session closure at the very end.

|

||||||

|

|

||||||

|

* **Primary Store**

|

||||||

|

|

||||||

|

Netflix runs large-scale pre-compute systems every night to compute a brand-new home page for every profile of every user based on watch history and recommendations.

|

||||||

|

|

||||||

|

All of that data is written into the EVCache cluster from where the online services read the data and build the homepage.

|

||||||

|

|

||||||

|

* **High Volume Data**

|

||||||

|

|

||||||

|

Netflix has data that has a high volume of access and also needs to be highly available. For example, UI strings and translations that are shown on the Netflix home page.

|

||||||

|

|

||||||

|

A separate process asynchronously computes and publishes the UI string to EVCache from where the application can read it with low latency and high availability.

|

||||||

37

data/guides/4-ways-of-qr-code-payment.md

Normal file

37

data/guides/4-ways-of-qr-code-payment.md

Normal file

@@ -0,0 +1,37 @@

|

|||||||

|

---

|

||||||

|

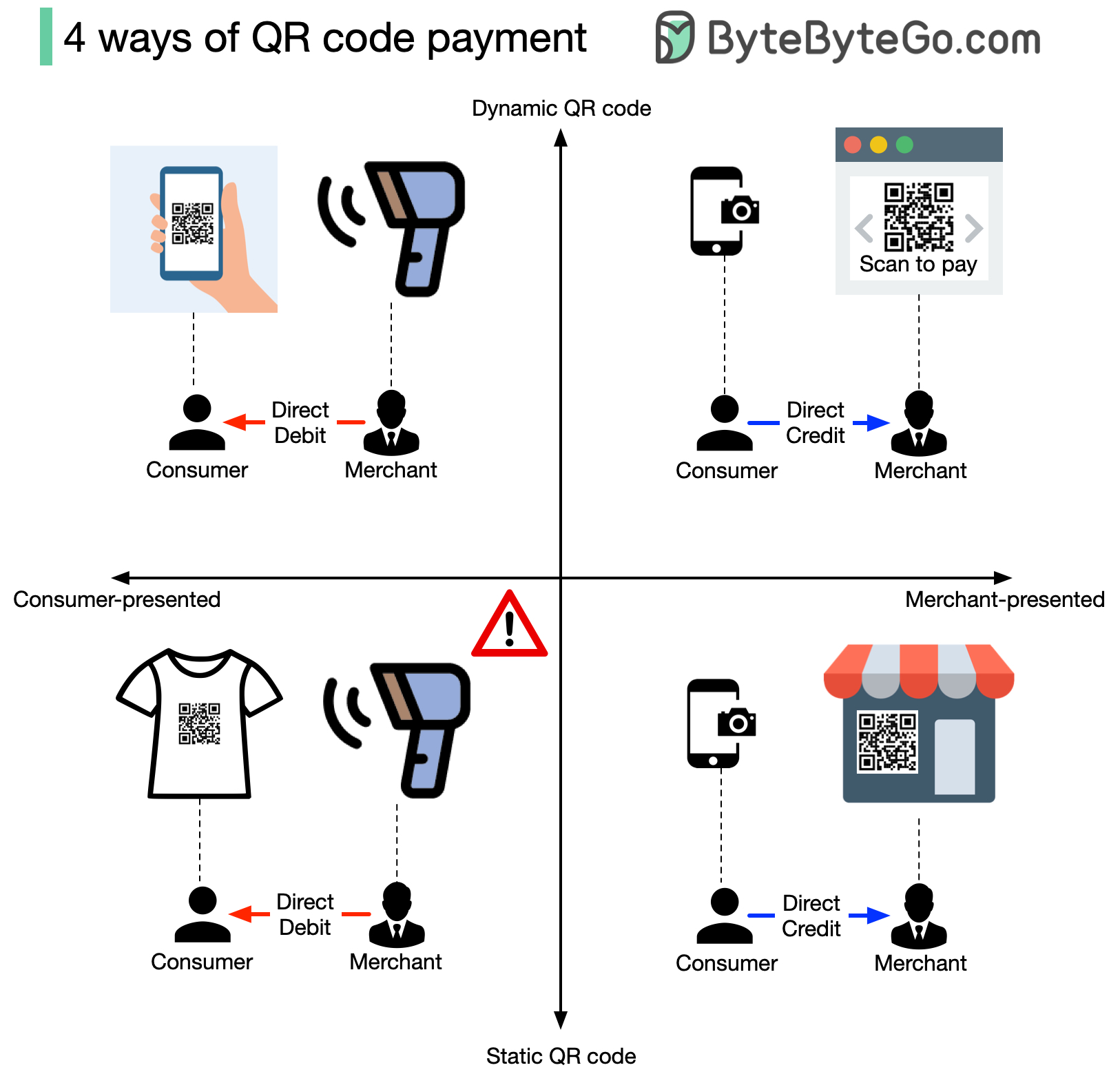

title: "4 Ways of QR Code Payment"

|

||||||

|

description: "Explore the 4 different methods of QR code payments."

|

||||||

|

image: "https://assets.bytebytego.com/diagrams/0310-qr-code.jpg"

|

||||||

|

createdAt: "2024-03-01"

|