diff --git a/images/banner.jpg b/.github/banner.jpg

similarity index 100%

rename from images/banner.jpg

rename to .github/banner.jpg

diff --git a/.gitignore b/.gitignore

index 5ca2fa2..c9fe04b 100644

--- a/.gitignore

+++ b/.gitignore

@@ -2,6 +2,7 @@

*.epub

__pycache__/

*.py[cod]

+.idea

# C extensions

*.so

@@ -41,6 +42,8 @@ htmlcov/

nosetests.xml

coverage.xml

+node_modules

+

# Translations

*.mo

*.pot

diff --git a/CONTRIBUTING.md b/CONTRIBUTING.md

index 22177a5..da16ad6 100644

--- a/CONTRIBUTING.md

+++ b/CONTRIBUTING.md

@@ -13,7 +13,3 @@ Thank you for your interest in contributing! Here are some guidelines to follow

### GitHub Pull Requests Docs

If you are not familiar with pull requests, review the [pull request docs](https://help.github.com/articles/using-pull-requests/).

-

-## Translations

-

-Refer to [TRANSLATIONS.md](translations/TRANSLATIONS.md)

diff --git a/README.md b/README.md

index f7c801a..0a75df3 100644

--- a/README.md

+++ b/README.md

@@ -1,5 +1,5 @@

-  +

+

@@ -22,1718 +22,431 @@ Whether you're preparing for a System Design Interview or you simply want to und

# Table of Contents

-

+

+

+* [API and Web Development](https://bytebytego.com/guides/api-web-development)

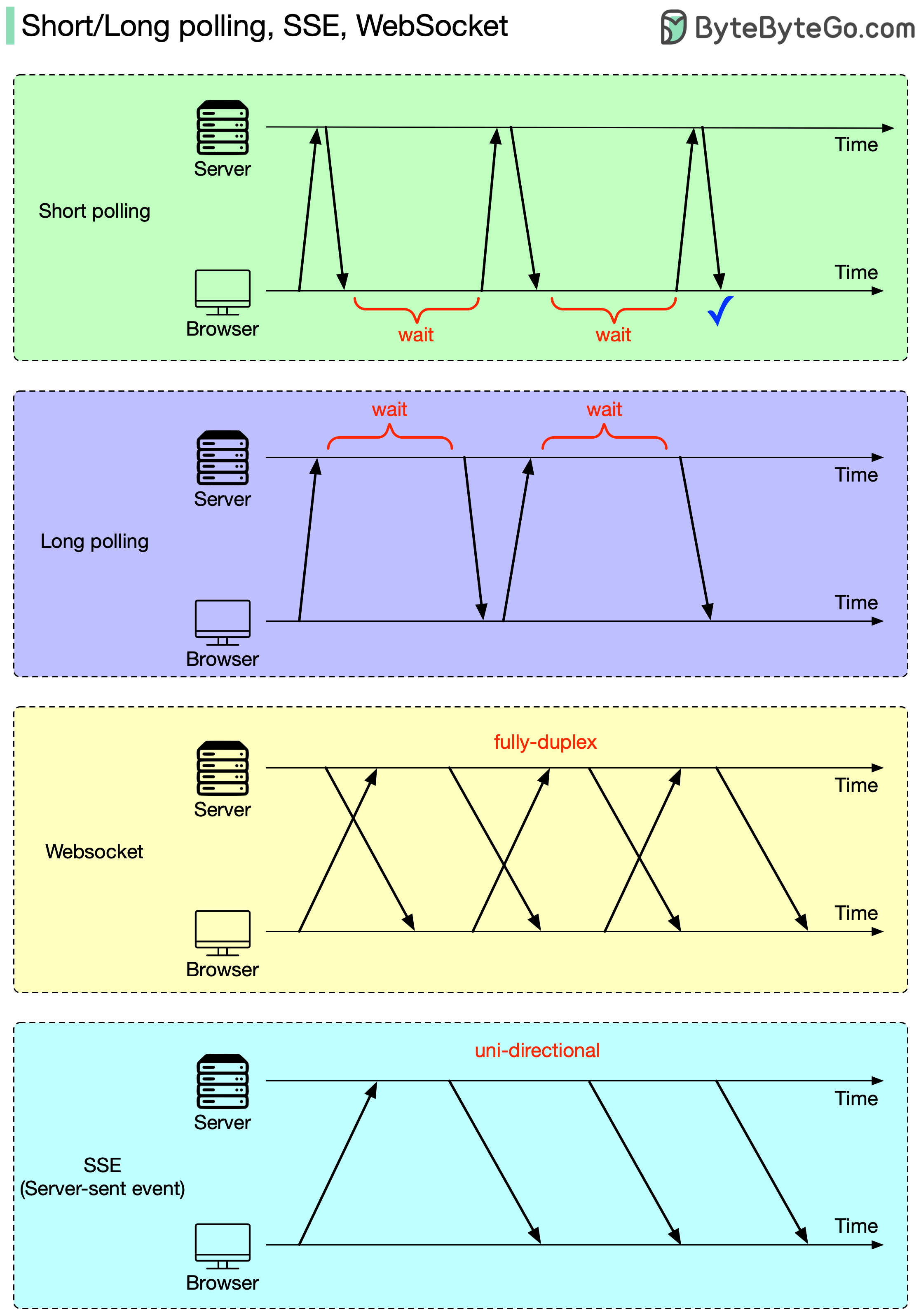

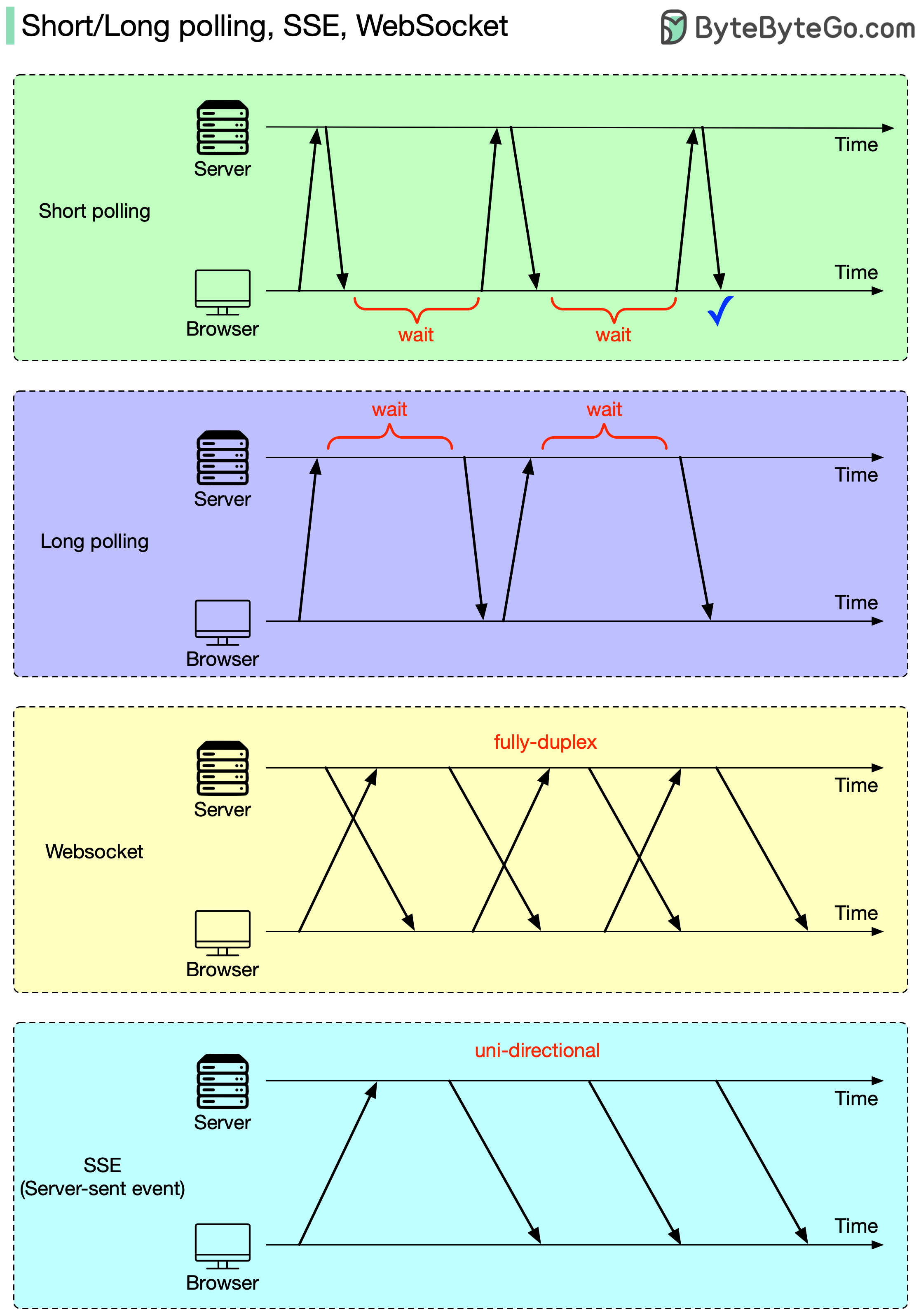

+ * [Short/long polling, SSE, WebSocket](https://bytebytego.com/guides/shortlong-polling-sse-websocket)

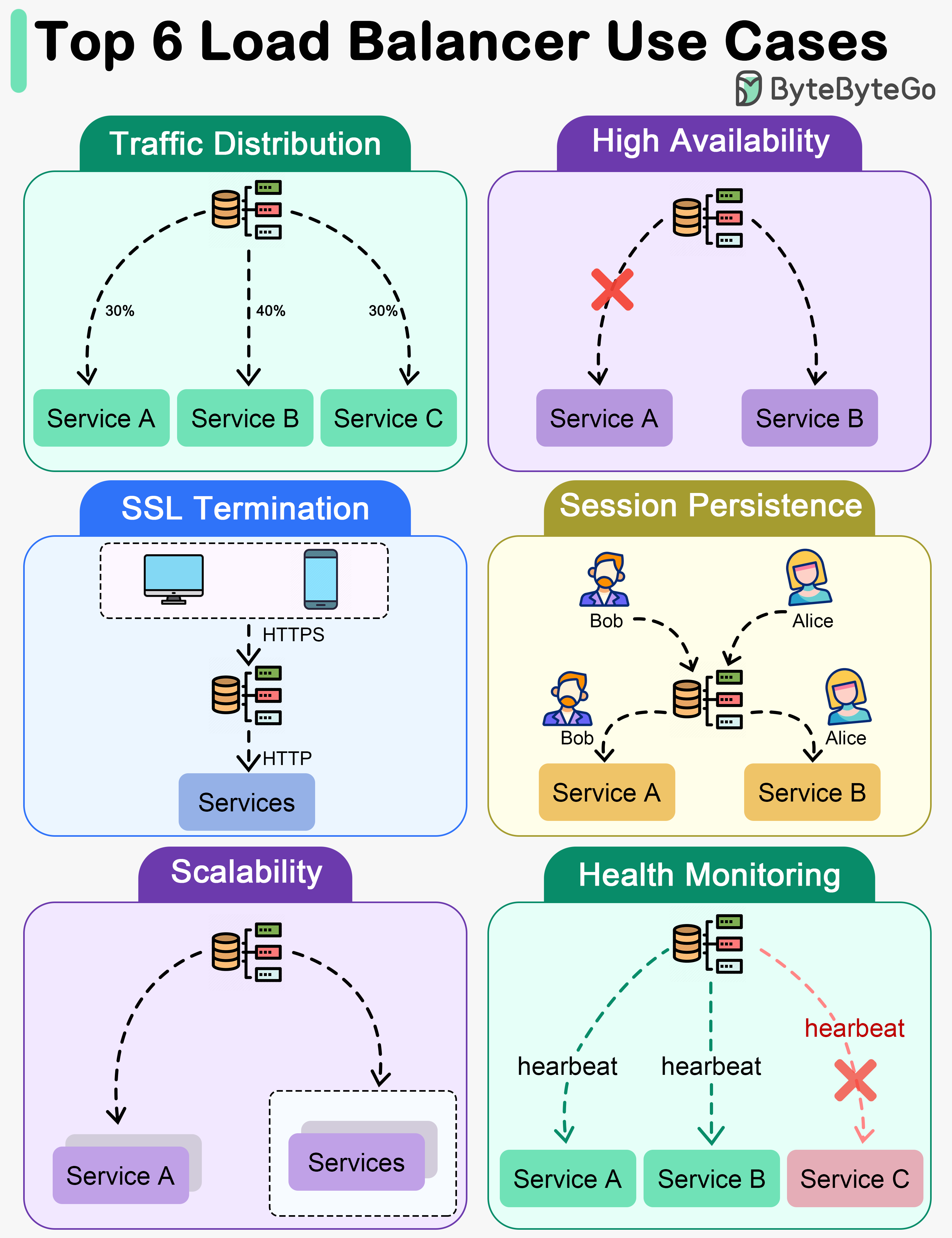

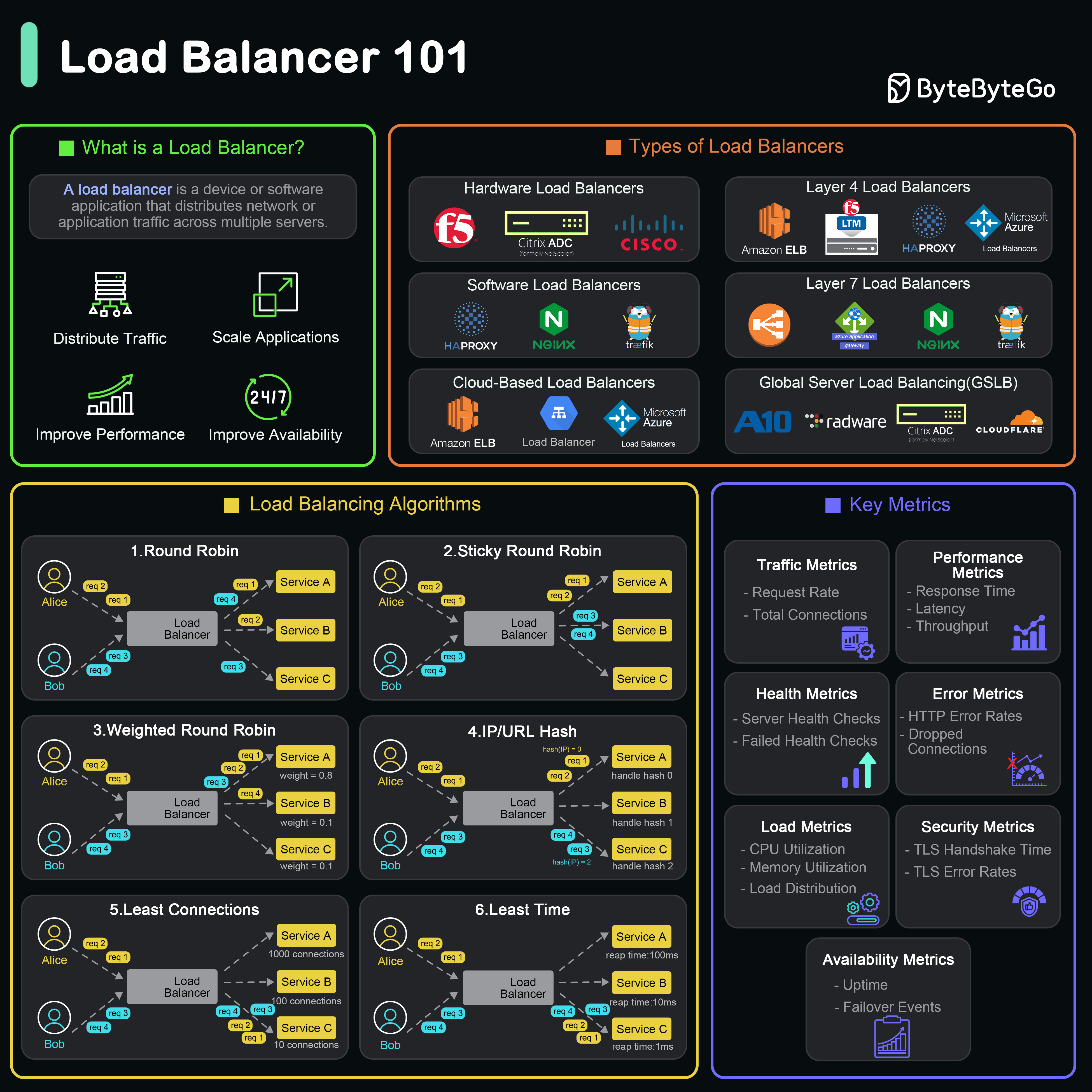

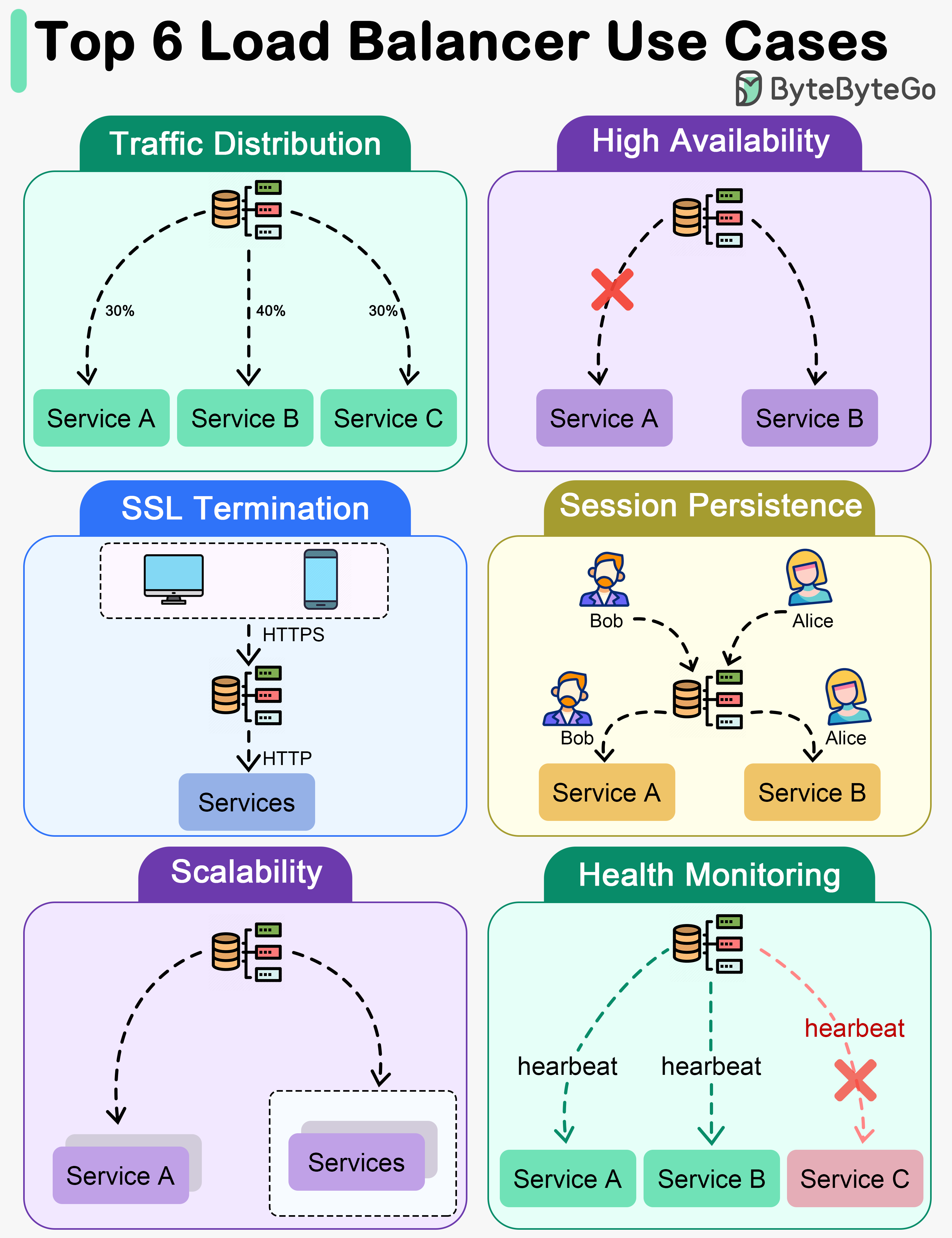

+ * [Load Balancer Realistic Use Cases](https://bytebytego.com/guides/load-balancer-realistic-use-cases-you-may-not-know)

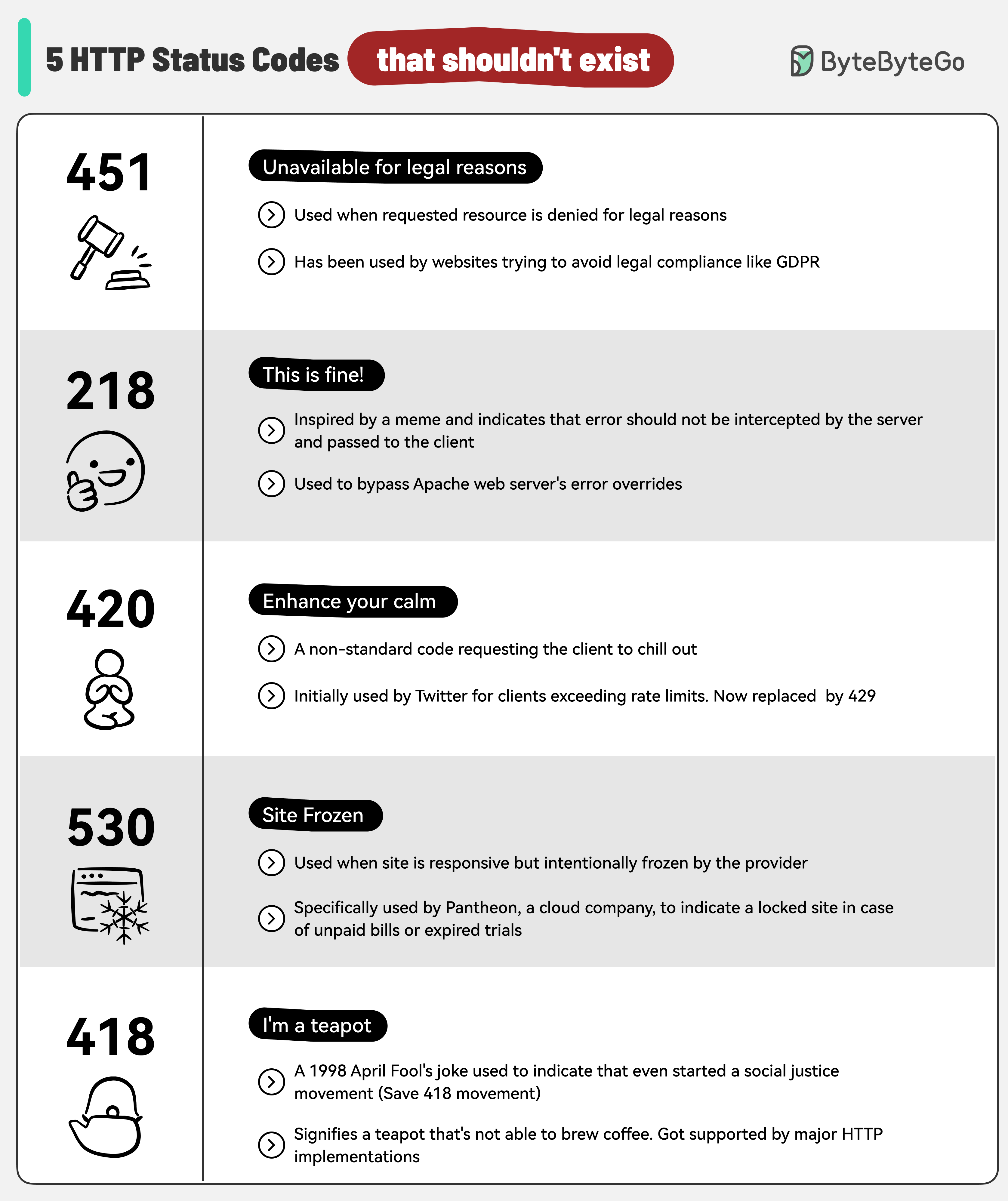

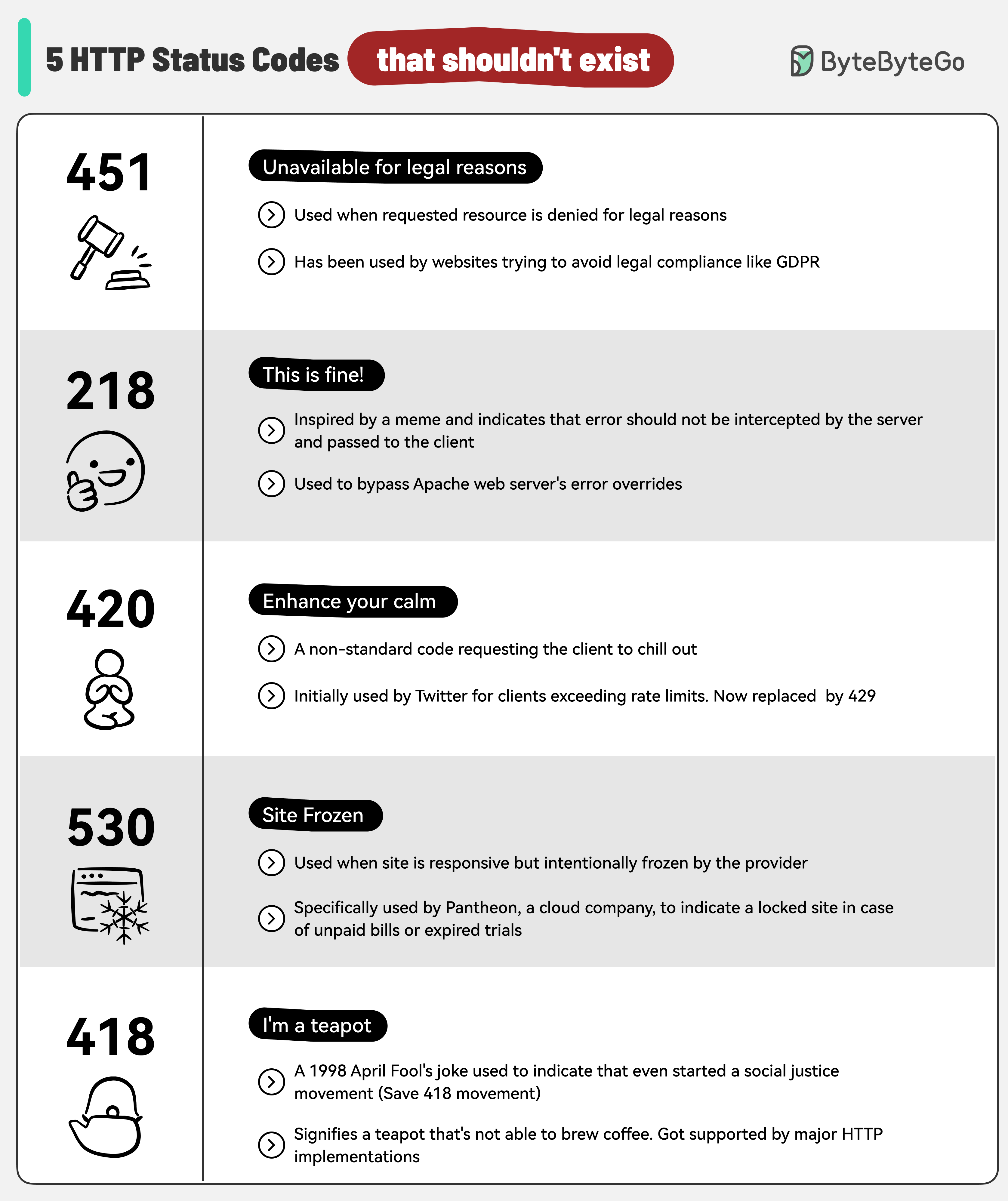

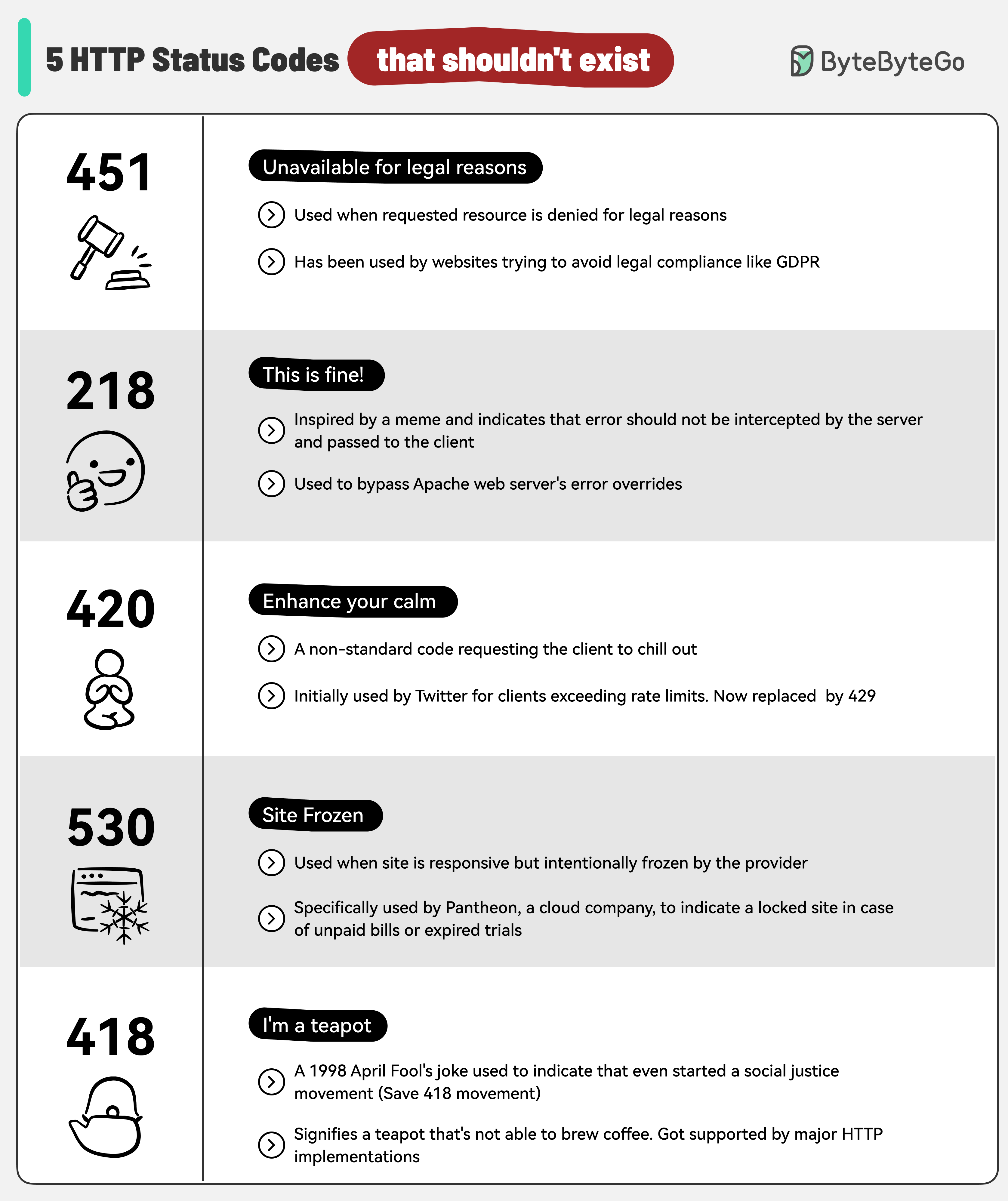

+ * [5 HTTP Status Codes That Should Never Have Been Created](https://bytebytego.com/guides/5-http-status-codes-that-should-never-have-been-created)

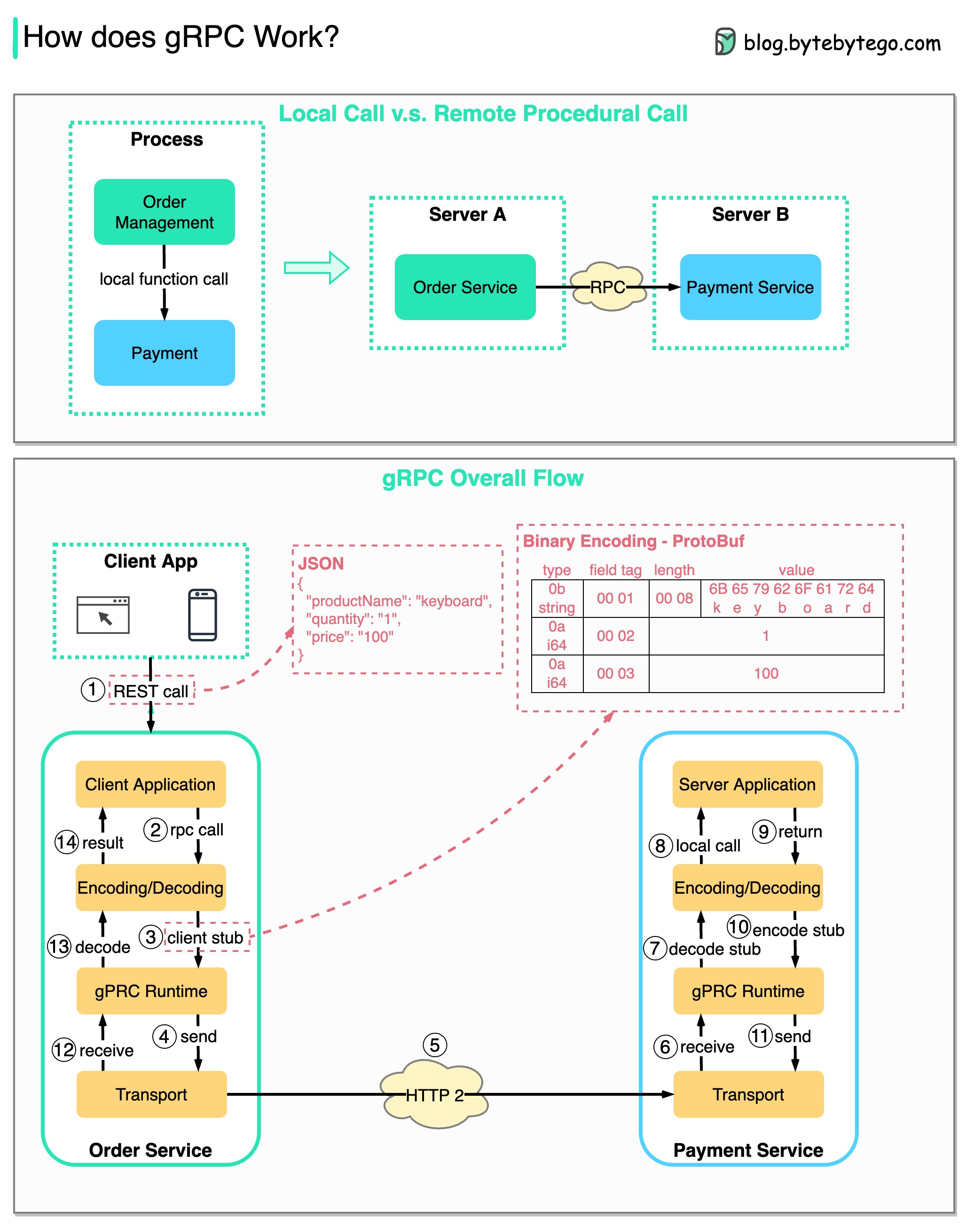

+ * [How does gRPC work?](https://bytebytego.com/guides/how-does-grpc-work)

+ * [How NAT Enabled the Internet](https://bytebytego.com/guides/how-nat-made-the-growth-of-the-internet-possible)

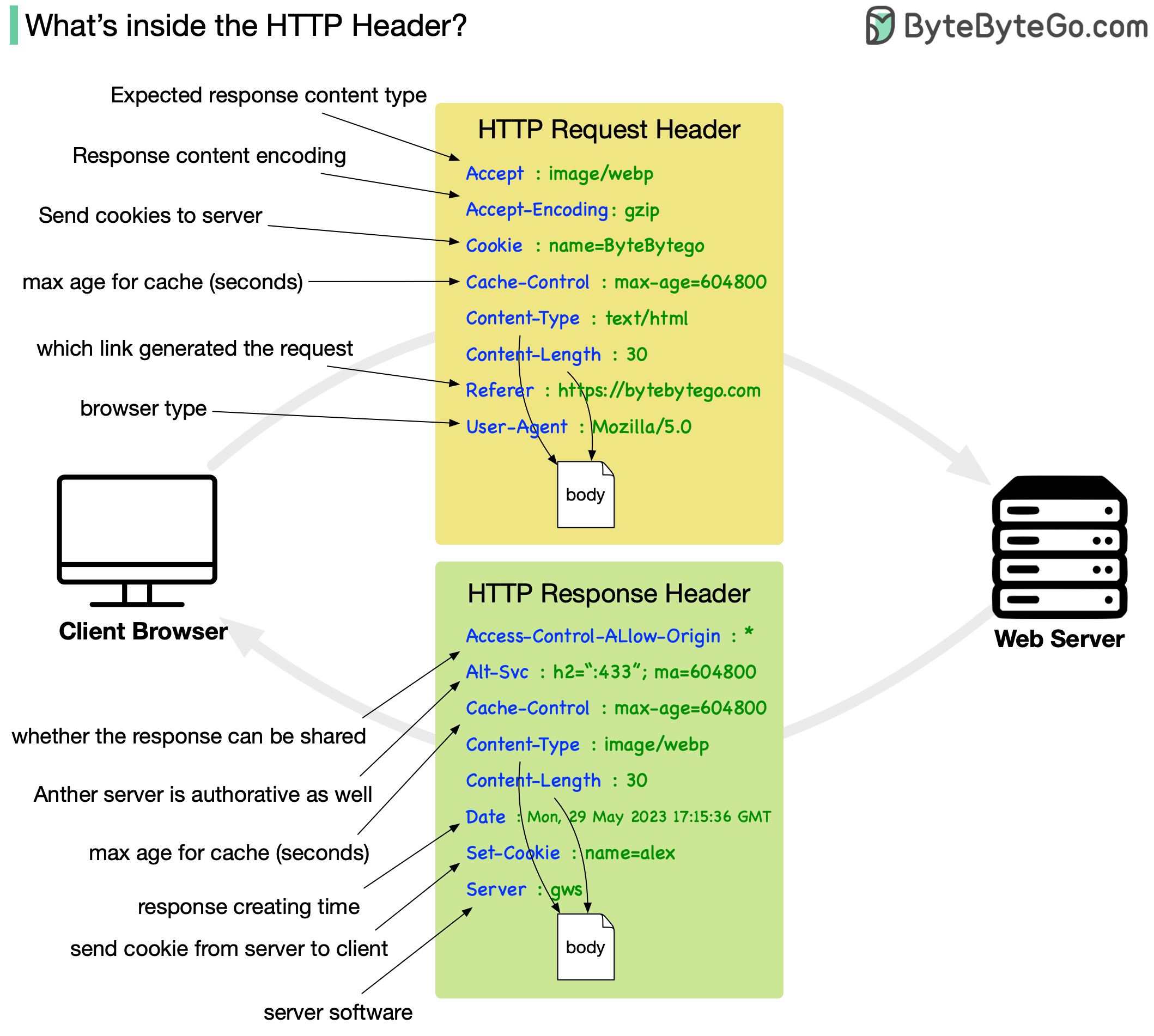

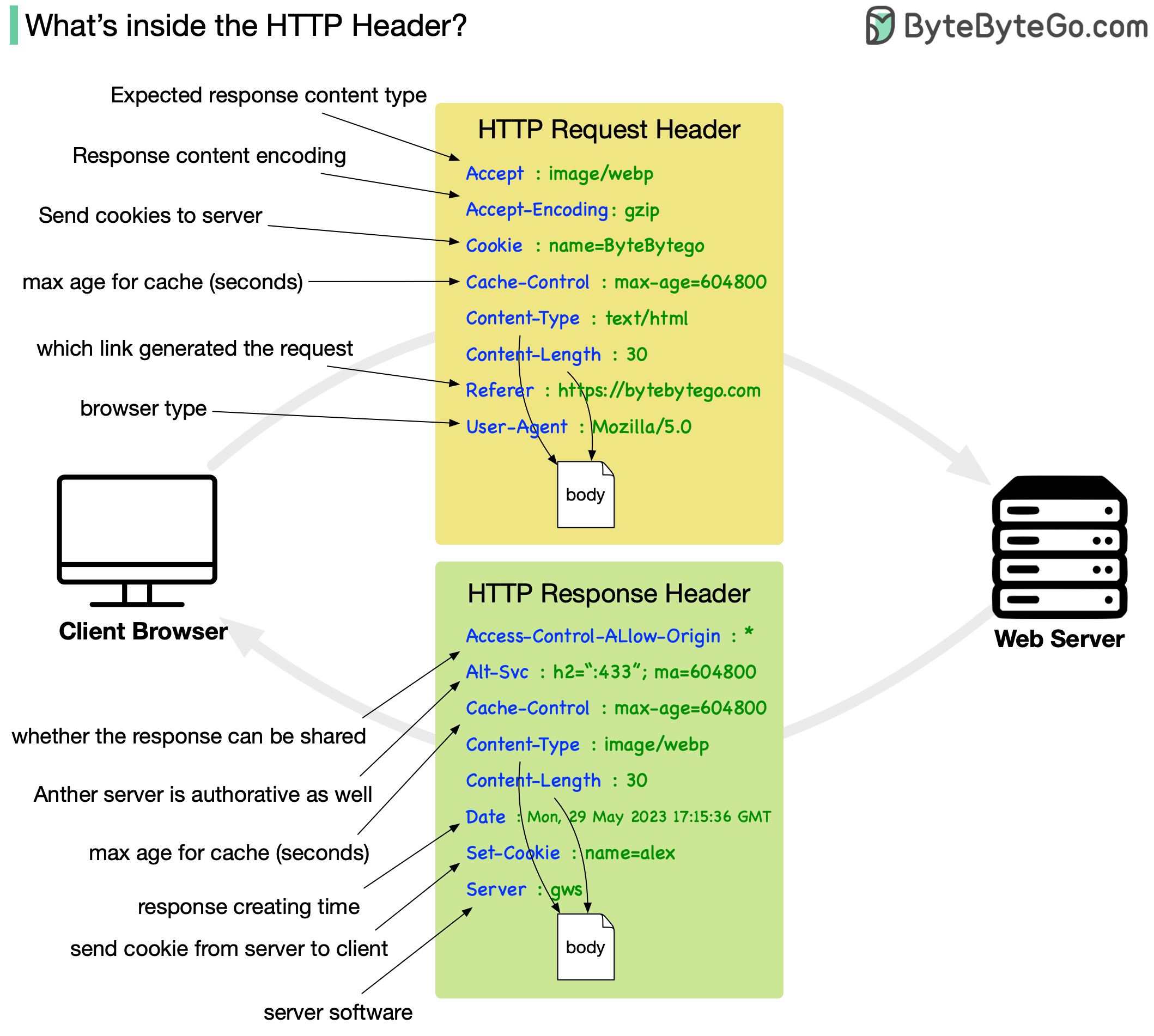

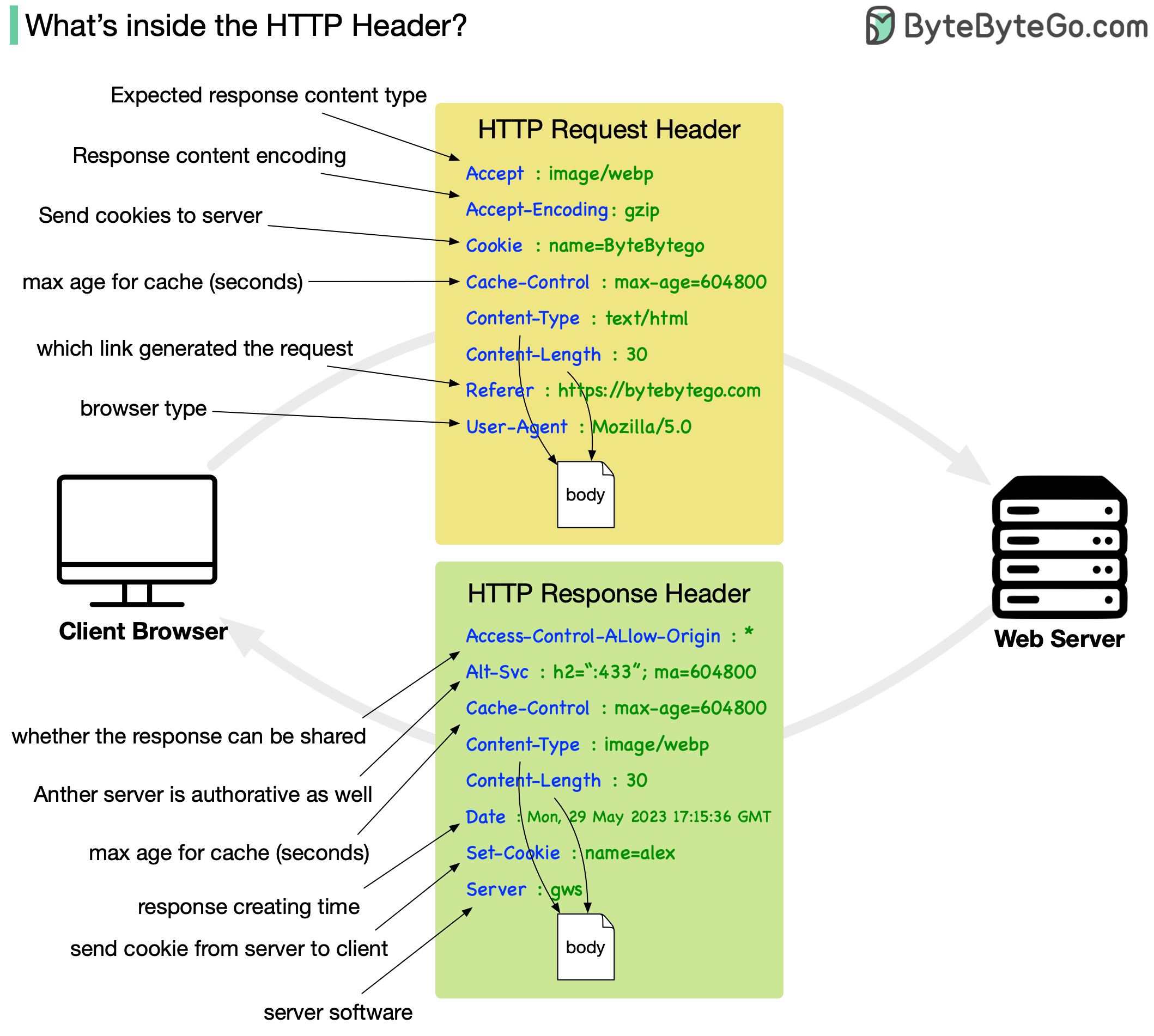

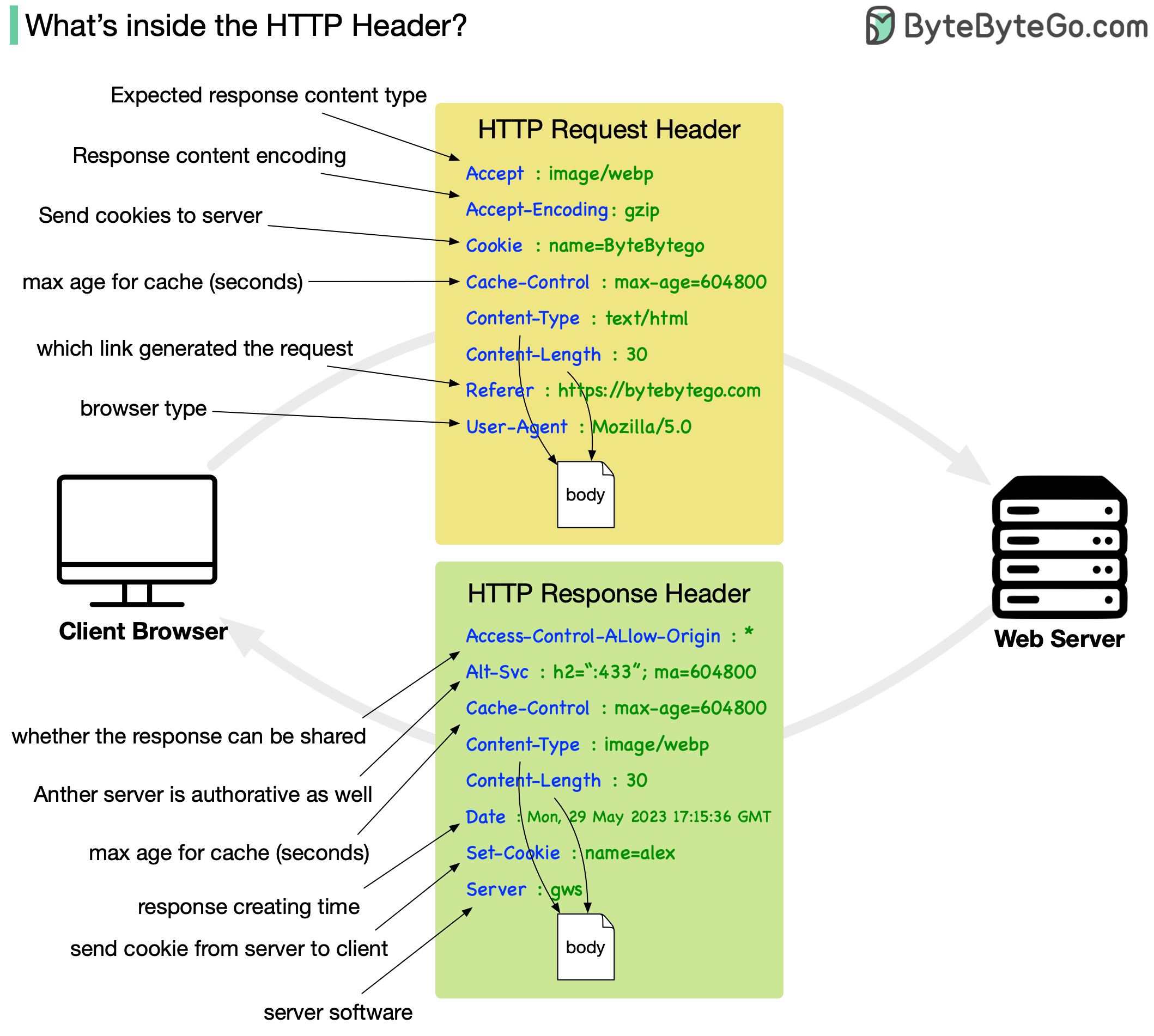

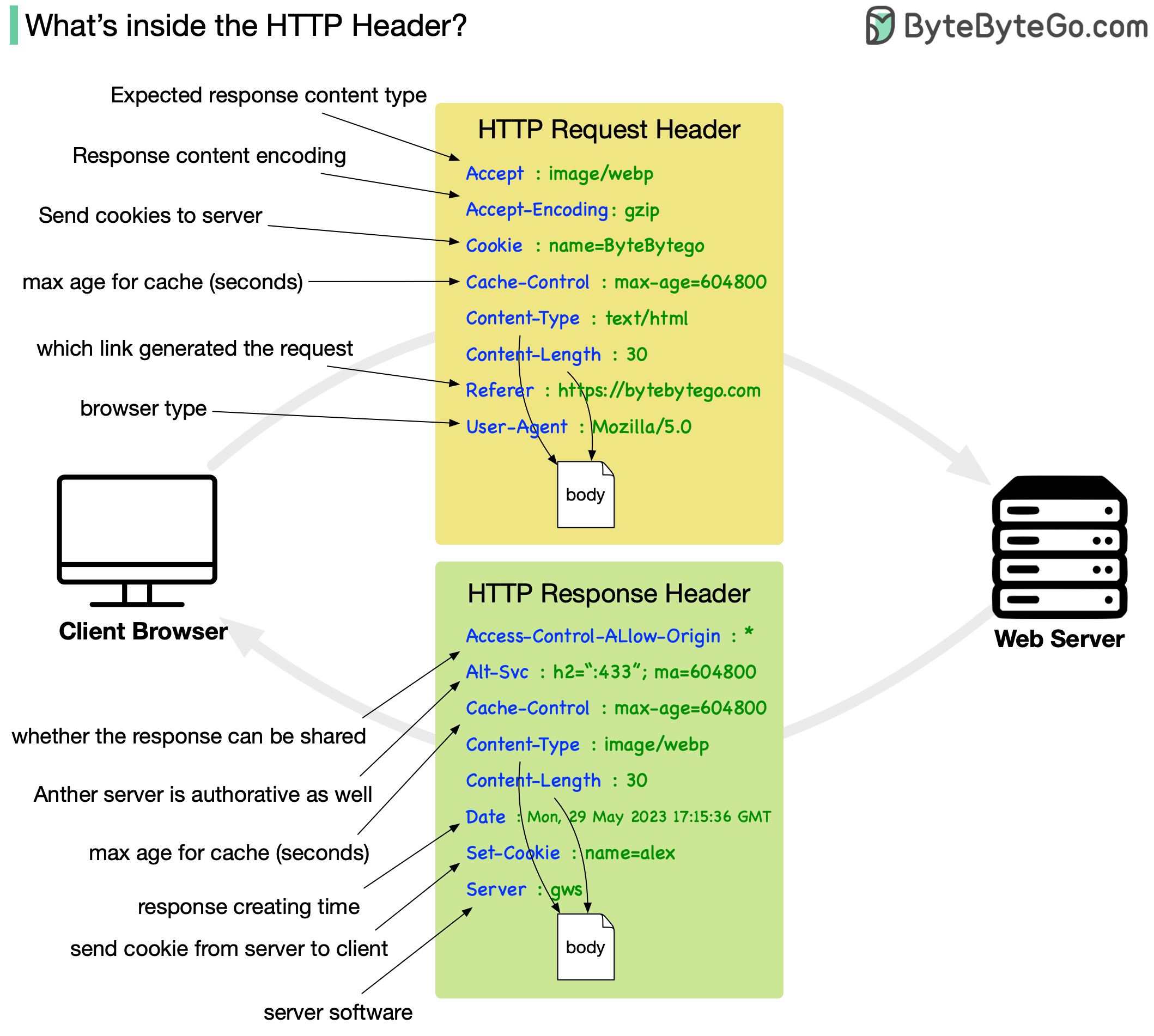

+ * [Important Things About HTTP Headers](https://bytebytego.com/guides/important-things-about-http-headers-you-may-not-know)

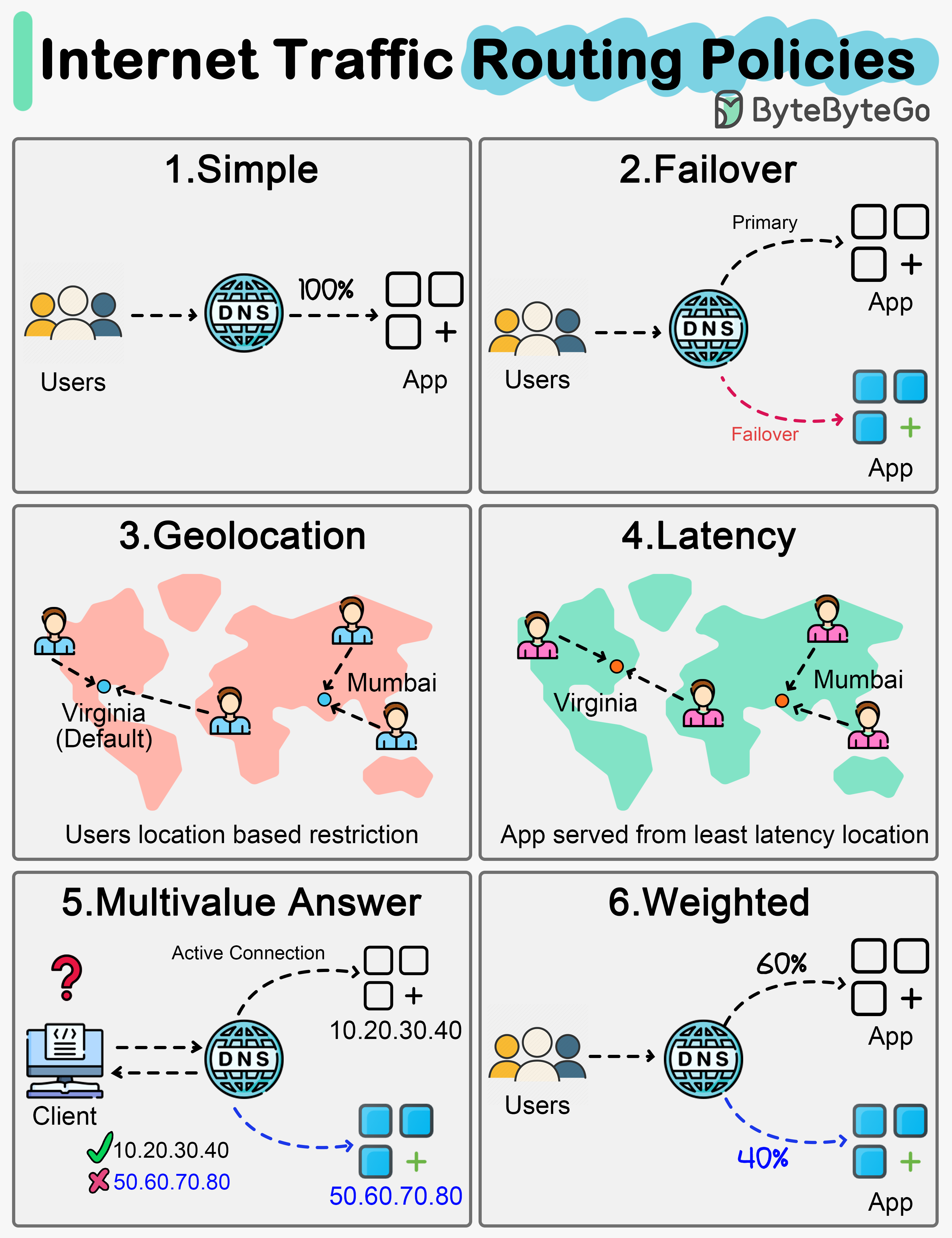

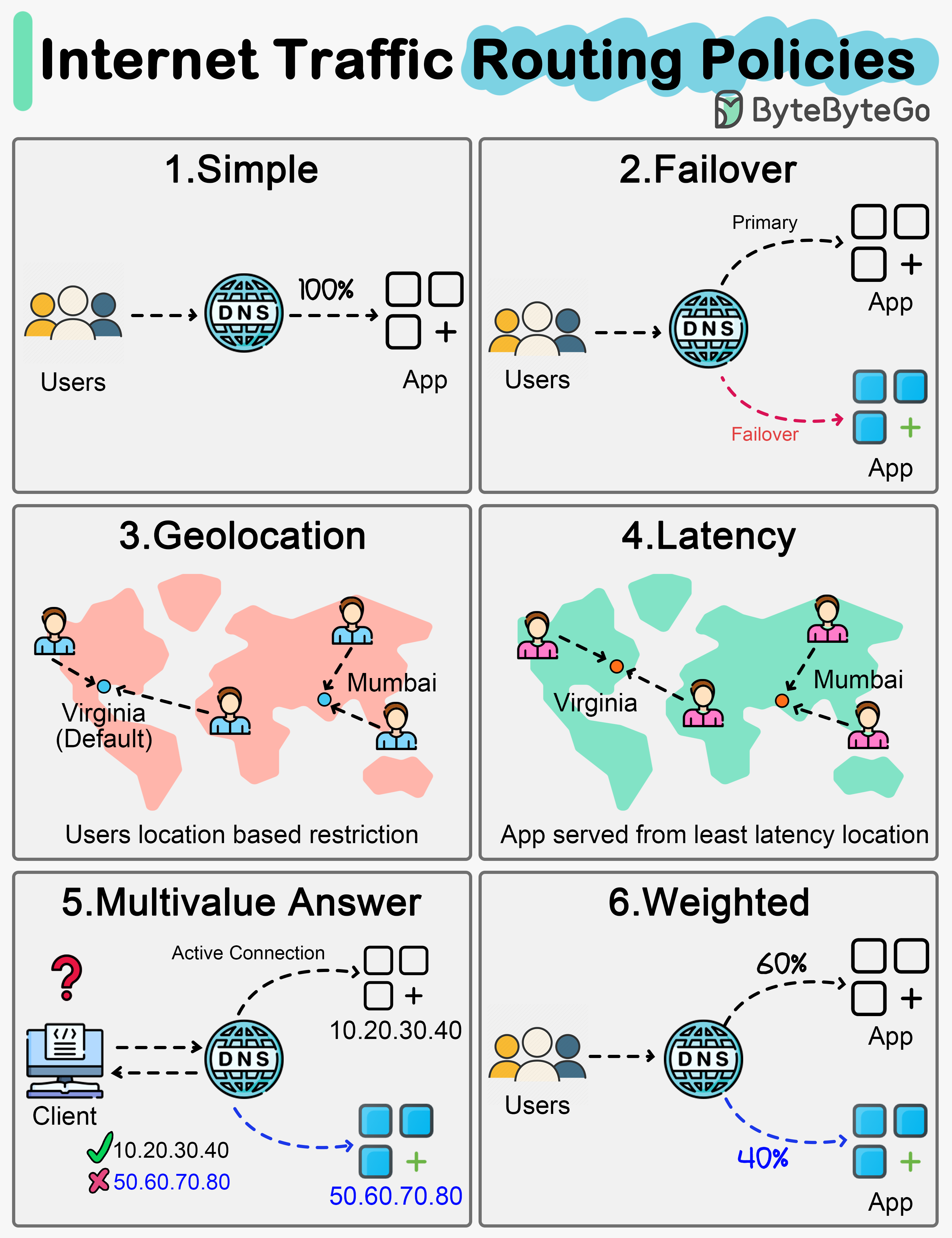

+ * [Internet Traffic Routing Policies](https://bytebytego.com/guides/internet-traffic-routing-policies)

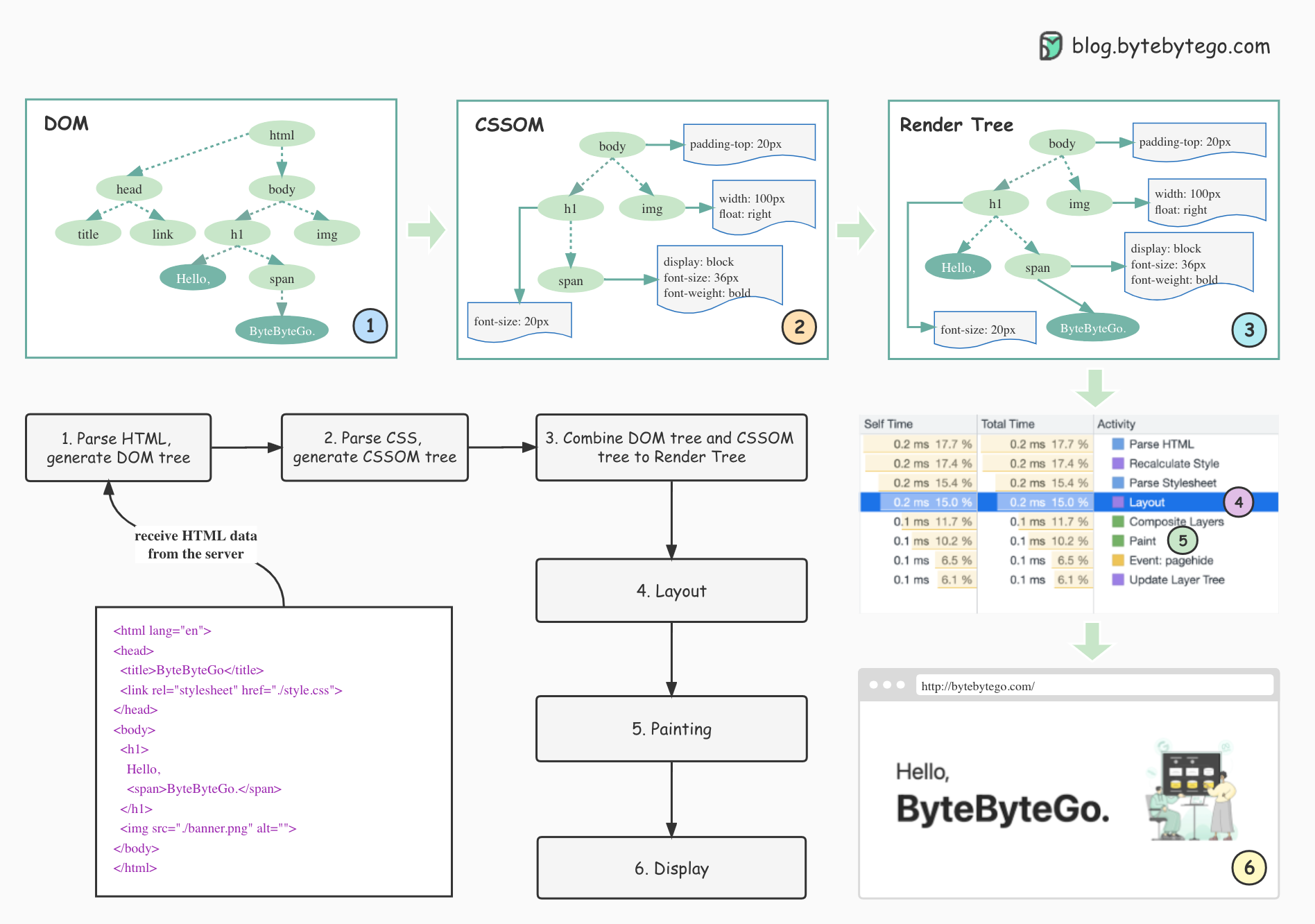

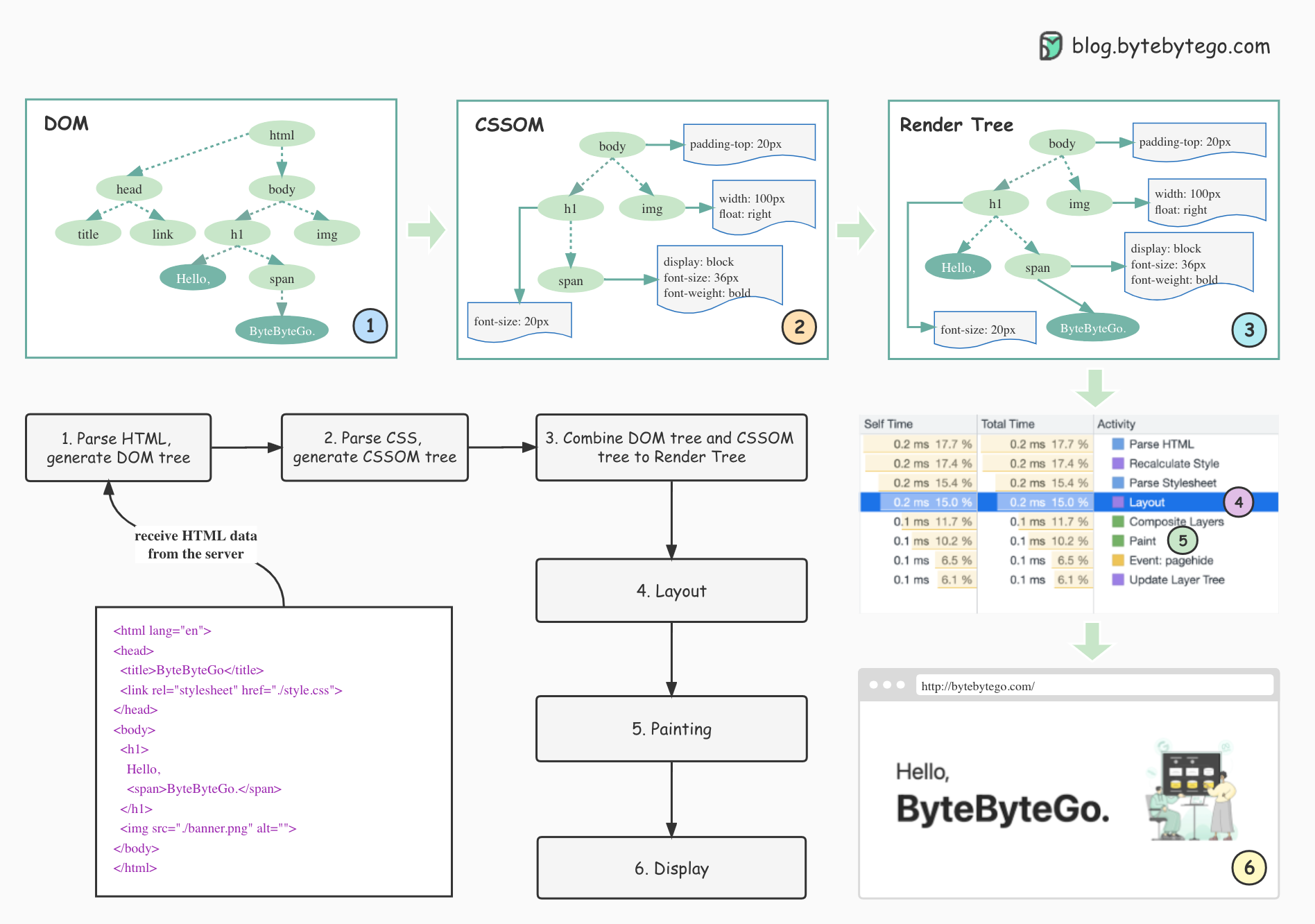

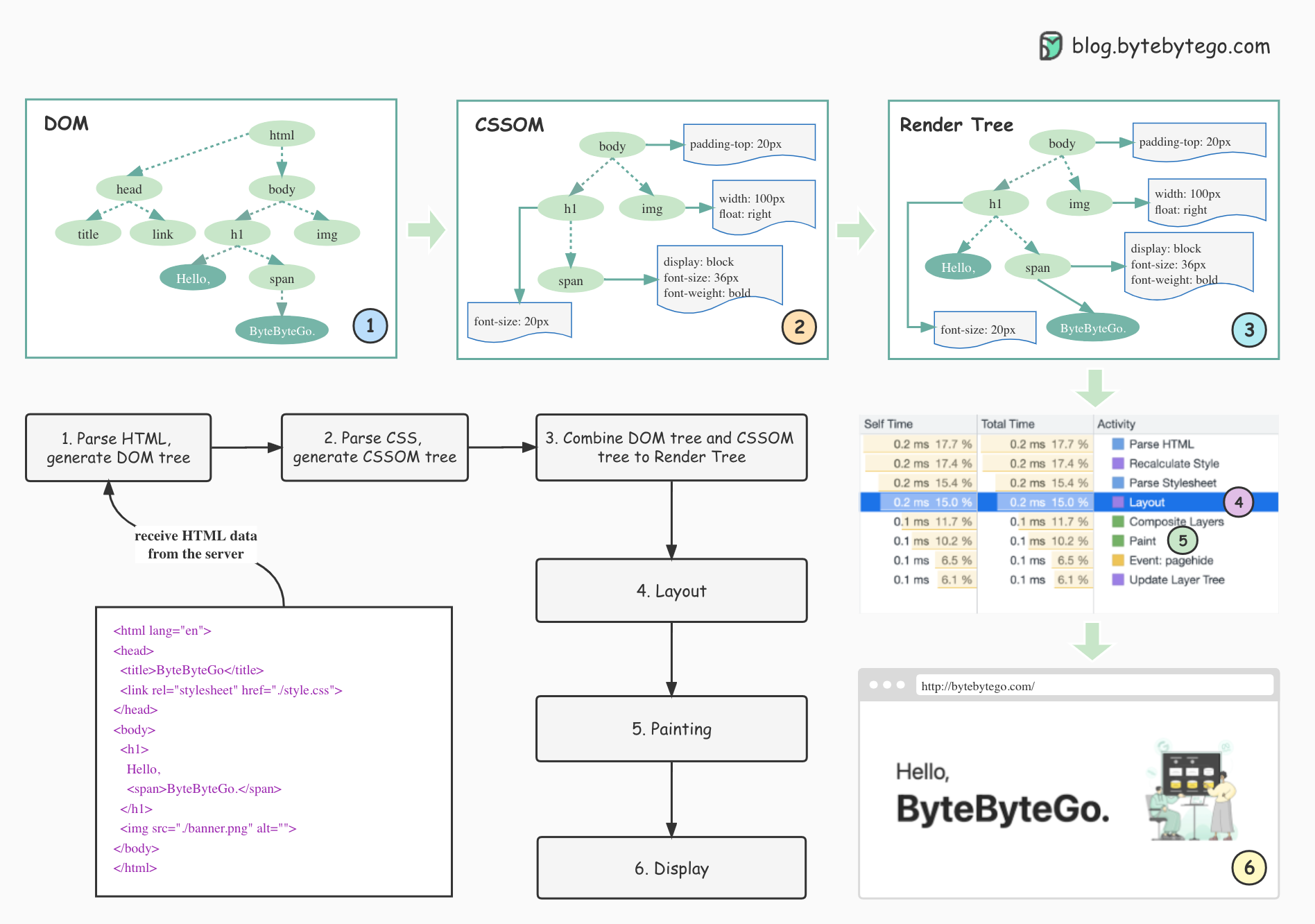

+ * [How Browsers Render Web Pages](https://bytebytego.com/guides/how-does-the-browser-render-a-web-page)

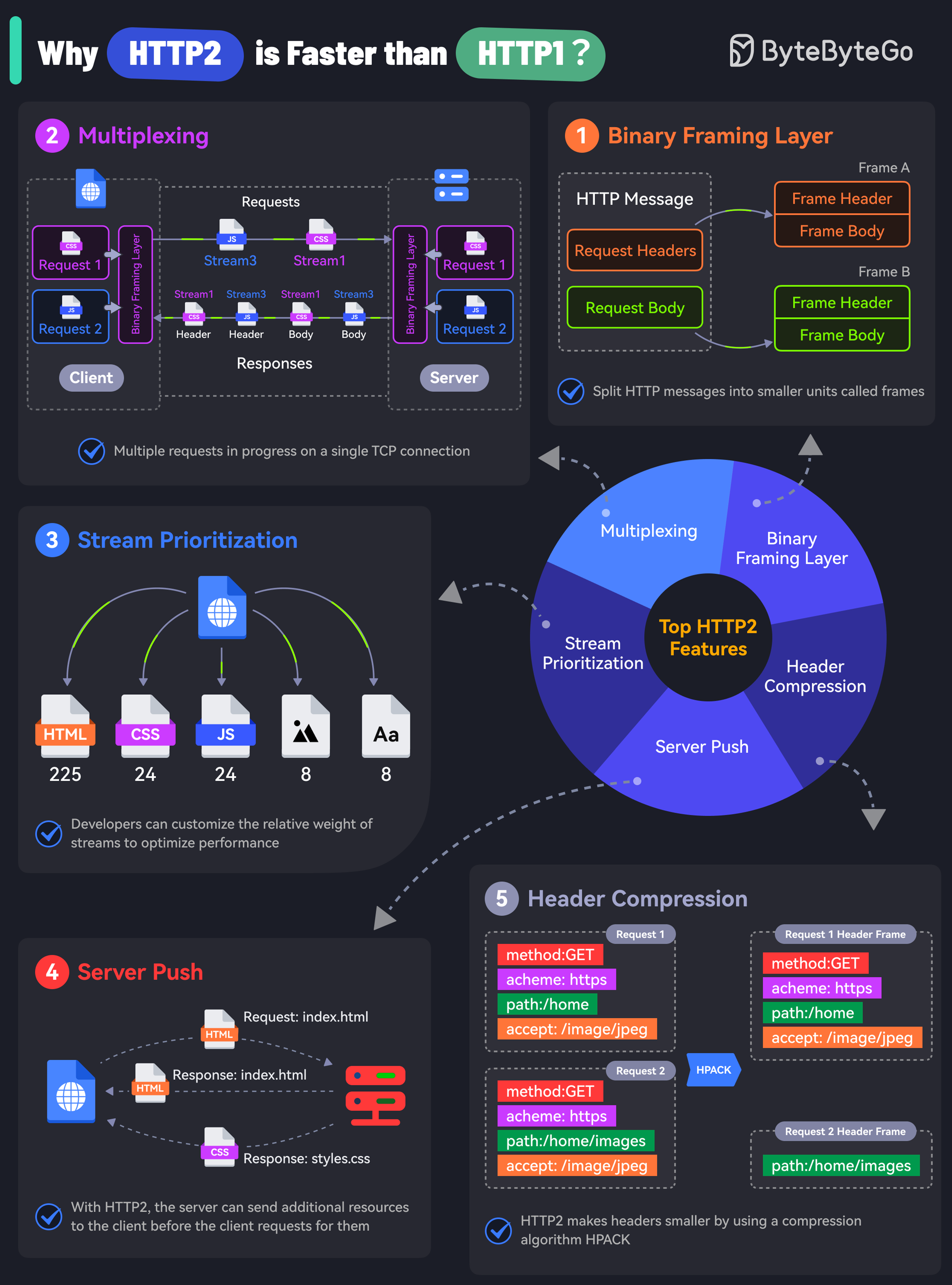

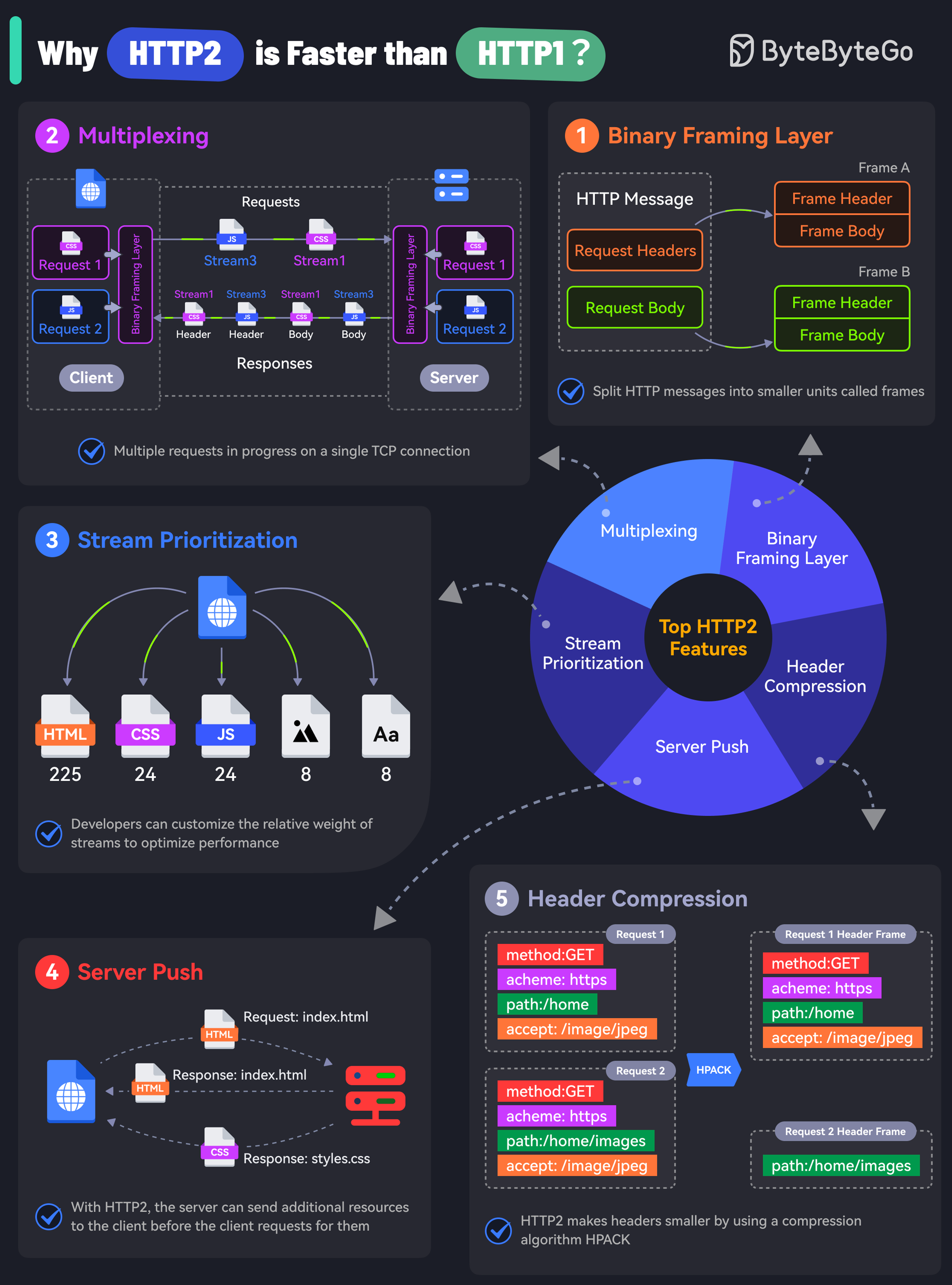

+ * [What makes HTTP2 faster than HTTP1?](https://bytebytego.com/guides/what-makes-http2-faster-than-http1)

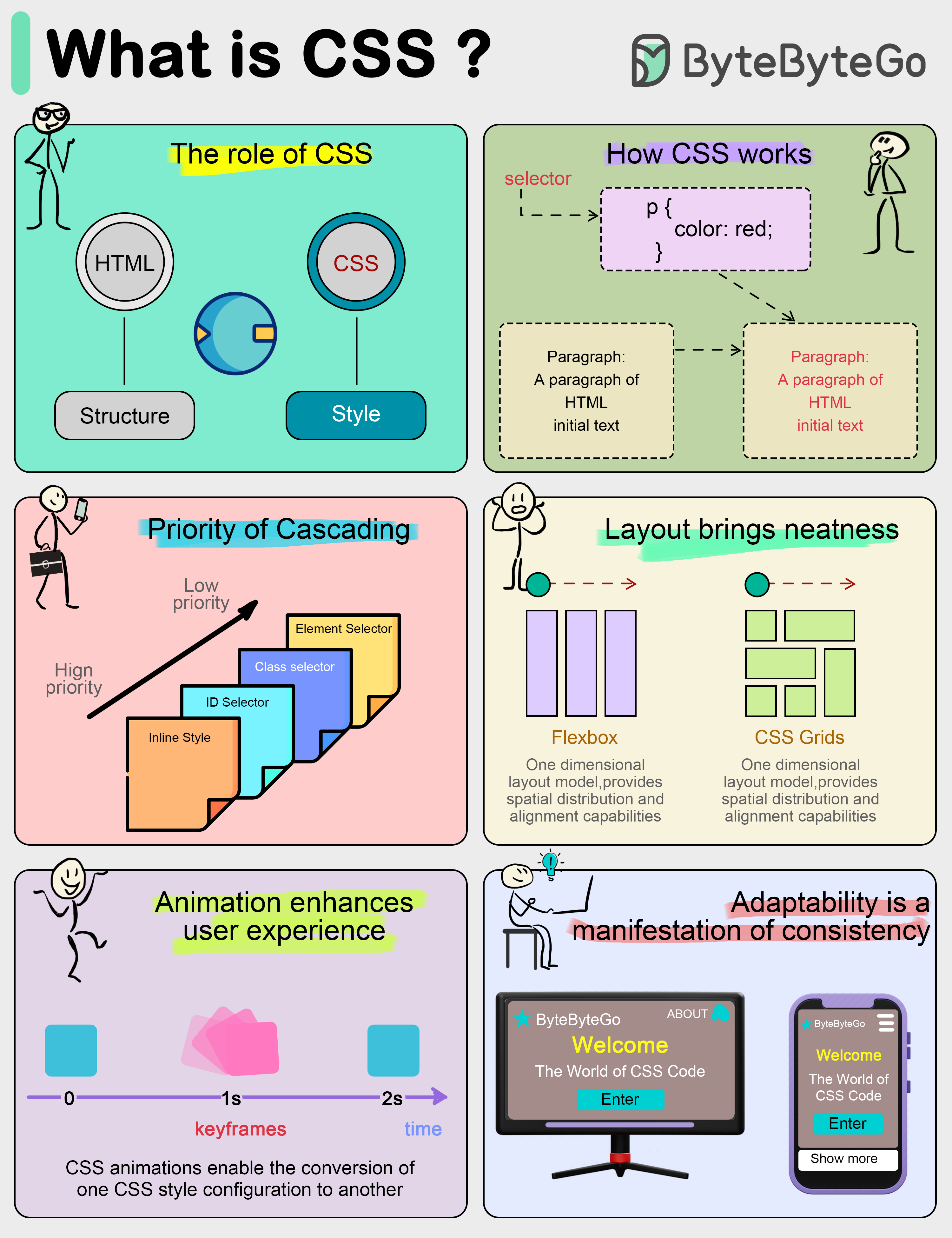

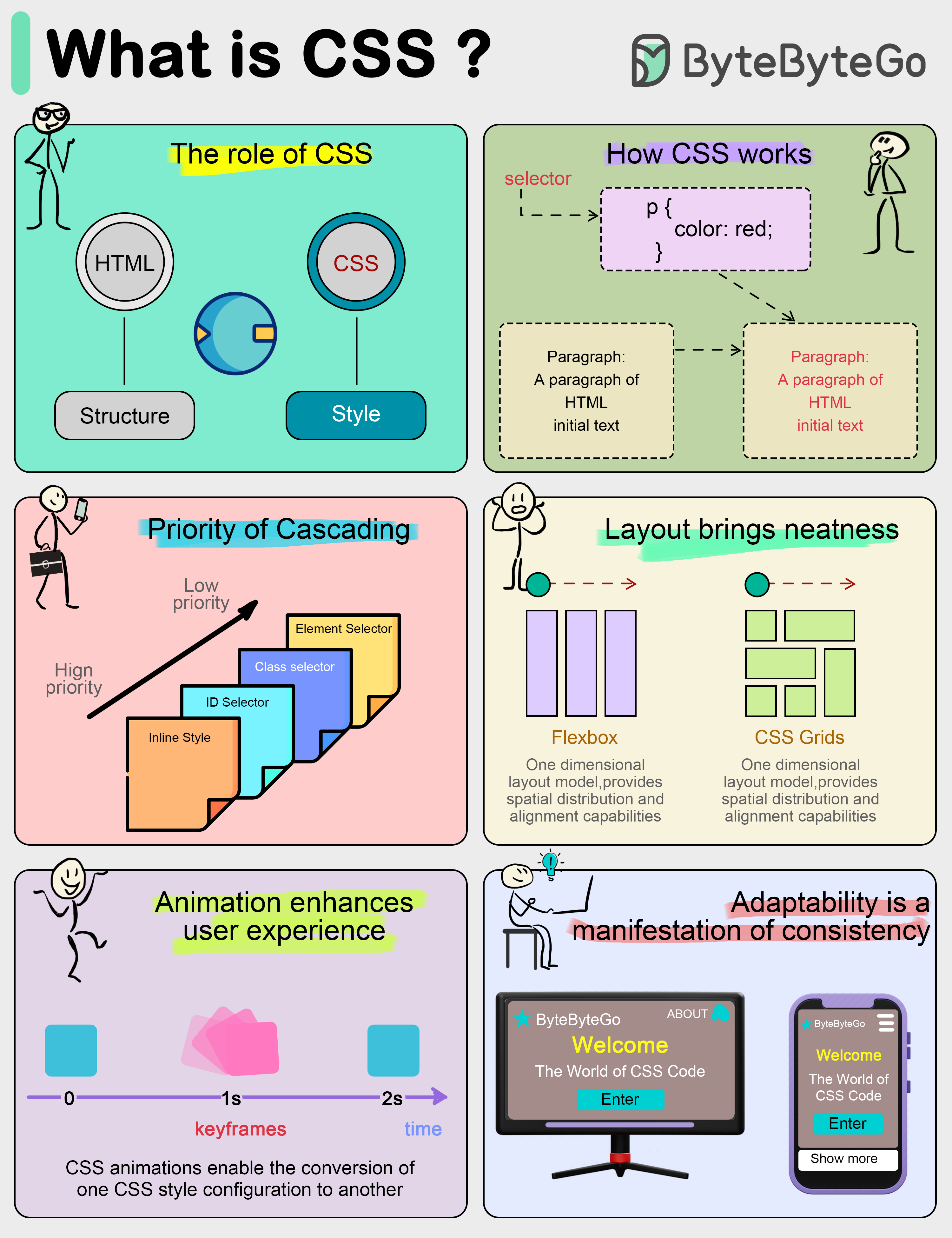

+ * [What is CSS (Cascading Style Sheets)?](https://bytebytego.com/guides/what-is-css-cascading-style-sheets)

+ * [Key Use Cases for Load Balancers](https://bytebytego.com/guides/key-use-cases-for-load-balancers)

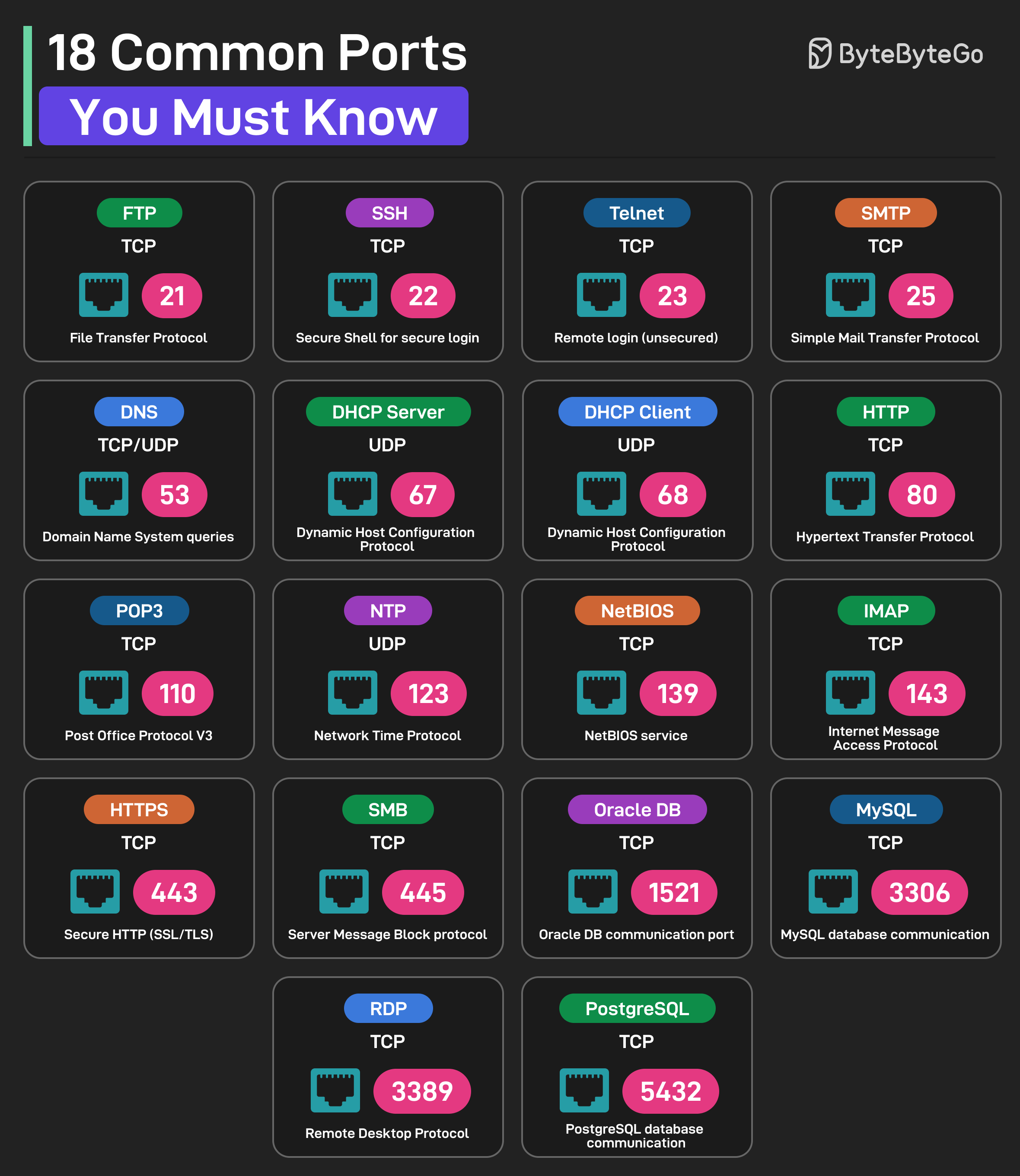

+ * [18 Common Ports Worth Knowing](https://bytebytego.com/guides/18-common-ports-worth-knowing)

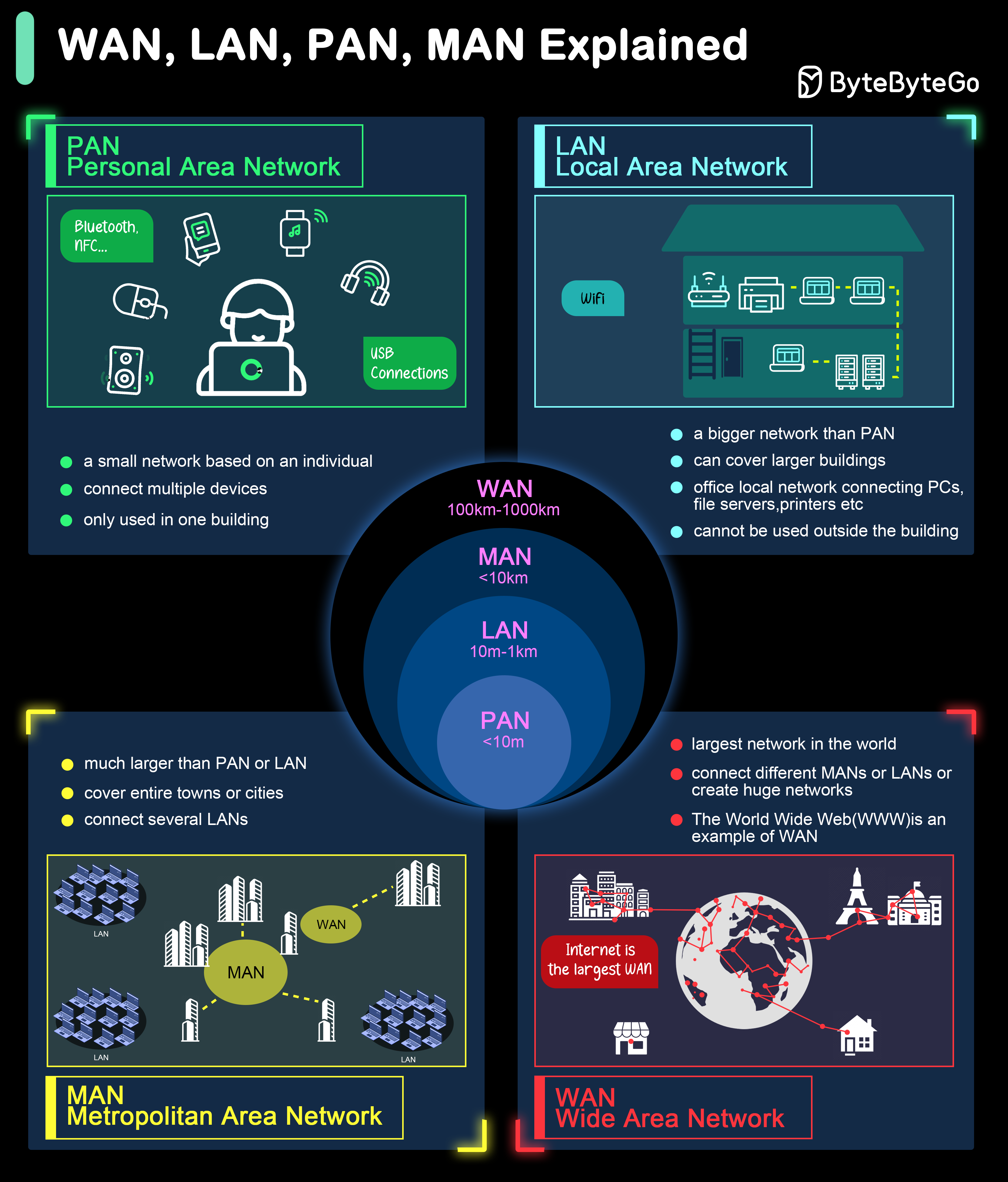

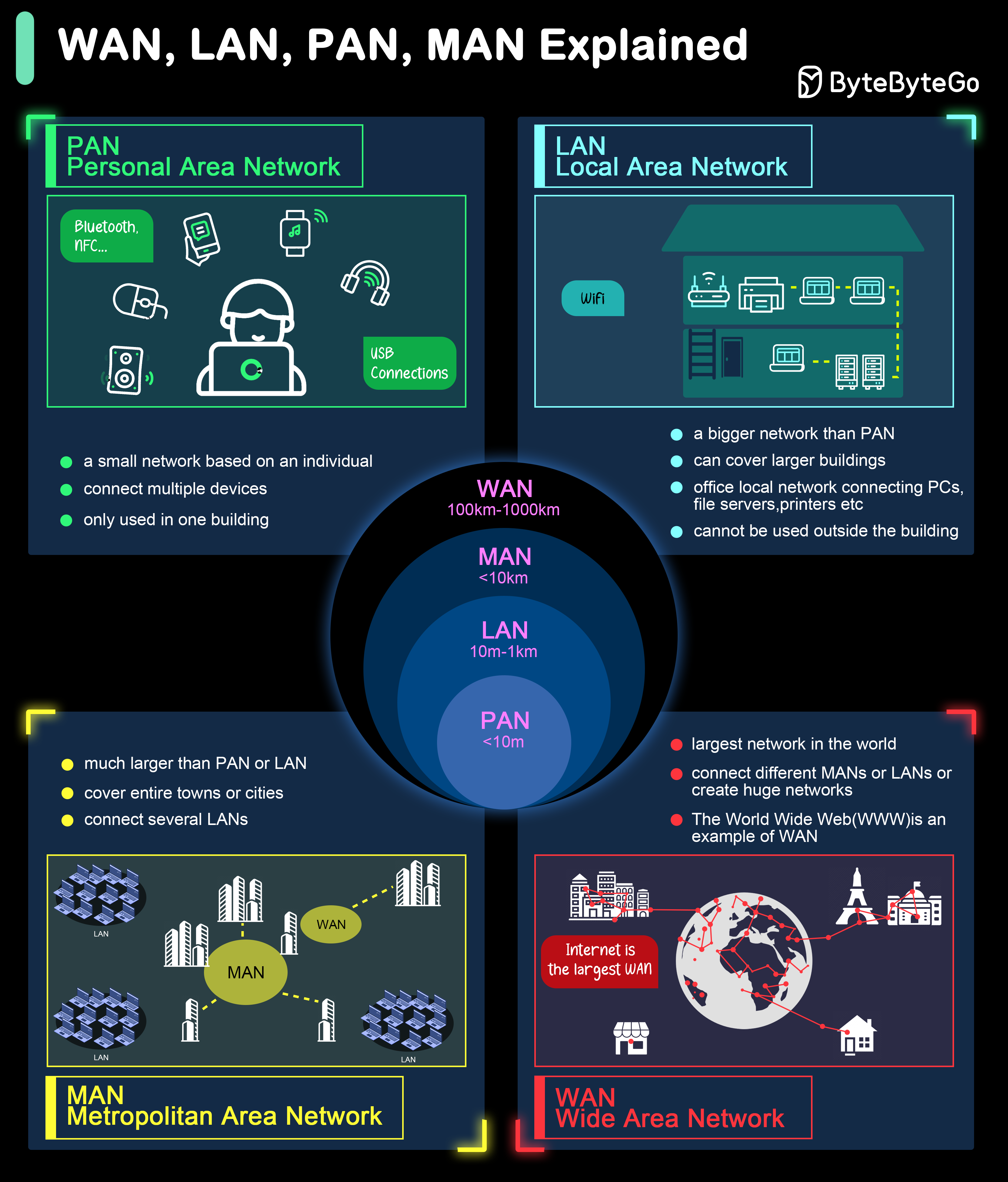

+ * [What are the differences between WAN, LAN, PAN and MAN?](https://bytebytego.com/guides/what-are-the-differences-between-wan-lan-pan-and-man)

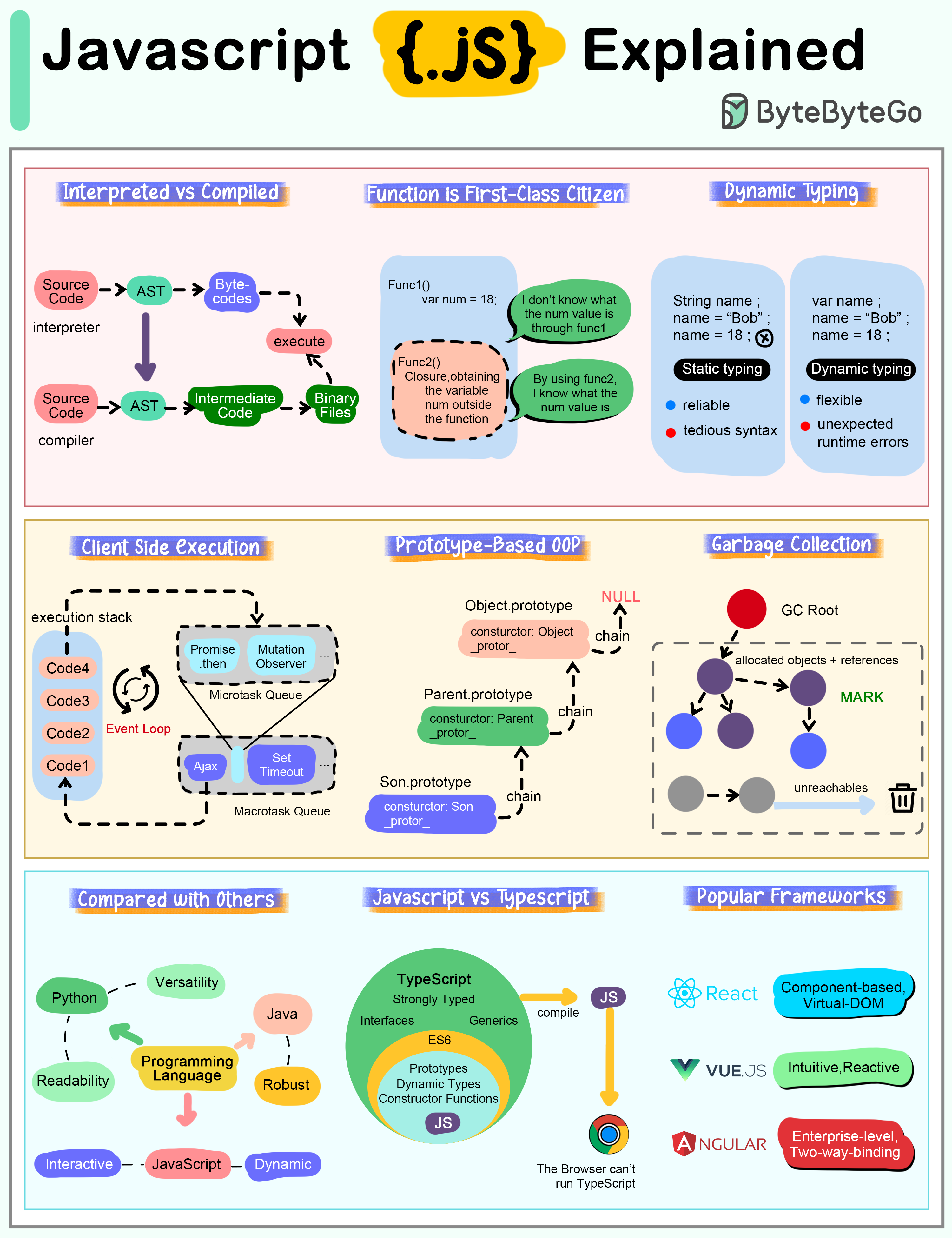

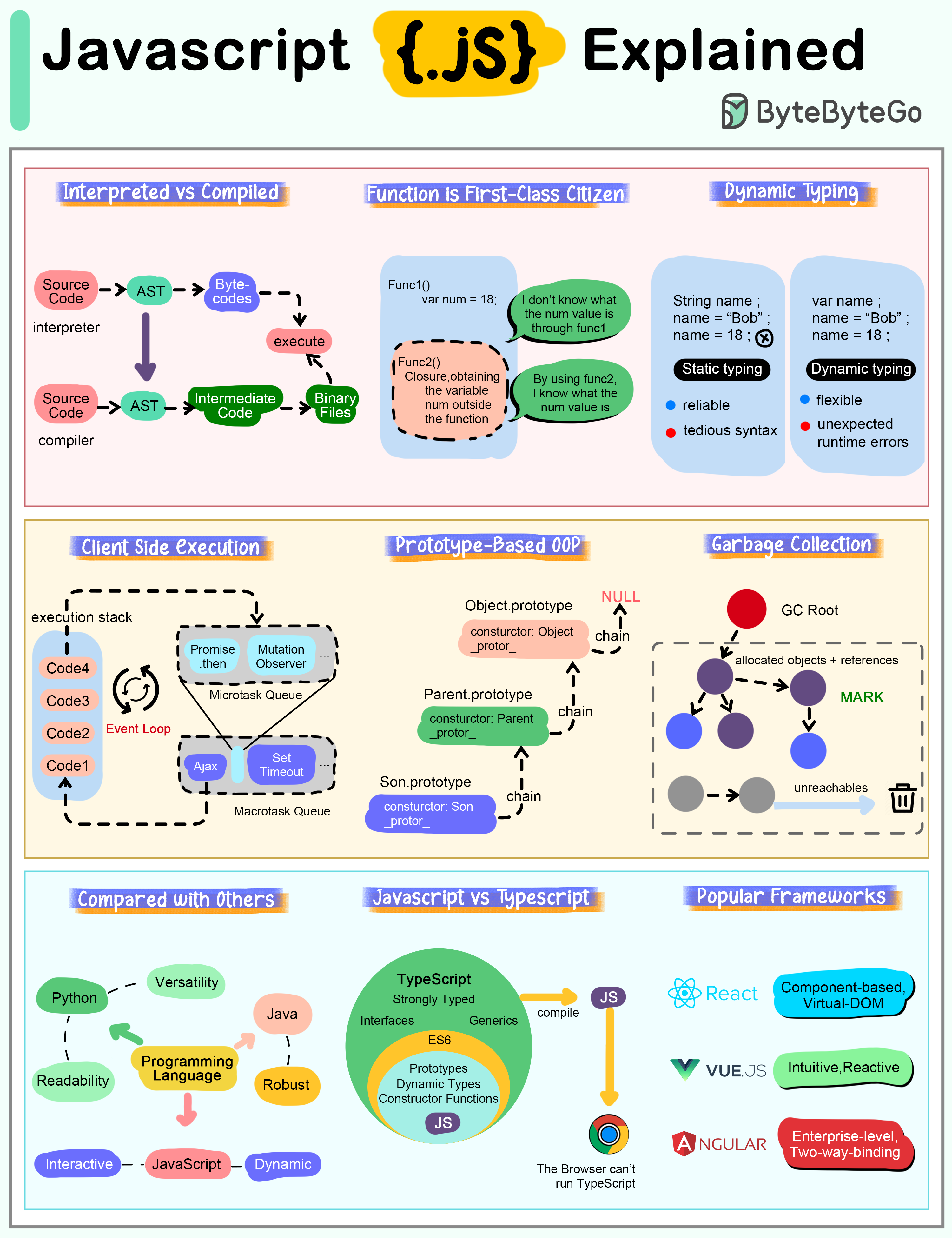

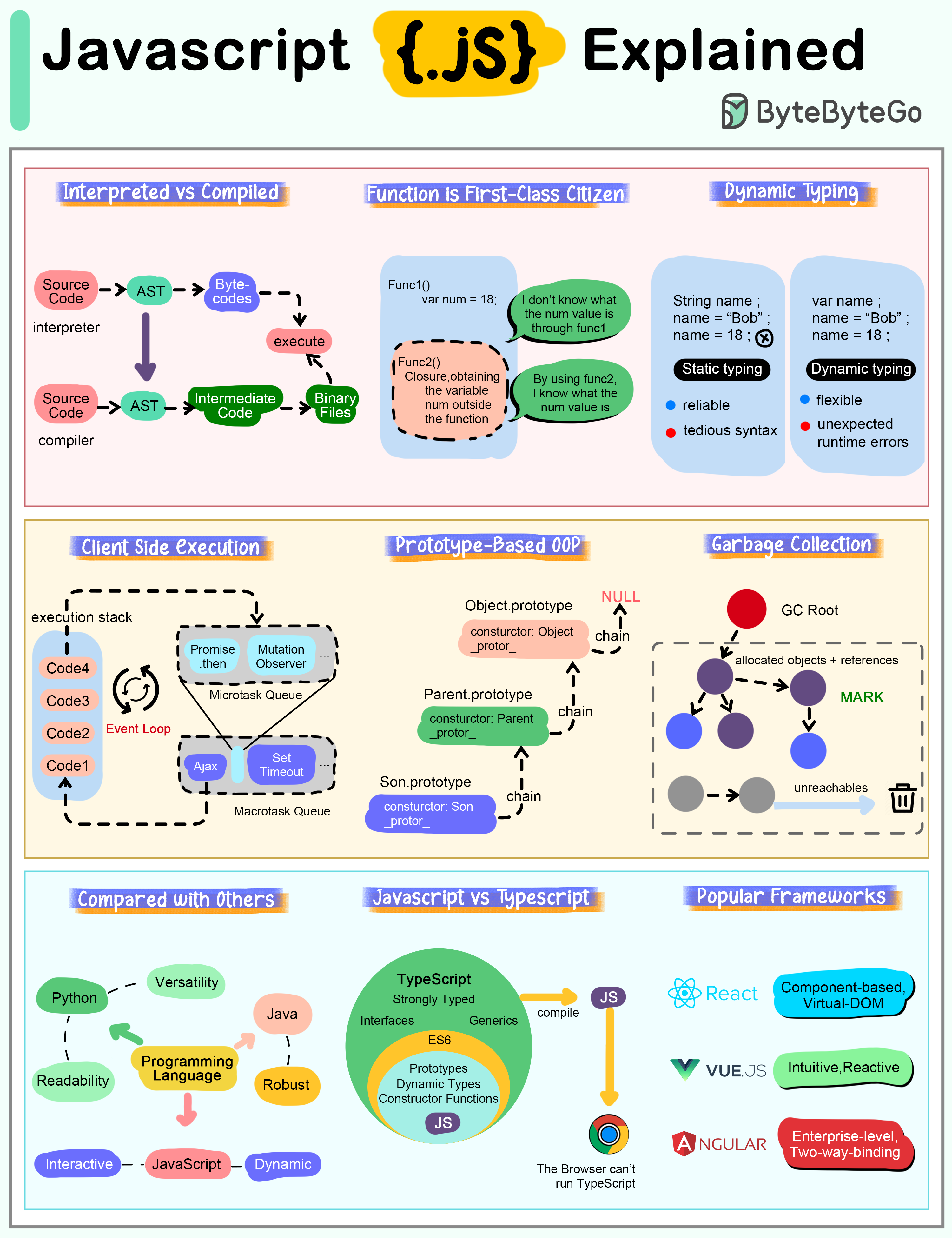

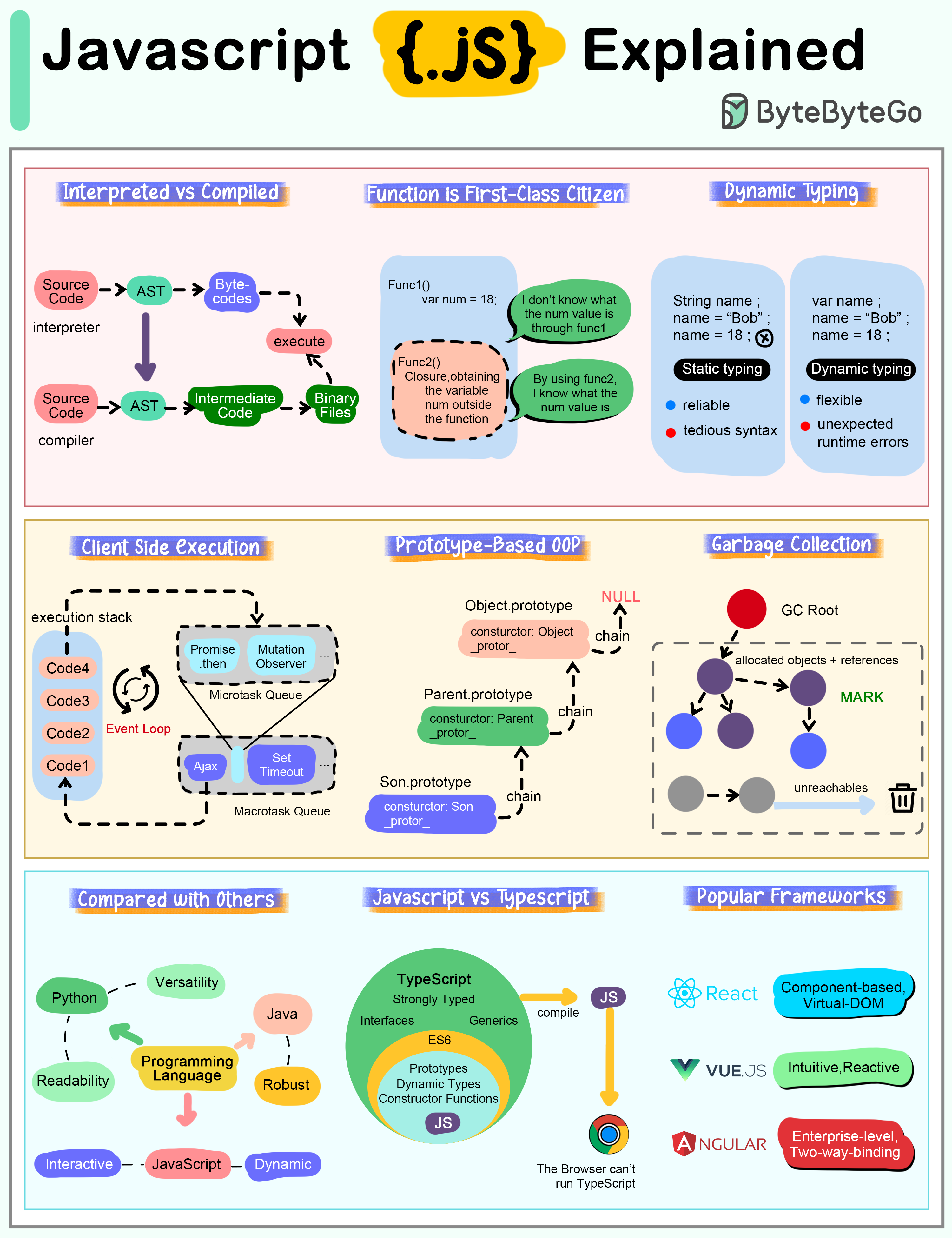

+ * [How does Javascript Work?](https://bytebytego.com/guides/how-does-javascript-work)

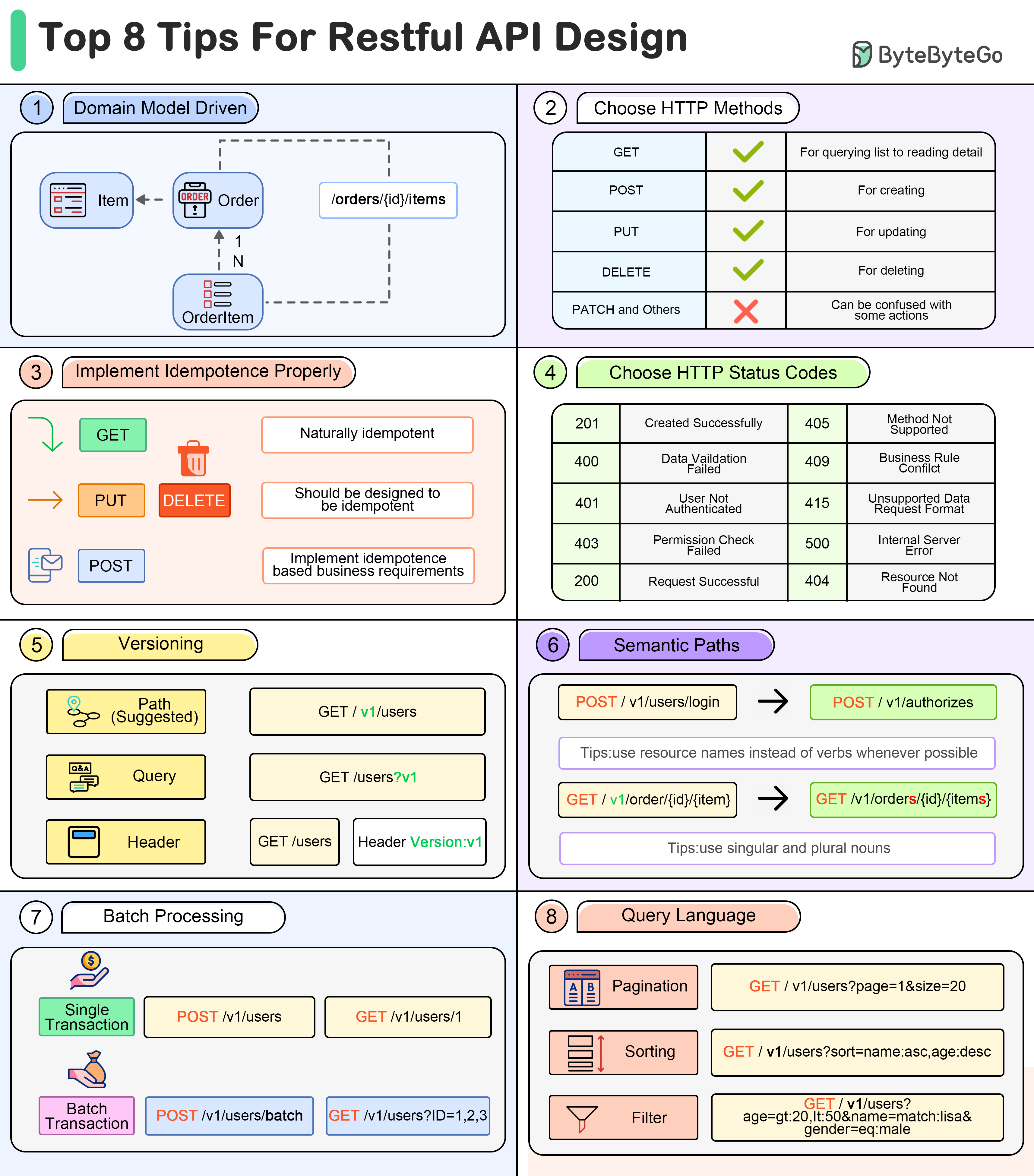

+ * [8 Tips for Efficient API Design](https://bytebytego.com/guides/8-tips-for-efficient-api-design)

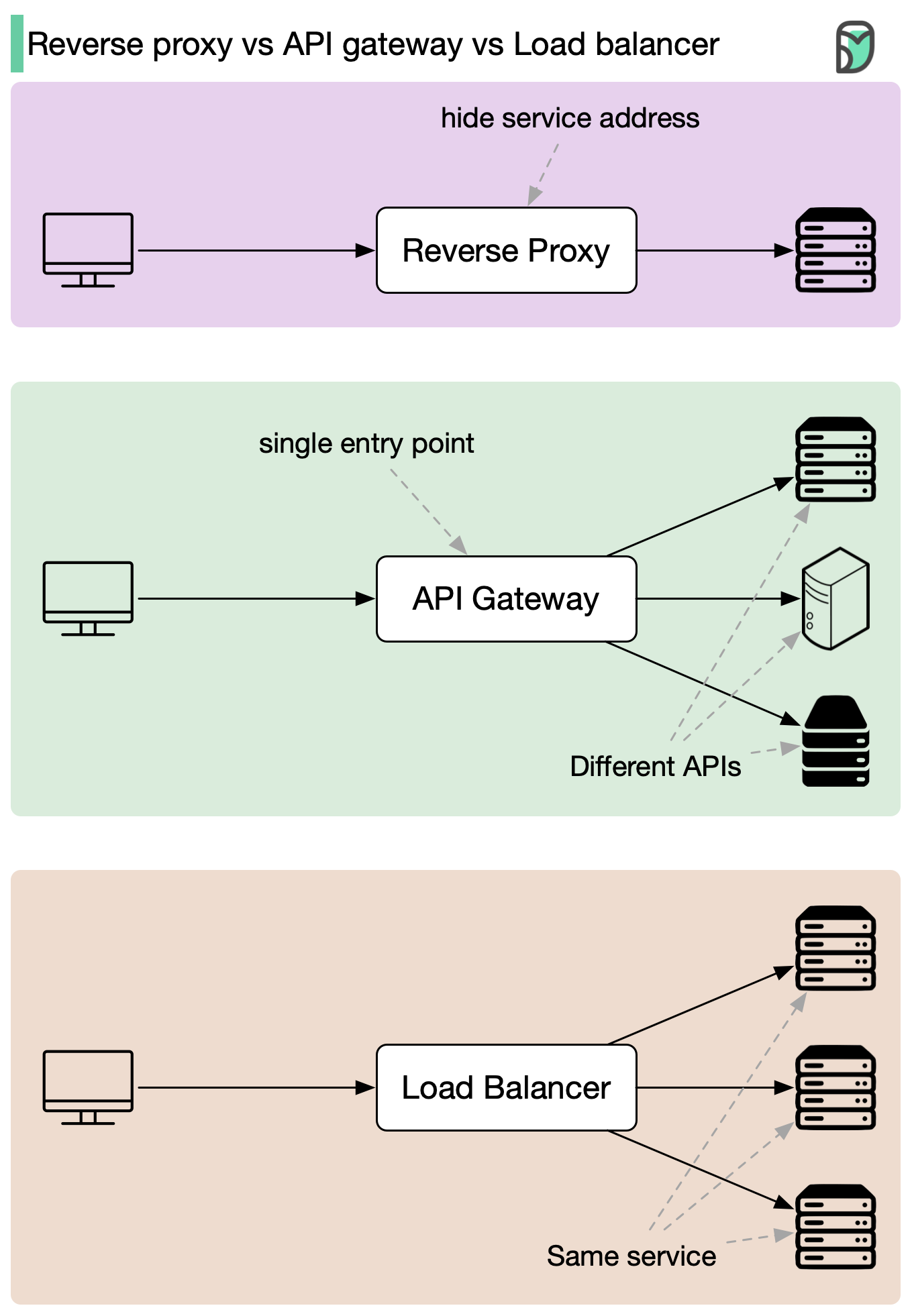

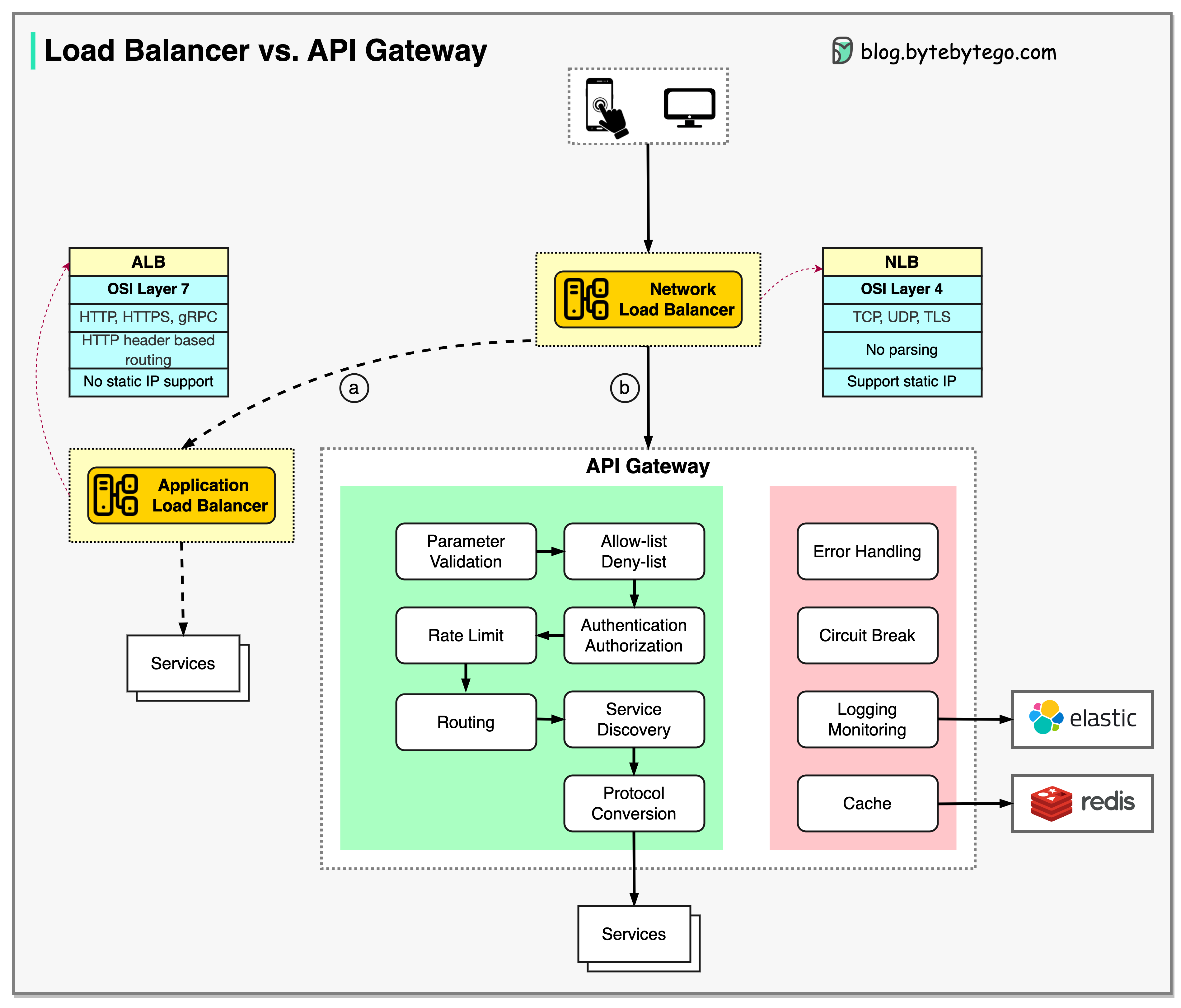

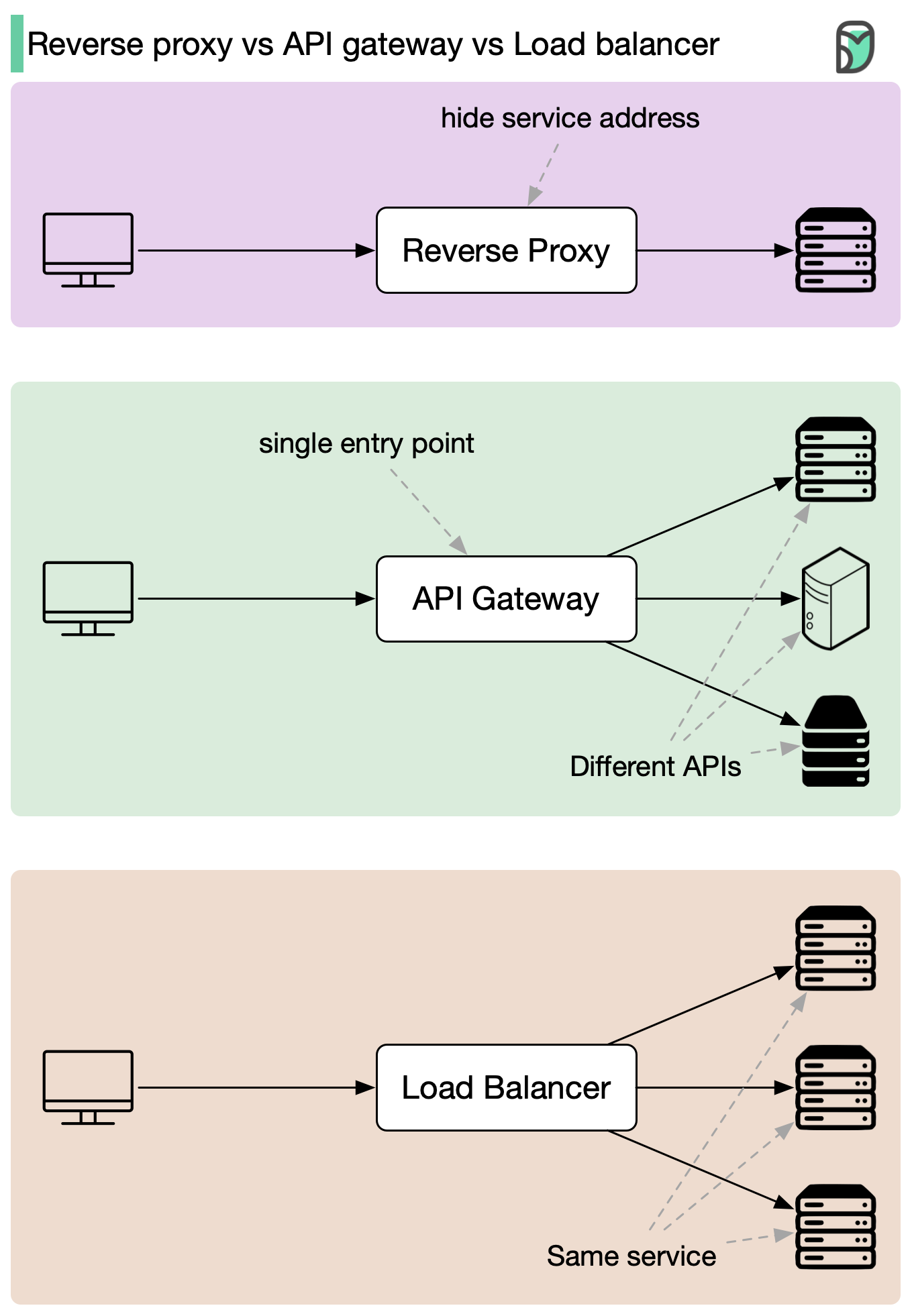

+ * [Reverse Proxy vs. API Gateway vs. Load Balancer](https://bytebytego.com/guides/reverse-proxy-vs-api-gateway-vs-load-balancer)

+ * [How does REST API work?](https://bytebytego.com/guides/how-does-rest-api-work)

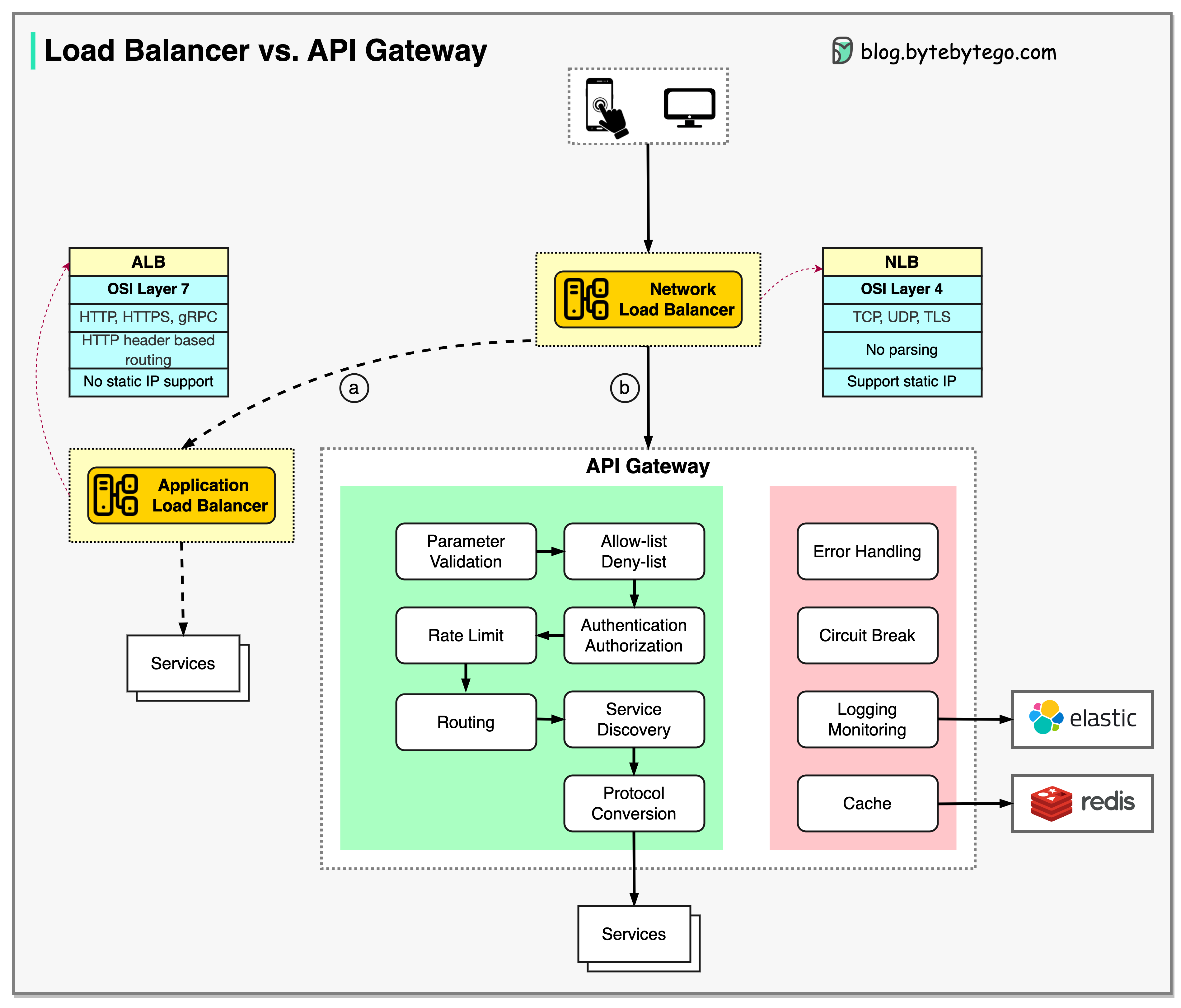

+ * [Load Balancer vs. API Gateway](https://bytebytego.com/guides/what-are-the-differences-between-a-load-balancer-and-an-api-gateway)

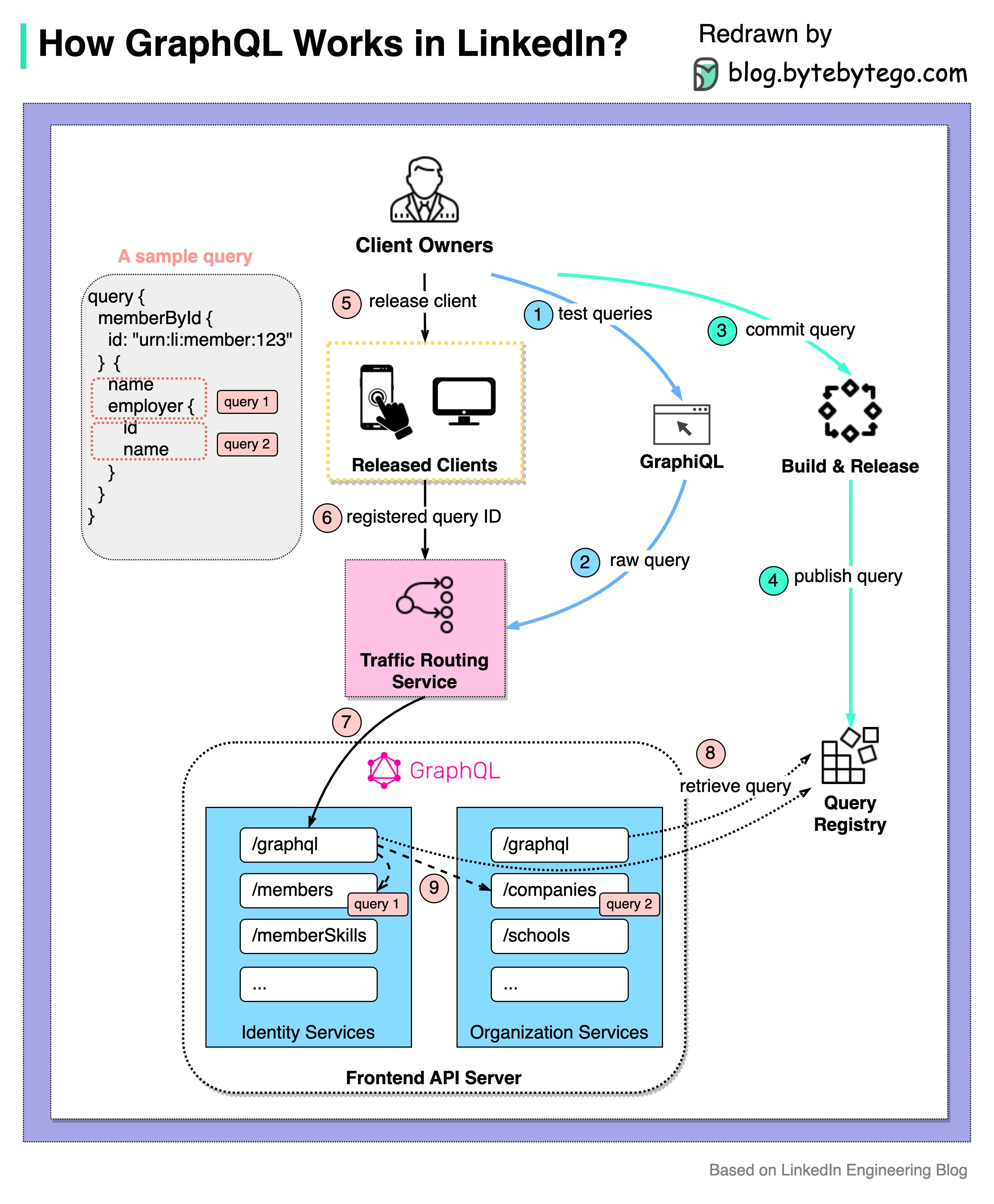

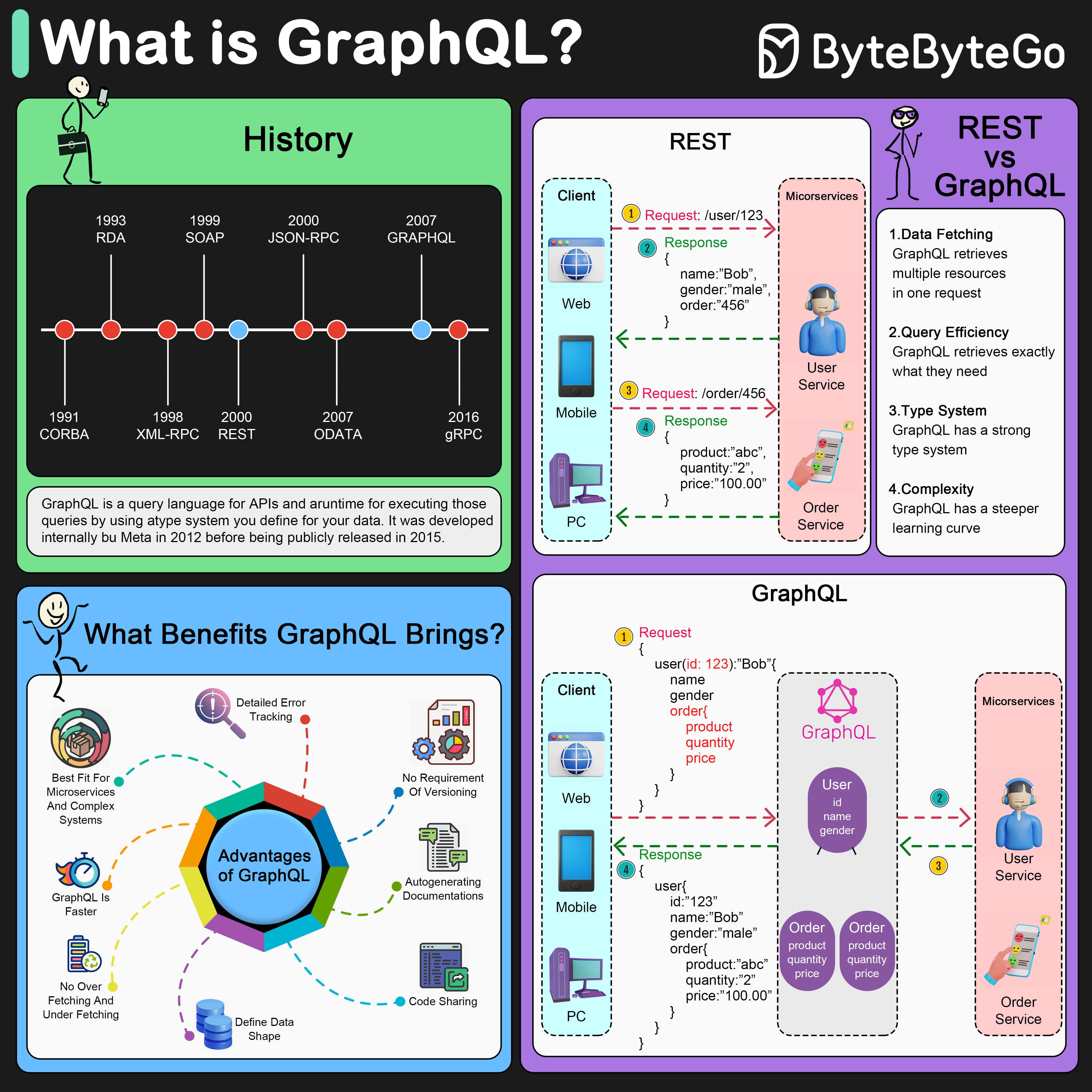

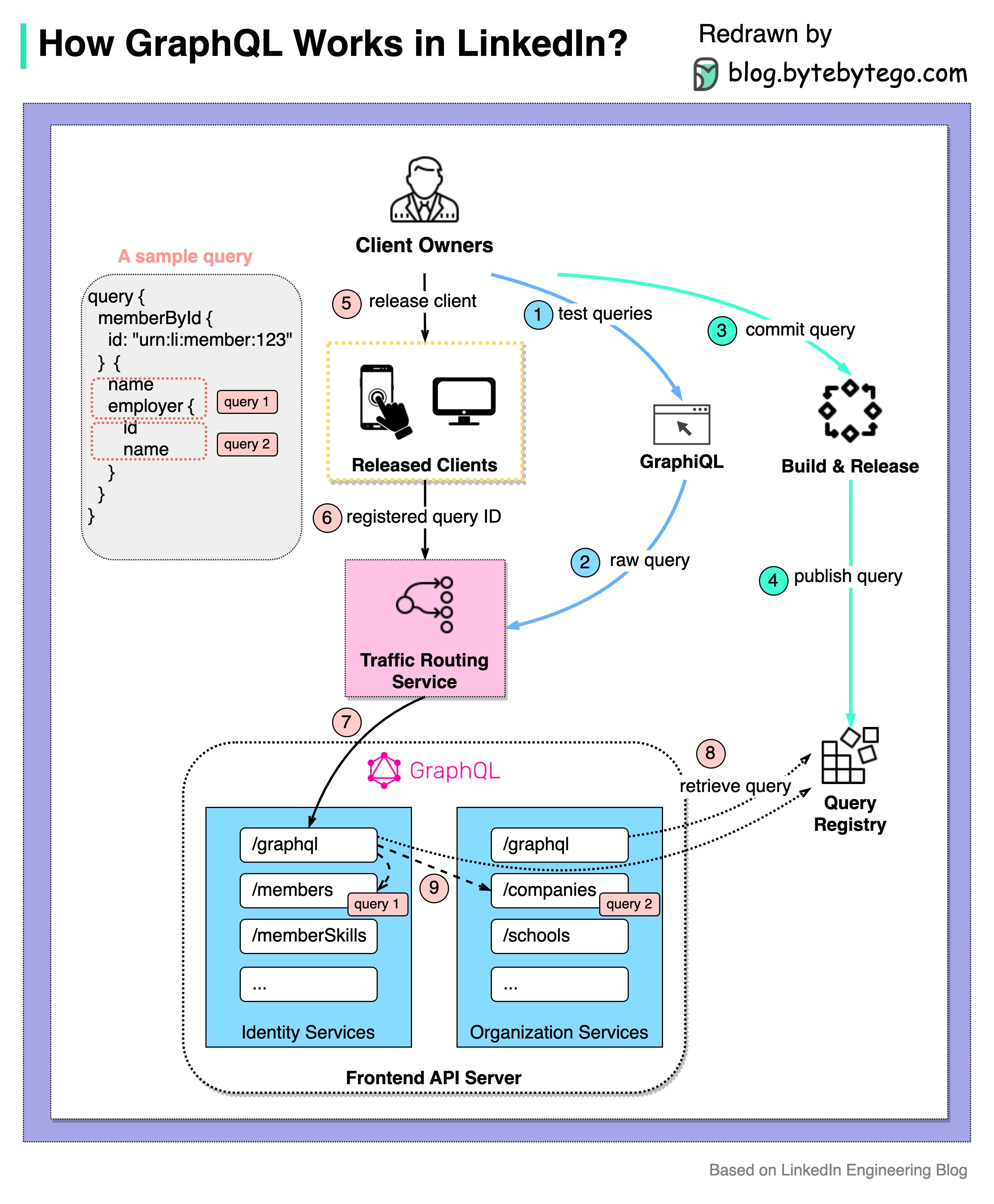

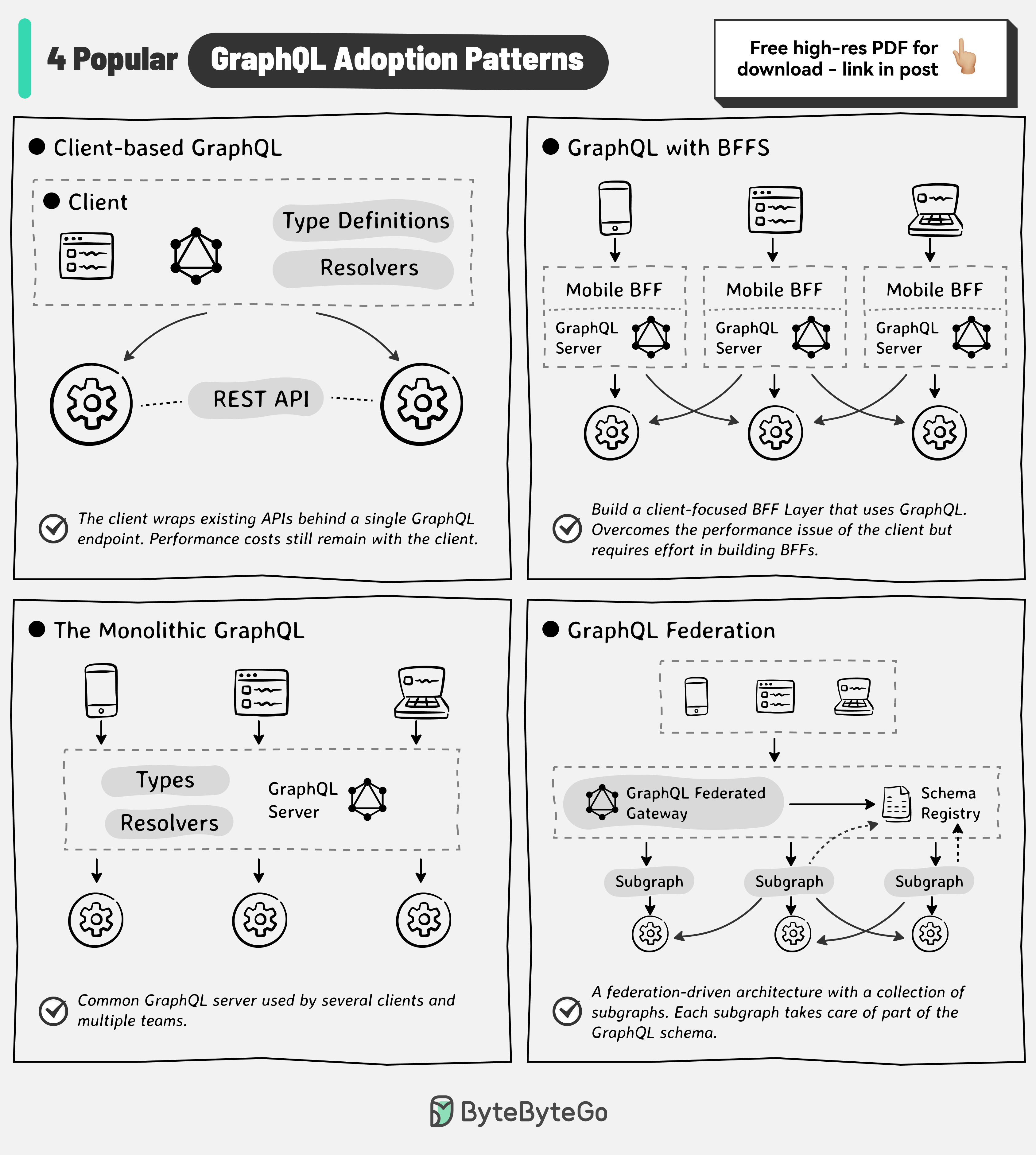

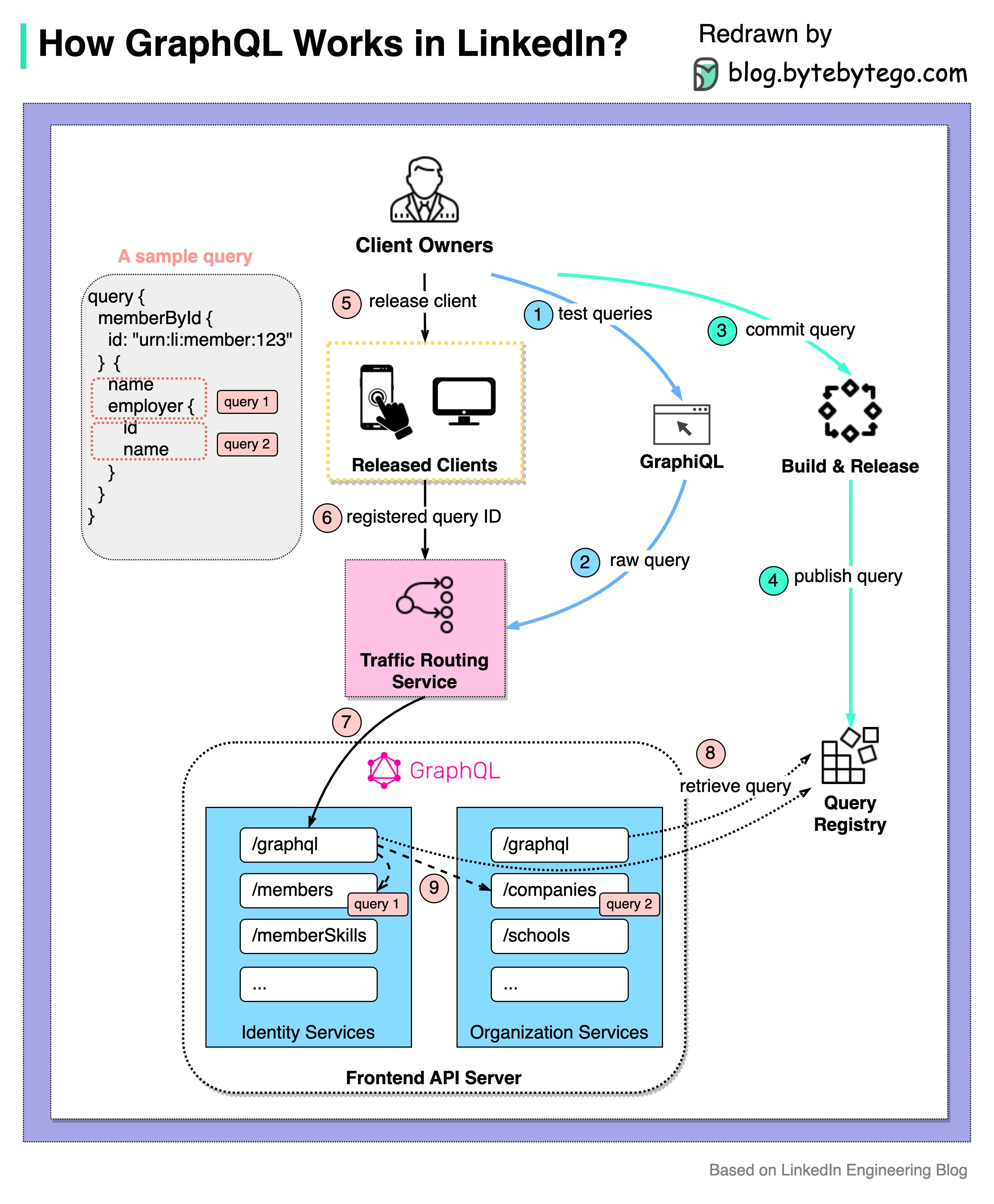

+ * [How GraphQL Works at LinkedIn](https://bytebytego.com/guides/how-does-graphql-work-in-the-real-world)

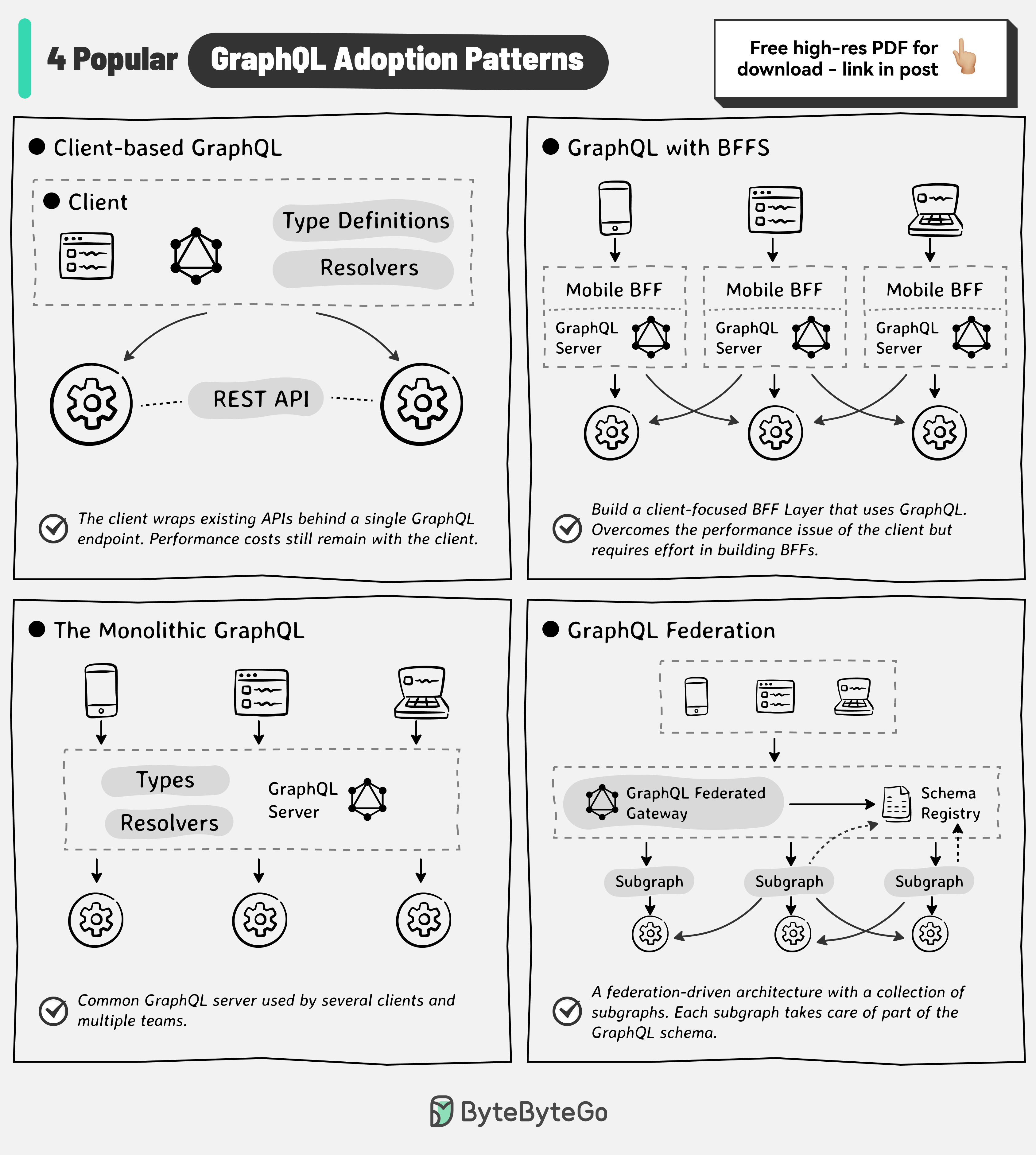

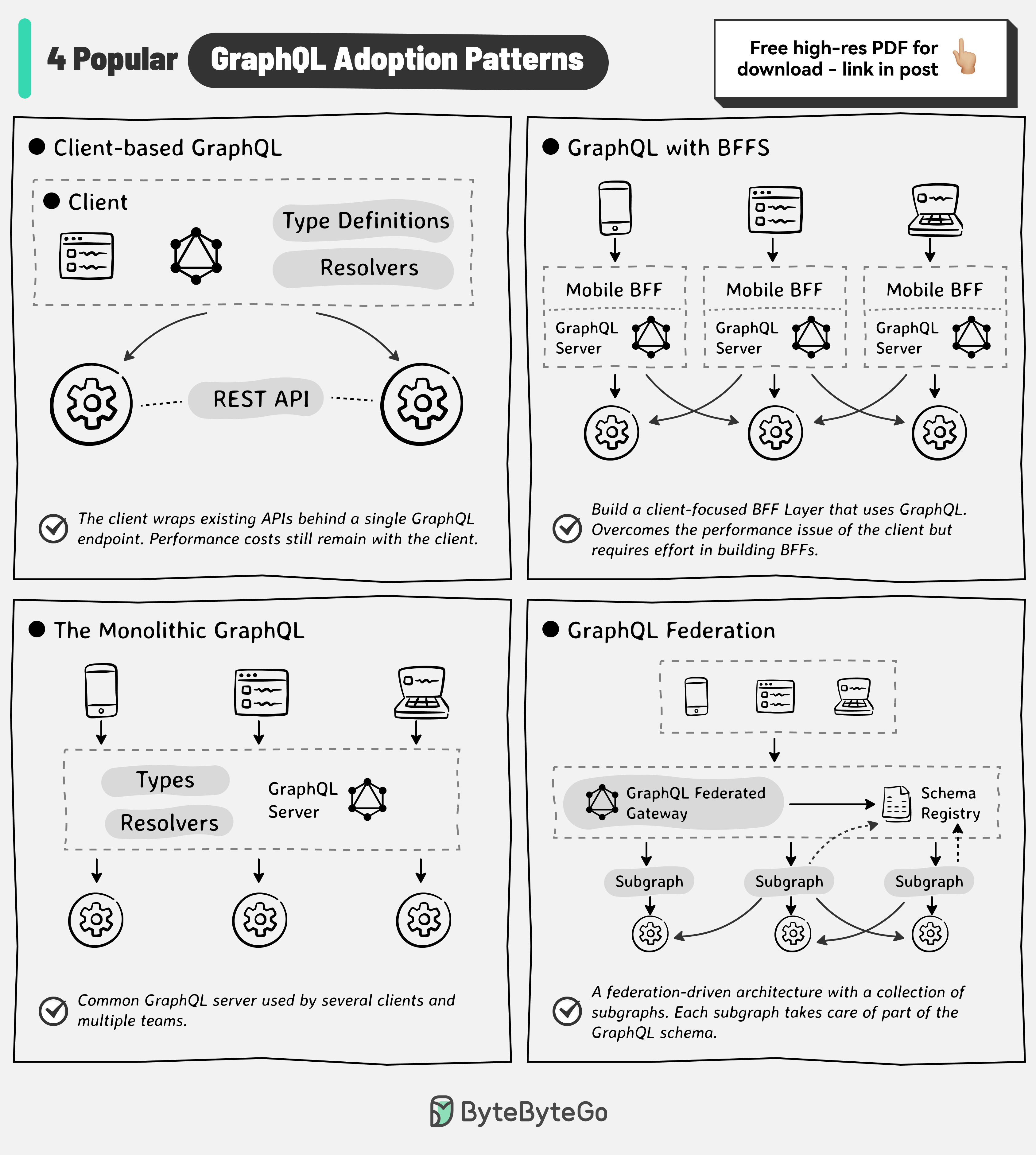

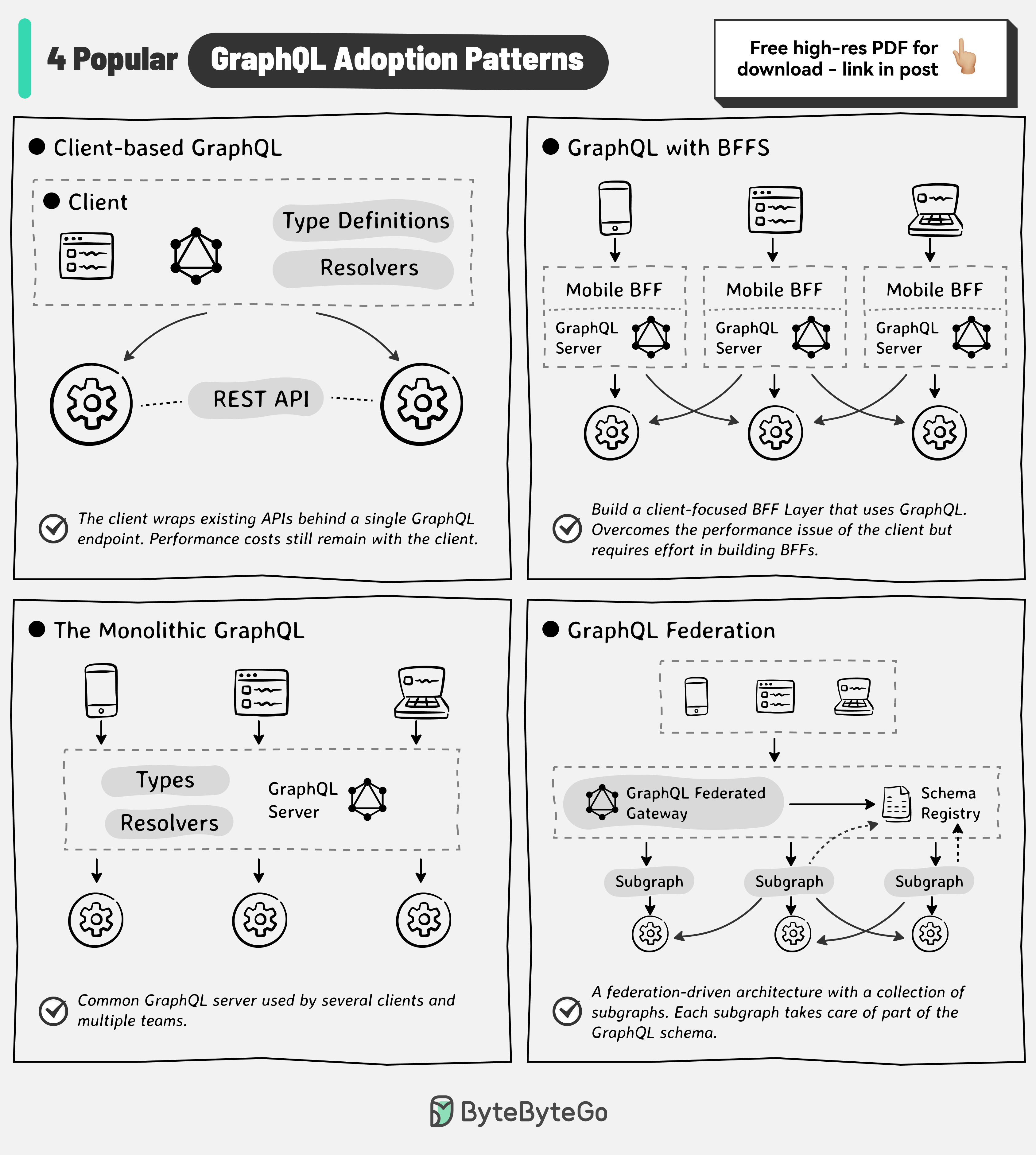

+ * [GraphQL Adoption Patterns](https://bytebytego.com/guides/graphql-adoption-patterns)

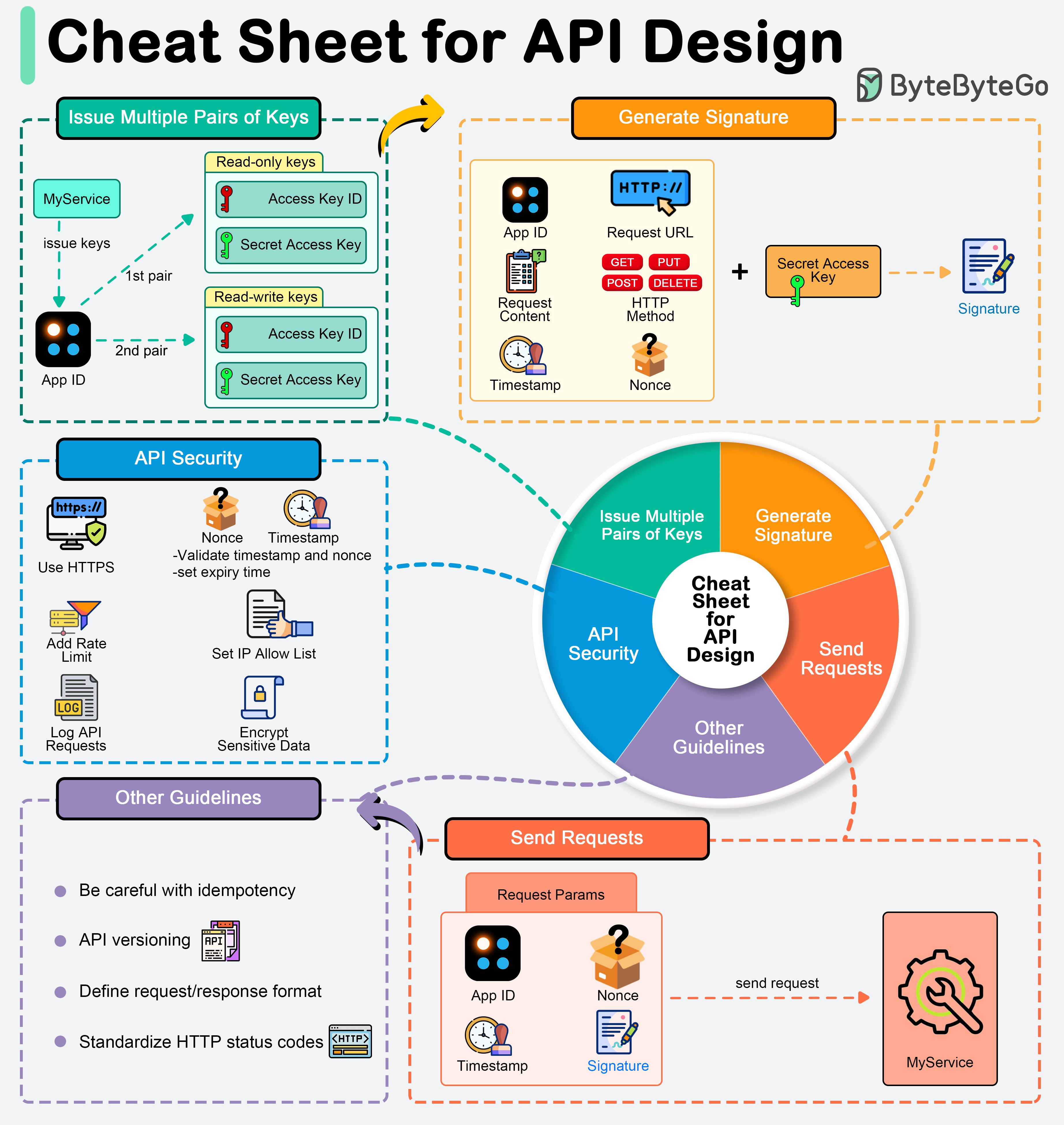

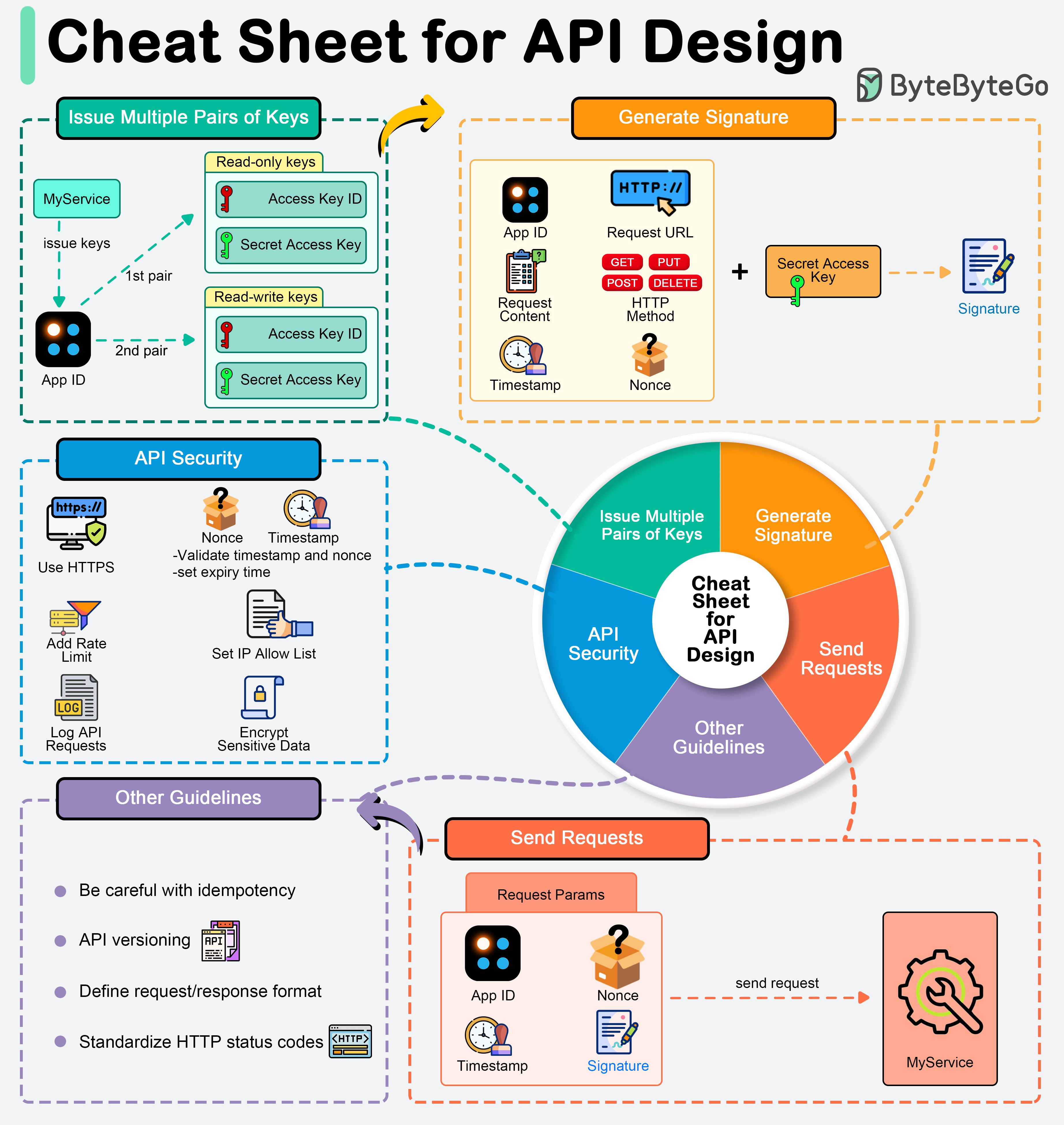

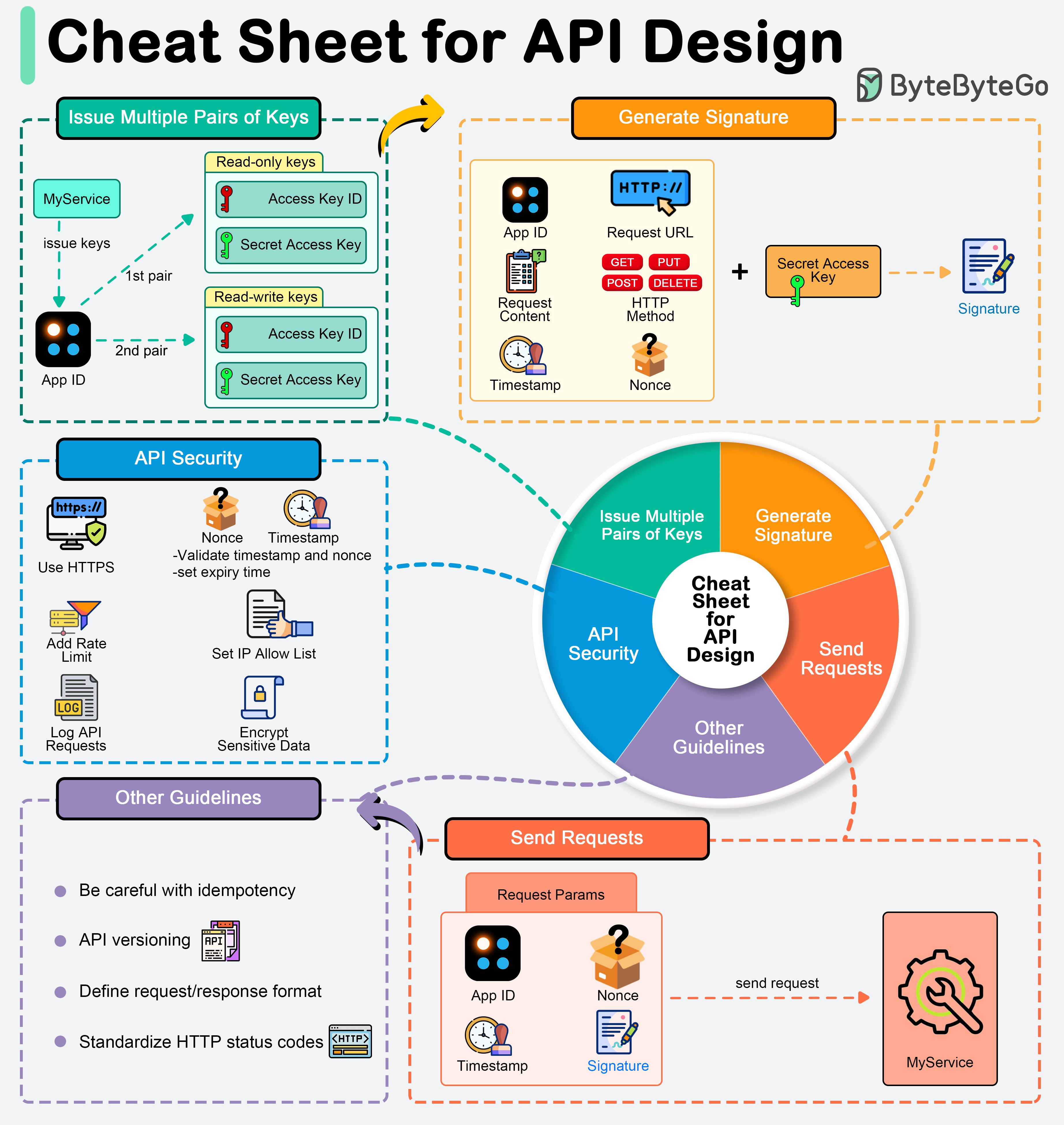

+ * [A cheat sheet for API designs](https://bytebytego.com/guides/a-cheat-sheet-for-api-designs)

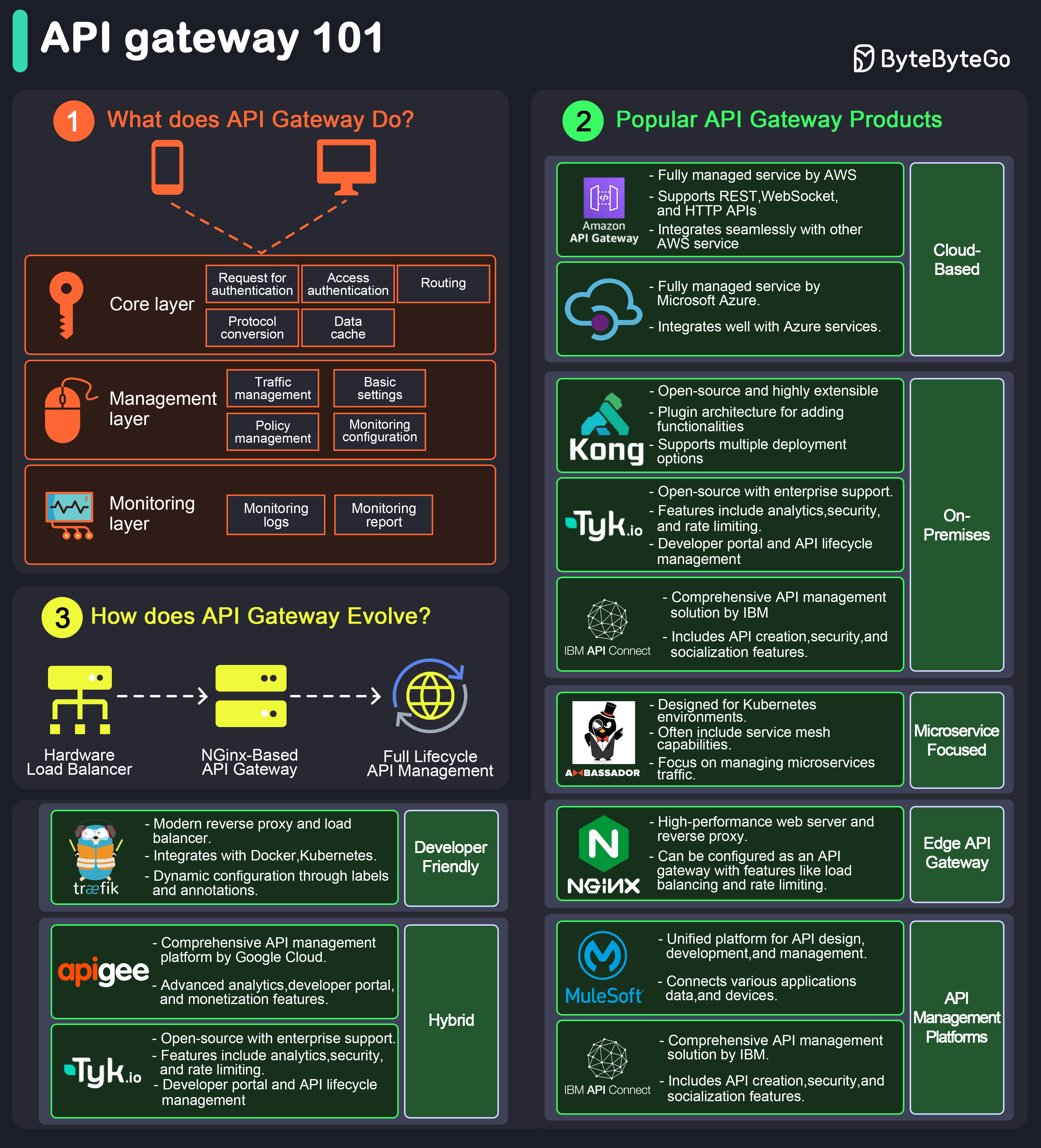

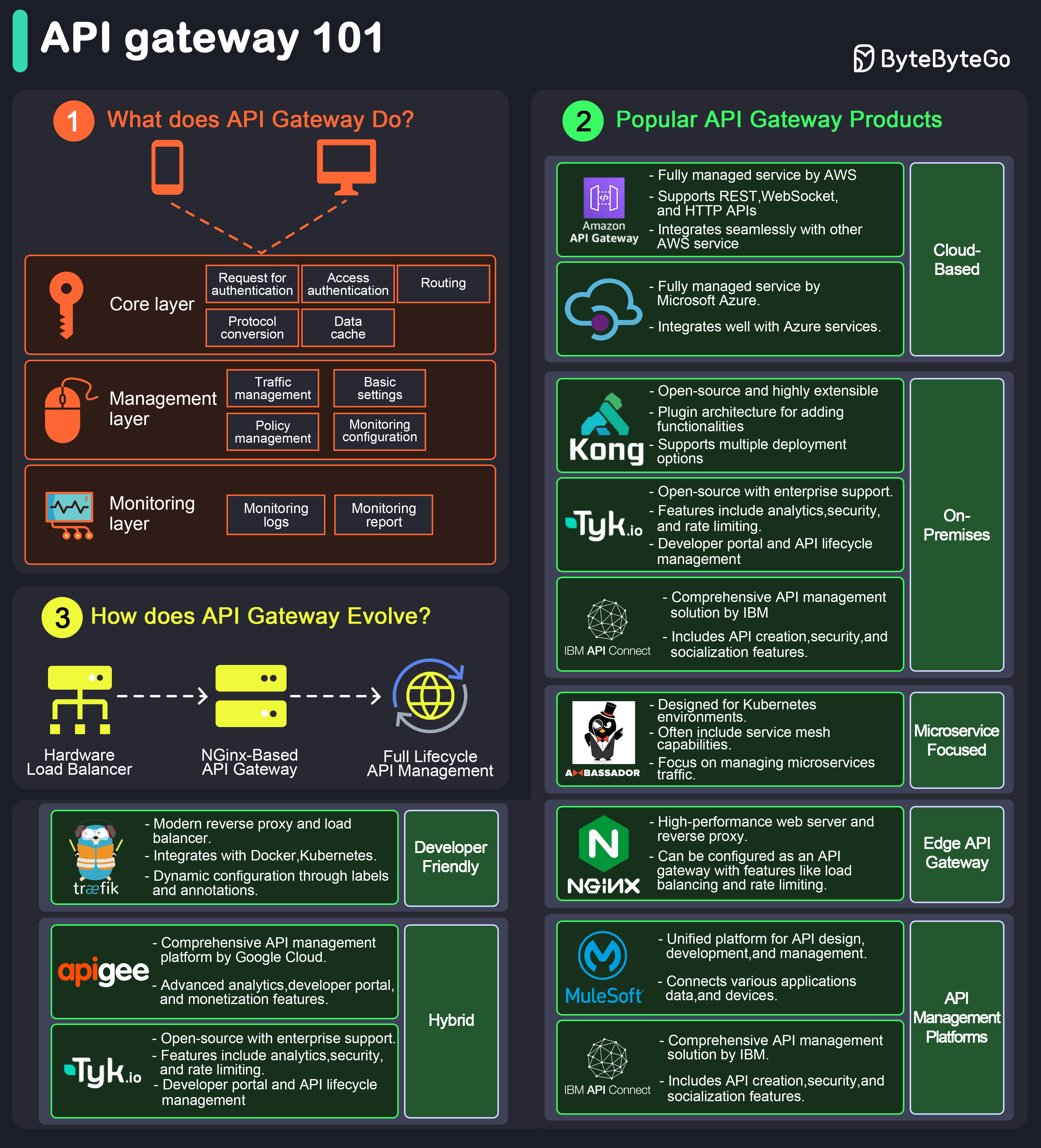

+ * [API Gateway 101](https://bytebytego.com/guides/api-gateway-101)

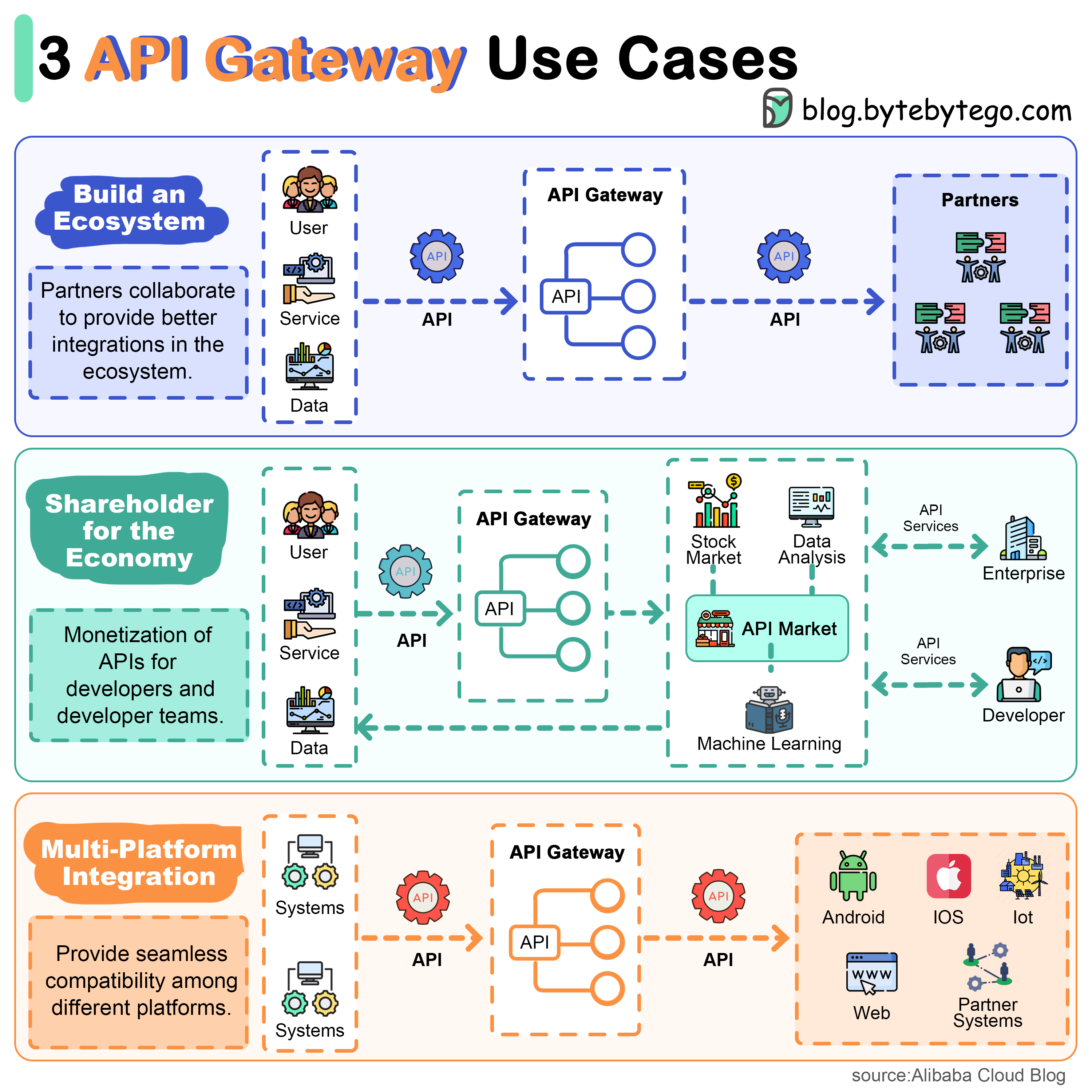

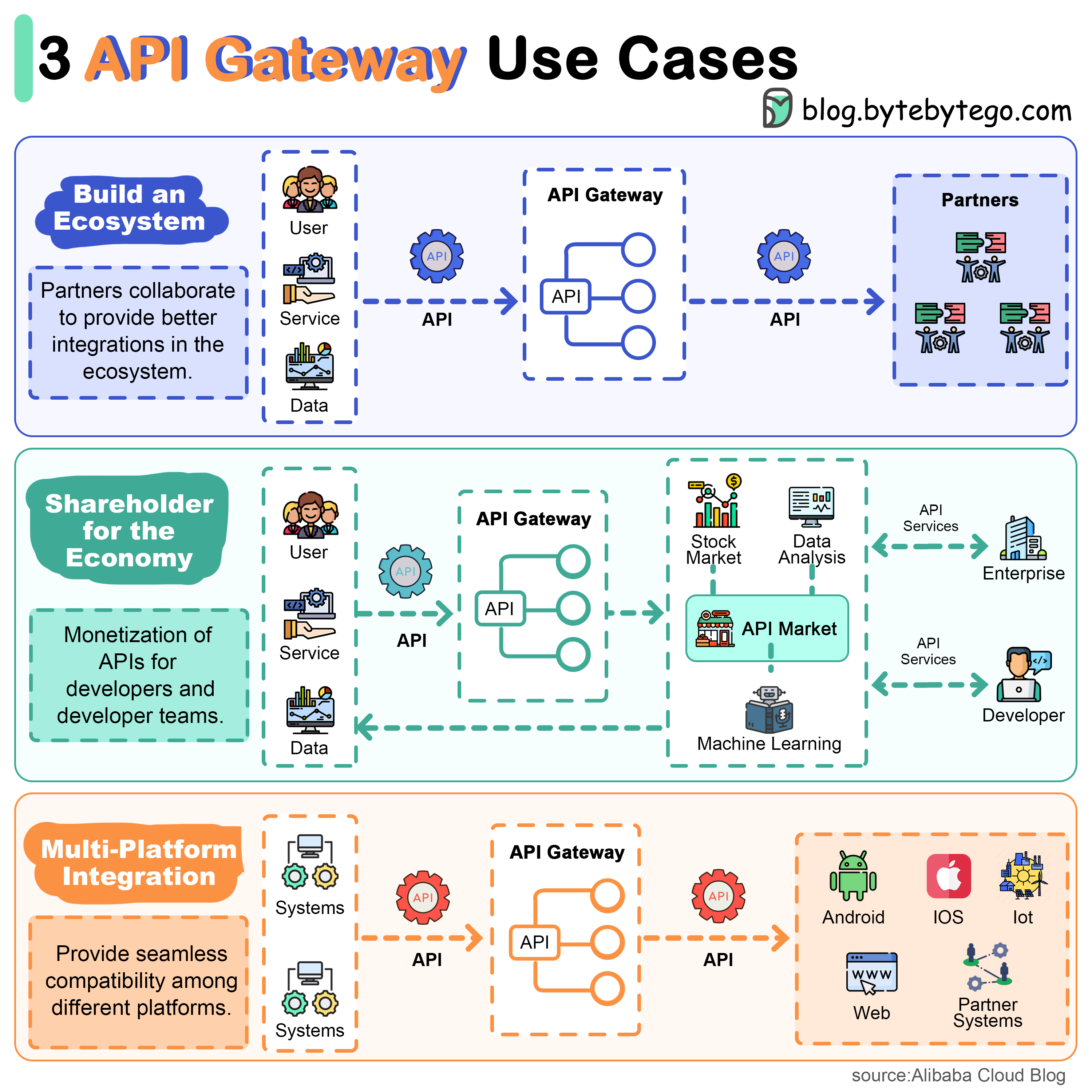

+ * [Top 3 API Gateway Use Cases](https://bytebytego.com/guides/top-3-api-gateway-use-cases)

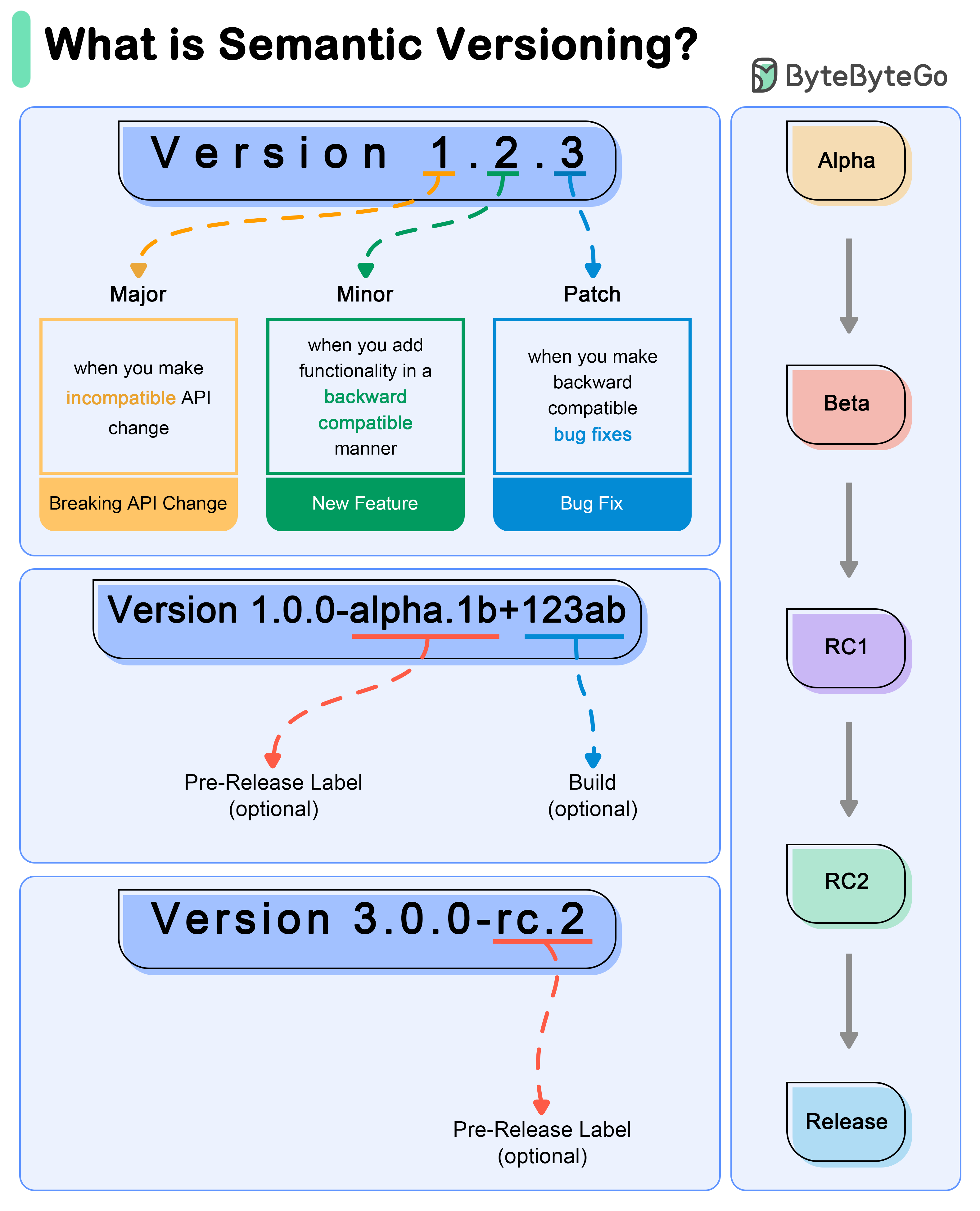

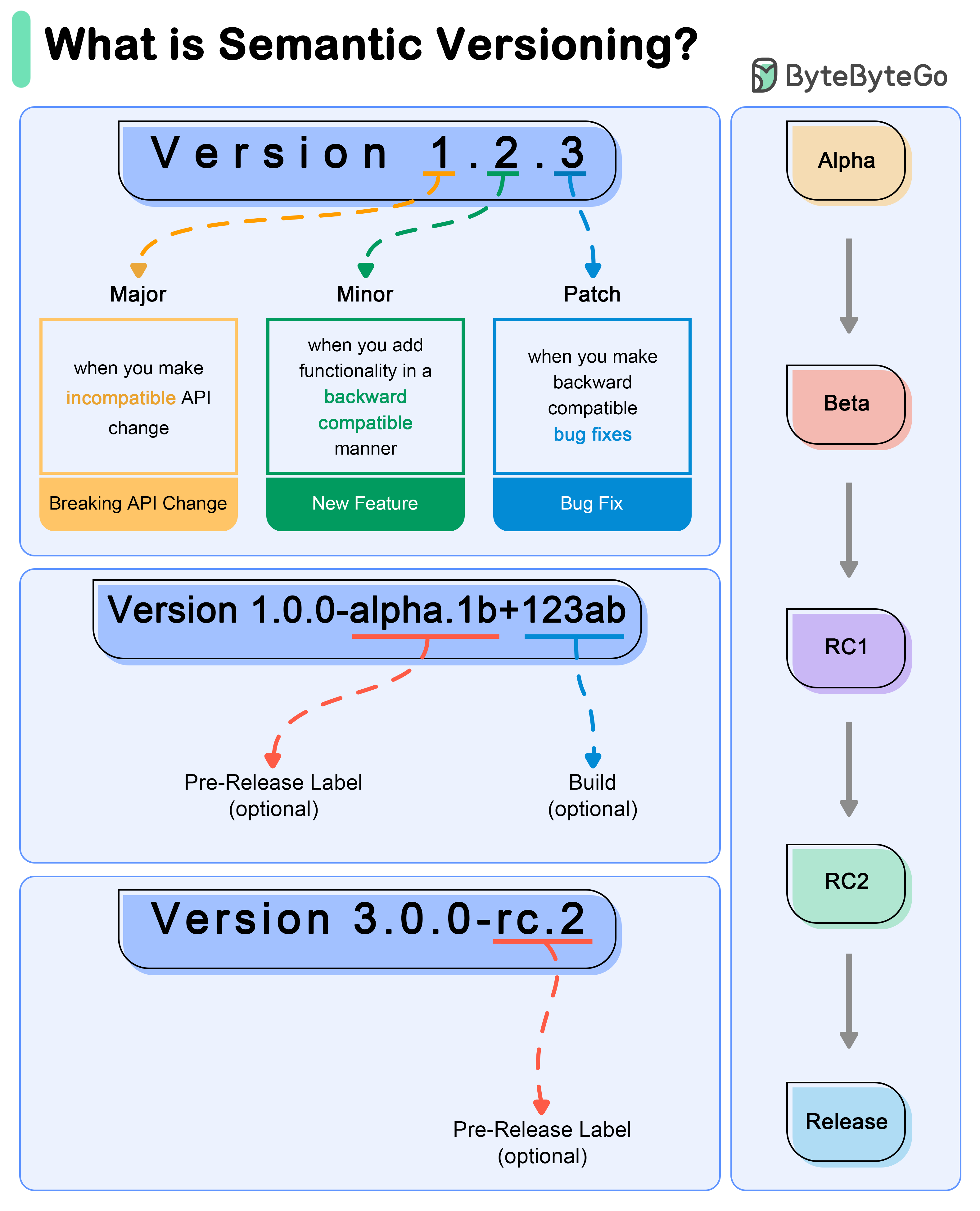

+ * [What do version numbers mean?](https://bytebytego.com/guides/what-do-version-numbers-mean)

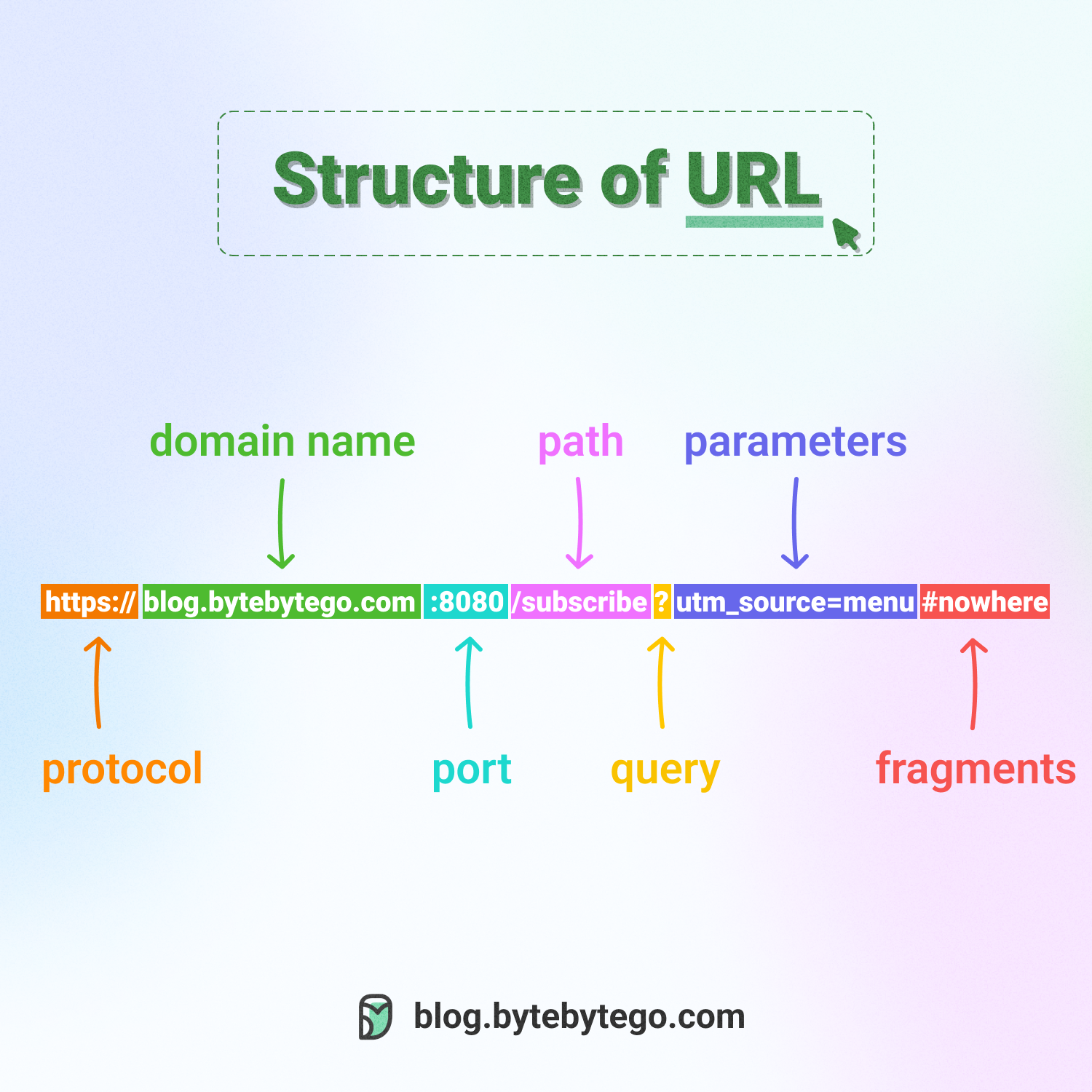

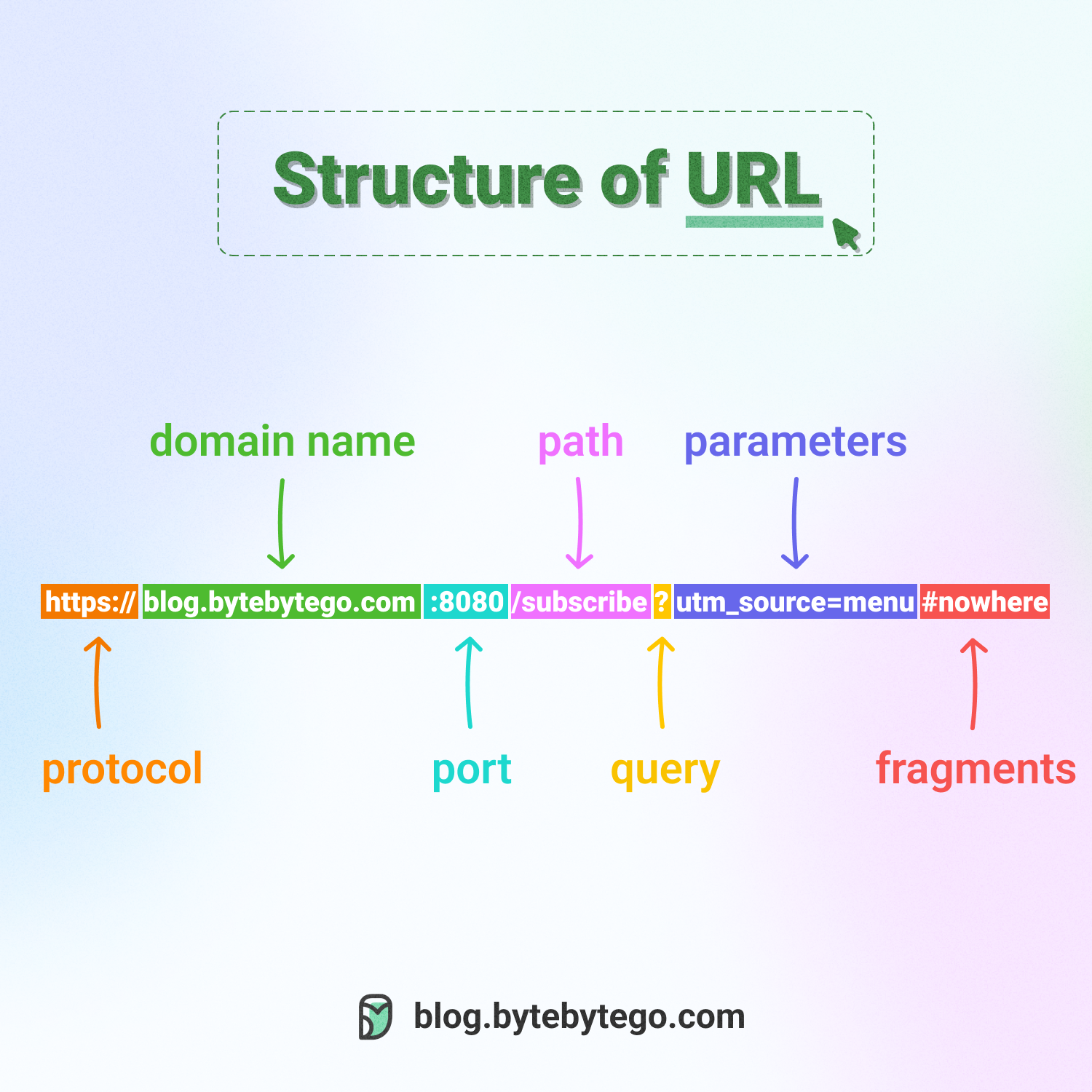

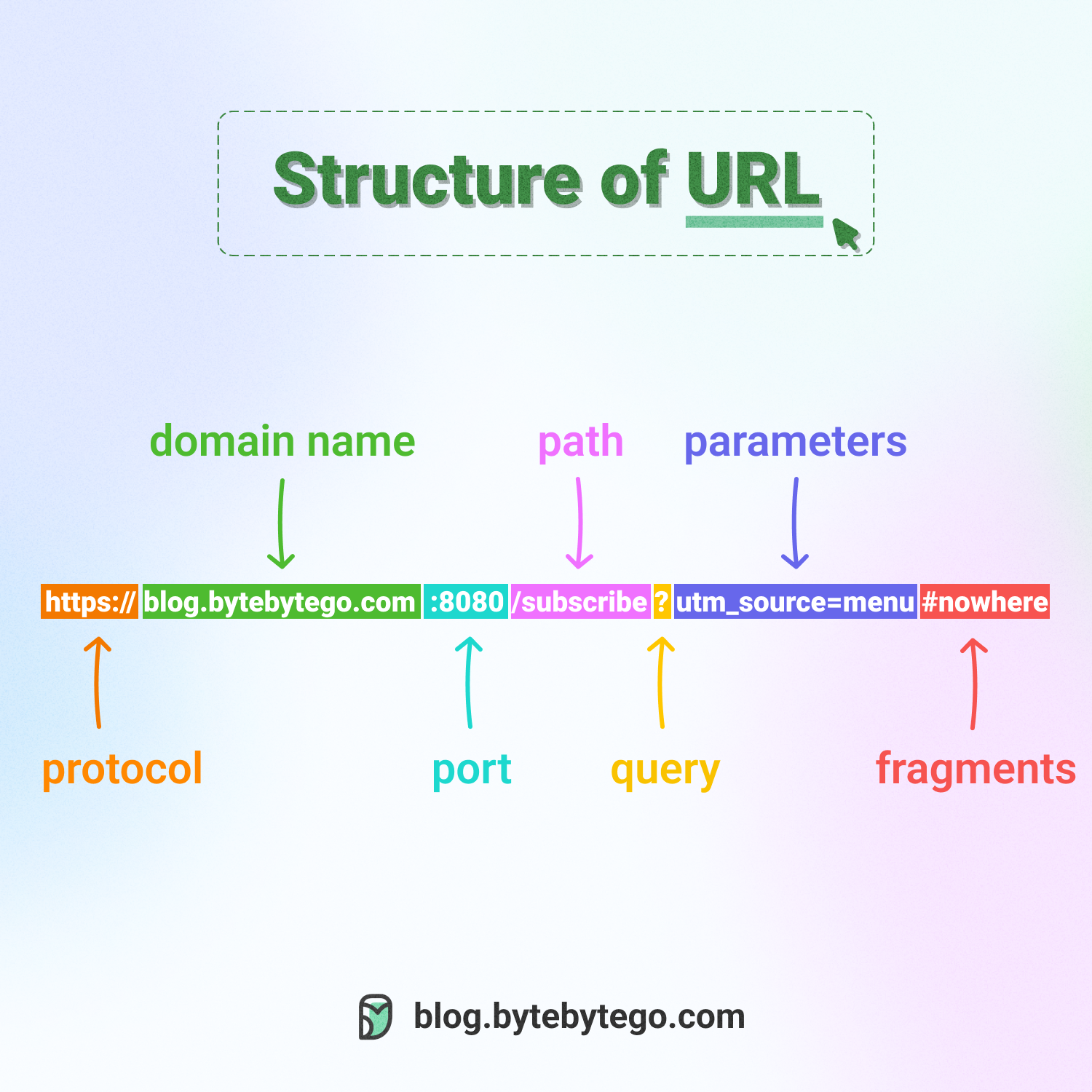

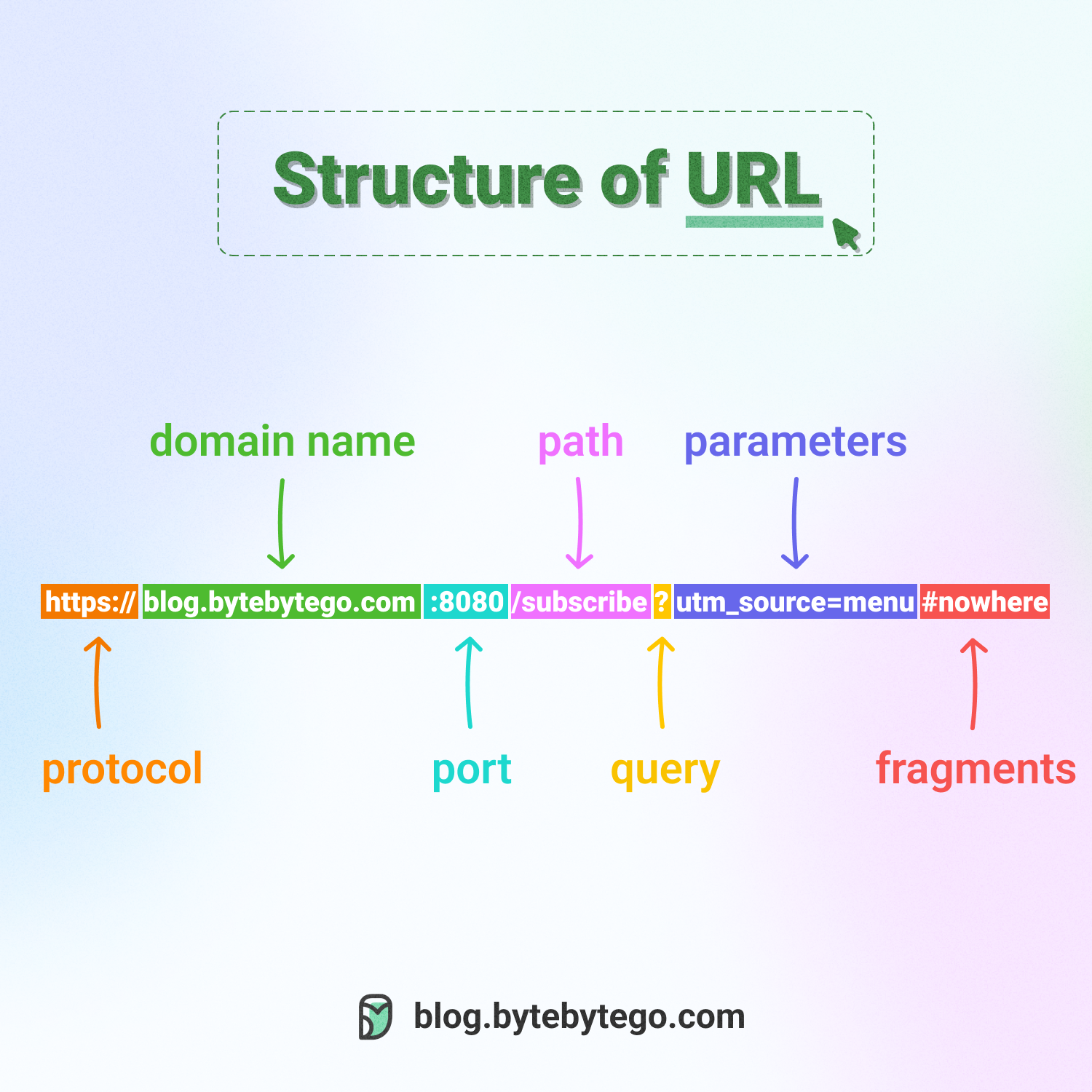

+ * [Do you know all the components of a URL?](https://bytebytego.com/guides/do-you-know-all-the-components-of-a-url)

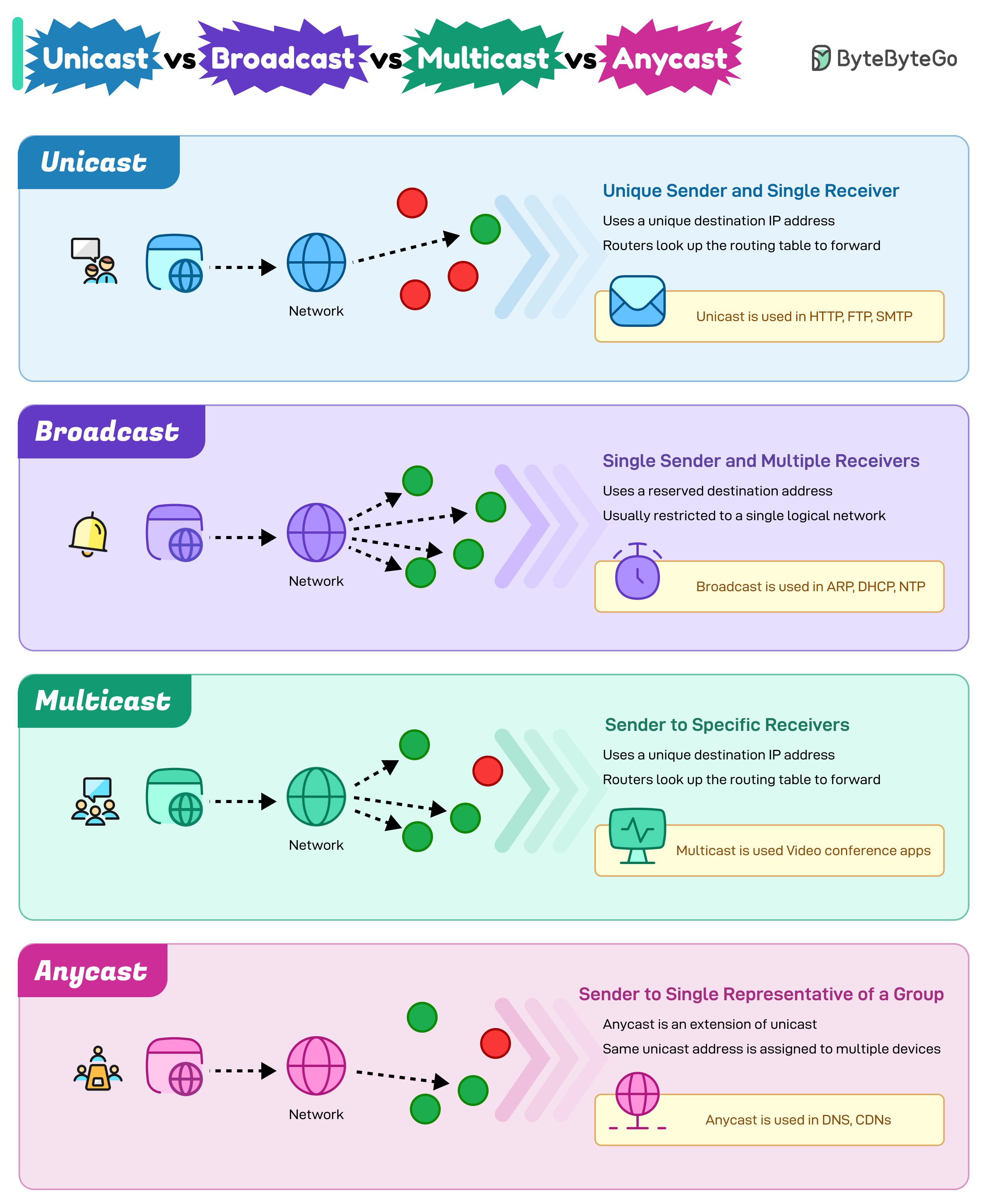

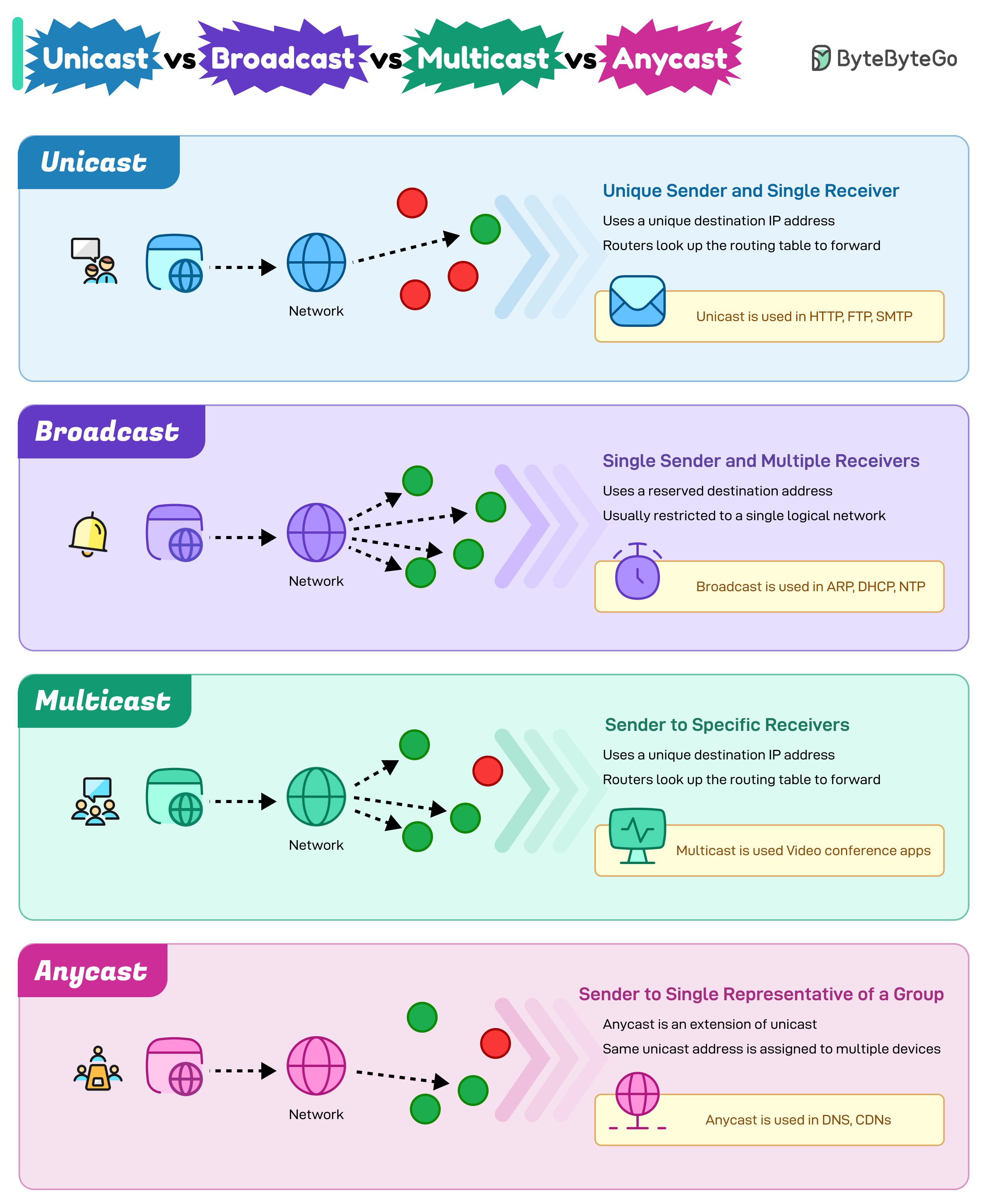

+ * [Unicast vs Broadcast vs Multicast vs Anycast](https://bytebytego.com/guides/unicast-vs-broadcast-vs-multicast-vs-anycast)

+ * [10 Essential Components of a Production Web Application](https://bytebytego.com/guides/10-essential-components-of-a-production-web-application)

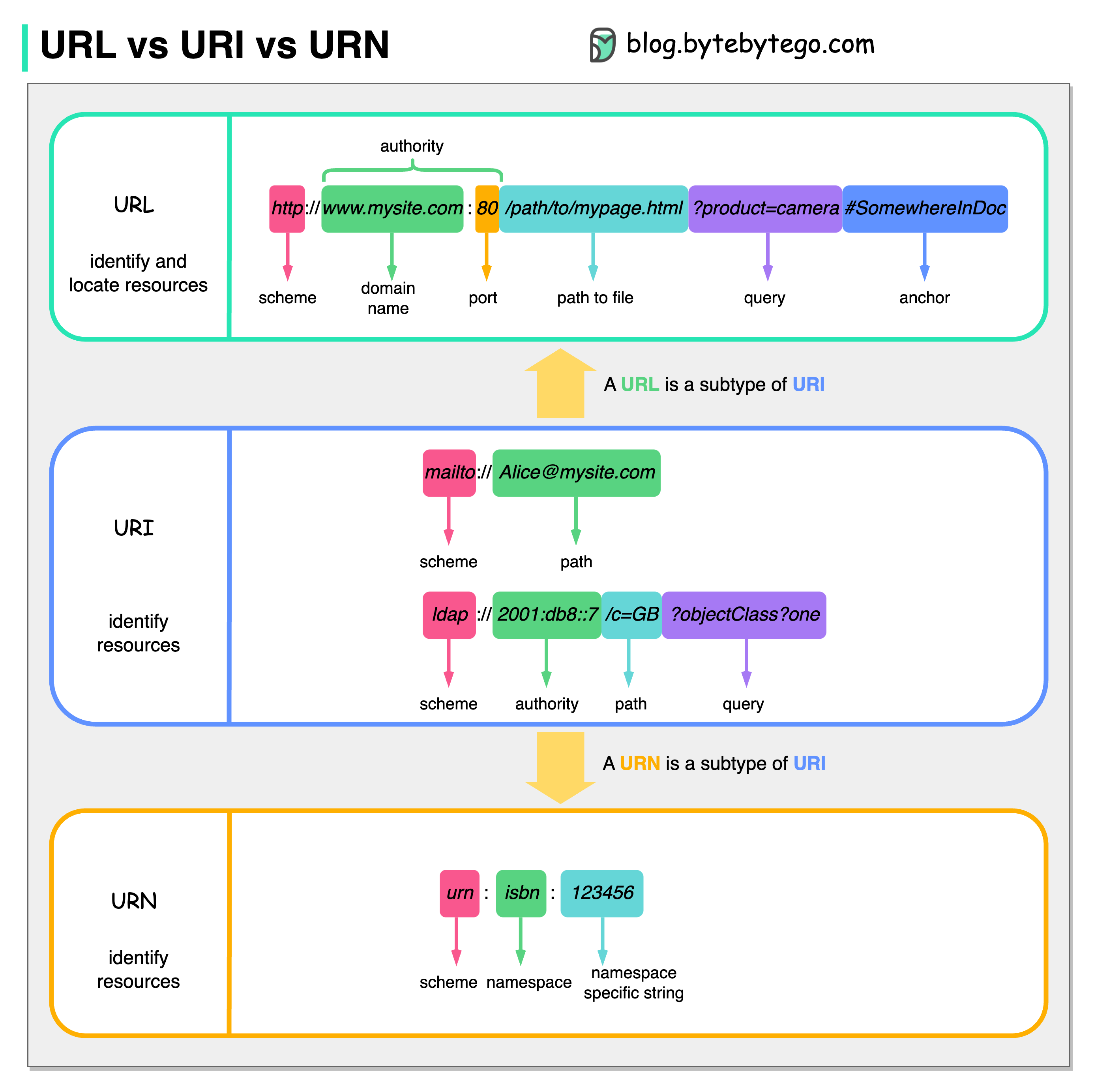

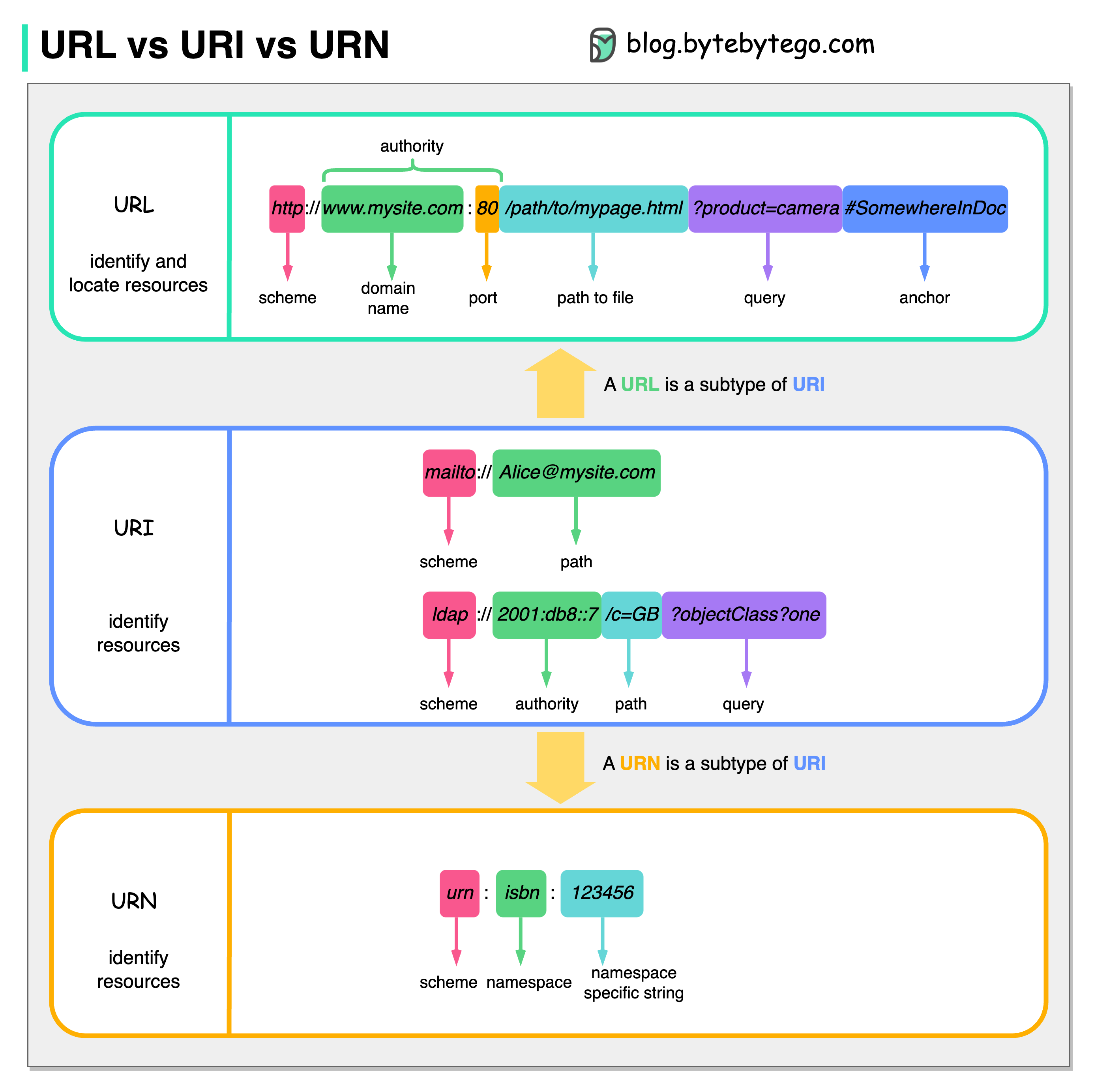

+ * [URL, URI, URN - Differences Explained](https://bytebytego.com/guides/url-uri-urn-do-you-know-the-differences)

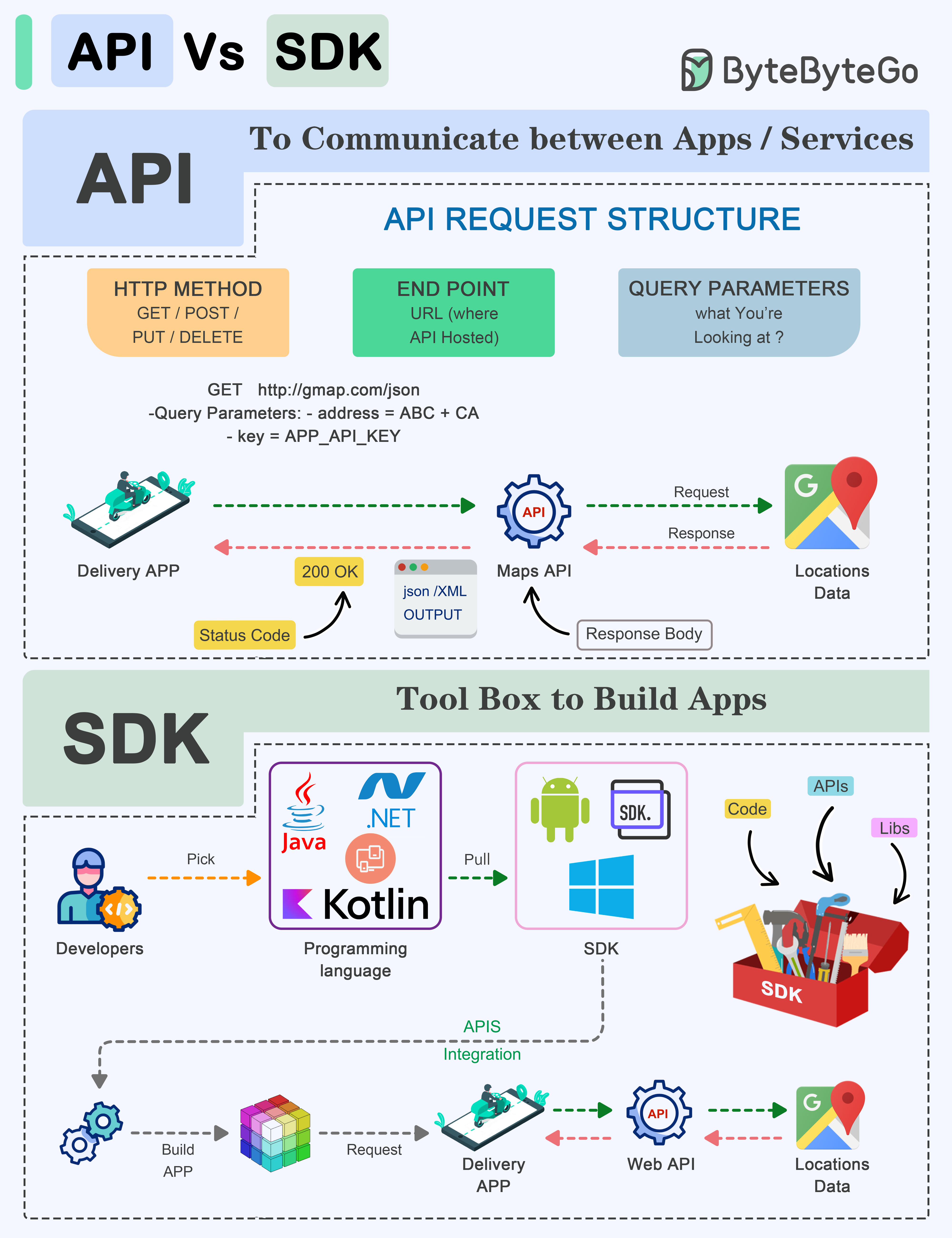

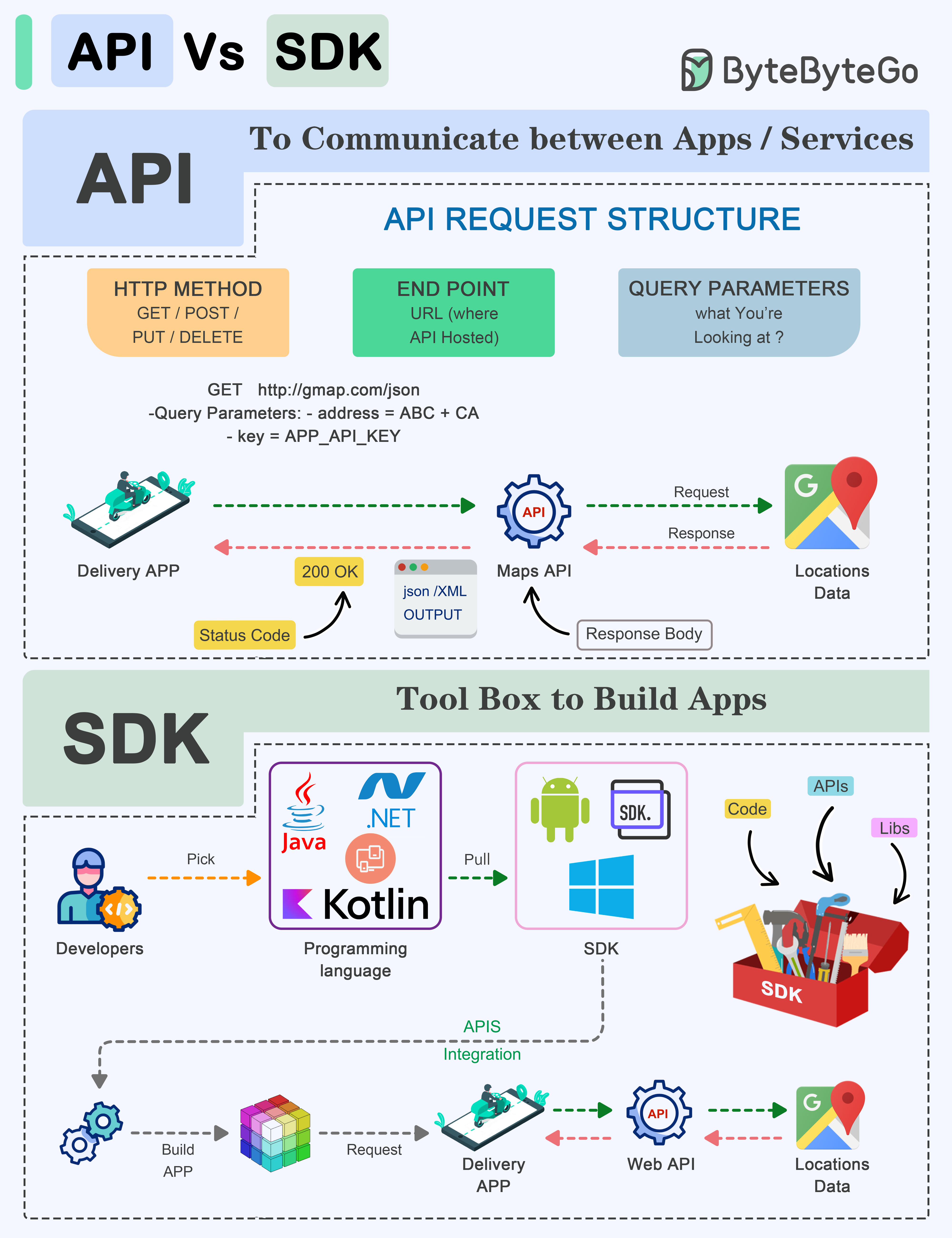

+ * [API vs SDK](https://bytebytego.com/guides/api-vs-sdk)

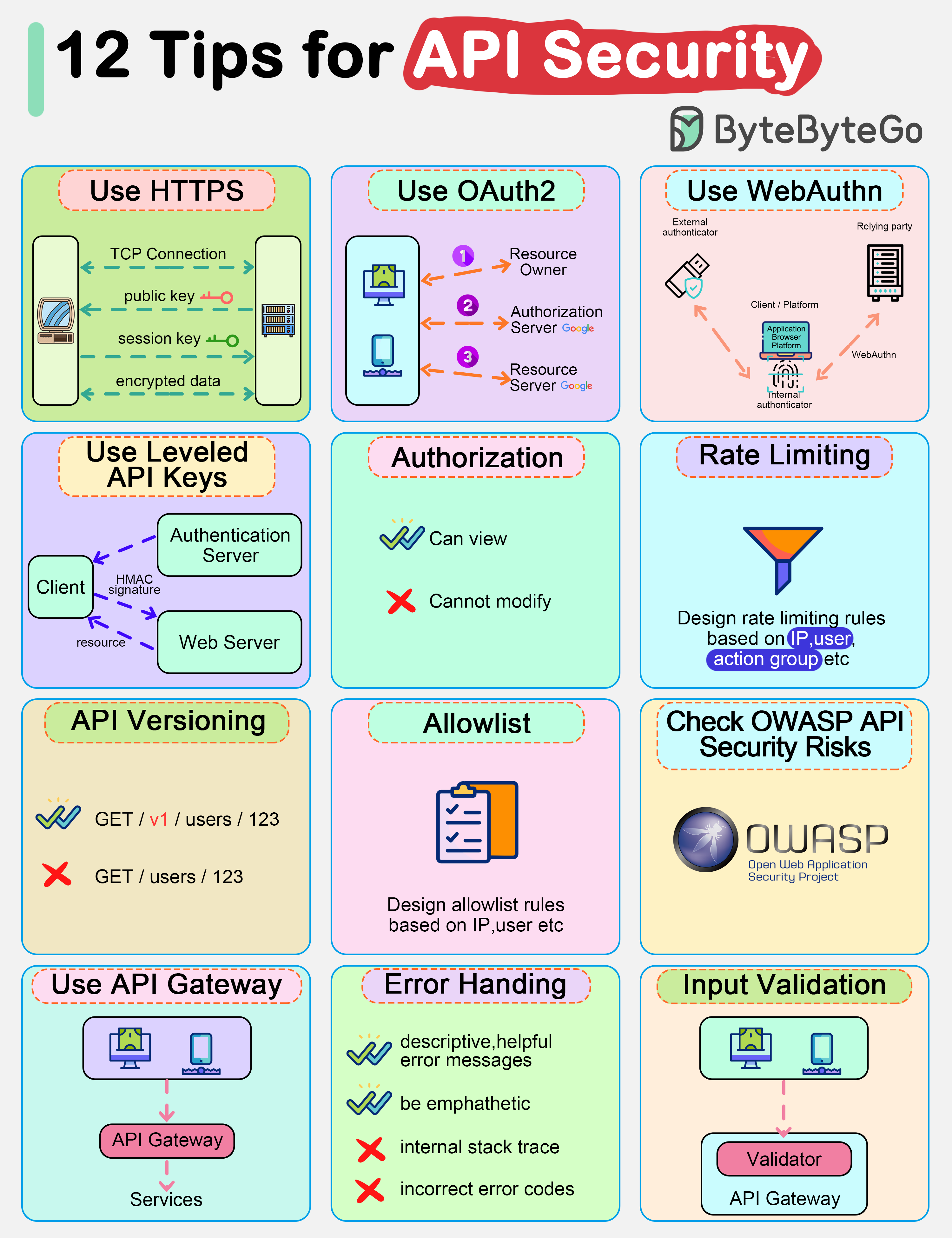

+ * [A Cheatsheet to Build Secure APIs](https://bytebytego.com/guides/a-cheatsheet-to-build-secure-apis)

+ * [HTTP Status Codes You Should Know](https://bytebytego.com/guides/http-status-code-you-should-know)

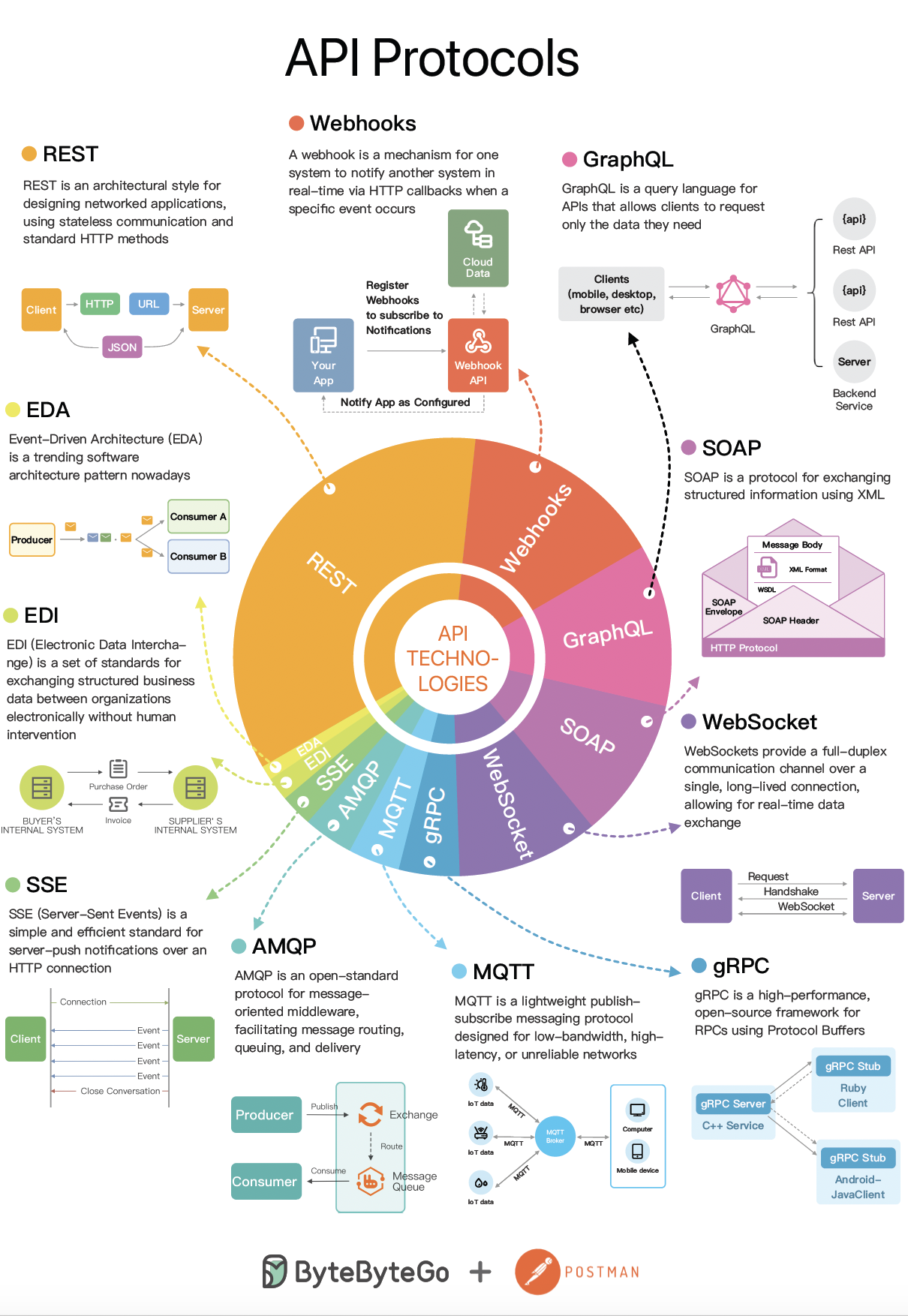

+ * [SOAP vs REST vs GraphQL vs RPC](https://bytebytego.com/guides/soap-vs-rest-vs-graphql-vs-rpc)

+ * [A Cheatsheet on Comparing API Architectural Styles](https://bytebytego.com/guides/a-cheatsheet-on-comparing-api-architectural-styles)

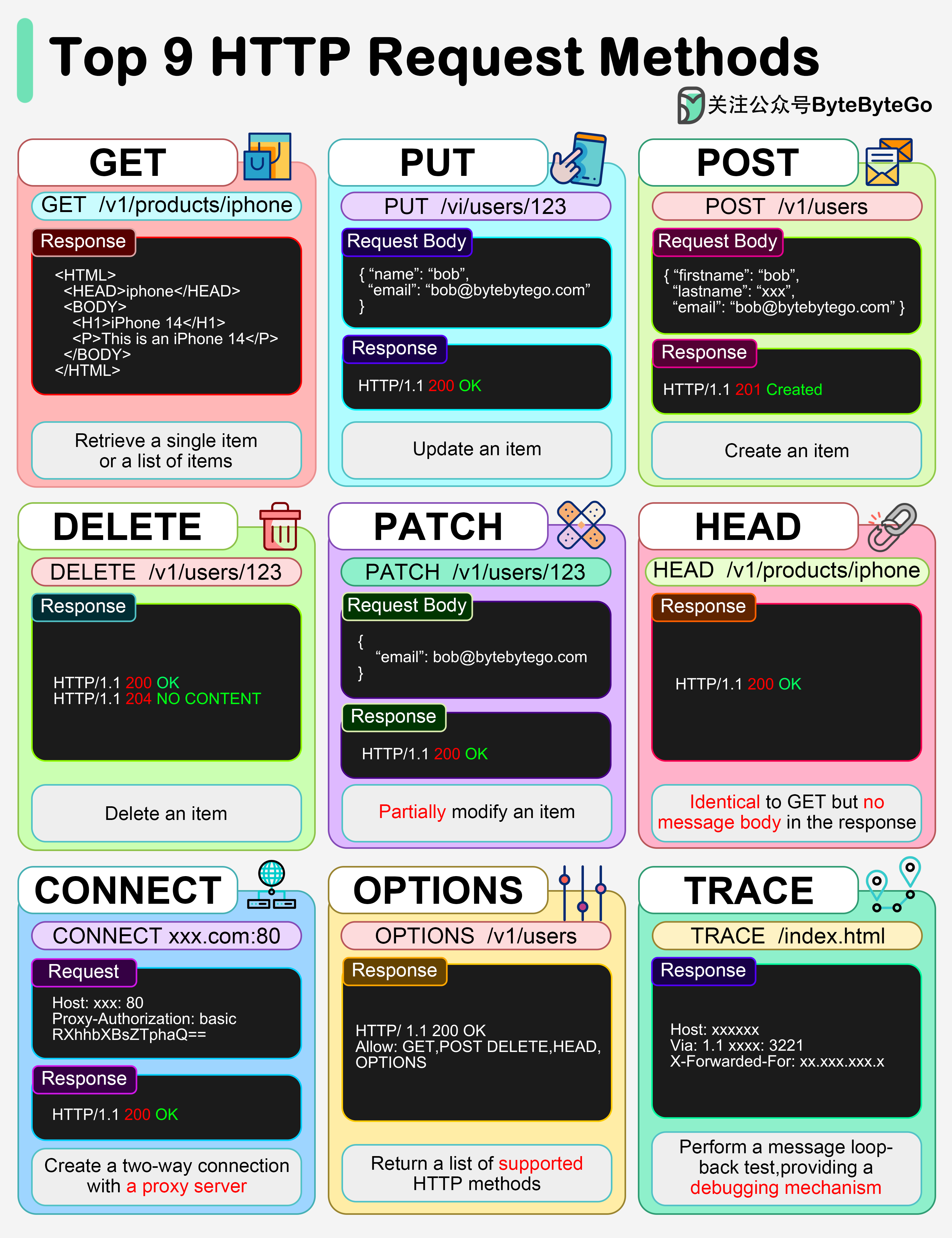

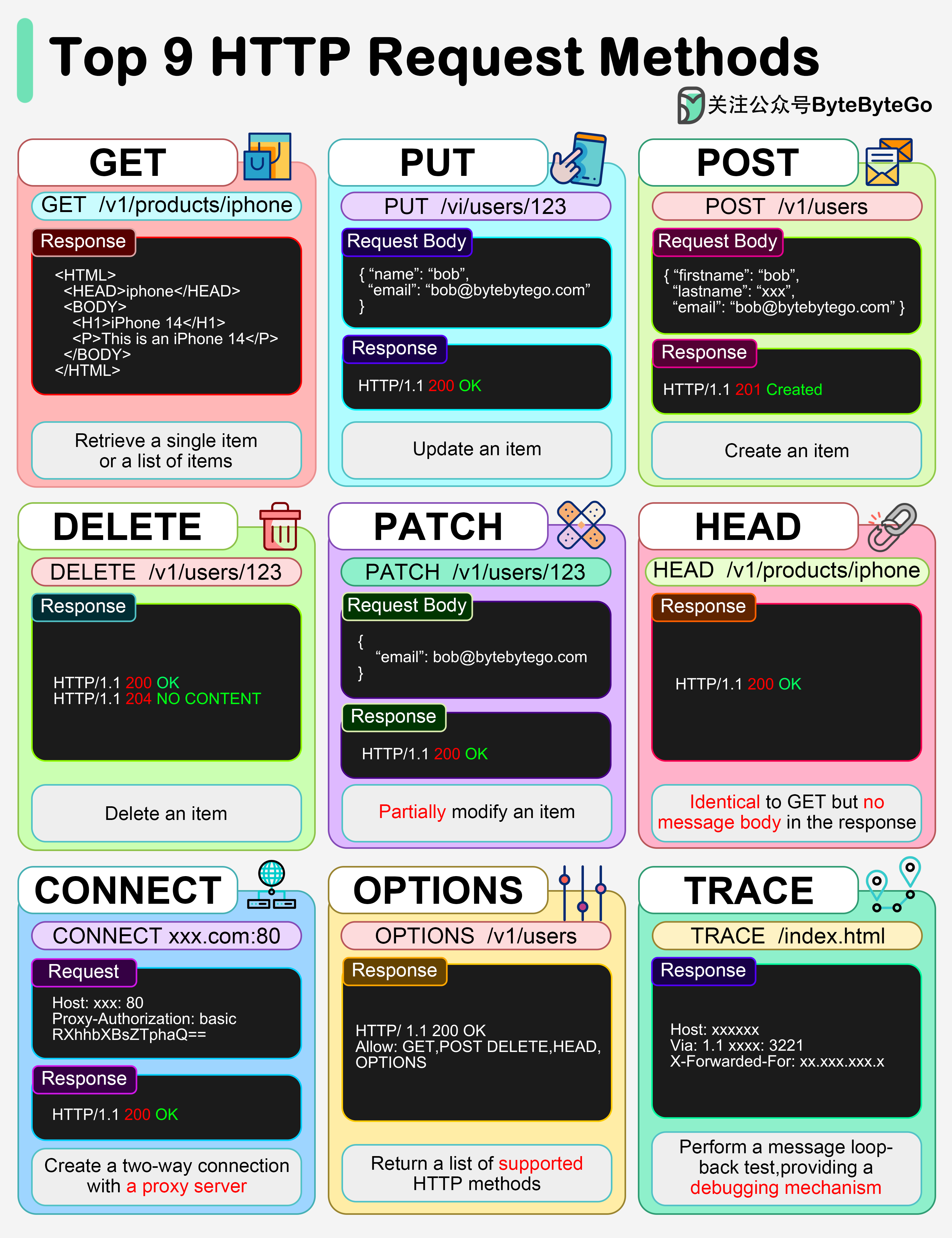

+ * [Top 9 HTTP Request Methods](https://bytebytego.com/guides/top-9-http-request-methods)

+ * [What is a Load Balancer?](https://bytebytego.com/guides/what-is-a-load-balancer)

+ * [Proxy vs Reverse Proxy](https://bytebytego.com/guides/proxy-vs-reverse-proxy)

+ * [HTTP/1 -> HTTP/2 -> HTTP/3](https://bytebytego.com/guides/http1-http2-http3)

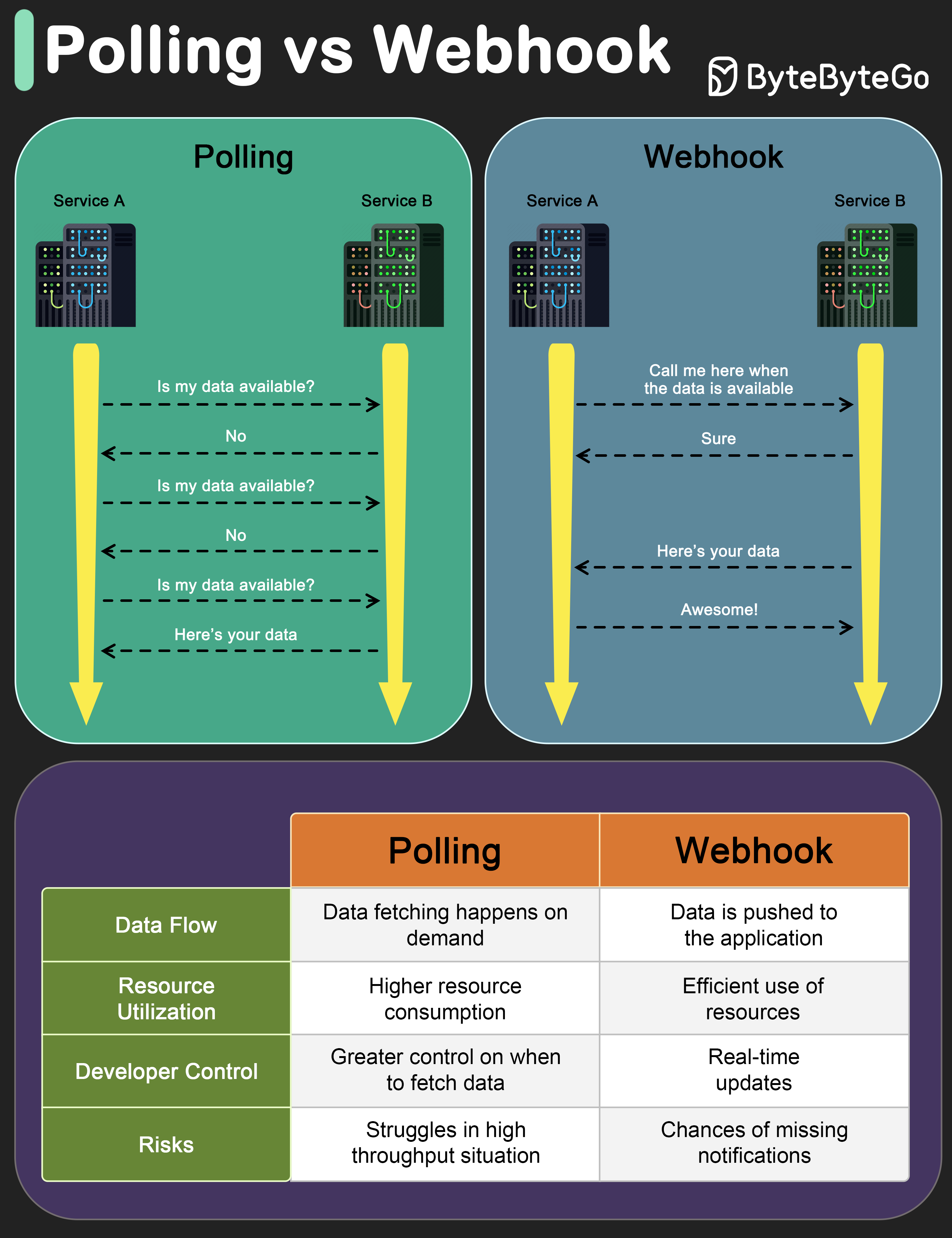

+ * [Polling vs Webhooks](https://bytebytego.com/guides/polling-vs-webhooks)

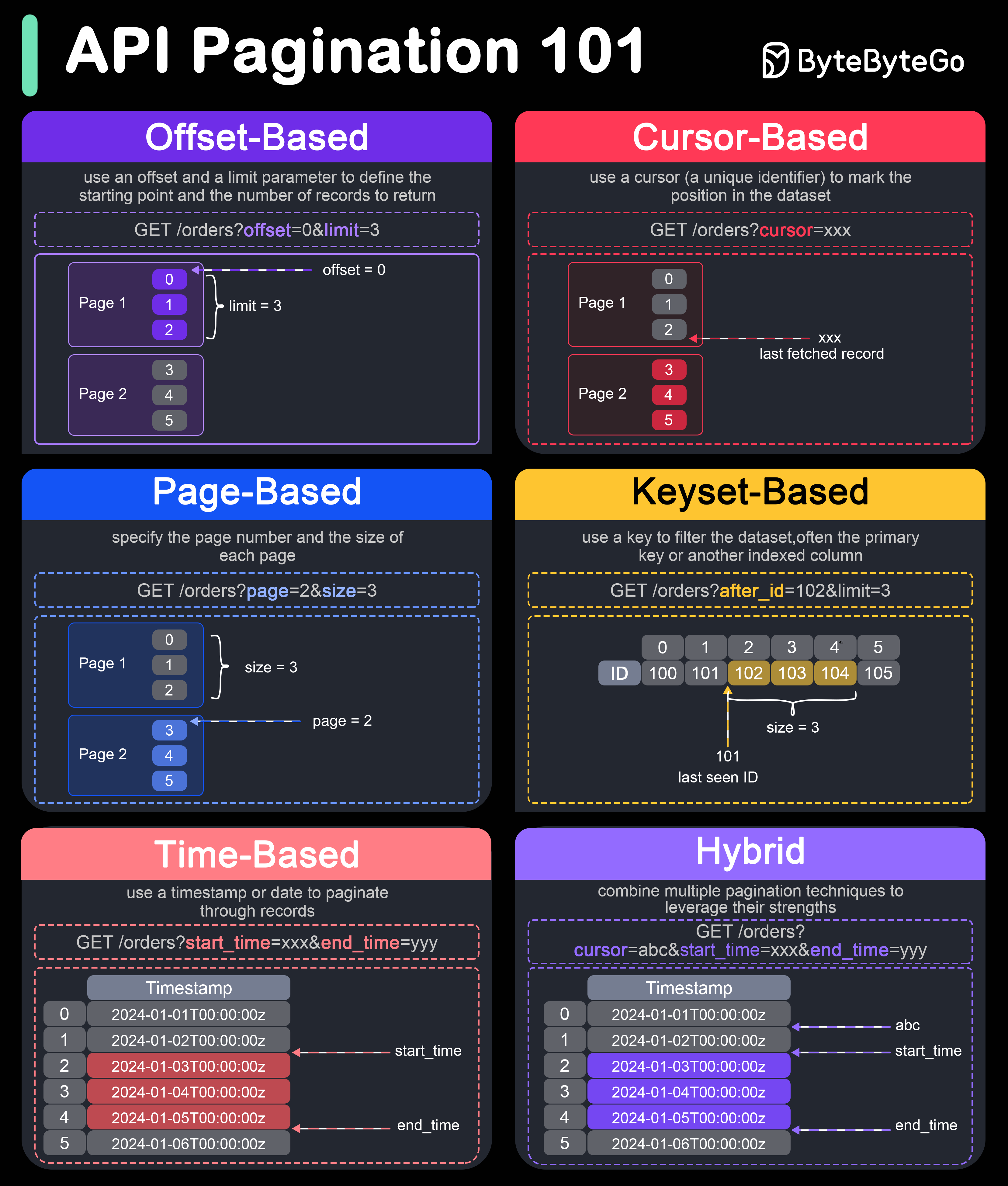

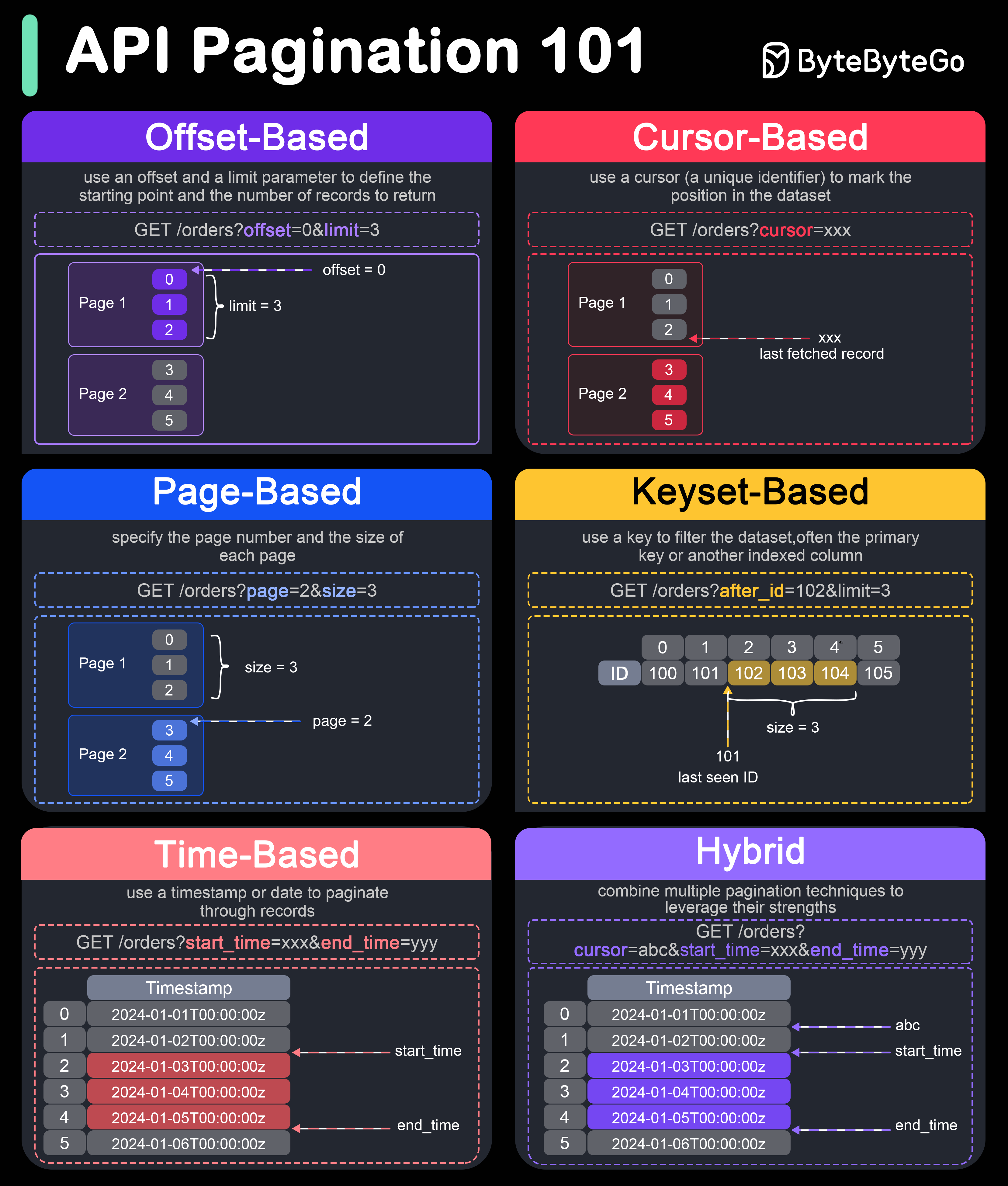

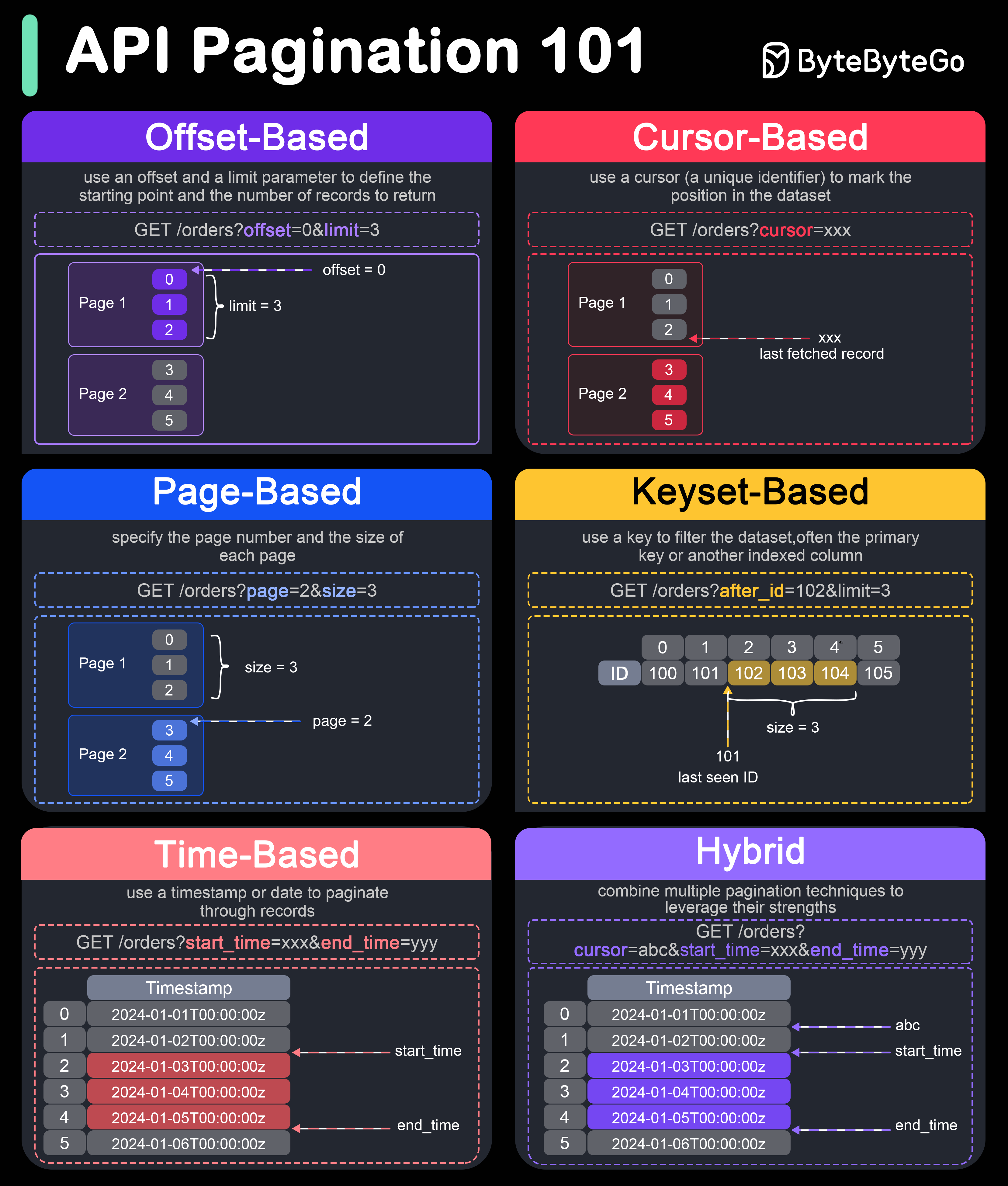

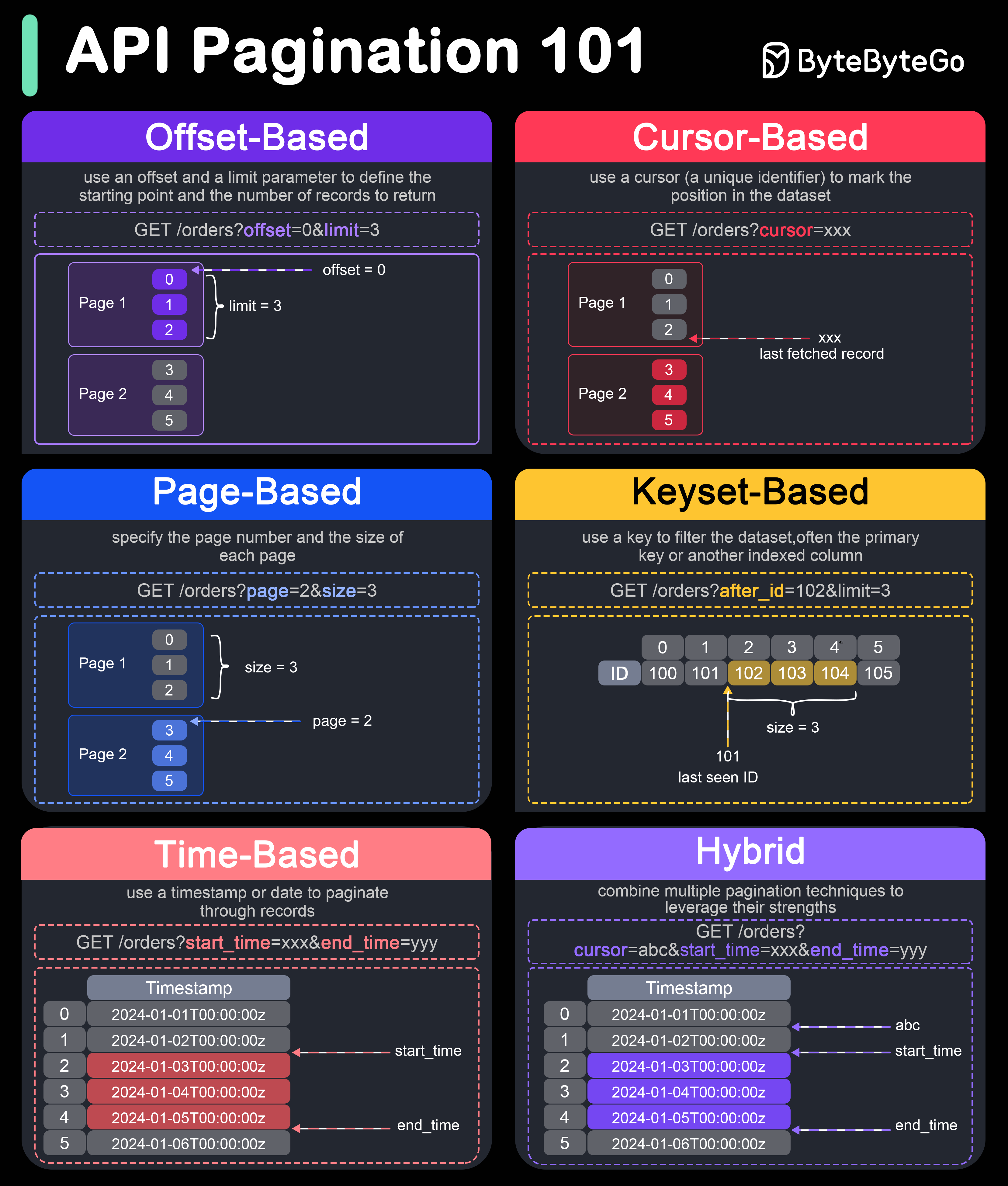

+ * [How do we Perform Pagination in API Design?](https://bytebytego.com/guides/how-do-we-perform-pagination-in-api-design)

+ * [How to Design Effective and Safe APIs](https://bytebytego.com/guides/how-do-we-design-effective-and-safe-apis)

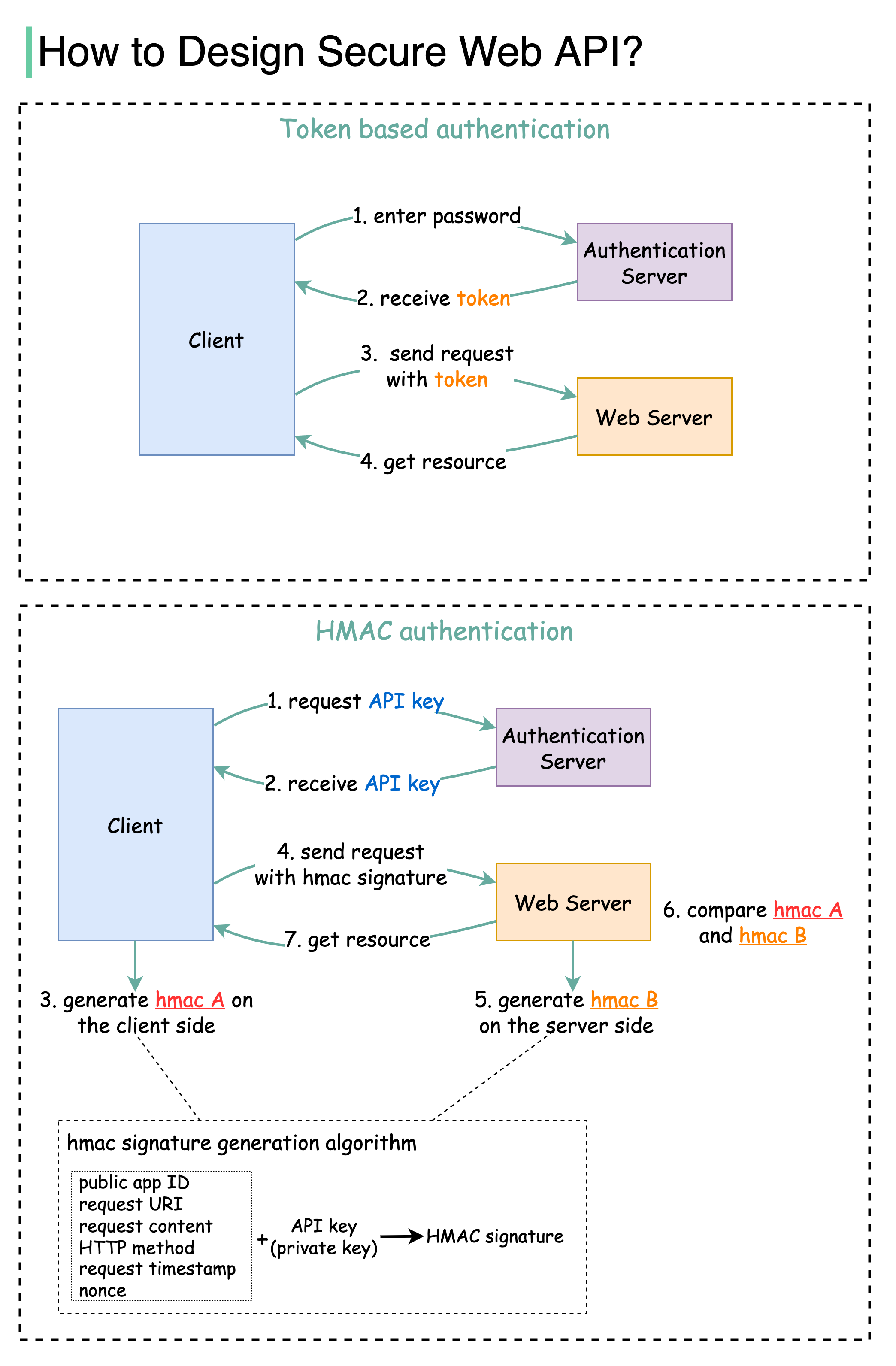

+ * [How to Design Secure Web API Access](https://bytebytego.com/guides/how-to-design-secure-web-api-access-for-your-website)

+ * [What Does an API Gateway Do?](https://bytebytego.com/guides/what-does-api-gateway-do)

+ * [What is gRPC?](https://bytebytego.com/guides/what-is-grpc)

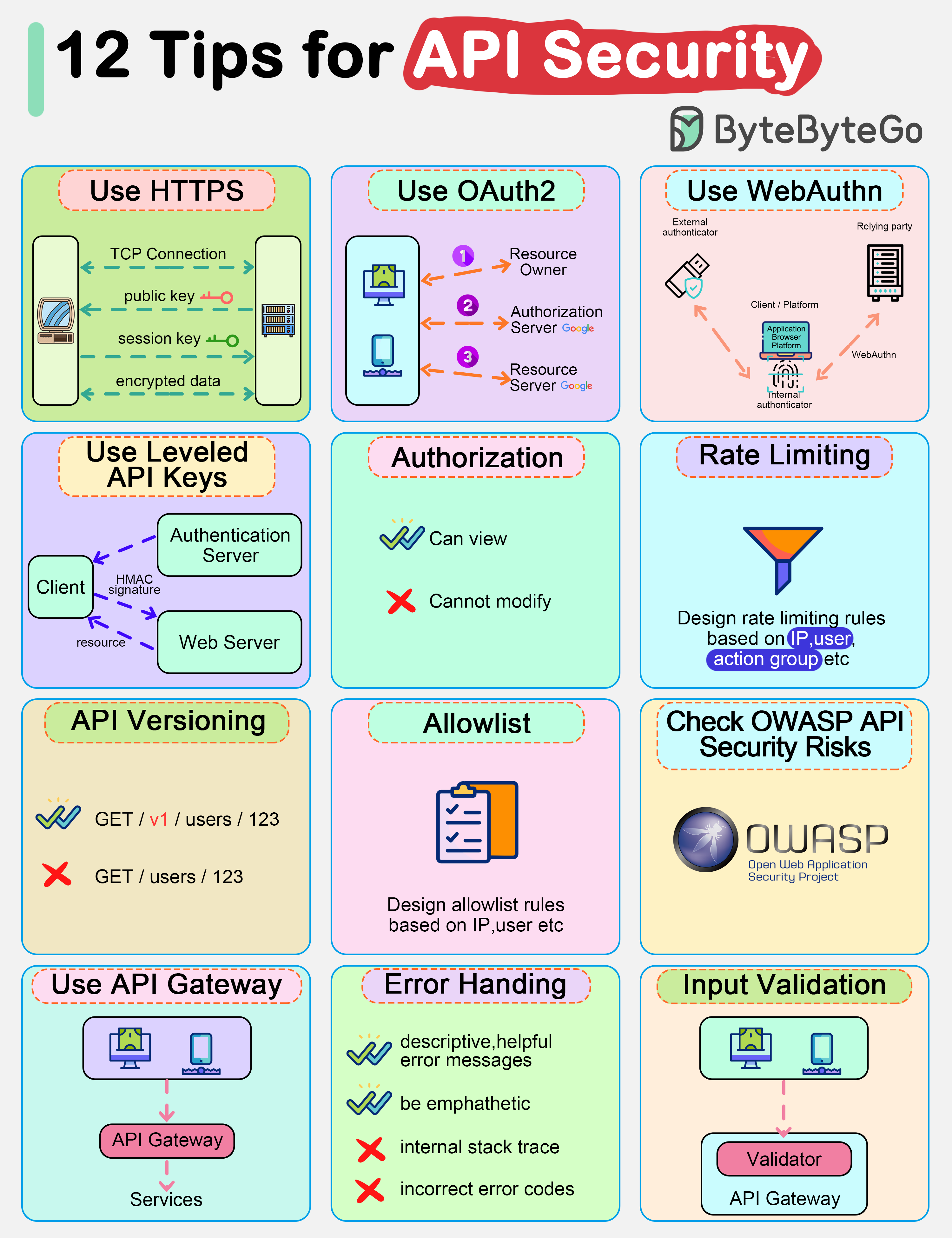

+ * [Top 12 Tips for API Security](https://bytebytego.com/guides/top-12-tips-for-api-security)

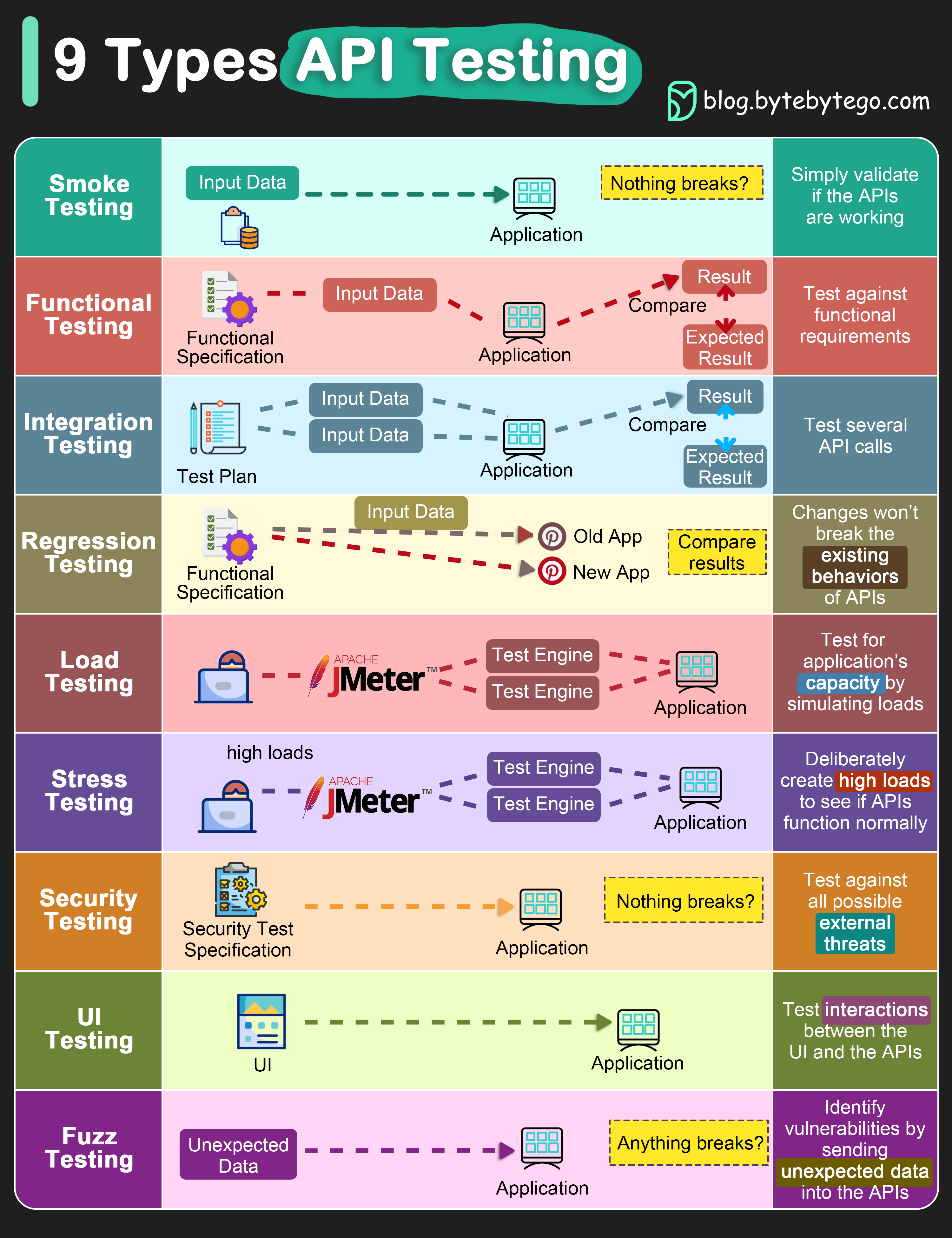

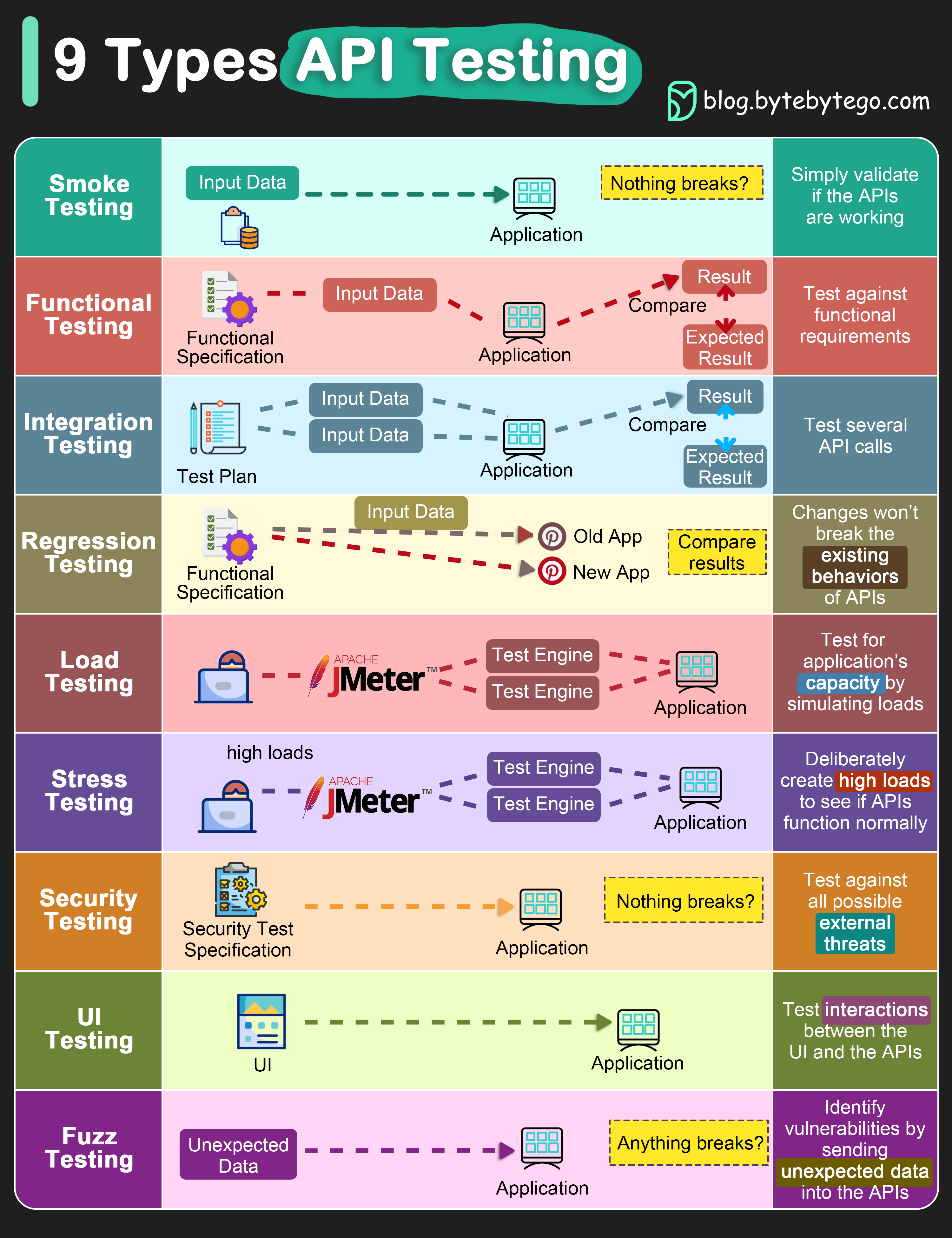

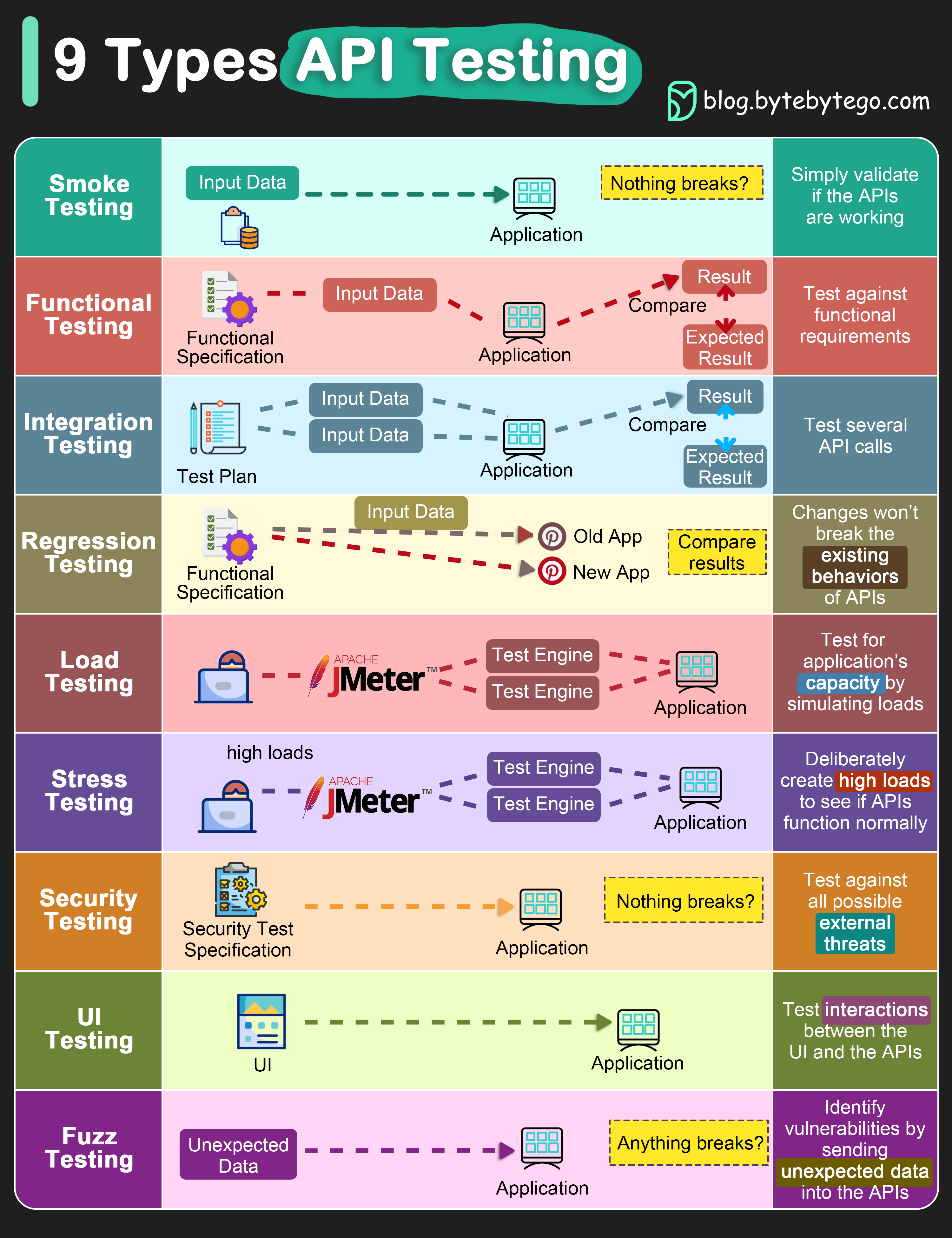

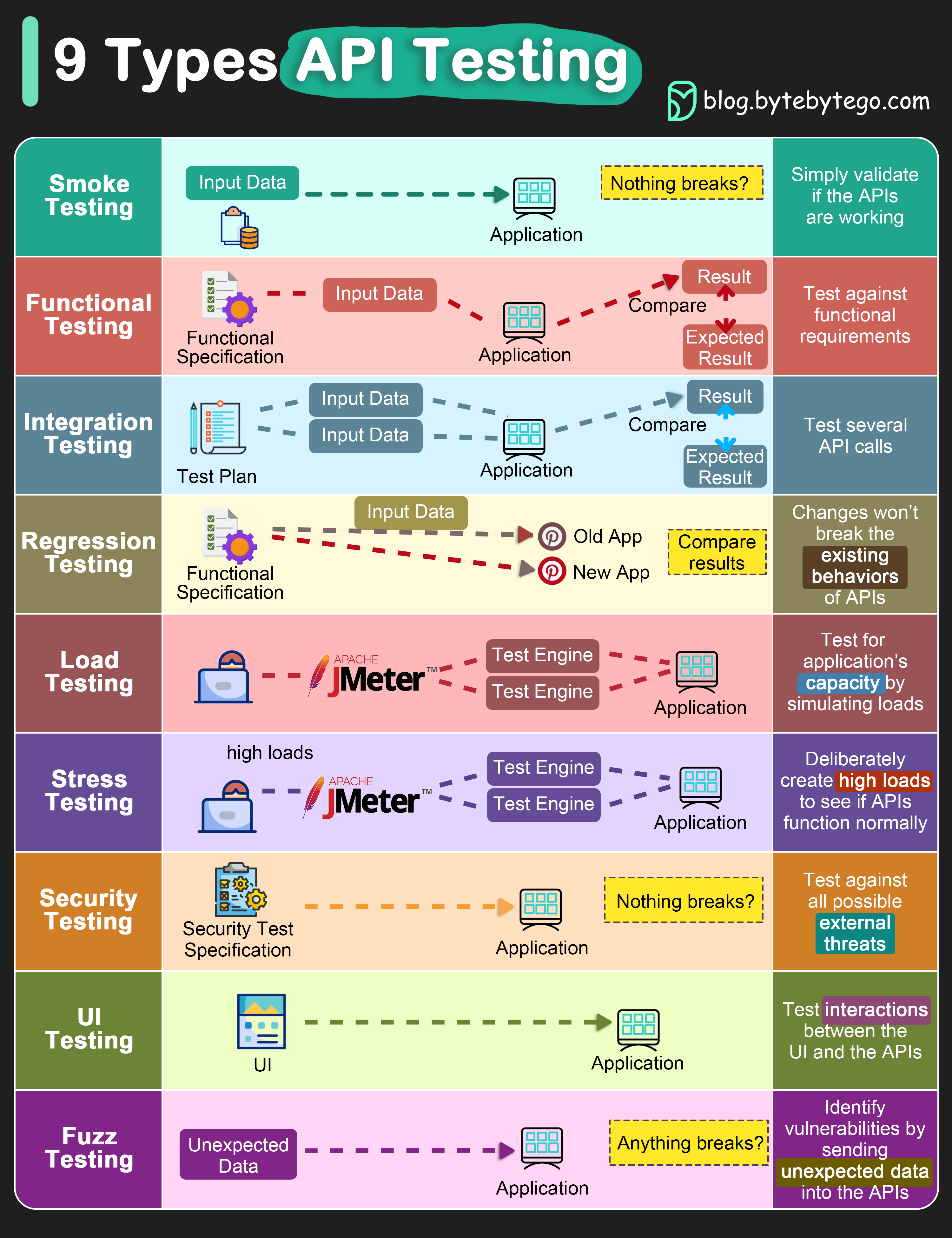

+ * [Explaining 9 Types of API Testing](https://bytebytego.com/guides/explaining-9-types-of-api-testing)

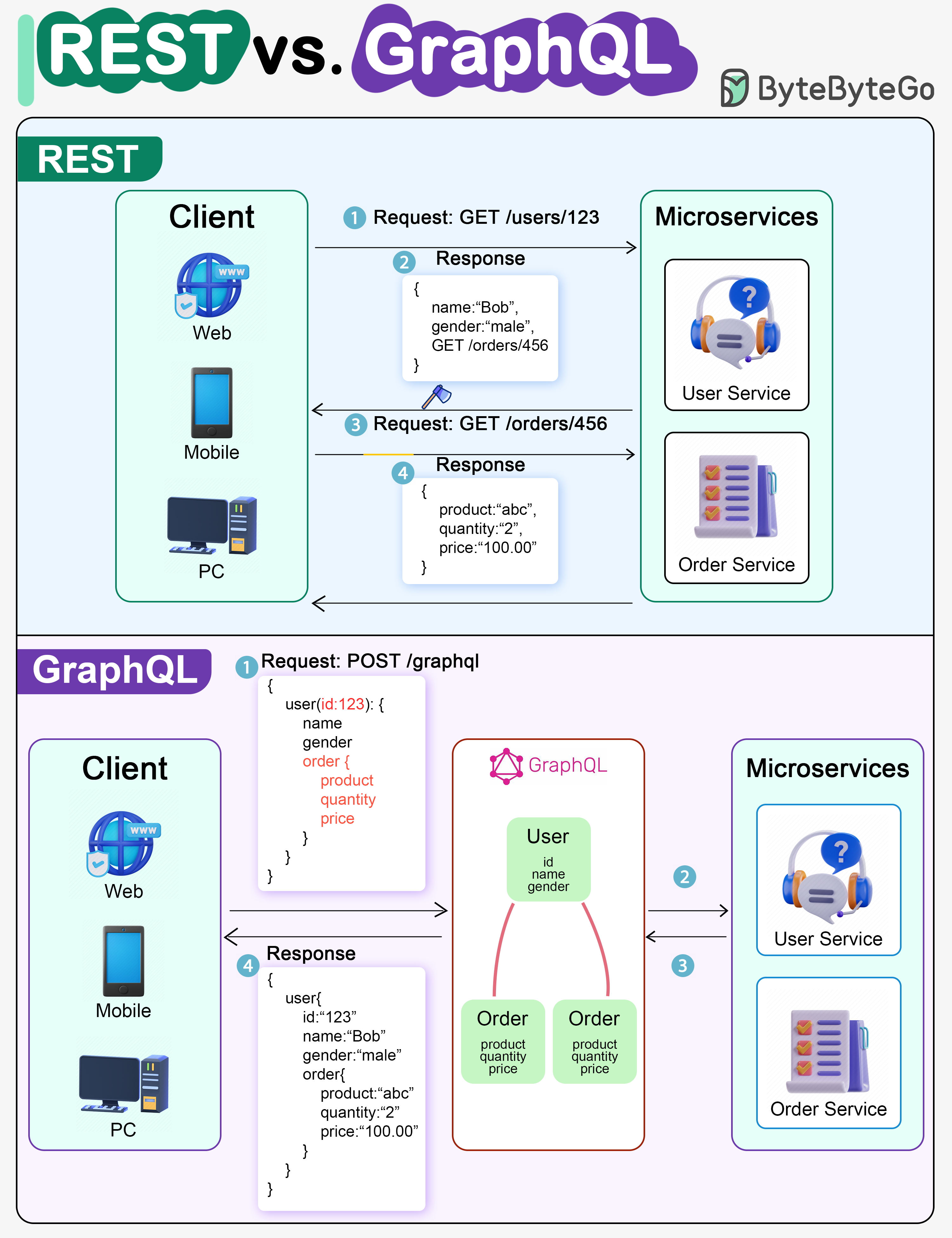

+ * [REST API vs. GraphQL](https://bytebytego.com/guides/rest-api-vs-graphql)

+ * [What is GraphQL?](https://bytebytego.com/guides/what-is-graphql)

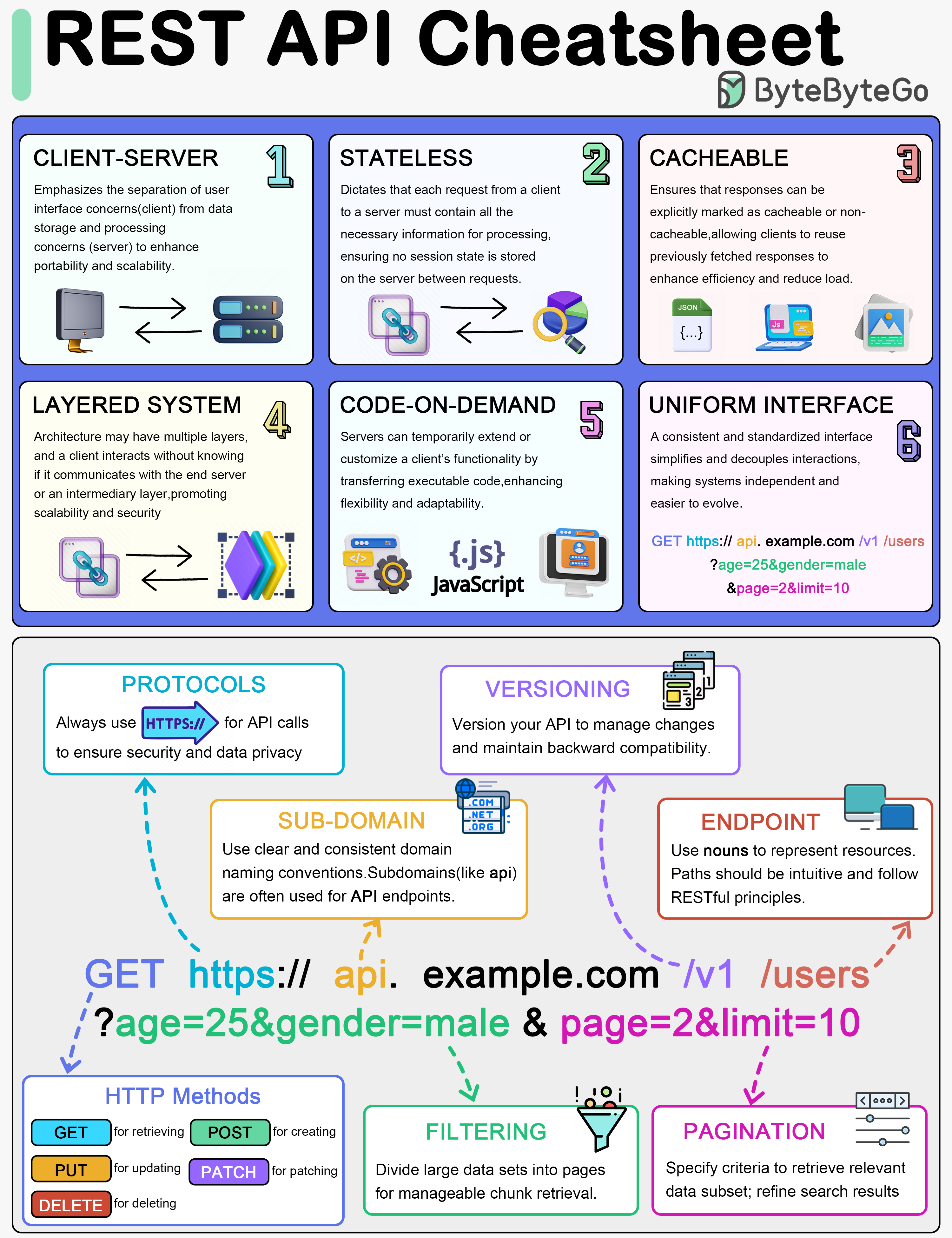

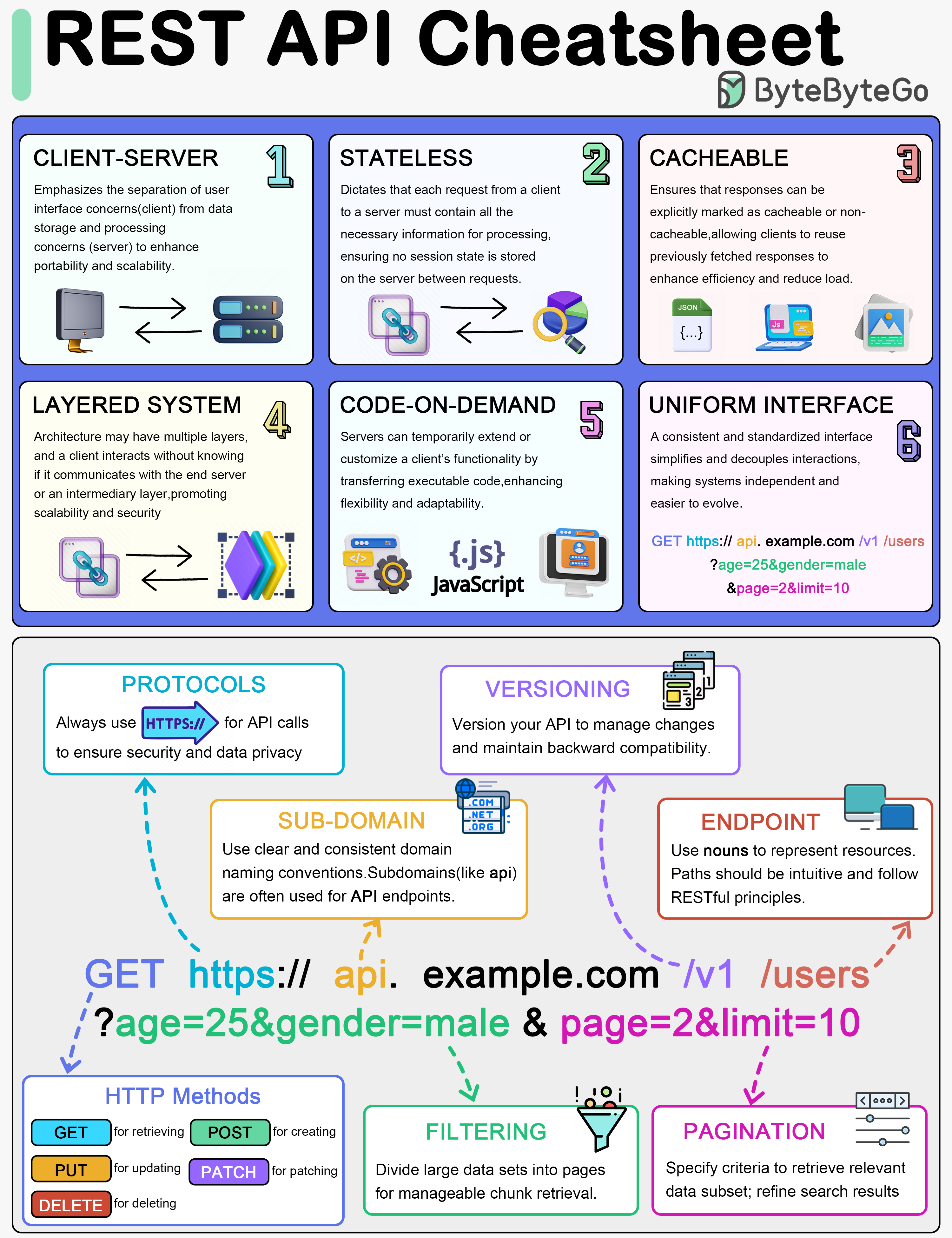

+ * [REST API Cheatsheet](https://bytebytego.com/guides/rest-api-cheatsheet)

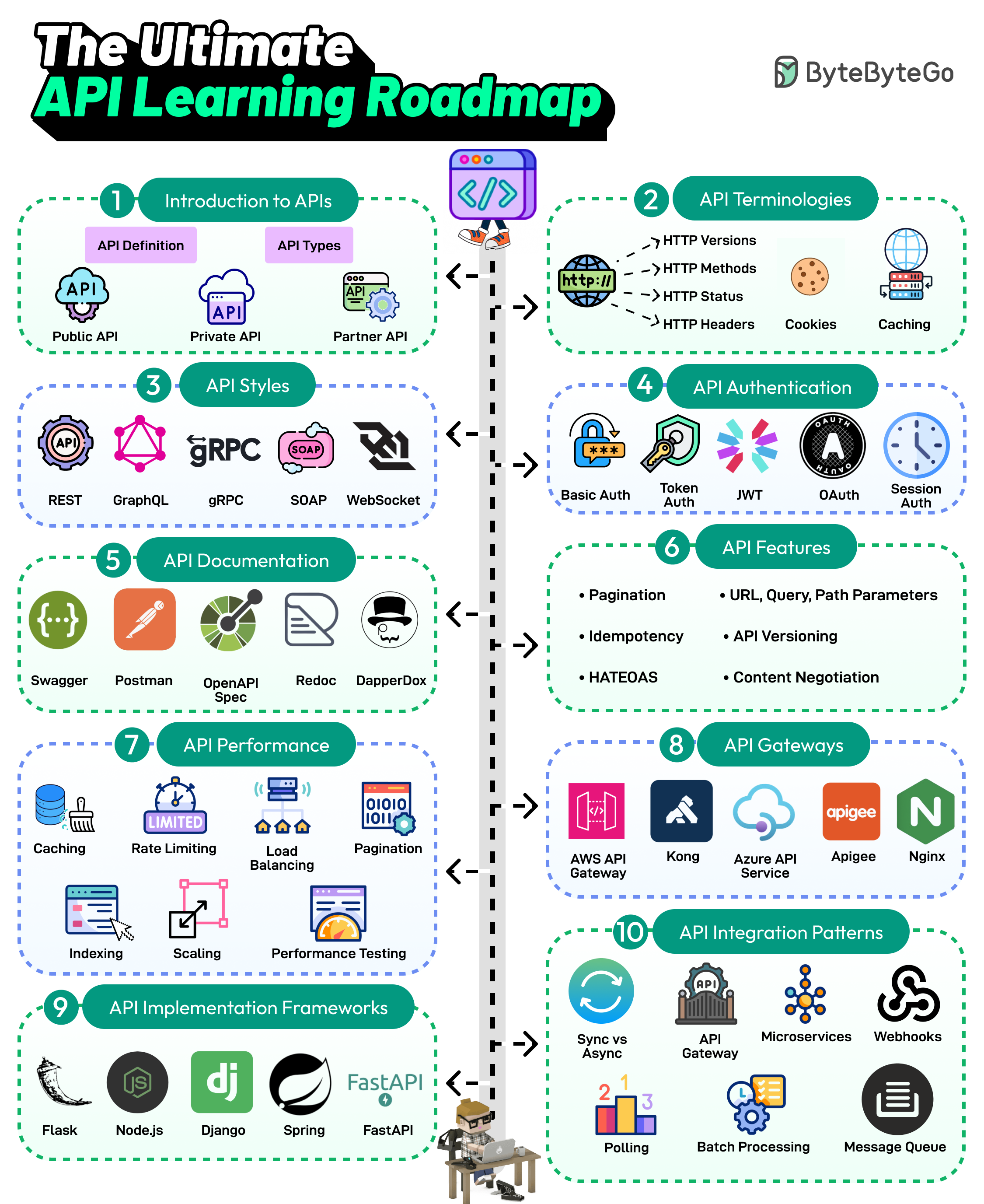

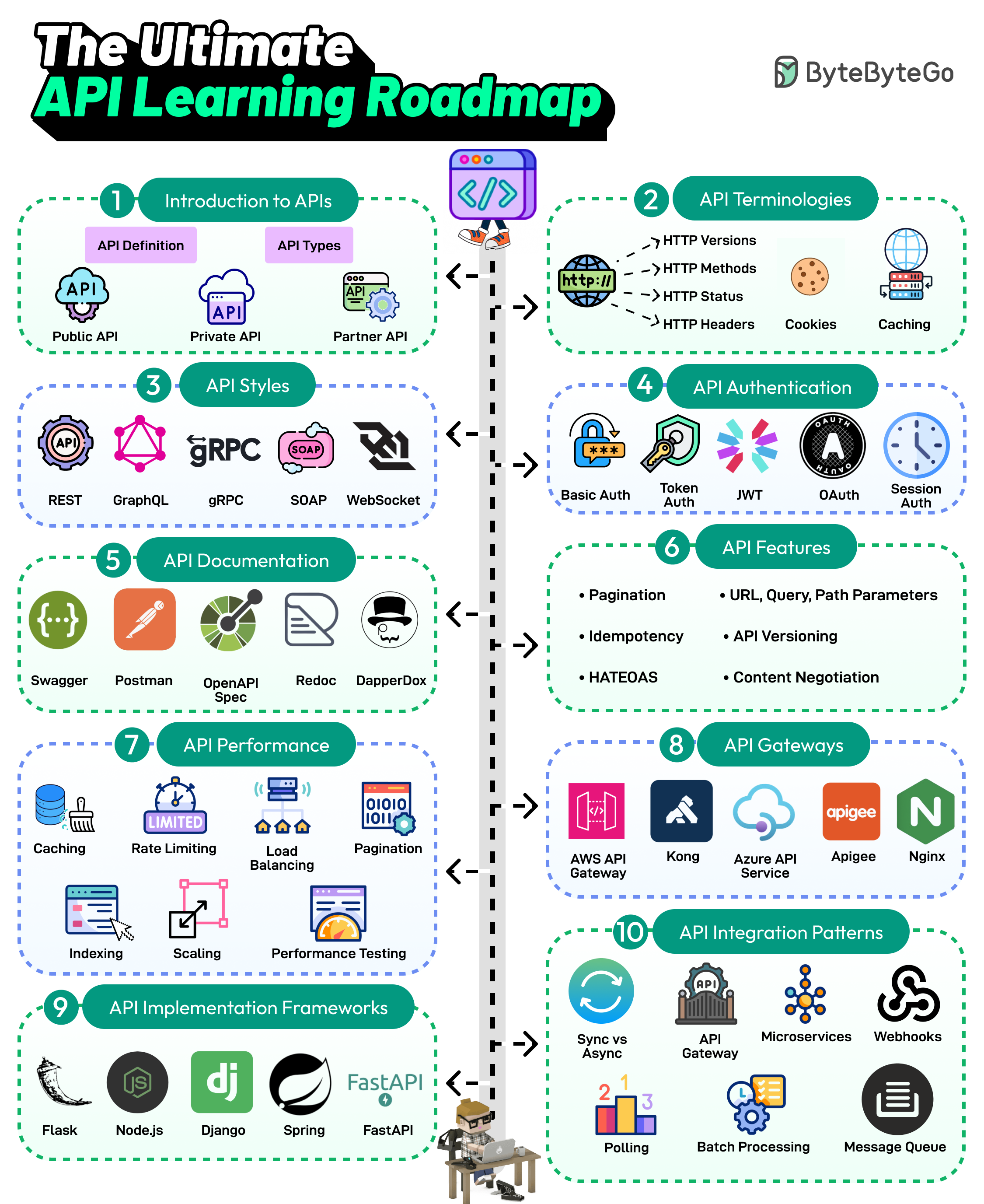

+ * [The Ultimate API Learning Roadmap](https://bytebytego.com/guides/the-ultimate-api-learning-roadmap)

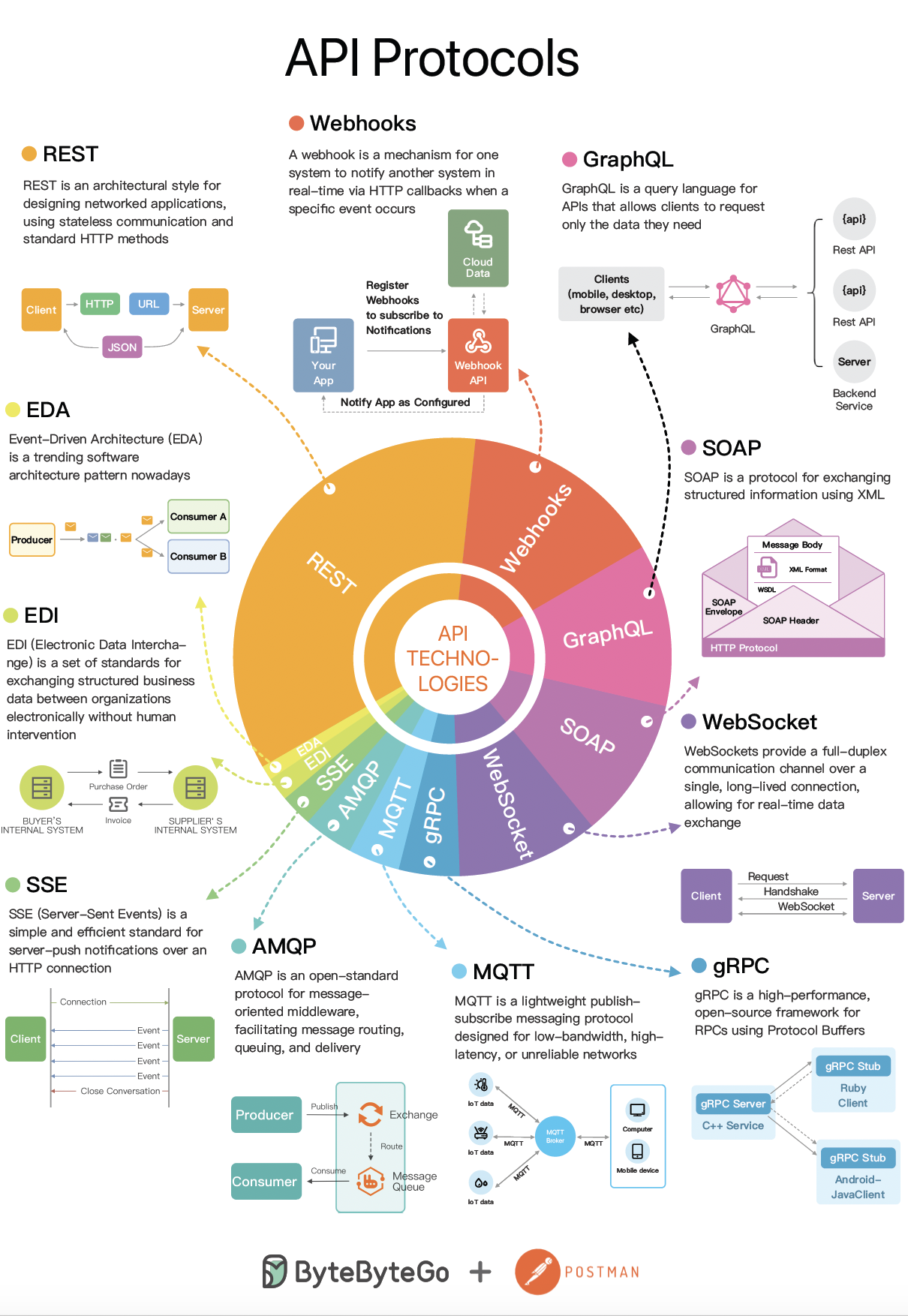

+ * [The Evolving Landscape of API Protocols in 2023](https://bytebytego.com/guides/the-evolving-landscape-of-api-protocols-in-2023)

+* [Real World Case Studies](https://bytebytego.com/guides/real-world-case-studies)

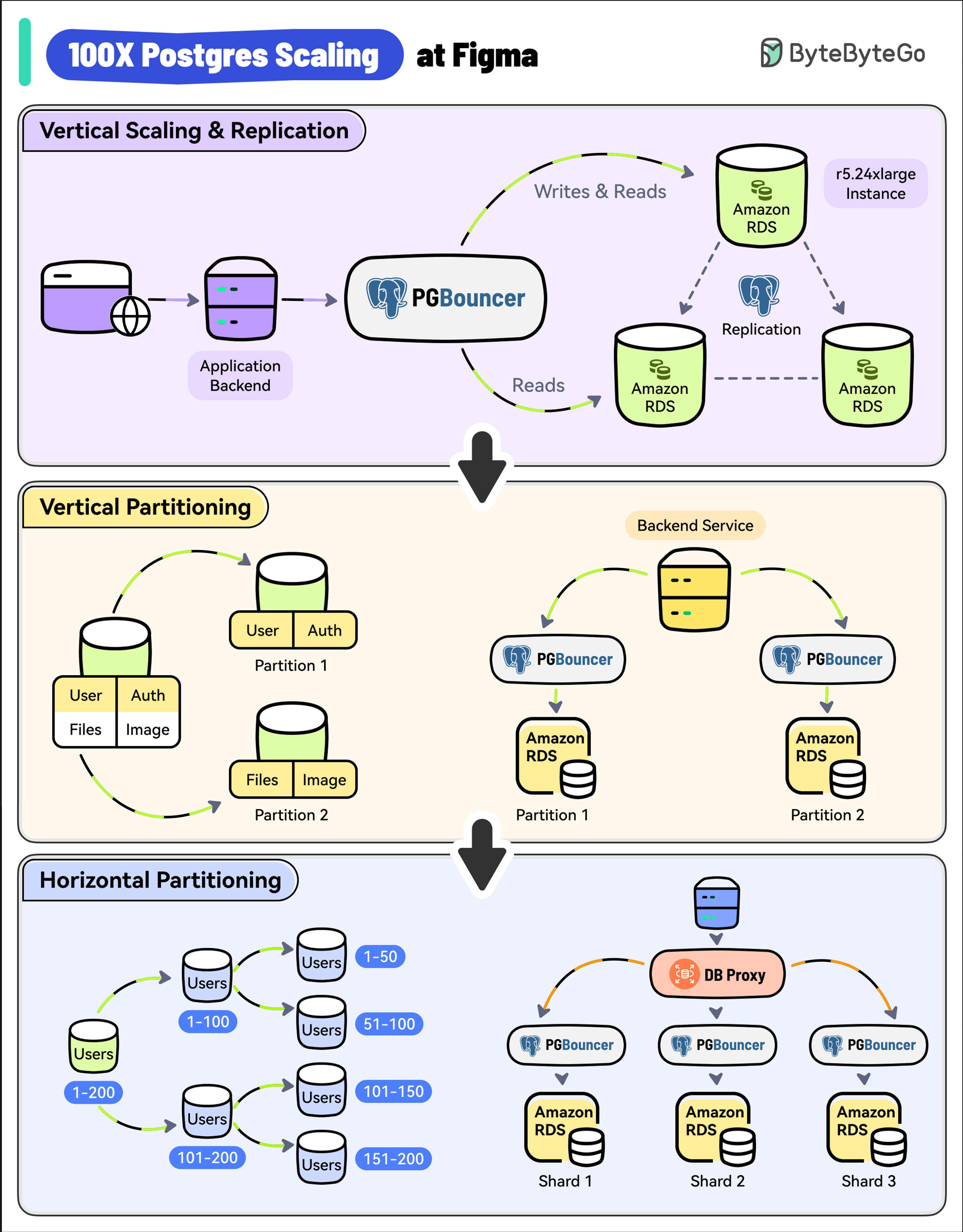

+ * [100X Postgres Scaling at Figma](https://bytebytego.com/guides/100x-postgres-scaling-at-figma)

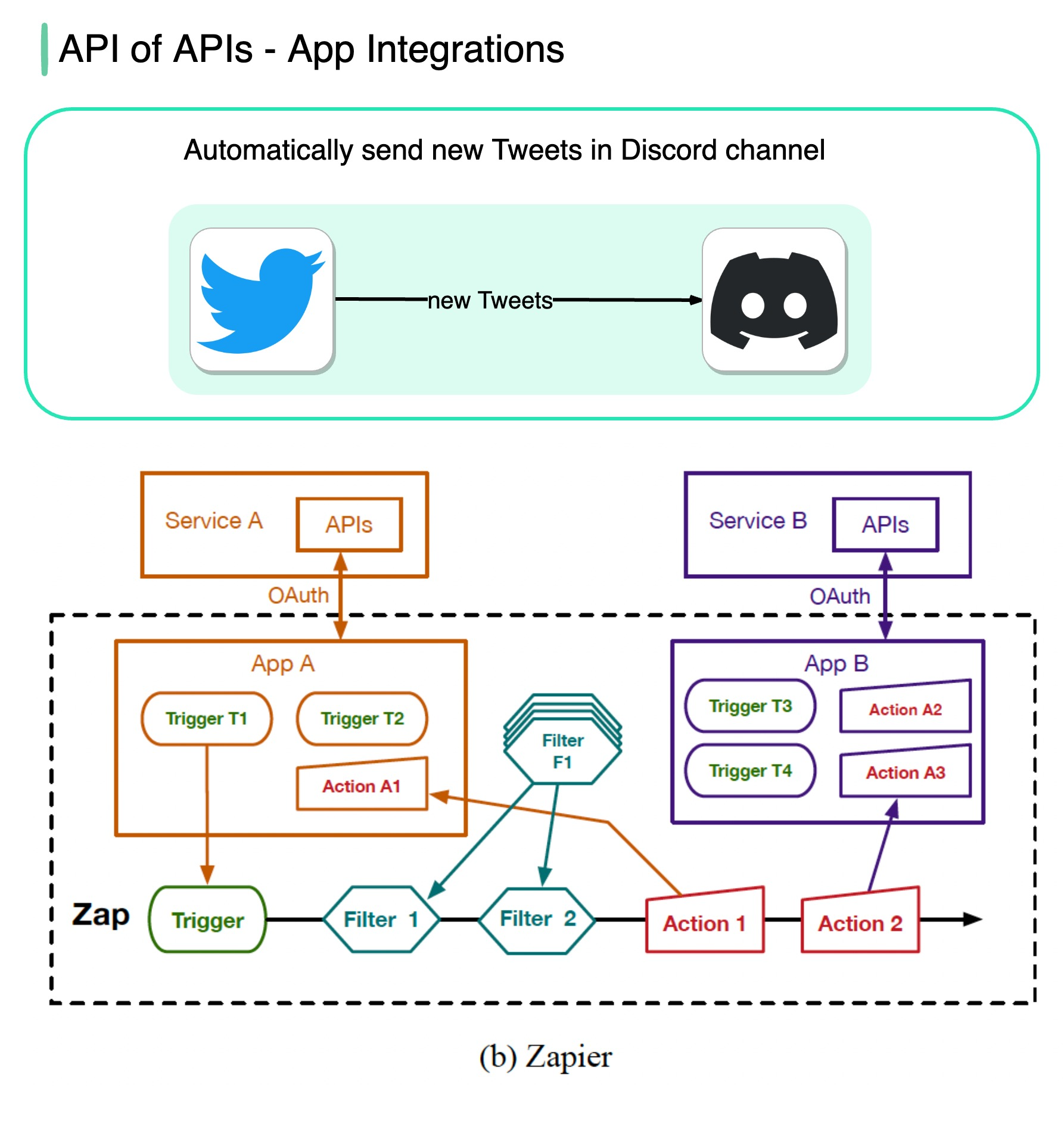

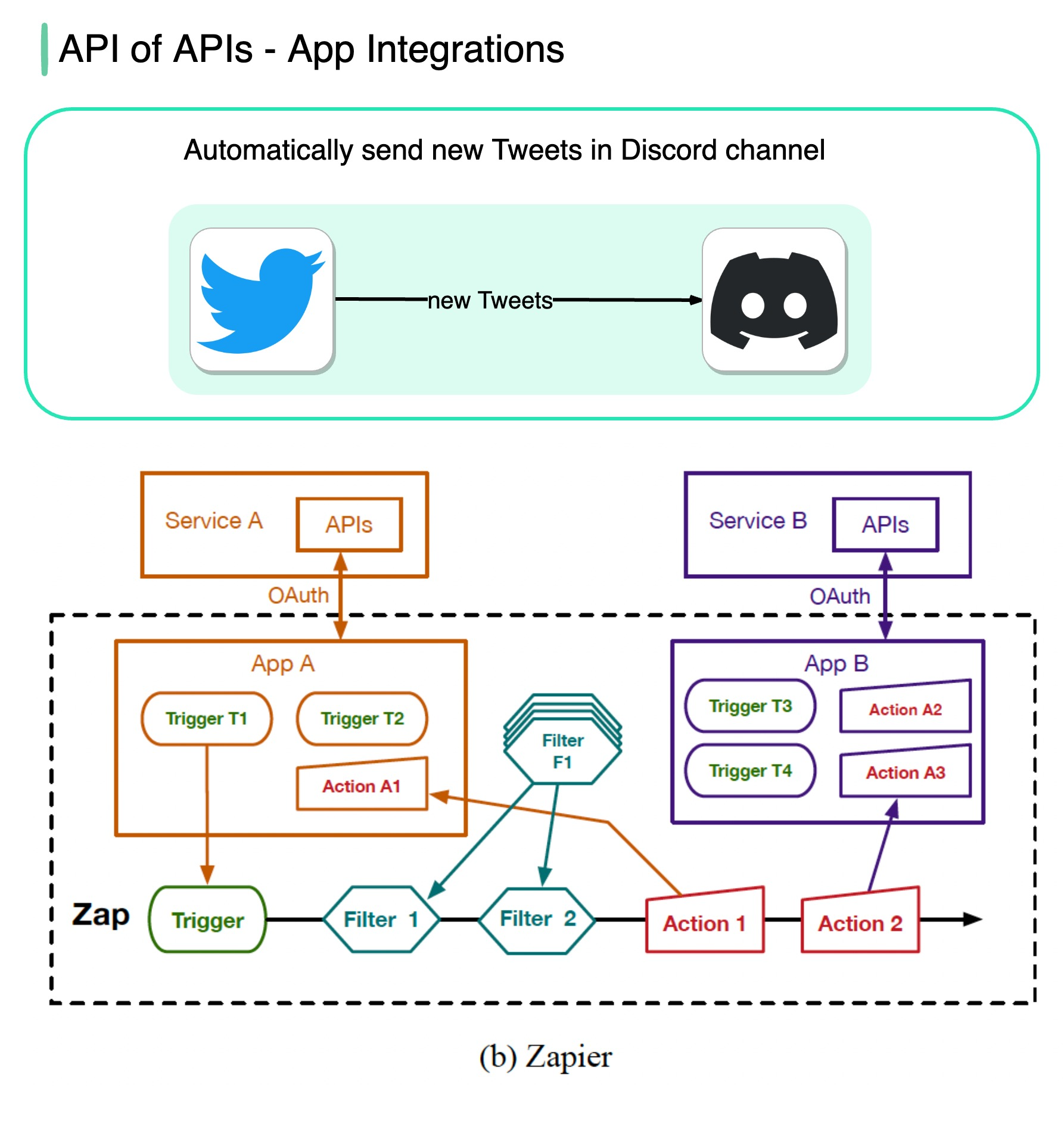

+ * [API of APIs - App Integrations](https://bytebytego.com/guides/api-of-apis-app-integrations)

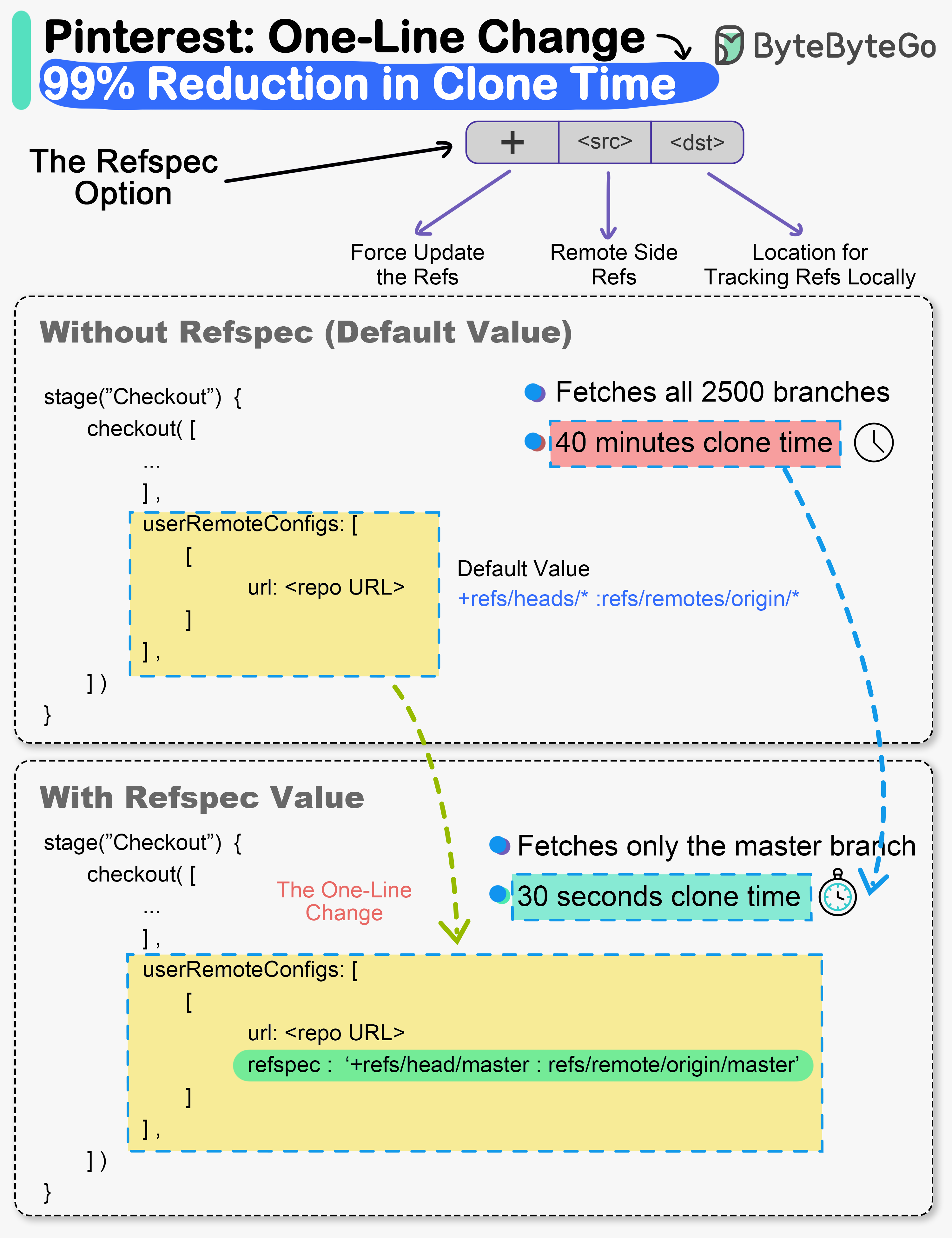

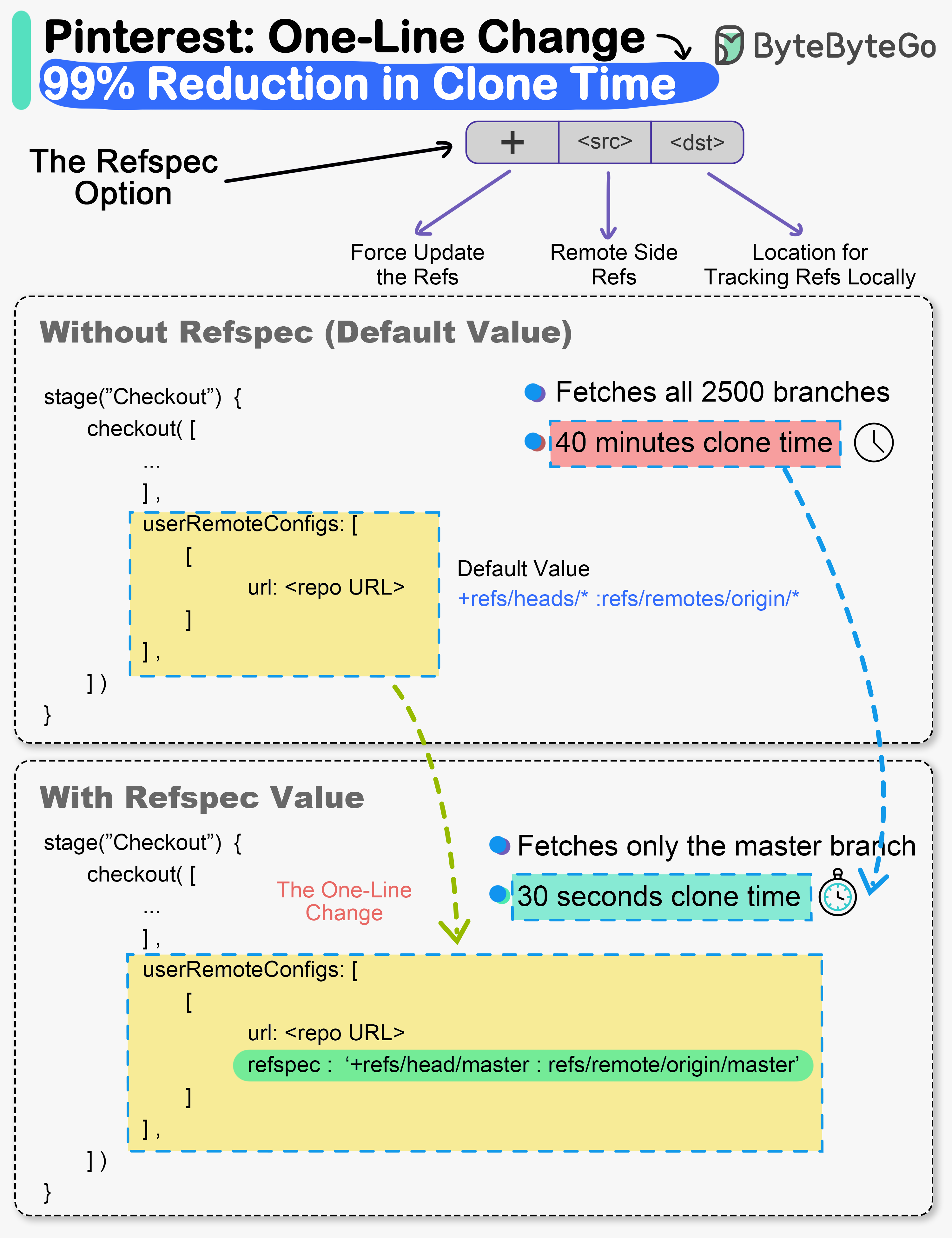

+ * [The one-line change that reduced clone times by 99% at Pinterest](https://bytebytego.com/guides/the-one-line-change-that-reduced-clone-times-by-a-whopping-99-says-pinterest)

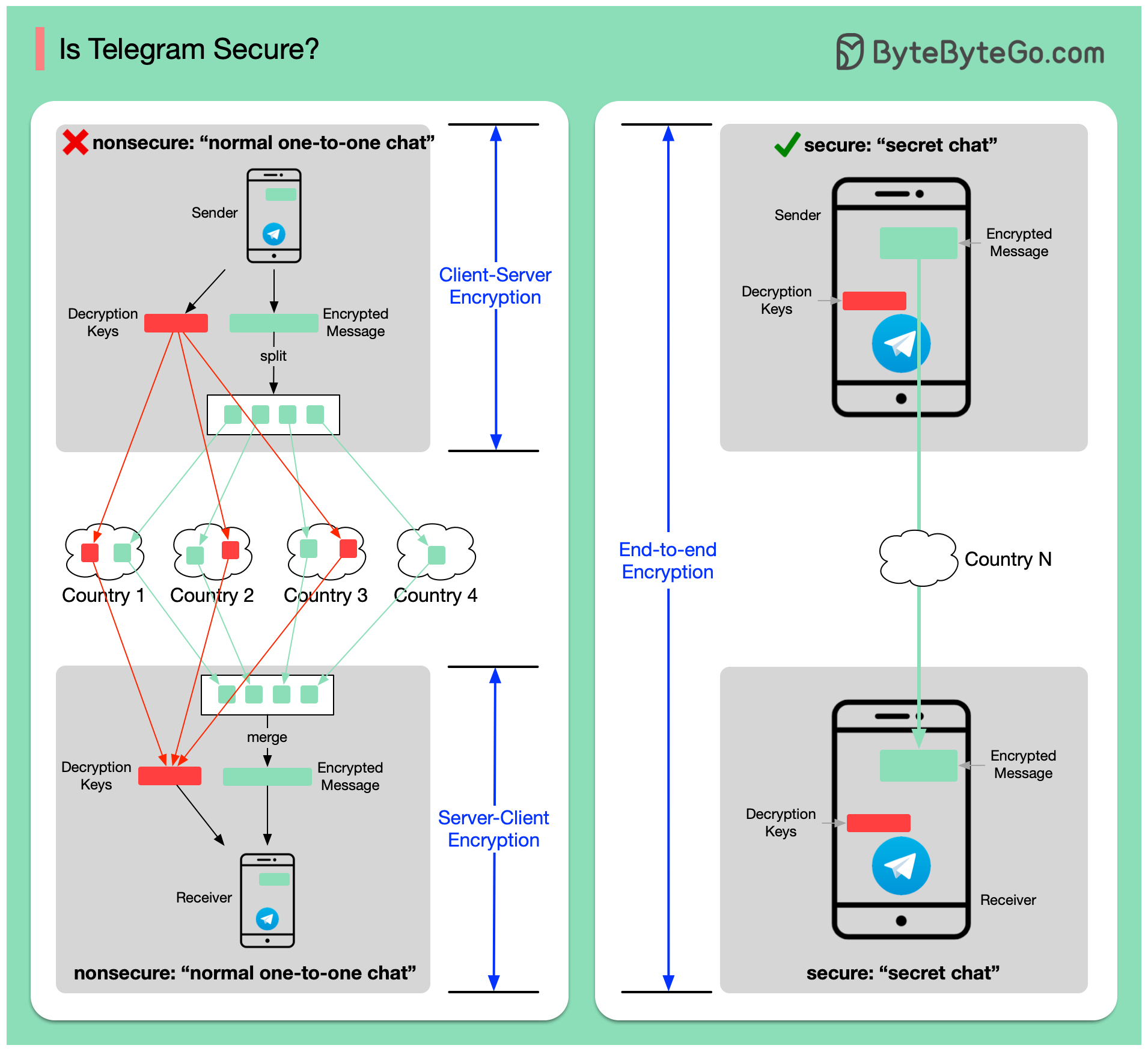

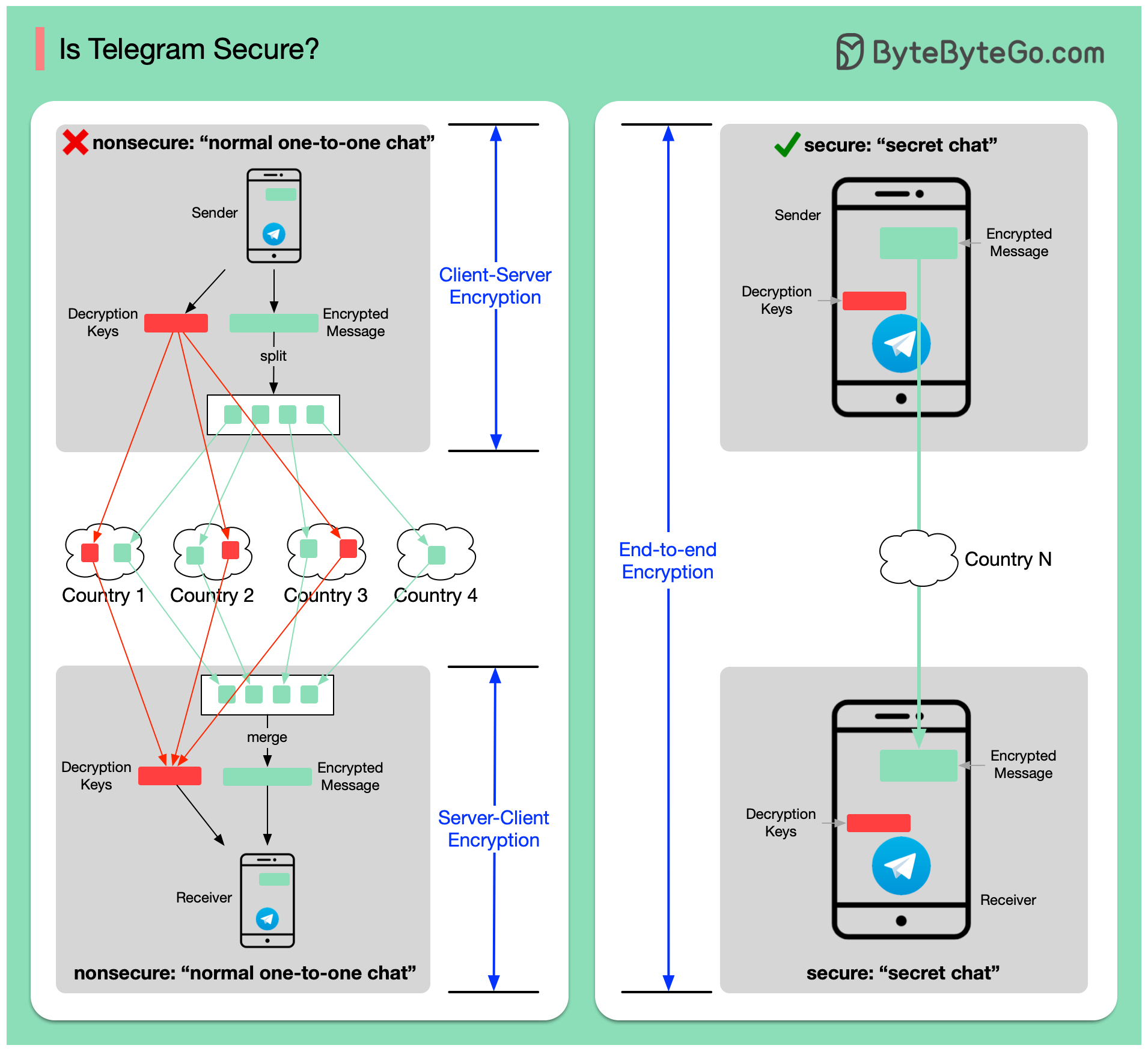

+ * [Is Telegram Secure?](https://bytebytego.com/guides/is-telegram-secure)

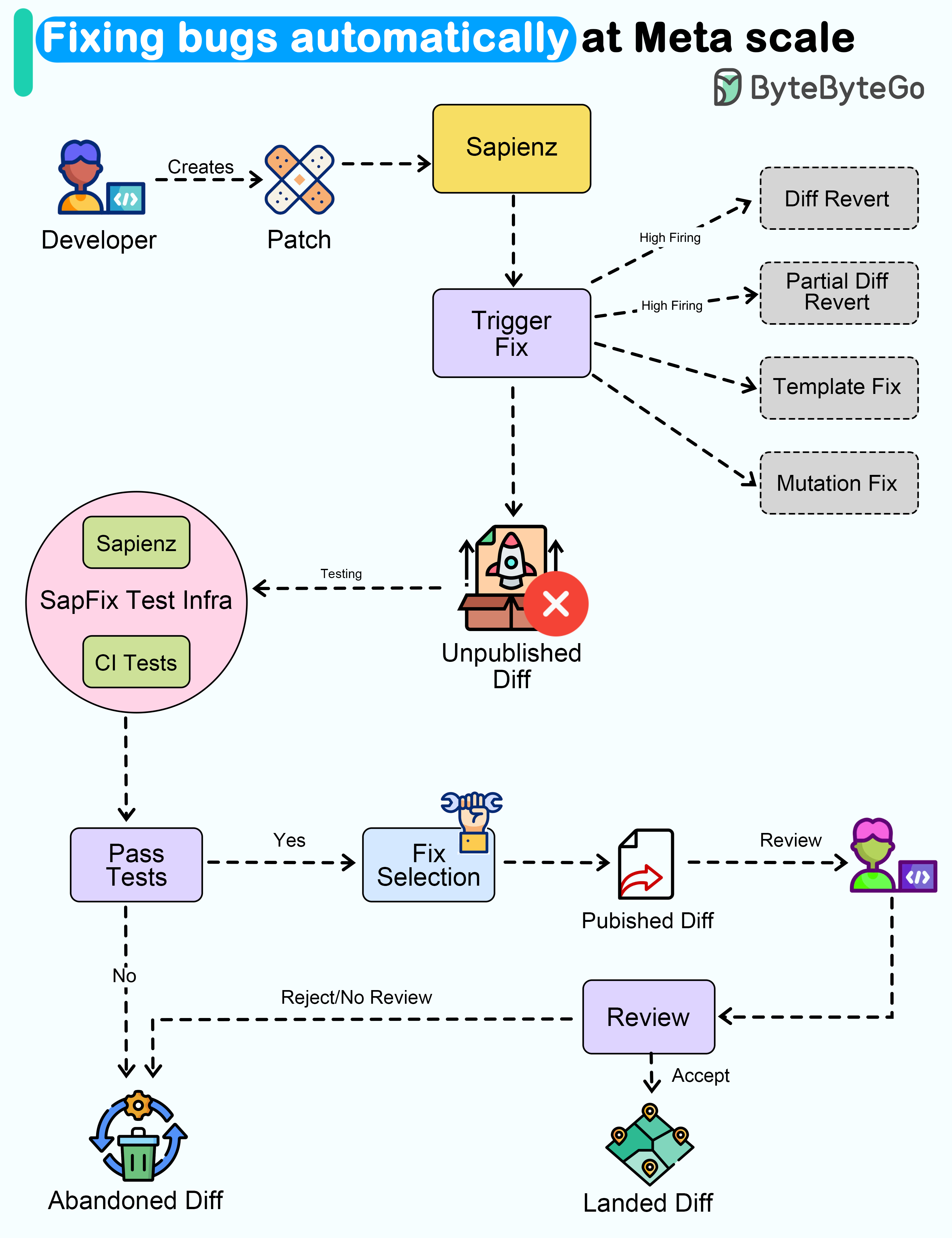

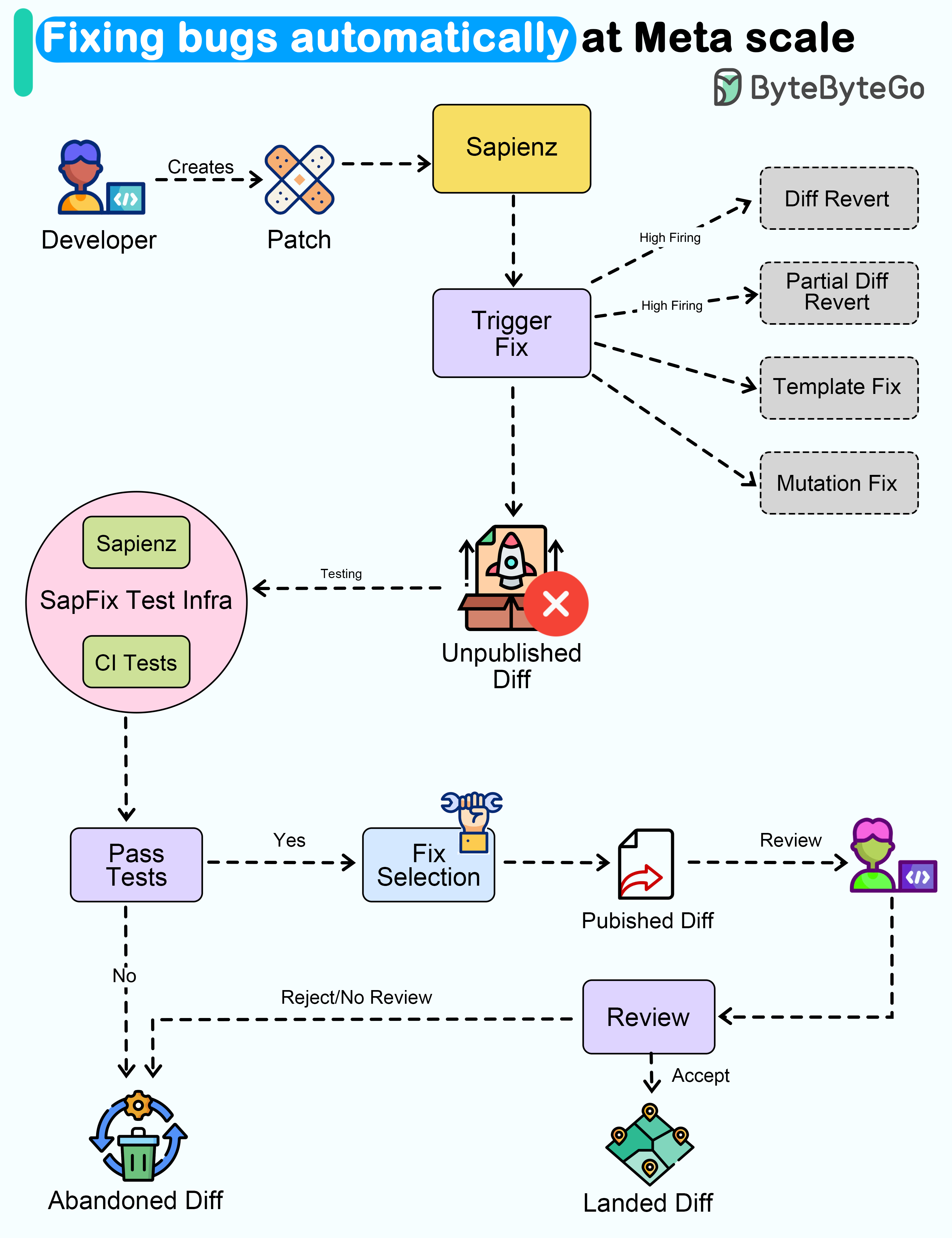

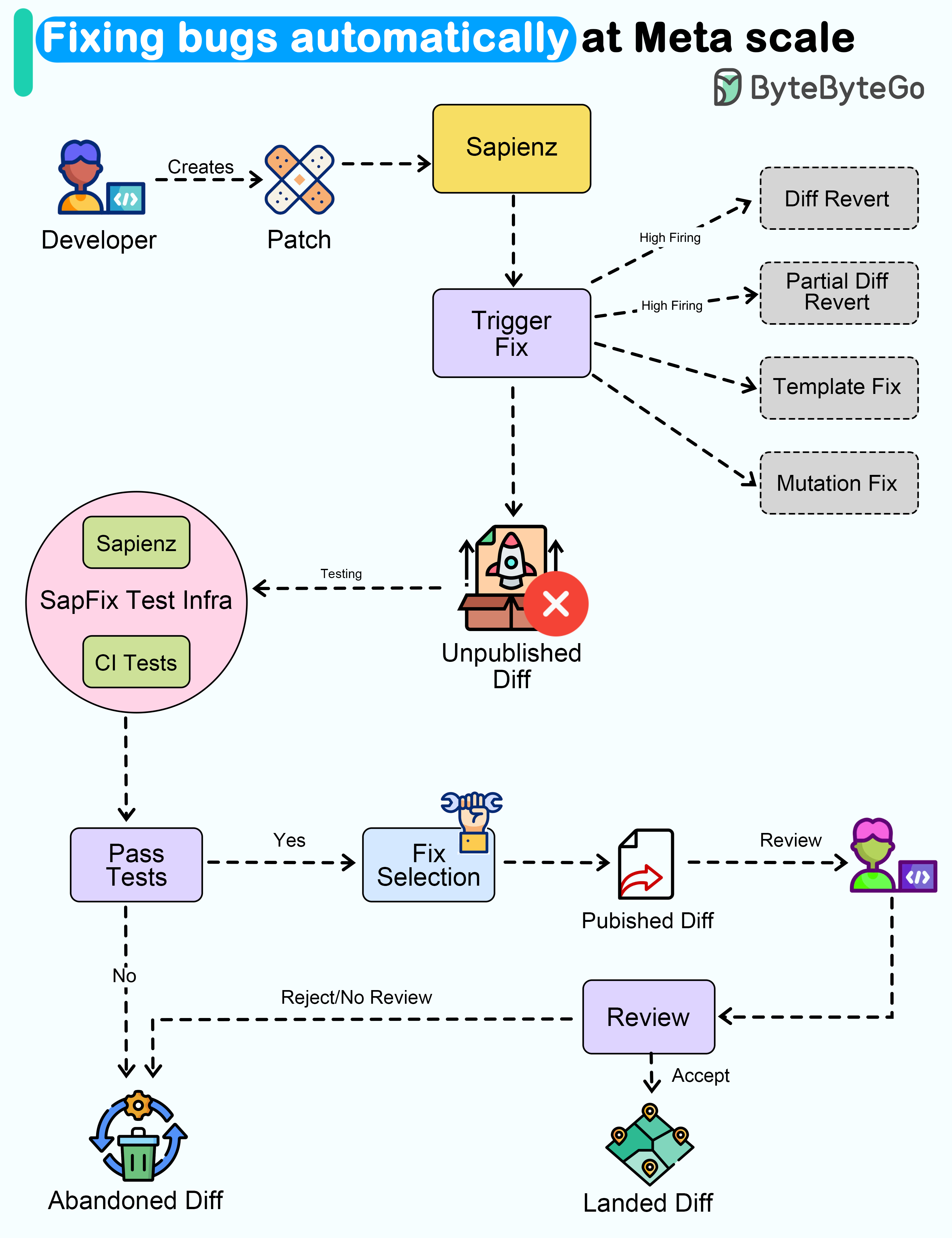

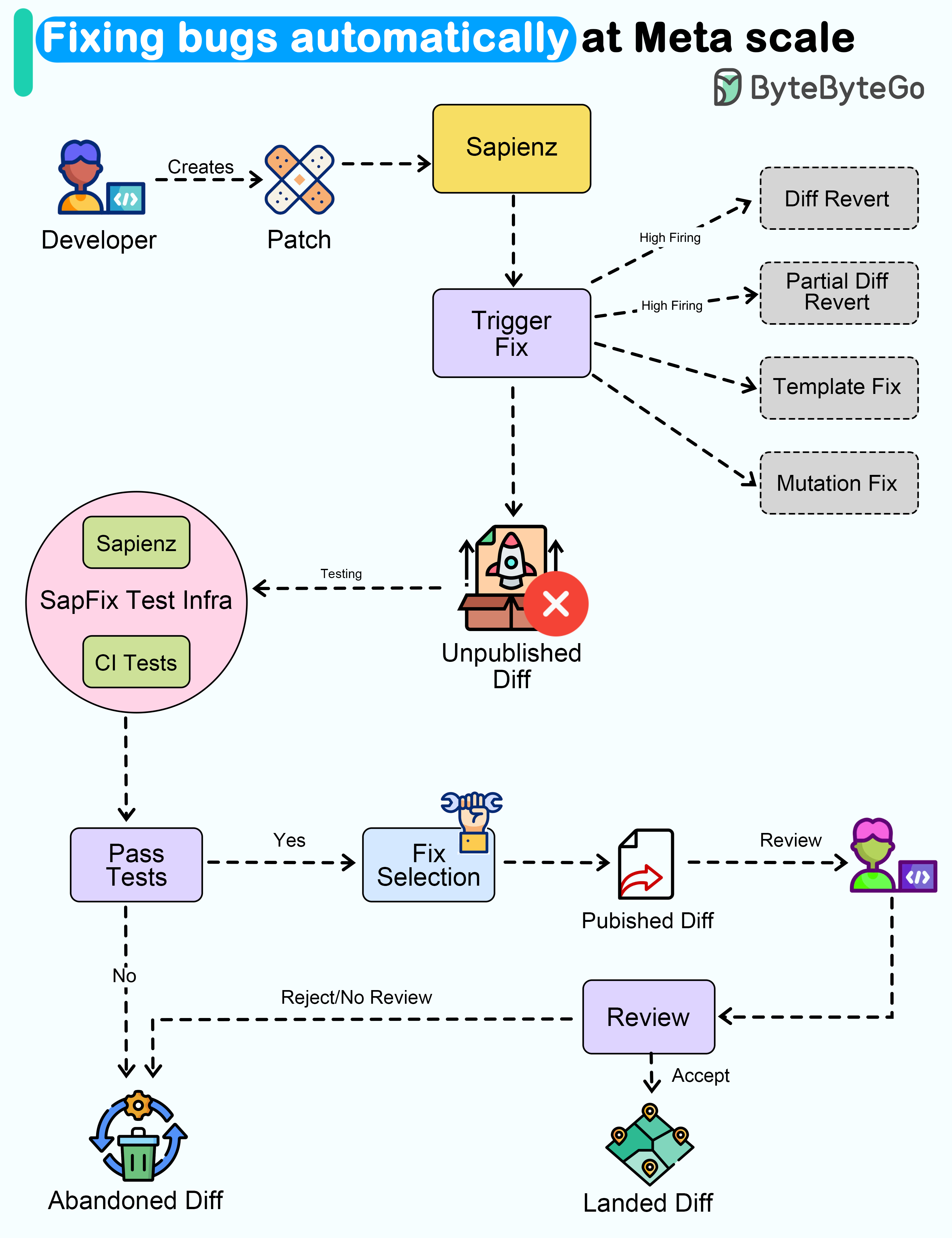

+ * [Fixing Bugs Automatically at Meta Scale](https://bytebytego.com/guides/fixing-bugs-automatically-at-meta-scale)

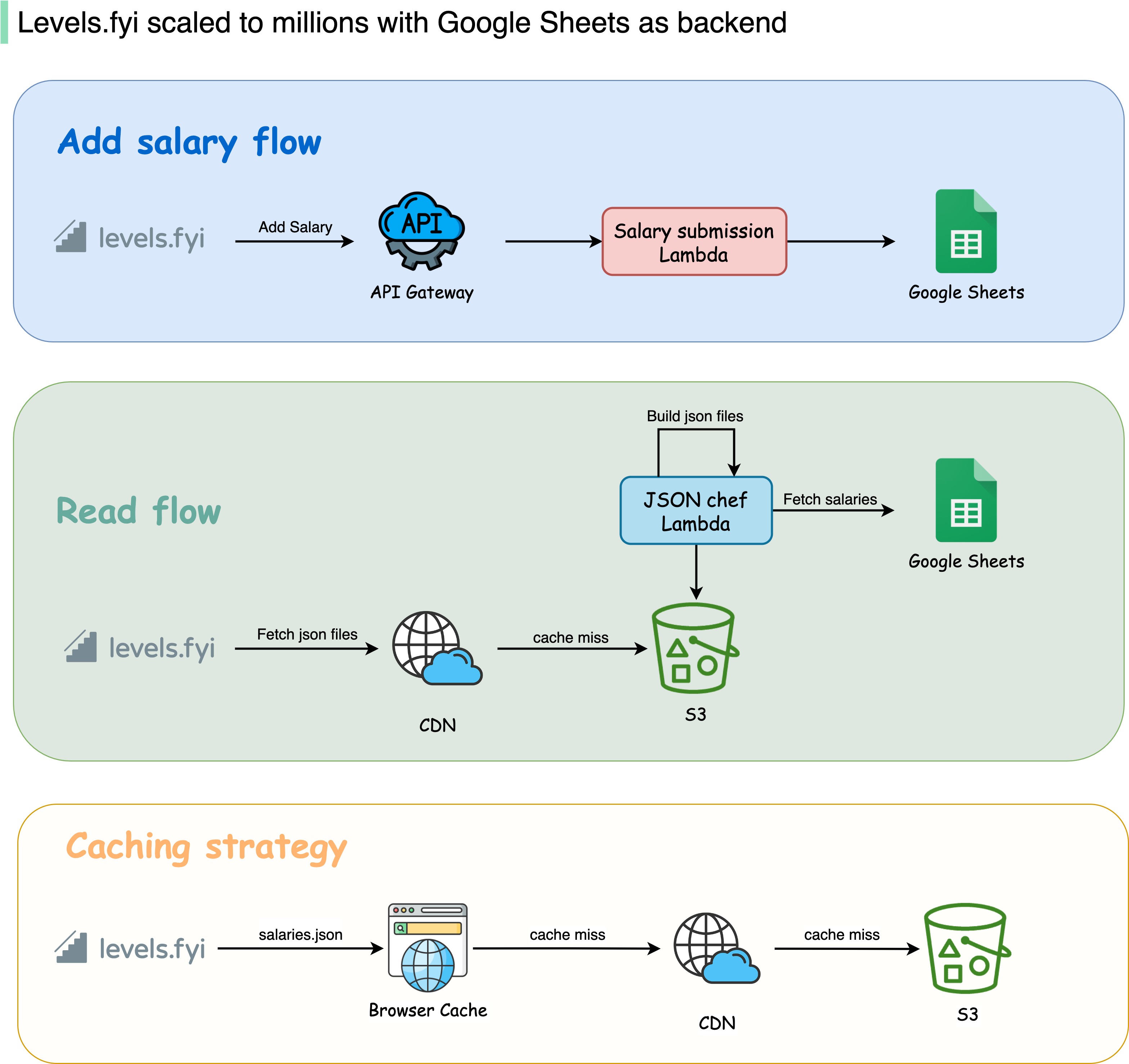

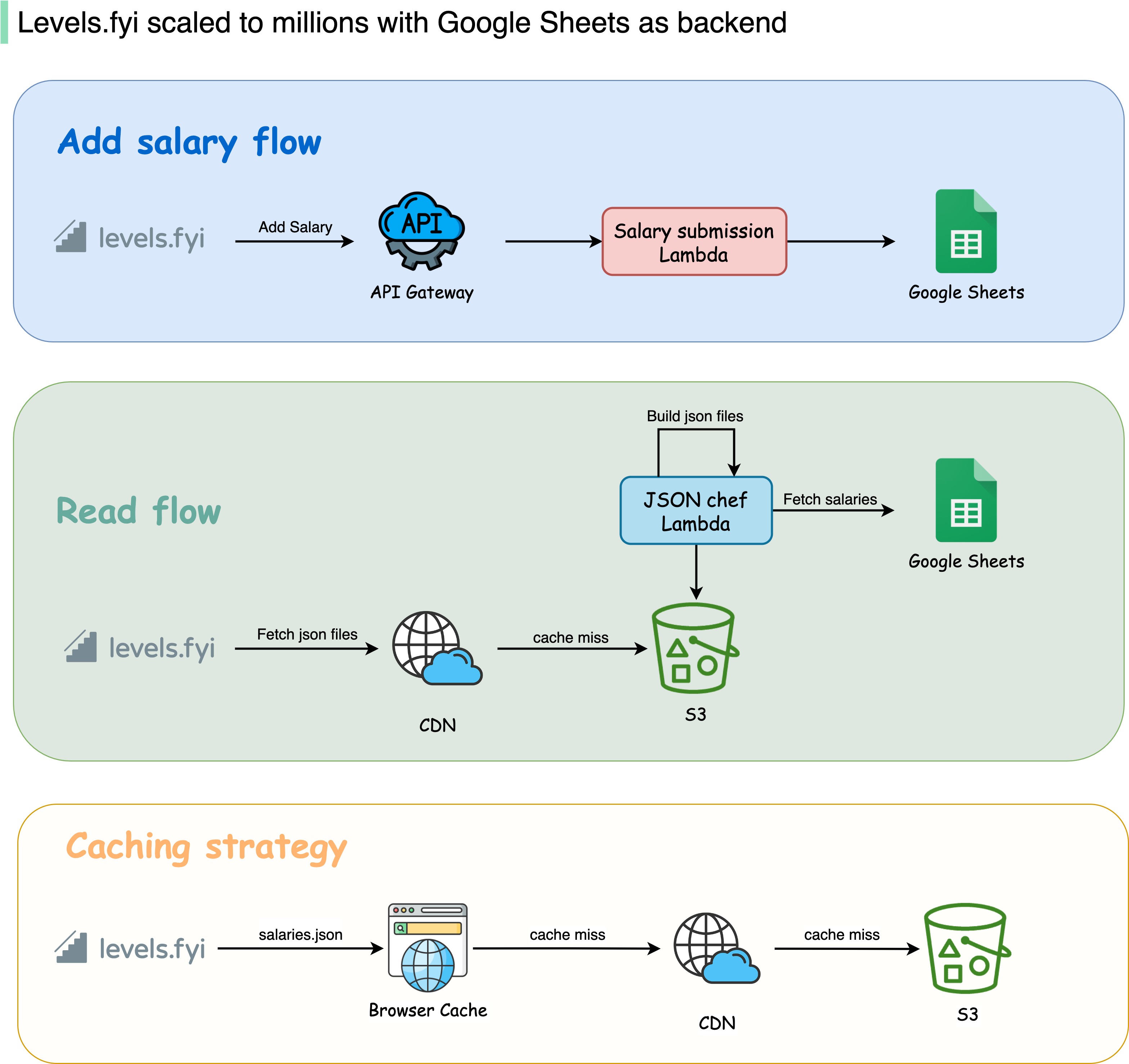

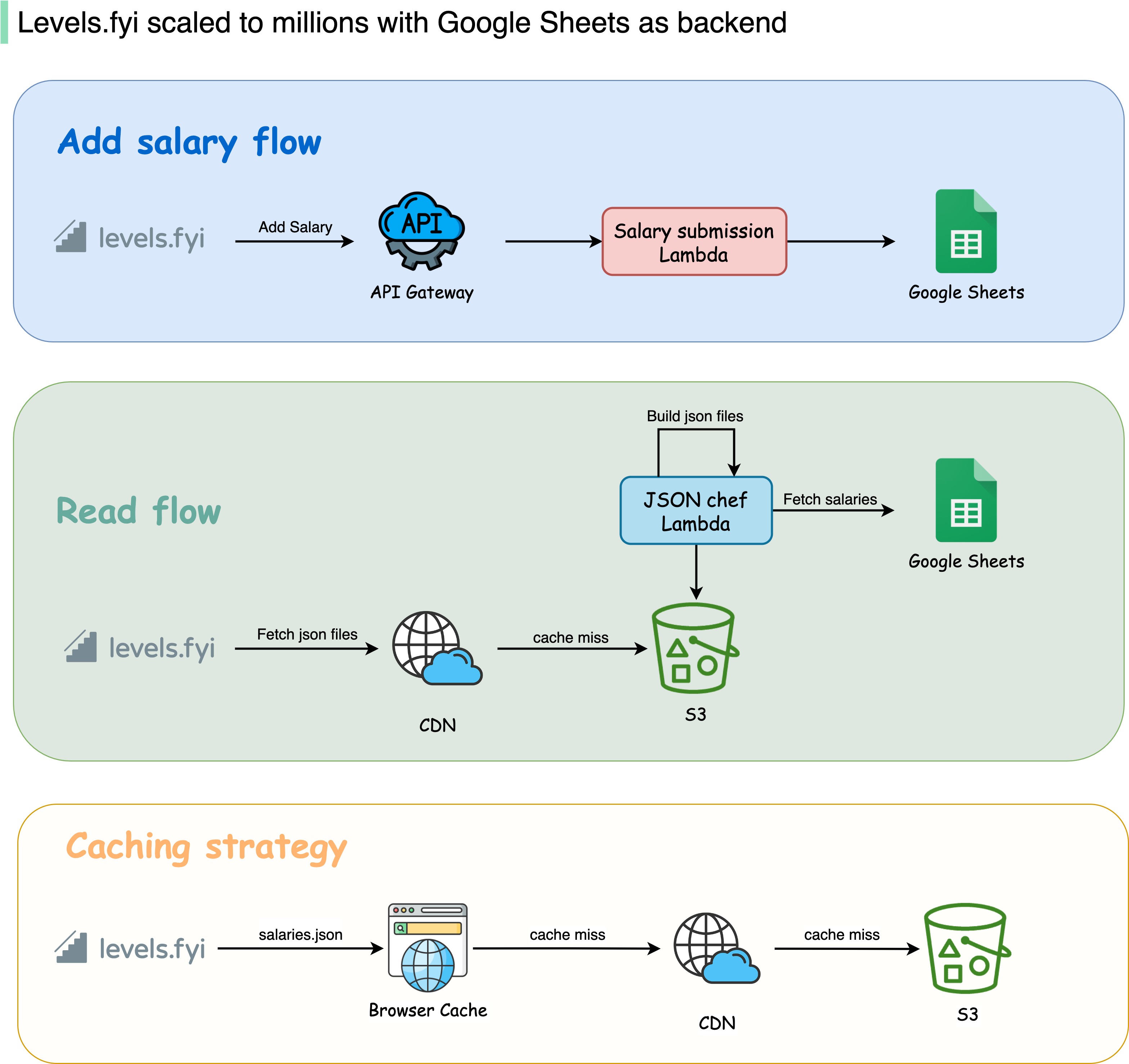

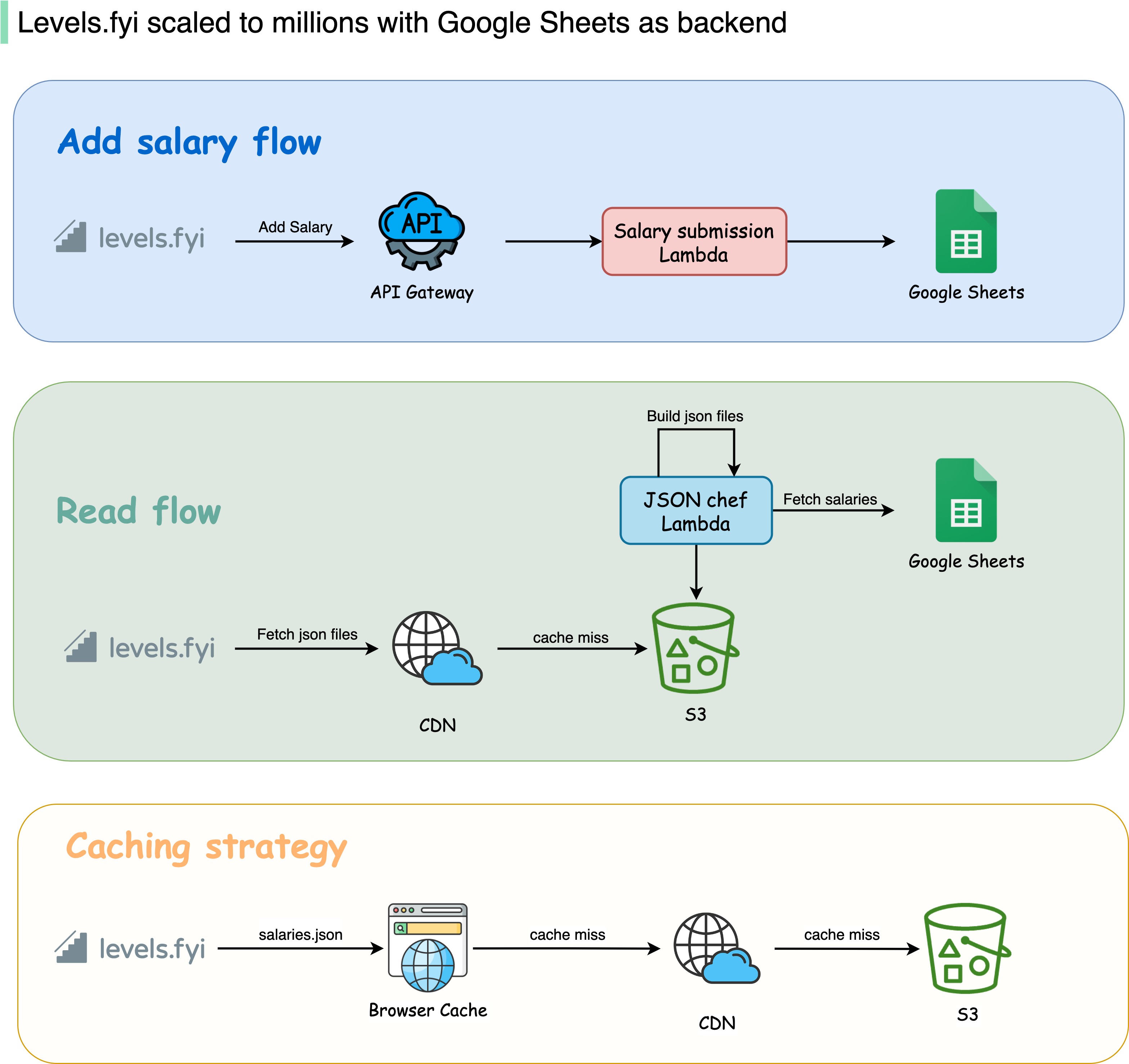

+ * [How Levelsfyi Scaled to Millions of Users with Google Sheets](https://bytebytego.com/guides/how-levelsfyi-scaled-to-millions-of-users-with-google-sheets)

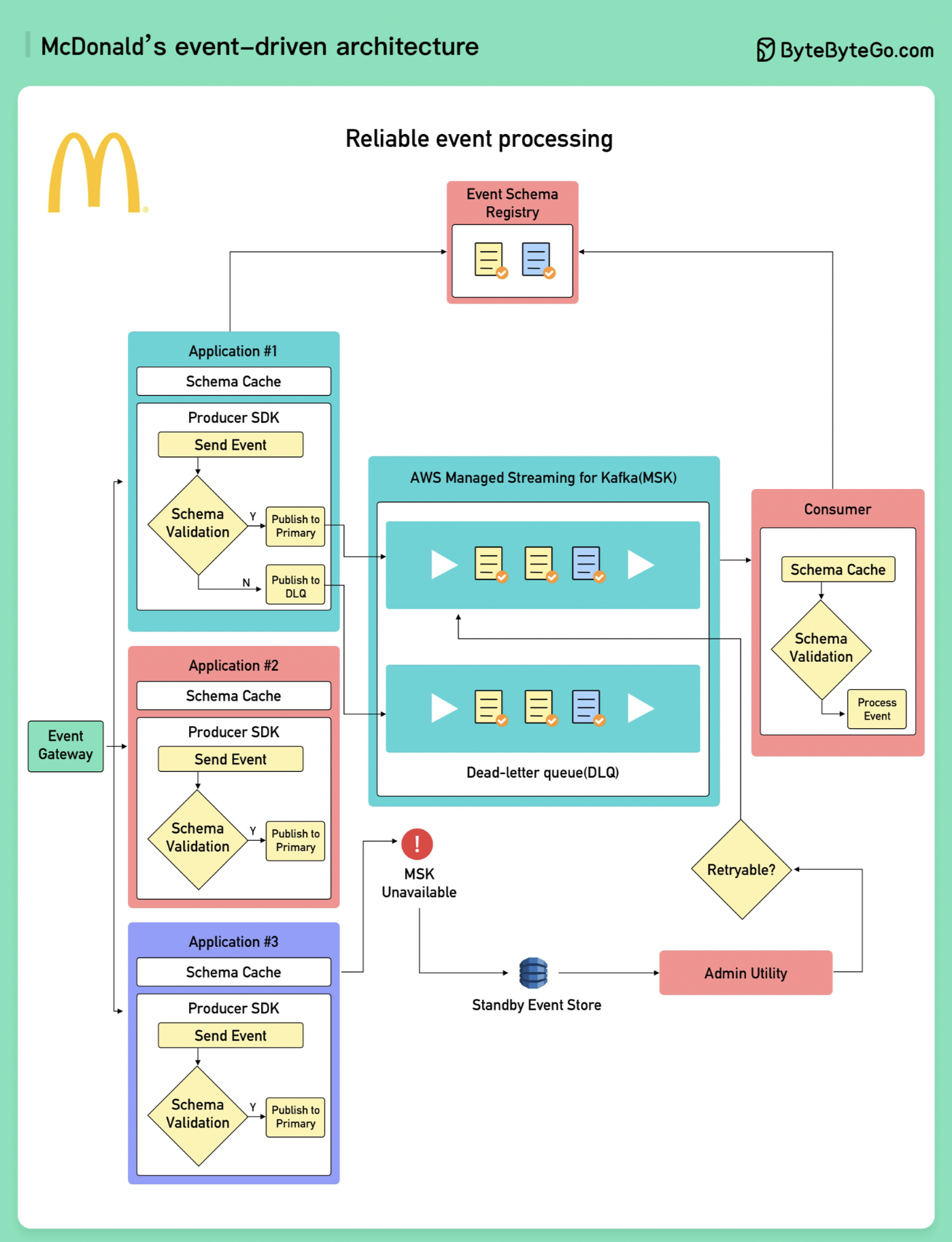

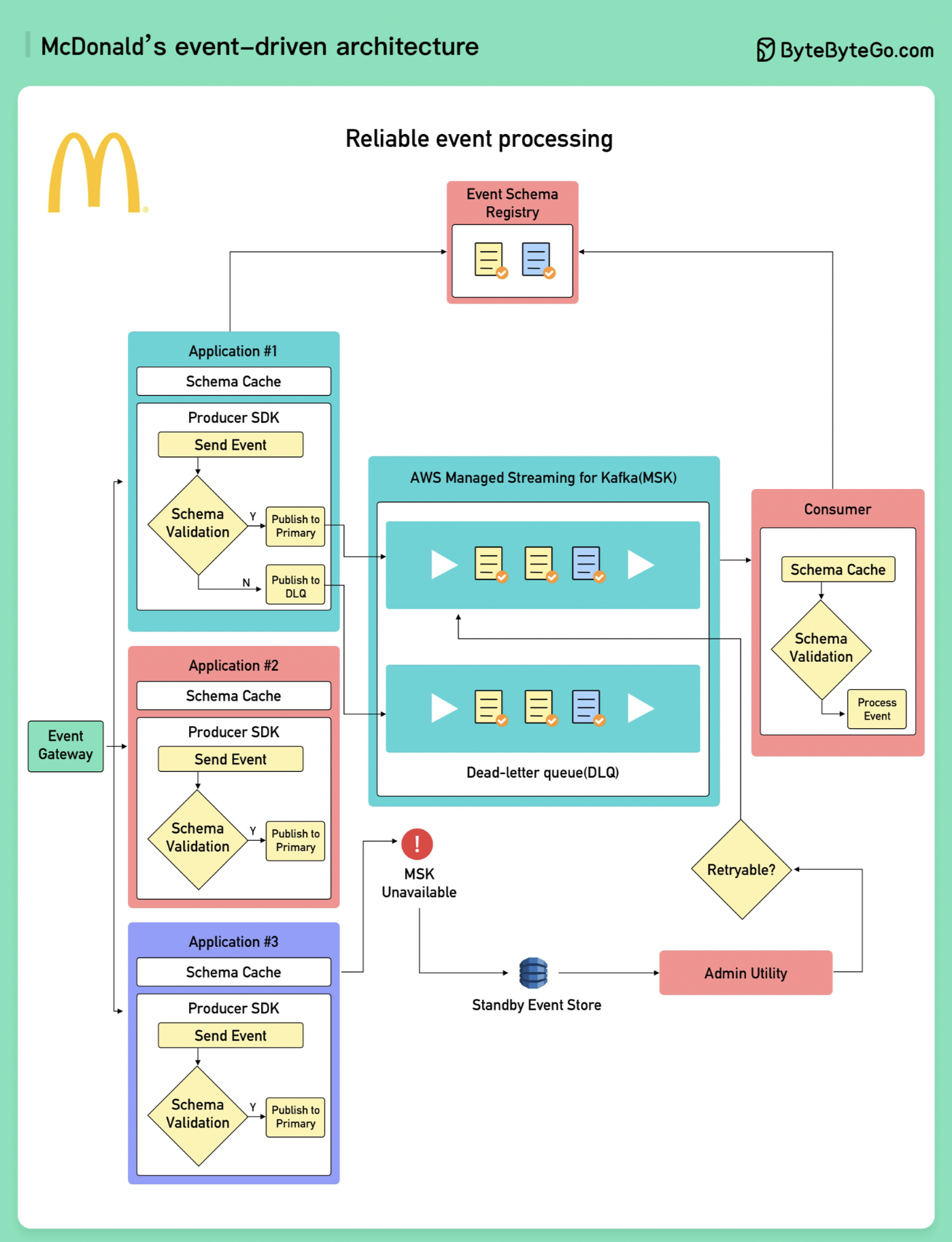

+ * [McDonald’s Event-Driven Architecture](https://bytebytego.com/guides/mcdonald's-event-driven-architecture)

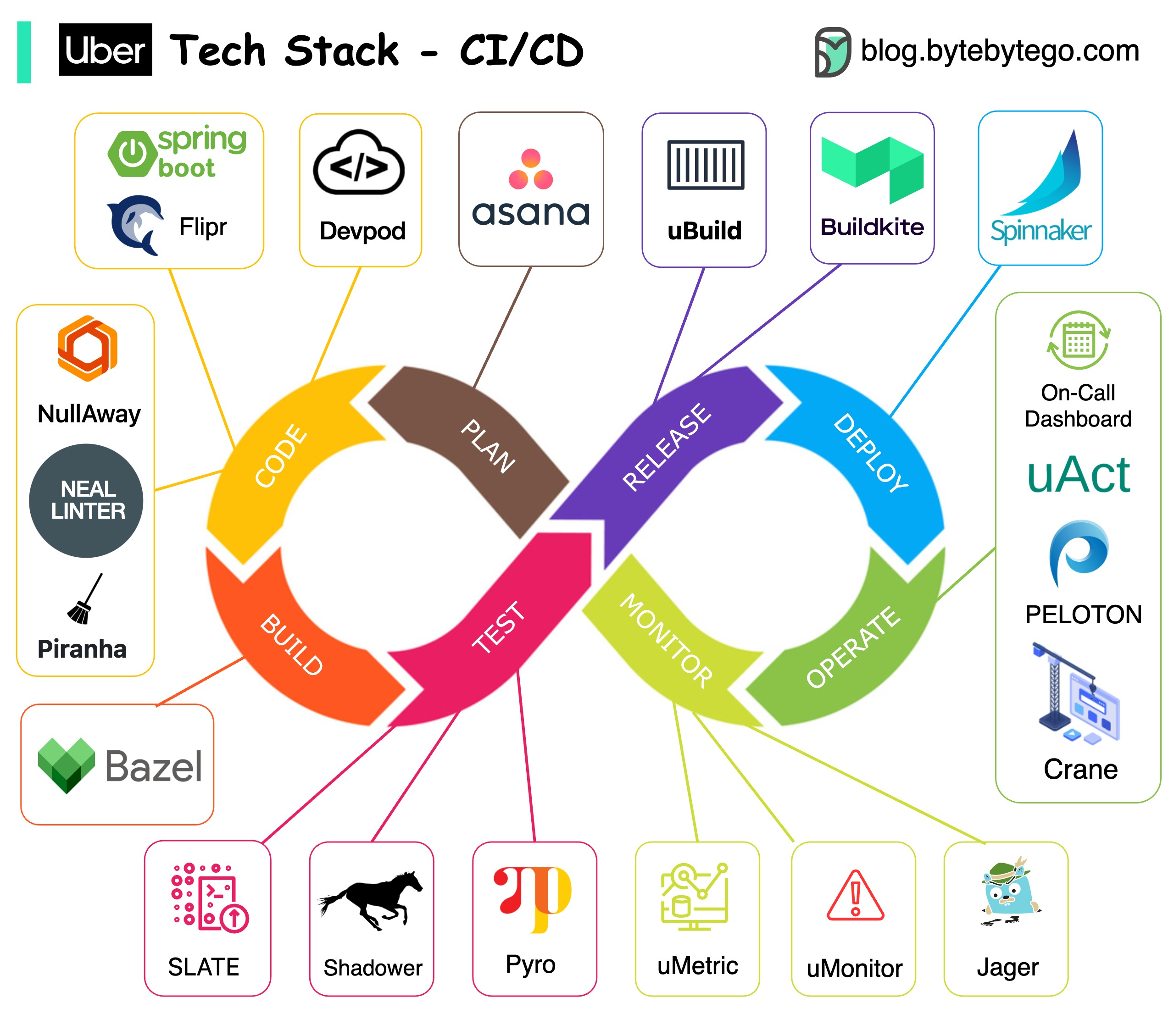

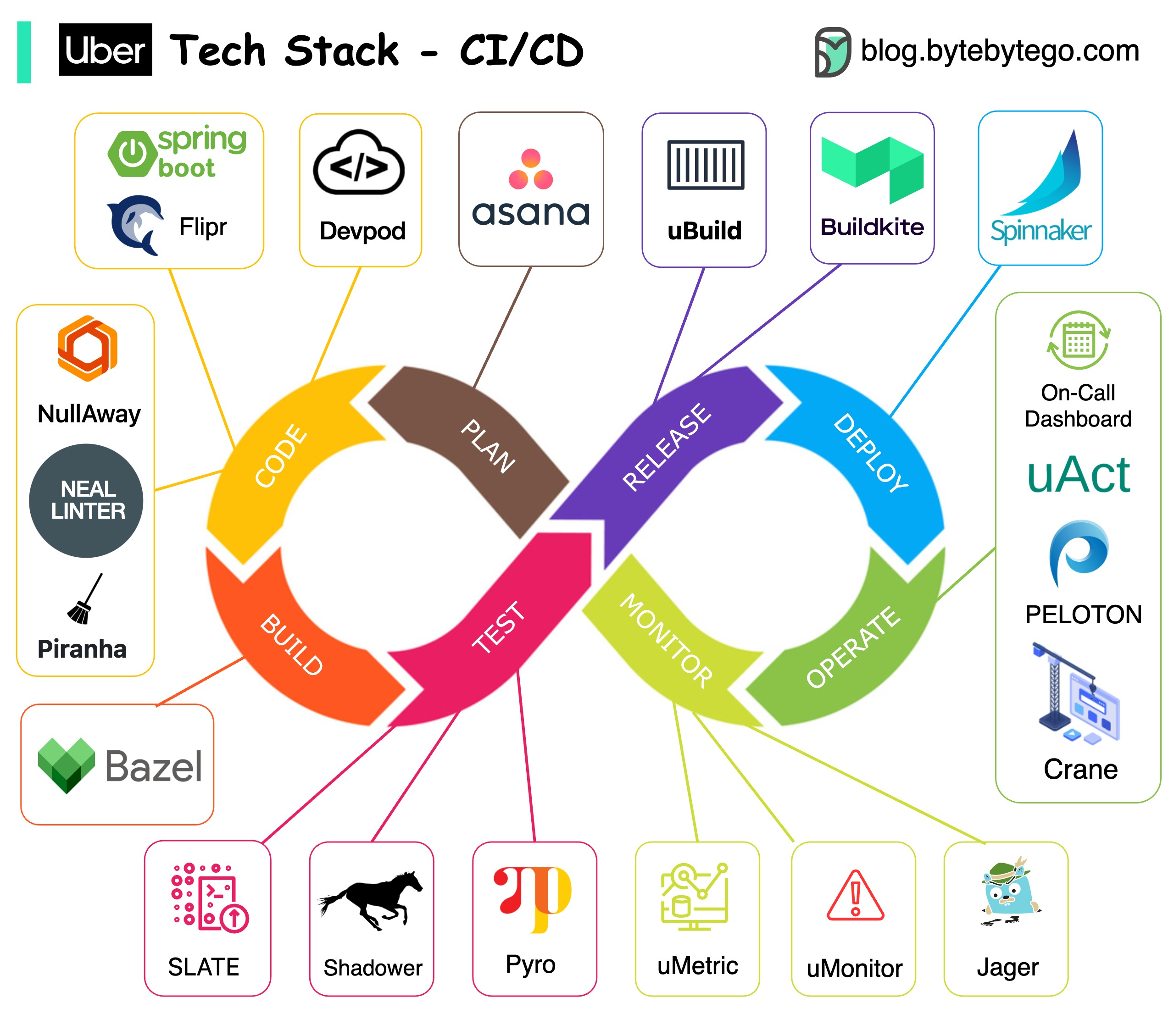

+ * [Uber Tech Stack - CI/CD](https://bytebytego.com/guides/uber-tech-stack-cicd)

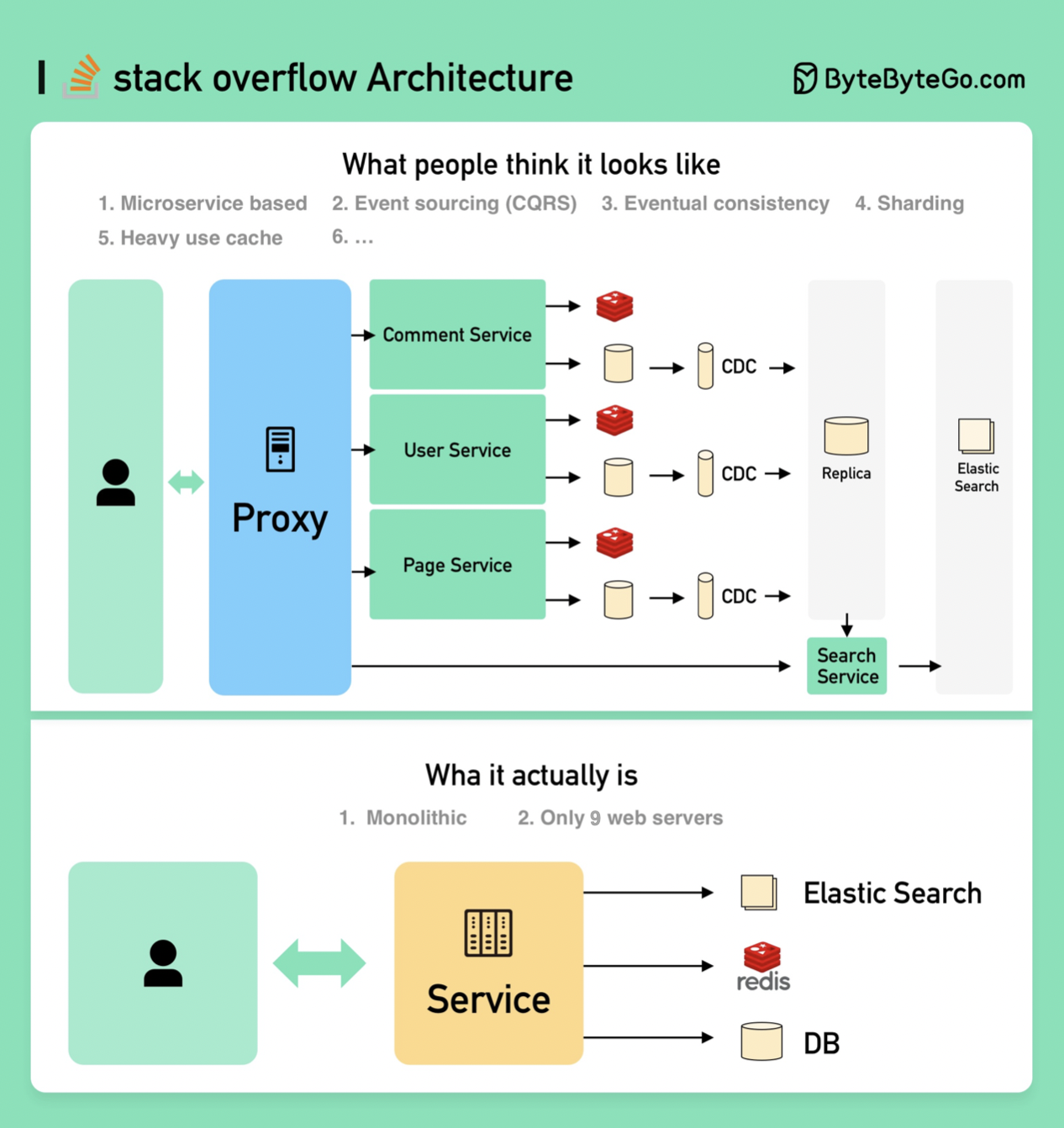

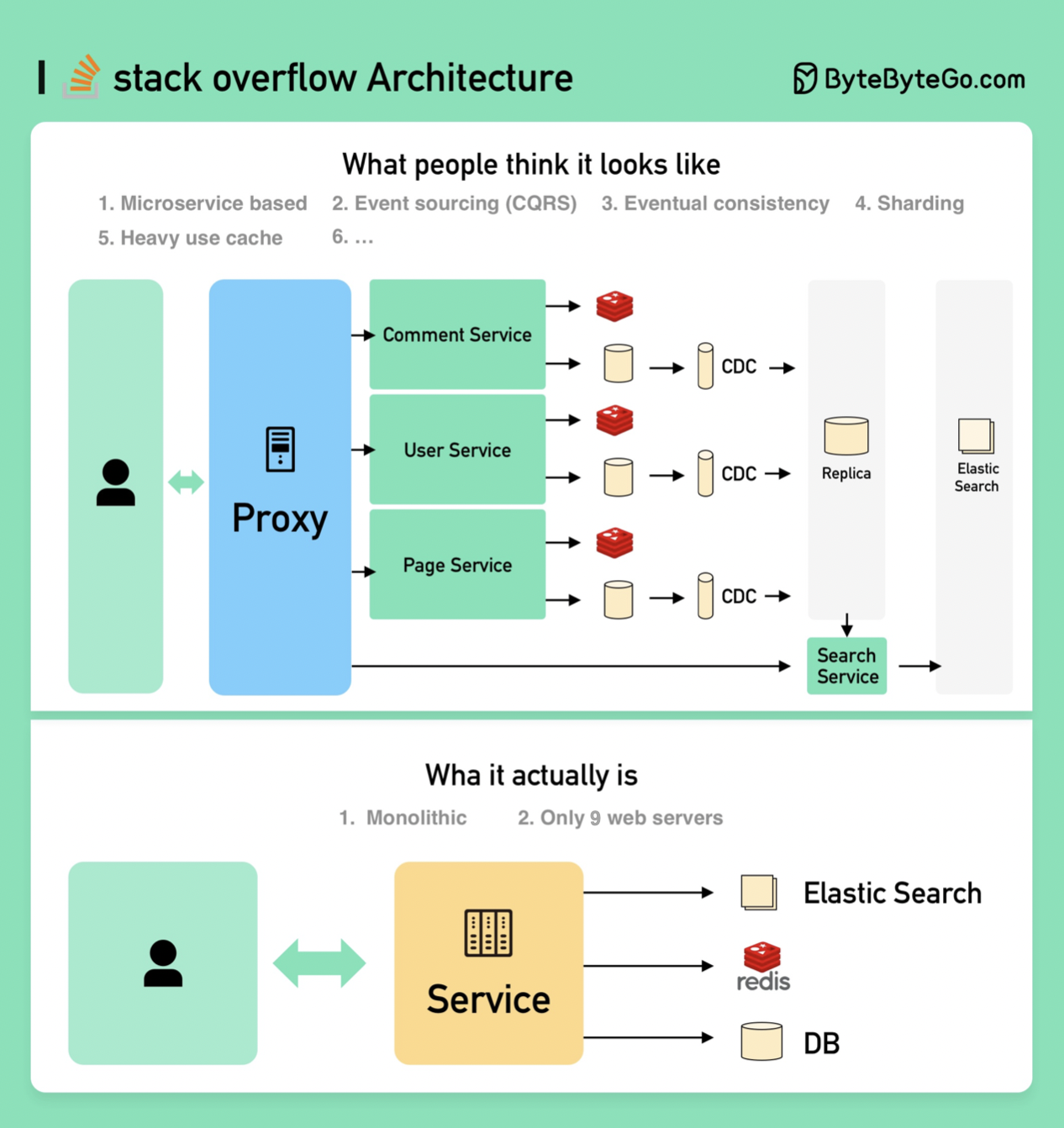

+ * [How to Design Stack Overflow](https://bytebytego.com/guides/how-will-you-design-the-stack-overflow-website)

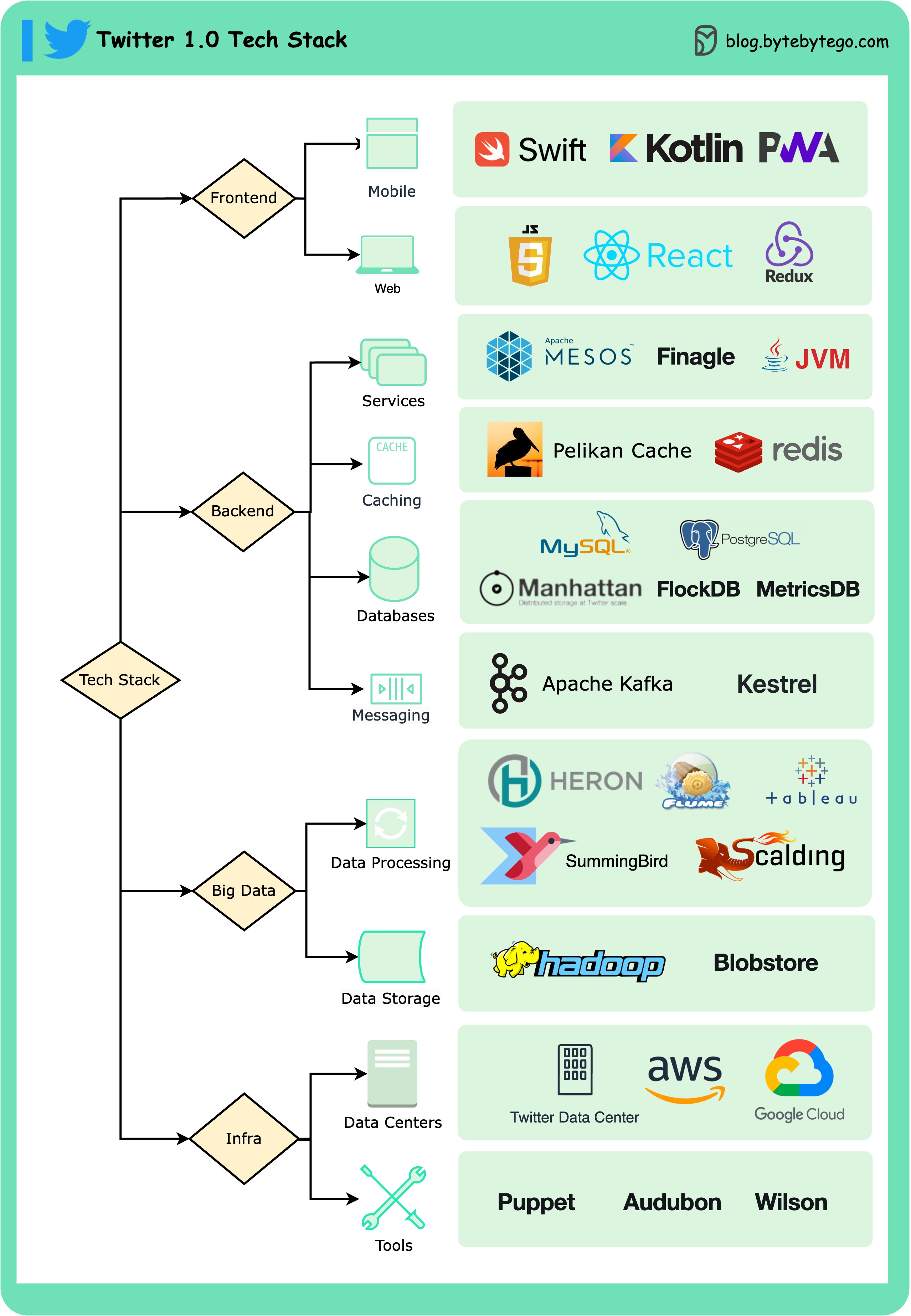

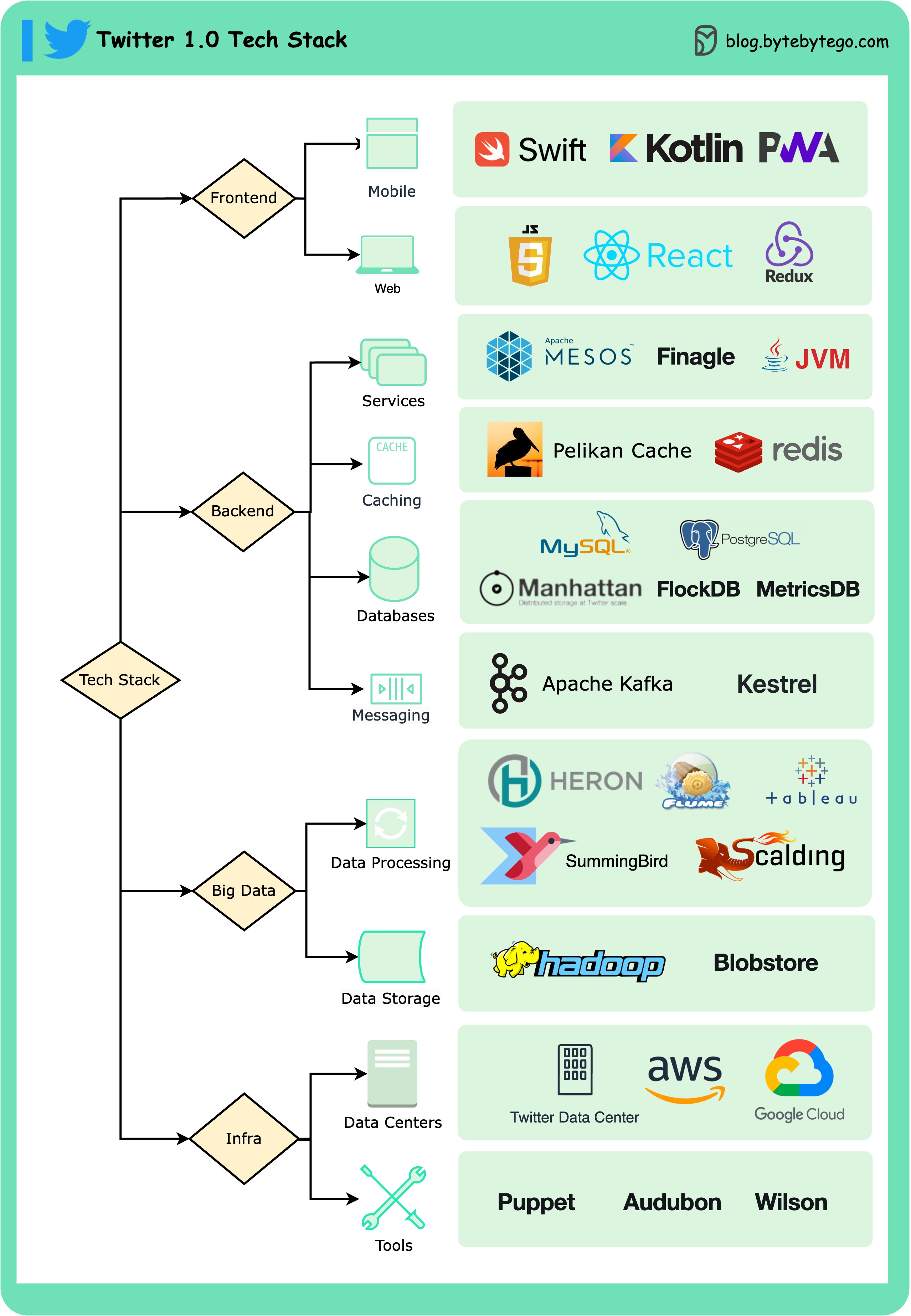

+ * [Twitter 1.0 Tech Stack](https://bytebytego.com/guides/twitter-10-tech-stack)

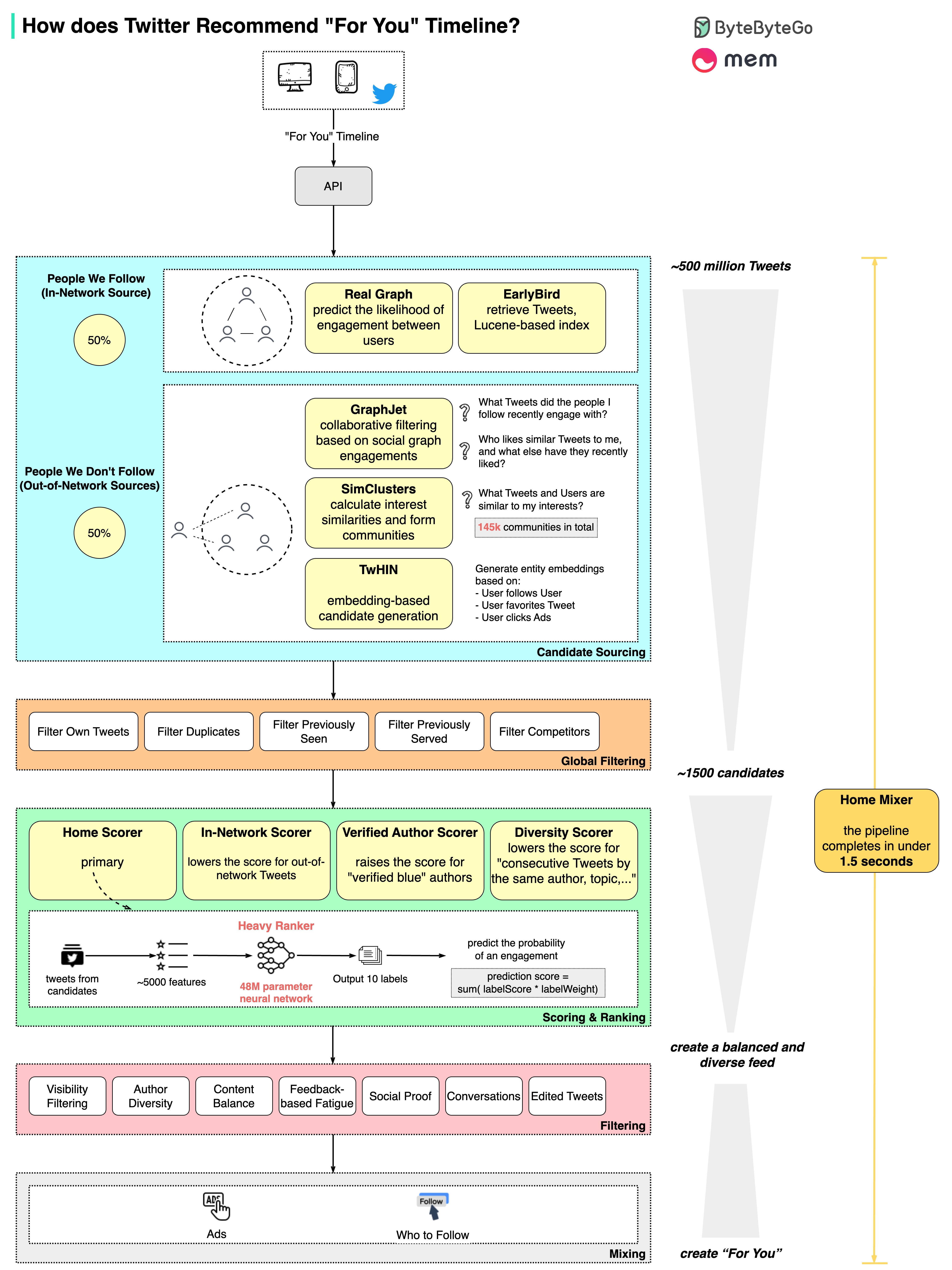

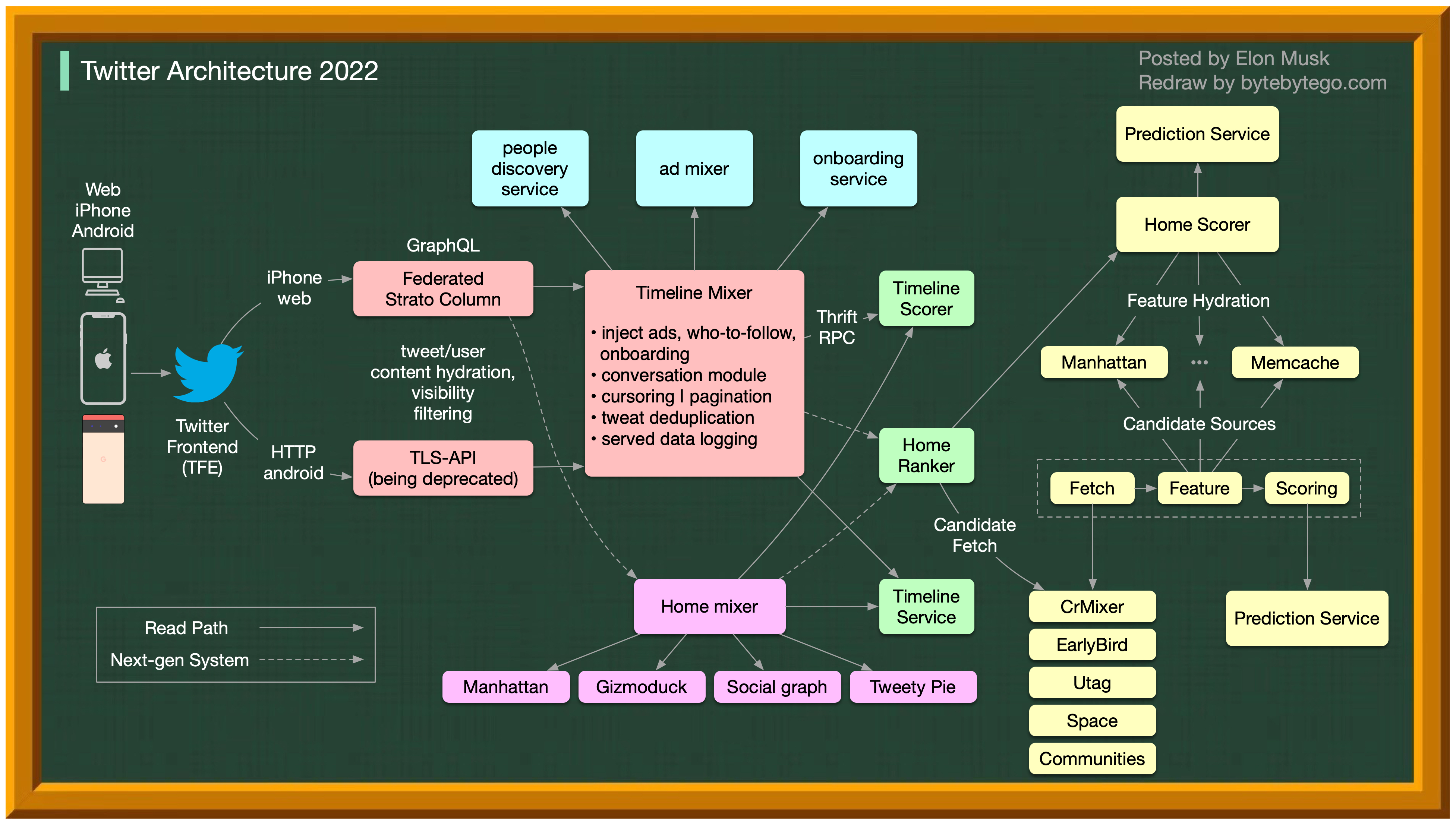

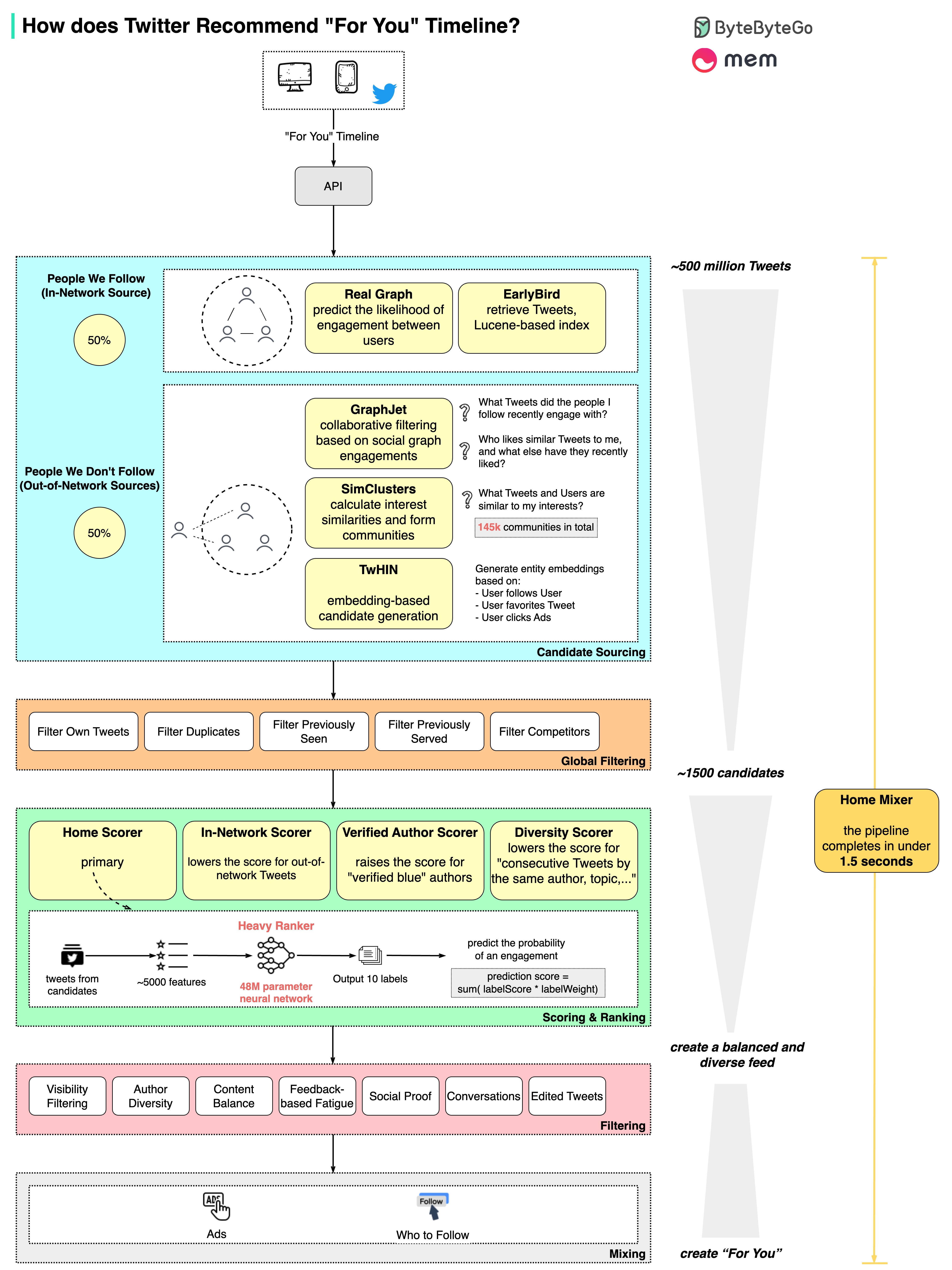

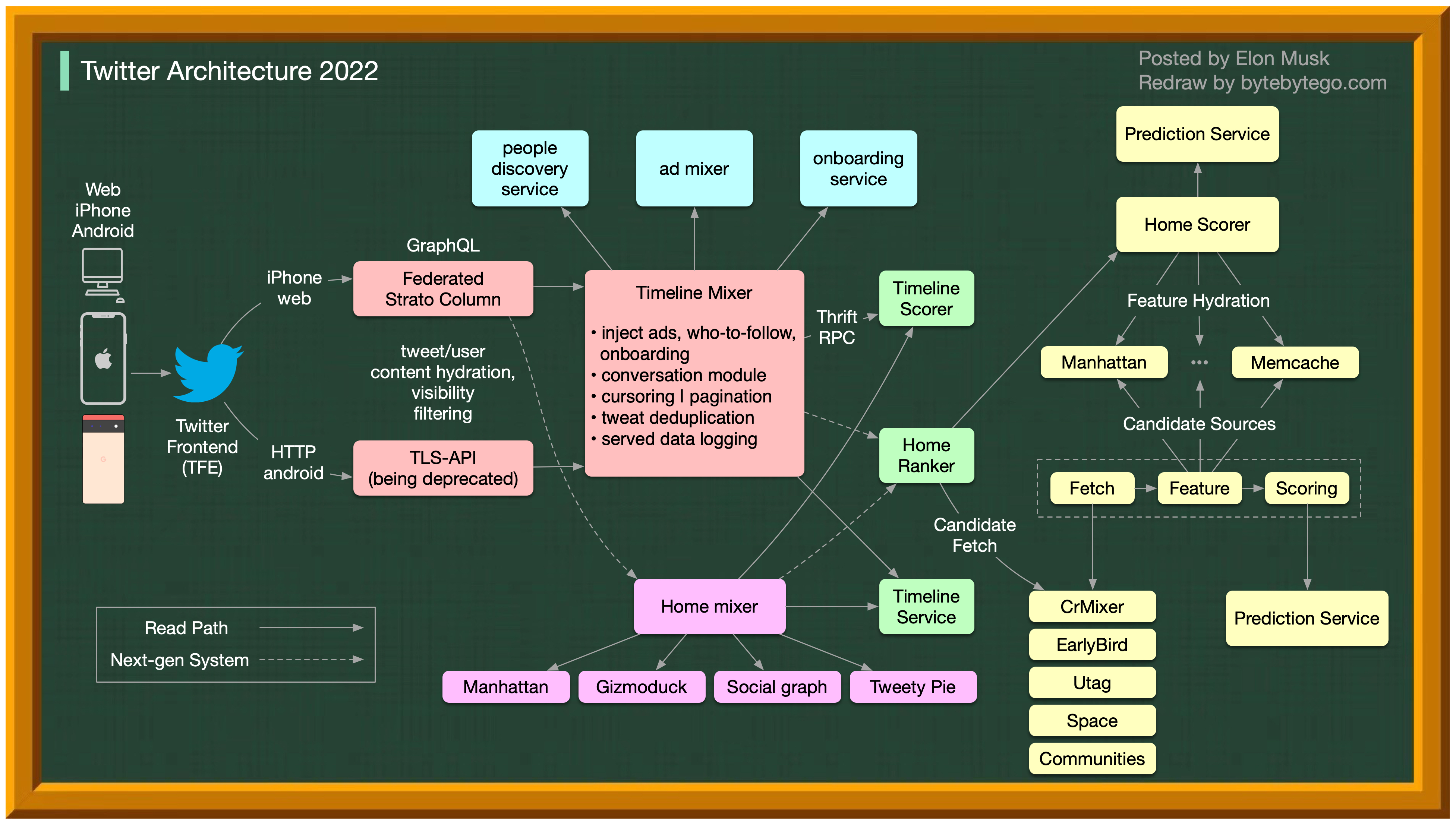

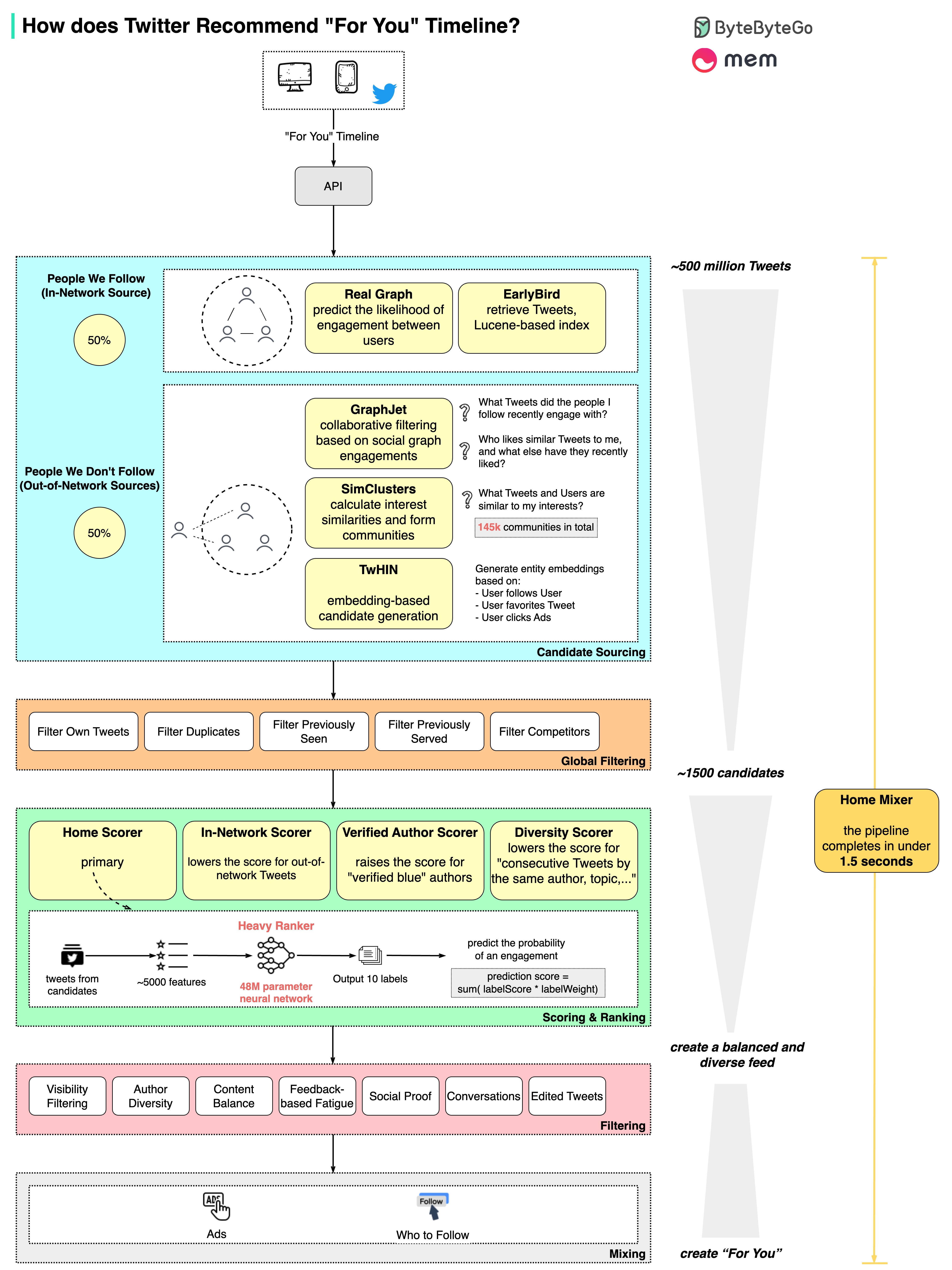

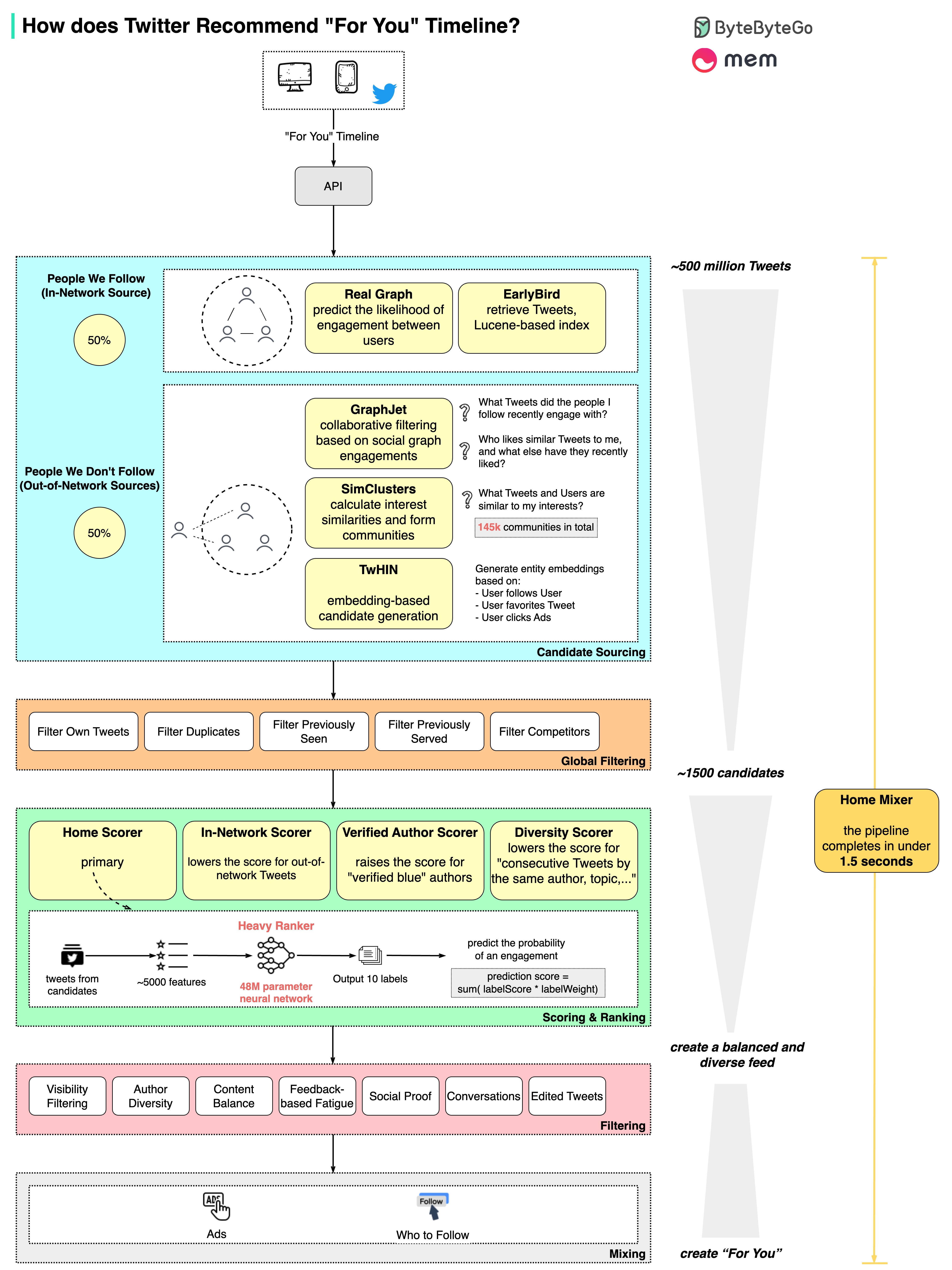

+ * [How does Twitter recommend “For You” Timeline in 1.5 seconds?](https://bytebytego.com/guides/how-does-twitter-recommend-tweets)

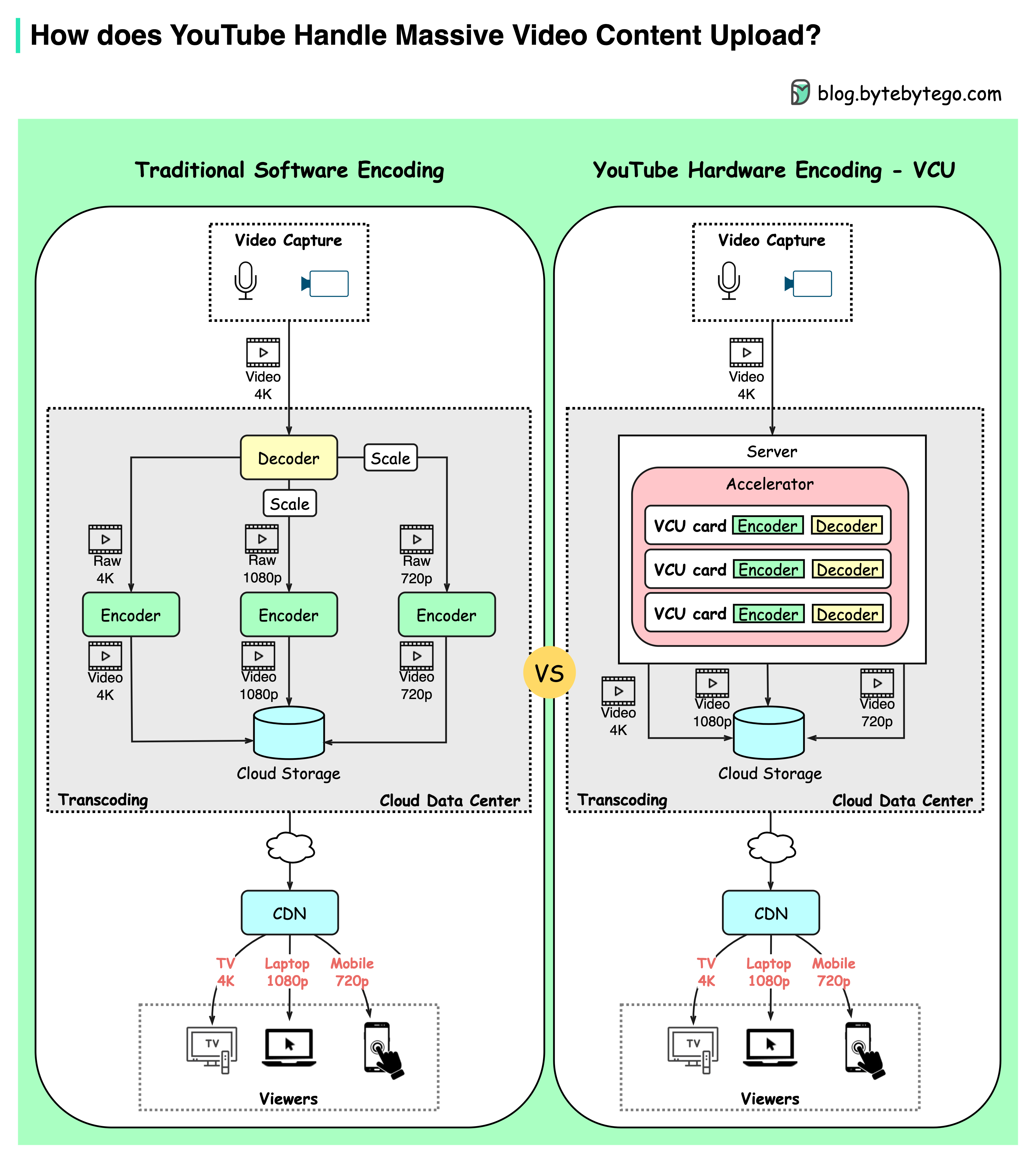

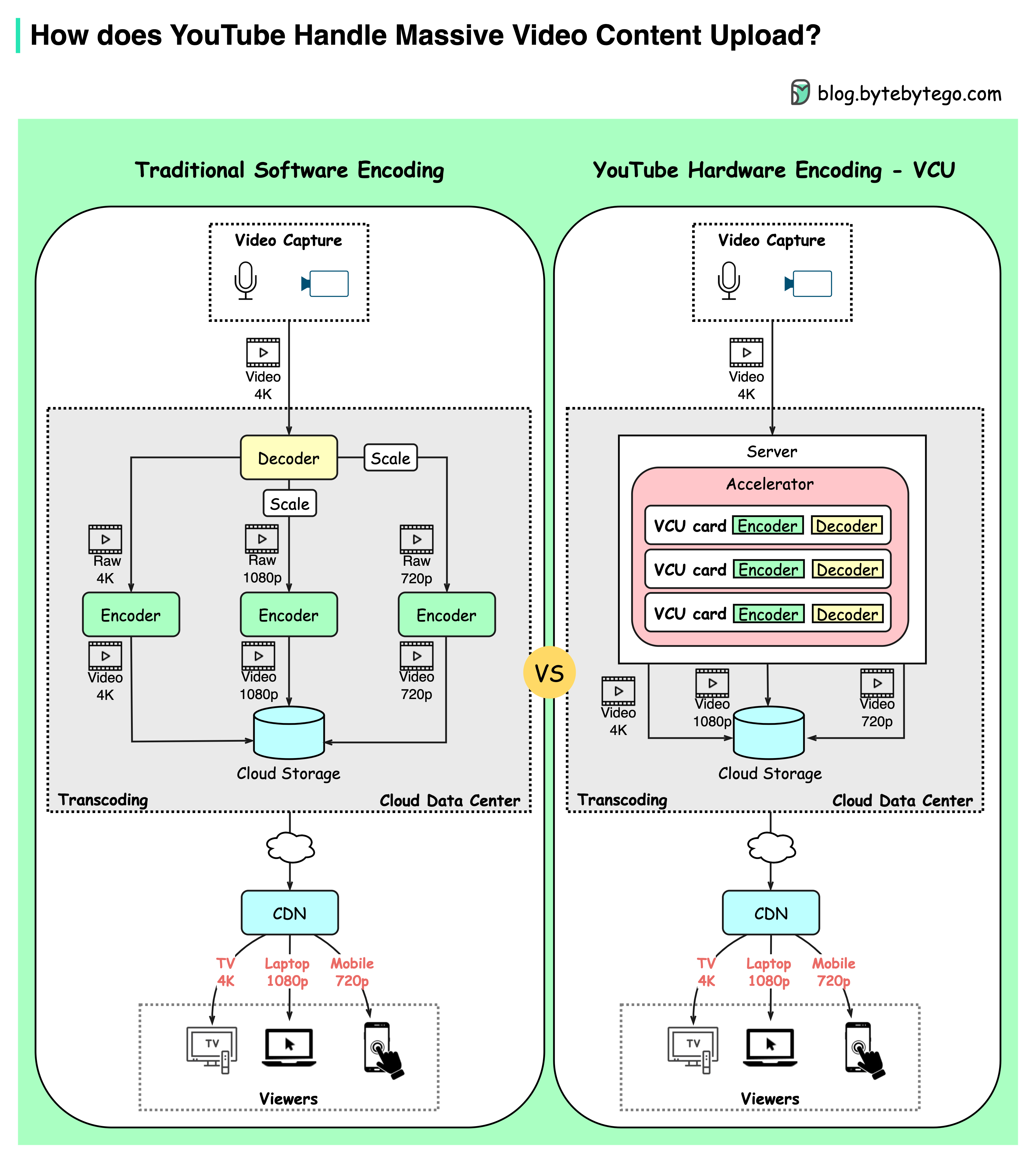

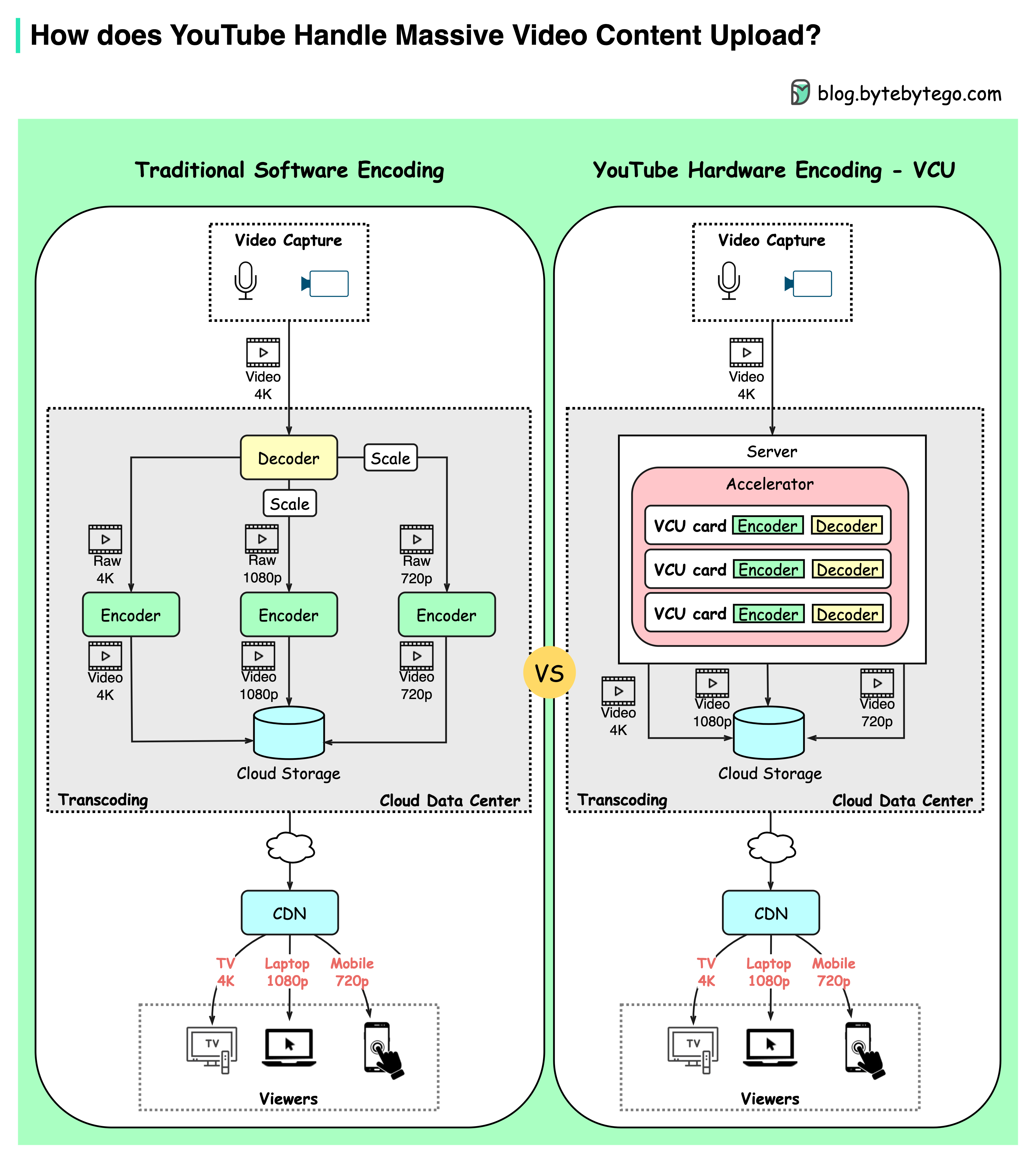

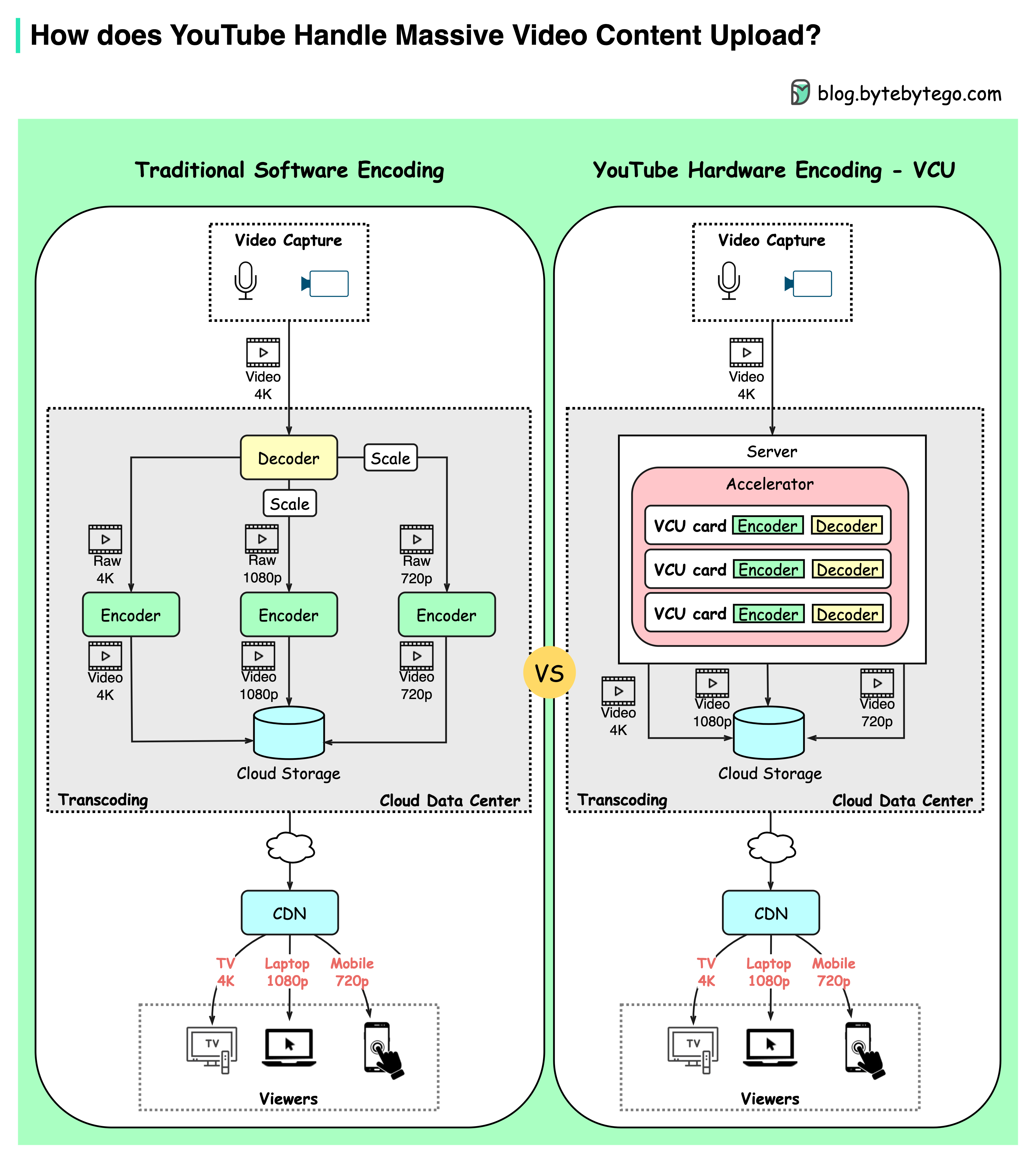

+ * [How YouTube Handles Massive Video Uploads](https://bytebytego.com/guides/how-does-youtube-handle-massive-video-content-upload)

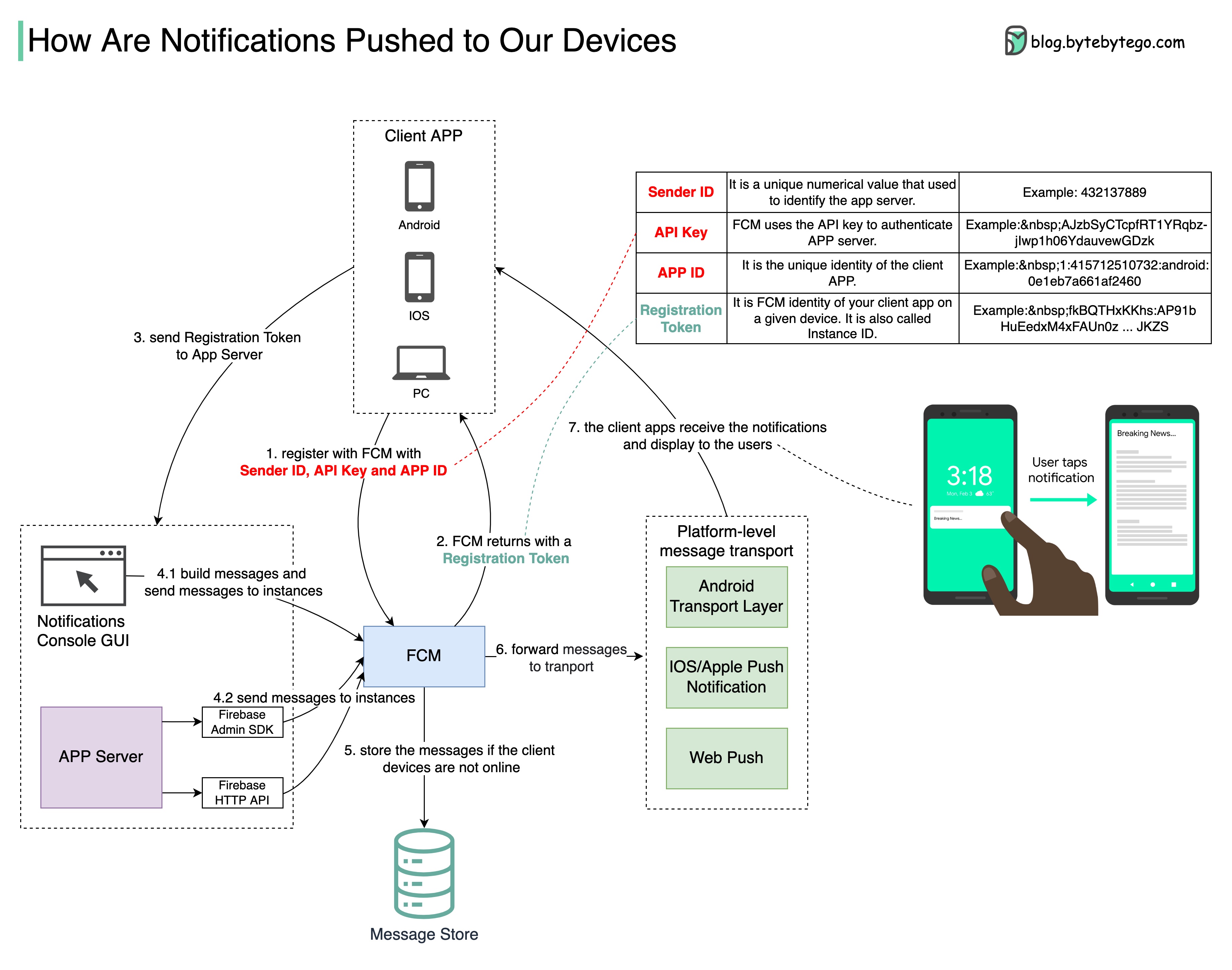

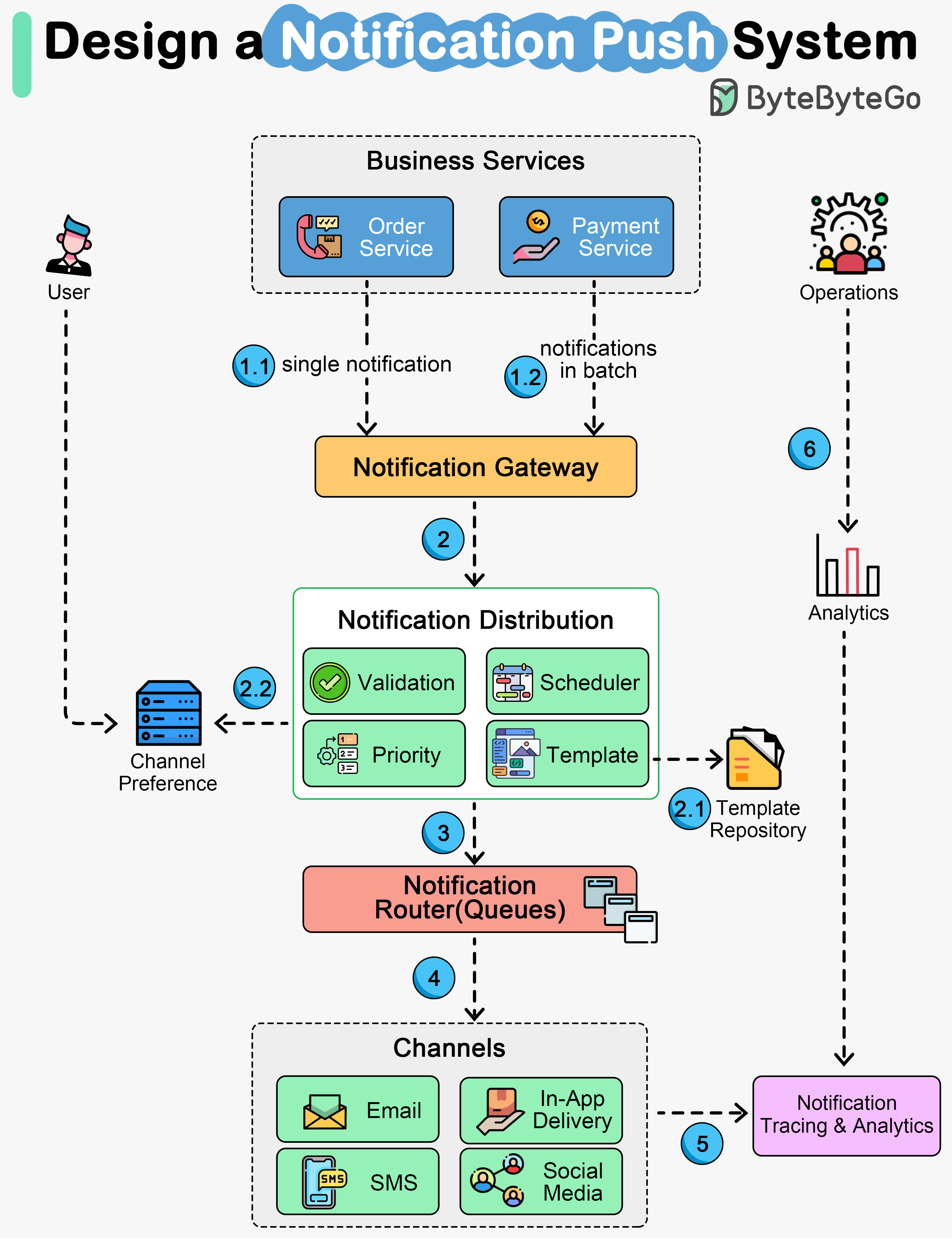

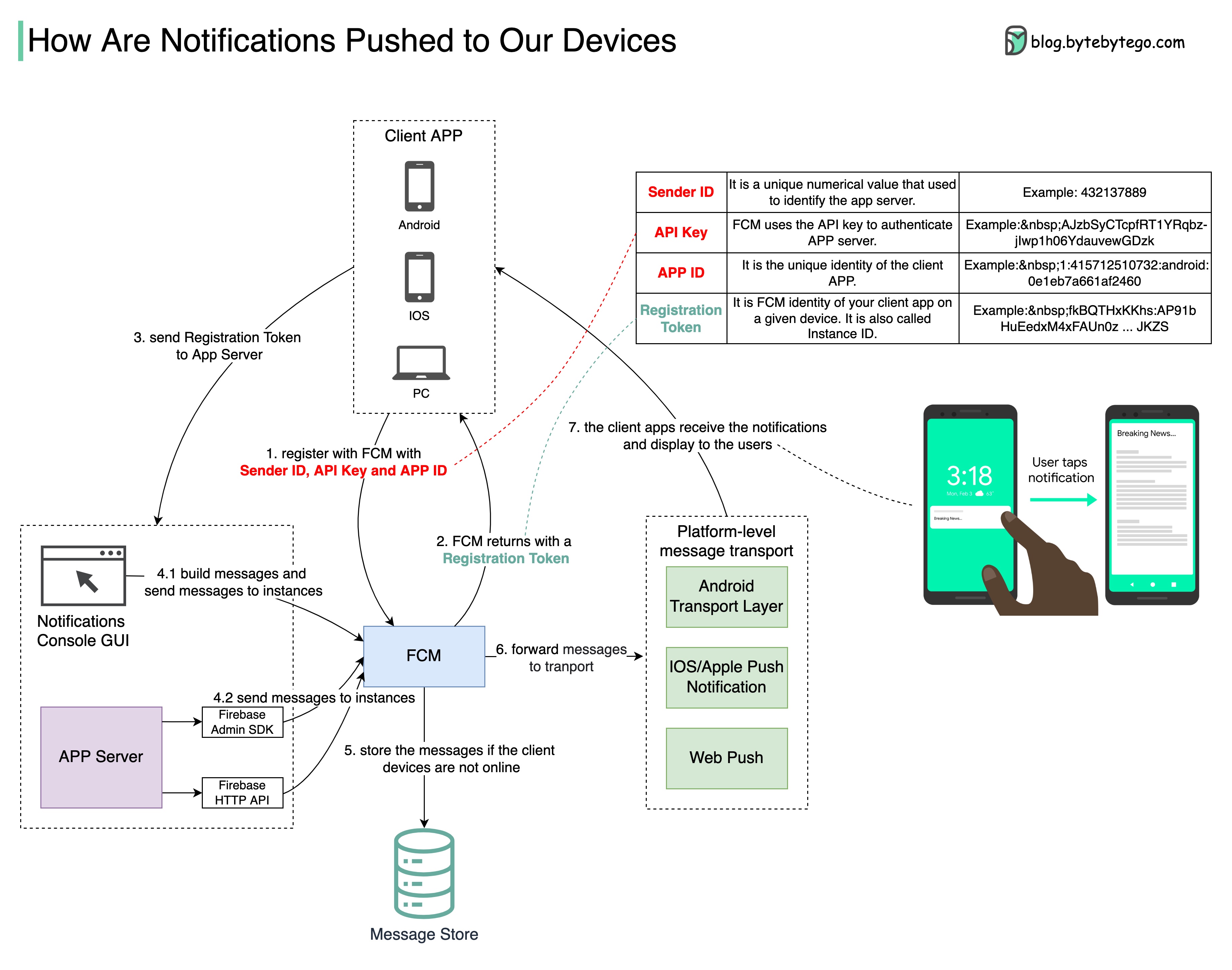

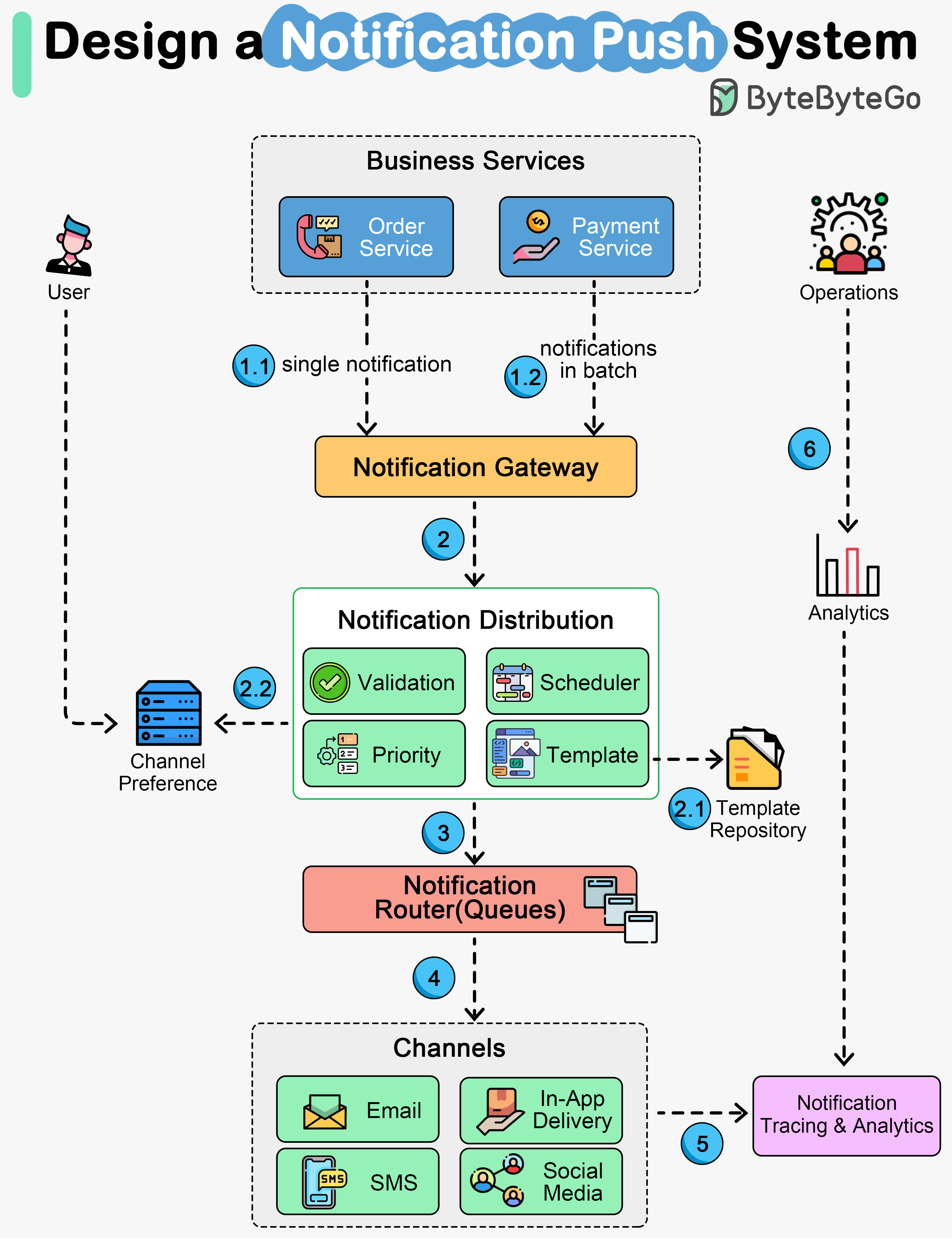

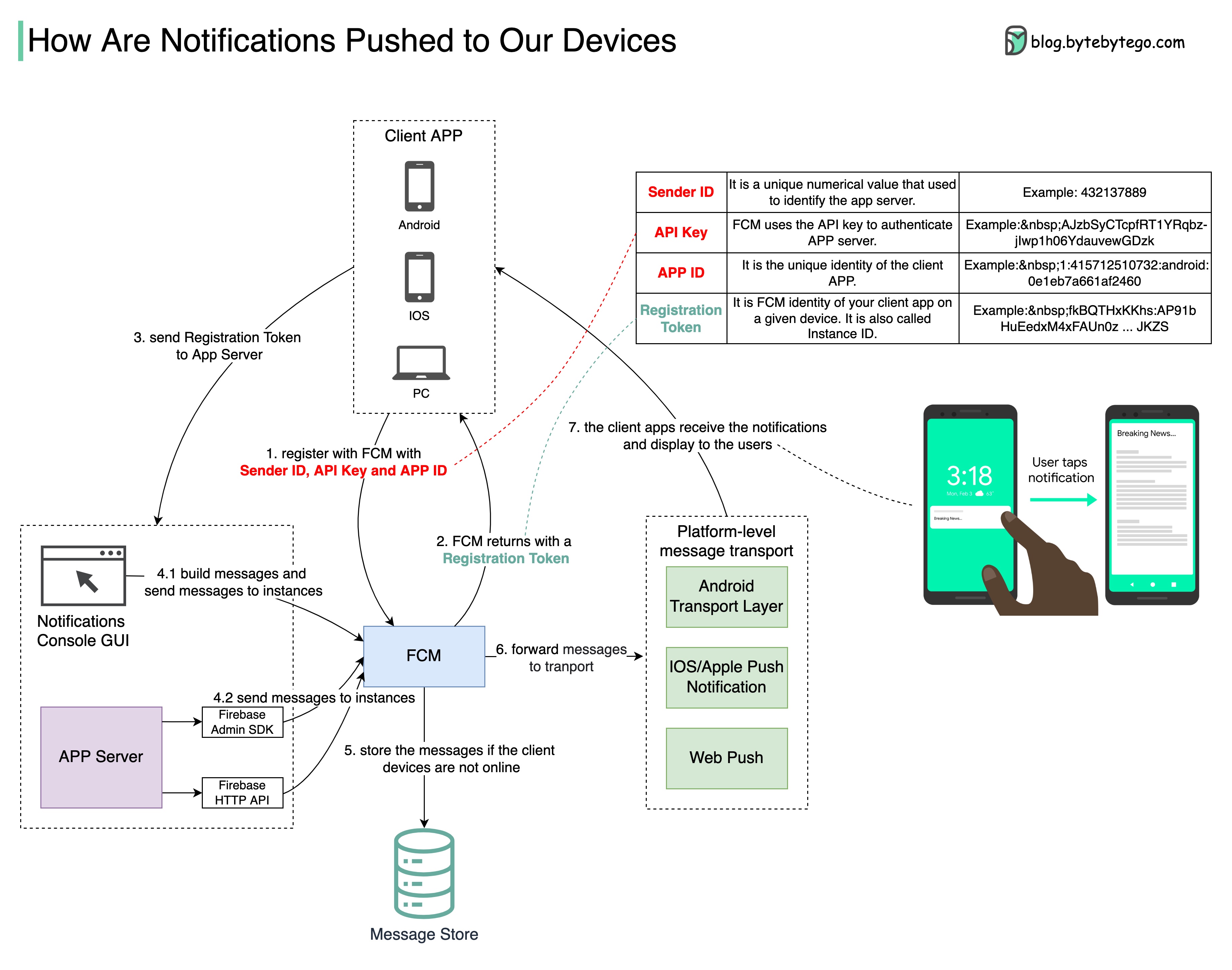

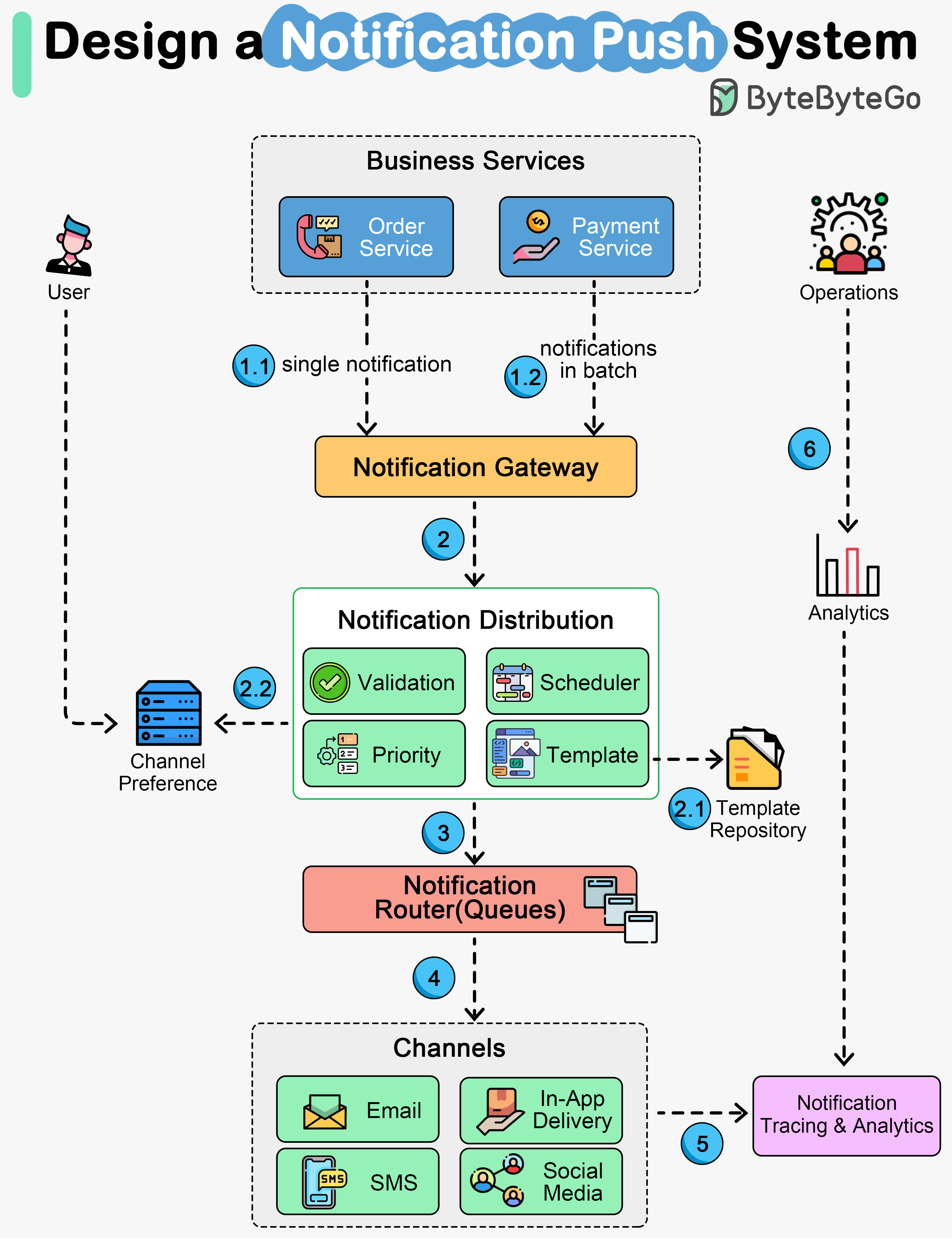

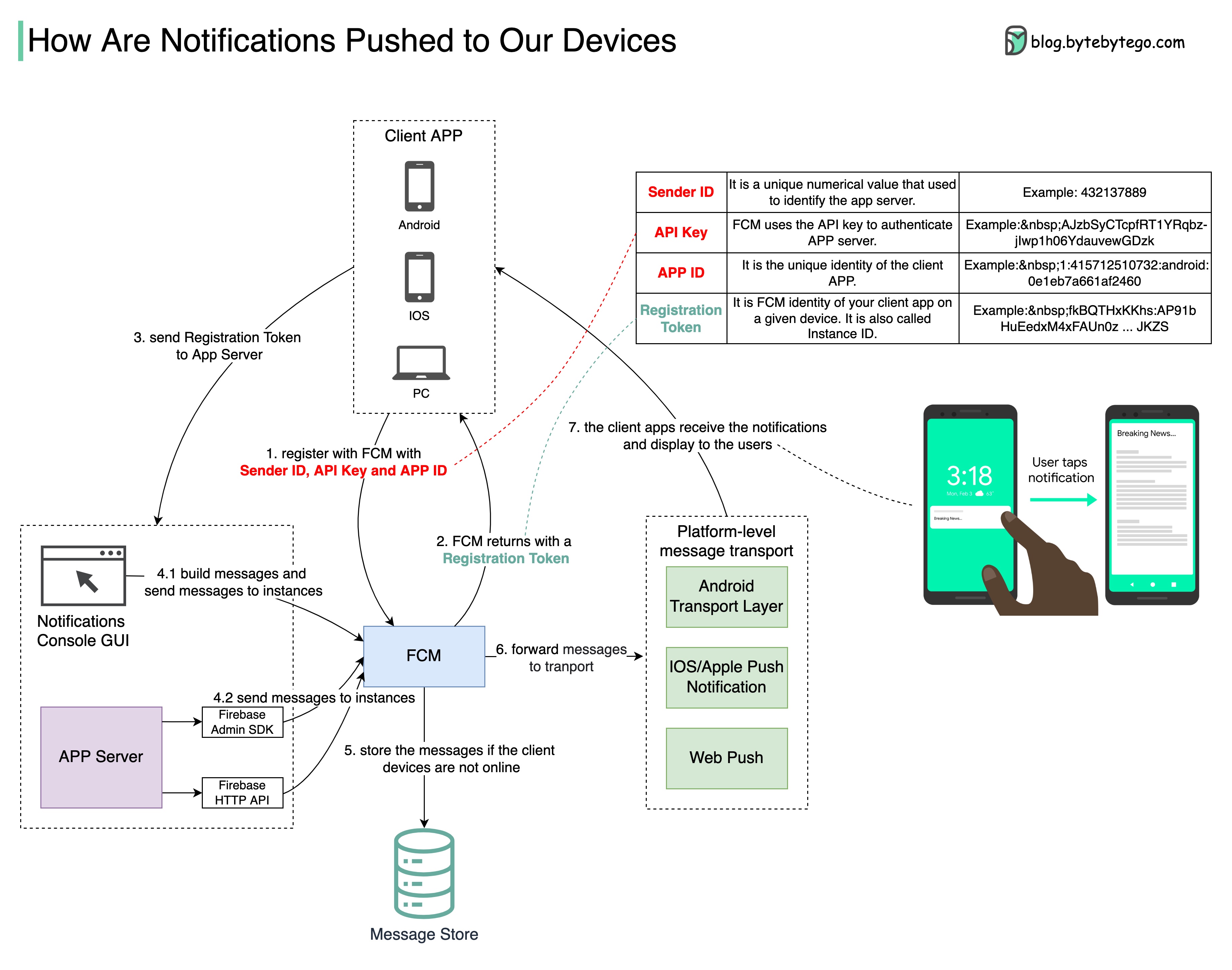

+ * [How Does a Typical Push Notification System Work?](https://bytebytego.com/guides/how-does-a-typical-push-notification-system-work)

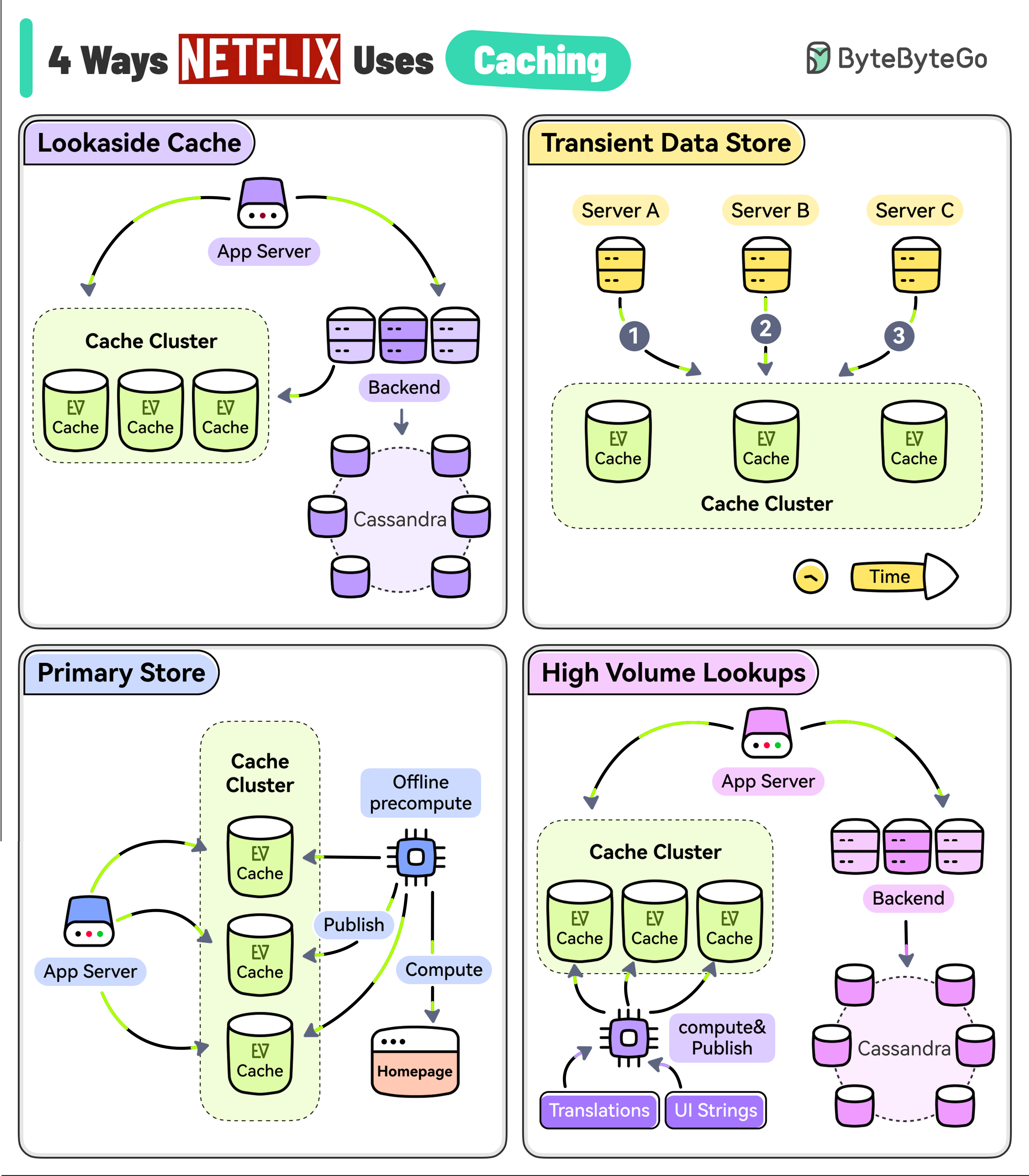

+ * [4 Ways Netflix Uses Caching](https://bytebytego.com/guides/4-ways-netflix-uses-caching-to-hold-user-attention)

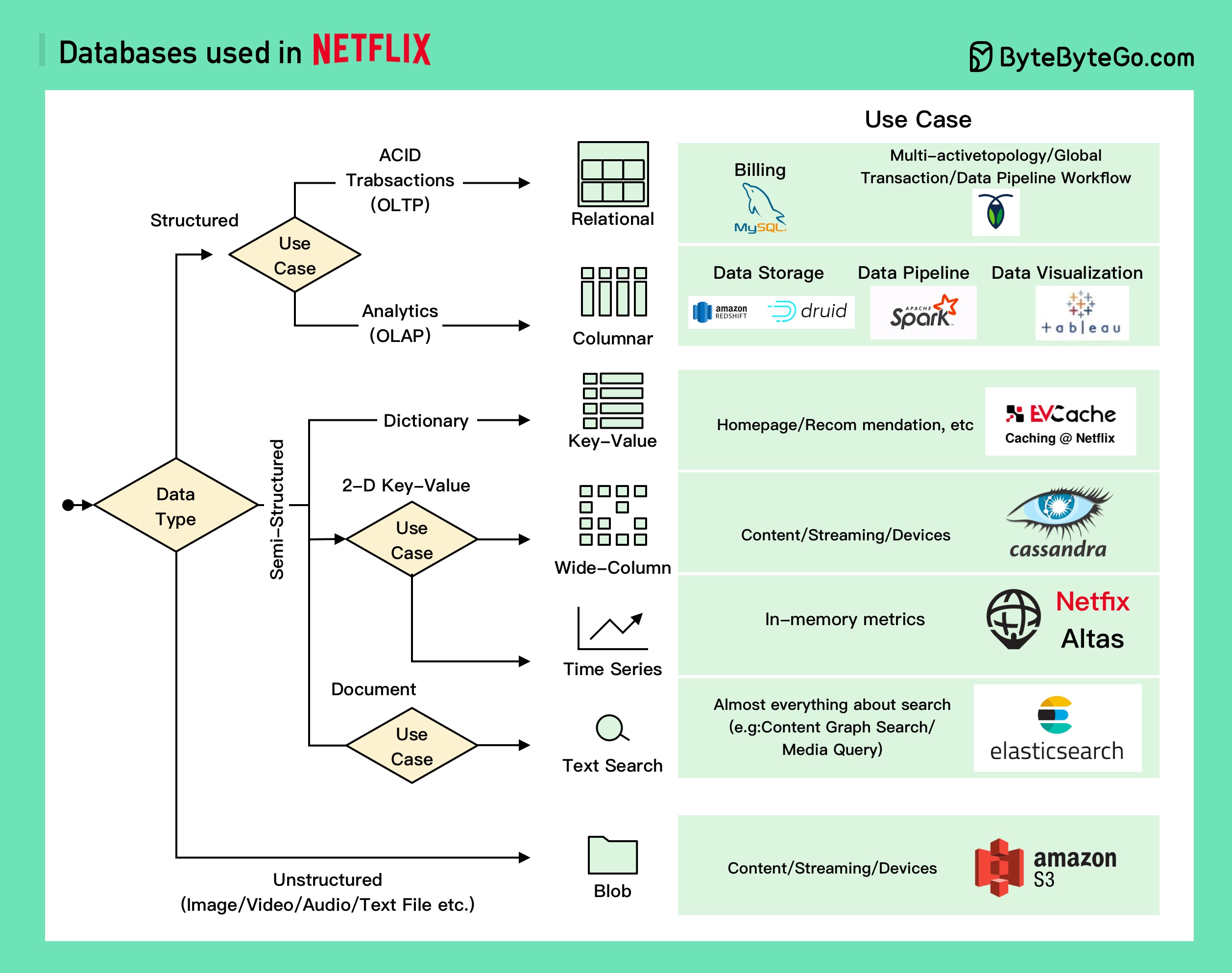

+ * [Netflix Tech Stack - Databases](https://bytebytego.com/guides/netflix-tech-stack-databases)

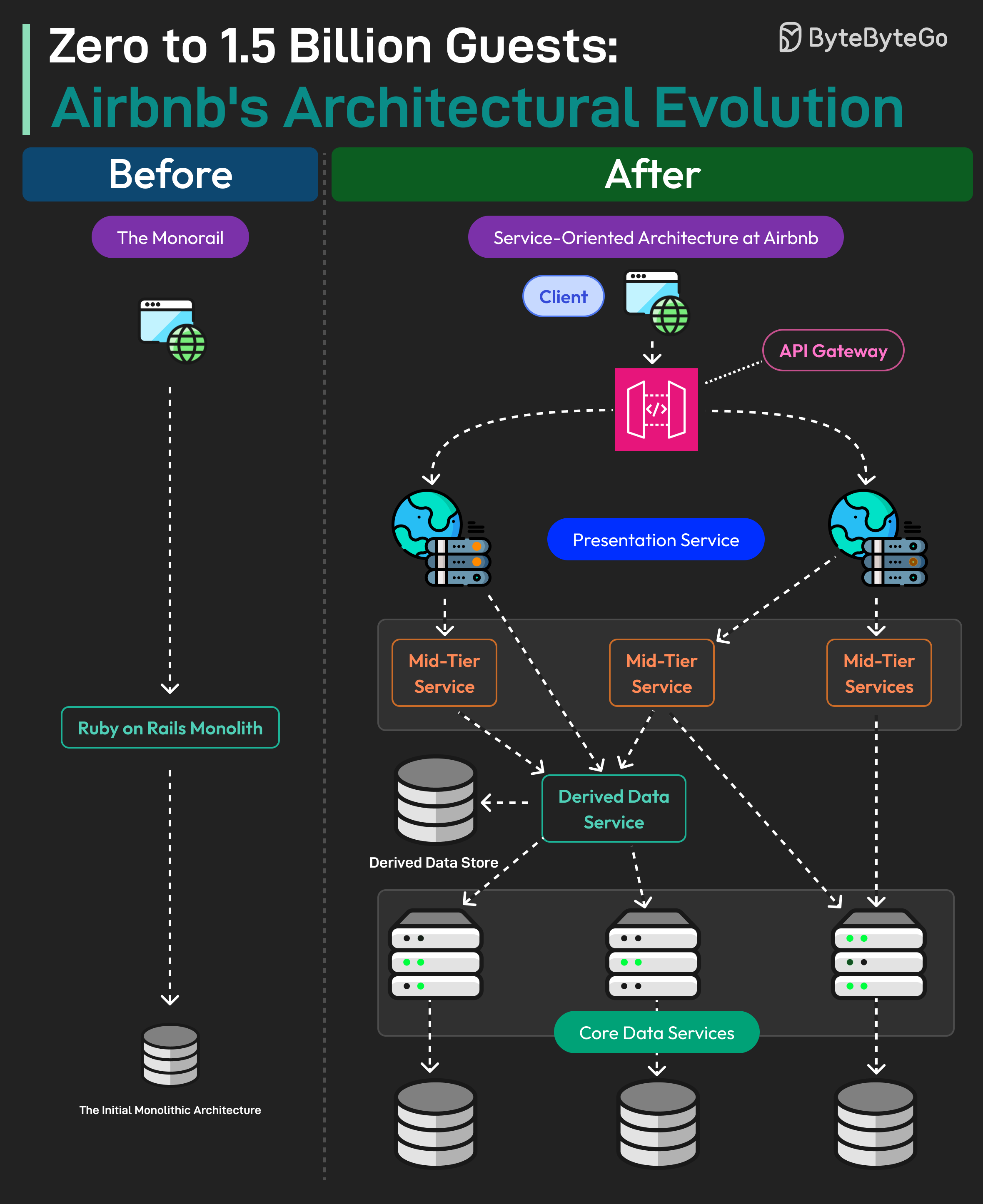

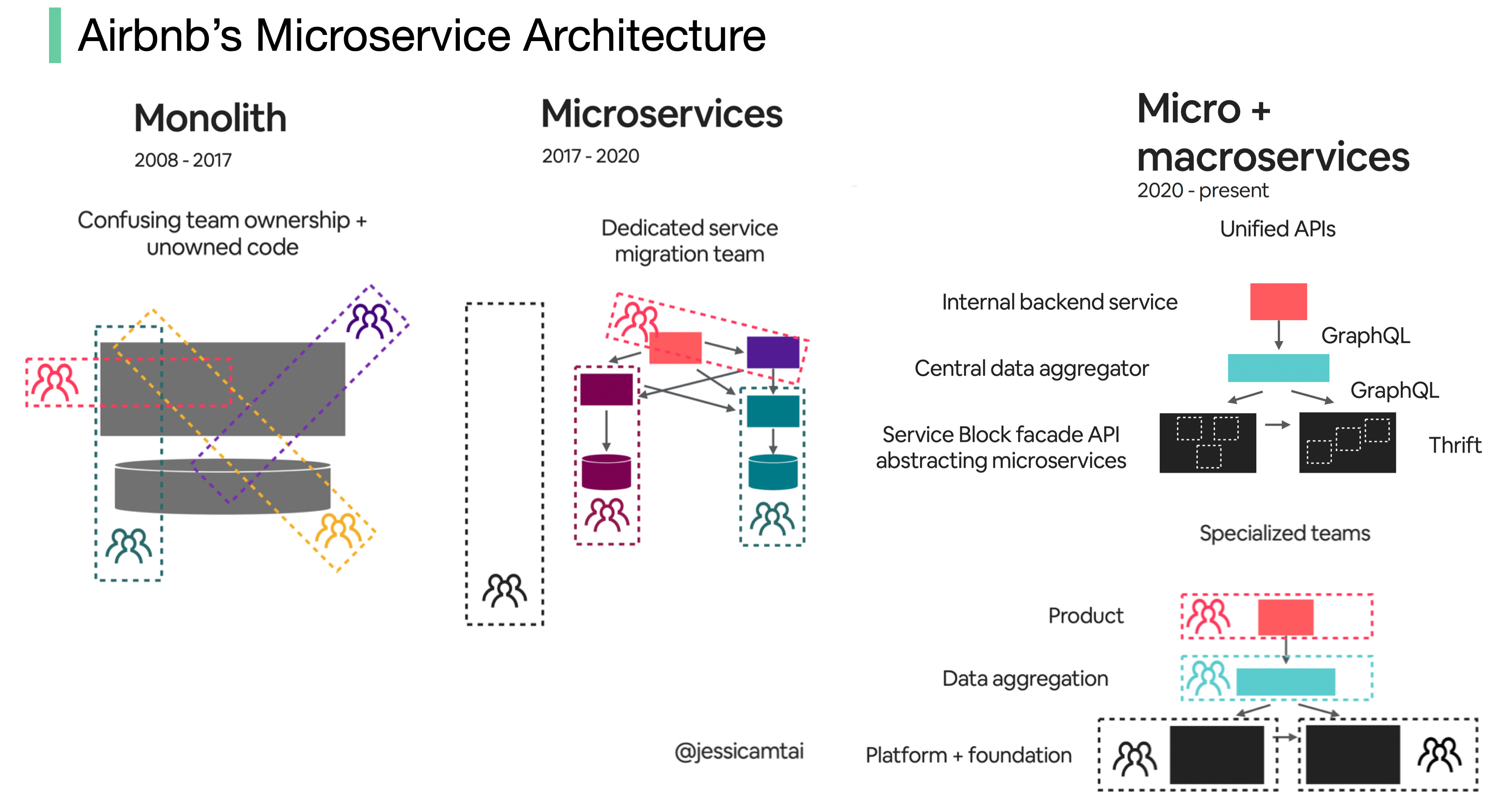

+ * [0 to 1.5 Billion Guests: Airbnb's Architectural Evolution](https://bytebytego.com/guides/airbnb-artchitectural-evolution)

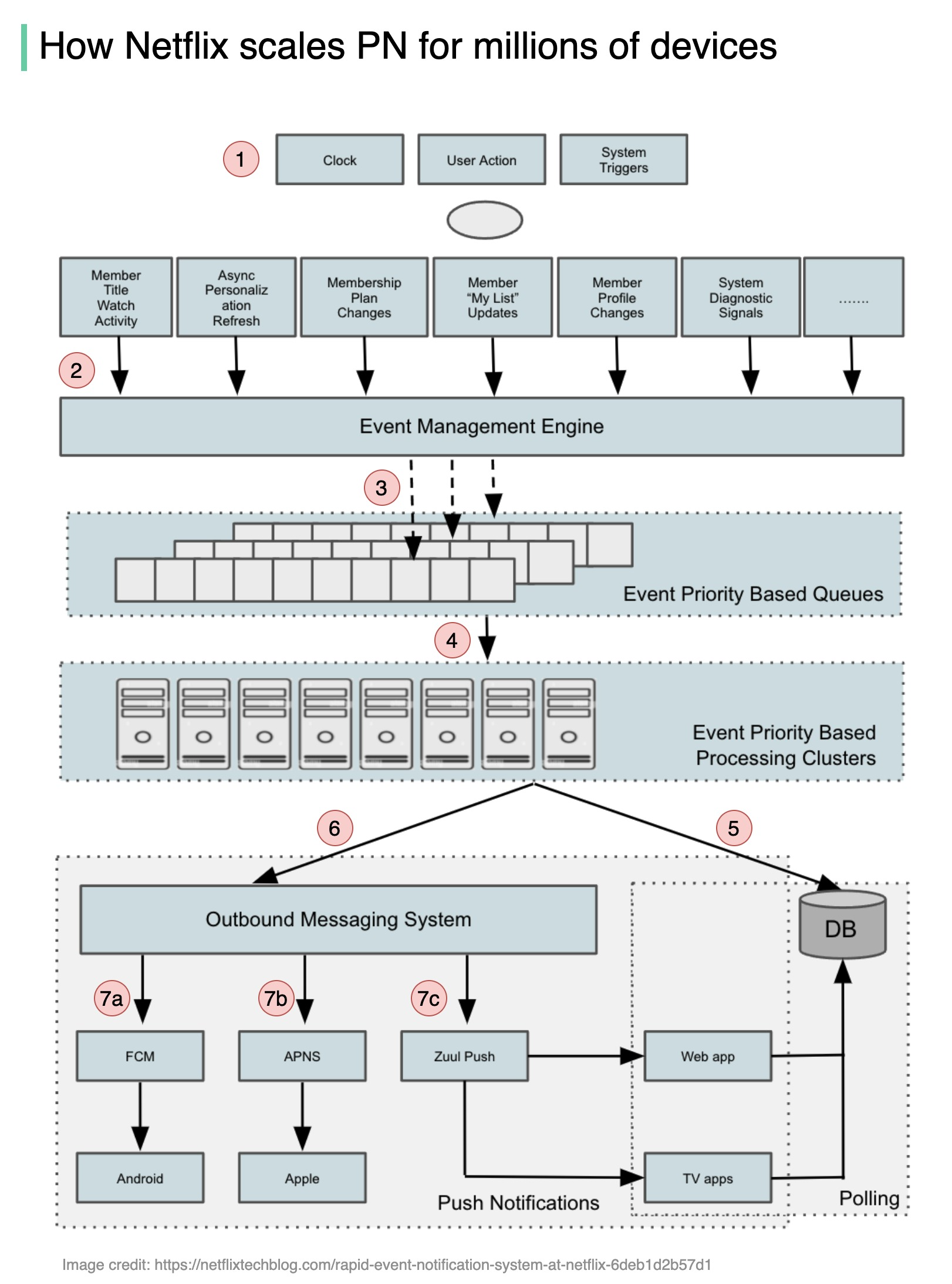

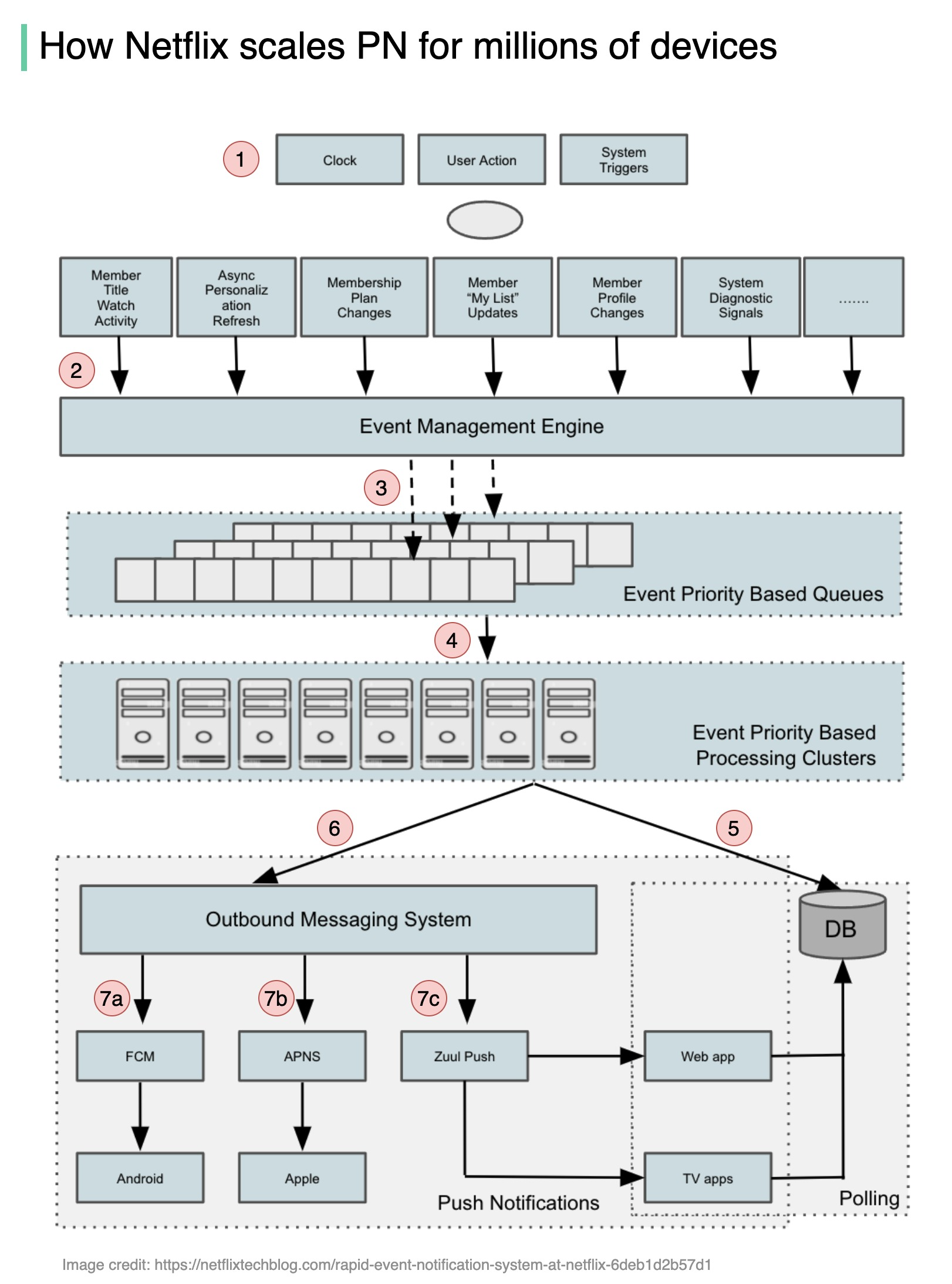

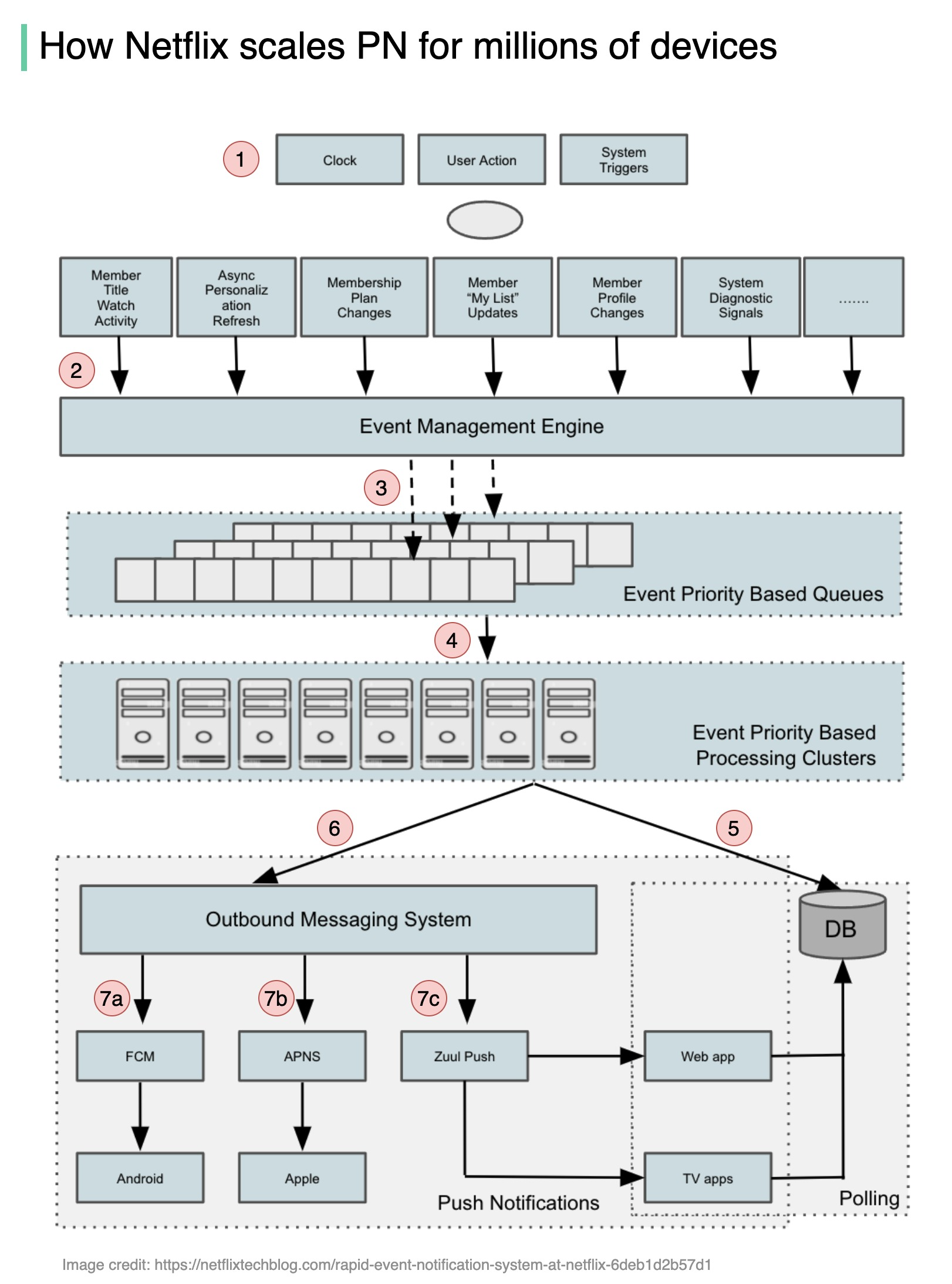

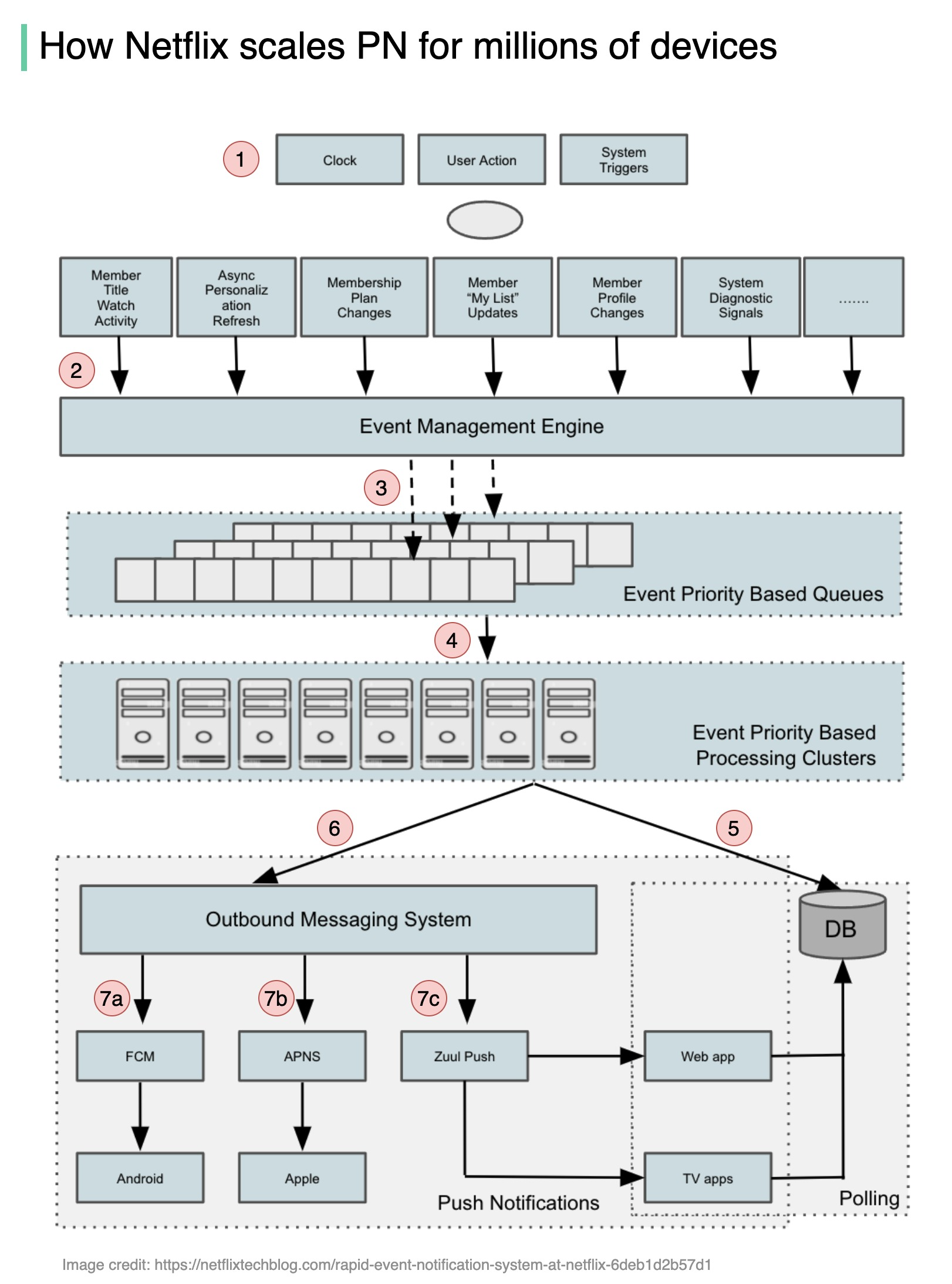

+ * [How Netflix Scales Push Messaging](https://bytebytego.com/guides/how-does-netflix-scale-push-messaging-for-millions-of-devices)

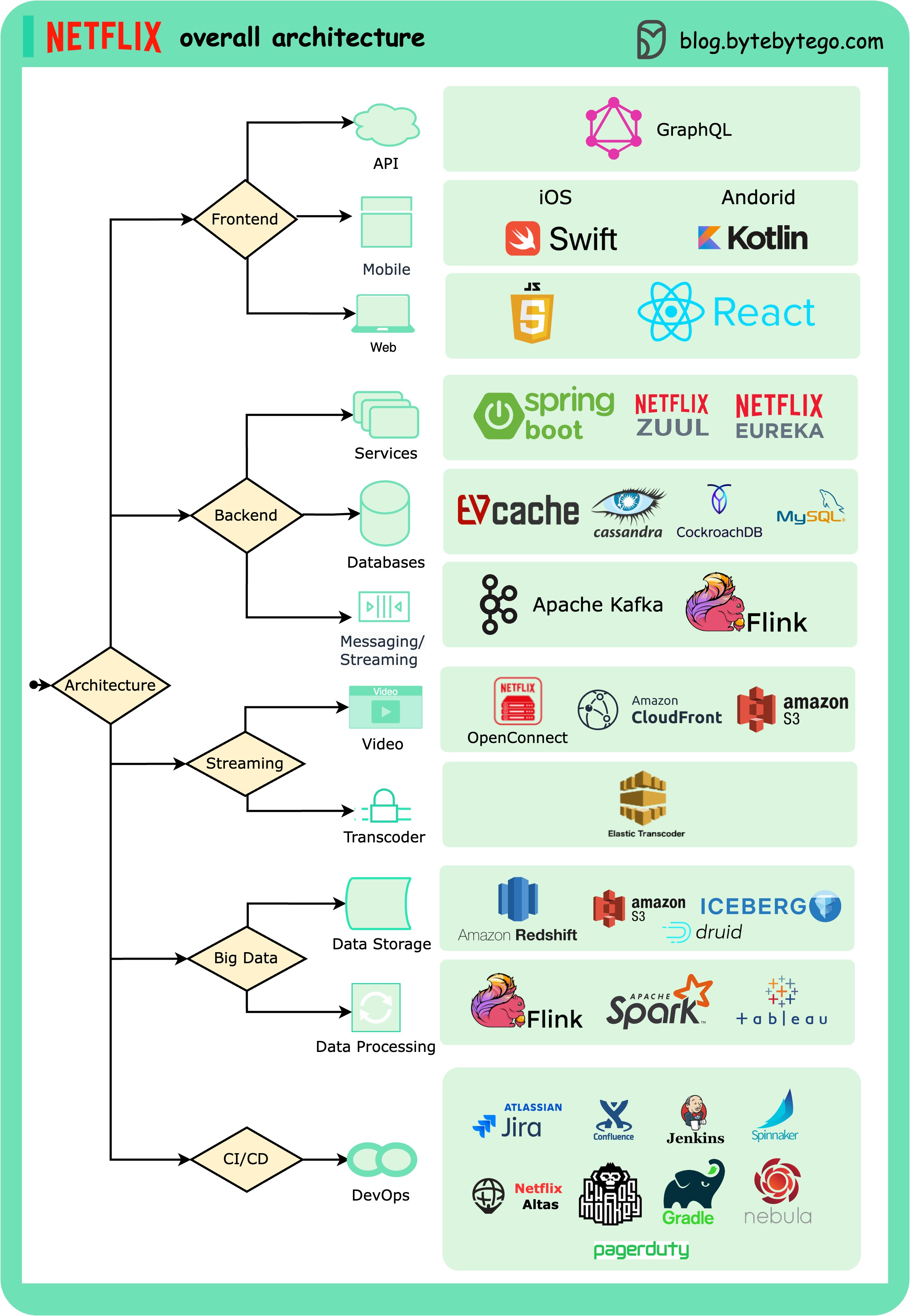

+ * [Netflix's Overall Architecture](https://bytebytego.com/guides/netflixs-overall-architecture)

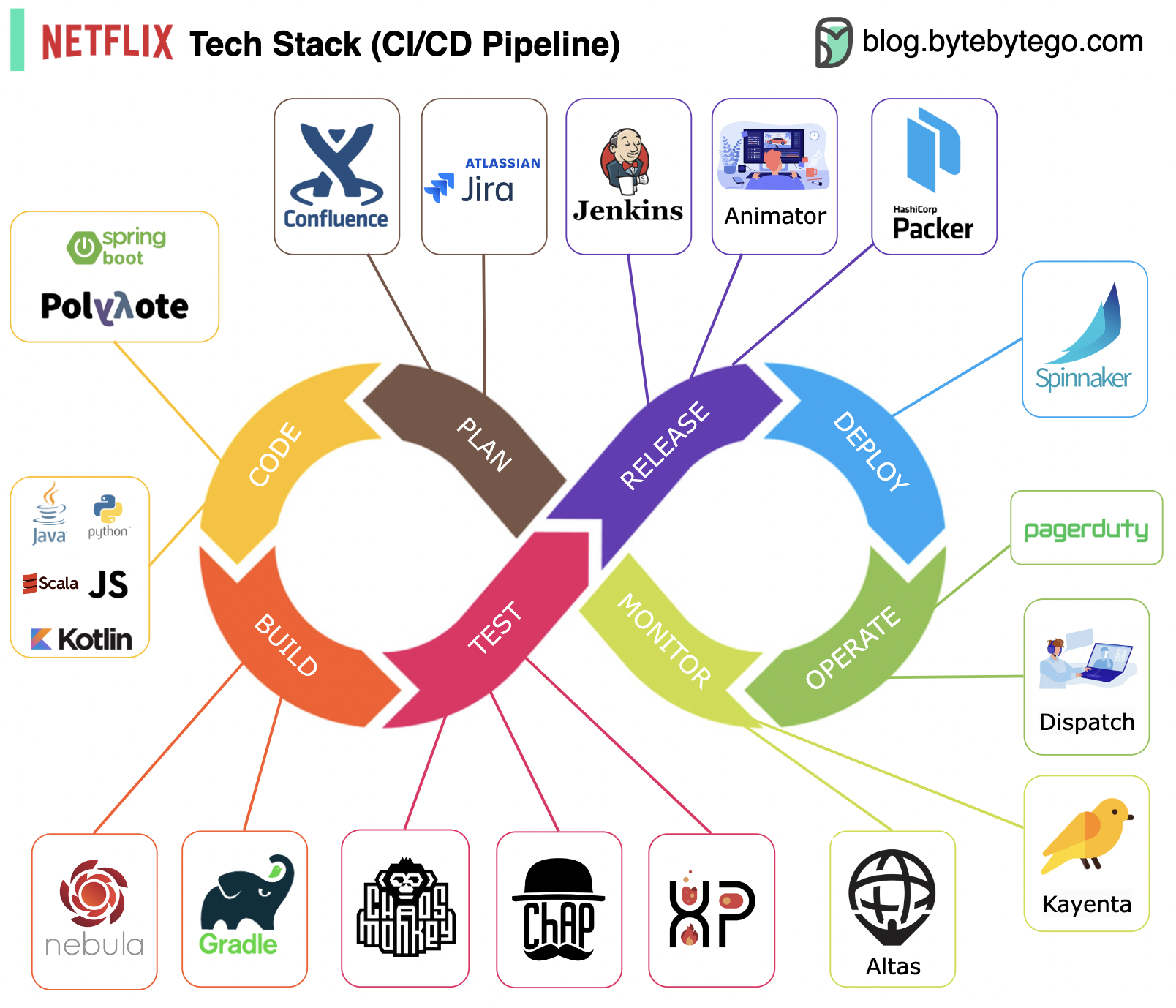

+ * [Netflix Tech Stack - CI/CD Pipeline](https://bytebytego.com/guides/netflix-tech-stack-cicd-pipeline)

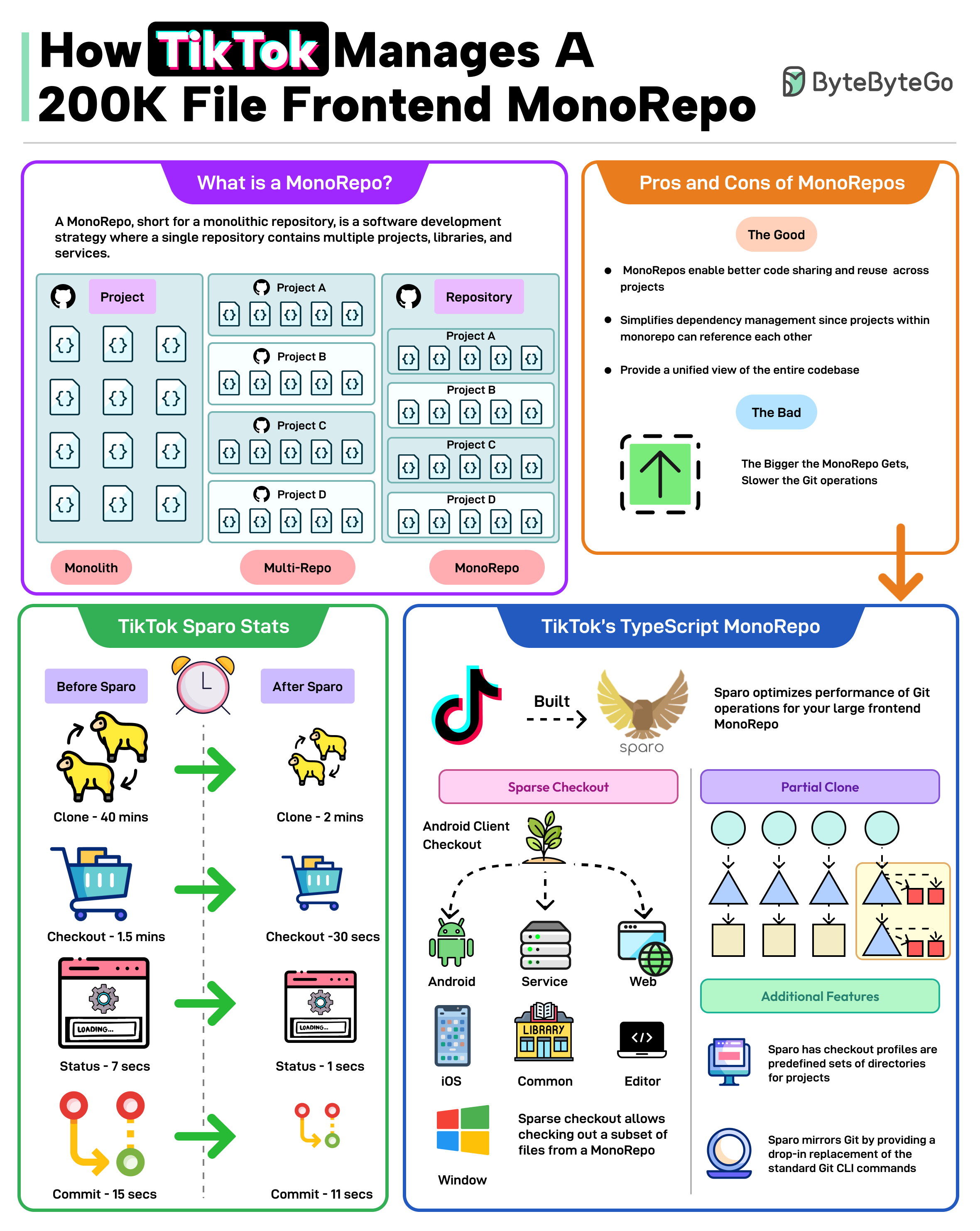

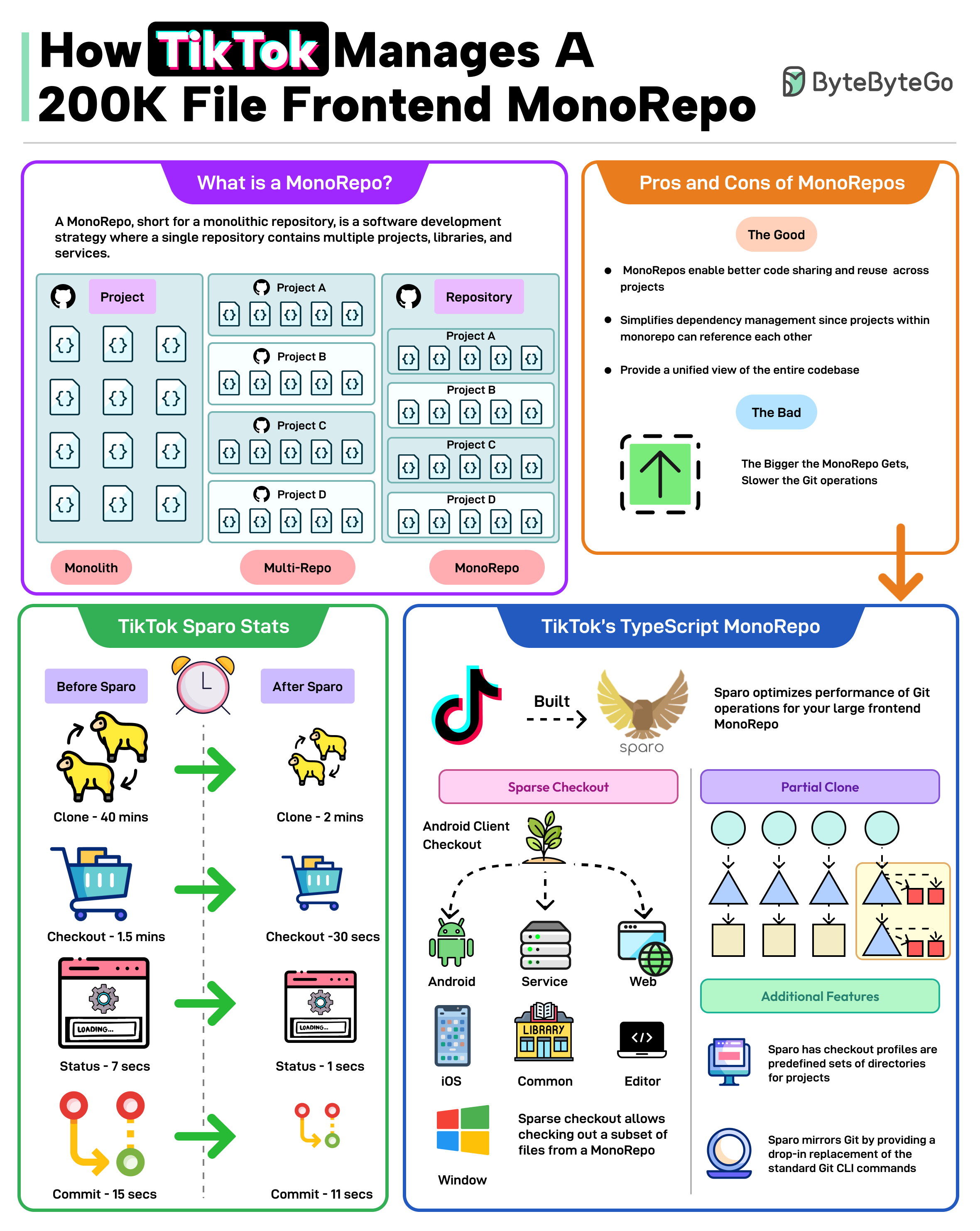

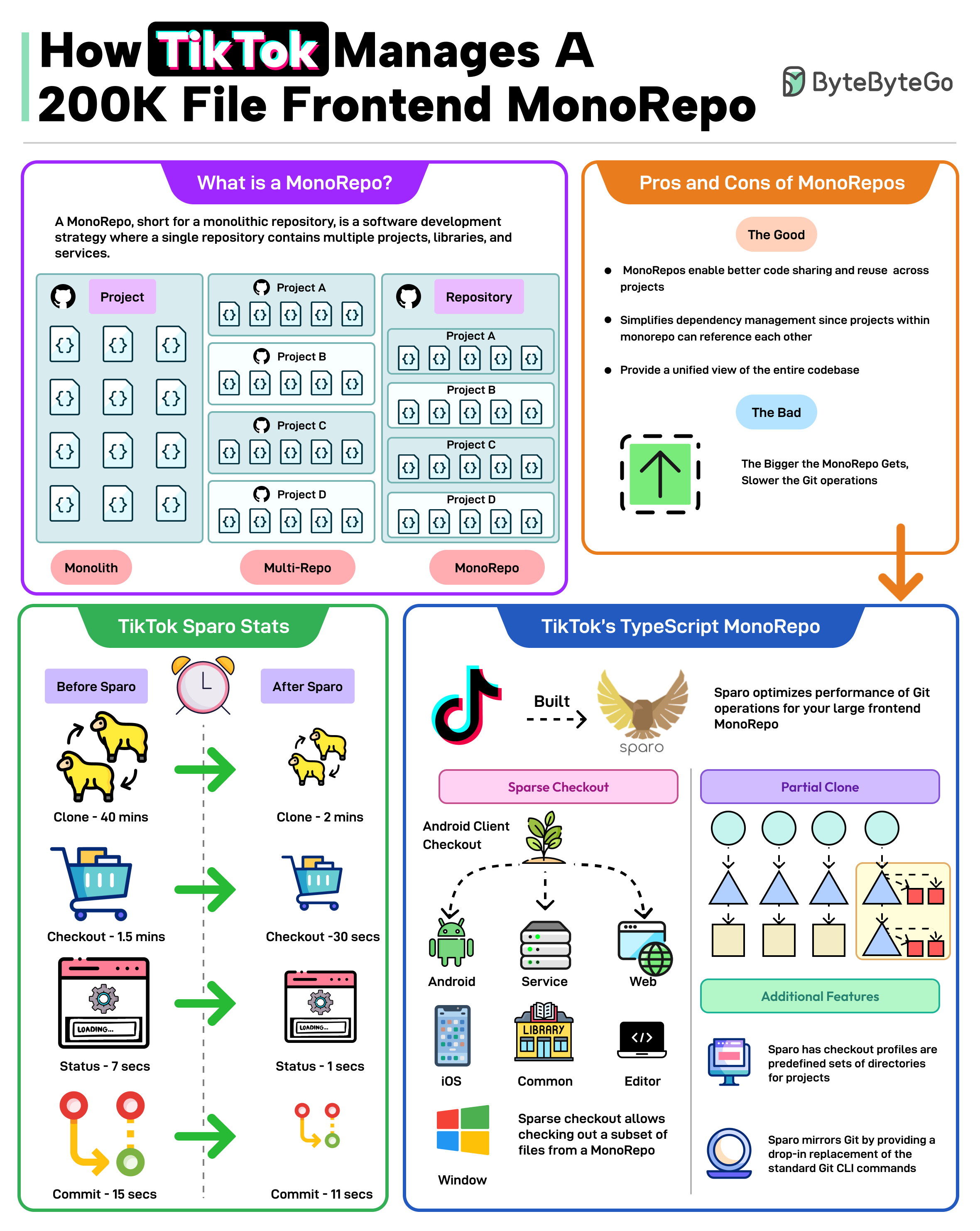

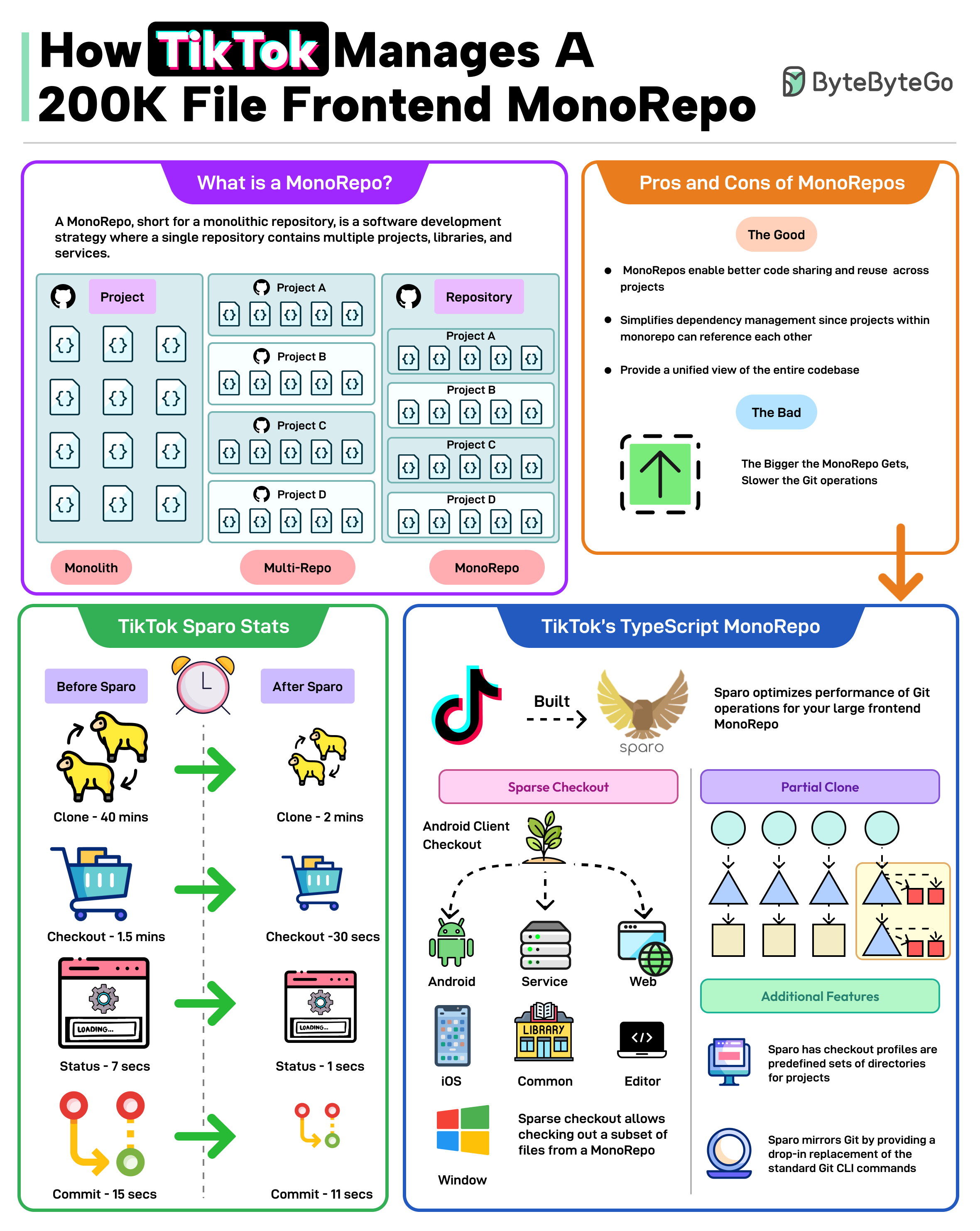

+ * [How TikTok Manages a 200K File Frontend MonoRepo](https://bytebytego.com/guides/how-tiktok-manages-a-200k-file-frontend-monorepo)

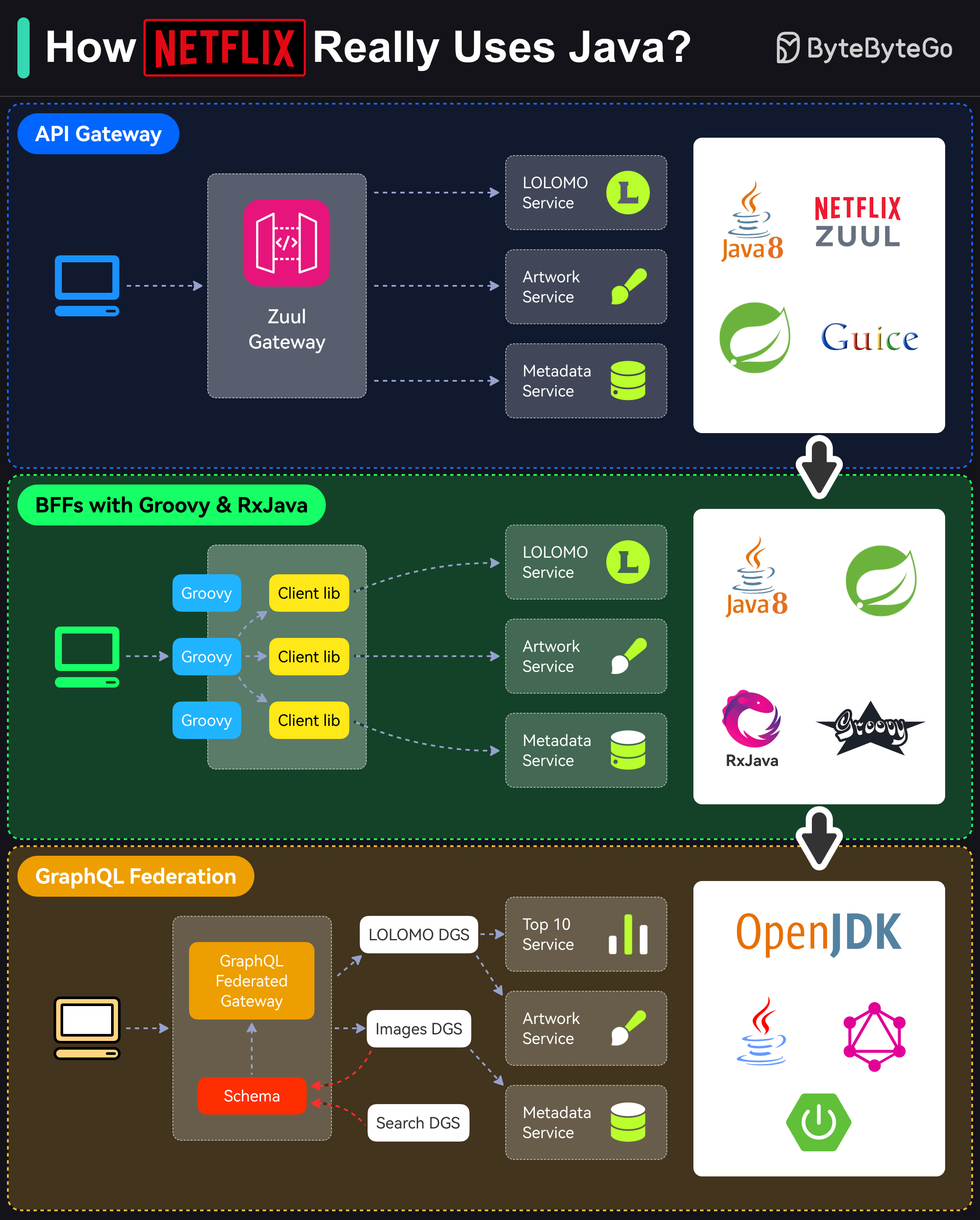

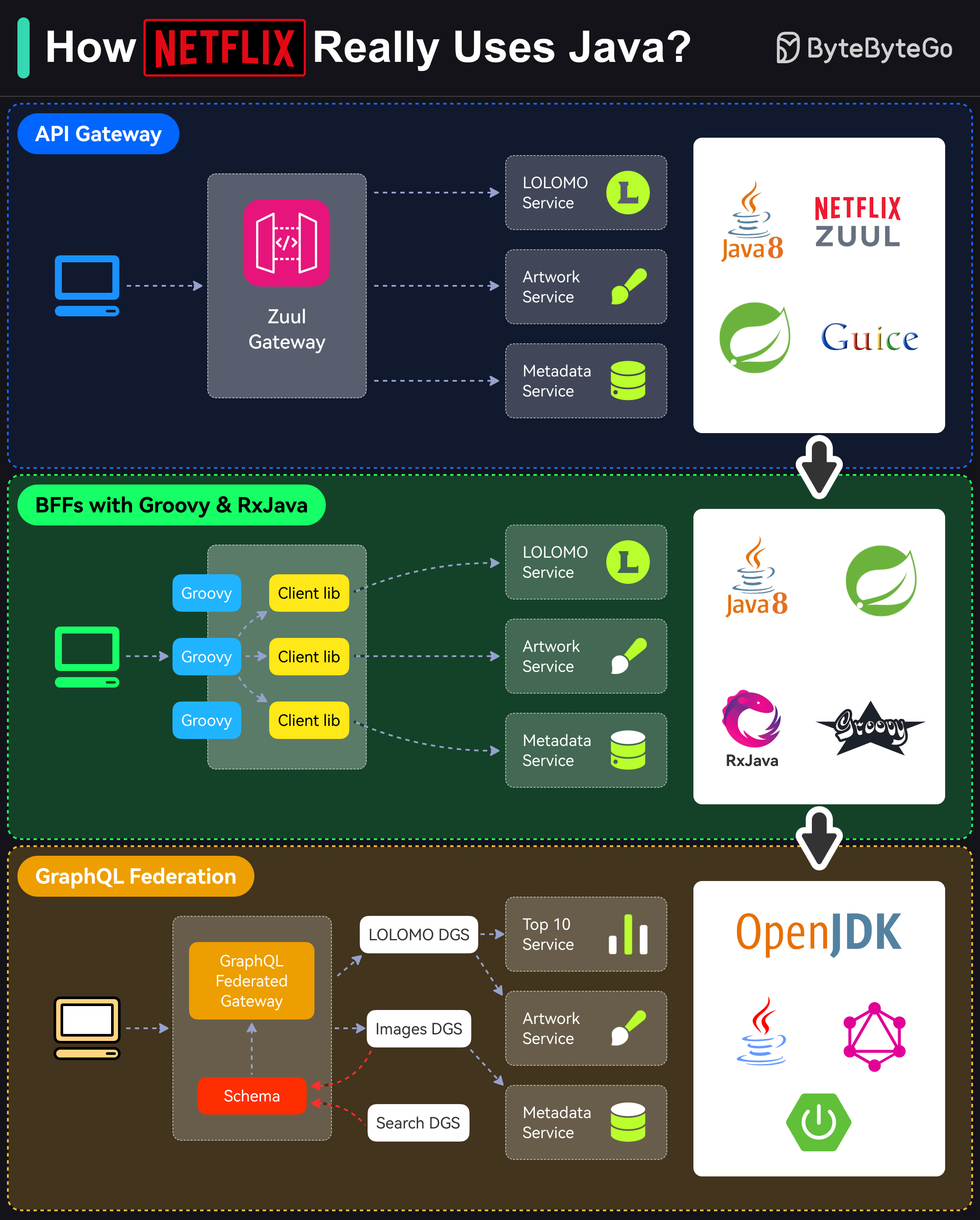

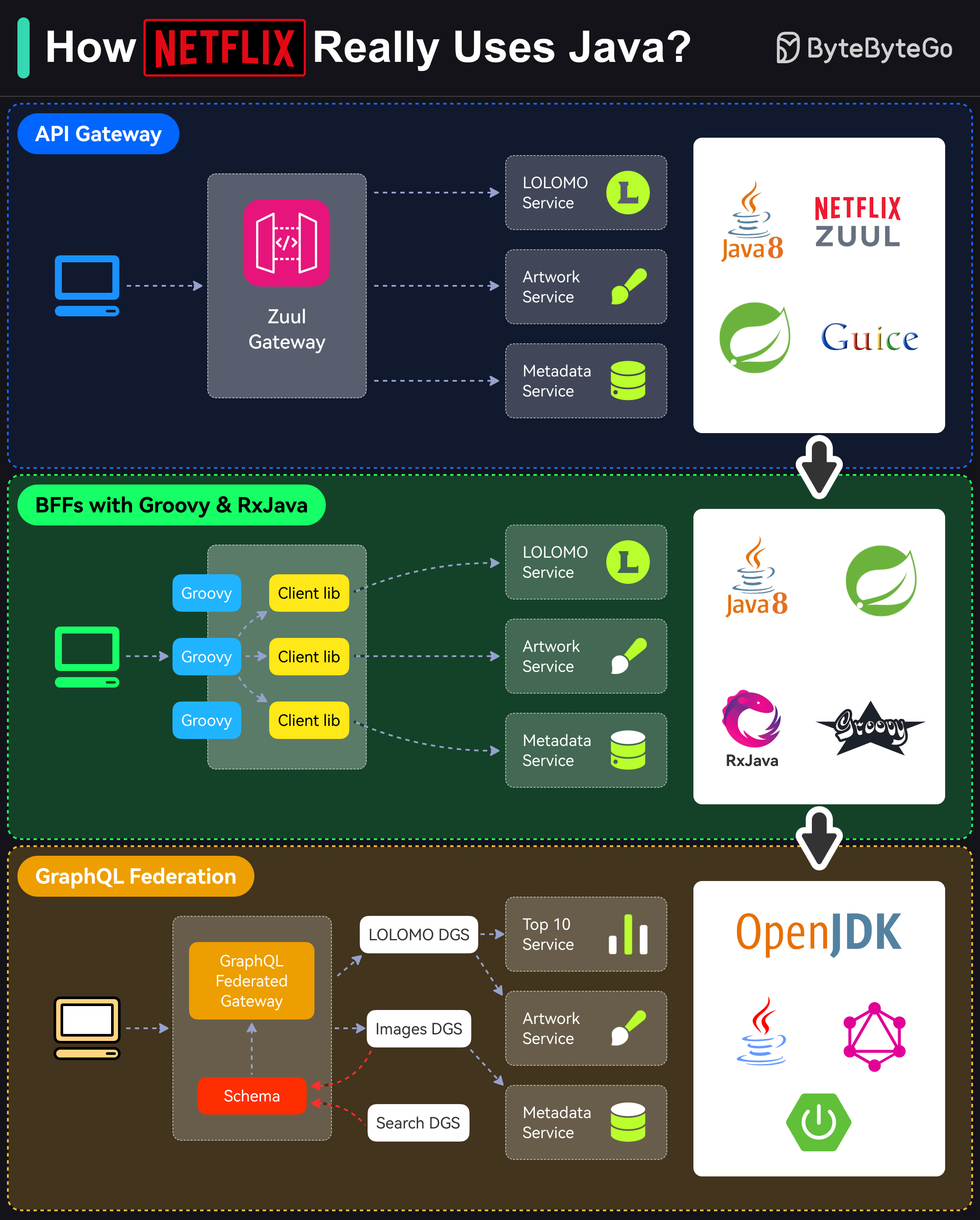

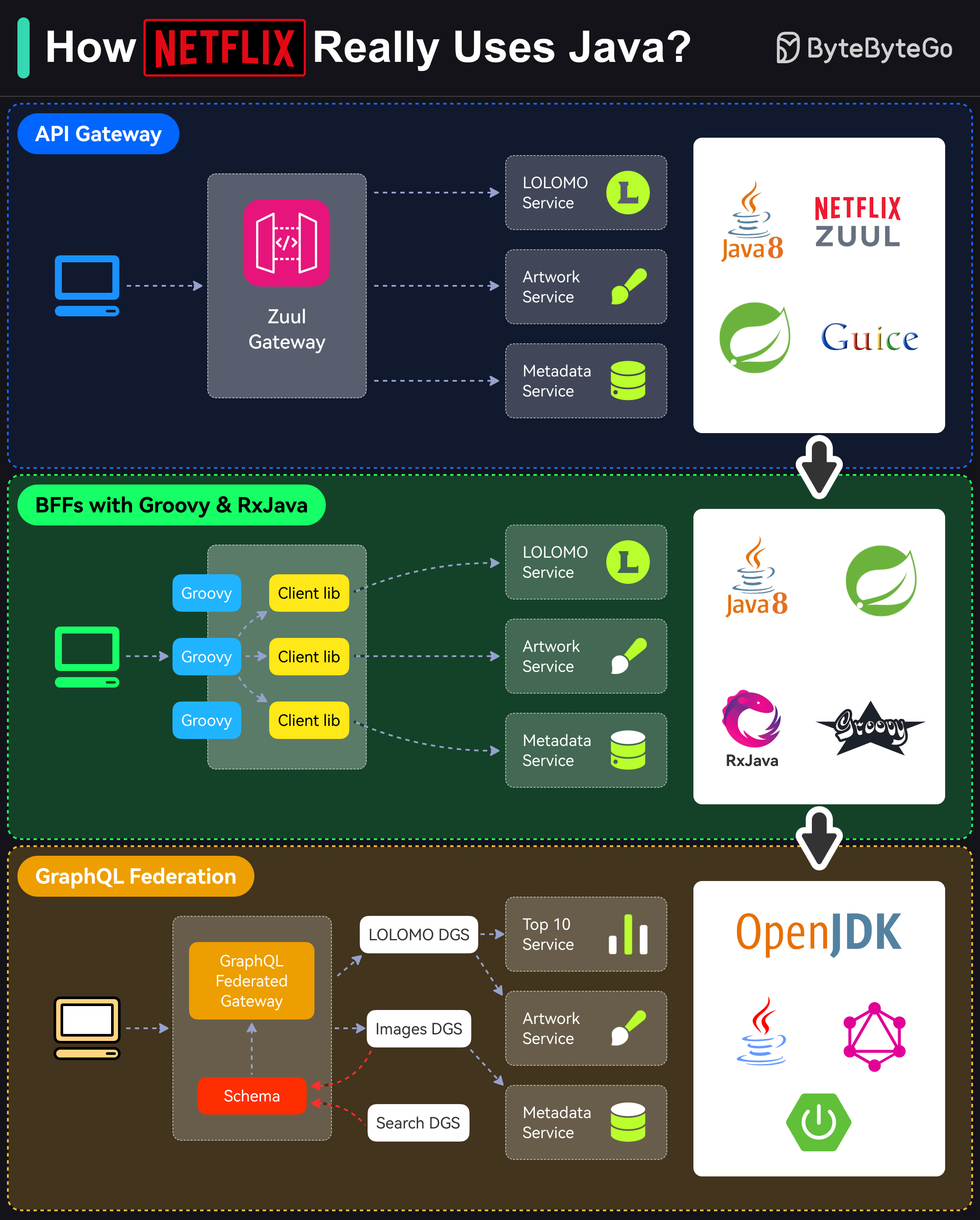

+ * [How Netflix Really Uses Java](https://bytebytego.com/guides/how-netflix-really-uses-java)

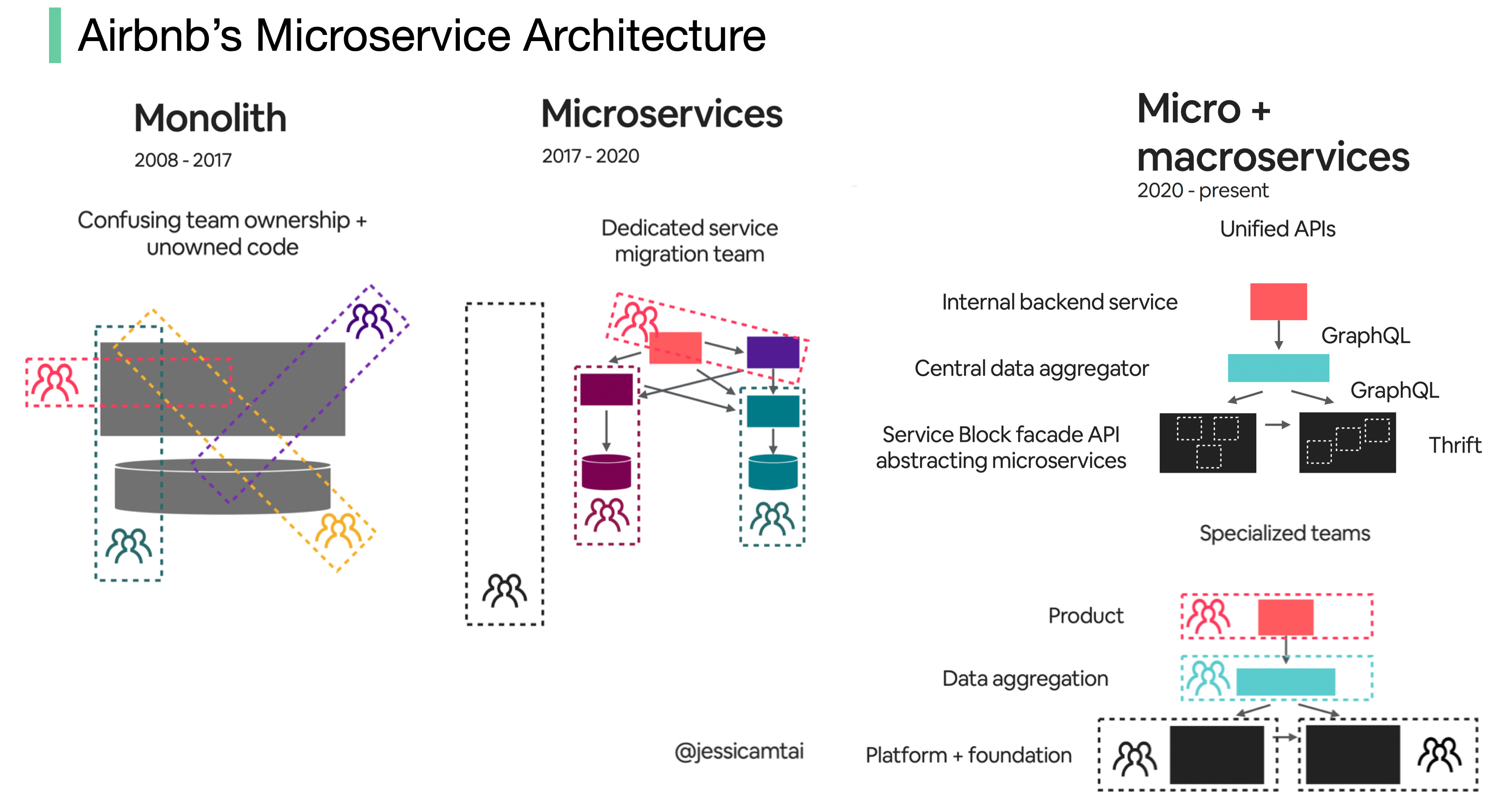

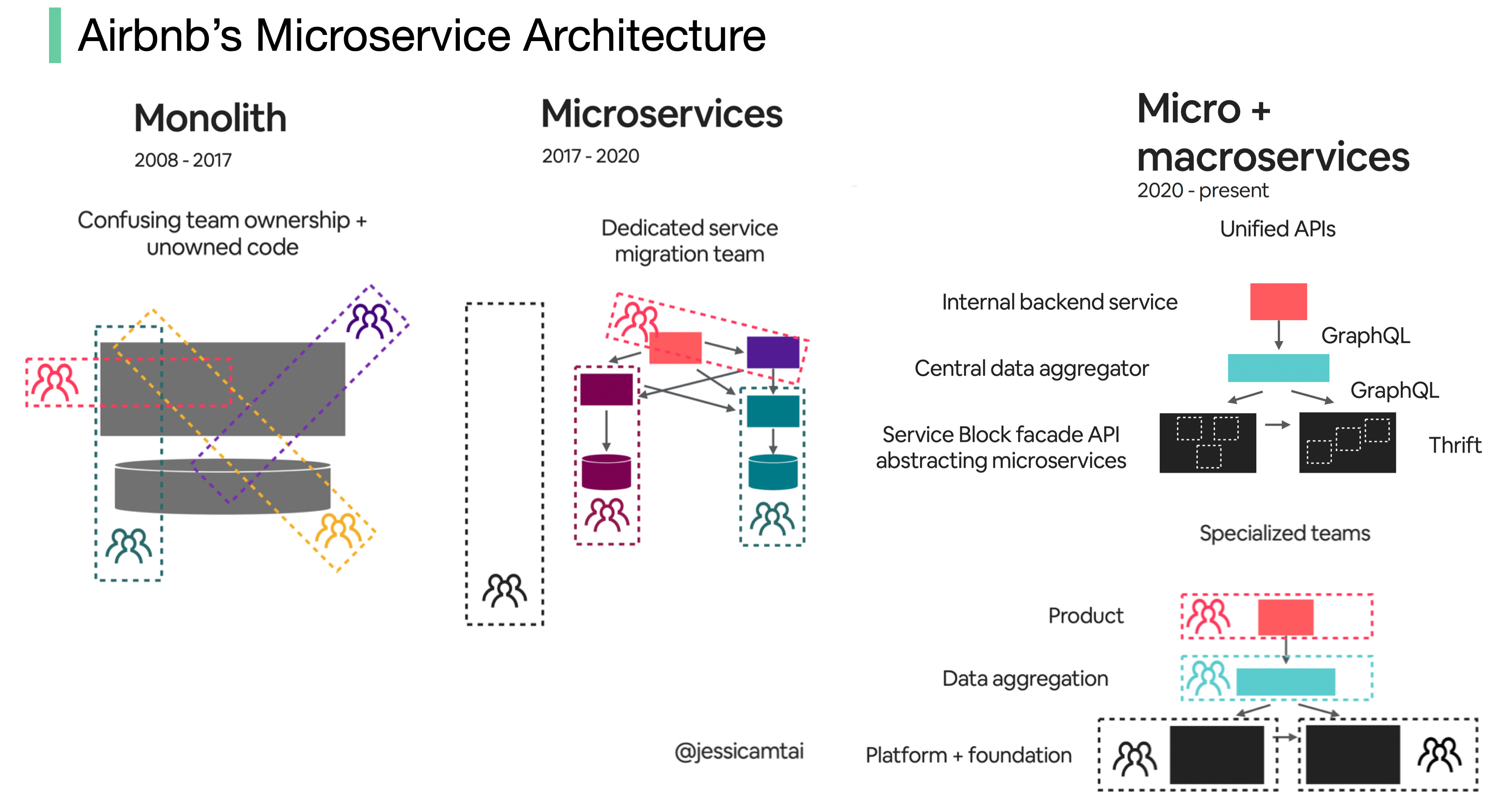

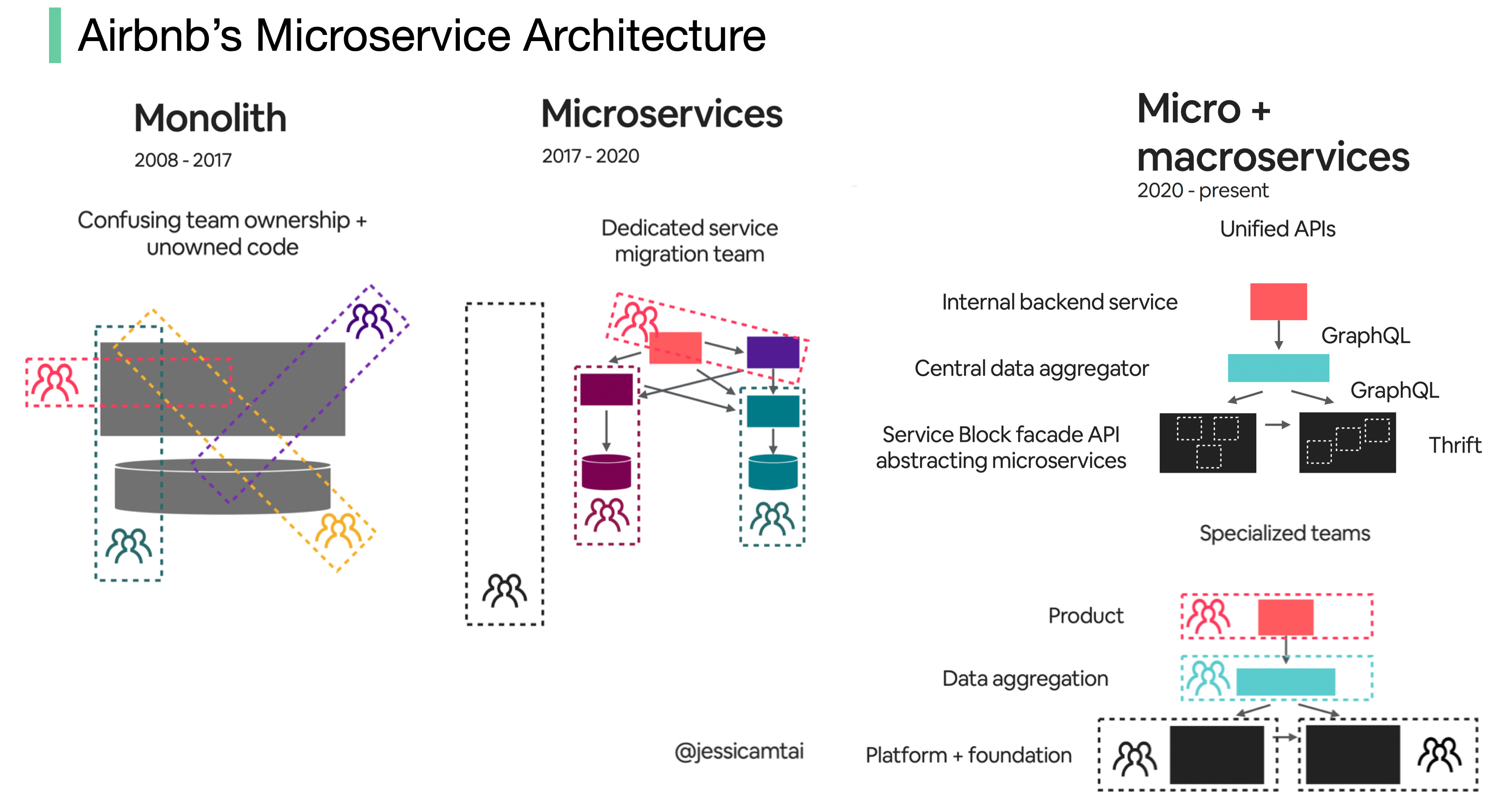

+ * [Evolution of Airbnb’s Microservice Architecture](https://bytebytego.com/guides/evolution-of-airbnb's-microservice)

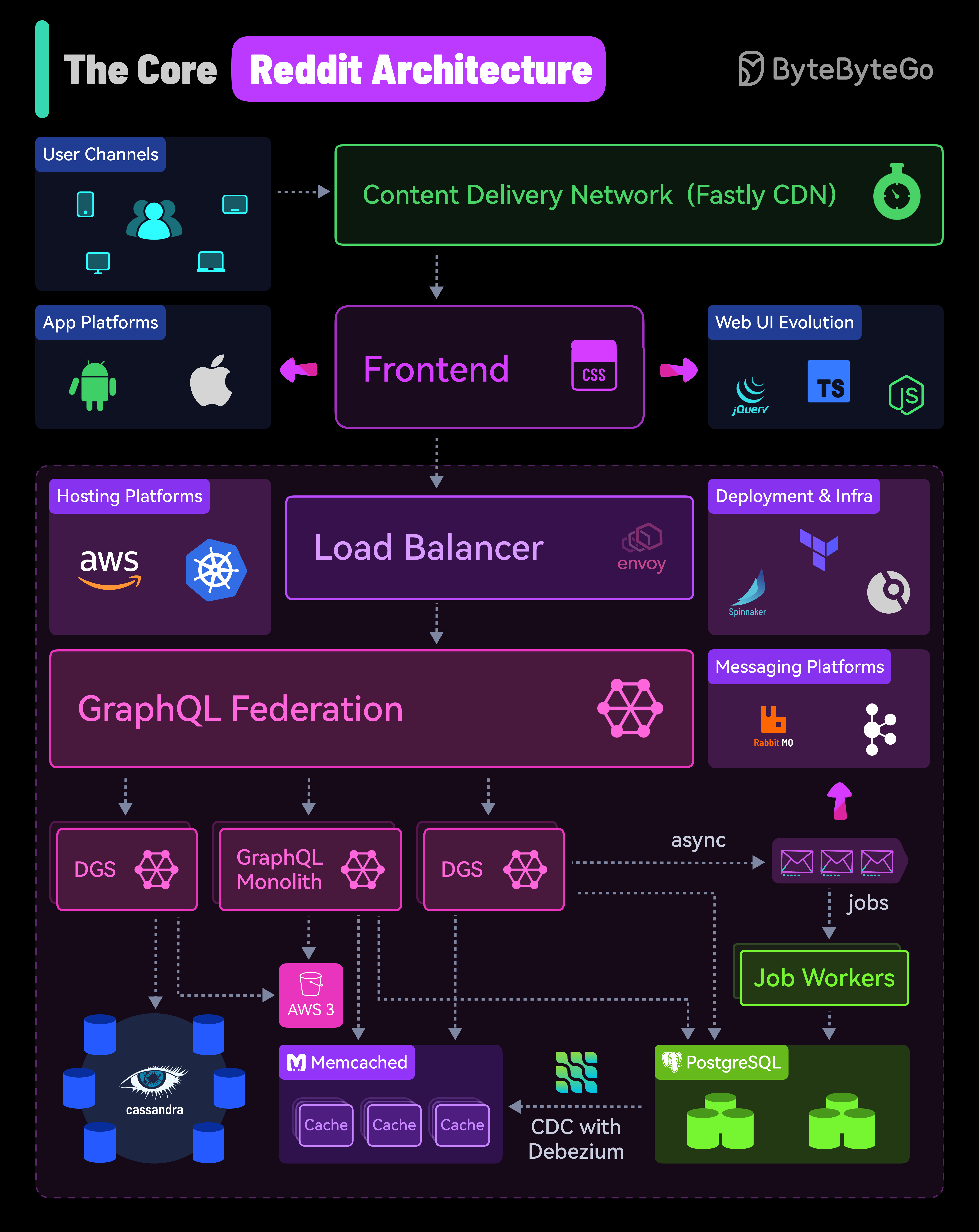

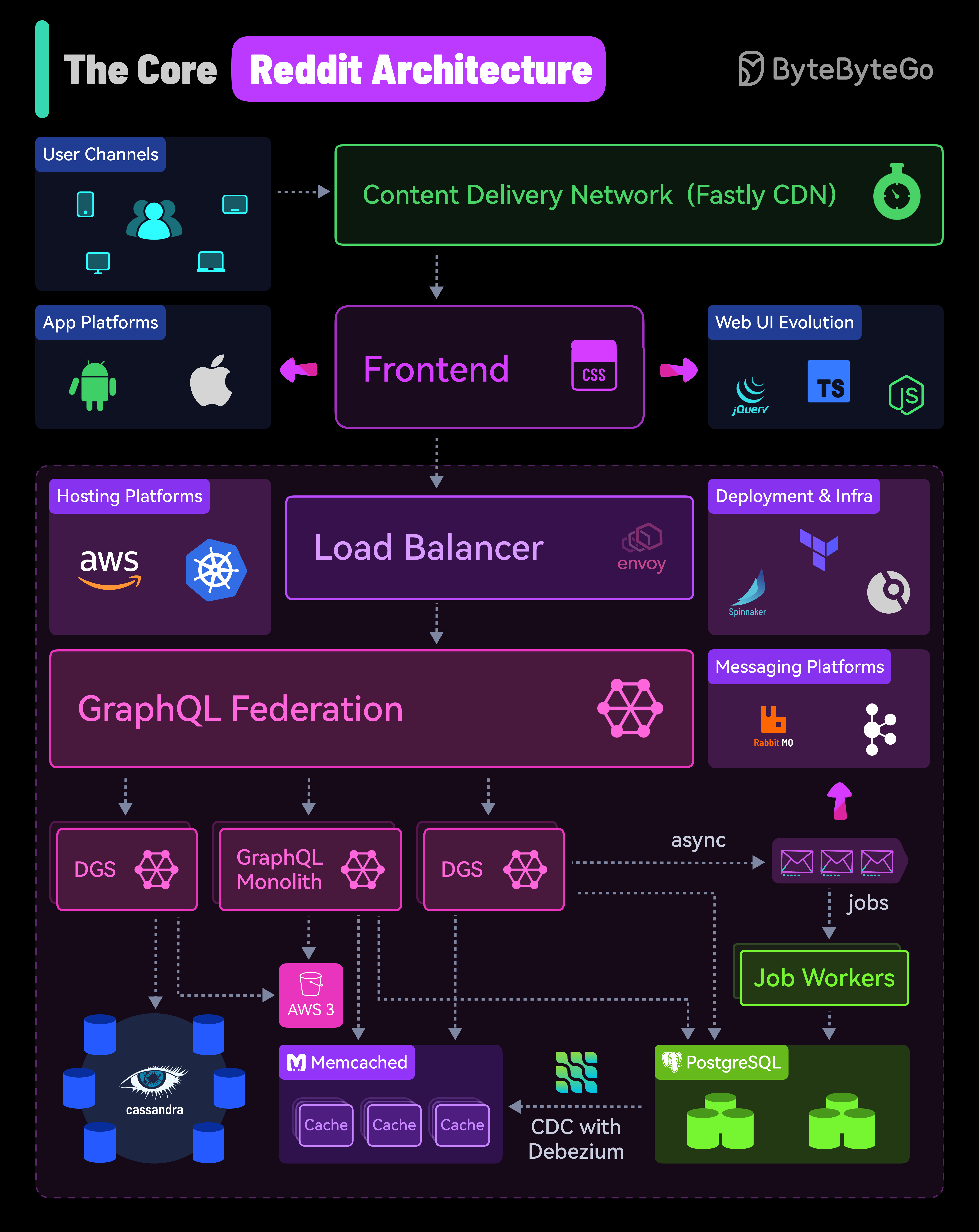

+ * [Reddit's Core Architecture](https://bytebytego.com/guides/reddit's-core-architecture)

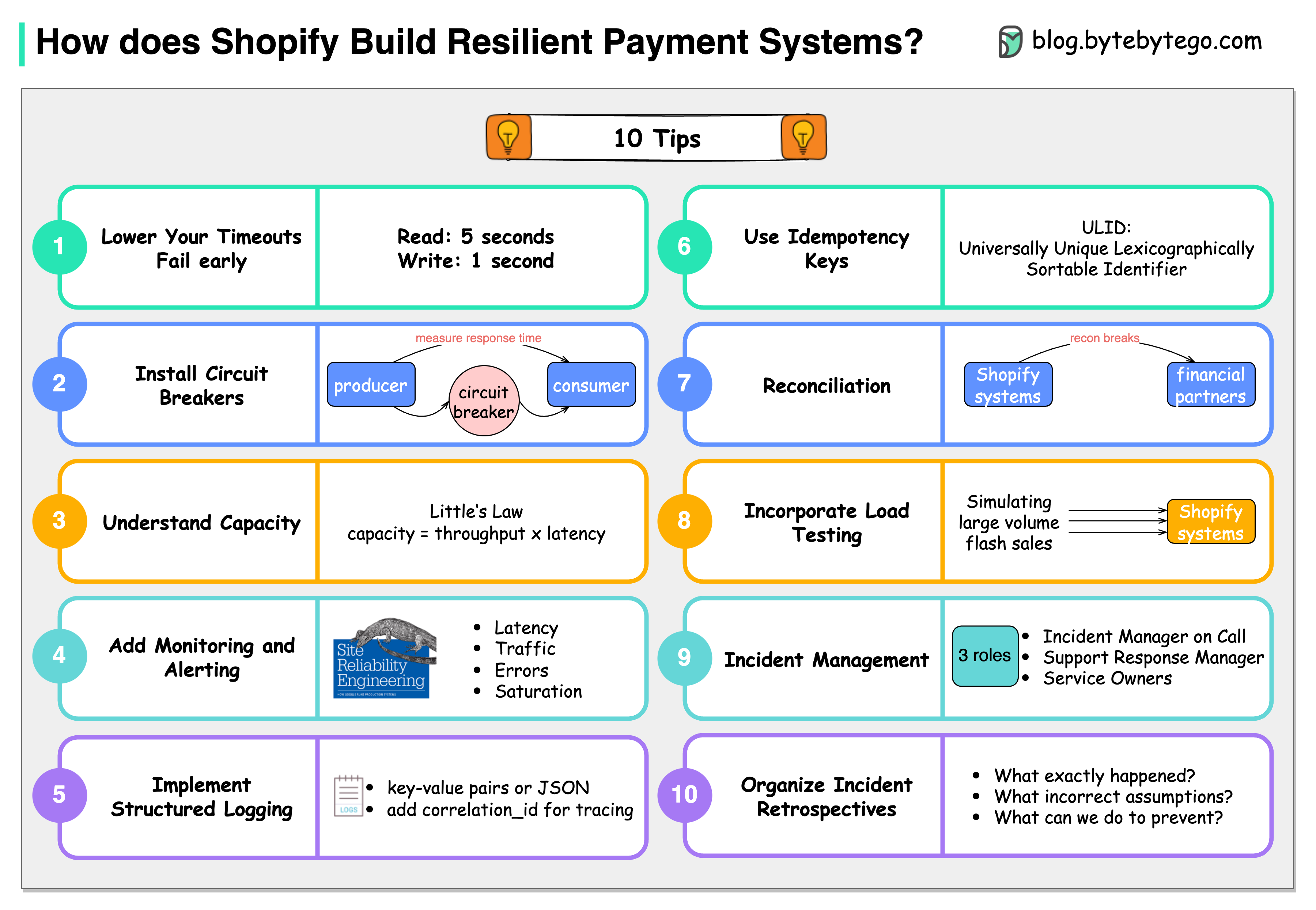

+ * [10 Principles for Building Resilient Payment Systems](https://bytebytego.com/guides/10-principles-for-building-resilient-payment-systems-by-shopify)

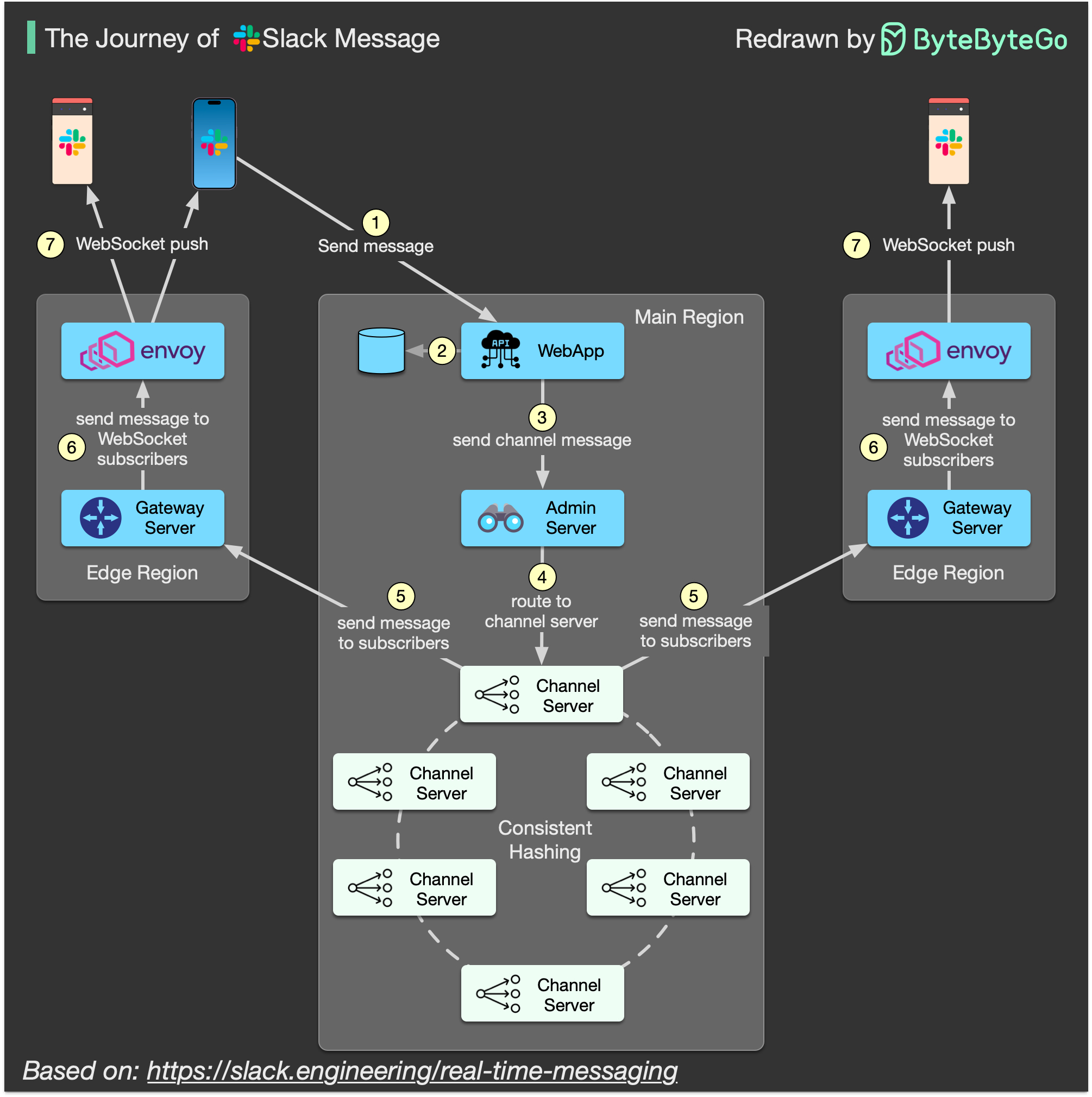

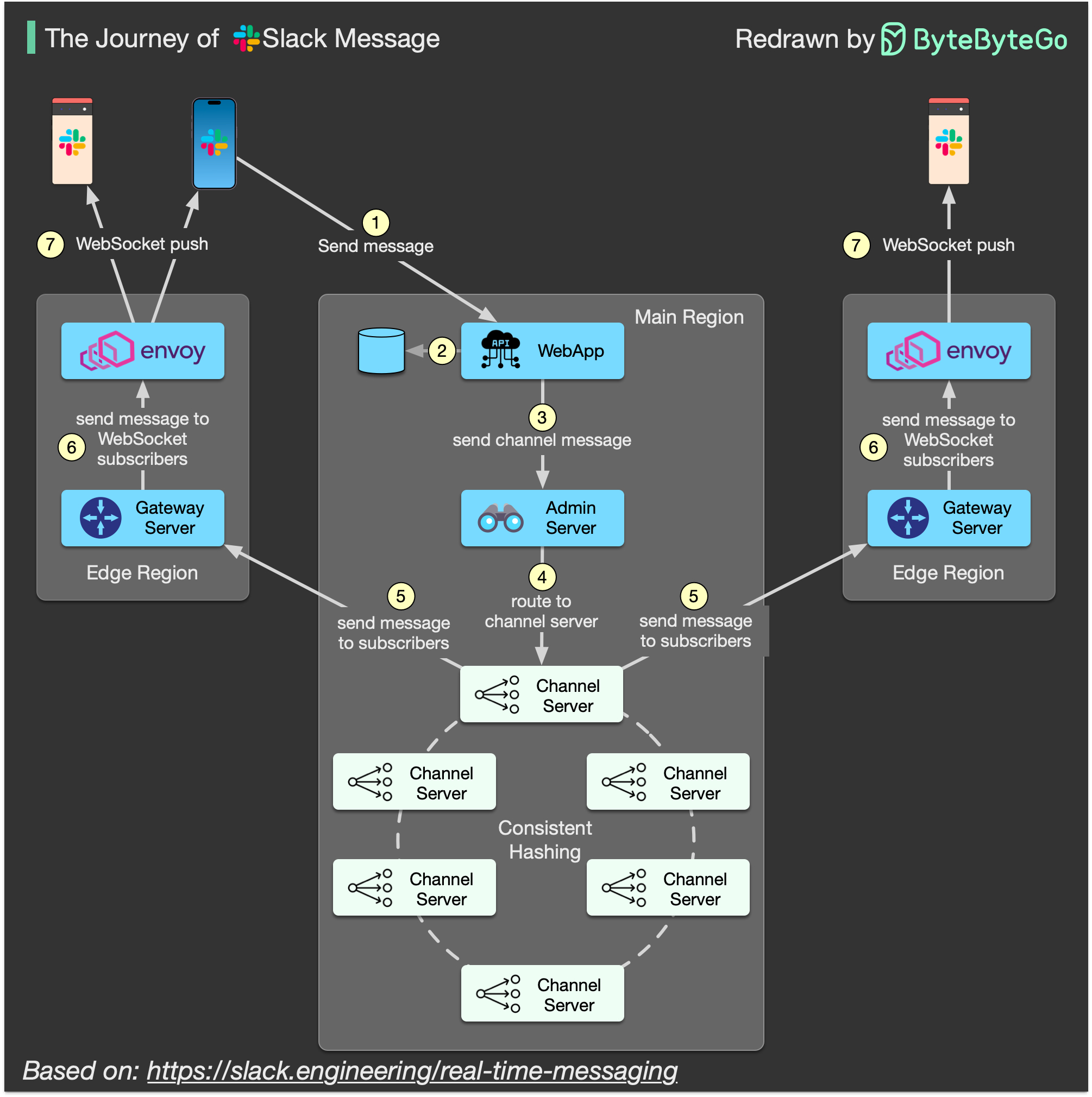

+ * [What is the Journey of a Slack Message?](https://bytebytego.com/guides/what-is-the-journey-of-a-slack-message)

+ * [Top 9 Engineering Blogs](https://bytebytego.com/guides/top-9-engineering-blog-favorites)

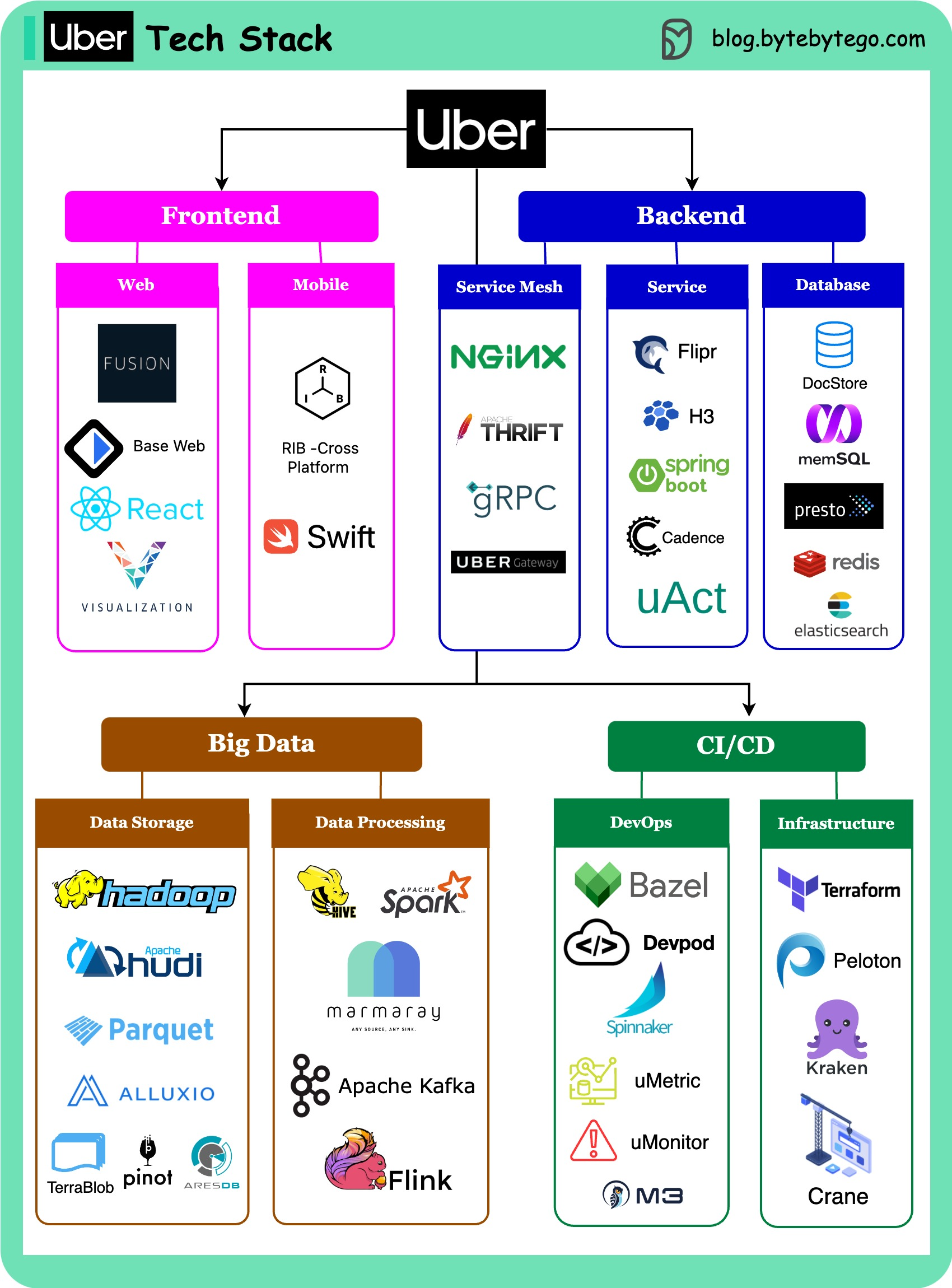

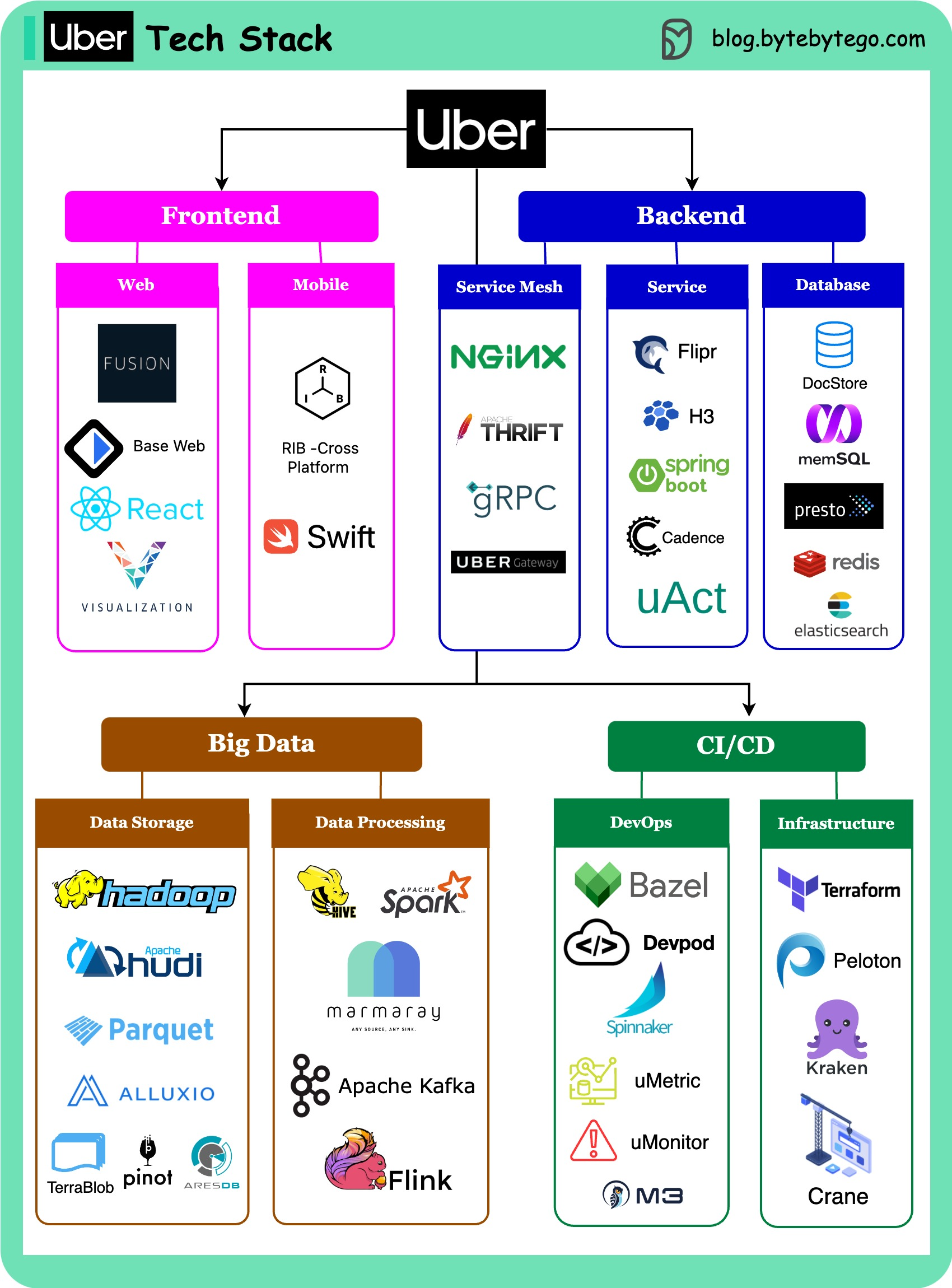

+ * [Uber Tech Stack](https://bytebytego.com/guides/uber-tech-stack)

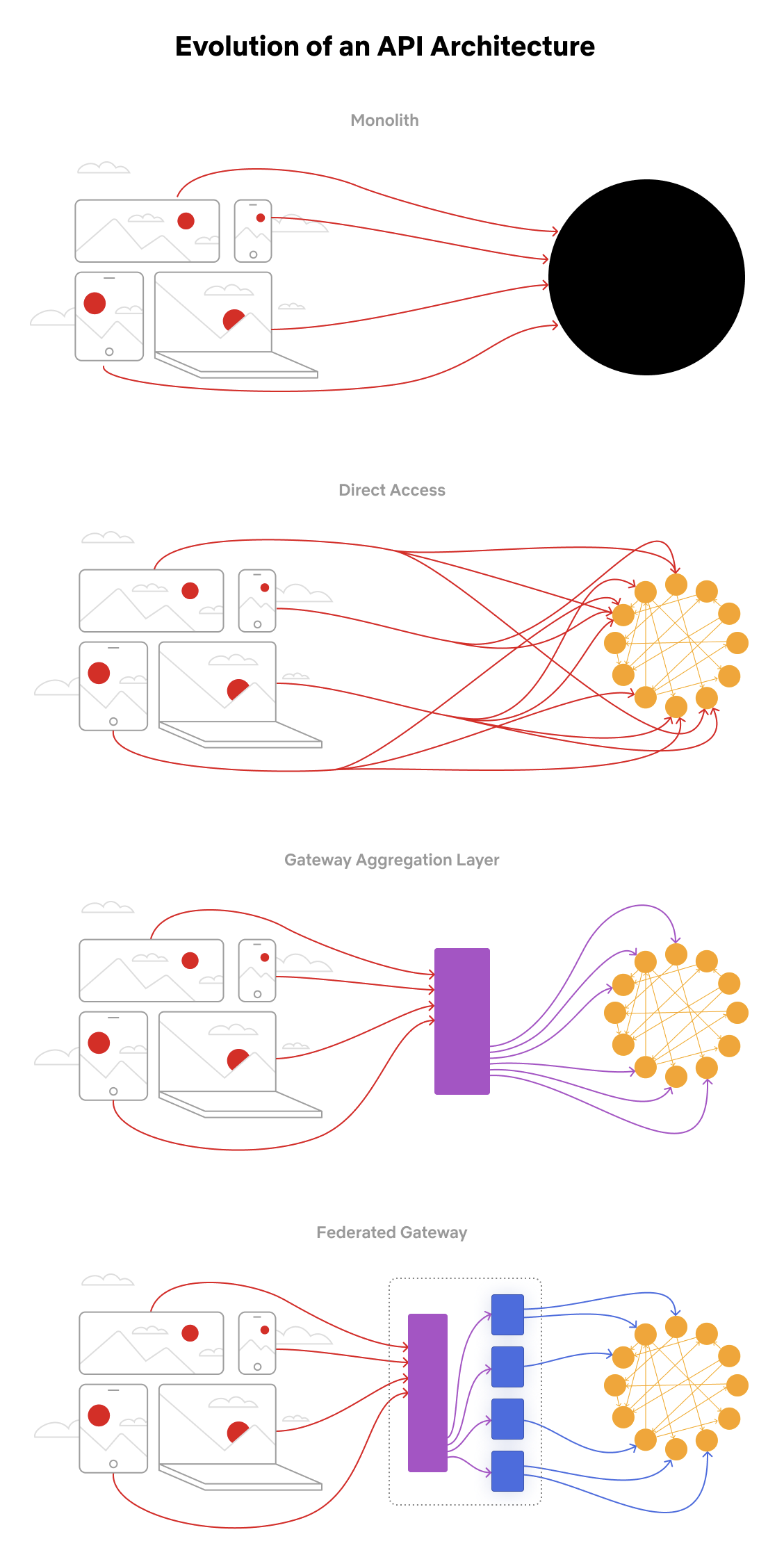

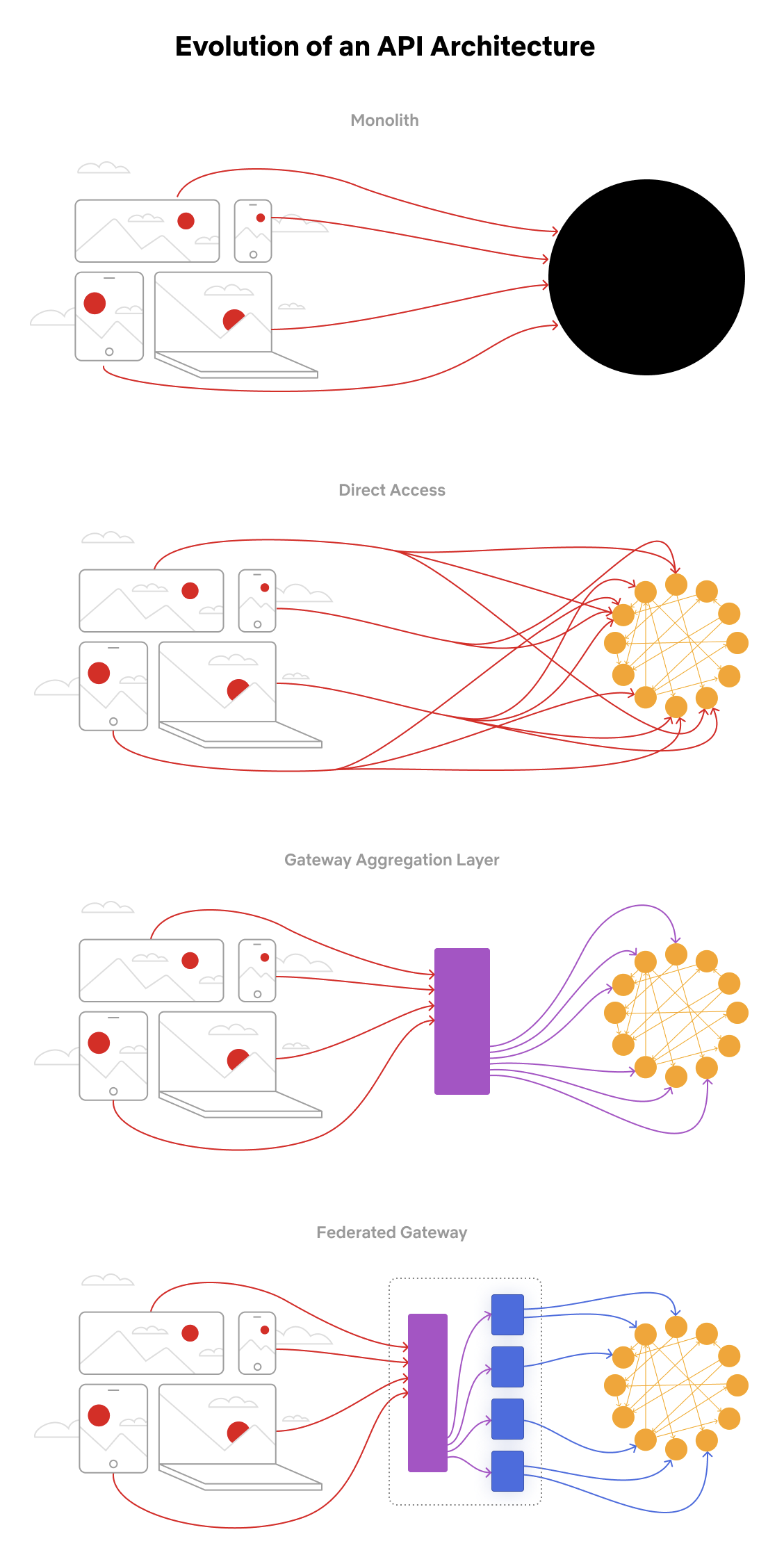

+ * [Evolution of the Netflix API Architecture](https://bytebytego.com/guides/evolution-of-the-netflix-api-architecture)

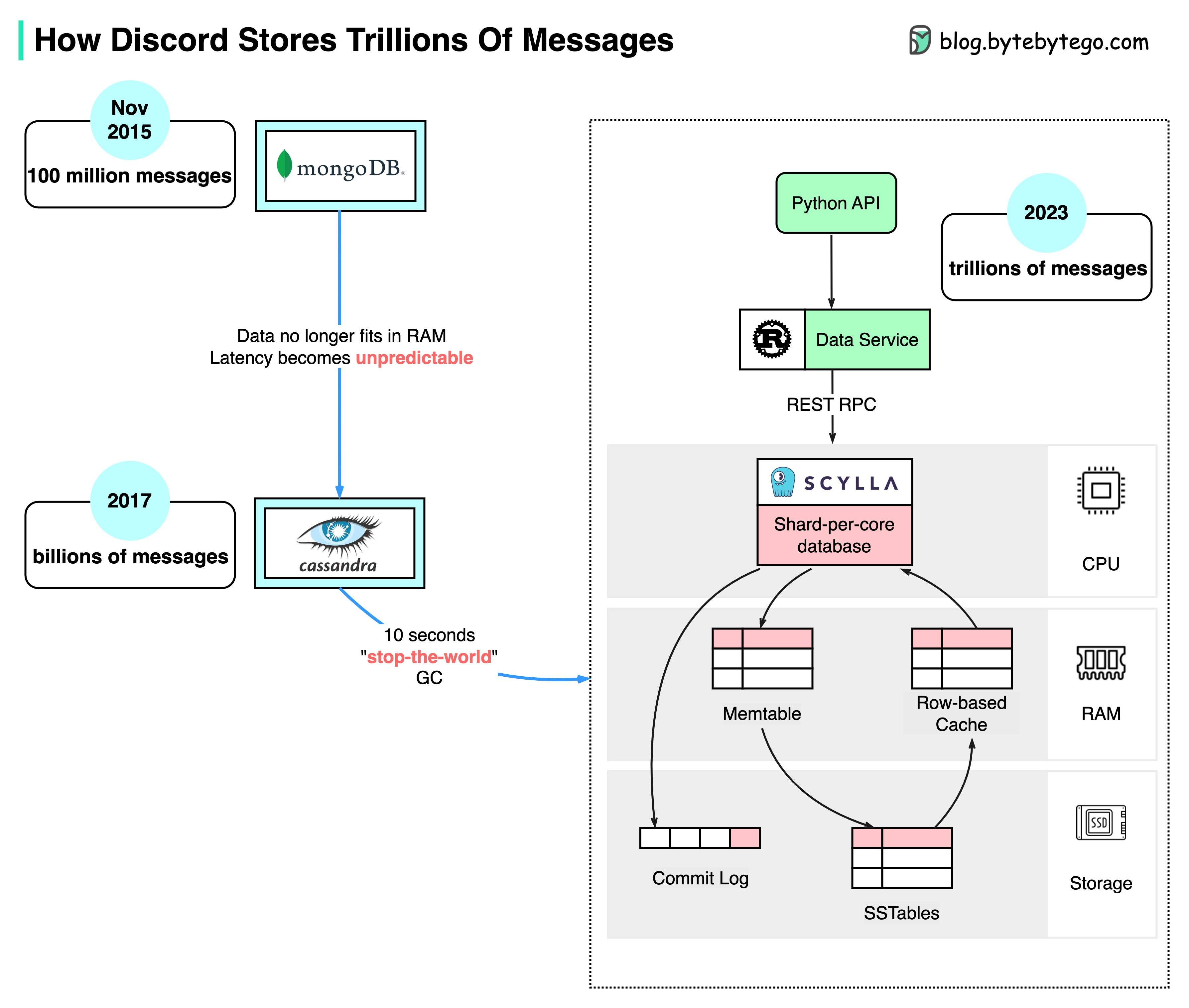

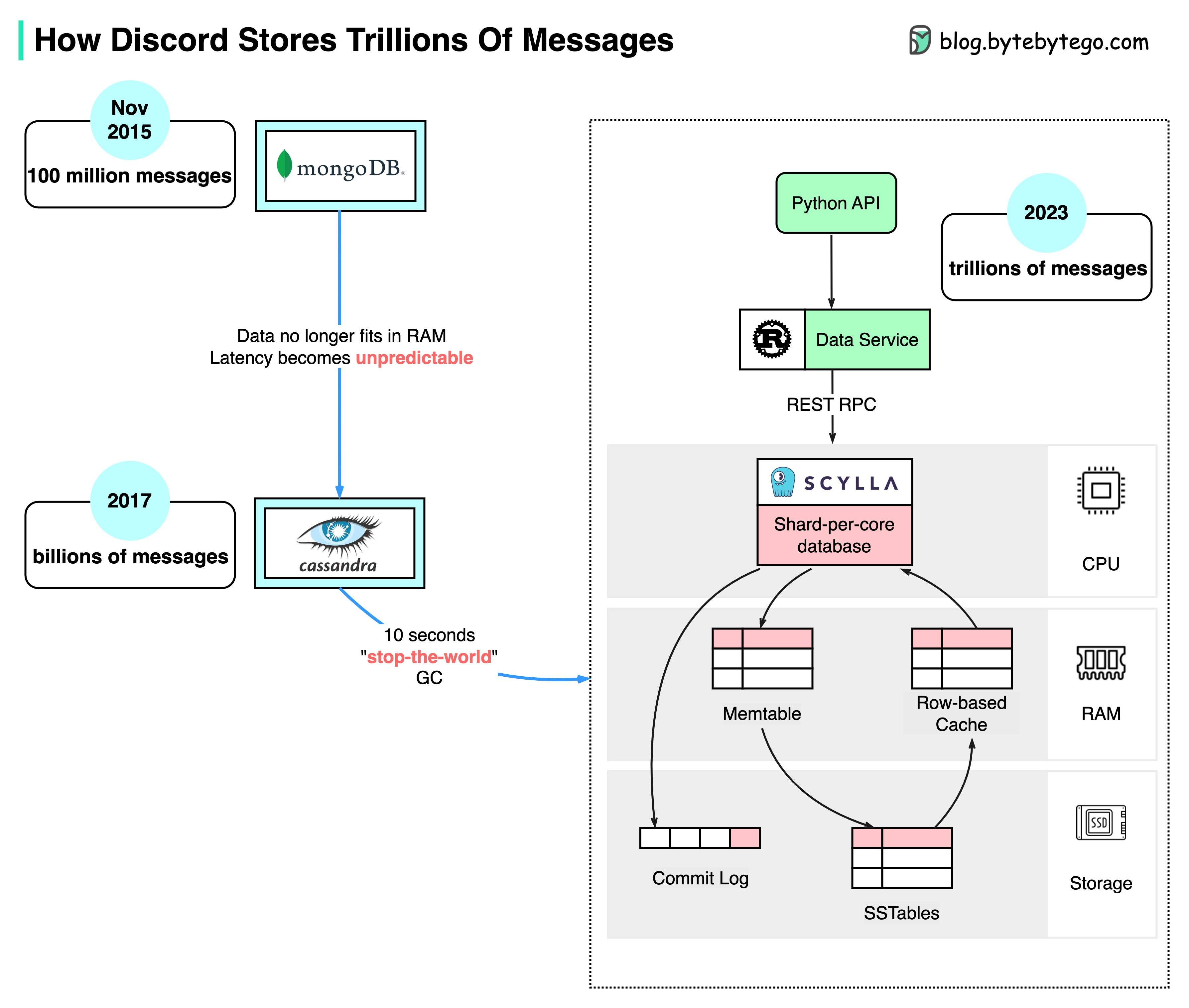

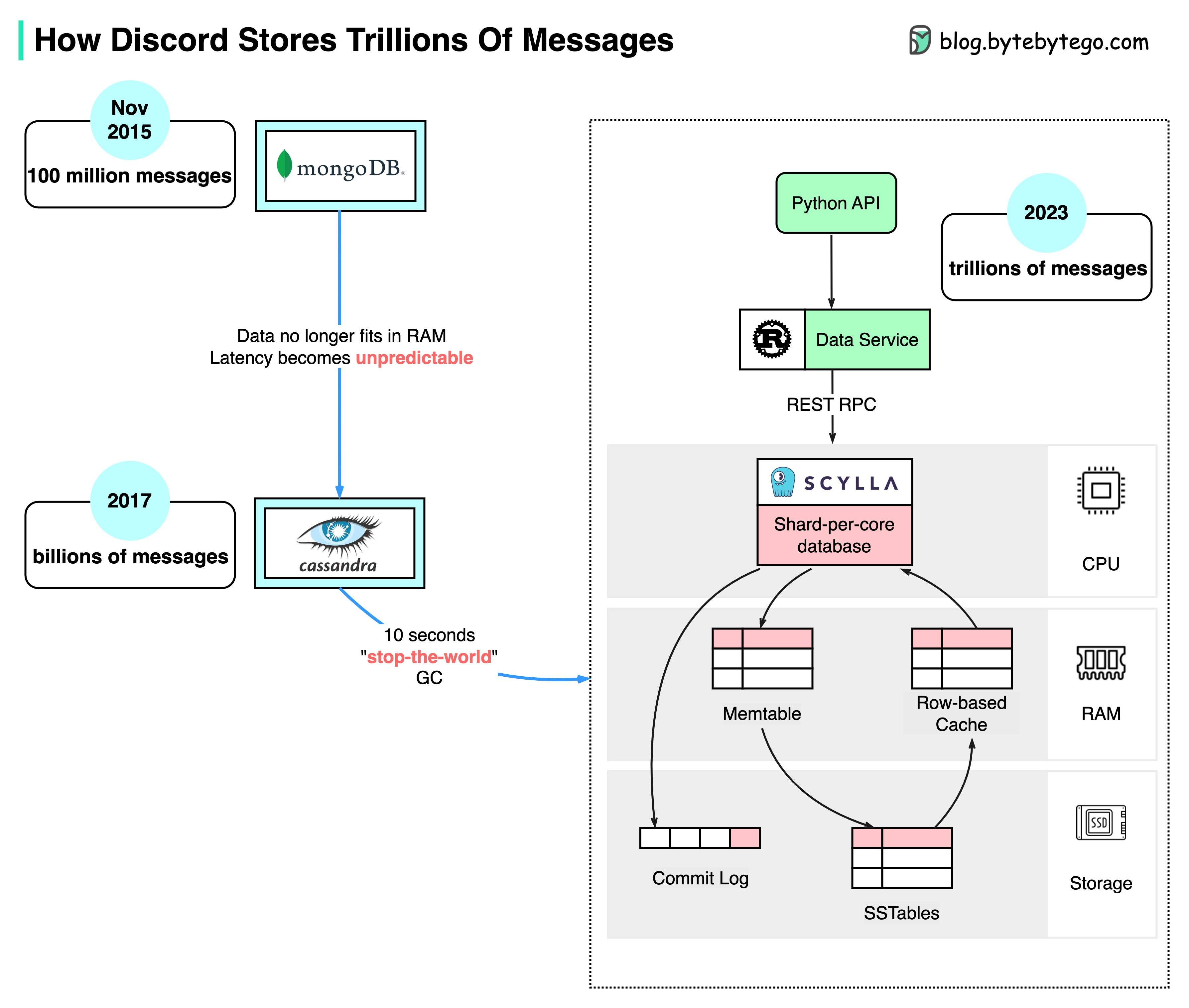

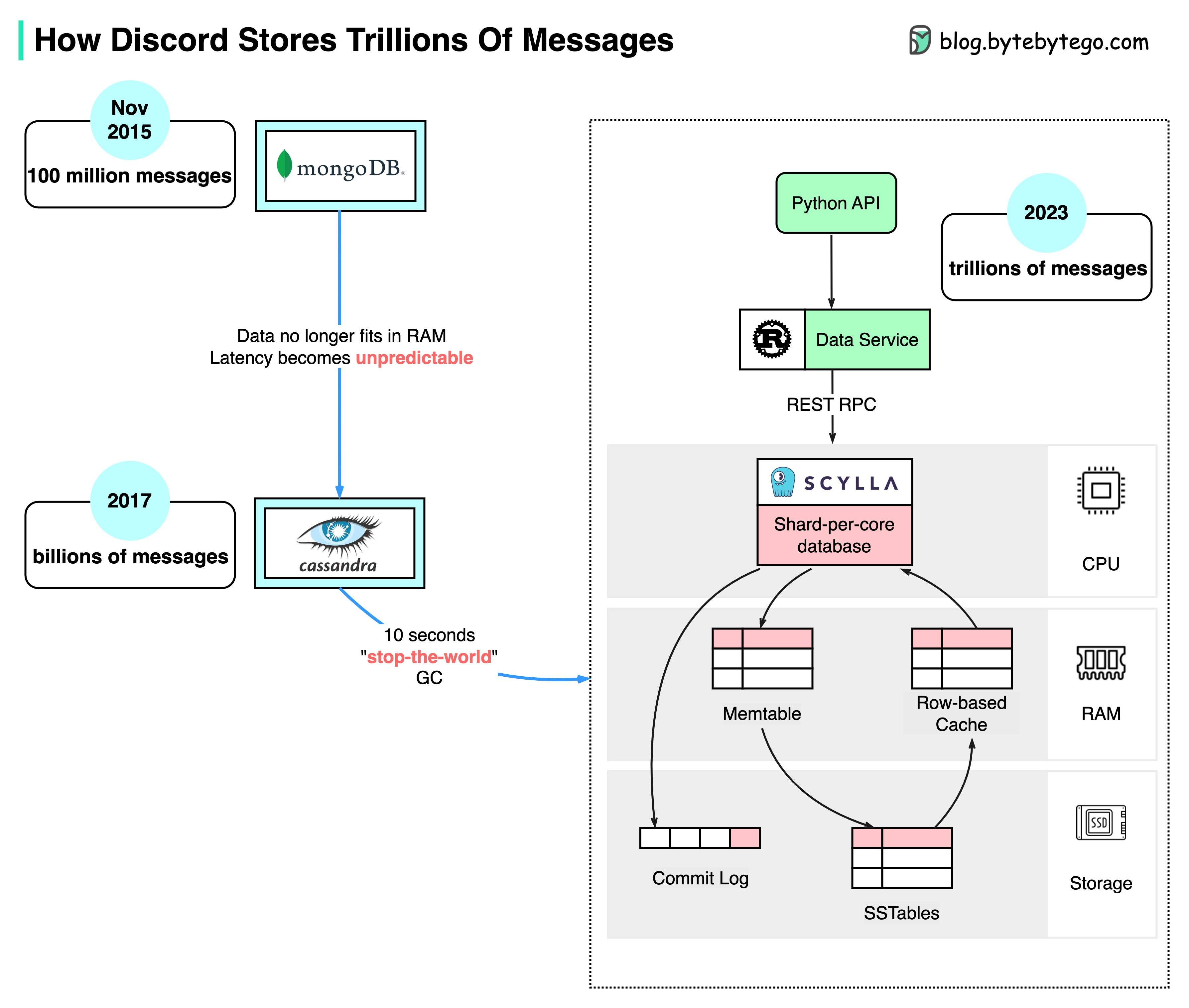

+ * [How Discord Stores Trillions of Messages](https://bytebytego.com/guides/how-discord-stores-trillions-of-messages)

+ * [Twitter Architecture 2022 vs. 2012](https://bytebytego.com/guides/twitter-architecture-2022-vs-2012)

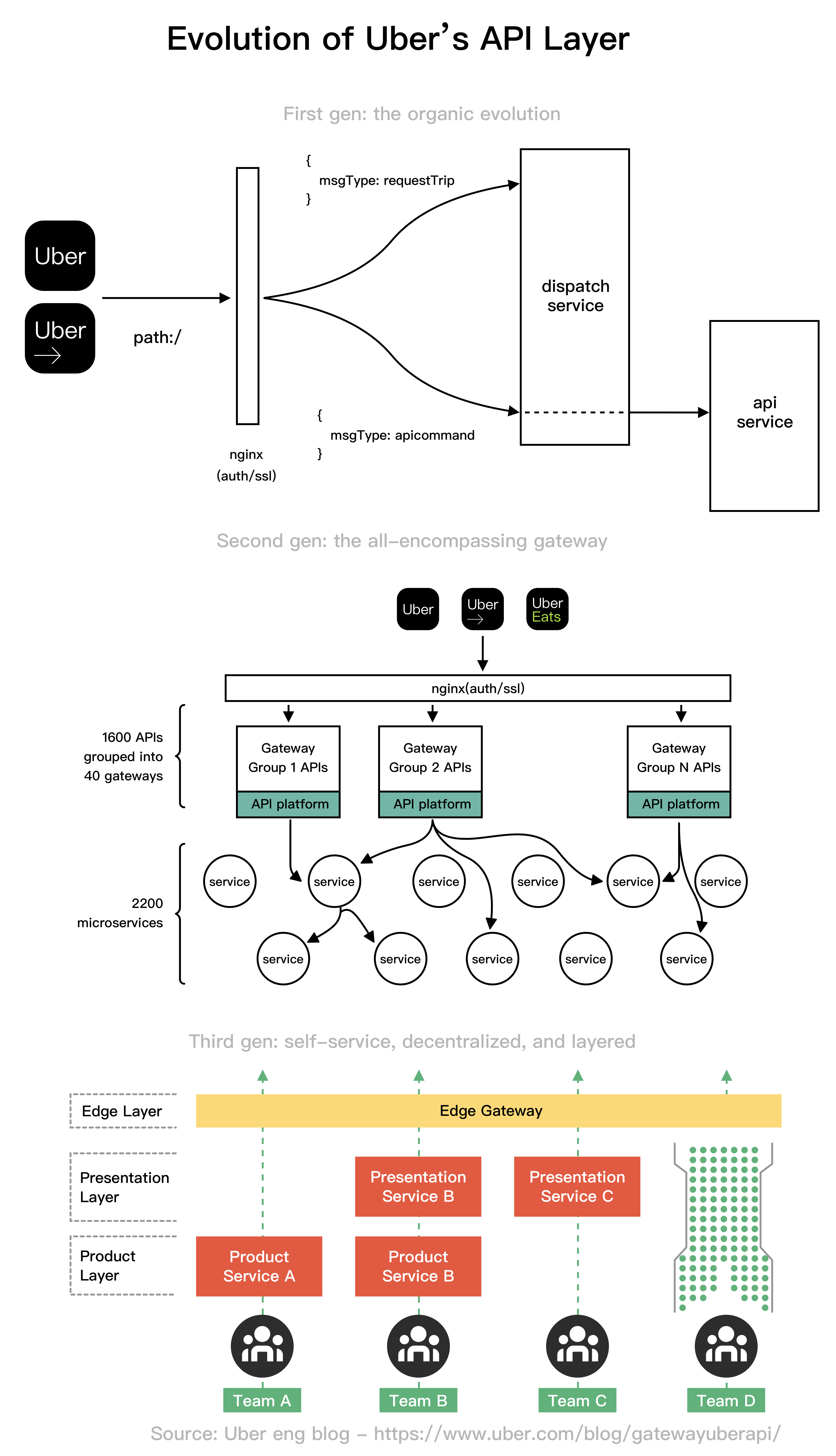

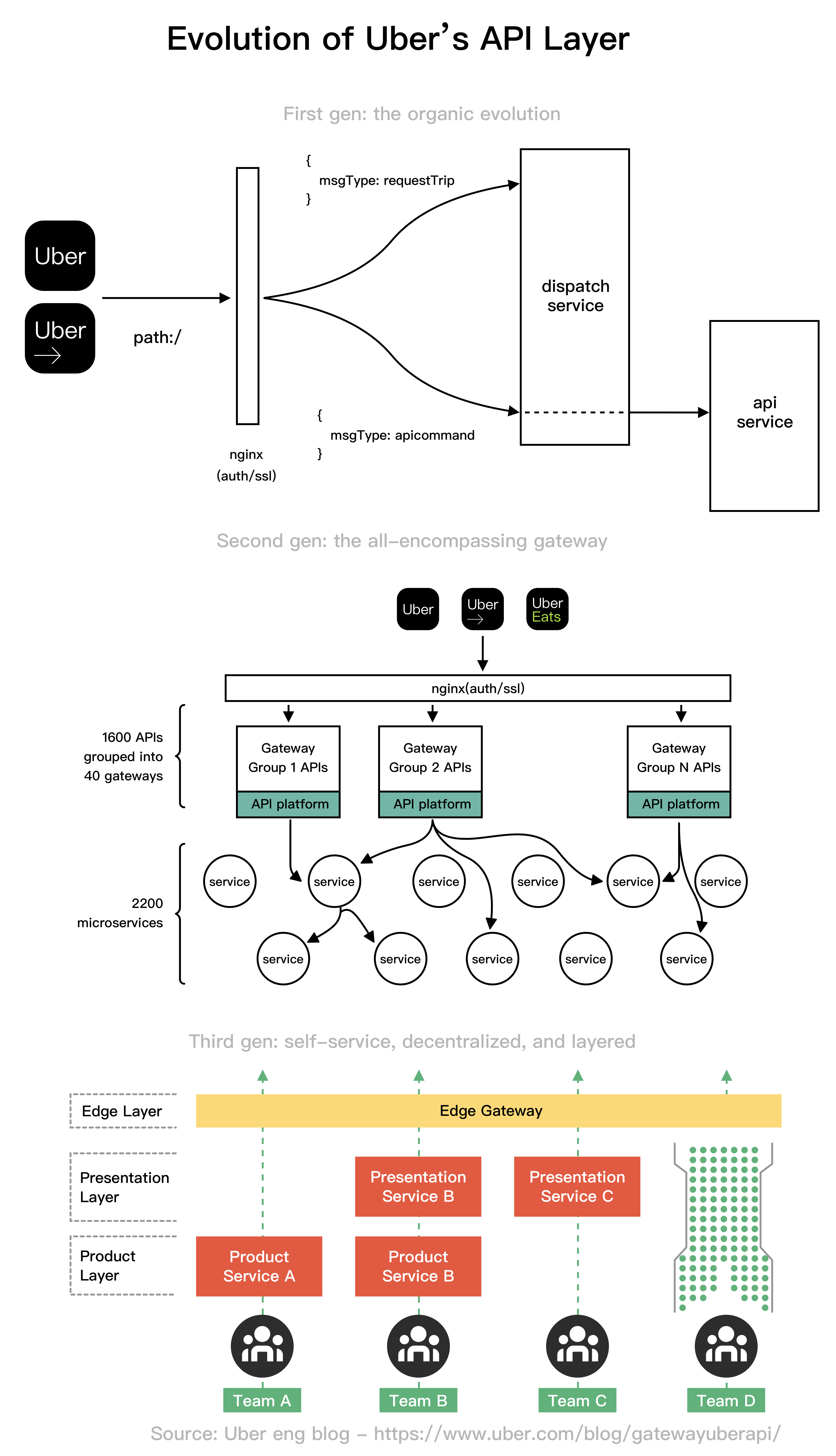

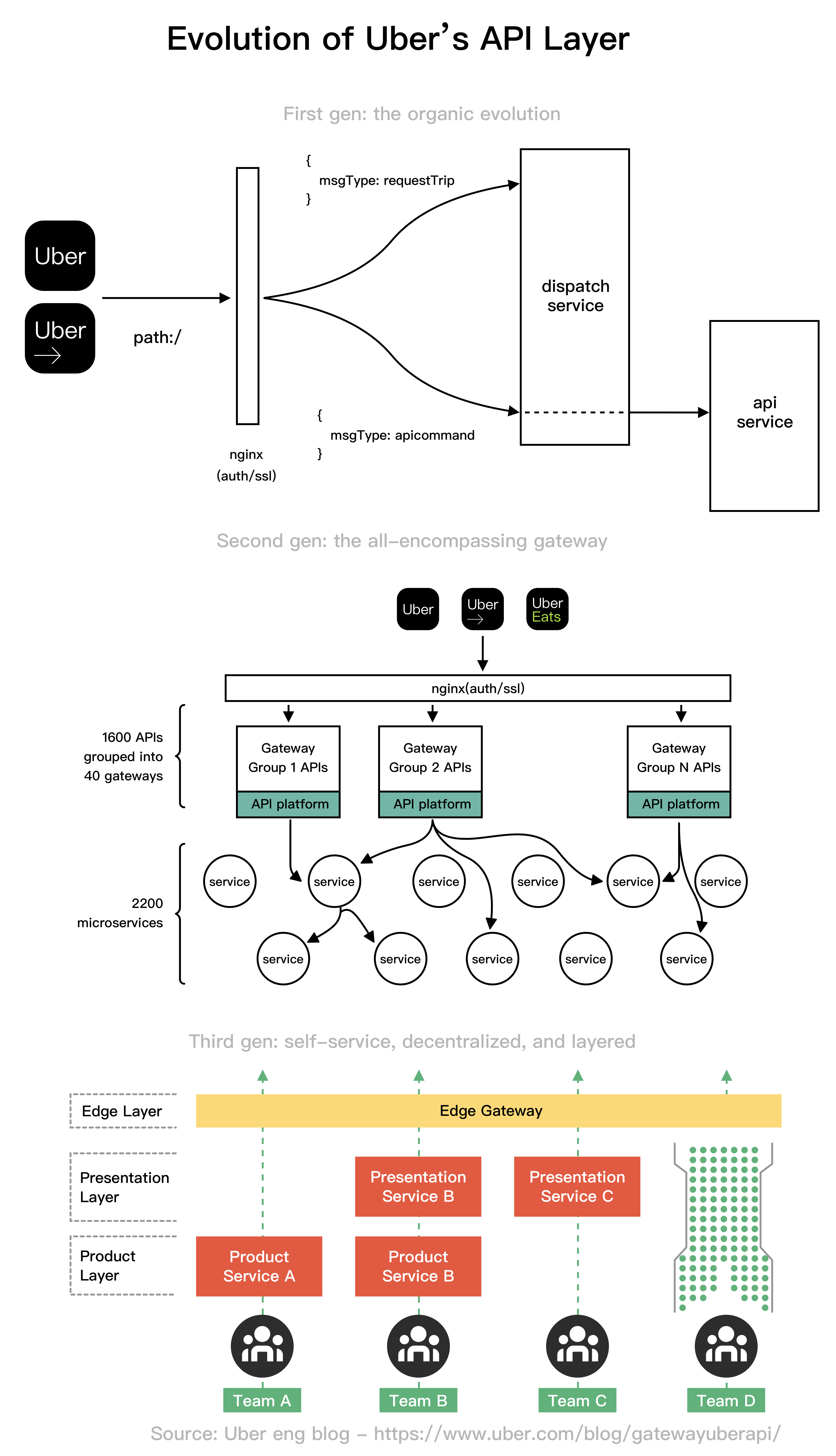

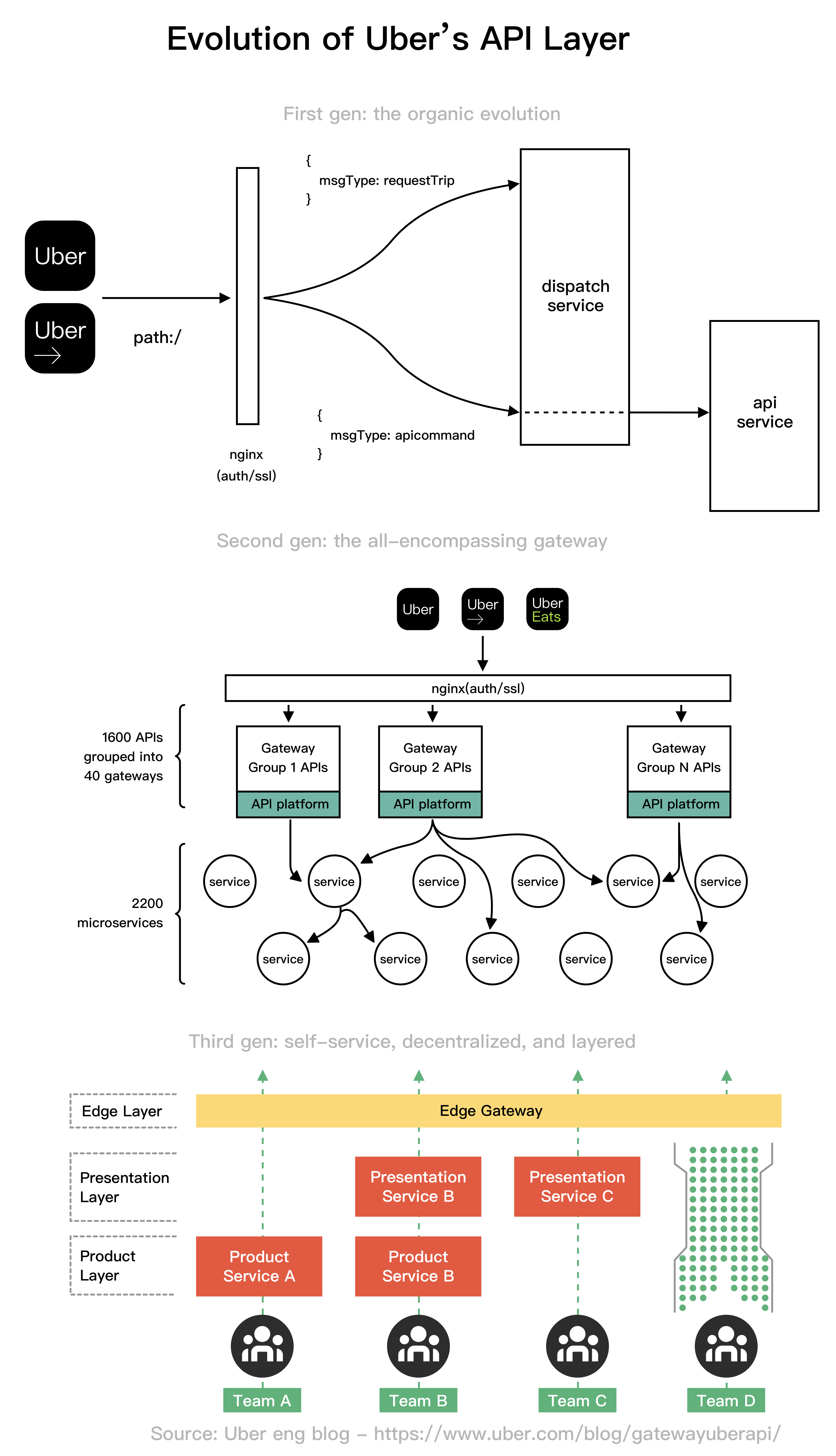

+ * [Evolution of Uber's API Layer](https://bytebytego.com/guides/evolution-of-uber's-api-layer)

+ * [Netflix's Tech Stack](https://bytebytego.com/guides/netflixs-tech-stack)

+* [AI and Machine Learning](https://bytebytego.com/guides/ai-machine-learning)

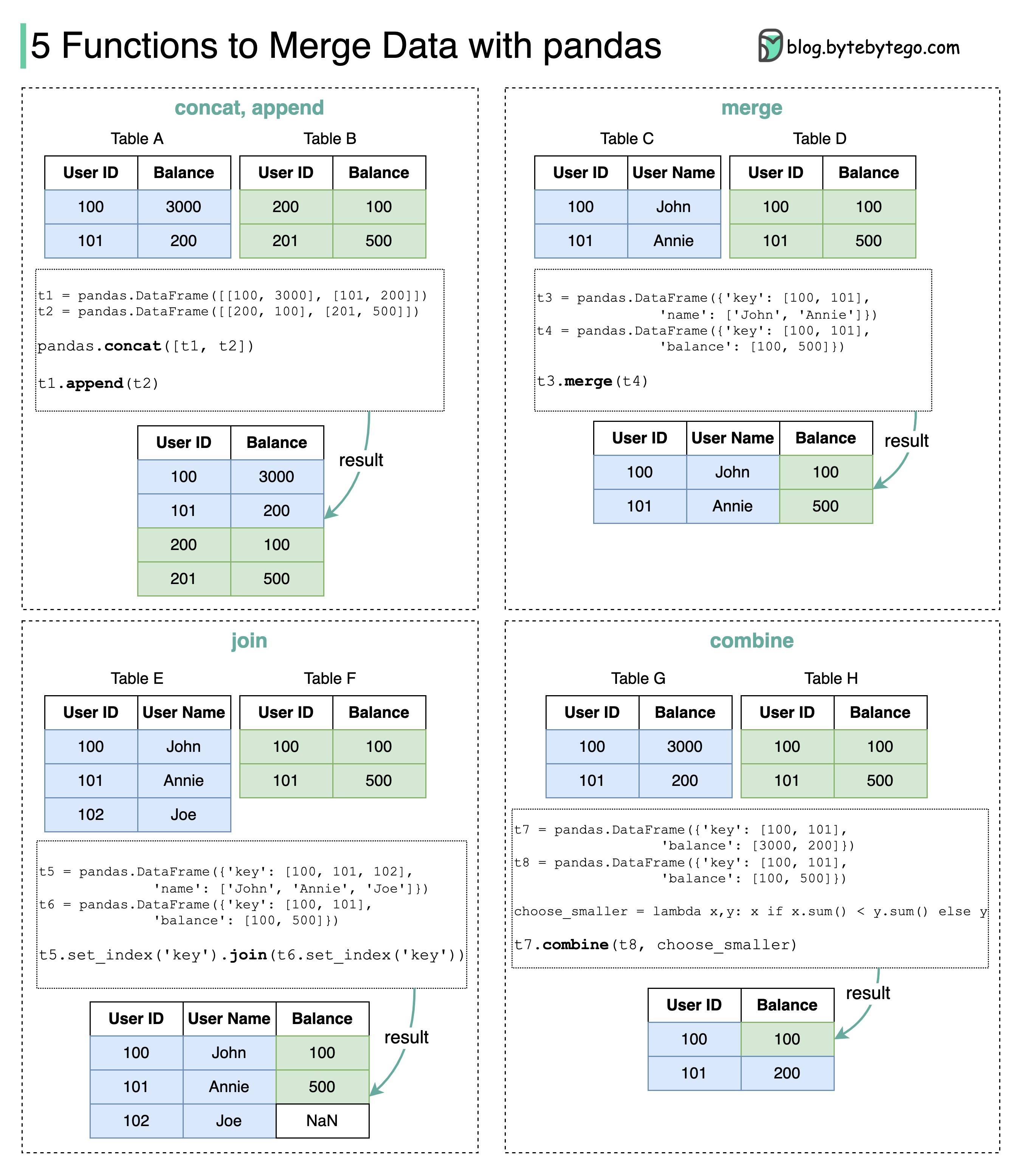

+ * [5 Functions to Merge Data with Pandas](https://bytebytego.com/guides/5-functions-to-merge-data-with-pandas)

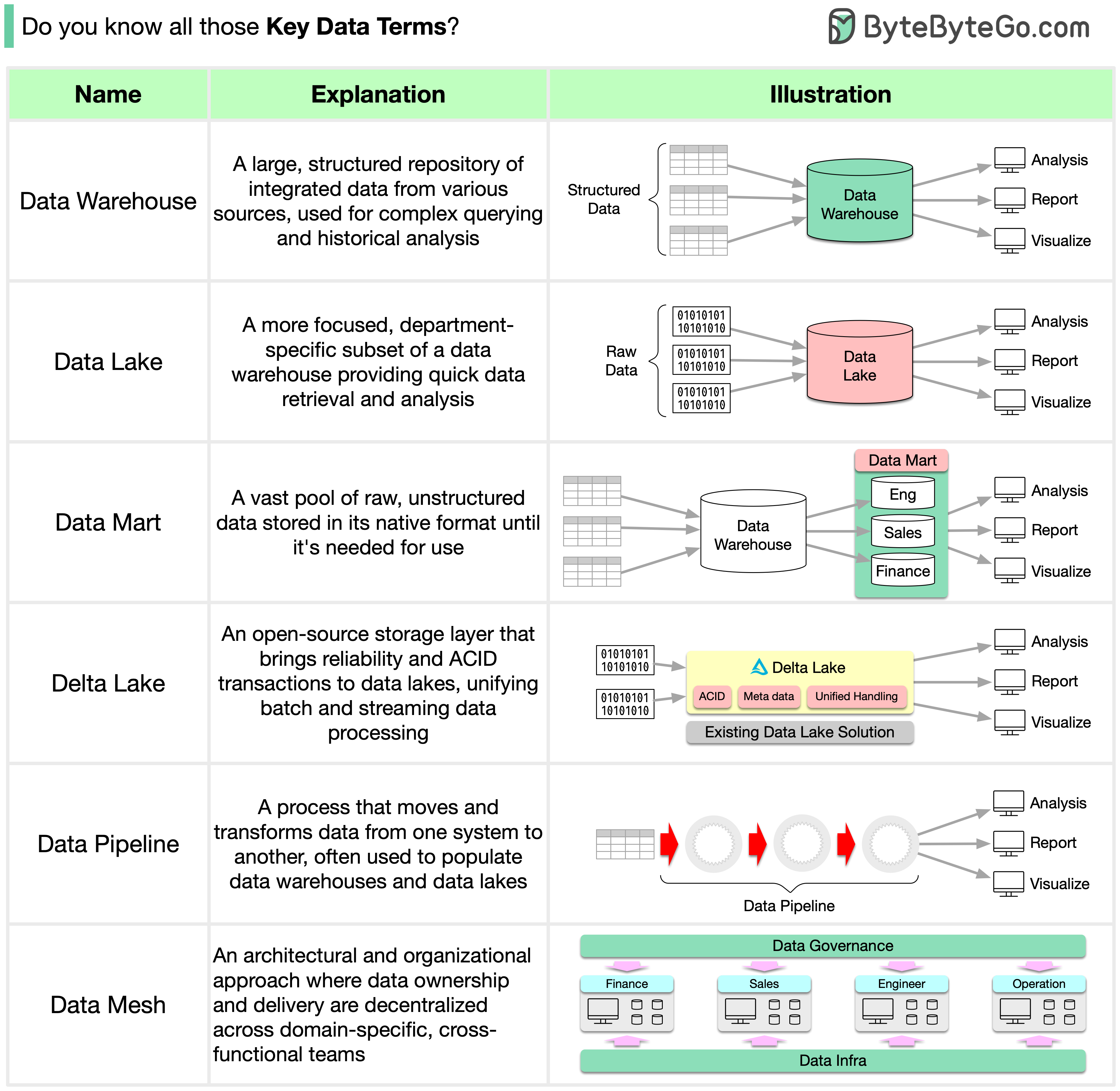

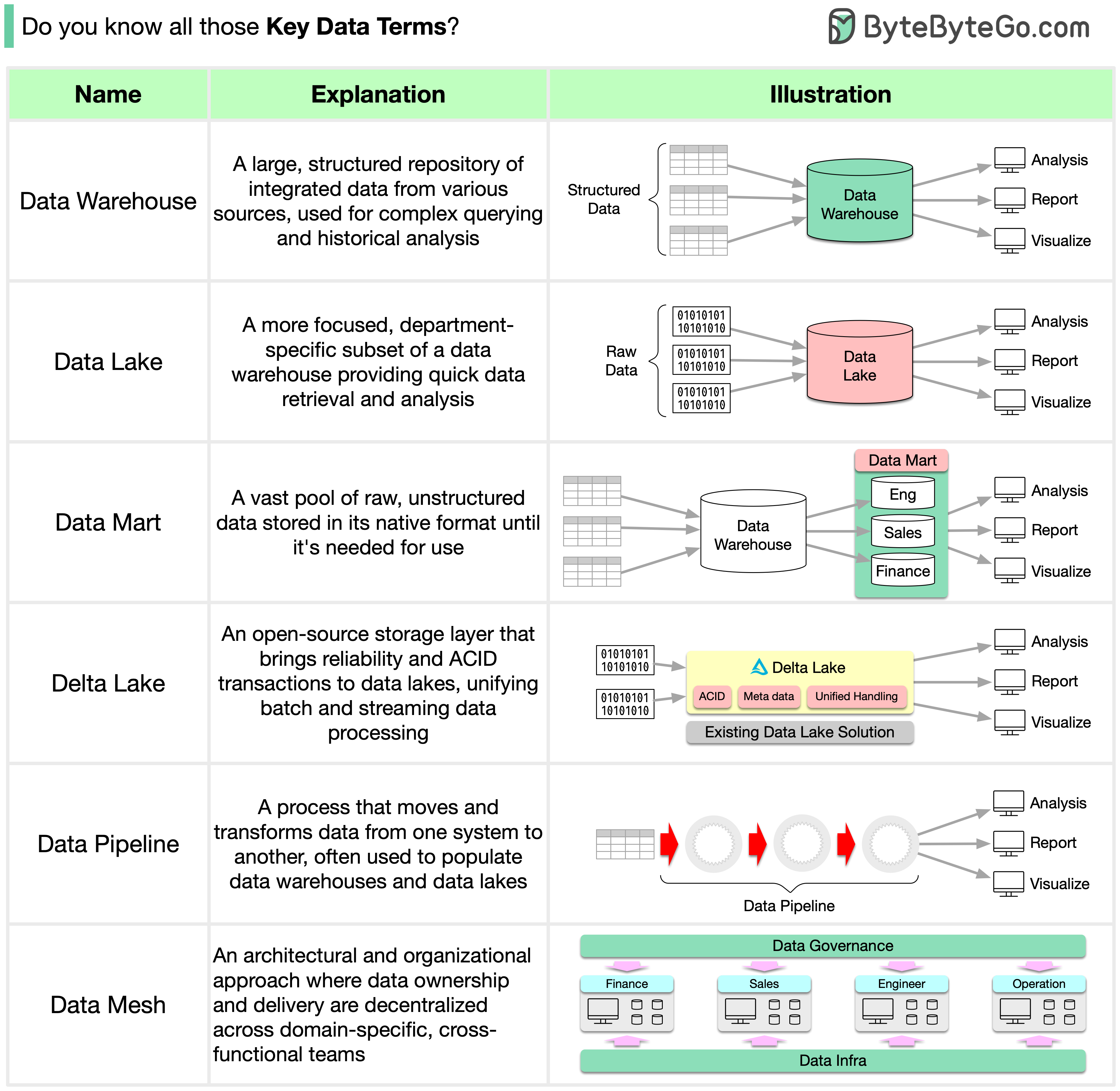

+ * [Key Data Terms](https://bytebytego.com/guides/key-data-terms)

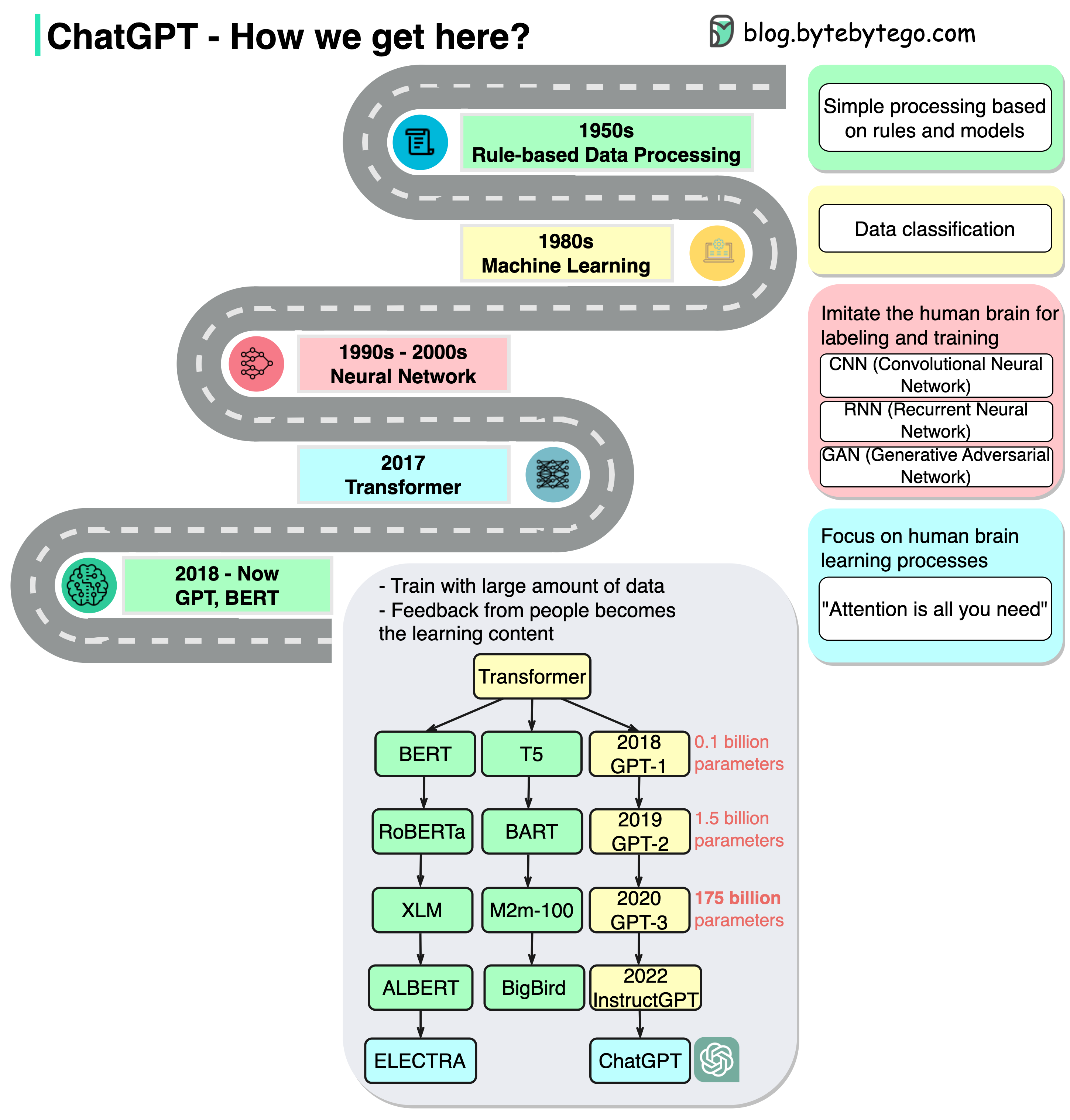

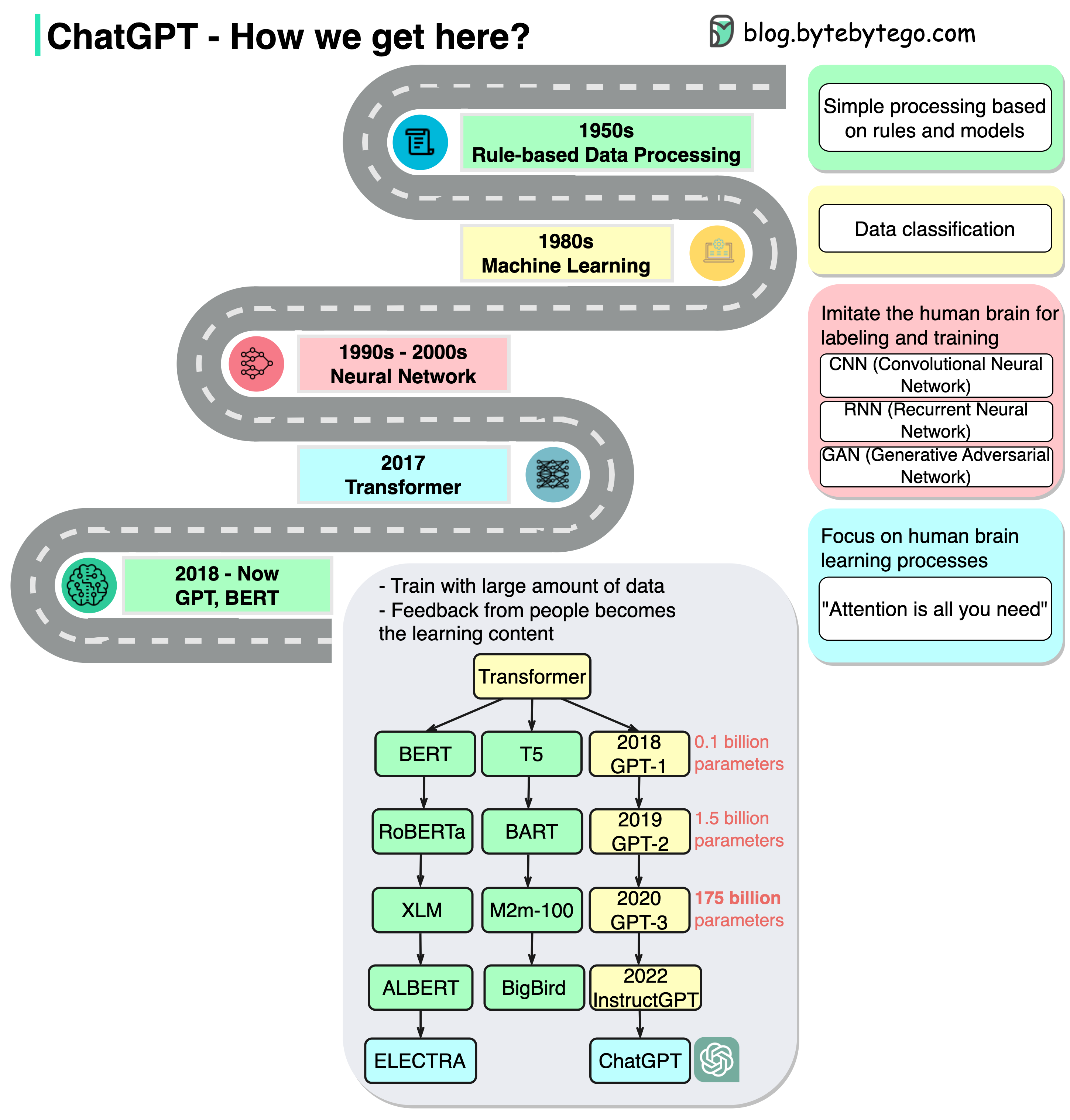

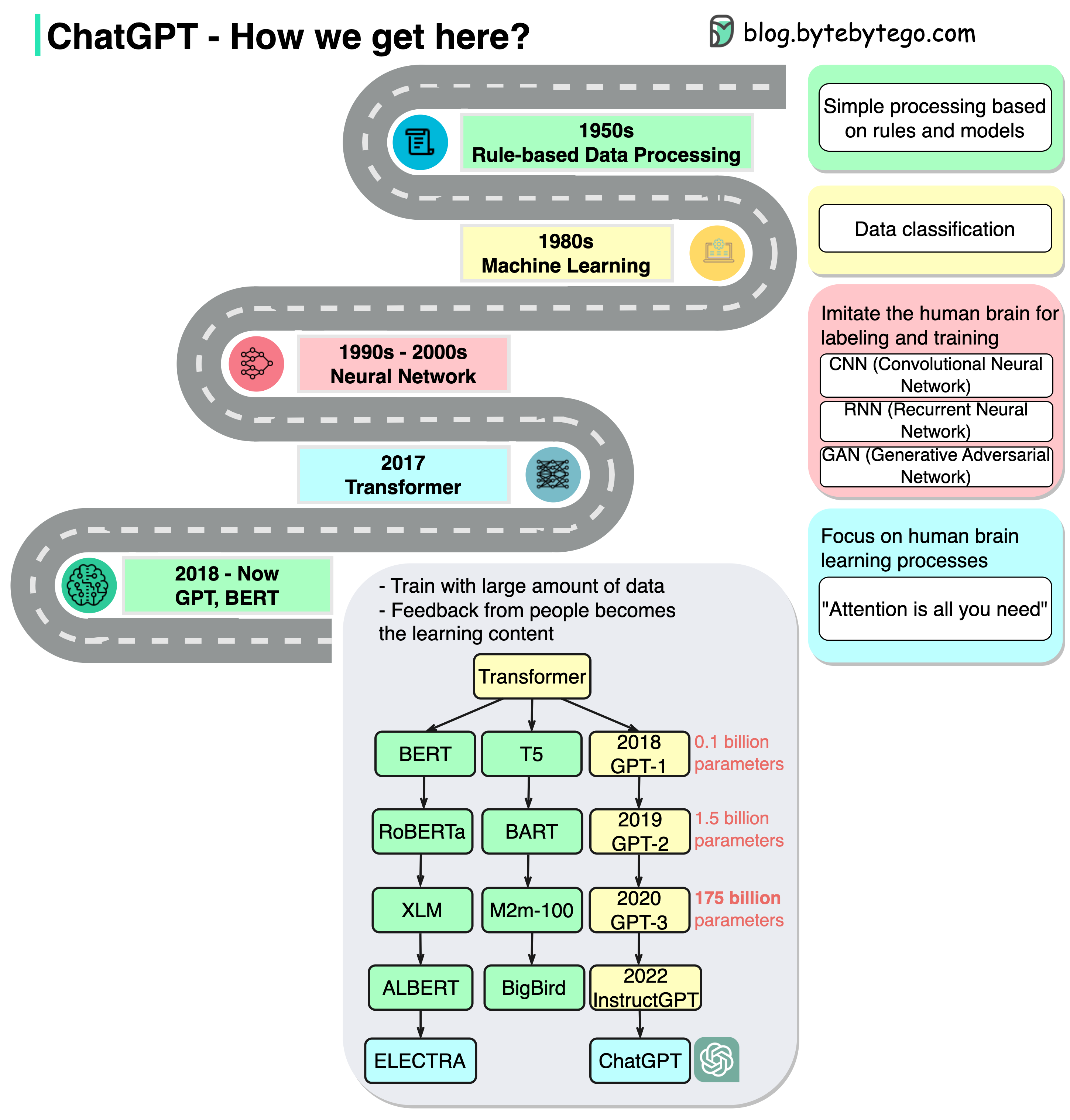

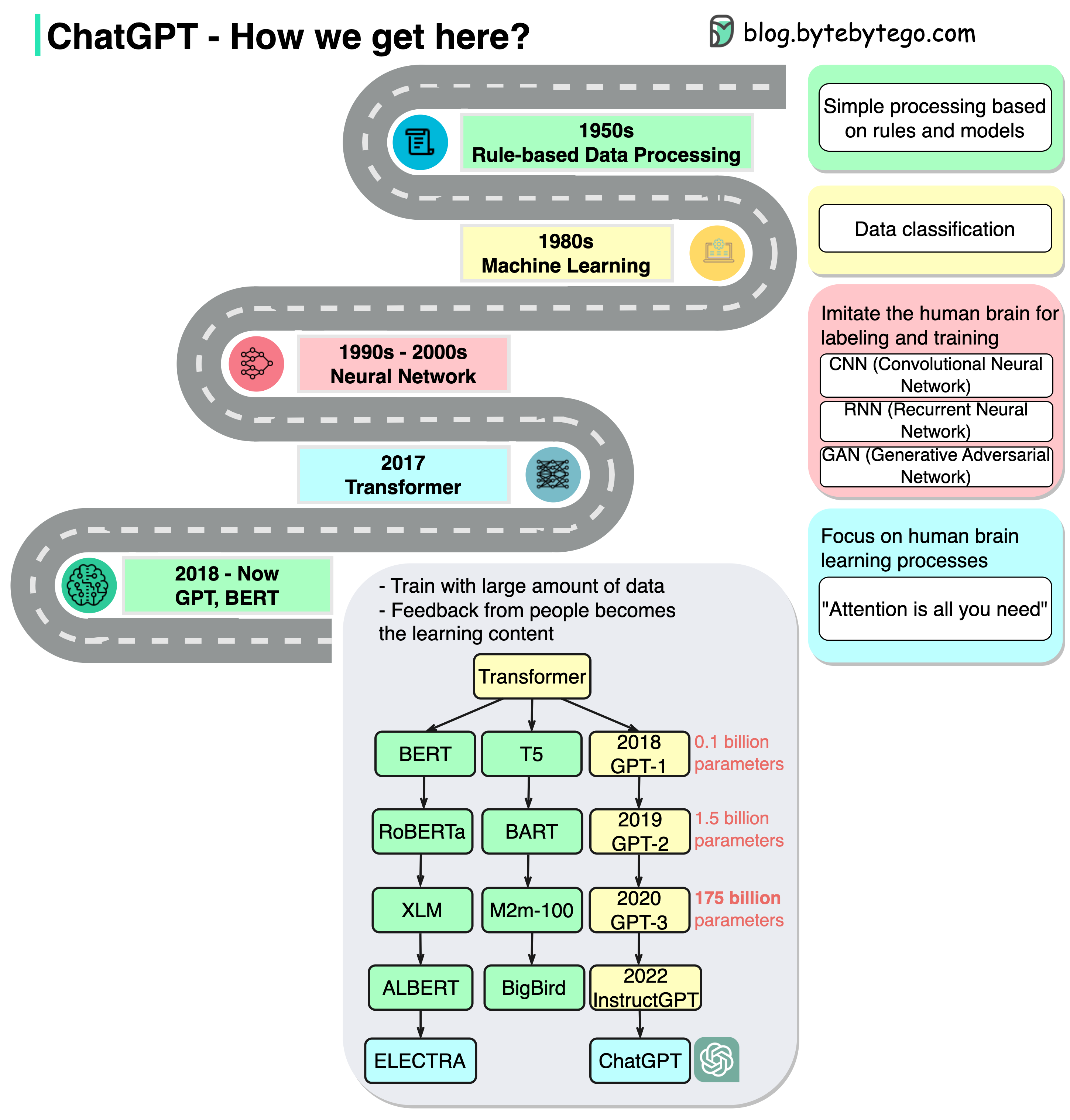

+ * [ChatGPT Timeline](https://bytebytego.com/guides/chatgpt-timeline)

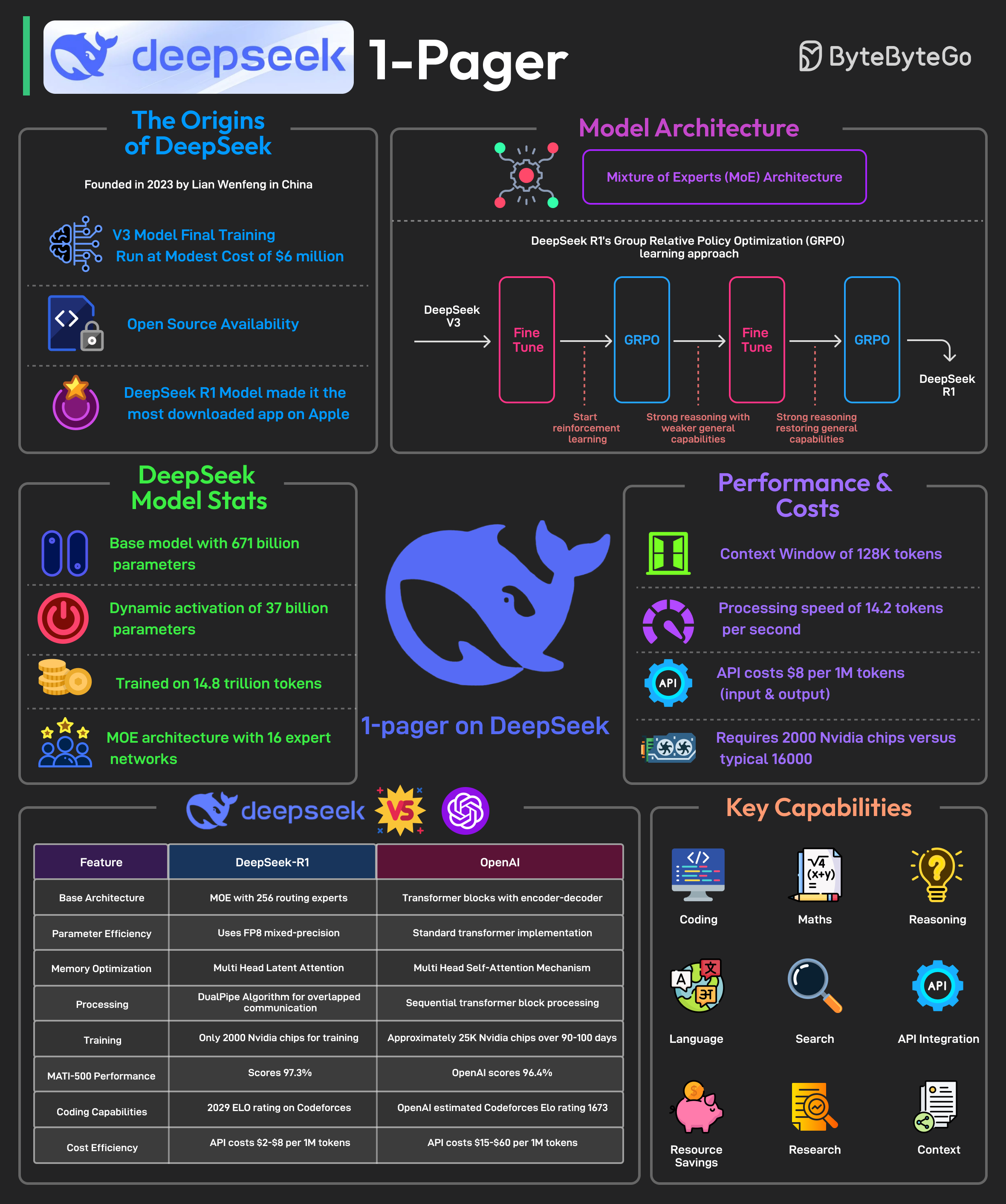

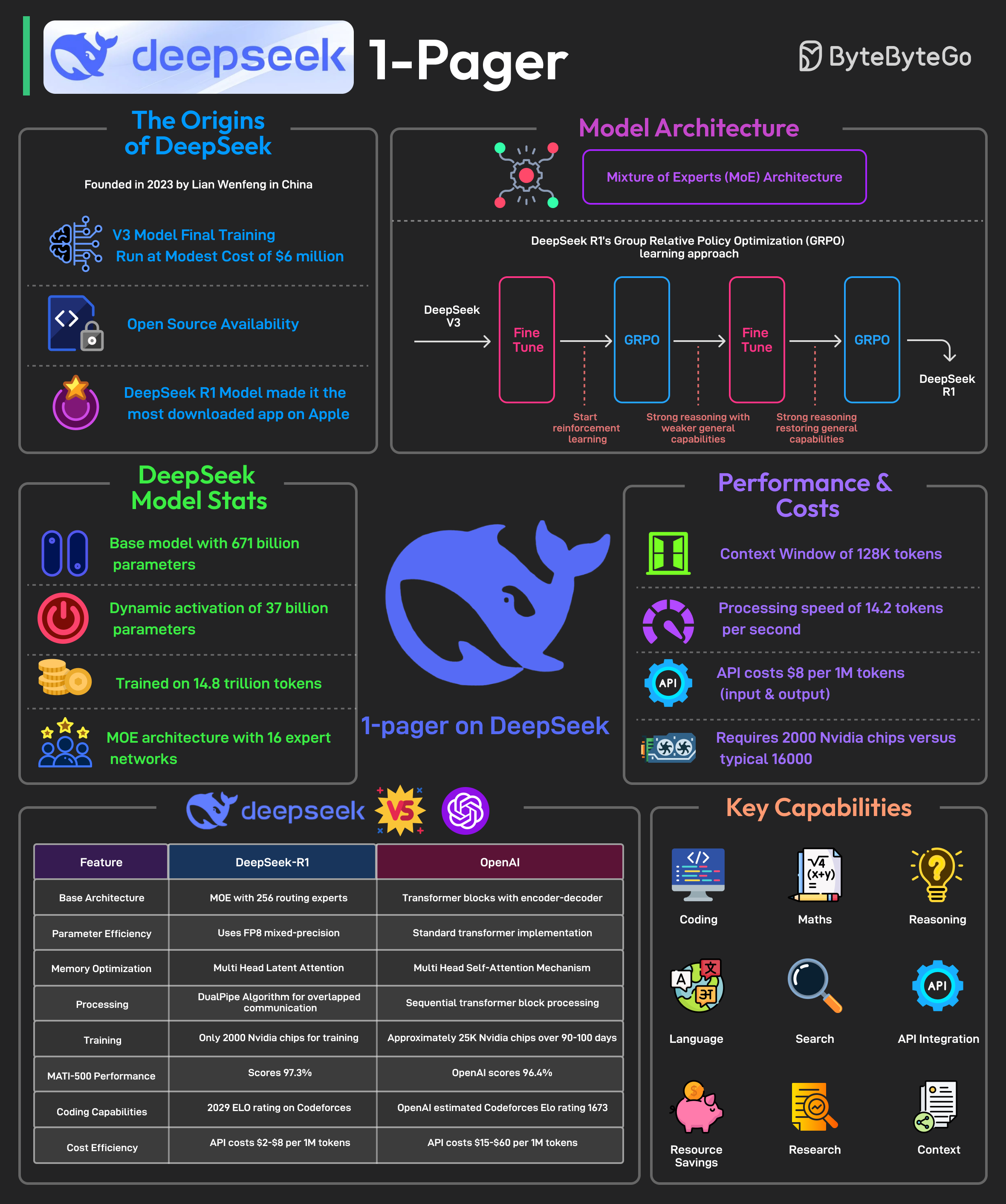

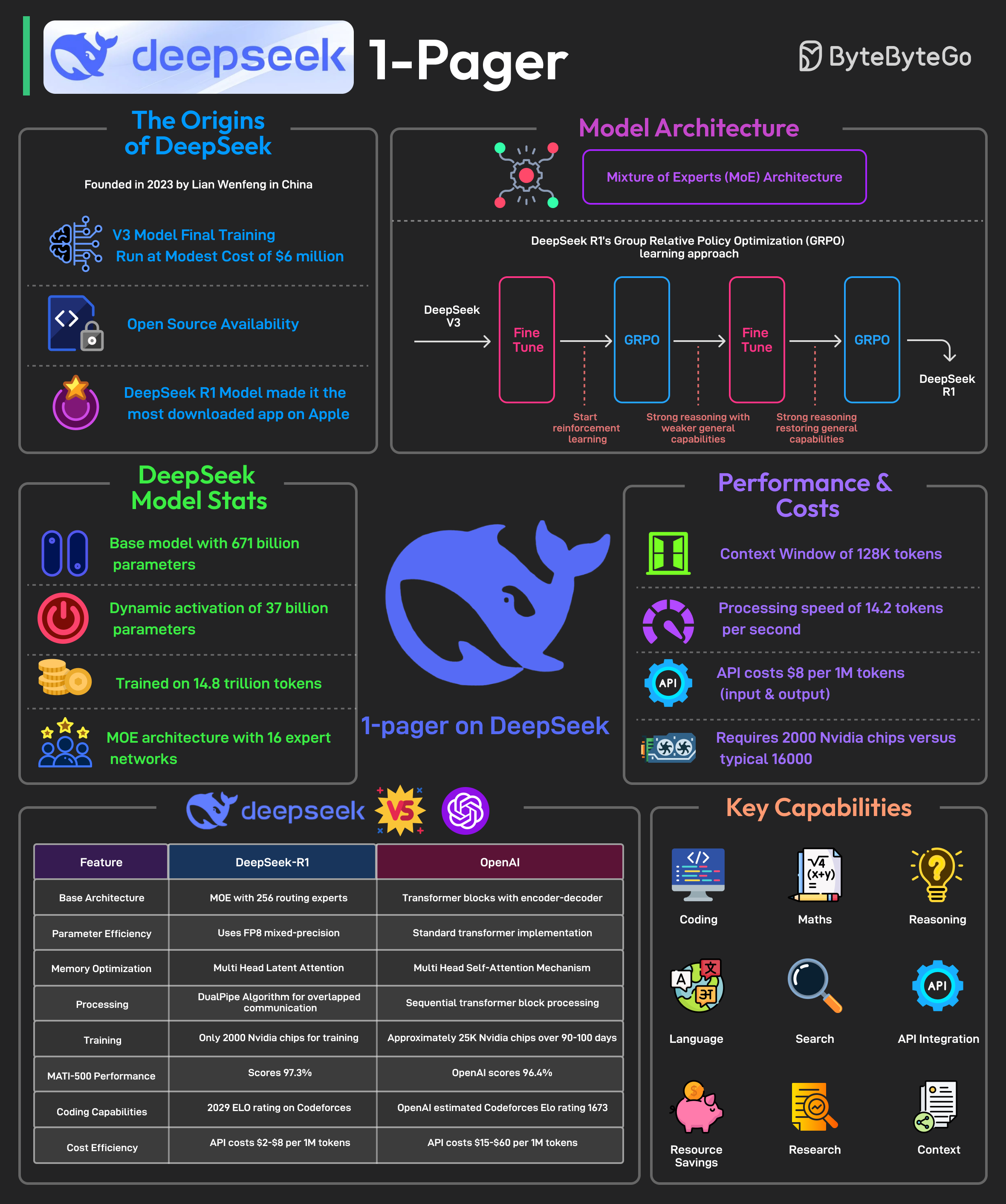

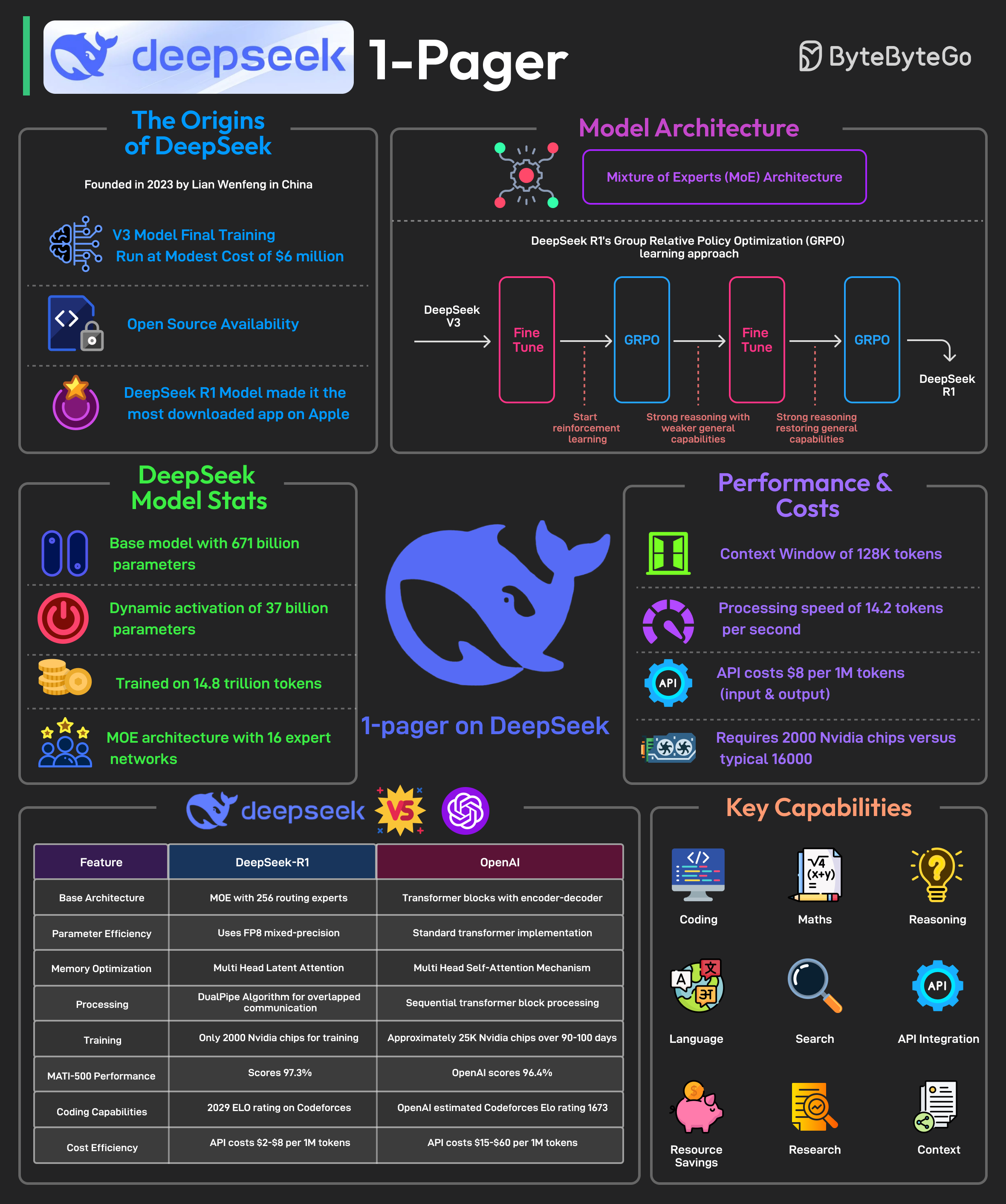

+ * [DeepSeek 1-Pager](https://bytebytego.com/guides/deepseek-1-pager)

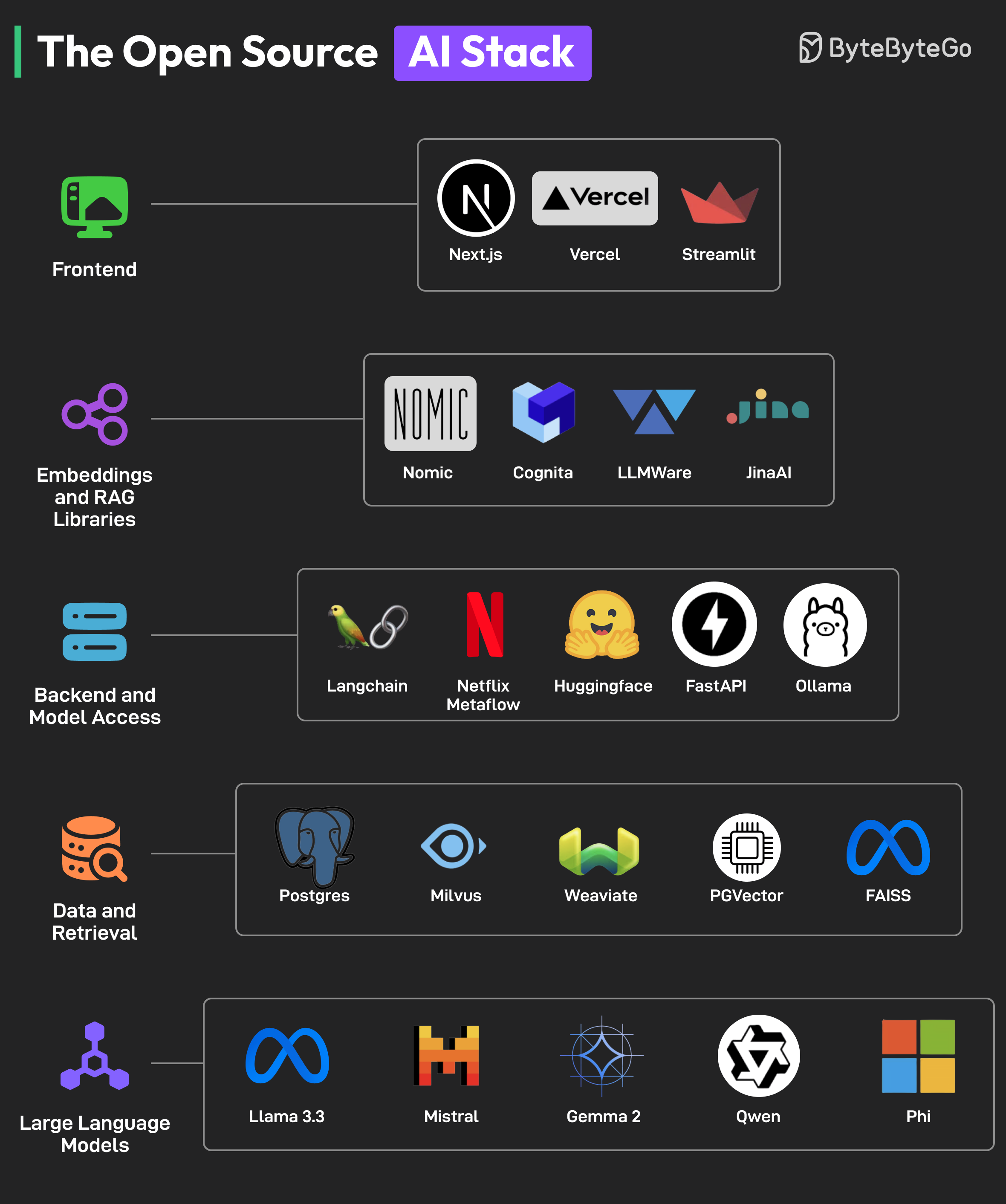

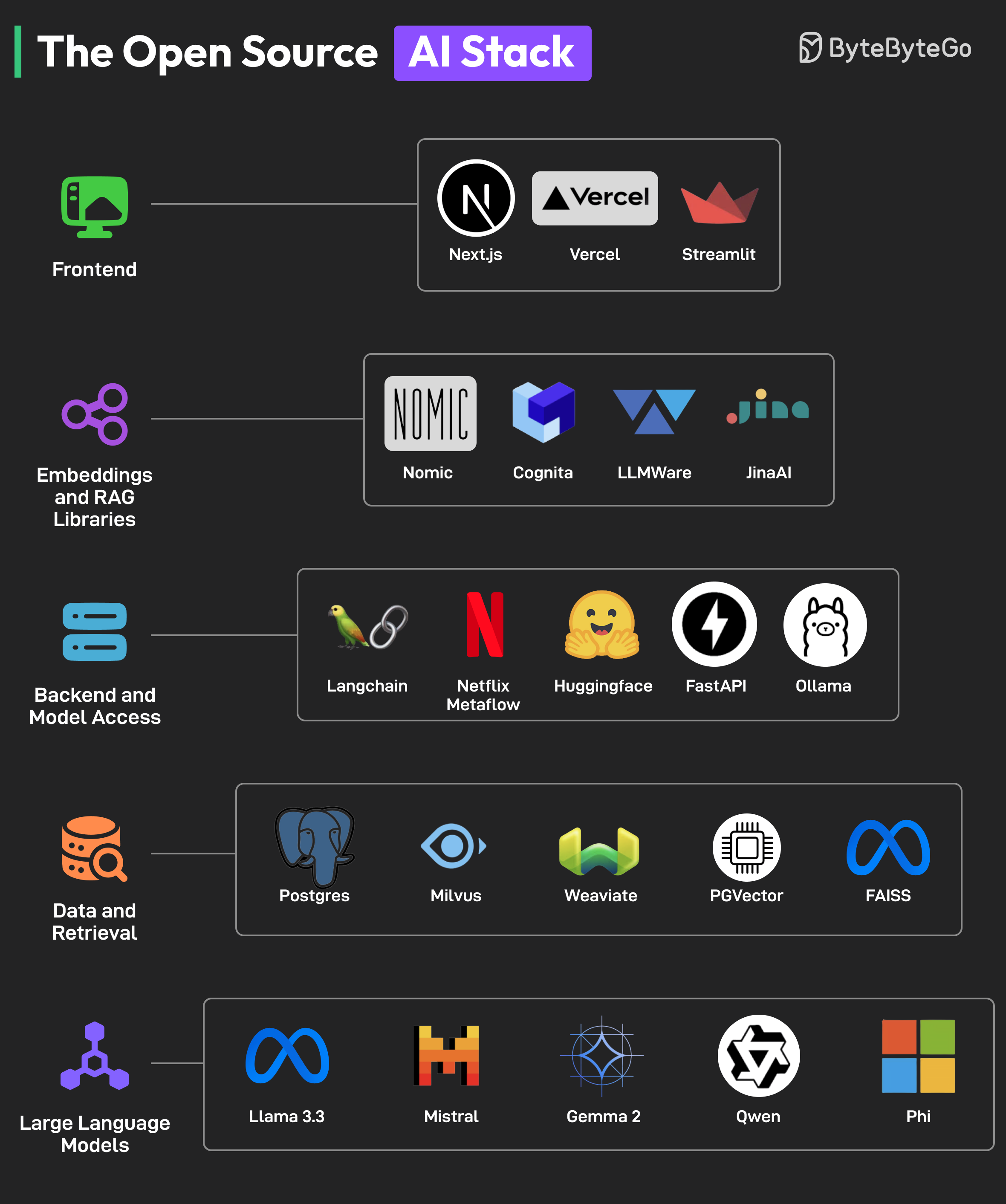

+ * [The Open Source AI Stack](https://bytebytego.com/guides/the-open-source-ai-stack)

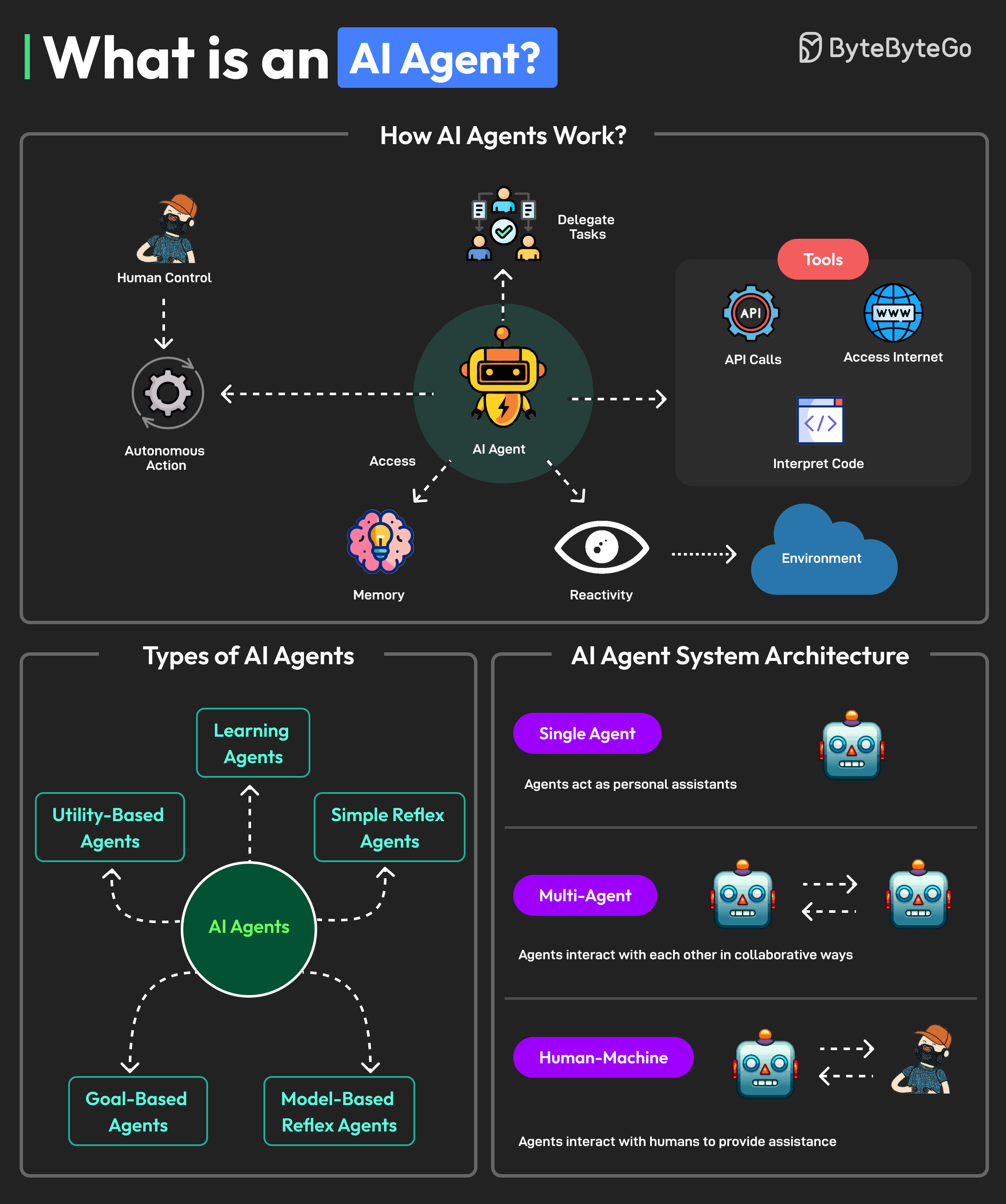

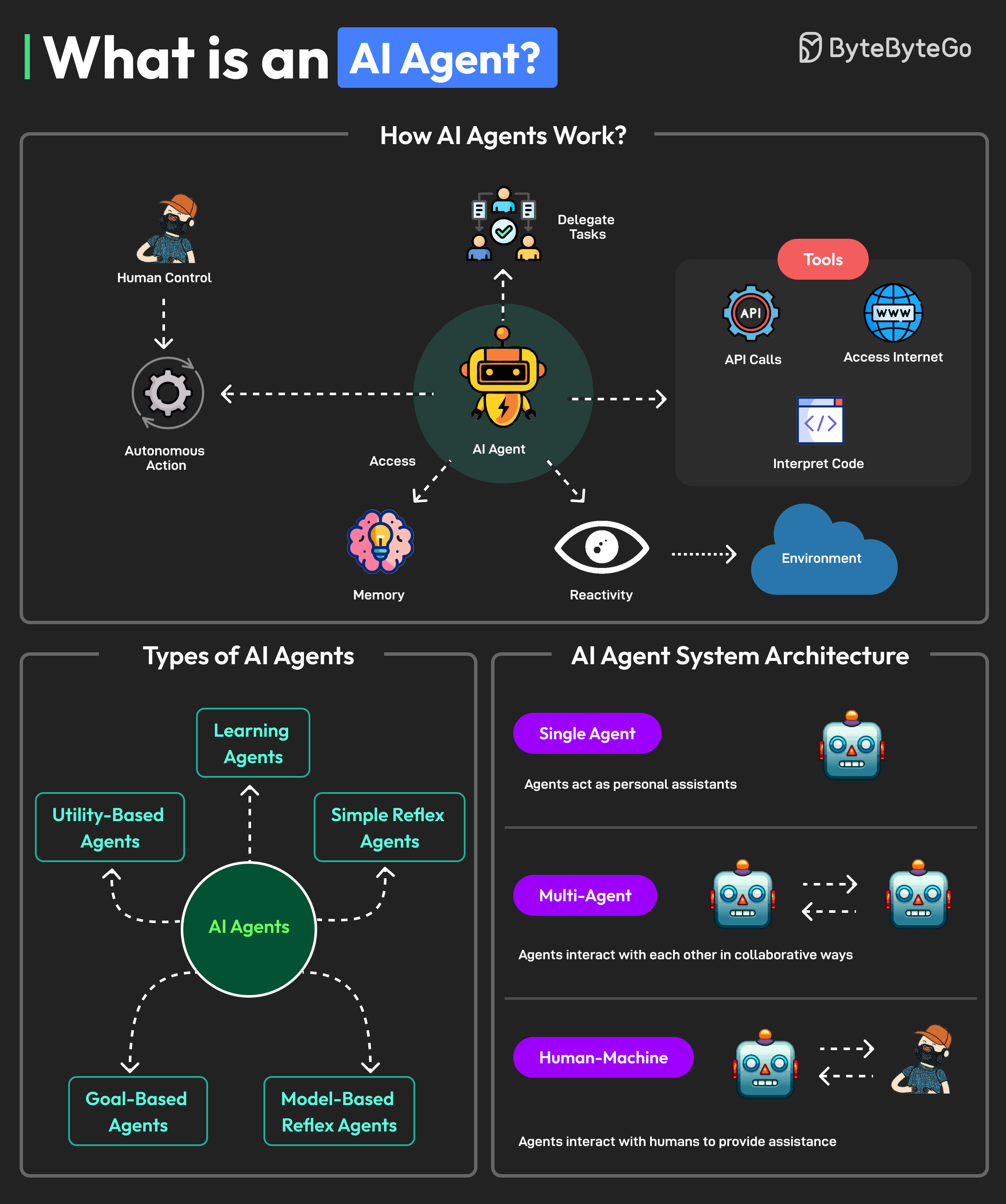

+ * [What is an AI Agent?](https://bytebytego.com/guides/what-is-an-ai-agent)

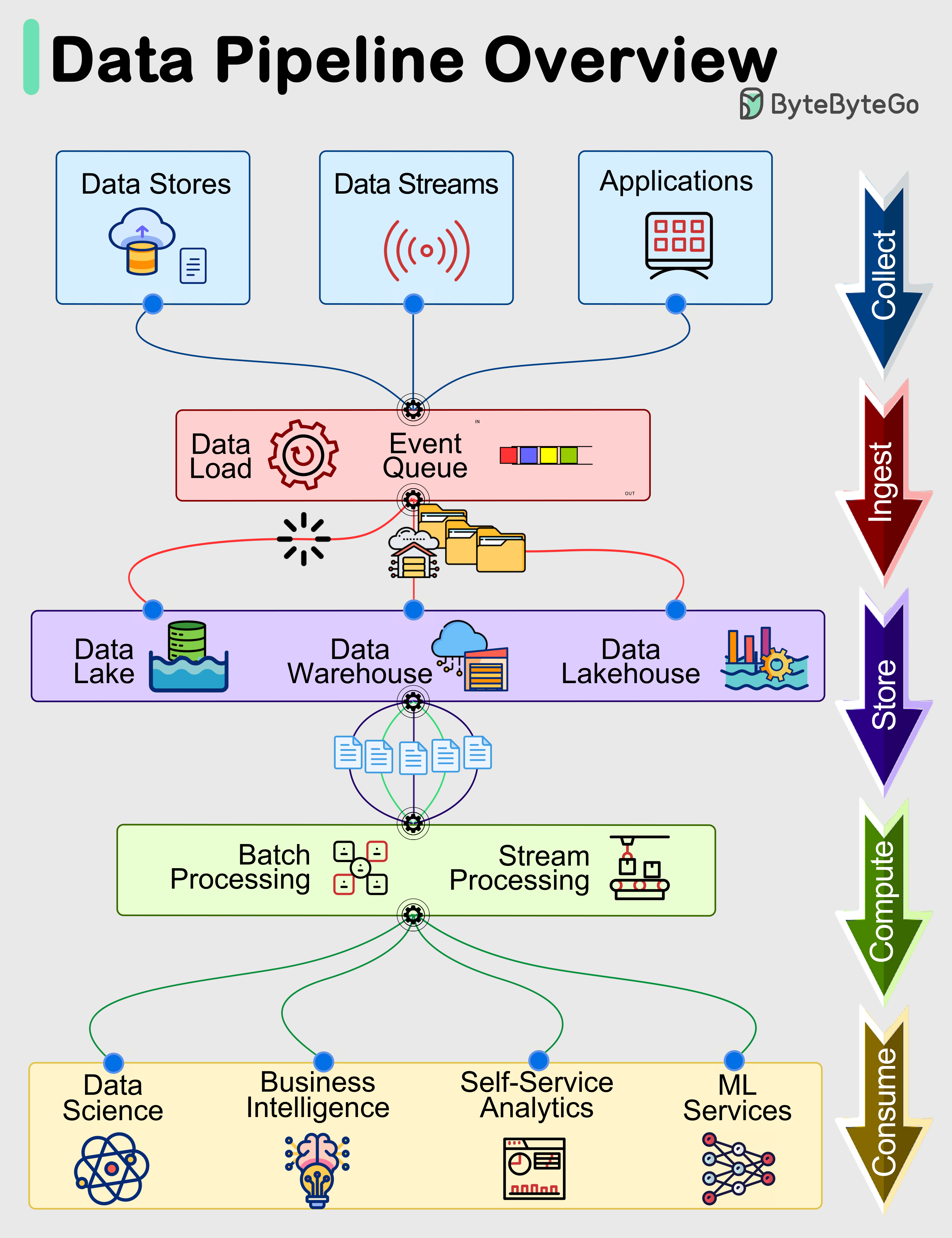

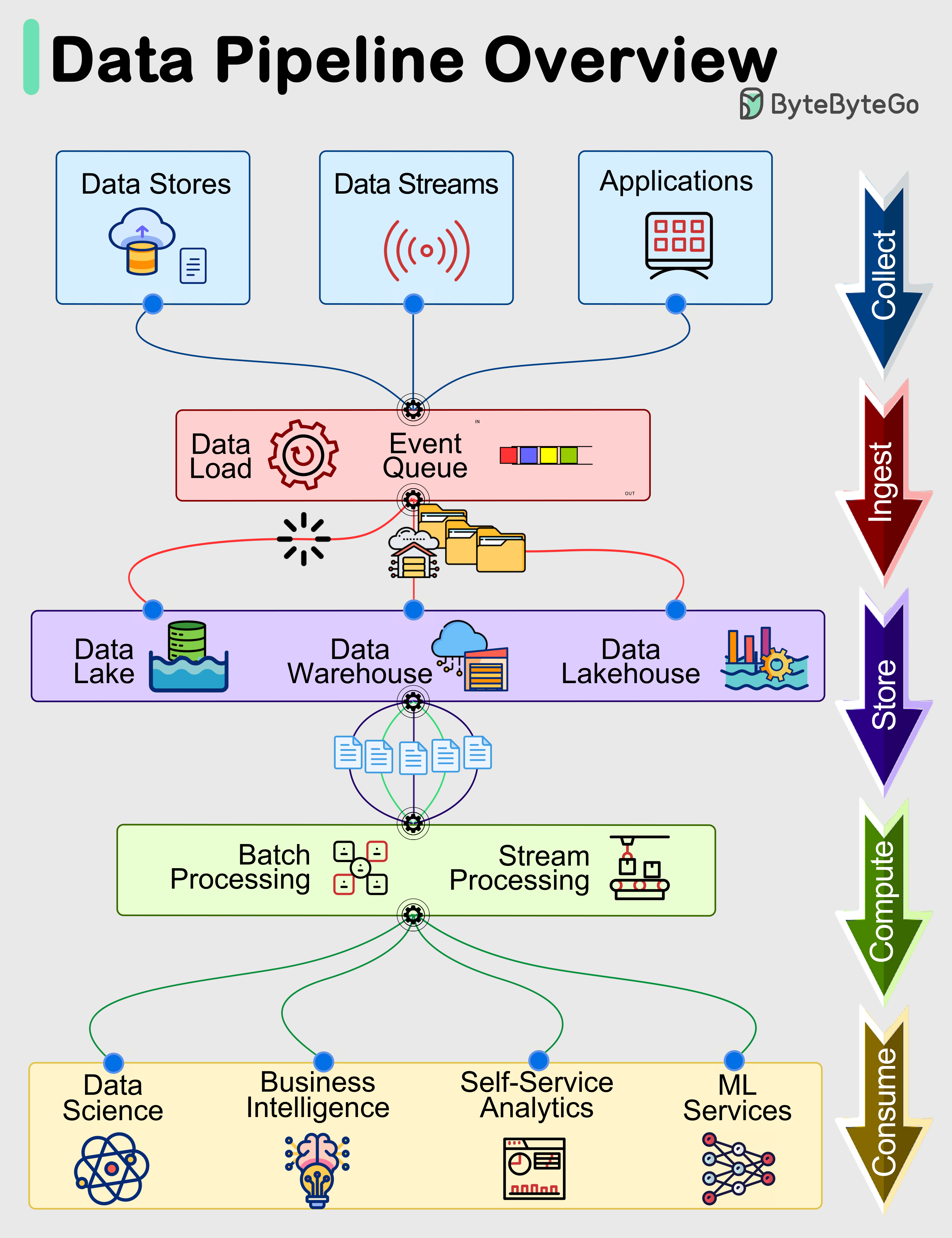

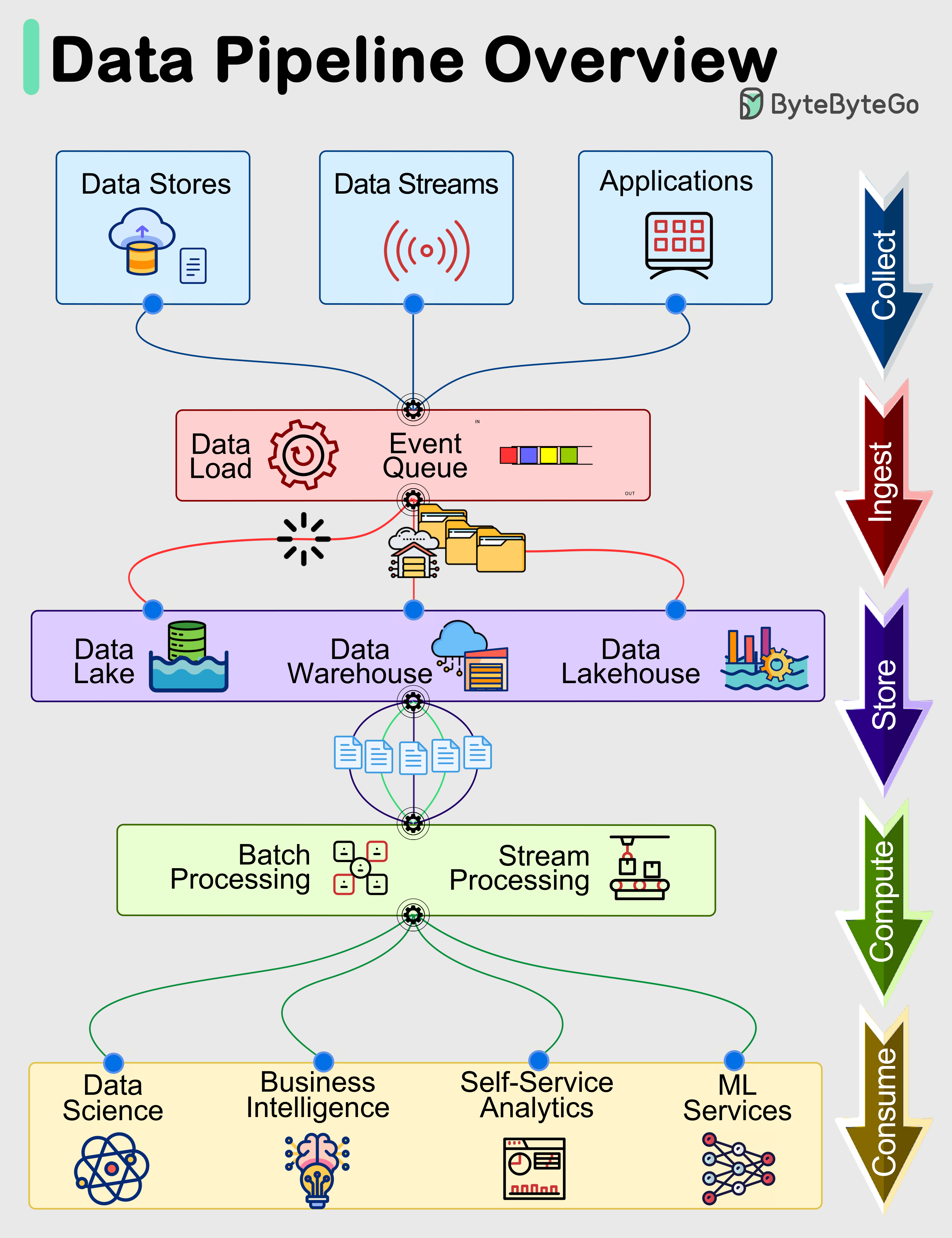

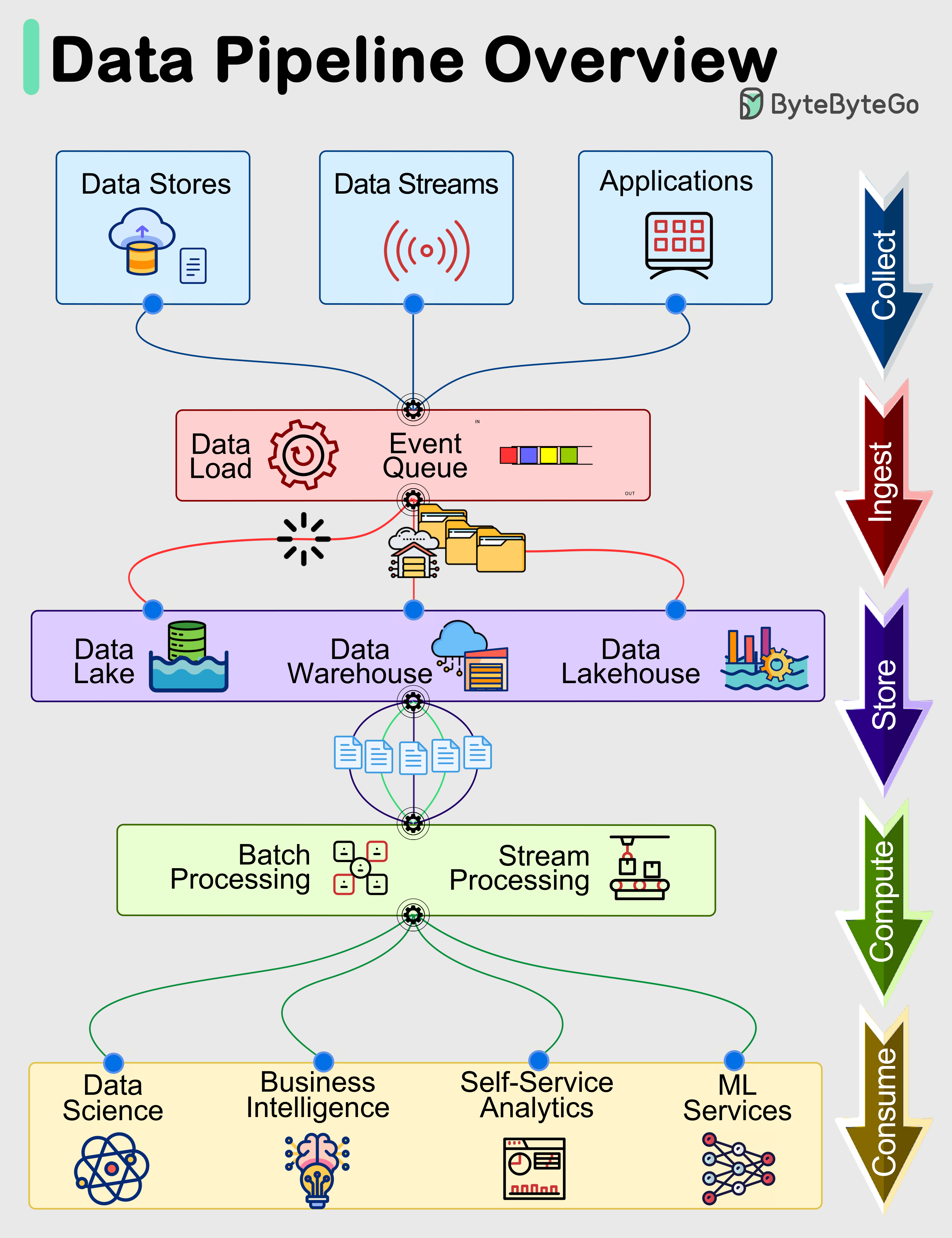

+ * [Data Pipelines Overview](https://bytebytego.com/guides/data-pipelines-overview)

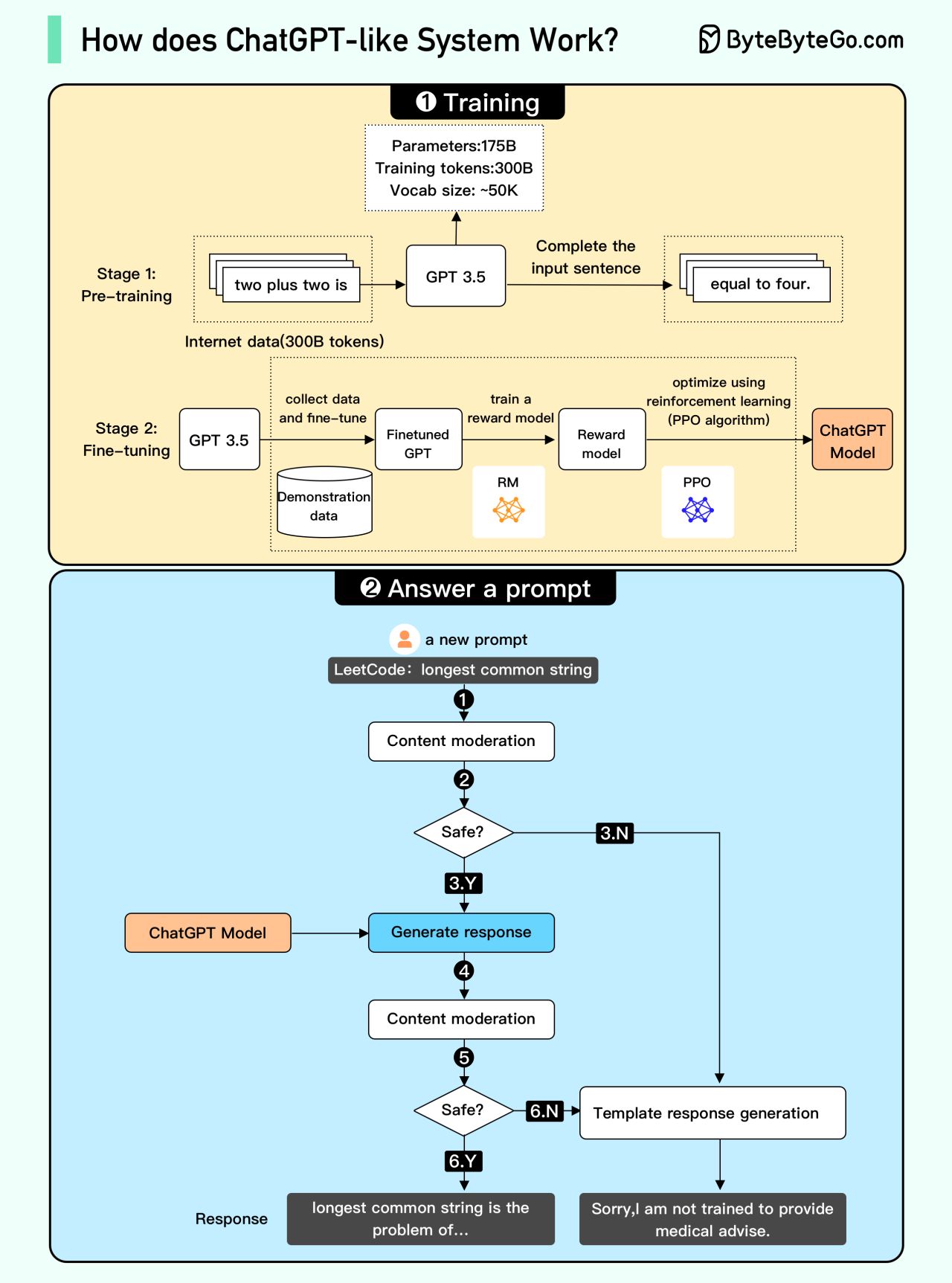

+ * [How does ChatGPT work?](https://bytebytego.com/guides/how-does-chatgpt-work)

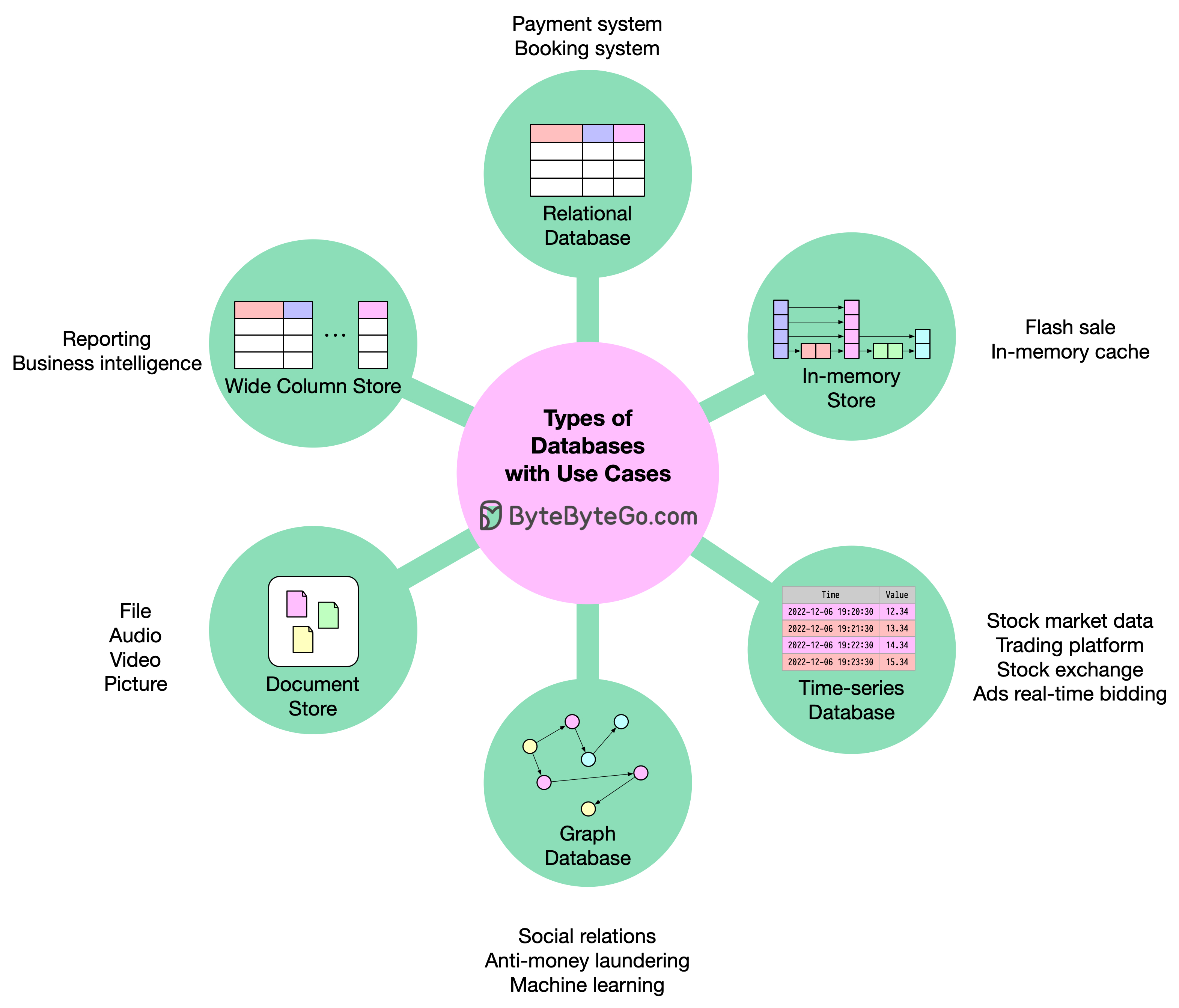

+* [Database and Storage](https://bytebytego.com/guides/database-and-storage)

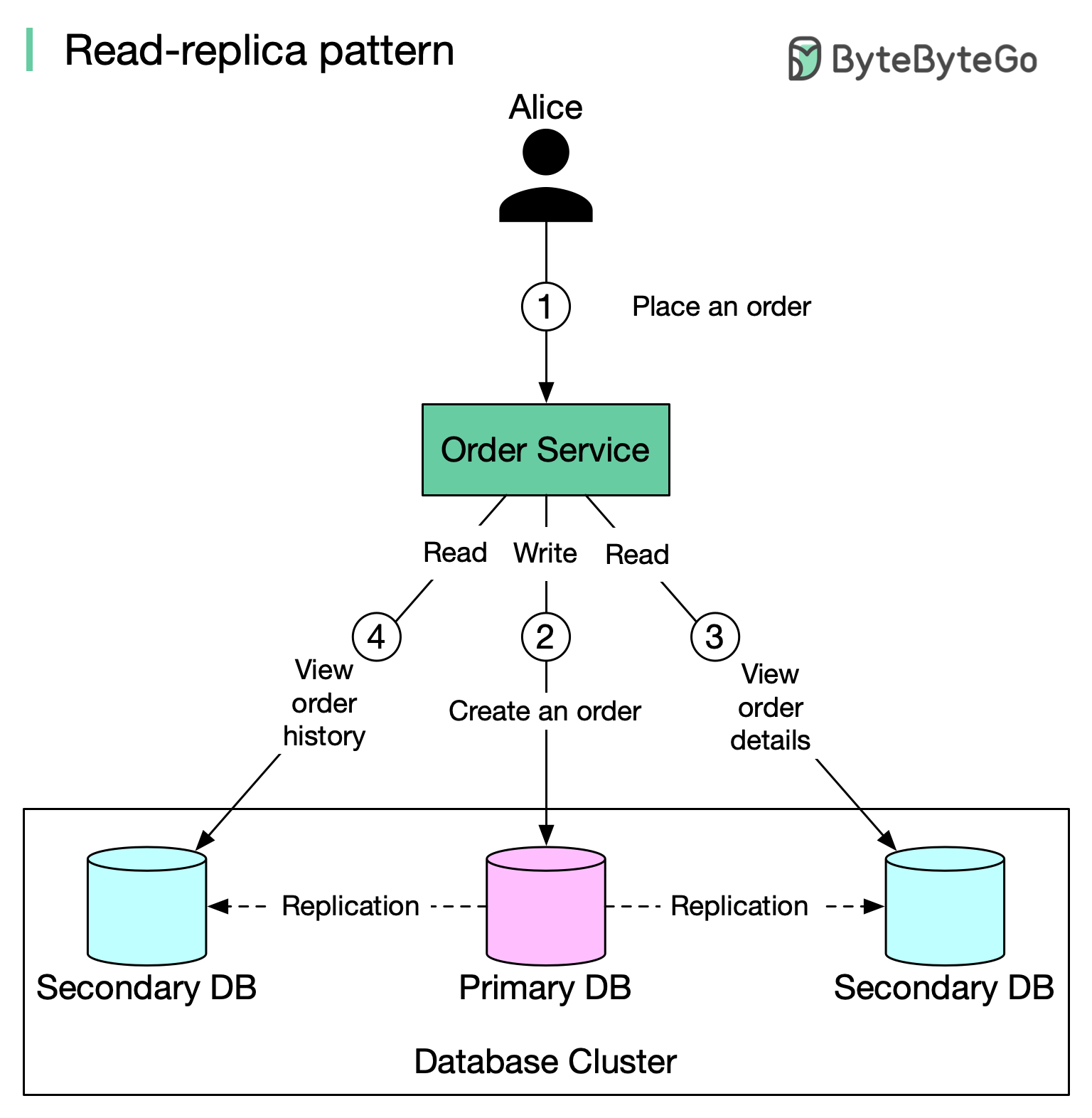

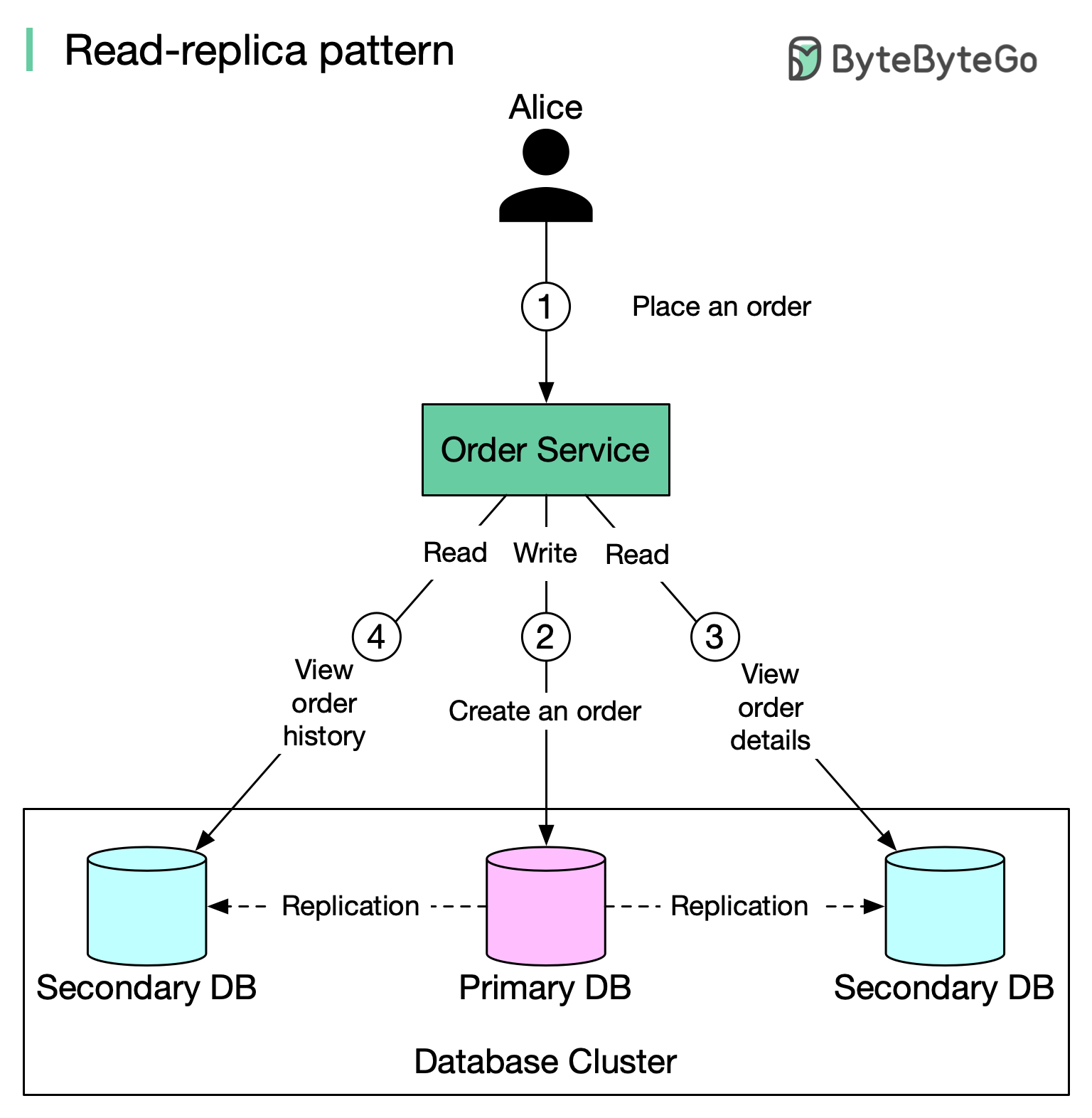

+ * [Read Replica Pattern](https://bytebytego.com/guides/read-replica-pattern)

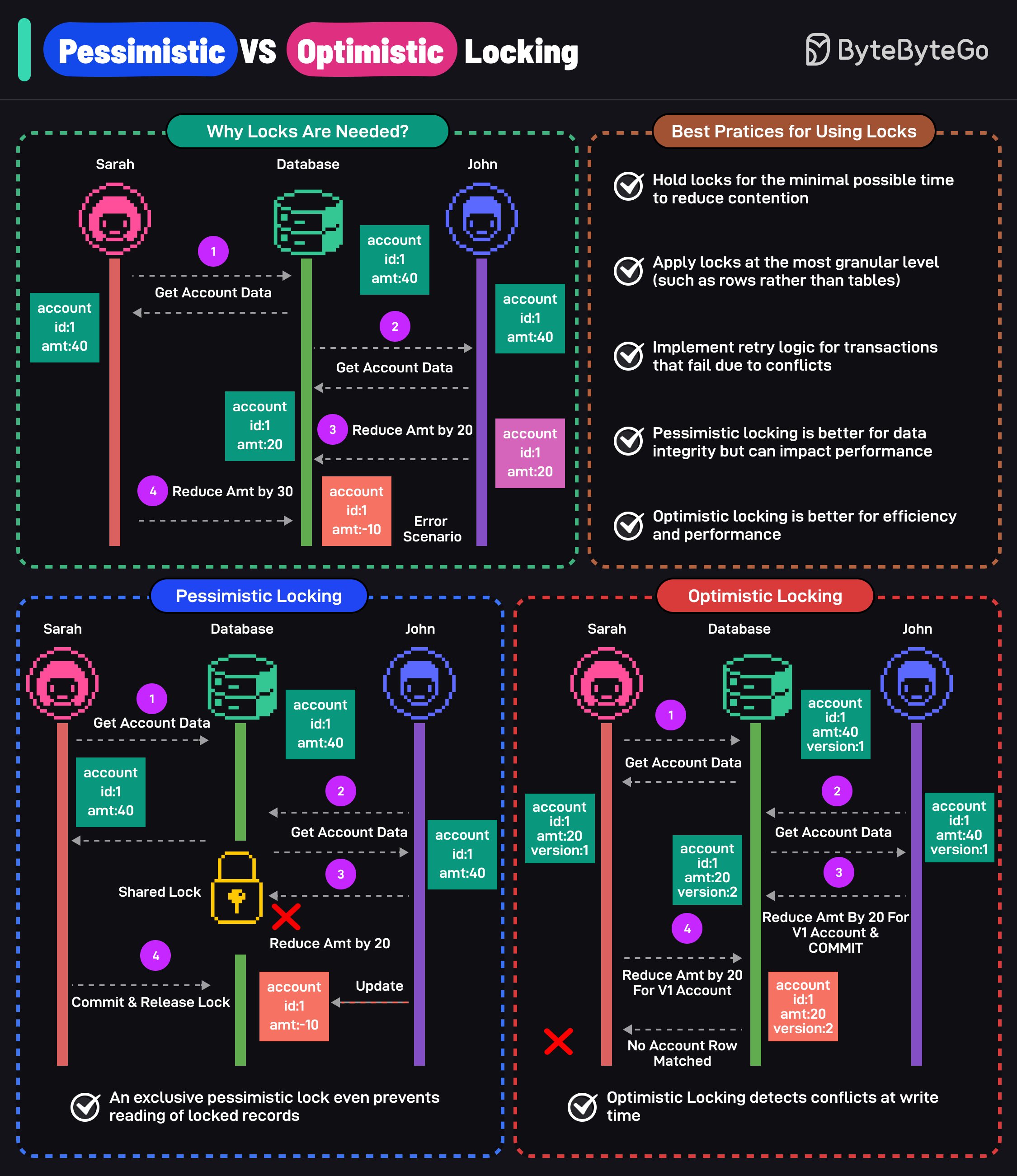

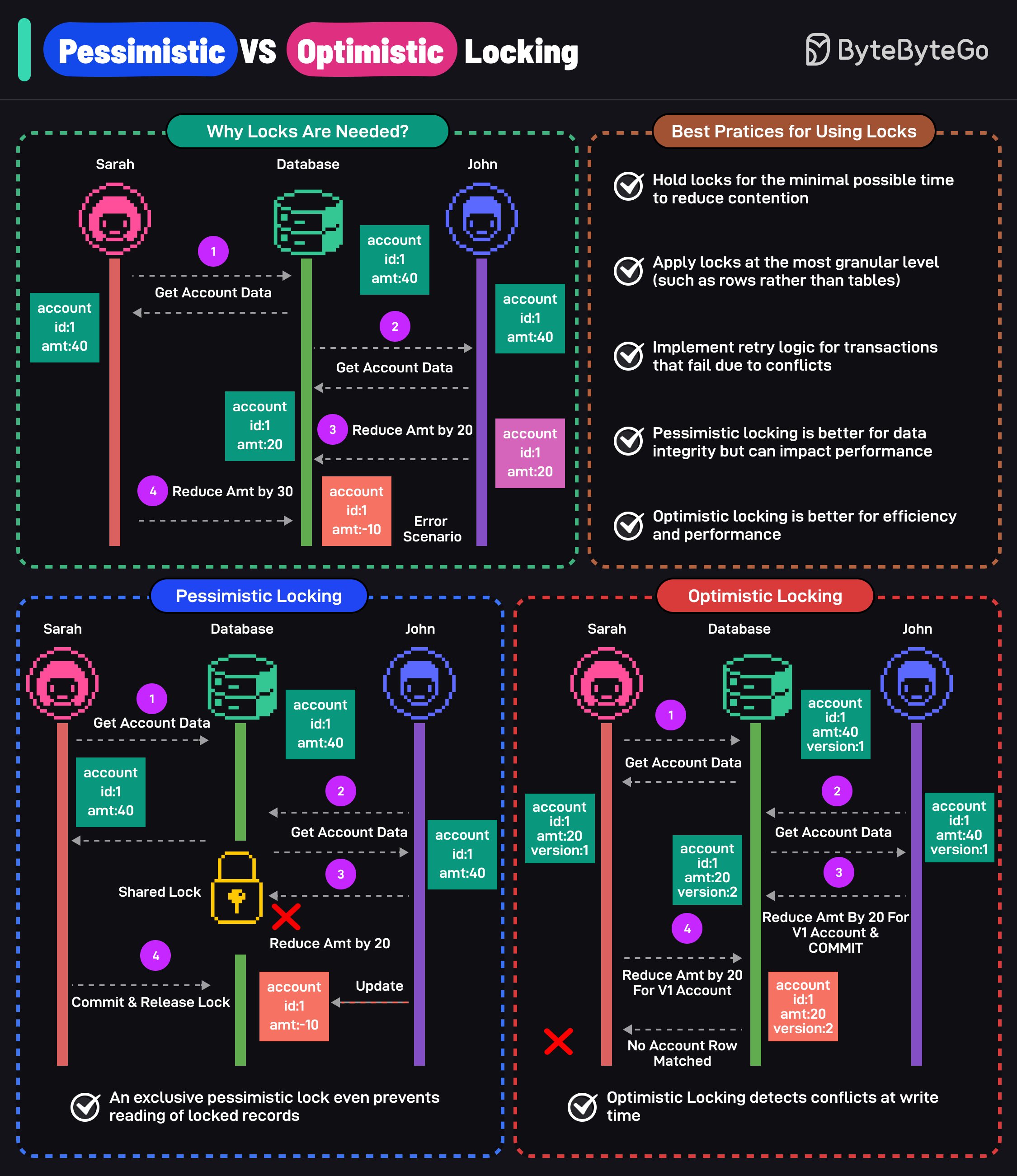

+ * [Pessimistic vs Optimistic Locking](https://bytebytego.com/guides/pessimistic-vs-optimistic-locking)

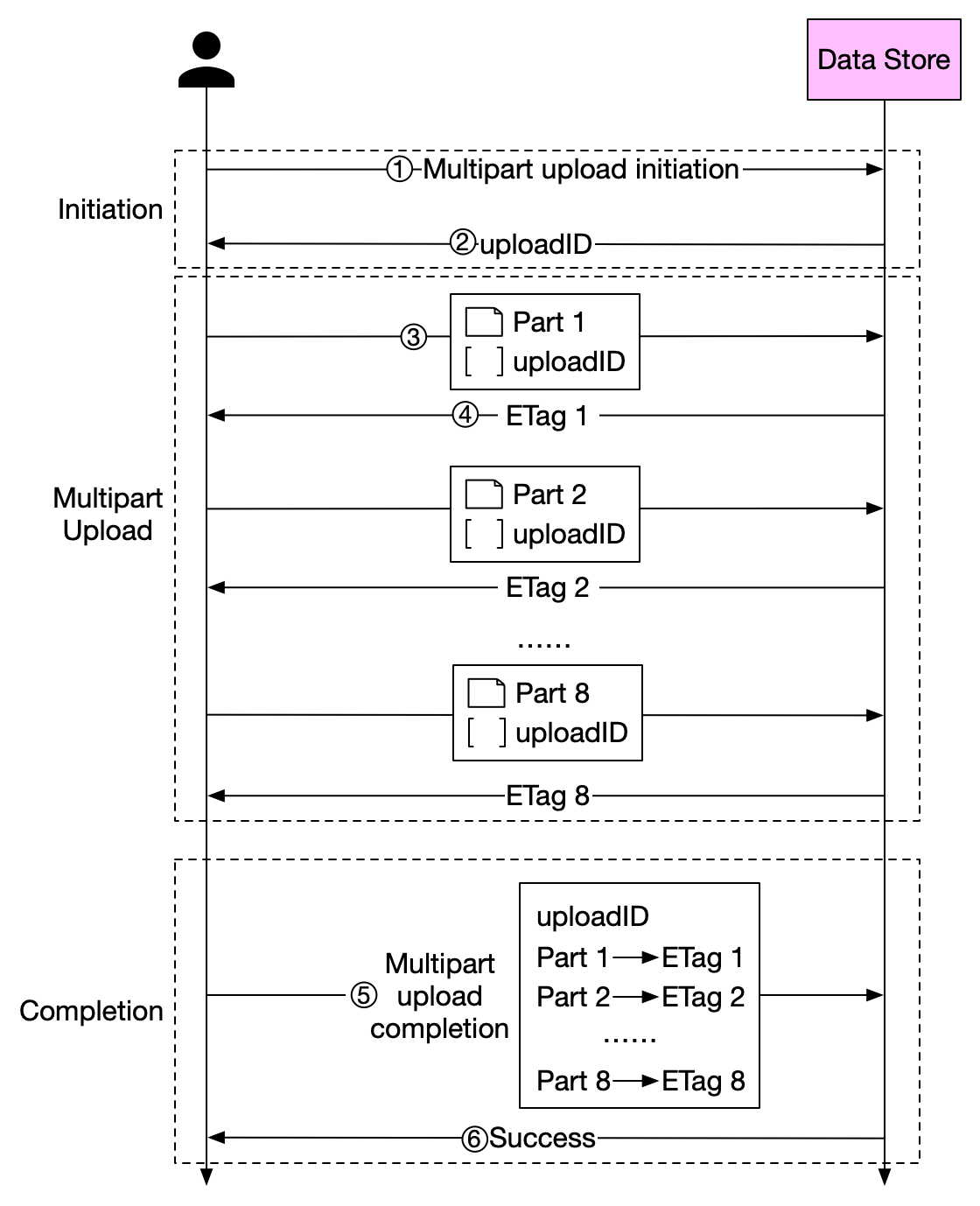

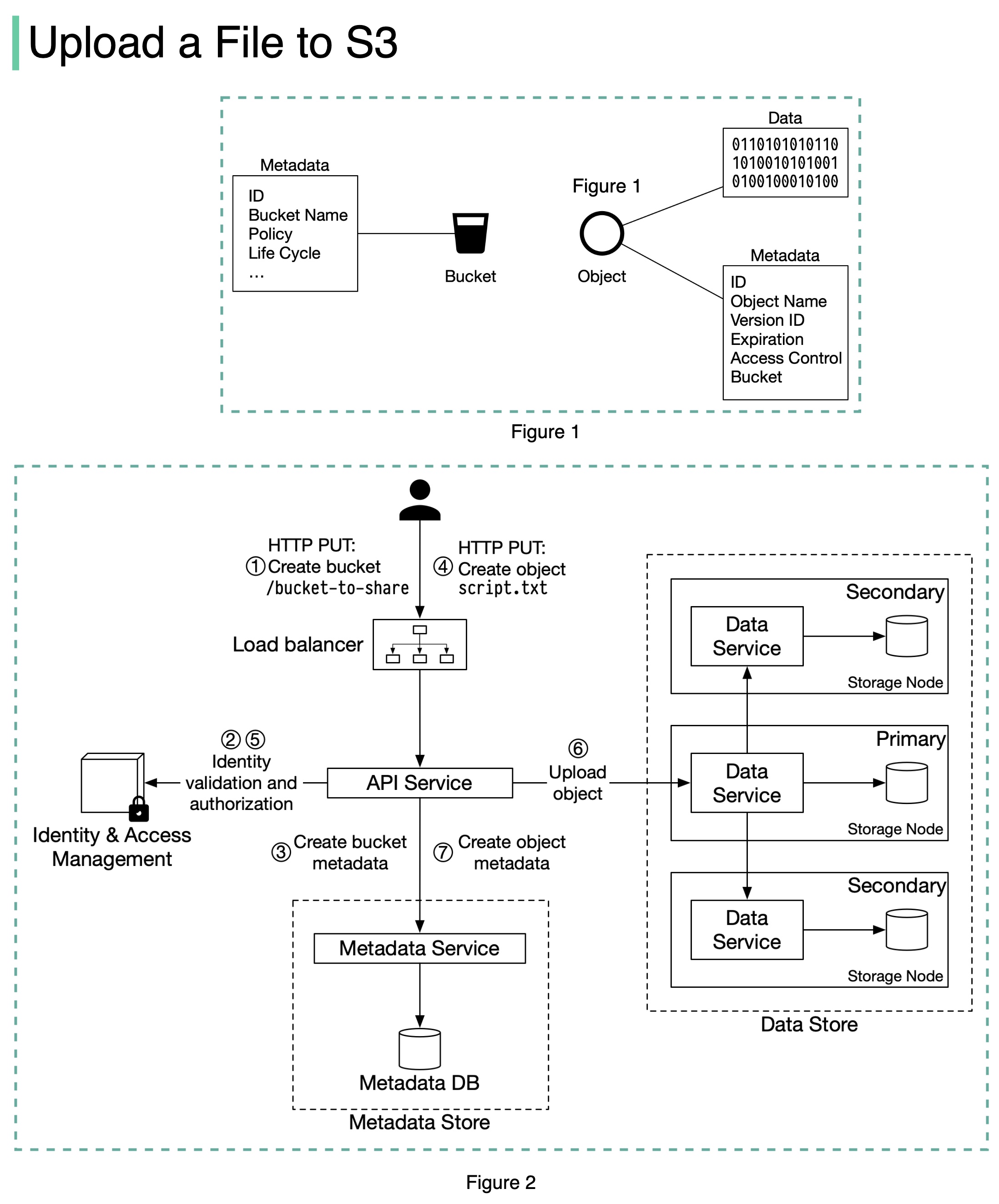

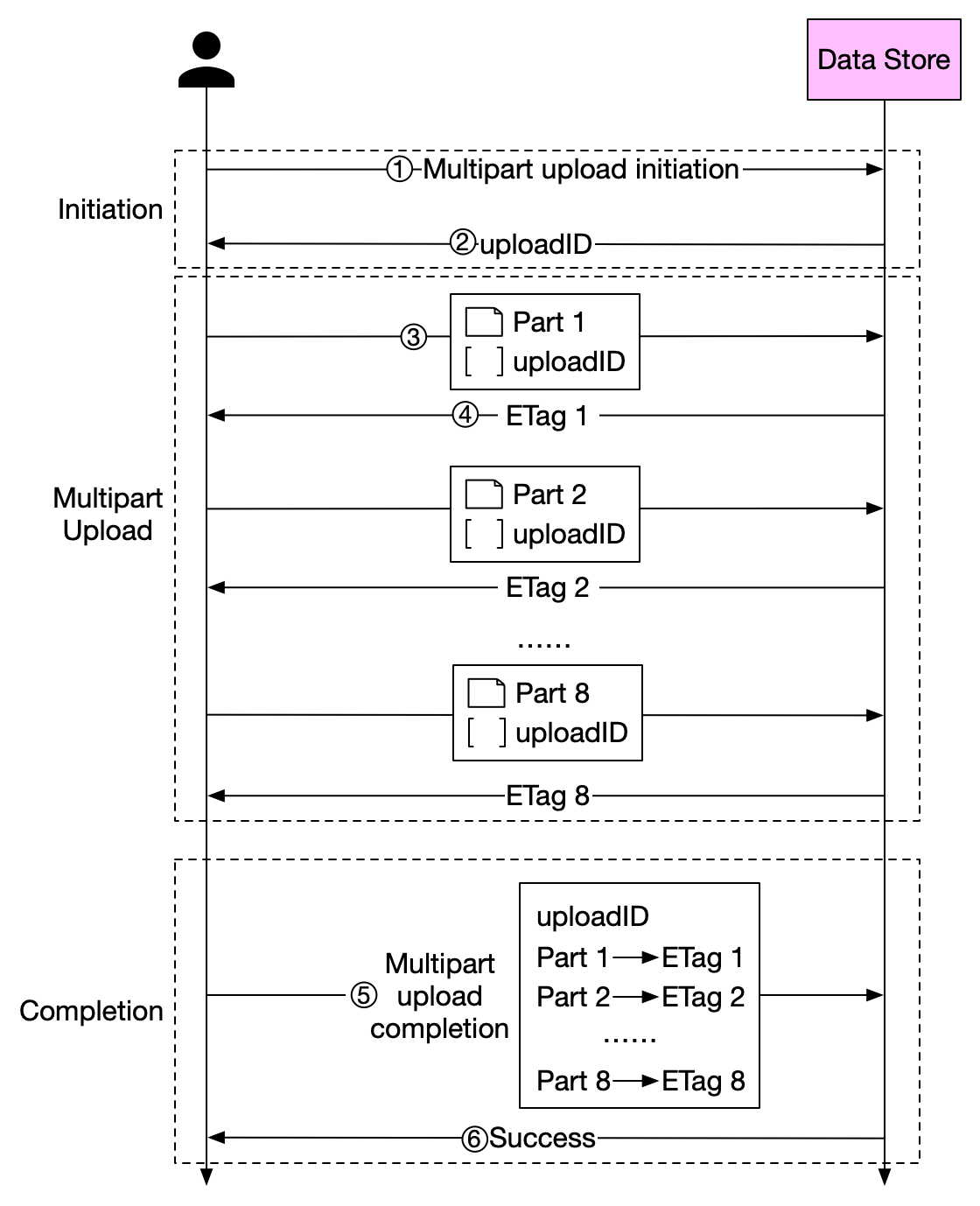

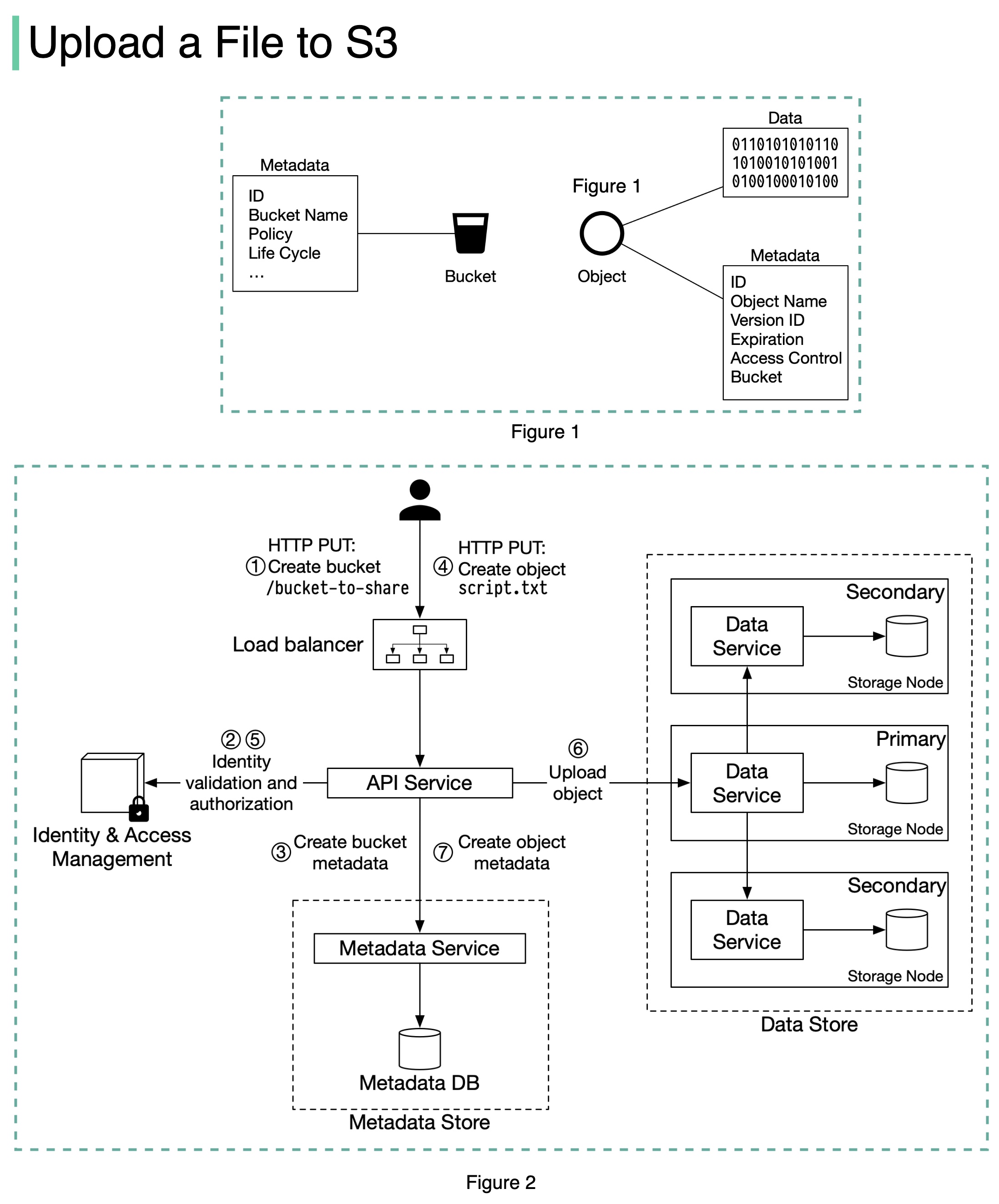

+ * [How to Upload a Large File to S3](https://bytebytego.com/guides/how-to-upload-a-large-file-to-s3)

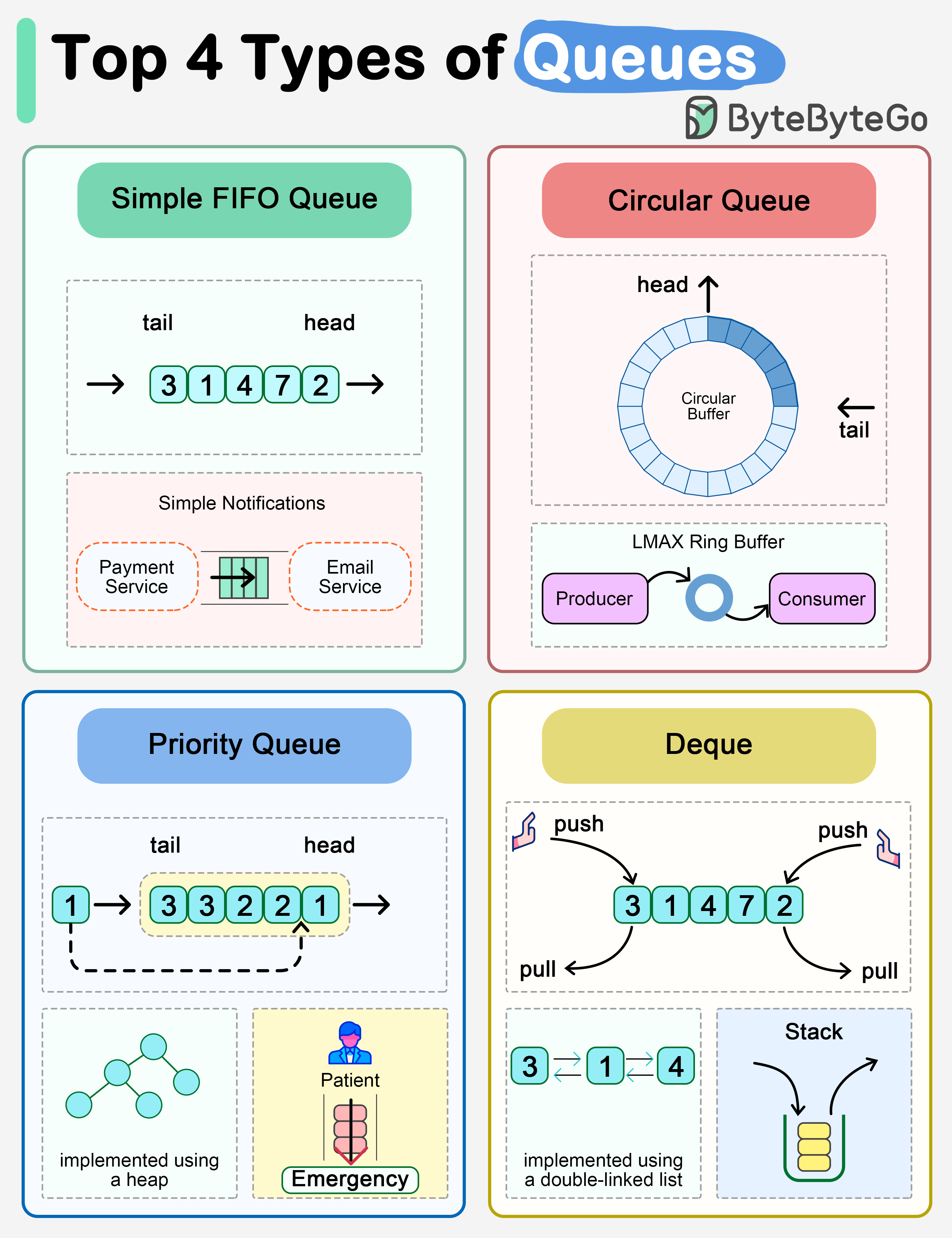

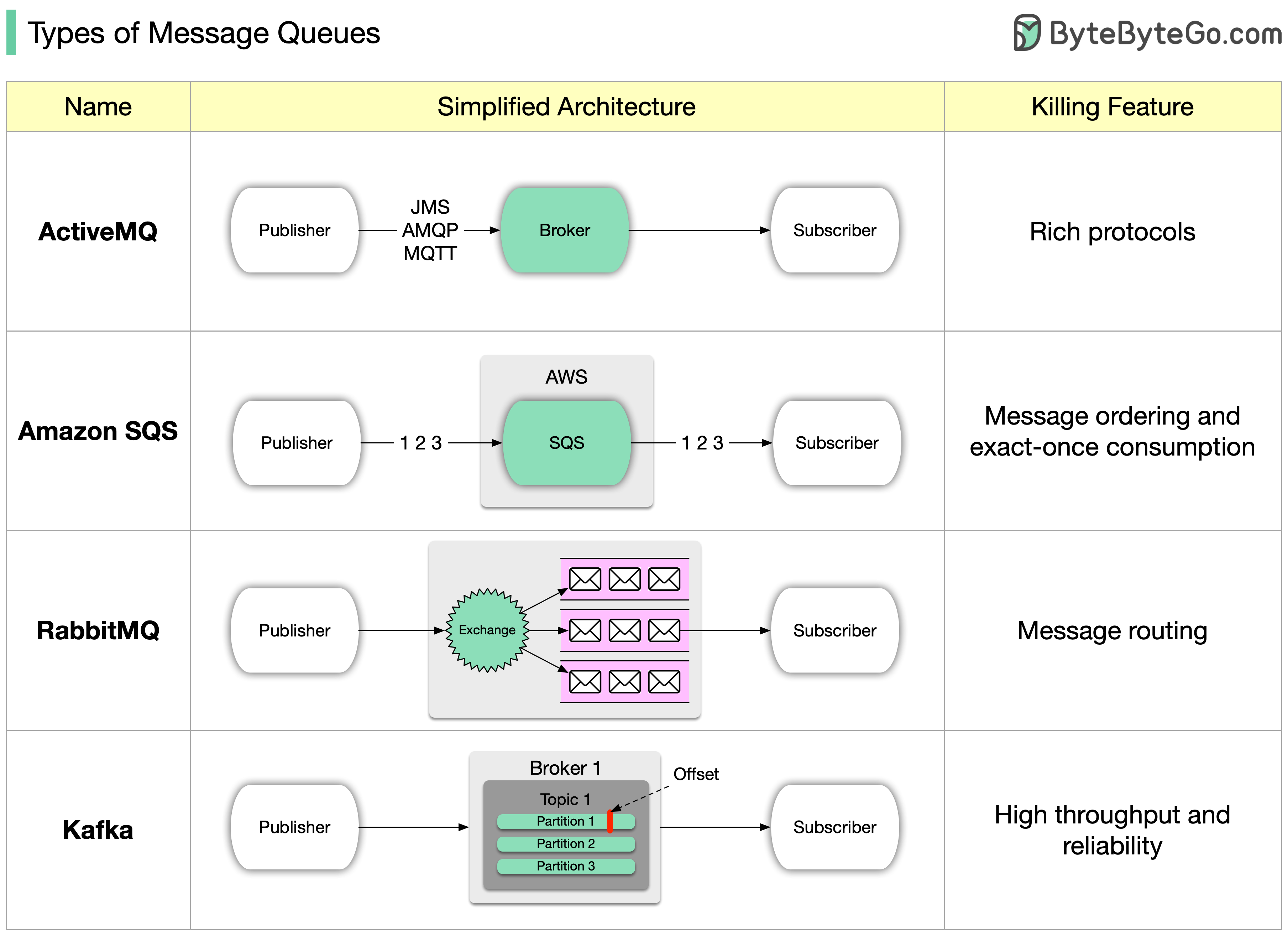

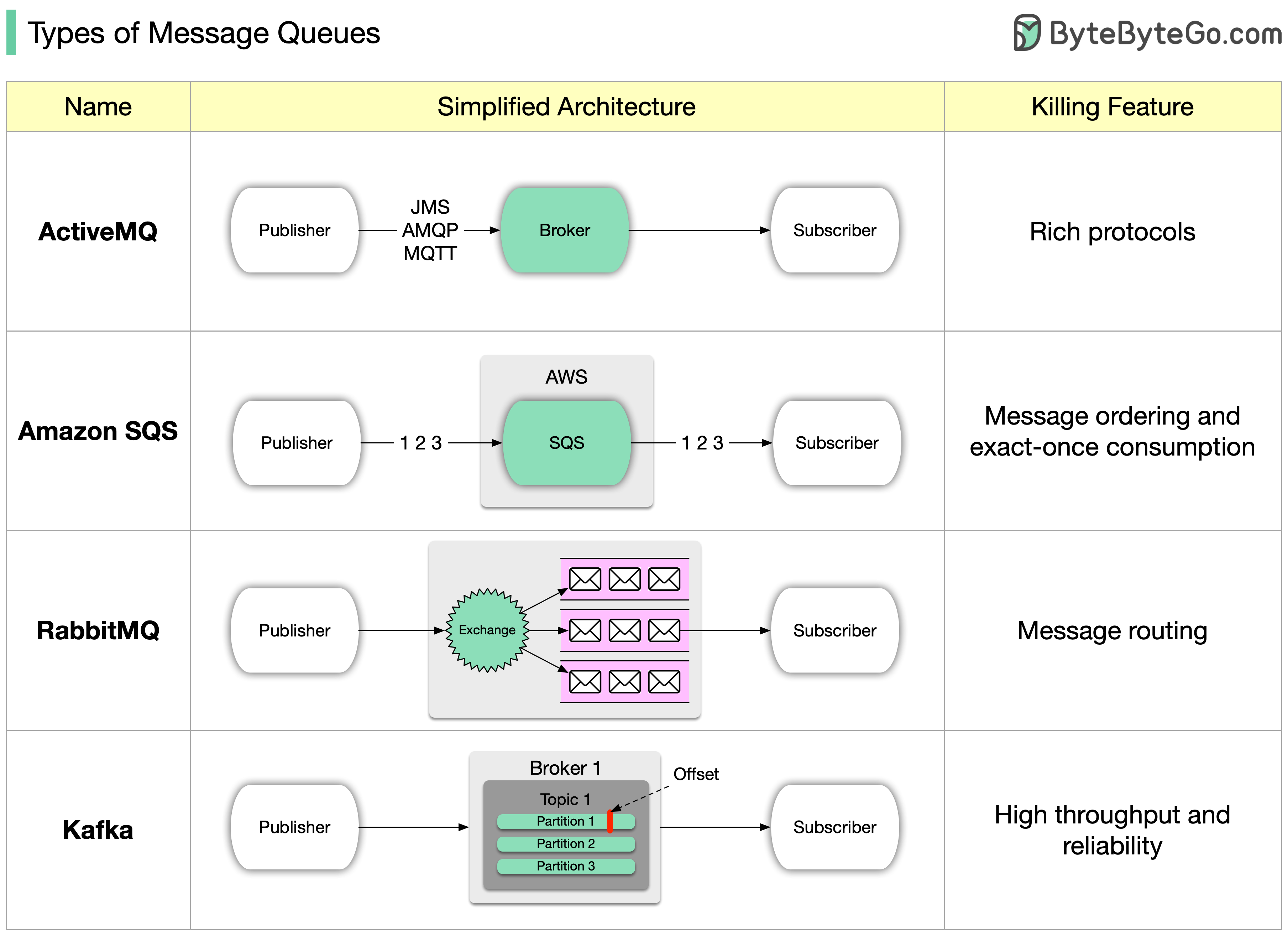

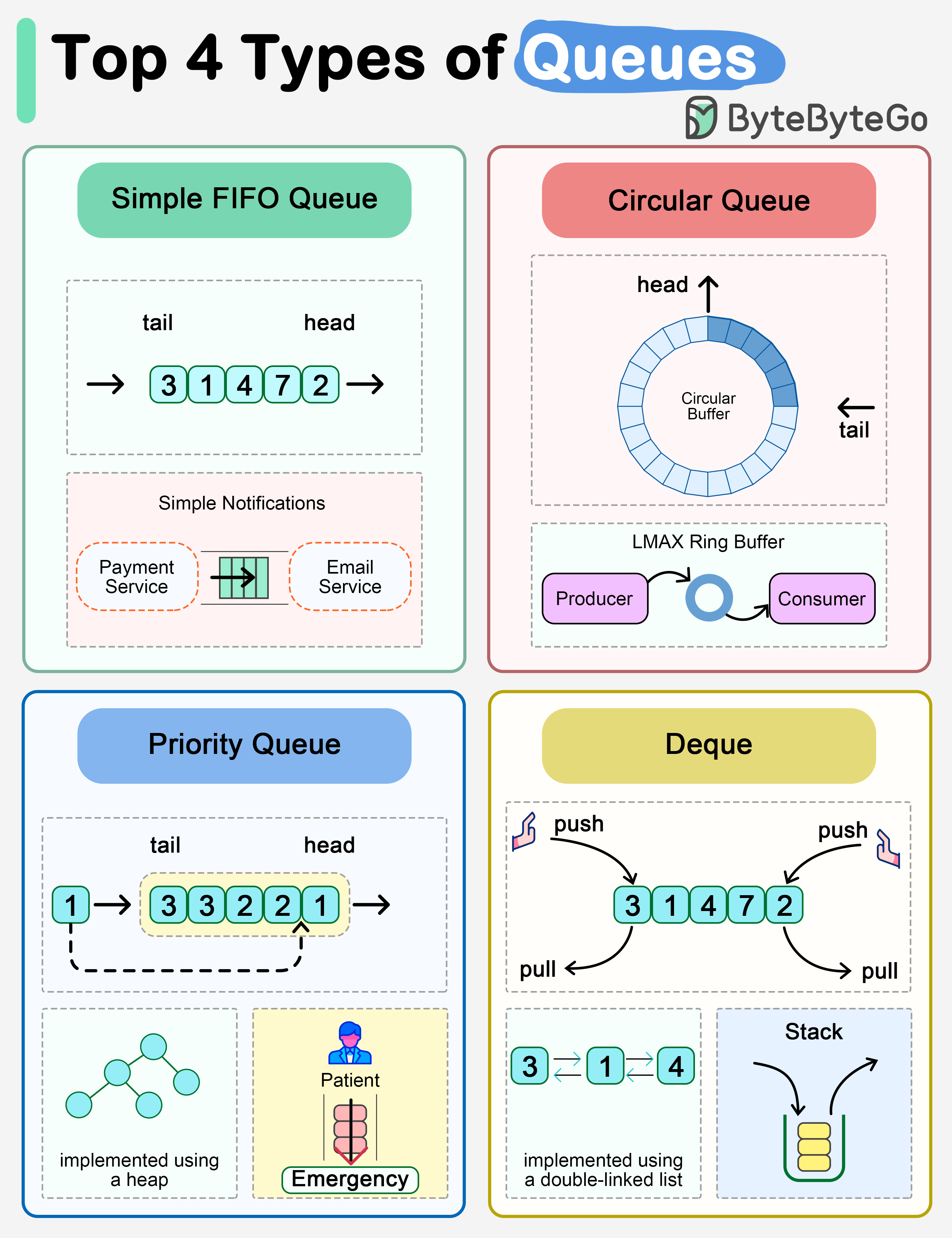

+ * [Types of Message Queues](https://bytebytego.com/guides/types-of-message-queue)

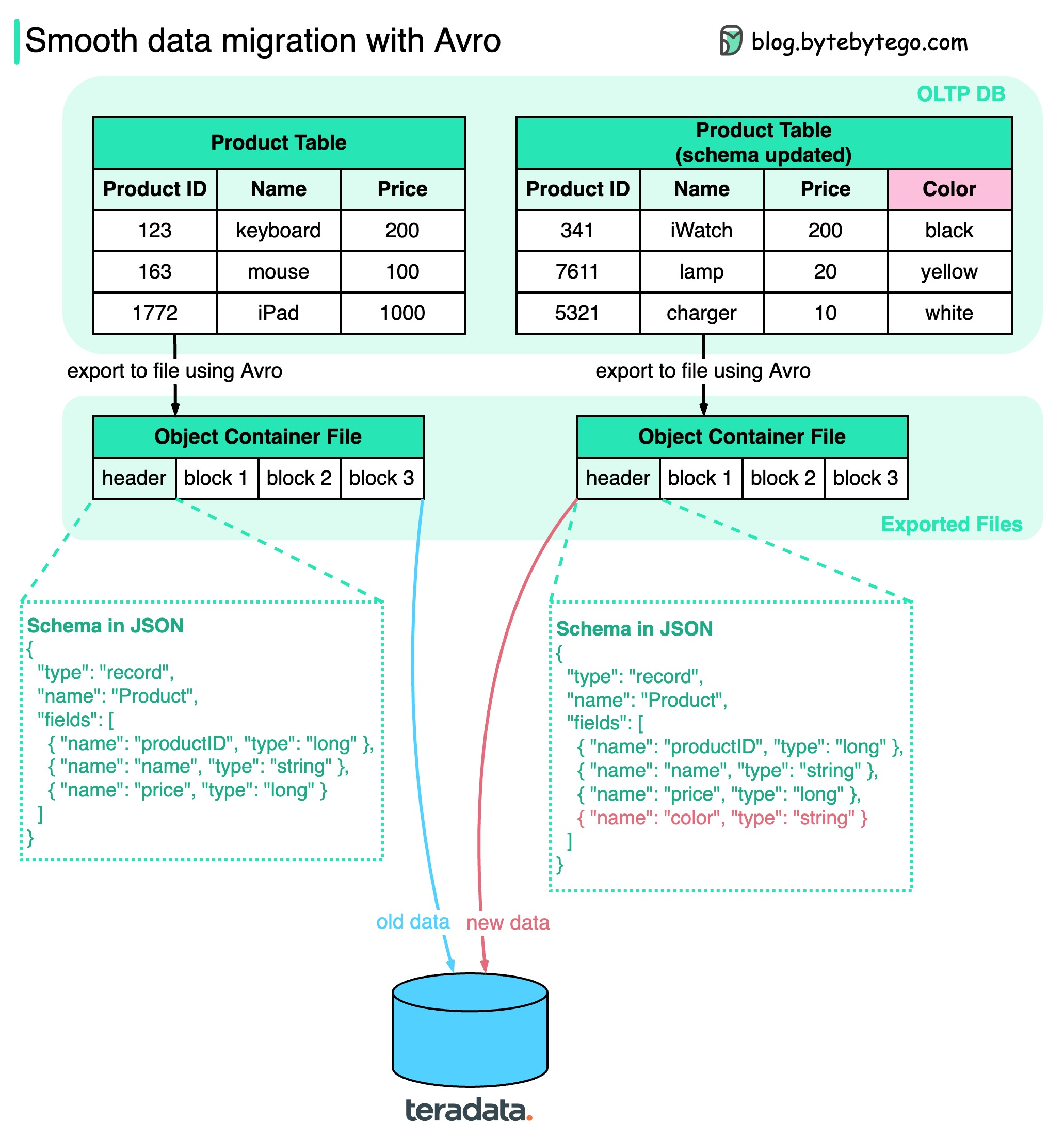

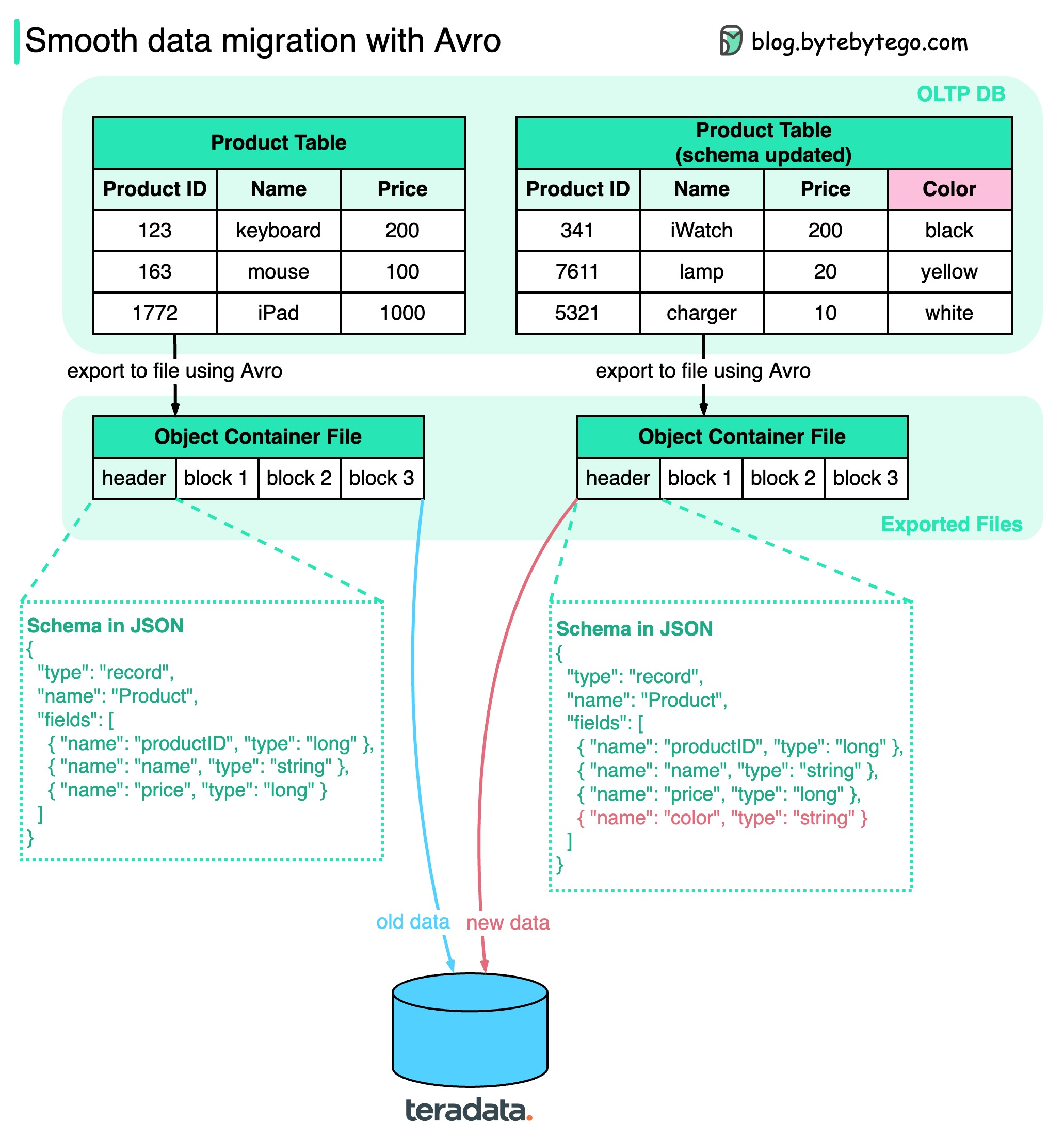

+ * [Smooth Data Migration with Avro](https://bytebytego.com/guides/smooth-data-migration-with-avro)

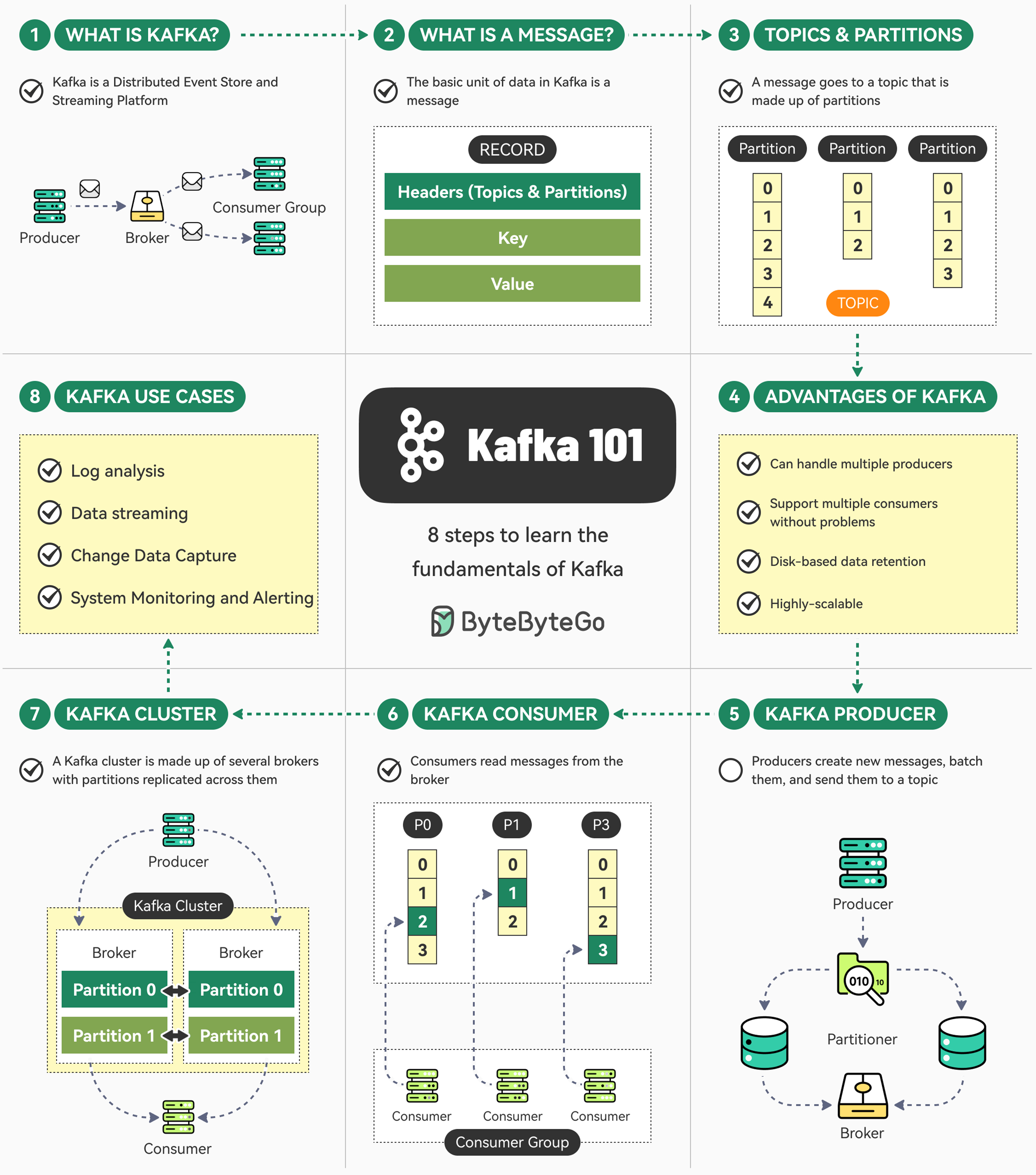

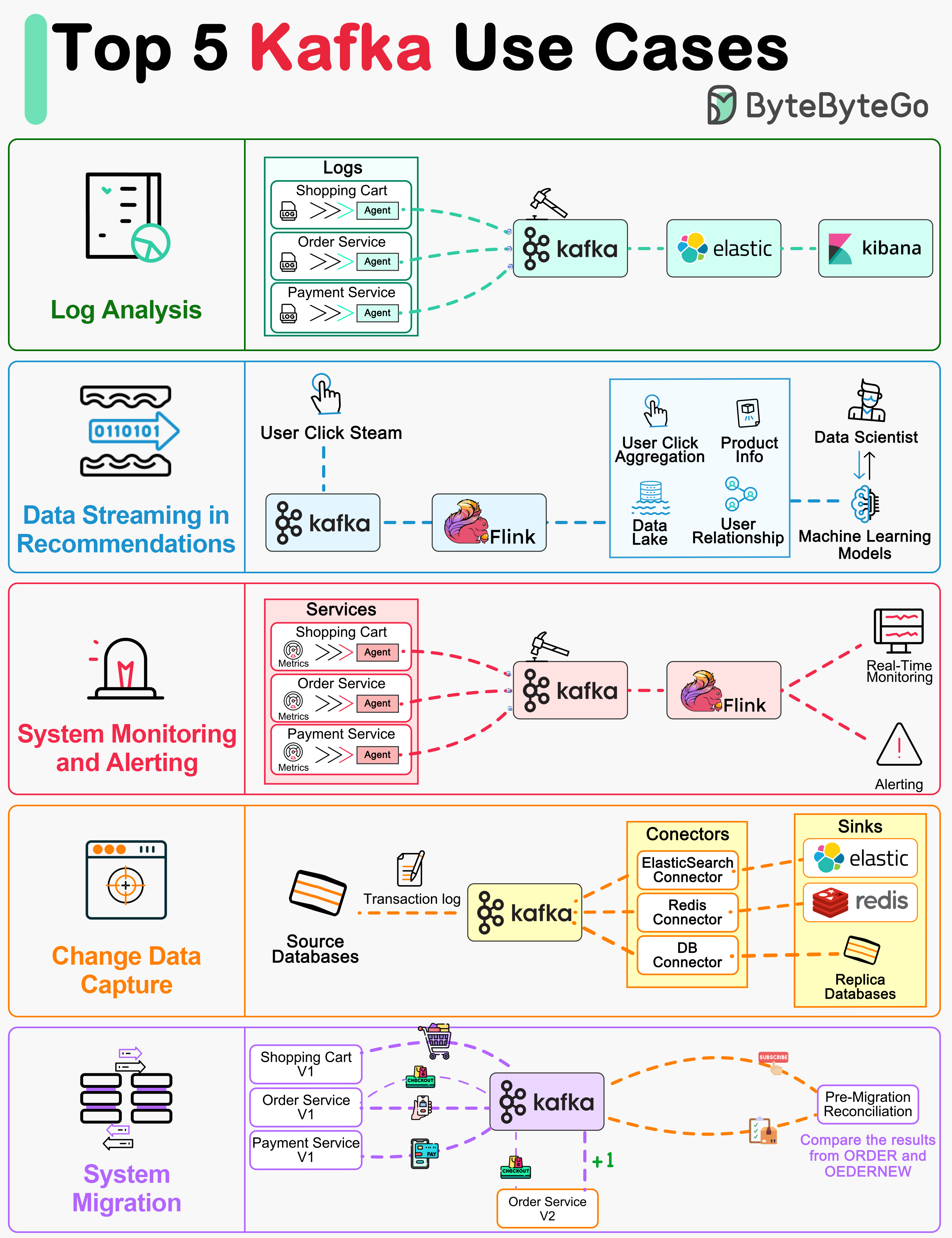

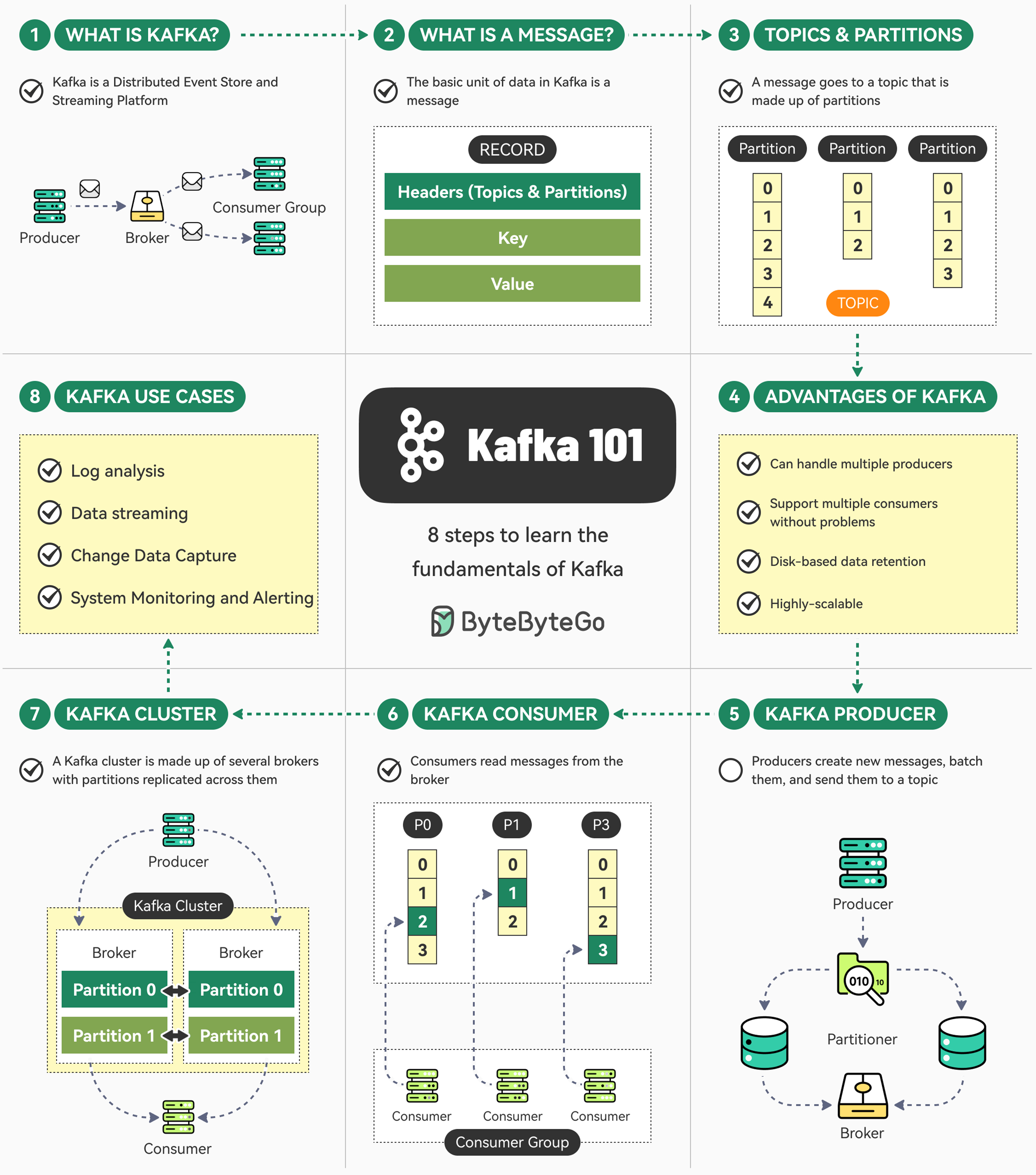

+ * [The Ultimate Kafka 101 You Cannot Miss](https://bytebytego.com/guides/the-ultimate-kafka-101-you-cannot-miss)

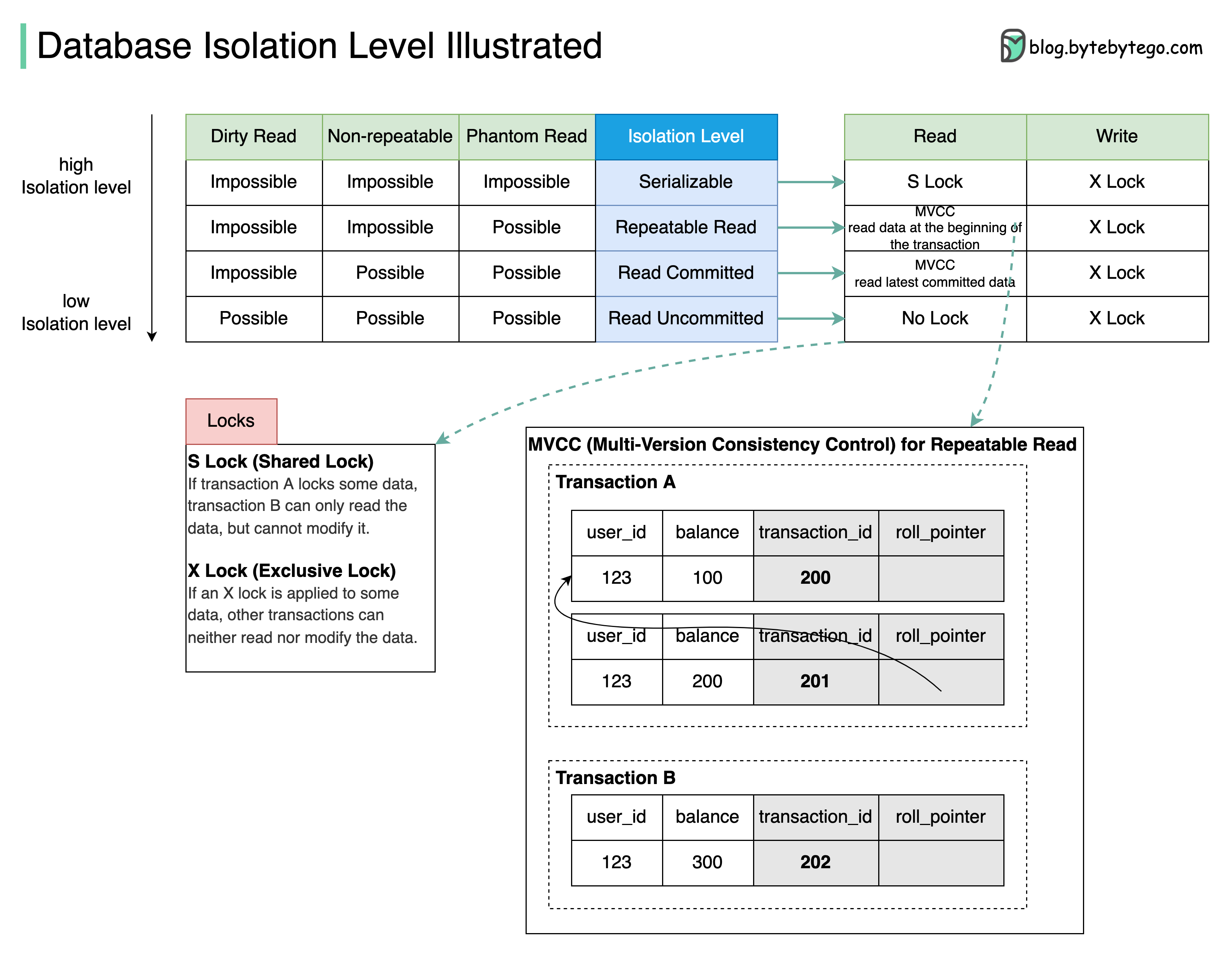

+ * [Database Isolation Levels](https://bytebytego.com/guides/what-are-database-isolation-levels)

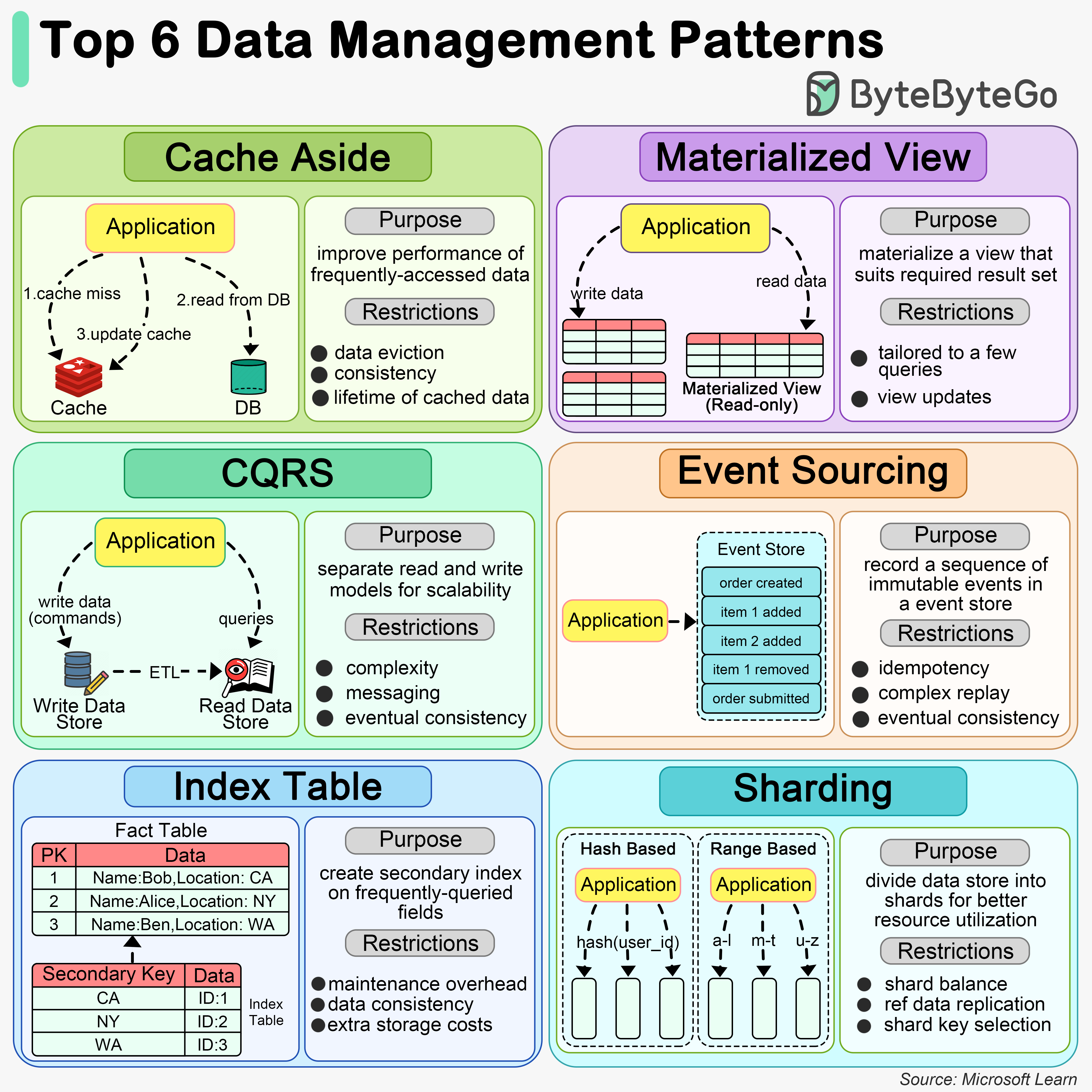

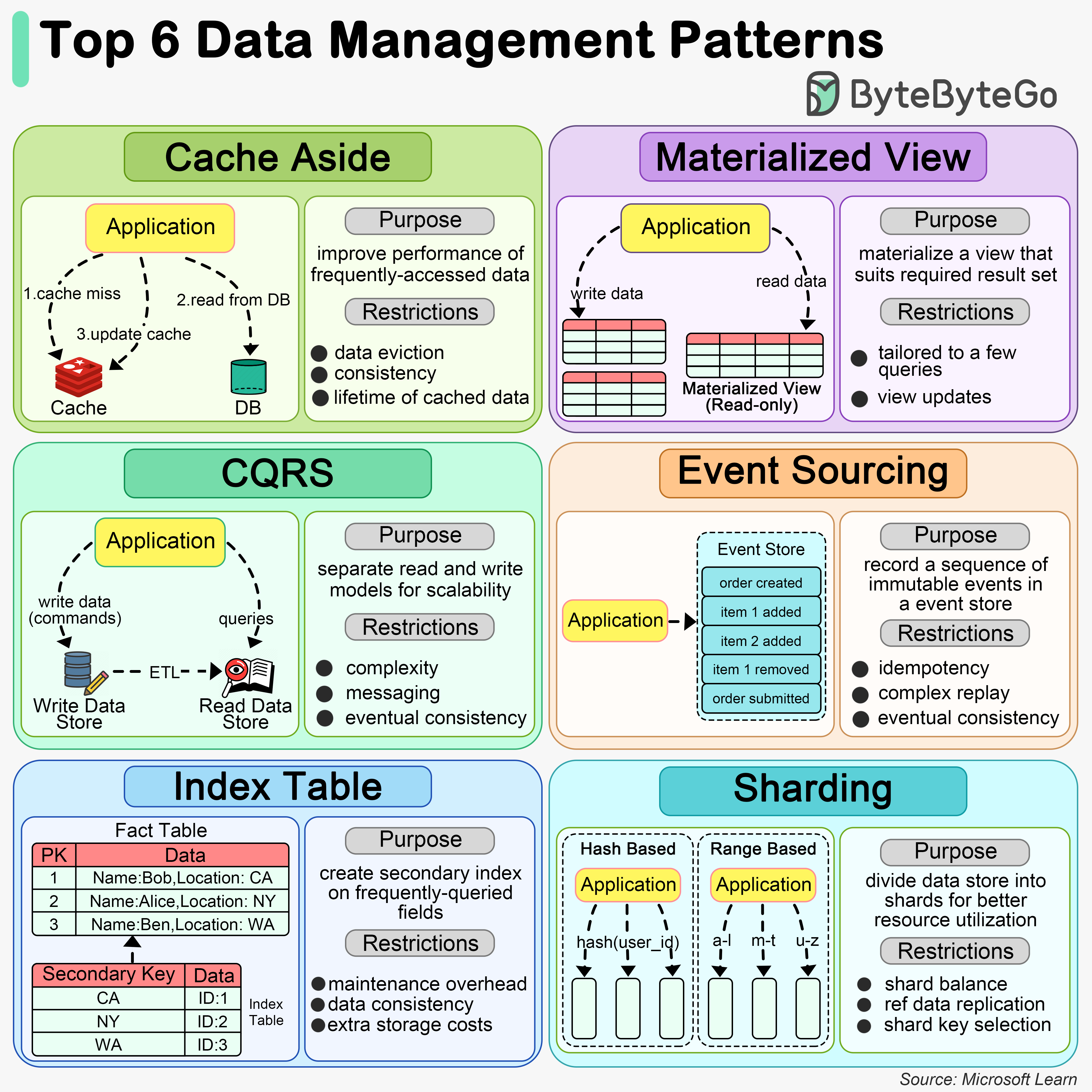

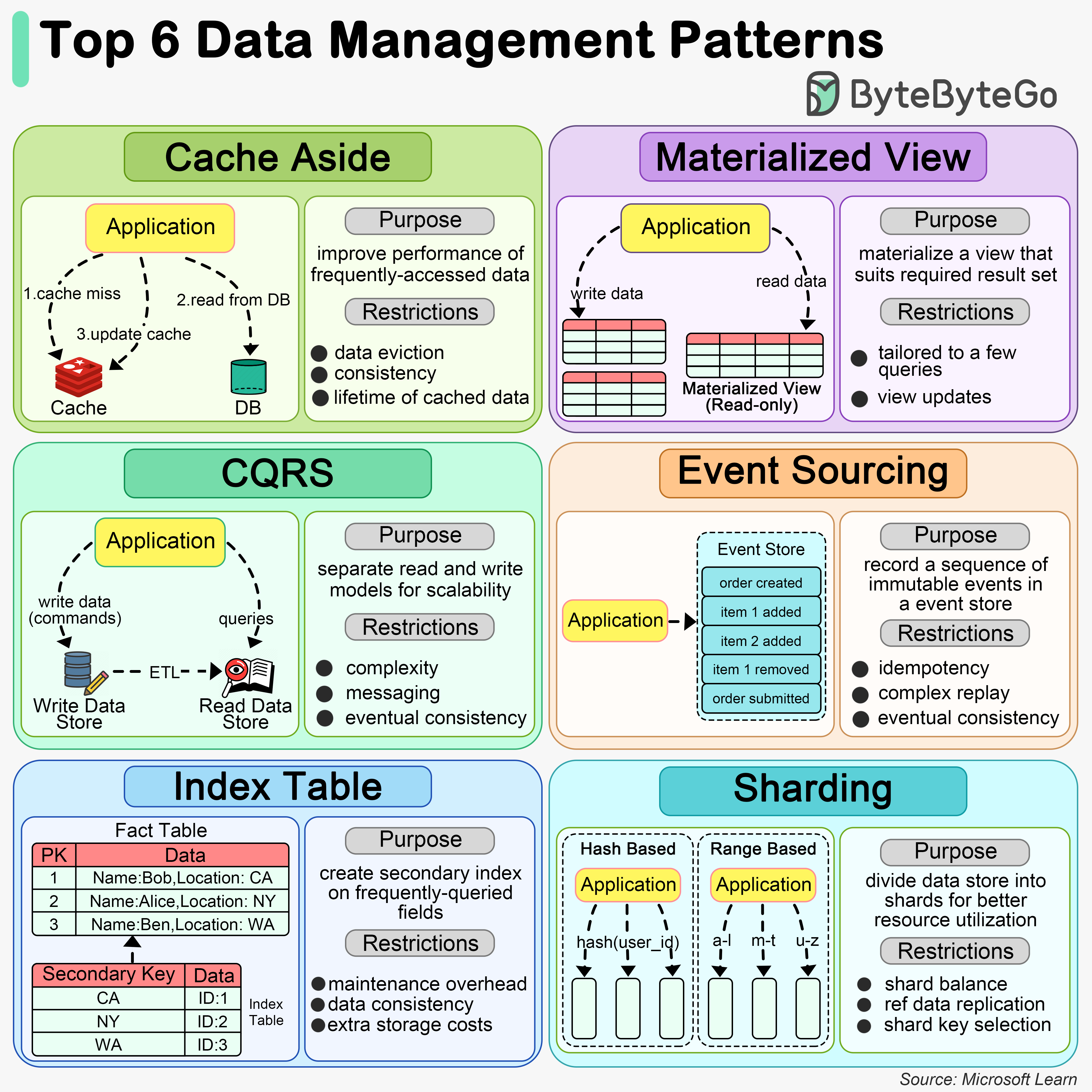

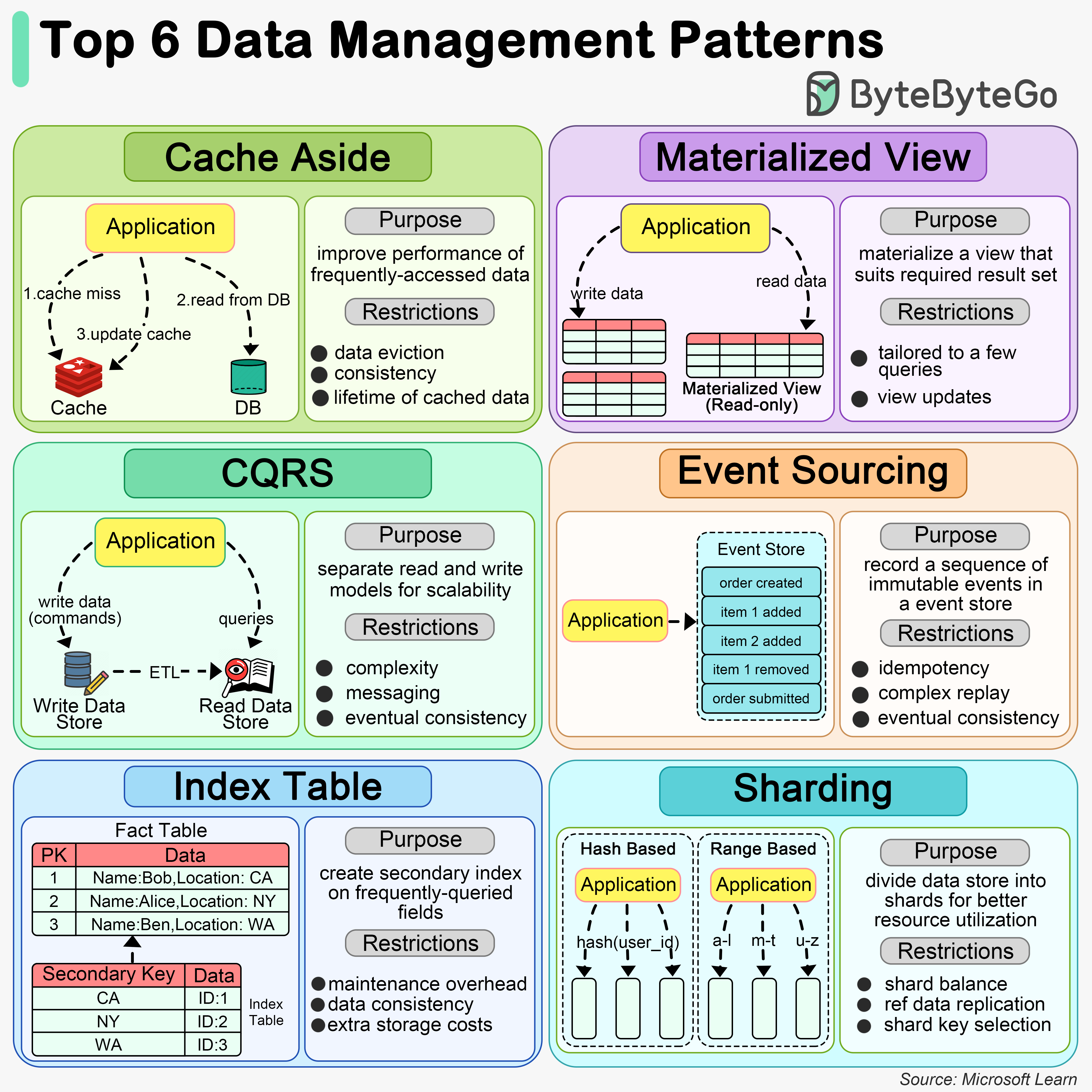

+ * [Top 6 Data Management Patterns](https://bytebytego.com/guides/how-do-we-manage-data)

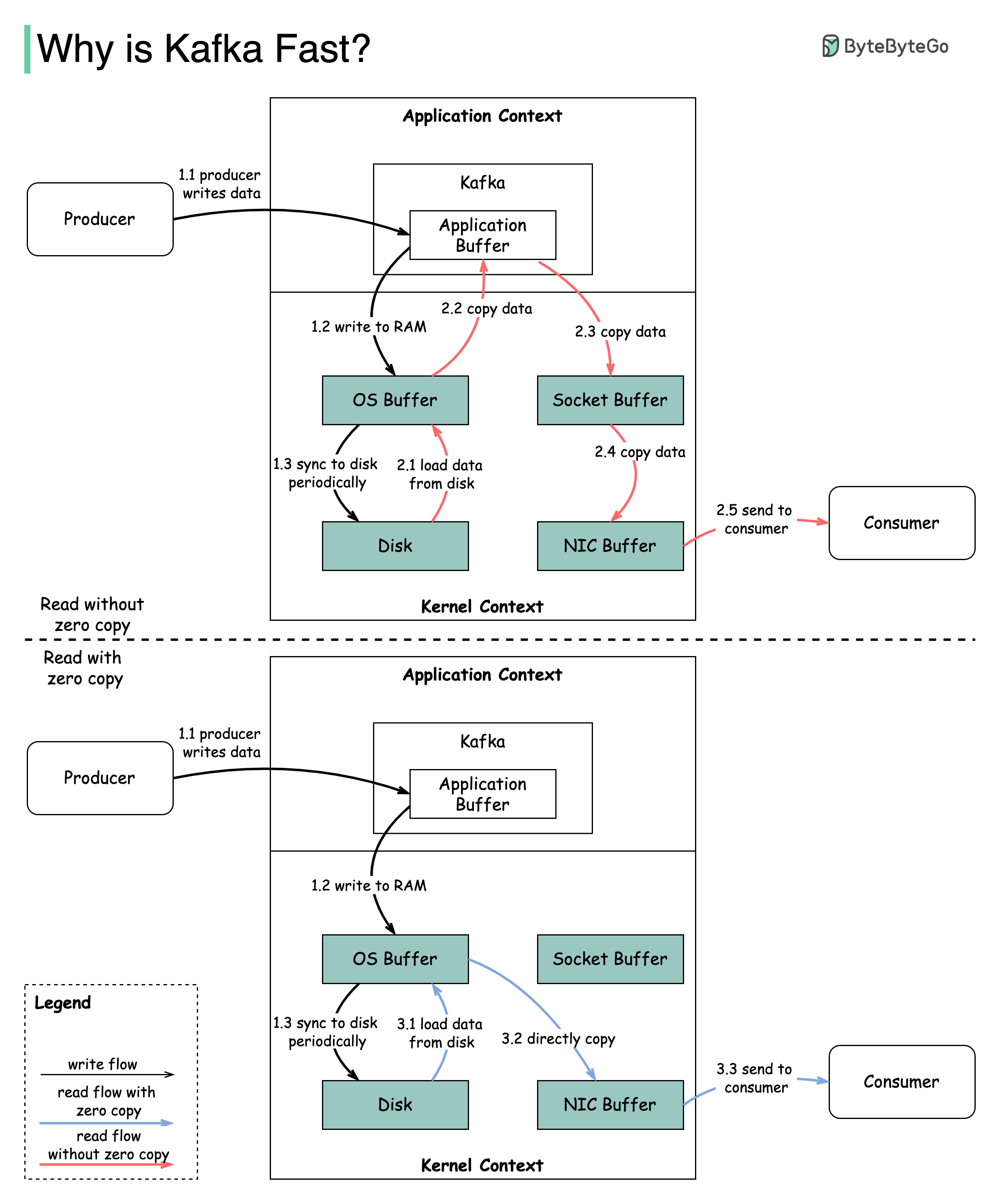

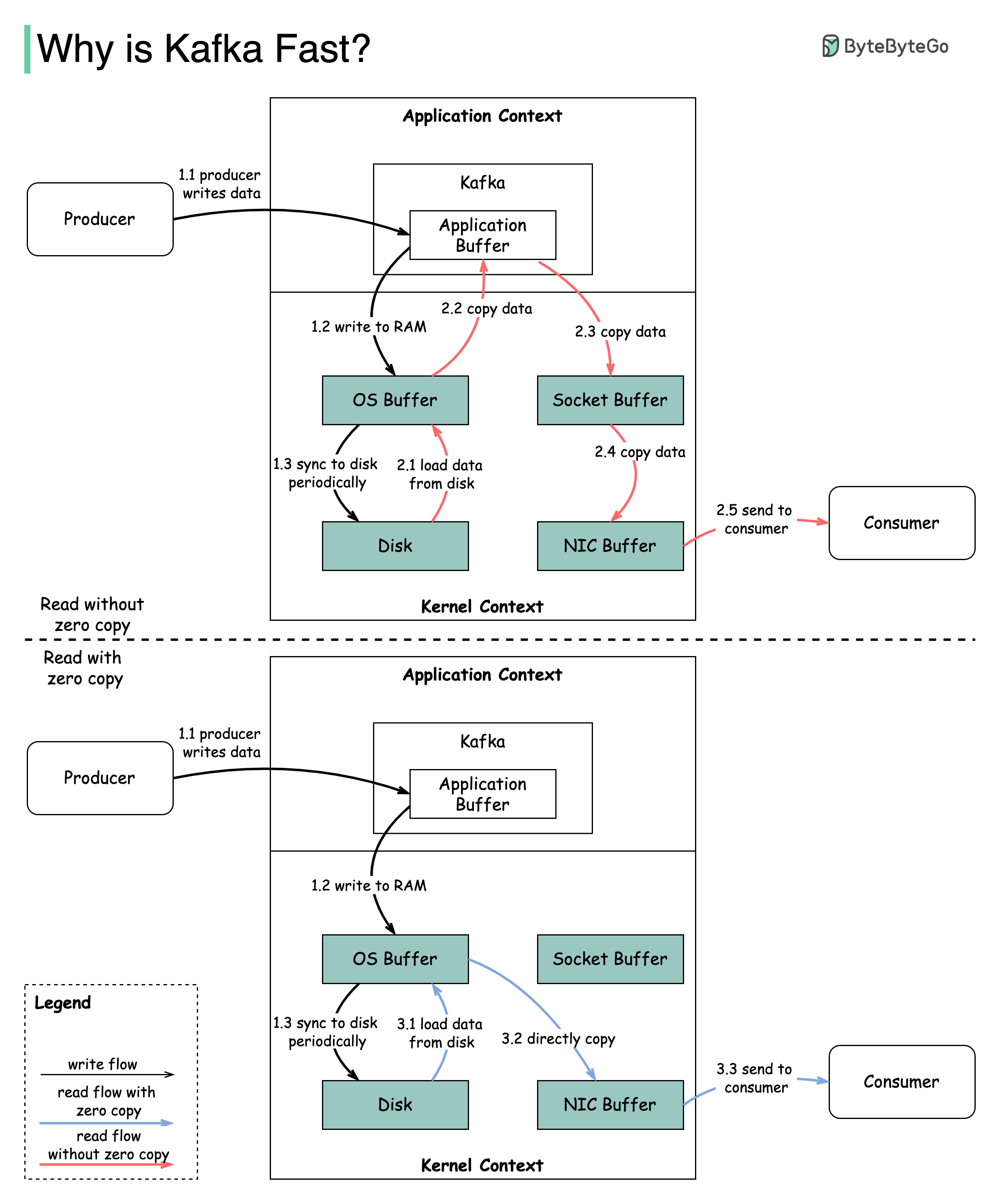

+ * [Why is Kafka Fast?](https://bytebytego.com/guides/why-is-kafka-fast)

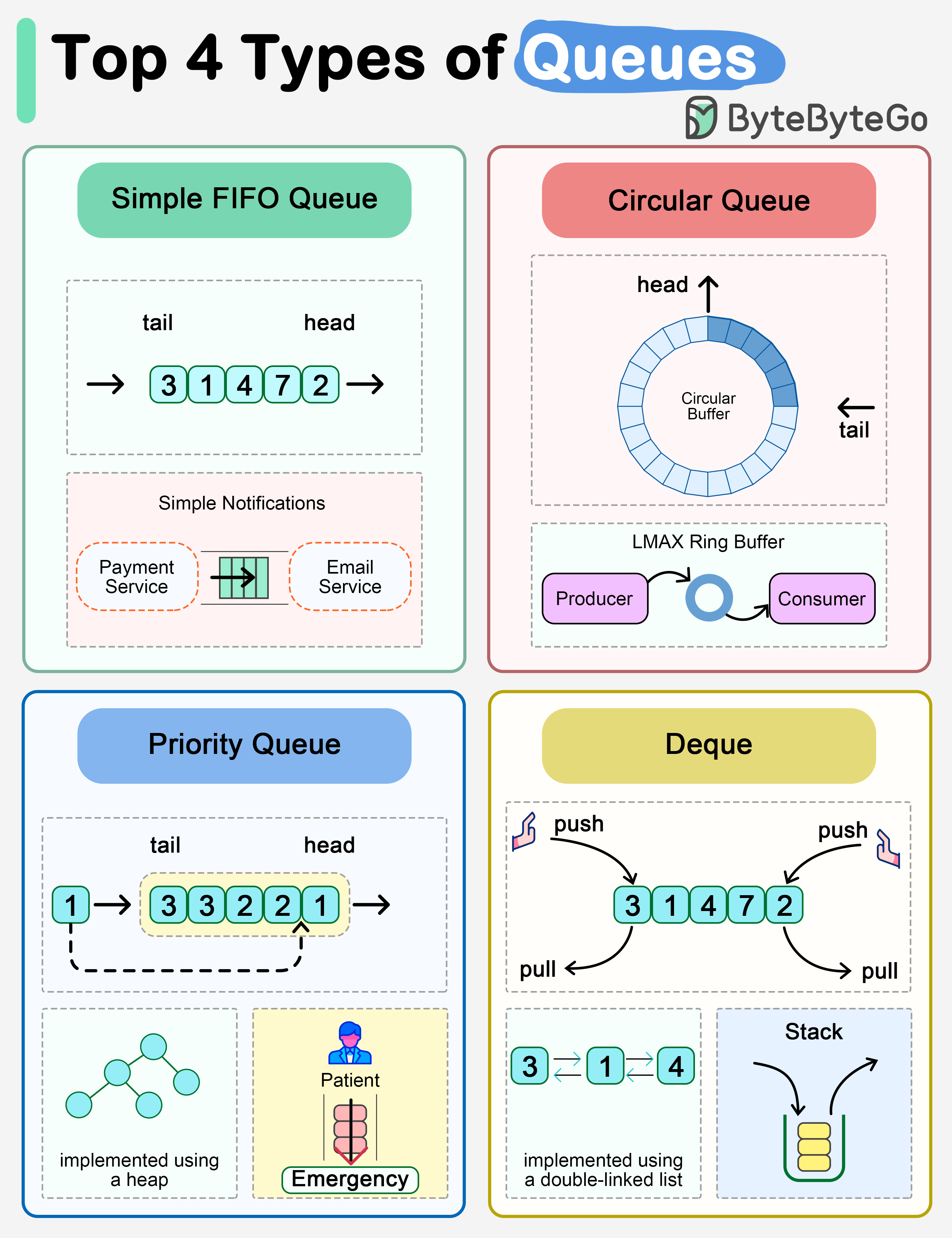

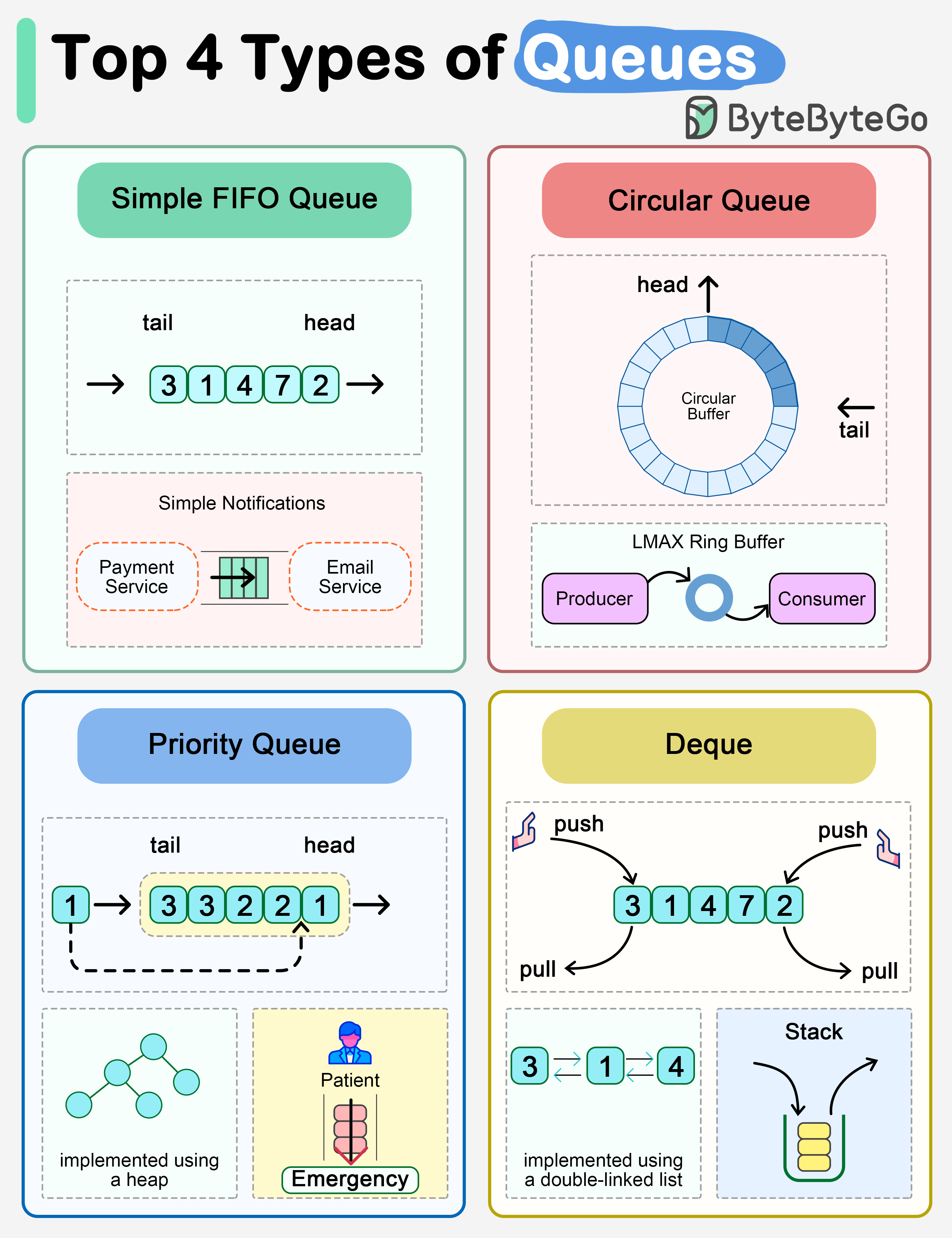

+ * [Explaining the 4 Most Commonly Used Types of Queues](https://bytebytego.com/guides/explaining-the-4-most-commonly-used-types-of-queues-in-a-single-diagram)

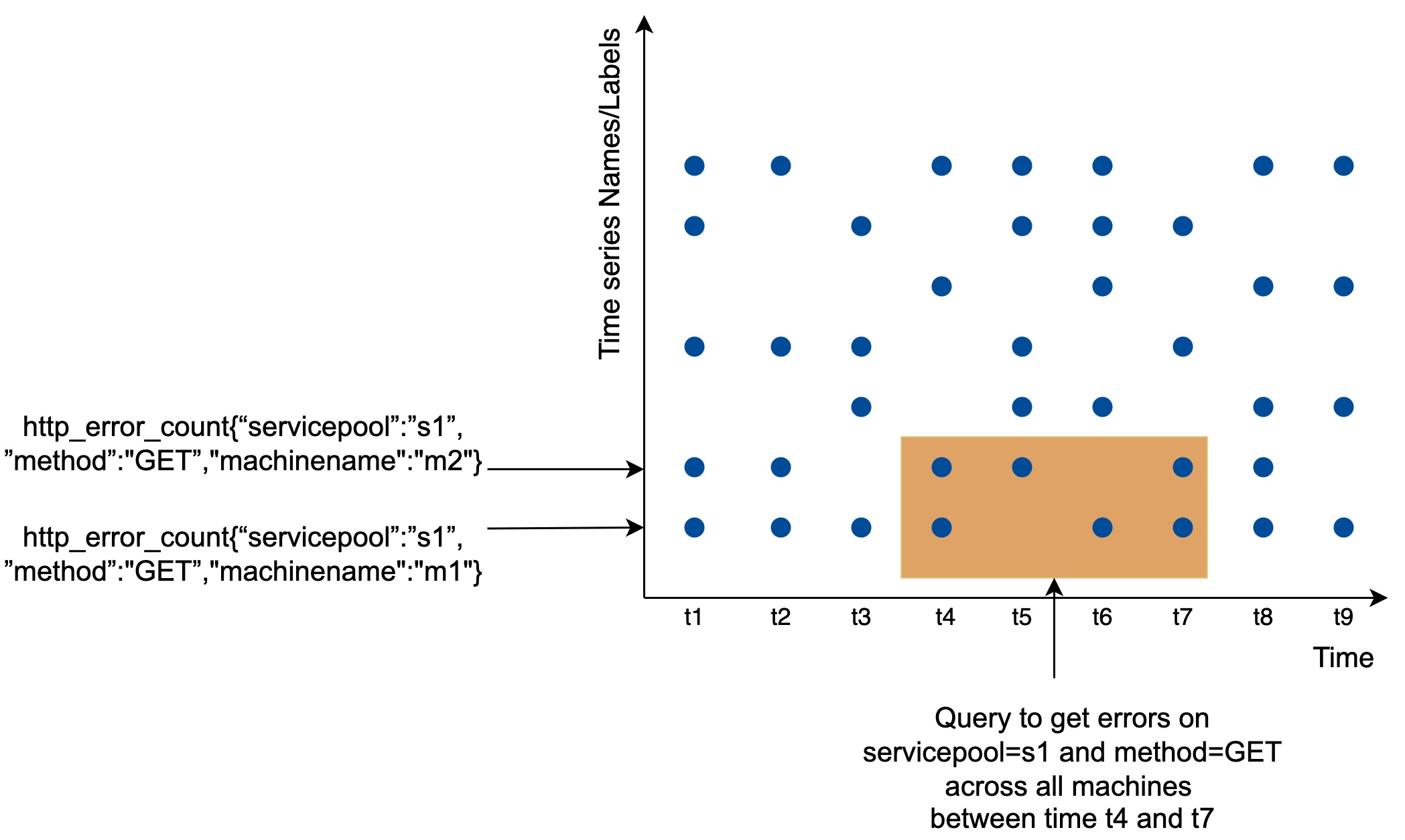

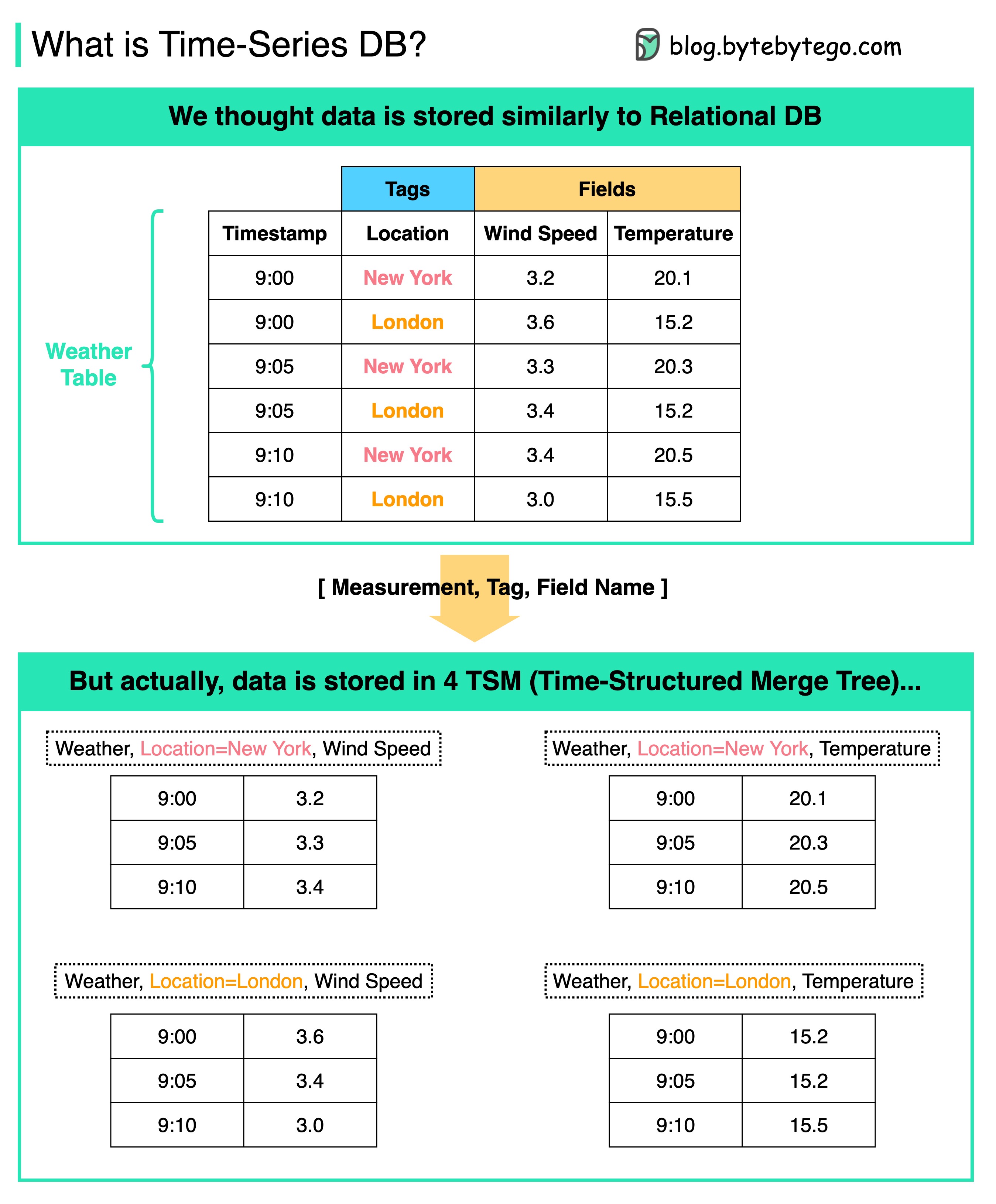

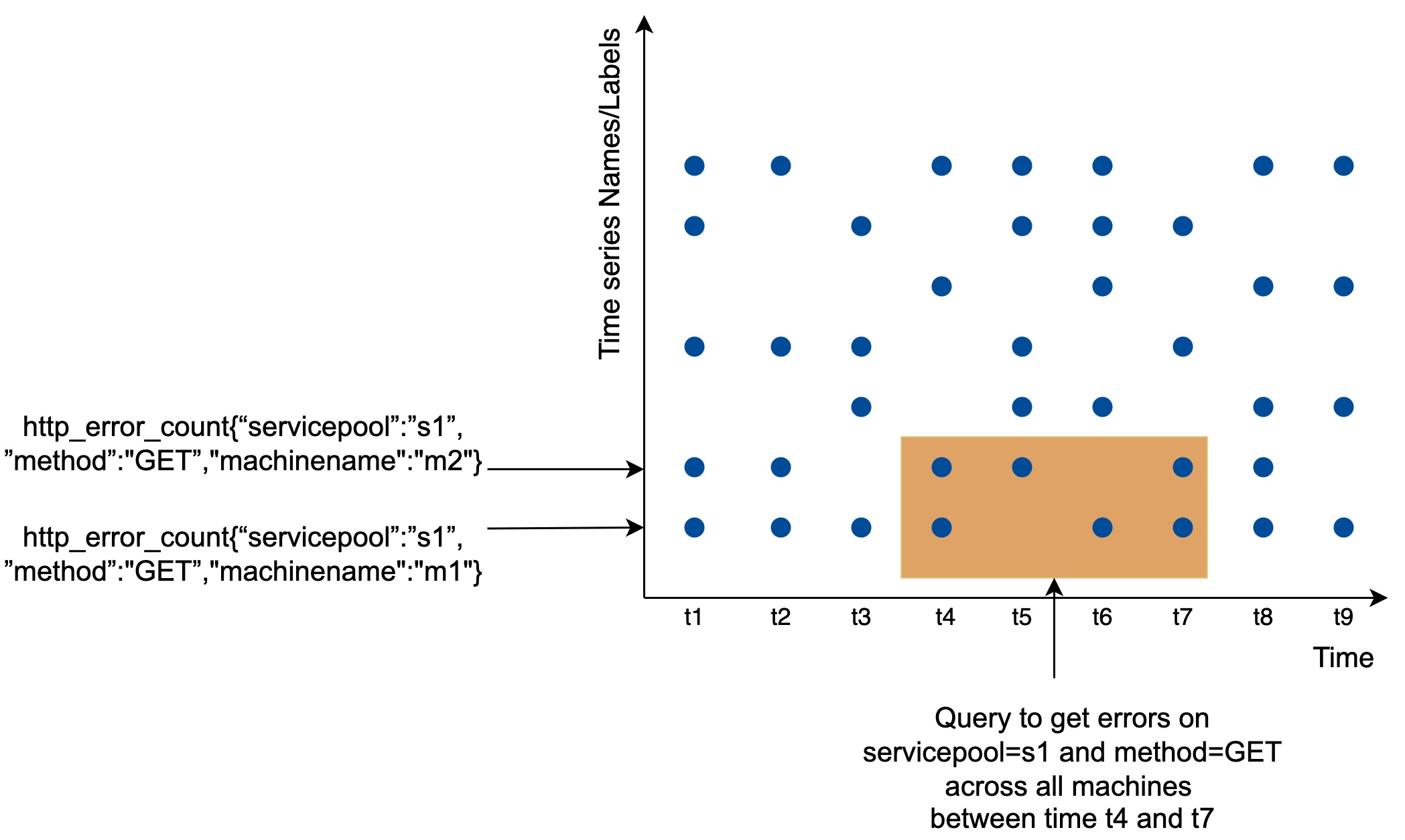

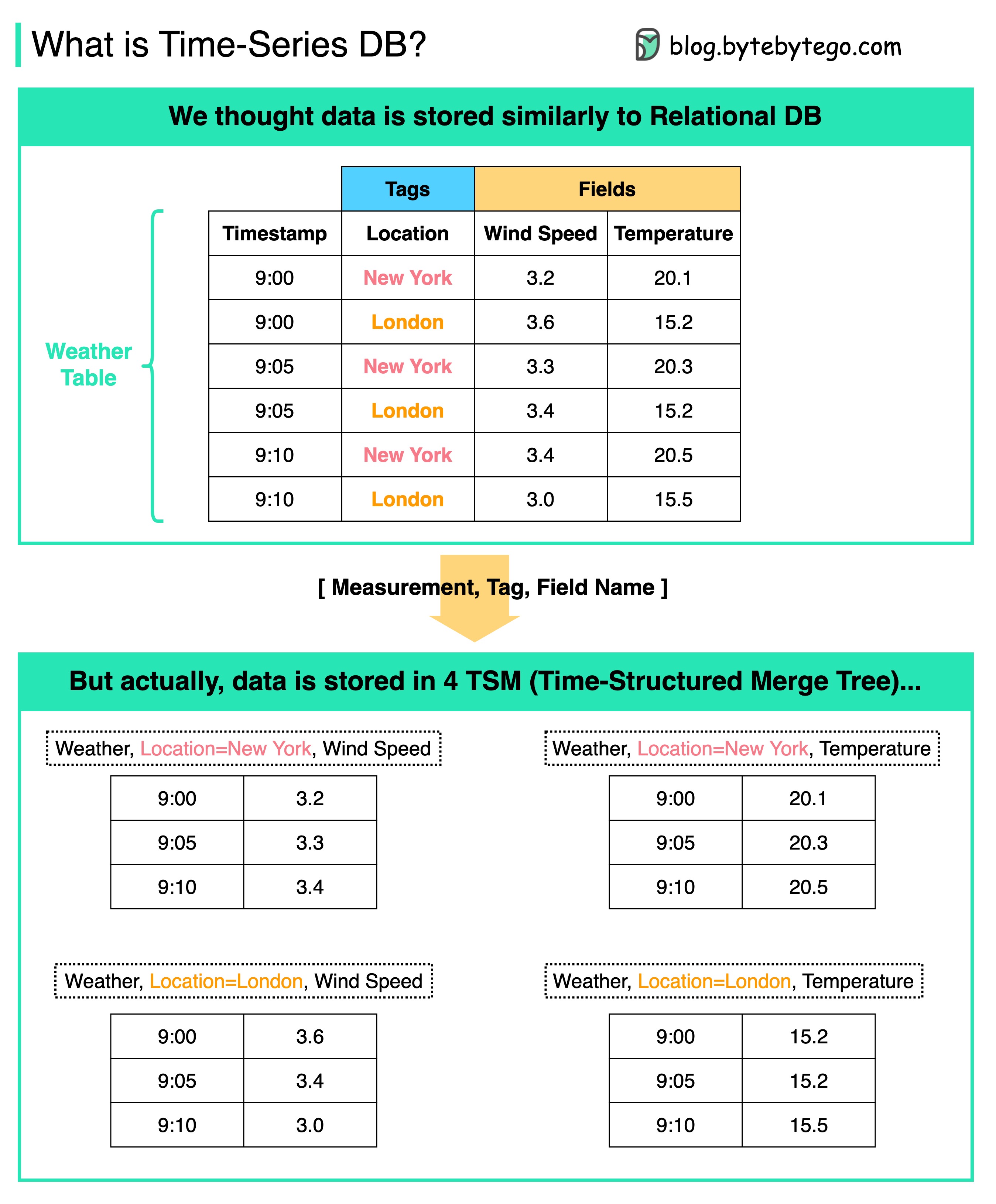

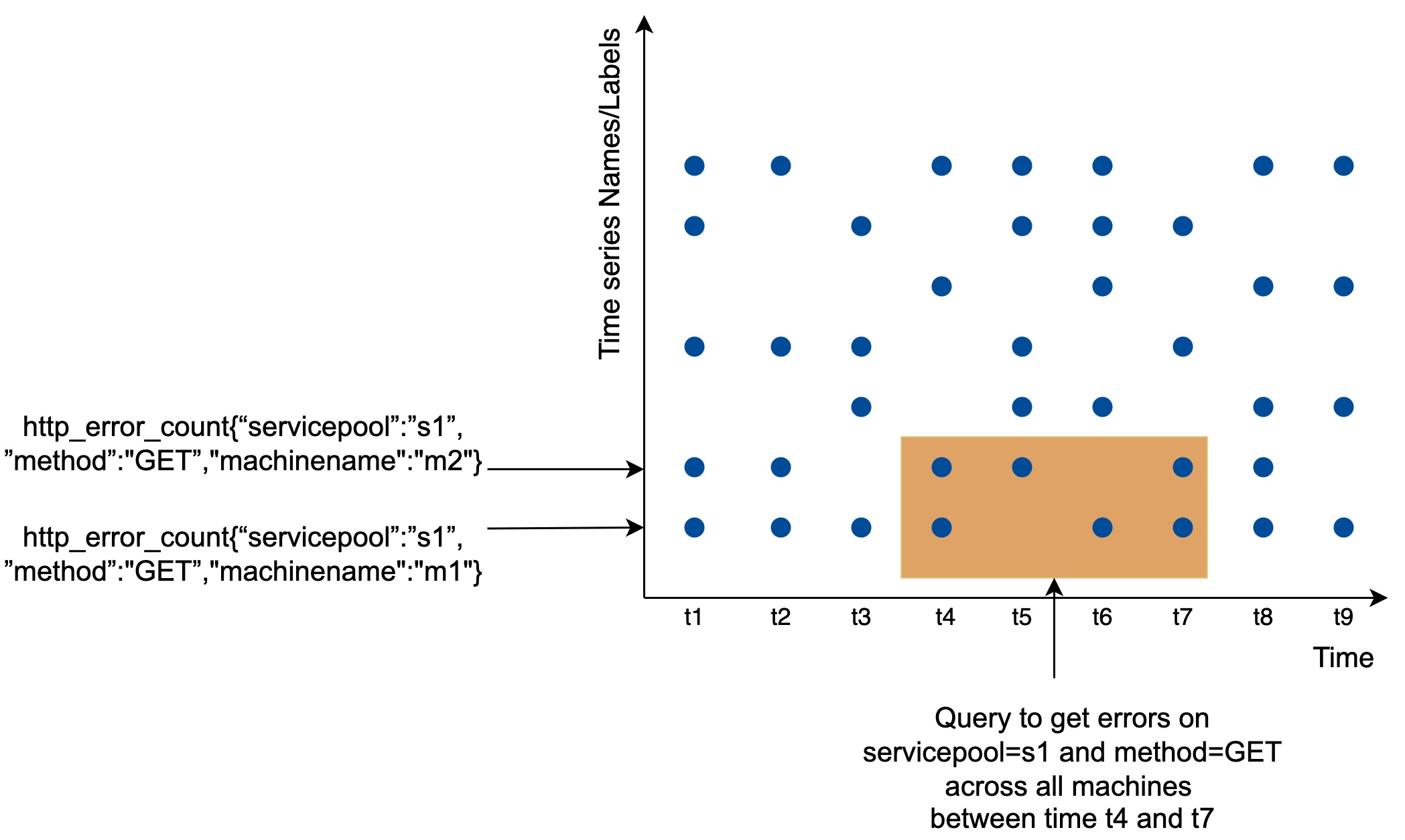

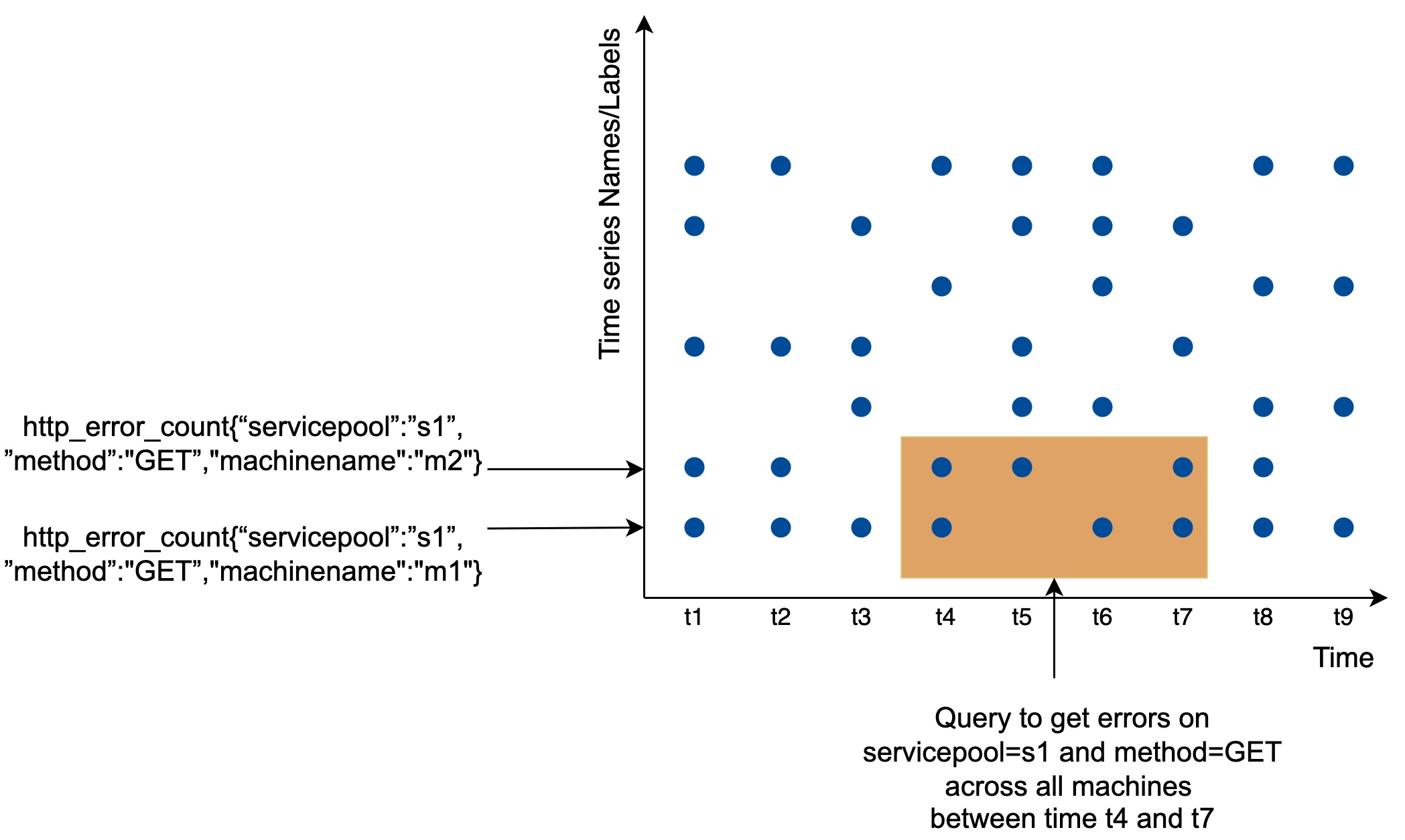

+ * [Time Series DB (TSDB) in 20 Lines](https://bytebytego.com/guides/time-series-db-tsdb-in-20-lines)

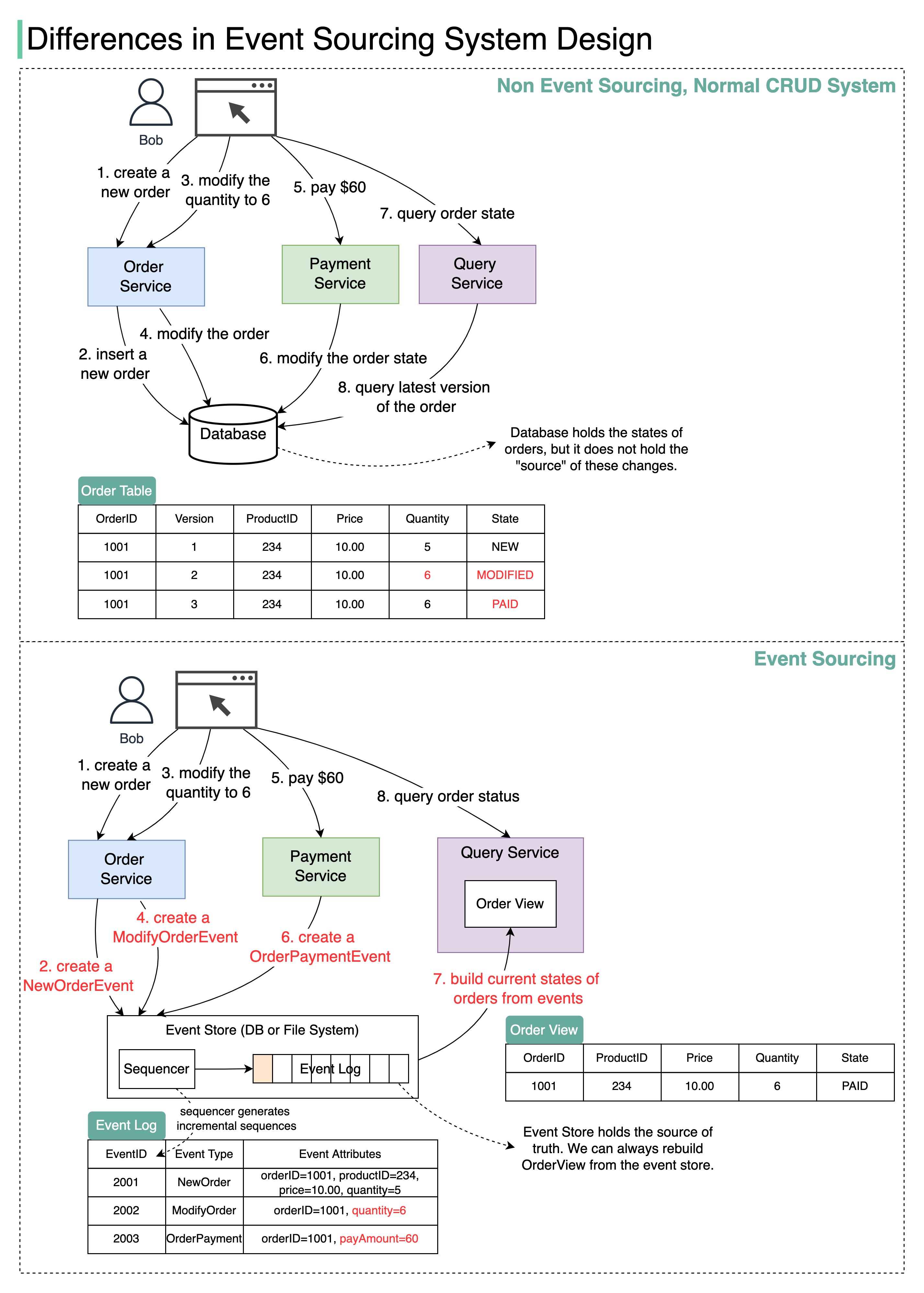

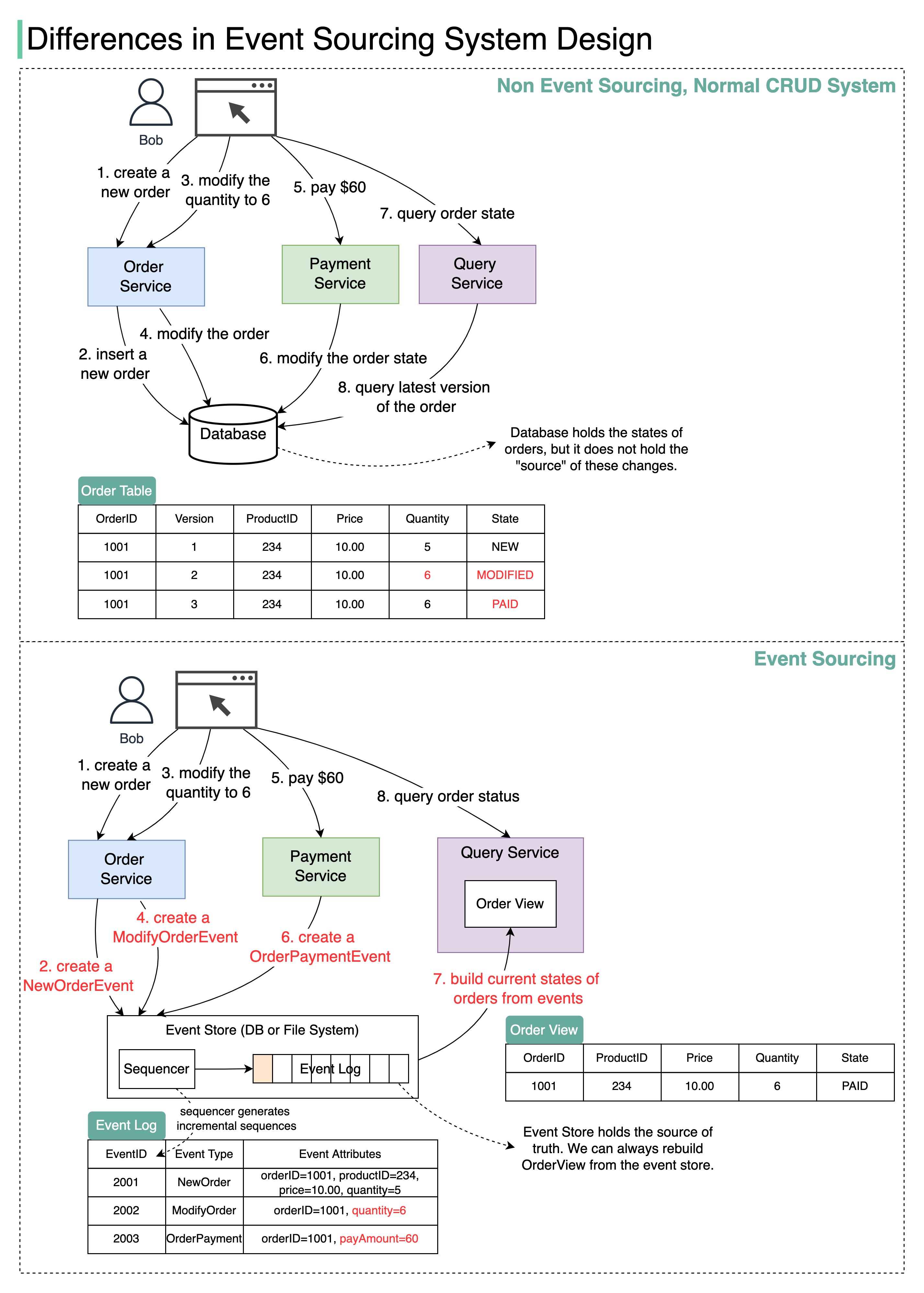

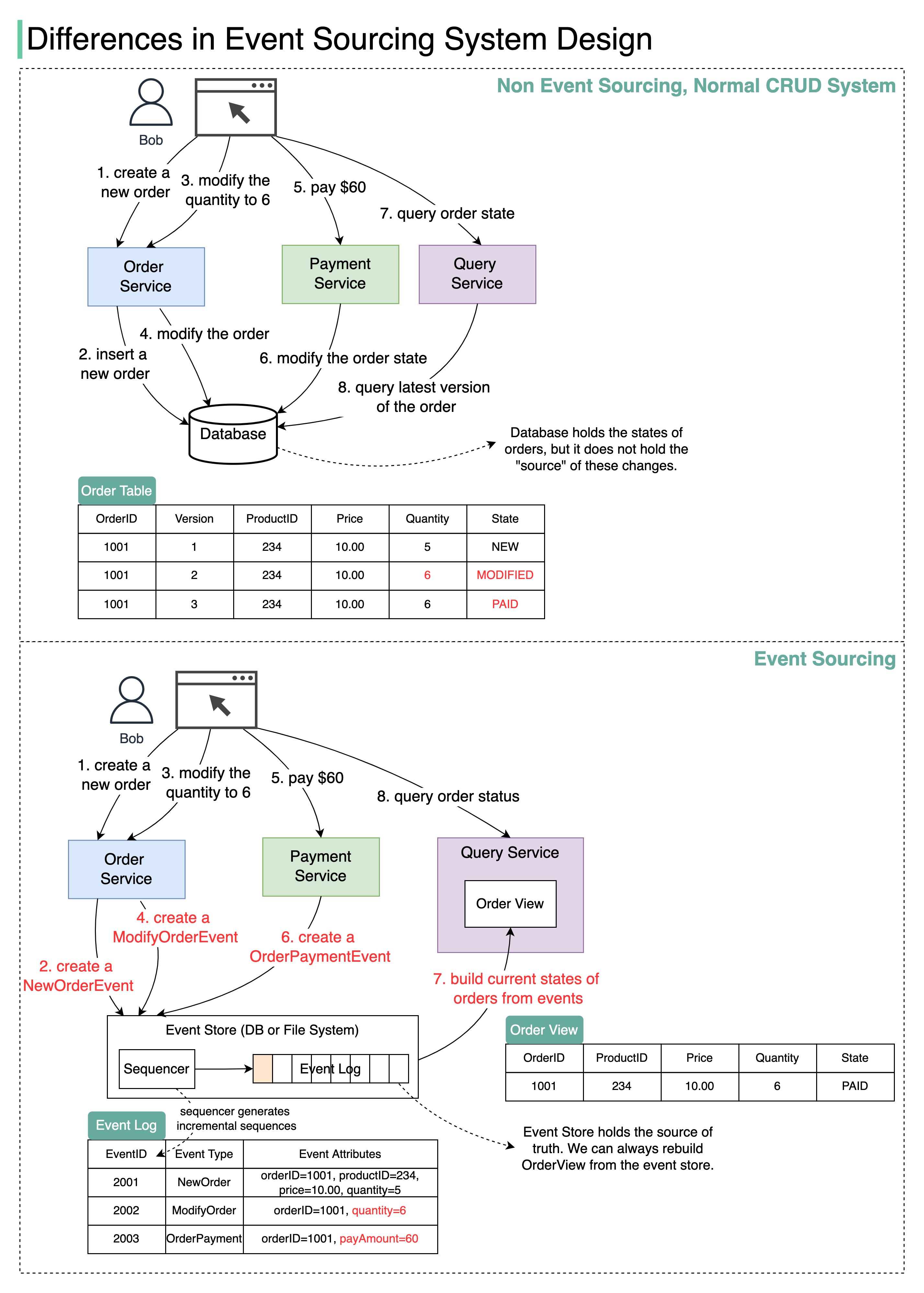

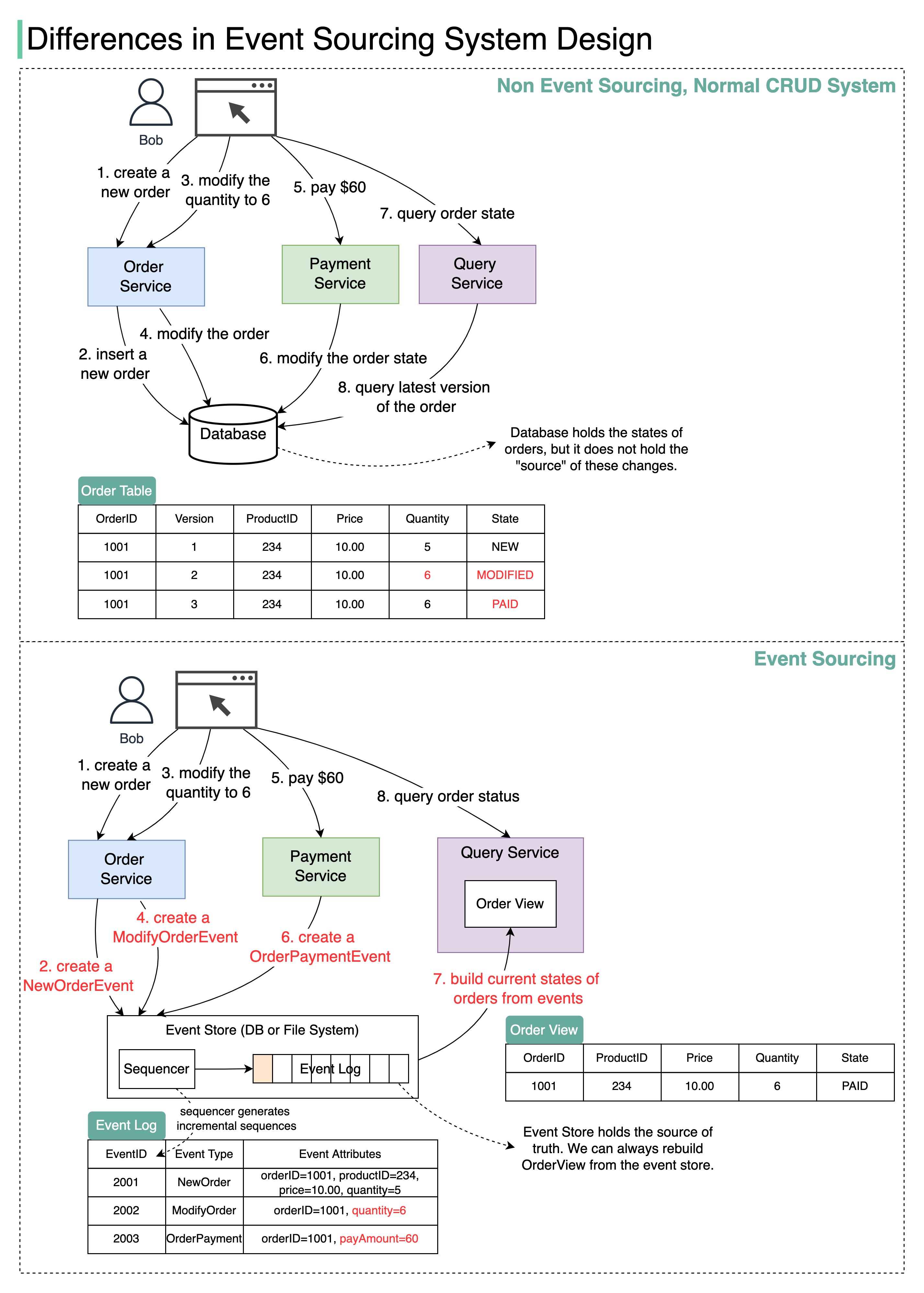

+ * [Differences in Event Sourcing System Design](https://bytebytego.com/guides/differences-in-event-sourcing-system-design)

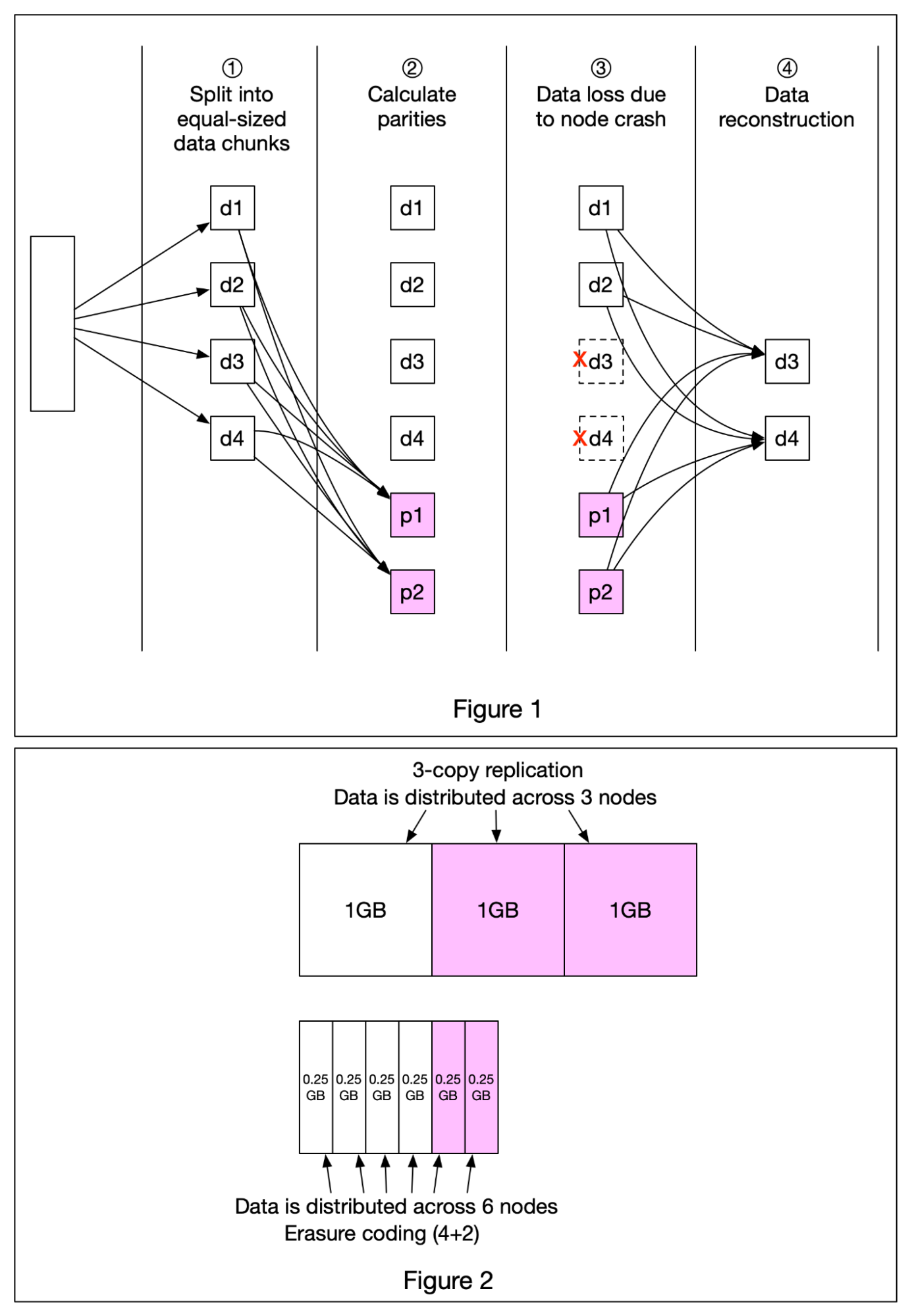

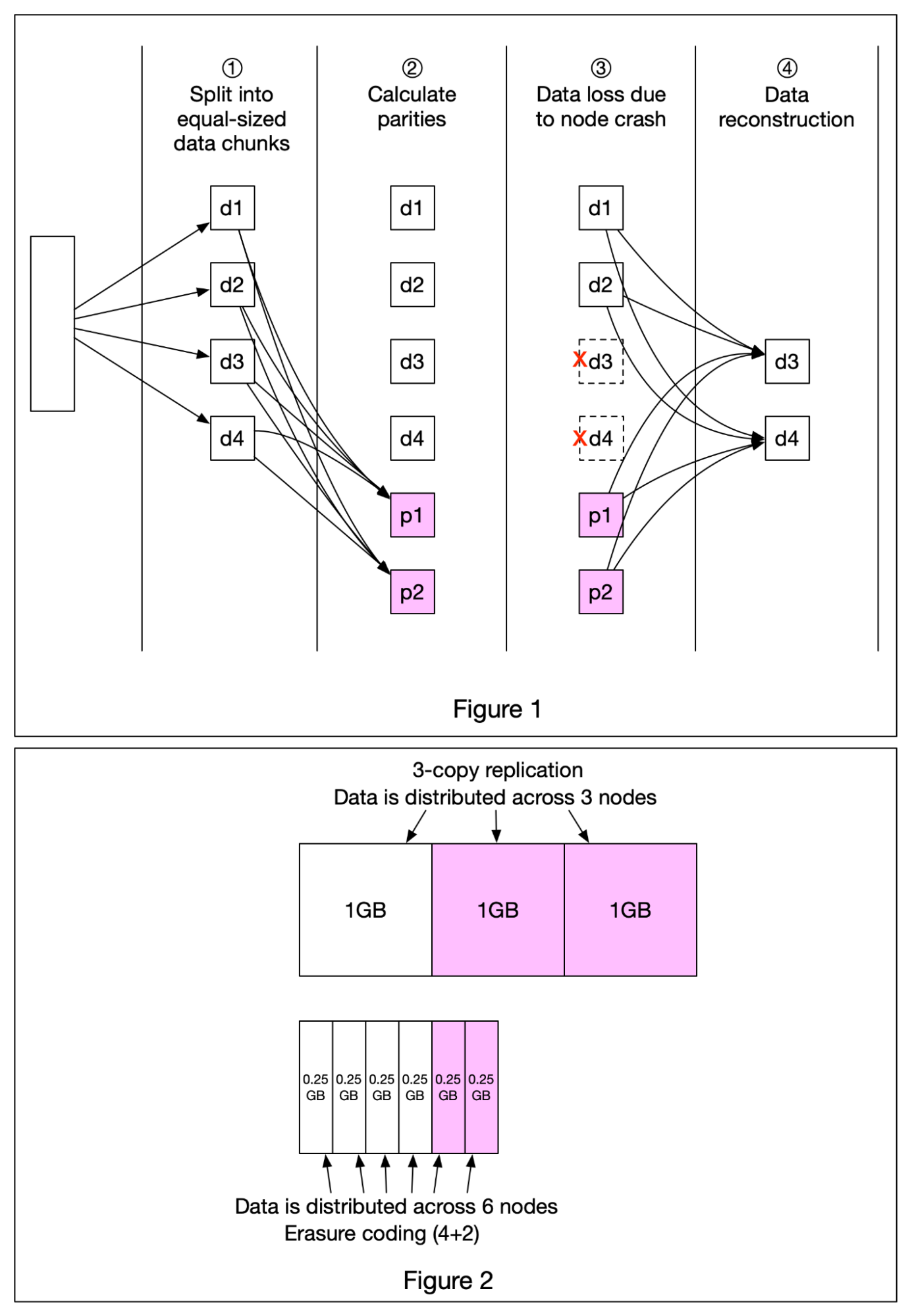

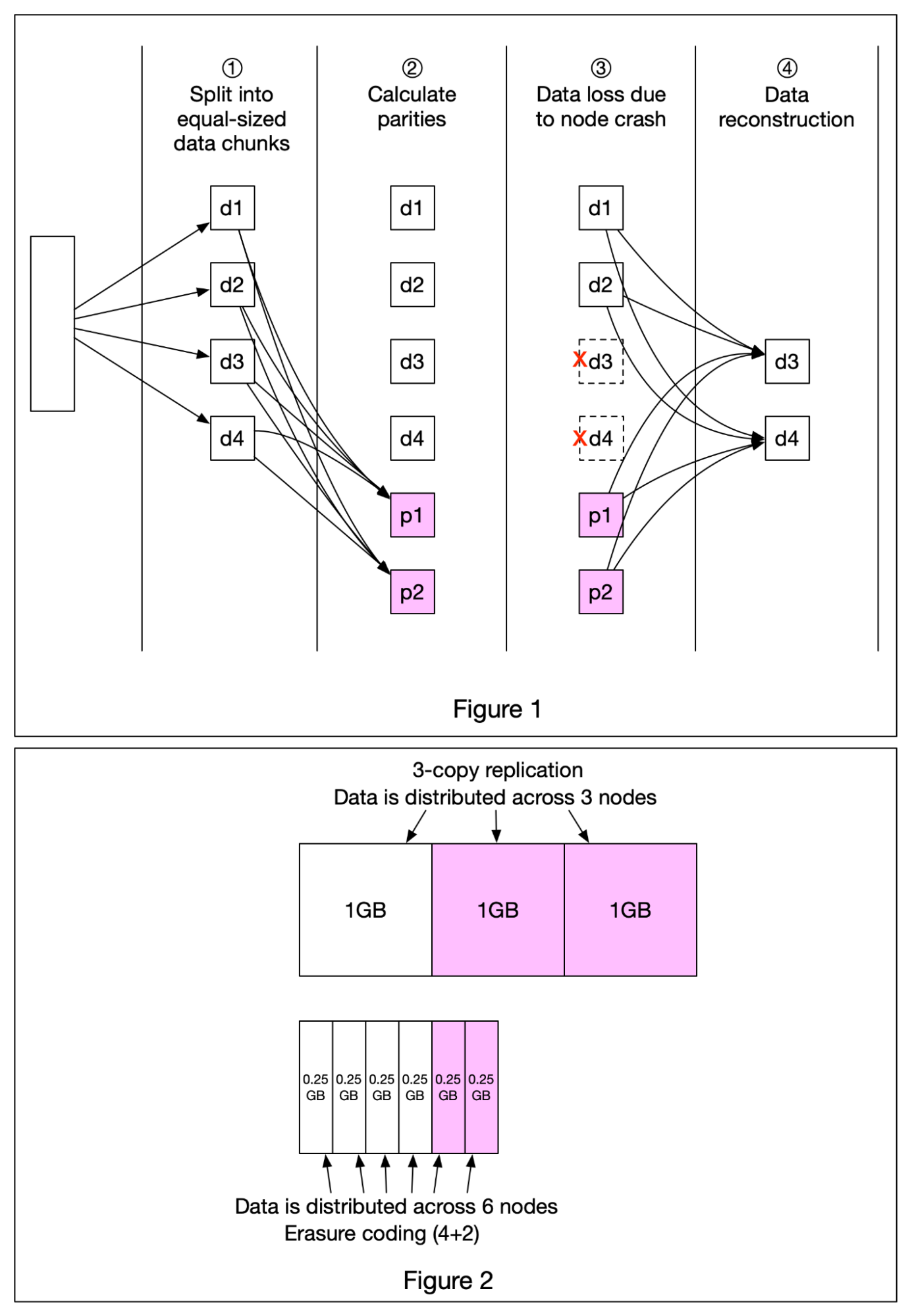

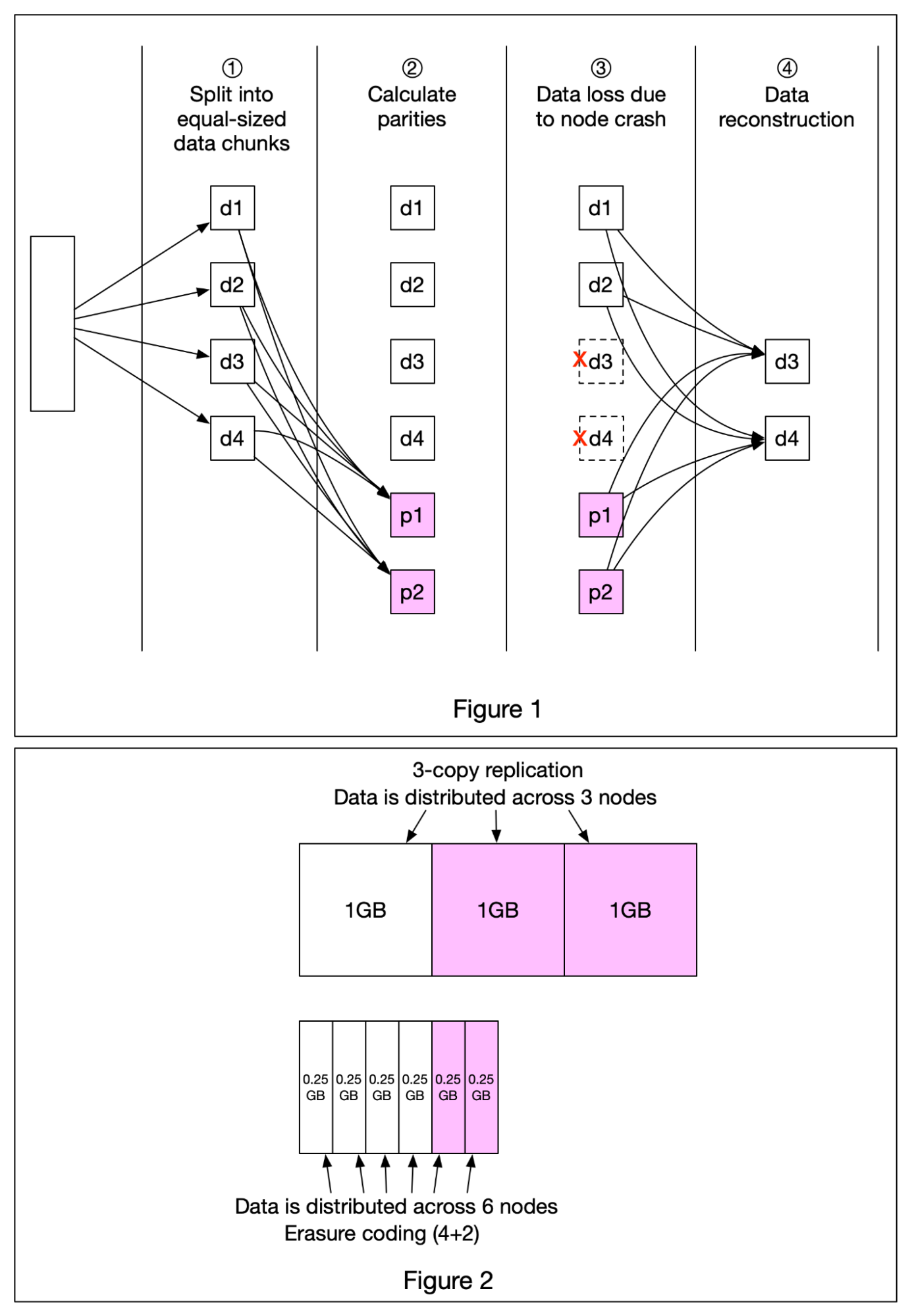

+ * [Erasure Coding](https://bytebytego.com/guides/erasure-coding)

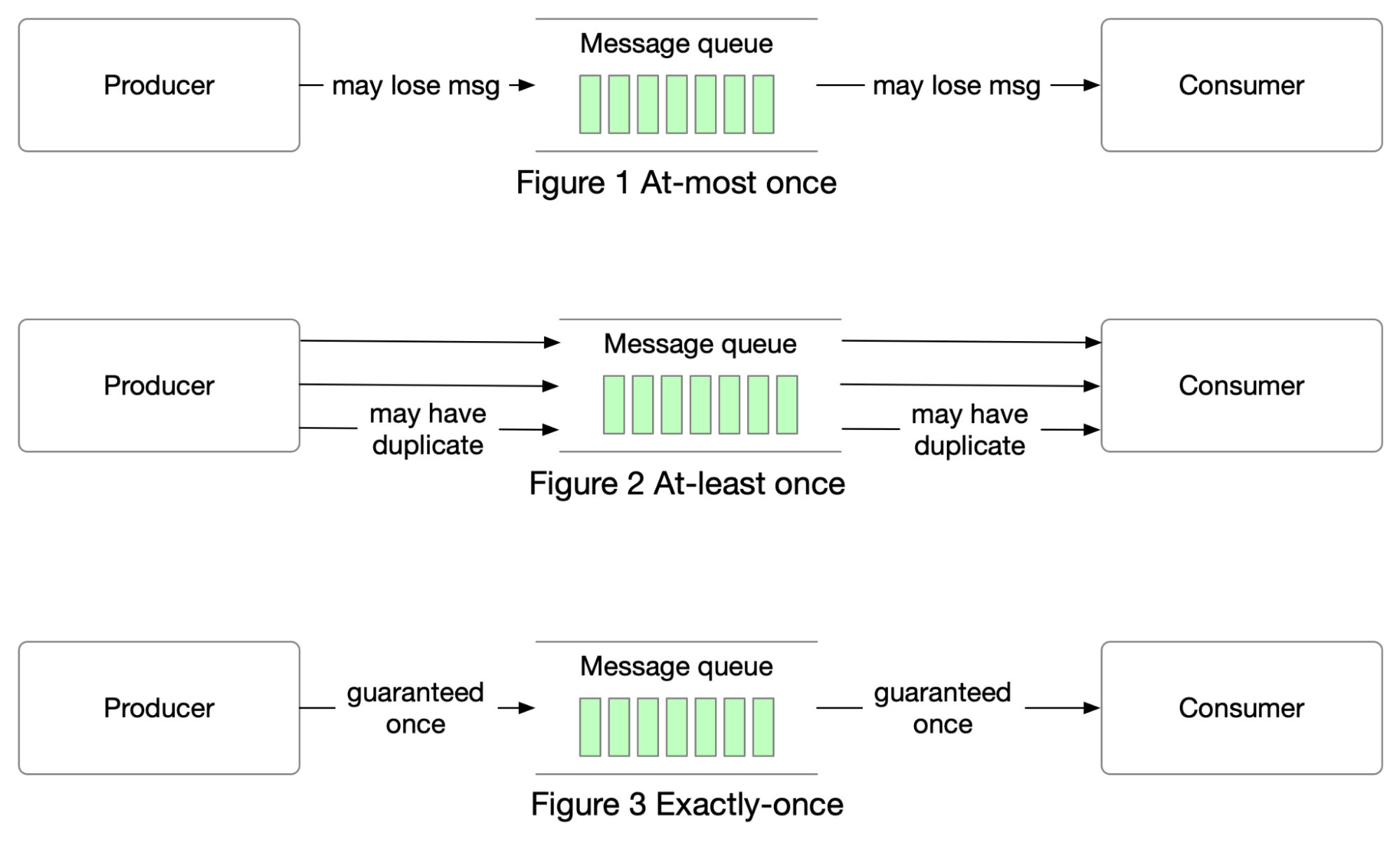

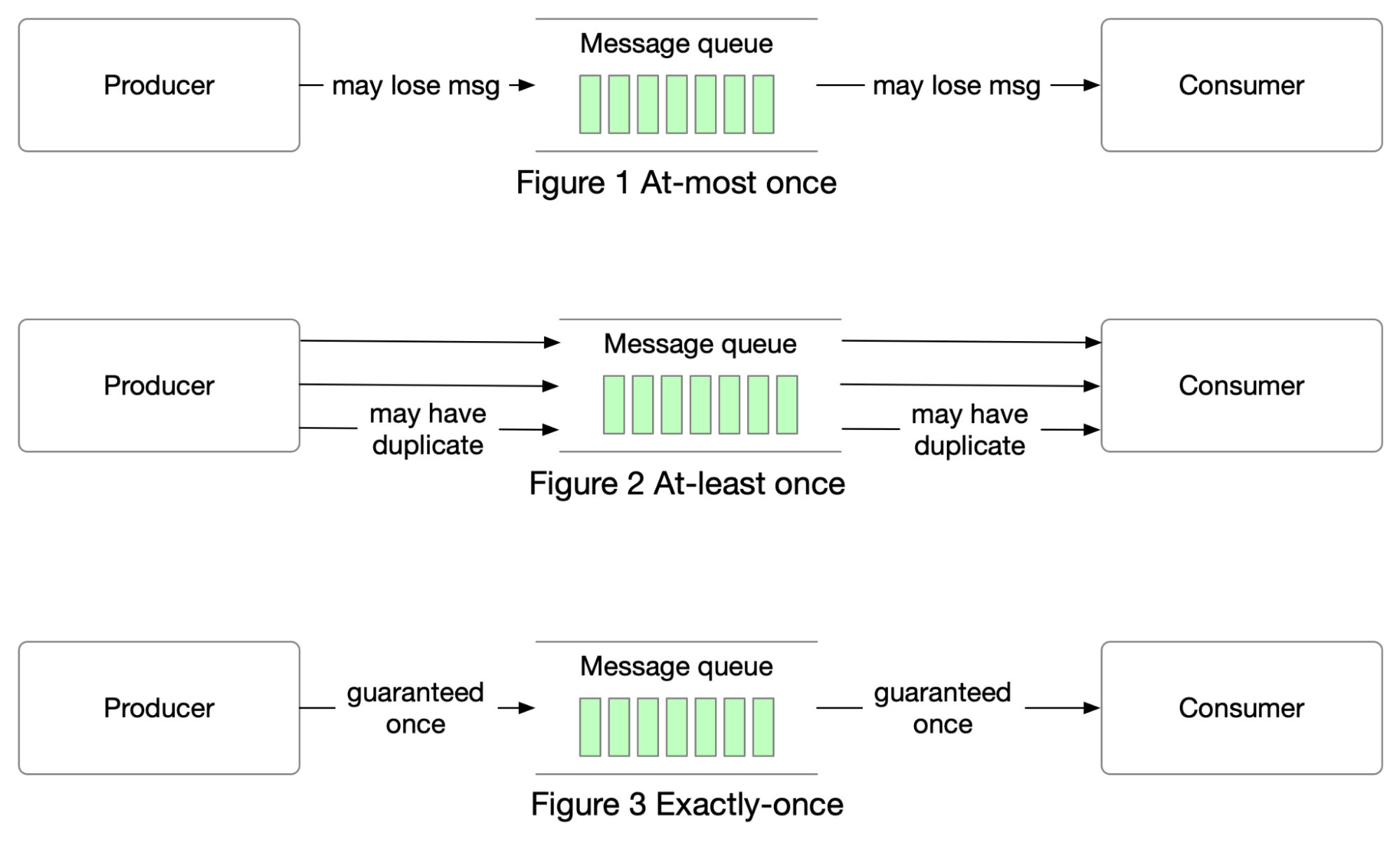

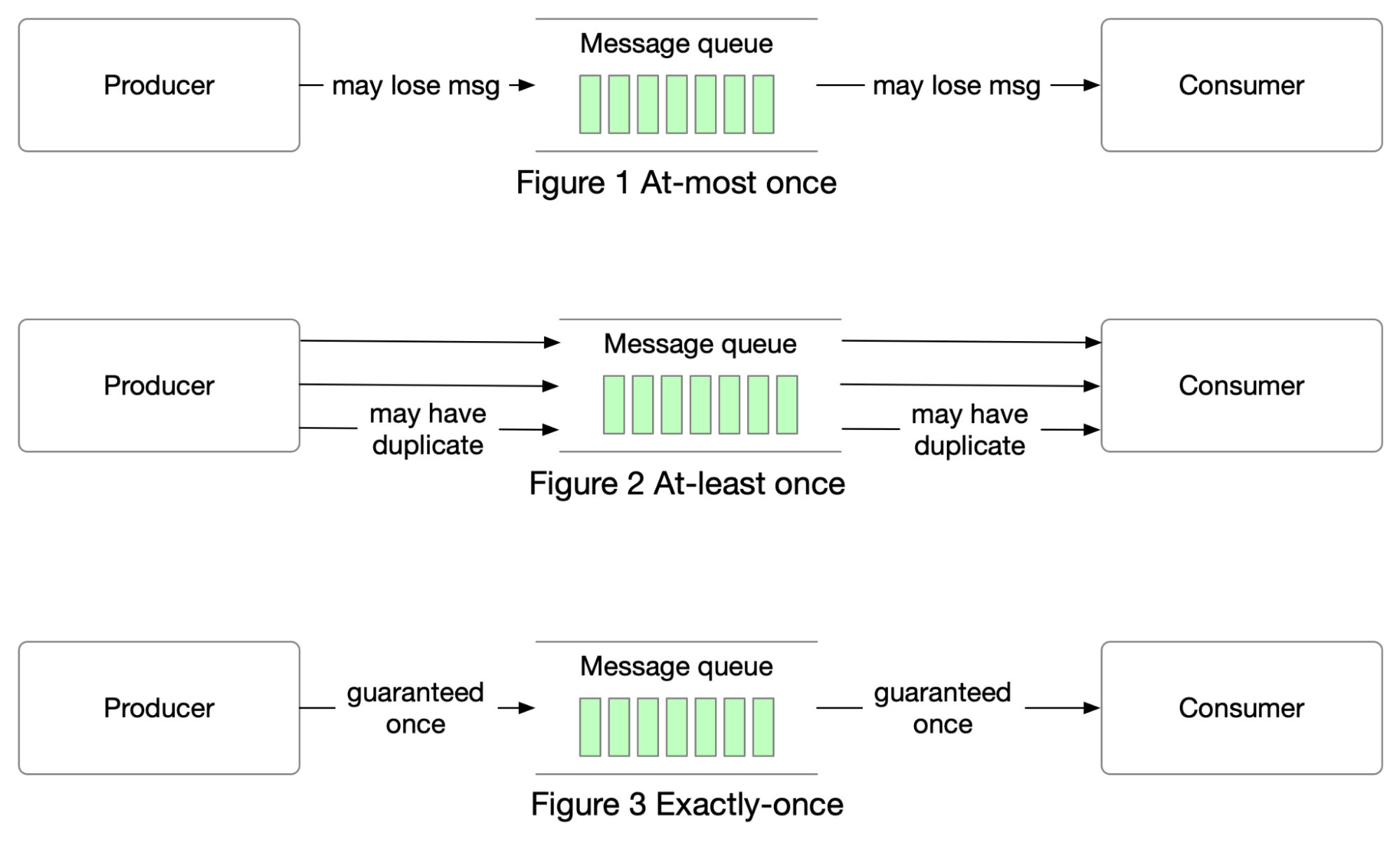

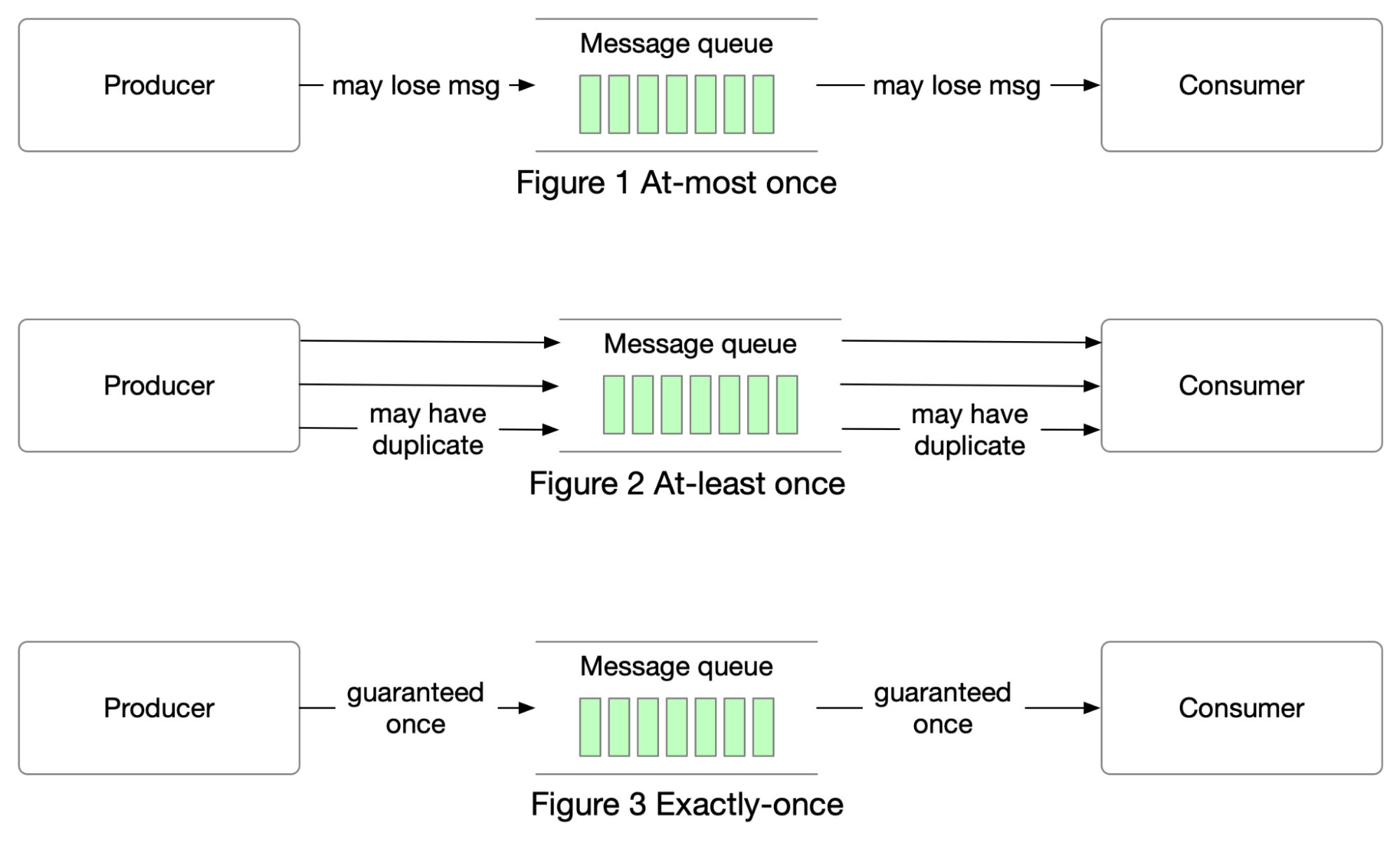

+ * [Delivery Semantics](https://bytebytego.com/guides/delivery-semantics)

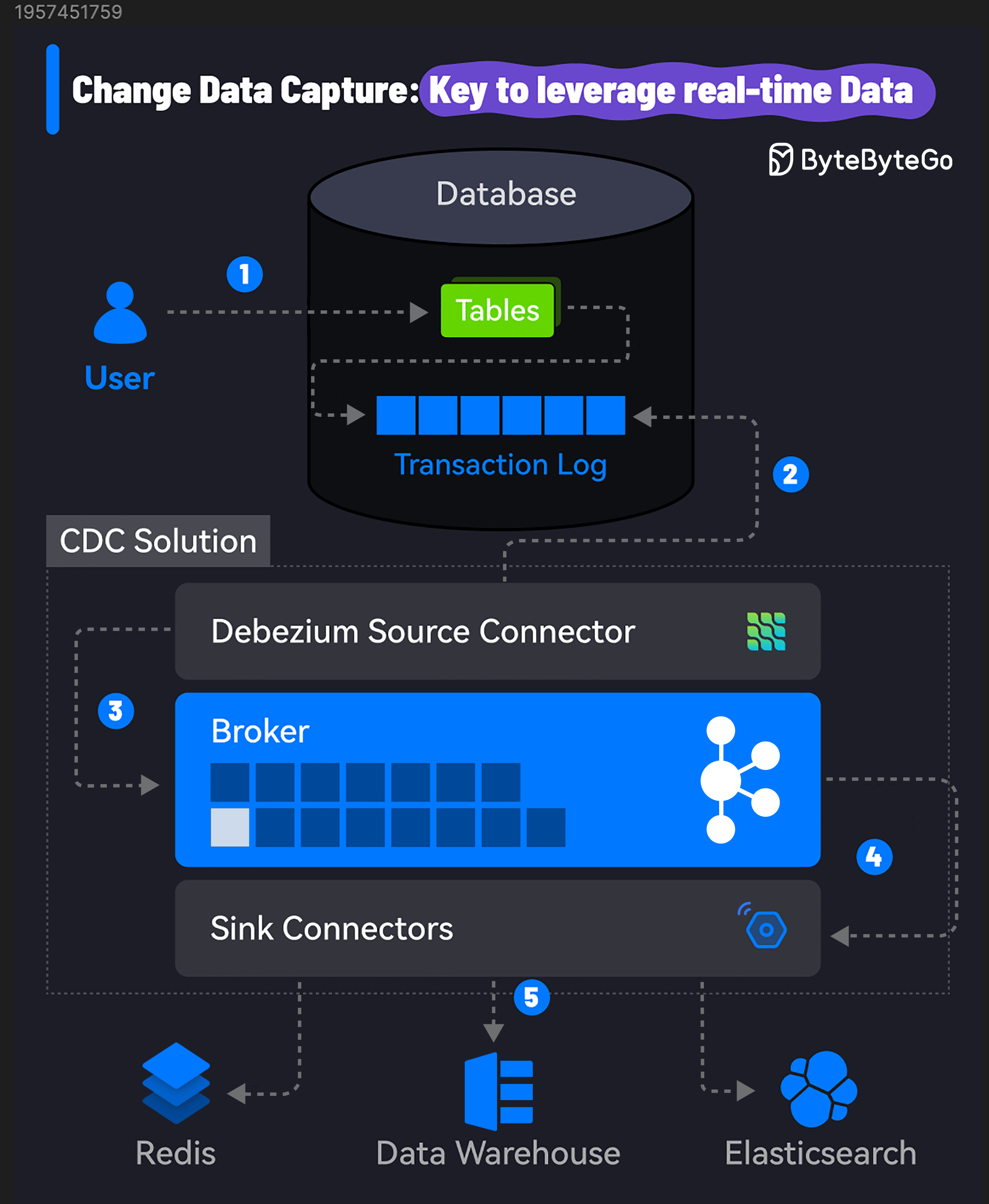

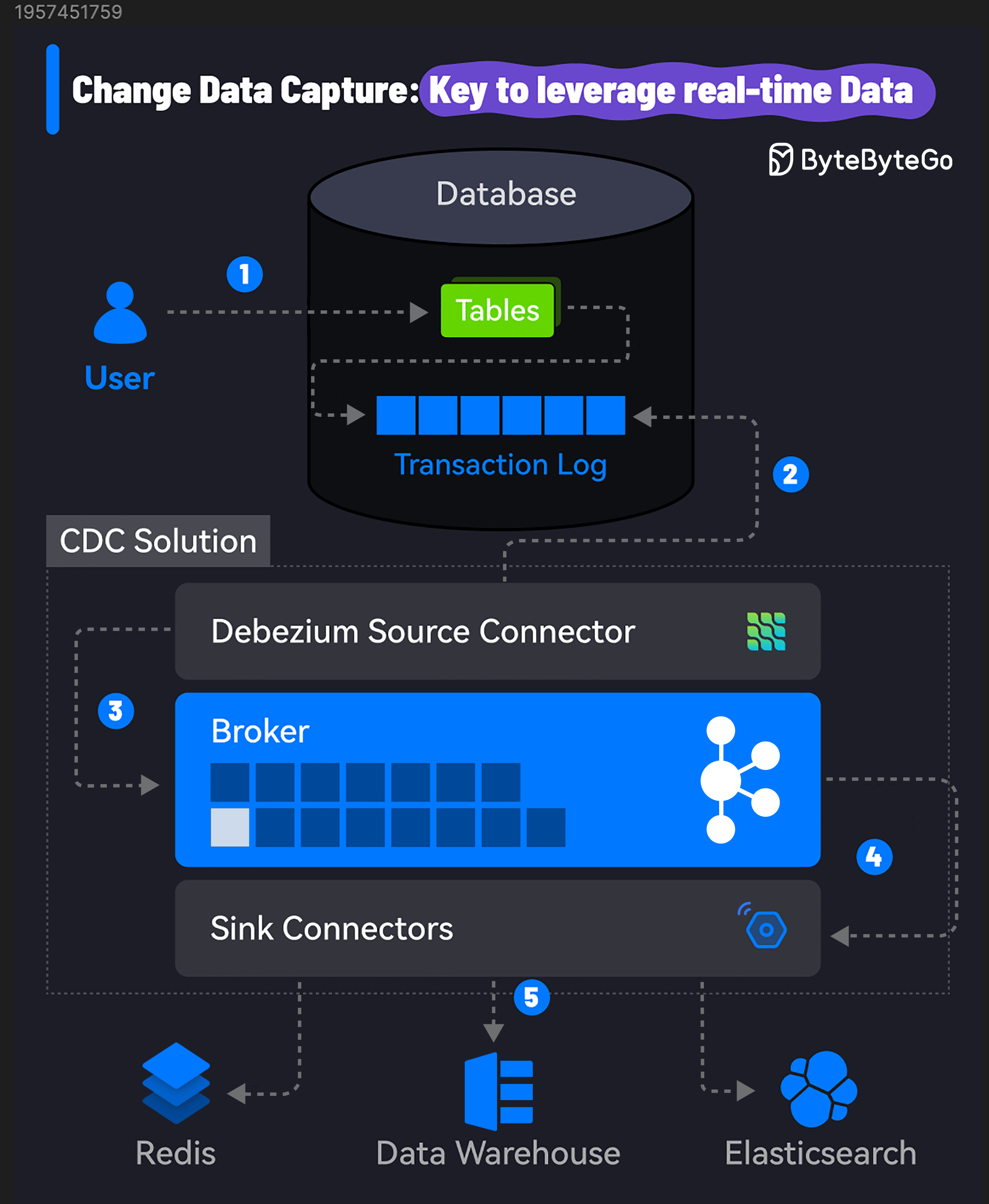

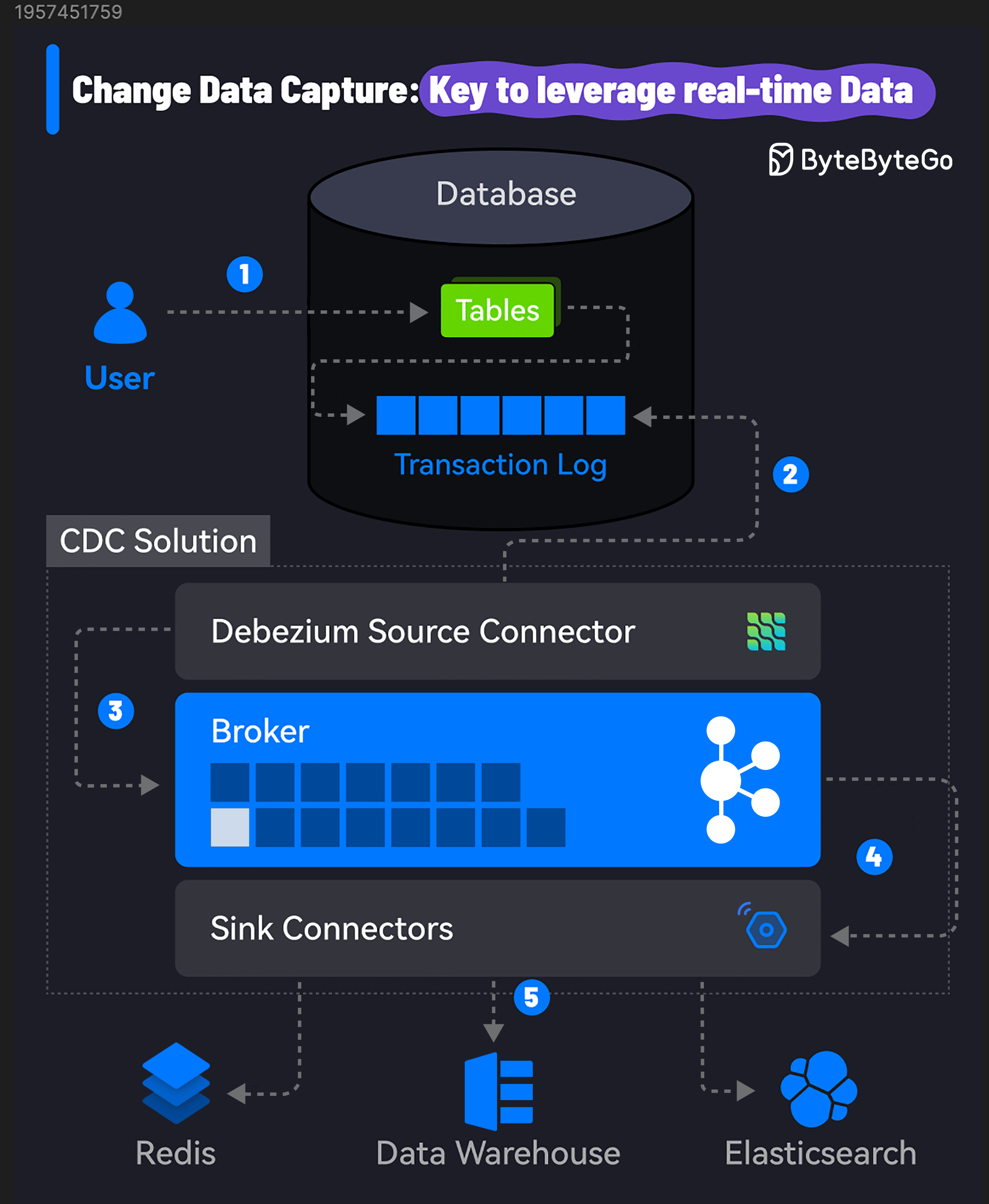

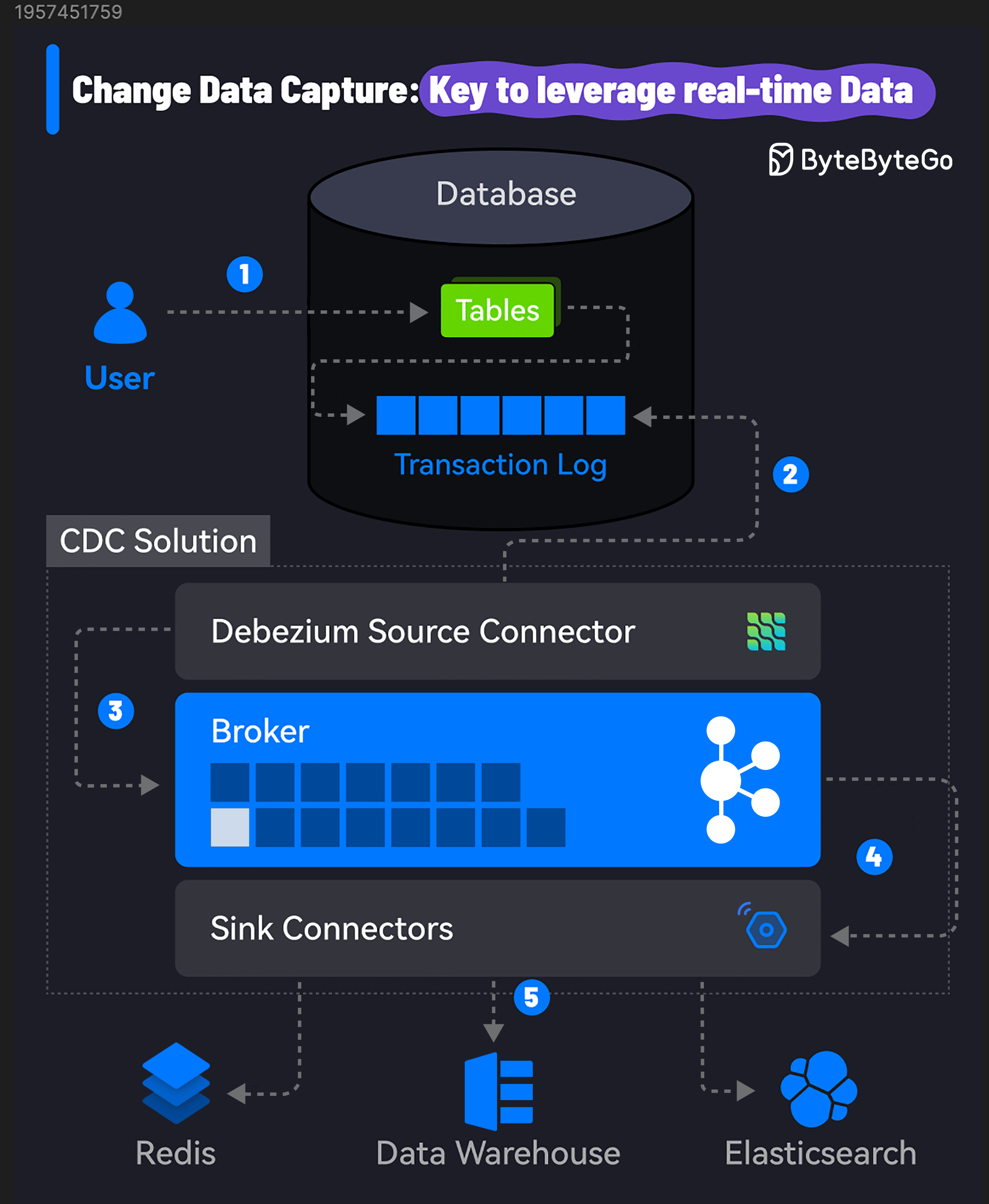

+ * [Change Data Capture: Key to Leverage Real-time Data](https://bytebytego.com/guides/change-data-capture-key-to-leverage-real-time-data)

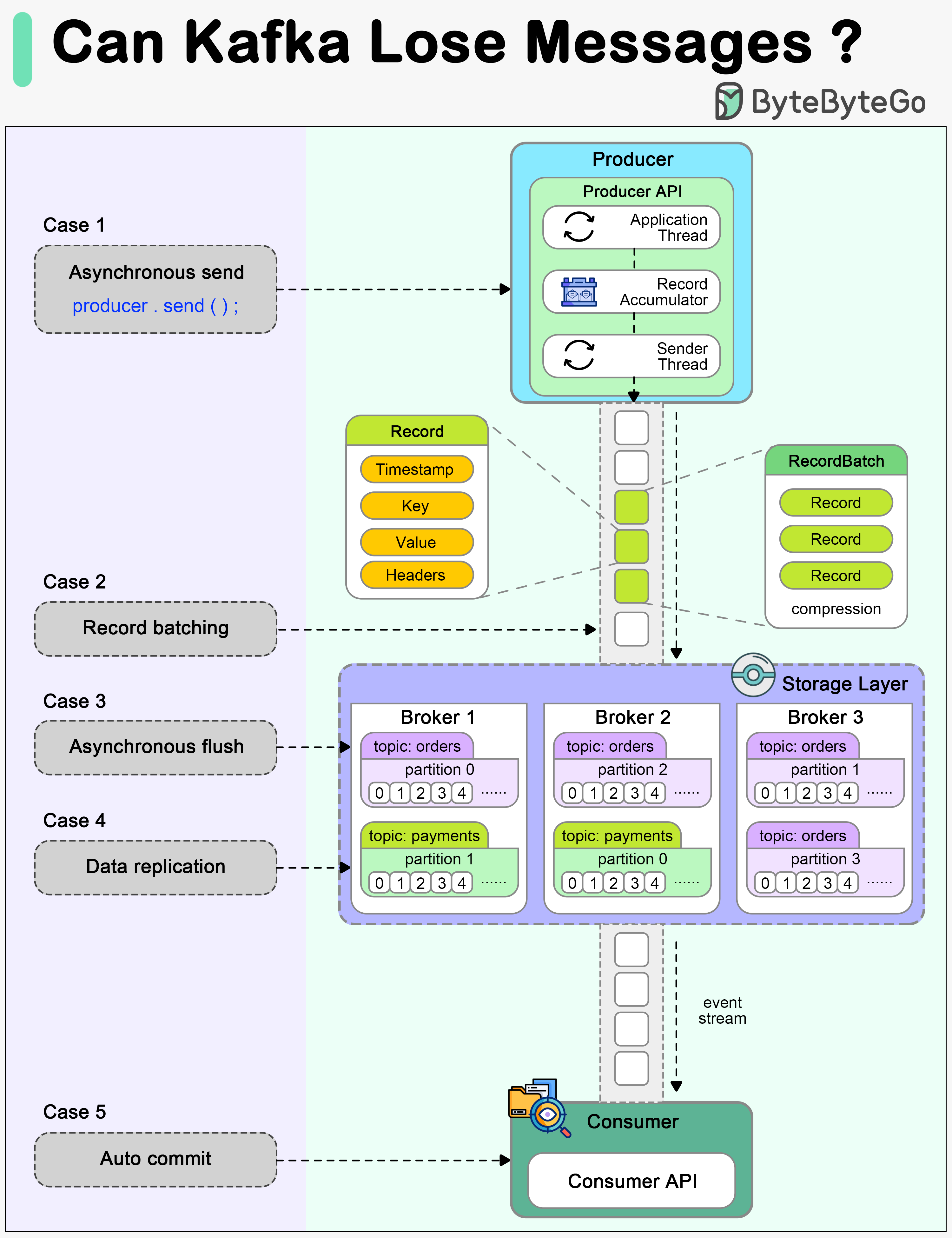

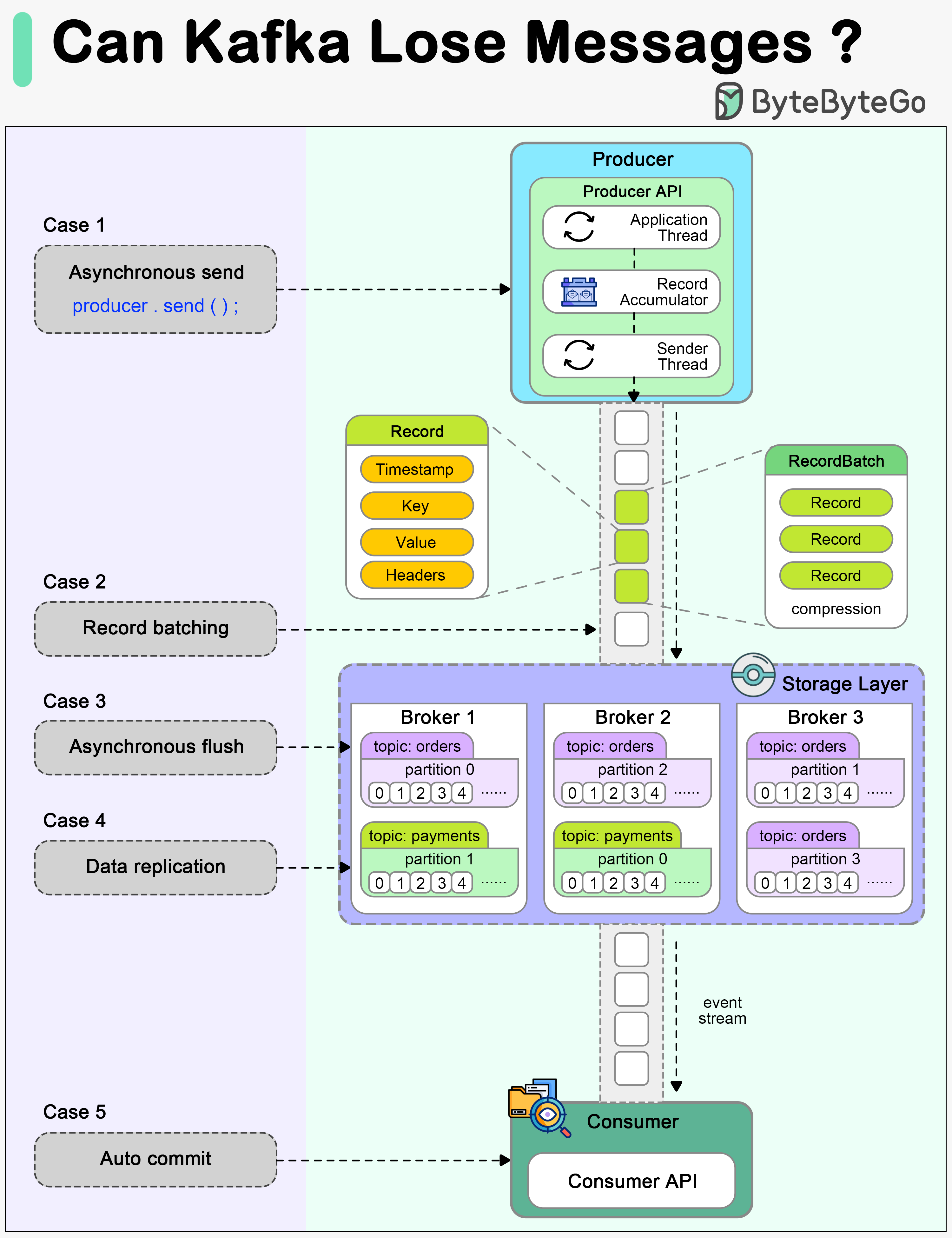

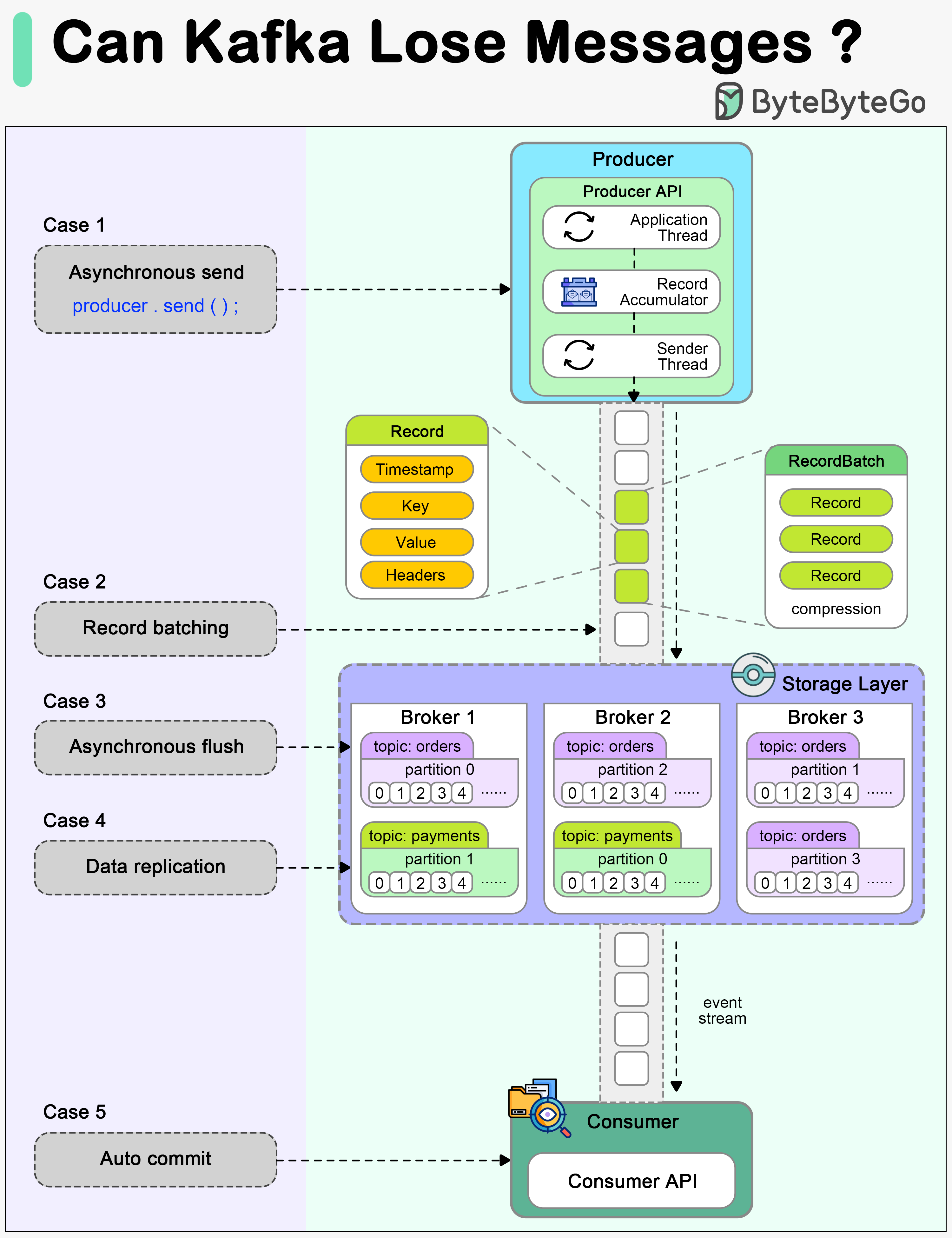

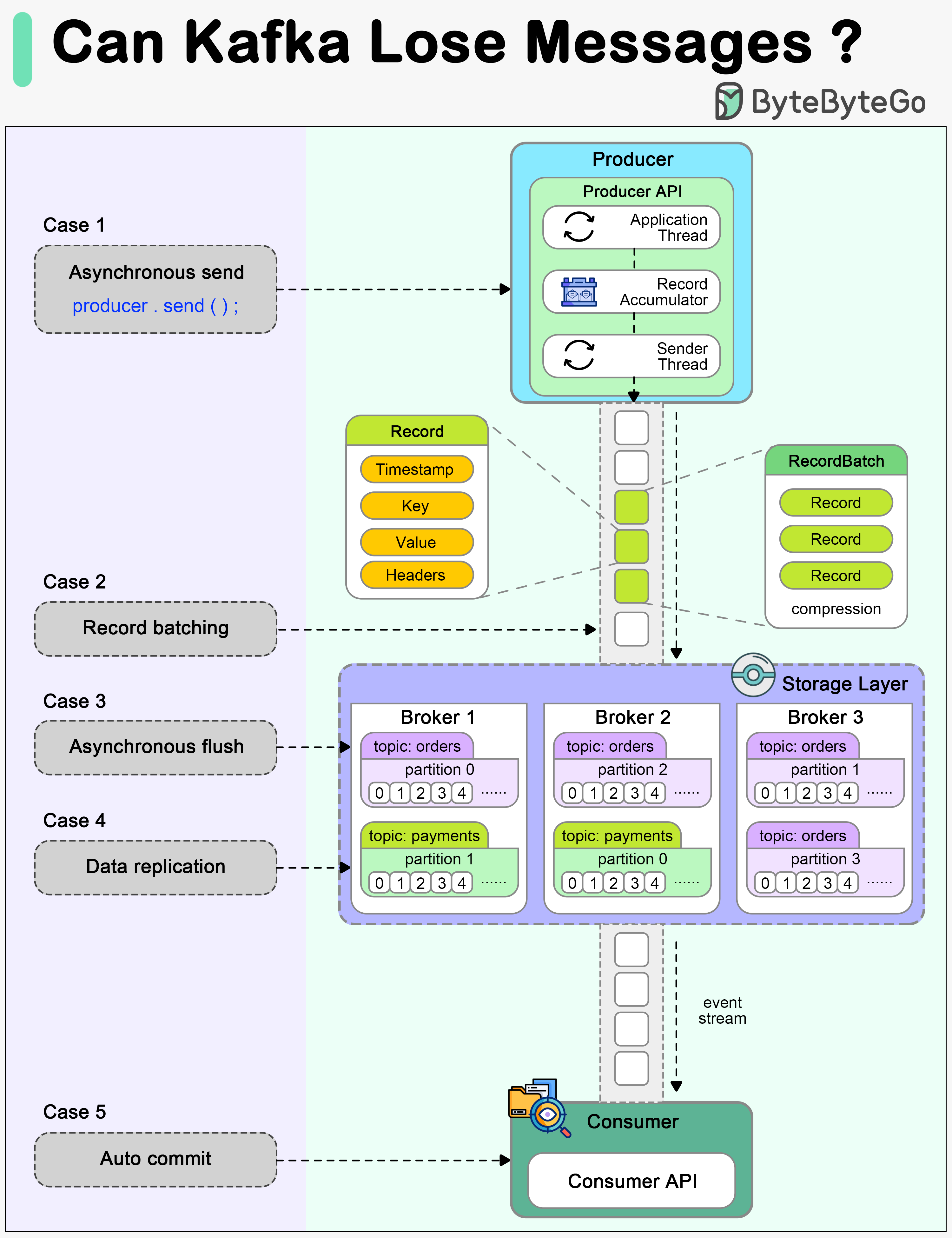

+ * [Can Kafka Lose Messages?](https://bytebytego.com/guides/can-kafka-lose-messages)

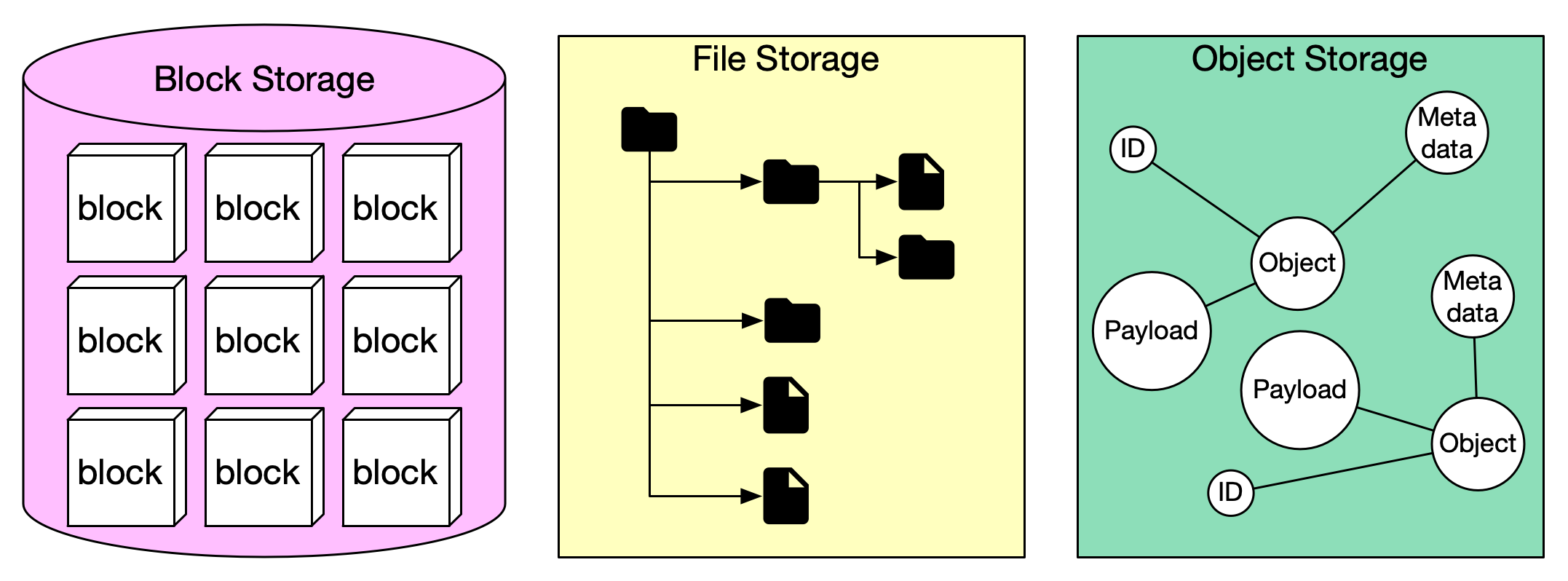

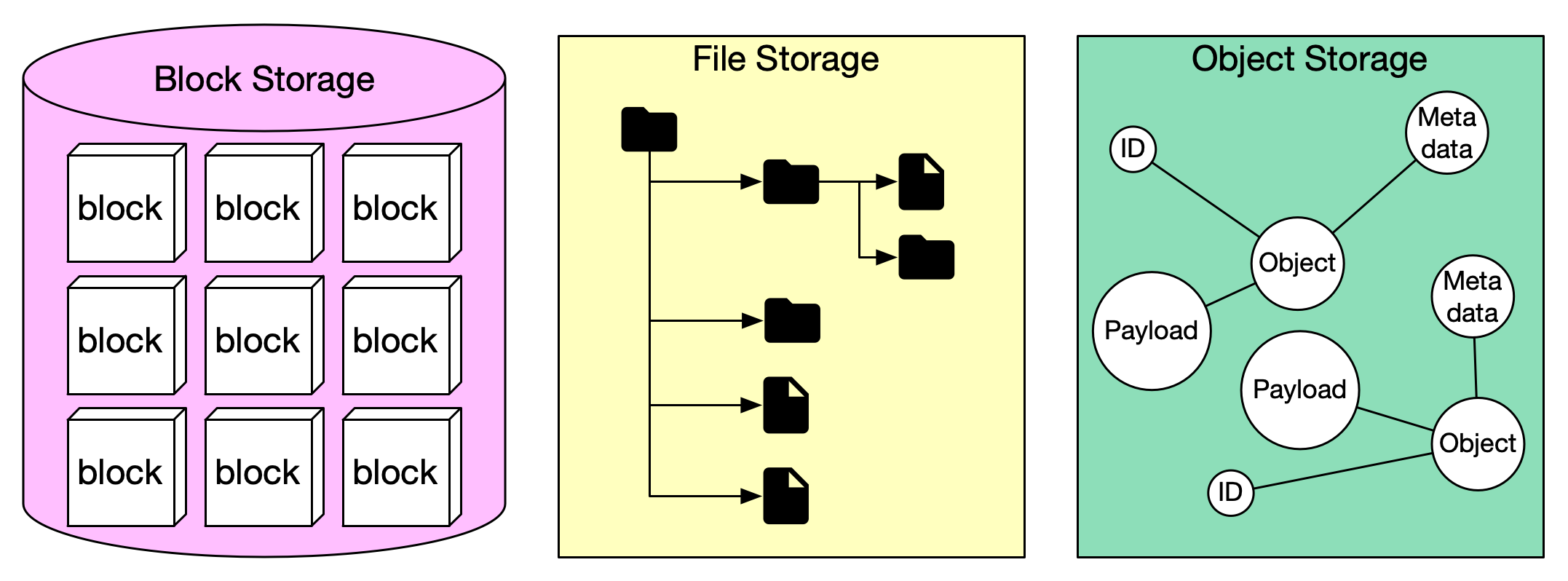

+ * [Storage Systems Overview](https://bytebytego.com/guides/storage-systems-overview)

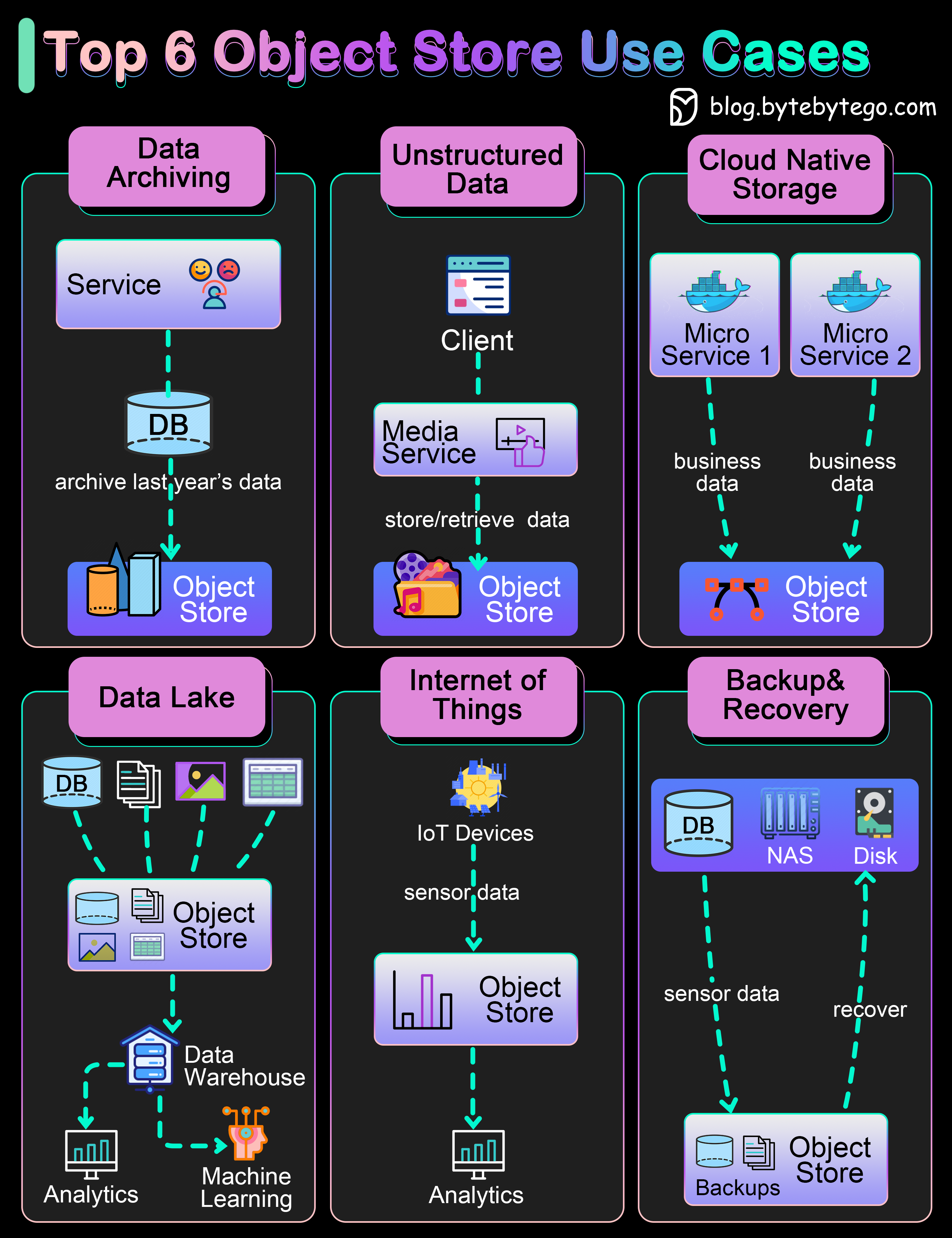

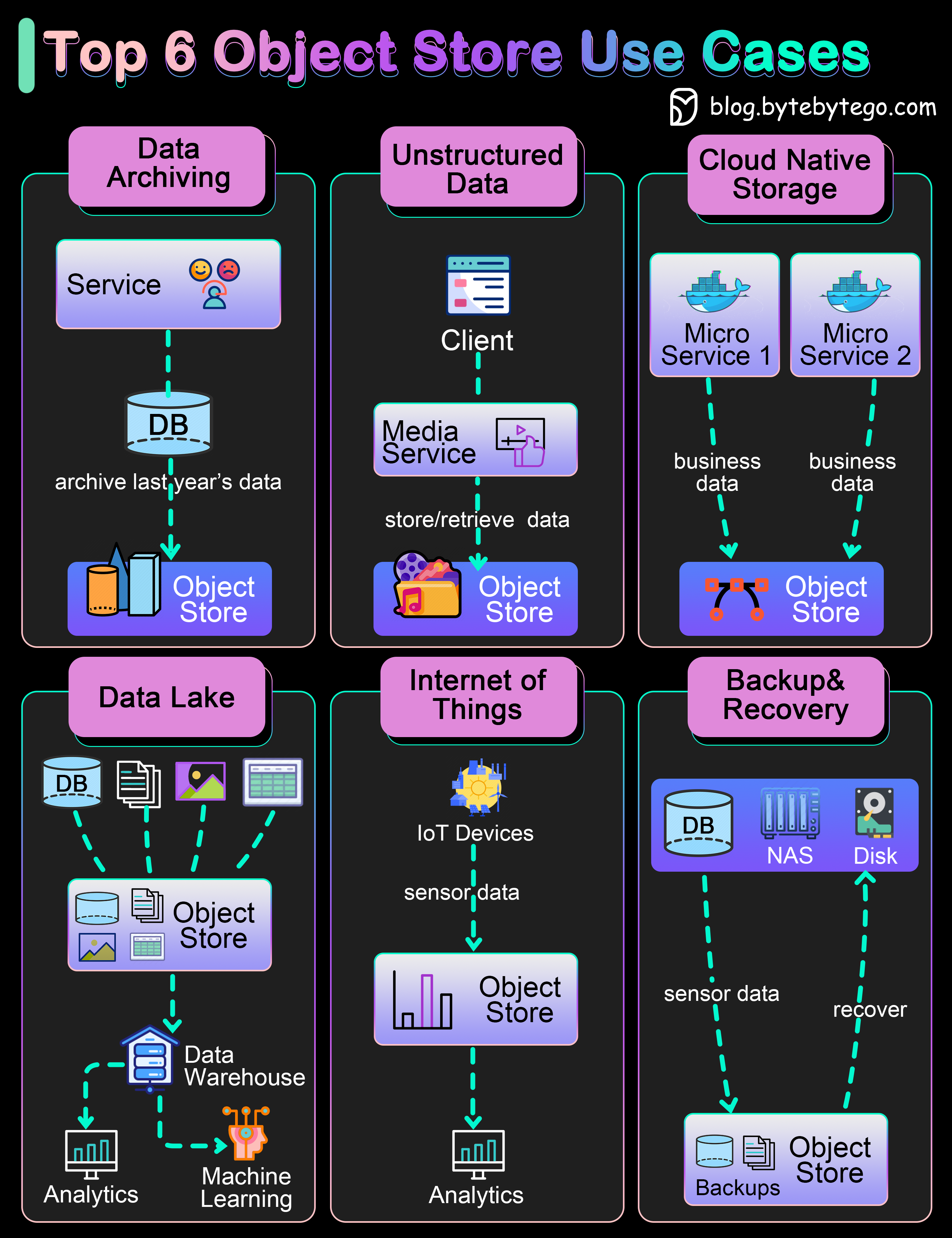

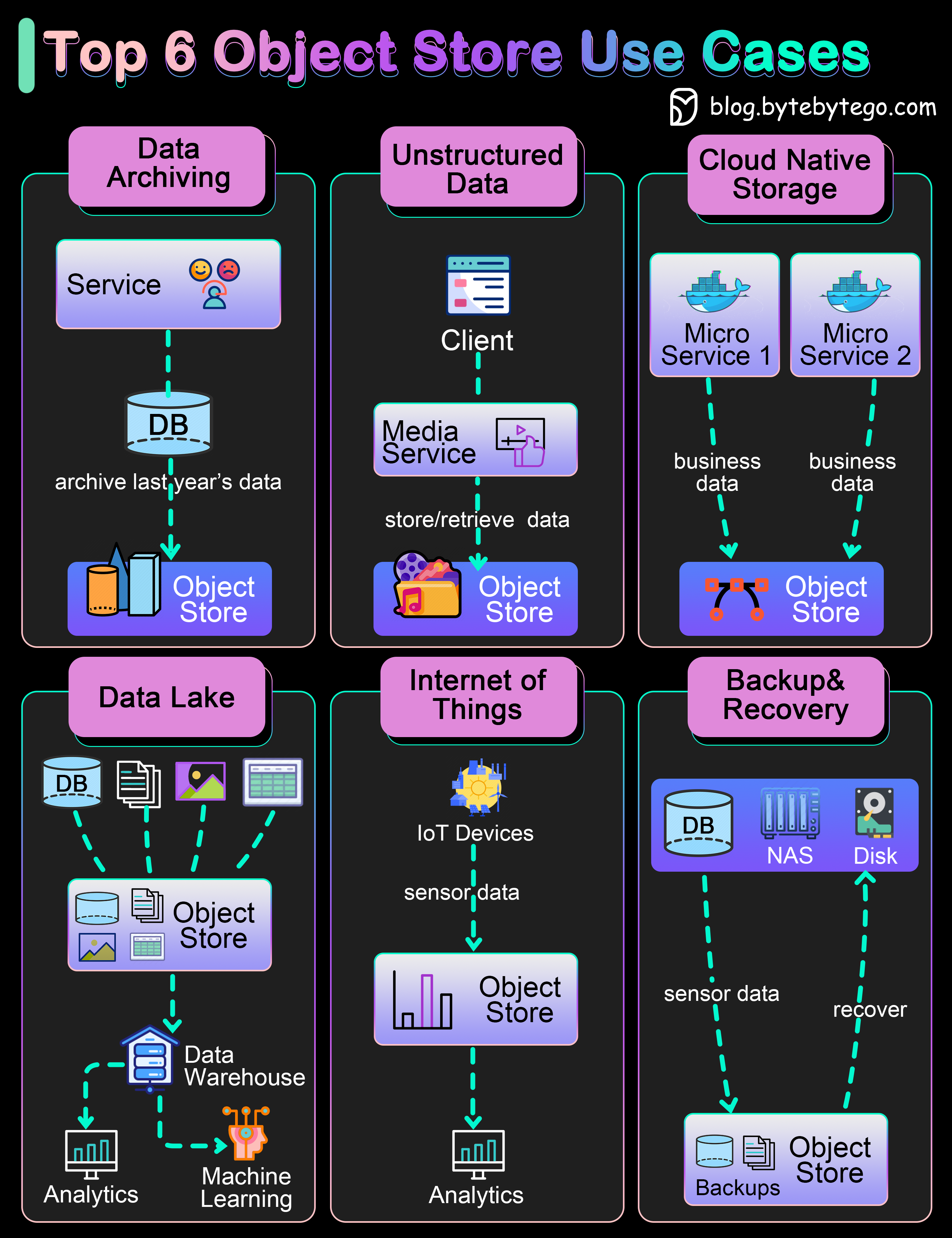

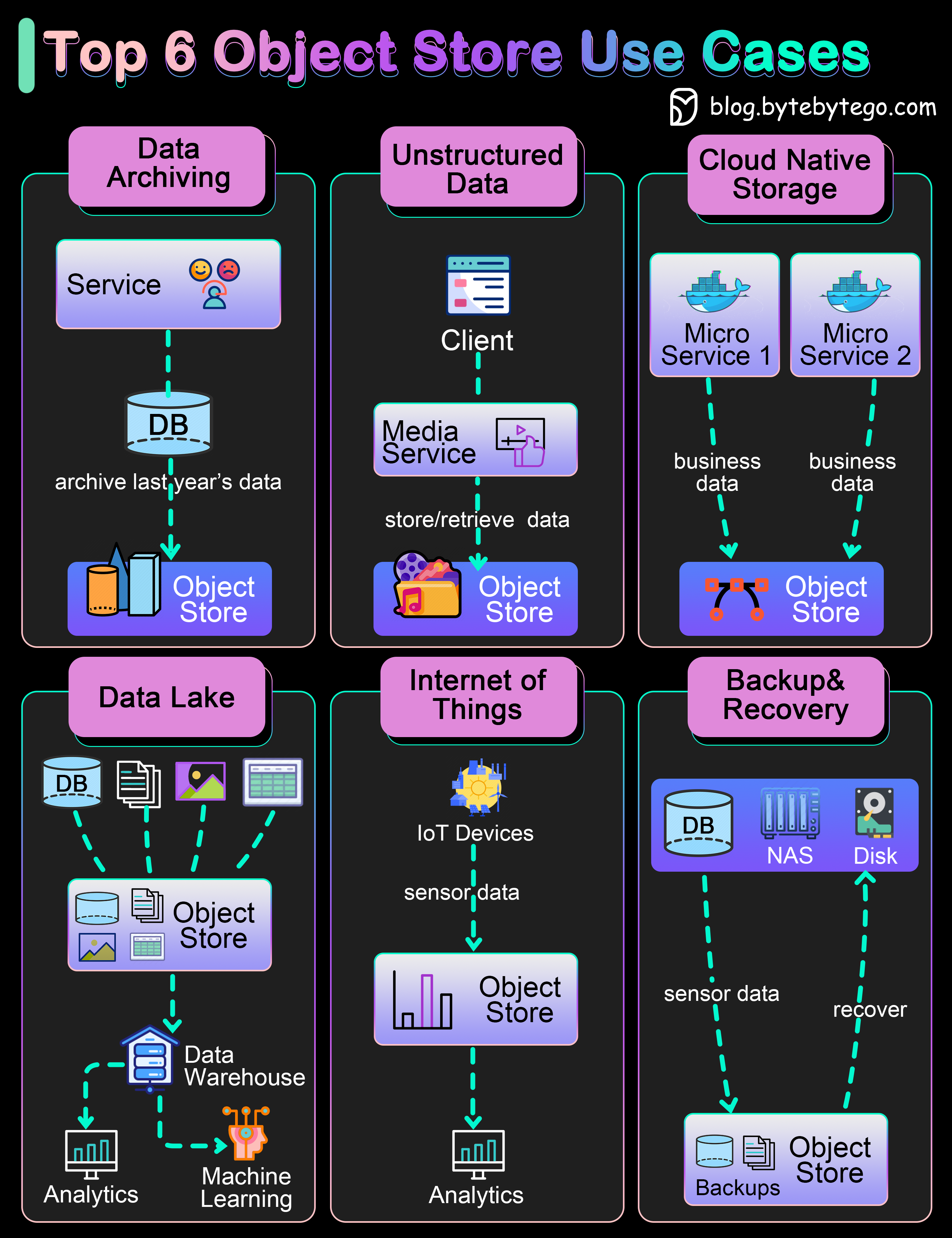

+ * [Explain the Top 6 Use Cases of Object Stores](https://bytebytego.com/guides/explain-the-top-6-use-cases-of-object-stores)

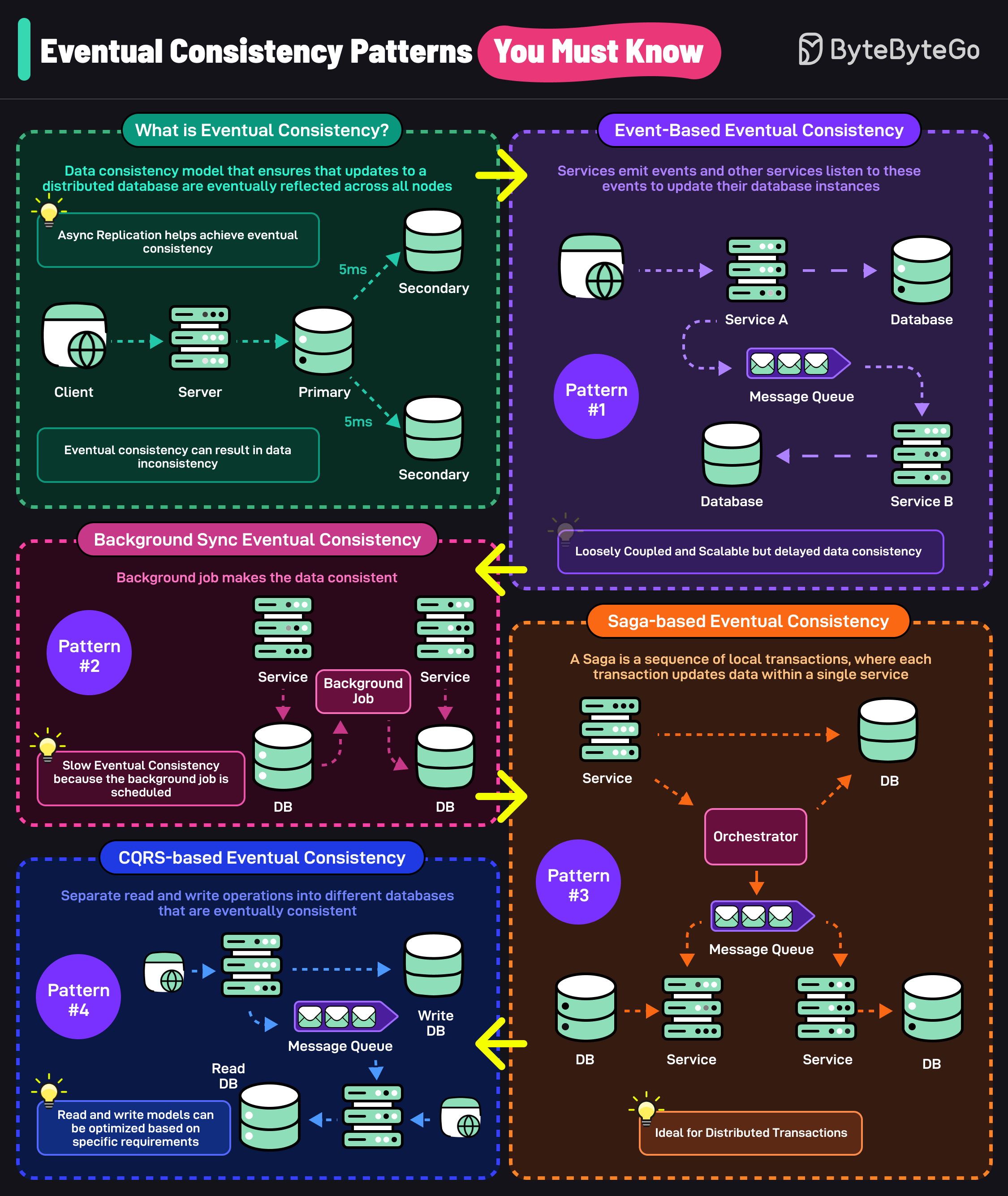

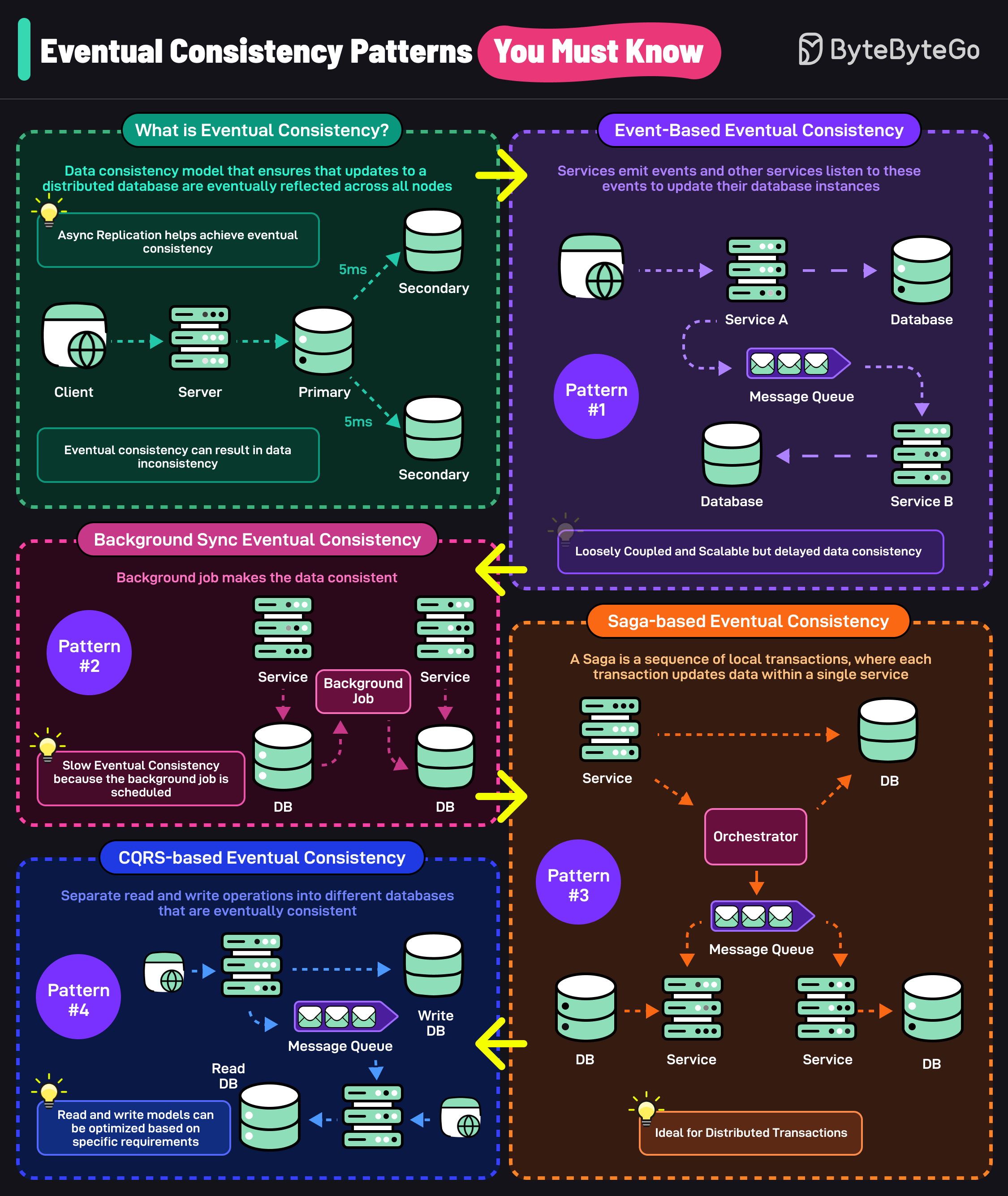

+ * [Top Eventual Consistency Patterns You Must Know](https://bytebytego.com/guides/top-eventual-consistency-patterns-you-must-know)

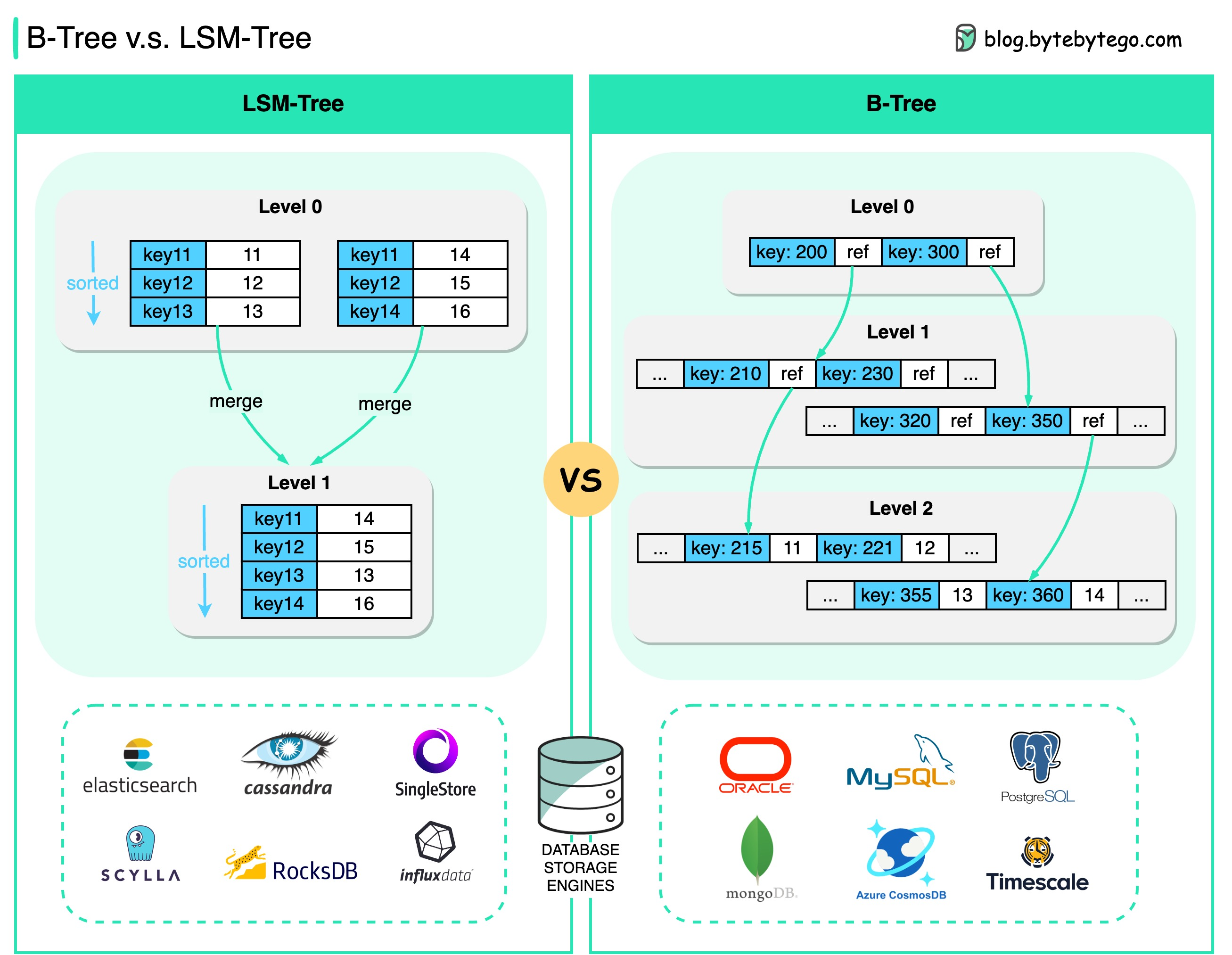

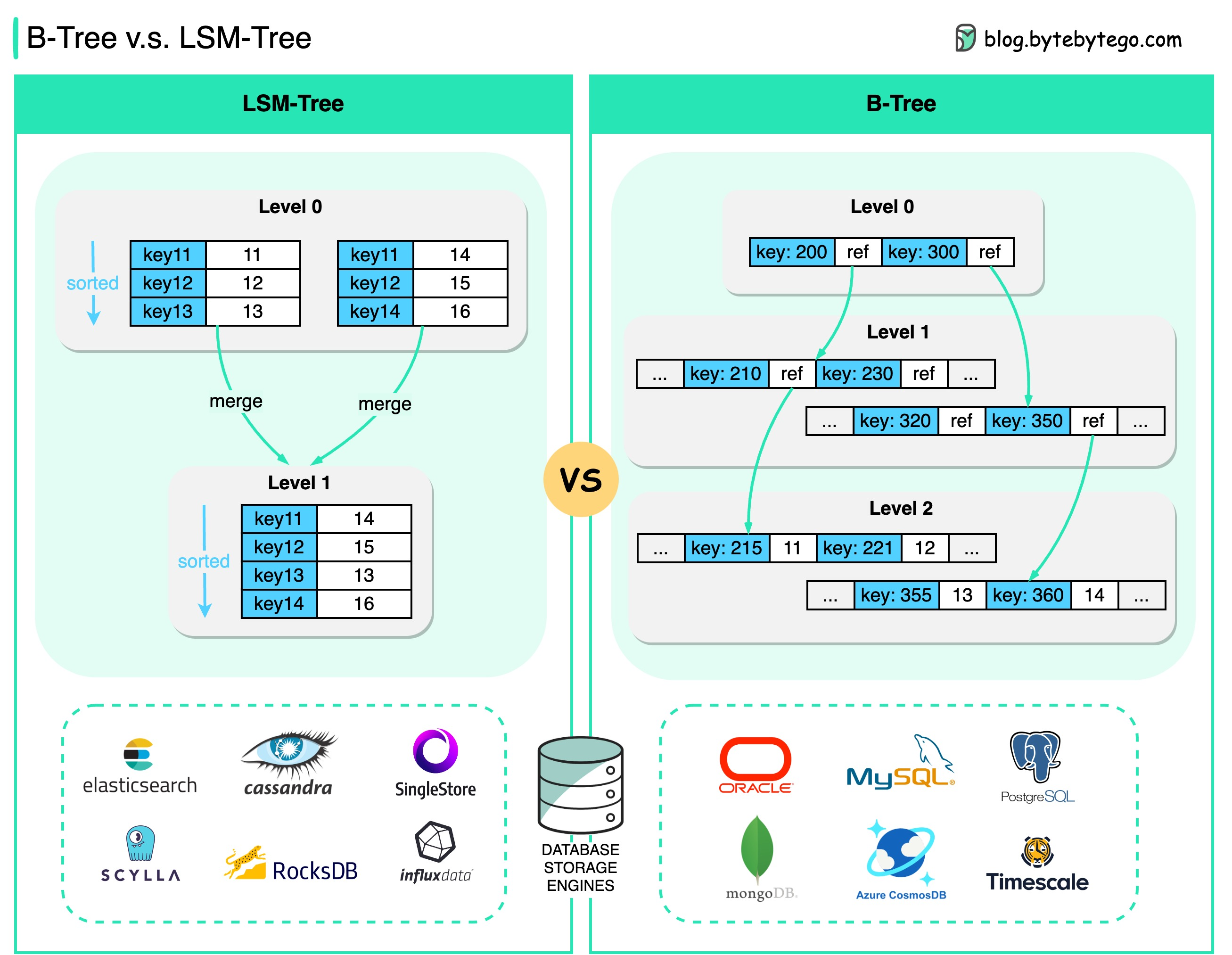

+ * [B-Tree vs. LSM-Tree](https://bytebytego.com/guides/b-tree-vs)

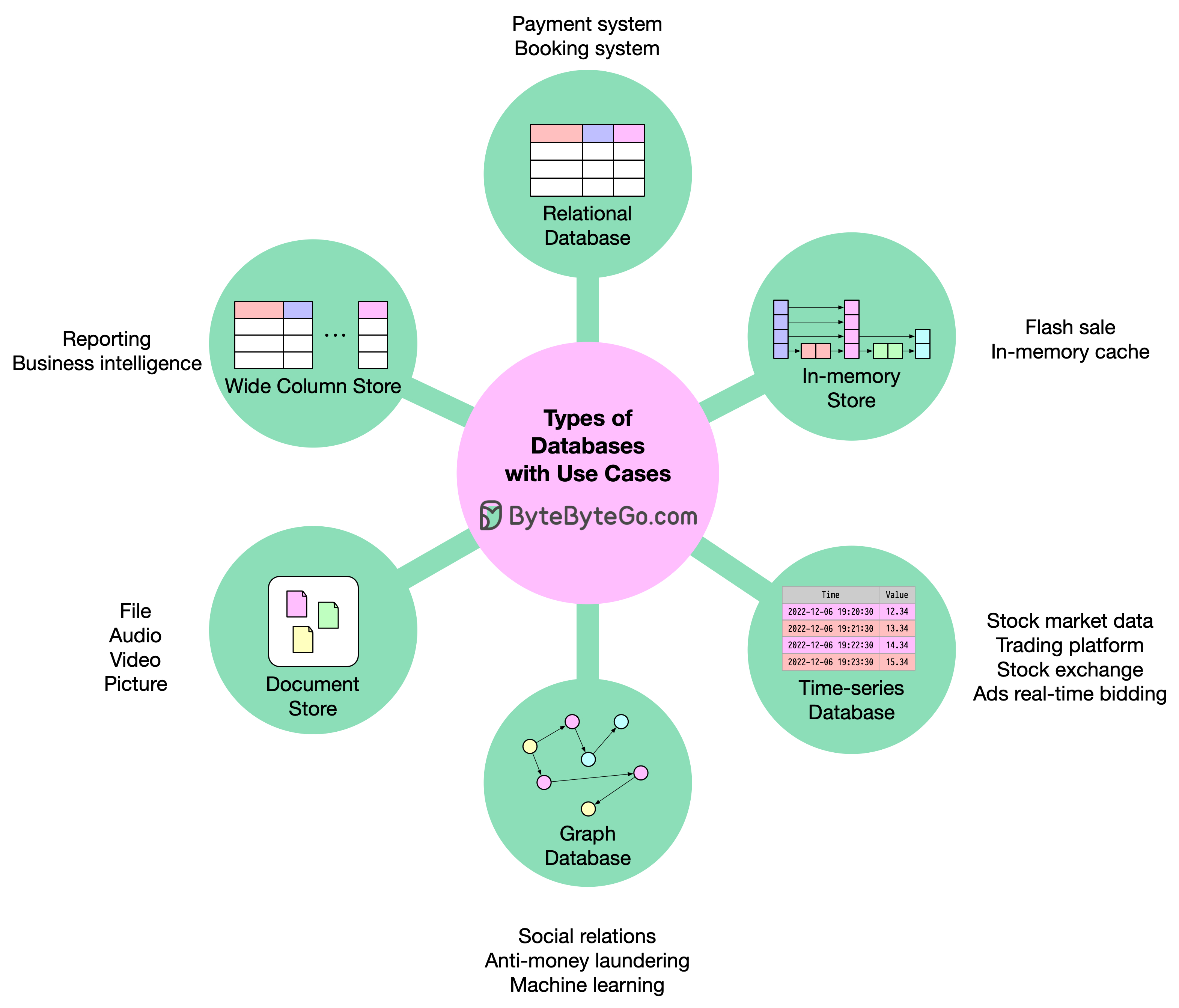

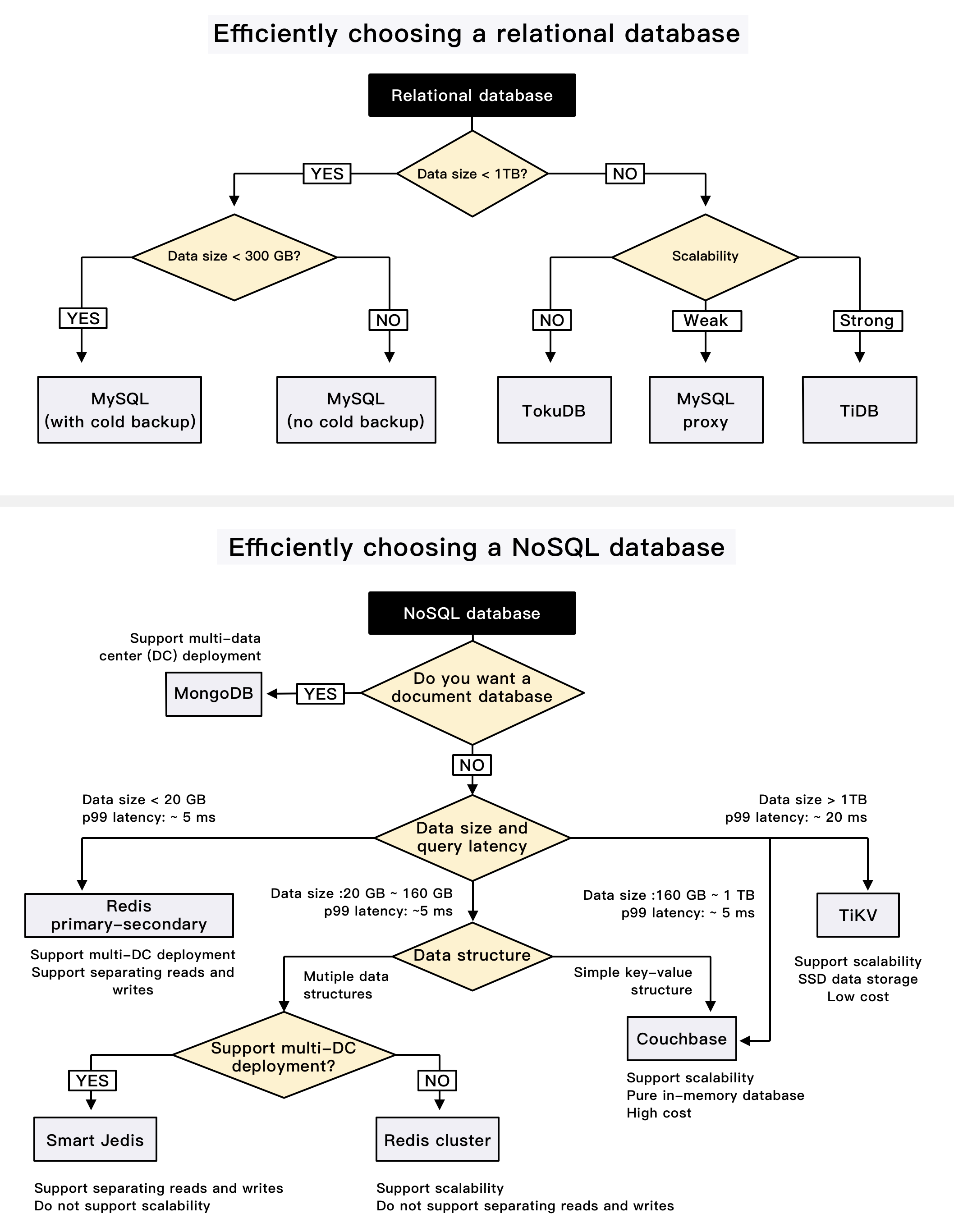

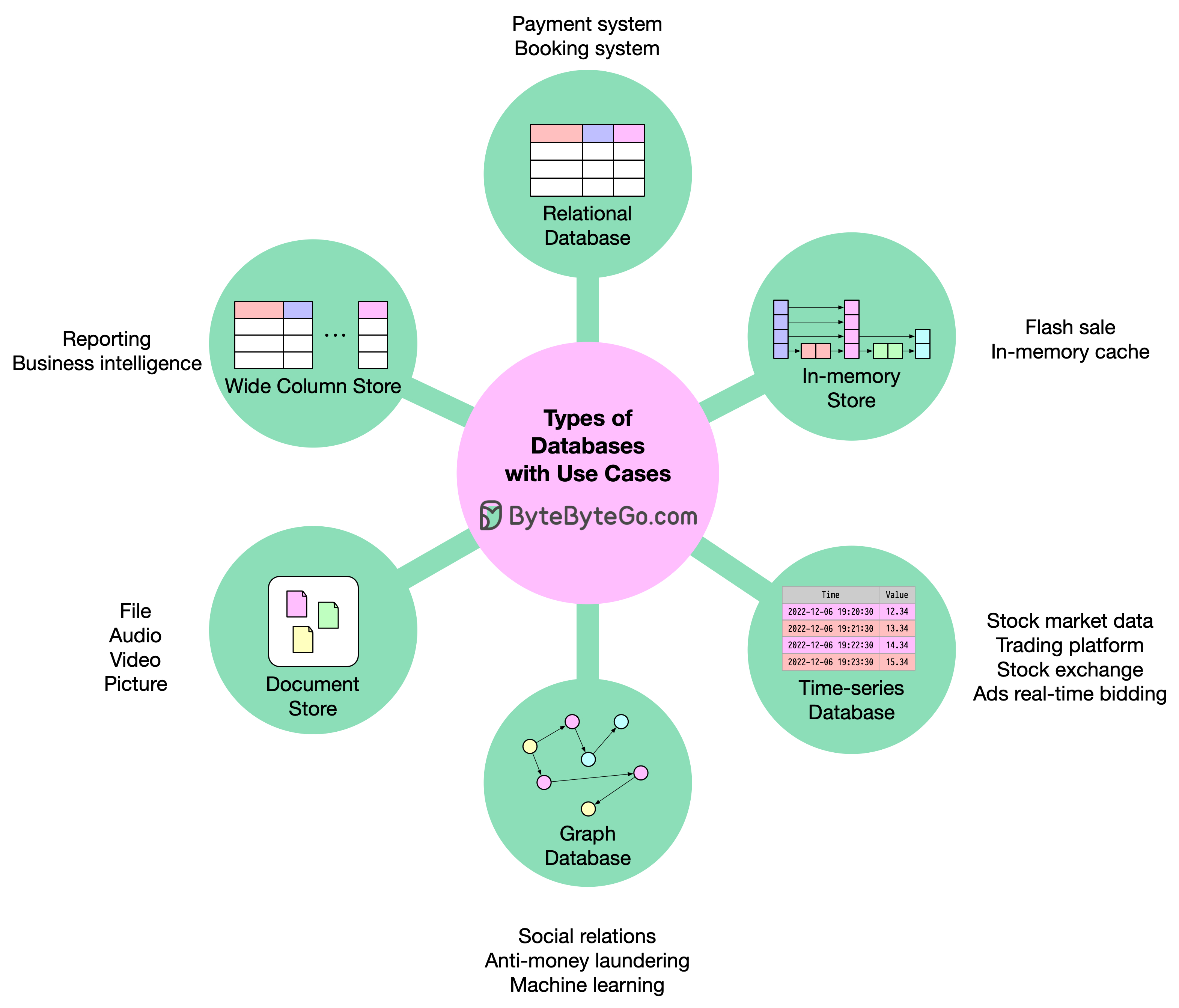

+ * [How to Decide Which Type of Database to Use](https://bytebytego.com/guides/how-do-you-decide-which-type-of-database-to-use)

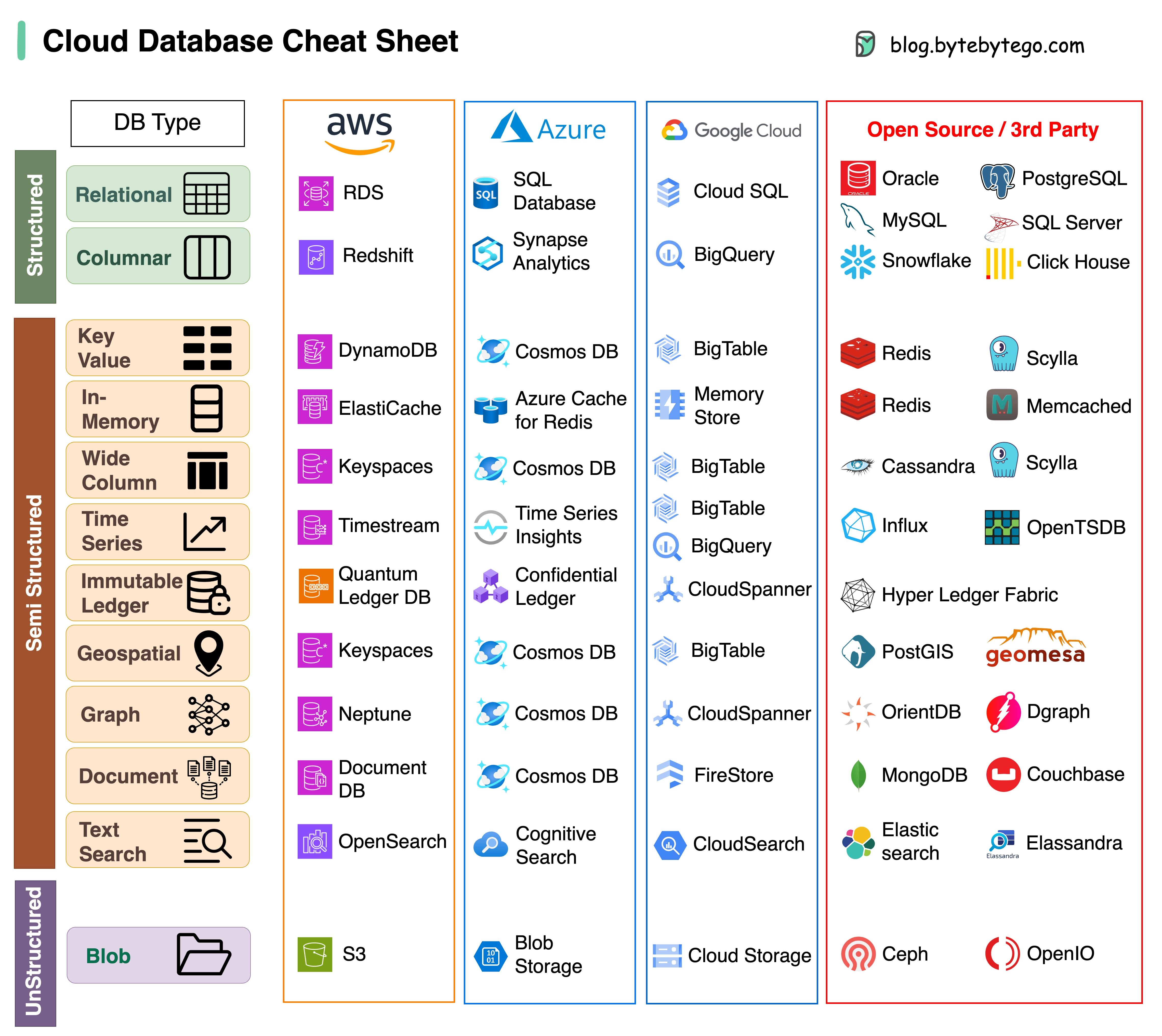

+ * [Cloud Database Cheat Sheet](https://bytebytego.com/guides/cloud-database-cheat-sheet)

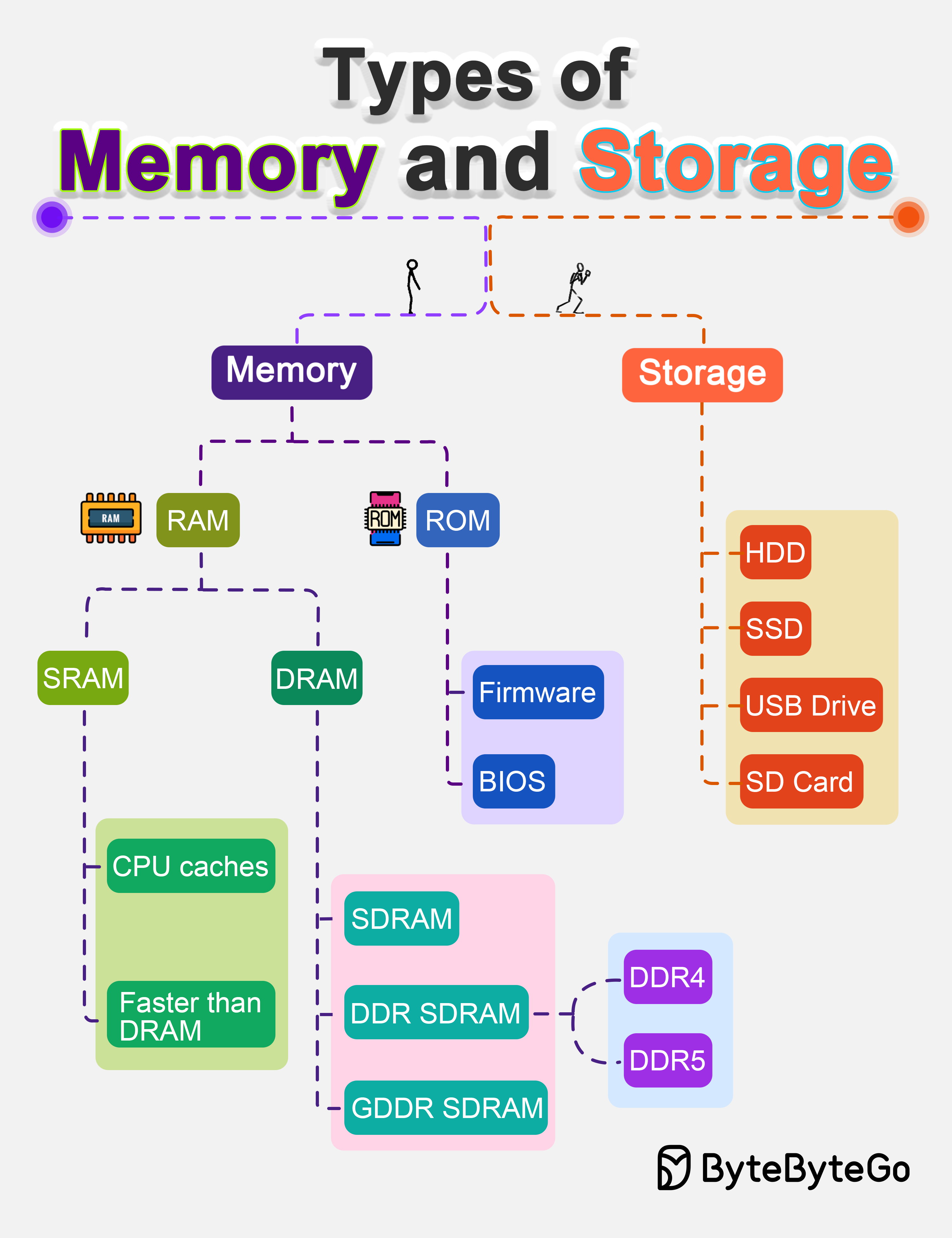

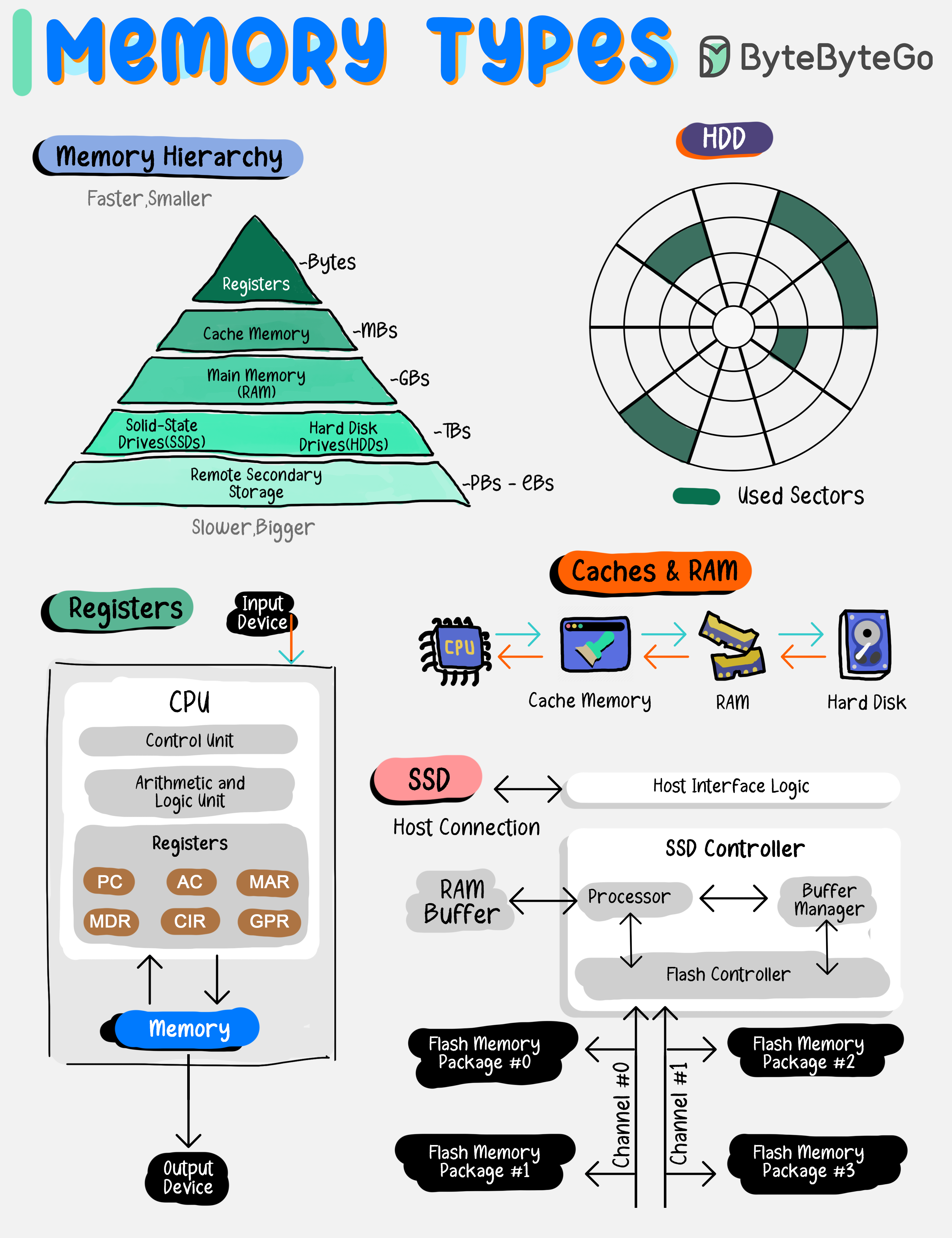

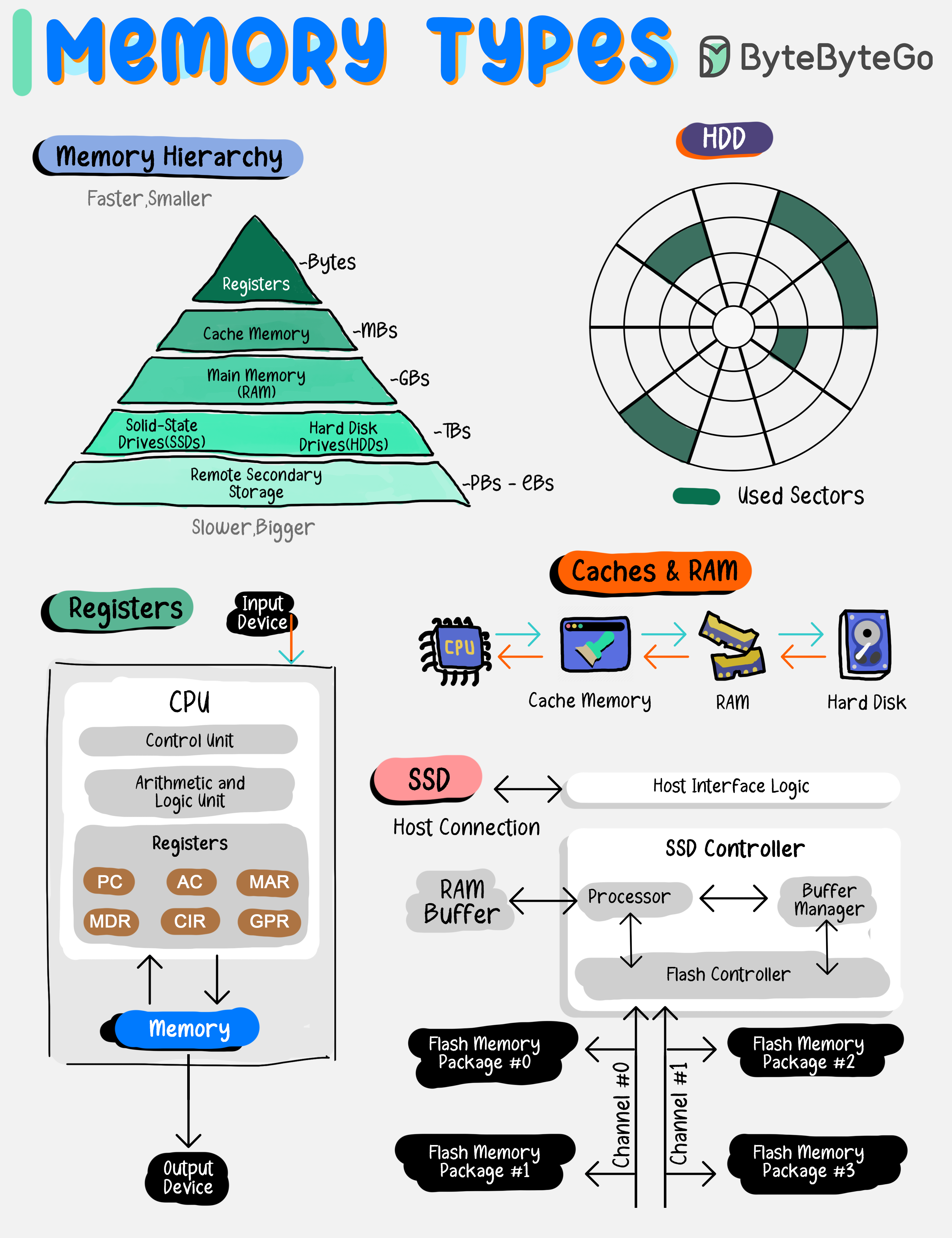

+ * [Types of Memory](https://bytebytego.com/guides/types-of-memory)

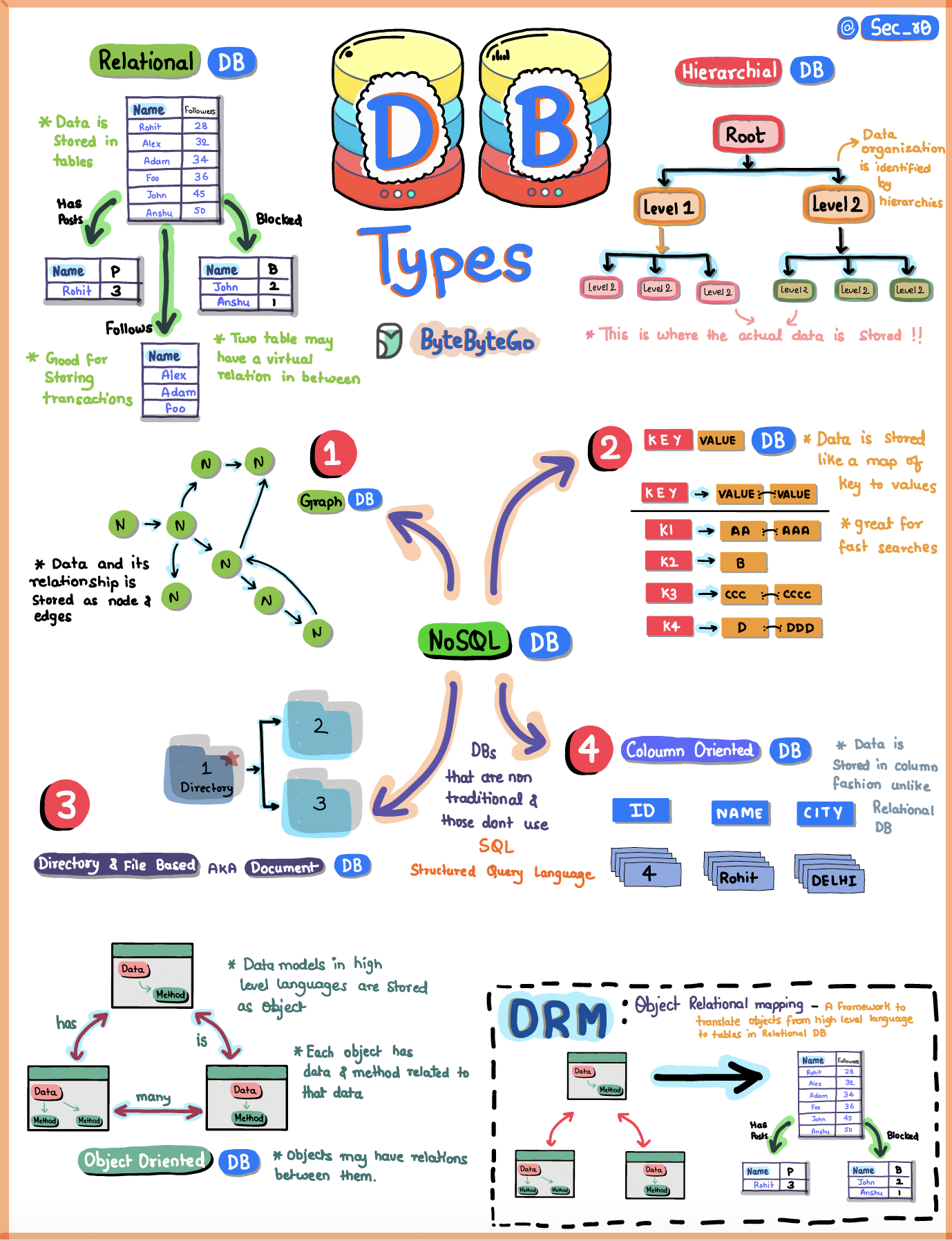

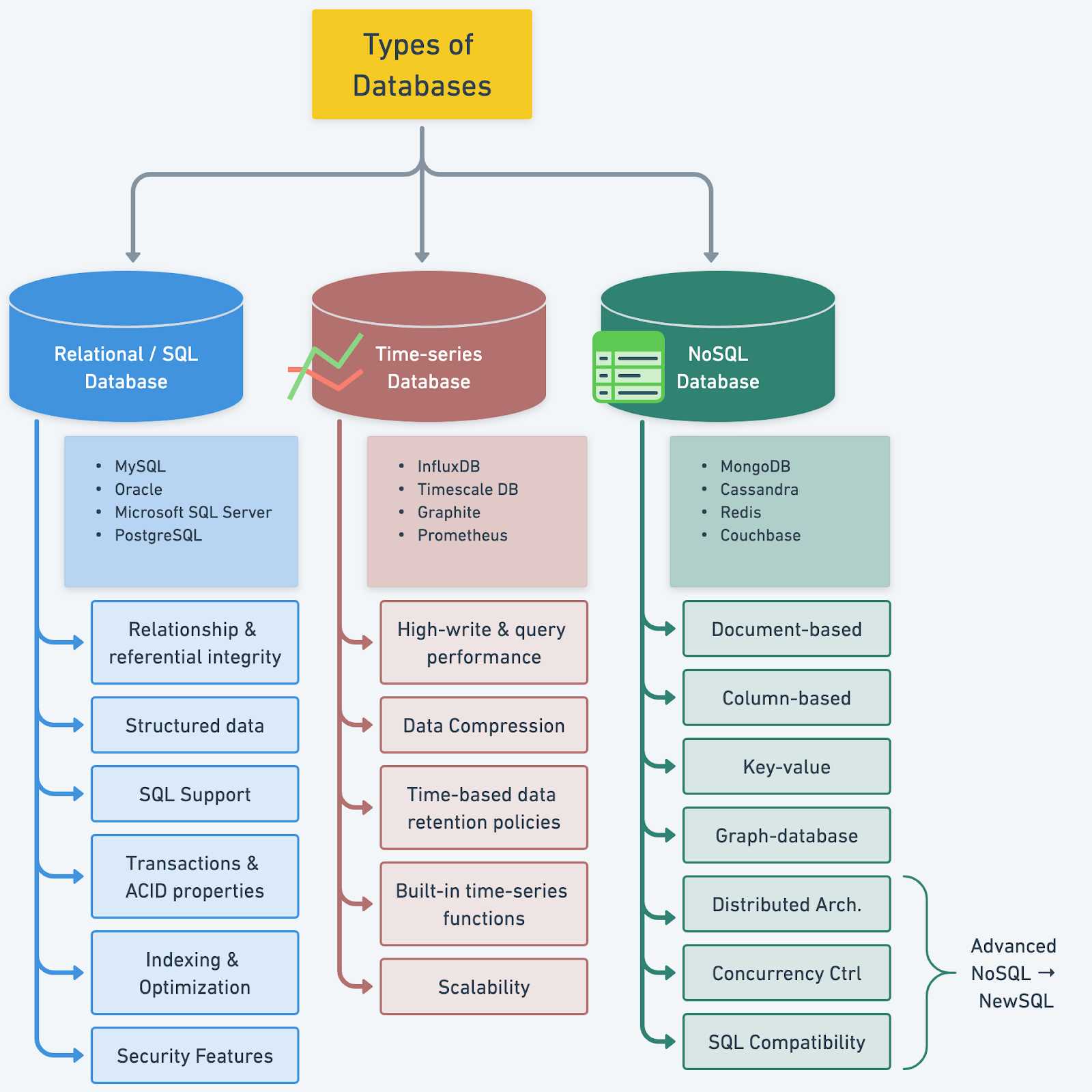

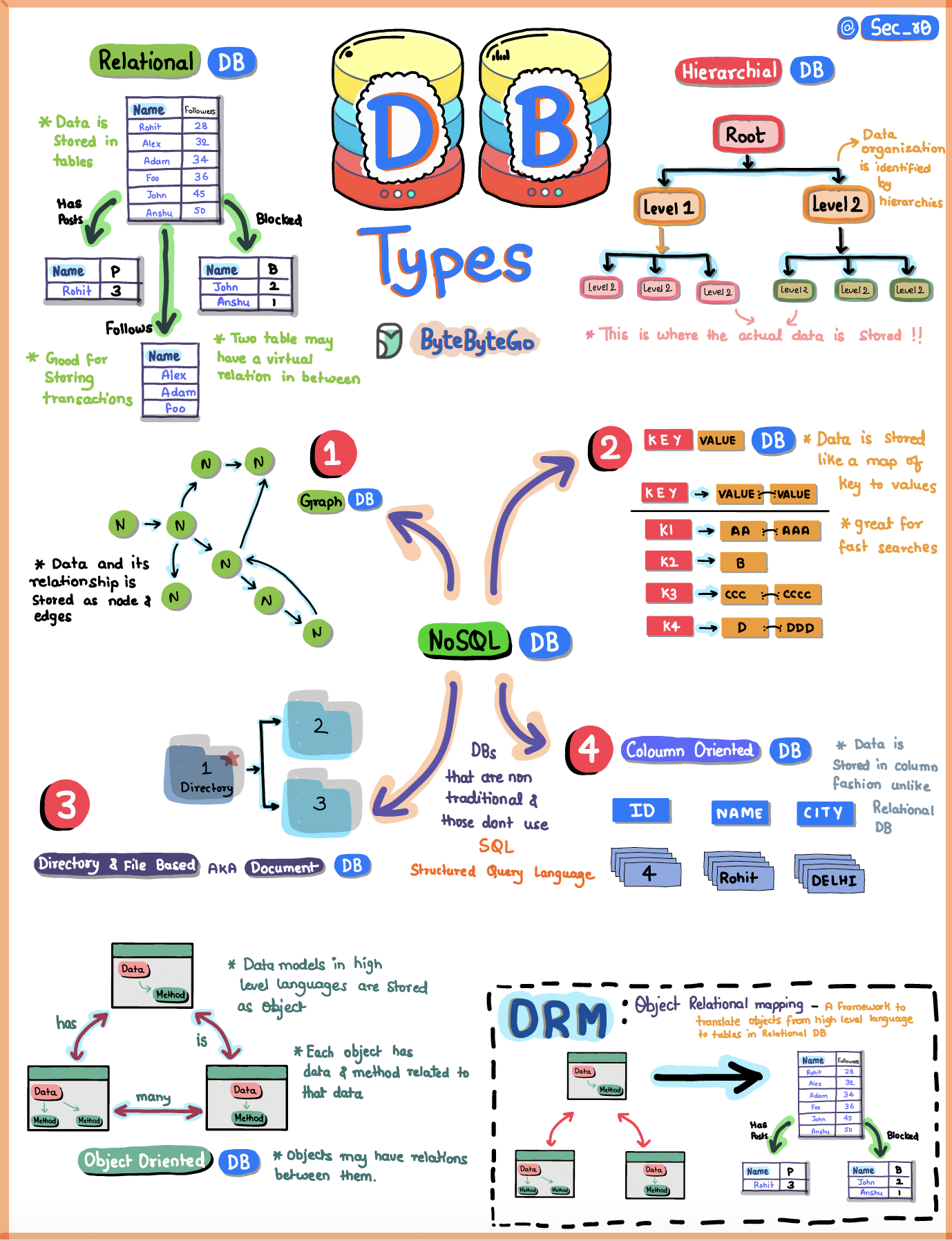

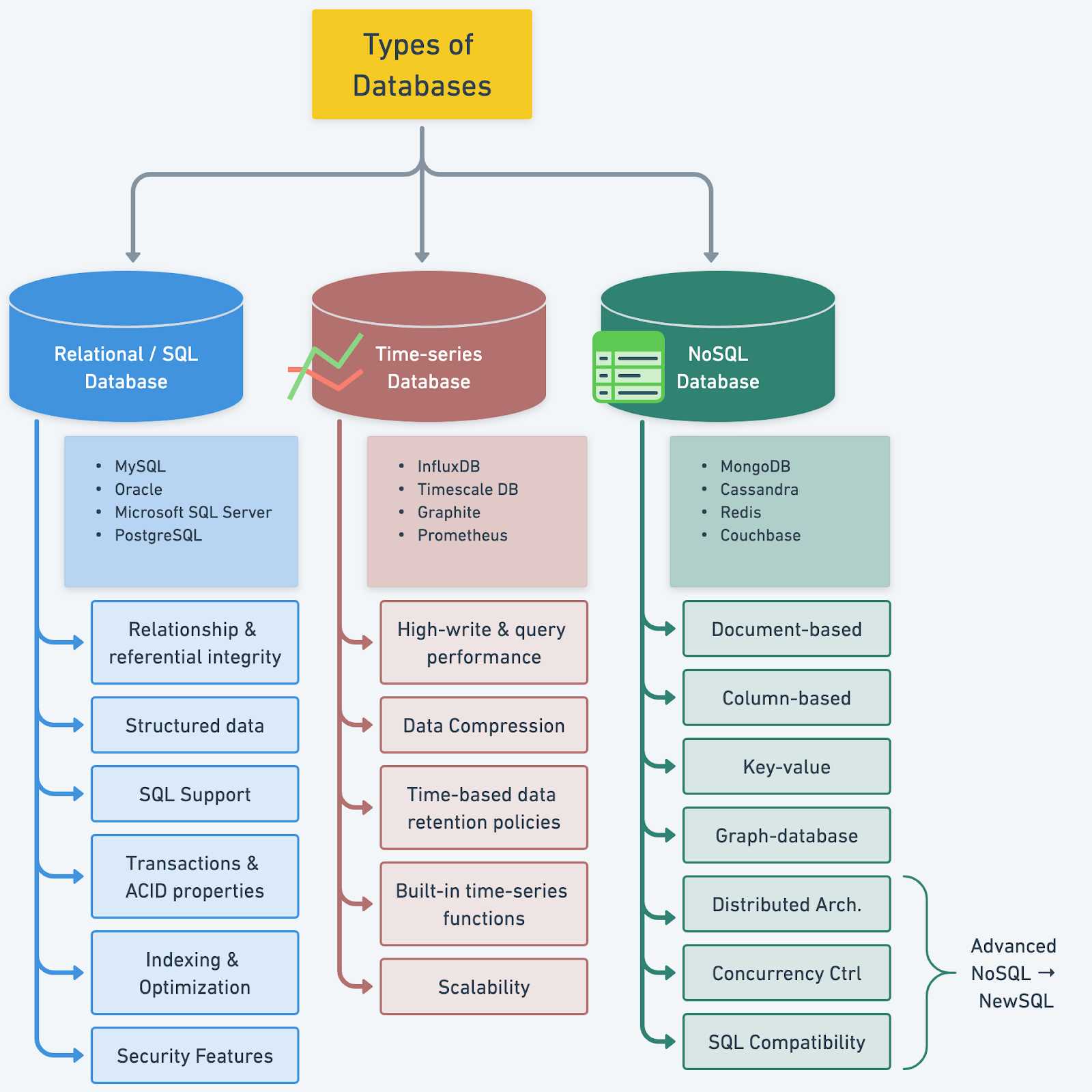

+ * [Understanding Database Types](https://bytebytego.com/guides/understanding-database-types)

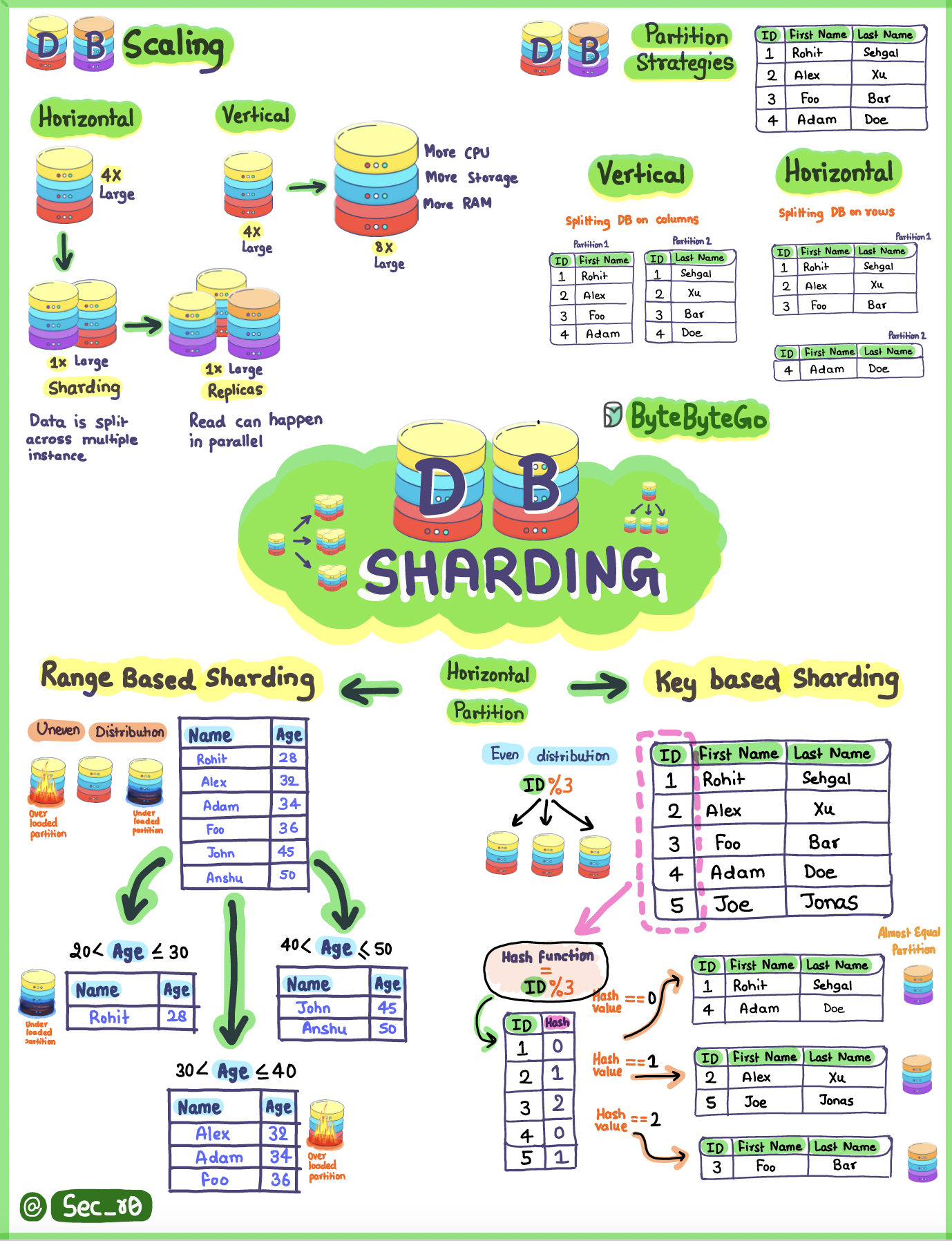

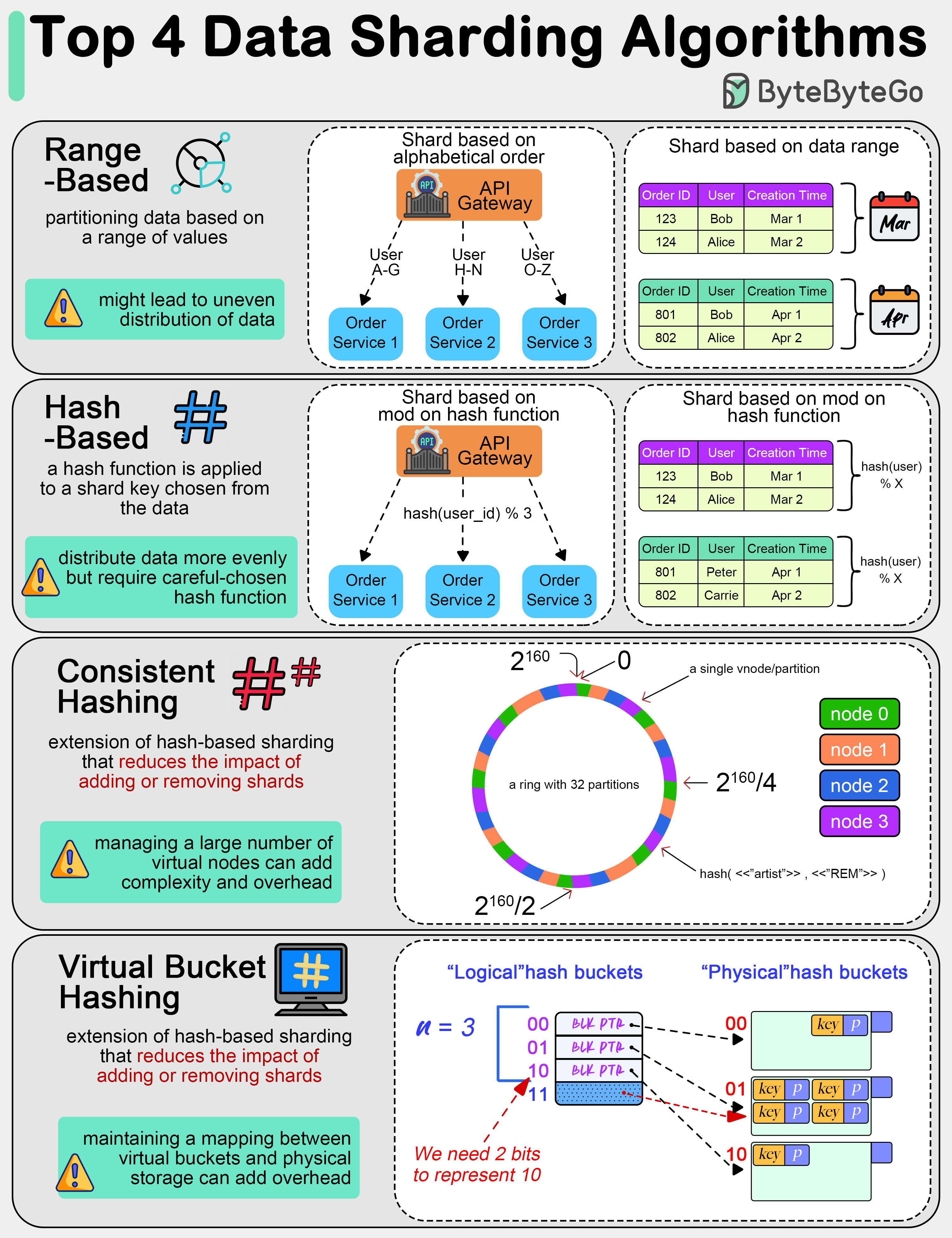

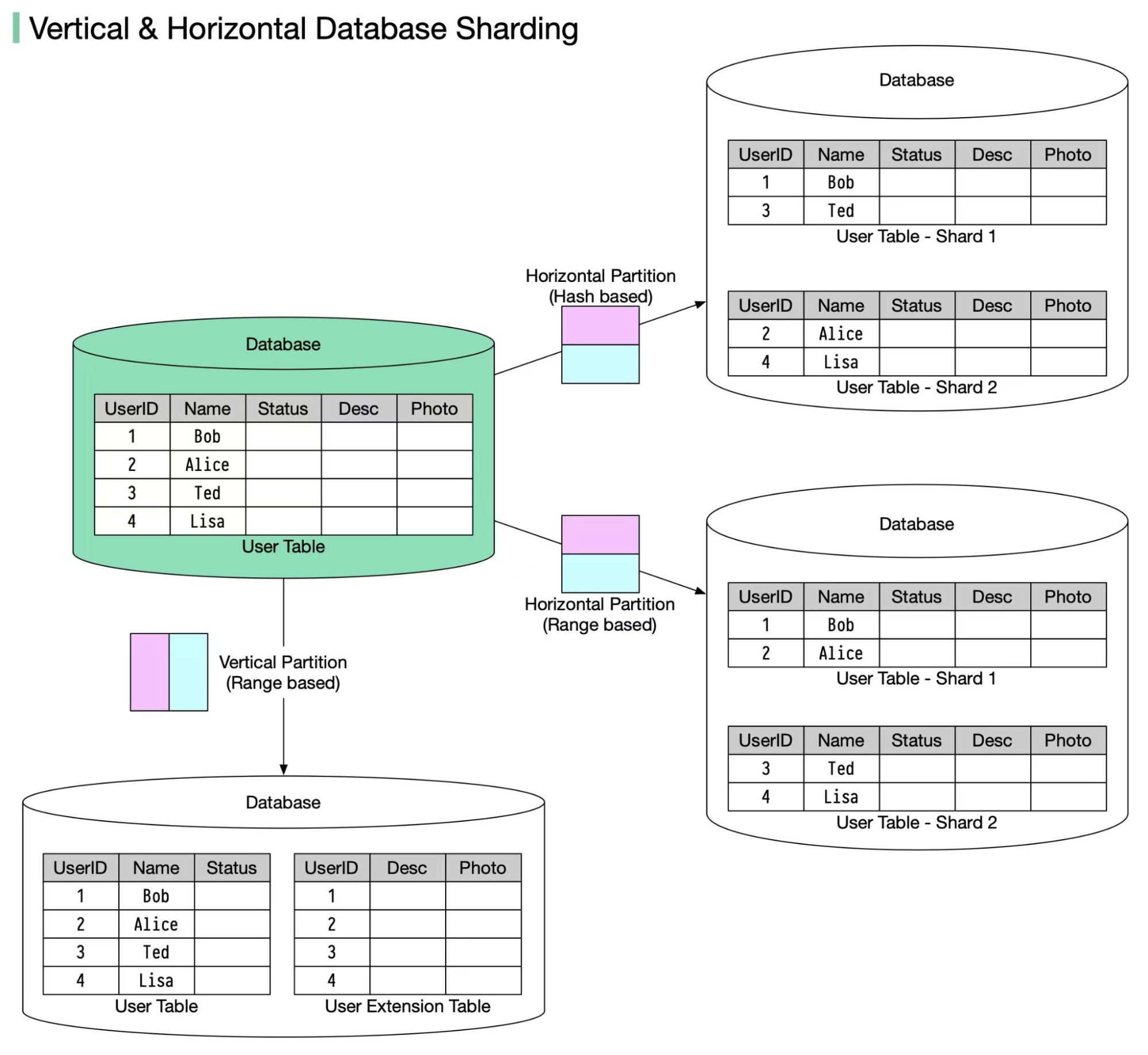

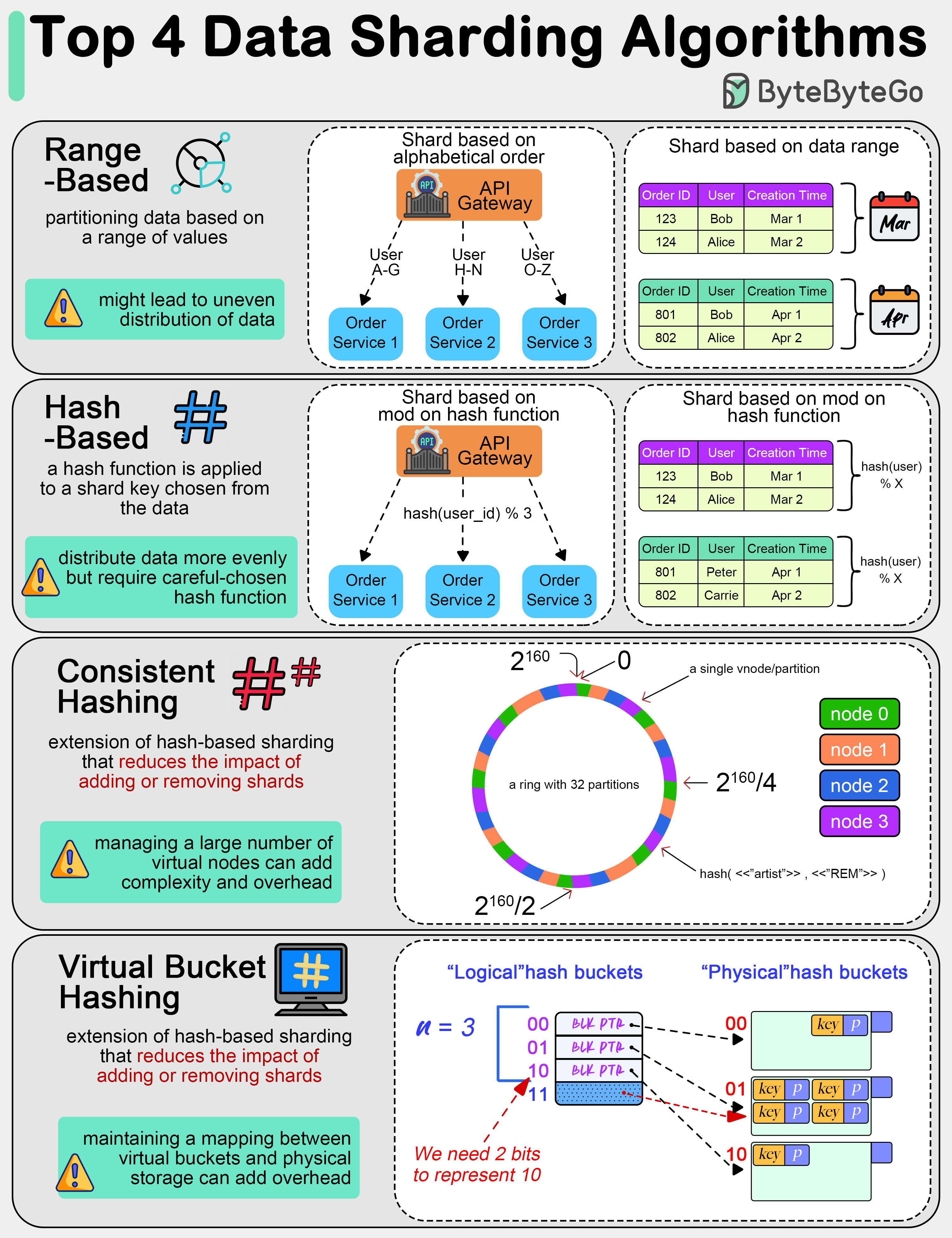

+ * [Top 4 Data Sharding Algorithms Explained](https://bytebytego.com/guides/top-4-data-sharding-algorithms-explained)

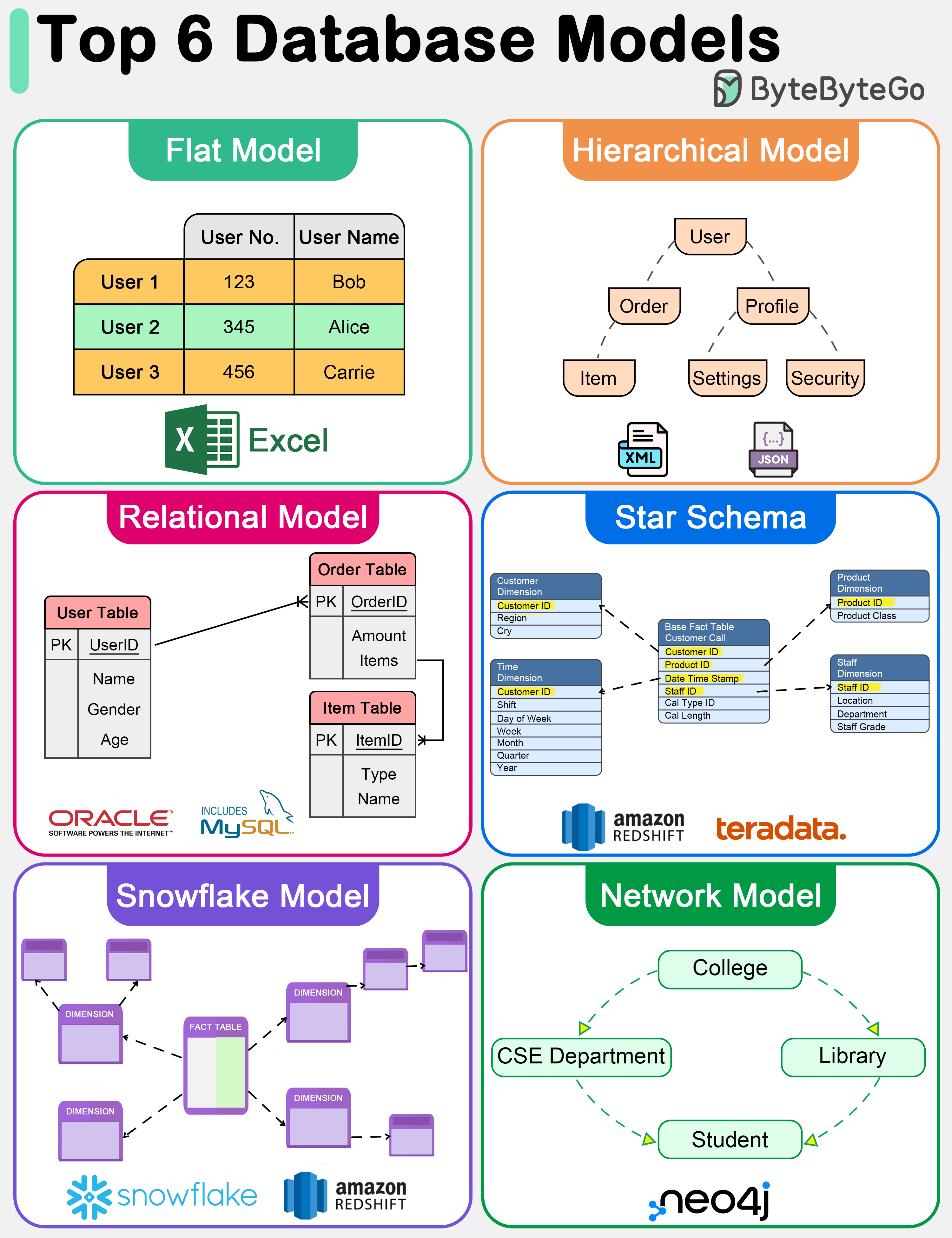

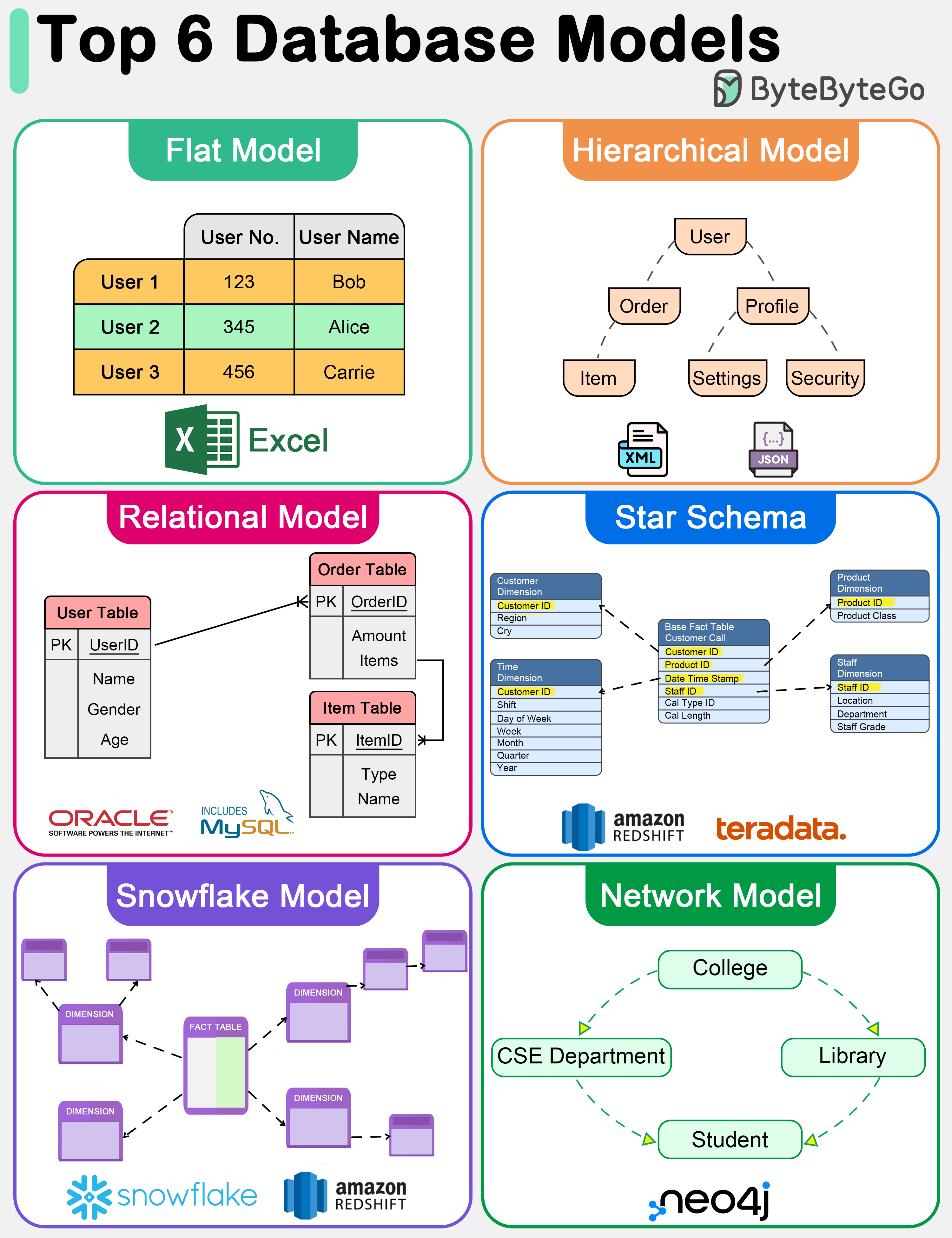

+ * [Top 6 Database Models](https://bytebytego.com/guides/top-6-database-models)

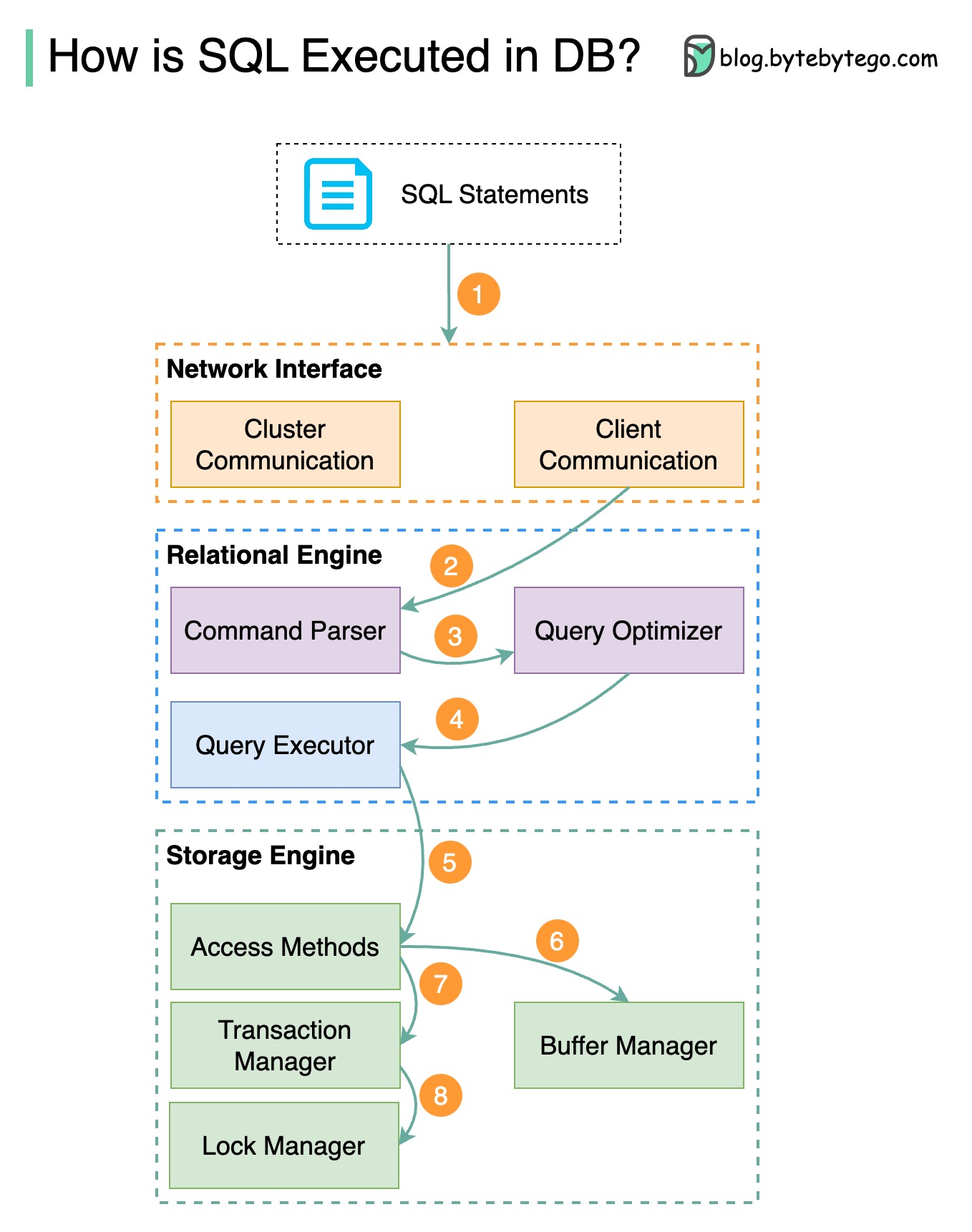

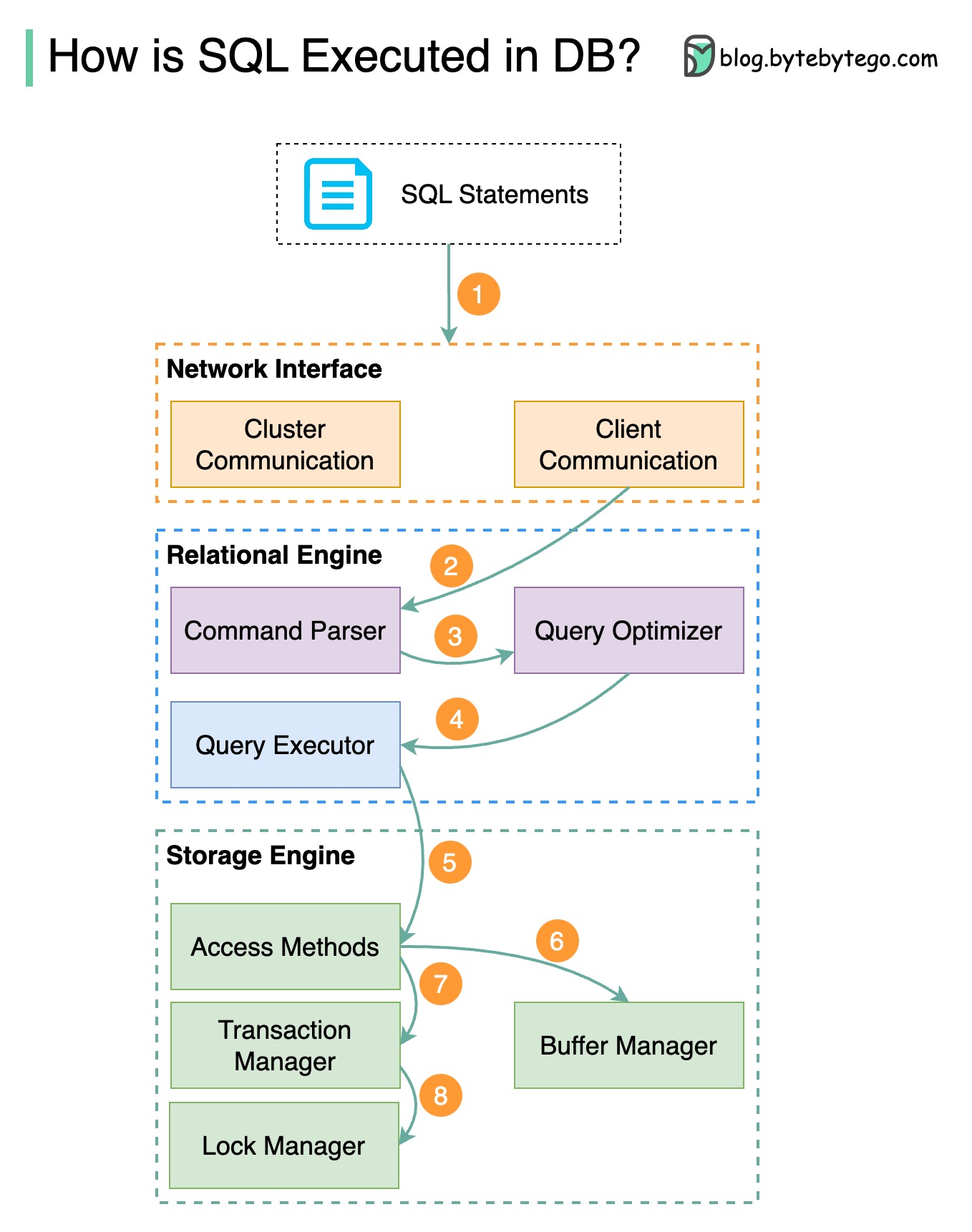

+ * [SQL Statement Execution in Database](https://bytebytego.com/guides/how-is-a-sql-statement-executed-in-the-database)

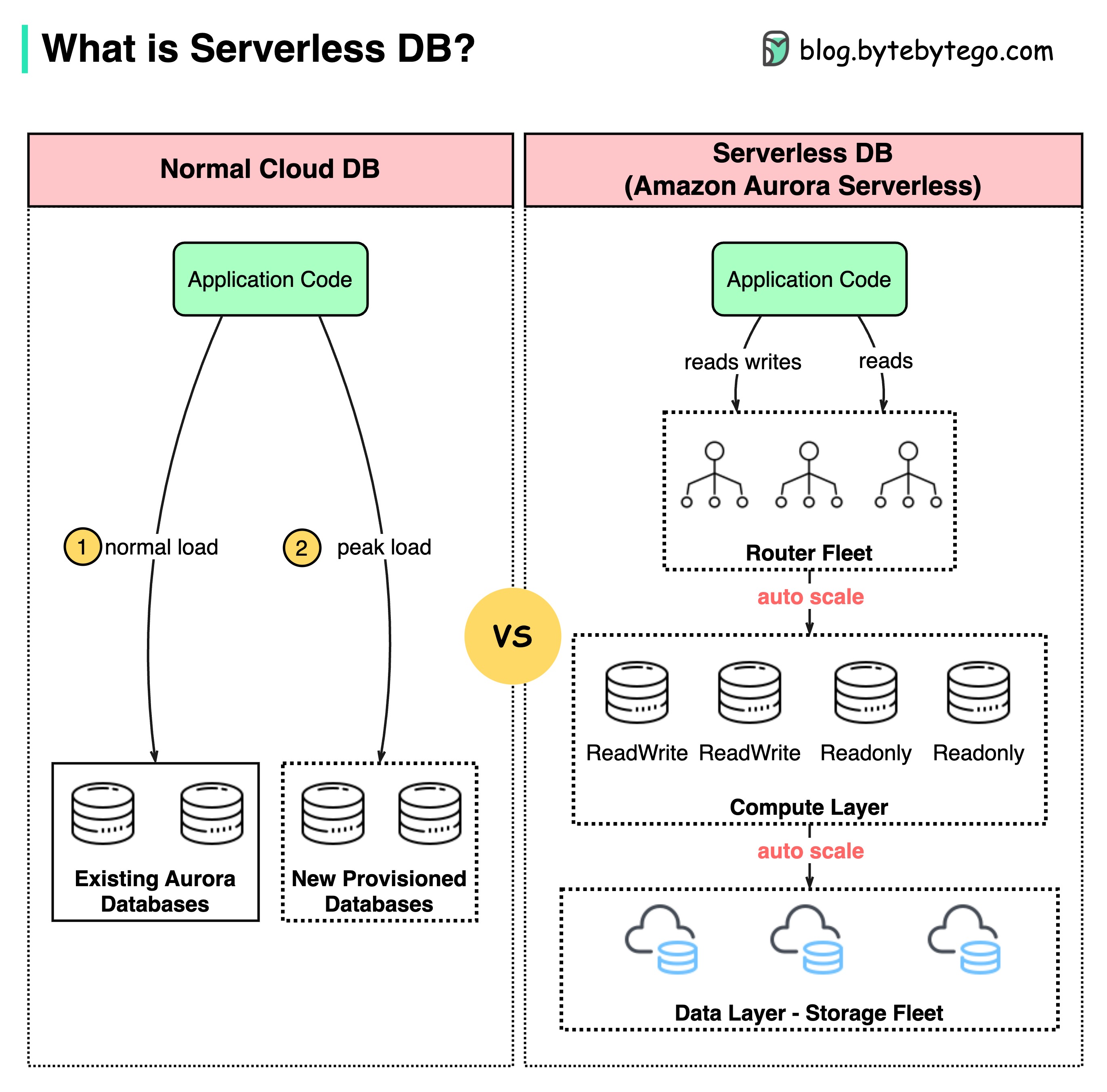

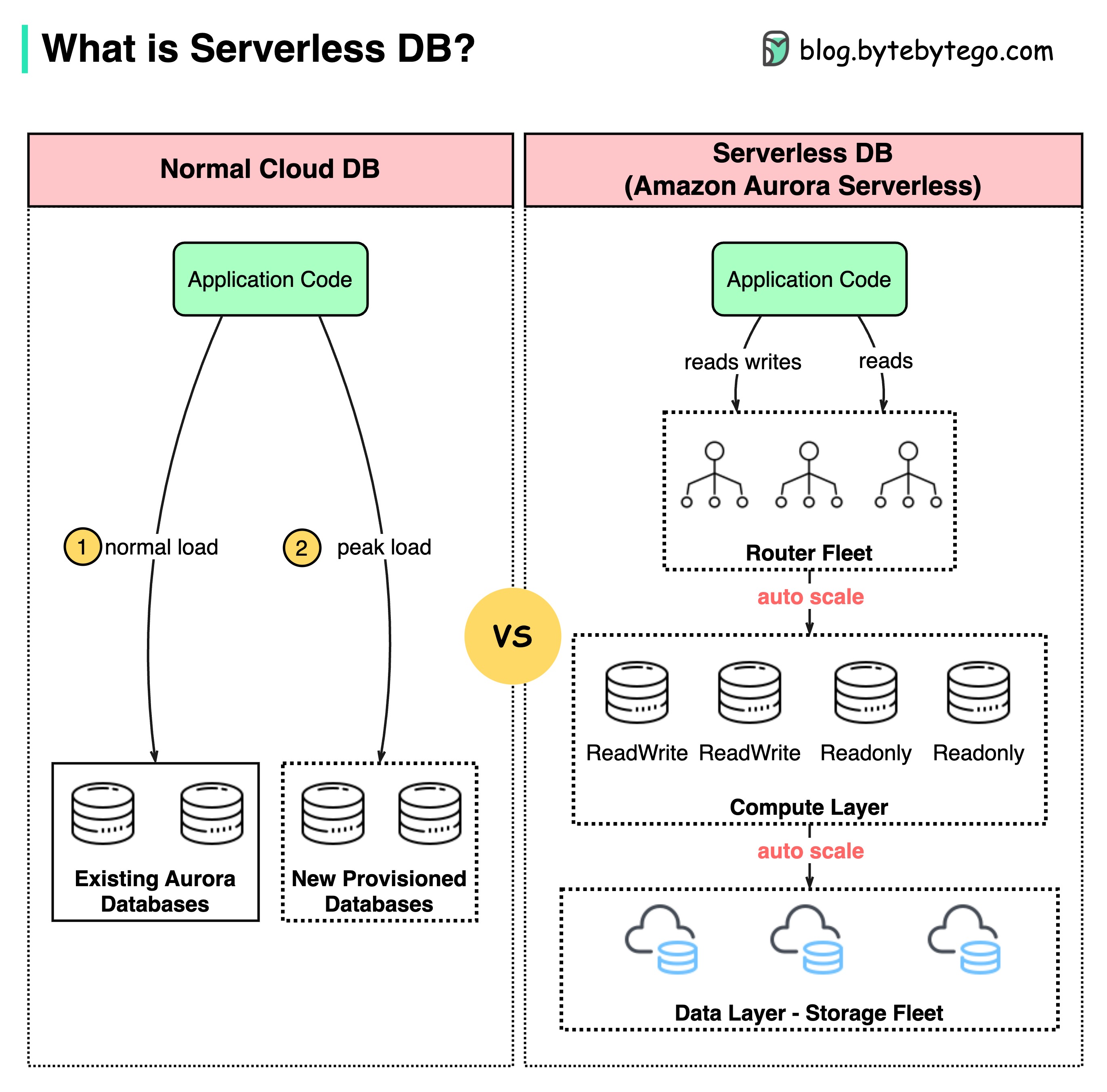

+ * [What is Serverless DB?](https://bytebytego.com/guides/what-is-serverless-db)

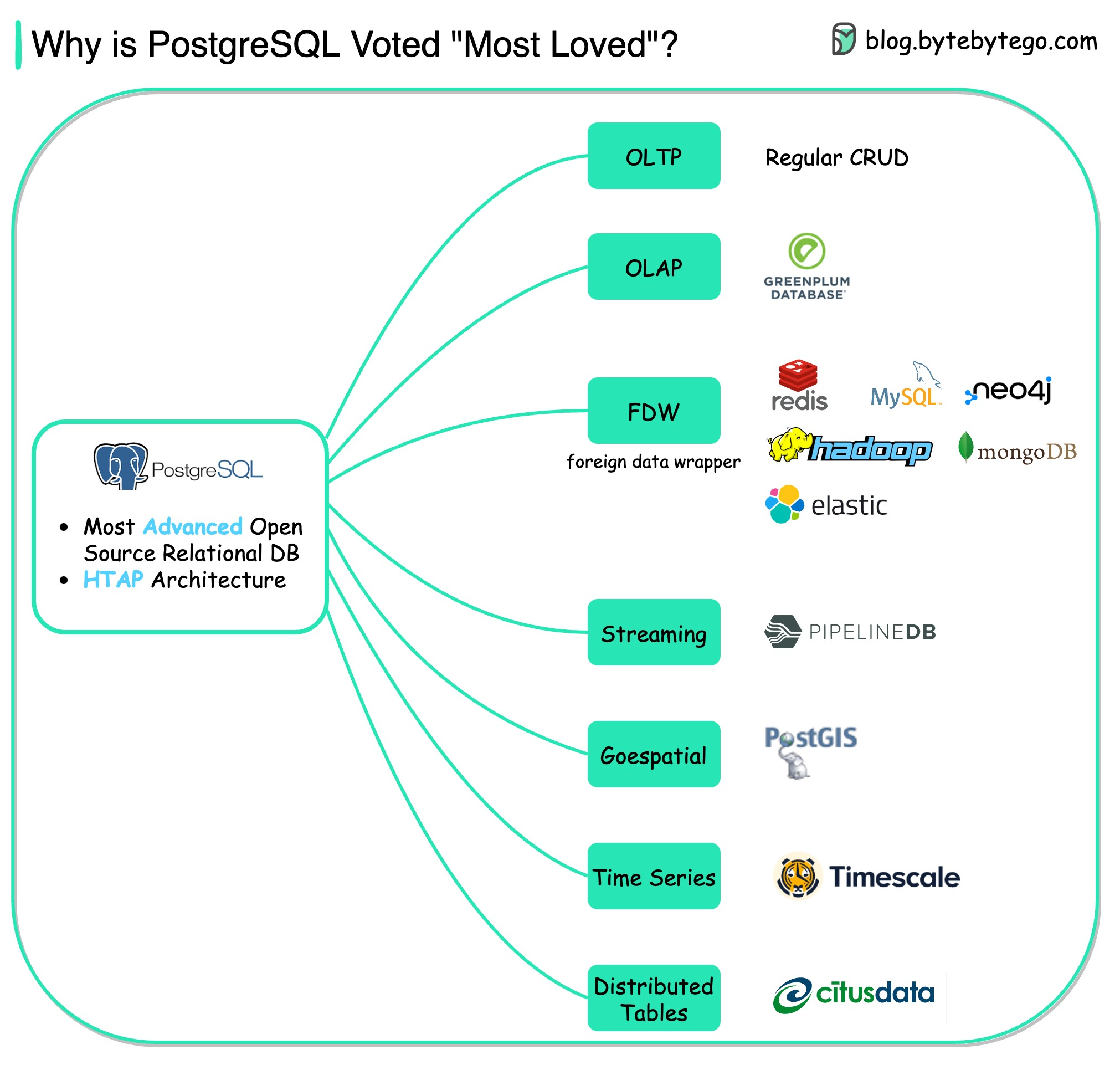

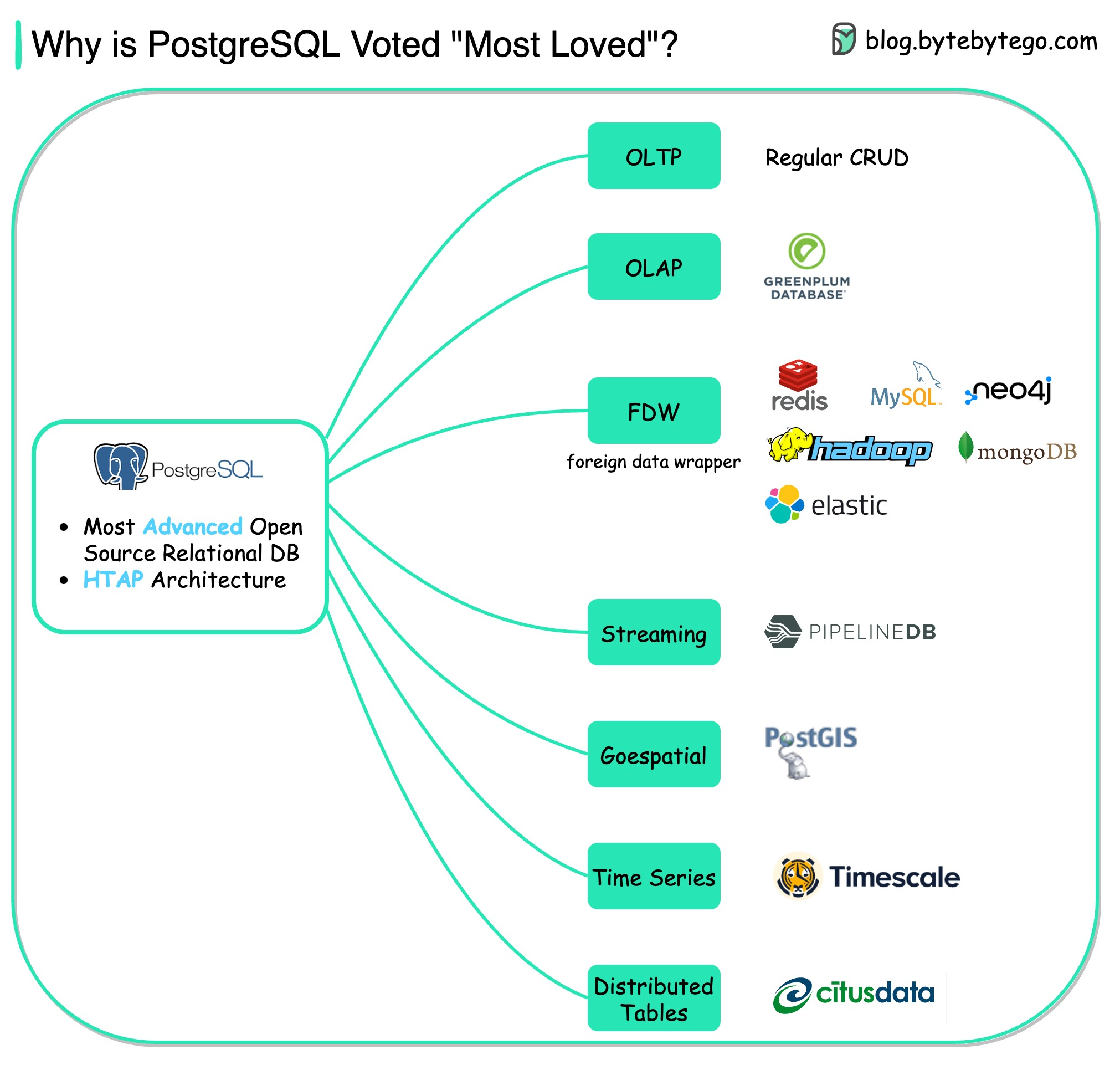

+ * [Why PostgreSQL is the Most Loved Database](https://bytebytego.com/guides/why-is-postgresql-voted-as-the-most-loved-database-by-stackoverflow-2022-developer-survey)

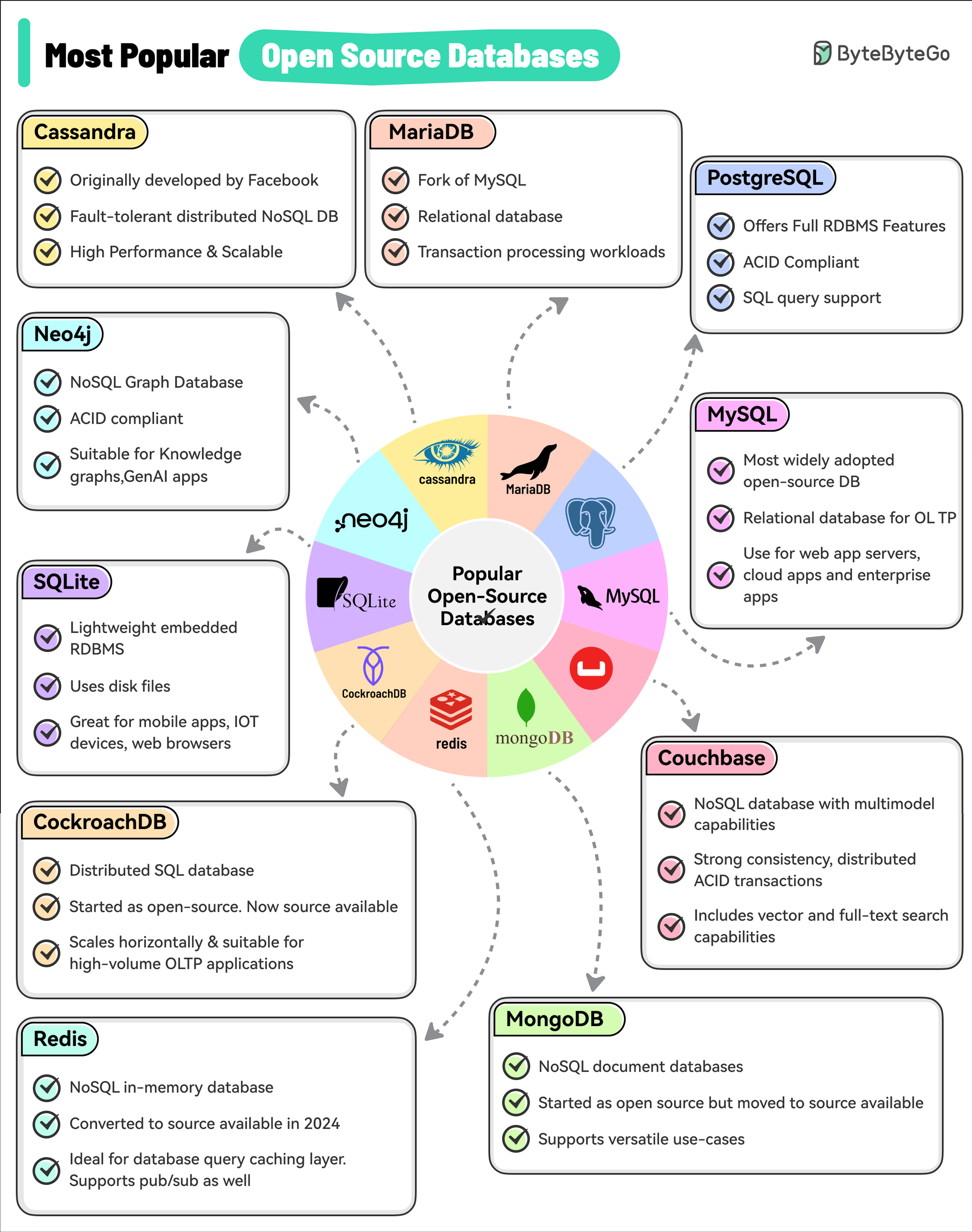

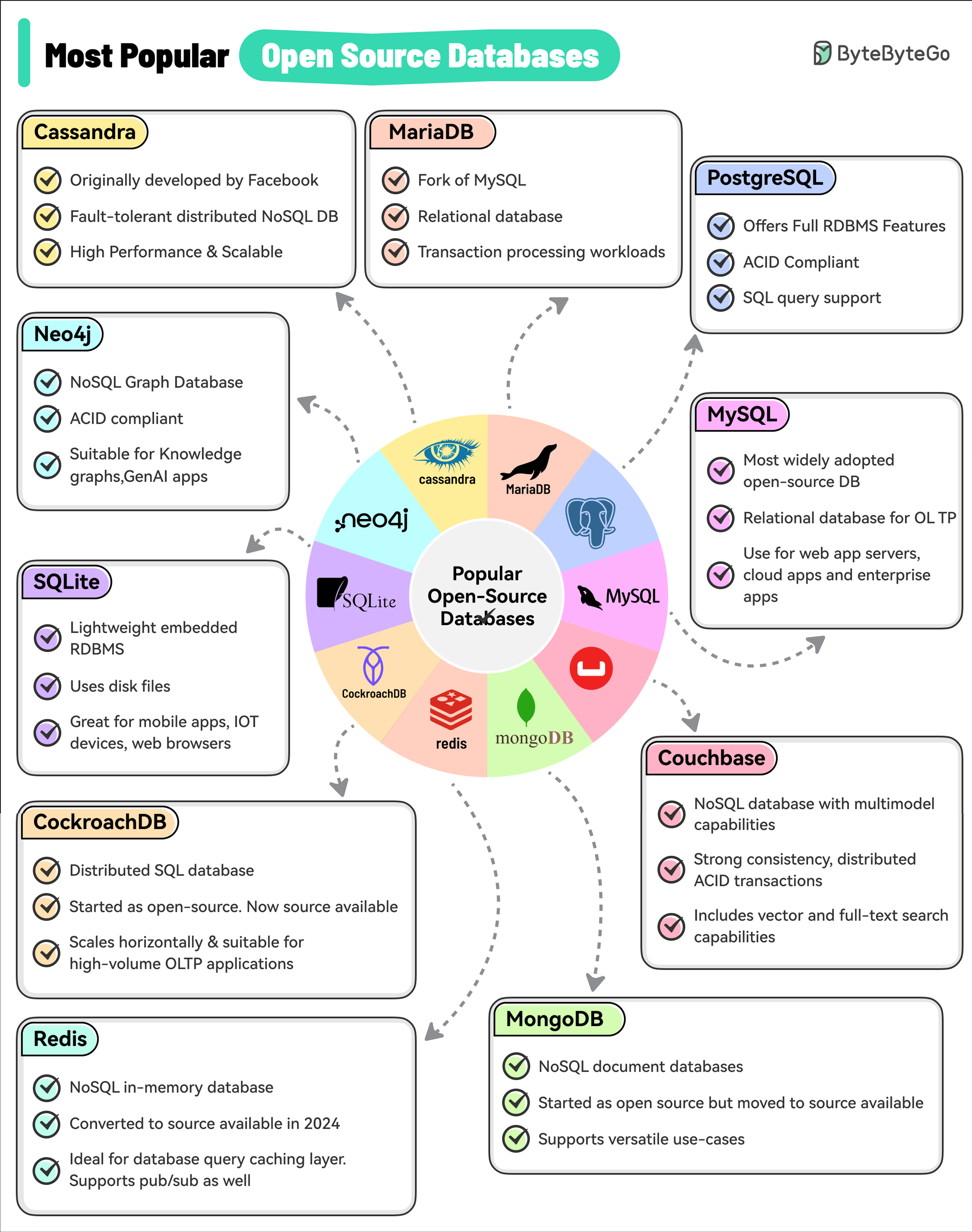

+ * [Top 10 Most Popular Open-Source Databases](https://bytebytego.com/guides/top-10-most-popular-open-source-databases)

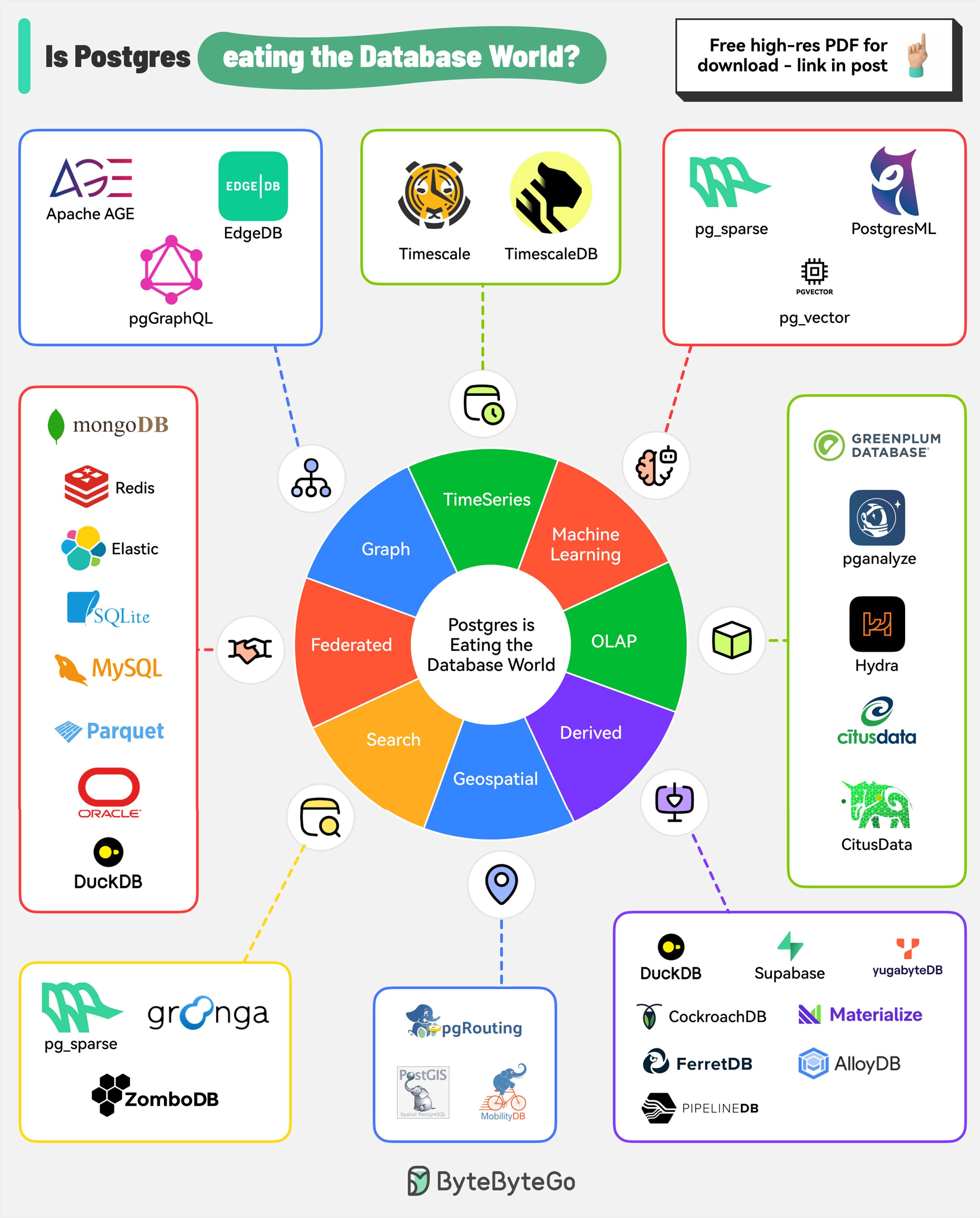

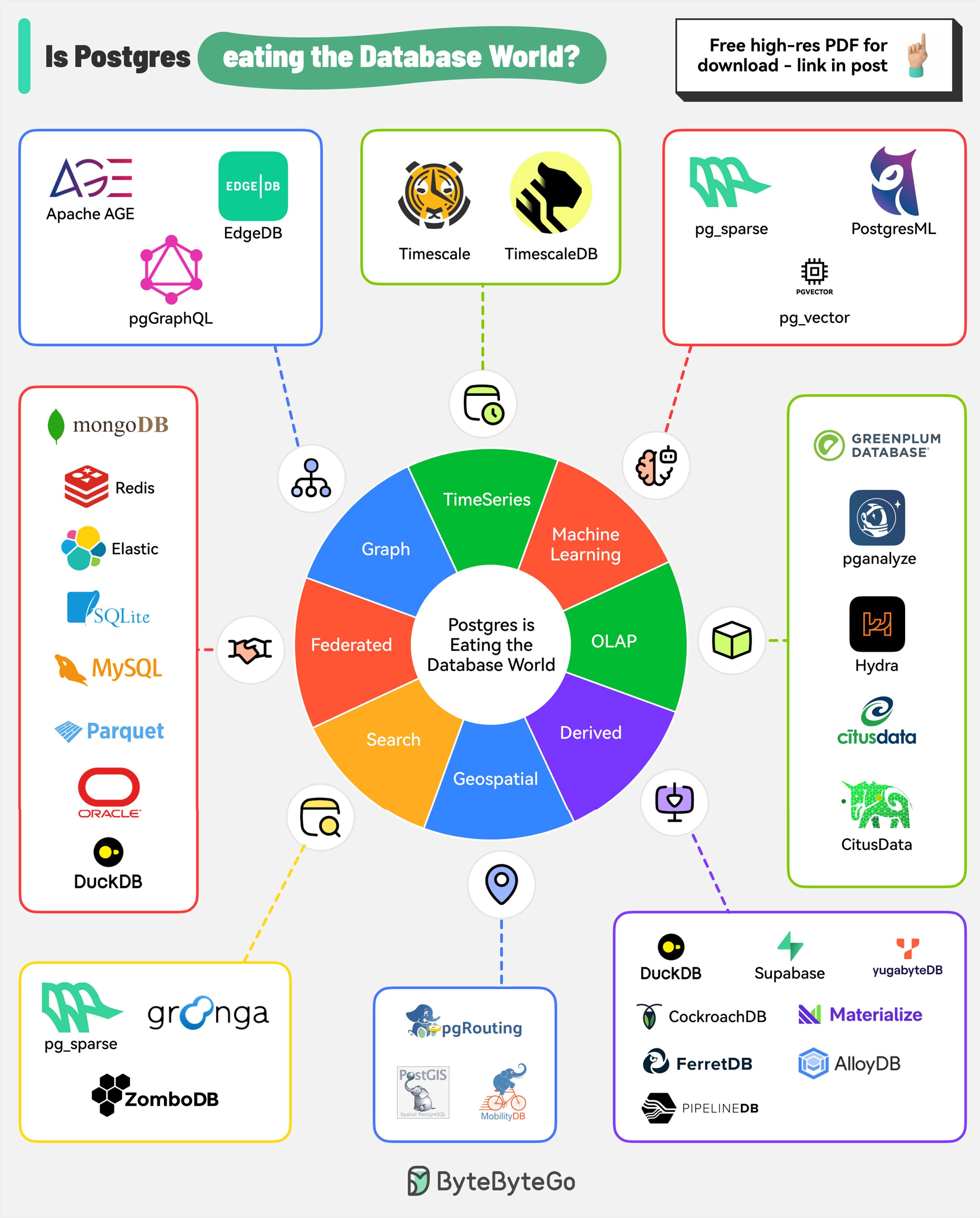

+ * [Is PostgreSQL Eating the Database World?](https://bytebytego.com/guides/is-postgresql-eating-the-database-world)

+ * [How to Choose the Right Database](https://bytebytego.com/guides/how-to-choose-the-right-database)

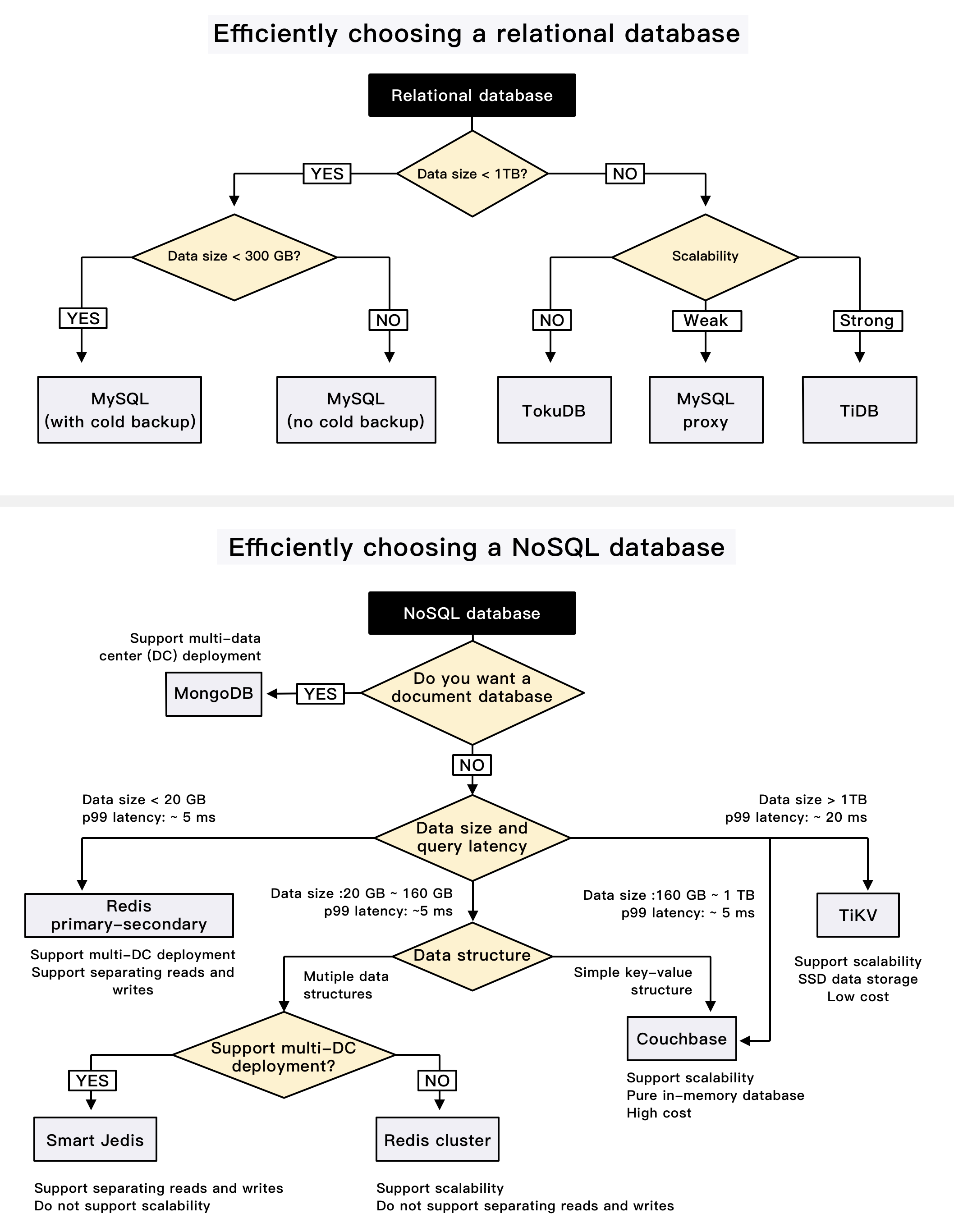

+ * [iQIYI Database Selection Trees](https://bytebytego.com/guides/iqiyi-database-selection-trees)

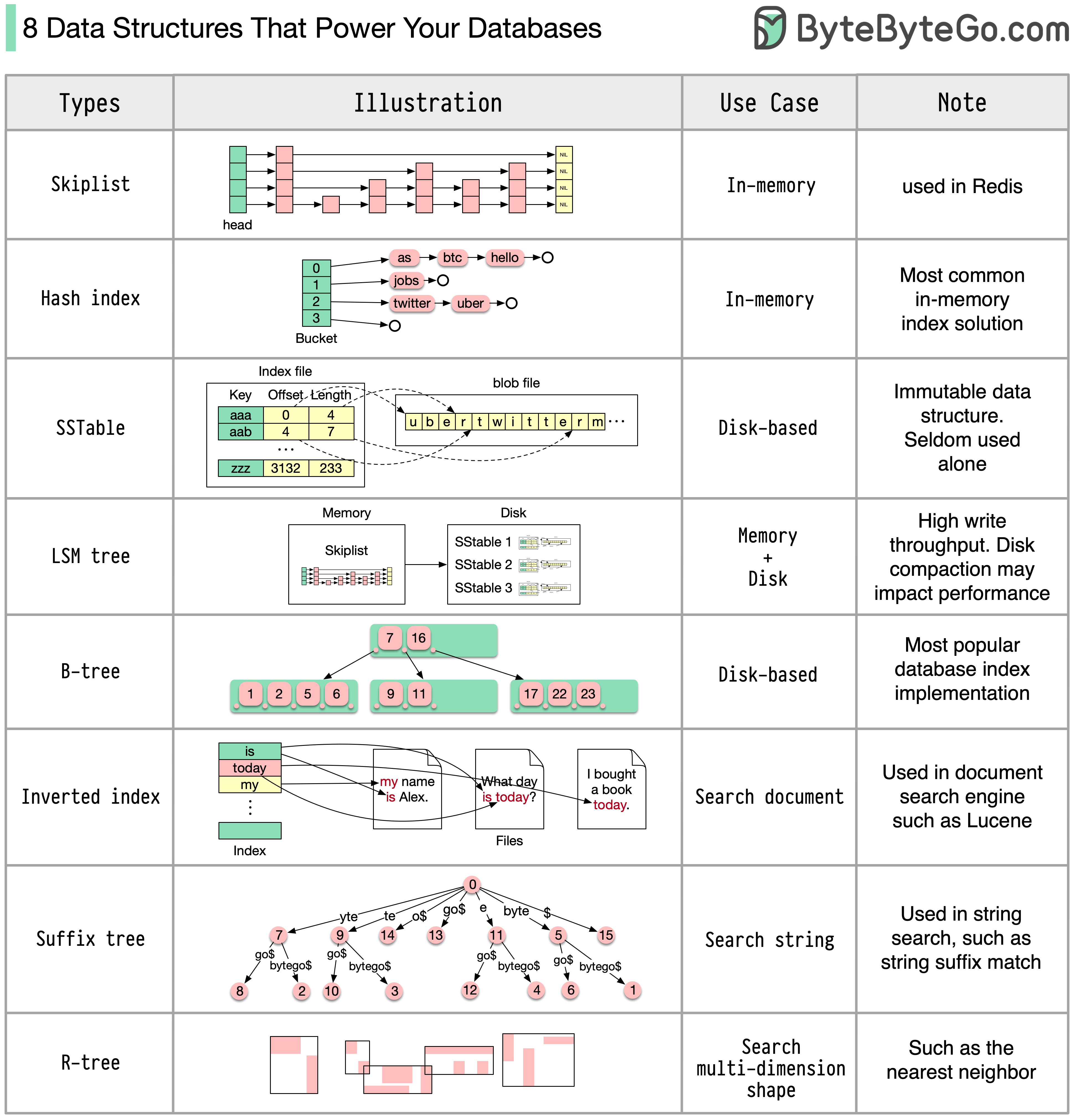

+ * [8 Data Structures That Power Your Databases](https://bytebytego.com/guides/8-data-structures-that-power-your-databases)

+ * [How to Implement Read Replica Pattern](https://bytebytego.com/guides/how-to-implement-read-replica-pattern)

+ * [A Crash Course on Database Sharding](https://bytebytego.com/guides/a-crash-course-in-database-sharding)

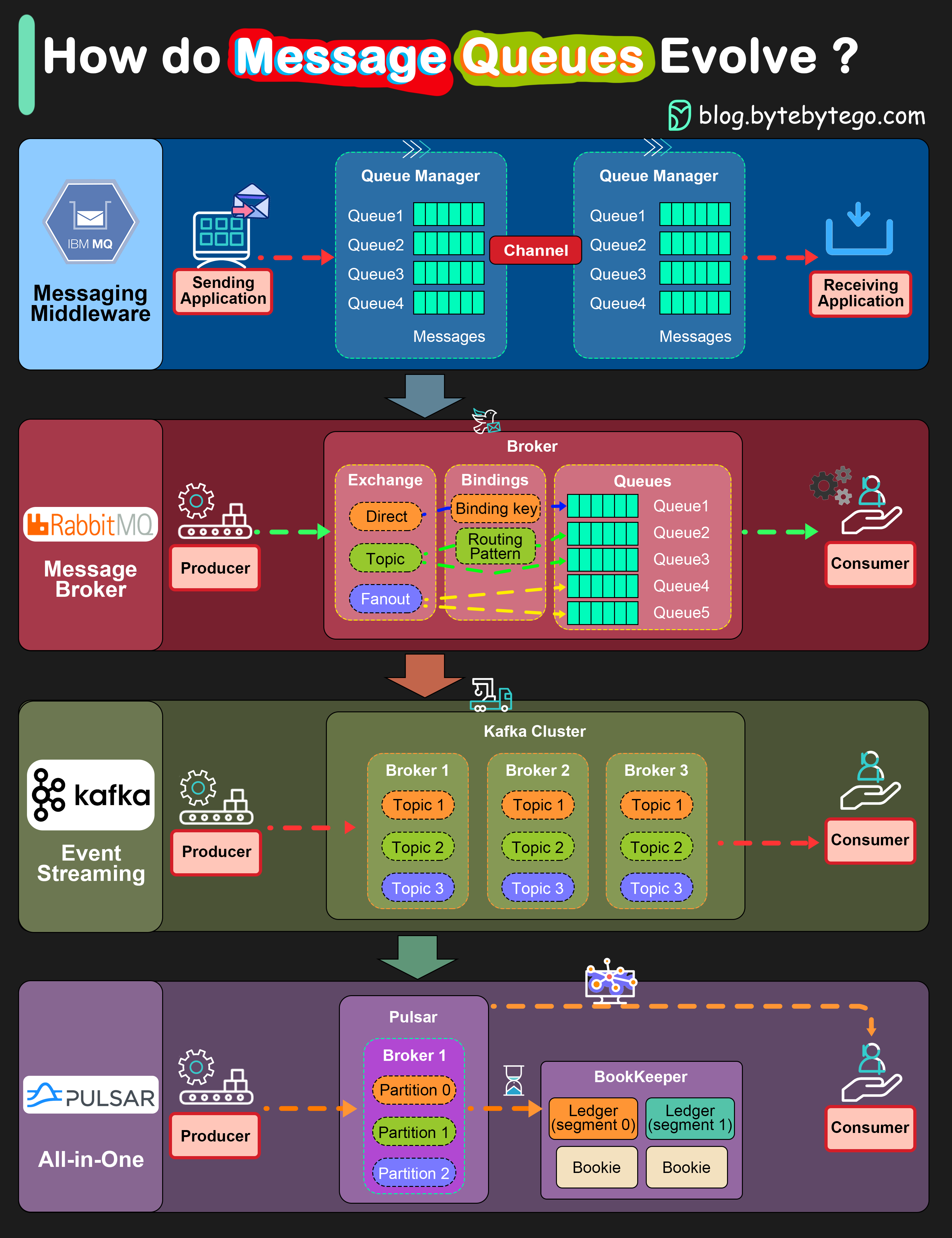

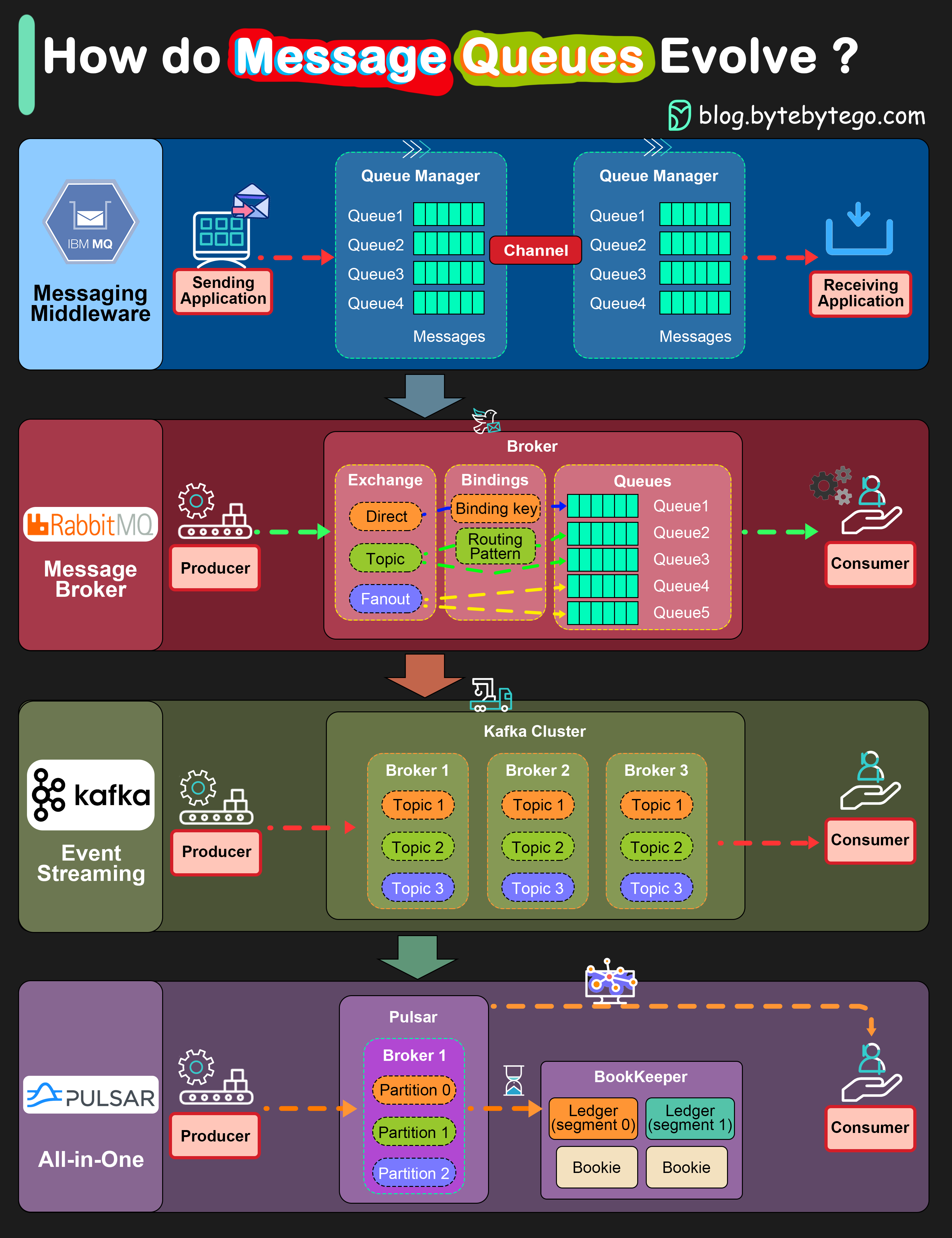

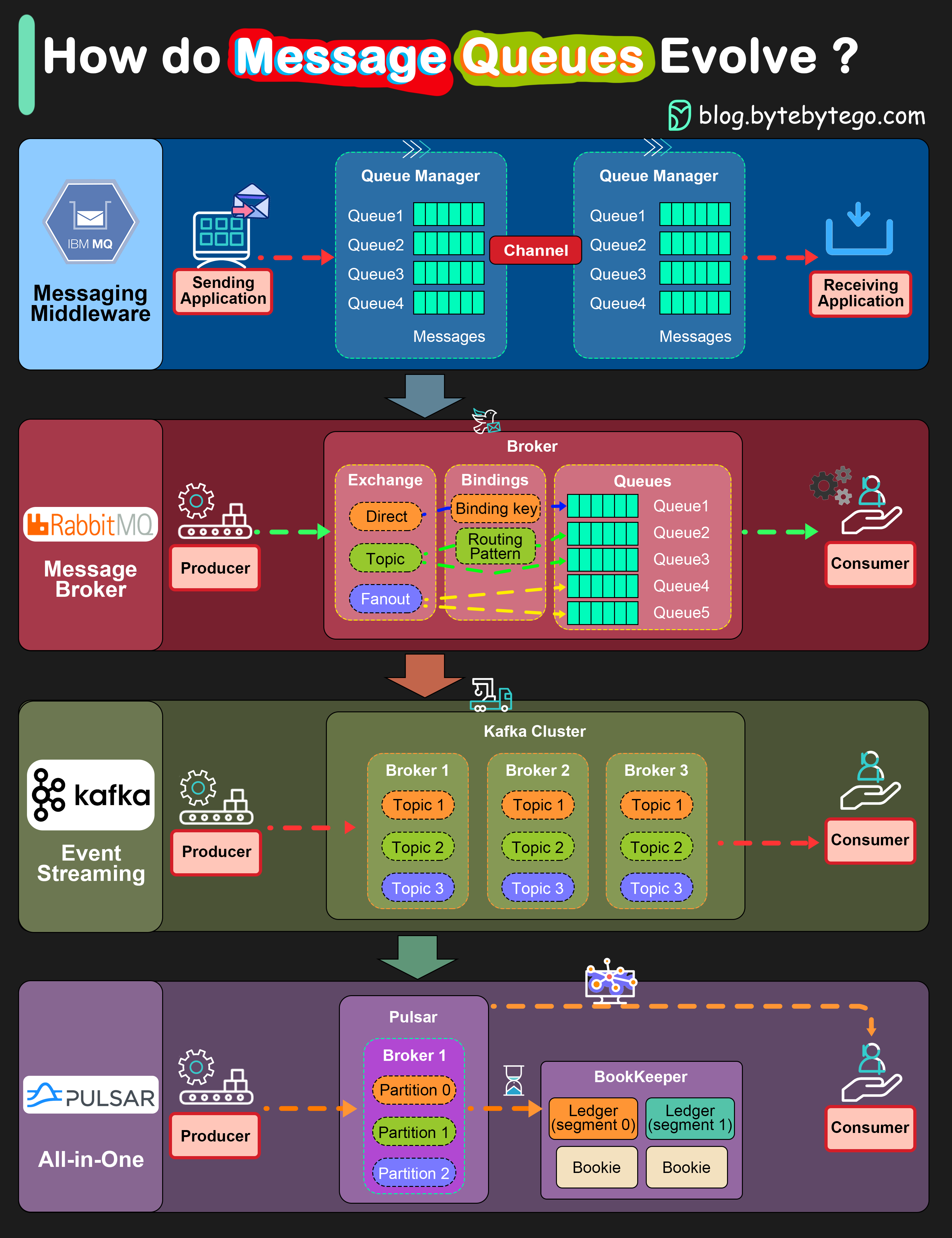

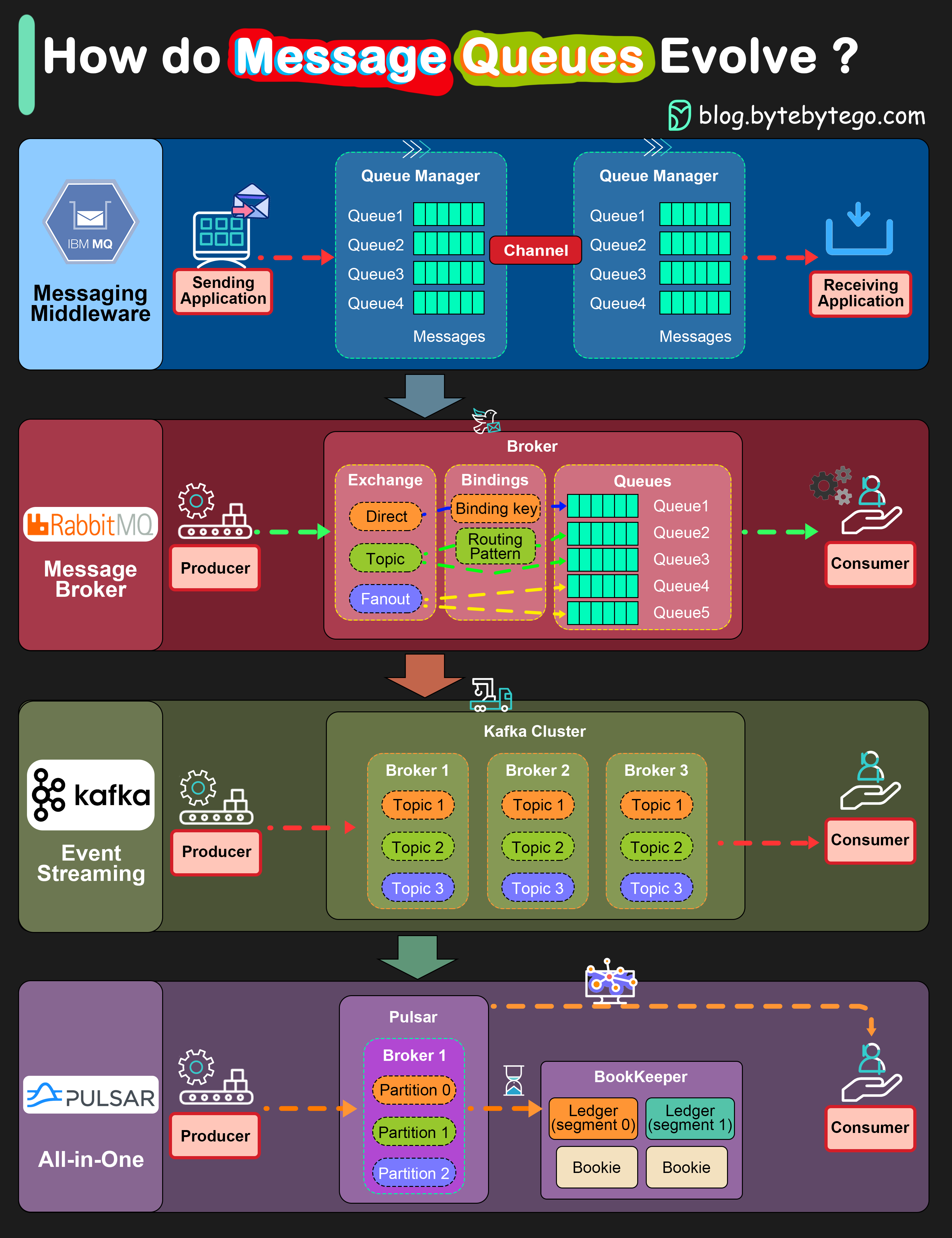

+ * [IBM MQ -> RabbitMQ -> Kafka -> Pulsar: Message Queue Evolution](https://bytebytego.com/guides/how-do-message-queue-architectures-evolve)

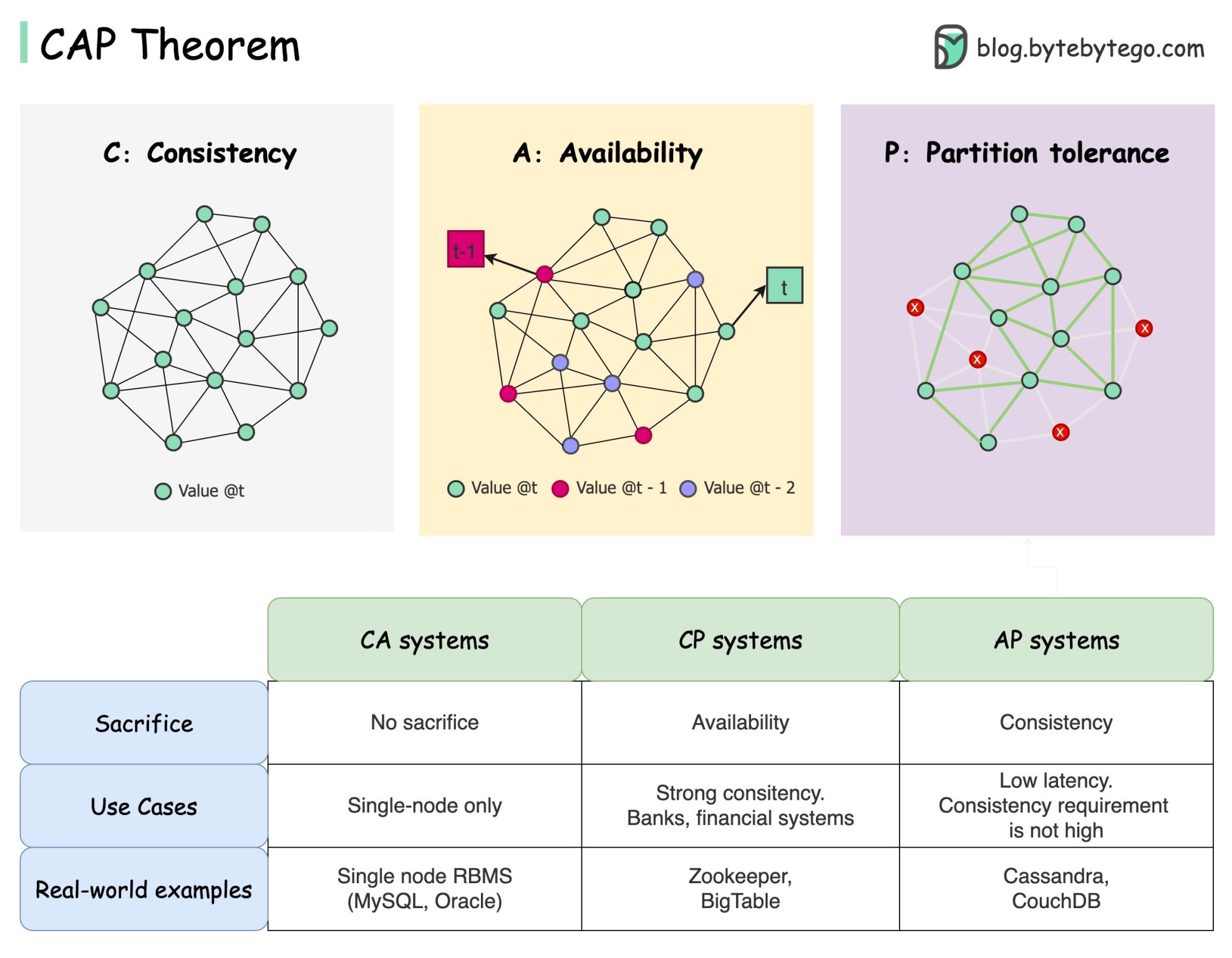

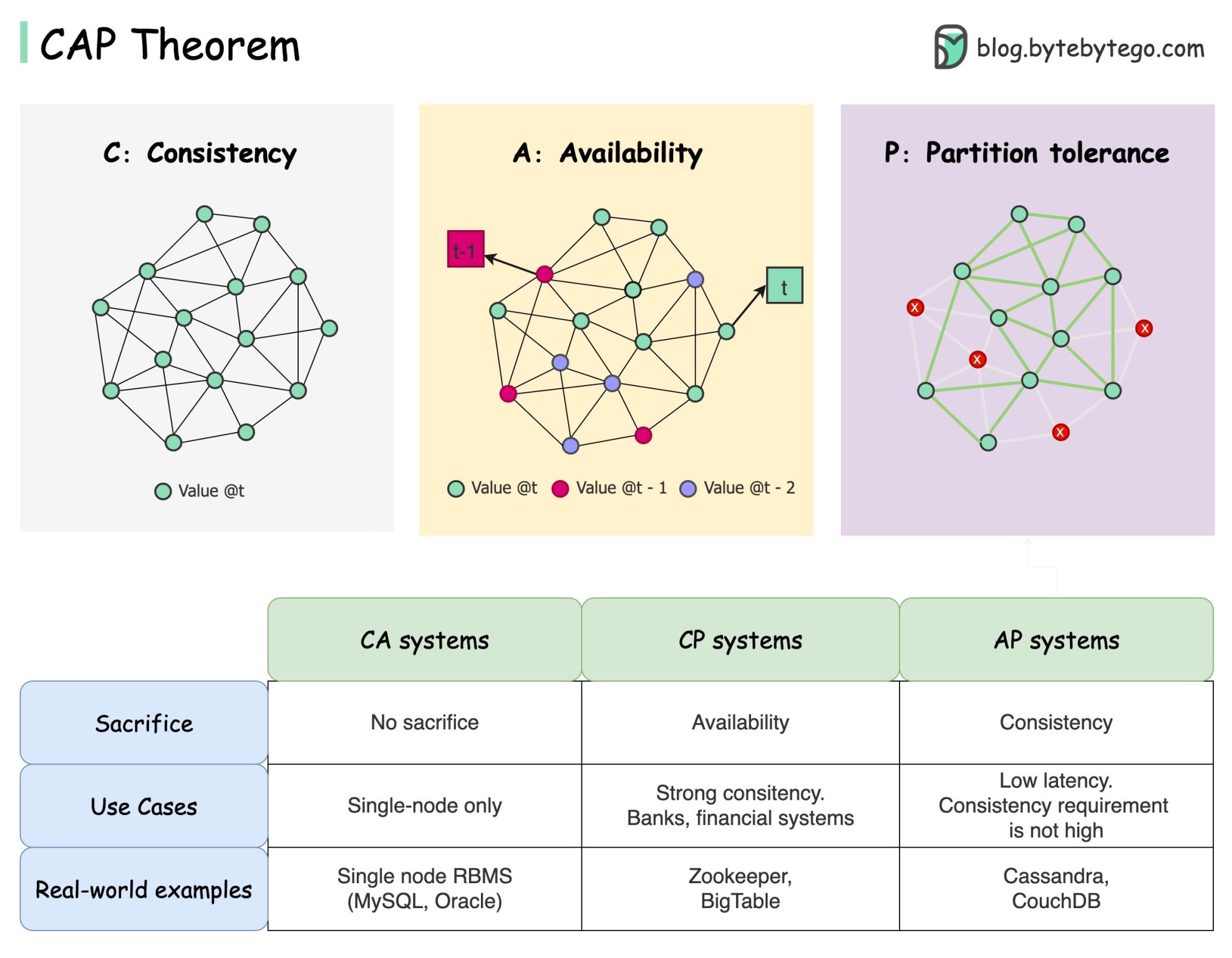

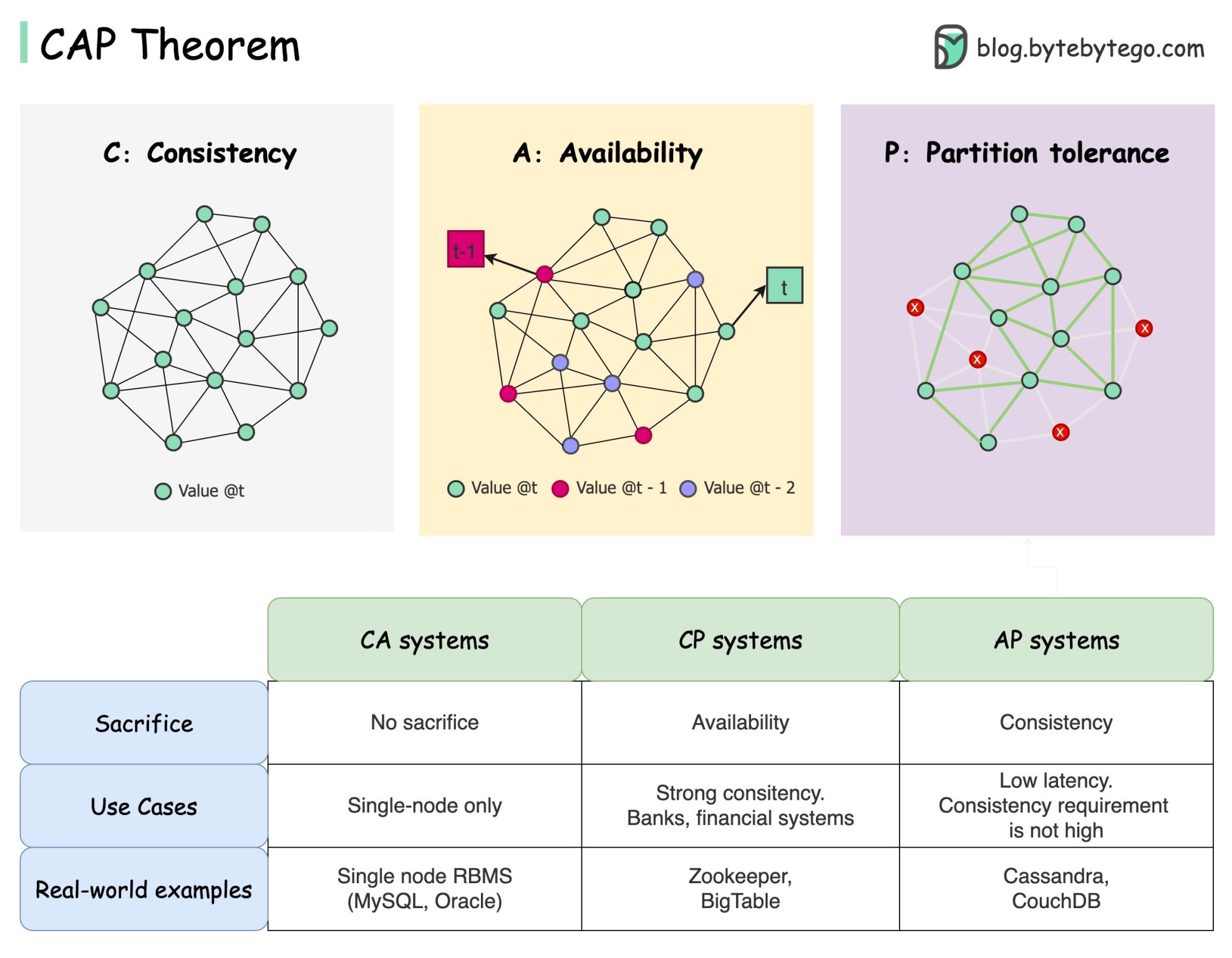

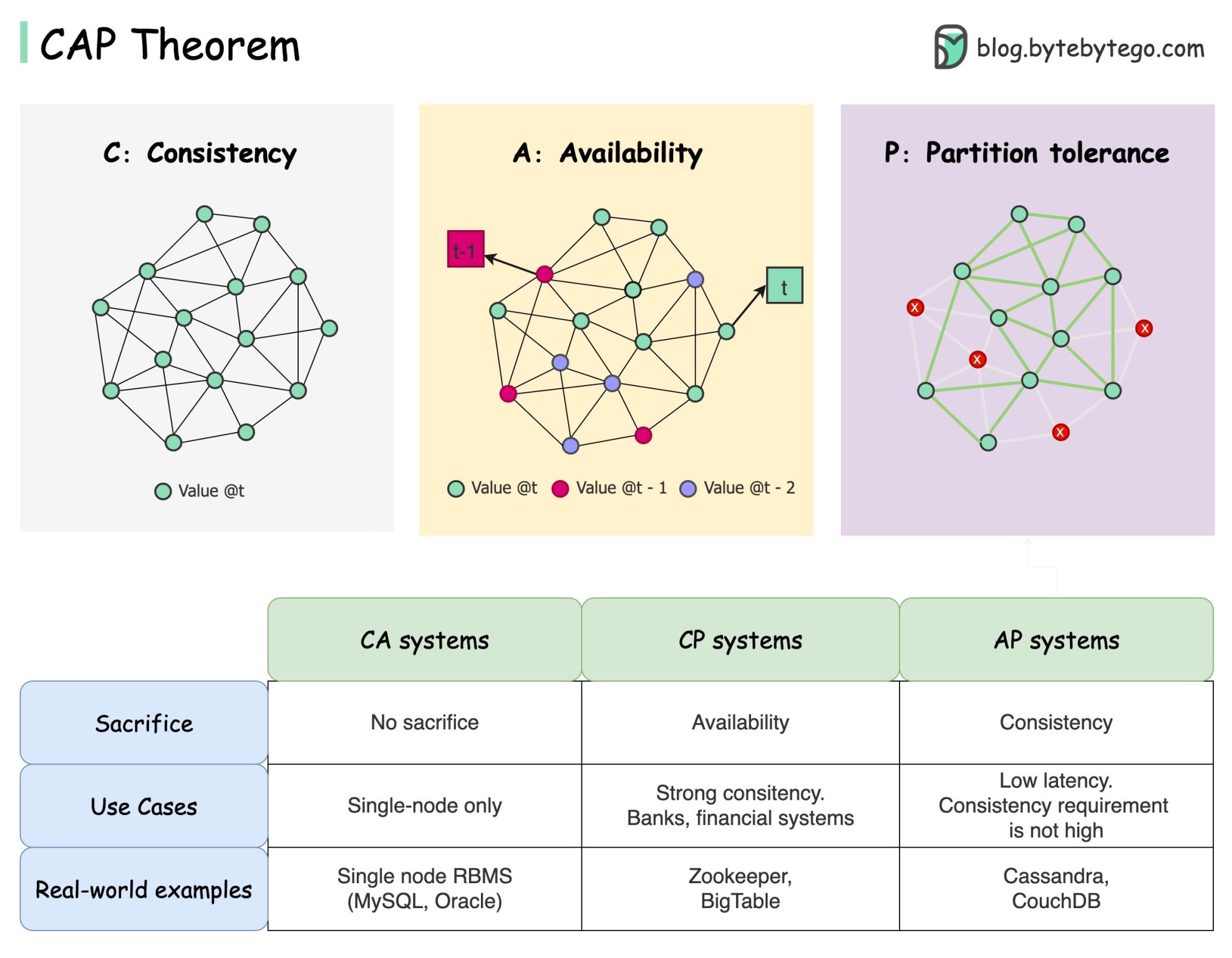

+ * [CAP Theorem: One of the Most Misunderstood Terms](https://bytebytego.com/guides/cap-theorem-one-of-the-most-misunderstood-terms)

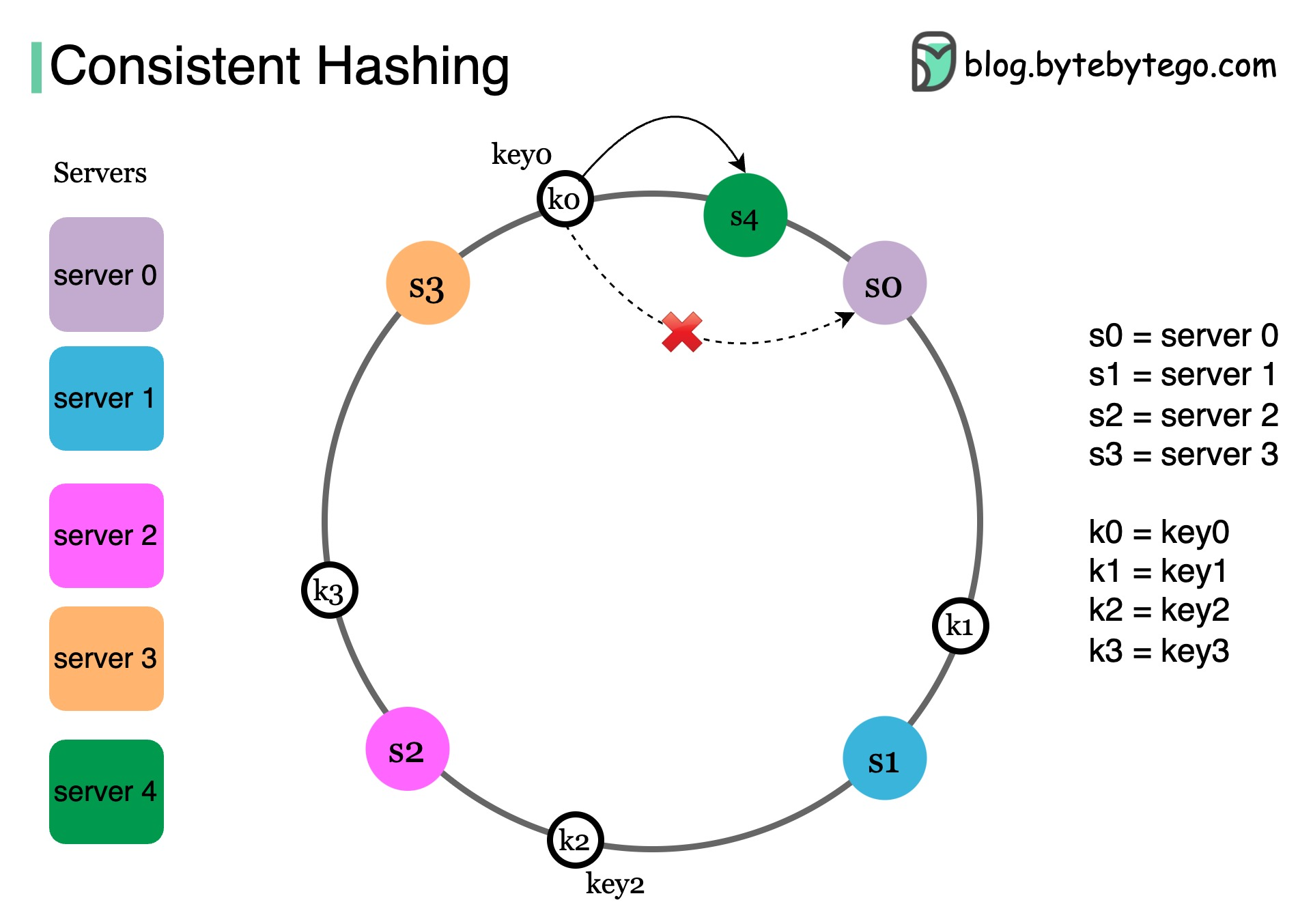

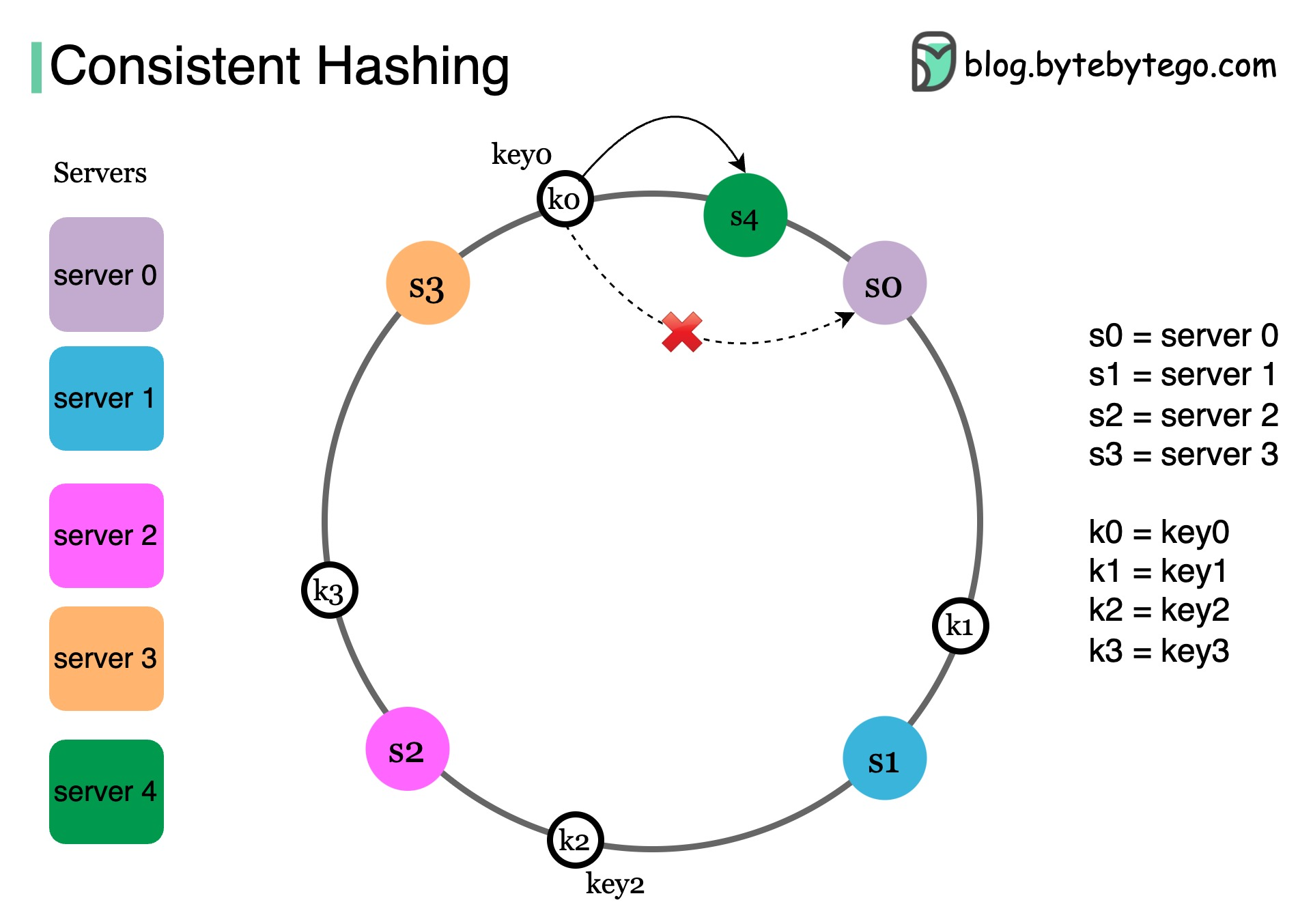

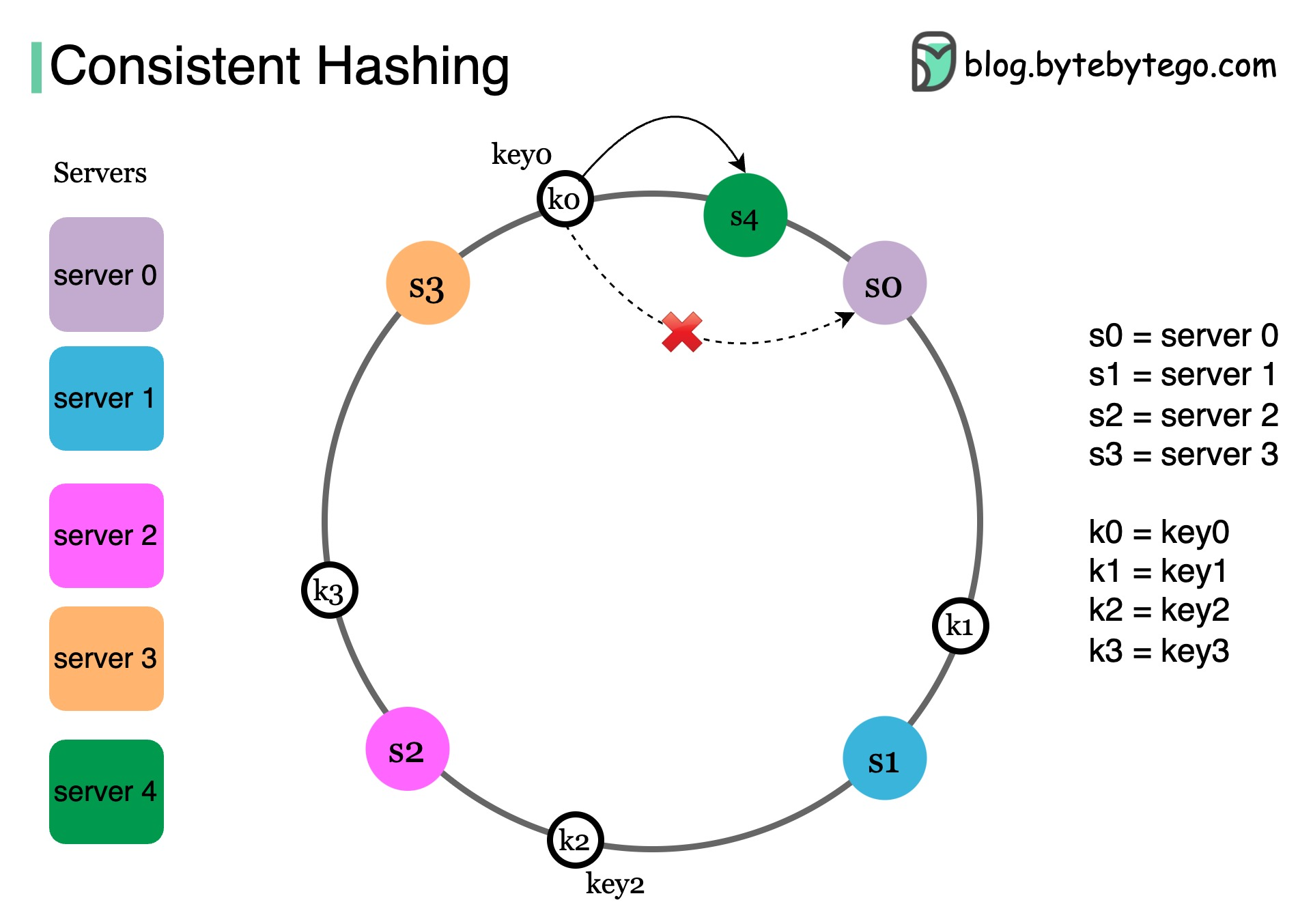

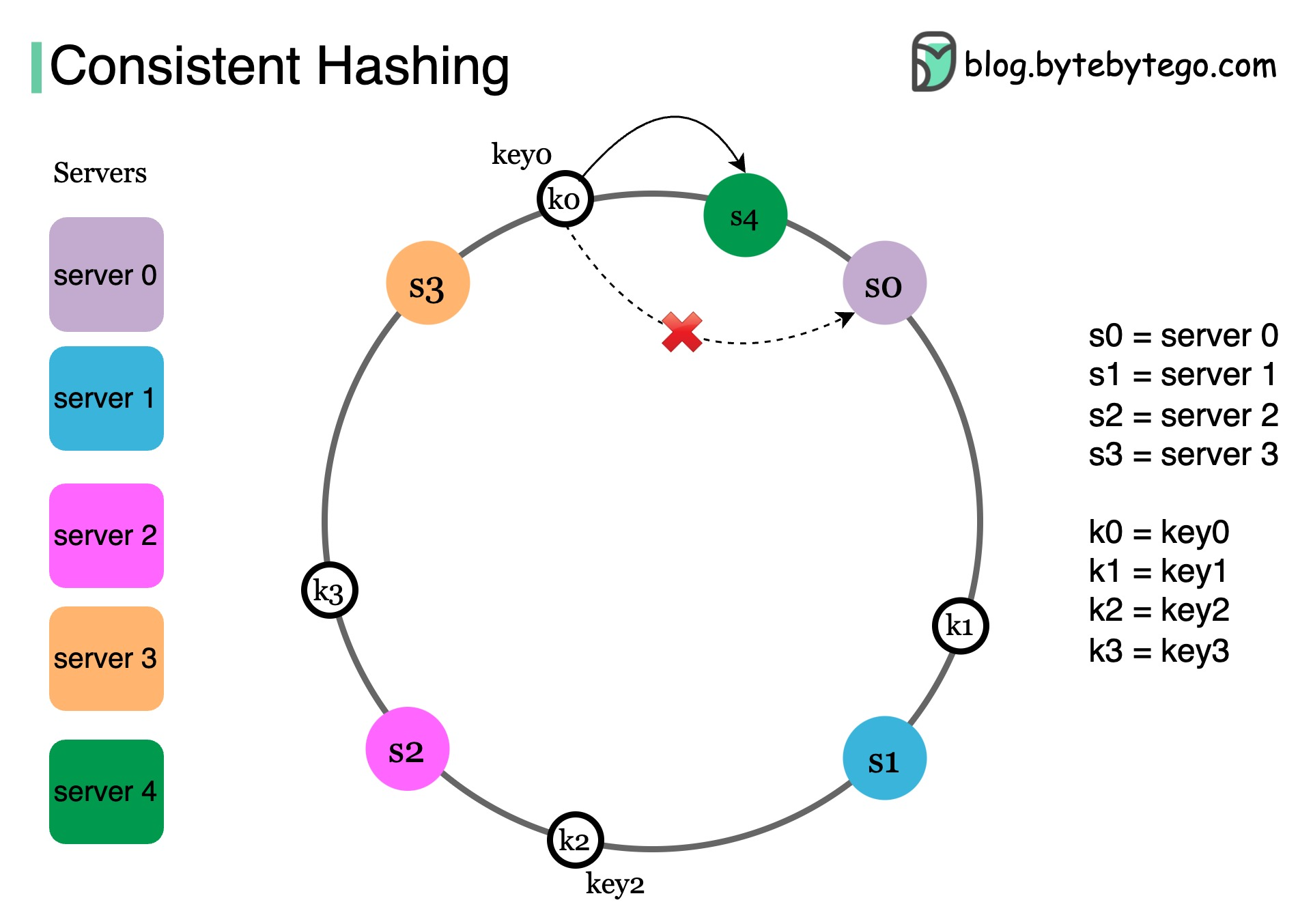

+ * [Consistent Hashing Explained](https://bytebytego.com/guides/consistent-hashing)

+ * [Types of Databases](https://bytebytego.com/guides/types-of-databases)

+ * [Key Concepts to Understand Database Sharding](https://bytebytego.com/guides/key-concepts-to-understand-database-sharding)

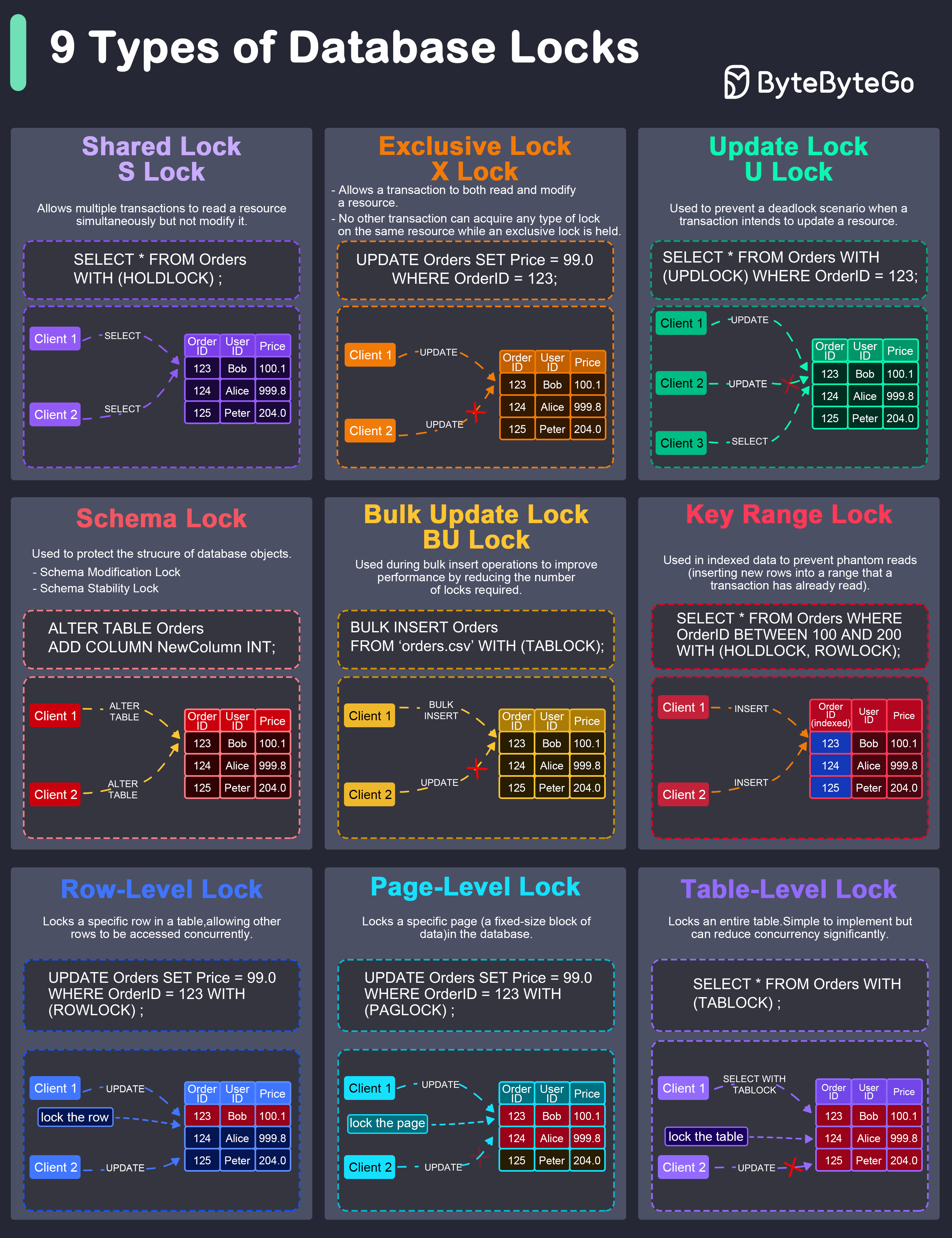

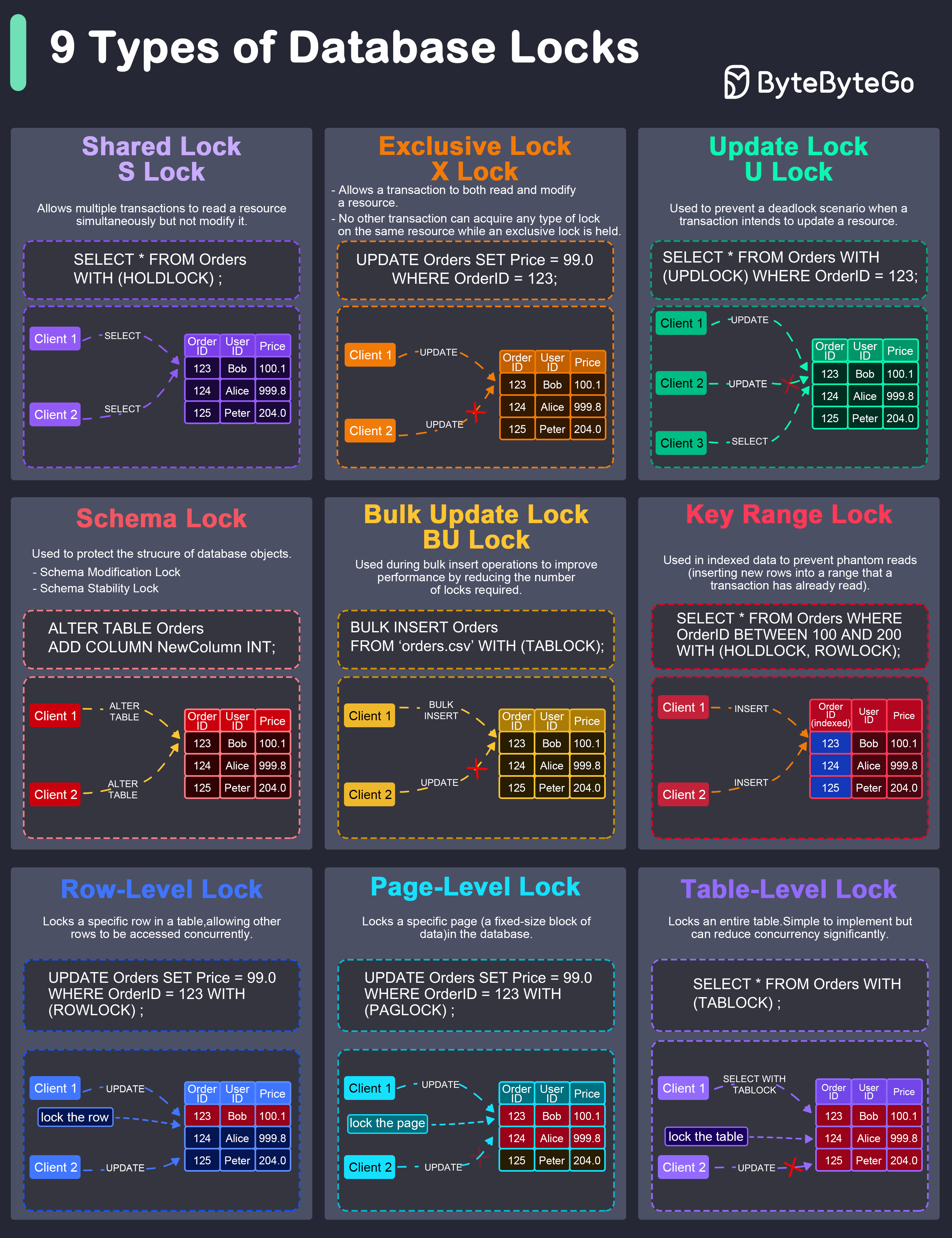

+ * [Database Locks Explained](https://bytebytego.com/guides/what-are-the-differences-among-database-locks)

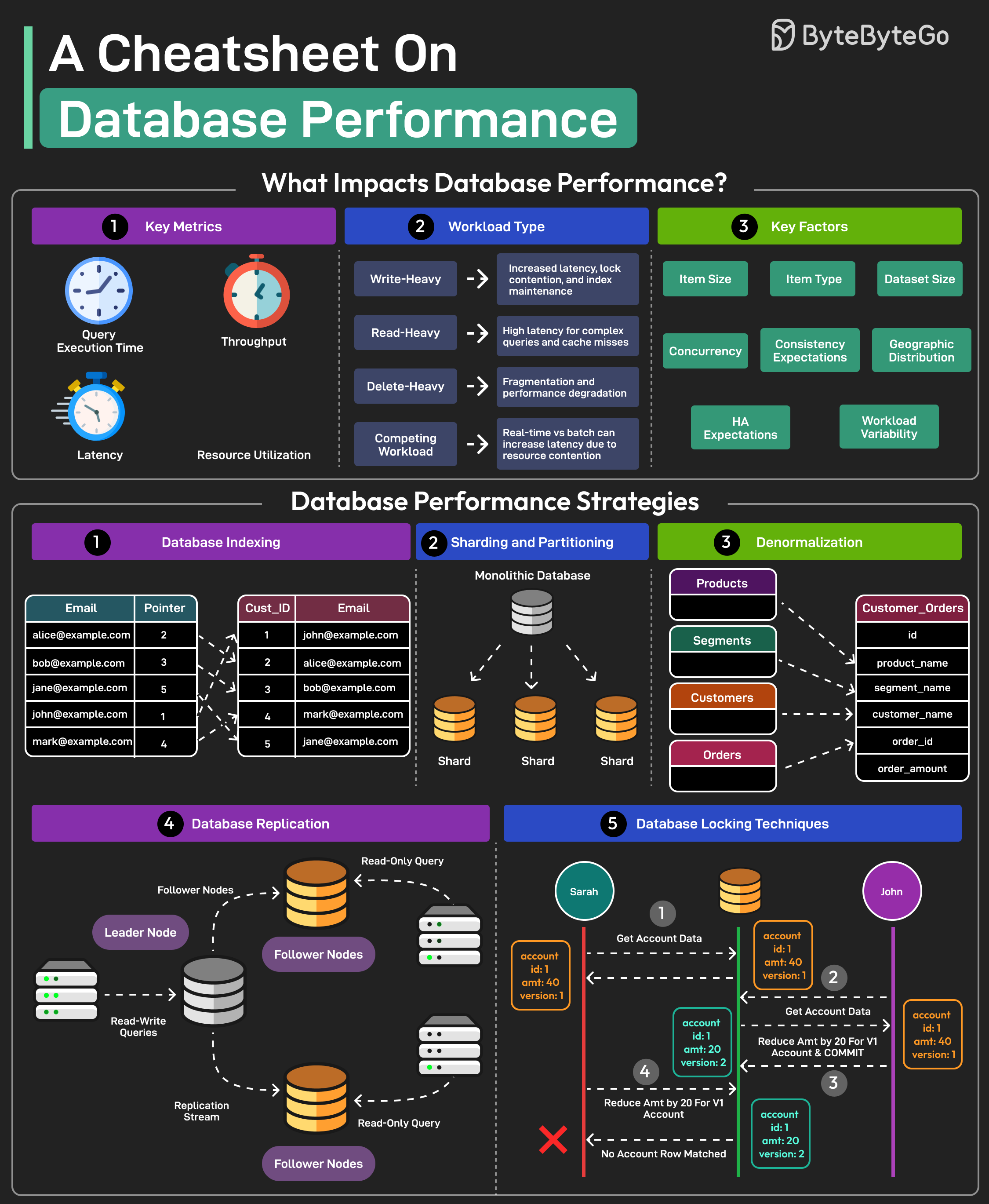

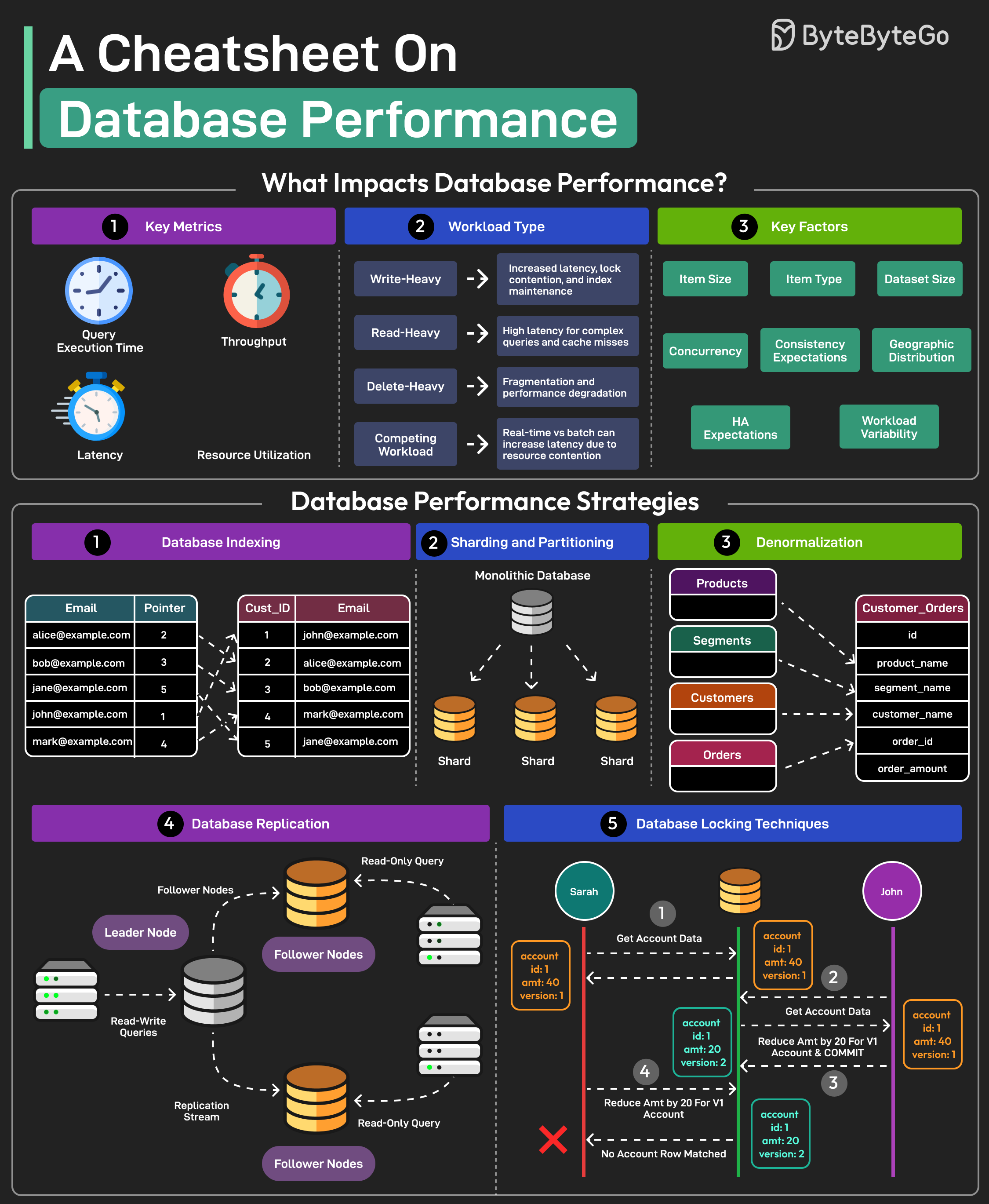

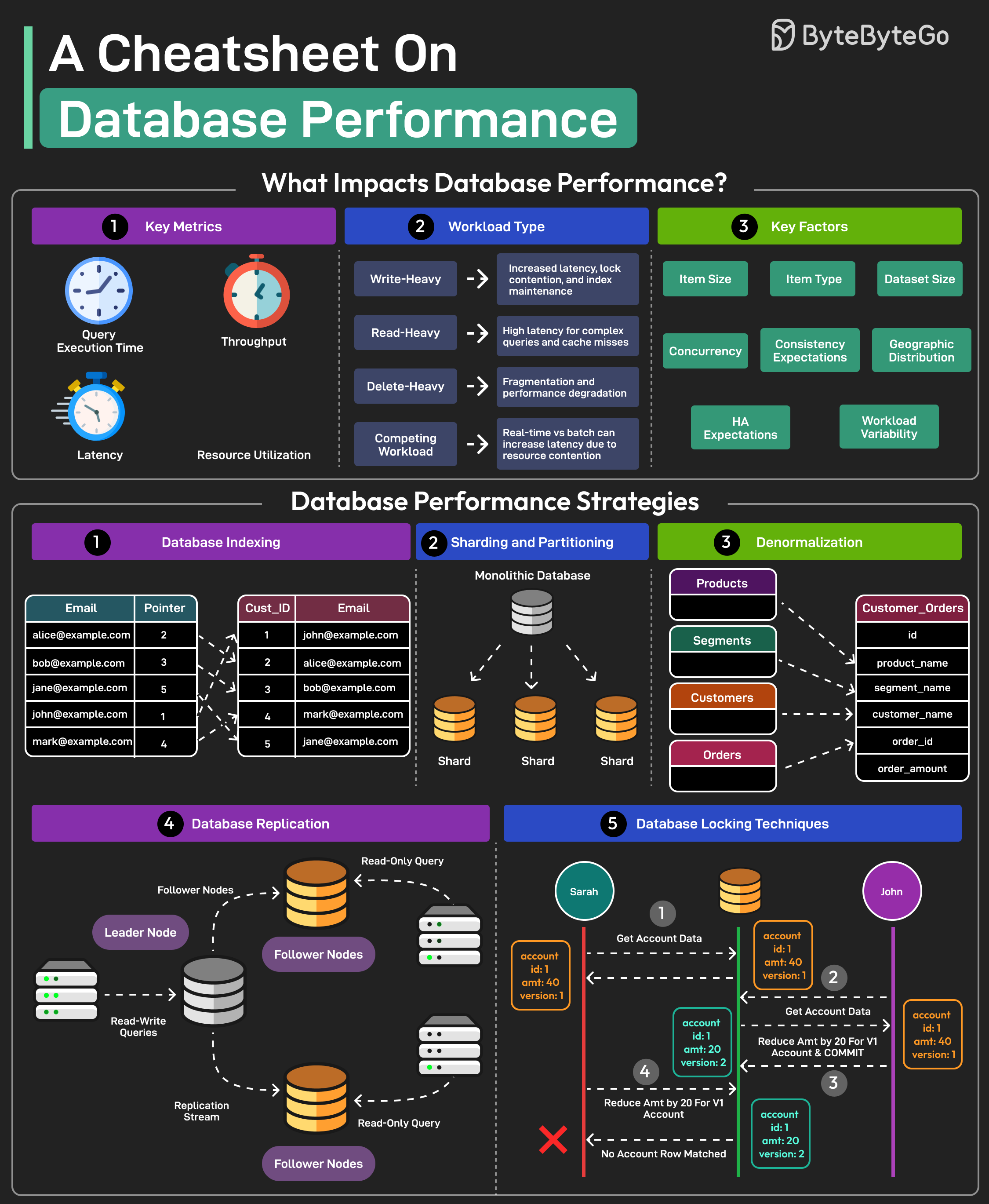

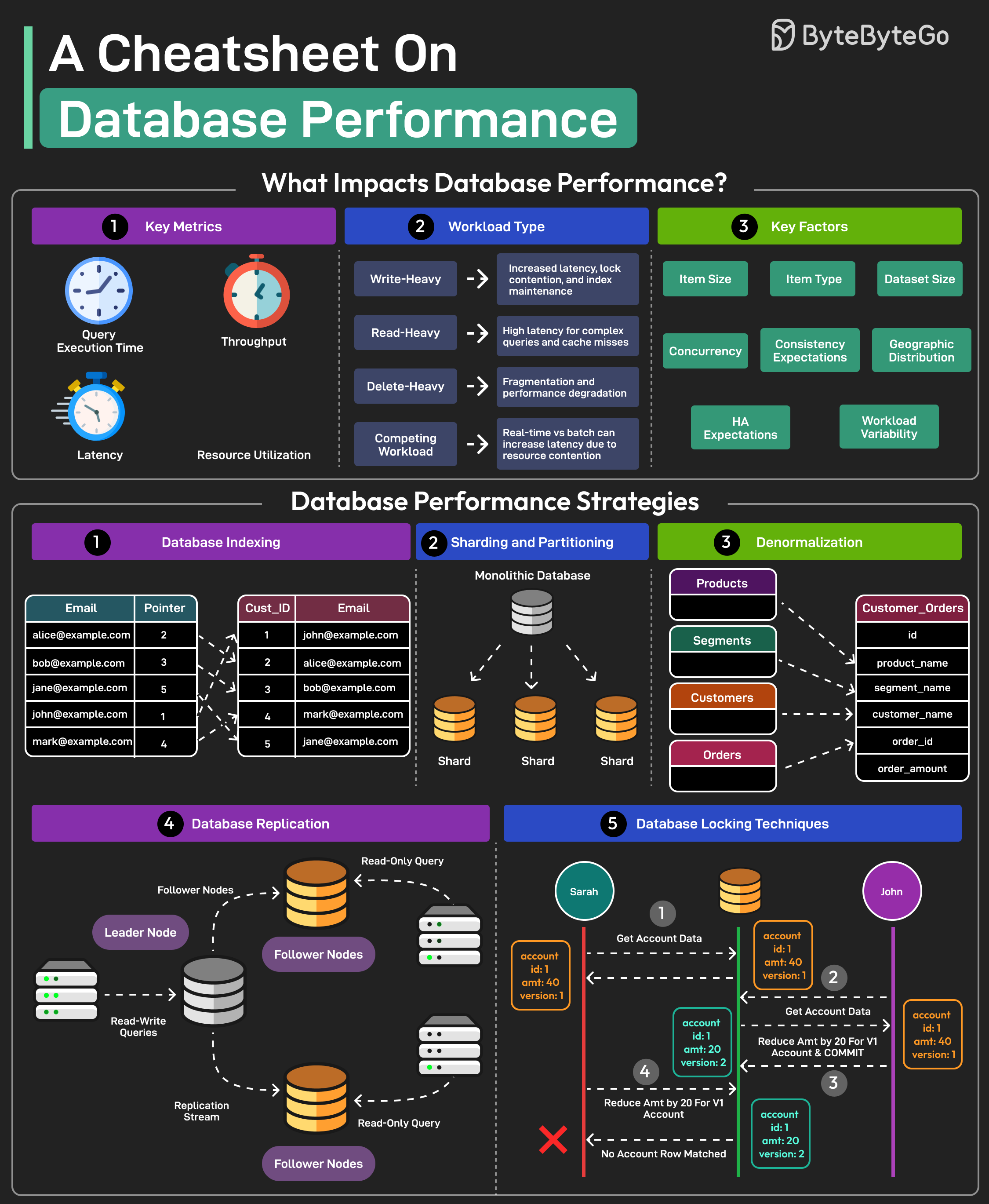

+ * [A Cheatsheet on Database Performance](https://bytebytego.com/guides/a-cheatsheet-on-database-performance)

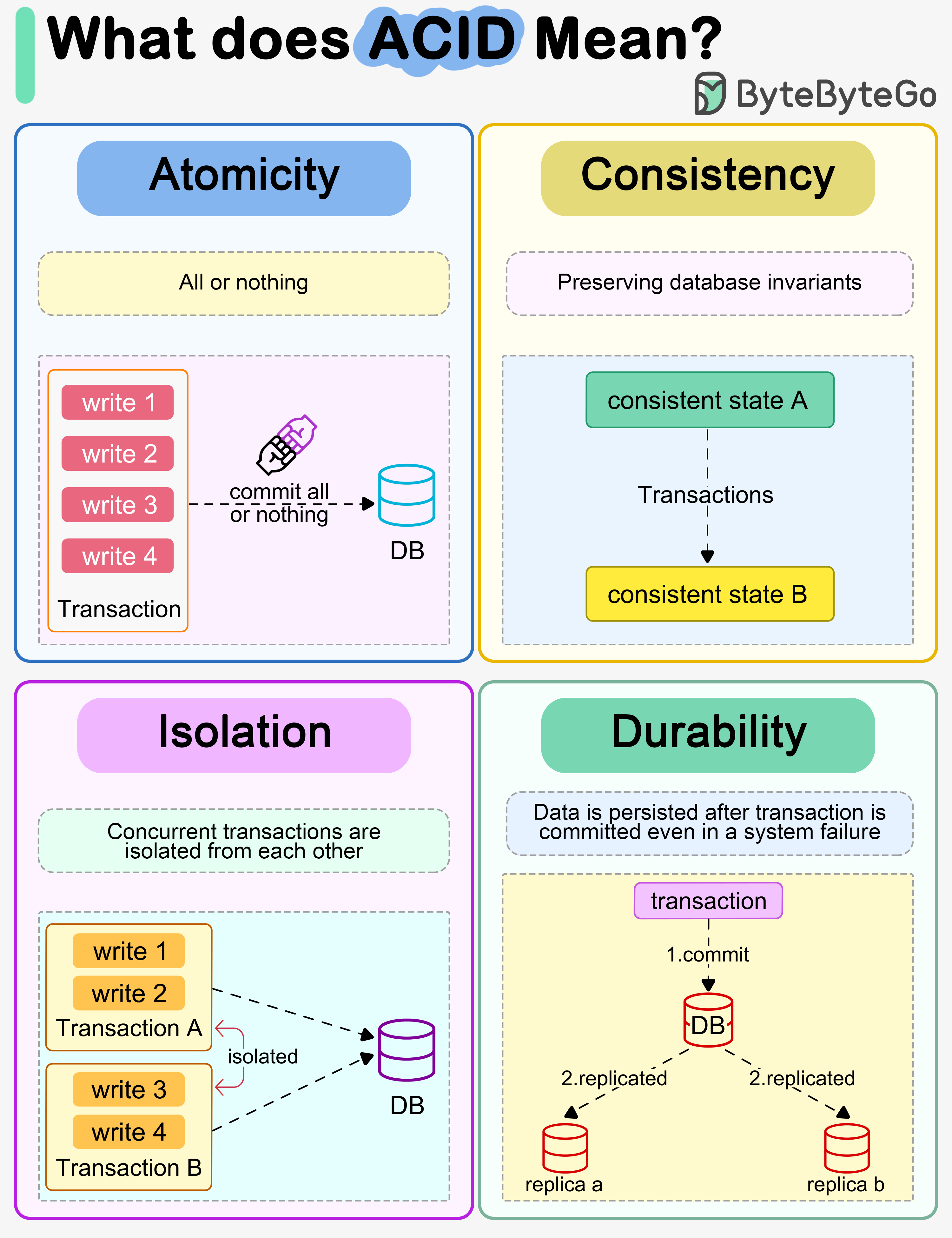

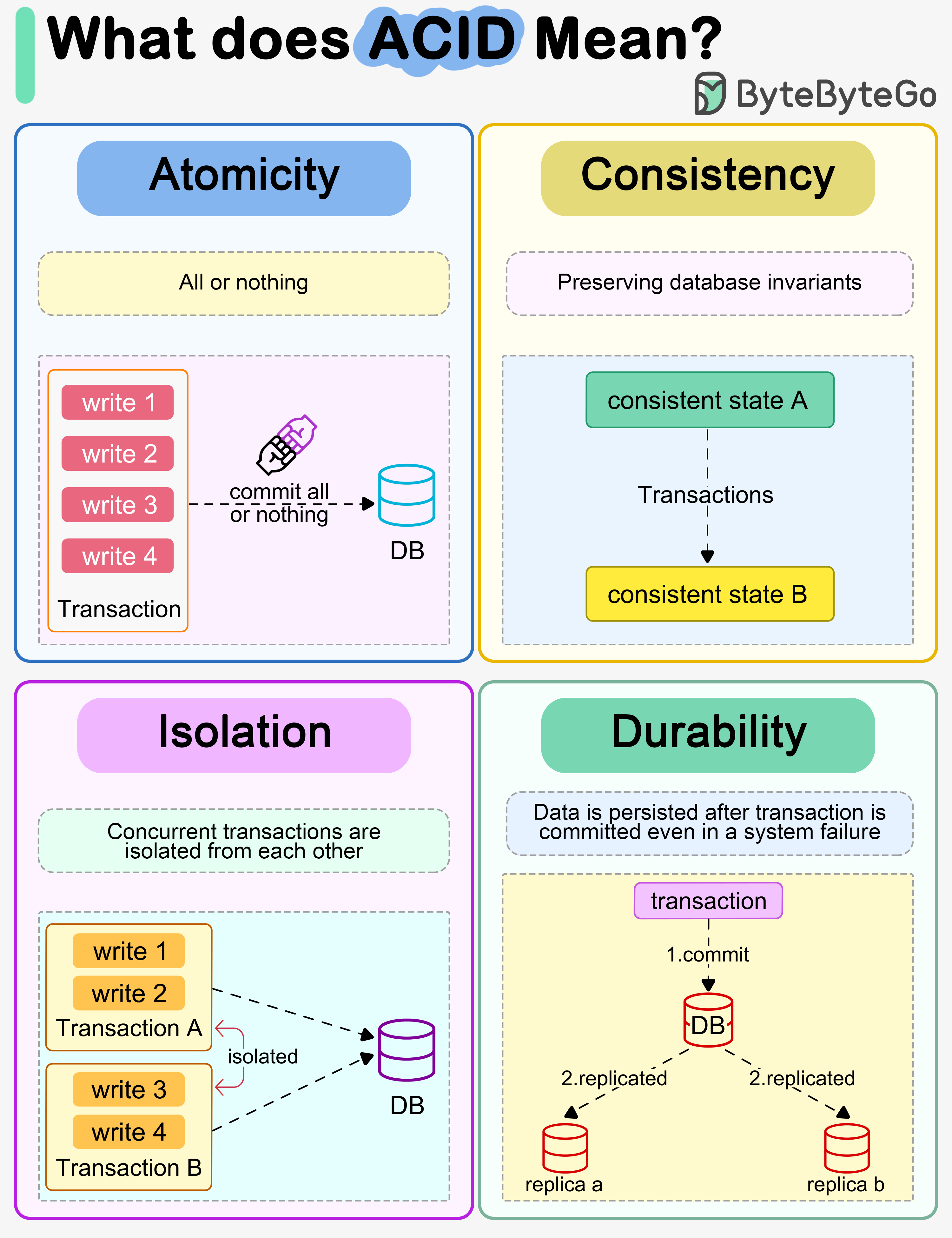

+ * [What does ACID mean?](https://bytebytego.com/guides/what-does-acid-mean)

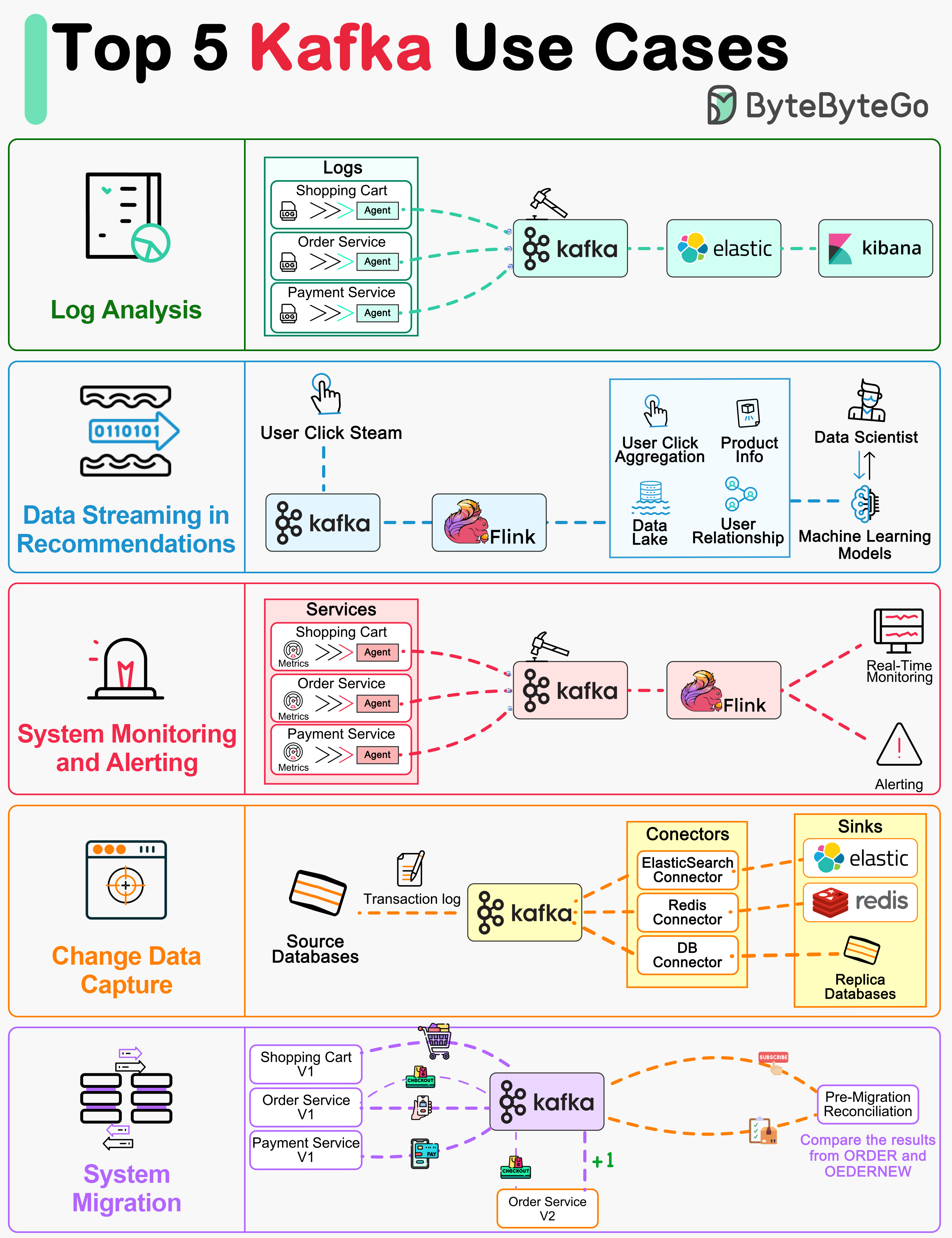

+ * [Top 5 Kafka Use Cases](https://bytebytego.com/guides/top-5-kafka-use-cases)

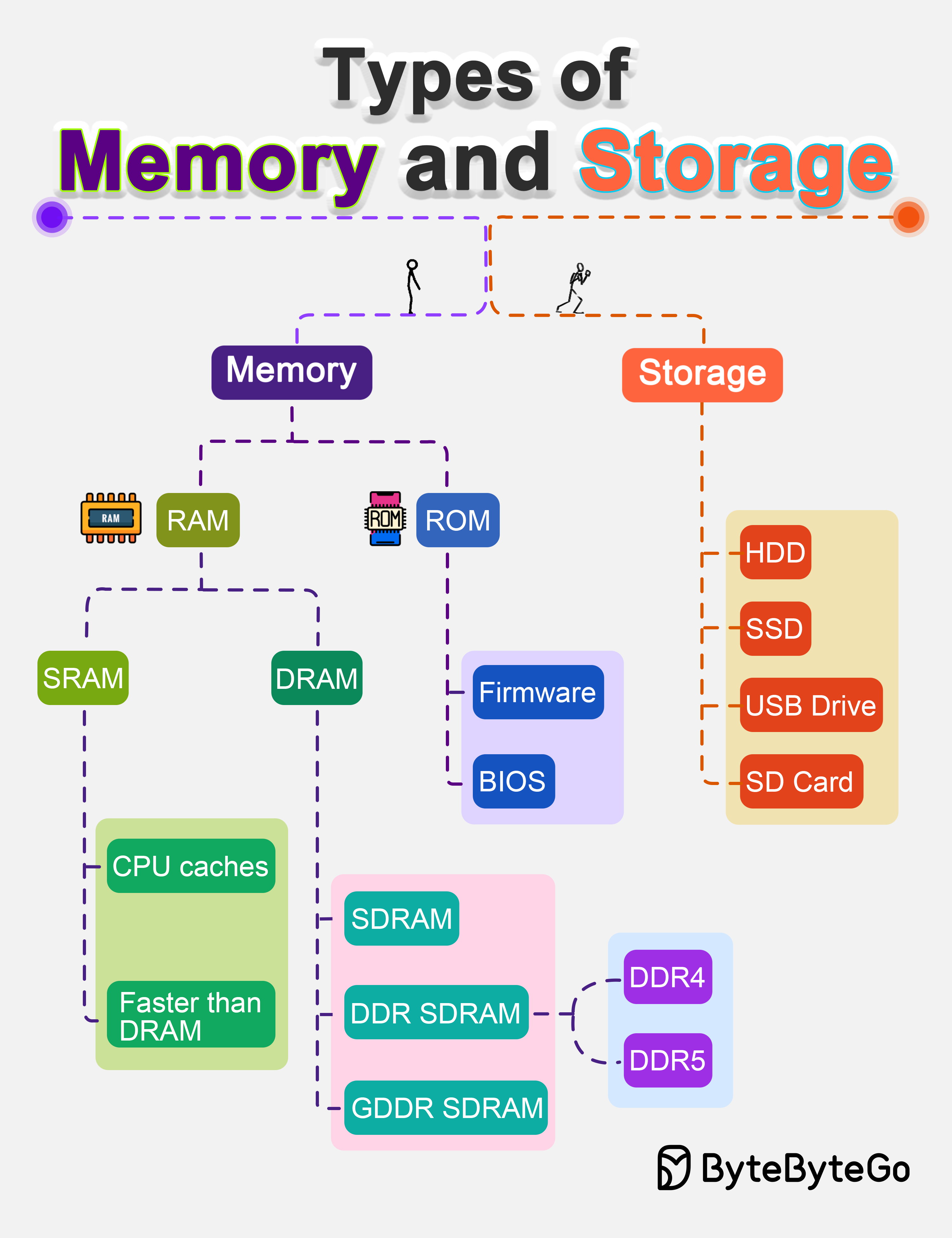

+ * [Types of Memory and Storage](https://bytebytego.com/guides/types-of-memory-and-storage)

+ * [7 Must-Know Strategies to Scale Your Database](https://bytebytego.com/guides/7-must-know-strategies-to-scale-your-database)

+* [Technical Interviews](https://bytebytego.com/guides/technical-interviews)

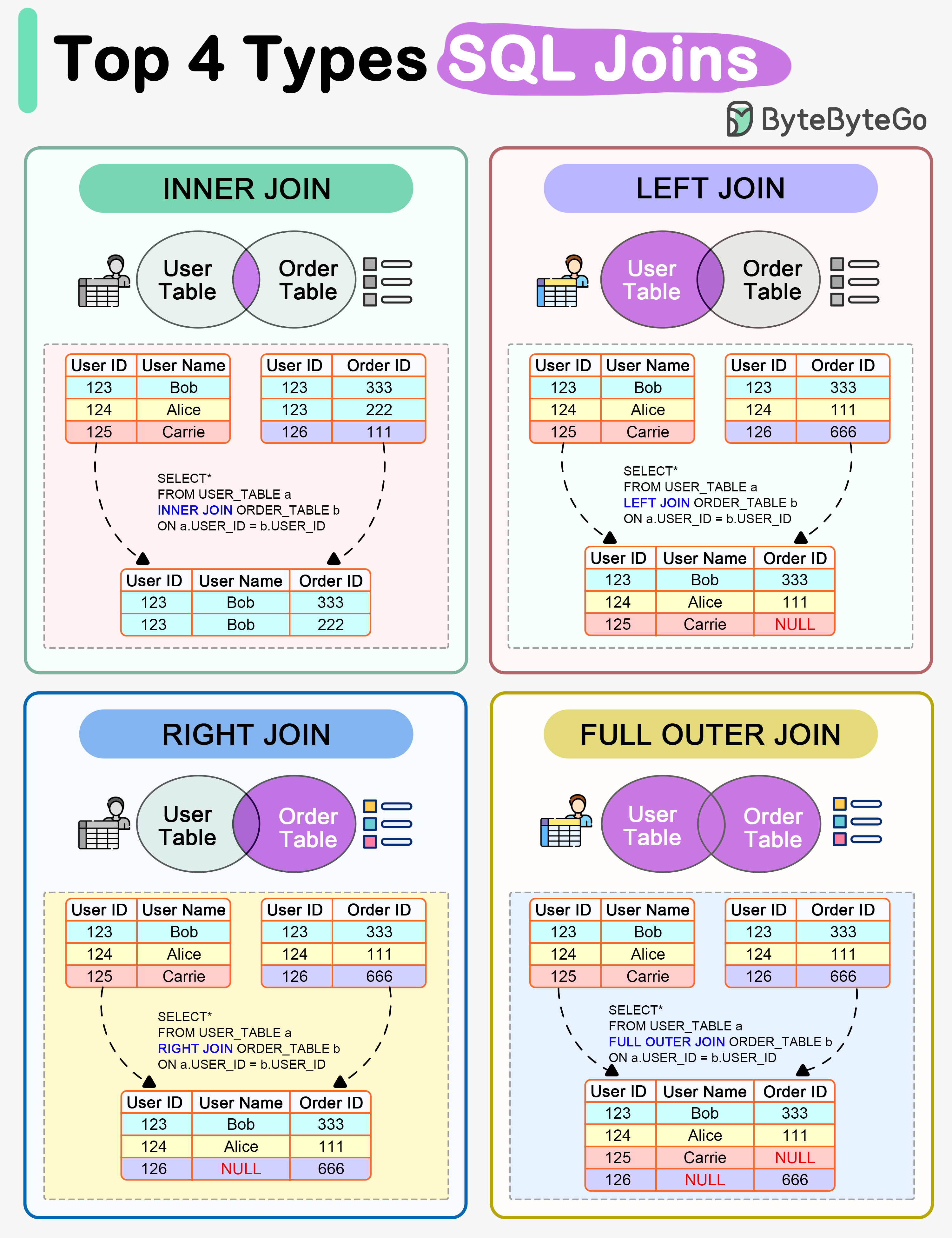

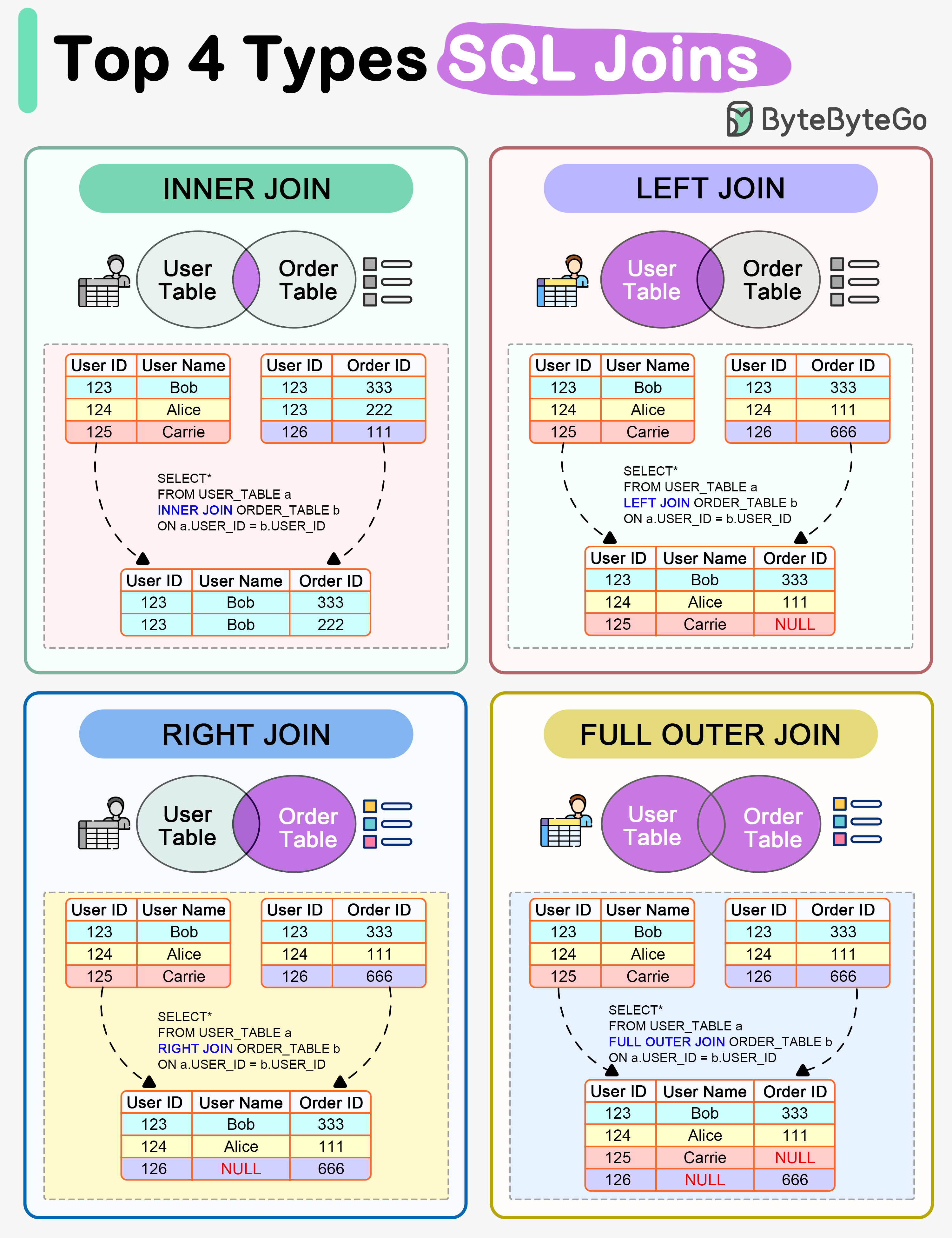

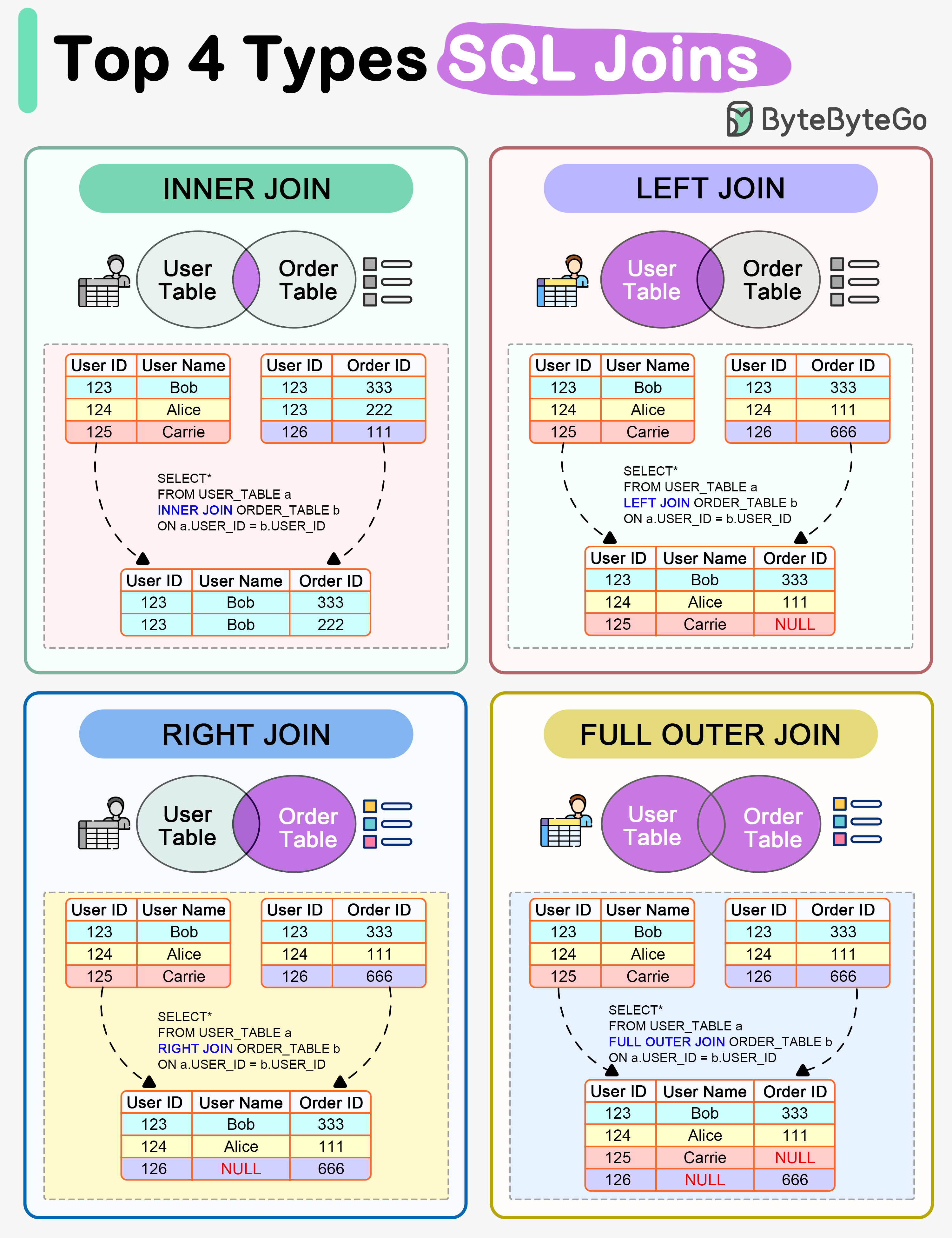

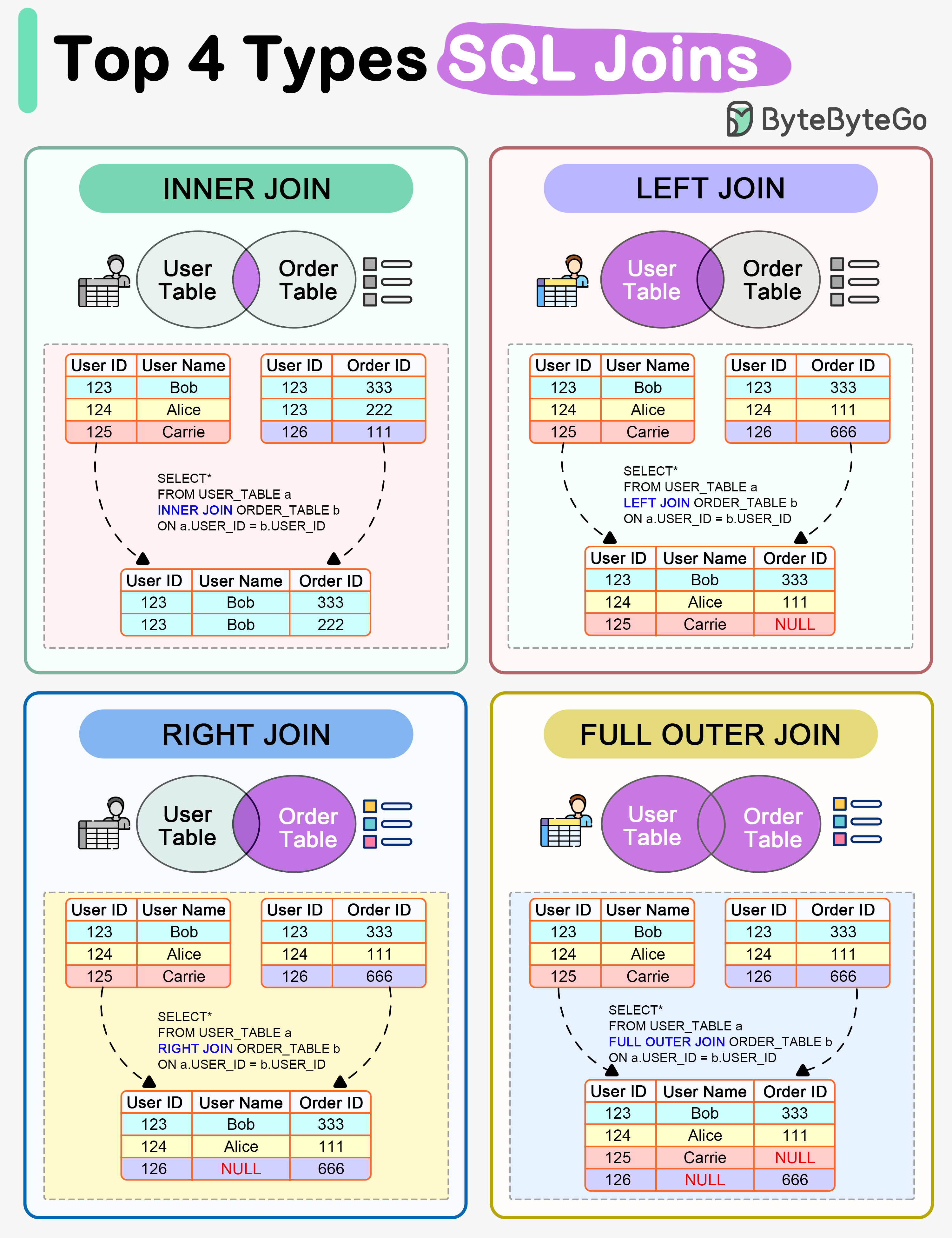

+ * [How do SQL Joins Work?](https://bytebytego.com/guides/how-do-sql-joins-work)

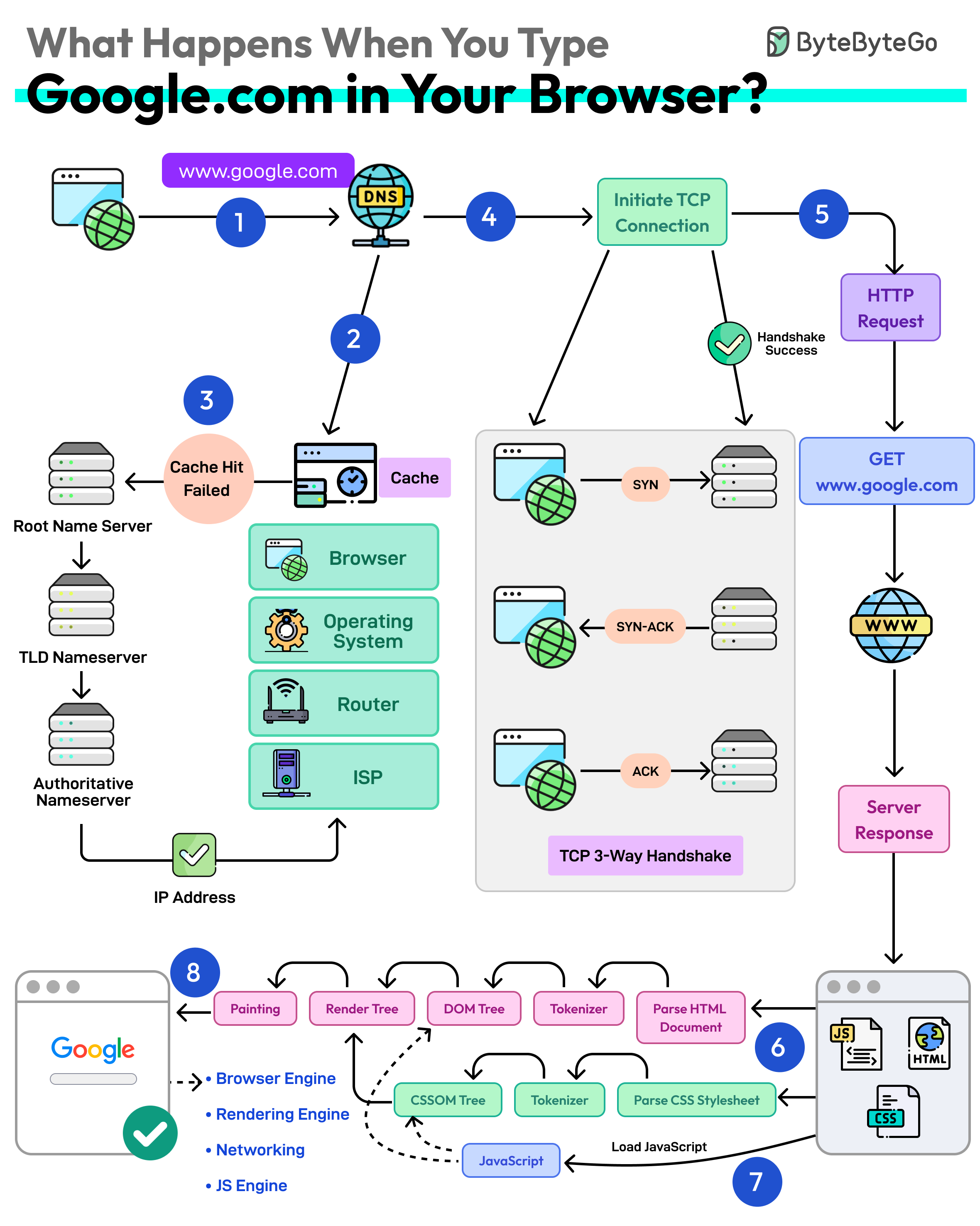

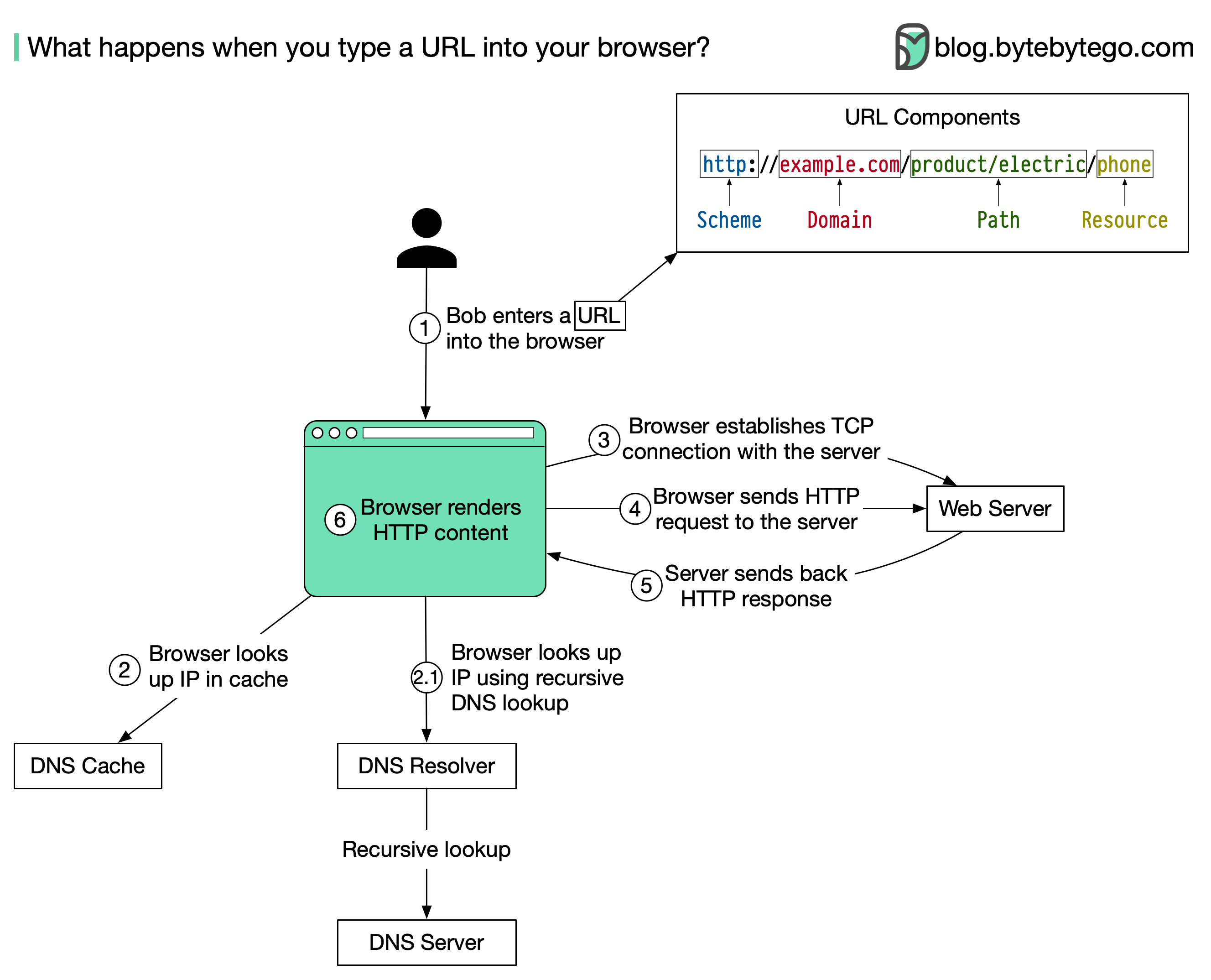

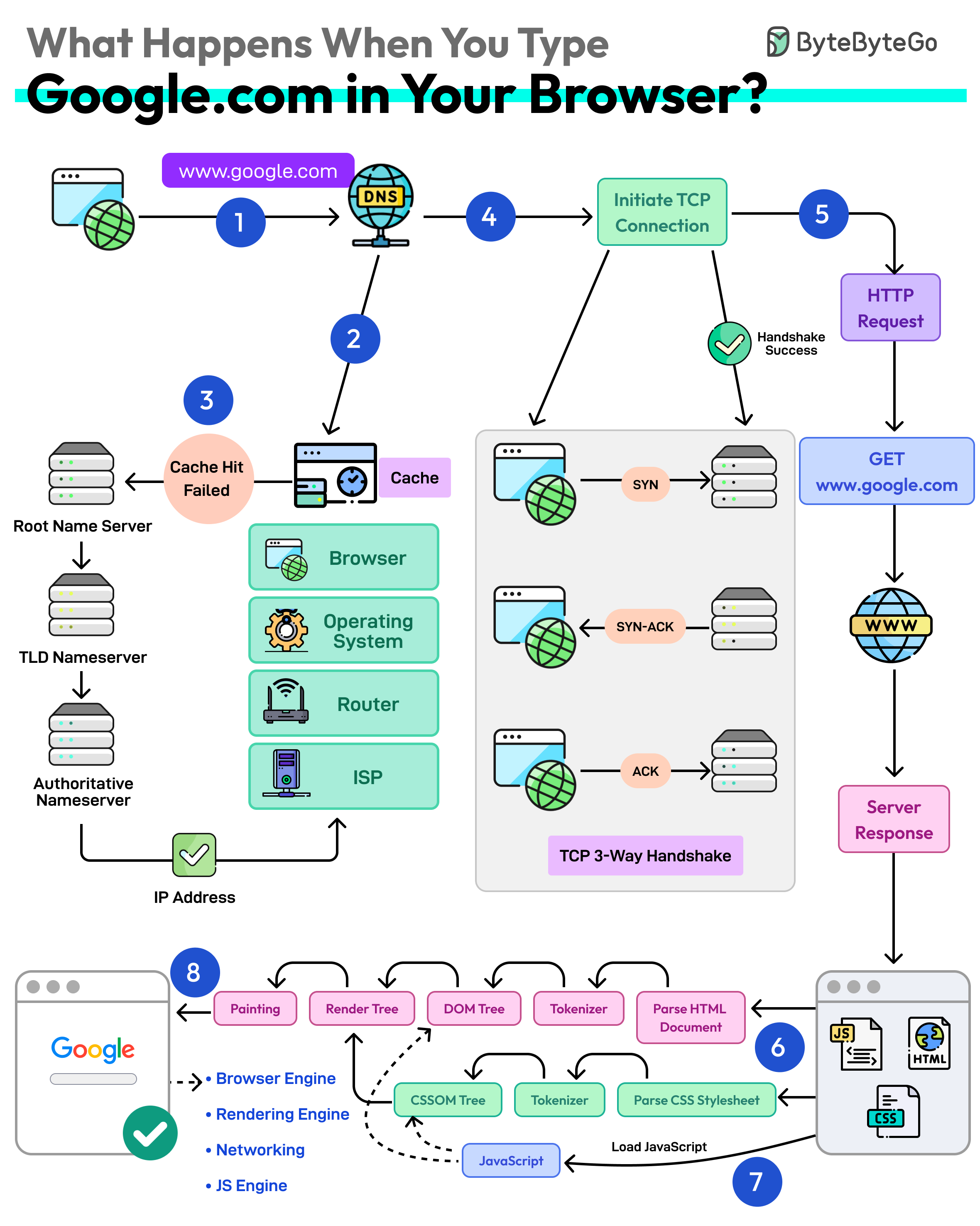

+ * [What Happens When You Type google.com Into a Browser?](https://bytebytego.com/guides/what-happens-when-you-type-google)

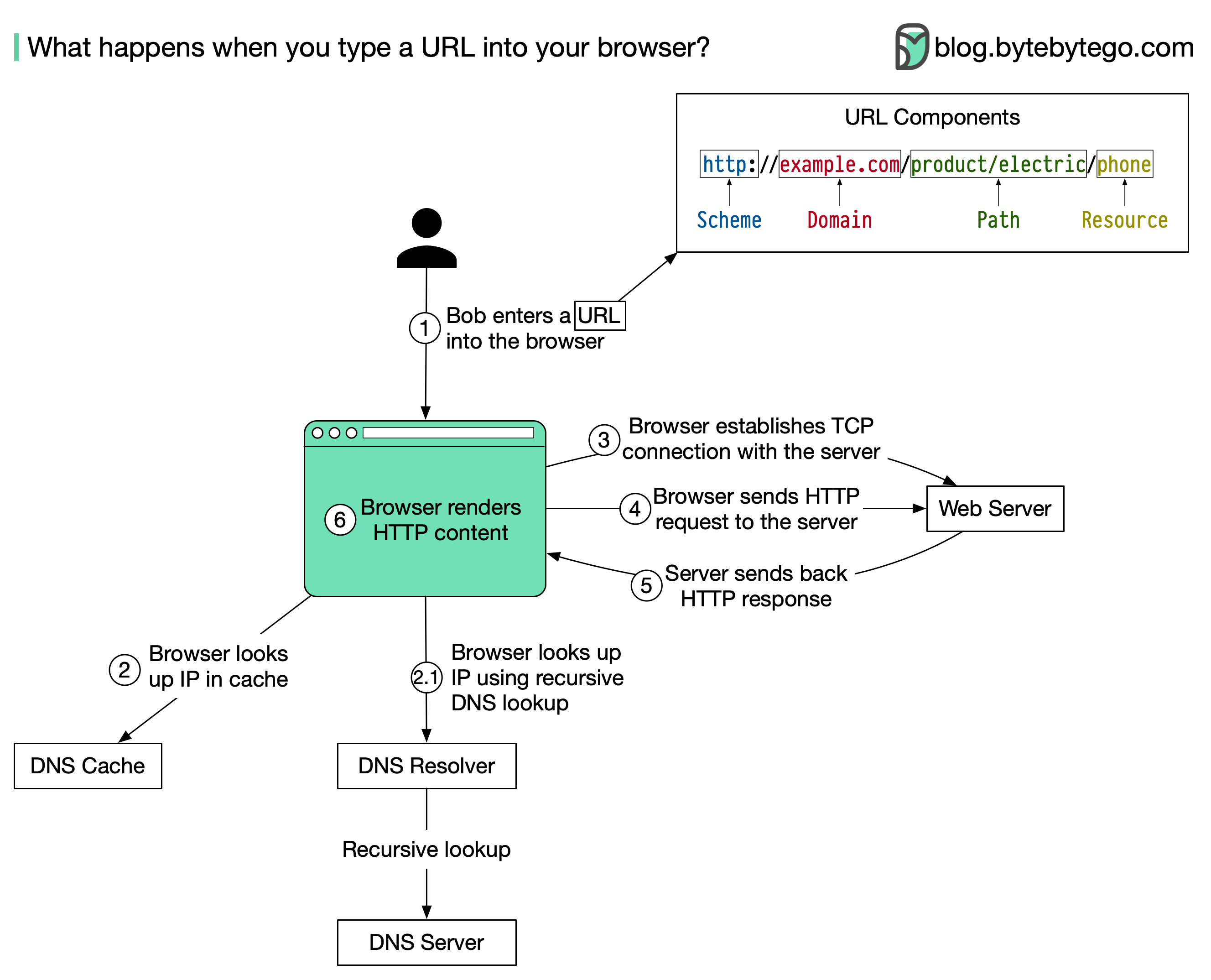

+ * [What Happens When You Type a URL Into Your Browser?](https://bytebytego.com/guides/what-happens-when-you-type-a-url-into-your-browser)

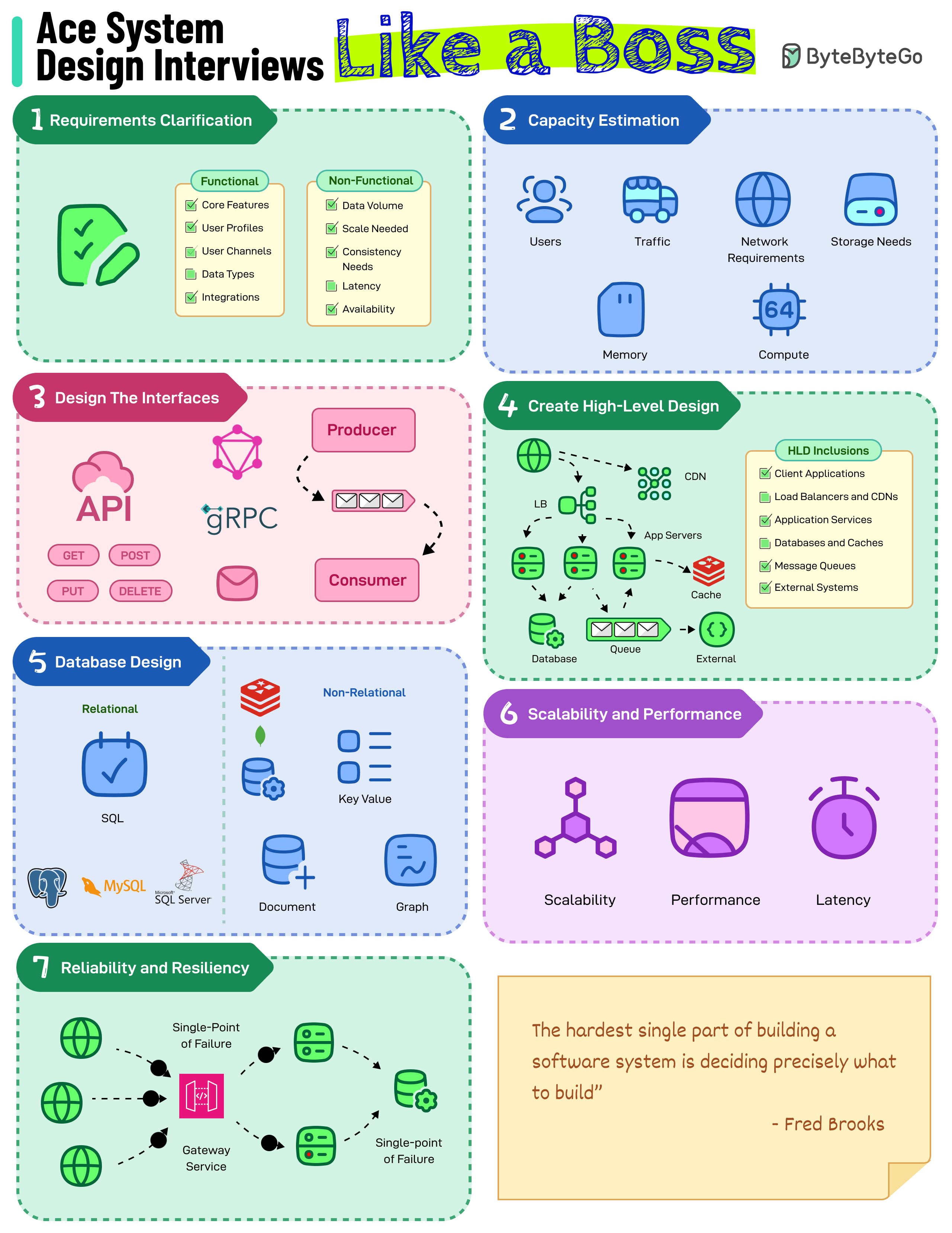

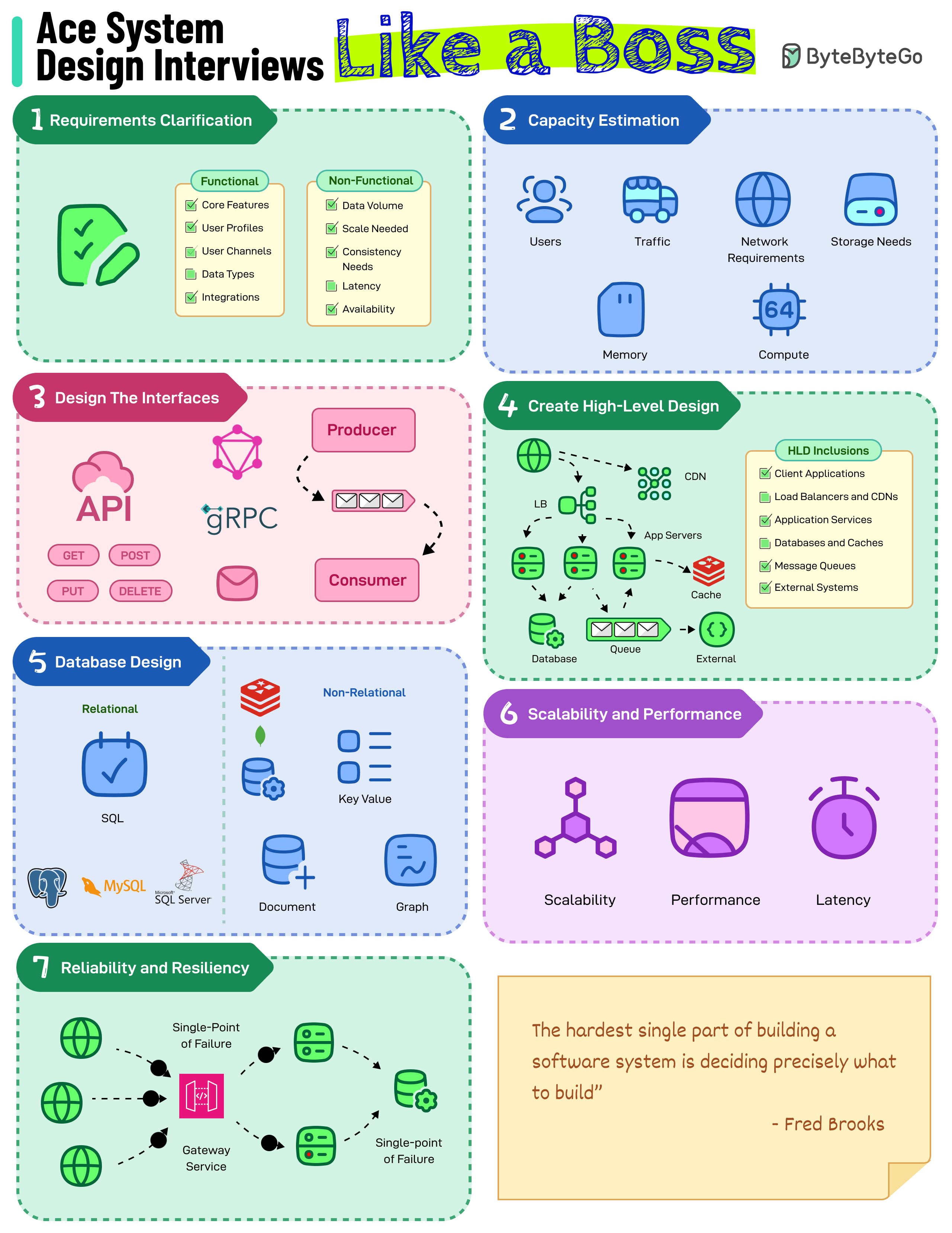

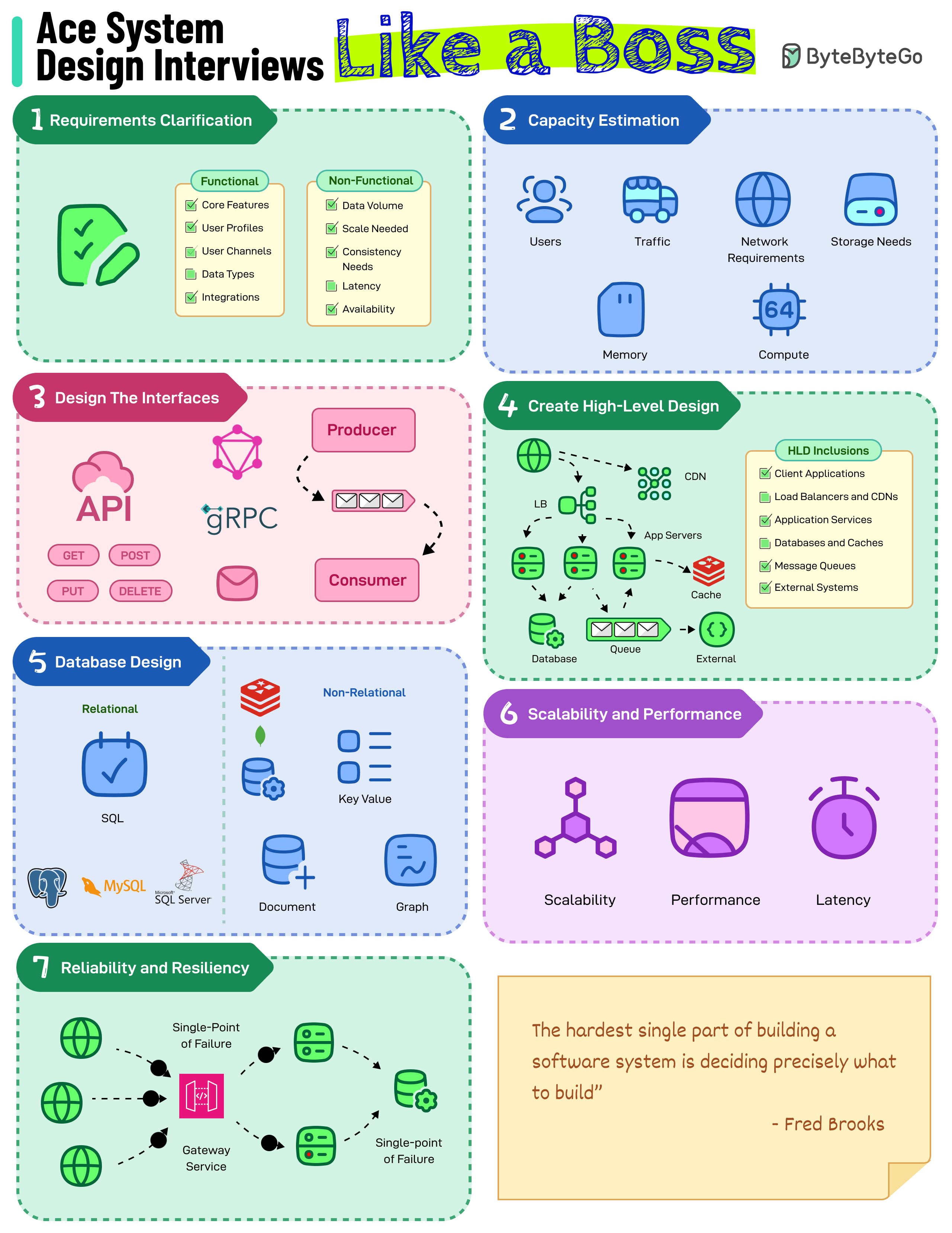

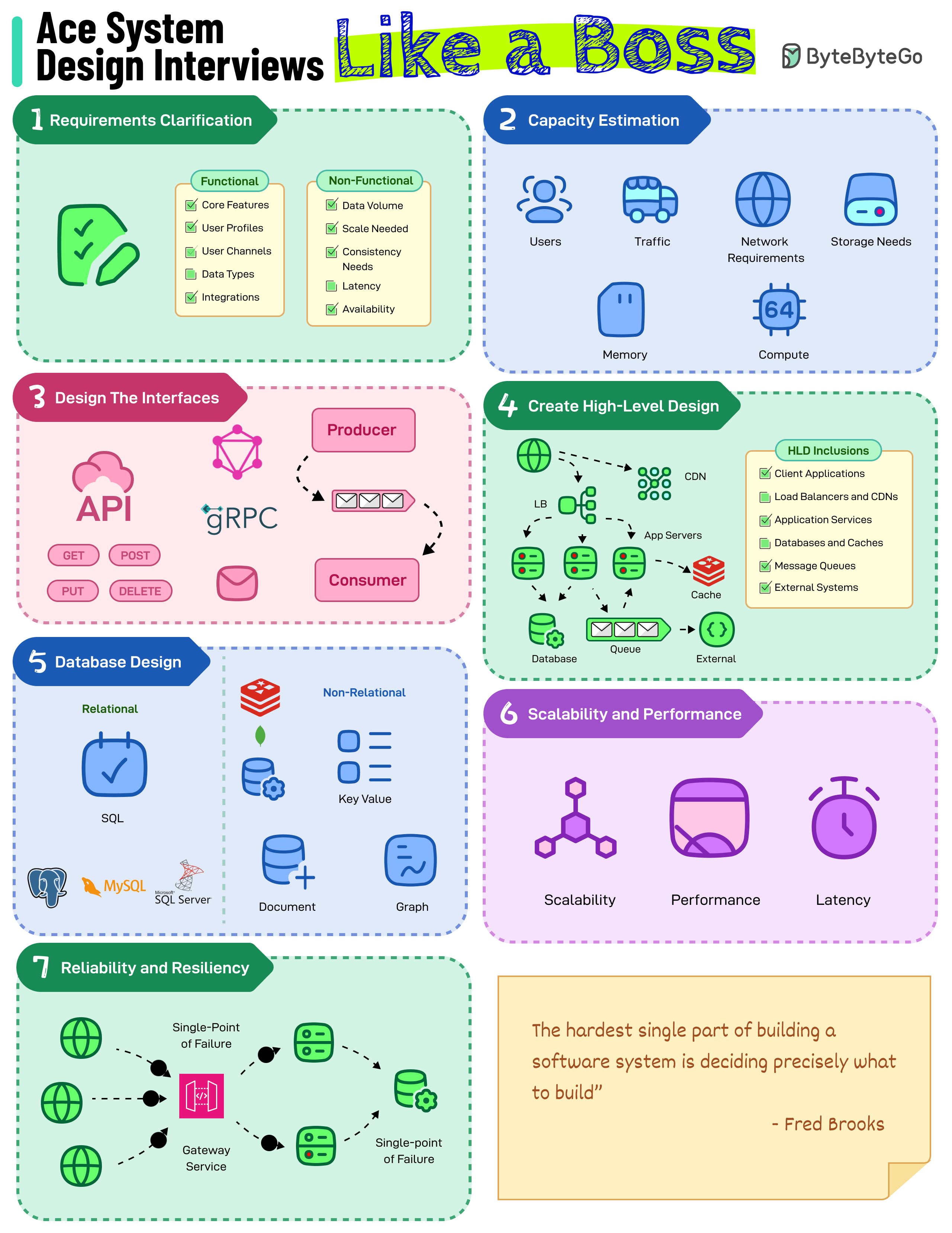

+ * [How to Ace System Design Interviews](https://bytebytego.com/guides/how-to-ace-system-design-interviews-like-a-boss)

+ * [Recommended Materials for Technical Interviews](https://bytebytego.com/guides/my-recommended-materials-for-cracking-your-next-technical-interview)

+* [Caching & Performance](https://bytebytego.com/guides/caching-performance)

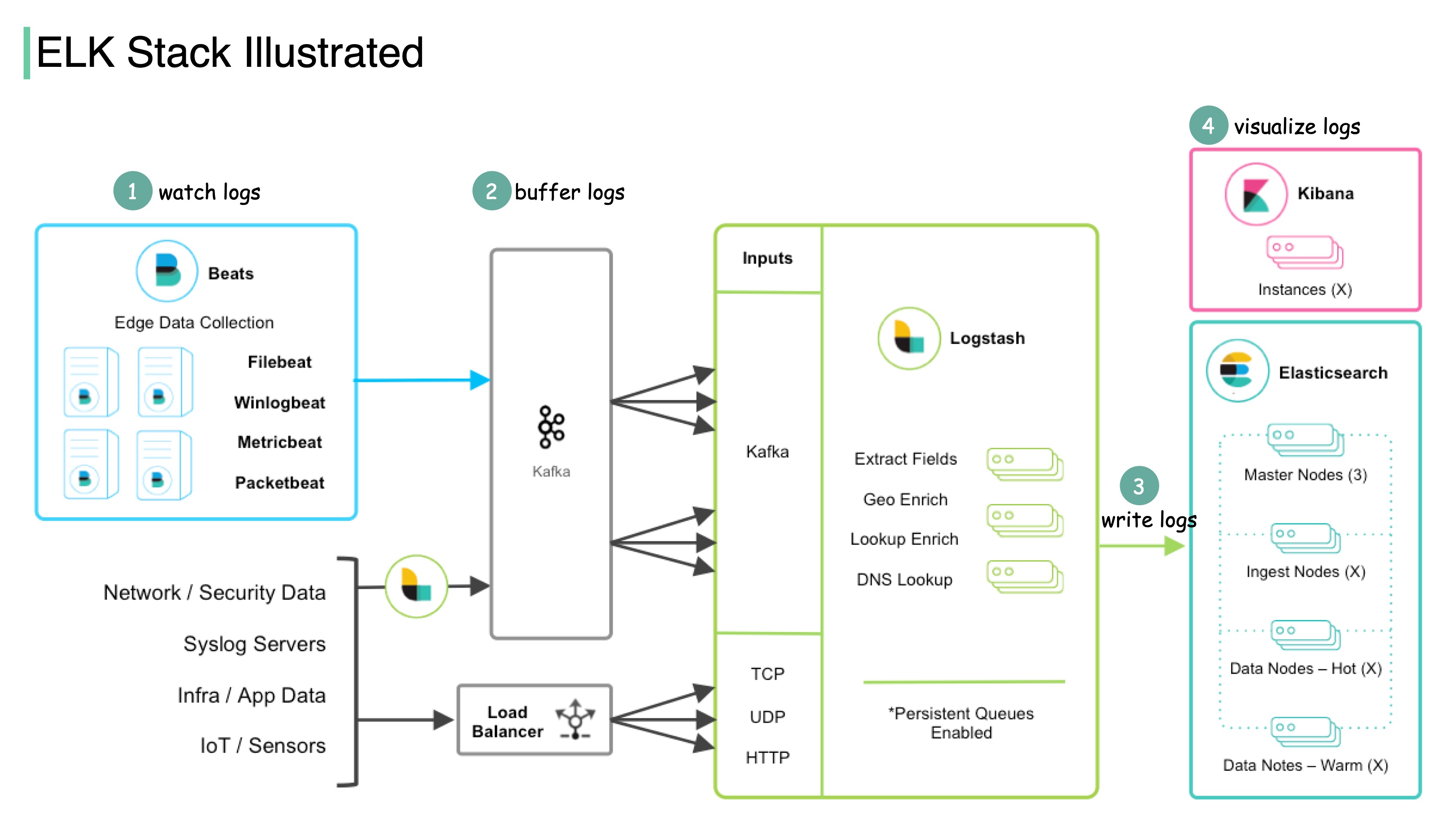

+ * [What is ELK Stack and Why is it Popular?](https://bytebytego.com/guides/what-is-elk-stack-and-why-is-it-so-popular-for-log-management)

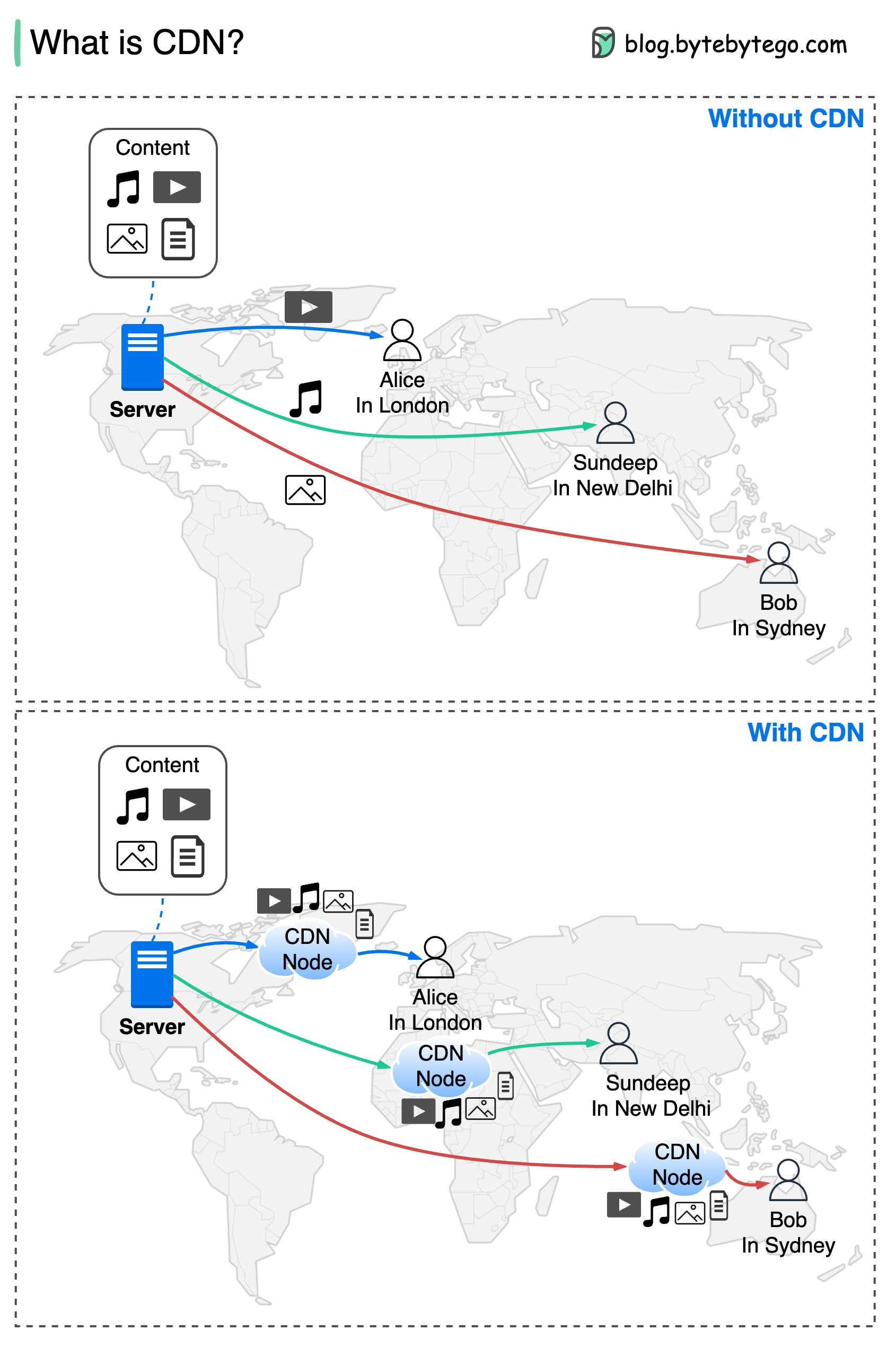

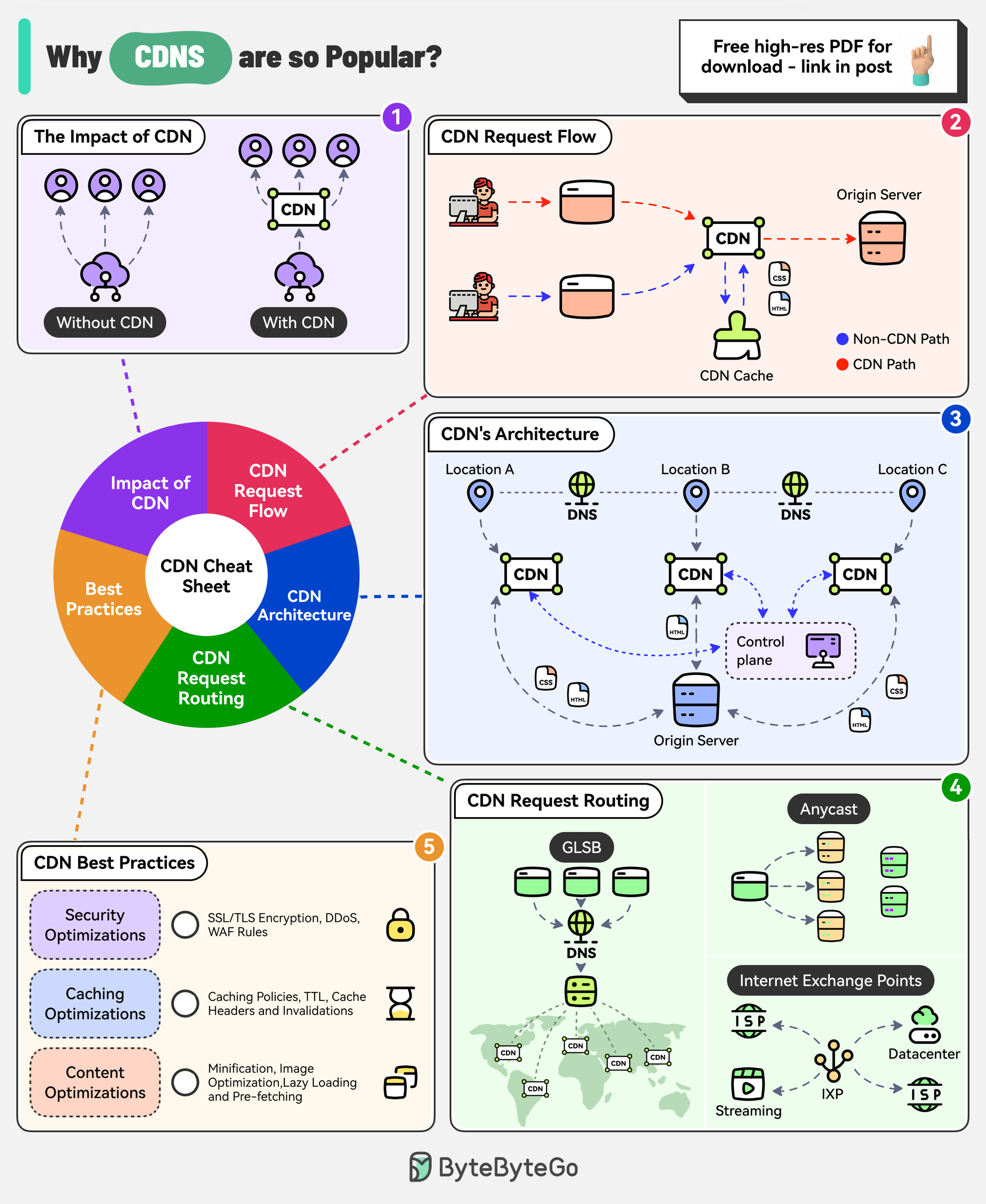

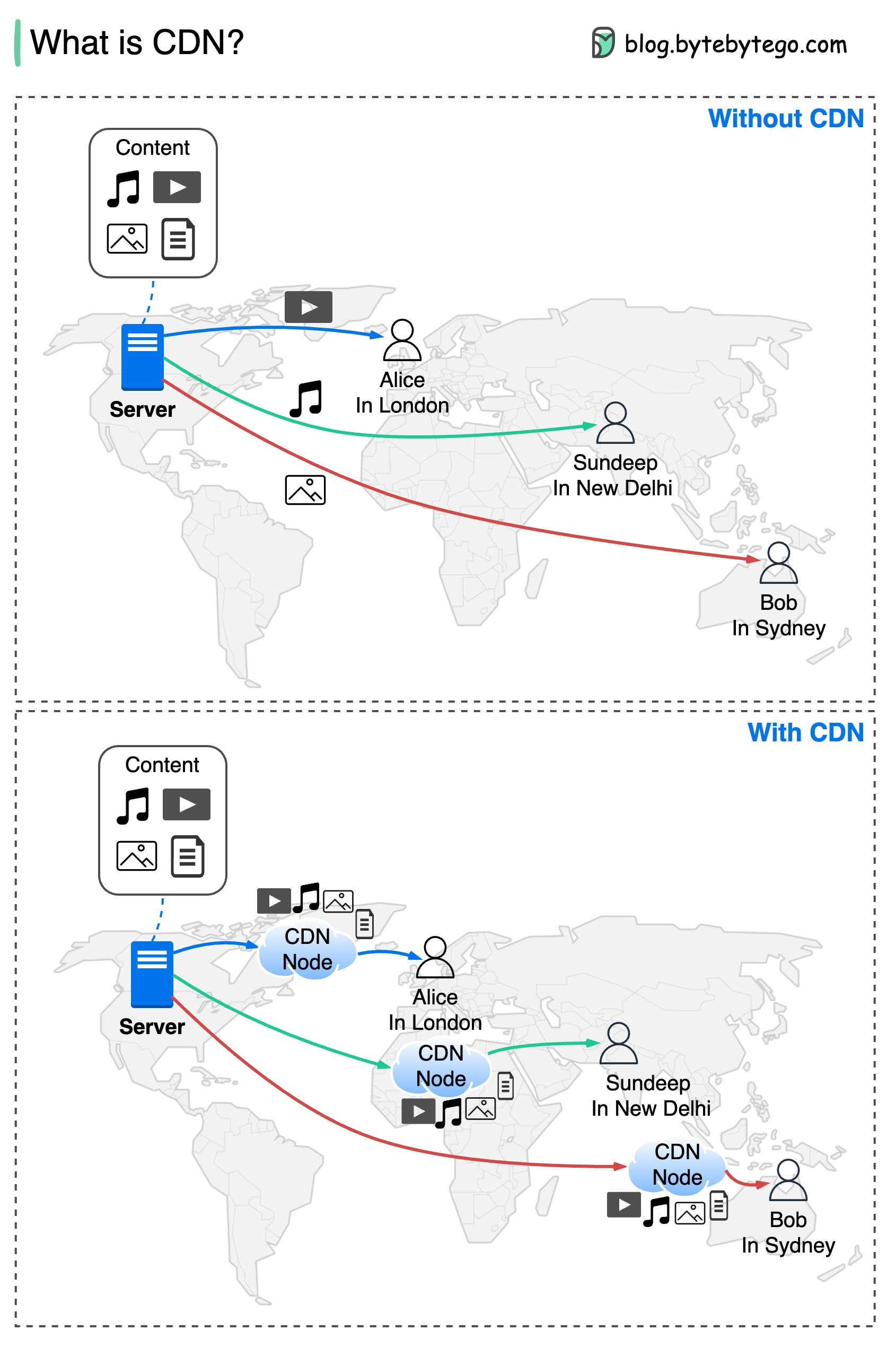

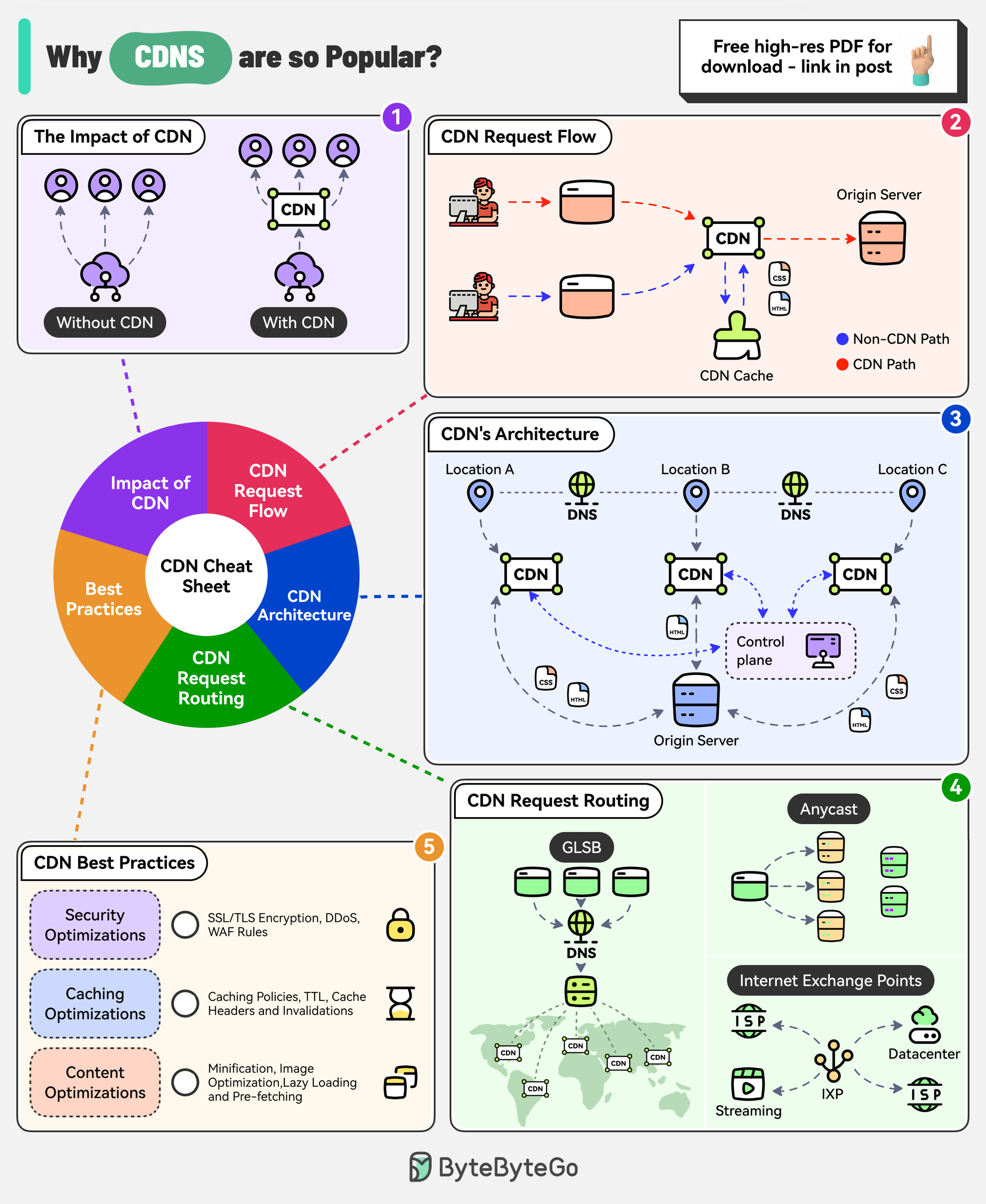

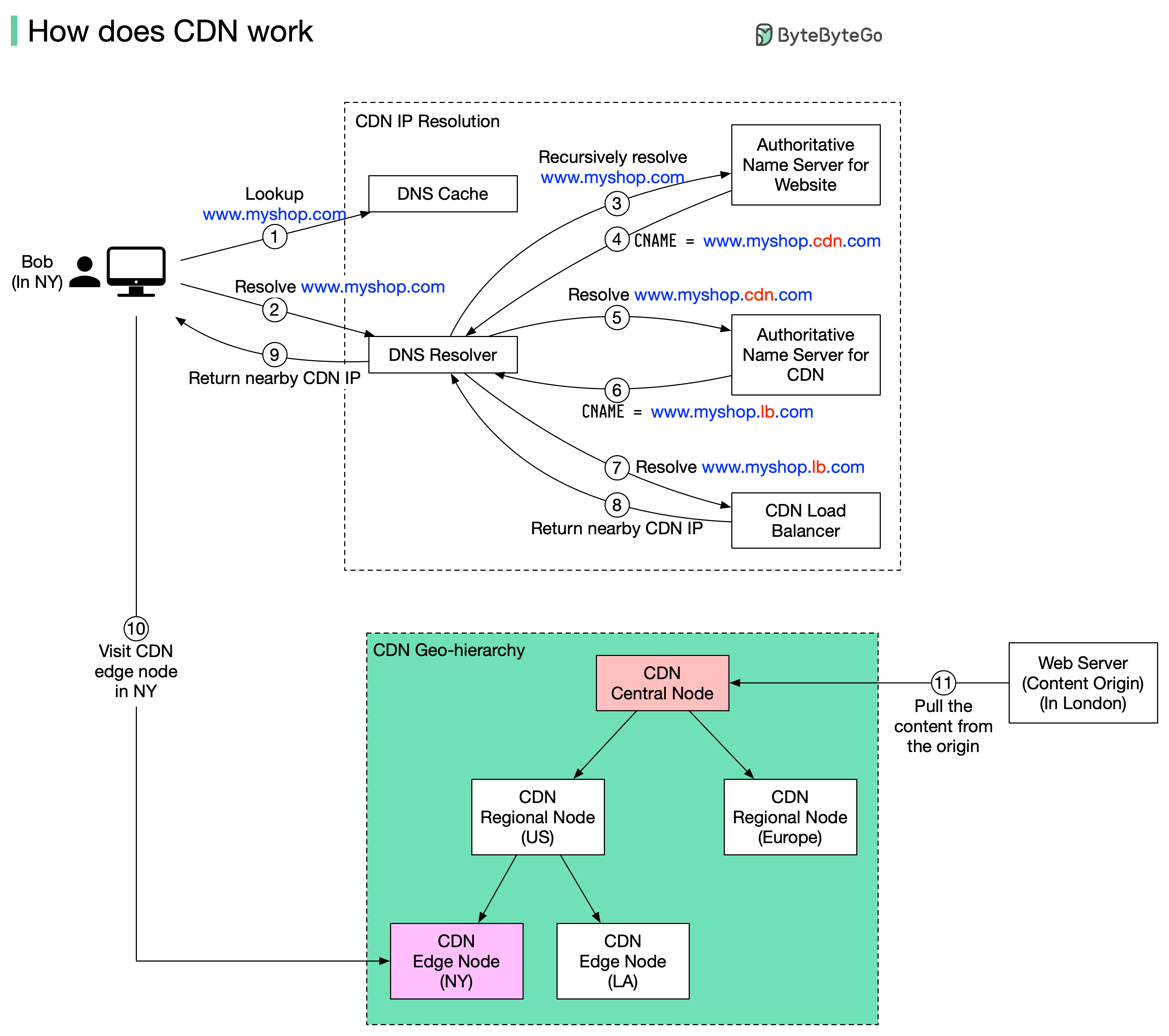

+ * [Why are Content Delivery Networks (CDN) so Popular?](https://bytebytego.com/guides/why-are-content-delivery-networks-cdn-so-popular)

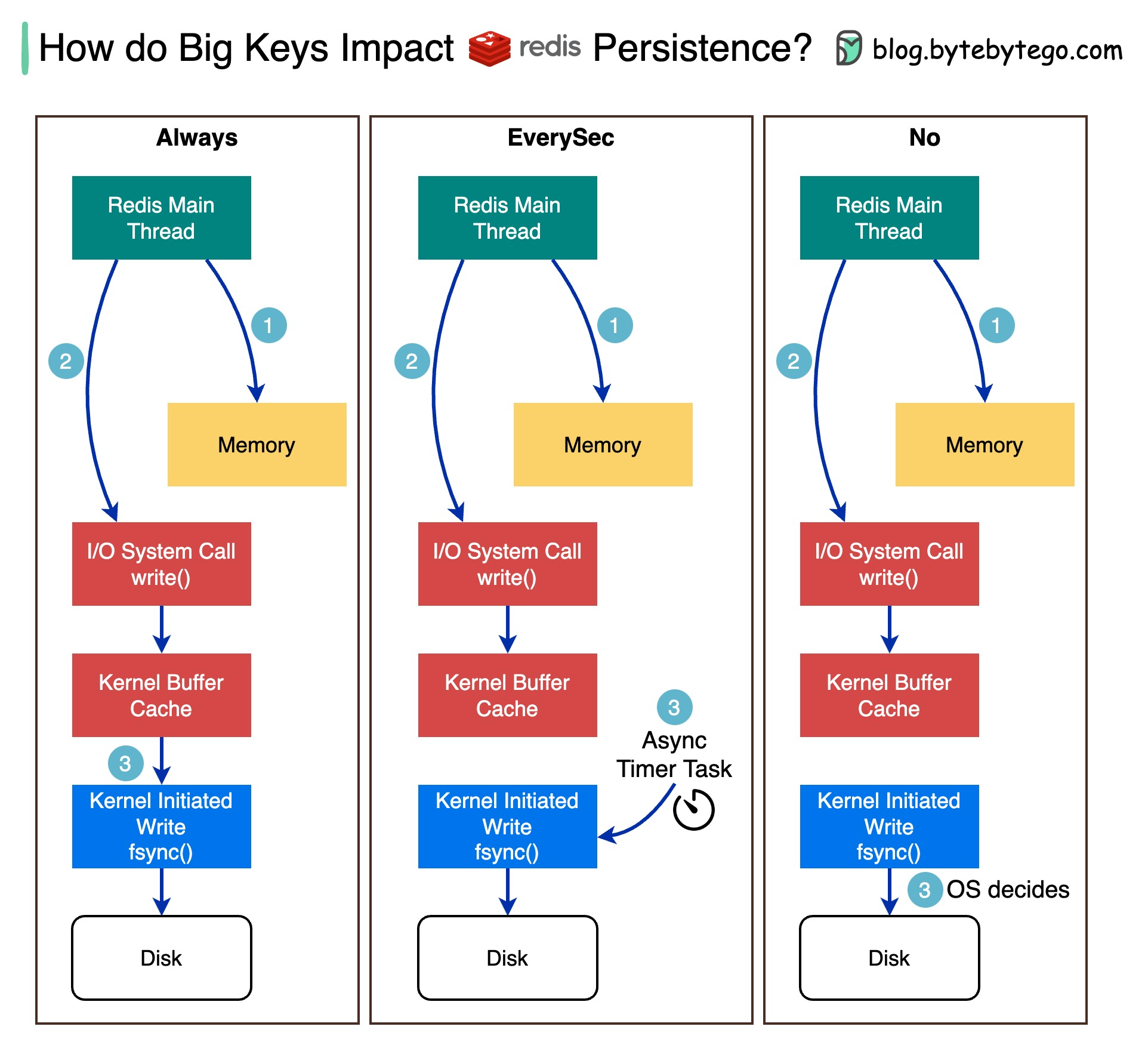

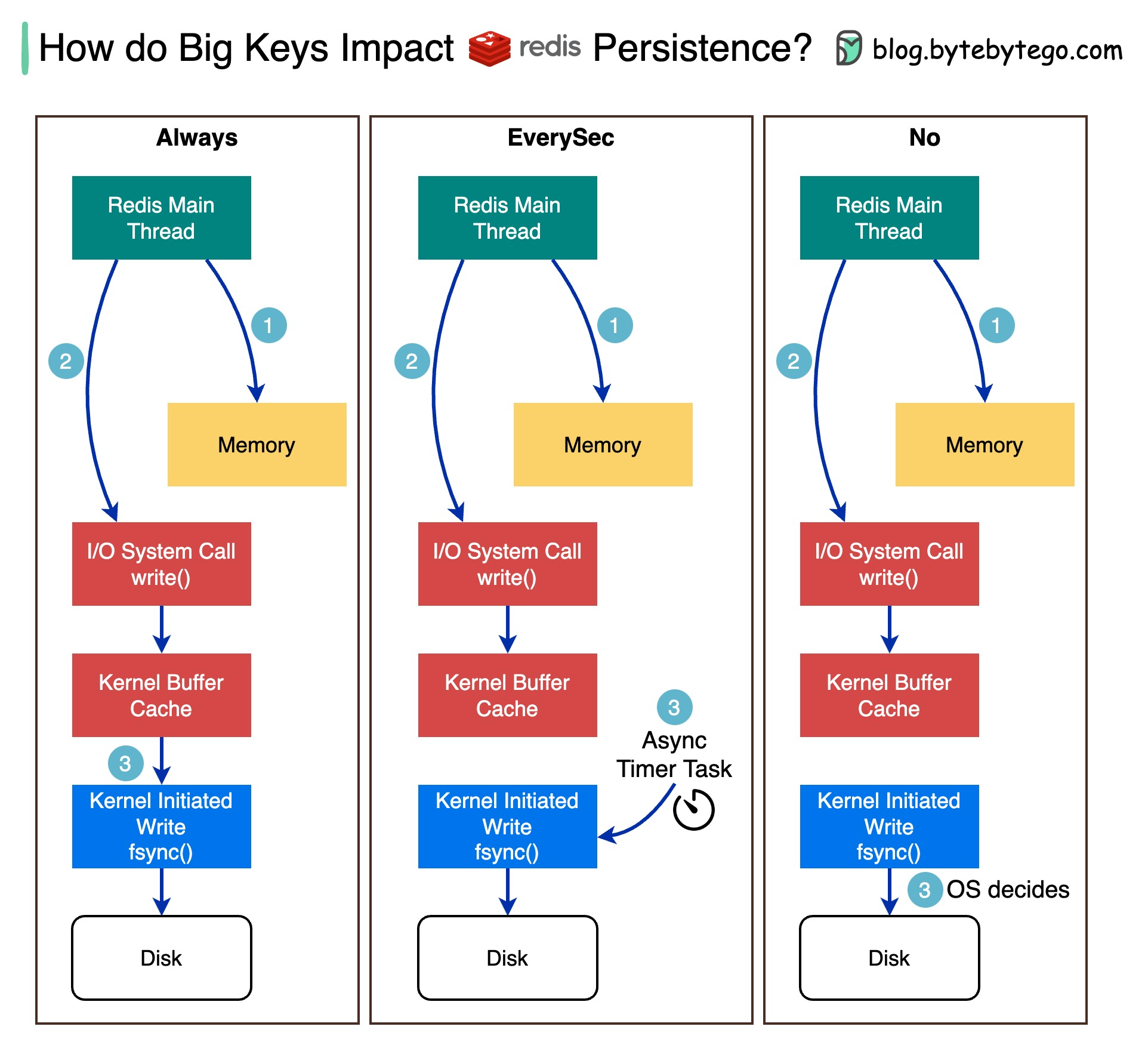

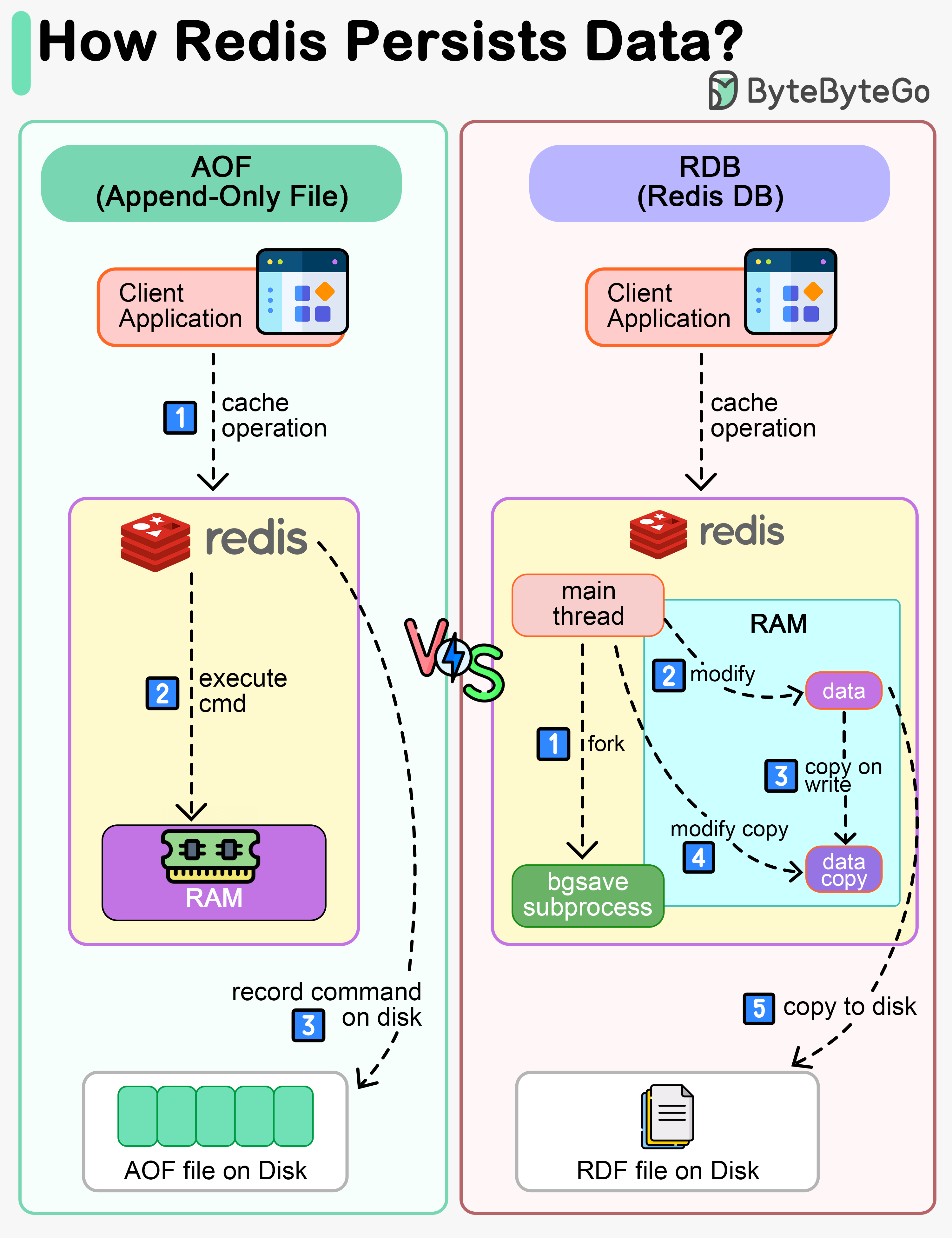

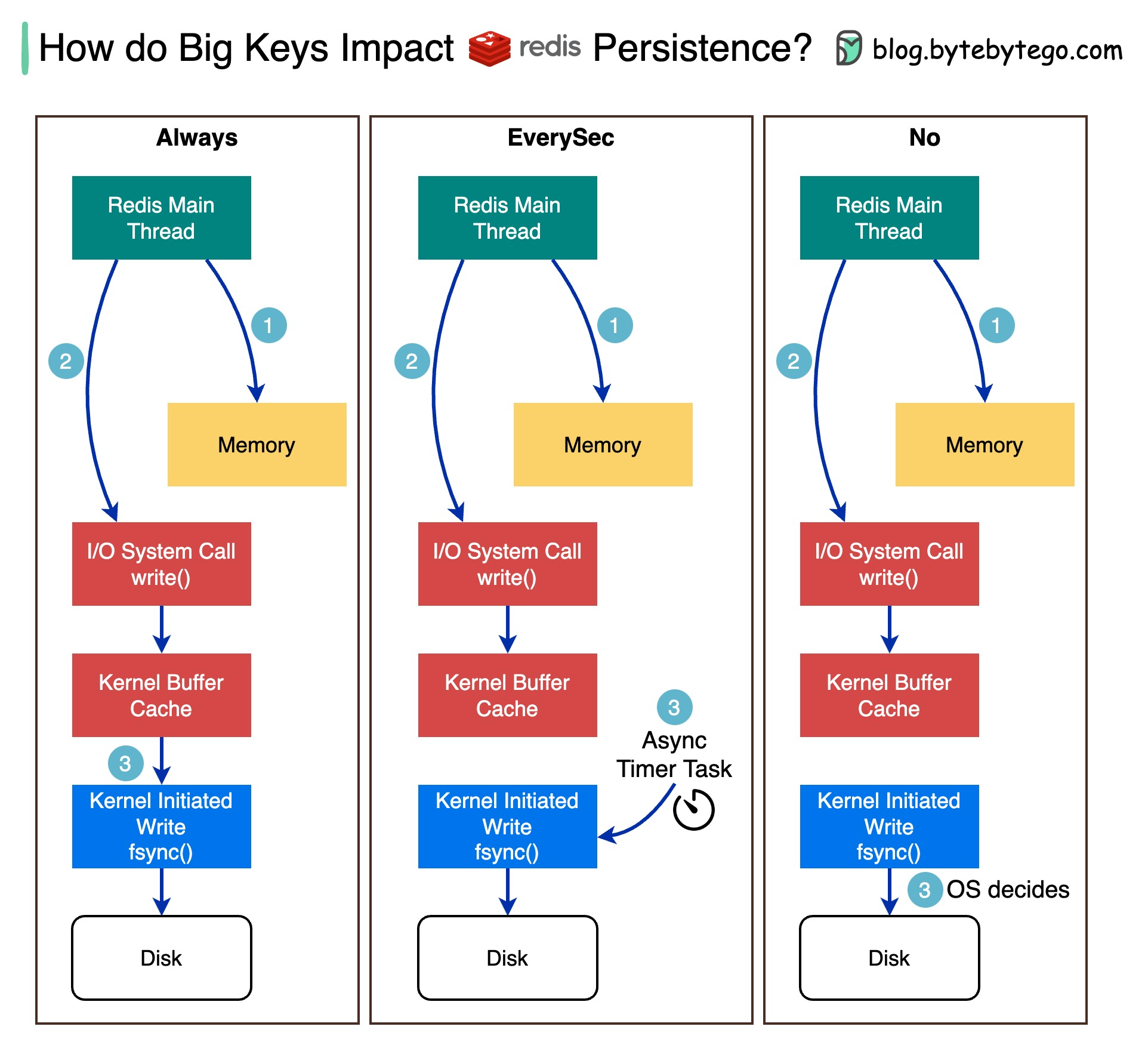

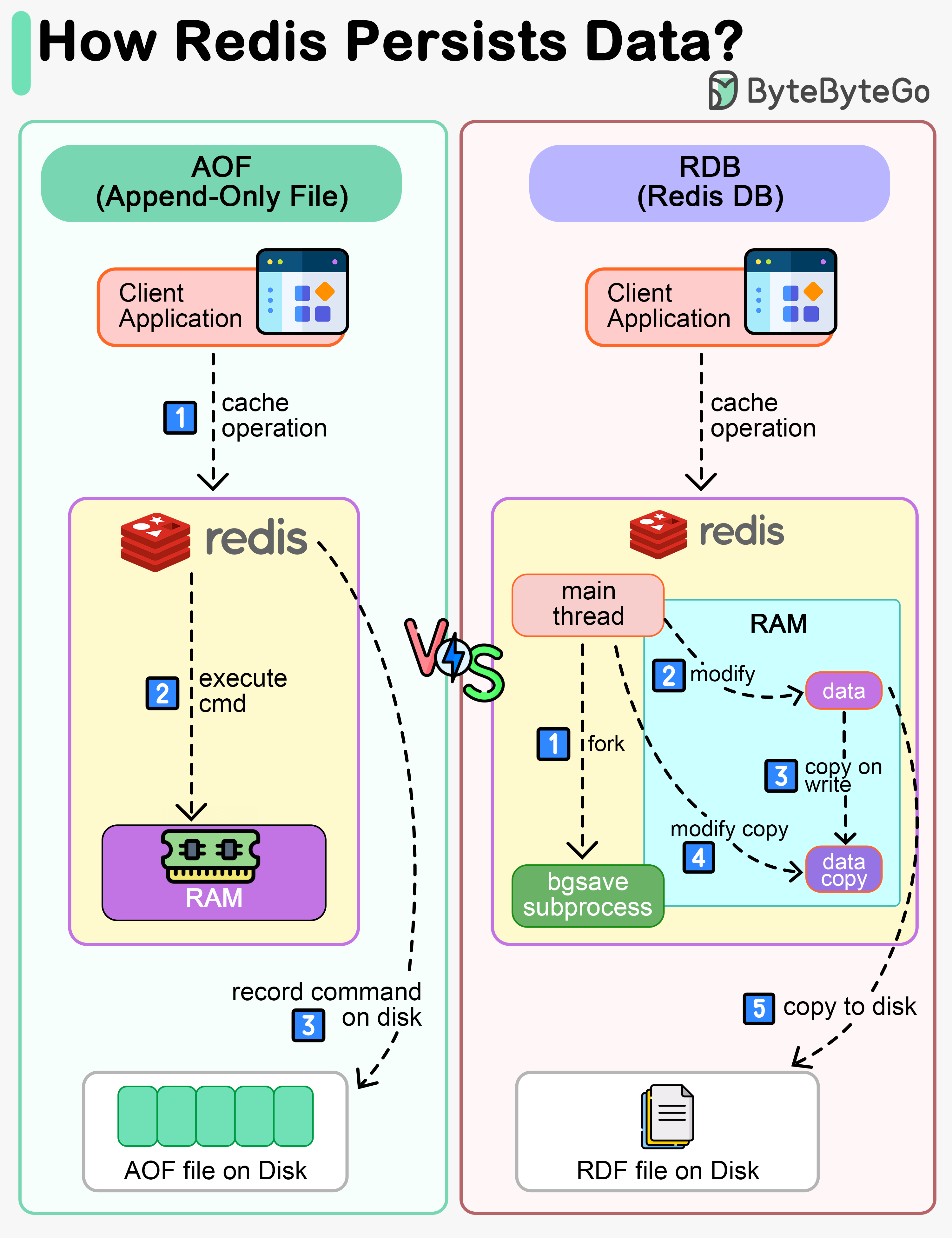

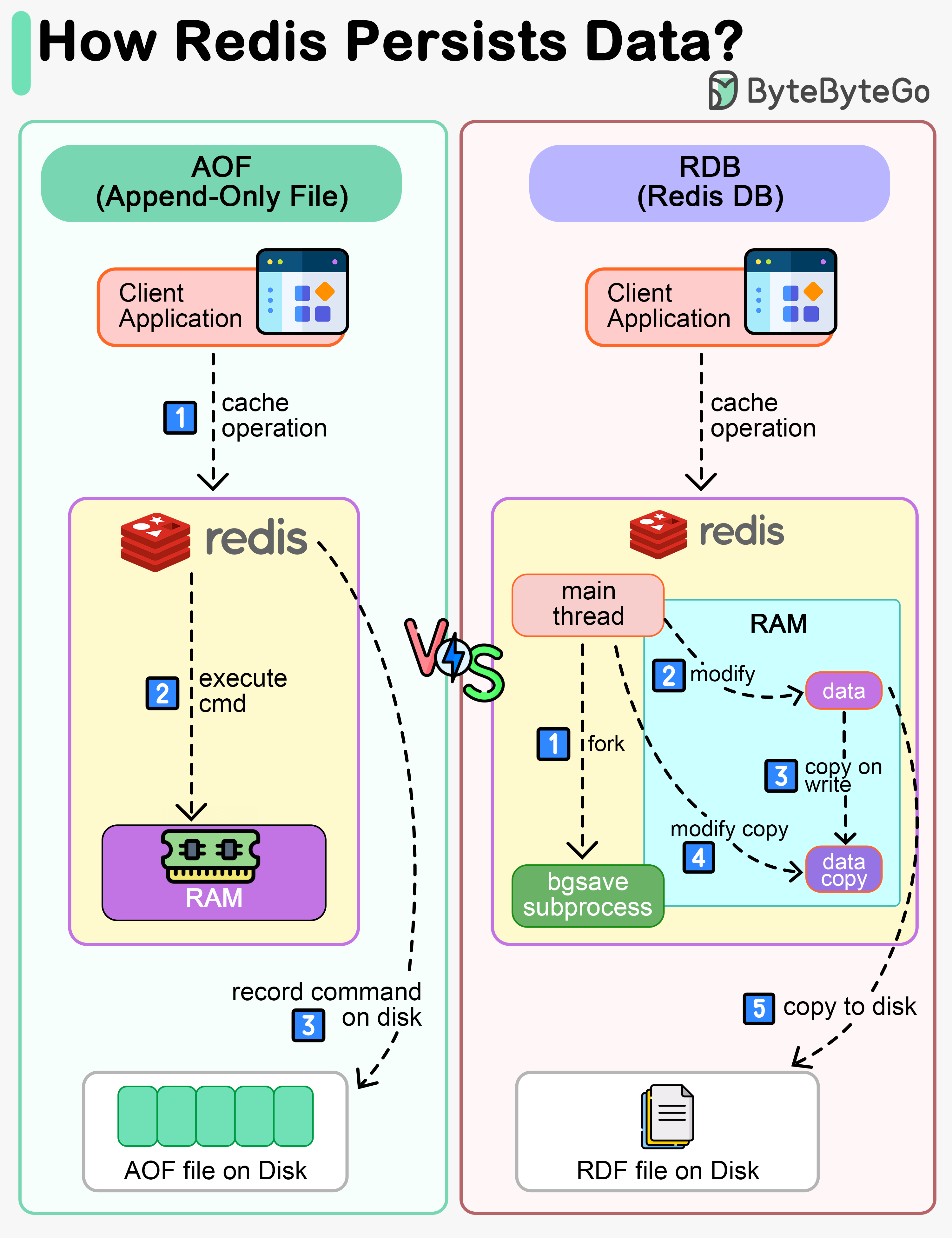

+ * [How Big Keys Impact Redis Persistence](https://bytebytego.com/guides/how-do-big-keys-impact-redis-persistence)

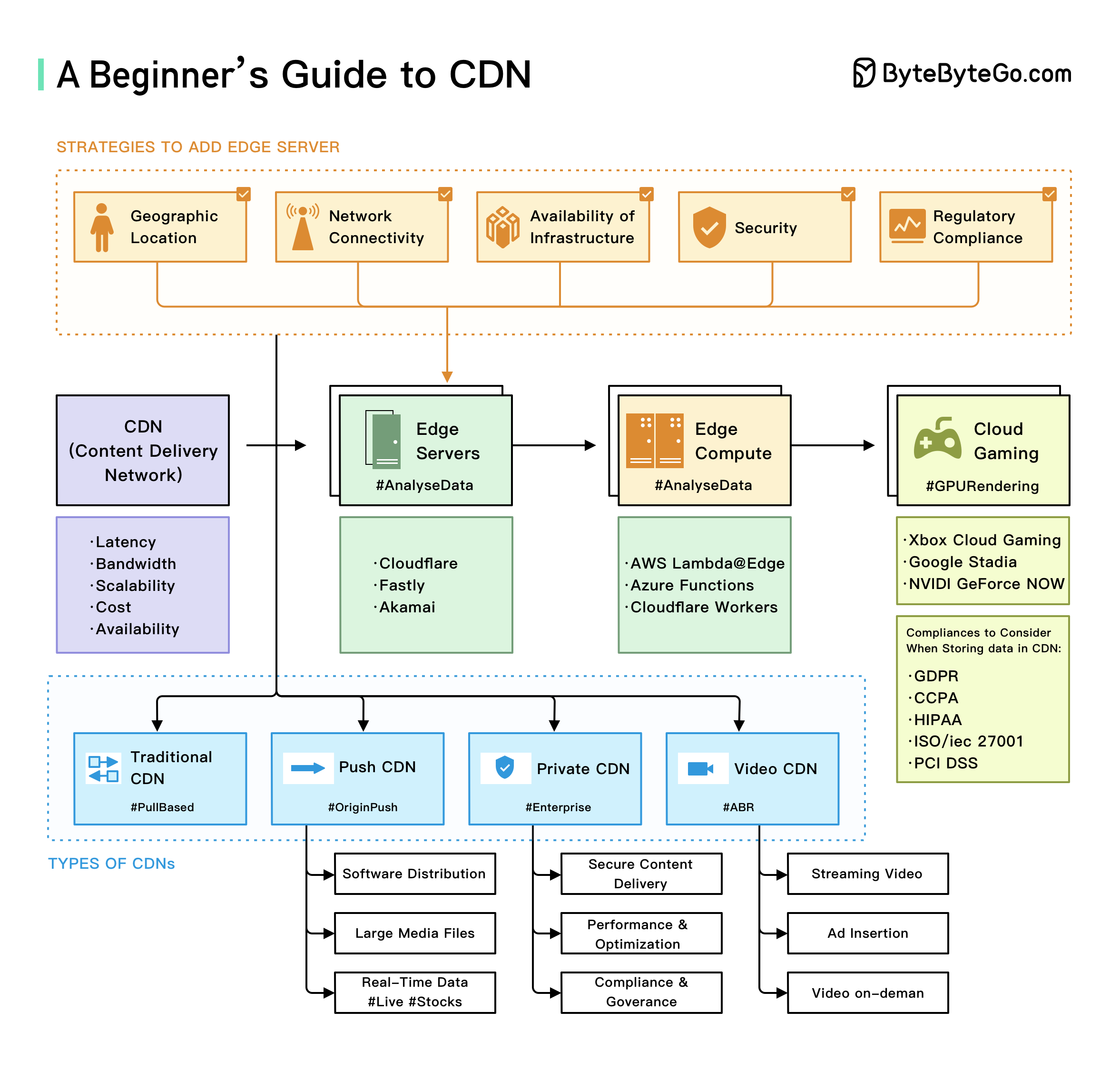

+ * [A Beginner's Guide to CDN](https://bytebytego.com/guides/a-beginner's-guide-to-cdn-content-delivery-network)

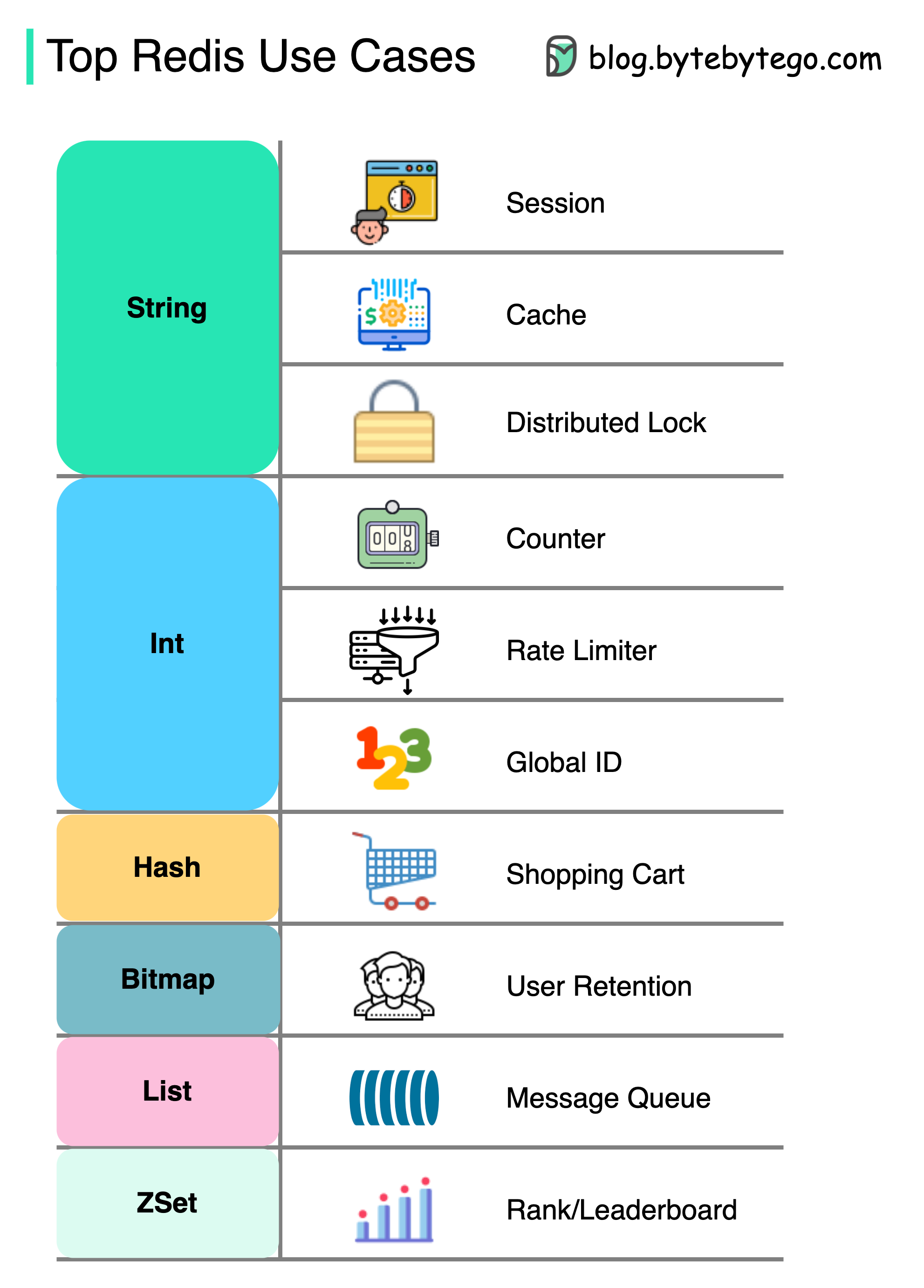

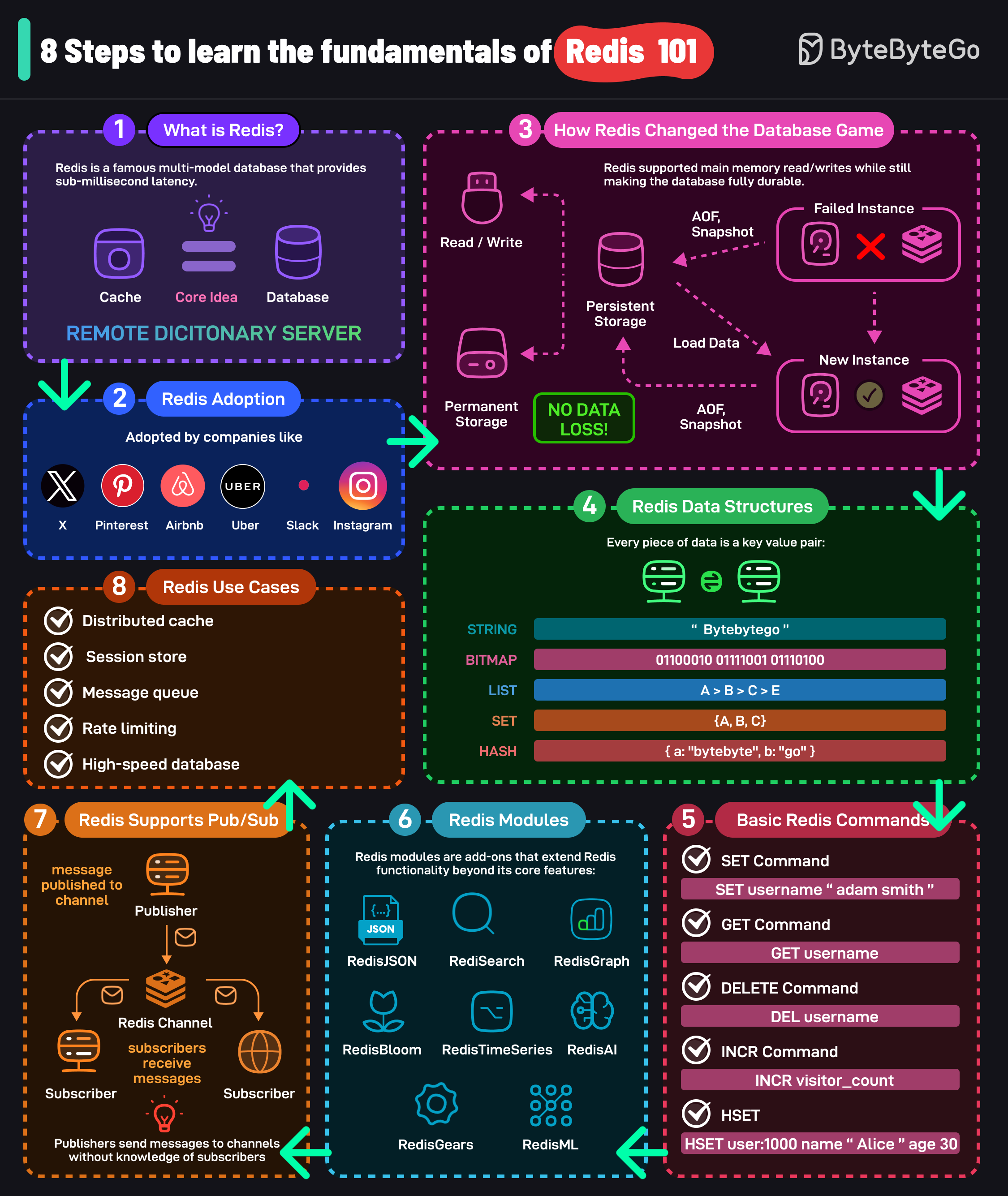

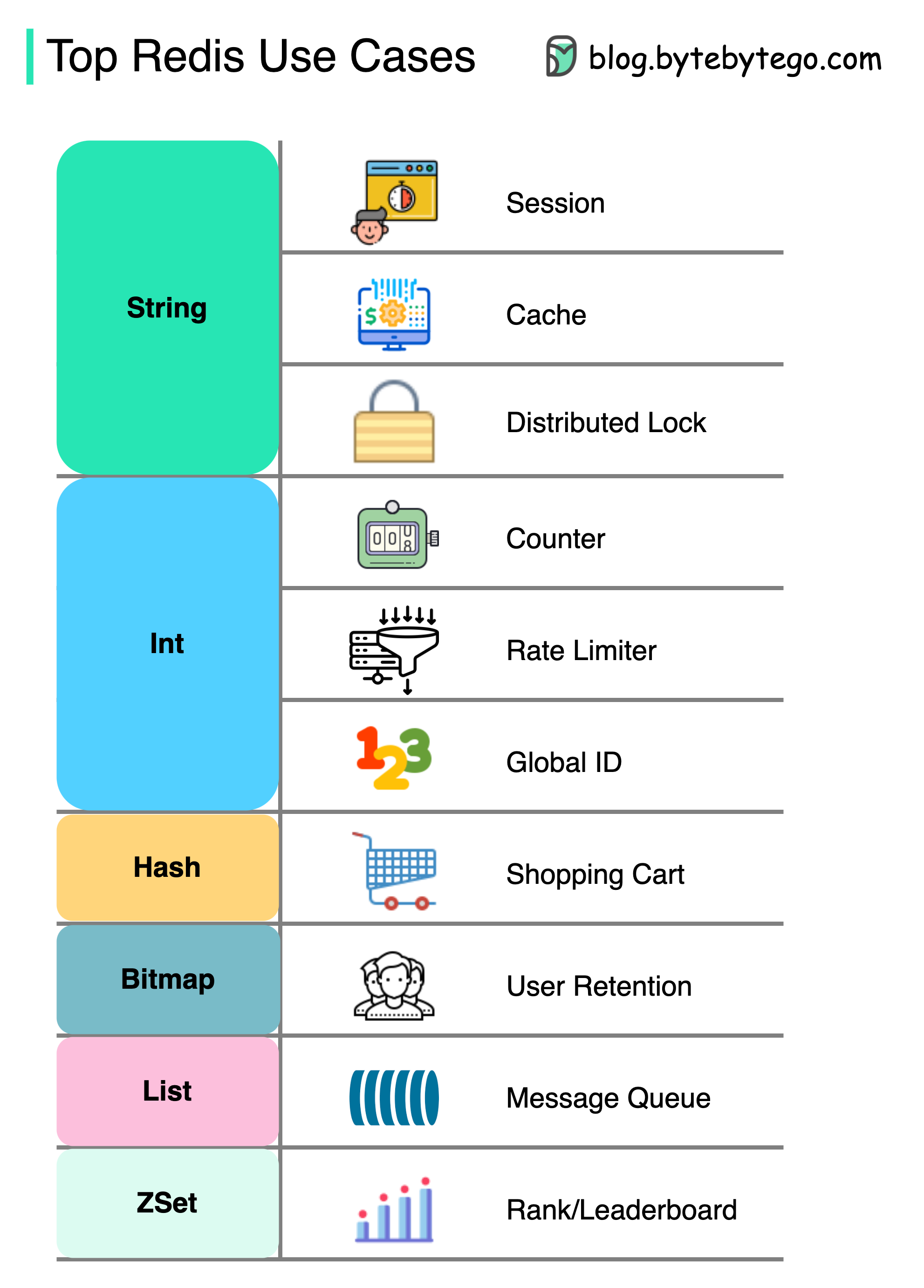

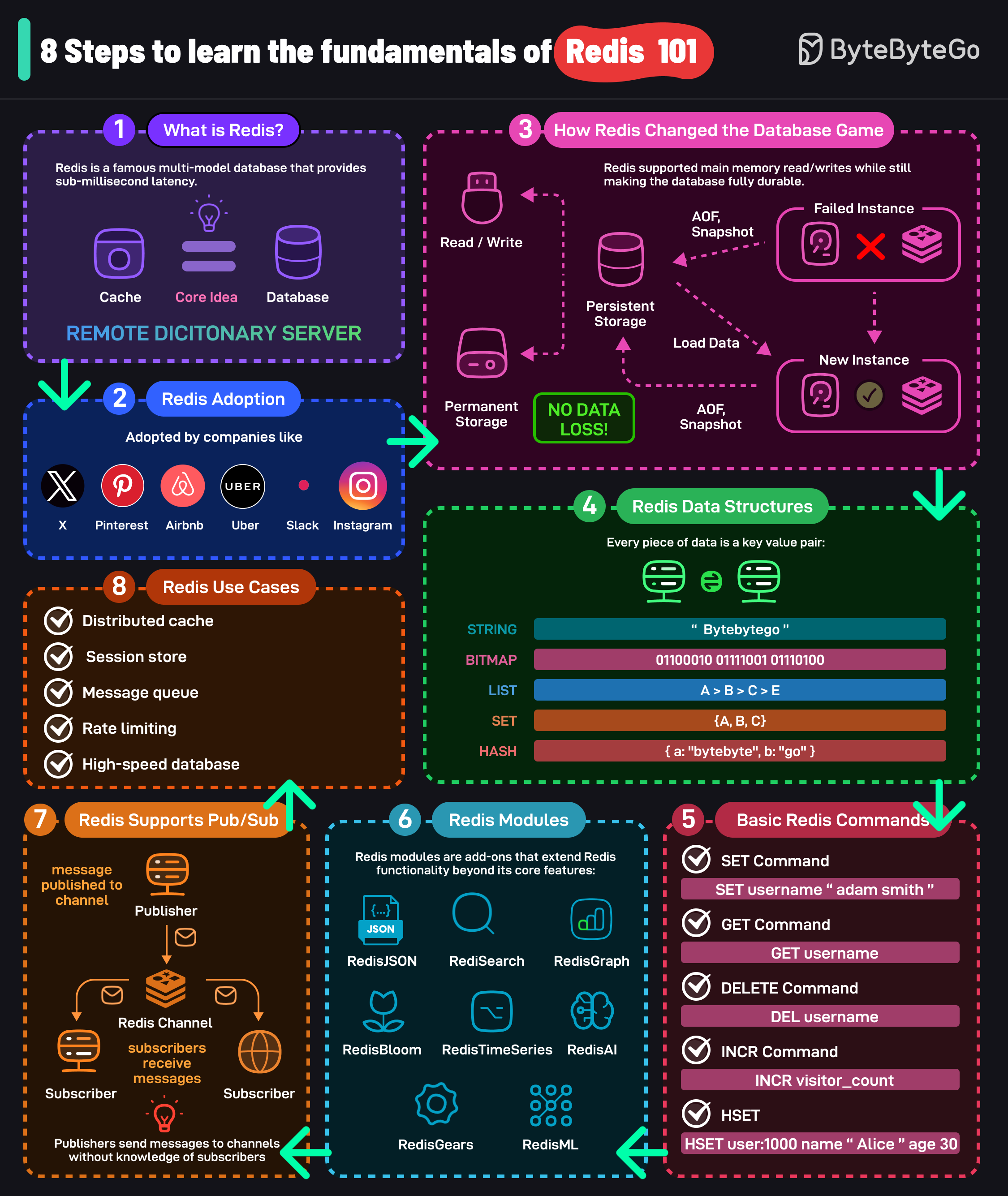

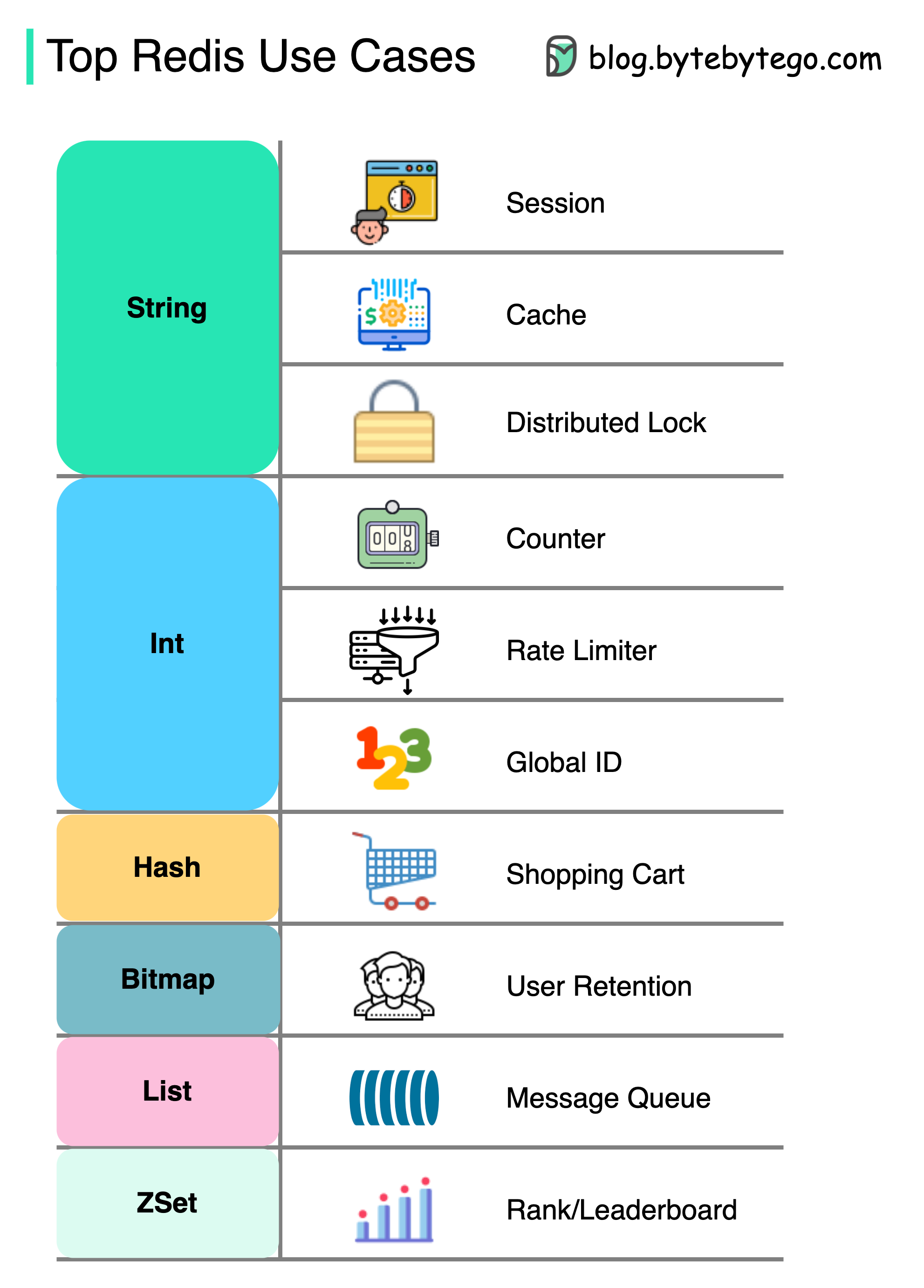

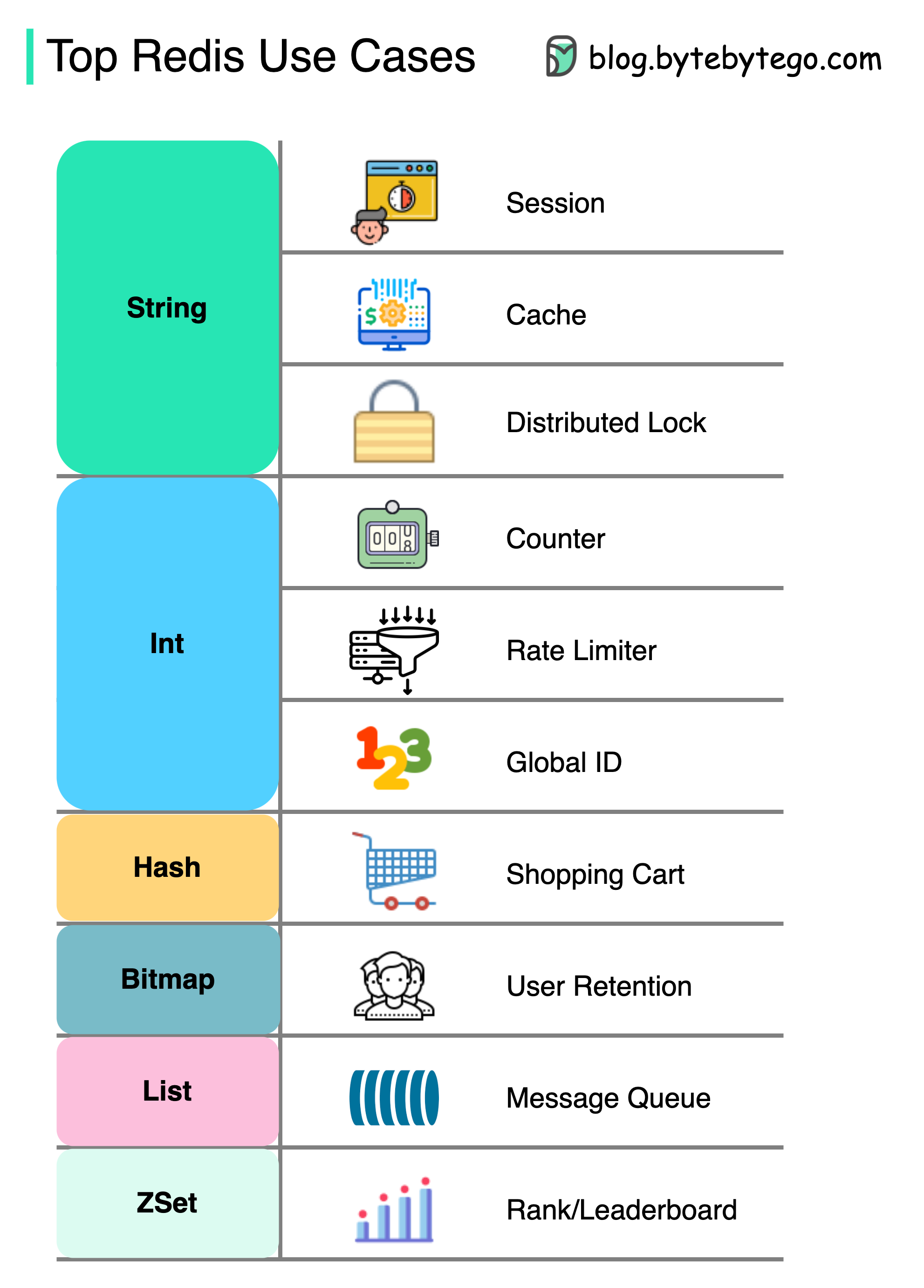

+ * [The Ultimate Redis 101](https://bytebytego.com/guides/the-ultimate-redis-101)

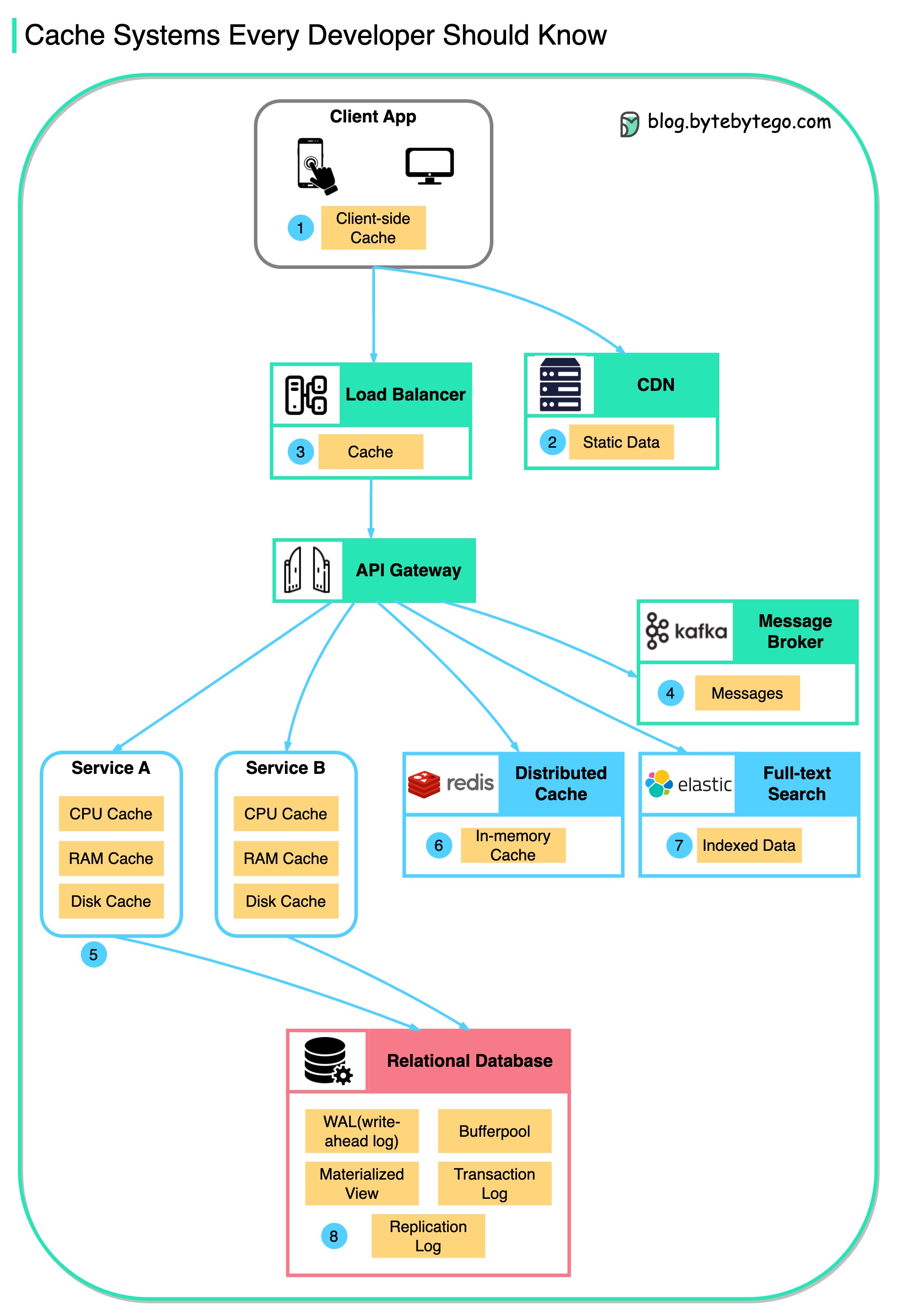

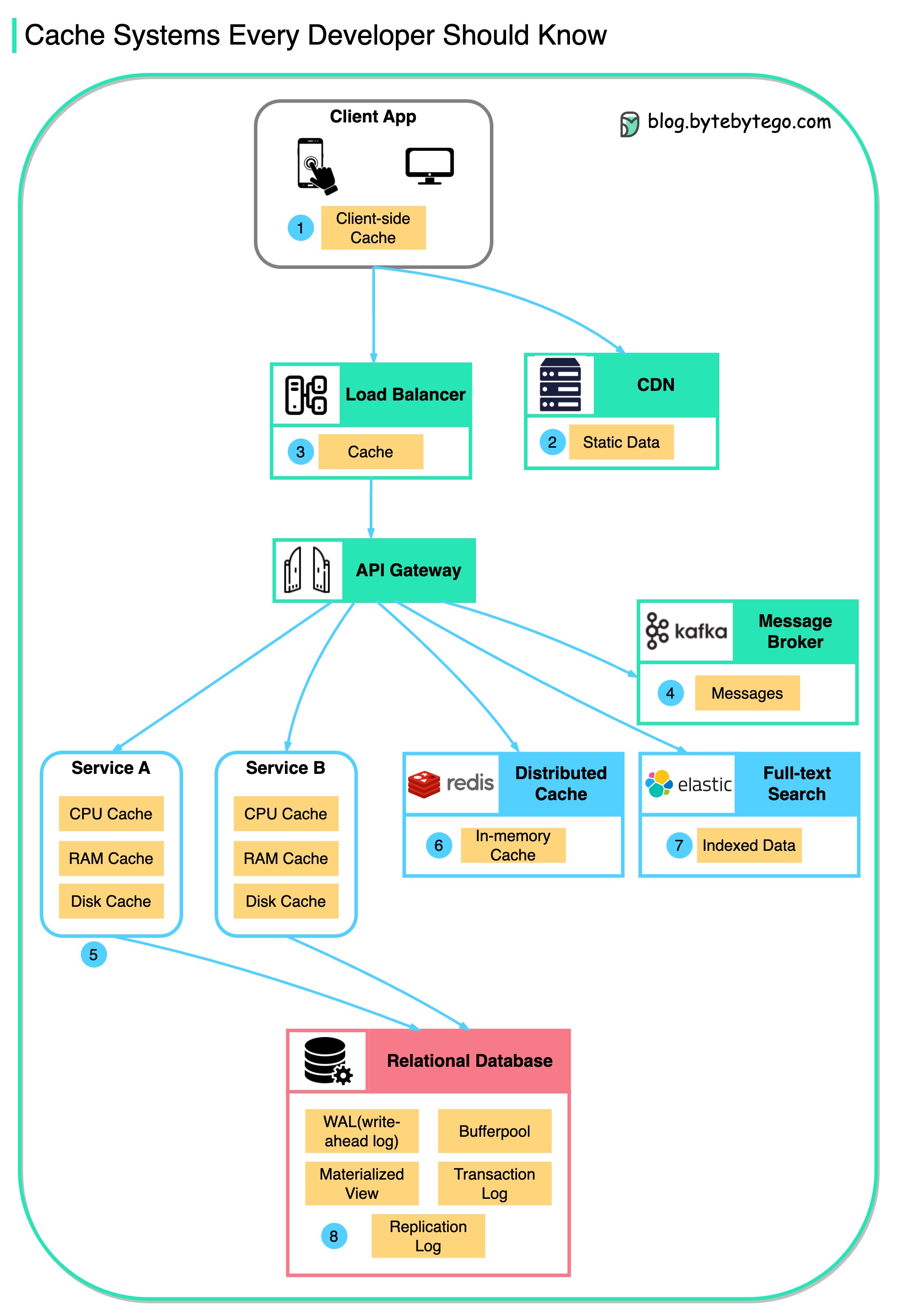

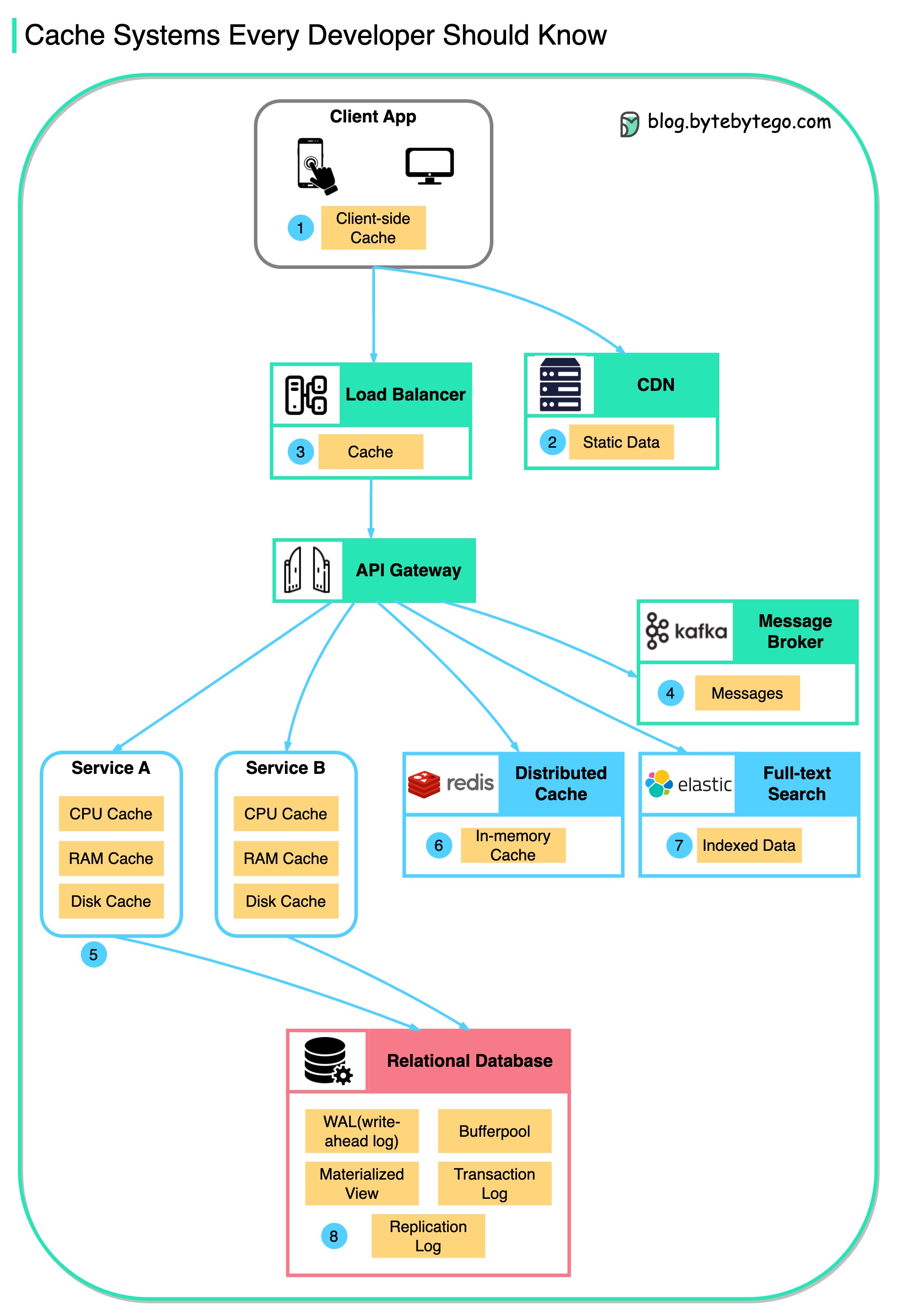

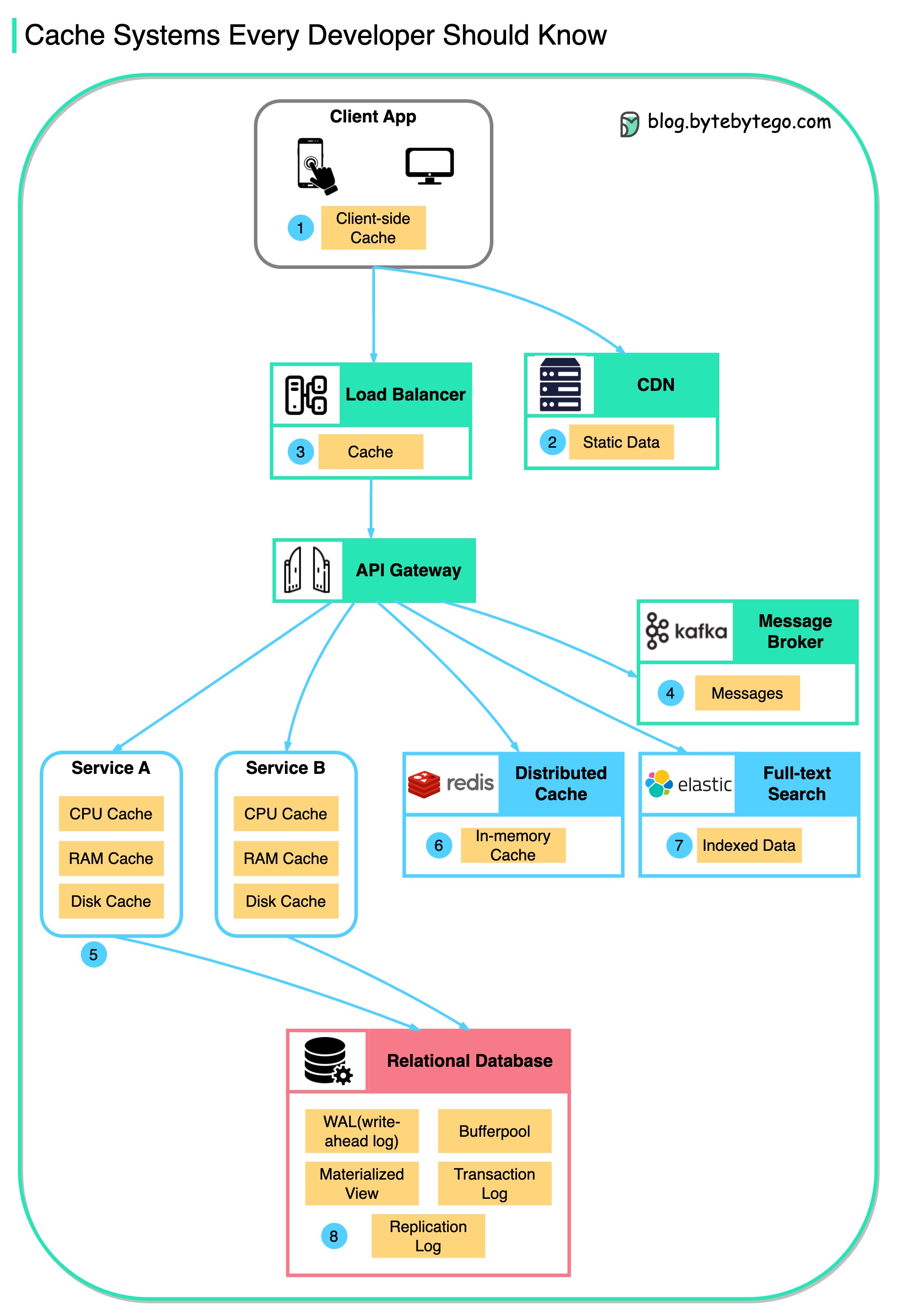

+ * [Cache Systems Every Developer Should Know](https://bytebytego.com/guides/cache-systems-every-developer-should-know)

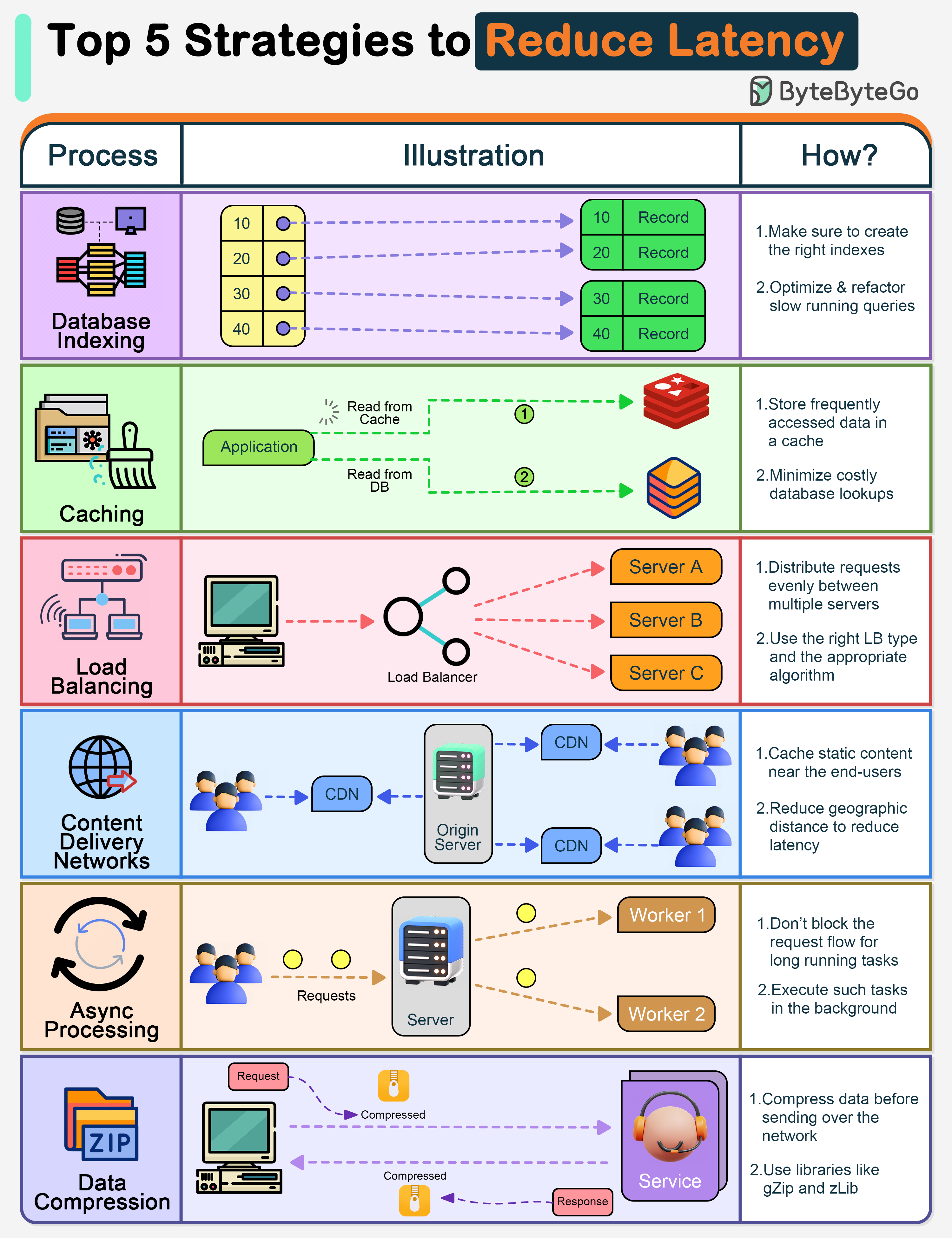

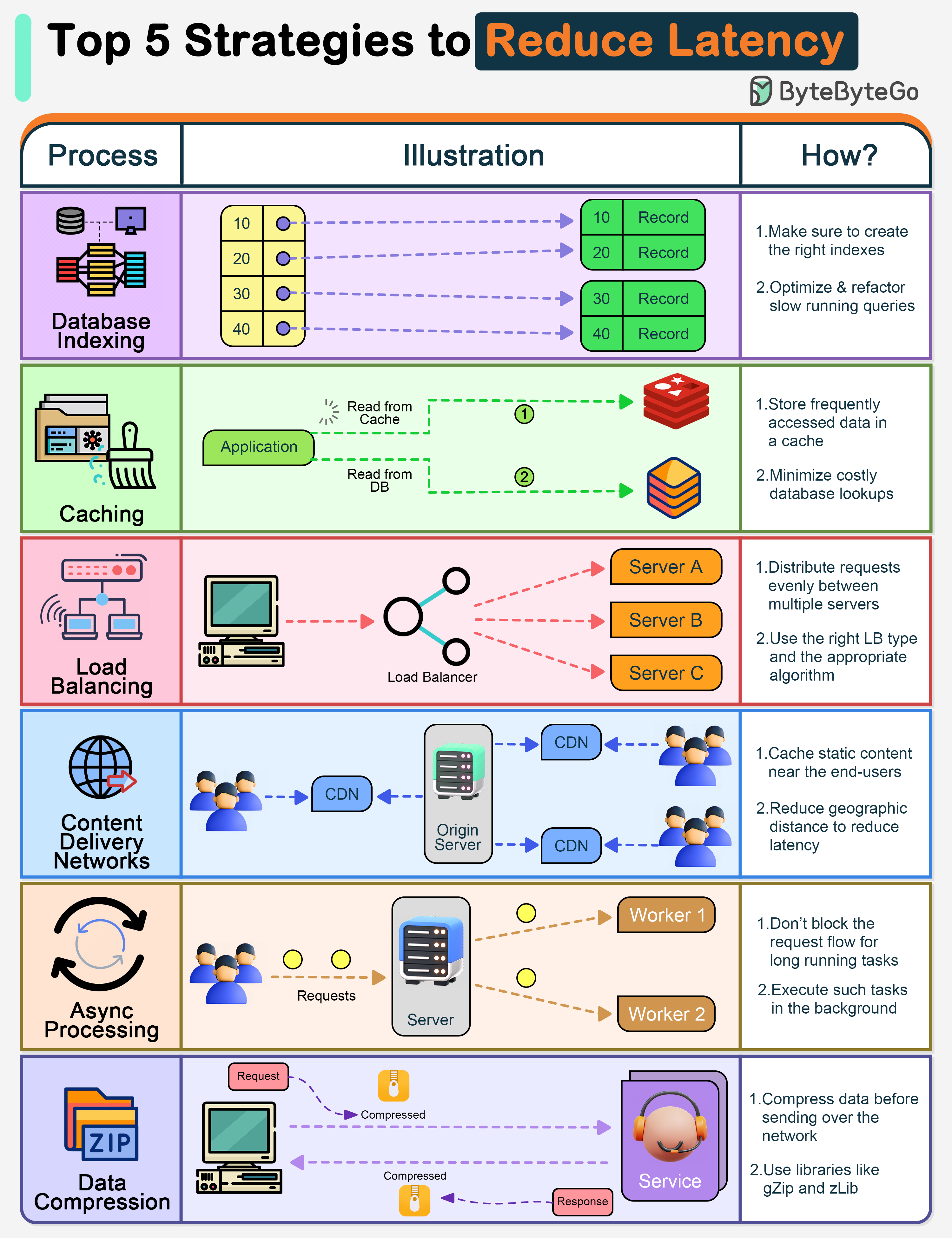

+ * [Top 5 Strategies to Reduce Latency](https://bytebytego.com/guides/top-5-strategies-to-reduce-latency)

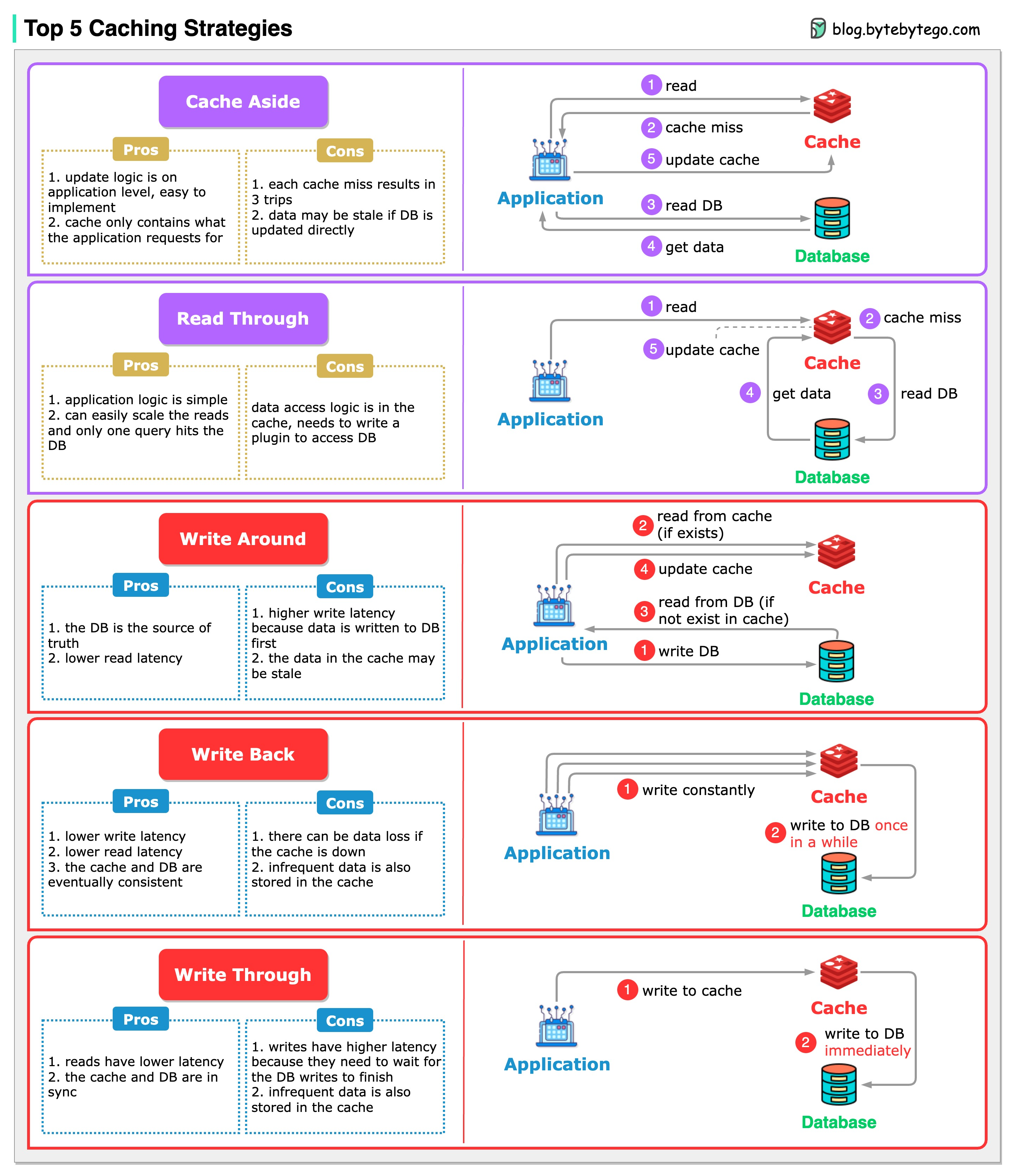

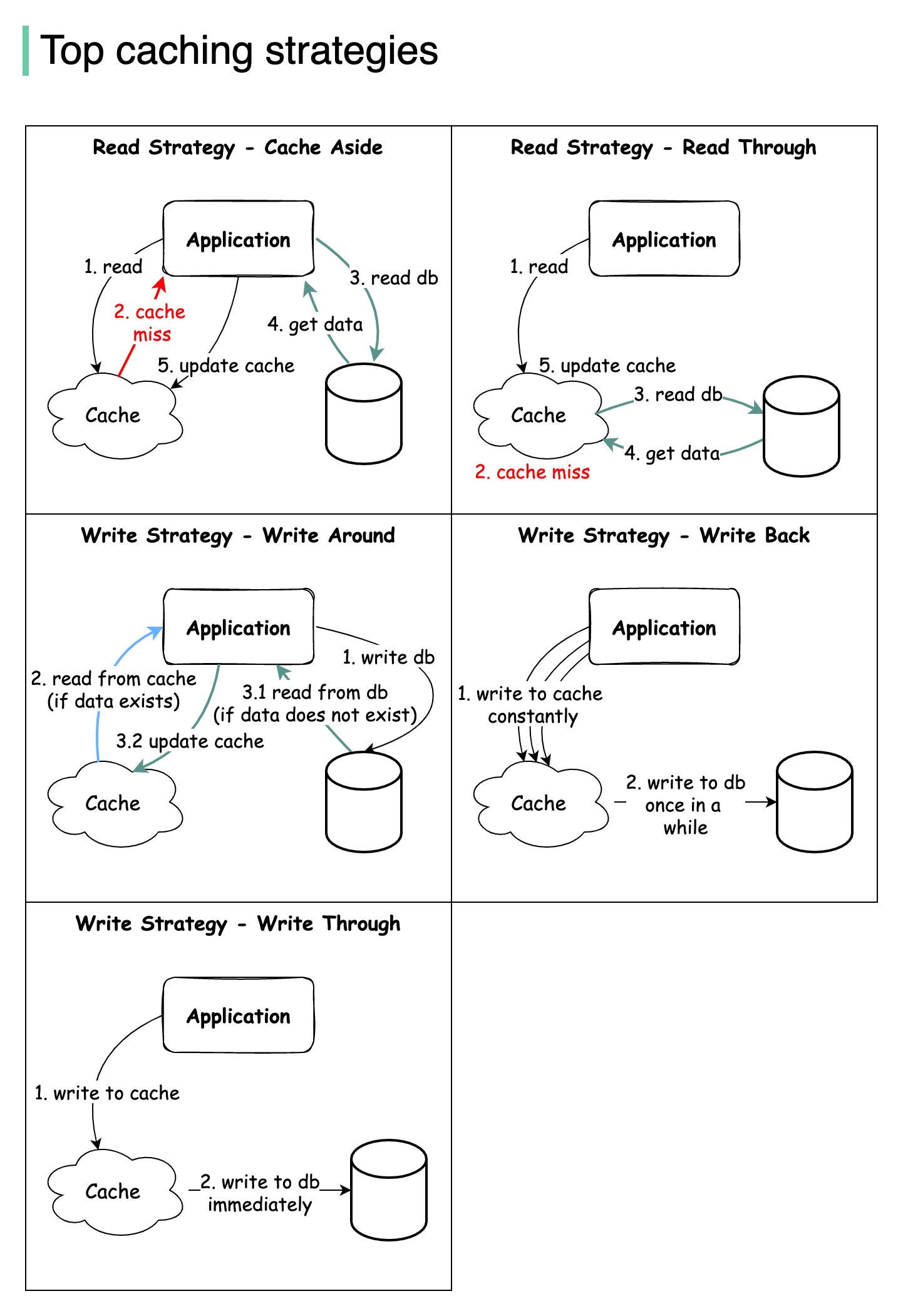

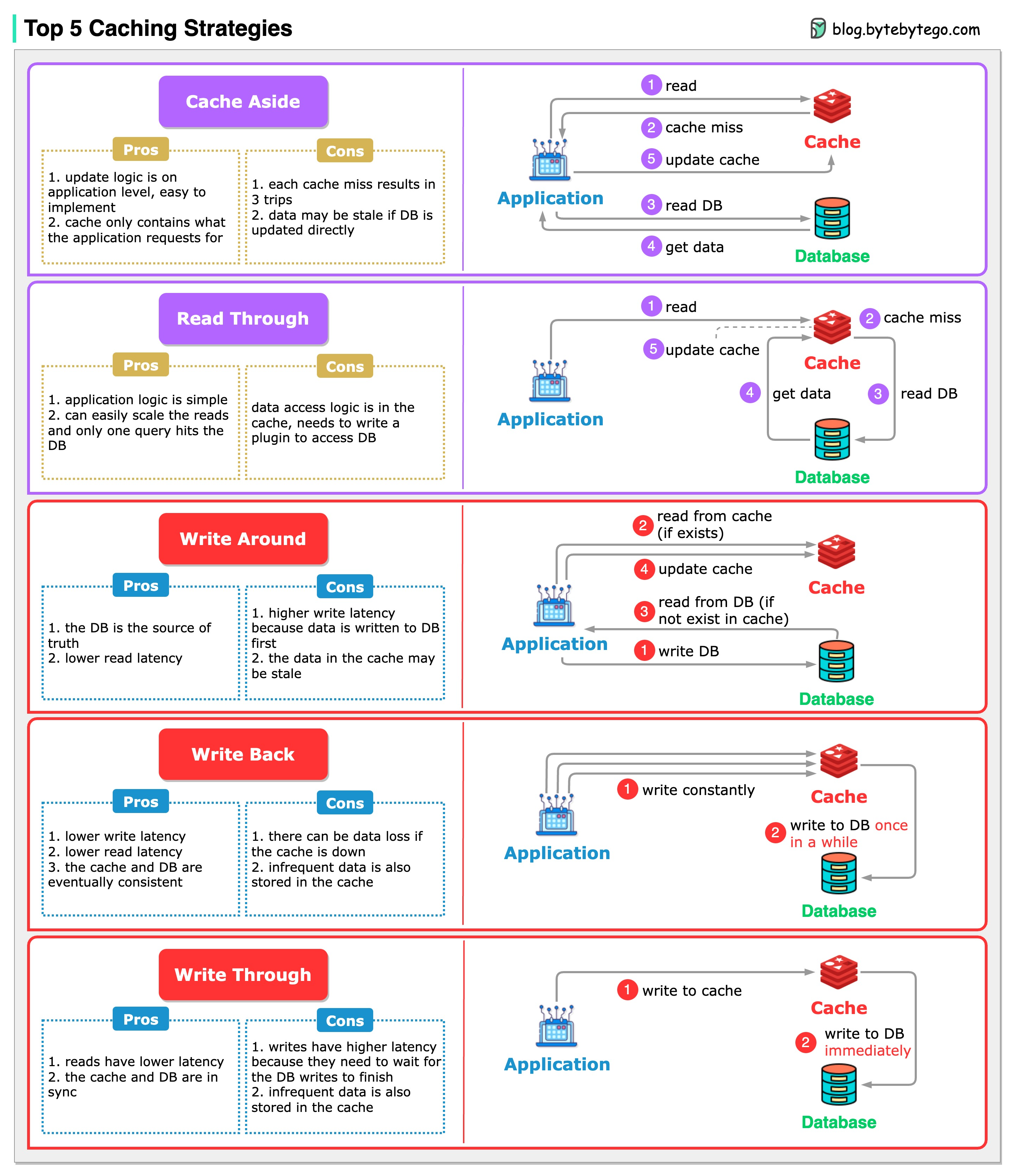

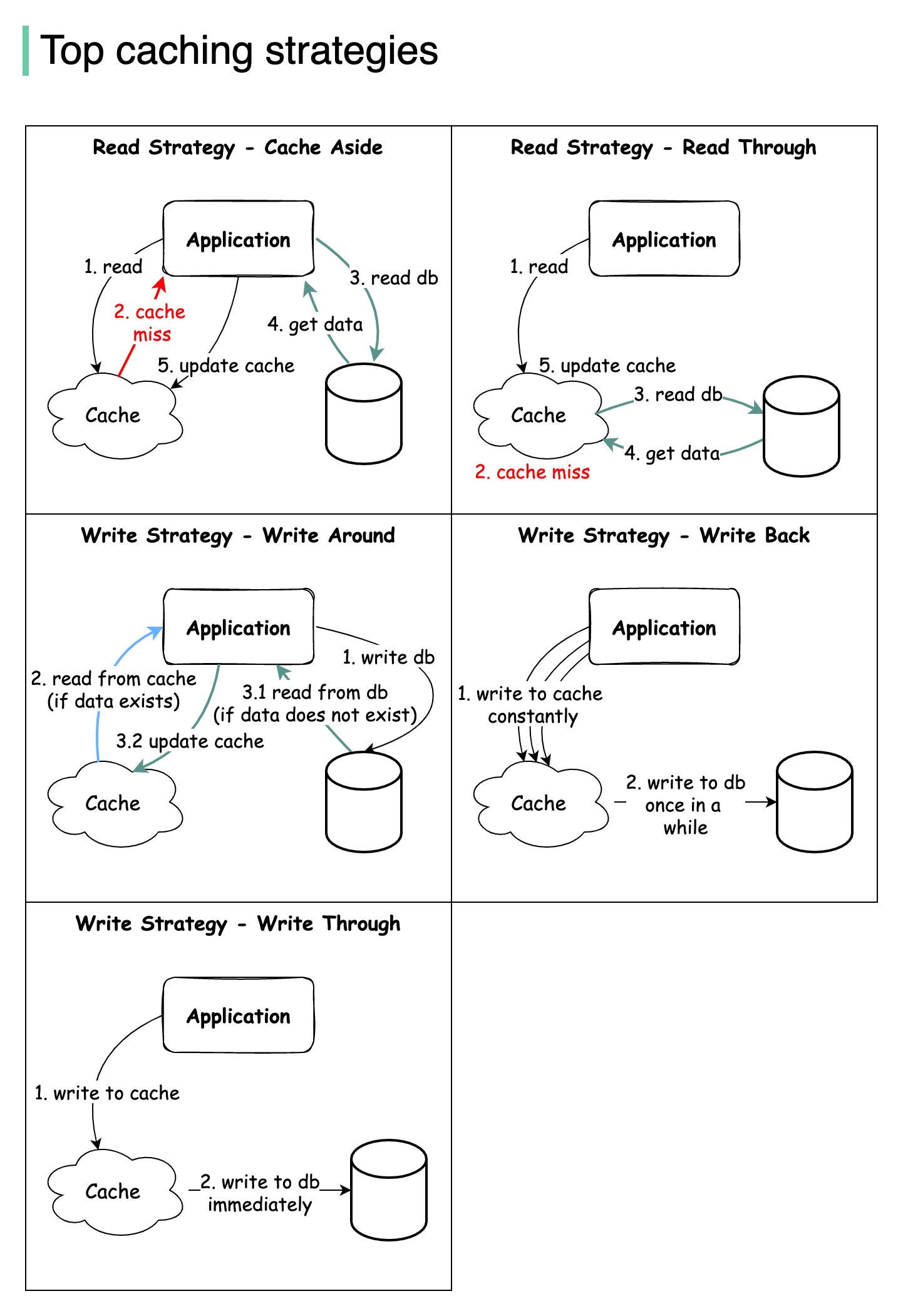

+ * [Top 5 Caching Strategies](https://bytebytego.com/guides/top-5-caching-strategies)

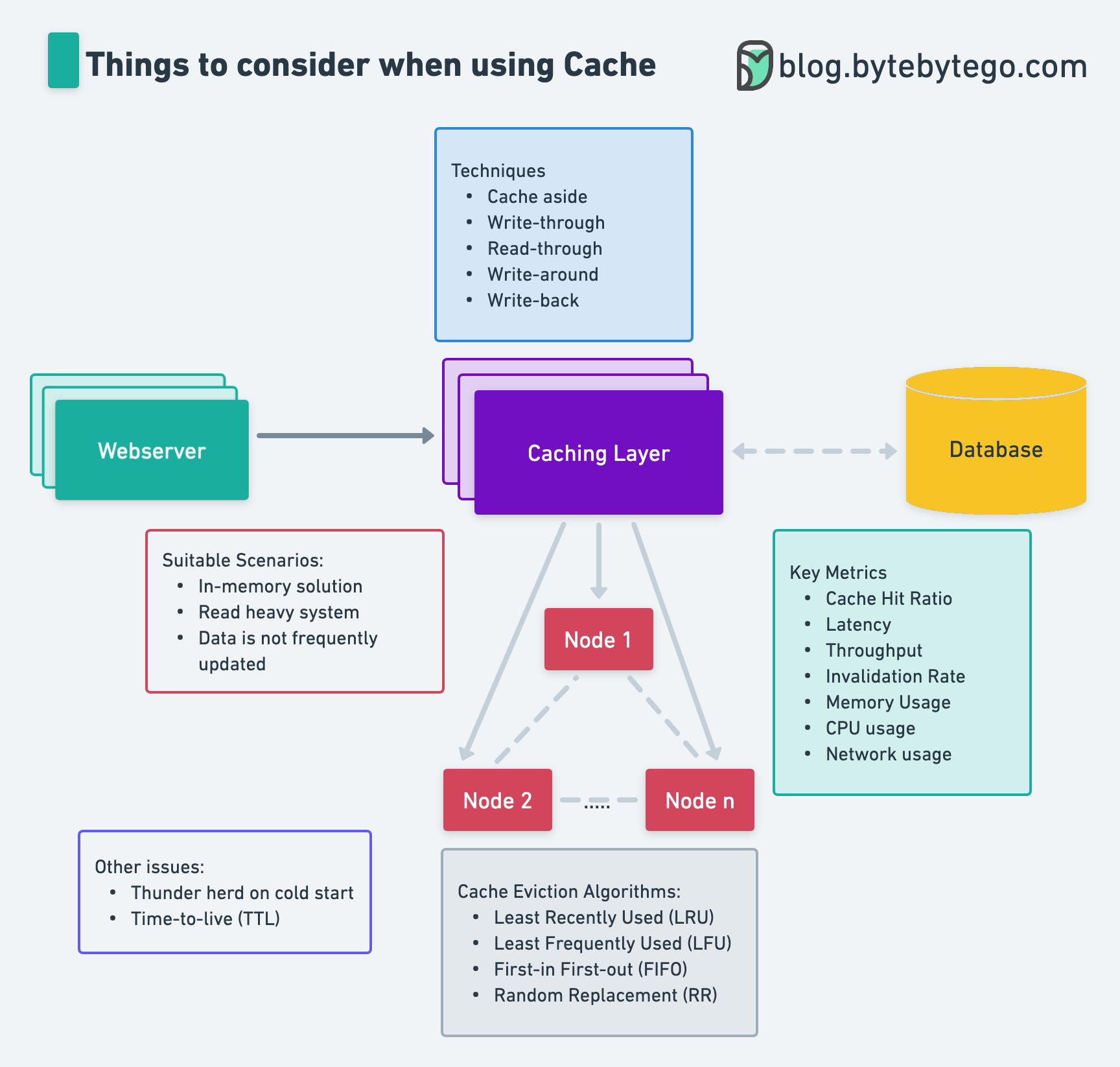

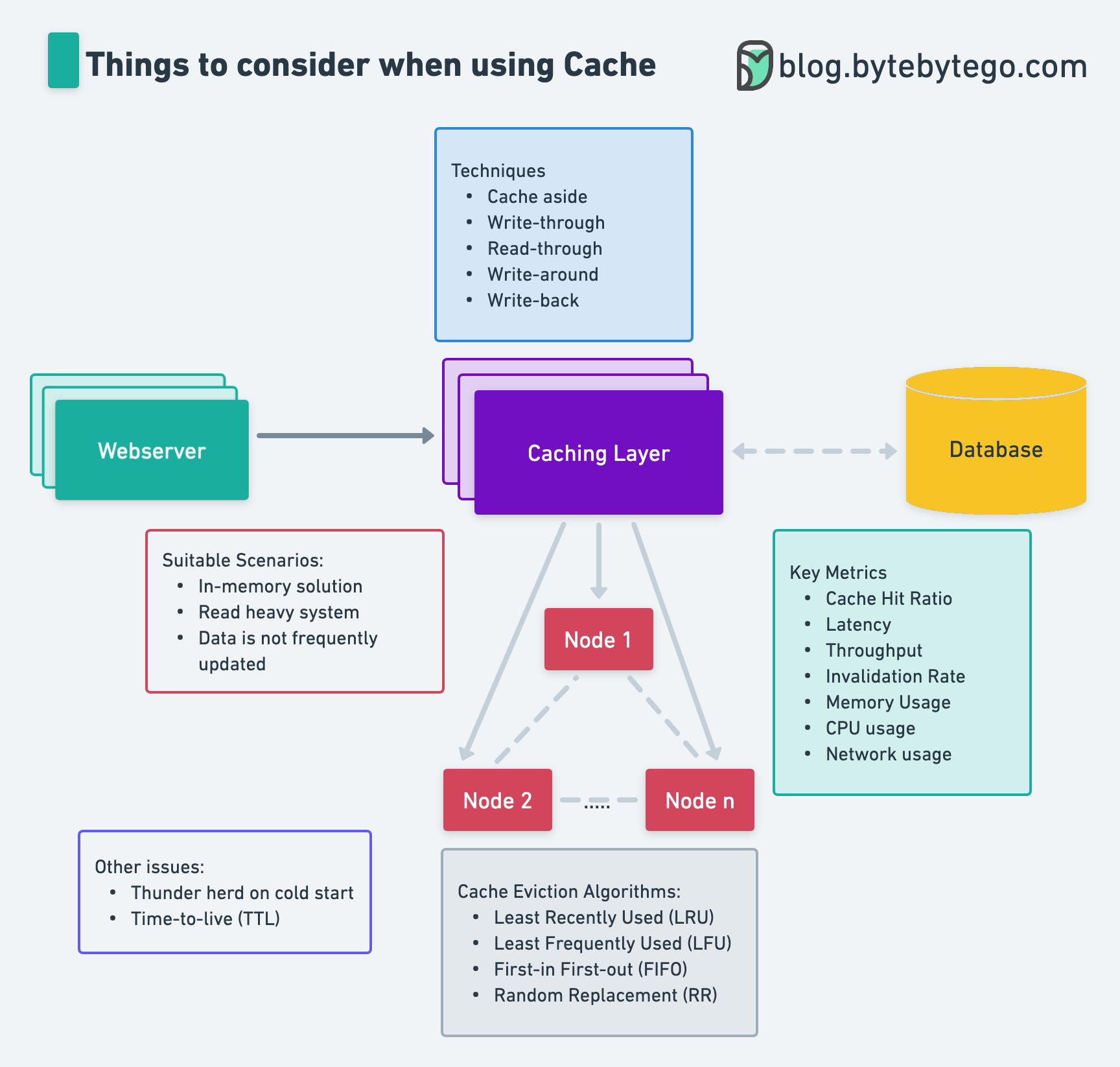

+ * [Things to Consider When Using Cache](https://bytebytego.com/guides/things-to-consider-when-using-cache)

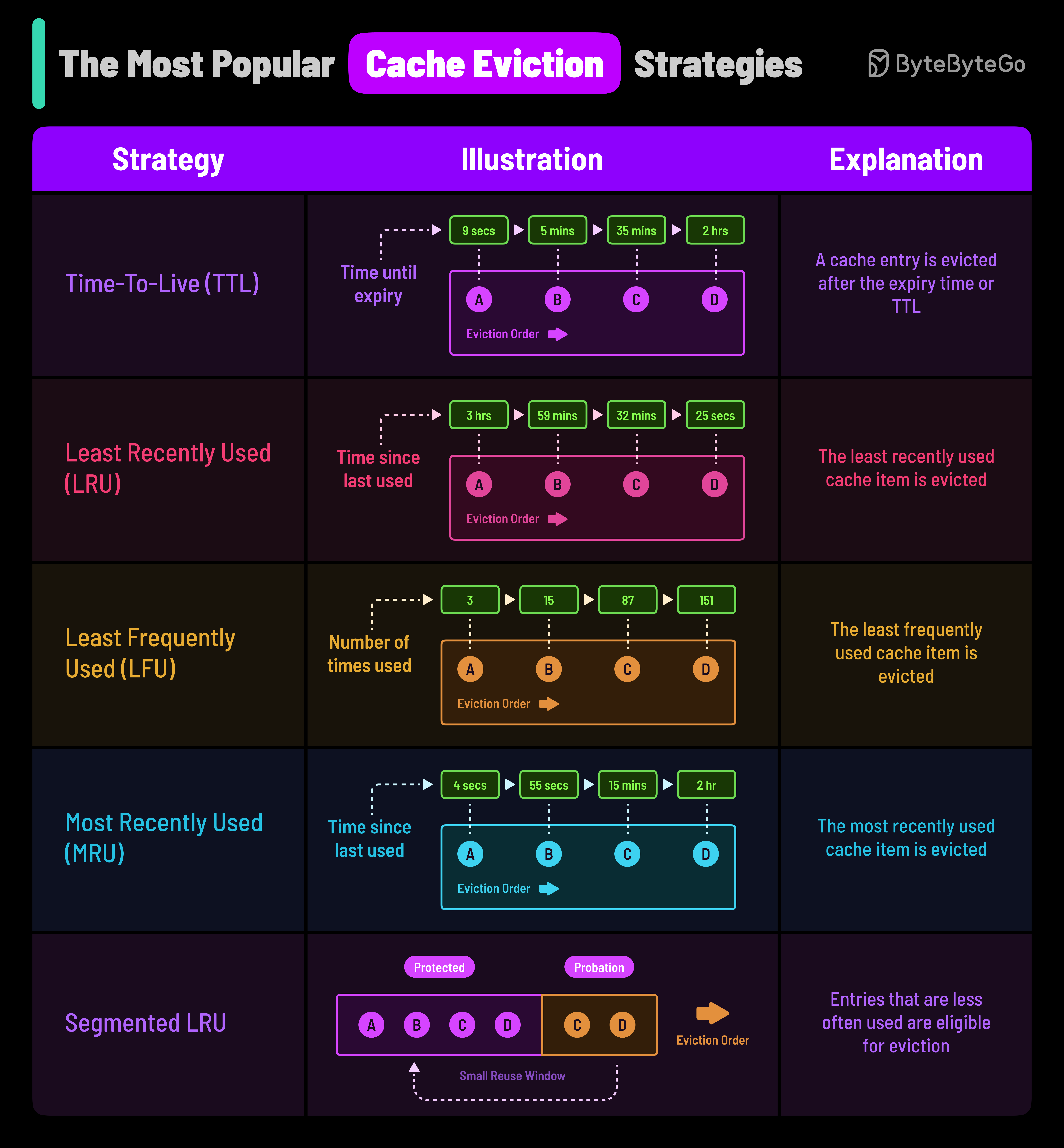

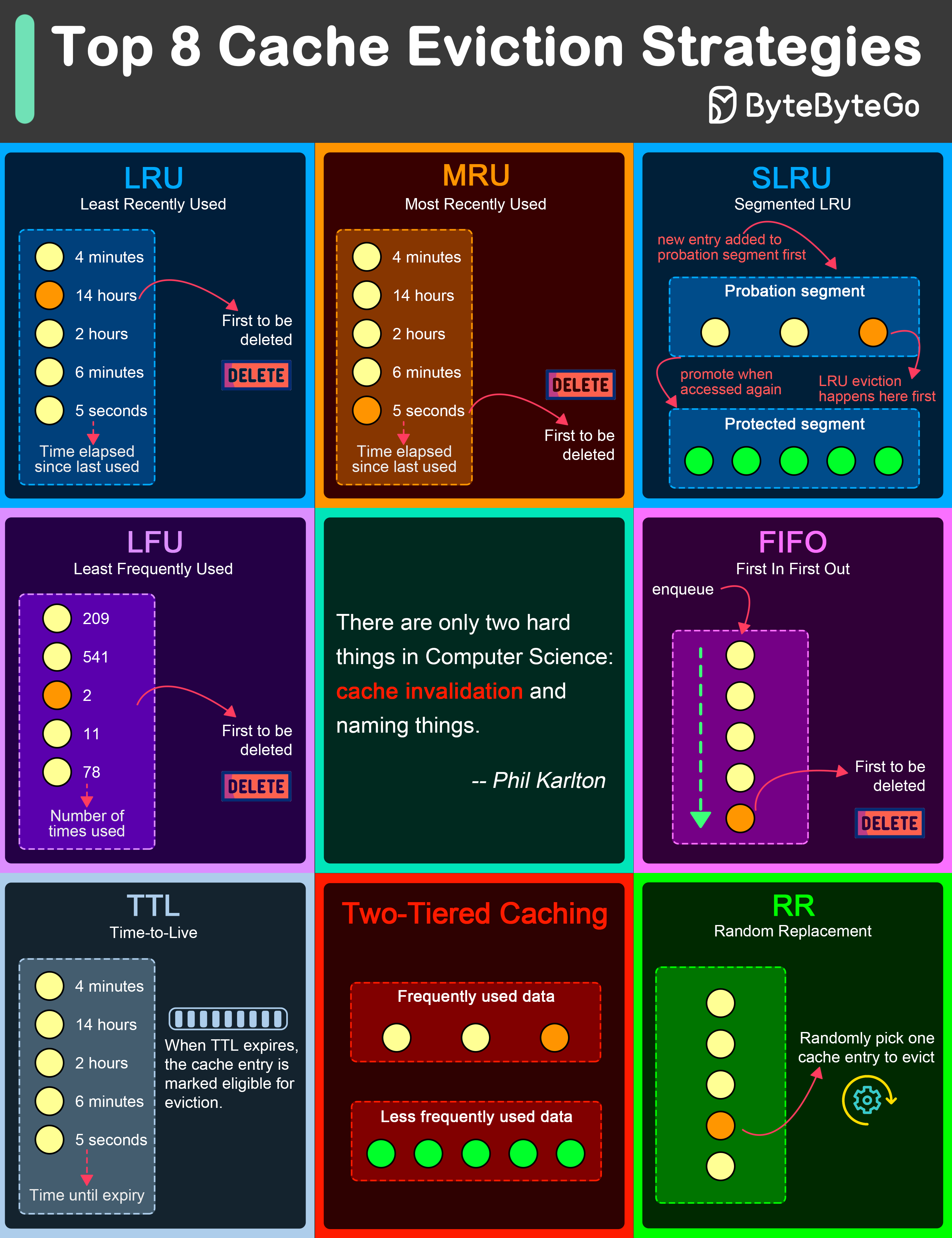

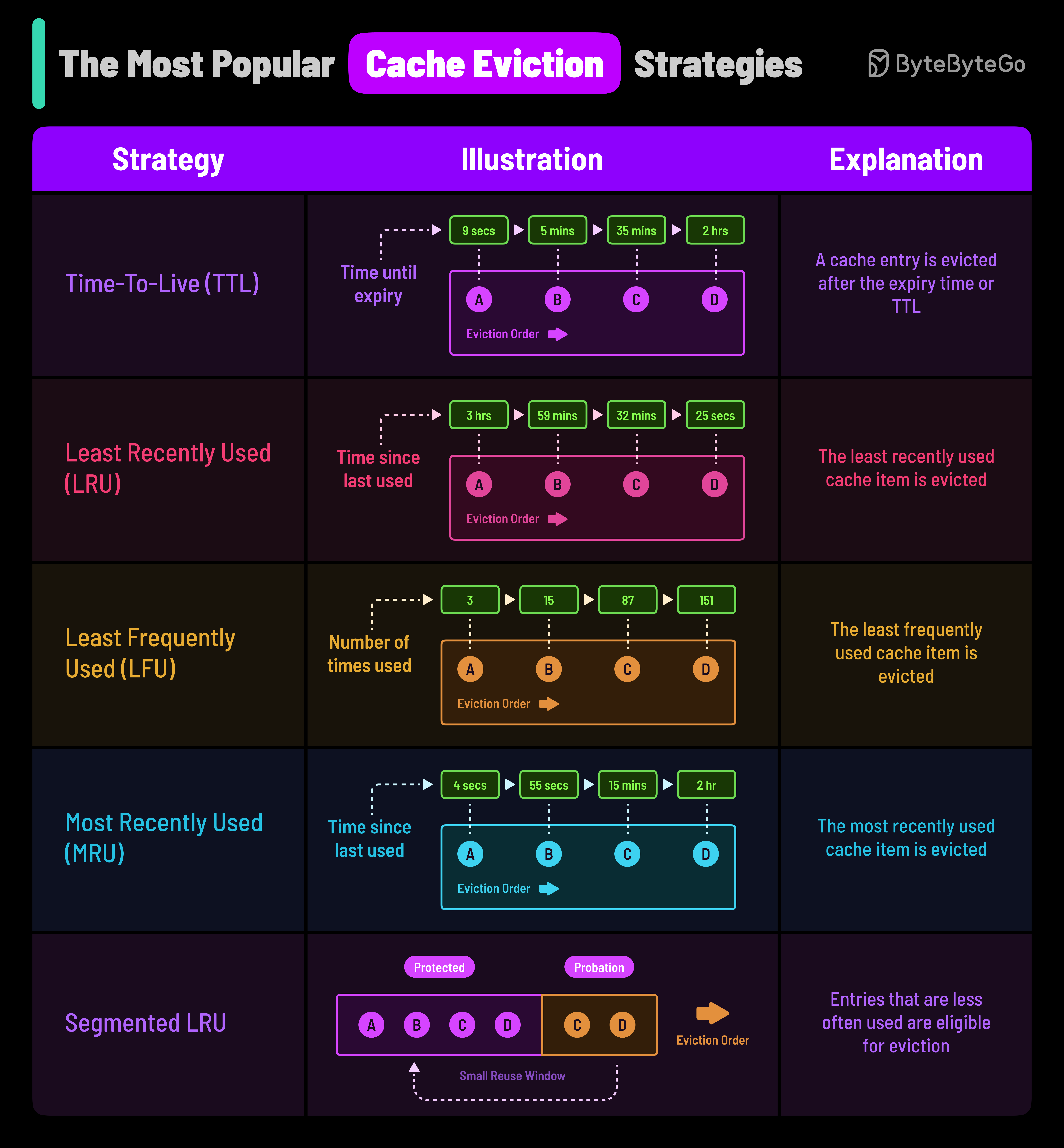

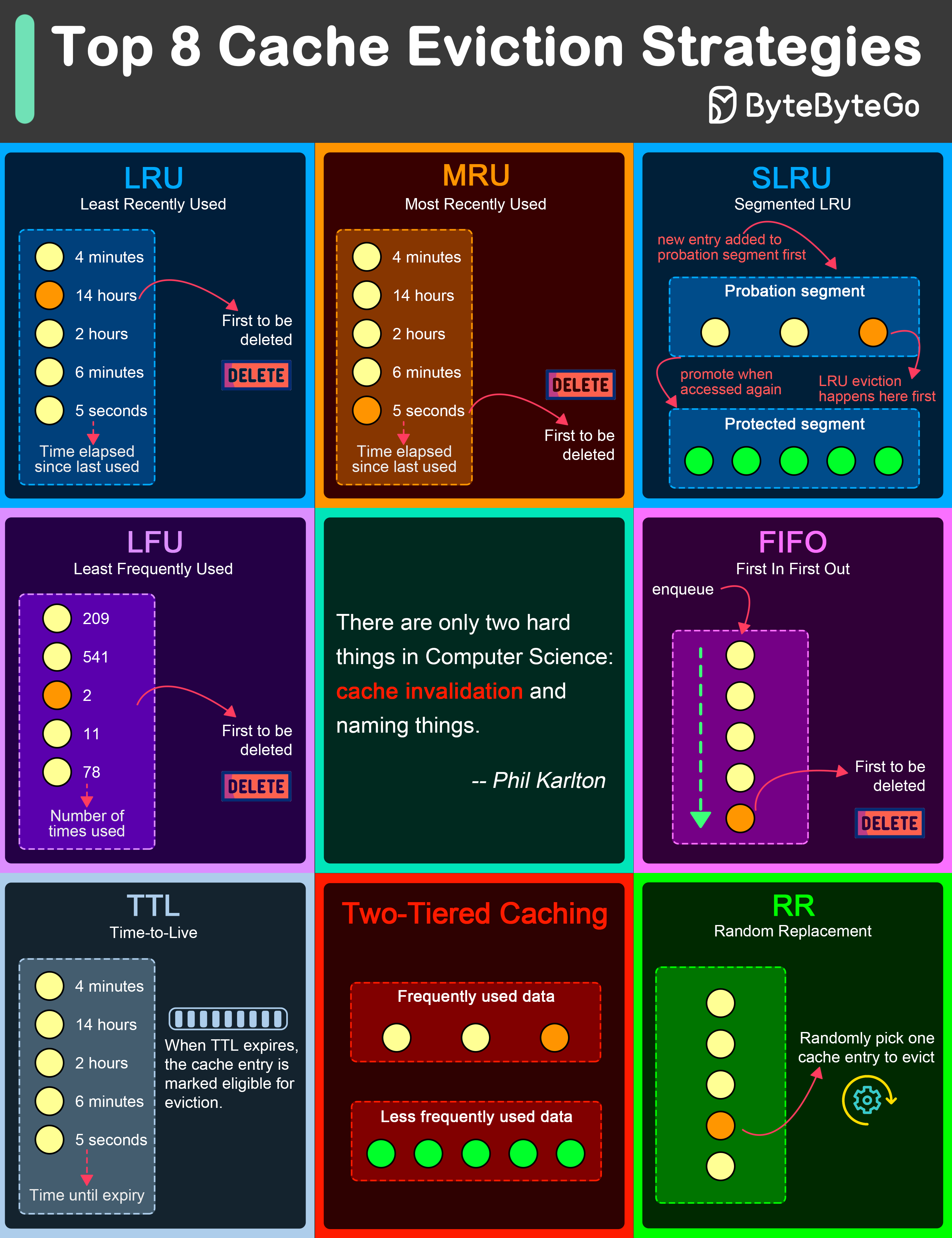

+ * [Cache Eviction Policies](https://bytebytego.com/guides/most-popular-cache-eviction)

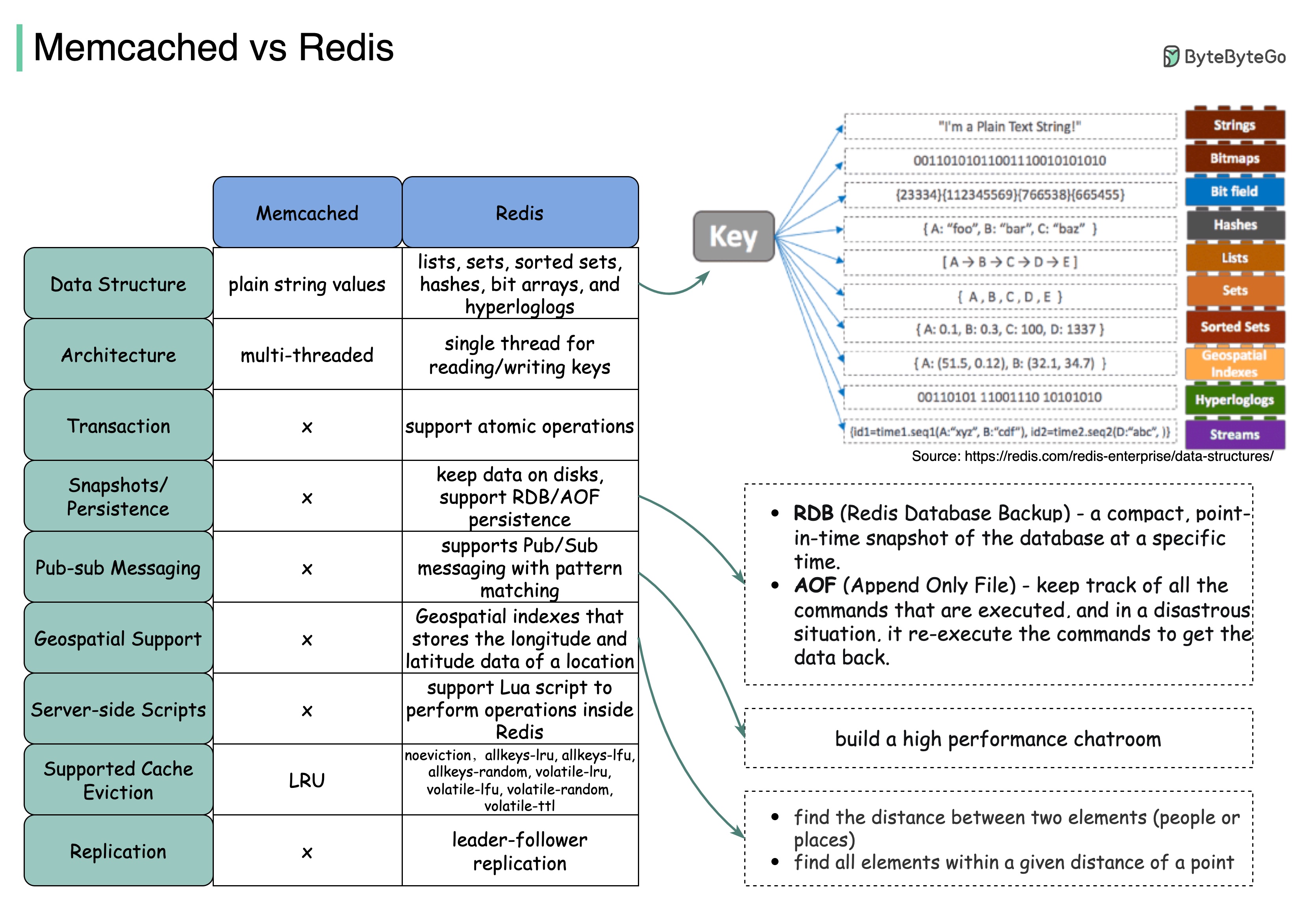

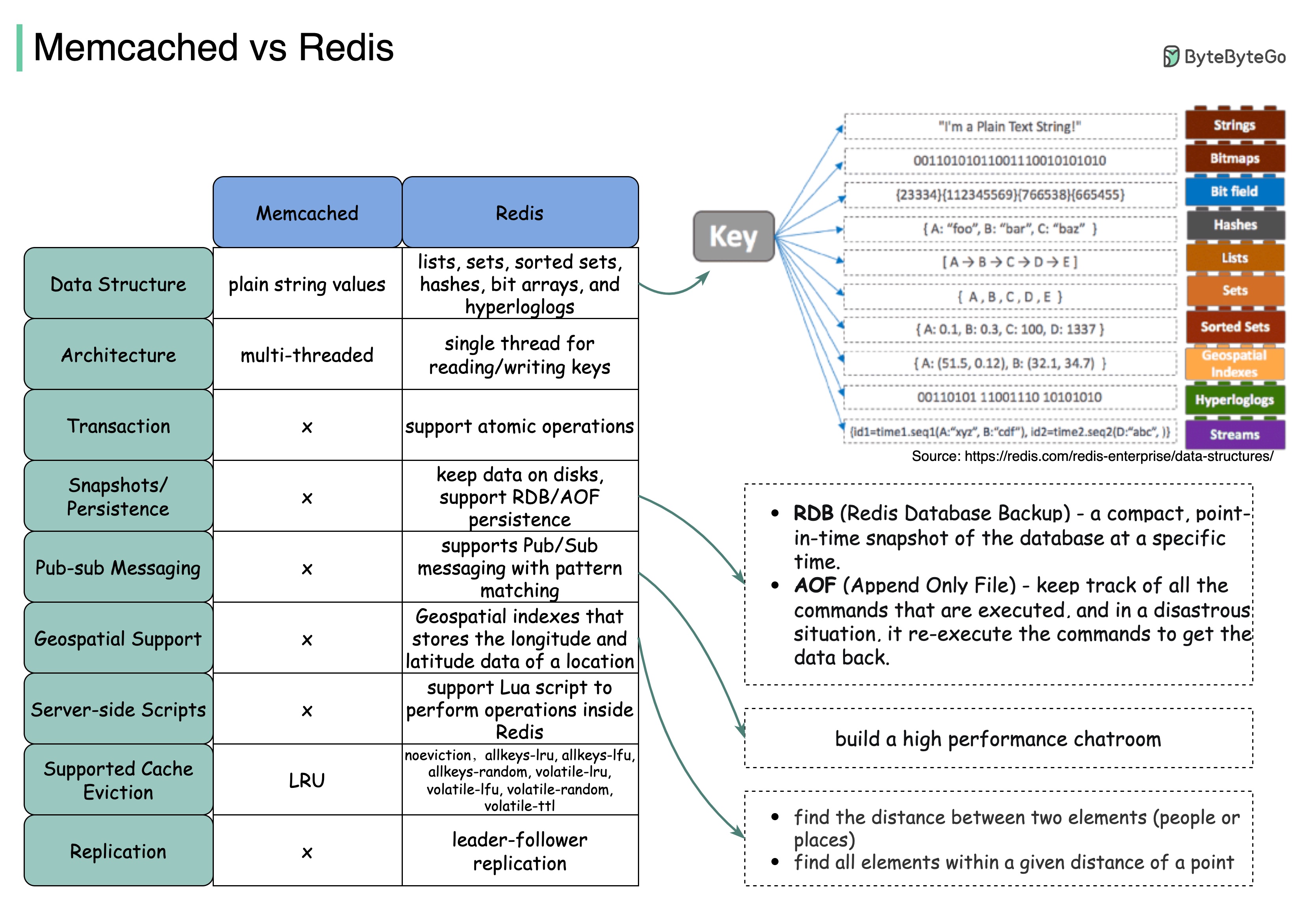

+ * [Memcached vs Redis](https://bytebytego.com/guides/memcached-vs-redis)

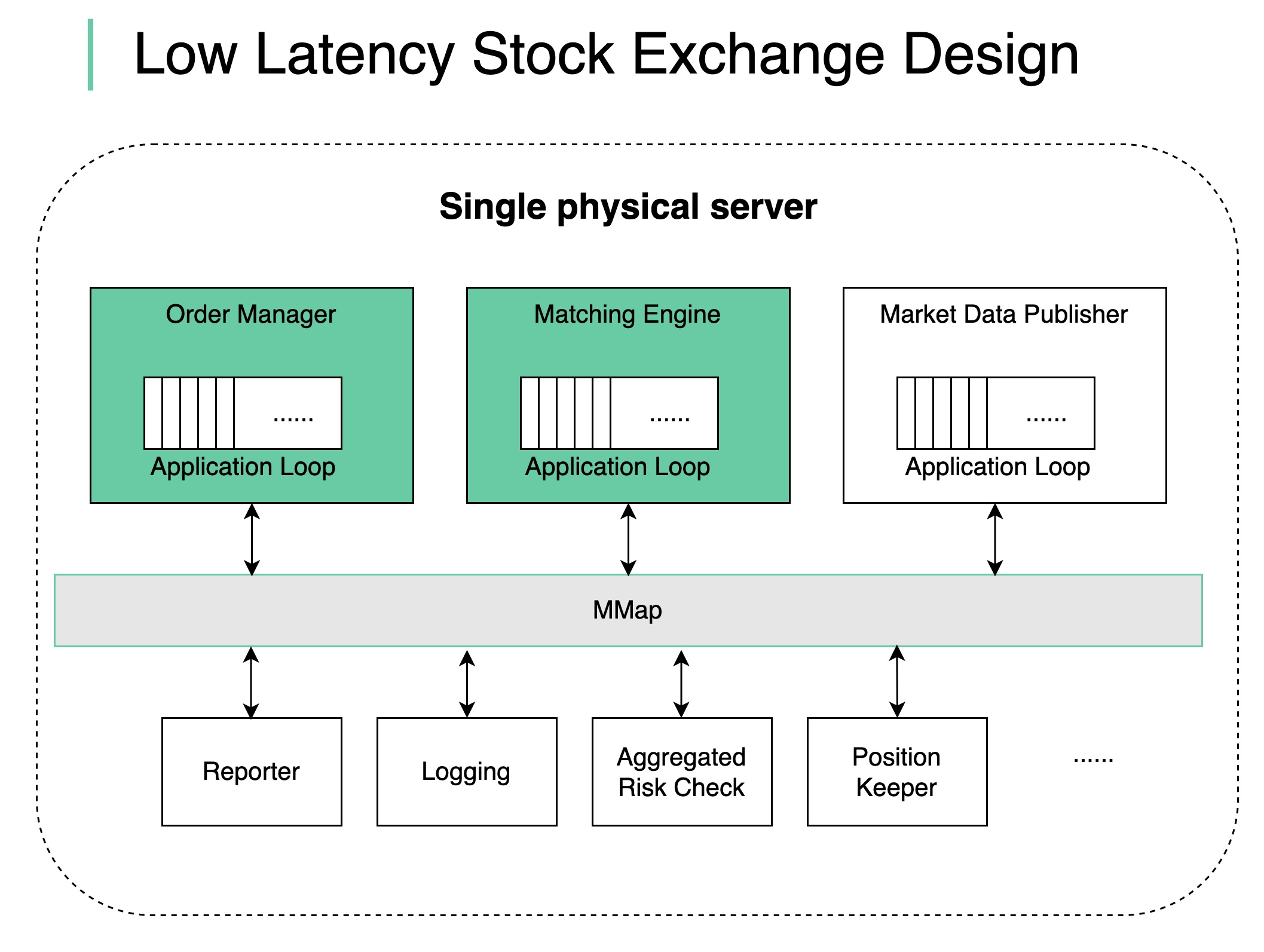

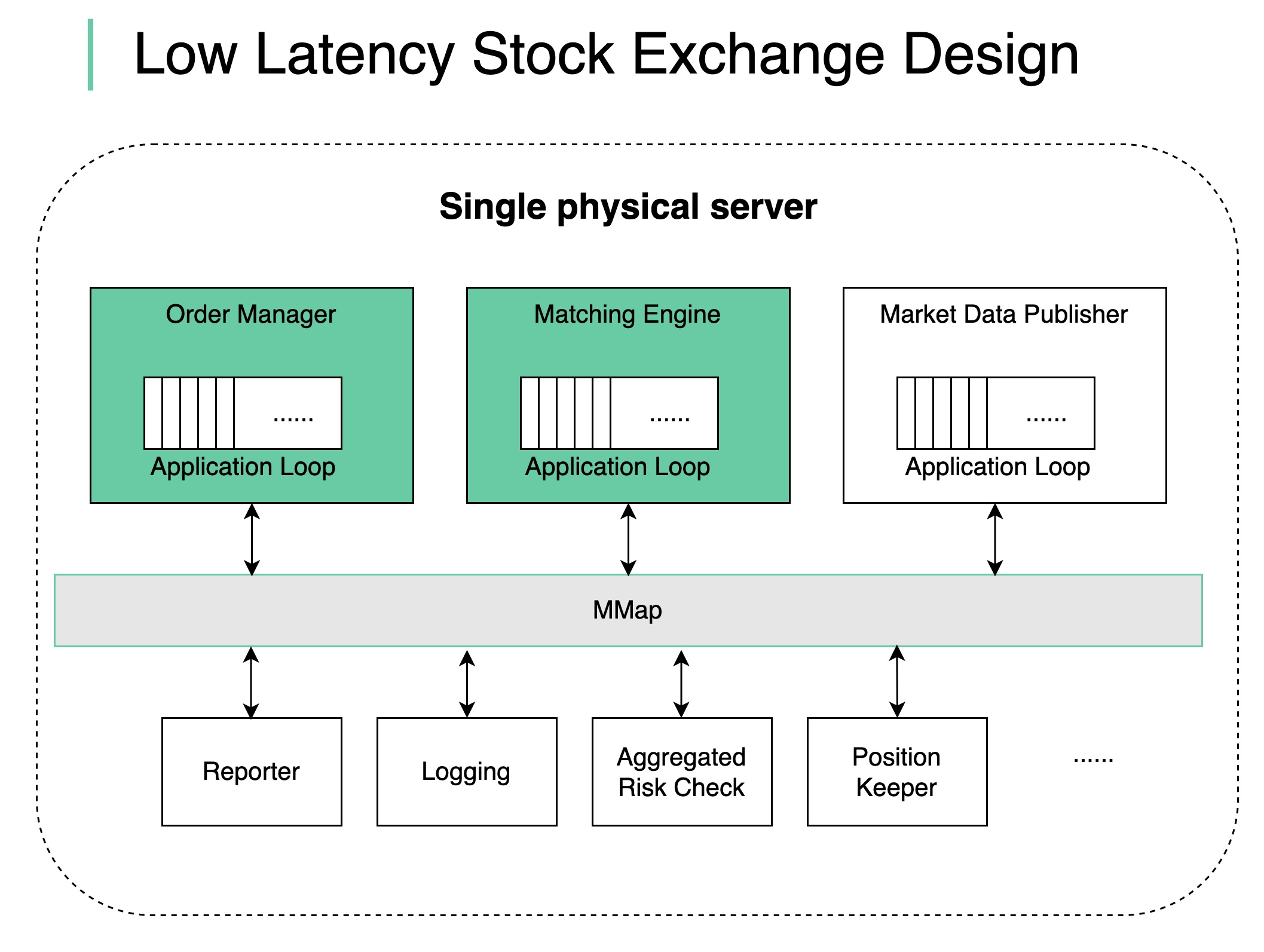

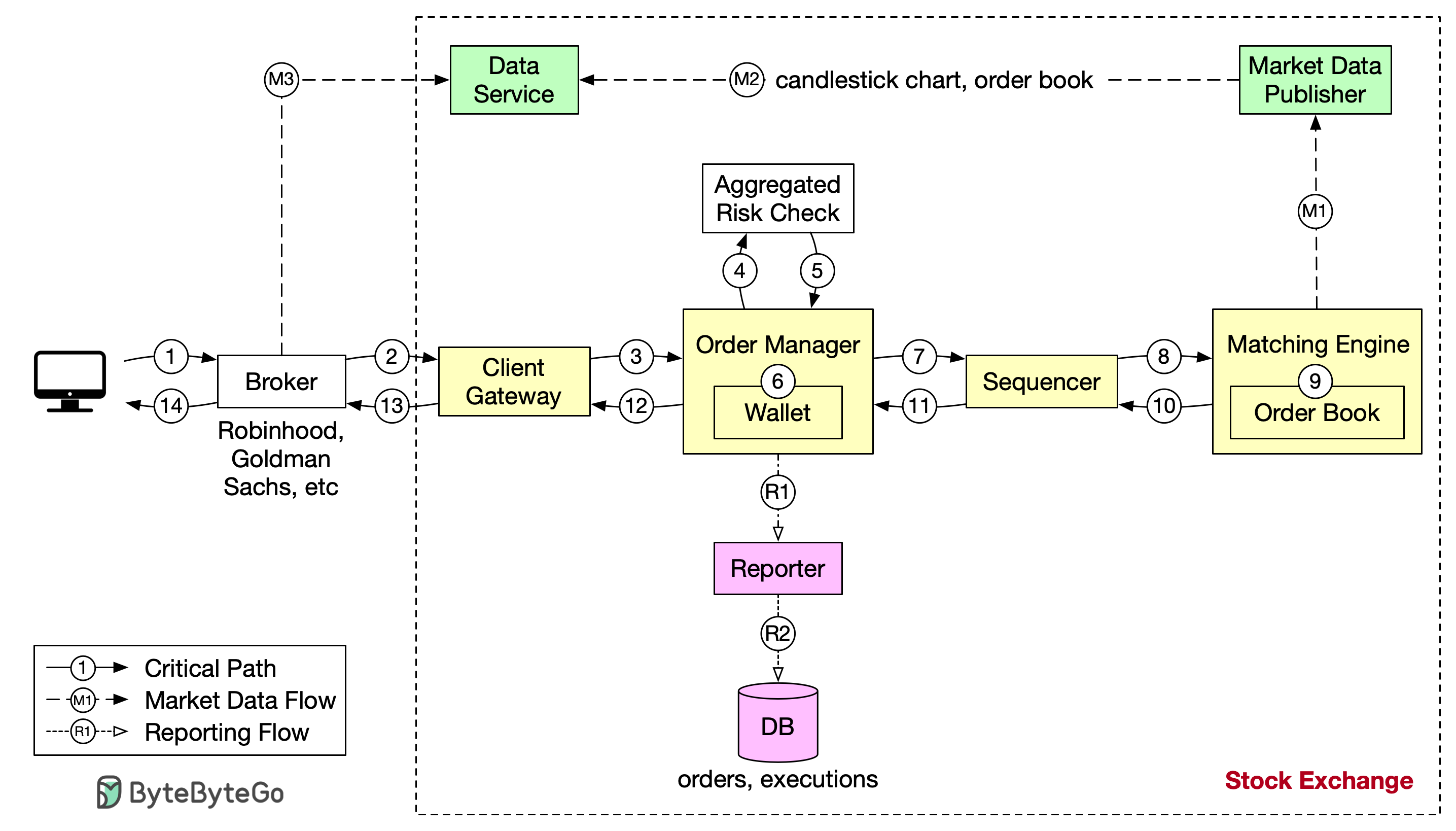

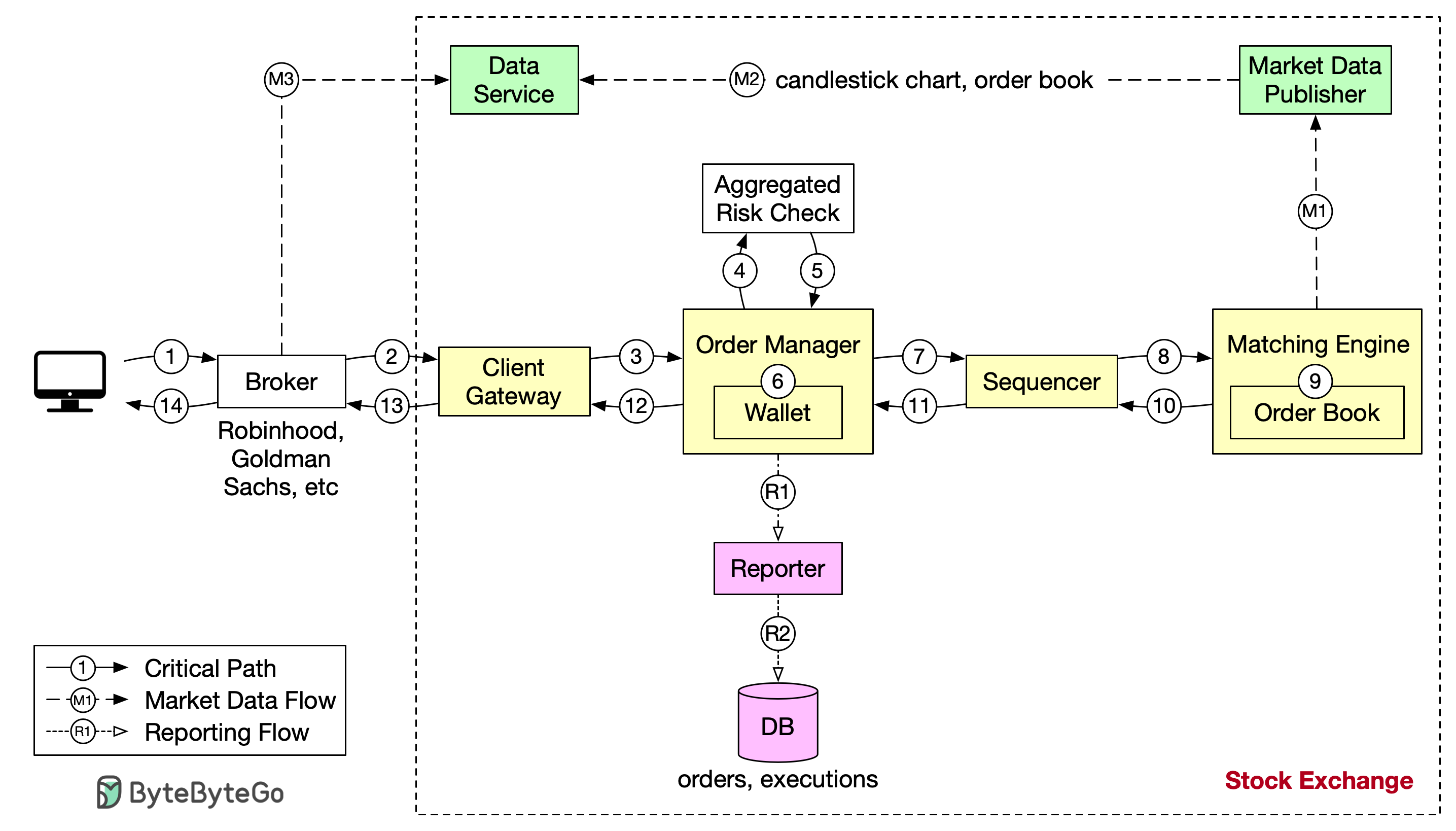

+ * [Low Latency Stock Exchange](https://bytebytego.com/guides/low-latency-stock-exchange)

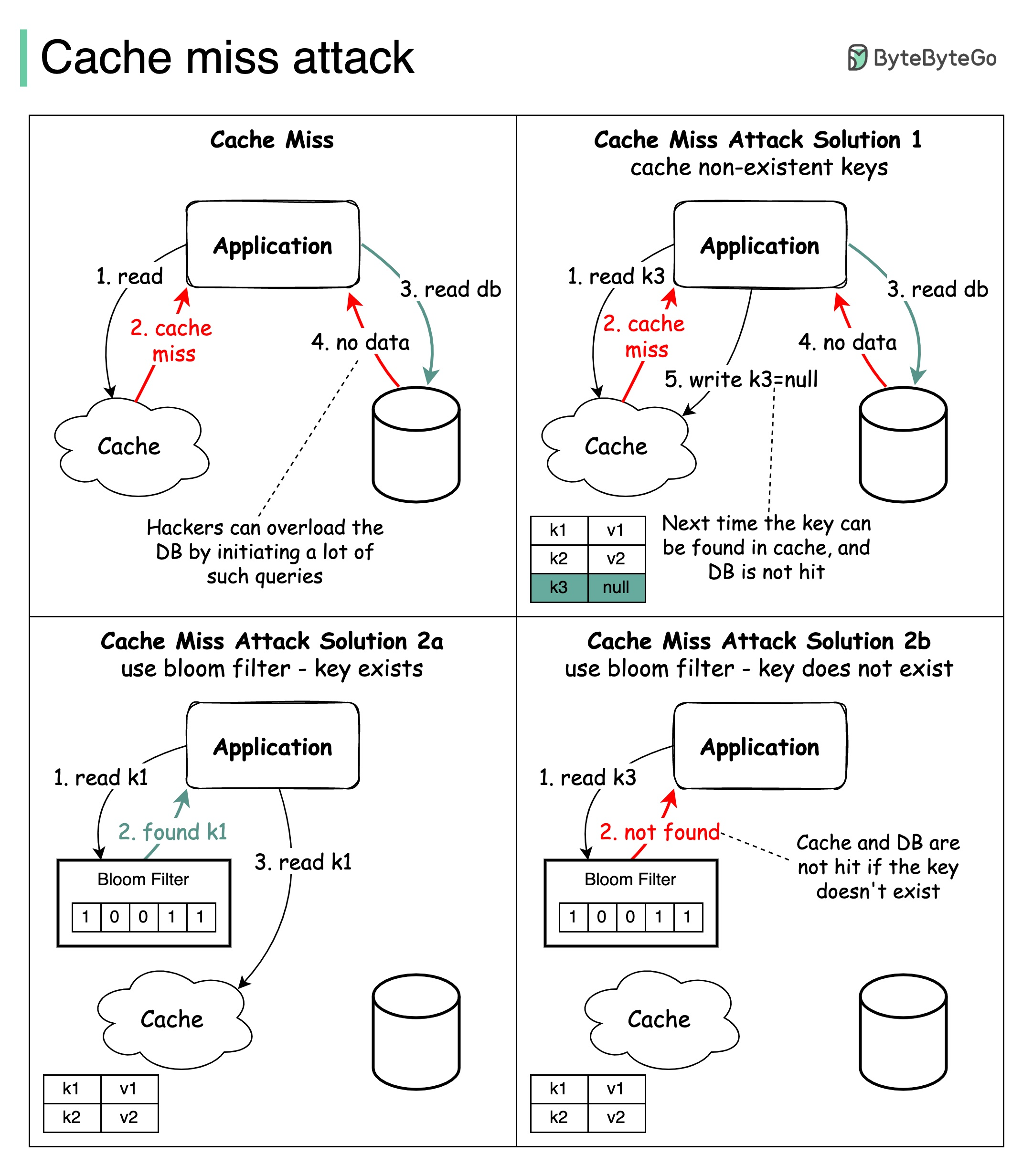

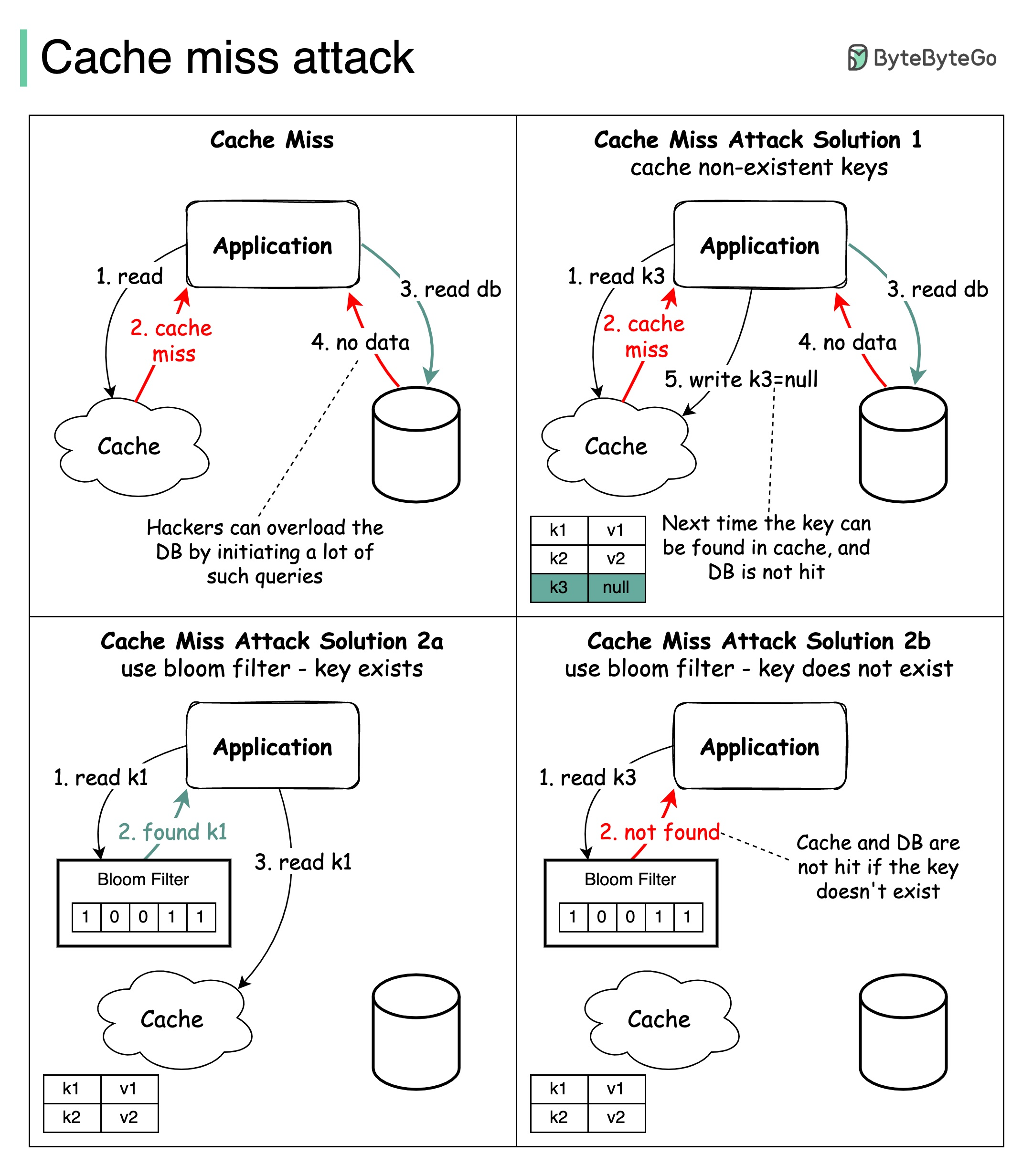

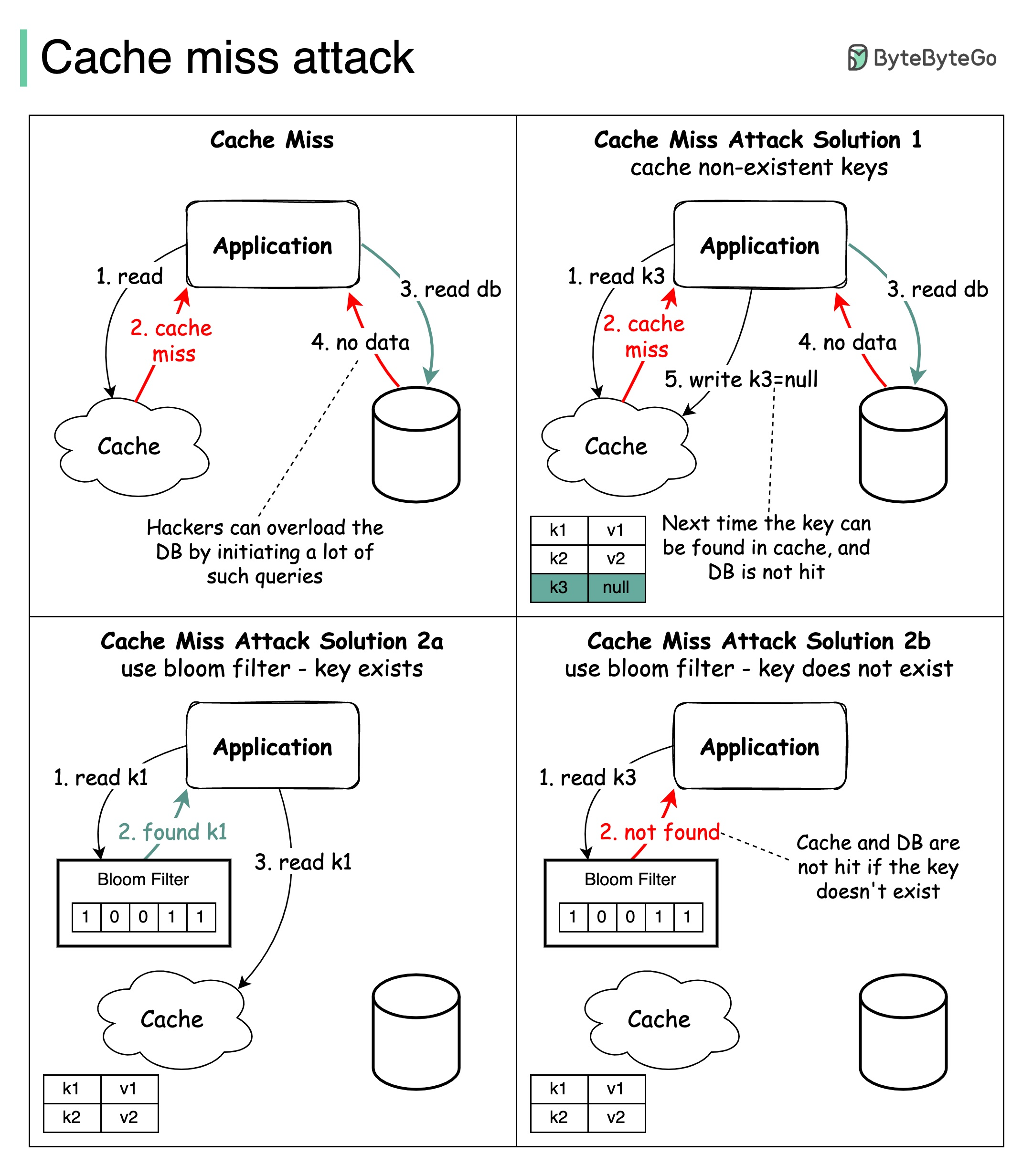

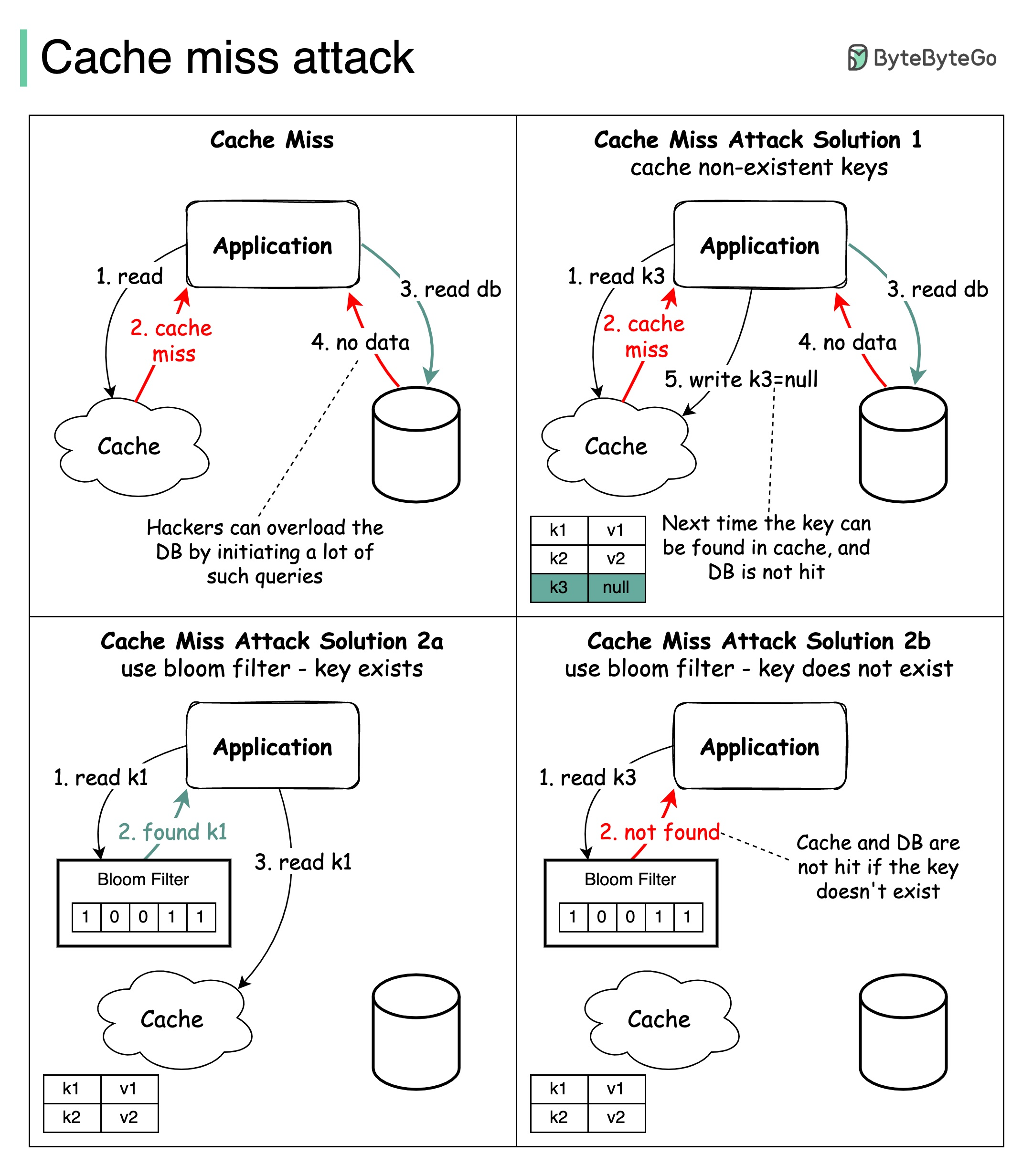

+ * [Cache Miss Attack](https://bytebytego.com/guides/cache-miss-attack)

+ * [Top 8 Cache Eviction Strategies](https://bytebytego.com/guides/top-8-cache-eviction-strategies)

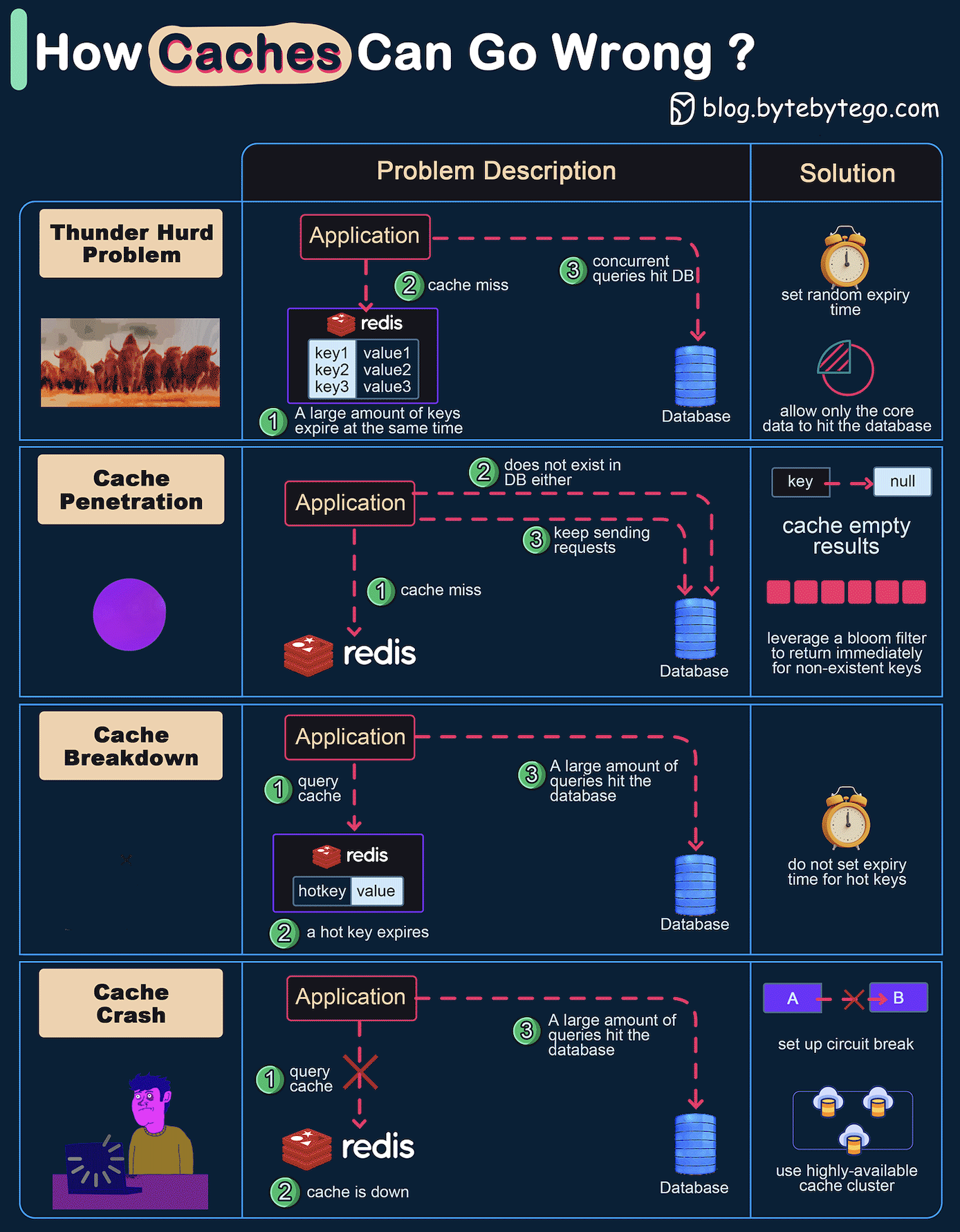

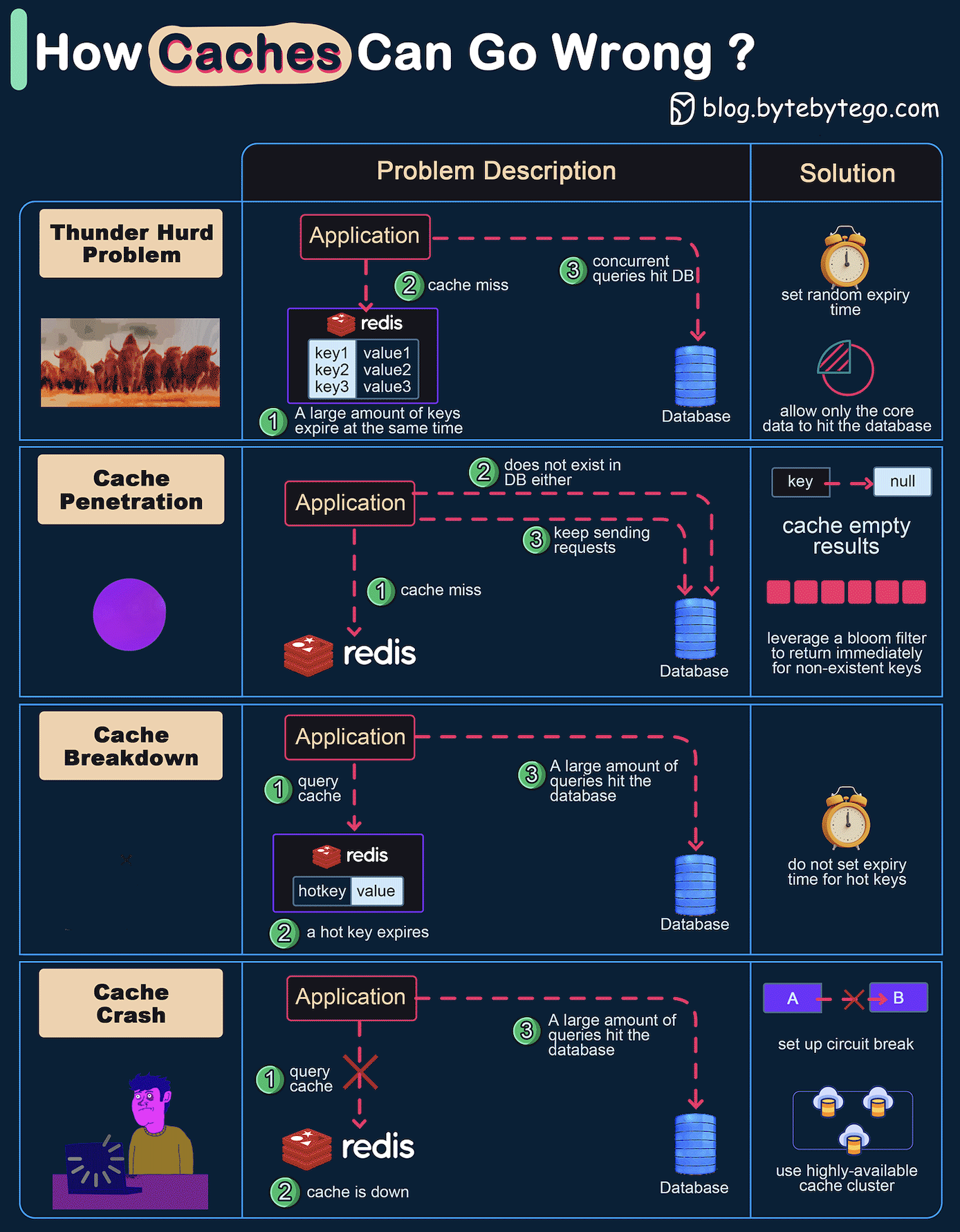

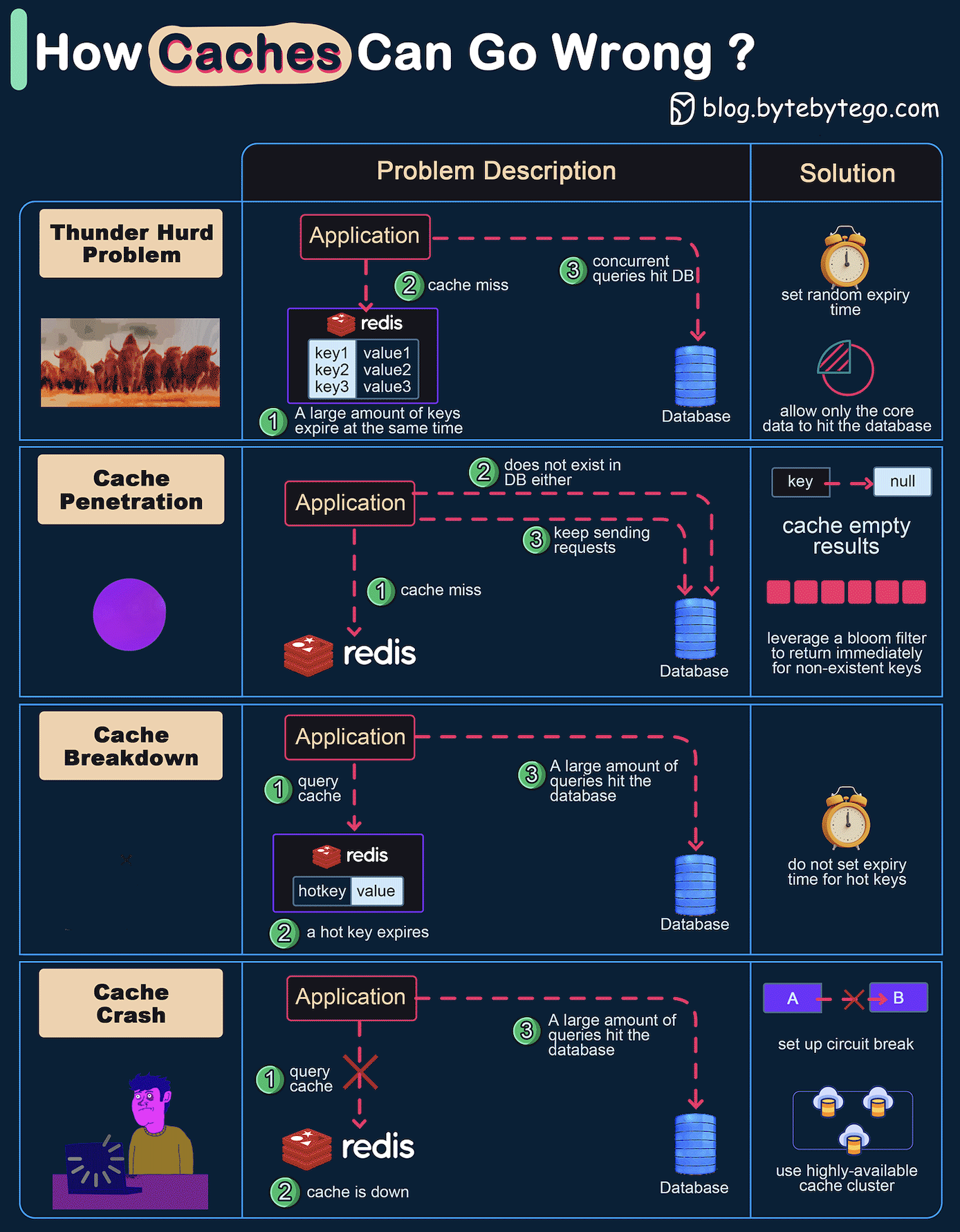

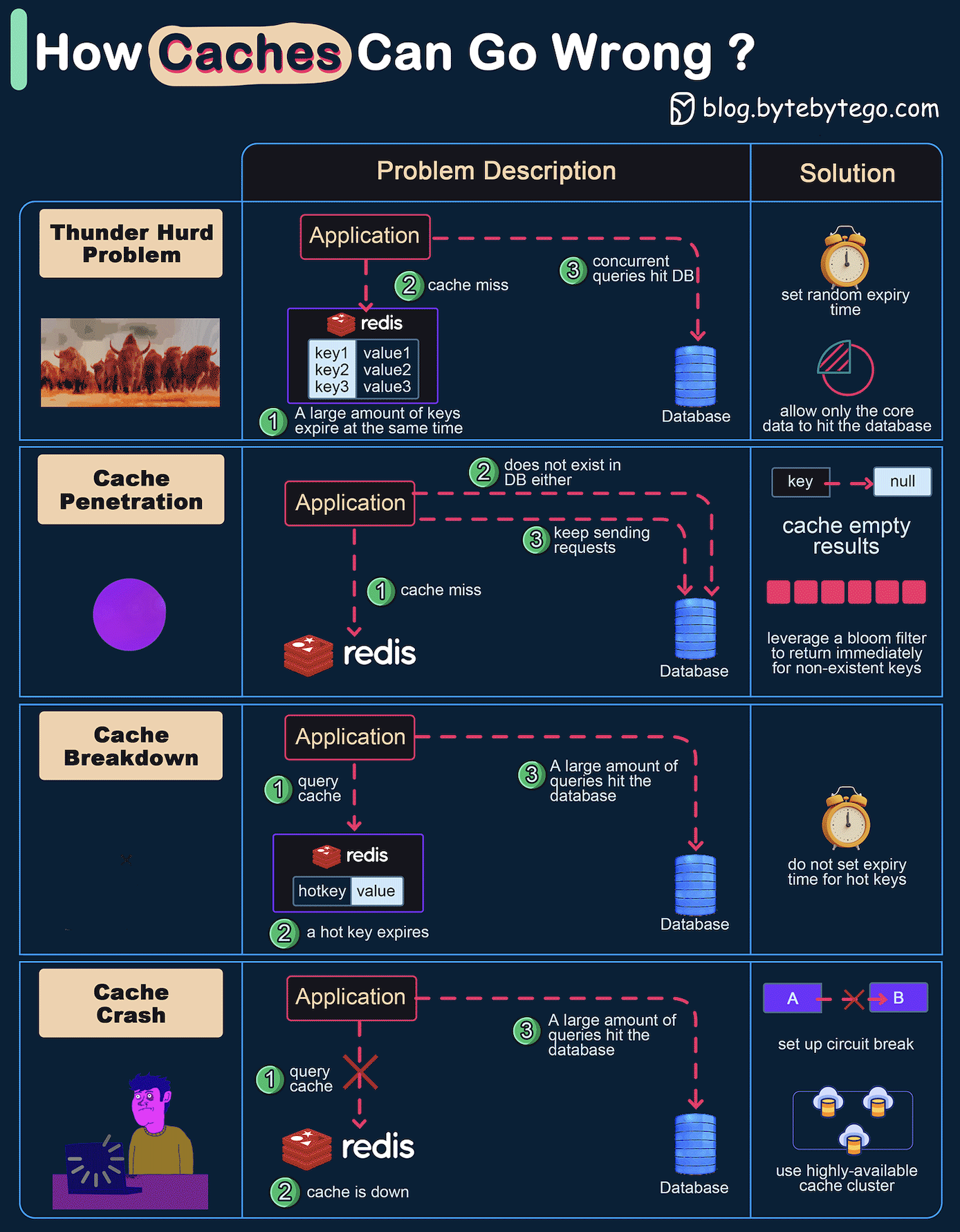

+ * [How Can Cache Systems Go Wrong?](https://bytebytego.com/guides/how-can-cache-systems-go-wrong)

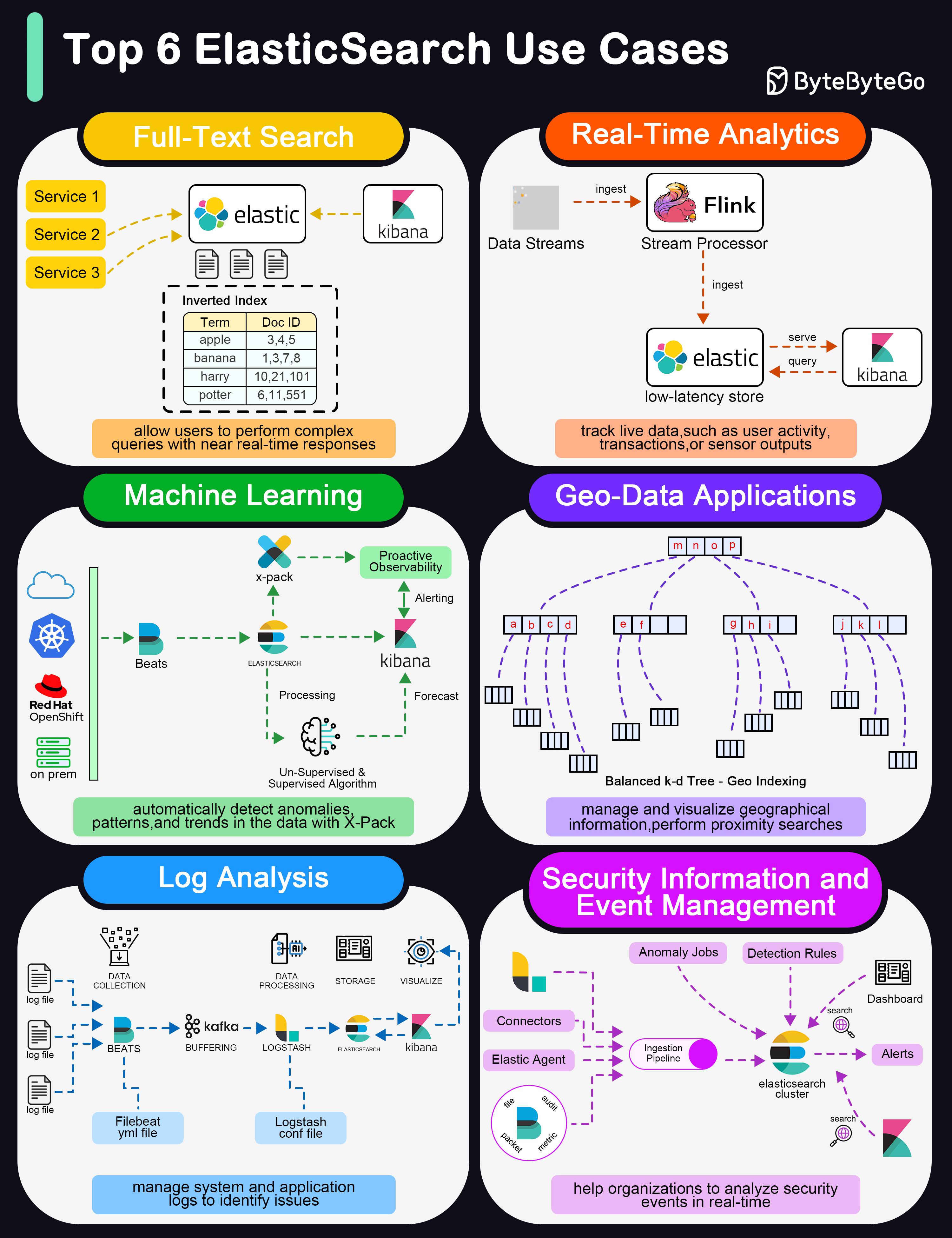

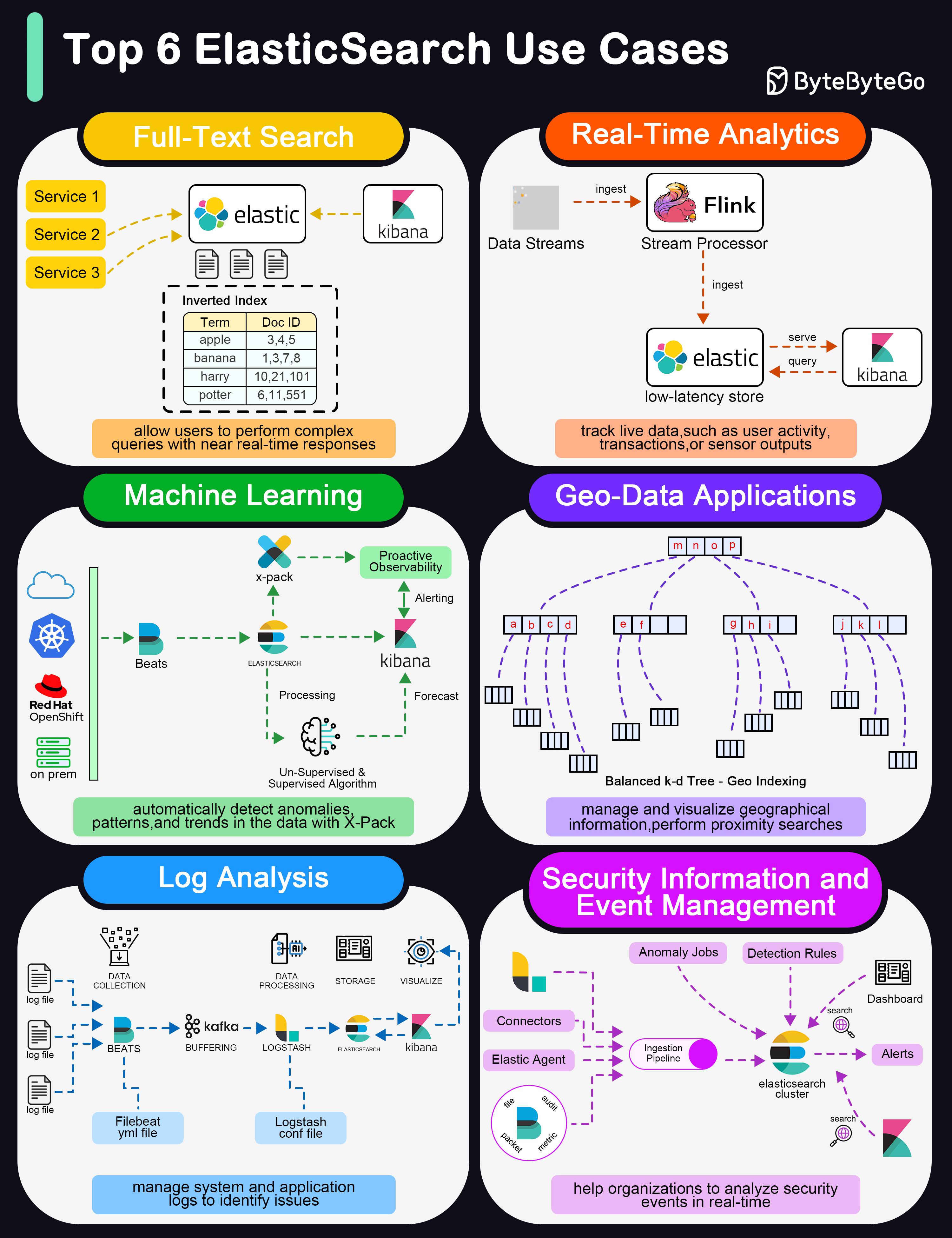

+ * [Top 6 Elasticsearch Use Cases](https://bytebytego.com/guides/top-6-elasticsearch-use-cases)

+ * [How Does CDN Work?](https://bytebytego.com/guides/how-does-cnd-work)

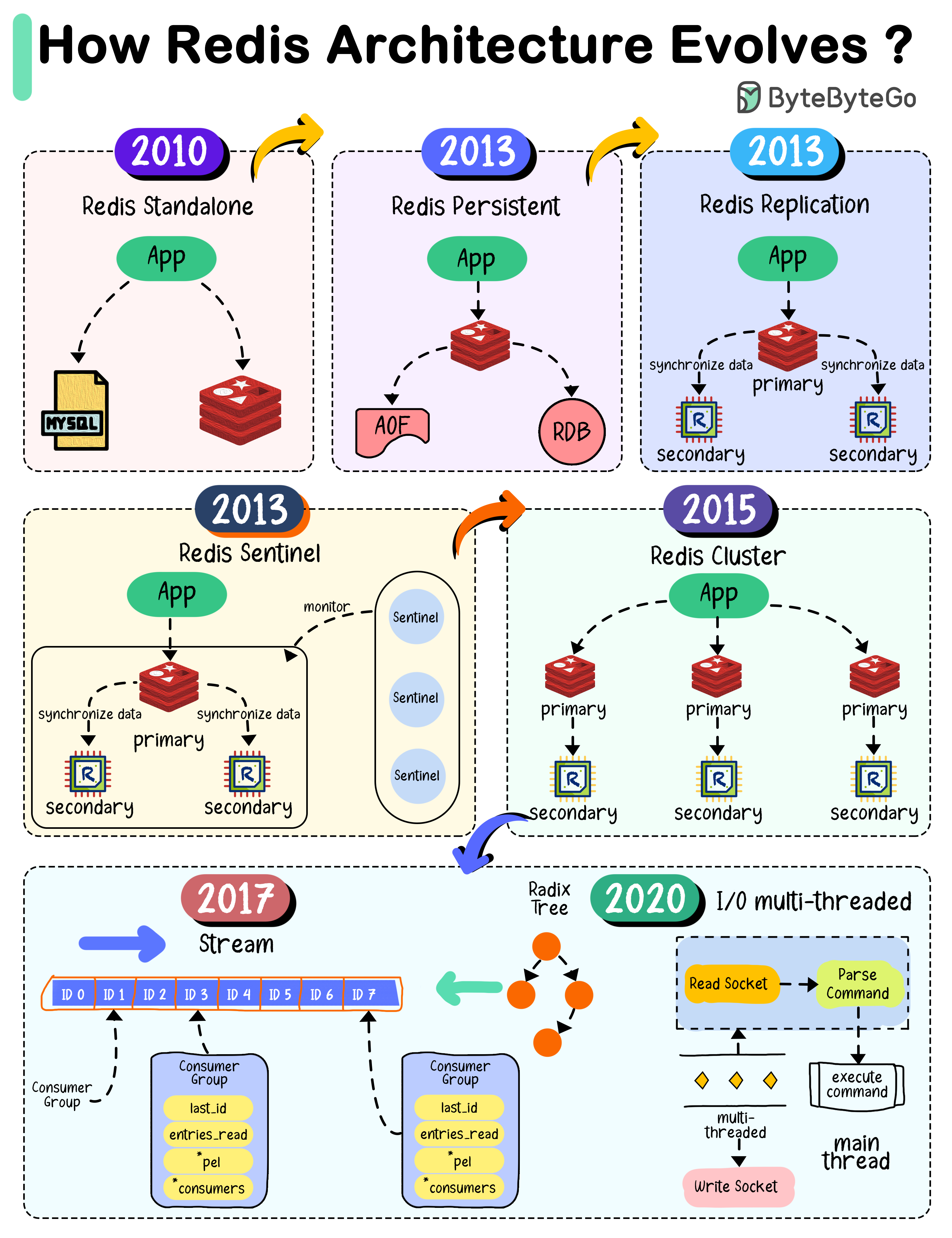

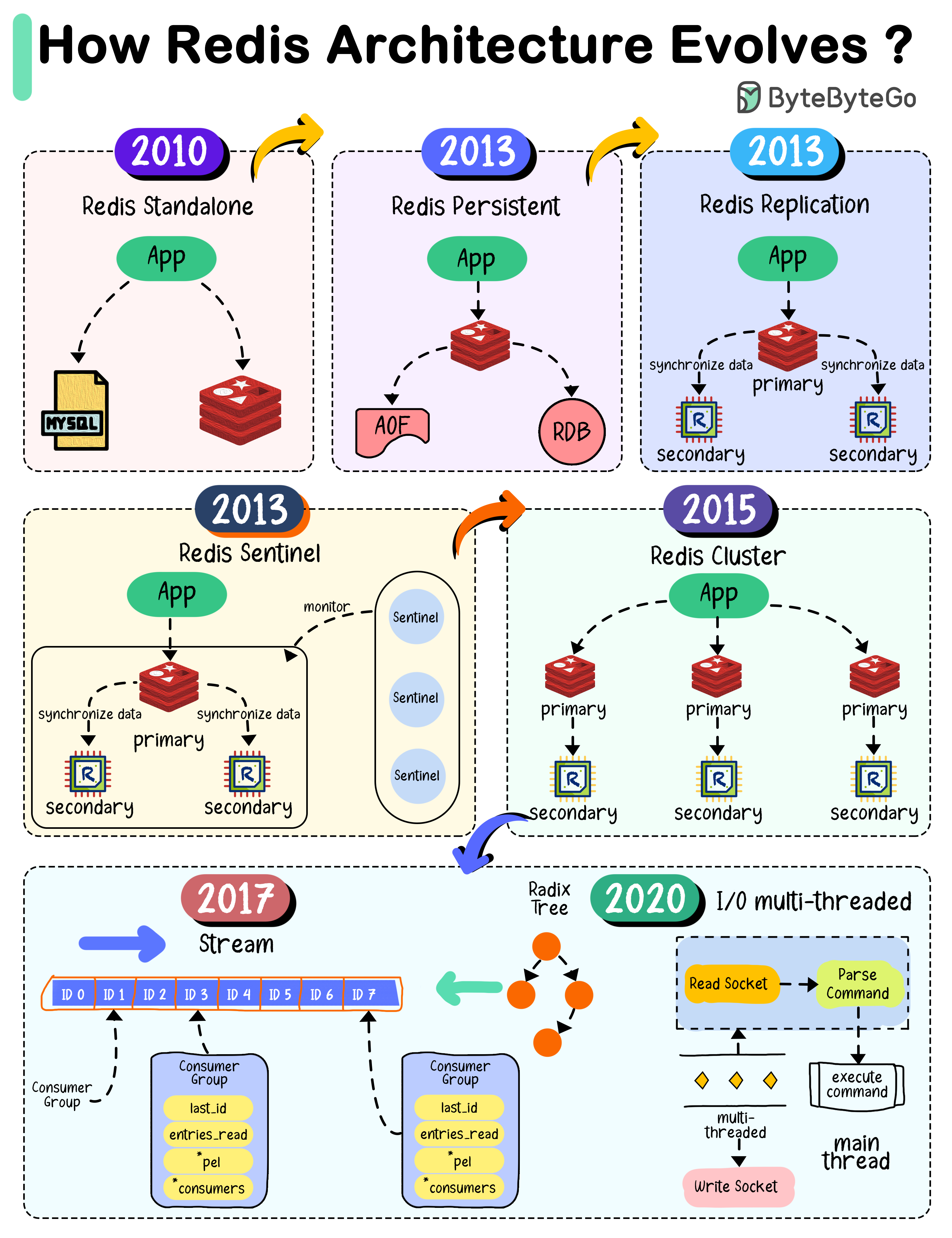

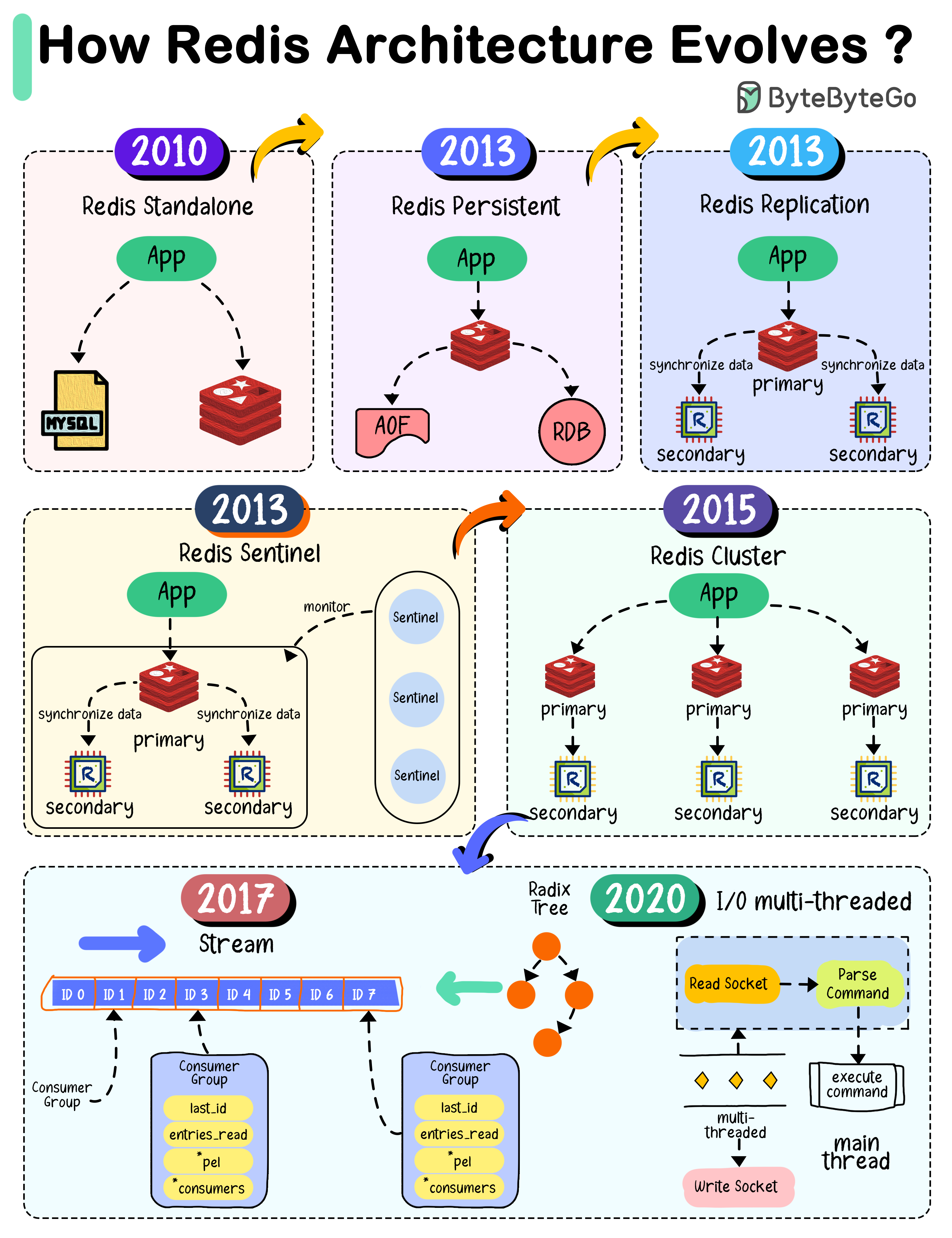

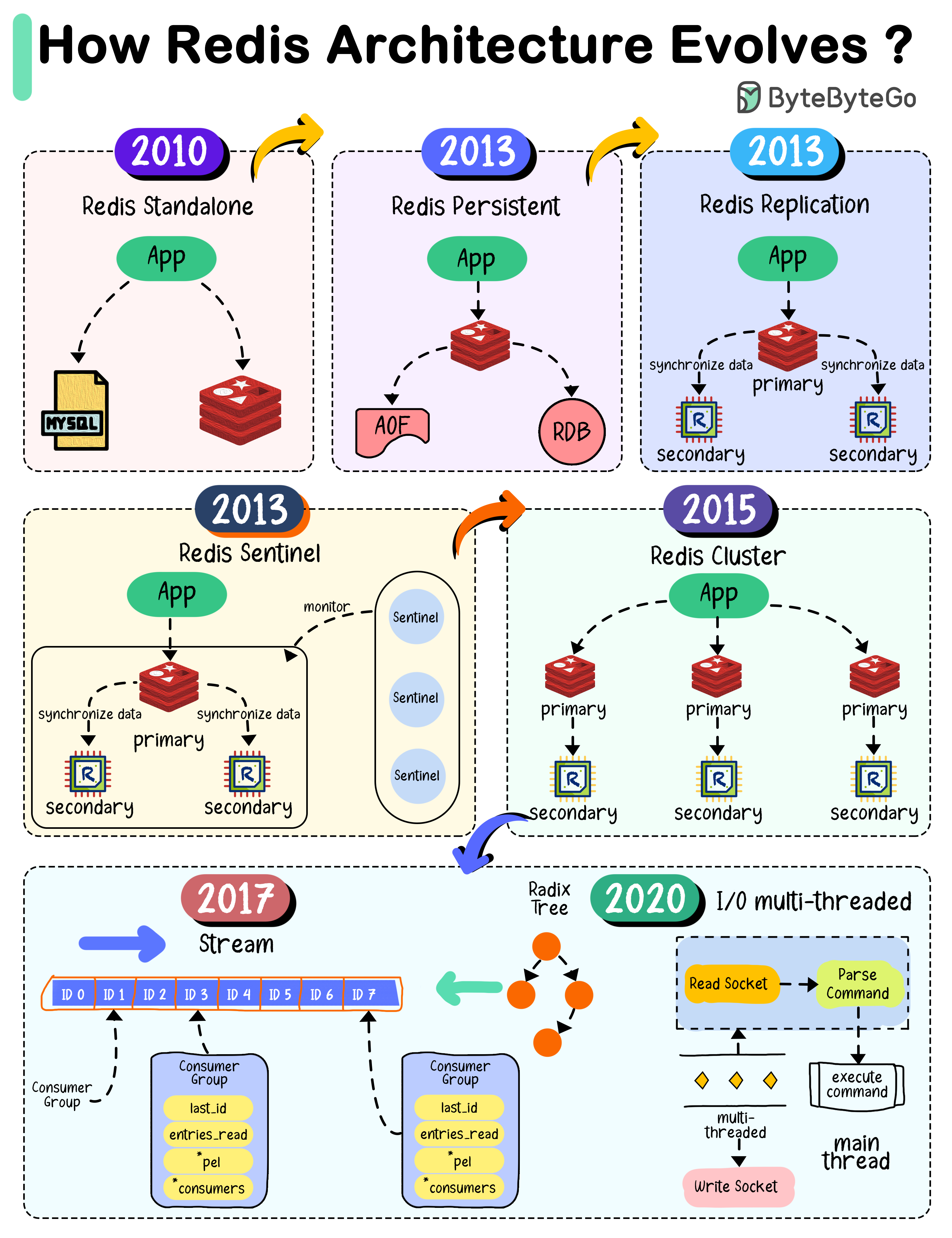

+ * [How Redis Architecture Evolved](https://bytebytego.com/guides/how-redis-architecture-evolve)

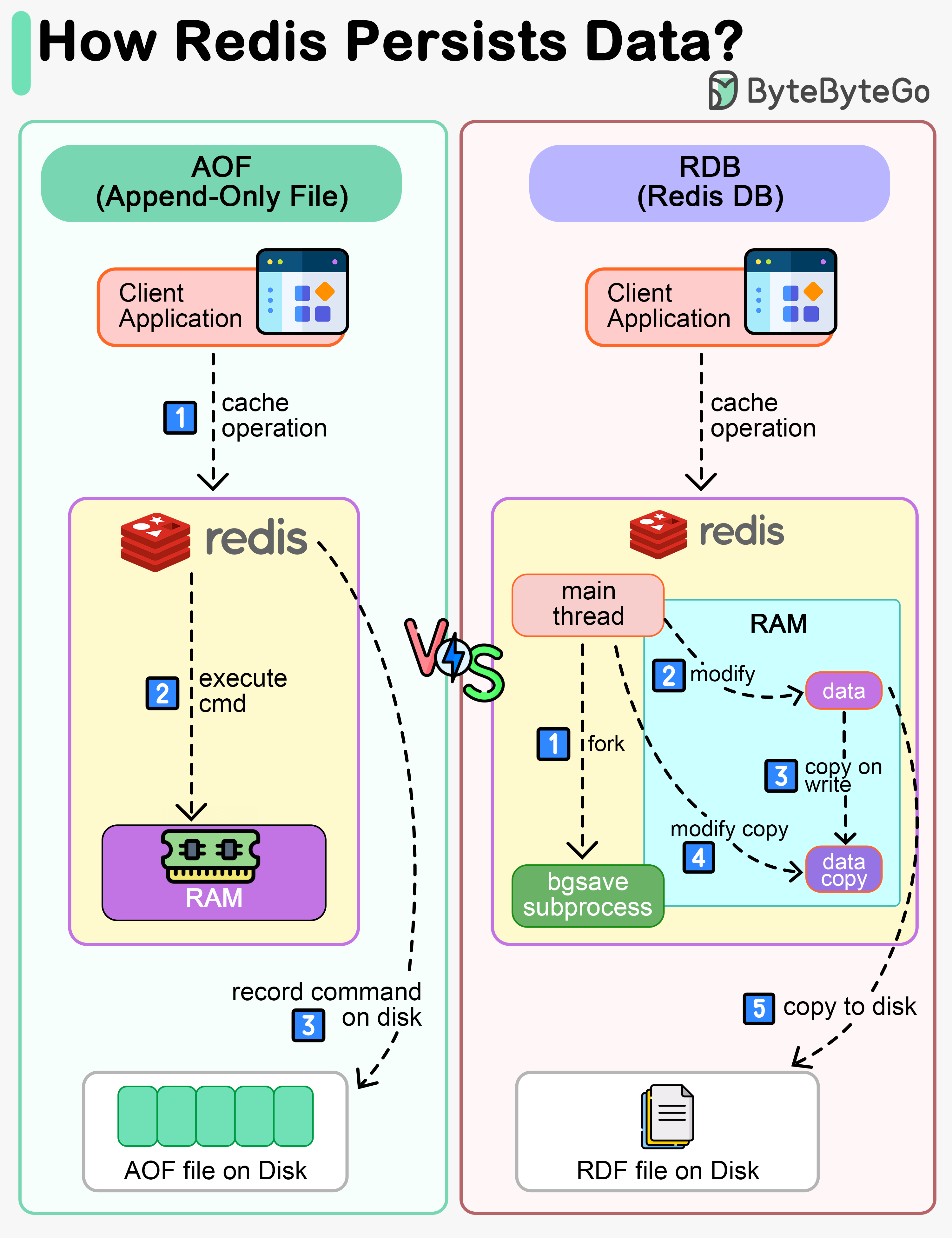

+ * [How Does Redis Persist Data?](https://bytebytego.com/guides/how-does-redis-persist-data)

+ * [How can Redis be used?](https://bytebytego.com/guides/how-can-redis-be-used)

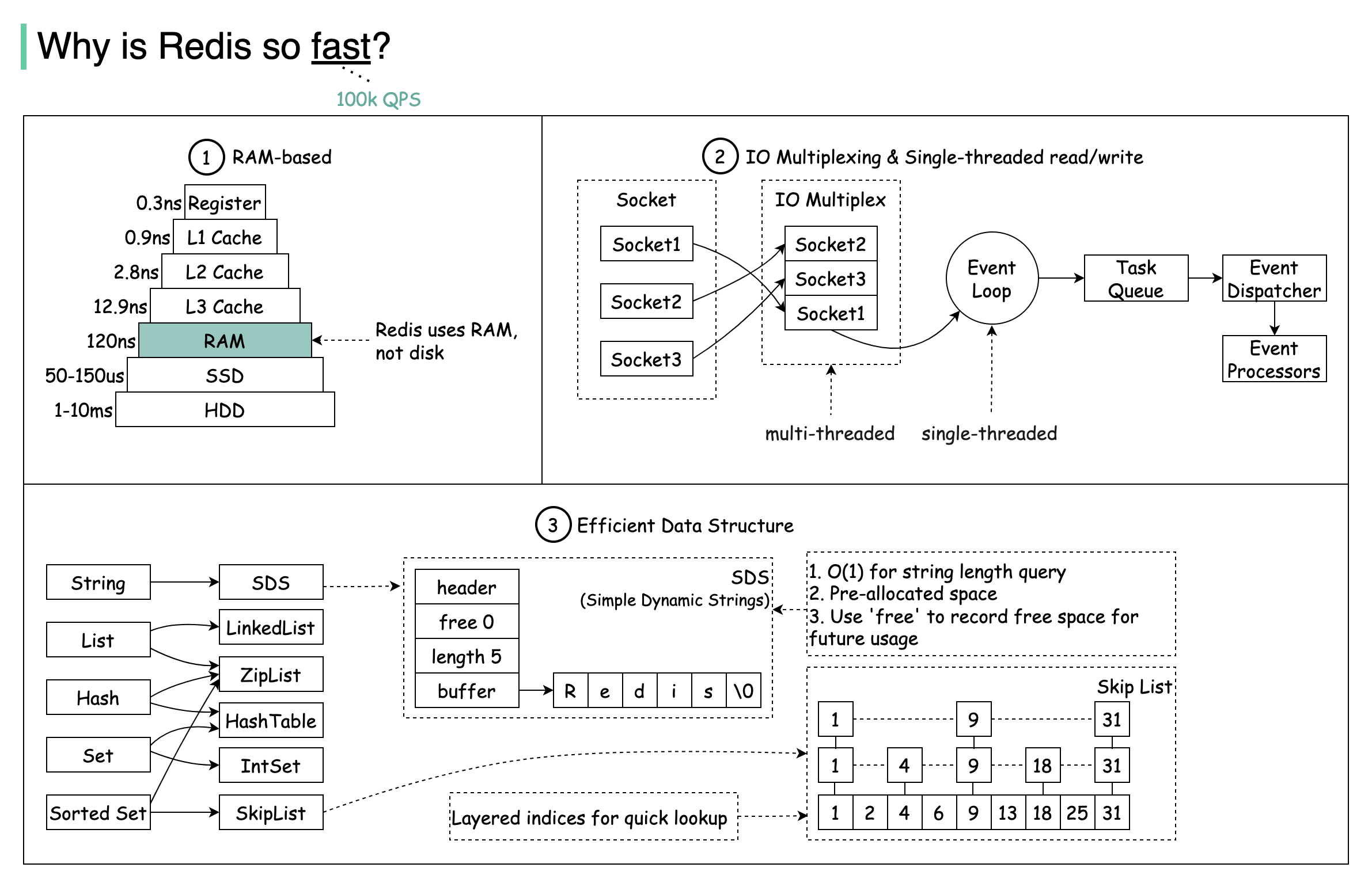

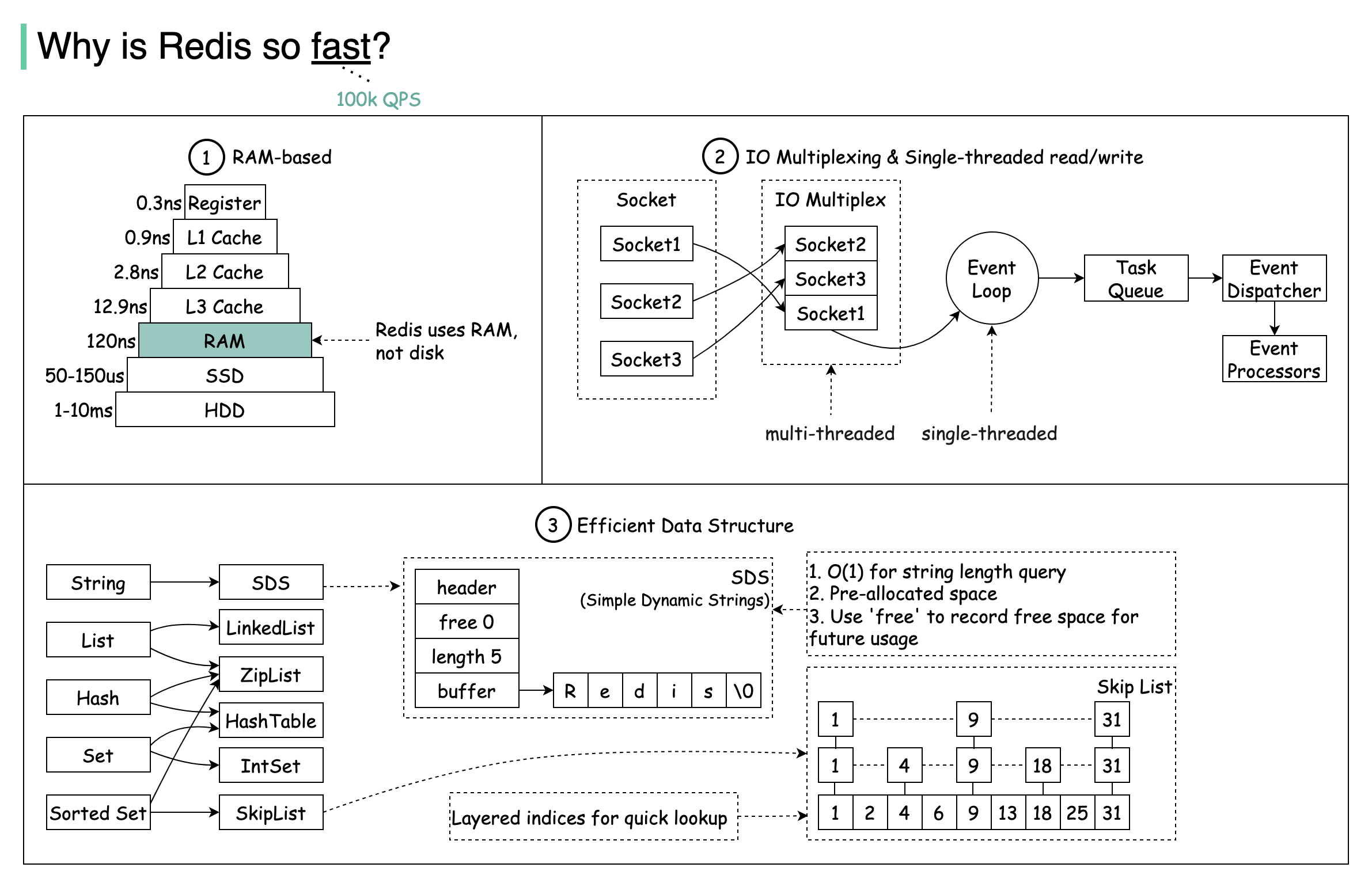

+ * [Why is Redis so Fast?](https://bytebytego.com/guides/why-is-redis-so-fast)

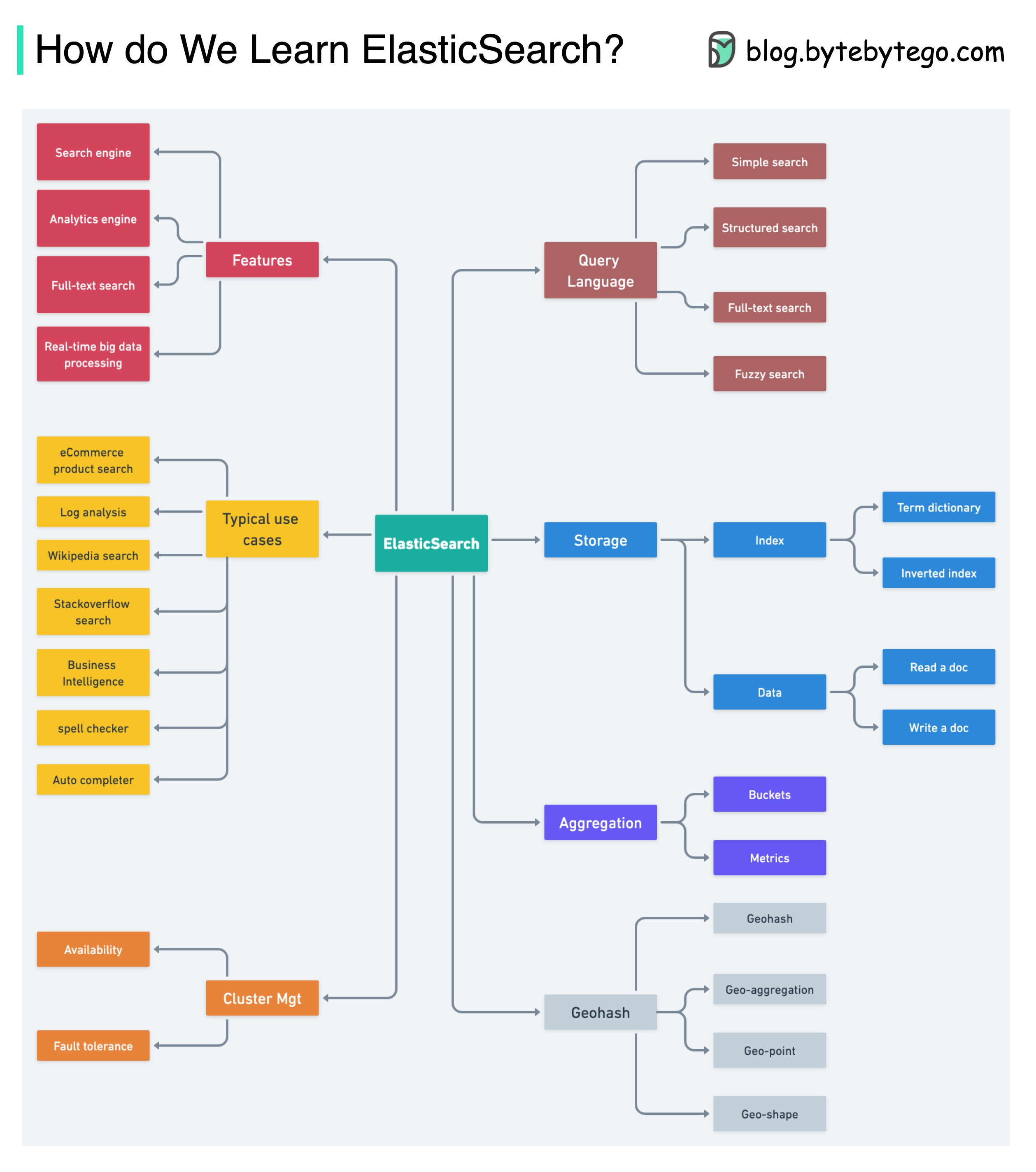

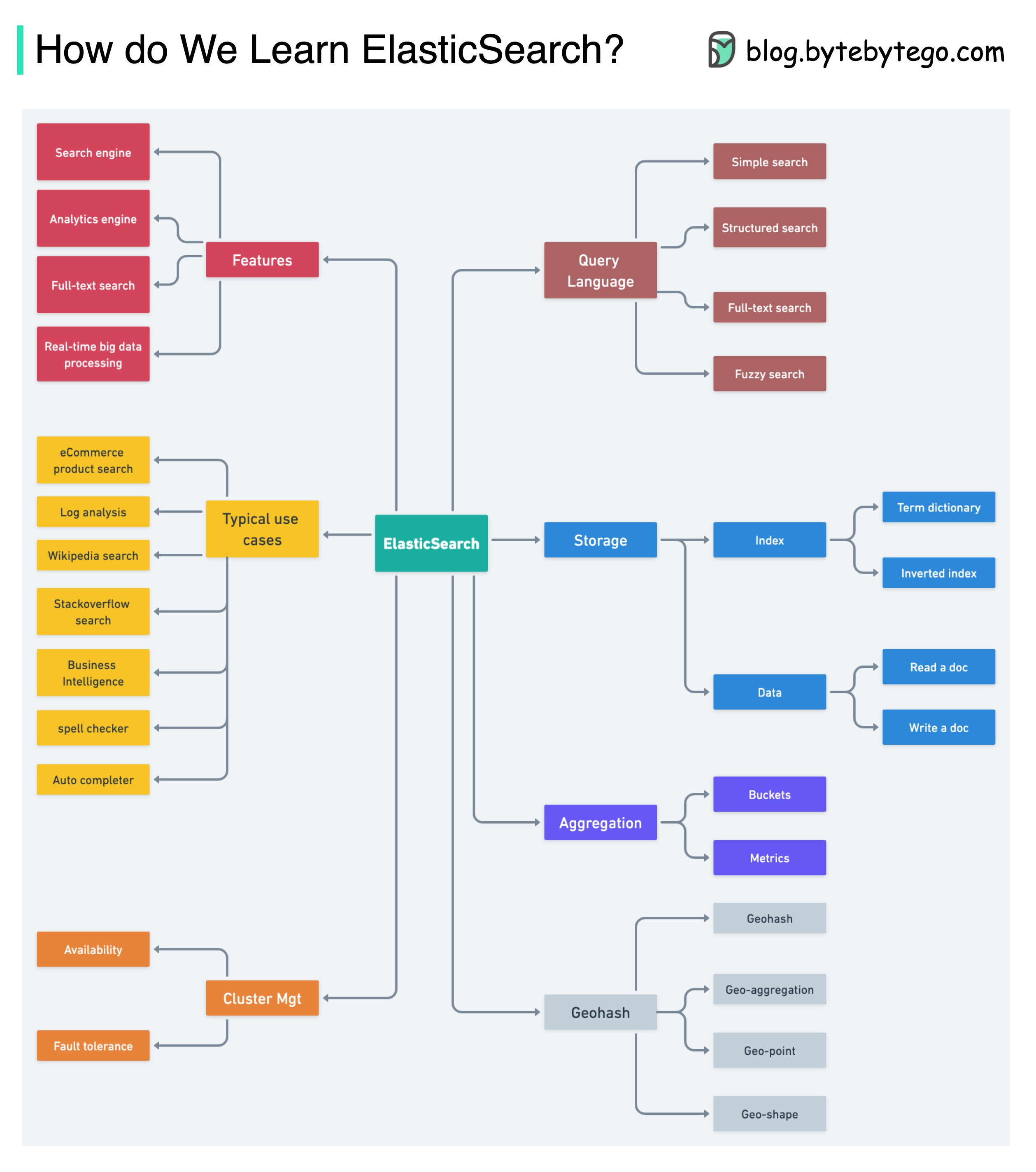

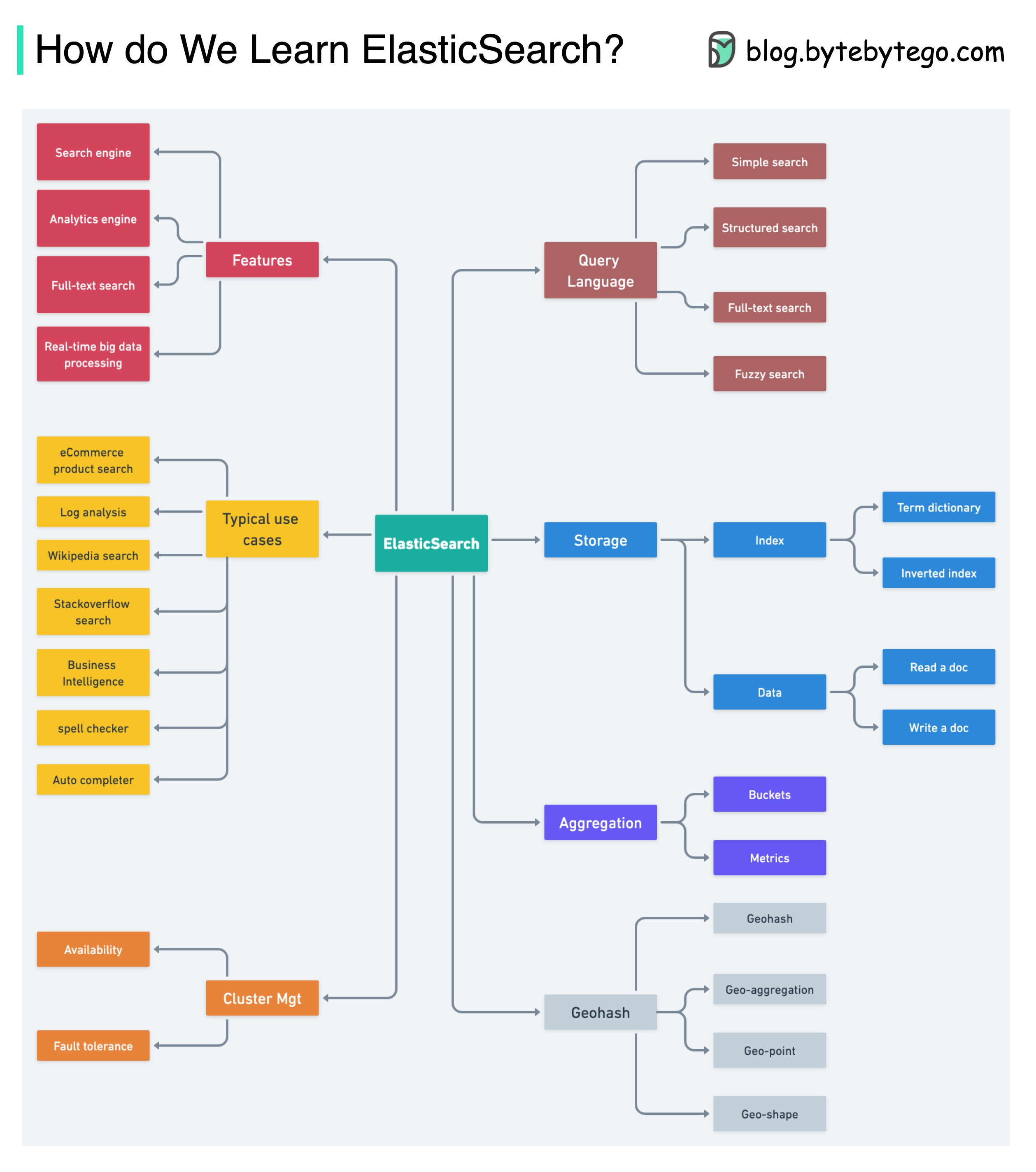

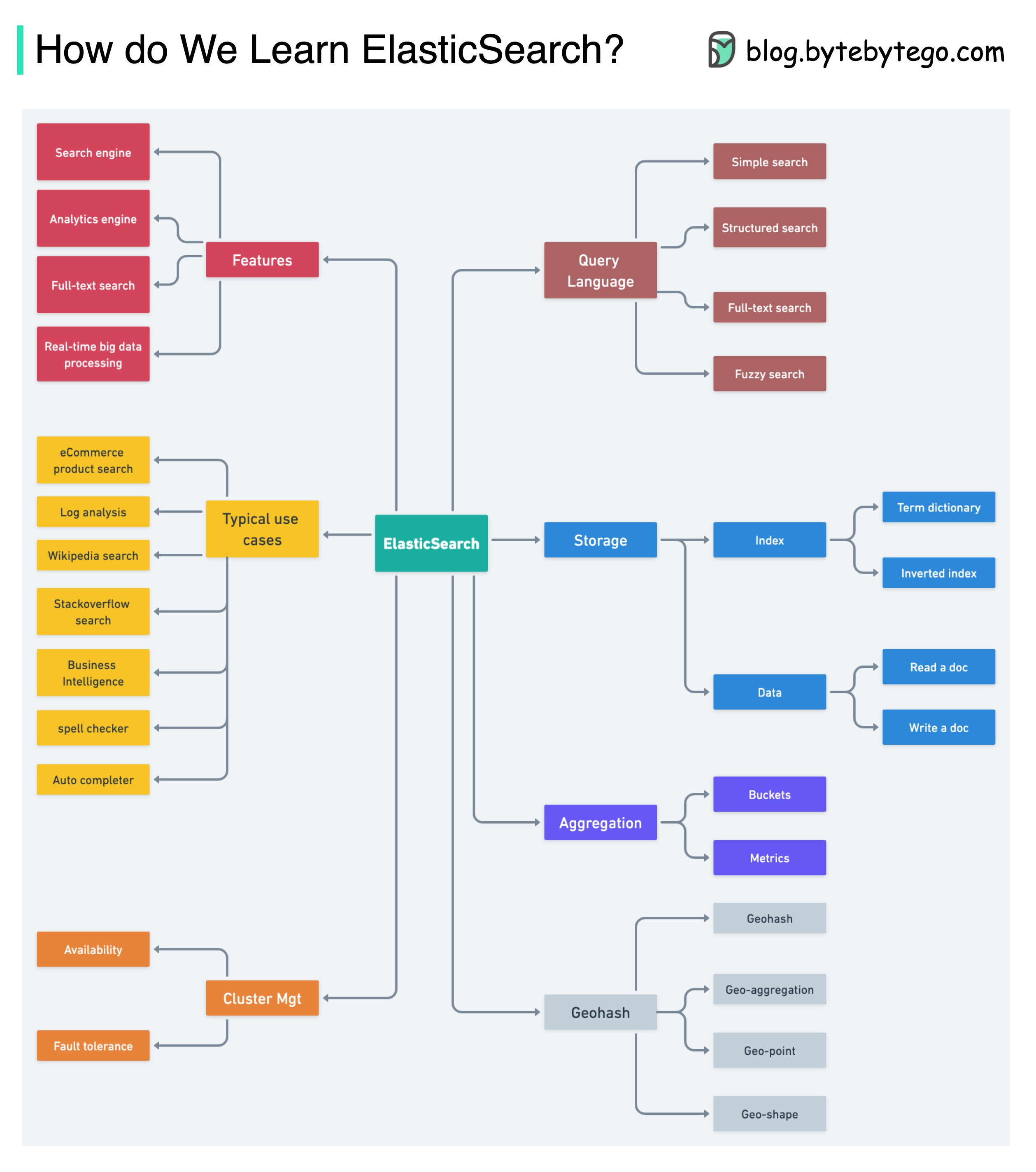

+ * [How to Learn Elasticsearch](https://bytebytego.com/guides/how-do-we-learn-elasticsearch)

+ * [What is CDN (Content Delivery Network)?](https://bytebytego.com/guides/what-is-cdn-content-delivery-network)

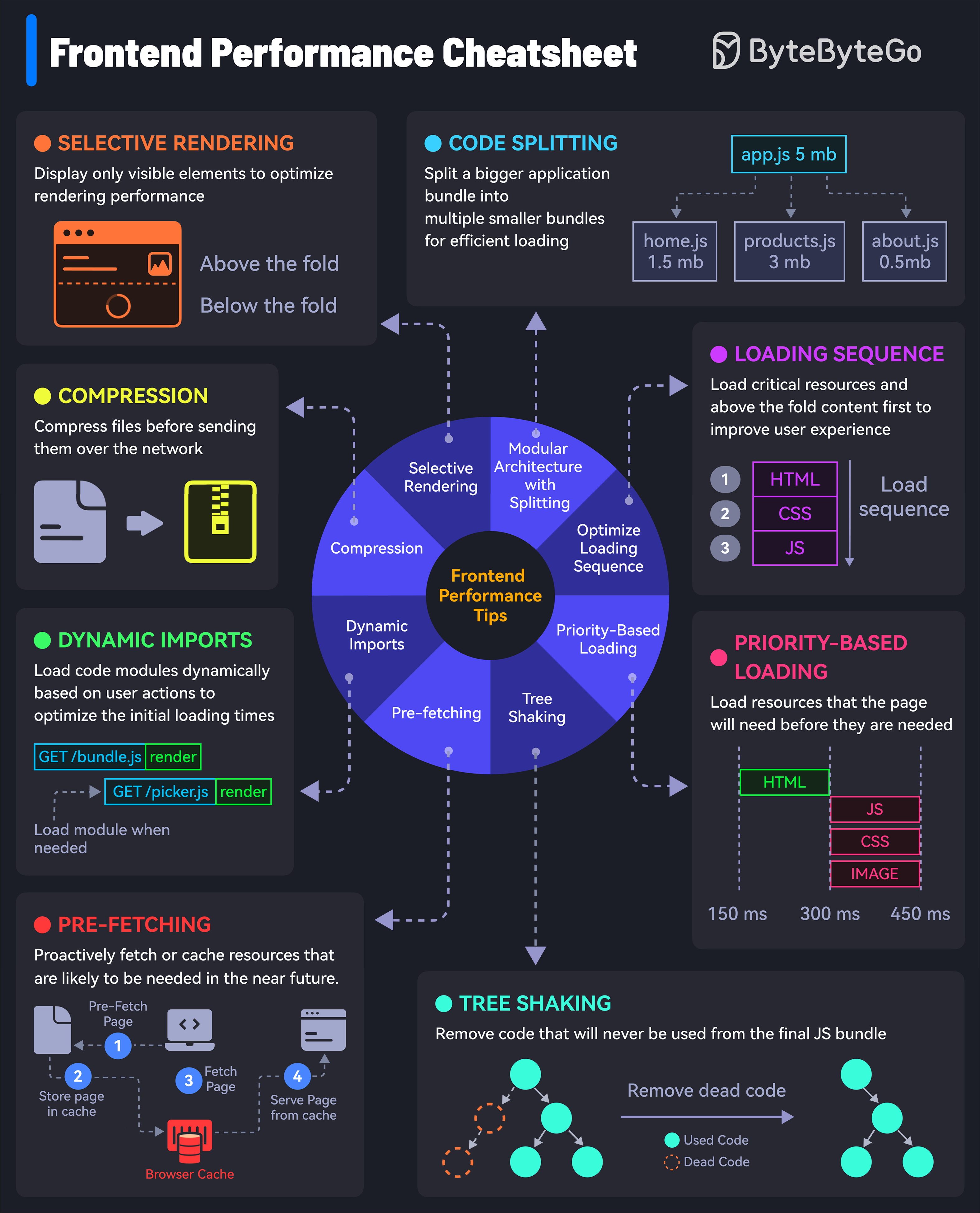

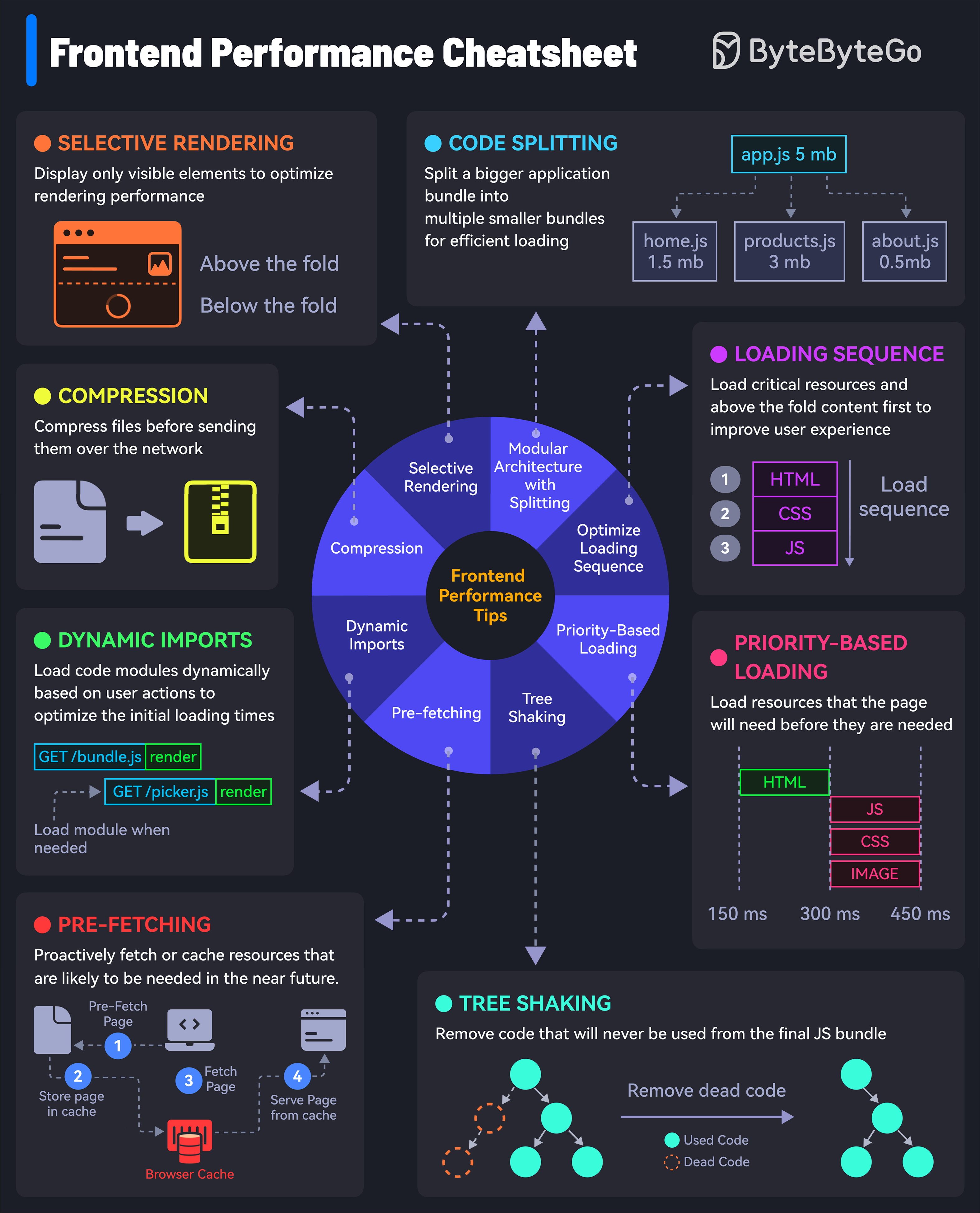

+ * [Frontend Performance Optimization](https://bytebytego.com/guides/how-to-load-your-websites-at-lightning-speed)

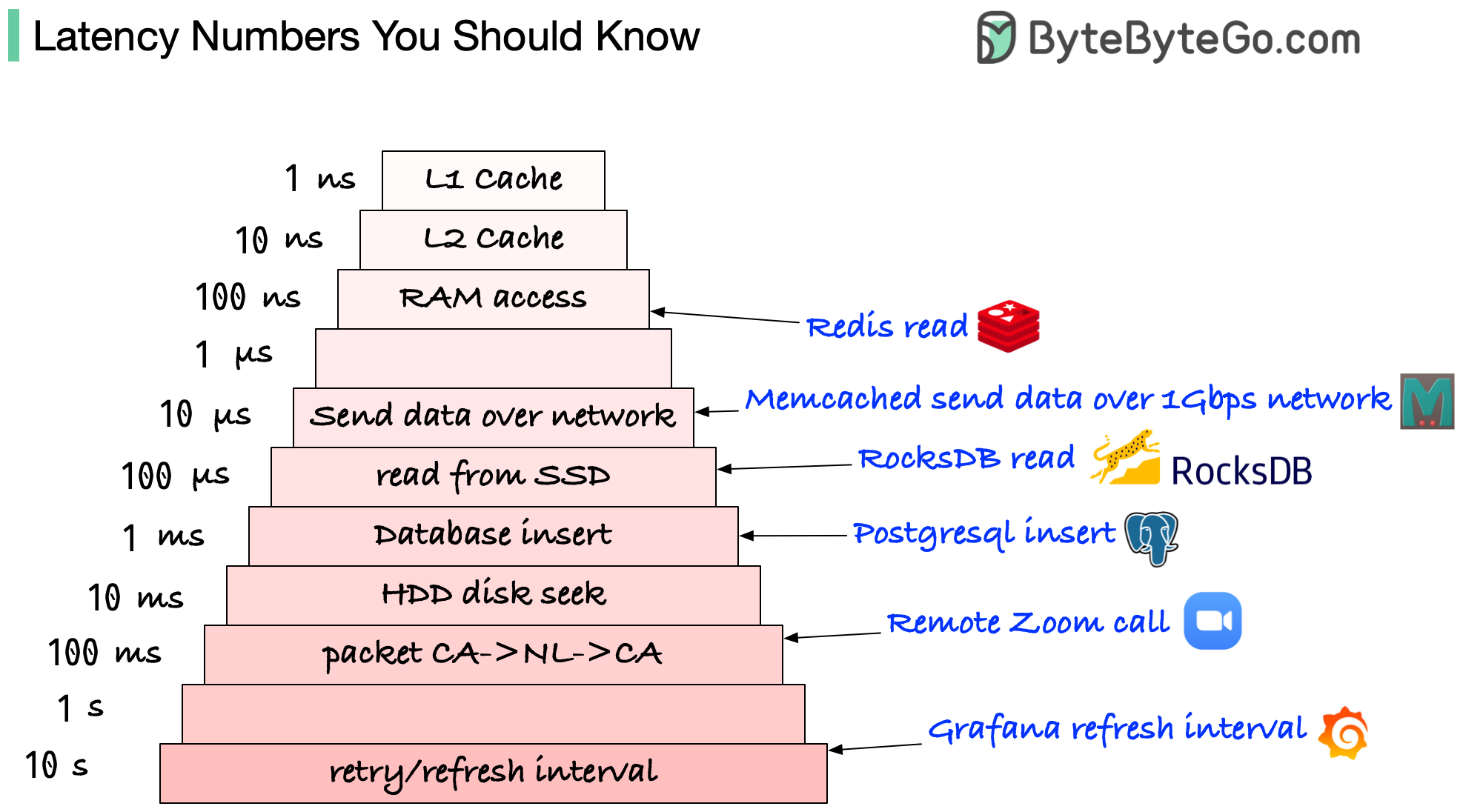

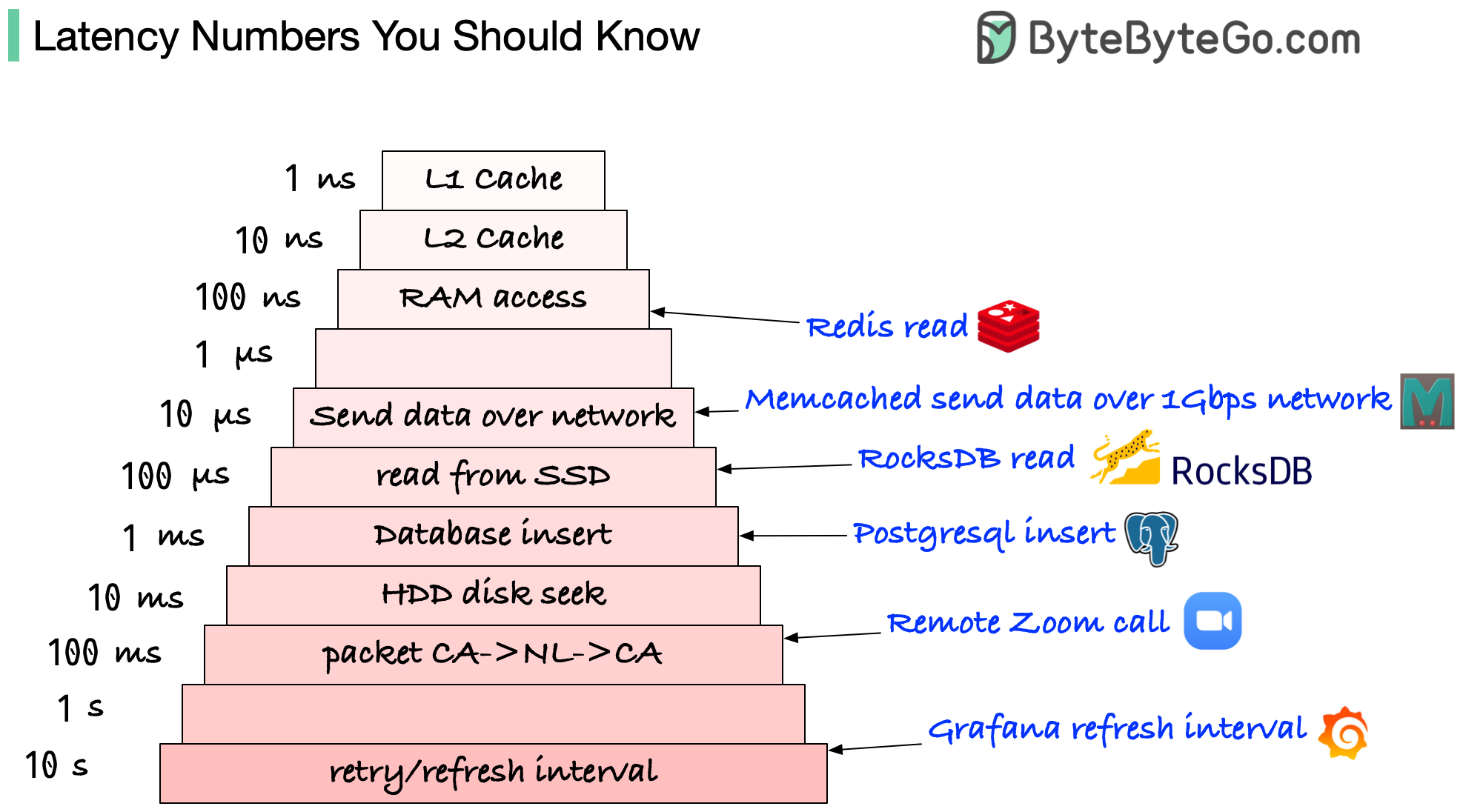

+ * [Which Latency Numbers Should You Know?](https://bytebytego.com/guides/which-latency-numbers-should-you-know)

+ * [Top Caching Strategies](https://bytebytego.com/guides/what-are-the-top-caching-strategies)

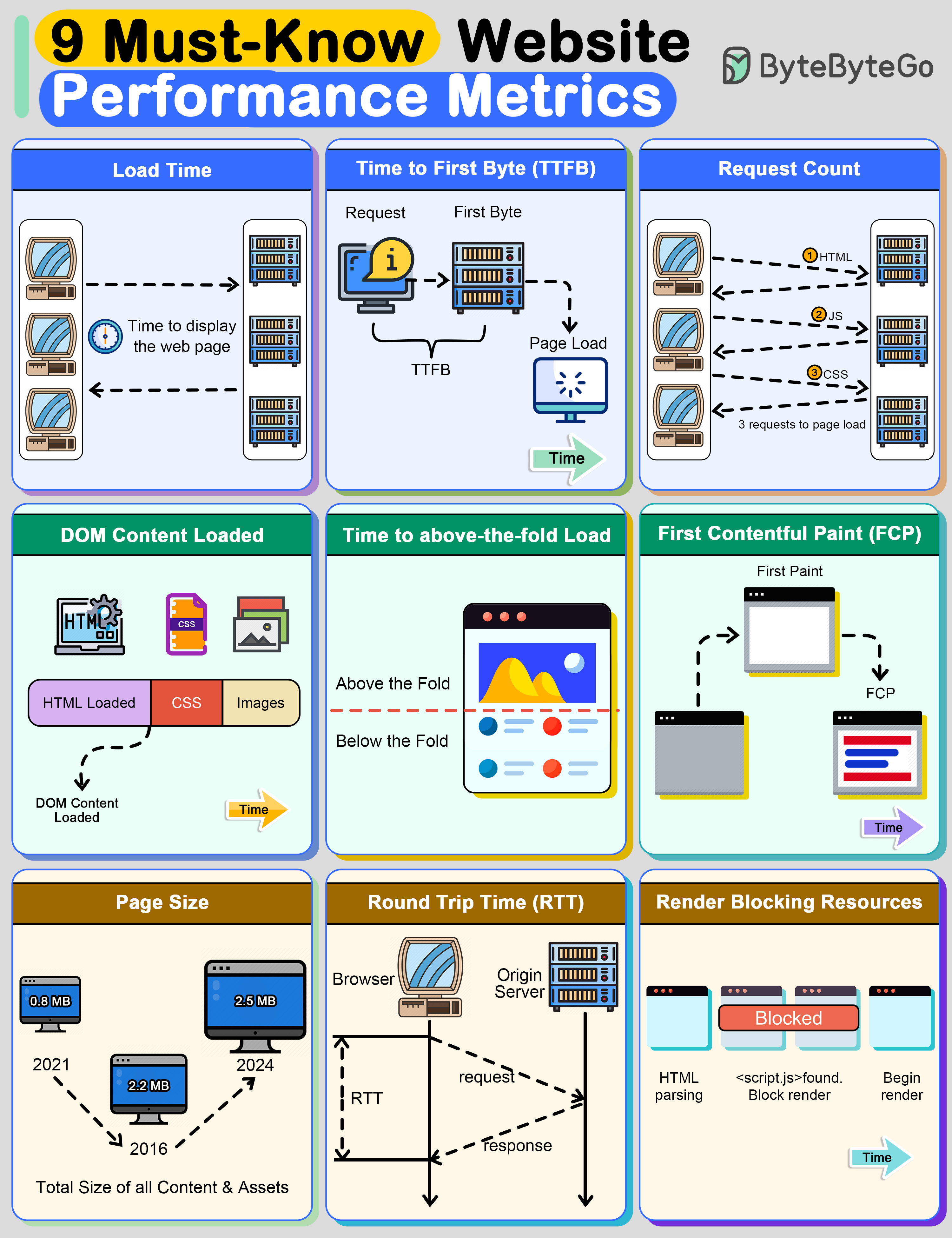

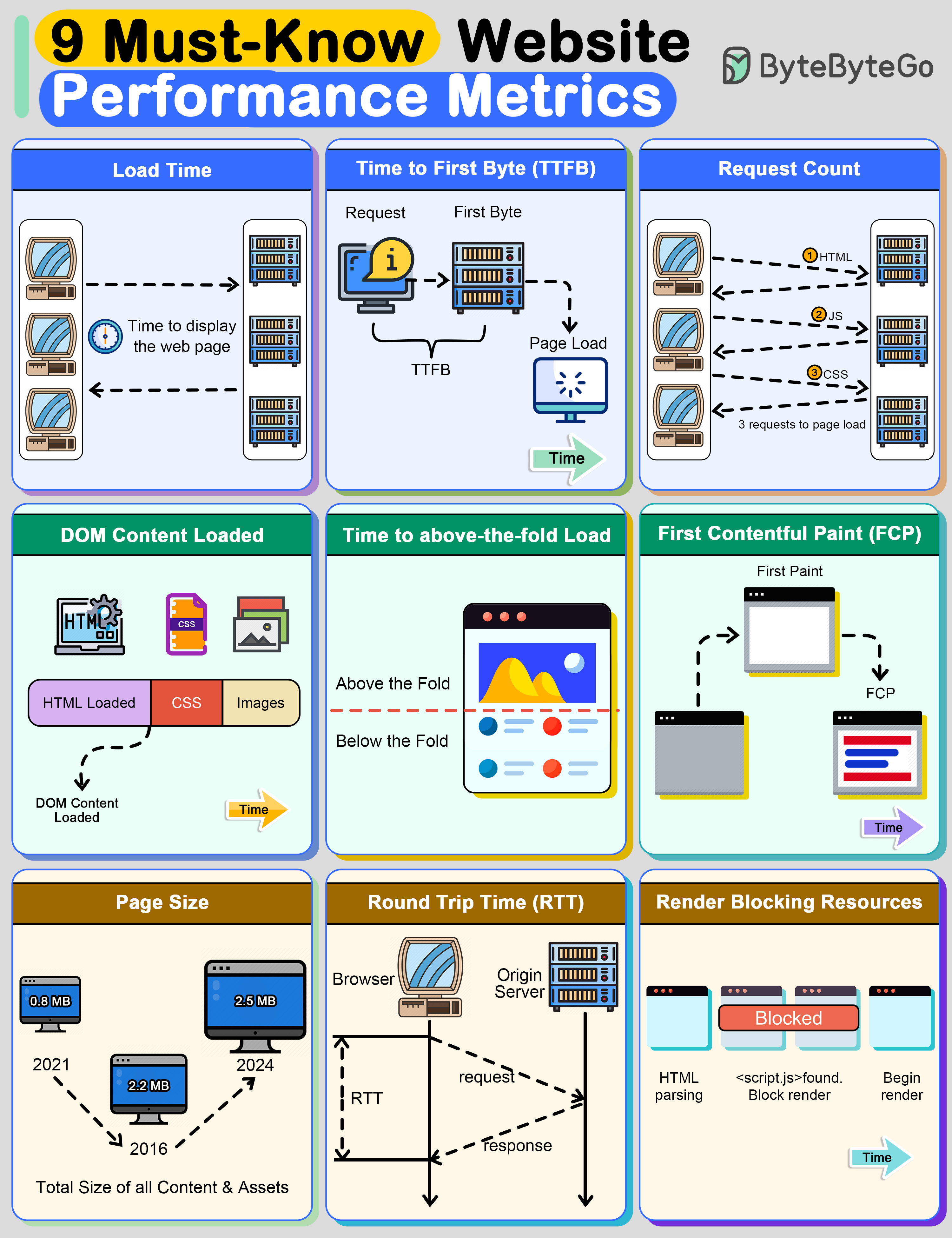

+ * [Top 9 Website Performance Metrics You Cannot Ignore](https://bytebytego.com/guides/top-9-website-performance-metrics-you-cannot-ignore)

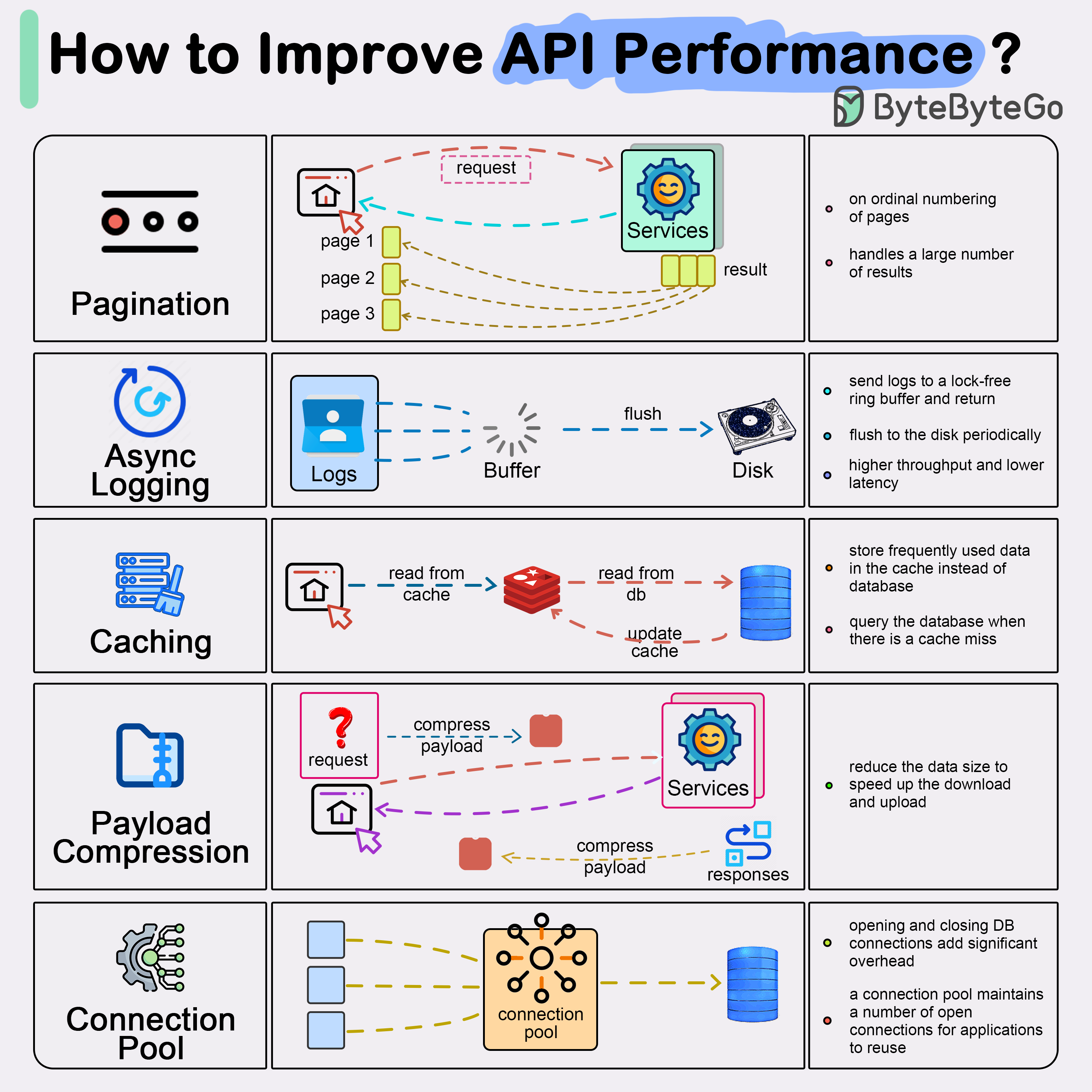

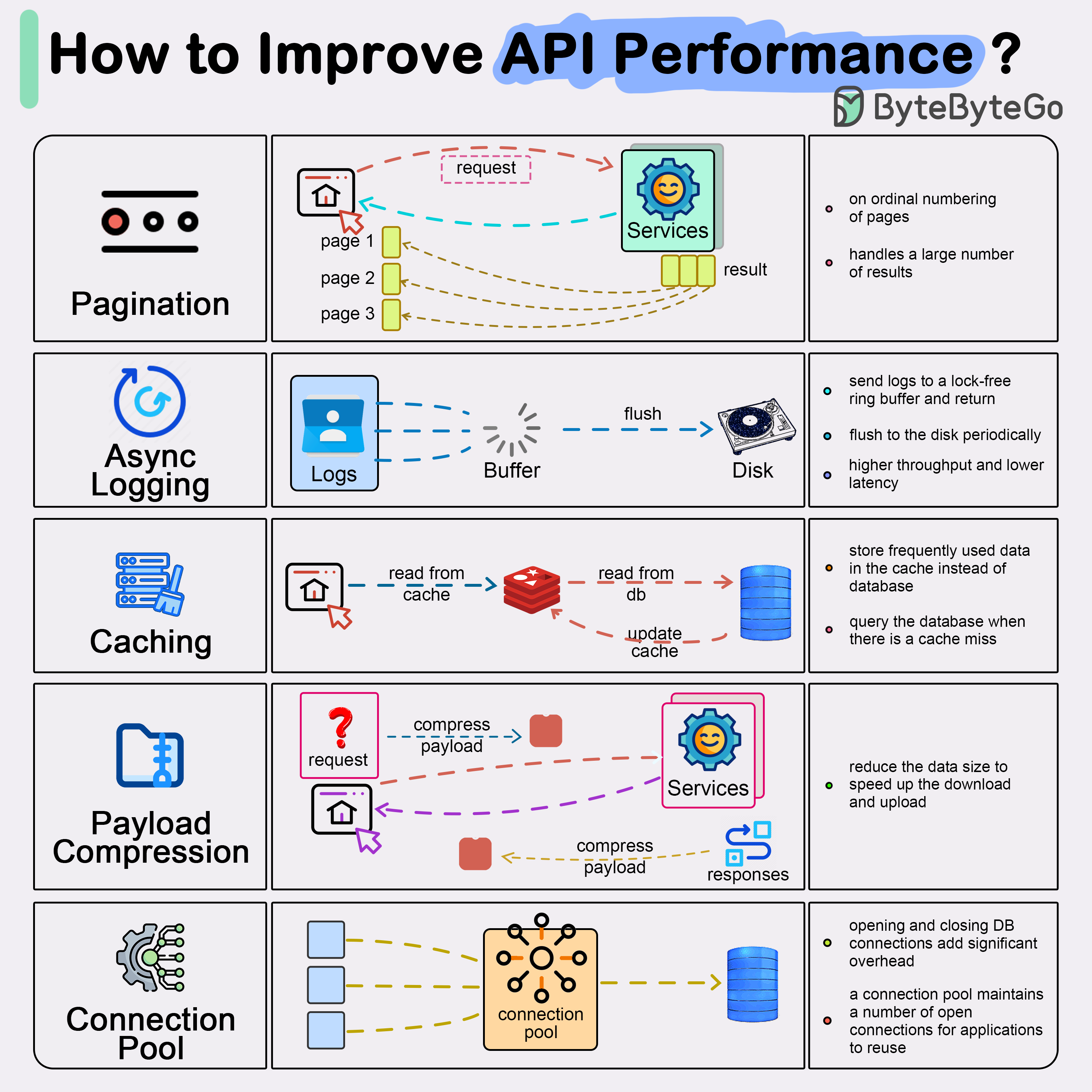

+ * [Top 5 Common Ways to Improve API Performance](https://bytebytego.com/guides/top-5-common-ways-to-improve-api-performance)

+ * [Learn Cache](https://bytebytego.com/guides/learn-cache)

+* [Payment and Fintech](https://bytebytego.com/guides/payment-and-fintech)

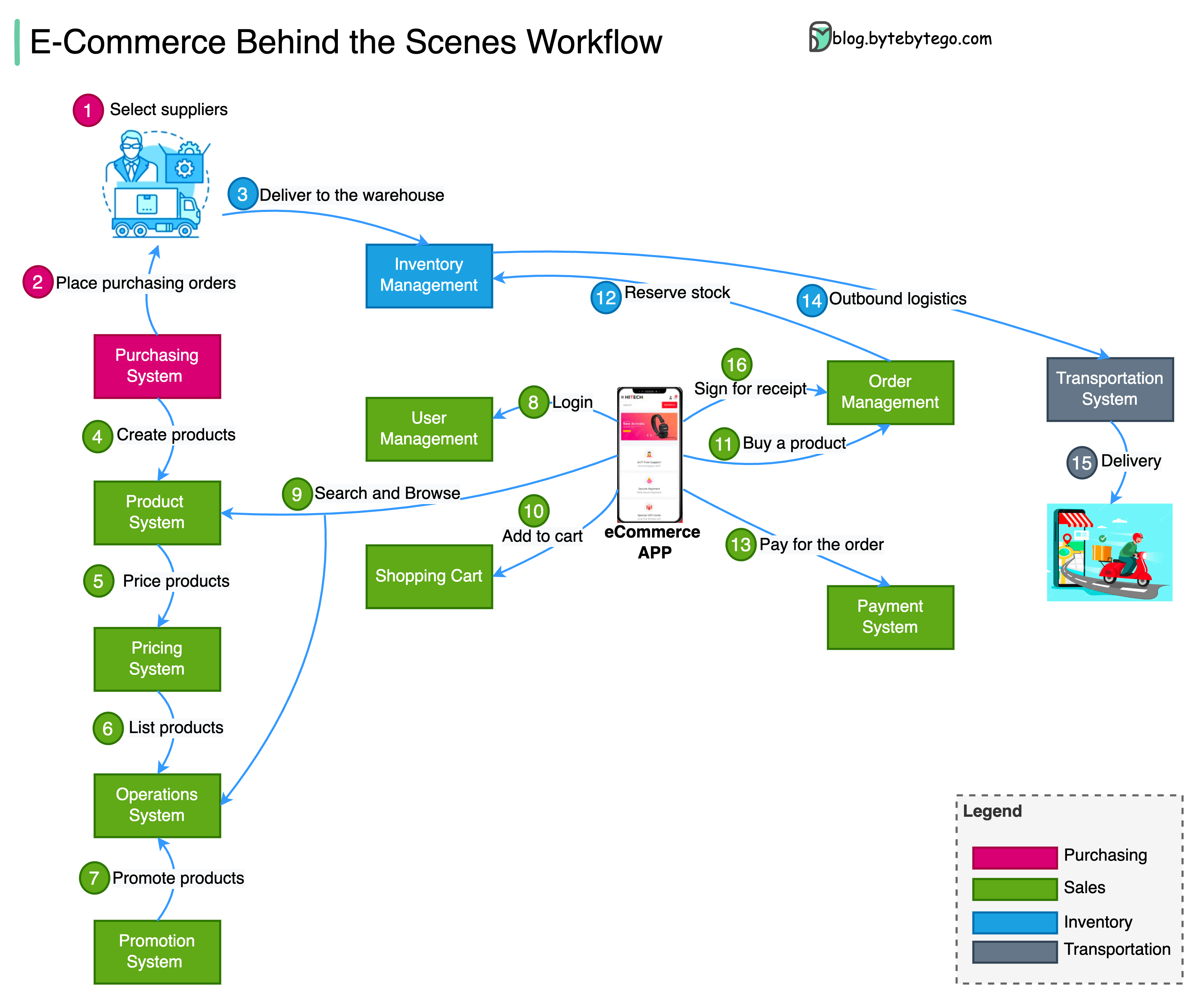

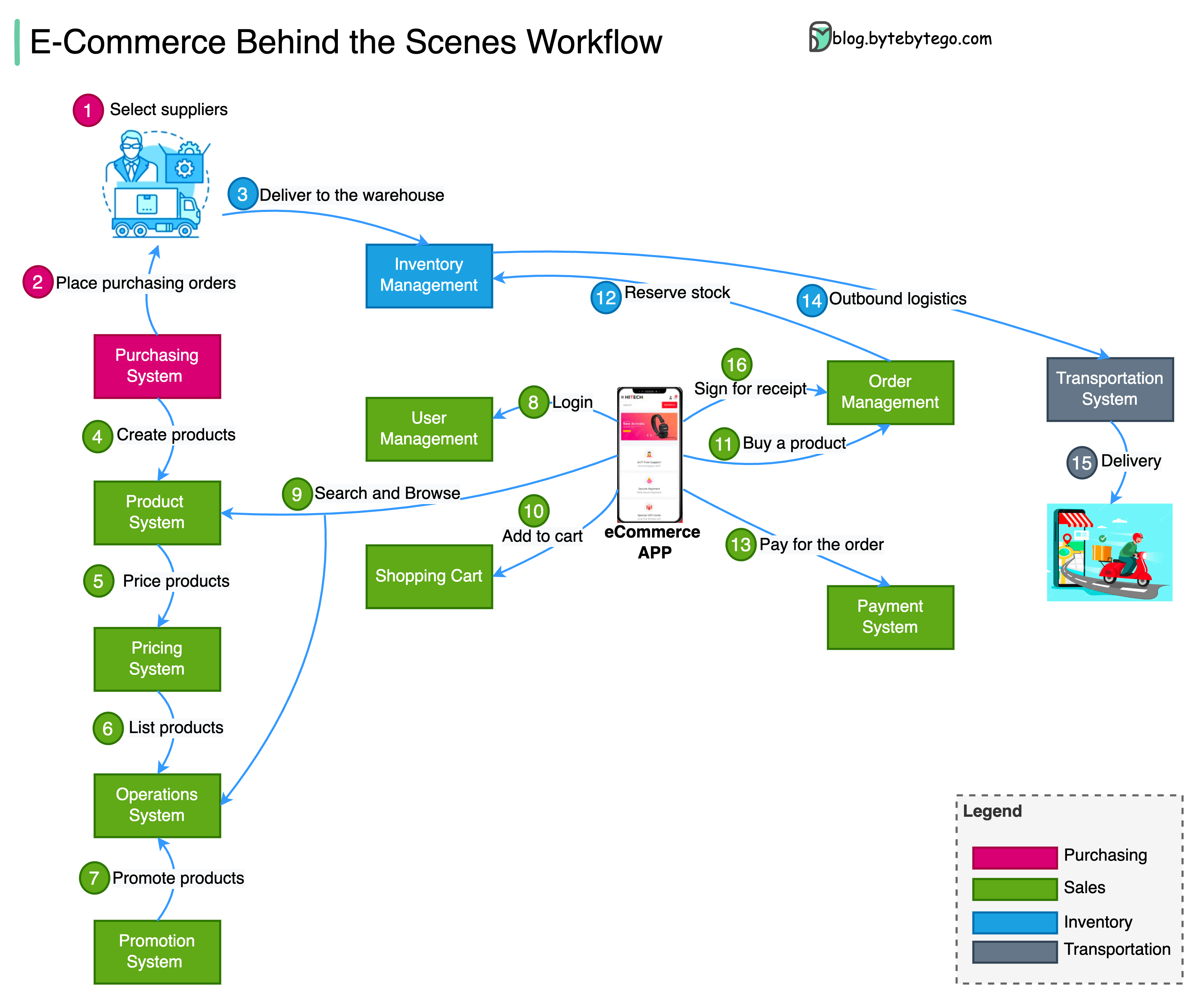

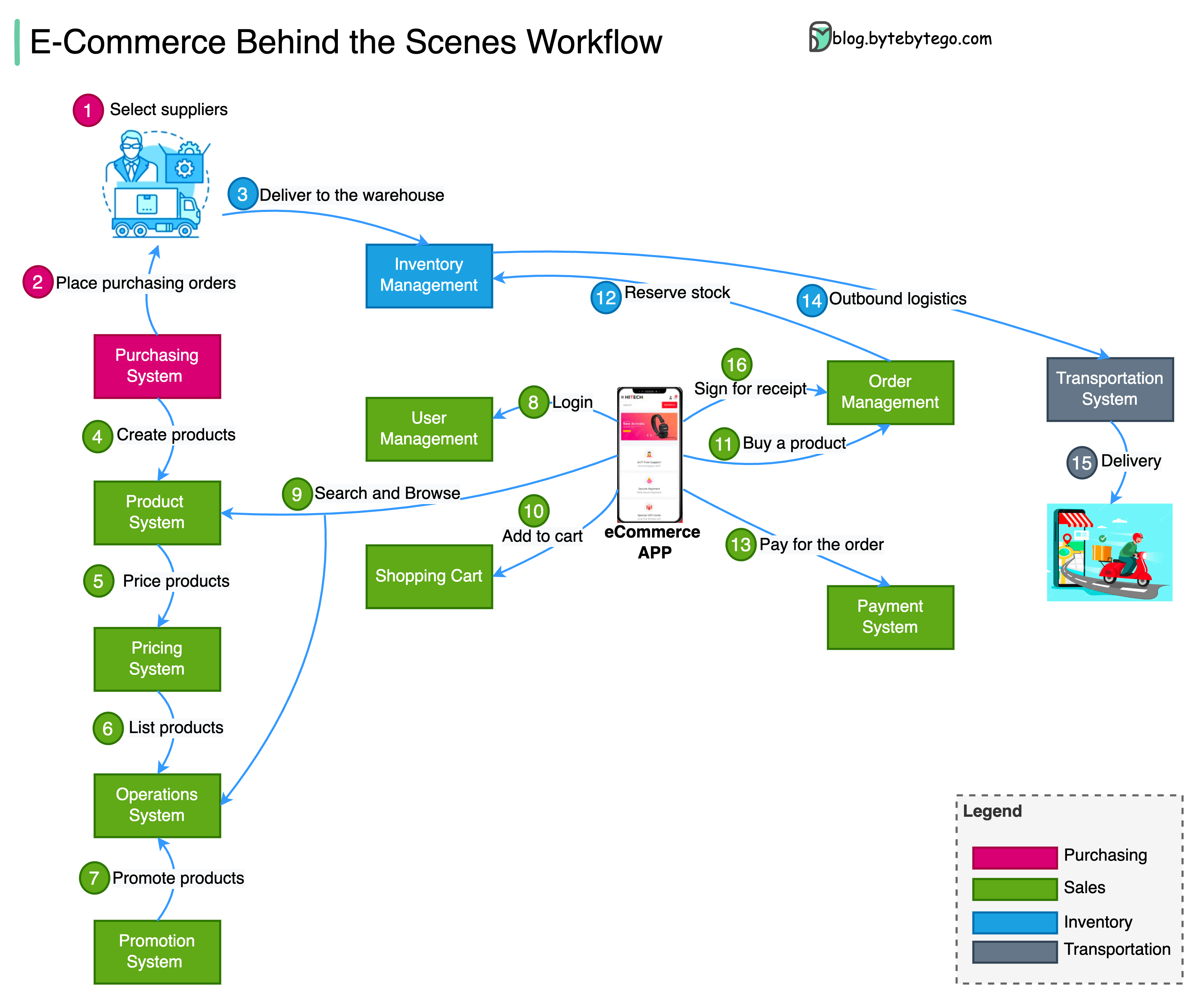

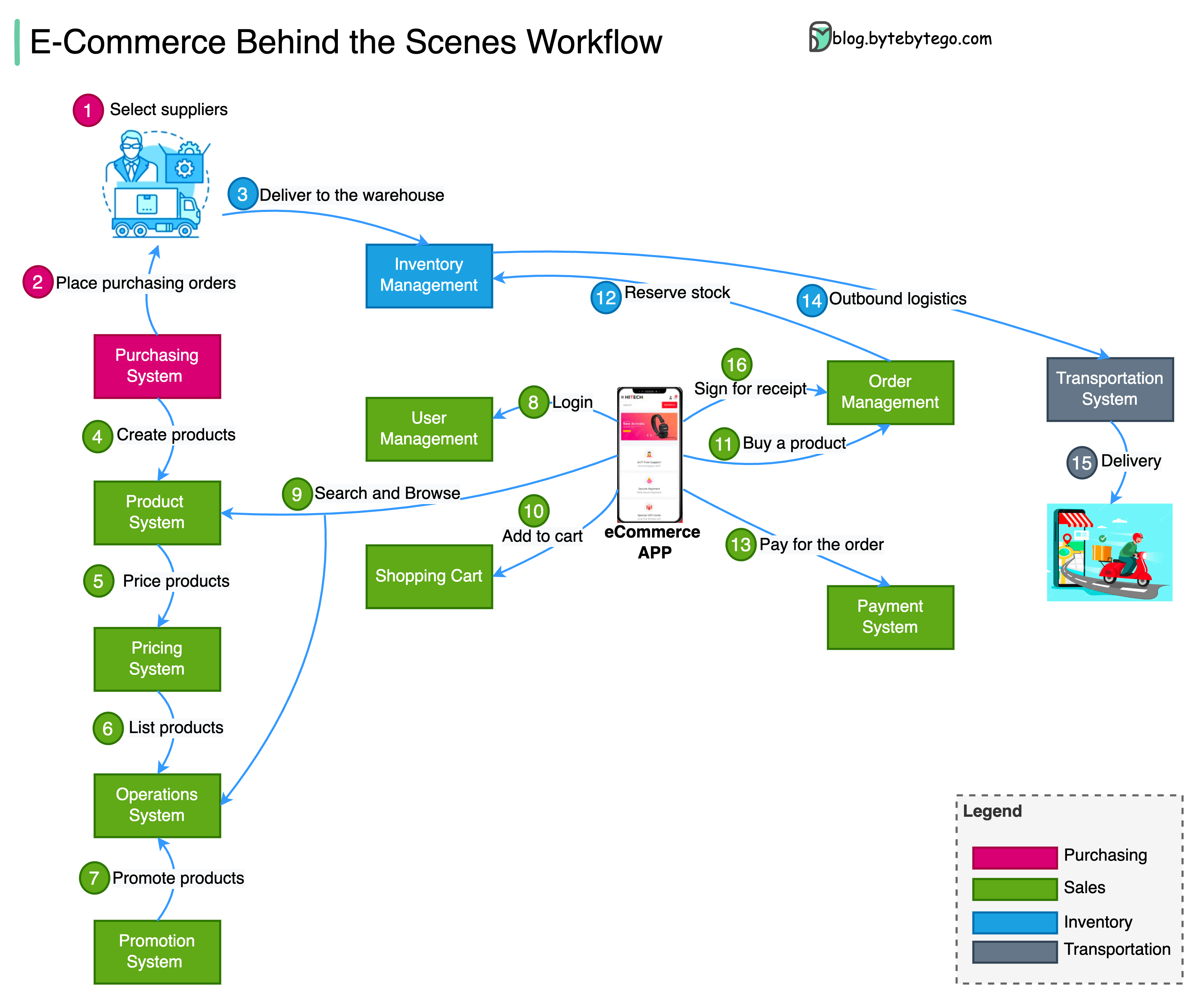

+ * [E-commerce Workflow](https://bytebytego.com/guides/e-commerce-workflow)

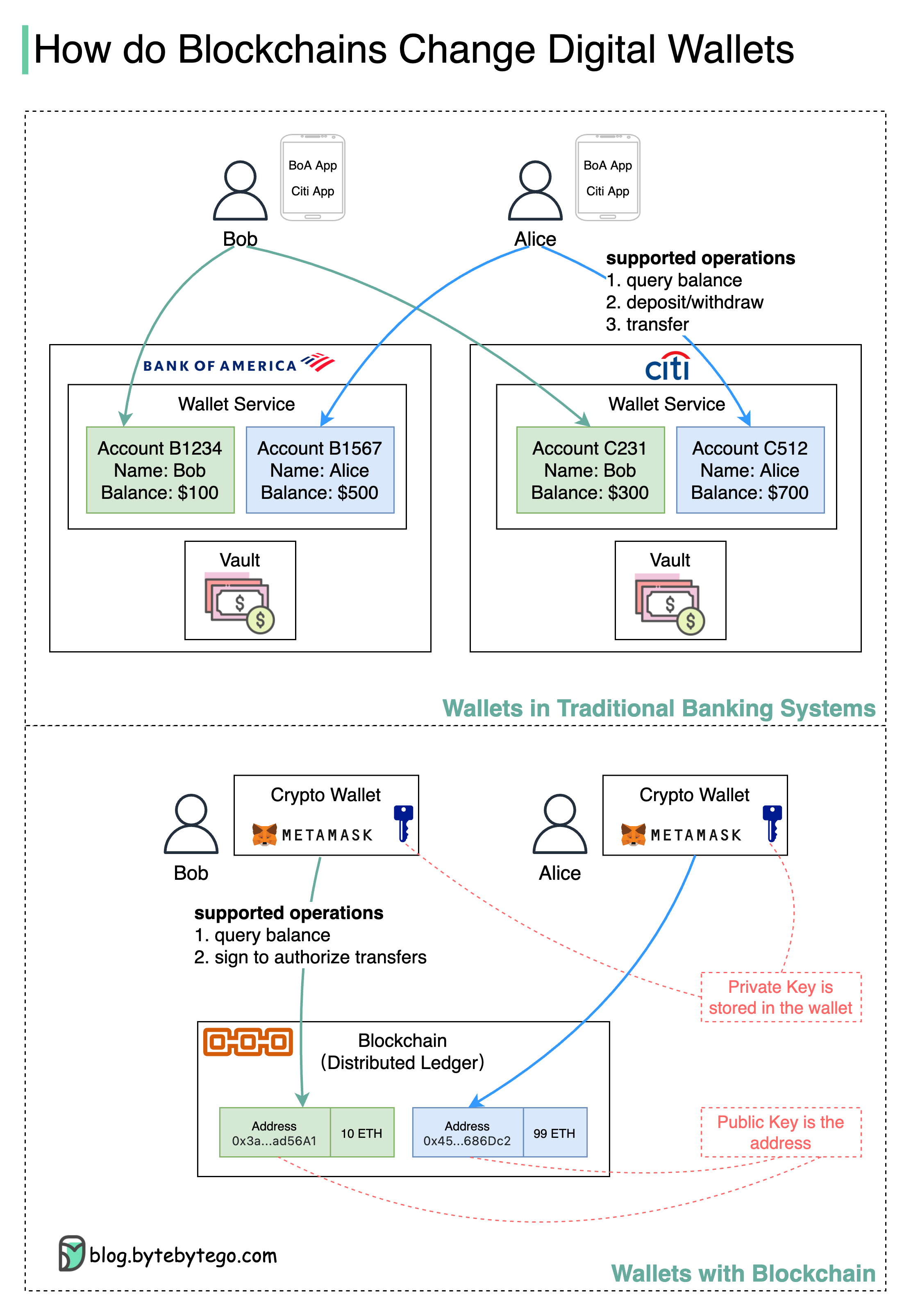

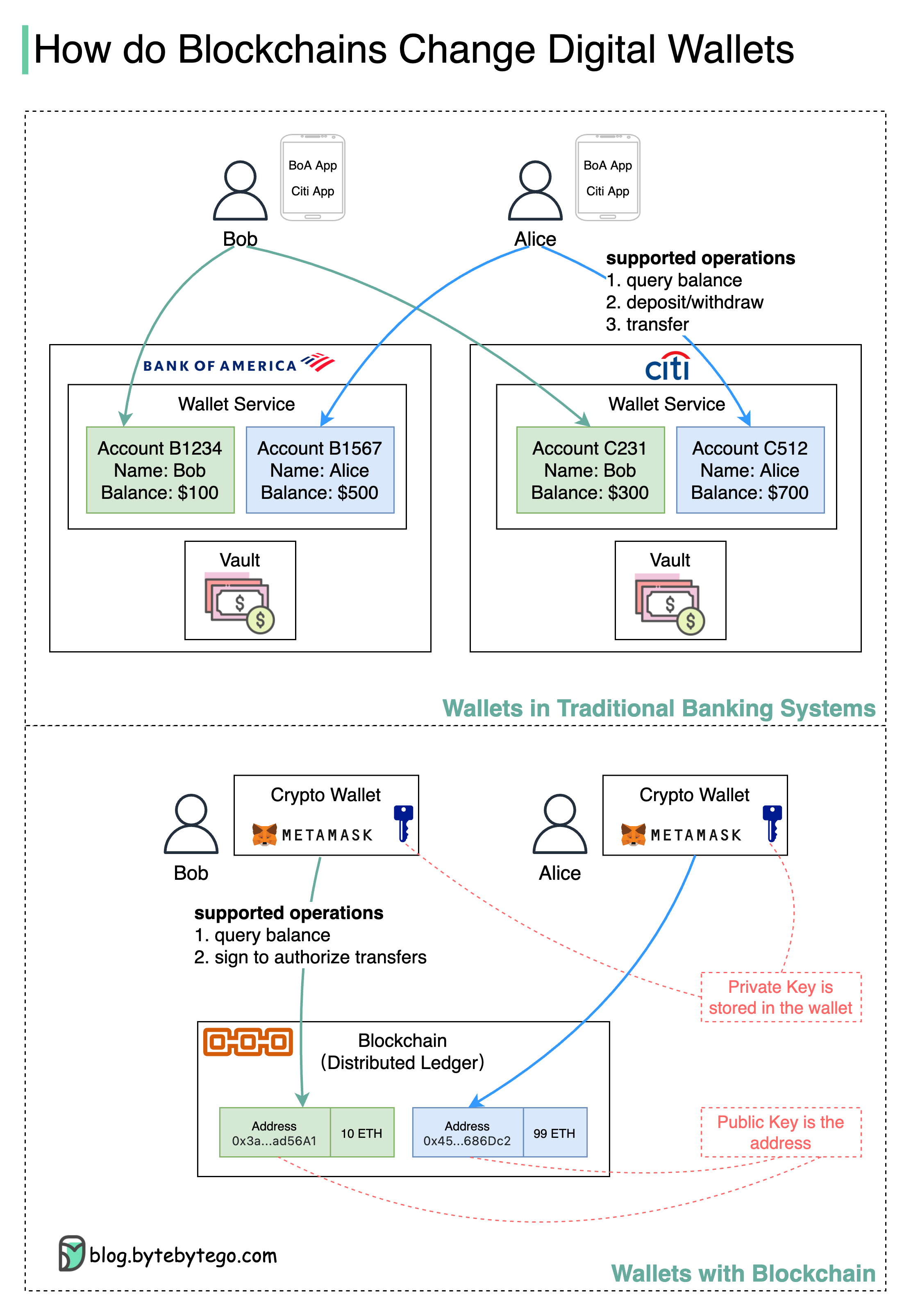

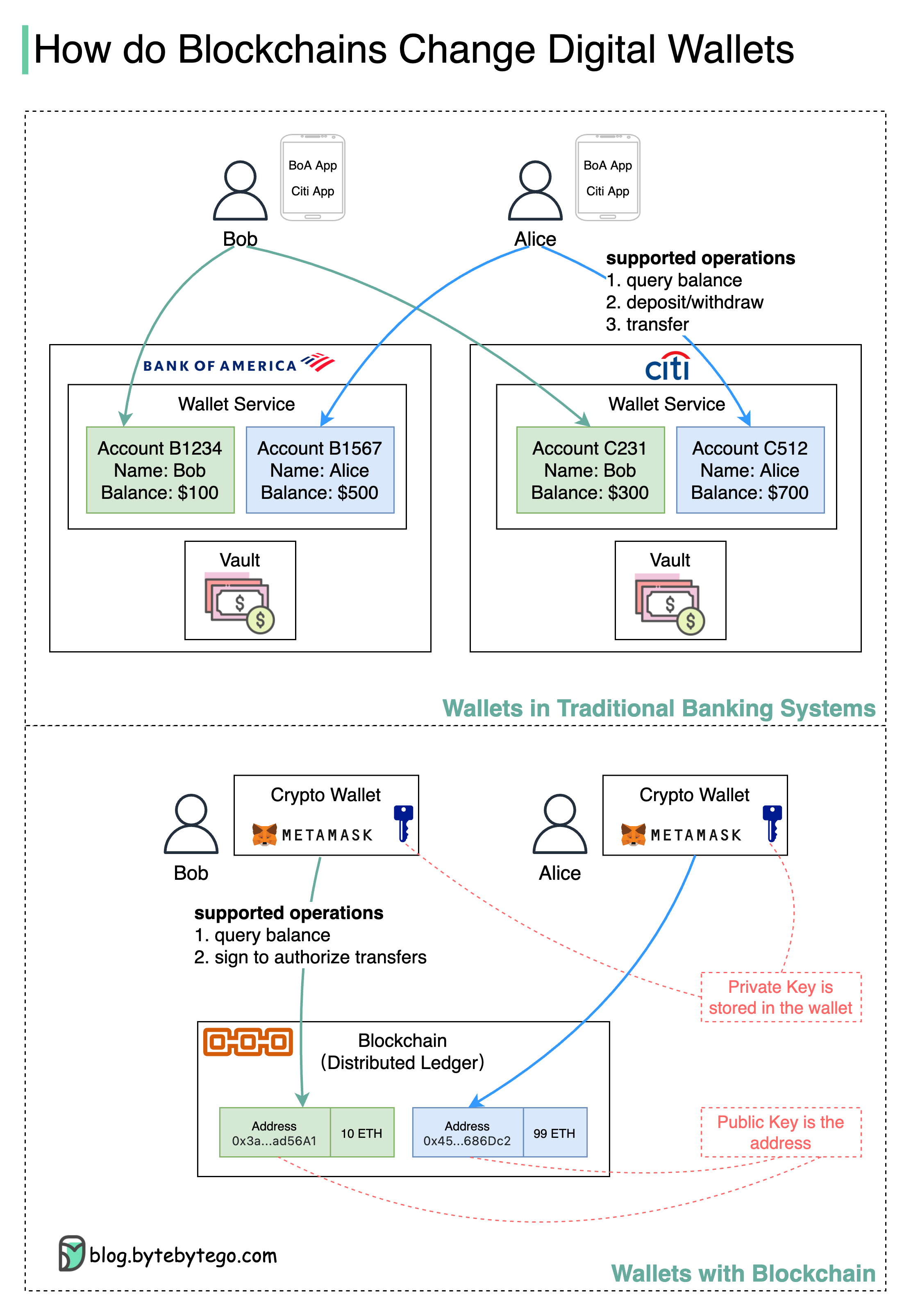

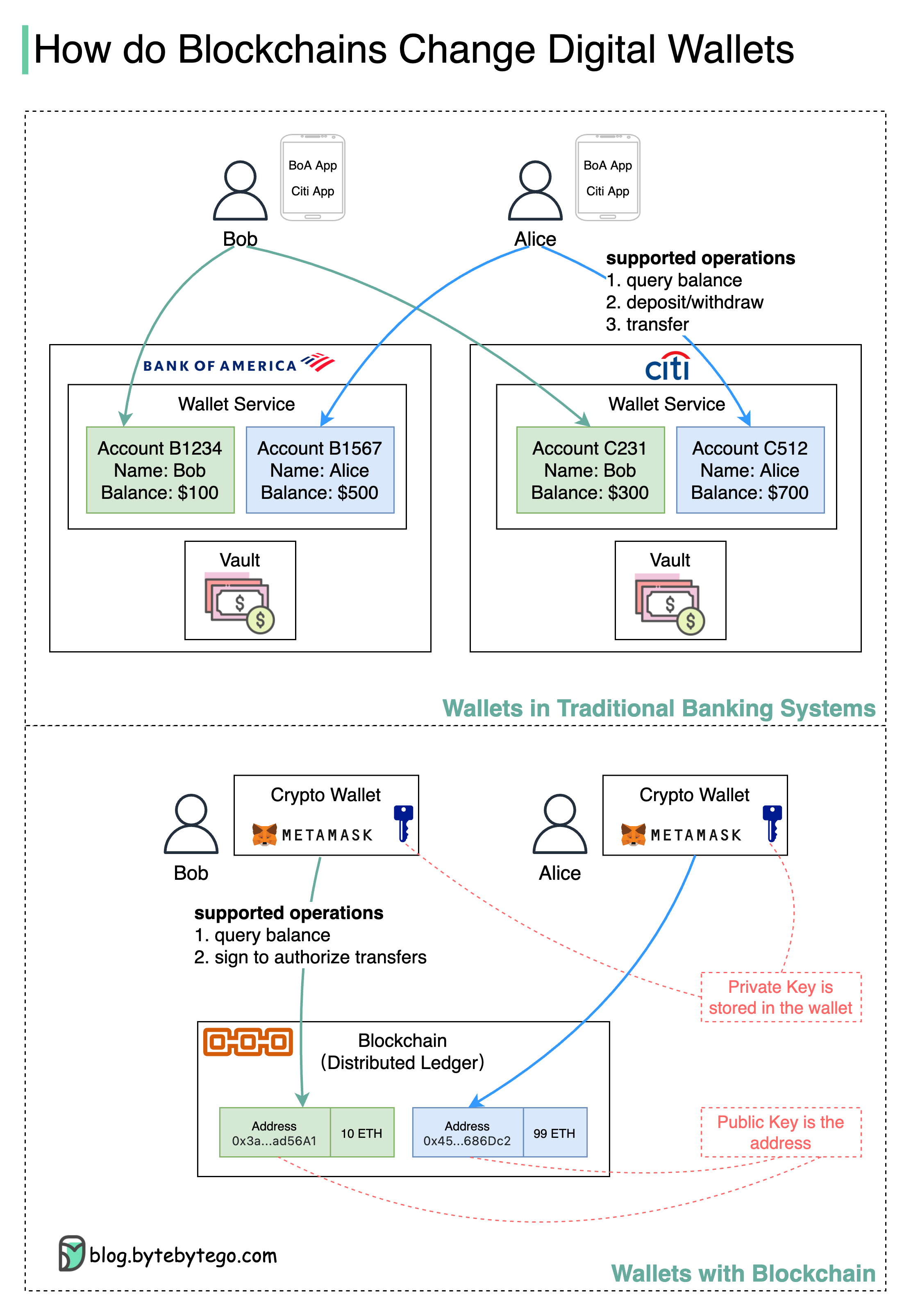

+ * [Digital Wallets: Banks vs. Blockchain](https://bytebytego.com/guides/digital-wallet-in-traditional-banks-vs-wallet-in-blockchain)

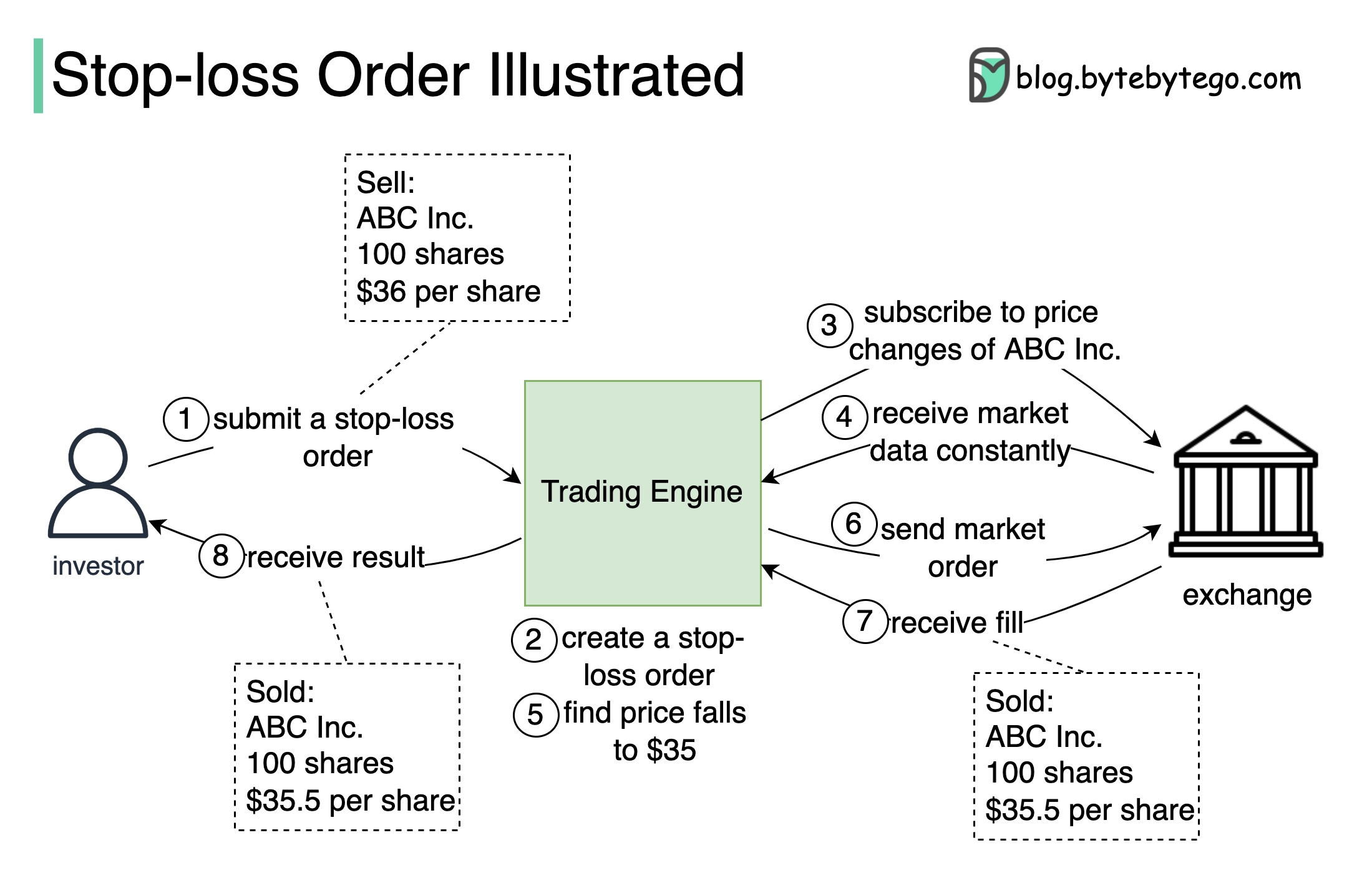

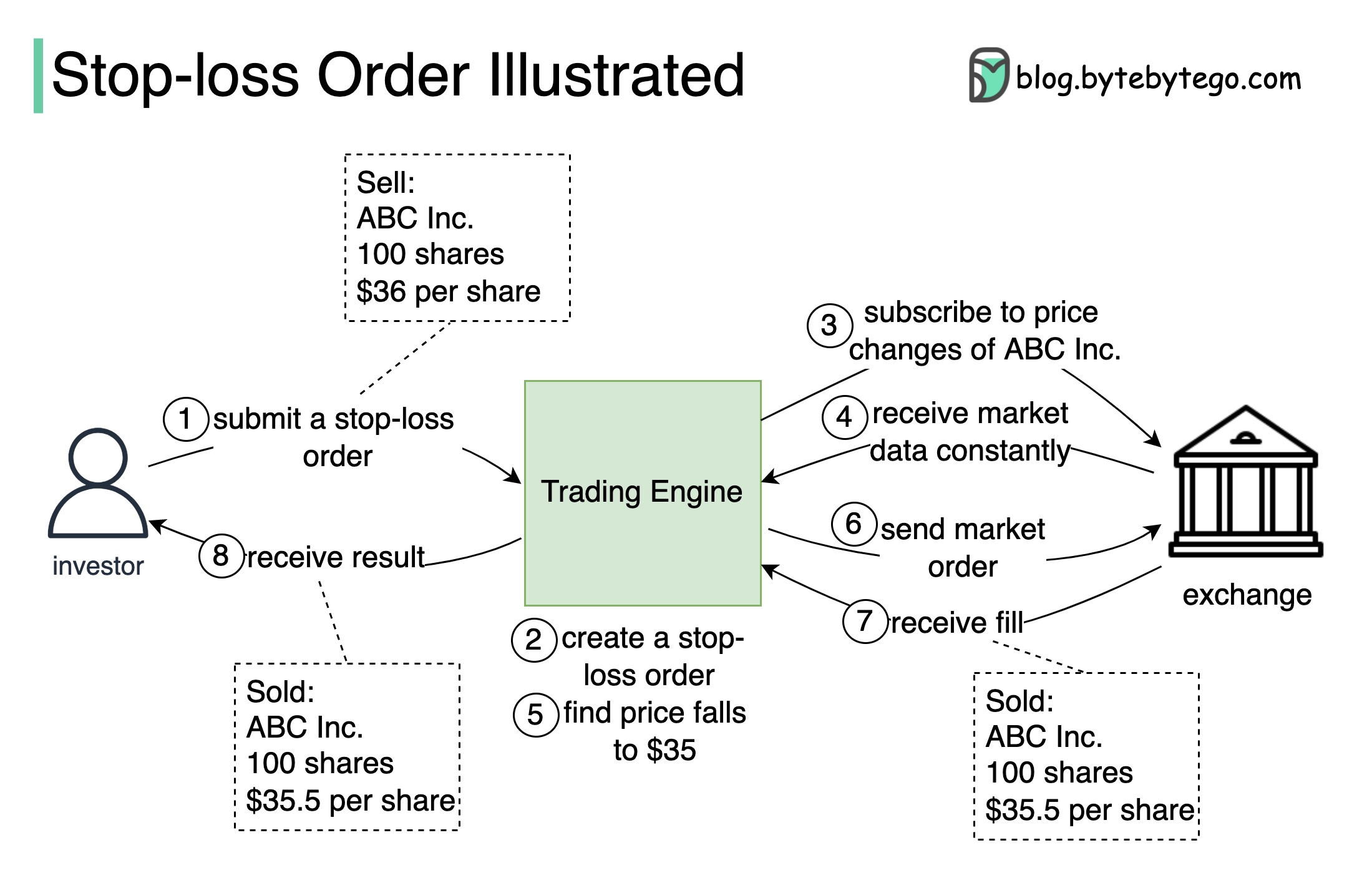

+ * [What is a Stop-Loss Order and How Does it Work?](https://bytebytego.com/guides/what-is-a-stop-loss-order-and-how-does-it-work)

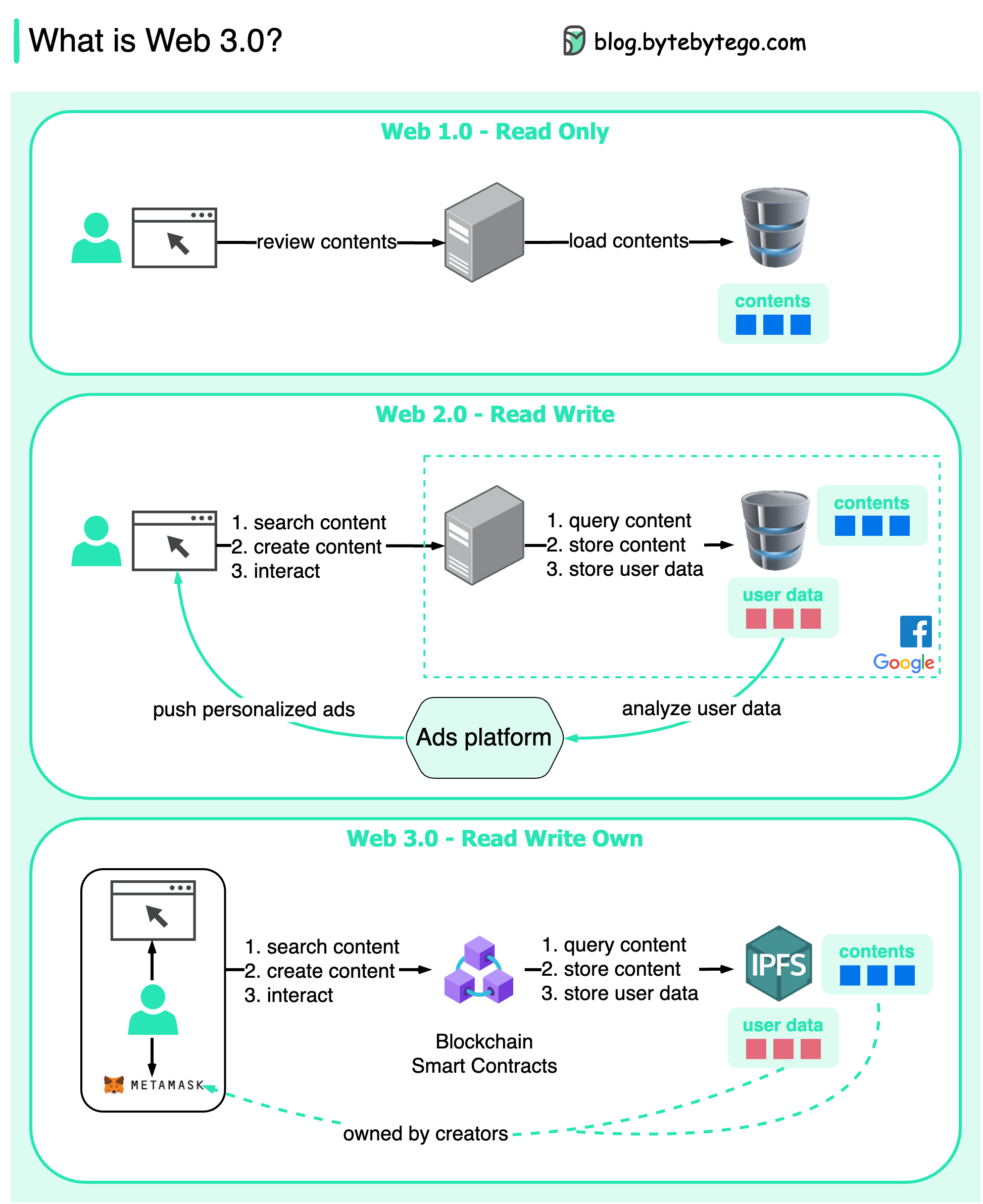

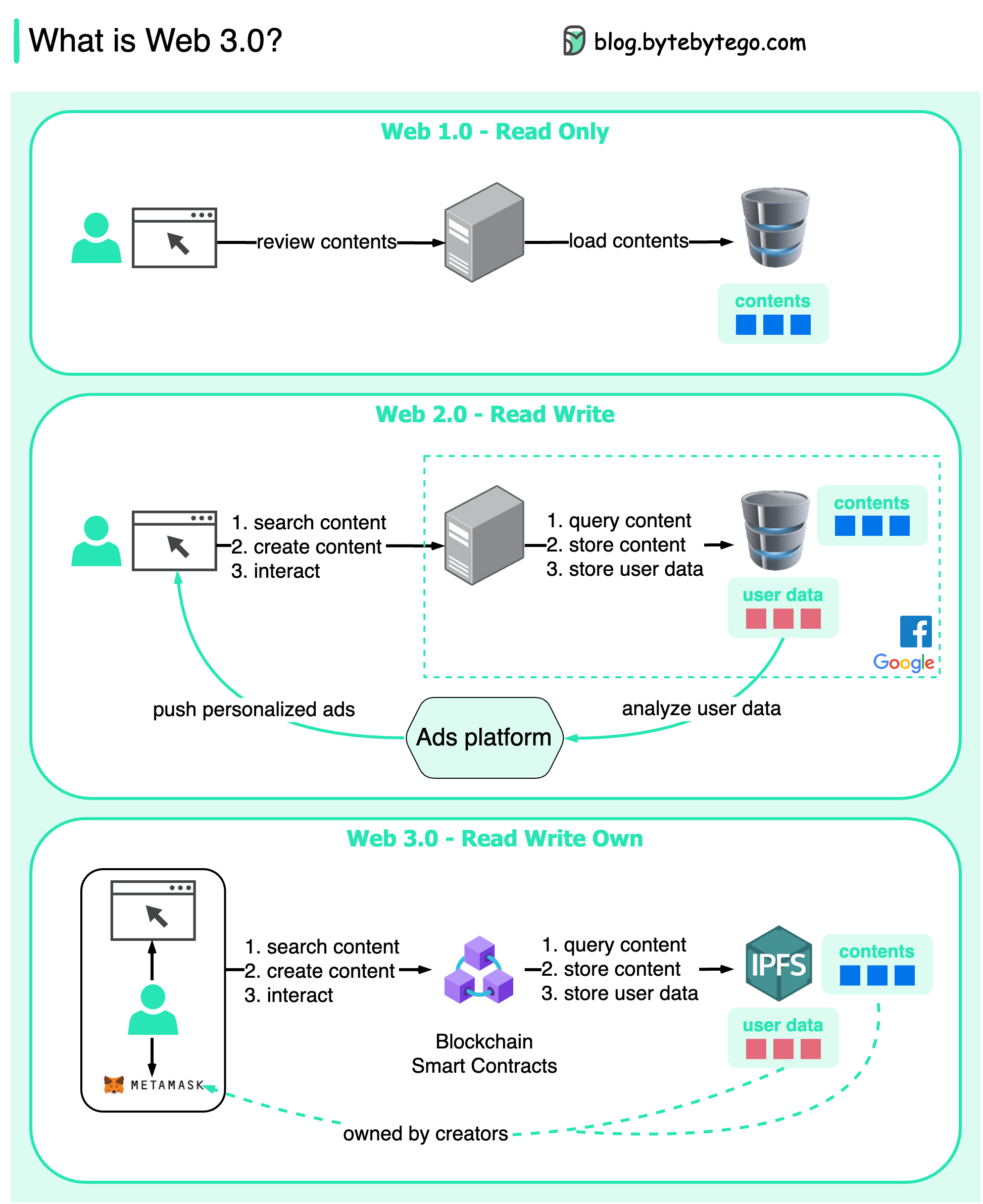

+ * [What is Web 3.0? Why doesn't it have ads?](https://bytebytego.com/guides/what-is-web-3)

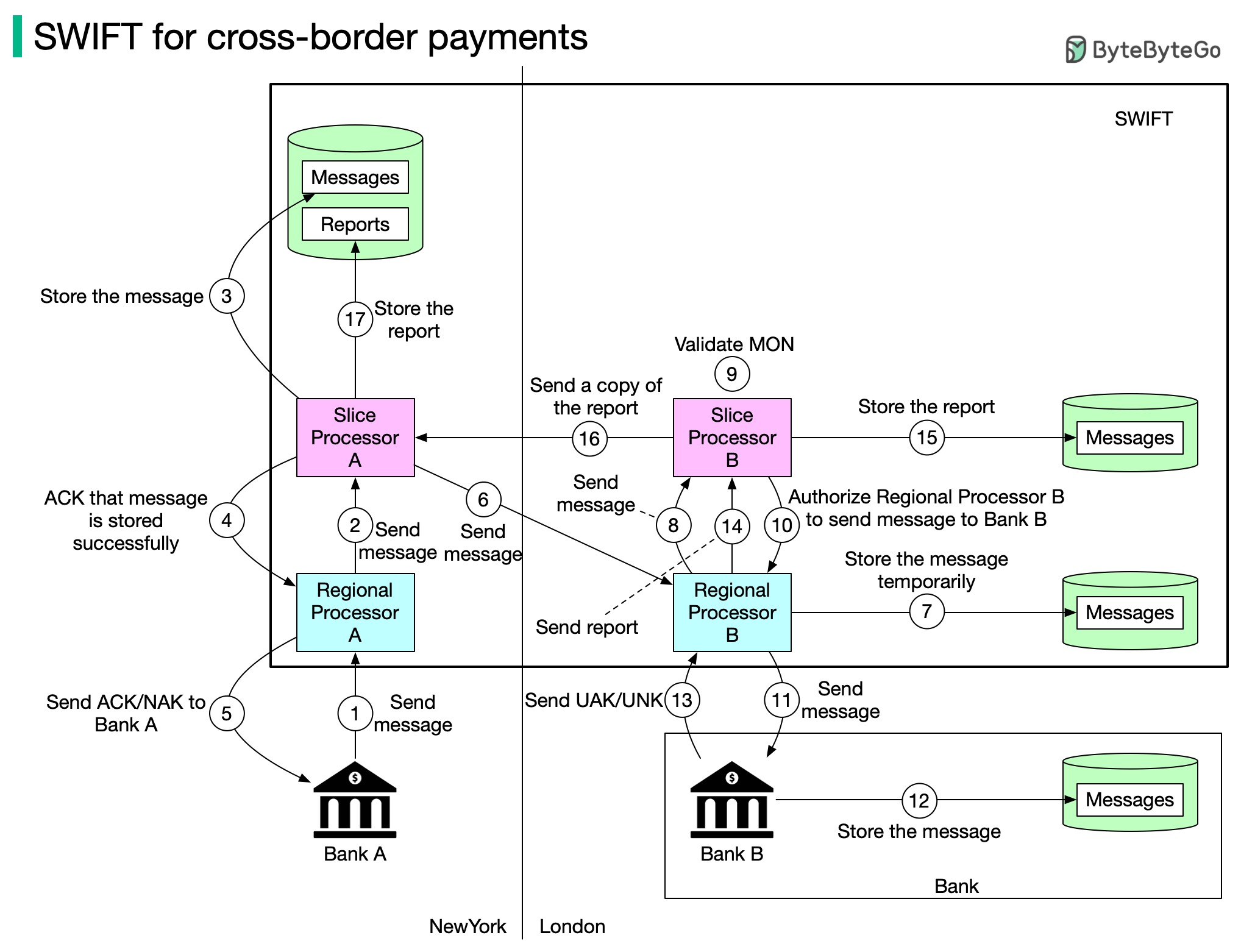

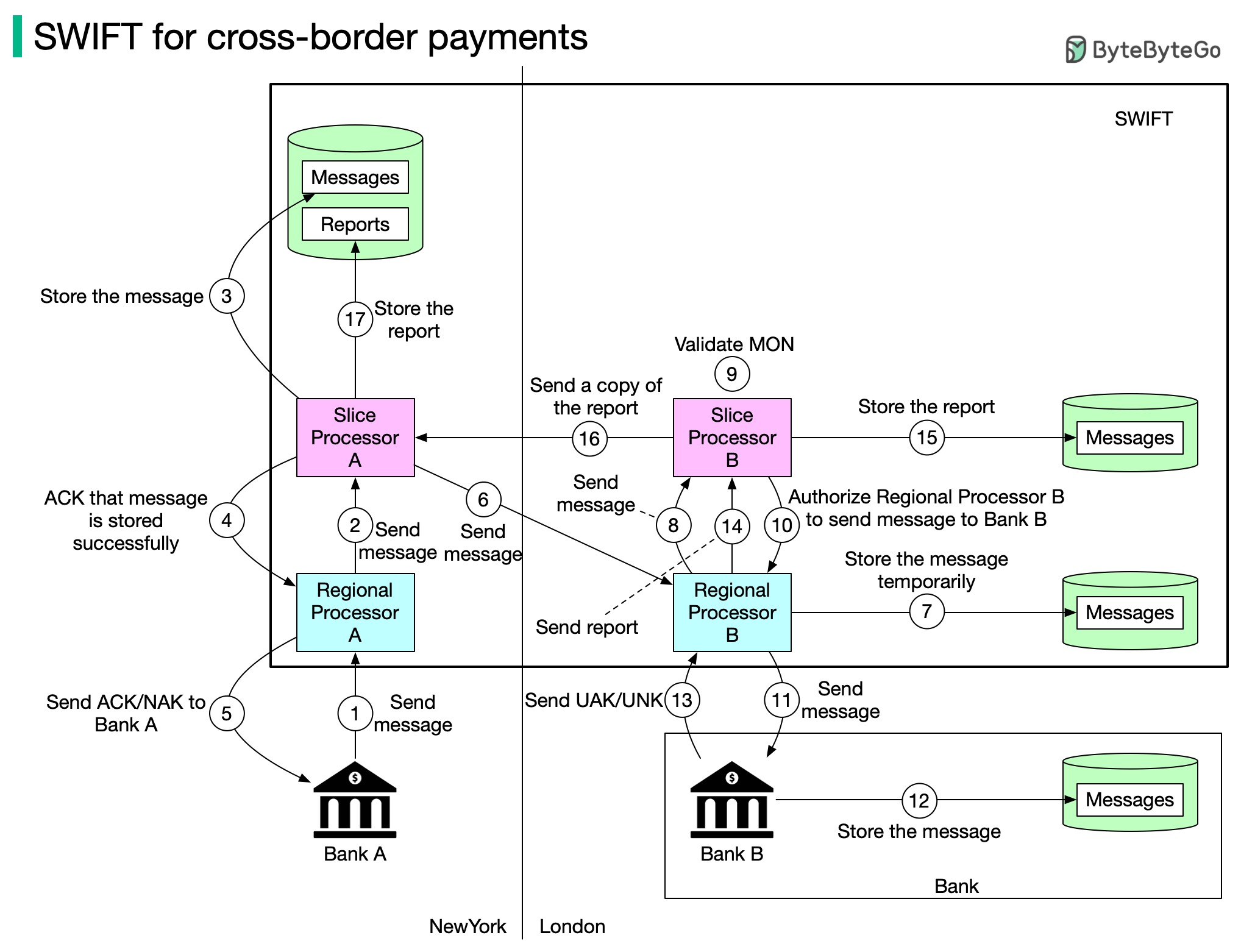

+ * [SWIFT Payment Messaging System](https://bytebytego.com/guides/swift-payment-messaging-system)

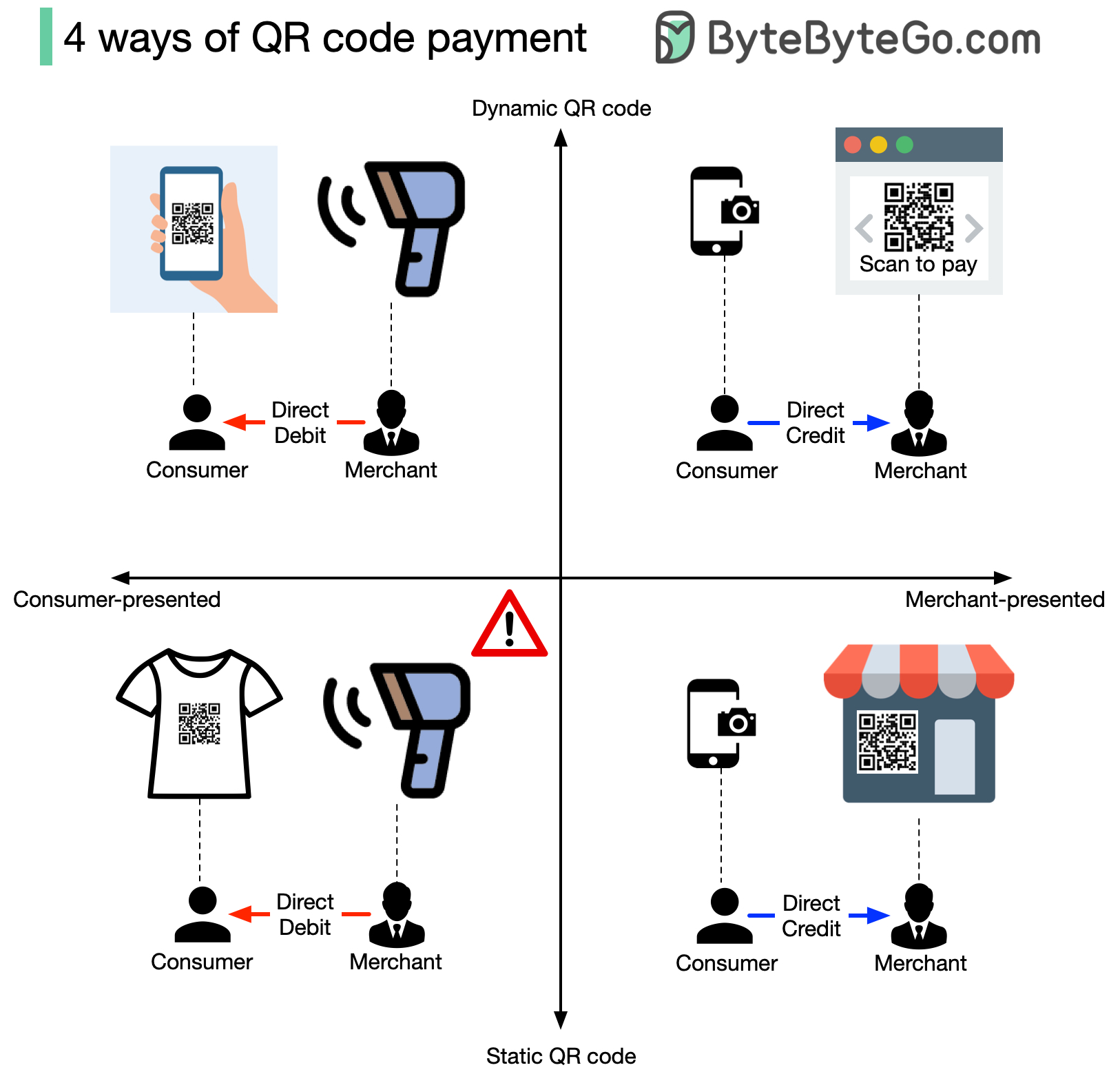

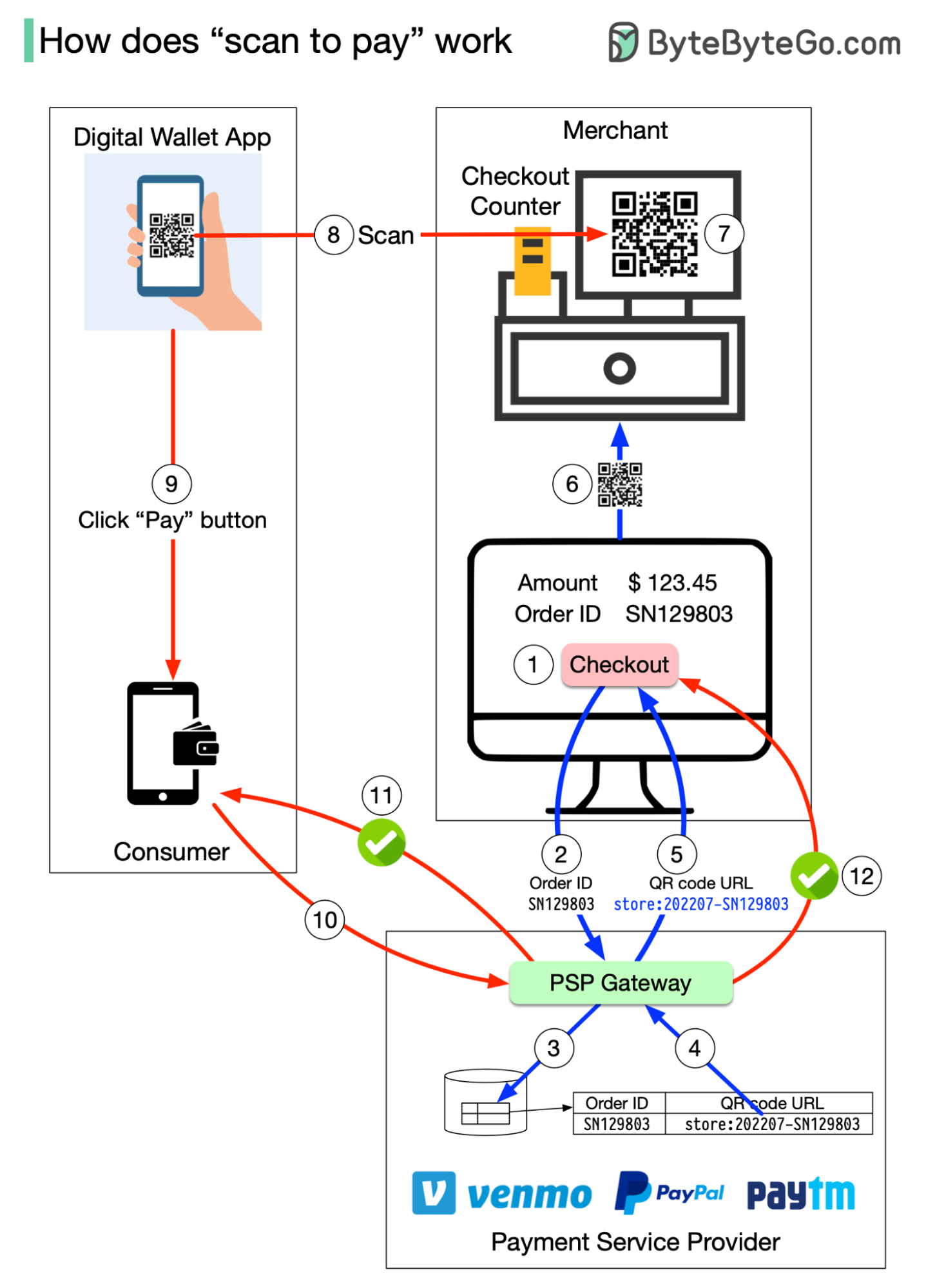

+ * [4 Ways of QR Code Payment](https://bytebytego.com/guides/4-ways-of-qr-code-payment)

+ * [Handling Hotspot Accounts](https://bytebytego.com/guides/handling-hotspot-accounts)

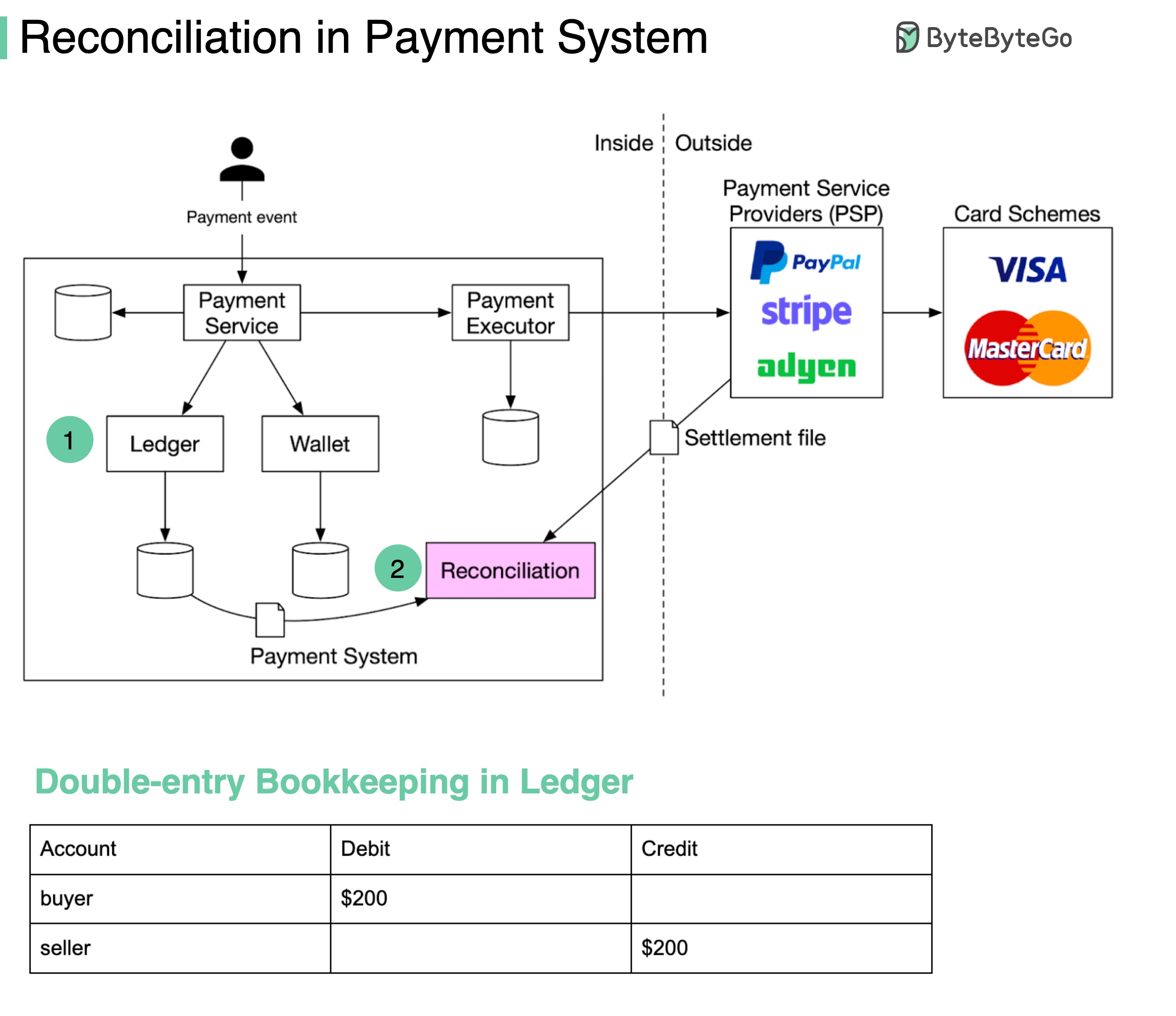

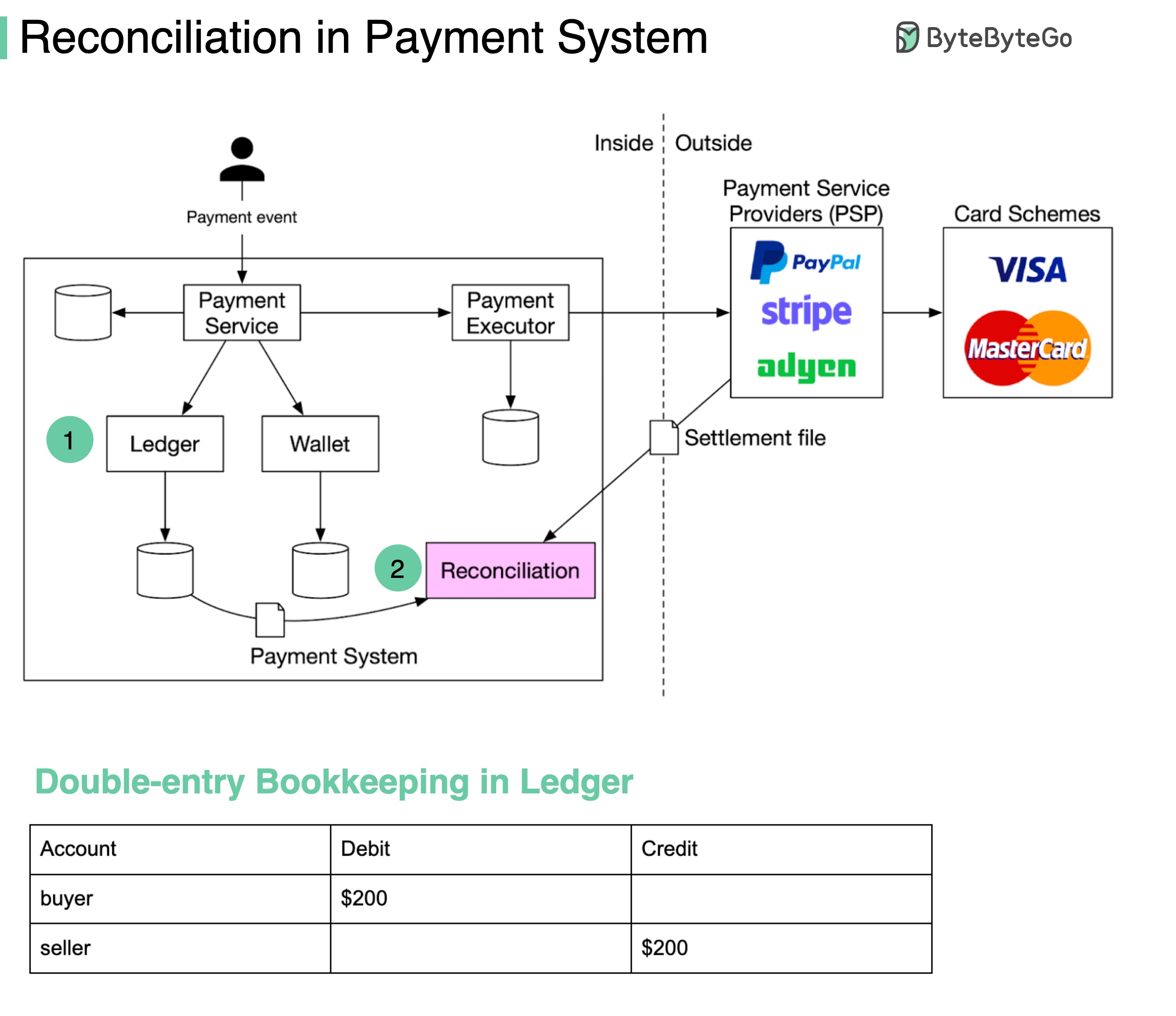

+ * [Reconciliation in Payment](https://bytebytego.com/guides/reconciliation-in-payment)

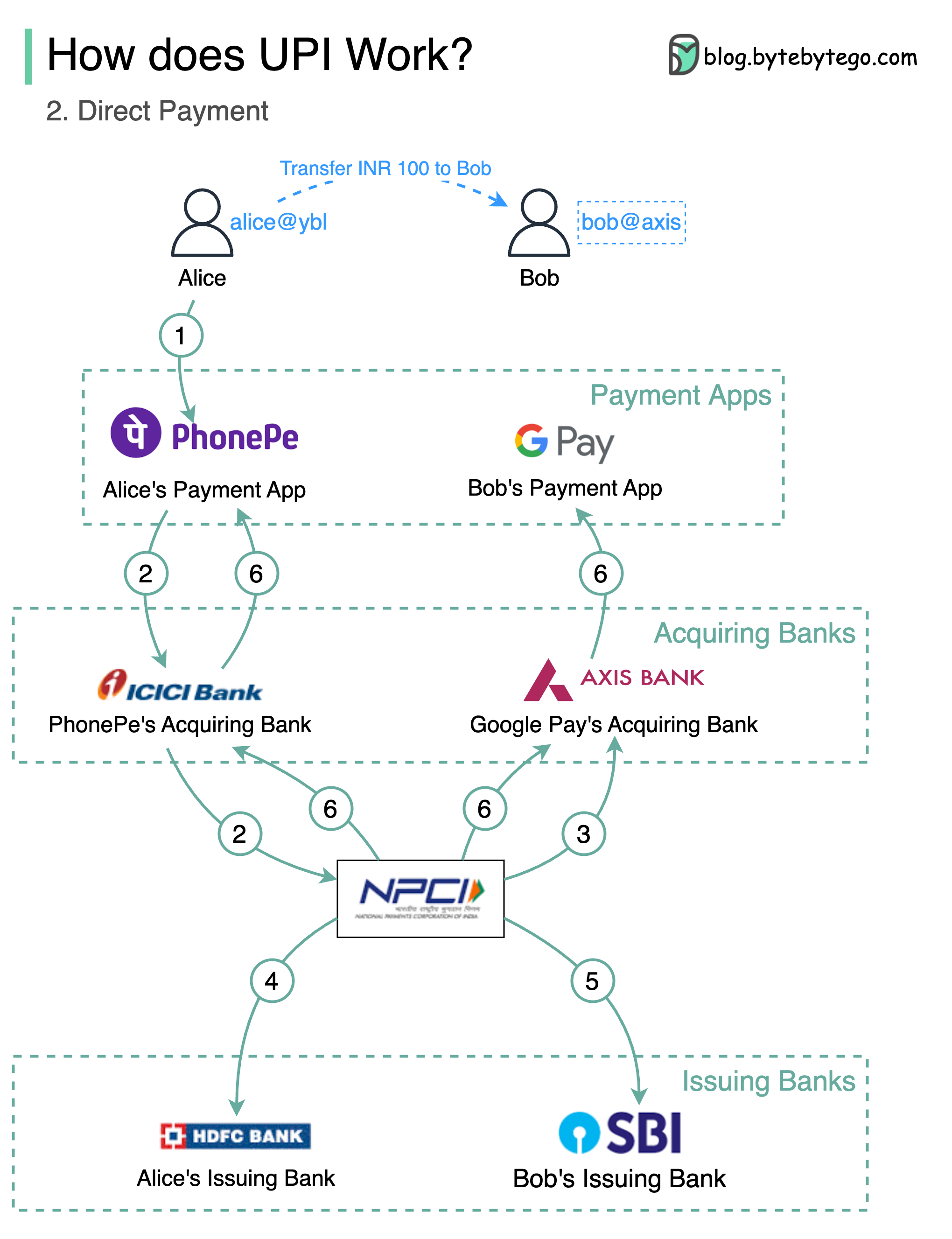

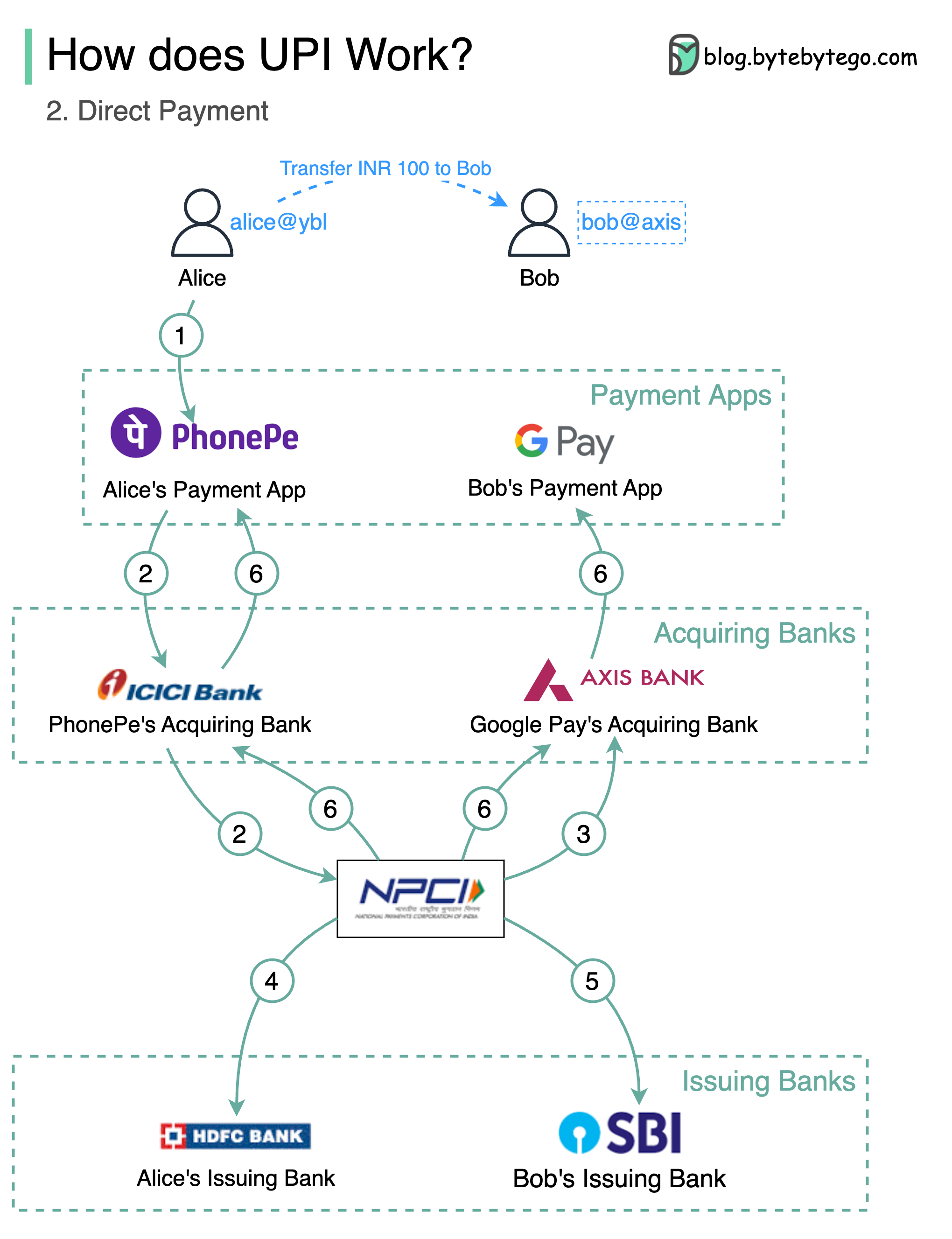

+ * [Unified Payments Interface (UPI)](https://bytebytego.com/guides/unified-payments-interface-upi-in-india)

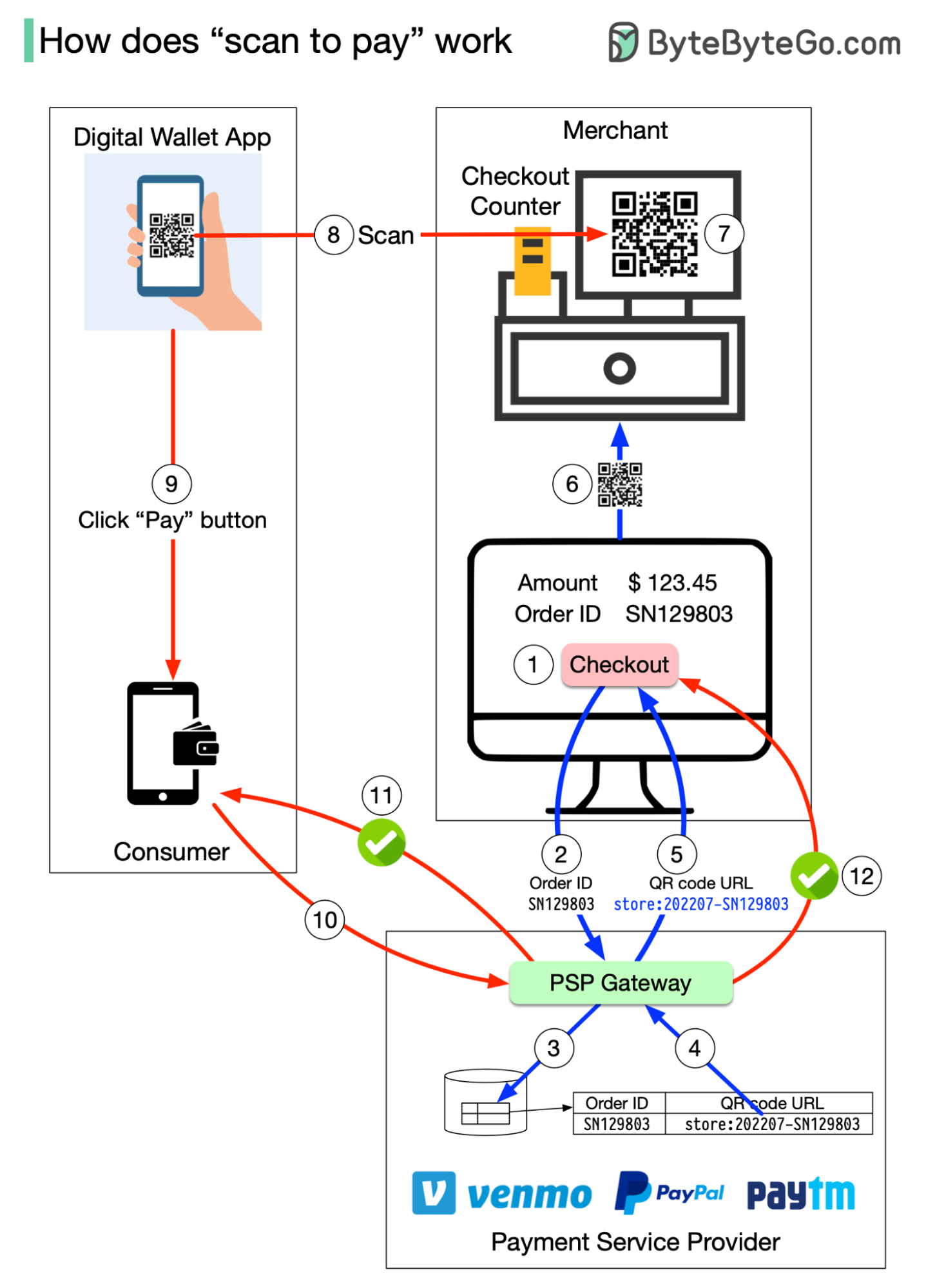

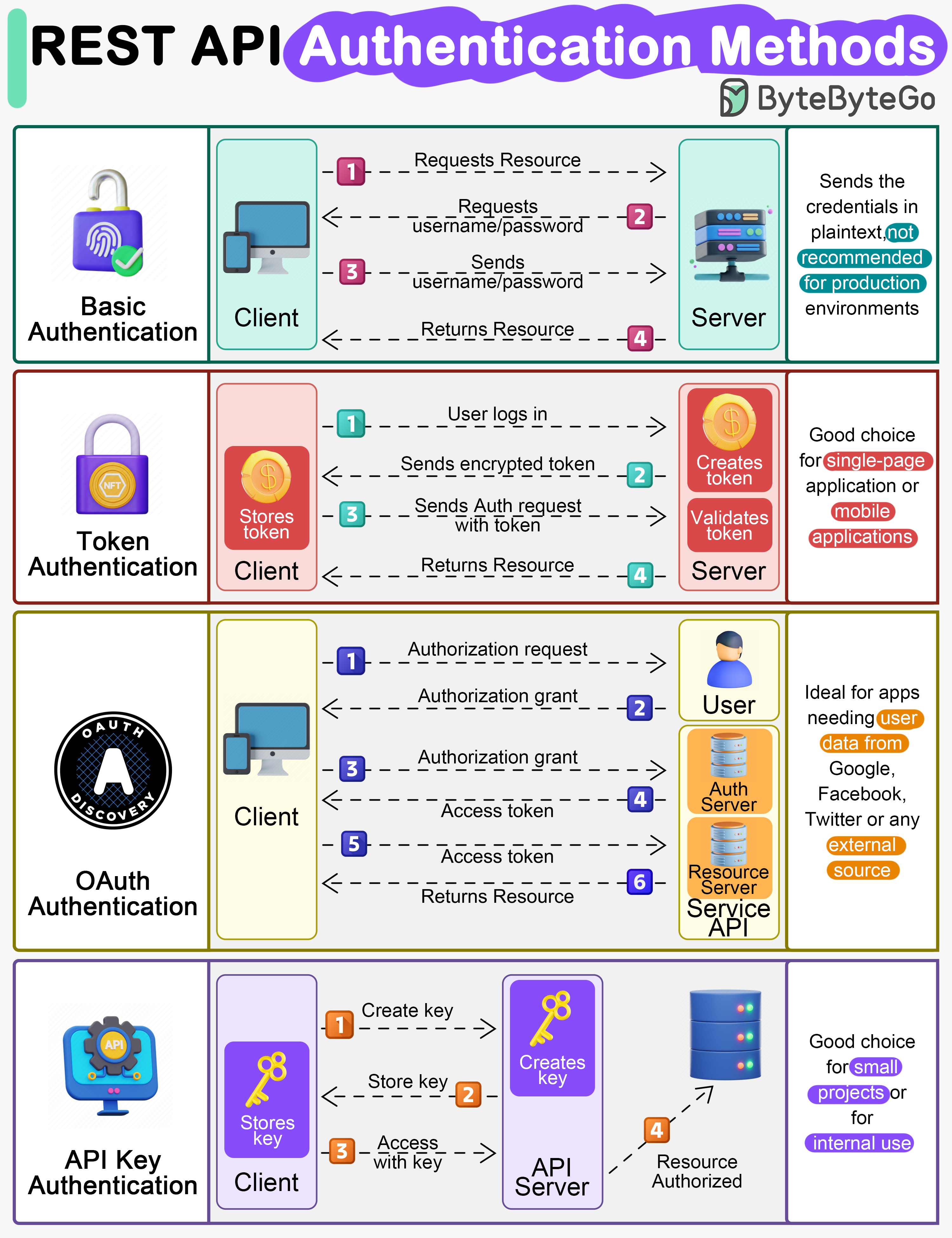

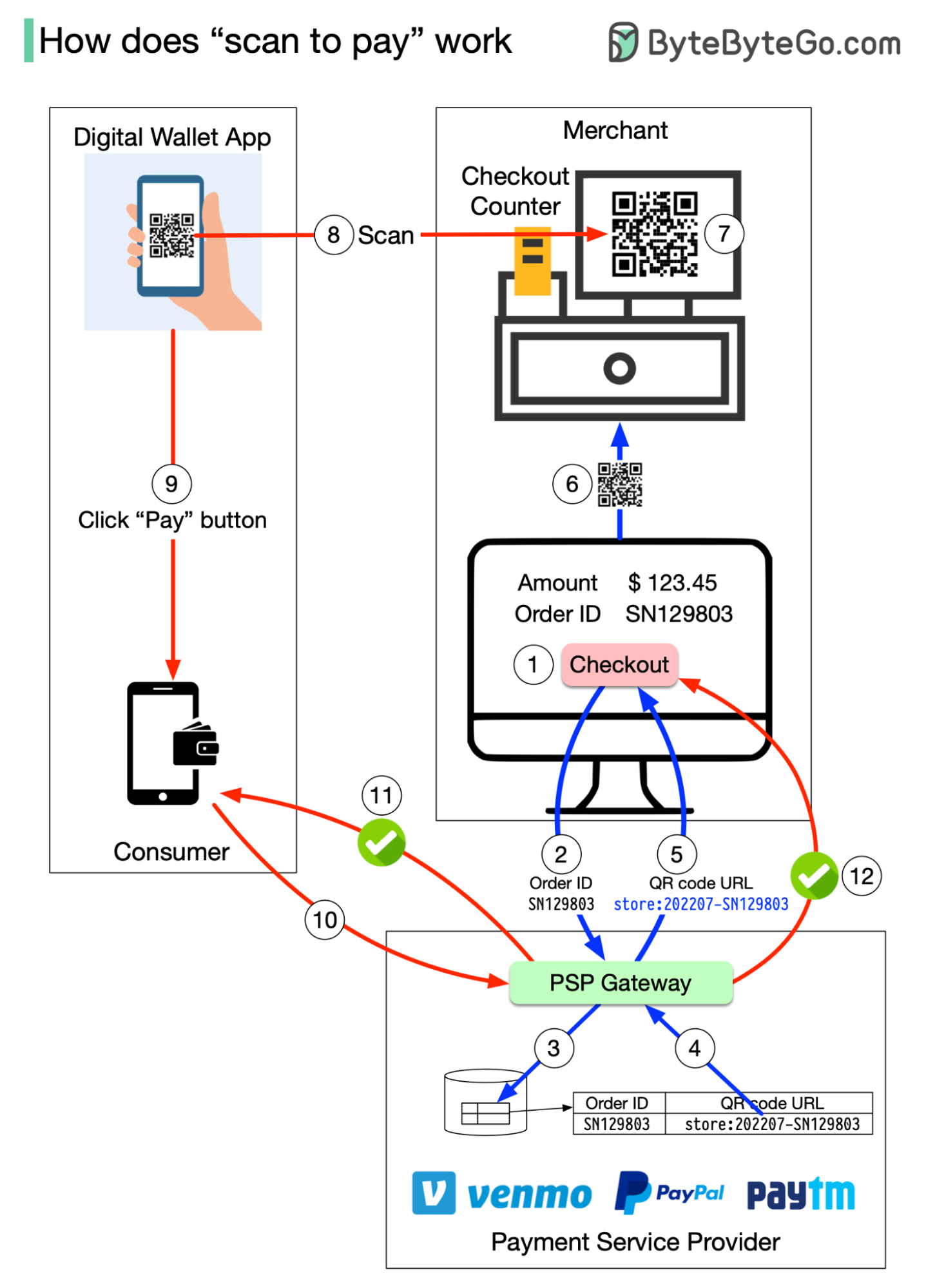

+ * [How Scan to Pay Works](https://bytebytego.com/guides/how-does-scan-to-pay-work)

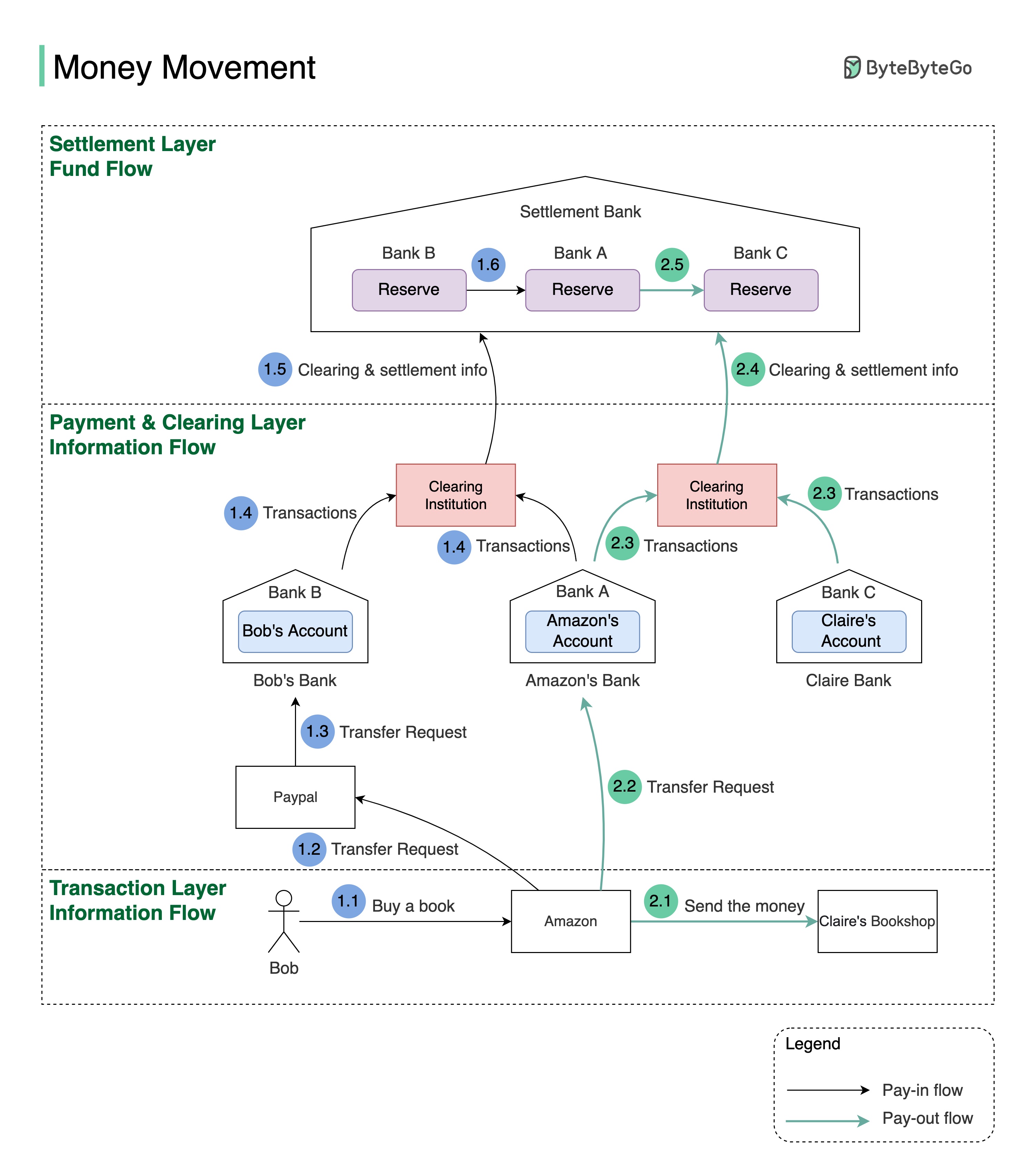

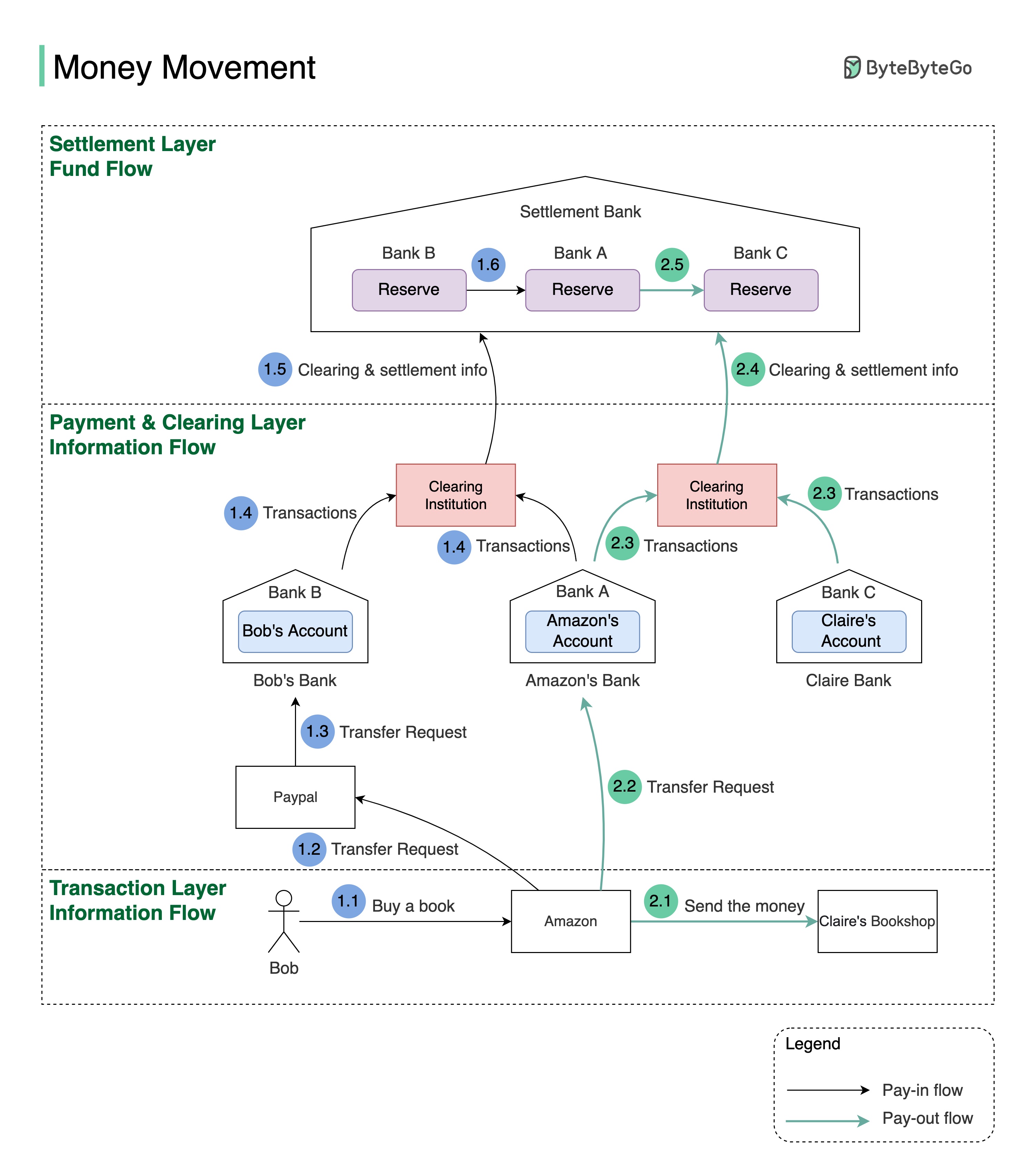

+ * [Money Movement](https://bytebytego.com/guides/money-movement)

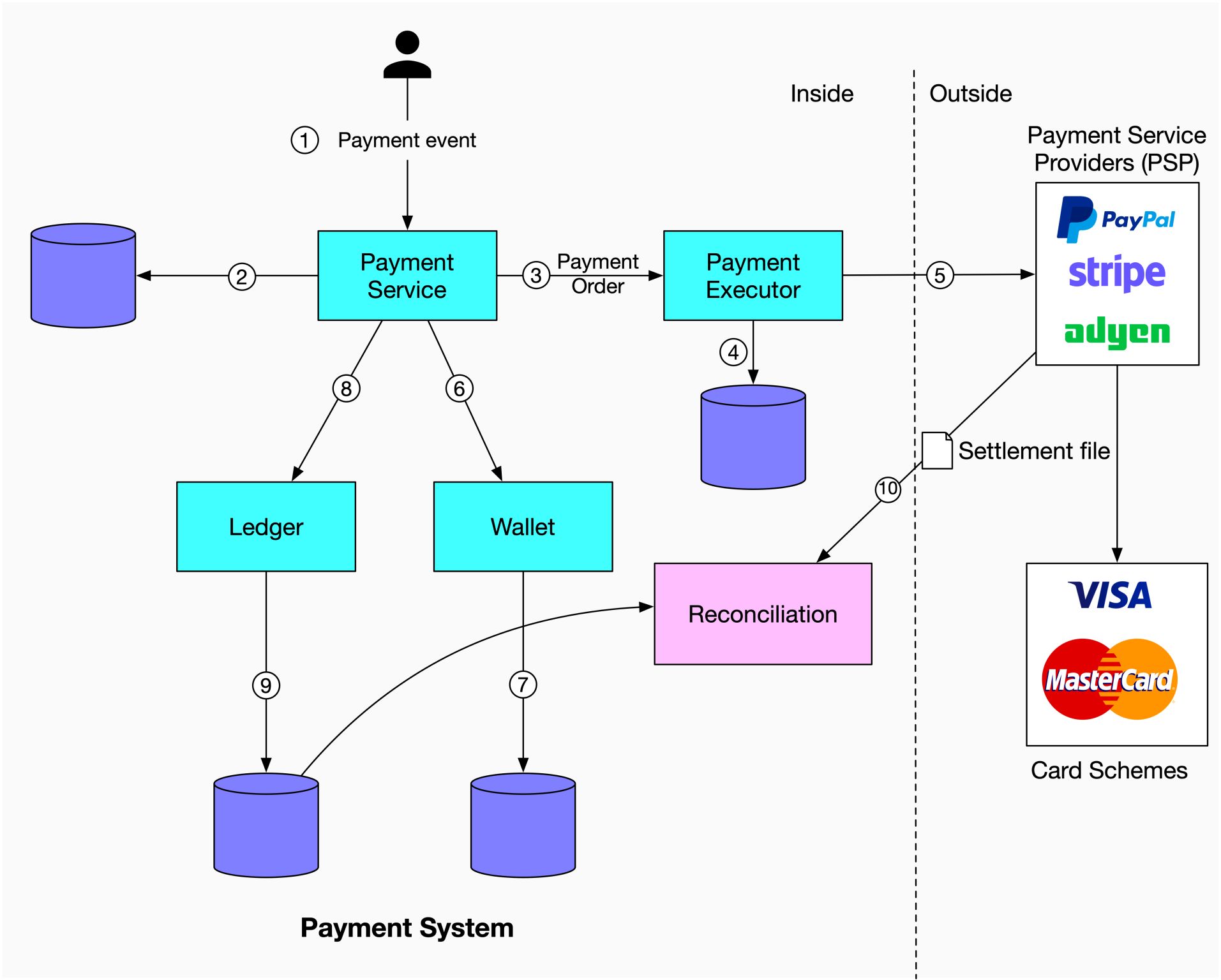

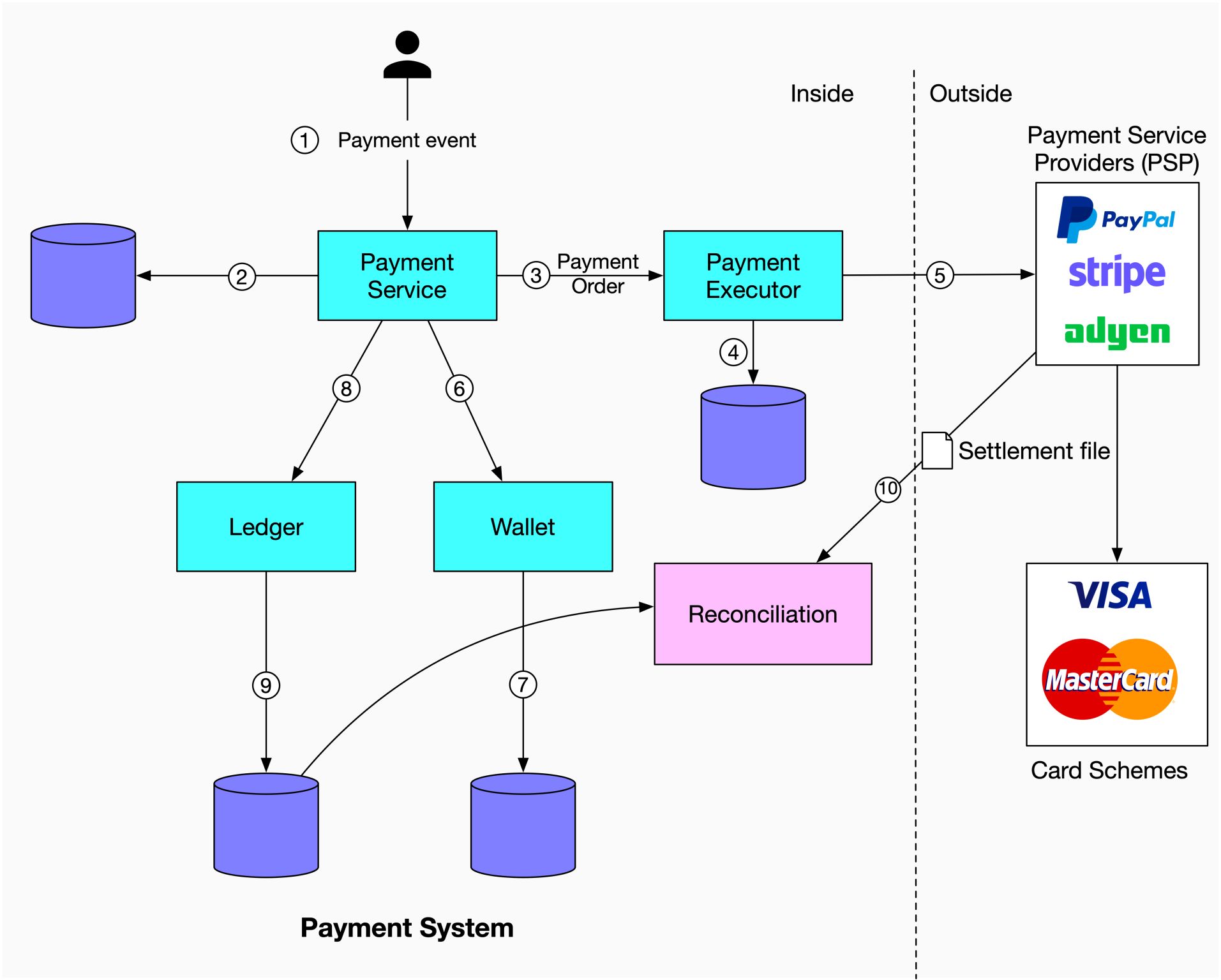

+ * [Payment System](https://bytebytego.com/guides/payment-system)

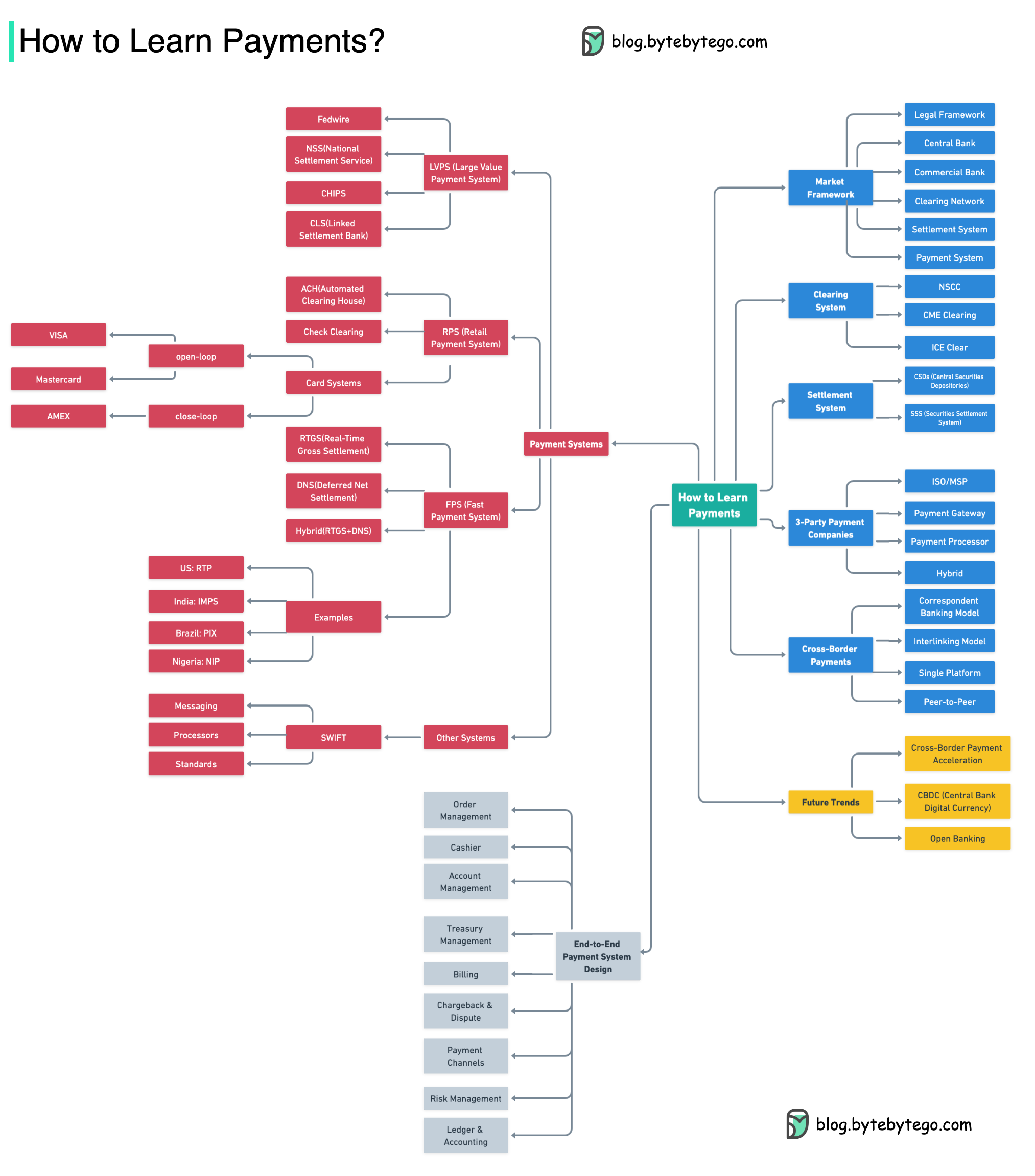

+ * [How to Learn Payments](https://bytebytego.com/guides/how-to-learn-payments)

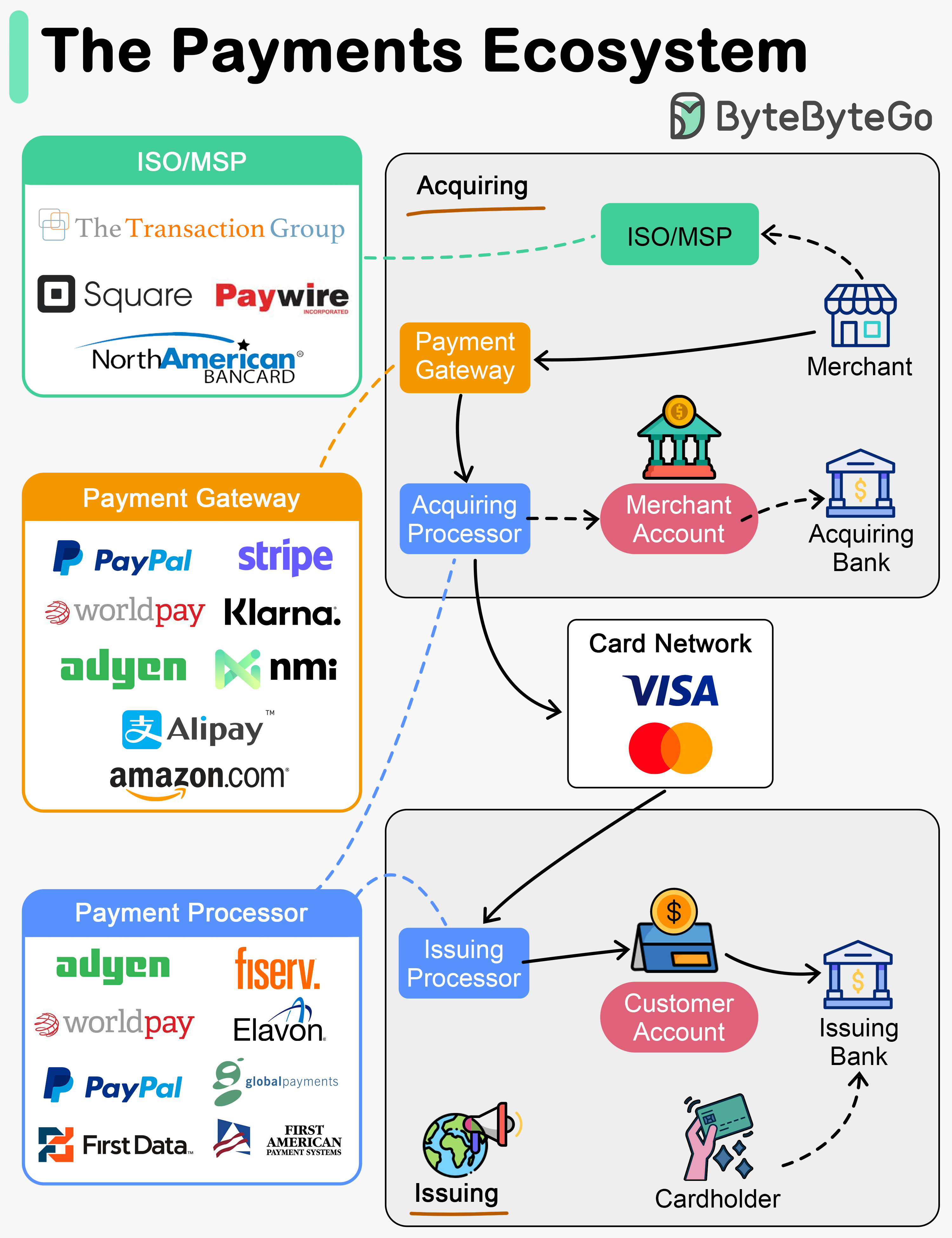

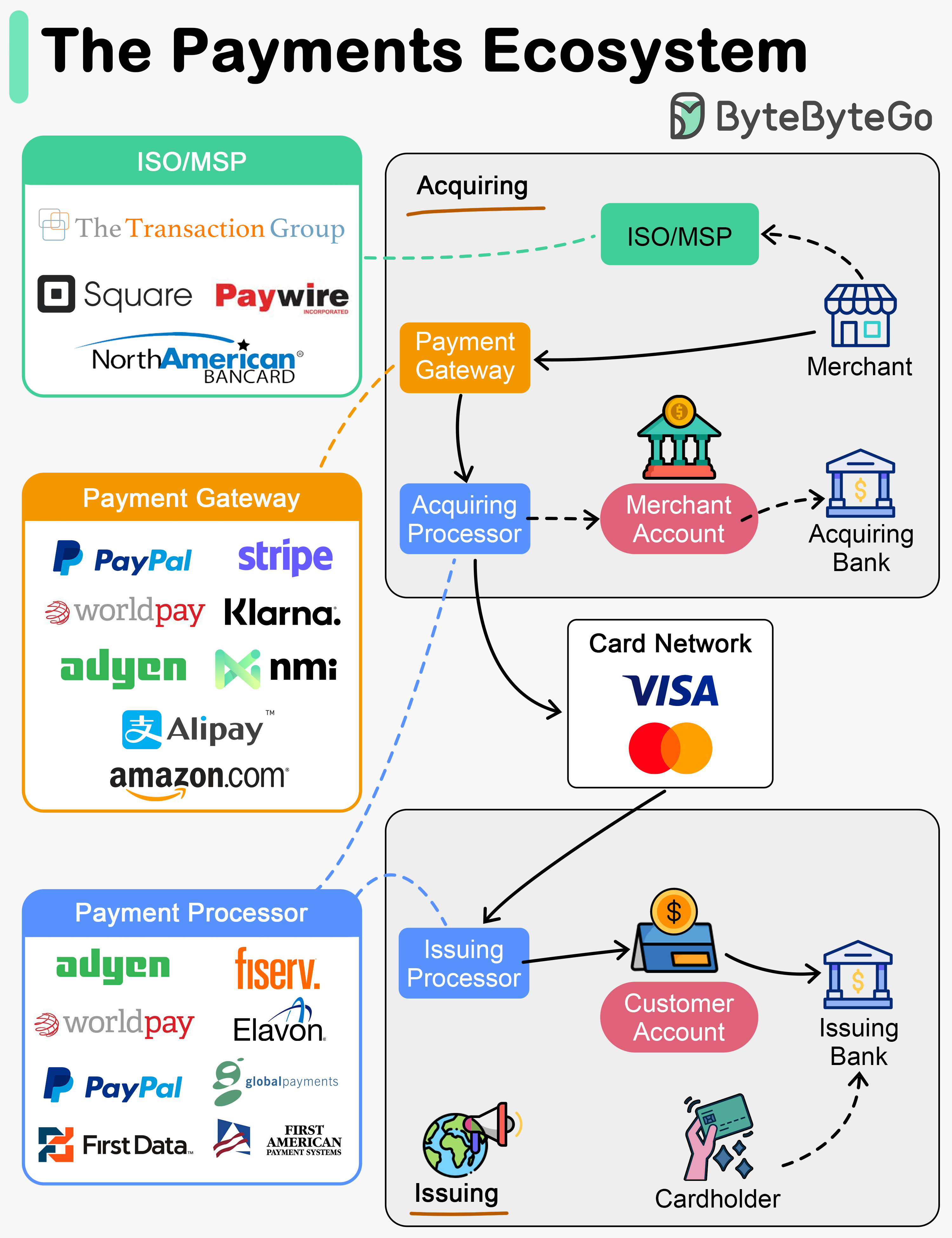

+ * [The Payments Ecosystem](https://bytebytego.com/guides/the-payments-ecosystem)

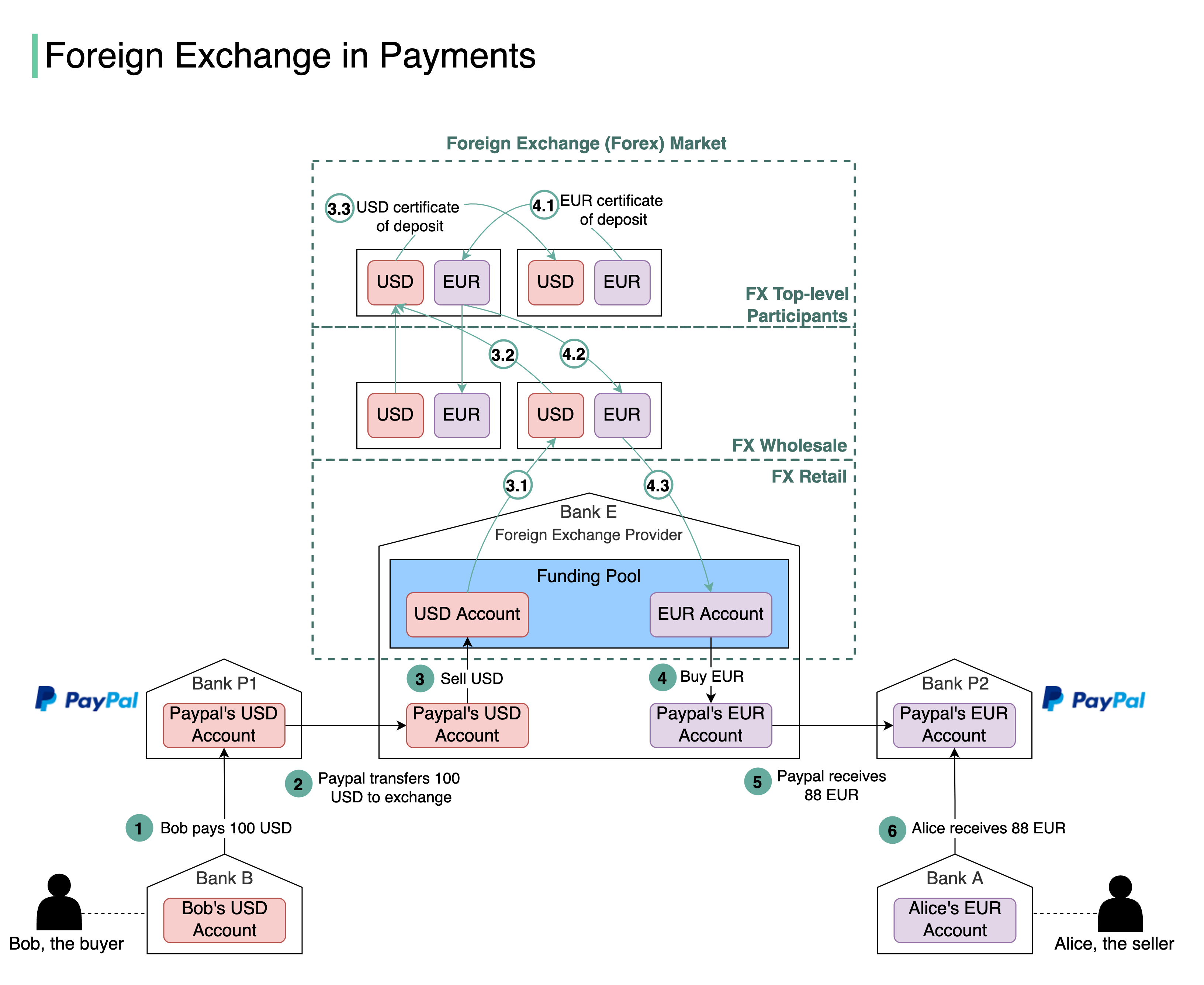

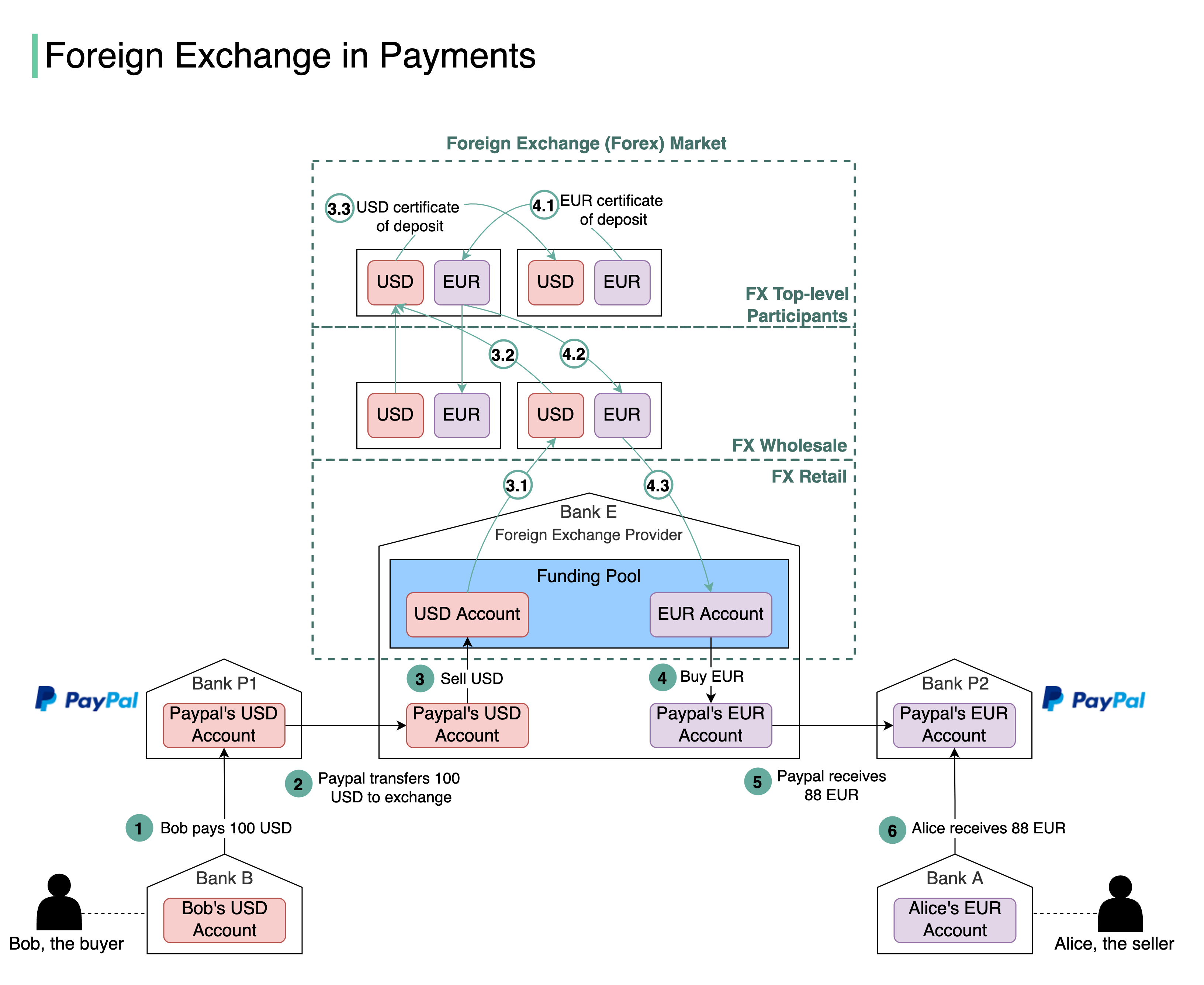

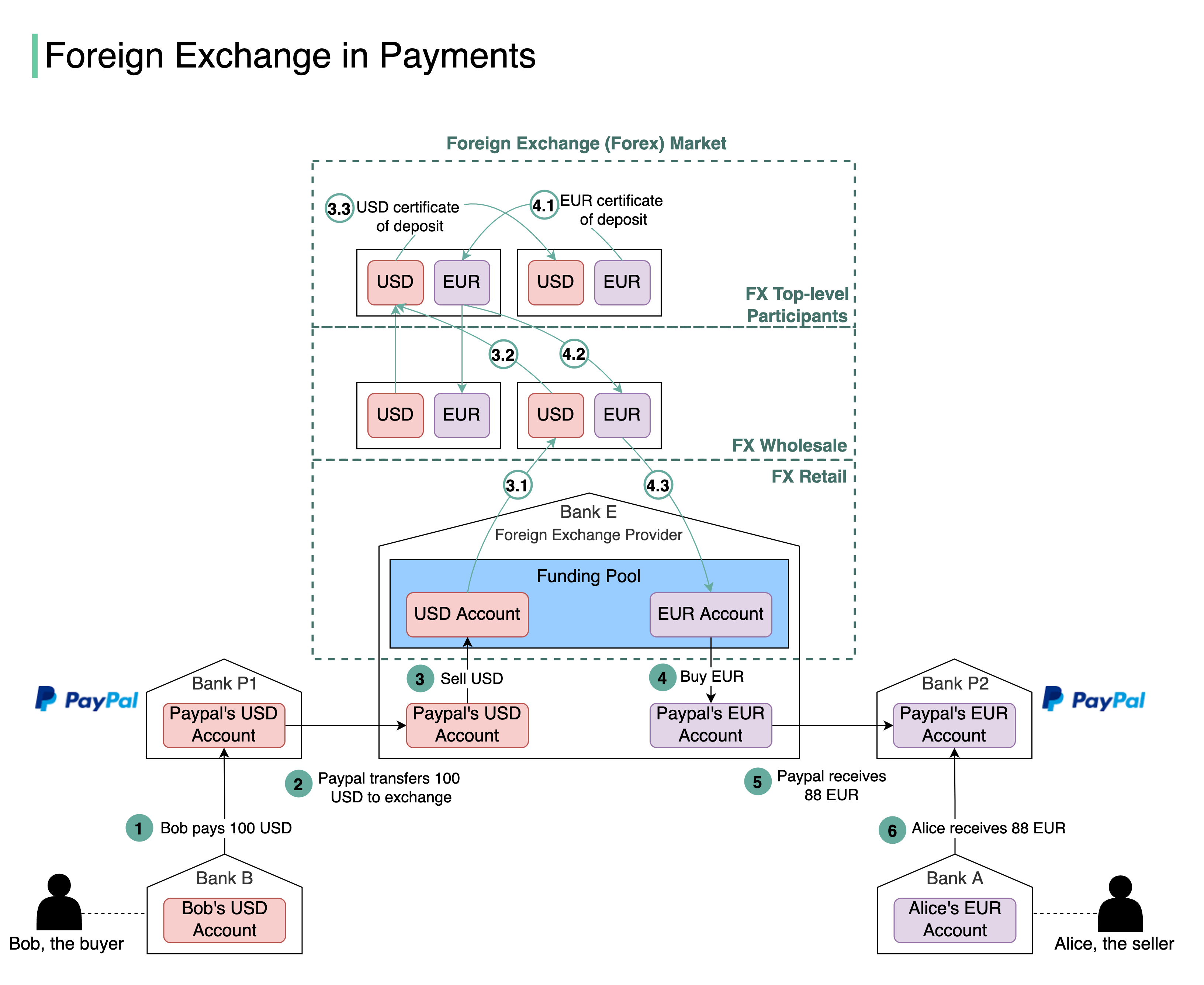

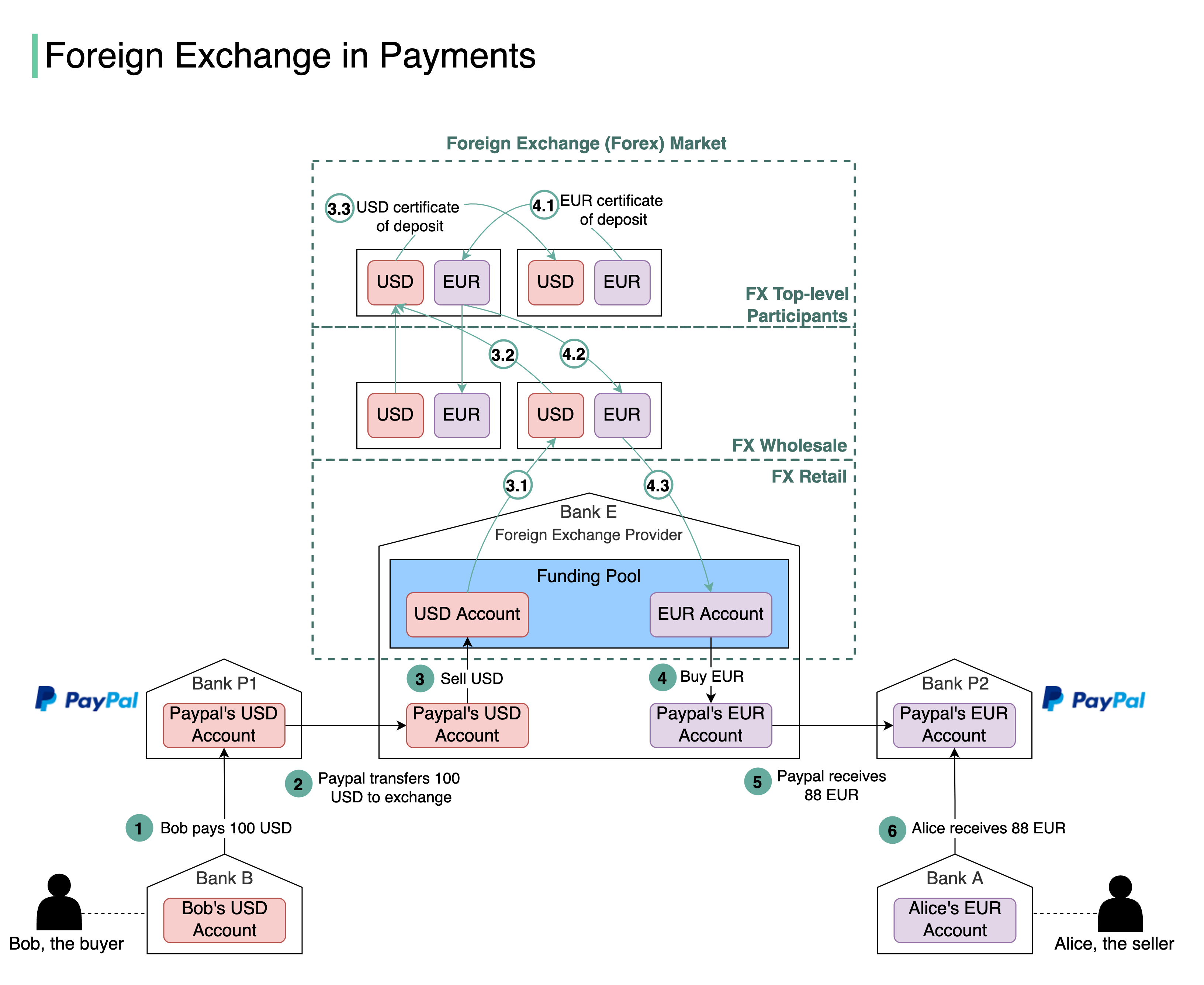

+ * [Foreign Exchange Payments](https://bytebytego.com/guides/foreign-exchange-payments)

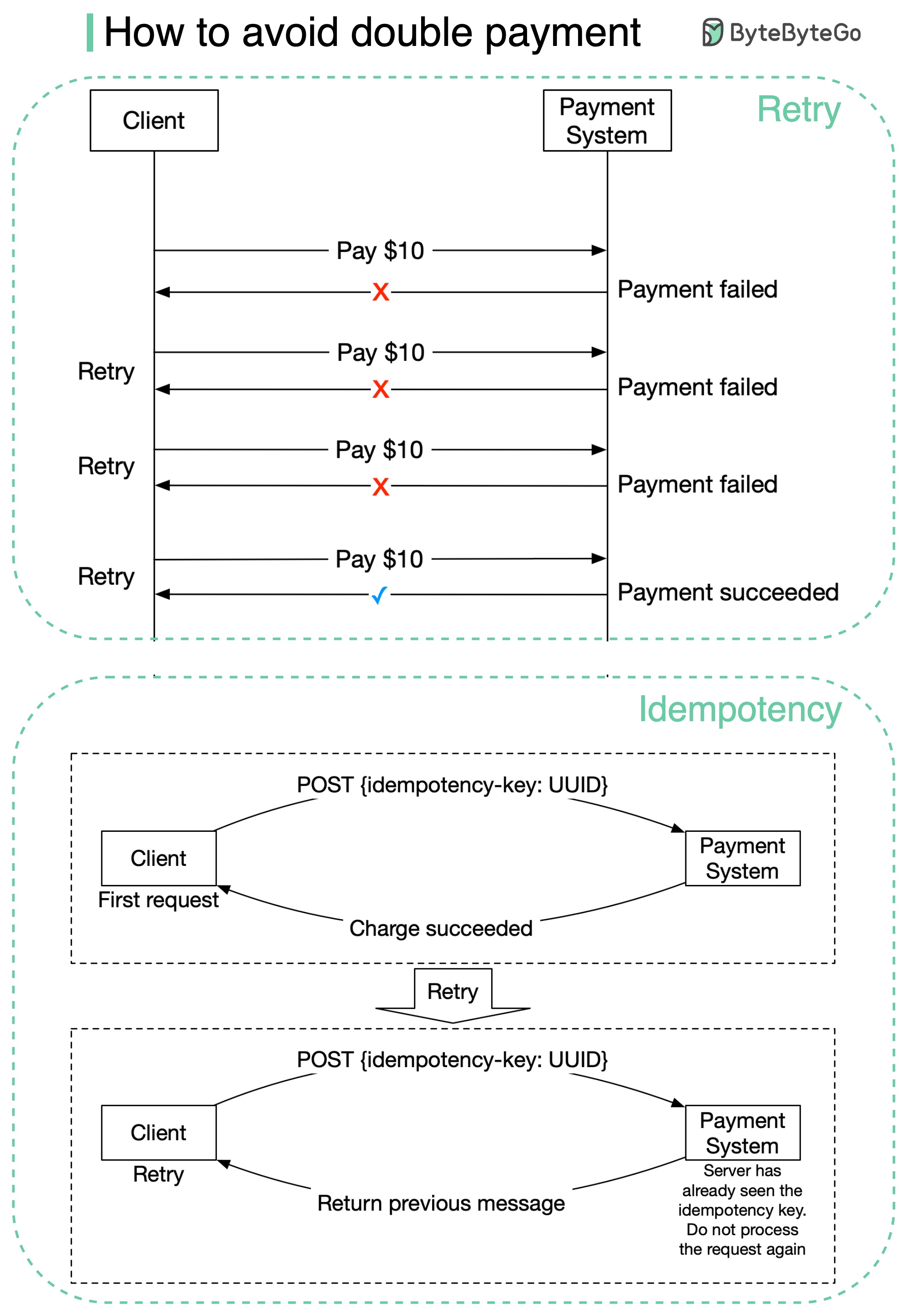

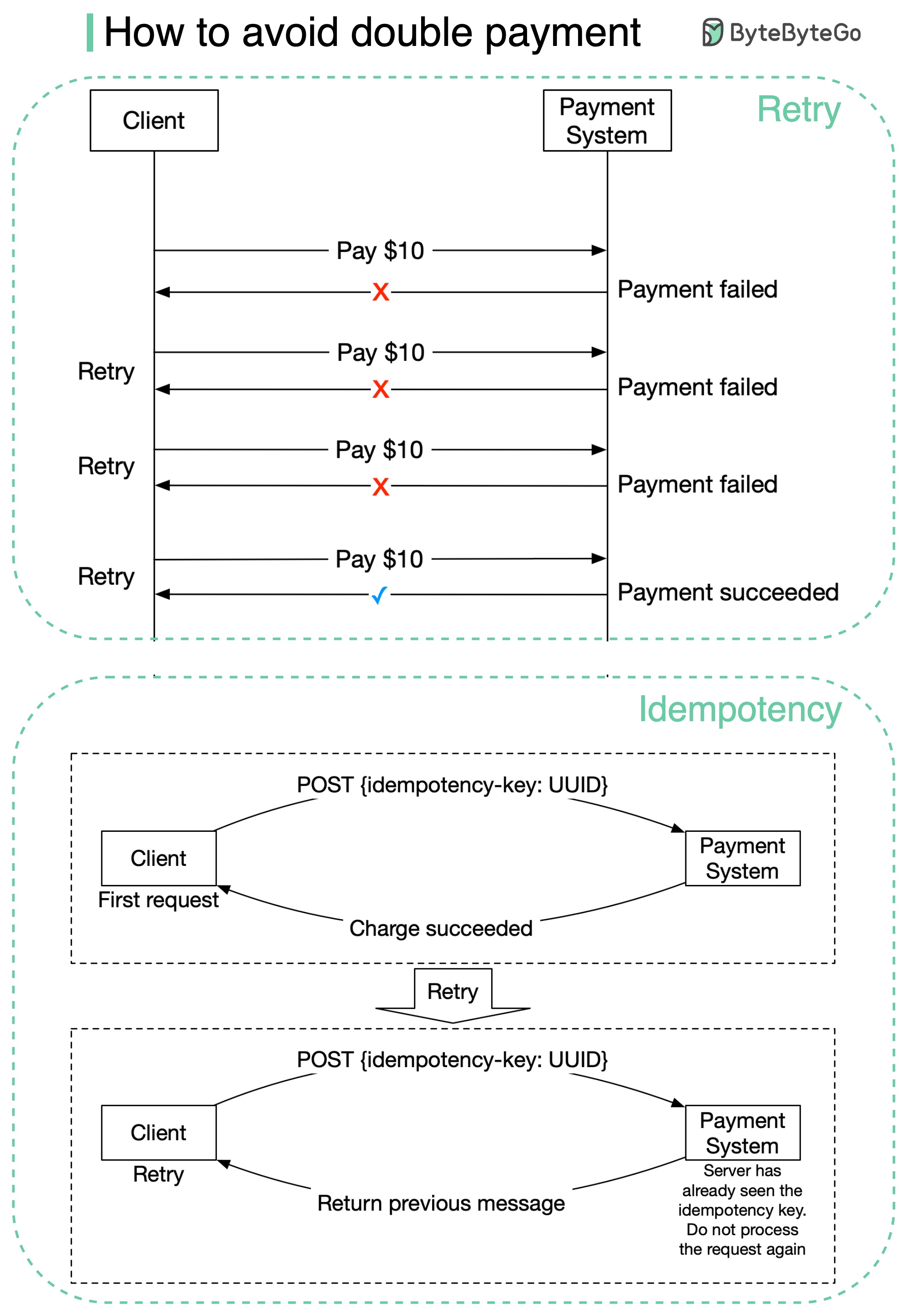

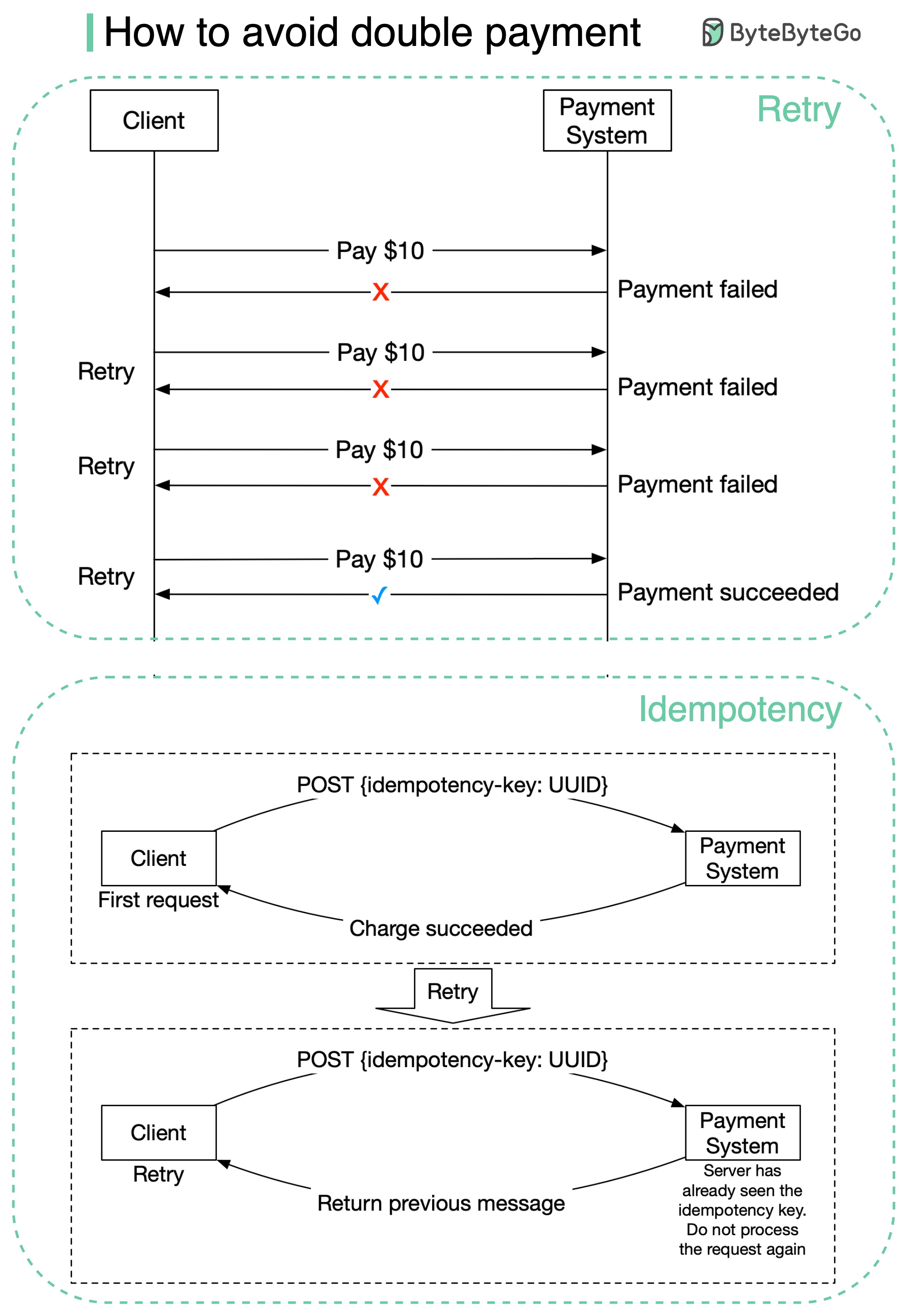

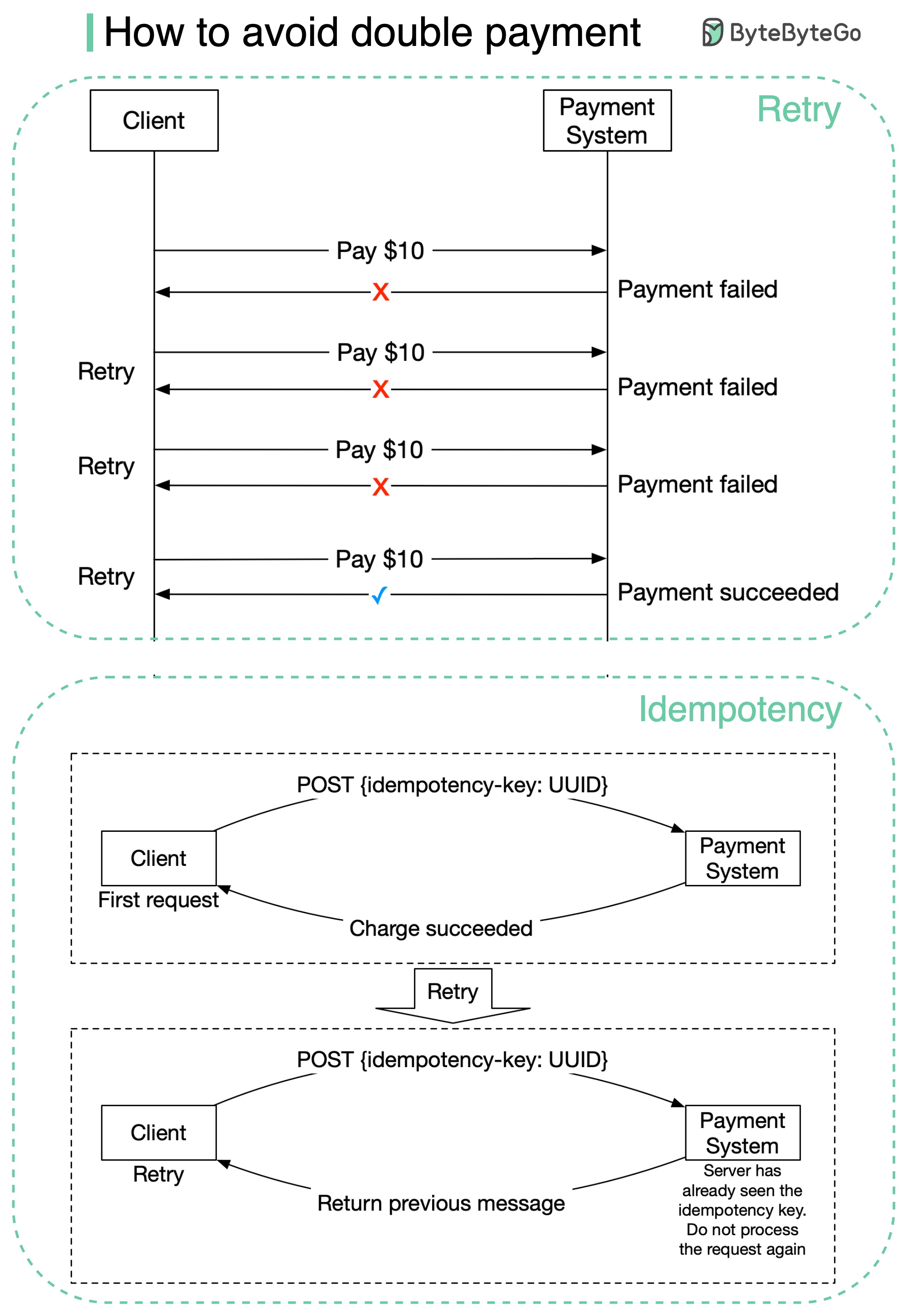

+ * [How to Avoid Double Payment](https://bytebytego.com/guides/how-to-avoid-double-payment)

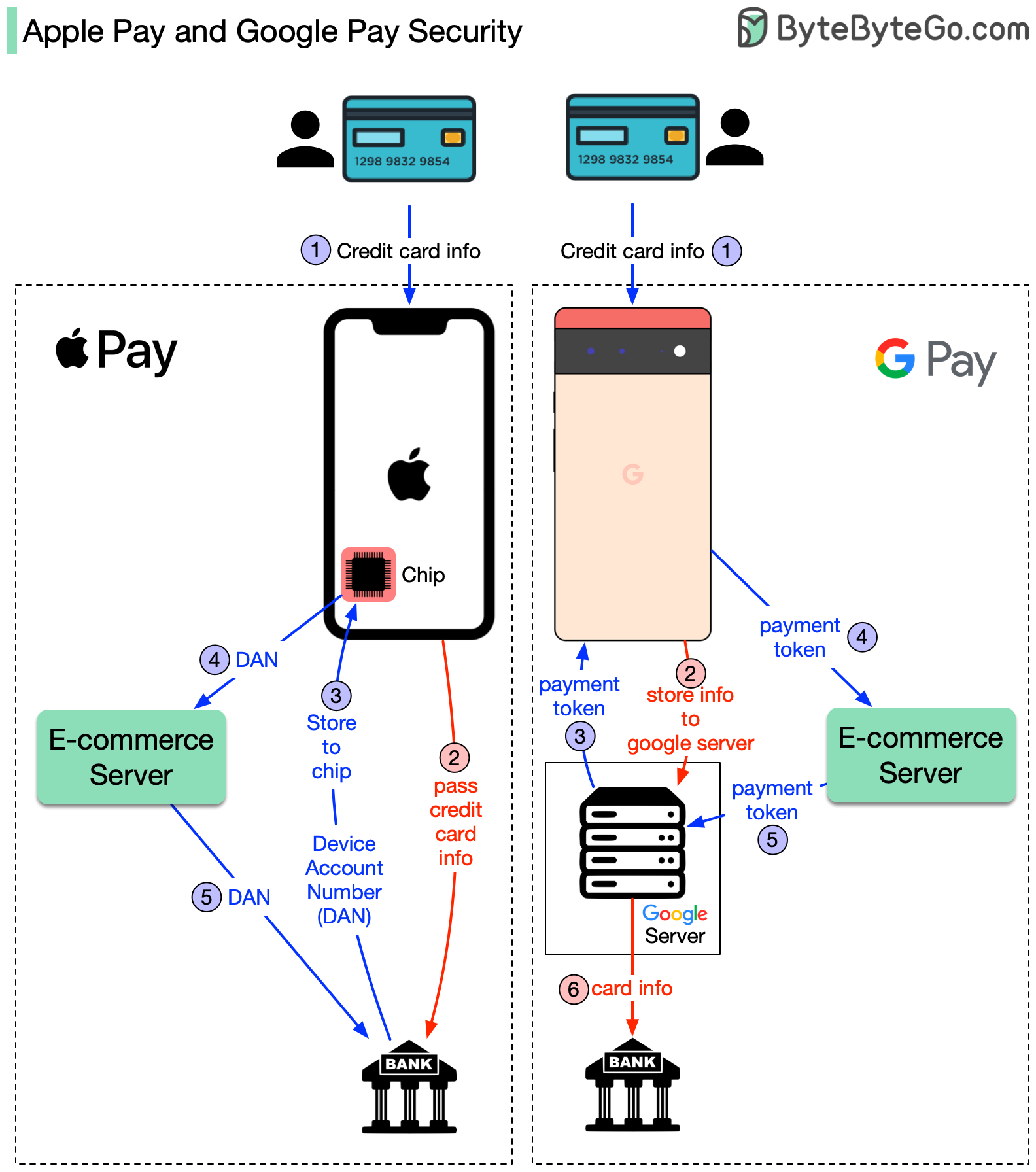

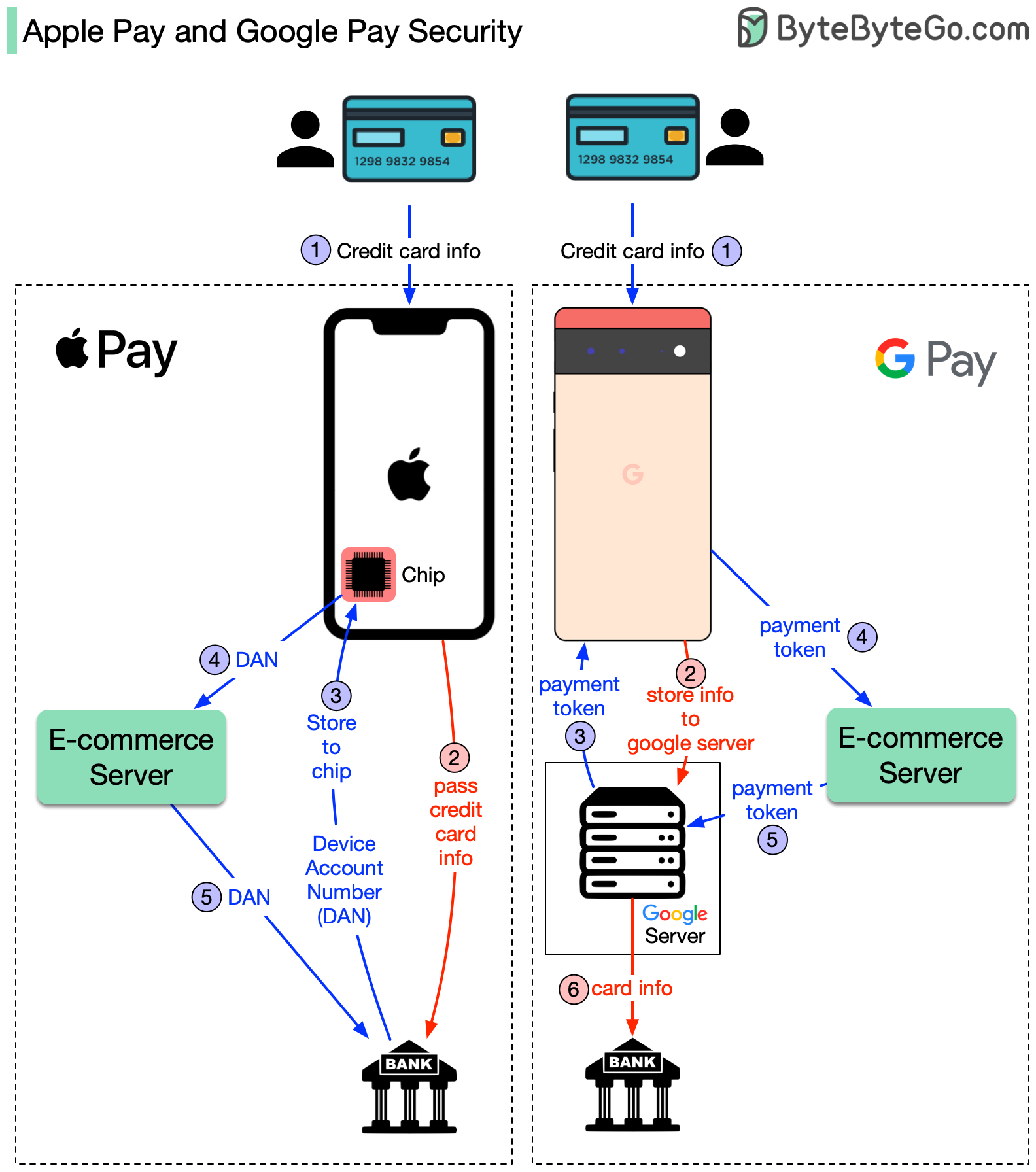

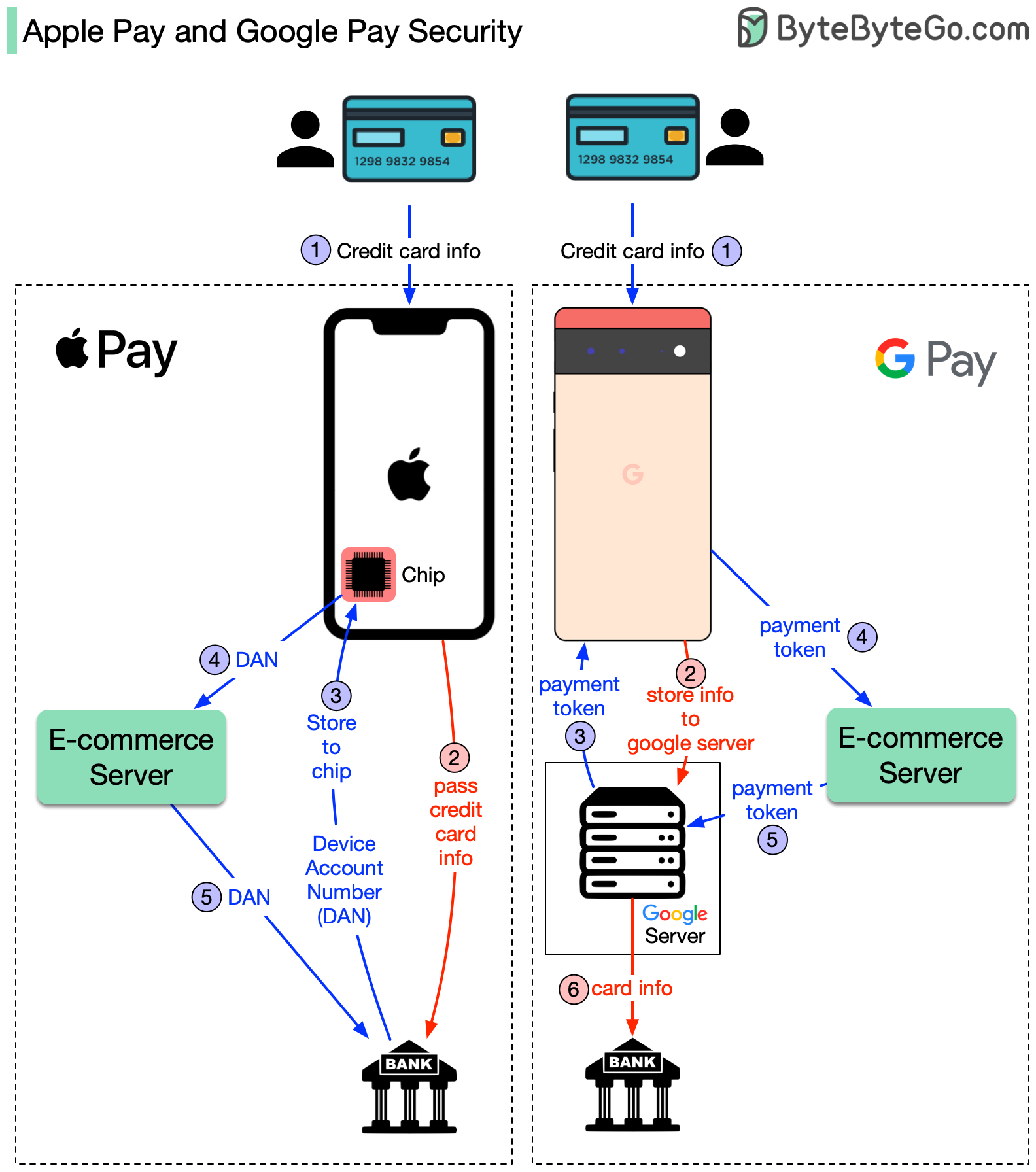

+ * [How do Apple Pay and Google Pay work?](https://bytebytego.com/guides/how-applegoogle-pay-works)

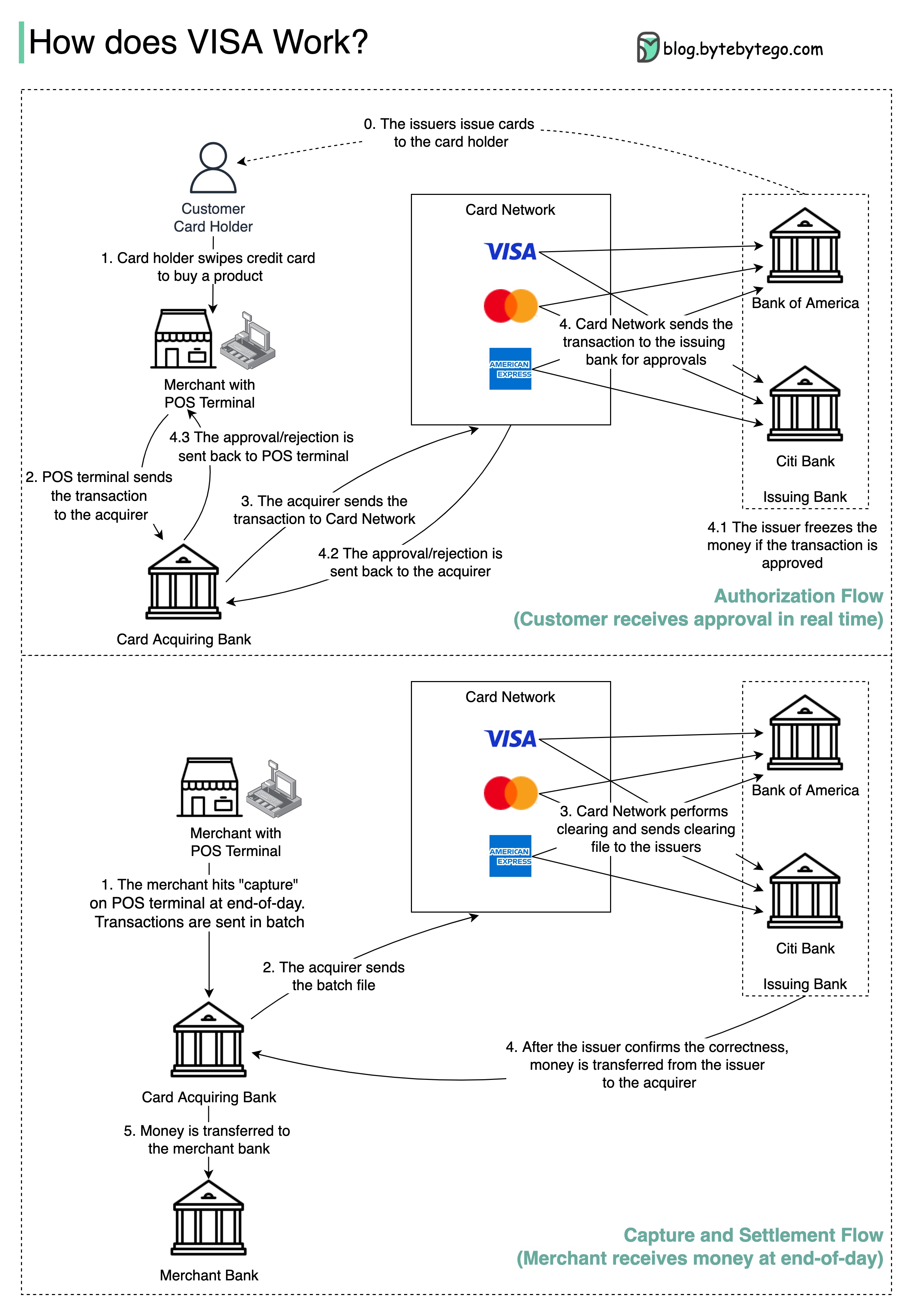

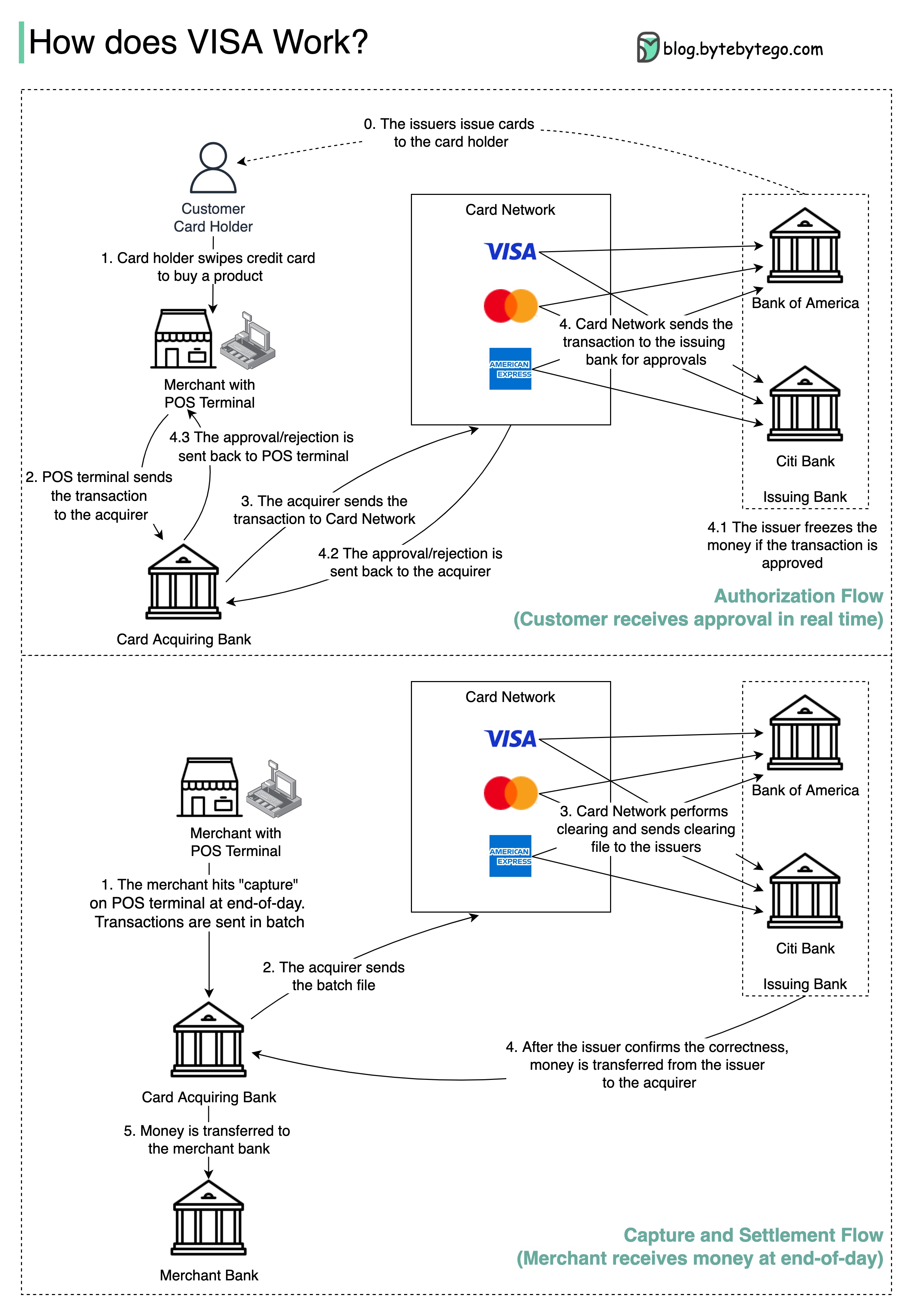

+ * [How VISA Works When Swiping a Credit Card](https://bytebytego.com/guides/how-does-visa-work-when-we-swipe-a-credit-card-at-a-merchant's-shop)

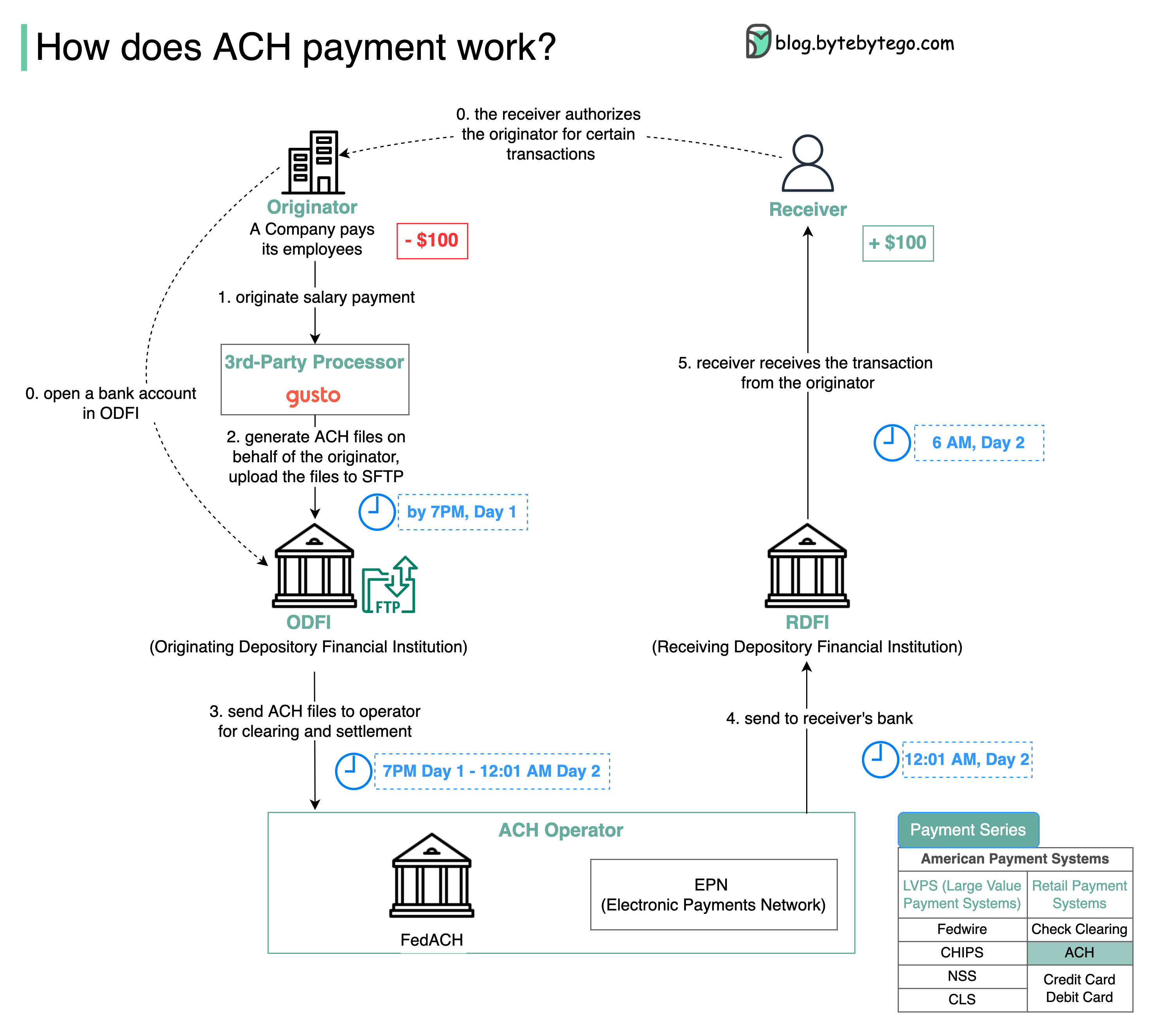

+ * [How ACH Payment Works](https://bytebytego.com/guides/how-does-ach-payment-work)

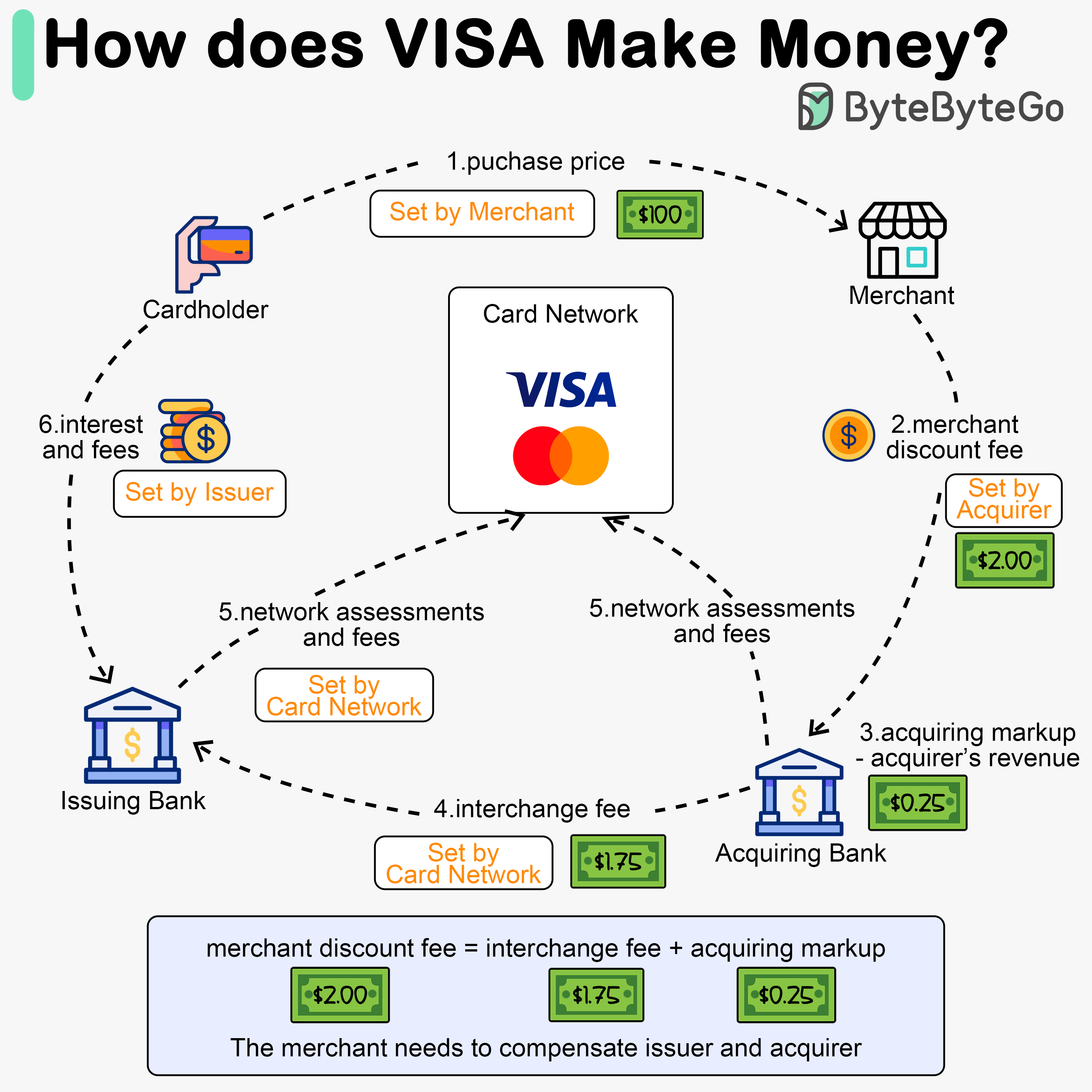

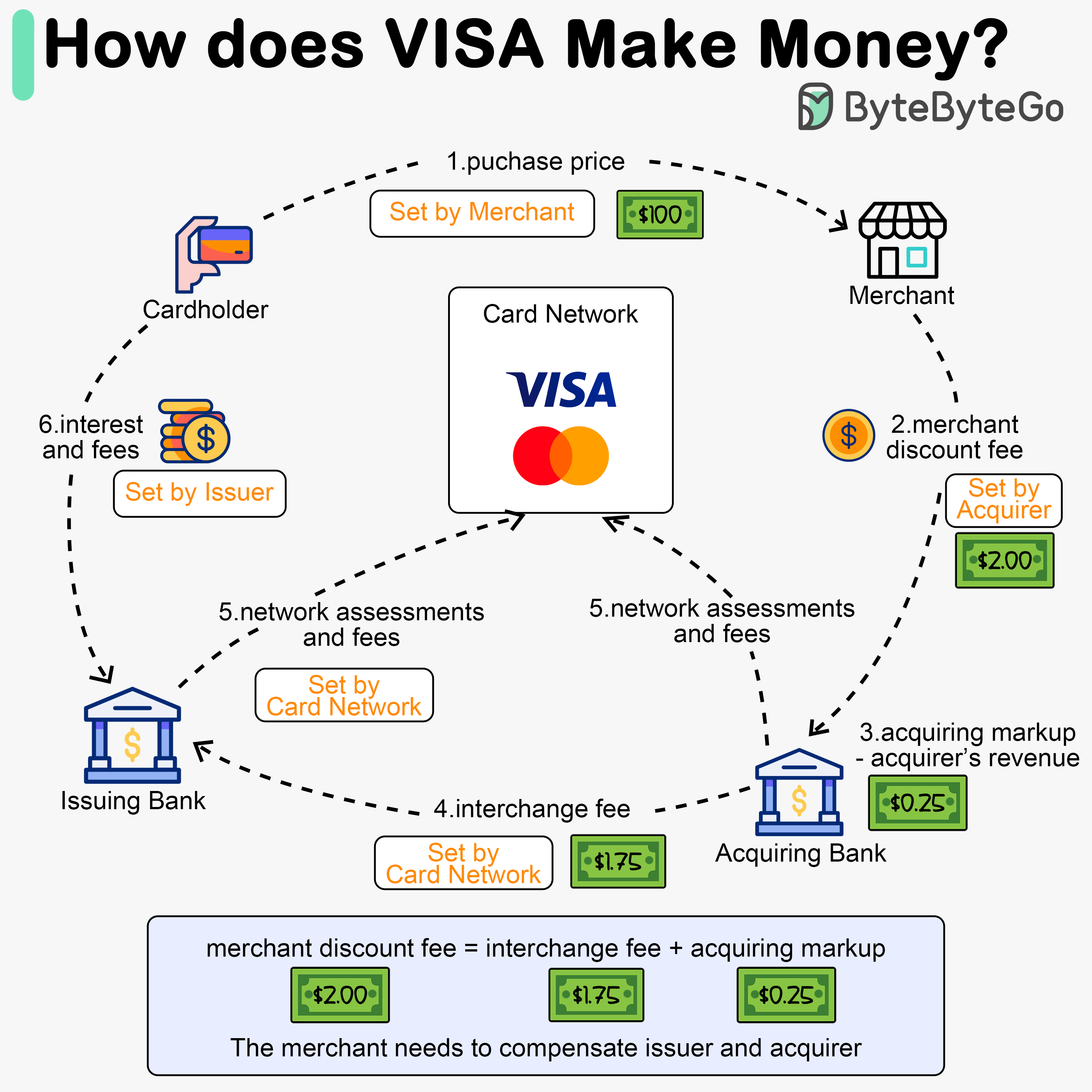

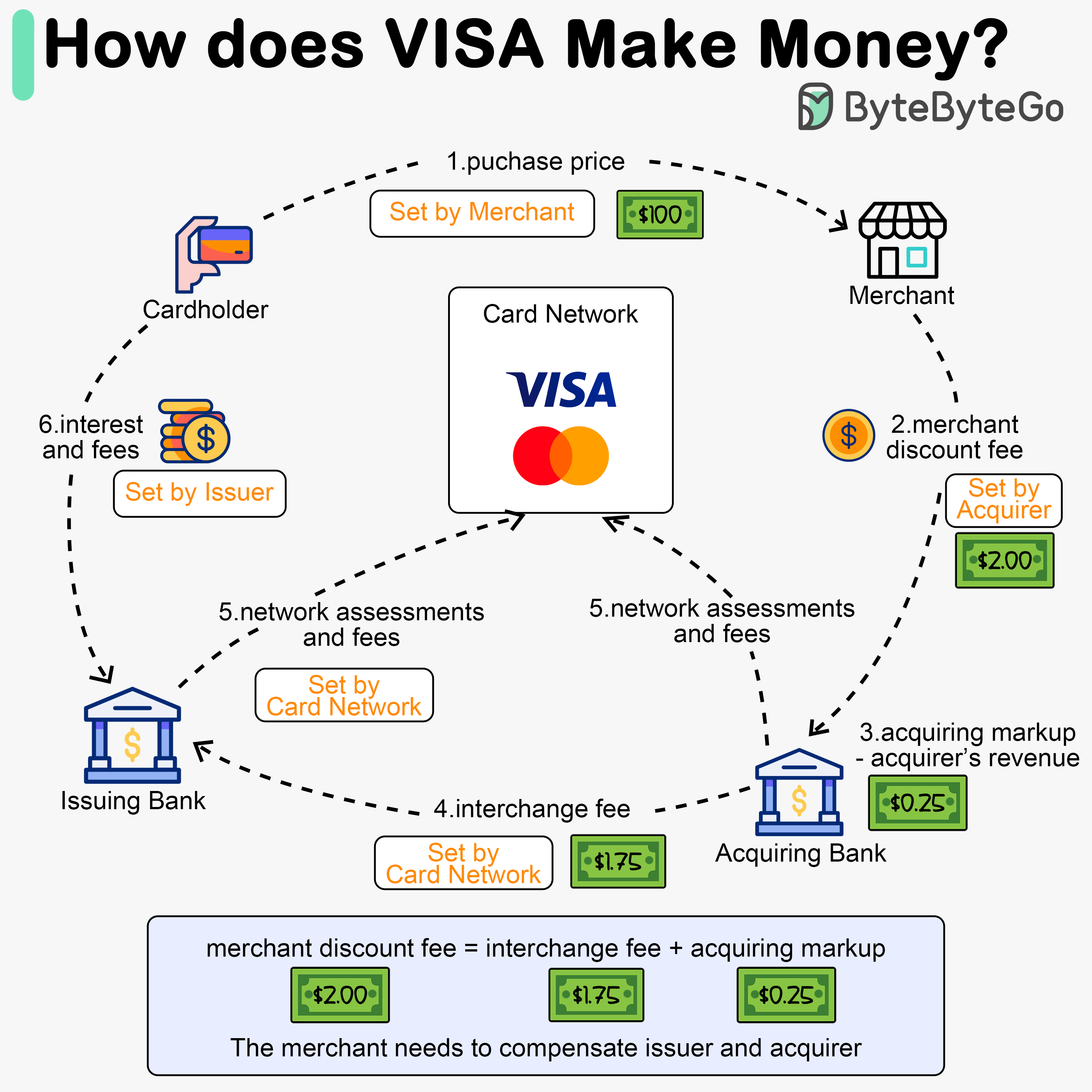

+ * [How does Visa make money?](https://bytebytego.com/guides/how-does-visa-make-money)

+* [Software Architecture](https://bytebytego.com/guides/software-architecture)

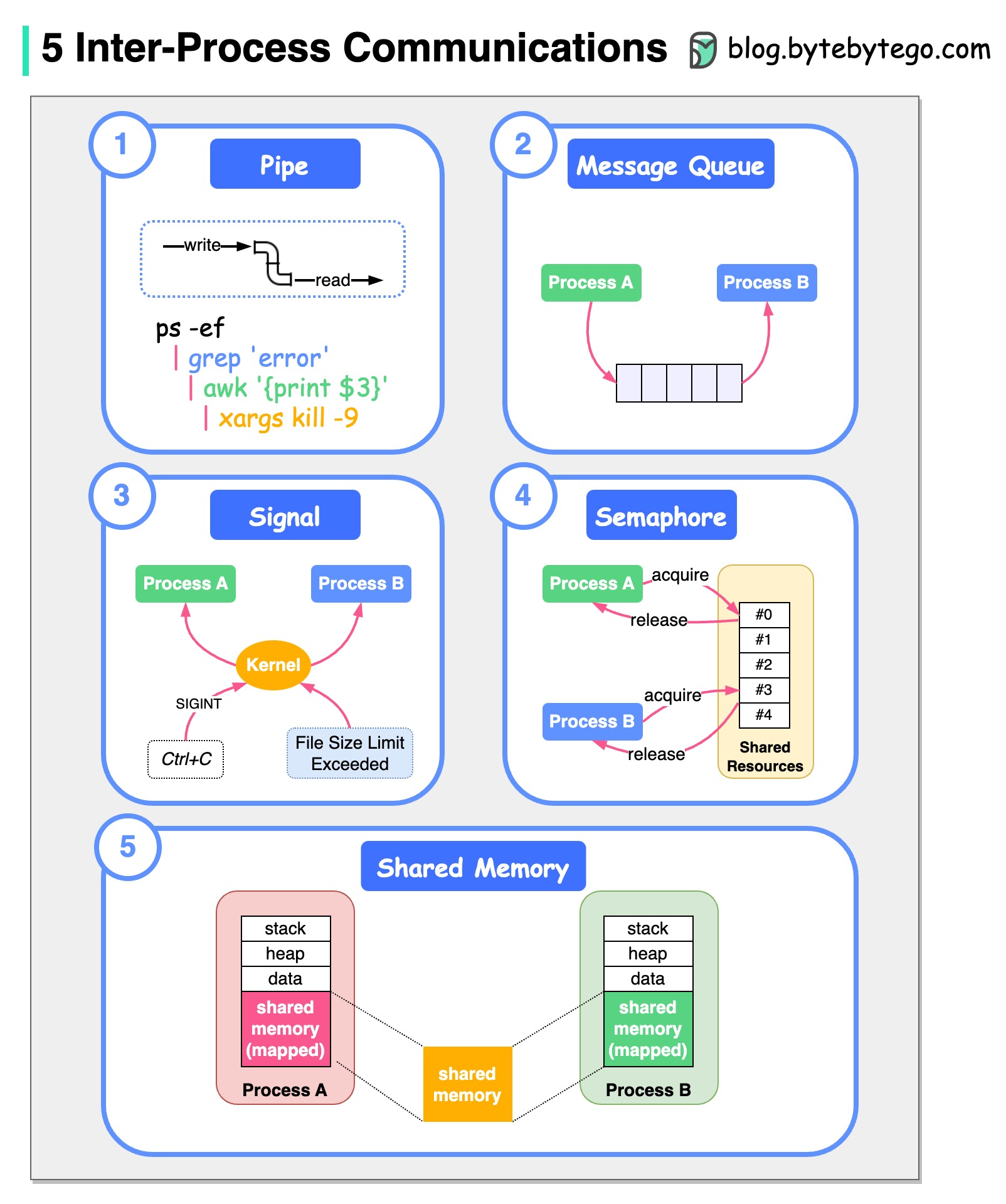

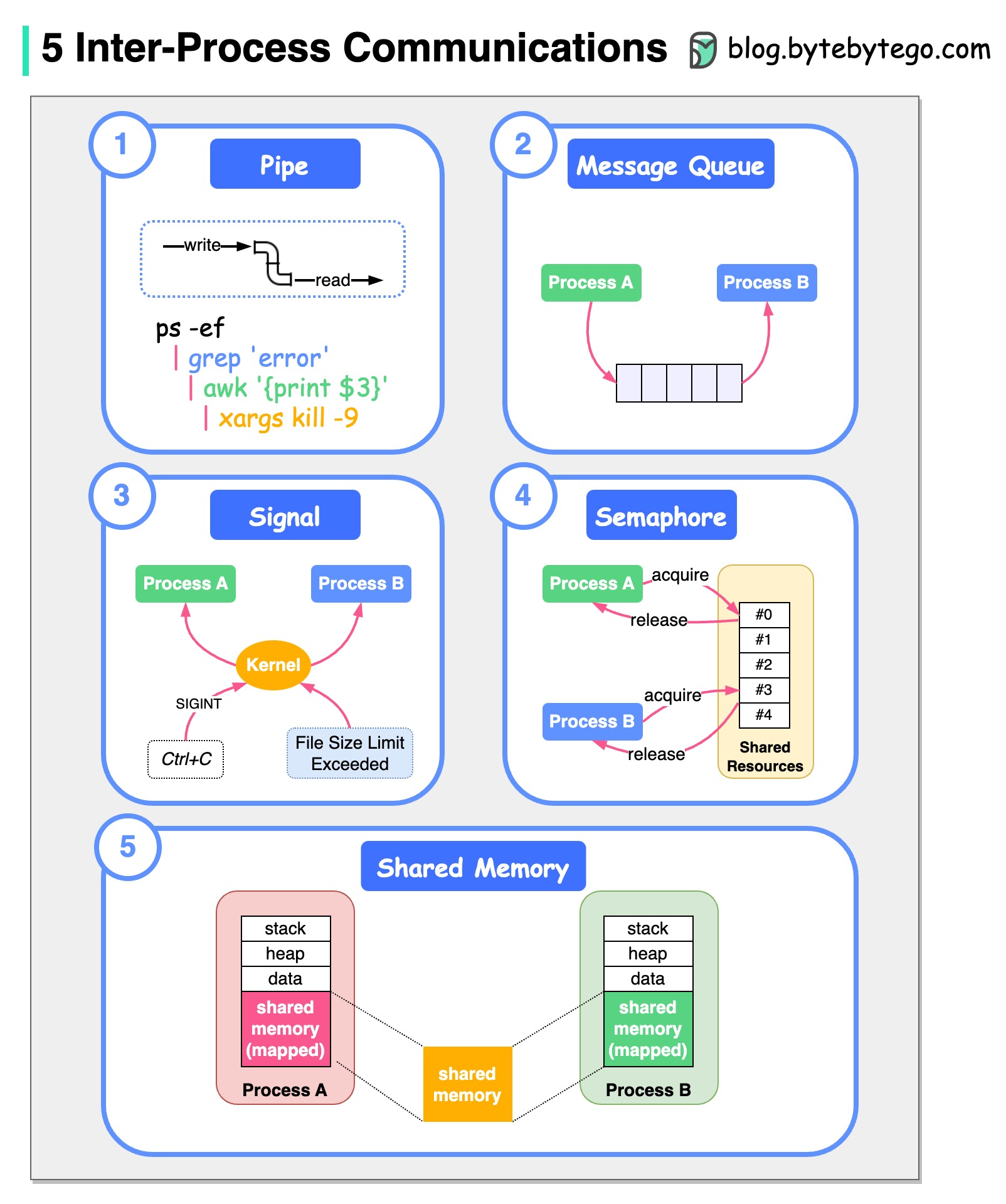

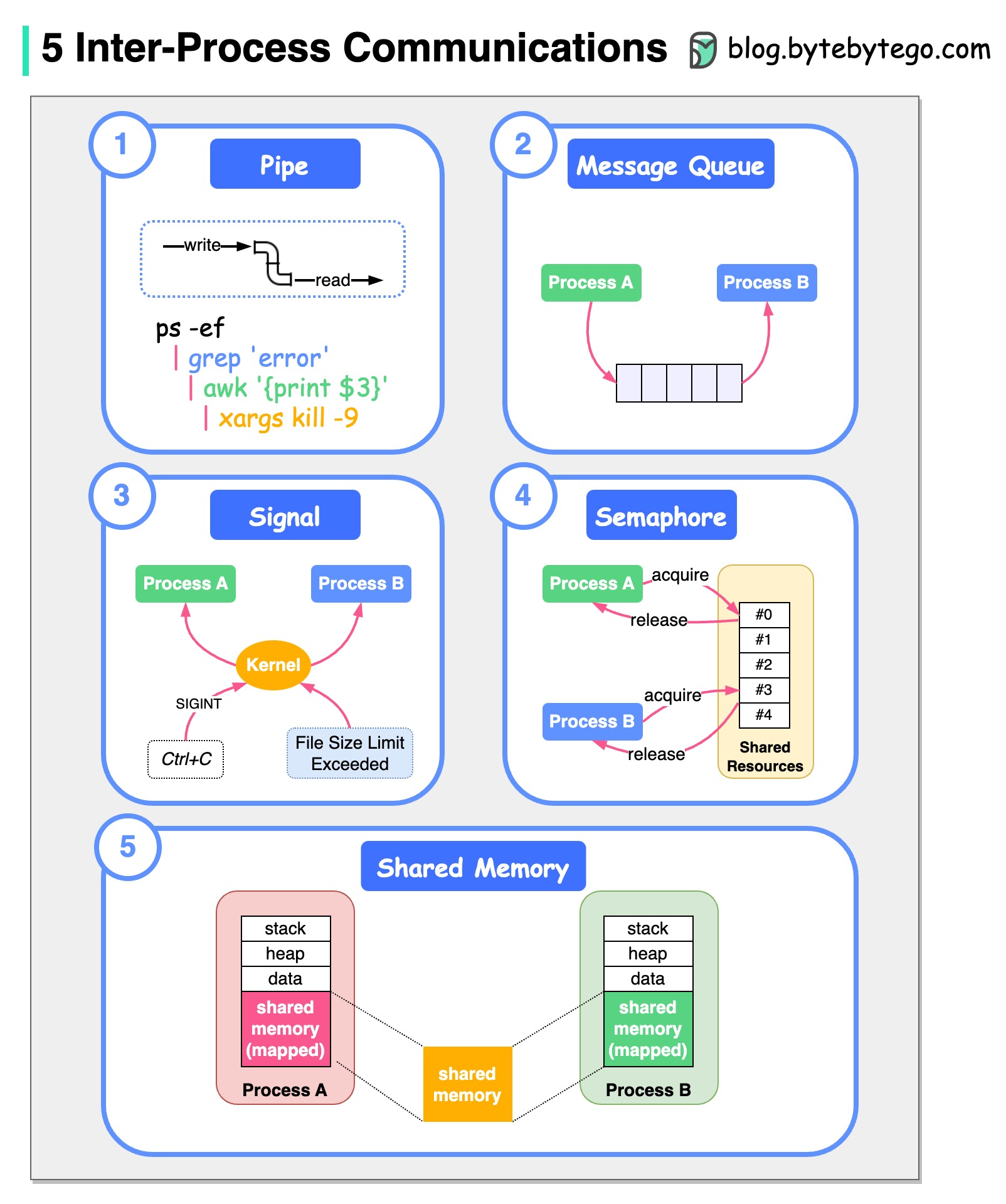

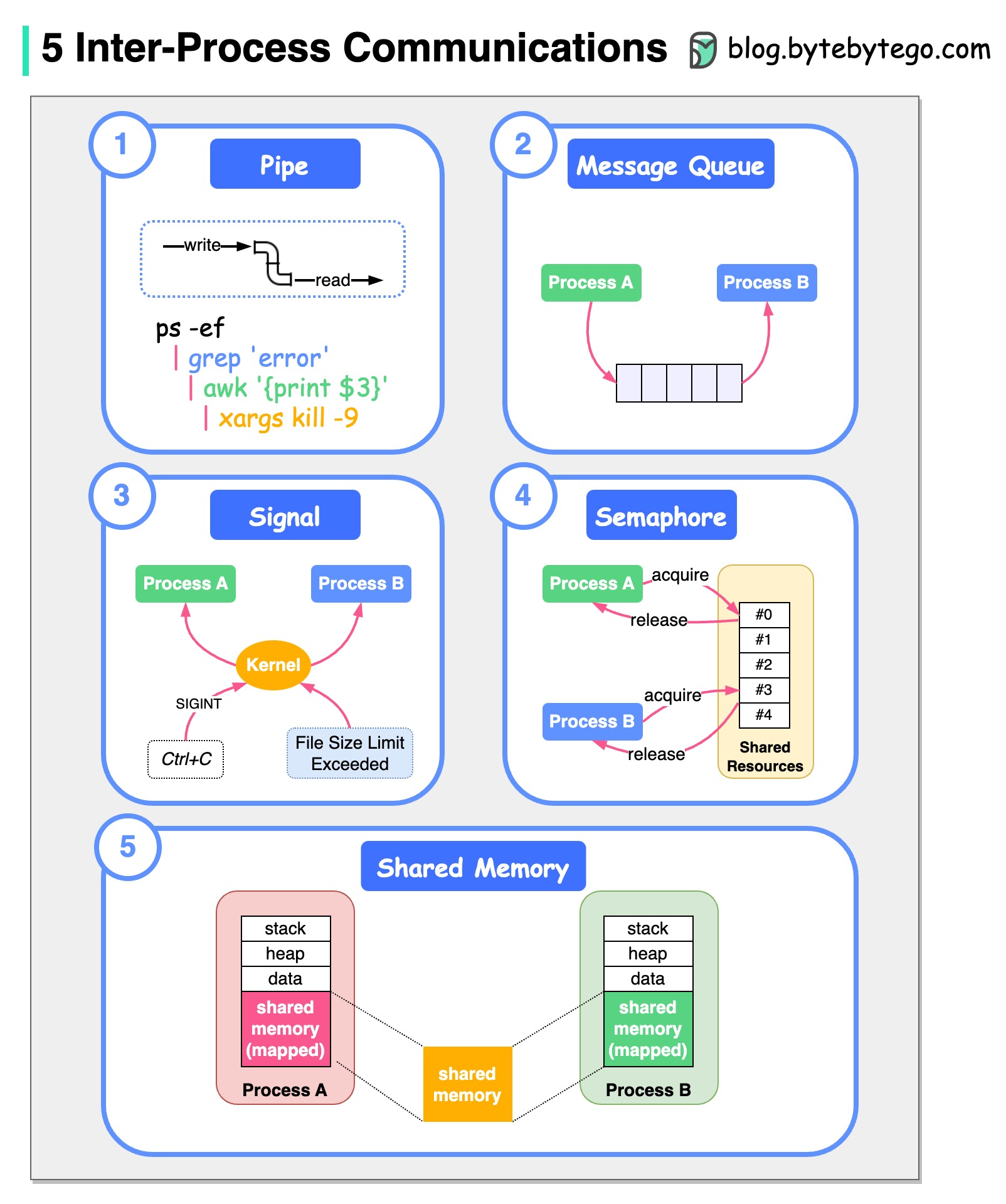

+ * [Inter-Process Communication on Linux](https://bytebytego.com/guides/how-do-processes-talk-to-each-other-on-linux)

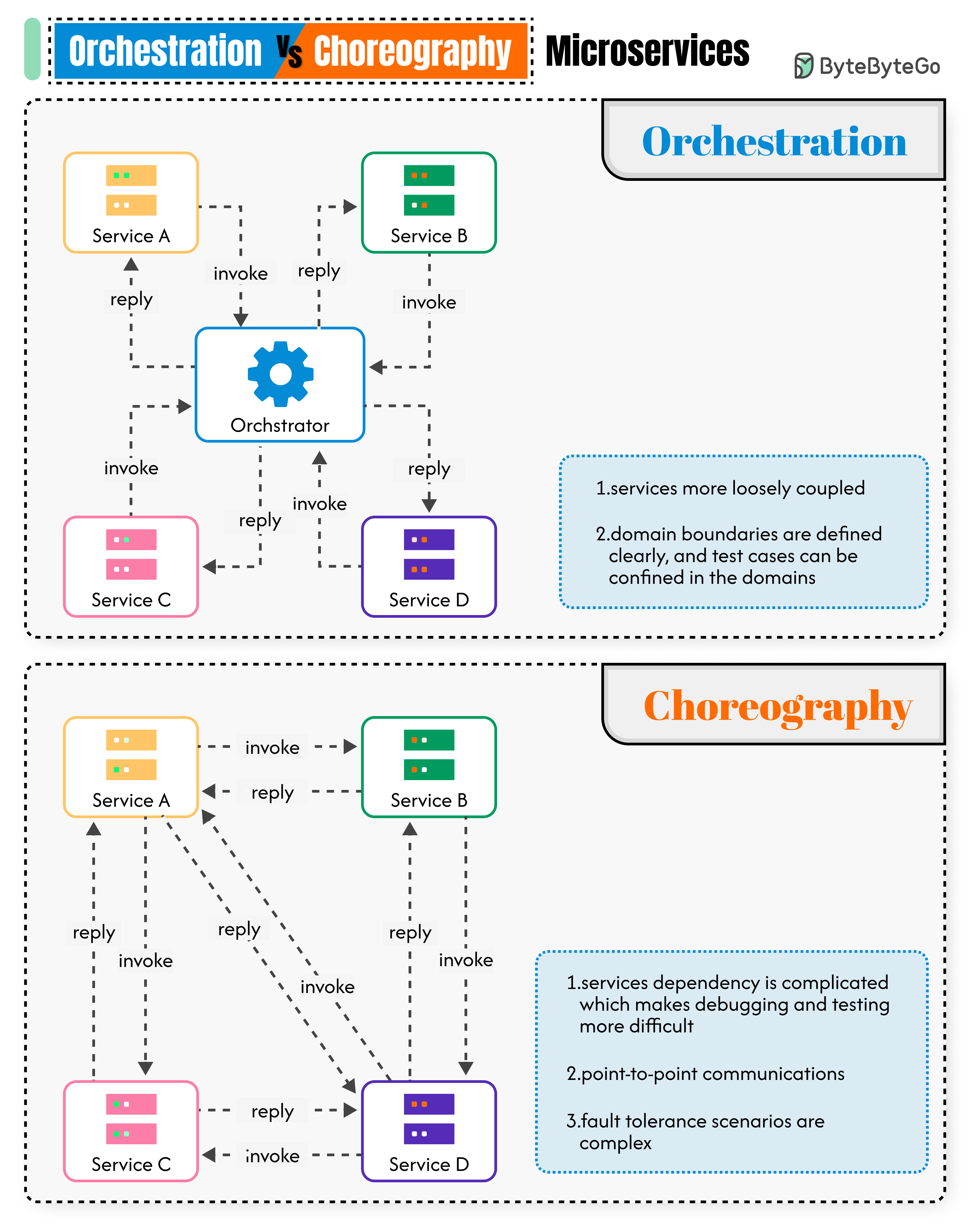

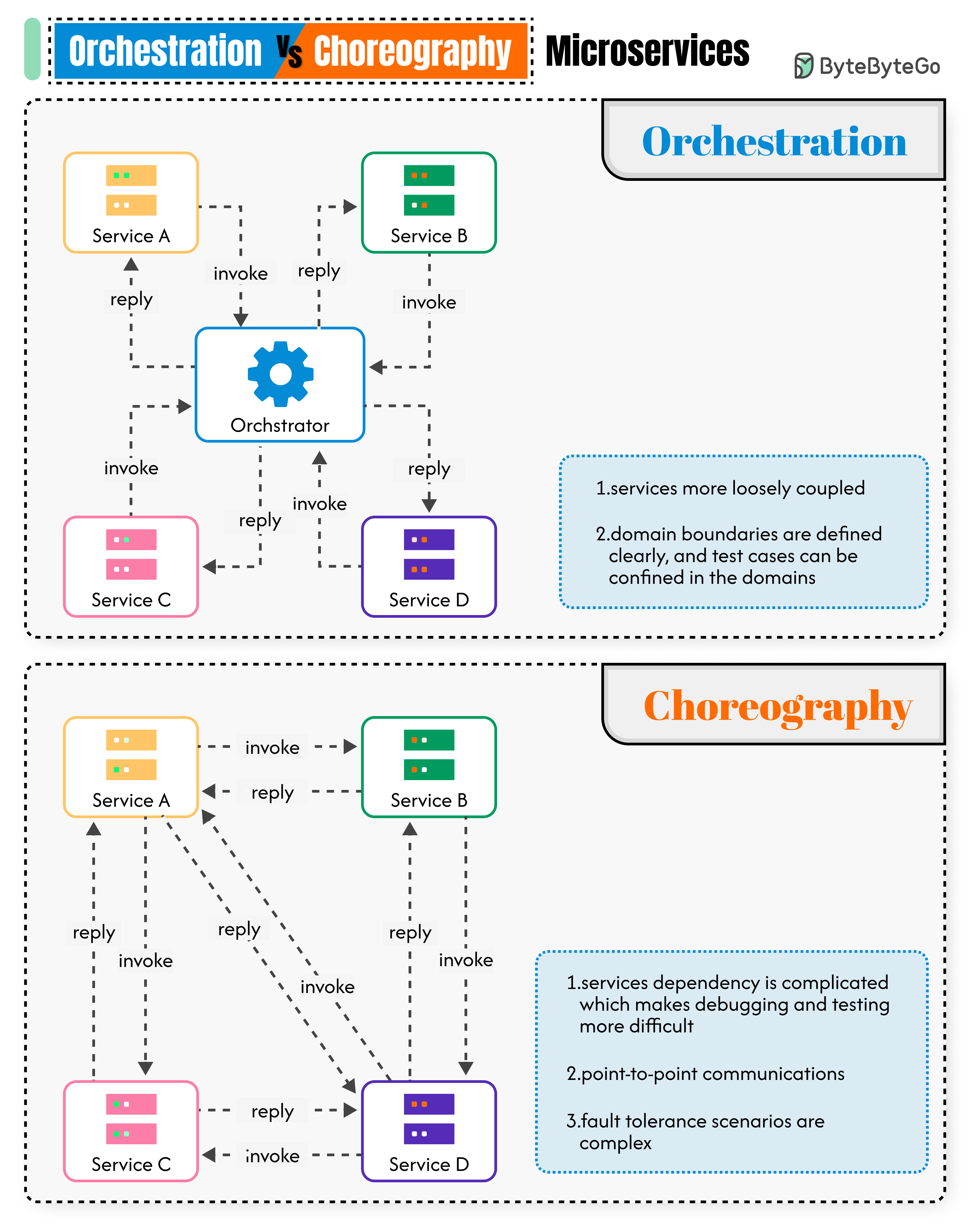

+ * [Orchestration vs. Choreography in Microservices](https://bytebytego.com/guides/orchestration-vs-choreography-microservices)

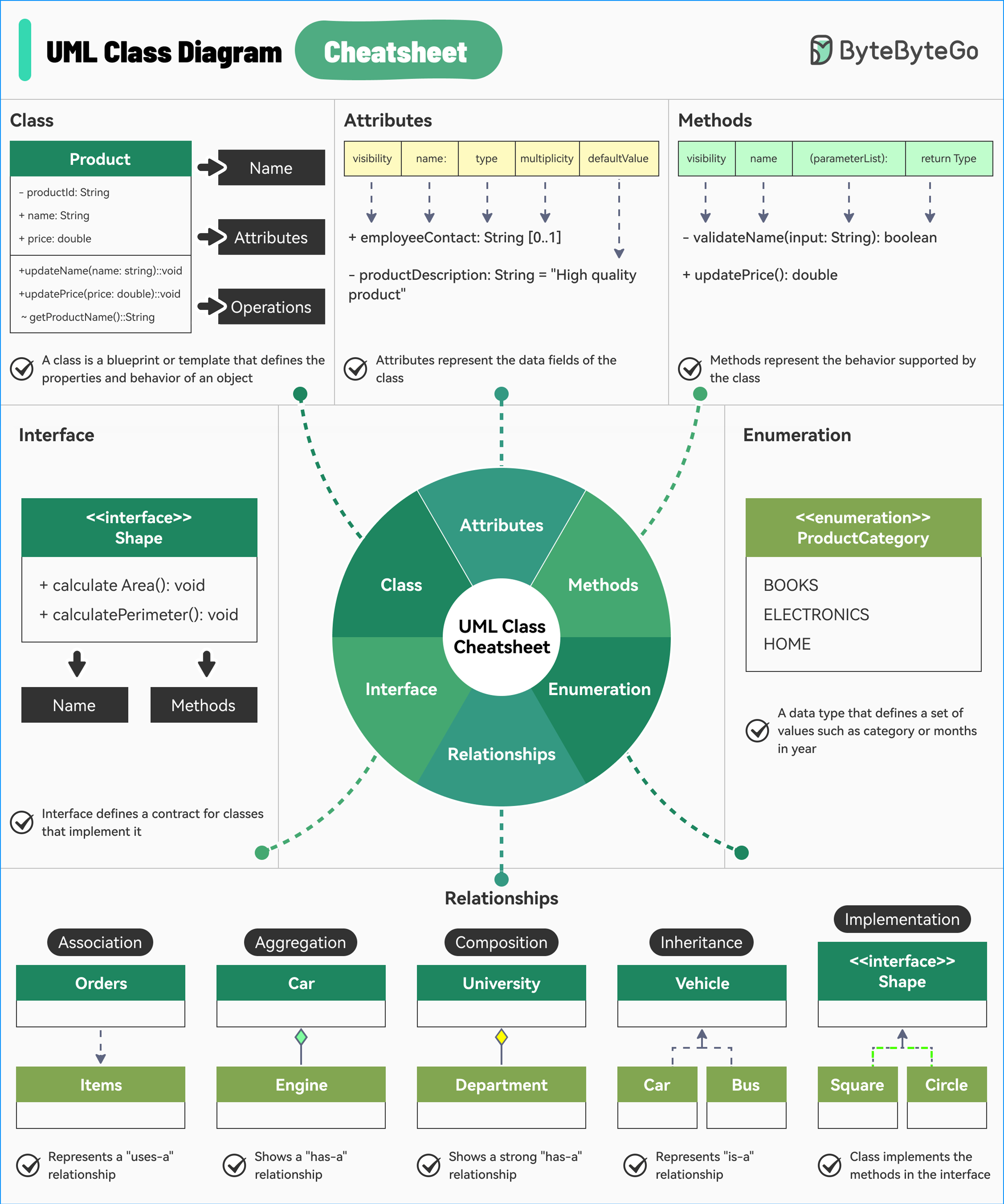

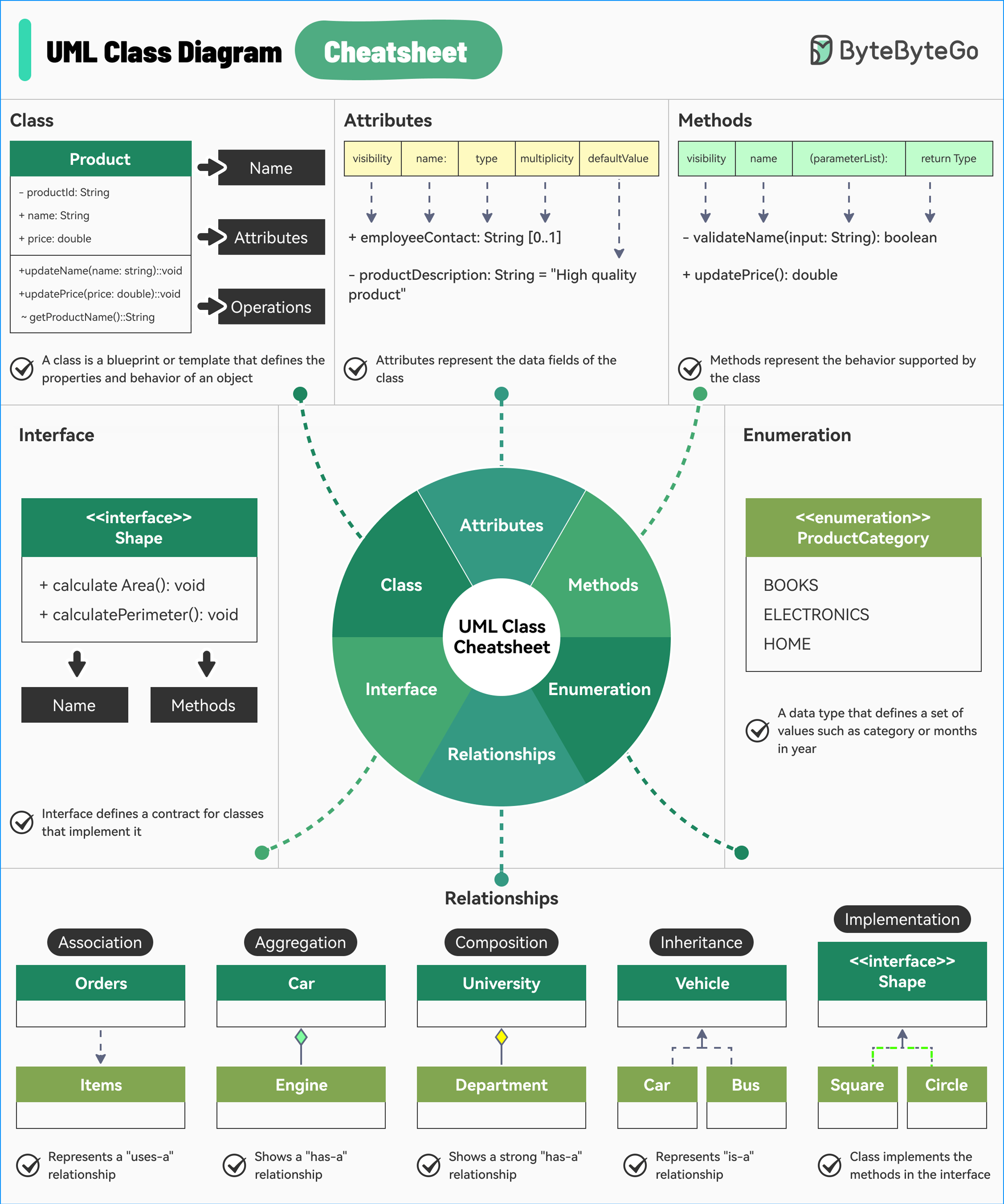

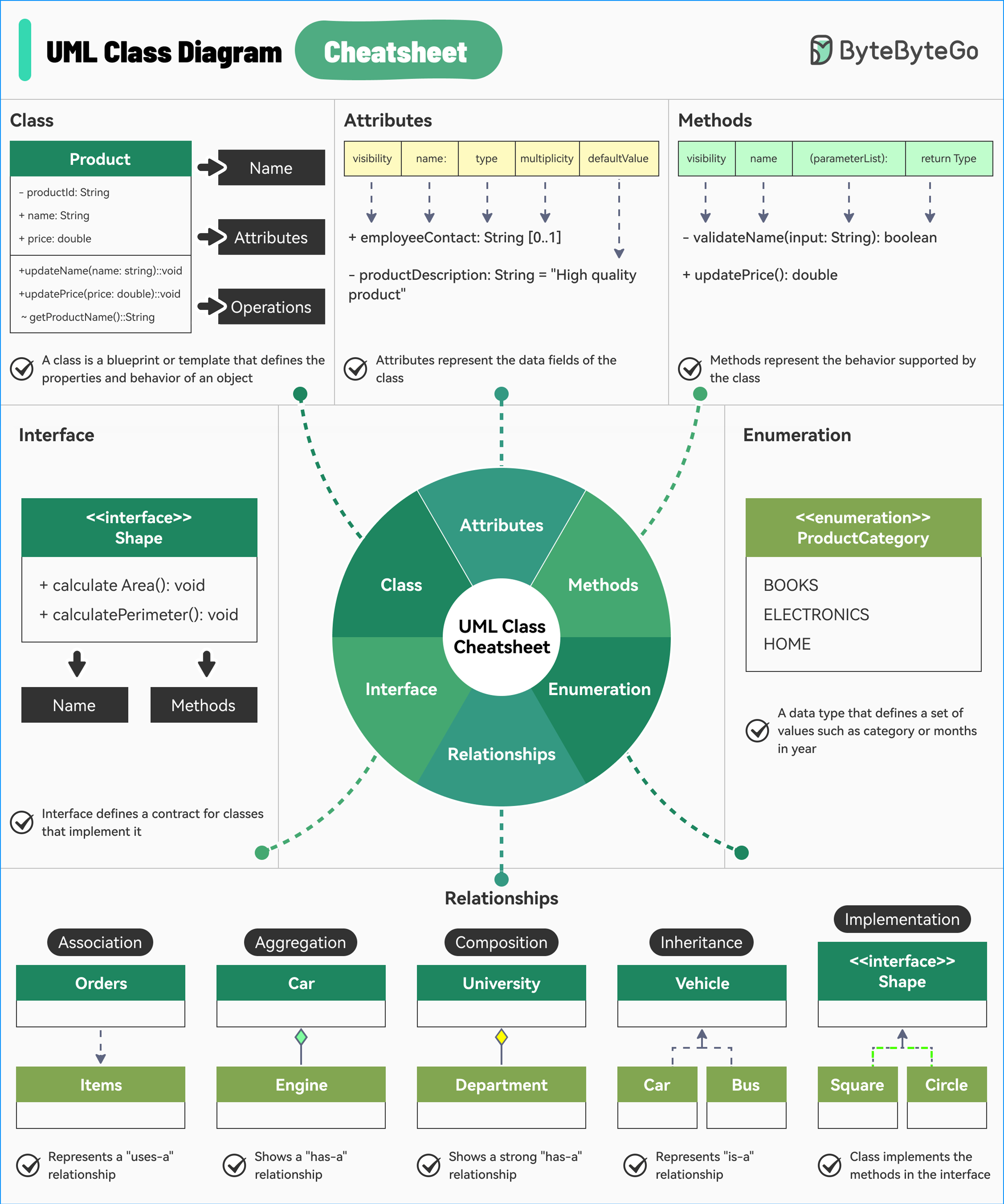

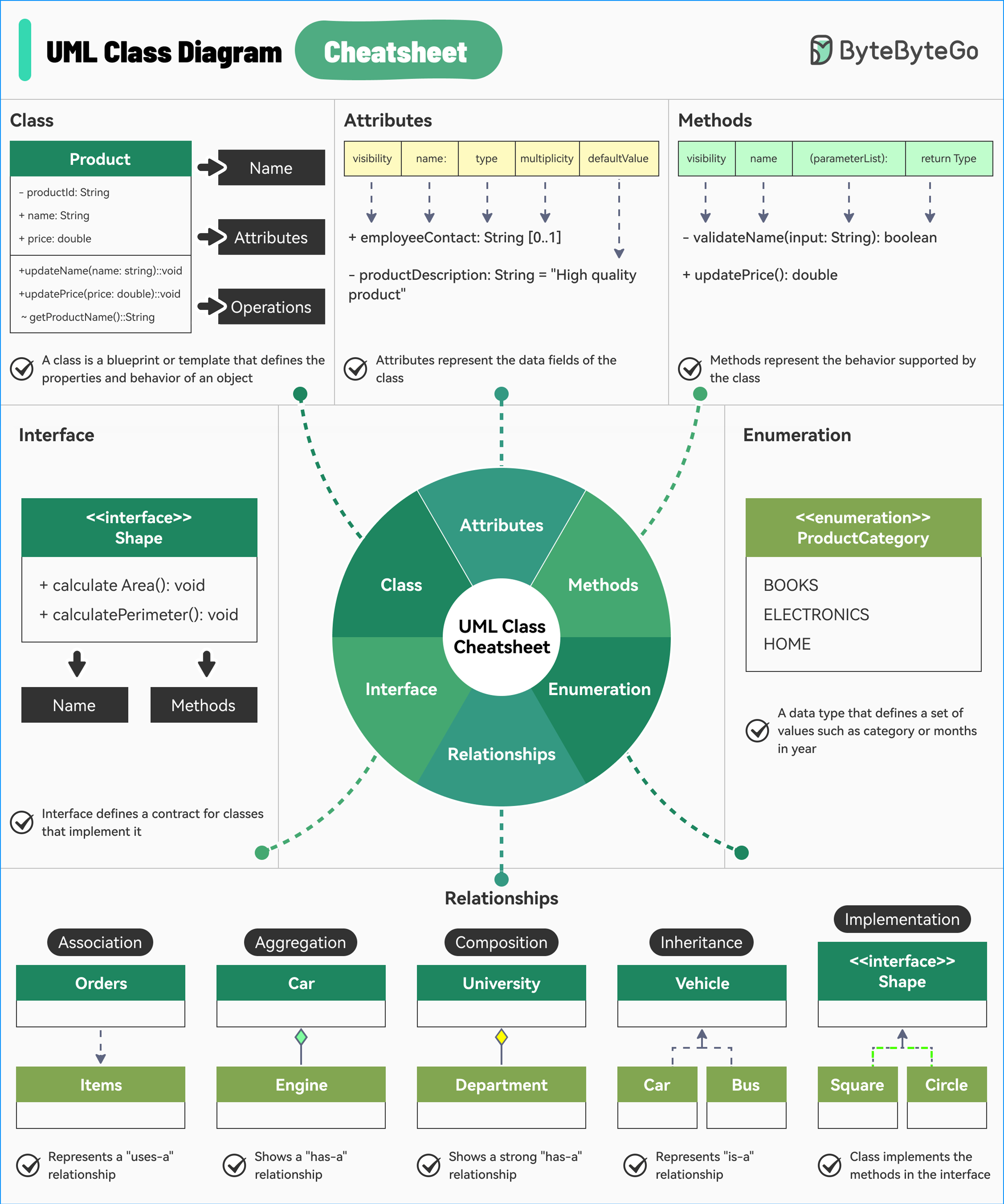

+ * [UML Class Diagrams Cheatsheet](https://bytebytego.com/guides/a-cheatsheet-for-uml-class-diagrams)

+ * [Amazon Prime Video Monitoring Service](https://bytebytego.com/guides/amazon-prime-video-monitoring-service)

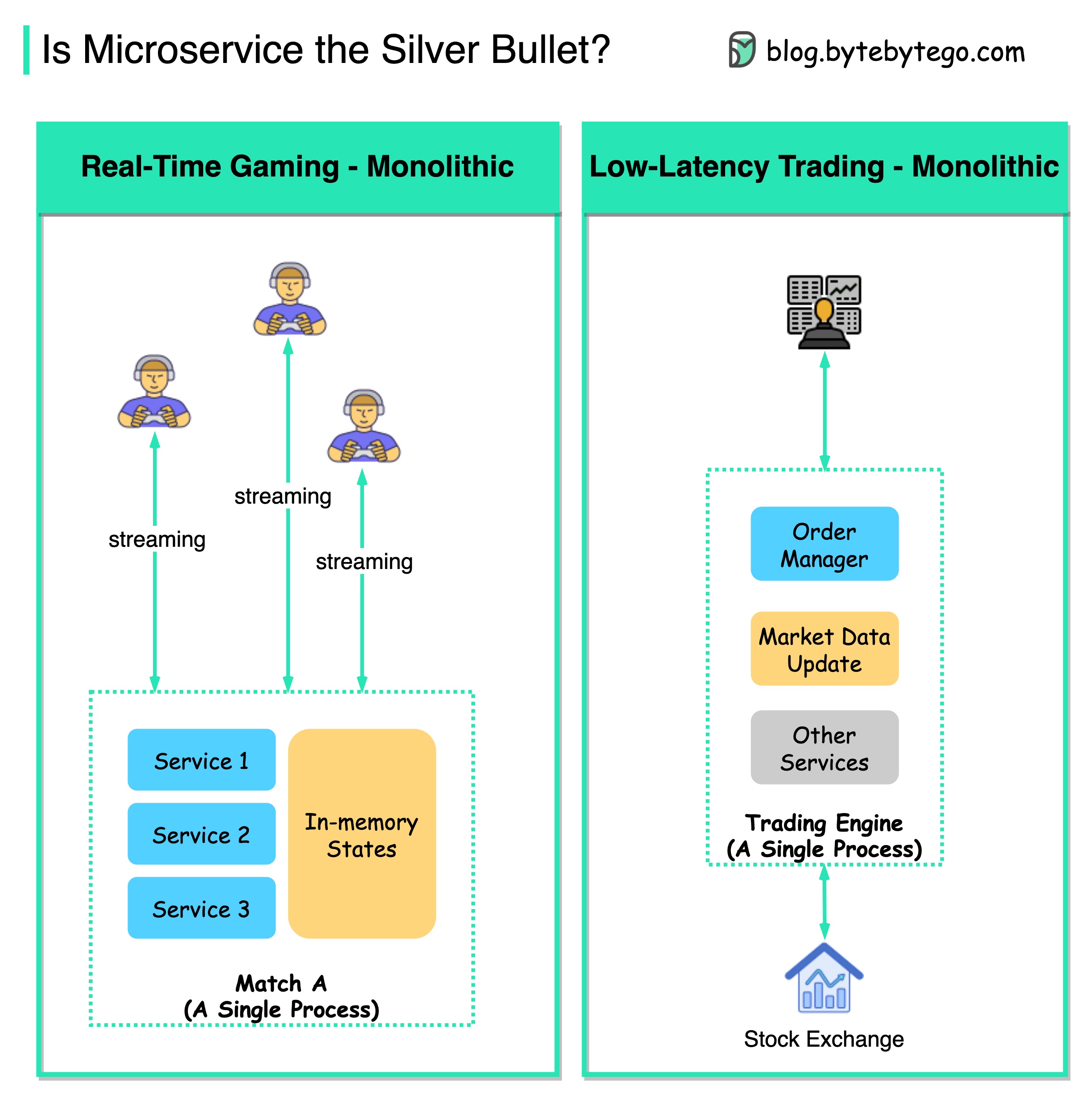

+ * [Is Microservice Architecture the Silver Bullet?](https://bytebytego.com/guides/is-microservice-architecture-the-silver-bullet)

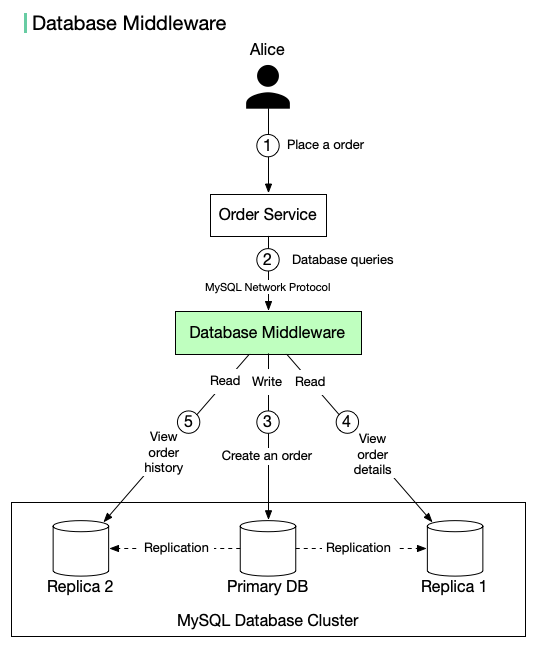

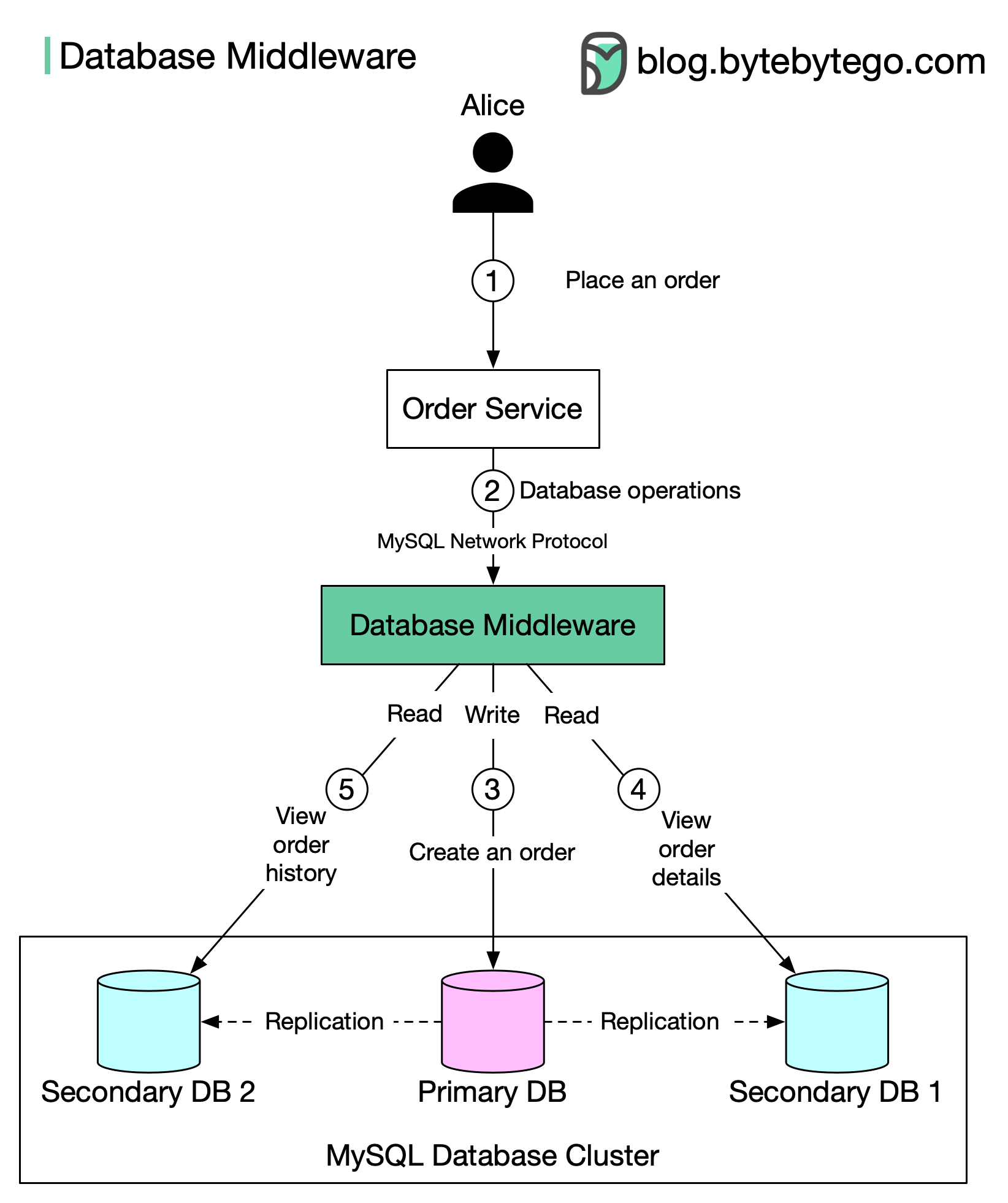

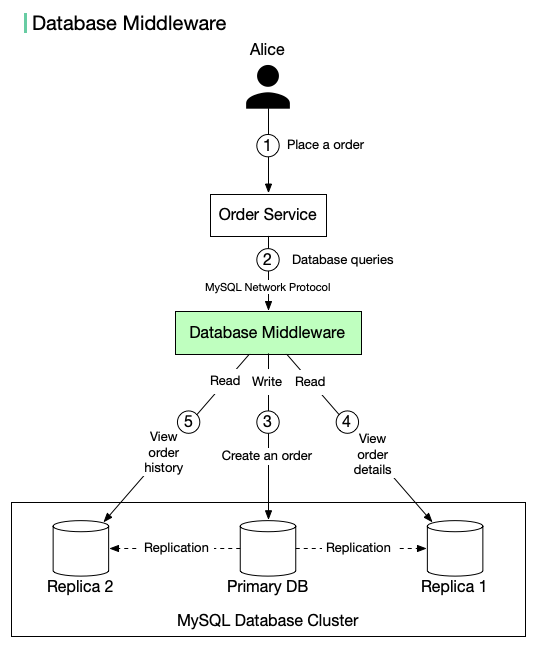

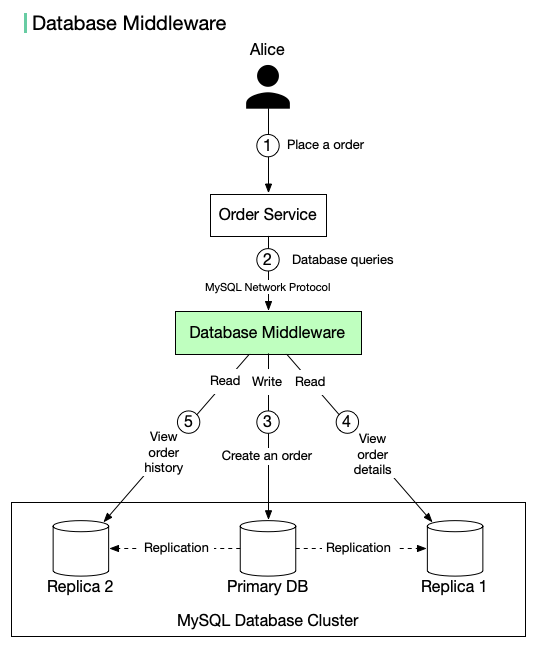

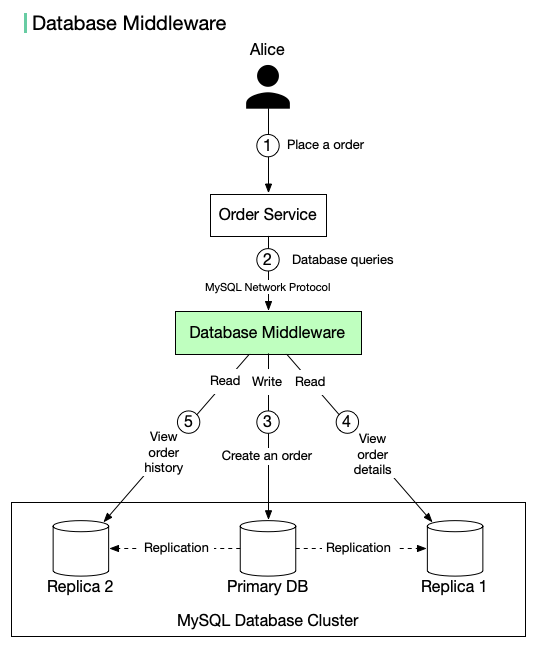

+ * [Database Middleware](https://bytebytego.com/guides/database-middleware)

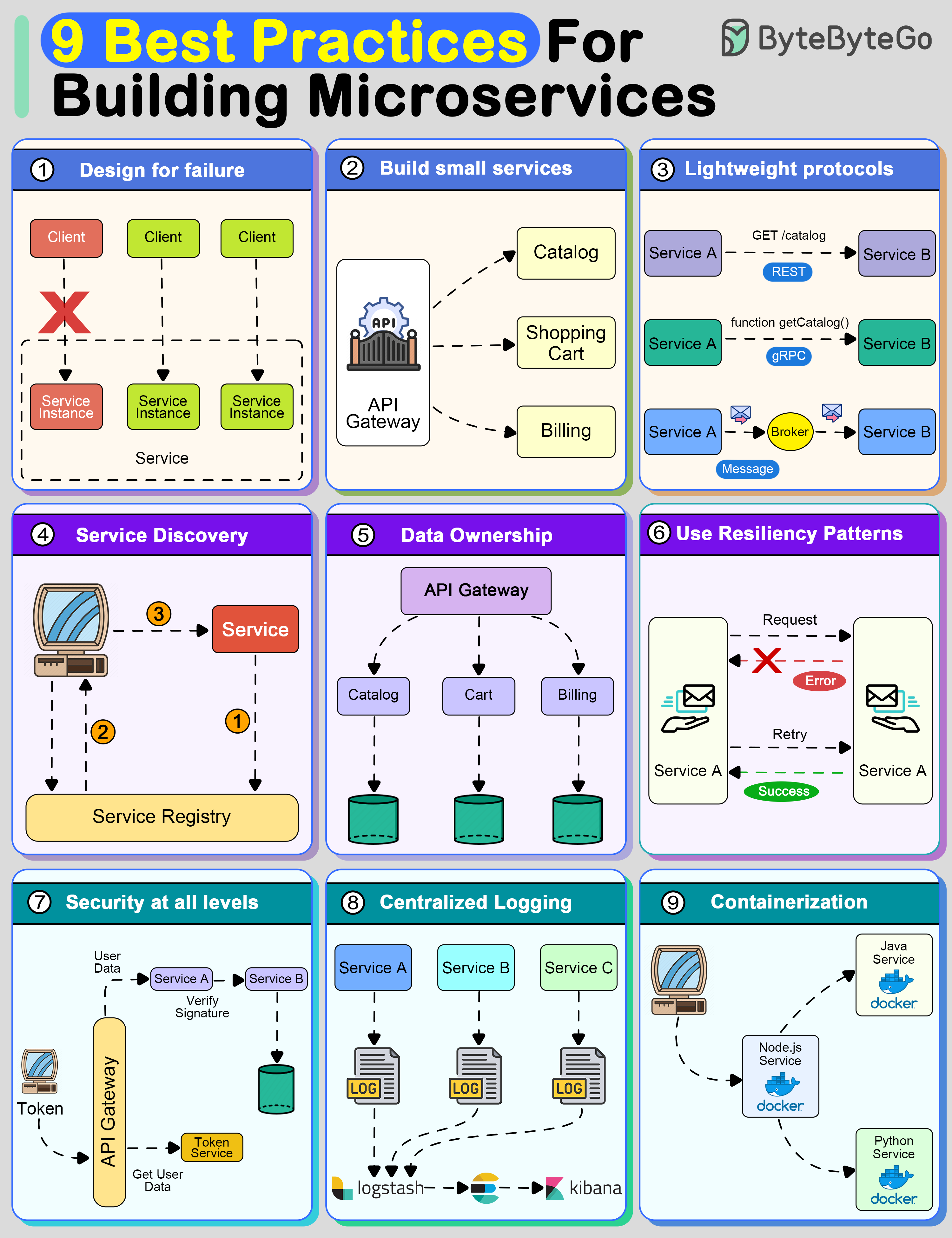

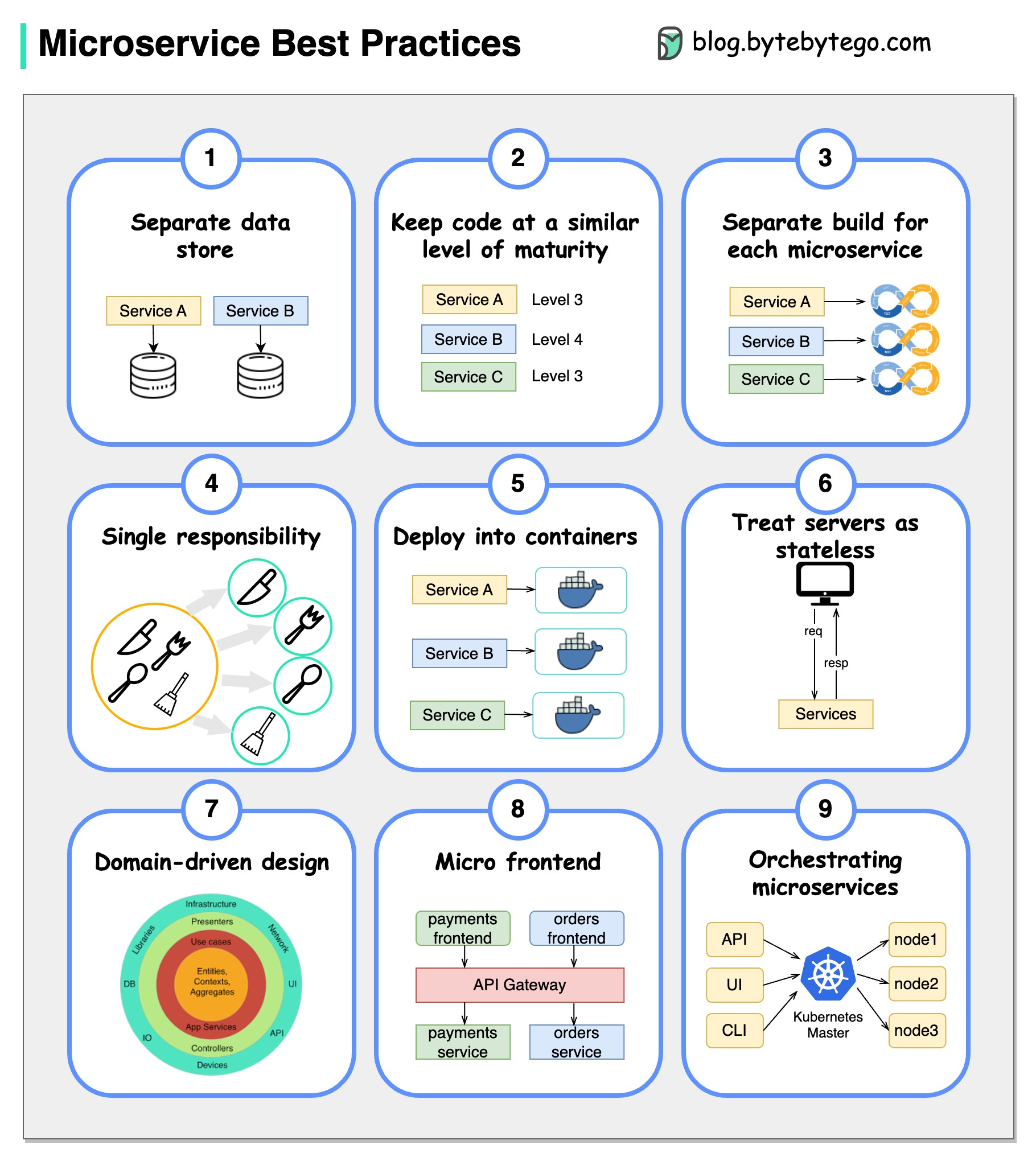

+ * [9 Best Practices for Developing Microservices](https://bytebytego.com/guides/9-best-practices-for-developing-microservices)

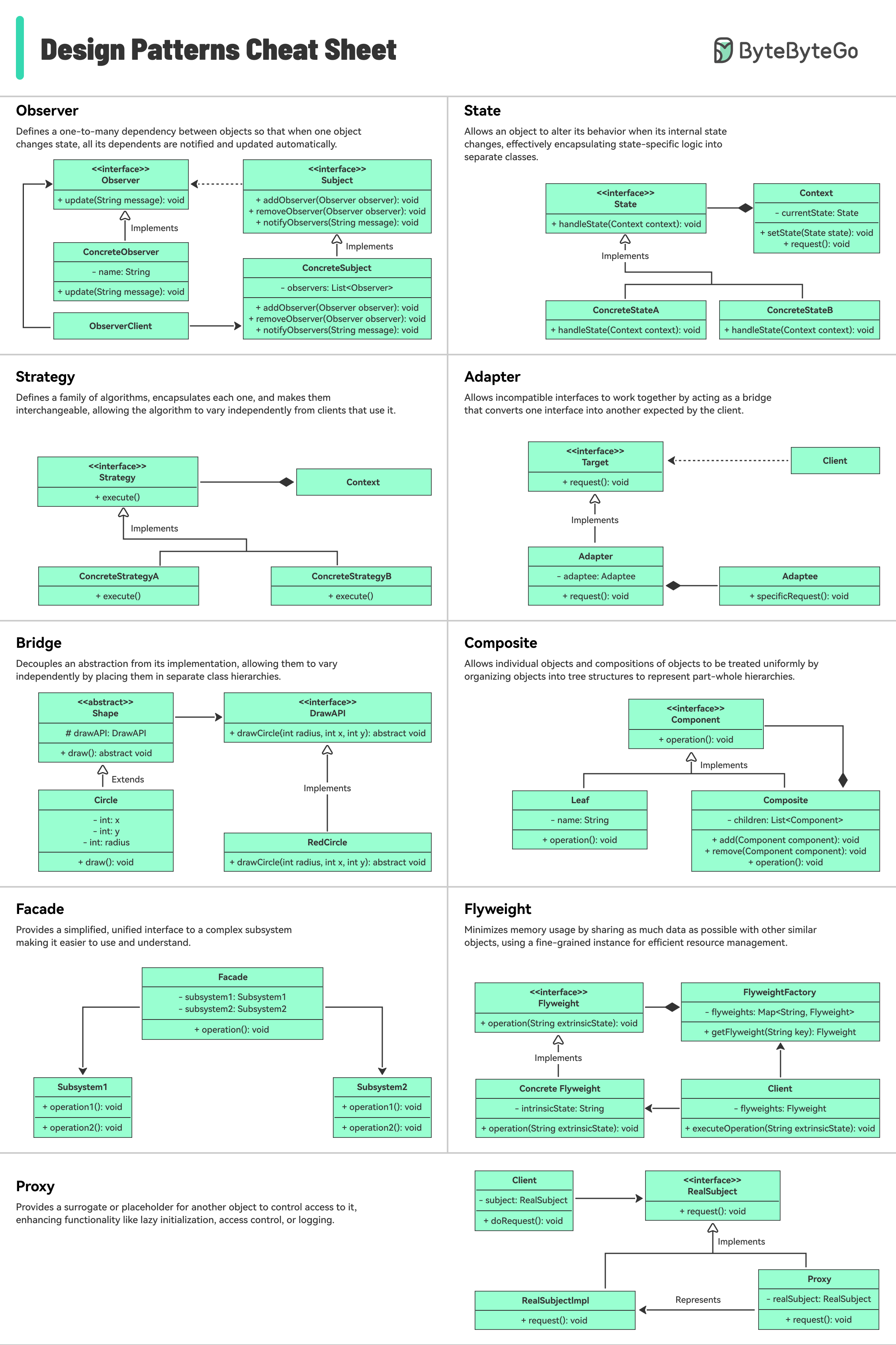

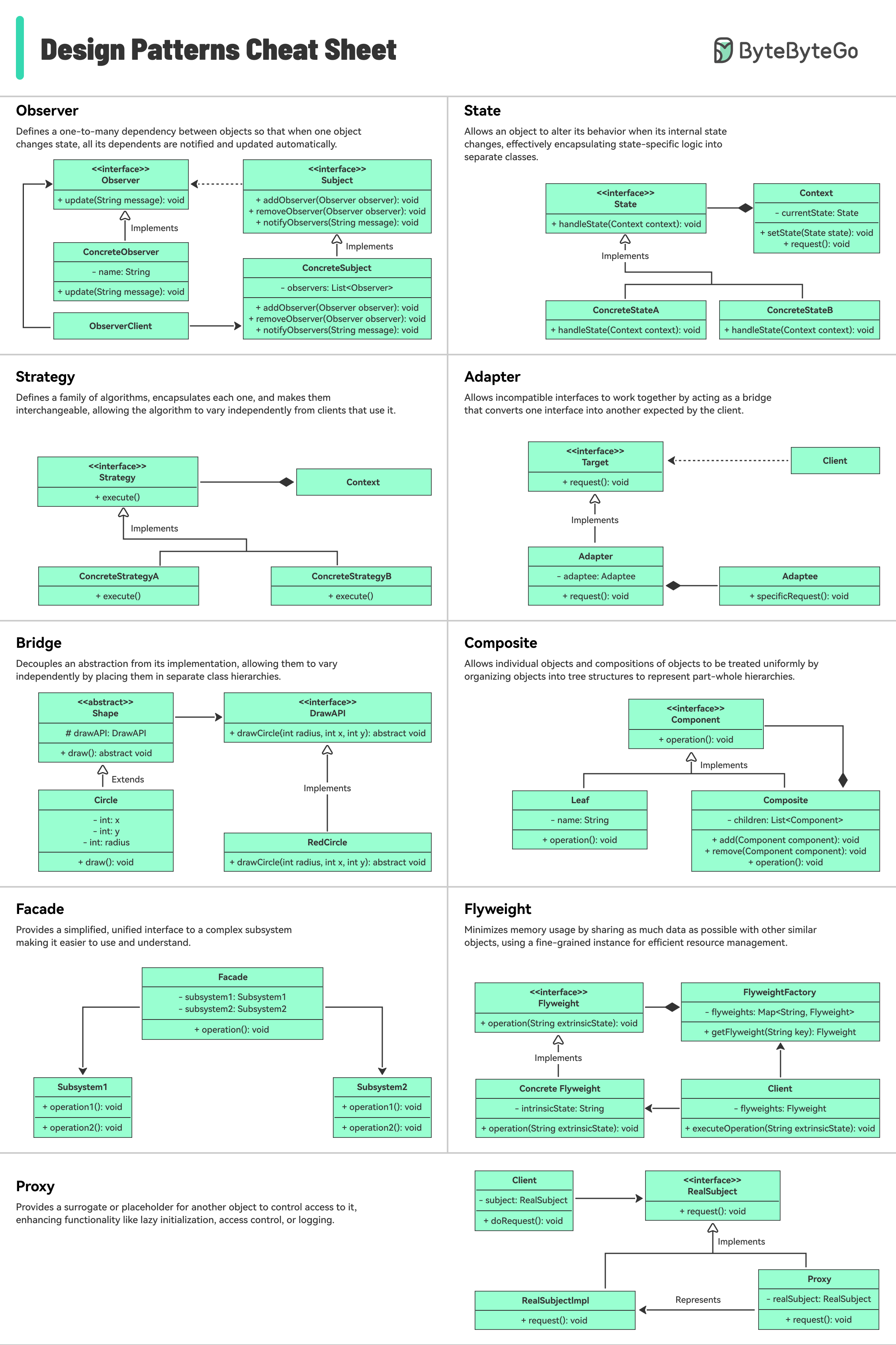

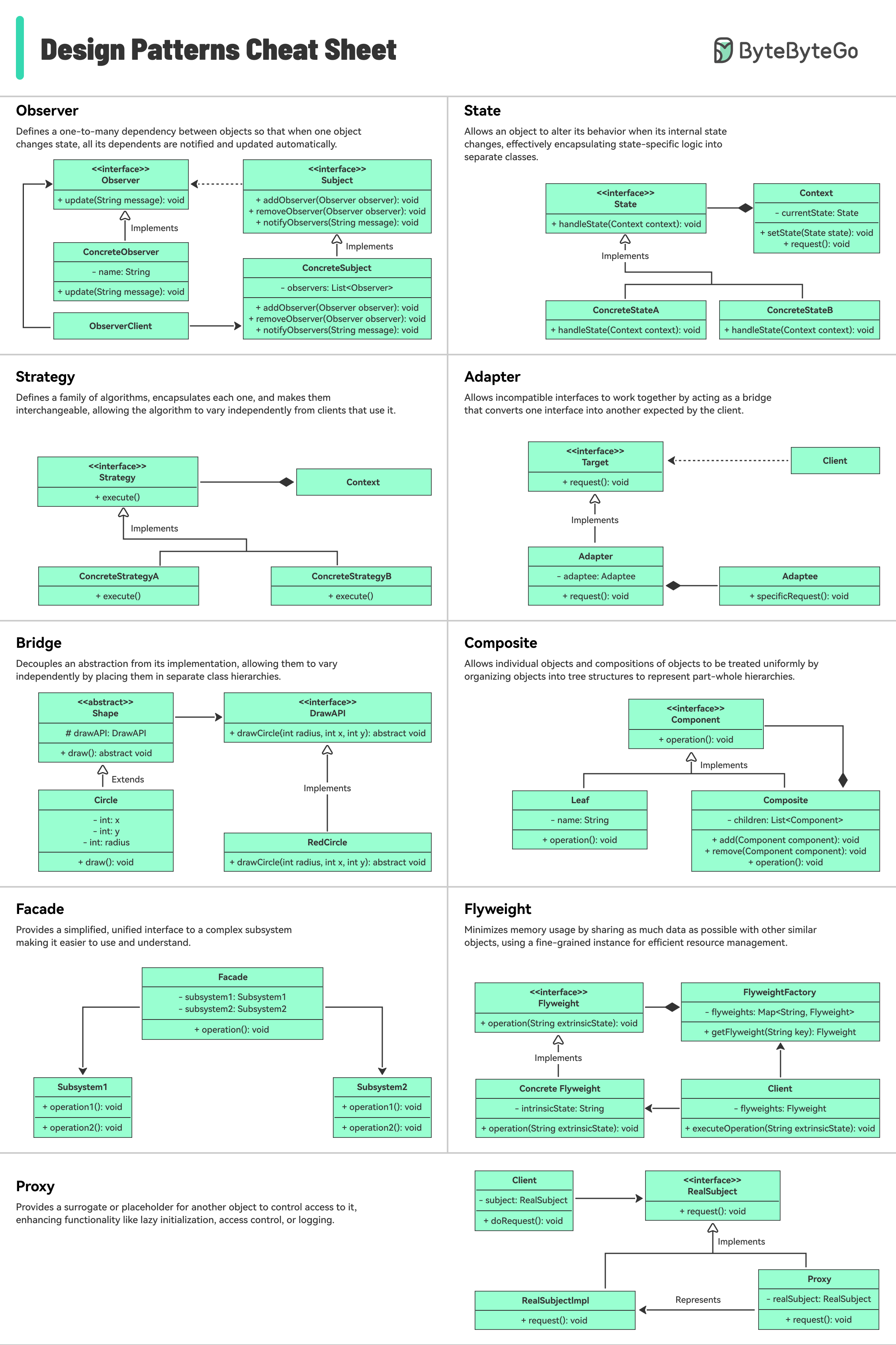

+ * [Design Patterns Cheat Sheet](https://bytebytego.com/guides/design-patterns-cheat-sheet-part-1-and-part-2)

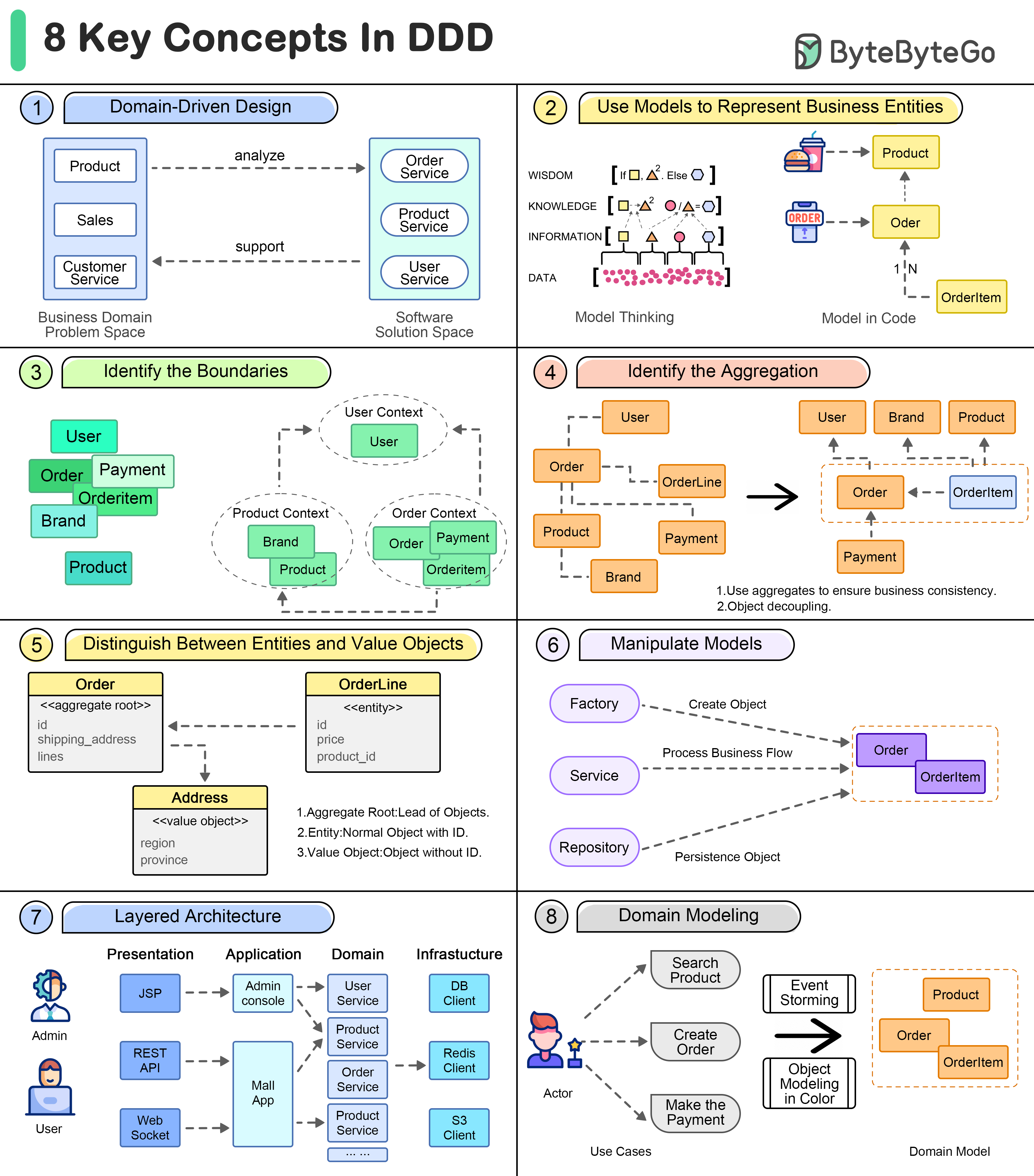

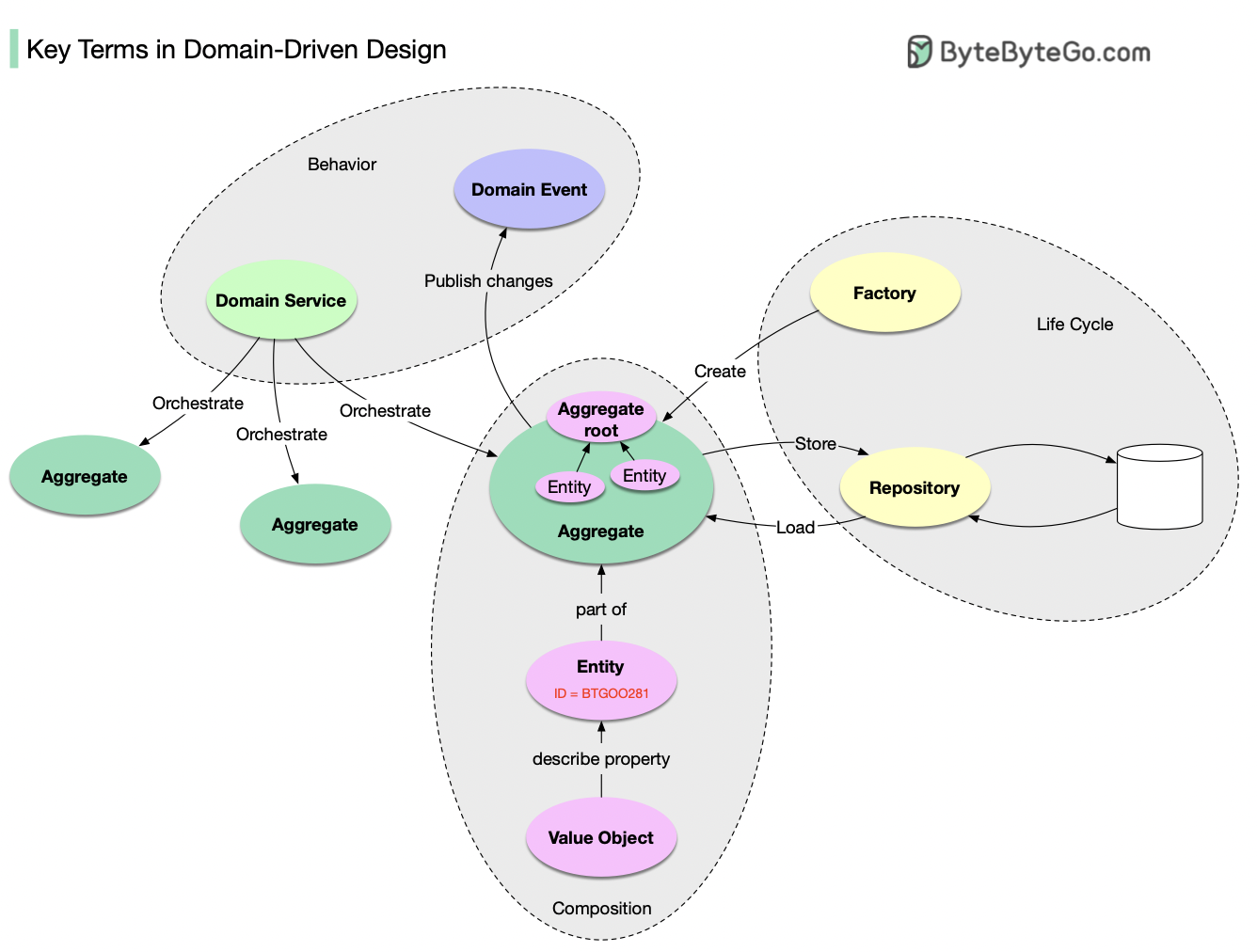

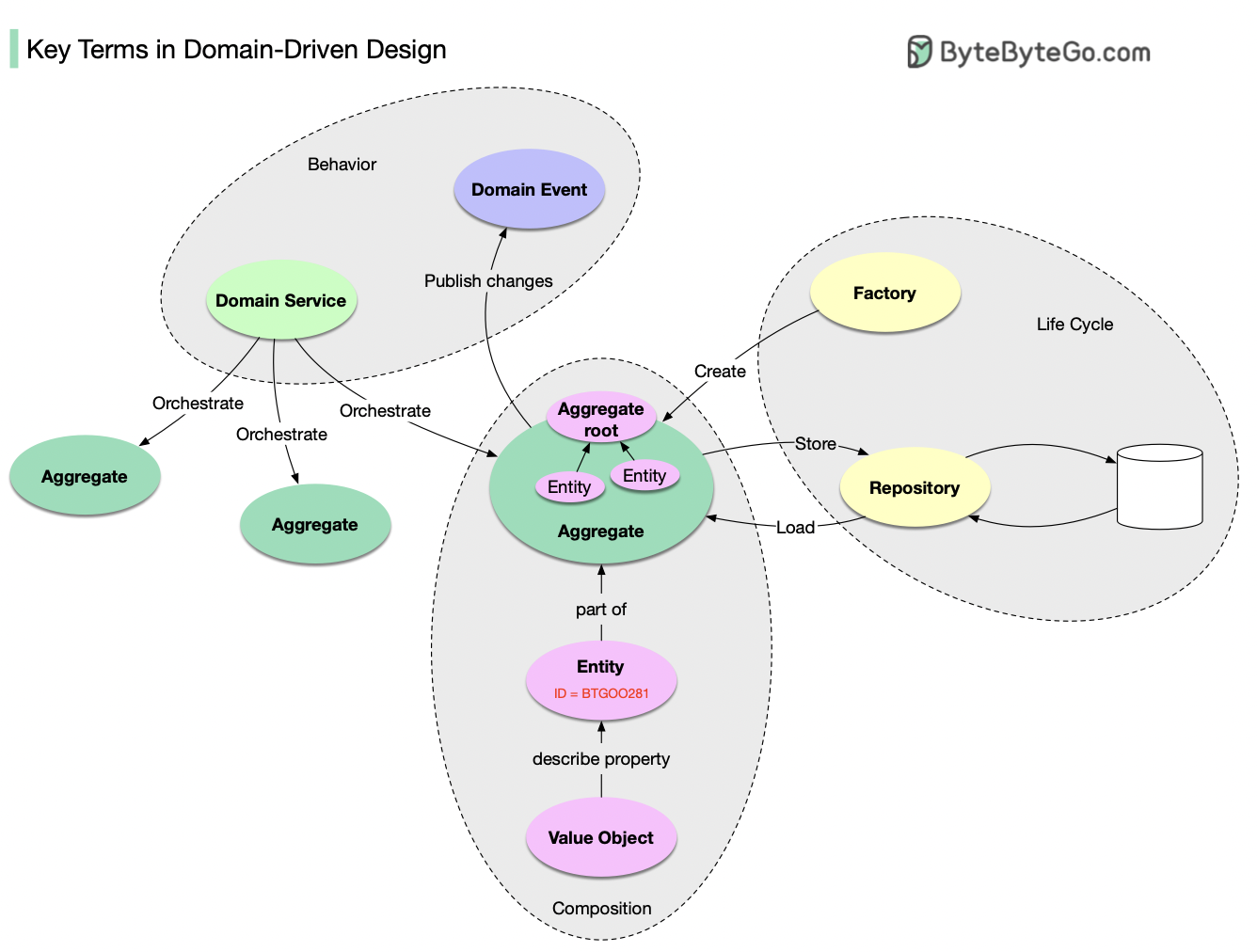

+ * [Key Terms in Domain-Driven Design](https://bytebytego.com/guides/key-terms-in-domain-driven-design)

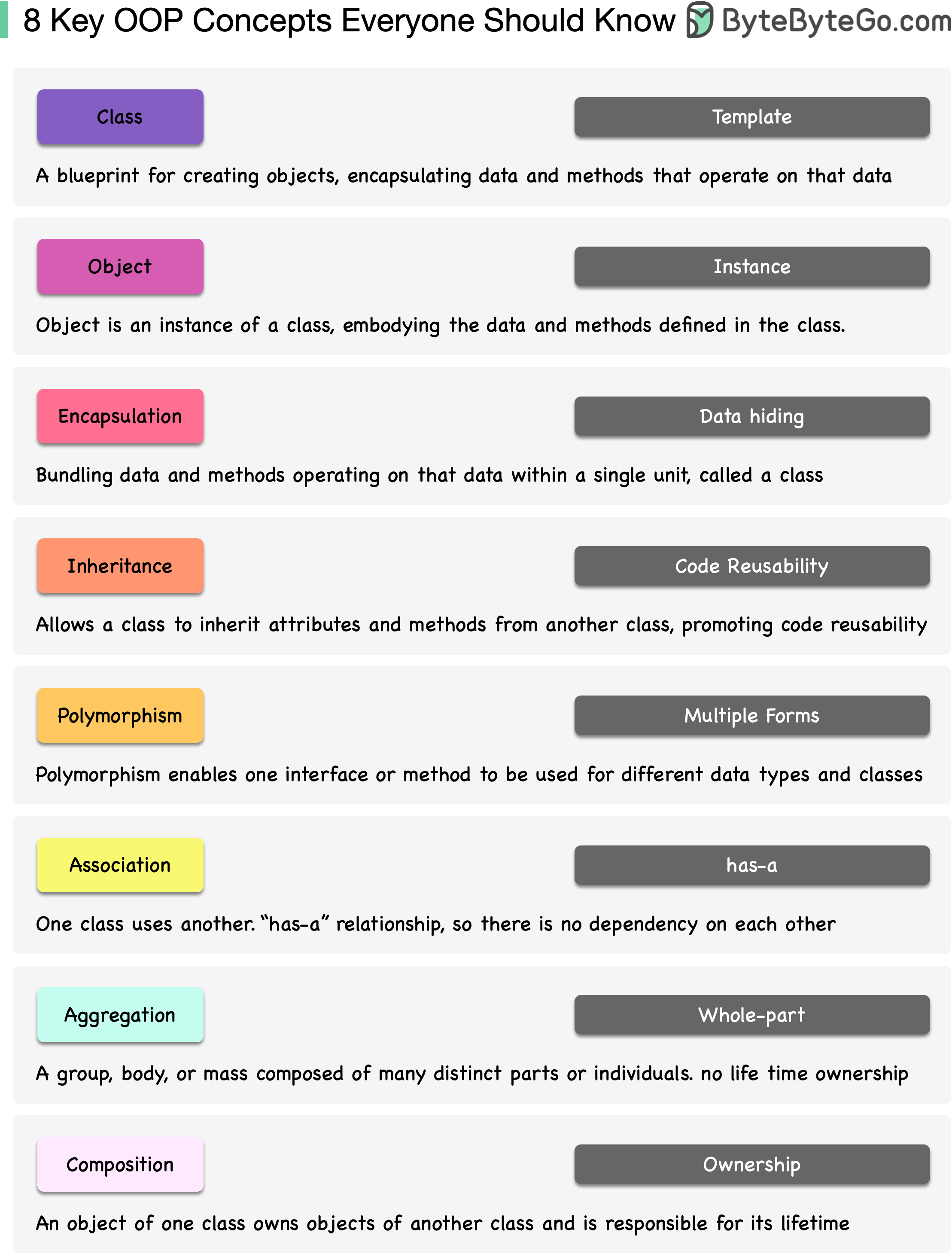

+ * [8 Key OOP Concepts Every Developer Should Know](https://bytebytego.com/guides/8-key-oop-concepts-every-developer-should-know)

+ * [18 Key Design Patterns Every Developer Should Know](https://bytebytego.com/guides/18-key-design-patterns-every-developer-should-know)

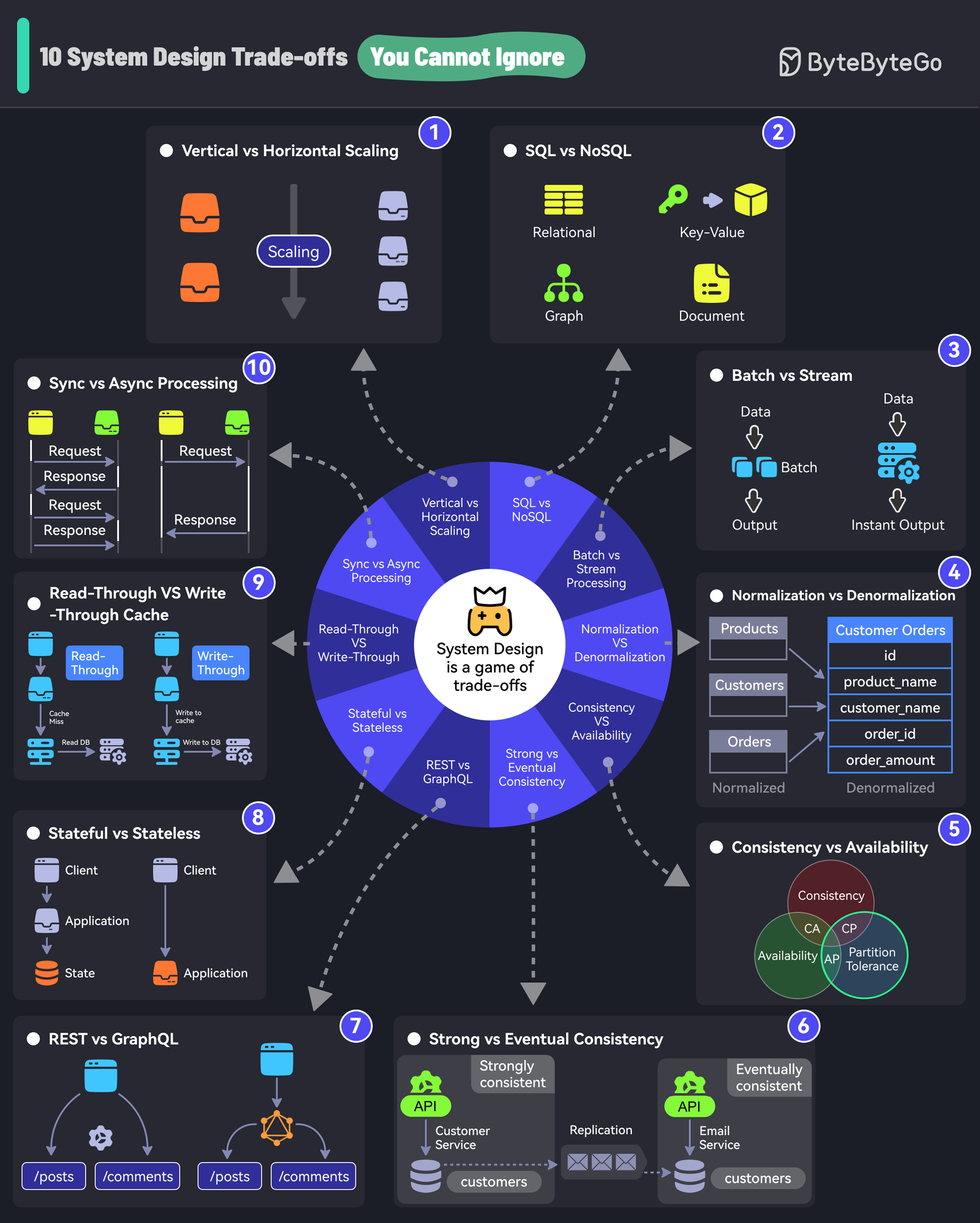

+ * [10 System Design Tradeoffs You Cannot Ignore](https://bytebytego.com/guides/10-system-design-tradeoffs-you-cannot-ignore)

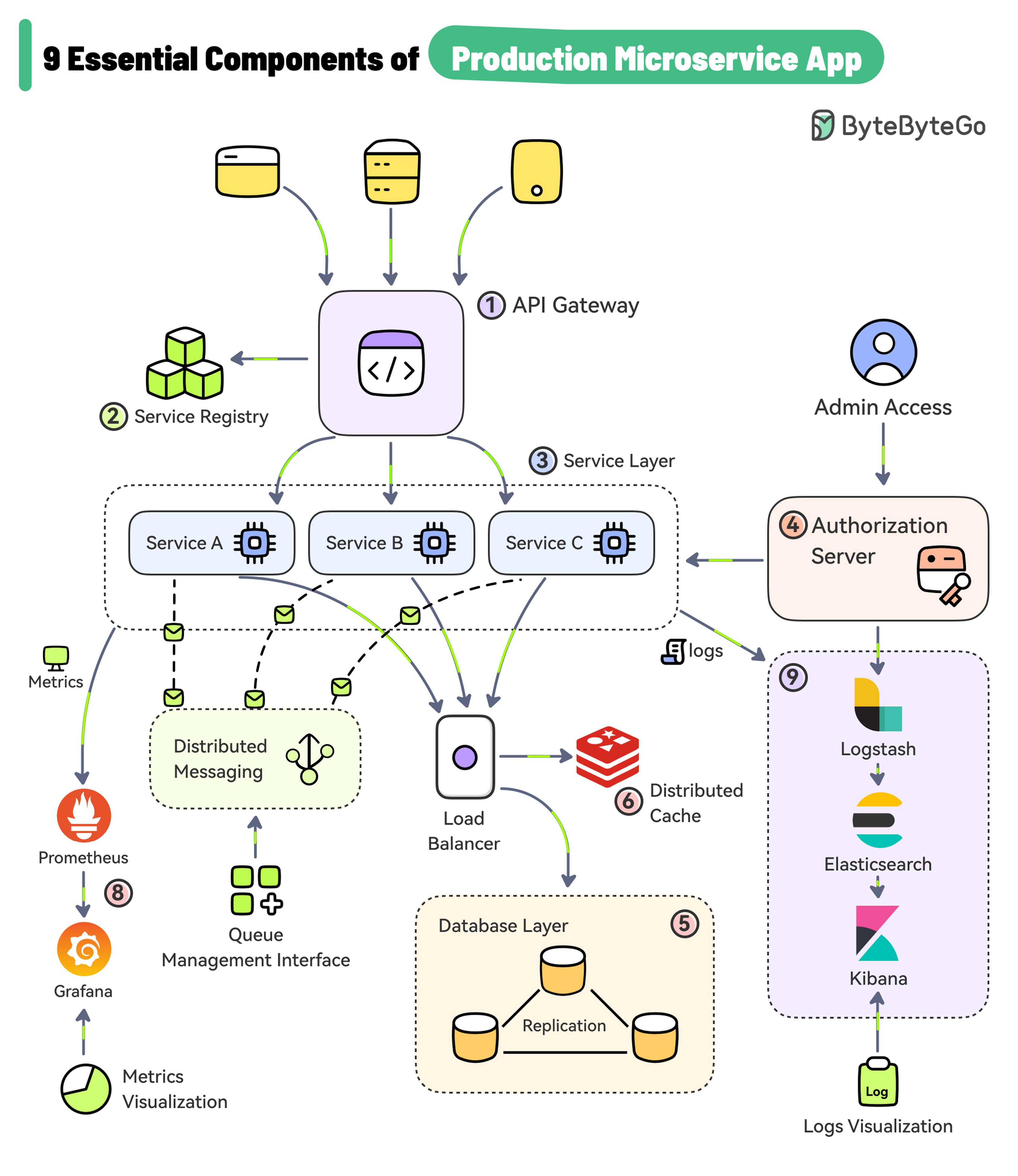

+ * [9 Essential Components of a Production Microservice Application](https://bytebytego.com/guides/9-essential-components-of-a-production-microservice-application)

+ * [9 Best Practices for Building Microservices](https://bytebytego.com/guides/9-best-practices-for-building-microservices)

+ * [8 Key Concepts in Domain-Driven Design](https://bytebytego.com/guides/8-key-concepts-in-ddd)

+ * [8 Common System Design Problems and Solutions](https://bytebytego.com/guides/8-common-system-design-problems-and-solutions)

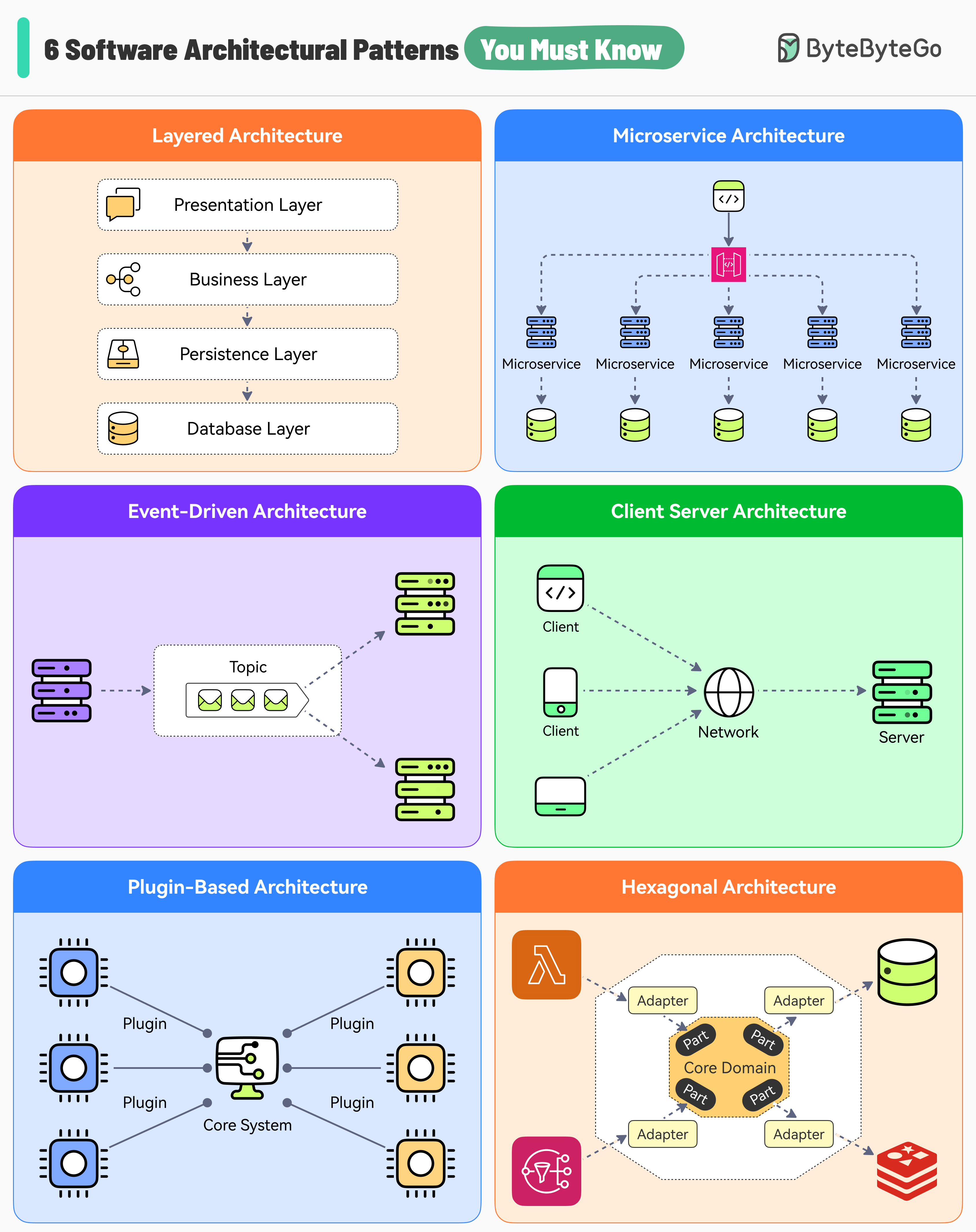

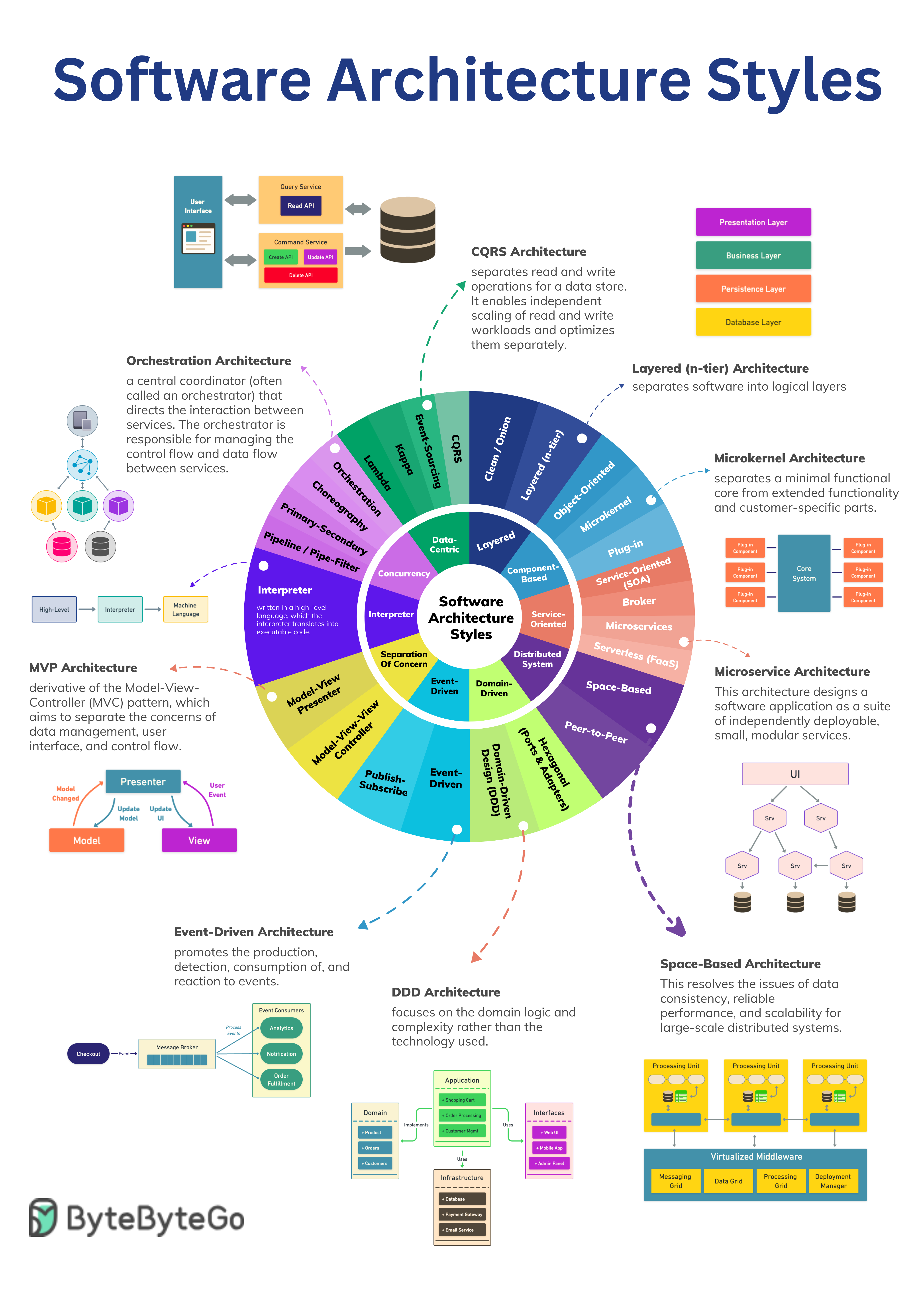

+ * [6 Software Architectural Patterns You Must Know](https://bytebytego.com/guides/6-software-architectural-patterns-you-must-know)

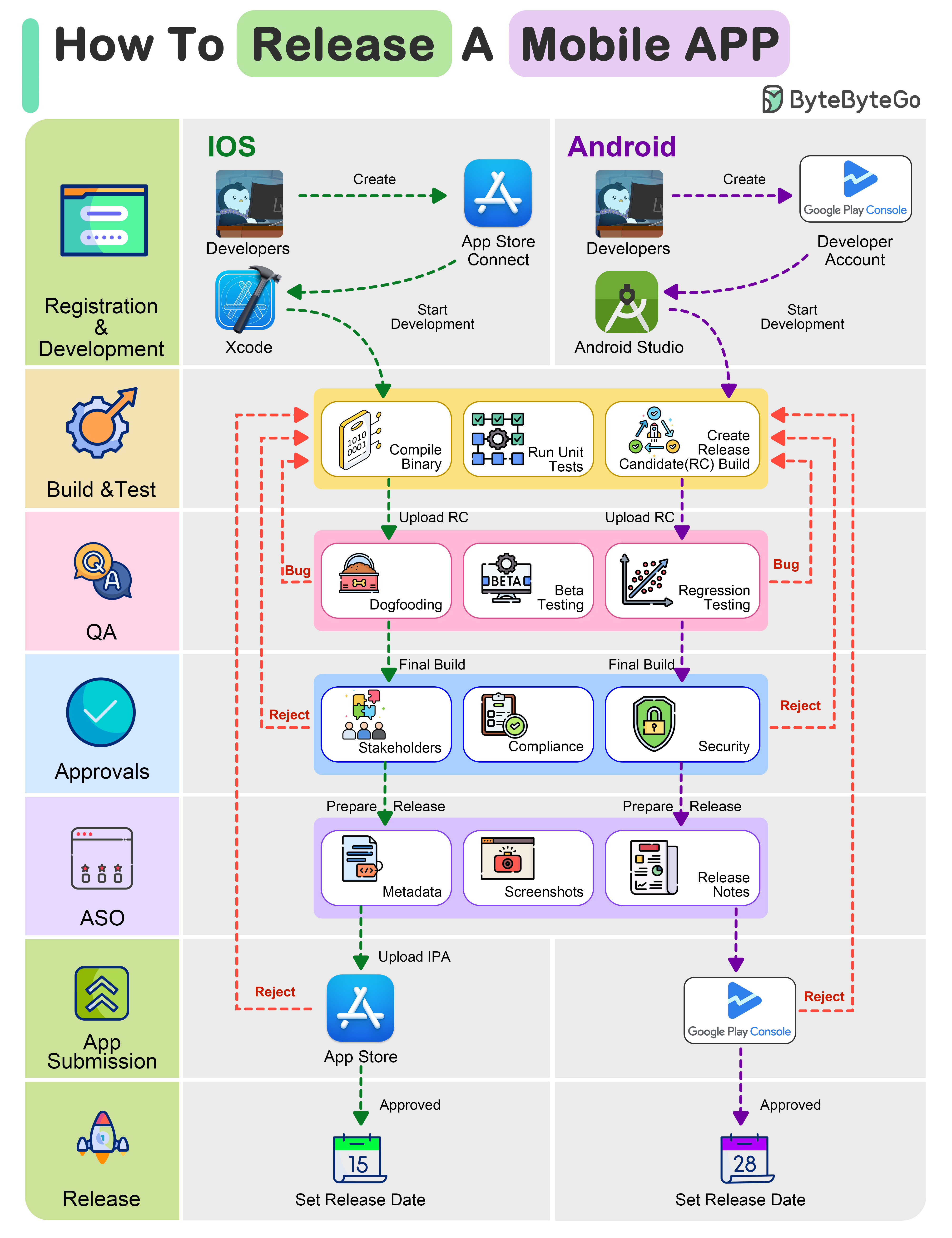

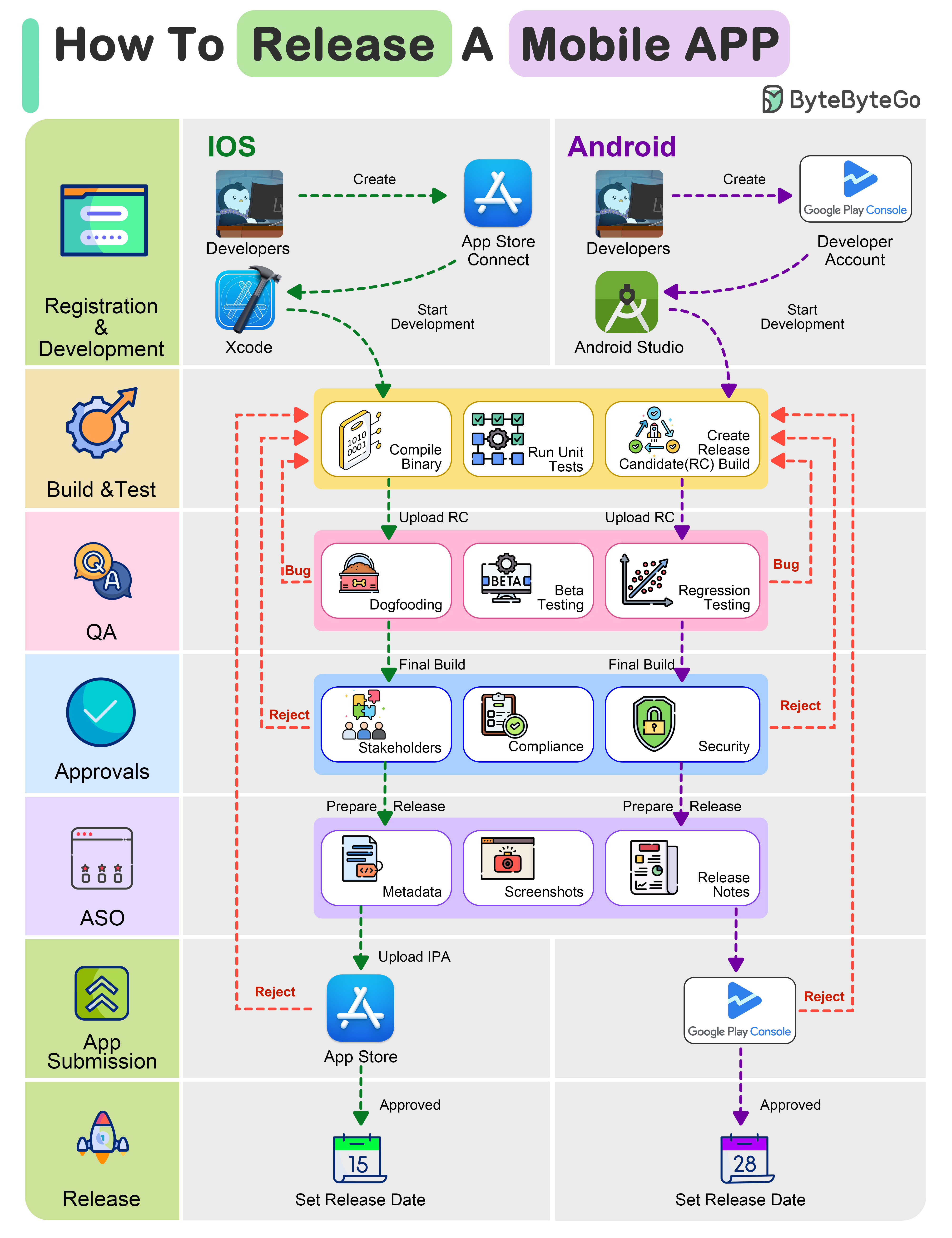

+ * [How To Release A Mobile App](https://bytebytego.com/guides/how-to-release-a-mobile-app)

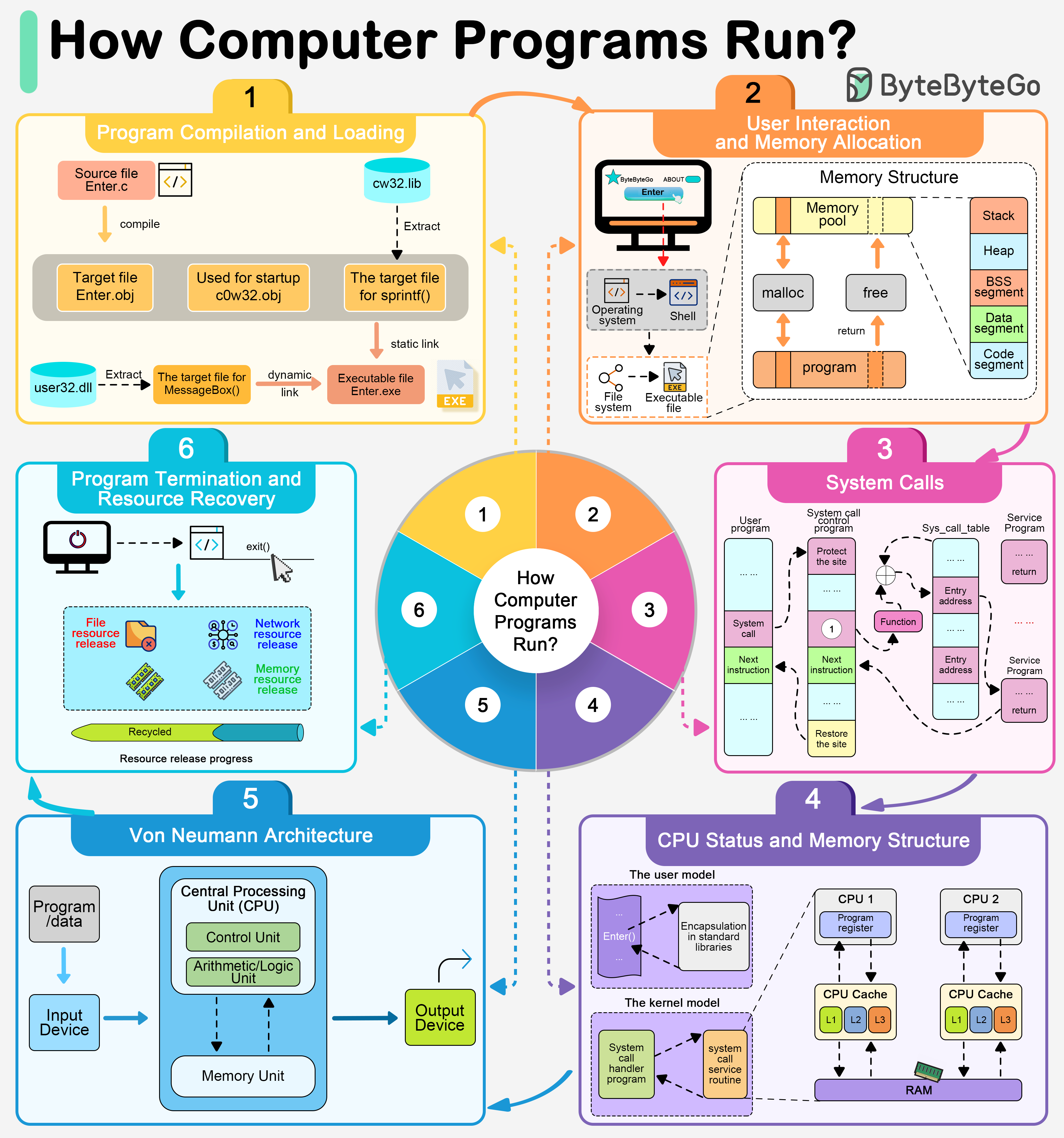

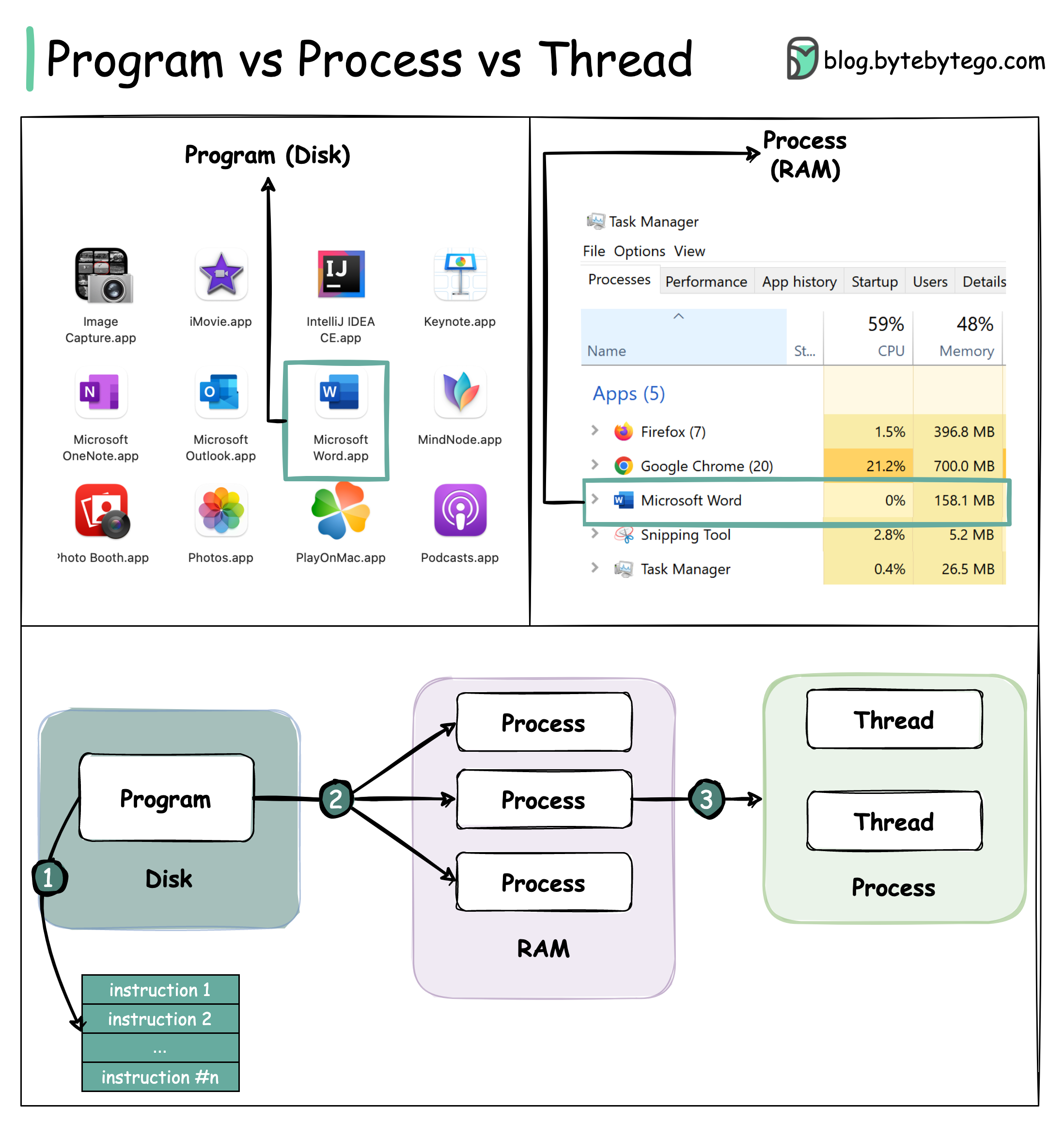

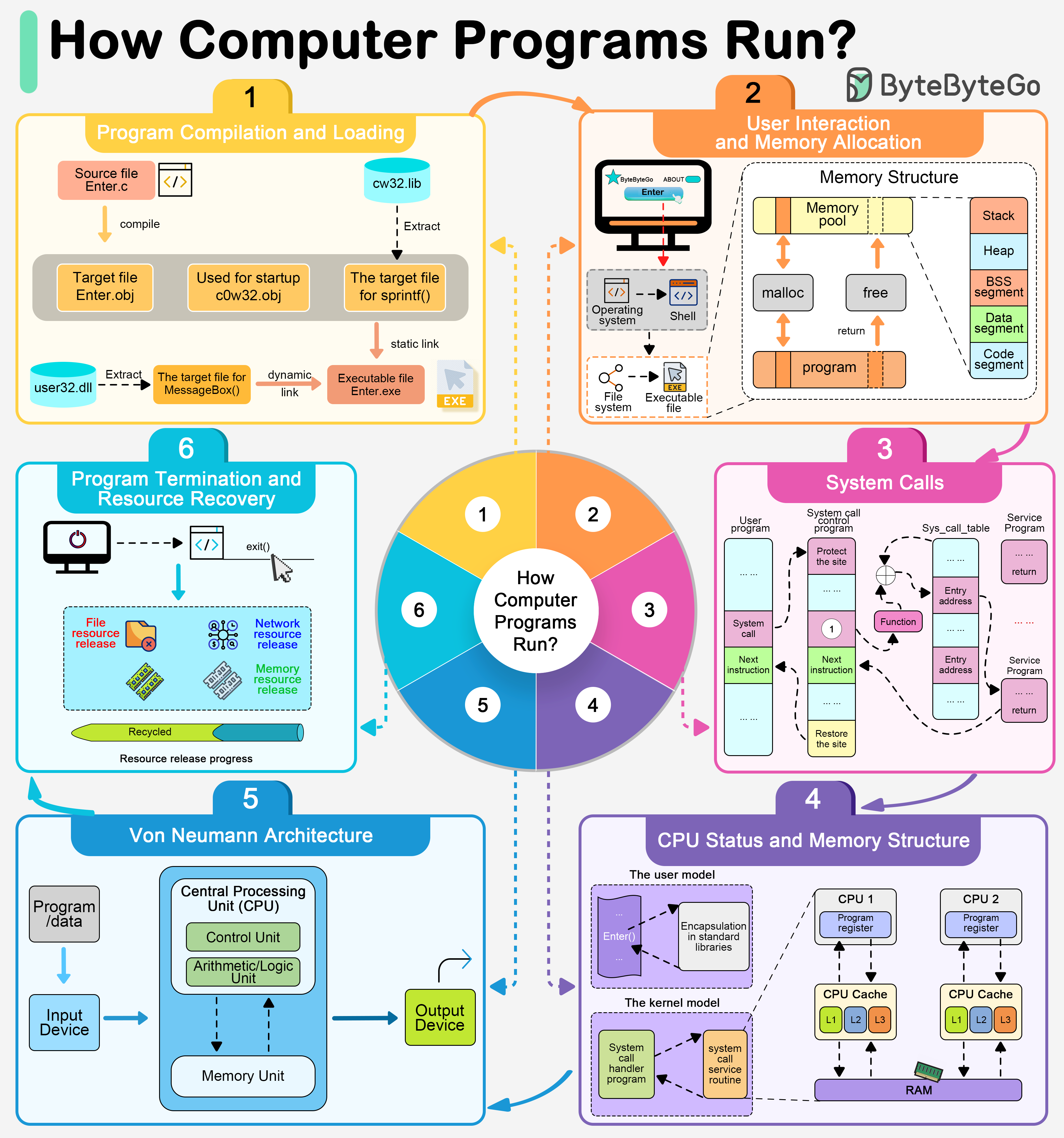

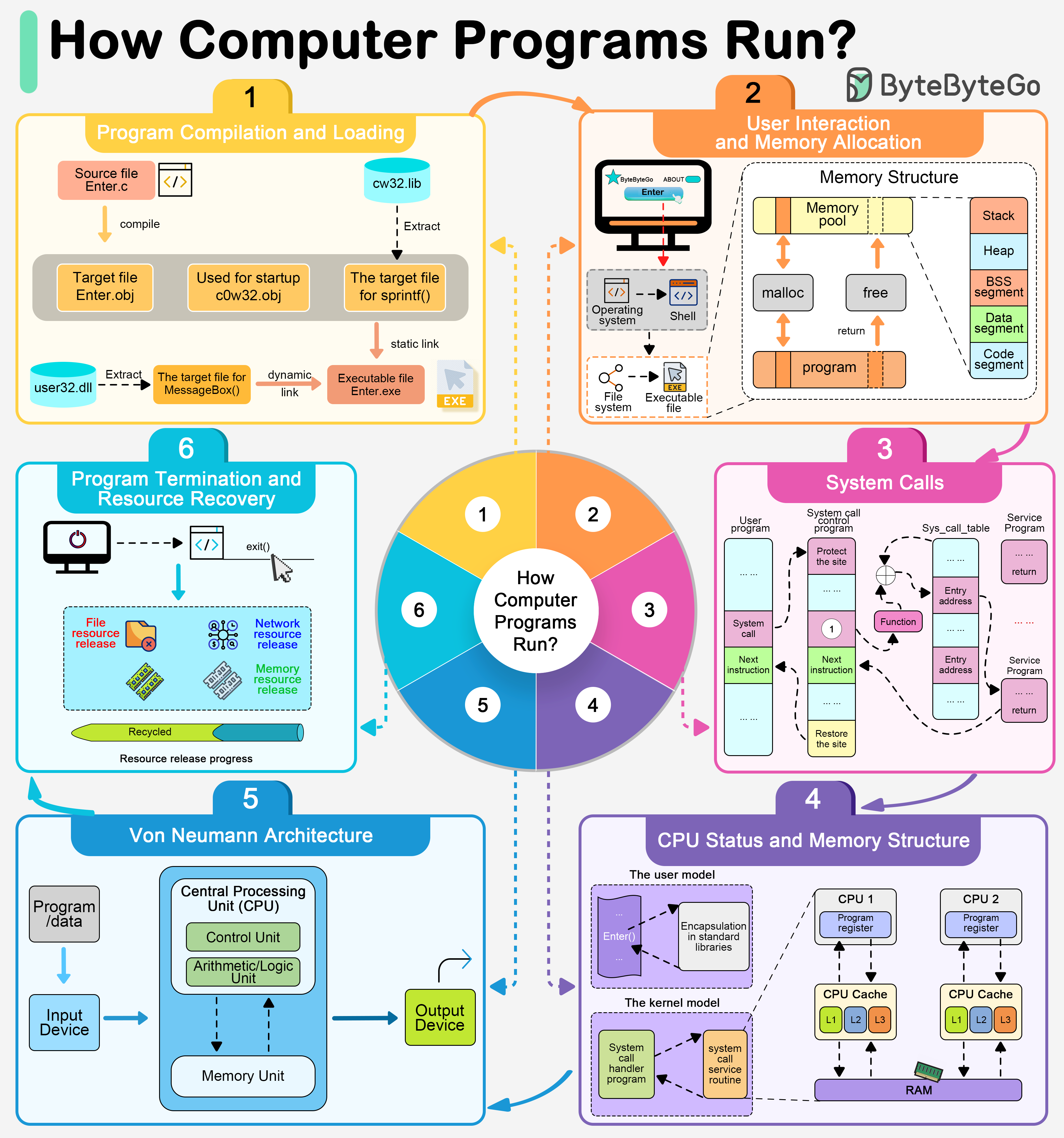

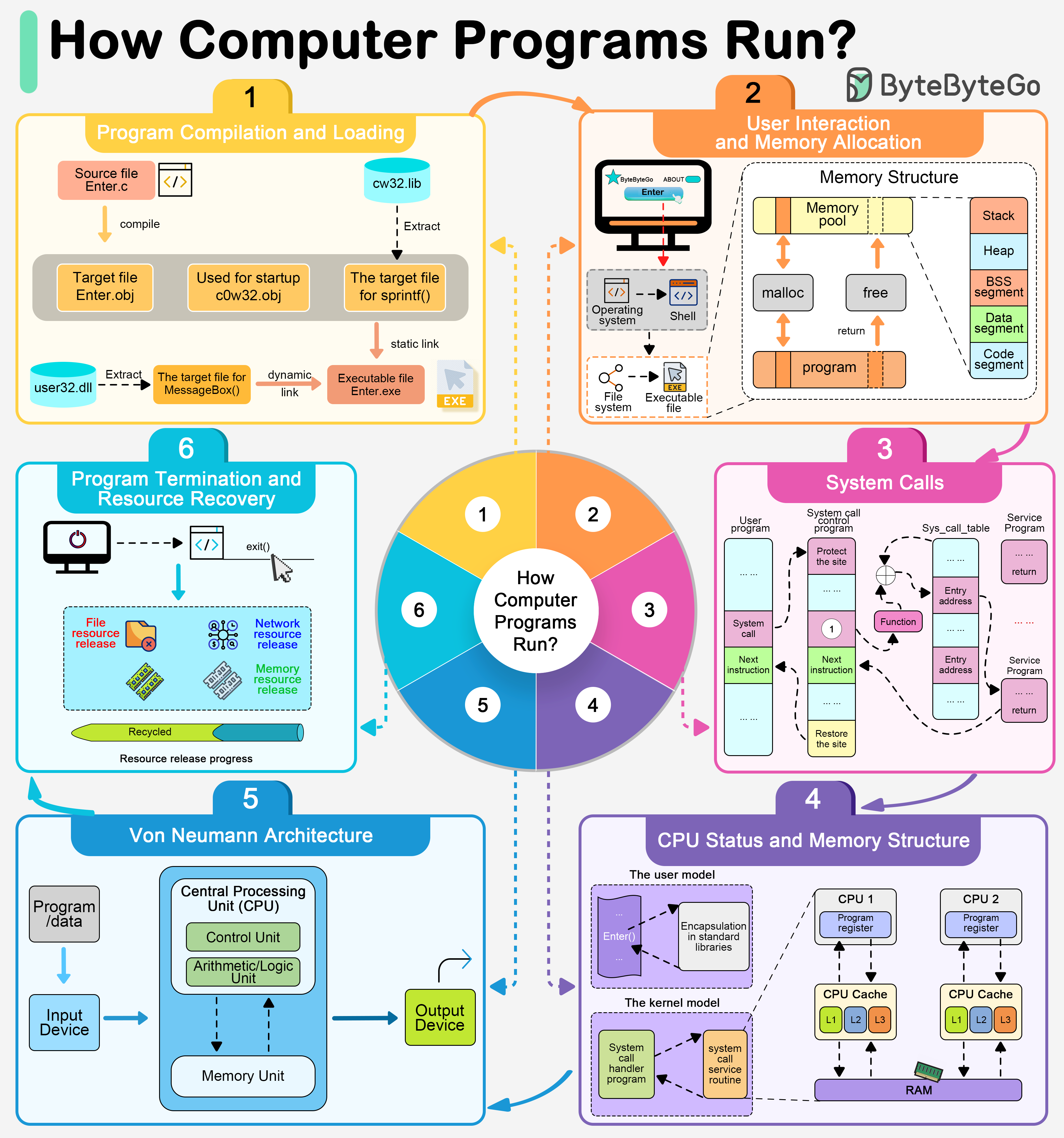

+ * [How Do Computer Programs Run?](https://bytebytego.com/guides/how-do-computer-programs-run)

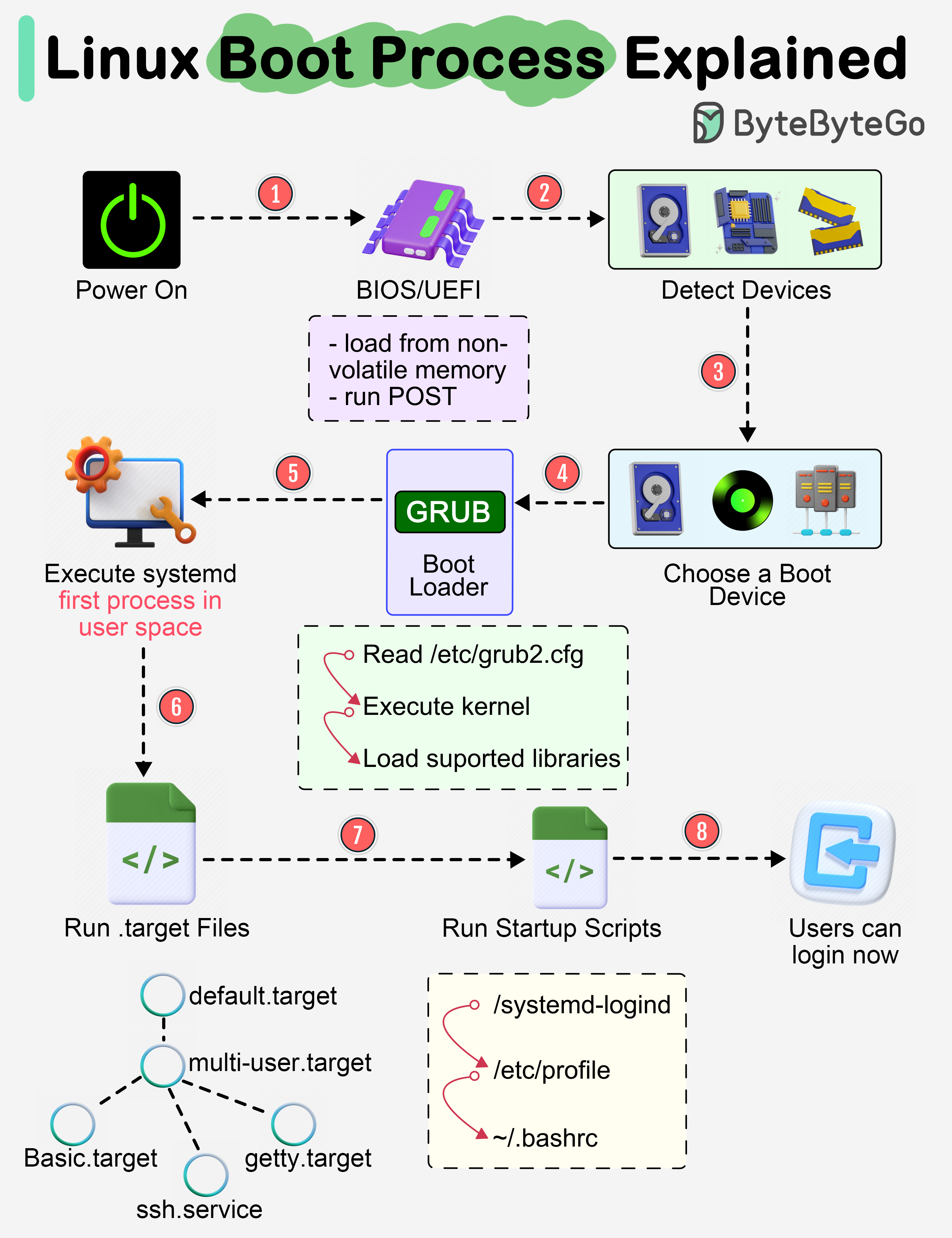

+ * [Linux Boot Process Explained](https://bytebytego.com/guides/linux-boot-process-explained)

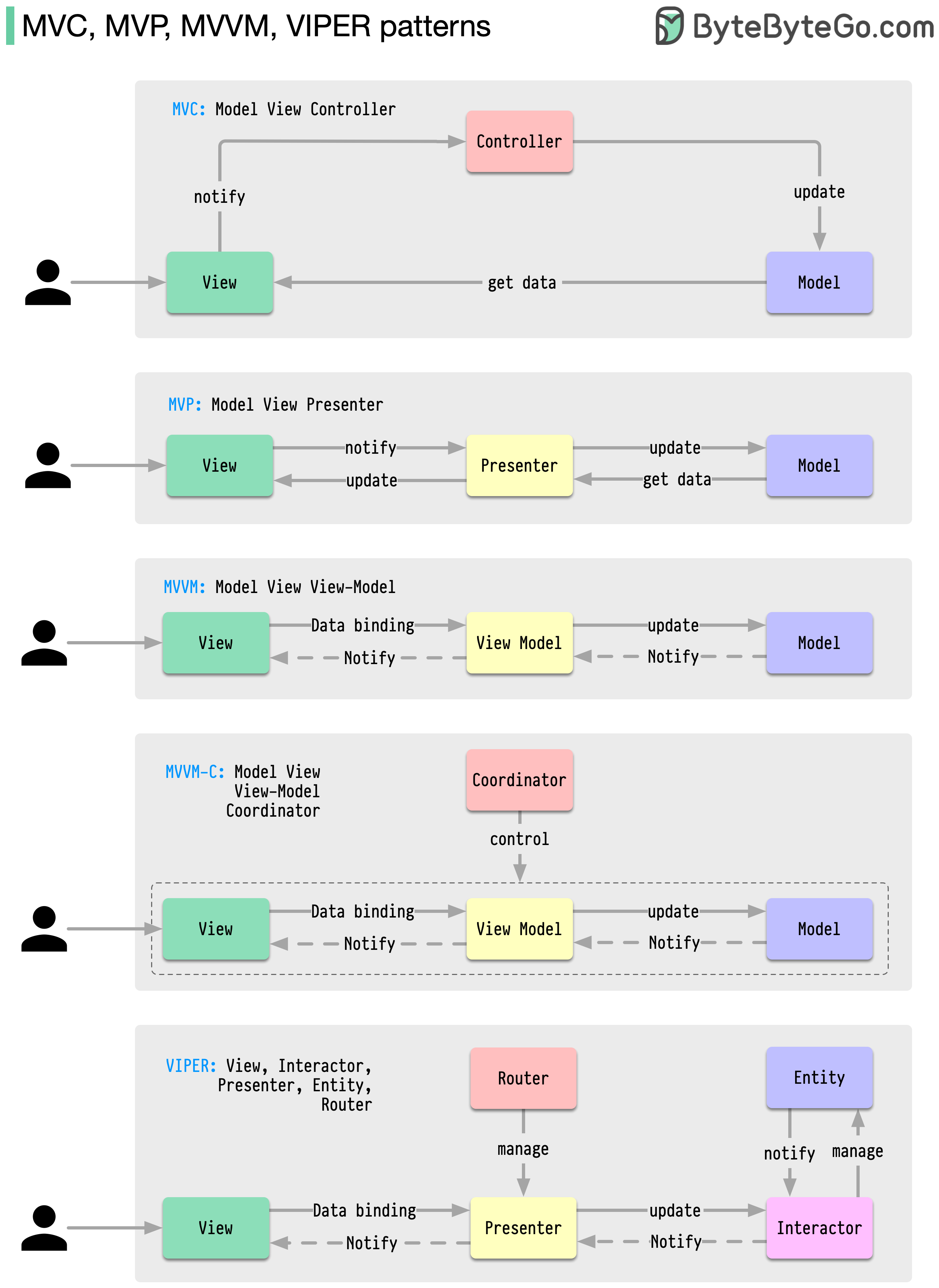

+ * [MVC, MVP, MVVM, VIPER Patterns](https://bytebytego.com/guides/mvc-mvp-mvvm-viper-patterns)

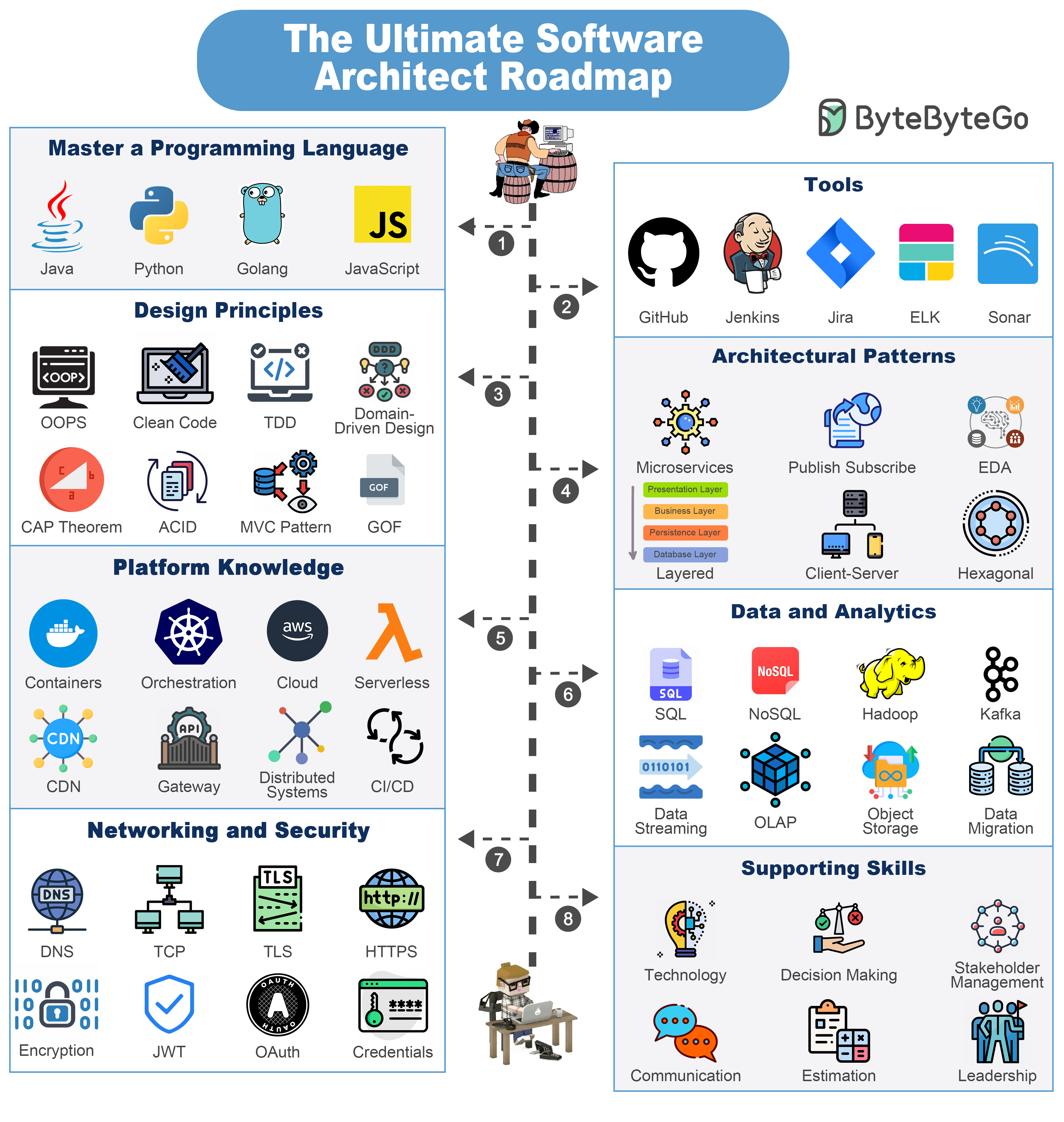

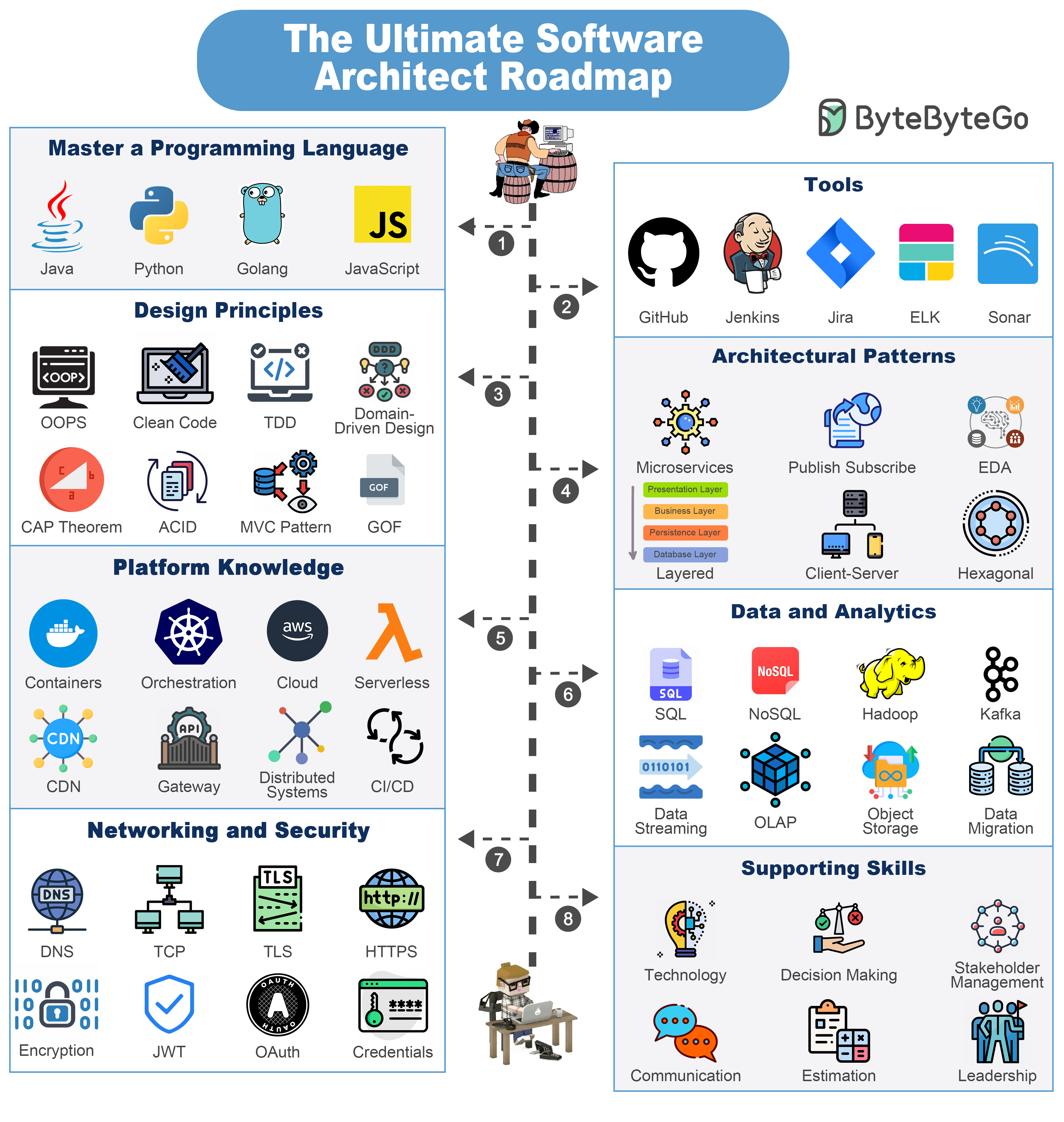

+ * [The Ultimate Software Architect Knowledge Map](https://bytebytego.com/guides/the-ultimate-software-architect-knowledge-map)

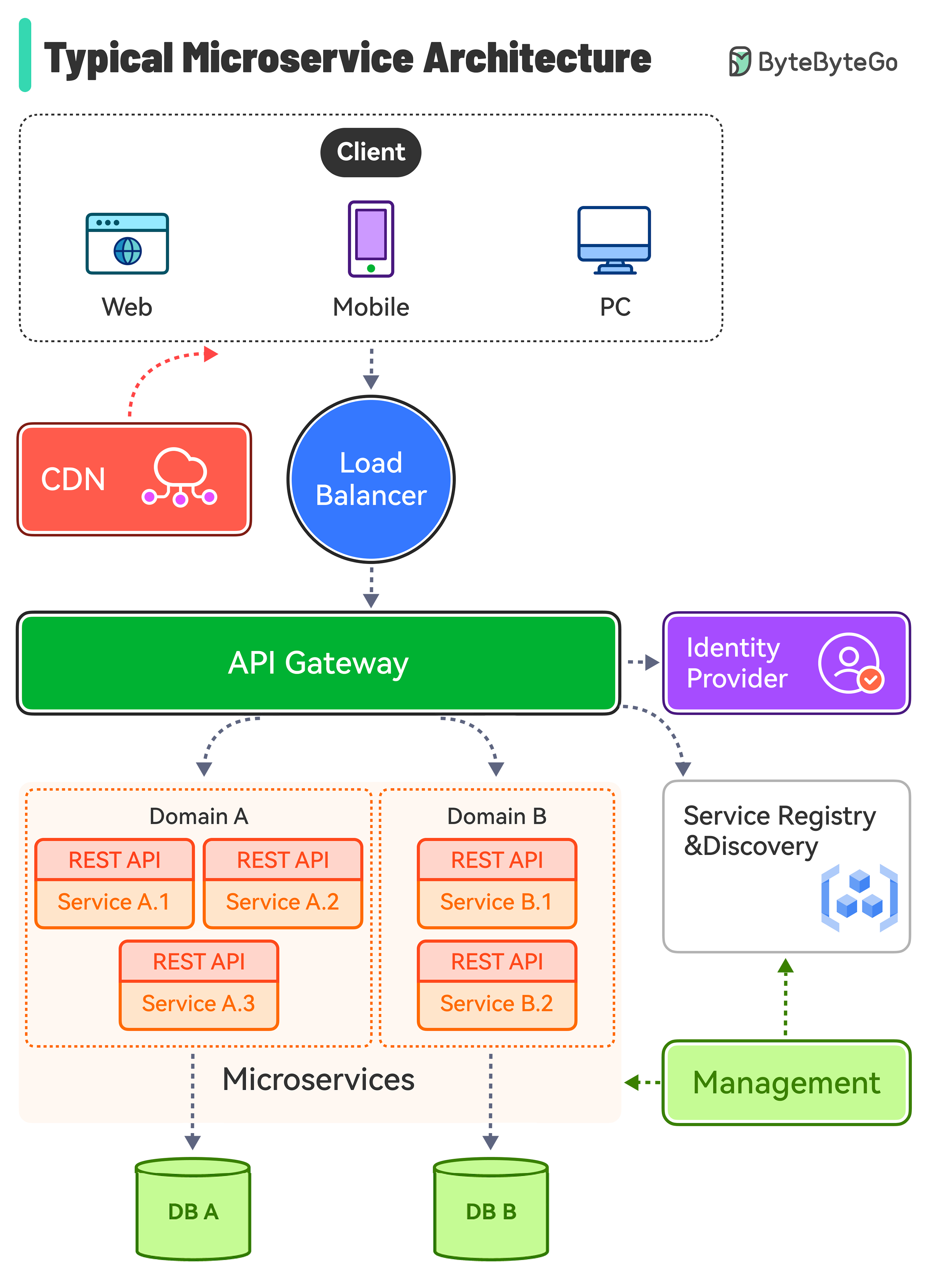

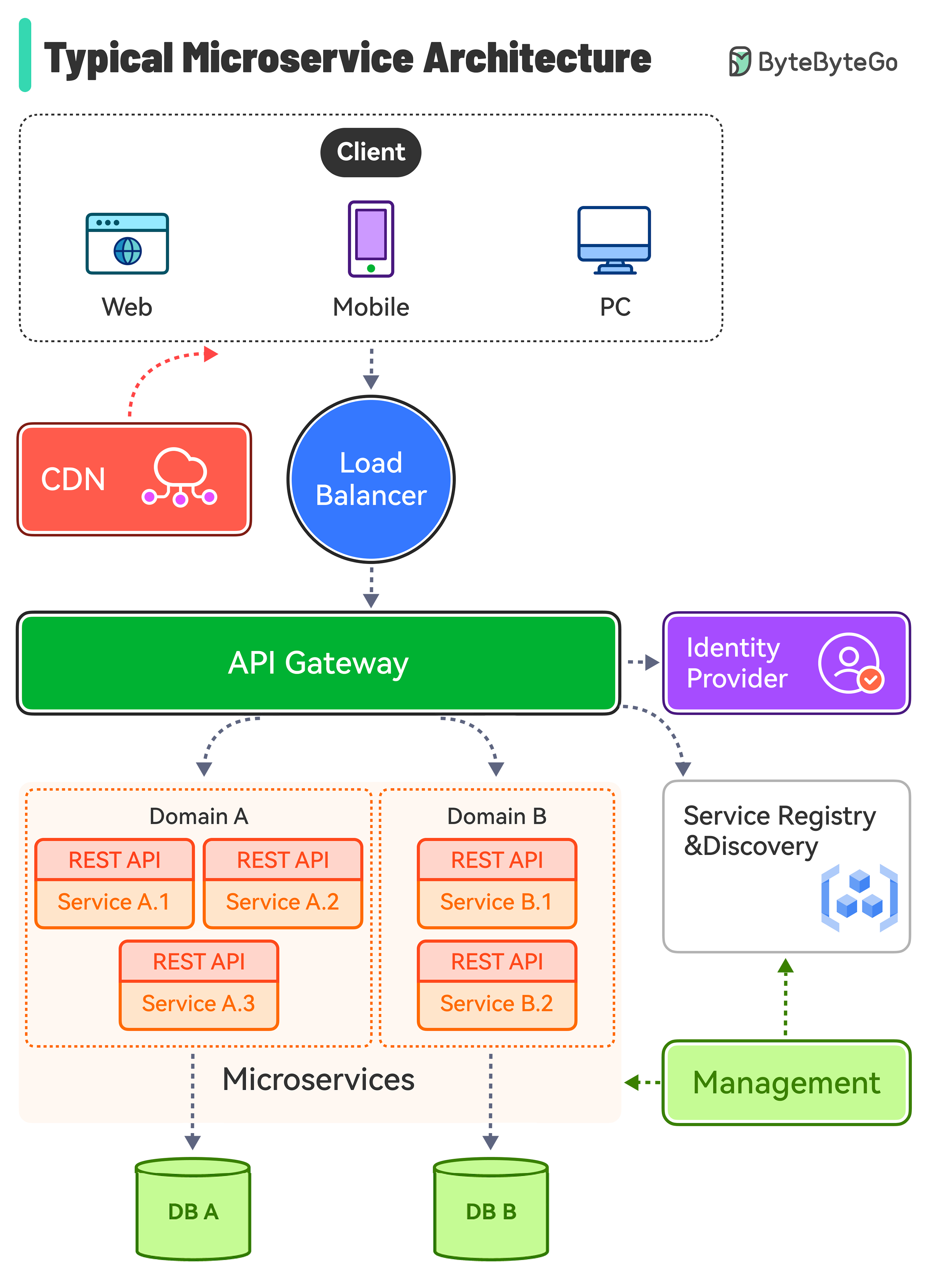

+ * [Typical Microservice Architecture](https://bytebytego.com/guides/what-does-a-typical-microservice-architecture-look-like)

+ * [Top 5 Software Architectural Patterns](https://bytebytego.com/guides/top-5-software-architectural-patterns)

+* [DevTools & Productivity](https://bytebytego.com/guides/devtools-productivity)

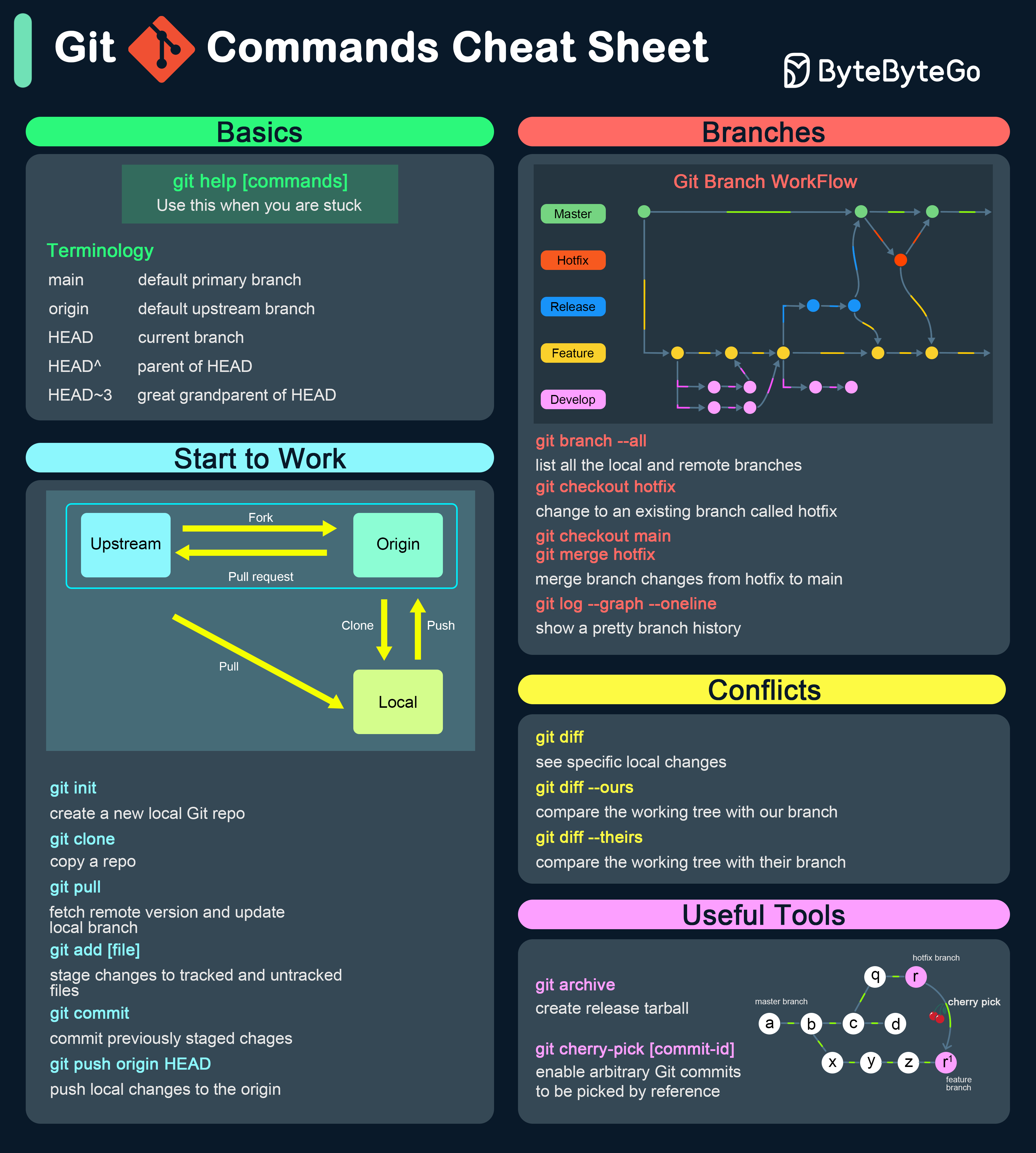

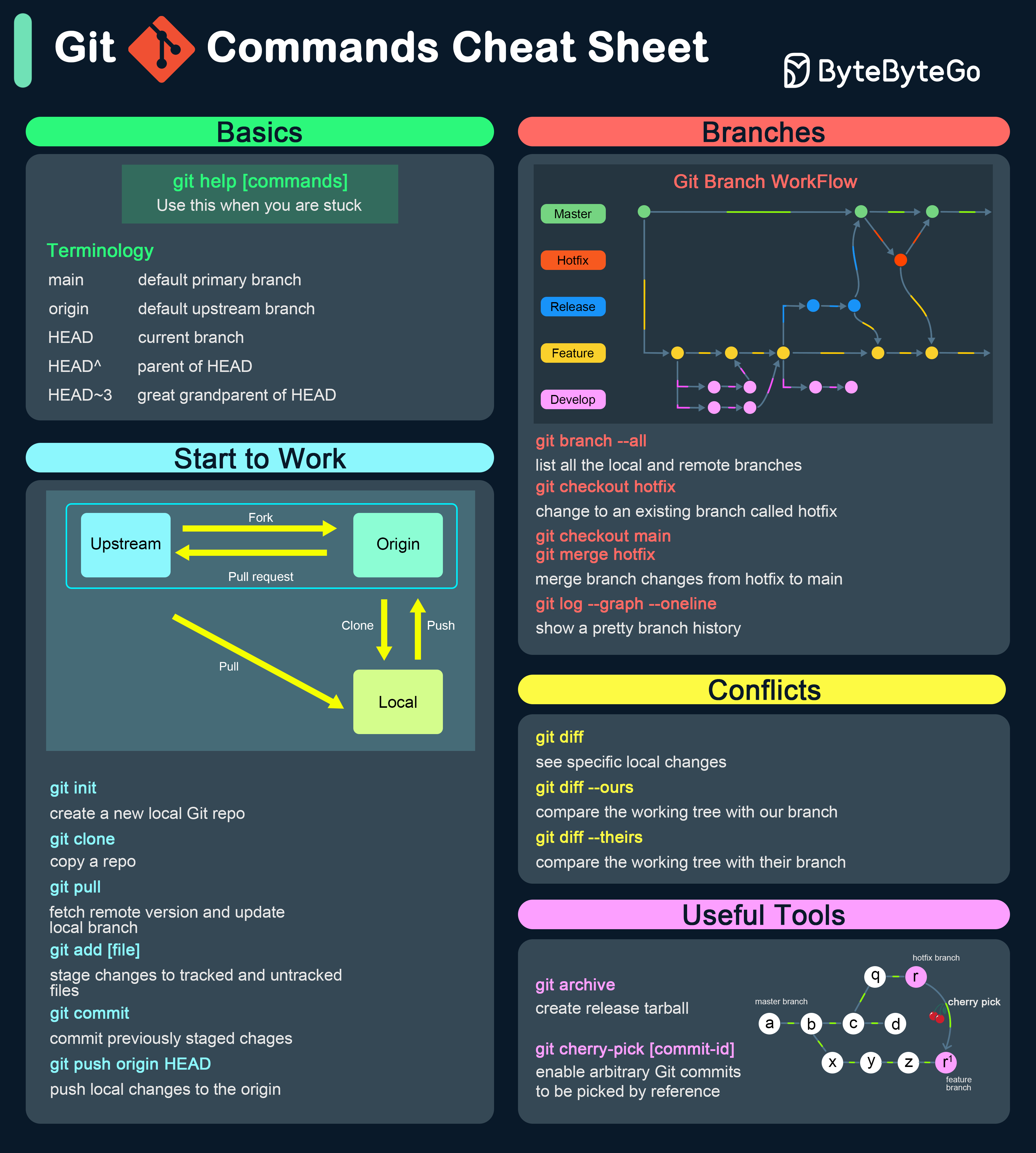

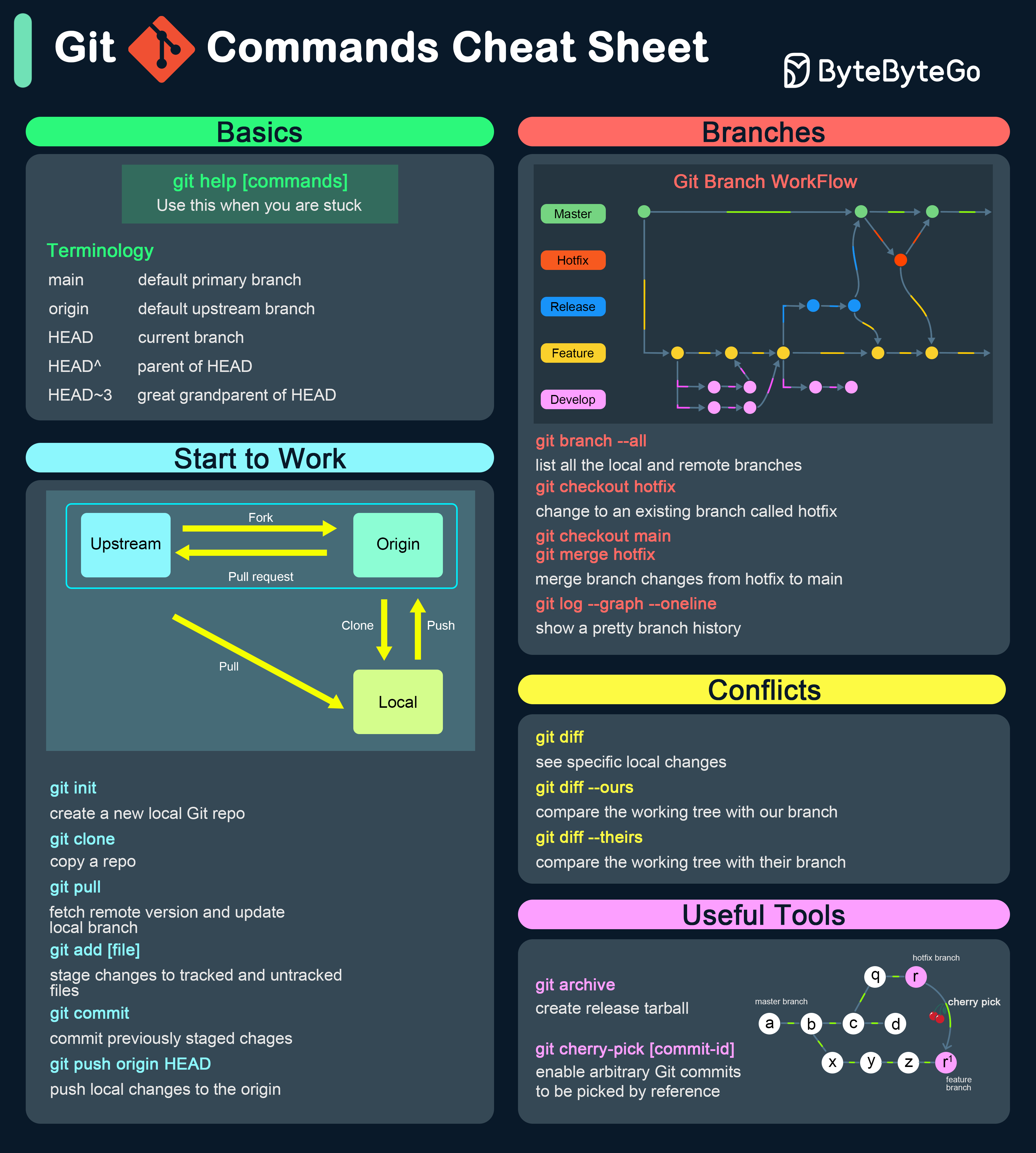

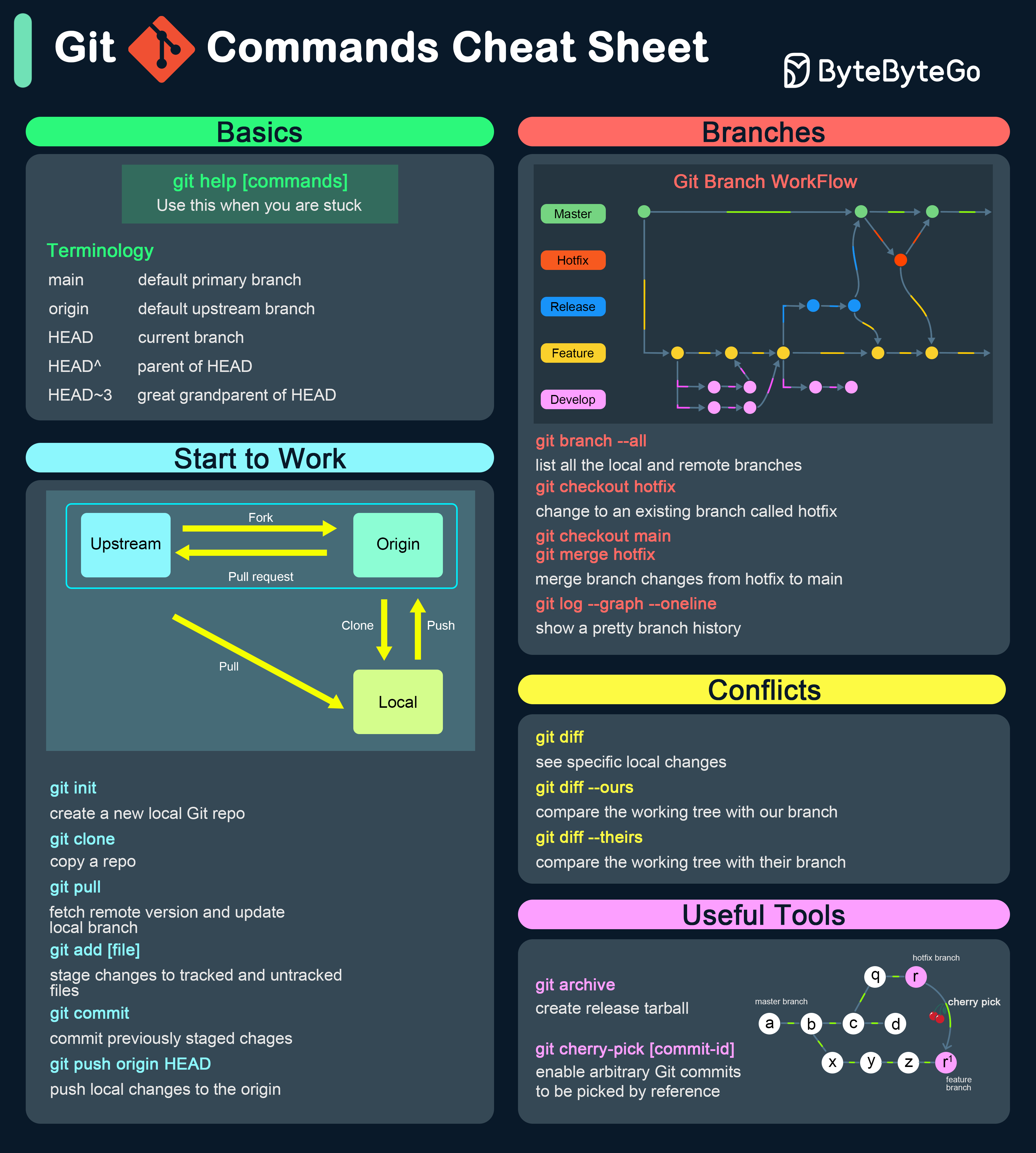

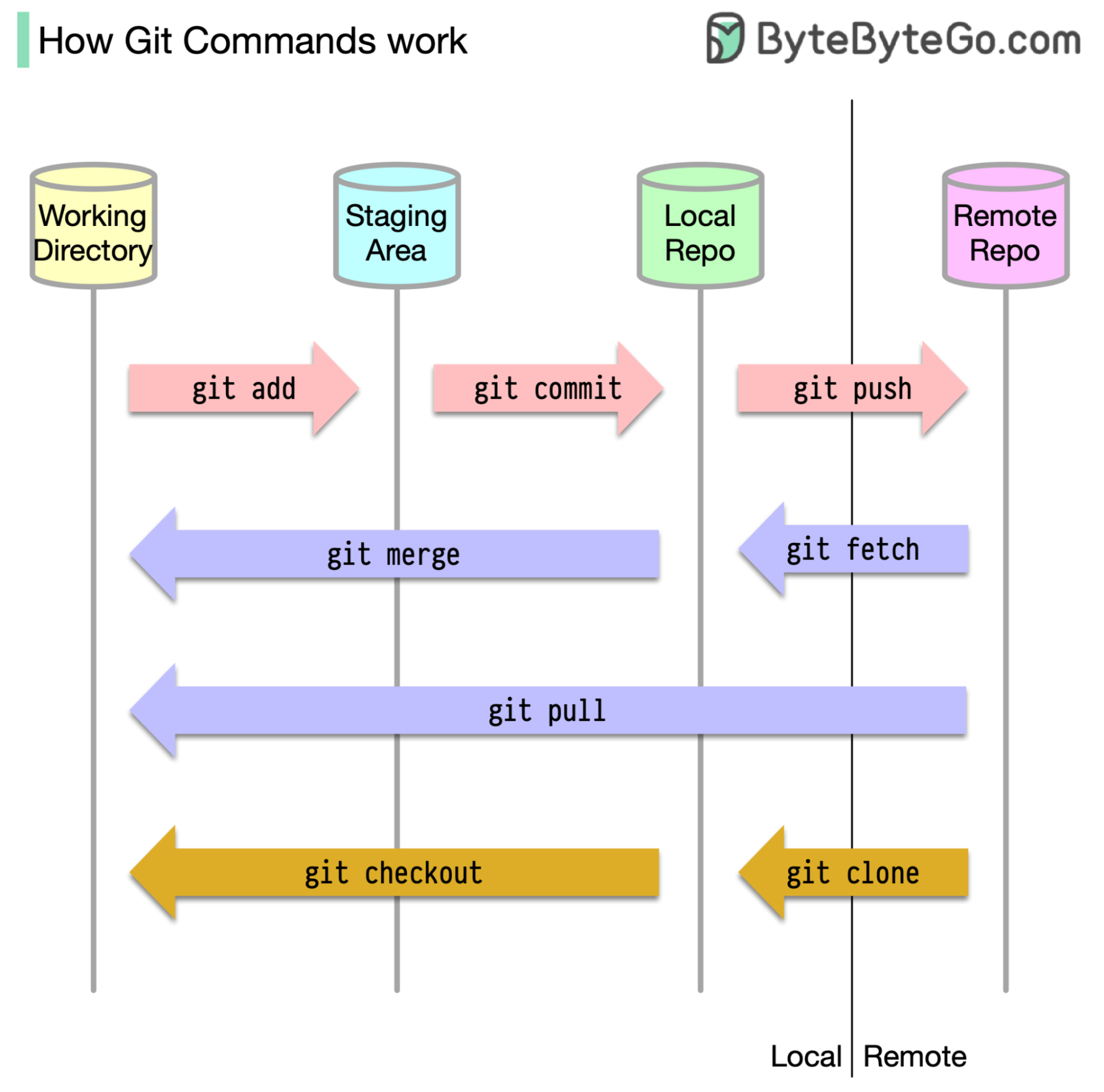

+ * [Git Commands Cheat Sheet](https://bytebytego.com/guides/git-commands-cheat-sheet)

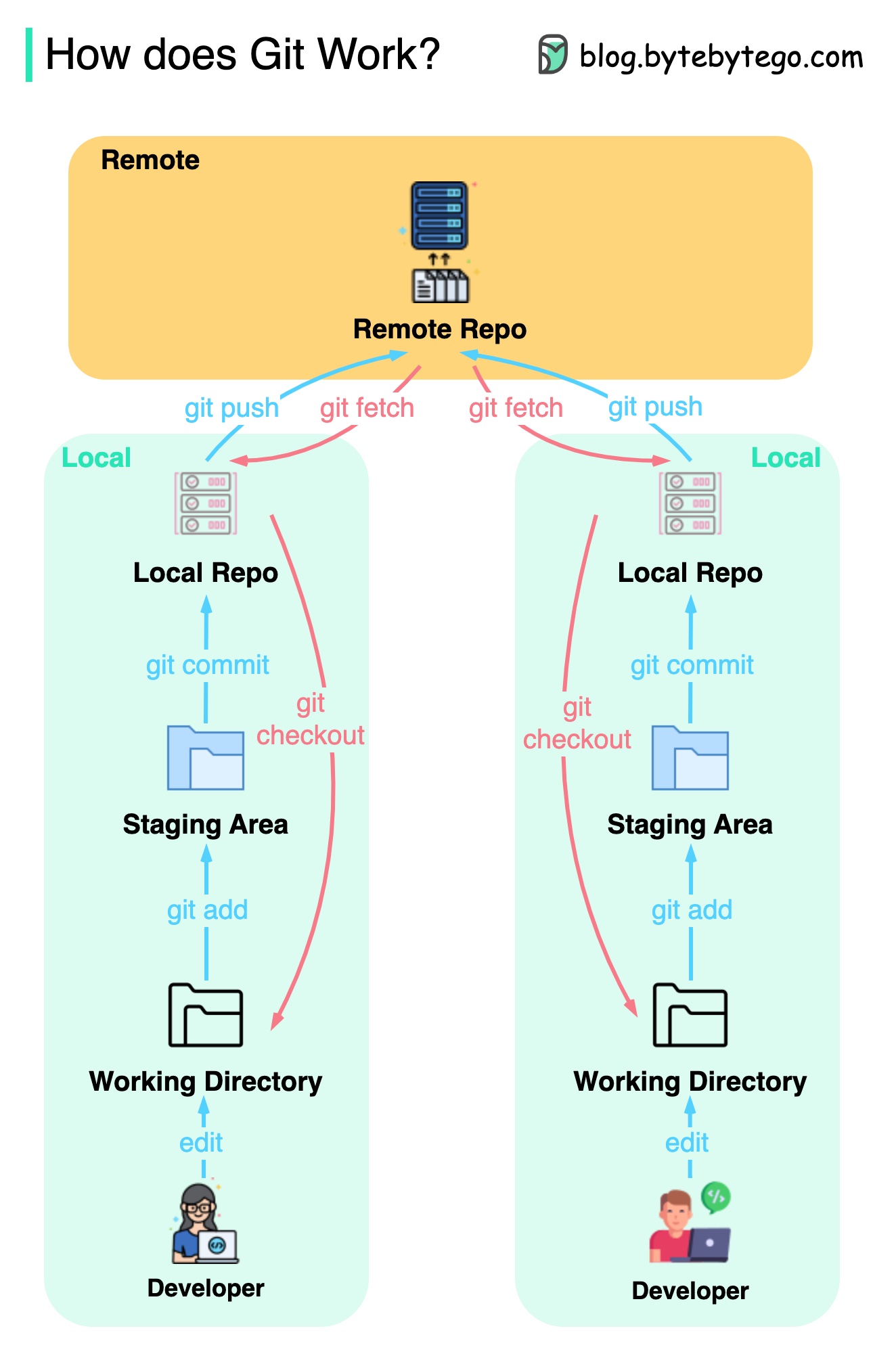

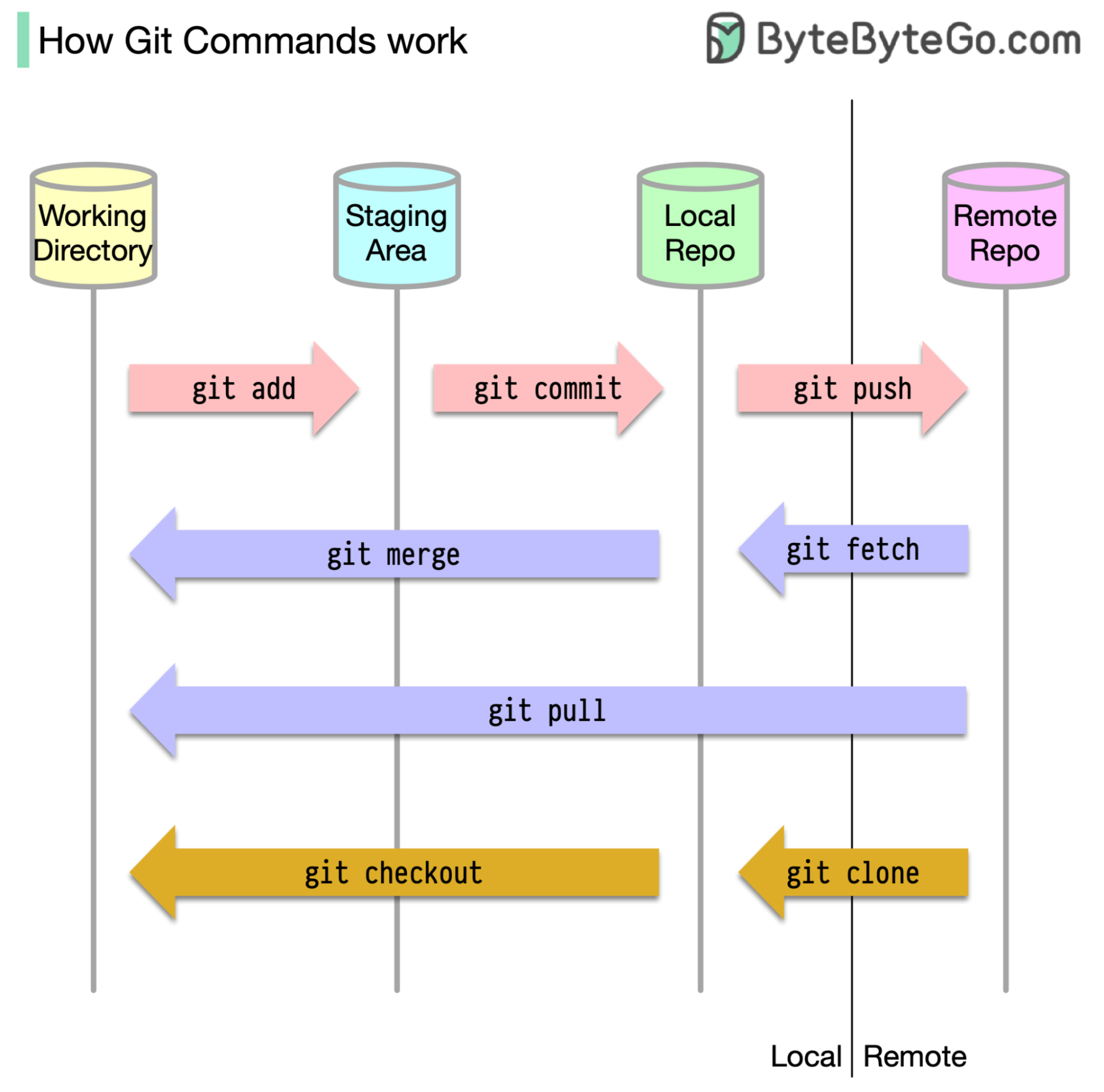

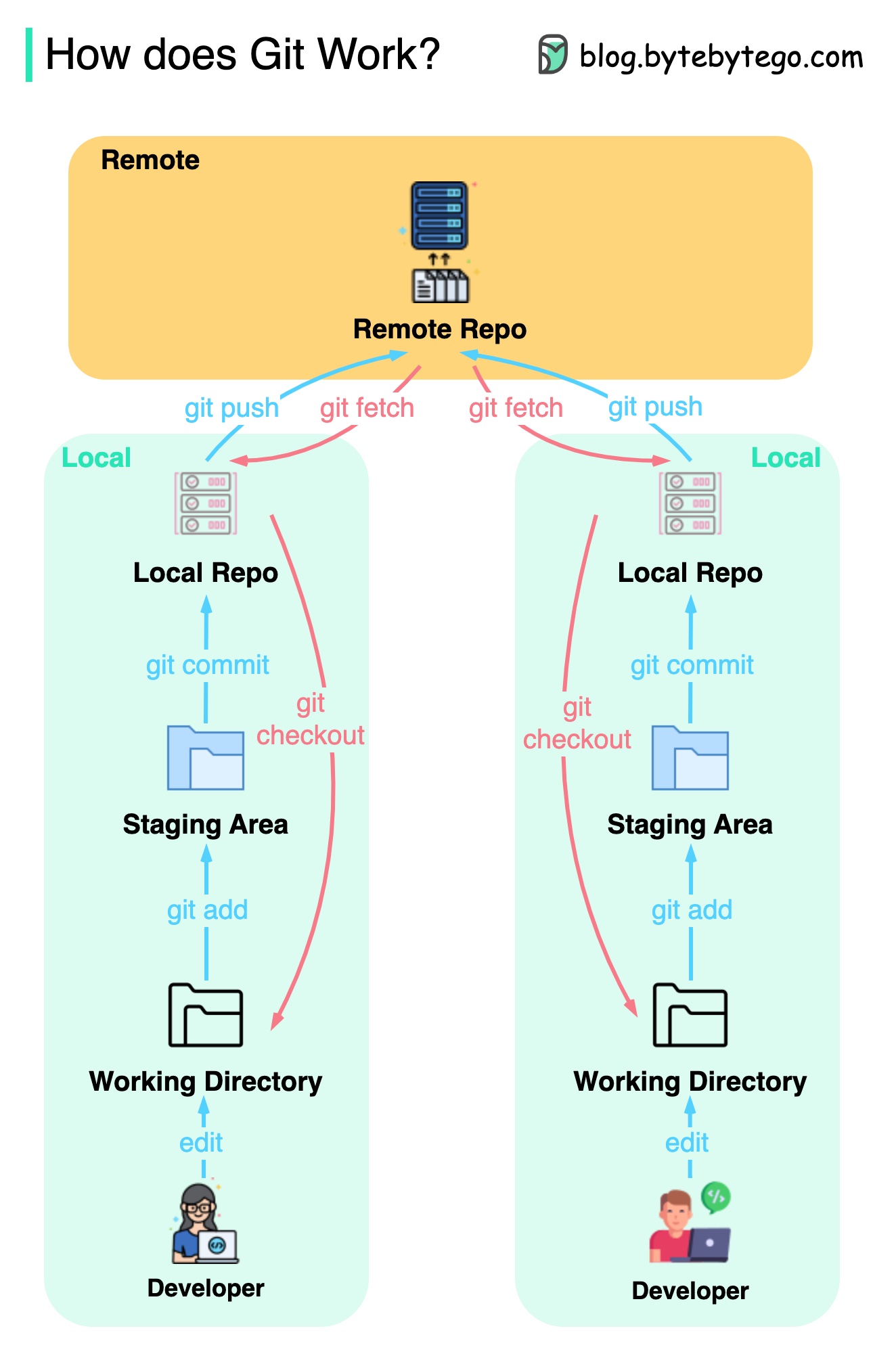

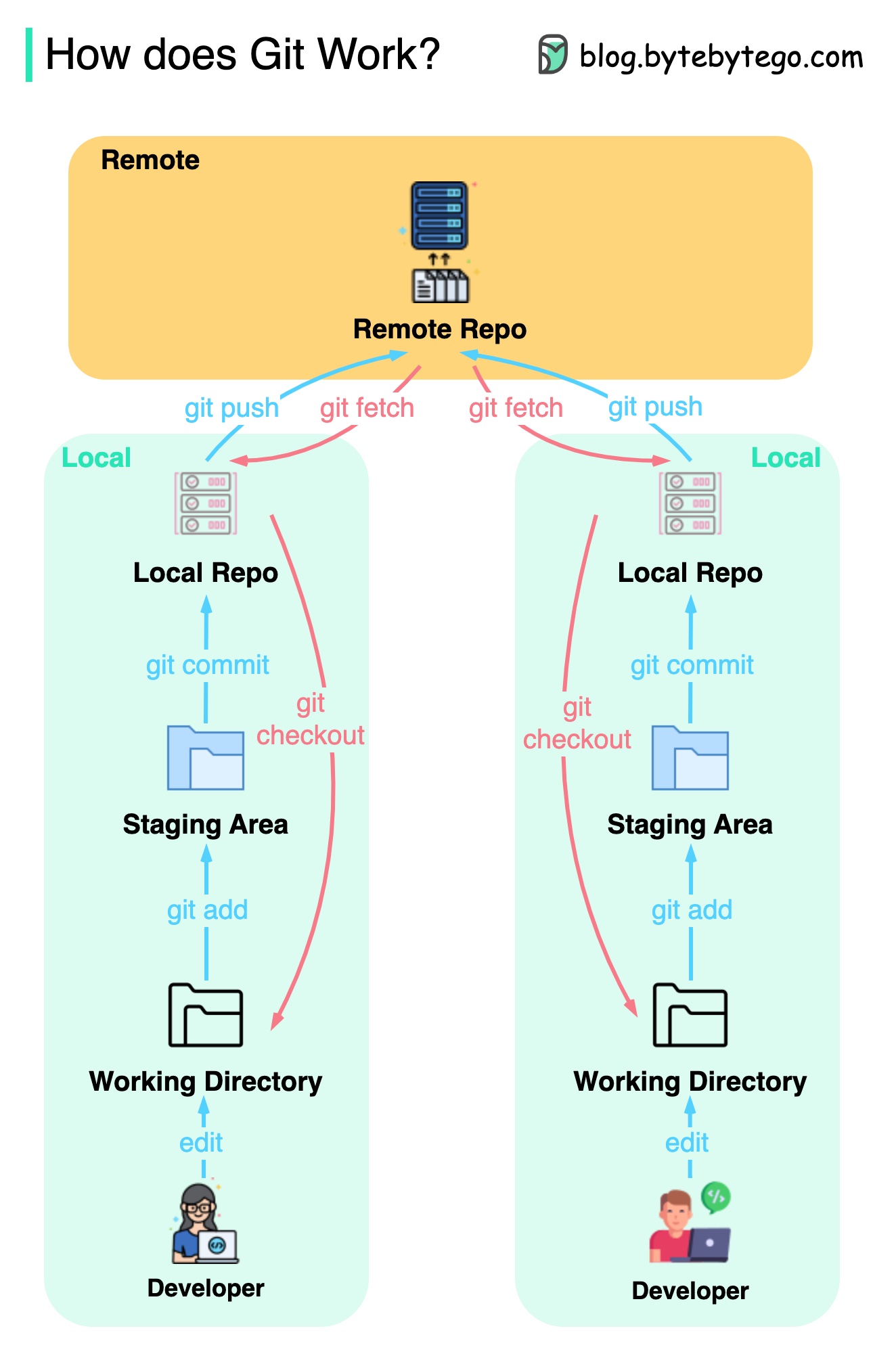

+ * [How does Git Work?](https://bytebytego.com/guides/git-workflow)

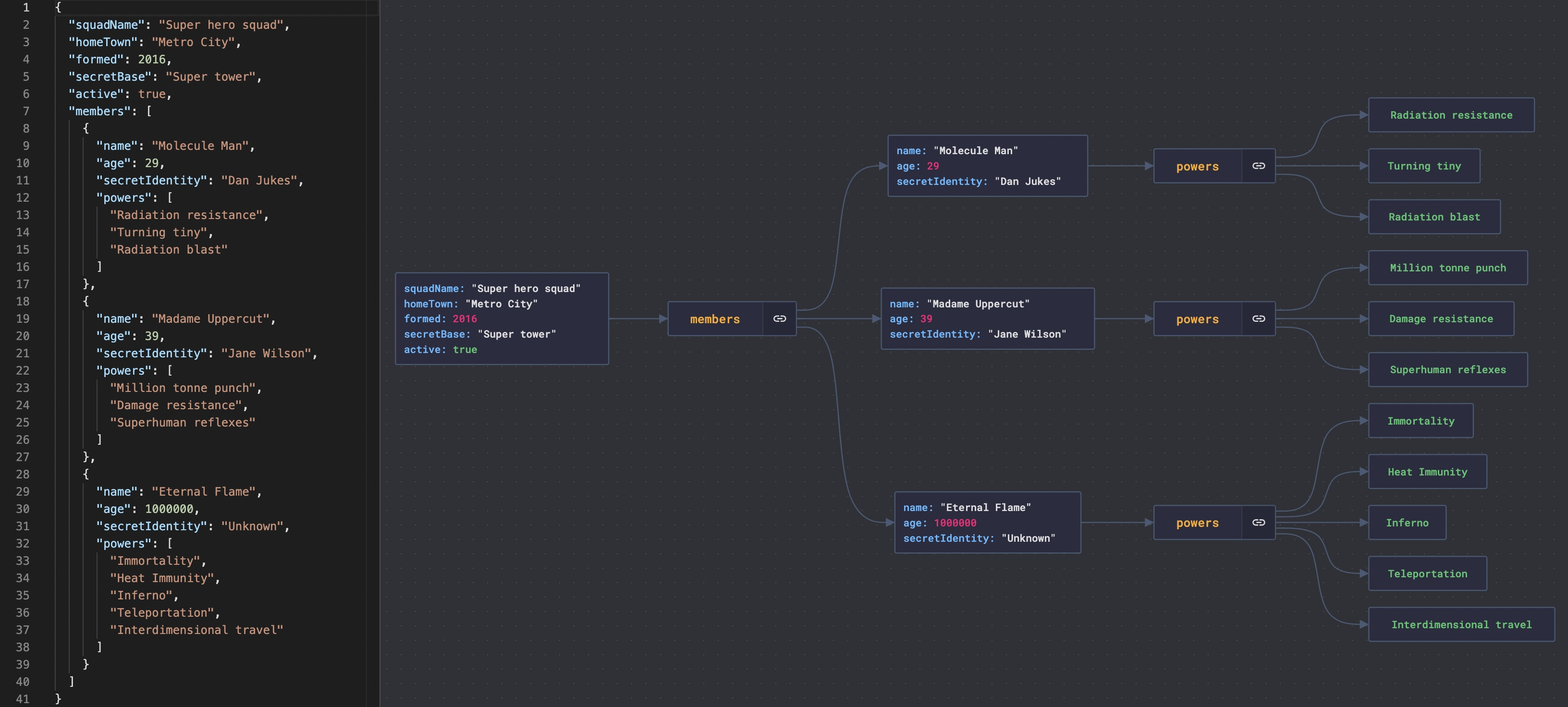

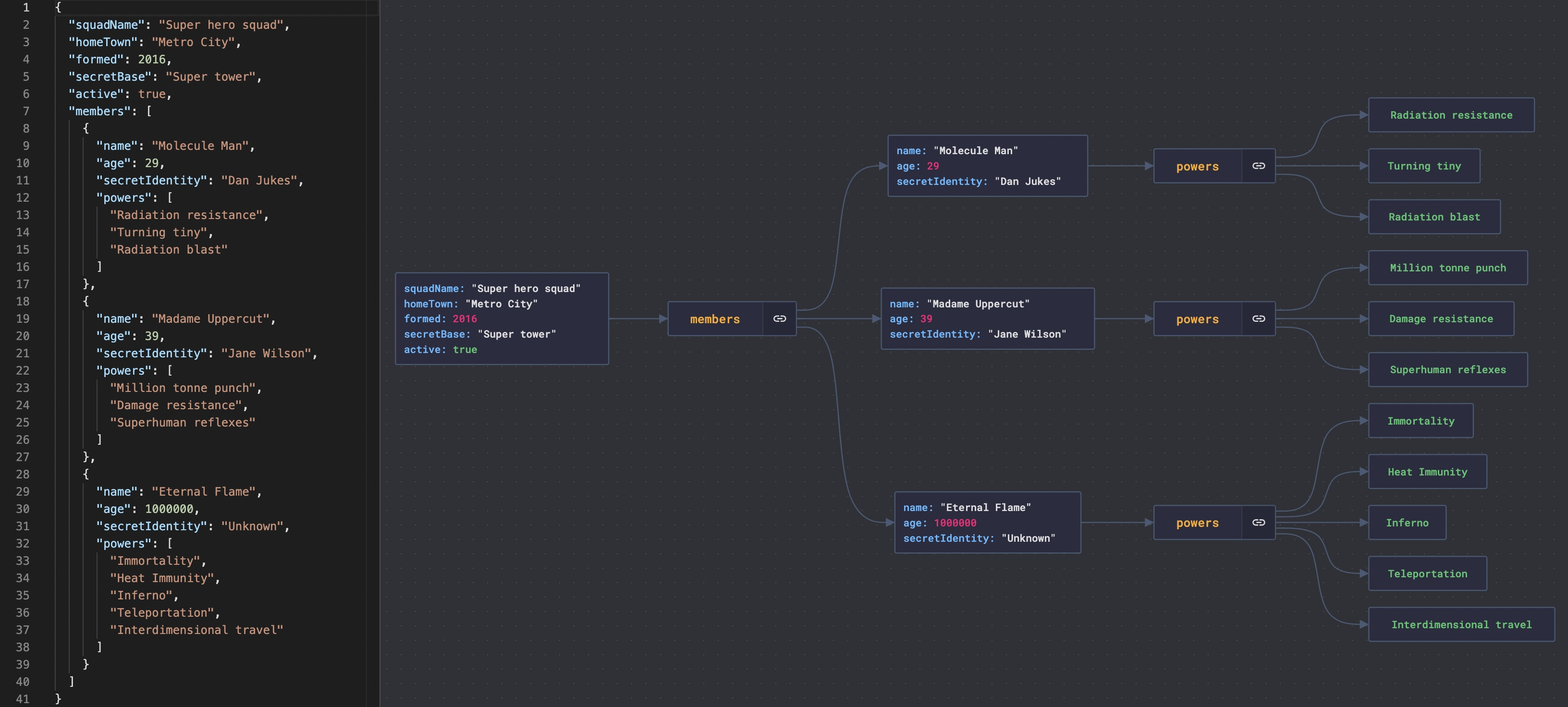

+ * [JSON Crack: Visualize JSON Files](https://bytebytego.com/guides/json-files)

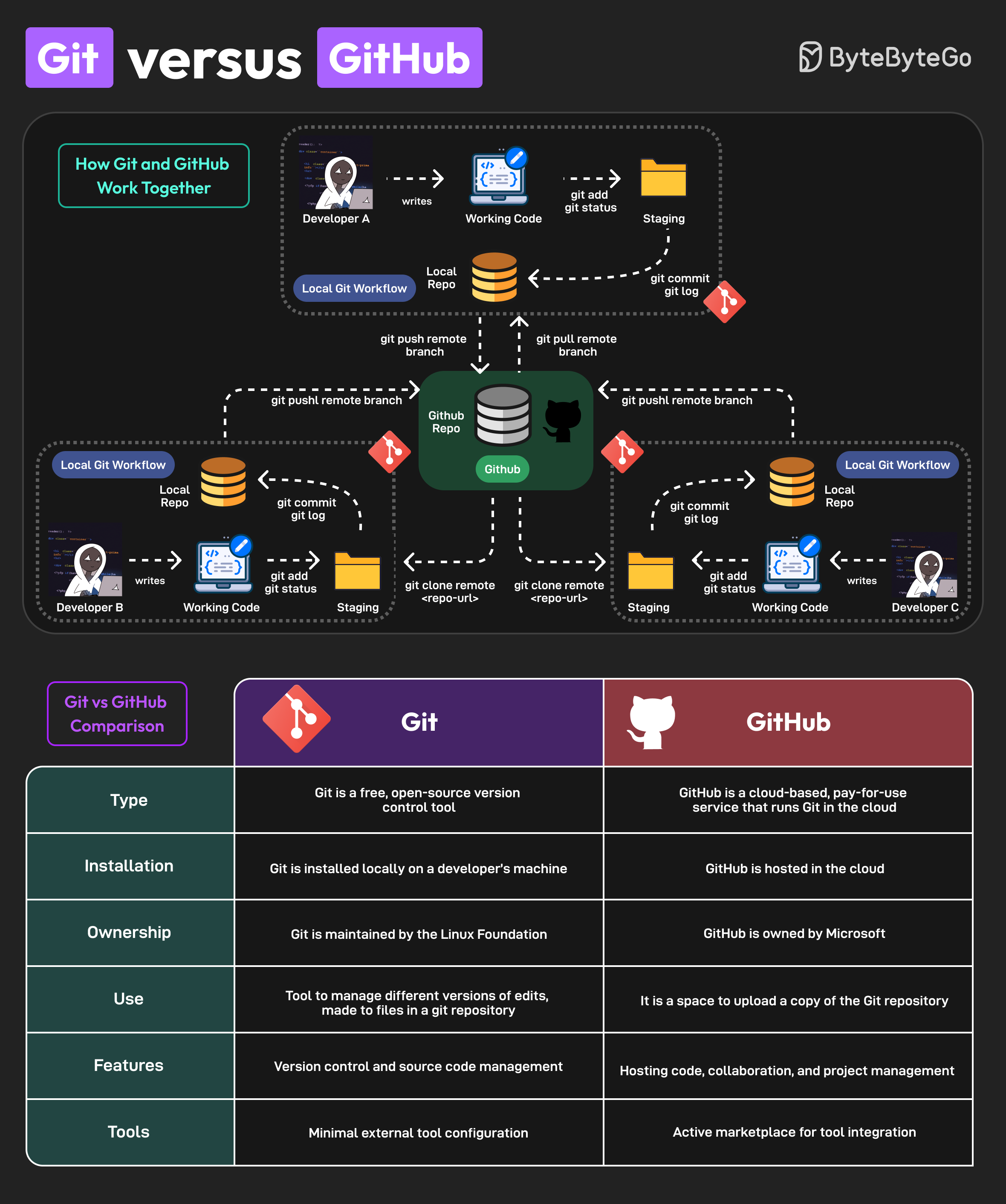

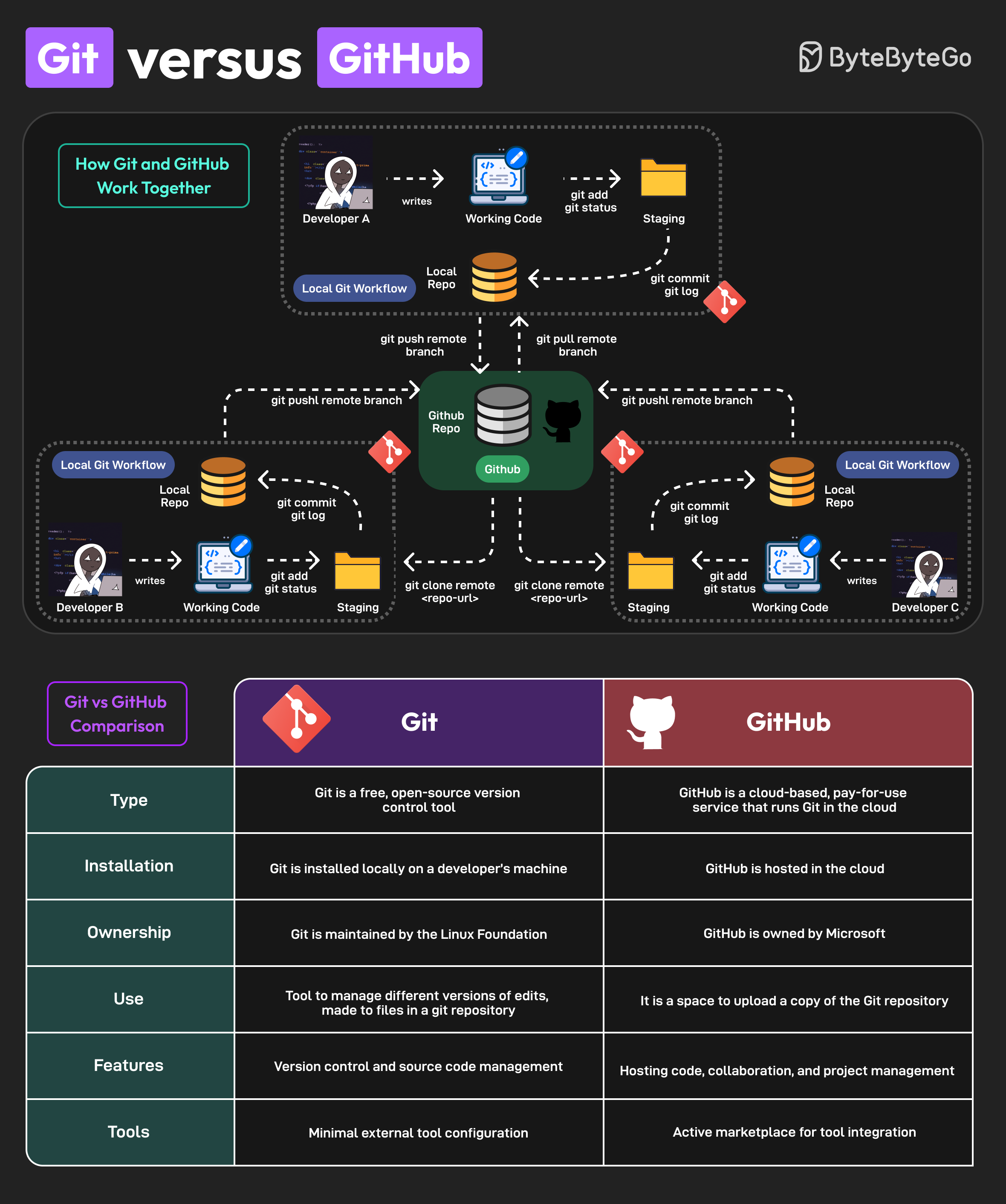

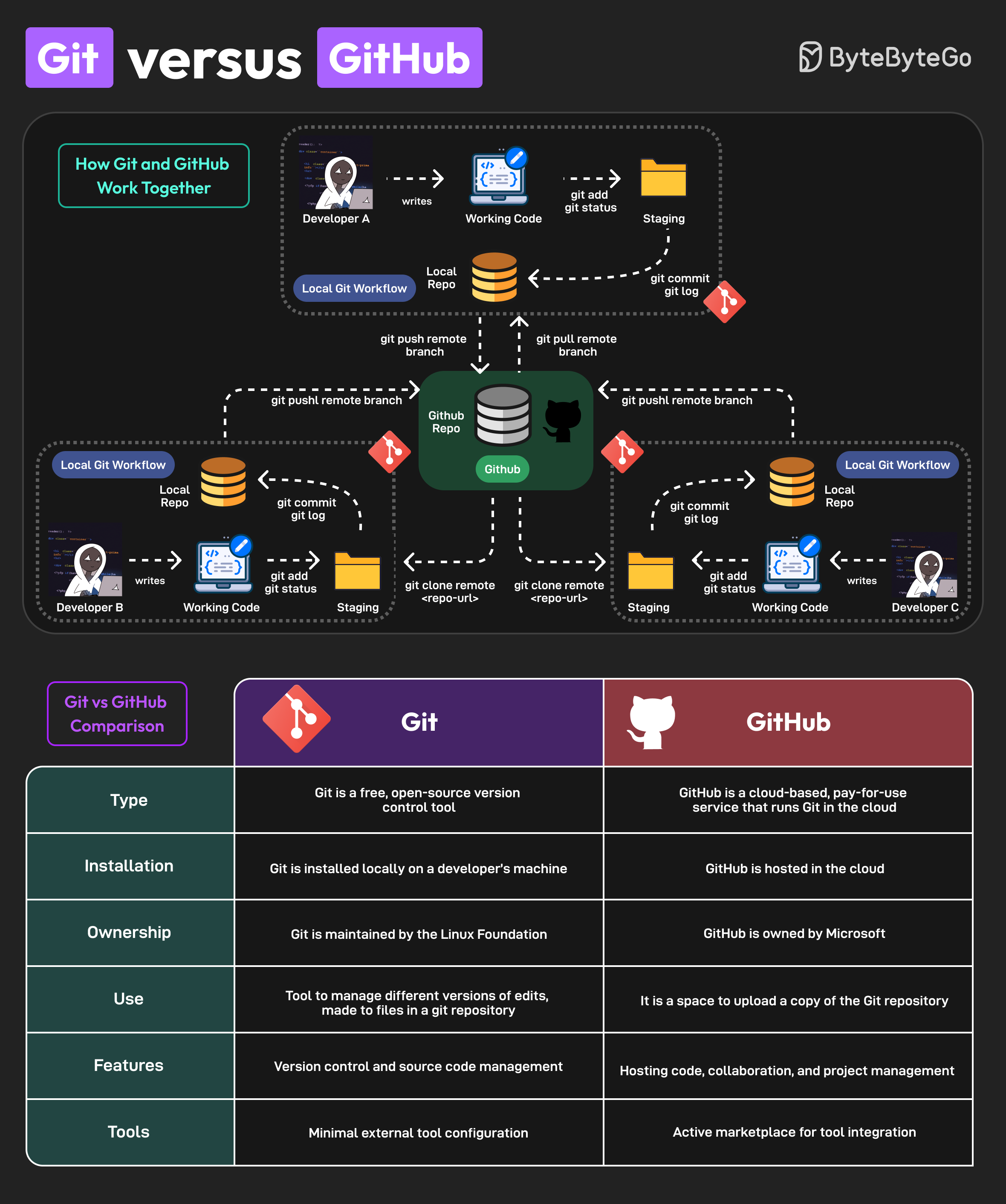

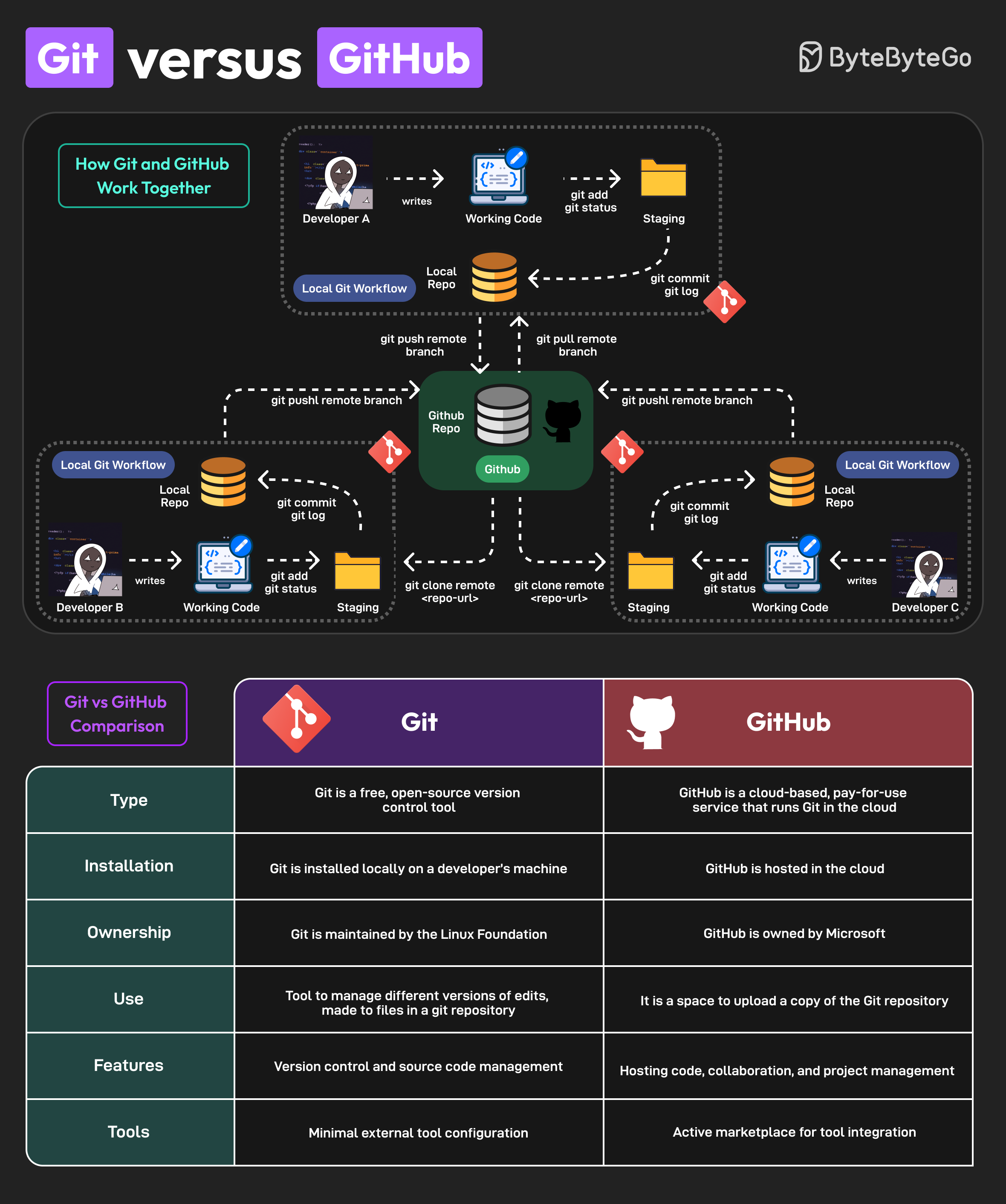

+ * [Git vs GitHub](https://bytebytego.com/guides/git-vs-github)

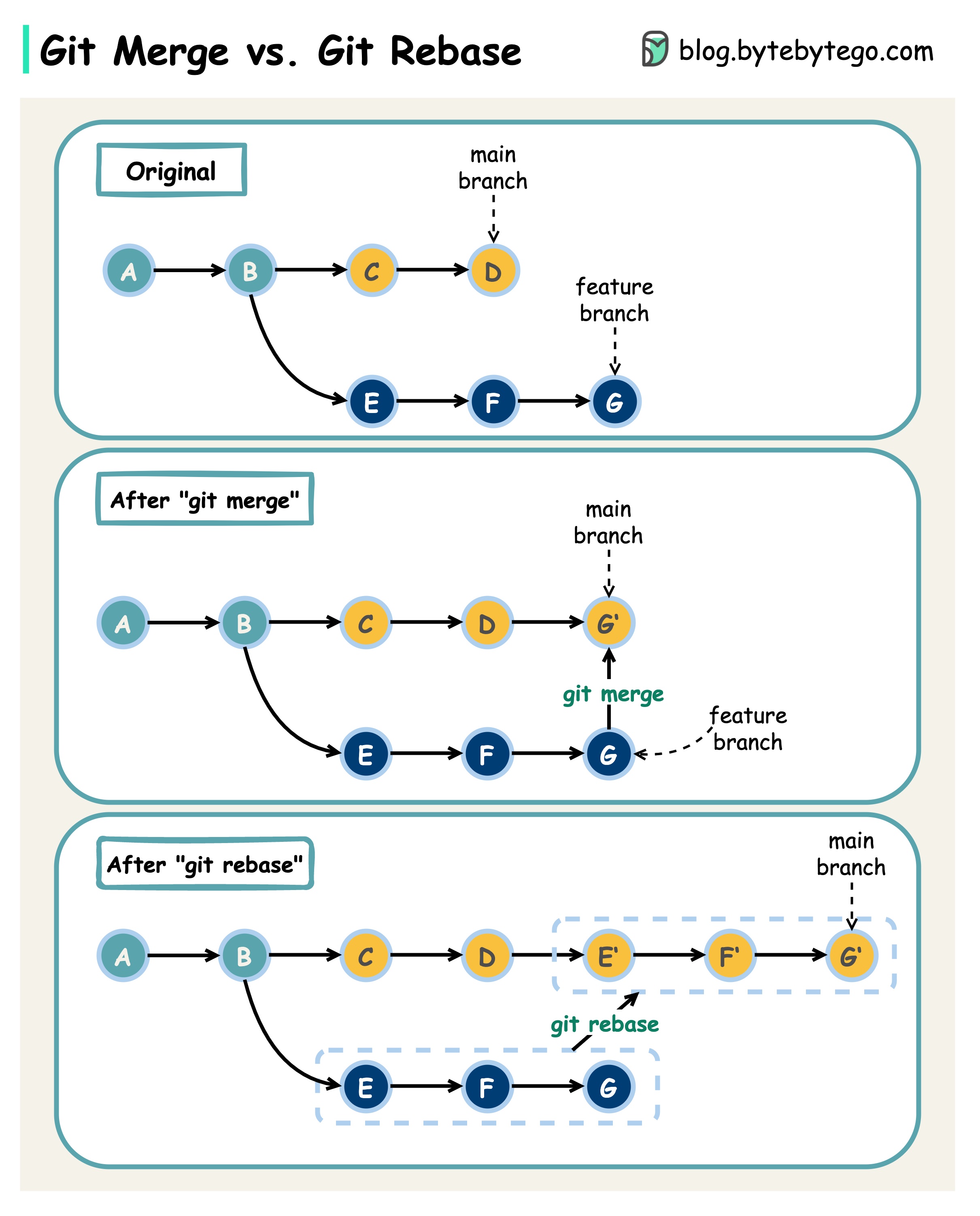

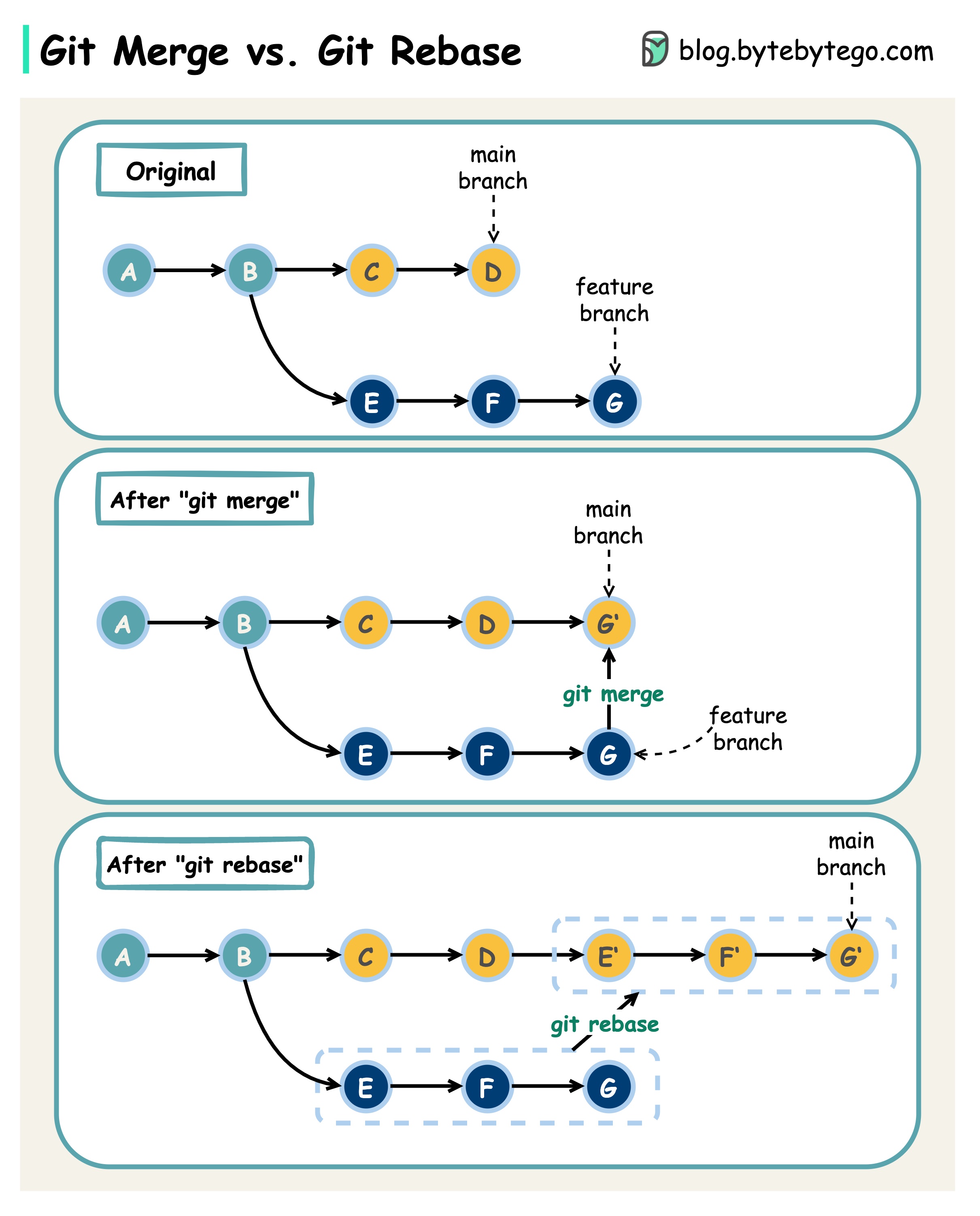

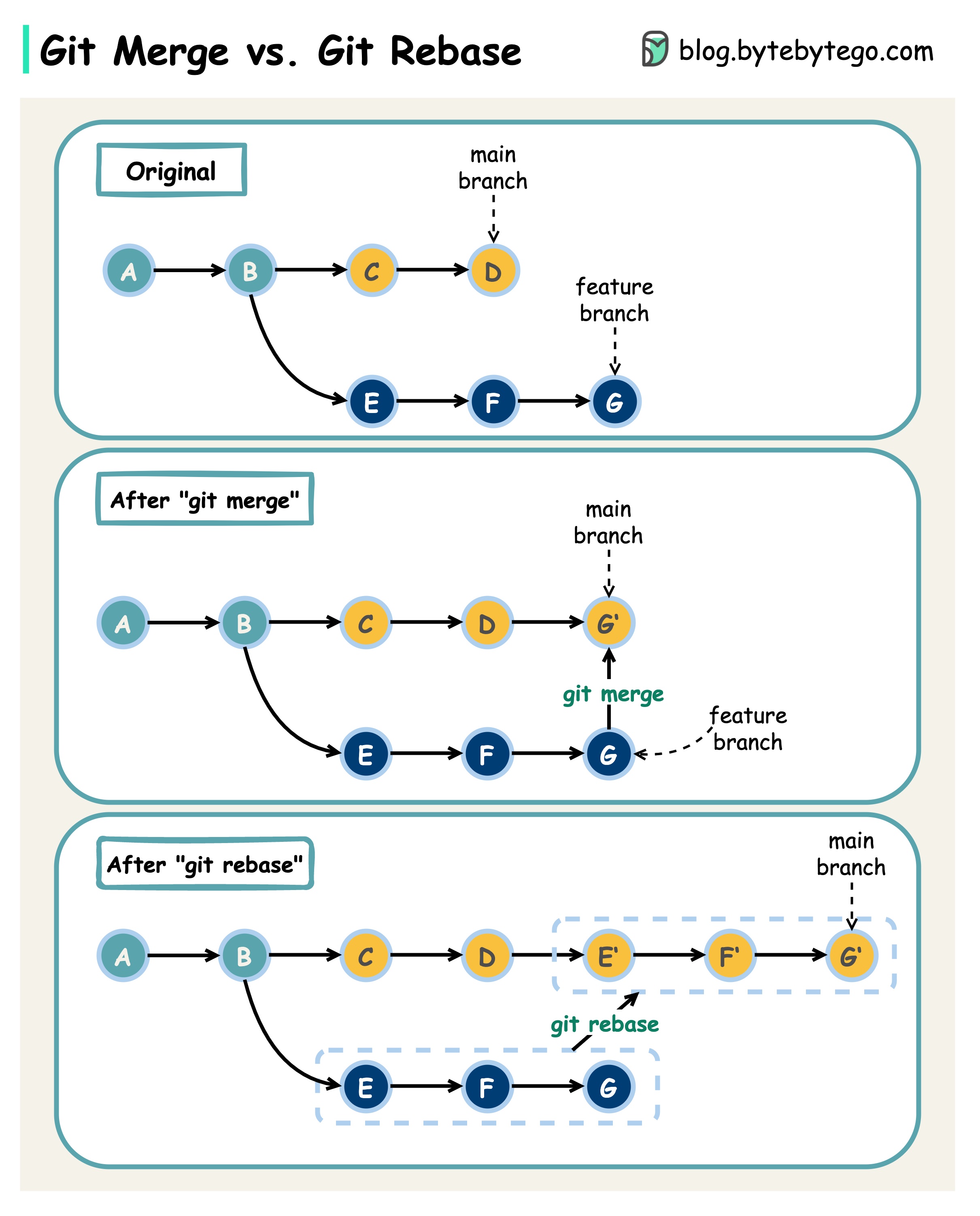

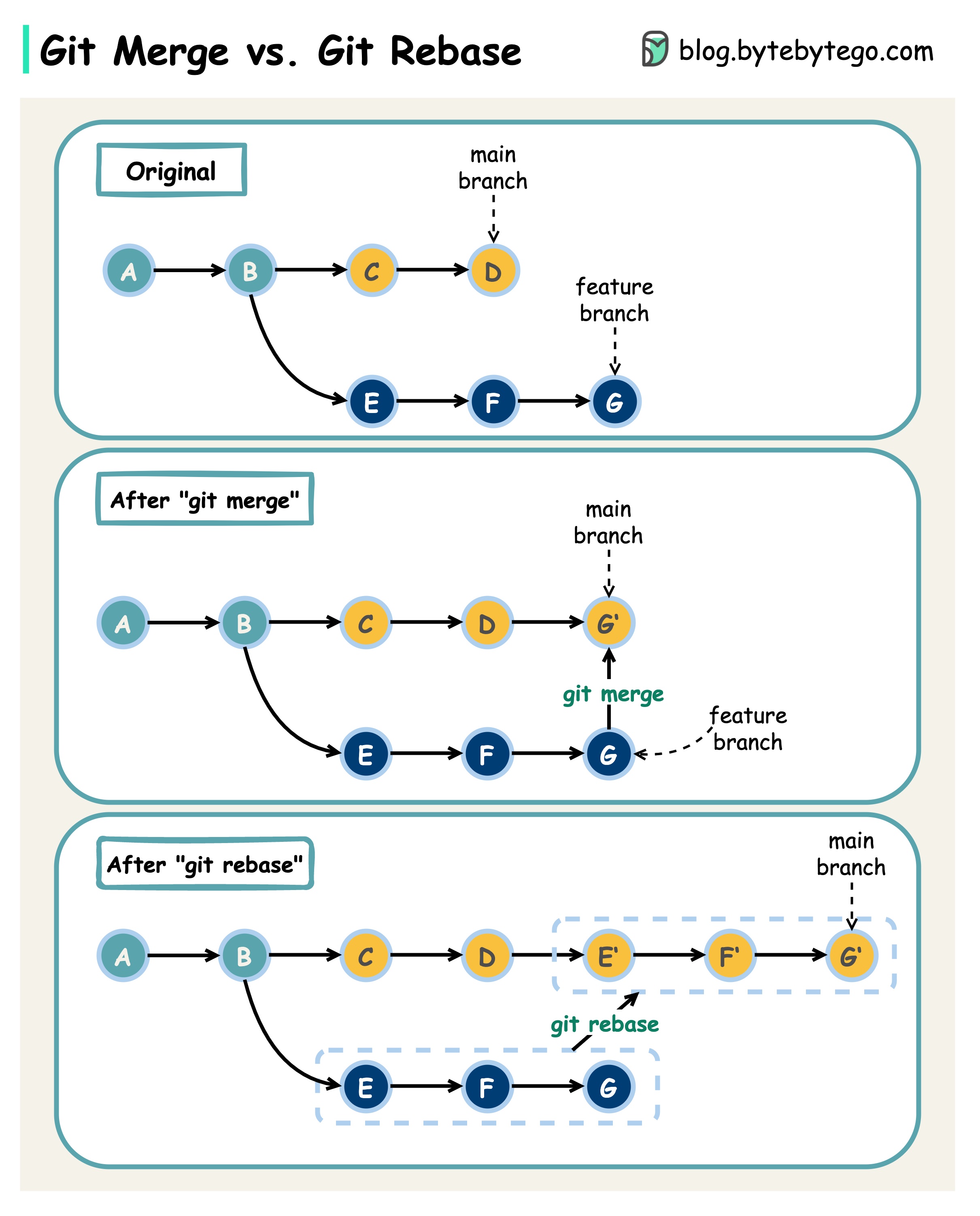

+ * [Git Merge vs. Git Rebase](https://bytebytego.com/guides/git-merge-vs-git-rebate)

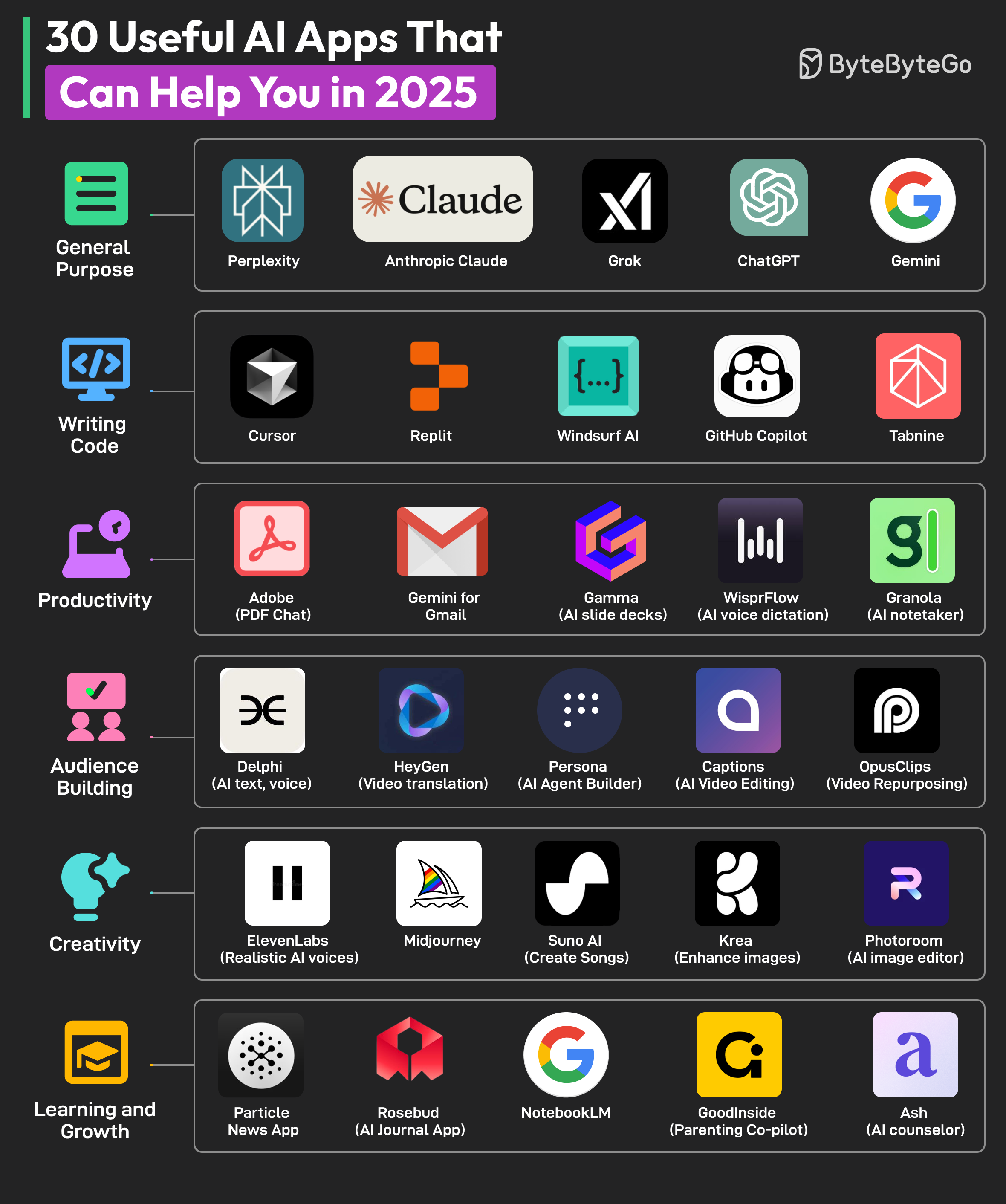

+ * [30 Useful AI Apps That Can Help You in 2025](https://bytebytego.com/guides/30-useful-ai-apps-that-can-help-you-in-2025)

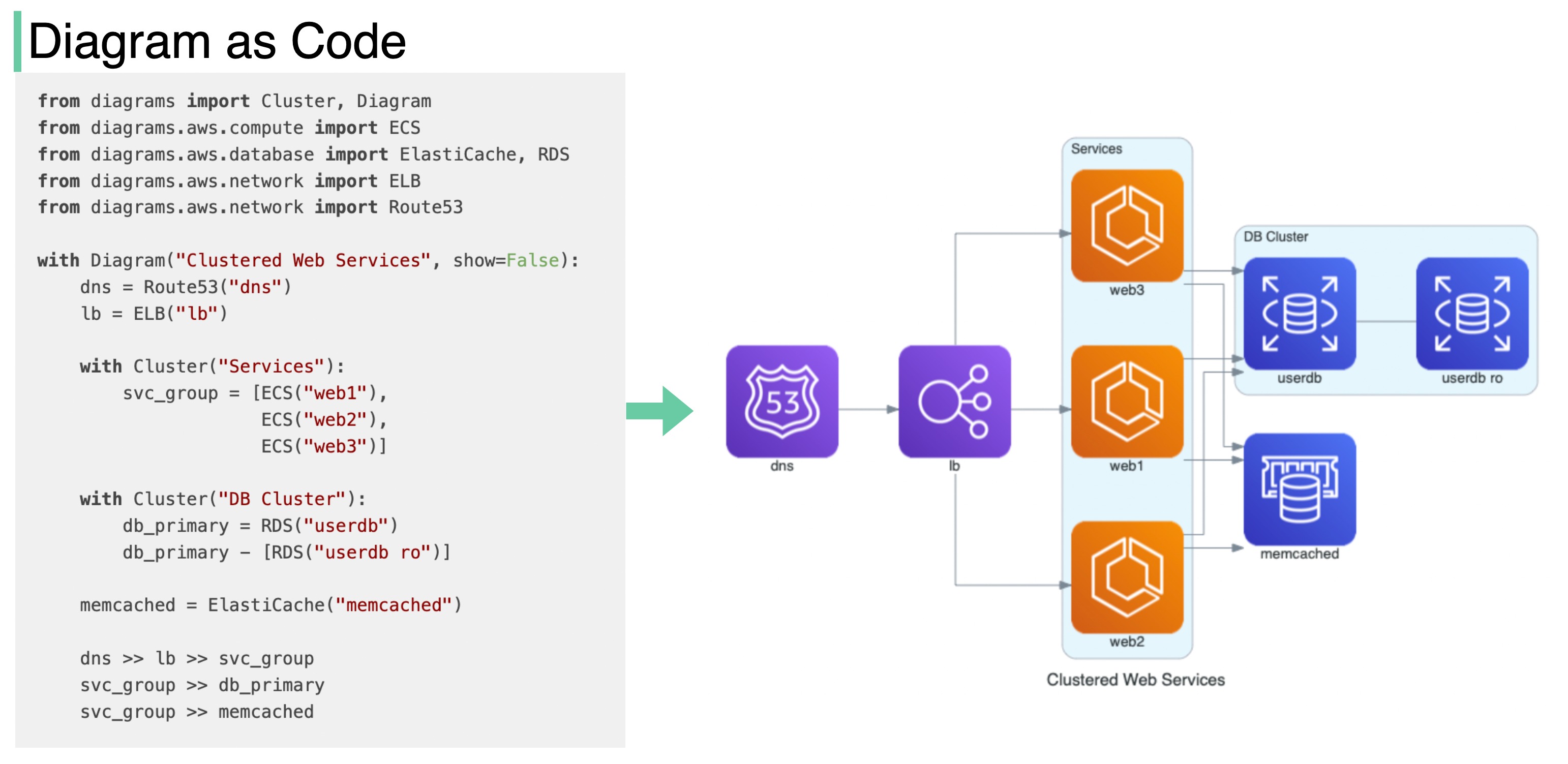

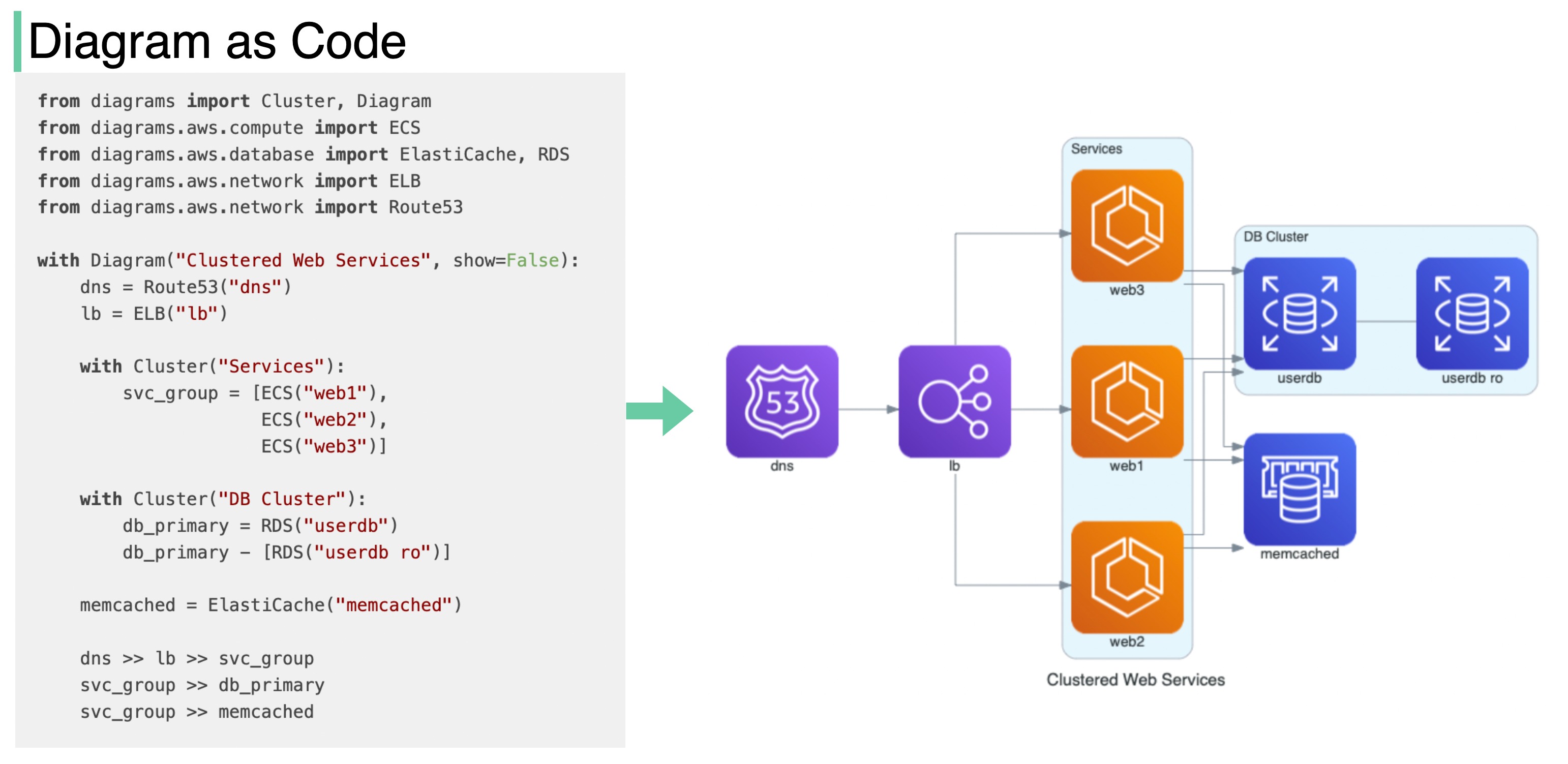

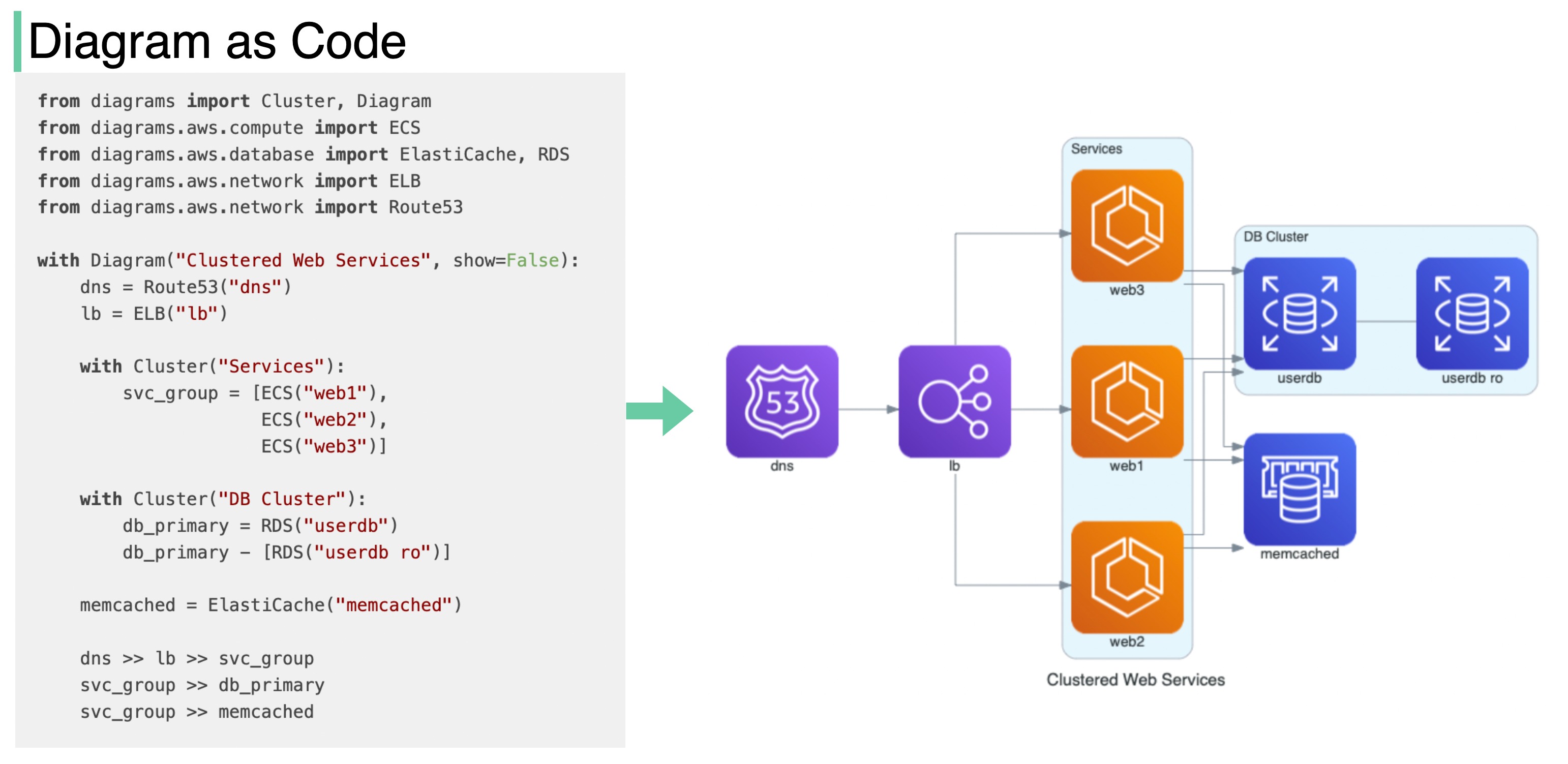

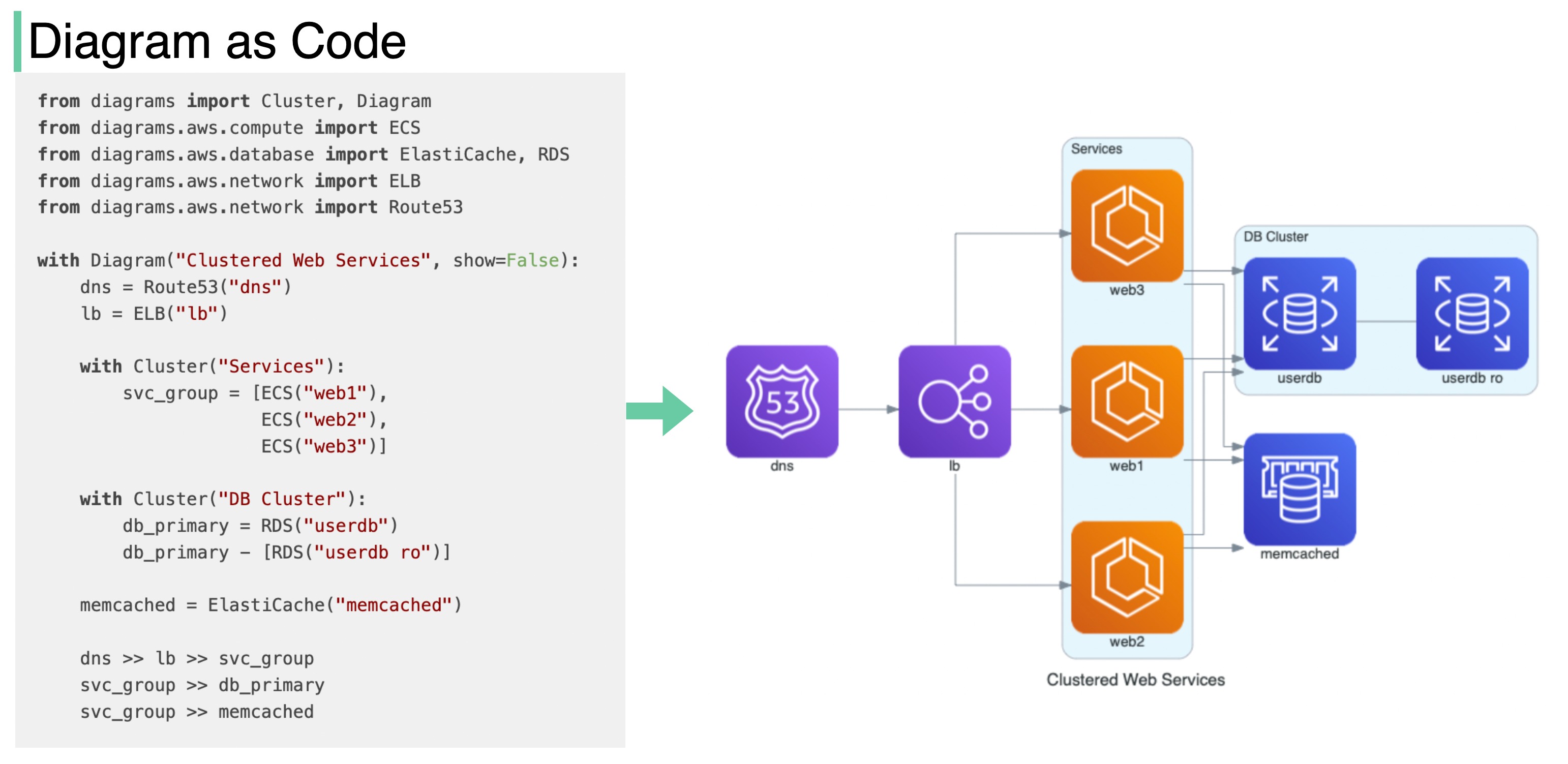

+ * [Diagram as Code](https://bytebytego.com/guides/diagram-as-code)

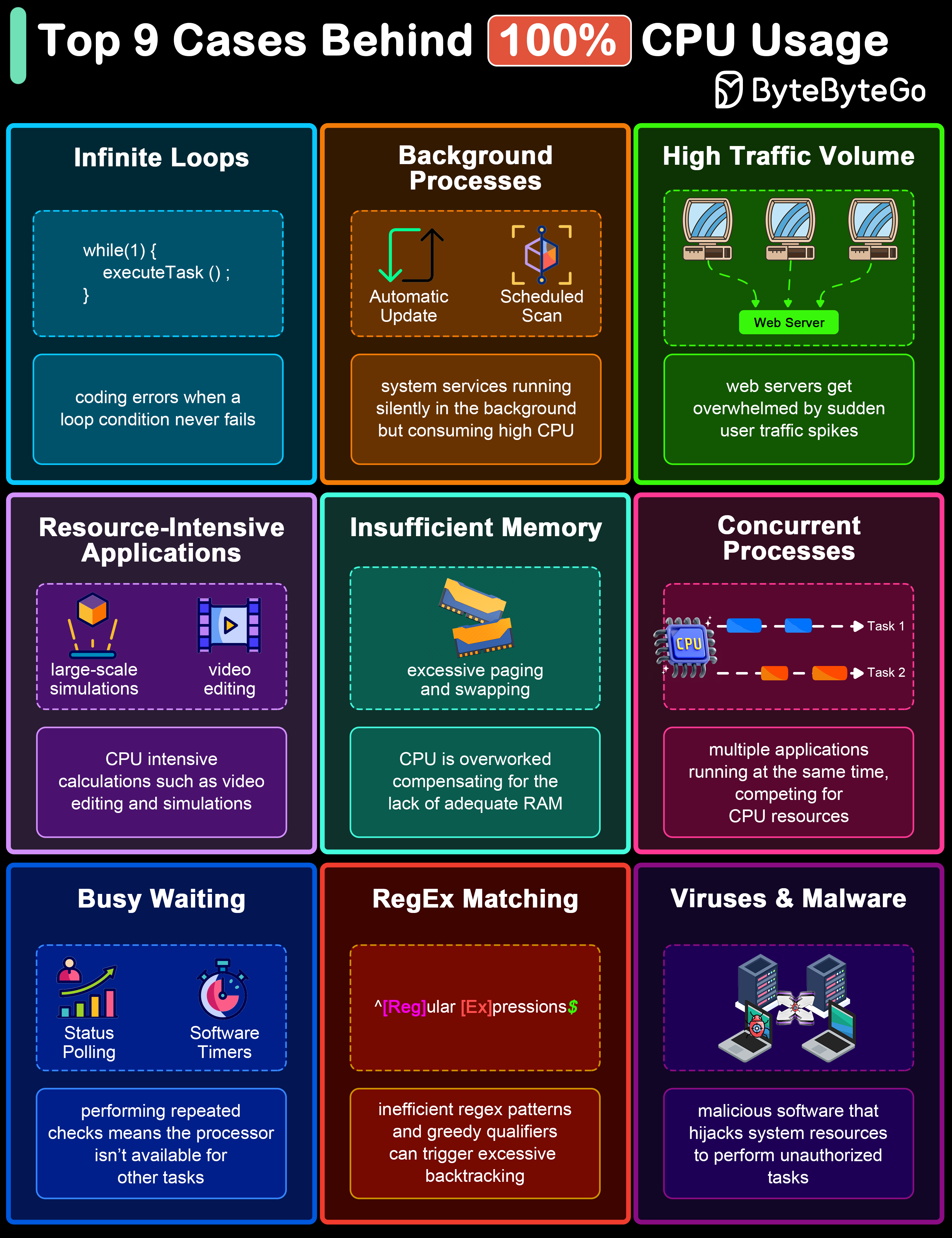

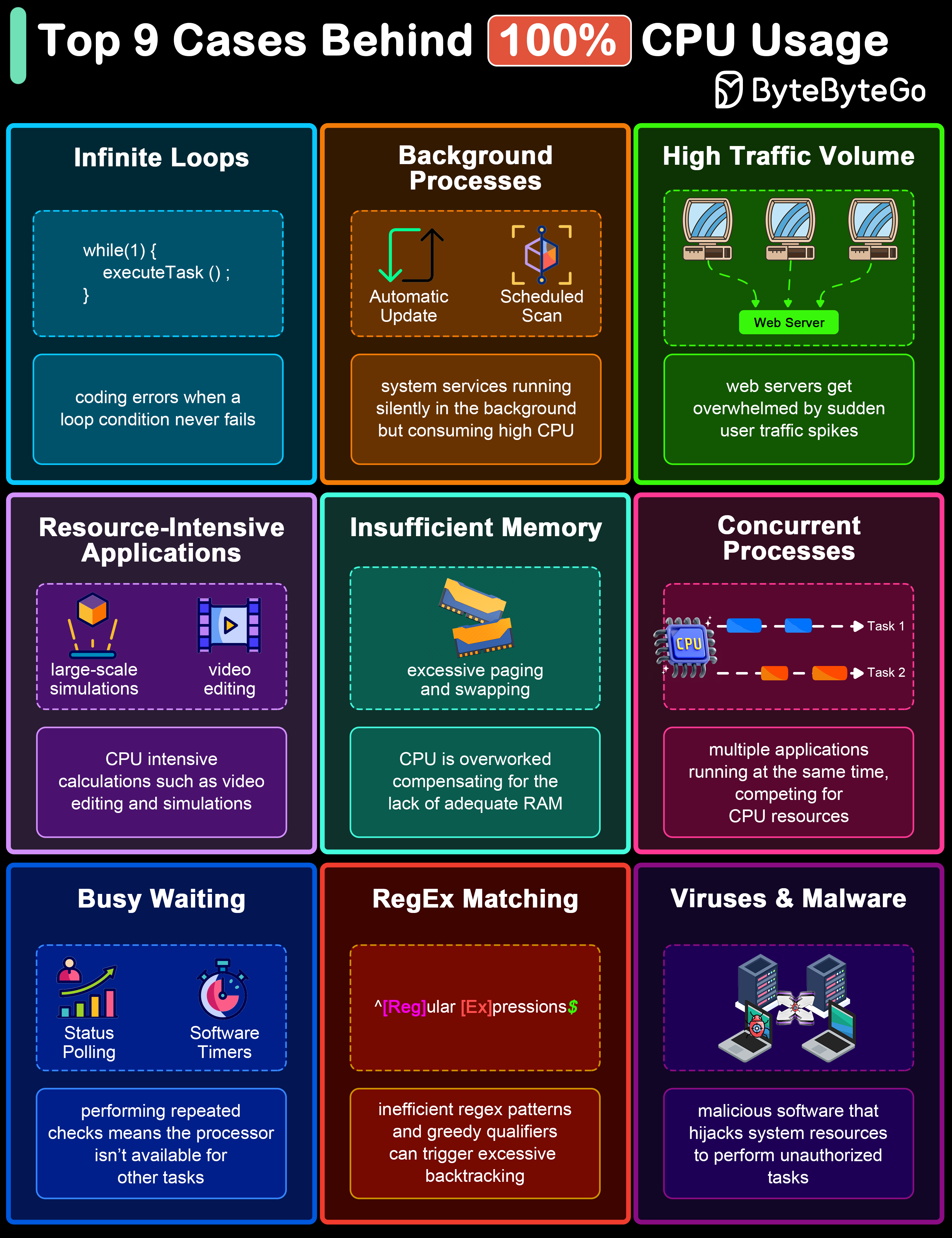

+ * [Top 9 Causes of 100% CPU Usage](https://bytebytego.com/guides/top-9-cases-behind-100-cpu-usage)

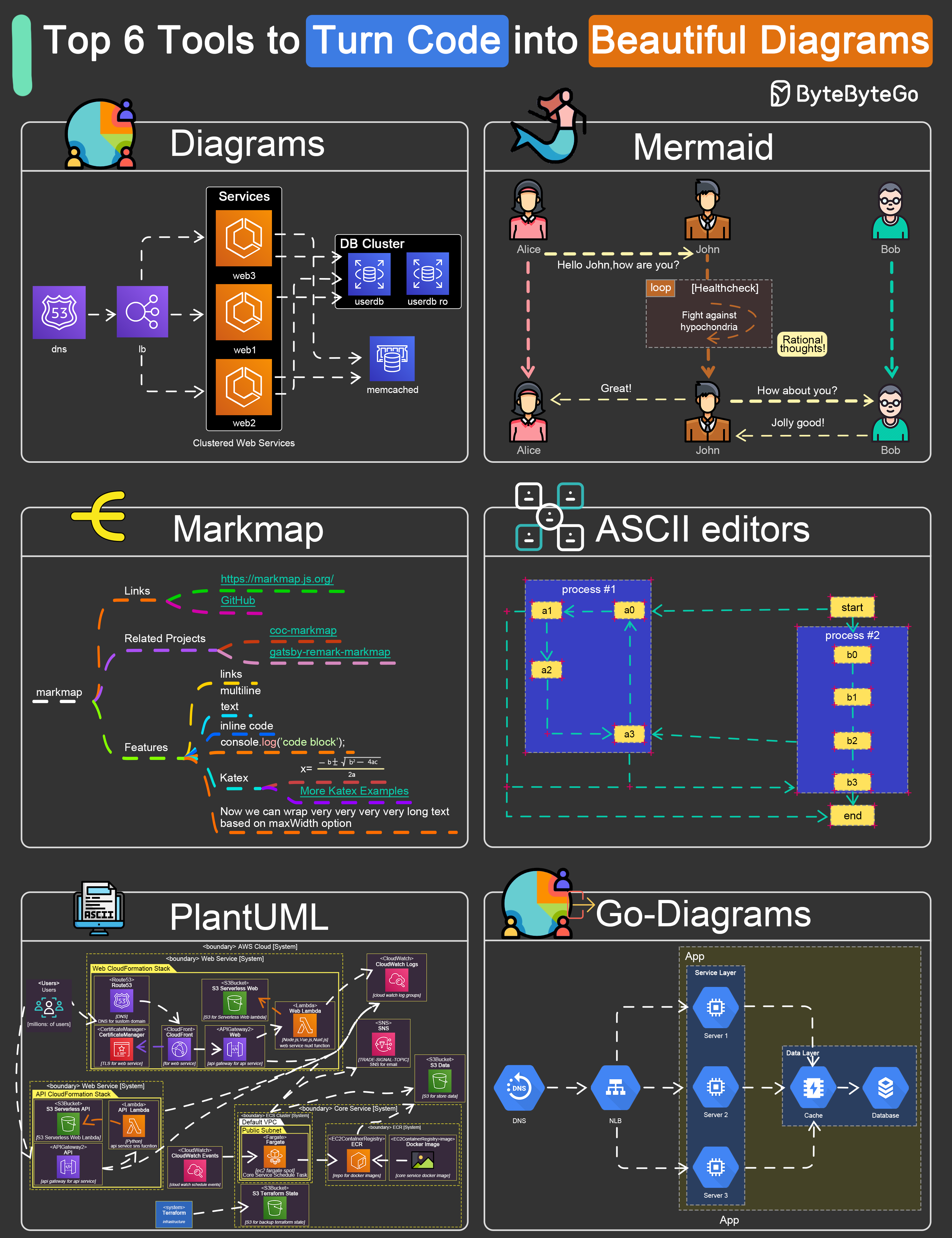

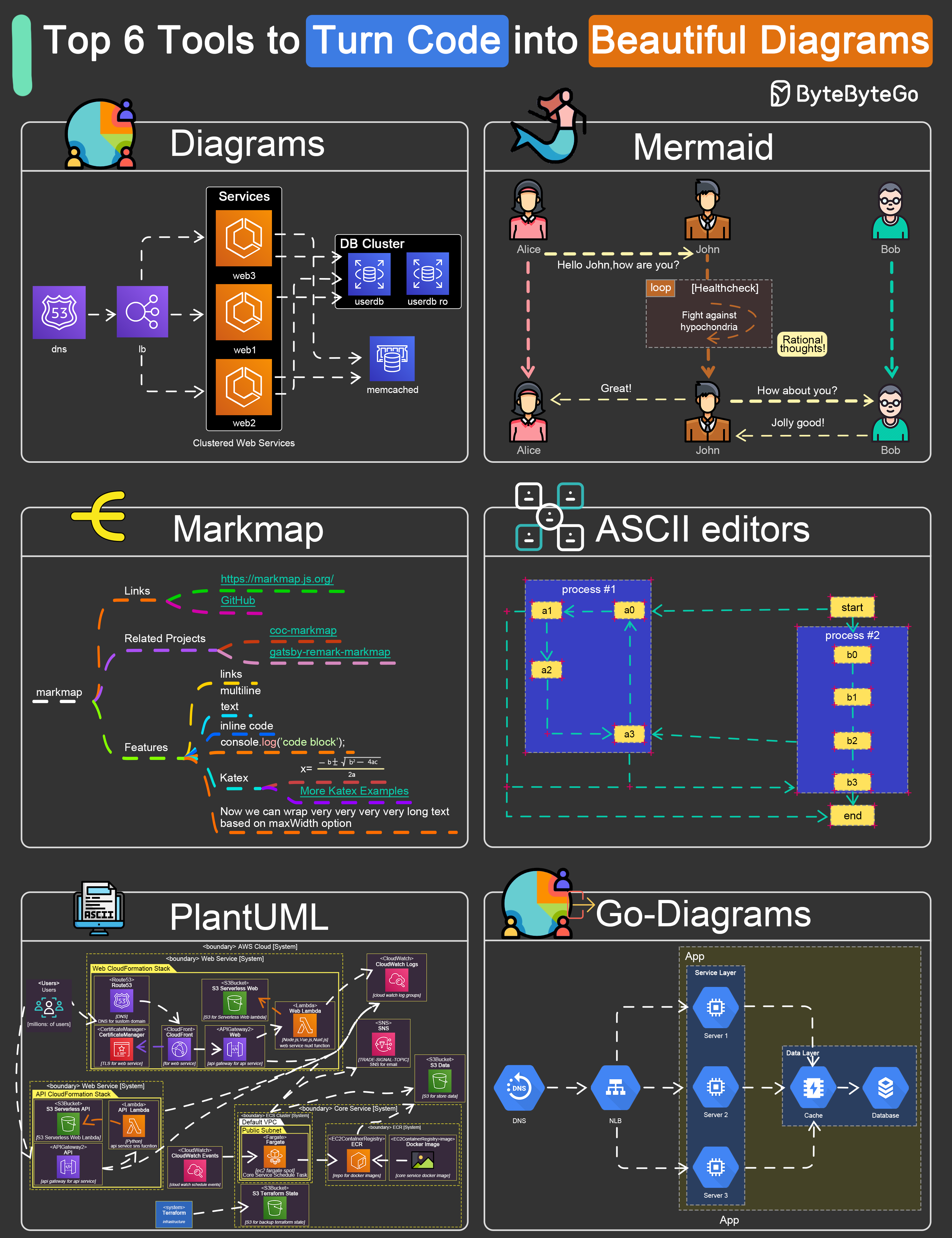

+ * [Top 6 Tools to Turn Code into Beautiful Diagrams](https://bytebytego.com/guides/top-6-tools-to-turn-code-into-beautiful-diagrams)

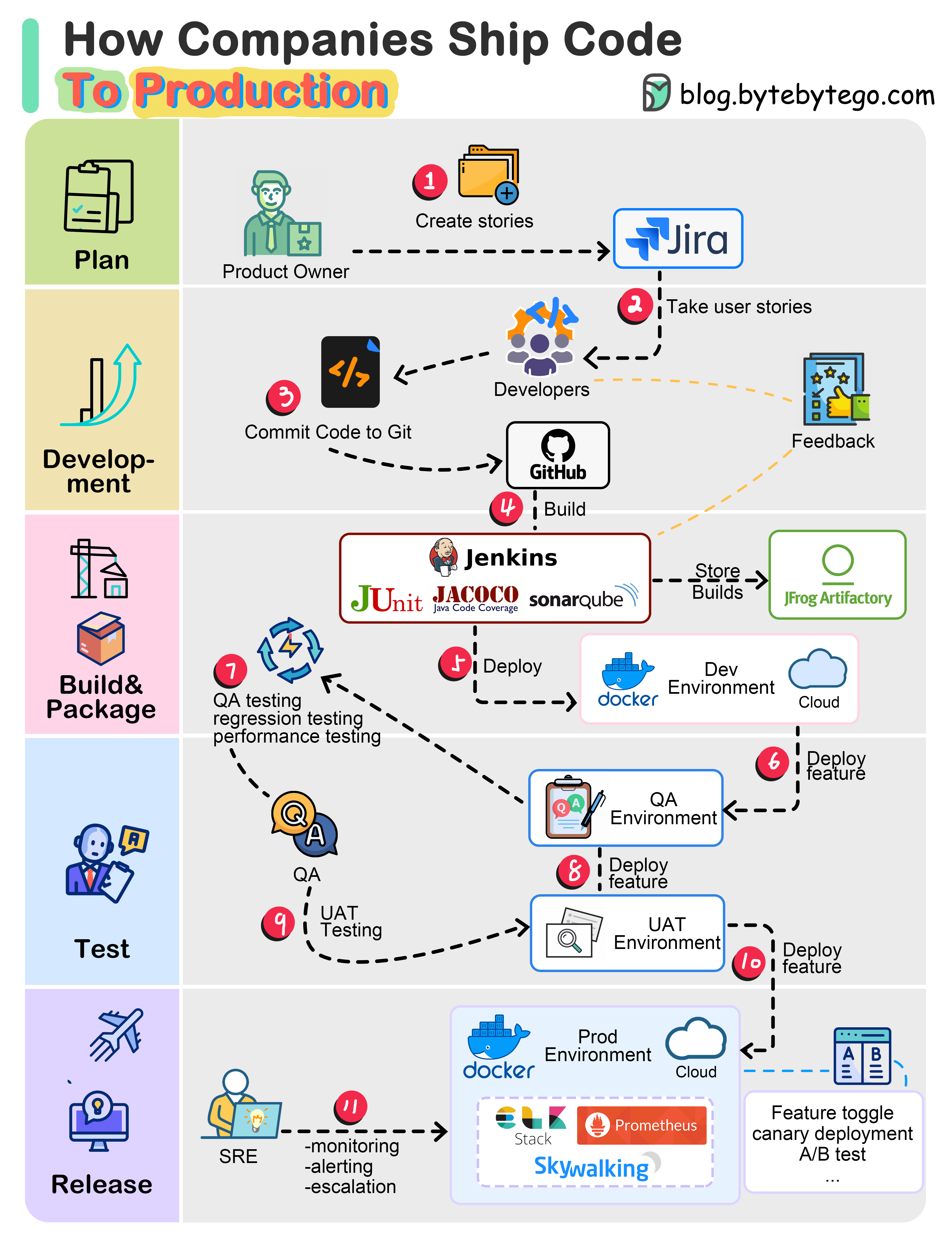

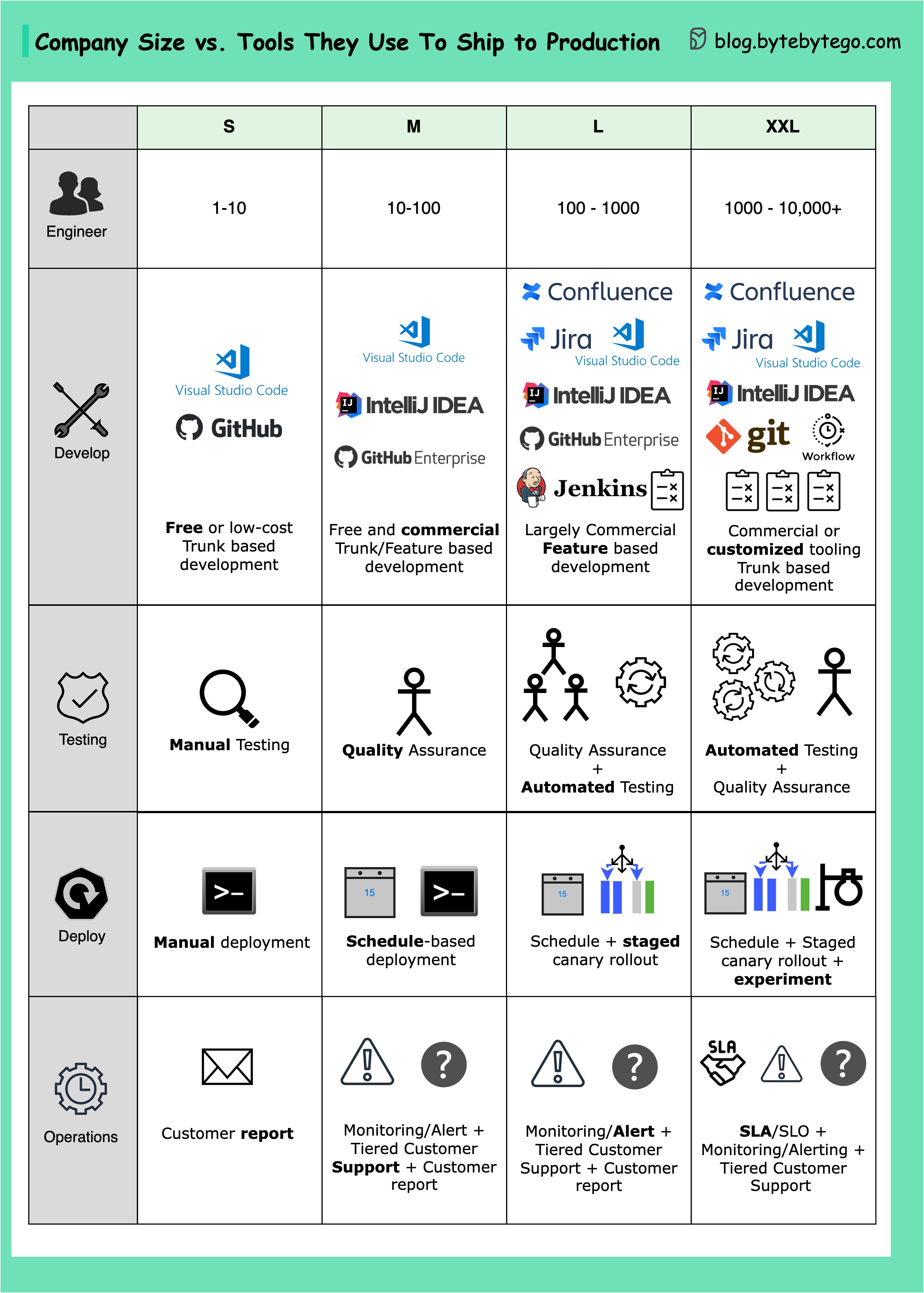

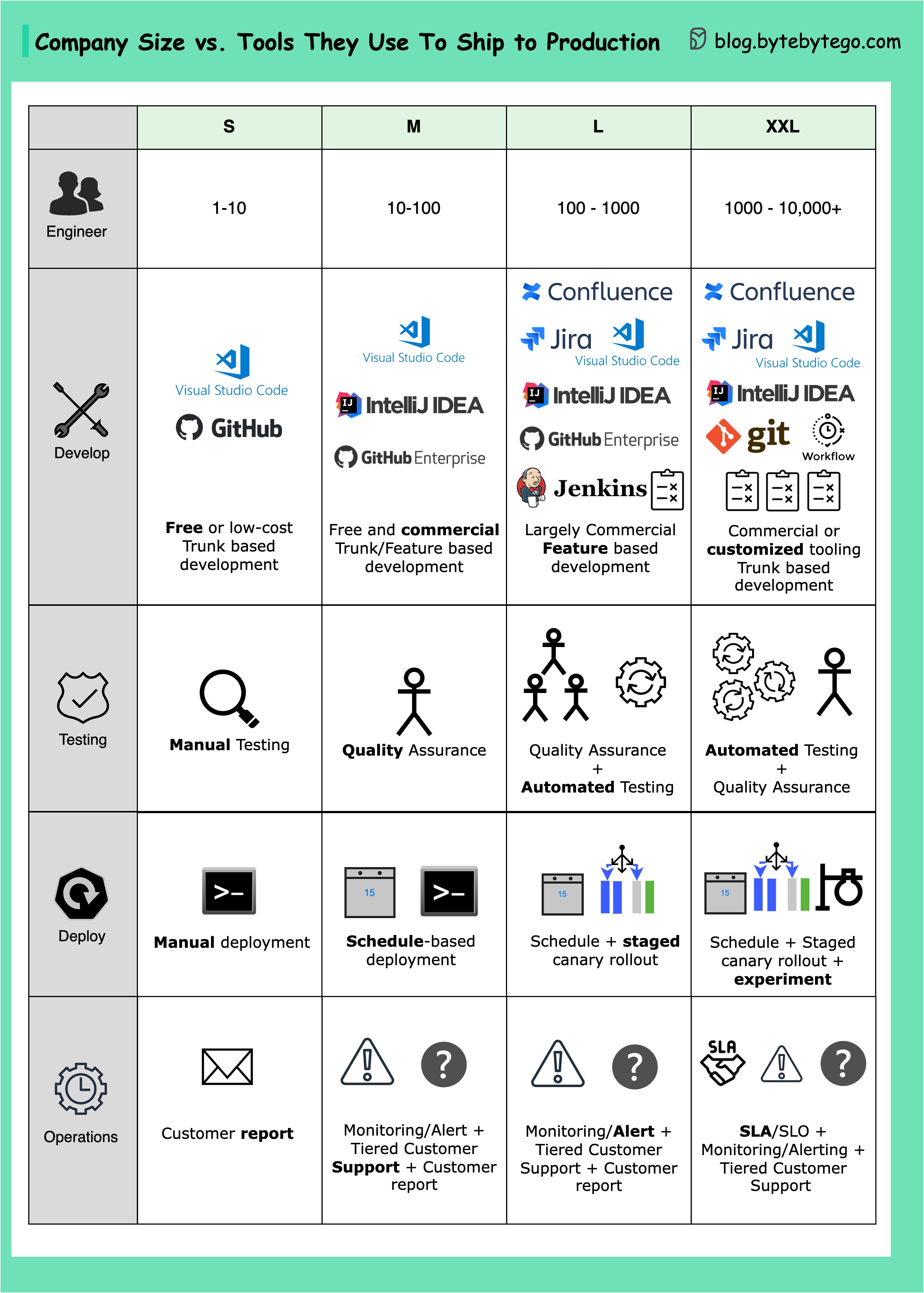

+ * [Tools for Shipping Code to Production](https://bytebytego.com/guides/what-tools-does-your-team-use-to-ship-code-to-production-and-ensure-code-quality)

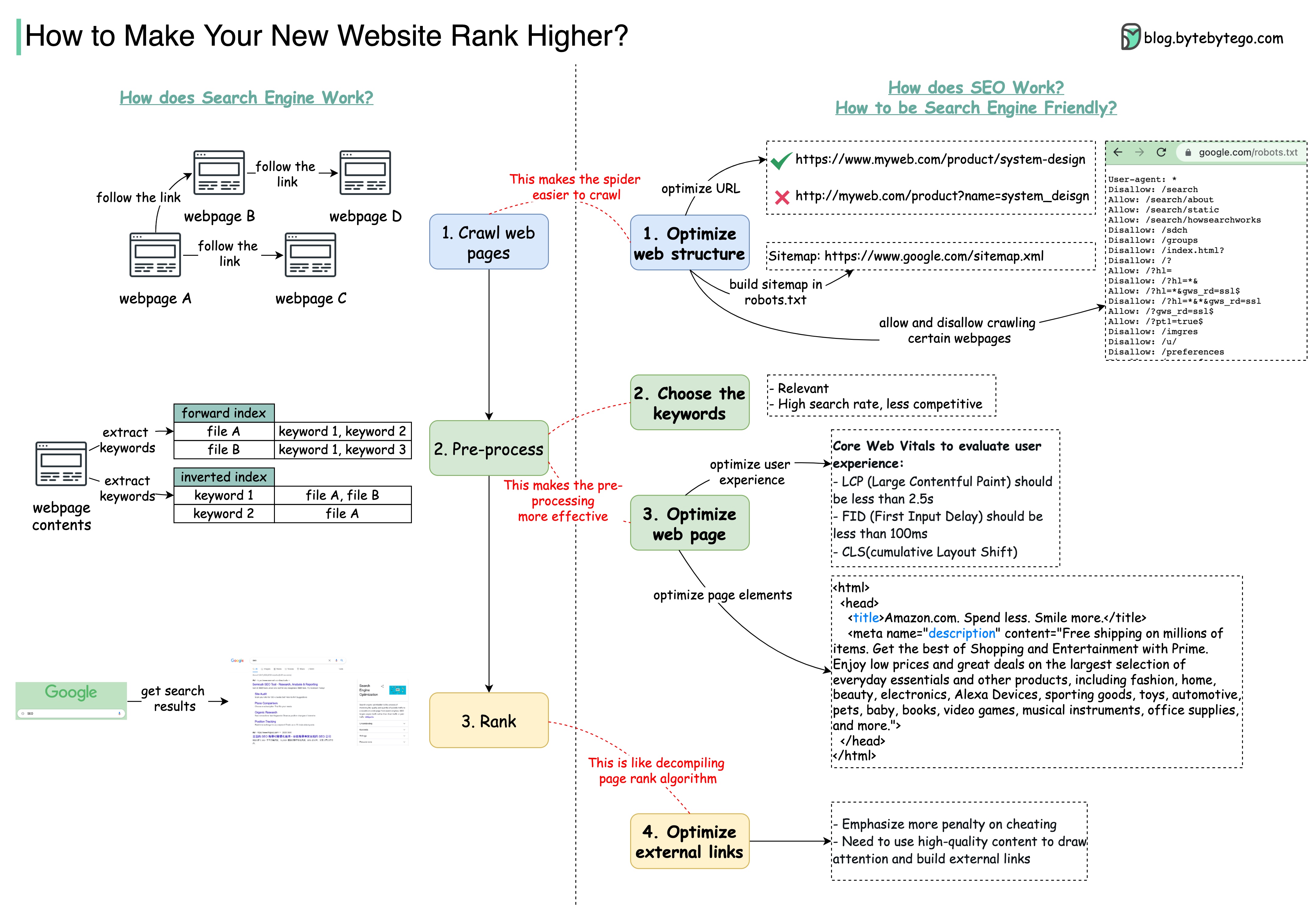

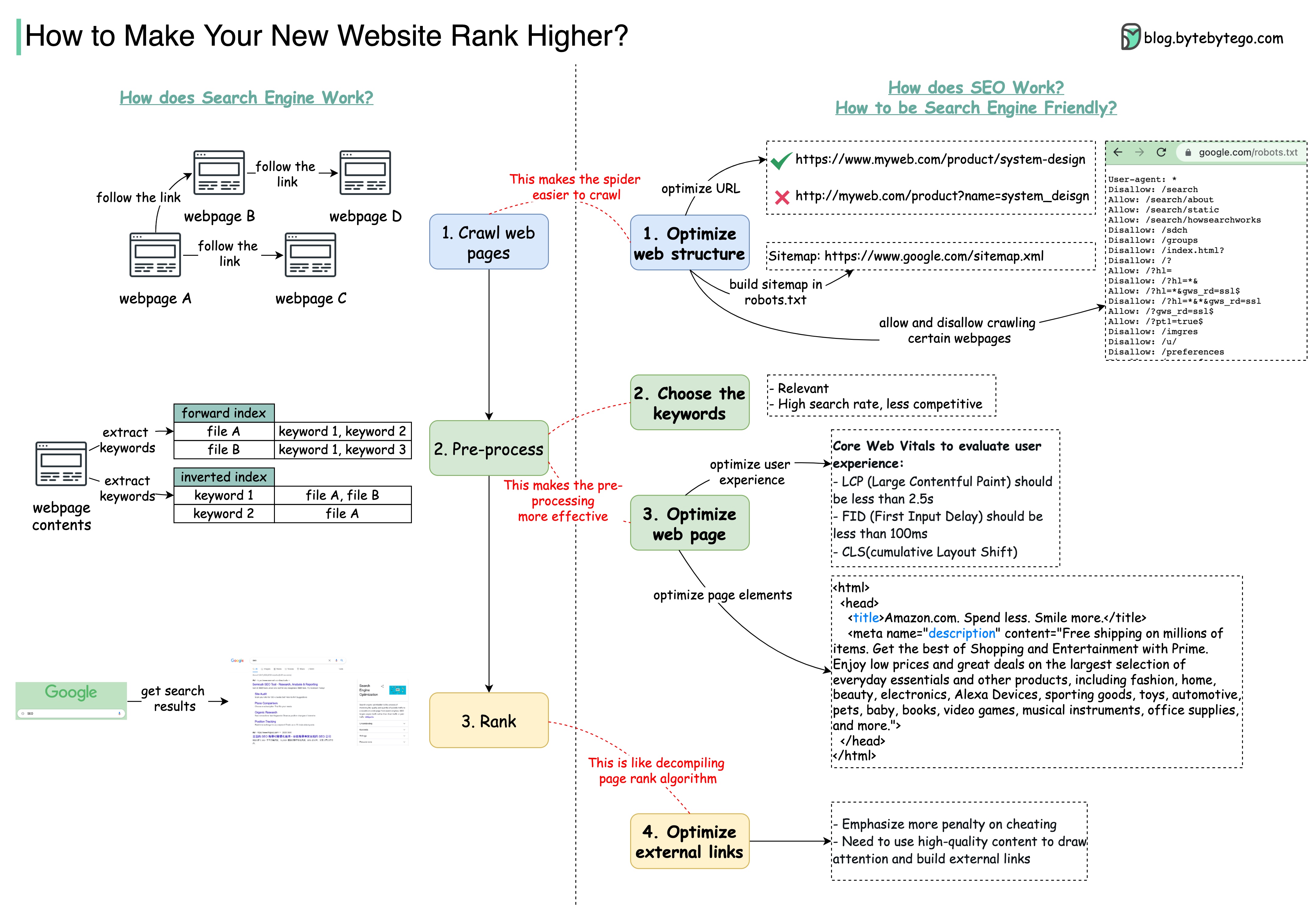

+ * [Making Sense of Search Engine Optimization](https://bytebytego.com/guides/making-sense-of-search-engine-optimization)

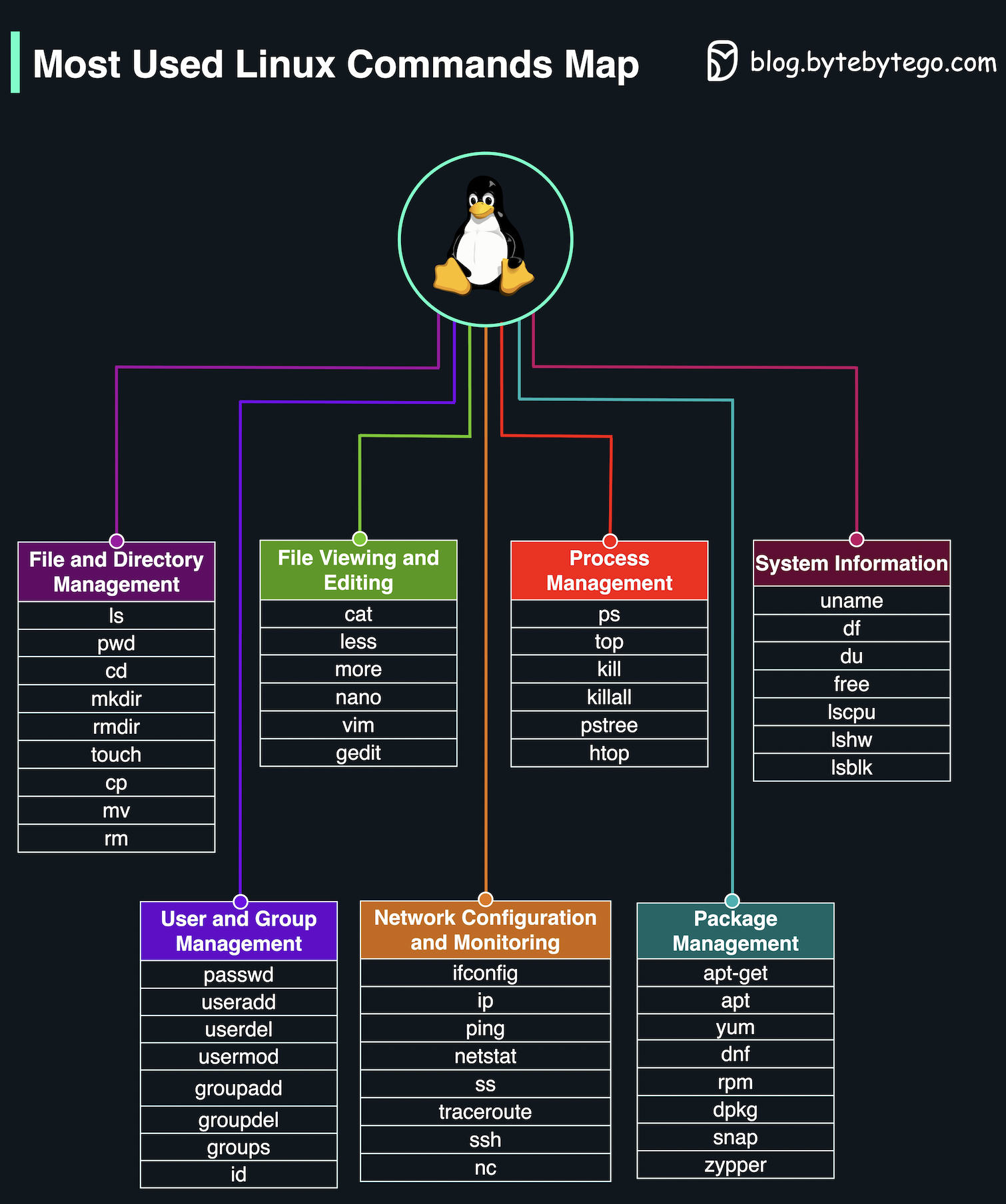

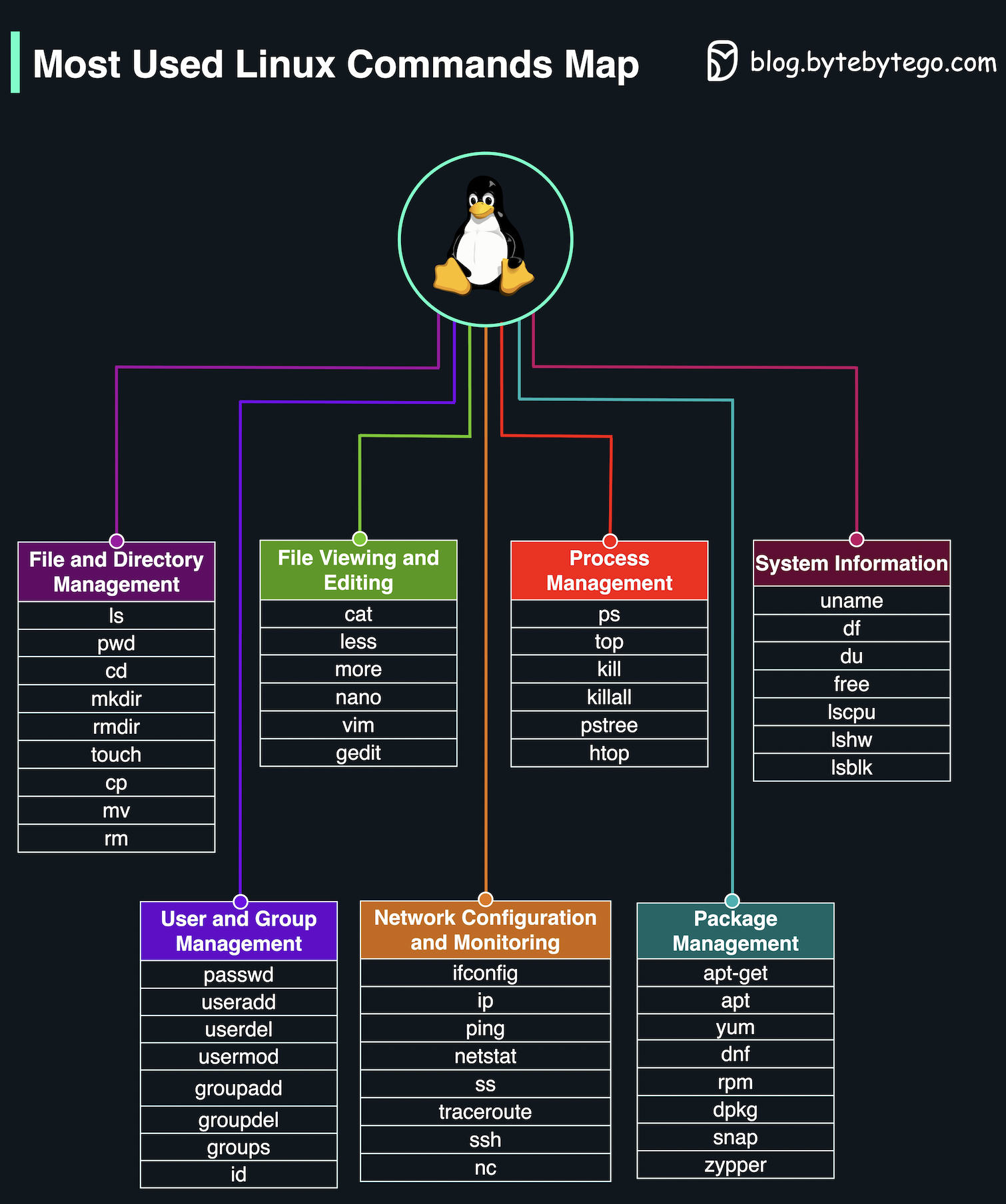

+ * [Most Used Linux Commands Map](https://bytebytego.com/guides/most-used-linux-commands-map)

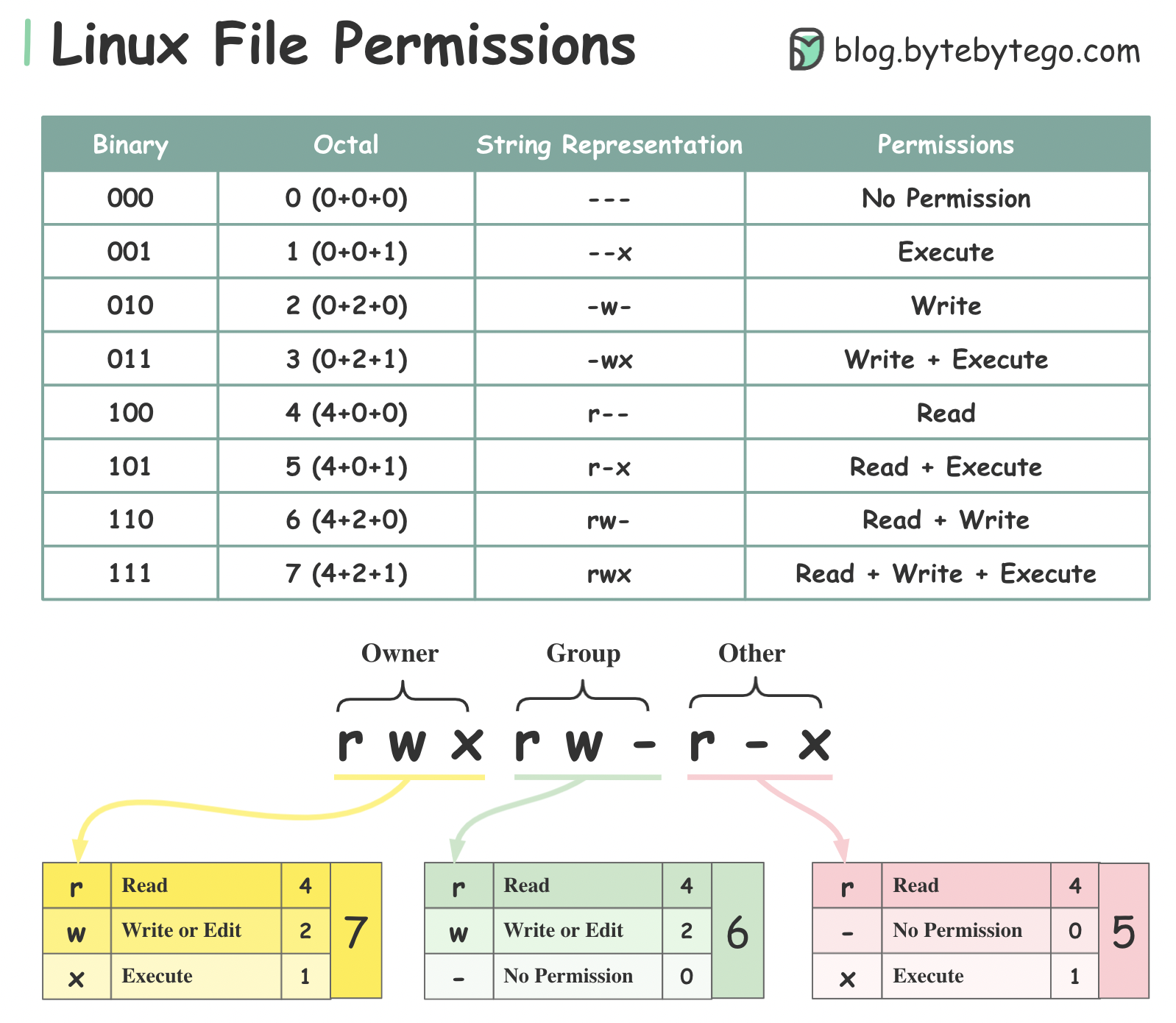

+ * [Linux File Permissions Illustrated](https://bytebytego.com/guides/linux-file-permission-illustrated)

+ * [5 Important Components of Linux](https://bytebytego.com/guides/5-important-components-of-linux)

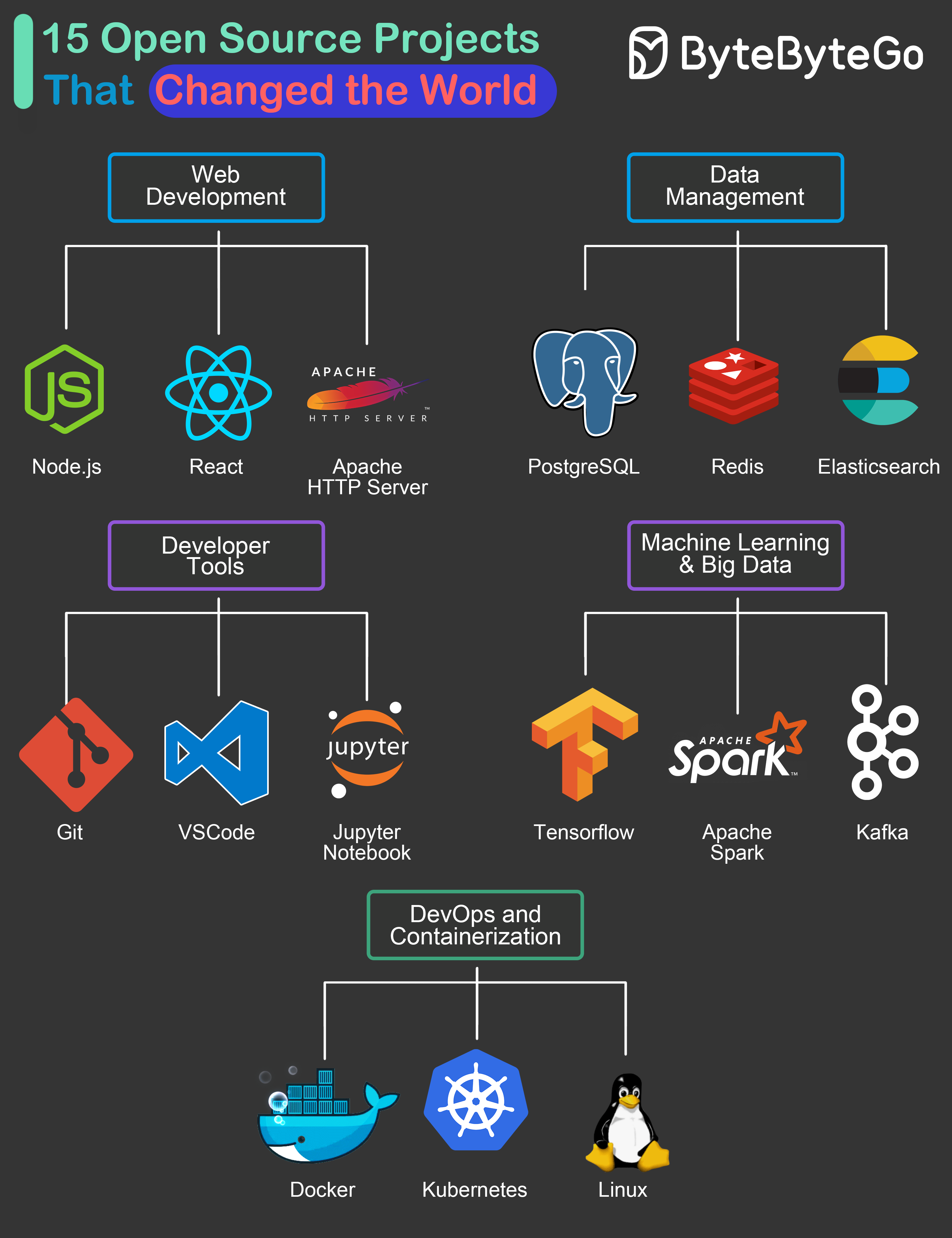

+ * [15 Open-Source Projects That Changed the World](https://bytebytego.com/guides/15-open-source-projects-that-changed-the-world)

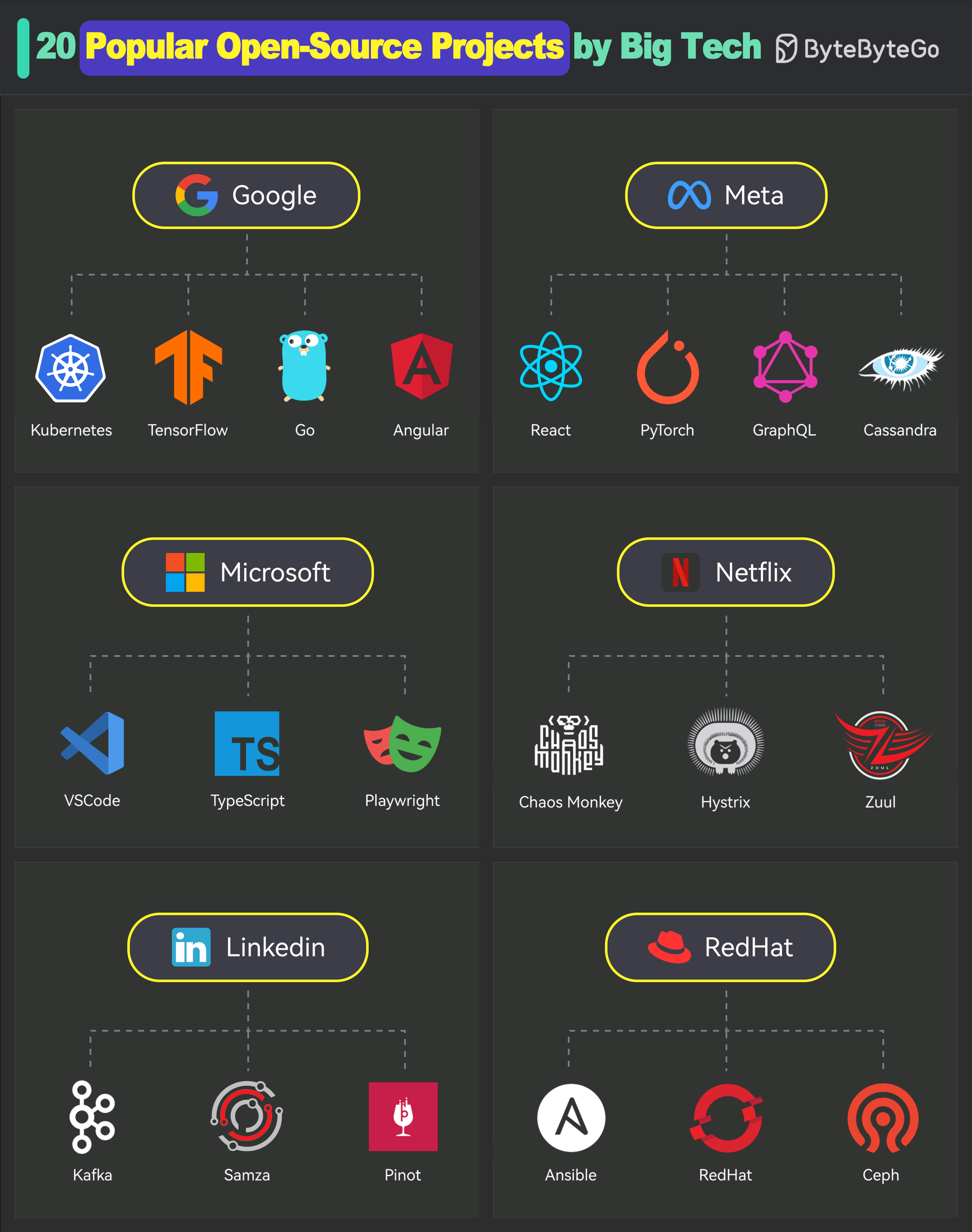

+ * [20 Popular Open Source Projects Started by Big Companies](https://bytebytego.com/guides/20-popular-open-source-projects-started-or-supported-by-big-companies)

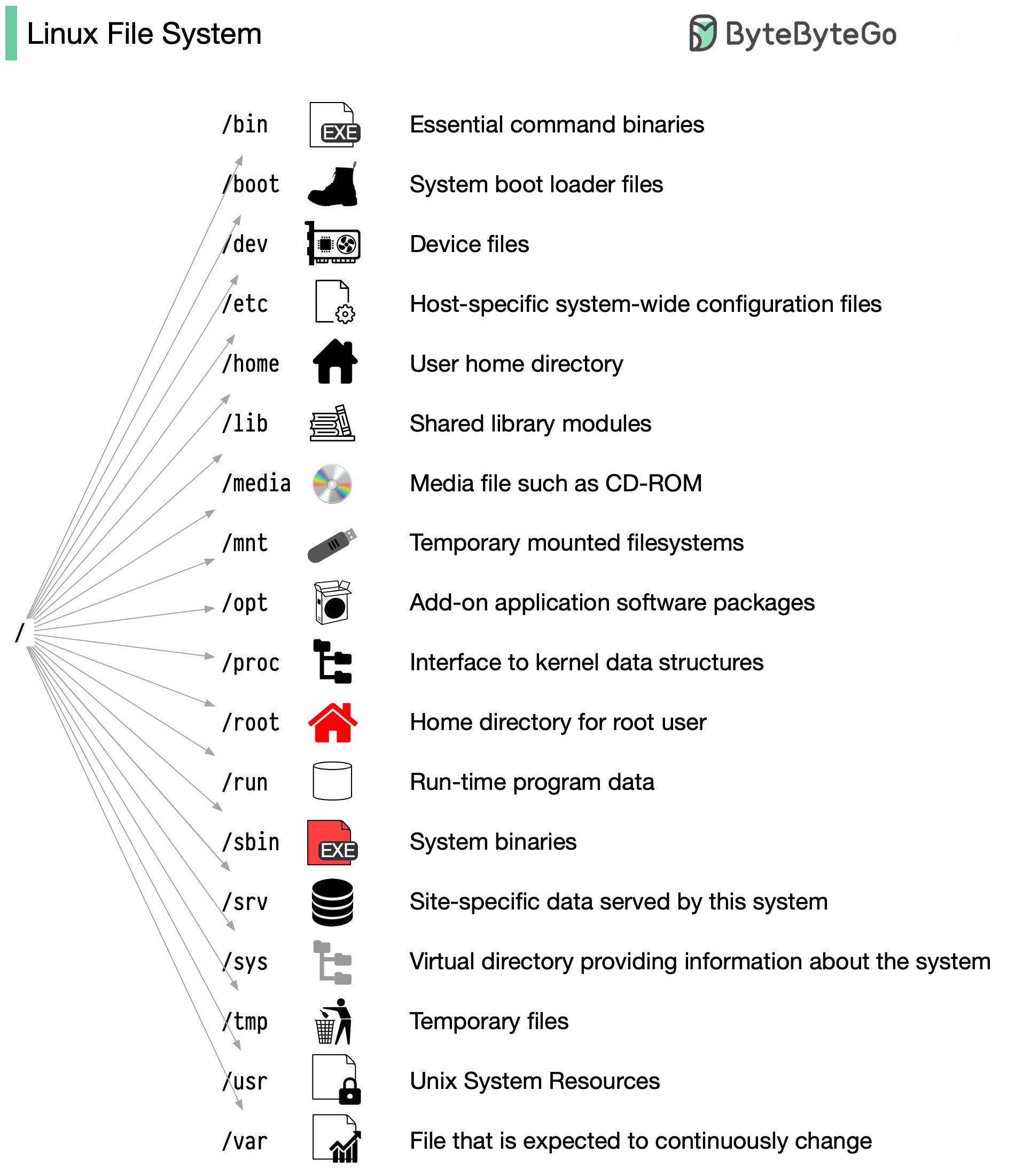

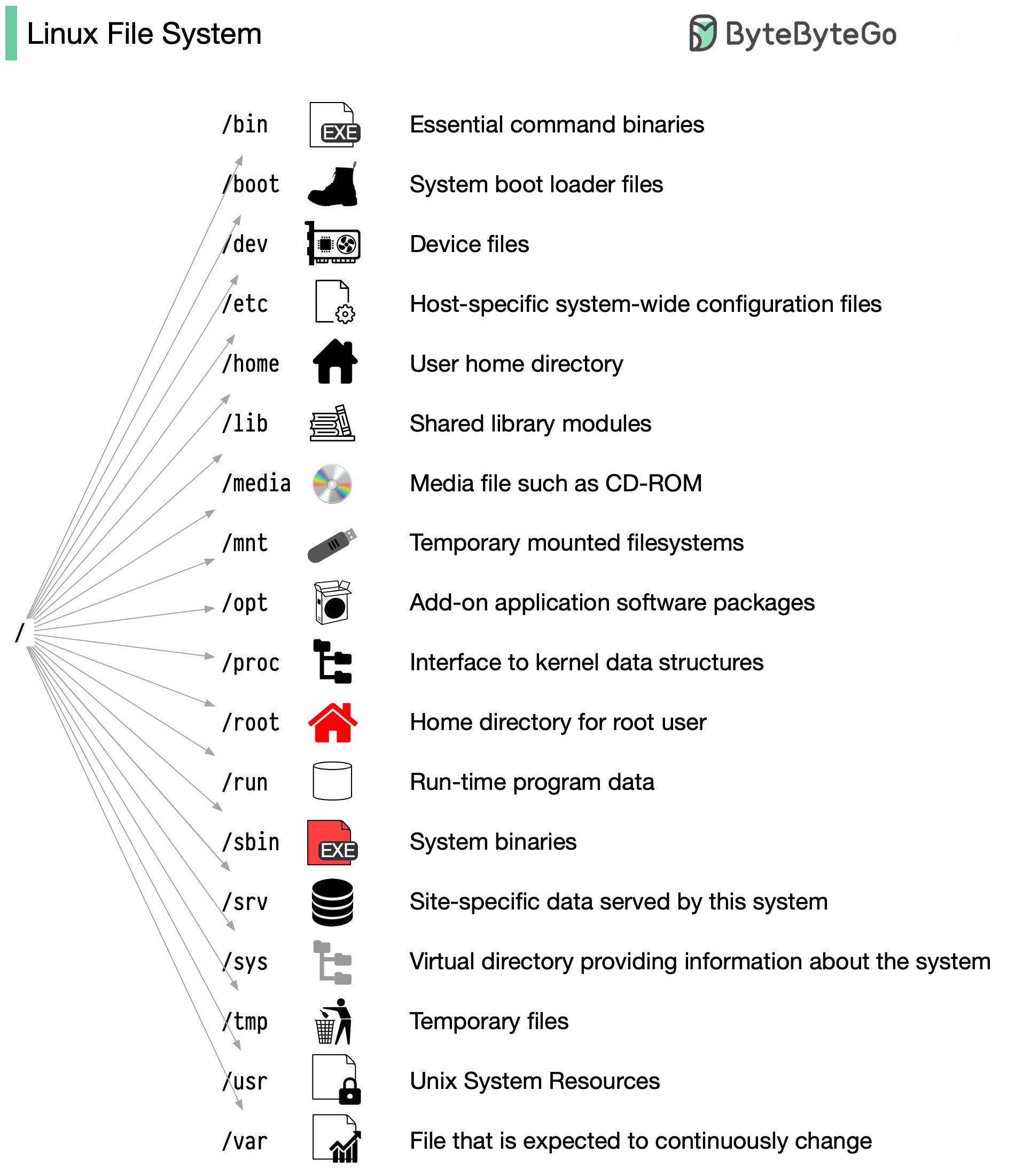

+ * [Linux File System Explained](https://bytebytego.com/guides/linux-file-system-explained)

+ * [Life is Short, Use Dev Tools](https://bytebytego.com/guides/life-is-short-use-dev-tools)

+ * [How Git Works](https://bytebytego.com/guides/how-does-git-work)

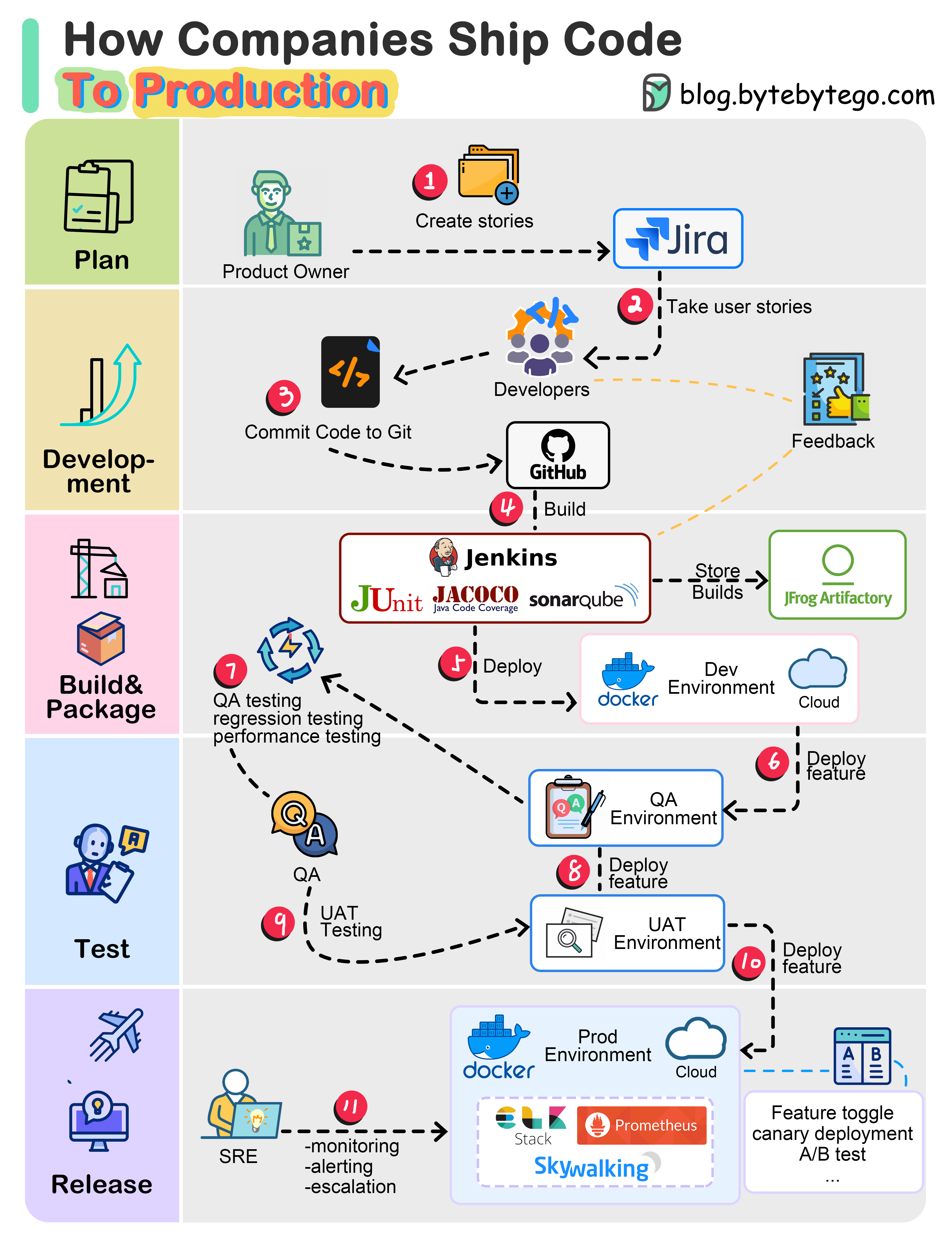

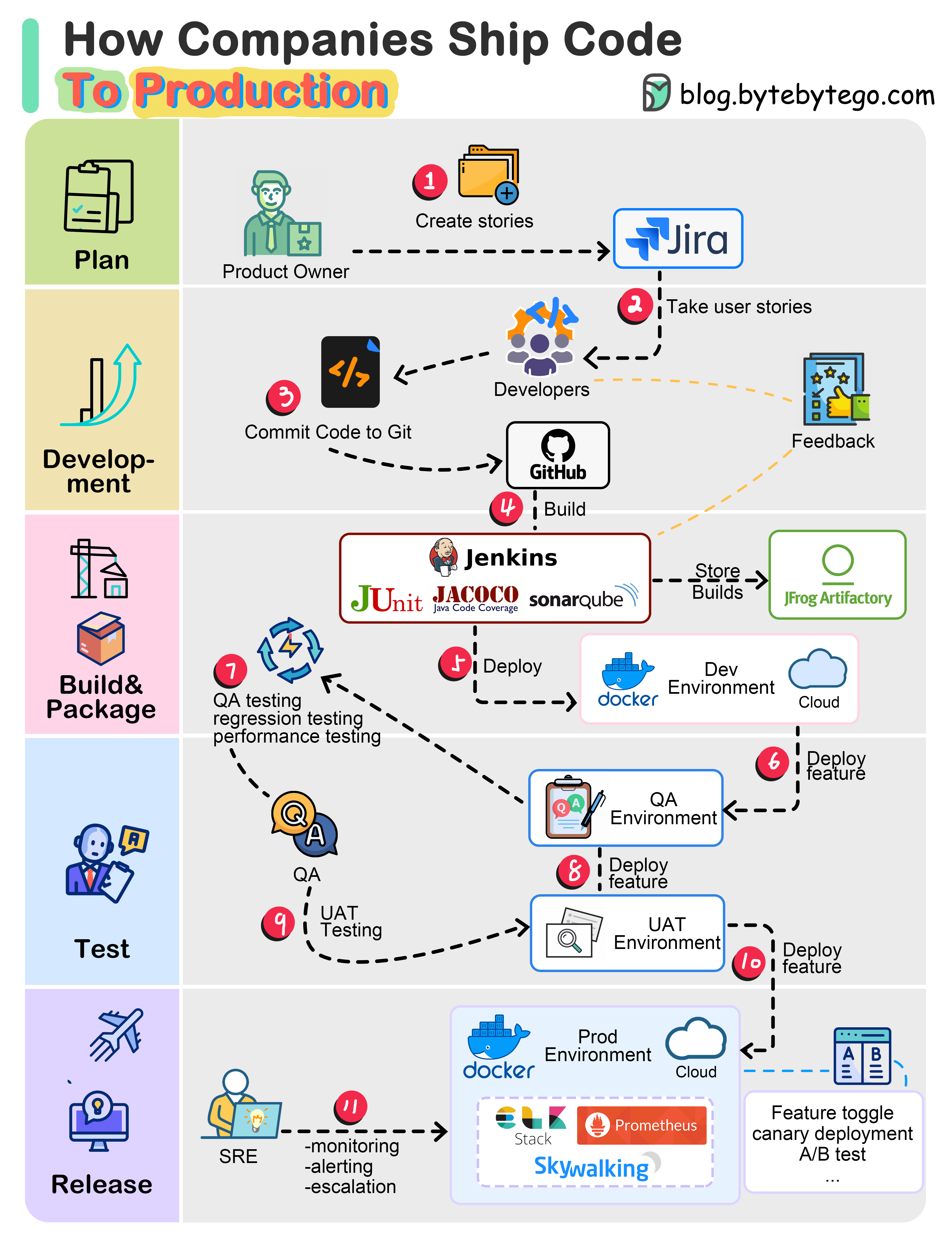

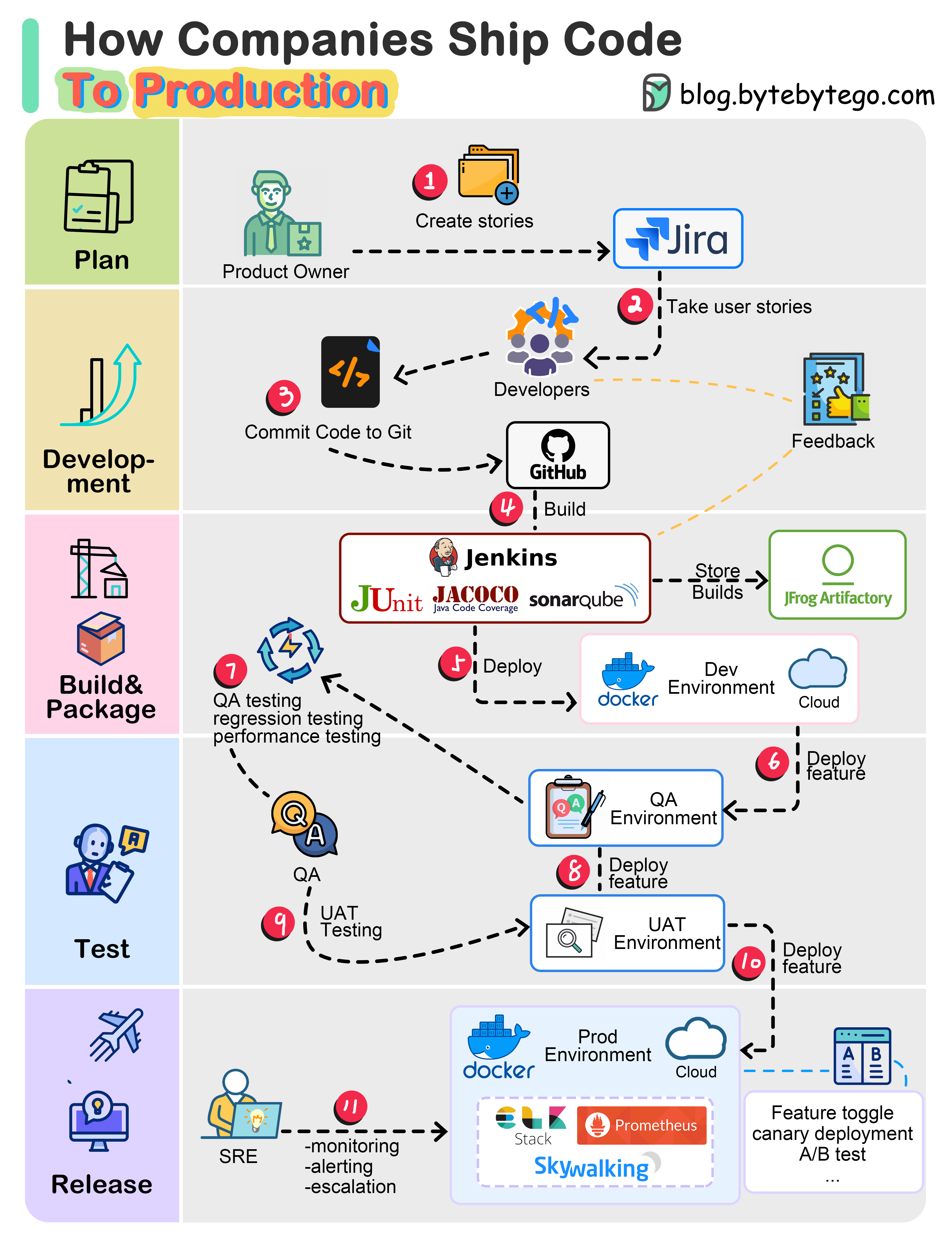

+ * [How do Companies Ship Code to Production?](https://bytebytego.com/guides/how-do-companies-ship-code-to-production)

+* [Software Development](https://bytebytego.com/guides/software-development)

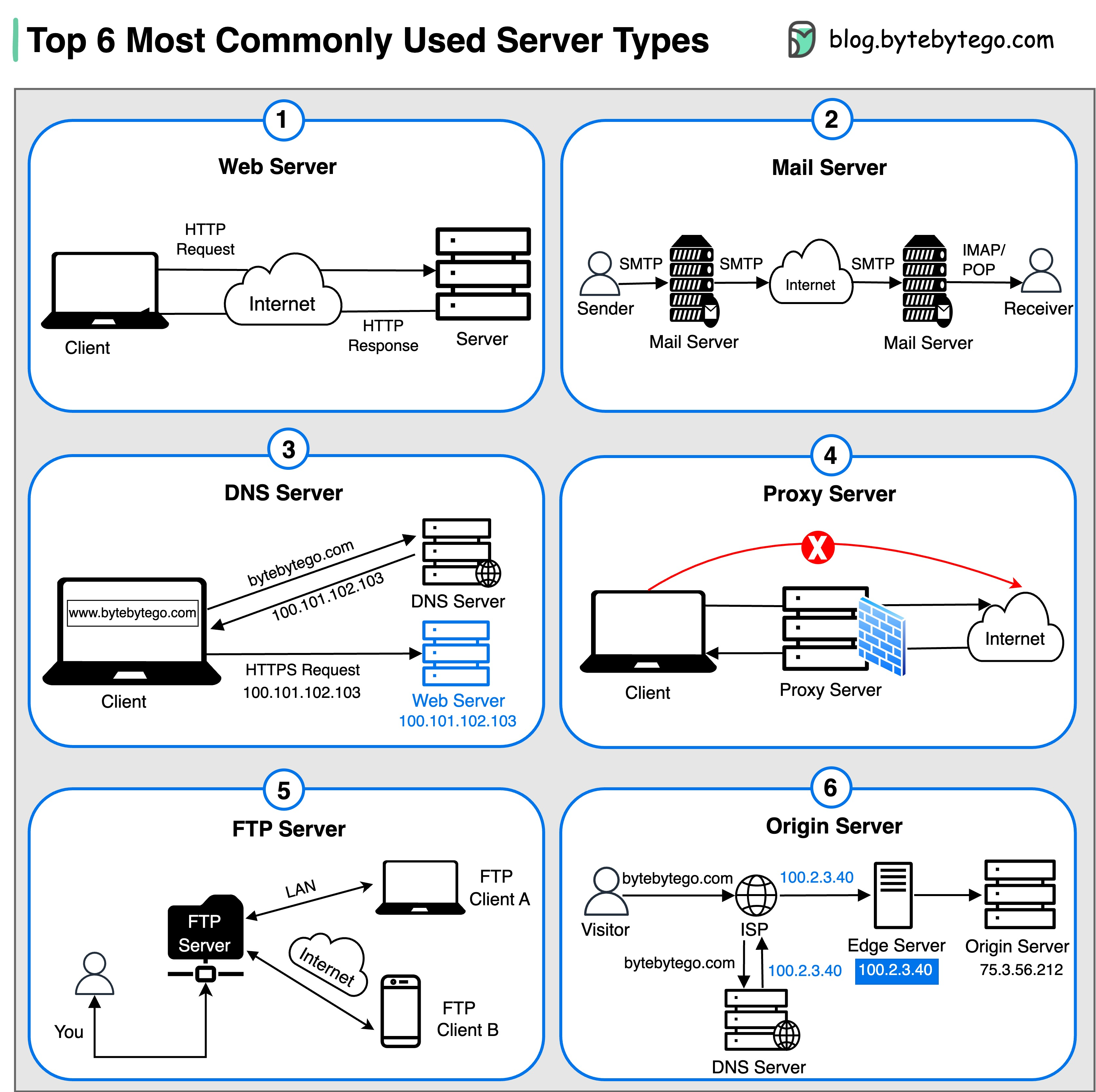

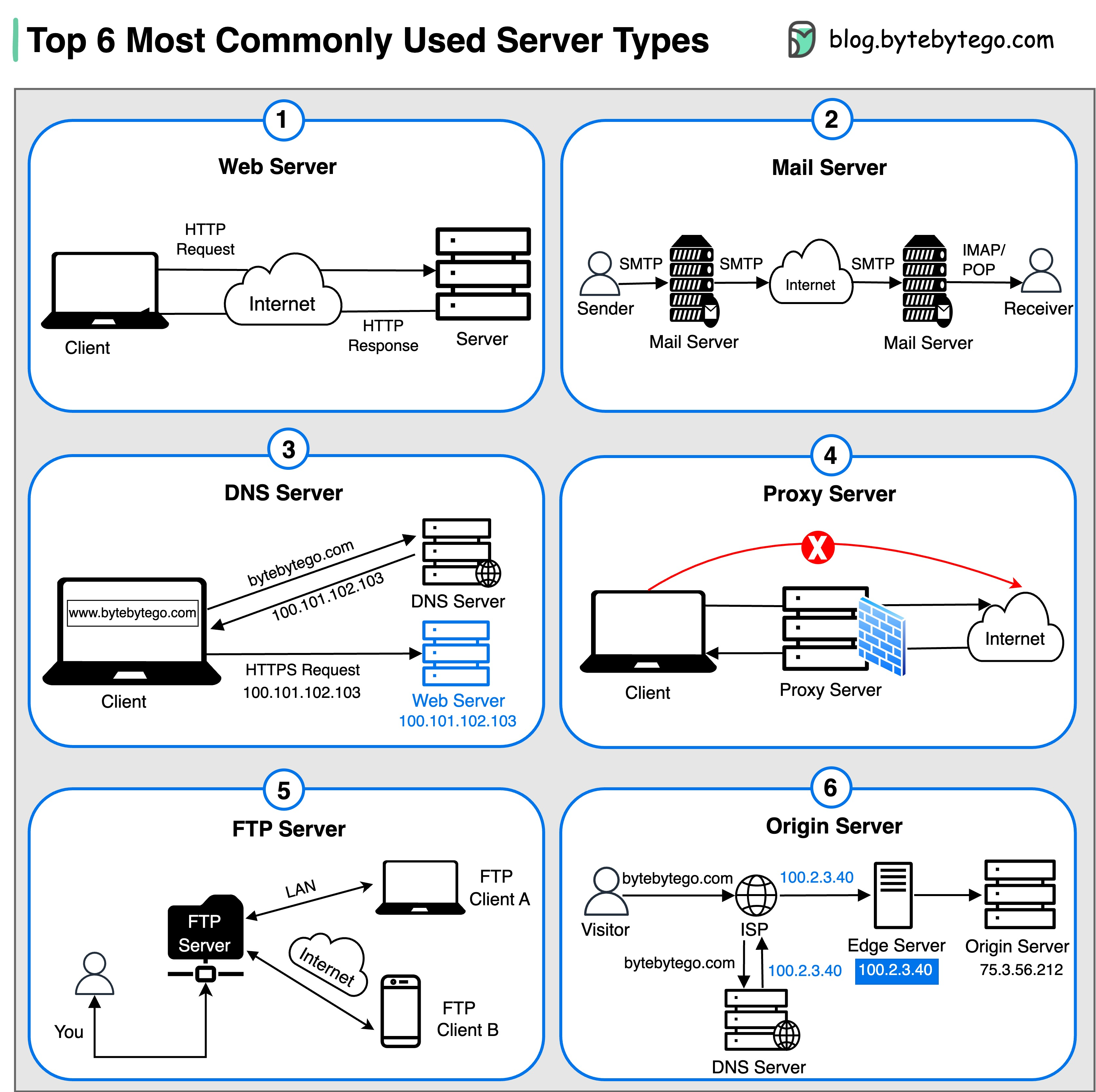

+ * [Top 6 Most Commonly Used Server Types](https://bytebytego.com/guides/top-6-most-commonly-used-server-types)

+ * [How does Garbage Collection work?](https://bytebytego.com/guides/how-does-garbage-collection-work)

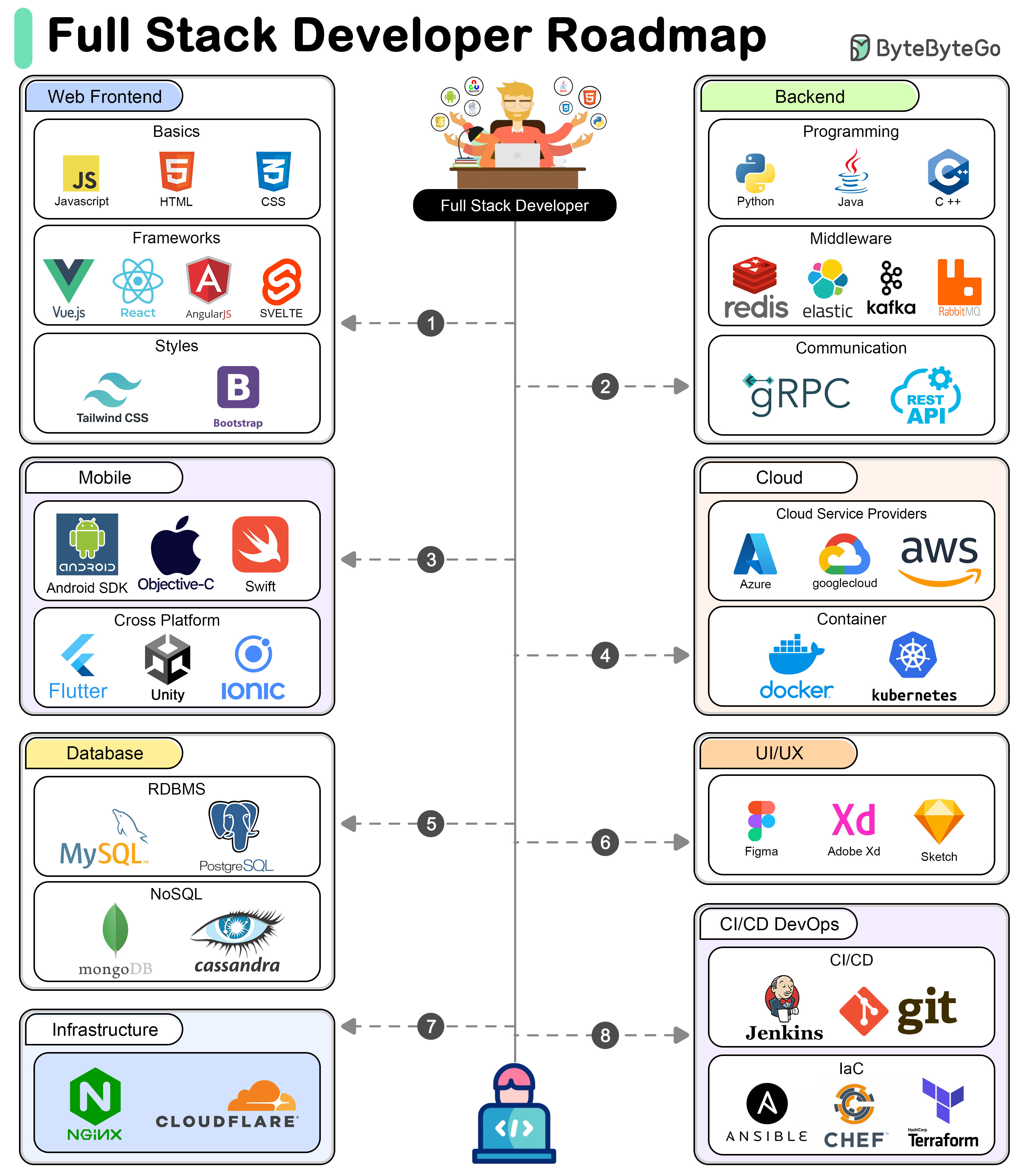

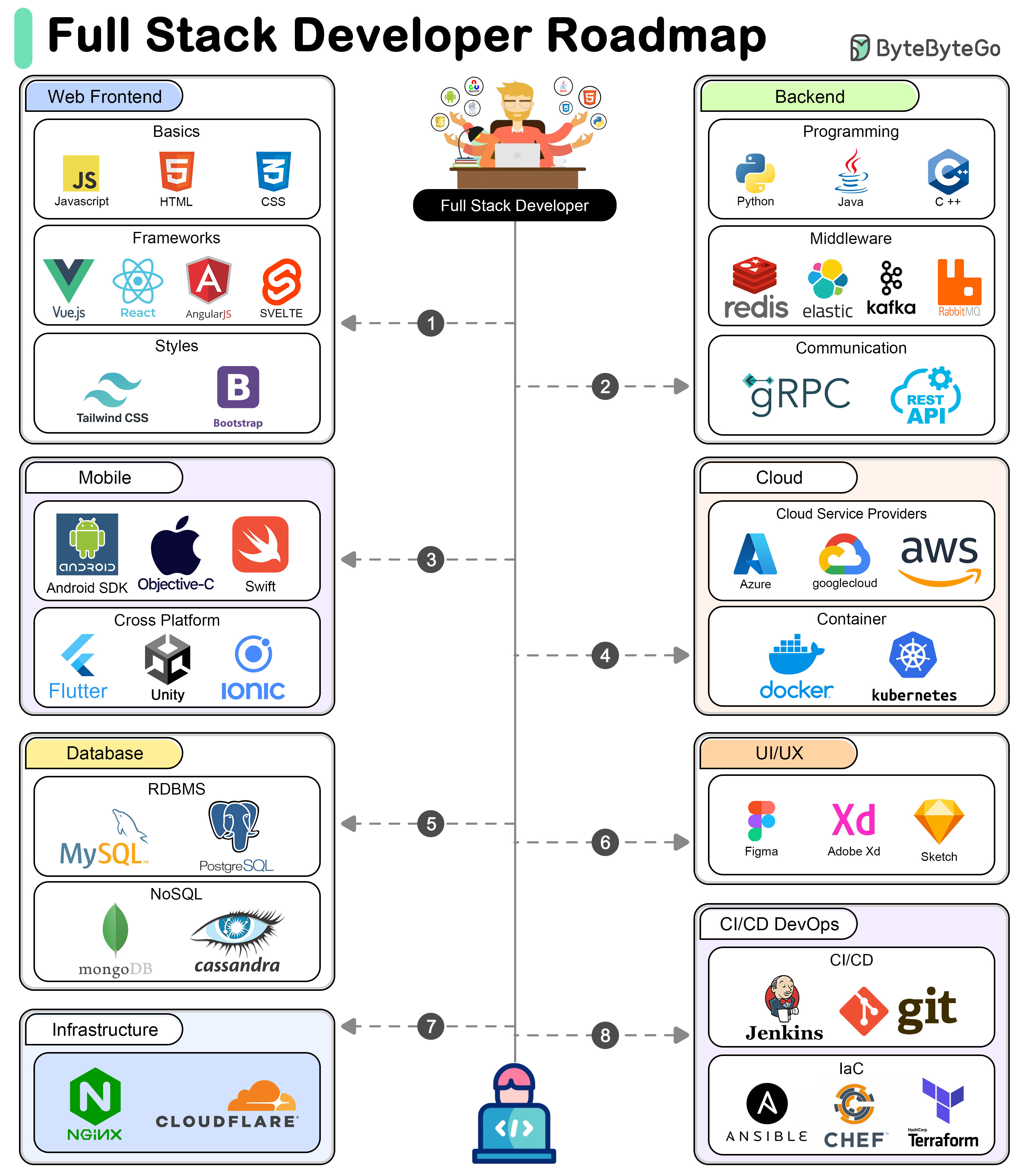

+ * [A Roadmap for Full-Stack Development](https://bytebytego.com/guides/a-roadmap-for-full-stack-development)

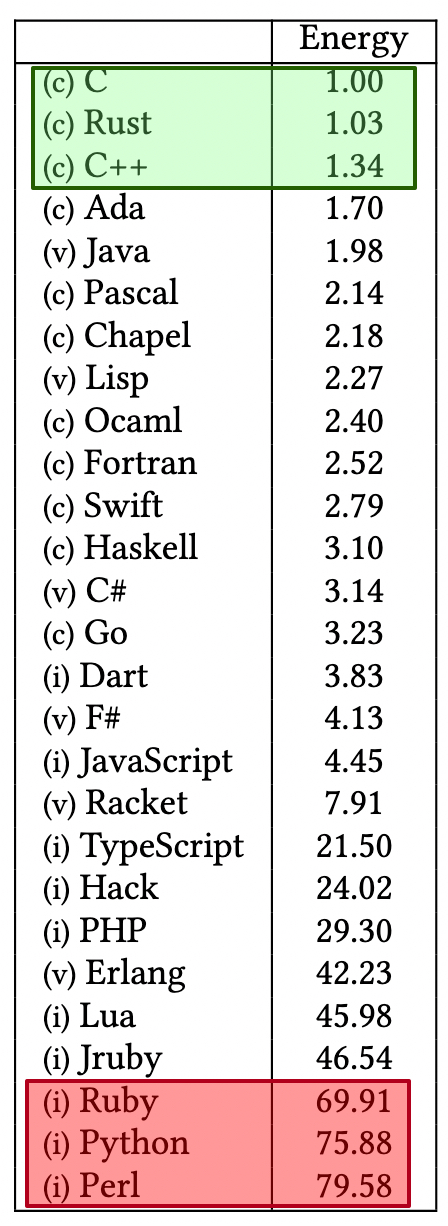

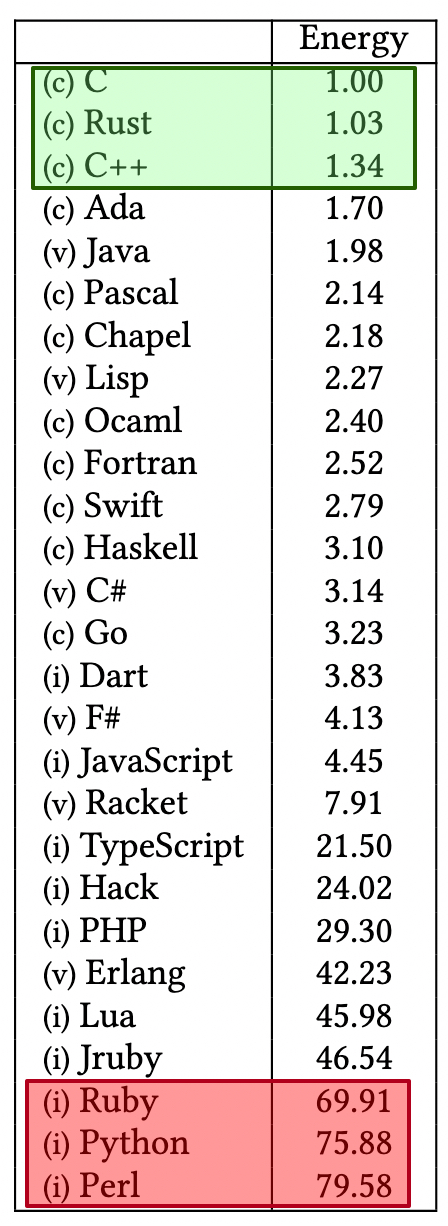

+ * [What Are the Greenest Programming Languages?](https://bytebytego.com/guides/what-are-the-greenest-programming-languages)

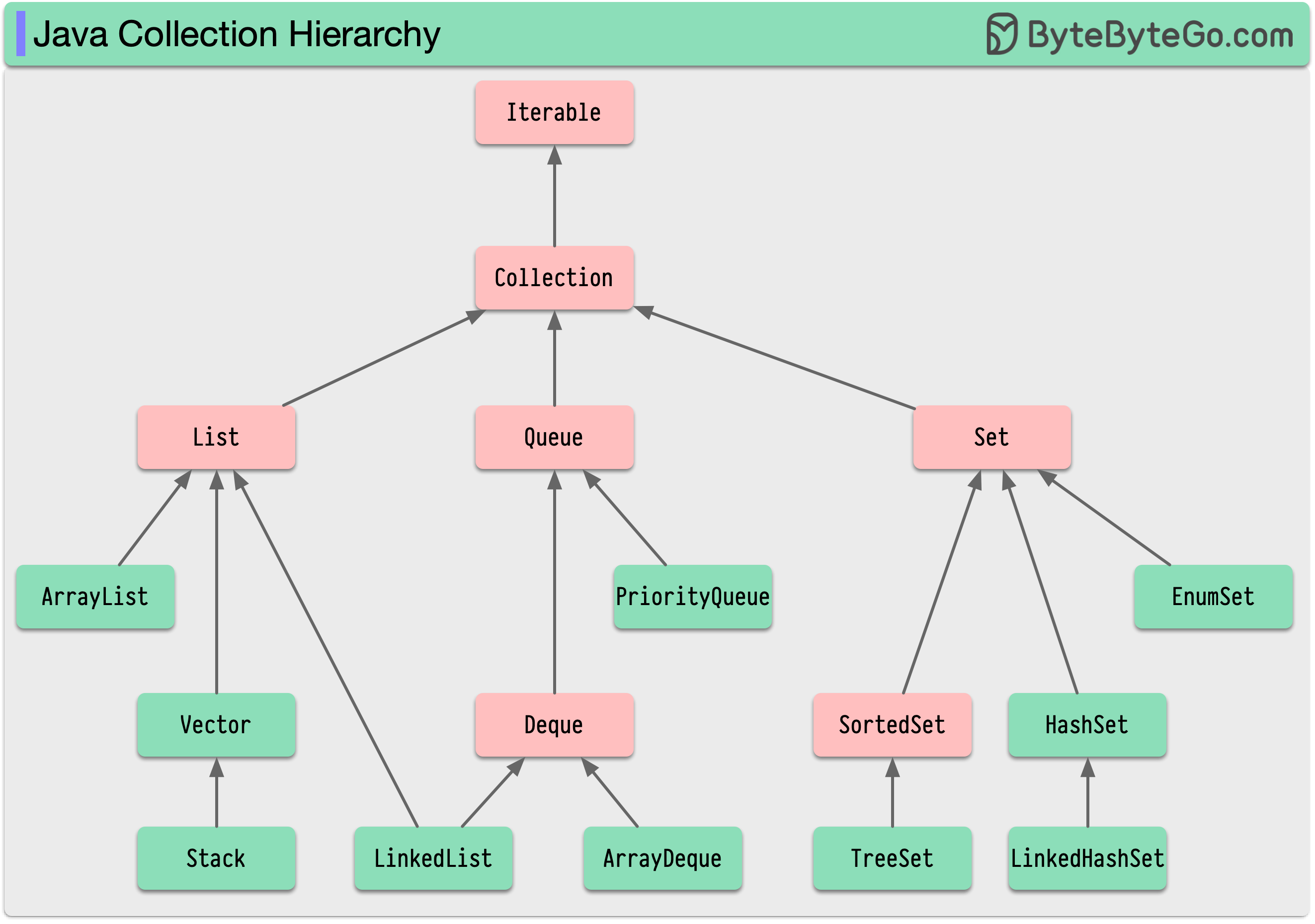

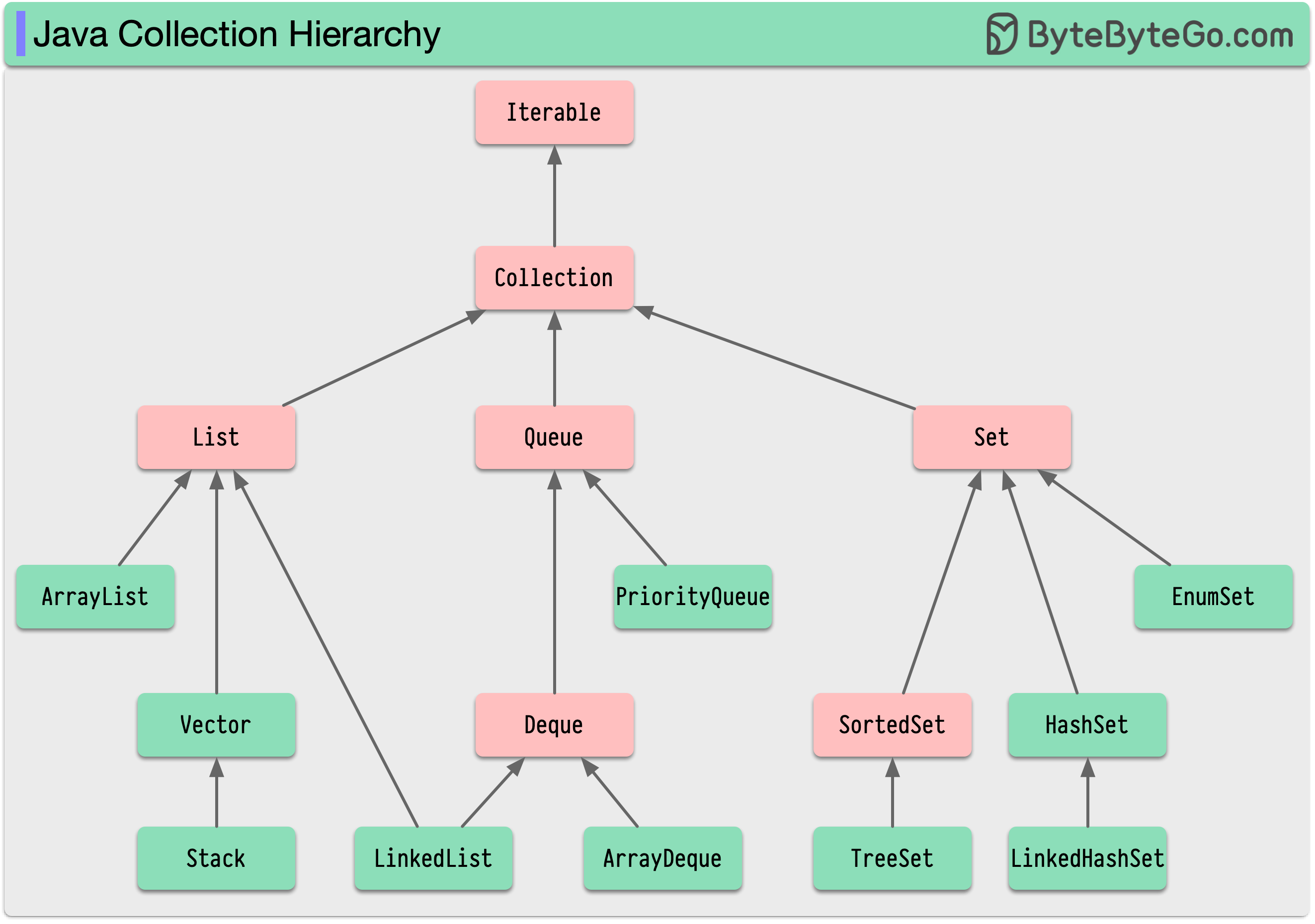

+ * [Java Collection Hierarchy](https://bytebytego.com/guides/java-collection-hierarchy)

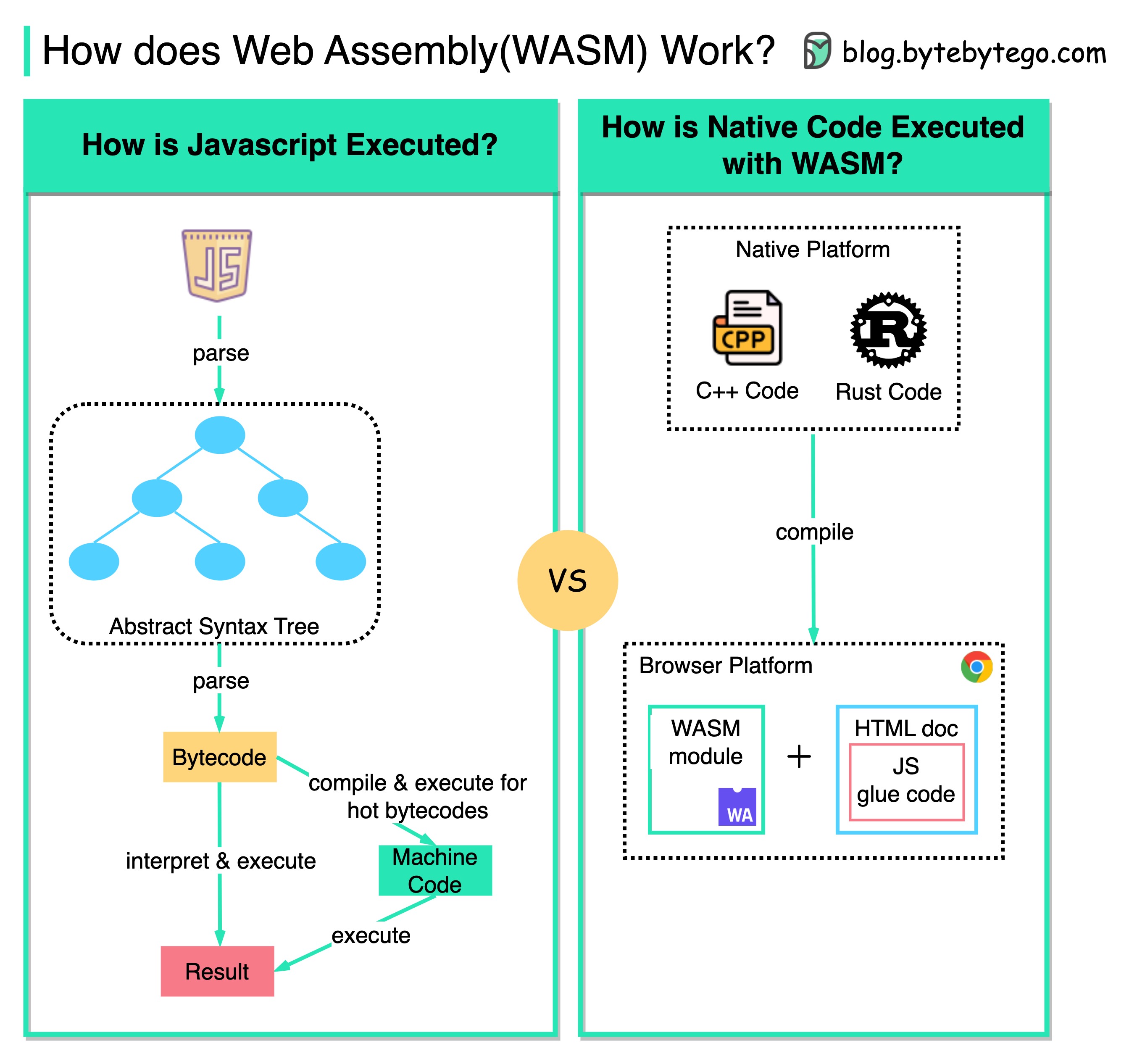

+ * [Running C, C++, or Rust in a Web Browser](https://bytebytego.com/guides/is-it-possible-to-run-c-c++-or-rust-on-a-web-browser)

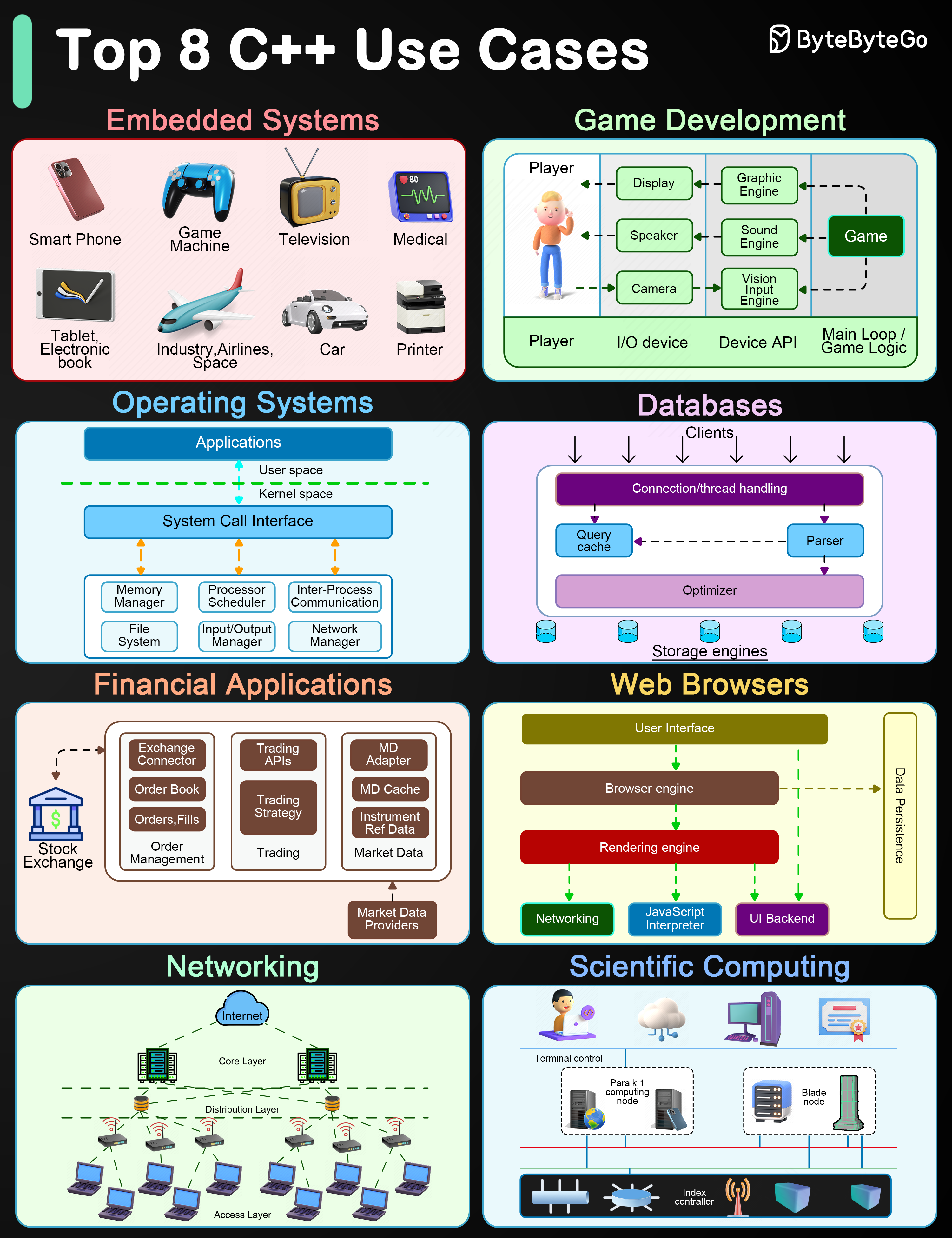

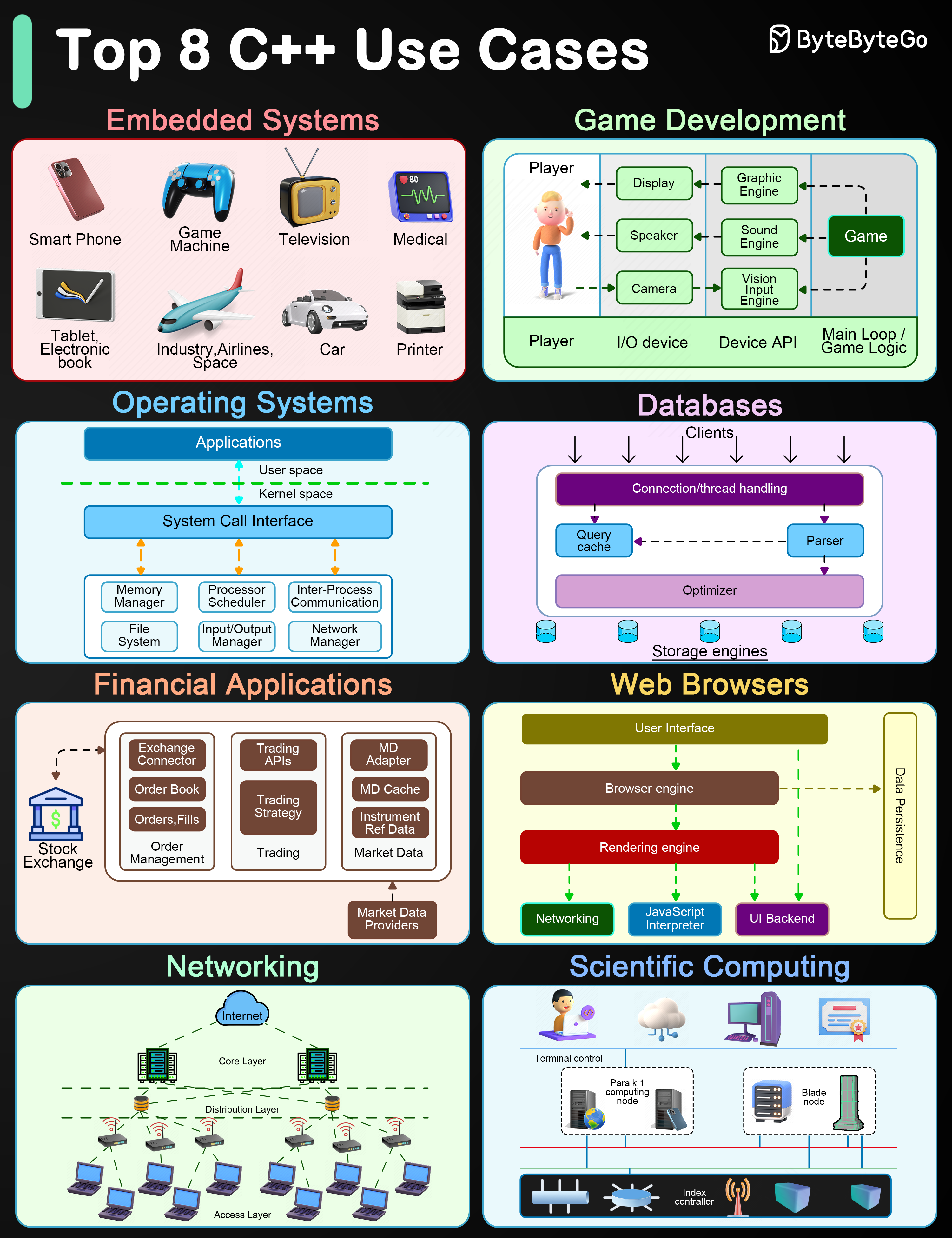

+ * [Top 8 C++ Use Cases](https://bytebytego.com/guides/top-8-c++-use-cases)

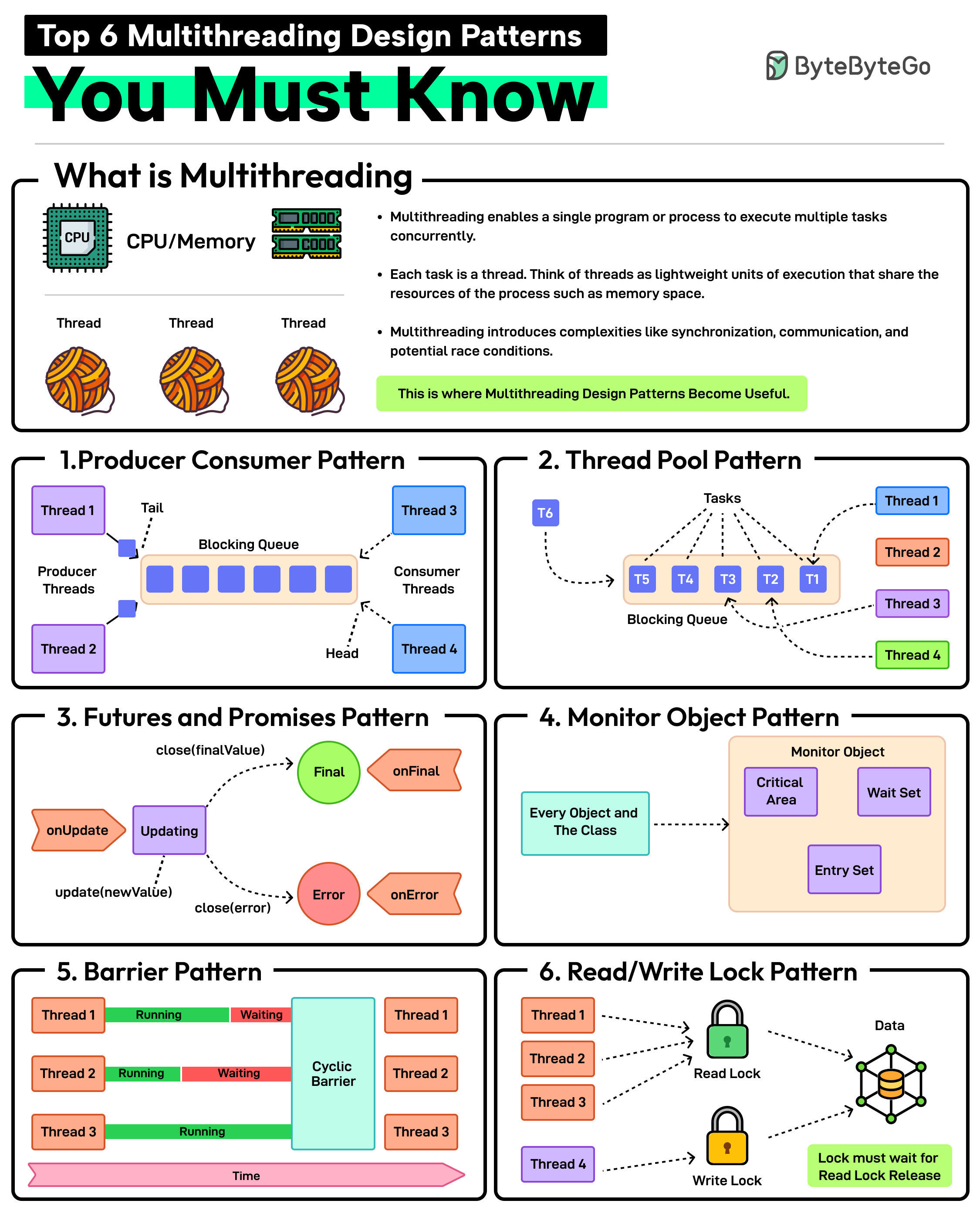

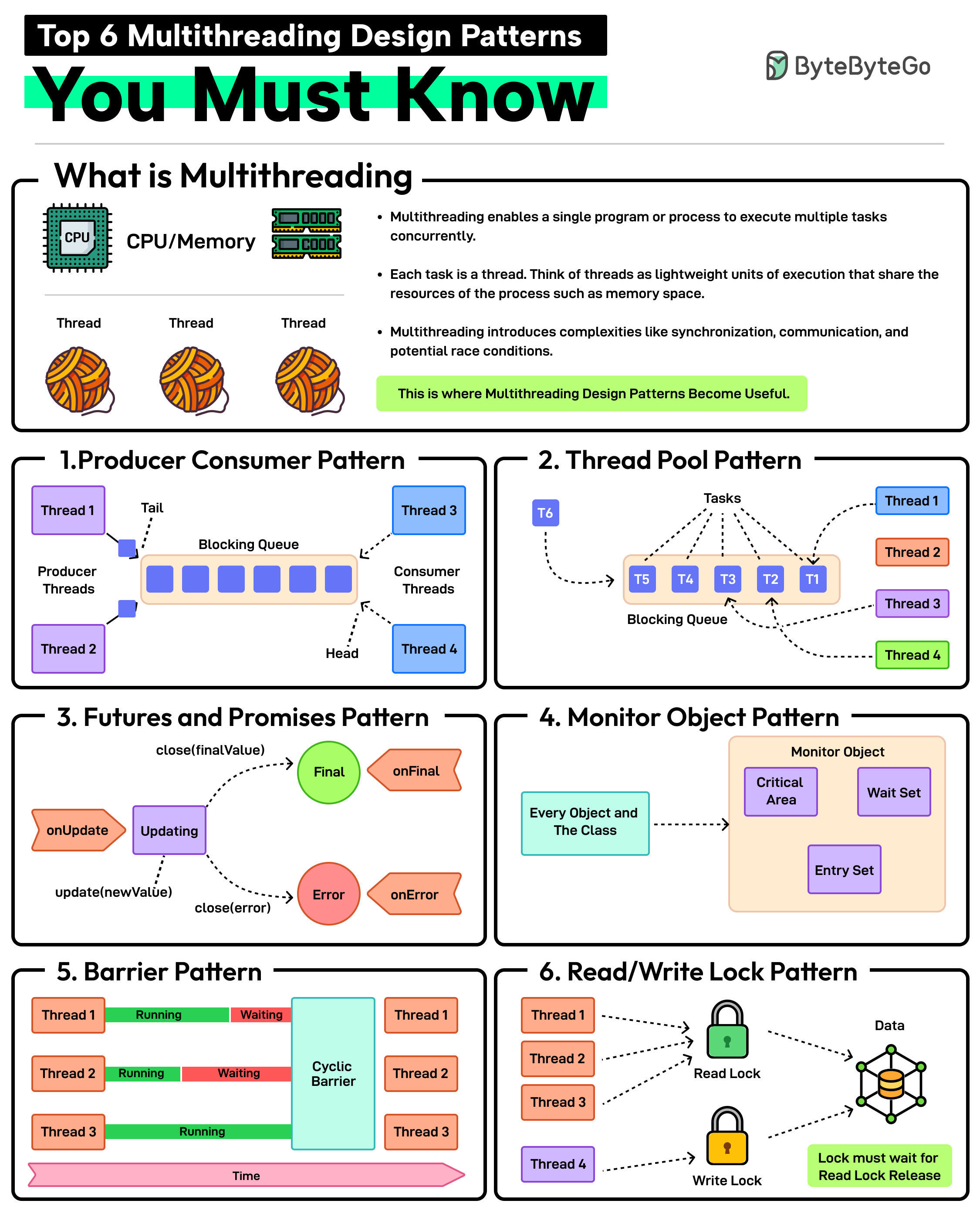

+ * [Top 6 Multithreading Design Patterns You Must Know](https://bytebytego.com/guides/top-6-multithreading-design-patterns-you-must-know)

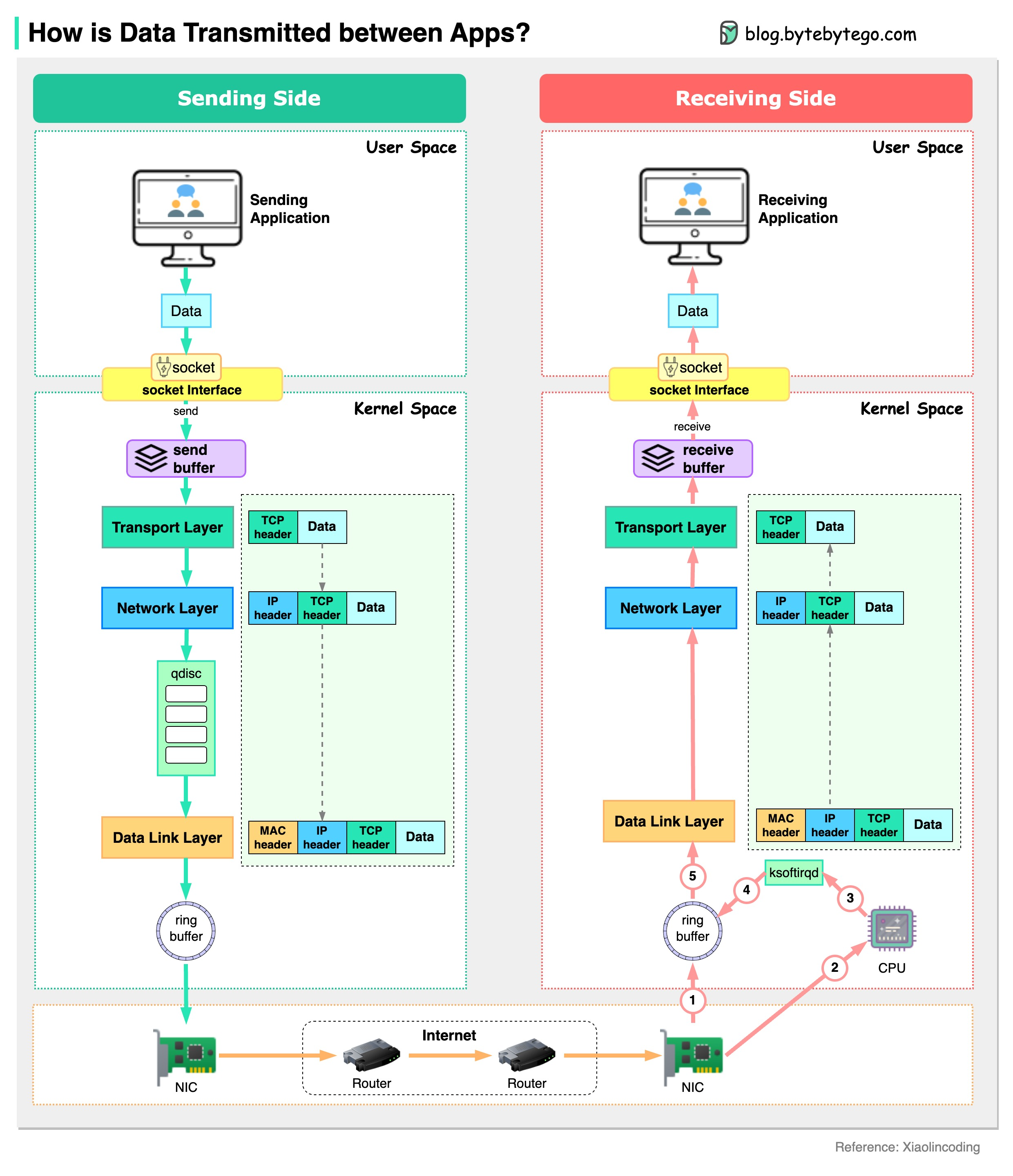

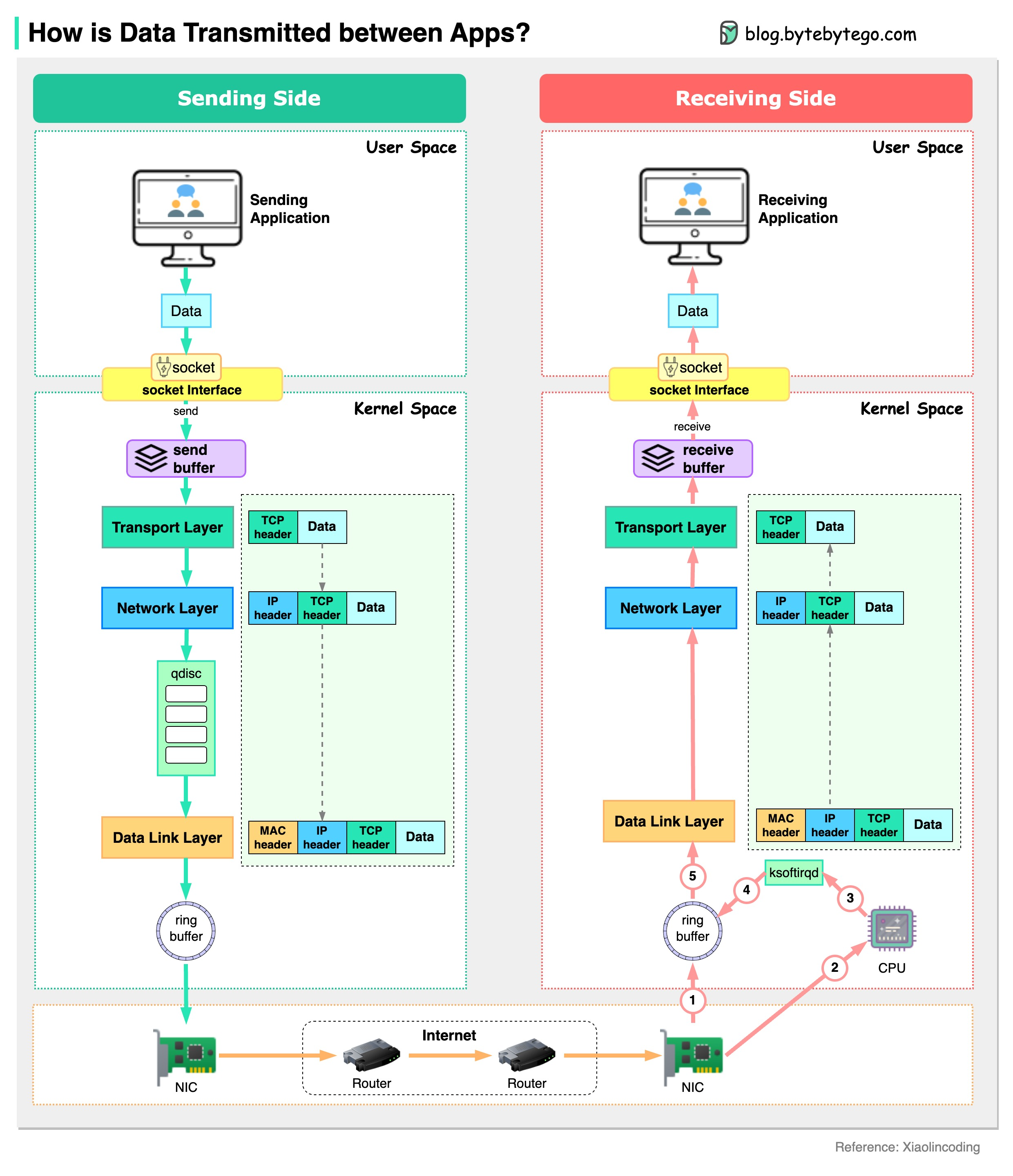

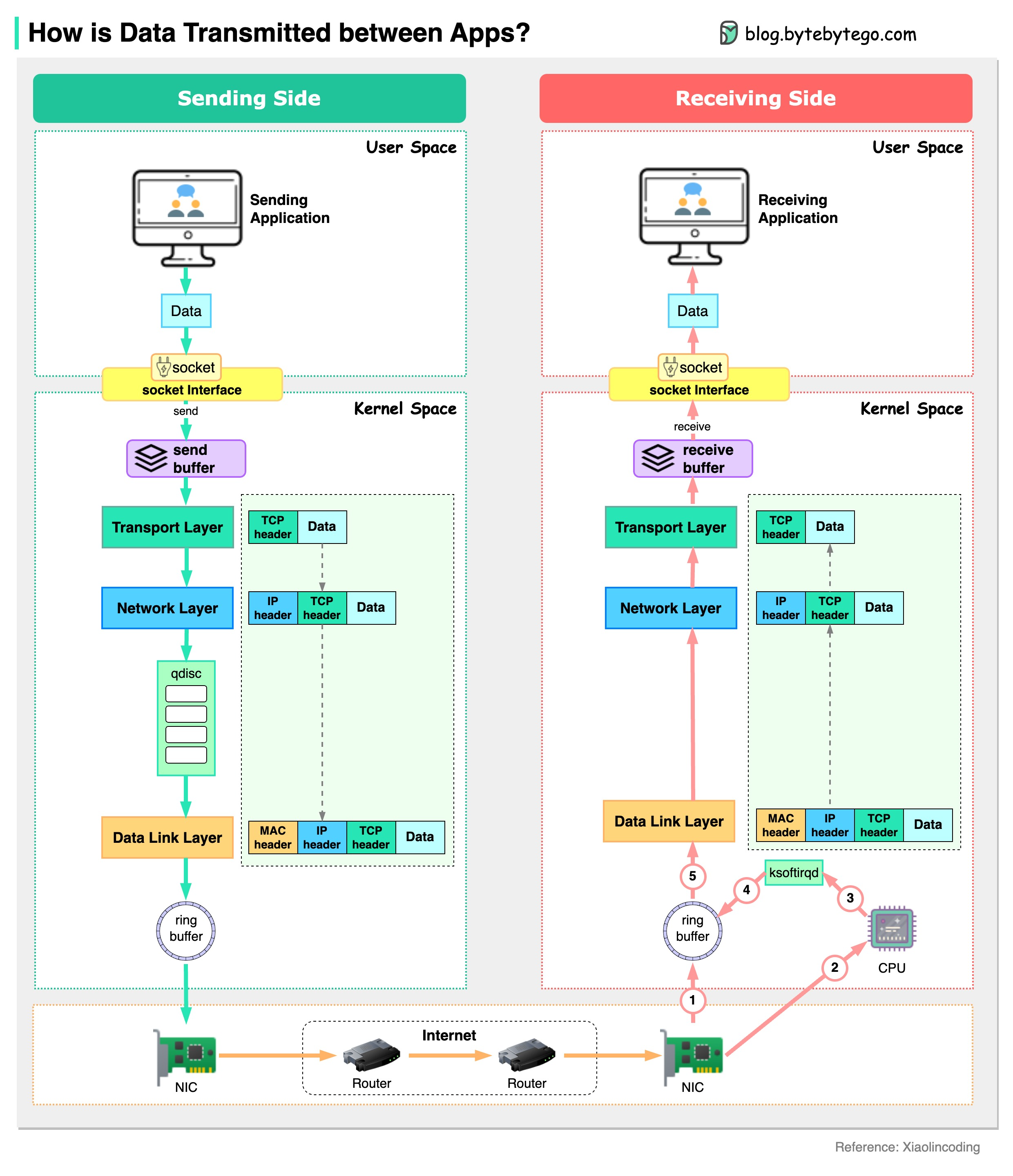

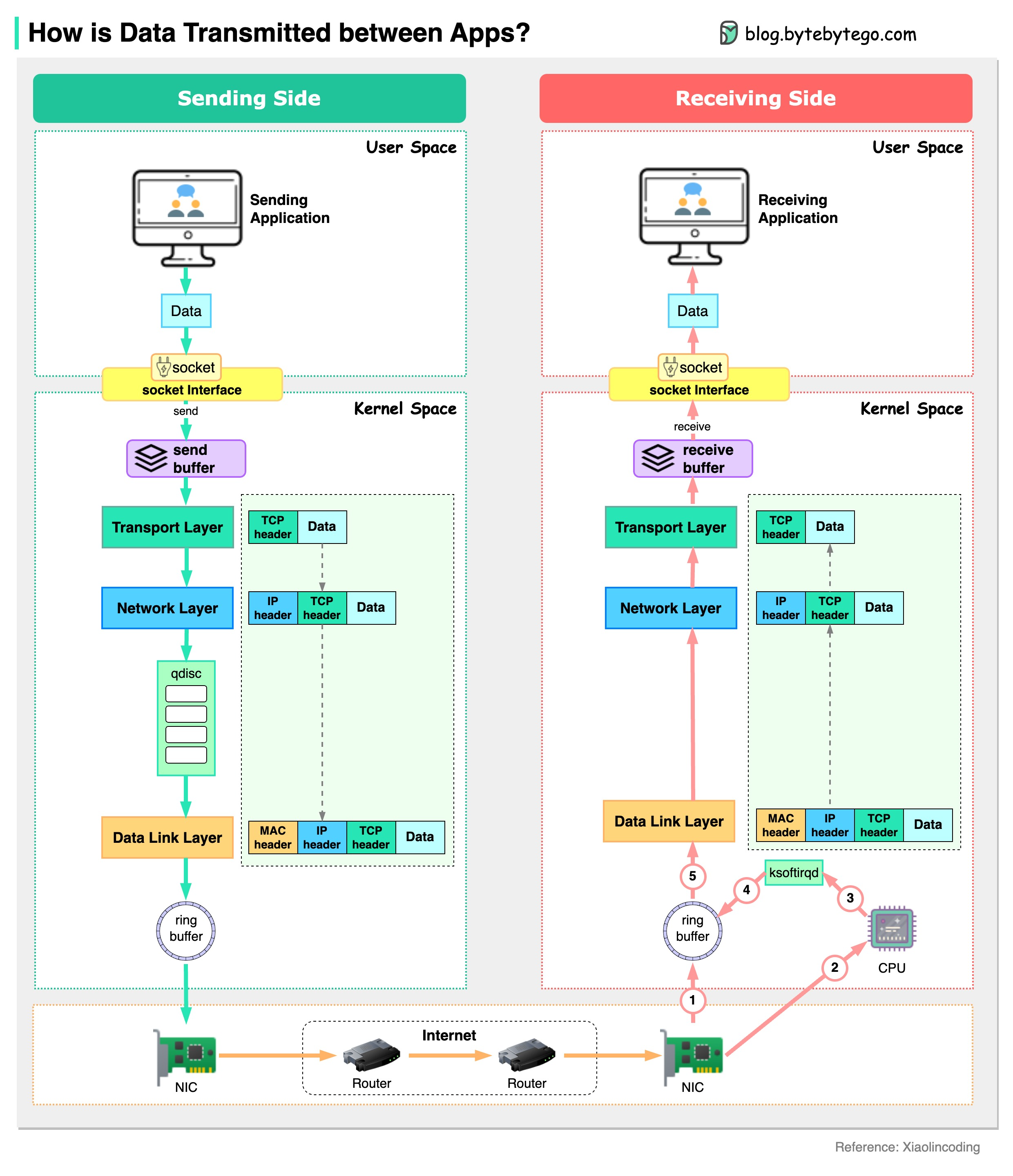

+ * [Data Transmission Between Applications](https://bytebytego.com/guides/how-is-data-transmitted-between-applications)

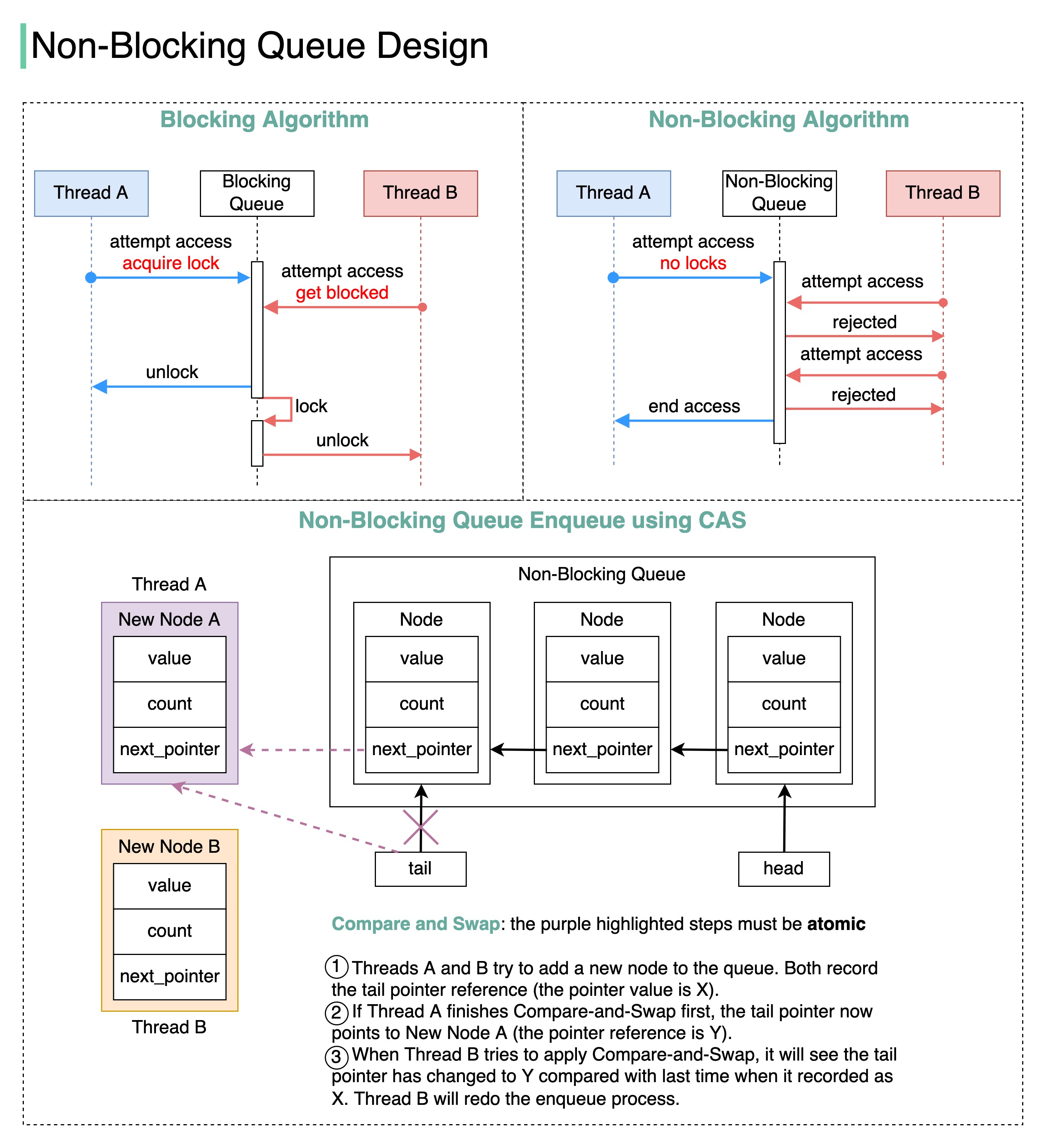

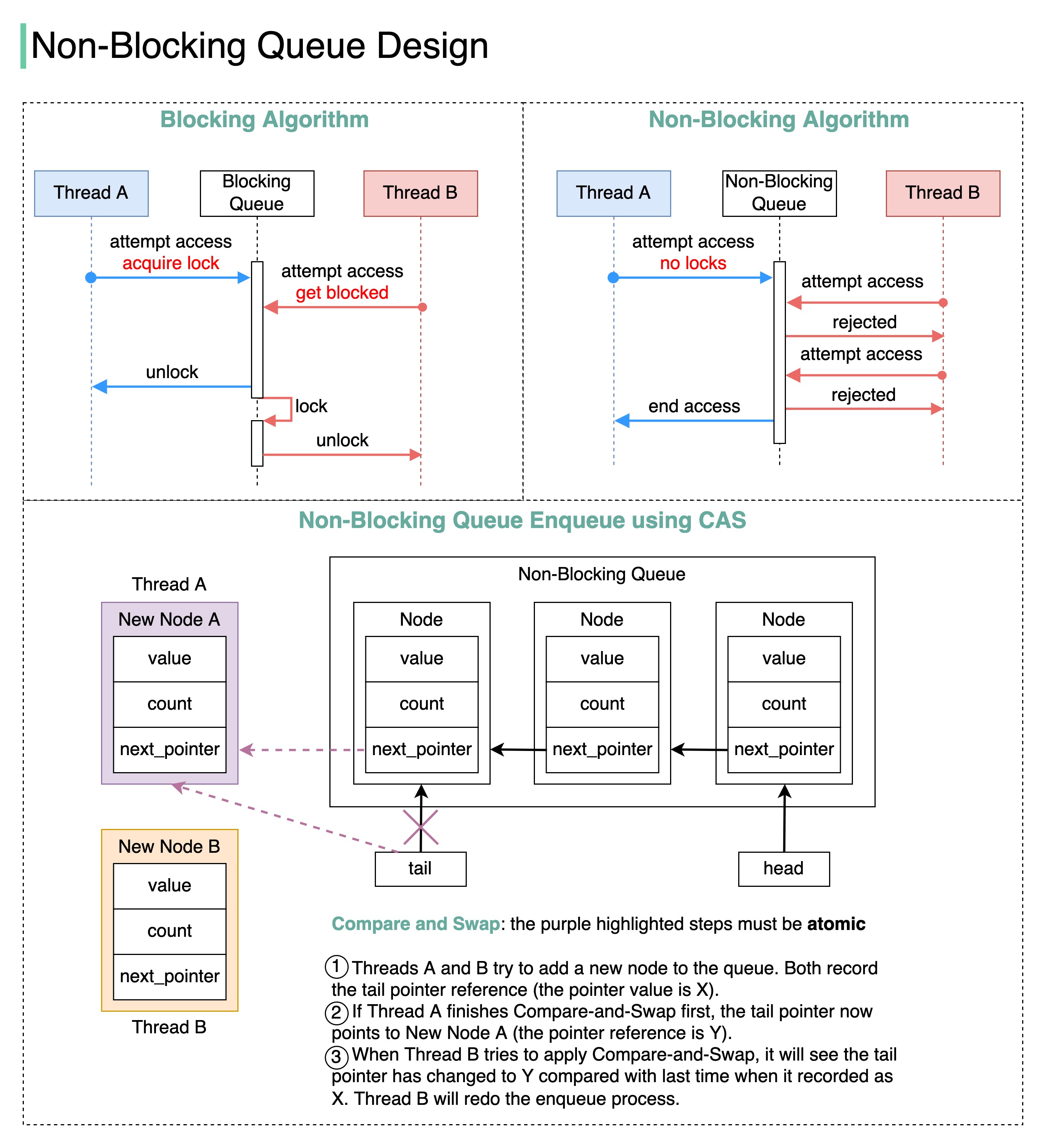

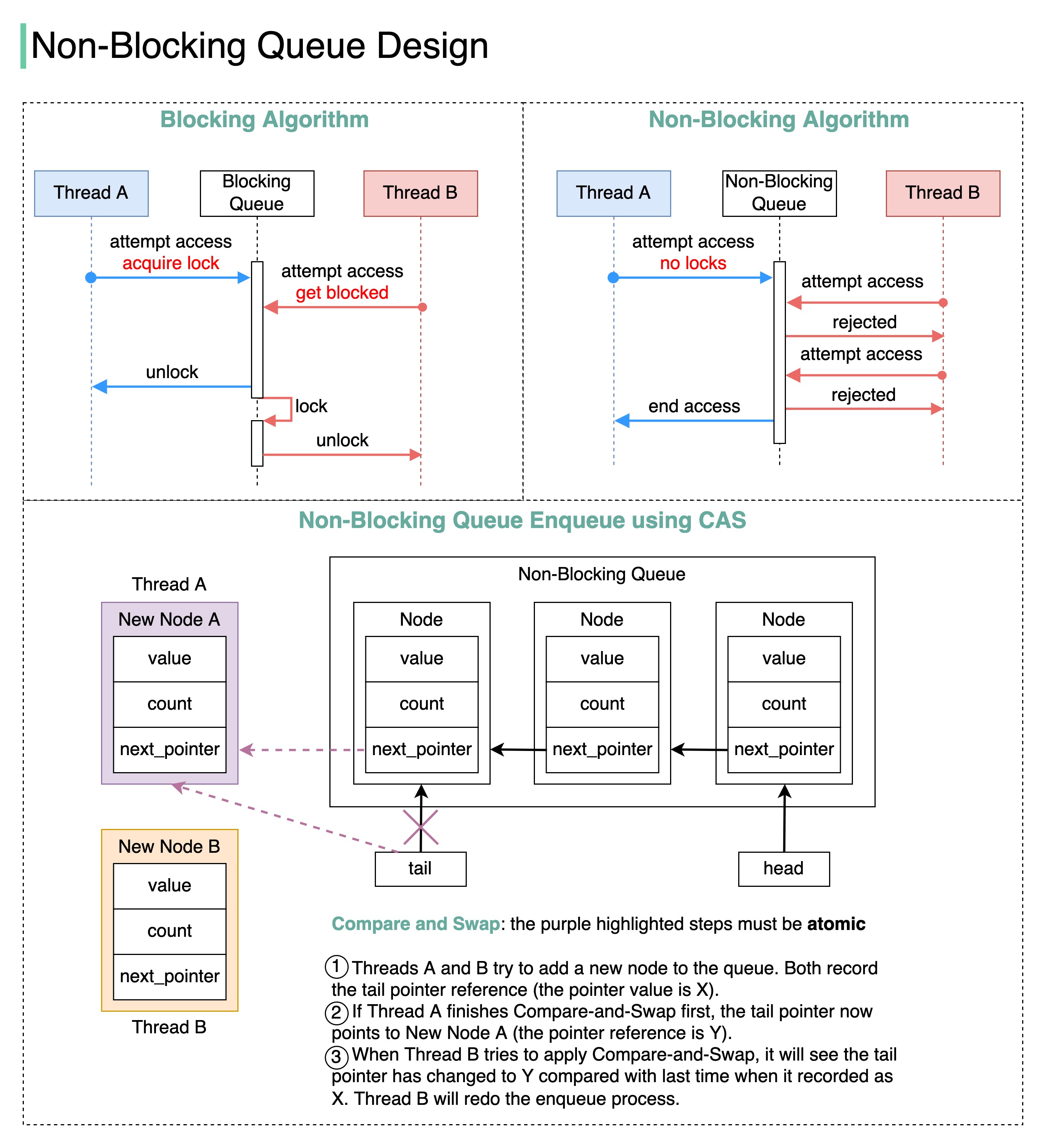

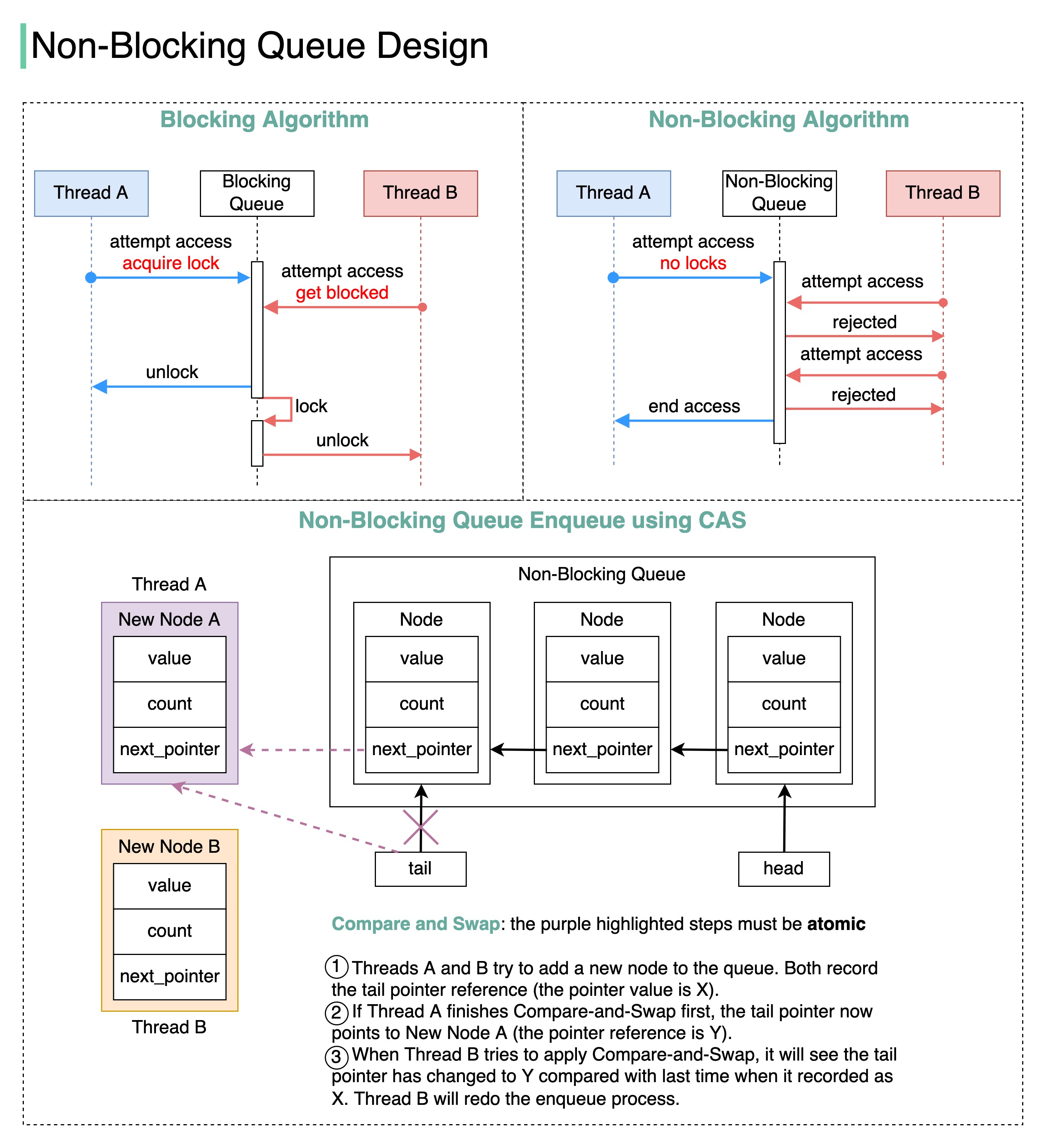

+ * [Blocking vs Non-Blocking Queue](https://bytebytego.com/guides/blocking-vs-non-blocking-queue)

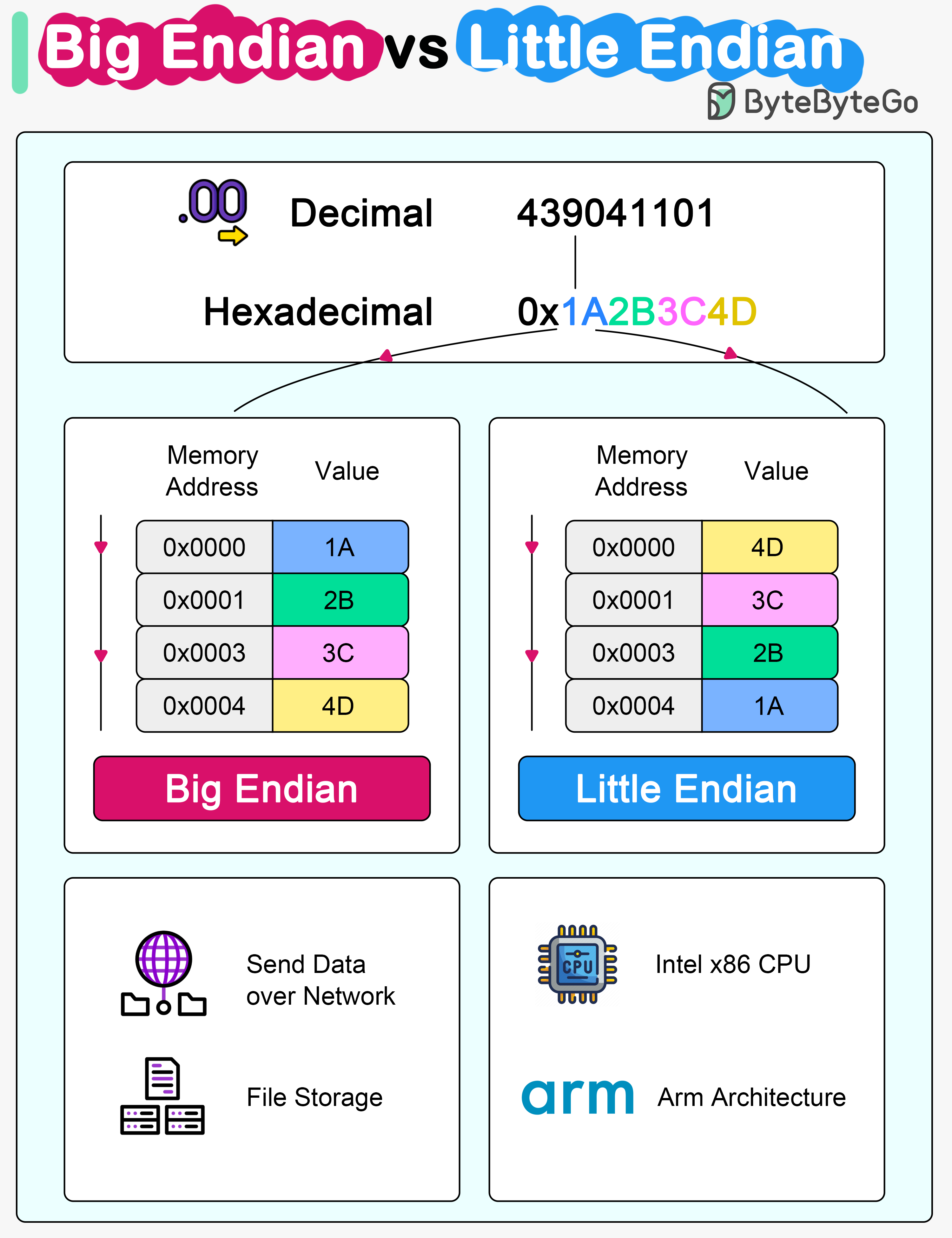

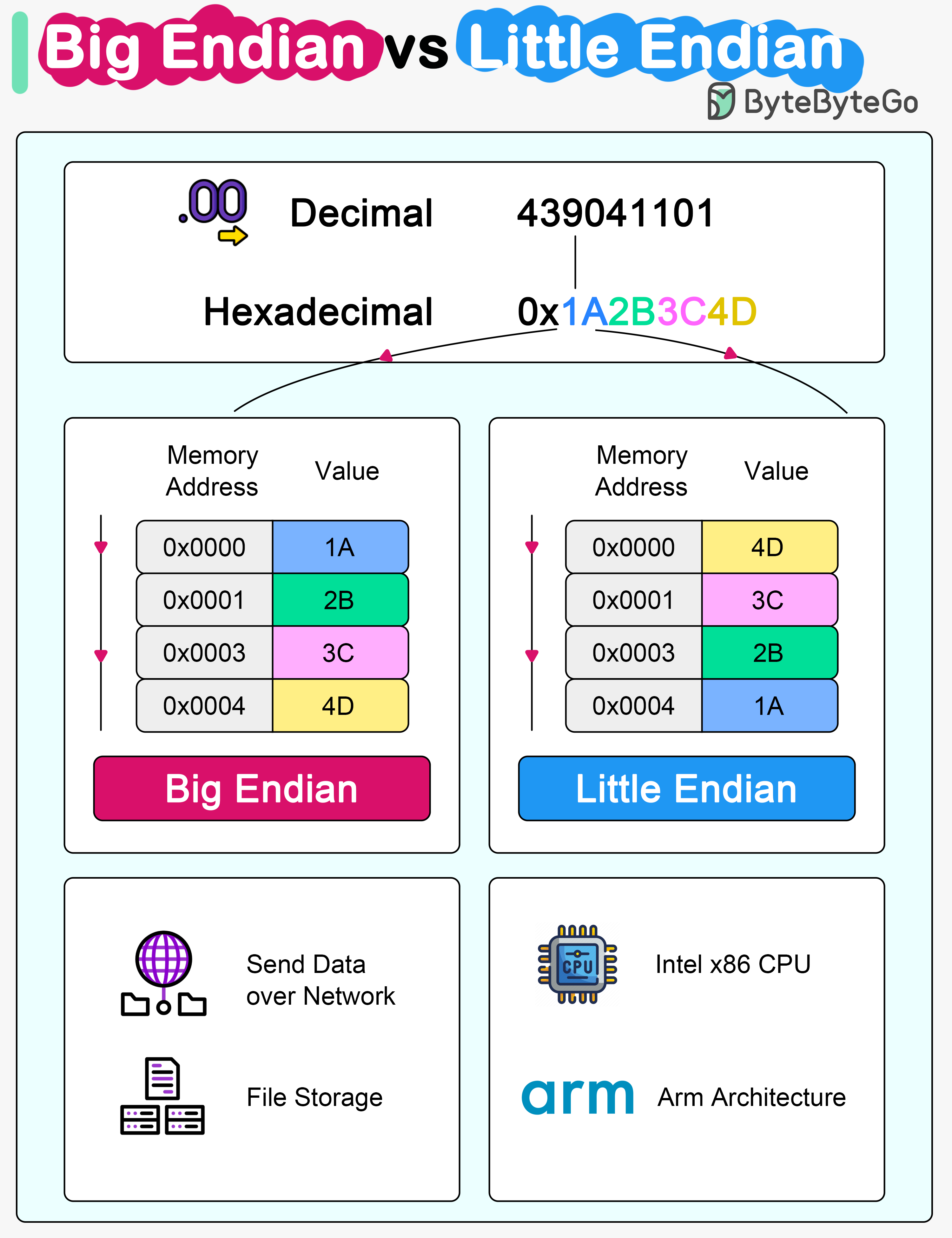

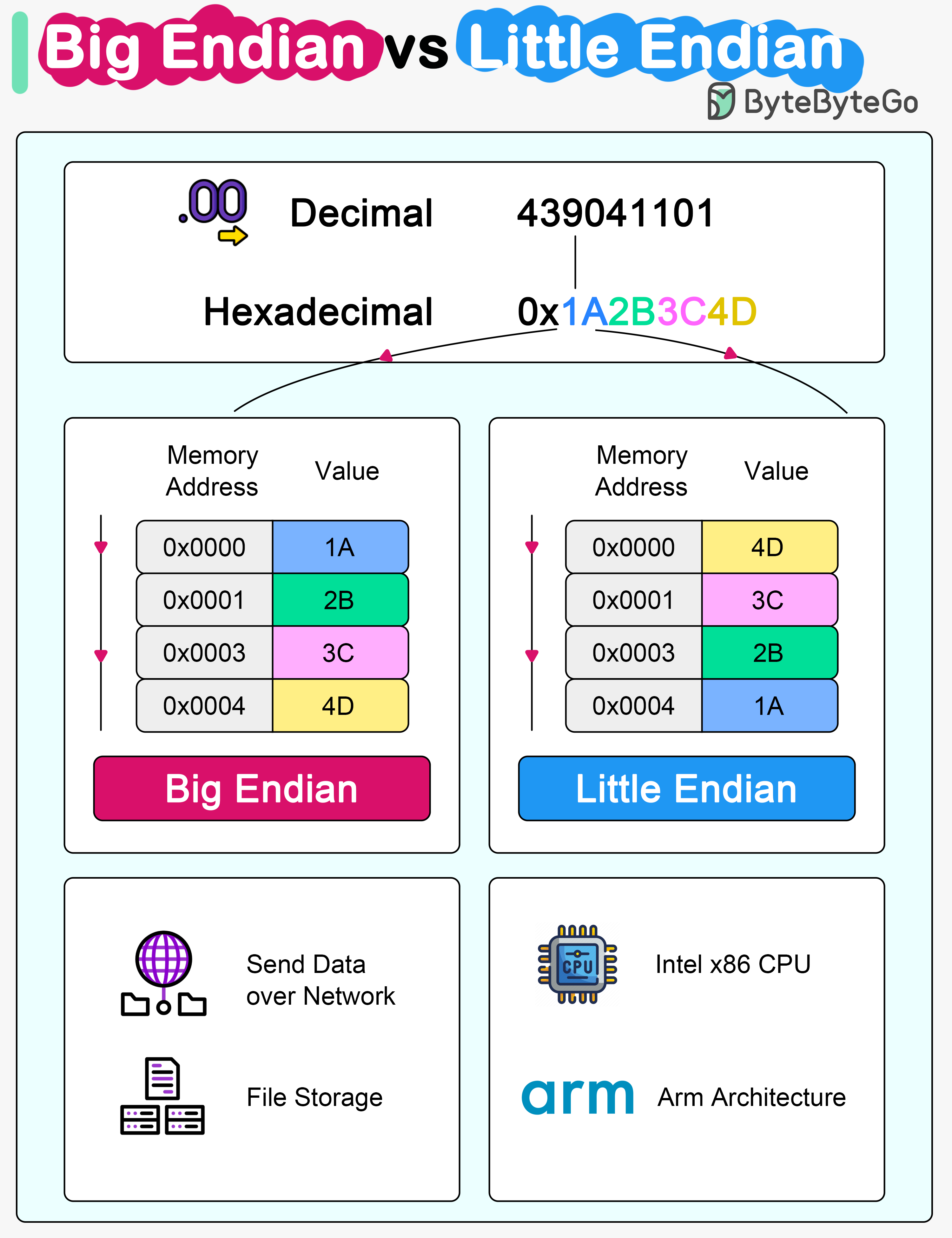

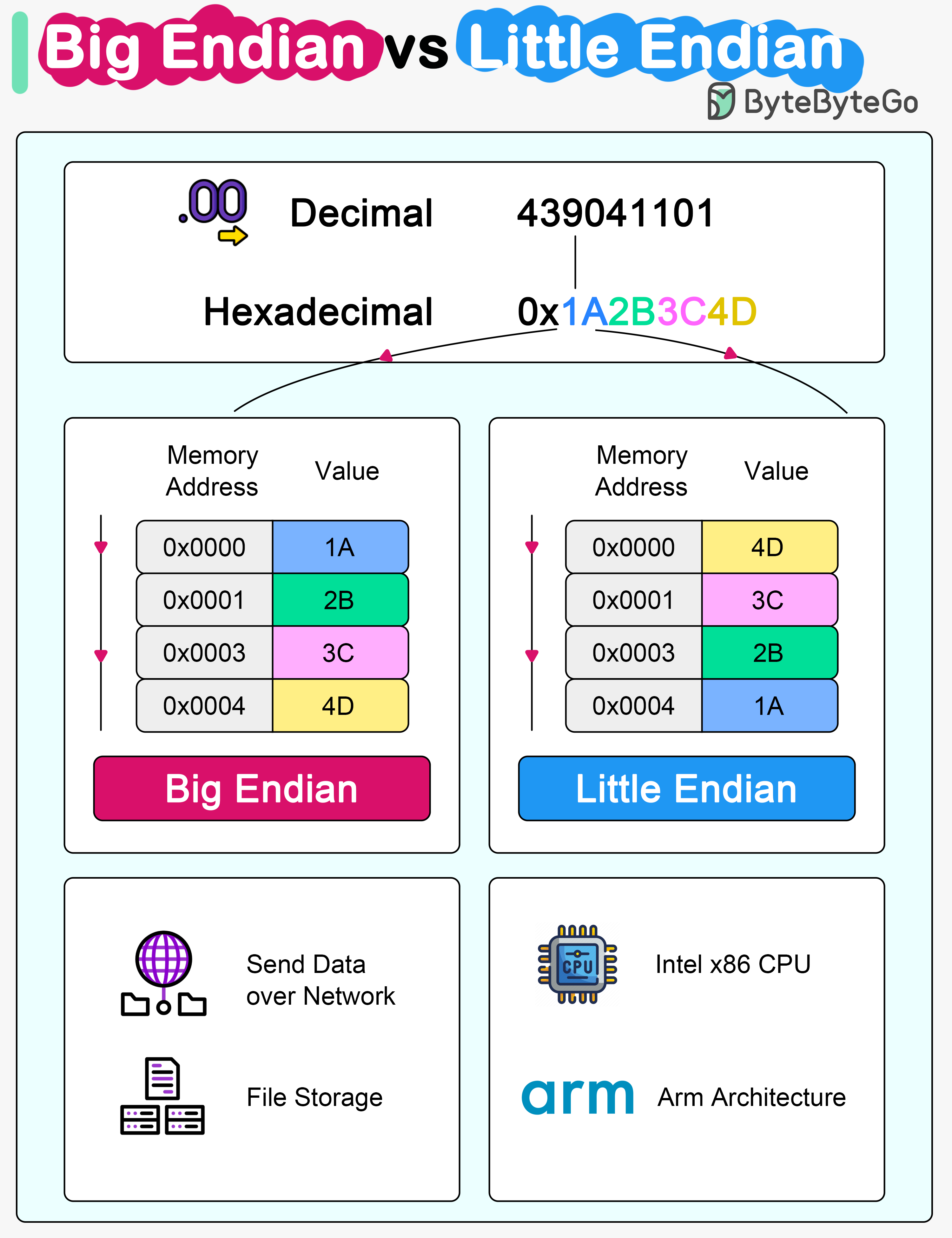

+ * [Big Endian vs Little Endian](https://bytebytego.com/guides/big-endian-vs-little-endian)

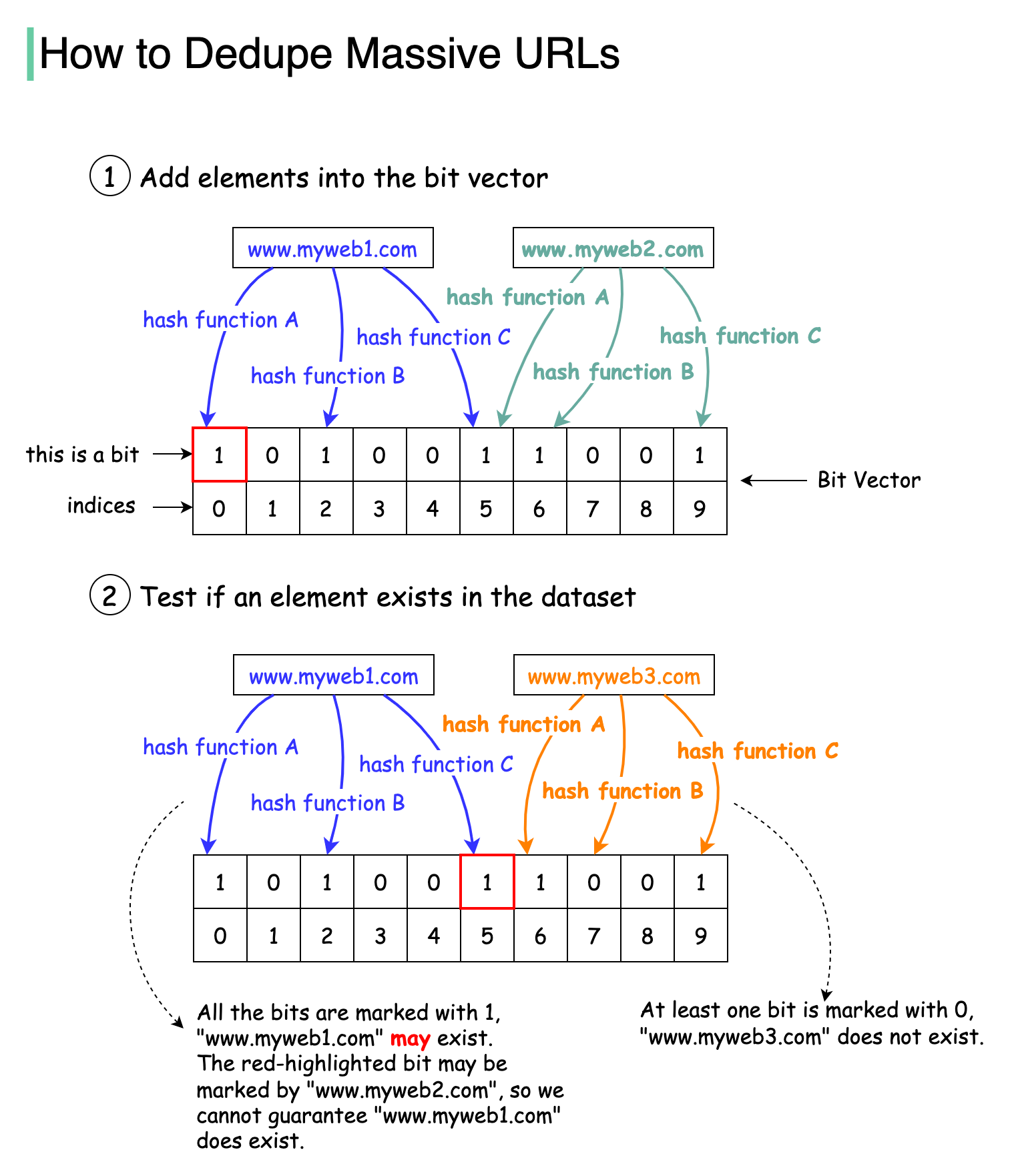

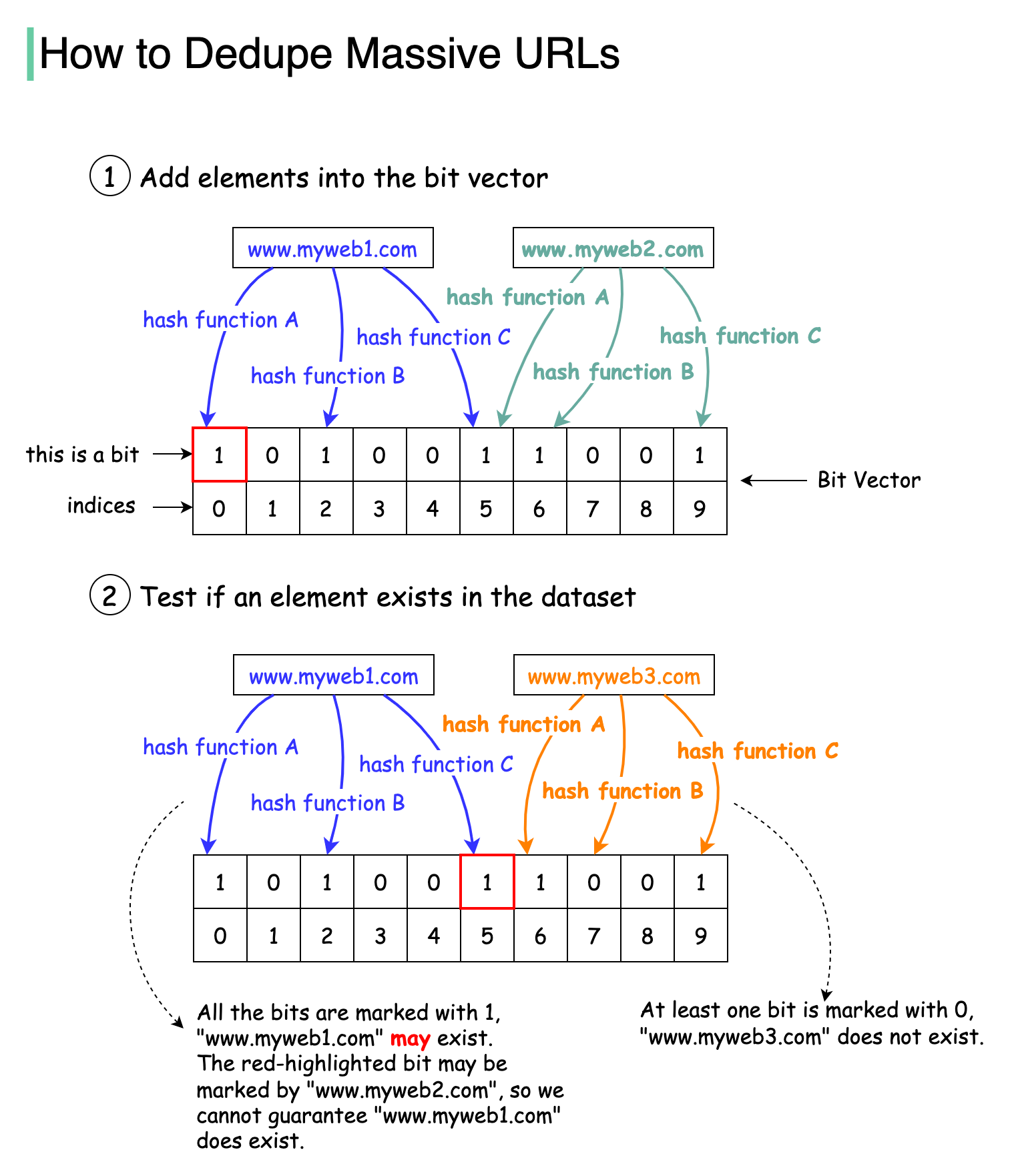

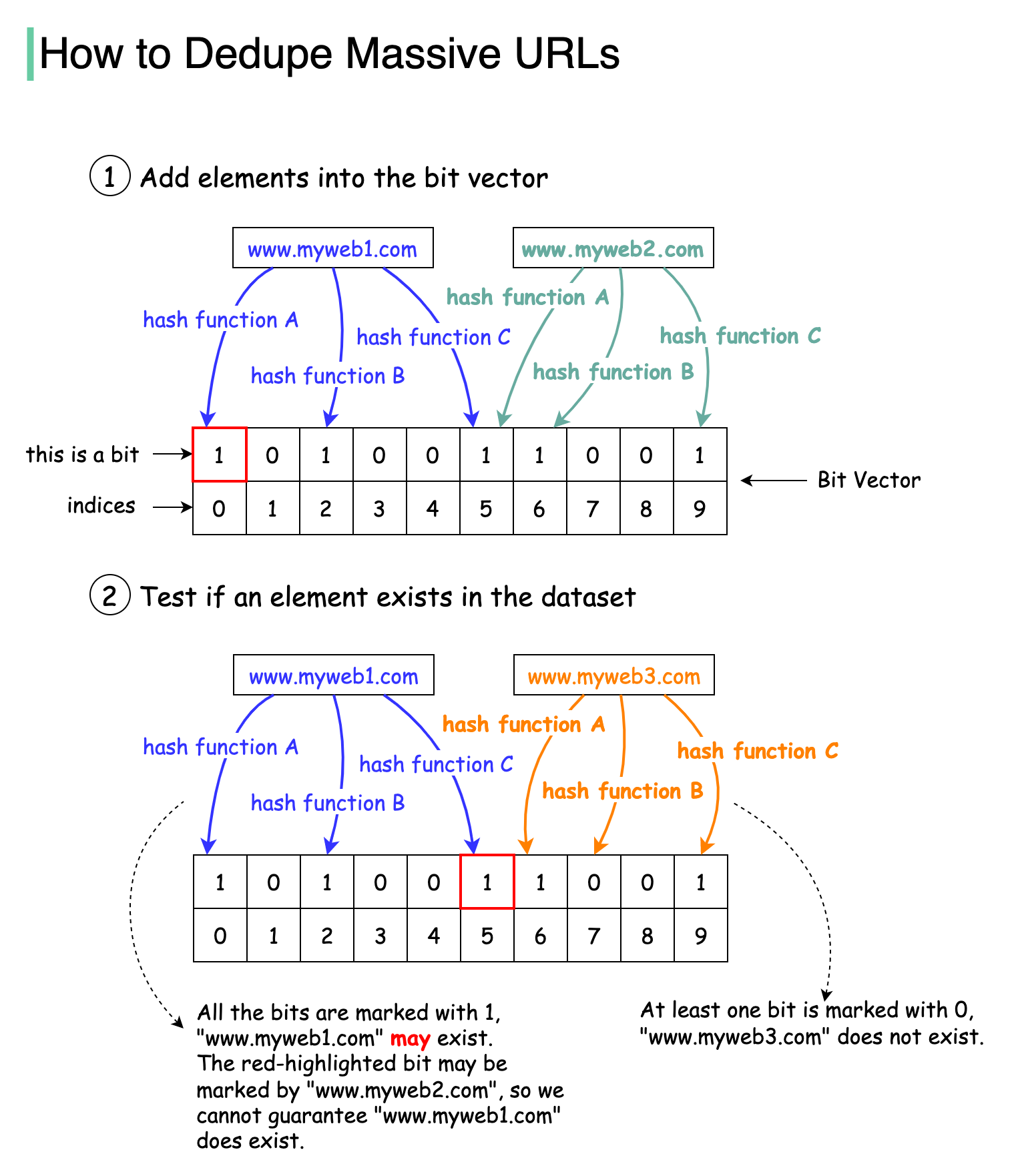

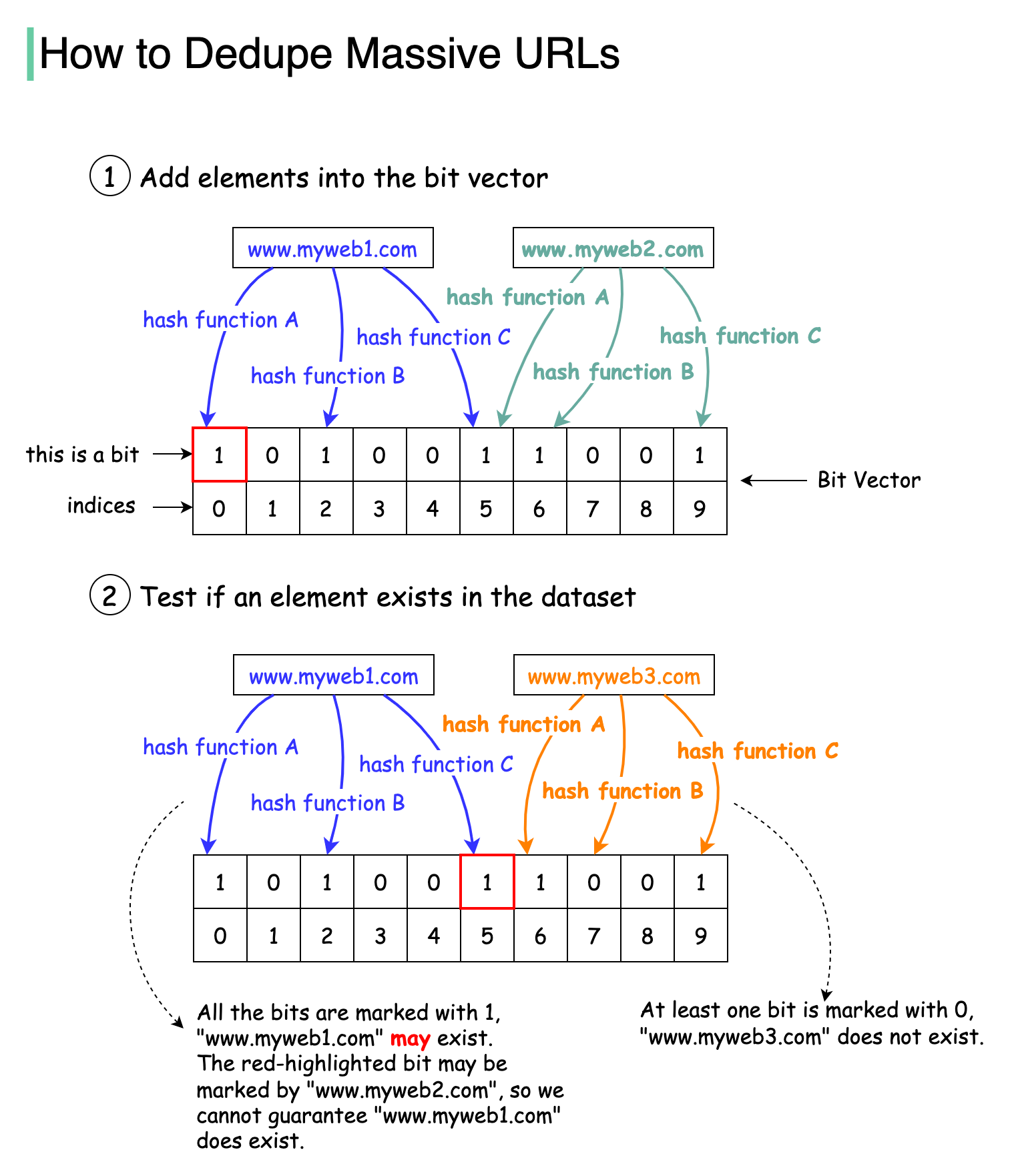

+ * [How to Avoid Crawling Duplicate URLs at Google Scale?](https://bytebytego.com/guides/how-to-avoid-crawling-duplicate-urls-at-google-scale)

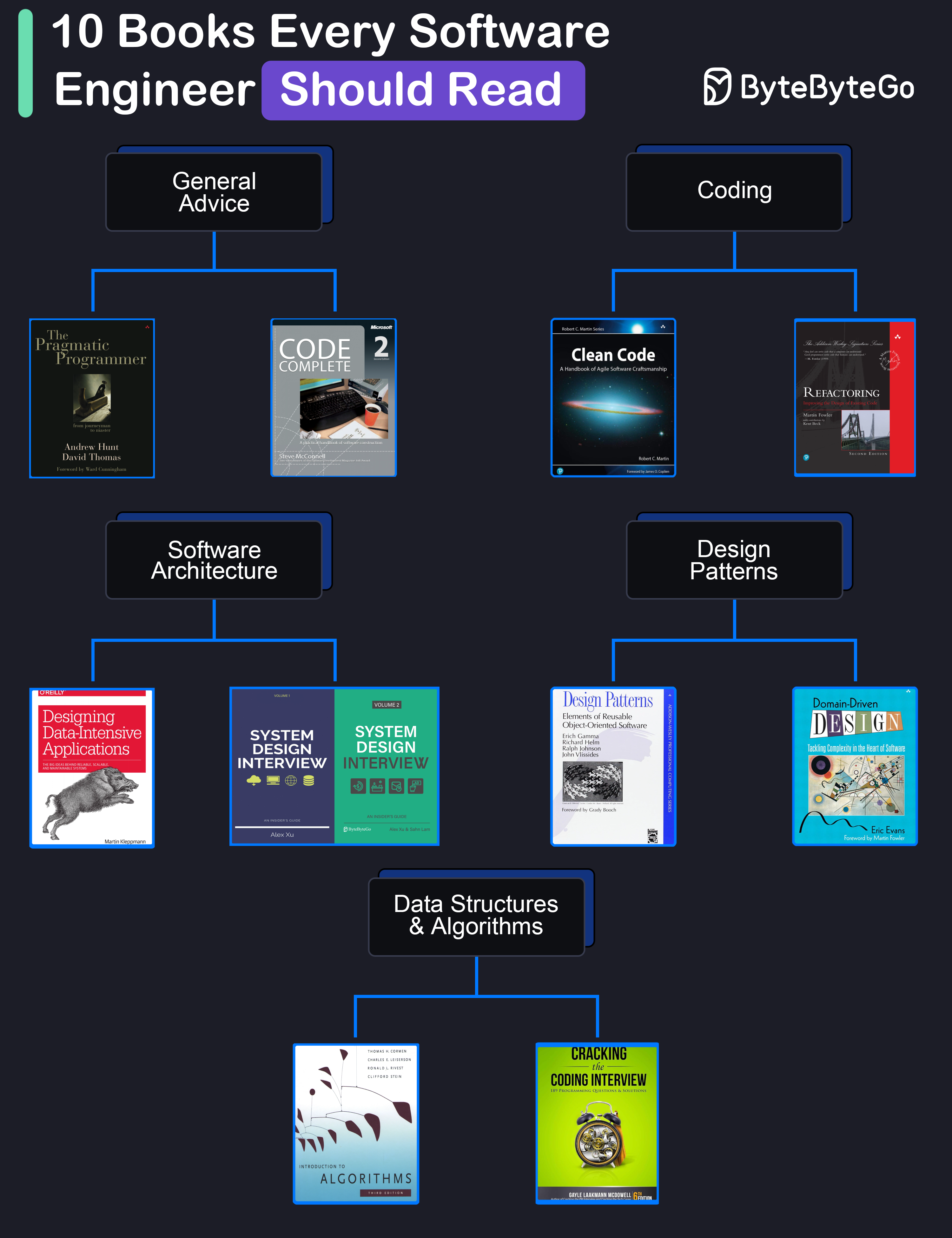

+ * [10 Books for Software Developers](https://bytebytego.com/guides/10-books-for-software-developers)

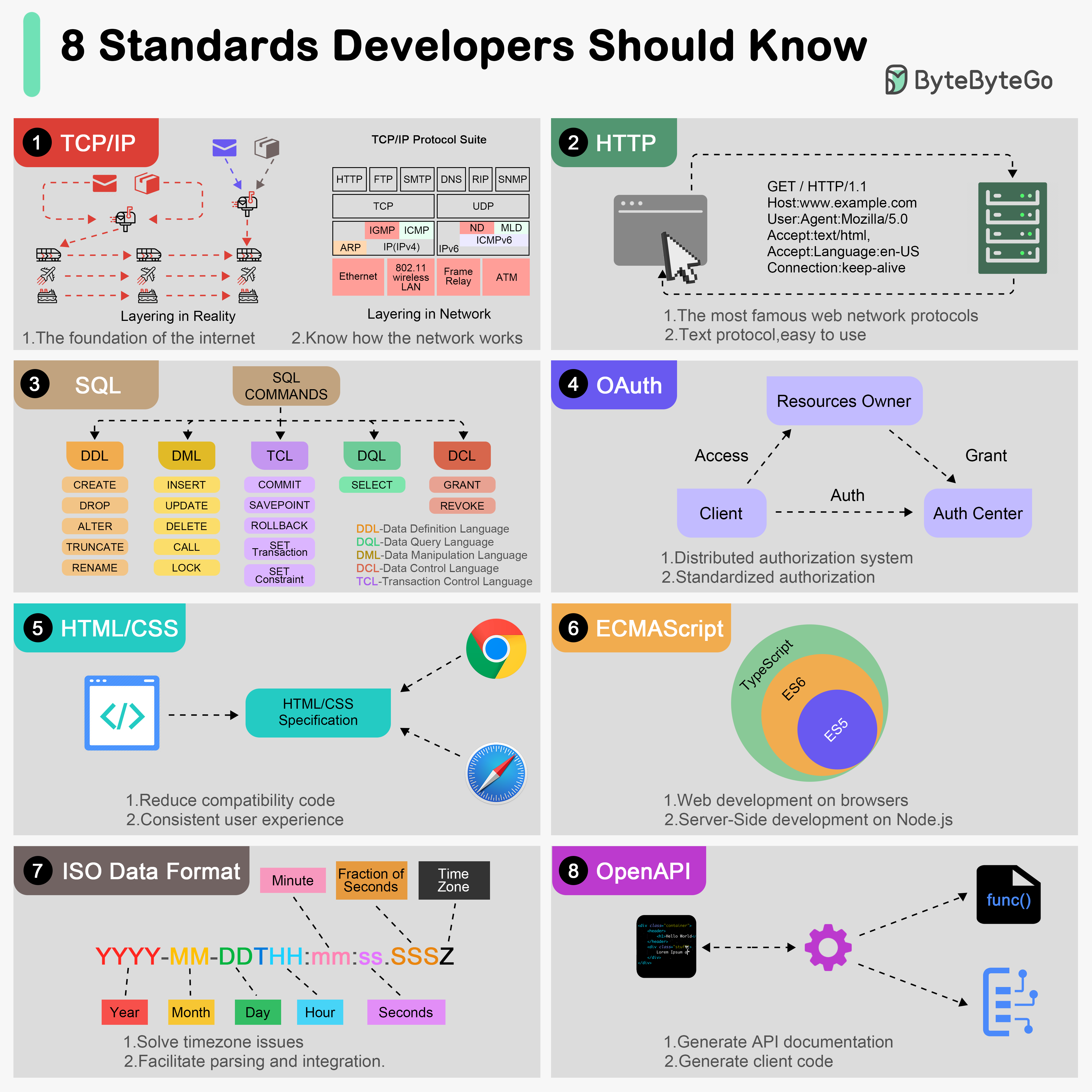

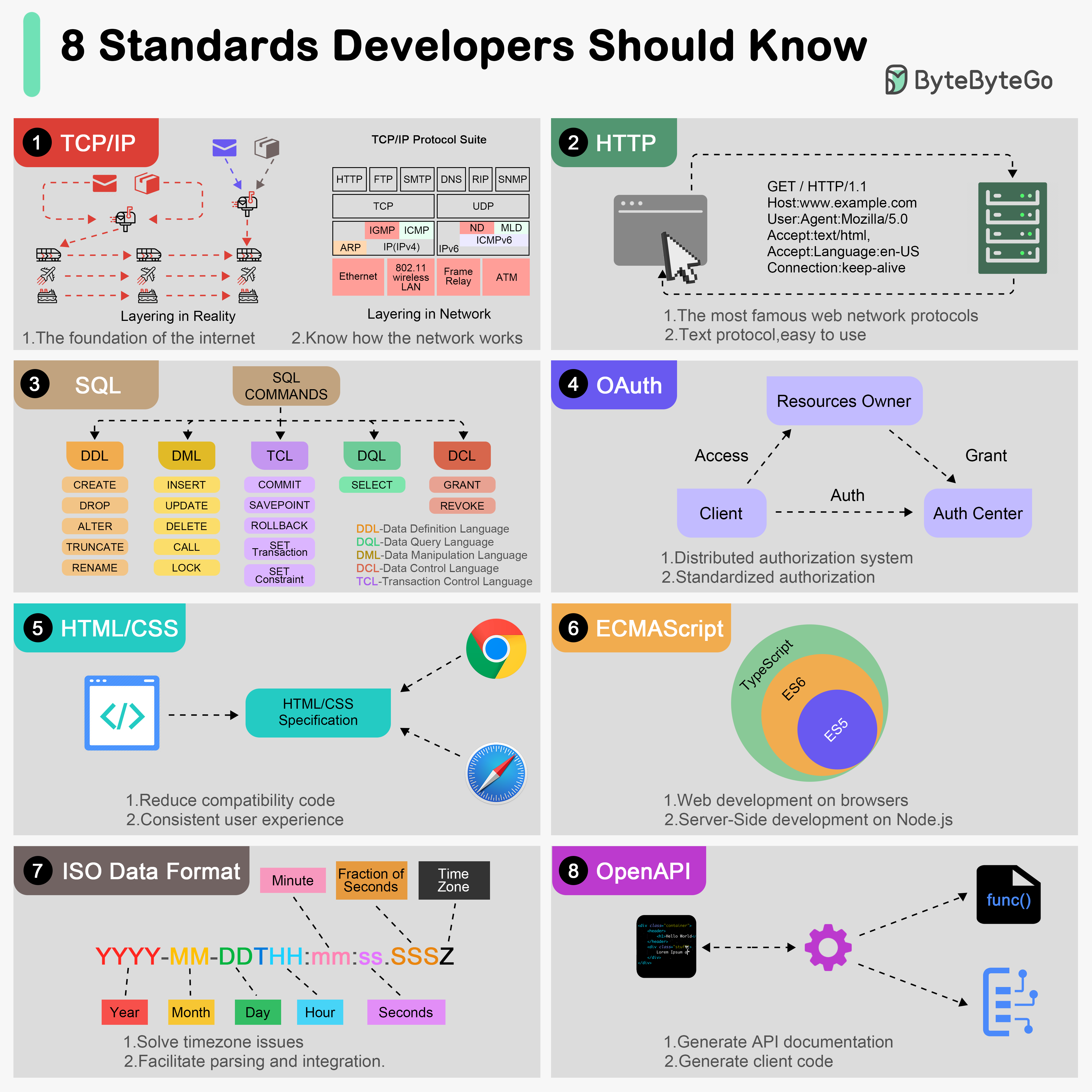

+ * [Top 8 Standards Every Developer Should Know](https://bytebytego.com/guides/top-8-standards-every-developer-should-know)

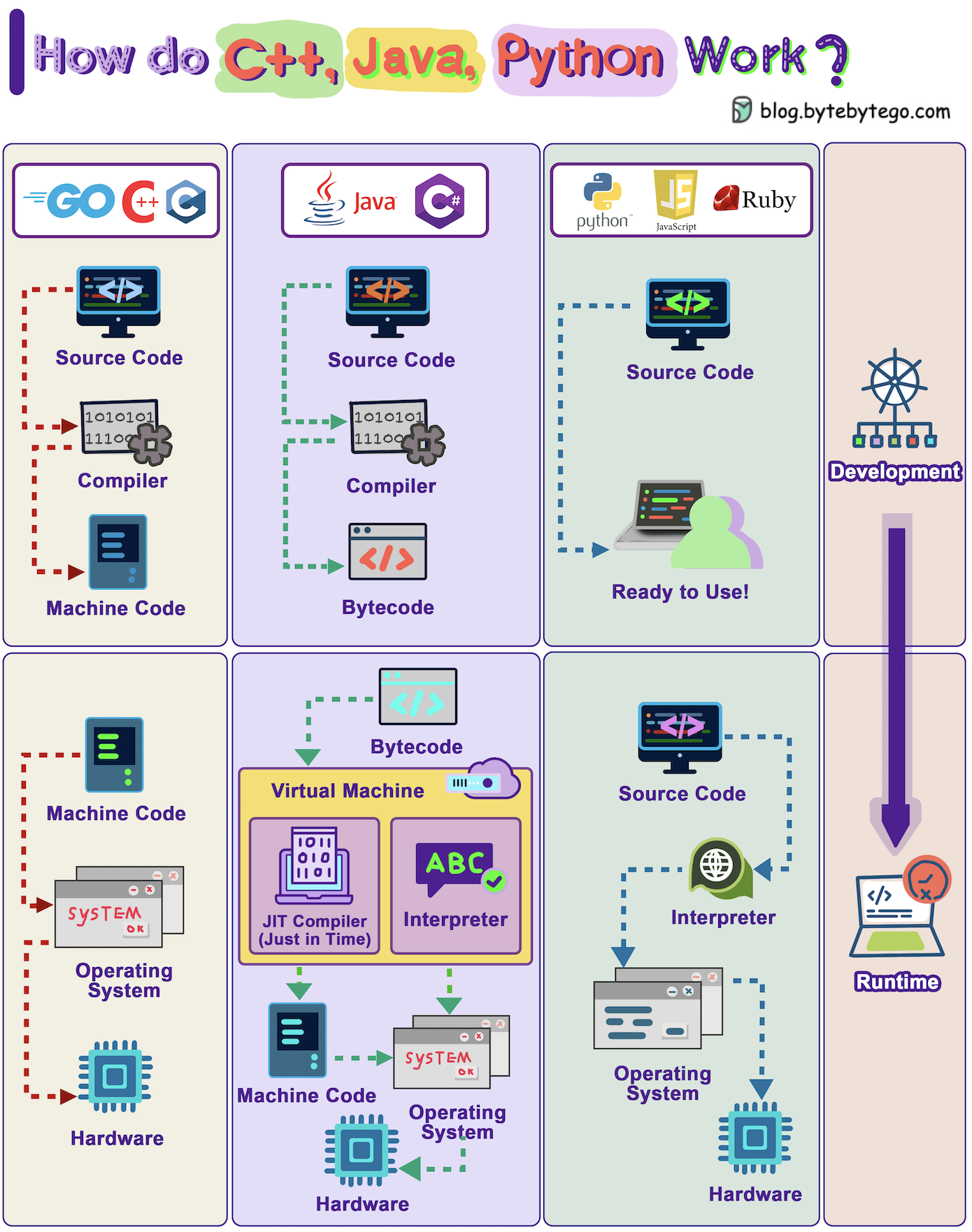

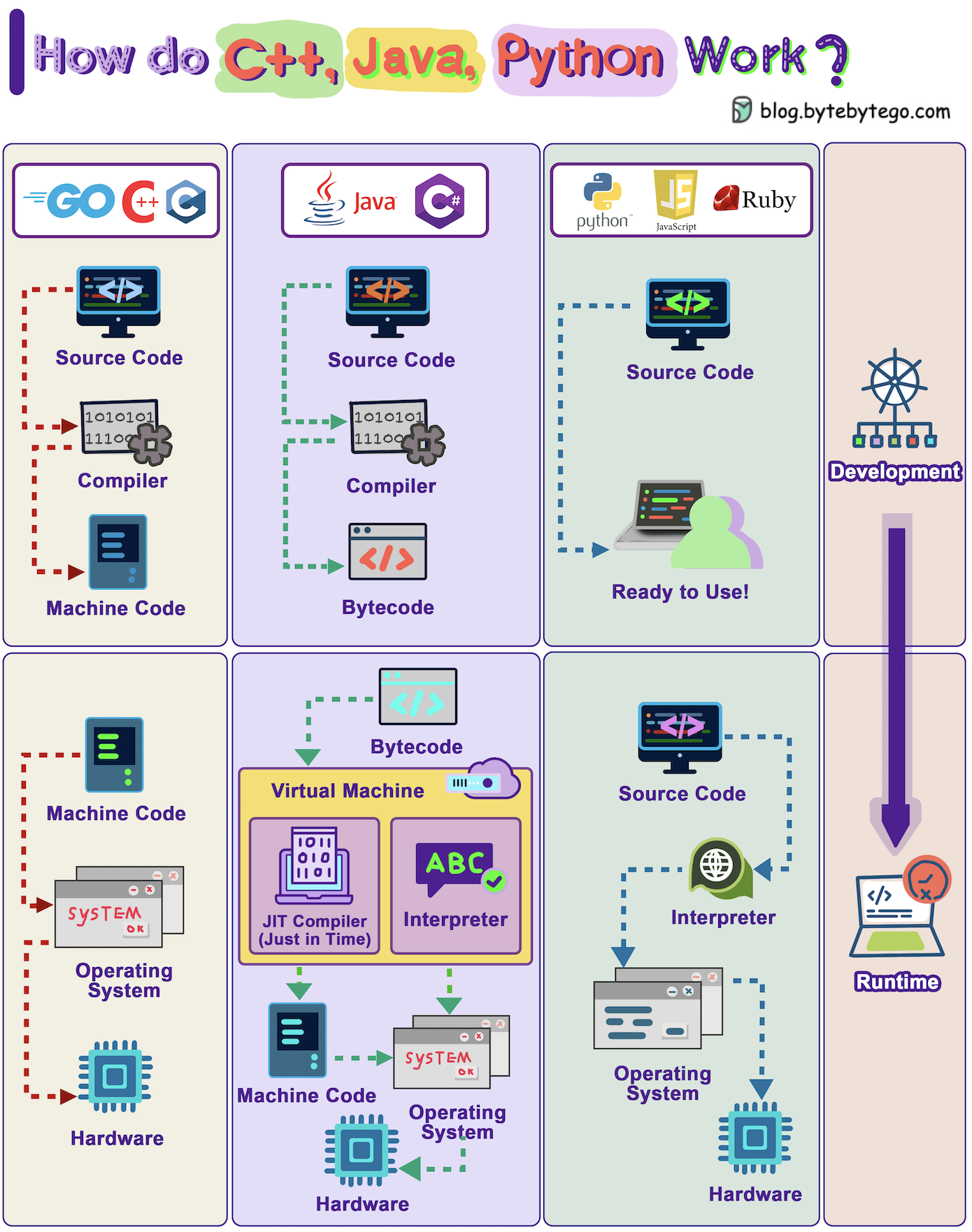

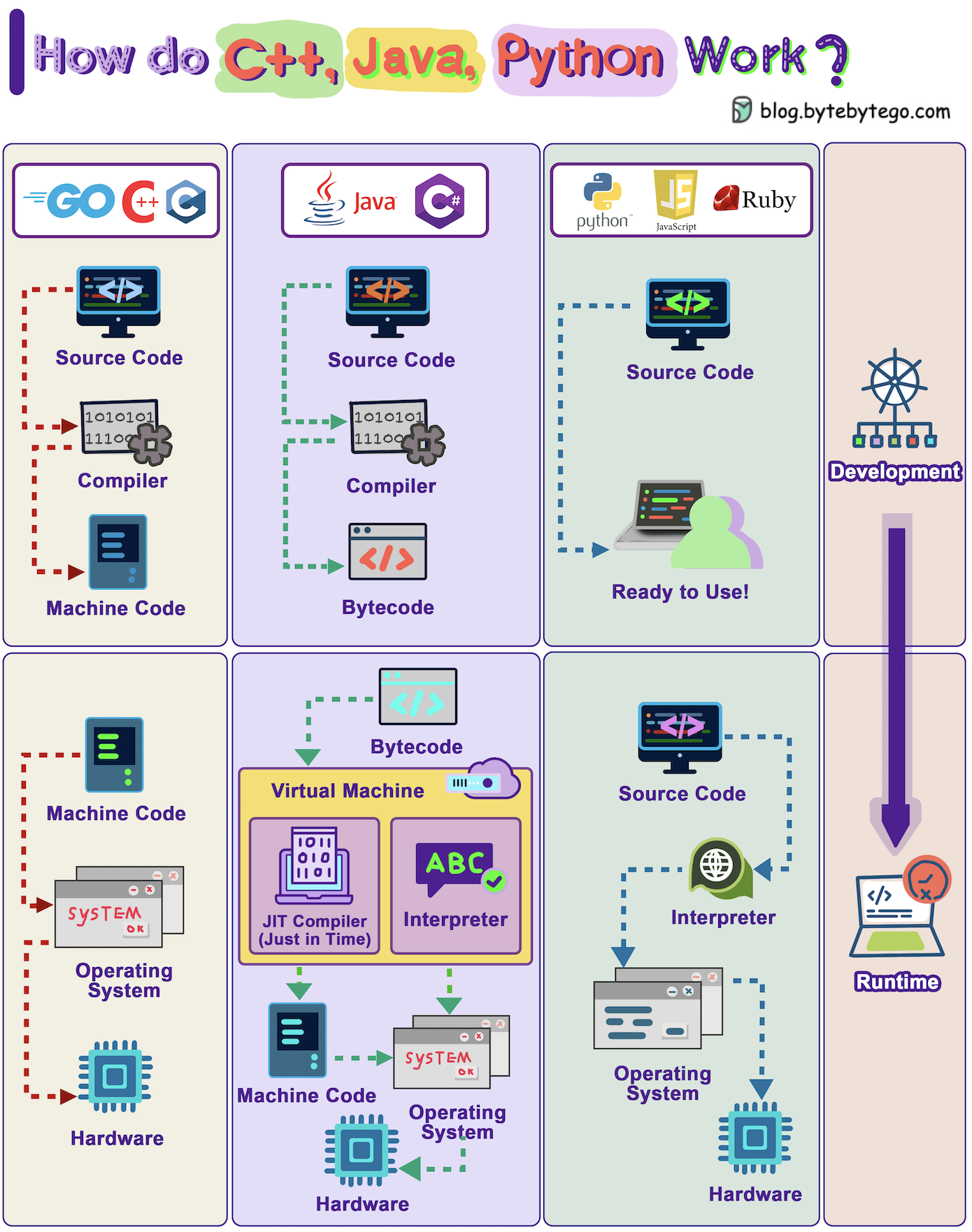

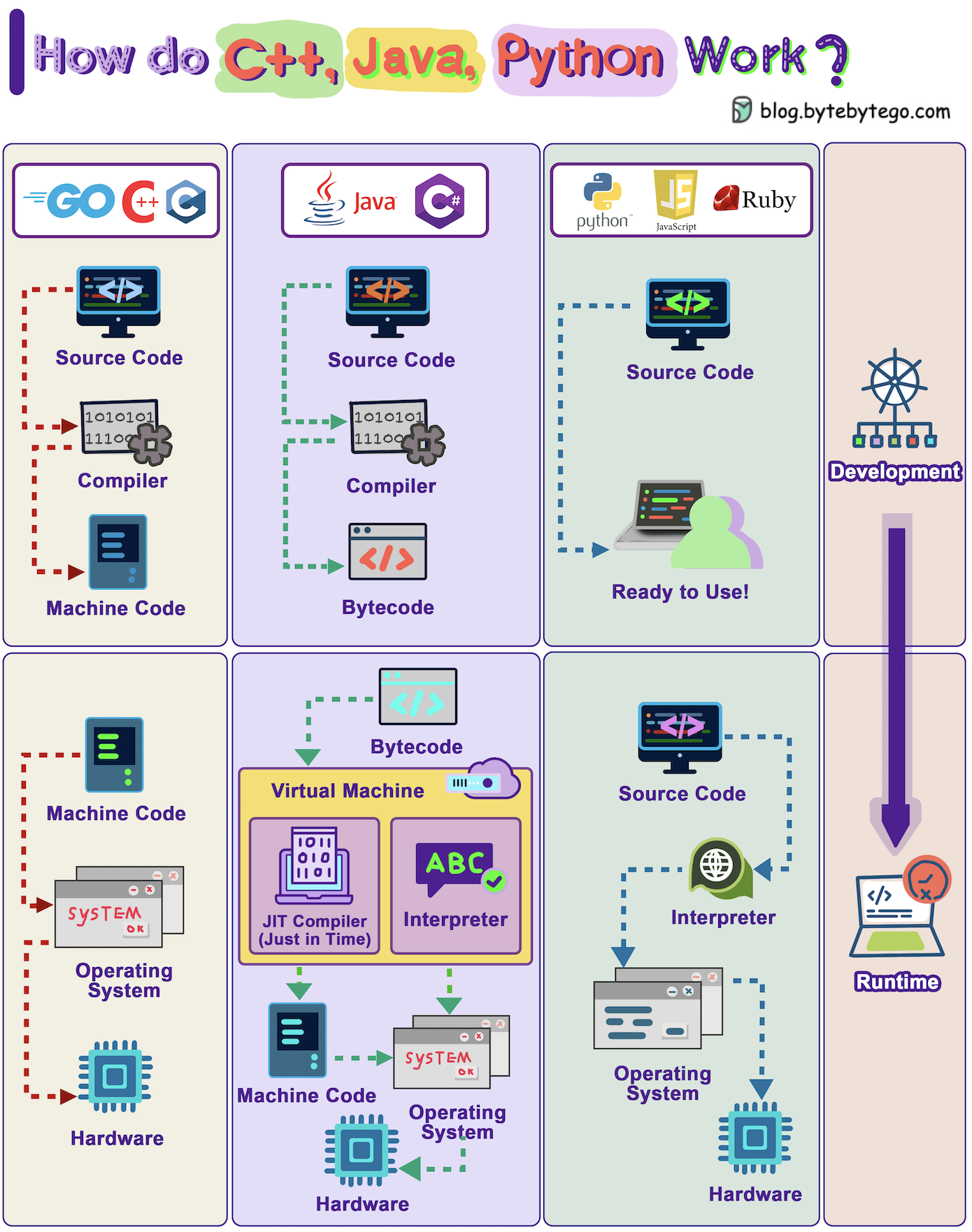

+ * [How Do C++, Java, Python Work?](https://bytebytego.com/guides/how-do-c++-java-python-work)

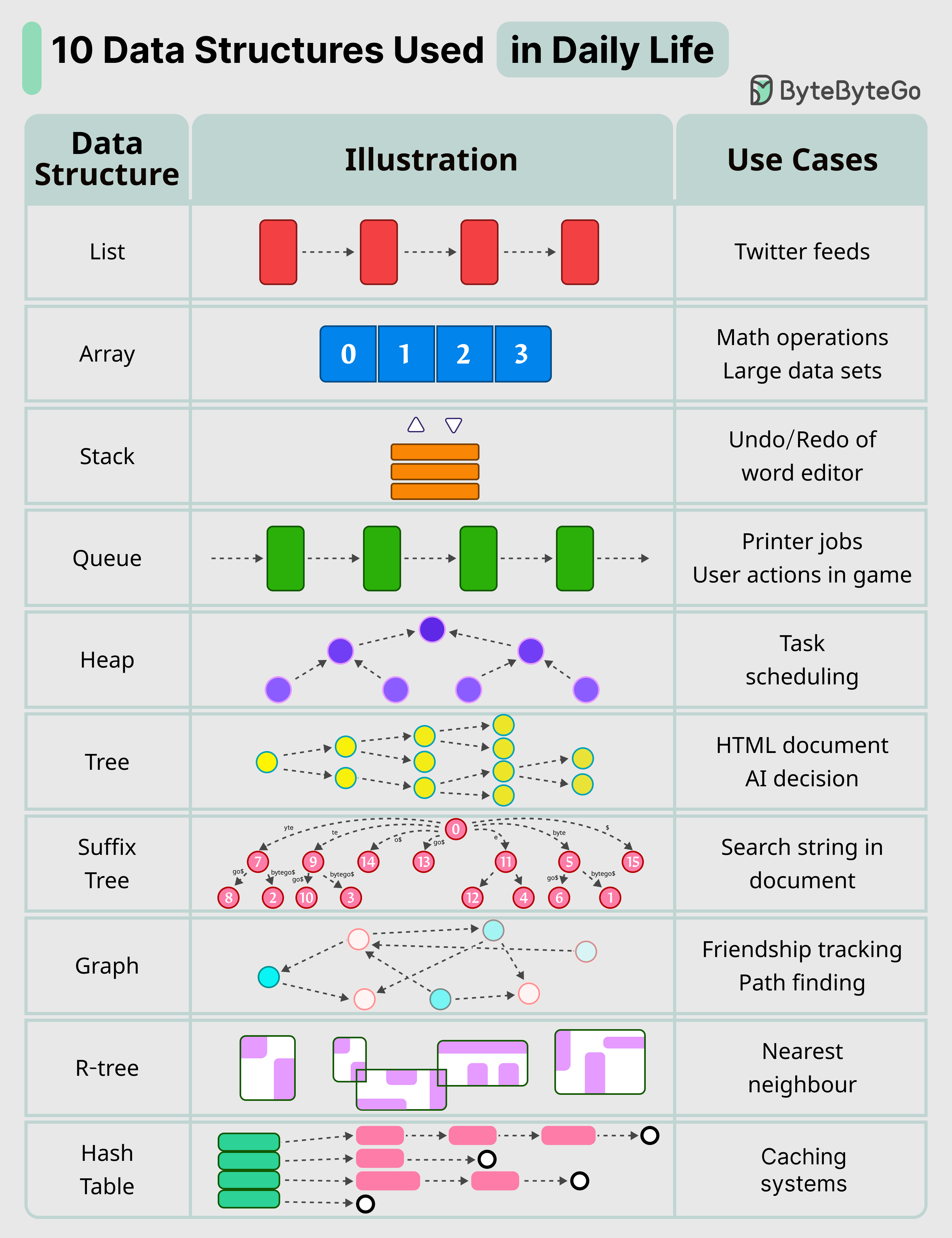

+ * [10 Key Data Structures We Use Every Day](https://bytebytego.com/guides/10-key-data-structures-we-use-every-day)

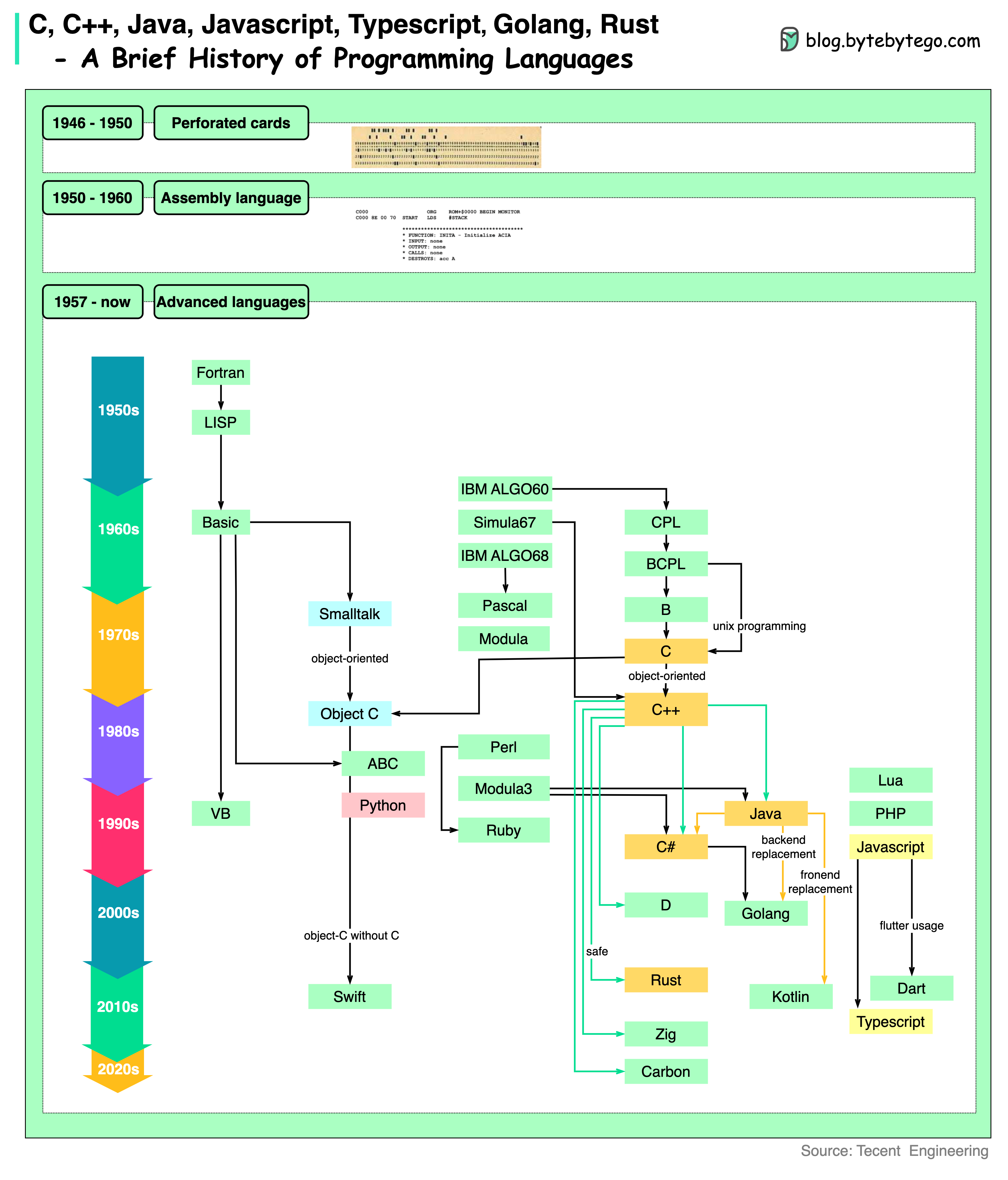

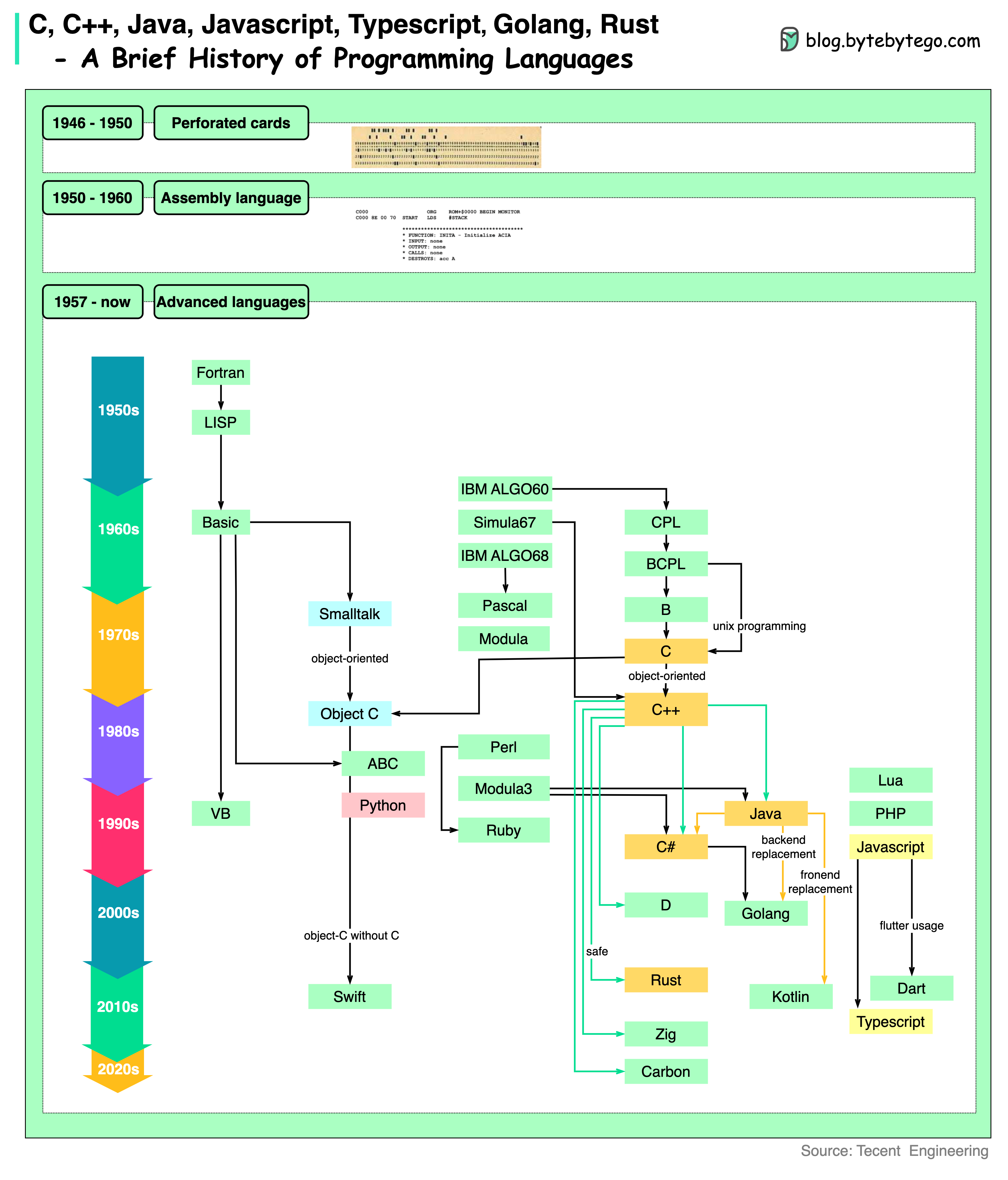

+ * [A Brief History of Programming Languages](https://bytebytego.com/guides/a-brief-history-og-programming-languages)

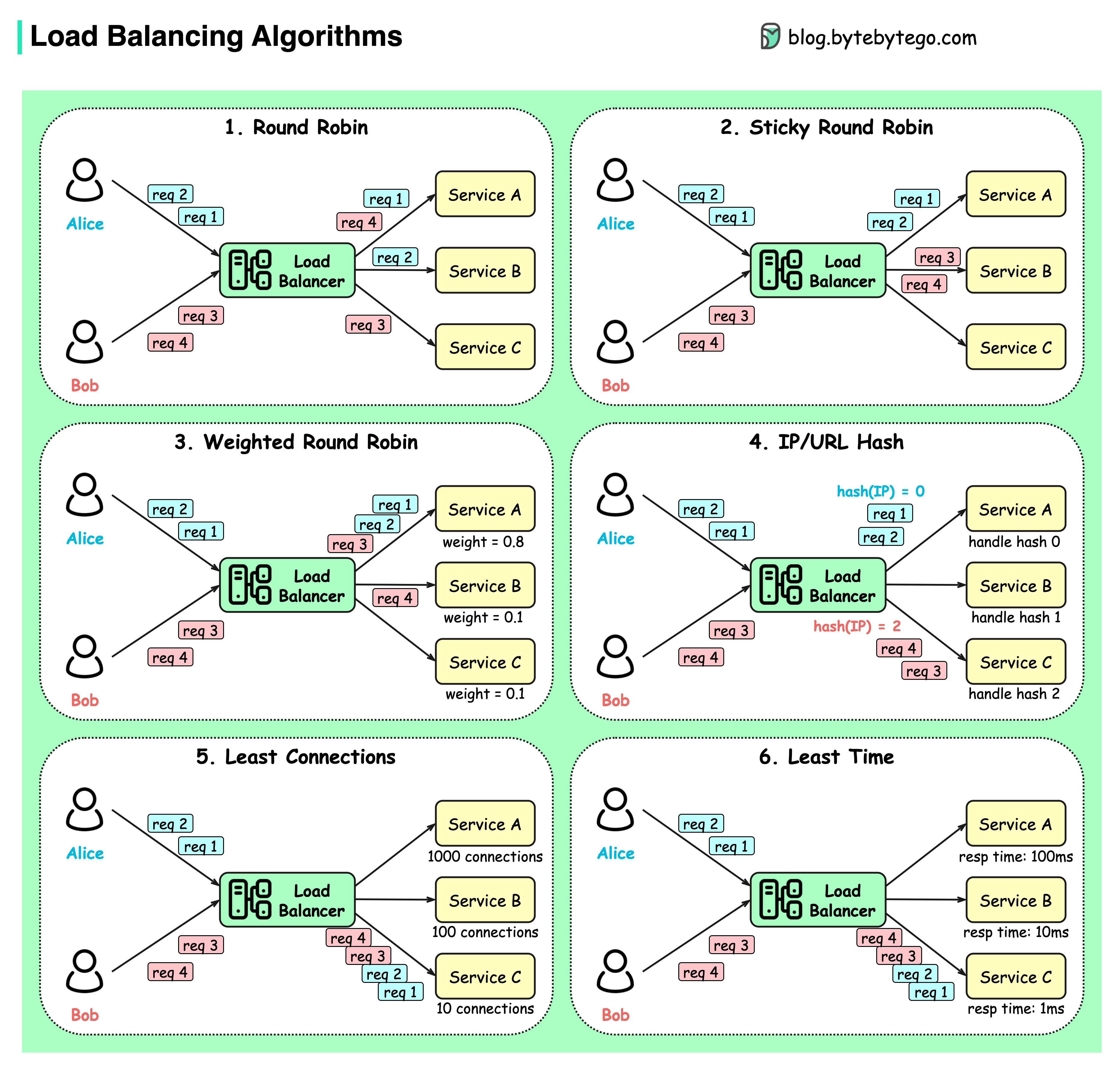

+ * [Top 6 Load Balancing Algorithms](https://bytebytego.com/guides/top-6-load-balancing-algorithms)

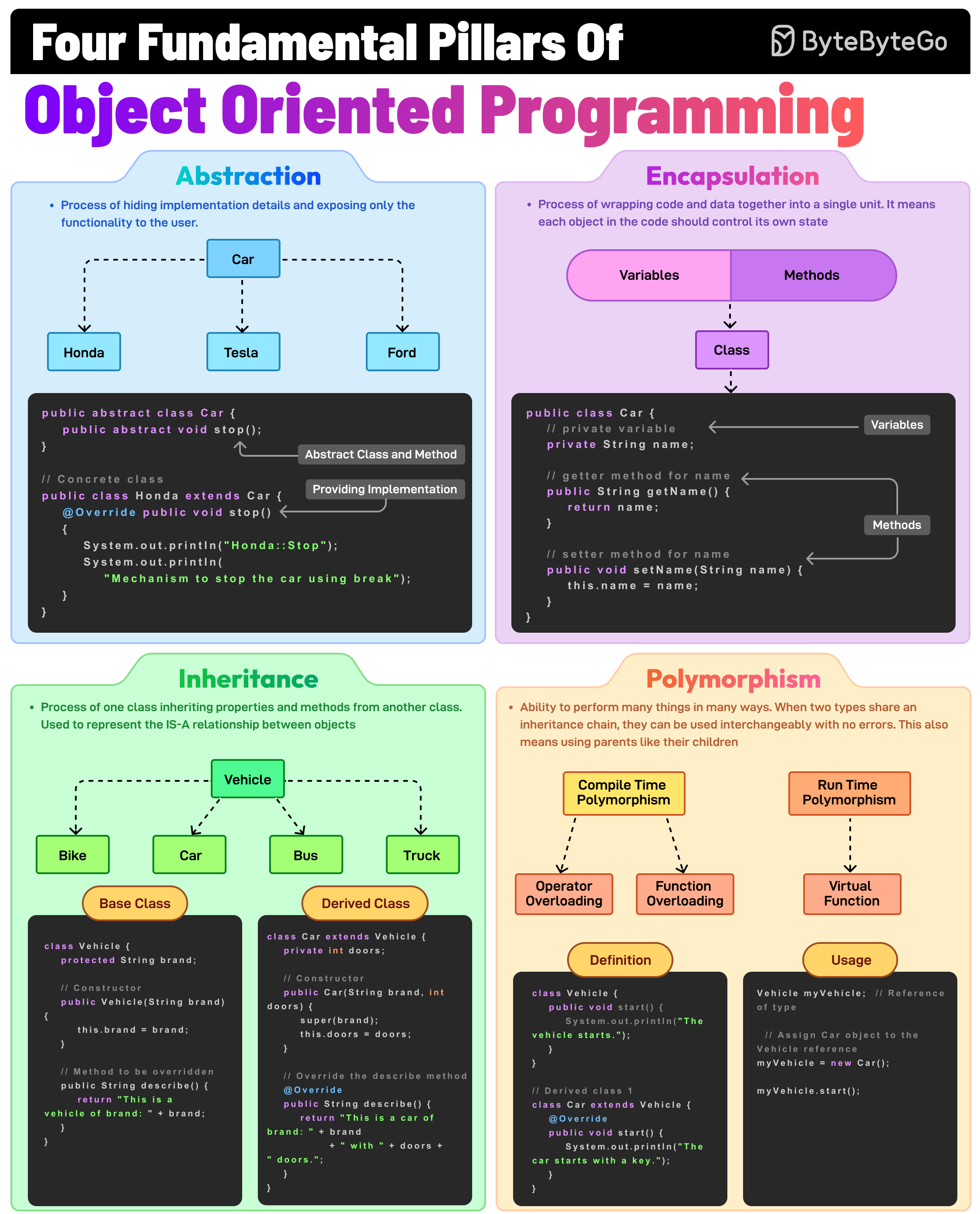

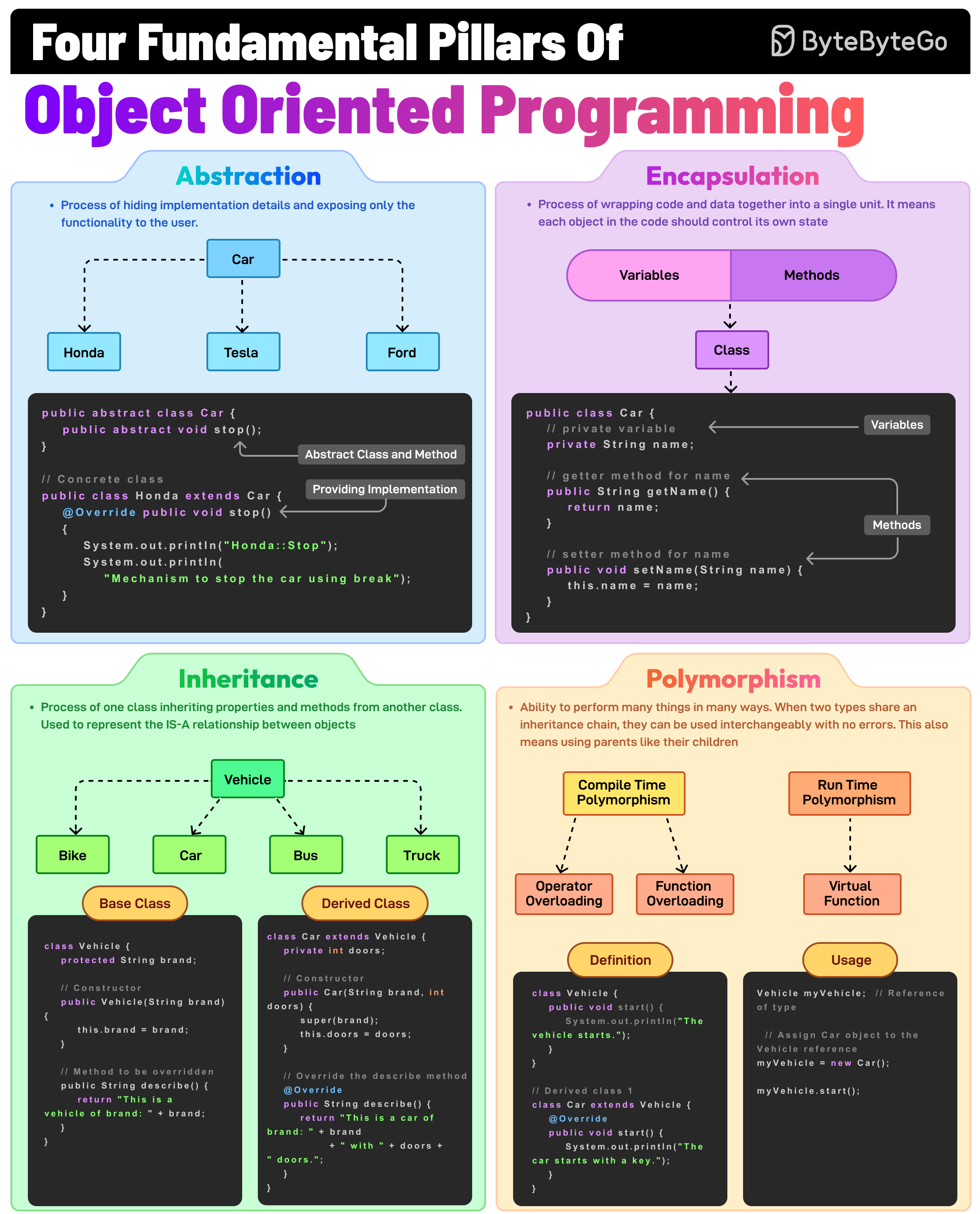

+ * [The Fundamental Pillars of Object-Oriented Programming](https://bytebytego.com/guides/the-fundamental-pillars-of-object-oriented-programming)

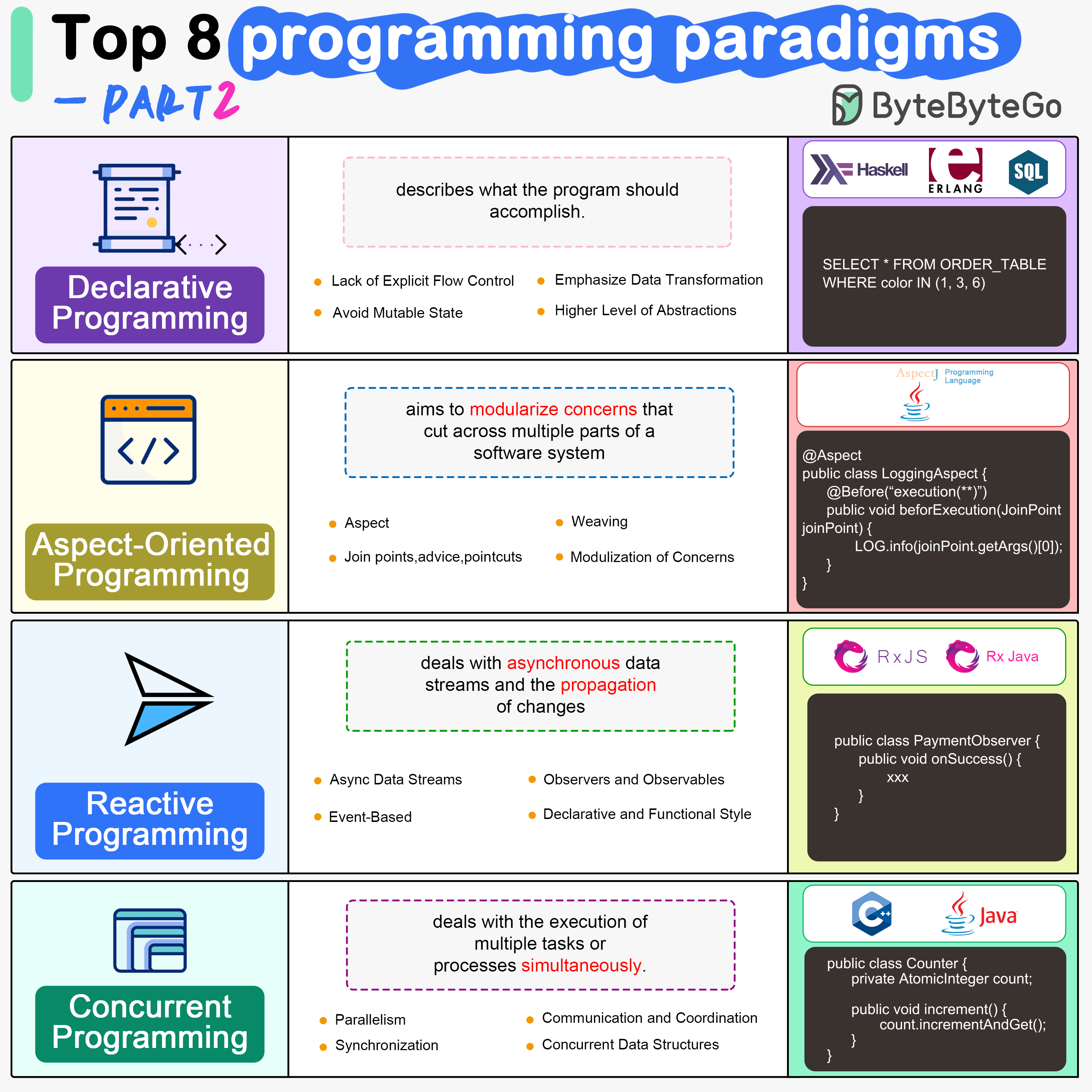

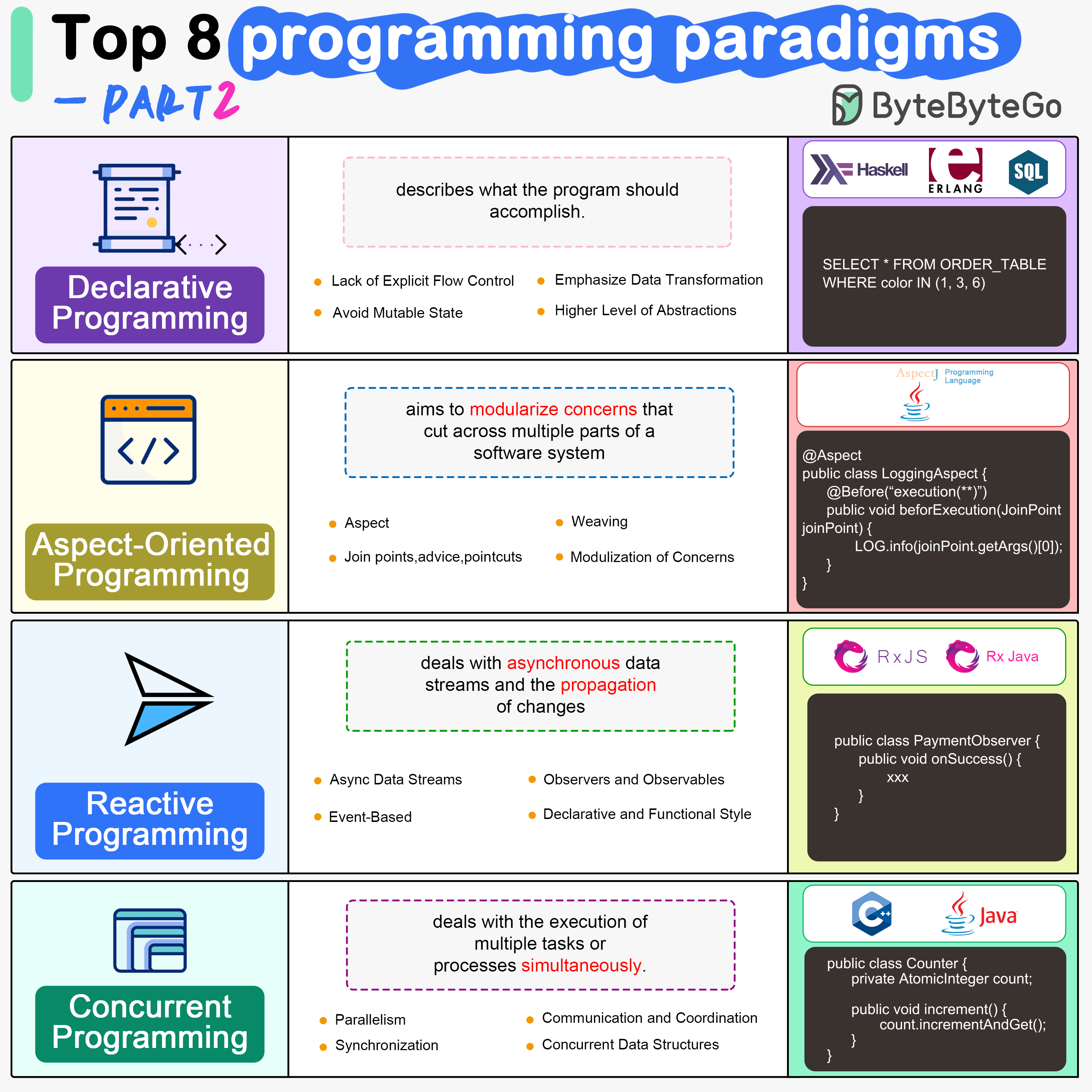

+ * [Top 8 Programming Paradigms](https://bytebytego.com/guides/top-8-programming-paradigms)

+ * [Algorithms for System Design Interviews](https://bytebytego.com/guides/algorithms-you-should-know-before-taking-system-design-interviews)

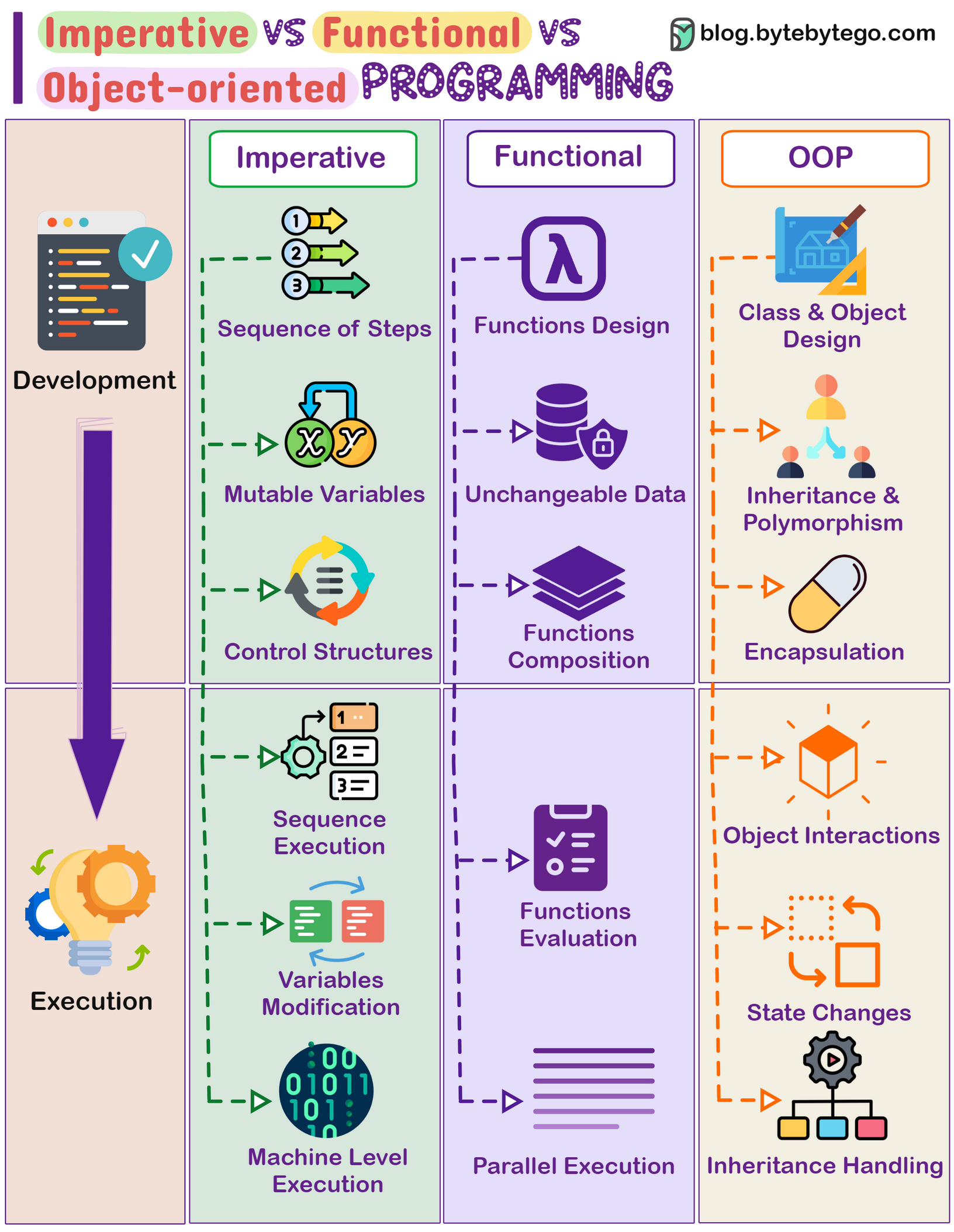

+ * [Imperative vs Functional vs Object-oriented Programming](https://bytebytego.com/guides/imperative-vs-functional-vs-object-oriented-programming)

+ * [Explaining 9 Types of API Testing](https://bytebytego.com/guides/explaining-9-types-of-api-testing)

+ * [The 9 Algorithms That Dominate Our World](https://bytebytego.com/guides/the-9-algorithms-that-dominate-our-world)

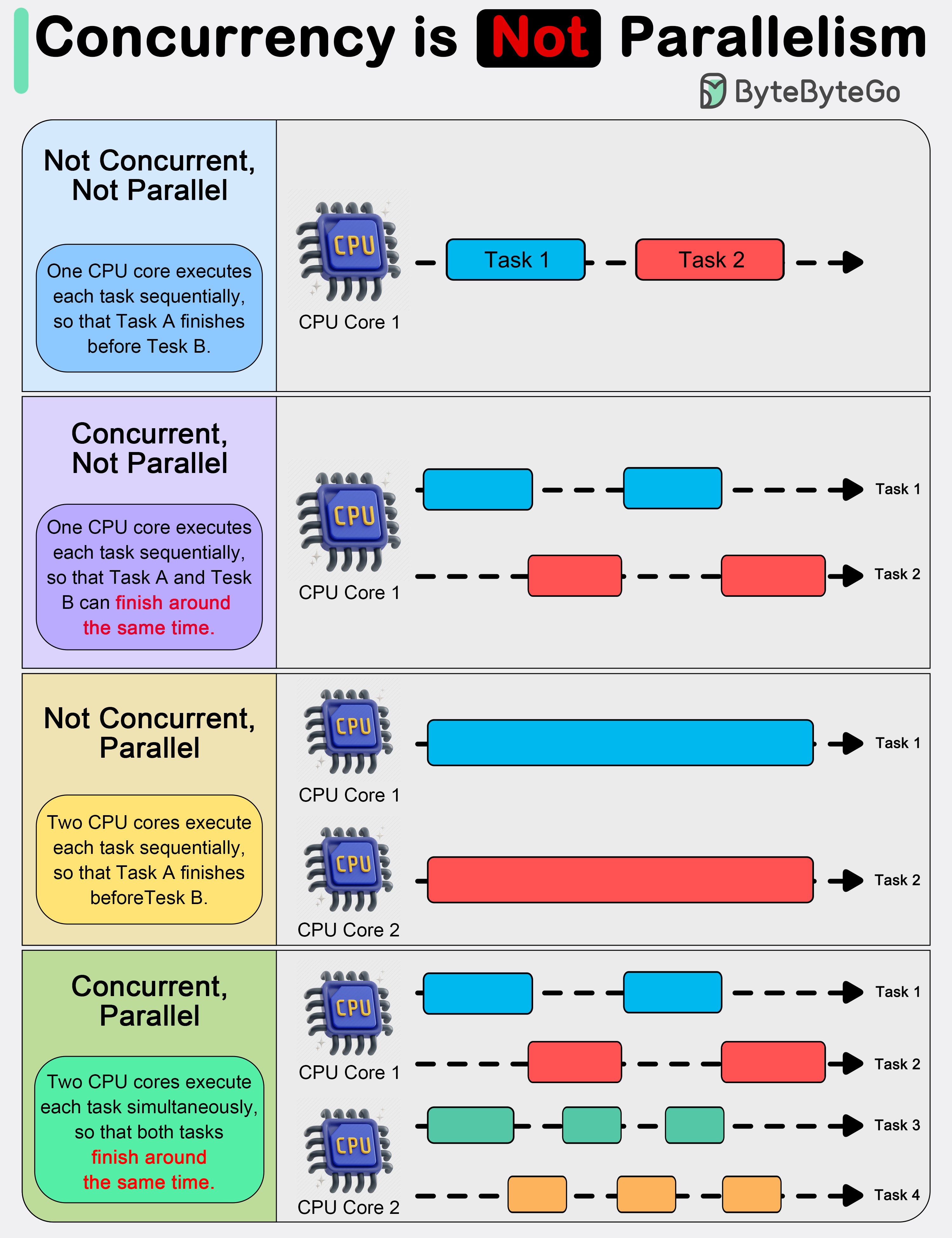

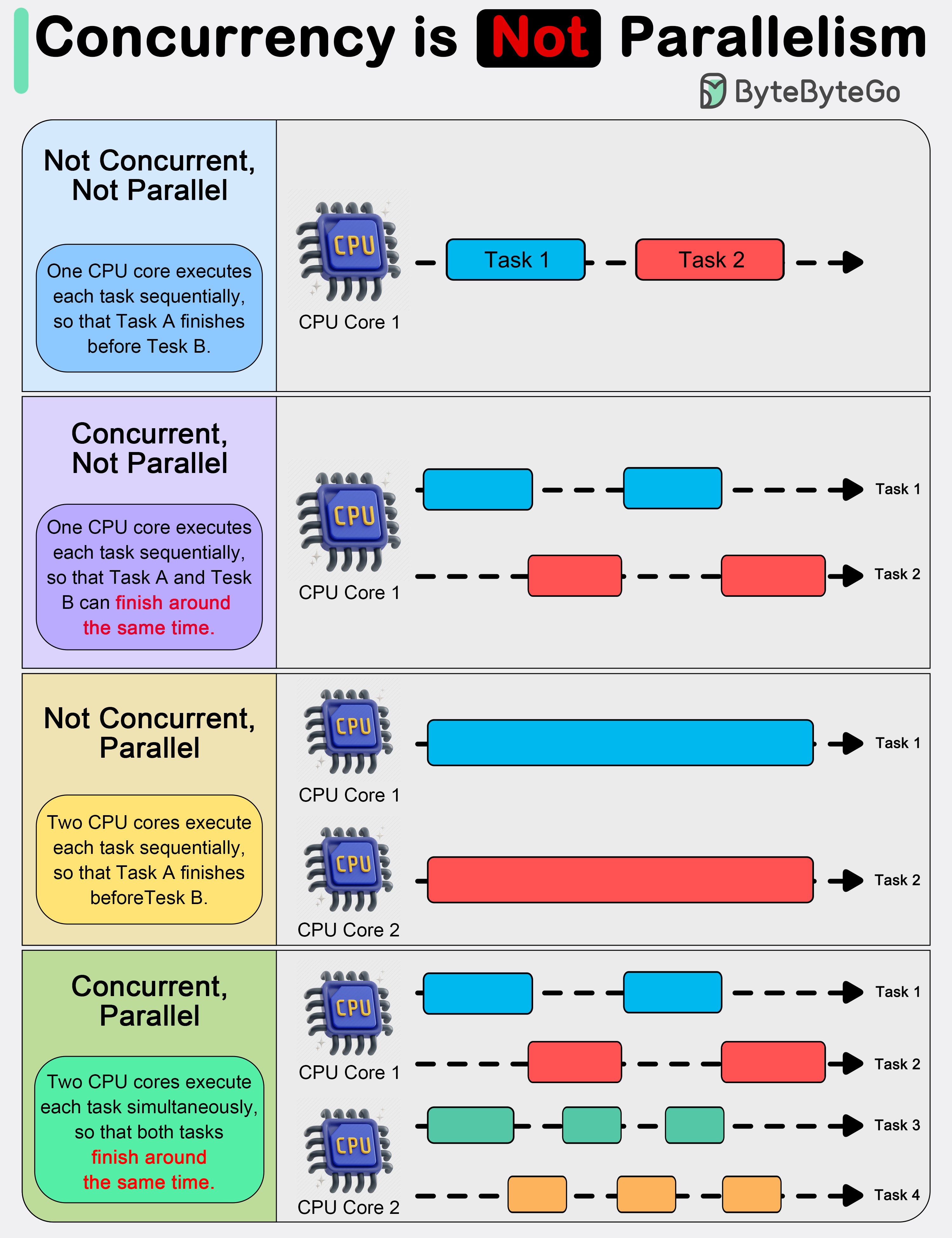

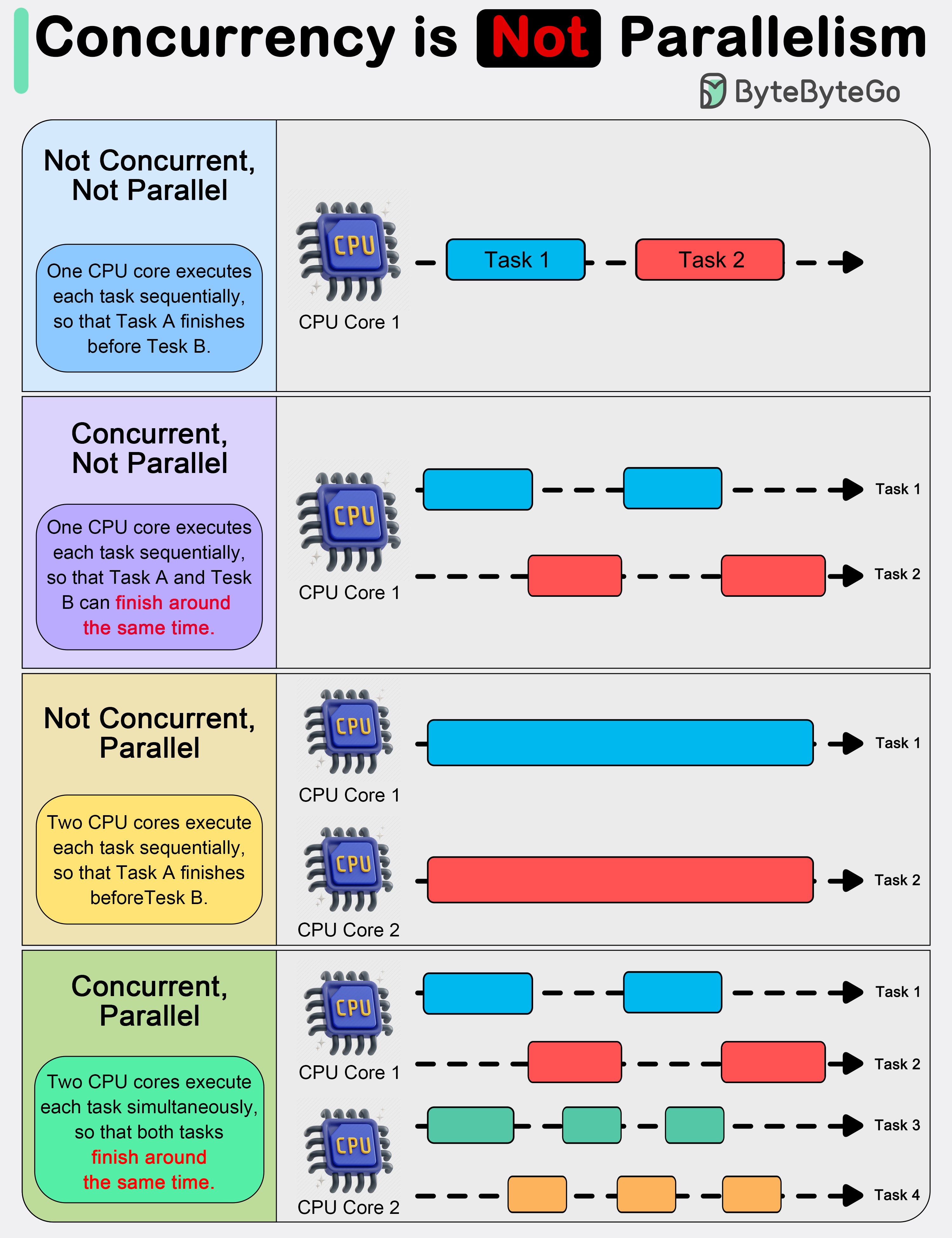

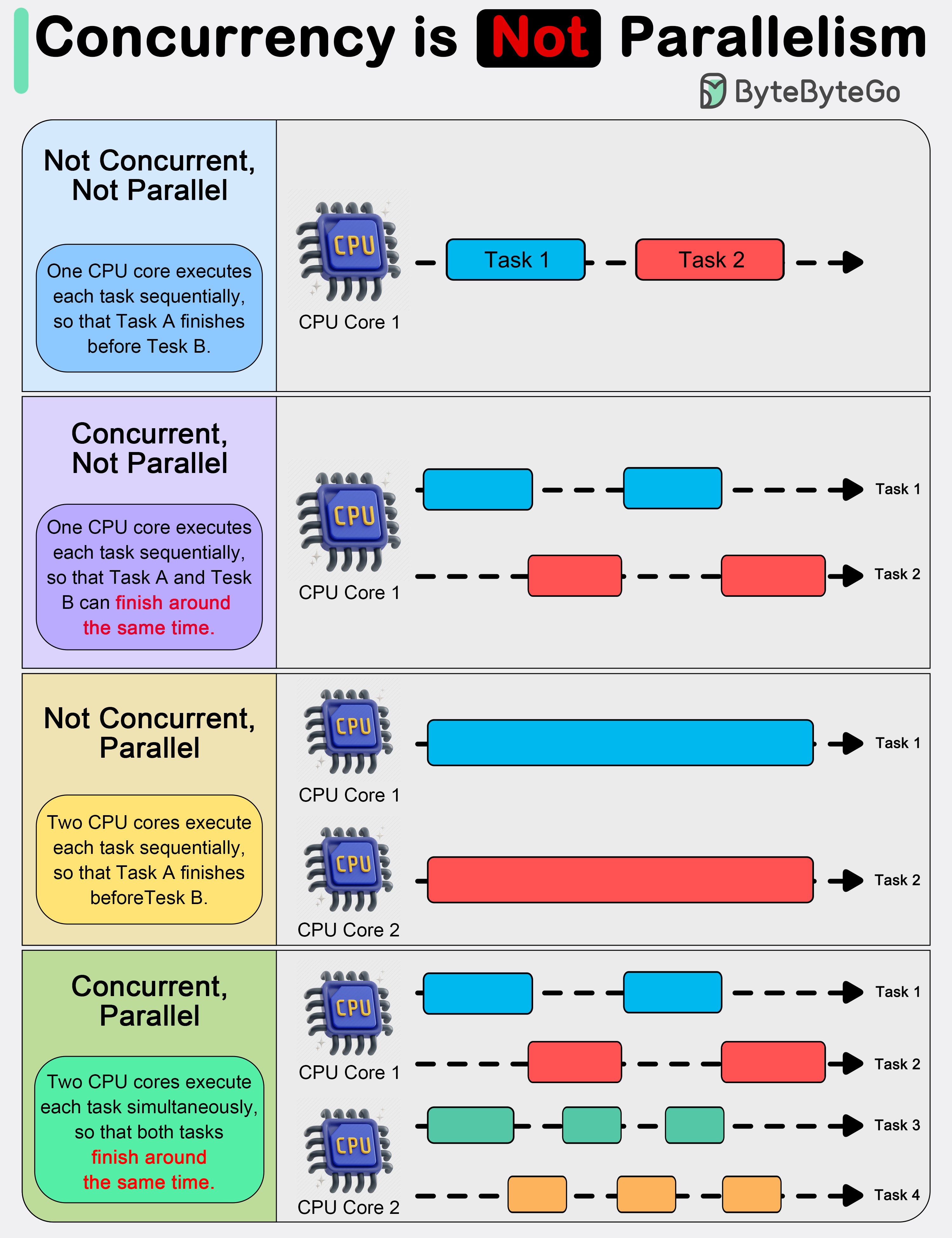

+ * [Concurrency vs Parallelism](https://bytebytego.com/guides/concurrency-is-not-parallelism)

+ * [Linux Boot Process Explained](https://bytebytego.com/guides/linux-boot-process-explained)

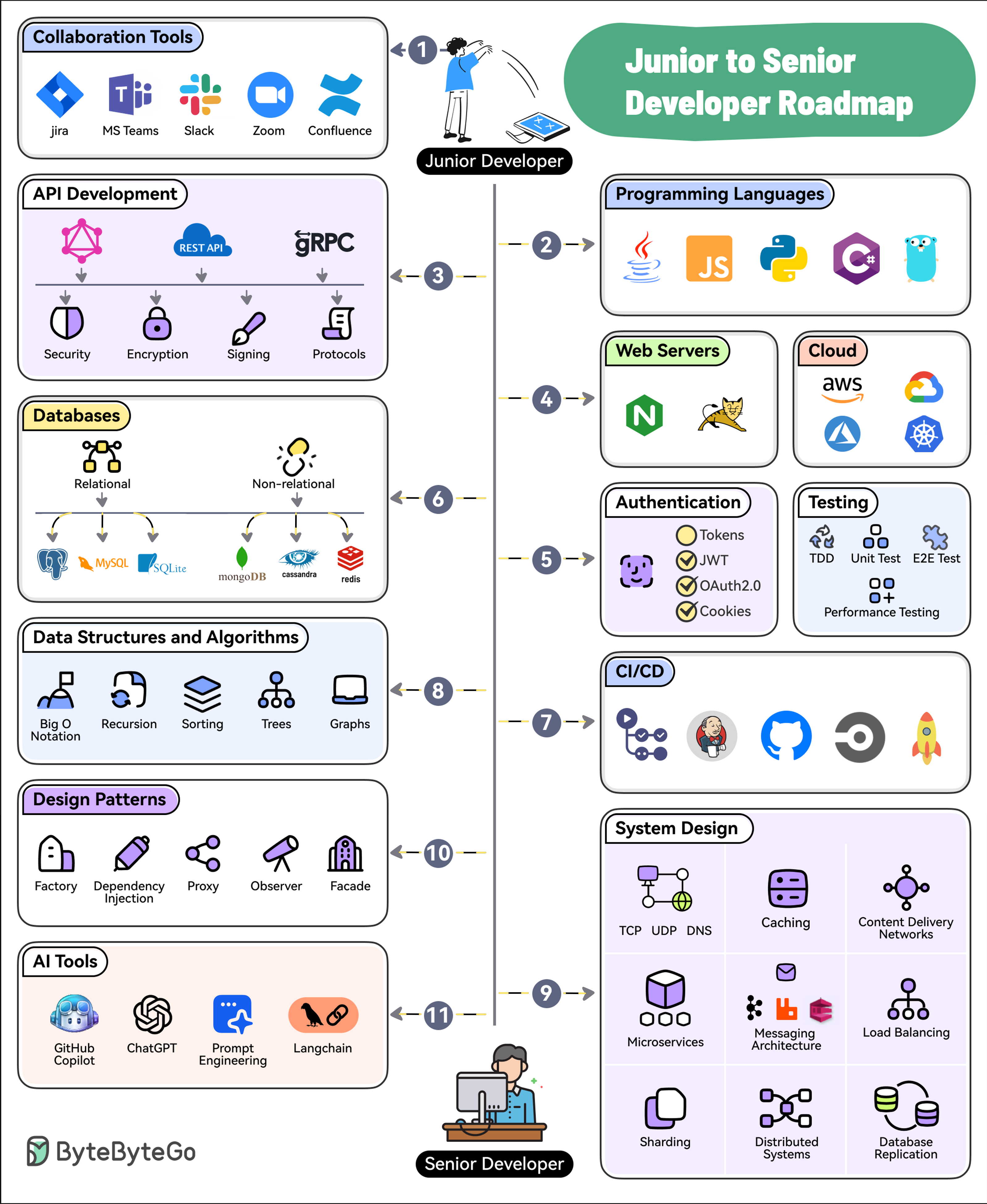

+ * [11 Steps to Go From Junior to Senior Developer](https://bytebytego.com/guides/11-steps-to-go-from-junior-to-senior-developer)

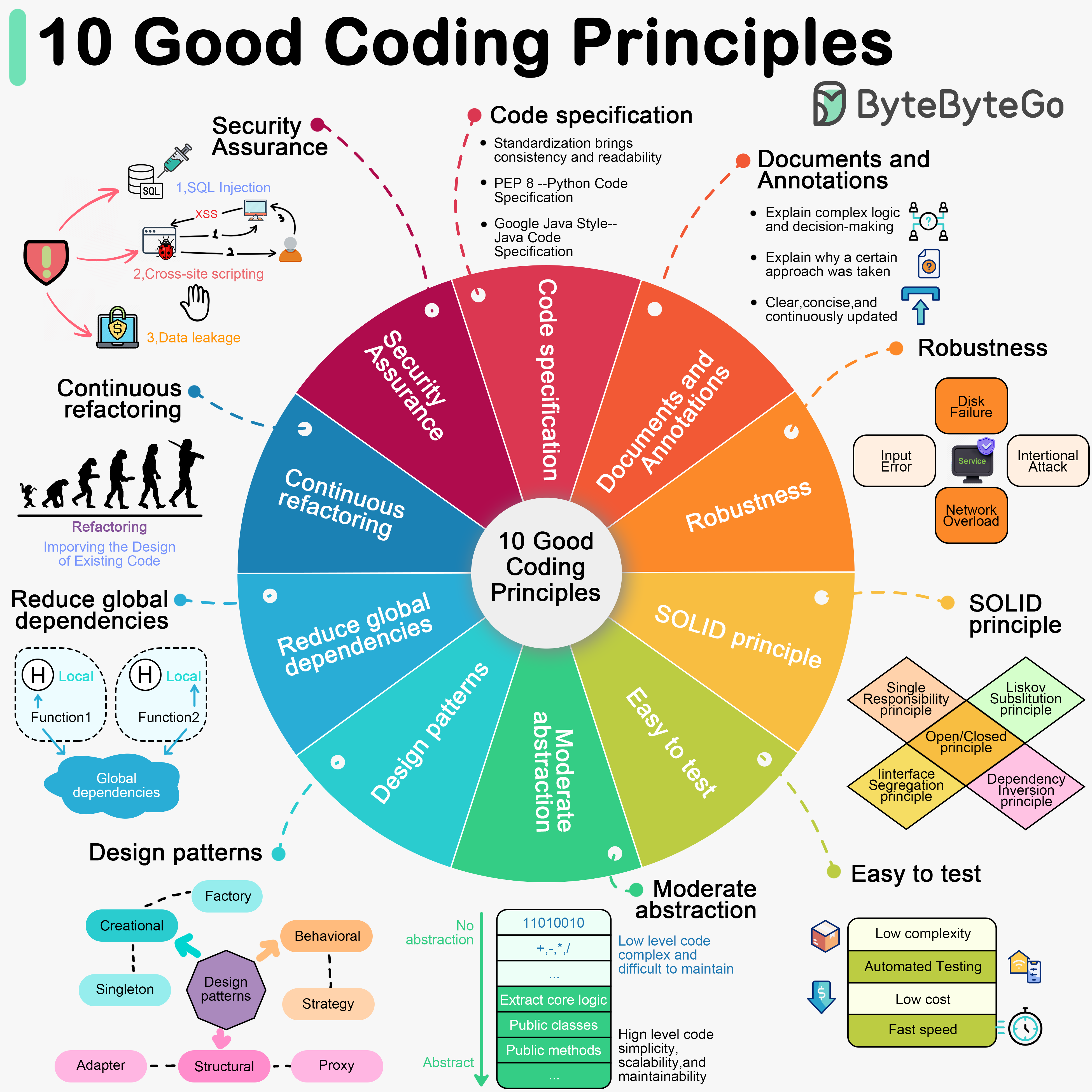

+ * [10 Good Coding Principles to Improve Code Quality](https://bytebytego.com/guides/10-good-coding-principles-to-improve-code-quality)

+* [Cloud & Distributed Systems](https://bytebytego.com/guides/cloud-distributed-systems)

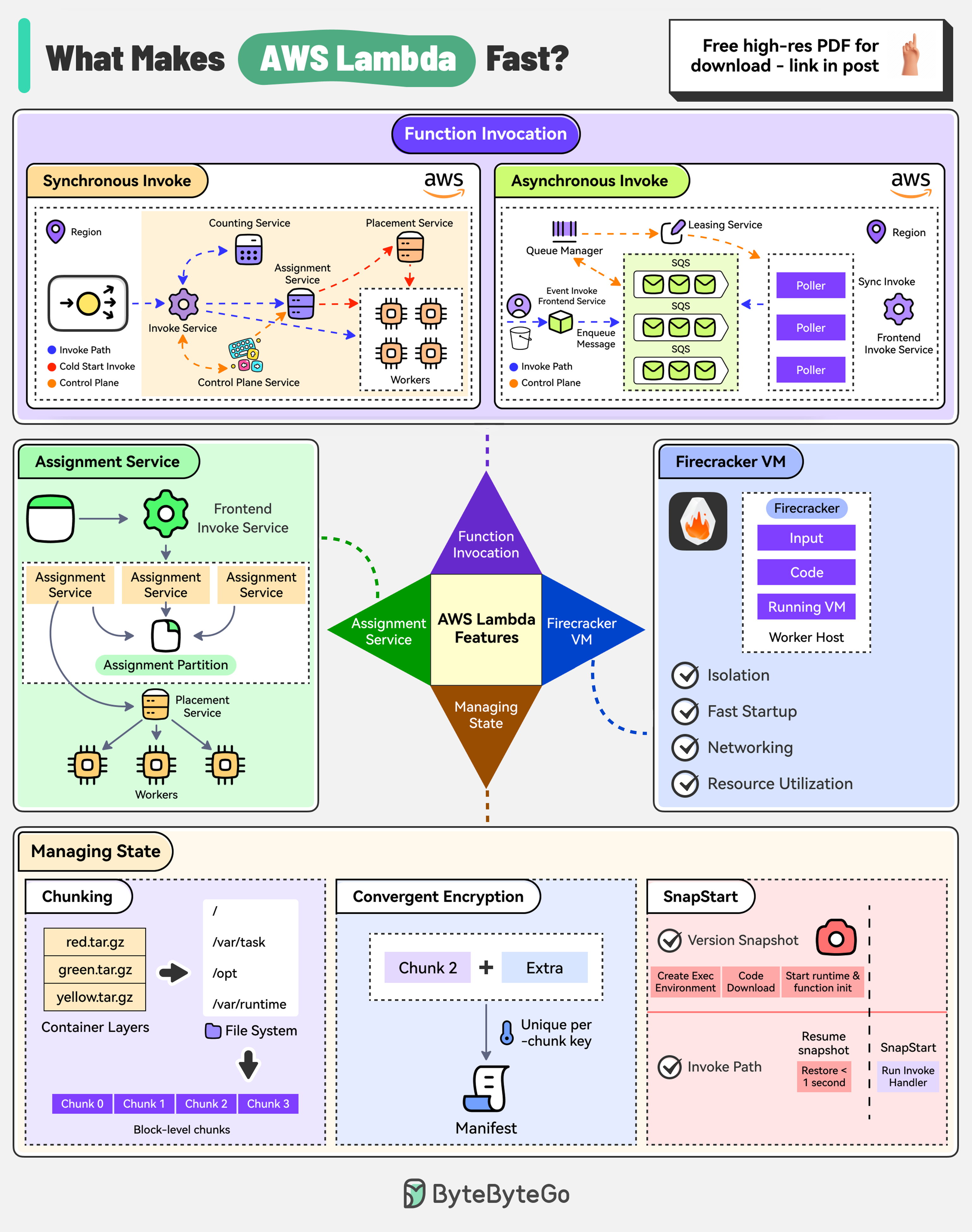

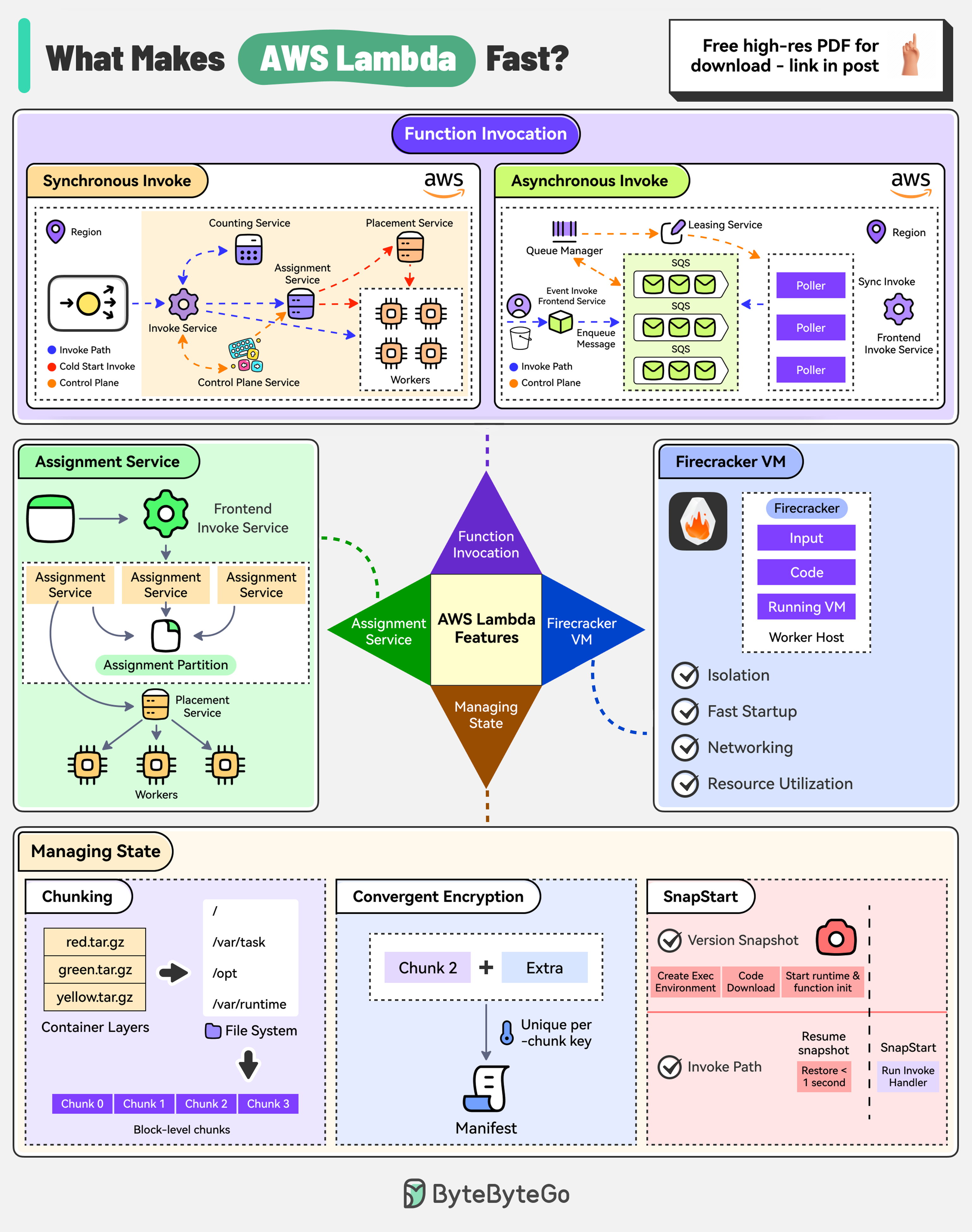

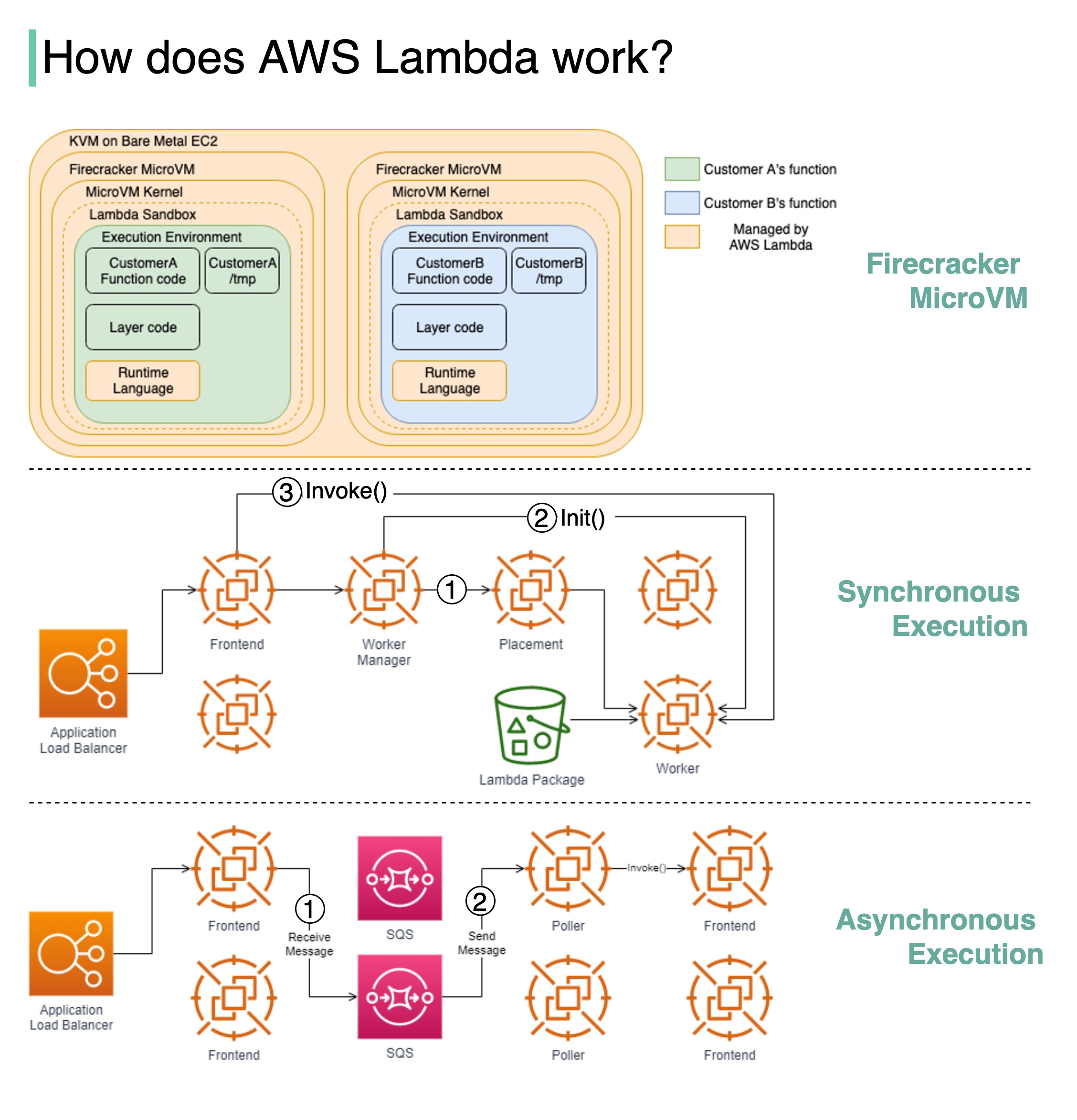

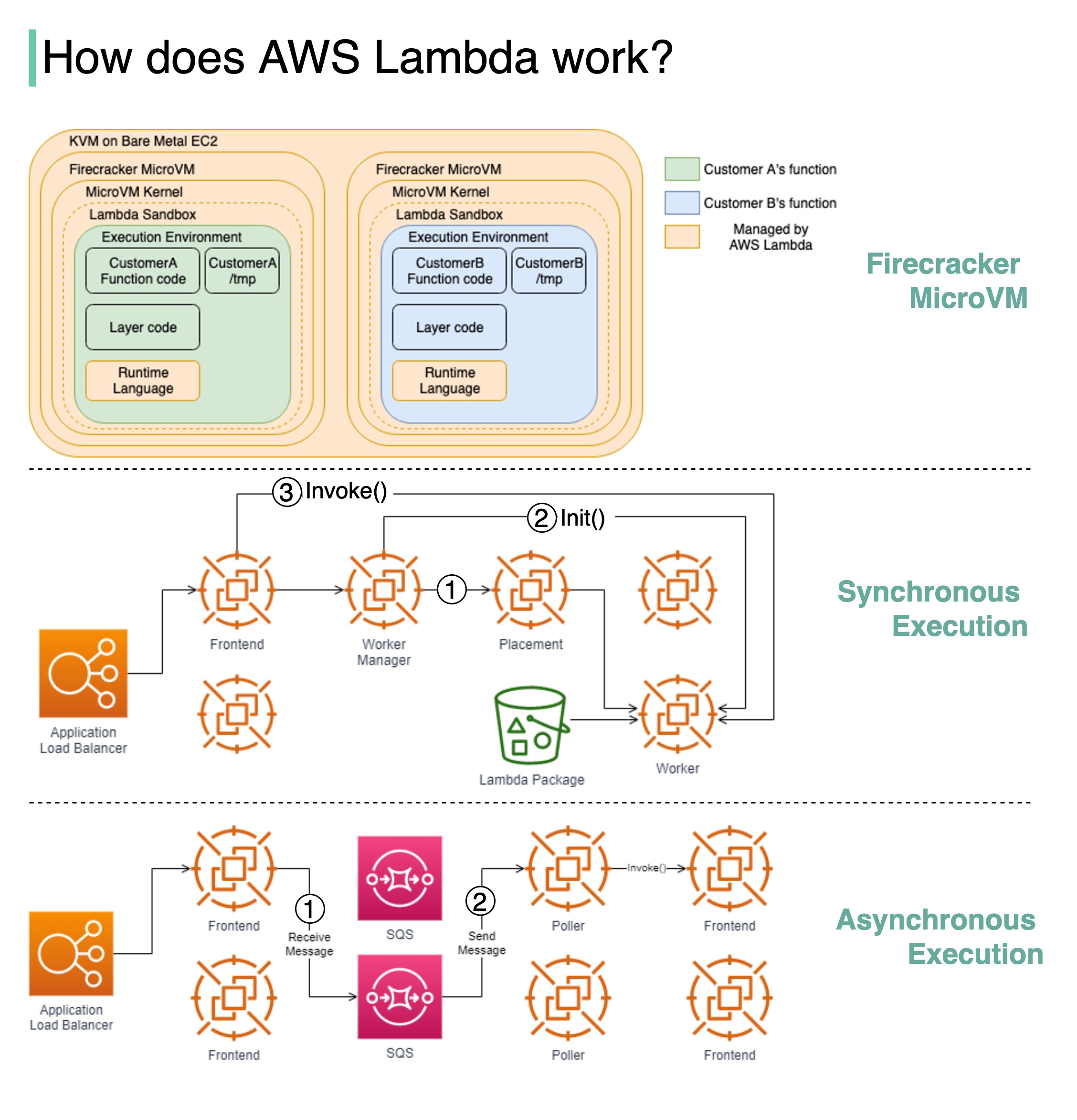

+ * [How AWS Lambda Works Behind the Scenes](https://bytebytego.com/guides/how-does-aws-lambda-work-behind-the-scenes)

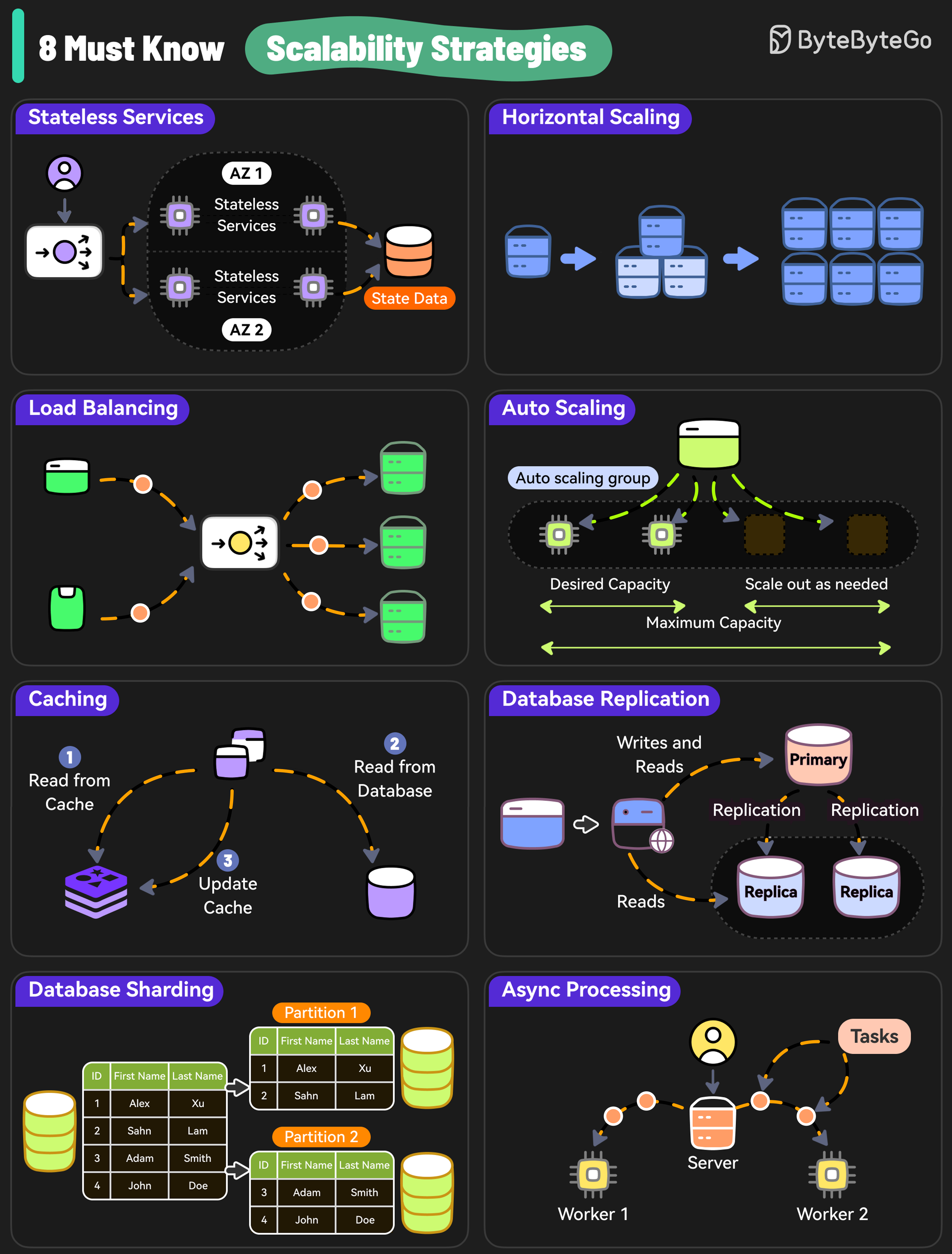

+ * [8 Must-Know Scalability Strategies](https://bytebytego.com/guides/8-must-know-scalability-strategies)

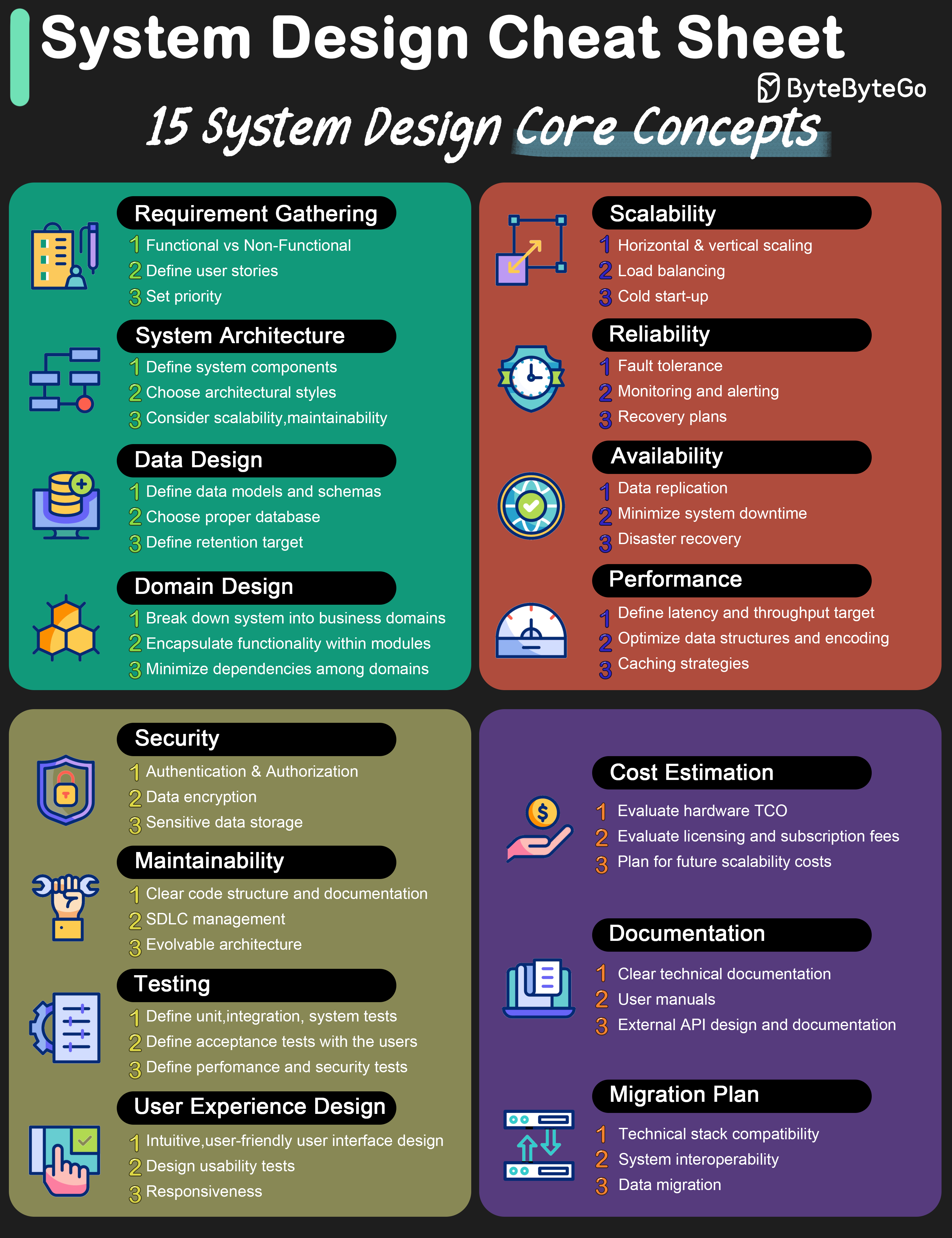

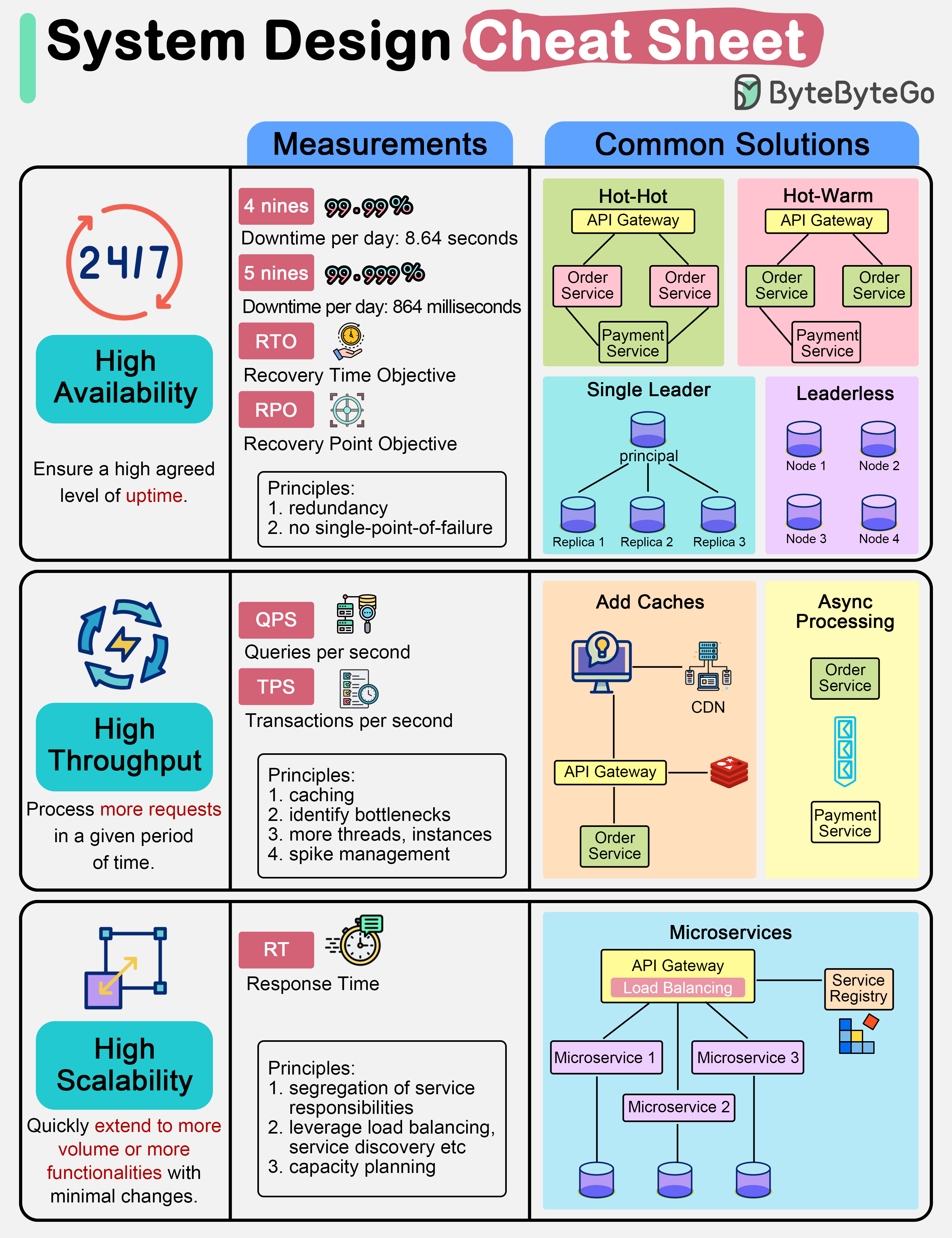

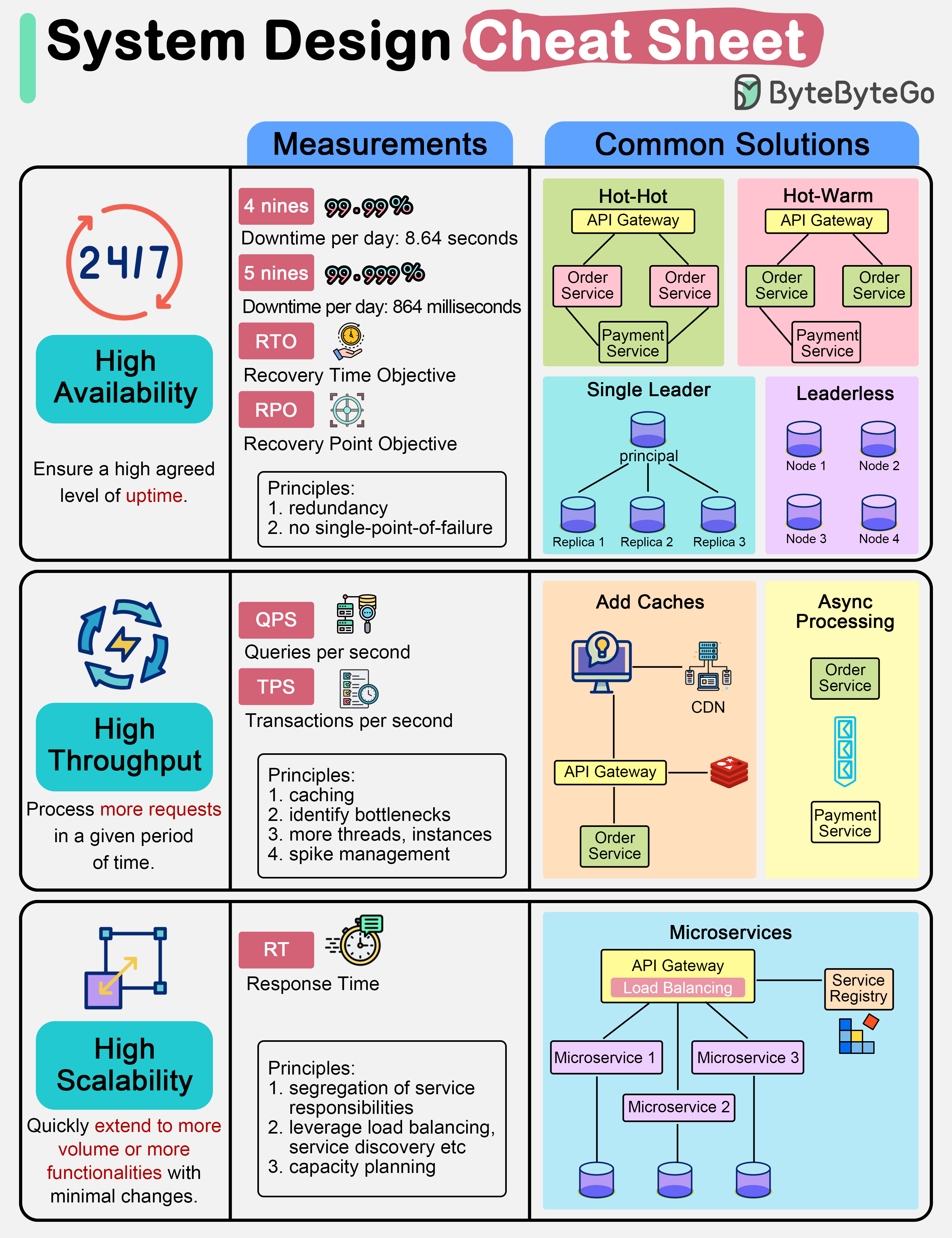

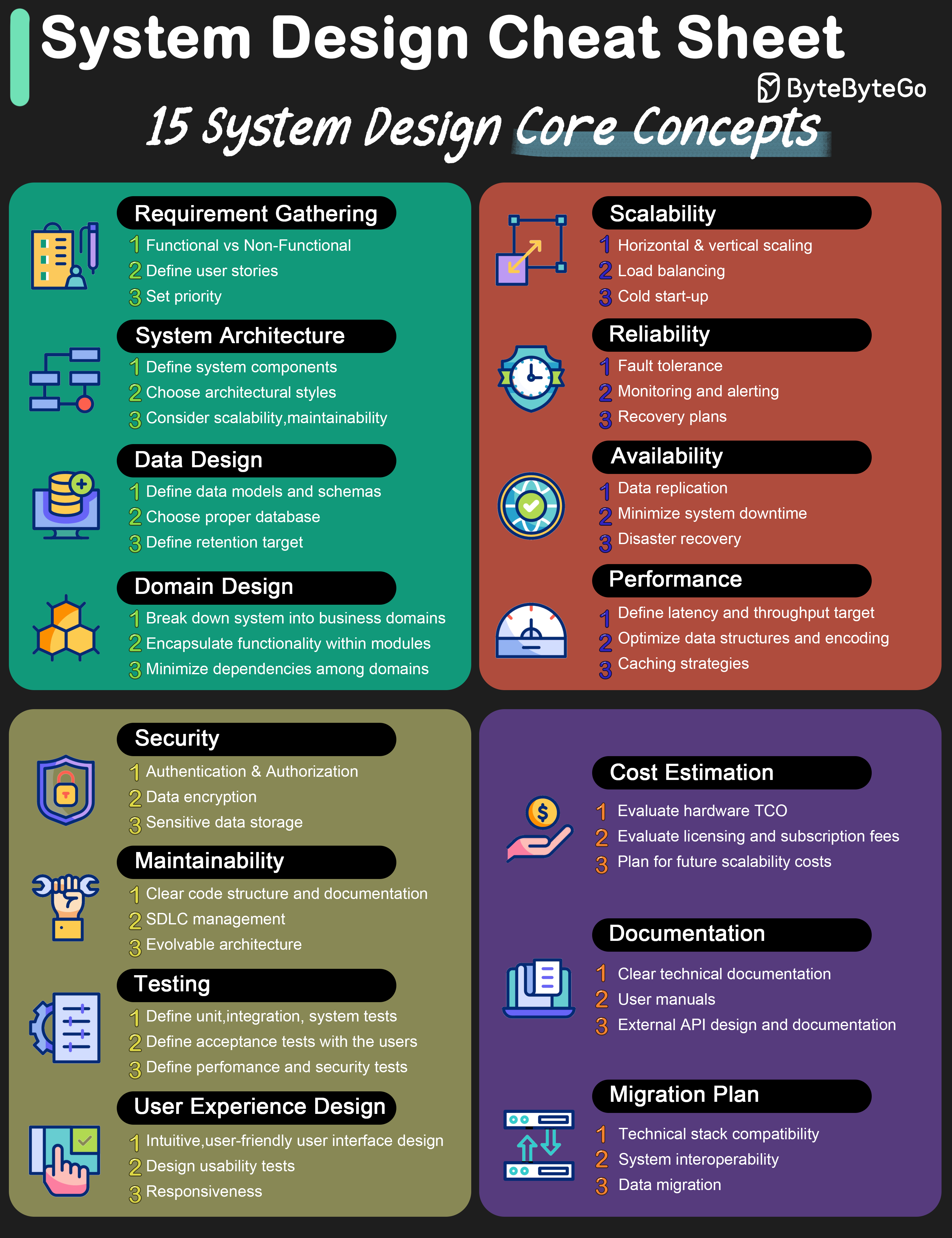

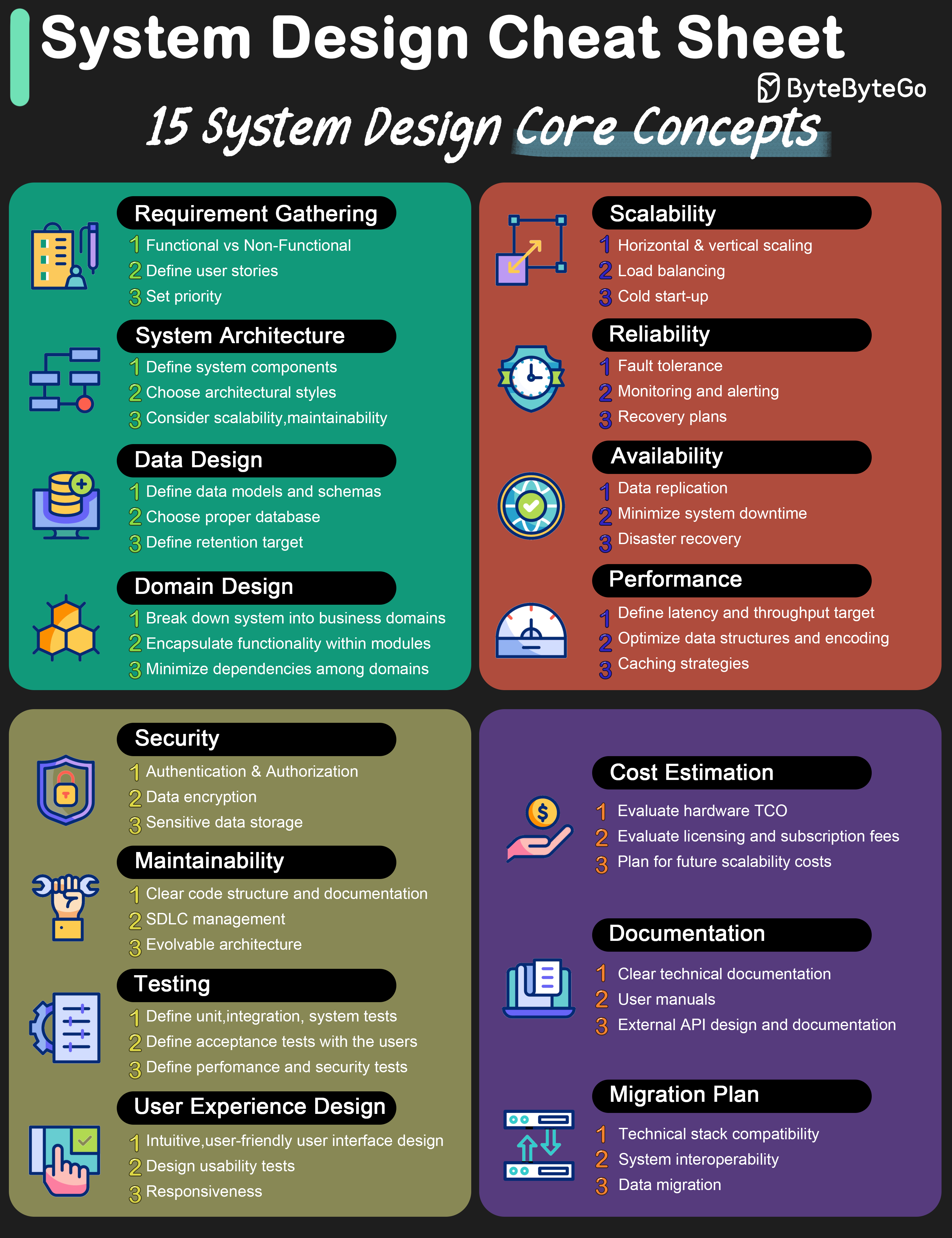

+ * [System Design Cheat Sheet](https://bytebytego.com/guides/system-design-cheat-sheet)

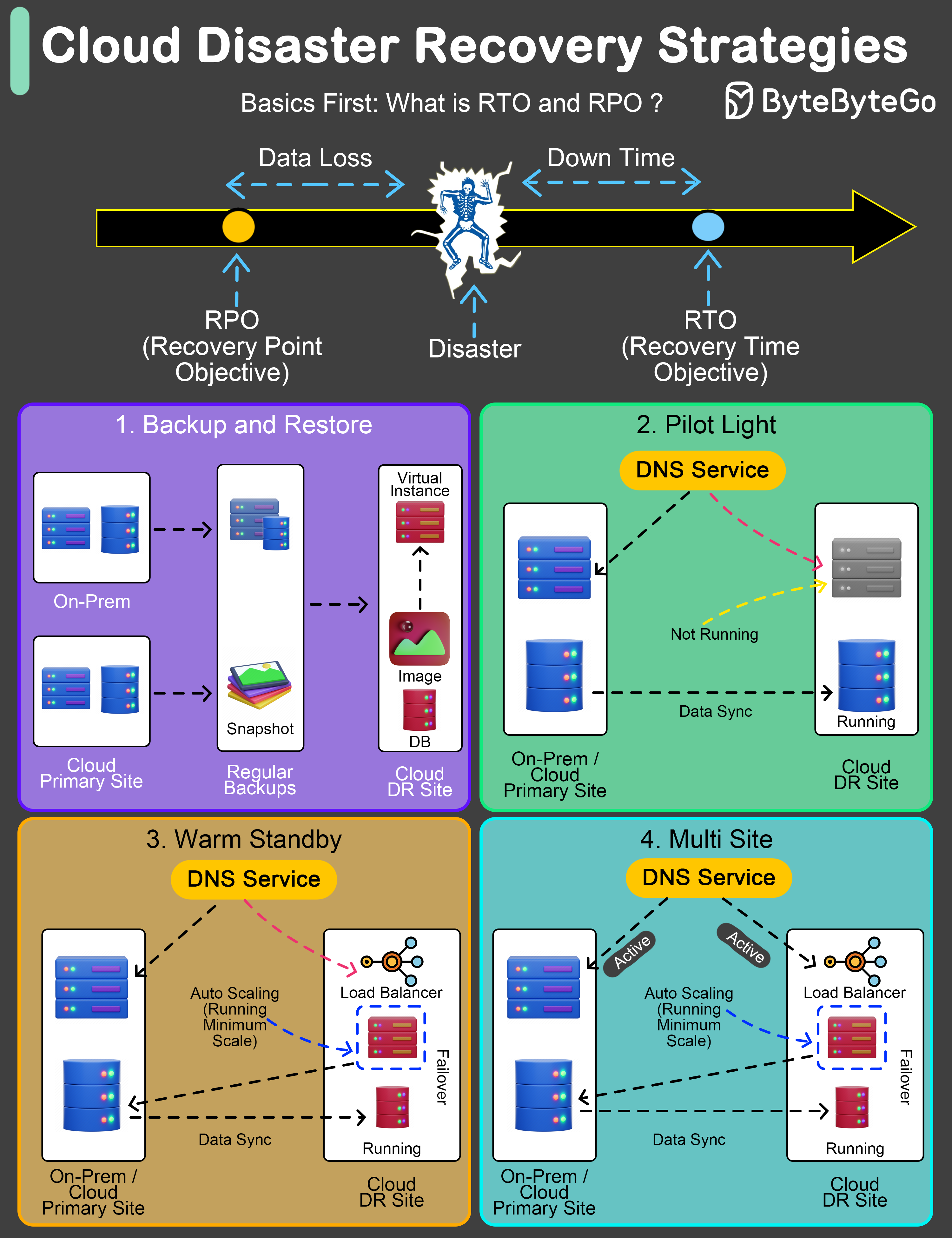

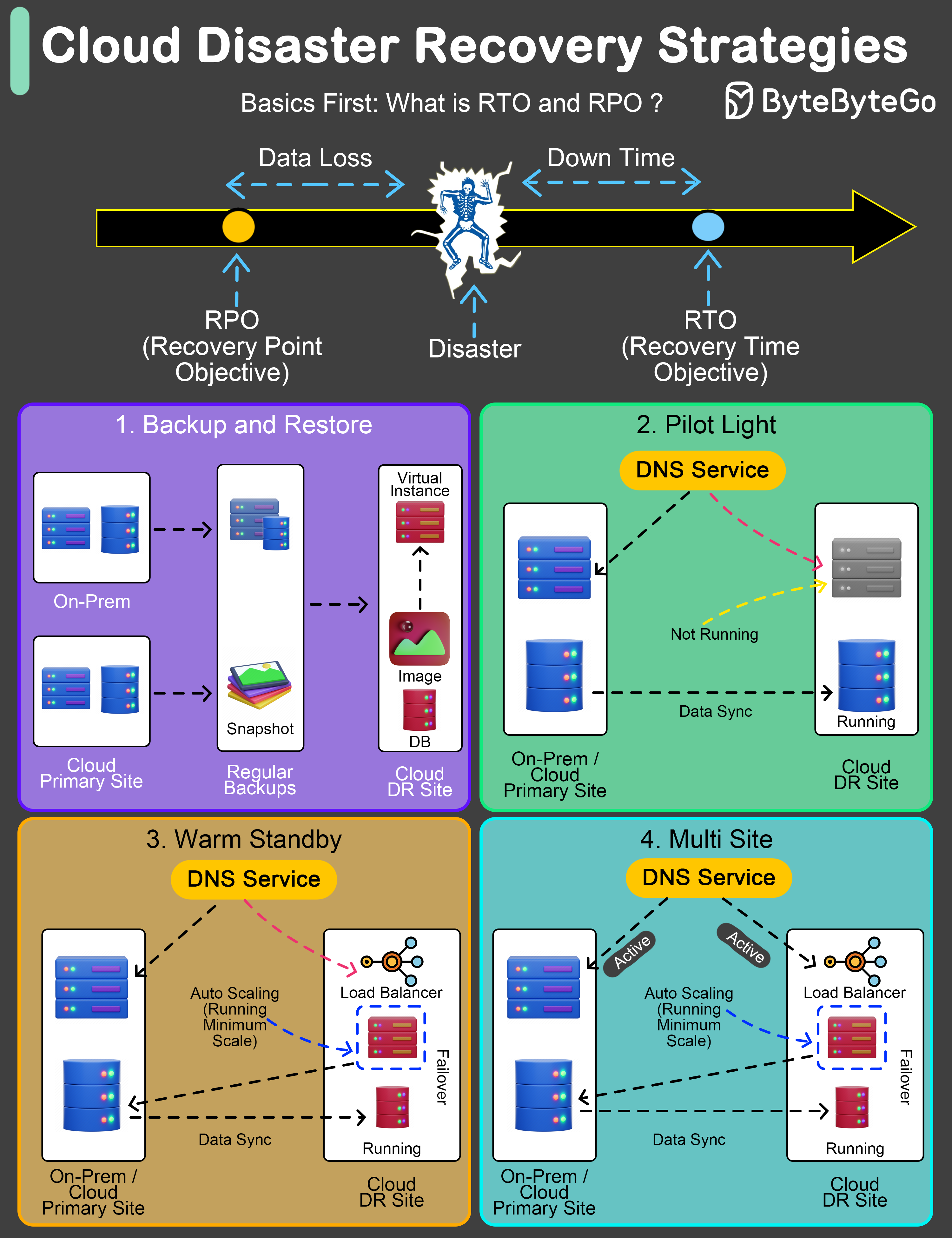

+ * [Cloud Disaster Recovery Strategies](https://bytebytego.com/guides/cloud-disaster-recovery-strategies)

+ * [Vertical vs Horizontal Partitioning](https://bytebytego.com/guides/vertical-partitioning-vs-horizontal-partitioning)

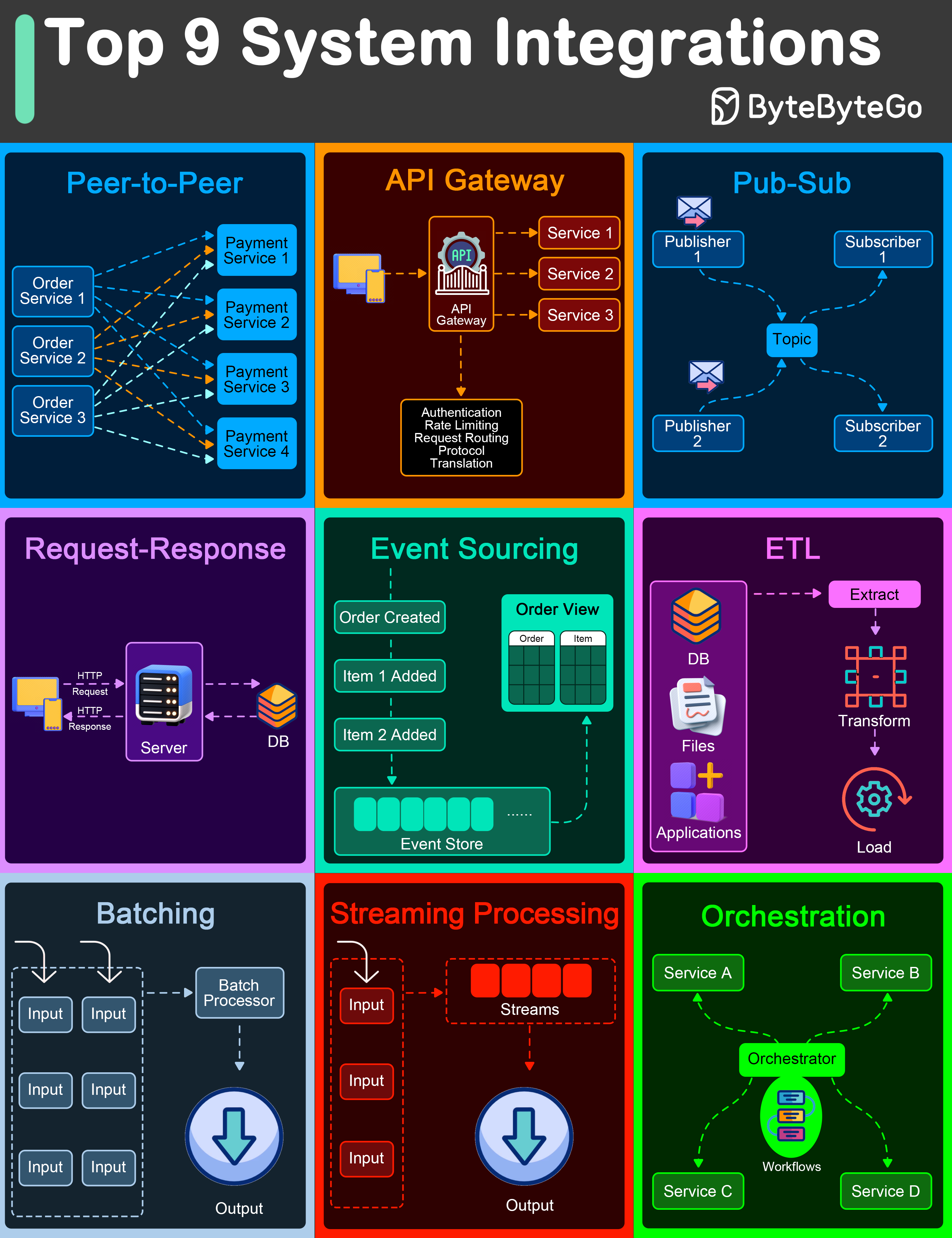

+ * [Top 9 Architectural Patterns for Data and Communication Flow](https://bytebytego.com/guides/top-9-architectural-patterns-for-data-and-communication-flow)

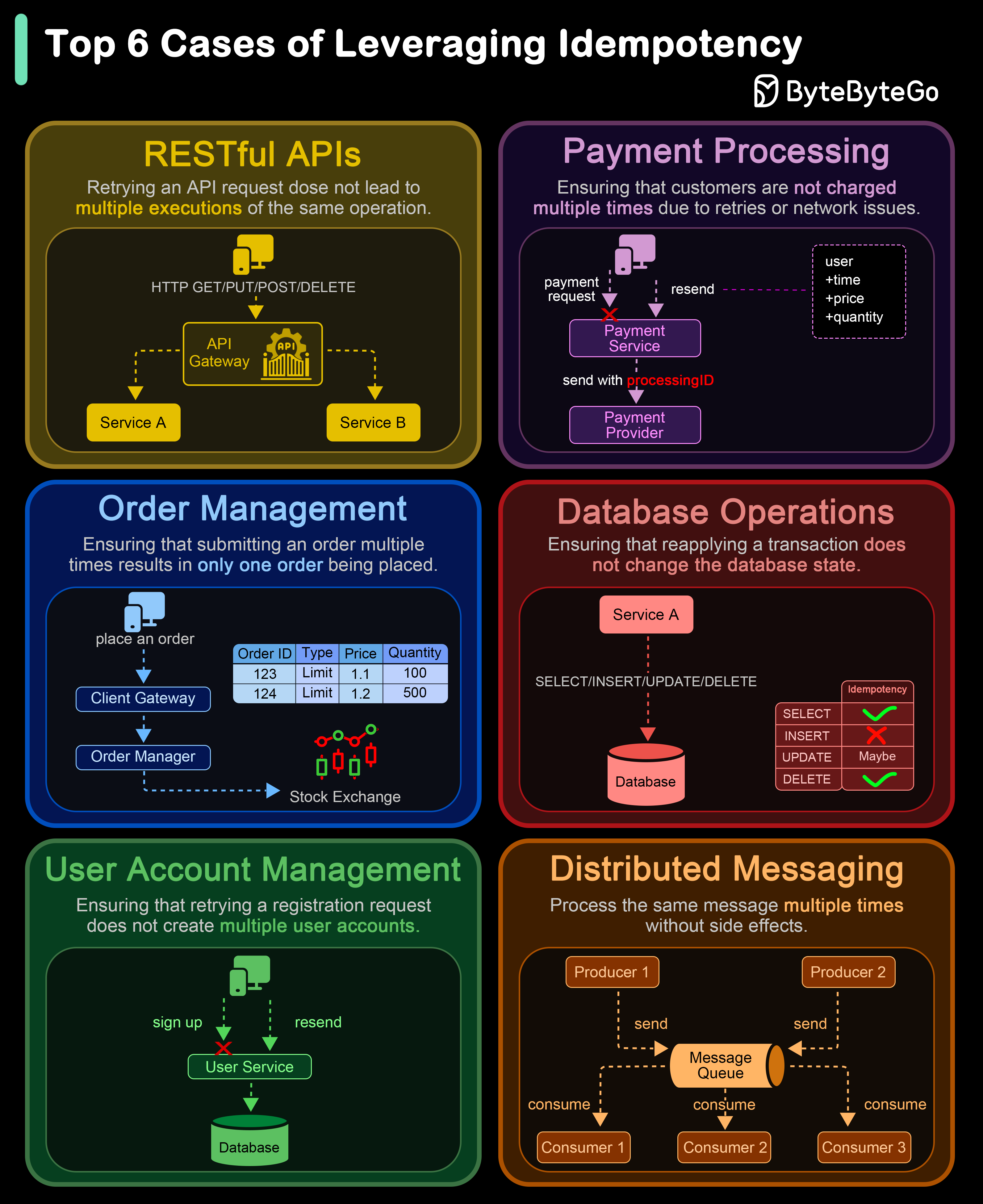

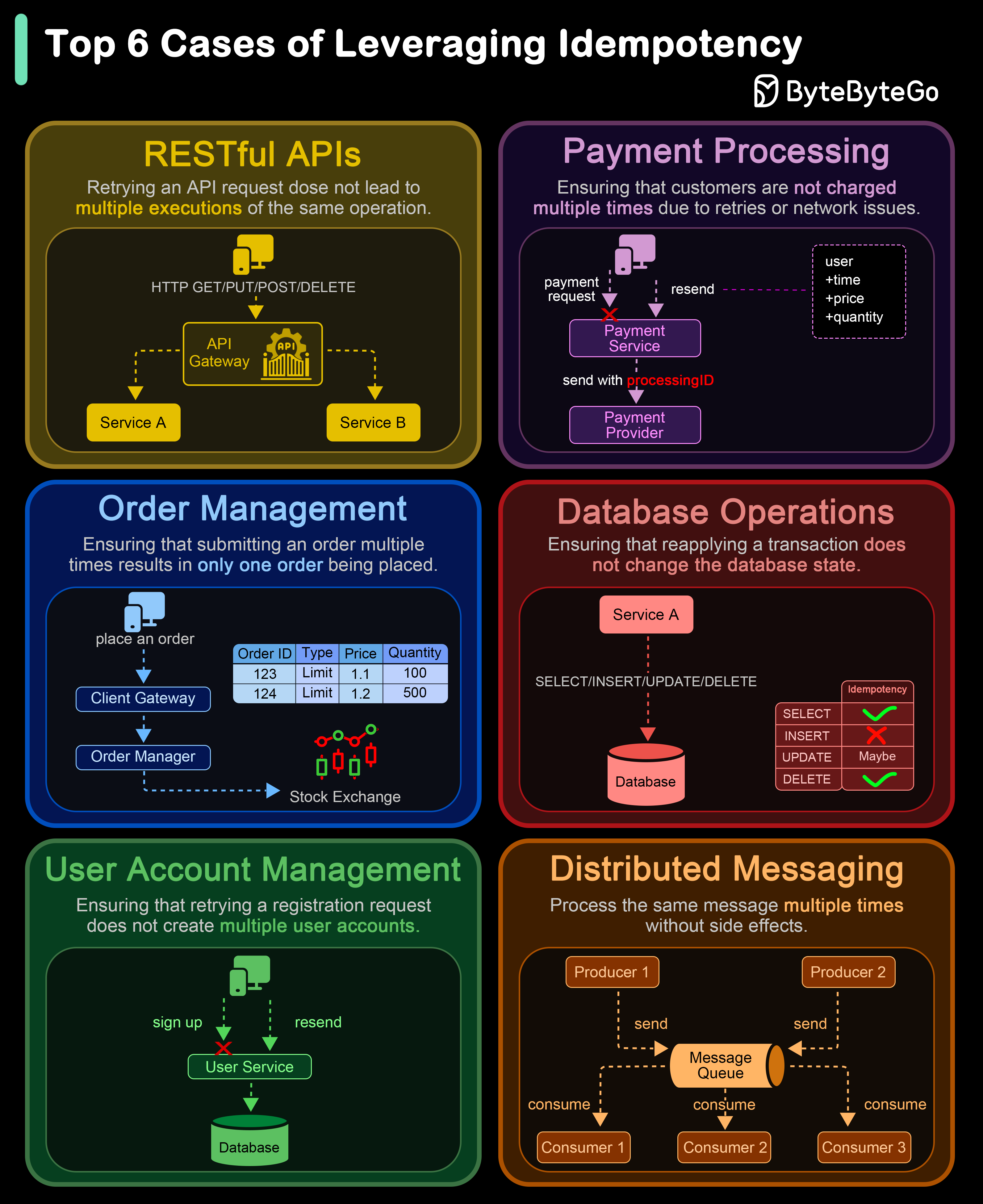

+ * [Top 6 Cases to Apply Idempotency](https://bytebytego.com/guides/top-6-cases-to-apply-idempotency)

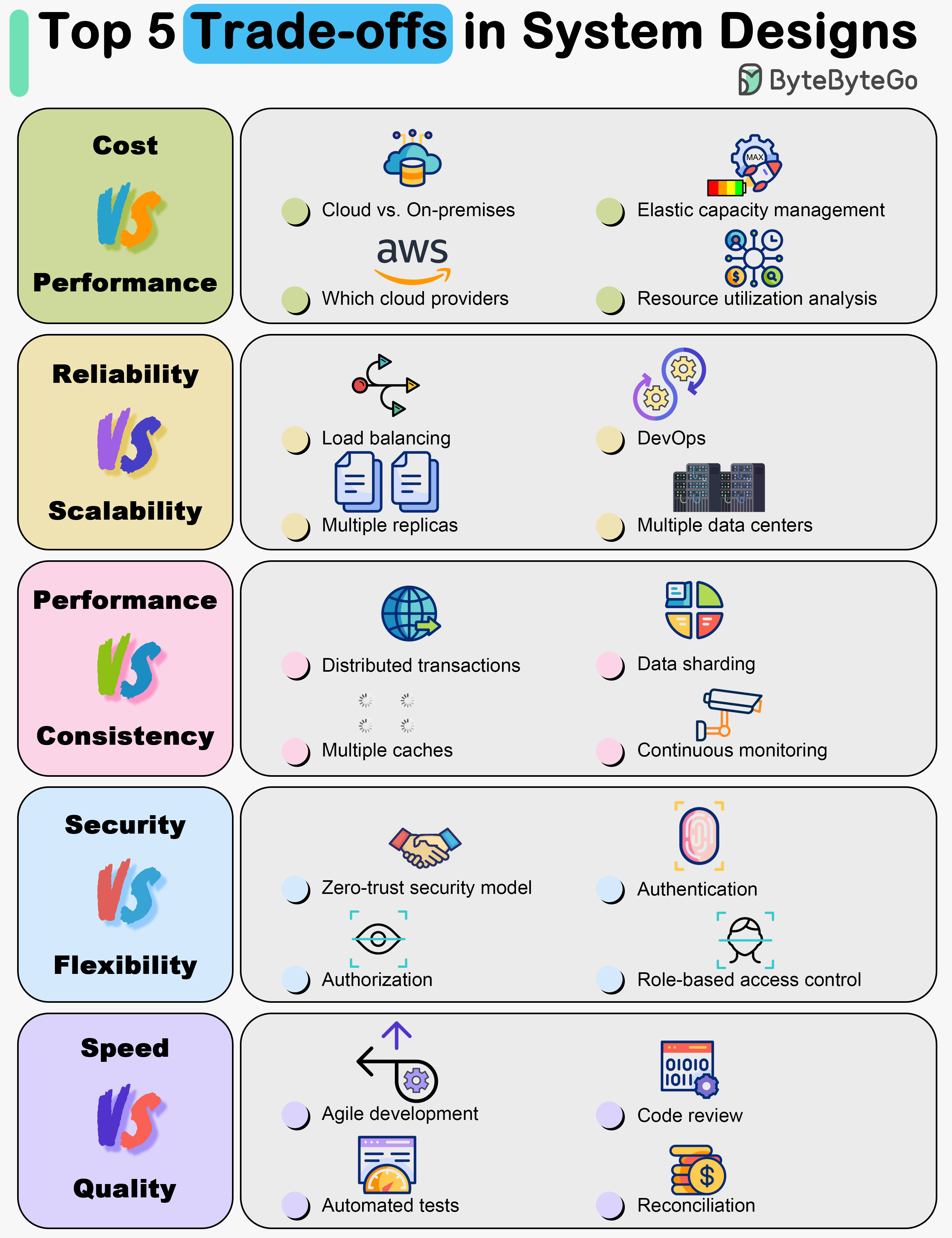

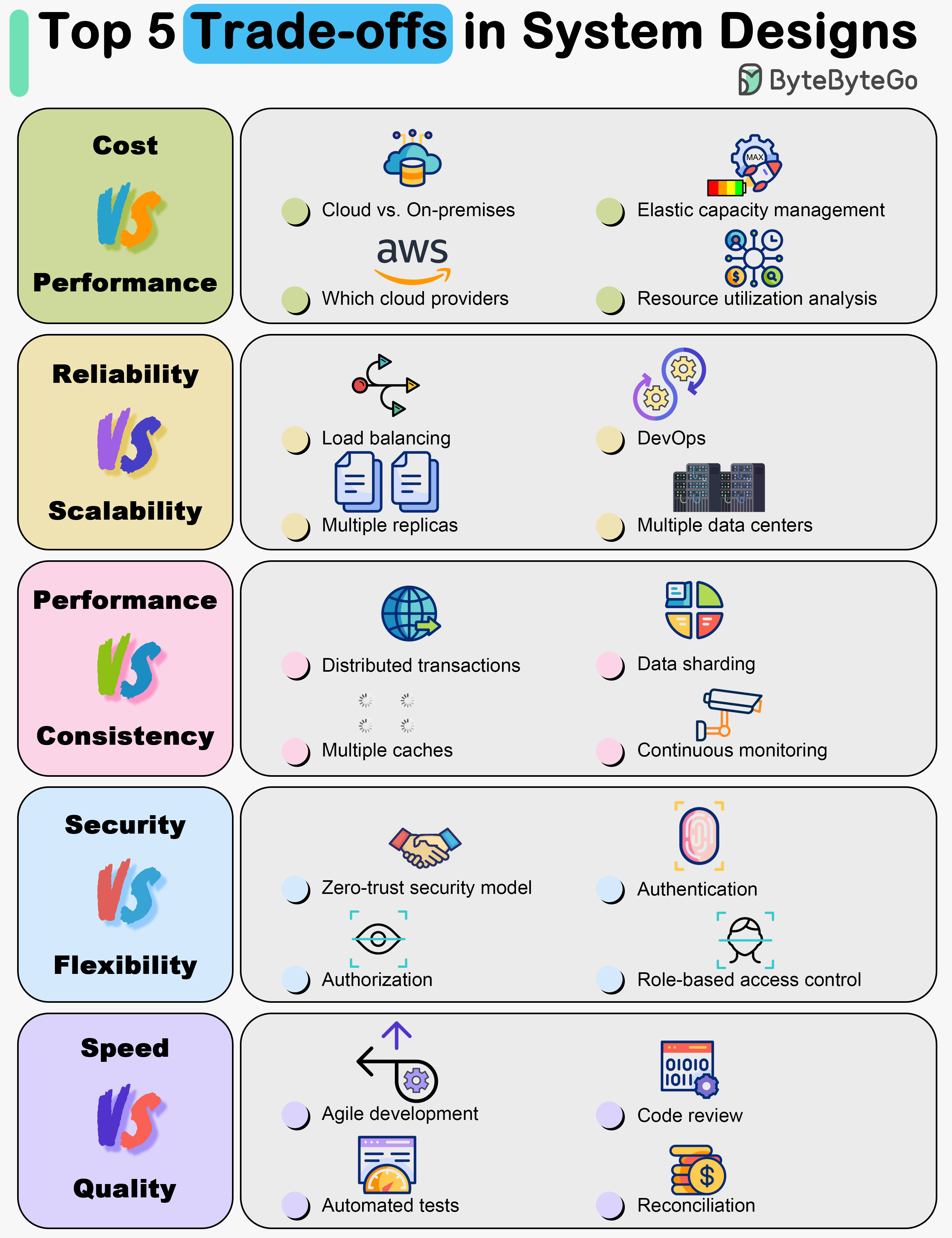

+ * [Top 5 Trade-offs in System Designs](https://bytebytego.com/guides/top-5-trade-offs-in-system-designs)

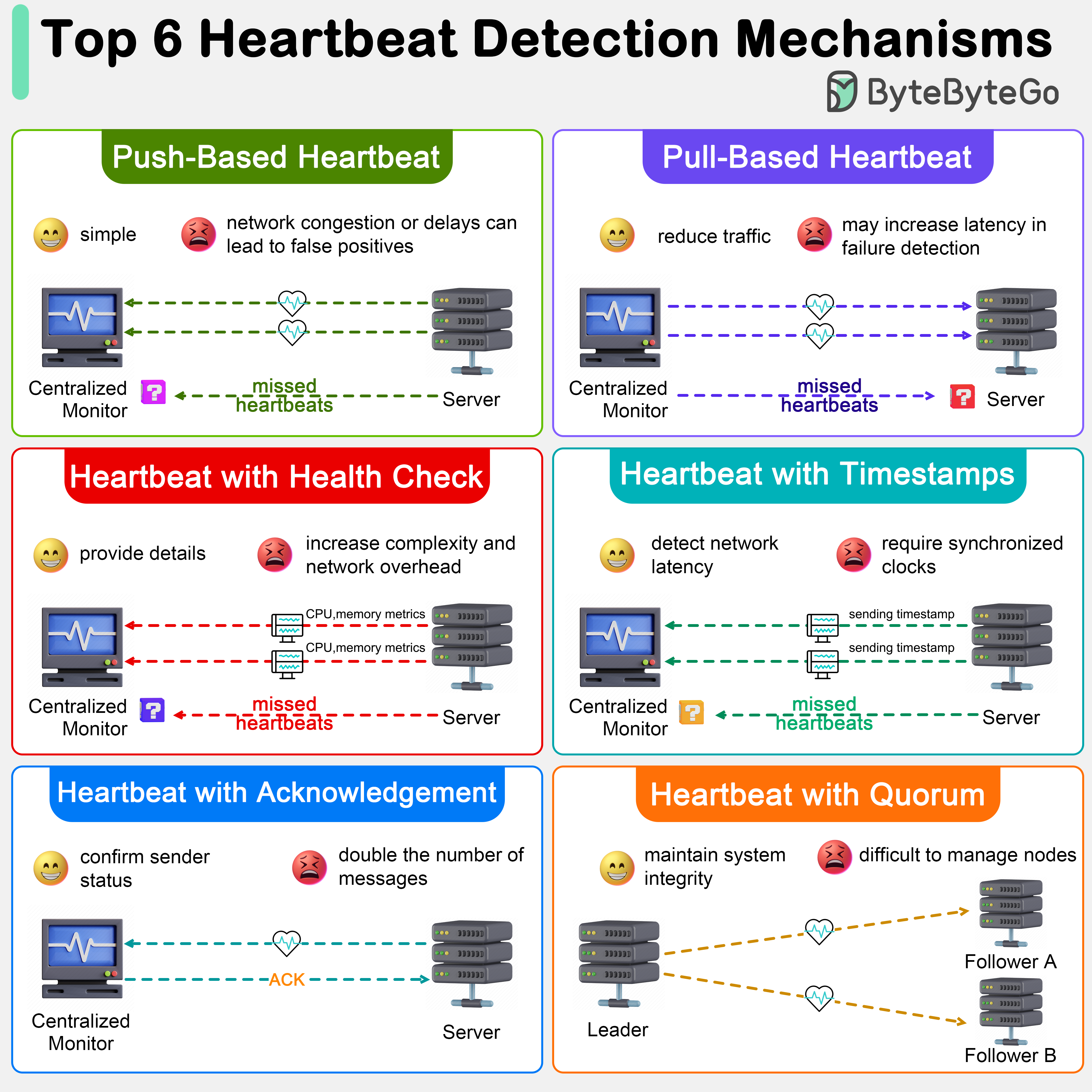

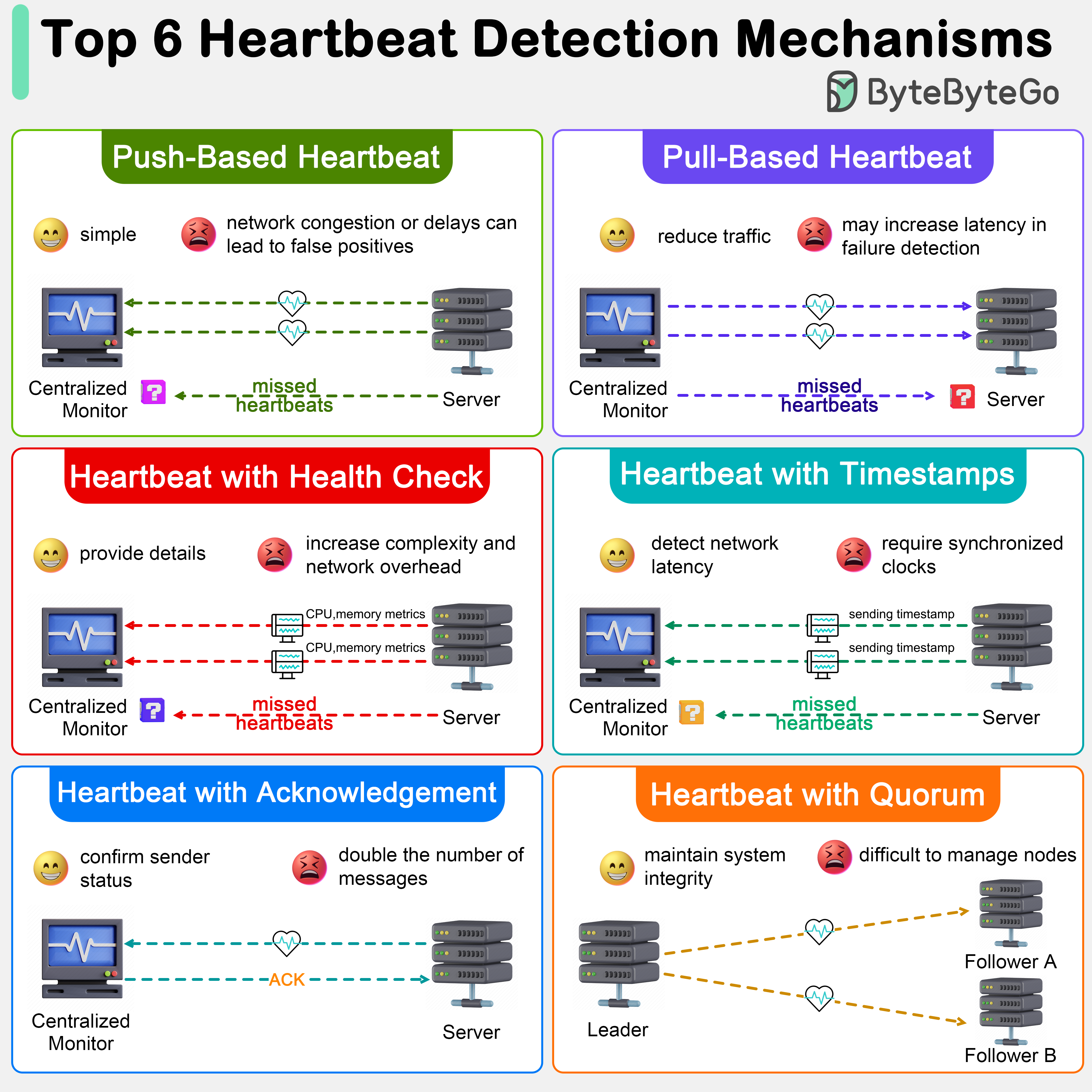

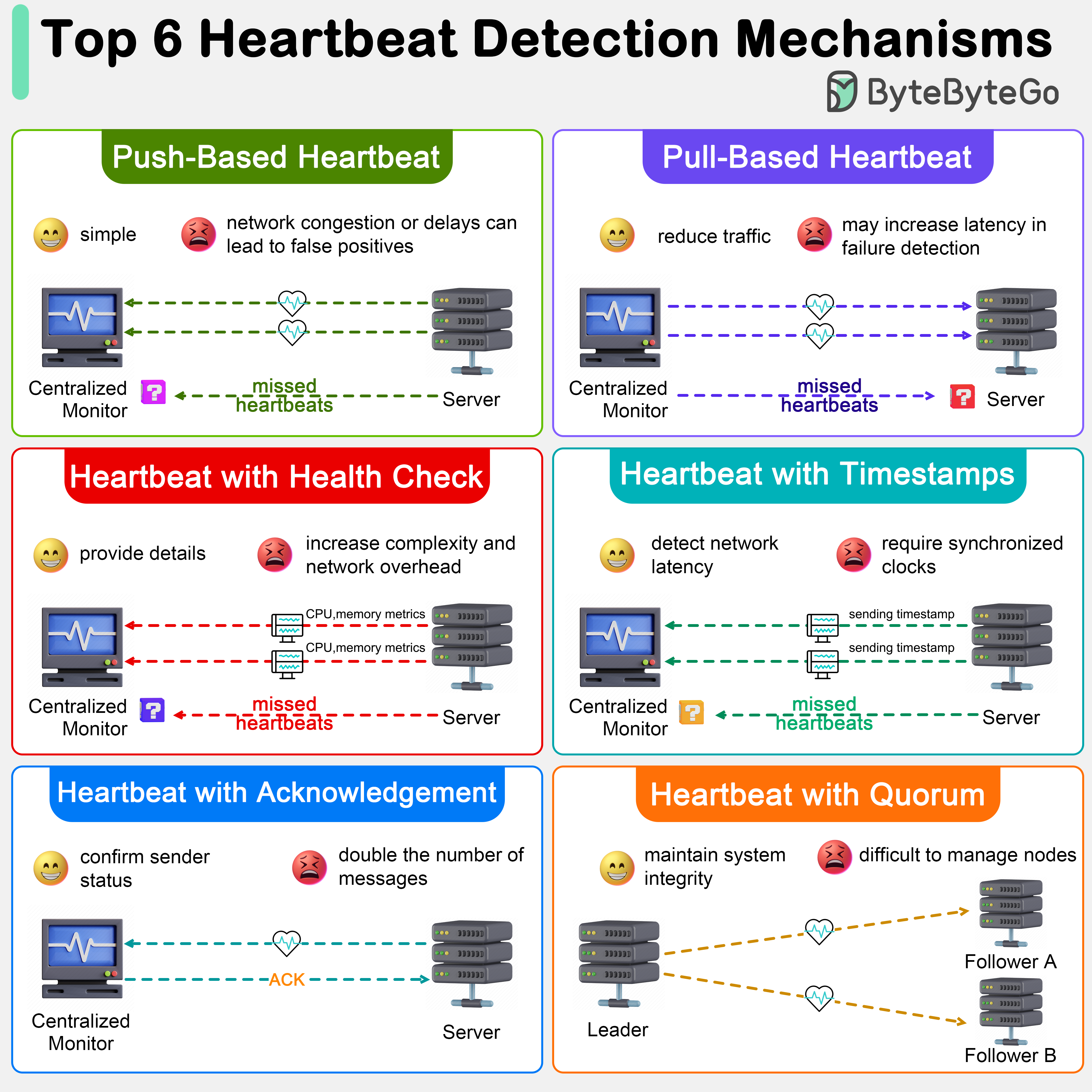

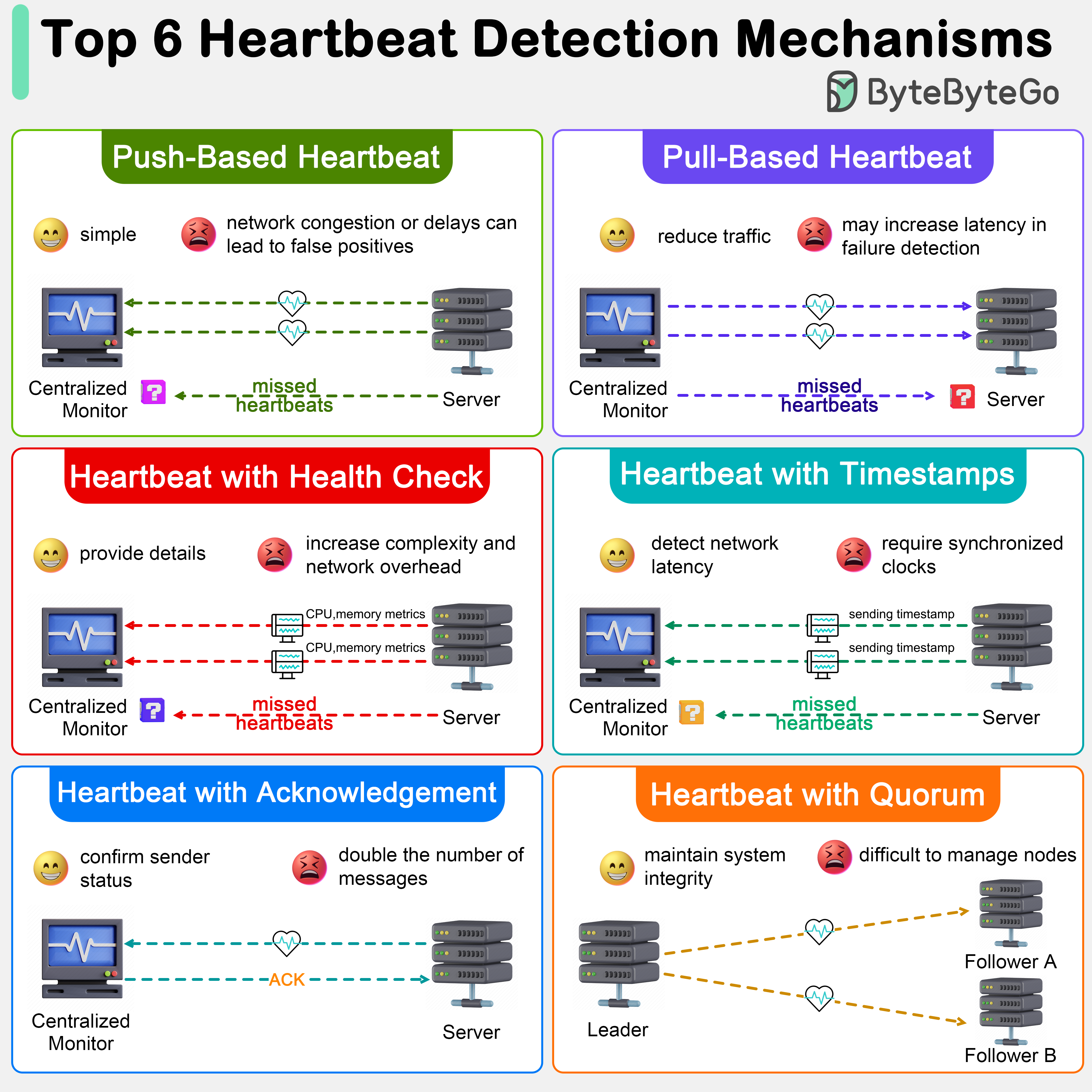

+ * [How to Detect Node Failures in Distributed Systems](https://bytebytego.com/guides/how-do-we-detect-node-failures-in-distributed-systems)

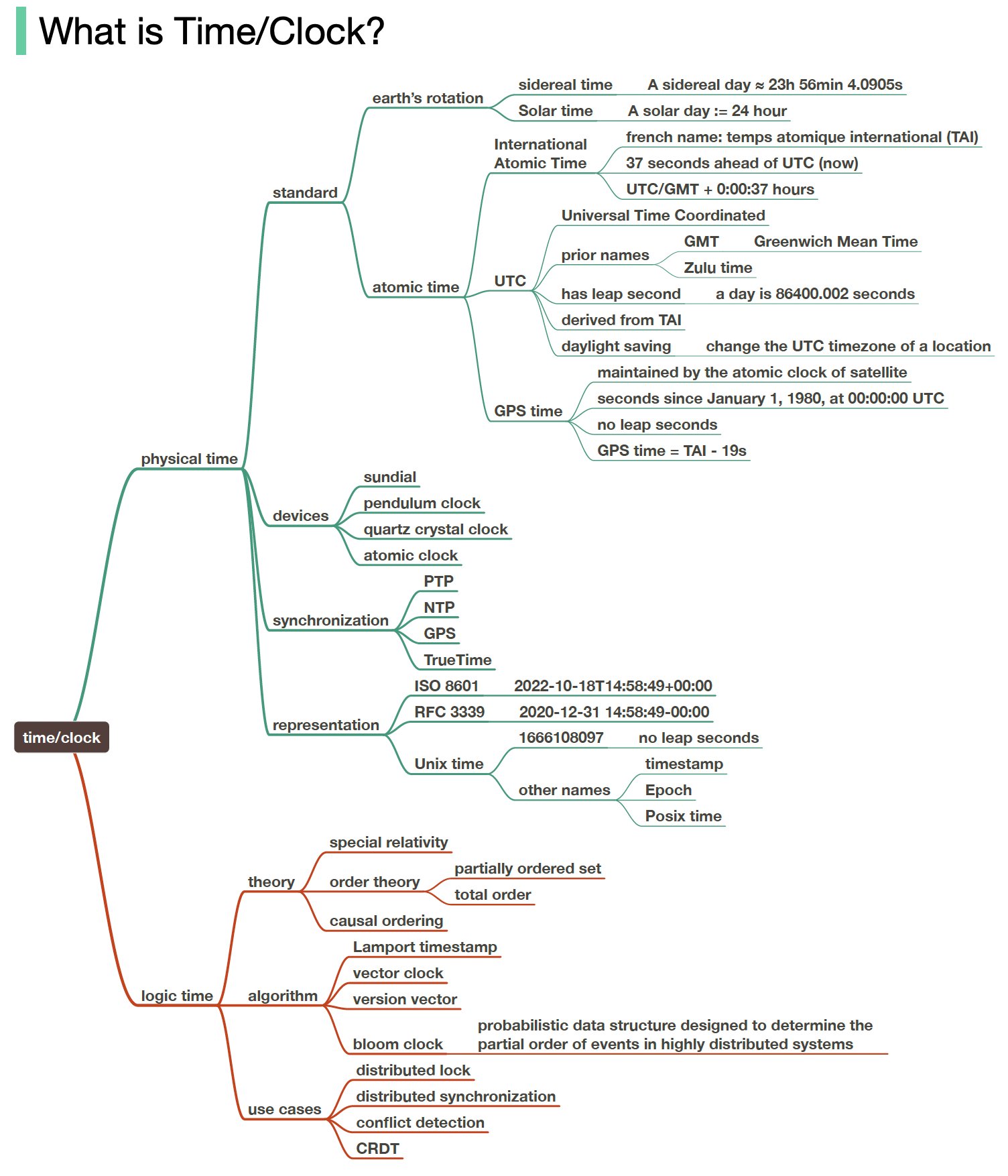

+ * [Why Meta, Google, and Amazon Stop Using Leap Seconds](https://bytebytego.com/guides/do-you-know-why-meta-google-and-amazon-all-stop-using-leap-seconds)

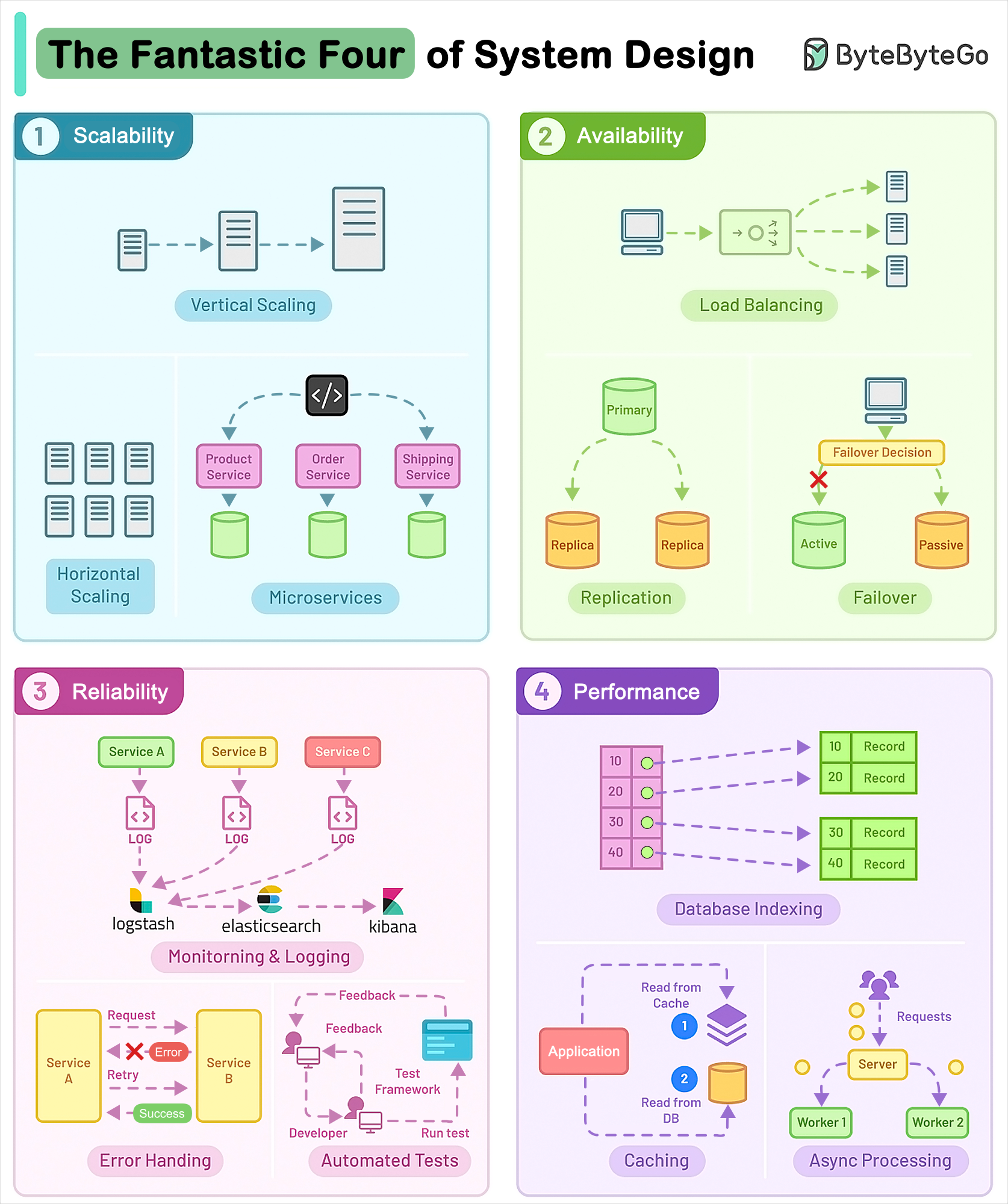

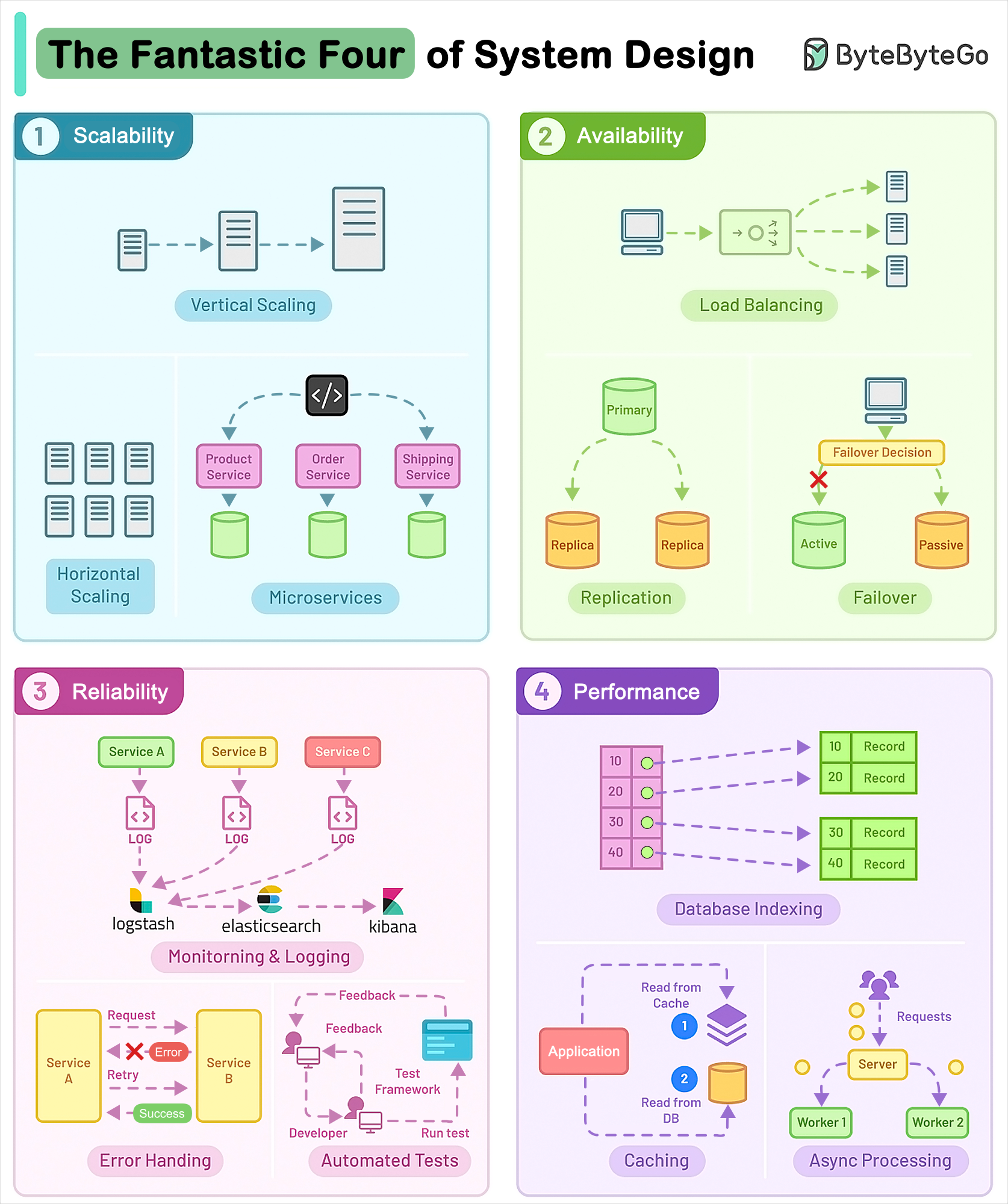

+ * [The Fantastic Four of System Design](https://bytebytego.com/guides/who-are-the-fantastic-four-of-system-design)

+ * [What makes AWS Lambda so fast?](https://bytebytego.com/guides/what-makes-aws-lambda-so-fast)

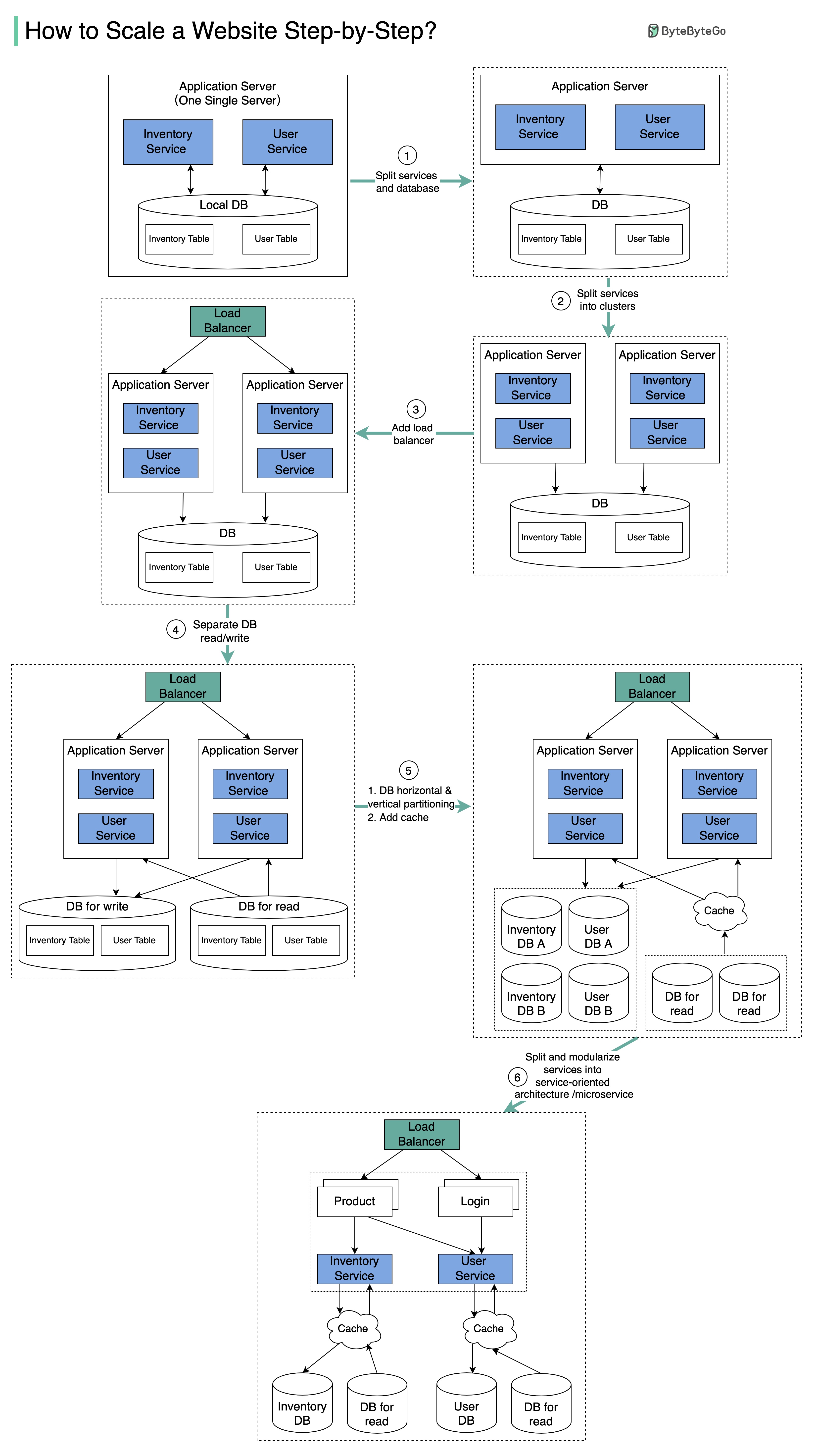

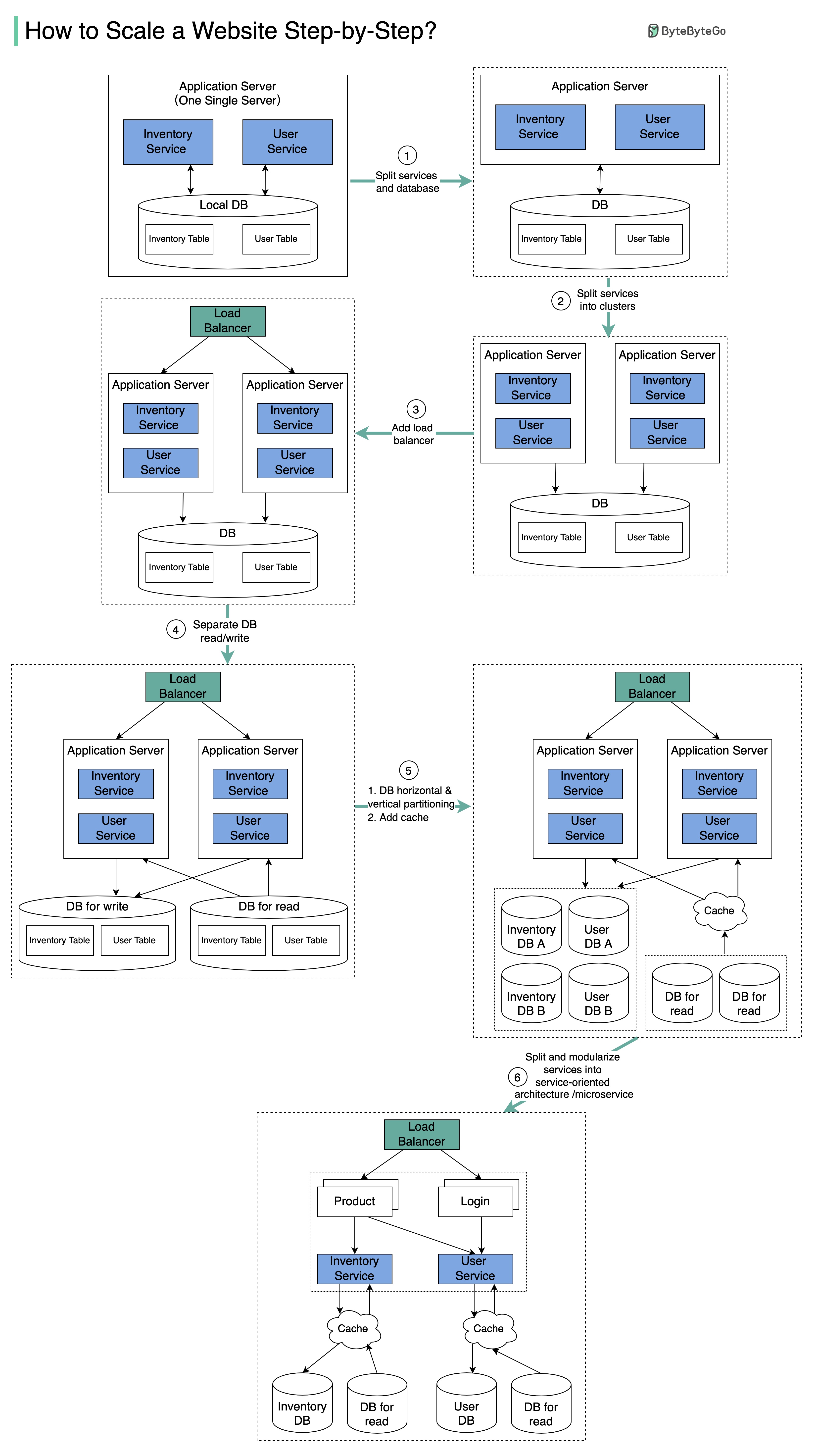

+ * [Scaling Websites for Millions of Users](https://bytebytego.com/guides/how-to-scale-a-website-to-support-millions-of-users)

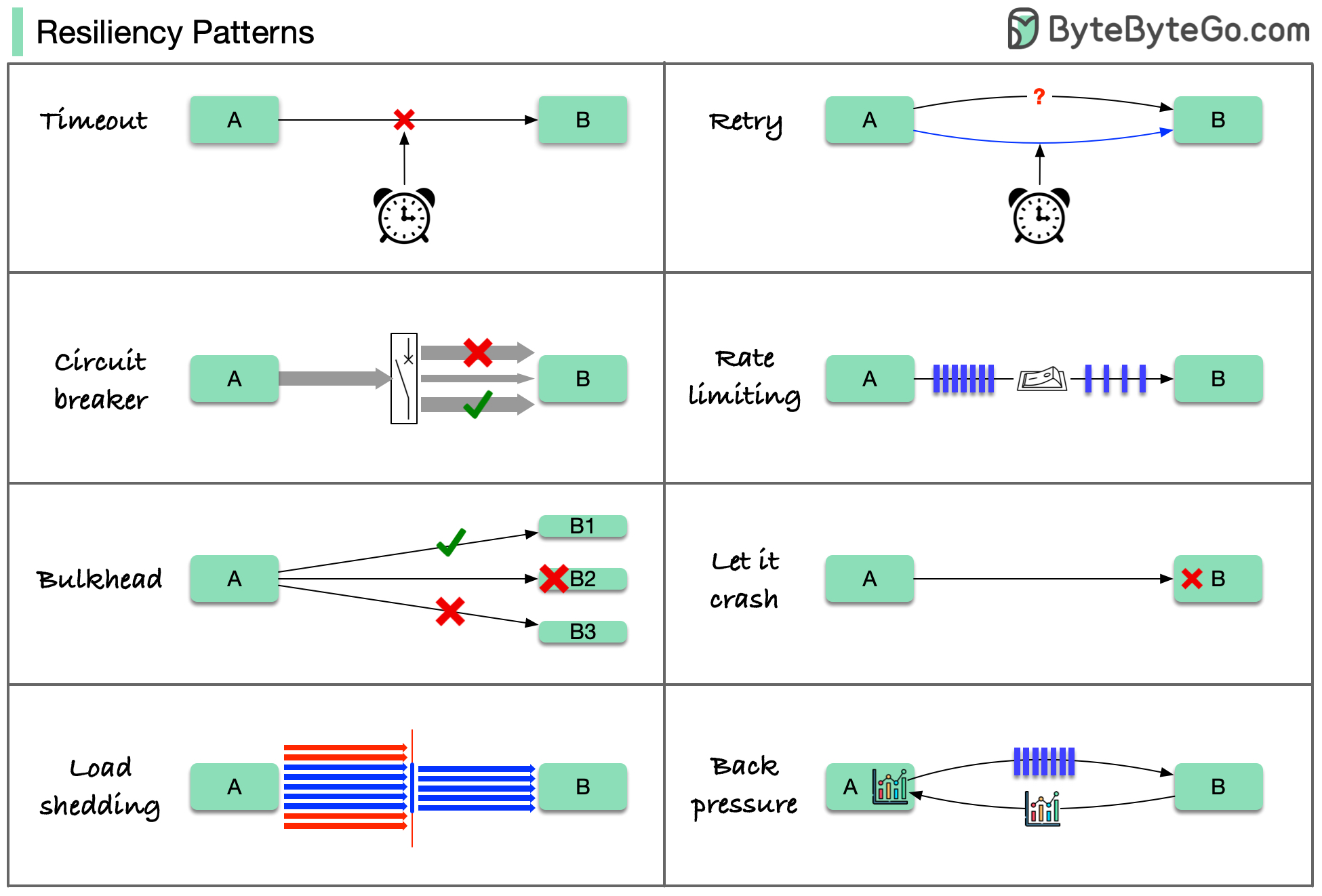

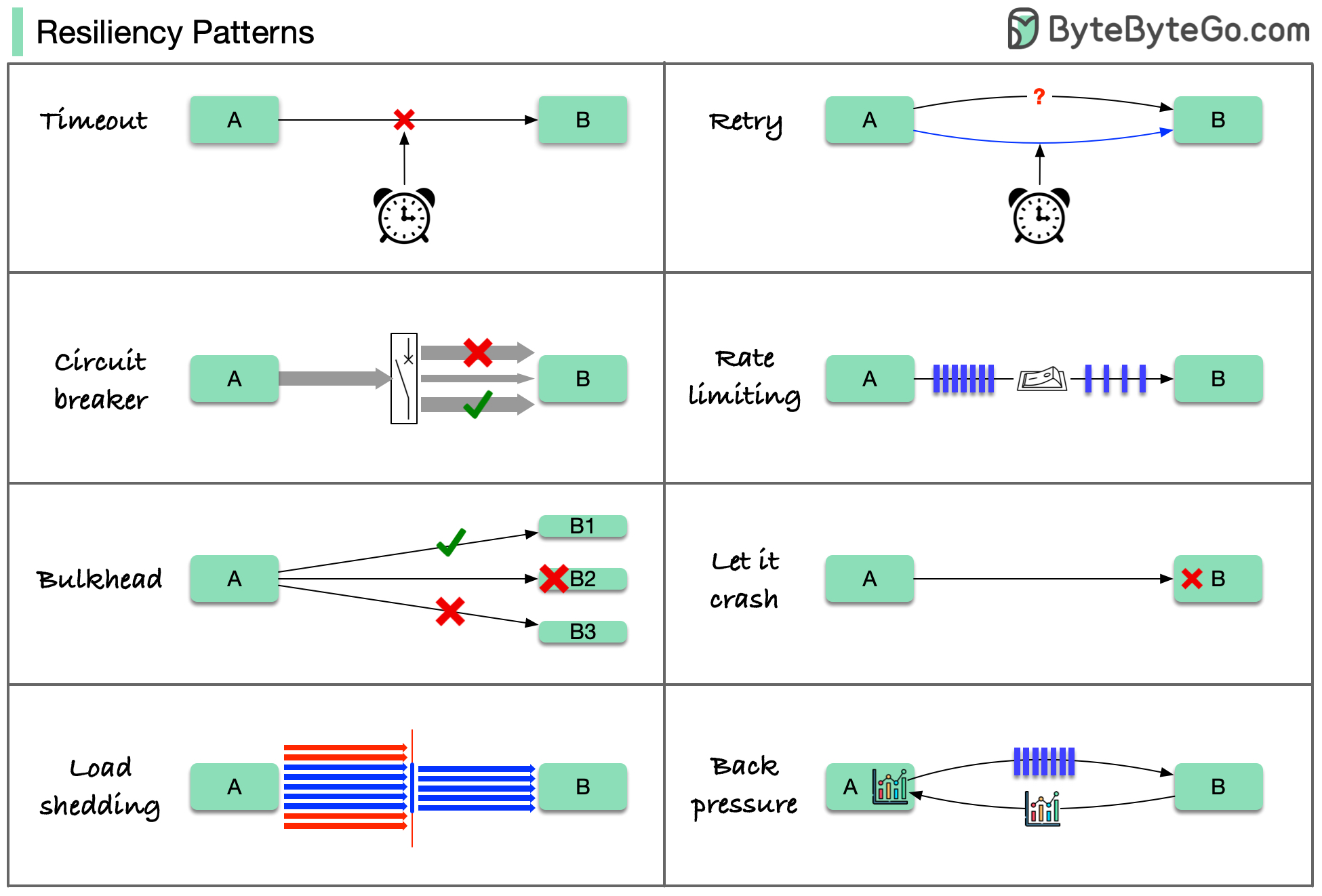

+ * [Resiliency Patterns](https://bytebytego.com/guides/resiliency-patterns)

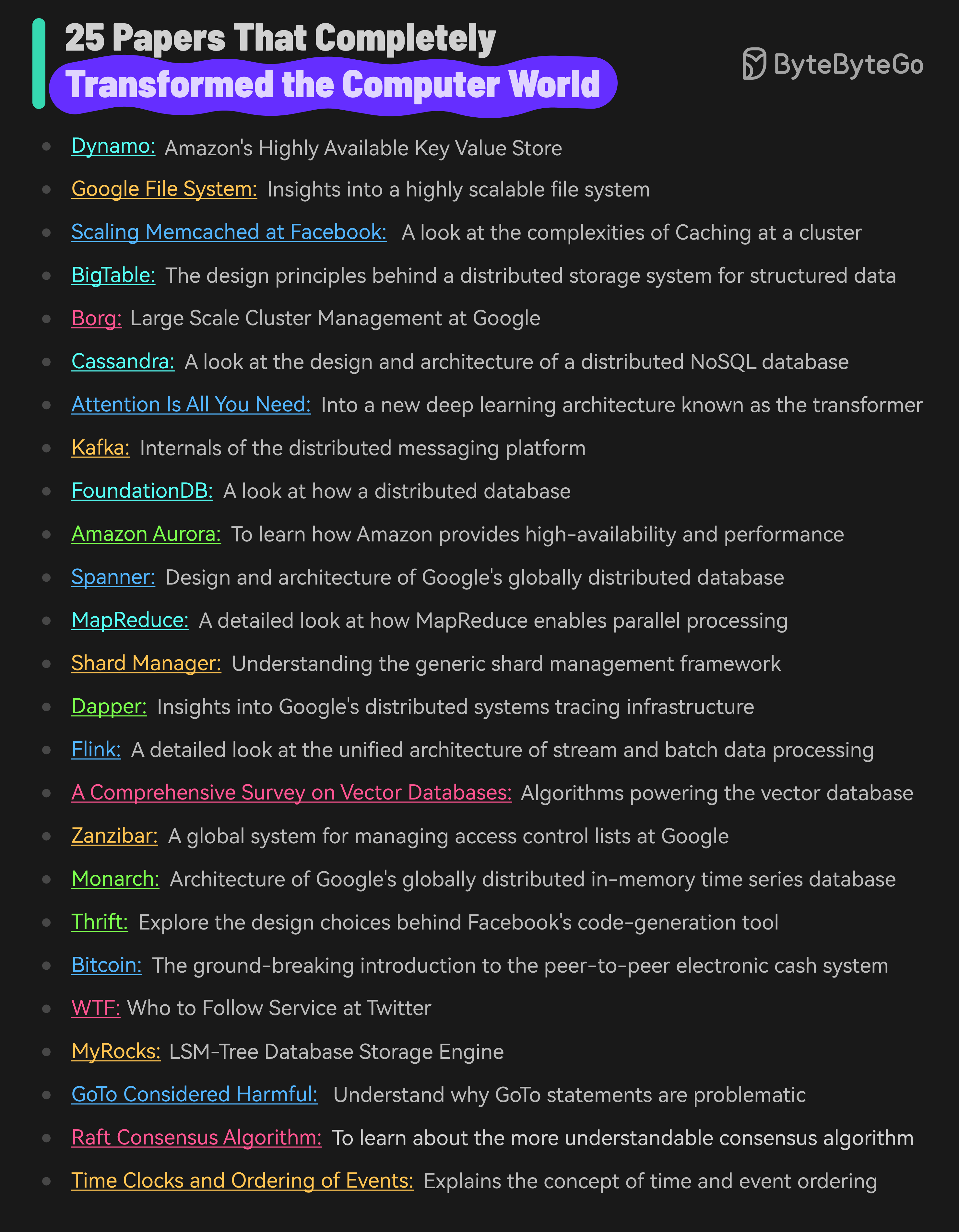

+ * [25 Papers That Completely Transformed the Computer World](https://bytebytego.com/guides/25-papers-that-completely-transformed-the-computer-world)

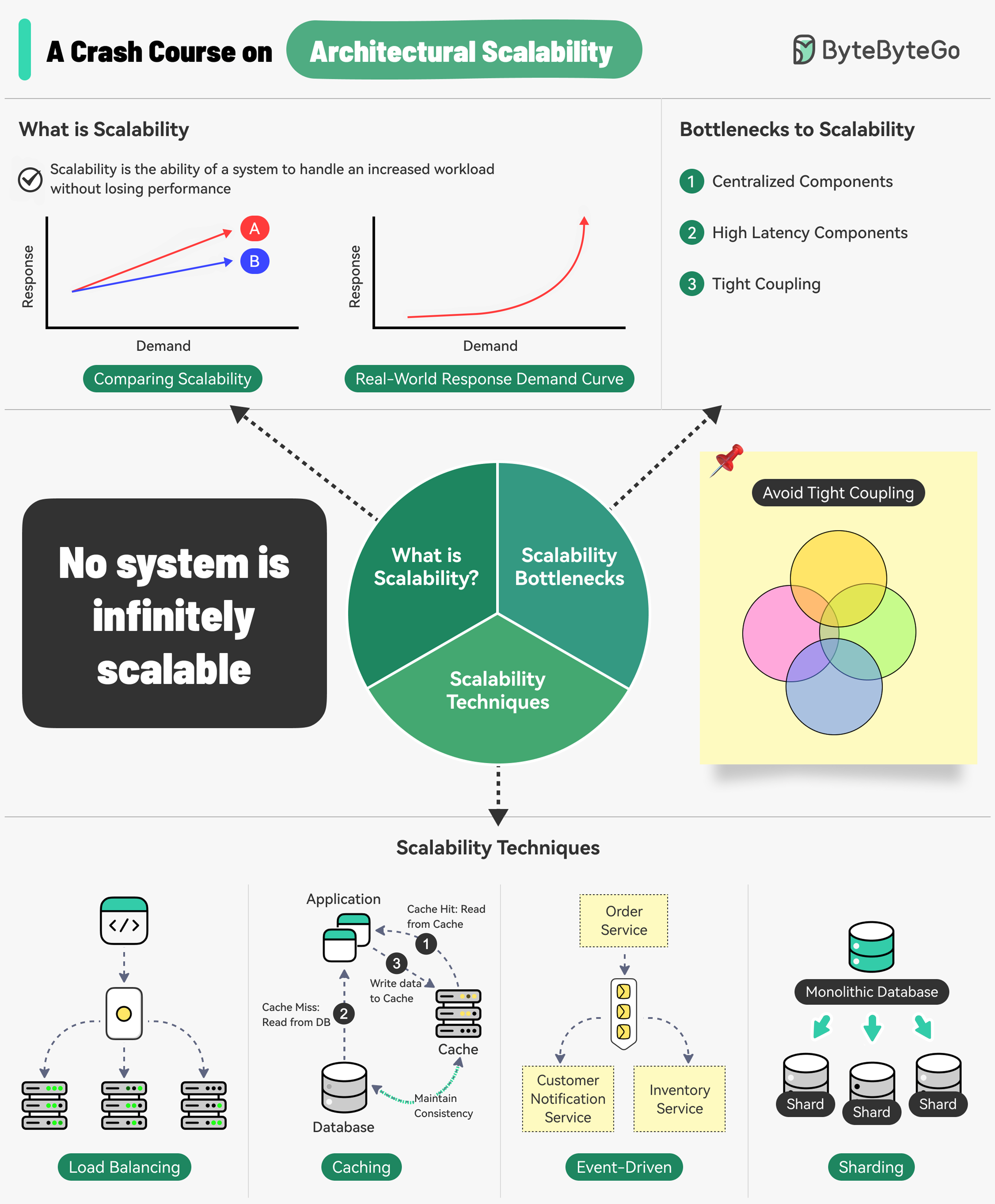

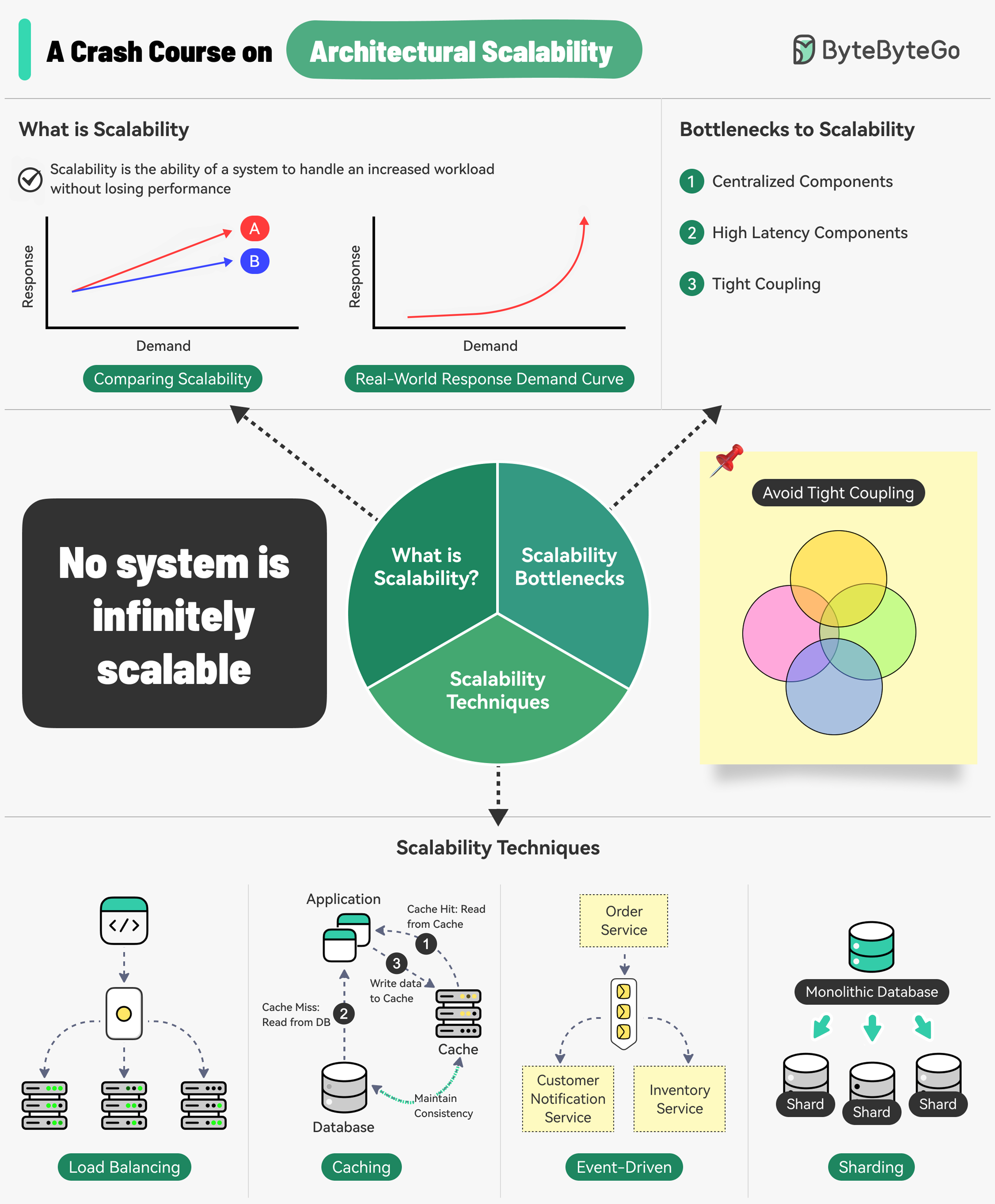

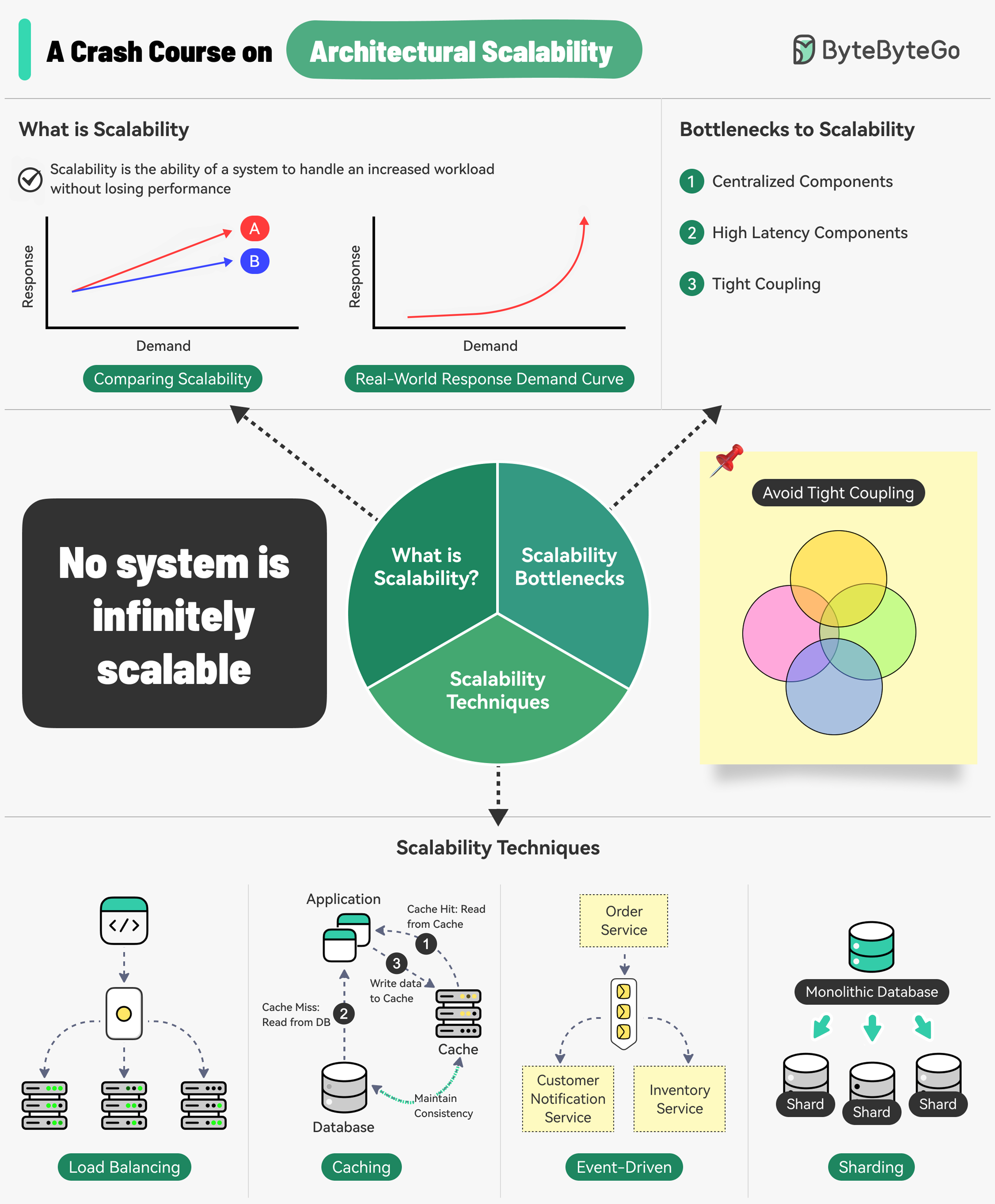

+ * [A Crash Course on Architectural Scalability](https://bytebytego.com/guides/a-crash-course-on-architectural-scalability)

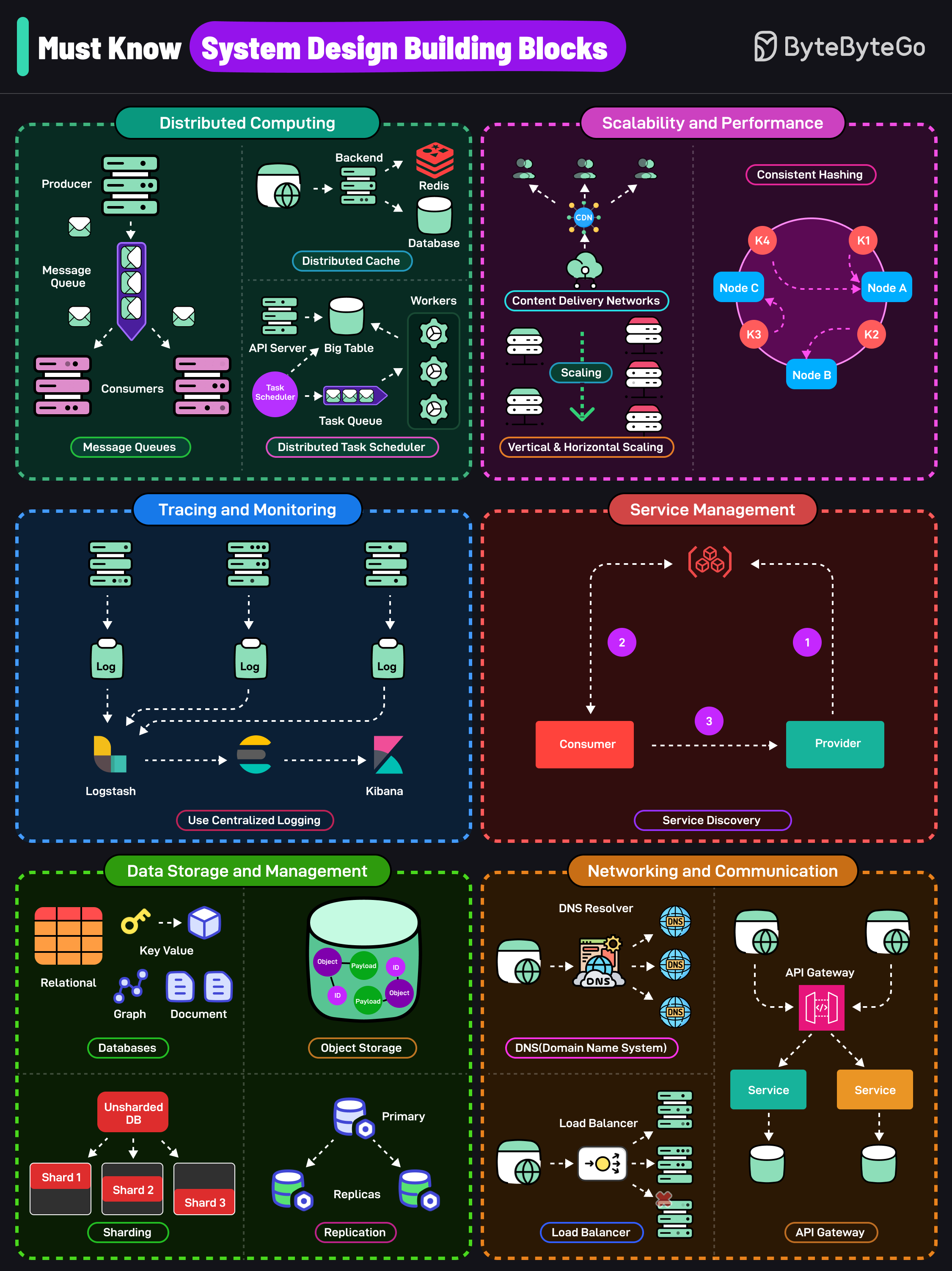

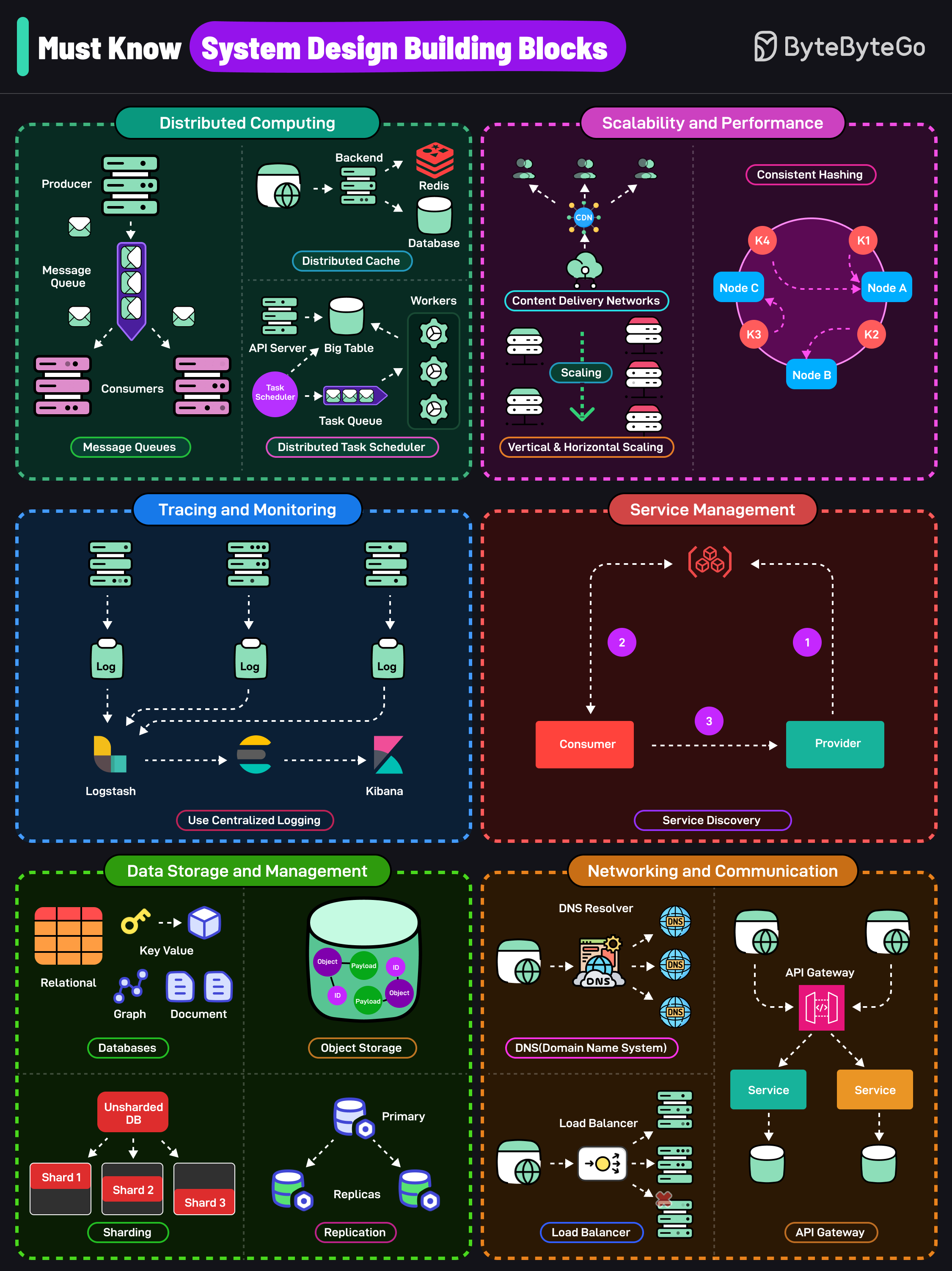

+ * [Must Know System Design Building Blocks](https://bytebytego.com/guides/must-know-system-design-building-blocks)

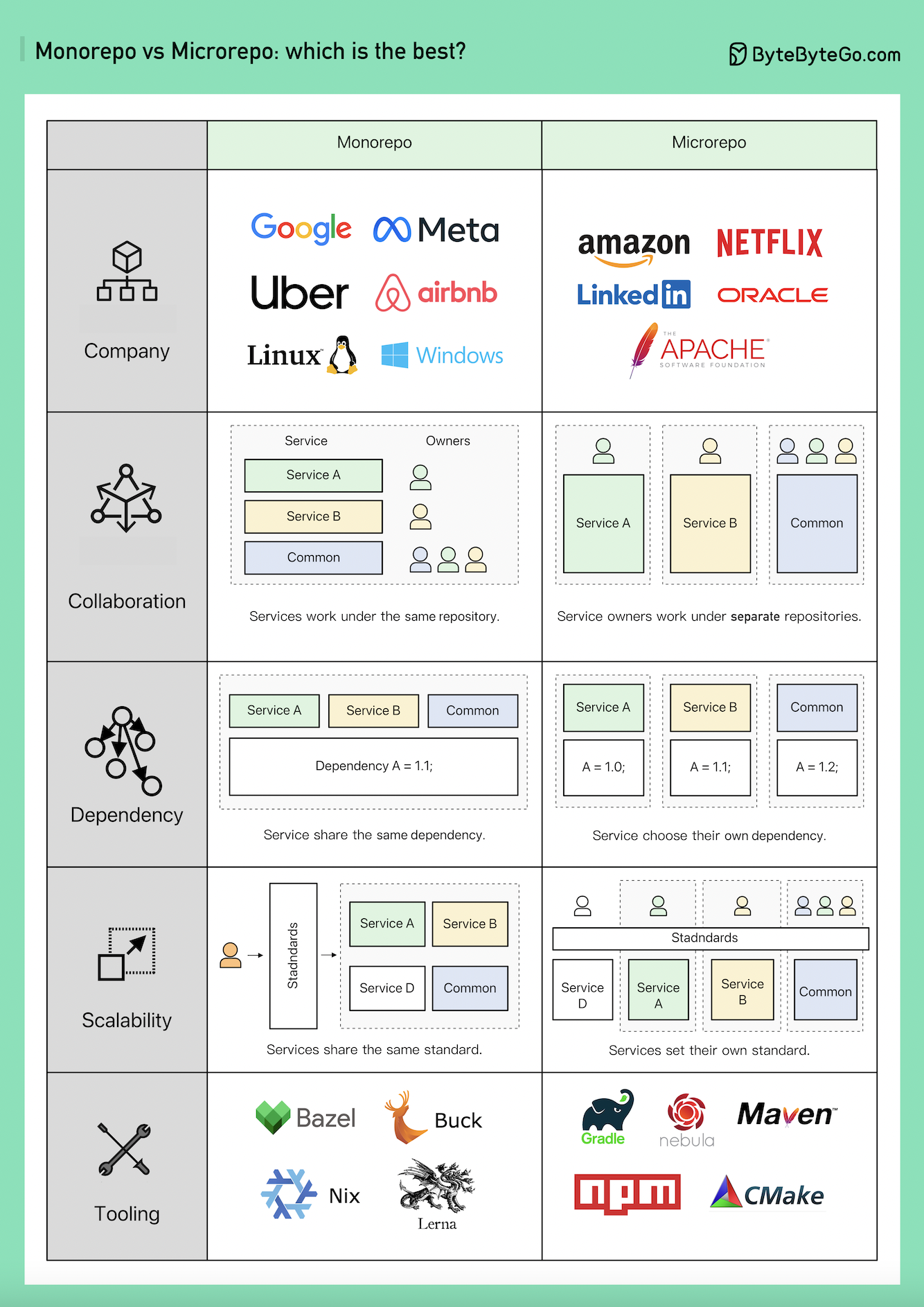

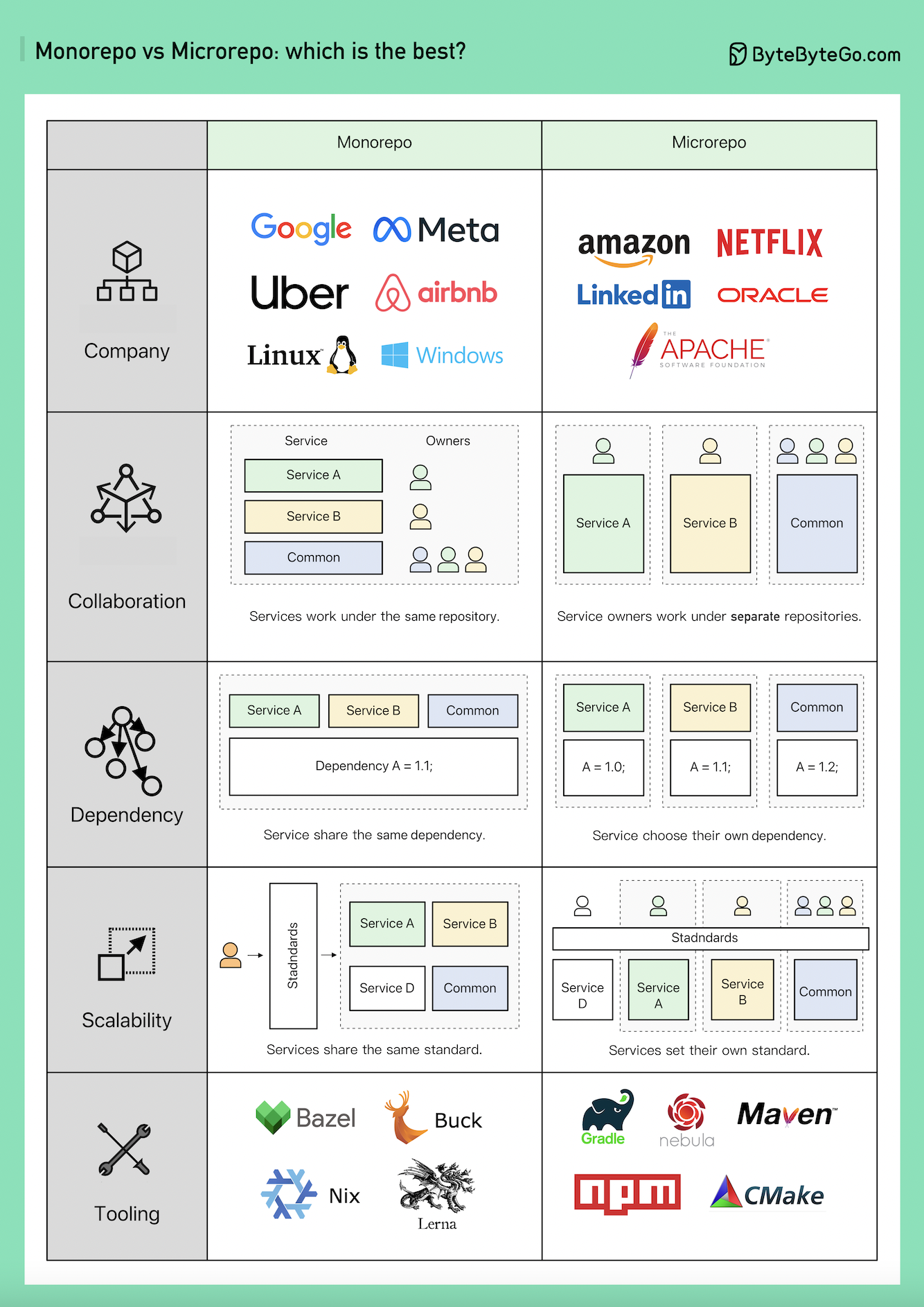

+ * [Monorepo vs. Microrepo: Which is Best?](https://bytebytego.com/guides/monorepo-vs)

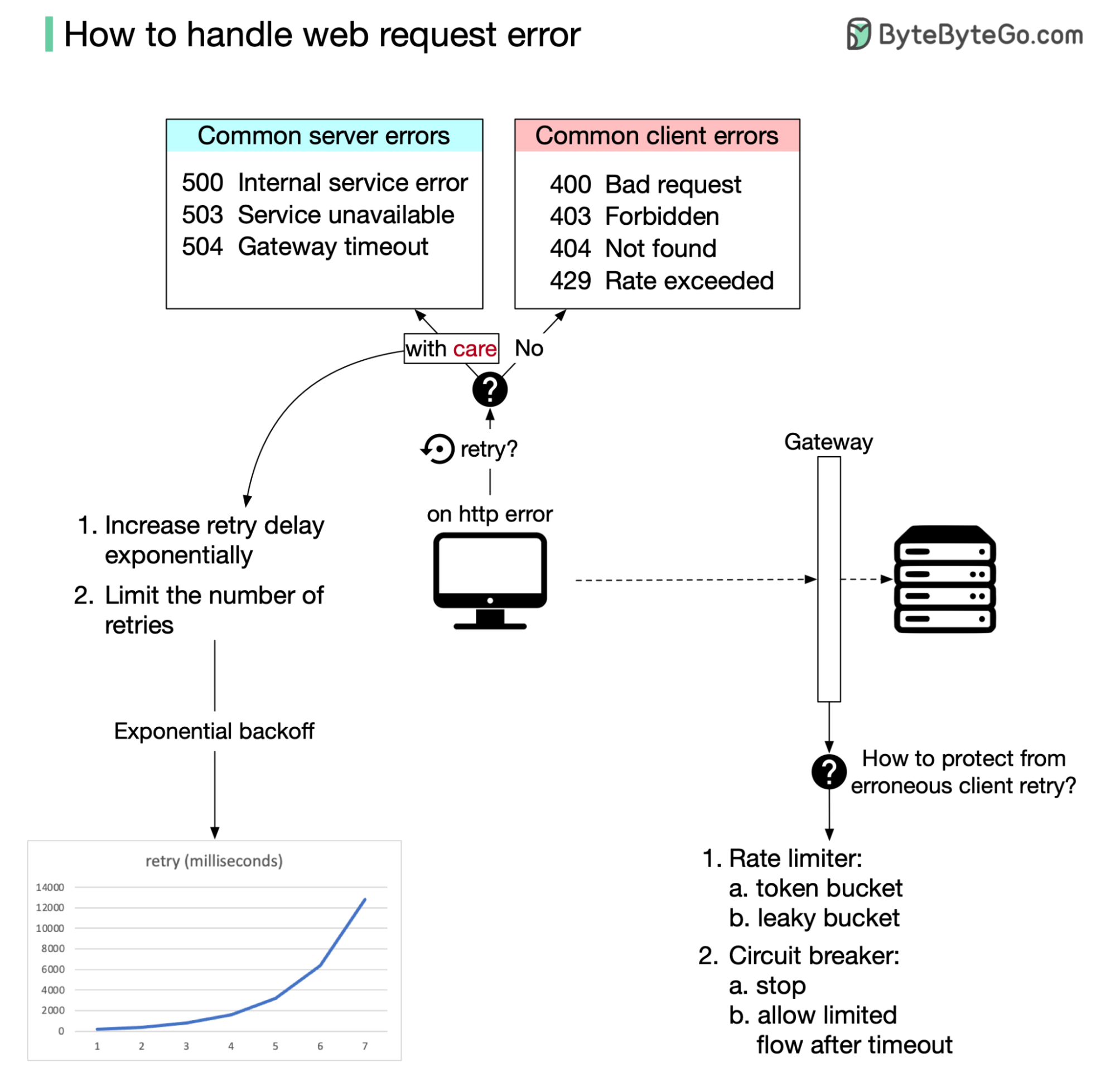

+ * [How to Handle Web Request Errors](https://bytebytego.com/guides/how-to-handle-web-request-error)

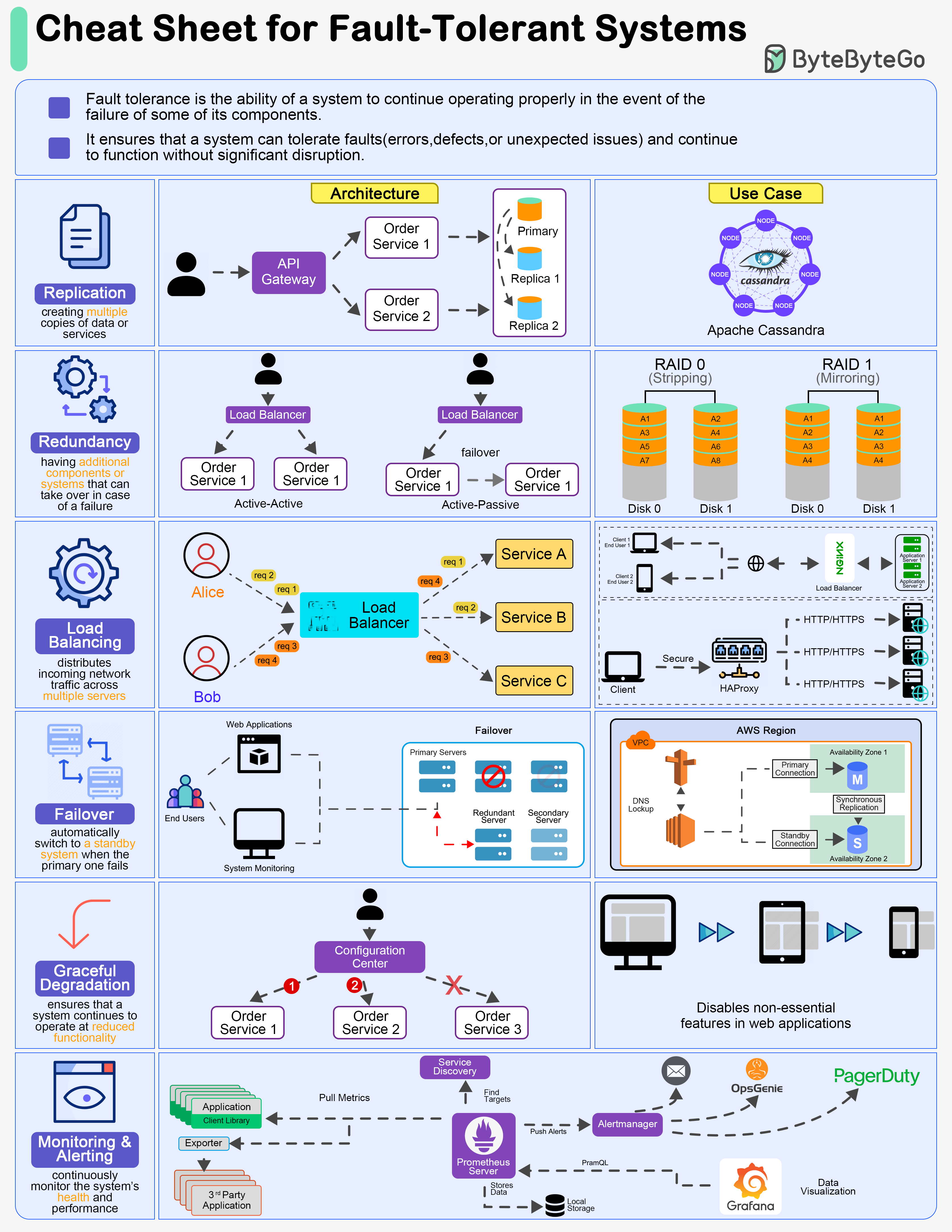

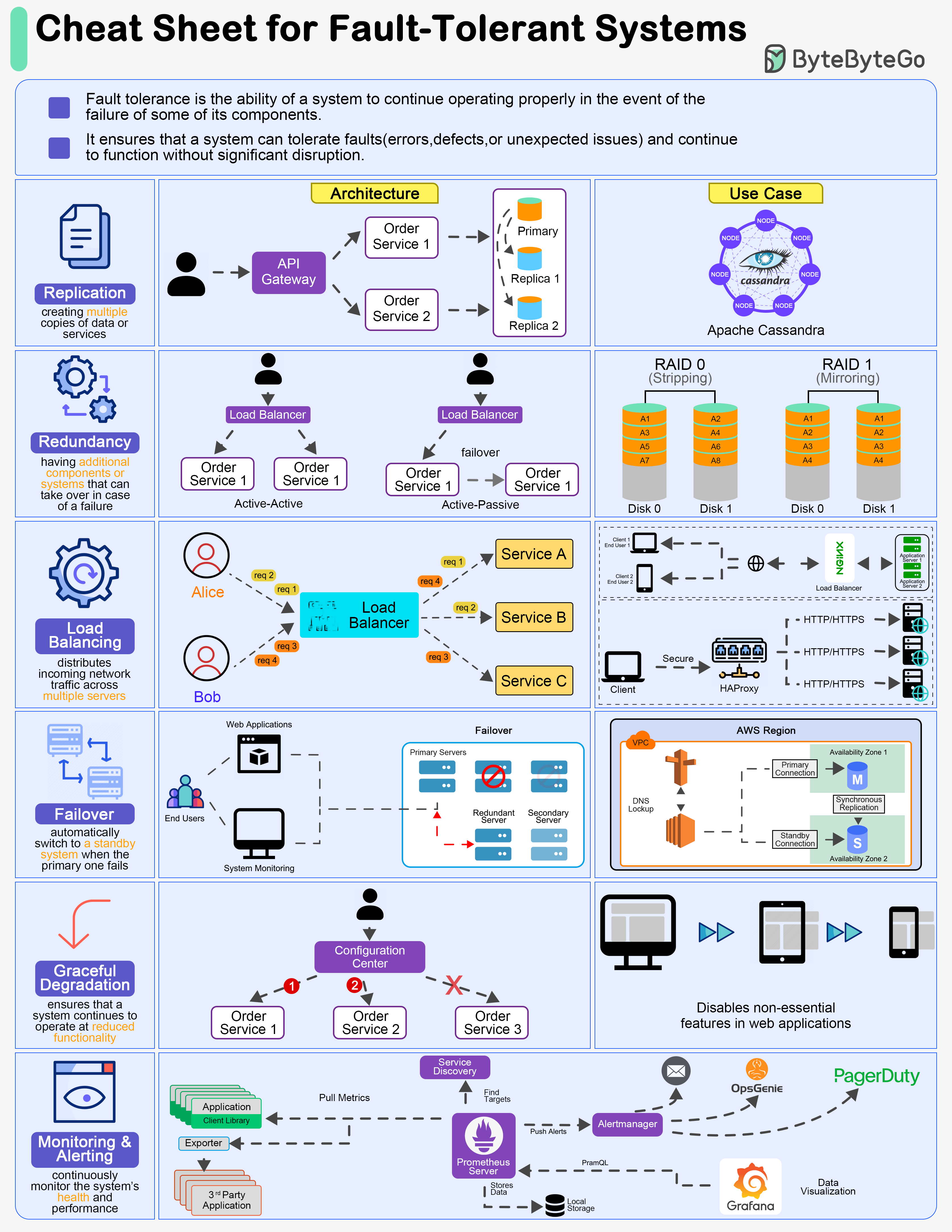

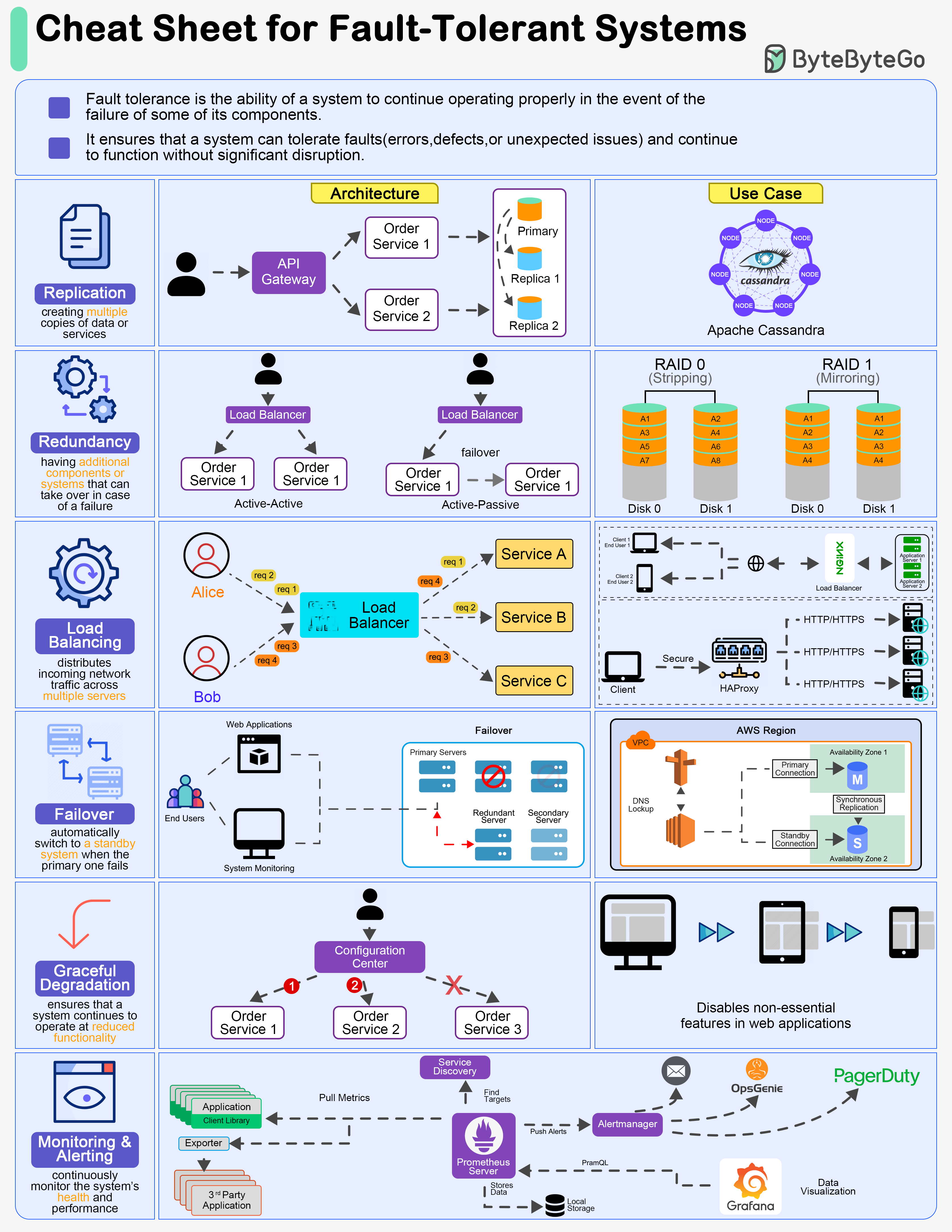

+ * [A Cheat Sheet for Designing Fault-Tolerant Systems](https://bytebytego.com/guides/a-cheat-sheet-for-designing-fault-tolerant-systems)

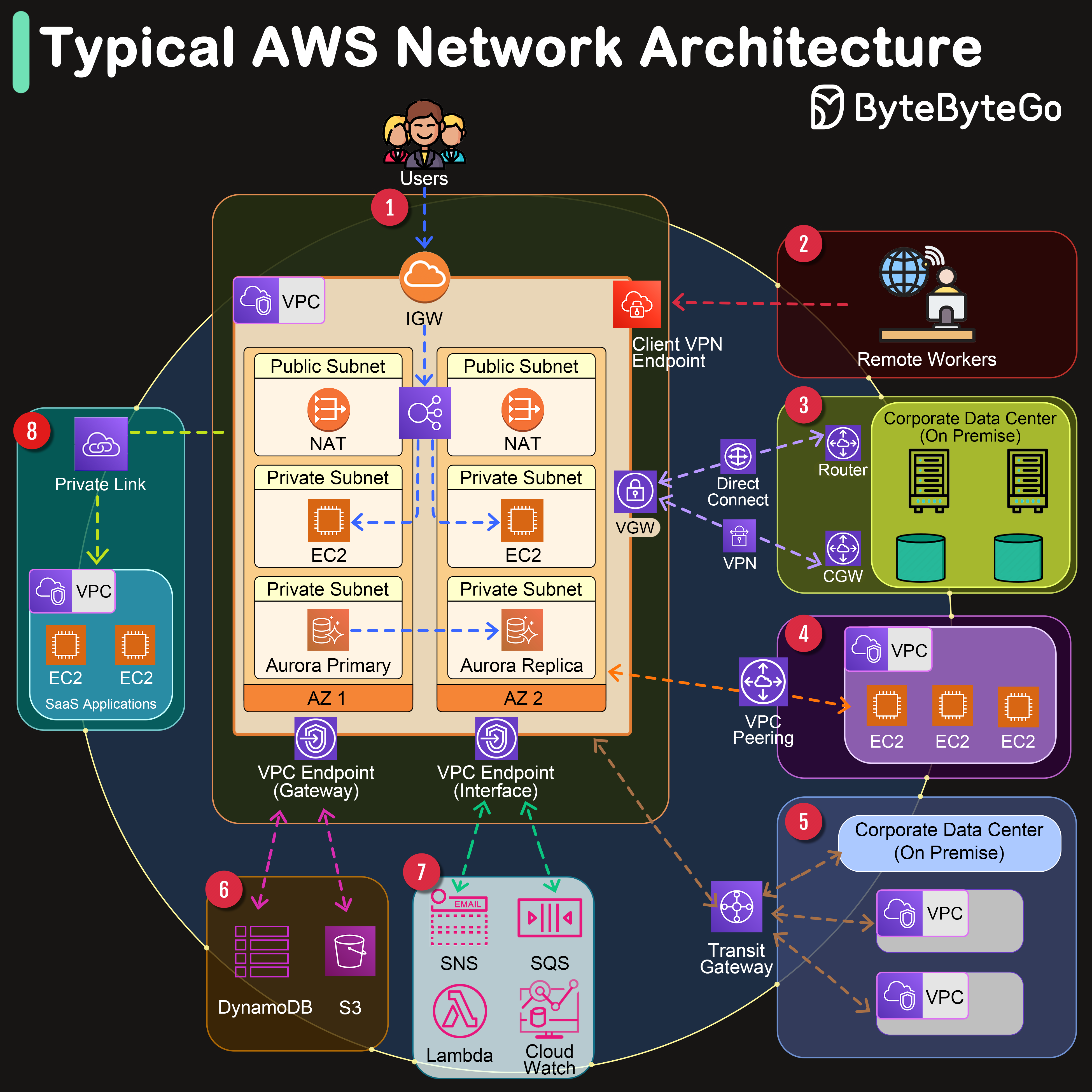

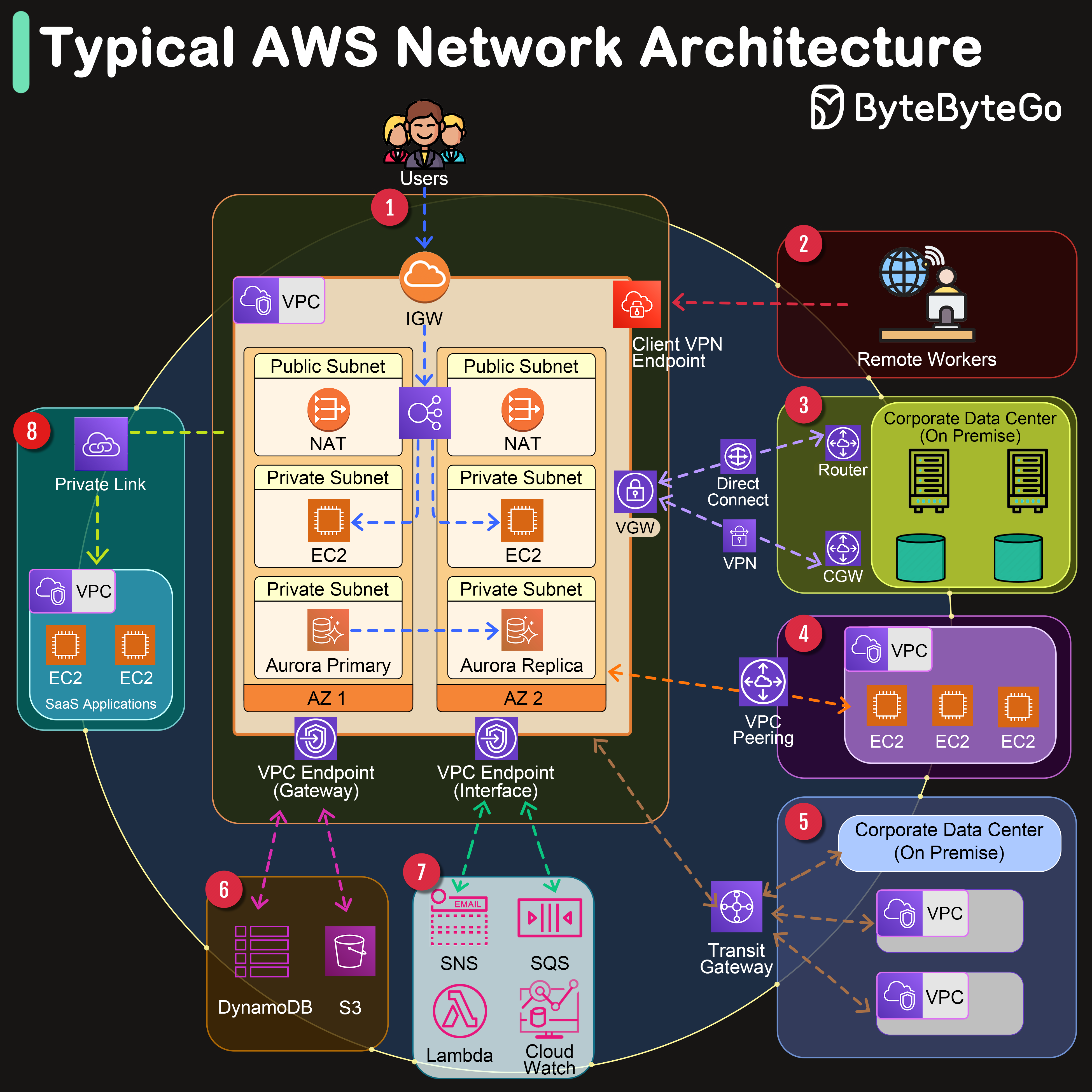

+ * [Typical AWS Network Architecture](https://bytebytego.com/guides/typical-aws-network-architecture-in-one-diagram)

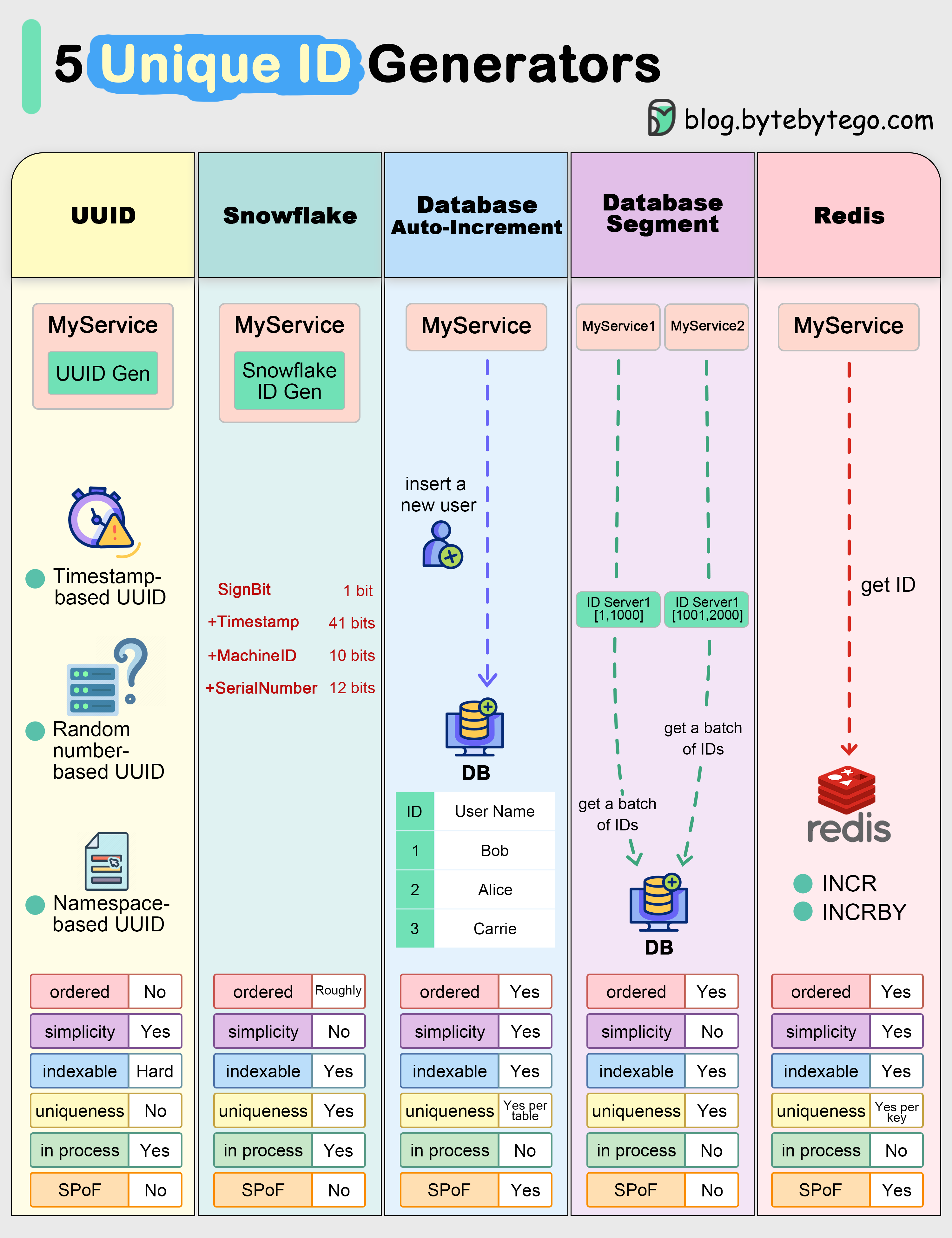

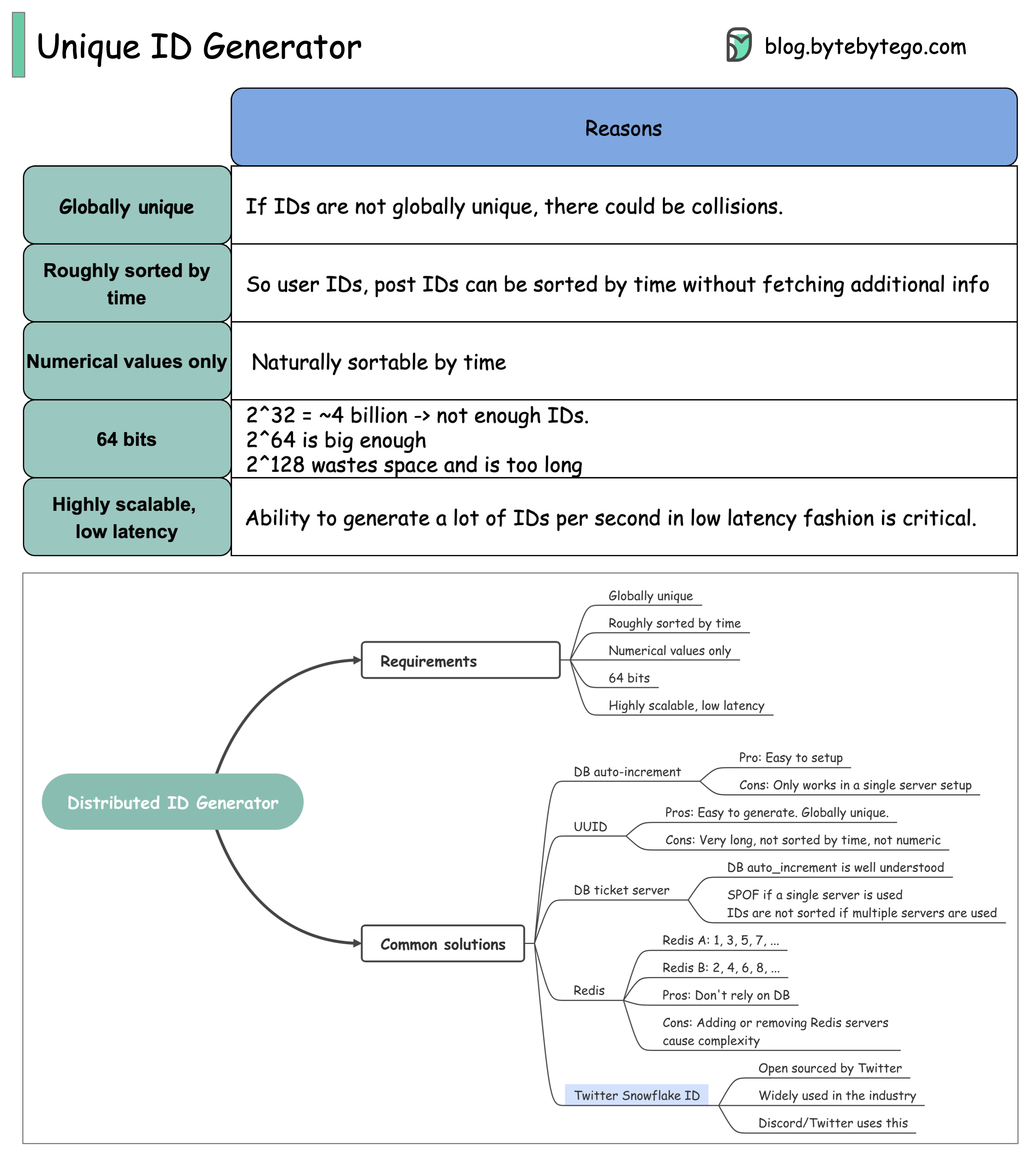

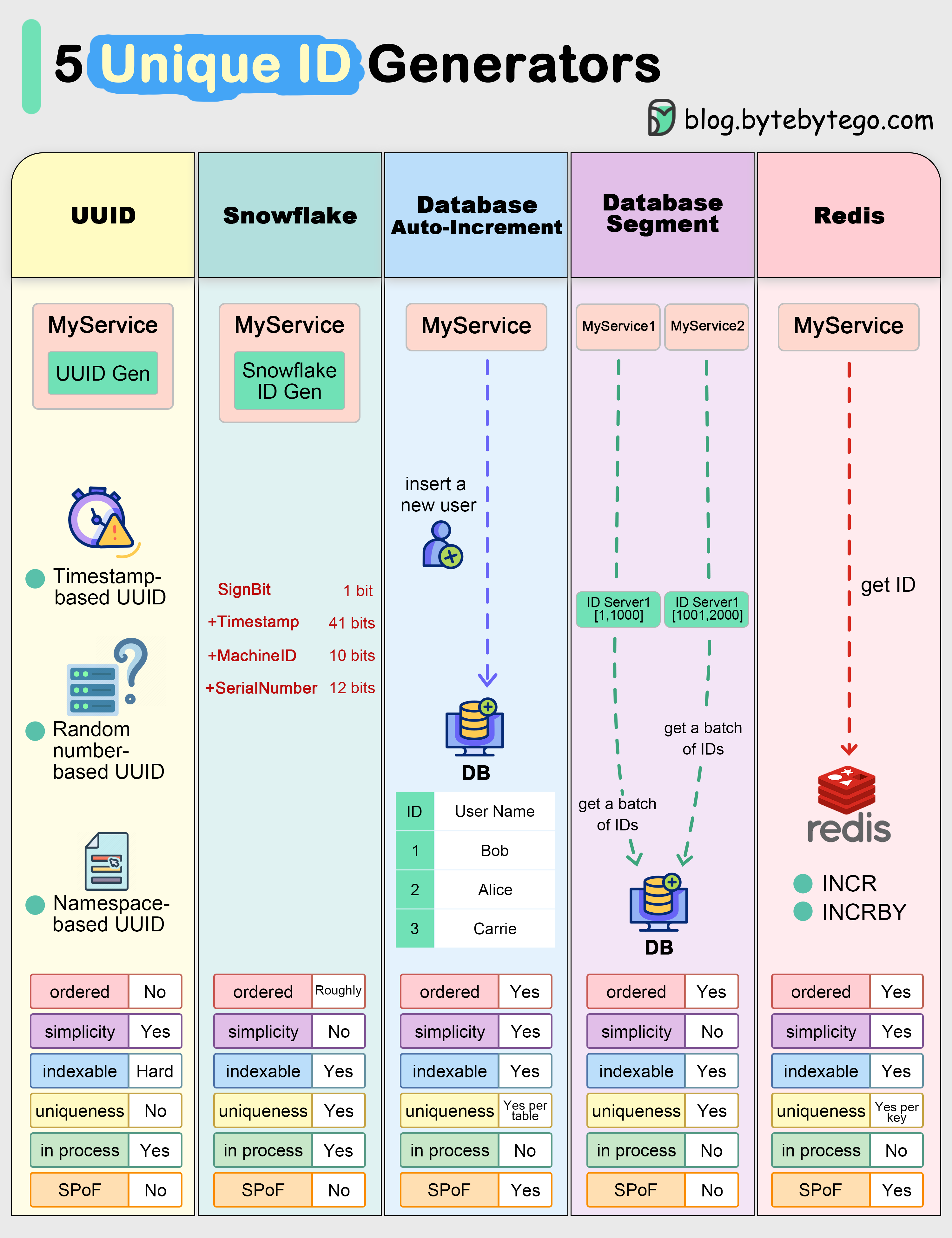

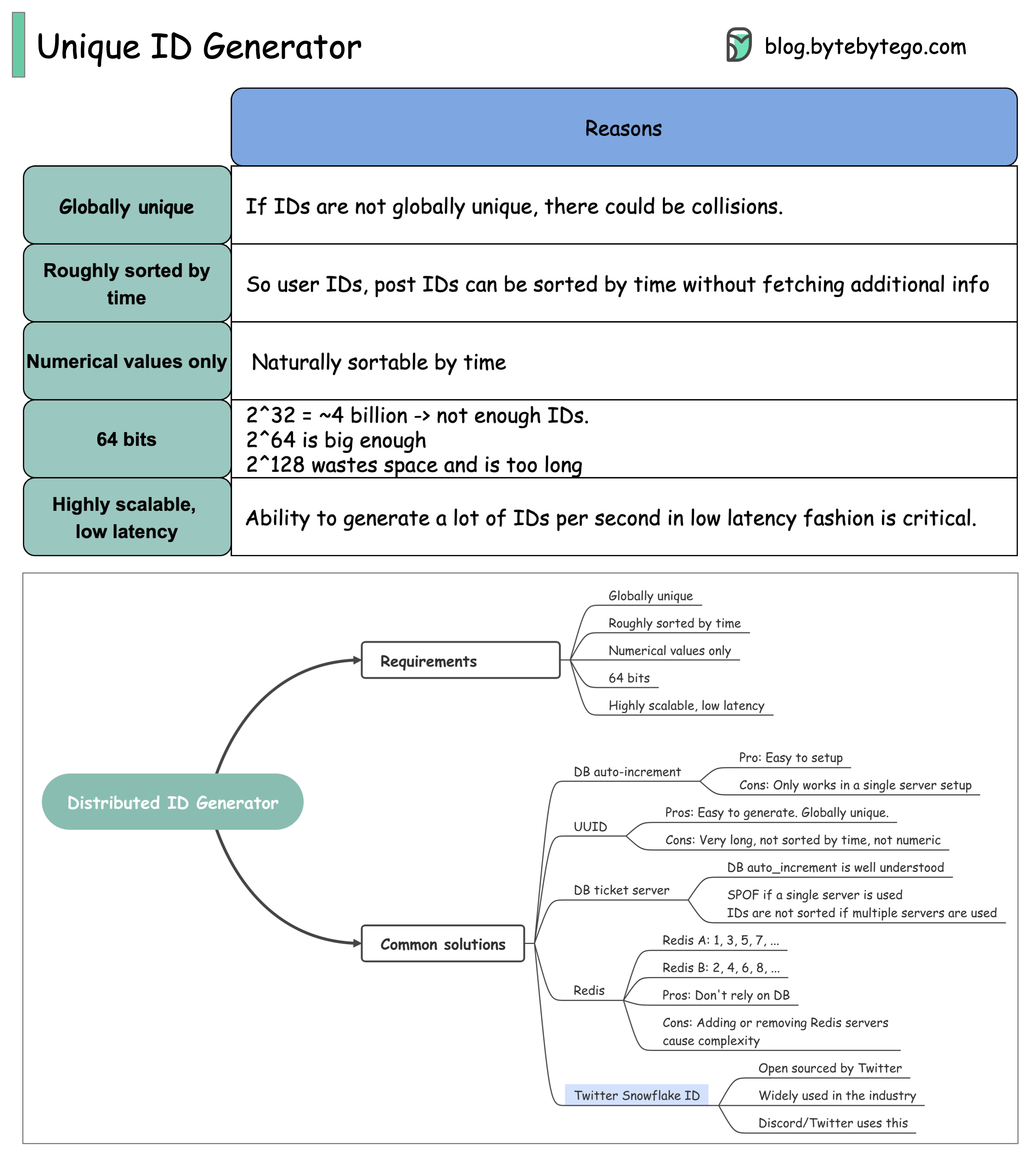

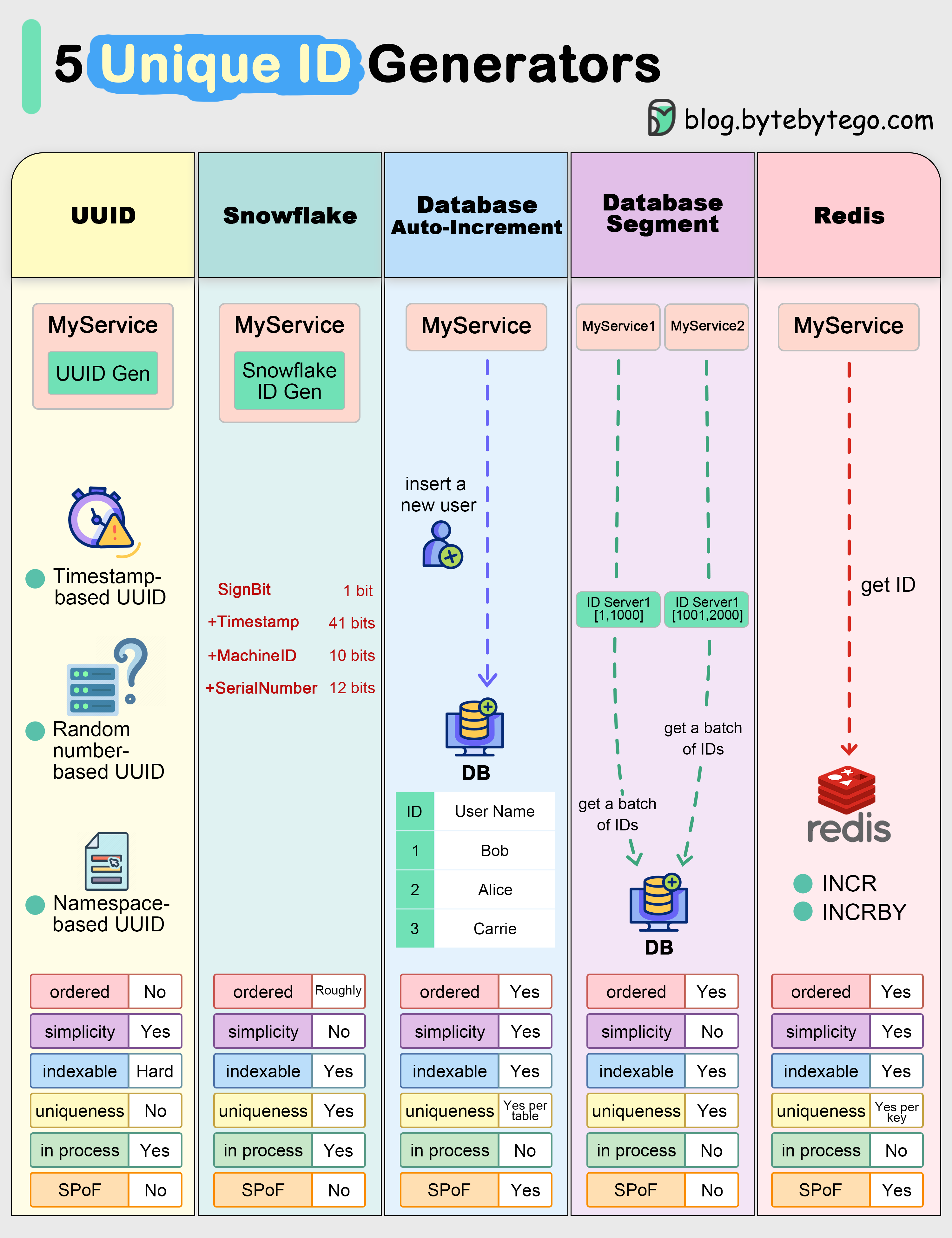

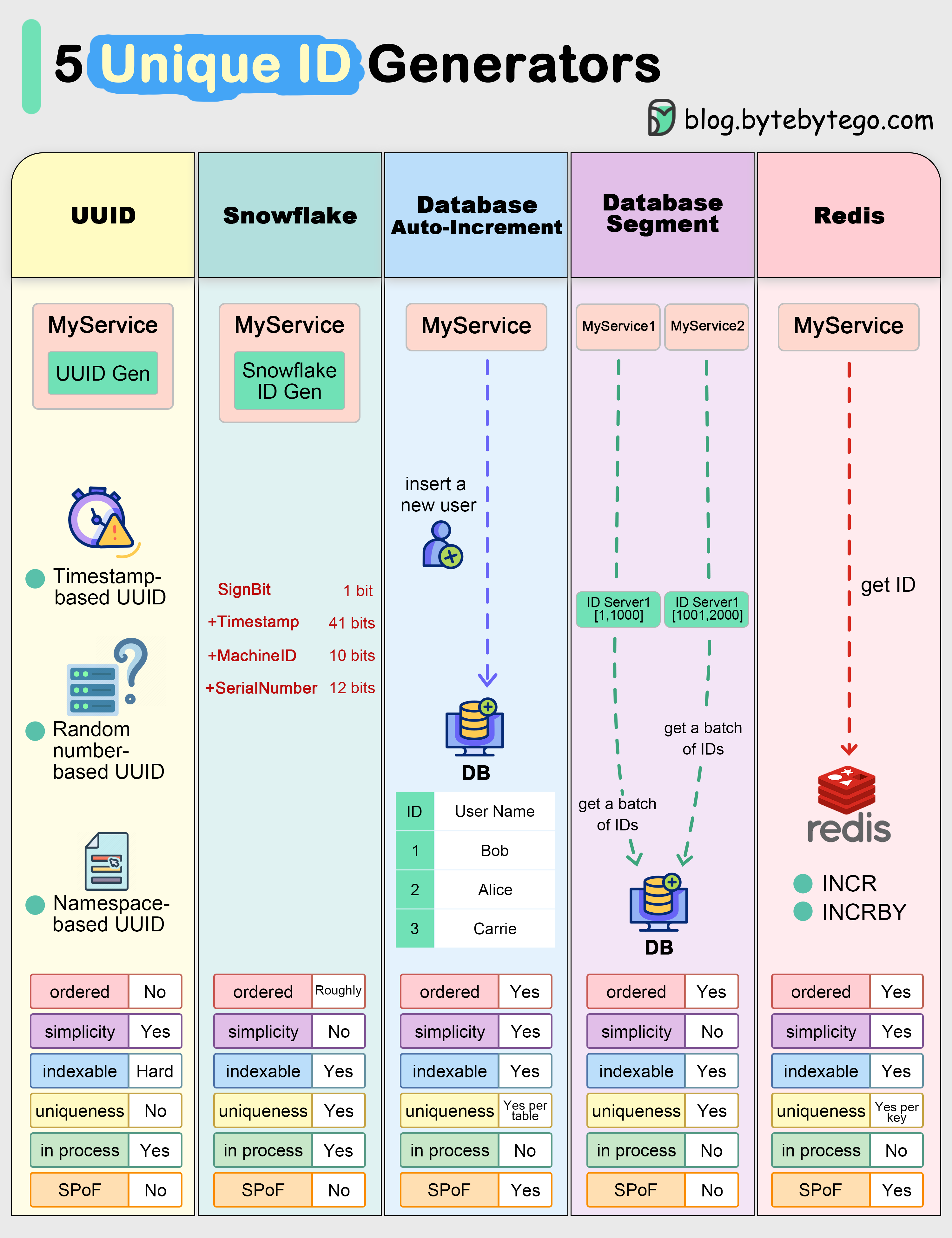

+ * [Unique ID Generator](https://bytebytego.com/guides/unique-id-generator)

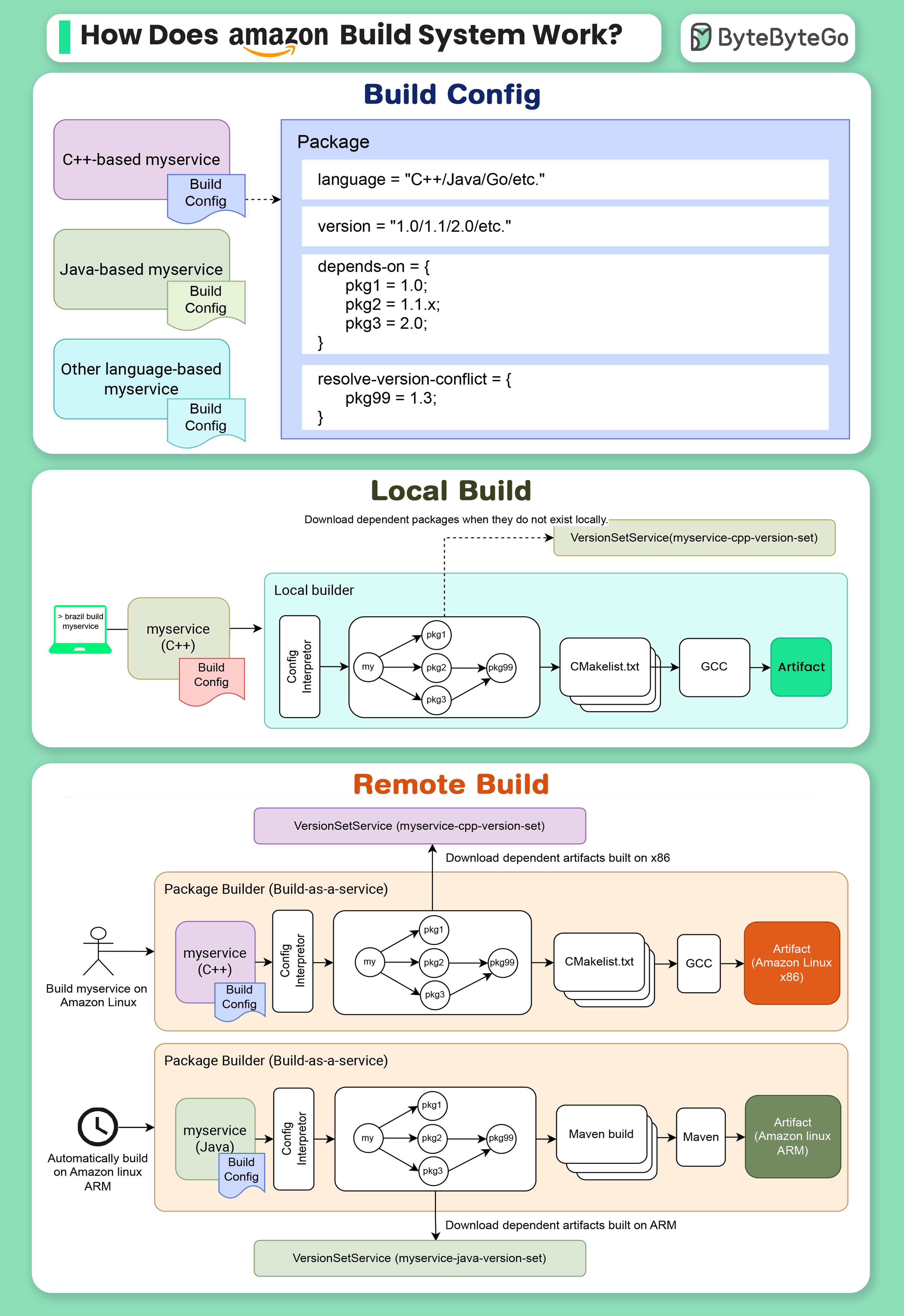

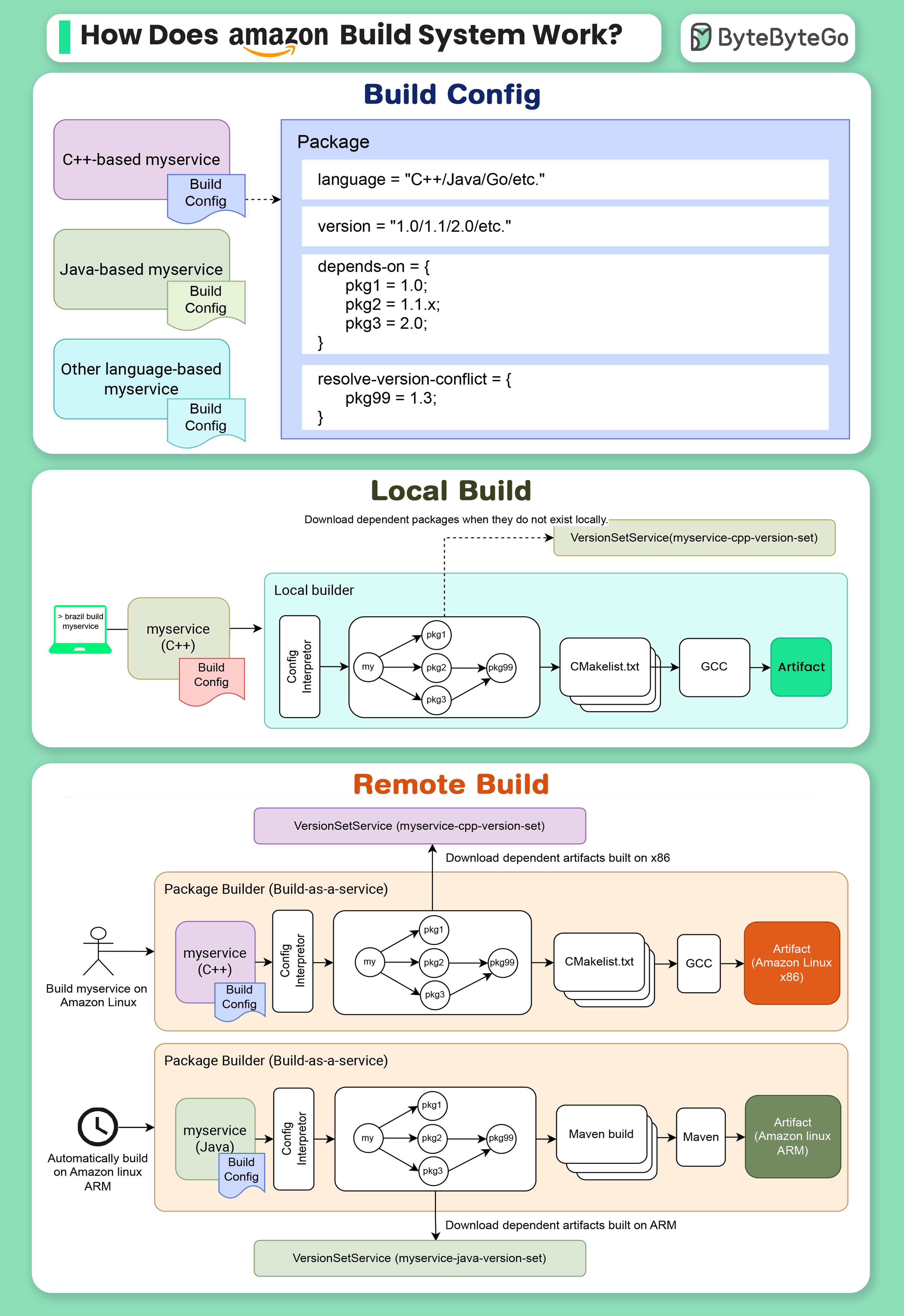

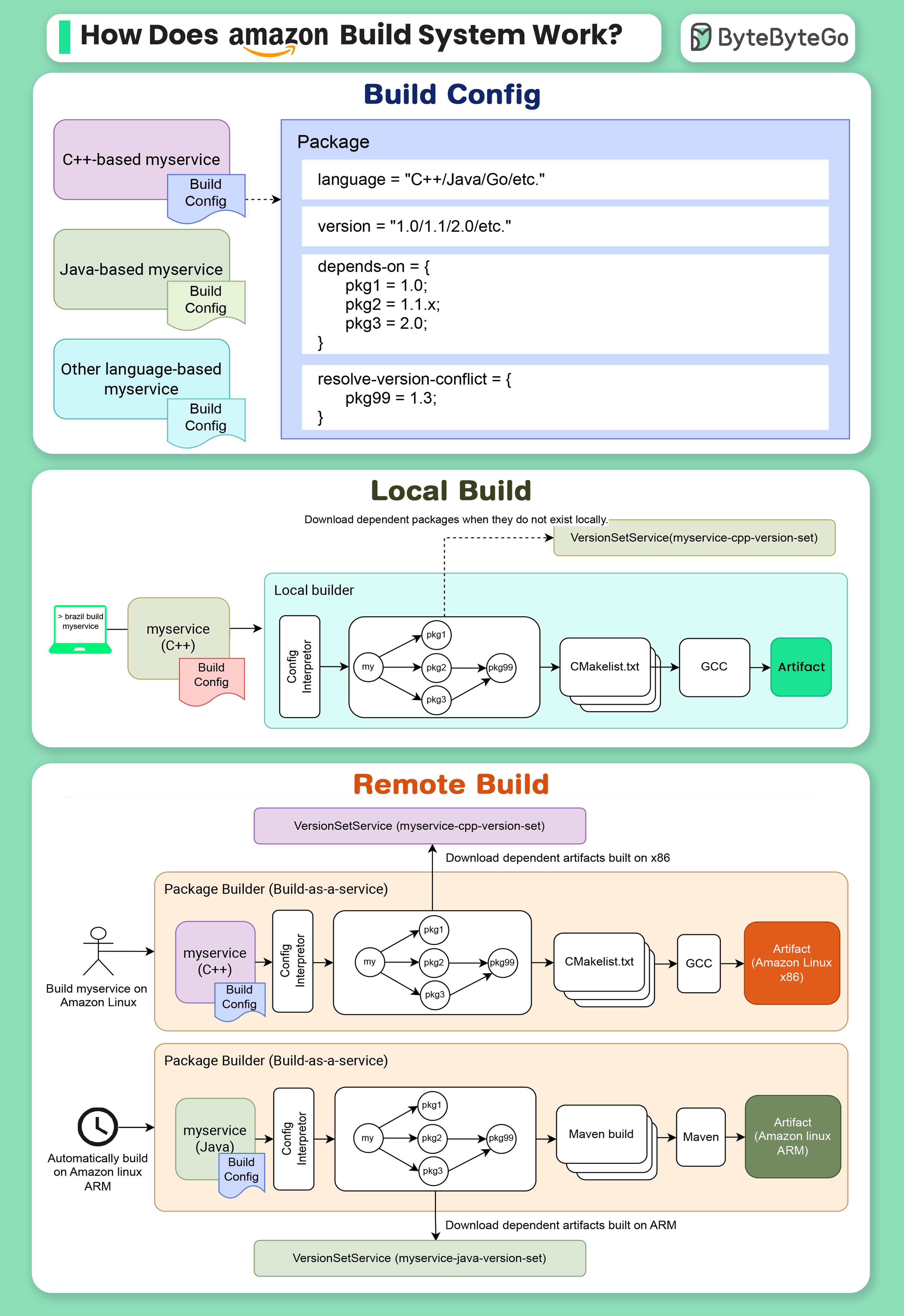

+ * [Amazon's Build System: Brazil](https://bytebytego.com/guides/how-does-amazon-build-system-work)

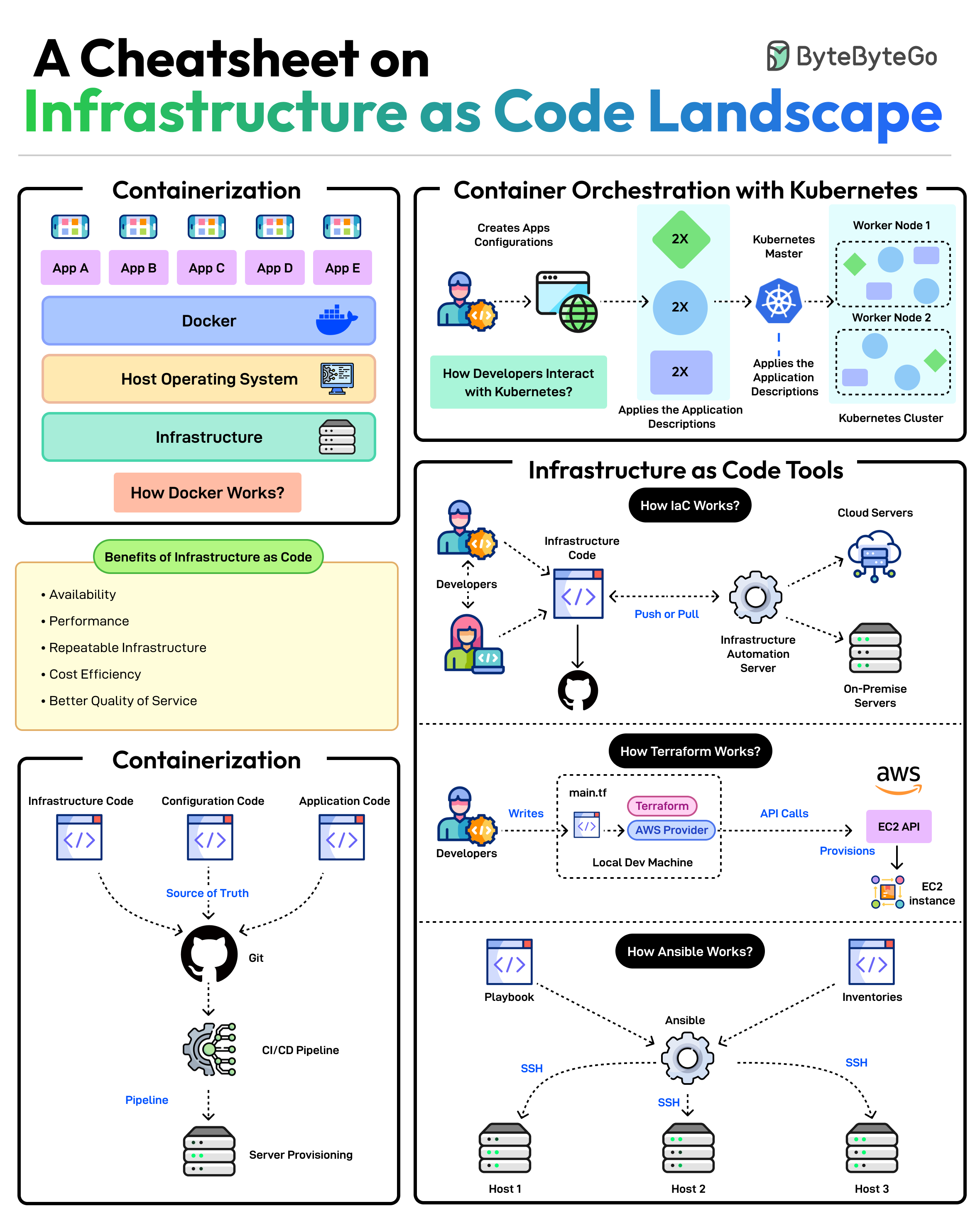

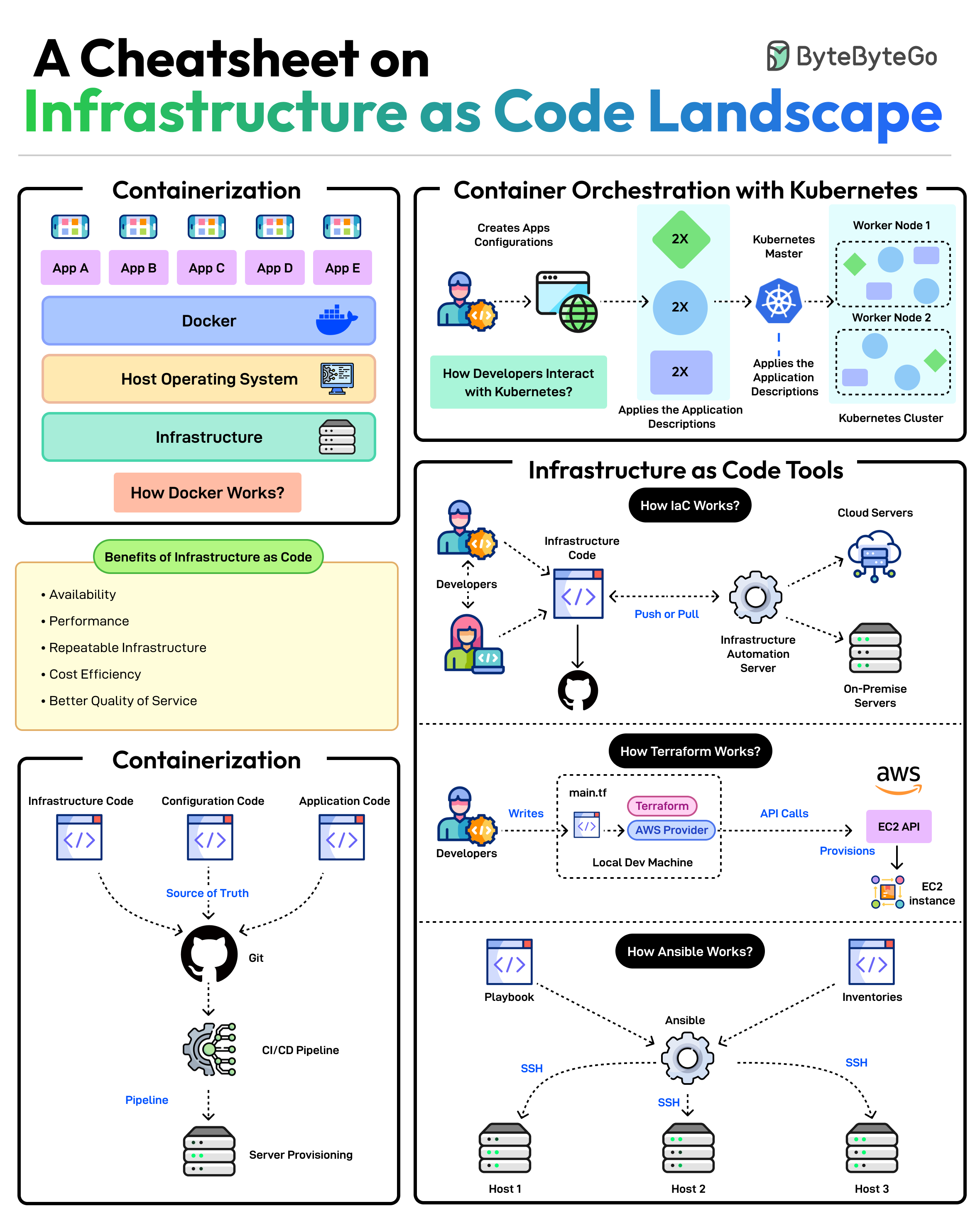

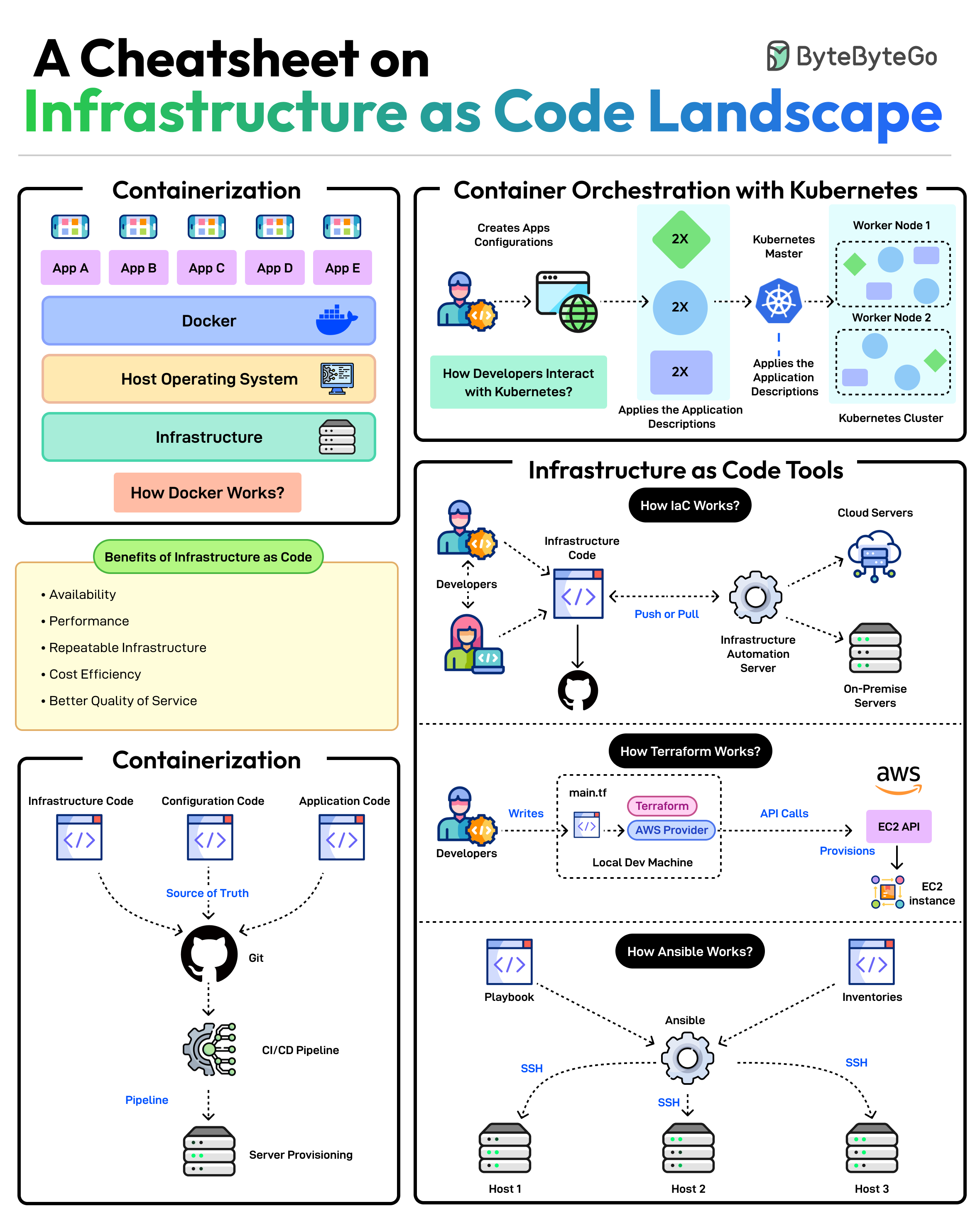

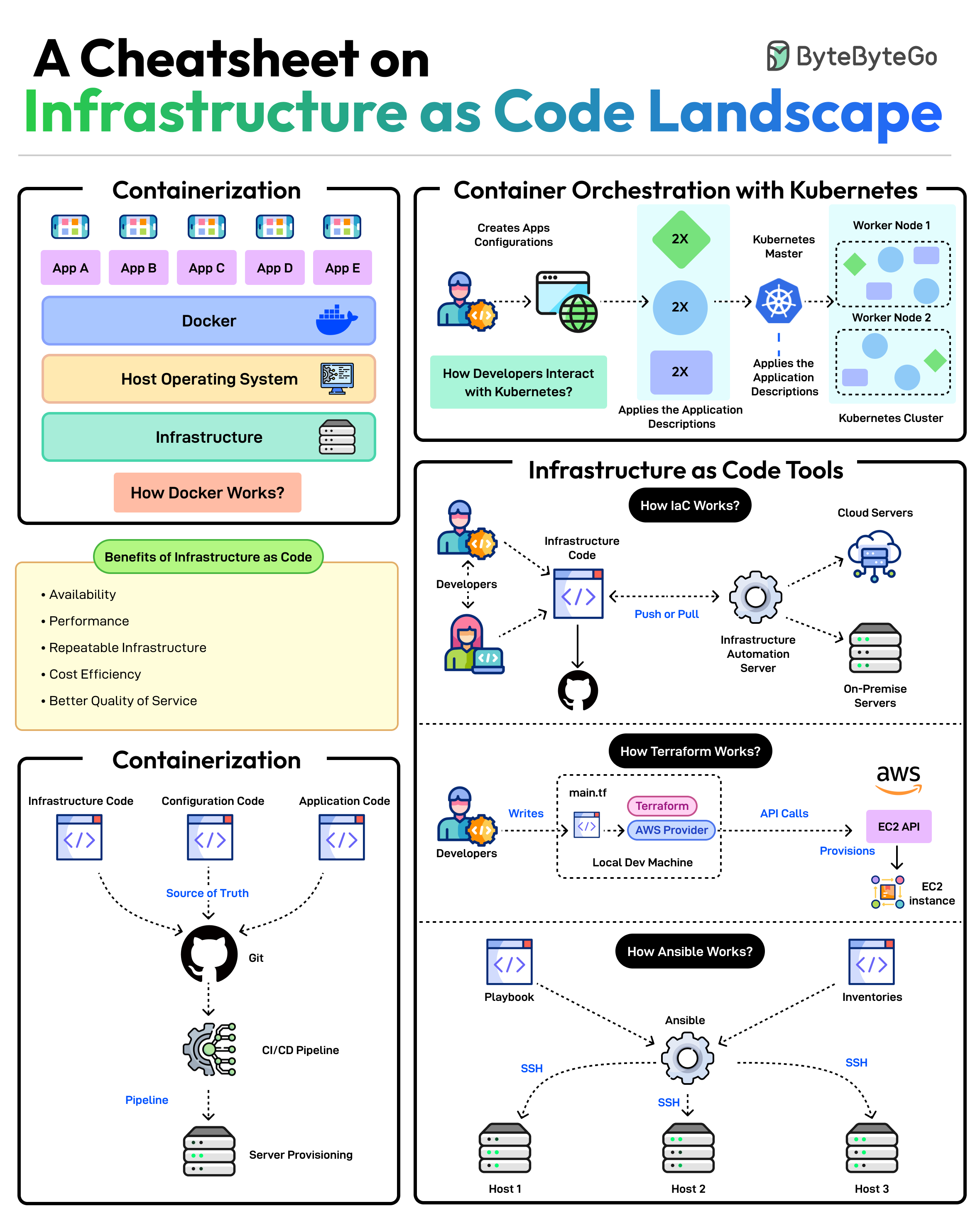

+ * [Infrastructure as Code Landscape Cheatsheet](https://bytebytego.com/guides/a-cheatsheet-on-infrastructure-as-code-landscape)

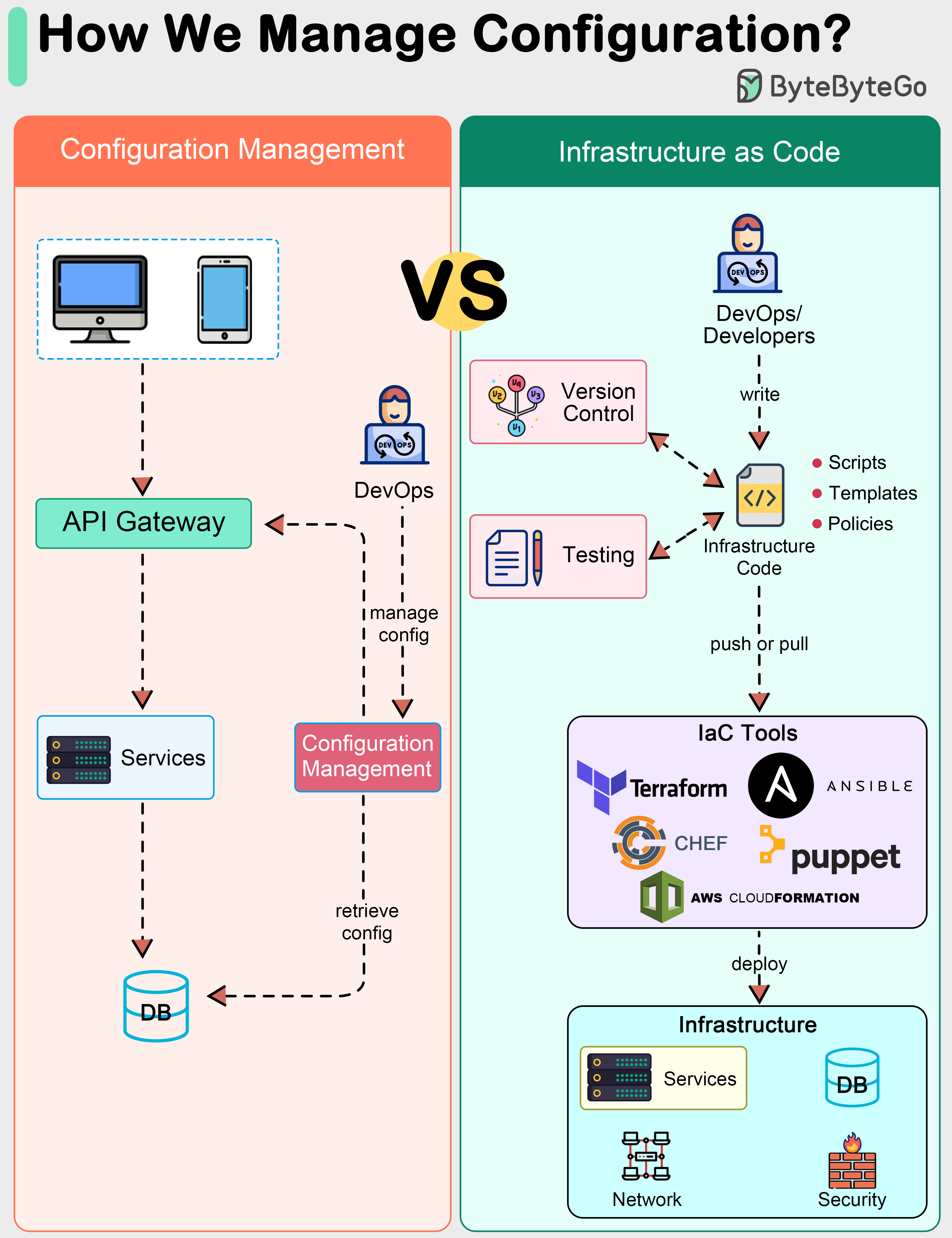

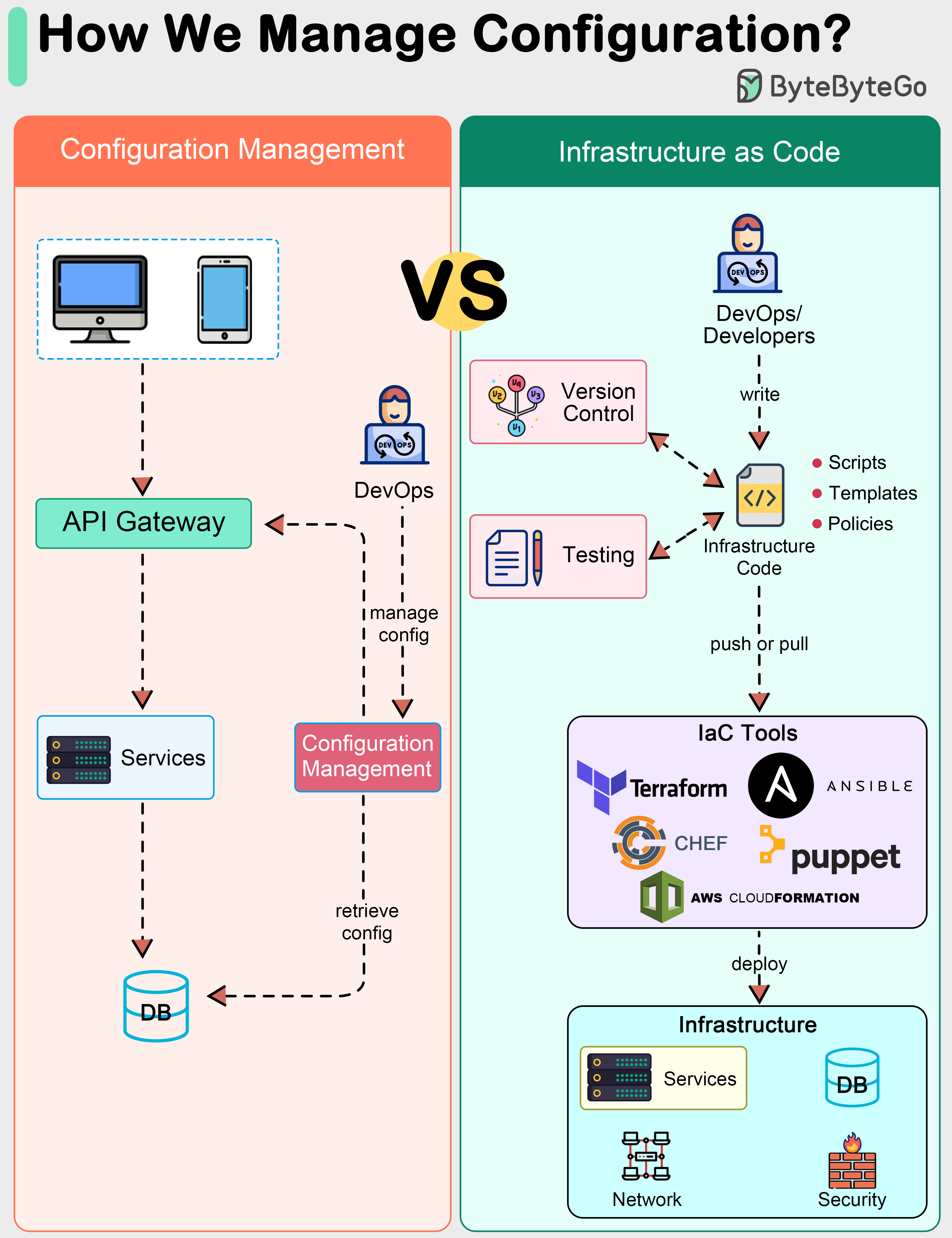

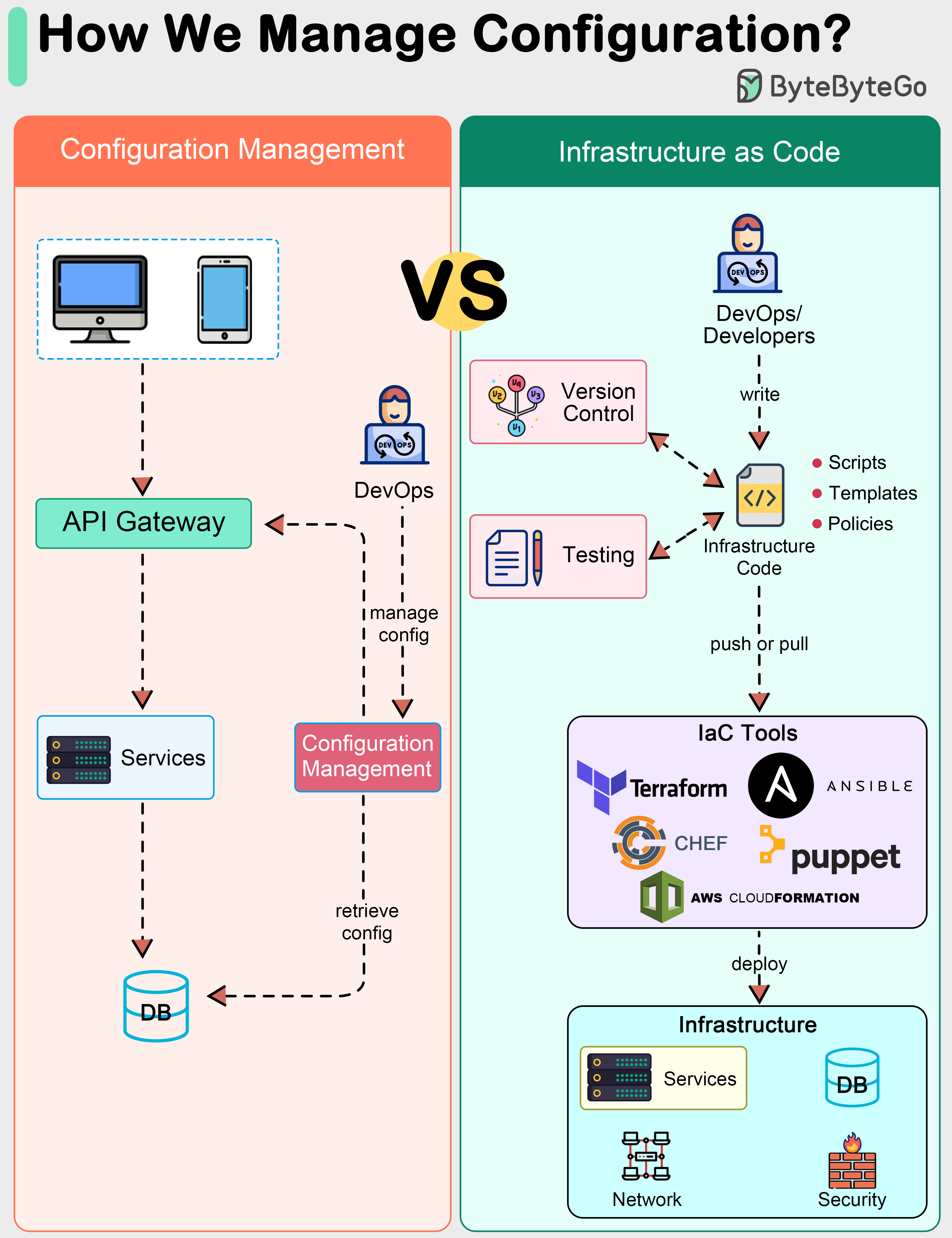

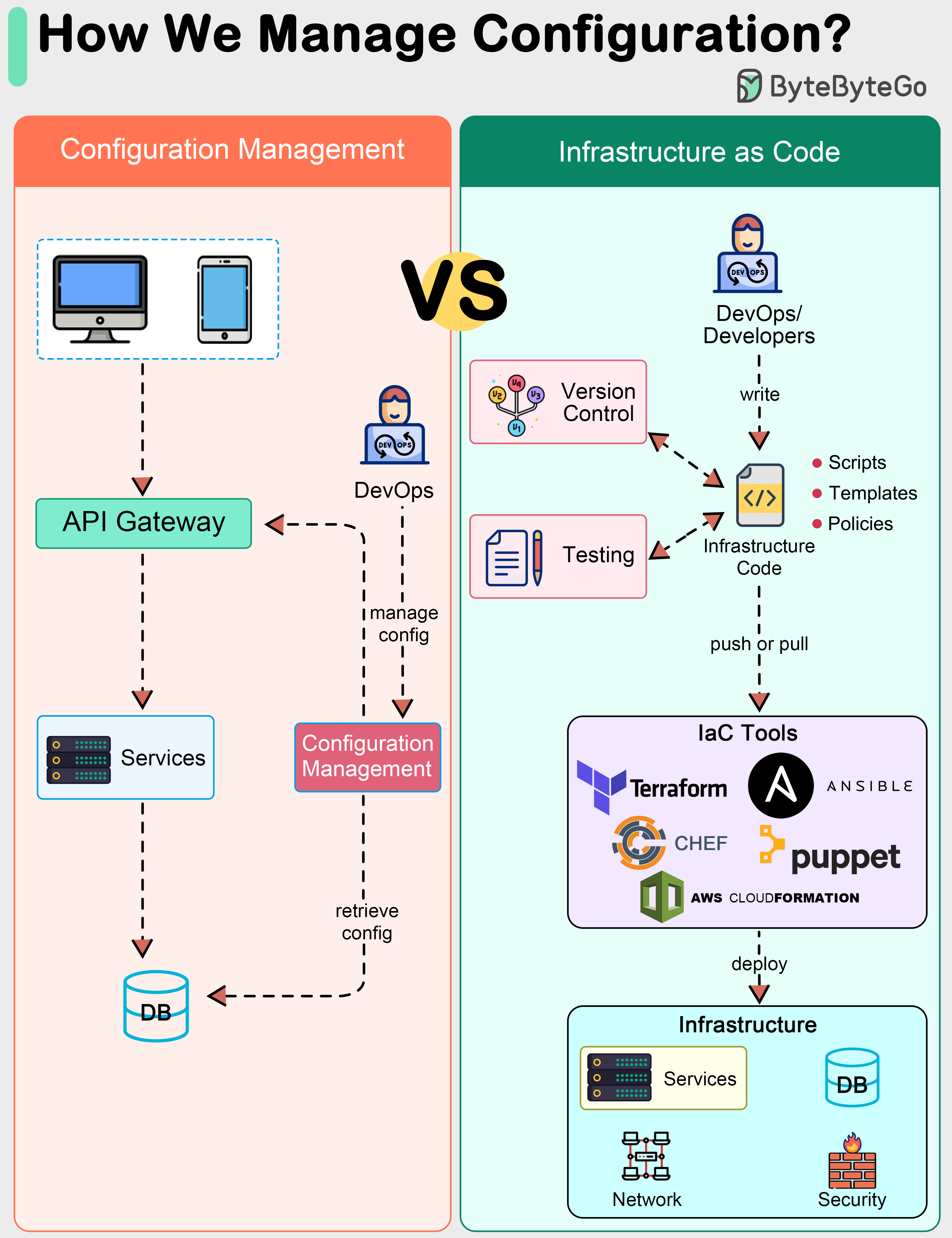

+ * [How do we manage configurations in a system?](https://bytebytego.com/guides/how-do-we-manage-configurations-in-a-system)

+ * [How do we incorporate Event Sourcing into systems?](https://bytebytego.com/guides/how-do-we-incorporate-event-sourcing-into-the-systems)

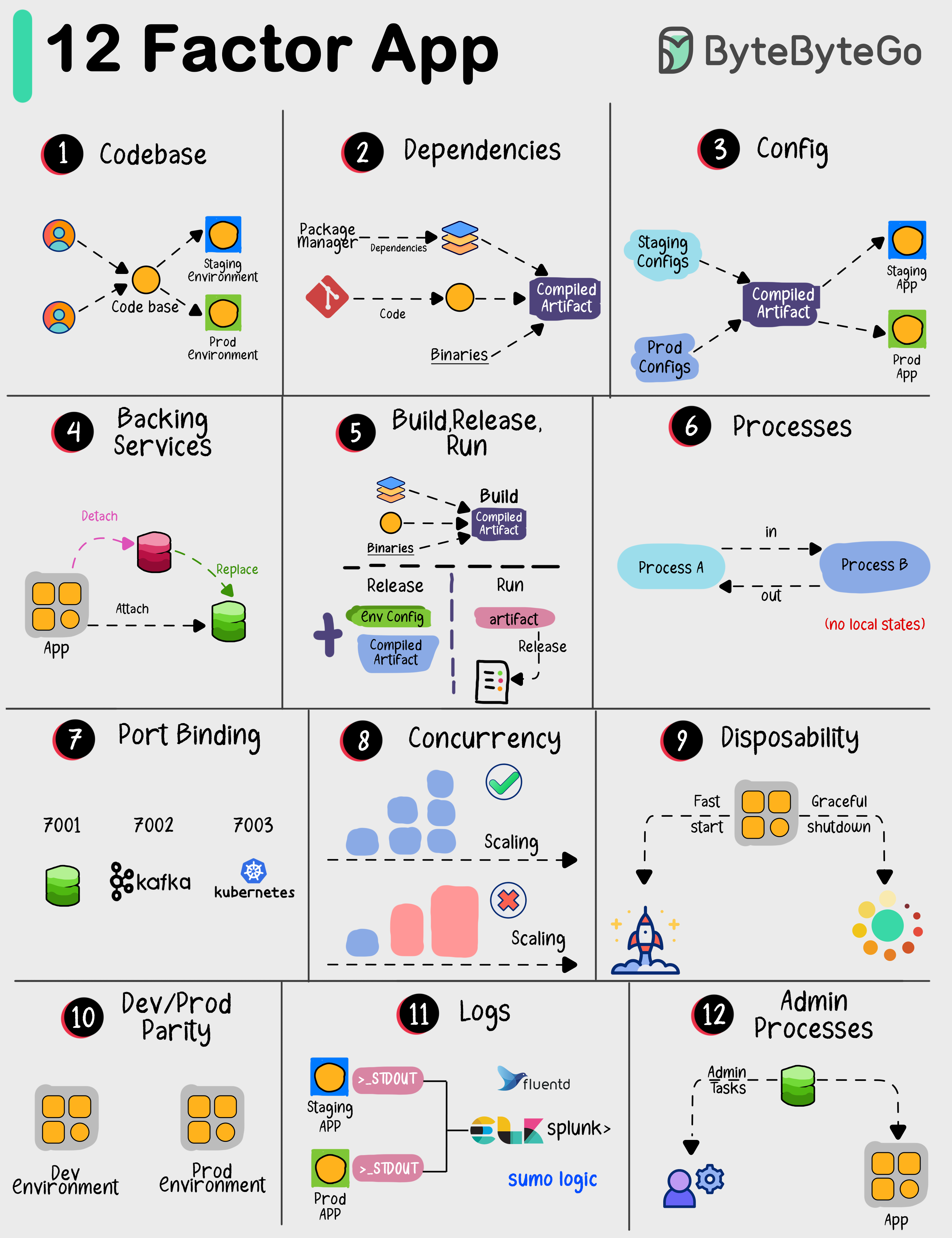

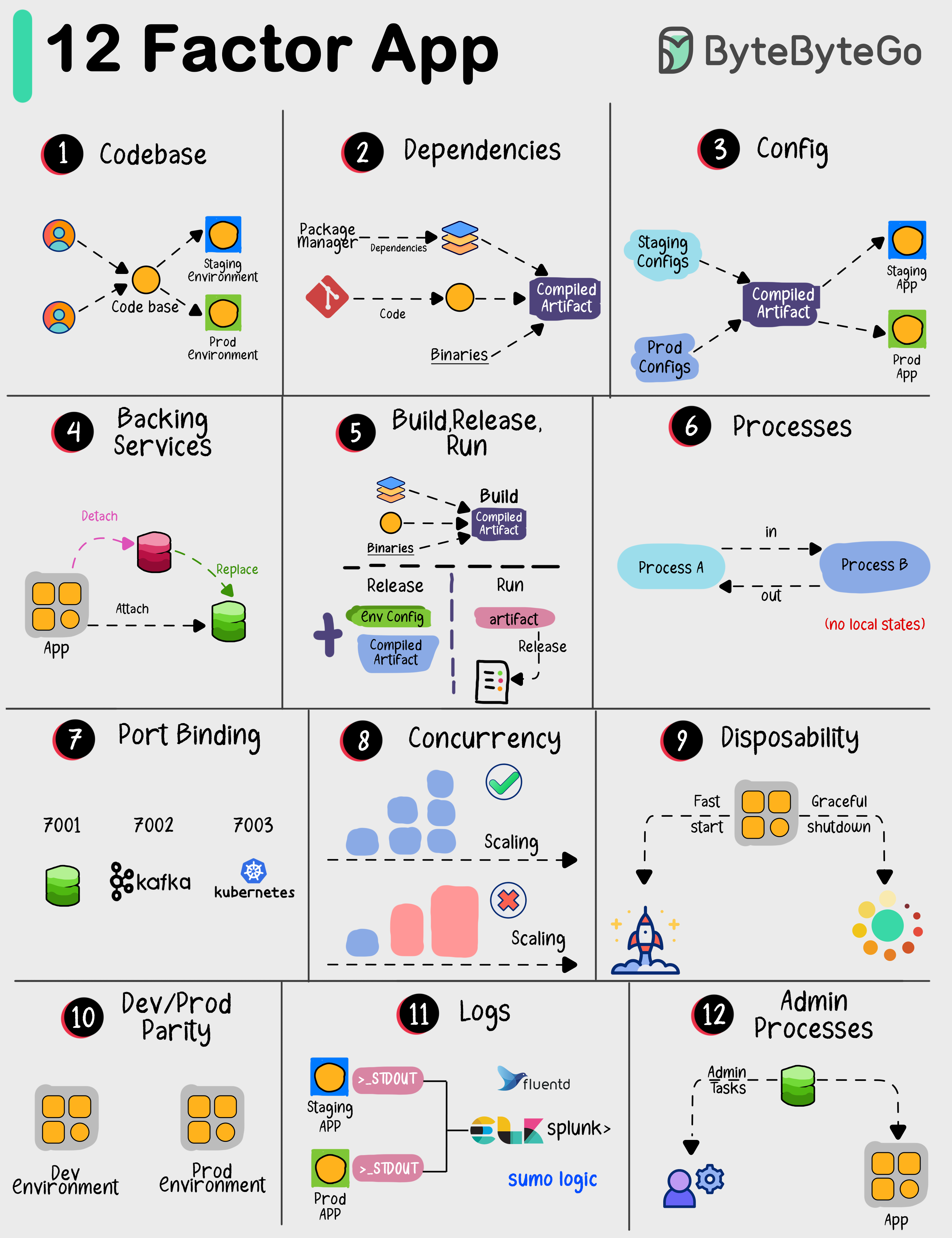

+ * [The 12-Factor App](https://bytebytego.com/guides/the-12-factor-app)

+ * [Explaining 5 Unique ID Generators](https://bytebytego.com/guides/explaining-5-unique-id-generators-in-distributed-systems)

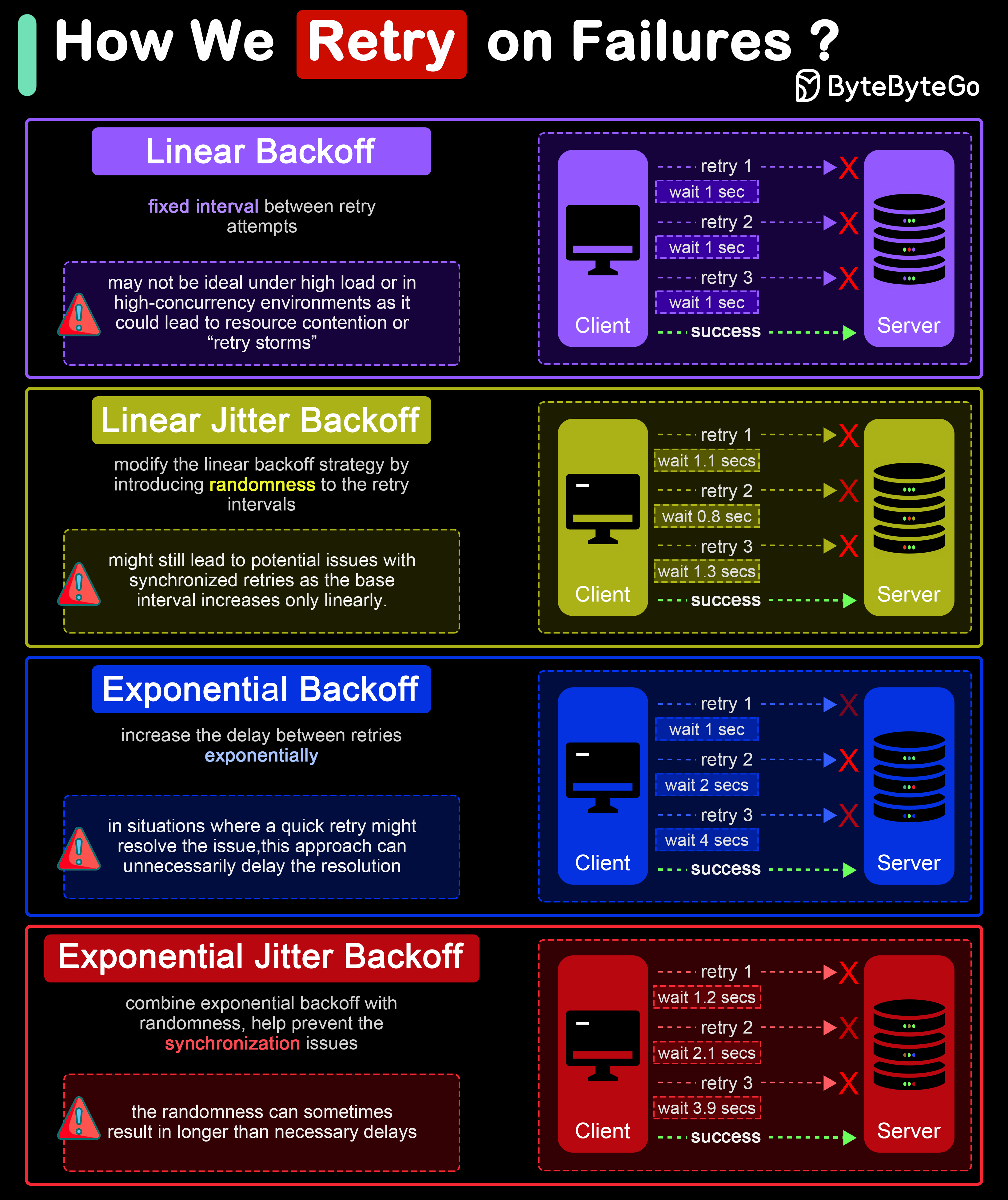

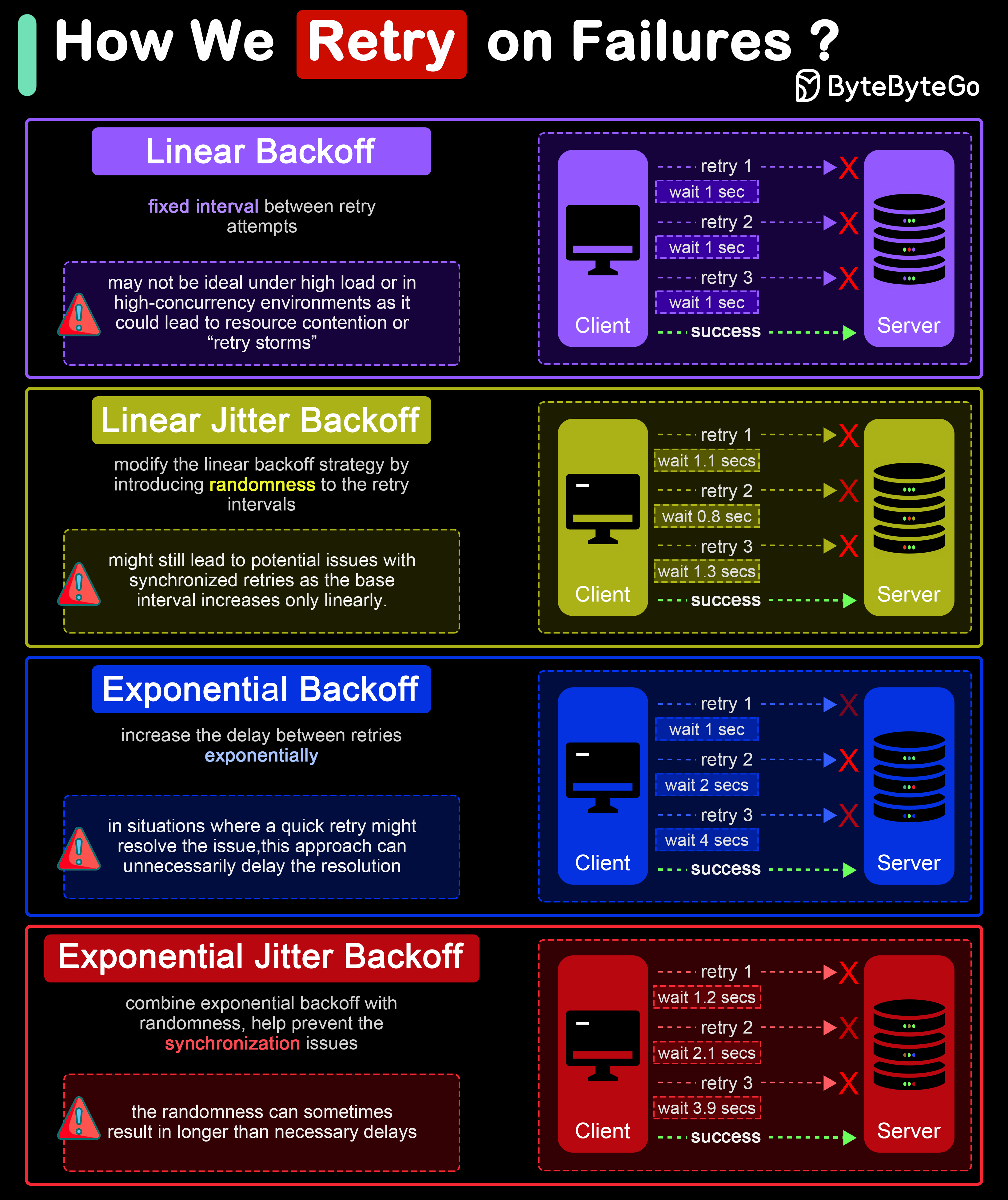

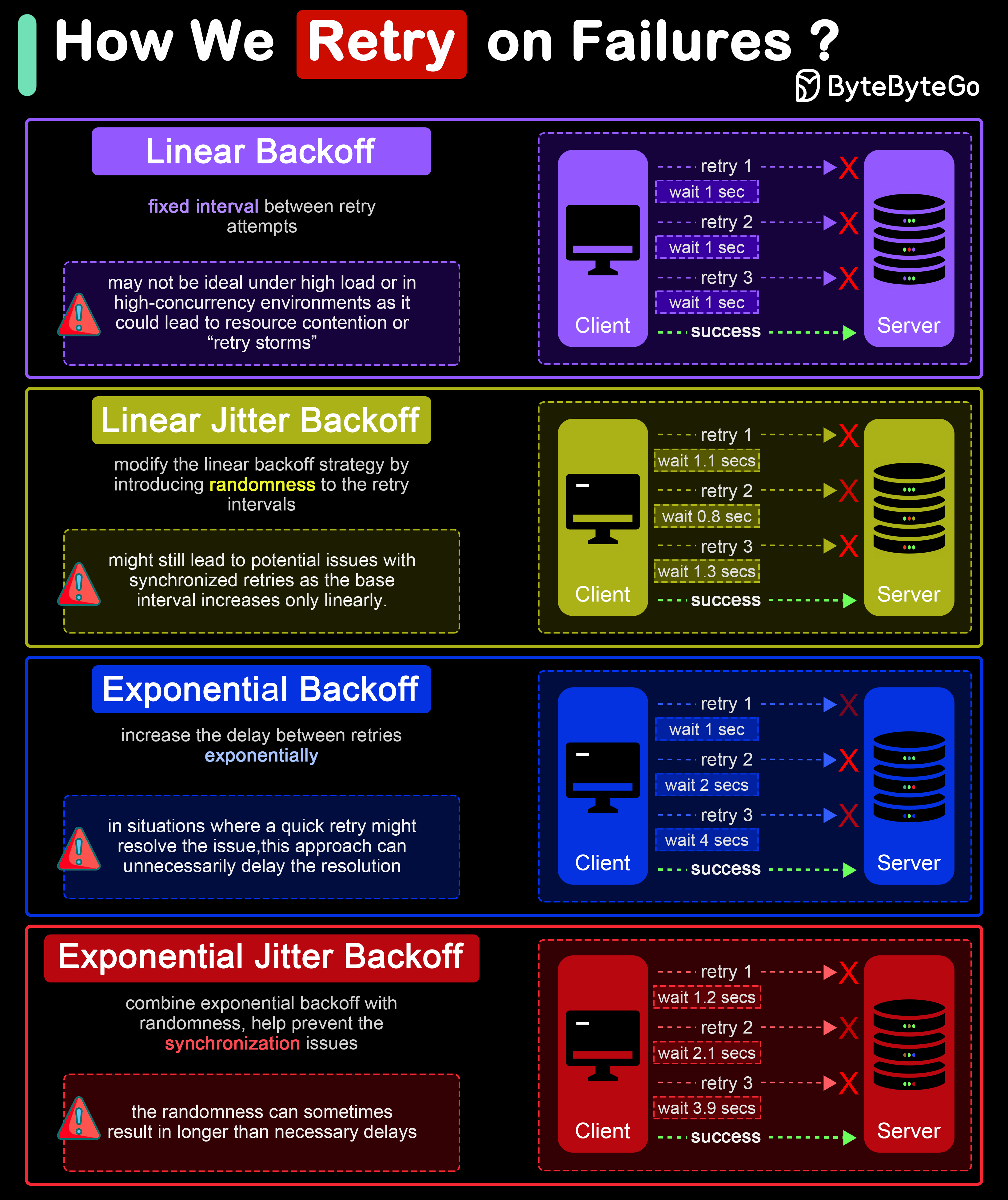

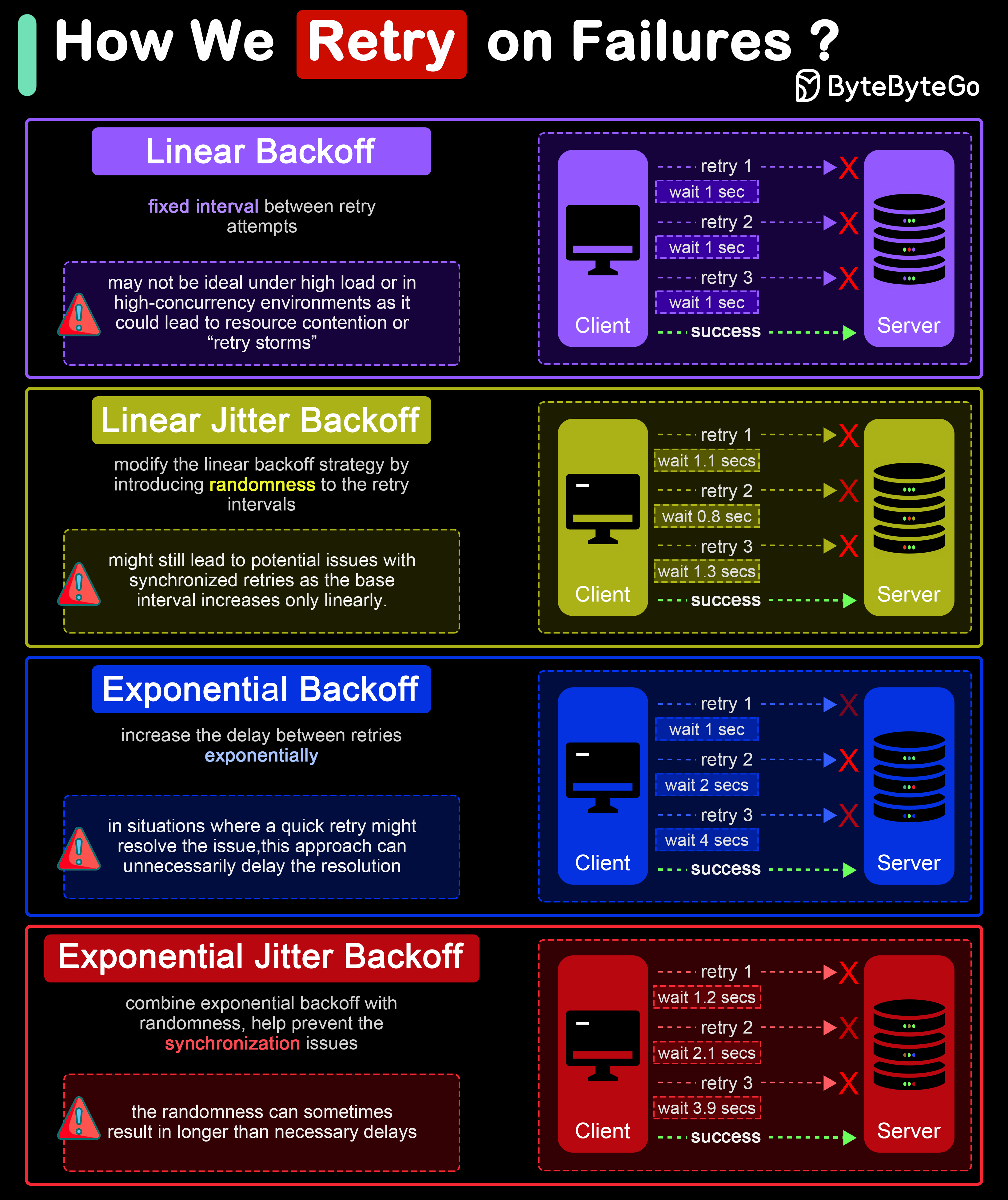

+ * [Retry Strategies for System Failures](https://bytebytego.com/guides/how-do-we-retry-on-failures)

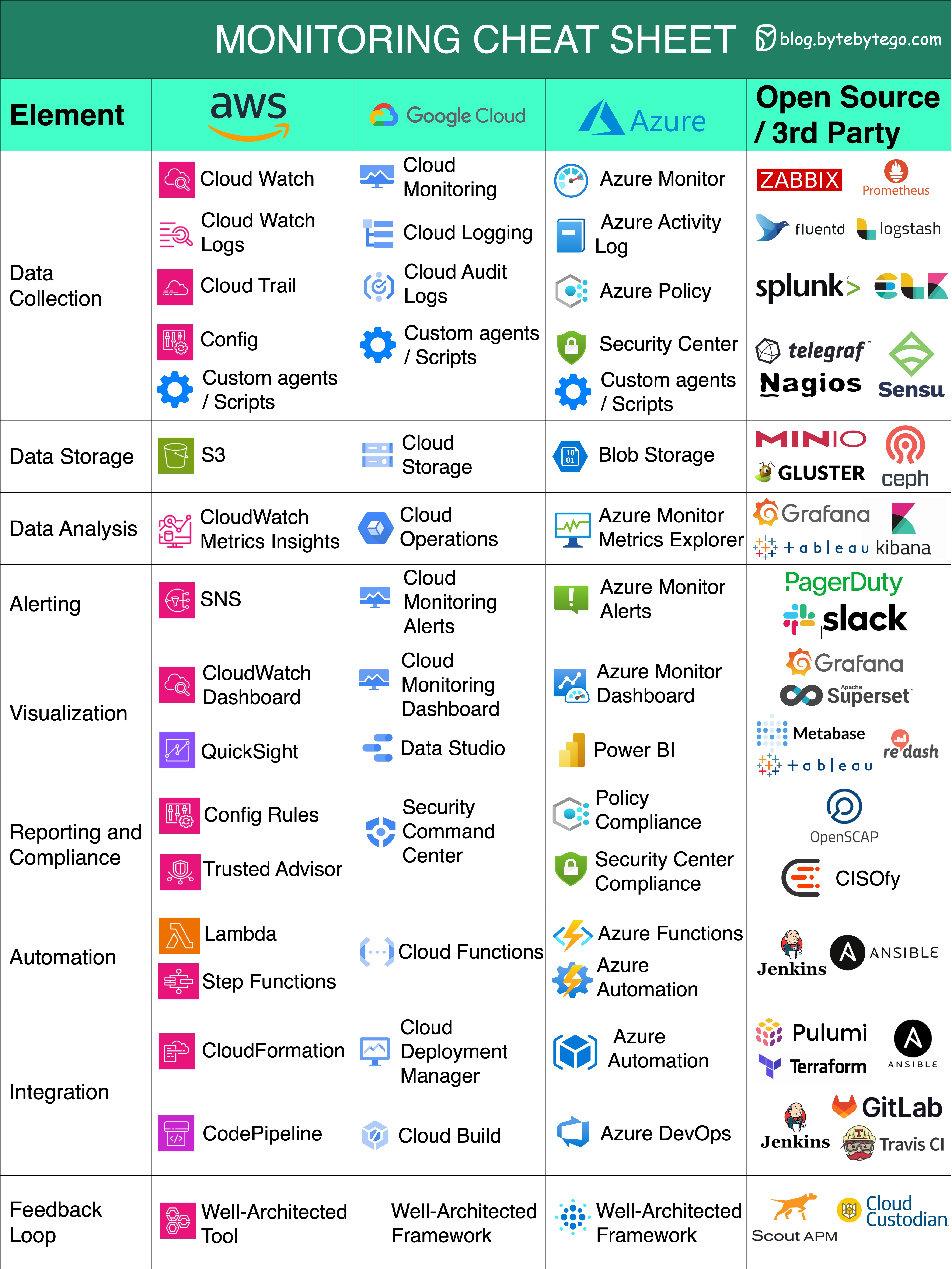

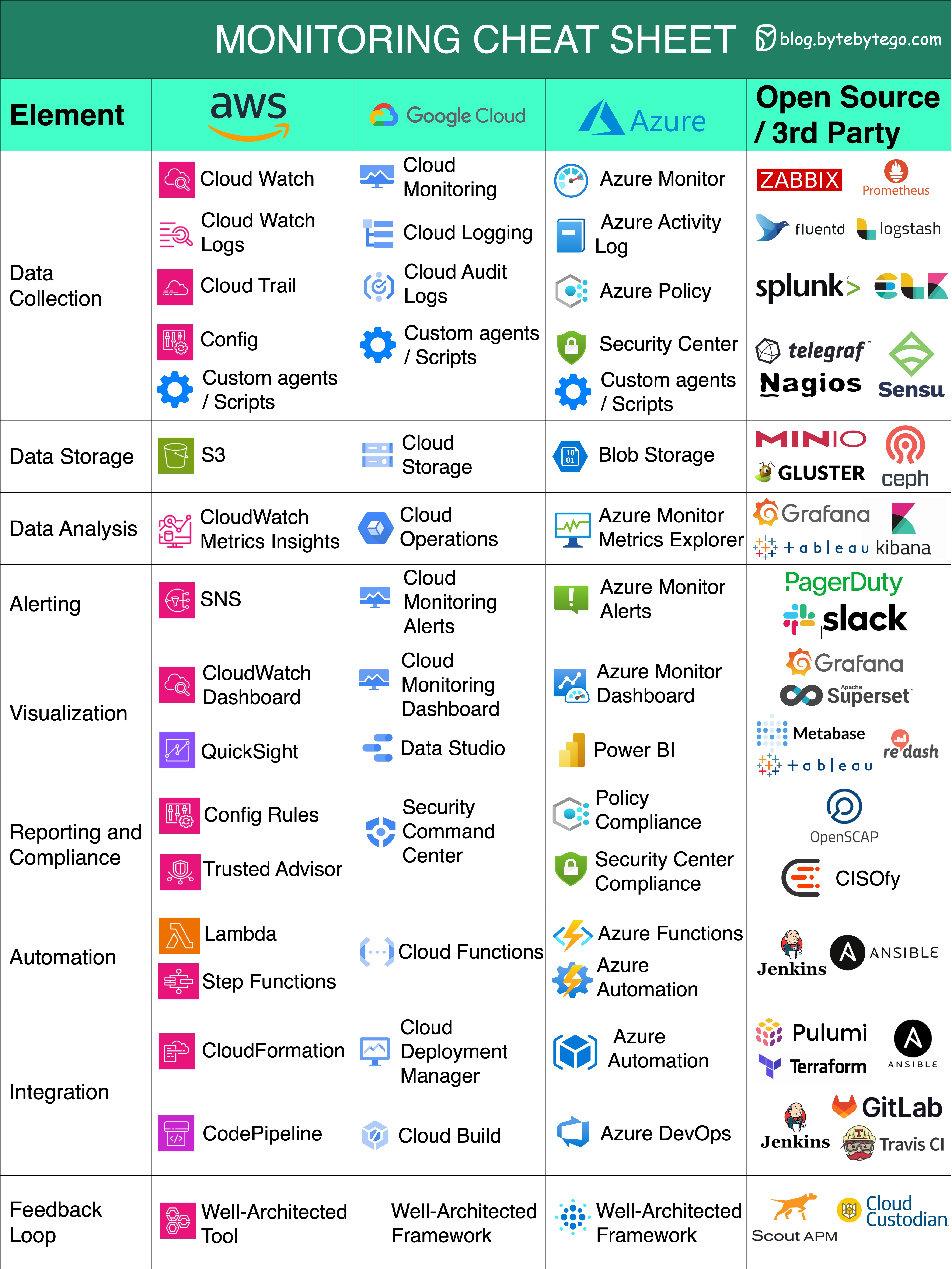

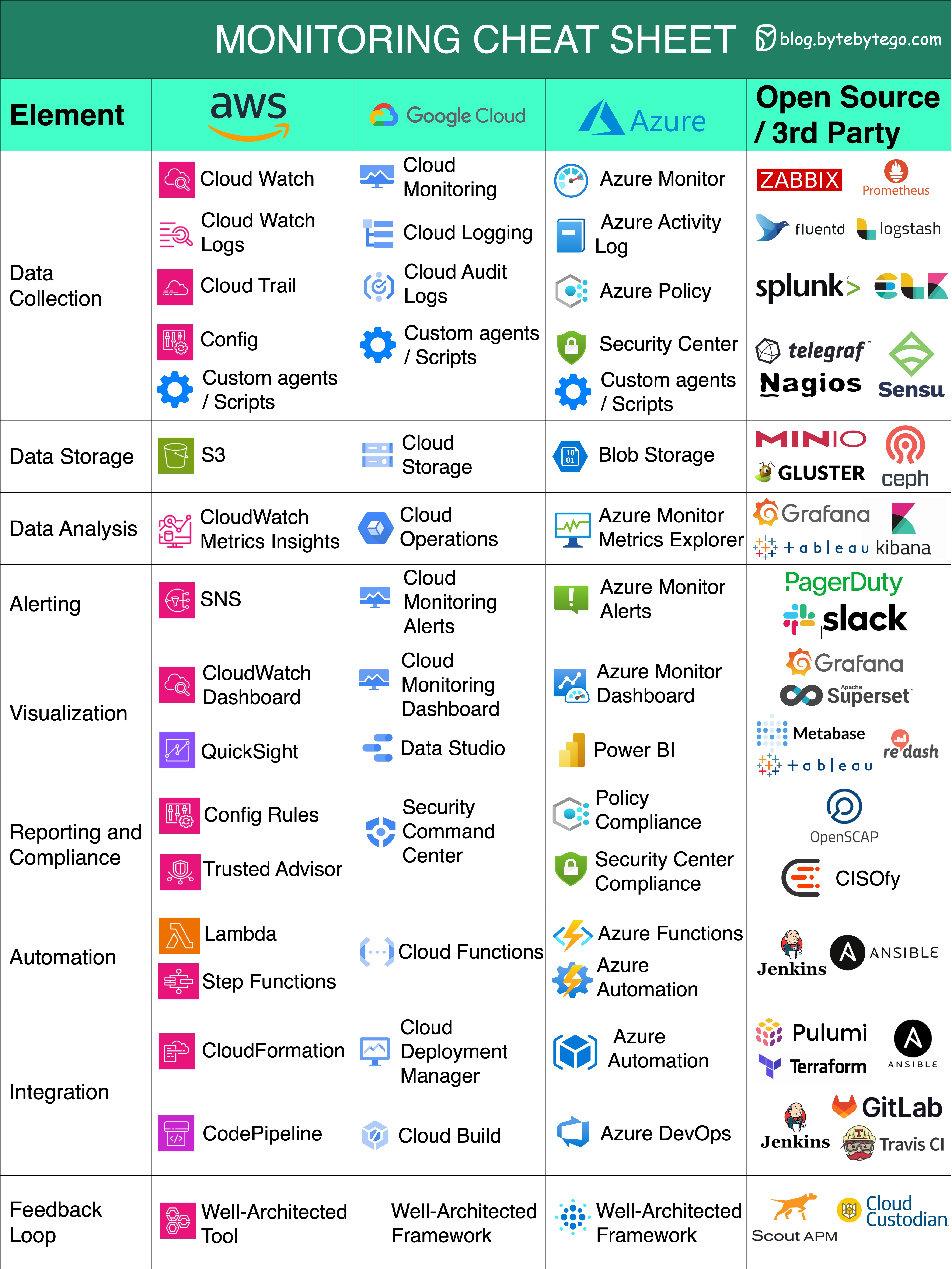

+ * [Cloud Monitoring Cheat Sheet](https://bytebytego.com/guides/cloud-monitoring-cheat-sheet)

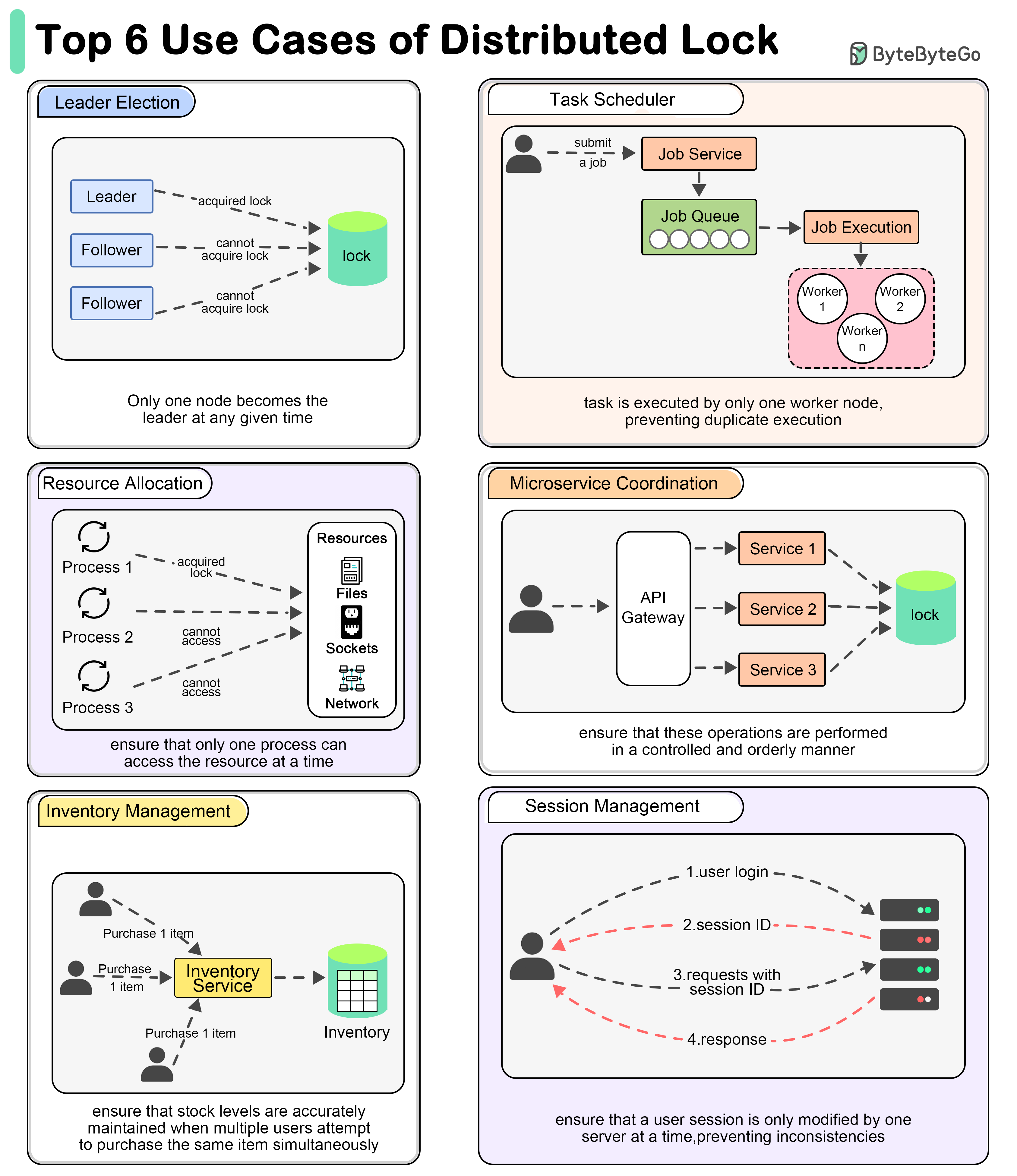

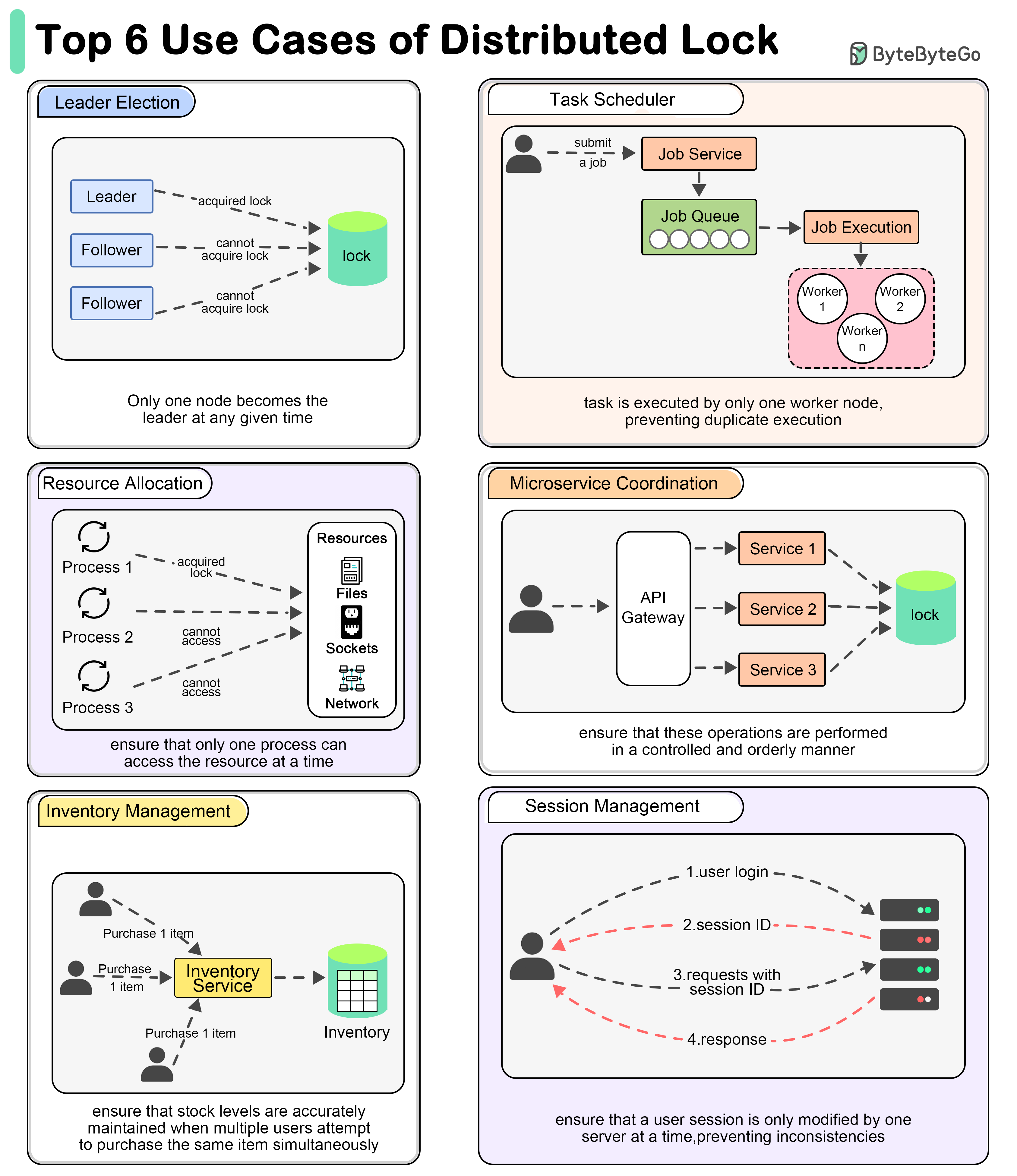

+ * [Why Use a Distributed Lock?](https://bytebytego.com/guides/why-do-we-need-to-use-a-distributed-lock)

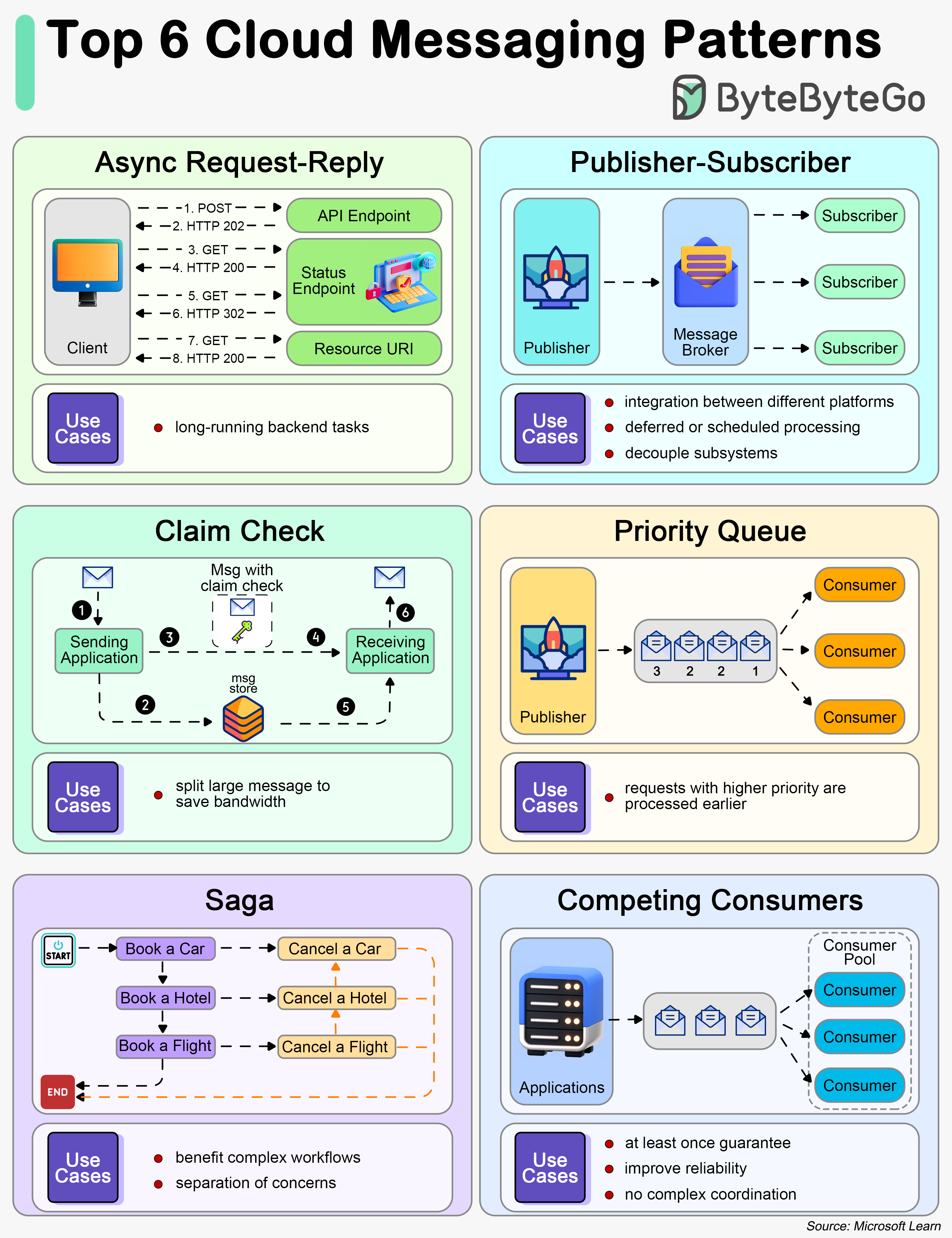

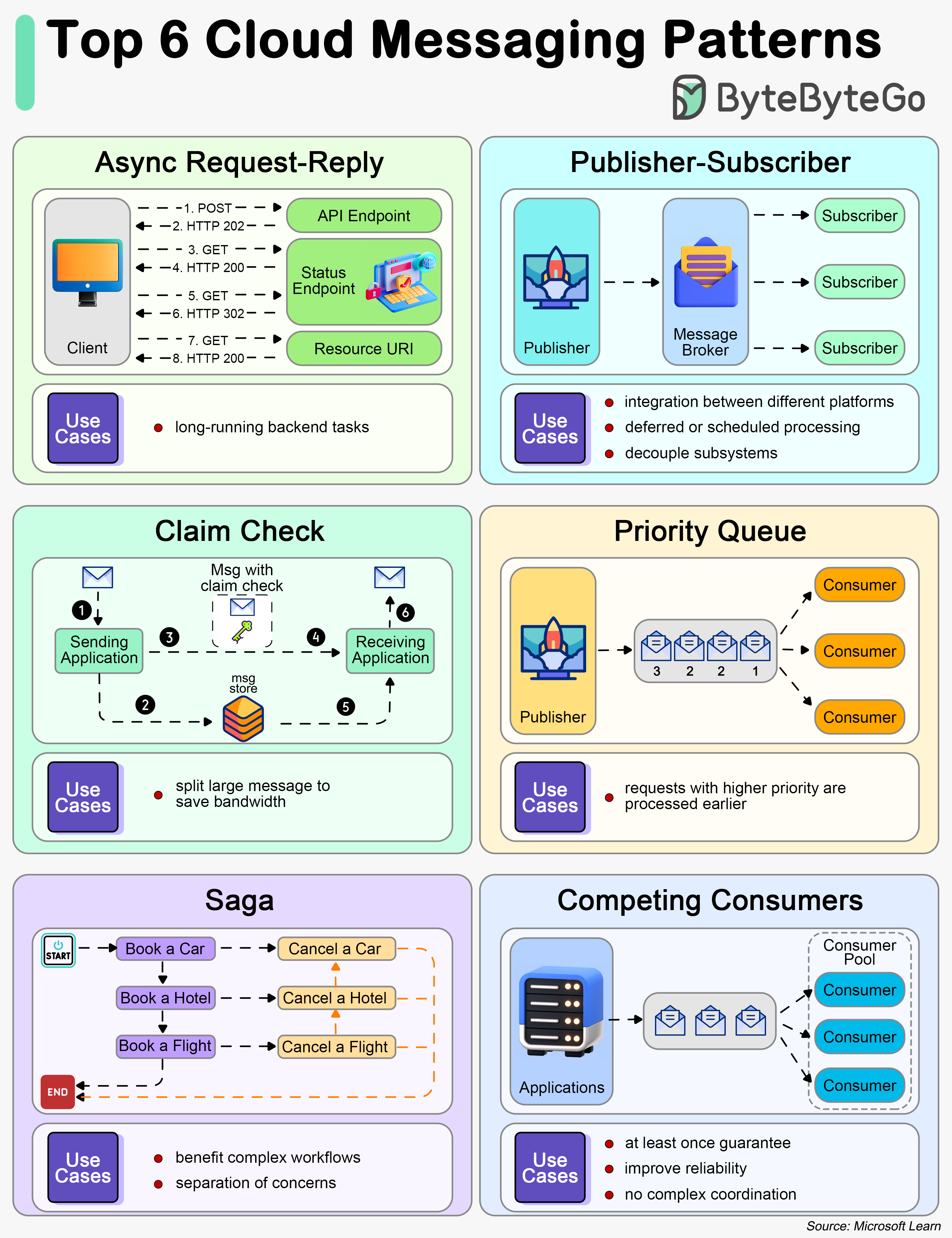

+ * [Top 6 Cloud Messaging Patterns](https://bytebytego.com/guides/top-6-cloud-messaging-patterns)

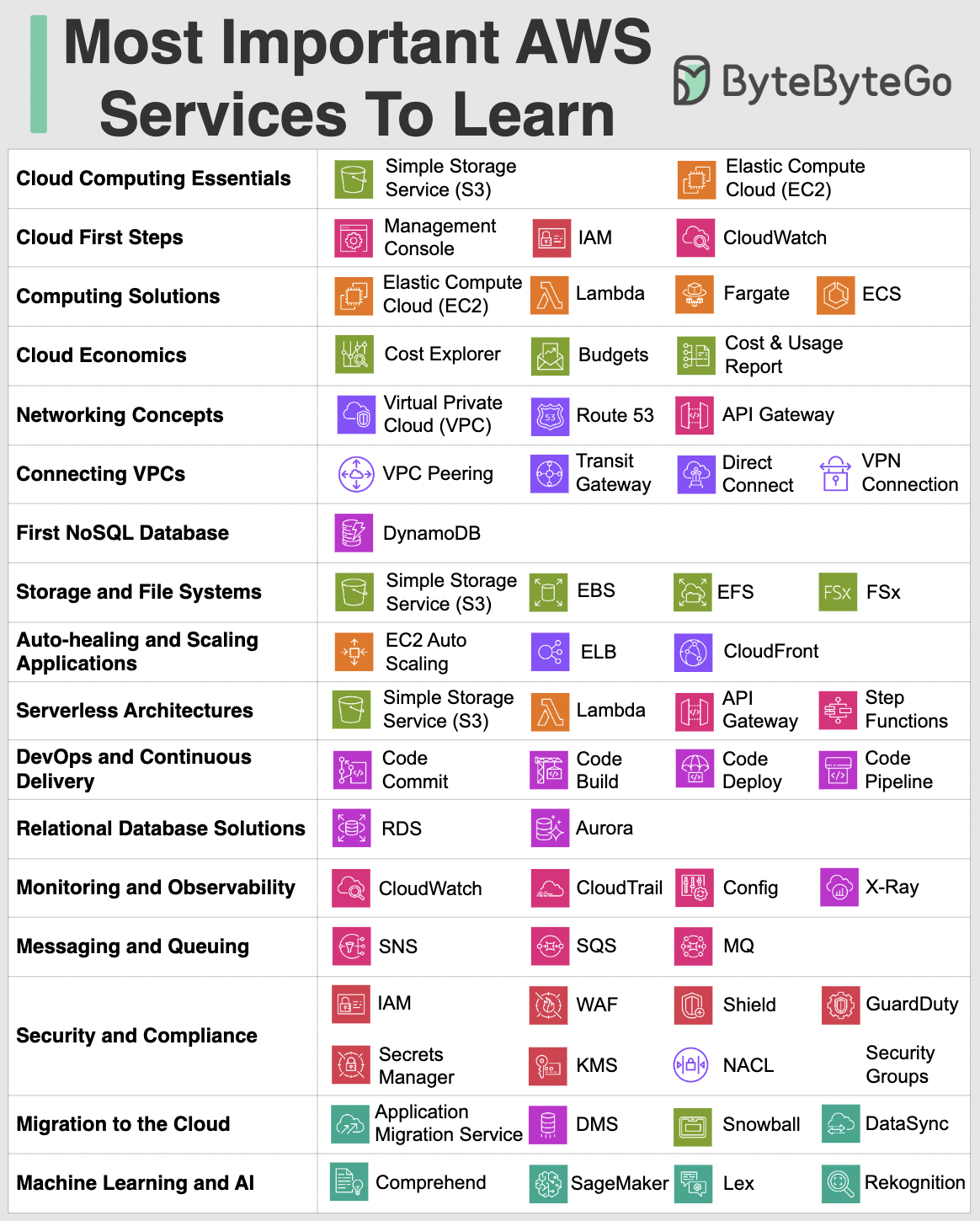

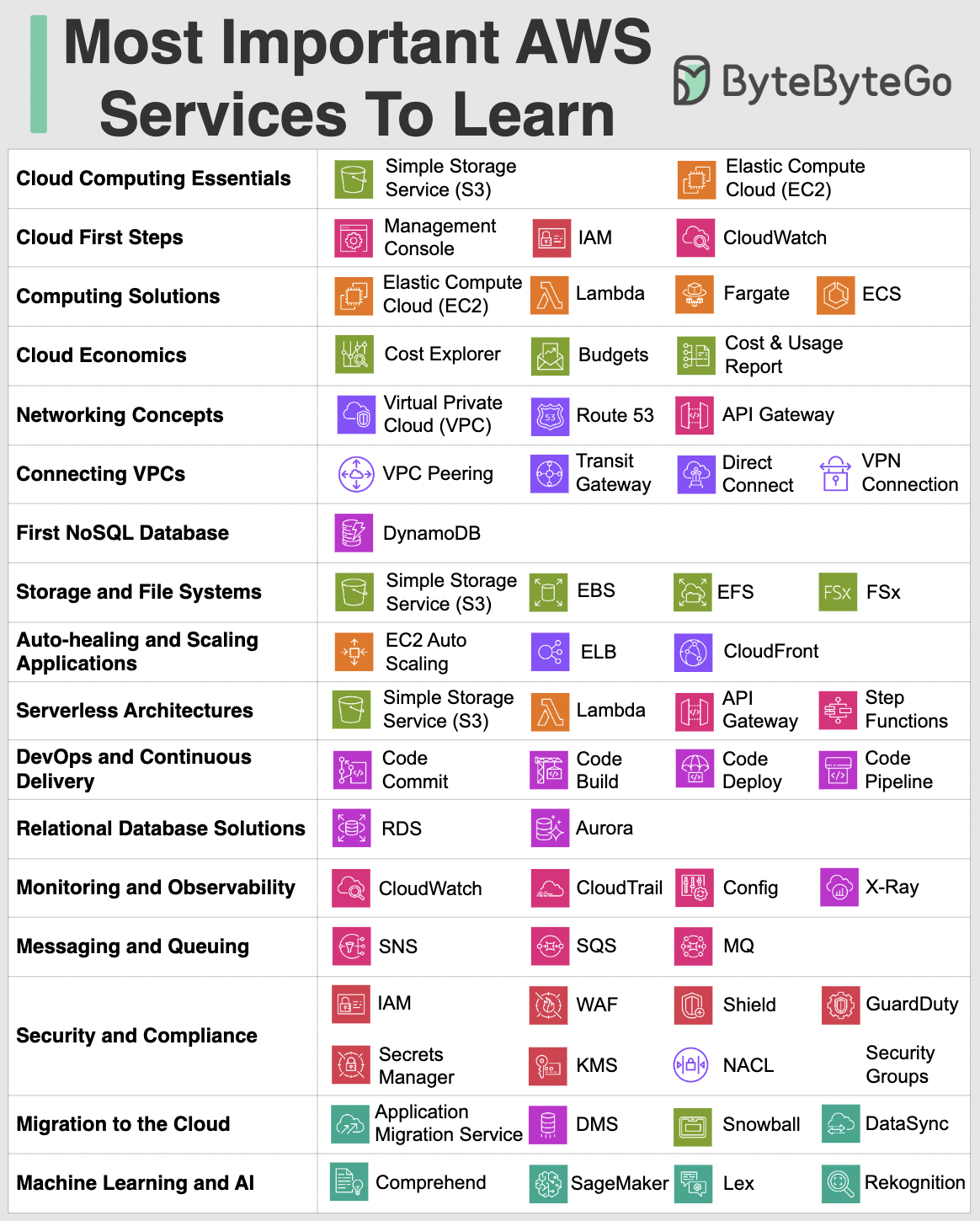

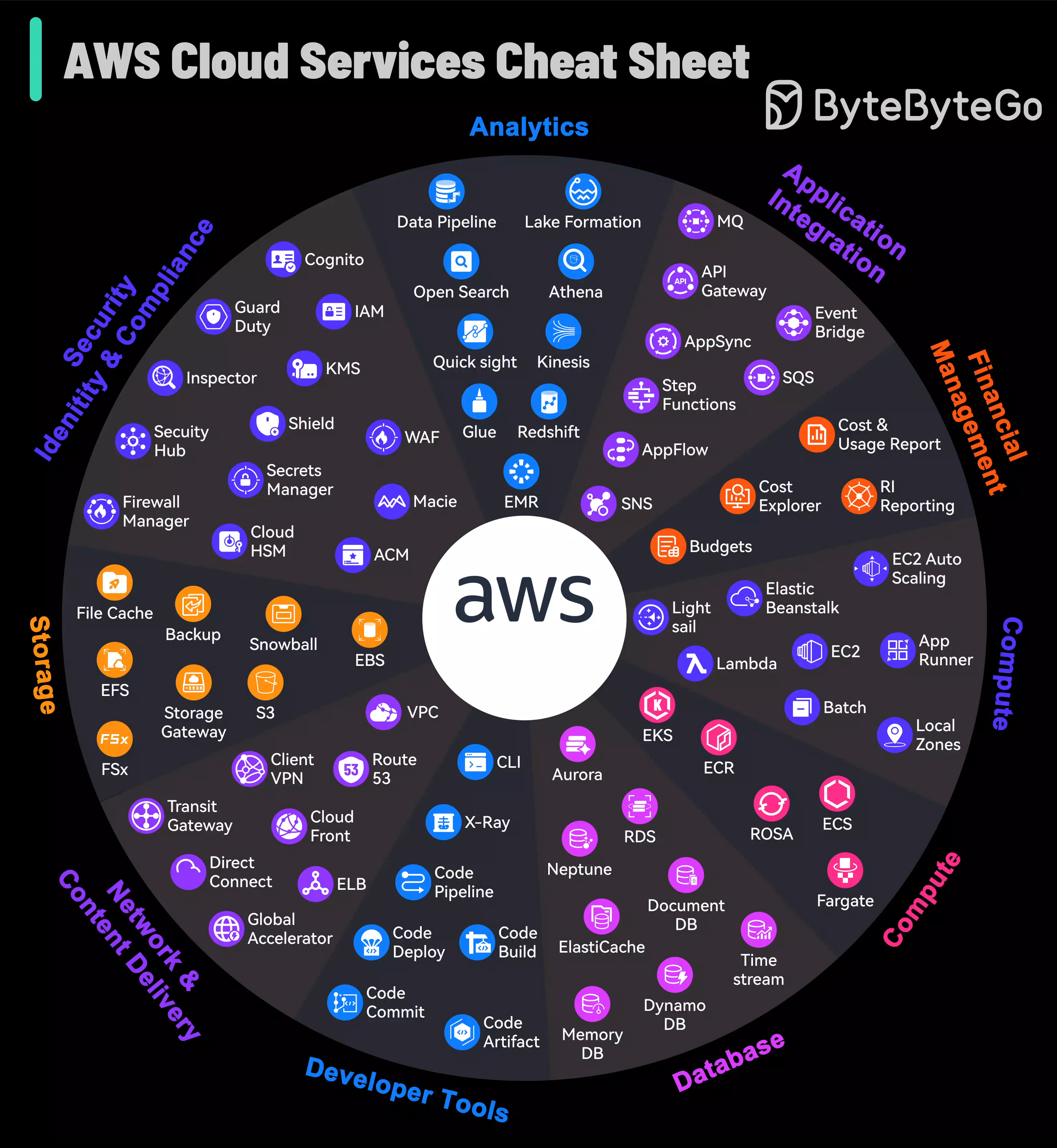

+ * [Most Important AWS Services to Learn](https://bytebytego.com/guides/what-are-the-most-important-aws-services-to-learn)

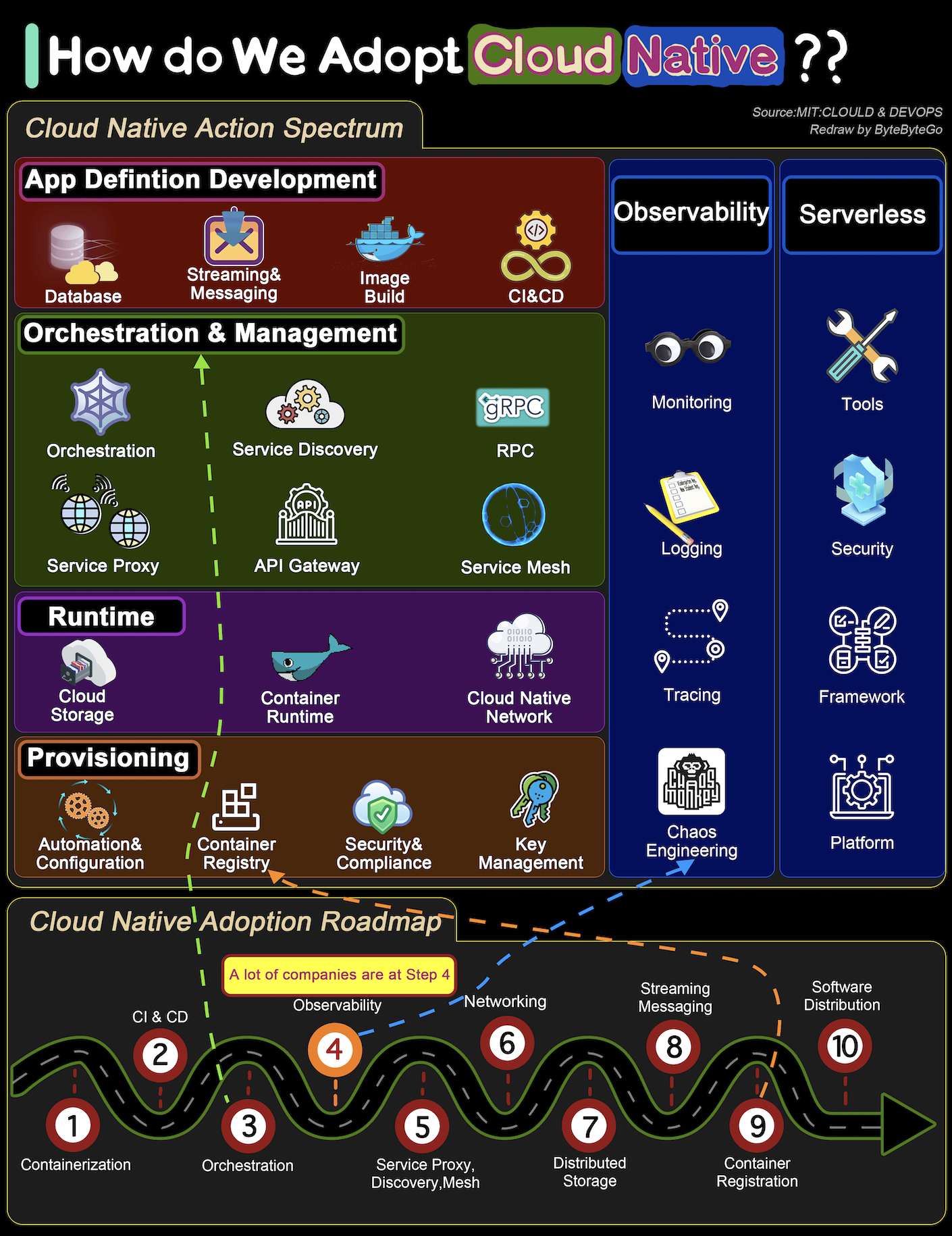

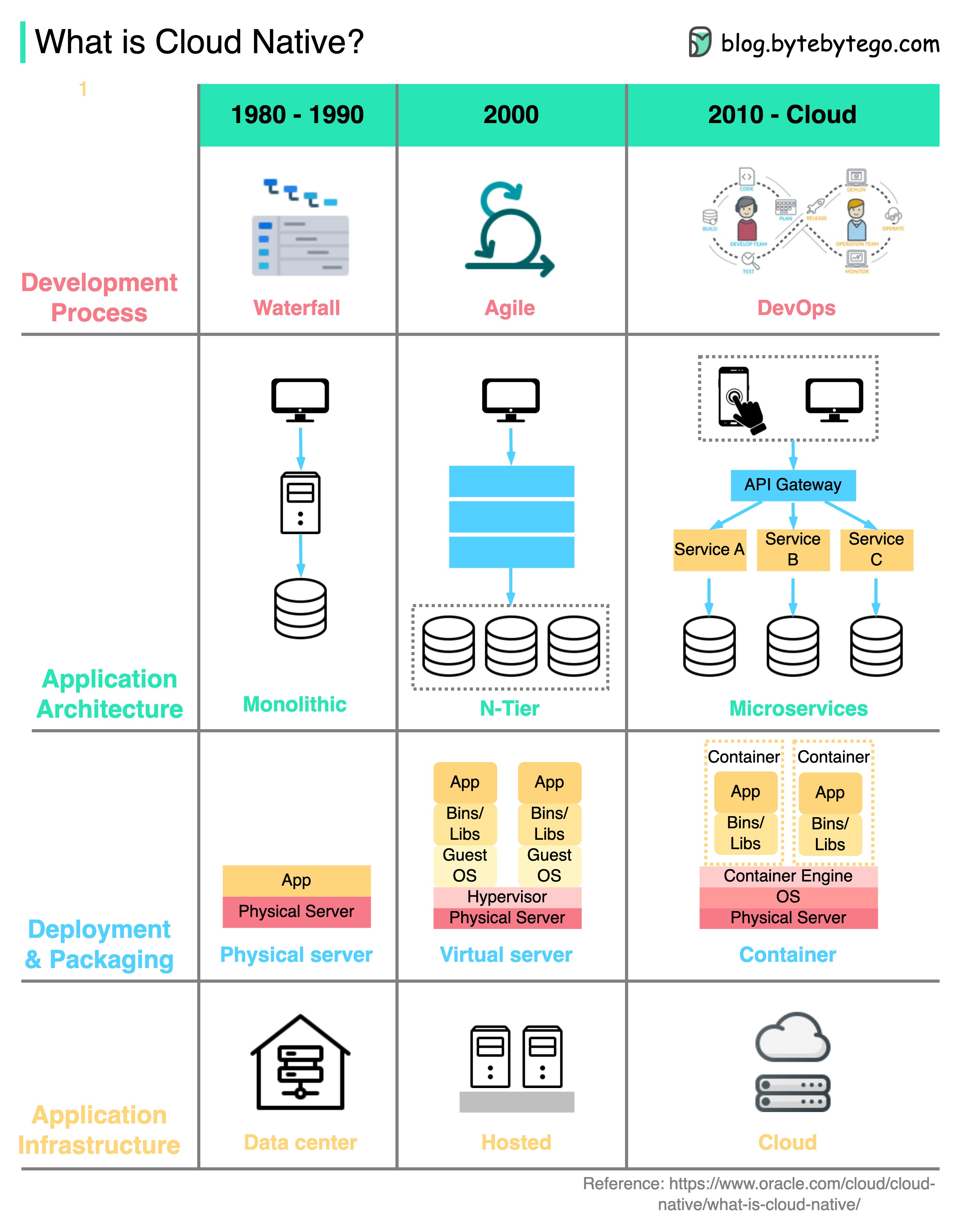

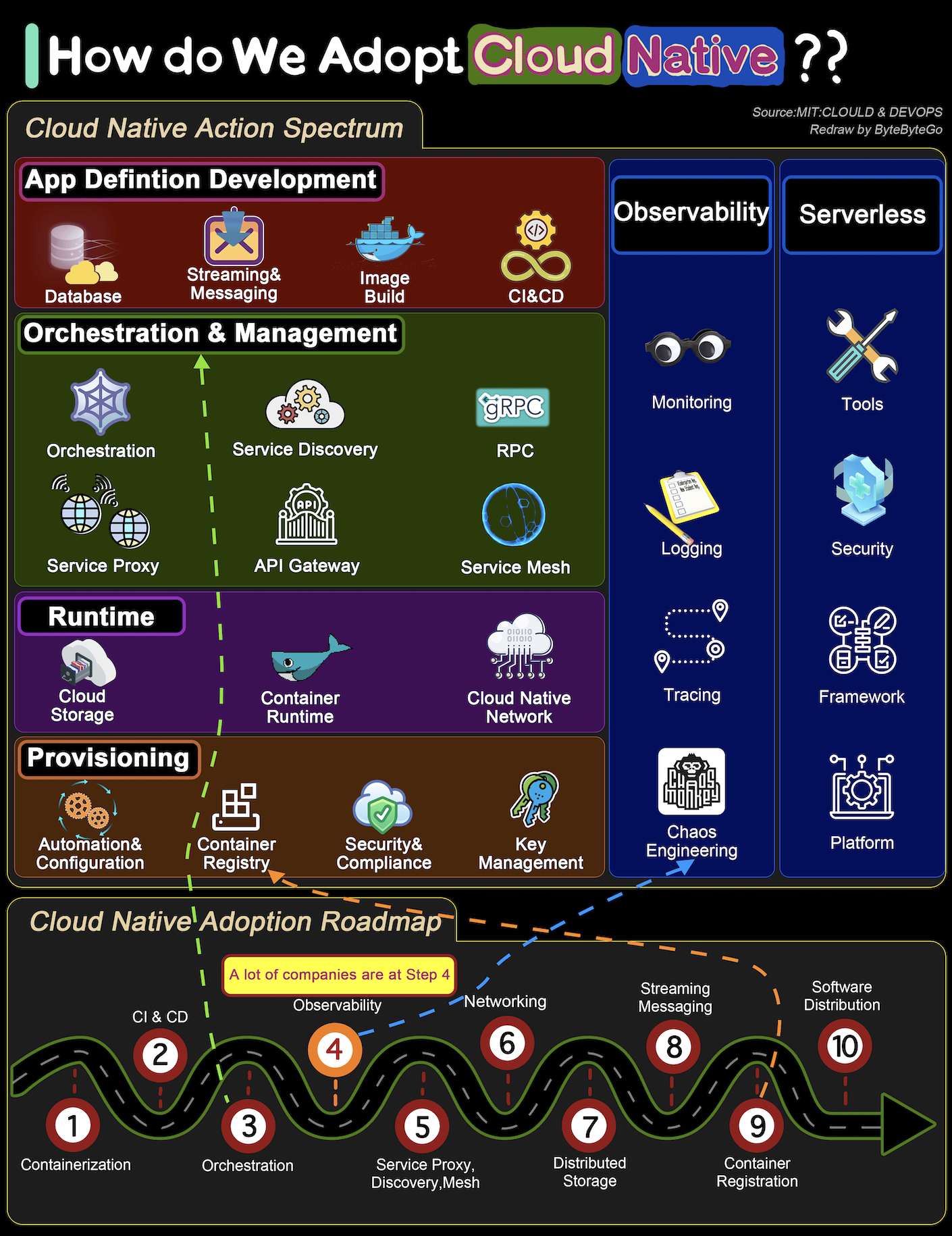

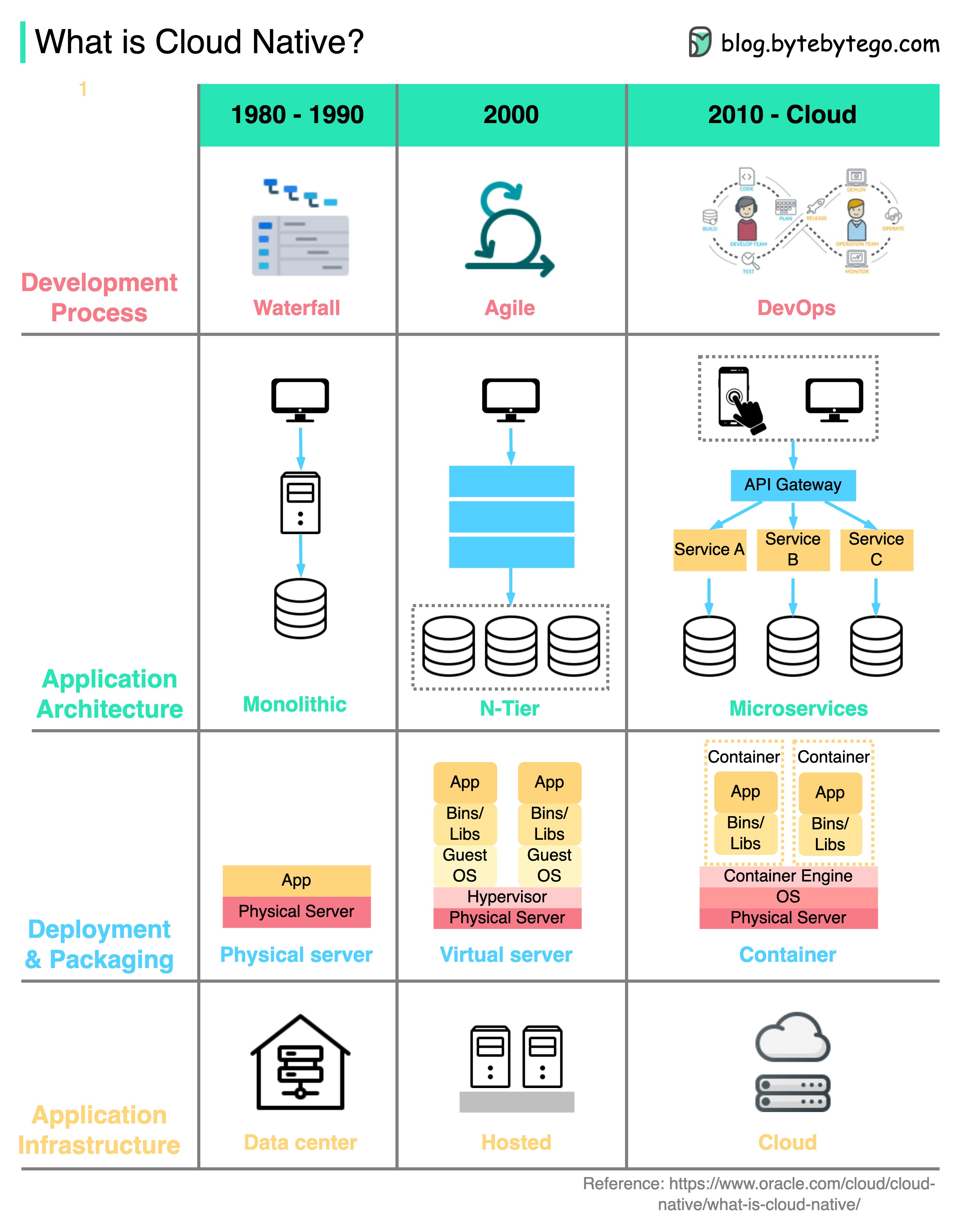

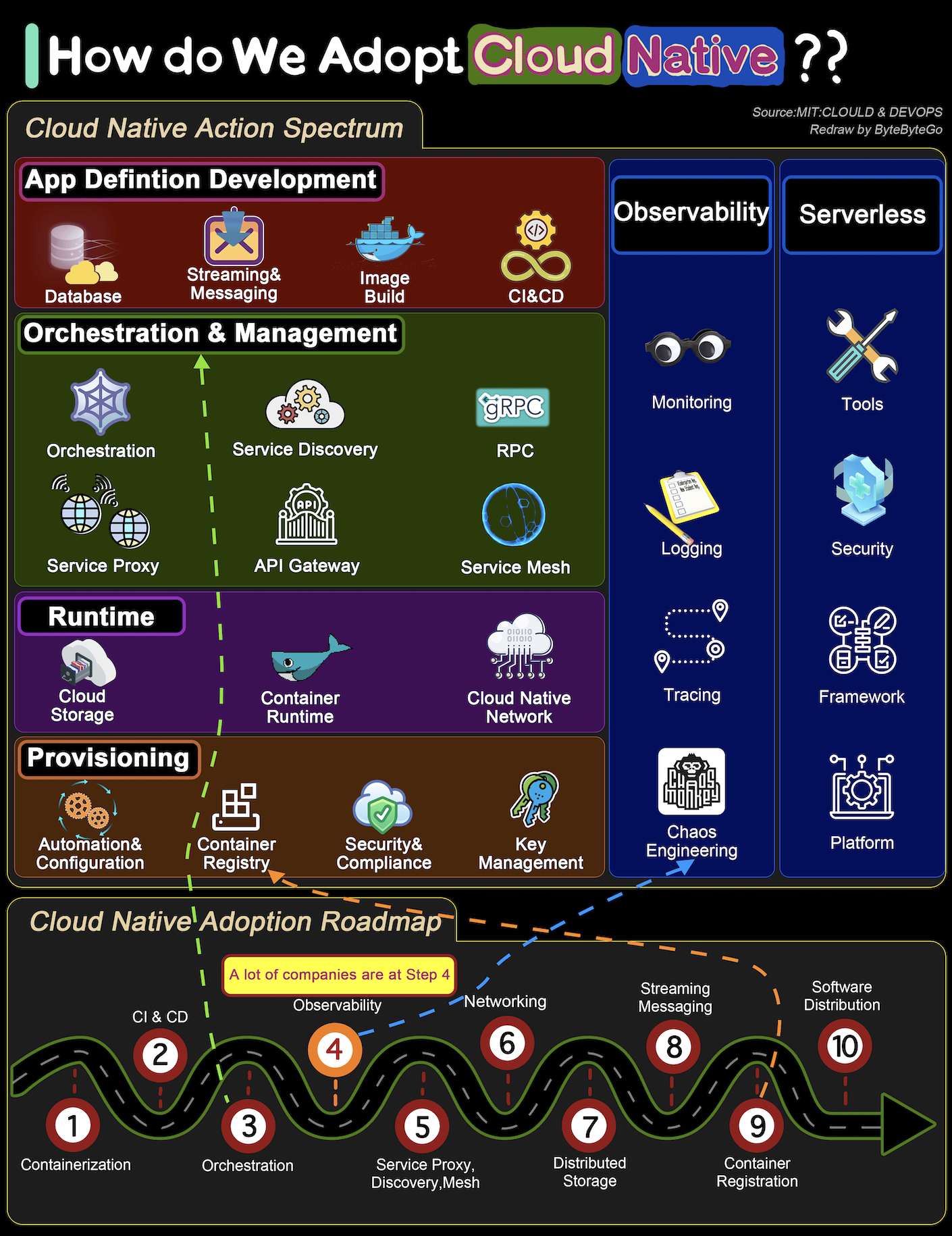

+ * [How to Transform a System to be Cloud Native](https://bytebytego.com/guides/how-do-we-transform-a-system-to-be-cloud-native)

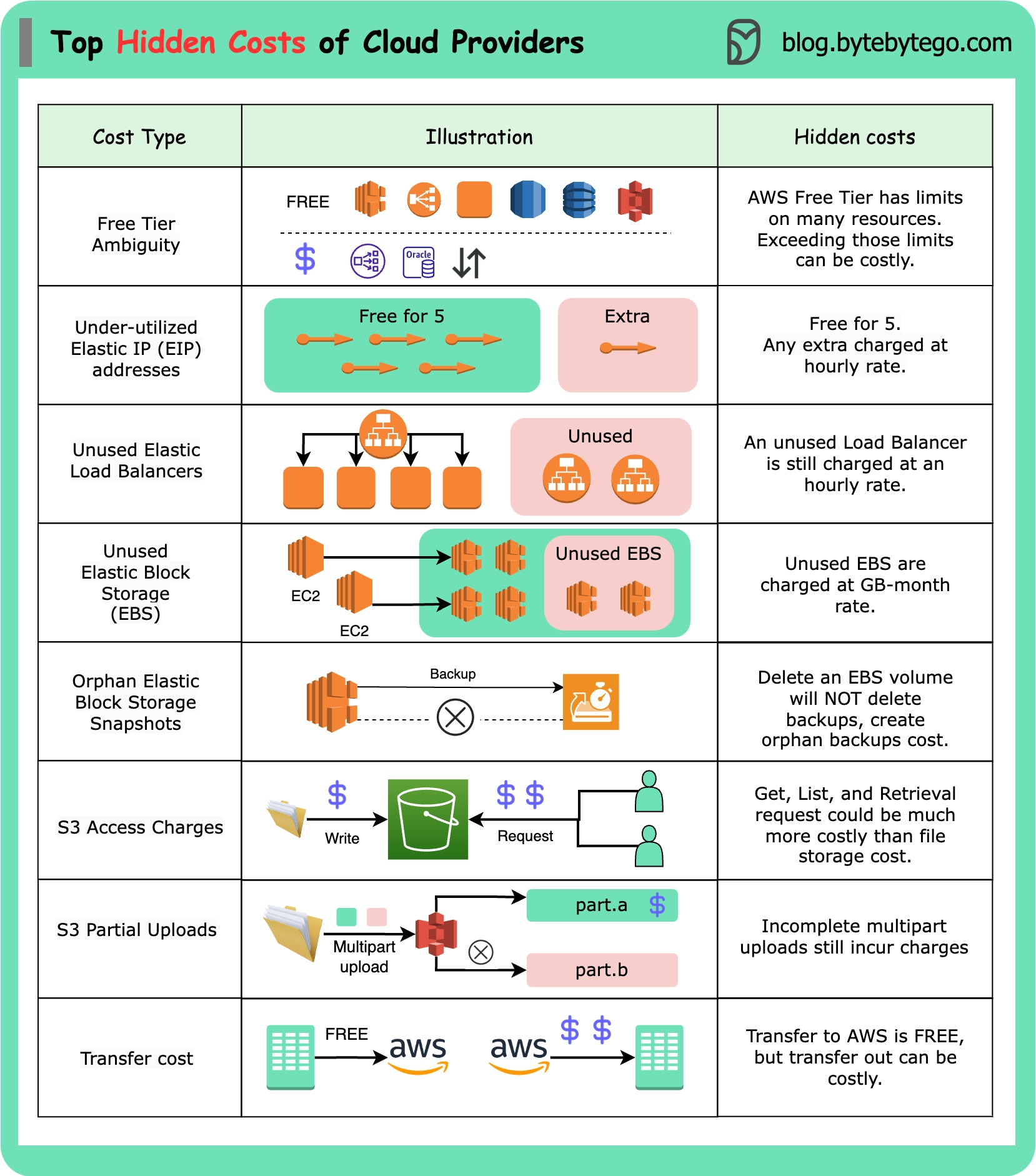

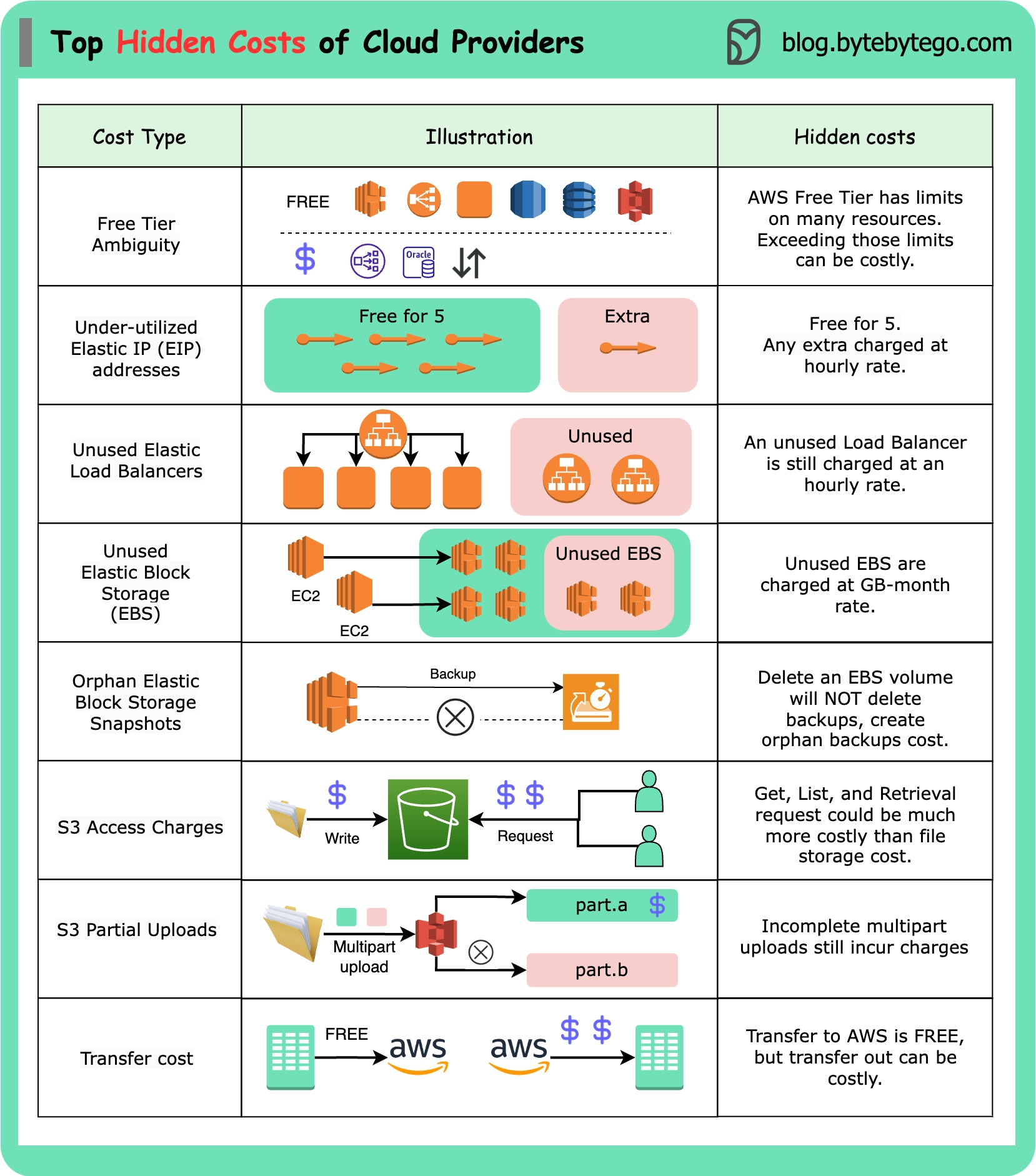

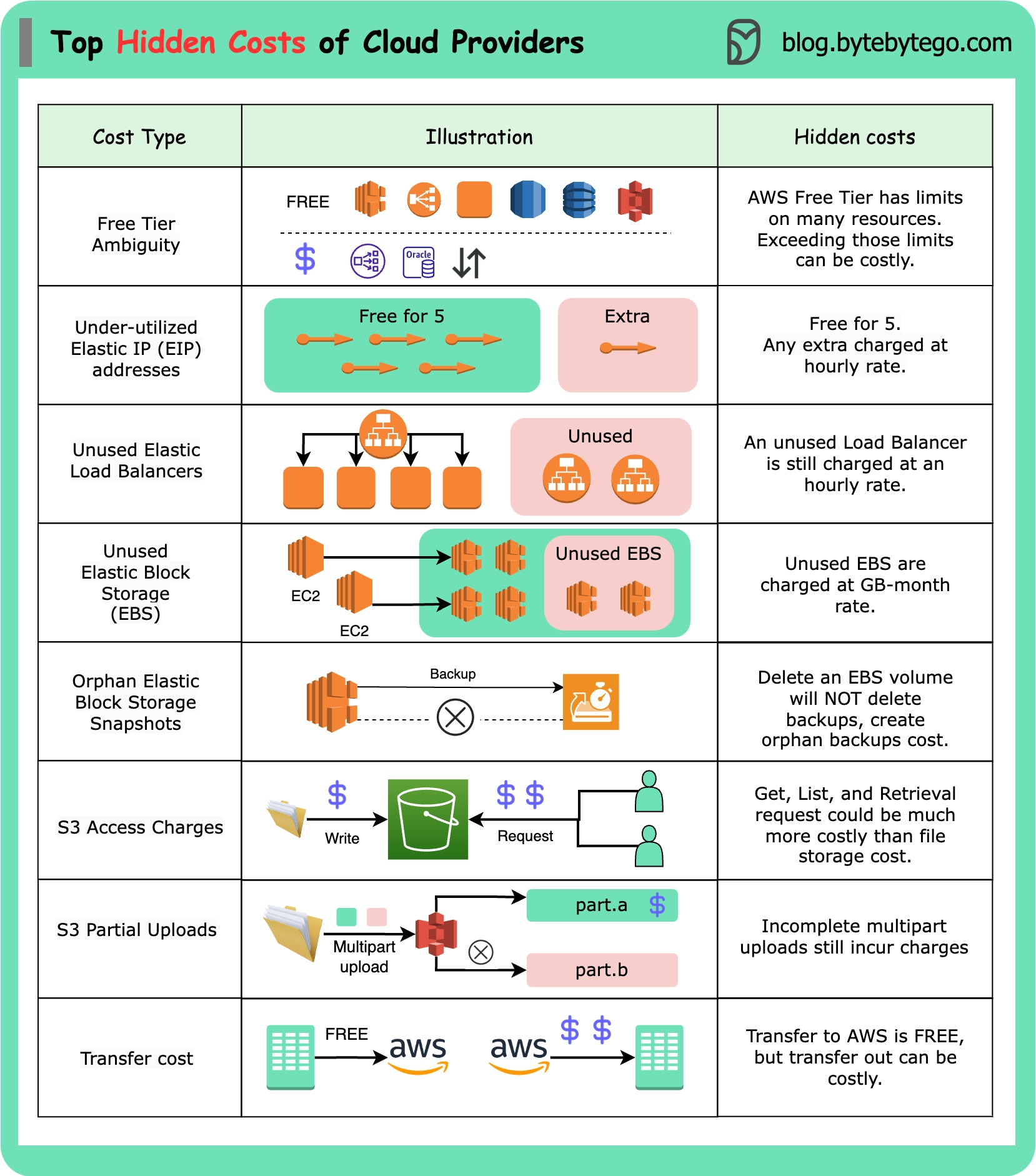

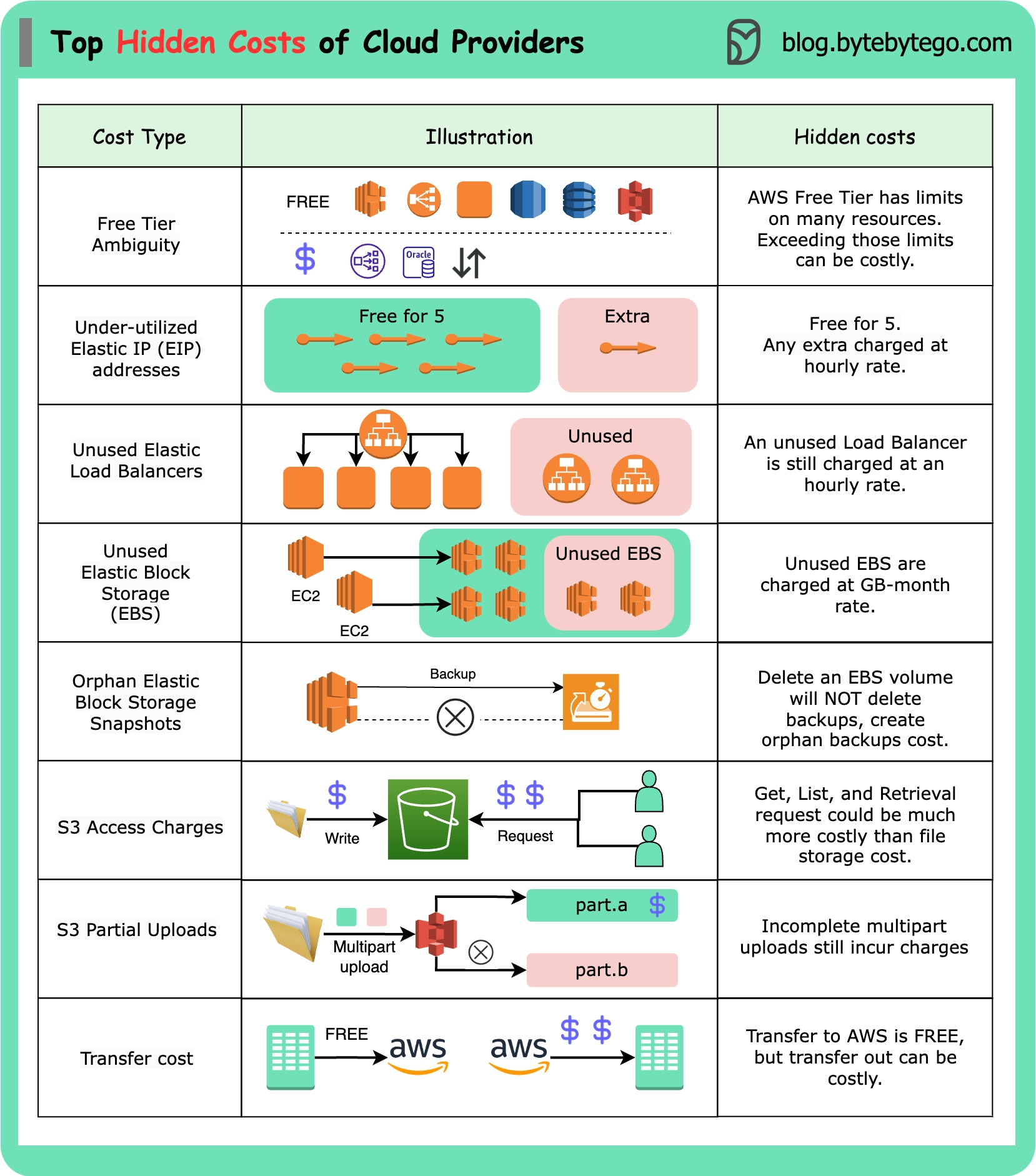

+ * [Hidden Costs of the Cloud](https://bytebytego.com/guides/hidden-costs-of-the-cloud)

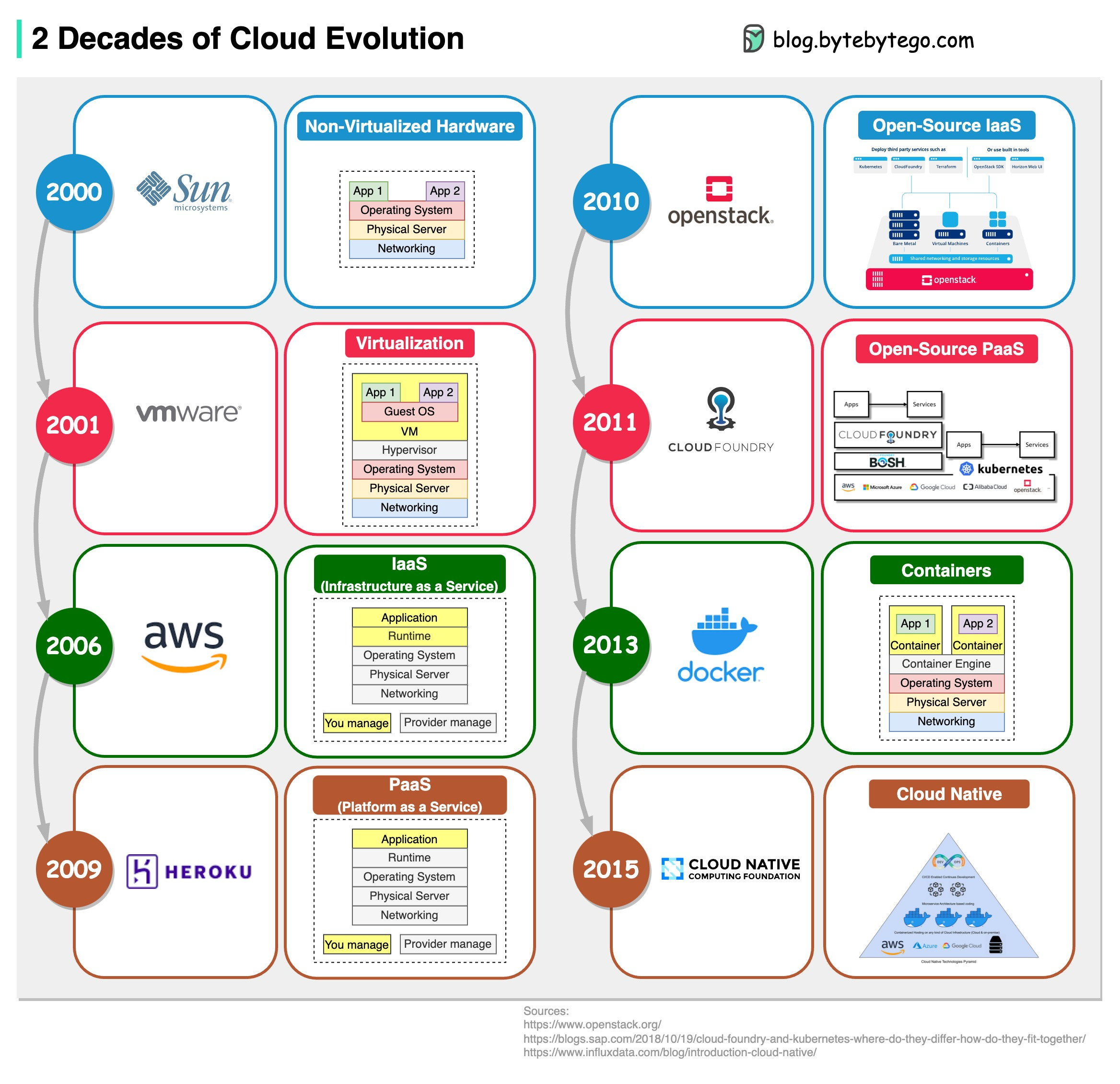

+ * [2 Decades of Cloud Evolution](https://bytebytego.com/guides/2-decades-of-cloud-evolution)

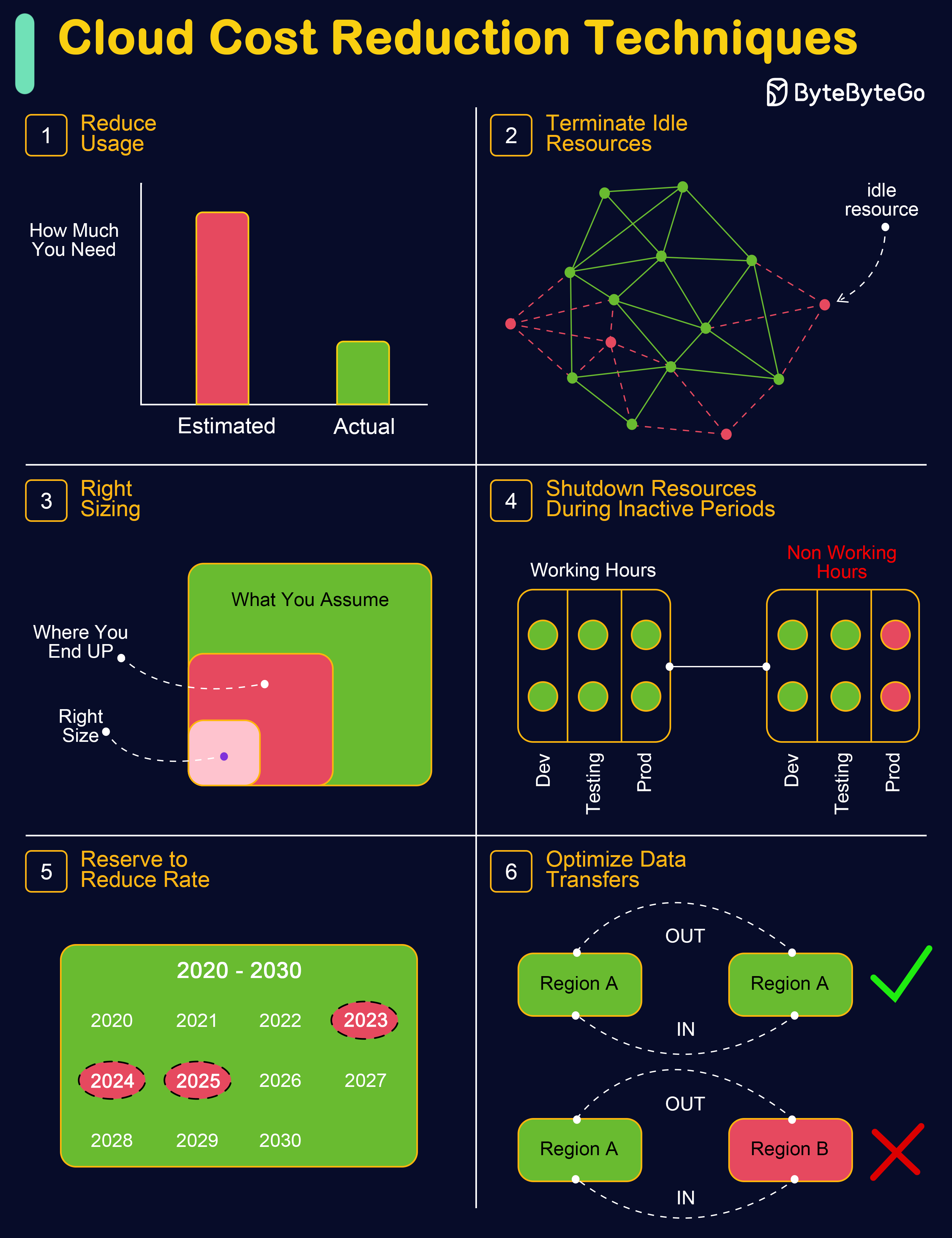

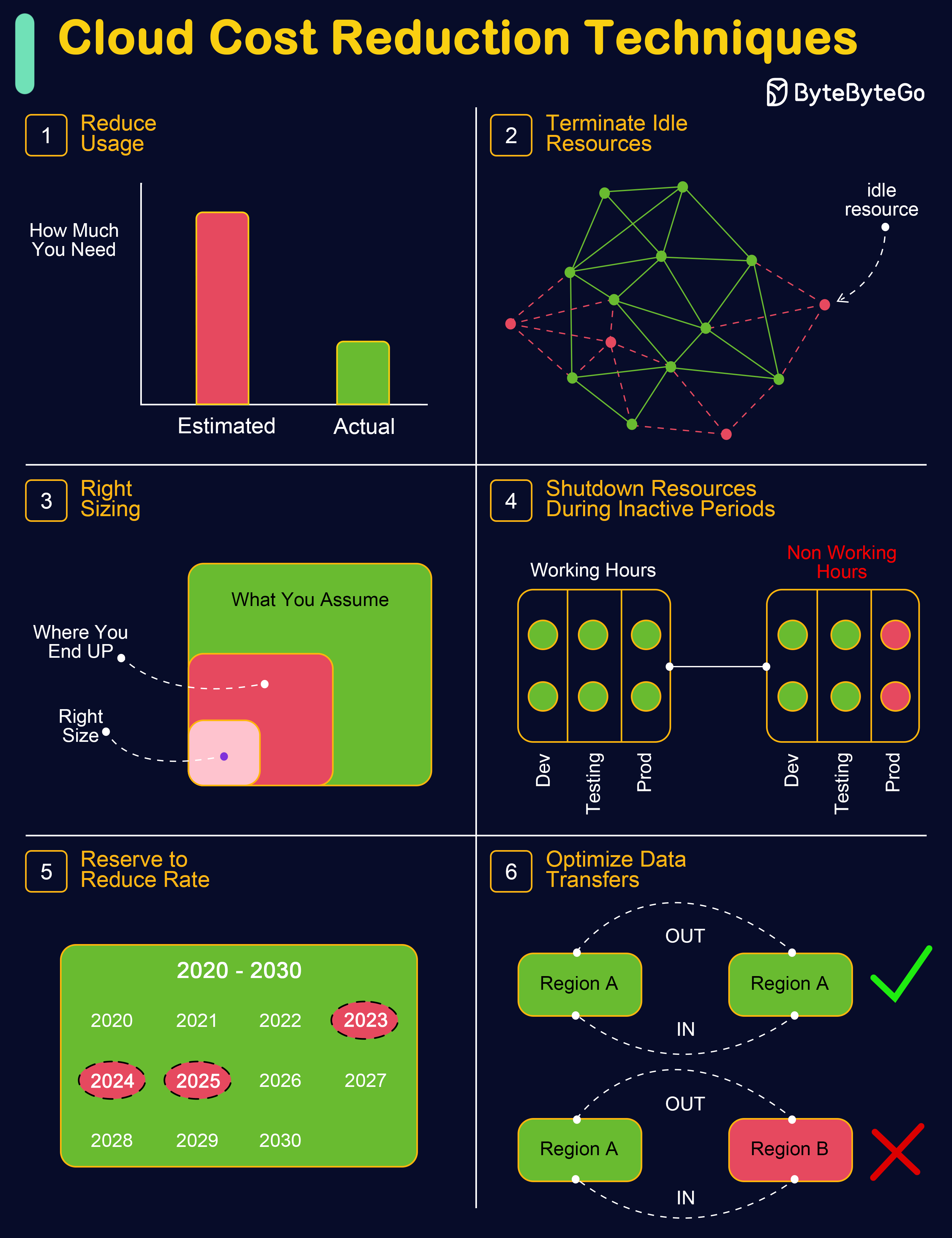

+ * [Cloud Cost Reduction Techniques](https://bytebytego.com/guides/cloud-cost-reduction-techniques)

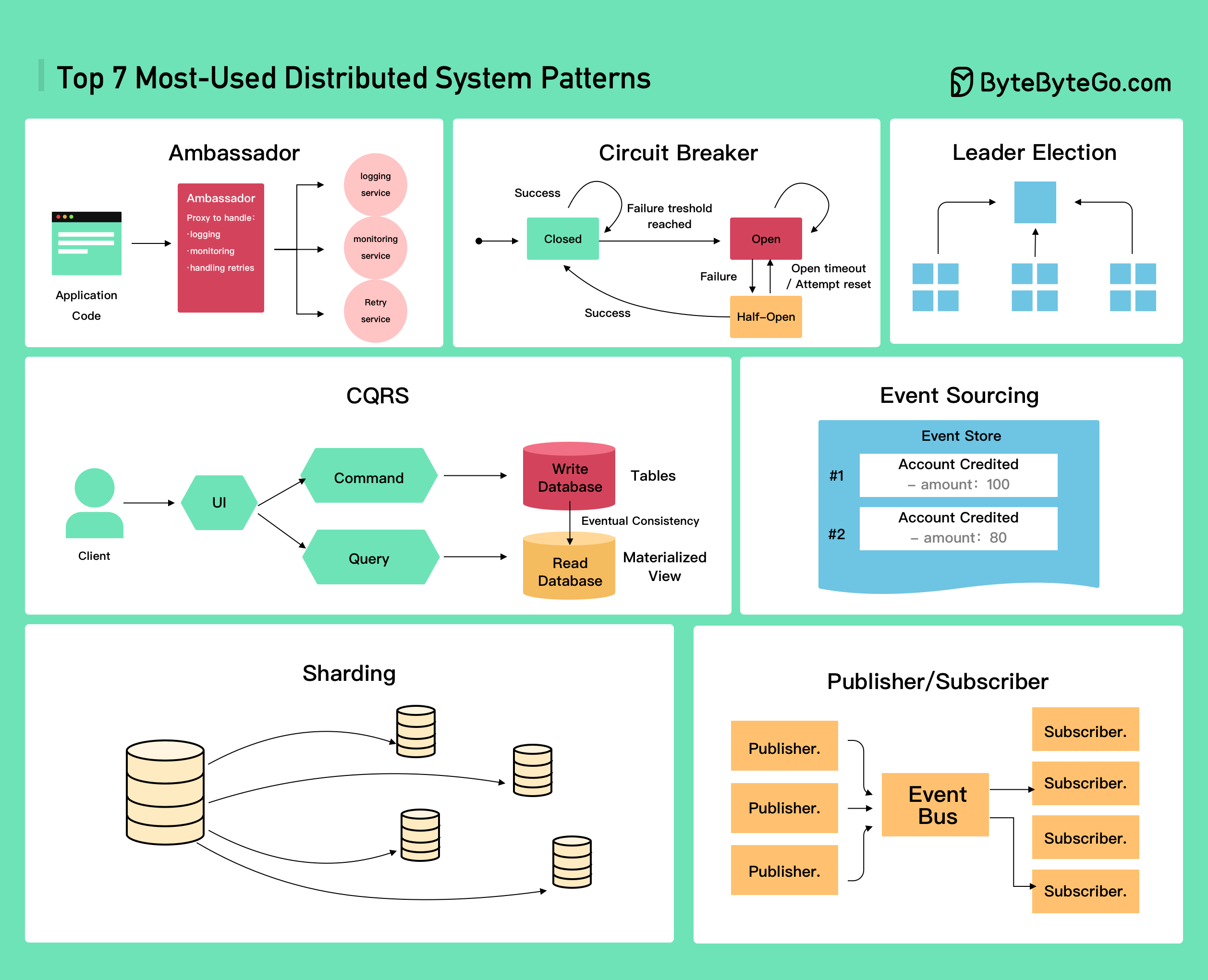

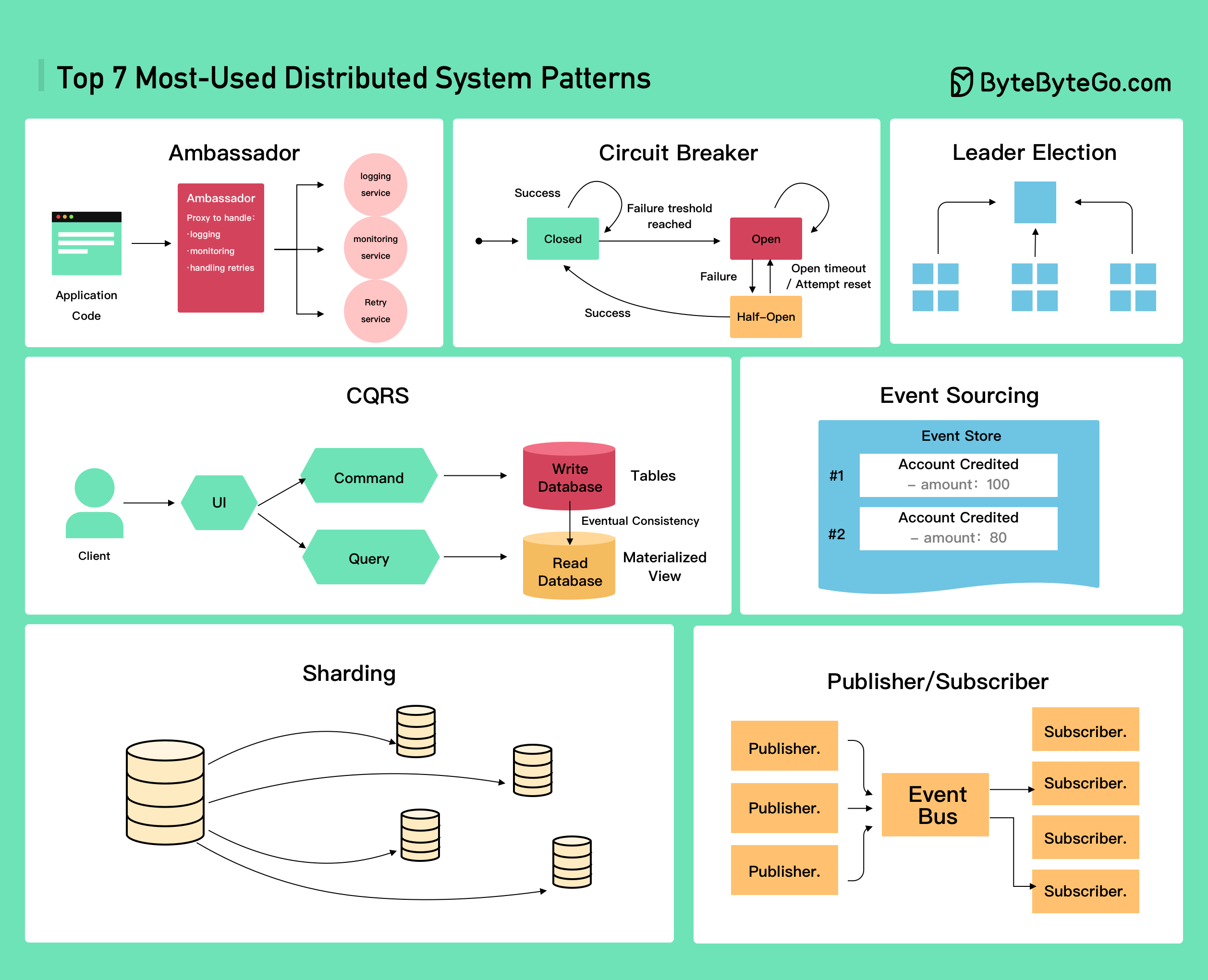

+ * [Top 7 Most-Used Distributed System Patterns](https://bytebytego.com/guides/top-7-most-used-distributed-system-patterns)

+ * [Cloud Load Balancer Cheat Sheet](https://bytebytego.com/guides/cloud-load-balancer-cheat-sheet)

+ * [AWS Services Evolution](https://bytebytego.com/guides/aws-services-evolution)

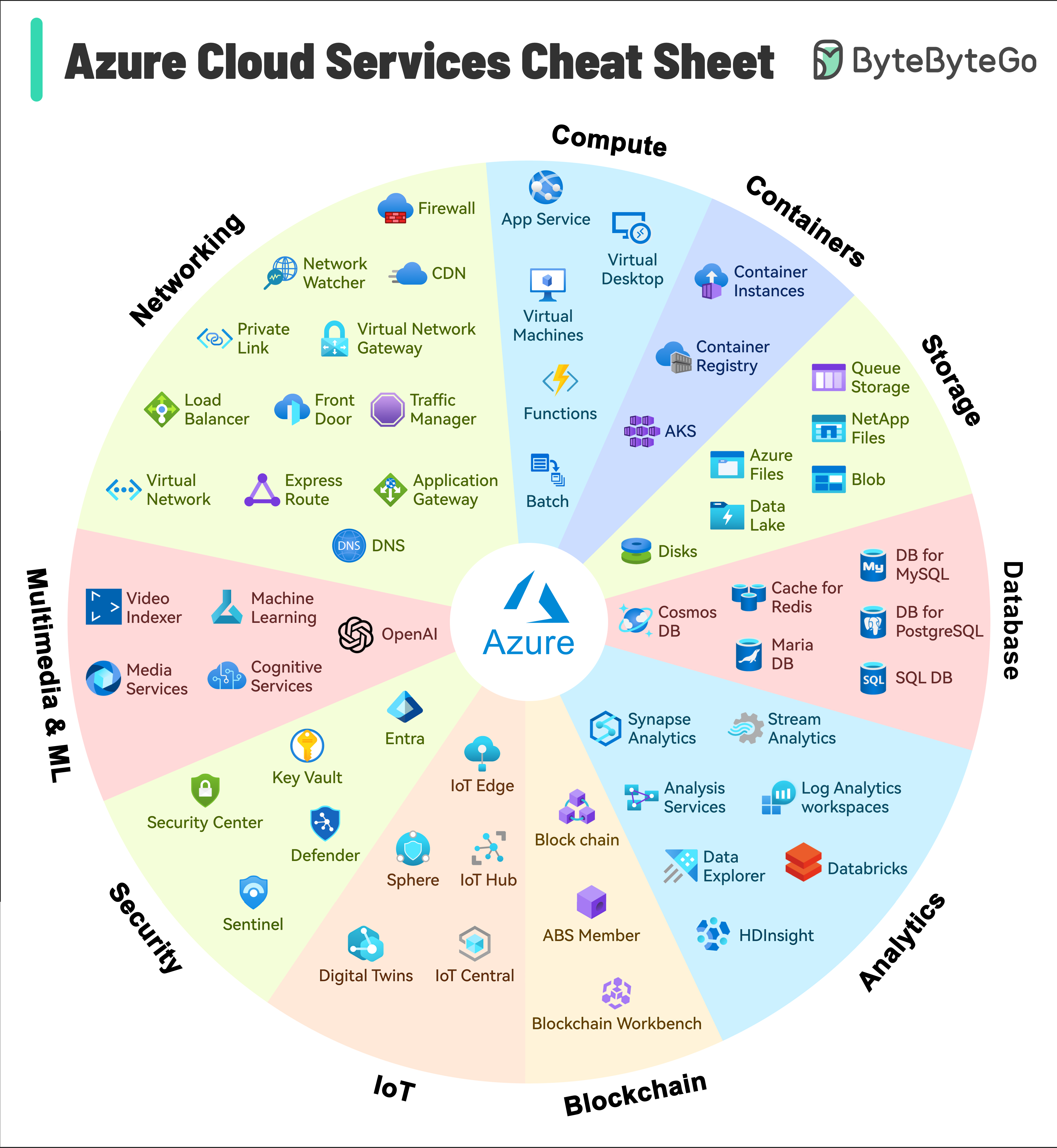

+ * [Azure Services Cheat Sheet](https://bytebytego.com/guides/azure-services-cheat-sheet)

+ * [A cheat sheet for system designs](https://bytebytego.com/guides/a-cheat-sheet-for-system-designs)

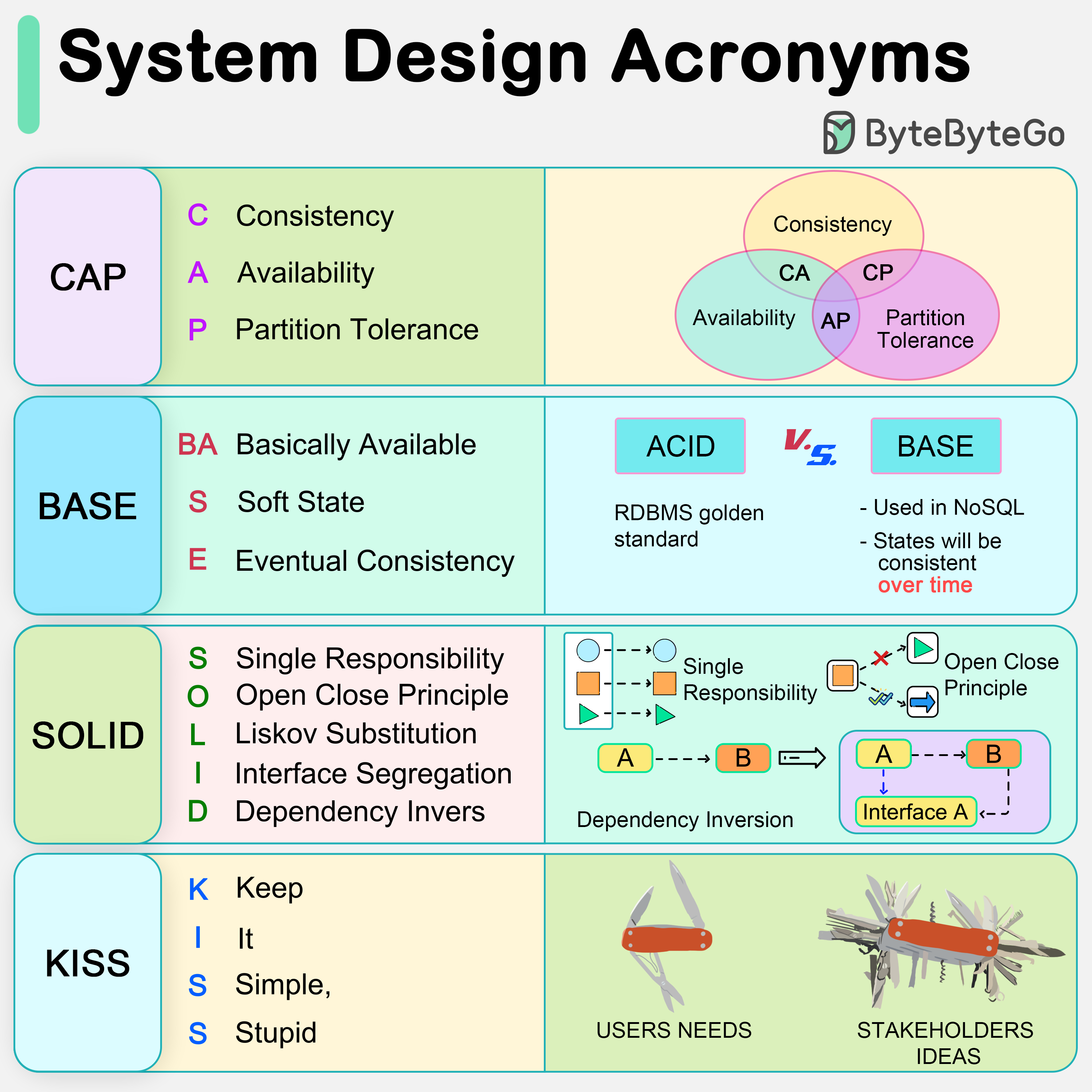

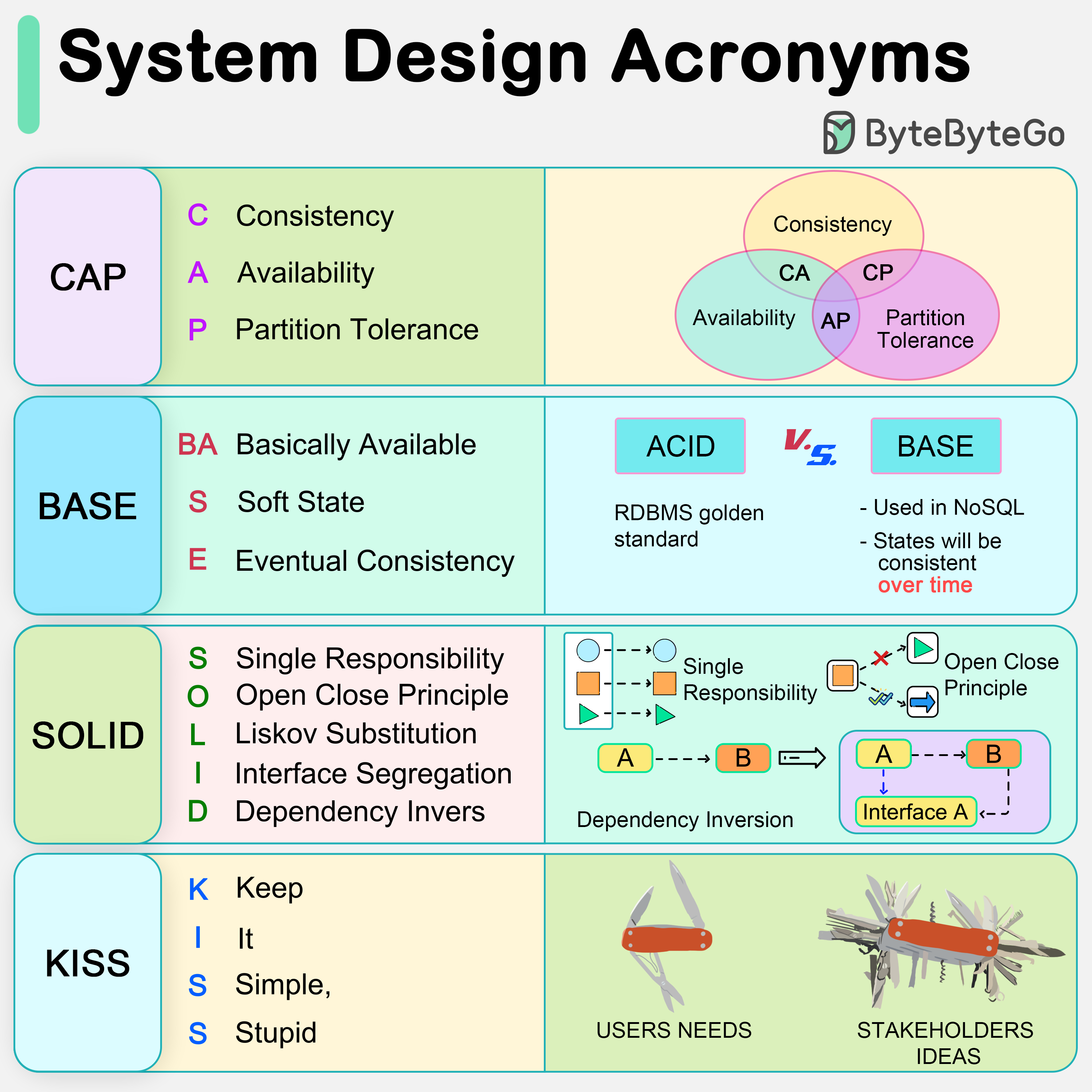

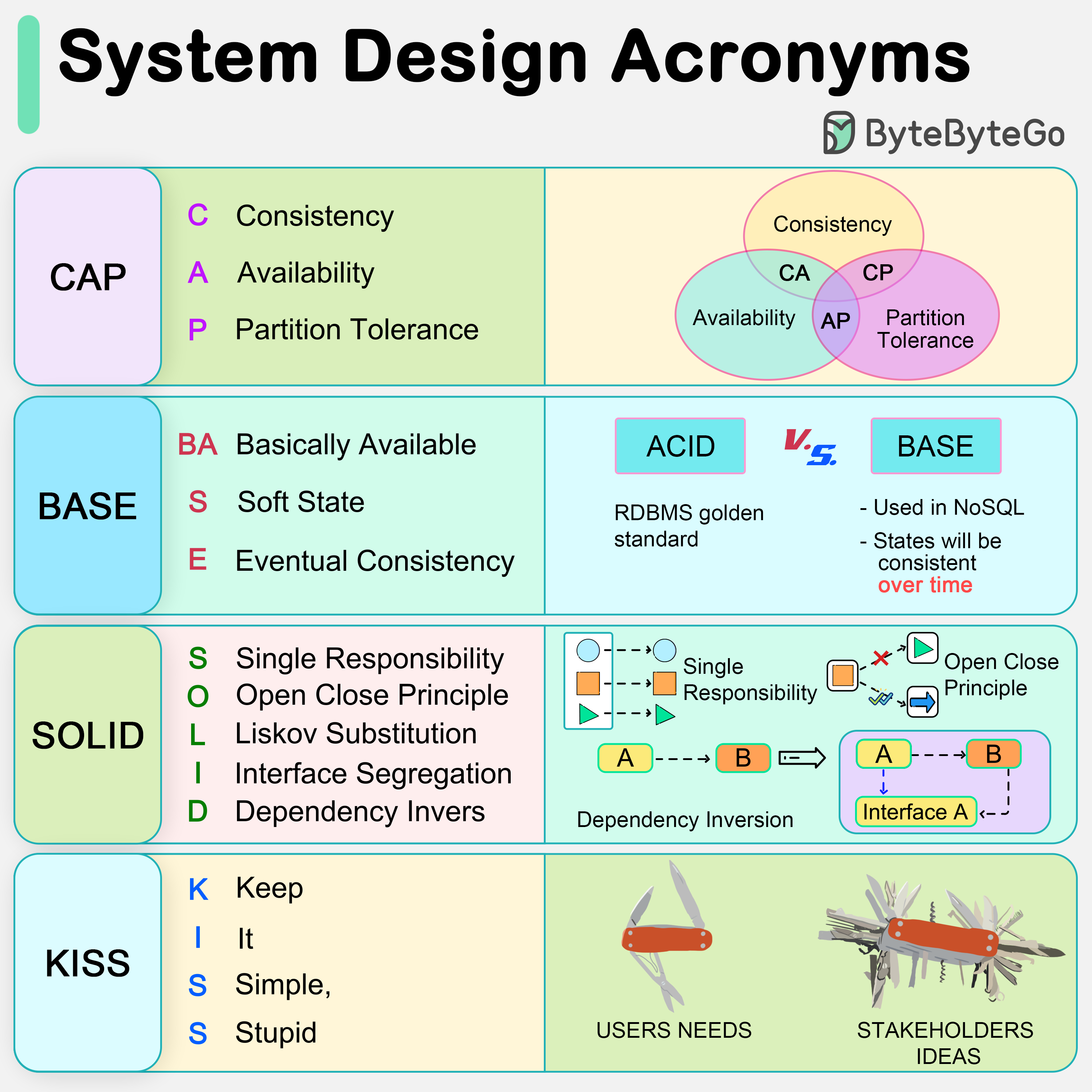

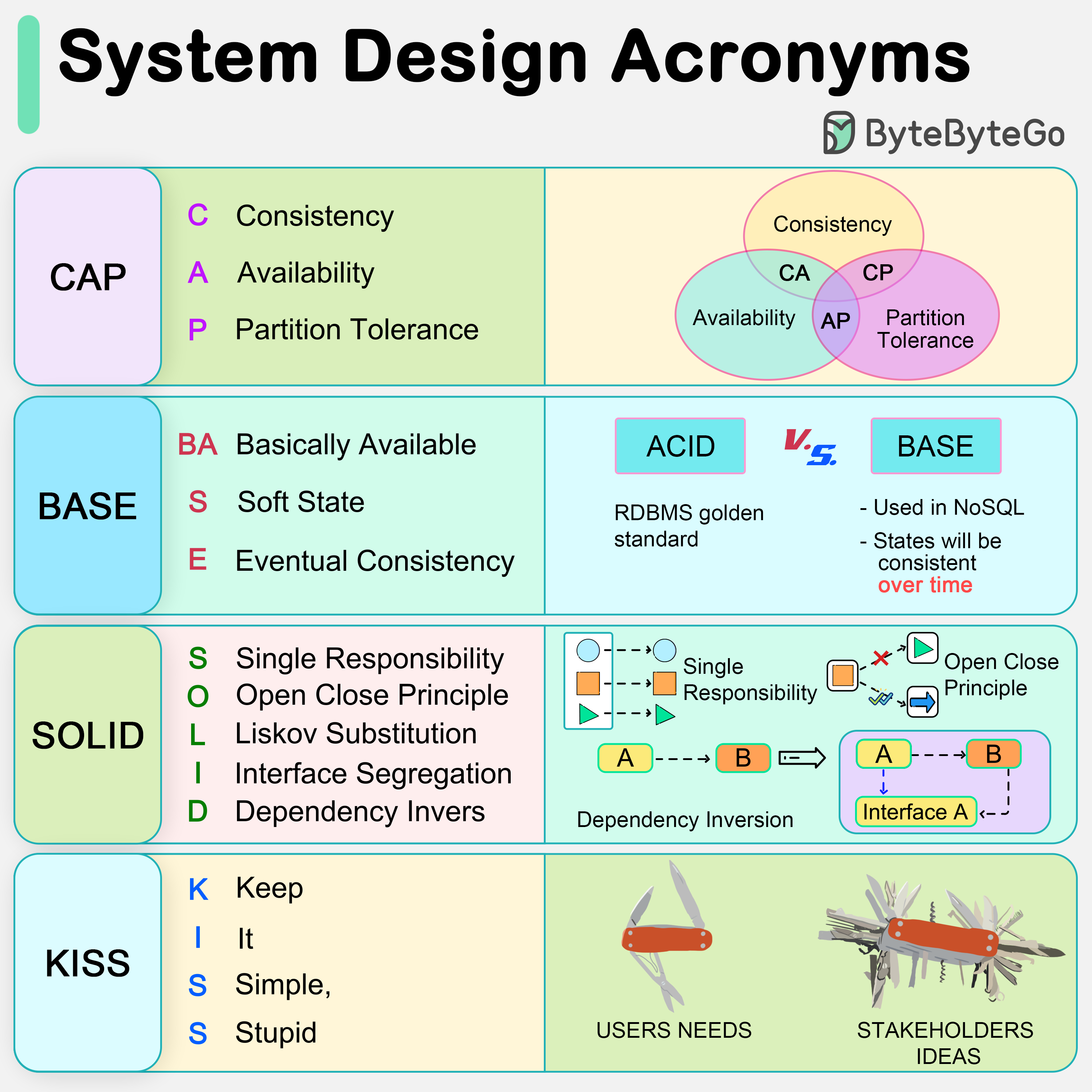

+ * [CAP, BASE, SOLID, KISS, What do these acronyms mean?](https://bytebytego.com/guides/cap-base-solid-kiss-what-do-these-acronyms-mean)

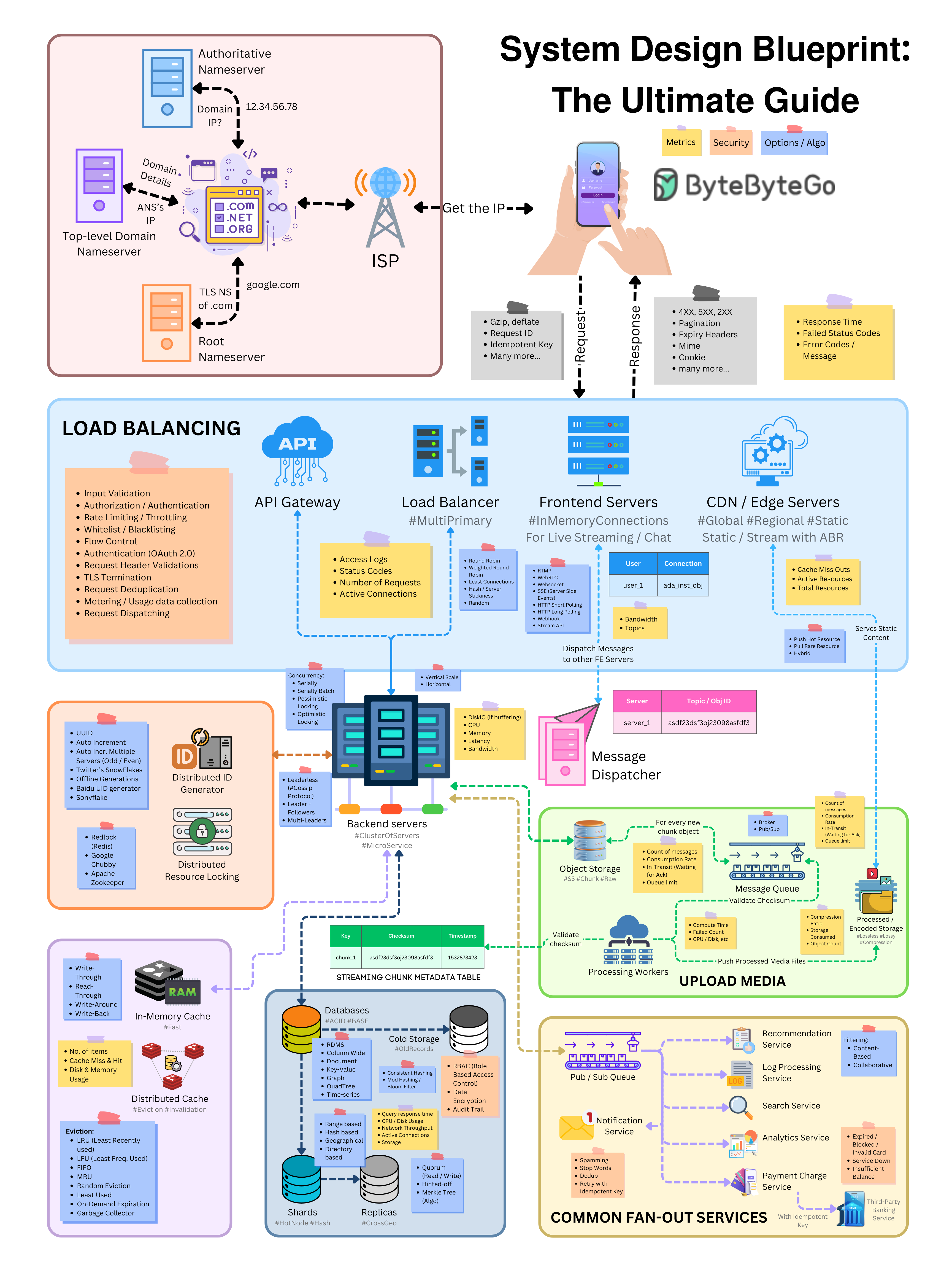

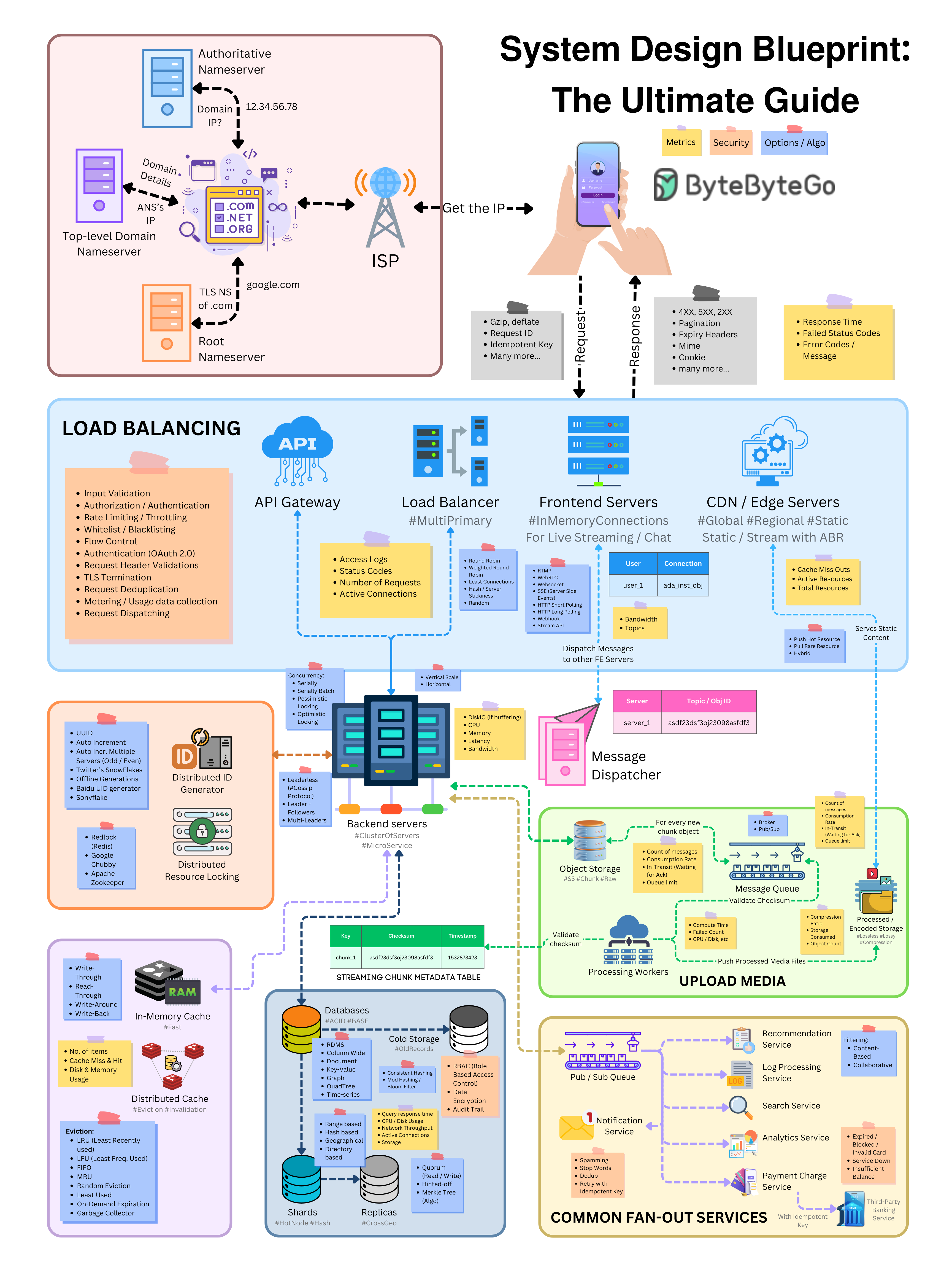

+ * [System Design Blueprint: The Ultimate Guide](https://bytebytego.com/guides/system-design-blueprint-the-ultimate-guide)

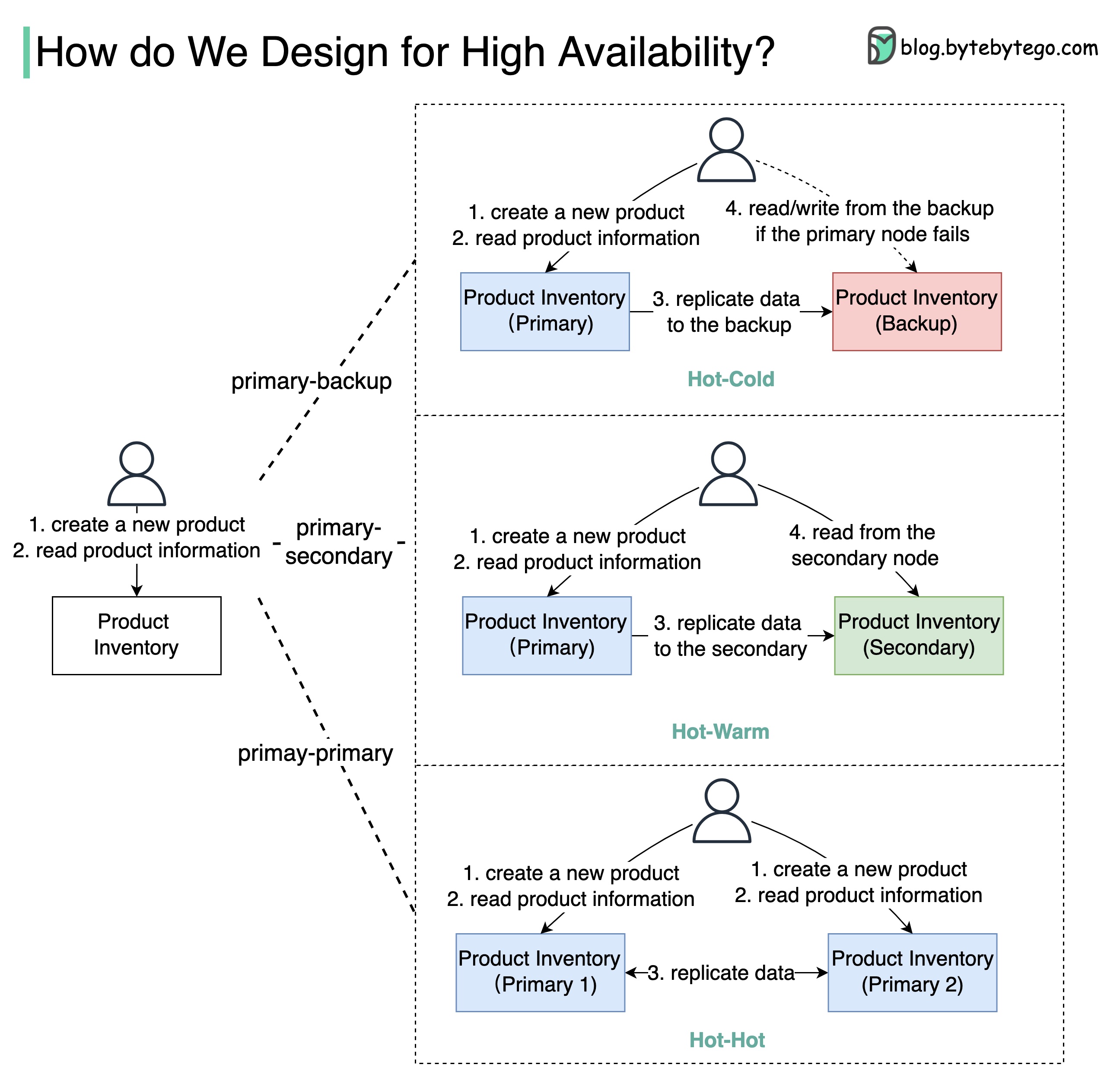

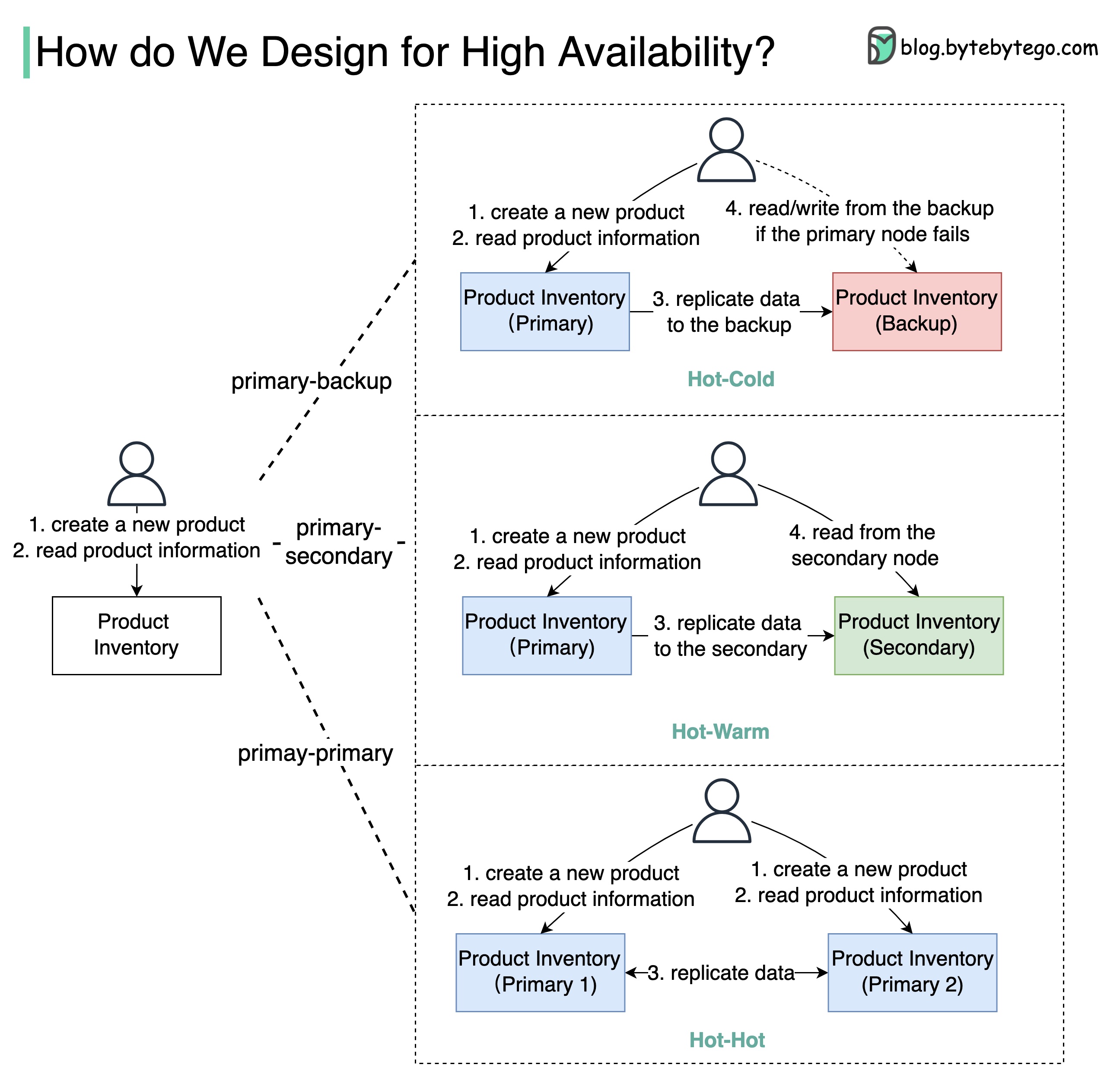

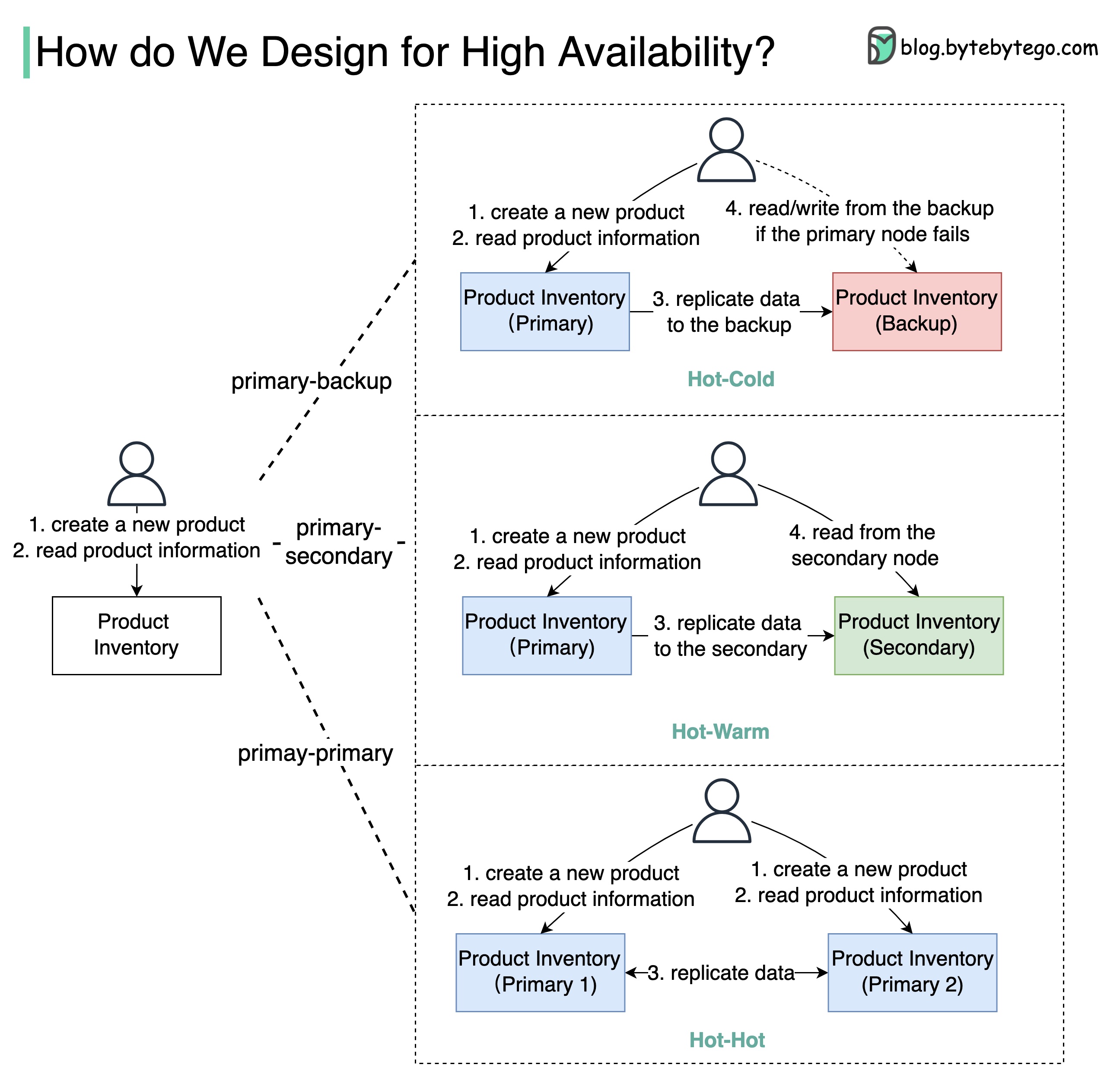

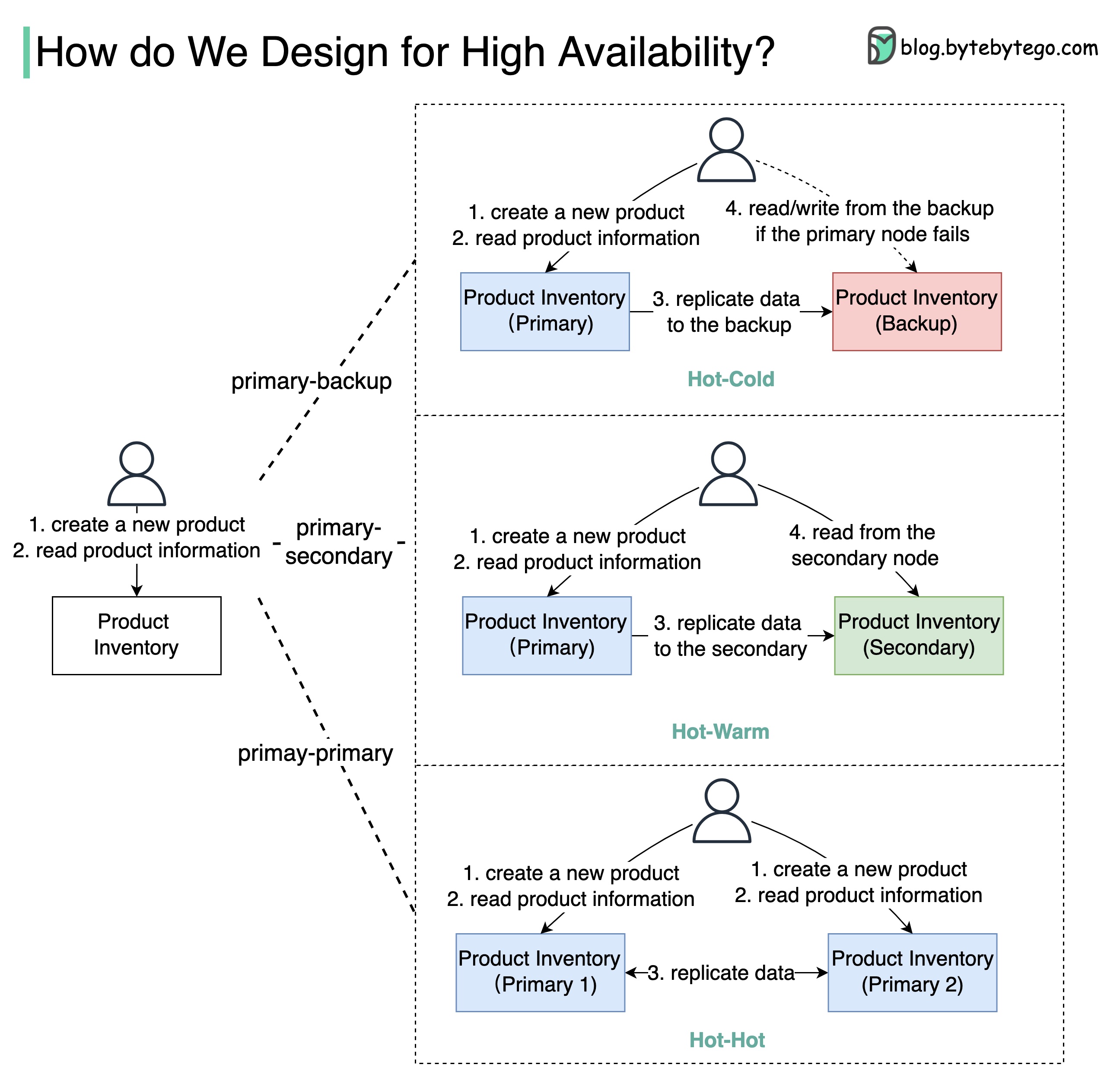

+ * [How to Design for High Availability](https://bytebytego.com/guides/how-do-we-design-for-high-availability)

+ * [What is Cloud Native?](https://bytebytego.com/guides/what-is-cloud-native)

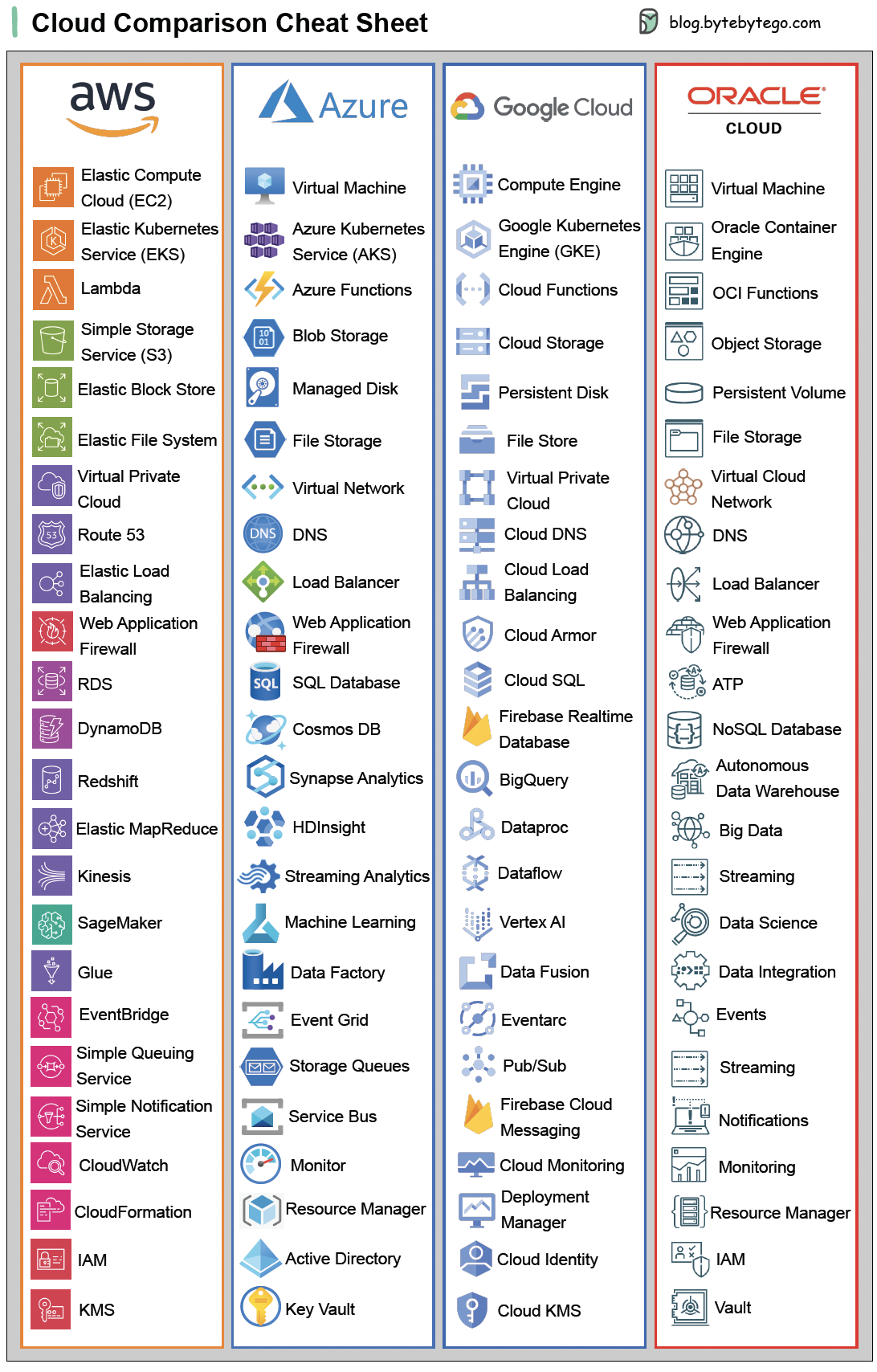

+ * [Cloud Comparison Cheat Sheet](https://bytebytego.com/guides/cloud-comparison-cheat-sheet)

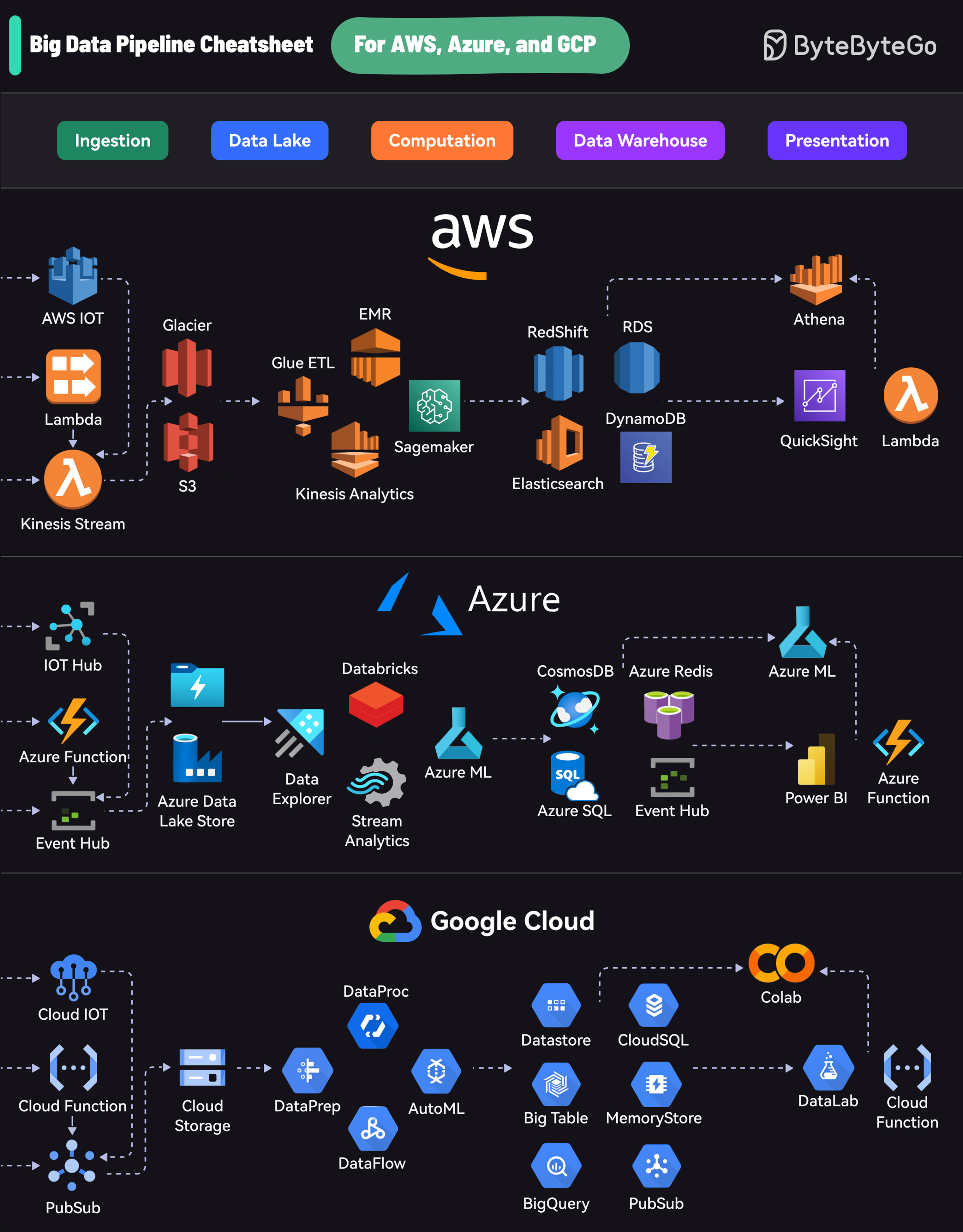

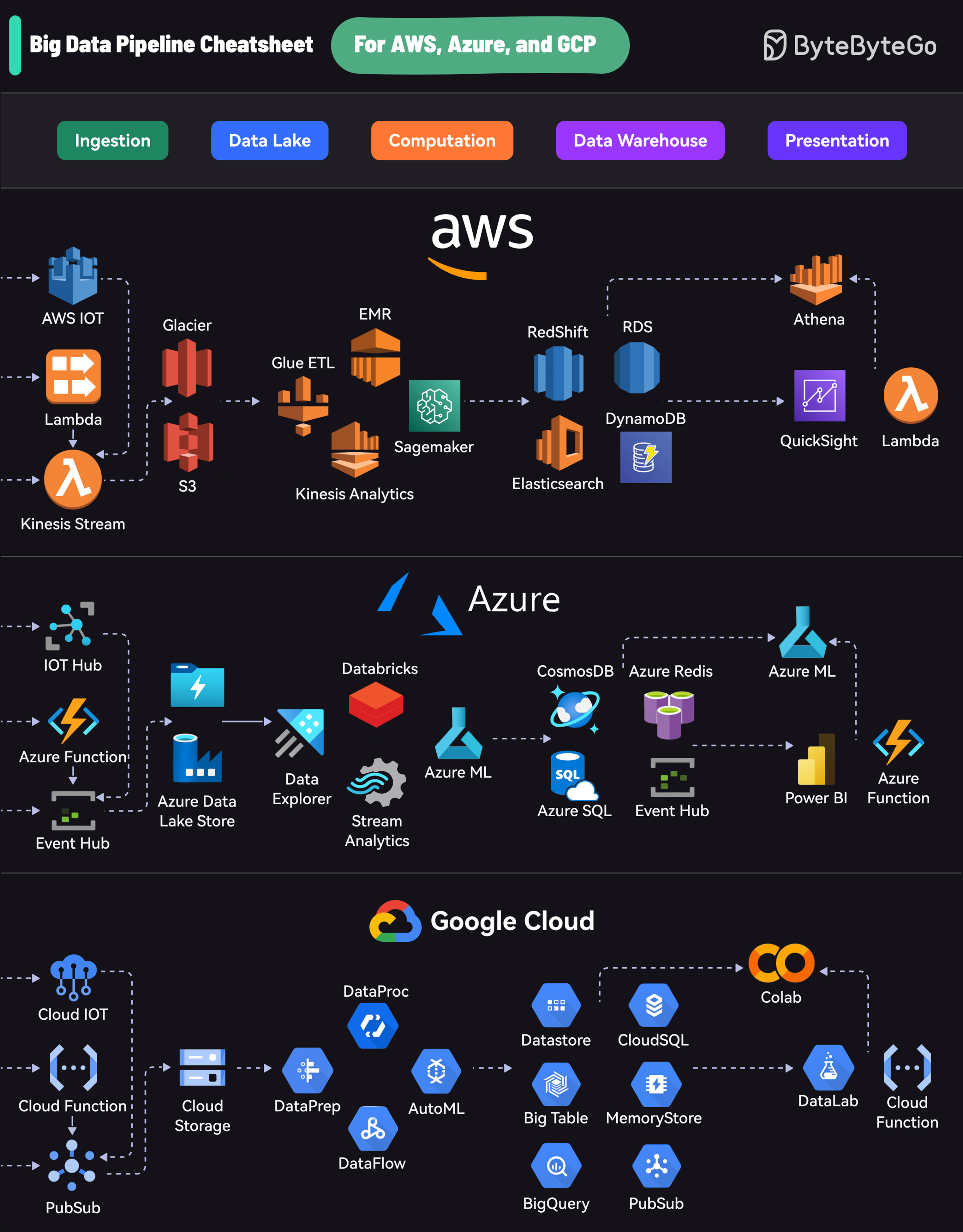

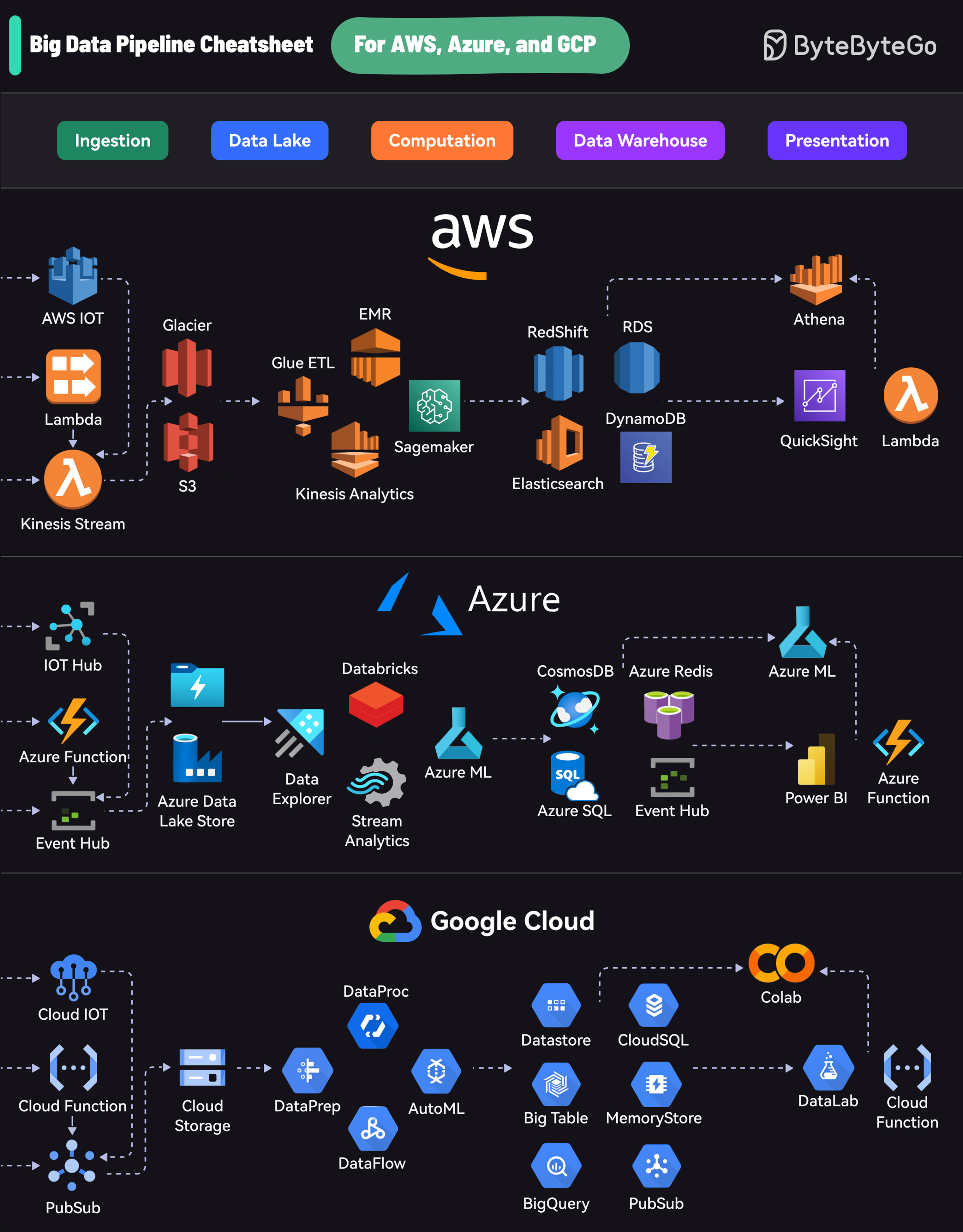

+ * [Big Data Pipeline Cheatsheet for AWS, Azure, and Google Cloud](https://bytebytego.com/guides/big-data-pipeline-cheatsheet-for-aws-azure-and-google-cloud)

+ * [AWS Services Cheat Sheet](https://bytebytego.com/guides/aws-services-cheat-sheet)

+* [How it Works?](https://bytebytego.com/guides/how-it-works)

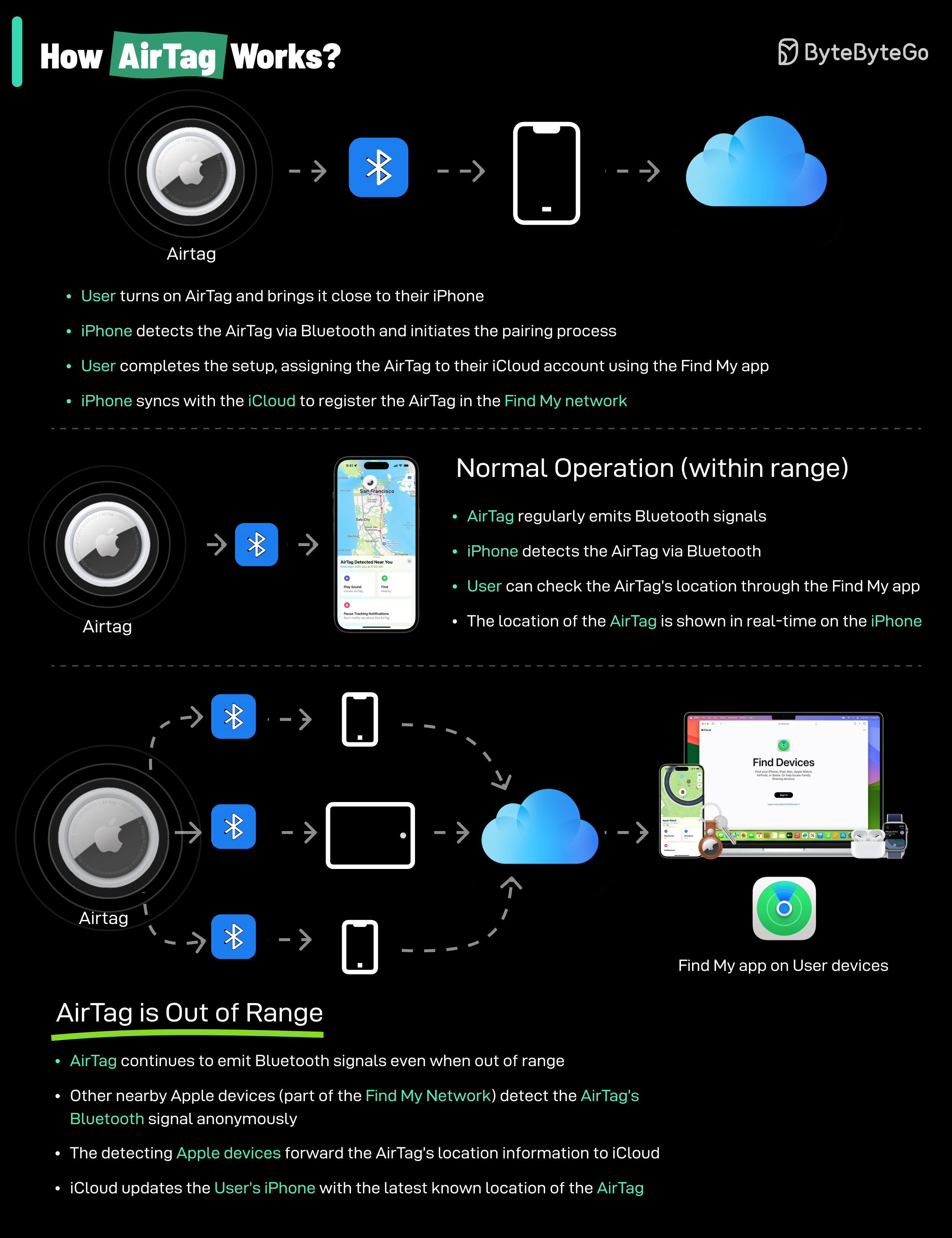

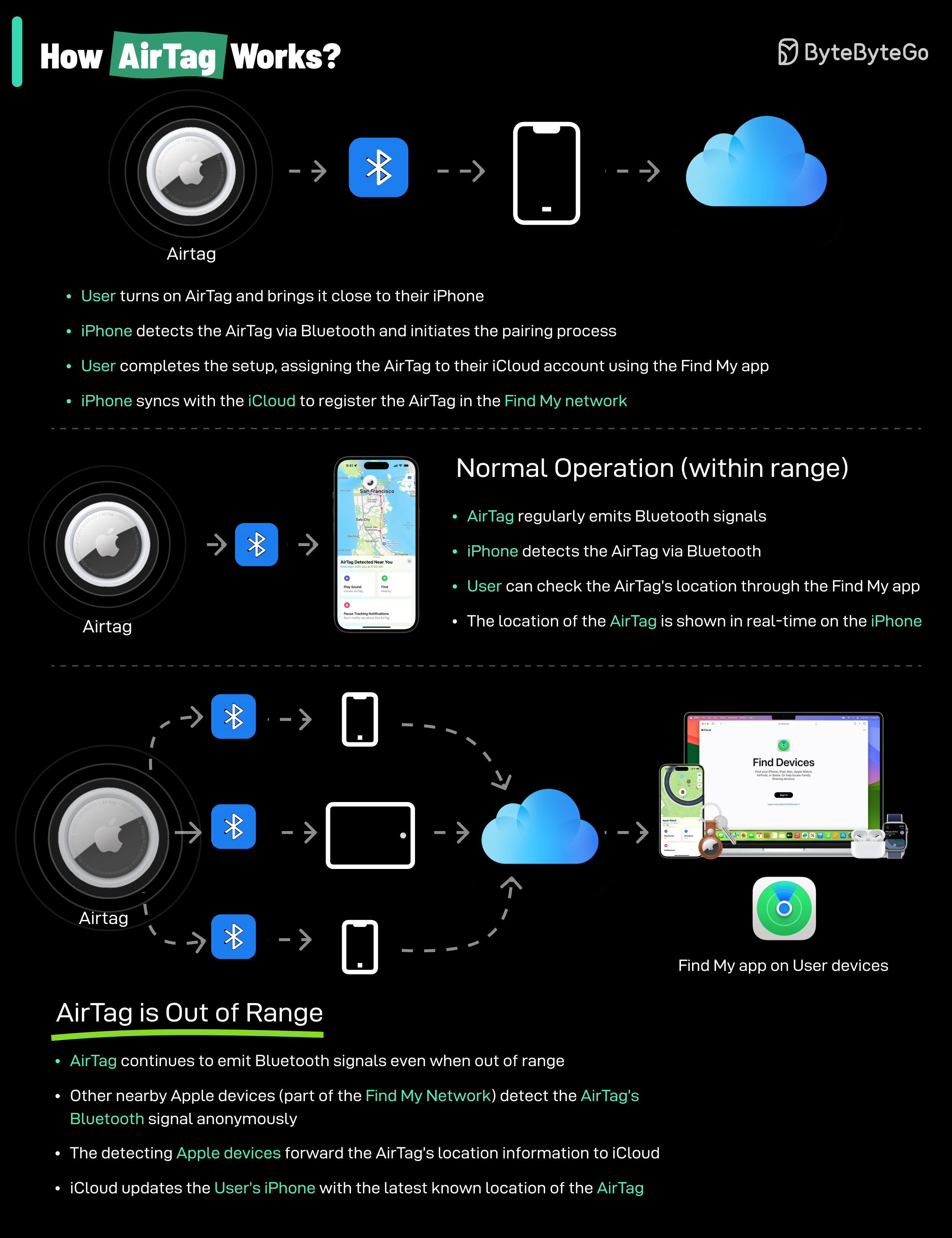

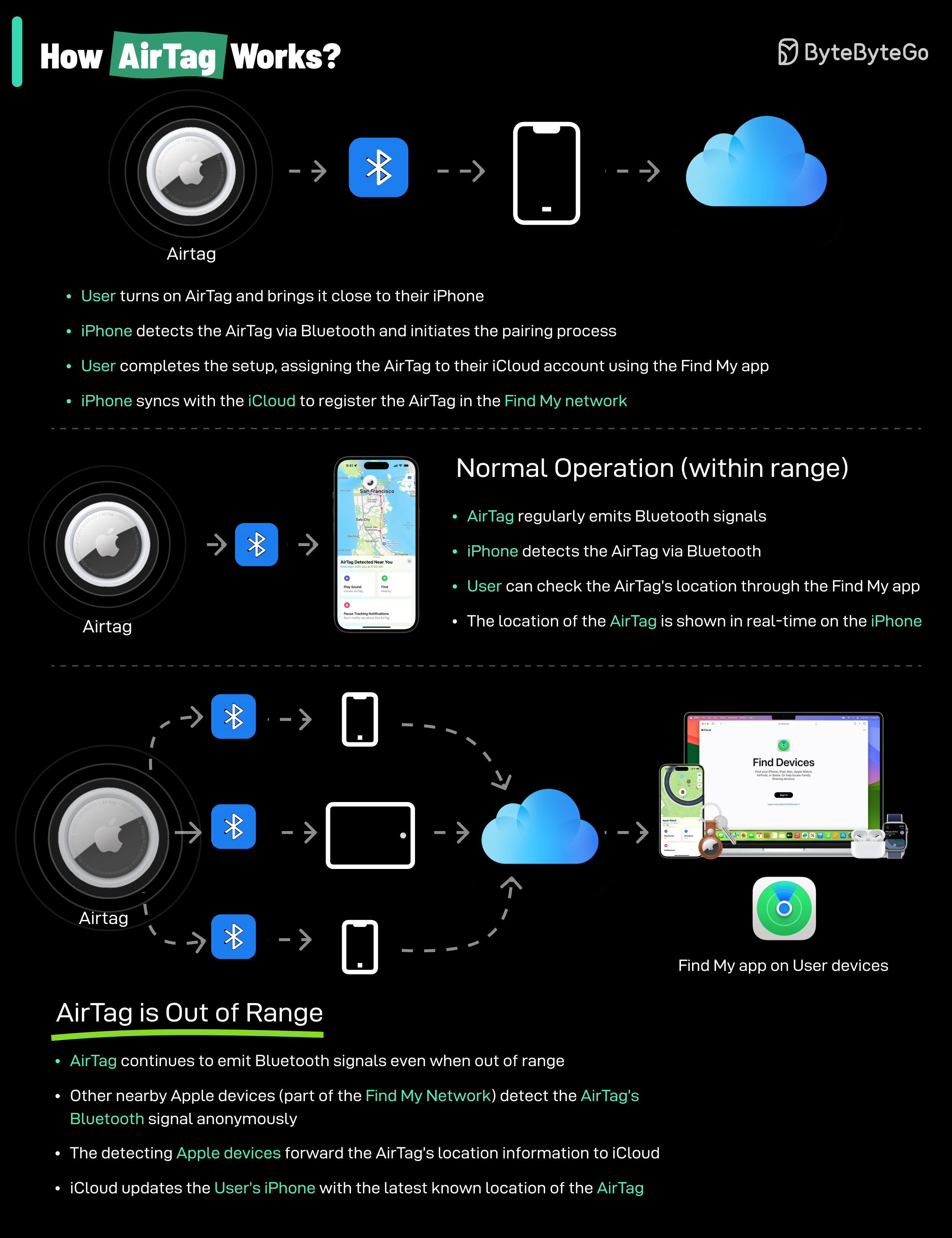

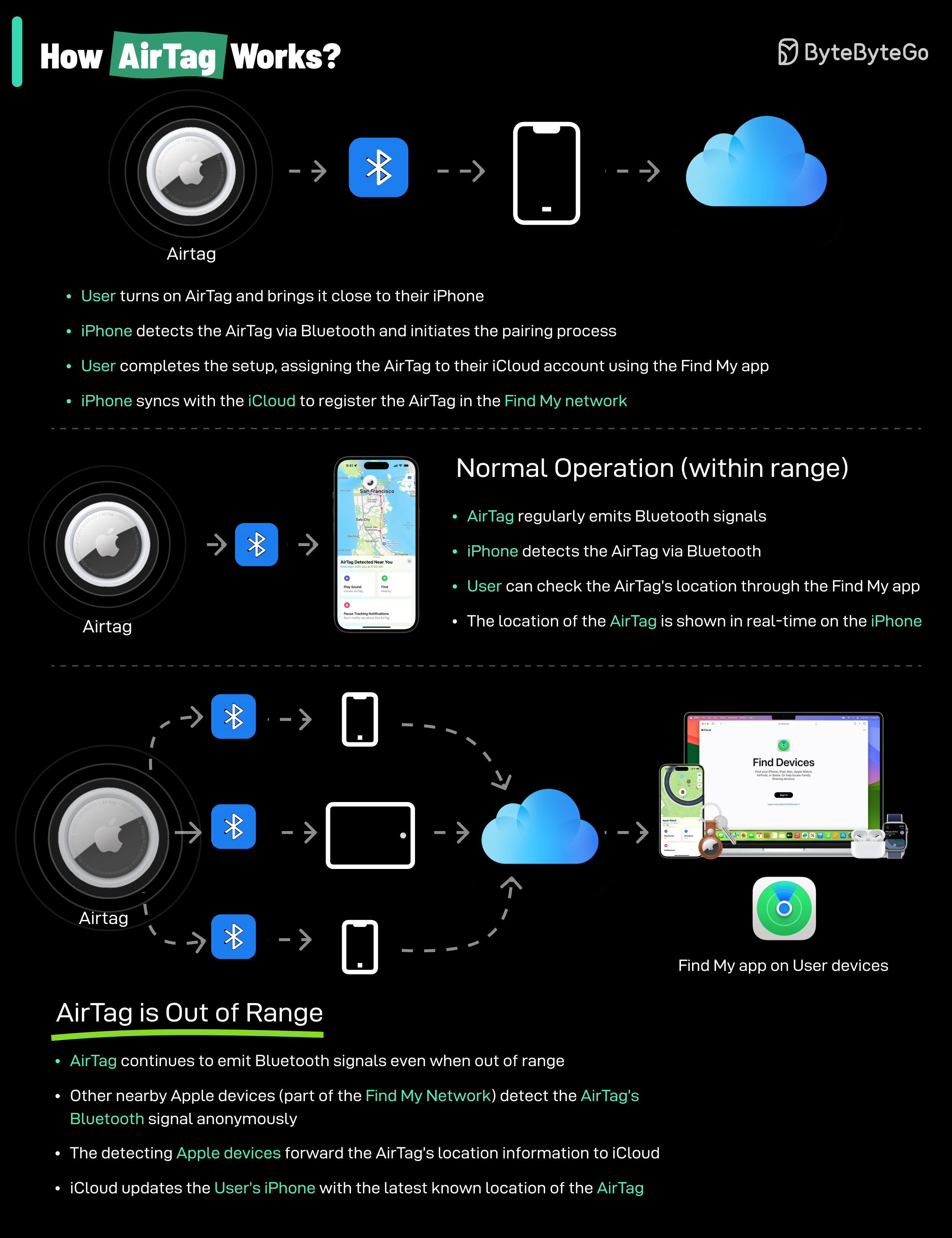

+ * [How do AirTags work?](https://bytebytego.com/guides/how-do-airtags-work)

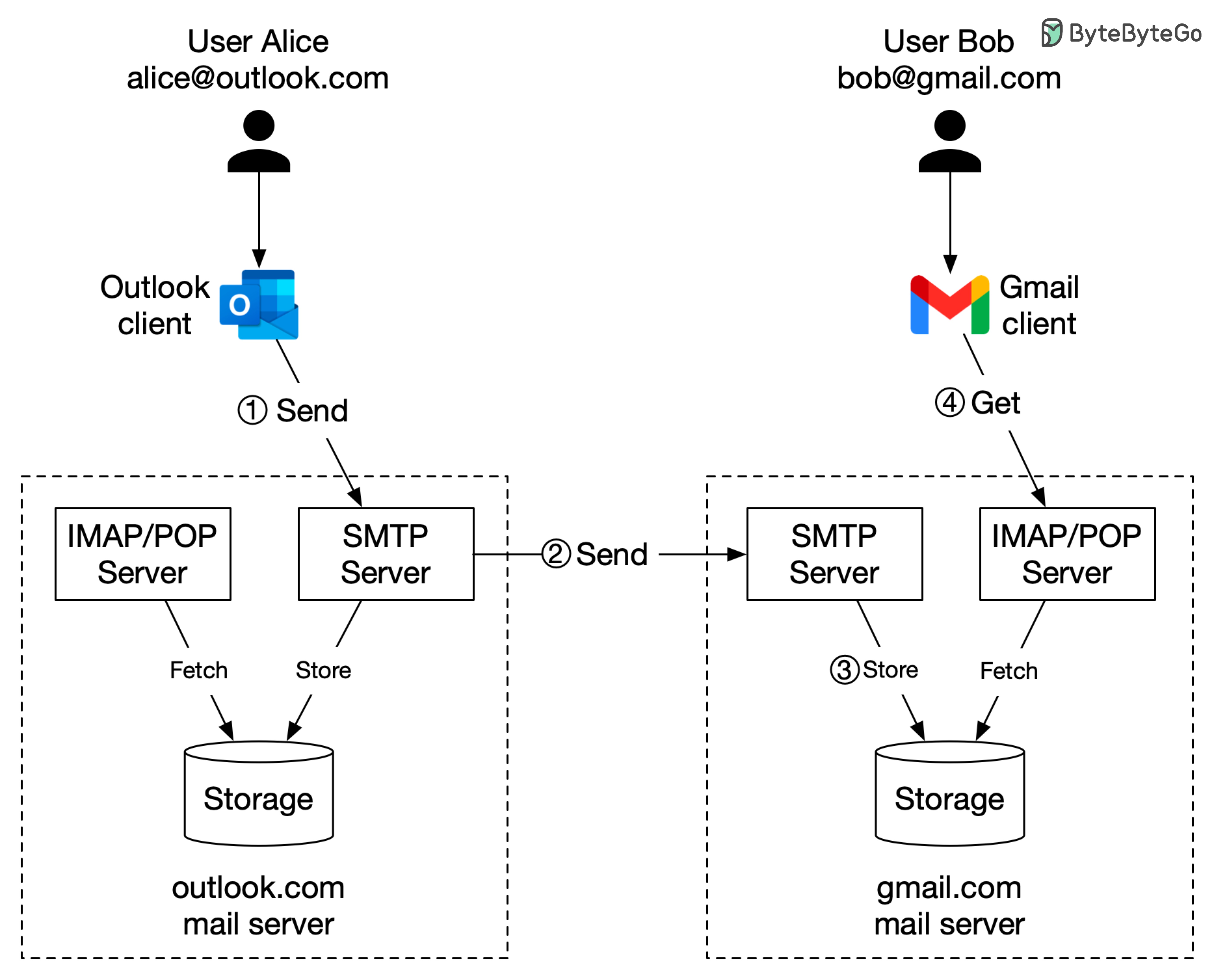

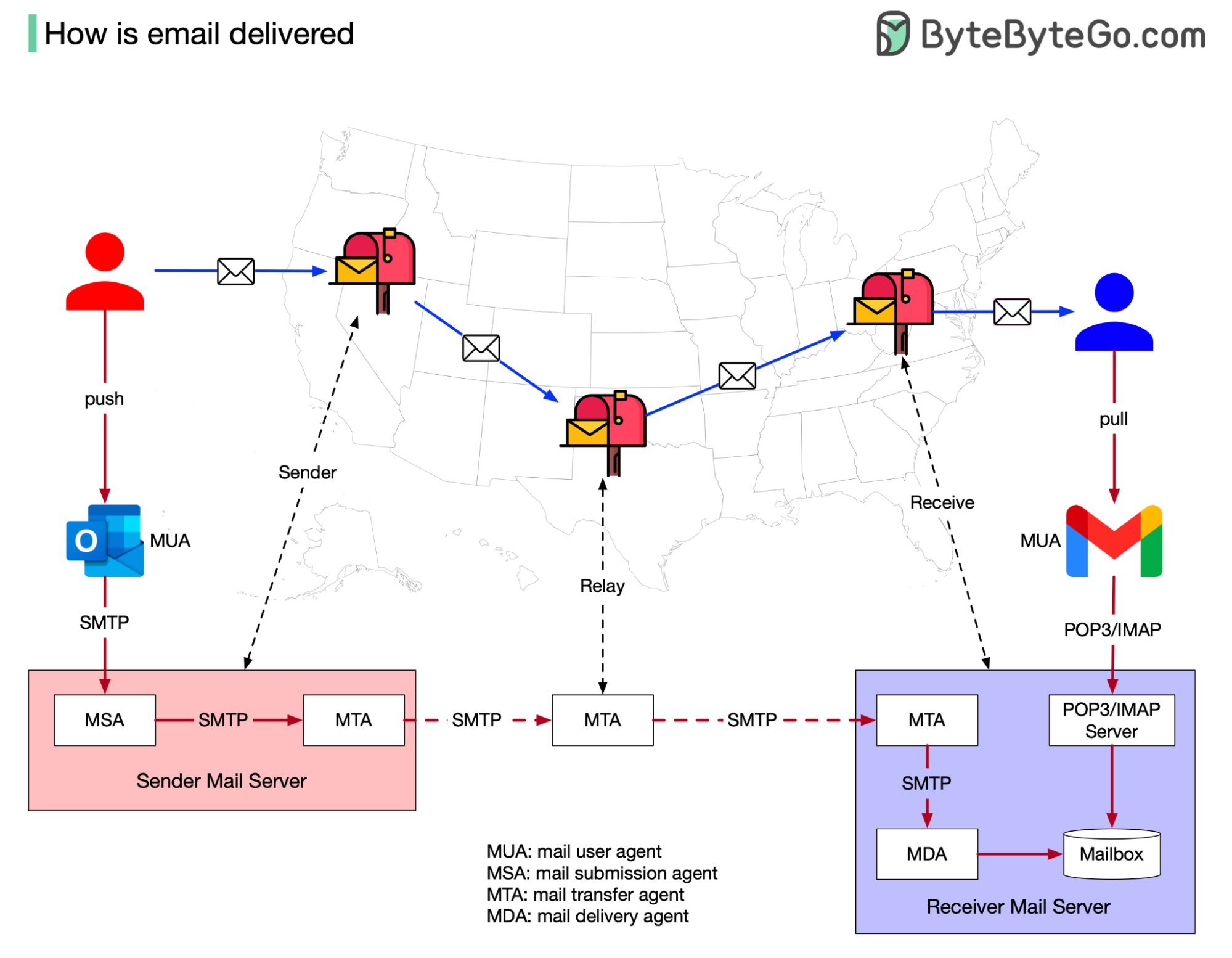

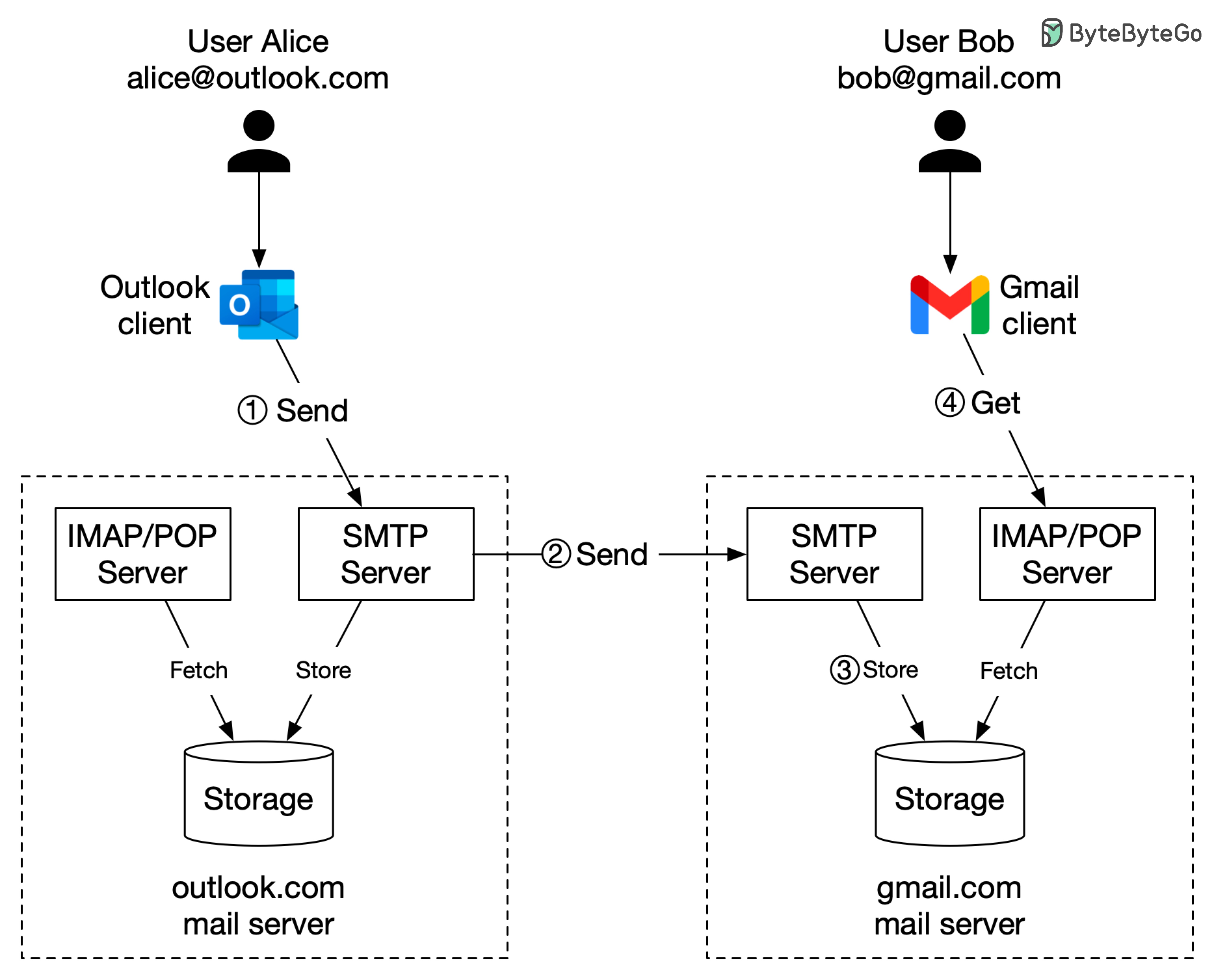

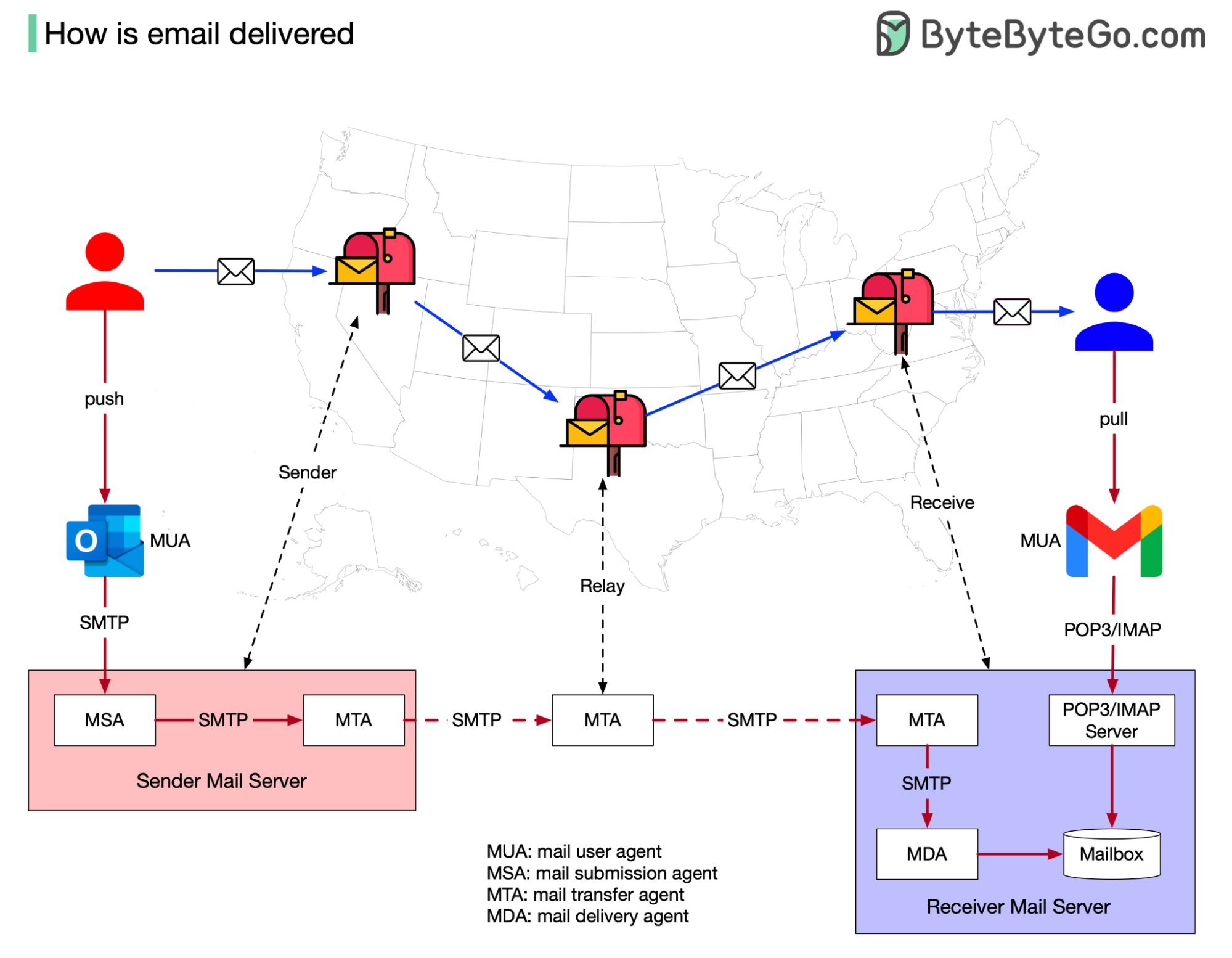

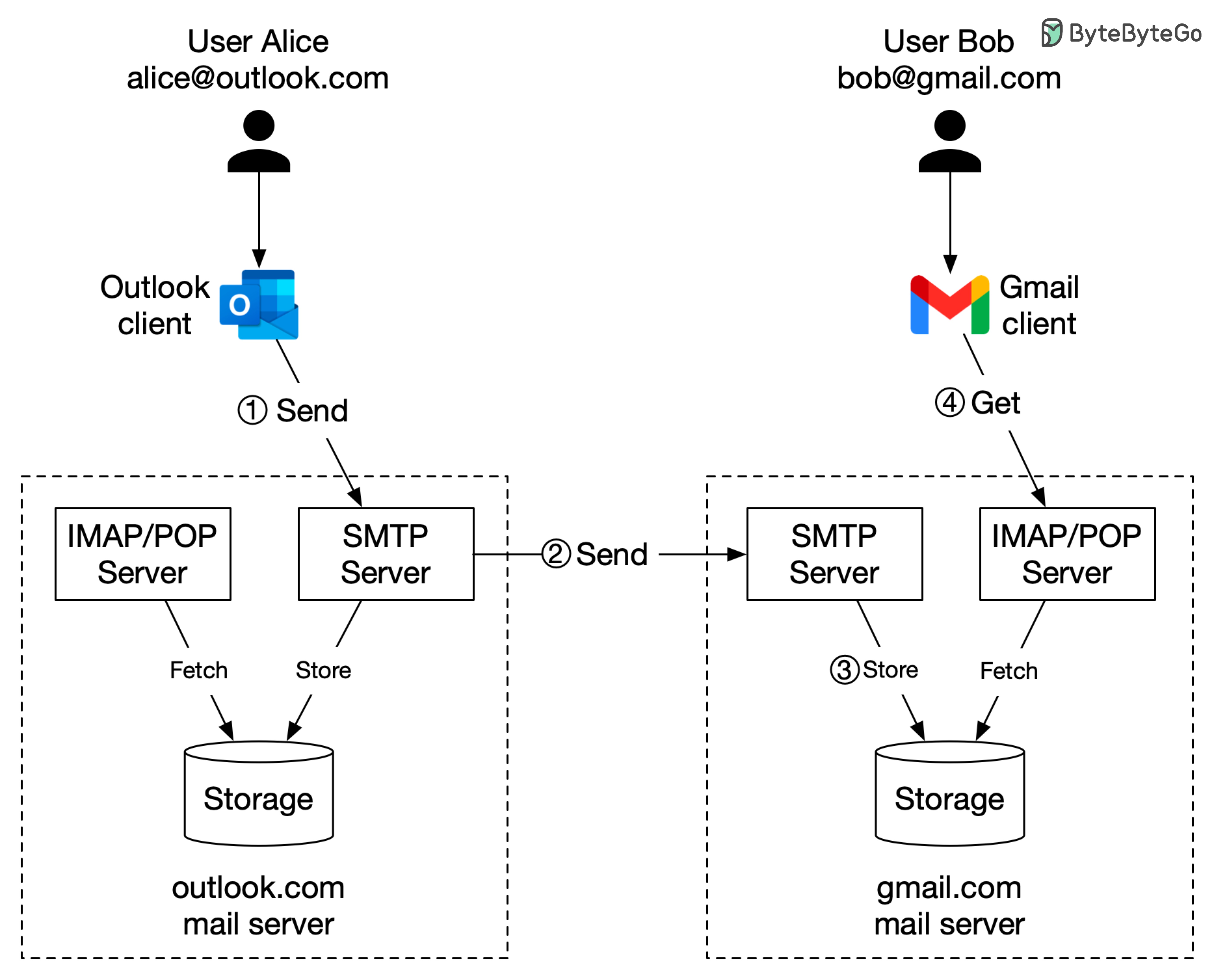

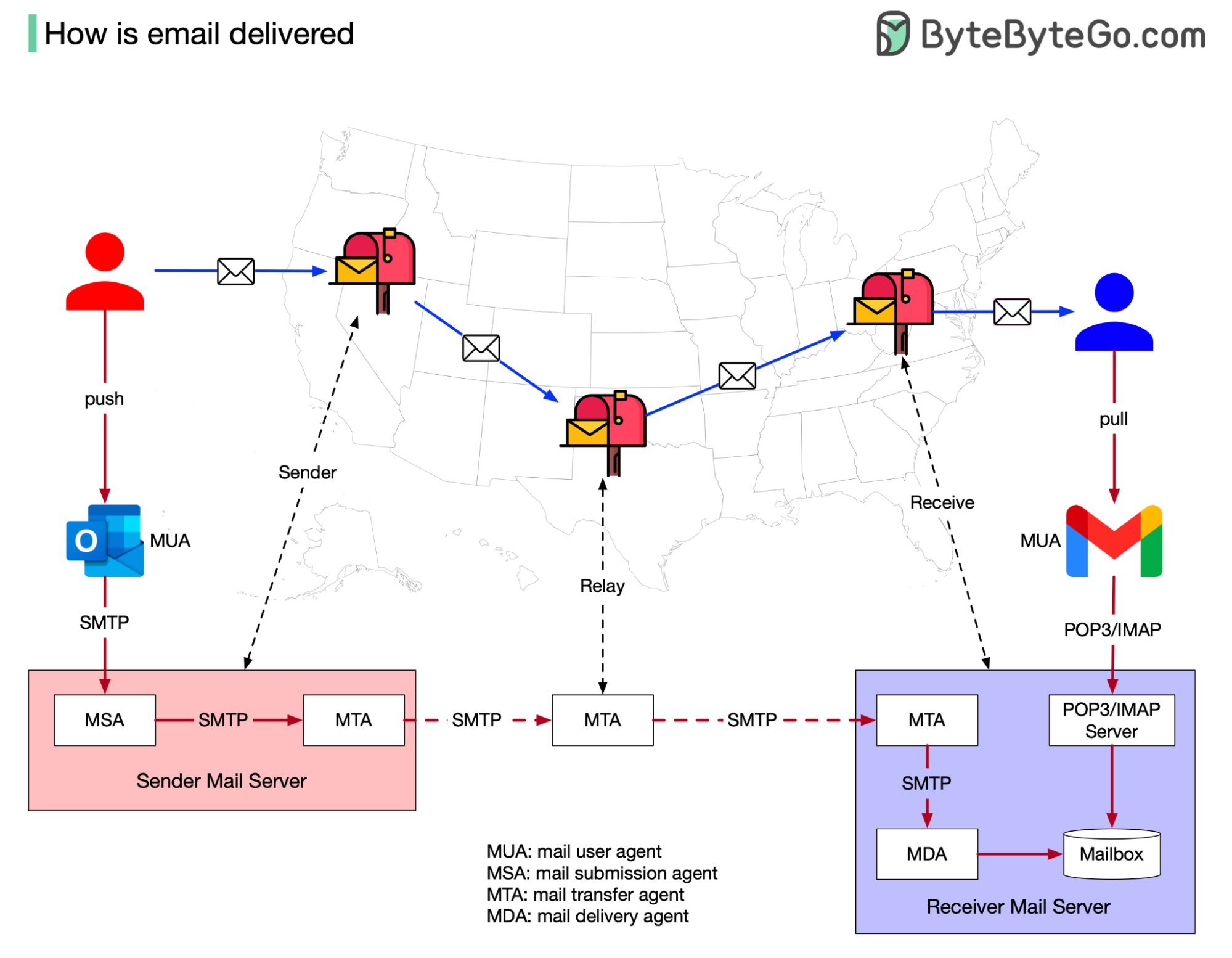

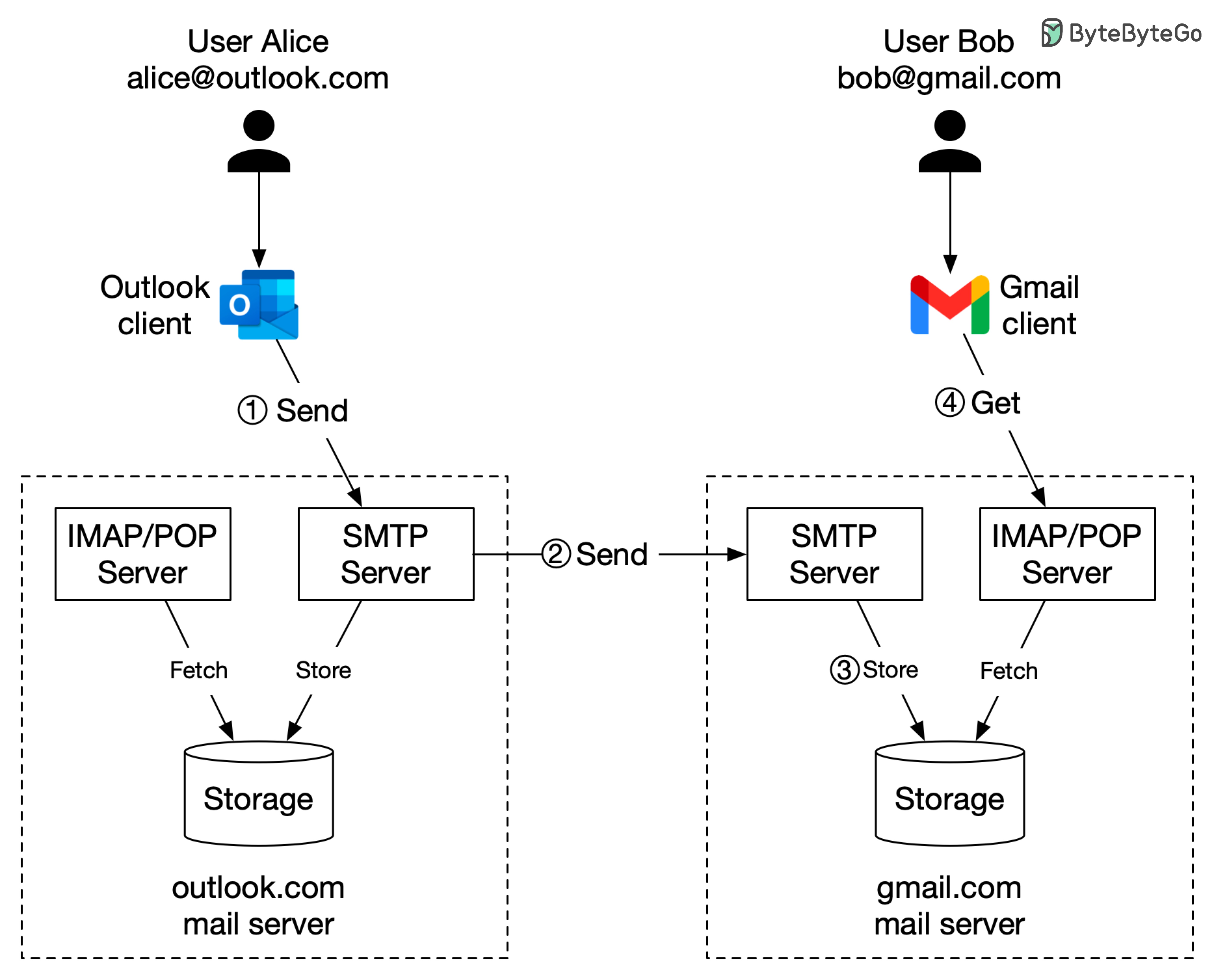

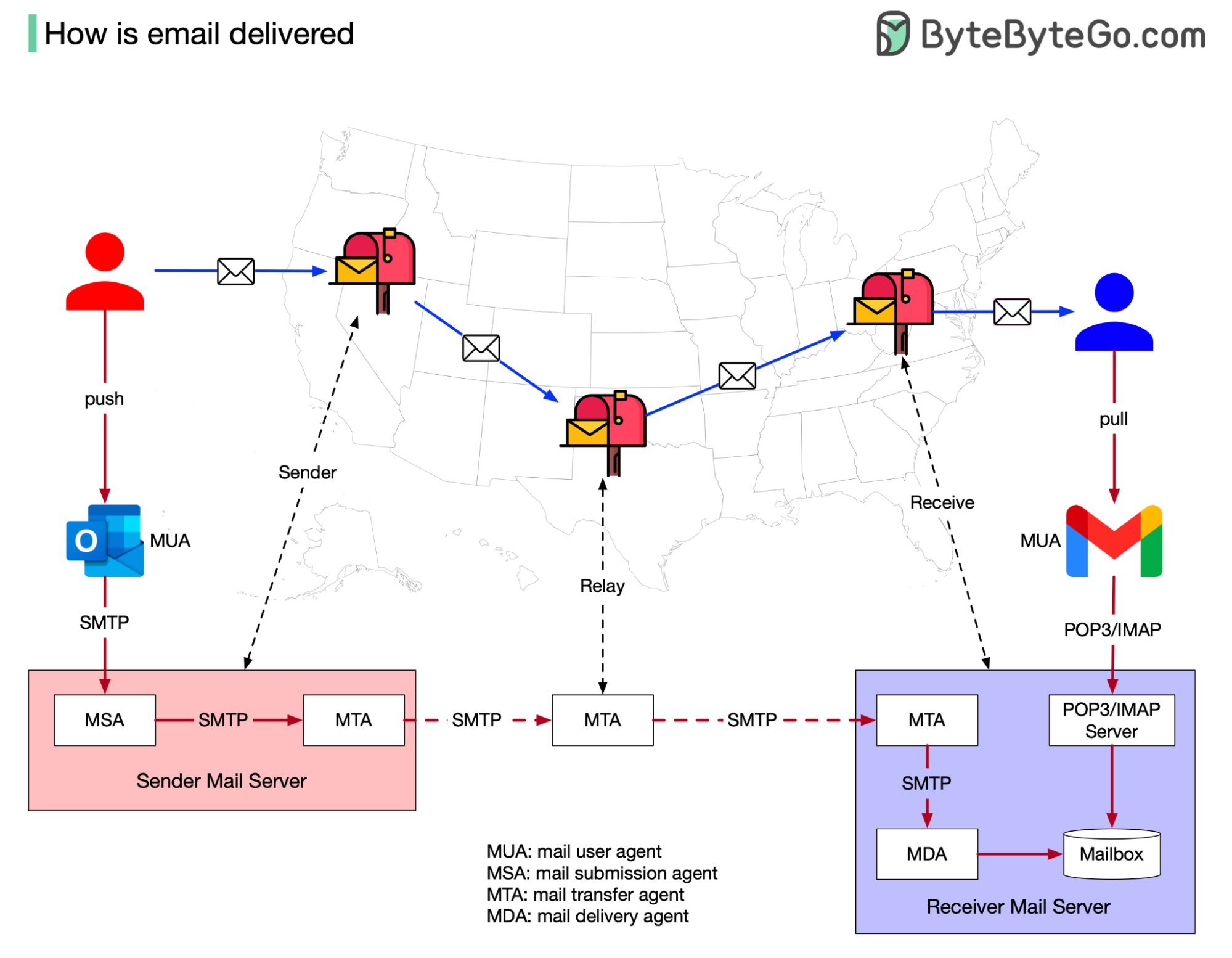

+ * [How is Email Delivered?](https://bytebytego.com/guides/how-is-email-delivered)

+ * [Design Gmail](https://bytebytego.com/guides/design-gmail)

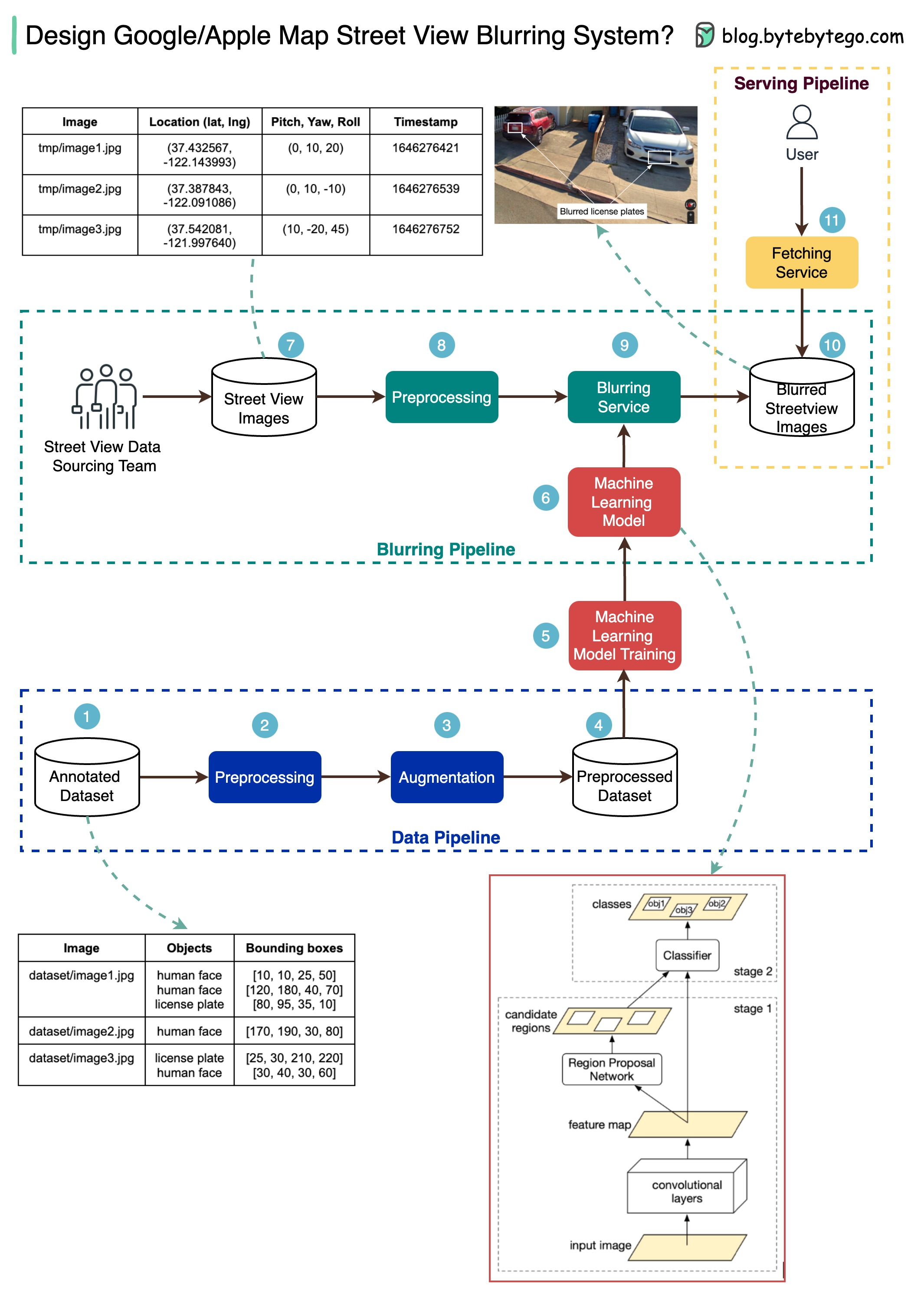

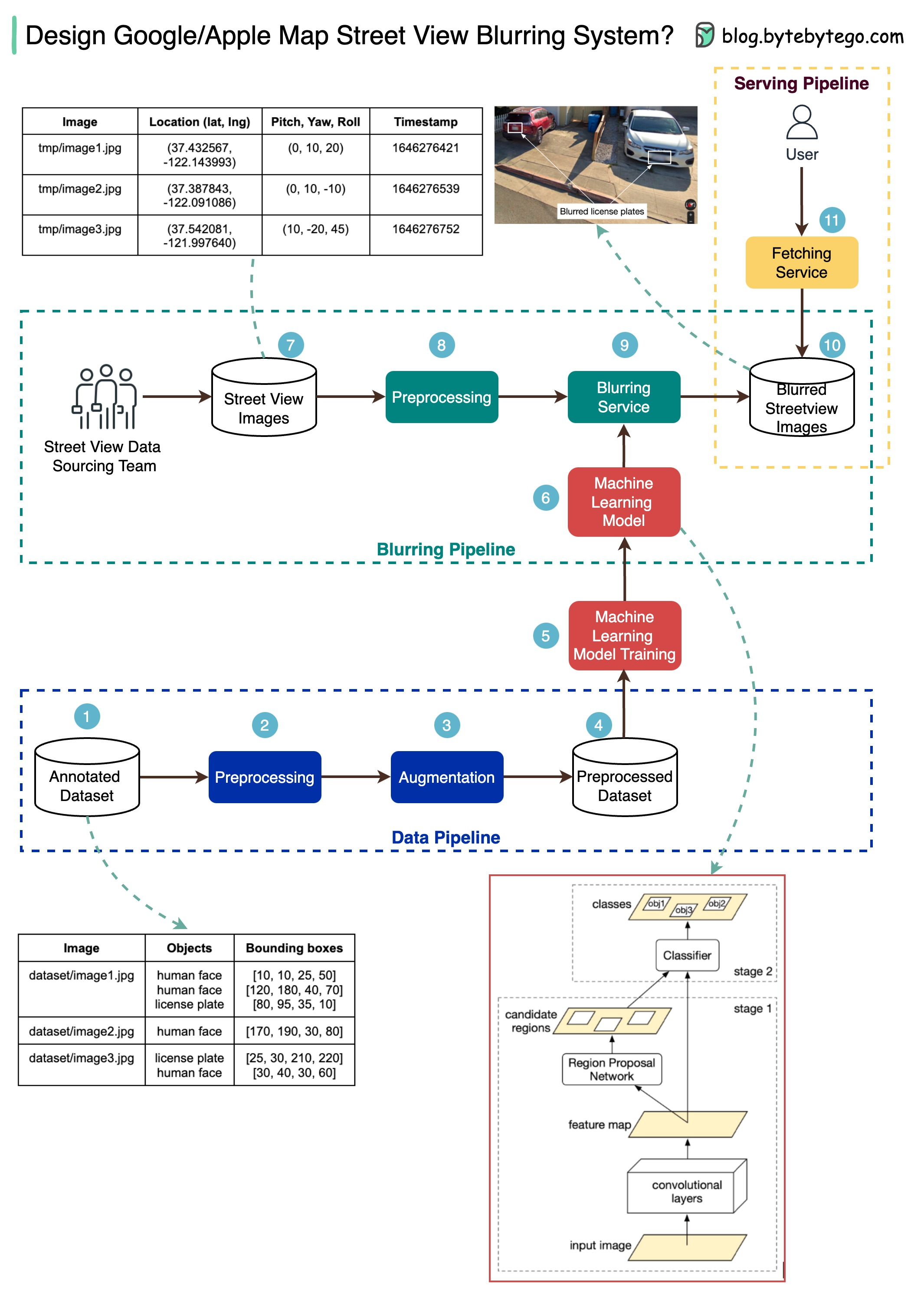

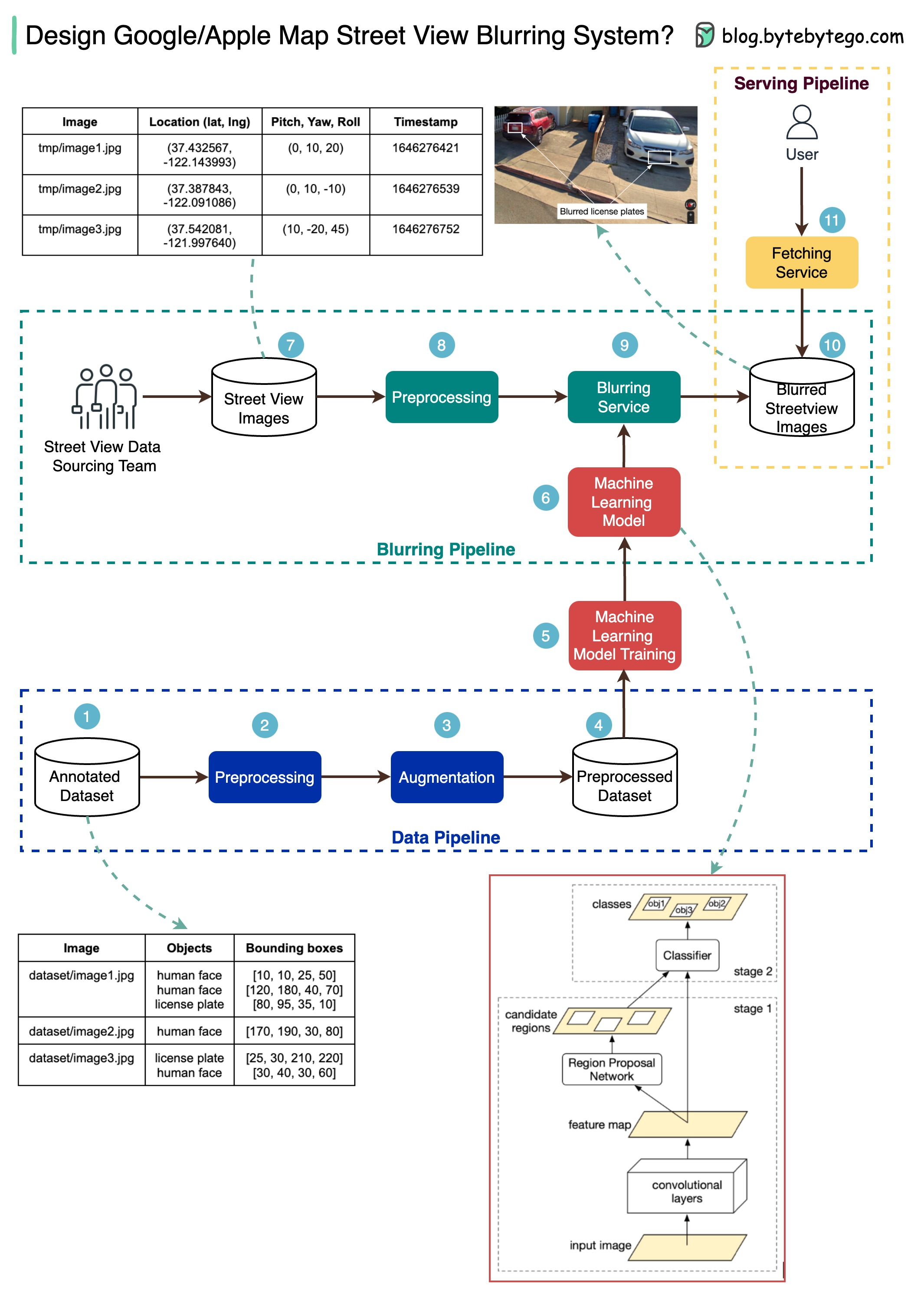

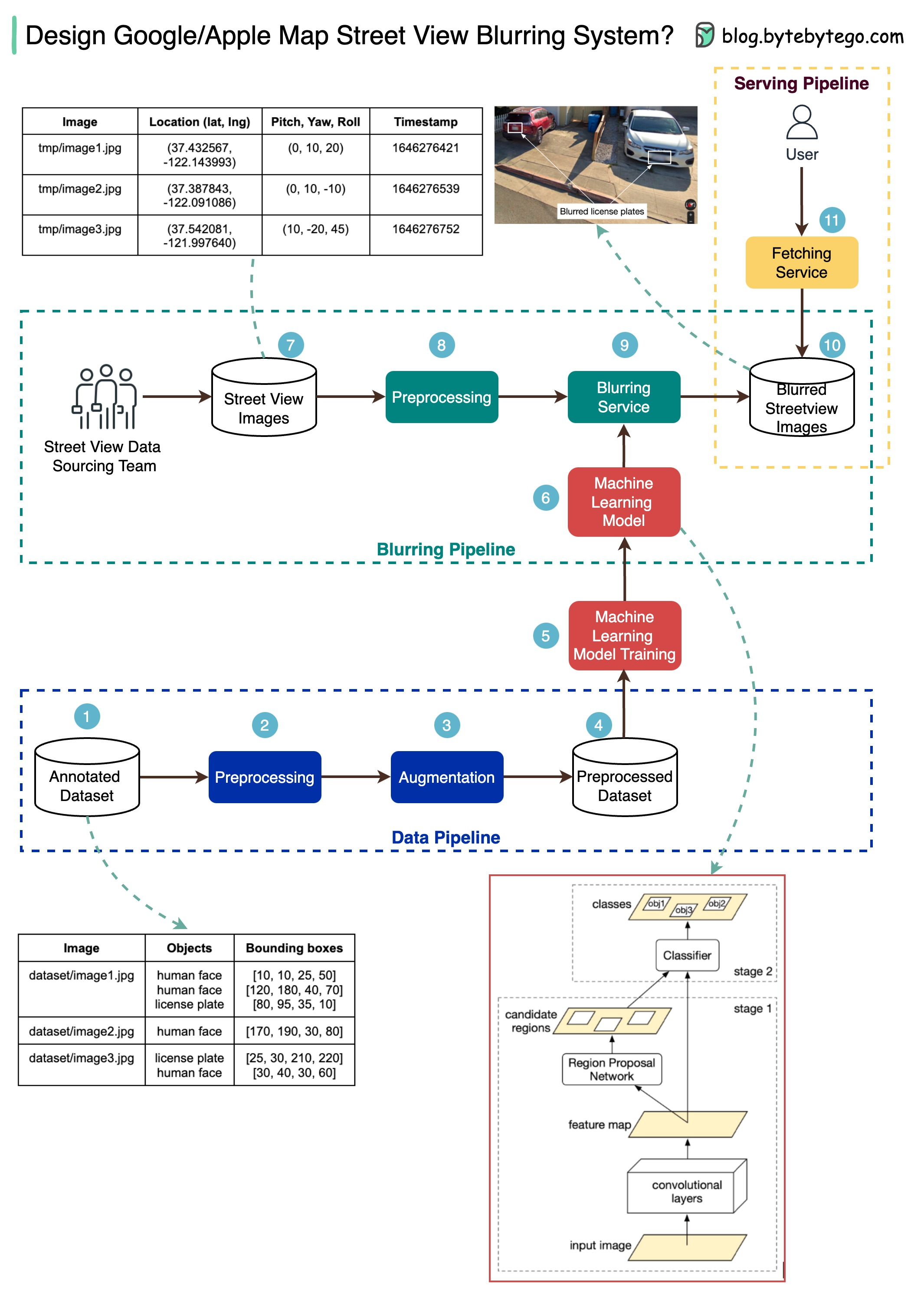

+ * [How Google/Apple Maps Blur License Plates and Faces](https://bytebytego.com/guides/how-do-googleapple-maps-blur-license-plates-and-human-faces-on-street-view)

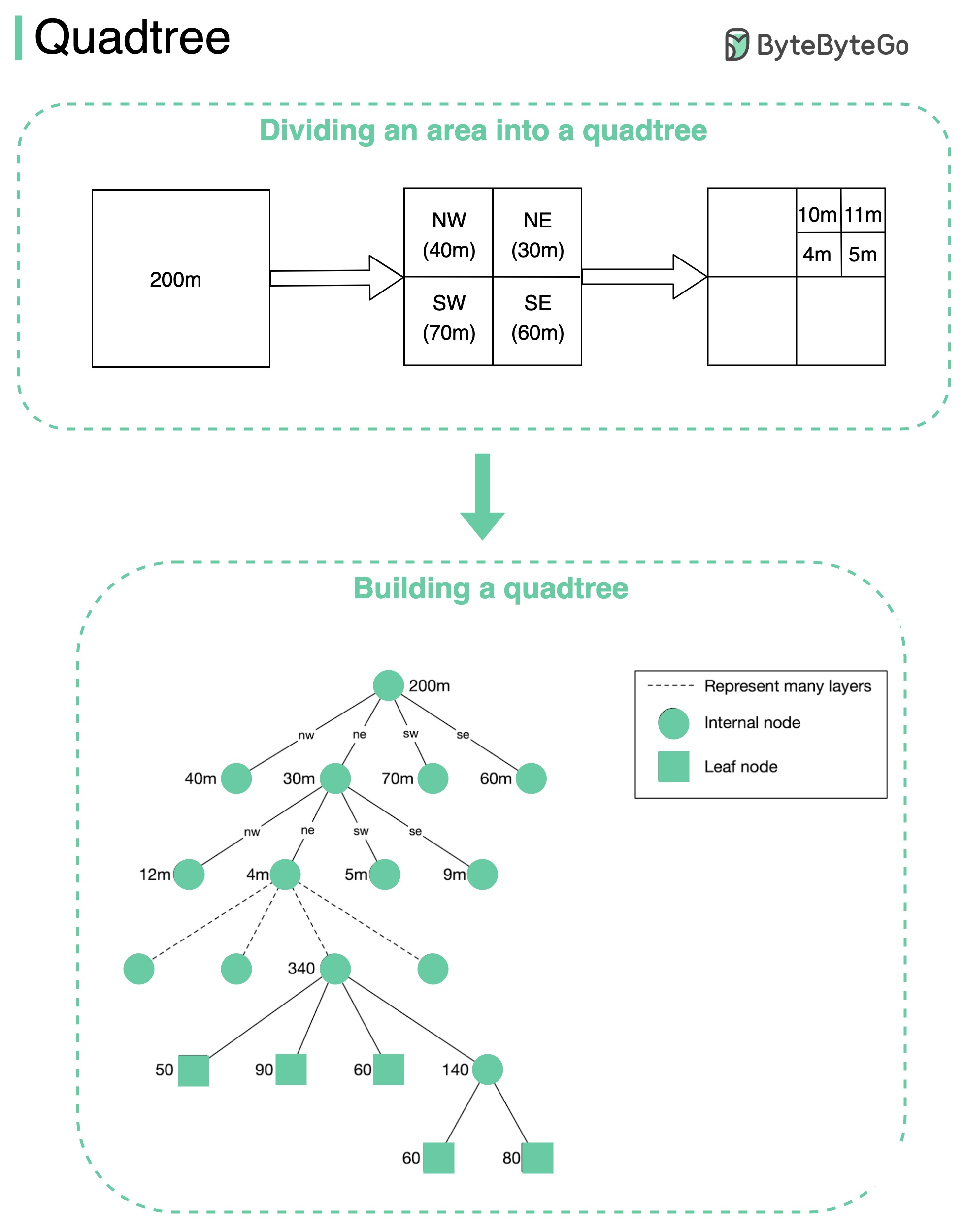

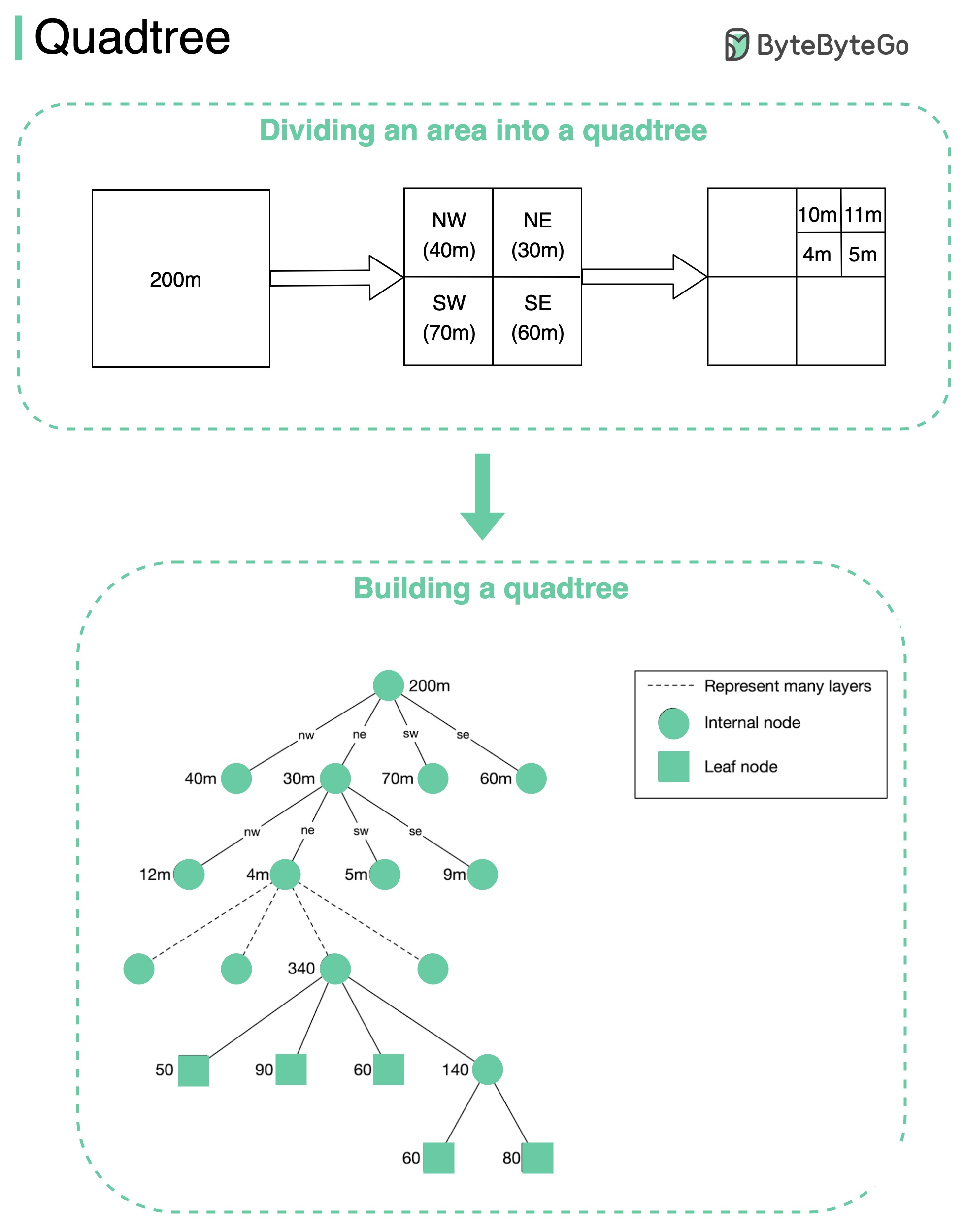

+ * [Quadtree](https://bytebytego.com/guides/quadtree)

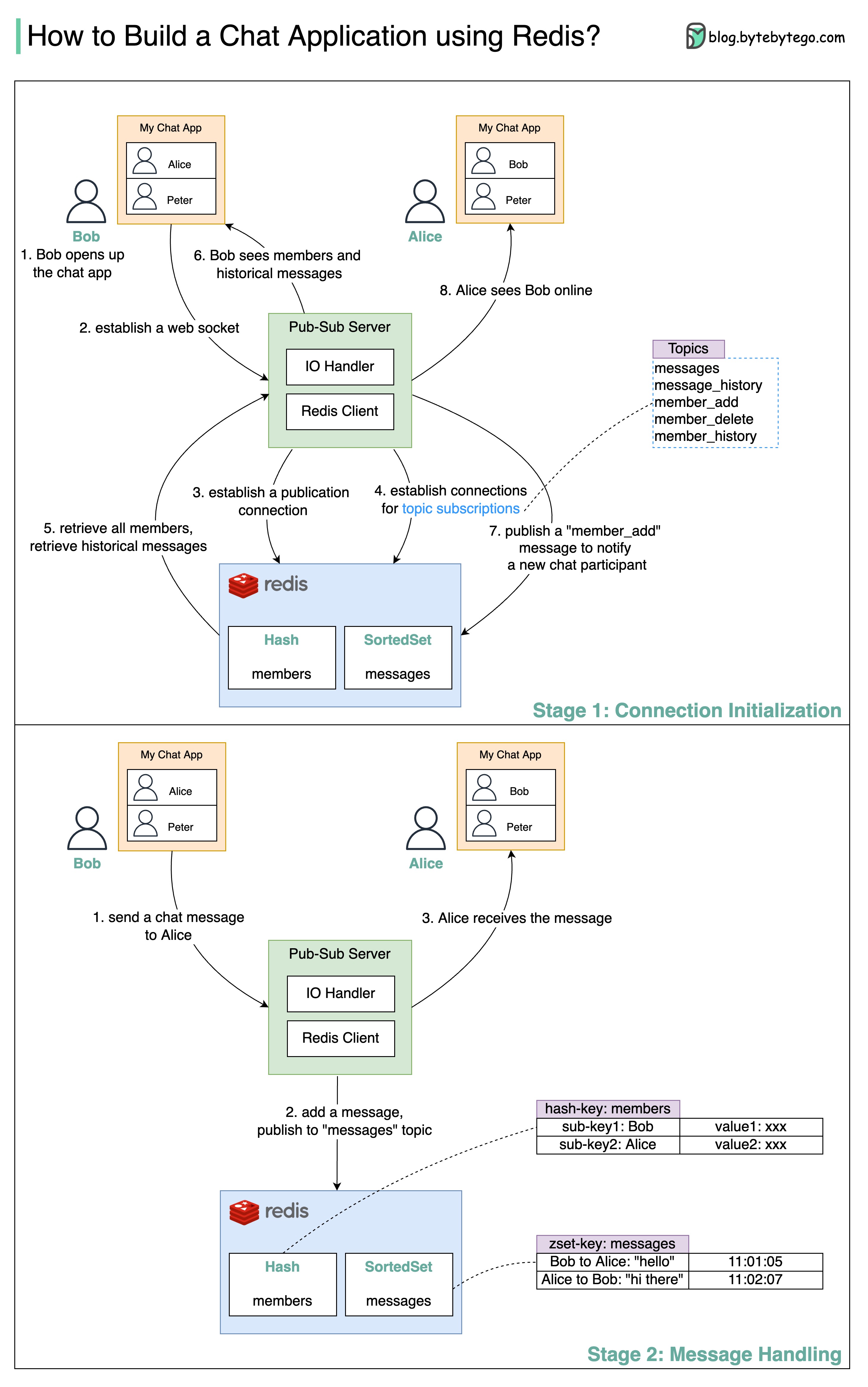

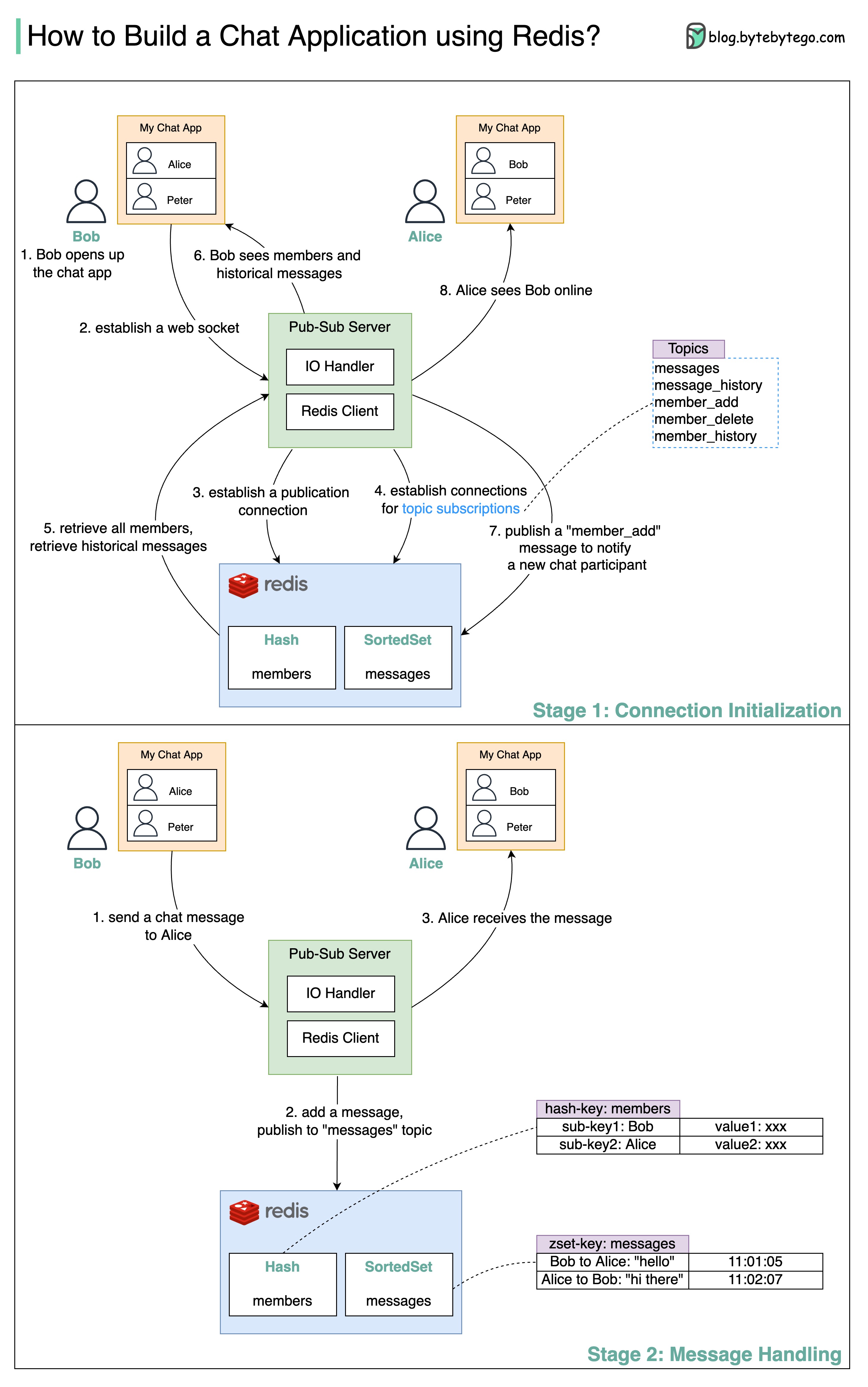

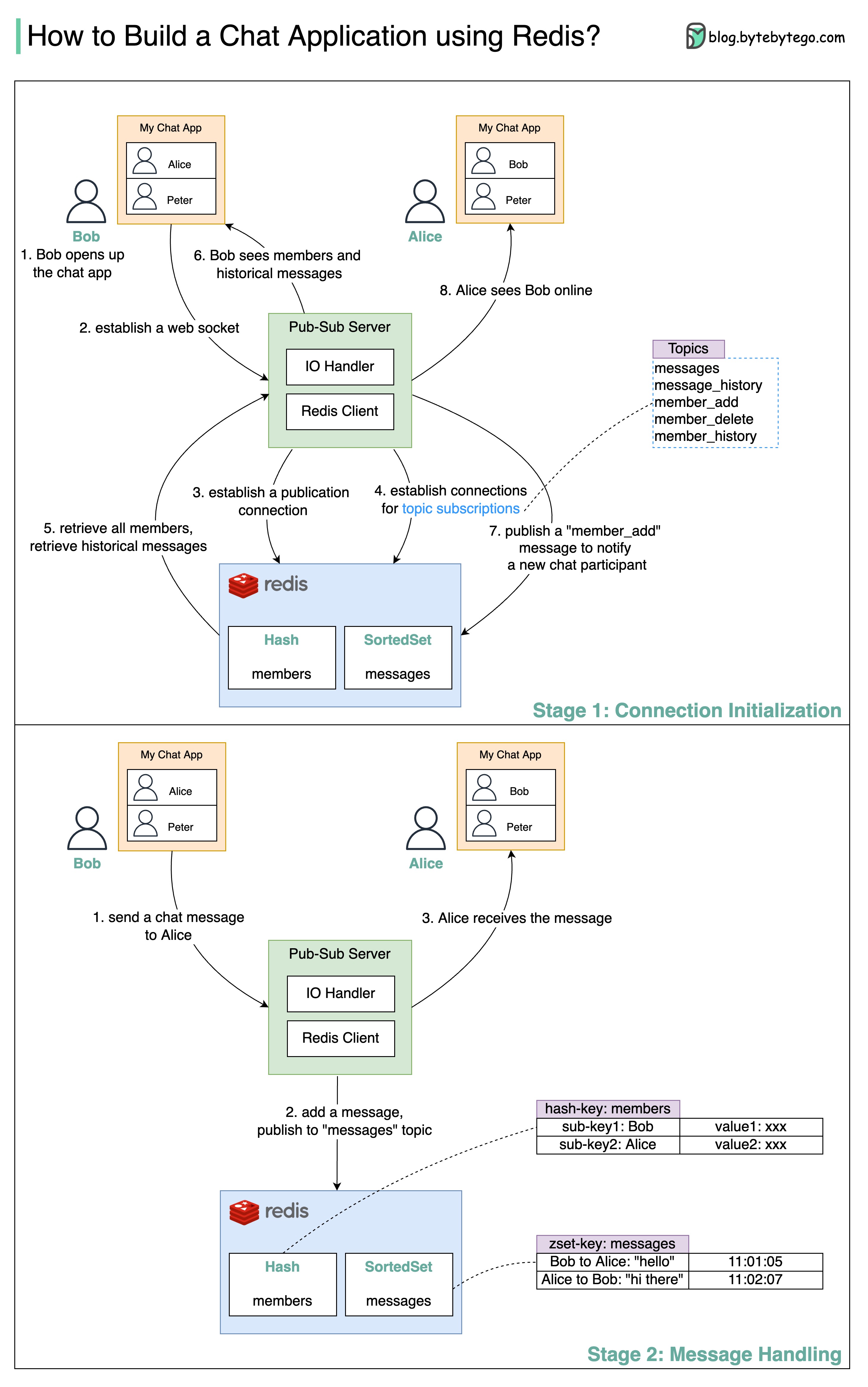

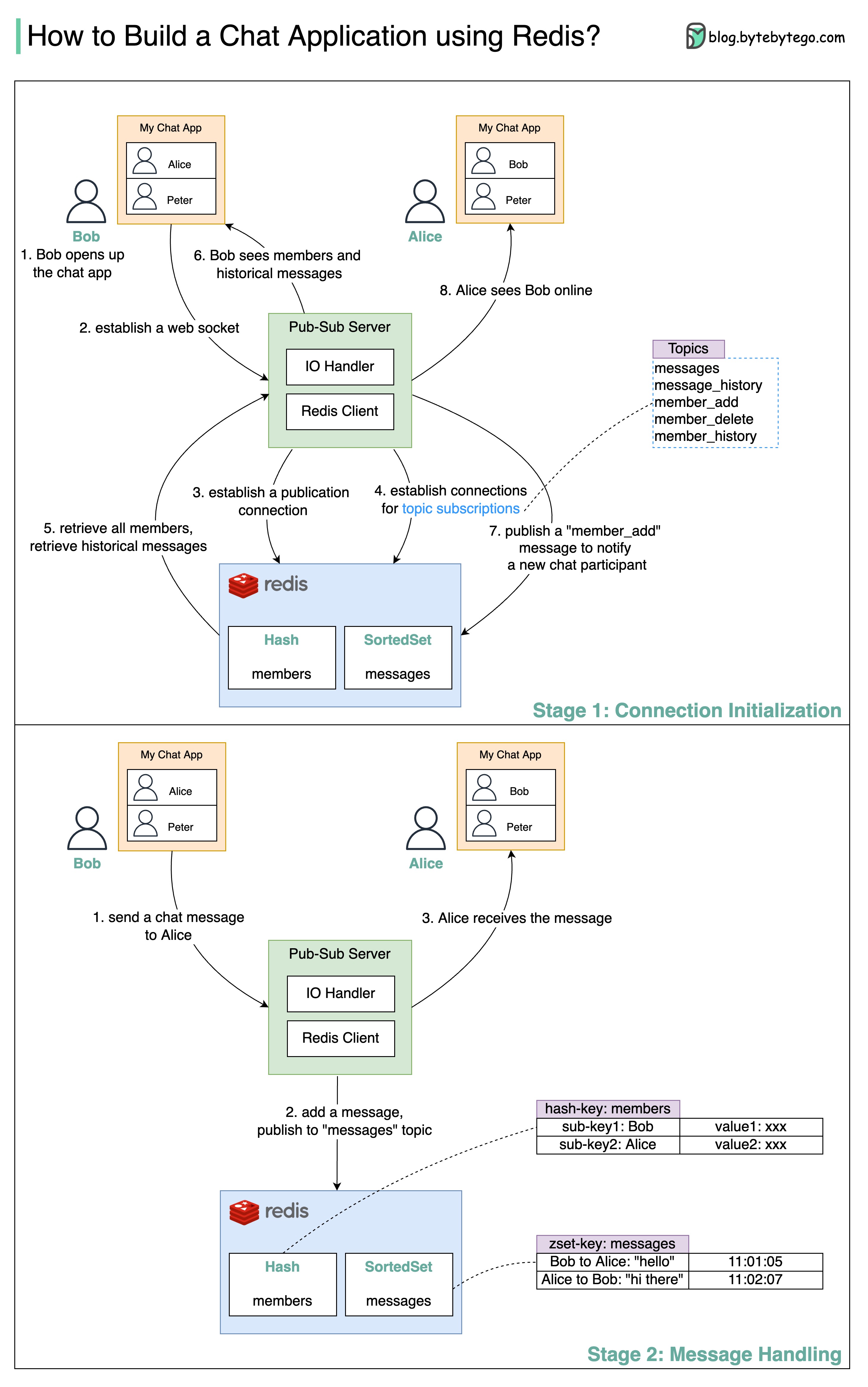

+ * [Build a Simple Chat Application with Redis](https://bytebytego.com/guides/build-a-simple-chat-application)

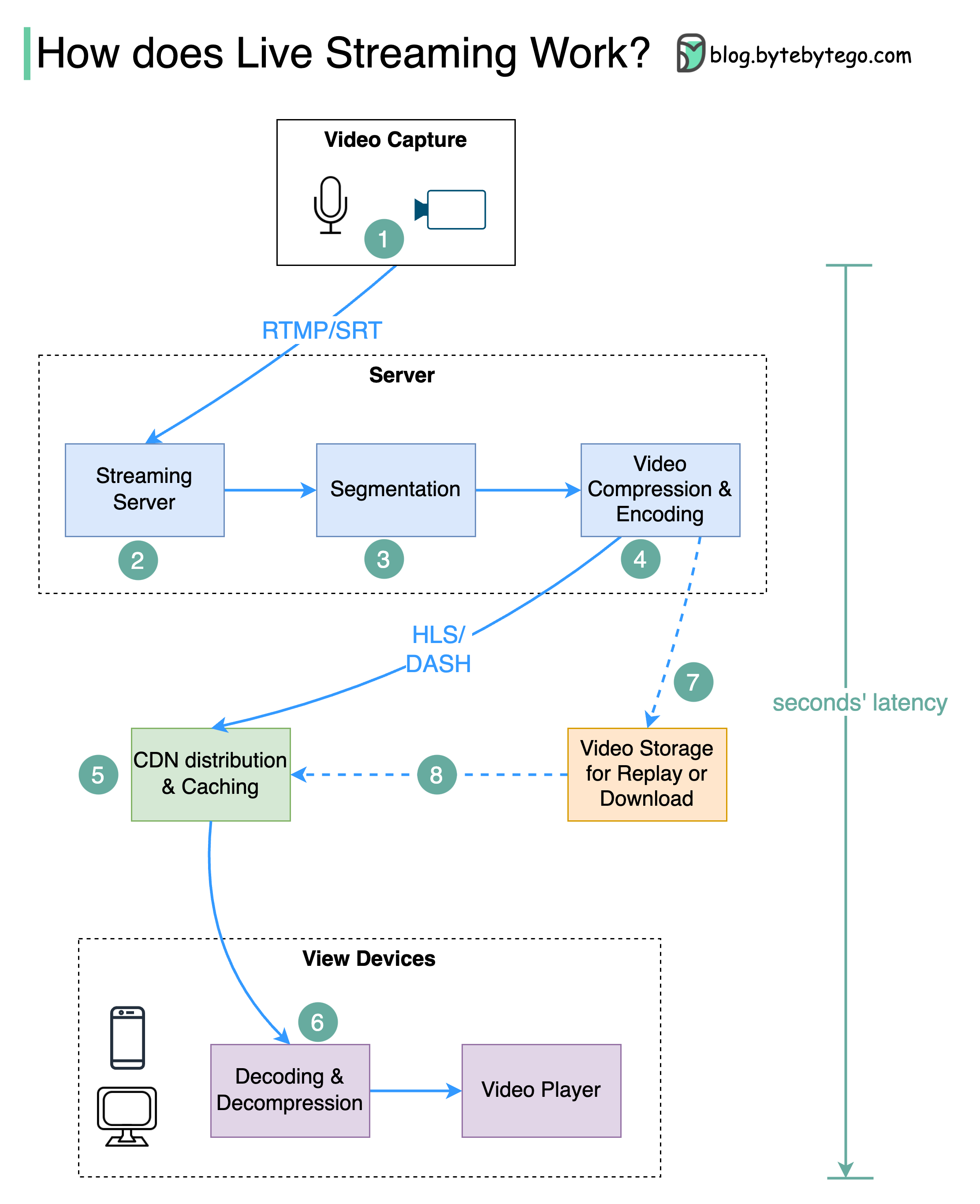

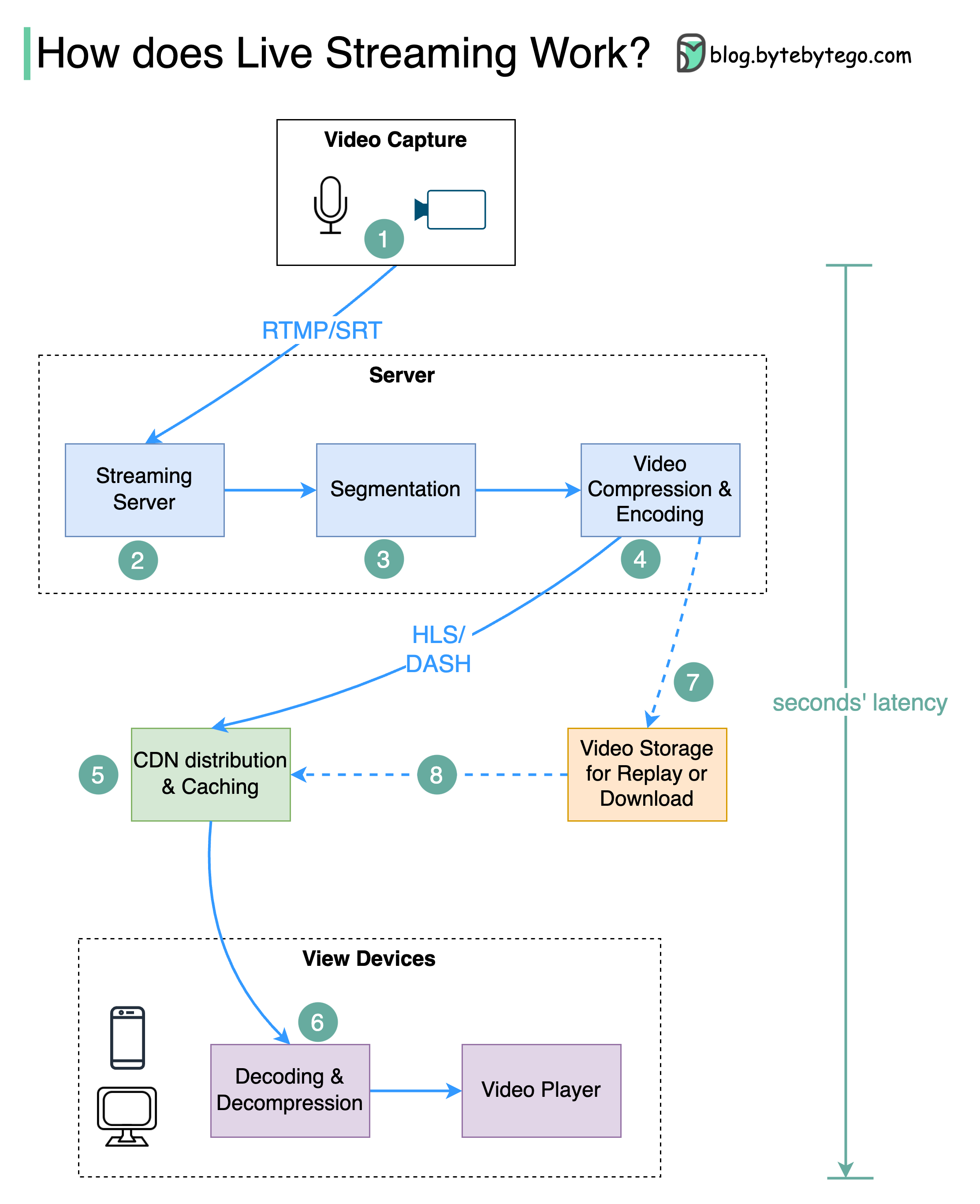

+ * [Live Streaming Explained](https://bytebytego.com/guides/live-streaming-explained)

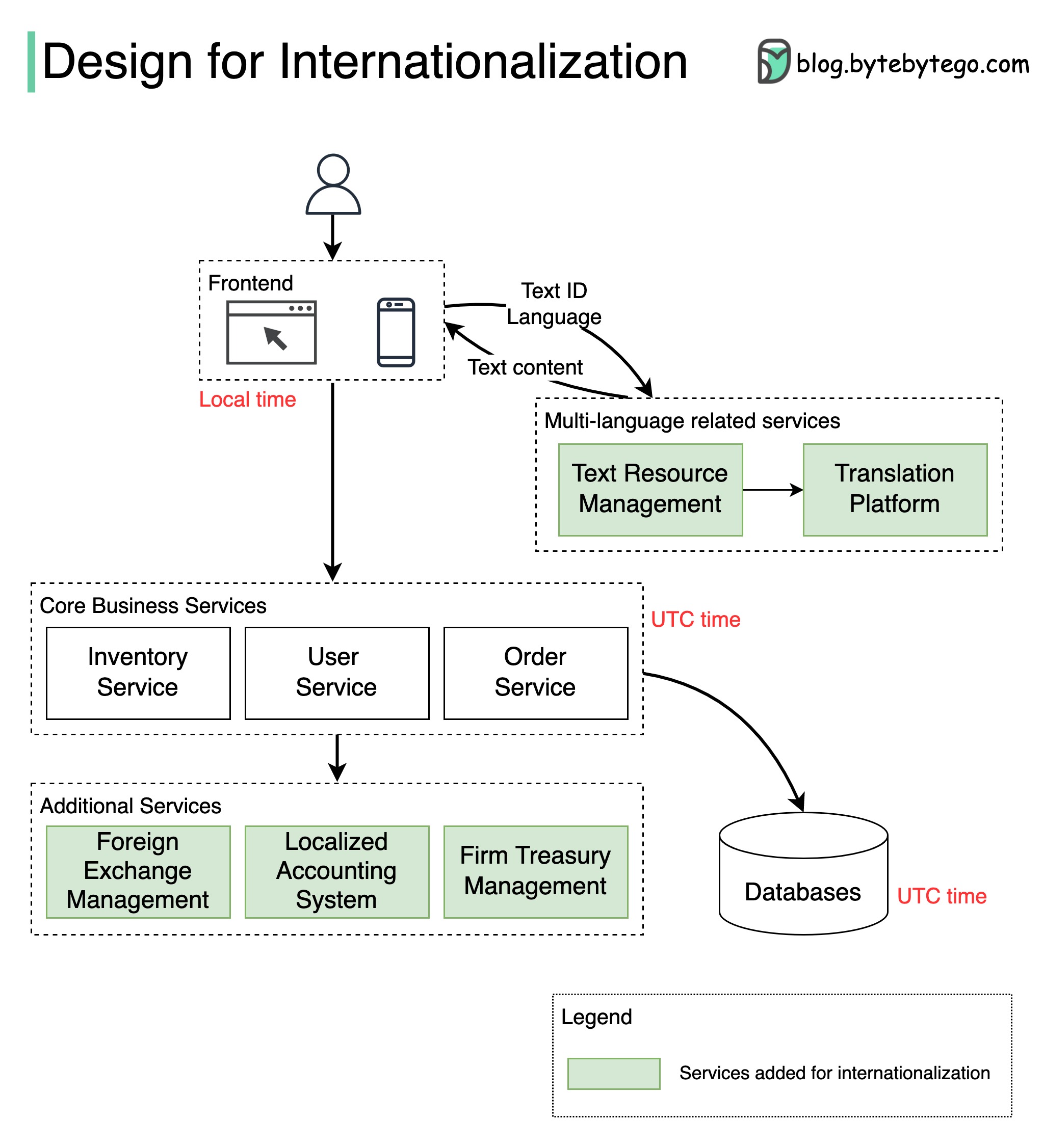

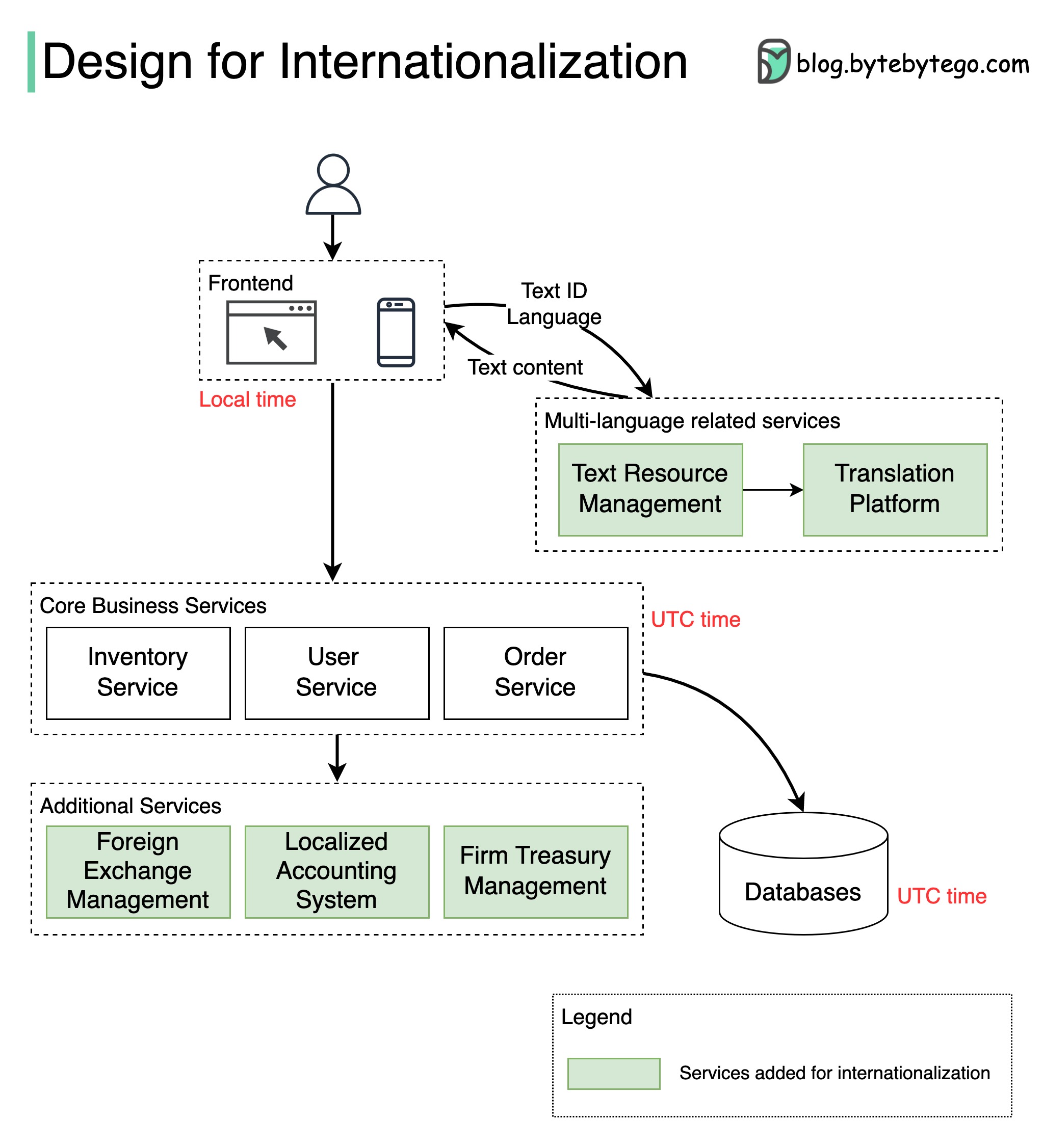

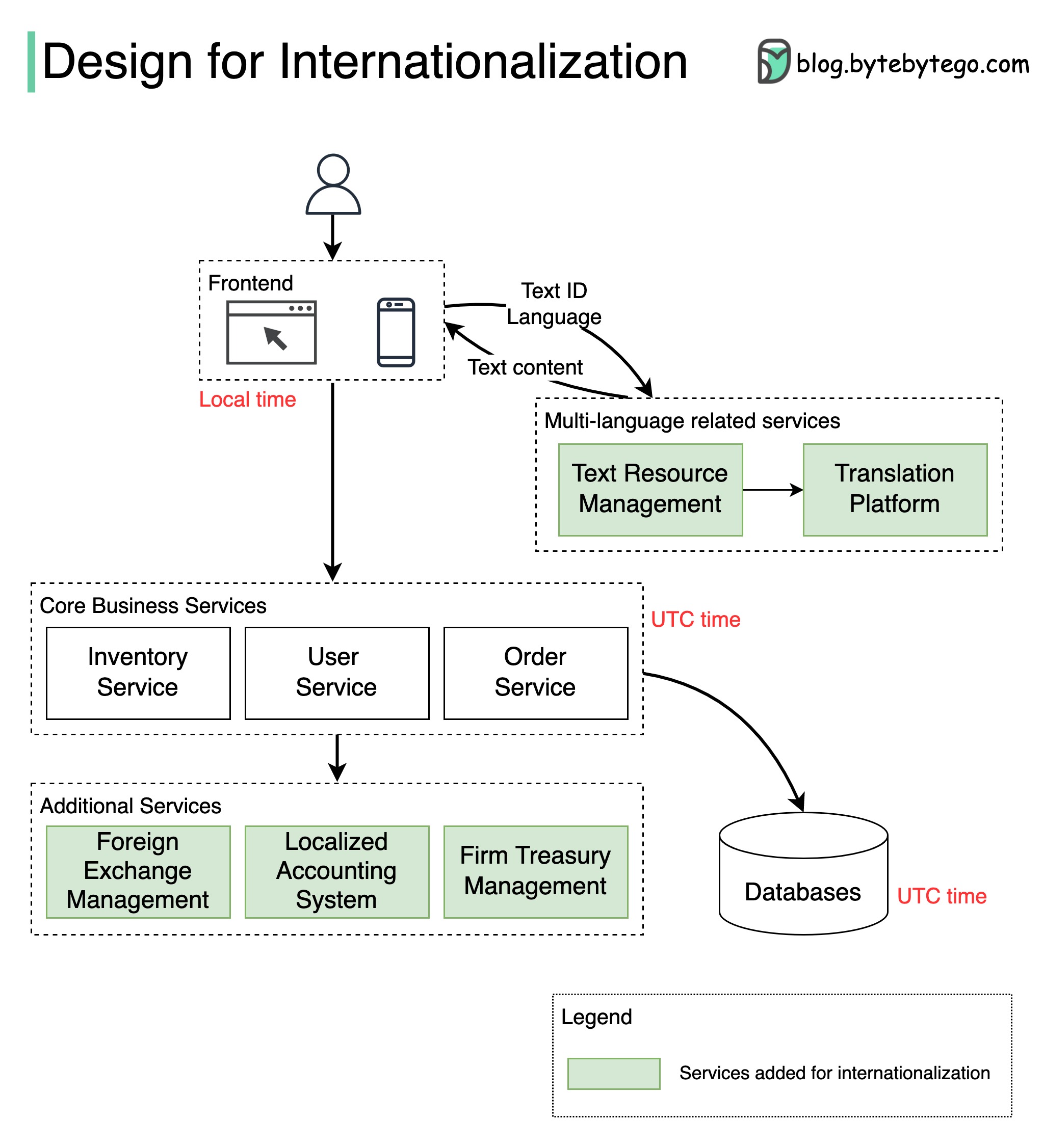

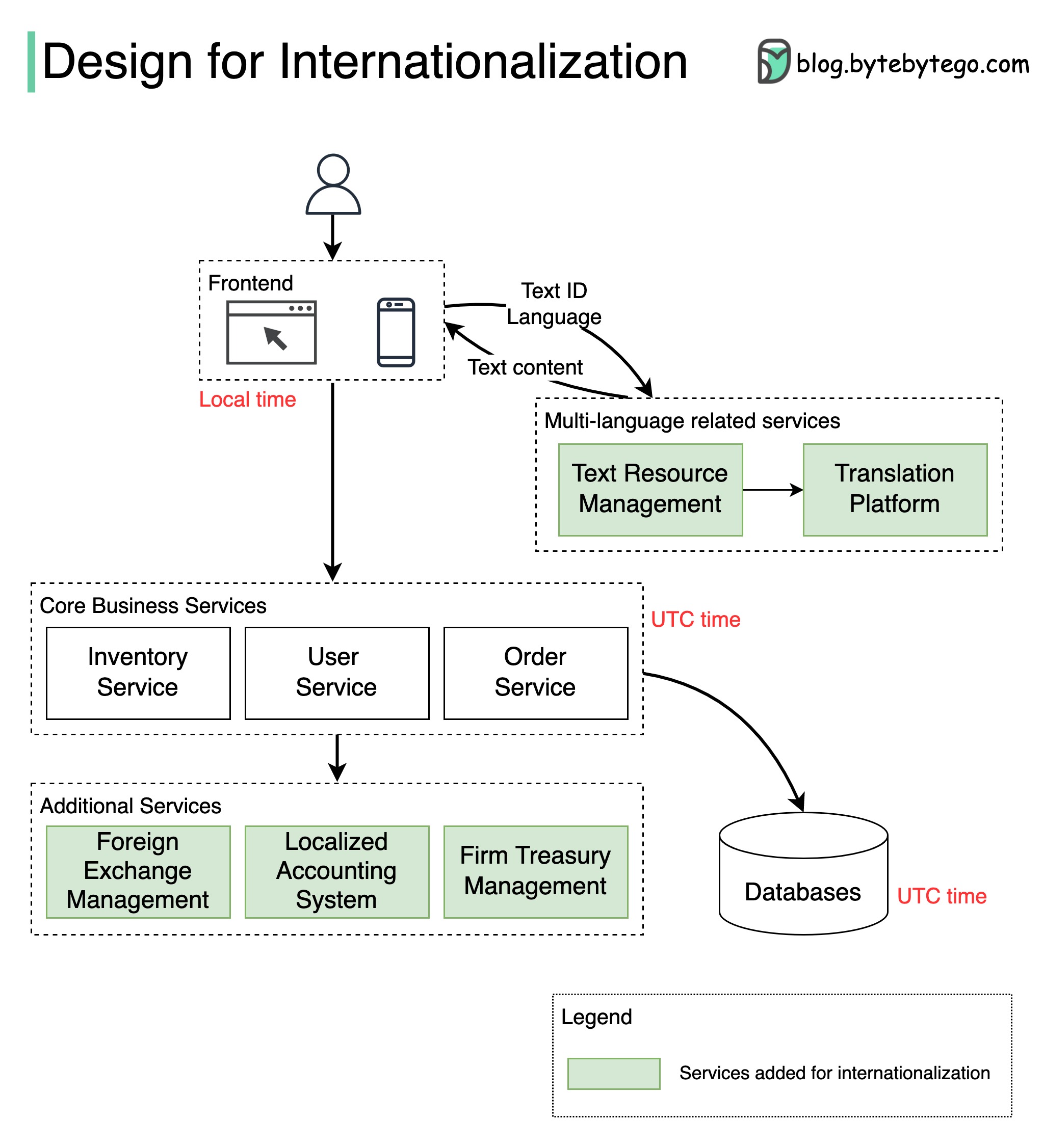

+ * [How to Design a System for Internationalization](https://bytebytego.com/guides/how-do-we-design-a-system-for-internationalization)

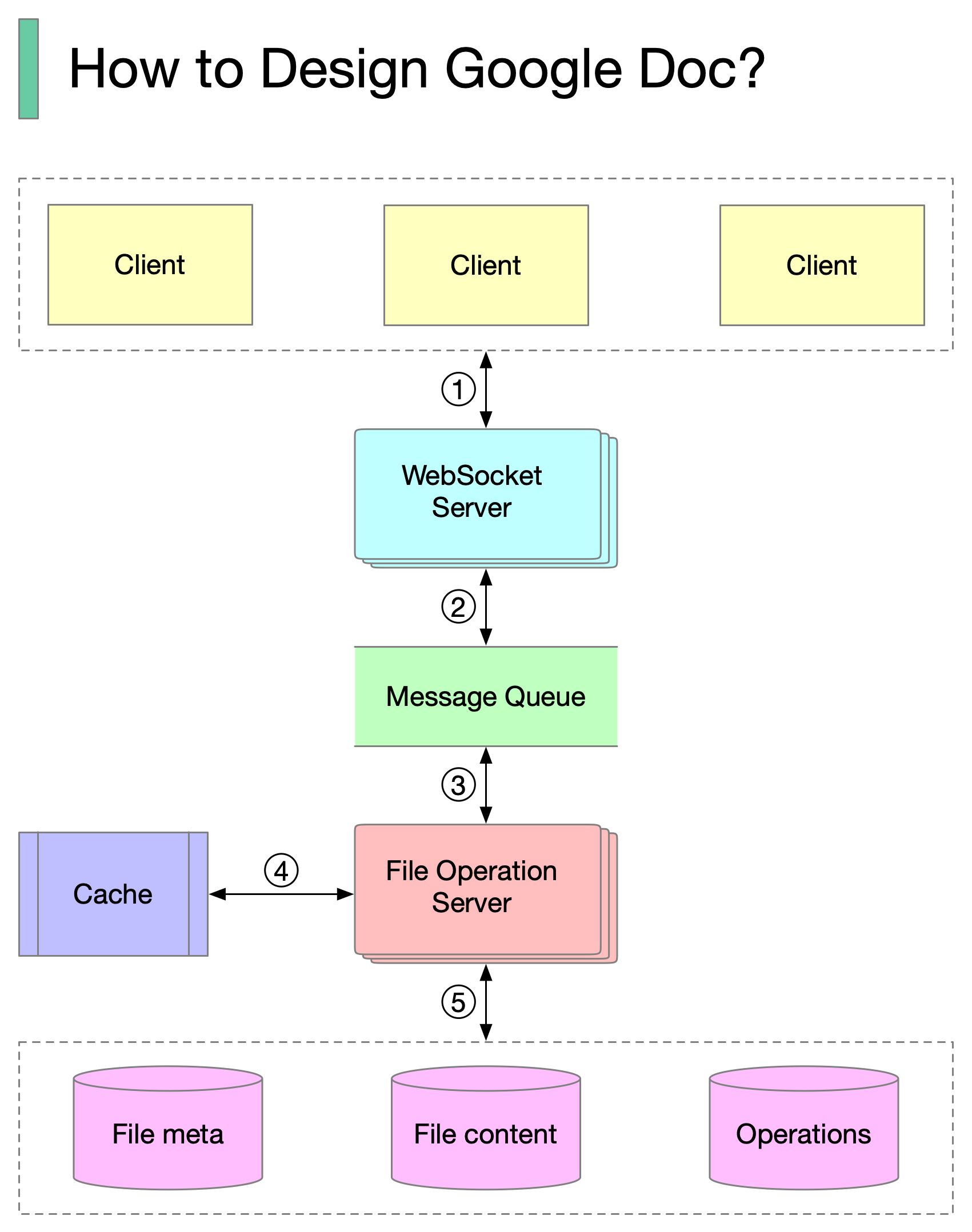

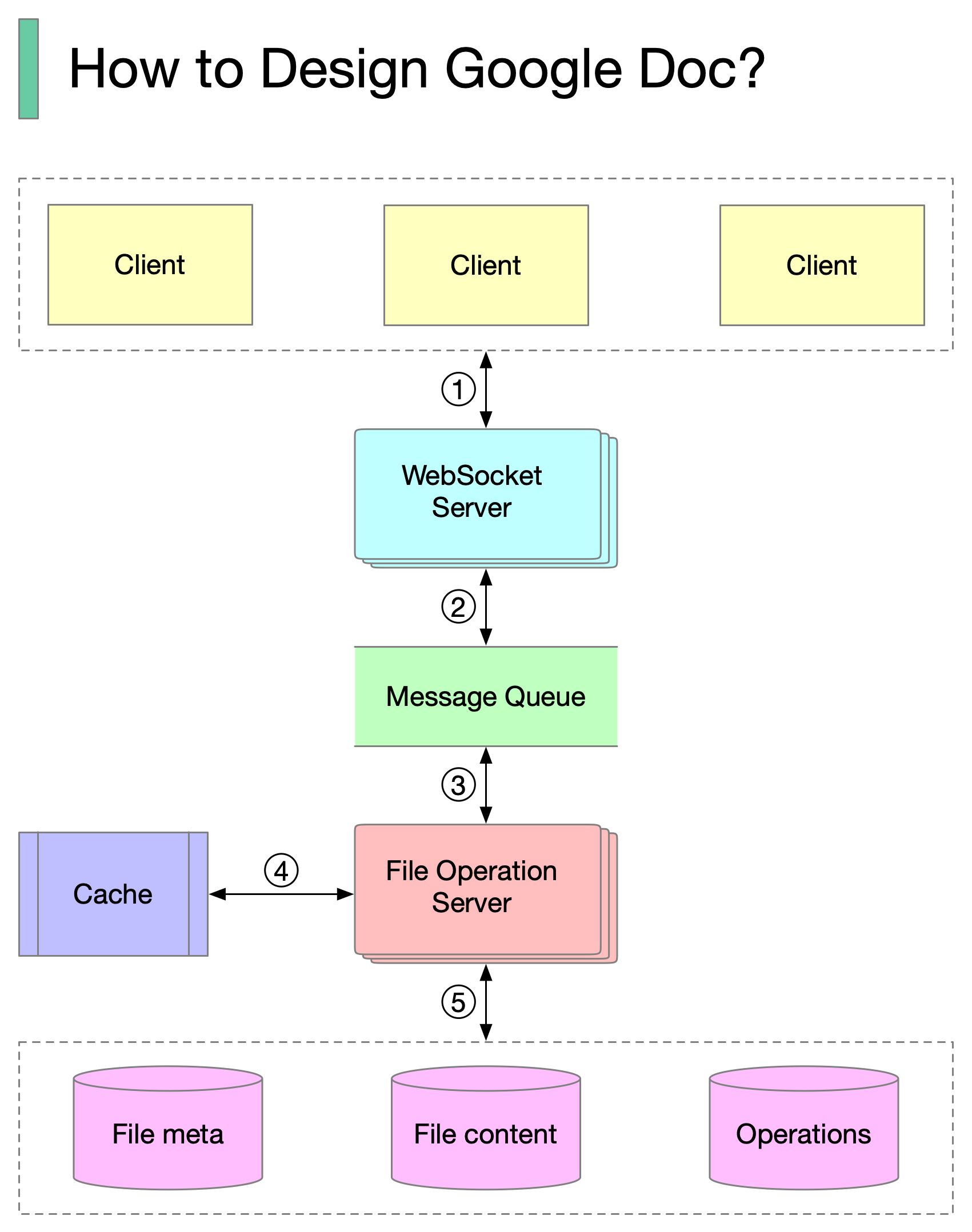

+ * [How to Design Google Docs](https://bytebytego.com/guides/how-to-design-google-docs)

+ * [Payment System](https://bytebytego.com/guides/payment-system)

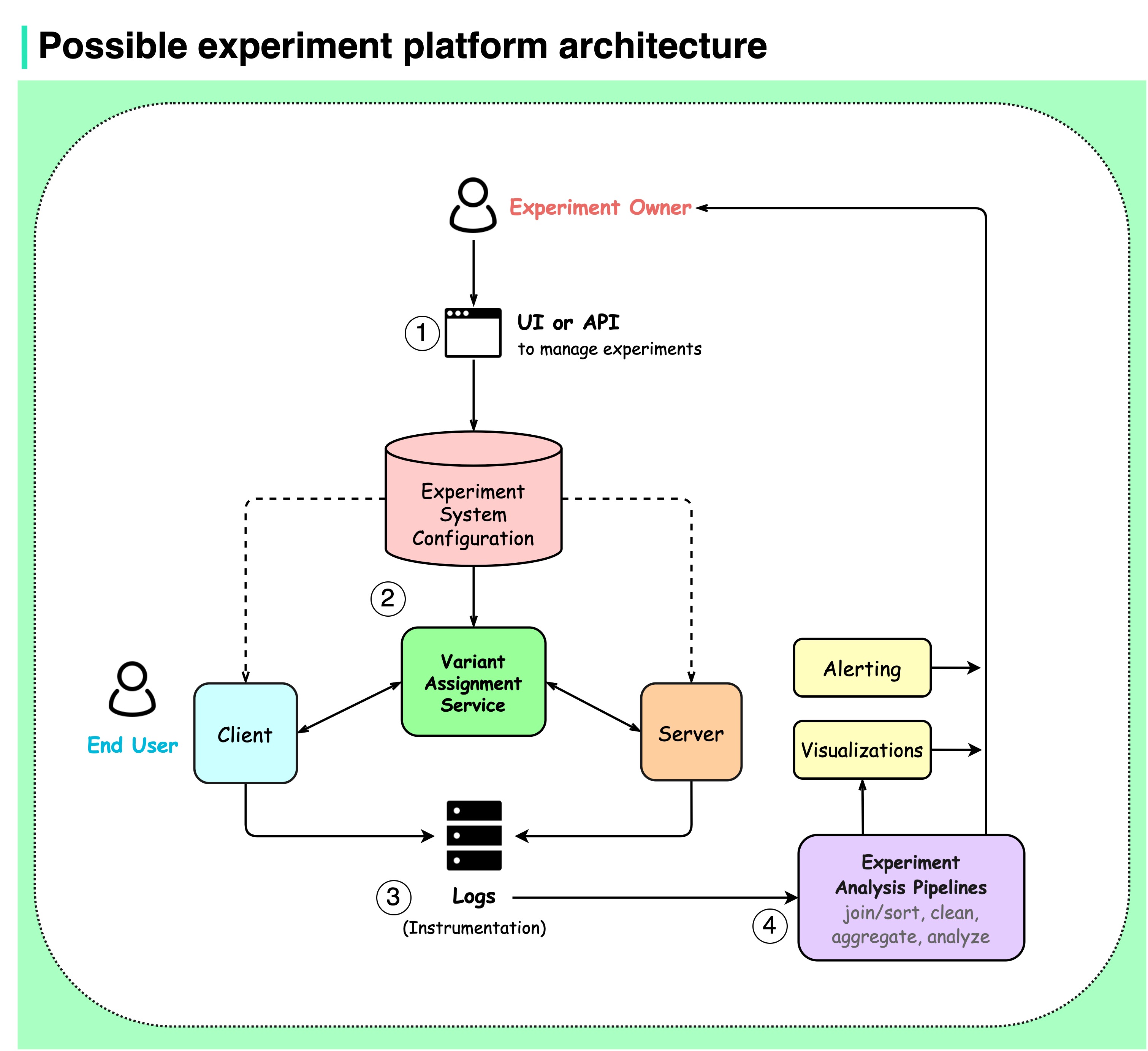

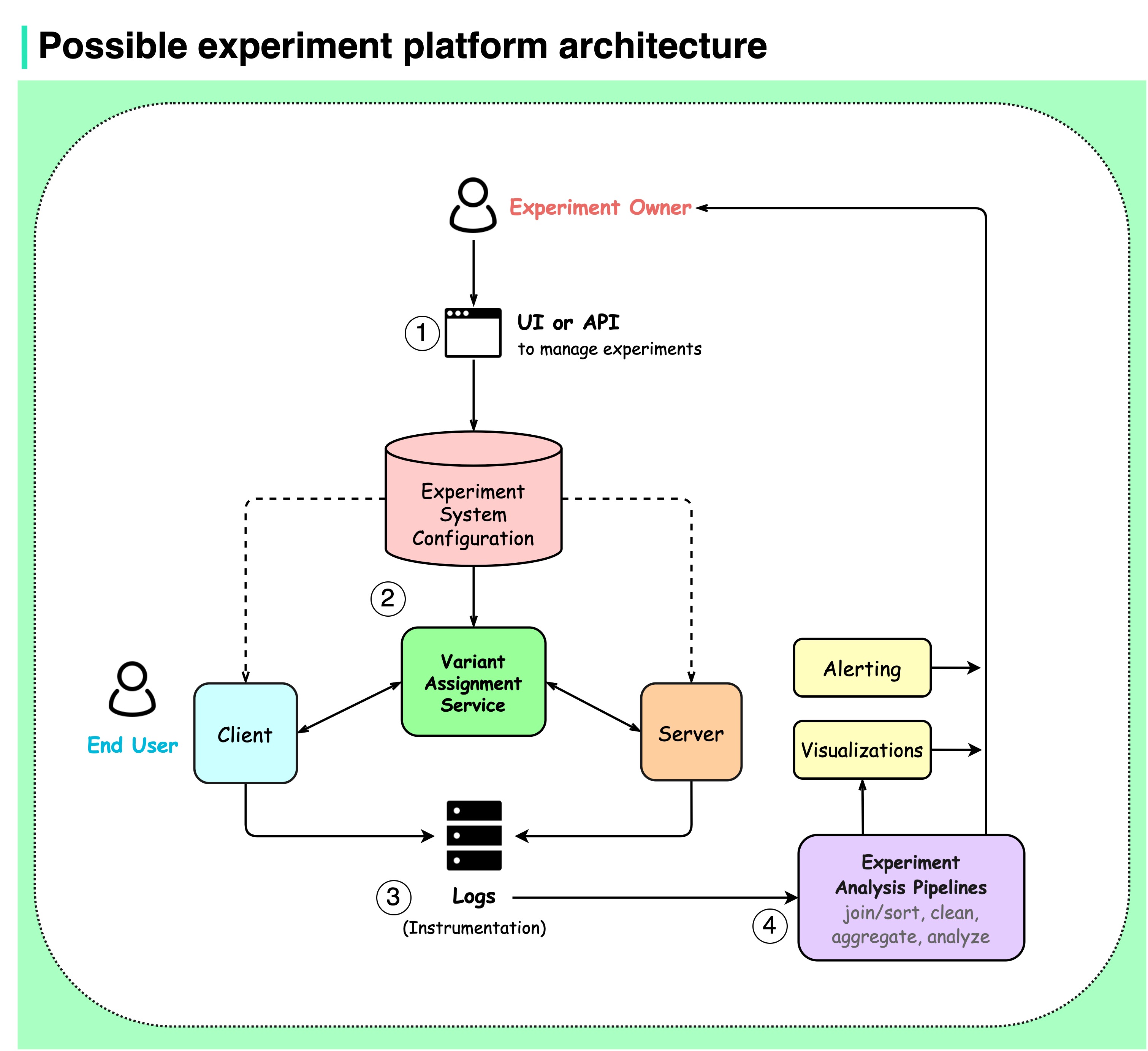

+ * [Experiment Platform Architecture](https://bytebytego.com/guides/possible-experiment-platform-architecture)

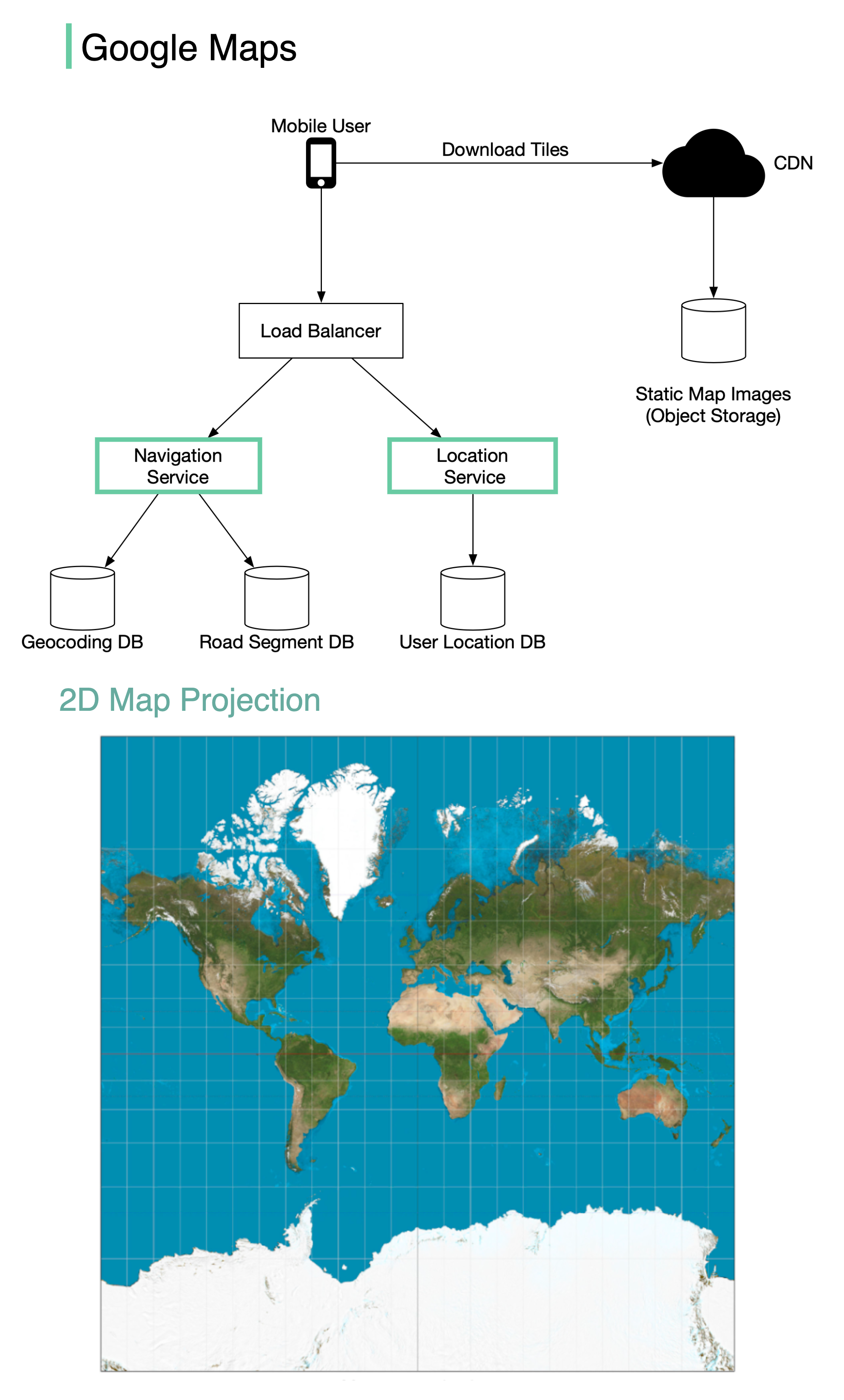

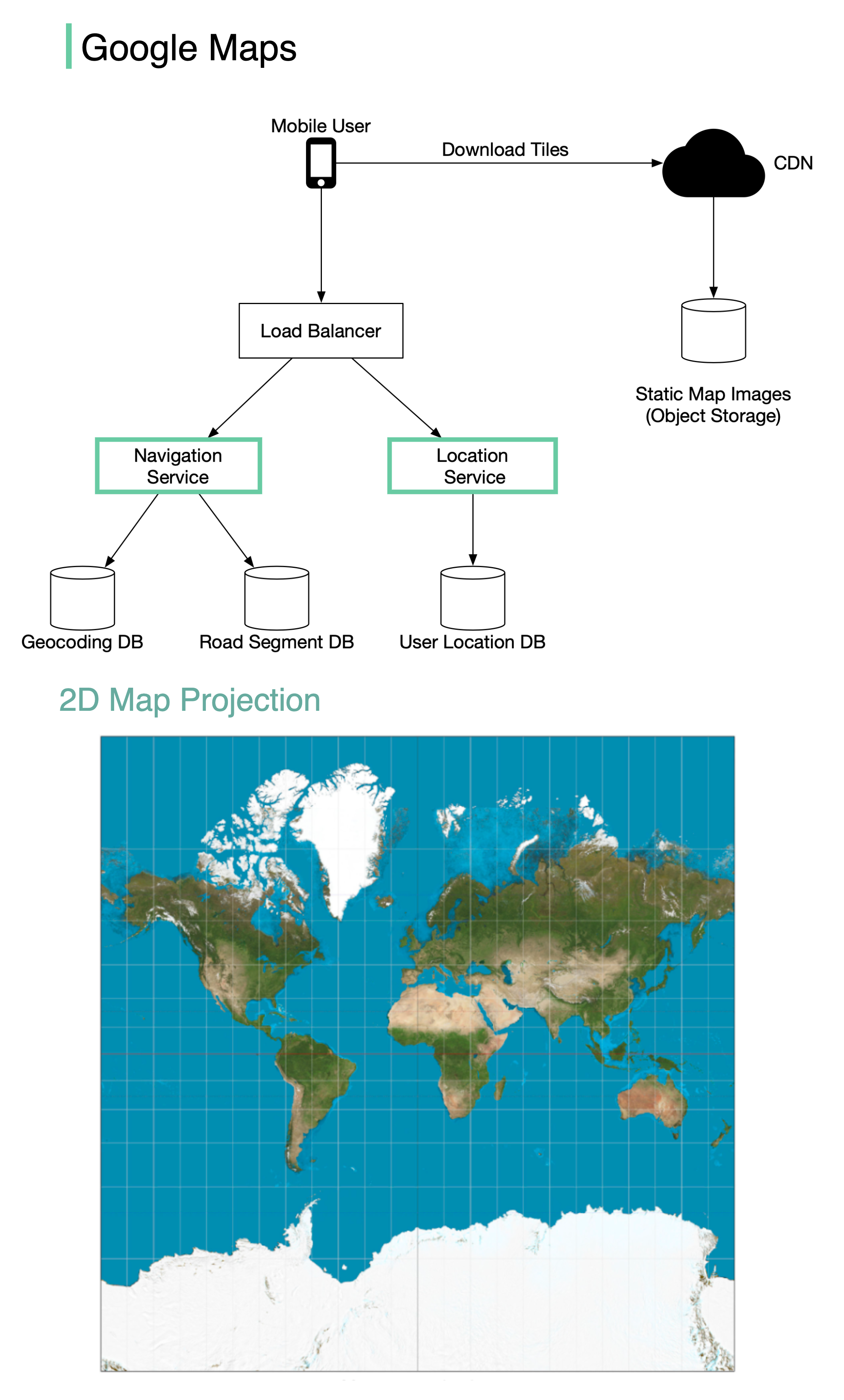

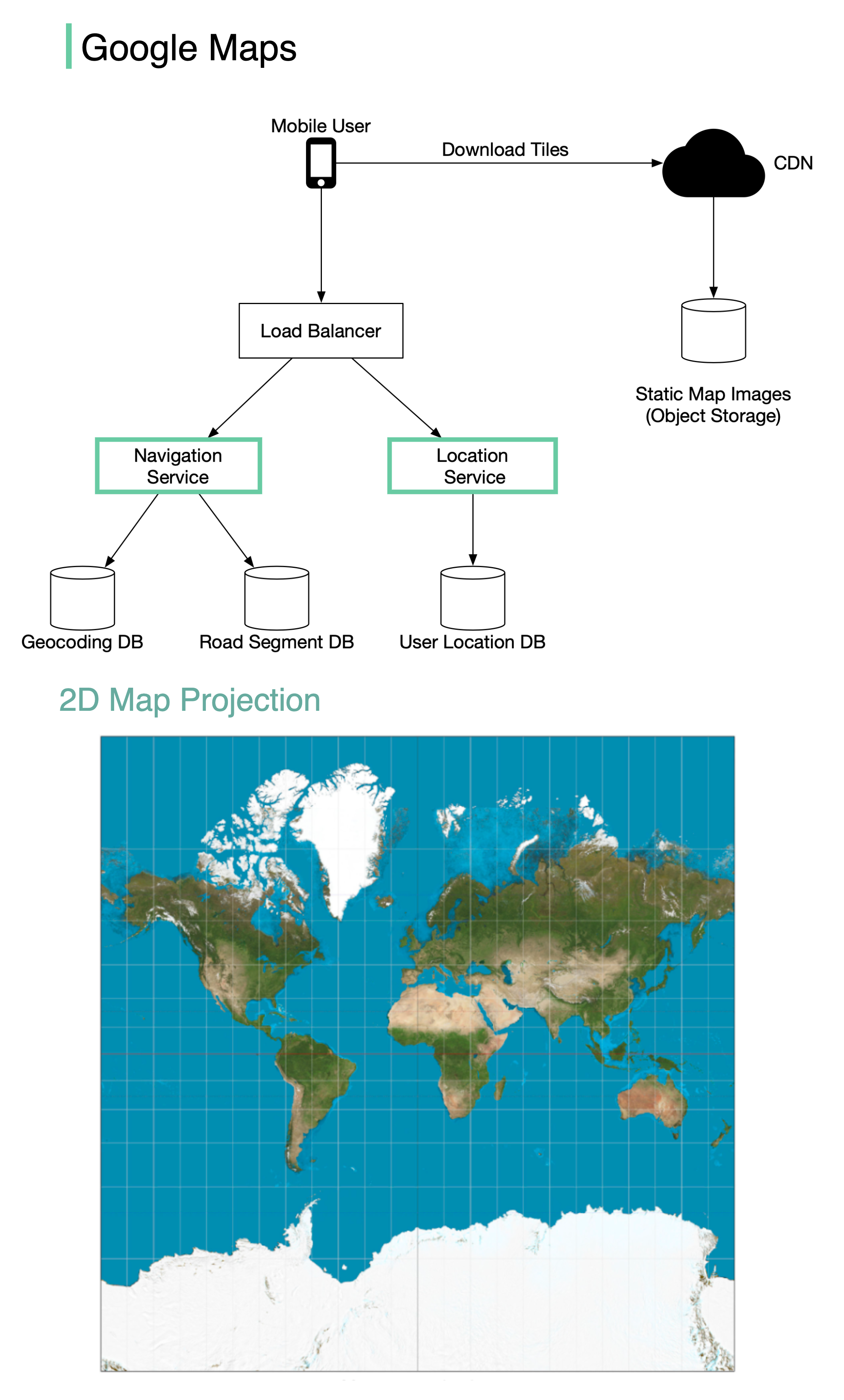

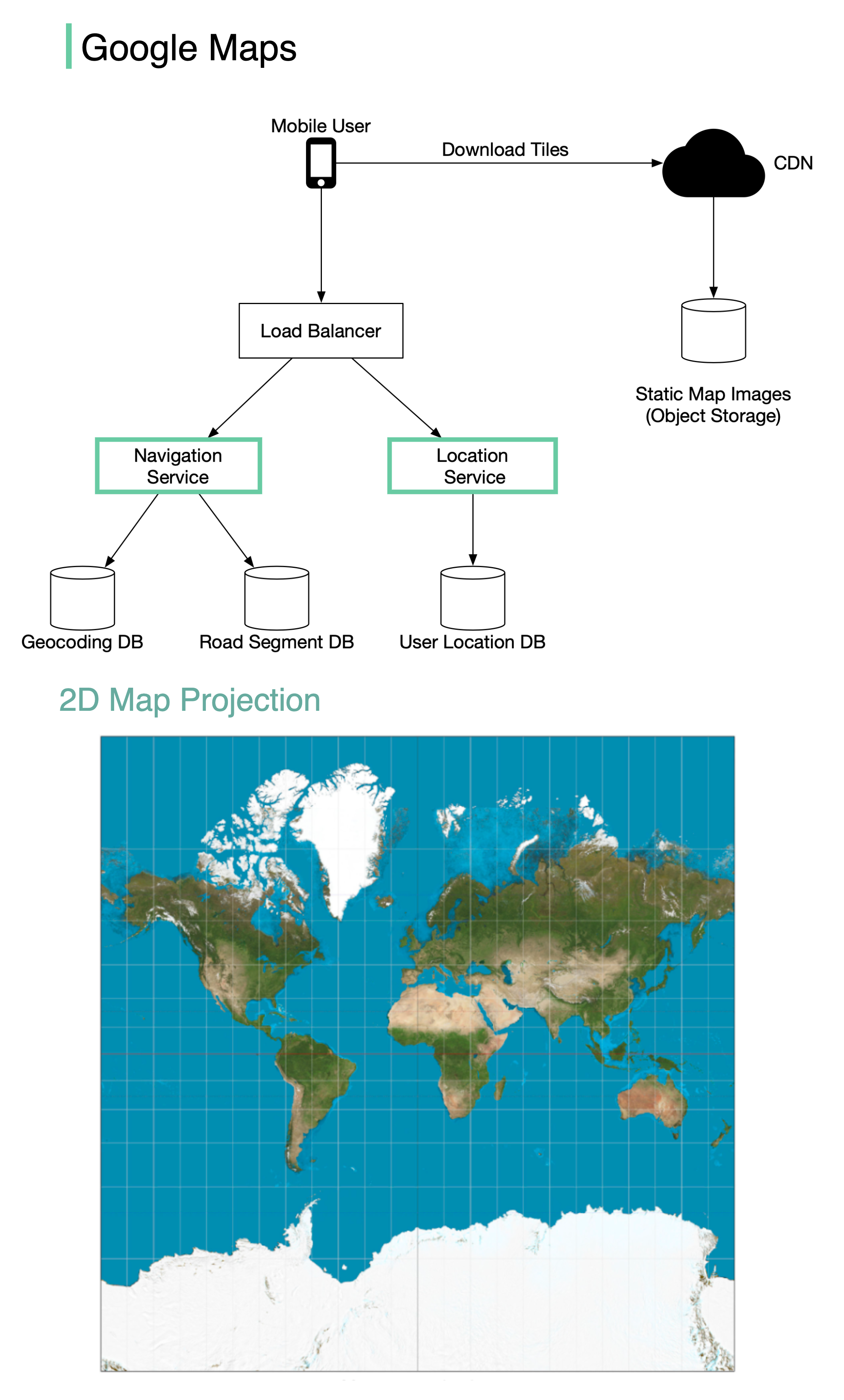

+ * [Design Google Maps](https://bytebytego.com/guides/design-google-maps)

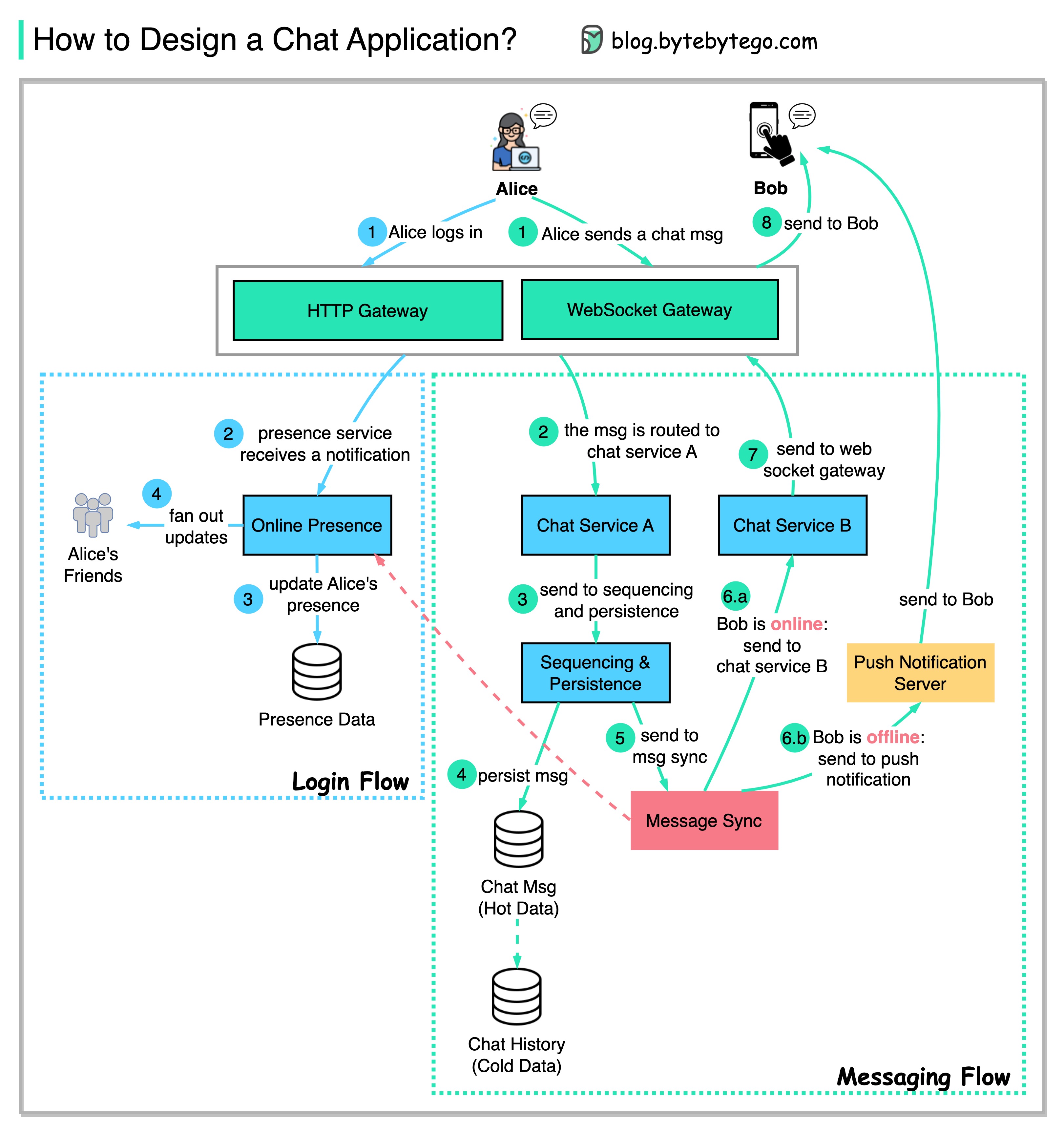

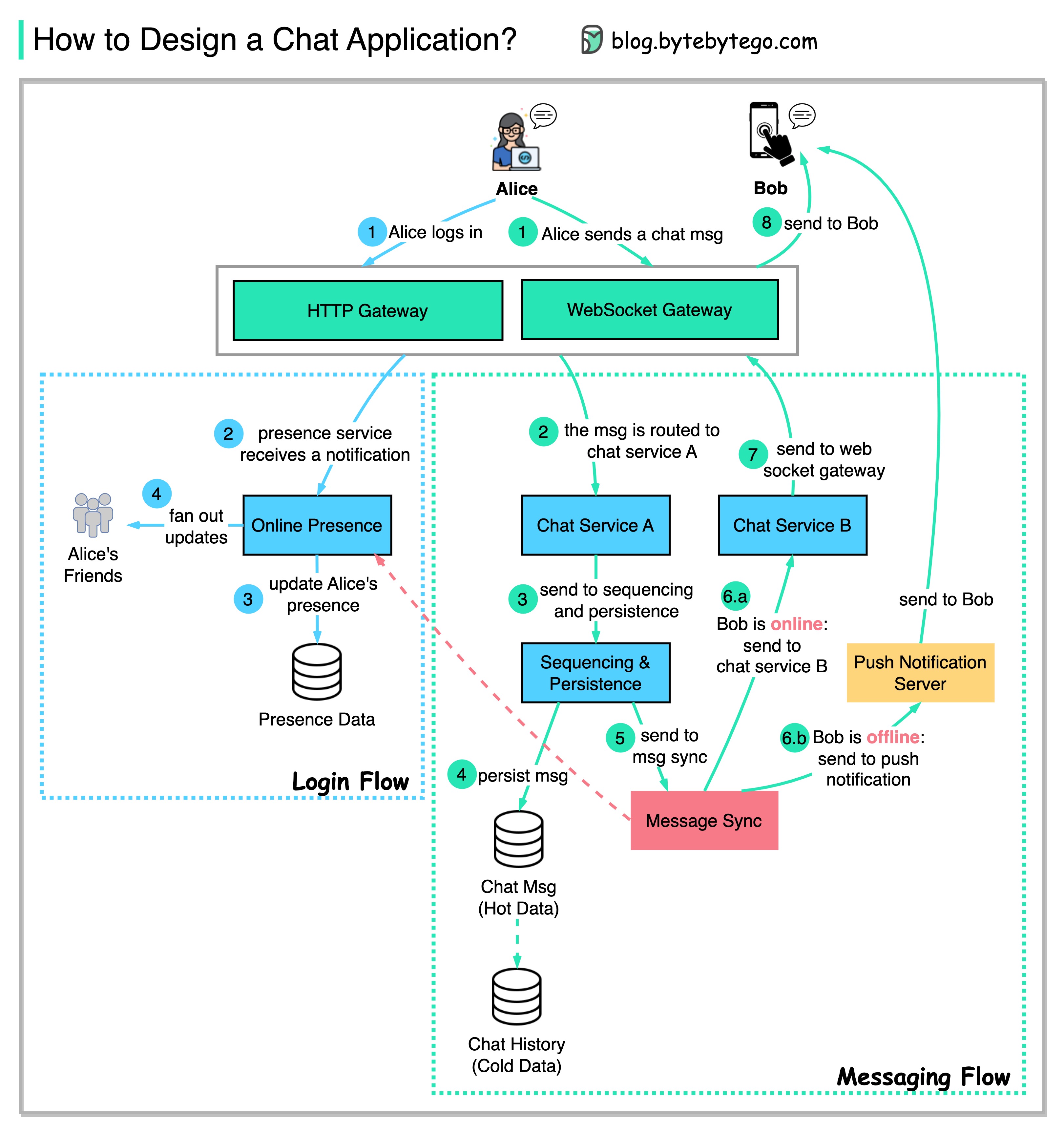

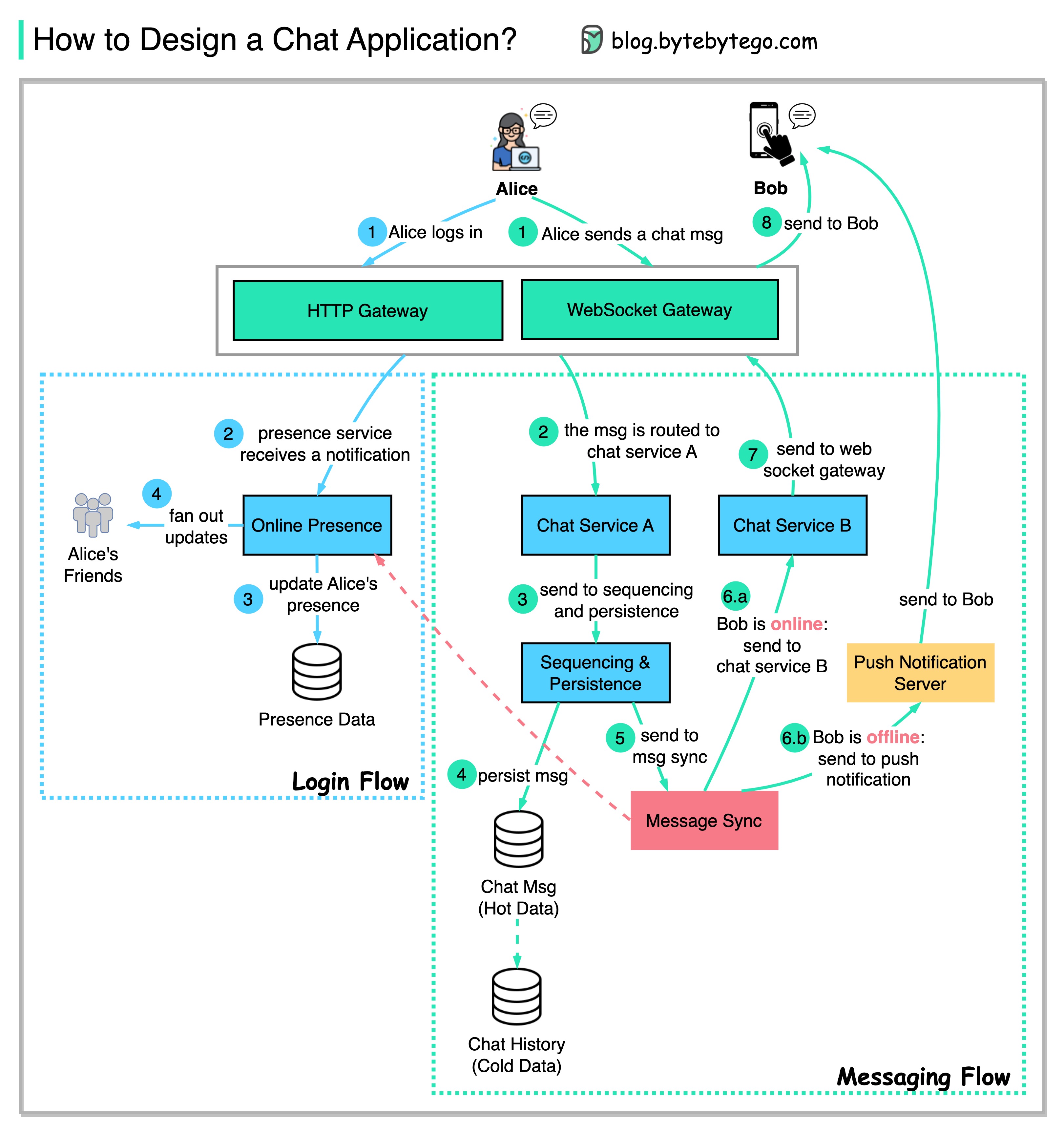

+ * [Designing a Chat Application](https://bytebytego.com/guides/how-do-we-design-a-chat-application-like-whatsapp-facebook-messenger-or-discord)

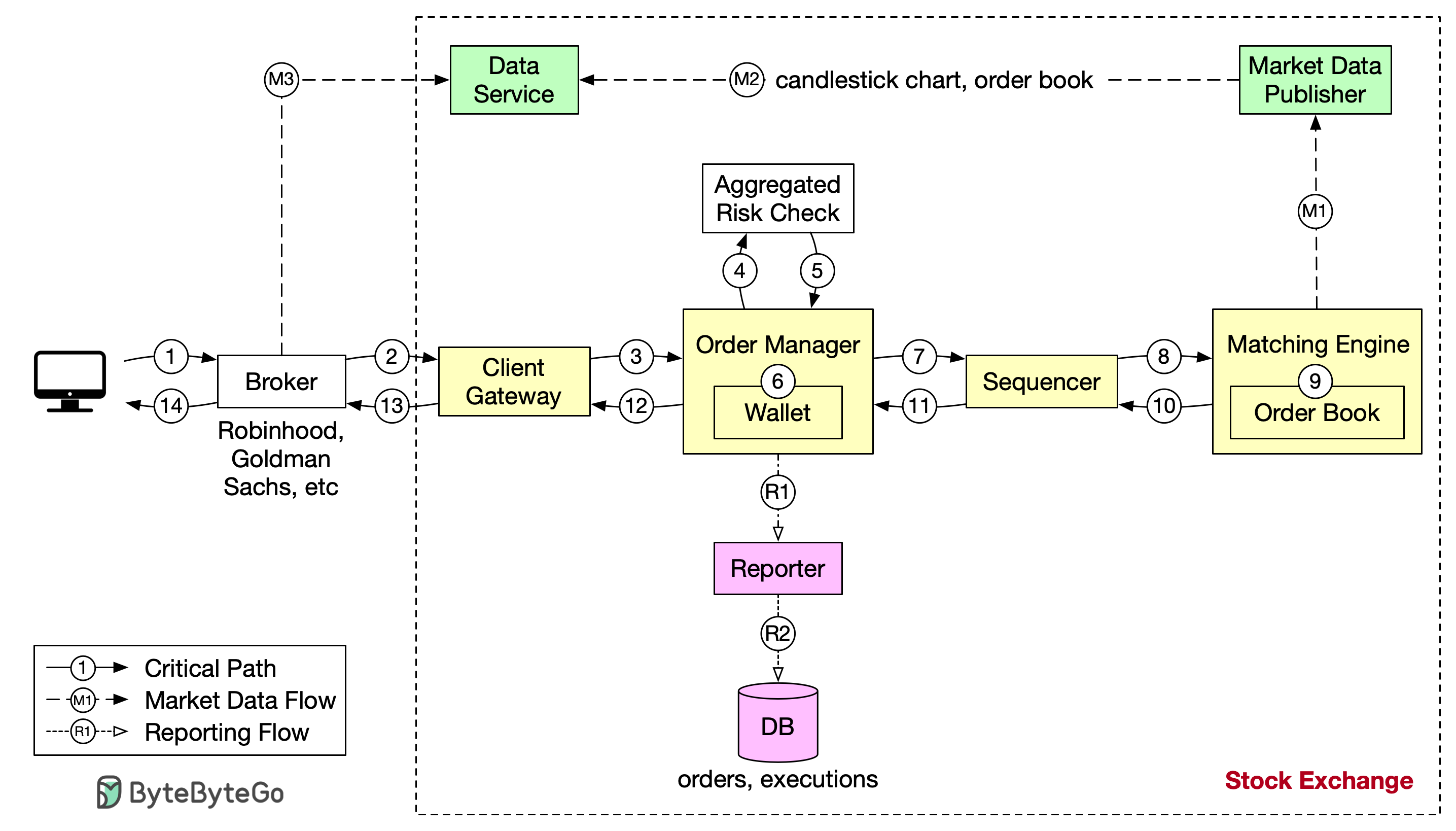

+ * [Design Stock Exchange](https://bytebytego.com/guides/design-stock-exchange)

+ * [How are Notifications Pushed to Our Phones or PCs?](https://bytebytego.com/guides/how-are-notifications-pushed-to-our-phones-or-pcs)

+ * [What Happens When You Upload a File to Amazon S3?](https://bytebytego.com/guides/what-happens-when-you-upload-a-file-to-amazon-s3)

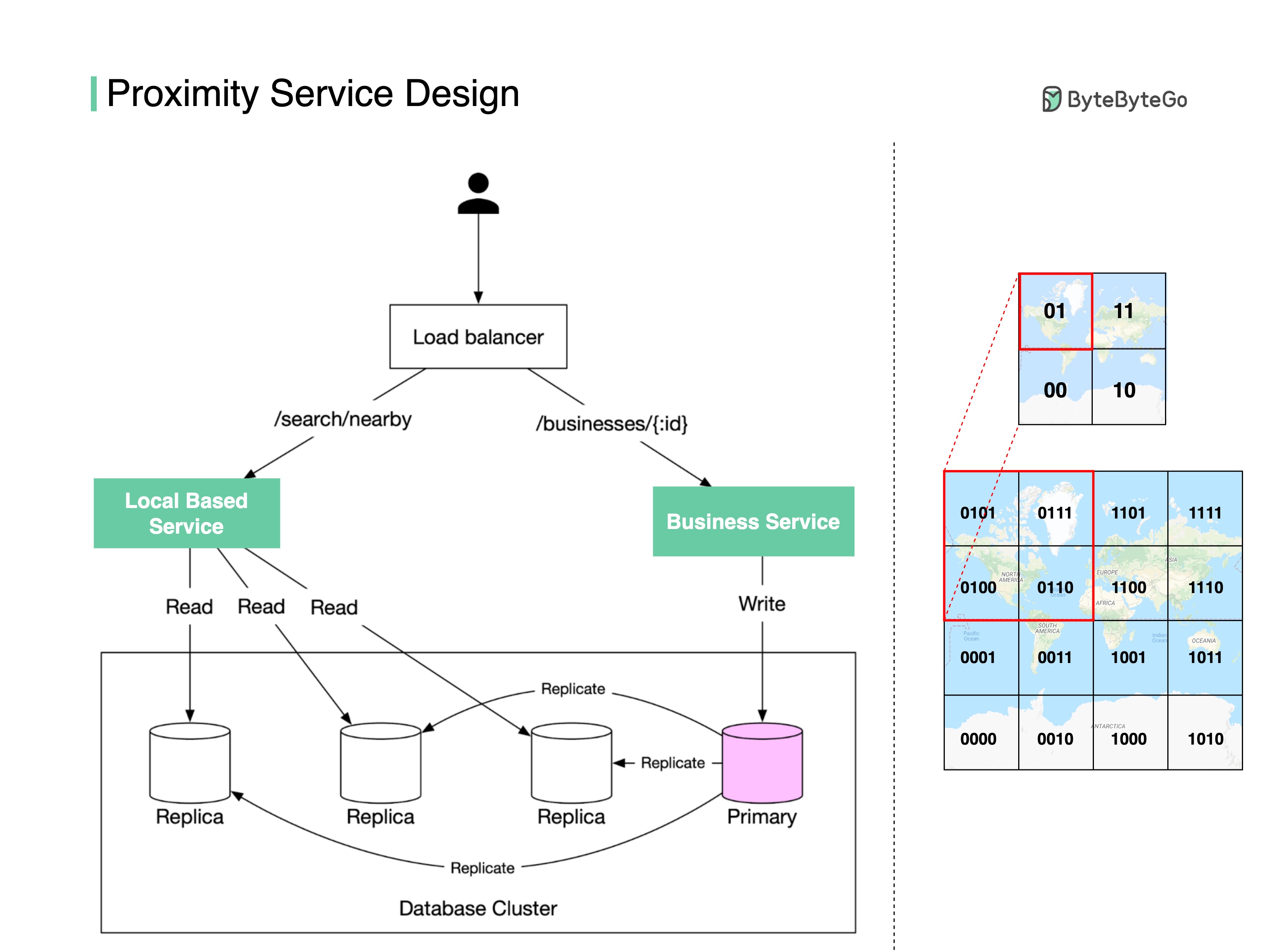

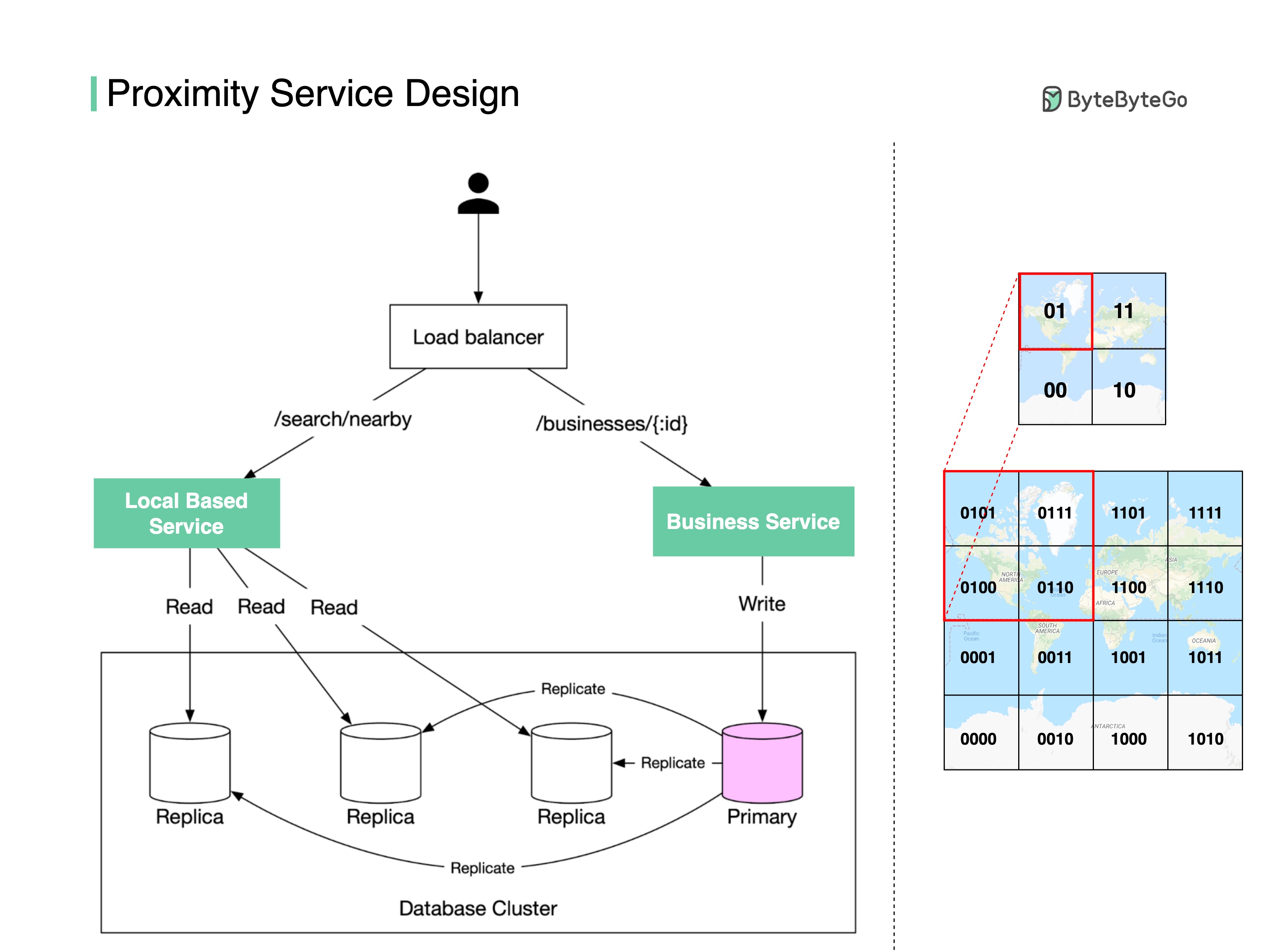

+ * [Proximity Service](https://bytebytego.com/guides/proximity-service)

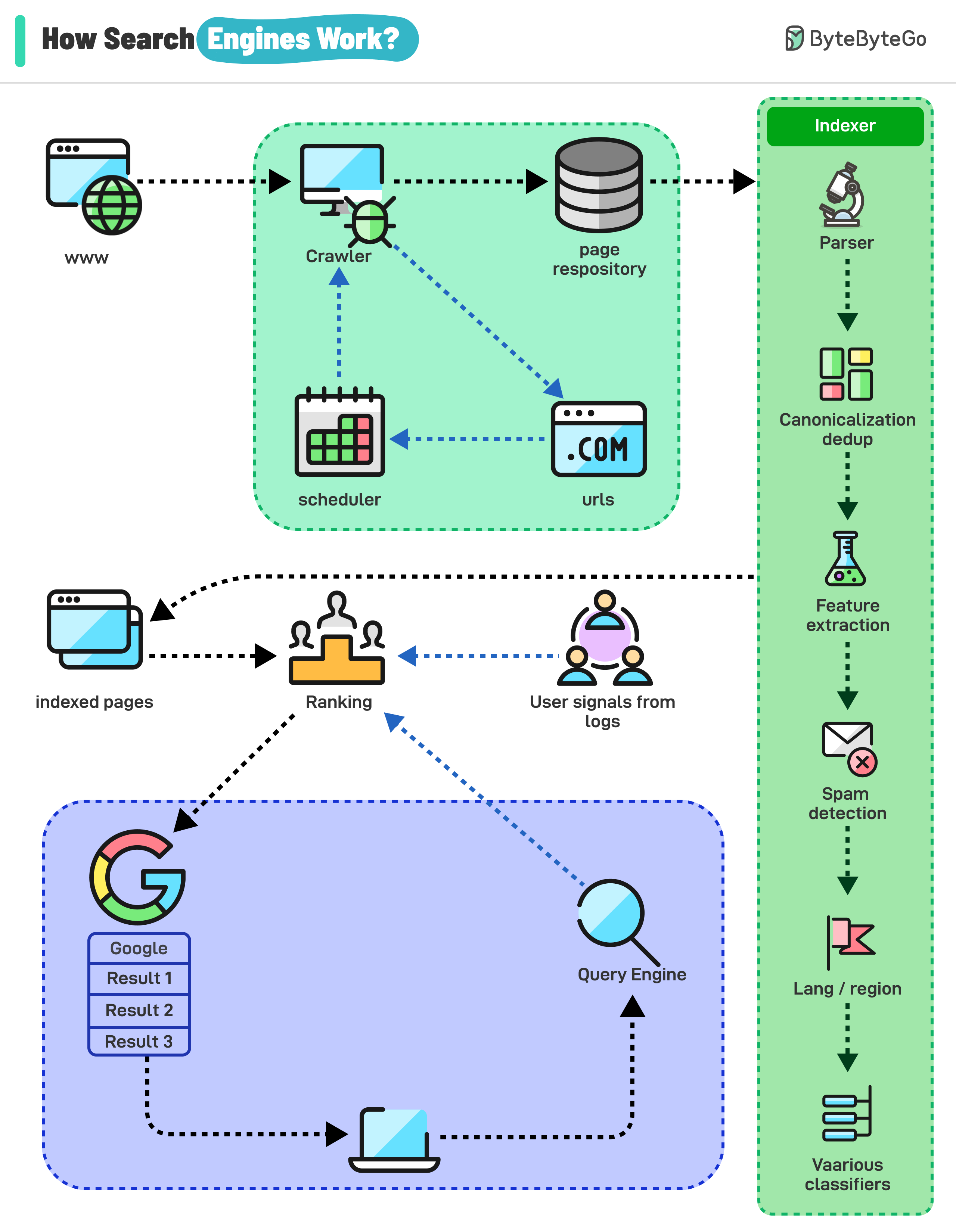

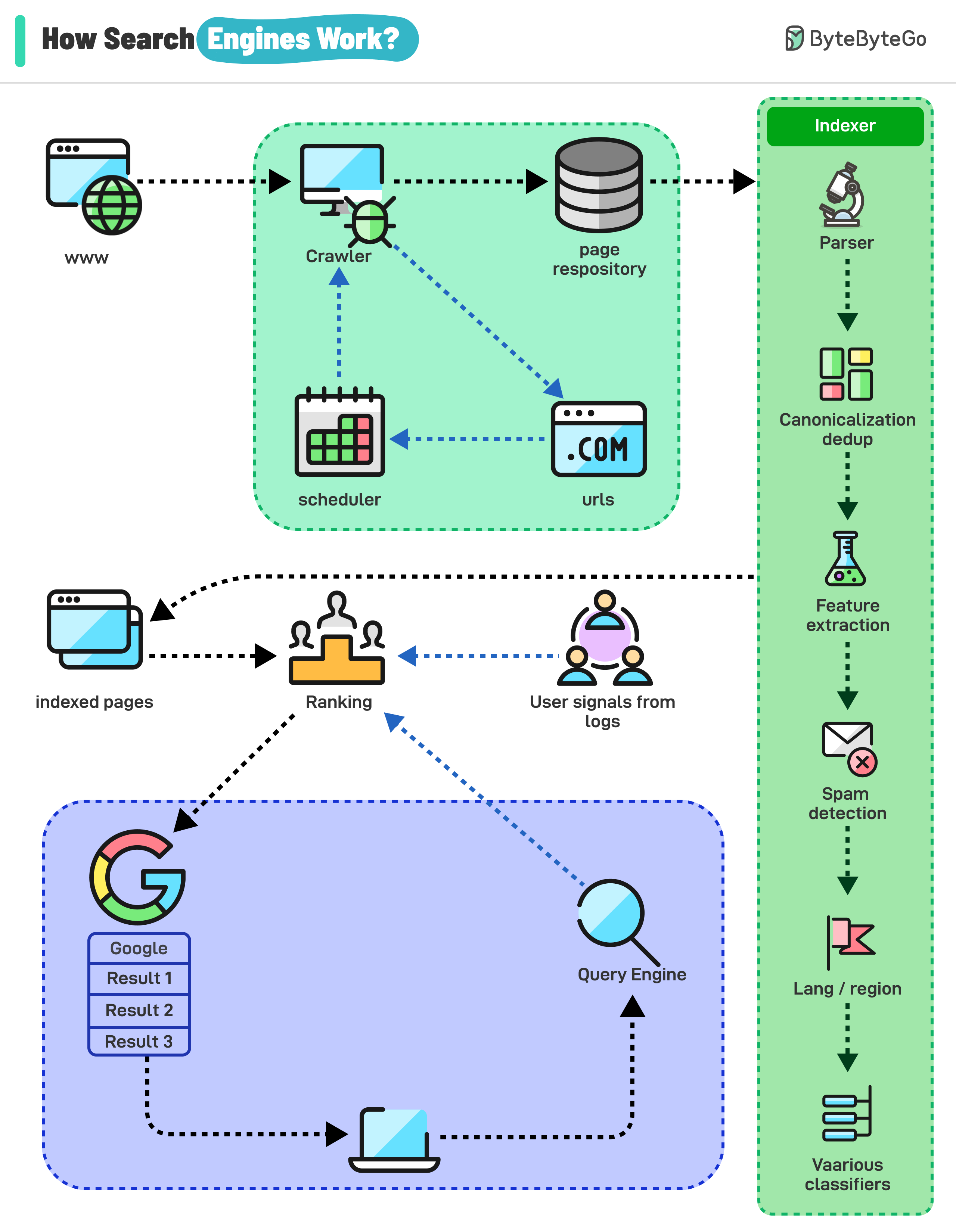

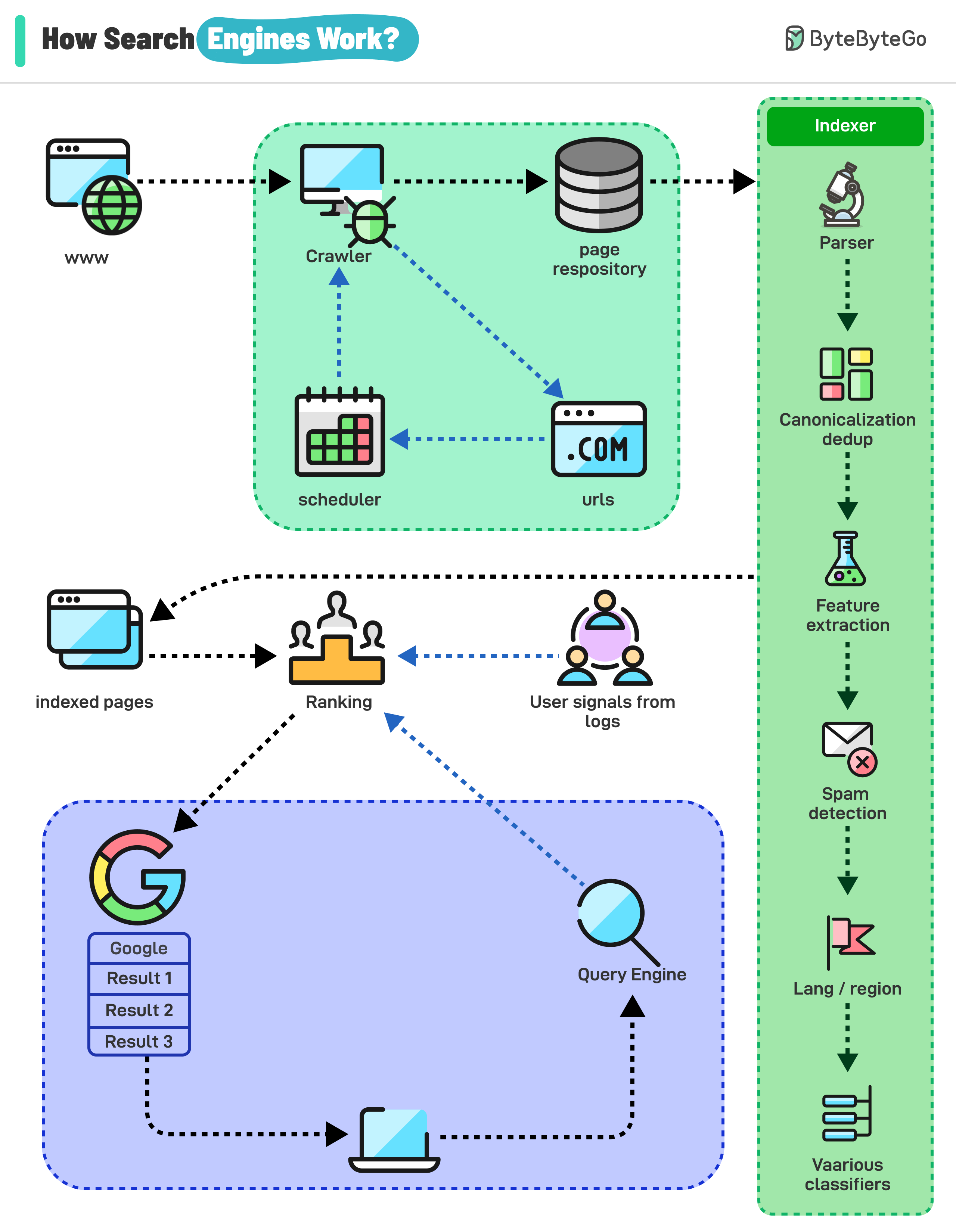

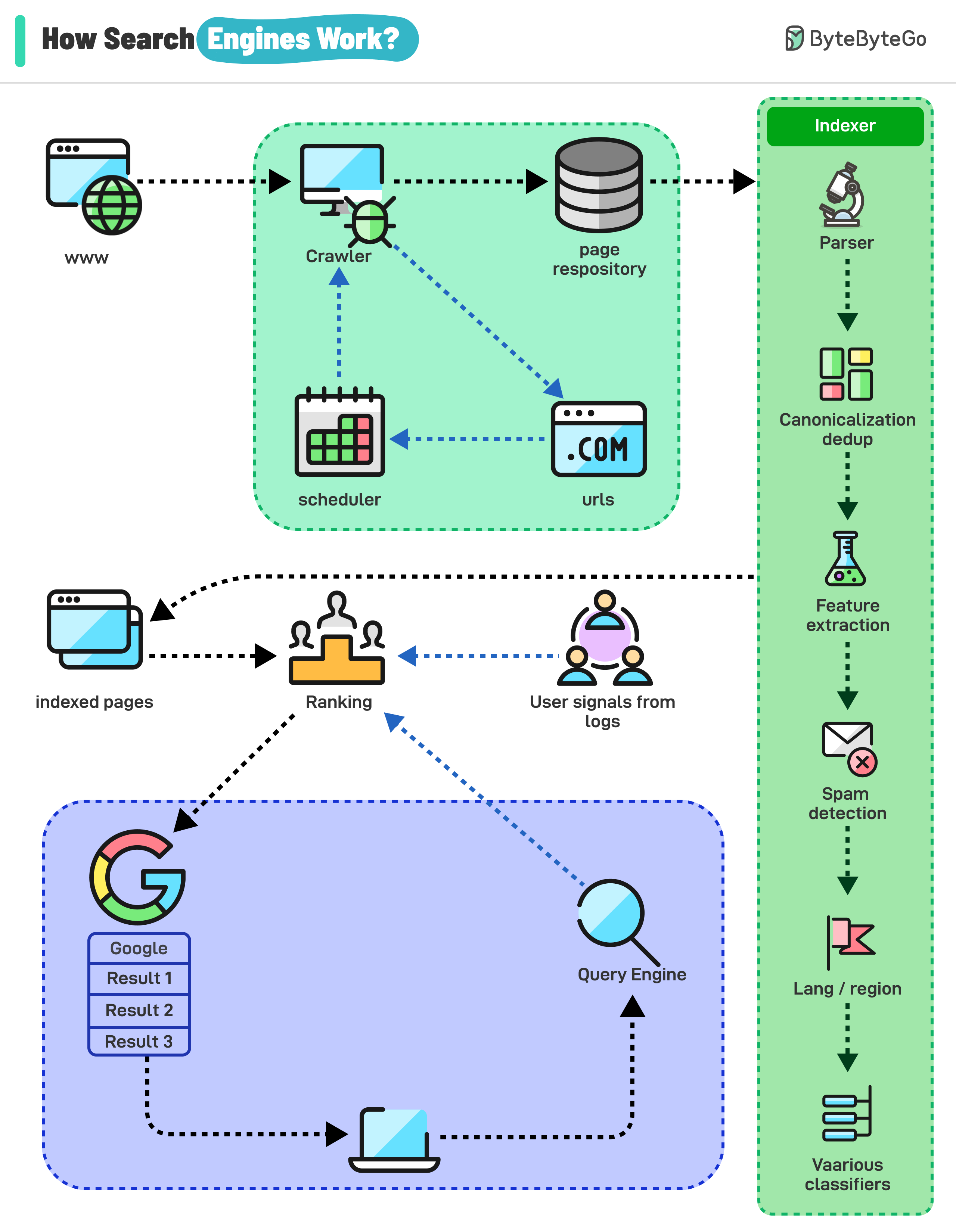

+ * [How Do Search Engines Work?](https://bytebytego.com/guides/how-do-search-engines-work)

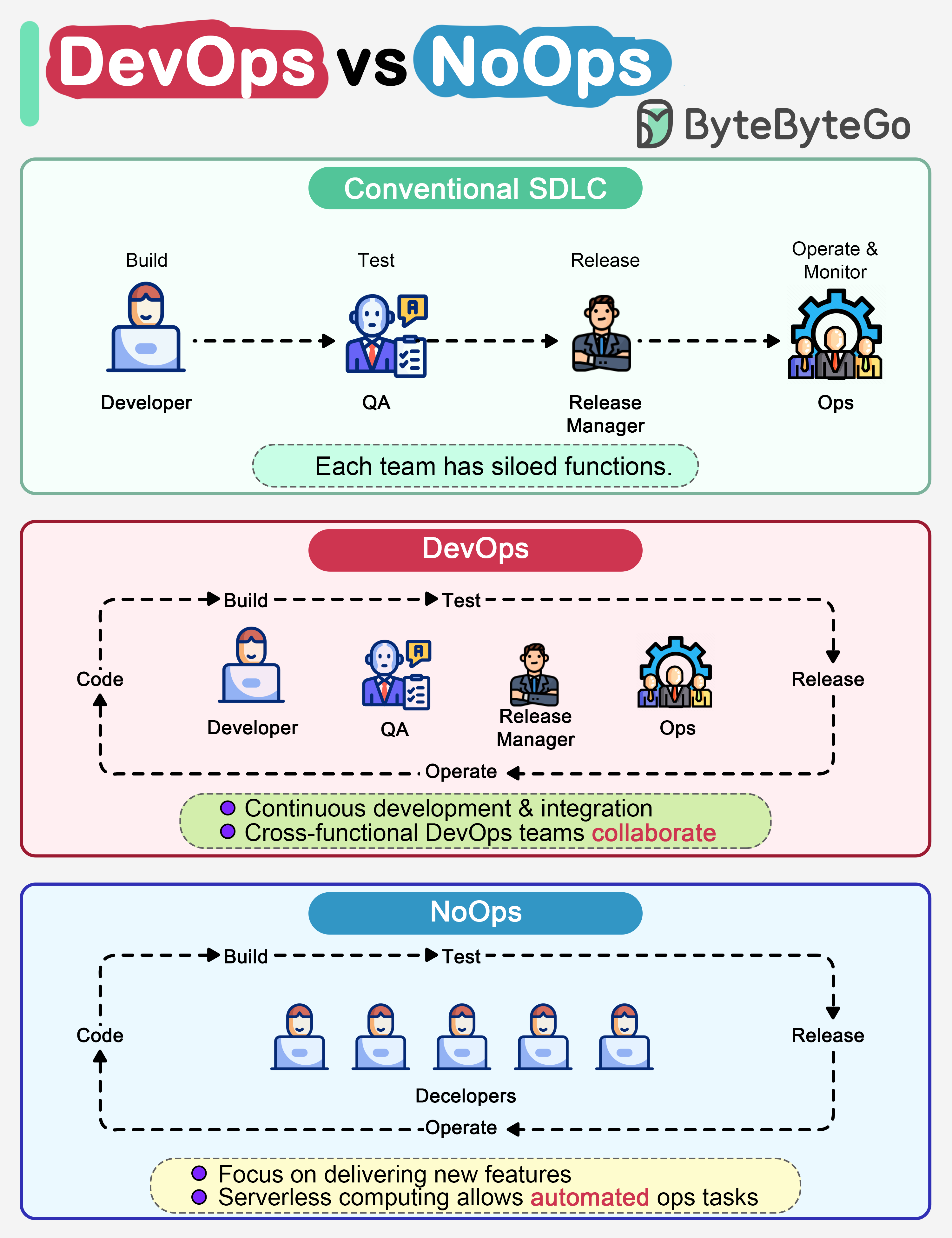

+* [DevOps and CI/CD](https://bytebytego.com/guides/devops-cicd)

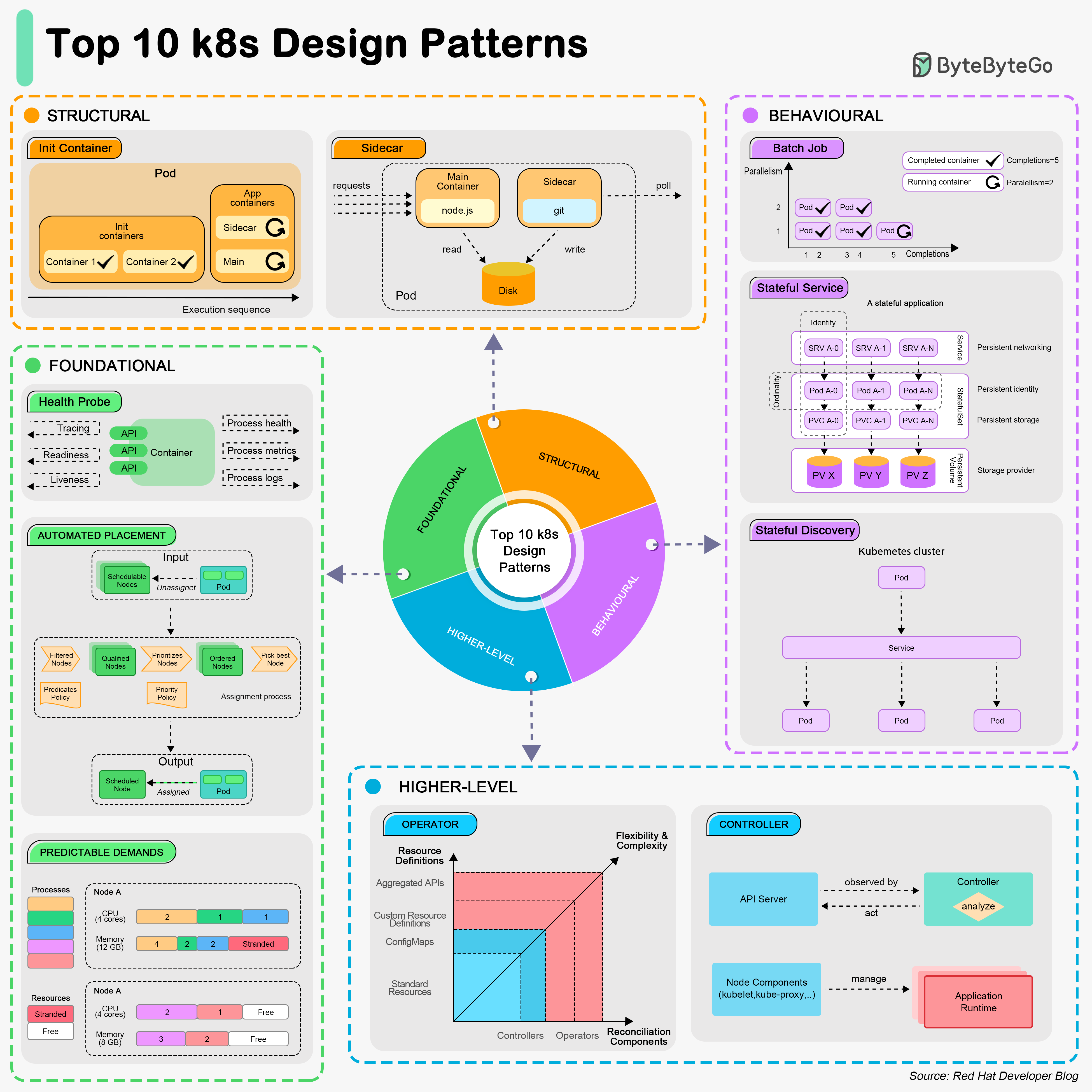

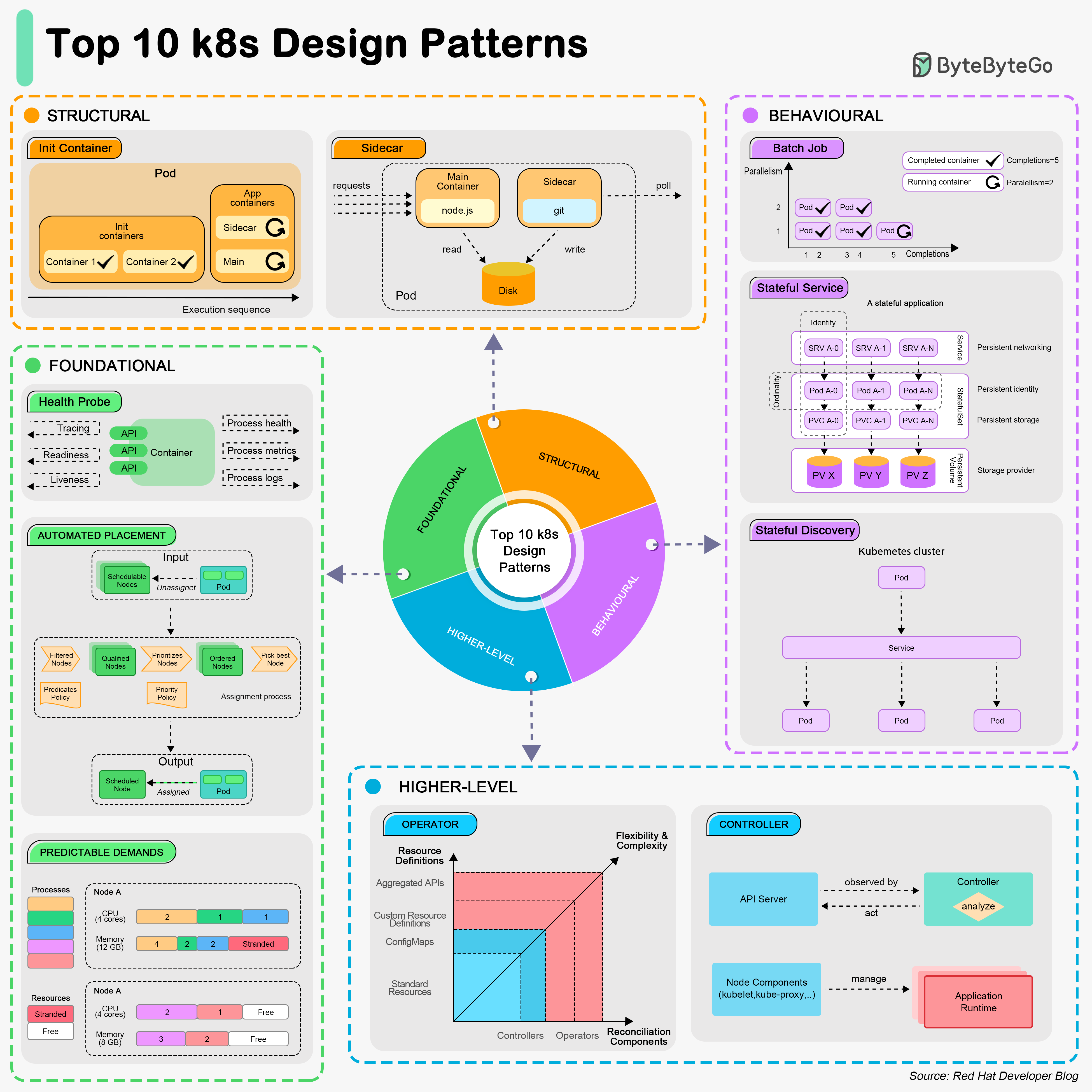

+ * [Top 10 Kubernetes Design Patterns](https://bytebytego.com/guides/top-10-k8s-design-patterns)

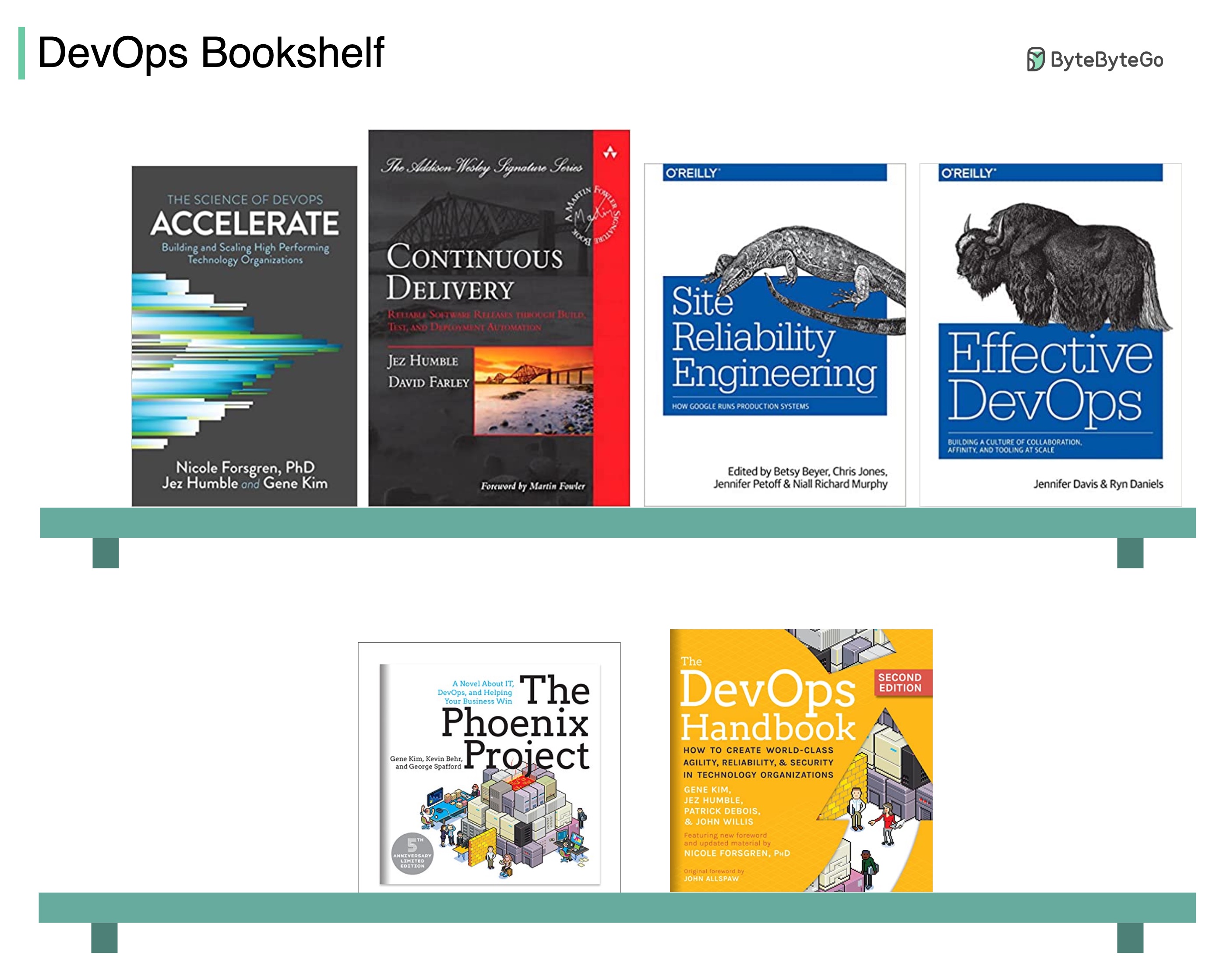

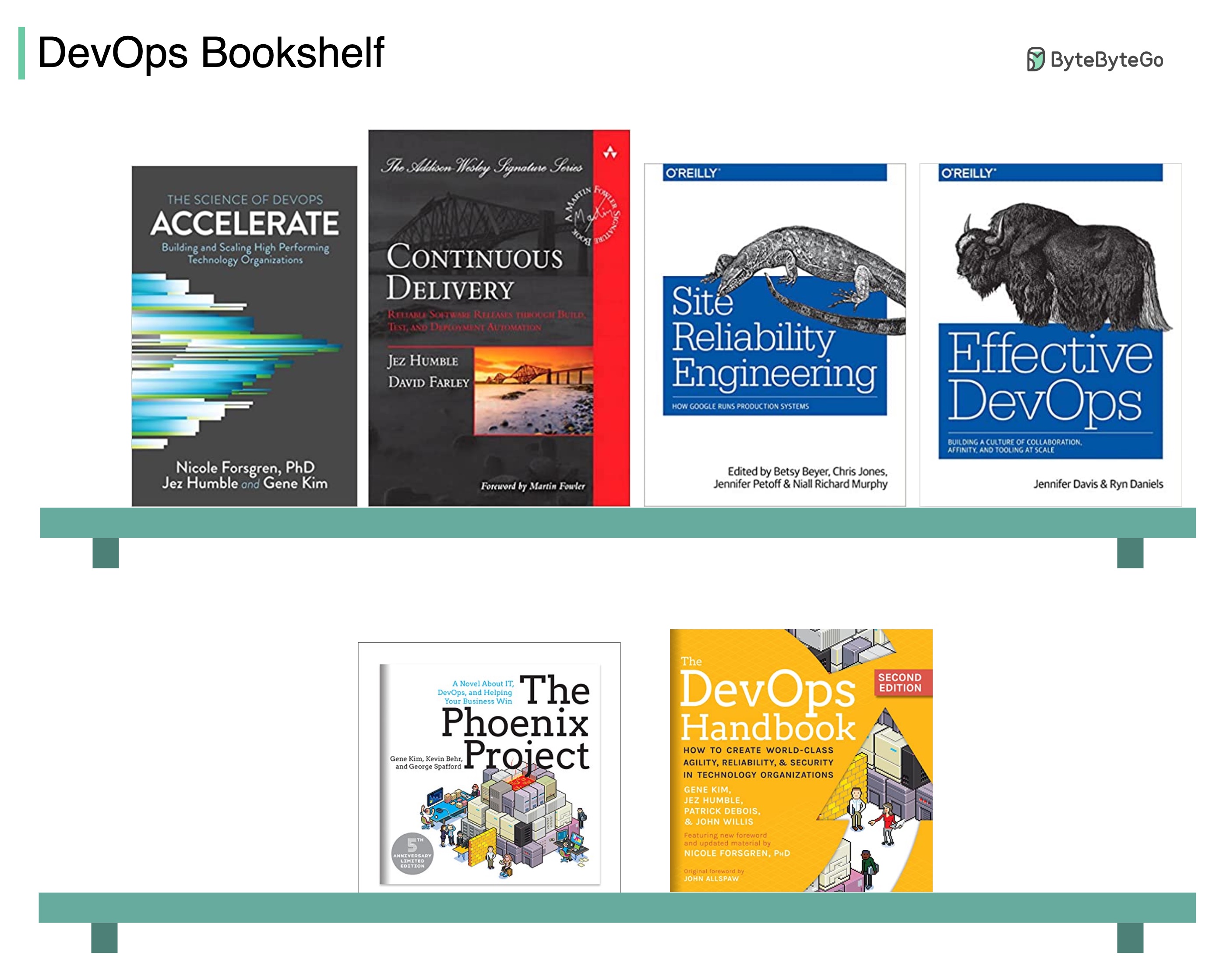

+ * [Some DevOps Books I Find Enlightening](https://bytebytego.com/guides/some-devops-books-i-find-enlightening)

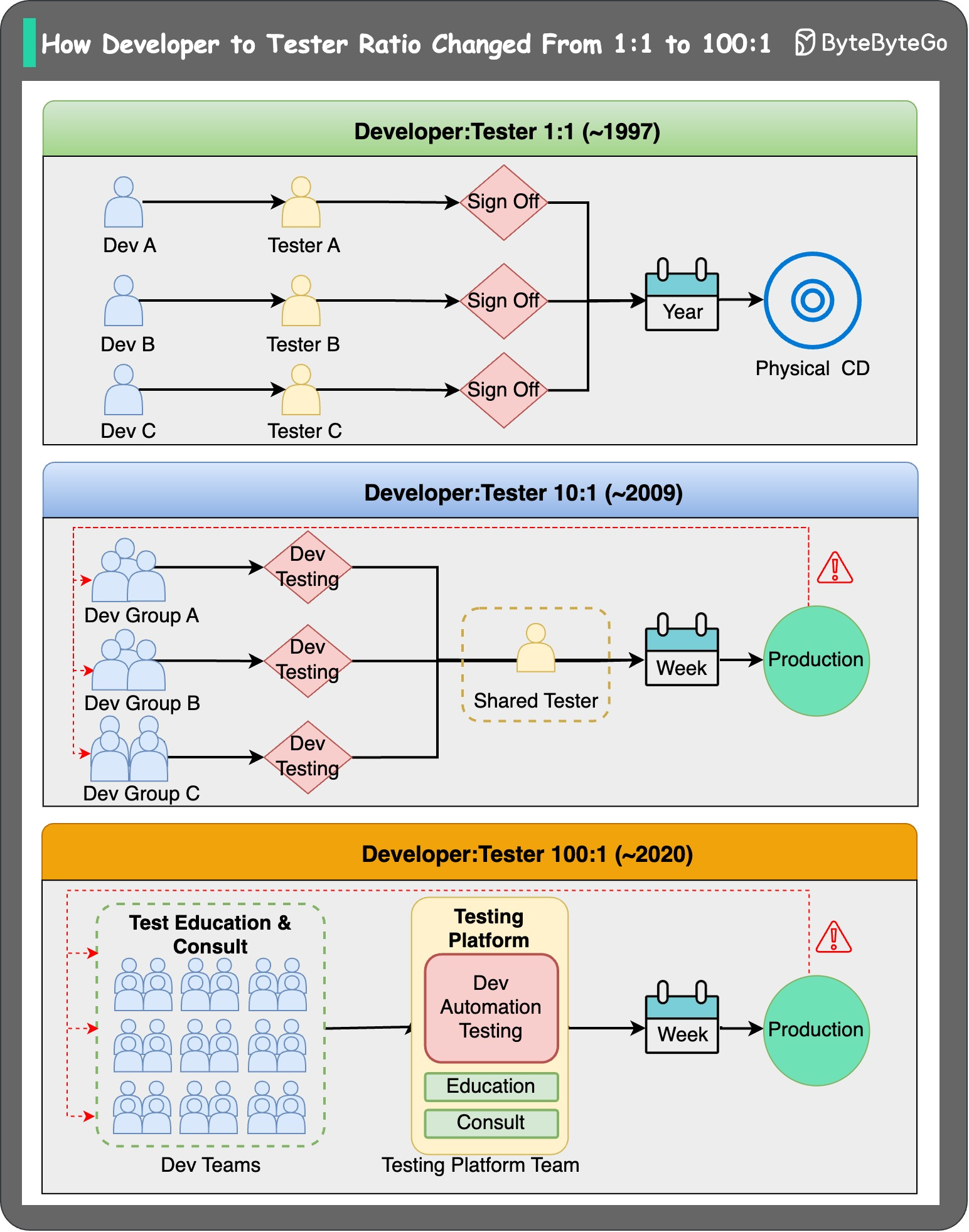

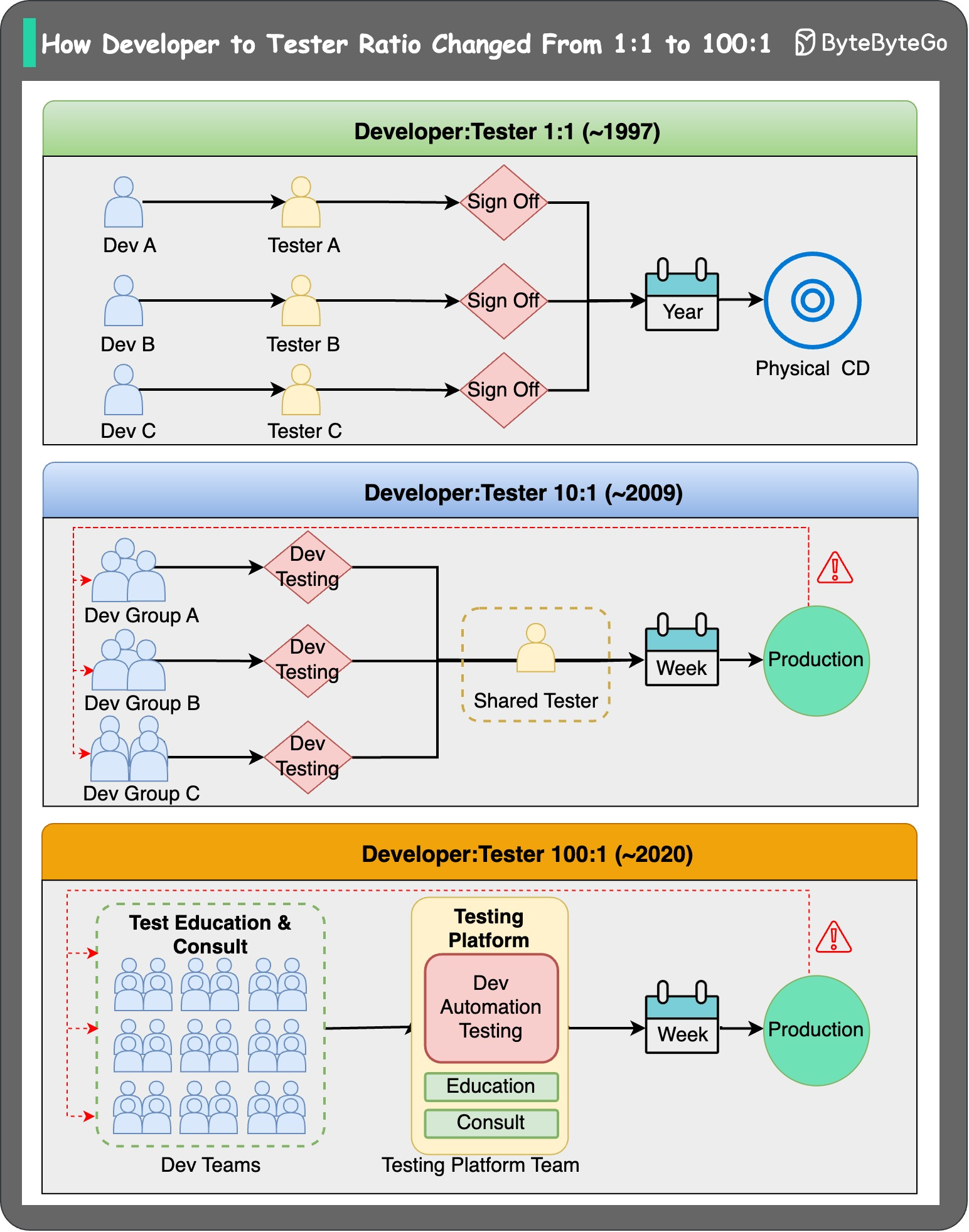

+ * [Paradigm Shift: Developer to Tester Ratio](https://bytebytego.com/guides/paradigm-shift-how-developer-to-tester-ratio-changed-from-11-to-1001)

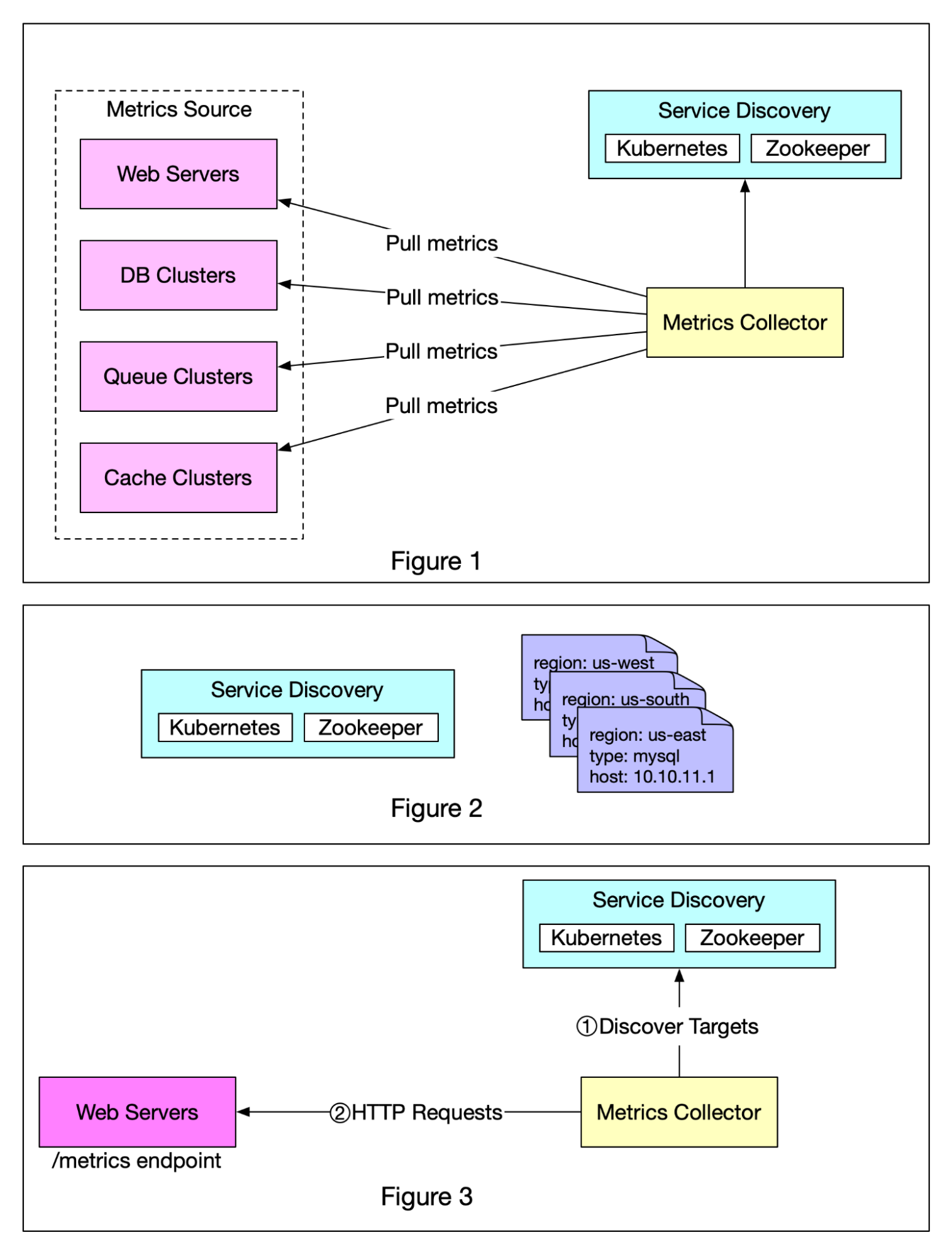

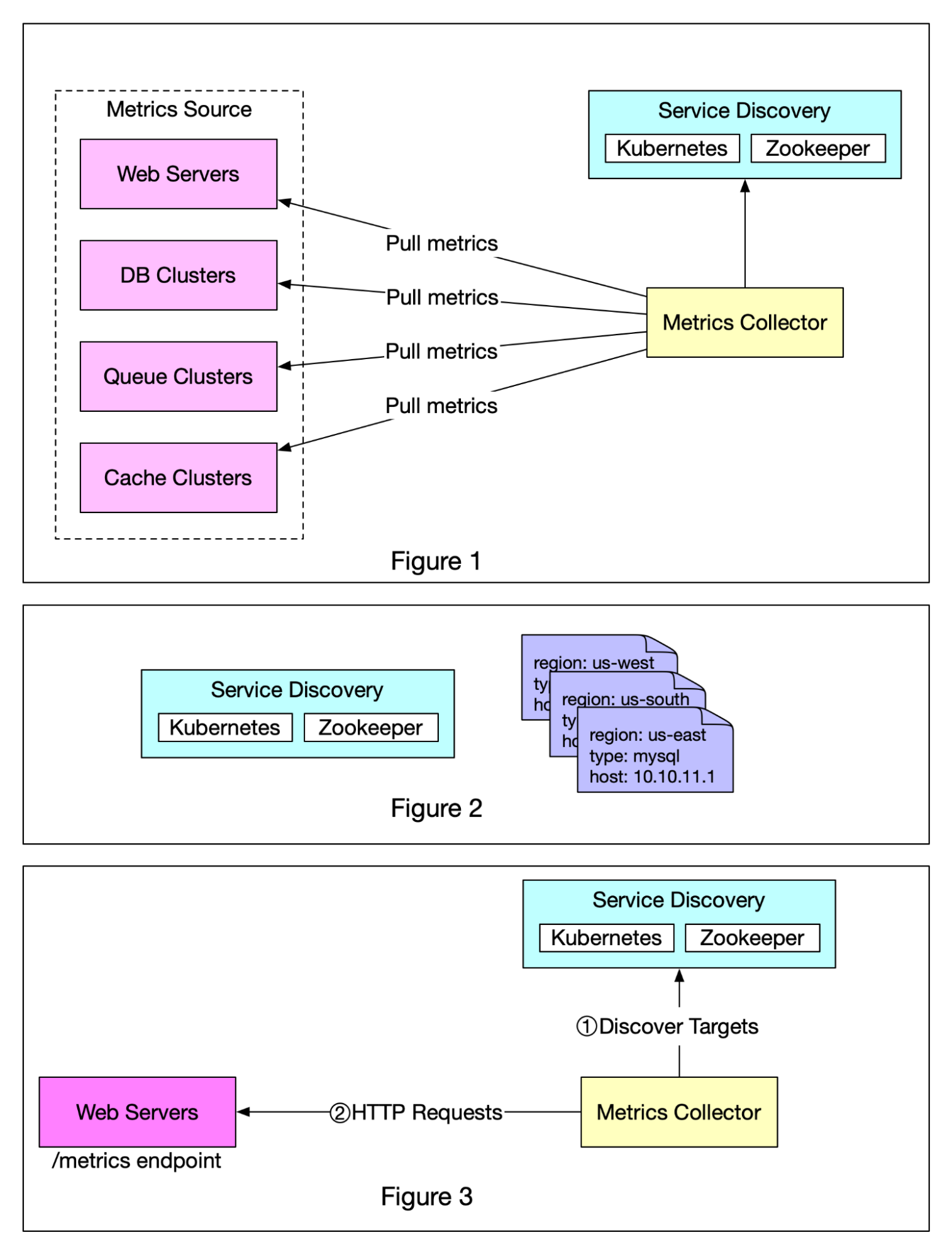

+ * [Push vs Pull in Metrics Collection Systems](https://bytebytego.com/guides/push-vs-pull-in-metrics-collecting-systems)

+ * [Choose the Right Database for Metric Collection](https://bytebytego.com/guides/choose-the-right-database-for-metric-collecting-system)

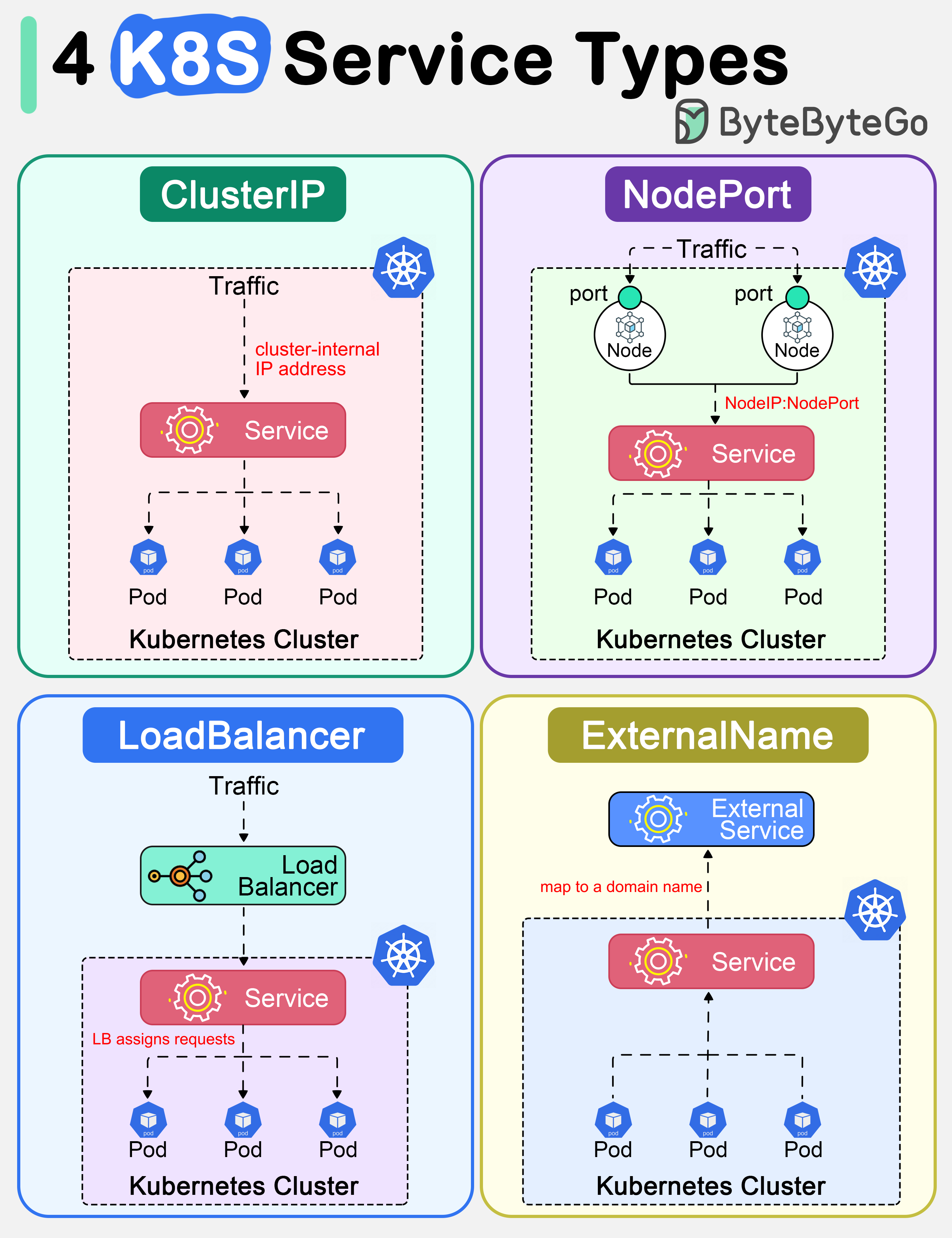

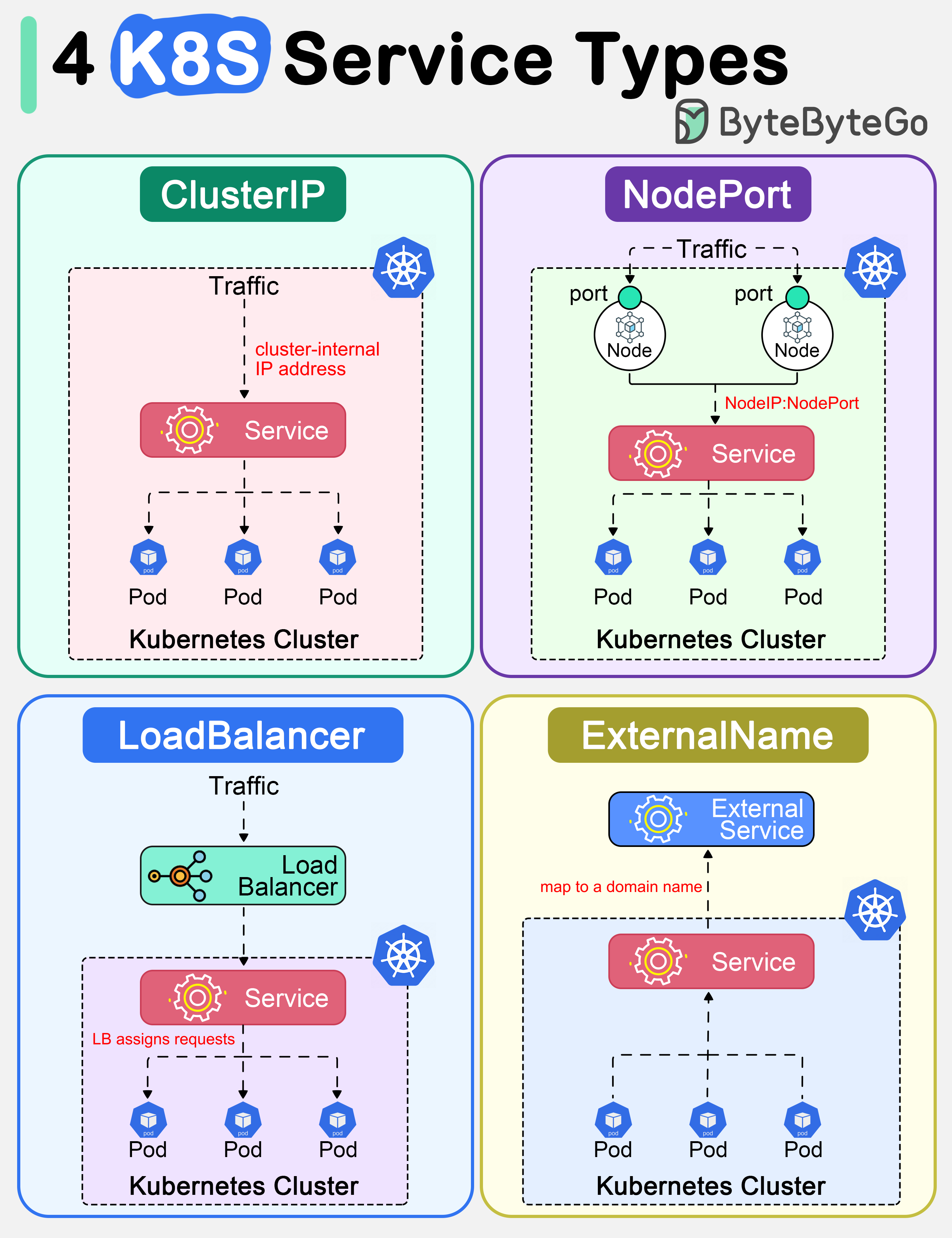

+ * [Top 4 Kubernetes Service Types](https://bytebytego.com/guides/top-4-kubernetes-service-types-in-one-diagram)

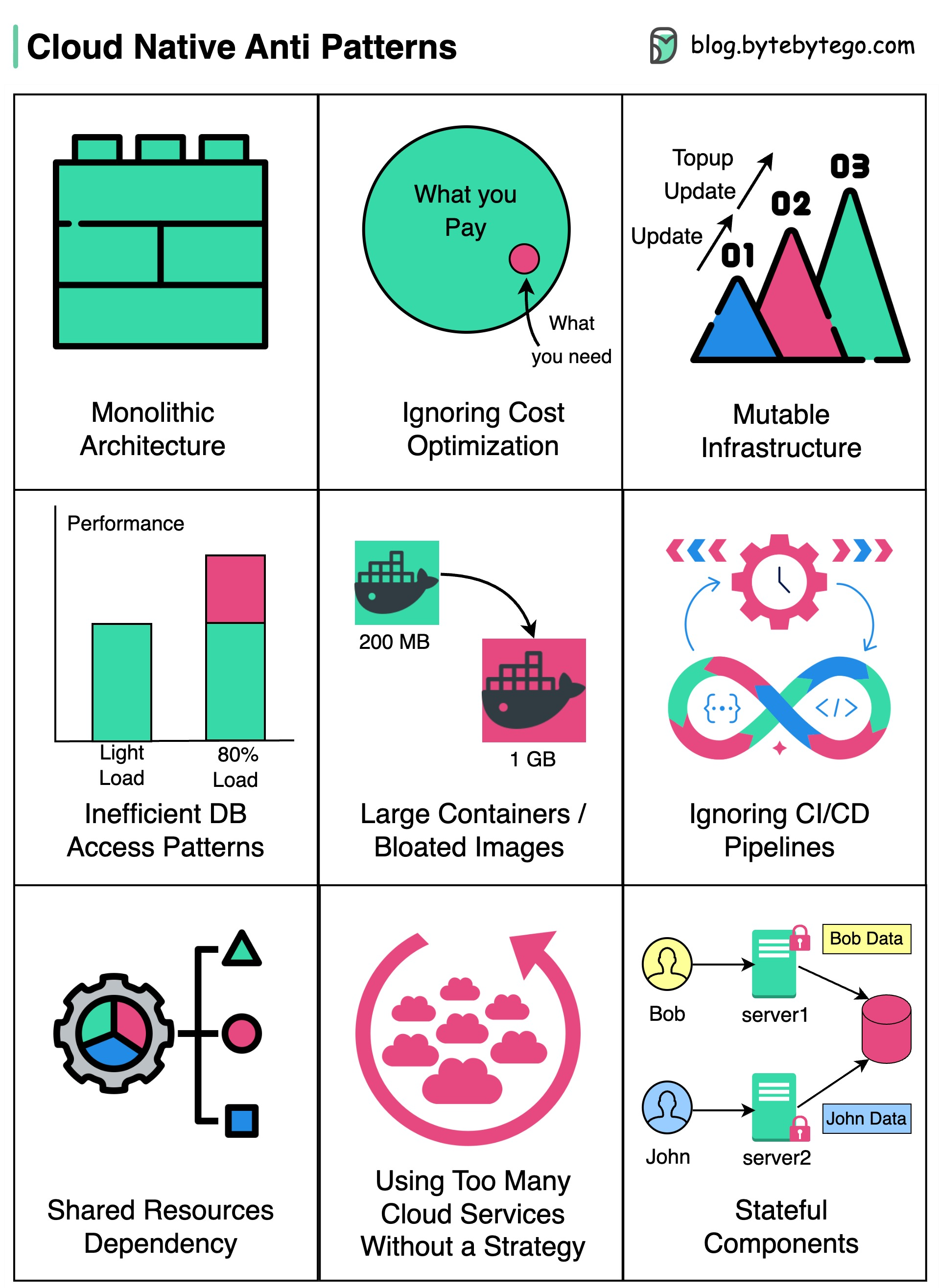

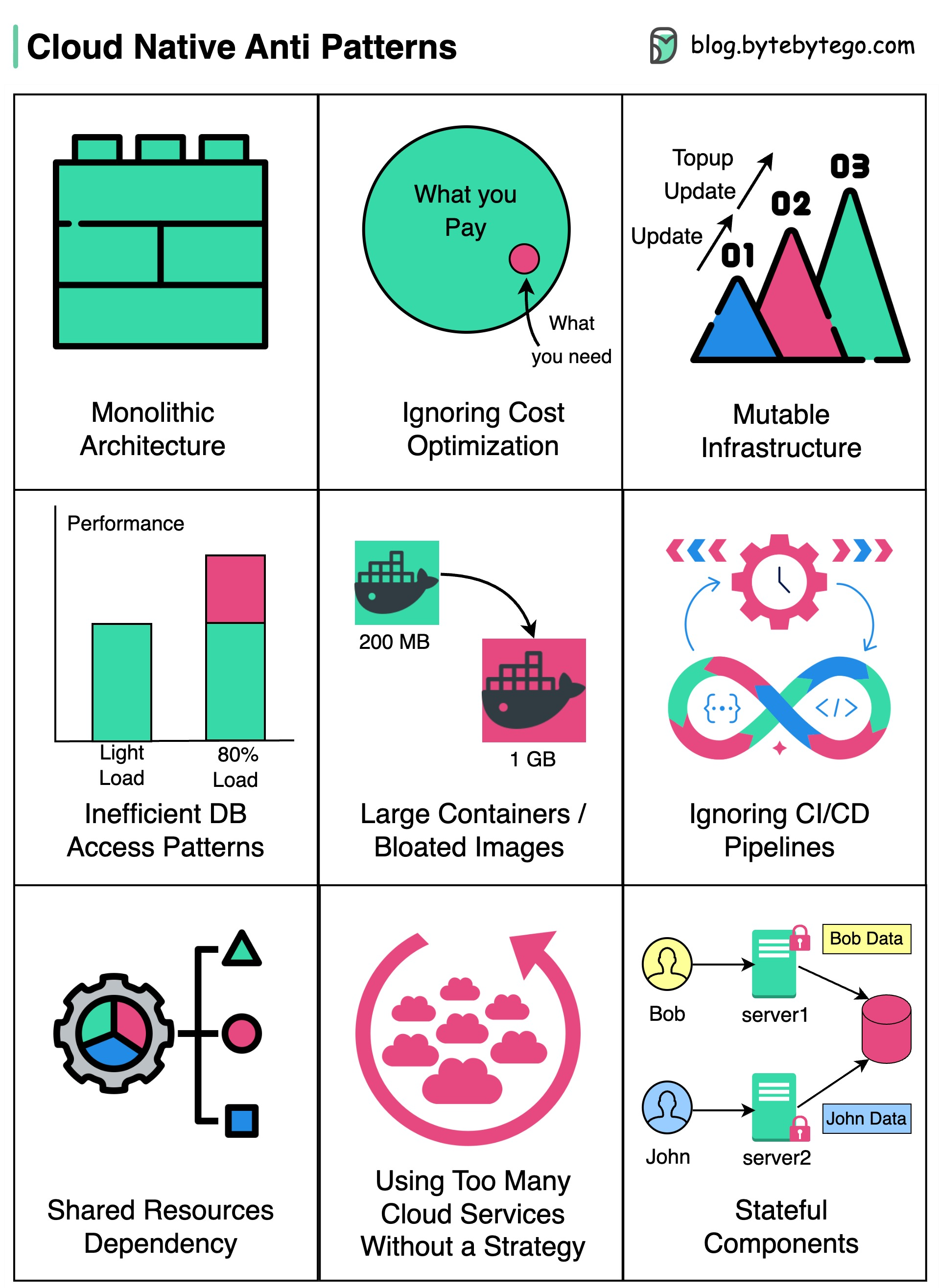

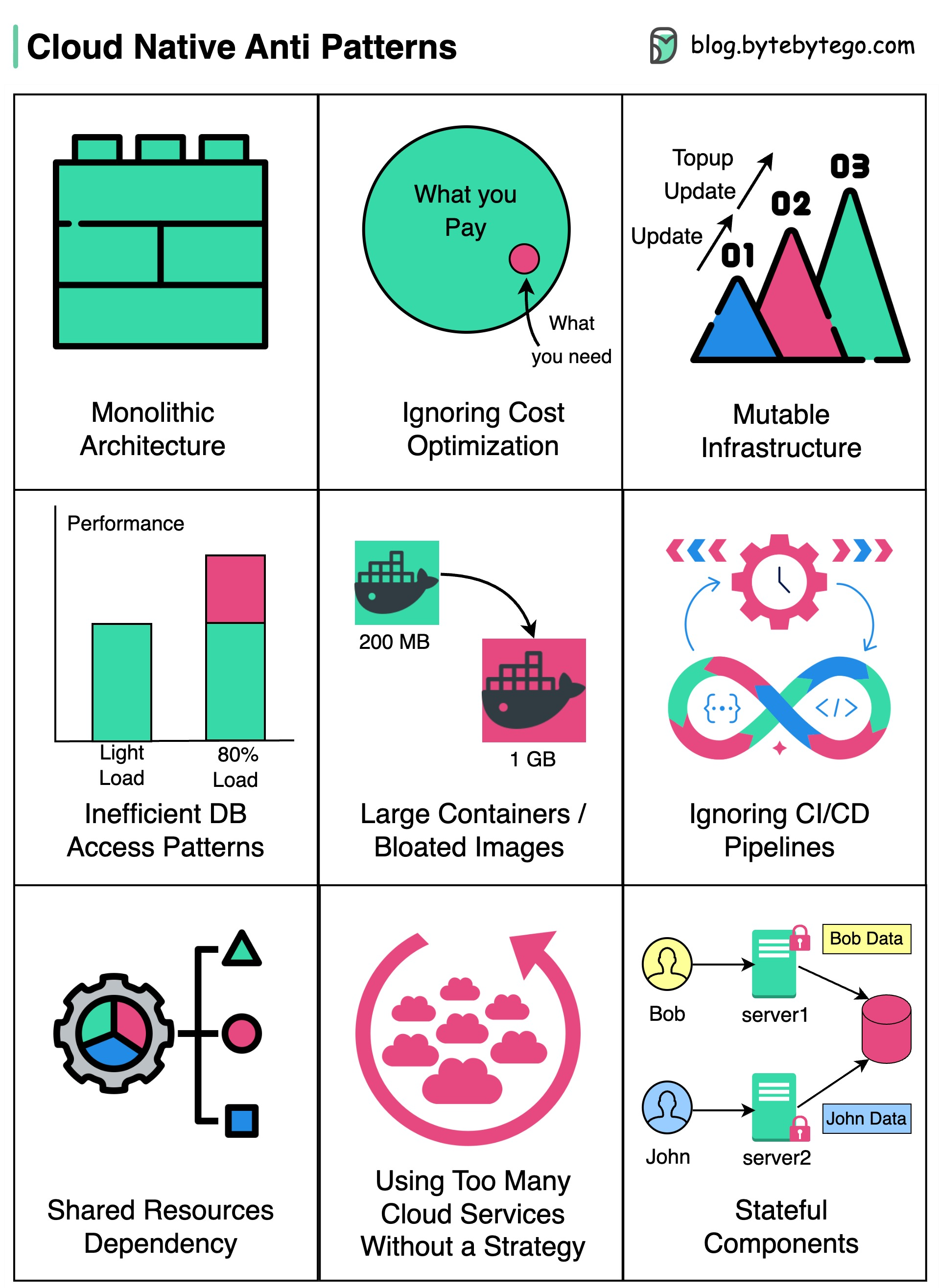

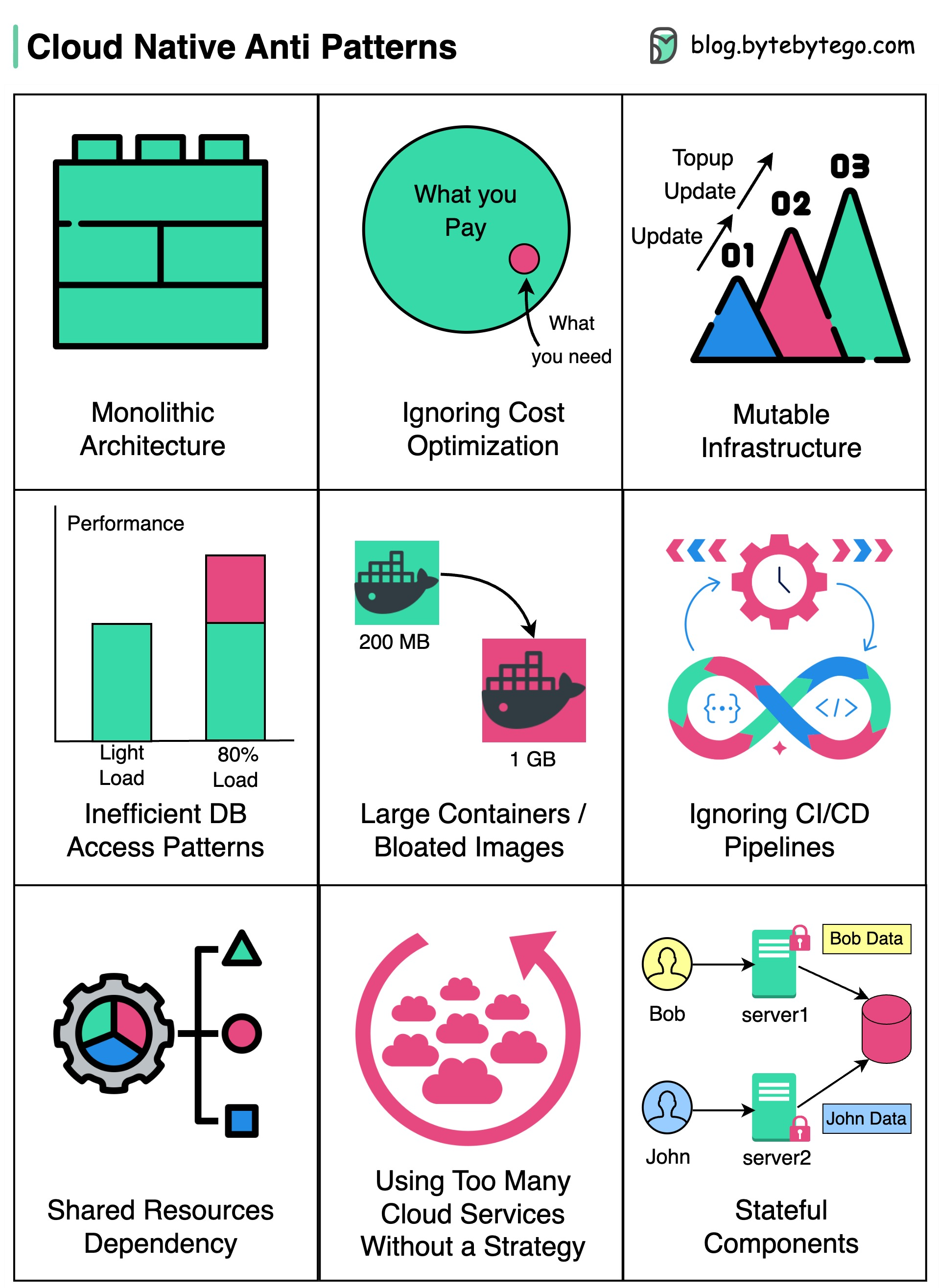

+ * [Cloud Native Anti-Patterns](https://bytebytego.com/guides/cloud-native-anti-patterns)

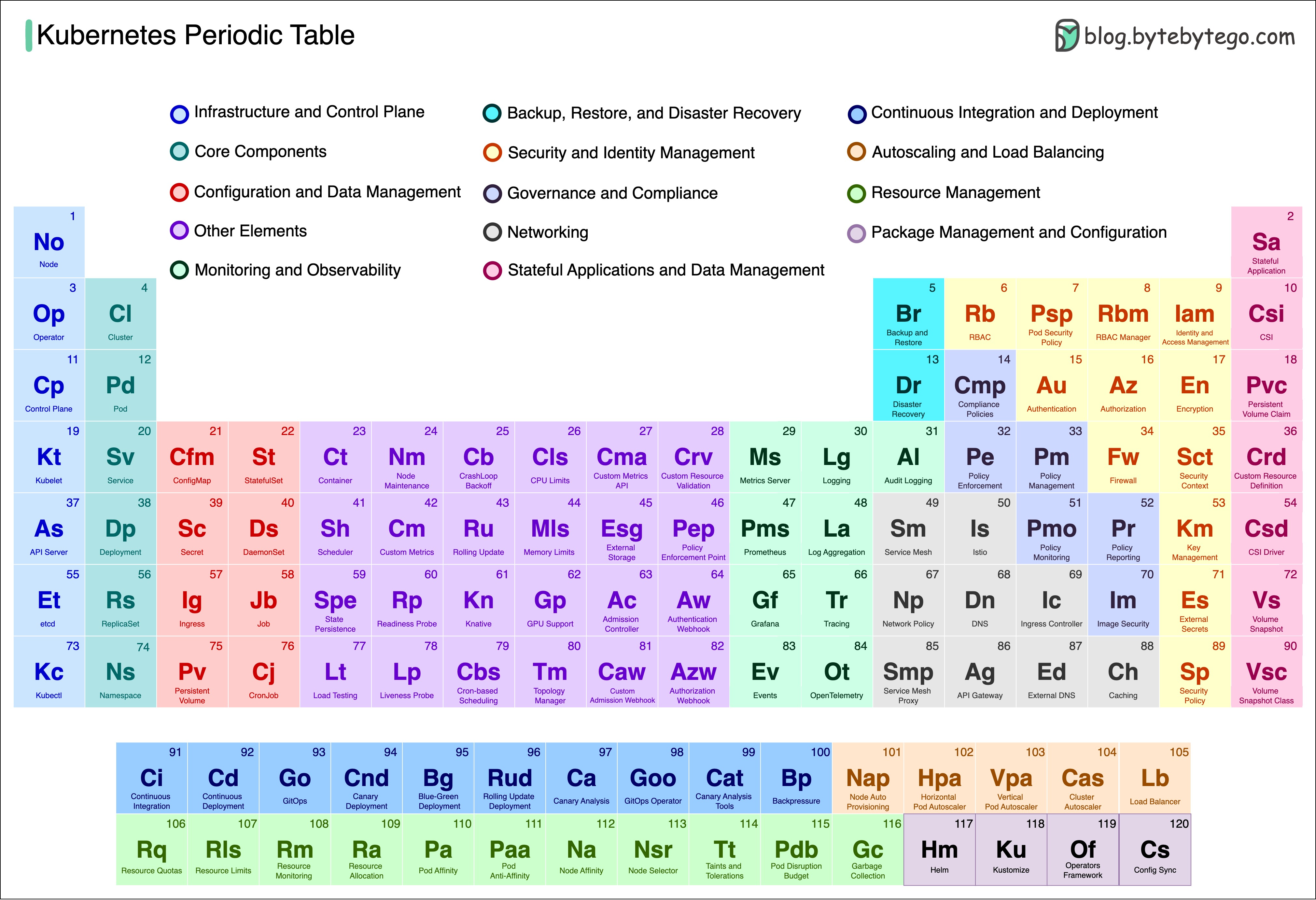

+ * [Kubernetes Tools Stack Wheel](https://bytebytego.com/guides/kubernetes-tools-stack-wheel)

+ * [Kubernetes Tools Ecosystem](https://bytebytego.com/guides/kubernetes-tools-ecosystem)

+ * [Kubernetes Periodic Table](https://bytebytego.com/guides/kubernetes-periodic-table)

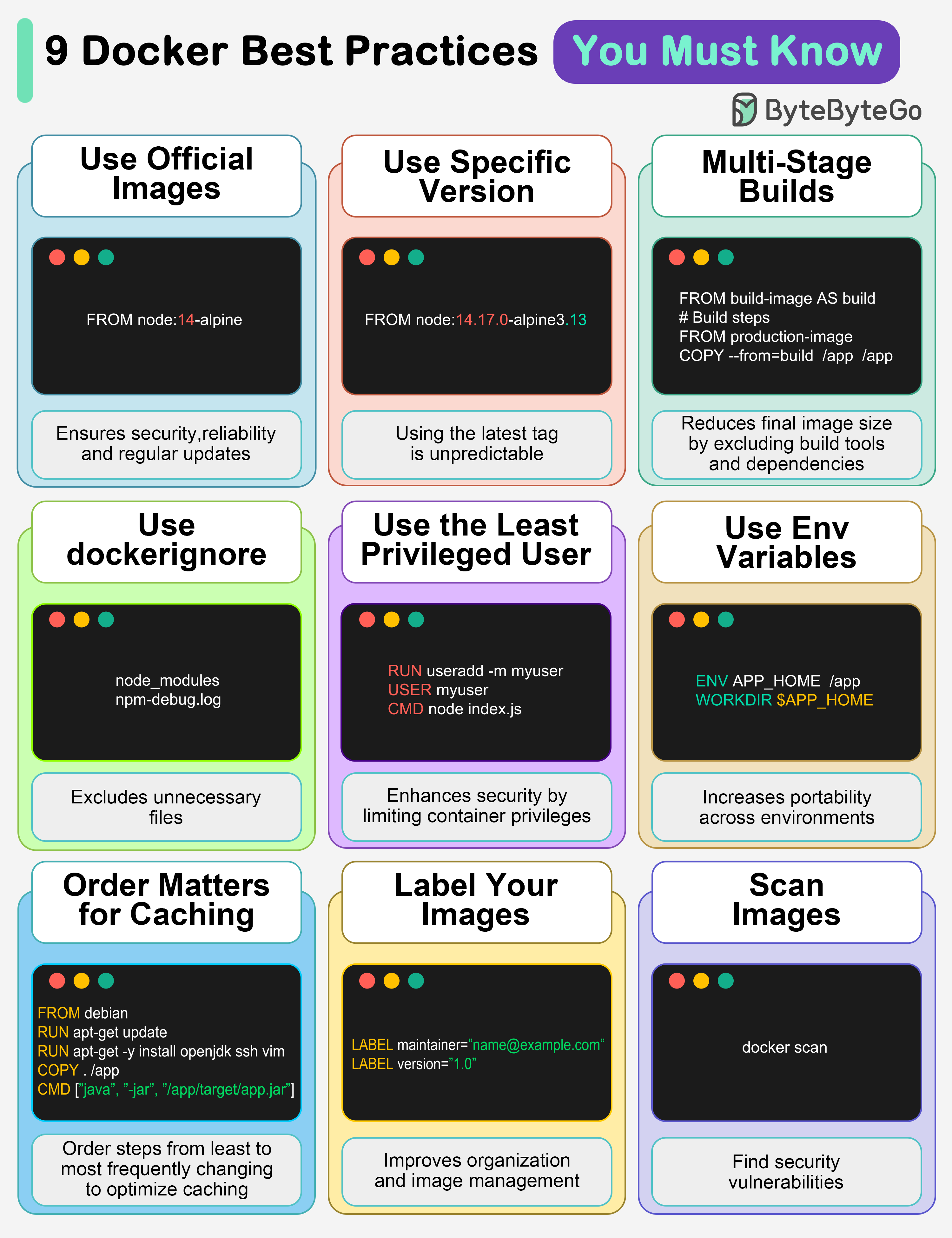

+ * [9 Docker Best Practices You Must Know](https://bytebytego.com/guides/9-docker-best-practices-you-must-know)

+ * [Netflix Tech Stack - CI/CD Pipeline](https://bytebytego.com/guides/netflix-tech-stack-cicd-pipeline)

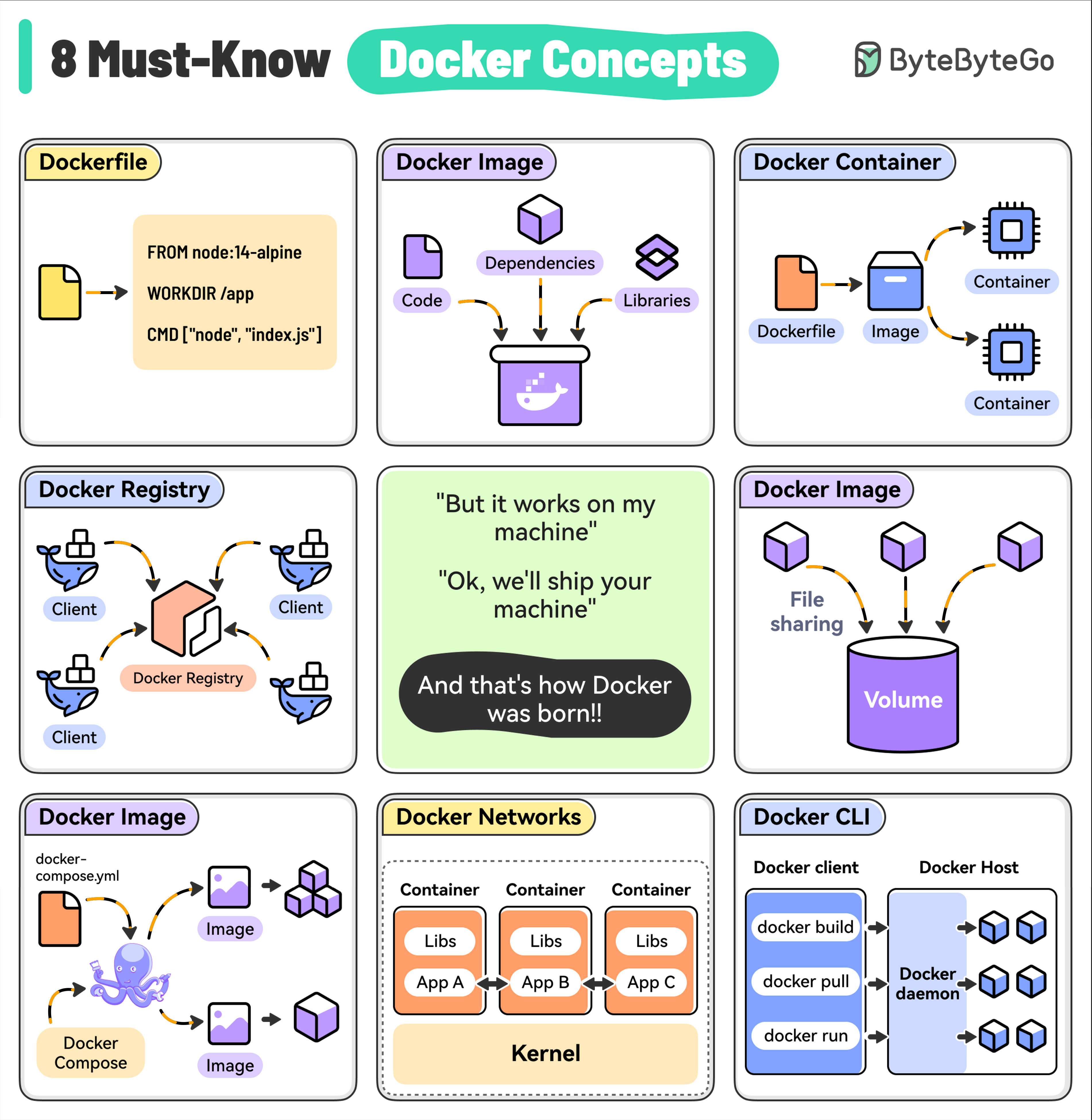

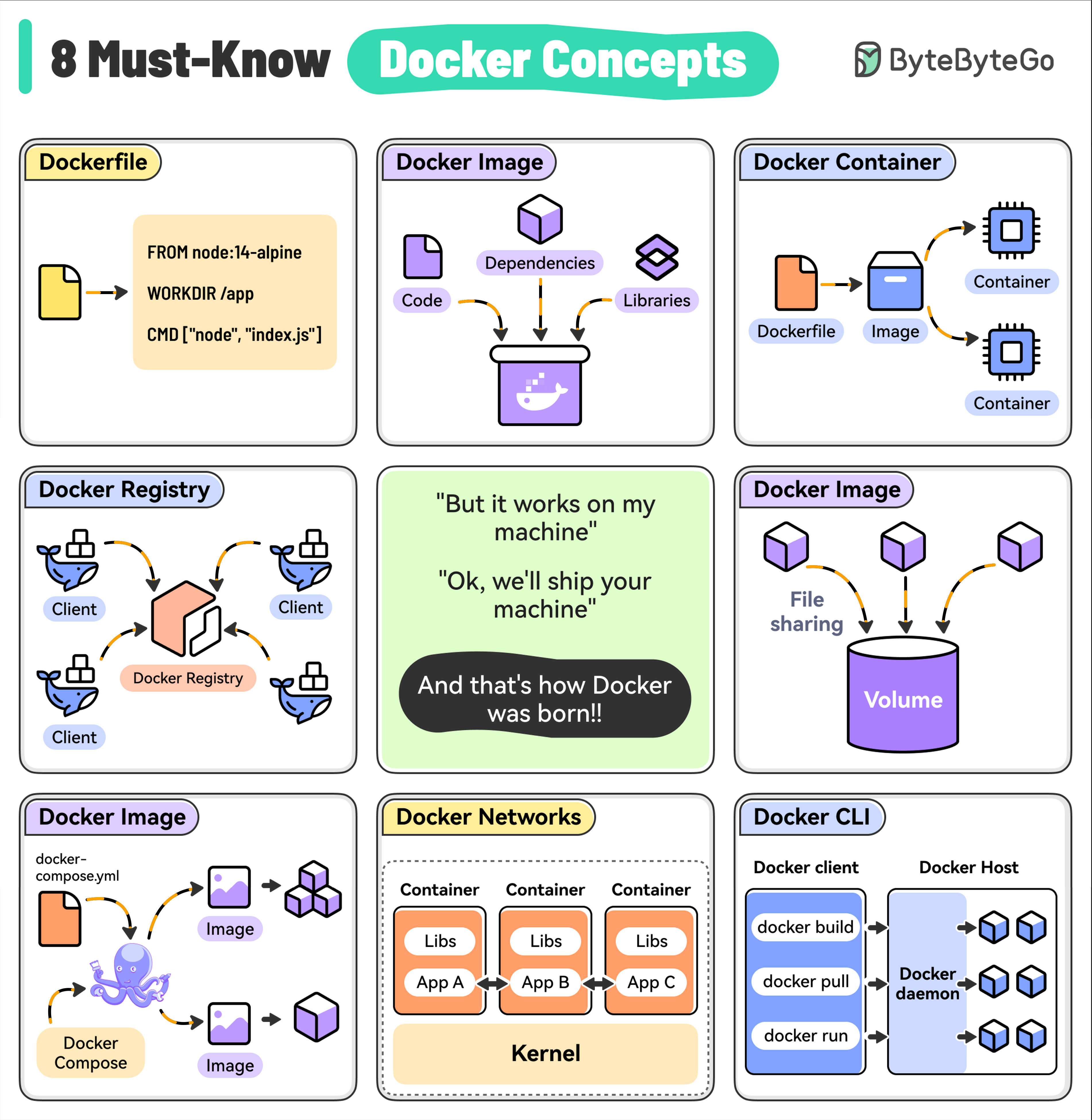

+ * [Top 8 Must-Know Docker Concepts](https://bytebytego.com/guides/top-8-must-know-docker-concepts)

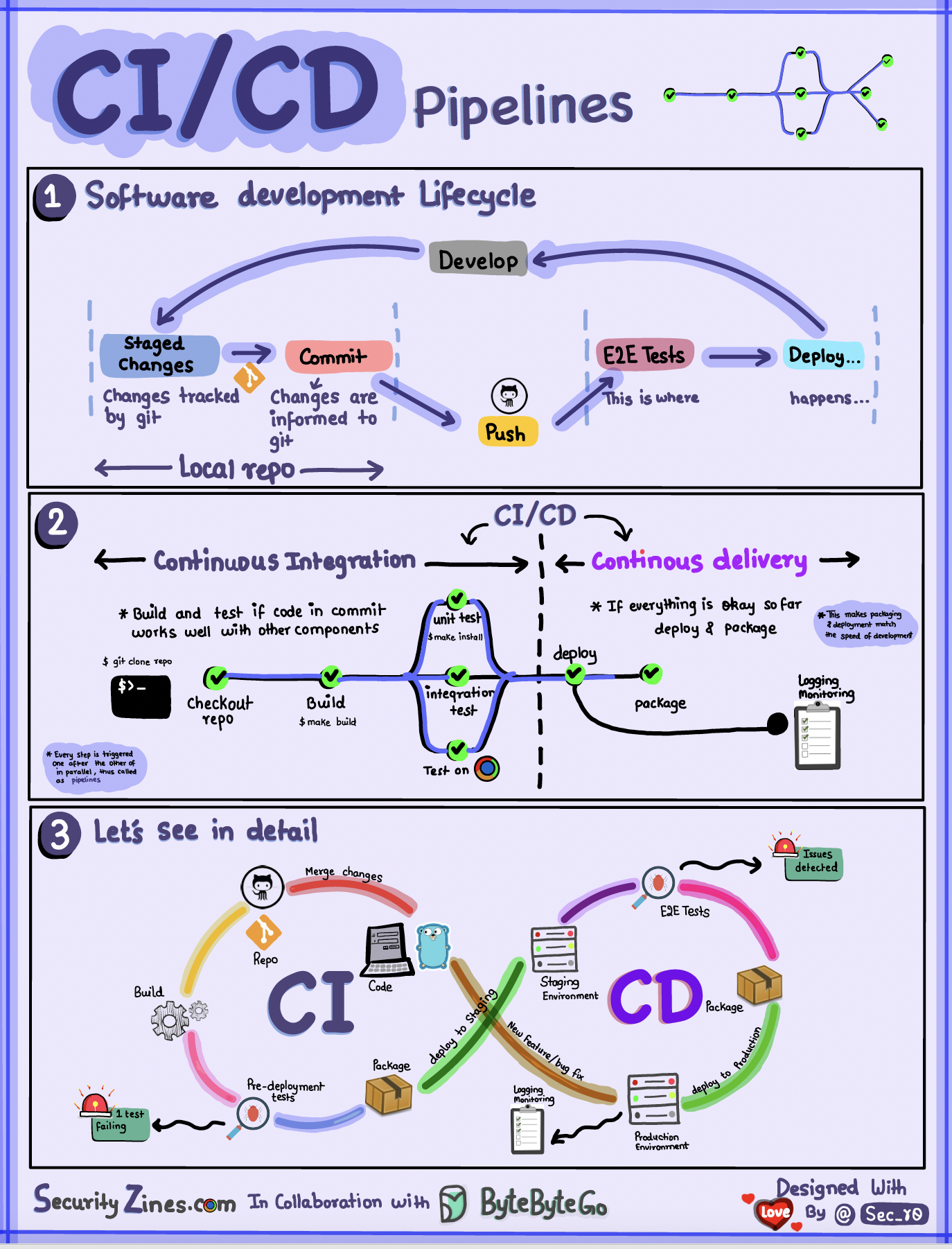

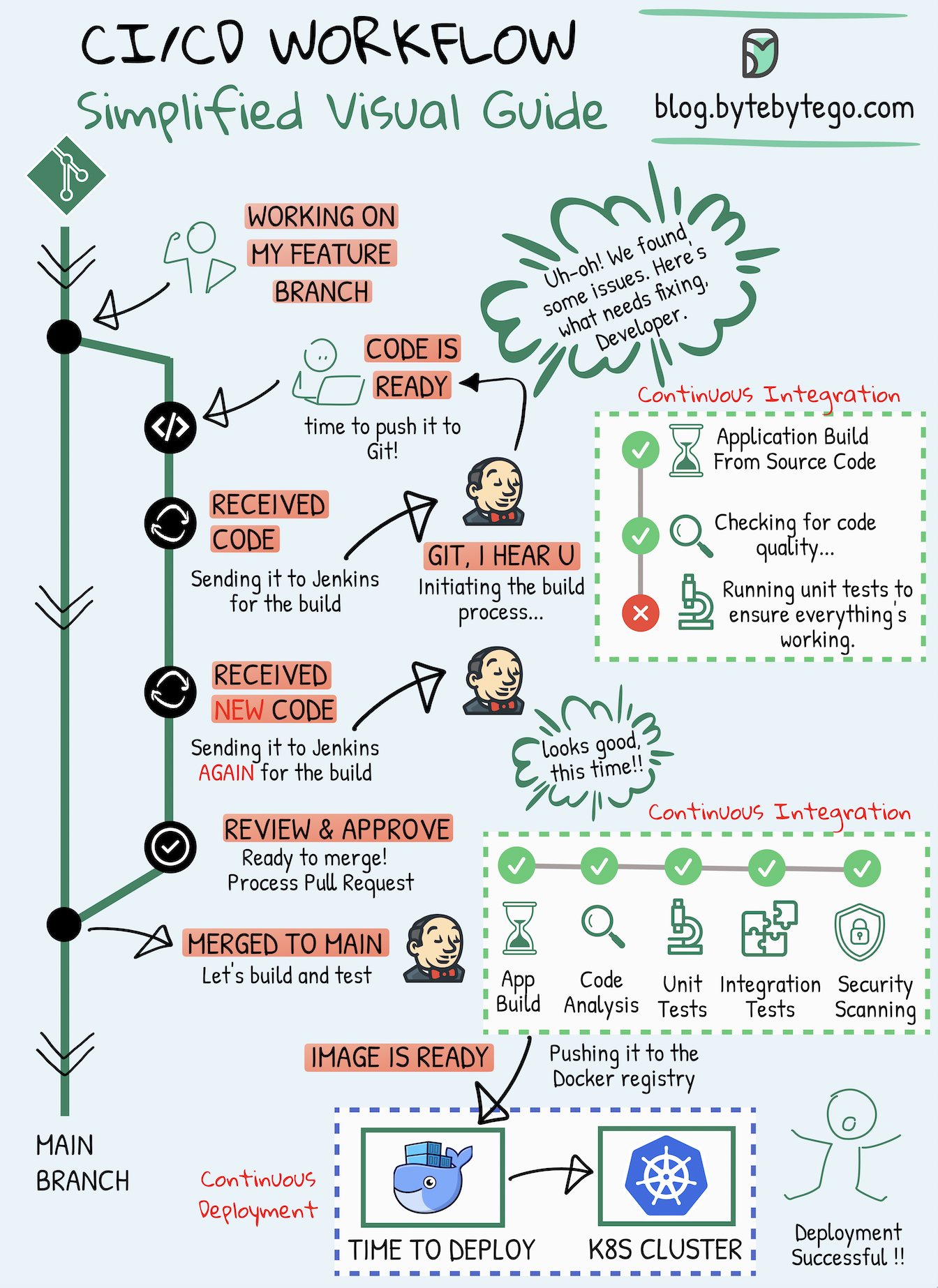

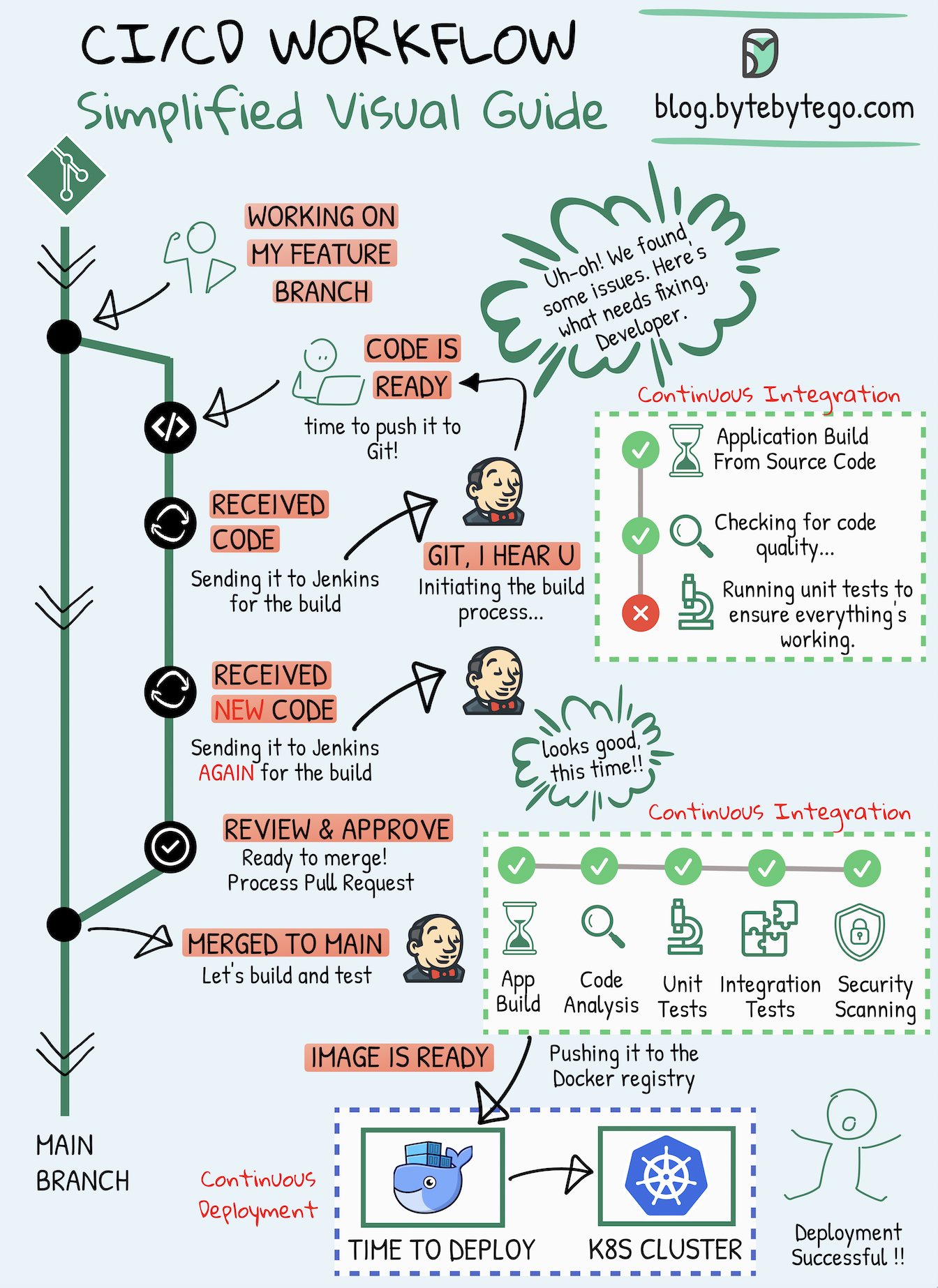

+ * [CI/CD Simplified Visual Guide](https://bytebytego.com/guides/cicd-simplified-visual-guide)

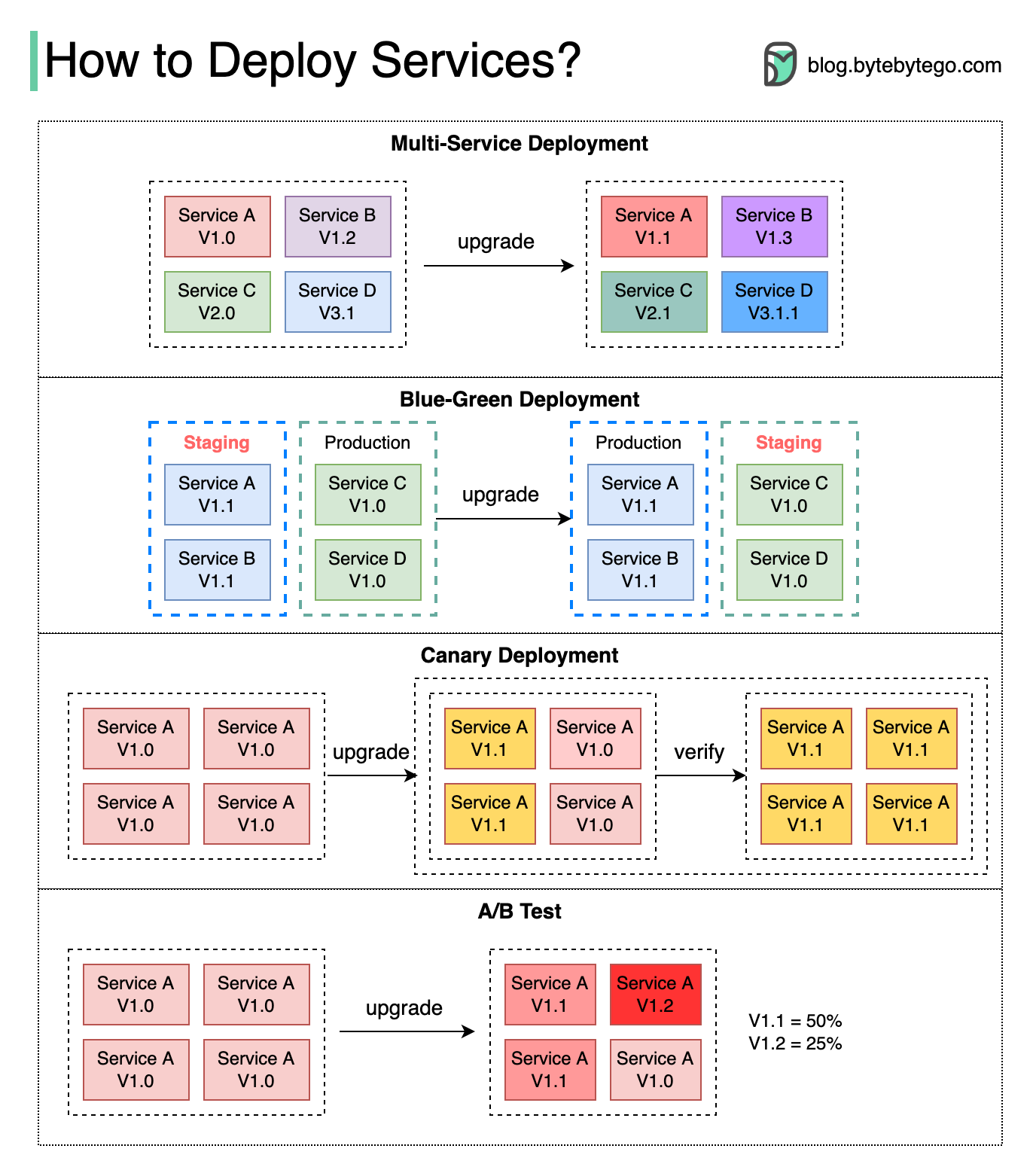

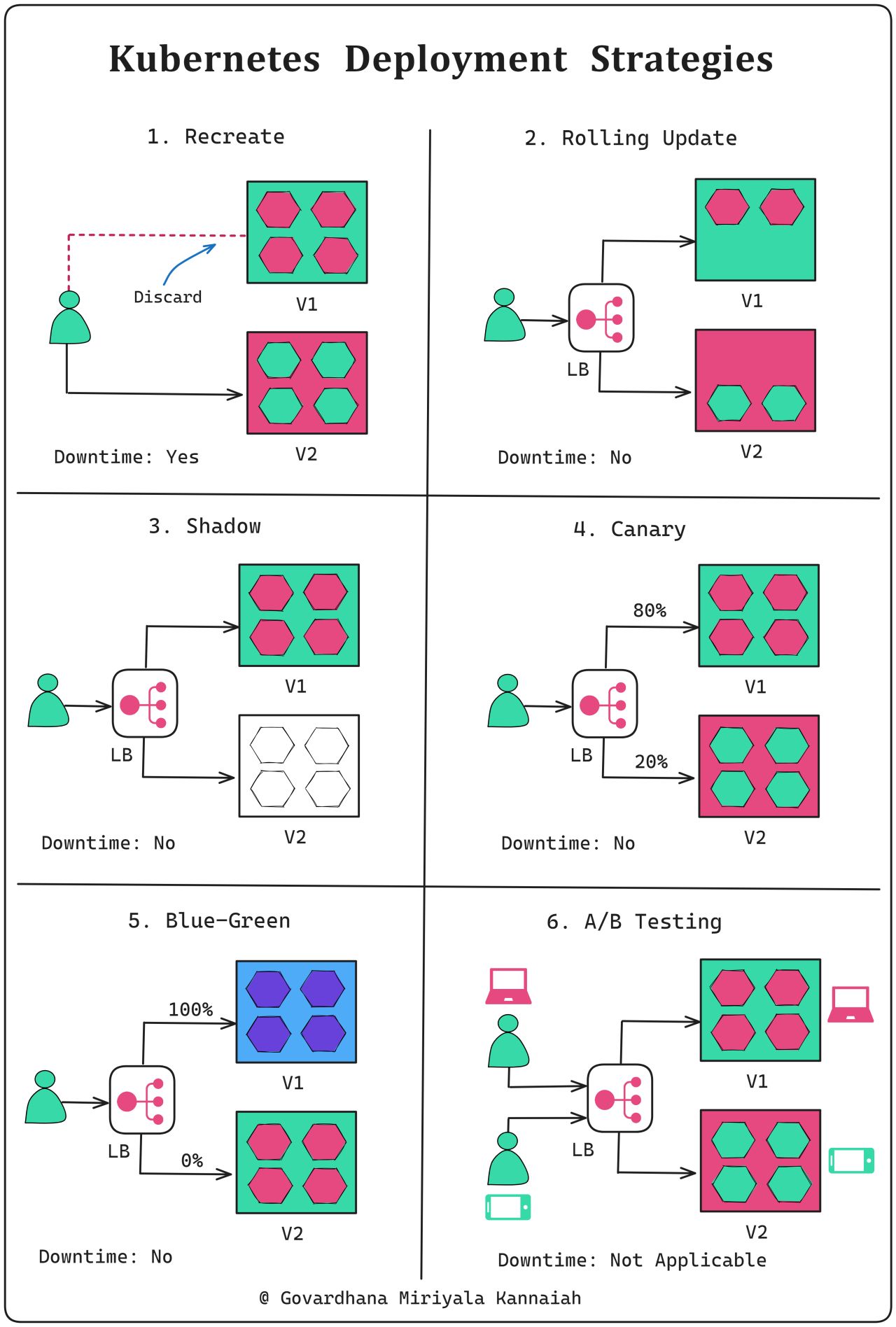

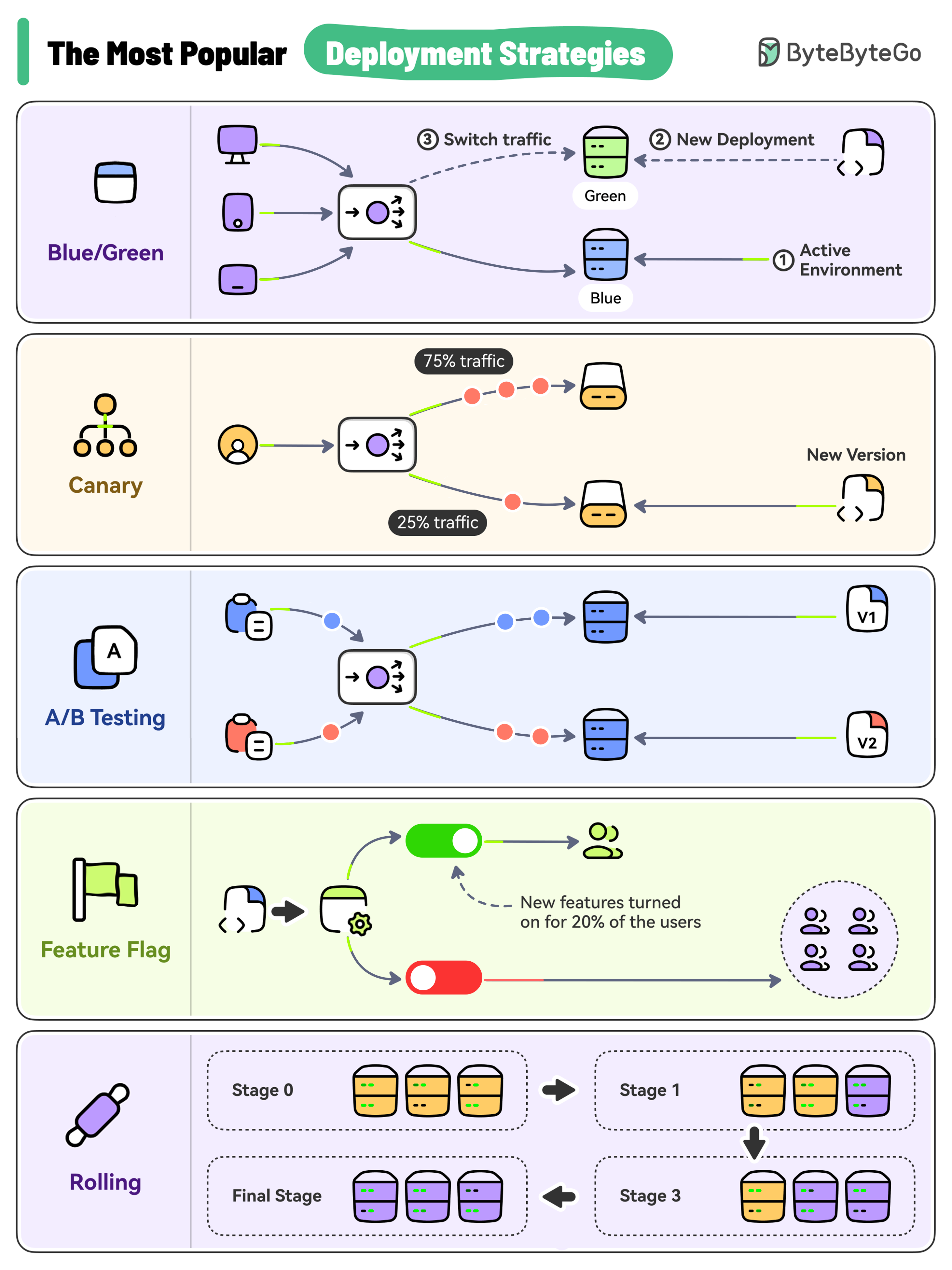

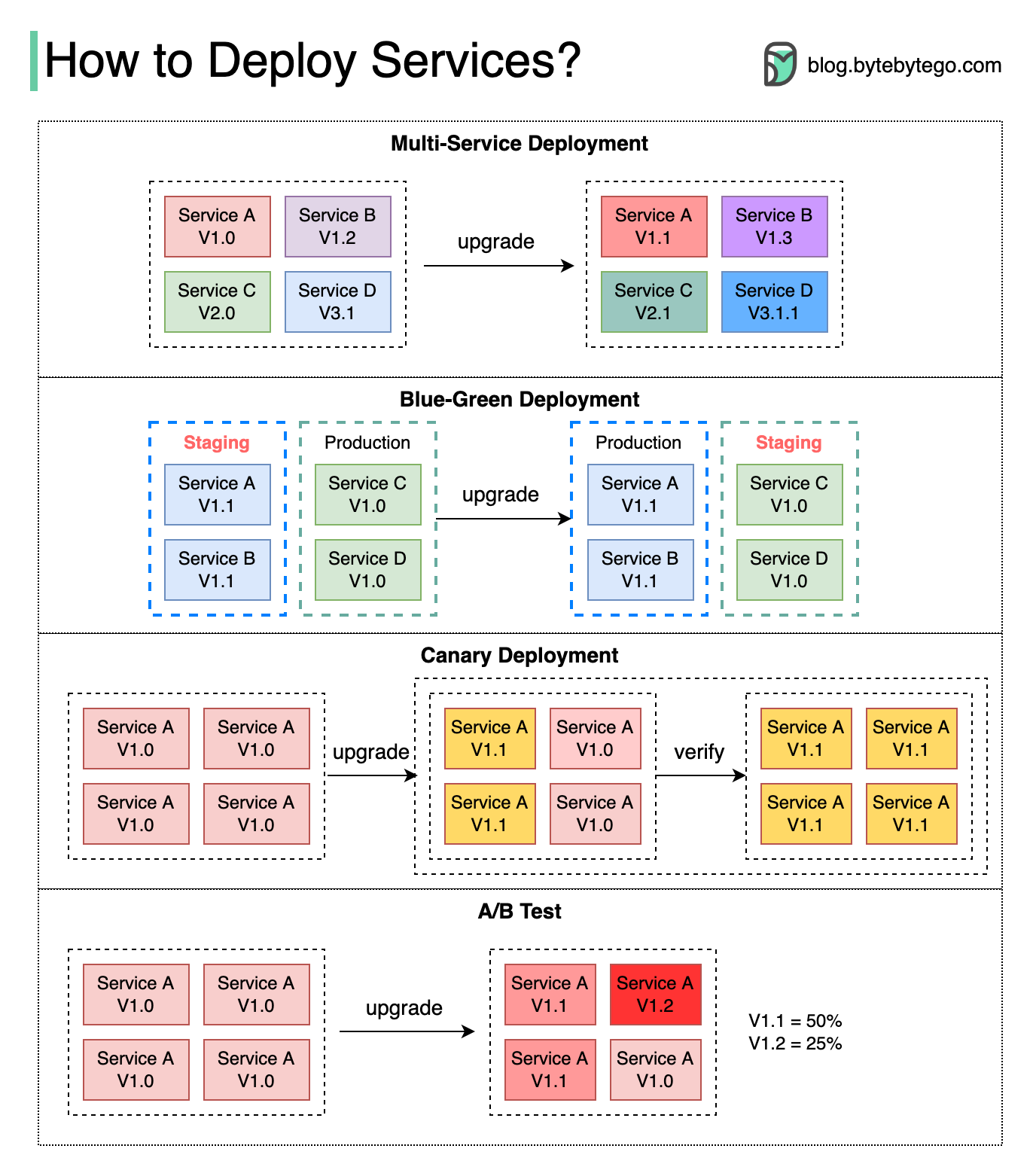

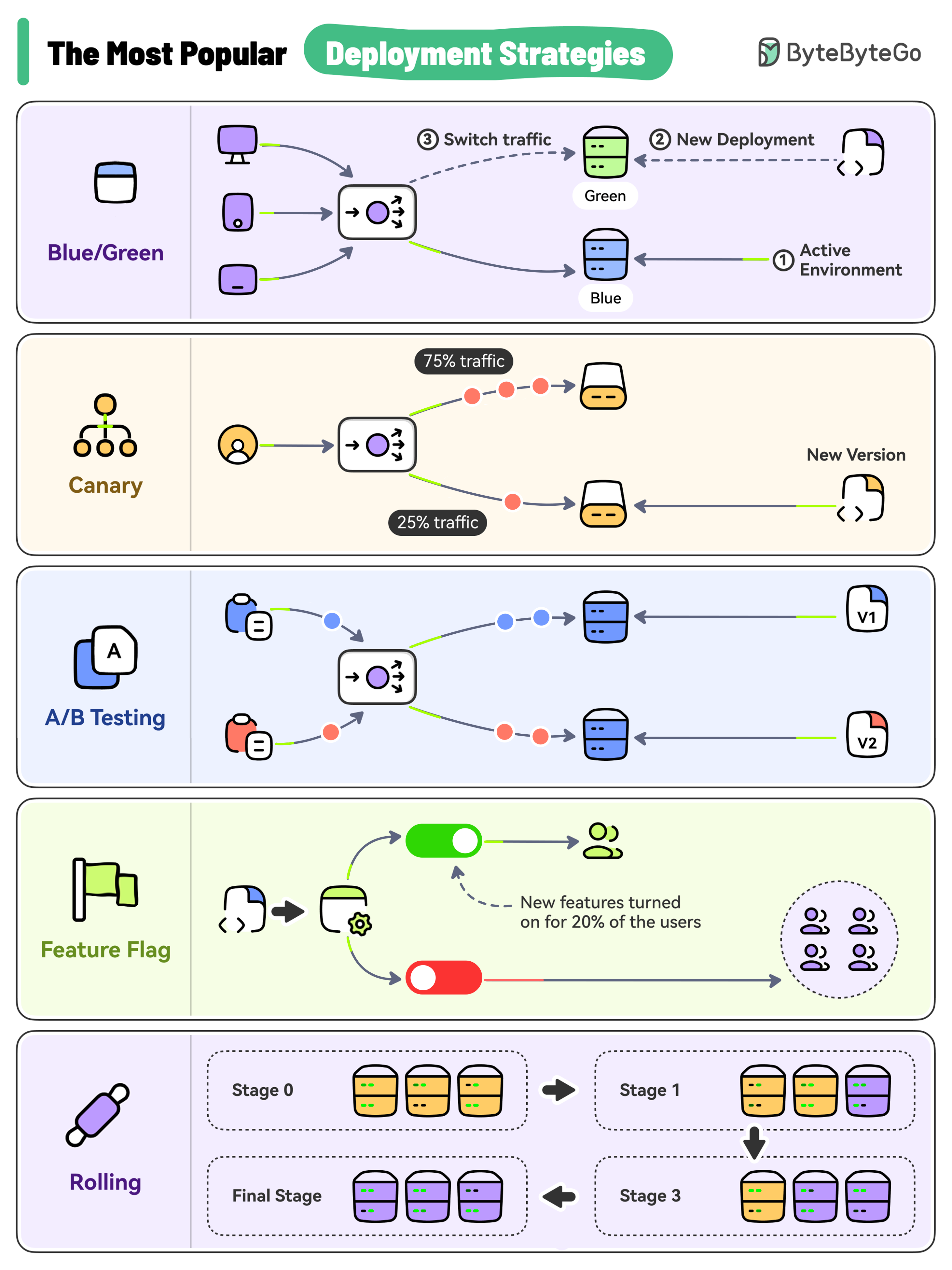

+ * [Top 5 Most-Used Deployment Strategies](https://bytebytego.com/guides/top-5-most-used-deployment-strategies)

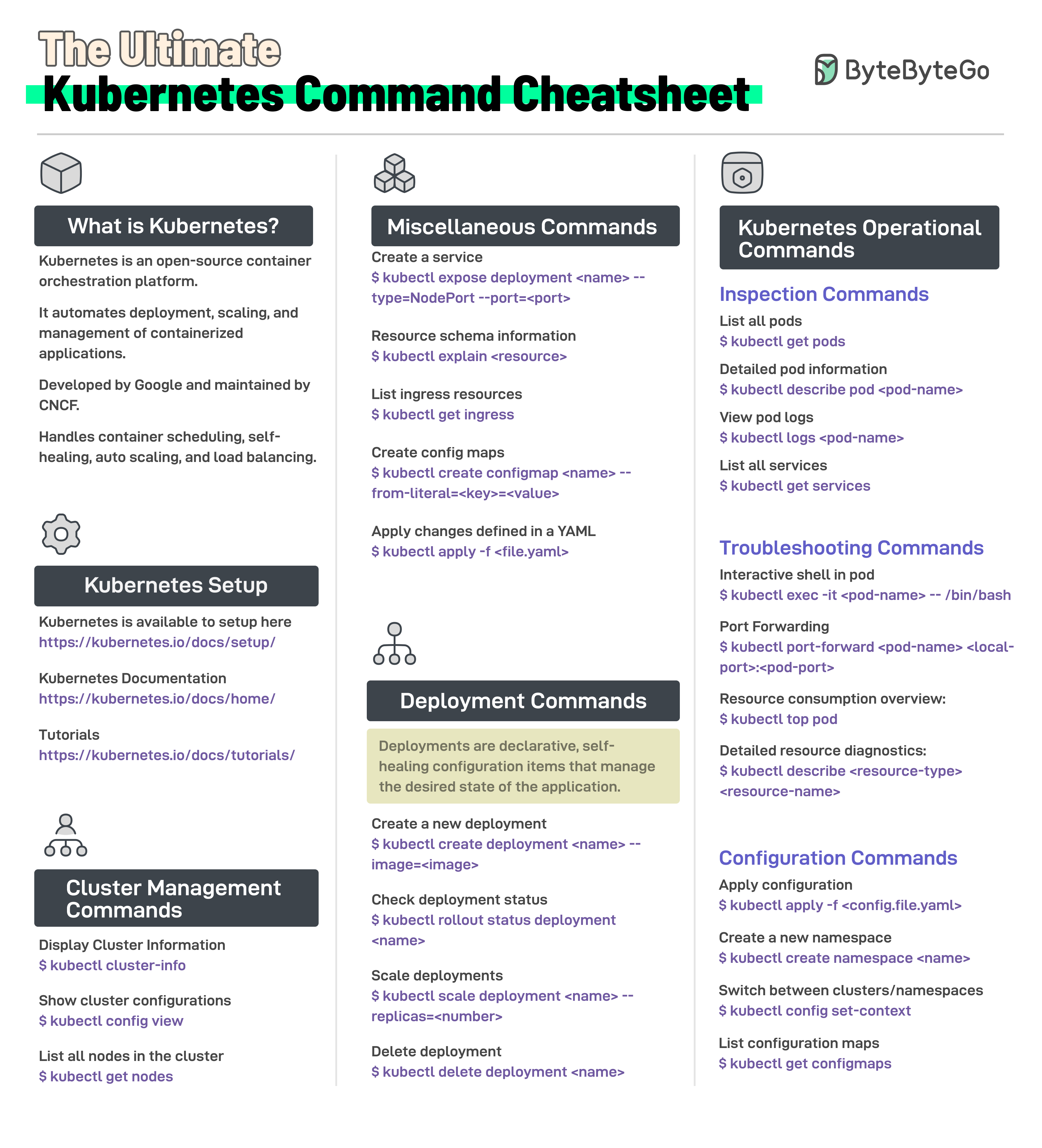

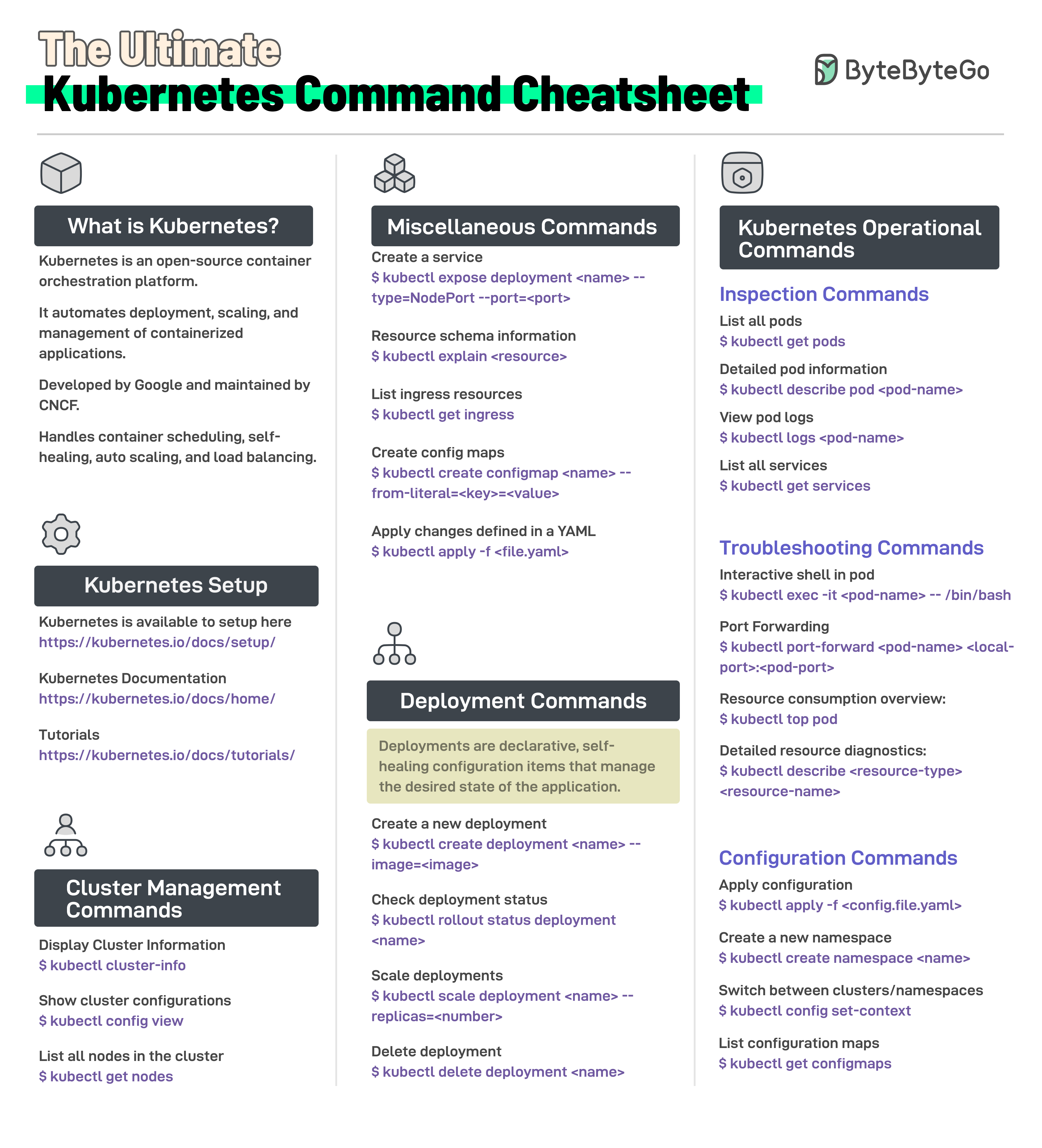

+ * [Kubernetes Command Cheatsheet](https://bytebytego.com/guides/the-ultimate-kubernetes-command-cheatsheet)

+ * [Kubernetes Deployment Strategies](https://bytebytego.com/guides/kubernetes-deployment-strategies)

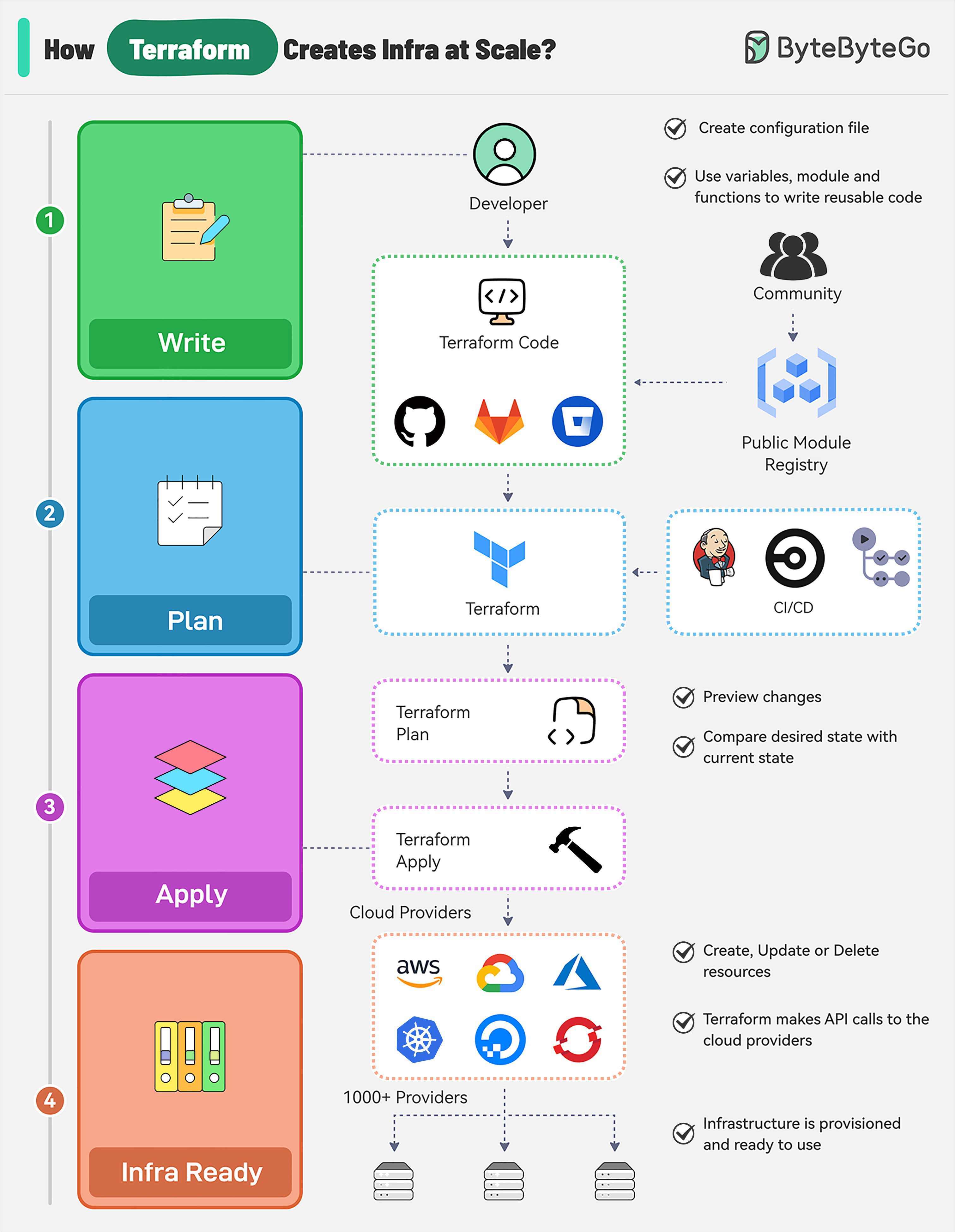

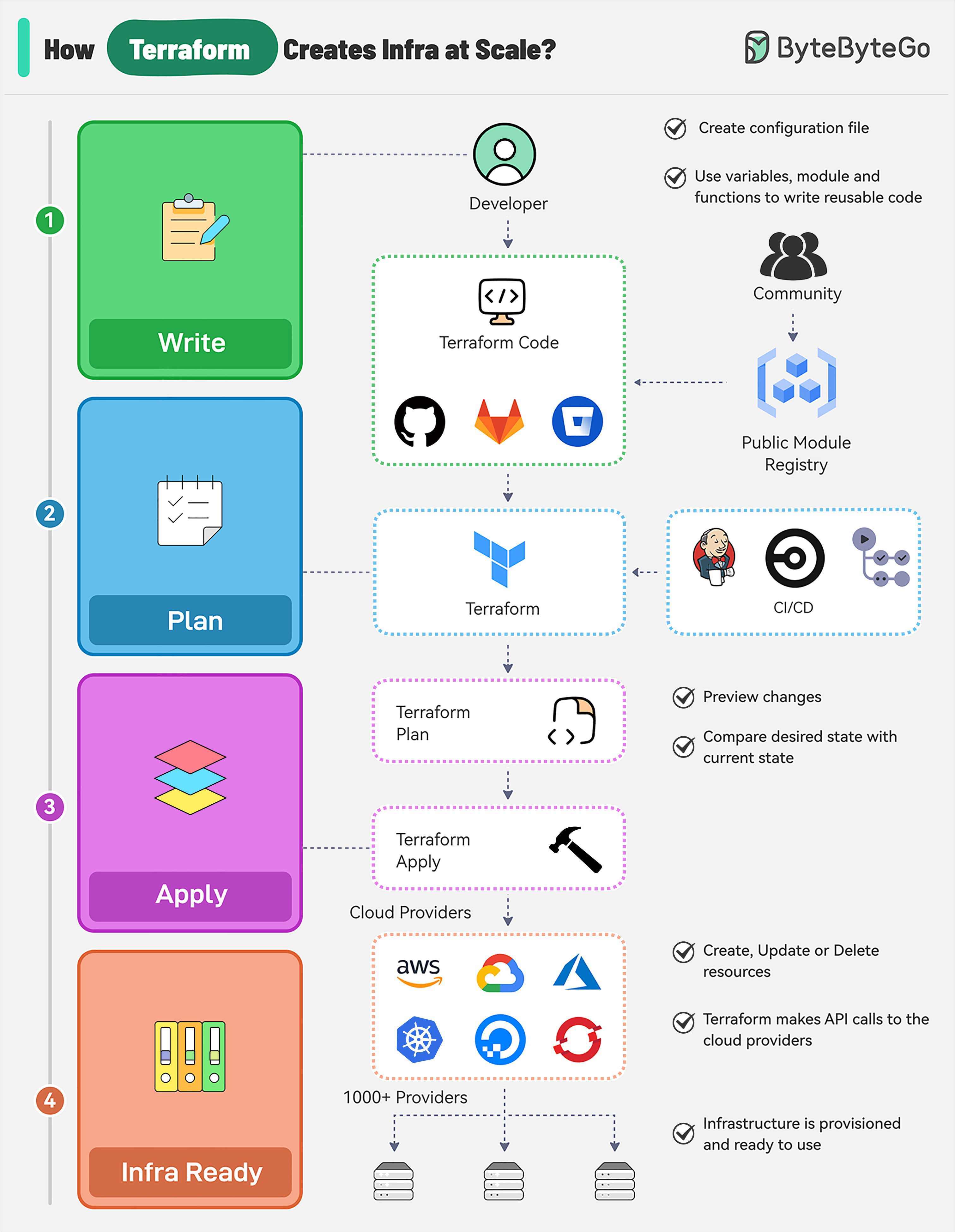

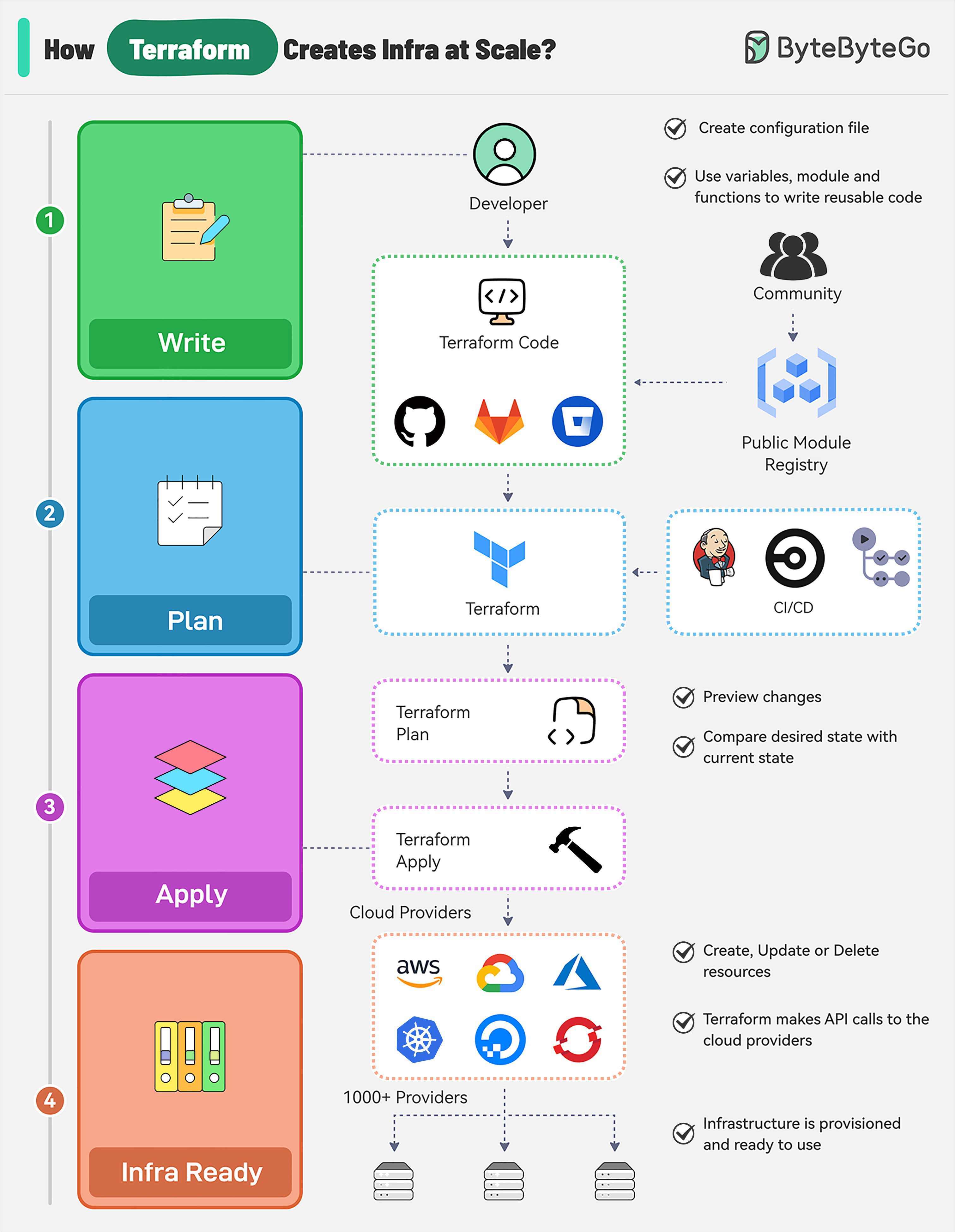

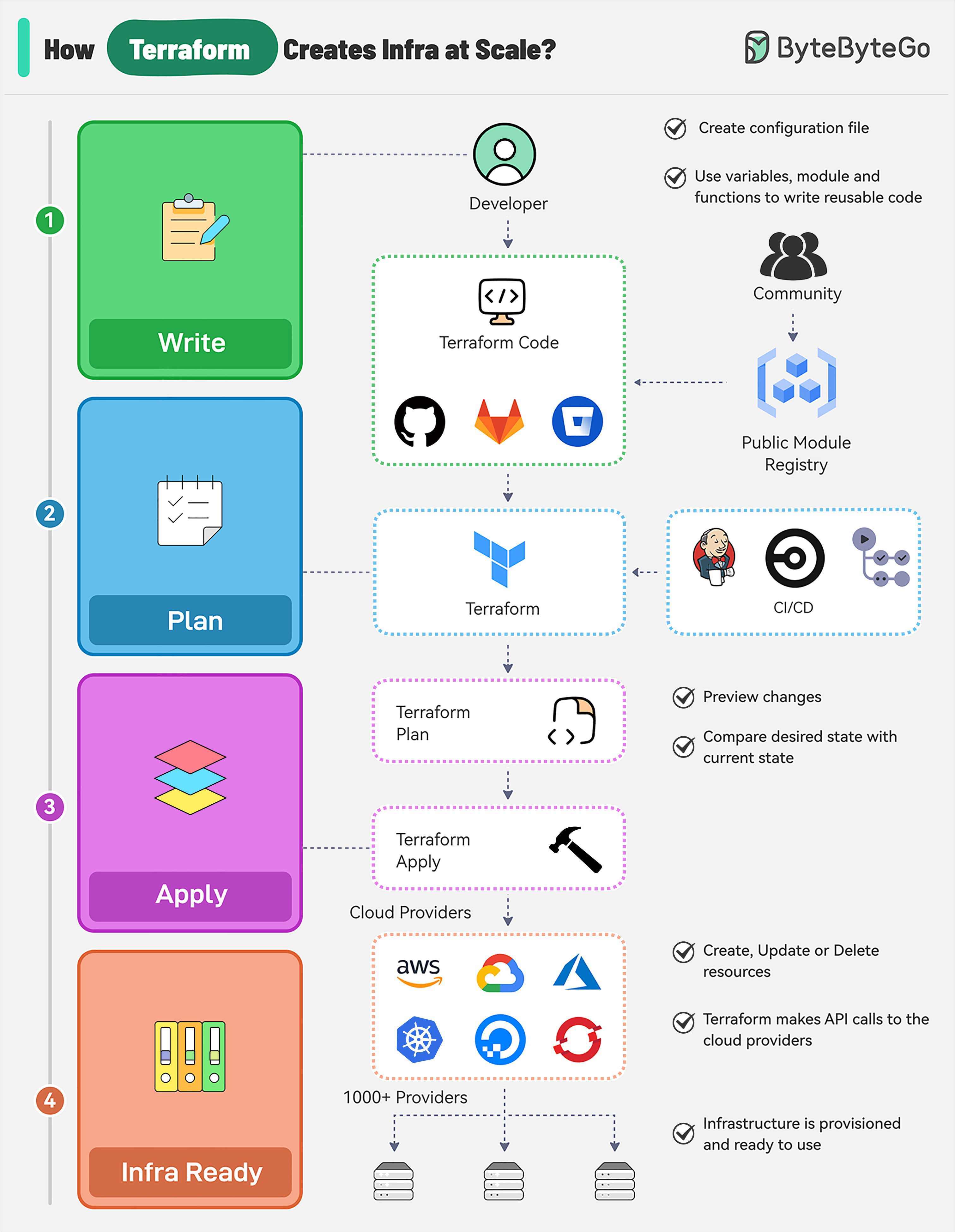

+ * [How does Terraform turn Code into Cloud?](https://bytebytego.com/guides/how-does-terraform-turn-code-into-cloud)

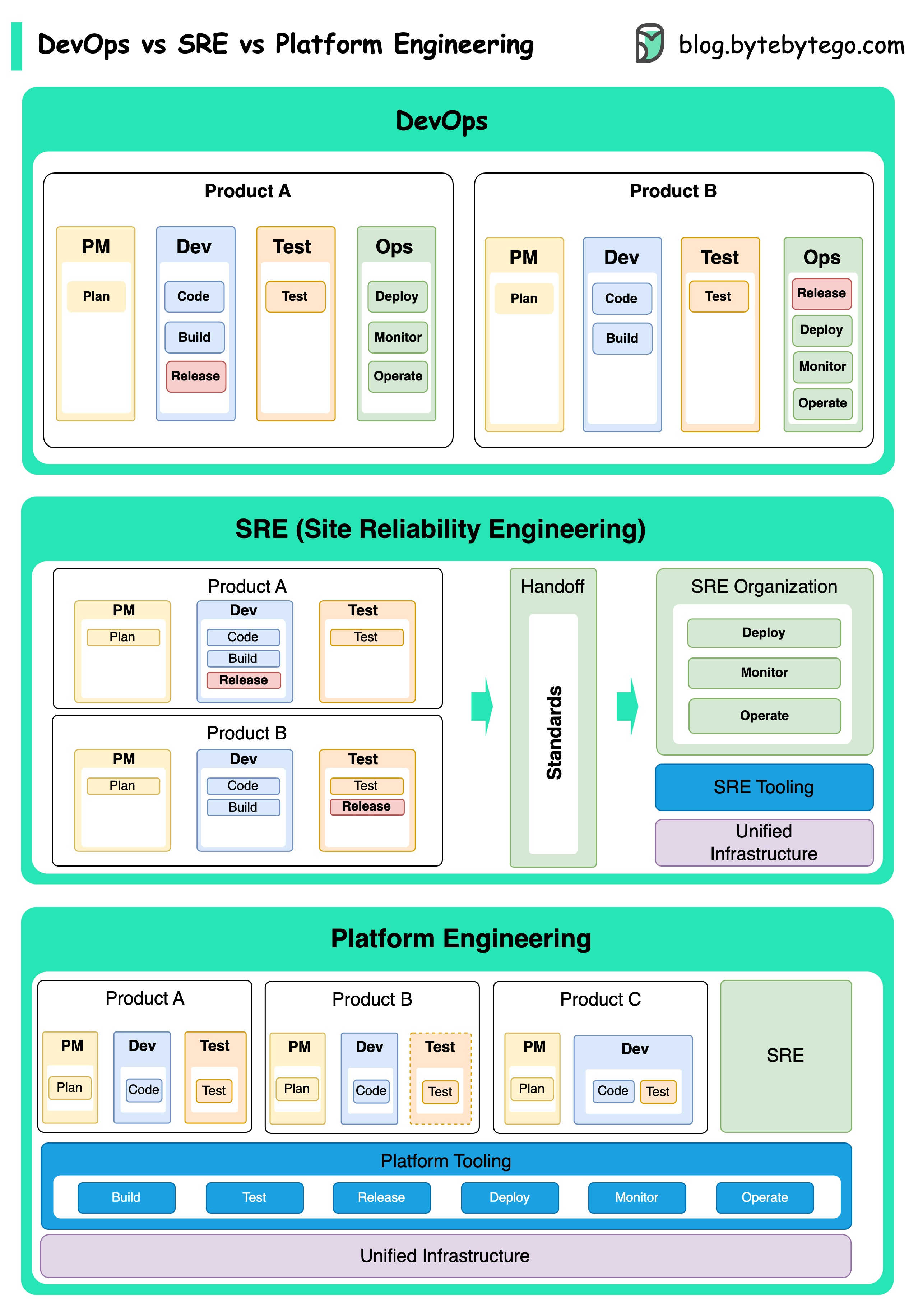

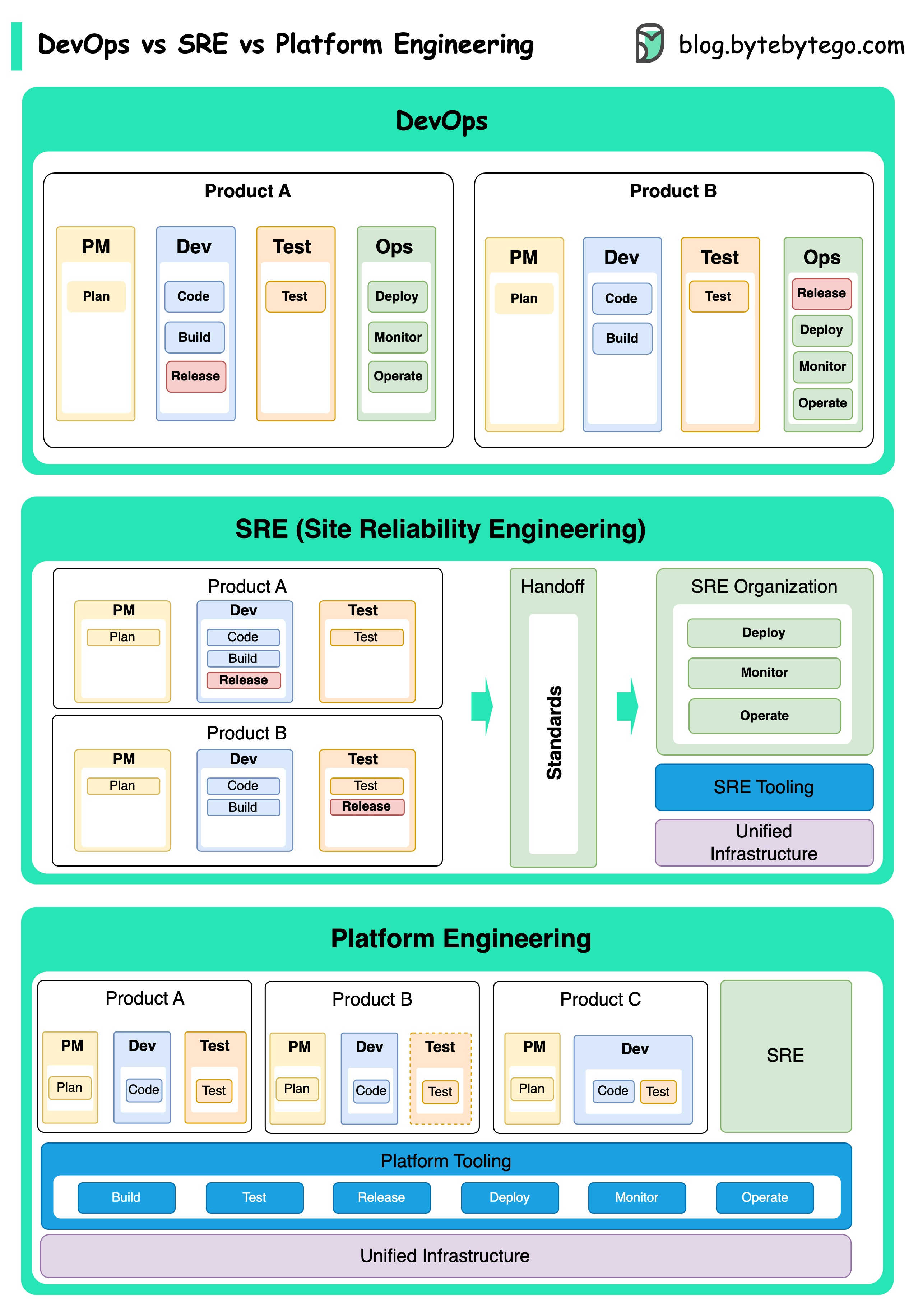

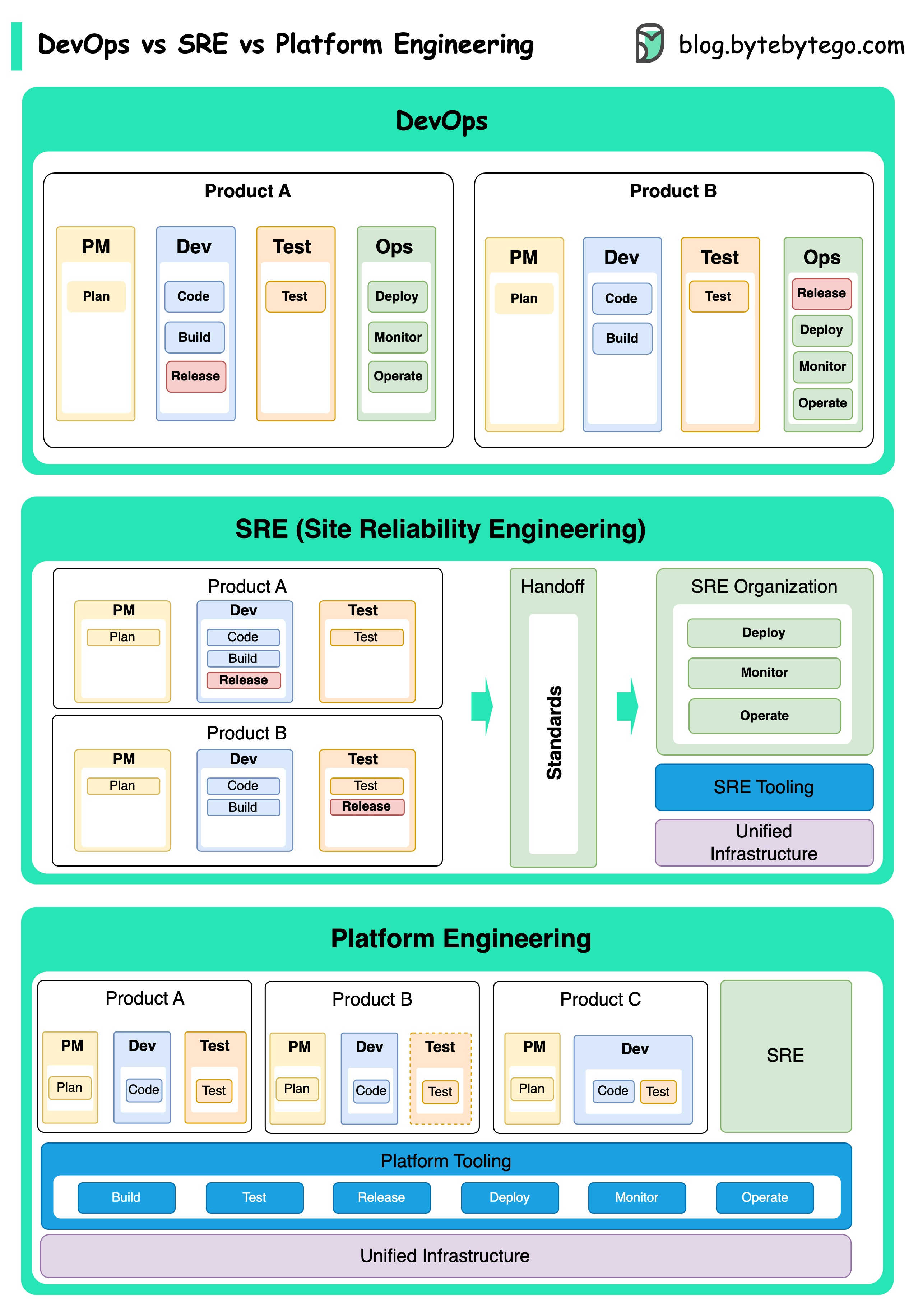

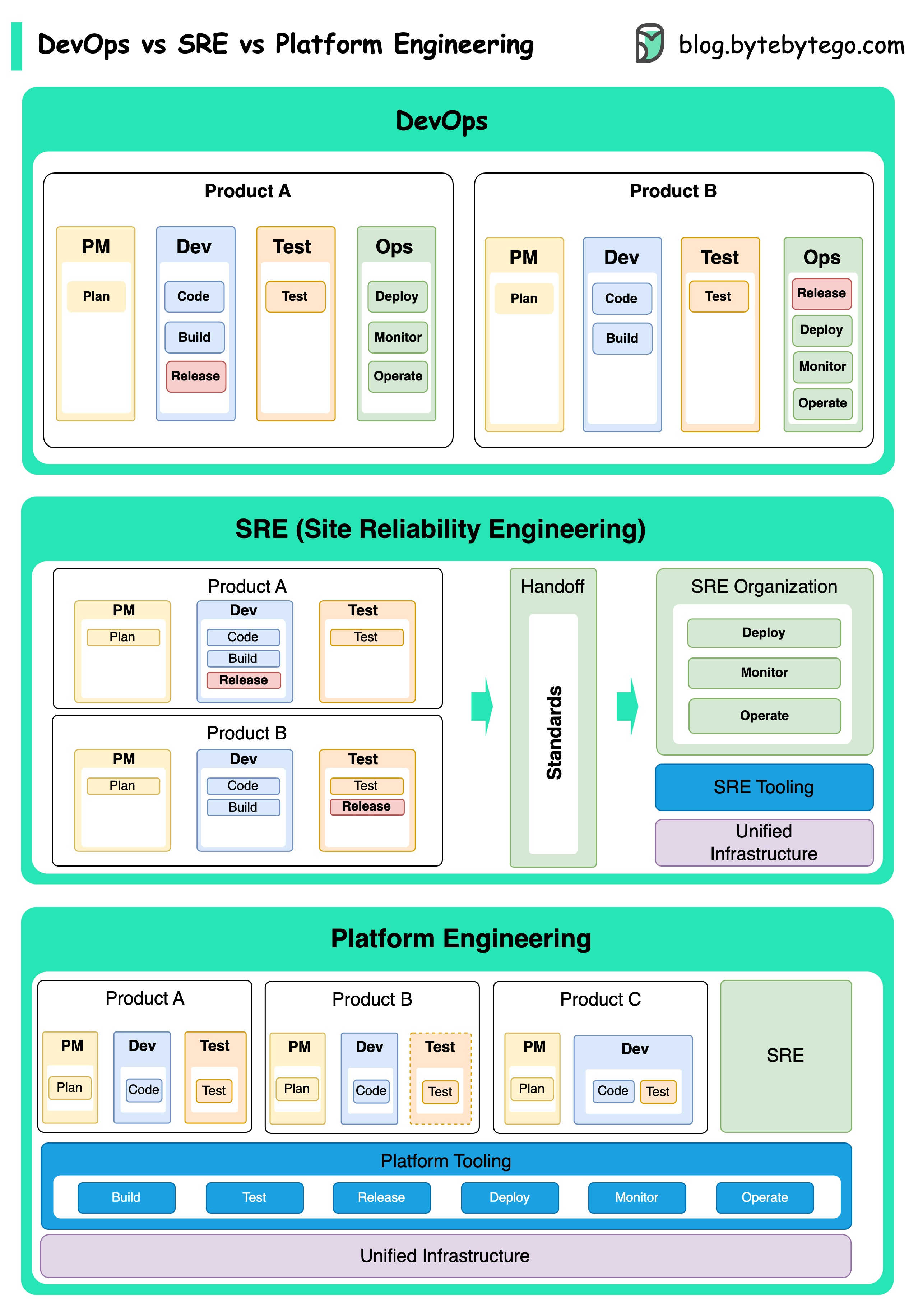

+ * [DevOps vs. SRE vs. Platform Engineering](https://bytebytego.com/guides/devops-vs-sre-vs-paltform-engg)

+ * [Deployment Strategies](https://bytebytego.com/guides/how-to-deploy-services)

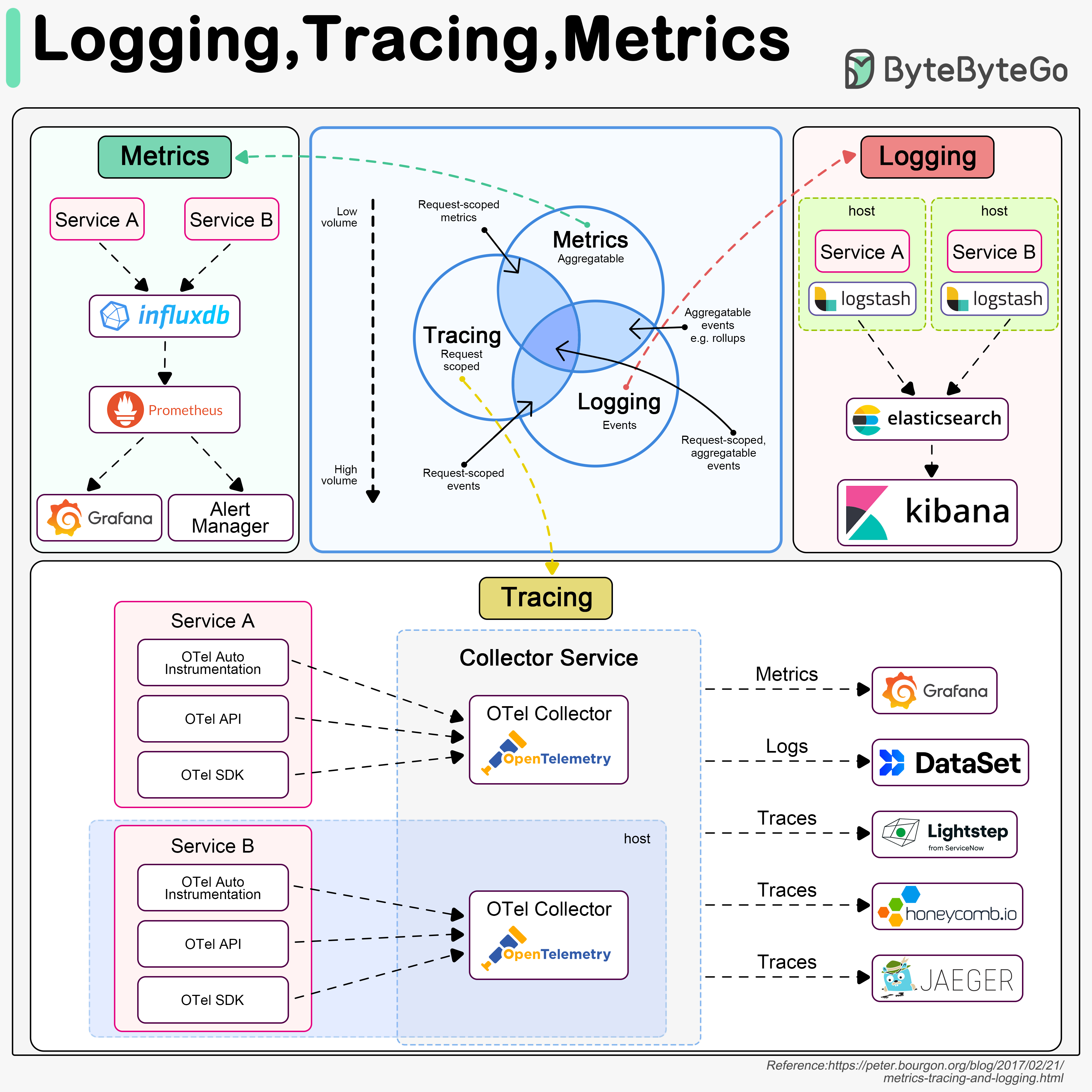

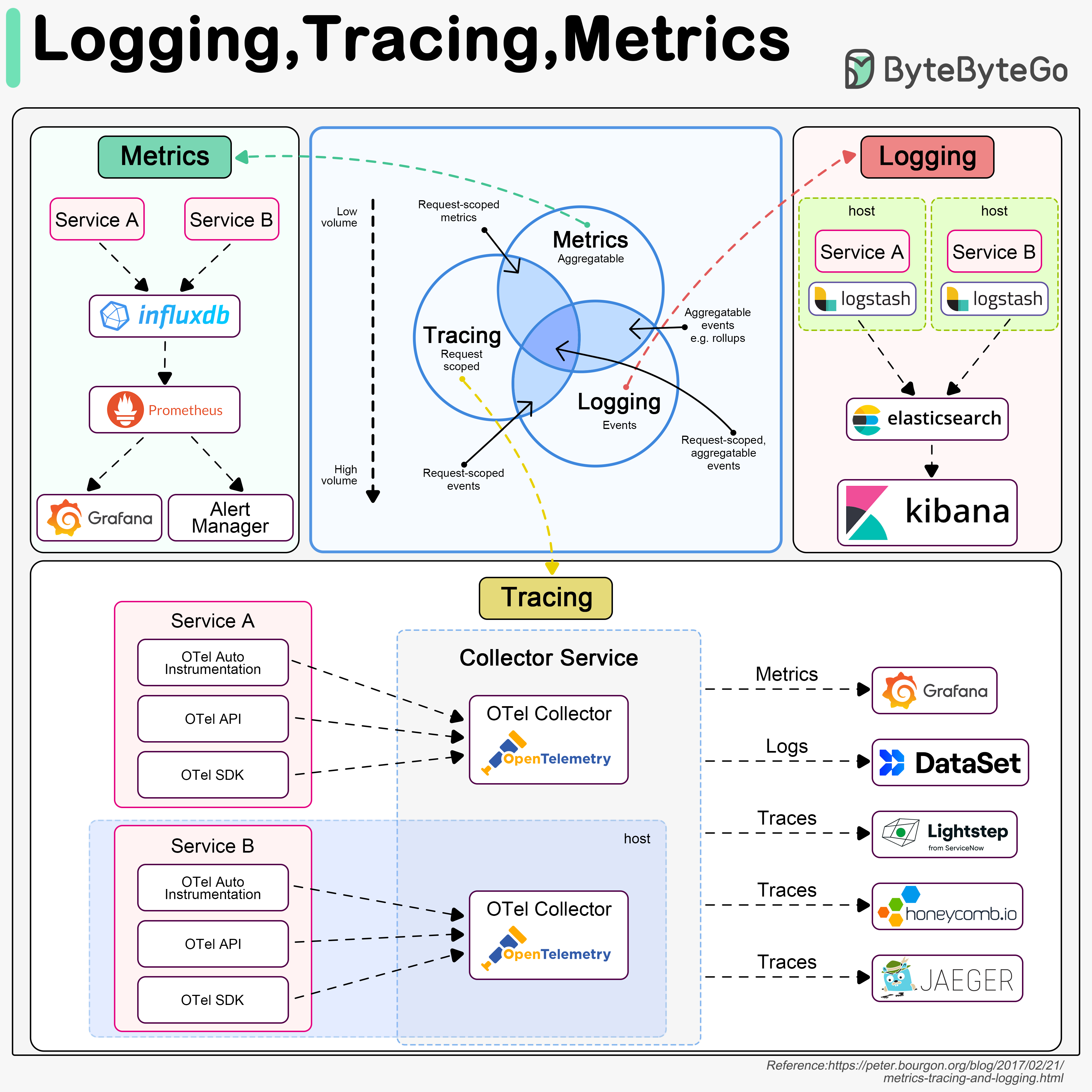

+ * [Logging, Tracing, and Metrics](https://bytebytego.com/guides/logging-tracing-metrics)

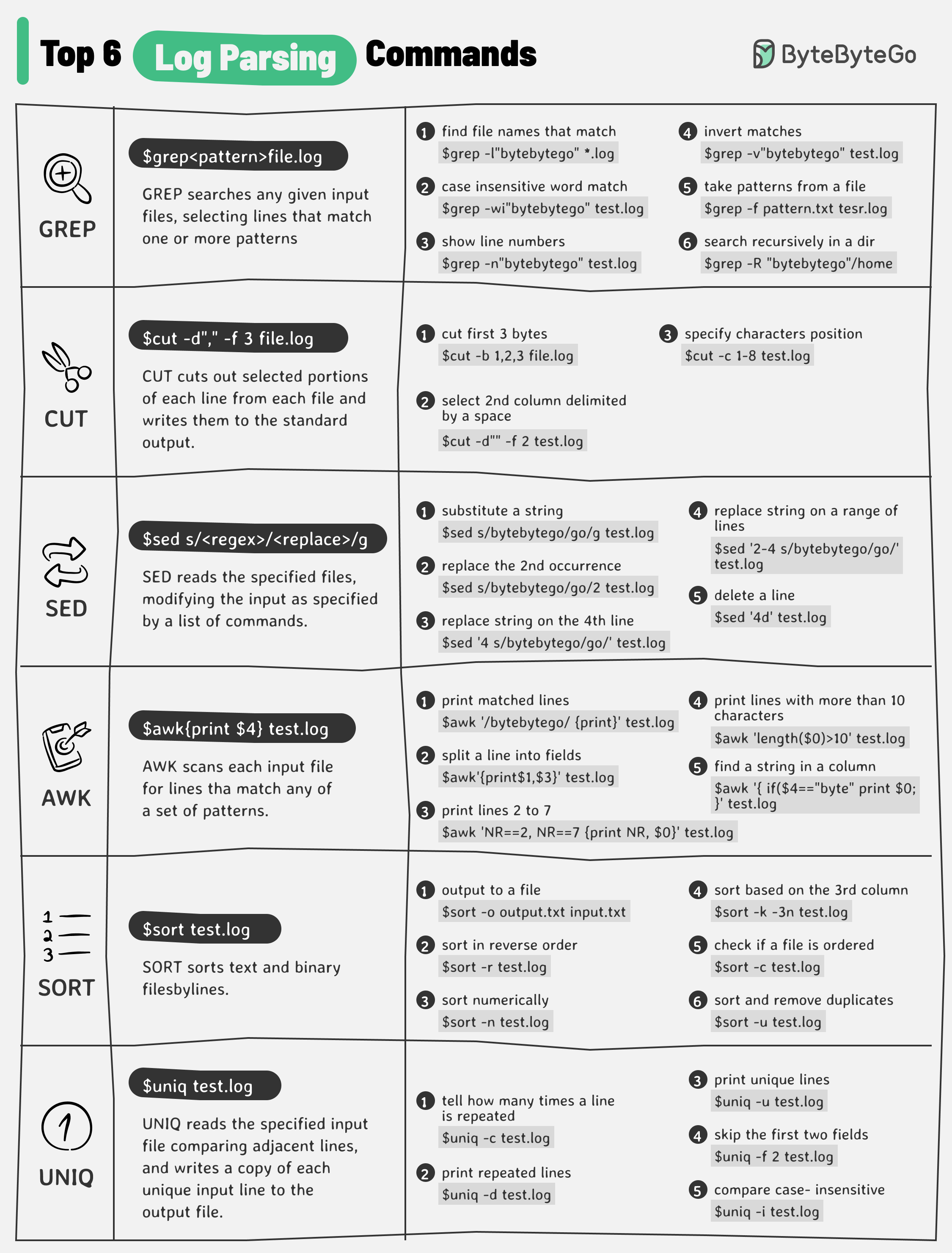

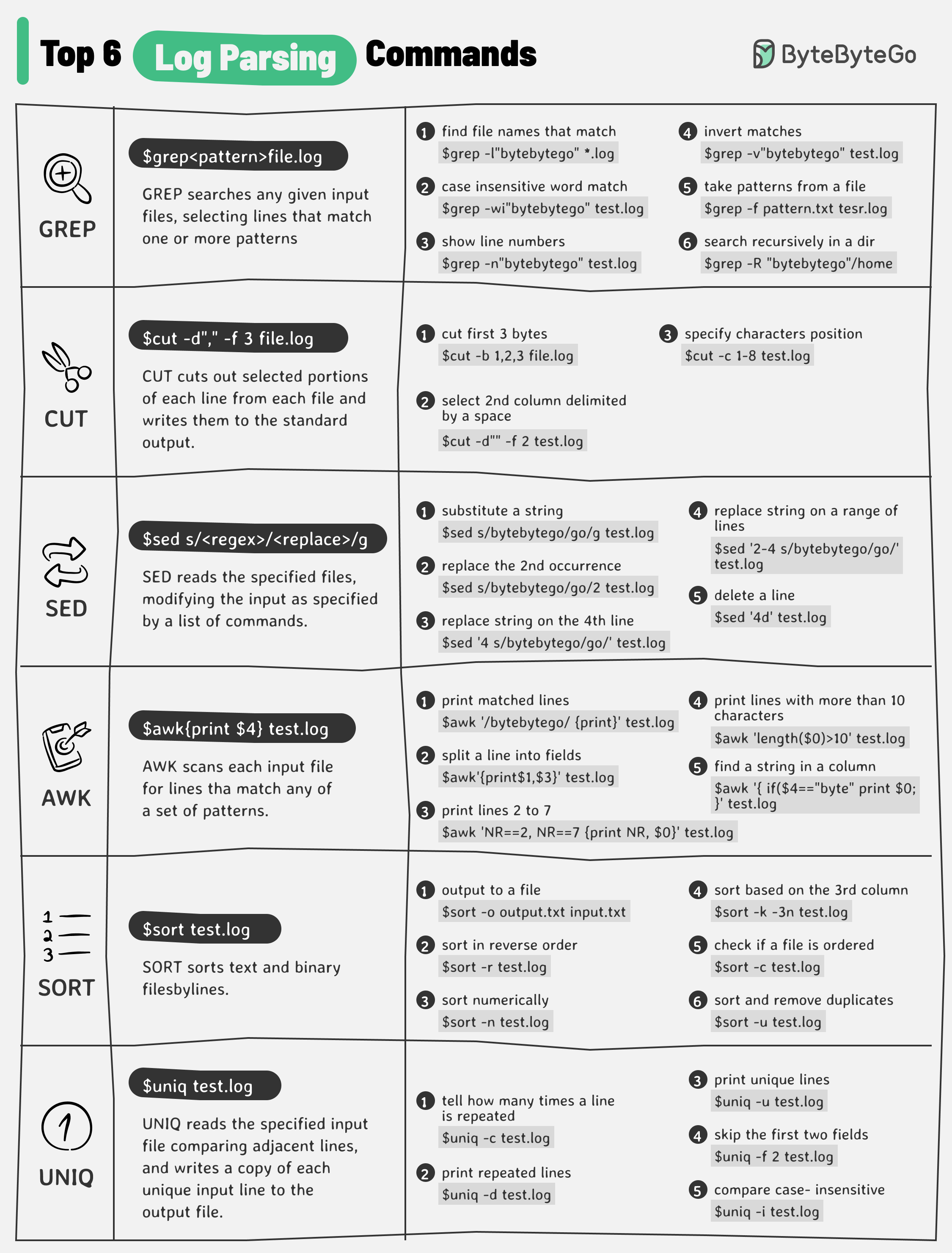

+ * [Log Parsing Cheat Sheet](https://bytebytego.com/guides/log-parsing-cheat-sheet)

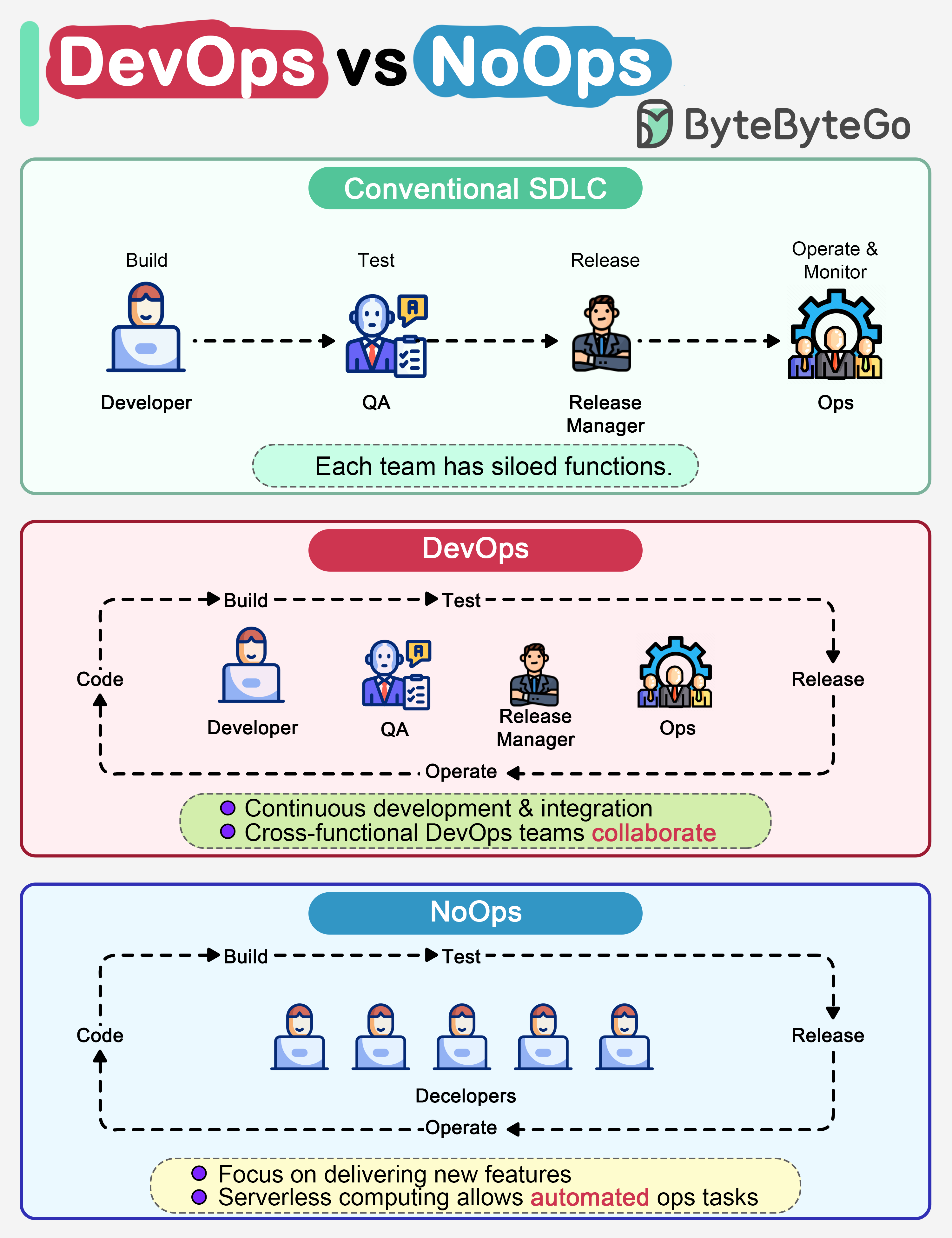

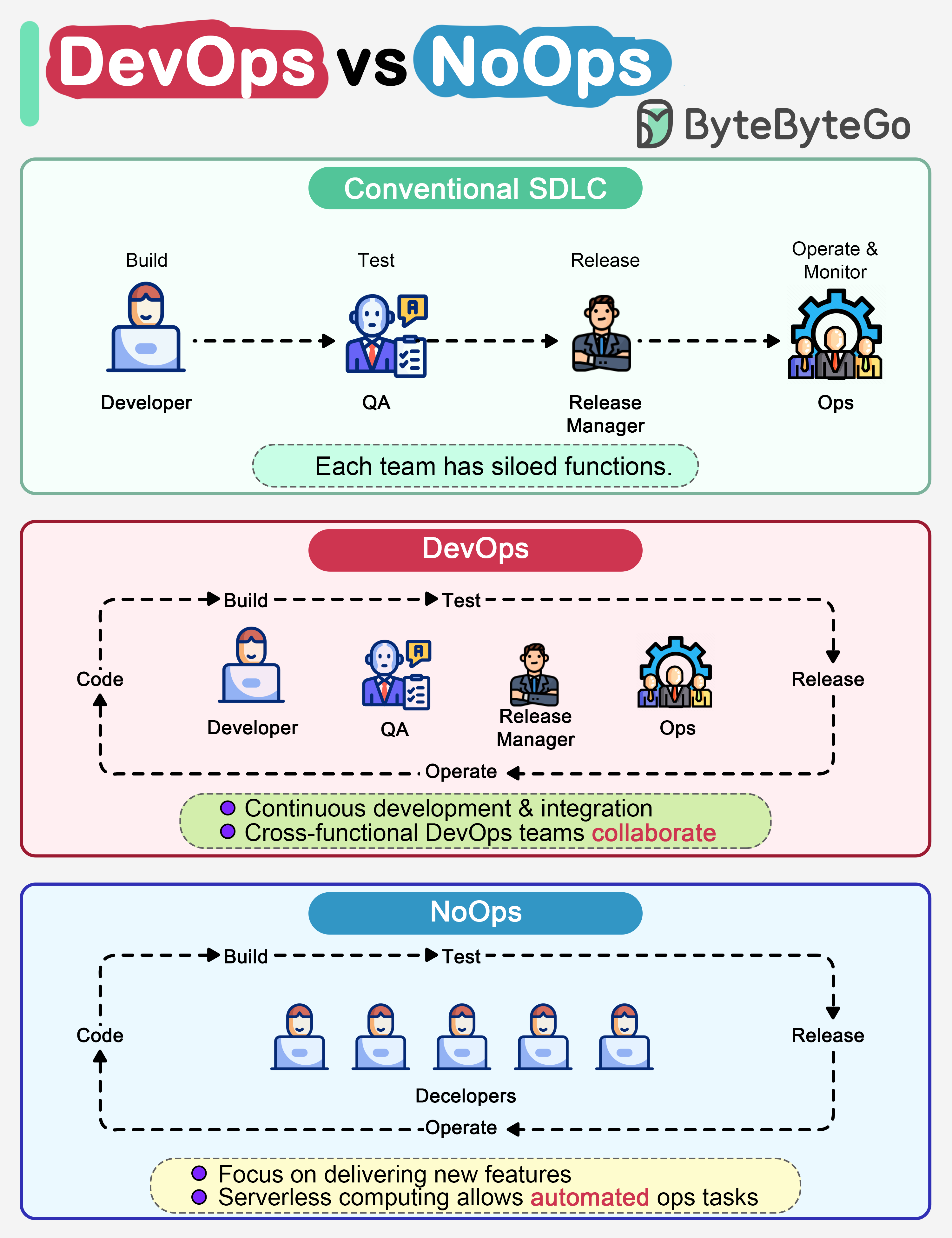

+ * [DevOps vs NoOps: What's the Difference?](https://bytebytego.com/guides/devops-vs-noops)

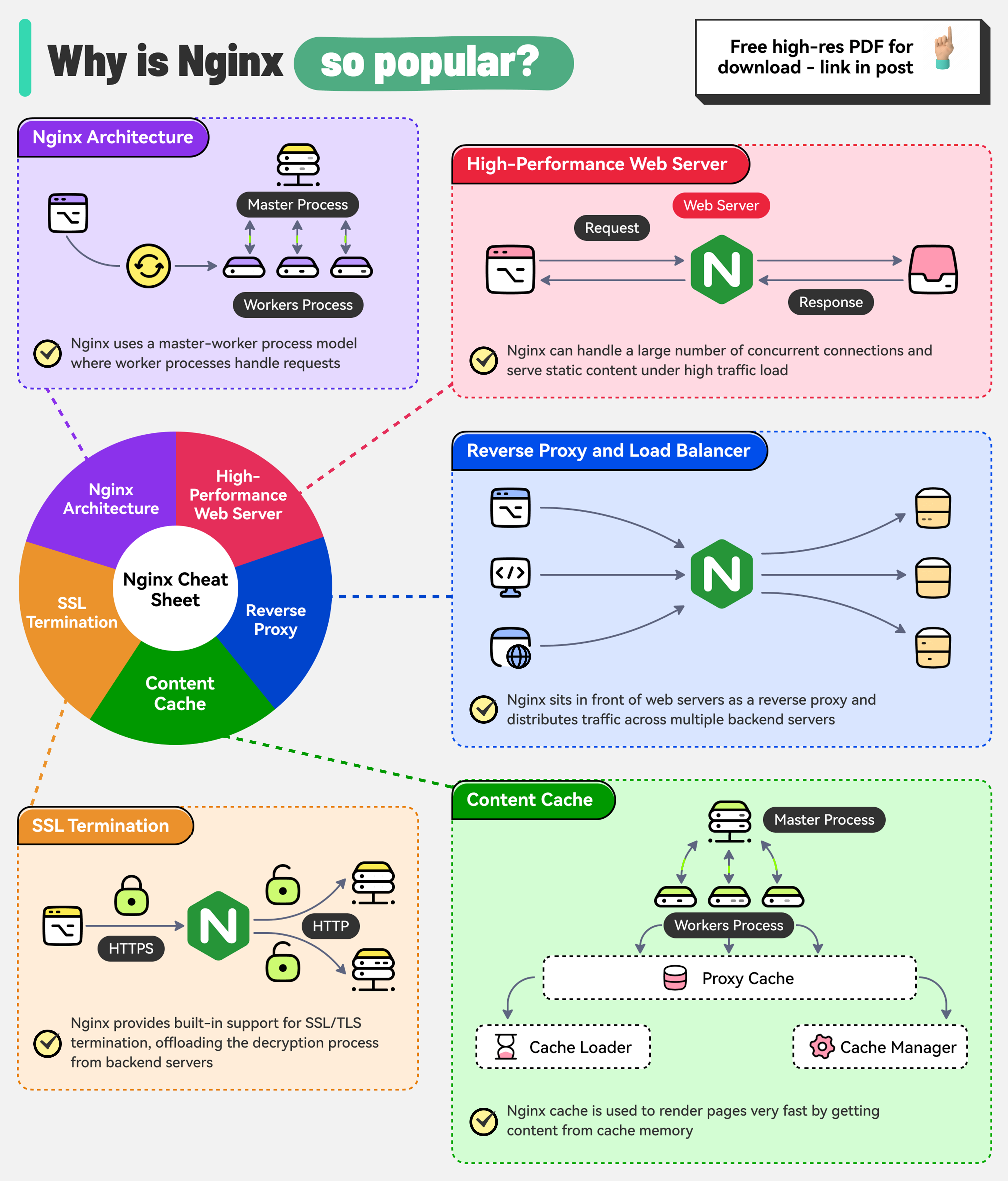

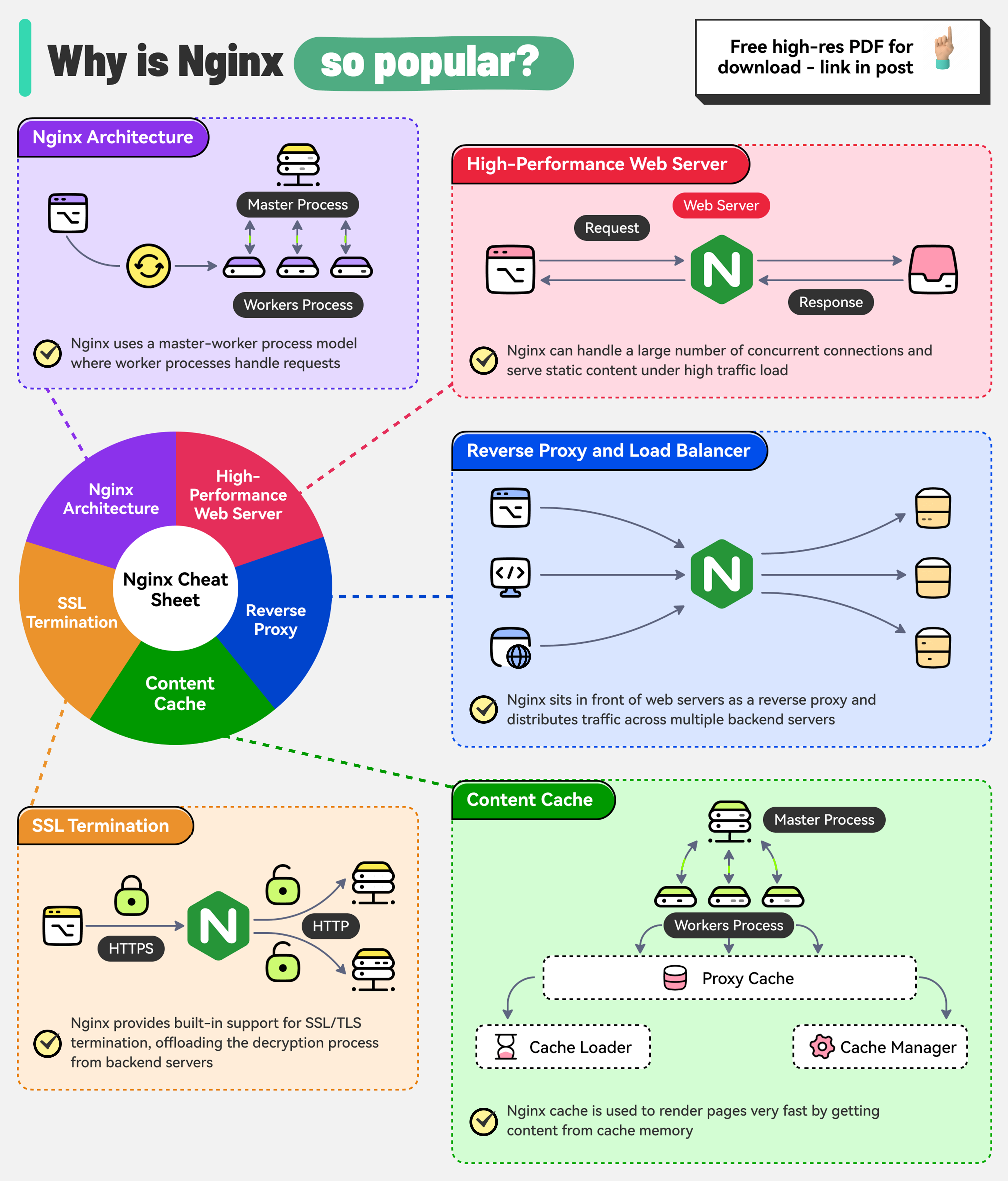

+ * [Why is Nginx so Popular?](https://bytebytego.com/guides/why-is-nginx-so-popular)

+ * [What is Kubernetes (k8s)?](https://bytebytego.com/guides/what-is-k8s-kubernetes)

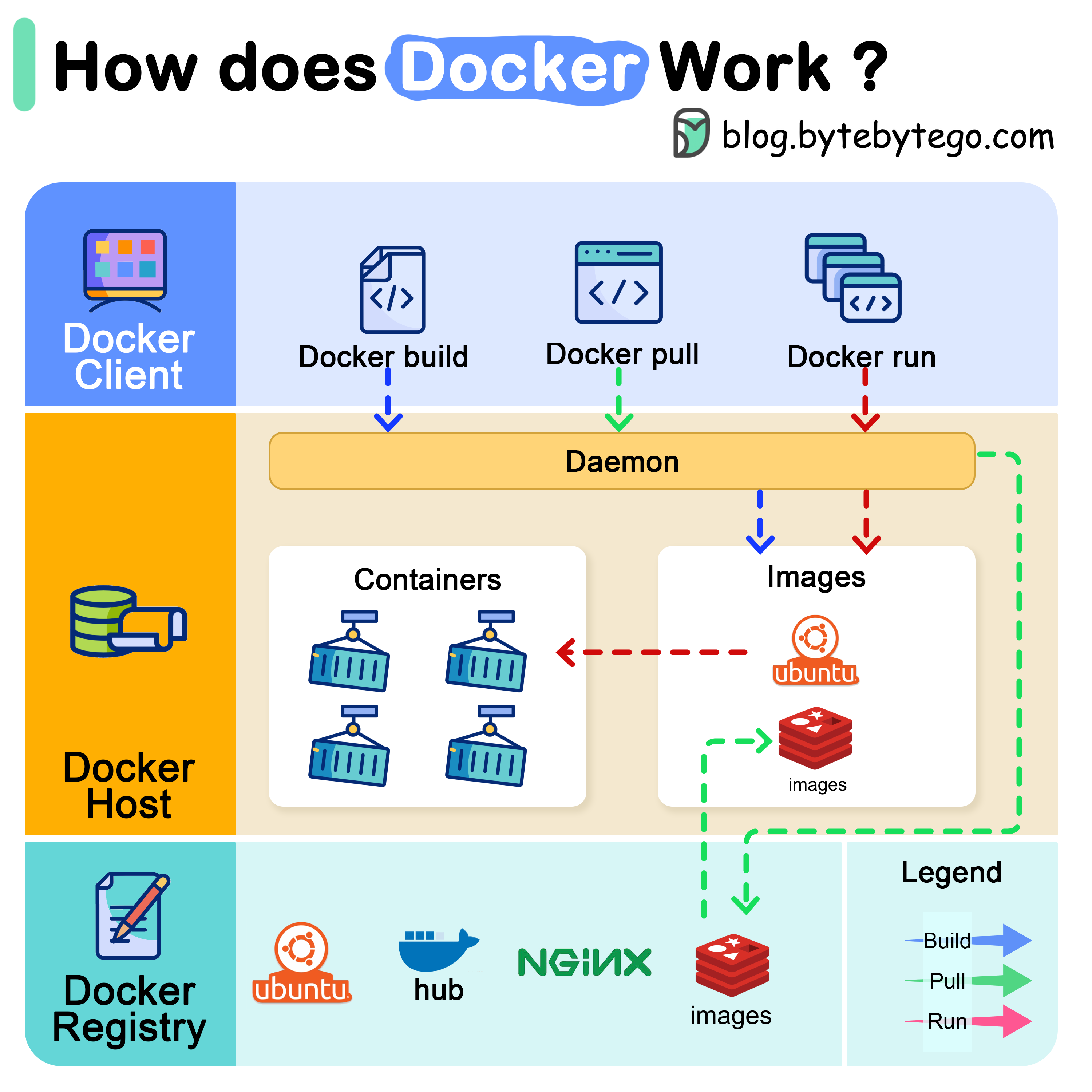

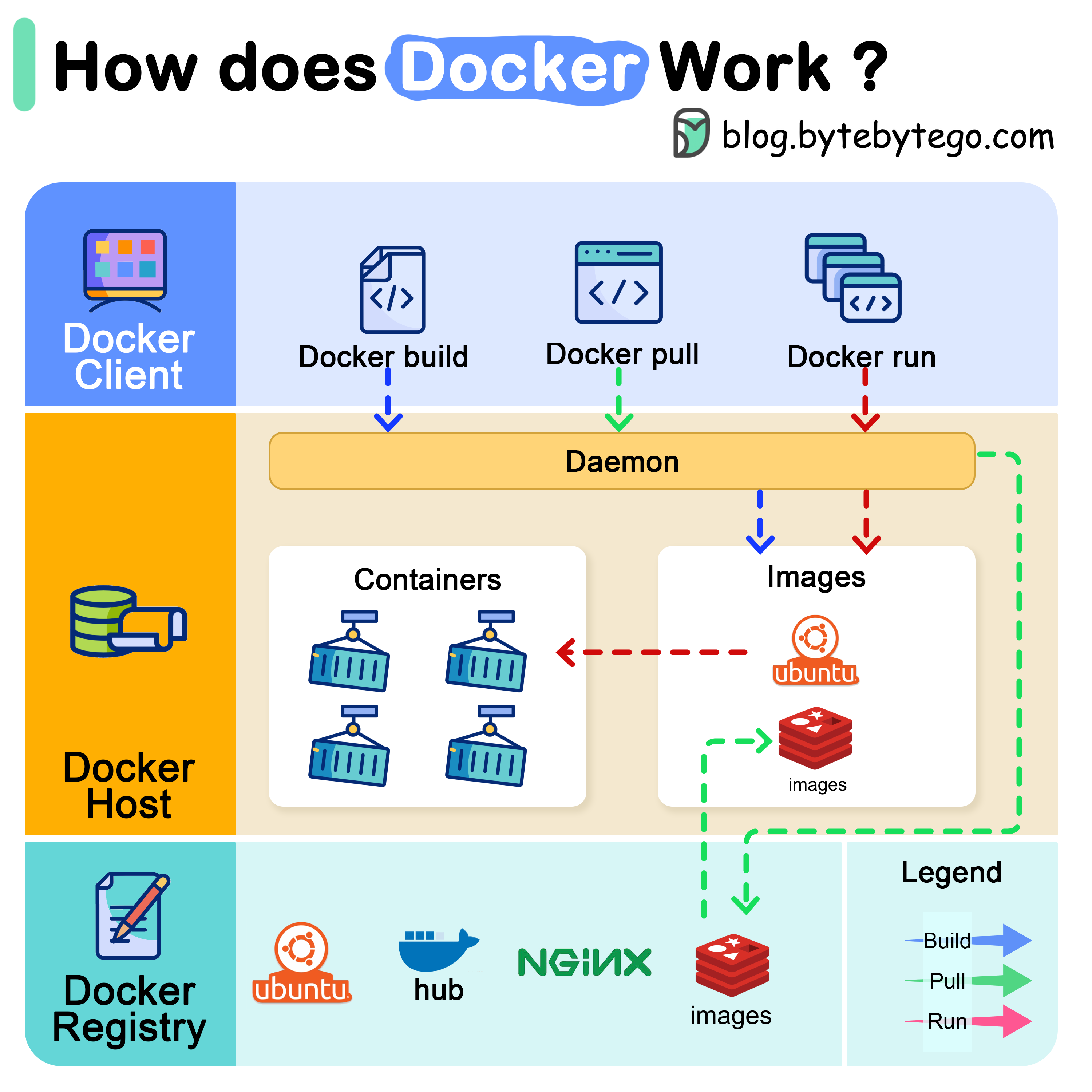

+ * [How does Docker work?](https://bytebytego.com/guides/how-does-docker-work)

+ * [CI/CD Pipeline Explained in Simple Terms](https://bytebytego.com/guides/cicd-pipeline-explained-in-simple-terms)

+* [Security](https://bytebytego.com/guides/security)

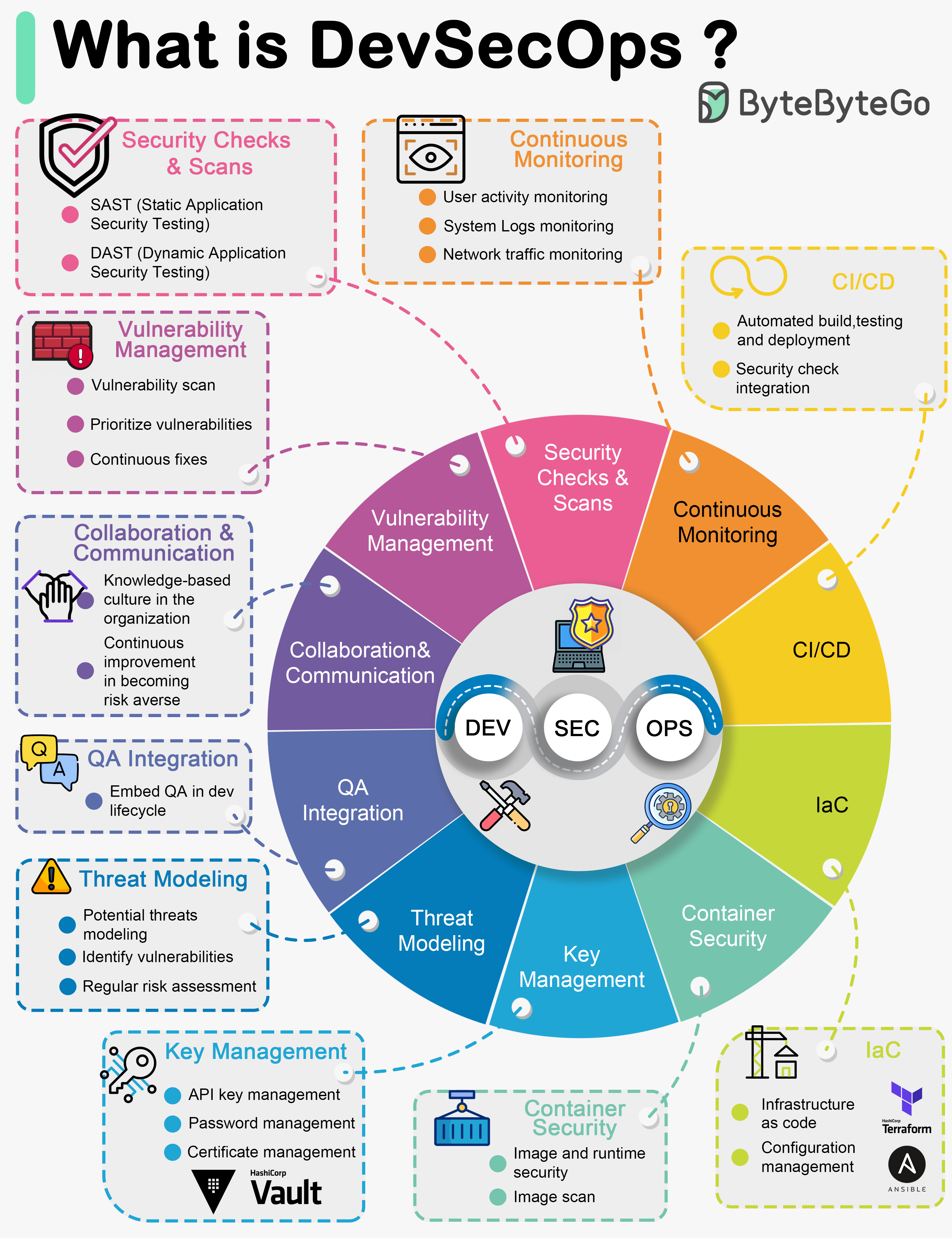

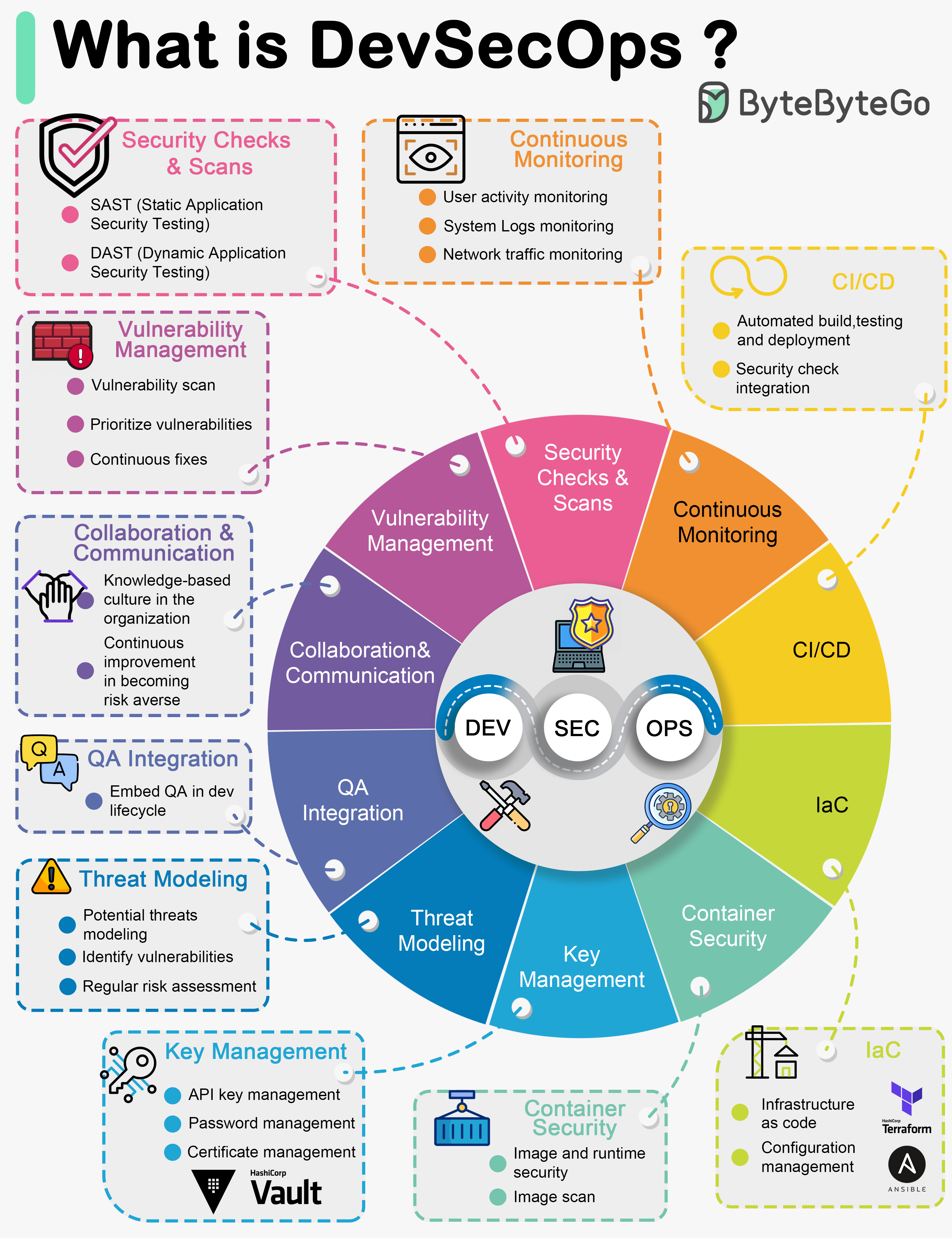

+ * [What is DevSecOps?](https://bytebytego.com/guides/what-is-devsecops)

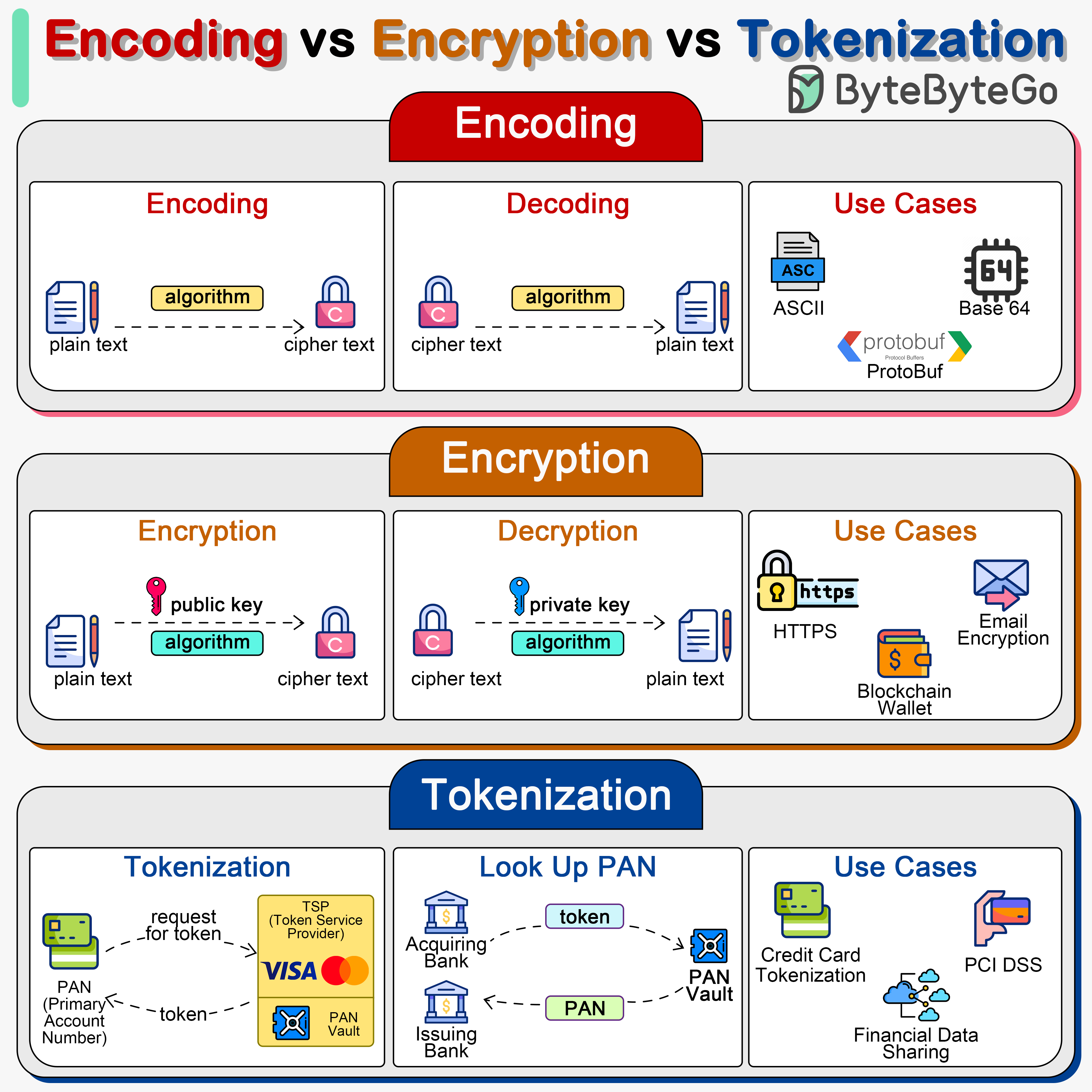

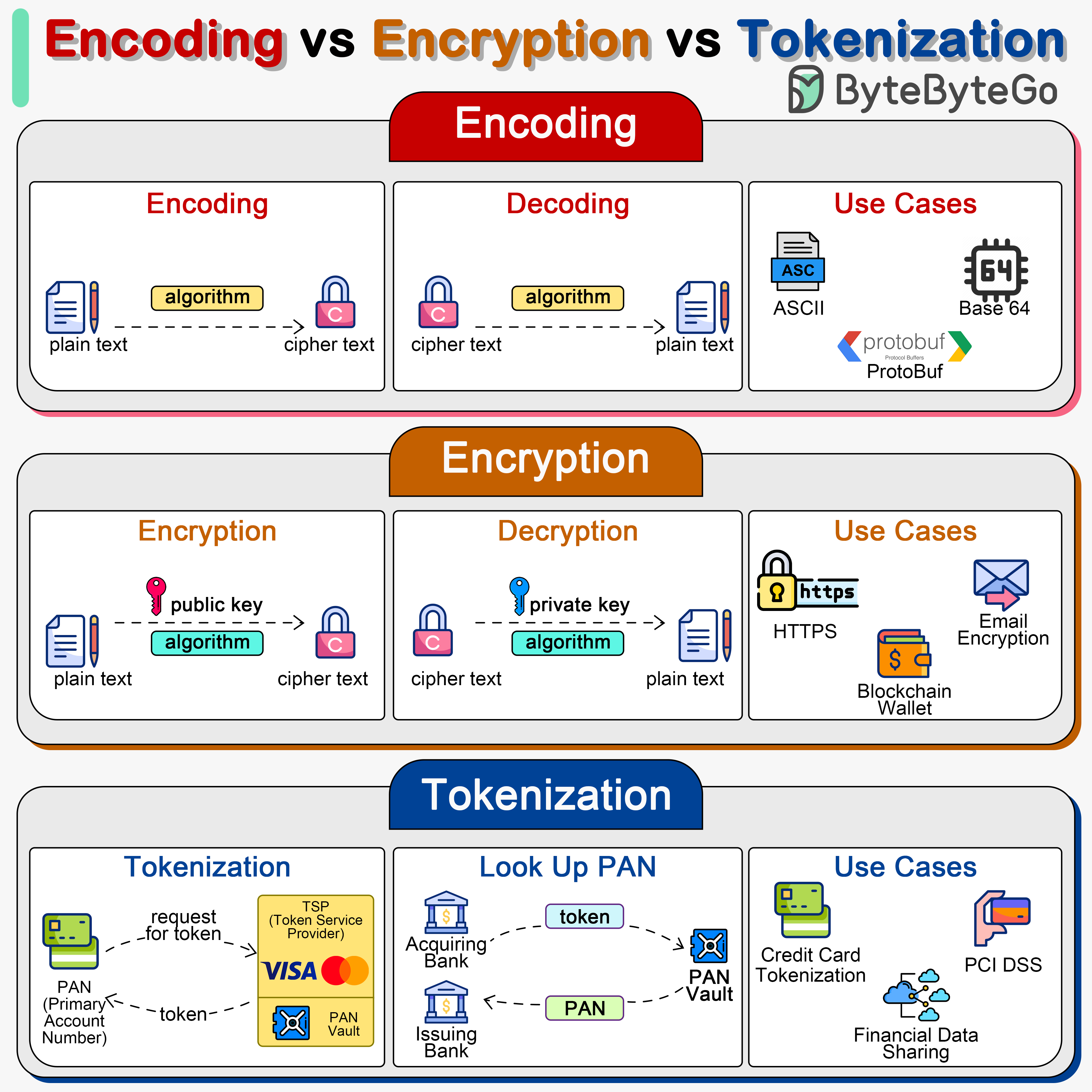

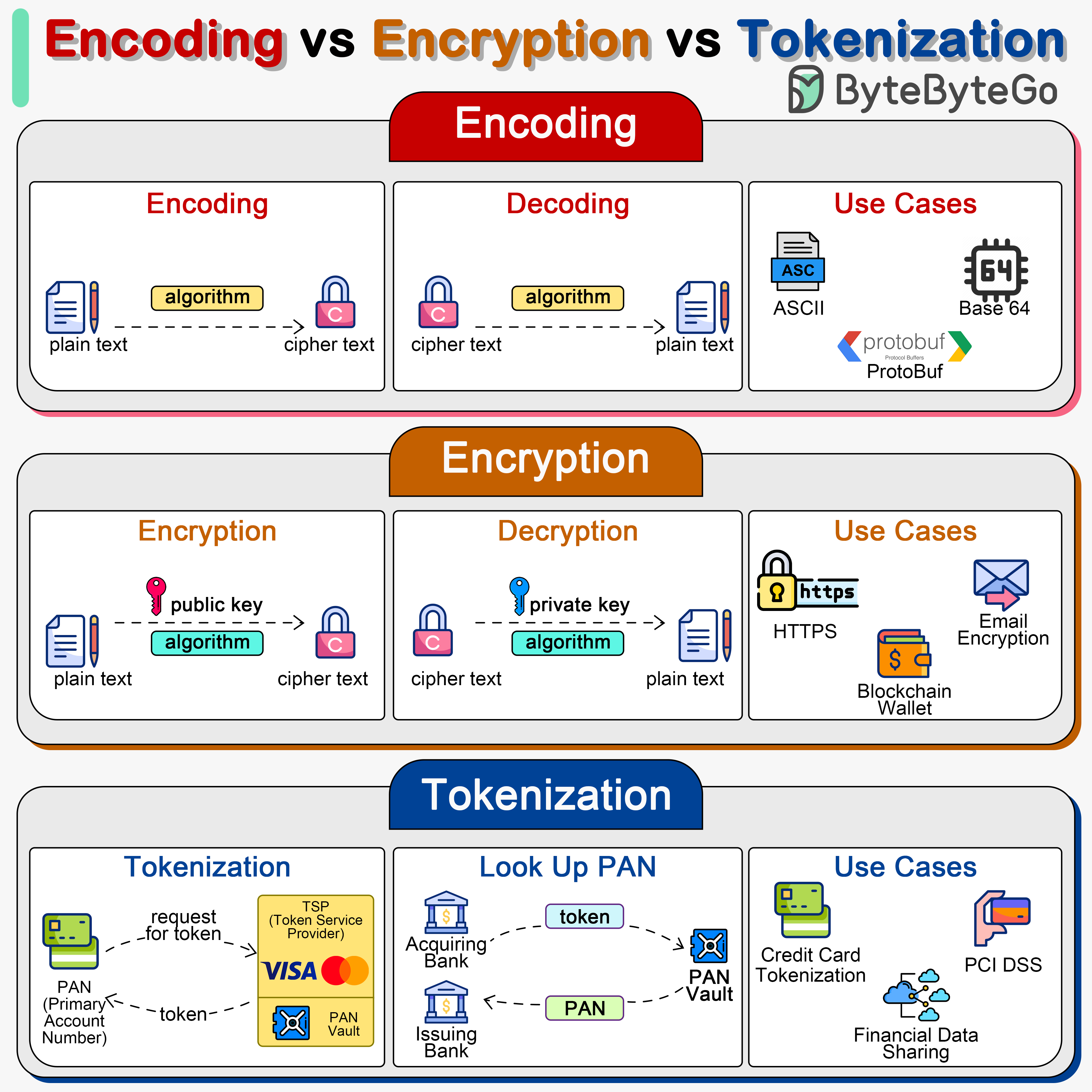

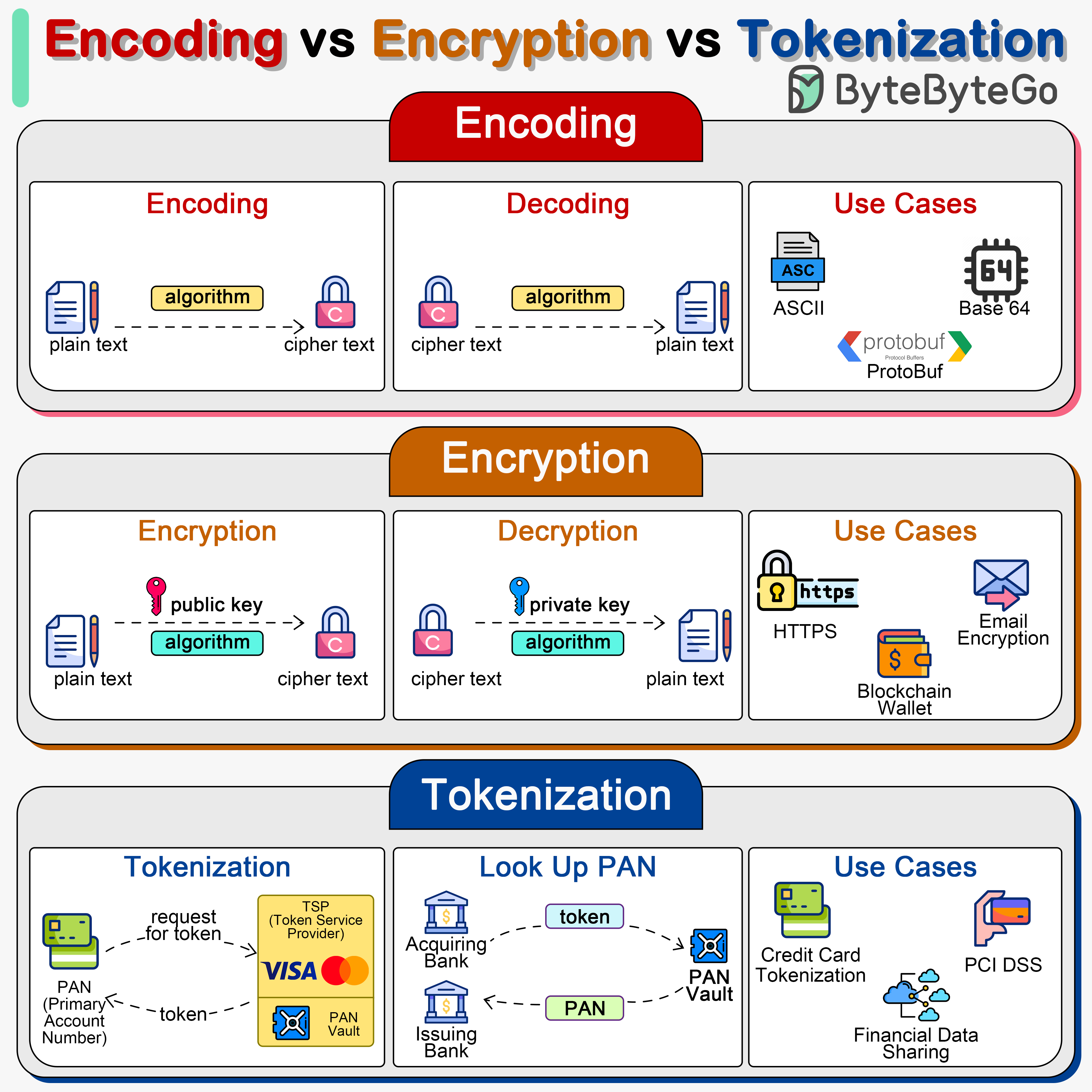

+ * [Encoding vs Encryption vs Tokenization](https://bytebytego.com/guides/encoding-vs-encryption-vs-tokenization)

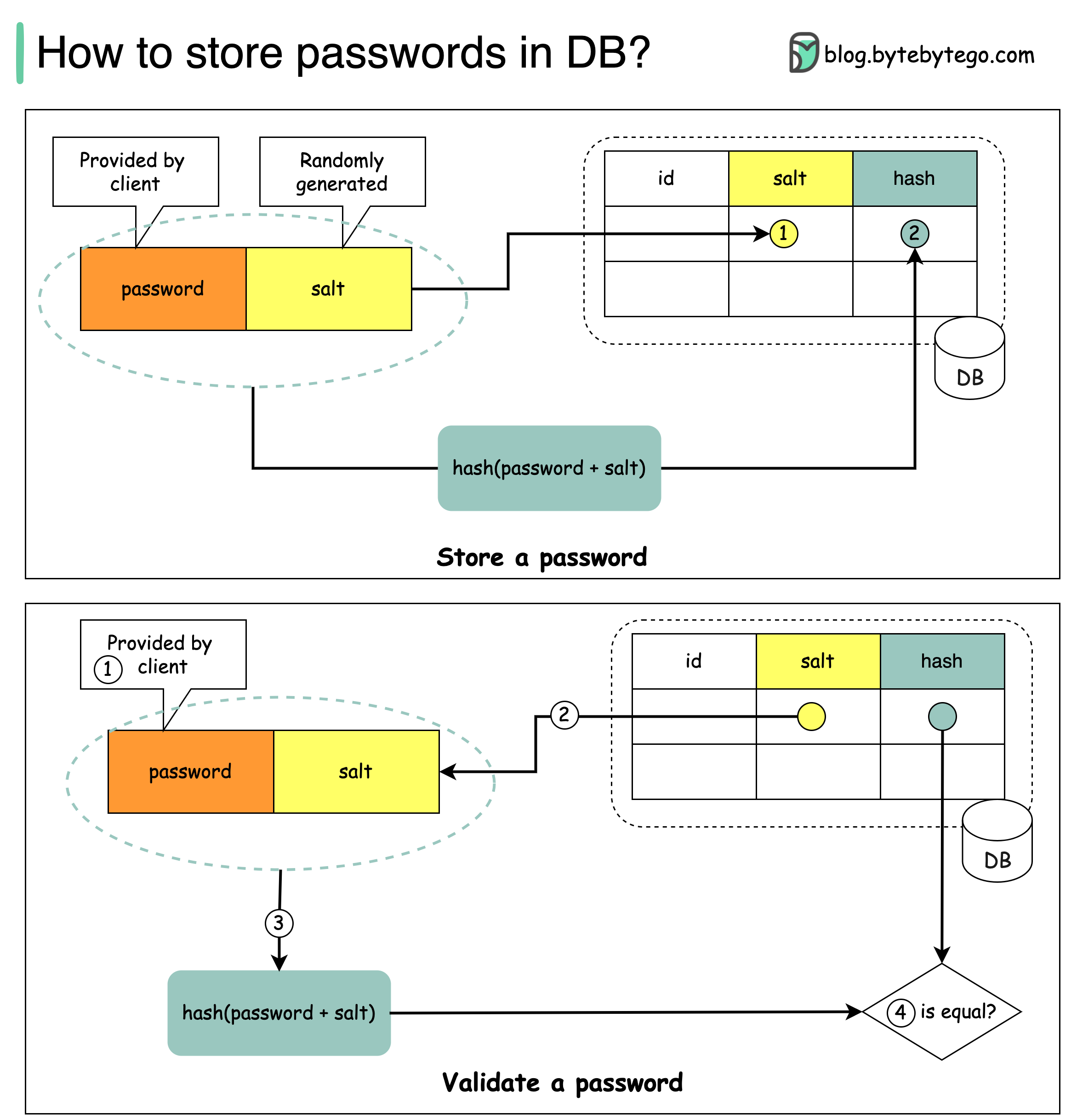

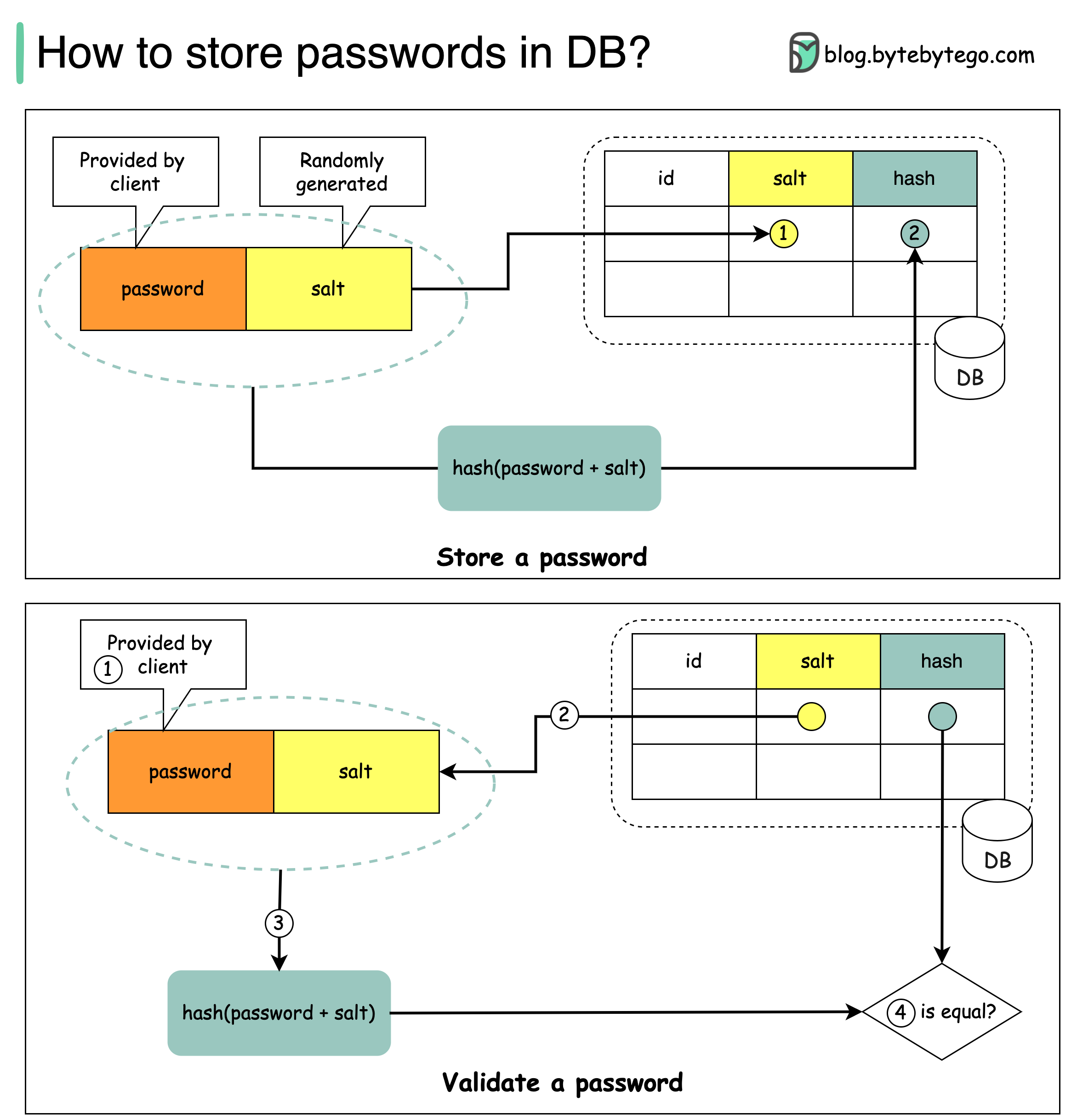

+ * [Storing Passwords Safely: A Comprehensive Guide](https://bytebytego.com/guides/how-to-store-passwords-in-the-database)

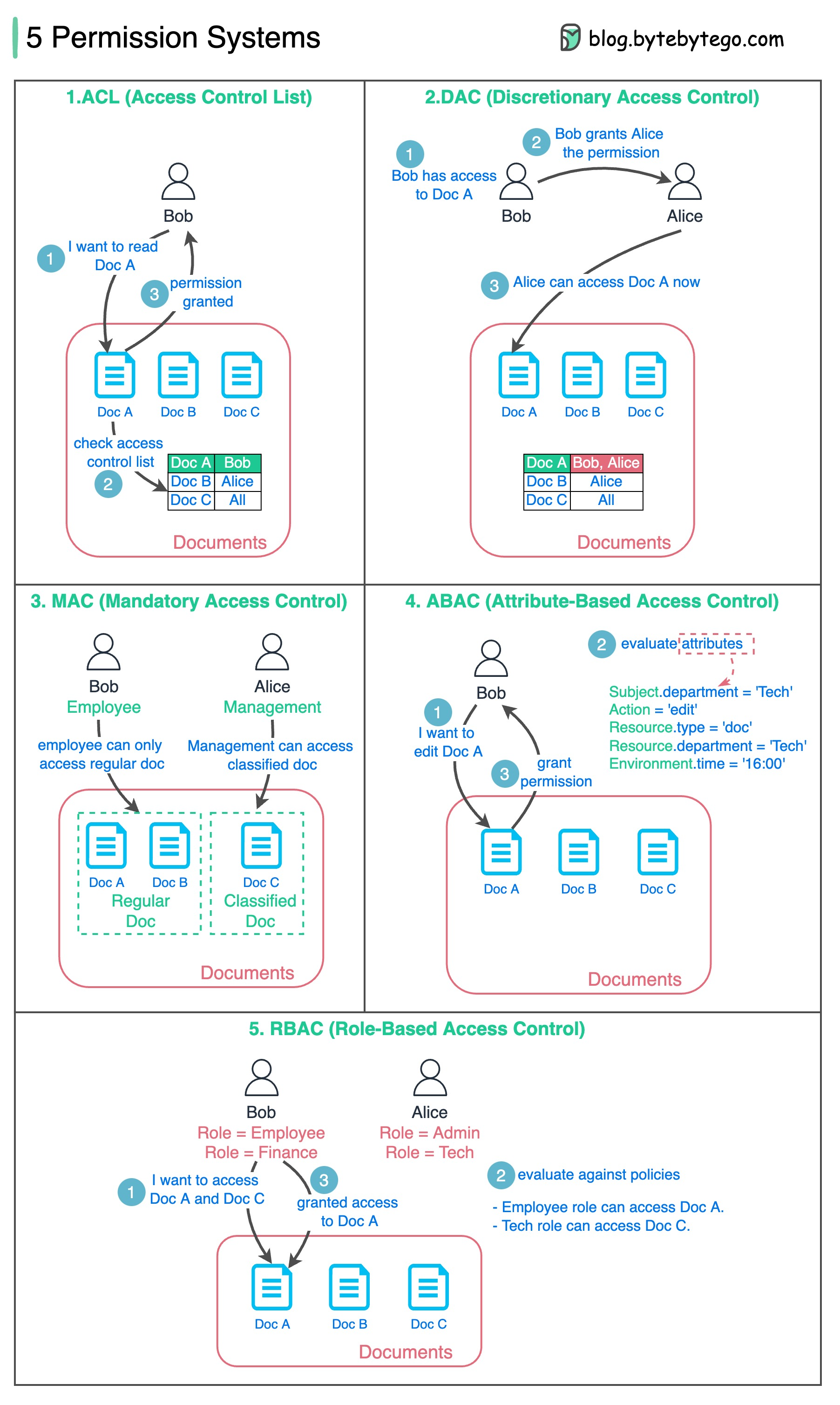

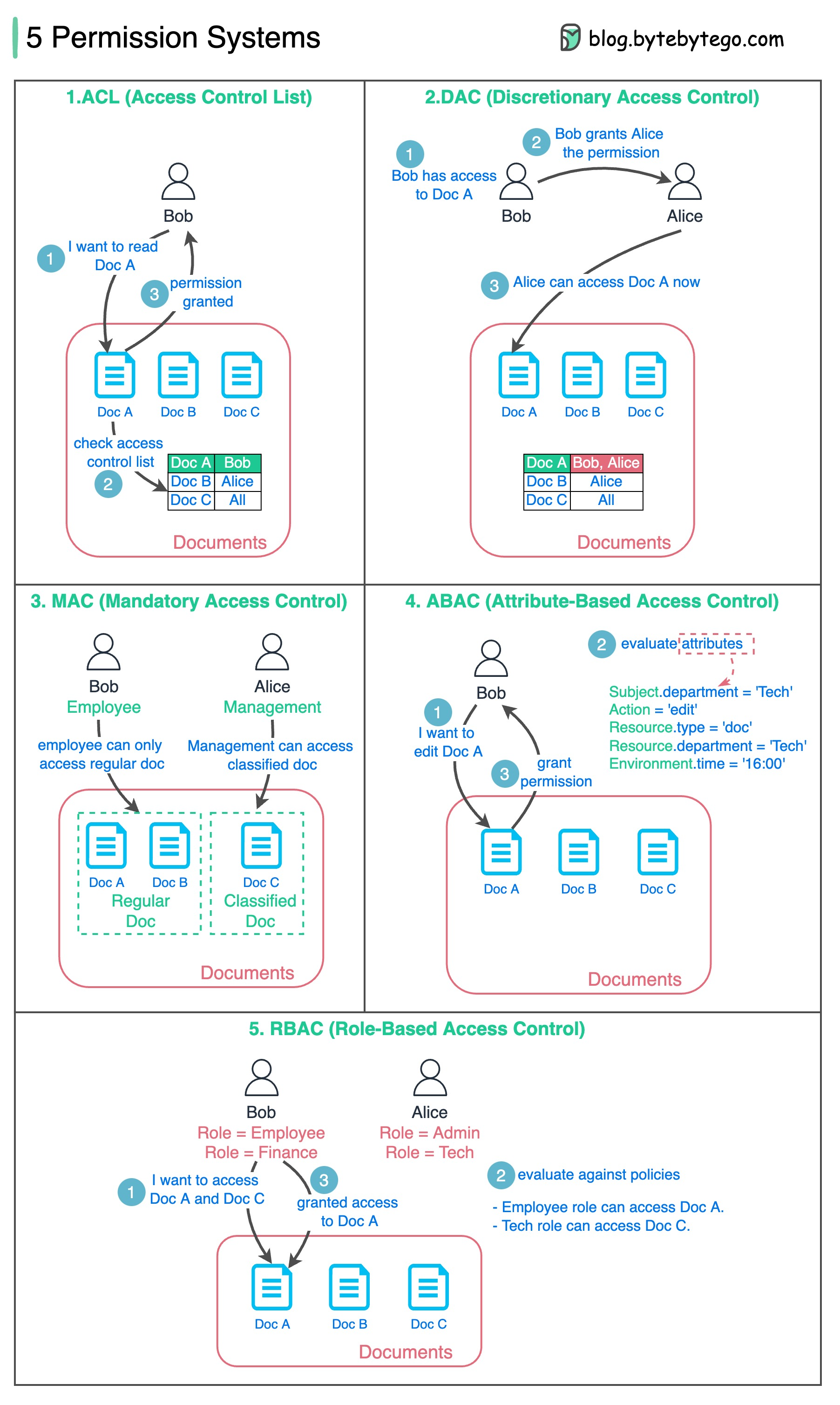

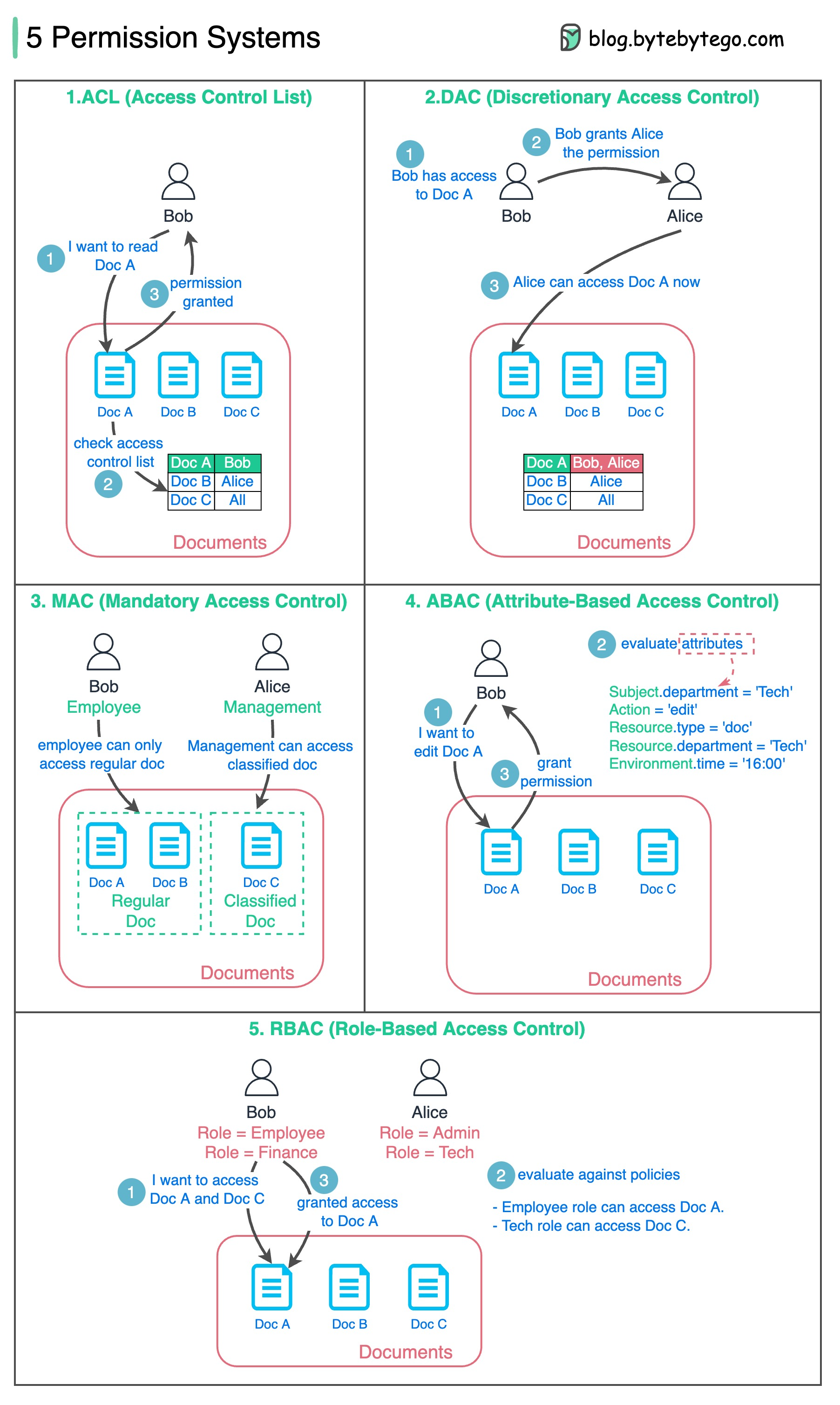

+ * [Designing a Permission System](https://bytebytego.com/guides/how-do-we-design-a-permission-system)

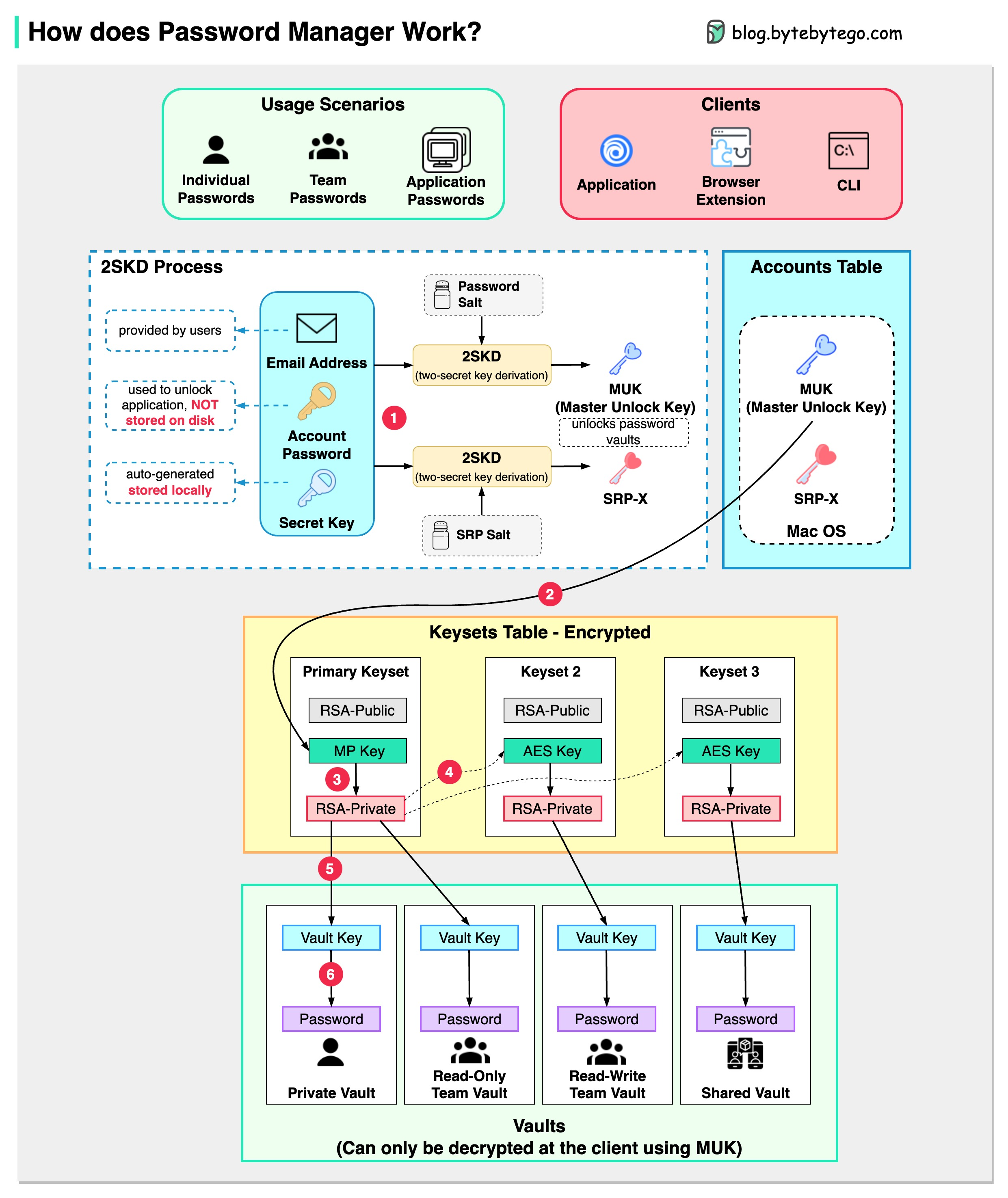

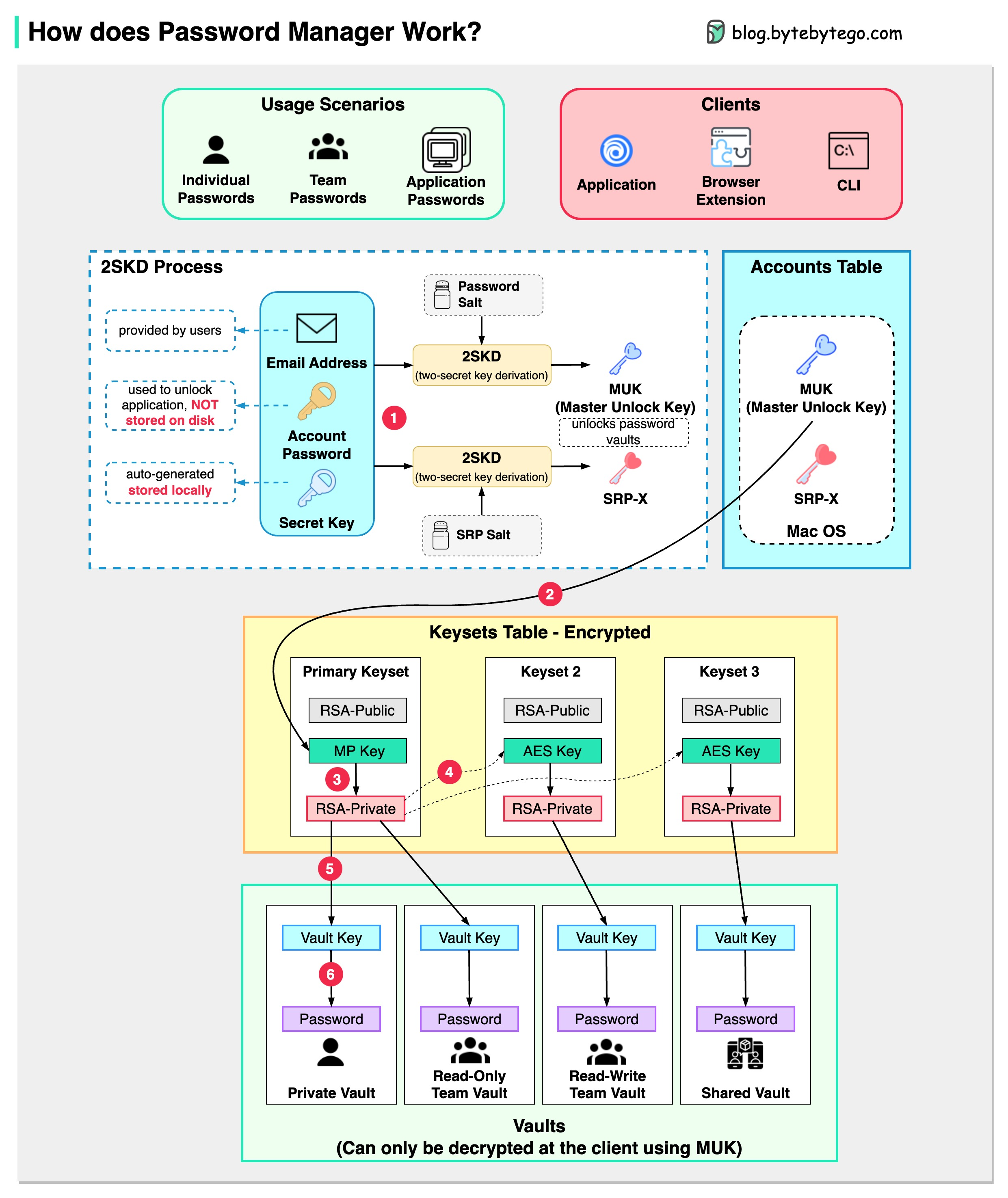

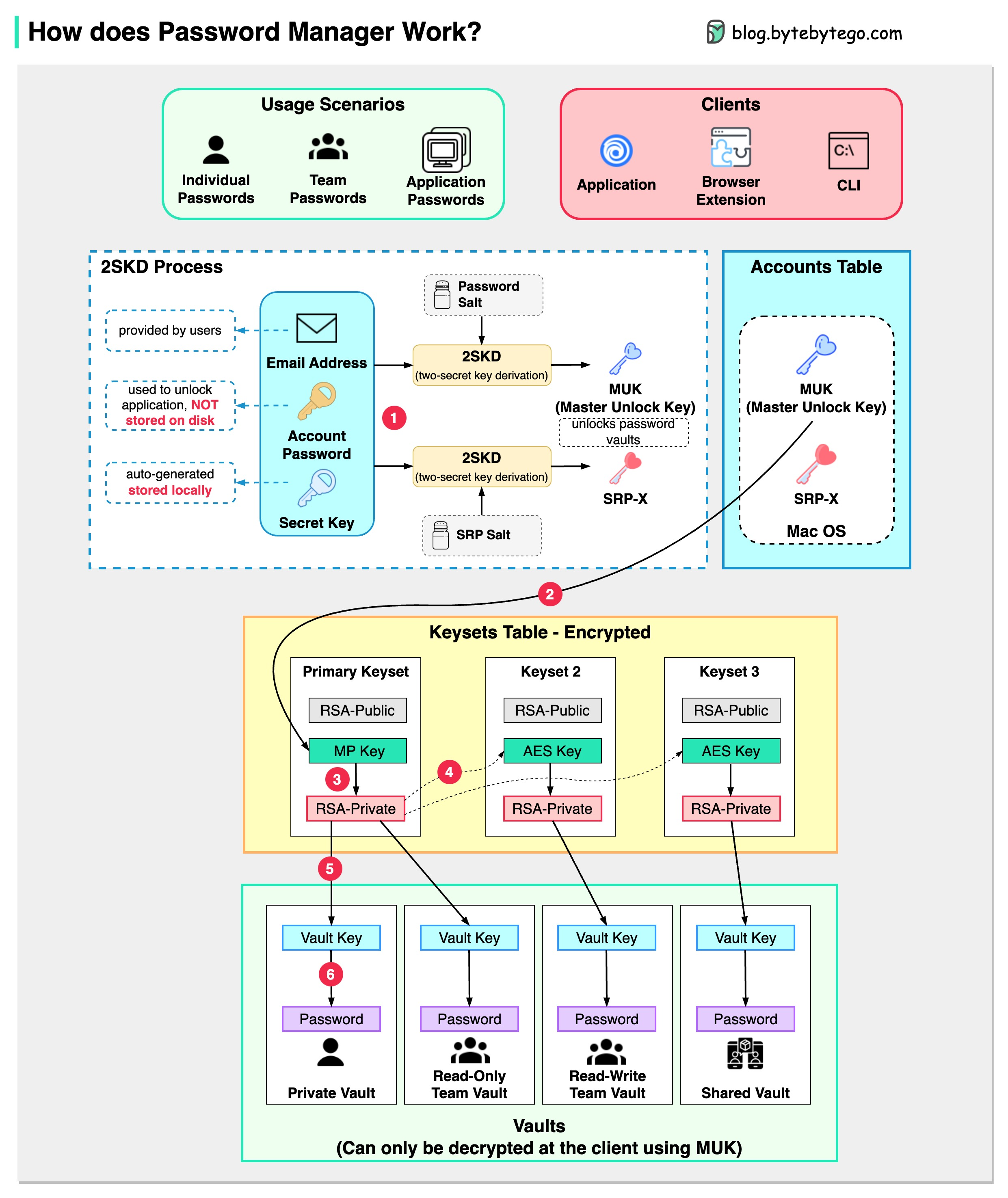

+ * [How Password Managers Work](https://bytebytego.com/guides/how-does-a-password-manager-such-as-1password-or-lastpass-work)

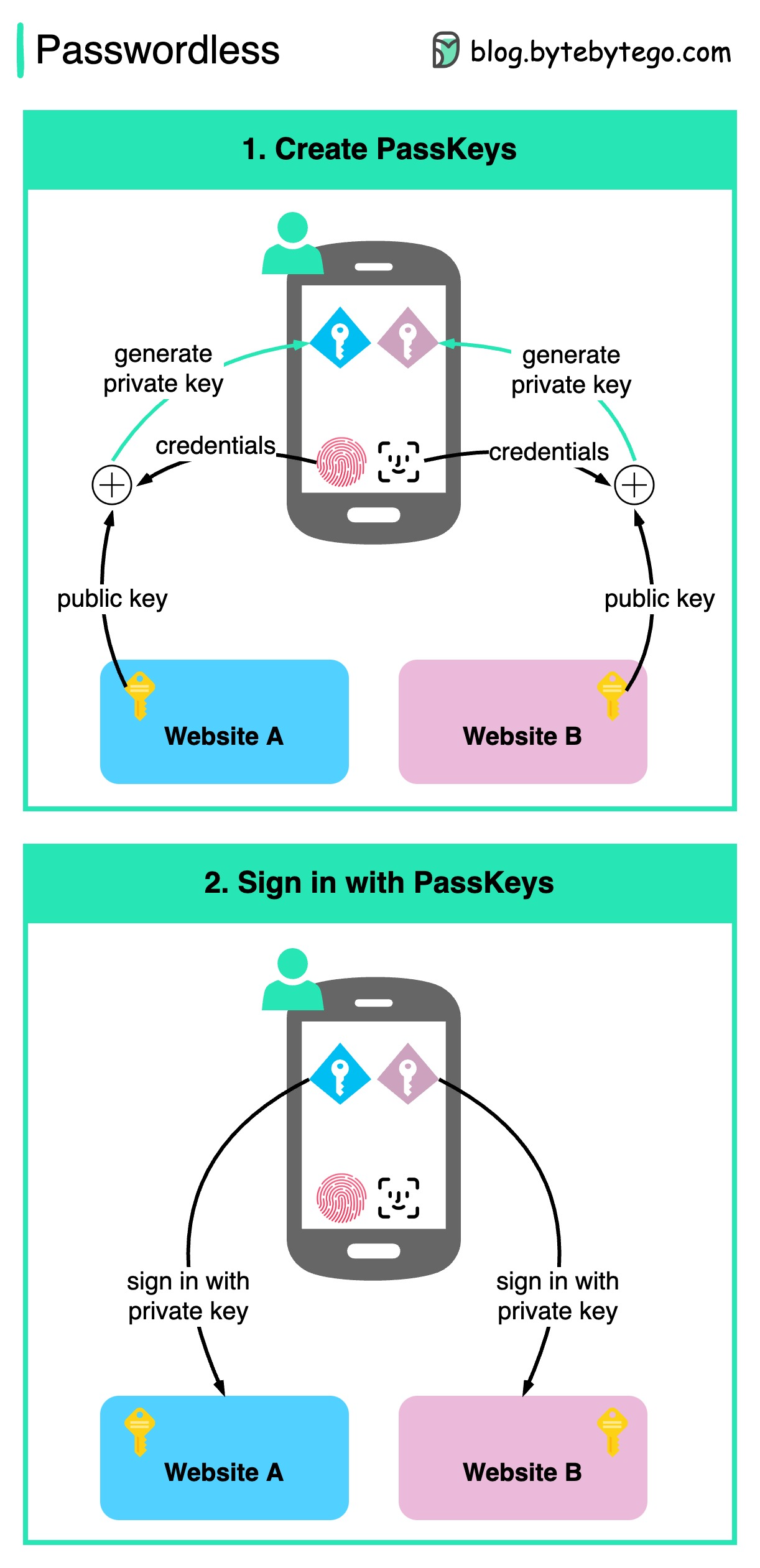

+ * [Is PassKey Shaping a Passwordless Future?](https://bytebytego.com/guides/is-passkey-shaping-a-passwordless-future)

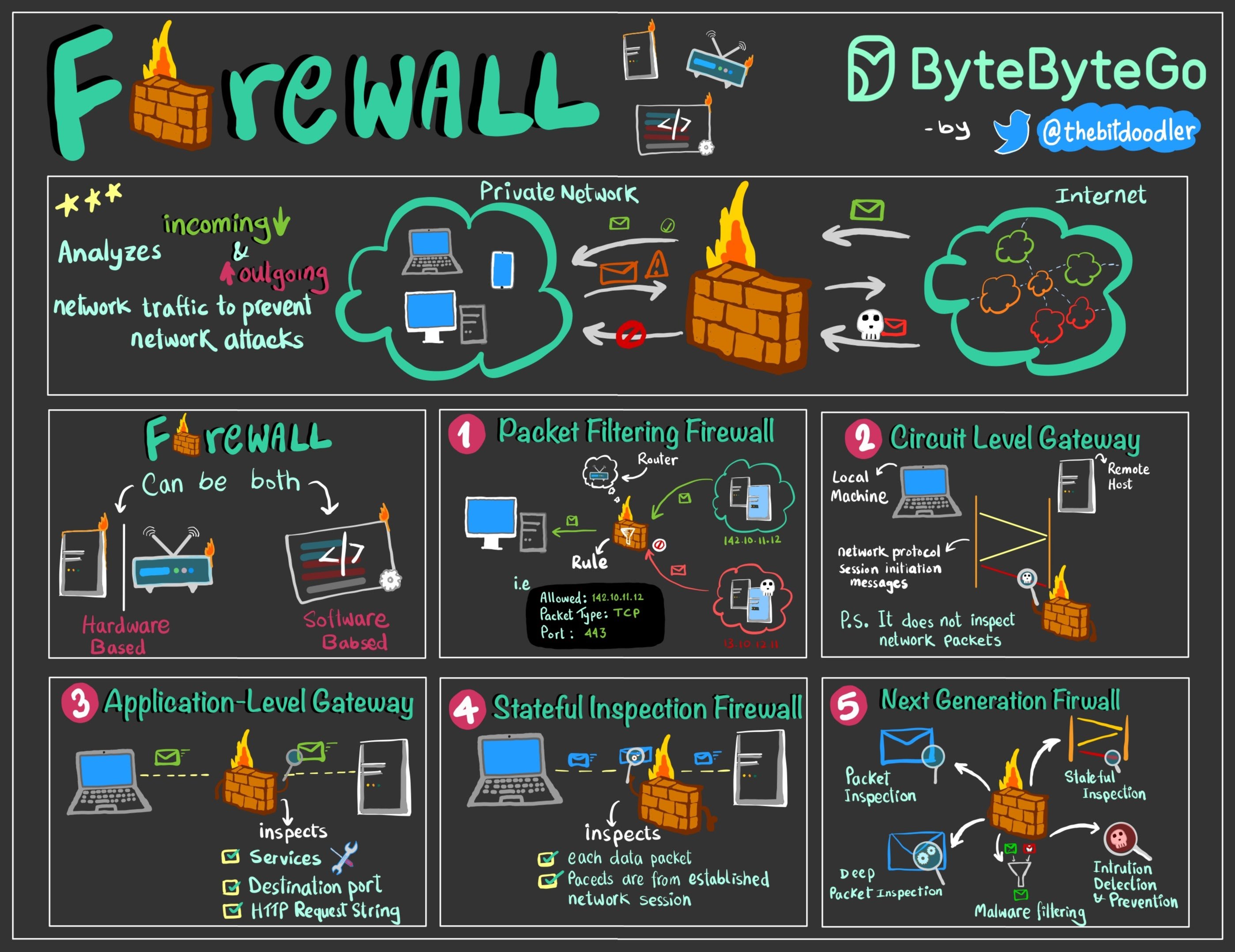

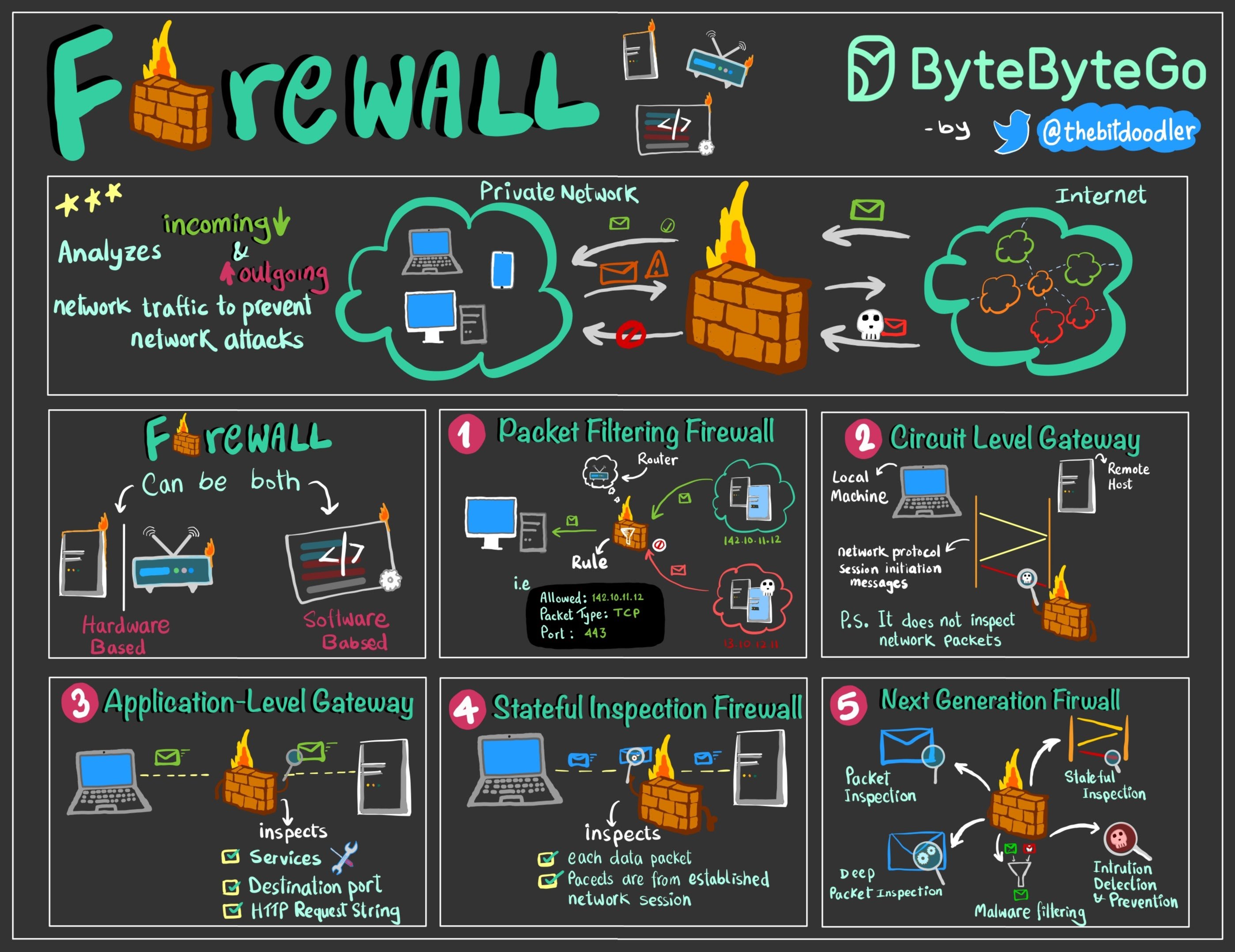

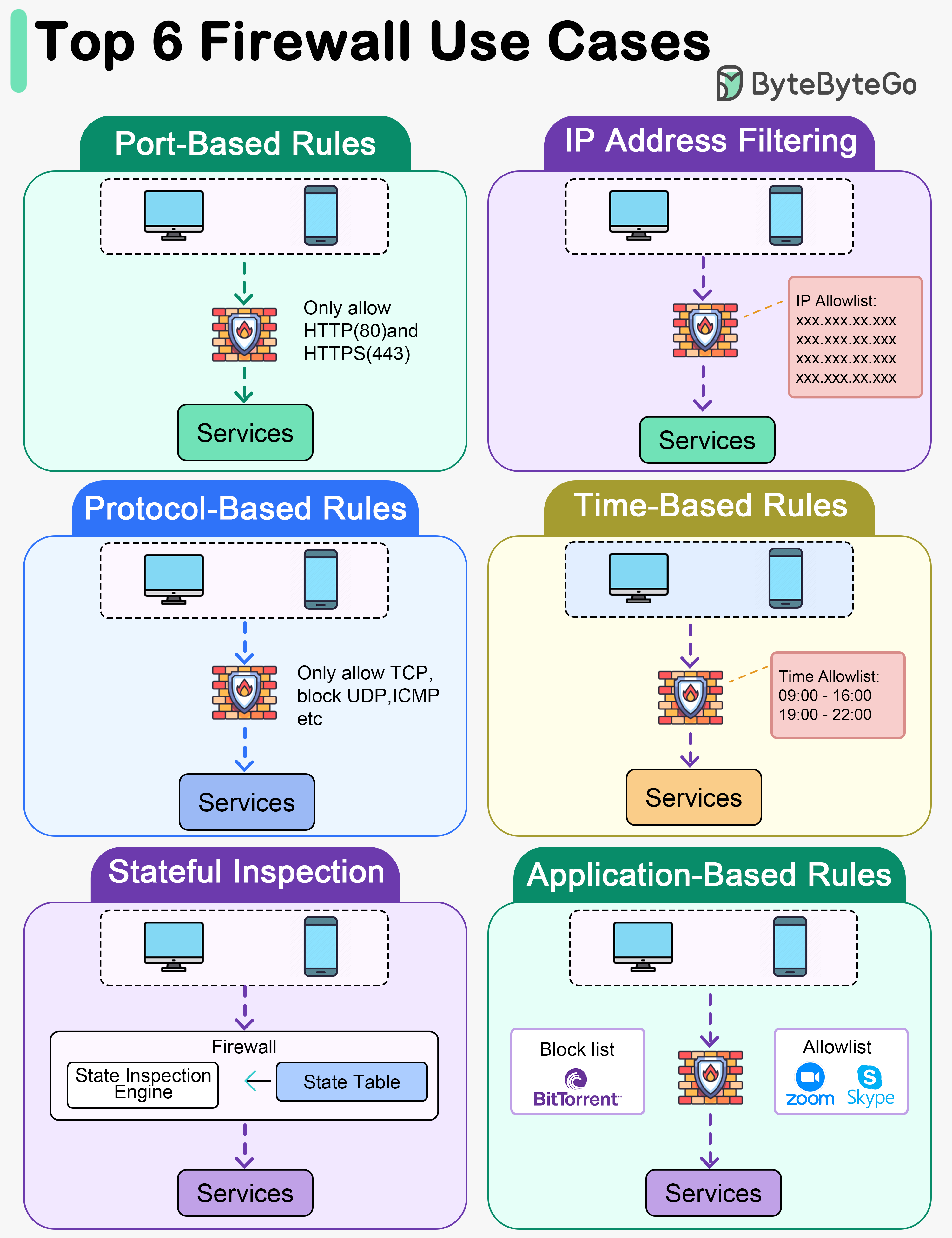

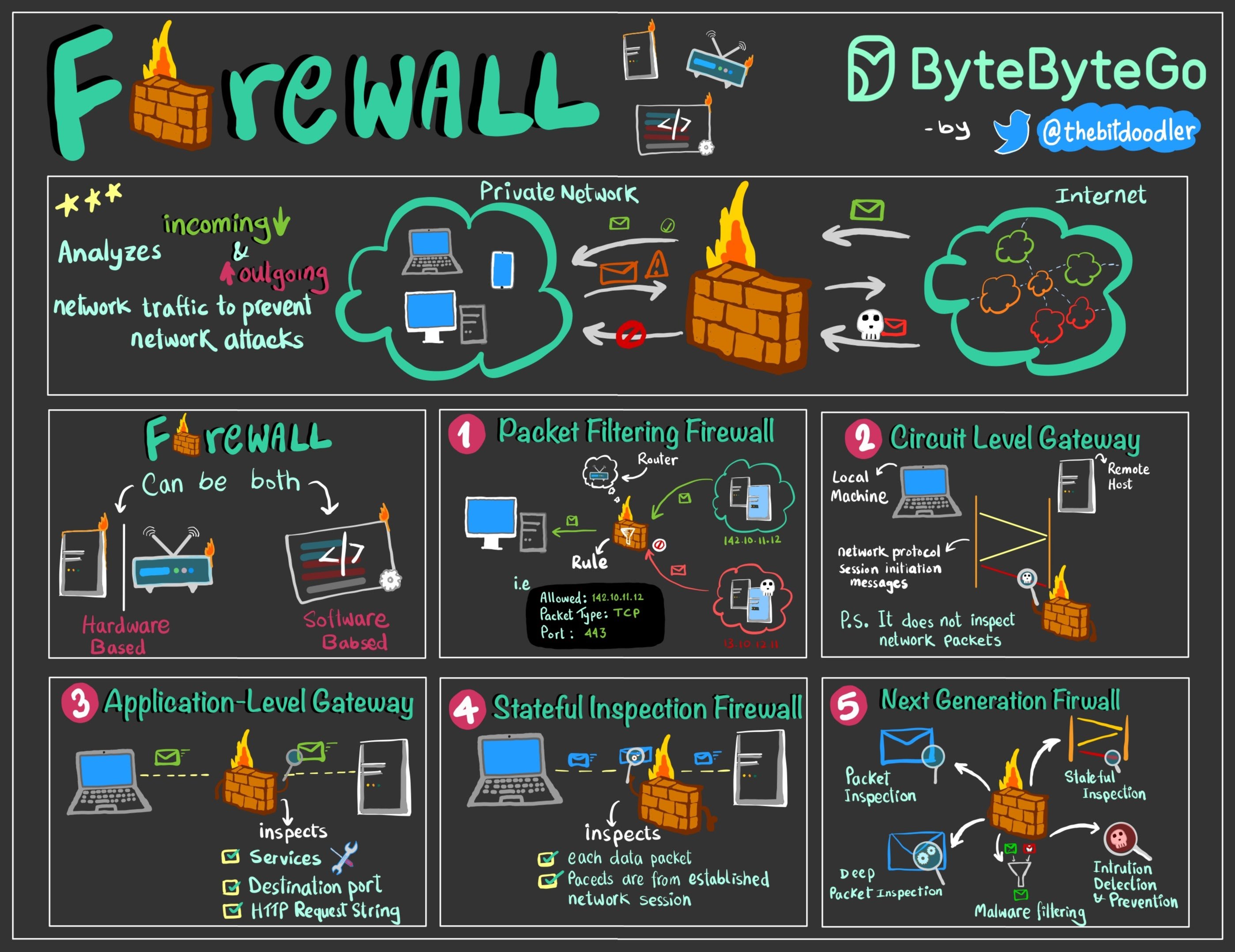

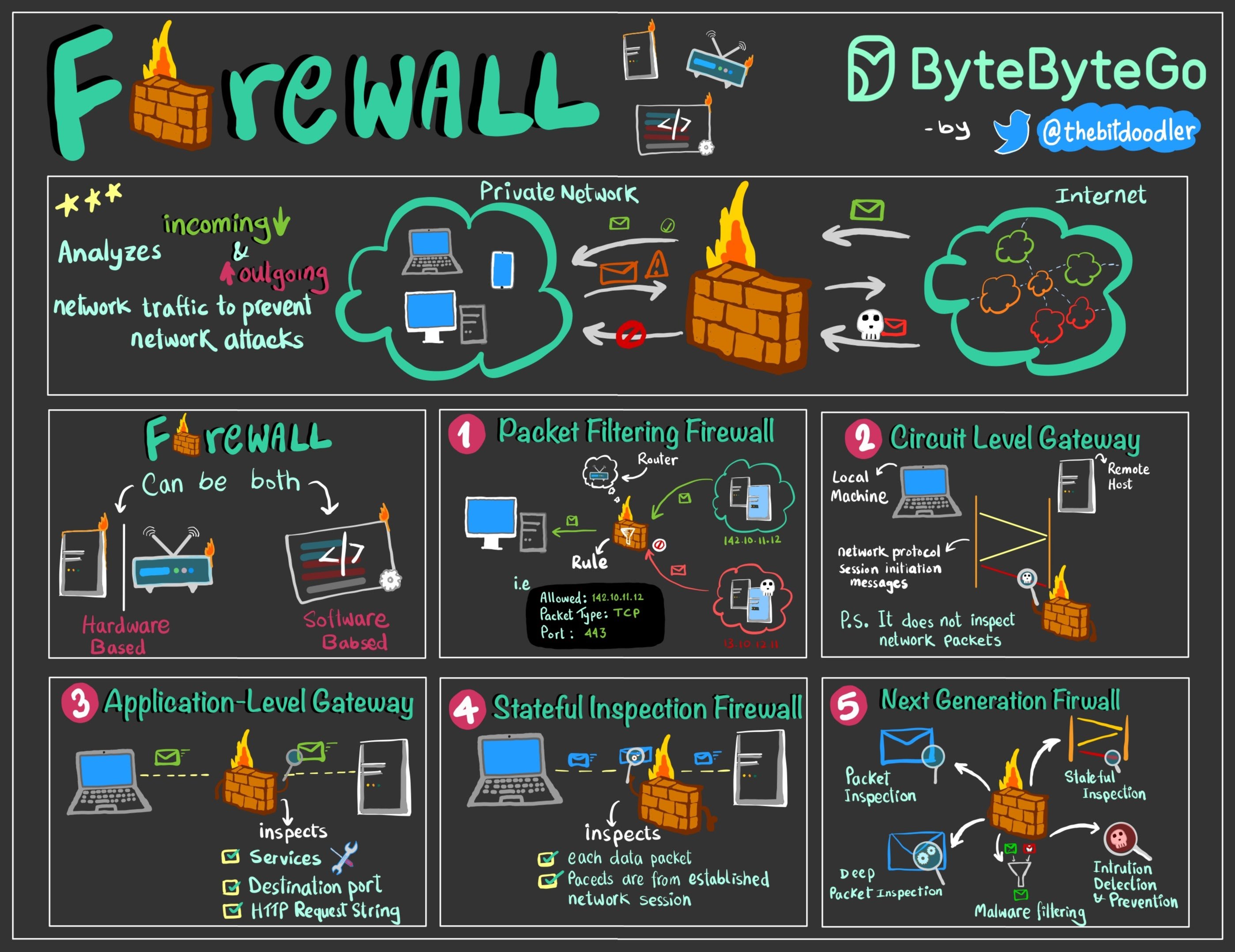

+ * [Firewall Explained to Kids and Adults](https://bytebytego.com/guides/firewall-explained-to-kids-and-adults)

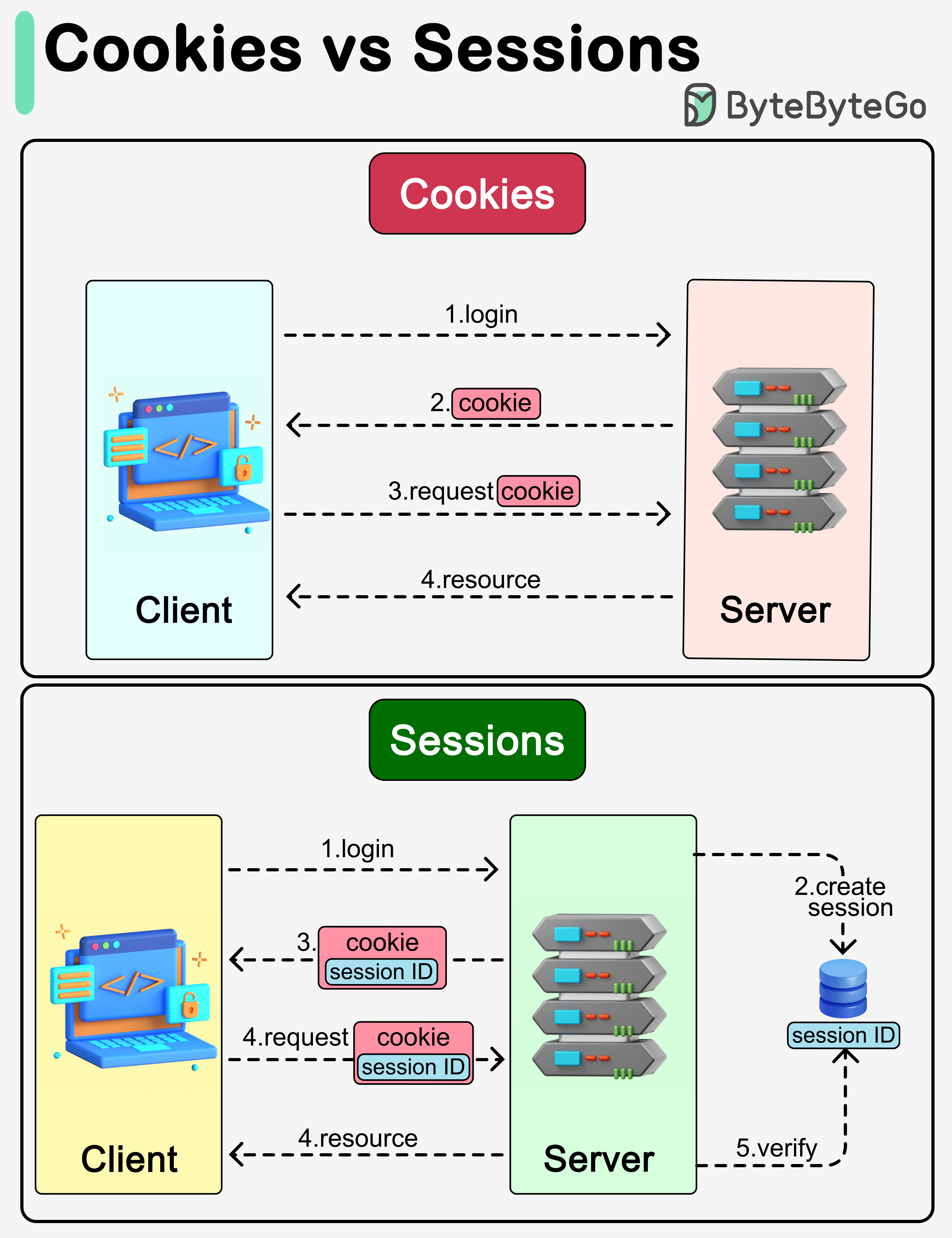

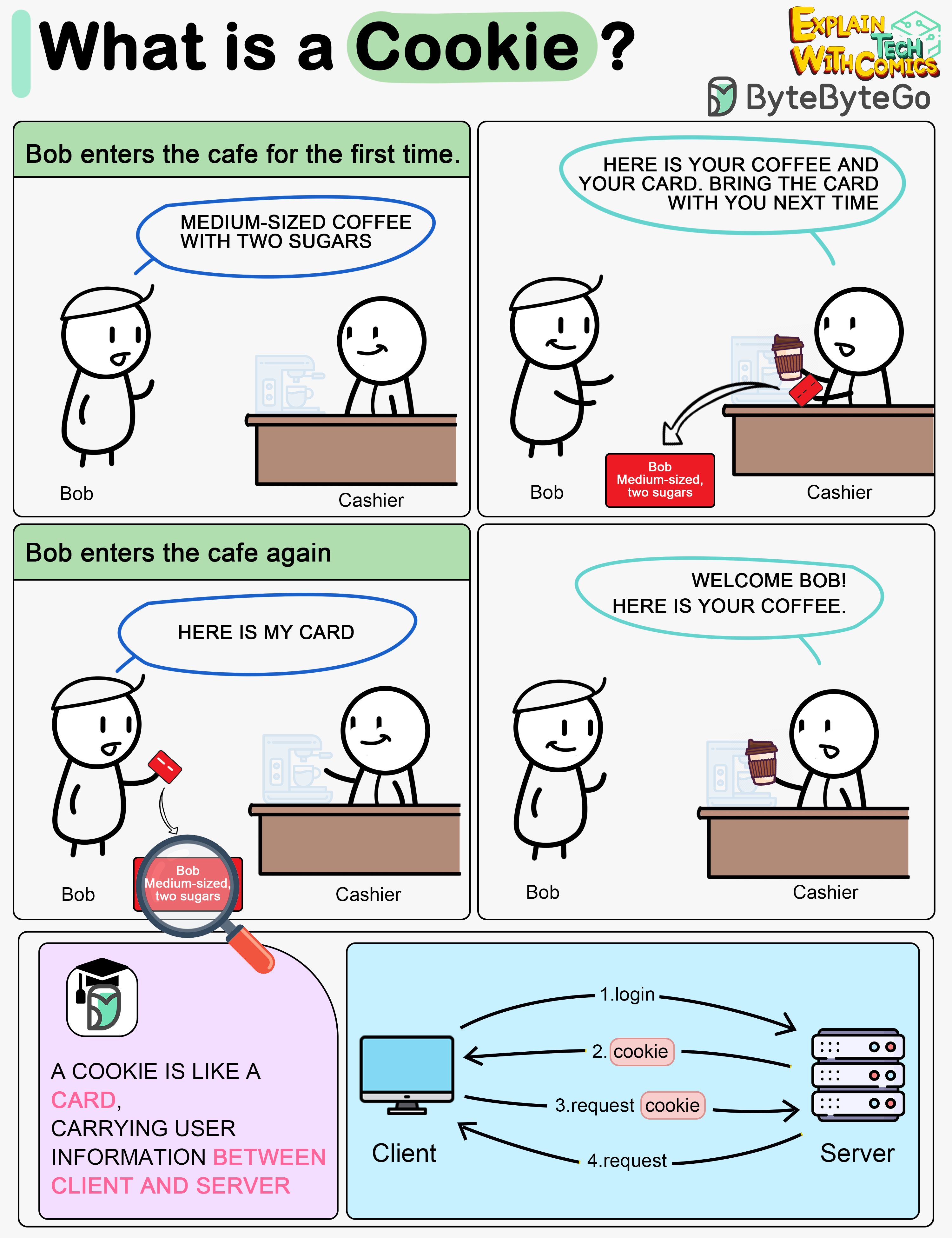

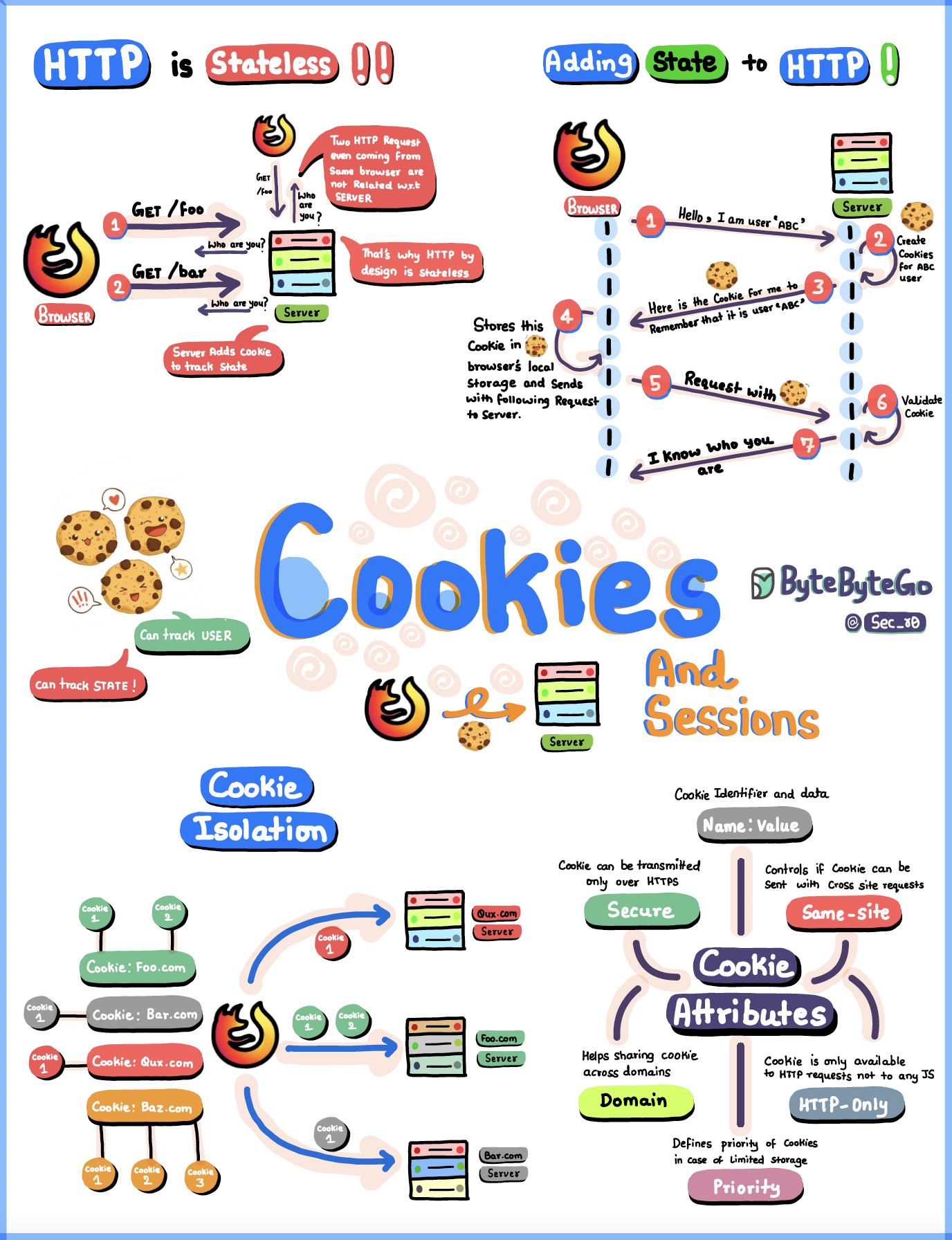

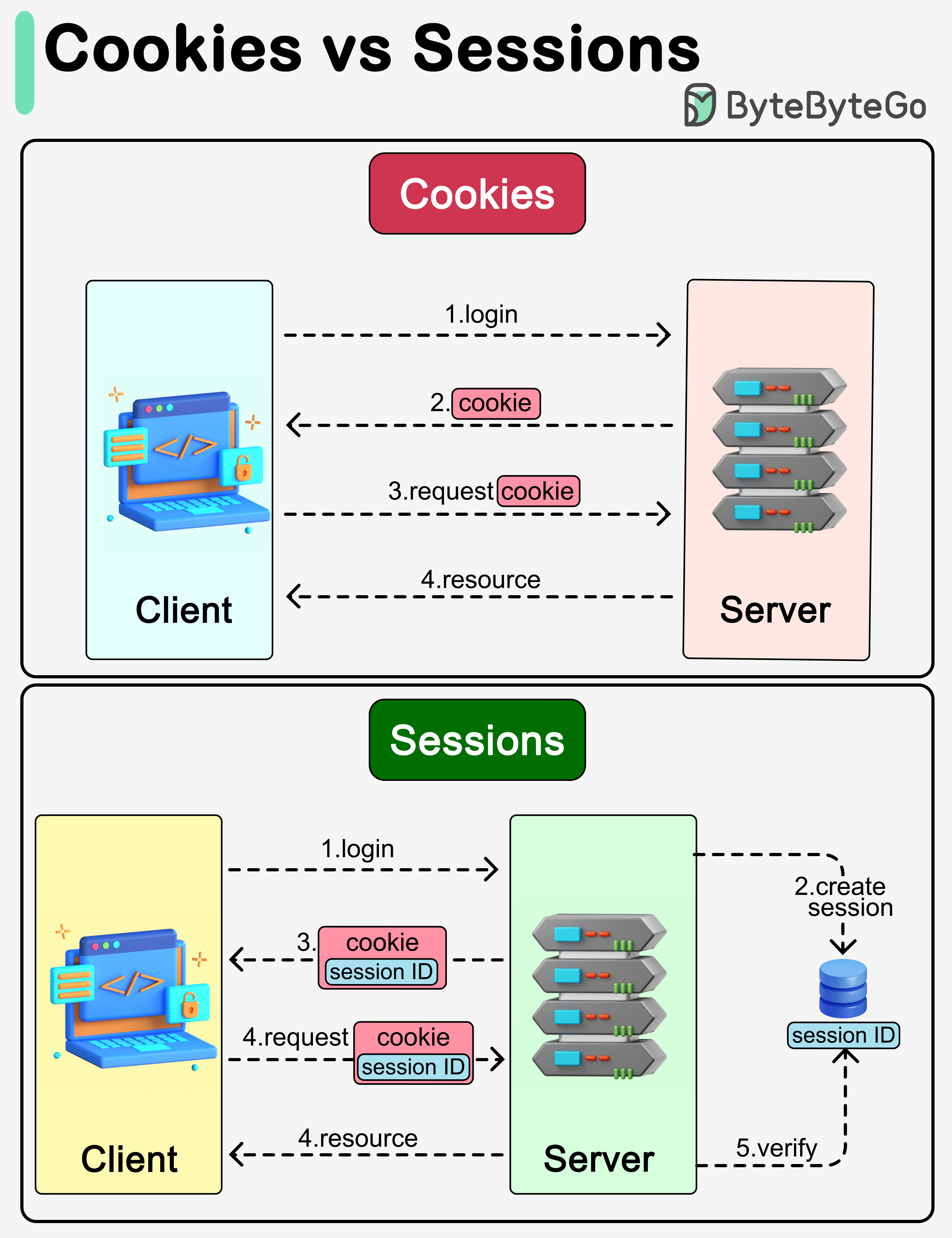

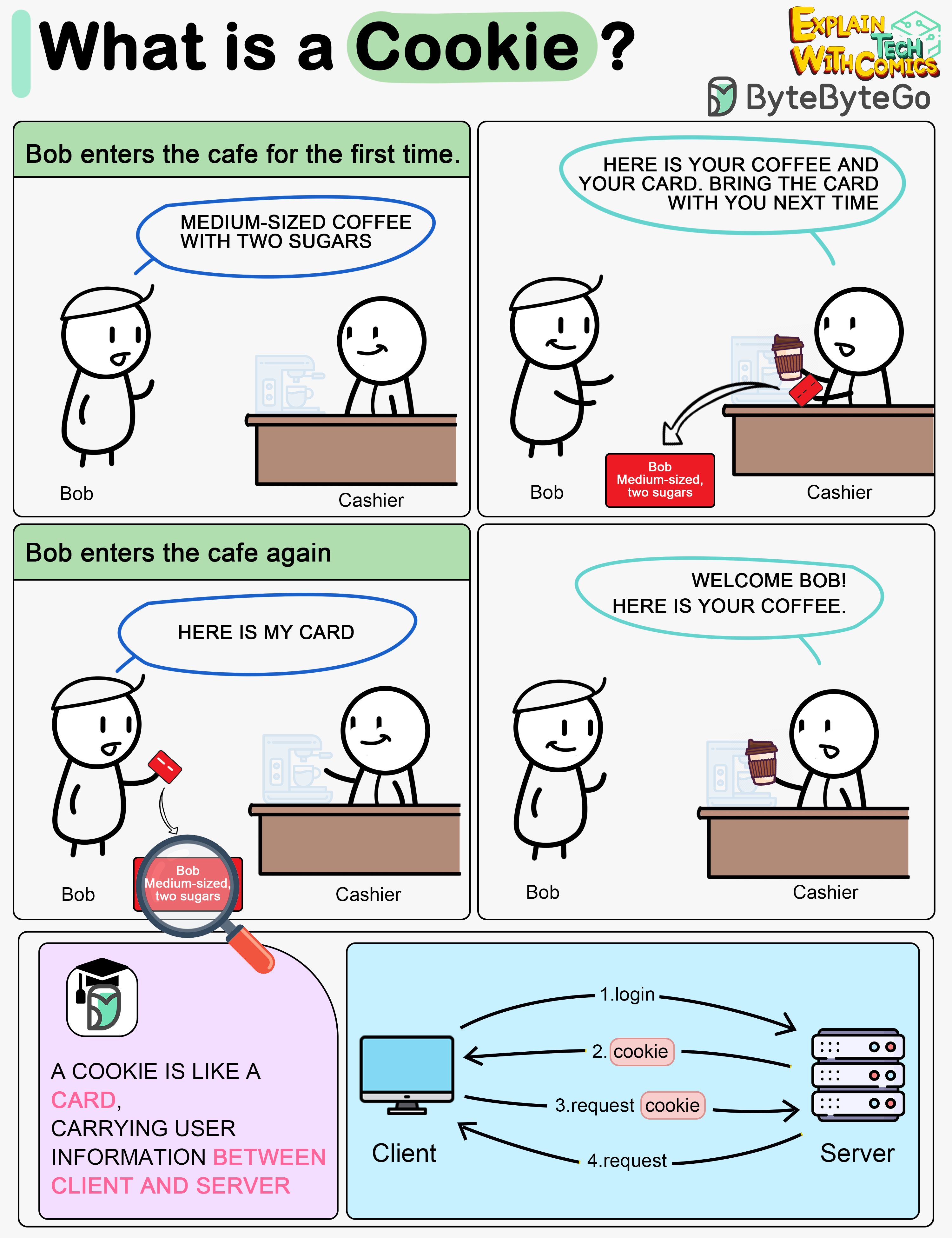

+ * [Cookies vs Sessions](https://bytebytego.com/guides/what-are-the-differences-between-cookies-and-sessions)

+ * [HTTP Cookies Explained With a Simple Diagram](https://bytebytego.com/guides/http-cookies-explained-with-a-simple-diagram)

+ * [Token, Cookie, Session](https://bytebytego.com/guides/token-cookie-session)

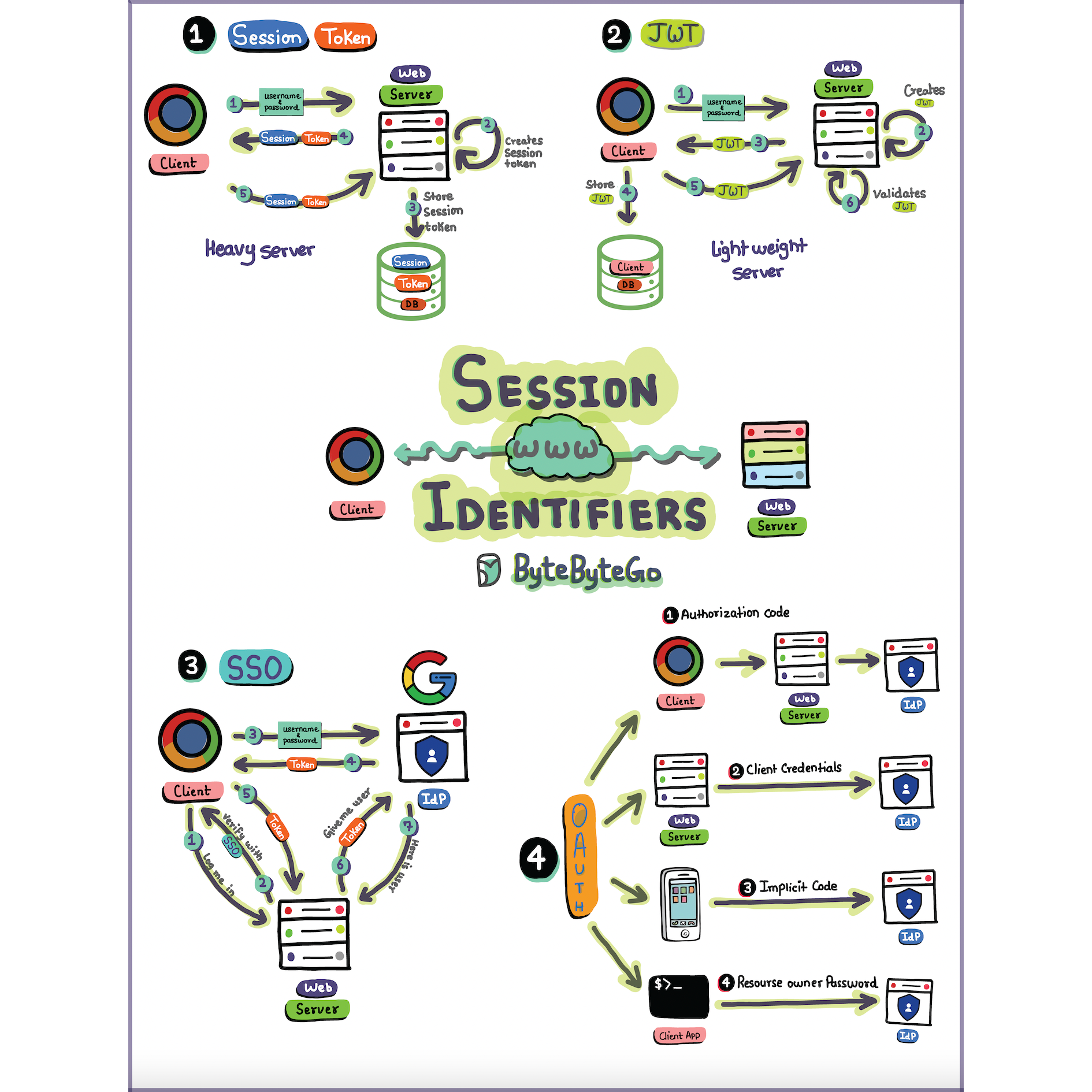

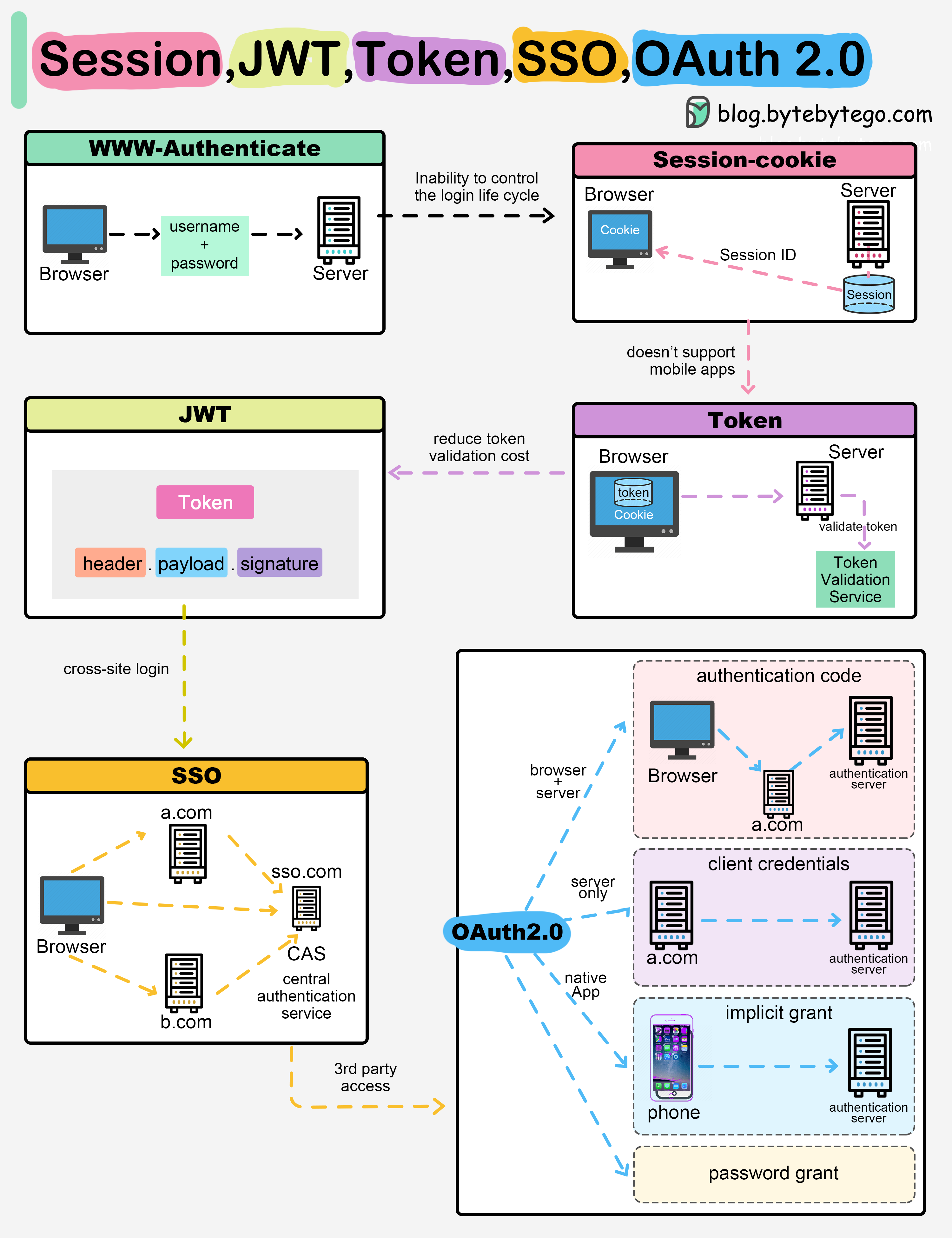

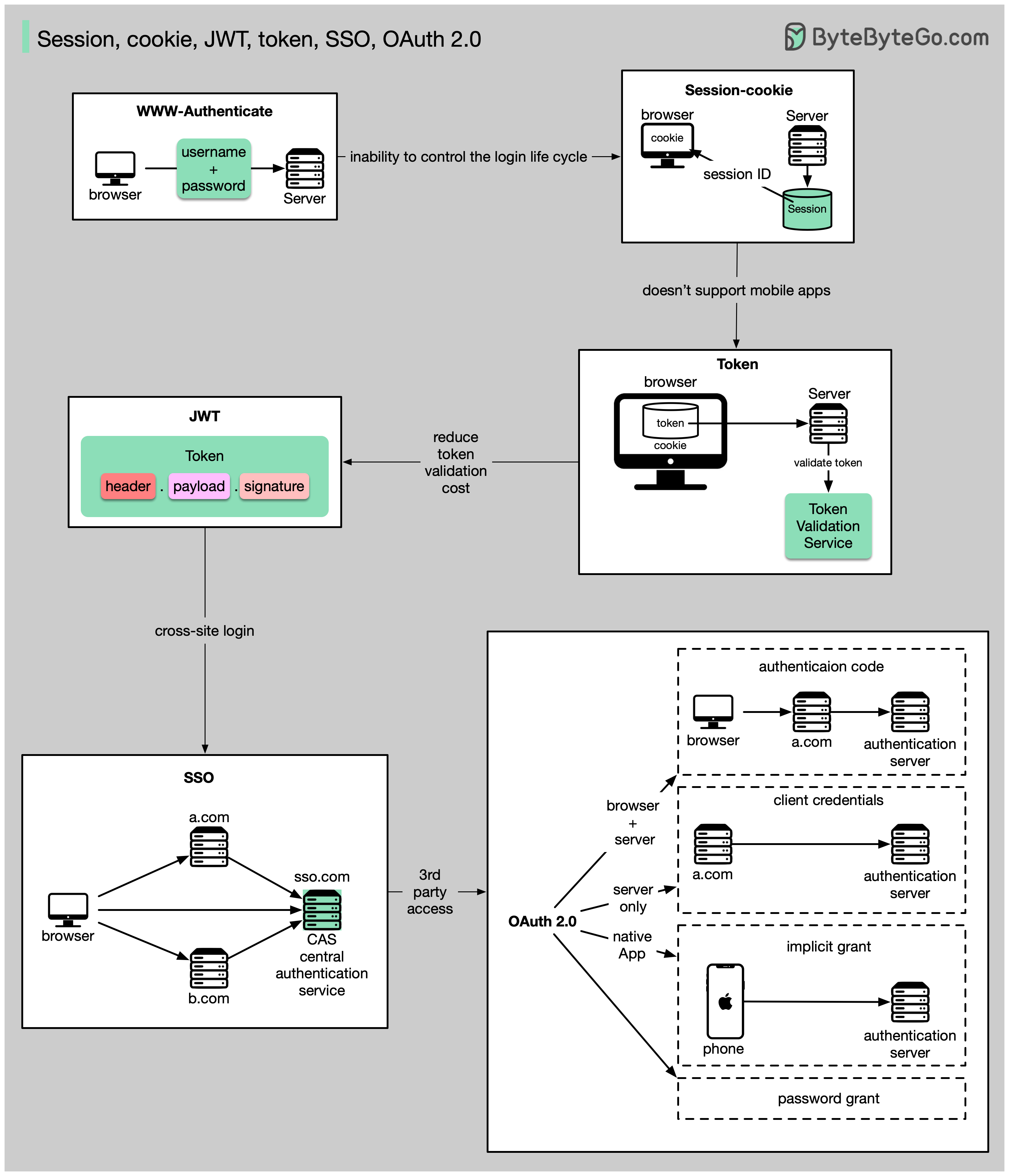

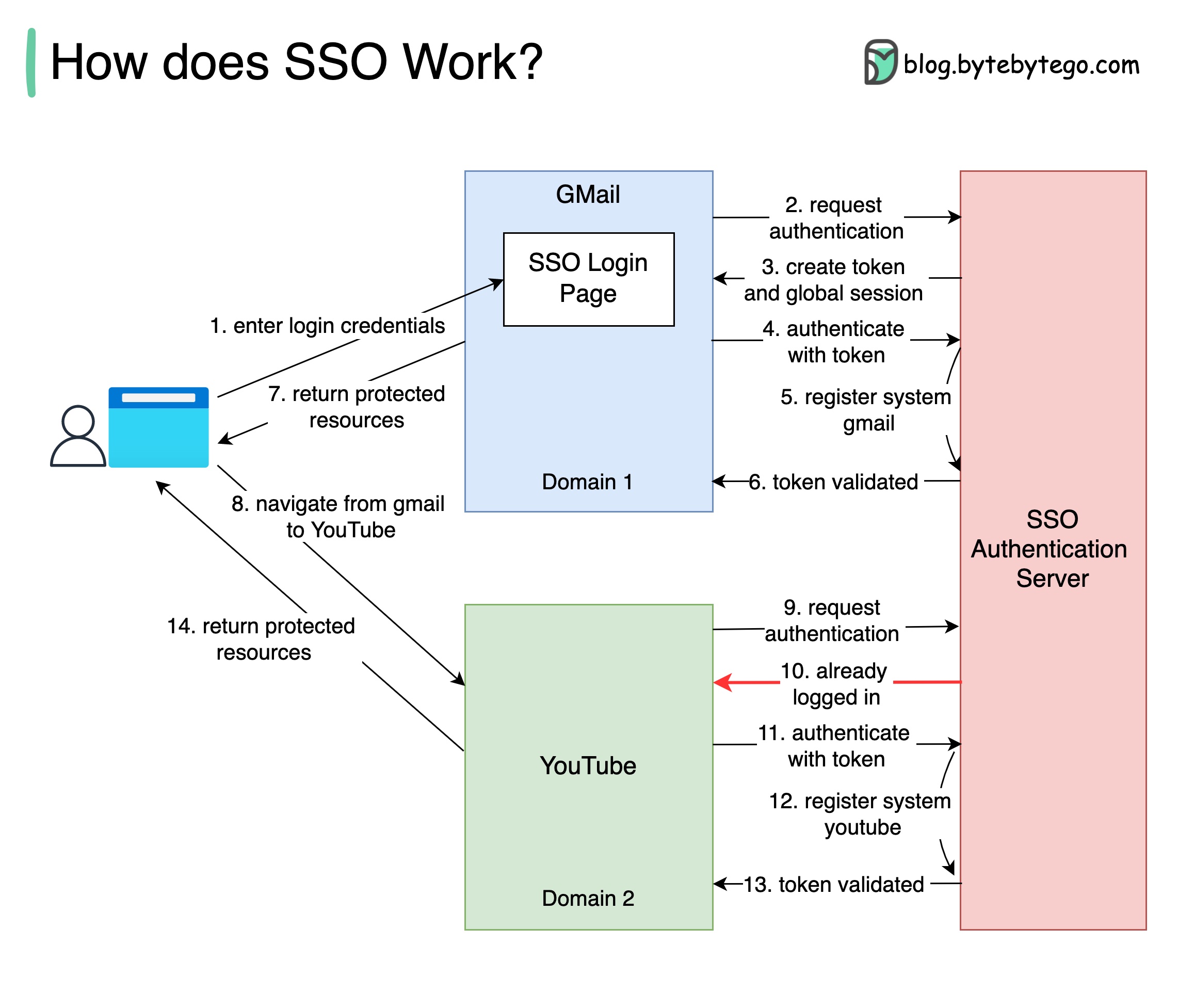

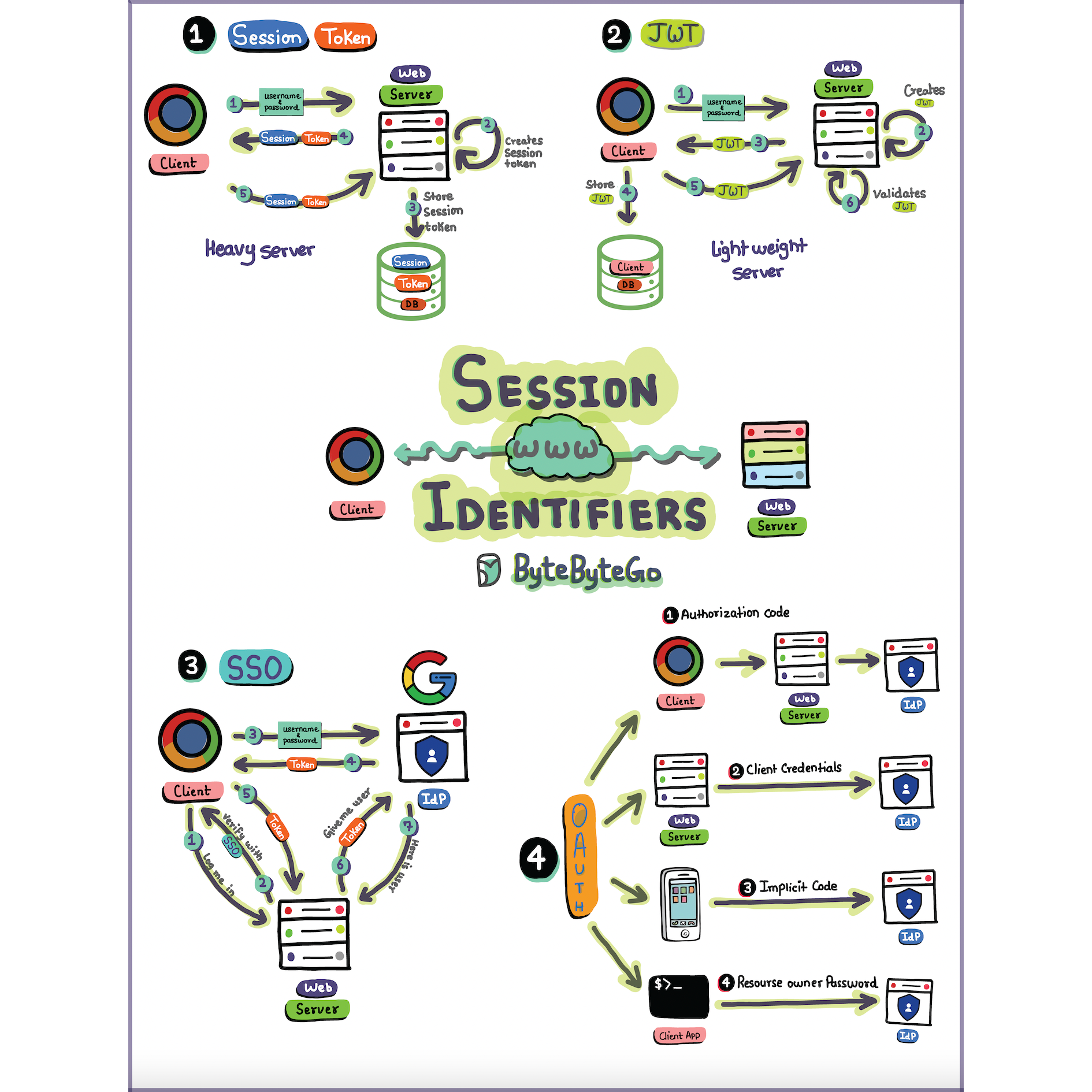

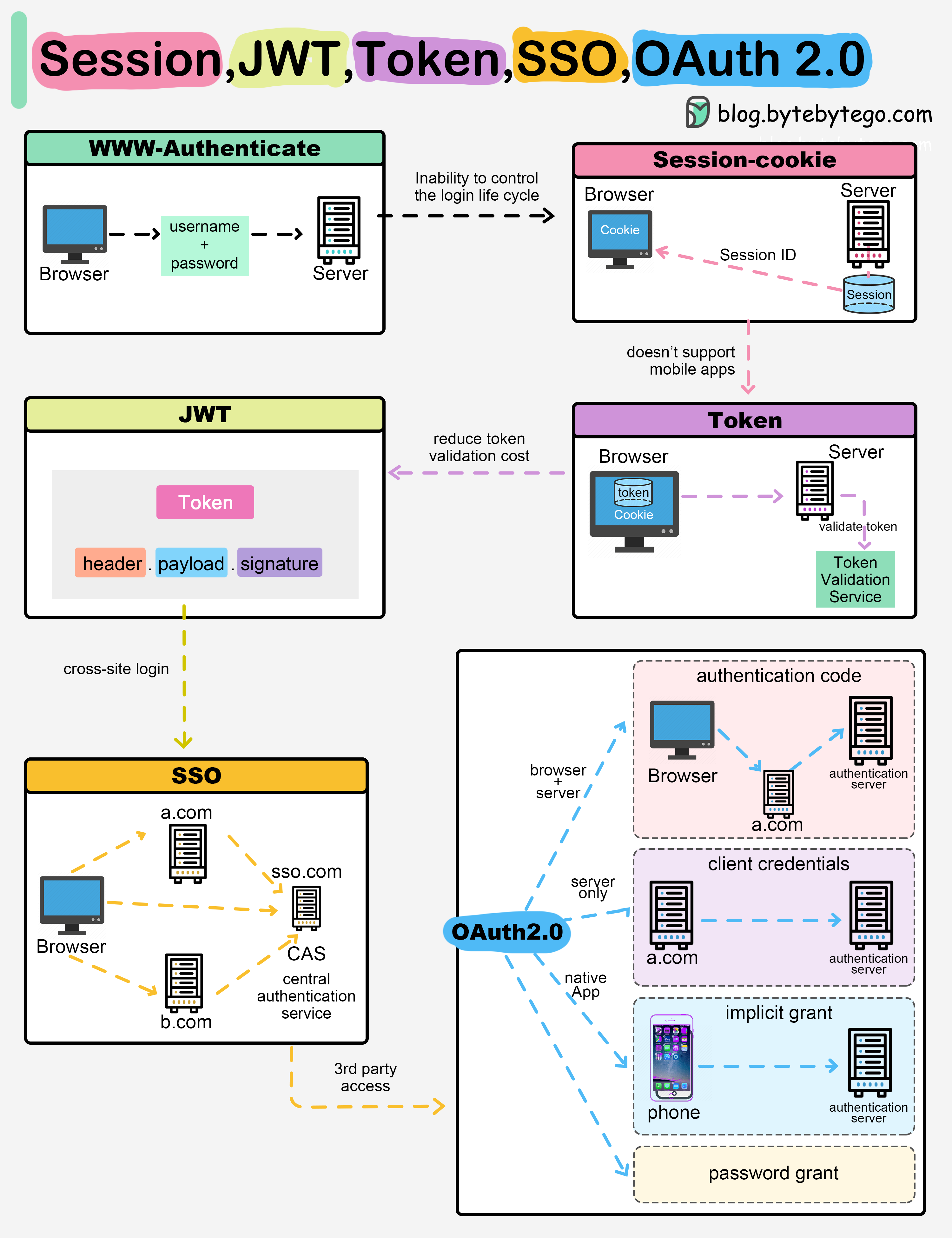

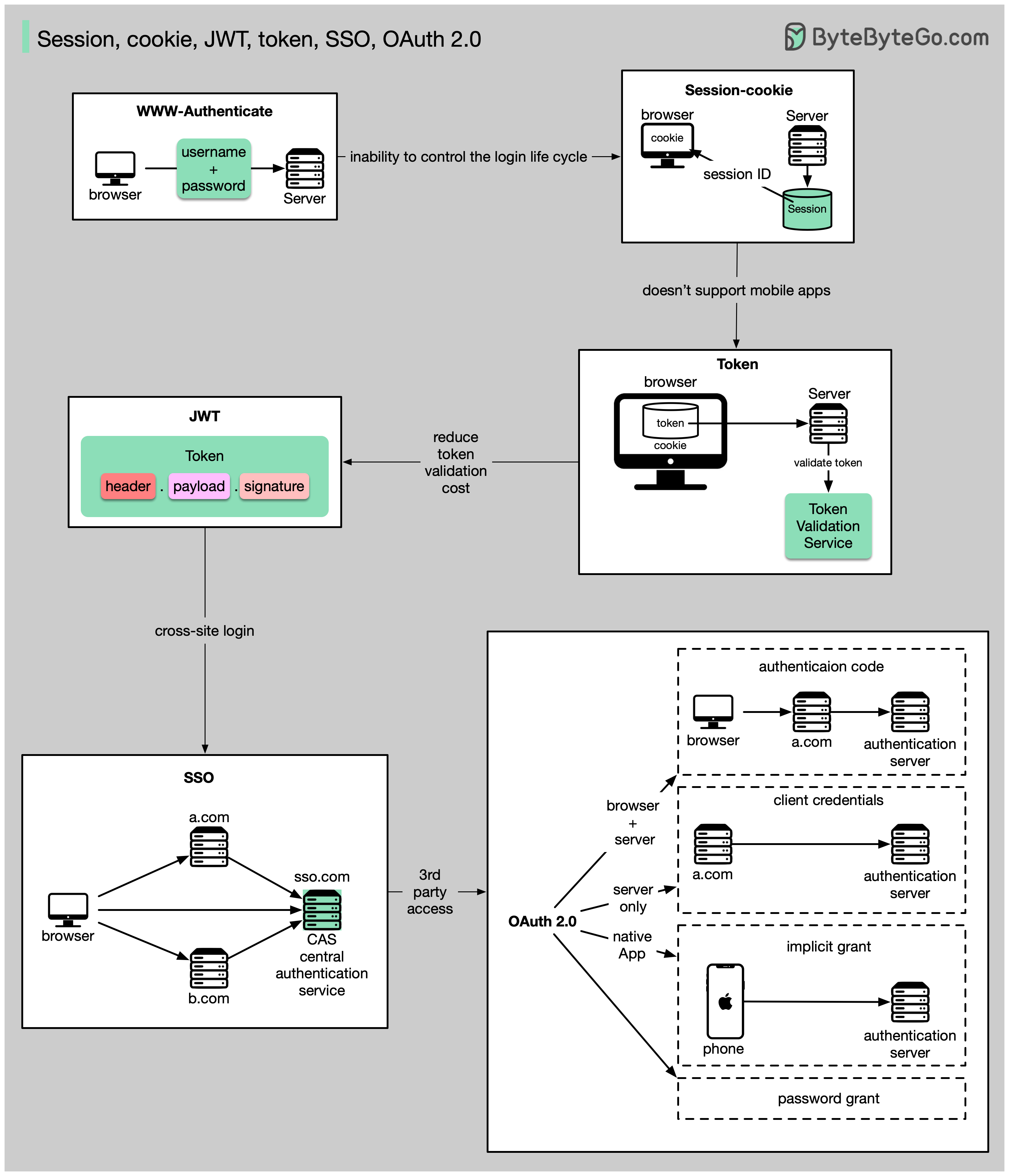

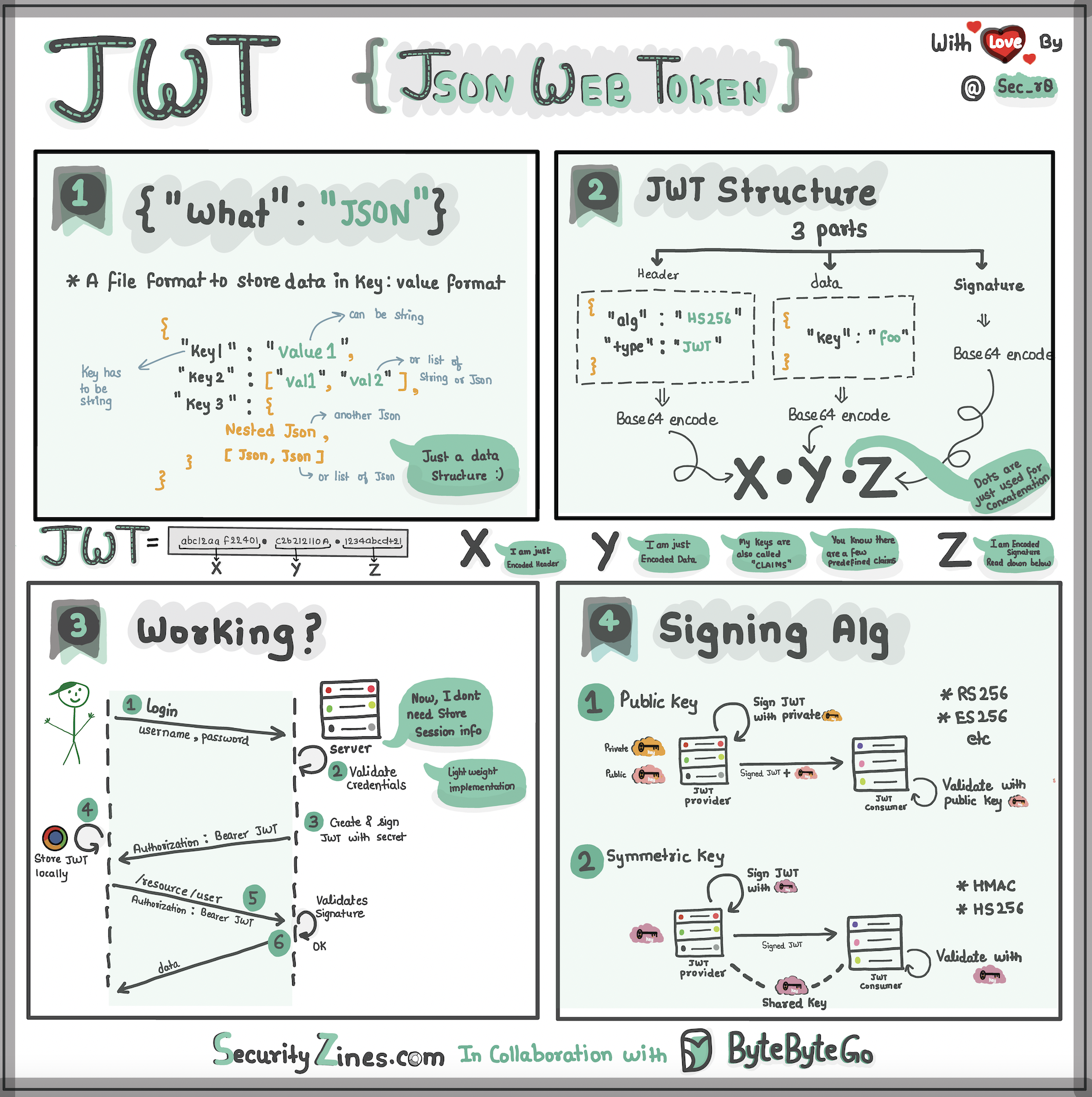

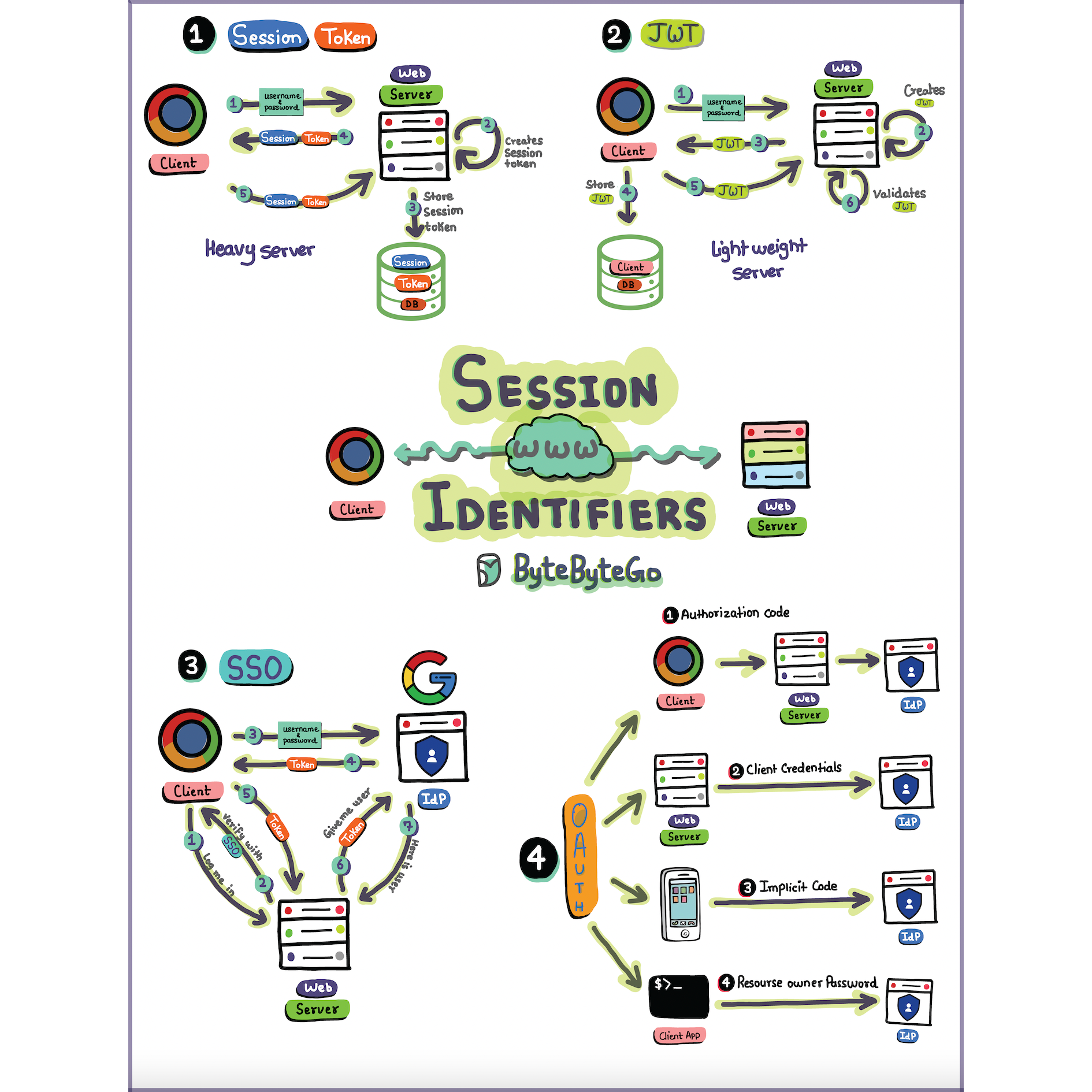

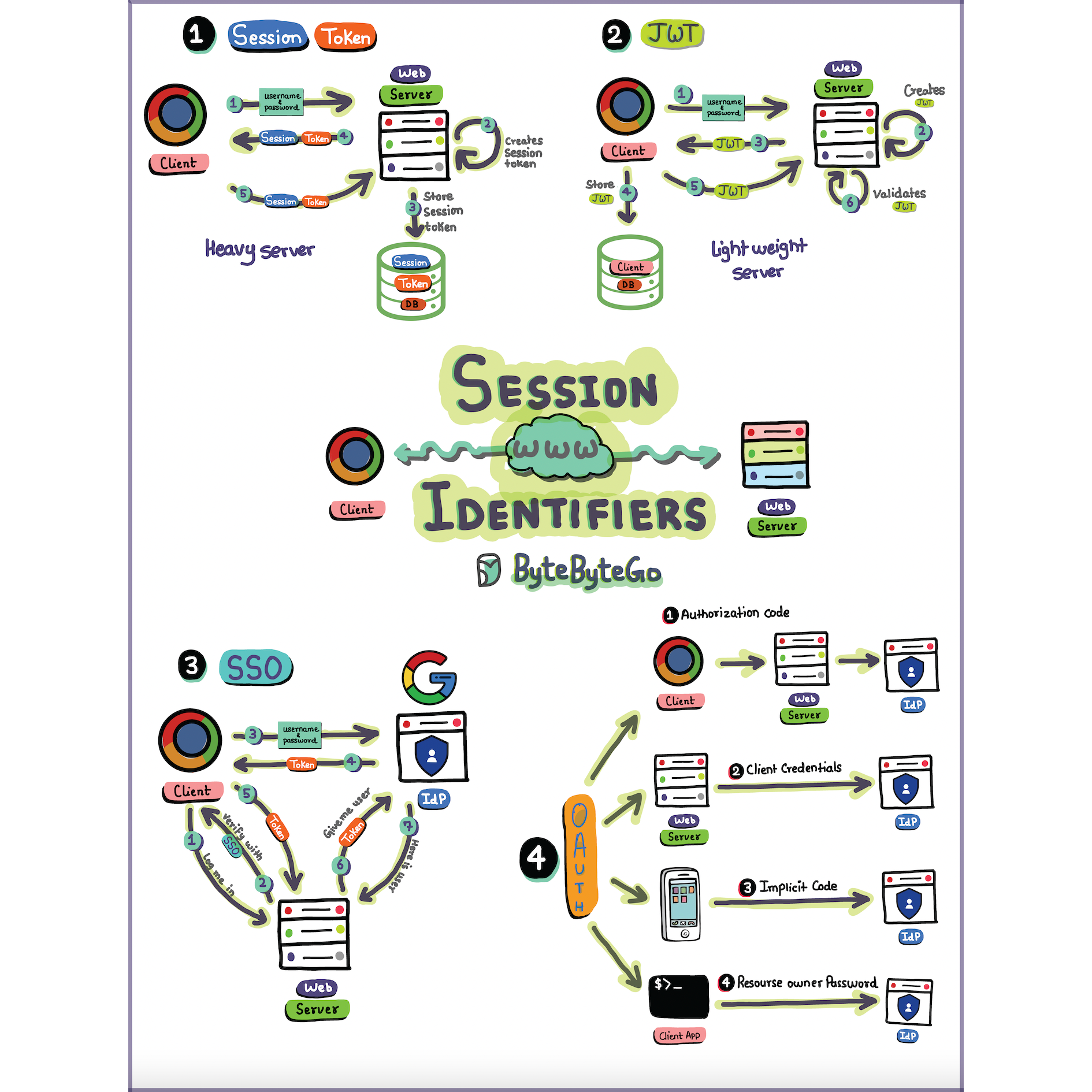

+ * [Sessions, Tokens, JWT, SSO, and OAuth Explained](https://bytebytego.com/guides/explaining-sessions-tokens-jwt-sso-and-oauth-in-one-diagram)

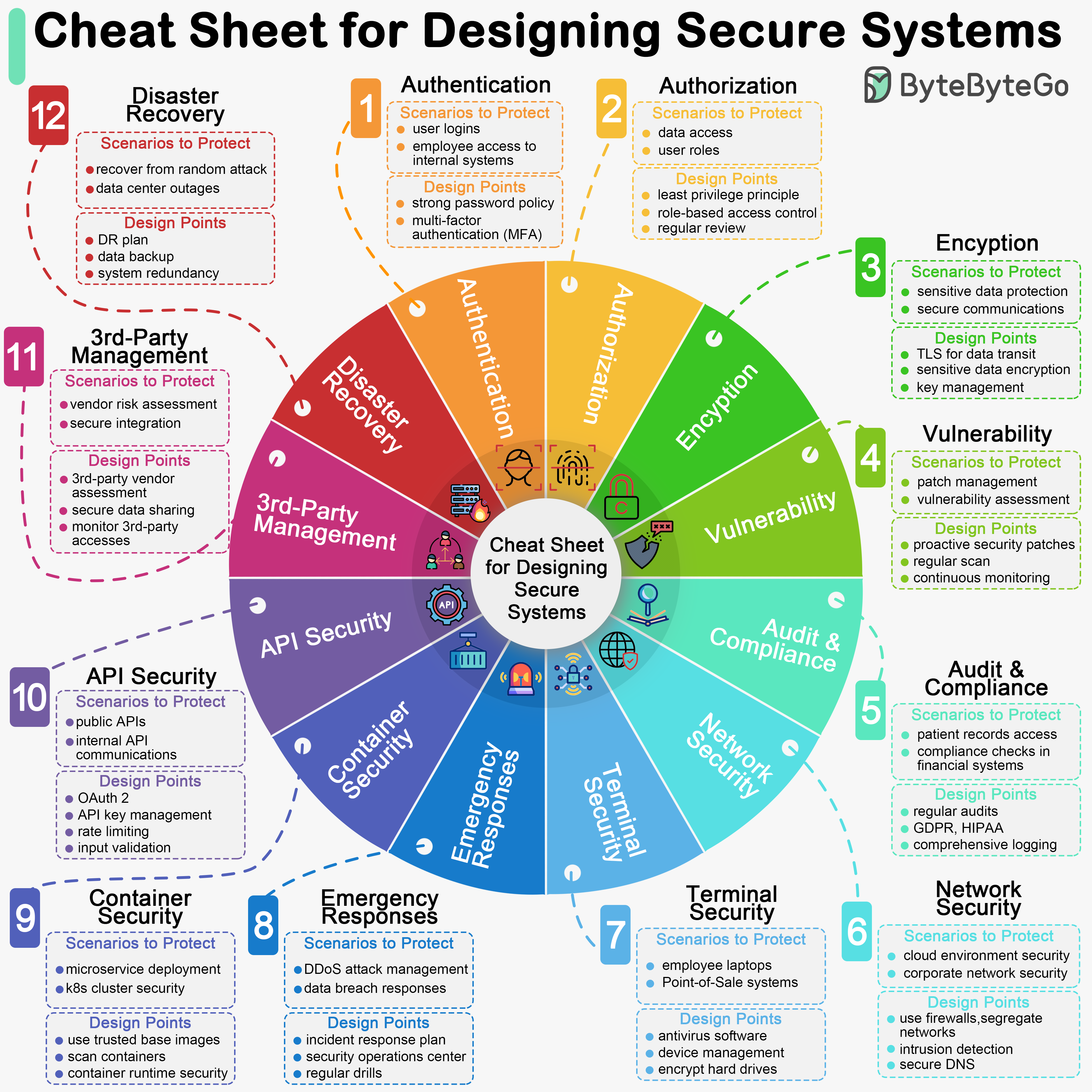

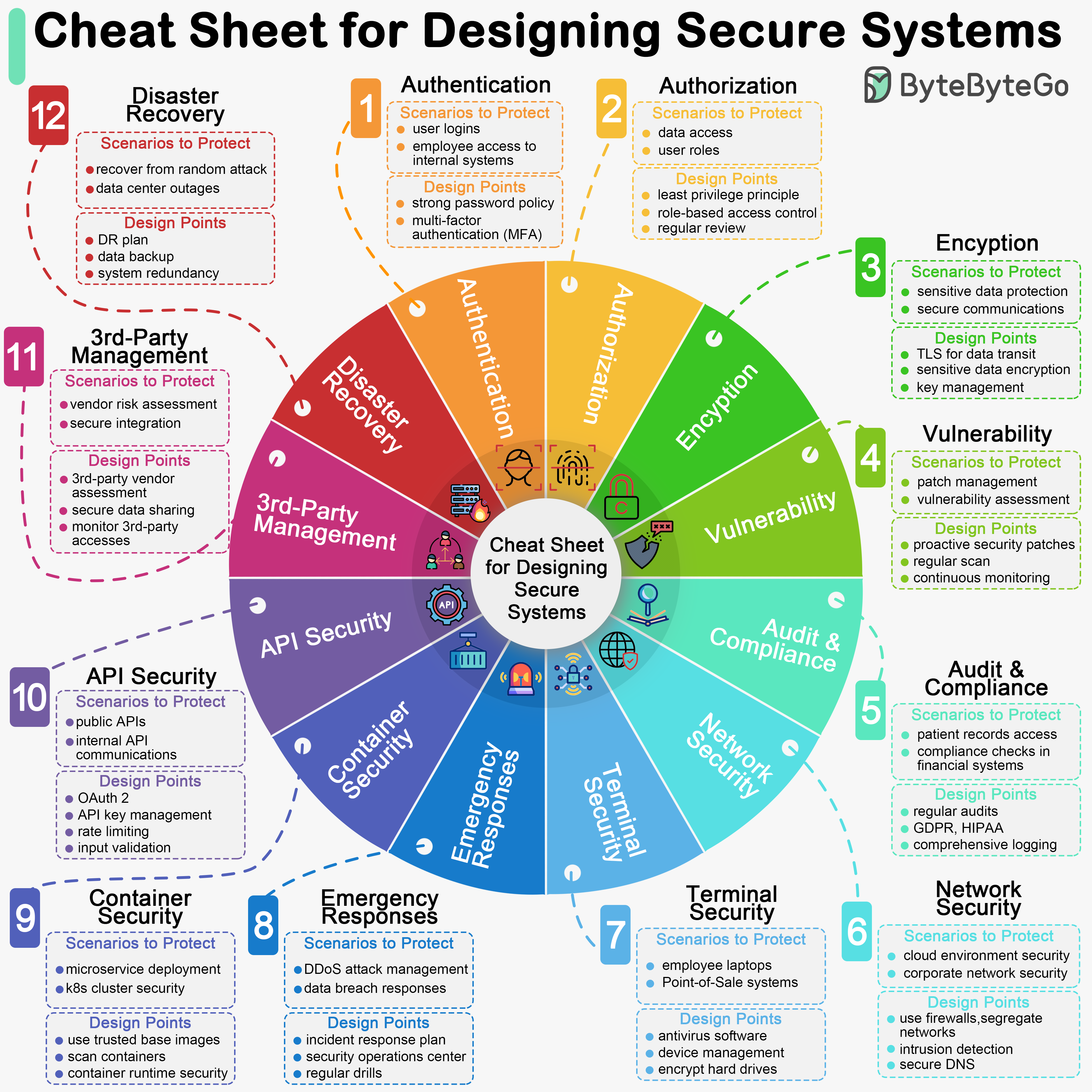

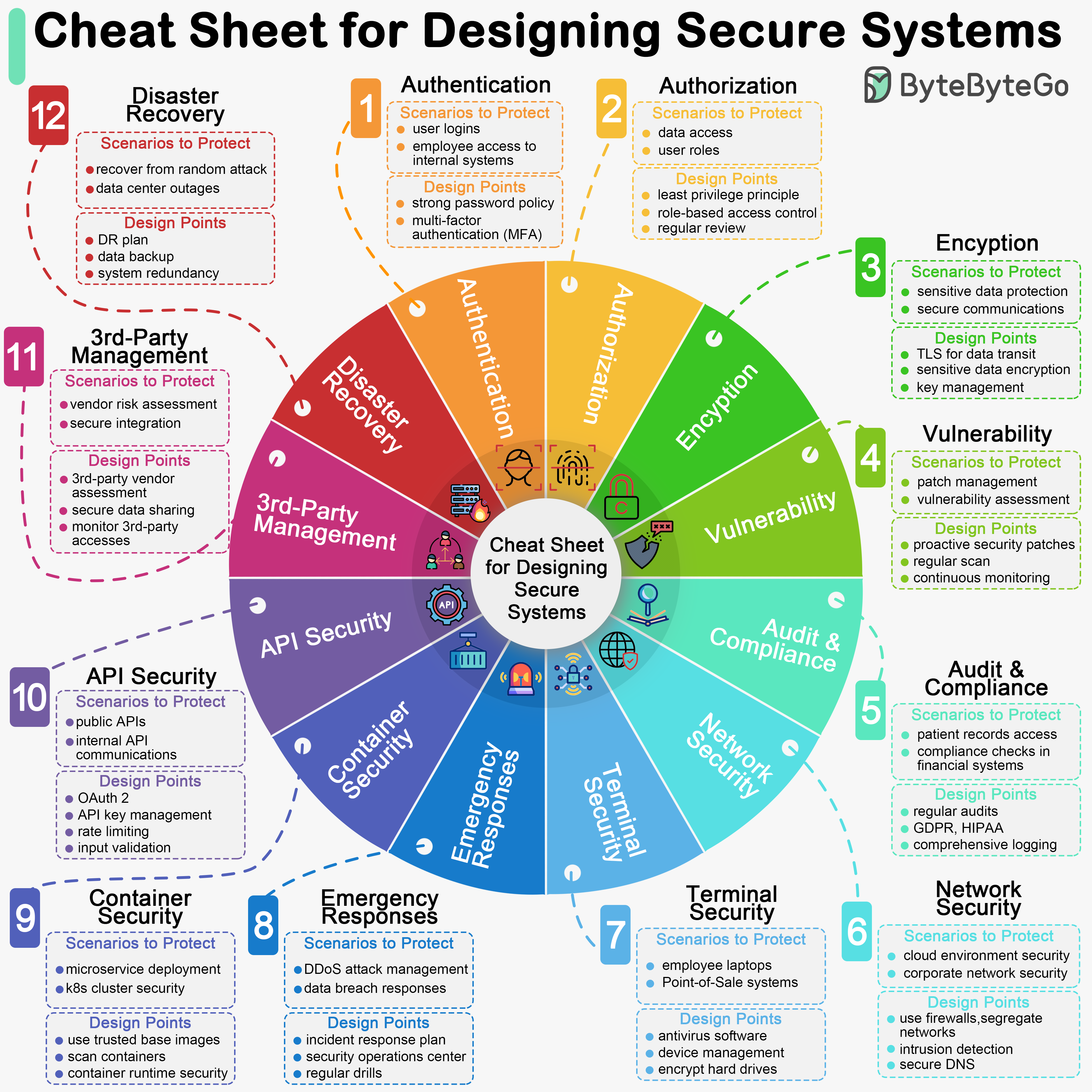

+ * [How to Design a Secure System](https://bytebytego.com/guides/how-do-we-design-a-secure-system)

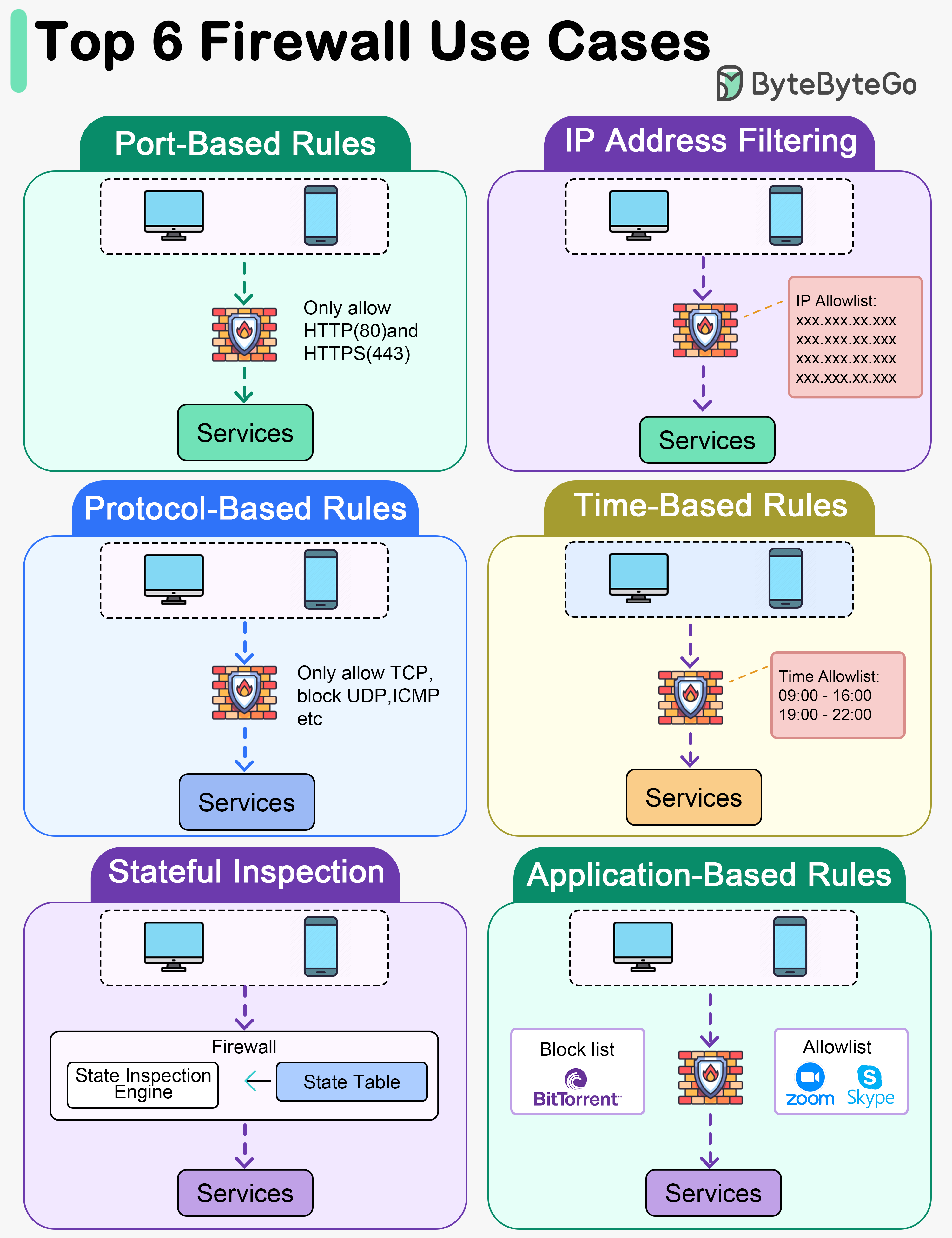

+ * [Top 6 Firewall Use Cases](https://bytebytego.com/guides/top-6-firewall-use-cases)

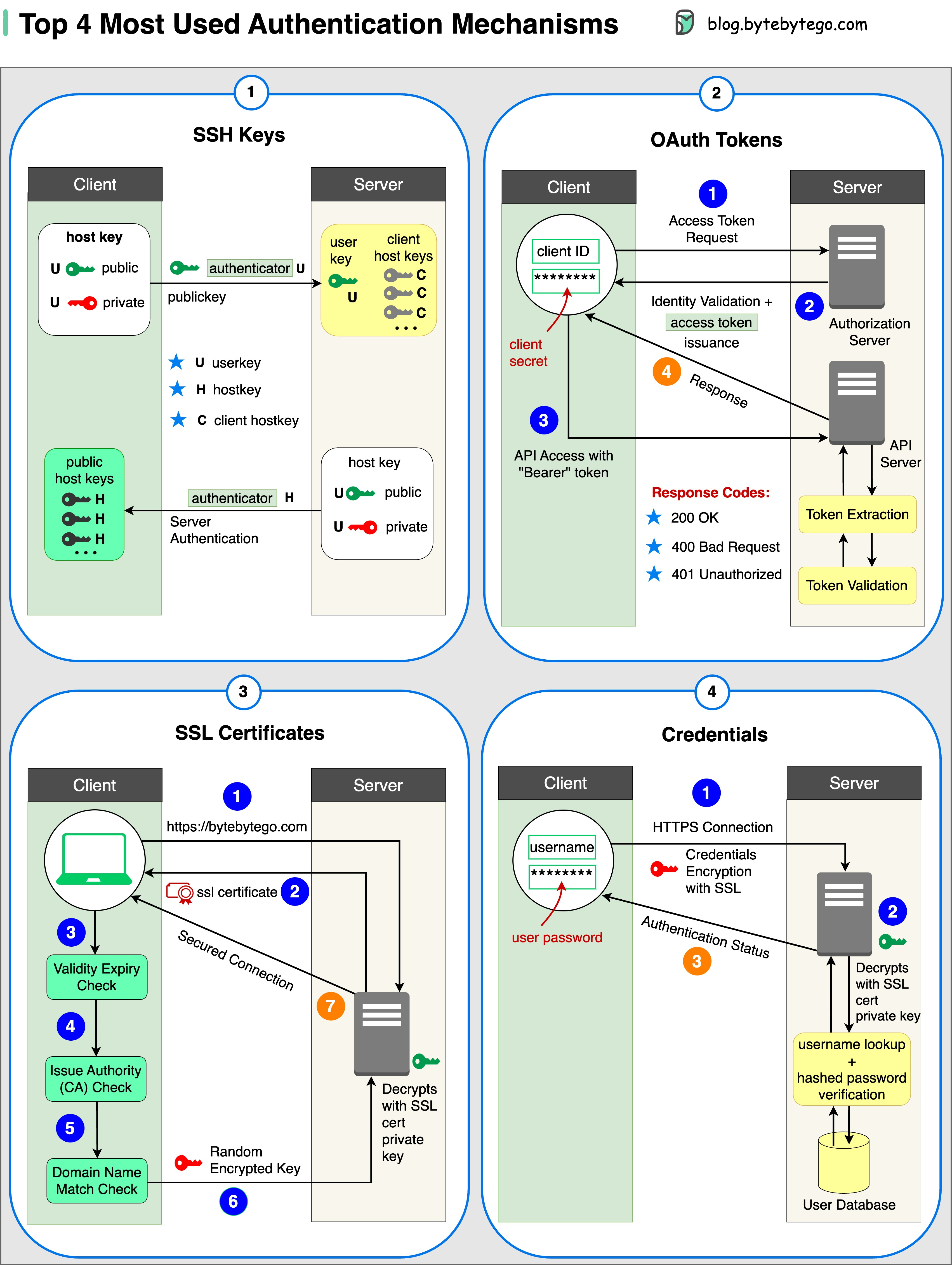

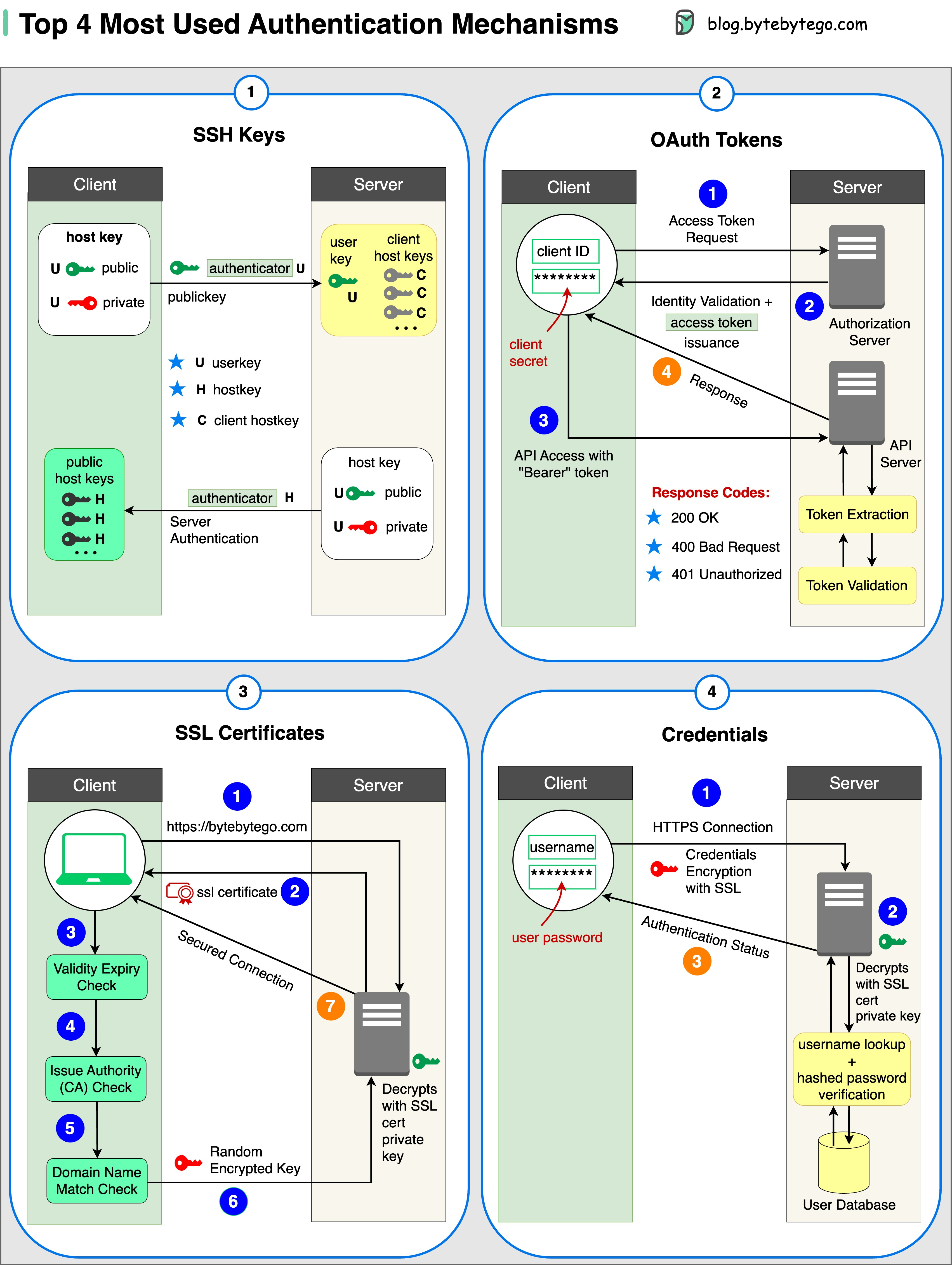

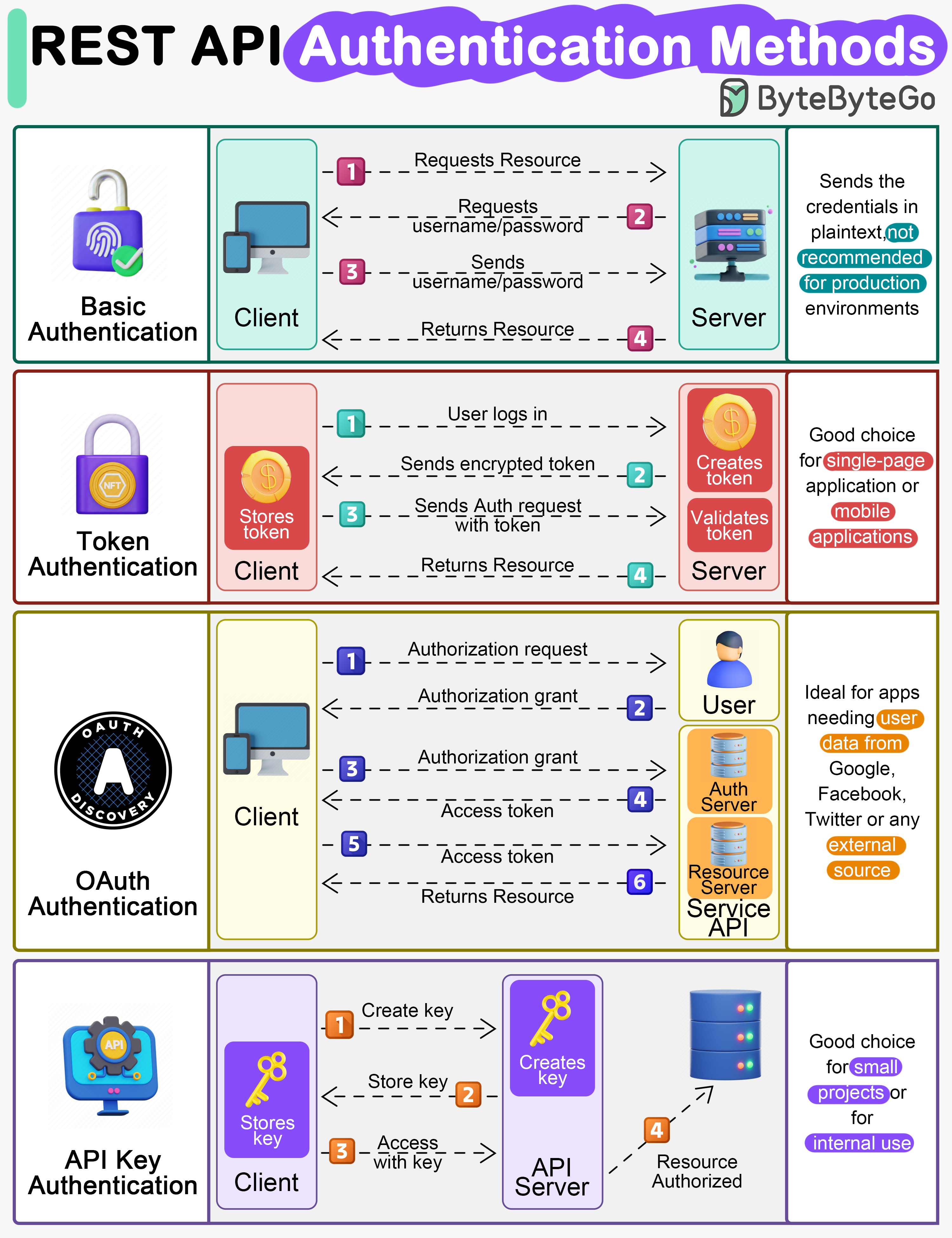

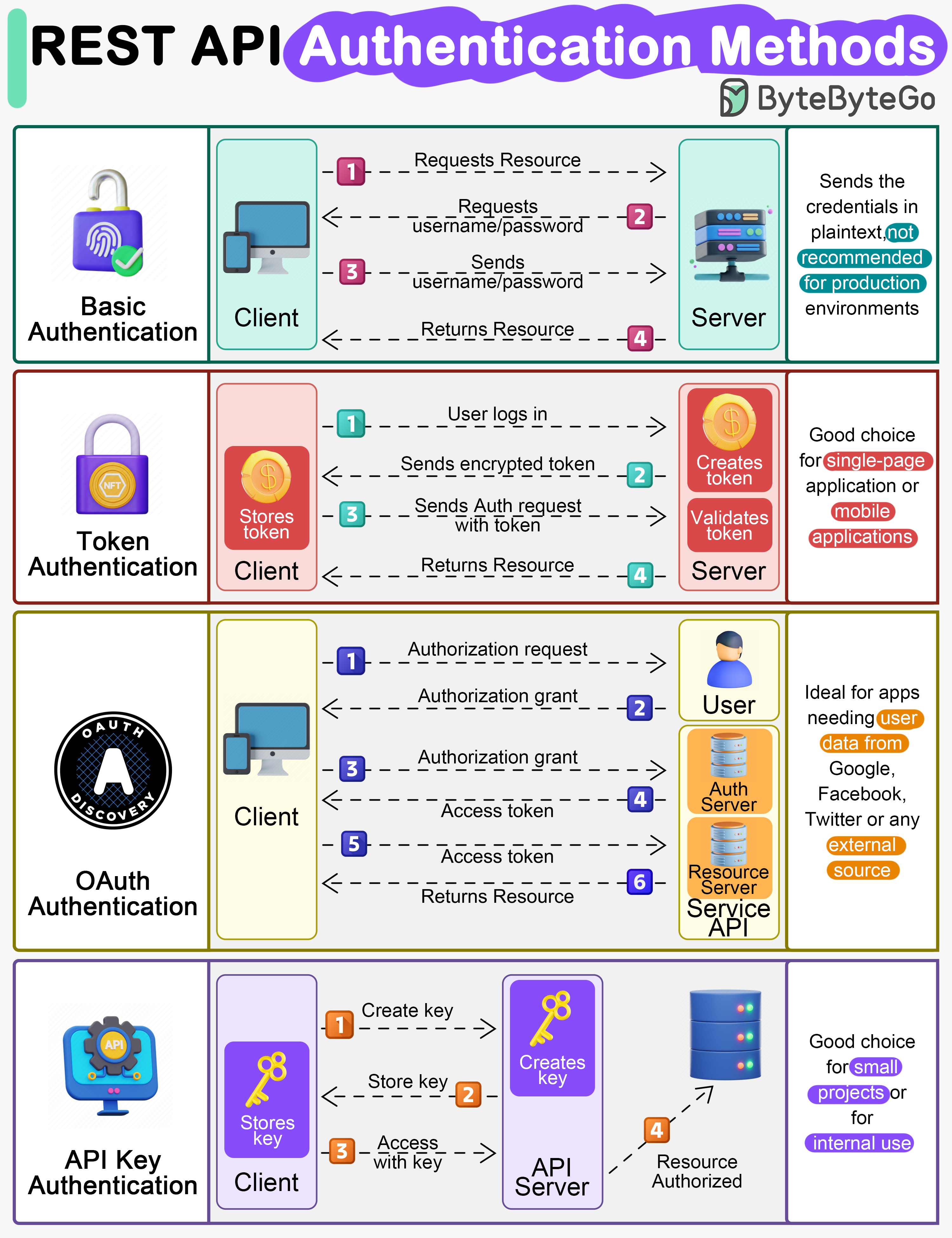

+ * [Top 4 Authentication Mechanisms](https://bytebytego.com/guides/top-4-forms-of-authentication-mechanisms)

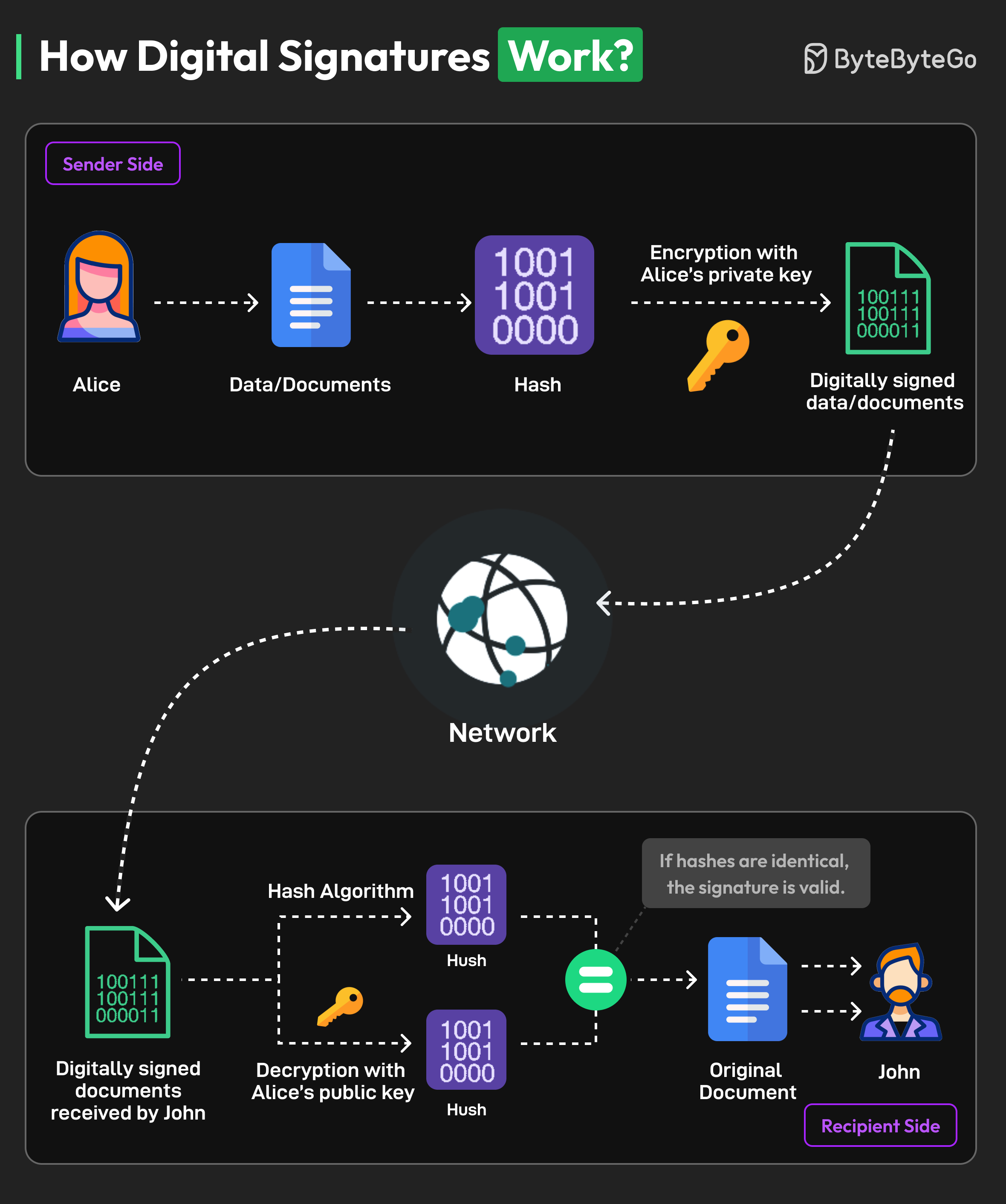

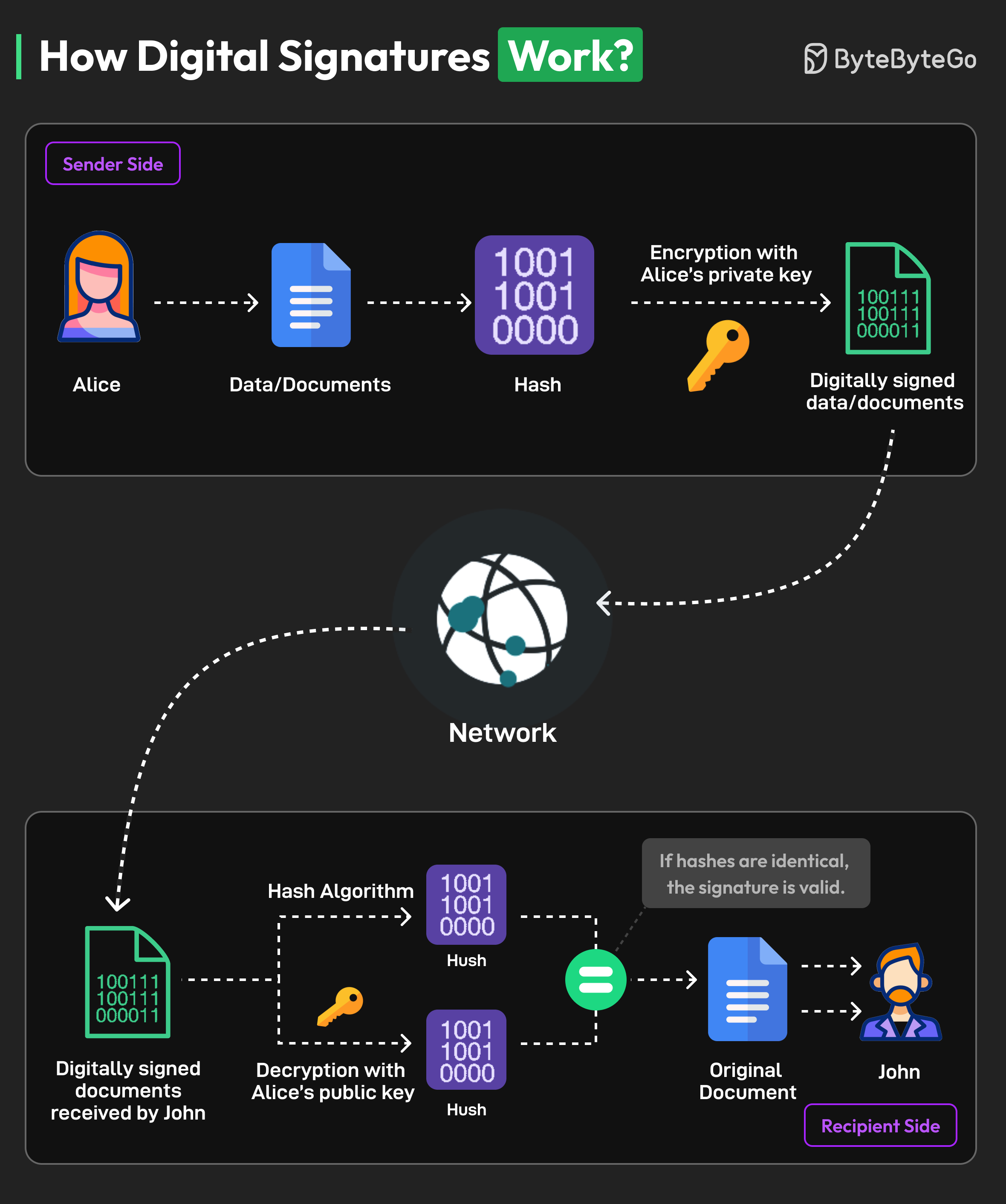

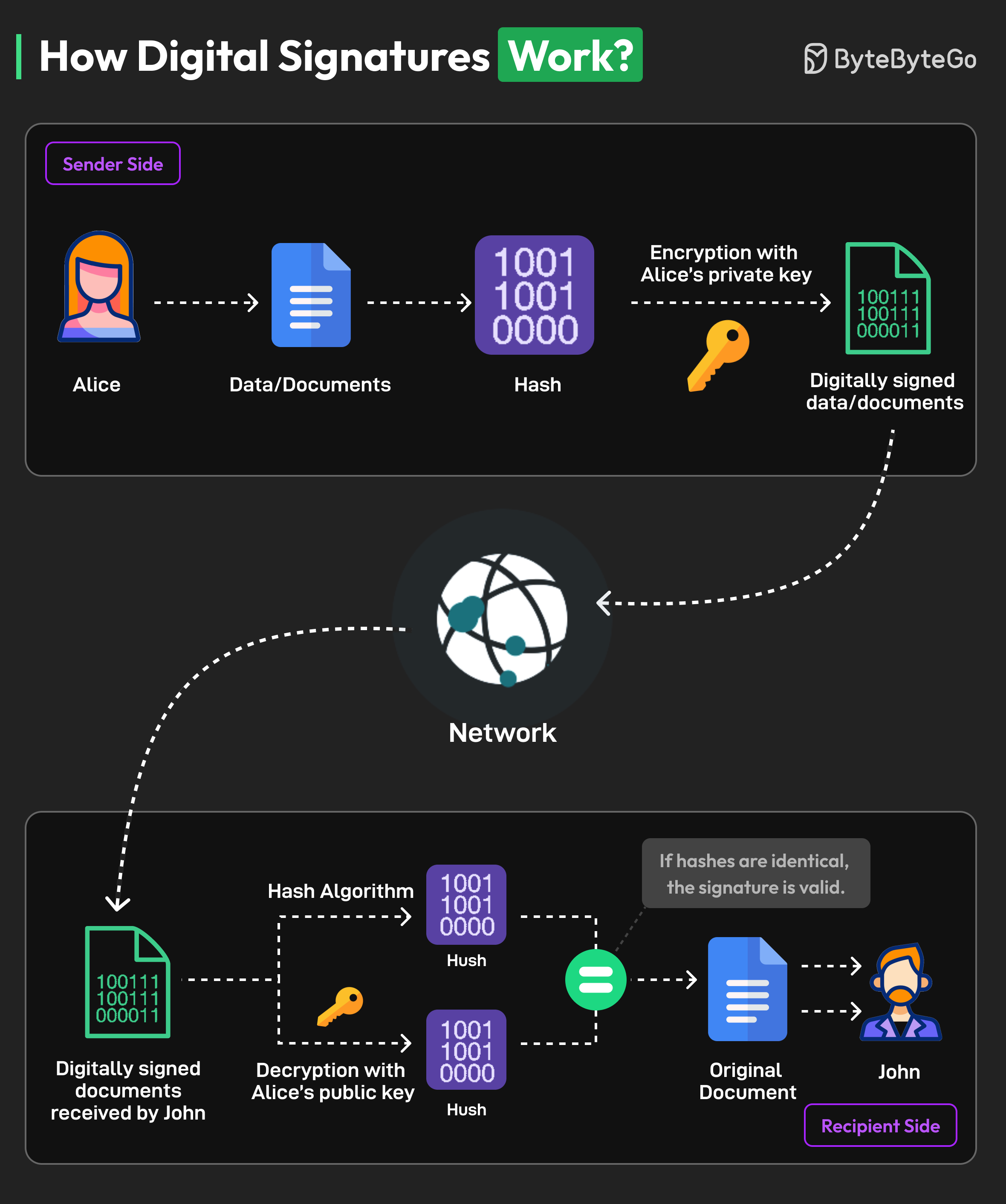

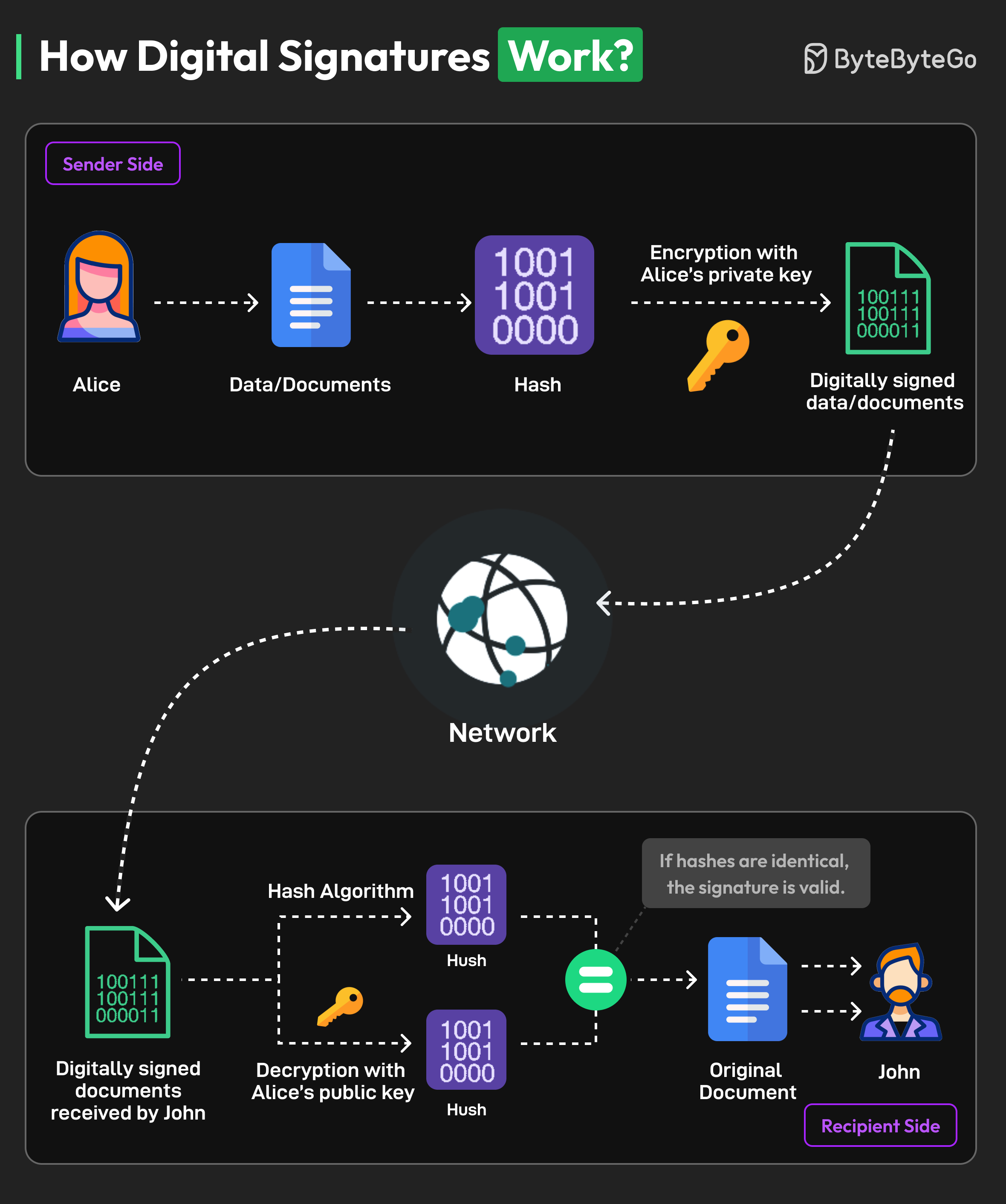

+ * [How Digital Signatures Work](https://bytebytego.com/guides/how-digital-signatures-work)

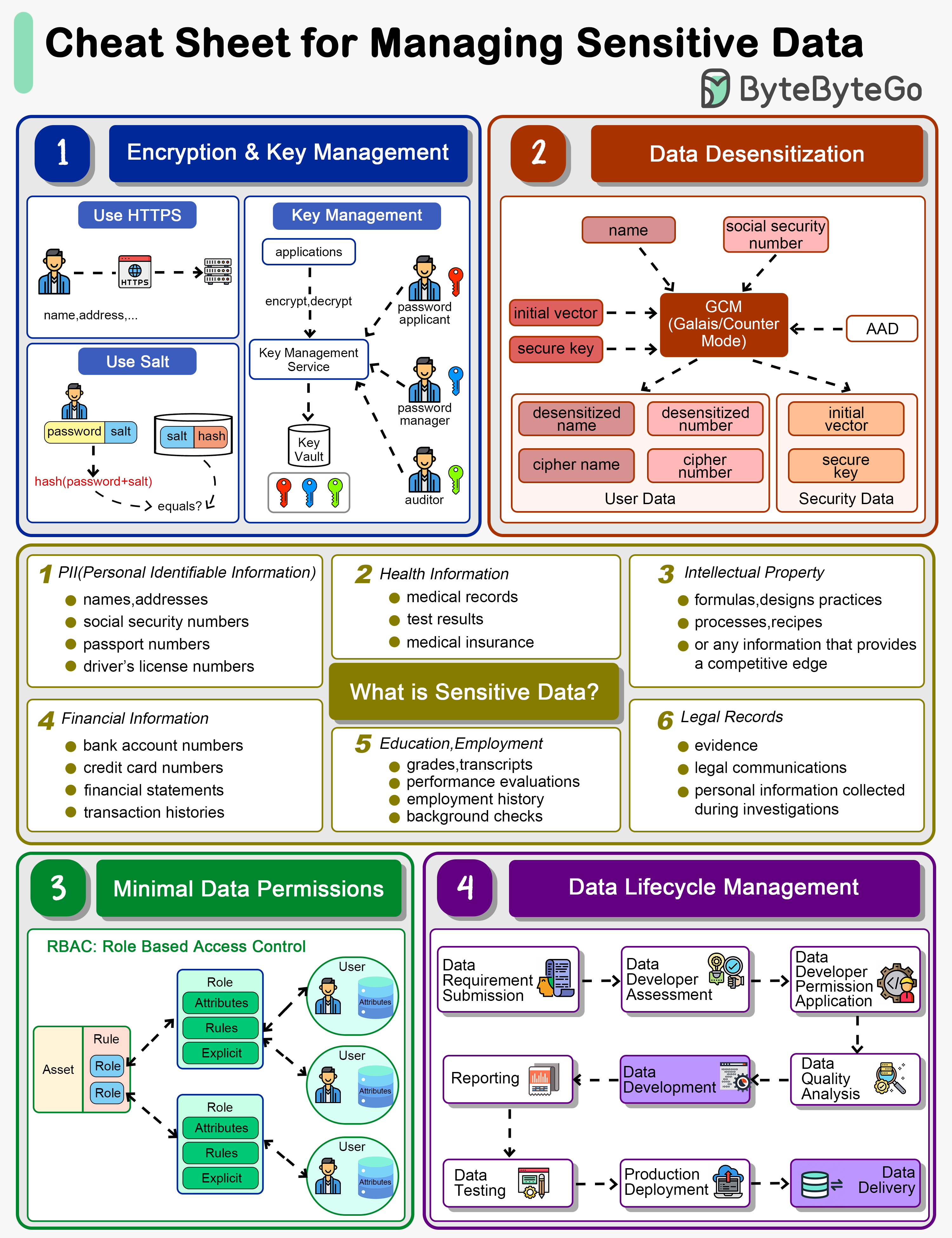

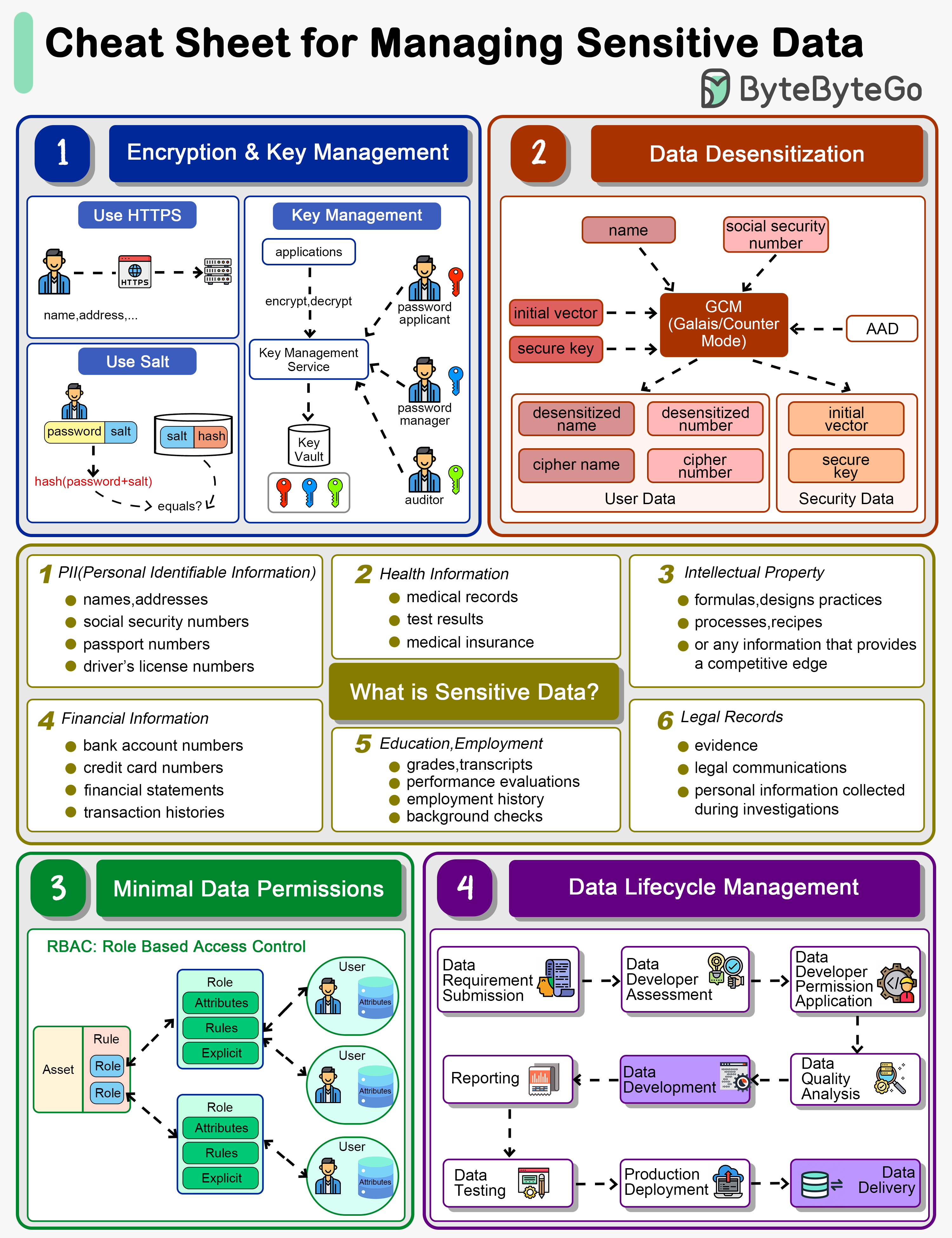

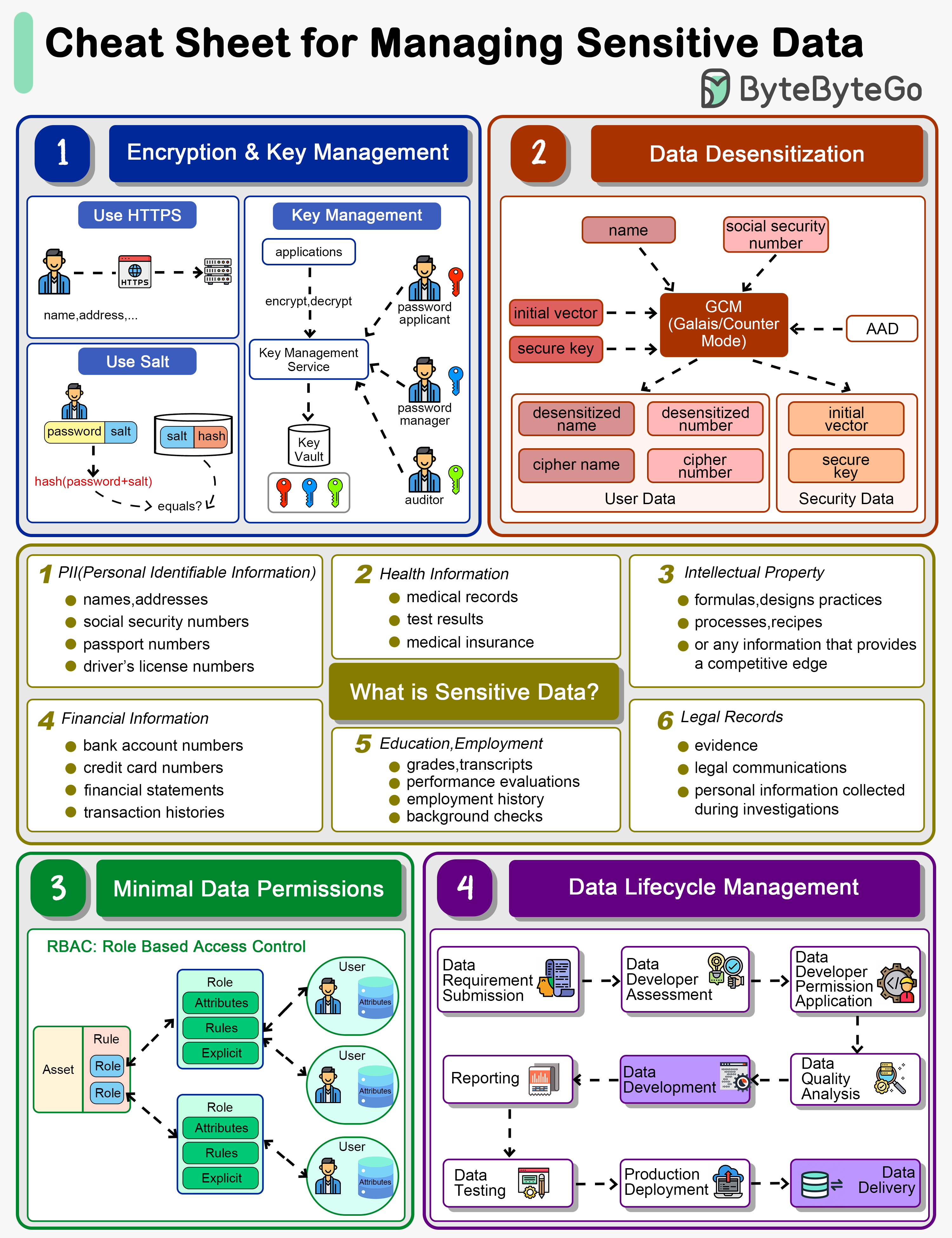

+ * [How do we manage sensitive data in a system?](https://bytebytego.com/guides/how-do-we-manage-sensitive-data-in-a-system)

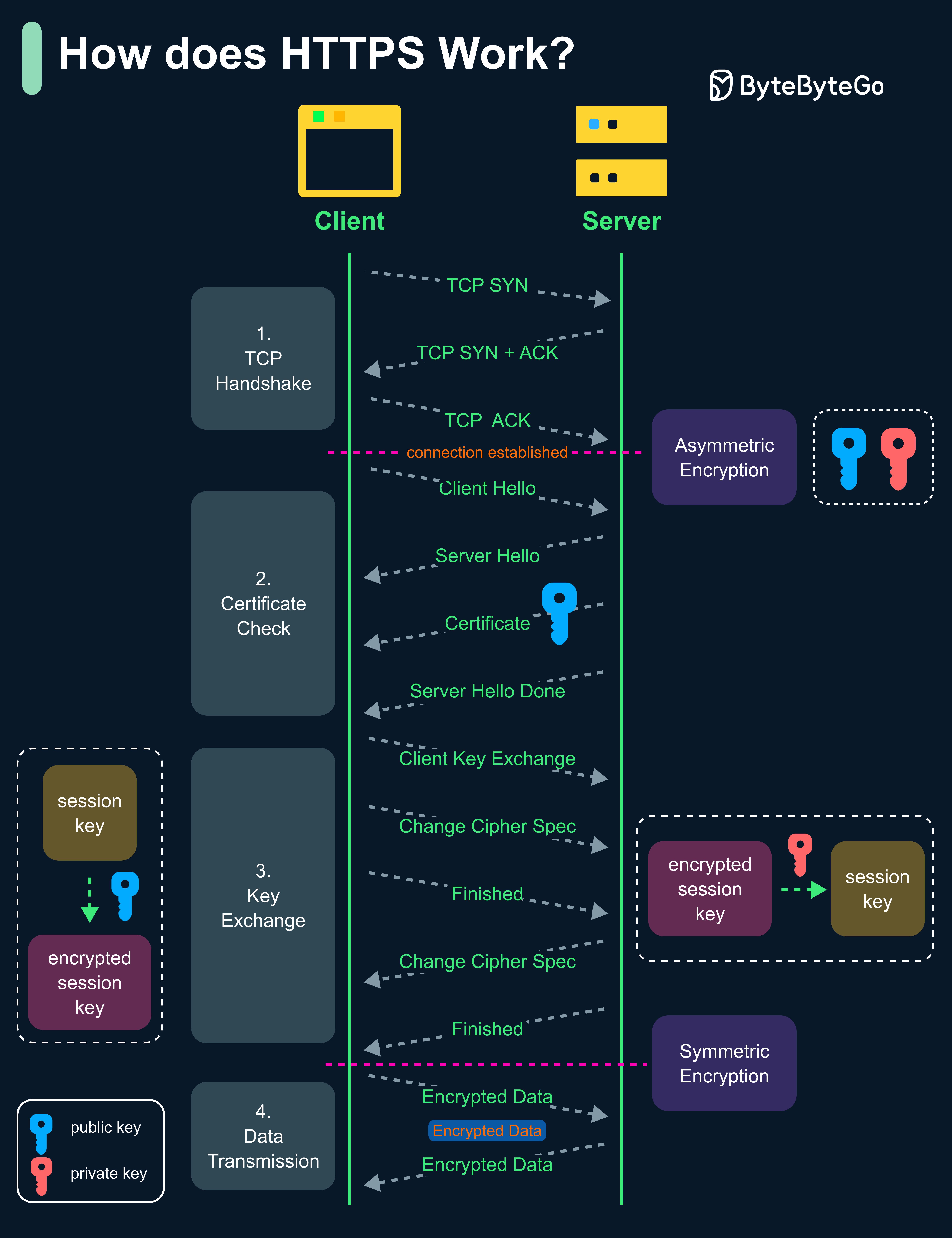

+ * [HTTPS, SSL Handshake, and Data Encryption Explained](https://bytebytego.com/guides/https-ssl-handshake-and-data-encryption-explained-to-kids)

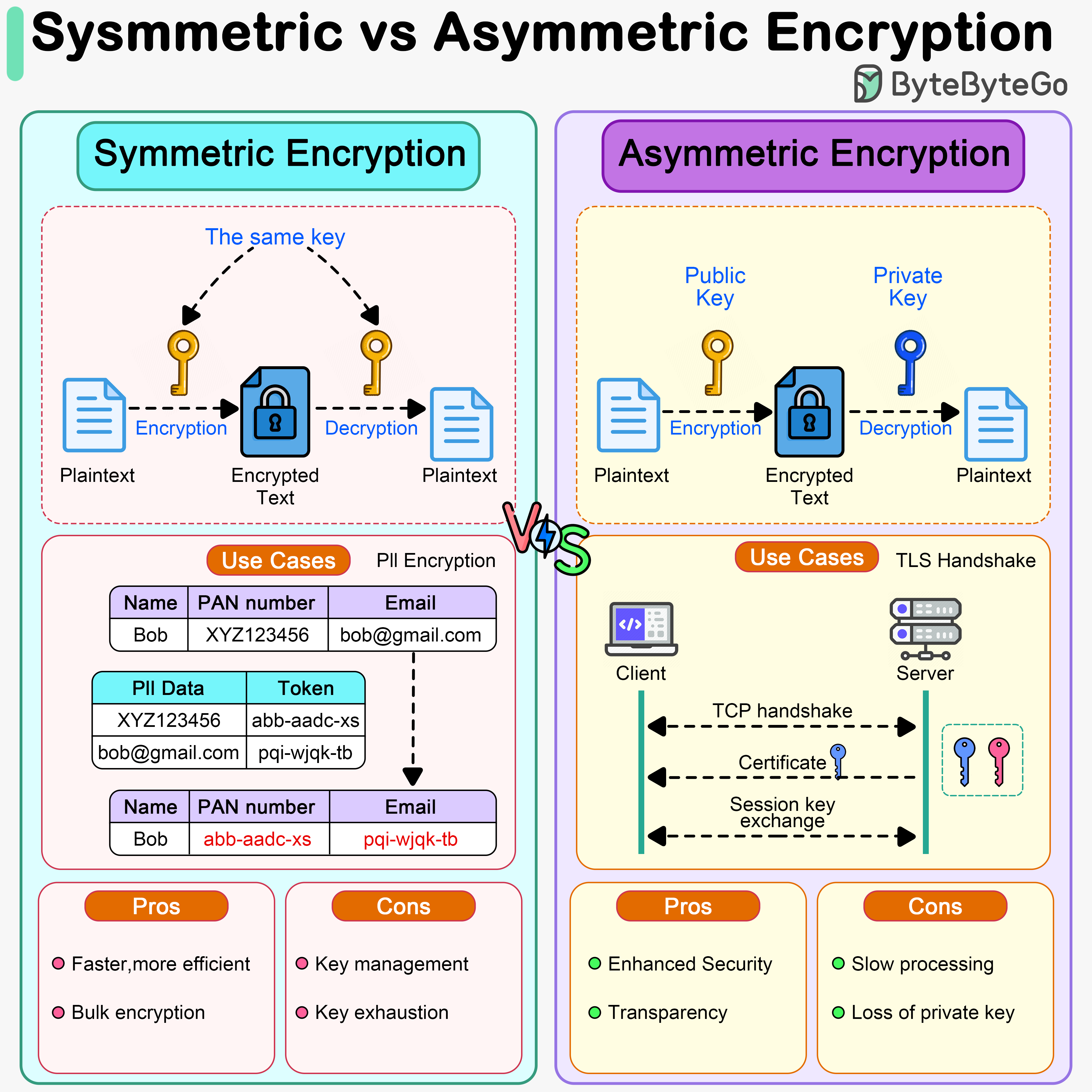

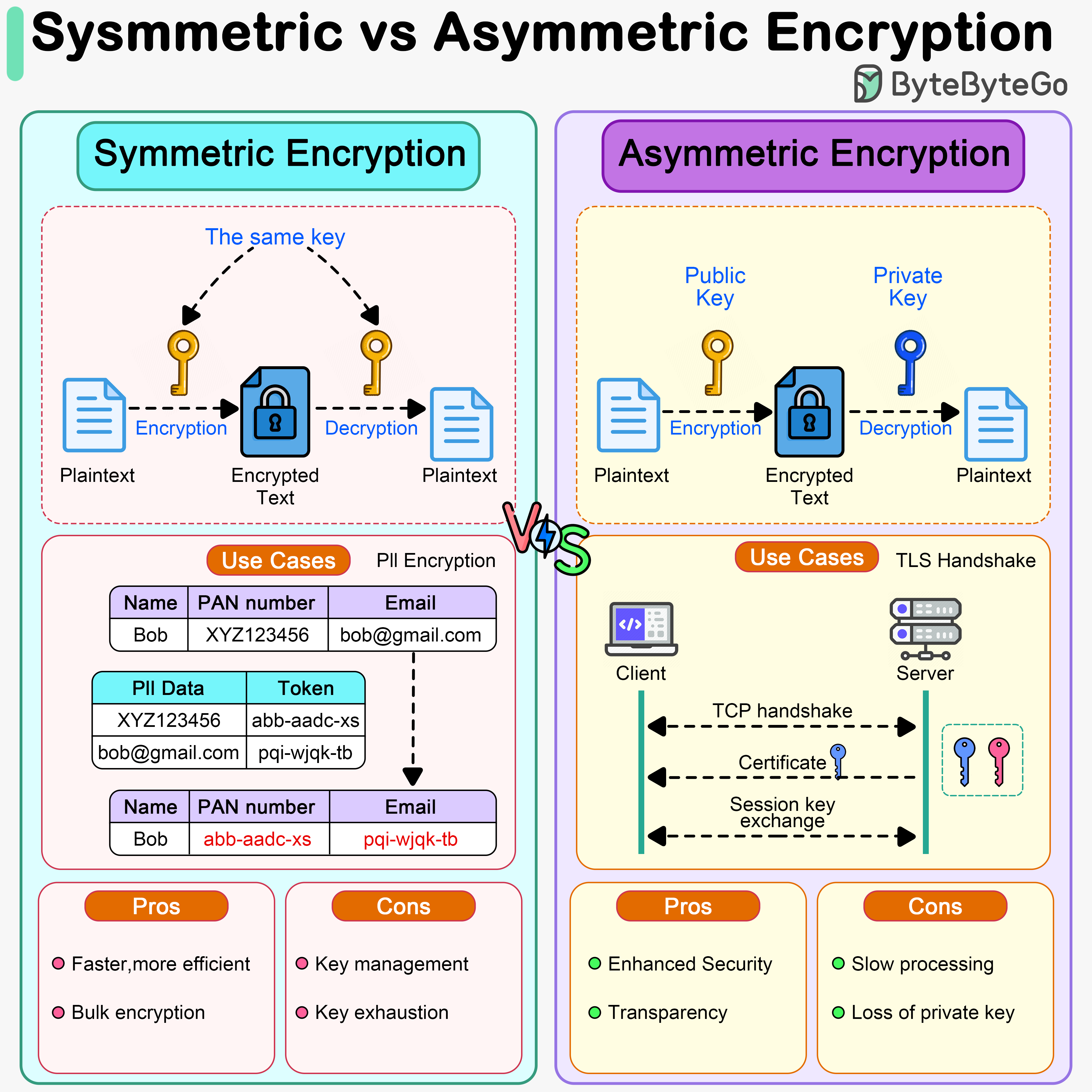

+ * [Symmetric vs Asymmetric Encryption](https://bytebytego.com/guides/symmetric-encryption-vs-asymmetric-encryption)

+ * [Session-based Authentication vs. JWT](https://bytebytego.com/guides/what's-the-difference-between-session-based-authentication-and-jwts)

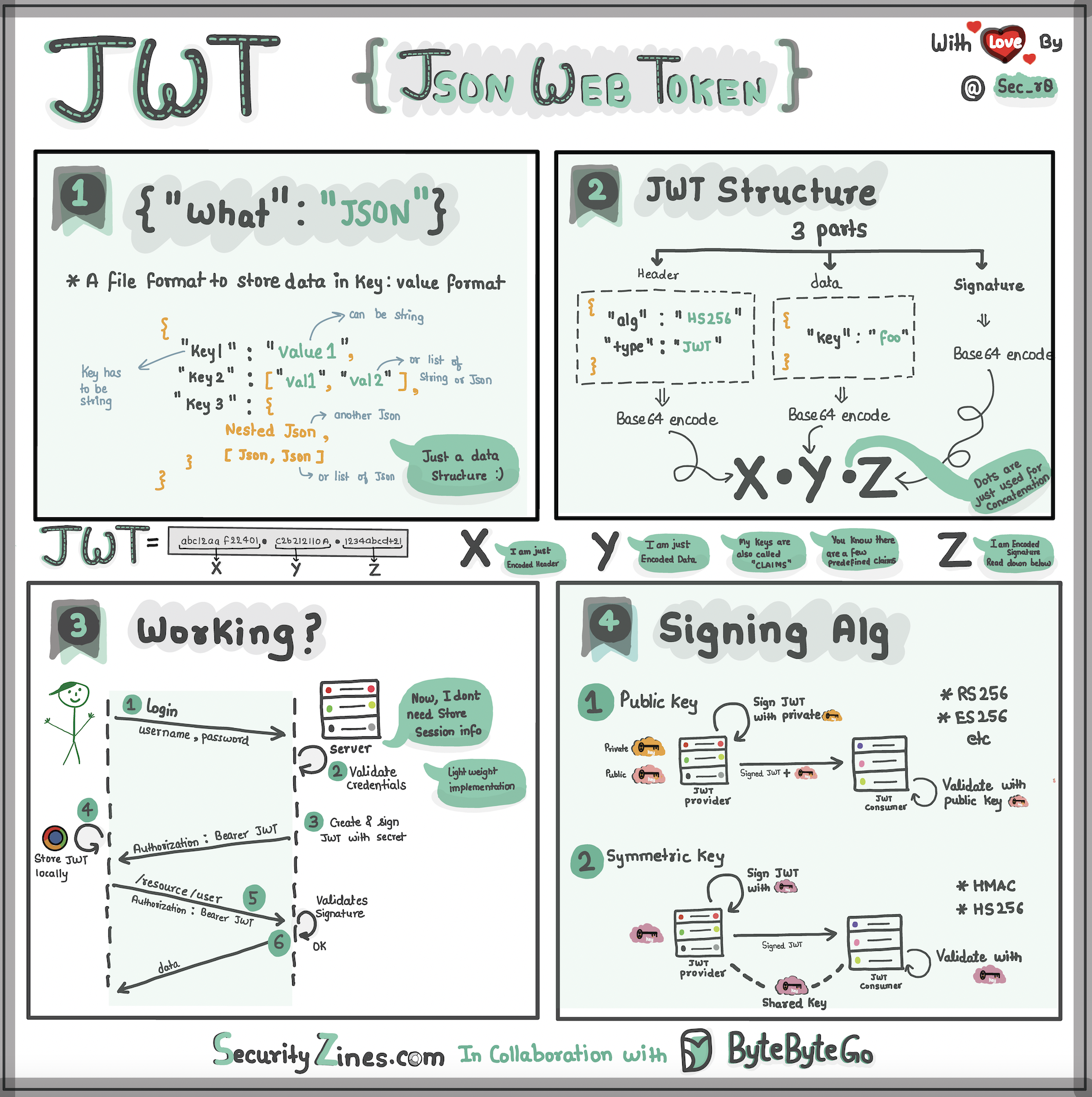

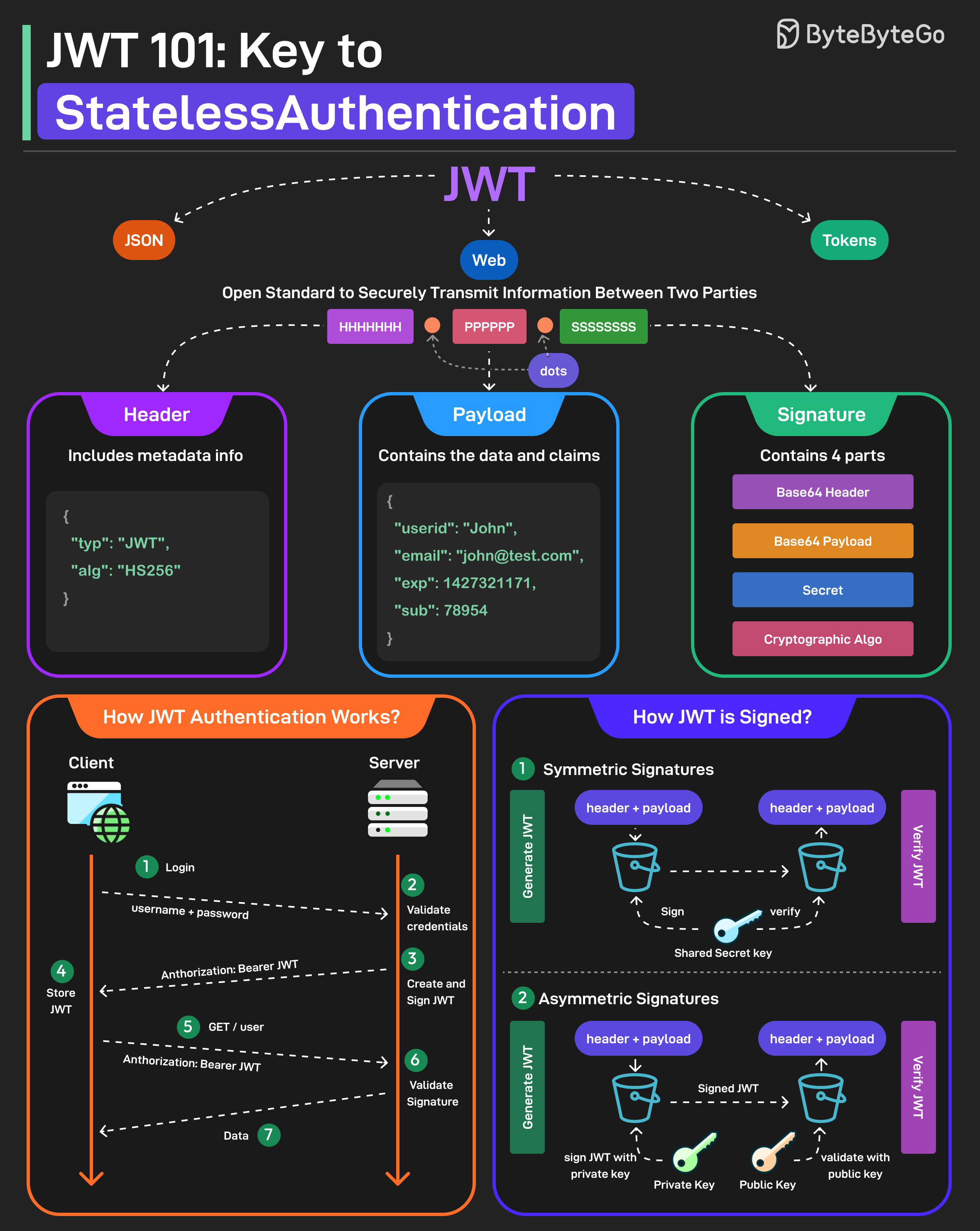

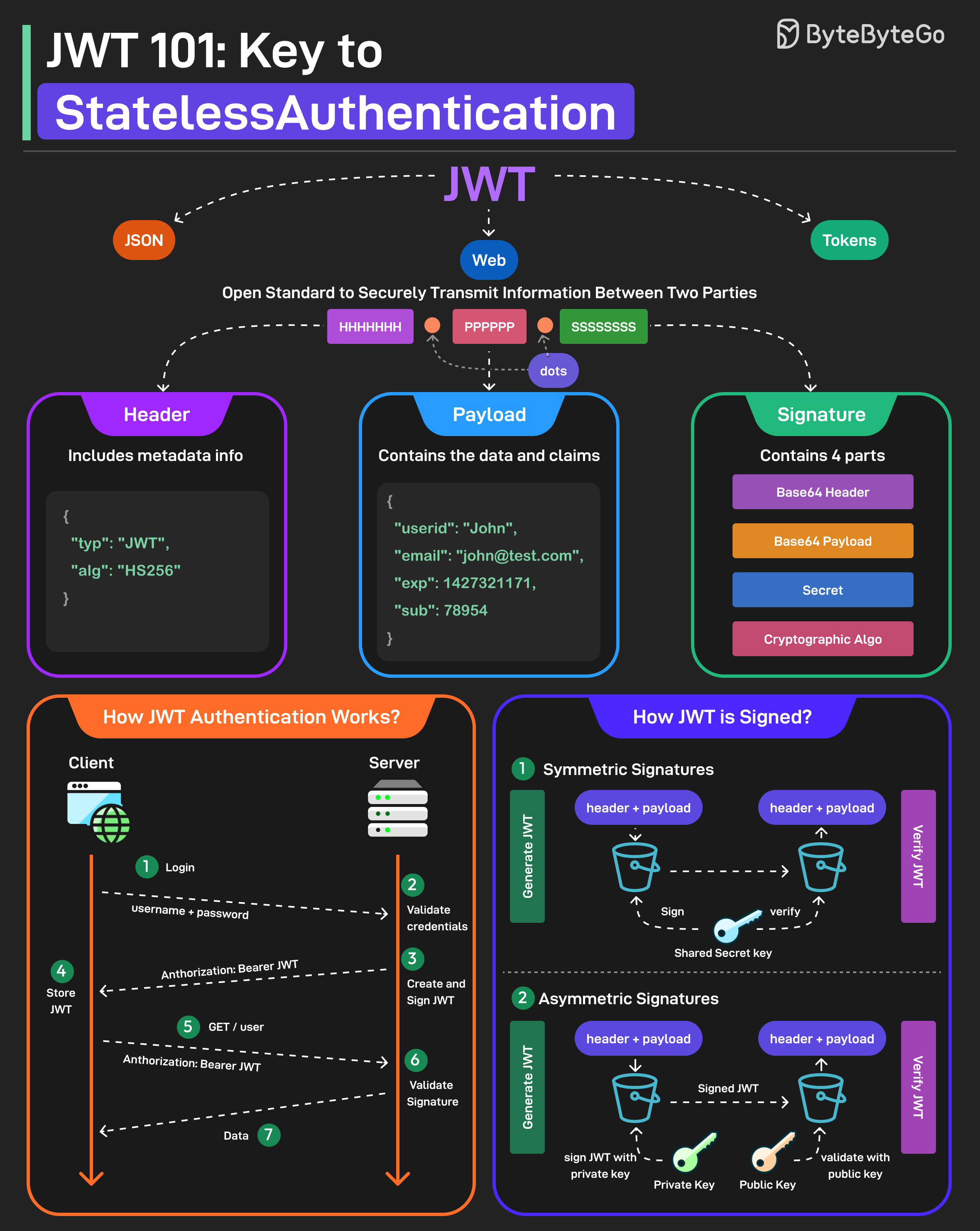

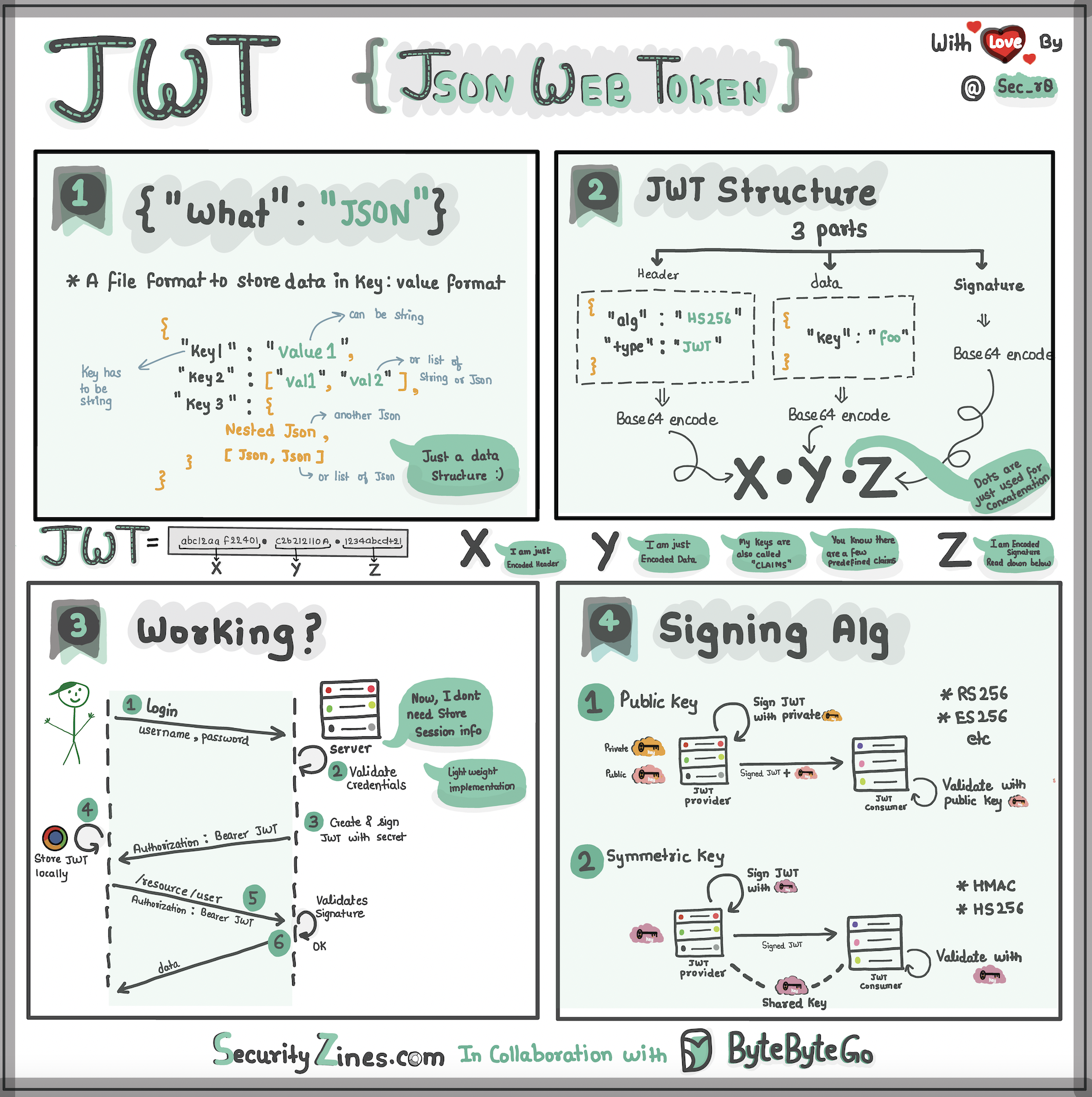

+ * [JWT 101: Key to Stateless Authentication](https://bytebytego.com/guides/jwt-101-key-to-stateless-authentication)

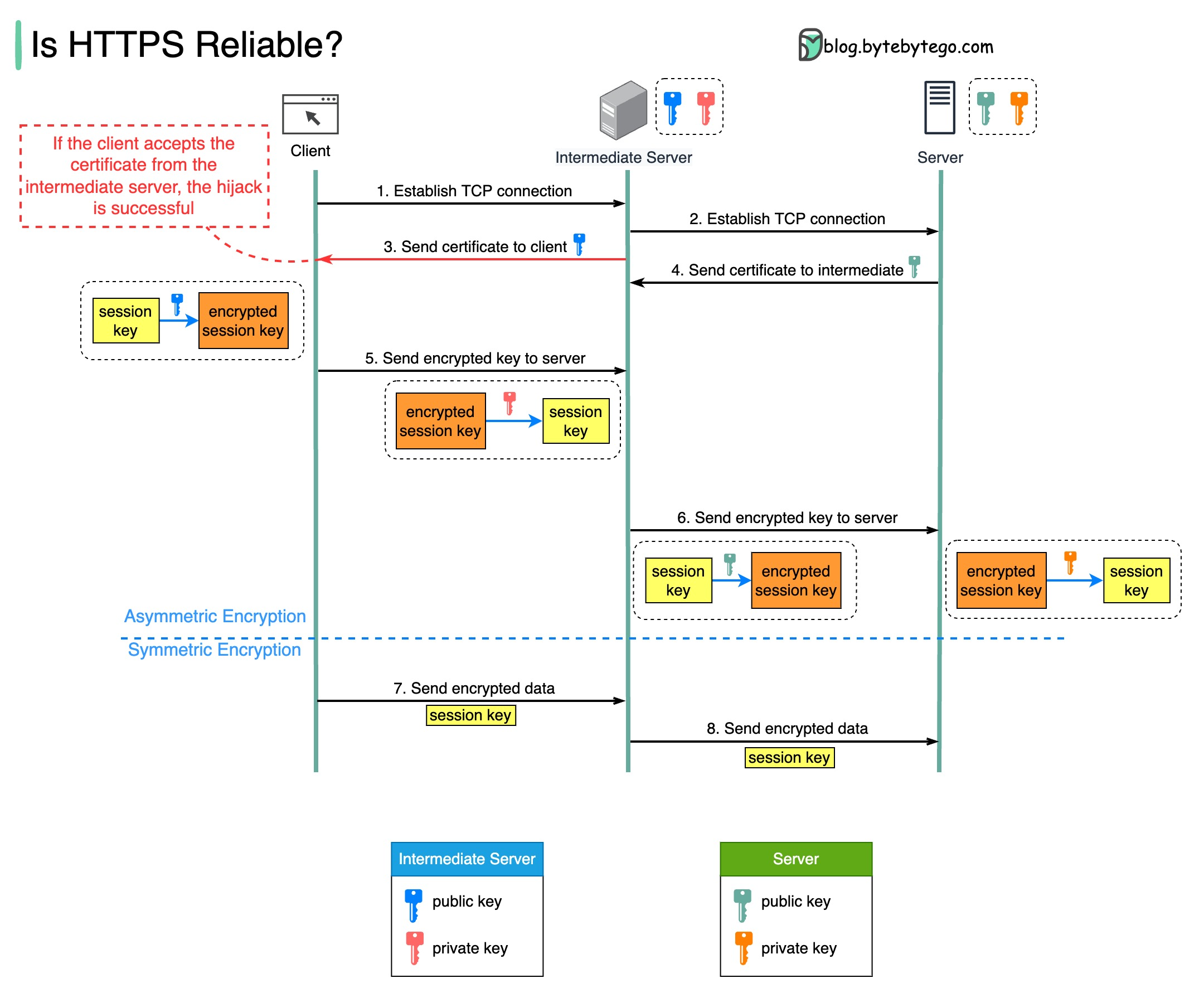

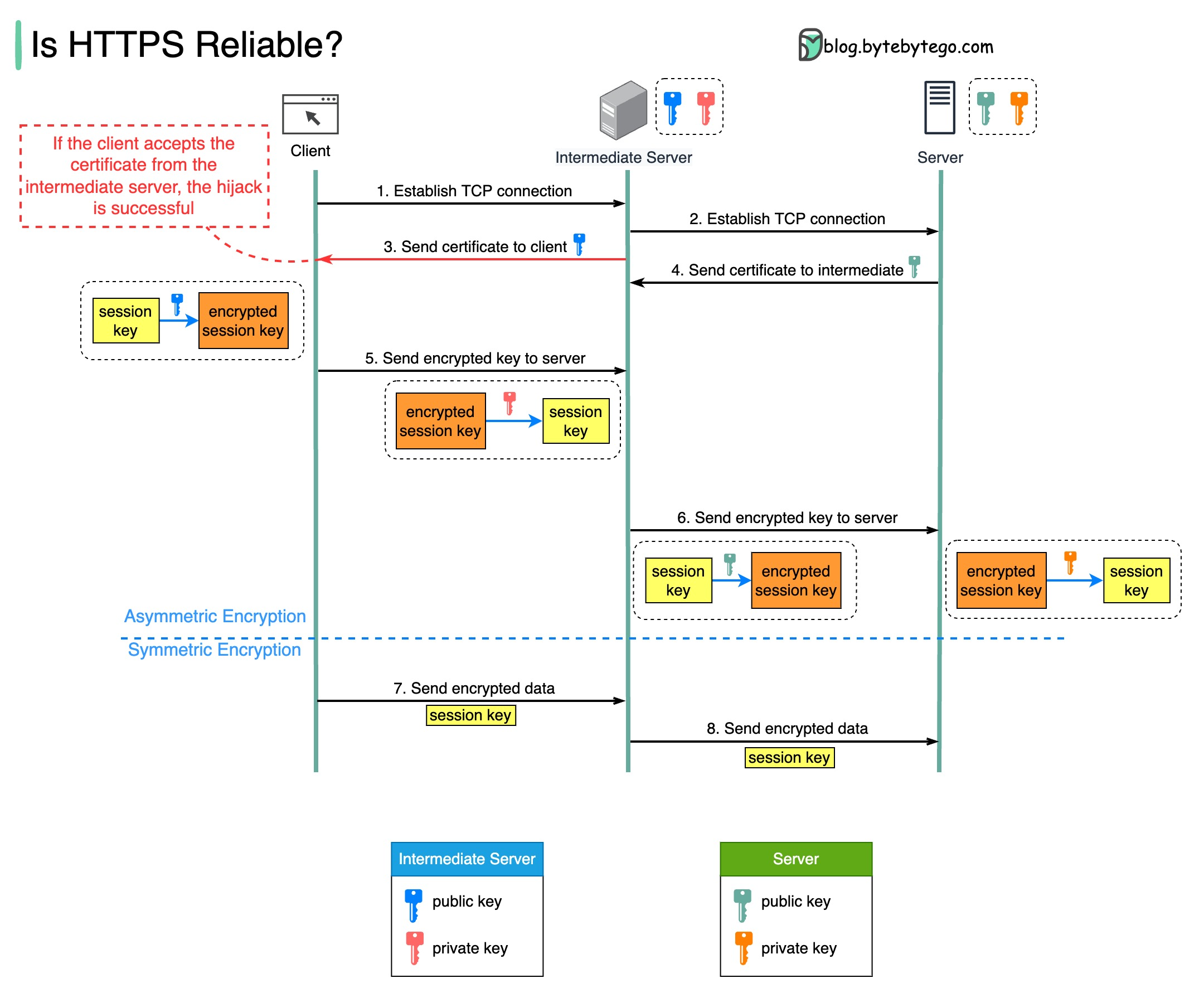

+ * [Is HTTPS Safe?](https://bytebytego.com/guides/is-https-safe)

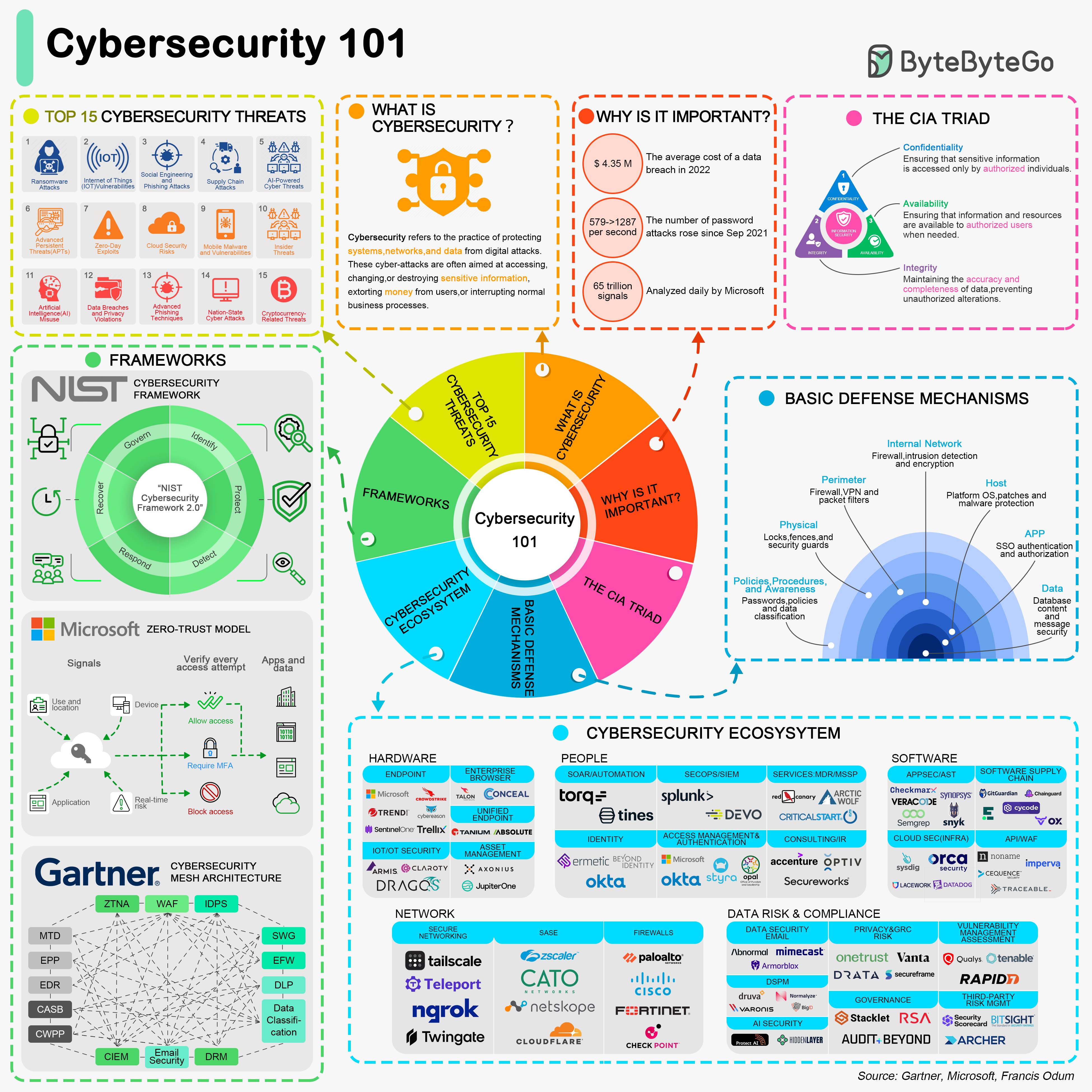

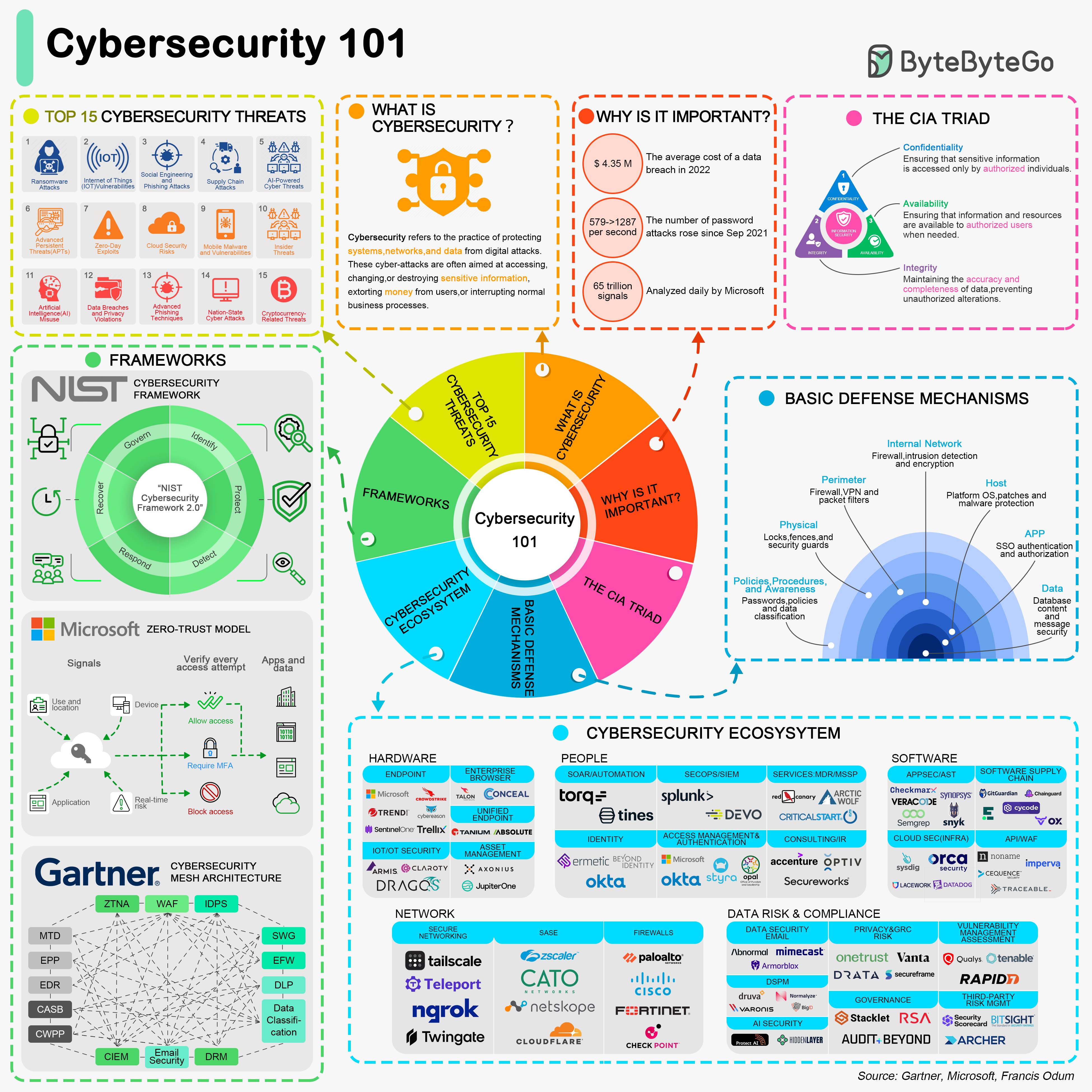

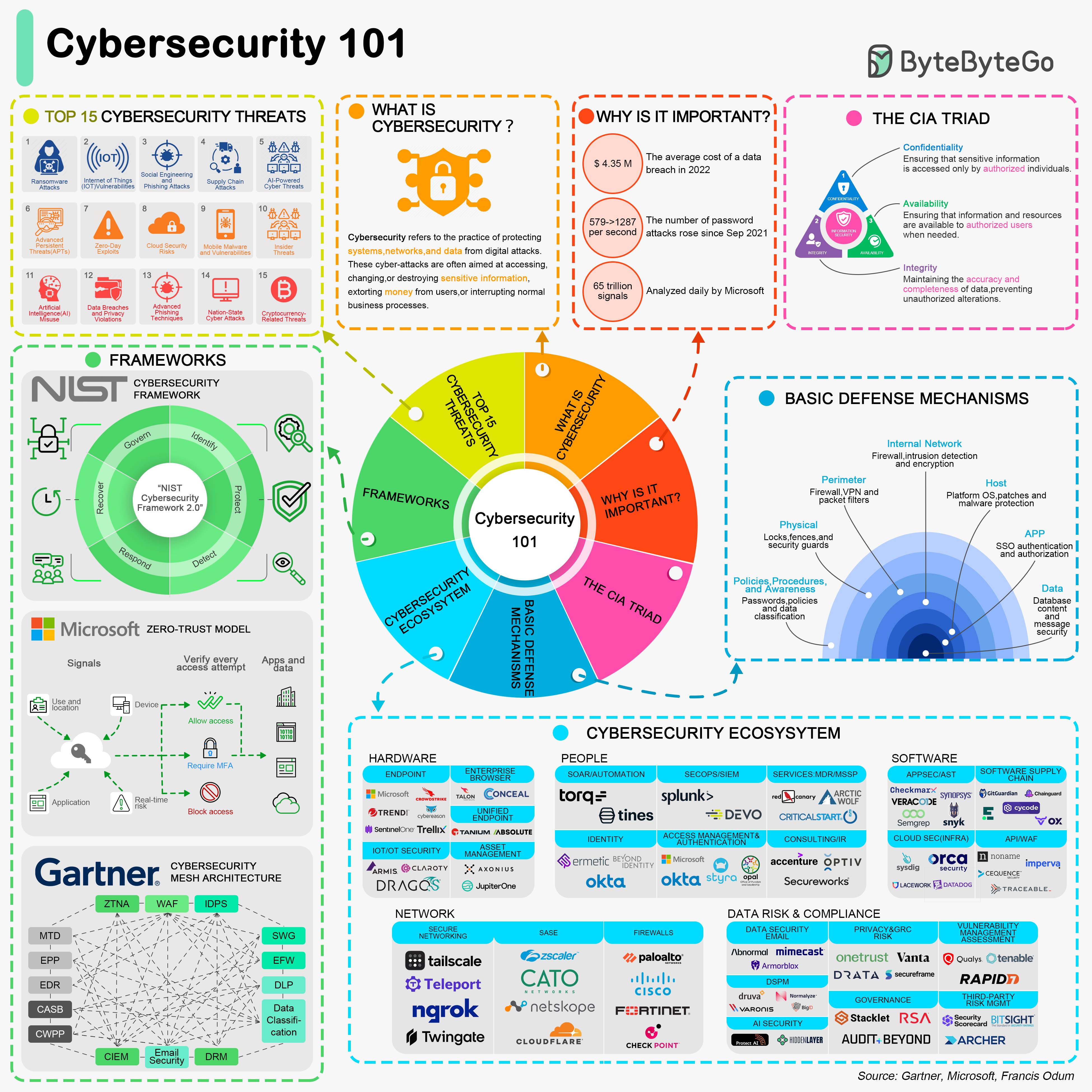

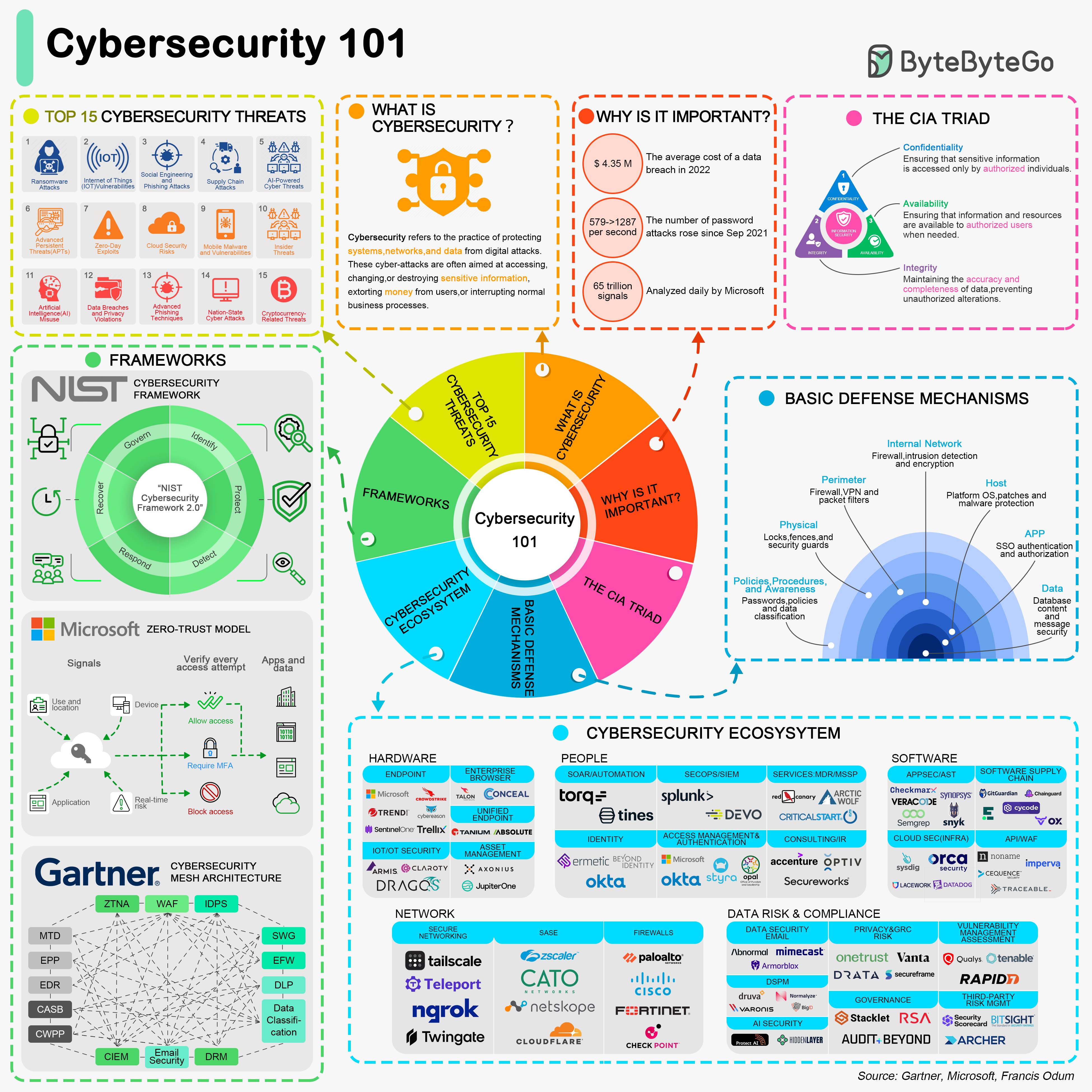

+ * [Cybersecurity 101](https://bytebytego.com/guides/cybersecurity-101-in-one-picture)

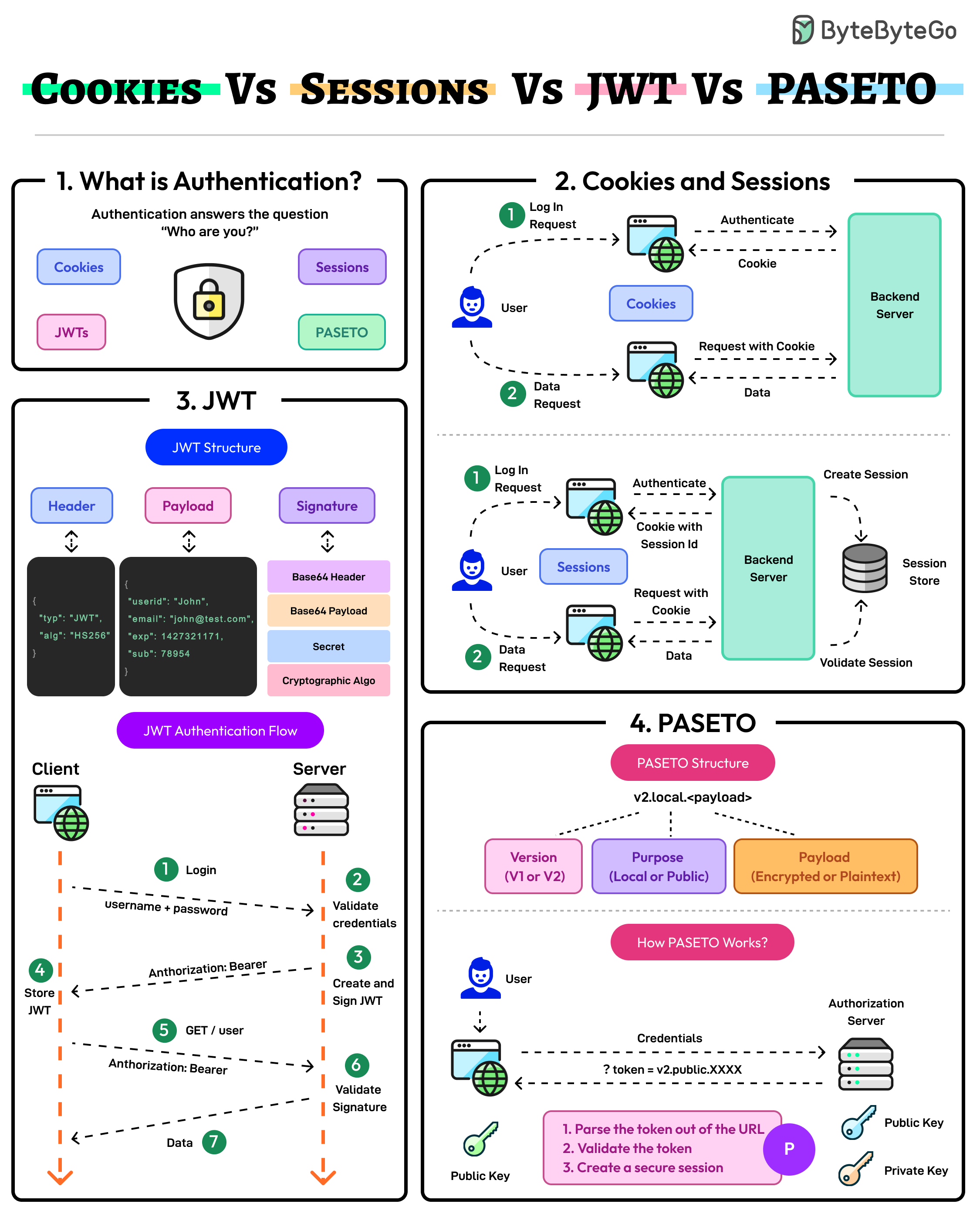

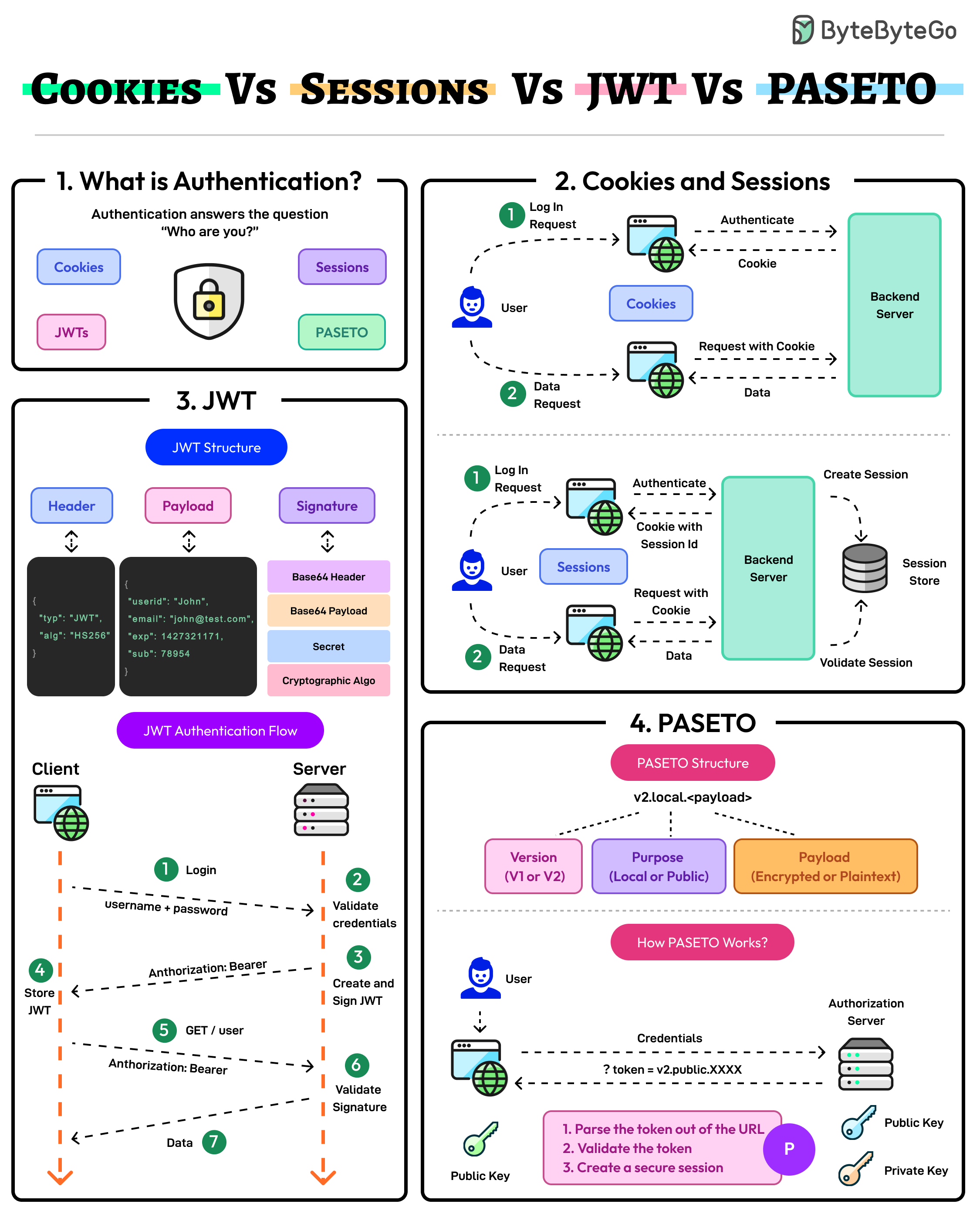

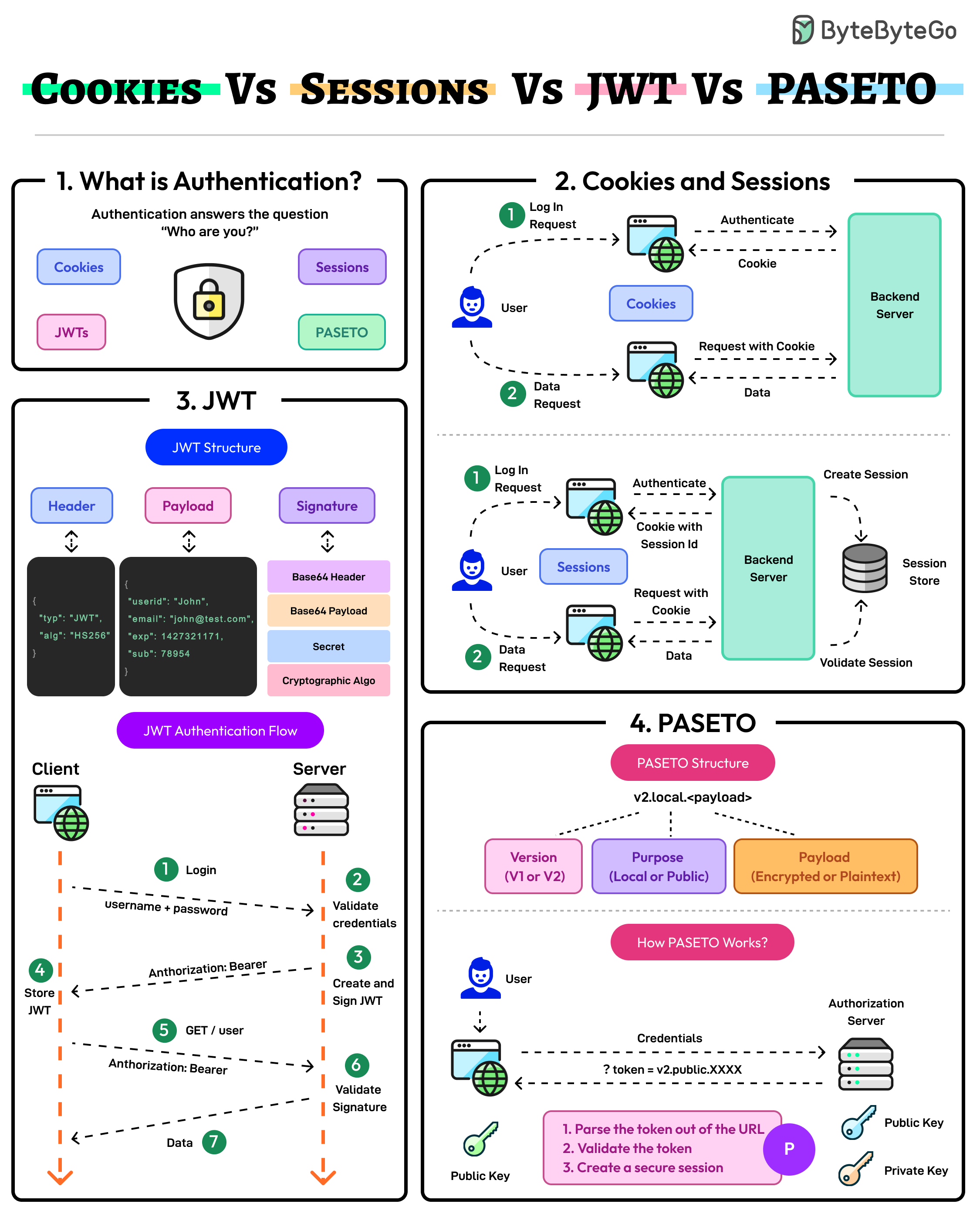

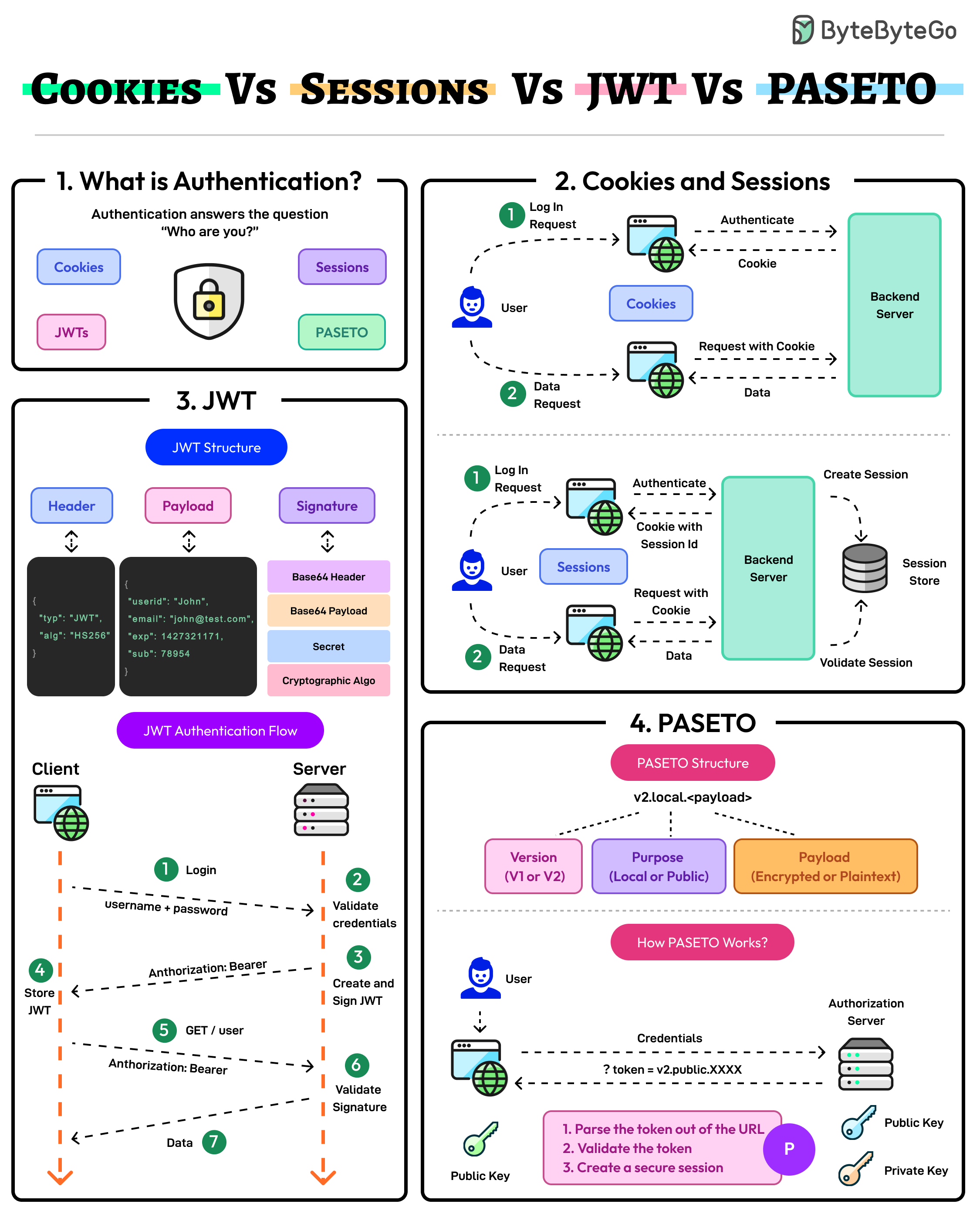

+ * [Cookies vs Sessions vs JWT vs PASETO](https://bytebytego.com/guides/cookies-vs-sessions-vs-jwt-vs-paseto)

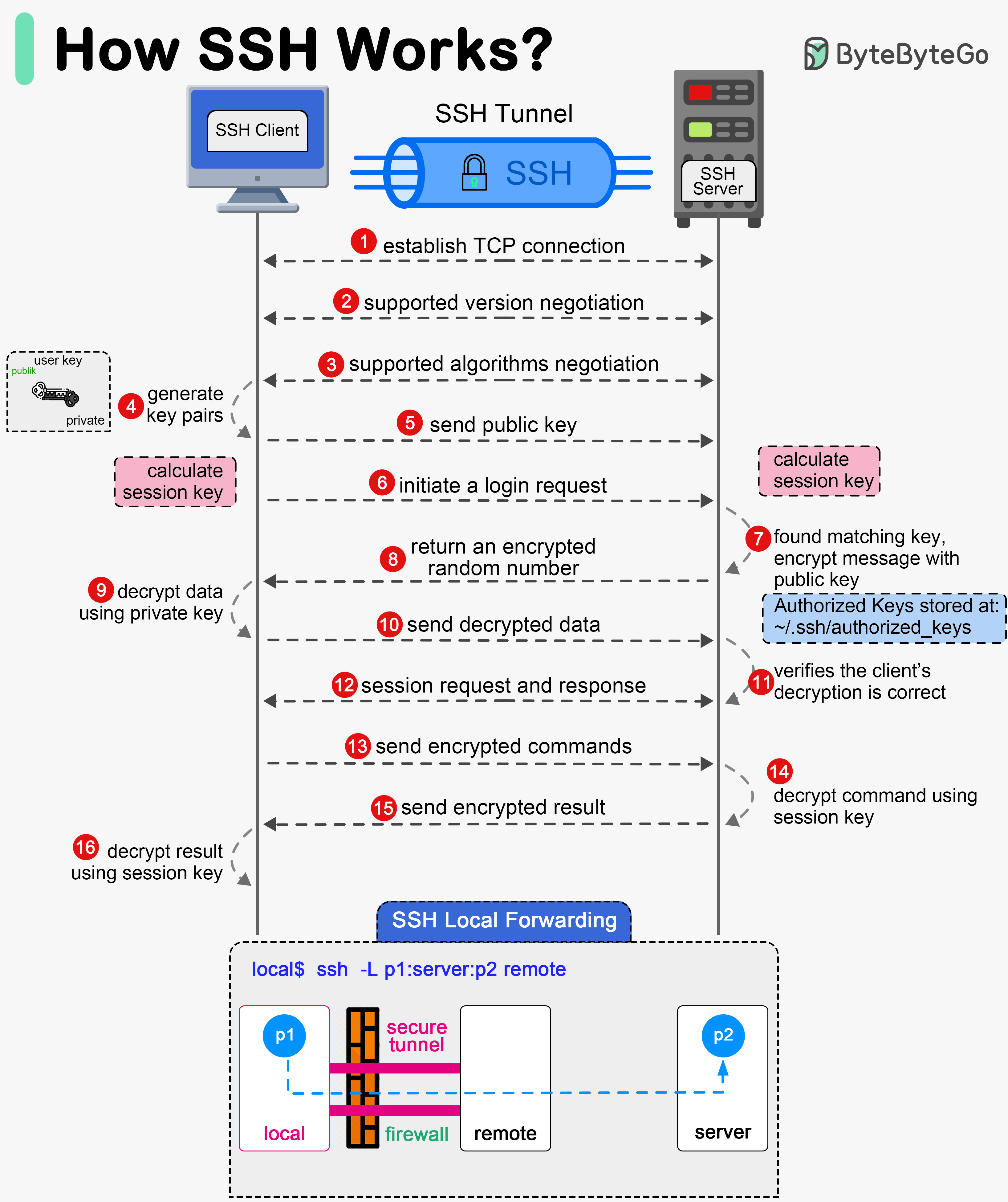

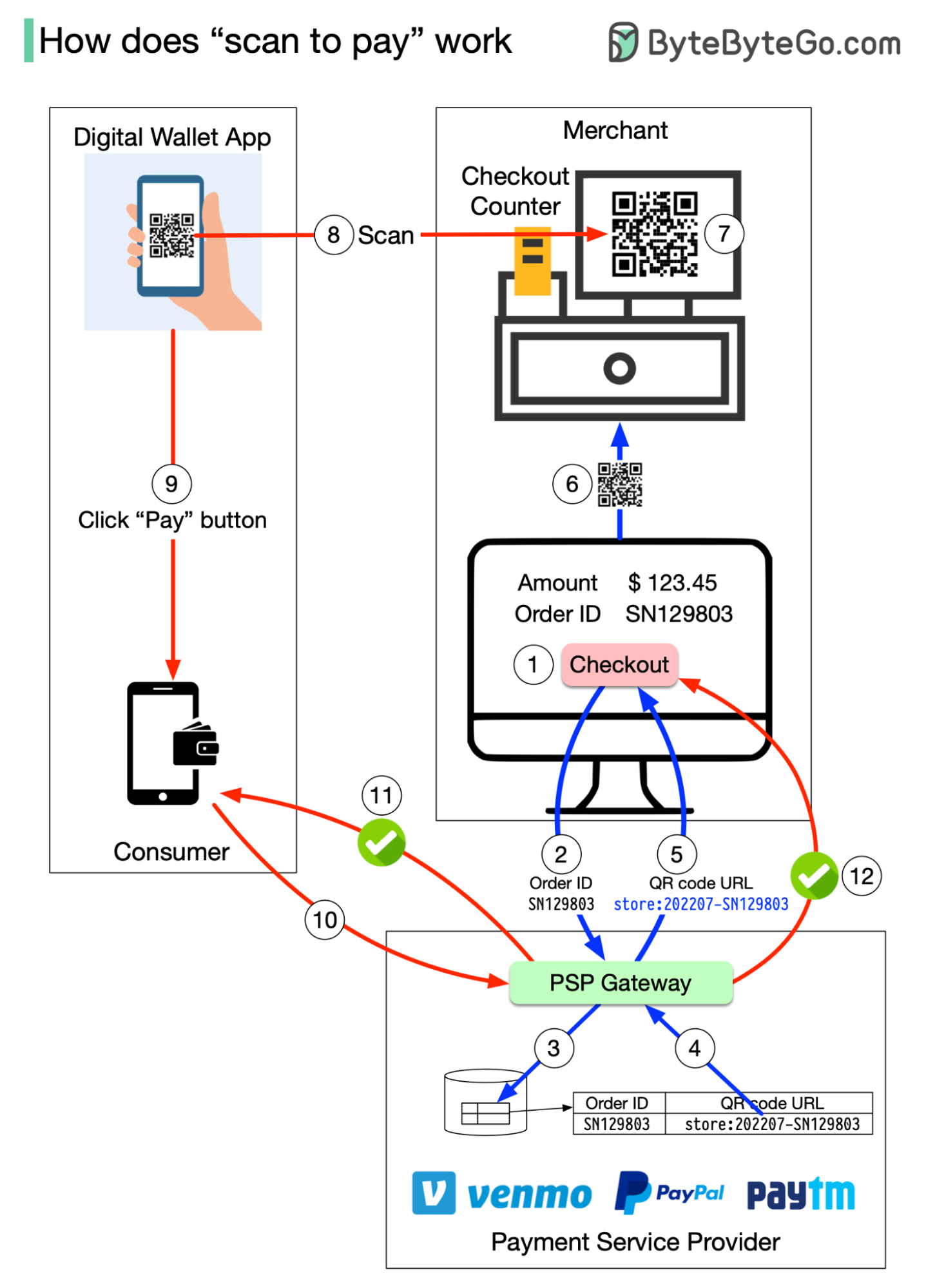

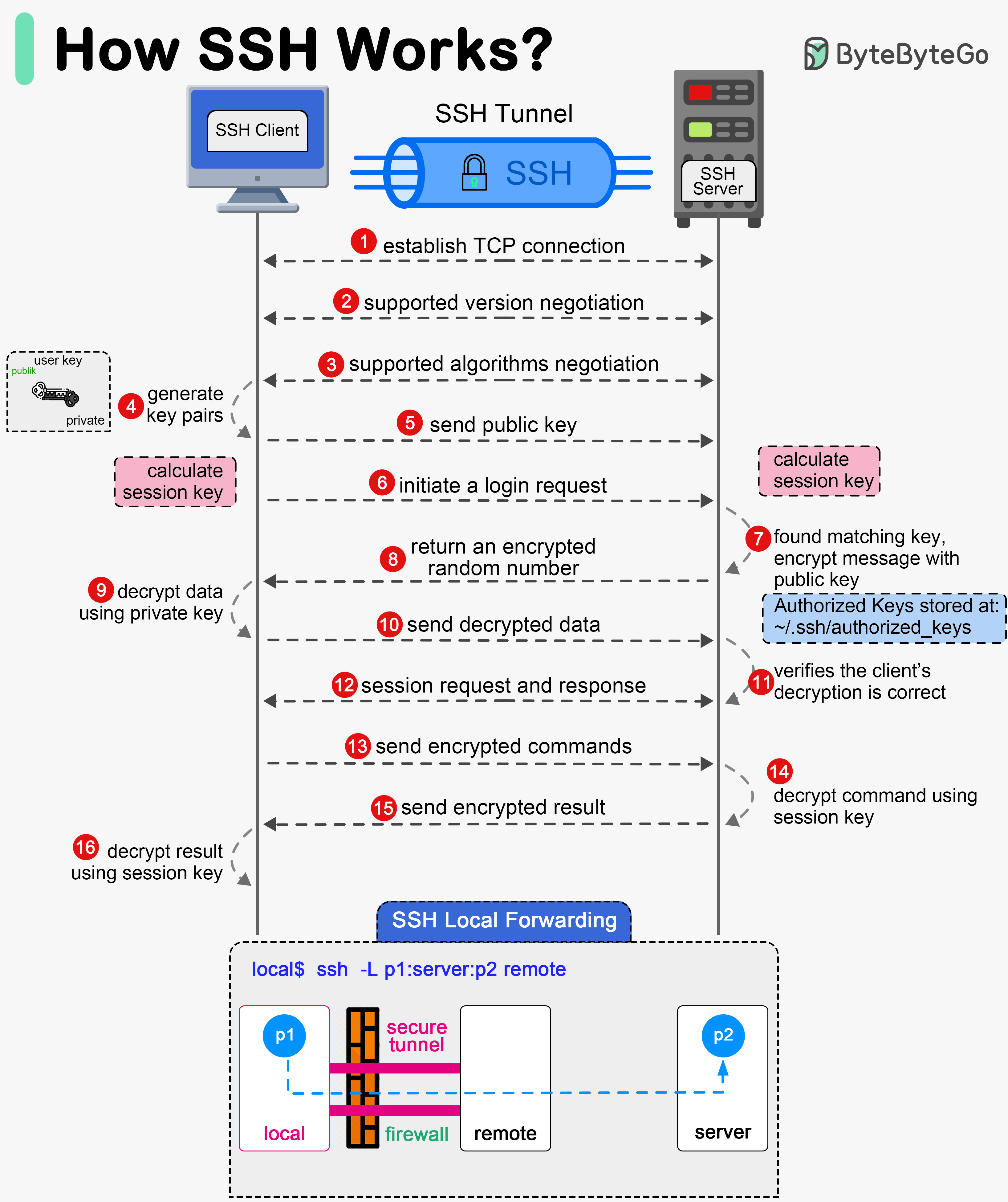

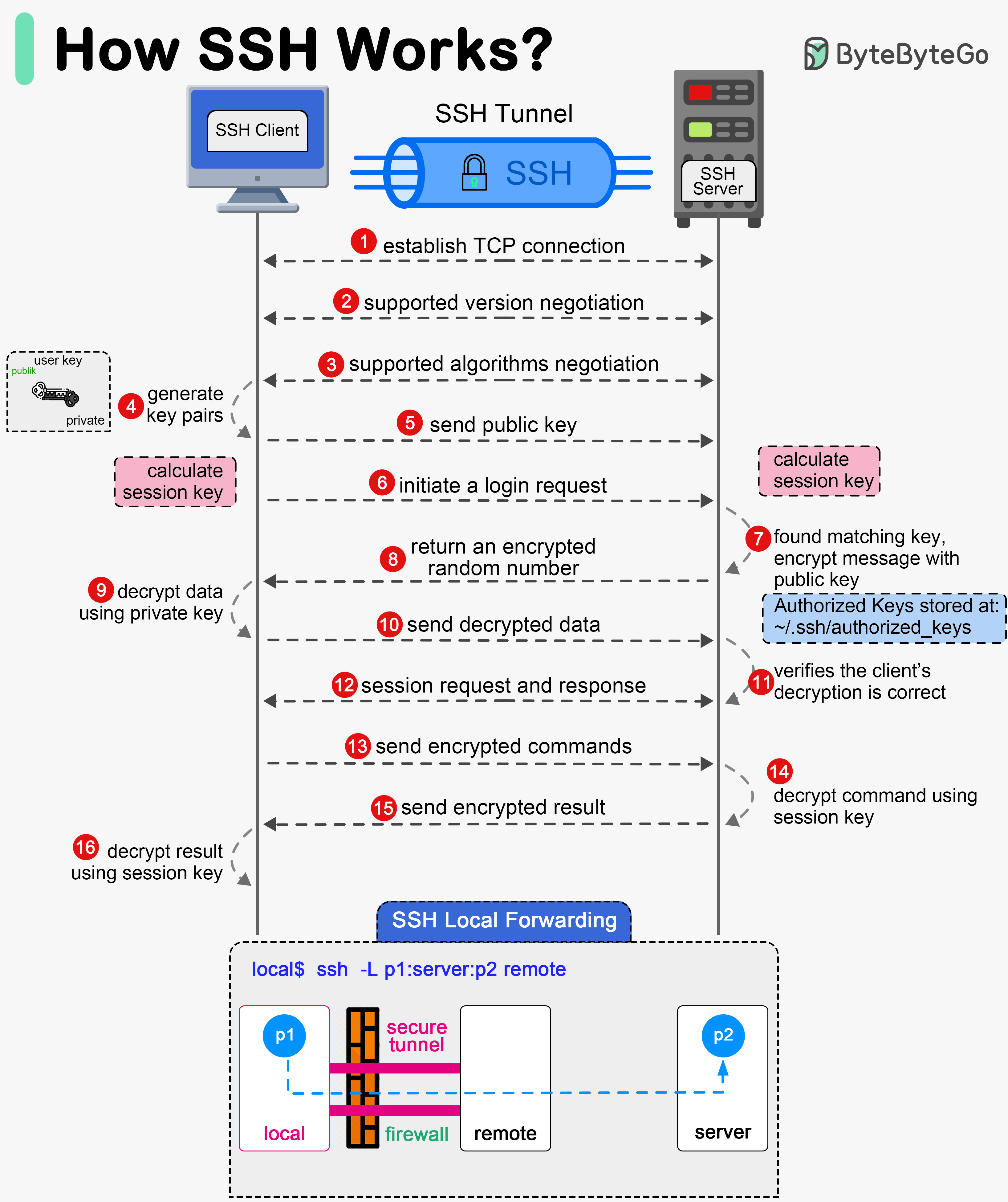

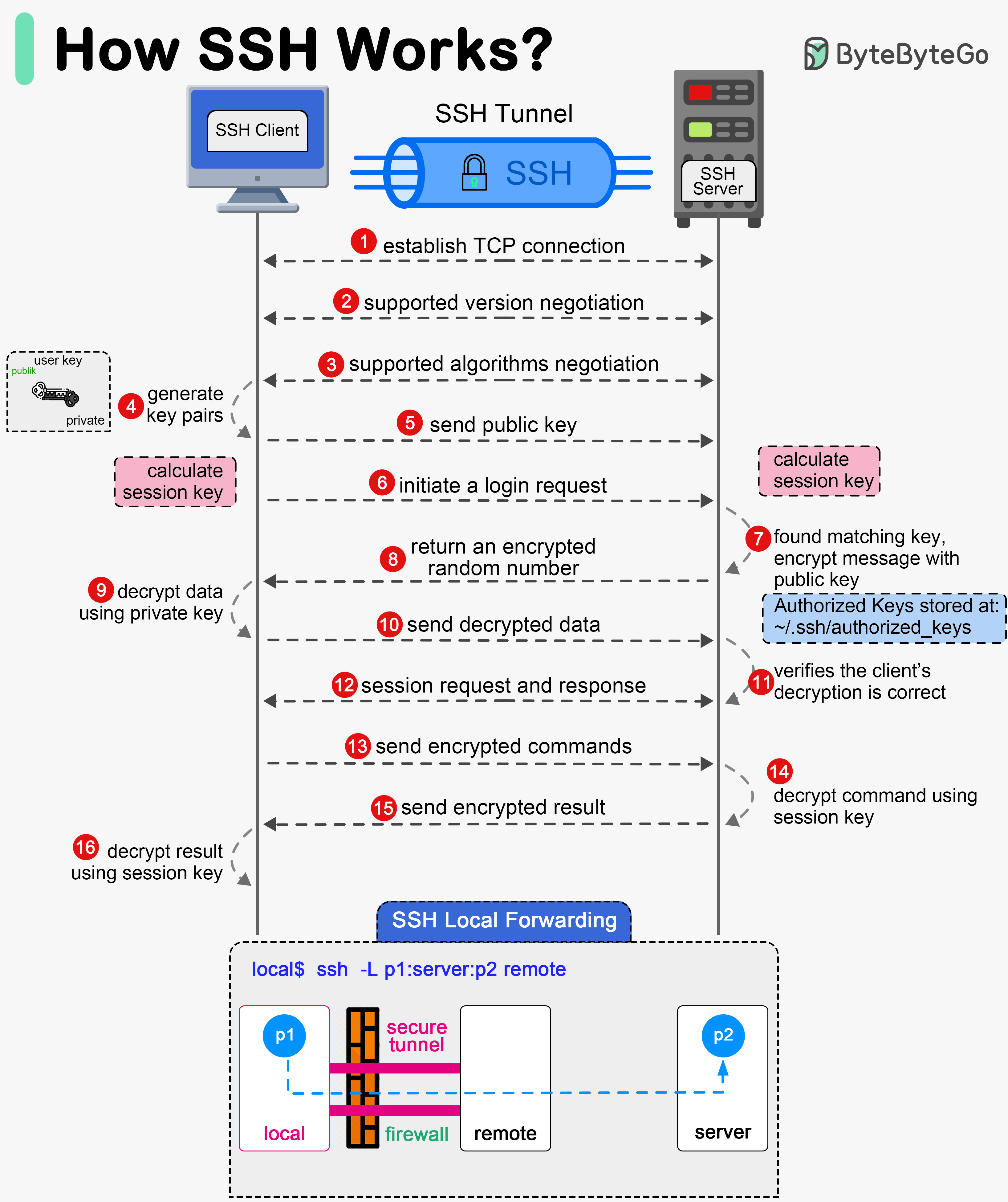

+ * [How does SSH work?](https://bytebytego.com/guides/how-does-ssh-work)

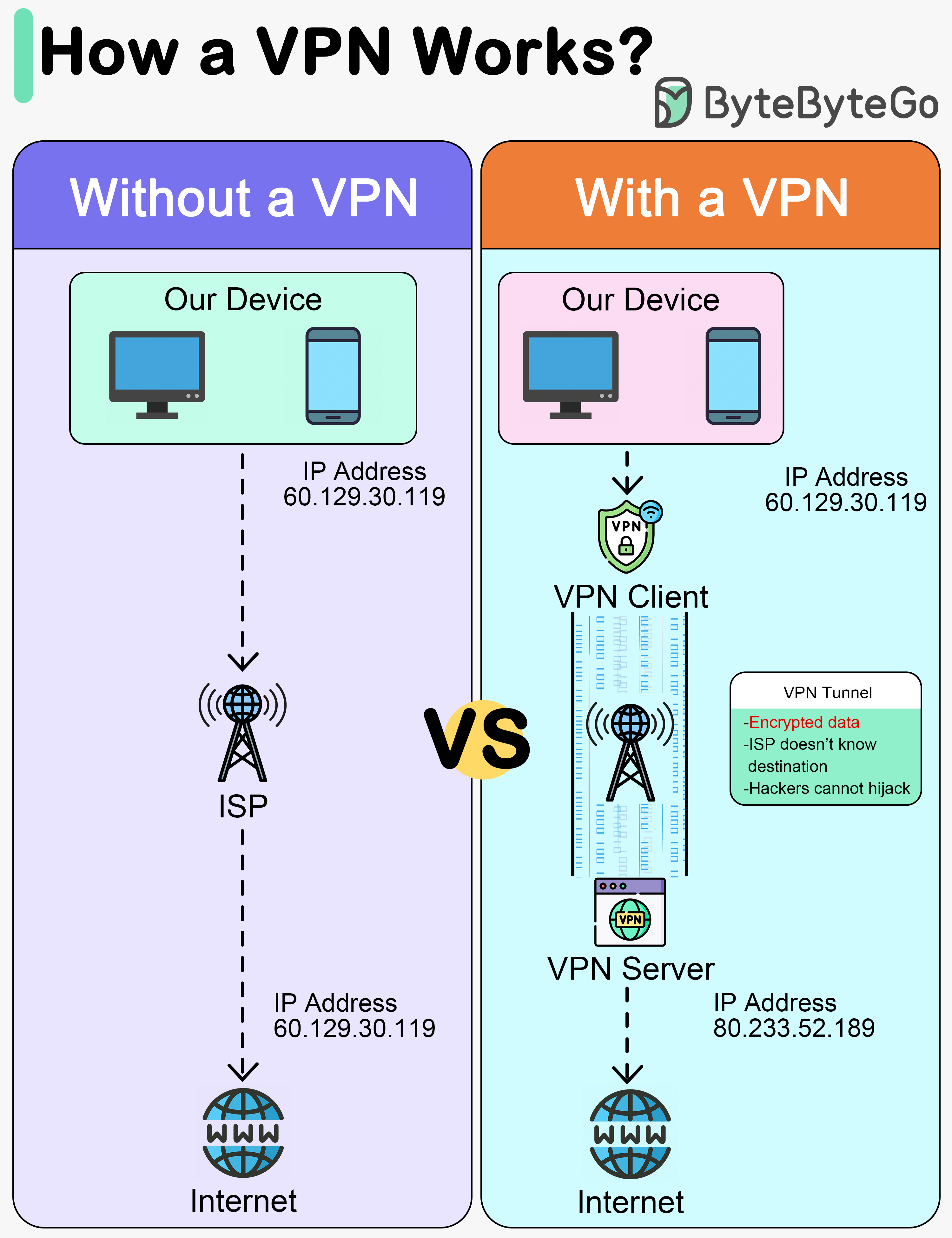

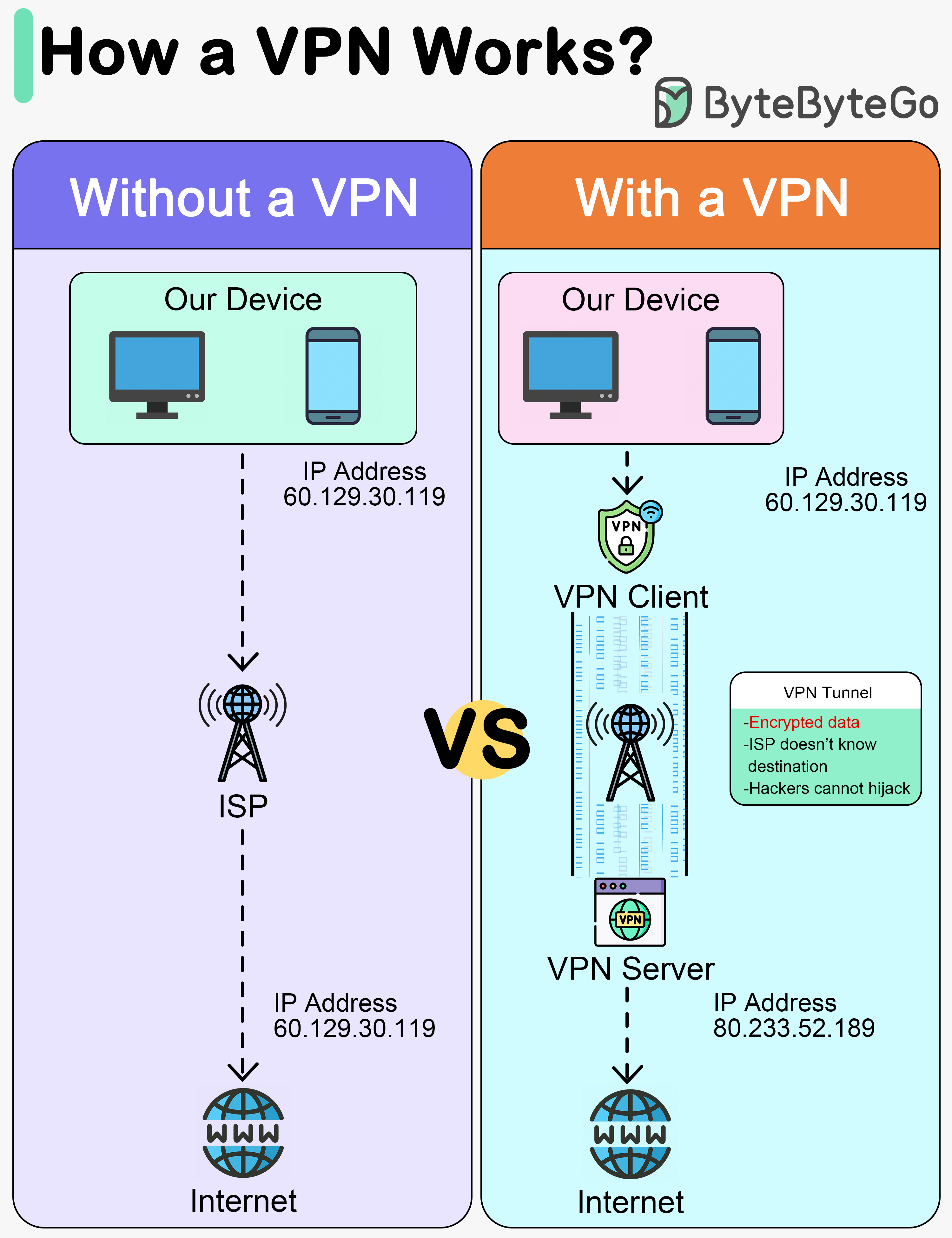

+ * [How Does a VPN Work?](https://bytebytego.com/guides/how-does-a-vpn-work)

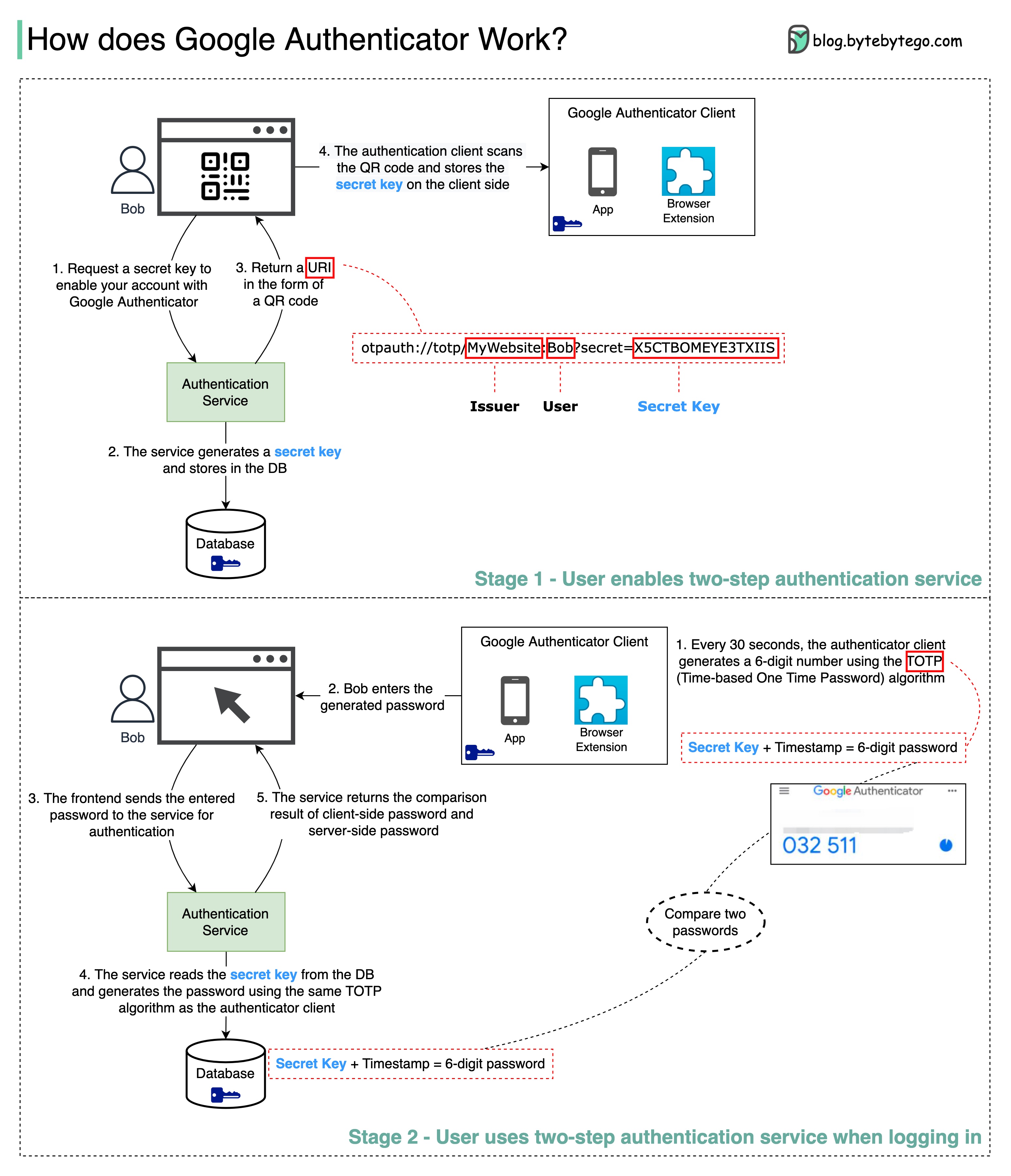

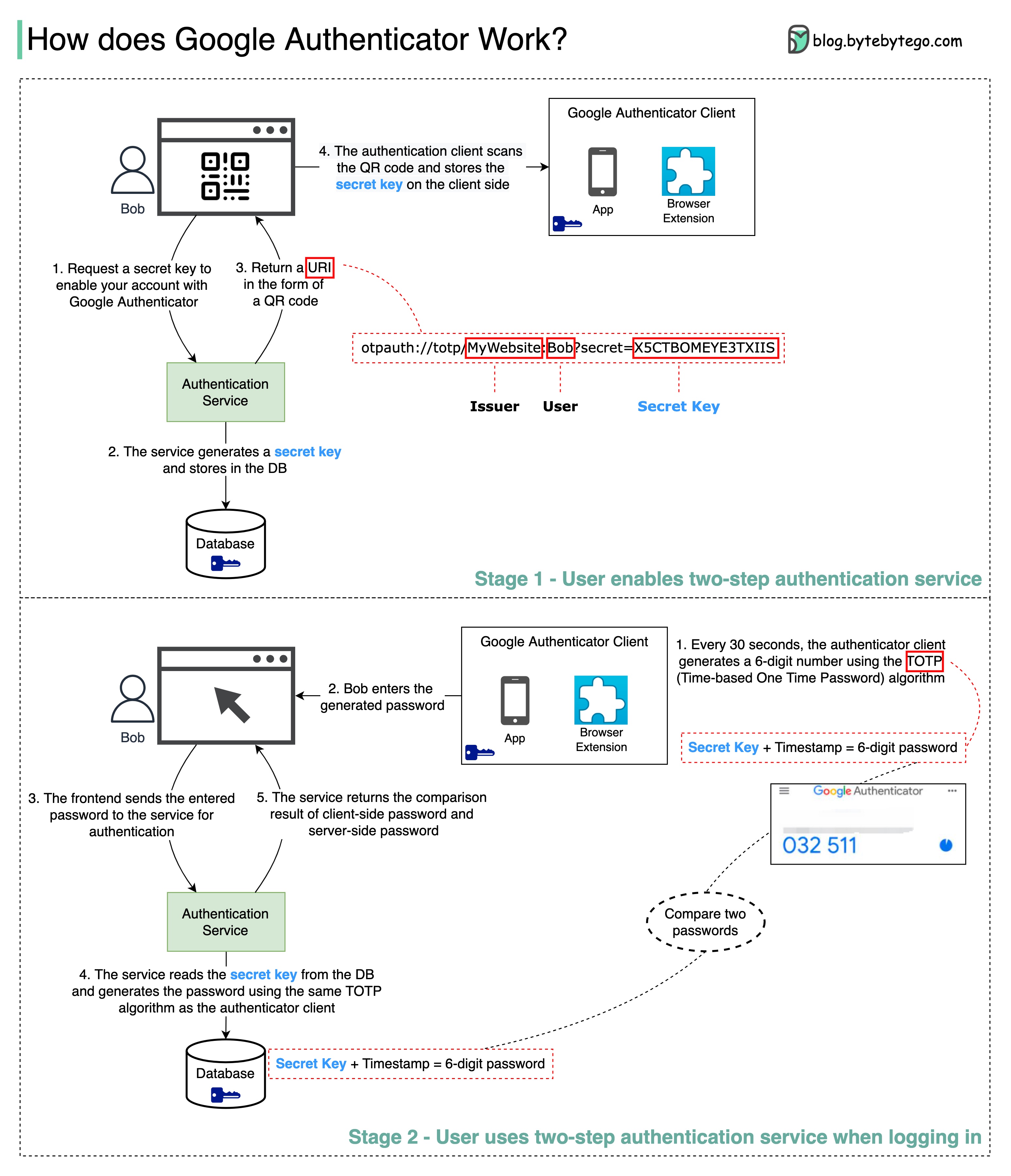

+ * [How Google Authenticator Works](https://bytebytego.com/guides/how-does-google-authenticator-or-other-types-of-2-factor-authenticators-work)

+ * [Types of VPNs](https://bytebytego.com/guides/types-of-vpns)

+ * [What is a Cookie?](https://bytebytego.com/guides/what-is-a-cookie)

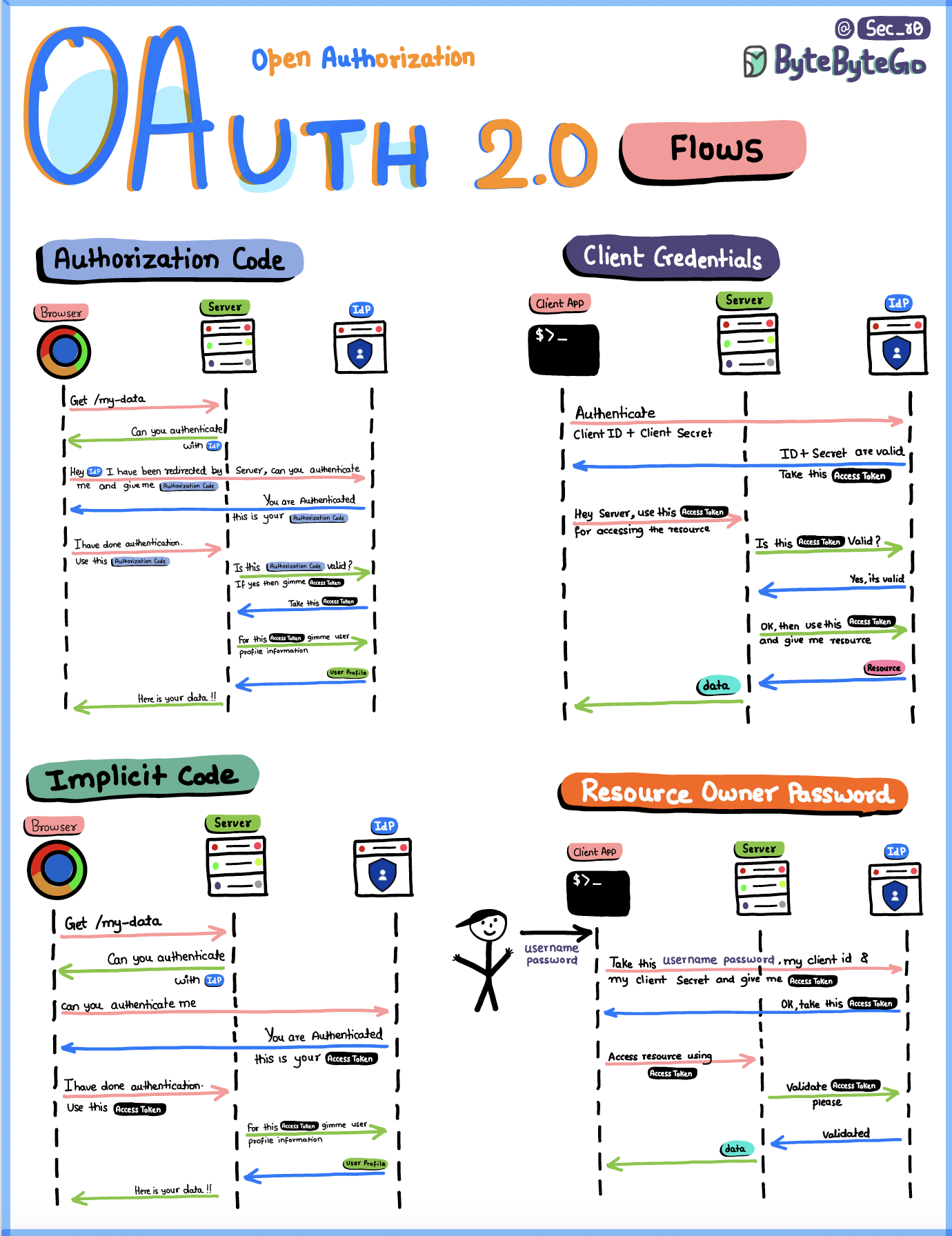

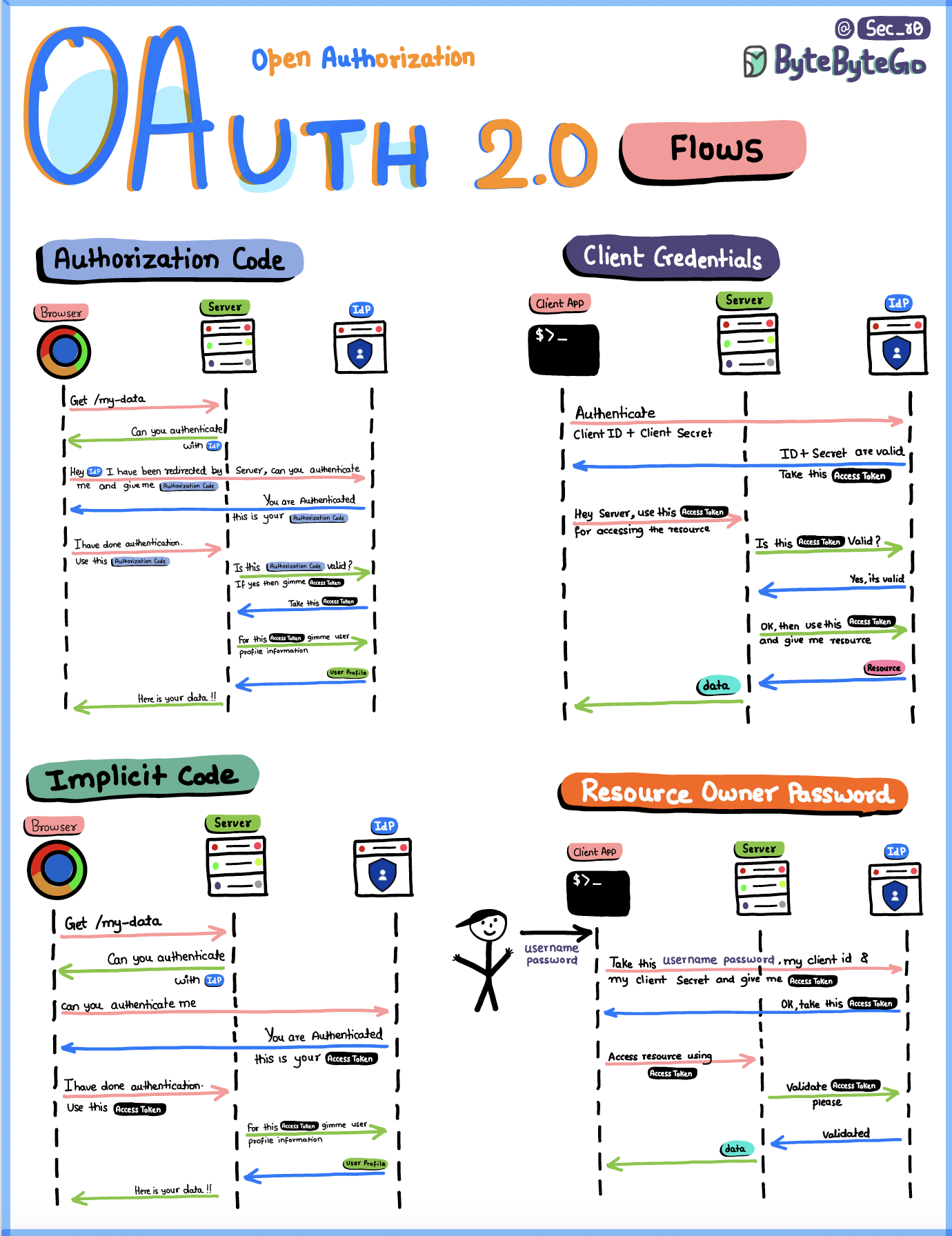

+ * [OAuth 2.0 Flows](https://bytebytego.com/guides/oauth-20-flows)

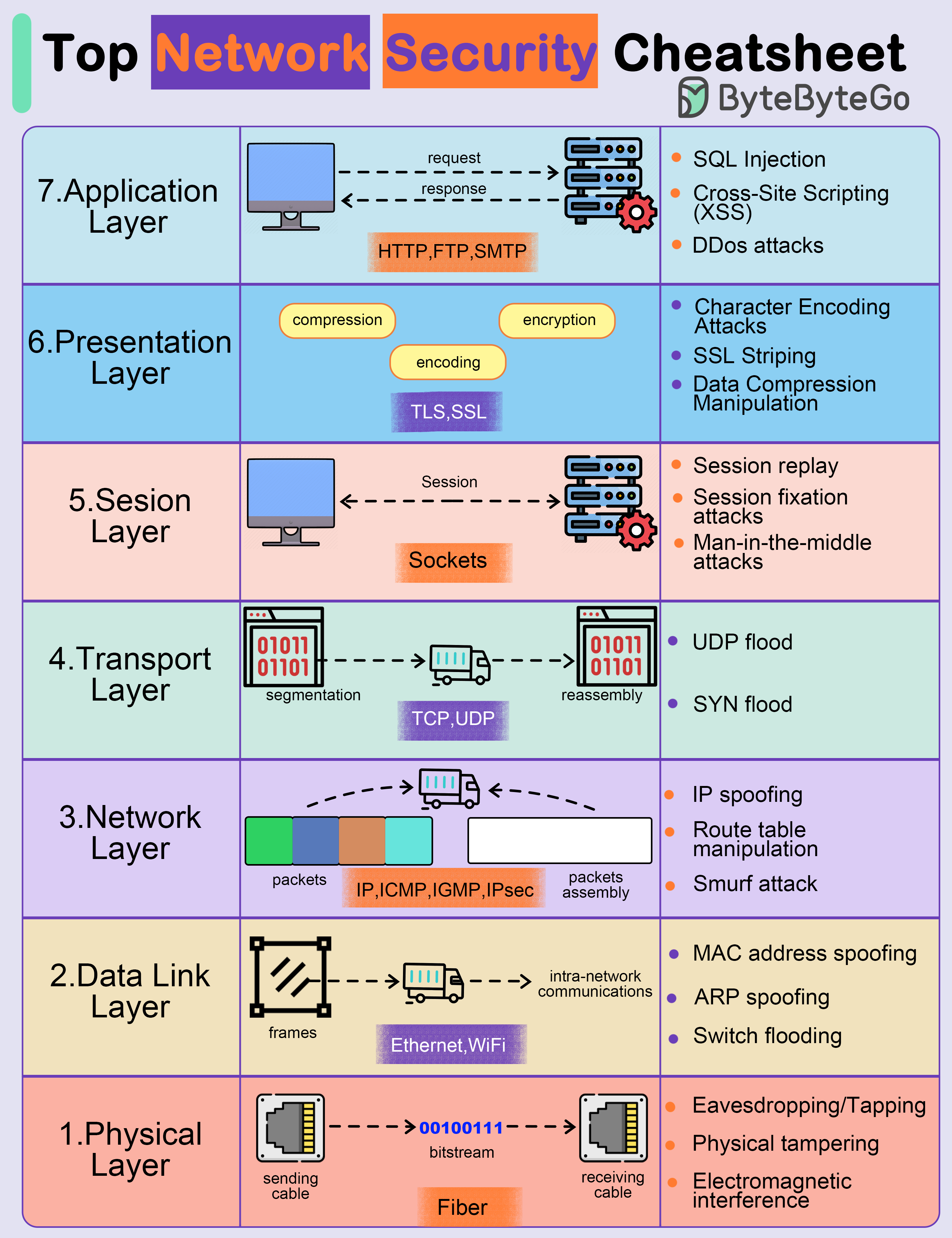

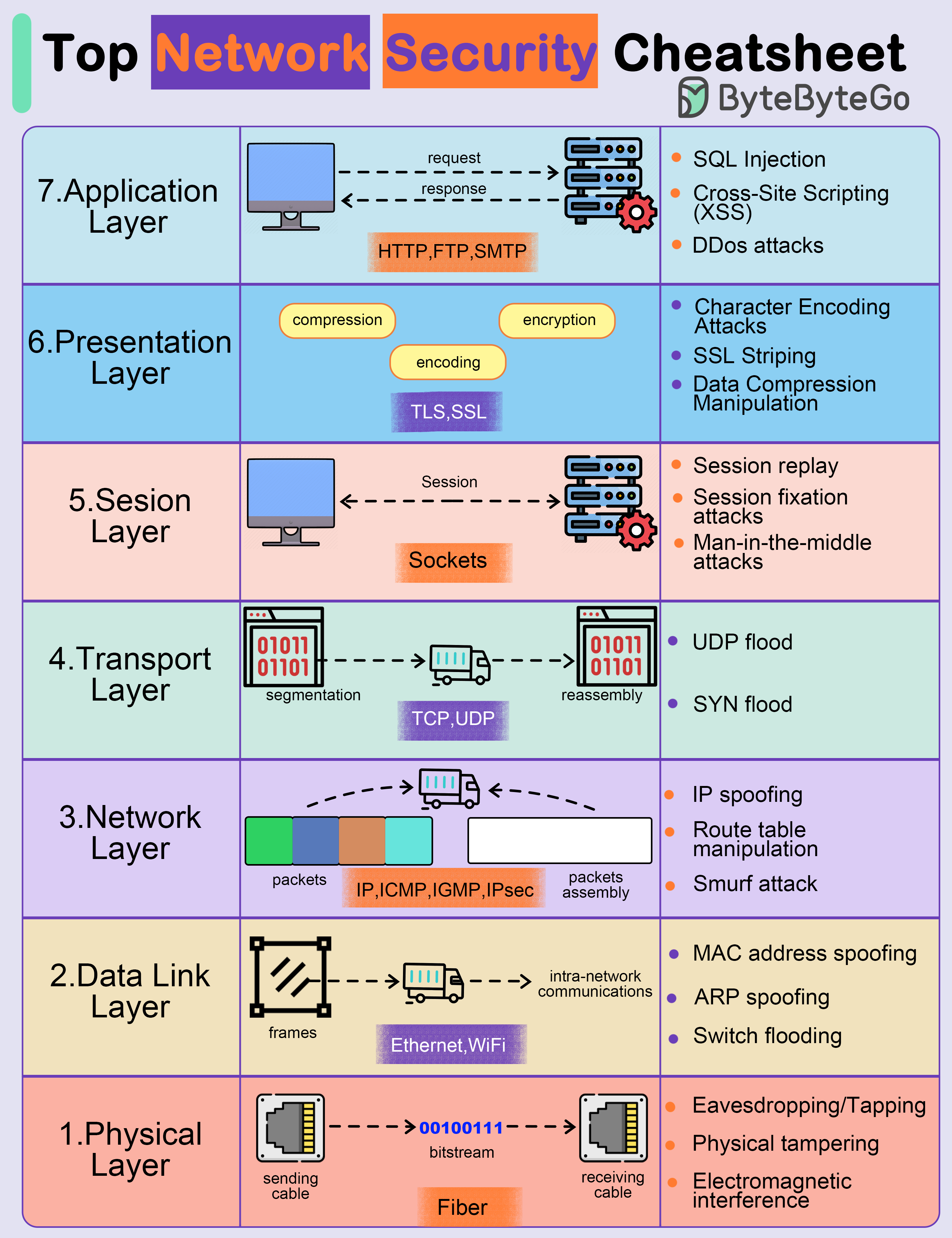

+ * [Top Network Security Cheatsheet](https://bytebytego.com/guides/top-network-security-cheatsheet)

+ * [What is SSO (Single Sign-On)?](https://bytebytego.com/guides/v1what-is-sso-single-sign-on)

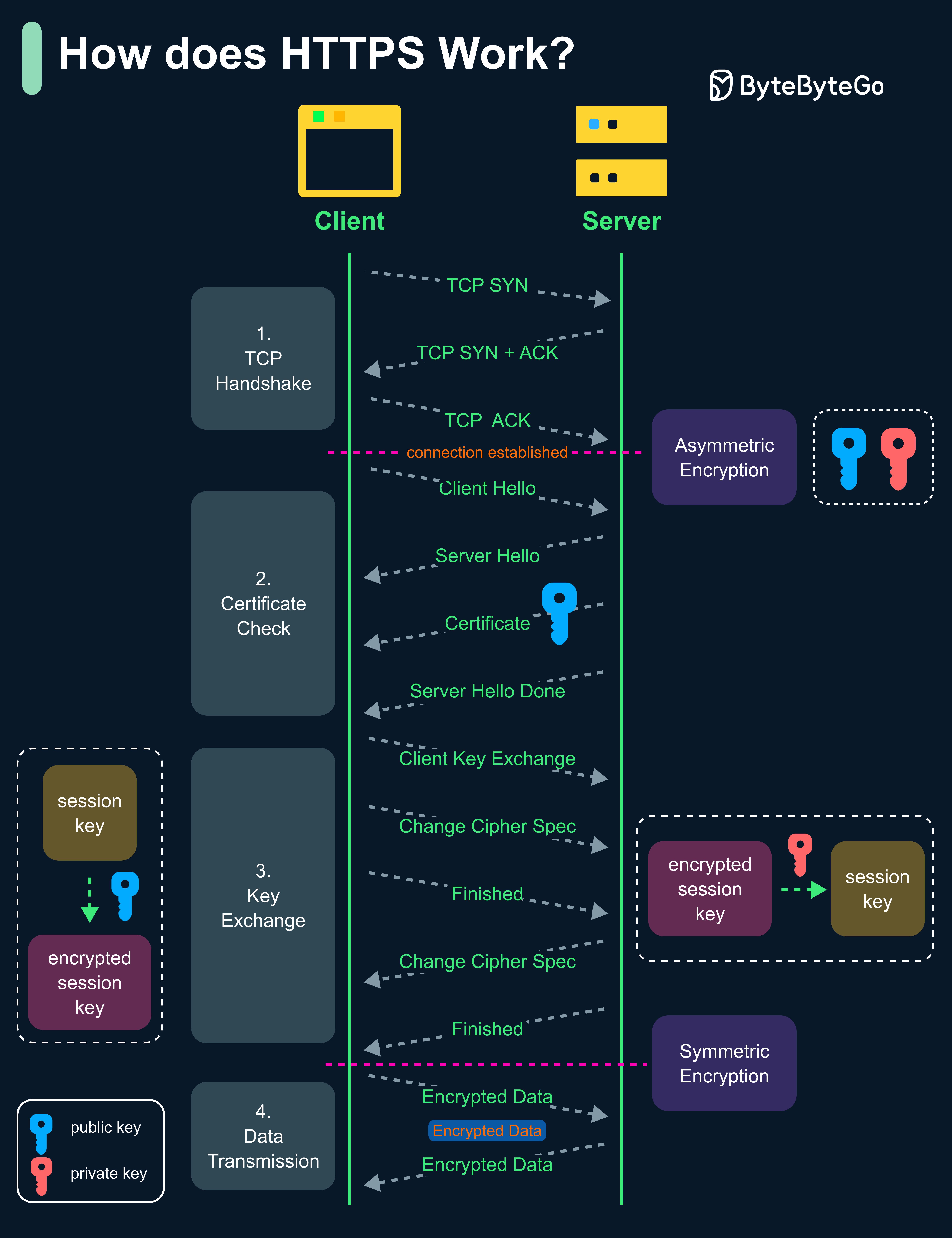

+ * [How does HTTPS work?](https://bytebytego.com/guides/how-does-https-work)

+ * [Session, Cookie, JWT, Token, SSO, and OAuth 2.0 Explained](https://bytebytego.com/guides/session-cookie-jwt-token-sso-and-oauth-2)

+ * [Explaining JSON Web Token (JWT) to a 10 Year Old Kid](https://bytebytego.com/guides/explaining-json-web-token-jwt-to-a-10-year-old-kid)

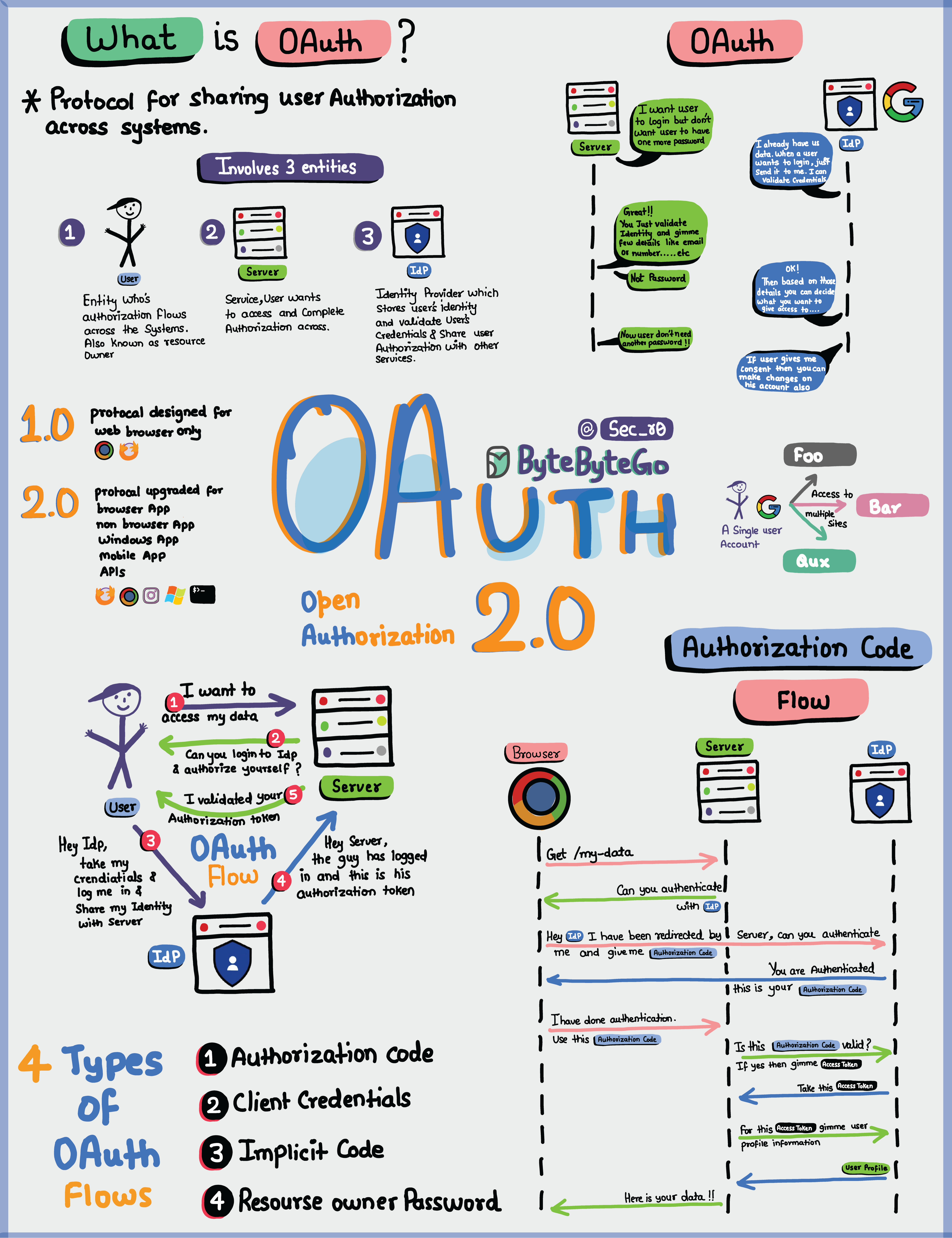

+ * [OAuth 2.0 Explained With Simple Terms](https://bytebytego.com/guides/oauth-2-explained-with-siple-terms)

+* [Computer Fundamentals](https://bytebytego.com/guides/computer-fundamentals)

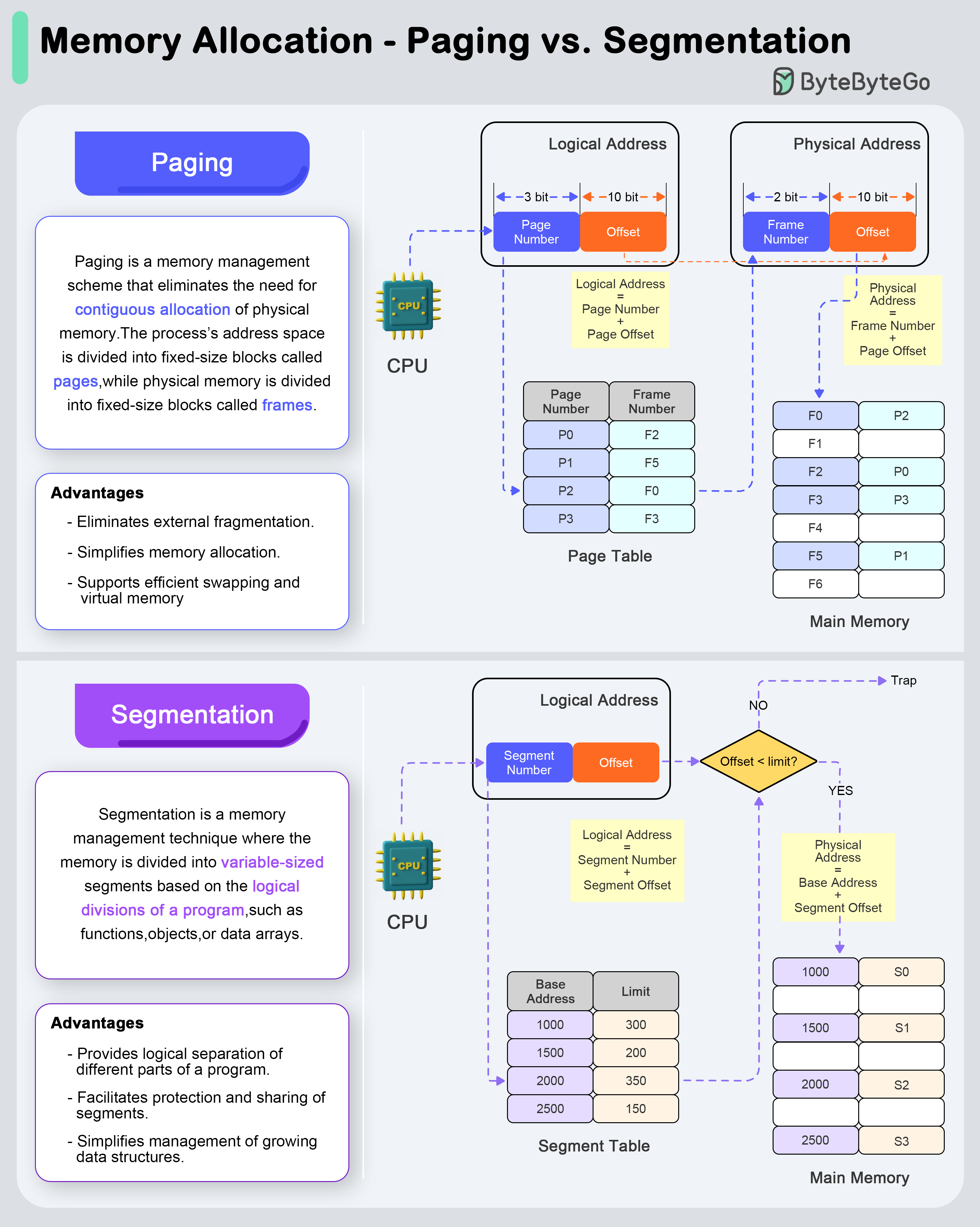

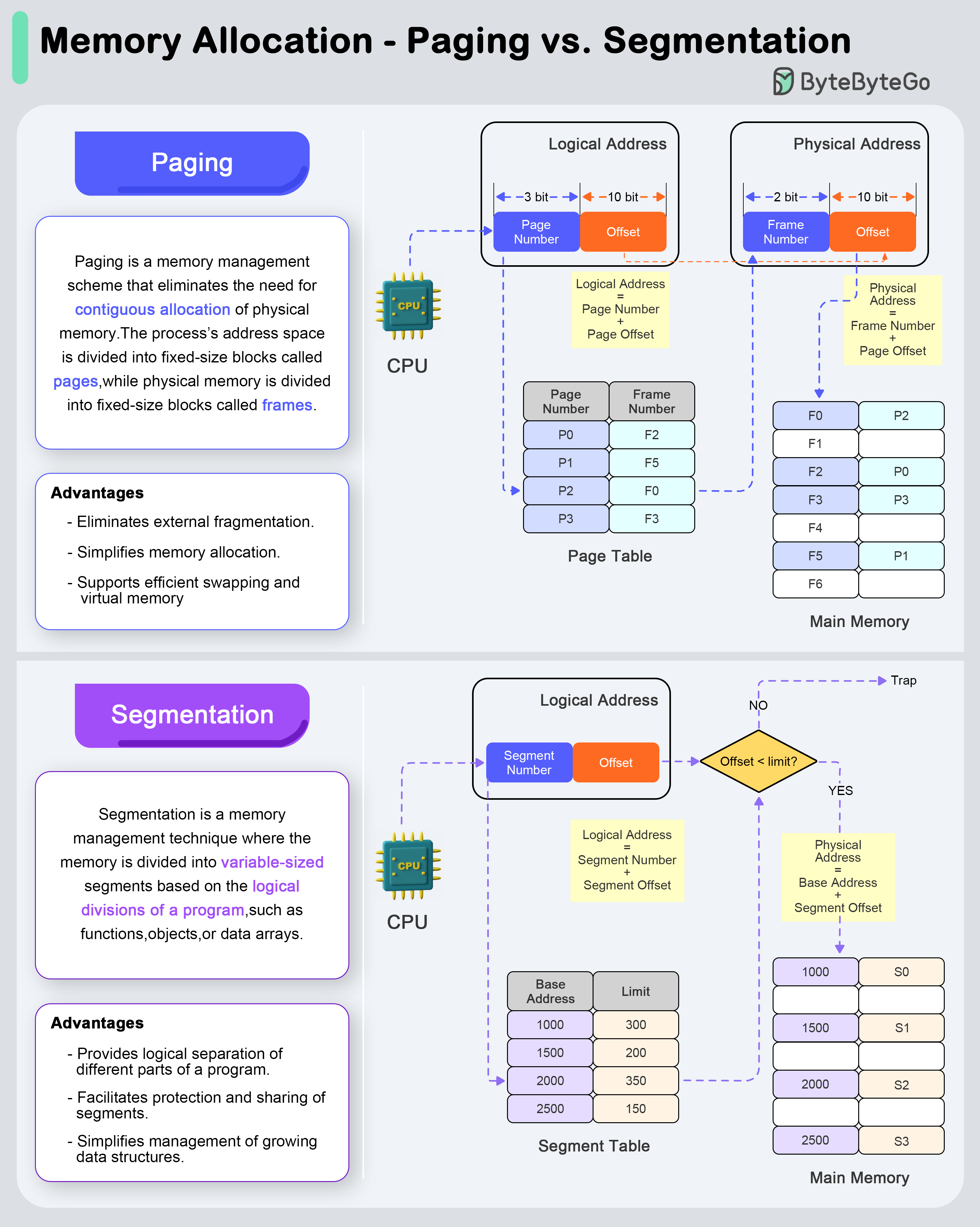

+ * [Paging vs Segmentation](https://bytebytego.com/guides/what-are-the-differences-between-paging-and-segmentation)

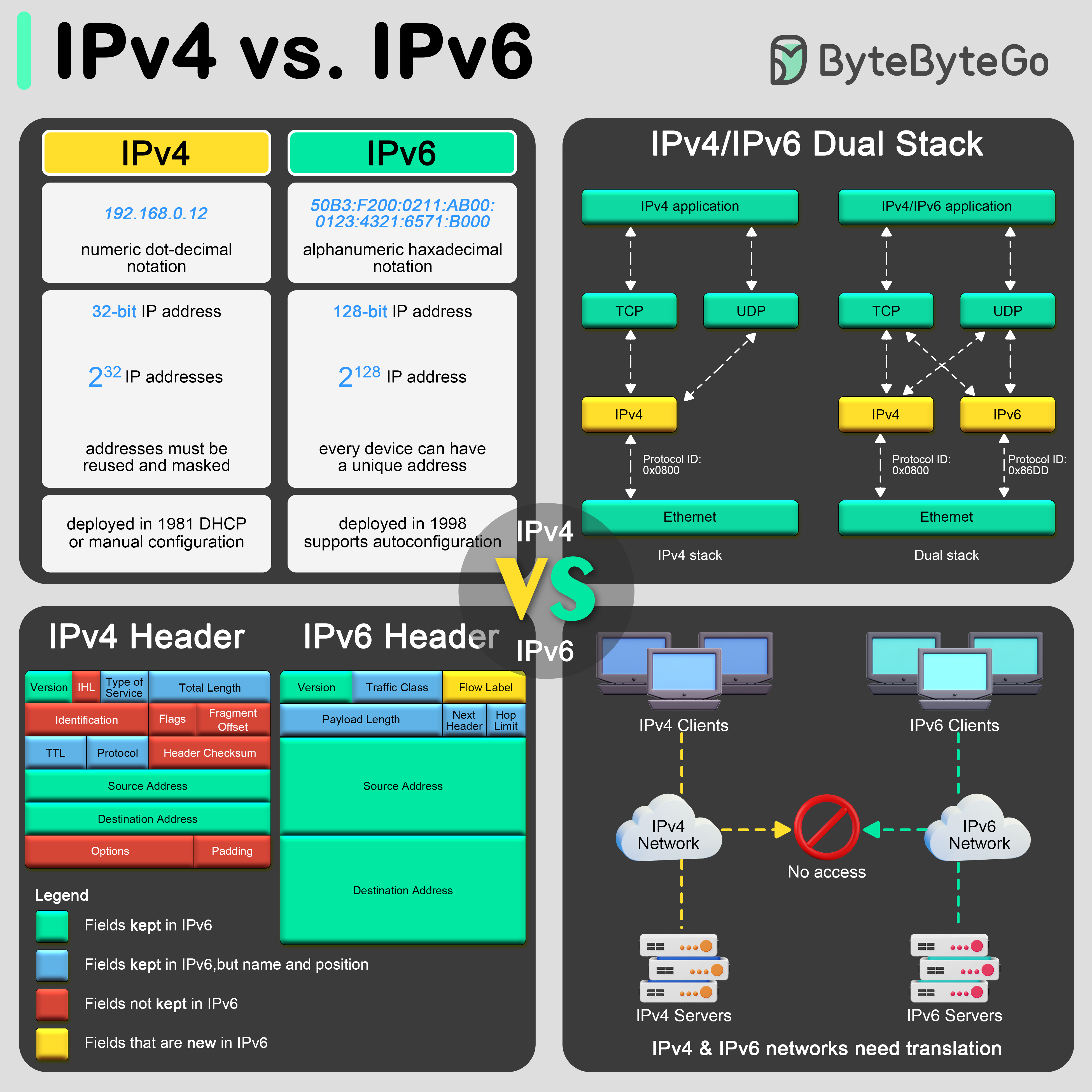

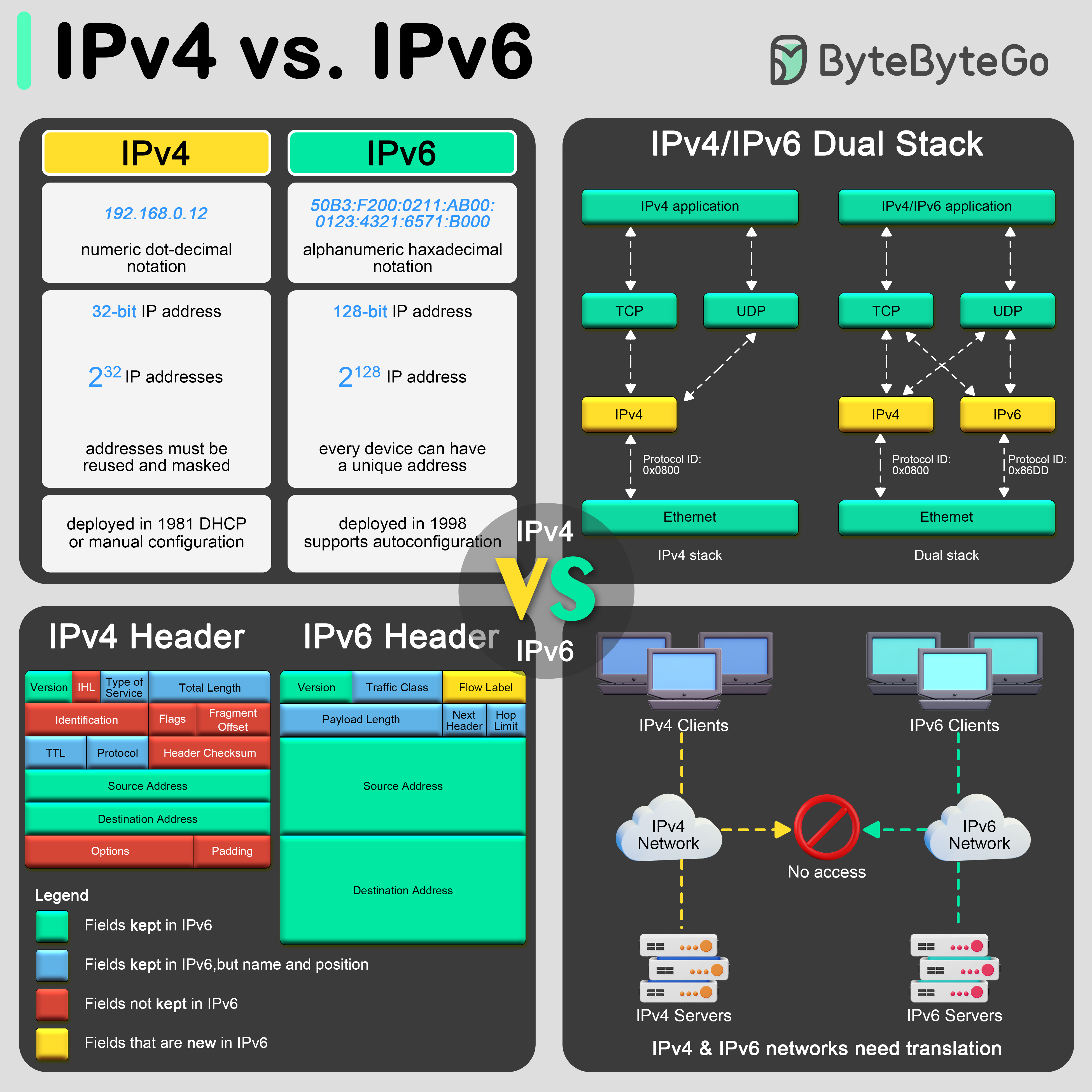

+ * [IPv4 vs. IPv6: Differences](https://bytebytego.com/guides/ipv4-vs-ipv6)

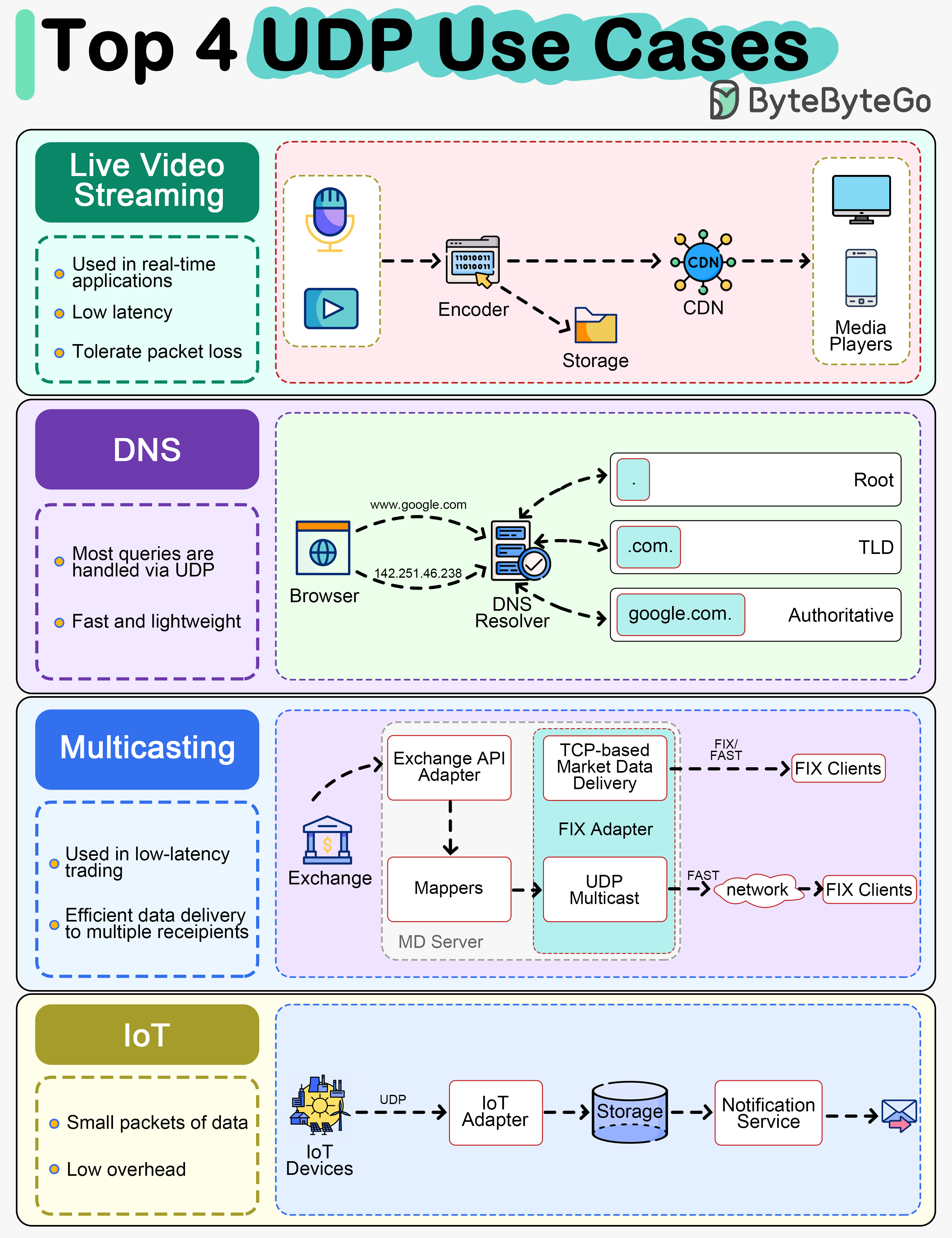

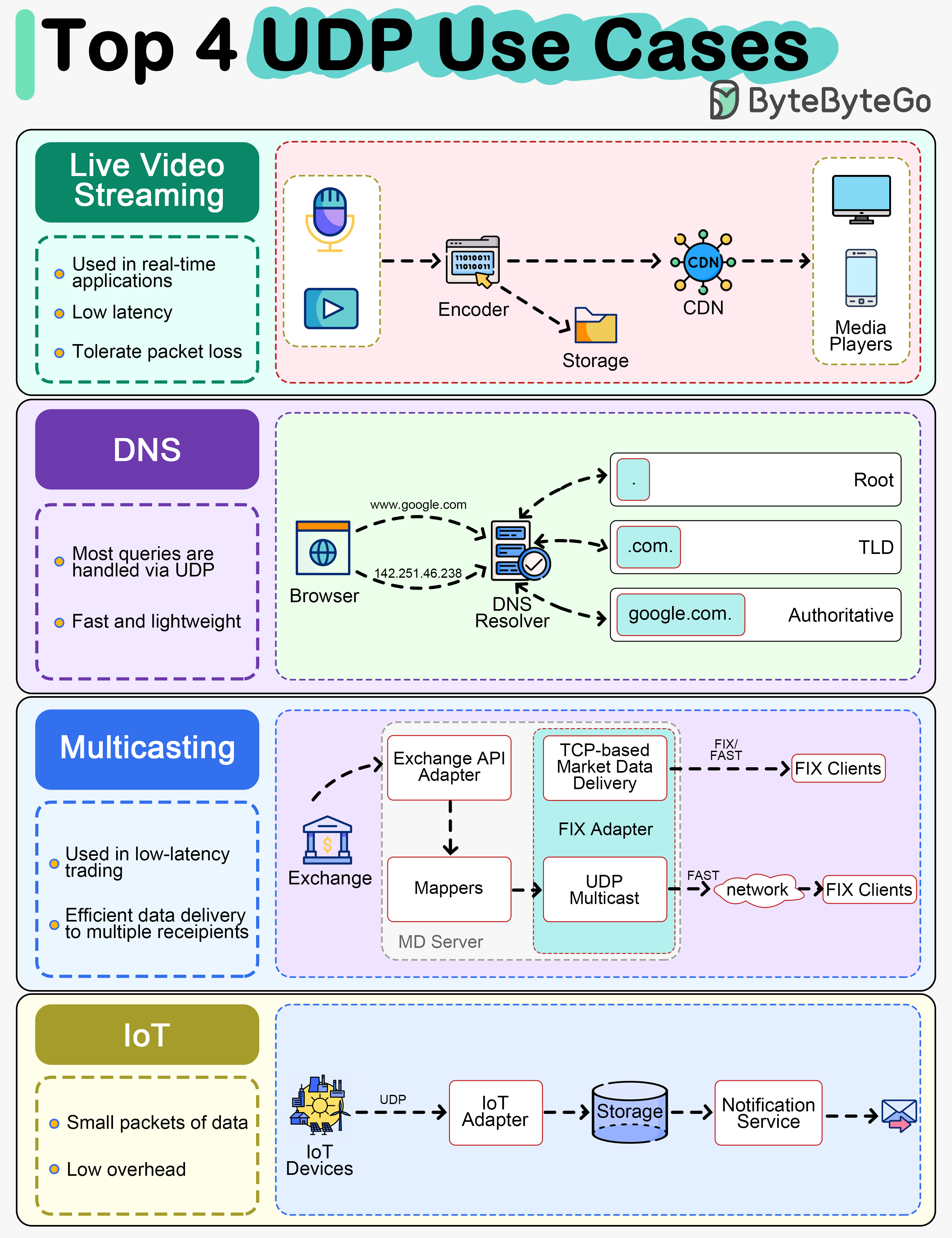

+ * [Top 4 Most Popular Use Cases for UDP](https://bytebytego.com/guides/top-4-most-popular-use-cases-for-udp)

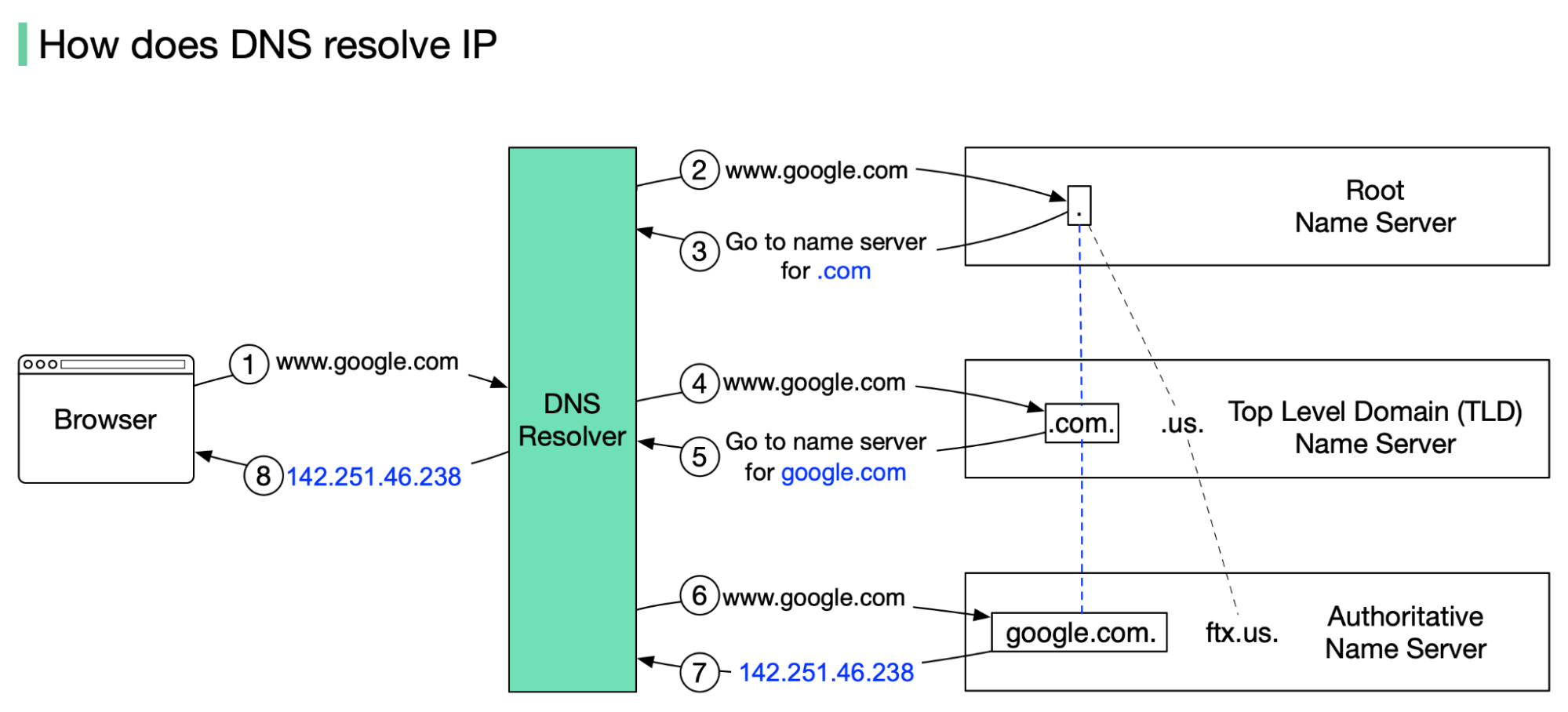

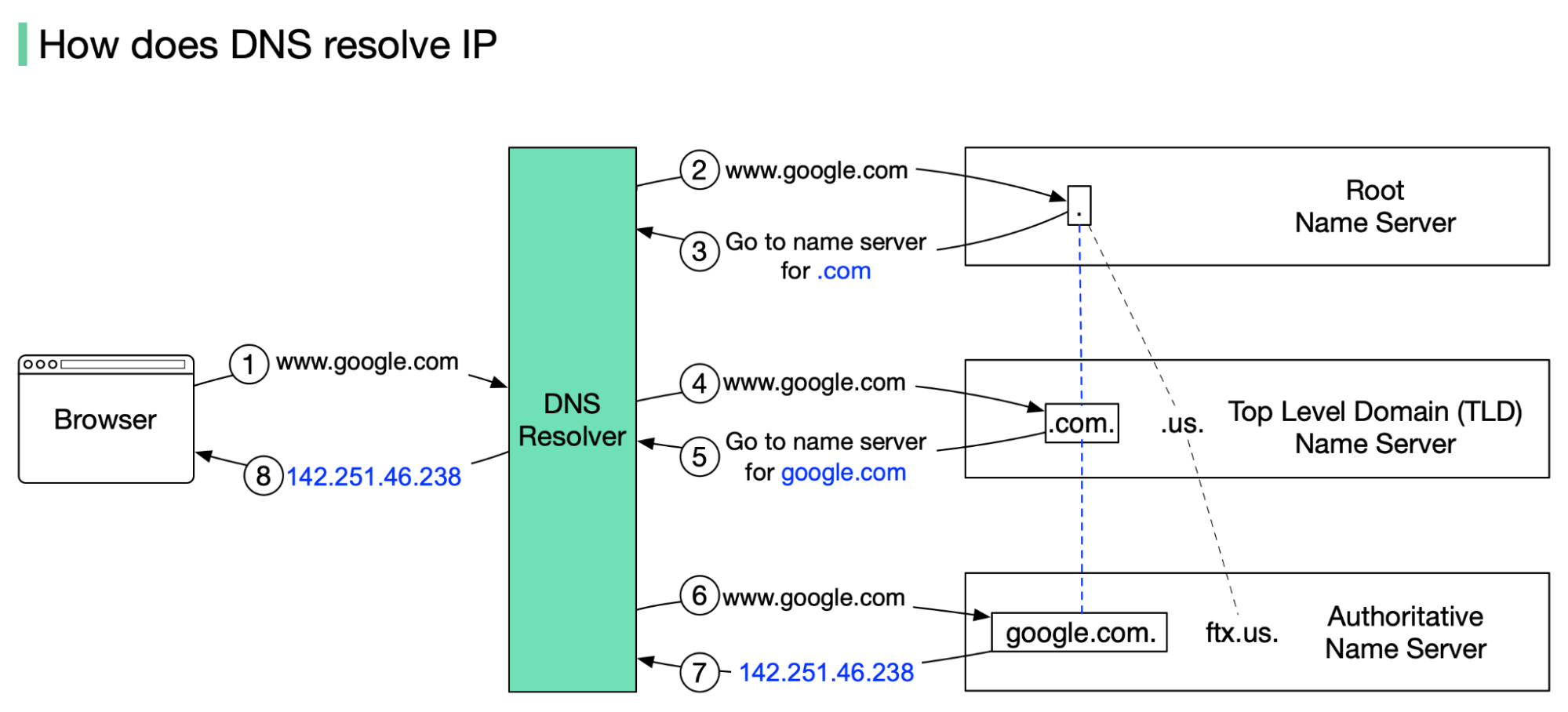

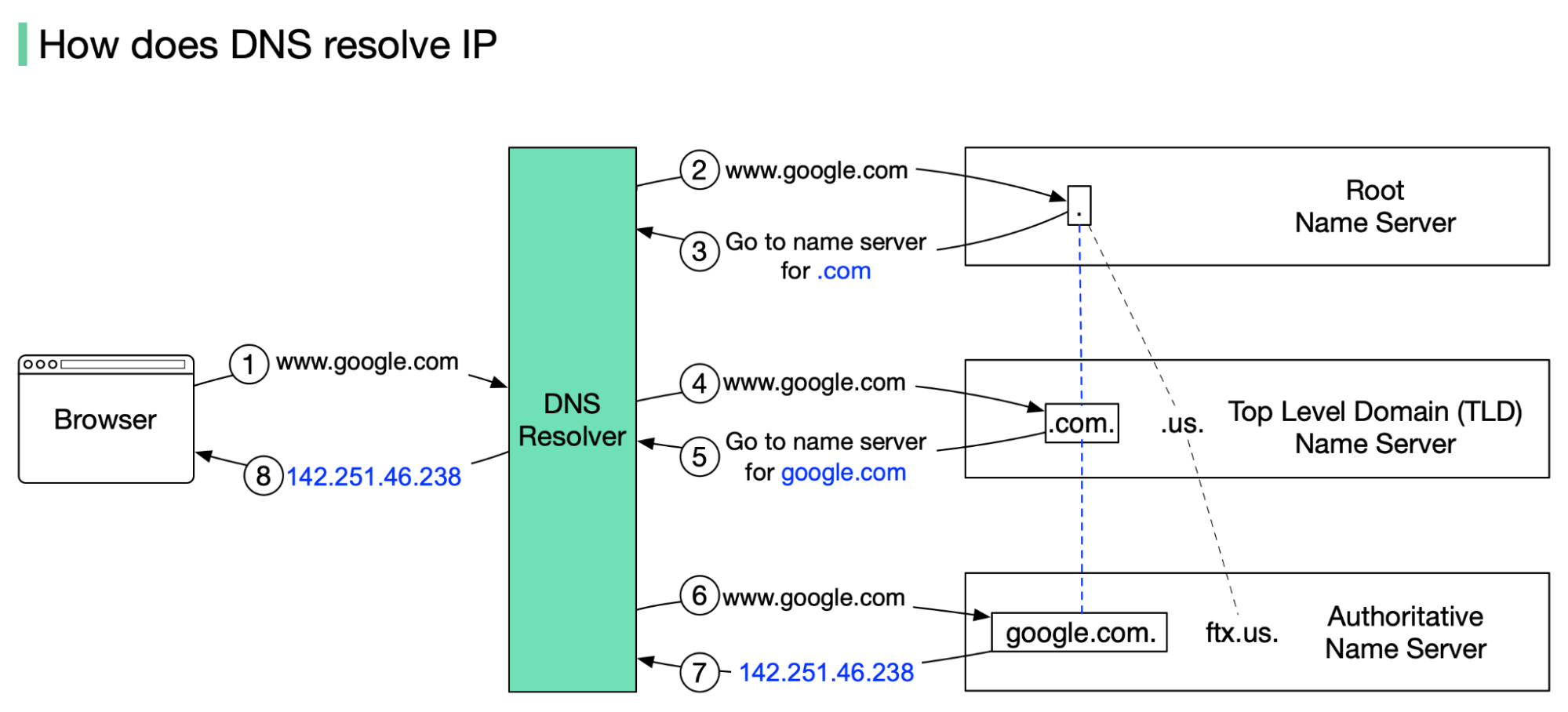

+ * [How Does the Domain Name System (DNS) Lookup Work?](https://bytebytego.com/guides/how-does-the-domain-name-system-dns-lookup-work)

+ * [DNS Record Types You Should Know](https://bytebytego.com/guides/dns-record-types-you-should-know)

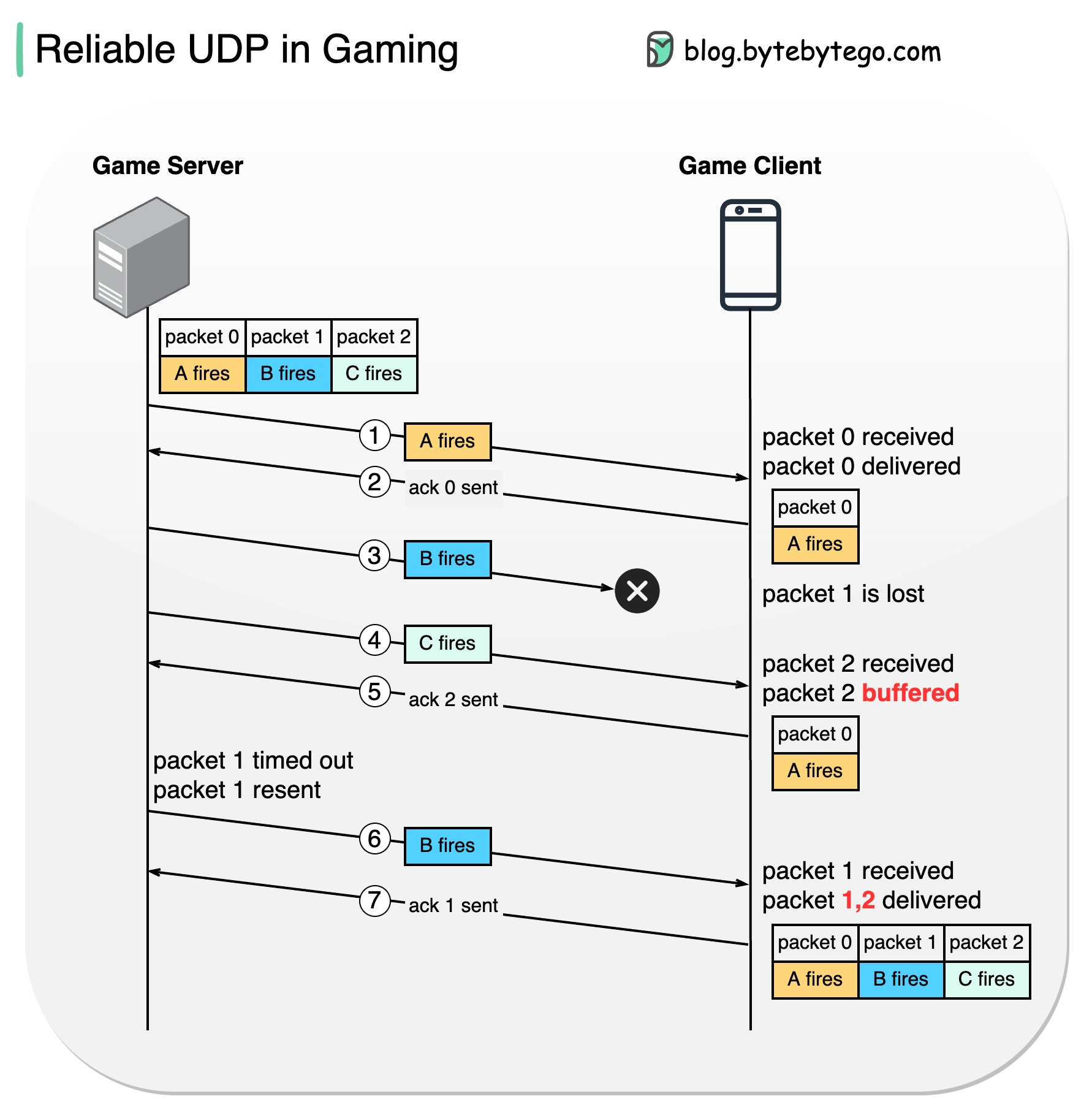

+ * [TCP vs UDP for Online Gaming](https://bytebytego.com/guides/what-protocol-does-online-gaming-use-to-transmit-data)

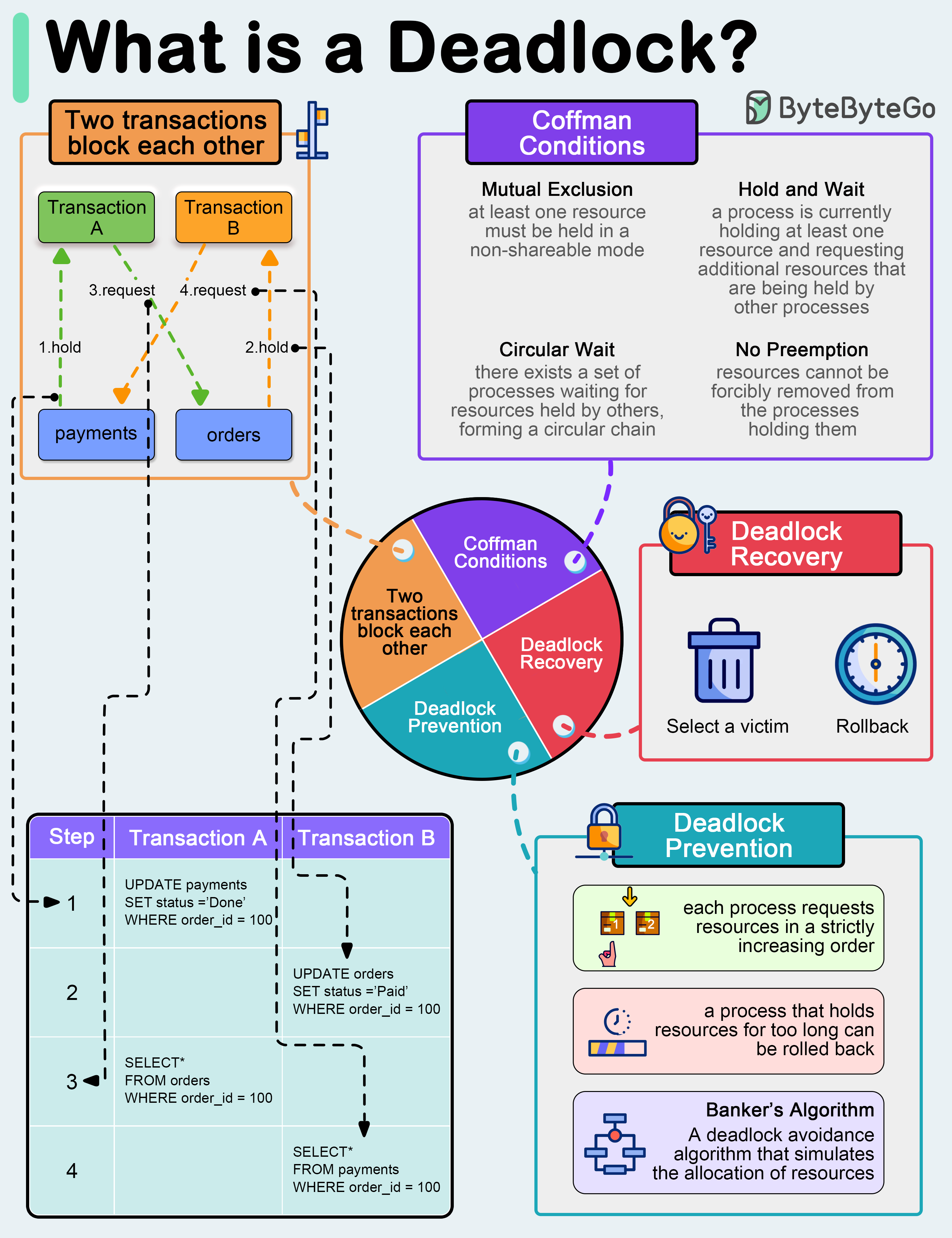

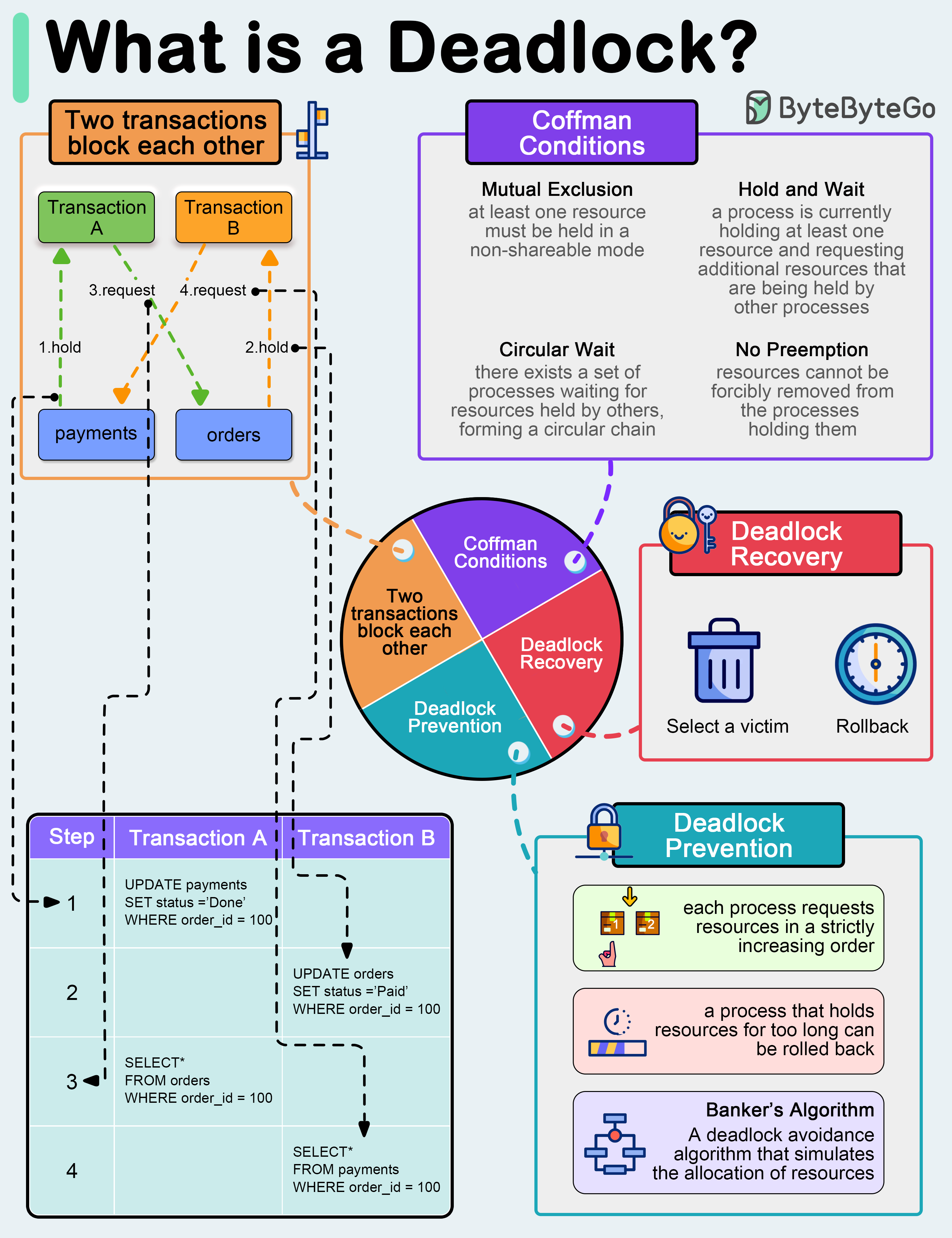

+ * [What is a Deadlock?](https://bytebytego.com/guides/what-is-a-deadlock)

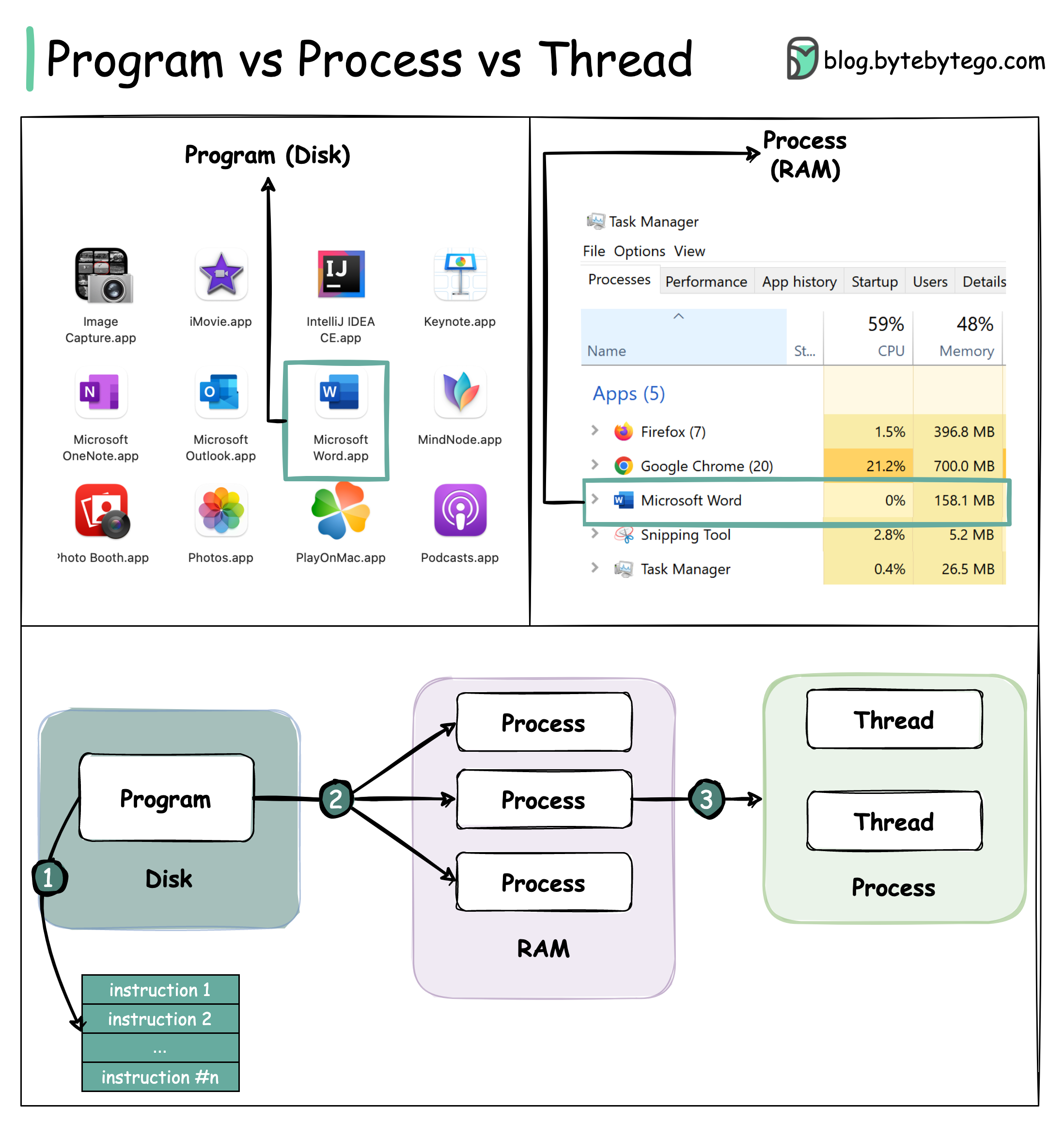

+ * [Process vs Thread: Key Differences](https://bytebytego.com/guides/what-is-the-difference-between-process-and-thread)

+ * [OSI Model Explained](https://bytebytego.com/guides/what-is-osi-model)

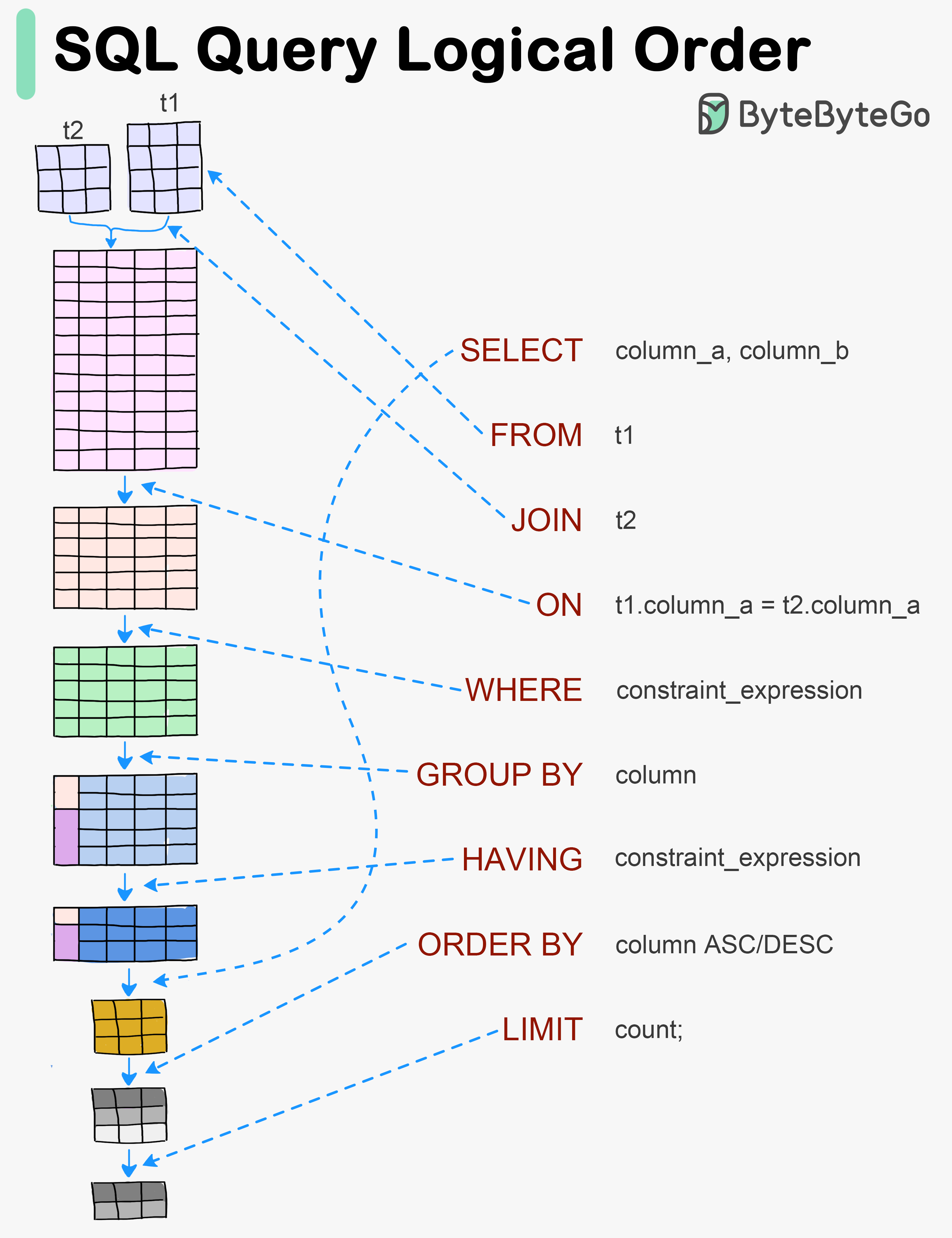

+ * [Visualizing a SQL Query](https://bytebytego.com/guides/visualizing-a-sql-query)

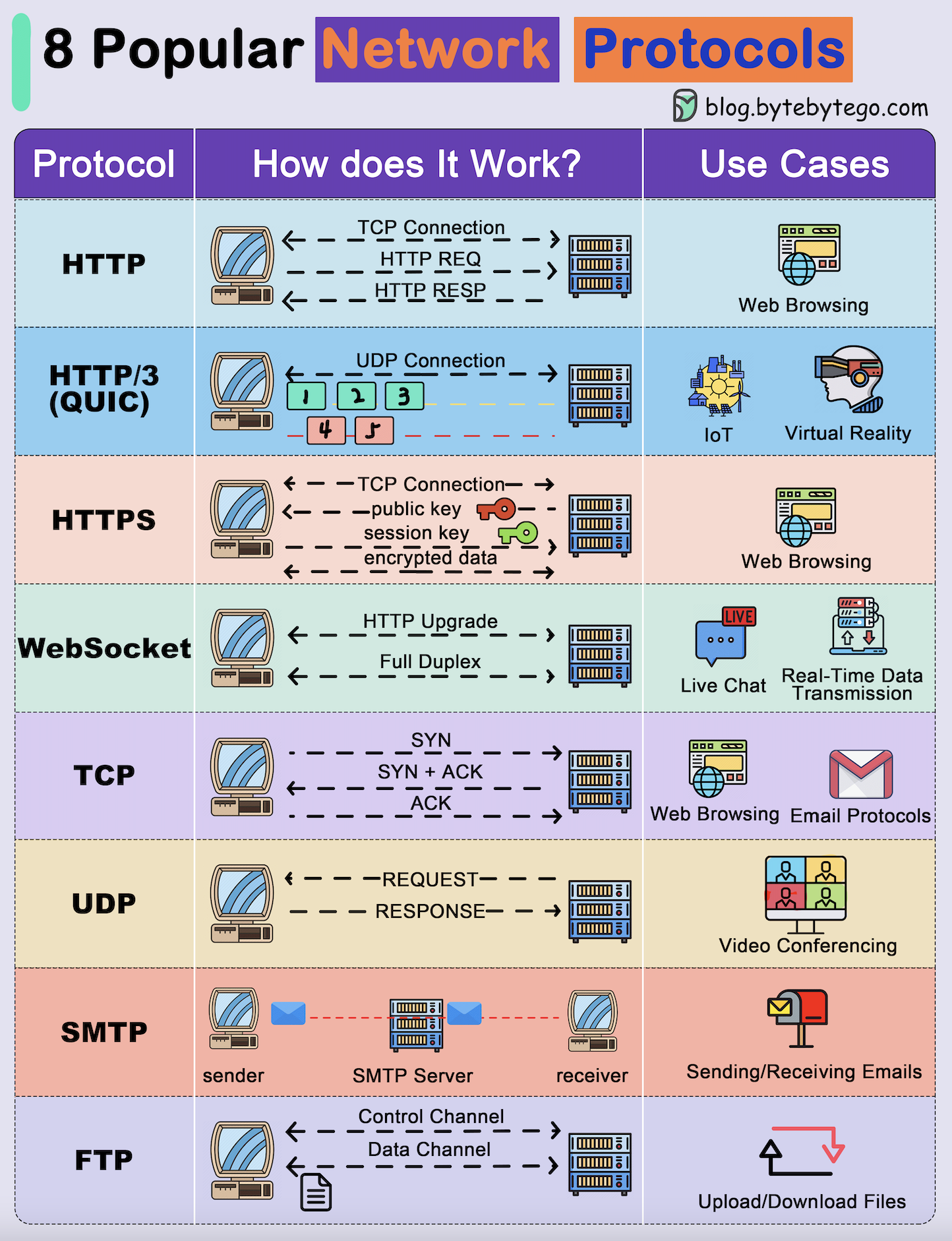

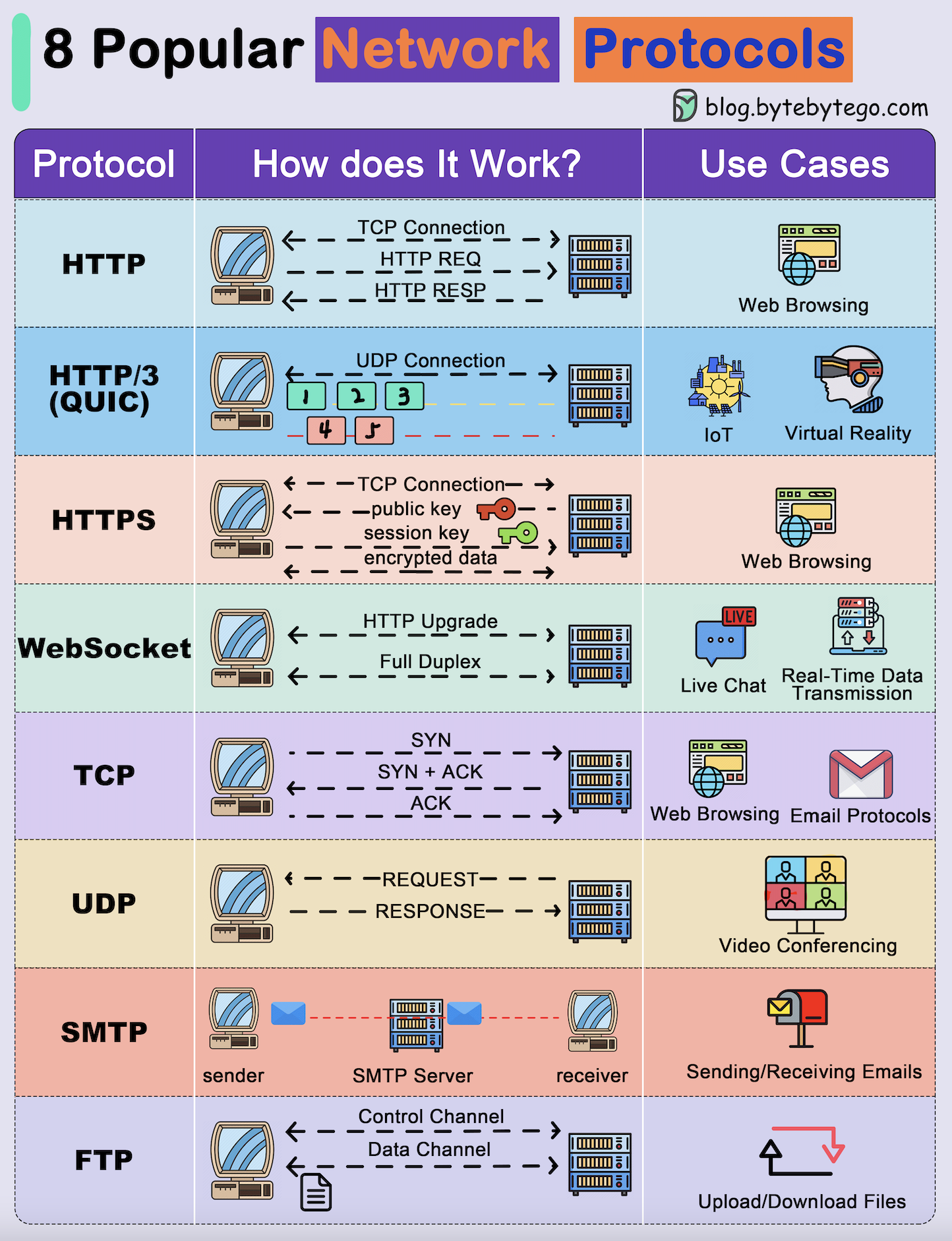

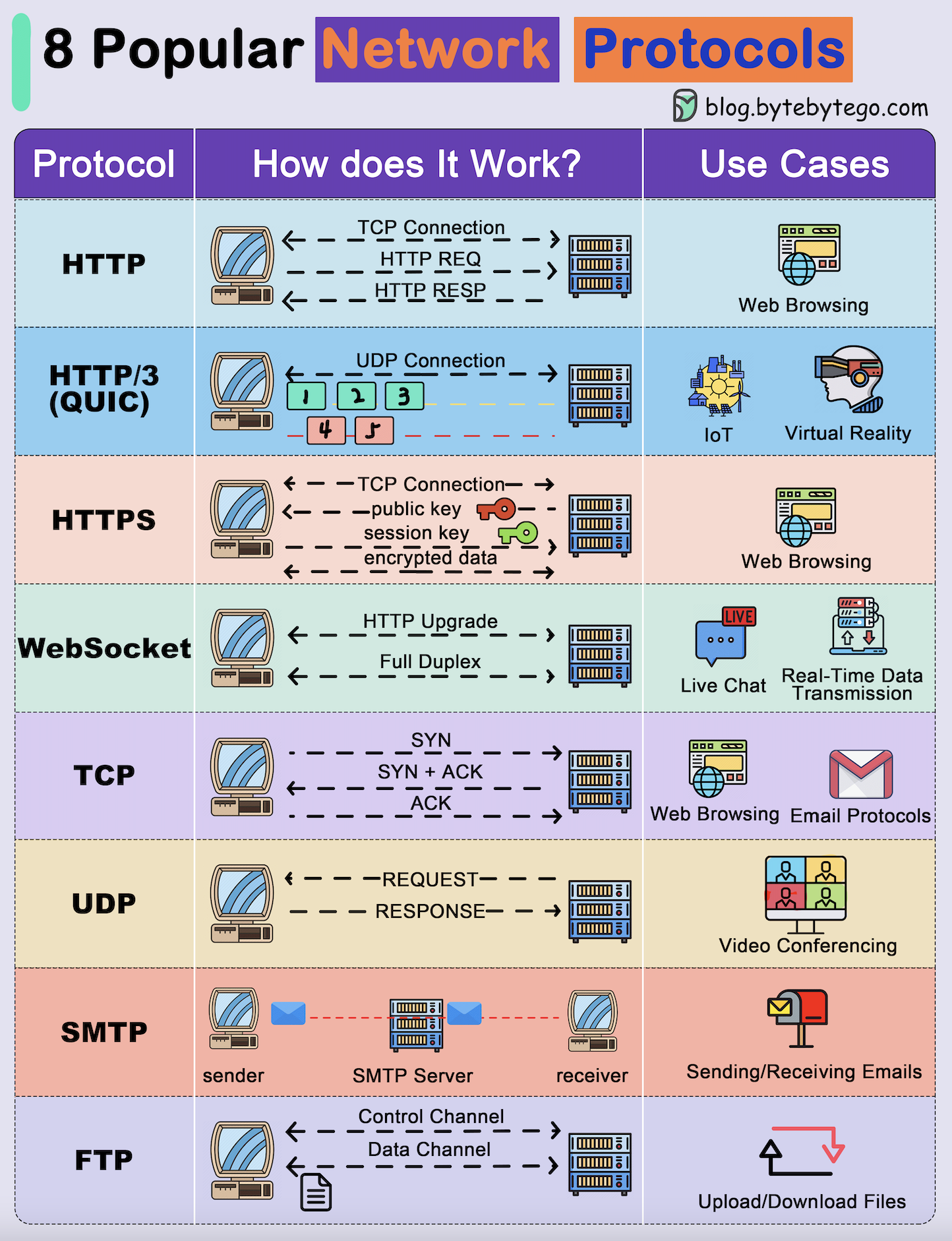

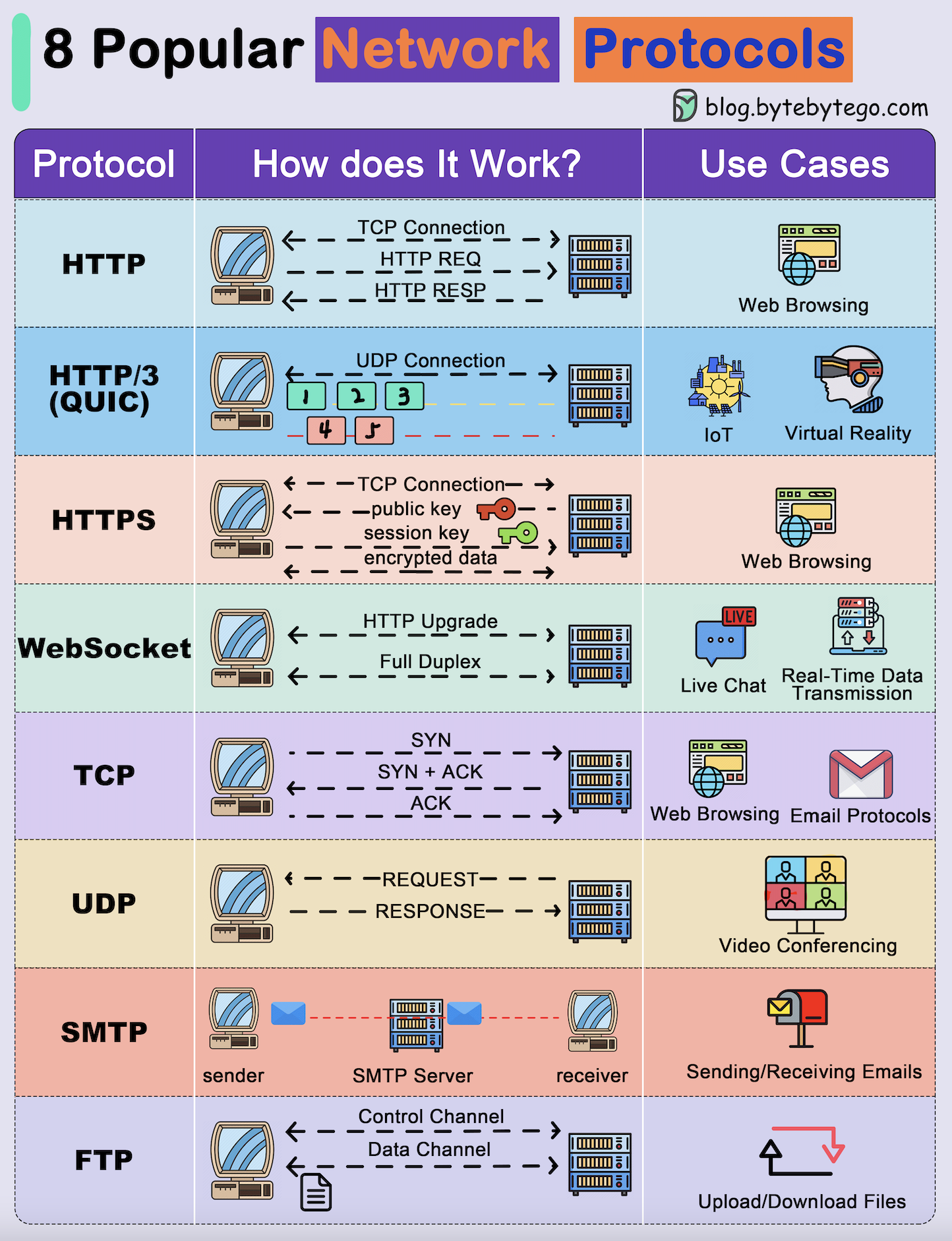

+ * [Explaining 8 Popular Network Protocols in 1 Diagram](https://bytebytego.com/guides/explaining-8-popular-network-protocols-in-1-diagram)

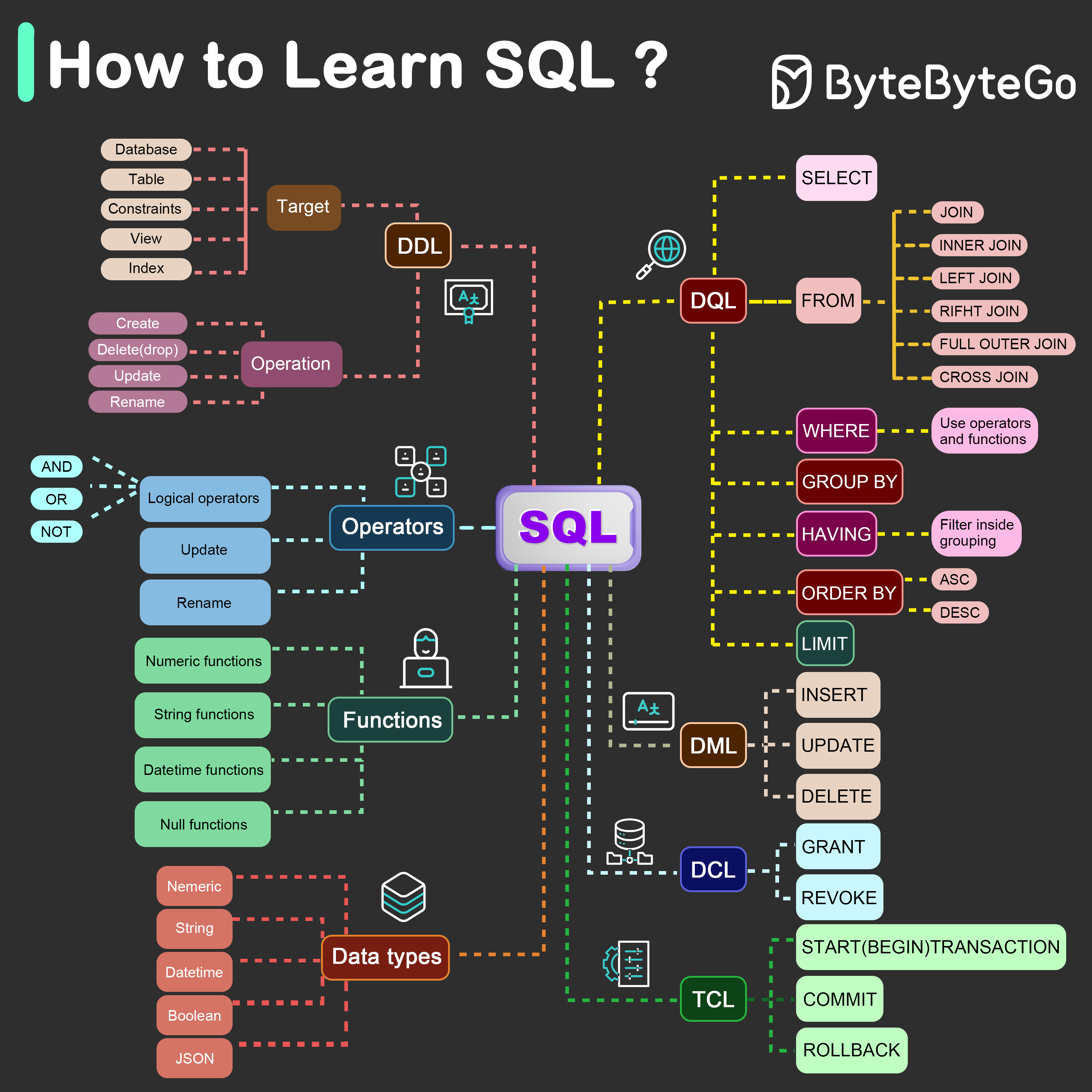

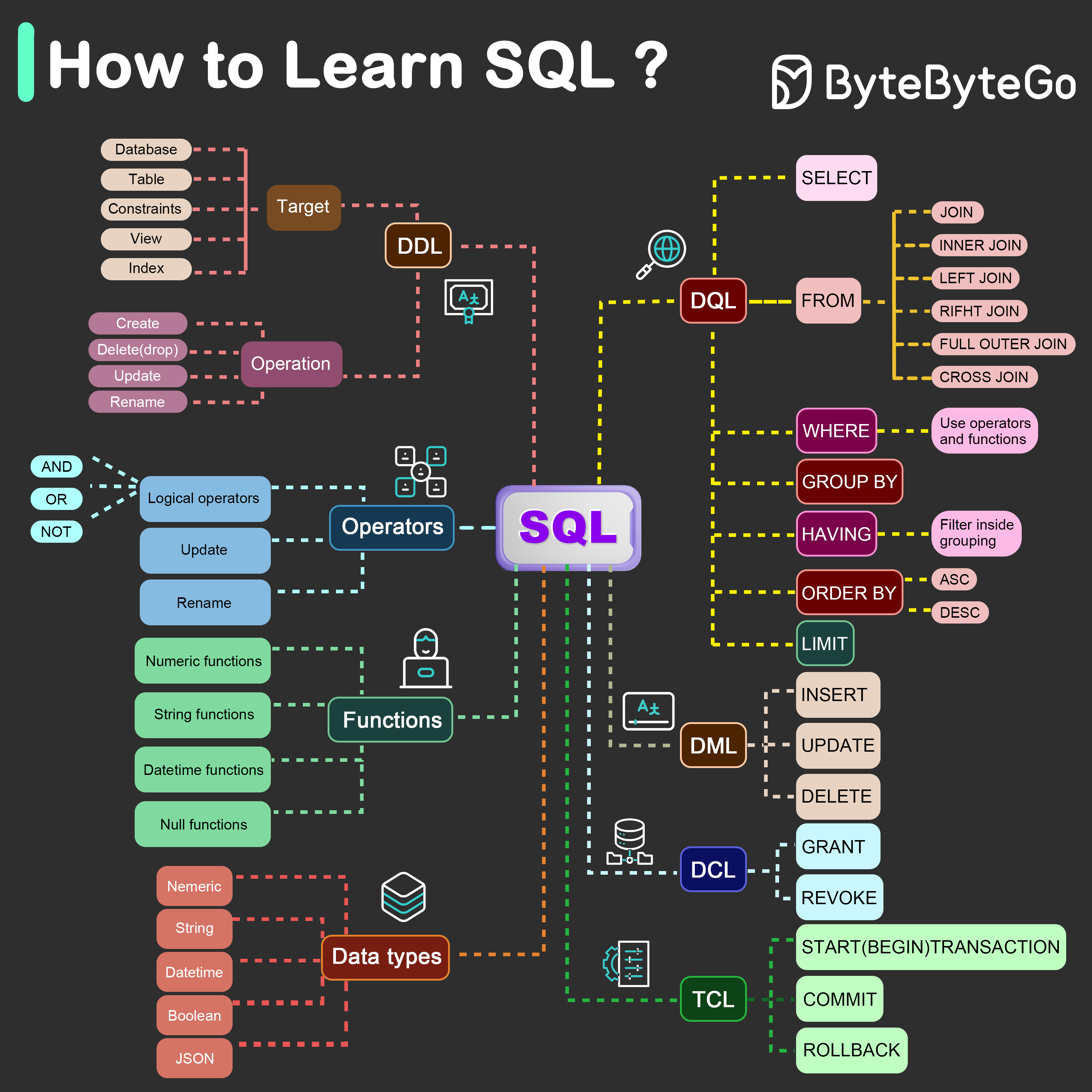

+ * [What is the Best Way to Learn SQL?](https://bytebytego.com/guides/what-is-the-best-way-to-learn-sql)

-- [Communication protocols](#communication-protocols)

- - [REST API vs. GraphQL](#rest-api-vs-graphql)

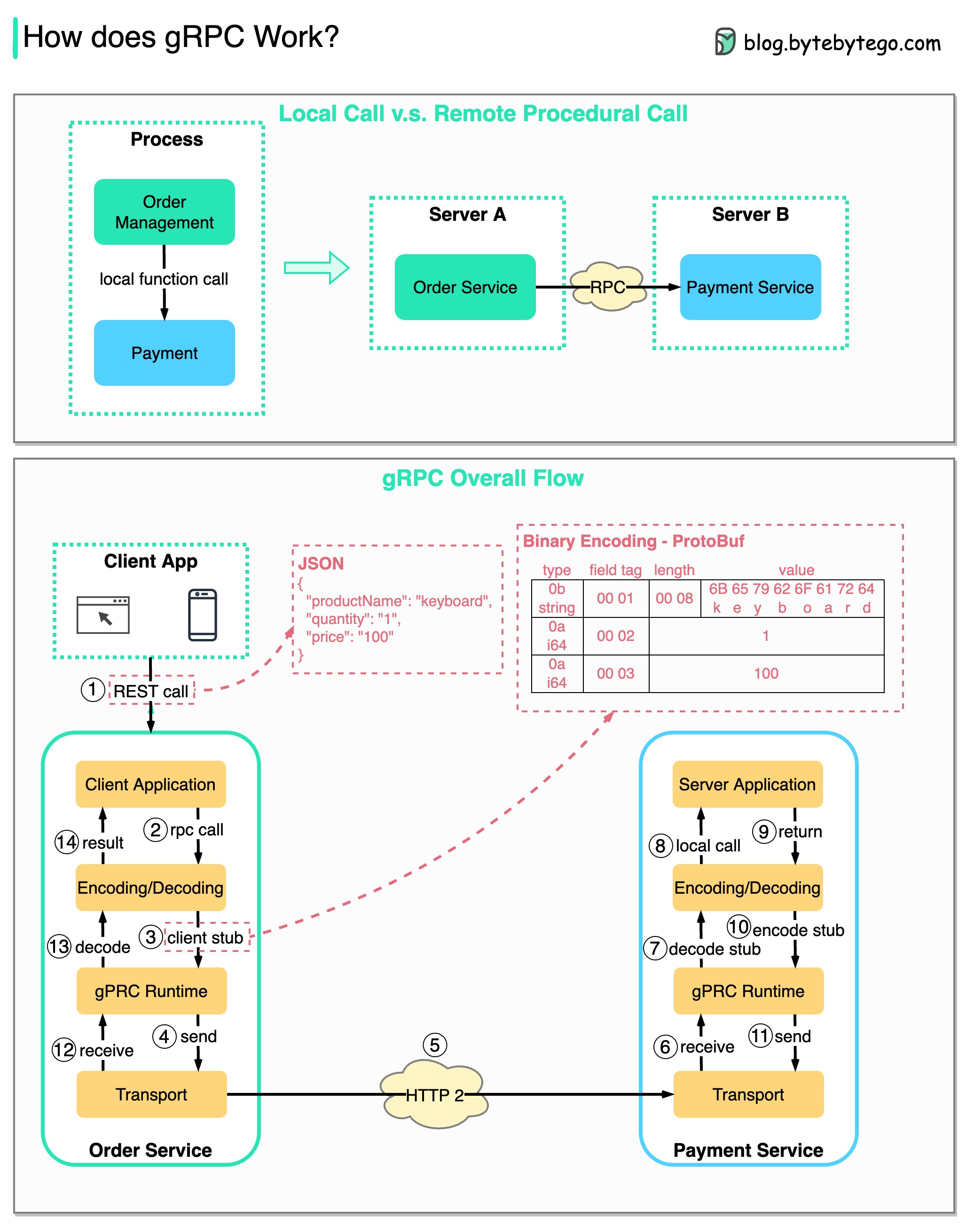

- - [How does gRPC work?](#how-does-grpc-work)

- - [What is a webhook?](#what-is-a-webhook)

- - [How to improve API performance?](#how-to-improve-api-performance)

- - [HTTP 1.0 -\> HTTP 1.1 -\> HTTP 2.0 -\> HTTP 3.0 (QUIC)](#http-10---http-11---http-20---http-30-quic)

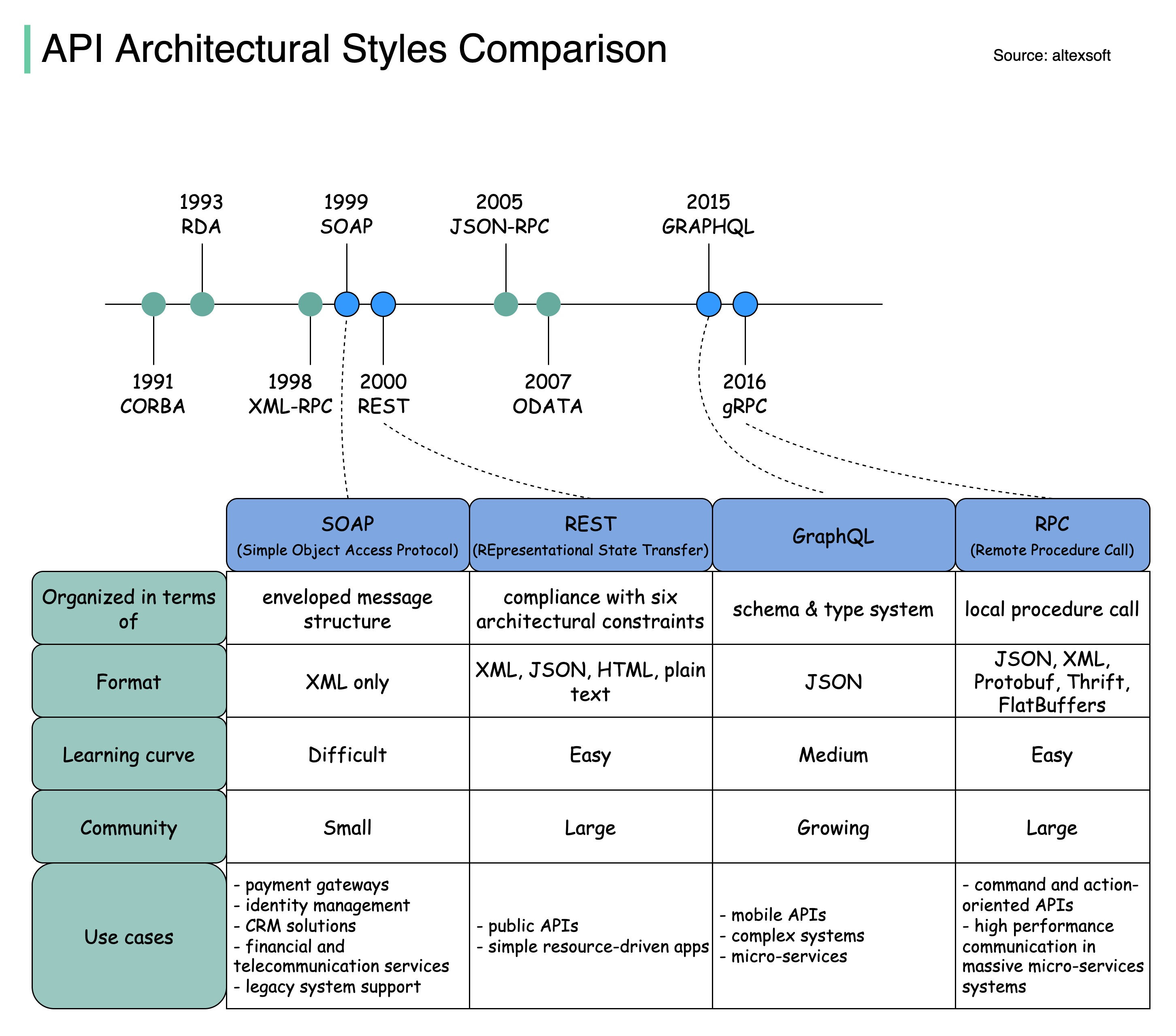

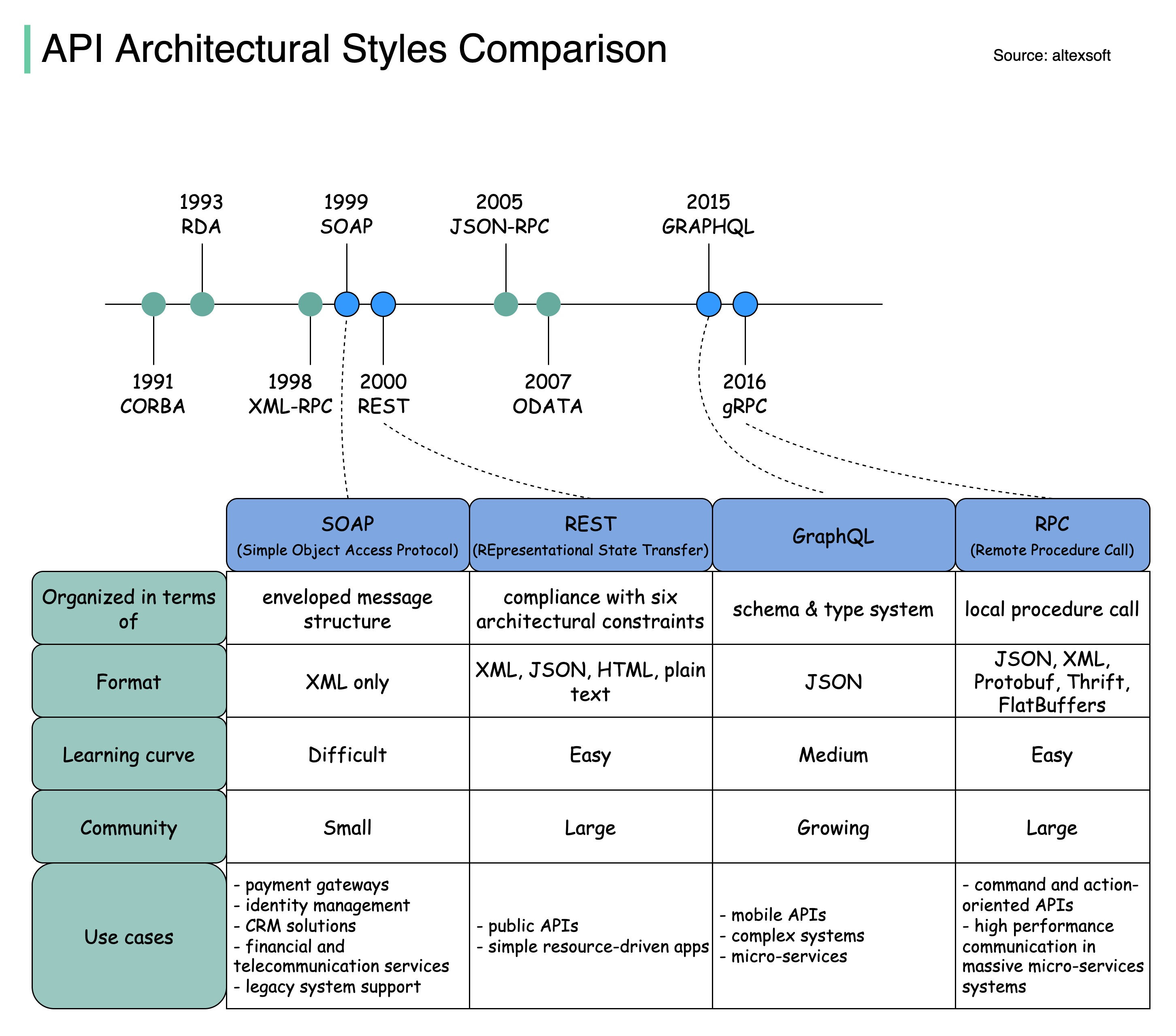

- - [SOAP vs REST vs GraphQL vs RPC](#soap-vs-rest-vs-graphql-vs-rpc)

- - [Code First vs. API First](#code-first-vs-api-first)

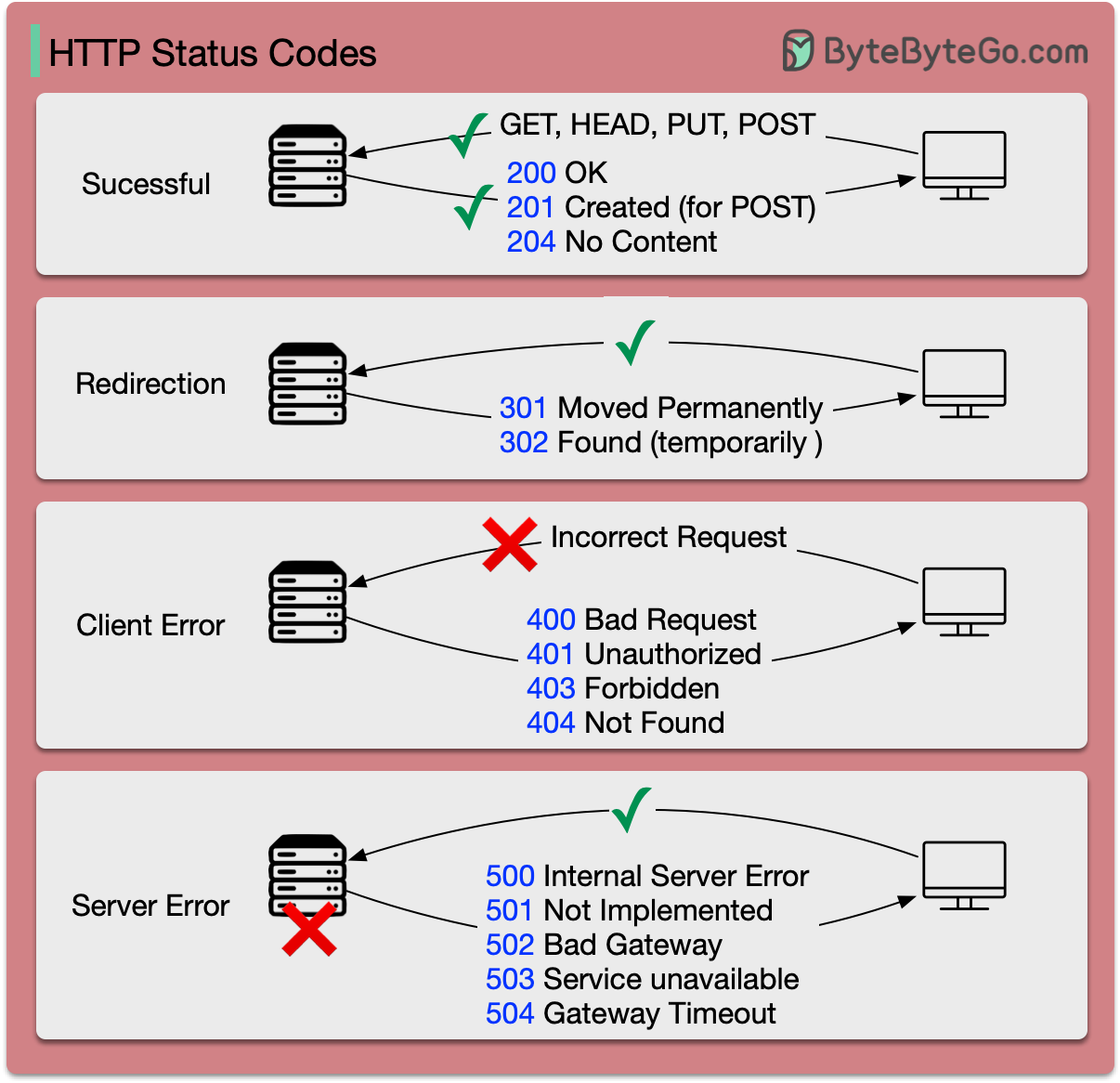

- - [HTTP status codes](#http-status-codes)

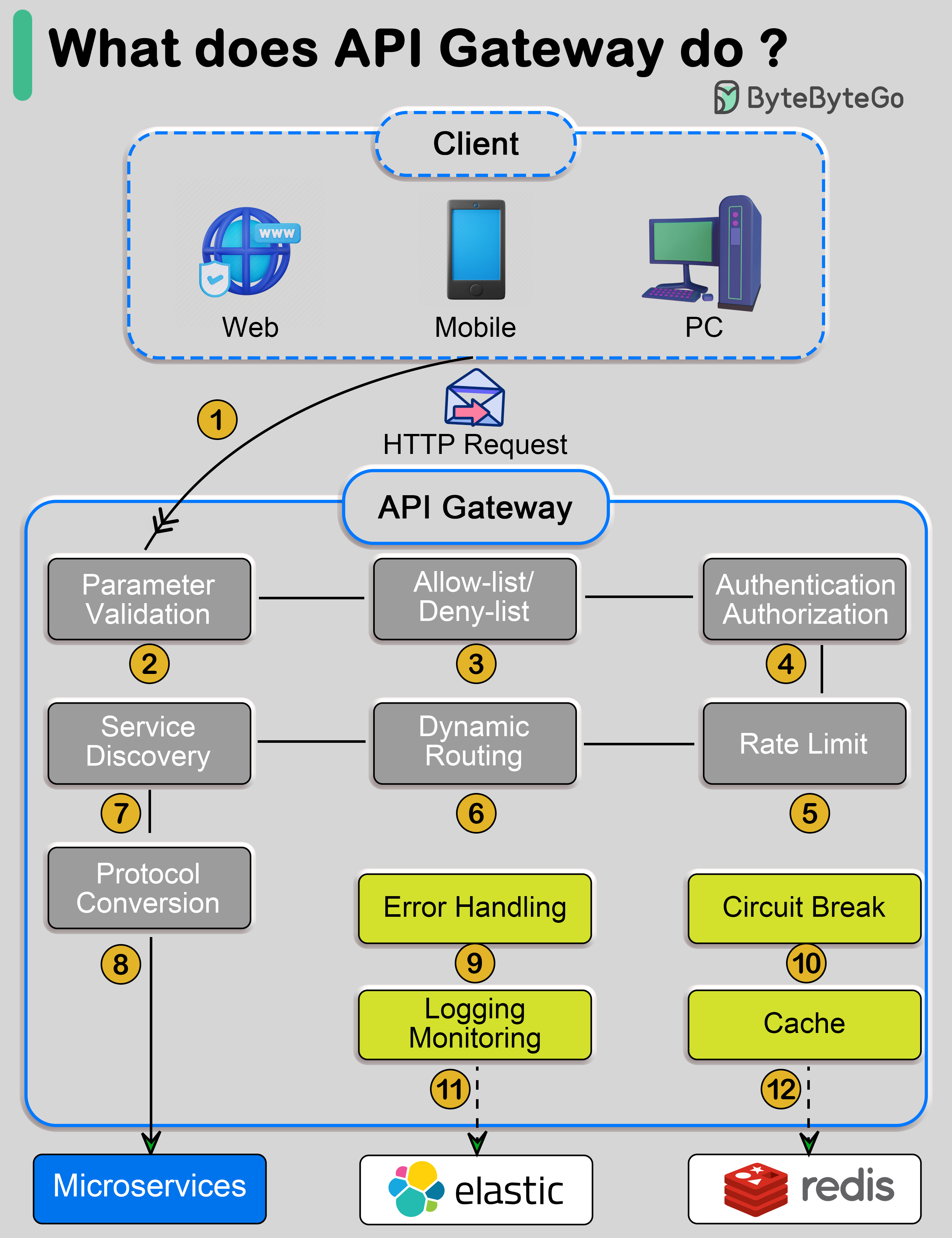

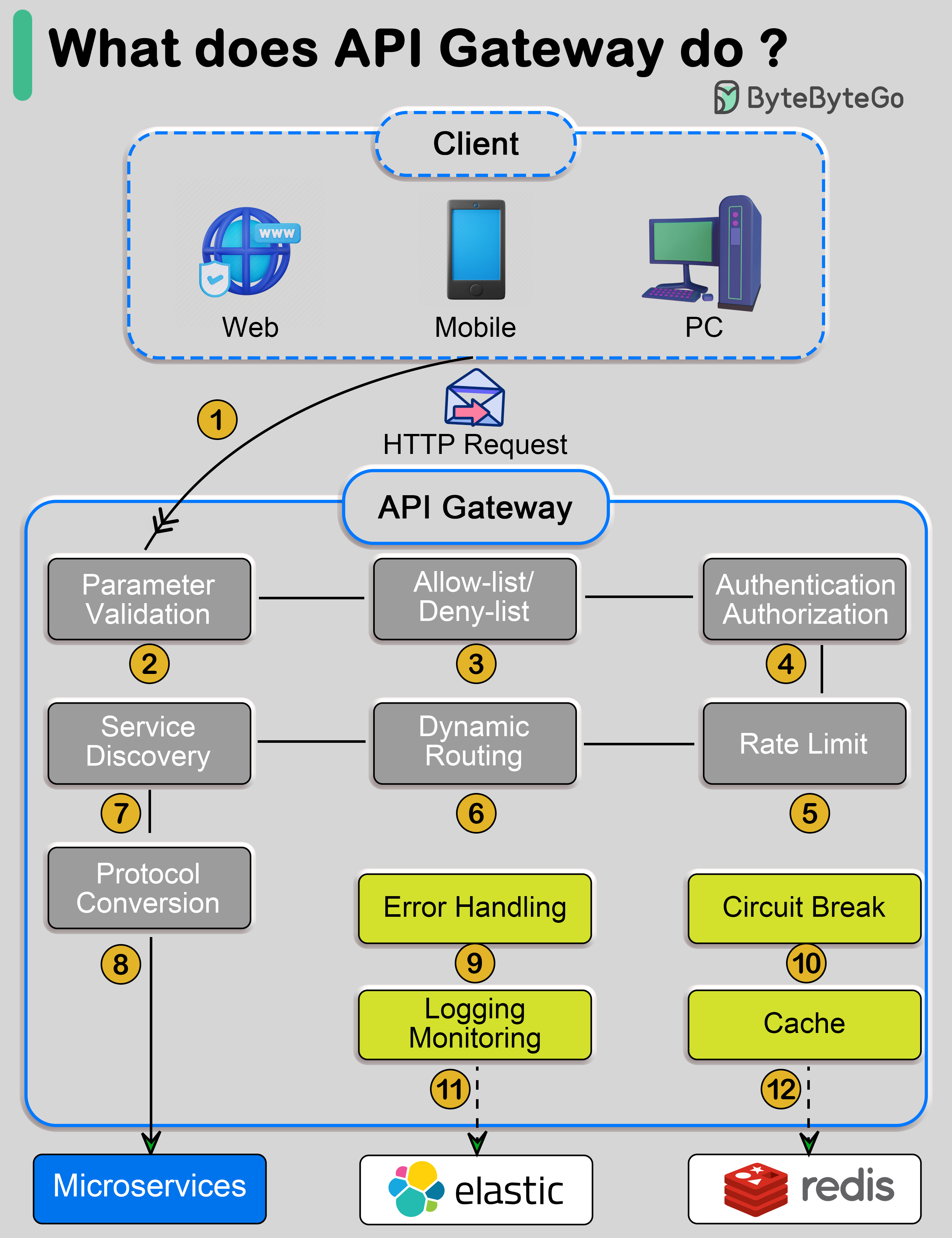

- - [What does API gateway do?](#what-does-api-gateway-do)

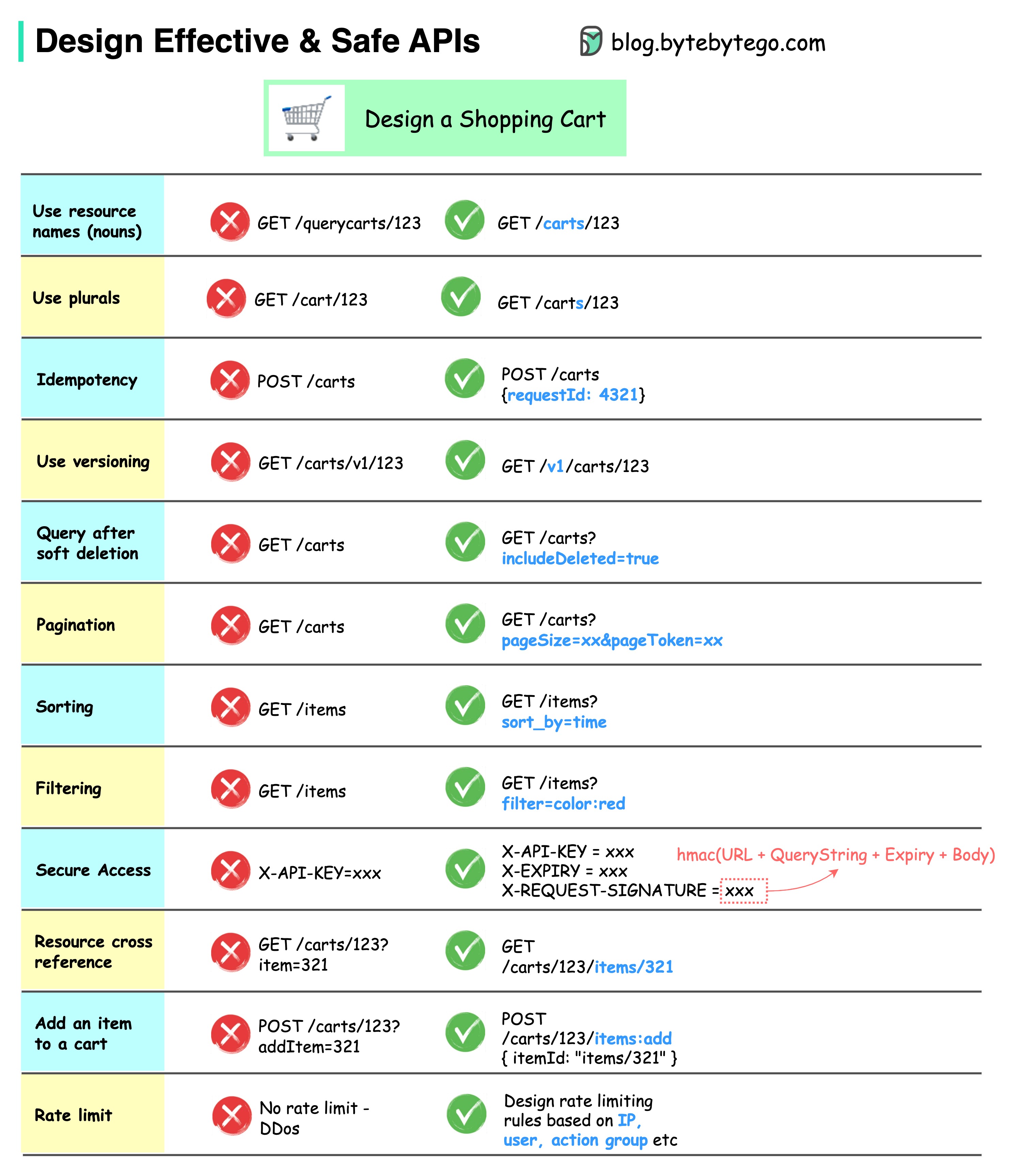

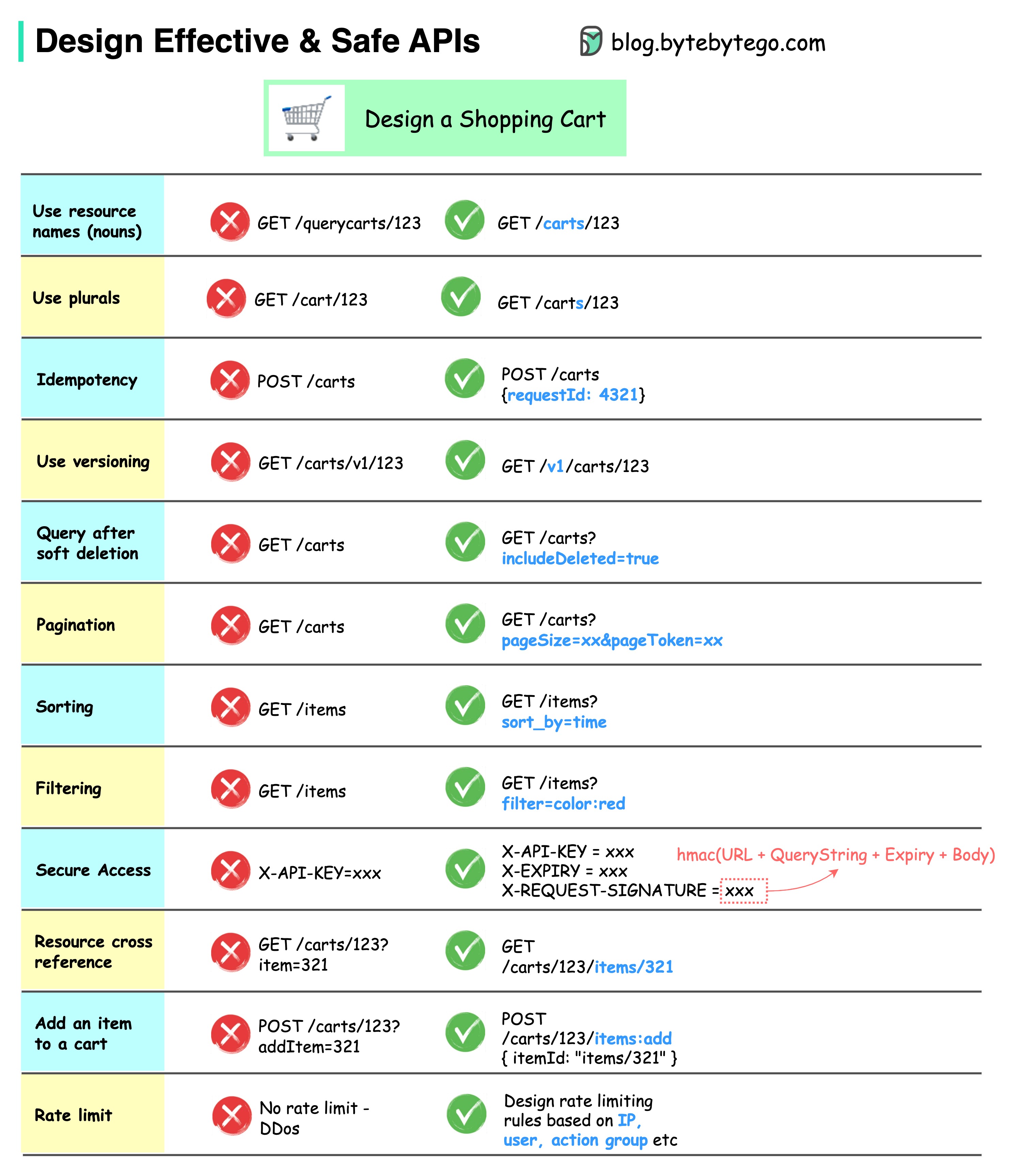

- - [How do we design effective and safe APIs?](#how-do-we-design-effective-and-safe-apis)

- - [TCP/IP encapsulation](#tcpip-encapsulation)

- - [Why is Nginx called a “reverse” proxy?](#why-is-nginx-called-a-reverse-proxy)

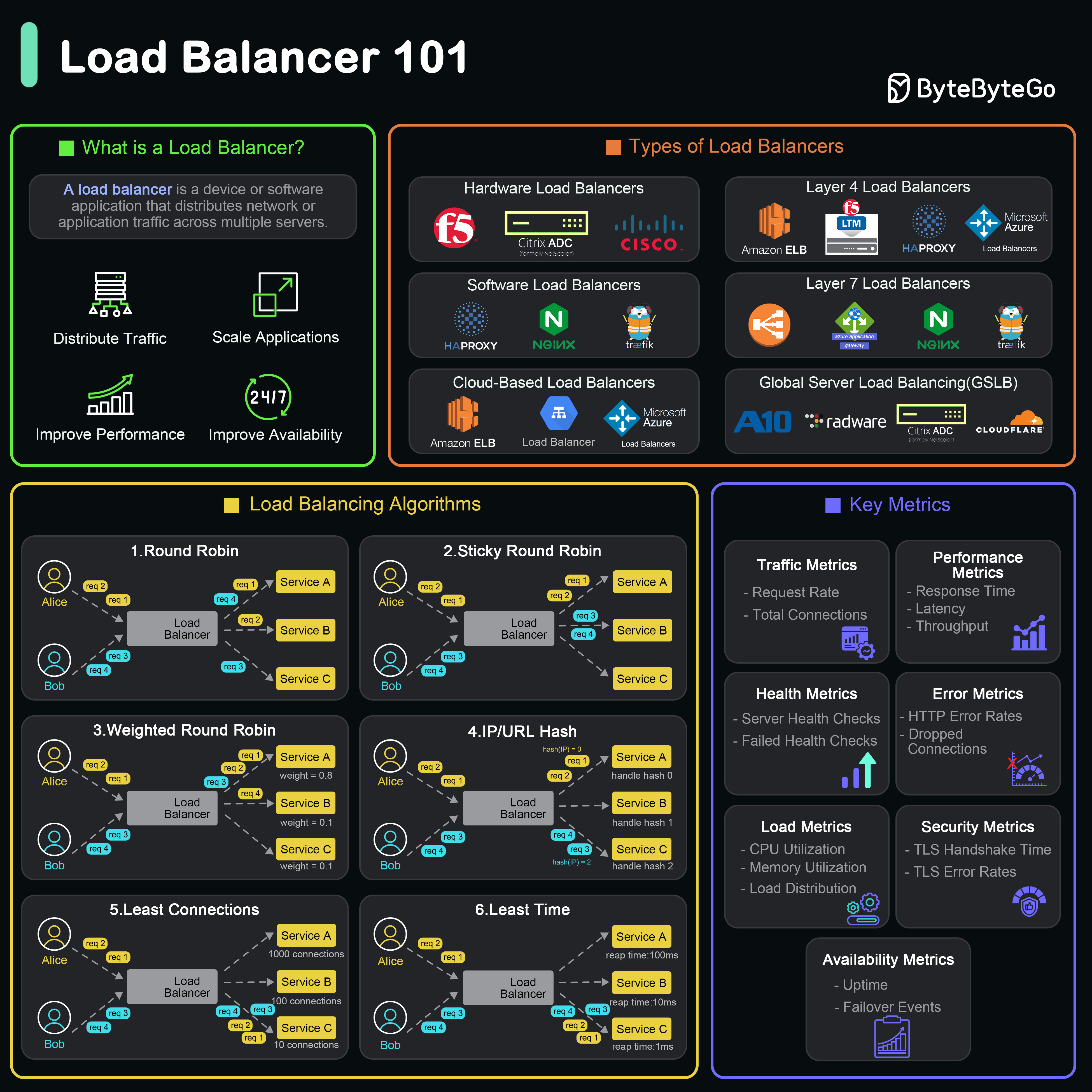

- - [What are the common load-balancing algorithms?](#what-are-the-common-load-balancing-algorithms)

- - [URL, URI, URN - Do you know the differences?](#url-uri-urn---do-you-know-the-differences)

-- [CI/CD](#cicd)

- - [CI/CD Pipeline Explained in Simple Terms](#cicd-pipeline-explained-in-simple-terms)

- - [Netflix Tech Stack (CI/CD Pipeline)](#netflix-tech-stack-cicd-pipeline)

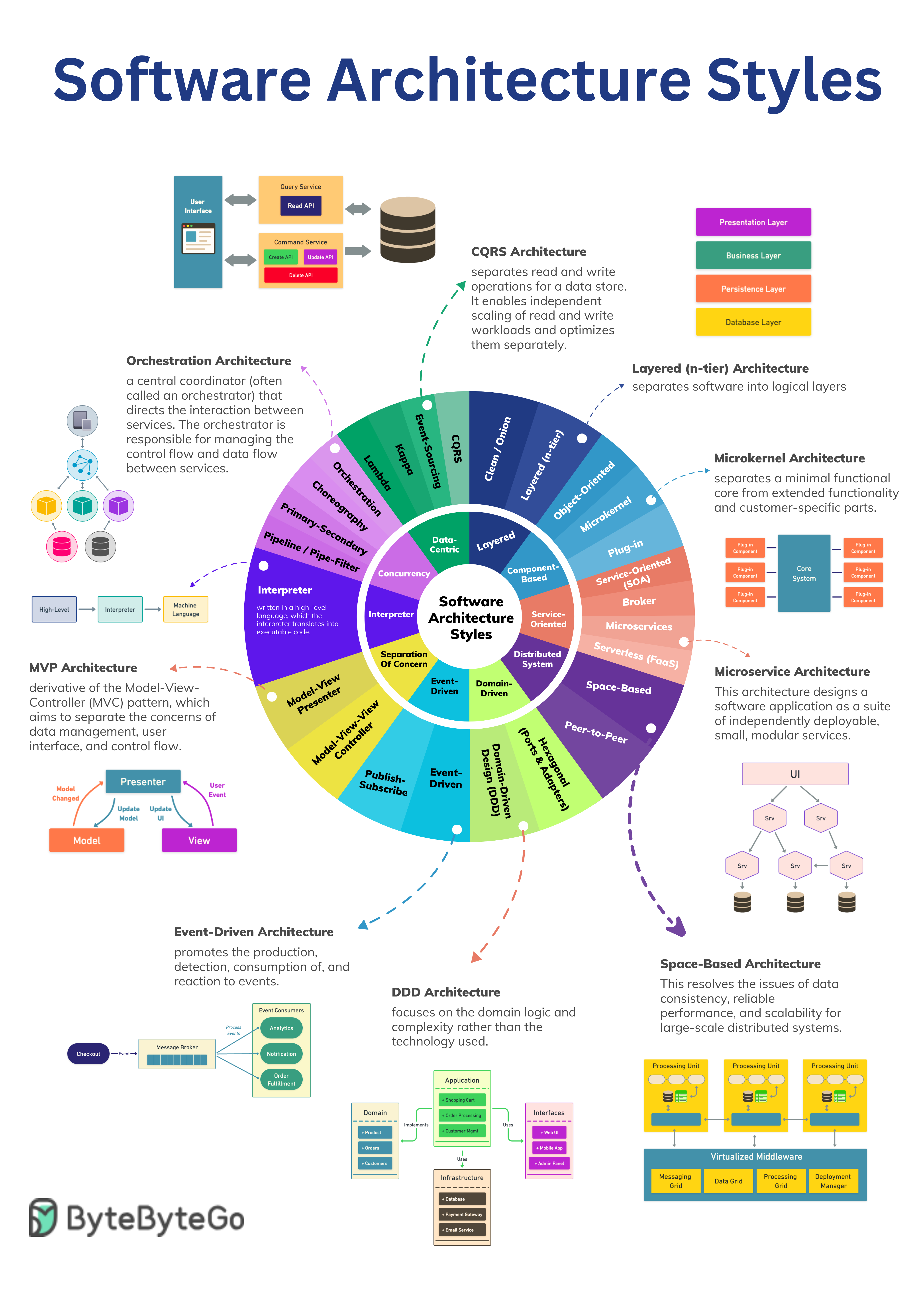

-- [Architecture patterns](#architecture-patterns)

- - [MVC, MVP, MVVM, MVVM-C, and VIPER](#mvc-mvp-mvvm-mvvm-c-and-viper)

- - [18 Key Design Patterns Every Developer Should Know](#18-key-design-patterns-every-developer-should-know)

-- [Database](#database)

- - [A nice cheat sheet of different databases in cloud services](#a-nice-cheat-sheet-of-different-databases-in-cloud-services)

- - [8 Data Structures That Power Your Databases](#8-data-structures-that-power-your-databases)

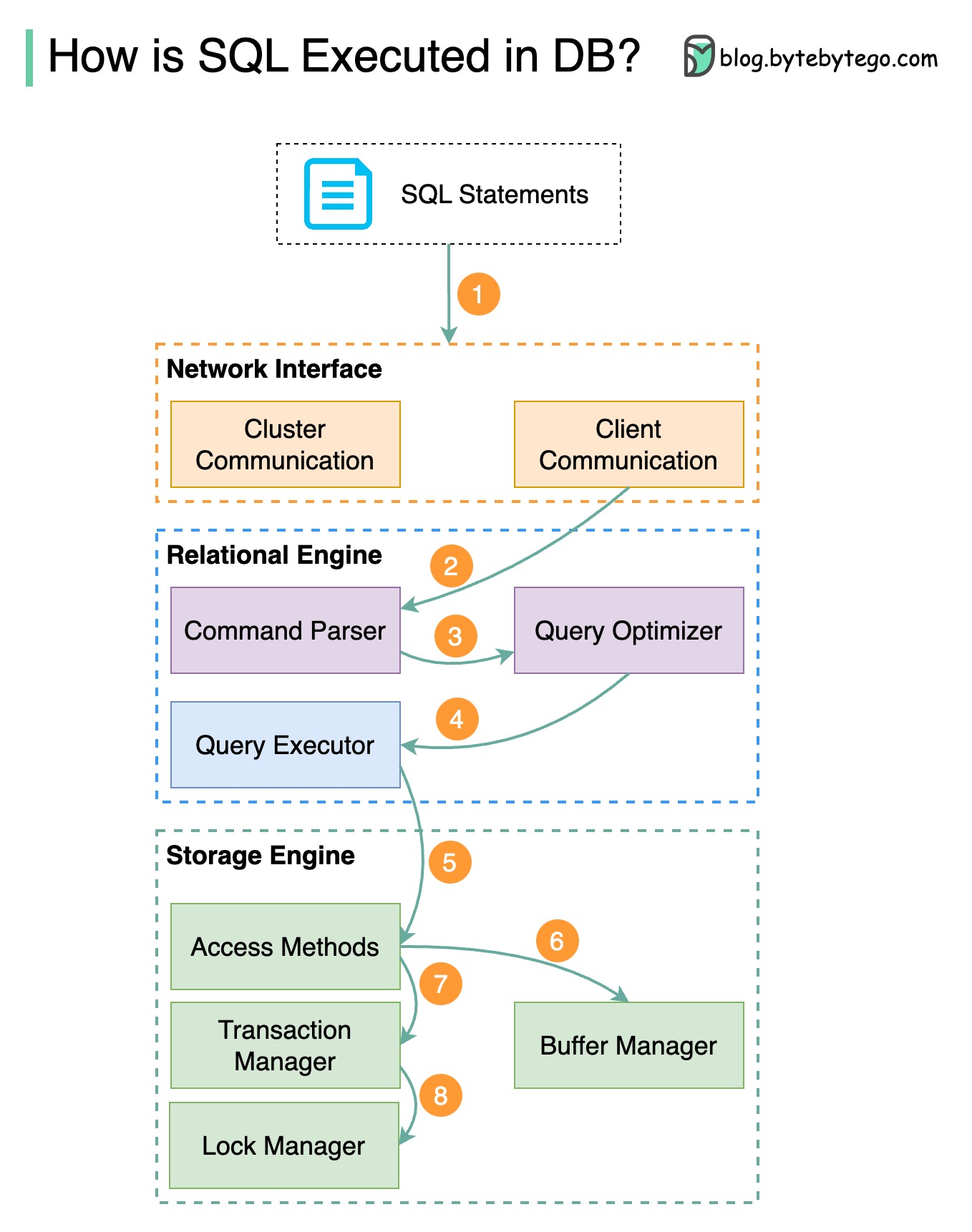

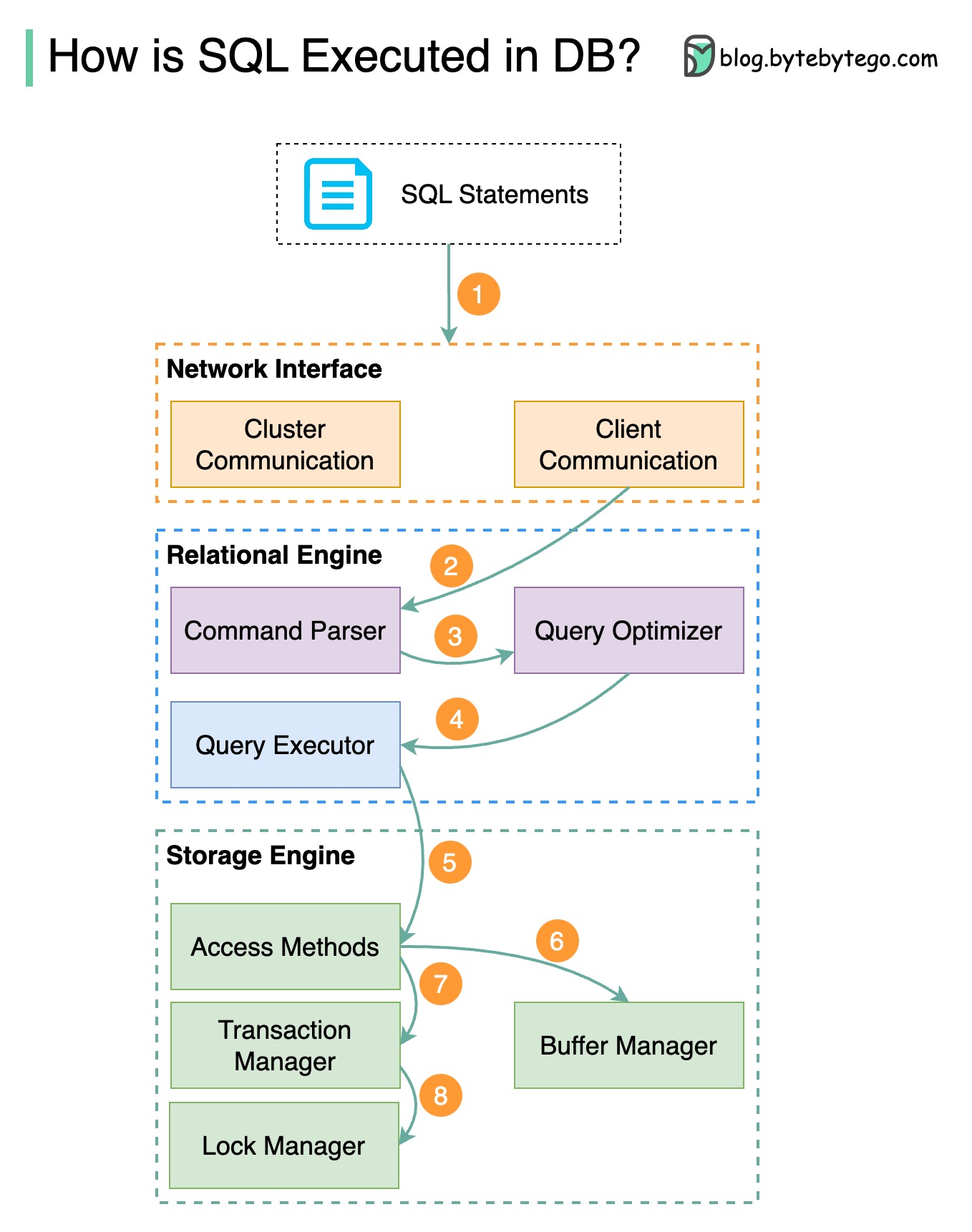

- - [How is an SQL statement executed in the database?](#how-is-an-sql-statement-executed-in-the-database)

- - [CAP theorem](#cap-theorem)

- - [Types of Memory and Storage](#types-of-memory-and-storage)

- - [Visualizing a SQL query](#visualizing-a-sql-query)

- - [SQL language](#sql-language)

-- [Cache](#cache)

- - [Data is cached everywhere](#data-is-cached-everywhere)

- - [Why is Redis so fast?](#why-is-redis-so-fast)

- - [How can Redis be used?](#how-can-redis-be-used)

- - [Top caching strategies](#top-caching-strategies)

-- [Microservice architecture](#microservice-architecture)

- - [What does a typical microservice architecture look like?](#what-does-a-typical-microservice-architecture-look-like)

- - [Microservice Best Practices](#microservice-best-practices)

- - [What tech stack is commonly used for microservices?](#what-tech-stack-is-commonly-used-for-microservices)

- - [Why is Kafka fast](#why-is-kafka-fast)

-- [Payment systems](#payment-systems)

- - [How to learn payment systems?](#how-to-learn-payment-systems)

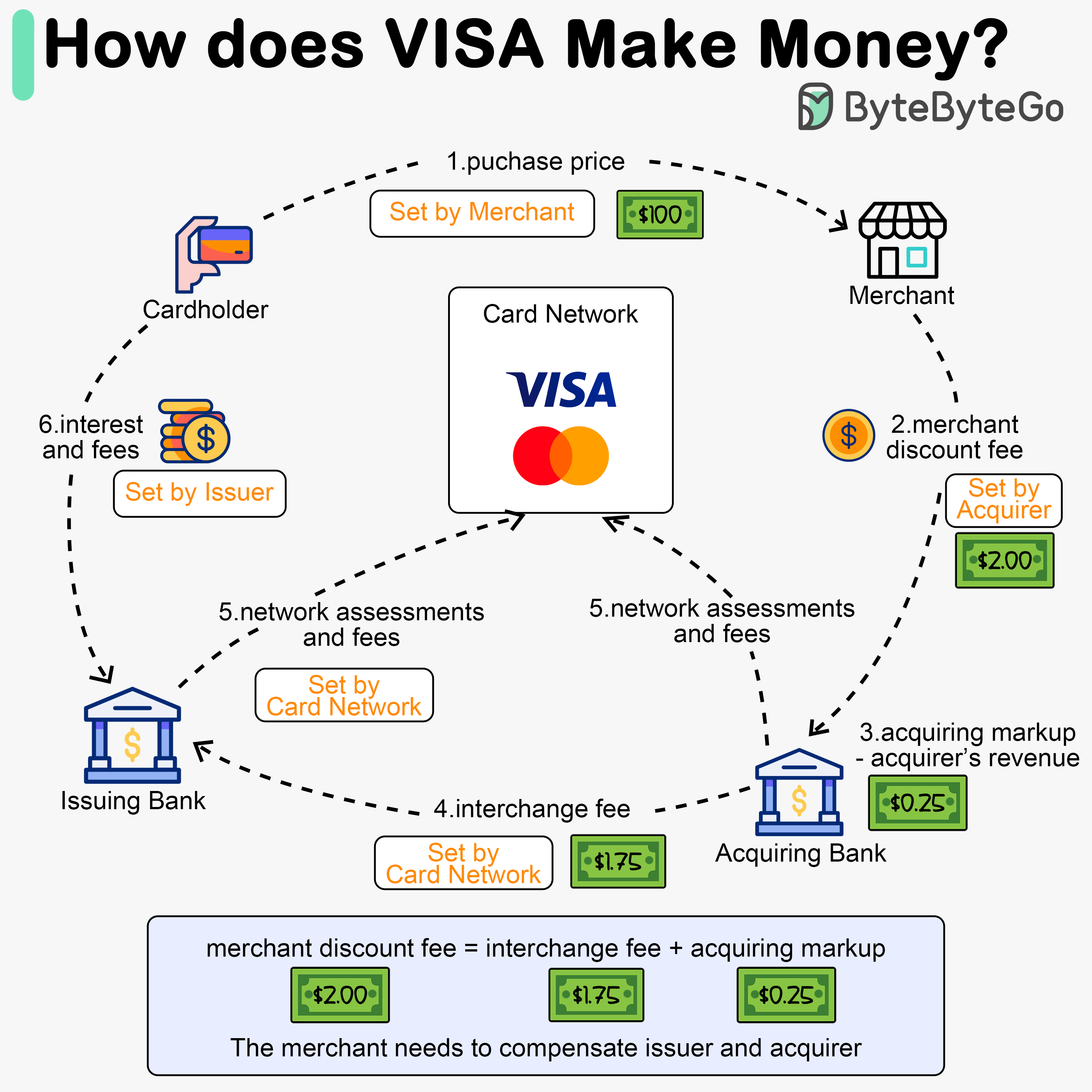

- - [Why is the credit card called “the most profitable product in banks”? How does VISA/Mastercard make money?](#why-is-the-credit-card-called-the-most-profitable-product-in-banks-how-does-visamastercard-make-money)

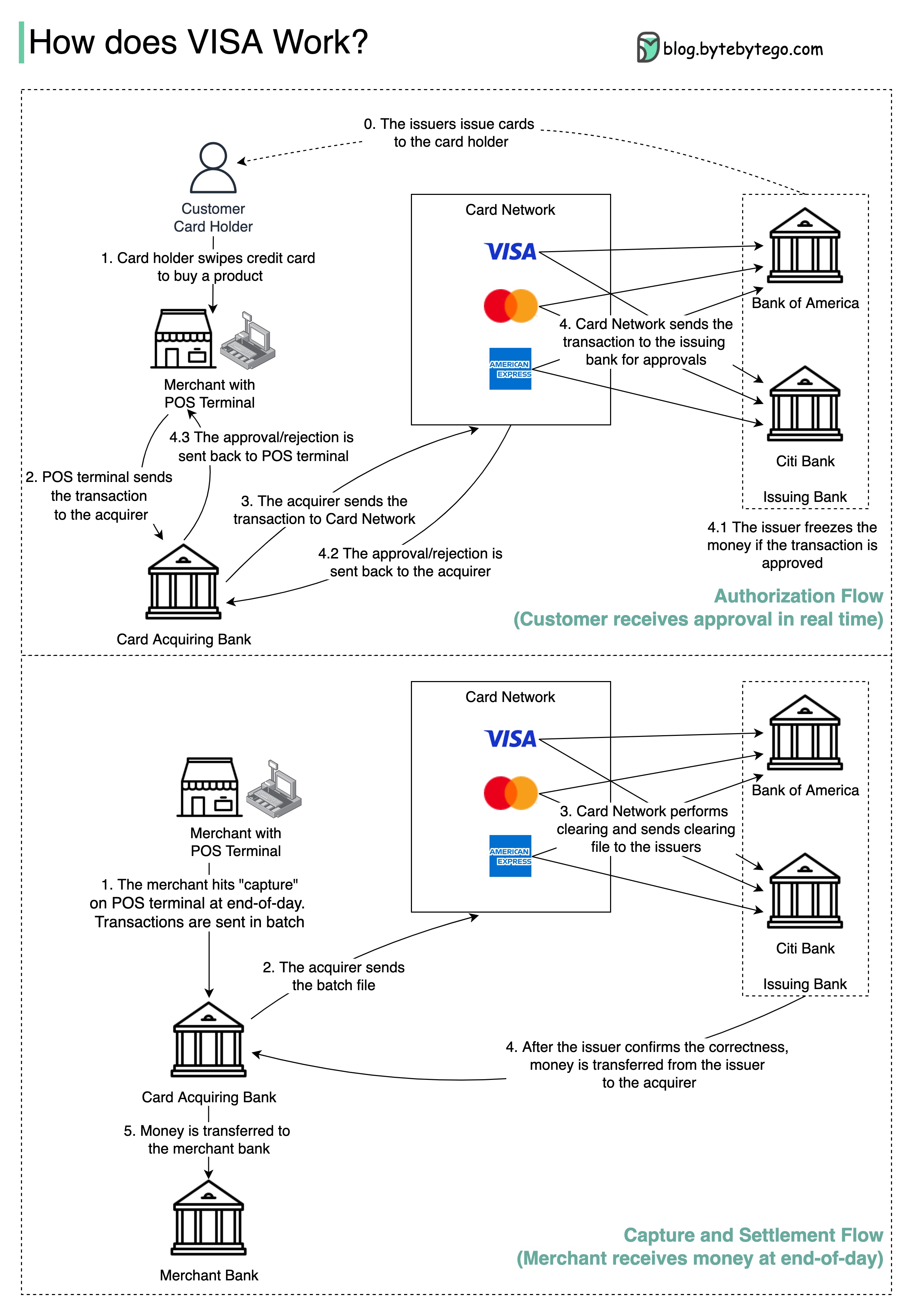

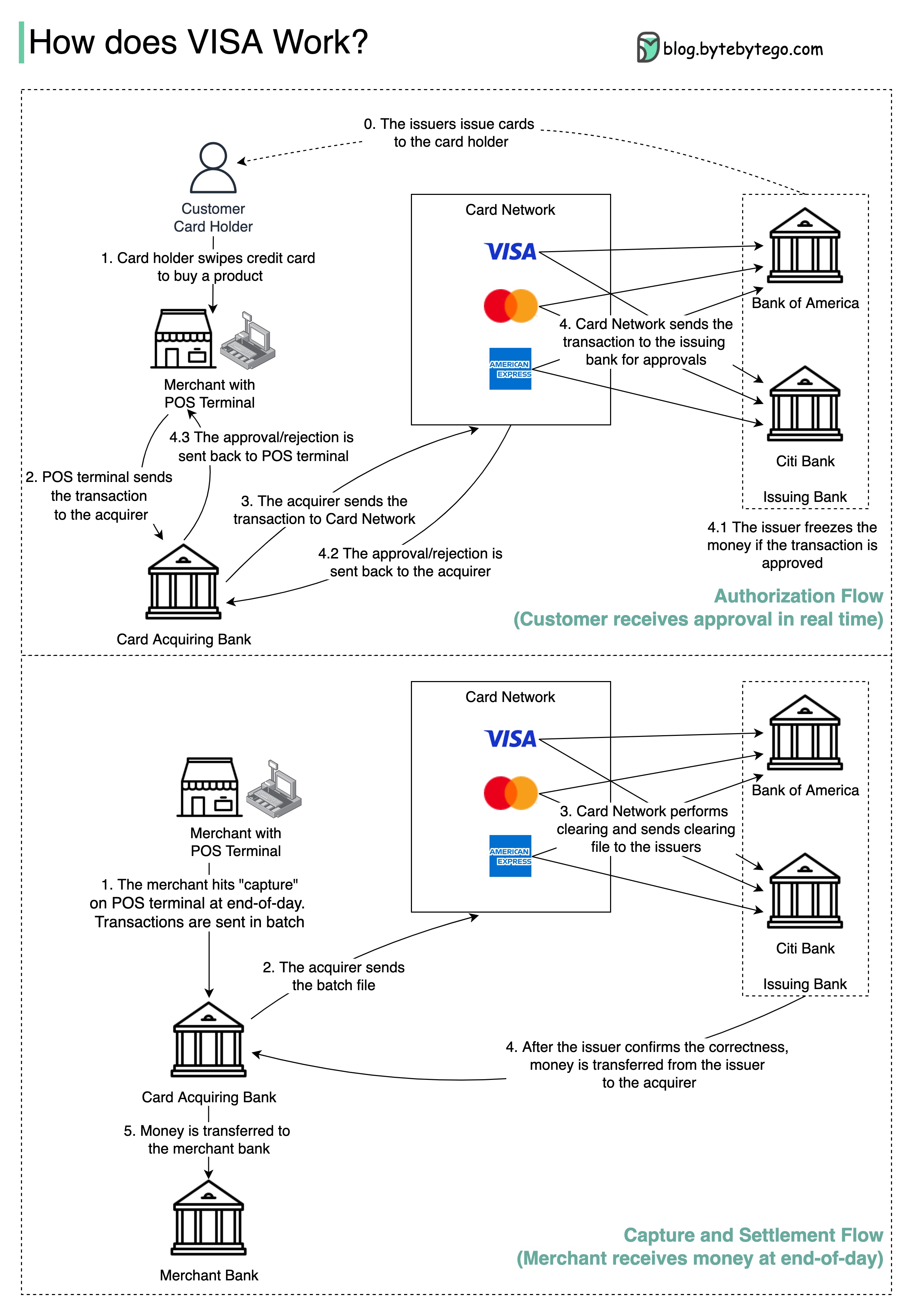

- - [How does VISA work when we swipe a credit card at a merchant’s shop?](#how-does-visa-work-when-we-swipe-a-credit-card-at-a-merchants-shop)

- - [Payment Systems Around The World Series (Part 1): Unified Payments Interface (UPI) in India](#payment-systems-around-the-world-series-part-1-unified-payments-interface-upi-in-india)

-- [DevOps](#devops)

- - [DevOps vs. SRE vs. Platform Engineering. What is the difference?](#devops-vs-sre-vs-platform-engineering-what-is-the-difference)

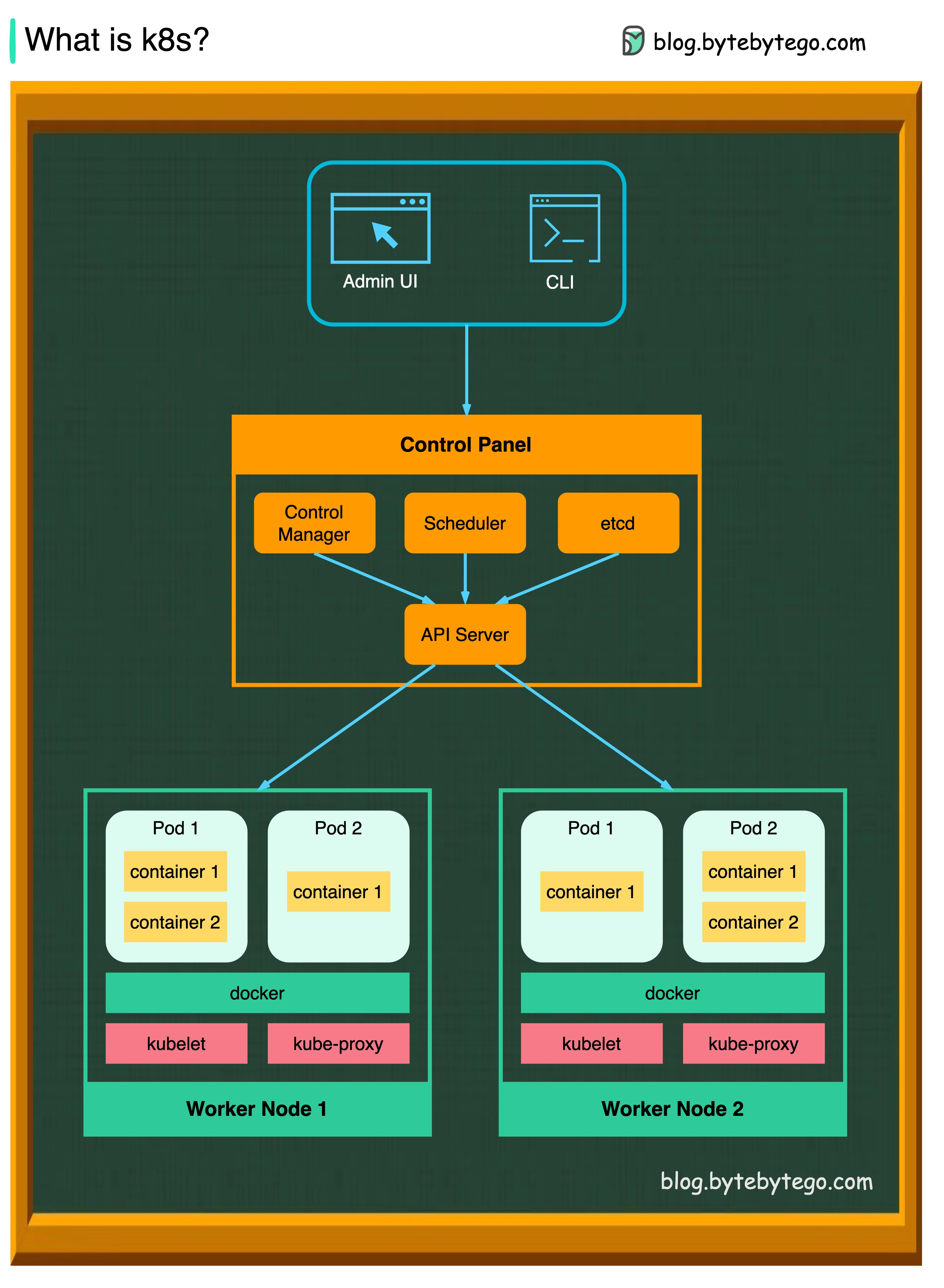

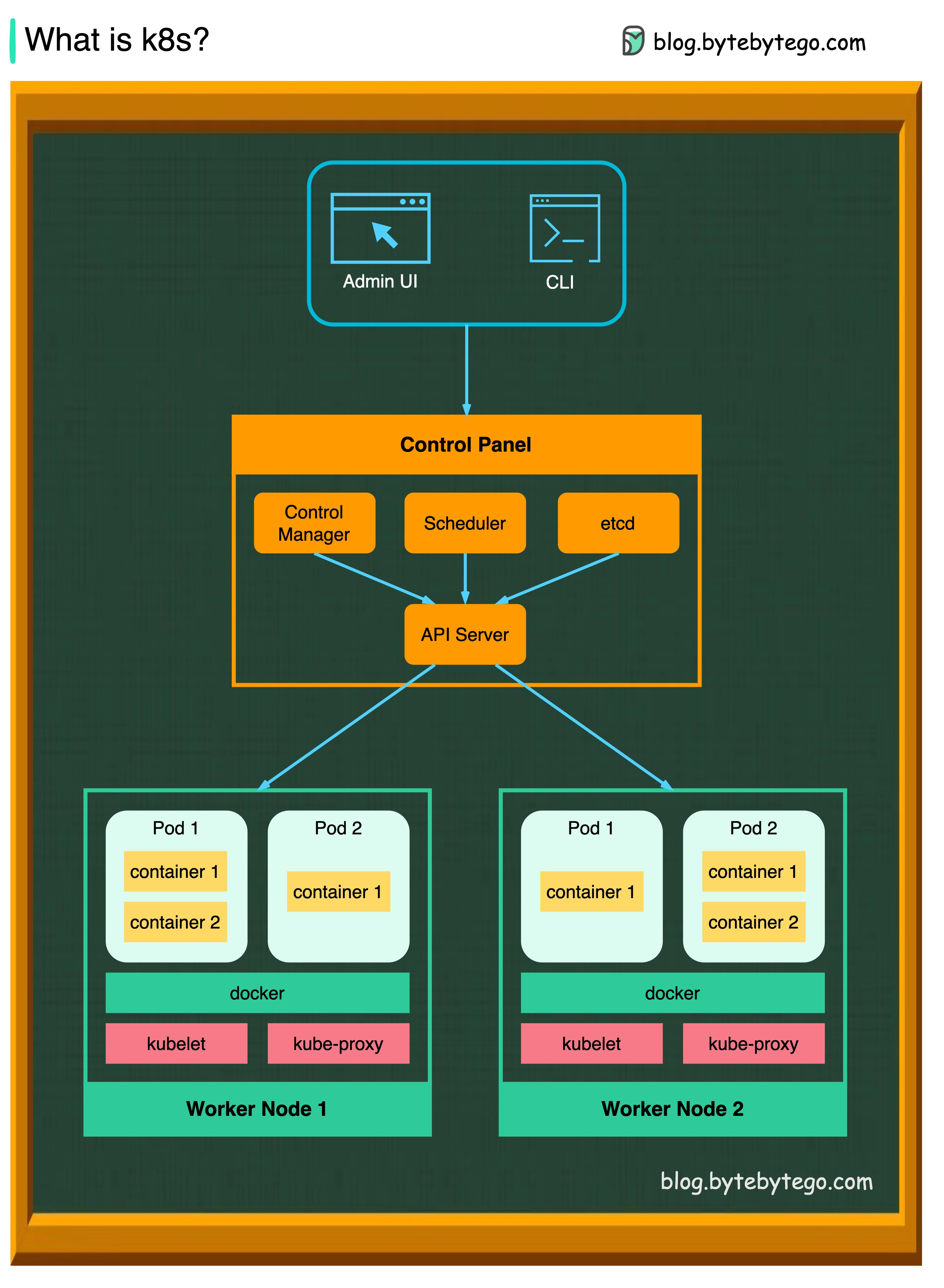

- - [What is k8s (Kubernetes)?](#what-is-k8s-kubernetes)

- - [Docker vs. Kubernetes. Which one should we use?](#docker-vs-kubernetes-which-one-should-we-use)

- - [How does Docker work?](#how-does-docker-work)

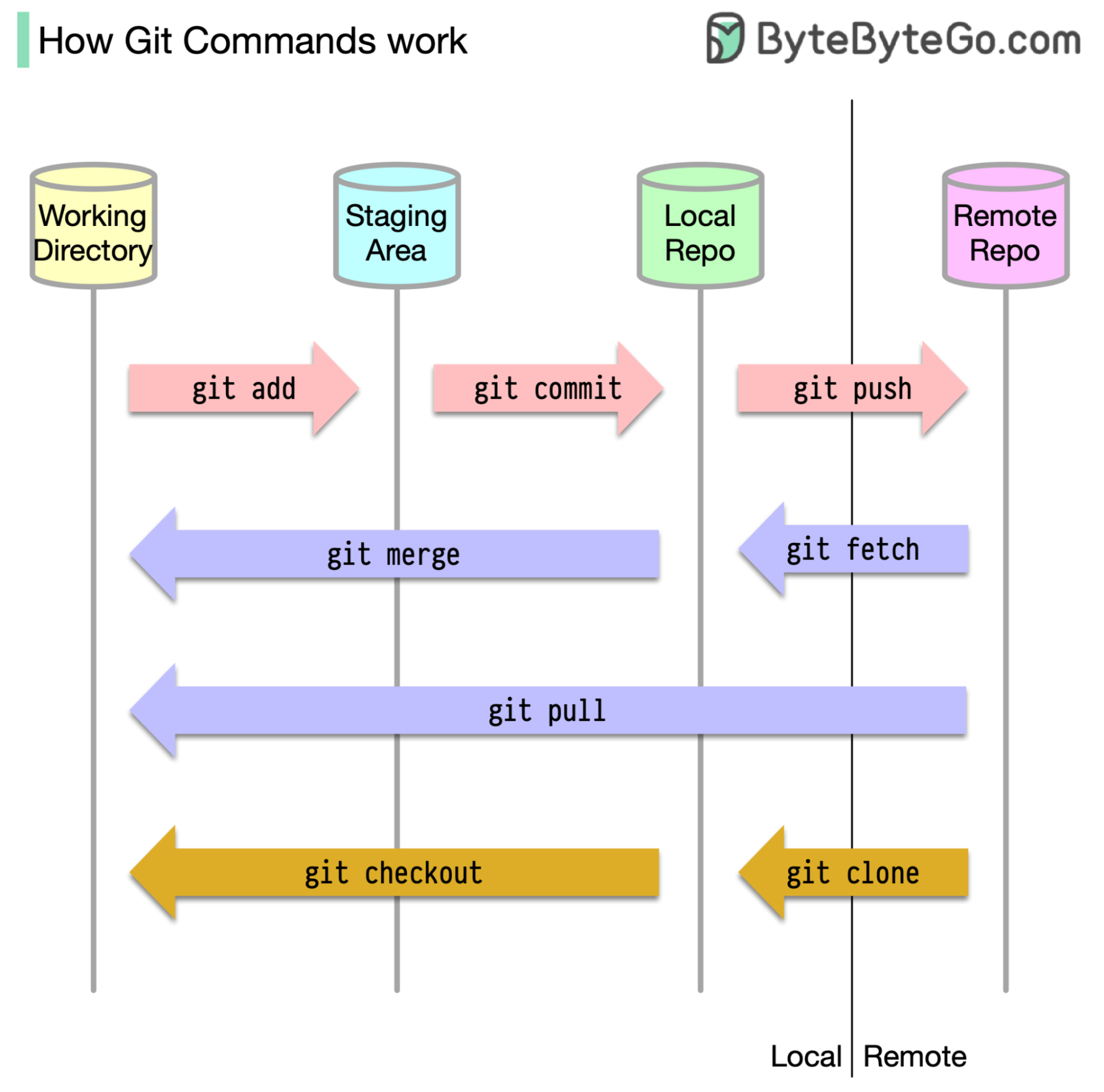

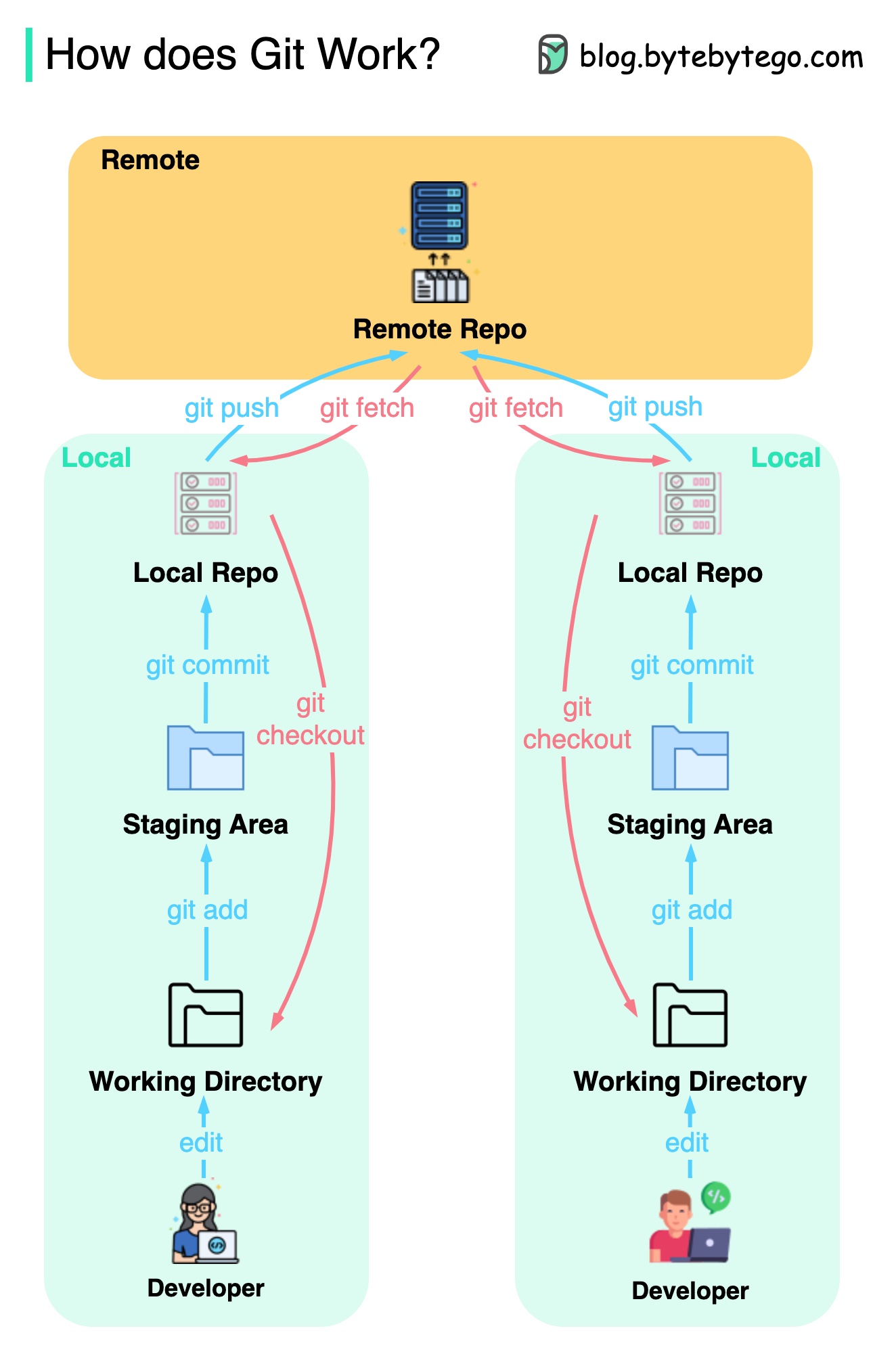

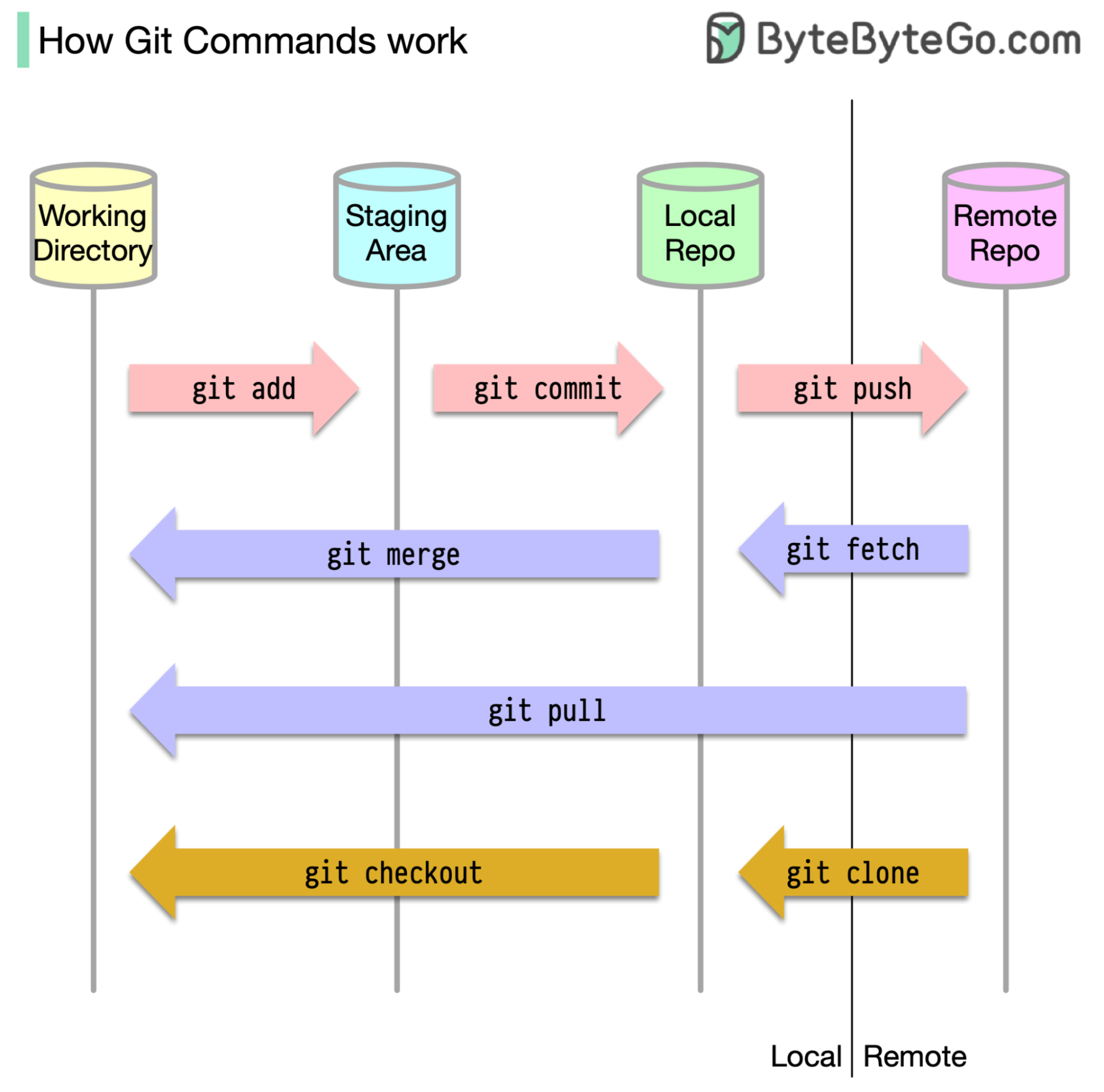

-- [GIT](#git)

- - [How Git Commands work](#how-git-commands-work)

- - [How does Git Work?](#how-does-git-work)

- - [Git merge vs. Git rebase](#git-merge-vs-git-rebase)

-- [Cloud Services](#cloud-services)

- - [A nice cheat sheet of different cloud services (2023 edition)](#a-nice-cheat-sheet-of-different-cloud-services-2023-edition)

- - [What is cloud native?](#what-is-cloud-native)

-- [Developer productivity tools](#developer-productivity-tools)

- - [Visualize JSON files](#visualize-json-files)

- - [Automatically turn code into architecture diagrams](#automatically-turn-code-into-architecture-diagrams)

-- [Linux](#linux)

- - [Linux file system explained](#linux-file-system-explained)

- - [18 Most-used Linux Commands You Should Know](#18-most-used-linux-commands-you-should-know)

-- [Security](#security)

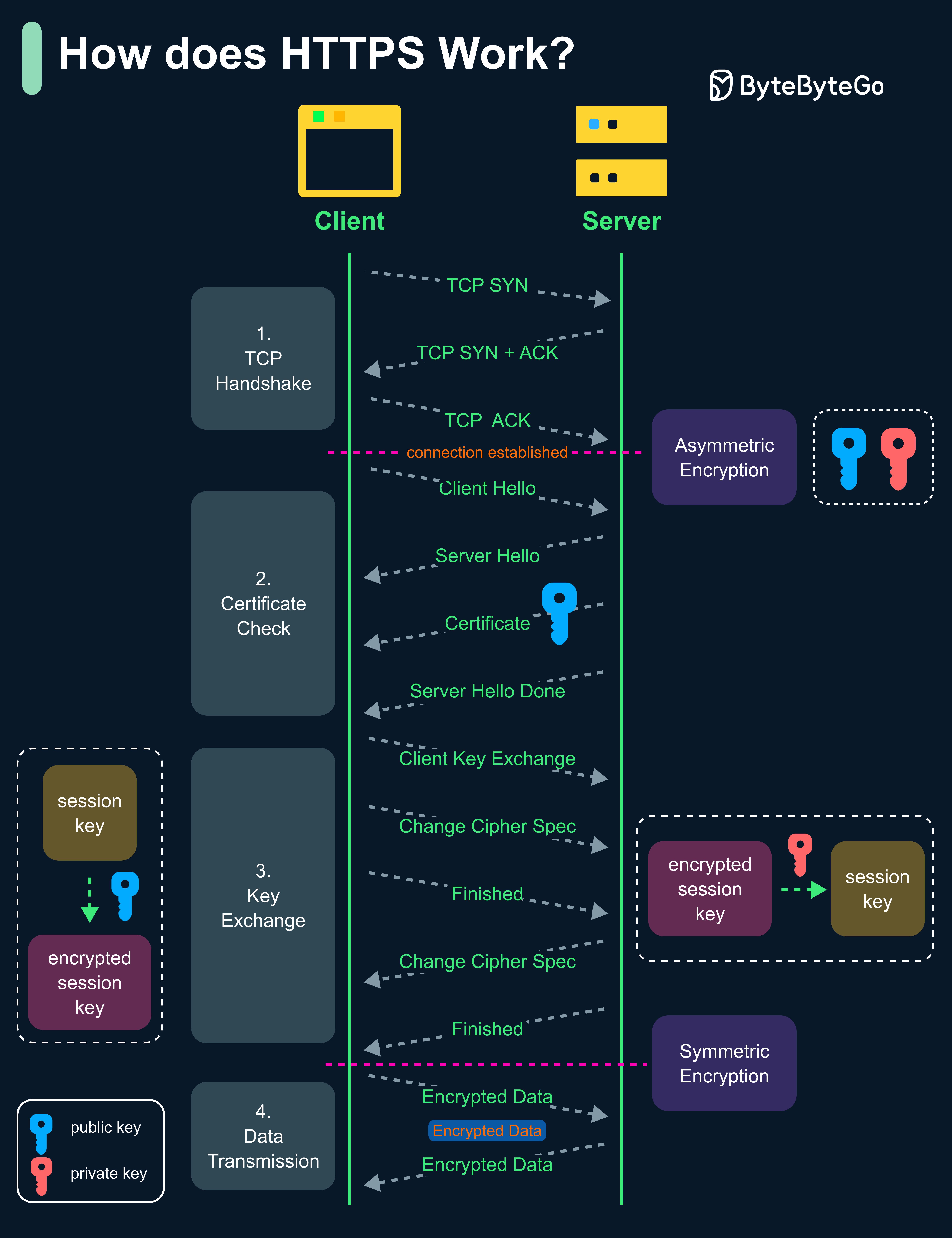

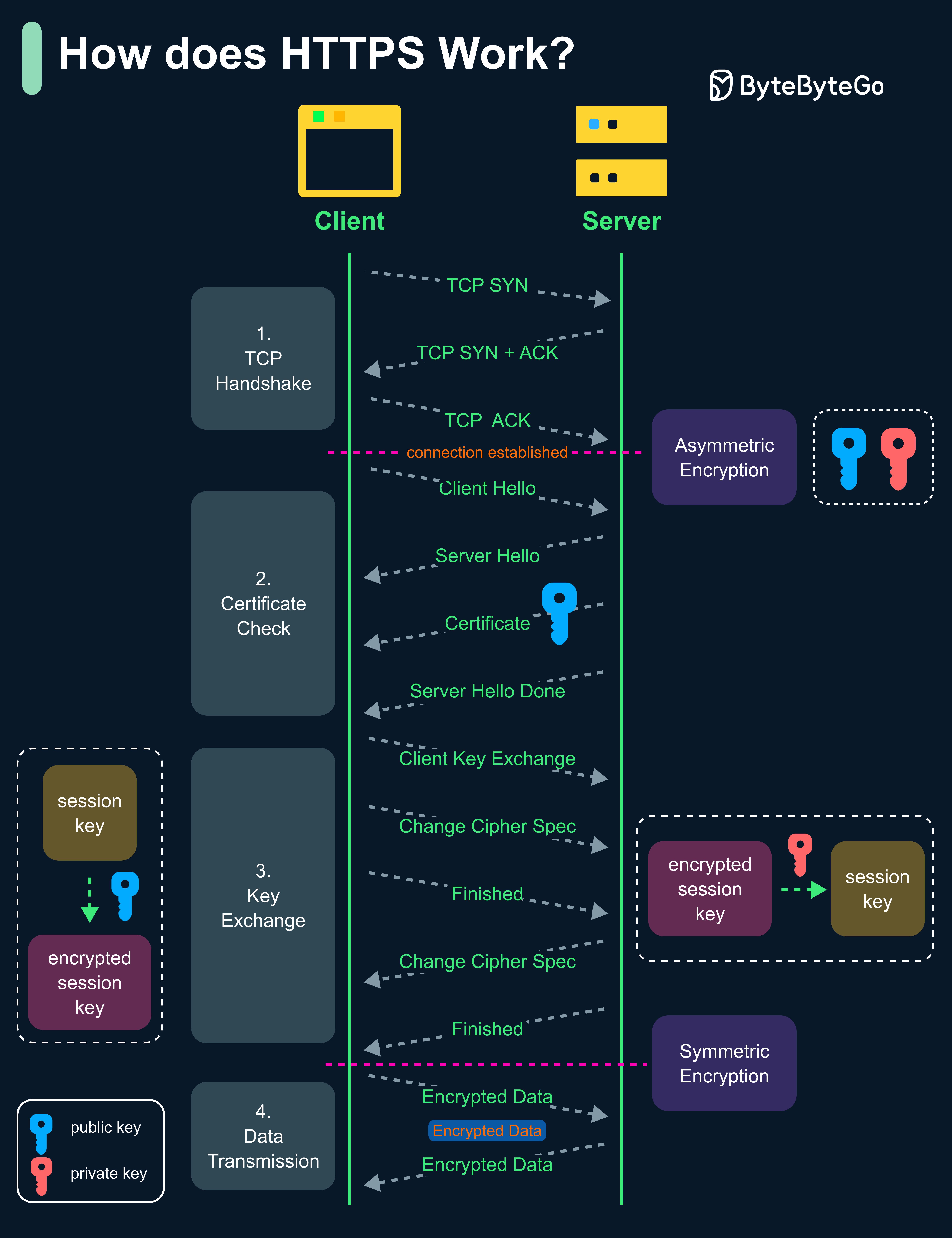

- - [How does HTTPS work?](#how-does-https-work)

- - [Oauth 2.0 Explained With Simple Terms.](#oauth-20-explained-with-simple-terms)

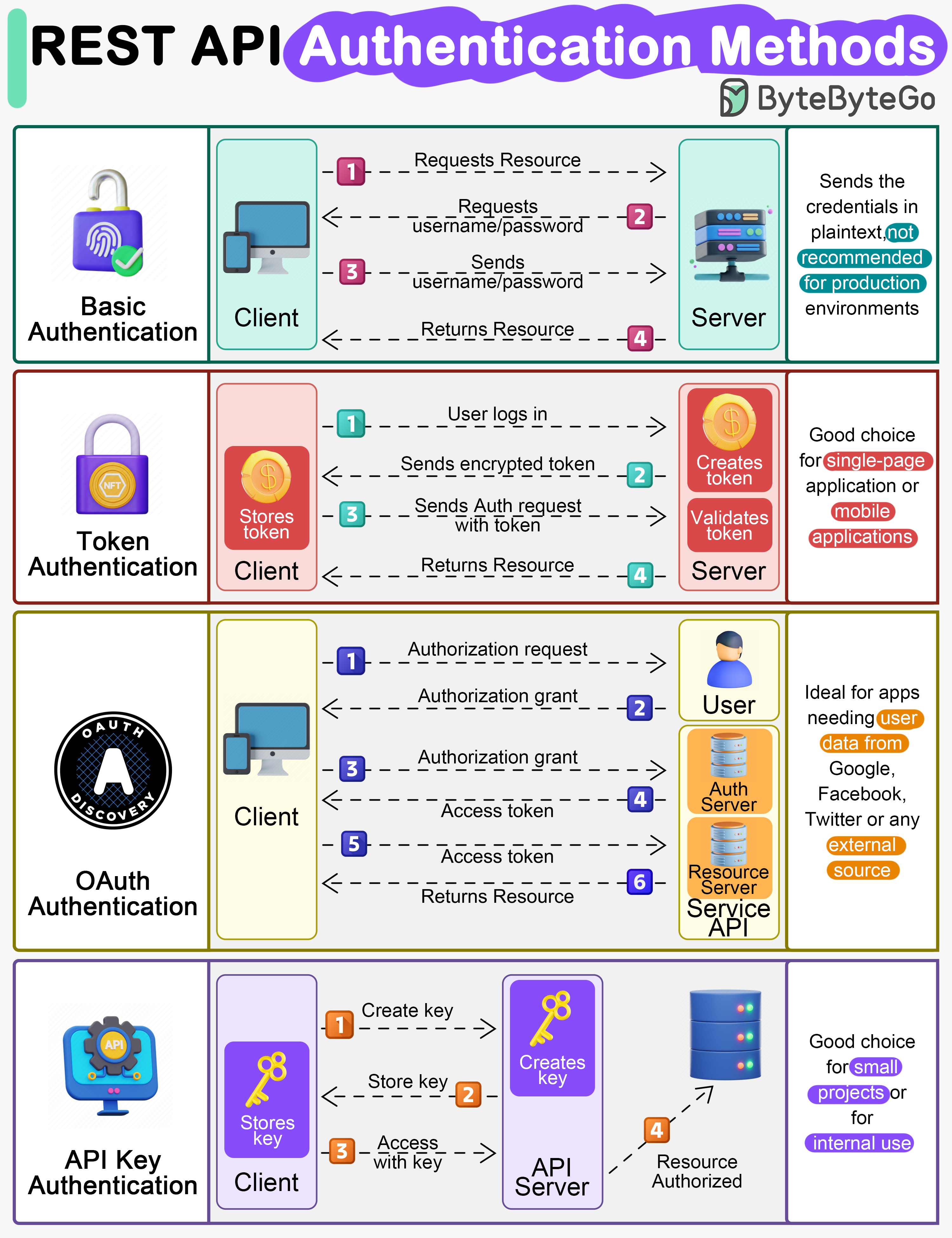

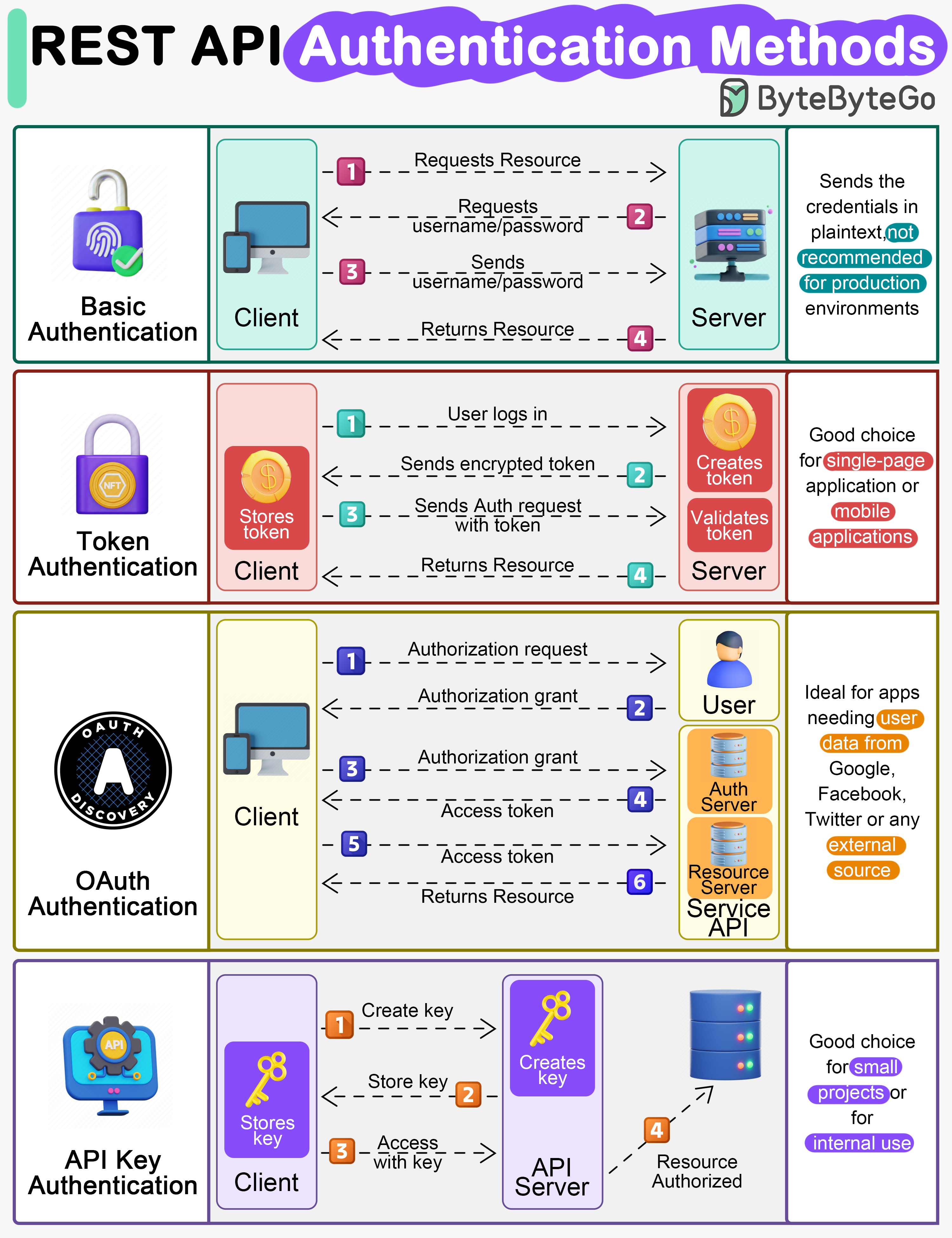

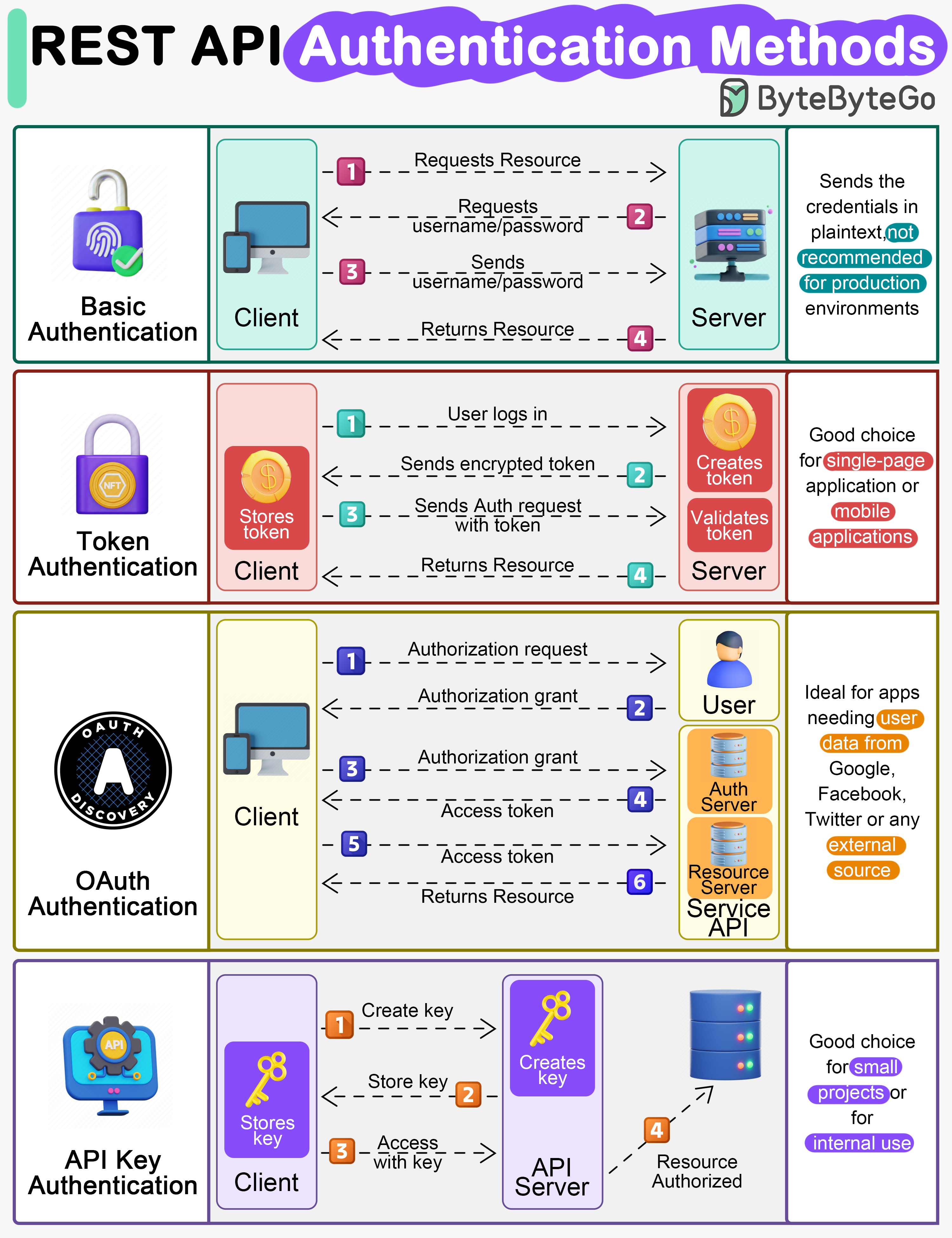

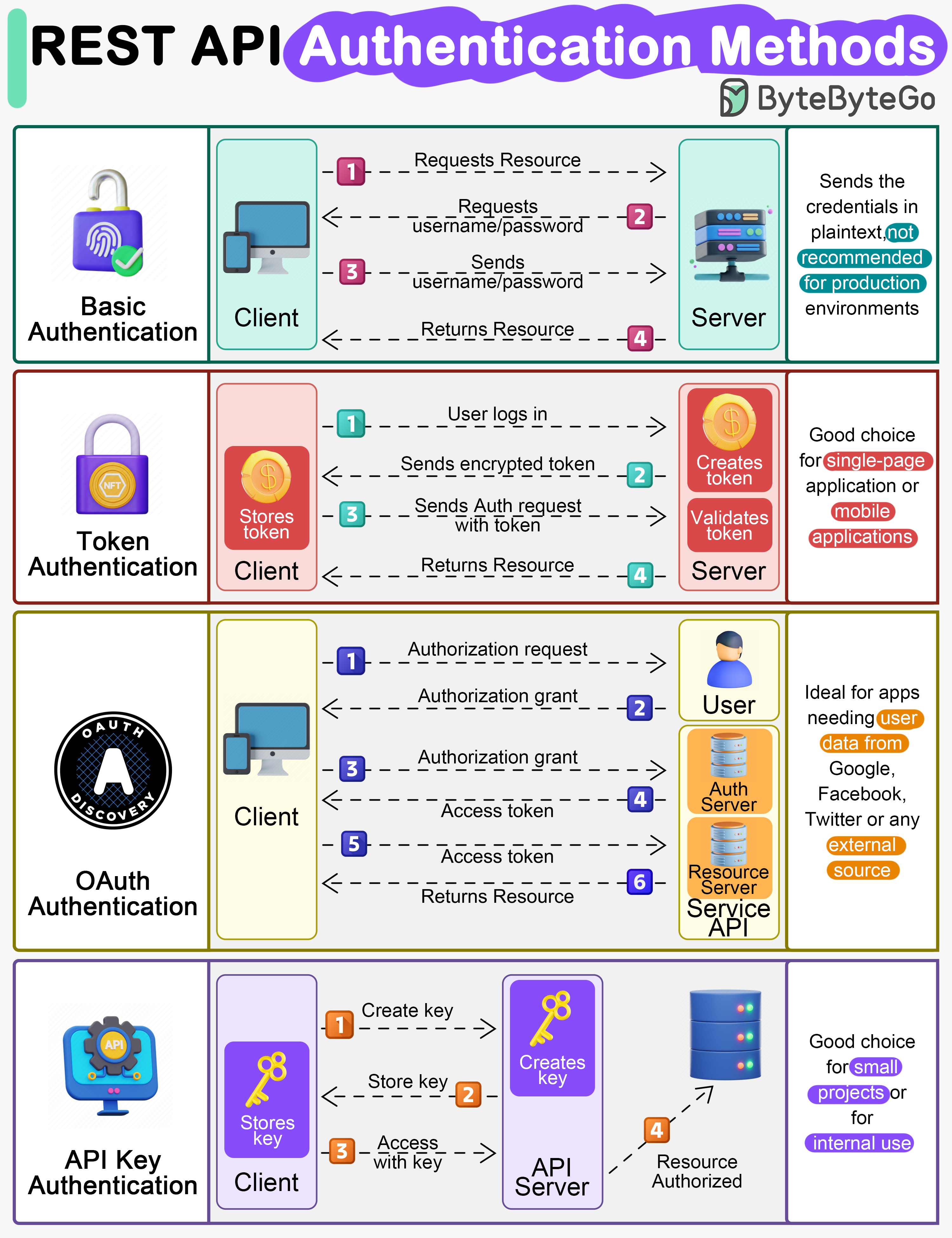

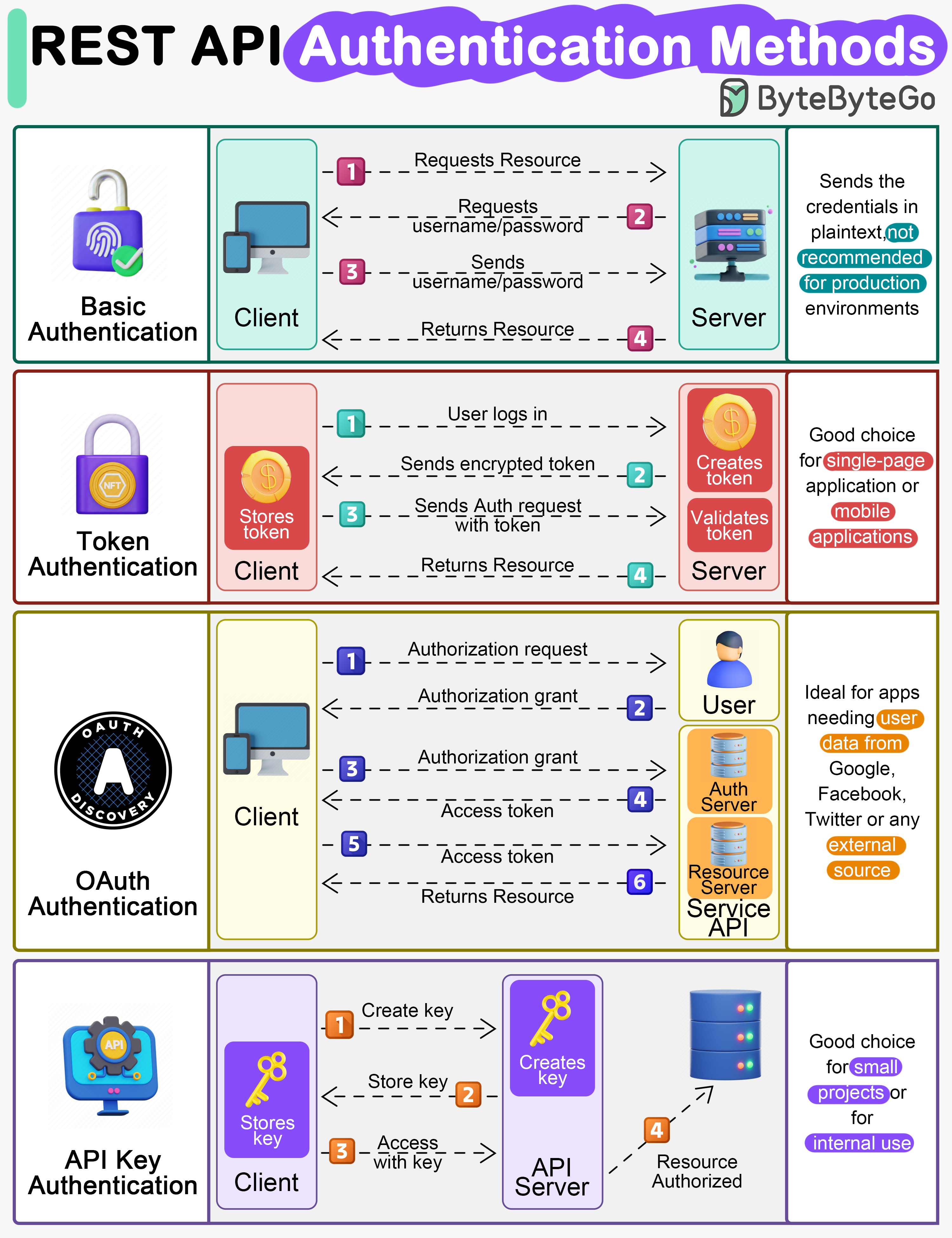

- - [Top 4 Forms of Authentication Mechanisms](#top-4-forms-of-authentication-mechanisms)

- - [Session, cookie, JWT, token, SSO, and OAuth 2.0 - what are they?](#session-cookie-jwt-token-sso-and-oauth-20---what-are-they)

- - [How to store passwords safely in the database and how to validate a password?](#how-to-store-passwords-safely-in-the-database-and-how-to-validate-a-password)

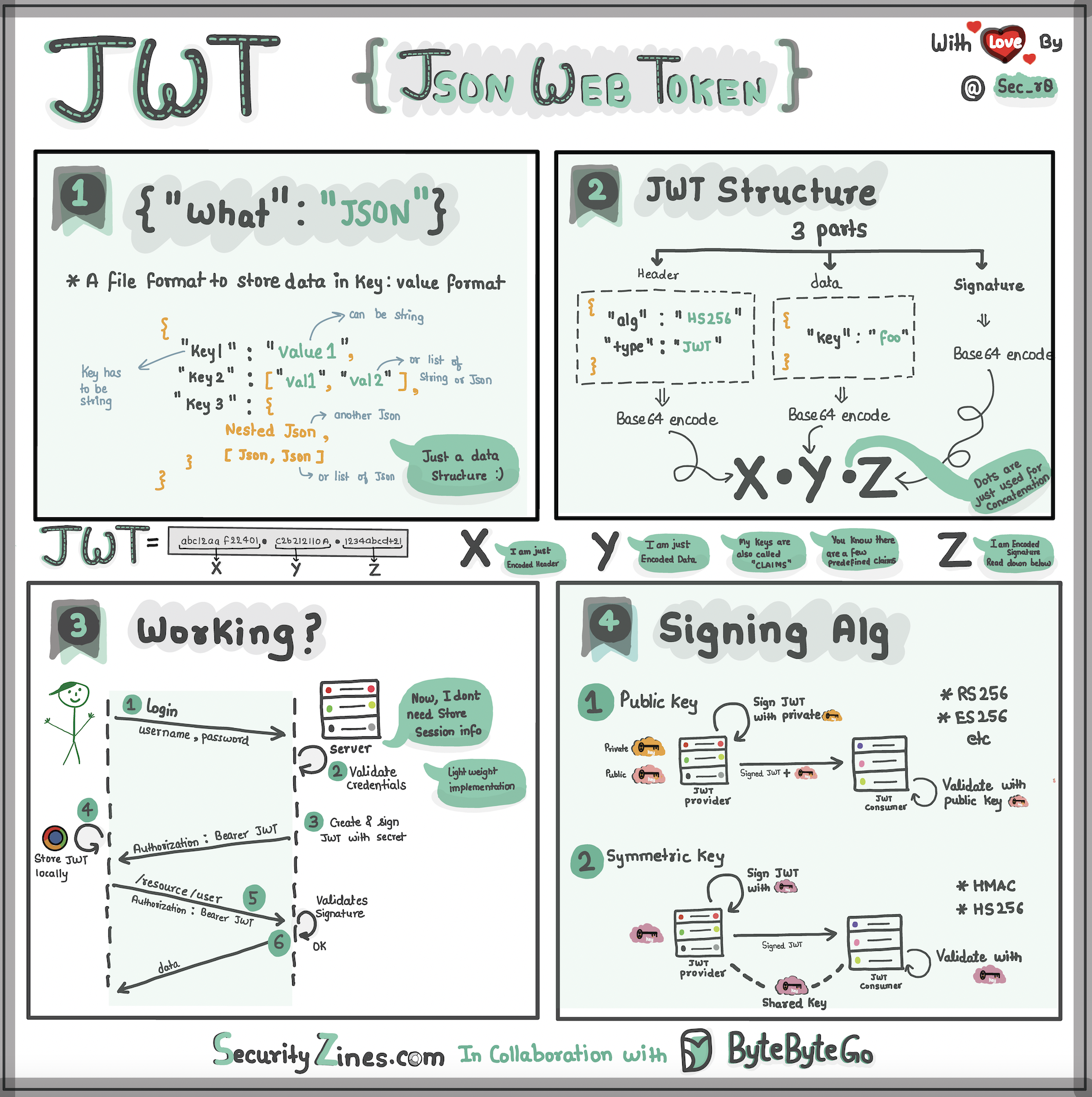

- - [Explaining JSON Web Token (JWT) to a 10 year old Kid](#explaining-json-web-token-jwt-to-a-10-year-old-kid)

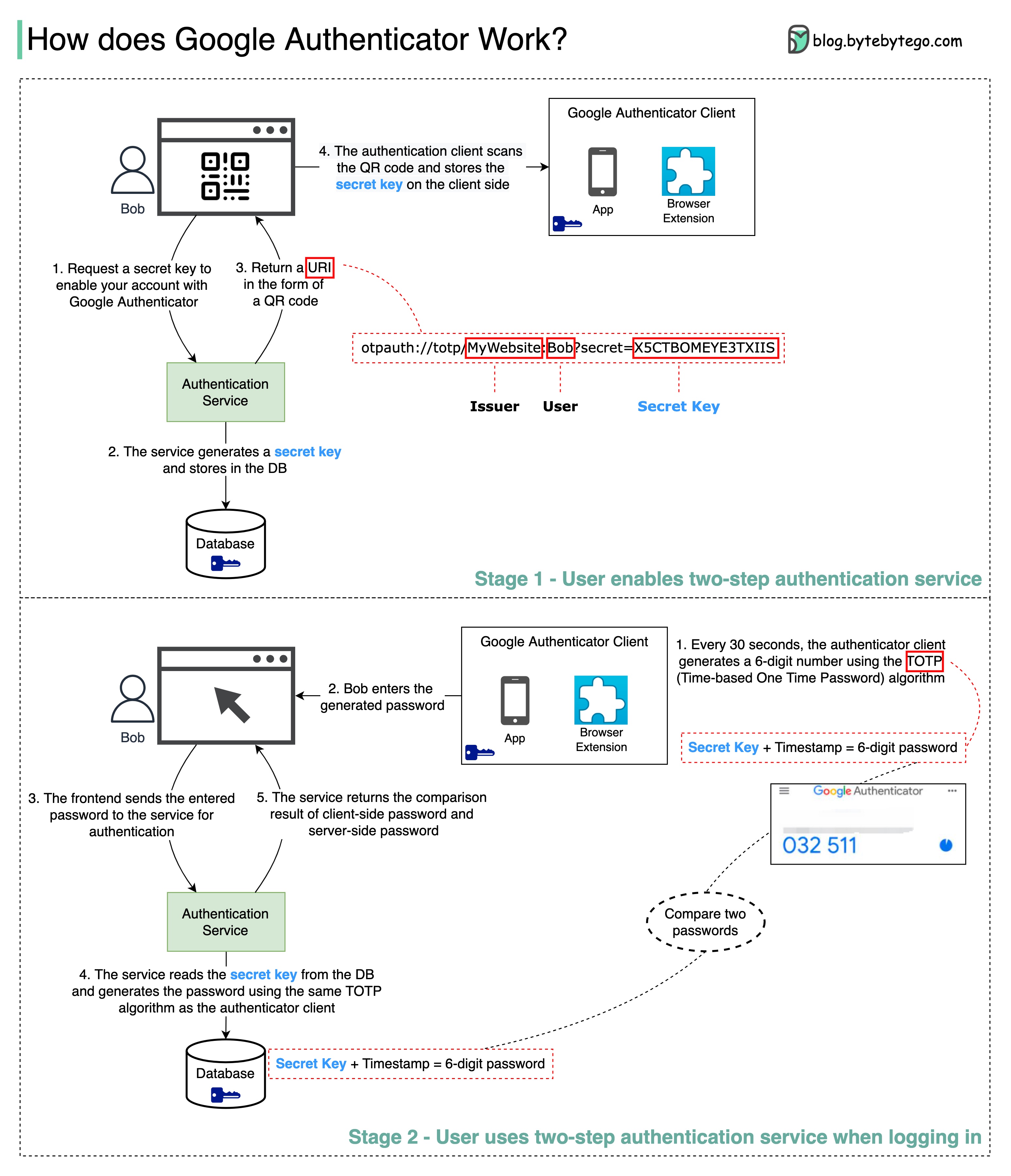

- - [How does Google Authenticator (or other types of 2-factor authenticators) work?](#how-does-google-authenticator-or-other-types-of-2-factor-authenticators-work)

-- [Real World Case Studies](#real-world-case-studies)

- - [Netflix's Tech Stack](#netflixs-tech-stack)

- - [Twitter Architecture 2022](#twitter-architecture-2022)

- - [Evolution of Airbnb’s microservice architecture over the past 15 years](#evolution-of-airbnbs-microservice-architecture-over-the-past-15-years)

- - [Monorepo vs. Microrepo.](#monorepo-vs-microrepo)

- - [How will you design the Stack Overflow website?](#how-will-you-design-the-stack-overflow-website)

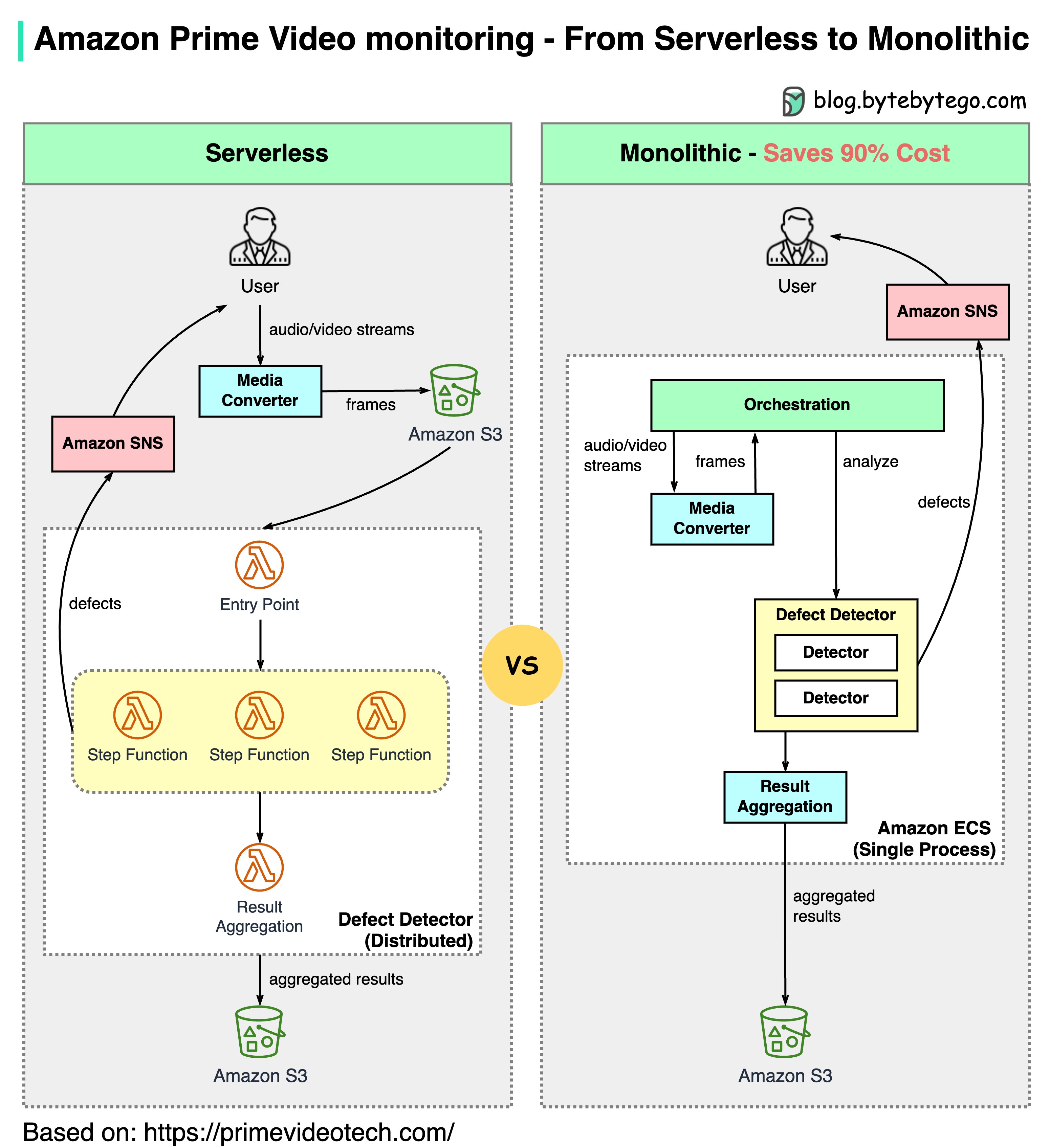

- - [Why did Amazon Prime Video monitoring move from serverless to monolithic? How can it save 90% cost?](#why-did-amazon-prime-video-monitoring-move-from-serverless-to-monolithic-how-can-it-save-90-cost)

- - [How does Disney Hotstar capture 5 Billion Emojis during a tournament?](#how-does-disney-hotstar-capture-5-billion-emojis-during-a-tournament)

- - [How Discord Stores Trillions Of Messages](#how-discord-stores-trillions-of-messages)

- - [How do video live streamings work on YouTube, TikTok live, or Twitch?](#how-do-video-live-streamings-work-on-youtube-tiktok-live-or-twitch)

-## Communication protocols

-

-Architecture styles define how different components of an application programming interface (API) interact with one another. As a result, they ensure efficiency, reliability, and ease of integration with other systems by providing a standard approach to designing and building APIs. Here are the most used styles:

-

-

-  -

-

-

-- SOAP:

-

- Mature, comprehensive, XML-based

-

- Best for enterprise applications

-

-- RESTful:

-

- Popular, easy-to-implement, HTTP methods

-

- Ideal for web services

-

-- GraphQL:

-

- Query language, request specific data

-

- Reduces network overhead, faster responses

-

-- gRPC:

-

- Modern, high-performance, Protocol Buffers

-

- Suitable for microservices architectures

-

-- WebSocket:

-

- Real-time, bidirectional, persistent connections

-

- Perfect for low-latency data exchange

-

-- Webhook:

-

- Event-driven, HTTP callbacks, asynchronous

-

- Notifies systems when events occur

-

-

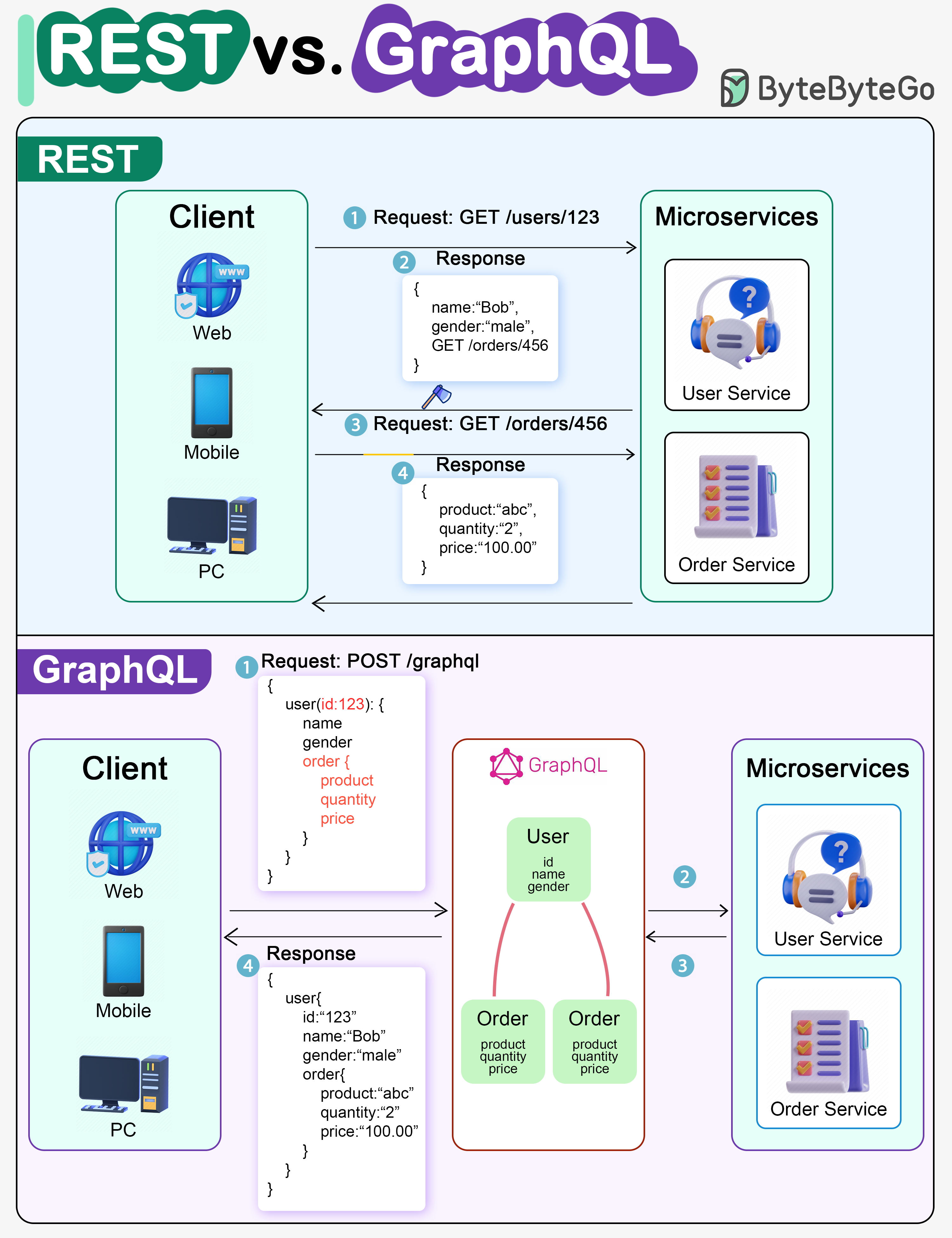

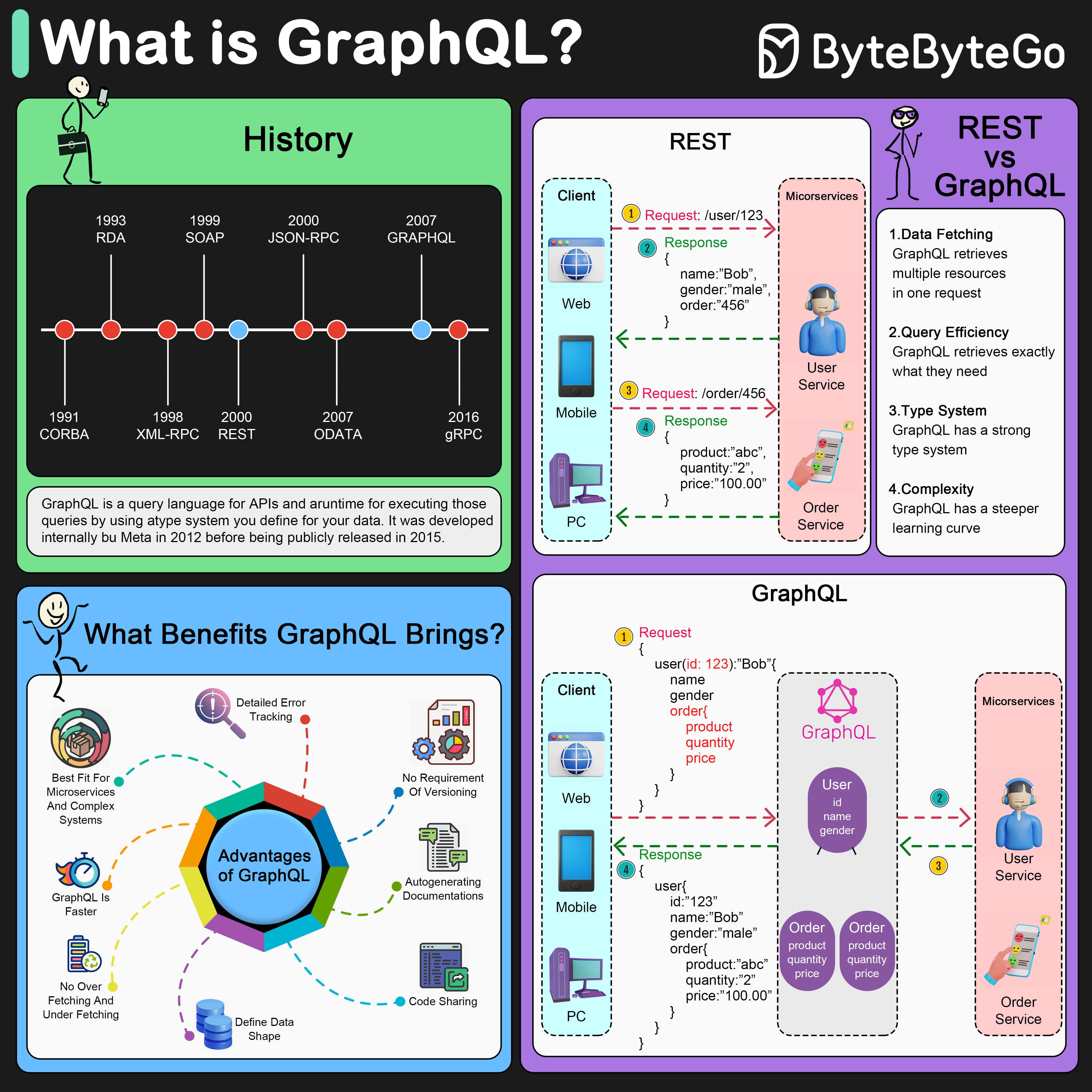

-### REST API vs. GraphQL

-

-When it comes to API design, REST and GraphQL each have their own strengths and weaknesses.

-

-The diagram below shows a quick comparison between REST and GraphQL.

-

-

-  -

-

-

-REST

-

-- Uses standard HTTP methods like GET, POST, PUT, DELETE for CRUD operations.

-- Works well when you need simple, uniform interfaces between separate services/applications.

-- Caching strategies are straightforward to implement.

-- The downside is it may require multiple roundtrips to assemble related data from separate endpoints.

-

-GraphQL

-

-- Provides a single endpoint for clients to query for precisely the data they need.

-- Clients specify the exact fields required in nested queries, and the server returns optimized payloads containing just those fields.

-- Supports Mutations for modifying data and Subscriptions for real-time notifications.

-- Great for aggregating data from multiple sources and works well with rapidly evolving frontend requirements.

-- However, it shifts complexity to the client side and can allow abusive queries if not properly safeguarded

-- Caching strategies can be more complicated than REST.

-

-The best choice between REST and GraphQL depends on the specific requirements of the application and development team. GraphQL is a good fit for complex or frequently changing frontend needs, while REST suits applications where simple and consistent contracts are preferred.

-

-Neither API approach is a silver bullet. Carefully evaluating requirements and tradeoffs is important to pick the right style. Both REST and GraphQL are valid options for exposing data and powering modern applications.

-

-

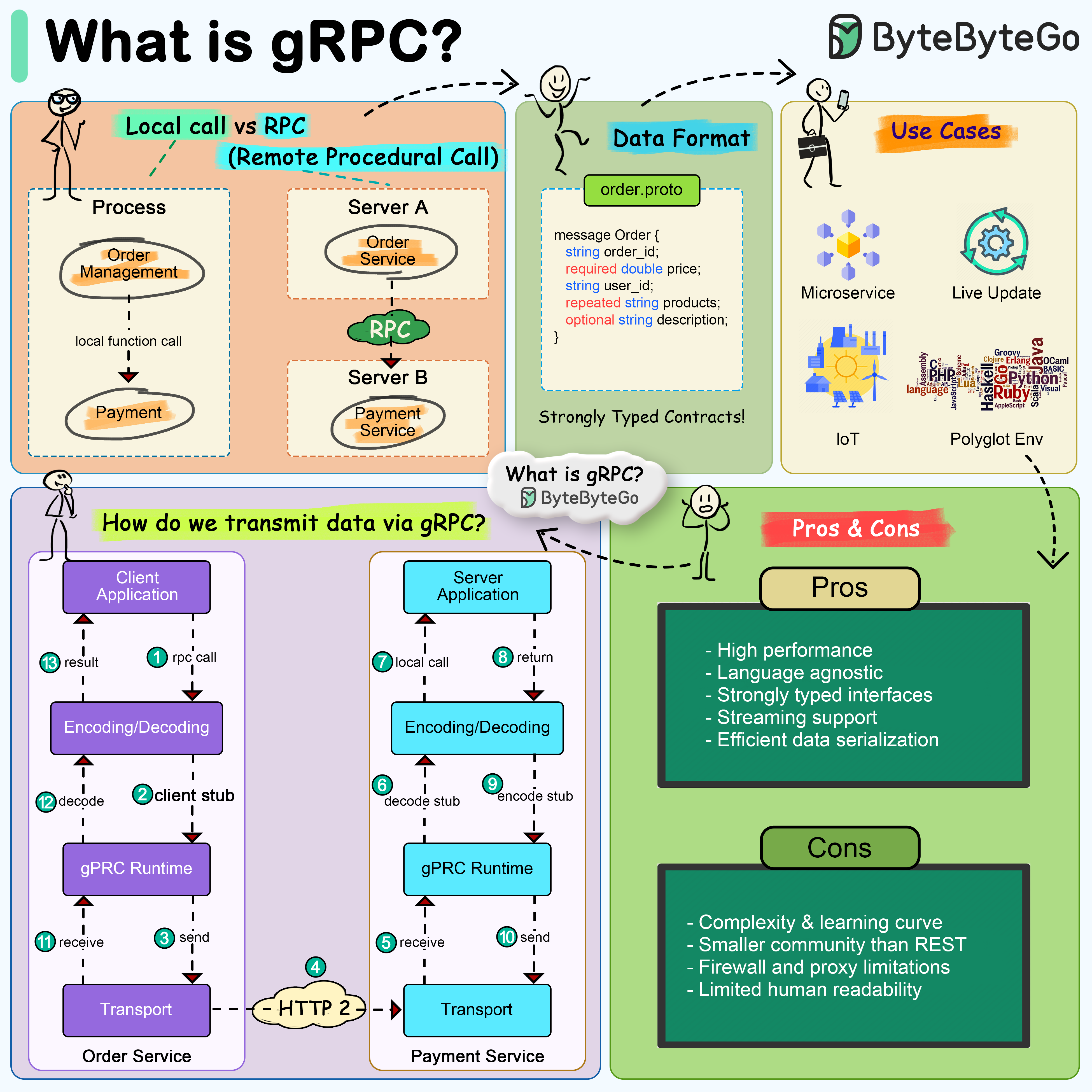

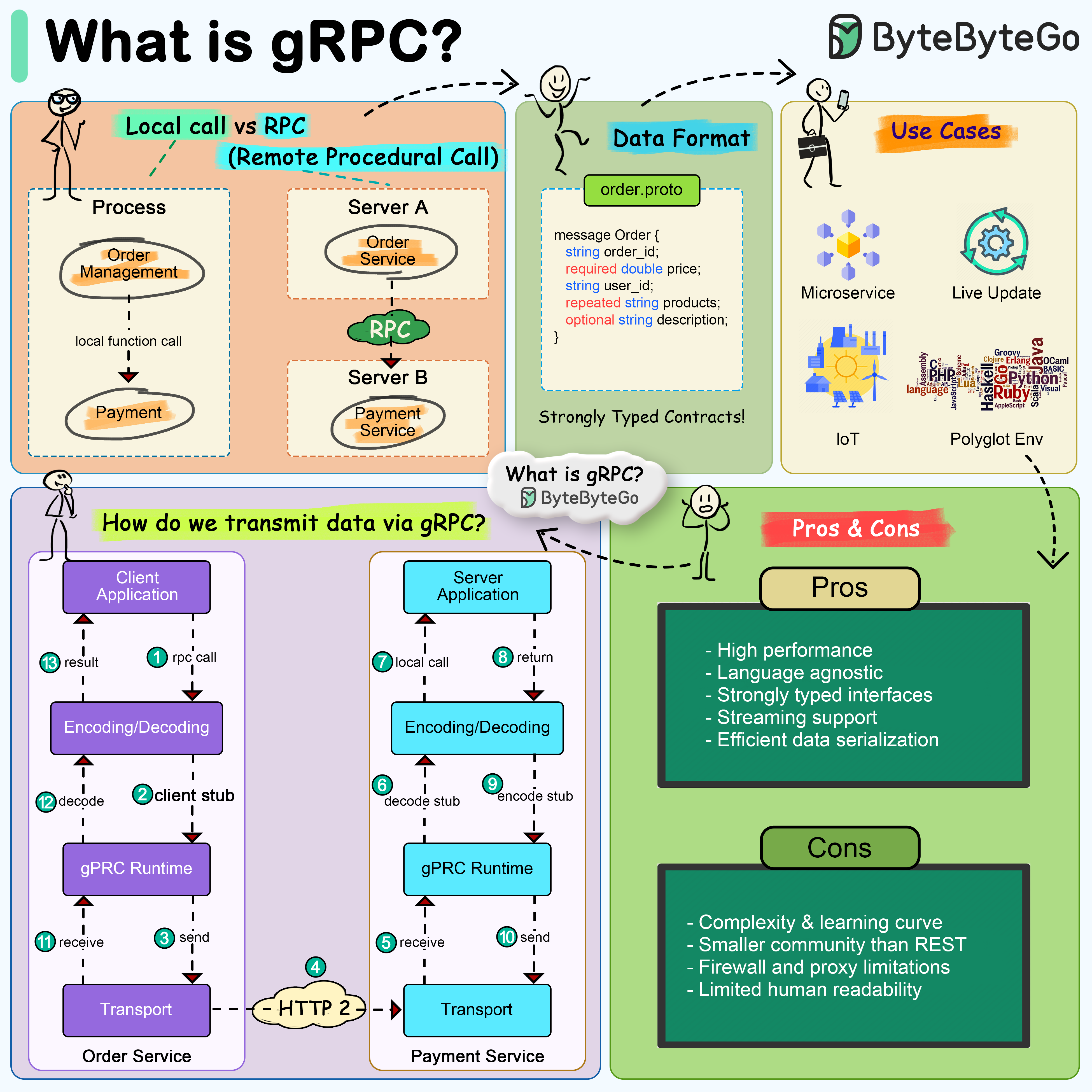

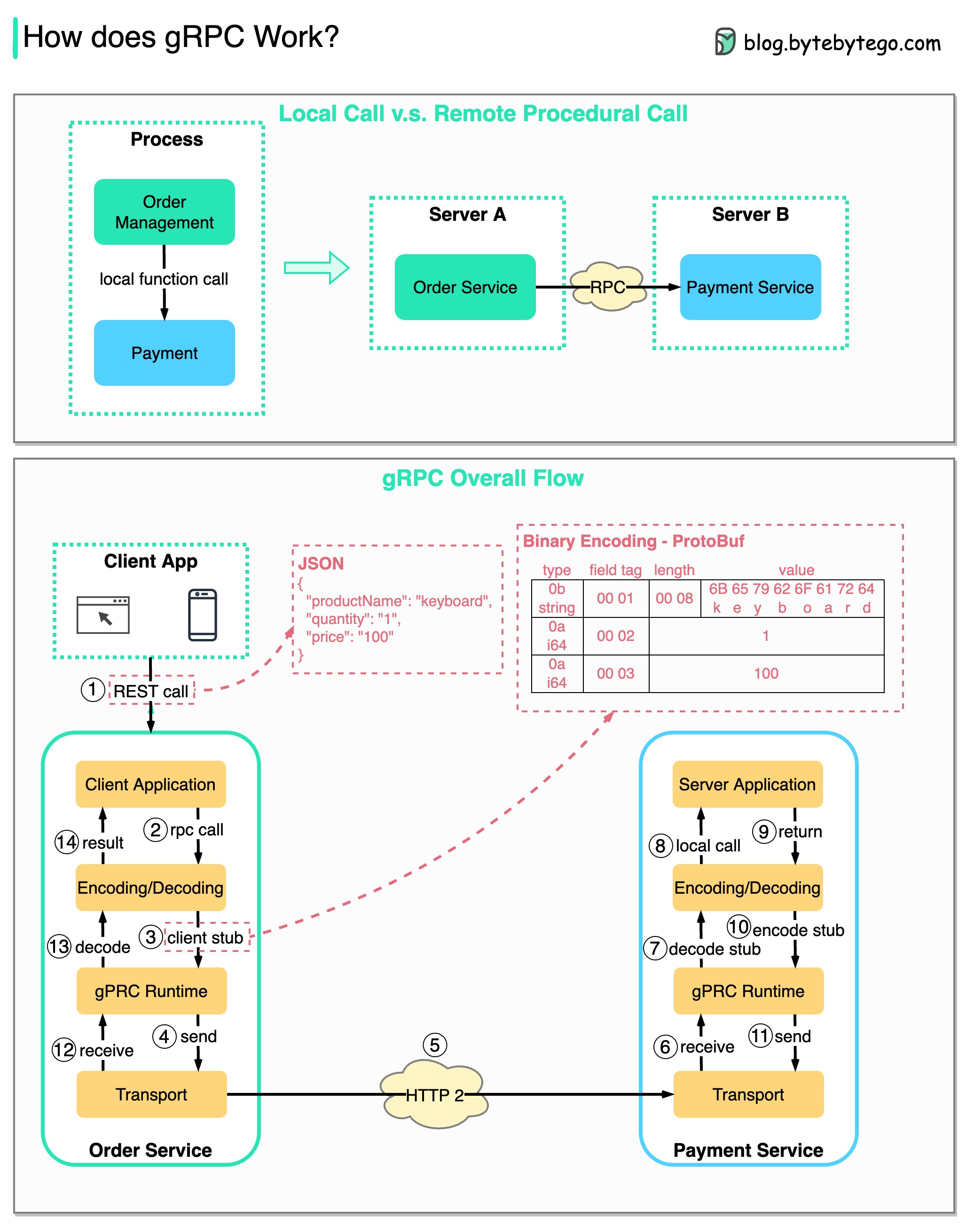

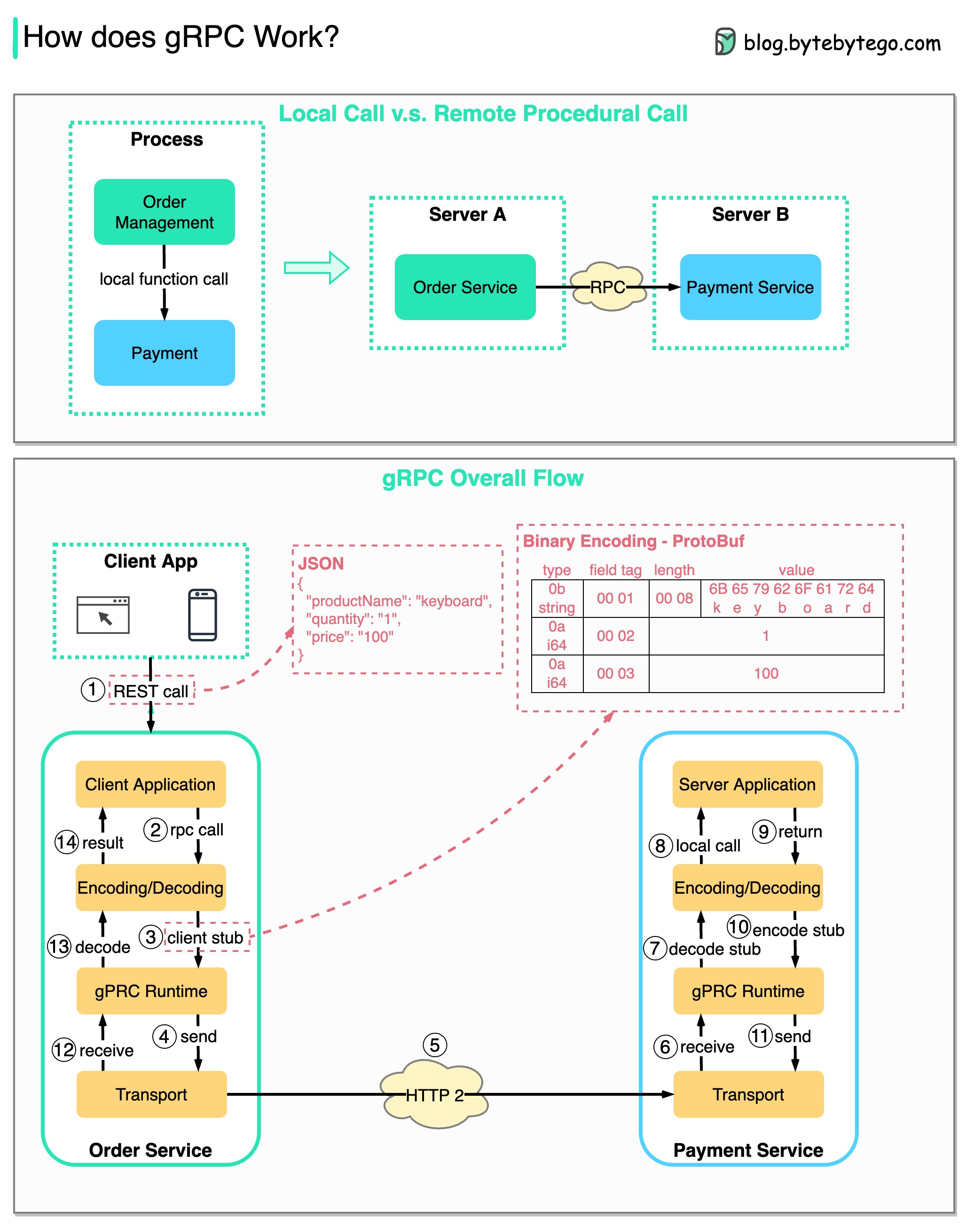

-### How does gRPC work?

-

-RPC (Remote Procedure Call) is called “**remote**” because it enables communications between remote services when services are deployed to different servers under microservice architecture. From the user’s point of view, it acts like a local function call.

-

-The diagram below illustrates the overall data flow for **gRPC**.

-

-

-  -

-

-

-Step 1: A REST call is made from the client. The request body is usually in JSON format.

-

-Steps 2 - 4: The order service (gRPC client) receives the REST call, transforms it, and makes an RPC call to the payment service. gRPC encodes the **client stub** into a binary format and sends it to the low-level transport layer.

-

-Step 5: gRPC sends the packets over the network via HTTP2. Because of binary encoding and network optimizations, gRPC is said to be 5X faster than JSON.

-

-Steps 6 - 8: The payment service (gRPC server) receives the packets from the network, decodes them, and invokes the server application.

-

-Steps 9 - 11: The result is returned from the server application, and gets encoded and sent to the transport layer.

-

-Steps 12 - 14: The order service receives the packets, decodes them, and sends the result to the client application.

-

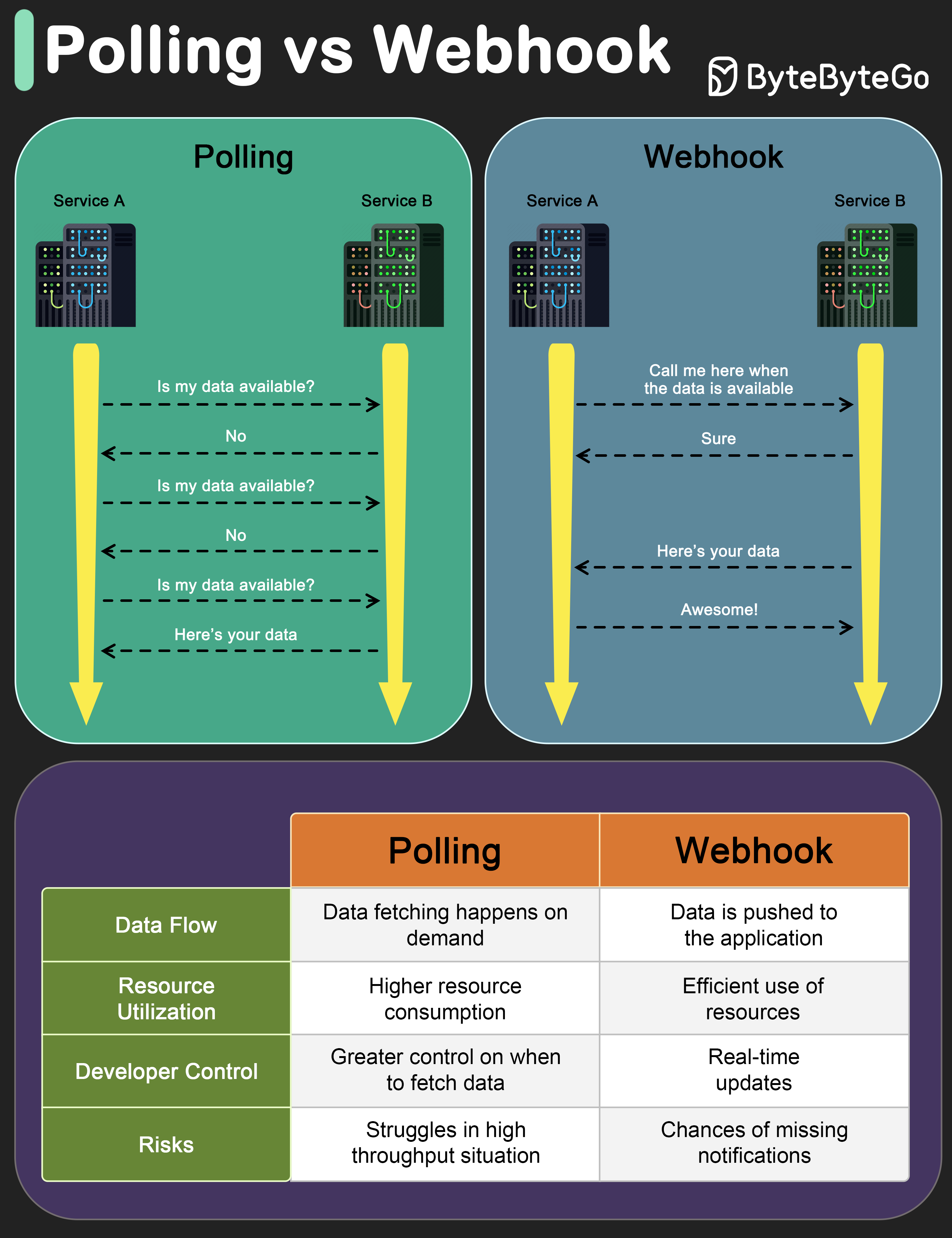

-### What is a webhook?

-

-The diagram below shows a comparison between polling and Webhook.

-

-

-  -

-

-

-Assume we run an eCommerce website. The clients send orders to the order service via the API gateway, which goes to the payment service for payment transactions. The payment service then talks to an external payment service provider (PSP) to complete the transactions.

-

-There are two ways to handle communications with the external PSP.

-

-**1. Short polling**

-

-After sending the payment request to the PSP, the payment service keeps asking the PSP about the payment status. After several rounds, the PSP finally returns with the status.

-

-Short polling has two drawbacks:

-* Constant polling of the status requires resources from the payment service.

-* The External service communicates directly with the payment service, creating security vulnerabilities.

-

-**2. Webhook**

-

-We can register a webhook with the external service. It means: call me back at a certain URL when you have updates on the request. When the PSP has completed the processing, it will invoke the HTTP request to update the payment status.

-

-In this way, the programming paradigm is changed, and the payment service doesn’t need to waste resources to poll the payment status anymore.

-

-What if the PSP never calls back? We can set up a housekeeping job to check payment status every hour.

-

-Webhooks are often referred to as reverse APIs or push APIs because the server sends HTTP requests to the client. We need to pay attention to 3 things when using a webhook:

-

-1. We need to design a proper API for the external service to call.

-2. We need to set up proper rules in the API gateway for security reasons.

-3. We need to register the correct URL at the external service.

-

-### How to improve API performance?

-

-The diagram below shows 5 common tricks to improve API performance.

-

-

-  -

-

-

-Pagination

-

-This is a common optimization when the size of the result is large. The results are streaming back to the client to improve the service responsiveness.

-

-Asynchronous Logging

-

-Synchronous logging deals with the disk for every call and can slow down the system. Asynchronous logging sends logs to a lock-free buffer first and immediately returns. The logs will be flushed to the disk periodically. This significantly reduces the I/O overhead.

-

-Caching

-

-We can store frequently accessed data into a cache. The client can query the cache first instead of visiting the database directly. If there is a cache miss, the client can query from the database. Caches like Redis store data in memory, so the data access is much faster than the database.

-

-Payload Compression

-

-The requests and responses can be compressed using gzip etc so that the transmitted data size is much smaller. This speeds up the upload and download.

-

-Connection Pool

-

-When accessing resources, we often need to load data from the database. Opening the closing db connections adds significant overhead. So we should connect to the db via a pool of open connections. The connection pool is responsible for managing the connection lifecycle.

-

-### HTTP 1.0 -> HTTP 1.1 -> HTTP 2.0 -> HTTP 3.0 (QUIC)

-

-What problem does each generation of HTTP solve?

-

-The diagram below illustrates the key features.

-

-

-  -

-

-

-- HTTP 1.0 was finalized and fully documented in 1996. Every request to the same server requires a separate TCP connection.

-

-- HTTP 1.1 was published in 1997. A TCP connection can be left open for reuse (persistent connection), but it doesn’t solve the HOL (head-of-line) blocking issue.

-

- HOL blocking - when the number of allowed parallel requests in the browser is used up, subsequent requests need to wait for the former ones to complete.

-

-- HTTP 2.0 was published in 2015. It addresses HOL issue through request multiplexing, which eliminates HOL blocking at the application layer, but HOL still exists at the transport (TCP) layer.

-

- As you can see in the diagram, HTTP 2.0 introduced the concept of HTTP “streams”: an abstraction that allows multiplexing different HTTP exchanges onto the same TCP connection. Each stream doesn’t need to be sent in order.

-

-- HTTP 3.0 first draft was published in 2020. It is the proposed successor to HTTP 2.0. It uses QUIC instead of TCP for the underlying transport protocol, thus removing HOL blocking in the transport layer.

-

-QUIC is based on UDP. It introduces streams as first-class citizens at the transport layer. QUIC streams share the same QUIC connection, so no additional handshakes and slow starts are required to create new ones, but QUIC streams are delivered independently such that in most cases packet loss affecting one stream doesn't affect others.

-

-### SOAP vs REST vs GraphQL vs RPC

-

-The diagram below illustrates the API timeline and API styles comparison.

-

-Over time, different API architectural styles are released. Each of them has its own patterns of standardizing data exchange.

-

-You can check out the use cases of each style in the diagram.

-

-

-  -

-

-

-

-### Code First vs. API First

-

-The diagram below shows the differences between code-first development and API-first development. Why do we want to consider API first design?

-

-

-  -

-

-

-

-- Microservices increase system complexity and we have separate services to serve different functions of the system. While this kind of architecture facilitates decoupling and segregation of duty, we need to handle the various communications among services.

-

-It is better to think through the system's complexity before writing the code and carefully defining the boundaries of the services.

-

-- Separate functional teams need to speak the same language and the dedicated functional teams are only responsible for their own components and services. It is recommended that the organization speak the same language via API design.

-

-We can mock requests and responses to validate the API design before writing code.

-

-- Improve software quality and developer productivity Since we have ironed out most of the uncertainties when the project starts, the overall development process is smoother, and the software quality is greatly improved.

-

-Developers are happy about the process as well because they can focus on functional development instead of negotiating sudden changes.

-

-The possibility of having surprises toward the end of the project lifecycle is reduced.

-

-Because we have designed the API first, the tests can be designed while the code is being developed. In a way, we also have TDD (Test Driven Design) when using API first development.

-

-### HTTP status codes

-

-

-  -

-

-

-

-The response codes for HTTP are divided into five categories:

-

-Informational (100-199)

-Success (200-299)

-Redirection (300-399)

-Client Error (400-499)

-Server Error (500-599)

-

-### What does API gateway do?

-

-The diagram below shows the details.

-

-

-  -

-

-

-Step 1 - The client sends an HTTP request to the API gateway.

-

-Step 2 - The API gateway parses and validates the attributes in the HTTP request.

-

-Step 3 - The API gateway performs allow-list/deny-list checks.

-

-Step 4 - The API gateway talks to an identity provider for authentication and authorization.

-

-Step 5 - The rate limiting rules are applied to the request. If it is over the limit, the request is rejected.

-

-Steps 6 and 7 - Now that the request has passed basic checks, the API gateway finds the relevant service to route to by path matching.

-

-Step 8 - The API gateway transforms the request into the appropriate protocol and sends it to backend microservices.

-

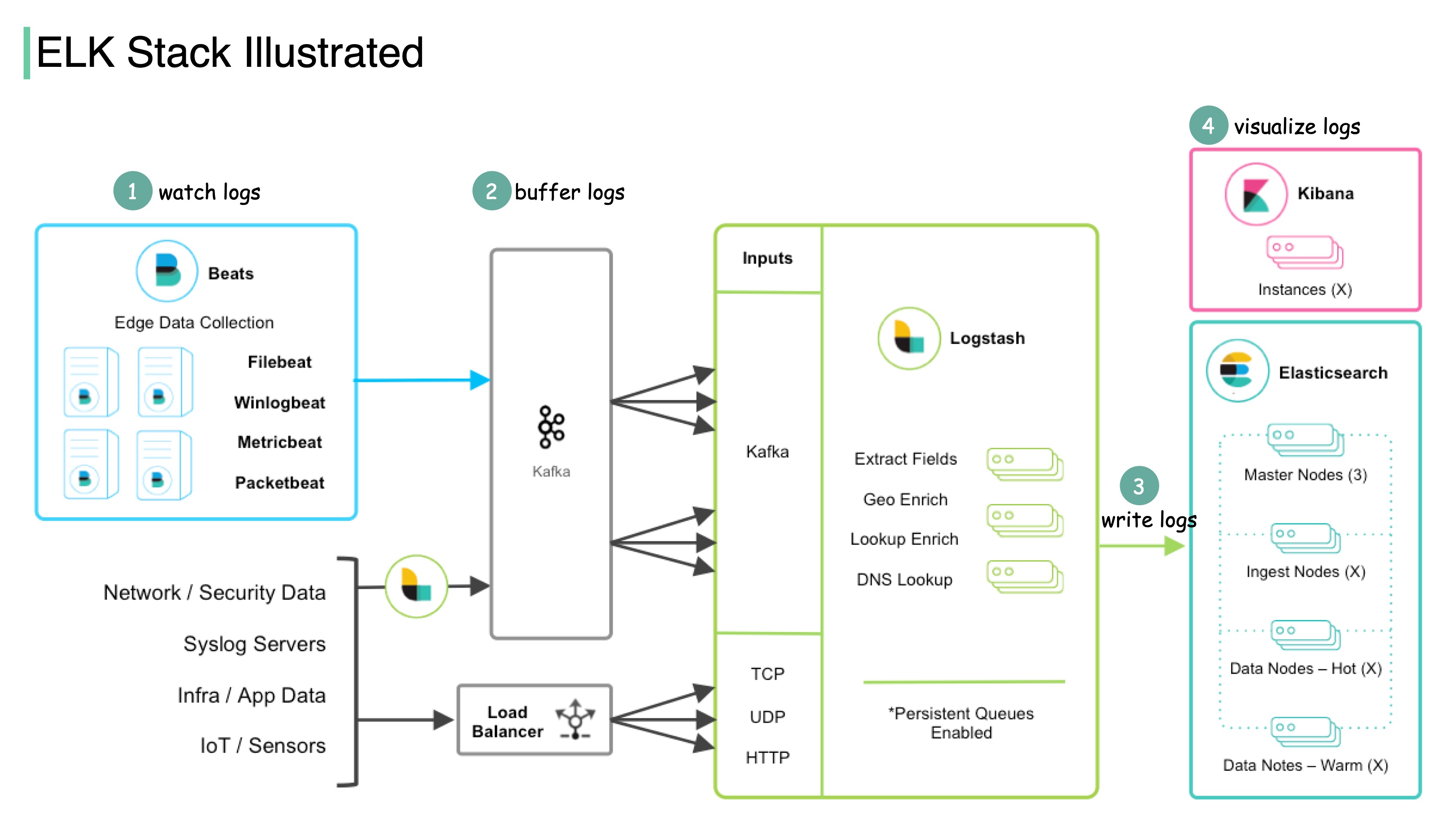

-Steps 9-12: The API gateway can handle errors properly, and deals with faults if the error takes a longer time to recover (circuit break). It can also leverage ELK (Elastic-Logstash-Kibana) stack for logging and monitoring. We sometimes cache data in the API gateway.

-

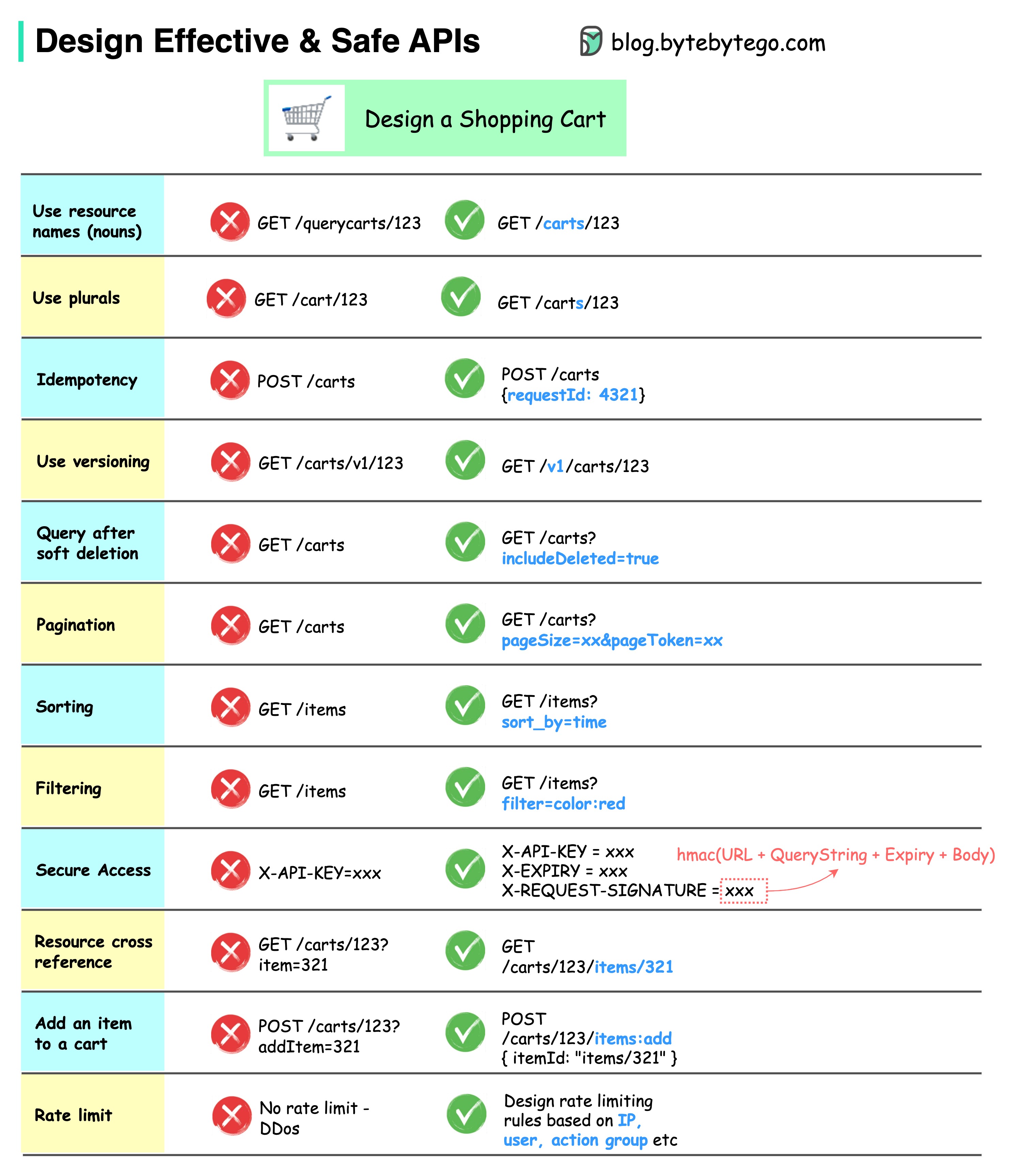

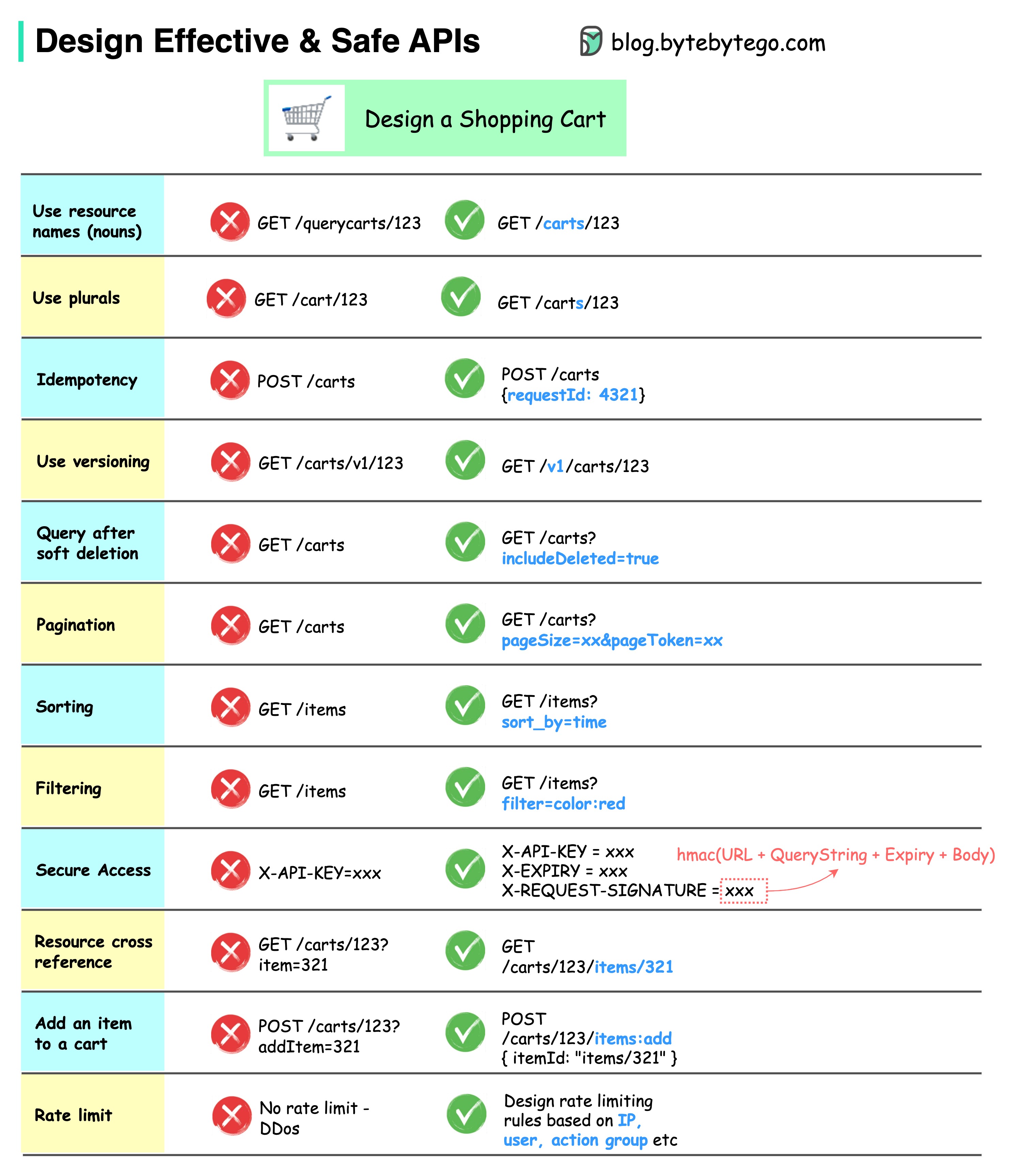

-### How do we design effective and safe APIs?

-

-The diagram below shows typical API designs with a shopping cart example.

-

-

-  -

-

-

-

-Note that API design is not just URL path design. Most of the time, we need to choose the proper resource names, identifiers, and path patterns. It is equally important to design proper HTTP header fields or to design effective rate-limiting rules within the API gateway.

-

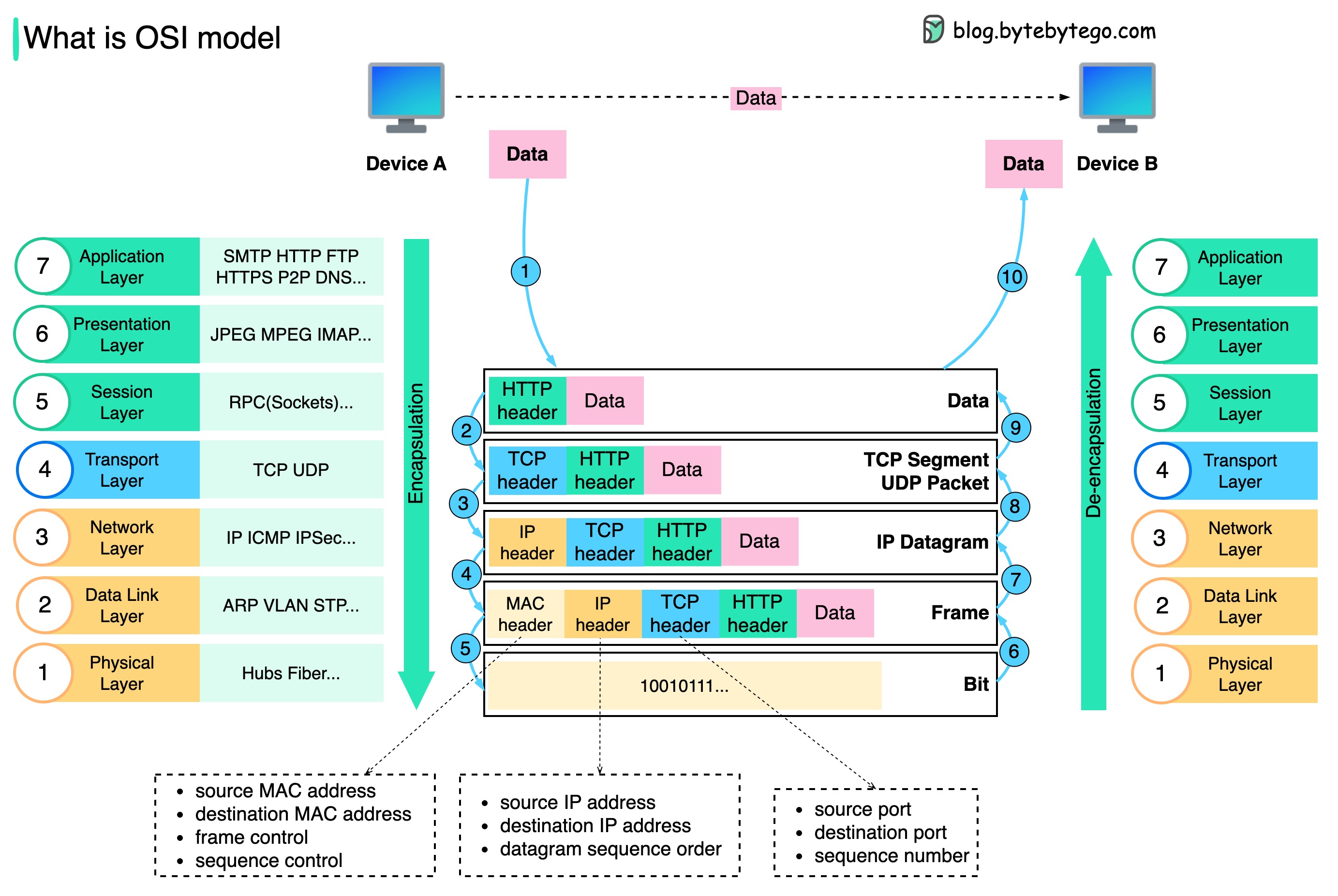

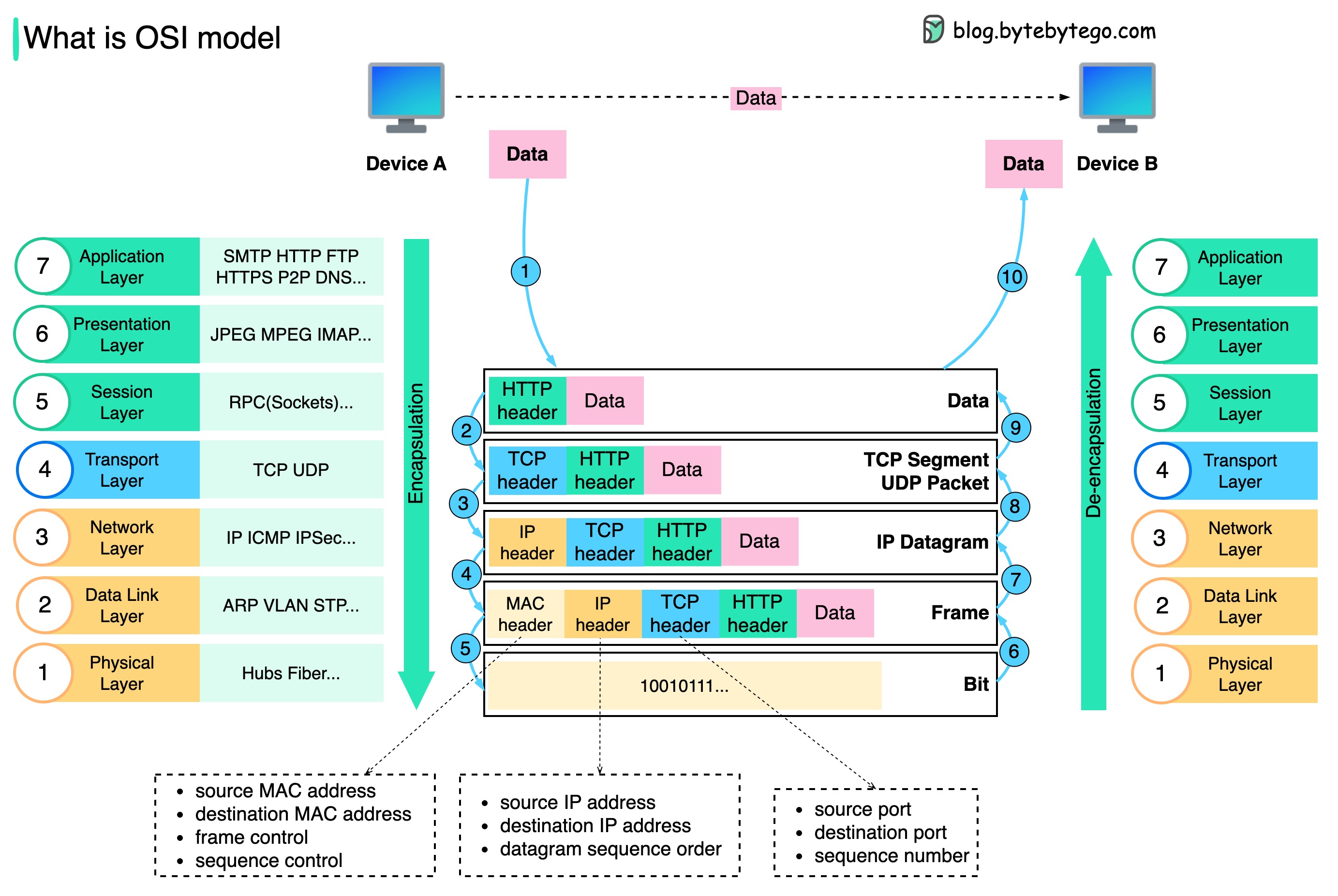

-### TCP/IP encapsulation

-

-How is data sent over the network? Why do we need so many layers in the OSI model?

-

-The diagram below shows how data is encapsulated and de-encapsulated when transmitting over the network.

-

-

-  -

-

-

-Step 1: When Device A sends data to Device B over the network via the HTTP protocol, it is first added an HTTP header at the application layer.

-

-Step 2: Then a TCP or a UDP header is added to the data. It is encapsulated into TCP segments at the transport layer. The header contains the source port, destination port, and sequence number.

-

-Step 3: The segments are then encapsulated with an IP header at the network layer. The IP header contains the source/destination IP addresses.

-

-Step 4: The IP datagram is added a MAC header at the data link layer, with source/destination MAC addresses.

-

-Step 5: The encapsulated frames are sent to the physical layer and sent over the network in binary bits.

-

-Steps 6-10: When Device B receives the bits from the network, it performs the de-encapsulation process, which is a reverse processing of the encapsulation process. The headers are removed layer by layer, and eventually, Device B can read the data.

-

-We need layers in the network model because each layer focuses on its own responsibilities. Each layer can rely on the headers for processing instructions and does not need to know the meaning of the data from the last layer.

-

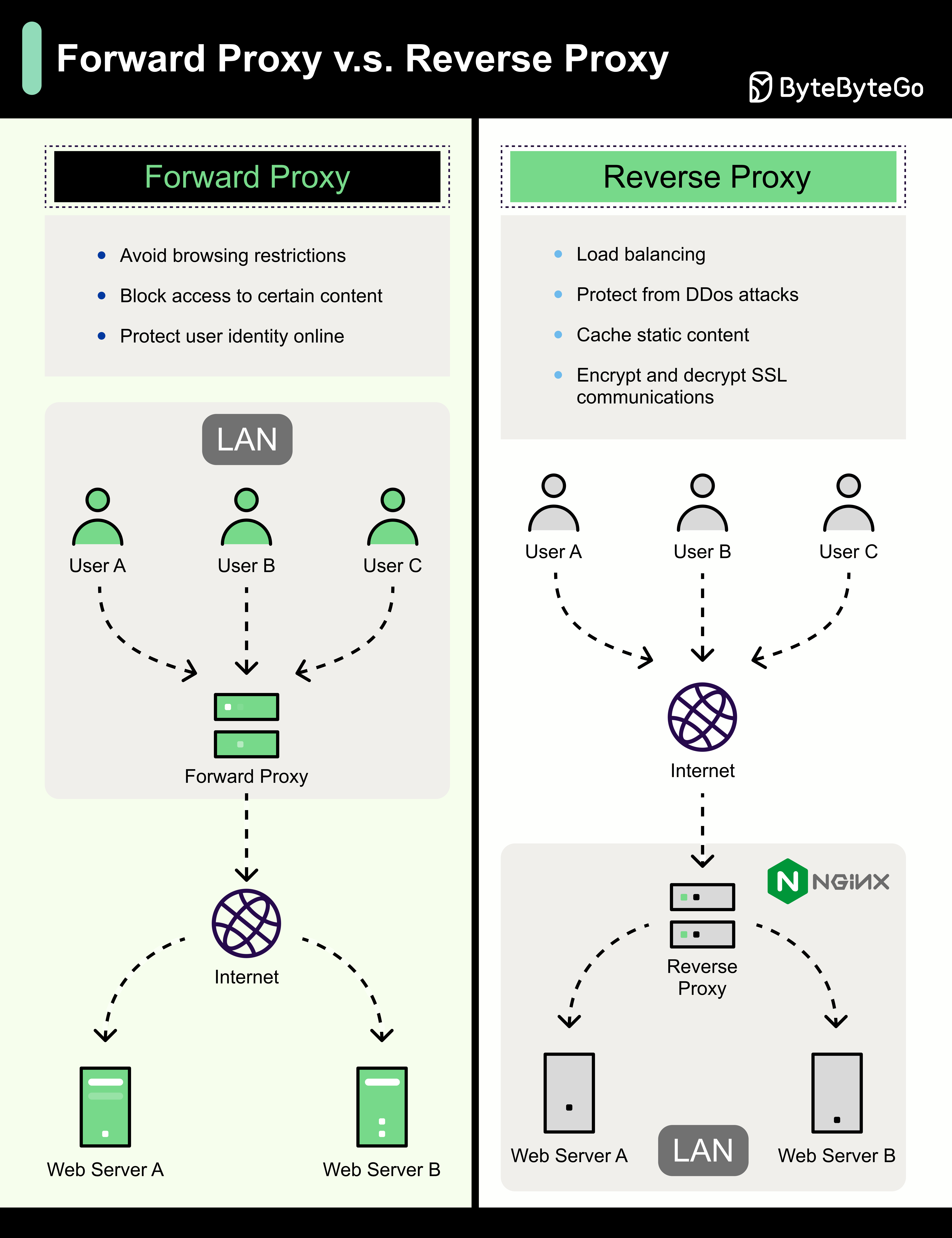

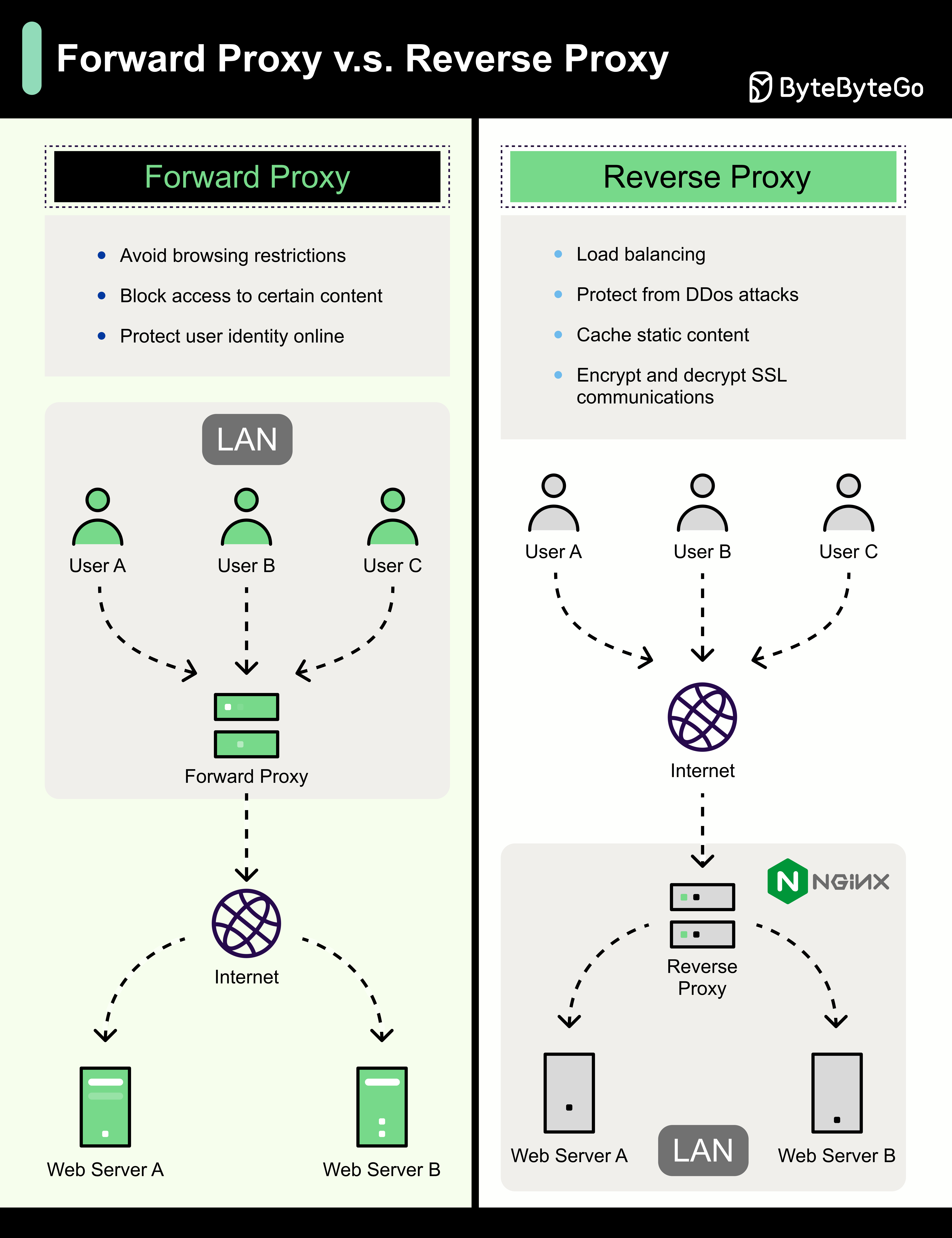

-### Why is Nginx called a “reverse” proxy?

-

-The diagram below shows the differences between a 𝐟𝐨𝐫𝐰𝐚𝐫𝐝 𝐩𝐫𝐨𝐱𝐲 and a 𝐫𝐞𝐯𝐞𝐫𝐬𝐞 𝐩𝐫𝐨𝐱𝐲.

-

-

-  -

-

-

-A forward proxy is a server that sits between user devices and the internet.

-

-A forward proxy is commonly used for:

-

-1. Protecting clients

-2. Circumventing browsing restrictions

-3. Blocking access to certain content

-

-A reverse proxy is a server that accepts a request from the client, forwards the request to web servers, and returns the results to the client as if the proxy server had processed the request.

-

-A reverse proxy is good for:

-

-1. Protecting servers

-2. Load balancing

-3. Caching static contents

-4. Encrypting and decrypting SSL communications

-

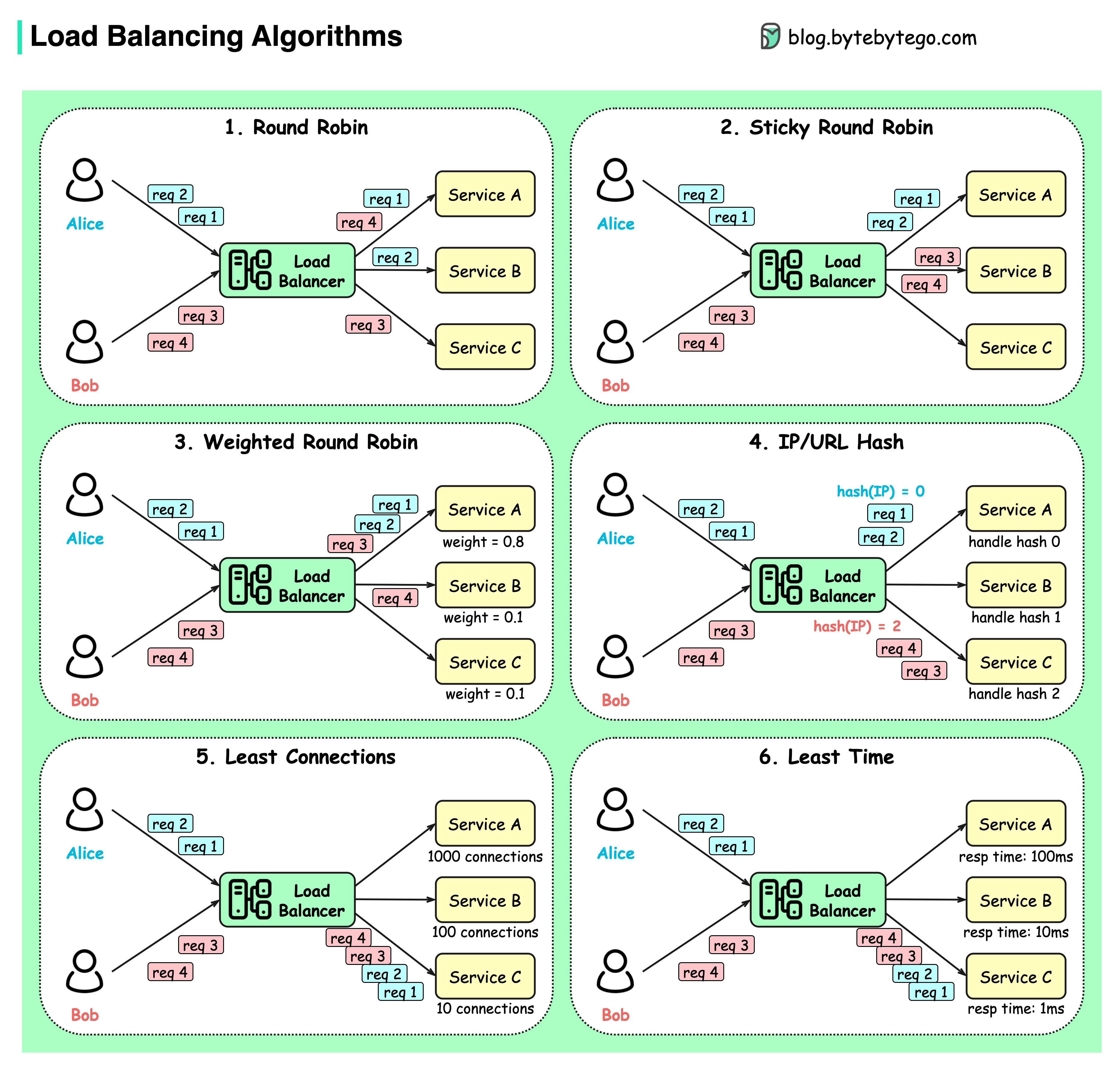

-### What are the common load-balancing algorithms?

-

-The diagram below shows 6 common algorithms.

-

-

-  -

-

-

-- Static Algorithms

-

-1. Round robin

-

- The client requests are sent to different service instances in sequential order. The services are usually required to be stateless.

-

-3. Sticky round-robin

-

- This is an improvement of the round-robin algorithm. If Alice’s first request goes to service A, the following requests go to service A as well.

-

-4. Weighted round-robin

-

- The admin can specify the weight for each service. The ones with a higher weight handle more requests than others.

-

-6. Hash

-

- This algorithm applies a hash function on the incoming requests’ IP or URL. The requests are routed to relevant instances based on the hash function result.

-

-- Dynamic Algorithms

-

-5. Least connections

-

- A new request is sent to the service instance with the least concurrent connections.

-

-7. Least response time

-

- A new request is sent to the service instance with the fastest response time.

-

-### URL, URI, URN - Do you know the differences?

-

-The diagram below shows a comparison of URL, URI, and URN.

-

-

-  -

-

-

-- URI

-

-URI stands for Uniform Resource Identifier. It identifies a logical or physical resource on the web. URL and URN are subtypes of URI. URL locates a resource, while URN names a resource.

-

-A URI is composed of the following parts:

-scheme:[//authority]path[?query][#fragment]

-

-- URL

-

-URL stands for Uniform Resource Locator, the key concept of HTTP. It is the address of a unique resource on the web. It can be used with other protocols like FTP and JDBC.

-

-- URN

-

-URN stands for Uniform Resource Name. It uses the urn scheme. URNs cannot be used to locate a resource. A simple example given in the diagram is composed of a namespace and a namespace-specific string.

-

-If you would like to learn more detail on the subject, I would recommend [W3C’s clarification](https://www.w3.org/TR/uri-clarification/).

-

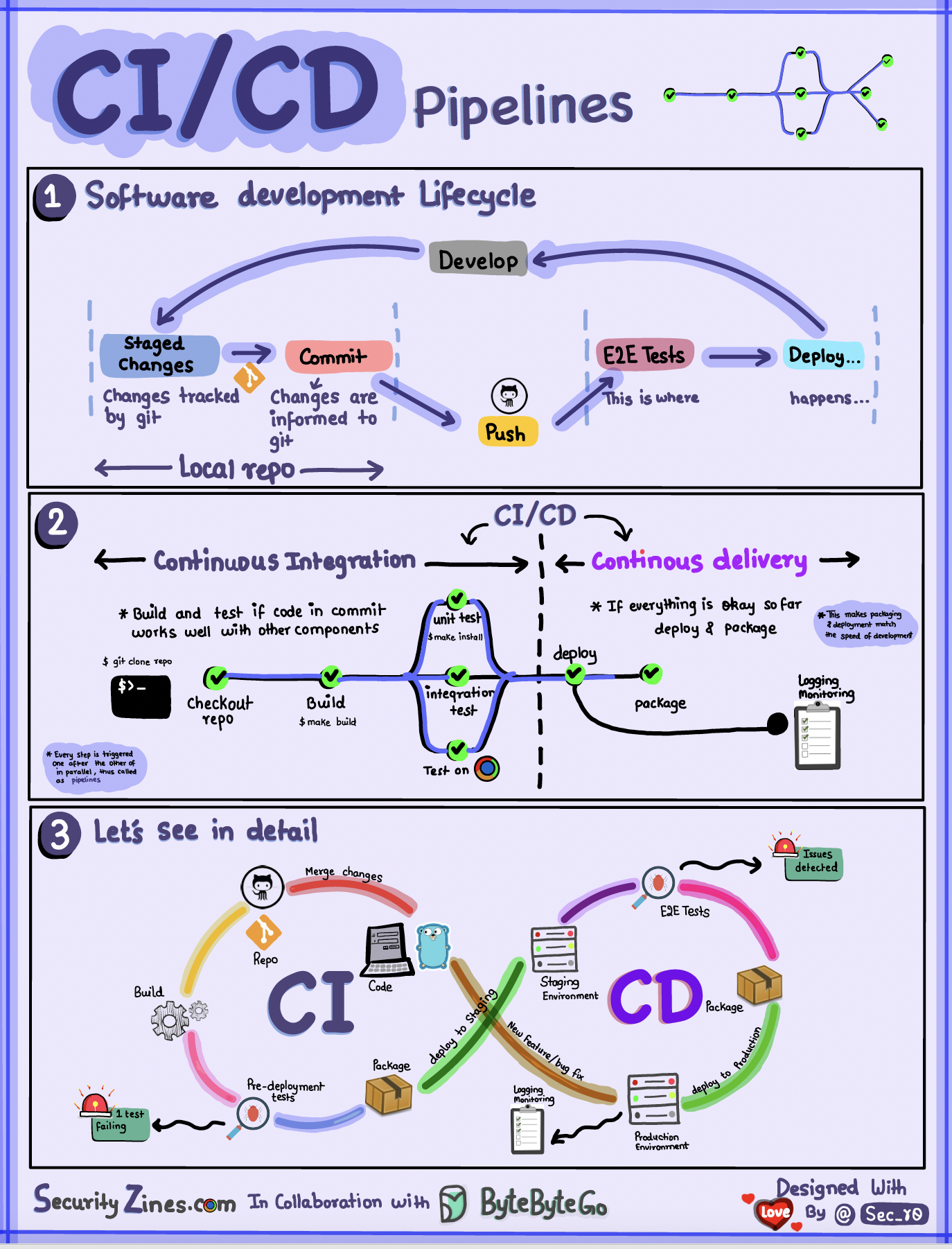

-## CI/CD

-

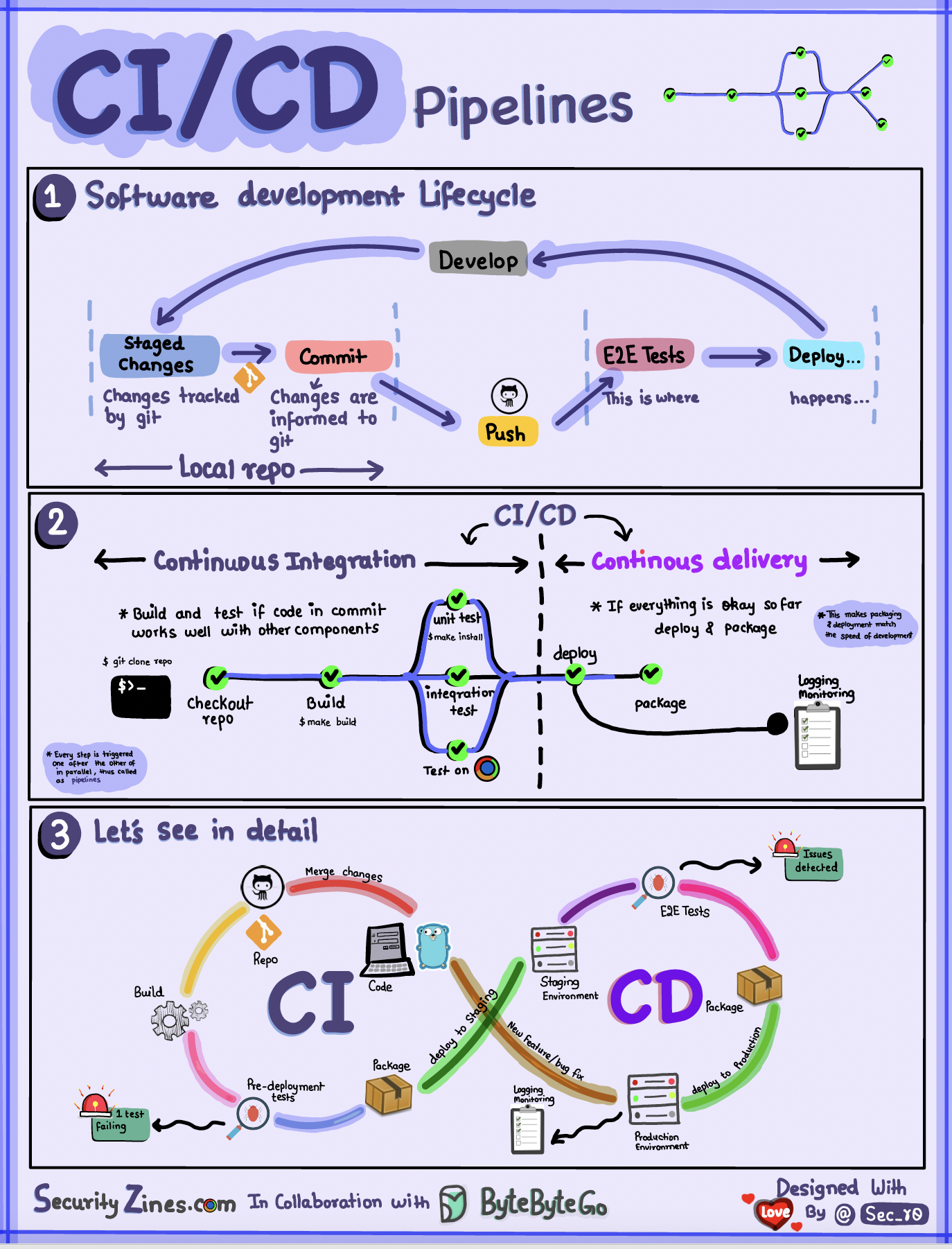

-### CI/CD Pipeline Explained in Simple Terms

-

-

-  -

-

-

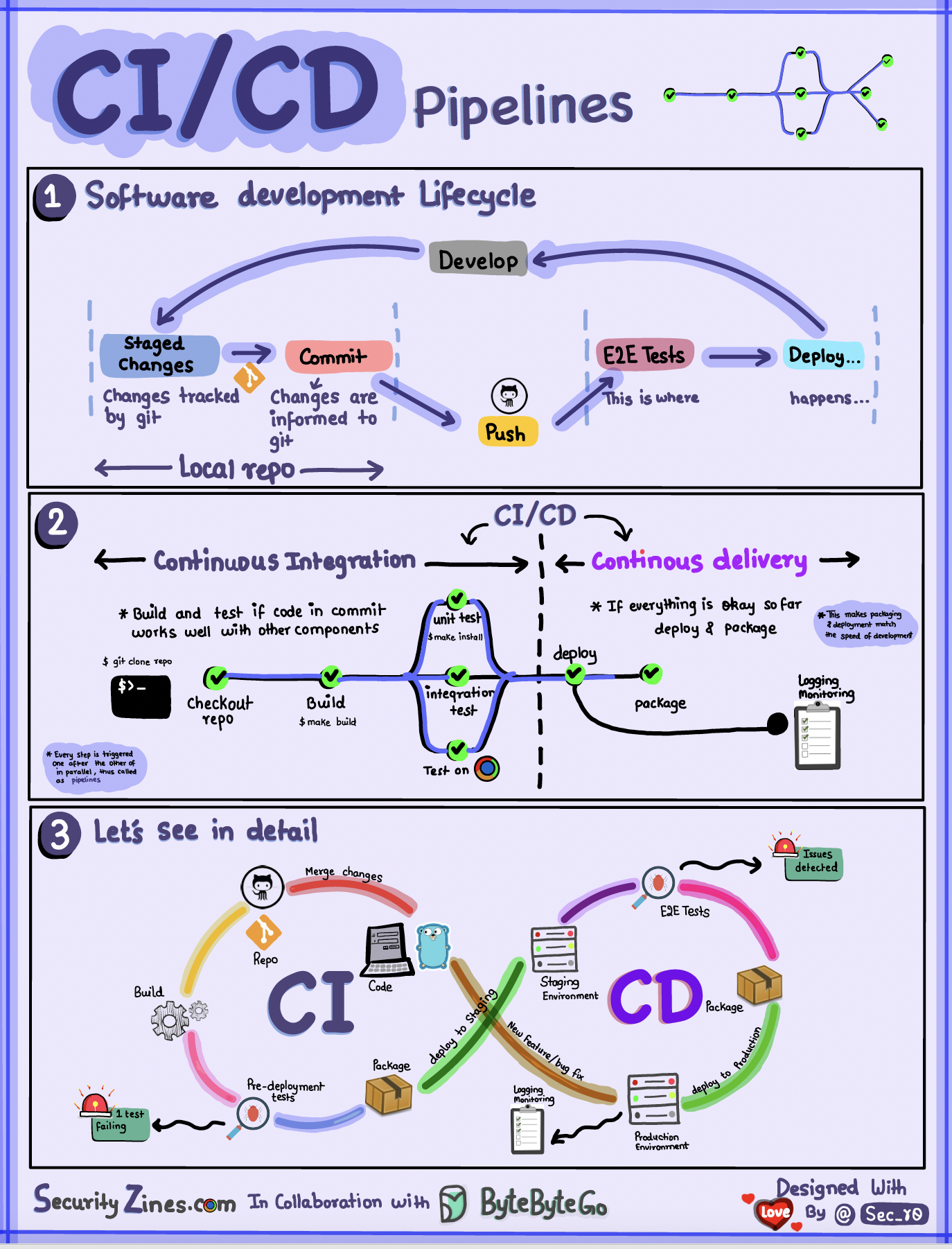

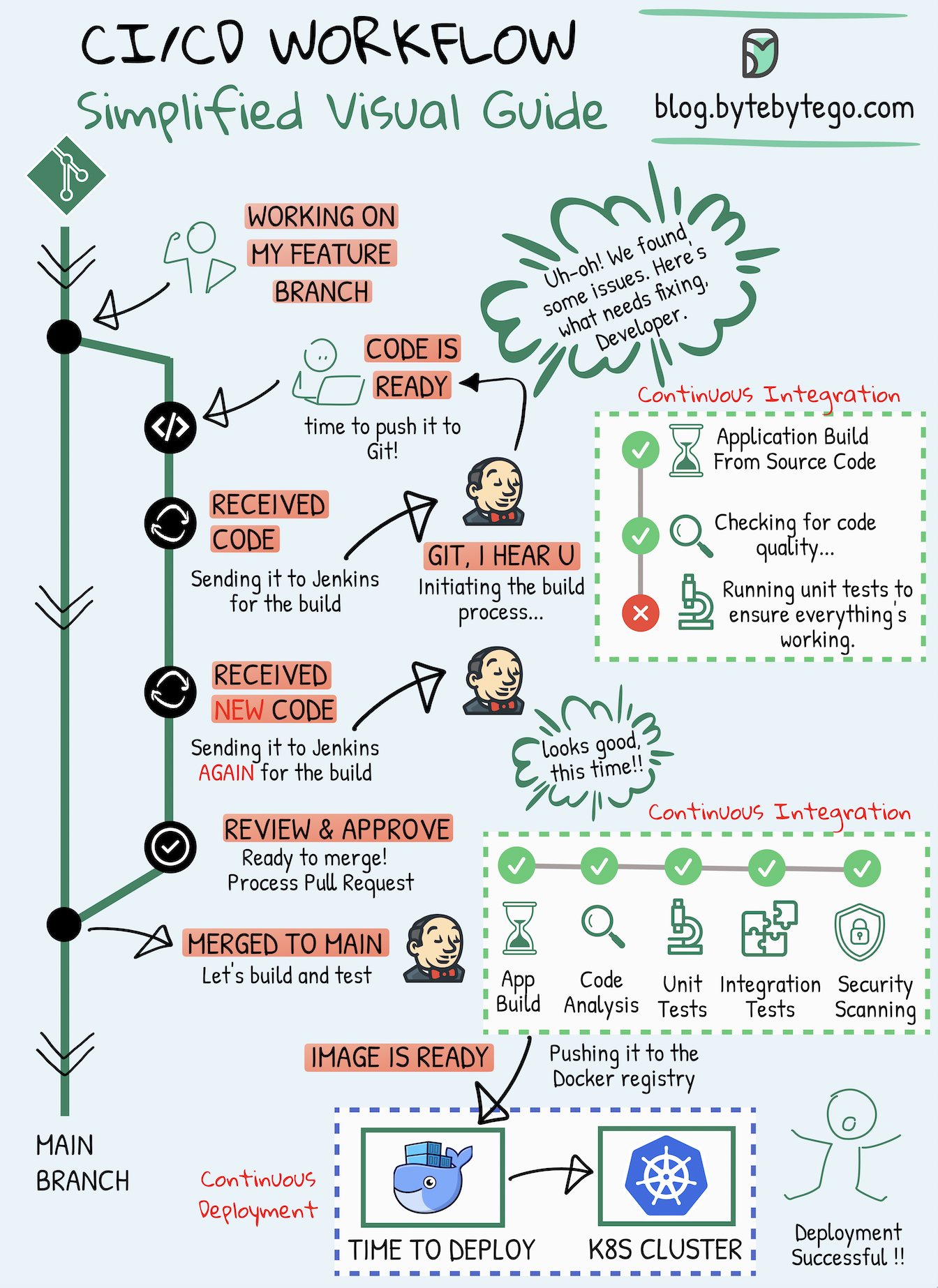

-Section 1 - SDLC with CI/CD

-

-The software development life cycle (SDLC) consists of several key stages: development, testing, deployment, and maintenance. CI/CD automates and integrates these stages to enable faster and more reliable releases.

-

-When code is pushed to a git repository, it triggers an automated build and test process. End-to-end (e2e) test cases are run to validate the code. If tests pass, the code can be automatically deployed to staging/production. If issues are found, the code is sent back to development for bug fixing. This automation provides fast feedback to developers and reduces the risk of bugs in production.

-

-Section 2 - Difference between CI and CD

-

-Continuous Integration (CI) automates the build, test, and merge process. It runs tests whenever code is committed to detect integration issues early. This encourages frequent code commits and rapid feedback.

-

-Continuous Delivery (CD) automates release processes like infrastructure changes and deployment. It ensures software can be released reliably at any time through automated workflows. CD may also automate the manual testing and approval steps required before production deployment.

-

-Section 3 - CI/CD Pipeline

-

-A typical CI/CD pipeline has several connected stages:

-- The developer commits code changes to the source control

-- CI server detects changes and triggers the build

-- Code is compiled, and tested (unit, integration tests)

-- Test results reported to the developer

-- On success, artifacts are deployed to staging environments

-- Further testing may be done on staging before release

-- CD system deploys approved changes to production

-

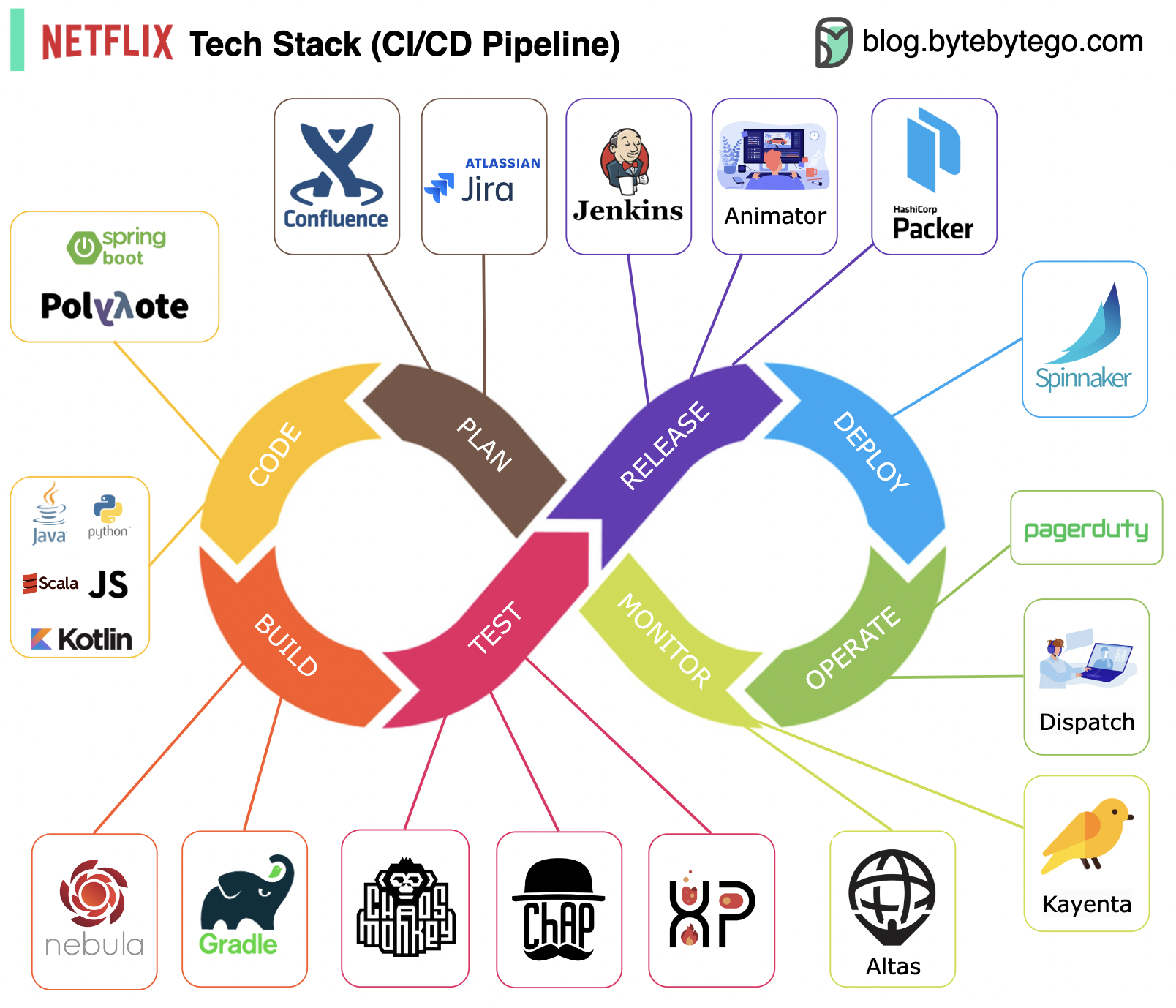

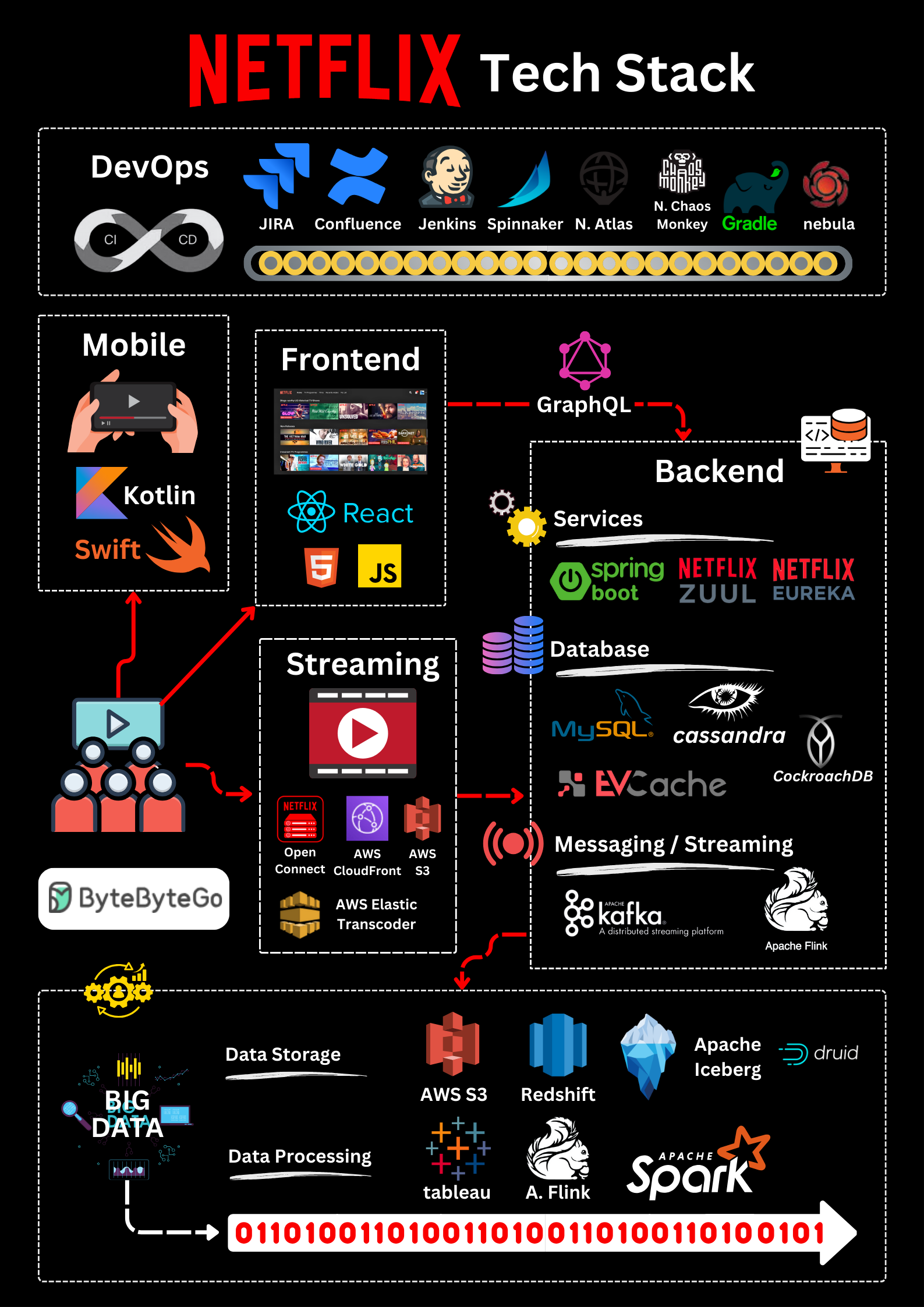

-### Netflix Tech Stack (CI/CD Pipeline)

-

-

-  -

-

-

-Planning: Netflix Engineering uses JIRA for planning and Confluence for documentation.

-

-Coding: Java is the primary programming language for the backend service, while other languages are used for different use cases.

-

-Build: Gradle is mainly used for building, and Gradle plugins are built to support various use cases.

-

-Packaging: Package and dependencies are packed into an Amazon Machine Image (AMI) for release.

-

-Testing: Testing emphasizes the production culture's focus on building chaos tools.

-

-Deployment: Netflix uses its self-built Spinnaker for canary rollout deployment.

-

-Monitoring: The monitoring metrics are centralized in Atlas, and Kayenta is used to detect anomalies.

-

-Incident report: Incidents are dispatched according to priority, and PagerDuty is used for incident handling.

-

-## Architecture patterns

-

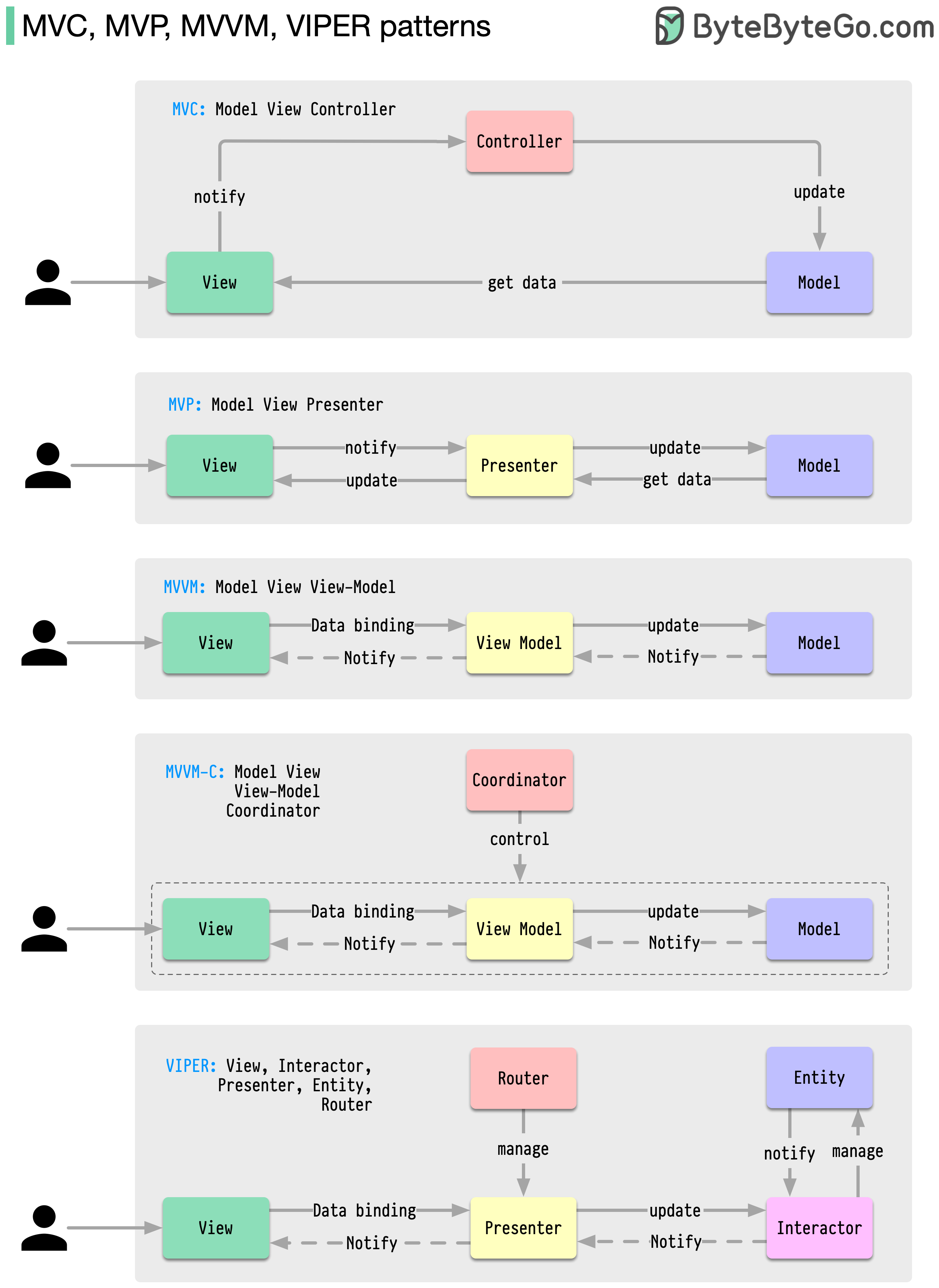

-### MVC, MVP, MVVM, MVVM-C, and VIPER

-These architecture patterns are among the most commonly used in app development, whether on iOS or Android platforms. Developers have introduced them to overcome the limitations of earlier patterns. So, how do they differ?

-

-

-  -

-

-

-- MVC, the oldest pattern, dates back almost 50 years

-- Every pattern has a "view" (V) responsible for displaying content and receiving user input

-- Most patterns include a "model" (M) to manage business data

-- "Controller," "presenter," and "view-model" are translators that mediate between the view and the model ("entity" in the VIPER pattern)

-

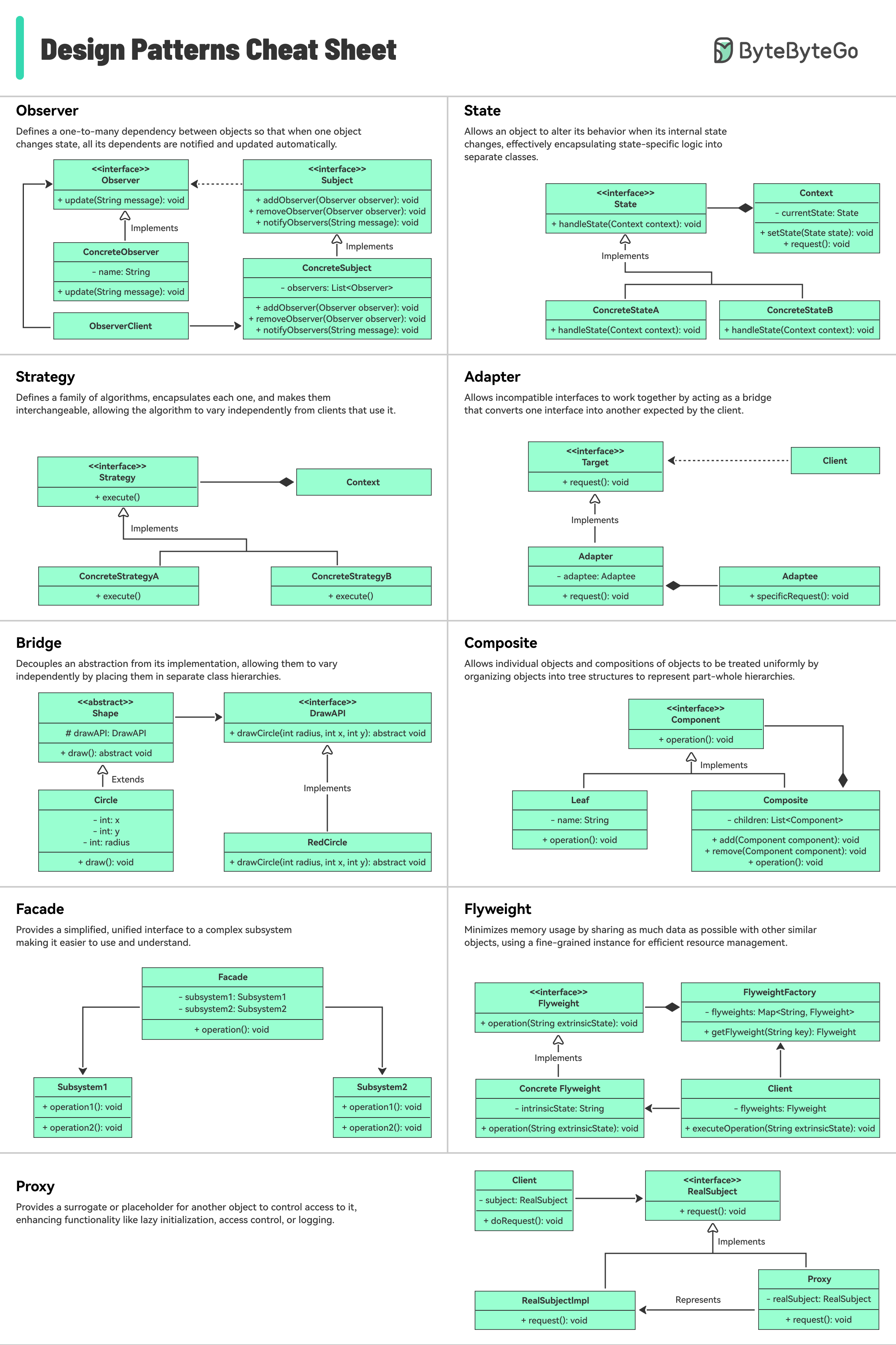

-### 18 Key Design Patterns Every Developer Should Know

-

-Patterns are reusable solutions to common design problems, resulting in a smoother, more efficient development process. They serve as blueprints for building better software structures. These are some of the most popular patterns:

-

-

-  -

-

-

-- Abstract Factory: Family Creator - Makes groups of related items.

-- Builder: Lego Master - Builds objects step by step, keeping creation and appearance separate.

-- Prototype: Clone Maker - Creates copies of fully prepared examples.

-- Singleton: One and Only - A special class with just one instance.

-- Adapter: Universal Plug - Connects things with different interfaces.

-- Bridge: Function Connector - Links how an object works to what it does.

-- Composite: Tree Builder - Forms tree-like structures of simple and complex parts.

-- Decorator: Customizer - Adds features to objects without changing their core.

-- Facade: One-Stop-Shop - Represents a whole system with a single, simplified interface.

-- Flyweight: Space Saver - Shares small, reusable items efficiently.

-- Proxy: Stand-In Actor - Represents another object, controlling access or actions.

-- Chain of Responsibility: Request Relay - Passes a request through a chain of objects until handled.

-- Command: Task Wrapper - Turns a request into an object, ready for action.

-- Iterator: Collection Explorer - Accesses elements in a collection one by one.

-- Mediator: Communication Hub - Simplifies interactions between different classes.

-- Memento: Time Capsule - Captures and restores an object's state.

-- Observer: News Broadcaster - Notifies classes about changes in other objects.

-- Visitor: Skillful Guest - Adds new operations to a class without altering it.

-

-## Database

-

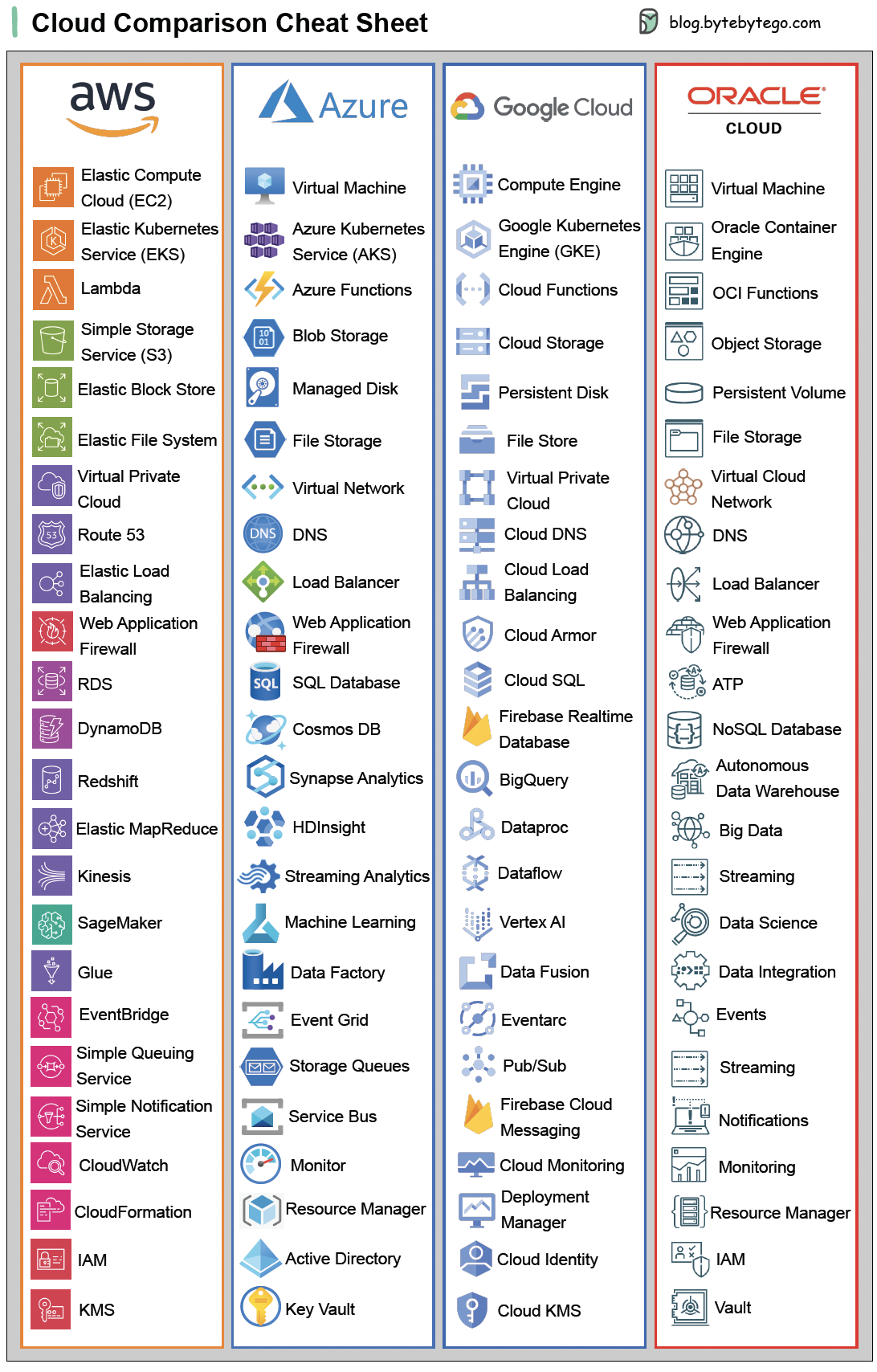

-### A nice cheat sheet of different databases in cloud services

-

-

-  -

-

-

-Choosing the right database for your project is a complex task. Many database options, each suited to distinct use cases, can quickly lead to decision fatigue.

-

-We hope this cheat sheet provides high-level direction to pinpoint the right service that aligns with your project's needs and avoid potential pitfalls.

-

-Note: Google has limited documentation for their database use cases. Even though we did our best to look at what was available and arrived at the best option, some of the entries may need to be more accurate.

-

-### 8 Data Structures That Power Your Databases

-

-The answer will vary depending on your use case. Data can be indexed in memory or on disk. Similarly, data formats vary, such as numbers, strings, geographic coordinates, etc. The system might be write-heavy or read-heavy. All of these factors affect your choice of database index format.

-

-

-  -

-

-

-The following are some of the most popular data structures used for indexing data:

-