This PR adds all the guides from [Visual Guides](https://bytebytego.com/guides/) section on bytebytego to the repository with proper links. - [x] Markdown files for guides and categories are placed inside `data/guides` and `data/categories` - [x] Guide links in readme are auto-generated using `scripts/readme.ts`. Everytime you run the script `npm run update-readme`, it reads the categories and guides from the above mentioned folders, generate production links for guides and categories and populate the table of content in the readme. This ensures that any future guides and categories will automatically get added to the readme. - [x] Sorting inside the readme matches the actual category and guides sorting on production

1.8 KiB

title, description, image, createdAt, draft, categories, tags

| title | description | image | createdAt | draft | categories | tags | |||

|---|---|---|---|---|---|---|---|---|---|

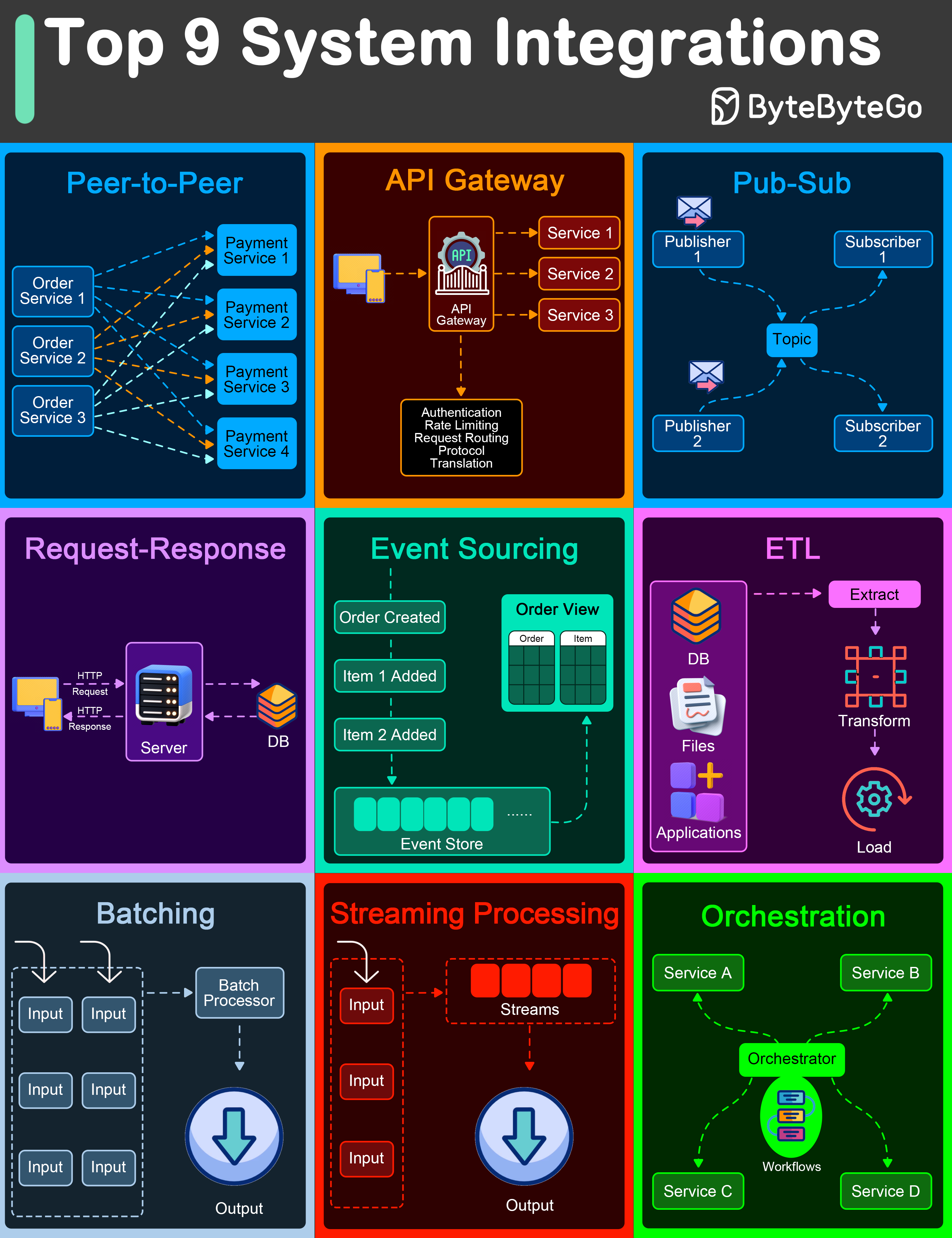

| Top 9 Architectural Patterns for Data and Communication Flow | Explore 9 key architectural patterns for efficient data and communication. | https://assets.bytebytego.com/diagrams/0387-top-9-system-integrations.png | 2024-01-31 | false |

|

|

-

Peer-to-Peer

The Peer-to-Peer pattern involves direct communication between two components without the need for a central coordinator.

-

API Gateway

An API Gateway acts as a single entry point for all client requests to the backend services of an application.

-

Pub-Sub

The Pub-Sub pattern decouples the producers of messages (publishers) from the consumers of messages (subscribers) through a message broker.

-

Request-Response

This is one of the most fundamental integration patterns, where a client sends a request to a server and waits for a response.

-

Event Sourcing

Event Sourcing involves storing the state changes of an application as a sequence of events.

-

ETL

ETL is a data integration pattern used to gather data from multiple sources, transform it into a structured format, and load it into a destination database.

-

Batching

Batching involves accumulating data over a period or until a certain threshold is met before processing it as a single group.

-

Streaming Processing

Streaming Processing allows for the continuous ingestion, processing, and analysis of data streams in real-time.

-

Orchestration

Orchestration involves a central coordinator (an orchestrator) managing the interactions between distributed components or services to achieve a workflow or business process.