mirror of

https://github.com/ByteByteGoHq/system-design-101.git

synced 2026-04-14 13:47:25 -04:00

Adds ByteByteGo guides and links (#106)

This PR adds all the guides from [Visual Guides](https://bytebytego.com/guides/) section on bytebytego to the repository with proper links. - [x] Markdown files for guides and categories are placed inside `data/guides` and `data/categories` - [x] Guide links in readme are auto-generated using `scripts/readme.ts`. Everytime you run the script `npm run update-readme`, it reads the categories and guides from the above mentioned folders, generate production links for guides and categories and populate the table of content in the readme. This ensures that any future guides and categories will automatically get added to the readme. - [x] Sorting inside the readme matches the actual category and guides sorting on production

This commit is contained in:

42

data/guides/chatgpt-timeline.md

Normal file

42

data/guides/chatgpt-timeline.md

Normal file

@@ -0,0 +1,42 @@

|

||||

---

|

||||

title: ChatGPT Timeline

|

||||

description: A visual guide to the evolution of ChatGPT and its underlying tech.

|

||||

image: 'https://assets.bytebytego.com/diagrams/0136-chatgpt-how-we-get-here.png'

|

||||

createdAt: '2024-03-10'

|

||||

draft: false

|

||||

categories:

|

||||

- ai-machine-learning

|

||||

tags:

|

||||

- AI History

|

||||

- NLP

|

||||

---

|

||||

|

||||

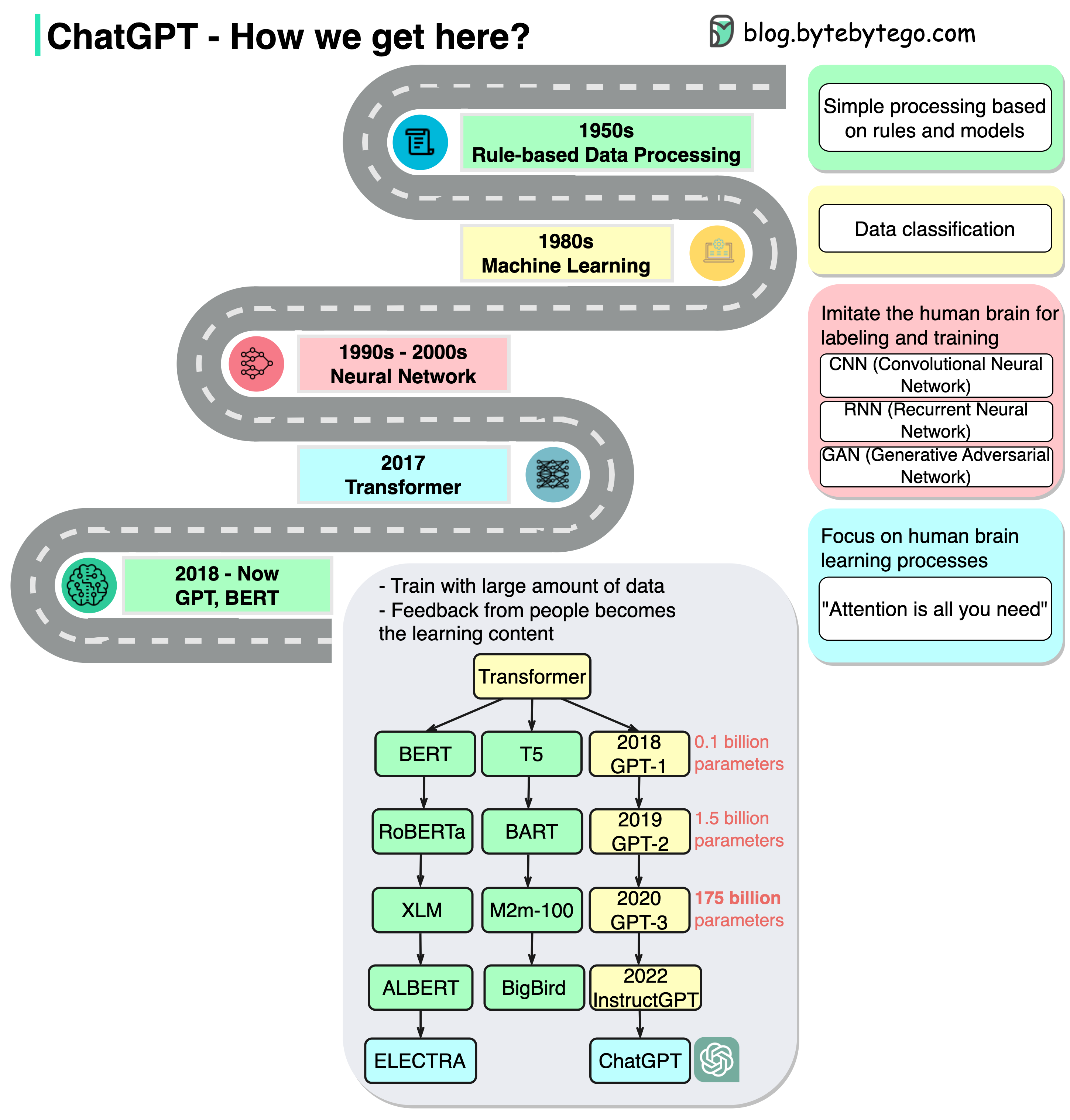

A picture is worth a thousand words. ChatGPT seems to come out of nowhere. Little did we know that it was built on top of decades of research.

|

||||

|

||||

|

||||

|

||||

The diagram above shows how we get here.

|

||||

|

||||

## 1950s

|

||||

|

||||

In this stage, people still used primitive models that are based on rules.

|

||||

|

||||

## 1980s

|

||||

|

||||

Since the 1980s, machine learning started to pick up and was used for classification. The training was conducted on a small range of data.

|

||||

|

||||

## 1990s - 2000s

|

||||

|

||||

Since the 1990s, neural networks started to imitate human brains for labeling and training. There are generally 3 types:

|

||||

|

||||

- CNN (Convolutional Neural Network): often used in visual-related tasks.

|

||||

- RNN (Recurrent Neural Network): useful in natural language tasks

|

||||

- GAN (Generative Adversarial Network): comprised of two networks(Generative and Discriminative). This is a generative model that can generate novel images that look alike.

|

||||

|

||||

## 2017

|

||||

|

||||

“Attention is all you need” represents the foundation of generative AI. The transformer model greatly shortens the training time by parallelism.

|

||||

|

||||

## 2018 - Now

|

||||

|

||||

In this stage, due to the major progress of the transformer model, we see various models train on a massive amount of data. Human demonstration becomes the learning content of the model. We’ve seen many AI writers that can write articles, news, technical docs, and even code. This has great commercial value as well and sets off a global whirlwind.

|

||||

Reference in New Issue

Block a user